id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

amitness/korpus_malti_press | 2023-08-15T13:49:33.000Z | [

"language:mt",

"region:us"

] | amitness | null | null | null | 0 | 4 | ---

language: mt

dataset_info:

features:

- name: category

dtype: string

- name: url

dtype: string

- name: title

dtype: string

- name: text

sequence: string

- name: subtitle

dtype: string

- name: source

dtype: string

- name: year

dtype: 'null'

- name: text_raw

sequence: string

splits:

- name: raw

num_bytes: 163668738

num_examples: 44824

download_size: 0

dataset_size: 163668738

configs:

- config_name: default

data_files:

- split: raw

path: data/raw-*

---

# Dataset Card for "korpus_malti_press"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

declare-lab/InstructEvalImpact | 2023-06-09T08:53:22.000Z | [

"size_categories:n<1K",

"license:apache-2.0",

"region:us"

] | declare-lab | null | null | null | 6 | 4 | ---

license: apache-2.0

size_categories:

- n<1K

ArXiv: 2306.04757

---

# Project Links

# Dataset Description

The IMPACT dataset contains 50 human created prompts for each category, 200 in total, to test LLMs general writing ability.

Instructed LLMs demonstrate promising ability in writing-based tasks, such as composing letters or ethical debates. This dataset consists prompts across 4 diverse usage scenarios:

- **Informative Writing**: User queries such as self-help advice or explanations for various concept

- **Professional Writing**: Format involves suggestions presentations or emails in a business setting

- **Argumentative Writing**: Debate positions on ethical and societal question

- **Creative Writing**: Diverse writing formats such as stories, poems, and songs.

The IMPACT dataset is included in our [InstructEval Benchmark Suite](https://github.com/declare-lab/instruct-eval).

# Evaluation Results

We leverage ChatGPT to judge the quality of the generated answers by LLMs. In terms of:

- Relevance: how well the answer engages with the given prompt

- Coherence: general text quality such as organization and logical flow

Each answer is scored on a Likert scale from 1 to 5. We evaluate the models in the zero-shot

setting based on the given prompt and perform sampling-based decoding with a temperature of 1.0

| **Model** | **Size** | **Informative** | | **Professional** | | **Argumentative** | | **Creative** | | **Avg.** | |

| :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: |

| | | Rel. | Coh. | Rel. | Coh. | Rel. | Coh. | Rel. | Coh. | Rel. | Coh. |

| **ChatGPT** | - | 3.34 | 3.98 | 3.88 | 3.96 | 3.96 | 3.82 | 3.92 | 3.94 | 3.78 | 3.93 |

| [**Flan-Alpaca**](https://huggingface.co/declare-lab/flan-alpaca-xxl) | 11B | 3.56 | 3.46 | 3.54 | 3.70 | 3.22 | 3.28 | 3.70 | 3.40 | 3.51 | 3.46 |

| [**Dolly-V2**](https://huggingface.co/databricks/dolly-v2-12b) | 12 B | 3.54 | 3.64 | 2.96 | 3.74 | 3.66 | 3.20 | 3.02 | 3.18 | 3.30 | 3.44 |

| [**StableVicuna**](https://huggingface.co/TheBloke/stable-vicuna-13B-HF) | 13B | 3.54 | 3.64 | 2.96 | 3.74 | 3.30 | 3.20 | 3.02 | 3.18 | 3.21 | 3.44 |

| [**Flan-T5**](https://huggingface.co/google/flan-t5-xxl) | 11B | 2.64 | 3.24 | 2.62 | 3.22 | 2.54 | 3.40 | 2.50 | 2.72 | 2.58 | 3.15 |

# Citation

Please consider citing the following article if you found our work useful:

```

bibtex

@article{chia2023instructeval,

title={INSTRUCTEVAL: Towards Holistic Evaluation of Instruction-Tuned Large Language Models},

author={Yew Ken Chia and Pengfei Hong and Lidong Bing and Soujanya Poria},

journal={arXiv preprint arXiv:2306.04757},

year={2023}

}

```

|

Binaryy/travel_sample | 2023-06-09T11:53:34.000Z | [

"region:us"

] | Binaryy | null | null | null | 1 | 4 | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 41063

num_examples: 20

download_size: 29530

dataset_size: 41063

---

# Dataset Card for "travel_sample"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

shawarmas/built-in-dictionary.txt | 2023-07-14T09:52:06.000Z | [

"region:us"

] | shawarmas | null | null | null | 1 | 4 | Entry not found |

polejowska/cd45rb | 2023-06-10T08:06:52.000Z | [

"region:us"

] | polejowska | null | null | null | 1 | 4 | ---

dataset_info:

features:

- name: image_id

dtype: int64

- name: image

dtype: image

- name: width

dtype: int32

- name: height

dtype: int32

- name: objects

list:

- name: category_id

dtype:

class_label:

names:

'0': leukocyte

- name: image_id

dtype: string

- name: id

dtype: int64

- name: area

dtype: int64

- name: bbox

sequence: float32

length: 4

- name: segmentation

list:

list: float32

- name: iscrowd

dtype: bool

splits:

- name: train

num_bytes: 35879463408.88

num_examples: 18421

- name: valid

num_bytes: 3475442128.938

num_examples: 1781

- name: test

num_bytes: 4074586864.944

num_examples: 2116

download_size: 43275144782

dataset_size: 43429492402.762

---

# Dataset Card for "cd45rb"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

eastwind/semeval-2016-absa-reviews-english-translated-resampled | 2023-06-11T10:17:43.000Z | [

"license:mit",

"region:us"

] | eastwind | null | null | null | 0 | 4 | ---

license: mit

---

# Dataset Card for Hotel Review ABSA (SemEval 2016 Translated from Arabic)

## Dataset Description

Derived from eastwind/semeval-2016-absa-reviews-english-translated-stanford-alpaca, by upsampling the neutral class and then resampling 3k examples from each class |

vietgpt/OSCAR-2201 | 2023-06-13T05:00:30.000Z | [

"region:us"

] | vietgpt | null | null | null | 0 | 4 | ---

dataset_info:

features:

- name: id

dtype: string

- name: text

dtype: string

- name: url

dtype: string

- name: date

dtype: string

- name: perplexity

dtype: float64

splits:

- name: train

num_bytes: 15978372237.047762

num_examples: 1700386

download_size: 6412125570

dataset_size: 15978372237.047762

---

# Dataset Card for "OSCAR-2201"

Num tokens: 2,682,681,285 tokens |

vietgpt/OSCAR-2109 | 2023-06-13T04:53:37.000Z | [

"region:us"

] | vietgpt | null | null | null | 0 | 4 | ---

dataset_info:

features:

- name: id

dtype: string

- name: text

dtype: string

- name: url

dtype: string

- name: date

dtype: string

- name: perplexity

dtype: float64

splits:

- name: train

num_bytes: 16802536783.756039

num_examples: 5098334

download_size: 8245526034

dataset_size: 16802536783.756039

---

# Dataset Card for "OSCAR-2109"

Num tokens: 2,884,522,212 tokens |

jondurbin/airoboros-gpt4-1.2 | 2023-06-22T15:00:42.000Z | [

"license:cc-by-nc-4.0",

"region:us"

] | jondurbin | null | null | null | 18 | 4 | ---

license: cc-by-nc-4.0

---

A continuation of [gpt4-1.1](https://huggingface.co/datasets/jondurbin/airoboros-gpt4-1.1), with:

* over 1000 new coding instructions, along with several hundred prompts using `PLAINFORMAT` to *hopefully* allow non-markdown/backtick/verbose code generation

* nearly 4000 additional math/reasoning, but this time using the ORCA style "[prompt]. Explain like I'm five." / Justify your logic, etc.

* several hundred roleplaying data

* additional misc/general data

### Usage and License Notices

All airoboros models and datasets are intended and licensed for research use only. I've used the 'cc-nc-4.0' license, but really it is subject to a custom/special license because:

- the base model is LLaMa, which has it's own special research license

- the dataset(s) were generated with OpenAI (gpt-4 and/or gpt-3.5-turbo), which has a clausing saying the data can't be used to create models to compete with openai

So, to reiterate: this model (and datasets) cannot be used commercially. |

Ali-C137/Guanaco-oasst1_Originals_Arabic_pairs | 2023-06-13T17:48:47.000Z | [

"region:us"

] | Ali-C137 | null | null | null | 0 | 4 | ---

dataset_info:

features:

- name: text

dtype: string

- name: translated_text

dtype: string

splits:

- name: train

num_bytes: 38713258

num_examples: 10364

download_size: 20094755

dataset_size: 38713258

---

# Dataset Card for "Guanaco-oasst1_Originals_Arabic_pairs"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

HuggingFaceM4/VisDial_modif-Sample | 2023-06-13T17:52:38.000Z | [

"region:us"

] | HuggingFaceM4 | null | null | null | 1 | 4 | ---

dataset_info:

features:

- name: caption

dtype: string

- name: dialog

sequence:

sequence: string

- name: image_path

dtype: string

- name: global_image_id

dtype: string

- name: anns_id

dtype: string

- name: image

dtype: image

- name: question

dtype: string

- name: answer

sequence: string

- name: context

dtype: string

splits:

- name: train

num_bytes: 164280536.5563279

num_examples: 1000

- name: validation

num_bytes: 162457052.0348837

num_examples: 1000

- name: test

num_bytes: 162318287.0

num_examples: 1000

download_size: 458274072

dataset_size: 489055875.5912116

---

# Dataset Card for "VisDial_modif-Sample"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

agkphysics/AudioSet | 2023-07-13T12:25:32.000Z | [

"task_categories:audio-classification",

"license:cc-by-4.0",

"audio",

"region:us"

] | agkphysics | null | null | null | 1 | 4 | ---

license: cc-by-4.0

tags:

- audio

task_categories:

- audio-classification

---

# AudioSet data

This repository contains the balanced training set and evaluation set

of the [AudioSet data](

https://research.google.com/audioset/dataset/index.html). The YouTube

videos were downloaded in March 2023, and so not all of the original

audios are available.

Extracting the `*.tar` files will place audio clips into the `audio/`

directory. The distribuion of audio clips is as follows:

- `audio/bal_train`: 18685 audio clips out of 22160 originally.

- `audio/eval`: 17142 audio clips out of 20371 originally.

Most audio is sampled at 48 kHz 24 bit, but about 10% is sampled at

44.1 kHz 24 bit. Audio files are stored in the FLAC format.

## Citation

```bibtex

@inproceedings{45857,

title = {Audio Set: An ontology and human-labeled dataset for audio events},

author = {Jort F. Gemmeke and Daniel P. W. Ellis and Dylan Freedman and Aren Jansen and Wade Lawrence and R. Channing Moore and Manoj Plakal and Marvin Ritter},

year = {2017},

booktitle = {Proc. IEEE ICASSP 2017},

address = {New Orleans, LA}

}

```

|

yyu/arxiv-attrprompt | 2023-09-13T20:57:33.000Z | [

"task_categories:text-classification",

"size_categories:10K<n<100K",

"language:en",

"license:apache-2.0",

"multilabel_classification",

"arxiv",

"scientific_papers",

"arxiv:2306.15895",

"region:us"

] | yyu | null | null | null | 1 | 4 | ---

license: apache-2.0

task_categories:

- text-classification

language:

- en

tags:

- multilabel_classification

- arxiv

- scientific_papers

size_categories:

- 10K<n<100K

version:

- V1

---

This is the data used in the paper [Large Language Model as Attributed Training Data Generator: A Tale of Diversity and Bias](https://github.com/yueyu1030/AttrPrompt).

See the paper: https://arxiv.org/abs/2306.15895 for details.

- `label.txt`: the label name for each class

- `train.jsonl`: The original training set.

- `valid.jsonl`: The original validation set.

- `test.jsonl`: The original test set.

- `simprompt.jsonl`: The training data generated by the simple prompt.

- `attrprompt.jsonl`: The training data generated by the attributed prompt.

**Note**: Different than the other datasets, the `labels` for training/validation/test data are all a *list* instead of an integer as it is a multi-label classification dataset. |

KimuGenie/KLUE_mrc_negative_train | 2023-06-22T04:18:36.000Z | [

"task_categories:question-answering",

"language:ko",

"license:cc-by-4.0",

"arxiv:2105.09680",

"region:us"

] | KimuGenie | null | null | null | 0 | 4 | ---

dataset_info:

features:

- name: title

dtype: string

- name: context

dtype: string

- name: question

dtype: string

- name: id

dtype: string

- name: answers

struct:

- name: answer_start

sequence: int64

- name: text

sequence: string

- name: document_id

dtype: int64

- name: hard_negative_text

sequence: string

- name: hard_negative_document_id

sequence: int64

- name: hard_negative_title

sequence: string

splits:

- name: train

num_bytes: 205021808

num_examples: 3952

- name: validation

num_bytes: 12329366

num_examples: 240

download_size: 124133126

dataset_size: 217351174

license: cc-by-4.0

task_categories:

- question-answering

language:

- ko

---

# Dataset Card for "KLUE_mrc_negative_train"

KLUE mrc train dataset에 BM25을 이용해서 question에 대한 hard negative text 20개를 추가한 데이터입니다.

BM25로 hard negative text를 찾았고, preprocessing을 통해 중복 데이터를 최대한 삭제했습니다.

사용한 BM25의 정보는 아래와 같습니다.

|top-k|top-10|top-20|top-50|top-100|

|-|-|-|-|-|

|accuracy(%)|92.1|95.0|97.1|98.8|

# Citation

```

@misc{park2021klue,

title={KLUE: Korean Language Understanding Evaluation},

author={Sungjoon Park and Jihyung Moon and Sungdong Kim and Won Ik Cho and Jiyoon Han and Jangwon Park and Chisung Song and Junseong Kim and Yongsook Song and Taehwan Oh and Joohong Lee and Juhyun Oh and Sungwon Lyu and Younghoon Jeong and Inkwon Lee and Sangwoo Seo and Dongjun Lee and Hyunwoo Kim and Myeonghwa Lee and Seongbo Jang and Seungwon Do and Sunkyoung Kim and Kyungtae Lim and Jongwon Lee and Kyumin Park and Jamin Shin and Seonghyun Kim and Lucy Park and Alice Oh and Jungwoo Ha and Kyunghyun Cho},

year={2021},

eprint={2105.09680},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

maximoss/rte3-french | 2023-09-08T08:57:36.000Z | [

"task_categories:text-classification",

"size_categories:1K<n<10K",

"language:fr",

"license:cc-by-4.0",

"region:us"

] | maximoss | null | null | null | 0 | 4 | ---

license: cc-by-4.0

task_categories:

- text-classification

language:

- fr

size_categories:

- 1K<n<10K

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

The RTE3-FR dataset is the French translation of the Textual Entailment English dataset used in the [RTE-3 Challenge](https://nlp.stanford.edu/RTE3-pilot/).

Like its English counterpart, the French RTE-3 dataset is composed of a development set and a test set, each containing 800 T/H pairs.

All T/H pairs were manually translated into French and proofread.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

- `id`: Index number.

- `language`: The language of the concerned pair of sentences.

- `premise`: The translated premise in the target language.

- `hypothesis`: The translated premise in the target language.

- `label`: The classification label, with possible values 0 (`entailment`), 1 (`neutral`), 2 (`contradiction`).

- `label_text`: The classification label, with possible values `entailment` (0), `neutral` (1), `contradiction` (2).

- `task`: The particular NLP task that the data was drawn from (IE, IR, QA and SUM).

- `length`: The length of the text of the pair.

### Data Splits

| name |entailment|neutral|contradiction|

|-------------|---------:|------:|------------:|

| dev | 412 | 299 | 89 |

| test | 410 | 318 | 72 |

| name |short|long|

|-------------|----:|---:|

| dev | 665 | 135|

| test | 683 | 117|

| name | IE| IR| QA|SUM|

|-------------|--:|--:|--:|--:|

| dev |200|200|200|200|

| test |200|200|200|200|

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

TBA

### Acknowledgements

This work was supported by the Defence Innovation Agency (AID) of the Directorate General of Armament (DGA) of the French Ministry of Armed Forces, and by the ICO, _Institut Cybersécurité Occitanie_, funded by Région Occitanie, France.

### Contributions

[More Information Needed] |

HausaNLP/Naija-Lex | 2023-06-18T16:13:08.000Z | [

"multilinguality:monolingual",

"multilinguality:multilingual",

"language:hau",

"language:ibo",

"language:yor",

"license:cc-by-nc-sa-4.0",

"sentiment analysis, Twitter, tweets",

"stopwords",

"region:us"

] | HausaNLP | Naija-Stopwords is a part of the Naija-Senti project. It is a list of collected stopwords from the four most widely spoken languages in Nigeria — Hausa, Igbo, Nigerian-Pidgin, and Yorùbá. | @inproceedings{muhammad-etal-2022-naijasenti,

title = "{N}aija{S}enti: A {N}igerian {T}witter Sentiment Corpus for Multilingual Sentiment Analysis",

author = "Muhammad, Shamsuddeen Hassan and

Adelani, David Ifeoluwa and

Ruder, Sebastian and

Ahmad, Ibrahim Sa{'}id and

Abdulmumin, Idris and

Bello, Bello Shehu and

Choudhury, Monojit and

Emezue, Chris Chinenye and

Abdullahi, Saheed Salahudeen and

Aremu, Anuoluwapo and

Jorge, Al{\"\i}pio and

Brazdil, Pavel",

booktitle = "Proceedings of the Thirteenth Language Resources and Evaluation Conference",

month = jun,

year = "2022",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2022.lrec-1.63",

pages = "590--602",

} | null | 0 | 4 | ---

license: cc-by-nc-sa-4.0

tags:

- sentiment analysis, Twitter, tweets

- stopwords

multilinguality:

- monolingual

- multilingual

language:

- hau

- ibo

- yor

pretty_name: NaijaStopwords

---

# Naija-Lexicons

Naija-Lexicons is a part of the [Naija-Senti](https://huggingface.co/datasets/HausaNLP/NaijaSenti-Twitter) project. It is a list of collected stopwords from the four most widely spoken languages in Nigeria — Hausa, Igbo, Nigerian-Pidgin, and Yorùbá.

--------------------------------------------------------------------------------

## Dataset Description

- **Homepage:** https://github.com/hausanlp/NaijaSenti/tree/main/data/stopwords

- **Repository:** [GitHub](https://github.com/hausanlp/NaijaSenti/tree/main/data/stopwords)

- **Paper:** [NaijaSenti: A Nigerian Twitter Sentiment Corpus for Multilingual Sentiment Analysis](https://aclanthology.org/2022.lrec-1.63/)

- **Leaderboard:** N/A

- **Point of Contact:** [Shamsuddeen Hassan Muhammad](shamsuddeen2004@gmail.com)

### Languages

3 most indigenous Nigerian languages

* Hausa (hau)

* Igbo (ibo)

* Yoruba (yor)

## Dataset Structure

### Data Instances

List of lexicons instances in each of the 3 languages with their sentiment labels.

```

{

"word": "string",

"label": "string"

}

```

### How to use it

```python

from datasets import load_dataset

# you can load specific languages (e.g., Hausa). This download manually created and translated lexicons.

ds = load_dataset("HausaNLP/Naija-Lexicons", "hau")

# you can load specific languages (e.g., Hausa). You may also specify the split you want to downloaf

ds = load_dataset("HausaNLP/Naija-Lexicons", "hau", split = "manual")

```

## Additional Information

### Dataset Curators

* Shamsuddeen Hassan Muhammad

* Idris Abdulmumin

* Ibrahim Said Ahmad

* Bello Shehu Bello

### Licensing Information

This Naija-Lexicons dataset is licensed under a Creative Commons Attribution BY-NC-SA 4.0 International License

### Citation Information

```

@inproceedings{muhammad-etal-2022-naijasenti,

title = "{N}aija{S}enti: A {N}igerian {T}witter Sentiment Corpus for Multilingual Sentiment Analysis",

author = "Muhammad, Shamsuddeen Hassan and

Adelani, David Ifeoluwa and

Ruder, Sebastian and

Ahmad, Ibrahim Sa{'}id and

Abdulmumin, Idris and

Bello, Bello Shehu and

Choudhury, Monojit and

Emezue, Chris Chinenye and

Abdullahi, Saheed Salahudeen and

Aremu, Anuoluwapo and

Jorge, Al{\'\i}pio and

Brazdil, Pavel",

booktitle = "Proceedings of the Thirteenth Language Resources and Evaluation Conference",

month = jun,

year = "2022",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2022.lrec-1.63",

pages = "590--602",

}

```

### Contributions

> This work was carried out with support from Lacuna Fund, an initiative co-founded by The Rockefeller Foundation, Google.org, and Canada’s International Development Research Centre. The views expressed herein do not necessarily represent those of Lacuna Fund, its Steering Committee, its funders, or Meridian Institute. |

winglian/visual-novels-json | 2023-06-17T03:08:49.000Z | [

"region:us"

] | winglian | null | null | null | 0 | 4 | Entry not found |

renumics/beans-outlier | 2023-06-30T20:09:45.000Z | [

"task_categories:image-classification",

"task_ids:multi-class-image-classification",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:extended",

"language:en",

"license:mit",

"region:us"

] | renumics | null | null | null | 0 | 4 | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- en

license:

- mit

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- extended

task_categories:

- image-classification

task_ids:

- multi-class-image-classification

pretty_name: Beans

dataset_info:

features:

- name: image_file_path

dtype: string

- name: image

dtype: image

- name: labels

dtype:

class_label:

names:

'0': angular_leaf_spot

'1': bean_rust

'2': healthy

- name: embedding_foundation

sequence: float32

- name: embedding_ft

sequence: float32

- name: outlier_score_ft

dtype: float64

- name: outlier_score_foundation

dtype: float64

- name: nn_image

dtype: image

splits:

- name: train

num_bytes: 293531811.754

num_examples: 1034

download_size: 0

dataset_size: 293531811.754

---

# Dataset Card for "beans-outlier"

📚 This dataset is an enhancved version of the [ibean project of the AIR lab](https://github.com/AI-Lab-Makerere/ibean/).

The workflow is described in the medium article: [Changes of Embeddings during Fine-Tuning of Transformers](https://medium.com/@markus.stoll/changes-of-embeddings-during-fine-tuning-c22aa1615921).

## Explore the Dataset

The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) allows you to explorer this dataset. You can find a Hugging Face Space running Spotlight with this dataset here: <https://huggingface.co/spaces/renumics/beans-outlier>

Or you can explorer it locally:

```python

!pip install renumics-spotlight datasets

from renumics import spotlight

import datasets

ds = datasets.load_dataset("renumics/beansoutlier", split="train")

df = ds.to_pandas()

df["label_str"] = df["labels"].apply(lambda x: ds.features["labels"].int2str(x))

dtypes = {

"nn_image": spotlight.Image,

"image": spotlight.Image,

"embedding_ft": spotlight.Embedding,

"embedding_foundation": spotlight.Embedding,

}

spotlight.show(

df,

dtype=dtypes,

layout="https://spotlight.renumics.com/resources/layout_pre_post_ft.json",

)

``` |

sert121/SpiderSQL | 2023-06-19T18:09:01.000Z | [

"license:mit",

"region:us"

] | sert121 | null | null | null | 0 | 4 | ---

license: mit

---

|

sadmoseby/oassist_transformed | 2023-06-19T20:18:47.000Z | [

"region:us"

] | sadmoseby | null | null | null | 0 | 4 | Entry not found |

AhmedSSoliman/CodeSearchNet | 2023-06-20T09:17:15.000Z | [

"license:ms-pl",

"region:us"

] | AhmedSSoliman | null | null | null | 0 | 4 | ---

license: ms-pl

---

|

timpal0l/scandisent | 2023-06-21T13:39:40.000Z | [

"task_categories:text-classification",

"size_categories:1K<n<10K",

"language:sv",

"language:no",

"language:da",

"language:en",

"language:fi",

"license:openrail",

"arxiv:2104.10441",

"region:us"

] | timpal0l | null | null | null | 1 | 4 | ---

license: openrail

task_categories:

- text-classification

language:

- sv

- no

- da

- en

- fi

pretty_name: ScandiSent

size_categories:

- 1K<n<10K

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository: https://github.com/timpal0l/ScandiSent**

- **Paper: https://arxiv.org/pdf/2104.10441.pdf**

- **Leaderboard:**

- **Point of Contact: Tim Isbister**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

IIC/livingner3 | 2023-06-21T15:31:48.000Z | [

"task_categories:text-classification",

"task_ids:multi-label-classification",

"multilinguality:monolingual",

"language:es",

"license:cc-by-4.0",

"biomedical",

"clinical",

"spanish",

"region:us"

] | IIC | null | null | null | 0 | 4 | ---

language:

- es

tags:

- biomedical

- clinical

- spanish

multilinguality:

- monolingual

task_categories:

- text-classification

task_ids:

- multi-label-classification

license:

- cc-by-4.0

pretty_name: LivingNER3

train-eval-index:

- task: text-classification

task_id: multi_label_classification

splits:

train_split: train

eval_split: test

metrics:

- type: f1

name: f1

---

# LivingNER

This is a third party reupload of the [LivingNER](https://temu.bsc.es/livingner/) task 3 dataset.

It only contains the task 3 for the Spanish language. It does not include the multilingual data nor the background data.

This dataset is part of a benchmark in the paper [TODO](TODO).

### Citation Information

```bibtex

TODO

```

### Citation Information of the original dataset

```bibtex

@article{amiranda2022nlp,

title={Mention detection, normalization \& classification of species, pathogens, humans and food in clinical documents: Overview of LivingNER shared task and resources},

author={Miranda-Escalada, Antonio and Farr{'e}-Maduell, Eul{`a}lia and Lima-L{'o}pez, Salvador and Estrada, Darryl and Gasc{'o}, Luis and Krallinger, Martin},

journal = {Procesamiento del Lenguaje Natural},

year={2022}

}

```

|

Jingmiao/PUZZLEQA | 2023-06-28T02:56:19.000Z | [

"language:en",

"license:apache-2.0",

"arxiv:2306.12255",

"region:us"

] | Jingmiao | null | null | null | 0 | 4 | ---

language:

- en

license: apache-2.0

---

### Acknowledgements

The PUZZLEQA is scraped from [NPR Sunday Puzzle Official Website](https://www.npr.org/series/4473090/sunday-puzzle) and [NPR Puzzle Synopsis](https://groups.google.com/g/nprpuzzle),

made by a group of fans by running a mailing list that distributed questions and answers for each week’s puzzle.

The authors of the dataset cleaned the data and made some multiple choice based on the question and answers.

### Creation

The Multiple Choice Dataset is generated from PUZZLEQA dataset using the following algorithm.

1. Read the fr_big_exp.tsv.tsv file

2. Group rule-question-answer triples in a given Sunday together (so the rules of each question will be the same)

3. For each question, randomly select three other answers from answers on the same Sunday. Shuffle 3 selected answers with the correct answer for the given question to obtain 4 choices for this question. \\

4. identify the correct answer for the given question as the "gold" answer.

Recent.tsv is the dataset based on the NPR PUZZLE in 2023.

# Citation

@inproceedings{zhao2023solving,

title={Solving and Generating NPR Sunday Puzzles with Large Language Models},

author={Jingmiao Zhao and Carolyn Jane Anderson},

year={2023},

eprint={2306.12255},

archivePrefix={arXiv},

primaryClass={cs.CL}

} |

ChanceFocus/flare-ner | 2023-07-27T00:02:41.000Z | [

"license:mit",

"region:us"

] | ChanceFocus | null | null | null | 0 | 4 | ---

license: mit

dataset_info:

features:

- name: query

dtype: string

- name: answer

dtype: string

- name: label

sequence: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 470523

num_examples: 408

- name: valid

num_bytes: 101644

num_examples: 103

- name: test

num_bytes: 156592

num_examples: 98

download_size: 224350

dataset_size: 728759

---

|

theonlydo/indonesia-slang | 2023-07-06T18:25:43.000Z | [

"region:us"

] | theonlydo | null | null | null | 0 | 4 | |

atom-in-the-universe/fanfics-10k-10k | 2023-06-23T09:28:54.000Z | [

"region:us"

] | atom-in-the-universe | null | null | null | 0 | 4 | Entry not found |

caldervf/cicero_dataset_with_embeddings_and_faiss_index | 2023-06-24T08:15:45.000Z | [

"region:us"

] | caldervf | null | null | null | 0 | 4 | ---

dataset_info:

features:

- name: _id

dtype: string

- name: title

dtype: string

- name: content

dtype: string

- name: summary

dtype: string

- name: content_filtered

dtype: string

- name: embeddings

sequence: float32

splits:

- name: train

num_bytes: 19279400

num_examples: 1143

download_size: 13285598

dataset_size: 19279400

---

# Dataset Card for "cicero_dataset_with_embeddings_and_faiss_index"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ChanceFocus/flare-finqa | 2023-08-18T20:03:26.000Z | [

"region:us"

] | ChanceFocus | null | null | null | 2 | 4 | ---

dataset_info:

features:

- name: id

dtype: string

- name: query

dtype: string

- name: answer

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 27056024

num_examples: 6251

- name: valid

num_bytes: 3764872

num_examples: 883

- name: test

num_bytes: 4846110

num_examples: 1147

download_size: 0

dataset_size: 35667006

---

# Dataset Card for "flare-finqa"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

layoric/labeled-multiple-choice-explained | 2023-06-26T00:10:58.000Z | [

"size_categories:1K<n<10K",

"language:en",

"license:unknown",

"region:us"

] | layoric | null | null | null | 0 | 4 | ---

license: unknown

language:

- en

size_categories:

- 1K<n<10K

---

This dataset is based on `under-tree/labeled-multiple-choice` but using GPT-3.5-turbo to generate explanations for each answer option.

This was a very basic attempt to follow the Orca paper approach of a 'teacher' model to provide more context to some trivia questions.

Questions were deduplicated based on the question text.

I used the python library `guidance` to help generate the prompts. Below is the prompt template I used.

```

{{#role 'system'~}}

You are an AI assistant that helps people find information. User will give you a question. Your task is to answer as faithfully as you can, and most importantly, provide explanation why incorrect answers are not correct. While answering think step-by-step and justify your answer.

{{~/role}}

{{#role 'user'~}}

USER:

Topic: {{topic}}

Question: {{question}}

### Answer

The correct answer is:

{{answer_key}}). {{answer}}

### Explanation:

Let's break it down step by step.

1. Read the question and options carefully.

2. Identify the differences between the options.

3. Determine which options are not logical based on the difference.

4. Go through each incorrect answer providing an explanation why it is incorrect.

{{~/role}}

{{#role 'assistant'~}}

{{~gen 'explanation'}}

{{~/role}}

``` |

FreedomIntelligence/alpaca-gpt4-french | 2023-08-06T08:09:08.000Z | [

"license:apache-2.0",

"region:us"

] | FreedomIntelligence | null | null | null | 0 | 4 | ---

license: apache-2.0

---

The dataset is used in the research related to [MultilingualSIFT](https://github.com/FreedomIntelligence/MultilingualSIFT). |

wisenut-nlp-team/namu | 2023-07-10T07:46:04.000Z | [

"license:cc-by-4.0",

"region:us"

] | wisenut-nlp-team | null | null | null | 0 | 4 | ---

license: cc-by-4.0

dataset_info:

features:

- name: title

dtype: string

- name: text

dtype: string

- name: contributors

sequence: string

- name: id

dtype: string

splits:

- name: train

num_bytes: 8757569508

num_examples: 867023

download_size: 4782924595

dataset_size: 8757569508

---

```

from datasets import load_dataset

raw_dataset = load_dataset(

"wisenut-nlp-team/namu",

"raw",

use_auth_token="<your personal/api token>"

)

processed_dataset = load_dataset(

"wisenut-nlp-team/namu",

"processed",

use_auth_token="<your personal/api token>"

)

```

|

barbaroo/Faroese_BLARK_small | 2023-08-07T14:47:31.000Z | [

"task_categories:text-generation",

"language:fo",

"region:us"

] | barbaroo | null | null | null | 0 | 4 | ---

task_categories:

- text-generation

language:

- fo

---

# Dataset Card for Faroese_BLARK_small

## Dataset Description

All sentences are retrieved from:

- **Paper:**

Annika Simonsen, Sandra Saxov Lamhauge, Iben Nyholm Debess, and Peter Juel Henrichsen. 2022. Creating a Basic Language Resource Kit for Faroese. In Proceedings of the Thirteenth Language Resources and Evaluation Conference, pages 4637–4643, Marseille, France. European Language Resources Association.

### Dataset Summary

This dataset is a filtered version of the corpus (35.6 M tokens) first published as BLARK - Basic Language Resource Kit for Faroese.

The pre-processing and filtering steps include:

- Normalize format to utf-8

- Remove shorter sentences (less than 10 units, where units are separated by spaces)

- Remove archaic Faroese

- Remove separators ('\r', '\t', '\n')

- Remove non standard formatting. Examples: '§§', ' | ', '**', ' • ', ' • ', '.- ', ': ?', '.?', '\xa0', '\xad', '_ _', '. .', etc.

- Remove (most) numbered lists, of formats: 1), 1:, Stk. 1 etc.

- Replace arbitrary number of question/exclamation marks and full-stops with 1. Example: !!!!!! -> !

- Remove websites that start with http

- Remove sentences without (or with little) linguistic content. In practice: all sentences where more than half of the characters (excluding spaces) are number, punctuations and letters in caps-lock (acronyms and initials)

- Remove duplicates

### Supported Tasks and Leaderboards

Suitable for MLM and CLM

|

anzorq/kbd_speech | 2023-10-08T18:12:13.000Z | [

"task_categories:automatic-speech-recognition",

"task_categories:text-to-speech",

"language:kbd",

"region:us"

] | anzorq | null | null | null | 1 | 4 | ---

language:

- kbd

task_categories:

- automatic-speech-recognition

- text-to-speech

dataset_info:

features:

- name: audio

dtype: audio

- name: transcription

dtype: string

- name: gender

dtype: string

- name: country

dtype: string

- name: speaker_id

dtype: int64

splits:

- name: train

num_bytes: 193658385.11

num_examples: 20555

download_size: 518811329

dataset_size: 193658385.11

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "kbd_speech"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

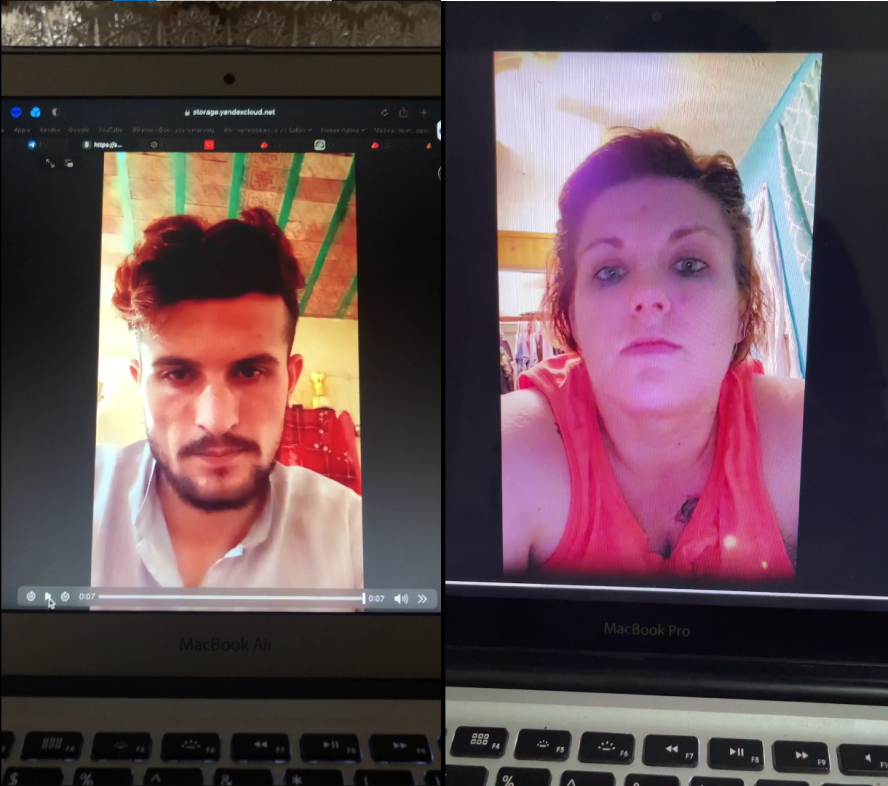

TrainingDataPro/MacBook-Attacks-Dataset | 2023-09-14T16:54:13.000Z | [

"task_categories:video-classification",

"language:en",

"license:cc-by-nc-nd-4.0",

"finance",

"region:us"

] | TrainingDataPro | The dataset consists of videos of replay attacks played on different

models of MacBooks. The dataset solves tasks in the field of anti-spoofing and

it is useful for buisness and safety systems.

The dataset includes: **replay attacks** - videos of real people played on

a computer and filmed on the phone. | @InProceedings{huggingface:dataset,

title = {MacBook-Attacks-Dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 1 | 4 | ---

license: cc-by-nc-nd-4.0

task_categories:

- video-classification

language:

- en

tags:

- finance

dataset_info:

features:

- name: file

dtype: string

- name: phone

dtype: string

- name: computer

dtype: string

- name: gender

dtype: string

- name: age

dtype: int16

- name: country

dtype: string

splits:

- name: train

num_bytes: 1418

num_examples: 24

download_size: 573934283

dataset_size: 1418

---

# Antispoofing Replay Dataset

The dataset consists of videos of replay attacks played on different models of MacBooks. The dataset solves tasks in the field of anti-spoofing and it is useful for buisness and safety systems.

The dataset includes: **replay attacks** - videos of real people played on a computer and filmed on the phone.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=MacBook-Attacks-Dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

The folder "attacks" includes videos of replay attack

### Models of MacBooks in the datset:

- MacBook 13

- MacBook Air

- MacBook Air 7

- MacBook Air 11

- MacBook Air 13

- MacBook Air M1

- MacBook Pro 12

- MacBook Pro 13

### File with the extension .csv

includes the following information for each media file:

- **file**: link to access the replay video,

- **phone**: the device used to capture the replay video,

- **computer**: the device used to play the video,

- **gender**: gender of a person in the video,

- **age**: age of the person in the video,

- **country**: country of the person

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=MacBook-Attacks-Dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

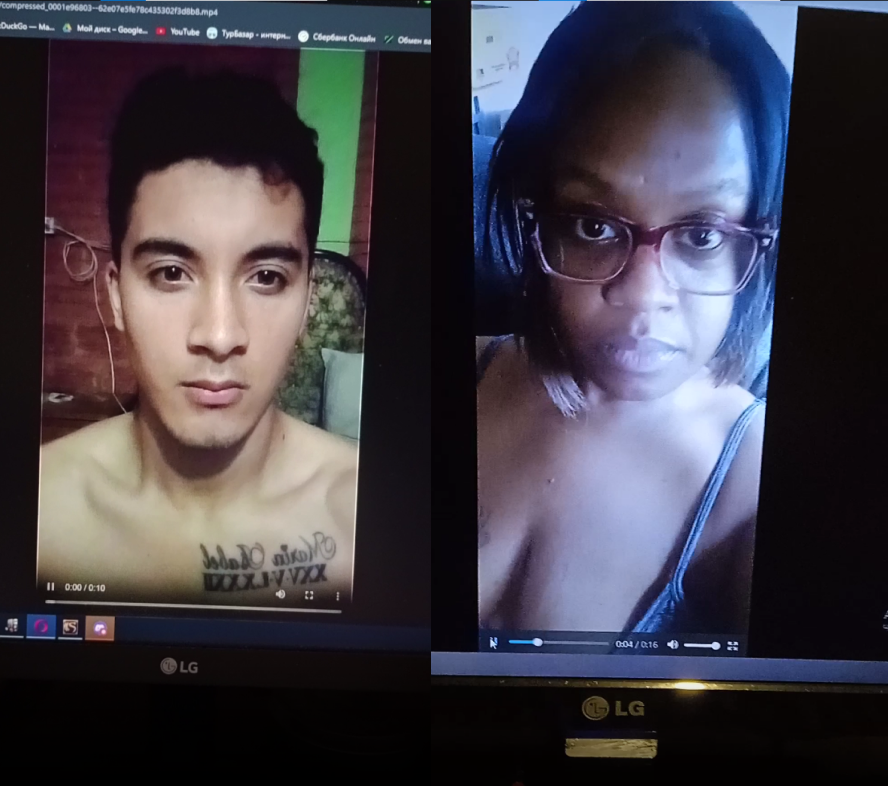

TrainingDataPro/monitors-replay-attacks-dataset | 2023-09-14T16:54:44.000Z | [

"task_categories:video-classification",

"language:en",

"license:cc-by-nc-nd-4.0",

"legal",

"region:us"

] | TrainingDataPro | The dataset consists of videos of replay attacks played on different models of

computers. The dataset solves tasks in the field of anti-spoofing and it is

useful for buisness and safety systems.

The dataset includes: **replay attacks** - videos of real people played

on a computer and filmed on the phone. | @InProceedings{huggingface:dataset,

title = {monitors-replay-attacks-dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 1 | 4 | ---

license: cc-by-nc-nd-4.0

task_categories:

- video-classification

language:

- en

tags:

- legal

dataset_info:

features:

- name: file

dtype: string

- name: phone

dtype: string

- name: computer

dtype: string

- name: gender

dtype: string

- name: age

dtype: int16

- name: country

dtype: string

splits:

- name: train

num_bytes: 588

num_examples: 10

download_size: 342902185

dataset_size: 588

---

# Monitors Replay Attacks Dataset

The dataset consists of videos of replay attacks played on different models of computers. The dataset solves tasks in the field of anti-spoofing and it is useful for buisness and safety systems.

The dataset includes: **replay attacks** - videos of real people played on a computer and filmed on the phone.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=monitors-replay-attacks-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

The folder "attacks" includes videos of replay attacks

### Computer companies in the datset:

- Dell

- LG

- ASUS

- HP

- Redmi

- AOC

- Samsung

### File with the extension .csv

includes the following information for each media file:

- **file**: link to access the replay video,

- **phone**: the device used to capture the replay video,

- **computer**: the device used to play the video,

- **gender**: gender of a person in the video,

- **age**: age of the person in the video,

- **country**: country of the person

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=monitors-replay-attacks-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

ammarnasr/the-stack-java-clean | 2023-08-14T21:18:42.000Z | [

"task_categories:text-generation",

"size_categories:1M<n<10M",

"language:code",

"license:openrail",

"code",

"region:us"

] | ammarnasr | null | null | null | 0 | 4 | ---

license: openrail

dataset_info:

features:

- name: hexsha

dtype: string

- name: size

dtype: int64

- name: content

dtype: string

- name: avg_line_length

dtype: float64

- name: max_line_length

dtype: int64

- name: alphanum_fraction

dtype: float64

splits:

- name: train

num_bytes: 3582248477.9086223

num_examples: 806789

- name: test

num_bytes: 394048264.9973618

num_examples: 88747

- name: valid

num_bytes: 3982797.09401595

num_examples: 897

download_size: 1323156008

dataset_size: 3980279540

task_categories:

- text-generation

language:

- code

tags:

- code

pretty_name: TheStack-Java

size_categories:

- 1M<n<10M

---

## Dataset 1: TheStack - Java - Cleaned

**Description**: This dataset is drawn from TheStack Corpus, an open-source code dataset with over 3TB of GitHub data covering 48 programming languages. We selected a small portion of this dataset to optimize smaller language models for Java, a popular statically typed language.

**Target Language**: Java

**Dataset Size**:

- Training: 900,000 files

- Validation: 50,000 files

- Test: 50,000 files

**Preprocessing**:

1. Selected Java as the target language due to its popularity on GitHub.

2. Filtered out files with average line length > 100 characters, maximum line length > 1000 characters, and alphabet ratio < 25%.

3. Split files into 90% training, 5% validation, and 5% test sets.

**Tokenizer**: Byte Pair Encoding (BPE) tokenizer with tab and whitespace tokens. GPT-2 vocabulary extended with special tokens.

**Training Sequences**: Sequences constructed by joining training data text to reach a context length of 2048 tokens (1024 tokens for full fine-tuning). |

TrainingDataPro/anti-spoofing-real-waist-high-dataset | 2023-09-14T16:55:22.000Z | [

"task_categories:video-classification",

"task_categories:image-to-image",

"language:en",

"license:cc-by-nc-nd-4.0",

"legal",

"region:us"

] | TrainingDataPro | The dataset consists of waist-high selfies and video of real people.

The dataset solves tasks in the field of anti-spoofing and it is useful

for buisness and safety systems. | @InProceedings{huggingface:dataset,

title = {anti-spoofing-real-waist-high-dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 1 | 4 | ---

license: cc-by-nc-nd-4.0

task_categories:

- video-classification

- image-to-image

language:

- en

tags:

- legal

dataset_info:

features:

- name: photo

dtype: image

- name: video

dtype: string

- name: phone

dtype: string

- name: gender

dtype: string

- name: age

dtype: int8

- name: country

dtype: string

splits:

- name: train

num_bytes: 34728975

num_examples: 8

download_size: 195022198

dataset_size: 34728975

---

# Anti-Spoofing Real Waist-High Dataset

The dataset consists of waist-high selfies and video of real people. The dataset solves tasks in the field of anti-spoofing and it is useful for buisness and safety systems.

### The dataset includes 2 different types of files:

- **Photo** - a selfie of a person from a mobile phone, the person is depicted alone on it, the face is clearly visible. Person is presented waist-high.

- **Video** - filmed on the front camera, on which a person moves his/her head left, right, up and down. Duration of the video is from 10 to 20 seconds.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=anti-spoofing-real-waist-high-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

- The folder **"photo"** includes selfies of people

- The folder **"video"** includes videos of people

### File with the extension .csv

includes the following information for each media file:

- **photo**: link to access the selfie,

- **video**: link to access the video,

- **phone**: the device used to capture selfie and video,

- **gender**: gender of a person,

- **age**: age of the person,

- **country**: country of the person

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=anti-spoofing-real-waist-high-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

Ali-C137/Darija-Stories-Dataset | 2023-07-29T13:54:28.000Z | [

"task_categories:text-generation",

"language:ar",

"license:cc-by-nc-4.0",

"region:us"

] | Ali-C137 | null | null | null | 3 | 4 | ---

dataset_info:

features:

- name: ChapterName

dtype: string

- name: ChapterLink

dtype: string

- name: Author

dtype: string

- name: Text

dtype: string

- name: Tags

dtype: int64

splits:

- name: train

num_bytes: 476926644

num_examples: 6142

download_size: 241528641

dataset_size: 476926644

license: cc-by-nc-4.0

task_categories:

- text-generation

language:

- ar

pretty_name: Darija (Moroccan Arabic) Stories Dataset

---

# Dataset Card for "Darija-Stories-Dataset"

**Darija (Moroccan Arabic) Stories Dataset is a large-scale collection of stories written in Moroccan Arabic dialect (Darija).**

## Dataset Description

Darija (Moroccan Arabic) Stories Dataset contains a diverse range of stories that provide insights into Moroccan culture, traditions, and everyday life. The dataset consists of textual content from various chapters, including narratives, dialogues, and descriptions. Each story chapter is associated with a URL link for online reading or reference. The dataset also includes information about the author and tags that provide additional context or categorization.

## Dataset Details

- **Homepage:** https://huggingface.co/datasets/Ali-C137/Darija-Stories-Dataset

- **Author:** Elfilali Ali

- **Email:** ali.elfilali00@gmail.com, alielfilali0909@gmail.com

- **Github Profile:** [https://github.com/alielfilali01](https://github.com/alielfilali01)

- **LinkedIn Profile:** [https://www.linkedin.com/in/alielfilali01/](https://www.linkedin.com/in/alielfilali01/)

## Dataset Size

The Darija (Moroccan Arabic) Stories Dataset is the largest publicly available dataset in Moroccan Arabic dialect (Darija) to date, with over 70 million tokens.

## Potential Use Cases

- **Arabic Dialect NLP:** Researchers can utilize this dataset to develop and evaluate NLP models specifically designed for Arabic dialects, with a focus on Moroccan Arabic (Darija). Tasks such as dialect identification, part-of-speech tagging, and named entity recognition can be explored.

- **Sentiment Analysis:** The dataset can be used to analyze sentiment expressed in Darija stories, enabling sentiment classification, emotion detection, or opinion mining within the context of Moroccan culture.

- **Text Generation:** Researchers and developers can leverage the dataset to generate new stories or expand existing ones using various text generation techniques, facilitating the development of story generation systems specifically tailored for Moroccan Arabic dialect.

## Dataset Access

The Darija (Moroccan Arabic) Stories Dataset is available for academic and non-commercial use, under a Creative Commons Non Commercial license.

## Citation

Please use the following citation when referencing the Darija (Moroccan Arabic) Stories Dataset:

```

@dataset{

title = {Darija (Moroccan Arabic) Stories Dataset},

author = {Elfilali Ali},

howpublished = {Dataset},

url = {https://huggingface.co/datasets/Ali-C137/Darija-Stories-Dataset},

year = {2023},

}

```

|

crumb/flan-ul2-tinystories | 2023-07-02T04:47:47.000Z | [

"language:en",

"license:mit",

"region:us"

] | crumb | null | null | null | 2 | 4 | ---

license: mit

language:

- en

---

Around a quarter of a million examples generated from Flan-UL2 (20b) with the prompt "Write a short story using the vocabulary of a first-grader." to be used in an experimental curriculum learning setting. I had to checkpoint every 1024 examples to mitigate the program slowing down due to memory usage. This was run in bf16 on an RTXA6000 with the following settings:

```

top_k = random between (40, 128)

temperature = random between (0.6, 0.95)

max_length = 128

batch_size = 32

```

I wanted a less uniform boring set with the same exact patterns so I randomly modulate the temperature and top_k values to get a good mix. This cost ~$6 usd to create on runpod. |

Symato/c4_vi-filtered_200GB | 2023-07-03T11:53:47.000Z | [

"region:us"

] | Symato | null | null | null | 0 | 4 | Entry not found |

bias-amplified-splits/mnli | 2023-07-04T11:48:21.000Z | [

"task_categories:text-classification",

"size_categories:100K<n<1M",

"language:en",

"license:cc-by-4.0",

"arxiv:2305.18917",

"arxiv:1704.05426",

"region:us"

] | bias-amplified-splits | GLUE, the General Language Understanding Evaluation benchmark

(https://gluebenchmark.com/) is a collection of resources for training,

evaluating, and analyzing natural language understanding systems. | @inproceedings{wang2019glue,

title={{GLUE}: A Multi-Task Benchmark and Analysis Platform for Natural Language Understanding},

author={Wang, Alex and Singh, Amanpreet and Michael, Julian and Hill, Felix and Levy, Omer and Bowman, Samuel R.},

note={In the Proceedings of ICLR.},

year={2019}

} | null | 0 | 4 | ---

license: cc-by-4.0

dataset_info:

- config_name: minority_examples

features:

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: label

dtype:

class_label:

names:

'0': entailment

'1': neutral

'2': contradiction

- name: idx

dtype: int32

splits:

- name: train.biased

num_bytes: 58497575

num_examples: 309873

- name: train.anti_biased

num_bytes: 16122071

num_examples: 82829

- name: validation_matched.biased

num_bytes: 1443678

num_examples: 7771

- name: validation_matched.anti_biased

num_bytes: 390105

num_examples: 2044

- name: validation_mismatched.biased

num_bytes: 1536381

num_examples: 7797

- name: validation_mismatched.anti_biased

num_bytes: 412850

num_examples: 2035

download_size: 92308759

dataset_size: 78402660

- config_name: partial_input

features:

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: label

dtype:

class_label:

names:

'0': entailment

'1': neutral

'2': contradiction

- name: idx

dtype: int32

splits:

- name: train.biased

num_bytes: 59529986

num_examples: 309873

- name: train.anti_biased

num_bytes: 15089660

num_examples: 82829

- name: validation_matched.biased

num_bytes: 1445996

num_examples: 7745

- name: validation_matched.anti_biased

num_bytes: 387787

num_examples: 2070

- name: validation_mismatched.biased

num_bytes: 1529878

num_examples: 7758

- name: validation_mismatched.anti_biased

num_bytes: 419353

num_examples: 2074

download_size: 92308759

dataset_size: 78402660

task_categories:

- text-classification

language:

- en

pretty_name: MultiNLI

size_categories:

- 100K<n<1M

---

# Dataset Card for Bias-amplified Splits for MultiNLI

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Annotations](#annotations)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Citation Information](#citation-information)

## Dataset Description

- **Repository:** [Fighting Bias with Bias repo](https://github.com/schwartz-lab-nlp/fight-bias-with-bias)

- **Paper:** [arXiv](https://arxiv.org/abs/2305.18917)

- **Point of Contact:** [Yuval Reif](mailto:yuval.reif@mail.huji.ac.il)

- **Original Dataset's Paper:** [MultiNLI](https://arxiv.org/abs/1704.05426)

### Dataset Summary

Bias-amplified splits is a novel evaluation framework to assess model robustness, by amplifying dataset biases in the training data and challenging models to generalize beyond them. This framework is defined by a bias-amplified training set and a hard, anti-biased test set, which we automatically extract from existing datasets using model-based methods.

Our experiments show that the identified anti-biased examples are naturally challenging for models, and moreover, models trained on bias-amplified data exhibit dramatic performance drops on anti-biased examples, which are not mitigated by common approaches to improve generalization.

Here we apply our framework to **MultiNLI**, a crowd-sourced collection of 433k sentence pairs annotated with textual entailment information.

Our evaluation framework can be applied to any existing dataset, even those considered obsolete, to test model robustness. We hope our work will guide the development of robust models that do not rely on superficial biases and correlations.

#### Evaluation Results (DeBERTa-large)

##### For splits based on minority examples:

| Training Data \ Test Data | Original test | Anti-biased test |

|---------------------------|---------------|------------------|

| Original training split | 91.1 | 74.3 |

| Biased training split | 88.7 | 57.5 |

##### For splits based on partial-input model:

| Training Data \ Test Data | Original test | Anti-biased test |

|---------------------------|---------------|------------------|

| Original training split | 91.1 | 81.4 |

| Biased training split | 89.5 | 71.8 |

#### Loading the Data

```

from datasets import load_dataset

# choose which bias detection method to use for the bias-amplified splits: either "minority_examples" or "partial_input"

dataset = load_dataset("bias-amplified-splits/mnli", "minority_examples")

# use the biased training split and anti-biased test split

train_dataset = dataset['train.biased']

eval_dataset = dataset['validation_matched.anti_biased']

```

## Dataset Structure

### Data Instances

Data instances are taken directly from MultiNLI (GLUE version), and re-split into biased and anti-biased subsets. Here is an example of an instance from the dataset:

```

{

"idx": 0,

"premise": "Your contribution helped make it possible for us to provide our students with a quality education.",

"hypothesis": "Your contributions were of no help with our students' education.",

"label": 2

}

```

### Data Fields

- `idx`: unique identifier for the example within its original data splits (e.g., validation matched)

- `premise`: a piece of text

- `hypothesis`: a piece of text that may be true, false, or whose truth conditions may not be knowable when compared to the premise

- `label`: one of `0`, `1` and `2` (`entailment`, `neutral`, and `contradiction`)

### Data Splits

Bias-amplified splits require a method to detect *biased* and *anti-biased* examples in datasets. We release bias-amplified splits based created with each of these two methods:

- **Minority examples**: A novel method we introduce that leverages representation learning and clustering for identifying anti-biased *minority examples* (Tu et al., 2020)—examples that defy common statistical patterns found in the rest of the dataset.

- **Partial-input baselines**: A common method for identifying biased examples containing annotation artifacts in a dataset, which examines the performance of models that are restricted to using only part of the input. Such models, if successful, are bound to rely on unintended or spurious patterns in the dataset.

Using each of the two methods, we split each of the original train and test splits into biased and anti-biased subsets. See the [paper](https://arxiv.org/abs/2305.18917) for more details.

#### Minority Examples

| Dataset Split | Number of Instances in Split |

|-------------------------------------|------------------------------|

| Train - biased | 309873 |

| Train - anti-biased | 82829 |

| Validation matched - biased | 7771 |

| Validation matched - anti-biased | 2044 |

| Validation mismatched - biased | 7797 |

| Validation mismatched - anti-biased | 2035 |

#### Partial-input Baselines

| Dataset Split | Number of Instances in Split |

|-------------------------------------|------------------------------|

| Train - biased | 309873 |

| Train - anti-biased | 82829 |

| Validation matched - biased | 7745 |

| Validation matched - anti-biased | 2070 |

| Validation mismatched - biased | 7758 |

| Validation mismatched - anti-biased | 2074 |

## Dataset Creation

### Curation Rationale

NLP models often rely on superficial cues known as *dataset biases* to achieve impressive performance, and can fail on examples where these biases do not hold. To develop more robust, unbiased models, recent work aims to filter bisased examples from training sets. We argue that in order to encourage the development of robust models, we should in fact **amplify** biases in the training sets, while adopting the challenge set approach and making test sets anti-biased. To implement our approach, we introduce a simple framework that can be applied automatically to any existing dataset to use it for testing model robustness.

### Annotations

#### Annotation process

No new annotations are required to create bias-amplified splits. Existing data instances are split into *biased* and *anti-biased* splits based on automatic model-based methods to detect such examples.

## Considerations for Using the Data

### Social Impact of Dataset

Bias-amplified splits were created to promote the development of robust NLP models that do not rely on superficial biases and correlations, and provide more challenging evaluation of existing systems.

### Discussion of Biases

We propose to use bias-amplified splits to complement benchmarks with challenging evaluation settings that test model robustness, in addition to the dataset’s main training and test sets. As such, while existing dataset biases are *amplified* during training with bias-amplified splits, these splits are intended primarily for model evaluation, to expose the bias-exploiting behaviors of models and to identify more robsut models and effective robustness interventions.

## Additional Information

### Dataset Curators

Bias-amplified splits were introduced by Yuval Reif and Roy Schwartz from the [Hebrew University of Jerusalem](https://schwartz-lab-huji.github.io).

MultiNLI was developed by Adina Williams, Nikita Nangia and Samuel Bowman.

### Citation Information

```

@misc{reif2023fighting,

title = "Fighting Bias with Bias: Promoting Model Robustness by Amplifying Dataset Biases",

author = "Yuval Reif and Roy Schwartz",

month = may,

year = "2023",

url = "https://arxiv.org/pdf/2305.18917",

}

```

Source dataset:

```

@InProceedings{N18-1101,

author = "Williams, Adina

and Nangia, Nikita

and Bowman, Samuel",

title = "A Broad-Coverage Challenge Corpus for

Sentence Understanding through Inference",

booktitle = "Proceedings of the 2018 Conference of

the North American Chapter of the

Association for Computational Linguistics:

Human Language Technologies, Volume 1 (Long

Papers)",

year = "2018",

publisher = "Association for Computational Linguistics",

pages = "1112--1122",

location = "New Orleans, Louisiana",

url = "http://aclweb.org/anthology/N18-1101"

}

``` |

bias-amplified-splits/anli | 2023-07-04T11:49:28.000Z | [

"task_categories:text-classification",

"size_categories:100K<n<1M",

"language:en",

"license:cc-by-nc-4.0",

"arxiv:2305.18917",

"arxiv:1910.14599",

"region:us"

] | bias-amplified-splits | The Adversarial Natural Language Inference (ANLI) is a new large-scale NLI benchmark dataset,

The dataset is collected via an iterative, adversarial human-and-model-in-the-loop procedure.

ANLI is much more difficult than its predecessors including SNLI and MNLI.

It contains three rounds. Each round has train/dev/test splits. | @InProceedings{nie2019adversarial,

title={Adversarial NLI: A New Benchmark for Natural Language Understanding},

author={Nie, Yixin

and Williams, Adina

and Dinan, Emily

and Bansal, Mohit

and Weston, Jason

and Kiela, Douwe},

booktitle = "Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics",

year = "2020",

publisher = "Association for Computational Linguistics",

} | null | 0 | 4 | ---

license: cc-by-nc-4.0

dataset_info:

- config_name: minority_examples

features:

- name: round

dtype: string

- name: uid

dtype: string

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: label

dtype:

class_label:

names:

'0': entailment

'1': neutral

'2': contradiction

- name: reason

dtype: string

splits:

- name: train.biased

num_bytes: 61260115

num_examples: 134068

- name: train.anti_biased

num_bytes: 13246263

num_examples: 28797

- name: validation.biased

num_bytes: 1311433

num_examples: 2317

- name: validation.anti_biased

num_bytes: 500409

num_examples: 883

- name: test.biased

num_bytes: 1284544

num_examples: 2262

- name: test.anti_biased

num_bytes: 539798

num_examples: 938

download_size: 86373189

dataset_size: 78142562

- config_name: partial_input

features:

- name: round

dtype: string

- name: uid

dtype: string

- name: premise

dtype: string

- name: hypothesis

dtype: string

- name: label

dtype:

class_label:

names:

'0': entailment

'1': neutral

'2': contradiction

- name: reason

dtype: string

splits:

- name: train.biased

num_bytes: 60769911

num_examples: 134068

- name: train.anti_biased

num_bytes: 13736467

num_examples: 28797

- name: validation.biased

num_bytes: 1491254

num_examples: 2634

- name: validation.anti_biased

num_bytes: 320588

num_examples: 566

- name: test.biased

num_bytes: 1501586

num_examples: 2634

- name: test.anti_biased

num_bytes: 322756

num_examples: 566

download_size: 86373189

dataset_size: 78142562

task_categories:

- text-classification

language:

- en

pretty_name: Adversarial NLI

size_categories:

- 100K<n<1M

---

# Dataset Card for Bias-amplified Splits for Adversarial NLI

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Annotations](#annotations)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Citation Information](#citation-information)

## Dataset Description

- **Repository:** [Fighting Bias with Bias repo](https://github.com/schwartz-lab-nlp/fight-bias-with-bias)

- **Paper:** [arXiv](https://arxiv.org/abs/2305.18917)

- **Point of Contact:** [Yuval Reif](mailto:yuval.reif@mail.huji.ac.il)

- **Original Dataset's Paper:** [ANLI](https://arxiv.org/abs/1910.14599)

### Dataset Summary

Bias-amplified splits is a novel evaluation framework to assess model robustness, by amplifying dataset biases in the training data and challenging models to generalize beyond them. This framework is defined by a bias-amplified training set and a hard, anti-biased test set, which we automatically extract from existing datasets using model-based methods.

Our experiments show that the identified anti-biased examples are naturally challenging for models, and moreover, models trained on bias-amplified data exhibit dramatic performance drops on anti-biased examples, which are not mitigated by common approaches to improve generalization.

Here we apply our framework to Adversarial Natural Language Inference (ANLI), a large-scale NLI benchmark dataset. The dataset was collected via an iterative, adversarial human-and-model-in-the-loop procedure. ANLI is much more difficult than its predecessors including SNLI and MNLI.

Our evaluation framework can be applied to any existing dataset, even those considered obsolete, to test model robustness. We hope our work will guide the development of robust models that do not rely on superficial biases and correlations.

#### Evaluation Results (DeBERTa-large)

##### For splits based on minority examples:

| Training Data \ Test Data | Original test | Anti-biased test |

|---------------------------|---------------|------------------|

| Original training split | 67.5 | 58.3 |

| Biased training split | 60.6 | 21.4 |

##### For splits based on partial-input model:

| Training Data \ Test Data | Original test | Anti-biased test |

|---------------------------|---------------|------------------|

| Original training split | 67.5 | 50.0 |

| Biased training split | 62.5 | 28.3 |

#### Loading the Data

ANLI contains three rounds of data collection, and each round has train/dev/test splits. We concatenated the splits from all rounds to create one train/dev/test splits.

```

from datasets import load_dataset

# choose which bias detection method to use for the bias-amplified splits: either "minority_examples" or "partial_input"

dataset = load_dataset("bias-amplified-splits/anli", "minority_examples")

# use the biased training split and anti-biased test split

train_dataset = dataset['train.biased']

eval_dataset = dataset['validation.anti_biased']

```

## Dataset Structure

### Data Instances

Data instances are taken directly from ANLI, and re-split into biased and anti-biased subsets. Here is an example of an instance from the dataset:

```

{

"round": "r1",

"idx": "20a331ee-cf54-4e8a-9ff9-6152cd679780",

"premise": "Milton Teagle "Richard" Simmons (born July 12, 1948) is an American fitness guru, actor, and comedian. He promotes weight-loss programs, prominently through his "Sweatin' to the Oldies" line of aerobics videos and is known for his eccentric, flamboyant, and energetic personality.",

"hypothesis": "Milton Teagle "Richard" Simmons created his "Sweatin' to the Oldies" line of aerobics videos without help or input from anyone else.",

"label": 1,

"reason": "The context gives no information as to how the "Sweatin' to the Oldies" videos are produced, Simmons may well produce them alone, or may produce them with a team. The system may have had difficulty with this because it is unlikely that Simmons produced the videos alone."

}

```

### Data Fields

- `round`: which round of data collection the example comes from (one of `r1`, `r2` and `r3`)

- `uid`: unique identifier for the example.

- `premise`: a piece of text

- `hypothesis`: a piece of text that may be true, false, or whose truth conditions may not be knowable when compared to the premise