id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

Arkan0ID/furniture-dataset | 2023-08-06T03:15:37.000Z | [

"region:us"

] | Arkan0ID | null | null | null | 0 | 3 | Entry not found |

ShenRuililin/MedicalQnA | 2023-08-07T08:54:25.000Z | [

"license:mit",

"region:us"

] | ShenRuililin | null | null | null | 0 | 3 | ---

license: mit

---

|

jaygala223/38-cloud-dataset | 2023-08-07T09:44:38.000Z | [

"region:us"

] | jaygala223 | null | null | null | 1 | 3 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype: image

splits:

- name: train

num_bytes: 757246236.0

num_examples: 8400

download_size: 754389599

dataset_size: 757246236.0

---

# Dataset Card for "38-cloud-train-only-v2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

d0rj/boolq-ru | 2023-08-14T09:47:04.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"annotations_creators:crowdsourced",

"language_creators:translated",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:boolq",

"language:ru",

"license:cc-by-sa-3.0",

"region:us"

] | d0rj | null | null | null | 0 | 3 | ---

annotations_creators:

- crowdsourced

language_creators:

- translated

language:

- ru

license:

- cc-by-sa-3.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- boolq

task_categories:

- text-classification

task_ids:

- natural-language-inference

paperswithcode_id: boolq

pretty_name: BoolQ (ru)

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

dataset_info:

features:

- name: question

dtype: string

- name: answer

dtype: bool

- name: passage

dtype: string

splits:

- name: train

num_bytes: 10819511

num_examples: 9427

- name: validation

num_bytes: 3710872

num_examples: 3270

download_size: 7376712

dataset_size: 14530383

---

# boolq-ru

Translated version of [boolq](https://huggingface.co/datasets/boolq) dataset into Russian.

## Dataset Description

- **Homepage:** [https://github.com/google-research-datasets/boolean-questions](https://github.com/google-research-datasets/boolean-questions) |

DFKI-SLT/argmicro | 2023-08-09T15:07:47.000Z | [

"license:cc-by-nc-sa-4.0",

"region:us"

] | DFKI-SLT | null | @inproceedings{peldszus2015annotated,

title={An annotated corpus of argumentative microtexts},

author={Peldszus, Andreas and Stede, Manfred},

booktitle={Argumentation and Reasoned Action: Proceedings of the 1st European Conference on Argumentation, Lisbon},

volume={2},

pages={801--815},

year={2015}

} | null | 0 | 3 | ---

license: cc-by-nc-sa-4.0

---

# An annotated corpus of argumentative microtexts

The arg-microtexts corpus features 112 short argumentative texts. All texts

were originally written in German and have been professionally translated to

English.

The texts with ids b001-b064 and k001-k031 have been collected in a controlled

text generation experiment from 23 subjects discussing various controversial

issues from [a fixed list](topics_triggers.md).

The texts with ids d01-d23 have been written by Andreas Peldszus and were

used mainly in teaching and testing students argumentative analysis.

All texts are annotated with argumentation structures, following the scheme

proposed in Peldszus & Stede (2013). For inter-annotator-agreement scores see

Peldszus (2014). The (German) annotation guidelines are published in Peldszus, Warzecha, Stede (2016).

## DATA FORMAT (ARGUMENTATION GRAPH)

This specifies the argumentation graphs following the

annotation scheme described in

Andreas Peldszus and Manfred Stede. From argument diagrams to argumentation

mining in texts: a survey. International Journal of Cognitive Informatics

and Natural Intelligence (IJCINI), 7(1):1–31, 2013.

An argumentation graph is a directed graph spanning over text segments. The

format distinguishes three different sorts of nodes: EDUs, ADUs & EDU-joints.

- EDU: elementary discourse units

The text is segmented into elementary discourse units, typically at a

clause/sentence level. This segmentation can be the result of manually

annotation or of automatic discourse segmenters.

- ADU: argumentative discourse units

Not every EDU is relevant in an argumentation. Also, the same claim might

be stated multiple times in longer texts. An argumentative discourse unit

represents a claim that stands for itself and is argumentatively relevant.

It is thus grounded in one or more EDUs. EDU and ADUs are connected by

segmentation edges. ADUs are associated with a dialectic role: They are

either proponent or opponent nodes.

- JOINT: a joint of two or more adjacent elementary discourse units

When two adjacent EDUs are argumentatively relevant only when taken

together, these EDUs are first connected with one joint EDU node by

segmentation edges and then this joint node is connected to a corresponding

ADU.

### edge type

The edges representing arguments are those that connect ADUs. The scheme

distinguishes between supporting and attacking relations. Supporting

relations are normal support and support by example. Attacking relations are

rebutting attacks (directed against another node, challenging the accept-

ability of the corresponding claim) and undercutting attacks (directed

against another relation, challenging the argumentative inference from the

source to the target of the relation). Finally, additional premises of

relations with more than one premise are represented by additional source

relations.

Values:

- seg: segmentation edges (EDU->ADU, EDU->JOINT, JOINT->ADU)

- sup: support (ADU->ADU)

- exa: support by example (ADU->ADU)

- add: additional source, for combined/convergent arguments with multiple premises (ADU->ADU)

- reb: rebutting attack (ADU->ADU)

- und: undercutting attack (ADU->Edge)

### adu type

The argumentation can be thought of as a dialectical exchange between the

role of the proponent (who is presenting and defending the central claim)

and the role of the opponent (who is critically challenging the proponents

claims). Each ADU is thus associated with one of these dialectic roles.

Values:

- pro: proponent

- opp: opponent

### stance type

Annotated texts typically discuss a controversial topic, i.e. an issue posed

as a yes/no question. Example: "Should we make use of capital punishment?"

The stance type specifies, which stance the author of this text takes

towards this issue.

Values:

- pro: yes, in favour of the proposed issue

- con: no, against the proposed issue

- unclear: the position of the author is unclear

- UNDEFINED |

ashtrayAI/Bangla_Financial_news_articles_Dataset | 2023-08-08T16:43:31.000Z | [

"license:cc0-1.0",

"Bengali",

"News",

"Sentiment",

"Text",

"Articles",

"Finance",

"region:us"

] | ashtrayAI | null | null | null | 1 | 3 | ---

license: cc0-1.0

tags:

- Bengali

- News

- Sentiment

- Text

- Articles

- Finance

---

# Bangla-Financial-news-articles-Dataset

A Comprehensive Resource for Analyzing Sentiments in over 7600+ Bangla News.

### Downloads

🔴 **Download** the **"💥Bangla_fin_news.zip"** file for all "7,695" news and extract it.

### About Dataset

**Welcome** to our Bengali Financial News Sentiment Analysis dataset! This collection comprises 7,695 financial news articles extracted, covering the period from March 3, 2014, to December 29, 2021. Utilizing the powerful web scraping tool "Beautiful Soup 4.4.0" in Python.

This dataset was a crucial part of our research published in the journal paper titled **"Stock Market Prediction of Bangladesh Using Multivariate Long Short-Term Memory with Sentiment Identification."** The paper can be accessed and cited at **http://doi.org/10.11591/ijece.v13i5.pp5696-5706**.

We are excited to share this unique dataset, which we hope will empower researchers, analysts, and enthusiasts to explore and understand the dynamics of the Bengali financial market through sentiment analysis. Join us on this journey of uncovering the hidden emotions driving market trends and decisions in Bangladesh. Happy analyzing!

### About this directory

**Directory Description:** Welcome to the "Bangla_fin_news" directory. This repository houses a collection of 7,695 CSV files, each containing valuable financial news data in the Bengali language. These files are indexed numerically from 1 to 7695, making it easy to access specific information for analysis or research.

**File Description:** Each file contains financial news articles and related information.

**example:**

File: "1.csv"

Columns:

Serial: The serial number of the news article.

Title: The title of the news article.

Date: The date when the news article was published.

Author: The name of the author who wrote the article.

News: The main content of the news article.

File: "2.csv"

Columns:

Serial: The serial number of the news article.

Title: The title of the news article.

Date: The date when the news article was published.

Author: The name of the author who wrote the article.

News: The main content of the news article.

**[… and so on for all 7,695 files …]**

Each CSV file within this directory represents unique financial news articles from March 3, 2014, to December 29, 2021. The dataset has been carefully compiled and structured, making it a valuable resource for sentiment analysis, market research, and any investigation into the dynamics of the Bengali financial market.

Feel free to explore, analyze, and gain insights from this extensive collection of Bengali financial news articles. Happy researching! ❤❤

|

hf-audio/esb-datasets-test-only | 2023-08-29T12:45:54.000Z | [

"task_categories:automatic-speech-recognition",

"annotations_creators:expert-generated",

"annotations_creators:crowdsourced",

"annotations_creators:machine-generated",

"language_creators:crowdsourced",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

... | hf-audio | null | null | null | 3 | 3 | ---

annotations_creators:

- expert-generated

- crowdsourced

- machine-generated

language:

- en

language_creators:

- crowdsourced

- expert-generated

license:

- cc-by-4.0

- apache-2.0

- cc0-1.0

- cc-by-nc-3.0

- other

multilinguality:

- monolingual

pretty_name: datasets

size_categories:

- 100K<n<1M

- 1M<n<10M

source_datasets:

- original

- extended|librispeech_asr

- extended|common_voice

tags:

- asr

- benchmark

- speech

- esb

task_categories:

- automatic-speech-recognition

extra_gated_prompt: >-

Three of the ESB datasets have specific terms of usage that must be agreed to

before using the data.

To do so, fill in the access forms on the specific datasets' pages:

* Common Voice: https://huggingface.co/datasets/mozilla-foundation/common_voice_9_0

* GigaSpeech: https://huggingface.co/datasets/speechcolab/gigaspeech

* SPGISpeech: https://huggingface.co/datasets/kensho/spgispeech

extra_gated_fields:

I hereby confirm that I have registered on the original Common Voice page and agree to not attempt to determine the identity of speakers in the Common Voice dataset: checkbox

I hereby confirm that I have accepted the terms of usages on GigaSpeech page: checkbox

I hereby confirm that I have accepted the terms of usages on SPGISpeech page: checkbox

duplicated_from: open-asr-leaderboard/datasets

---

All eight of datasets in ESB can be downloaded and prepared in just a single line of code through the Hugging Face Datasets library:

```python

from datasets import load_dataset

librispeech = load_dataset("esb/datasets", "librispeech", split="train")

```

- `"esb/datasets"`: the repository namespace. This is fixed for all ESB datasets.

- `"librispeech"`: the dataset name. This can be changed to any of any one of the eight datasets in ESB to download that dataset.

- `split="train"`: the split. Set this to one of train/validation/test to generate a specific split. Omit the `split` argument to generate all splits for a dataset.

The datasets are full prepared, such that the audio and transcription files can be used directly in training/evaluation scripts.

## Dataset Information

A data point can be accessed by indexing the dataset object loaded through `load_dataset`:

```python

print(librispeech[0])

```

A typical data point comprises the path to the audio file and its transcription. Also included is information of the dataset from which the sample derives and a unique identifier name:

```python

{

'dataset': 'librispeech',

'audio': {'path': '/home/sanchit-gandhi/.cache/huggingface/datasets/downloads/extracted/d2da1969fe9e7d06661b5dc370cf2e3c119a14c35950045bcb76243b264e4f01/374-180298-0000.flac',

'array': array([ 7.01904297e-04, 7.32421875e-04, 7.32421875e-04, ...,

-2.74658203e-04, -1.83105469e-04, -3.05175781e-05]),

'sampling_rate': 16000},

'text': 'chapter sixteen i might have told you of the beginning of this liaison in a few lines but i wanted you to see every step by which we came i to agree to whatever marguerite wished',

'id': '374-180298-0000'

}

```

### Data Fields

- `dataset`: name of the ESB dataset from which the sample is taken.

- `audio`: a dictionary containing the path to the downloaded audio file, the decoded audio array, and the sampling rate.

- `text`: the transcription of the audio file.

- `id`: unique id of the data sample.

### Data Preparation

#### Audio

The audio for all ESB datasets is segmented into sample lengths suitable for training ASR systems. The Hugging Face datasets library decodes audio files on the fly, reading the segments and converting them to a Python arrays. Consequently, no further preparation of the audio is required to be used in training/evaluation scripts.

Note that when accessing the audio column: `dataset[0]["audio"]` the audio file is automatically decoded and resampled to `dataset.features["audio"].sampling_rate`. Decoding and resampling of a large number of audio files might take a significant amount of time. Thus it is important to first query the sample index before the `"audio"` column, i.e. `dataset[0]["audio"]` should always be preferred over `dataset["audio"][0]`.

#### Transcriptions

The transcriptions corresponding to each audio file are provided in their 'error corrected' format. No transcription pre-processing is applied to the text, only necessary 'error correction' steps such as removing junk tokens (_<unk>_) or converting symbolic punctuation to spelled out form (_<comma>_ to _,_). As such, no further preparation of the transcriptions is required to be used in training/evaluation scripts.

Transcriptions are provided for training and validation splits. The transcriptions are **not** provided for the test splits. ESB requires you to generate predictions for the test sets and upload them to https://huggingface.co/spaces/esb/leaderboard for scoring.

### Access

All eight of the datasets in ESB are accessible and licensing is freely available. Three of the ESB datasets have specific terms of usage that must be agreed to before using the data. To do so, fill in the access forms on the specific datasets' pages:

* Common Voice: https://huggingface.co/datasets/mozilla-foundation/common_voice_9_0

* GigaSpeech: https://huggingface.co/datasets/speechcolab/gigaspeech

* SPGISpeech: https://huggingface.co/datasets/kensho/spgispeech

### Diagnostic Dataset

ESB contains a small, 8h diagnostic dataset of in-domain validation data with newly annotated transcriptions. The audio data is sampled from each of the ESB validation sets, giving a range of different domains and speaking styles. The transcriptions are annotated according to a consistent style guide with two formats: normalised and un-normalised. The dataset is structured in the same way as the ESB dataset, by grouping audio-transcription samples according to the dataset from which they were taken. We encourage participants to use this dataset when evaluating their systems to quickly assess performance on a range of different speech recognition conditions. For more information, visit: [esb/diagnostic-dataset](https://huggingface.co/datasets/esb/diagnostic-dataset).

## Summary of ESB Datasets

| Dataset | Domain | Speaking Style | Train (h) | Dev (h) | Test (h) | Transcriptions | License |

|--------------|-----------------------------|-----------------------|-----------|---------|----------|--------------------|-----------------|

| LibriSpeech | Audiobook | Narrated | 960 | 11 | 11 | Normalised | CC-BY-4.0 |

| Common Voice | Wikipedia | Narrated | 1409 | 27 | 27 | Punctuated & Cased | CC0-1.0 |

| Voxpopuli | European Parliament | Oratory | 523 | 5 | 5 | Punctuated | CC0 |

| TED-LIUM | TED talks | Oratory | 454 | 2 | 3 | Normalised | CC-BY-NC-ND 3.0 |

| GigaSpeech | Audiobook, podcast, YouTube | Narrated, spontaneous | 2500 | 12 | 40 | Punctuated | apache-2.0 |

| SPGISpeech | Fincancial meetings | Oratory, spontaneous | 4900 | 100 | 100 | Punctuated & Cased | User Agreement |

| Earnings-22 | Fincancial meetings | Oratory, spontaneous | 105 | 5 | 5 | Punctuated & Cased | CC-BY-SA-4.0 |

| AMI | Meetings | Spontaneous | 78 | 9 | 9 | Punctuated & Cased | CC-BY-4.0 |

## LibriSpeech

The LibriSpeech corpus is a standard large-scale corpus for assessing ASR systems. It consists of approximately 1,000 hours of narrated audiobooks from the [LibriVox](https://librivox.org) project. It is licensed under CC-BY-4.0.

Example Usage:

```python

librispeech = load_dataset("esb/datasets", "librispeech")

```

Train/validation splits:

- `train` (combination of `train.clean.100`, `train.clean.360` and `train.other.500`)

- `validation.clean`

- `validation.other`

Test splits:

- `test.clean`

- `test.other`

Also available are subsets of the train split, which can be accessed by setting the `subconfig` argument:

```python

librispeech = load_dataset("esb/datasets", "librispeech", subconfig="clean.100")

```

- `clean.100`: 100 hours of training data from the 'clean' subset

- `clean.360`: 360 hours of training data from the 'clean' subset

- `other.500`: 500 hours of training data from the 'other' subset

## Common Voice

Common Voice is a series of crowd-sourced open-licensed speech datasets where speakers record text from Wikipedia in various languages. The speakers are of various nationalities and native languages, with different accents and recording conditions. We use the English subset of version 9.0 (27-4-2022), with approximately 1,400 hours of audio-transcription data. It is licensed under CC0-1.0.

Example usage:

```python

common_voice = load_dataset("esb/datasets", "common_voice", use_auth_token=True)

```

Training/validation splits:

- `train`

- `validation`

Test splits:

- `test`

## VoxPopuli

VoxPopuli is a large-scale multilingual speech corpus consisting of political data sourced from 2009-2020 European Parliament event recordings. The English subset contains approximately 550 hours of speech largely from non-native English speakers. It is licensed under CC0.

Example usage:

```python

voxpopuli = load_dataset("esb/datasets", "voxpopuli")

```

Training/validation splits:

- `train`

- `validation`

Test splits:

- `test`

## TED-LIUM

TED-LIUM consists of English-language TED Talk conference videos covering a range of different cultural, political, and academic topics. It contains approximately 450 hours of transcribed speech data. It is licensed under CC-BY-NC-ND 3.0.

Example usage:

```python

tedlium = load_dataset("esb/datasets", "tedlium")

```

Training/validation splits:

- `train`

- `validation`

Test splits:

- `test`

## GigaSpeech

GigaSpeech is a multi-domain English speech recognition corpus created from audiobooks, podcasts and YouTube. We provide the large train set (2,500 hours) and the standard validation and test splits. It is licensed under apache-2.0.

Example usage:

```python

gigaspeech = load_dataset("esb/datasets", "gigaspeech", use_auth_token=True)

```

Training/validation splits:

- `train` (`l` subset of training data (2,500 h))

- `validation`

Test splits:

- `test`

Also available are subsets of the train split, which can be accessed by setting the `subconfig` argument:

```python

gigaspeech = load_dataset("esb/datasets", "spgispeech", subconfig="xs", use_auth_token=True)

```

- `xs`: extra-small subset of training data (10 h)

- `s`: small subset of training data (250 h)

- `m`: medium subset of training data (1,000 h)

- `xl`: extra-large subset of training data (10,000 h)

## SPGISpeech

SPGISpeech consists of company earnings calls that have been manually transcribed by S&P Global, Inc according to a professional style guide. We provide the large train set (5,000 hours) and the standard validation and test splits. It is licensed under a Kensho user agreement.

Loading the dataset requires authorization.

Example usage:

```python

spgispeech = load_dataset("esb/datasets", "spgispeech", use_auth_token=True)

```

Training/validation splits:

- `train` (`l` subset of training data (~5,000 h))

- `validation`

Test splits:

- `test`

Also available are subsets of the train split, which can be accessed by setting the `subconfig` argument:

```python

spgispeech = load_dataset("esb/datasets", "spgispeech", subconfig="s", use_auth_token=True)

```

- `s`: small subset of training data (~200 h)

- `m`: medium subset of training data (~1,000 h)

## Earnings-22

Earnings-22 is a 119-hour corpus of English-language earnings calls collected from global companies, with speakers of many different nationalities and accents. It is licensed under CC-BY-SA-4.0.

Example usage:

```python

earnings22 = load_dataset("esb/datasets", "earnings22")

```

Training/validation splits:

- `train`

- `validation`

Test splits:

- `test`

## AMI

The AMI Meeting Corpus consists of 100 hours of meeting recordings from multiple recording devices synced to a common timeline. It is licensed under CC-BY-4.0.

Example usage:

```python

ami = load_dataset("esb/datasets", "ami")

```

Training/validation splits:

- `train`

- `validation`

Test splits:

- `test` |

Elliot4AI/dolly-15k-chinese-guanacoformat | 2023-08-09T09:07:11.000Z | [

"task_categories:text-classification",

"task_categories:text-generation",

"size_categories:10K<n<100K",

"language:zh",

"license:apache-2.0",

"finance",

"region:us"

] | Elliot4AI | null | null | null | 3 | 3 | ---

license: apache-2.0

task_categories:

- text-classification

- text-generation

language:

- zh

tags:

- finance

size_categories:

- 10K<n<100K

---

# Dataset Summary

## 🏡🏡🏡🏡Fine-tune Dataset:中文数据集🏡🏡🏡🏡

😀😀😀😀😀😀😀😀 这个数据集是databricks/databricks-dolly-15k的中文guanaco版本

|

cayjobla/wikipedia-pretrain-processed | 2023-08-09T19:54:48.000Z | [

"region:us"

] | cayjobla | null | null | null | 0 | 3 | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: token_type_ids

sequence: int8

- name: attention_mask

sequence: int8

- name: next_sentence_label

dtype: int64

splits:

- name: train

num_bytes: 110639929064.0

num_examples: 35782642

- name: test

num_bytes: 12293328200.0

num_examples: 3975850

download_size: 4973120598

dataset_size: 122933257264.0

---

# Dataset Card for "wikipedia-pretrain-processed"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Kyle1668/pythia-semantic-memorization-perplexities | 2023-09-19T02:40:54.000Z | [

"region:us"

] | Kyle1668 | null | null | null | 0 | 3 | ---

configs:

- config_name: default

data_files:

- split: memories.deduped.12b

path: data/memories.deduped.12b-*

- split: memories.duped.12b

path: data/memories.duped.12b-*

- split: memories.duped.6.9b

path: data/memories.duped.6.9b-*

- split: pile.duped.6.9b

path: data/pile.duped.6.9b-*

- split: memories.duped.70m

path: data/memories.duped.70m-*

- split: memories.duped.160m

path: data/memories.duped.160m-*

- split: memories.duped.410m

path: data/memories.duped.410m-*

- split: pile.duped.70m

path: data/pile.duped.70m-*

- split: pile.duped.160m

path: data/pile.duped.160m-*

- split: pile.duped.410m

path: data/pile.duped.410m-*

- split: memories.duped.1.4b

path: data/memories.duped.1.4b-*

- split: memories.duped.1b

path: data/memories.duped.1b-*

- split: memories.duped.2.8b

path: data/memories.duped.2.8b-*

- split: pile.duped.1.4b

path: data/pile.duped.1.4b-*

- split: pile.duped.1b

path: data/pile.duped.1b-*

- split: pile.duped.2.8b

path: data/pile.duped.2.8b-*

- split: pile.duped.12b

path: data/pile.duped.12b-*

- split: memories.deduped.70m

path: data/memories.deduped.70m-*

- split: memories.deduped.160m

path: data/memories.deduped.160m-*

- split: memories.deduped.410m

path: data/memories.deduped.410m-*

- split: pile.deduped.70m

path: data/pile.deduped.70m-*

- split: pile.deduped.160m

path: data/pile.deduped.160m-*

- split: pile.deduped.410m

path: data/pile.deduped.410m-*

- split: memories.deduped.6.9b

path: data/memories.deduped.6.9b-*

- split: pile.deduped.6.9b

path: data/pile.deduped.6.9b-*

- split: pile.deduped.12b

path: data/pile.deduped.12b-*

- split: memories.deduped.2.8b

path: data/memories.deduped.2.8b-*

- split: pile.deduped.2.8b

path: data/pile.deduped.2.8b-*

- split: memories.deduped.1.4b

path: data/memories.deduped.1.4b-*

- split: memories.deduped.1b

path: data/memories.deduped.1b-*

- split: pile.deduped.1.4b

path: data/pile.deduped.1.4b-*

- split: pile.deduped.1b

path: data/pile.deduped.1b-*

dataset_info:

features:

- name: index

dtype: int32

- name: prompt_perplexity

dtype: float32

- name: generation_perplexity

dtype: float32

- name: sequence_perplexity

dtype: float32

splits:

- name: memories.deduped.12b

num_bytes: 29939456

num_examples: 1871216

- name: memories.duped.12b

num_bytes: 38117248

num_examples: 2382328

- name: memories.duped.6.9b

num_bytes: 33935616

num_examples: 2120976

- name: pile.duped.6.9b

num_bytes: 80000000

num_examples: 5000000

- name: memories.duped.70m

num_bytes: 7423248

num_examples: 463953

- name: memories.duped.160m

num_bytes: 11034768

num_examples: 689673

- name: memories.duped.410m

num_bytes: 15525456

num_examples: 970341

- name: pile.duped.70m

num_bytes: 80000000

num_examples: 5000000

- name: pile.duped.160m

num_bytes: 80000000

num_examples: 5000000

- name: pile.duped.410m

num_bytes: 80000000

num_examples: 5000000

- name: memories.duped.1.4b

num_bytes: 21979552

num_examples: 1373722

- name: memories.duped.1b

num_bytes: 20098256

num_examples: 1256141

- name: memories.duped.2.8b

num_bytes: 26801232

num_examples: 1675077

- name: pile.duped.1.4b

num_bytes: 80000000

num_examples: 5000000

- name: pile.duped.1b

num_bytes: 80000000

num_examples: 5000000

- name: pile.duped.2.8b

num_bytes: 80000000

num_examples: 5000000

- name: pile.duped.12b

num_bytes: 80000000

num_examples: 5000000

- name: memories.deduped.70m

num_bytes: 6583168

num_examples: 411448

- name: memories.deduped.160m

num_bytes: 9299120

num_examples: 581195

- name: memories.deduped.410m

num_bytes: 12976624

num_examples: 811039

- name: pile.deduped.70m

num_bytes: 80000000

num_examples: 5000000

- name: pile.deduped.160m

num_bytes: 80000000

num_examples: 5000000

- name: pile.deduped.410m

num_bytes: 80000000

num_examples: 5000000

- name: memories.deduped.6.9b

num_bytes: 26884704

num_examples: 1680294

- name: pile.deduped.6.9b

num_bytes: 80000000

num_examples: 5000000

- name: pile.deduped.12b

num_bytes: 80000000

num_examples: 5000000

- name: memories.deduped.2.8b

num_bytes: 21683376

num_examples: 1355211

- name: pile.deduped.2.8b

num_bytes: 80000000

num_examples: 5000000

- name: memories.deduped.1.4b

num_bytes: 16769552

num_examples: 1048097

- name: memories.deduped.1b

num_bytes: 16525840

num_examples: 1032865

- name: pile.deduped.1.4b

num_bytes: 80000000

num_examples: 5000000

- name: pile.deduped.1b

num_bytes: 80000000

num_examples: 5000000

download_size: 1891778367

dataset_size: 1595577216

---

# Dataset Card for "pythia-semantic-memorization-perplexities"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

pfcheng123/test | 2023-08-15T08:28:55.000Z | [

"region:us"

] | pfcheng123 | null | null | null | 0 | 3 | Entry not found |

BalajiAIdev/autotrain-data-animal-image-classification | 2023-08-10T02:33:12.000Z | [

"task_categories:image-classification",

"region:us"

] | BalajiAIdev | null | null | null | 0 | 3 | ---

task_categories:

- image-classification

---

# AutoTrain Dataset for project: animal-image-classification

## Dataset Description

This dataset has been automatically processed by AutoTrain for project animal-image-classification.

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<366x274 RGB PIL image>",

"target": 0

},

{

"image": "<367x274 RGB PIL image>",

"target": 0

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['Lion', 'Tiger'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 20 |

| valid | 20 |

|

mirix/messaih | 2023-08-11T08:42:42.000Z | [

"task_categories:audio-classification",

"size_categories:10K<n<100K",

"language:en",

"license:mit",

"SER",

"Speech Emotion Recognition",

"Speech Emotion Classification",

"Audio Classification",

"Audio",

"Emotion",

"Emo",

"Speech",

"Mosei",

"region:us"

] | mirix | null | null | null | 0 | 3 | ---

license: mit

task_categories:

- audio-classification

language:

- en

tags:

- SER

- Speech Emotion Recognition

- Speech Emotion Classification

- Audio Classification

- Audio

- Emotion

- Emo

- Speech

- Mosei

pretty_name: messAIh

size_categories:

- 10K<n<100K

---

DATASET DESCRIPTION

The messAIh dataset is a fork of [CMU MOSEI](http://multicomp.cs.cmu.edu/resources/cmu-mosei-dataset/).

Unlike its parent, MESSAIH is indended for unimodal model development and focusses exclusively on audio classification, more specifically, Speech Emotion Recognition (SER).

Of course, it can be used for bimodal classification by transcribing each audio track.

MESSAIH currently contains 13,234 speech samples annotated according to the [CMU MOSEI](https://aclanthology.org/P18-1208/) scheme:

> Each sentence is annotated for sentiment on a [-3,3] Likert scale of:

> [−3: highly negative, −2 negative, −1 weakly negative, 0 neutral, +1 weakly positive, +2 positive, +3 highly positive].

> Ekman emotions of {happiness, sadness, anger, fear, disgust, surprise}

> are annotated on a [0,3] Likert scale for presence of emotion

> x: [0: no evidence of x, 1: weakly x, 2: x, 3: highly x].

The dataset is provided as a [parquet file](https://drive.google.com/file/d/17qOa2cFDNCH2j2mL5gCNUOwLxpgnzPmB/view?usp=drive_link).

Provisionally, the file is stored on a [cloud drive](https://drive.google.com/file/d/17qOa2cFDNCH2j2mL5gCNUOwLxpgnzPmB/view?usp=drive_link) as it is too big for GitHub. Note that the original parquet file from August 10th 2023 was buggy and so was the Python script.

To facilitate inspection, a truncated csv sample file is also provided, but it does not contain the audio arrays.

If you train a model on this dataset, you would make us very happy by letting us know.

UNPACKING THE DATASET

A sample Python script (check the top of the script for the requirements) is also provided for illustrative purposes.

The script reads the parquet file and produces the following:

1. A csv file with file names and MOSEI values (columns names are self-explanatory).

2. A folder named "wavs" containing the audio samples.

LEGAL CONSIDERATIONS

Note that producing the wav files might (or might not) constitute copyright infringement as well as a violation of Google's Terms of Service.

Instead, researchers are encouraged to use the numpy arrays contained in the last column of the dataset ("wav2numpy") directly, without actually extracting any playable audio.

That, I believe, may keep us in the grey zone.

CAVEATS

As one can appreciate from the charts contained in the "charts" folder, the dataset is biased towards "positive" emotions, namely happiness.

Certain emotions such as fear may be underrepresented, not only in terms of number of occurences, but, more problematically, in terms of "intensity".

MOSEI is considered a natural or spontaneous emotion dataset (as opposed to an actored or scripted one) showcasing "genuine" emotions.

However, keep in mind that MOSEI was curated from a popular social network and social networks are notoriously abundant in fake emotions.

Moreover, certain emotions may be intrinsically more difficult to detect than others, even from a human perspective.

Yet, MOSEI is possibly one of the best datasets of its kind currently in the public domain.

Also note that the original [MOSEI](http://immortal.multicomp.cs.cmu.edu/CMU-MOSEI/labels/) contains nearly twice as many entries as MESSAIH does.

|

TrainingDataPro/biometric-attacks-in-different-lighting-conditions | 2023-09-14T16:34:15.000Z | [

"task_categories:video-classification",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"legal",

"finance",

"region:us"

] | TrainingDataPro | null | null | null | 1 | 3 | ---

license: cc-by-nc-nd-4.0

task_categories:

- video-classification

language:

- en

tags:

- code

- legal

- finance

---

# Biometric Attacks in Different Lighting Conditions Dataset

The dataset consists of videos of individuals and attacks with photos shown in the monitor . Videos are filmed in different lightning conditions (*in a dark room, daylight, light room and nightlight*) and in different places (*indoors, outdoors*). Each video in the dataset has an approximate duration of 20 seconds.

### Types of videos in the dataset:

- **darkroom_photo** - photo of a person in a **dark room** shown on a computer and filmed on the phone

- **daylight_photo** - photo of a person in a **daylight** shown on a computer and filmed on the phone

- **lightroom_photo** - photo of a person in a **light room** shown on a computer and filmed on the phone

- **nightlight_photo** - photo of a person in a **night light** shown on a computer and filmed on the phone

- **darkroom_video** - filmed in a **dark room**, on which a person moves his/her head left, right, up and down

- **daylight_video** - filmed in a **daylight**, on which a person moves his/her head left, right, up and down

- **lightroom_video** - filmed in a **light room**, on which a person moves his/her head left, right, up and down

- **nightlight_video** - filmed in a **night light**, on which a person moves his/her head left, right, up and down

- **mask** - video of the person wearing a **printed 2D mask**

- **outline** - video of the person wearing a **printed 2D mask with cut-out holes for eyes**

- **monitor_video** - video of a person played on a computer and filmed on the phone

.png?generation=1691658152306937&alt=media)

The dataset serves as a valuable resource for computer vision, anti-spoofing tasks, video analysis, and security systems. It allows for the development of algorithms and models that can effectively detect attacks.

Studying the dataset may lead to the development of improved security systems, surveillance technologies, and solutions to mitigate the risks associated with masked individuals carrying out attacks.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=biometric-attacks-in-different-lighting-conditions) to discuss your requirements, learn about the price and buy the dataset.

# Content

- **files** - contains of original videos and videos of attacks,

- **dataset_info.csvl** - includes the information about videos in the dataset

### File with the extension .csv

- **file**: link to the video,

- **type**: type of the video

# Attacks might be collected in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=biometric-attacks-in-different-lighting-conditions) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

almandsky/openassistant-tiny | 2023-08-10T15:58:18.000Z | [

"license:mit",

"region:us"

] | almandsky | null | null | null | 0 | 3 | ---

license: mit

---

|

RealTimeData/News_August_2023 | 2023-08-10T20:09:24.000Z | [

"size_categories:1K<n<10K",

"language:en",

"license:cc",

"region:us"

] | RealTimeData | null | null | null | 0 | 3 | ---

dataset_info:

features:

- name: authors

sequence: string

- name: date_download

dtype: string

- name: date_modify

dtype: string

- name: date_publish

dtype: string

- name: description

dtype: string

- name: filename

dtype: string

- name: image_url

dtype: string

- name: language

dtype: string

- name: localpath

dtype: string

- name: maintext

dtype: string

- name: source_domain

dtype: string

- name: title

dtype: string

- name: title_page

dtype: string

- name: title_rss

dtype: string

- name: url

dtype: string

splits:

- name: train

num_bytes: 18194599

num_examples: 5059

download_size: 8541046

dataset_size: 18194599

license: cc

language:

- en

size_categories:

- 1K<n<10K

---

# Dataset Card for "News_August_2023"

This dataset was constructed at 1 Aug 2023, which contains news published from 10 May 2023 to 1 Aug 2023 from various sources.

All news articles in this dataset are in English.

Created from `commoncrawl`. |

luci/questions | 2023-08-11T13:00:22.000Z | [

"language:fr",

"license:wtfpl",

"region:us"

] | luci | null | null | null | 0 | 3 | ---

license: wtfpl

language:

- fr

---

### Présentation Générale:

Ce dataset est une collection de questions et réponses en français, principalement axée sur les sujets techniques tels que le développement, le DevOps, la sécurité, les données, l'apprentissage automatique, et bien d'autres domaines liés à la technologie.

### Structure du Dataset:

Chaque élément du dataset est un objet avec les champs suivants:

- `id`: Un identifiant unique pour chaque entrée.

- `category`: La catégorie ou le domaine de la question (par exemple, "linux").

- `question`: La question posée.

- `answer`: La réponse à la question.

### Exemple d'une entrée:

```json

{

"id": "7d0b4a78-371e-4bd9-a292-c97ce162e740",

"category": "linux",

"question": "Qu'est-ce que Linux ?",

"answer": "Linux est un système d'exploitation libre et open source basé sur le noyau Linux..."

}

```

### Utilisation:

Ce dataset peut être utilisé pour :

1. **Entraîner des modèles de langage** : En utilisant les questions et les réponses comme données d'entraînement.

2. **Évaluer des modèles de langage** : En comparant les réponses générées par le modèle avec les réponses du dataset.

3. **Nettoyer et améliorer d'autres datasets** : En utilisant les questions comme base pour filtrer et nettoyer d'autres ensembles de données.

### Note Importante:

Il est important de noter que les réponses présentes dans ce dataset n'ont pas été supervisées pendant leur génération, que ce soit par des humains ou des machines. Par conséquent, il est possible que certaines réponses ne soient pas tout à fait exactes. Une vérification et une validation supplémentaires peuvent être nécessaires avant d'utiliser ces données pour des applications critiques.

### Objectif:

L'objectif principal est d'obtenir, au fil du temps, des données propres et pertinentes à partir de questions pertinentes dans le domaine technologique.

### Demo web:

http://loop.brain.fr/qwe/ |

thaottn/DataComp_large_pool_BLIP2_captions | 2023-09-01T01:06:32.000Z | [

"task_categories:image-to-text",

"task_categories:zero-shot-classification",

"size_categories:1B<n<10B",

"license:cc-by-4.0",

"arxiv:2307.10350",

"region:us"

] | thaottn | null | null | null | 0 | 3 | ---

license: cc-by-4.0

task_categories:

- image-to-text

- zero-shot-classification

size_categories:

- 1B<n<10B

---

# Dataset Card for DataComp_large_pool_BLIP2_captions

## Dataset Description

- **Paper: https://arxiv.org/abs/2307.10350**

- **Leaderboard: https://www.datacomp.ai/leaderboard.html**

- **Point of Contact: Thao Nguyen (thaottn@cs.washington.edu)**

### Dataset Summary

### Supported Tasks and Leaderboards

We have used this dataset for pre-training CLIP models and found that it rivals or outperforms models trained on raw web captions on average across the 38 evaluation tasks proposed by DataComp.

Refer to the DataComp leaderboard (https://www.datacomp.ai/leaderboard.html) for the top baselines uncovered in our work.

### Languages

Primarily English.

## Dataset Structure

### Data Instances

Each instance maps a unique image identifier from DataComp to the corresponding BLIP2 caption generated with temperature 0.75.

### Data Fields

uid: SHA256 hash of image, provided as metadata by the DataComp team.

blip2-cap: corresponding caption generated by BLIP2.

### Data Splits

Data was not split. The dataset is intended for pre-training multimodal models.

## Dataset Creation

### Curation Rationale

Web-crawled image-text data can contain a lot of noise, i.e. the caption may not reflect the content of the respective image. Filtering out noisy web data, however, can hurt the diversity of the training set.

To address both of these issues, we use image captioning models to increase the number of useful training samples from the initial pool, by ensuring the captions are more relevant to the images.

Our work systematically explores the effectiveness of using these synthetic captions to replace or complement the raw text data, in the context of CLIP pre-training.

### Source Data

#### Initial Data Collection and Normalization

The original 1.28M image-text pairs were collected by the DataComp team from Common Crawl. Minimal filtering was performed on the initial data pool (face blurring, NSFW removal, train-test deduplication).

We then replaced the original web-crawled captions with synthetic captions generated by BLIP2.

#### Who are the source language producers?

Common Crawl is the source for images. BLIP2 is the source of the text data.

### Annotations

#### Annotation process

The dataset was built in a fully automated process: captions are generated by the BLIP2 captioning model.

#### Who are the annotators?

No human annotators are involved.

### Personal and Sensitive Information

The images, which we inherit from the DataComp benchmark, already underwent face detection and face blurring. While the DataComp team made an attempt to remove NSFW instances, it is possible that such content may still exist (to a small degree) in this dataset.

Due to the large scale nature of this dataset, the content has not been manually verified to be completely safe. Therefore, it is strongly recommended that this dataset be used only for research purposes.

## Considerations for Using the Data

### Social Impact of Dataset

The publication contains some preliminary analyses of the fairness implication of training on this dataset, when evaluating on Fairface.

### Discussion of Biases

Refer to the publication for more details.

### Other Known Limitations

Refer to the publication for more details.

## Additional Information

### Citation Information

```bibtex

@article{nguyen2023improving,

title={Improving Multimodal Datasets with Image Captioning},

author={Nguyen, Thao and Gadre, Samir Yitzhak and Ilharco, Gabriel and Oh, Sewoong and Schmidt, Ludwig},

journal={arXiv preprint arXiv:2307.10350},

year={2023}

}

``` |

pensieves/spur | 2023-09-27T02:46:41.000Z | [

"license:apache-2.0",

"region:us"

] | pensieves | SPUR is a comprehensive benchmark for spatial understanding and reasoning with over <TODO> natural language questions. The data set comes with multiple splits: SpartQA_Human (train, val, test). | @INPROCEEDINGS{Rizvi2023Spur,

author = {

Md Imbesat Hassan Rizvi

and IG},

title = {SPUR: A Unified Benchmark for SPatial Understanding and Reasoning},

booktitle = {},

year = {2023}

} | null | 0 | 3 | ---

license: apache-2.0

---

# SPUR: A Unified Benchmark for SPatial Understanding and Reasoning

### Licensing Information

Creative Commons Attribution 4.0 International |

urialon/converted_qmsum | 2023-08-12T22:55:53.000Z | [

"region:us"

] | urialon | null | null | null | 0 | 3 | ---

dataset_info:

features:

- name: id

dtype: string

- name: pid

dtype: string

- name: input

dtype: string

- name: output

dtype: string

splits:

- name: train

num_bytes: 70168352

num_examples: 1257

- name: validation

num_bytes: 15955428

num_examples: 272

- name: test

num_bytes: 16408856

num_examples: 281

download_size: 42693177

dataset_size: 102532636

---

# Dataset Card for "converted_qmsum"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

HachiML/hh-rlhf-49k-ja-alpaca-format | 2023-08-13T01:04:53.000Z | [

"language:ja",

"license:mit",

"region:us"

] | HachiML | null | null | null | 0 | 3 | ---

dataset_info:

features:

- name: input

dtype: string

- name: output

dtype: string

- name: index

dtype: string

splits:

- name: train

num_bytes: 41667442

num_examples: 49332

download_size: 19145442

dataset_size: 41667442

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

license: mit

language:

- ja

---

# Dataset Card for "hh-rlhf-49k-ja-alpaca-format"

これは[kunishou/hh-rlhf-49k-ja](https://huggingface.co/datasets/kunishou/hh-rlhf-49k-ja)に以下の変更を加えたデータセットです。

- alpaca形式に変更

- 翻訳が失敗したもの(つまりng_translation!="0.0"のもの)の削除

- indexの頭に"hh-rlhf."をつける

This is a dataset of [kunishou/hh-rlhf-49k-en](https://huggingface.co/datasets/kunishou/hh-rlhf-49k-ja) with the following changes

- Changed to alpaca format

- Removed failed translations (i.e., those with ng_translation!="0.0")

- Add "hh-rlhf." at the beginning of indexes |

xasdoi9812323/hello | 2023-08-13T02:16:50.000Z | [

"task_categories:text-classification",

"language:en",

"license:openrail",

"region:us"

] | xasdoi9812323 | null | null | null | 0 | 3 | ---

license: openrail

task_categories:

- text-classification

language:

- en

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

pianoroll/maestro-do-storage | 2023-08-13T08:27:40.000Z | [

"region:us"

] | pianoroll | null | null | null | 0 | 3 | ---

dataset_info:

features:

- name: composer

dtype: string

- name: title

dtype: string

- name: midi_filename

dtype: string

- name: mp3_key

dtype: string

- name: pianoroll_key

dtype: string

- name: split

dtype: string

splits:

- name: train

num_bytes: 419735

num_examples: 1276

download_size: 89454

dataset_size: 419735

---

# Dataset Card for "maestro-do-storage"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

FreedomIntelligence/sharegpt-chinese | 2023-08-13T15:53:36.000Z | [

"license:apache-2.0",

"region:us"

] | FreedomIntelligence | null | null | null | 2 | 3 | ---

license: apache-2.0

---

Chinese ShareGPT data translated by gpt-3.5-turbo.

The dataset is used in the research related to [MultilingualSIFT](https://github.com/FreedomIntelligence/MultilingualSIFT). |

TrainingDataPro/pigs-detection-dataset | 2023-09-14T16:30:06.000Z | [

"task_categories:image-to-image",

"task_categories:image-classification",

"task_categories:object-detection",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"region:us"

] | TrainingDataPro | The dataset is a collection of images along with corresponding bounding box annotations

that are specifically curated for **detecting pigs' heads** in images. The dataset

covers different *pig breeds, sizes, and orientations*, providing a comprehensive

representation of pig appearances.

The pig detection dataset provides a valuable resource for researchers working on pig

detection tasks. It offers a diverse collection of annotated images, allowing for

comprehensive algorithm development, evaluation, and benchmarking, ultimately aiding in

the development of accurate and robust models. | @InProceedings{huggingface:dataset,

title = {pigs-detection-dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 1 | 3 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-to-image

- image-classification

- object-detection

tags:

- code

dataset_info:

features:

- name: id

dtype: int32

- name: image

dtype: image

- name: mask

dtype: image

- name: bboxes

dtype: string

splits:

- name: train

num_bytes: 5428811

num_examples: 27

download_size: 5391503

dataset_size: 5428811

---

# Pigs Detection Dataset

The dataset is a collection of images along with corresponding bounding box annotations that are specifically curated for **detecting pigs' heads** in images. The dataset covers different *pig breeds, sizes, and orientations*, providing a comprehensive representation of pig appearances.

The pig detection dataset provides a valuable resource for researchers working on pig detection tasks. It offers a diverse collection of annotated images, allowing for comprehensive algorithm development, evaluation, and benchmarking, ultimately aiding in the development of accurate and robust models.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=pigs-detection-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Dataset structure

- **images** - contains of original images of pigs

- **boxes** - includes bounding box labeling for the original images

- **annotations.xml** - contains coordinates of the bounding boxes and labels, created for the original photo

# Data Format

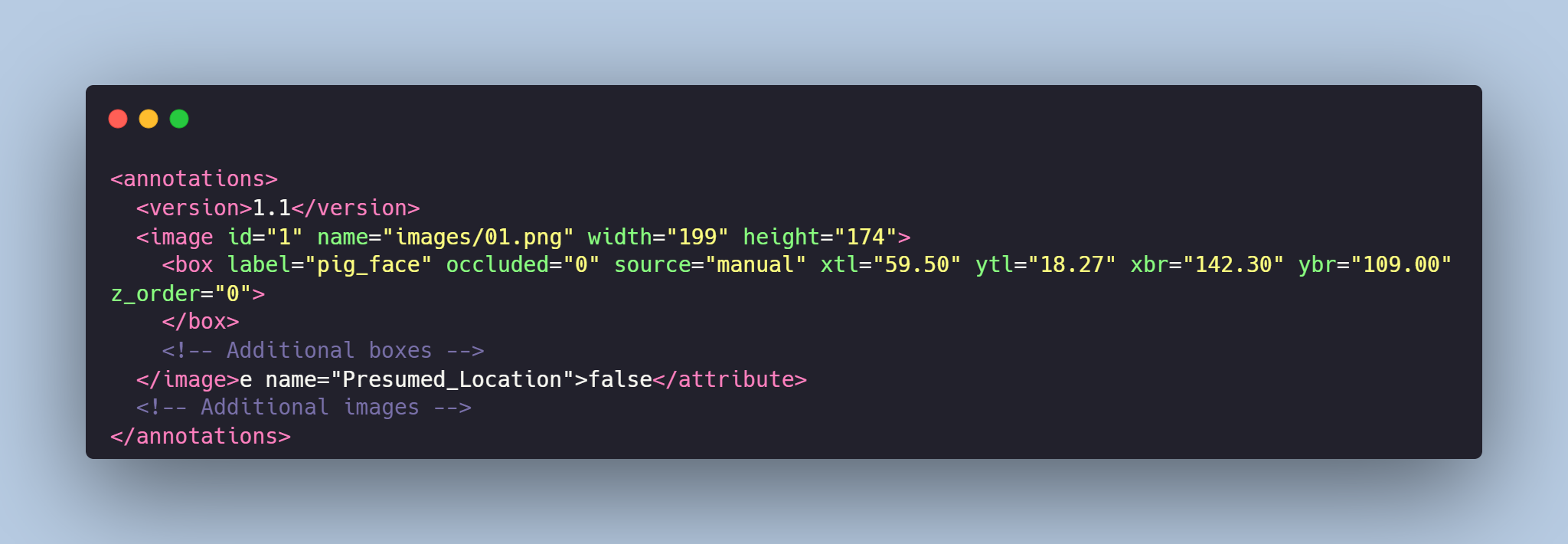

Each image from `images` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes for pigs detection. For each point, the x and y coordinates are provided.

# Example of XML file structure

# Pig Detection might be made in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=pigs-detection-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

TrainingDataPro/generated-vietnamese-passeports-dataset | 2023-09-14T16:29:29.000Z | [

"task_categories:image-classification",

"task_categories:image-segmentation",

"language:en",

"language:vi",

"license:cc-by-nc-nd-4.0",

"code",

"finance",

"legal",

"region:us"

] | TrainingDataPro | Data generation in machine learning involves creating or manipulating data to train

and evaluate machine learning models. The purpose of data generation is to provide

diverse and representative examples that cover a wide range of scenarios, ensuring the

model's robustness and generalization.

The dataset contains GENERATED Vietnamese passports, which are replicas of official

passports but with randomly generated details, such as name, date of birth etc.

The primary intention of generating these fake passports is to demonstrate the

structure and content of a typical passport document and to train the neural network to

identify this type of document.

Generated passports can assist in conducting research without accessing or compromising

real user data that is often sensitive and subject to privacy regulations. Synthetic

data generation allows researchers to *develop and refine models using simulated

passport data without risking privacy leaks*. | @InProceedings{huggingface:dataset,

title = {generated-vietnamese-passeports-dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 1 | 3 | ---

language:

- en

- vi

license: cc-by-nc-nd-4.0

task_categories:

- image-classification

- image-segmentation

tags:

- code

- finance

- legal

dataset_info:

features:

- name: id

dtype: int32

- name: image

dtype: image

splits:

- name: train

num_bytes: 28732495

num_examples: 20

download_size: 28741938

dataset_size: 28732495

---

# GENERATED Vietnamese Passports Dataset

**Data generation** in machine learning involves creating or manipulating data to train and evaluate machine learning models. The purpose of data generation is to provide diverse and representative examples that cover a wide range of scenarios, ensuring the model's *robustness and generalization*.

The dataset contains GENERATED Vietnamese passports, which are replicas of official passports but with randomly generated details, such as *name, date of birth etc*. The primary intention of generating these fake passports is to demonstrate the structure and content of a typical passport document and to train the neural network to identify this type of document.

Generated passports can assist in conducting research without accessing or compromising real user data that is often sensitive and subject to privacy regulations. Synthetic data generation allows researchers to *develop and refine models using simulated passport data without risking privacy leaks*.

### The dataset is solely for informational or educational purposes and should not be used for any fraudulent or deceptive activities.

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=generated-vietnamese-passeports-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Passports might be generated in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=generated-vietnamese-passeports-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

amitness/logits-arabic-128 | 2023-09-21T11:34:46.000Z | [

"region:us"

] | amitness | null | null | null | 0 | 3 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: input_ids

sequence: int32

- name: token_type_ids

sequence: int8

- name: attention_mask

sequence: int8

- name: labels

sequence: int64

- name: teacher_logits

sequence:

sequence: float64

- name: teacher_indices

sequence:

sequence: int64

- name: teacher_mask_indices

sequence: int64

splits:

- name: train

num_bytes: 19440049160

num_examples: 4294918

download_size: 7814026203

dataset_size: 19440049160

---

# Dataset Card for "logits-arabic-128"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

CyberHarem/tsunade_naruto | 2023-09-17T17:05:41.000Z | [

"task_categories:text-to-image",

"size_categories:n<1K",

"license:mit",

"art",

"not-for-all-audiences",

"region:us"

] | CyberHarem | null | null | null | 0 | 3 | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of tsunade (NARUTO)

This is the dataset of tsunade (NARUTO), containing 200 images and their tags.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

|

vasuens/openassistant | 2023-08-16T11:44:59.000Z | [

"license:llama2",

"region:us"

] | vasuens | null | null | null | 0 | 3 | ---

license: llama2

---

|

Deepakvictor/tan-tam | 2023-08-15T12:45:49.000Z | [

"task_categories:translation",

"task_categories:text-classification",

"size_categories:1K<n<10K",

"language:ta",

"language:en",

"license:openrail",

"region:us"

] | Deepakvictor | null | null | null | 0 | 3 | ---

license: openrail

task_categories:

- translation

- text-classification

language:

- ta

- en

pretty_name: translation

size_categories:

- 1K<n<10K

---

Translation of Tanglish to tamil

Source: karky.in

To use

```python

import datasets

s = datasets.load_dataset('Deepakvictor/tan-tam')

print(s)

"""

DatasetDict({

train: Dataset({

features: ['en', 'ta'],

num_rows: 22114

})

})

"""

```

Credits and Source: https://karky.in/

---

For Complex version --> "Deepakvictor/tanglish-tamil" |

m720/SHADR | 2023-08-17T14:49:14.000Z | [

"task_categories:text-classification",

"size_categories:1K<n<10K",

"language:en",

"license:cc-by-4.0",

"medical",

"arxiv:2308.06354",

"region:us"

] | m720 | null | null | null | 2 | 3 | ---

license: cc-by-4.0

task_categories:

- text-classification

language:

- en

tags:

- medical

pretty_name: SHADR

size_categories:

- 1K<n<10K

---

# SDoH Human Annotated Demographic Robustness (SHADR) Dataset

## Overview

The Social determinants of health (SDoH) play a pivotal role in determining patient outcomes. However, their documentation in electronic health records (EHR) remains incomplete. This dataset was created from a study examining the capability of large language models in extracting SDoH from the free text sections of EHRs. Furthermore, the study delved into the potential of synthetic clinical text to bolster the extraction process of these scarcely documented, yet crucial, clinical data.

## Dataset Structure & Modification

To understand potential biases in high-performing models and in those pre-trained on general text, GPT-4 was utilized to infuse demographic descriptors into our synthetic data.

For instance:

- **Original Sentence**: "Widower admits fears surrounding potential judgment…"

- **Modified Sentence**: “Hispanic widower admits fears surrounding potential judgment..."

Such demographic-infused sentences underwent manual validation. Out of these:

- 419 had mentions of SDoH

- 253 had mentions of adverse SDoH

- The remainder were tagged as NO_SDoH

## Instructions for Model Evaluation

1. Initially, run your model inference on the original sentences.

2. Subsequently, apply the same model to infer on the demographic-modified sentences.

3. Perform comparisons for robustness.

For a detailed understanding of the "adverse" labeling, refer to https://arxiv.org/pdf/2308.06354.pdf. Here, the 'adverse' column demarcates if the label corresponds to an "adverse" or "non-adverse" SDoH.

## Current Performance Metrics

- **Best Model Performance**:

- **Any SDoH**: 88% Macro-F1

- **Adverse SDoH**: 84% Macro-F1

- **Robustness Rate**:

- **Any SDoH**: 9.9%

- **Adverse SDoH**: 14.3%

## External Links

- A PhysioNet release of our annotated MIMIC-III courpus: `[TBD]`

- Github release: https://github.com/AIM-Harvard/SDoH

---

How to Cite:

```

@misc{guevara2023large,

title={Large Language Models to Identify Social Determinants of Health in Electronic Health Records},

author={Marco Guevara and Shan Chen and Spencer Thomas and Tafadzwa L. Chaunzwa and Idalid Franco and Benjamin Kann and Shalini Moningi and Jack Qian and Madeleine Goldstein and Susan Harper and Hugo JWL Aerts and Guergana K. Savova and Raymond H. Mak and Danielle S. Bitterman},

year={2023},

eprint={2308.06354},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

```

|

paralym/lima-chinese | 2023-08-15T15:41:46.000Z | [

"license:other",

"region:us"

] | paralym | null | null | null | 0 | 3 | ---

license: other

---

Lima data translated by gpt-3.5-turbo.

License under LIMA's License. |

devopsmarc/my-issues-dataset | 2023-08-22T21:22:44.000Z | [

"task_categories:sentence-similarity",

"task_ids:semantic-similarity-classification",

"annotations_creators:no-annotation",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:eng",

"license:apache-2.0",

"region:us"

] | devopsmarc | null | null | null | 0 | 3 | ---

annotations_creators:

- no-annotation

language_creators:

- found

language:

- eng

license:

- apache-2.0

multilinguality:

- monolingual

size_categories:

- 1K<n<10K

source_datasets:

- original

task_categories:

- sentence-similarity

task_ids:

- semantic-similarity-classification

dataset_info:

features:

- name: html_url

dtype: string

- name: title

dtype: string

- name: comments

dtype: string

- name: body

dtype: string

- name: comment_length

dtype: int64

- name: text

dtype: string

splits:

- name: train

num_bytes: 5968669

num_examples: 1061

download_size: 1229293

dataset_size: 5968669

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for Dataset Name

## Dataset Summary in English

This customized dataset is made of a corpus of commun Github issues, typically utilized for tracking bugs or features within a repositories. This self-constructed corpus can serve multiple purposes, such as analyzing the time taken to resolve open issues or pull requests, training a classifier to tag issues based on their descriptions (e.g., "bug," "enhancement," "question"), or developing a semantic search engine for finding relevant issues based on user queries.

## Résumé de l'ensemble de jeu de données en français

Ce jeu de données personnalisé est constitué d'un corpus de problèmes couramment rencontrés sur GitHub, généralement utilisés pour le suivi des bugs ou des fonctionnalités au sein des repositories. Ce corpus auto construit peut servir à de multiples fins, telles que l'analyse du temps nécessaire pour résoudre les problèmes ouverts ou les demandes d'extraction, l'entraînement d'un classificateur pour étiqueter les problèmes sur la base de leurs descriptions (par exemple, "bug", "amélioration", "question"), ou le développement d'un moteur de recherche sémantique pour trouver des problèmes pertinents sur la base des requêtes de l'utilisateur.

### Languages

English

## Dataset Structure

### Data Splits

Train

### Personal and Sensitive Information

Not applicable.

## Considerations for Using the Data

### Social Impact of Dataset

Not applicable.

### Discussion of Biases

Possible. Comments within dataset were not monitored and are uncensored.

### Licensing Information

Apache 2.0

### Citation Information

https://github.com/huggingface/datasets/issues

|

CyberHarem/uiharu_kazari_toarumajutsunoindex | 2023-09-17T17:08:08.000Z | [

"task_categories:text-to-image",

"size_categories:n<1K",

"license:mit",

"art",

"not-for-all-audiences",

"region:us"

] | CyberHarem | null | null | null | 0 | 3 | ---

license: mit

task_categories:

- text-to-image

tags:

- art

- not-for-all-audiences

size_categories:

- n<1K

---

# Dataset of Uiharu Kazari

This is the dataset of Uiharu Kazari, containing 96 images and their tags.

Images are crawled from many sites (e.g. danbooru, pixiv, zerochan ...), the auto-crawling system is powered by [DeepGHS Team](https://github.com/deepghs)([huggingface organization](https://huggingface.co/deepghs)).

| Name | Images | Download | Description |

|:------------|---------:|:------------------------------------|:-------------------------------------------------------------------------|

| raw | 96 | [Download](dataset-raw.zip) | Raw data with meta information. |

| raw-stage3 | 217 | [Download](dataset-raw-stage3.zip) | 3-stage cropped raw data with meta information. |

| 384x512 | 96 | [Download](dataset-384x512.zip) | 384x512 aligned dataset. |

| 512x512 | 96 | [Download](dataset-512x512.zip) | 512x512 aligned dataset. |

| 512x704 | 96 | [Download](dataset-512x704.zip) | 512x704 aligned dataset. |

| 640x640 | 96 | [Download](dataset-640x640.zip) | 640x640 aligned dataset. |

| 640x880 | 96 | [Download](dataset-640x880.zip) | 640x880 aligned dataset. |

| stage3-640 | 217 | [Download](dataset-stage3-640.zip) | 3-stage cropped dataset with the shorter side not exceeding 640 pixels. |

| stage3-800 | 217 | [Download](dataset-stage3-800.zip) | 3-stage cropped dataset with the shorter side not exceeding 800 pixels. |

| stage3-1200 | 217 | [Download](dataset-stage3-1200.zip) | 3-stage cropped dataset with the shorter side not exceeding 1200 pixels. |

|

KoalaAI/GitHub-CC0 | 2023-08-21T14:49:52.000Z | [

"task_categories:text-generation",

"task_categories:text-classification",

"size_categories:1K<n<10K",

"license:cc0-1.0",

"github",

"programming",

"code",

"public domain",

"cc0",

"region:us"

] | KoalaAI | null | null | null | 0 | 3 | ---

license: cc0-1.0

task_categories:

- text-generation

- text-classification

tags:

- github

- programming

- code

- public domain

- cc0

pretty_name: Public Domain GitHub Repositories

size_categories:

- 1K<n<10K

---

# Public Domain GitHub Repositories Dataset

This dataset contains metadata and source code of 9,000 public domain (cc0 or unlicense) licensed GitHub repositories that have more than 25 stars.

The dataset was created by scraping the GitHub API and downloading the repositories, so long as they are under 100mb.

The dataset can be used for various natural language processing and software engineering tasks, such as code summarization, code generation, code search, code analysis, etc.

## Dataset Summary

- **Number of repositories:** 9,000

- **Size:** 2.4 GB (compressed)

- **Languages:** Python, JavaScript, Java, C#, C++, Ruby, PHP, Go, Swift, and Rust

- **License:** Public Domain (cc0 or unlicense)

## Dataset License

This dataset is released under the Public Domain (cc0 or unlicense) license. The original repositories are also licensed under the Public Domain (cc0 or unlicense) license. You can use this dataset for any purpose without any restrictions.

## Reproducing this dataset

This dataset was produced by modifiying the "github-downloader" from EleutherAI. You can access our fork [on our GitHub page](https://github.com/KoalaAI-Research/github-downloader)

Replication steps are included in it's readme there. |

EleutherAI/CEBaB | 2023-08-16T23:09:21.000Z | [

"task_categories:text-classification",

"language:en",

"license:cc-by-4.0",

"arxiv:2205.14140",

"region:us"

] | EleutherAI | null | null | null | 1 | 3 | ---

license: cc-by-4.0

dataset_info:

features:

- name: original_id

dtype: int32

- name: edit_goal

dtype: string

- name: edit_type

dtype: string

- name: text

dtype: string

- name: food

dtype: string

- name: ambiance

dtype: string

- name: service

dtype: string

- name: noise

dtype: string

- name: counterfactual

dtype: bool

- name: rating

dtype: int64

splits:

- name: validation

num_bytes: 306529

num_examples: 1673

- name: test

num_bytes: 309751

num_examples: 1689

- name: train

num_bytes: 2282439

num_examples: 11728

download_size: 628886

dataset_size: 2898719

task_categories:

- text-classification

language:

- en

---

# Dataset Card for "CEBaB"

This is a lightly cleaned and simplified version of the CEBaB counterfactual restaurant review dataset from [this paper](https://arxiv.org/abs/2205.14140).

The most important difference from the original dataset is that the `rating` column corresponds to the _median_ rating provided by the Mechanical Turkers,

rather than the majority rating. These are the same whenever a majority rating exists, but when there is no majority rating (e.g. because there were two 1s,

two 2s, and one 3), the original dataset used a `"no majority"` placeholder whereas we are able to provide an aggregate rating for all reviews.

The exact code used to process the original dataset is provided below:

```py

from ast import literal_eval

from datasets import DatasetDict, Value, load_dataset

def compute_median(x: str):

"""Compute the median rating given a multiset of ratings."""

# Decode the dictionary from string format

dist = literal_eval(x)

# Should be a dictionary whose keys are string-encoded integer ratings

# and whose values are the number of times that the rating was observed

assert isinstance(dist, dict)