id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

webis/Touche23-ValueEval | 2023-05-23T20:19:40.000Z | [

"task_categories:text-classification",

"task_categories:zero-shot-classification",

"task_ids:multi-label-classification",

"size_categories:1K<n<10K",

"language:en",

"license:cc-by-4.0",

"Human Values",

"Value Detection",

"Multi-Label",

"region:us"

] | webis | Dataset for Touch\u00E9 / SemEval 2023 Task 4; ValueEval: Identification of Human Values behind Arguments:

https://www.overleaf.com/6679855346wrdckzkdccxg

Based on the original Webis-ArgValues-22 (https://doi.org/10.5281/zenodo.5657249) dataset accompanying the paper

Identifying the Human Values behind Arguments (Kiesel et al. 2022b; https://webis.de/publications.html#kiesel_2022b),

published at ACL'22. | @Article{mirzakhmedova:2023a,

author = {Nailia Mirzakhmedova and Johannes Kiesel and Milad Alshomary and Maximilian Heinrich and Nicolas Handkeand Xiaoni Cai and Valentin Barriere and Doratossadat Dastgheib and Omid Ghahroodi and {Mohammad Ali} Sadraeiand Ehsaneddin Asgari and Lea Kawaletz and Henning Wachsmuth and Benno Stein},

doi = {10.48550/arXiv.2301.13771},

journal = {CoRR},

month = jan,

publisher = {arXiv},

title = {{The Touch{\'e}23-ValueEval Dataset for Identifying Human Values behind Arguments}},

volume = {abs/2301.13771},

year = 2023

} | null | 3 | 67 | ---

license: cc-by-4.0

task_categories:

- text-classification

- zero-shot-classification

task_ids:

- multi-label-classification

language:

- en

tags:

- Human Values

- Value Detection

- Multi-Label

pretty_name: Human Value Detection Dataset

size_categories:

- 1K<n<10K

---

# The Touché23-ValueEval Dataset

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Dataset Usage](#dataset-usage)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Argument Instances](#argument-instances)

- [Metadata Instances](#metadata-instances)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

## Dataset Description

- **Homepage:** [https://webis.de/data/touche23-valueeval.html](https://webis.de/data/touche23-valueeval.html)

- **Repository:** [Zenodo](https://doi.org/10.5281/zenodo.6814563)

- **Paper:** [The Touché23-ValueEval Dataset for Identifying Human Values behind Arguments.](https://webis.de/downloads/publications/papers/mirzakhmedova_2023a.pdf)

- **Leaderboard:** [https://touche.webis.de/](https://touche.webis.de/semeval23/touche23-web/index.html#results)

- **Point of Contact:** [Webis Group](https://webis.de/people.html)

### Dataset Summary

The Touché23-ValueEval Dataset comprises 9324 arguments from six different sources. An arguments source is indicated with the first letter of its `Argument ID`:

- `A`: [IBM-ArgQ-Rank-30kArgs](https://research.ibm.com/haifa/dept/vst/debating_data.shtml#Argument%20Quality)

- `C`:Chinese question-answering website [Zhihu](https://www.zhihu.com)

- `D`:[Group Discussion Ideas (GD IDEAS)](https://www.groupdiscussionideas.com)

- `E`:[The Conference for the Future of Europe](https://futureu.europa.eu)

- `F`:Contribution by the language.ml lab (Doratossadat, Omid, Mohammad, Ehsaneddin) [1]:

arguments from the "Nahj al-Balagha" [2] and "Ghurar al-Hikam wa Durar ak-Kalim" [3]

- `G`:[The New York Times](https://www.nytimes.com)

The annotated labels are based on the value taxonomy published in

[Identifying the Human Values behind Arguments](https://webis.de/publications.html#kiesel_2022b) (Kiesel et al. 2022) at ACL'22.

[1] https://language.ml

[2] https://en.wikipedia.org/wiki/Nahj_al-Balagha

[3] https://en.wikipedia.org/wiki/Ghurar_al-Hikam_wa_Durar_al-Kalim

### Dataset Usage

The default configuration name is `main`.

```python

from datasets import load_dataset

dataset = load_dataset("webis/Touche23-ValueEval")

print(dataset['train'].info.description)

for argument in iter(dataset['train']):

print(f"{argument['Argument ID']}: {argument['Stance']} '{argument['Conclusion']}': {argument['Premise']}")

```

### Supported Tasks and Leaderboards

Human Value Detection

### Languages

The [Argument Instances](#argument-instances) are all monolingual; it only includes English (mostly en-US) documents.

The [Metadata Instances](#metadata-instances) for some dataset parts additionally state the arguments in their original language and phrasing.

## Dataset Structure

### Argument Instances

Each argument instance has the following attributes:

- `Argument ID`: The unique identifier for the argument within the dataset

- `Conclusion`: Conclusion text of the argument

- `Stance`: Stance of the `Premise` towards the `Conclusion; one of "in favor of", "against"

- `Premise`: Premise text of the argument

- `Labels`: The `Labels` for each example is an array of 1s (argument resorts to value) and 0s (argument does not resort to value). The order is the same as in the original files.

Additionally, the labels are separated into *value-categories*, aka. level 2 labels of the value taxonomy (Kiesel et al. 2022b), and *human values*, aka. level 1 labels of the value taxonomy.

This distinction is also reflected in the configuration names:

- `<config>`: As the [Task](https://touche.webis.de/semeval23/touche23-web/) is focused mainly on the detection of value-categories,

each base configuration ([listed below](#p-list-base-configs)) has the 20 value-categories as labels:

```python

labels = ["Self-direction: thought", "Self-direction: action", "Stimulation", "Hedonism", "Achievement", "Power: dominance", "Power: resources", "Face", "Security: personal", "Security: societal", "Tradition", "Conformity: rules", "Conformity: interpersonal", "Humility", "Benevolence: caring", "Benevolence: dependability", "Universalism: concern", "Universalism: nature", "Universalism: tolerance", "Universalism: objectivity"]

```

- `<config>-level1`: The 54 human values from the level 1 of the value taxonomy are not used for the 2023 task

(except for the annotation), but are still listed here for some might find them useful for understanding the value

categories. Their order is also the same as in the original files. For more details see the [value-categories](#metadata-instances) configuration.

<p id="p-list-base-configs">The configuration names (as replacements for <code><config></code>) in this dataset are:</p>

- `main`: 8865 arguments (sources: `A`, `D`, `E`) with splits `train`, `validation`, and `test` (default configuration name)

```python

dataset_main_train = load_dataset("webis/Touche23-ValueEval", split="train")

dataset_main_validation = load_dataset("webis/Touche23-ValueEval", split="validation")

dataset_main_test = load_dataset("webis/Touche23-ValueEval", split="test")

```

- `nahjalbalagha`: 279 arguments (source: `F`) with split `test`

```python

dataset_nahjalbalagha_test = load_dataset("webis/Touche23-ValueEval", name="nahjalbalagha", split="test")

```

- `nyt`: 80 arguments (source: `G`) with split `test`

```python

dataset_nyt_test = load_dataset("webis/Touche23-ValueEval", name="nyt", split="test")

```

- `zhihu`: 100 arguments (source: `C`) with split `validation`

```python

dataset_zhihu_validation = load_dataset("webis/Touche23-ValueEval", name="zhihu", split="validation")

```

Please note that due to copyright reasons, there currently does not exist a direct download link to the arguments contained in the

New york Times

dataset. Accessing any of the `nyt` or `nyt-level1` configurations will therefore use the specifically created

[nyt-downloader program](https://github.com/touche-webis-de/touche-code/tree/main/semeval23/human-value-detection/nyt-downloader)

to create and access the arguments locally. See the program's

[README](https://github.com/touche-webis-de/touche-code/blob/main/semeval23/human-value-detection/nyt-downloader/README.md)

for further details.

### Metadata Instances

The following lists all configuration names for metadata. Each configuration only has a single split named `meta`.

- `ibm-meta`: Each row corresponds to one argument (IDs starting with `A`) from the [IBM-ArgQ-Rank-30kArgs](https://research.ibm.com/haifa/dept/vst/debating_data.shtml#Argument%20Quality)

- `Argument ID`: The unique identifier for the argument

- `WA`: the quality label according to the weighted-average scoring function

- `MACE-P`: the quality label according to the MACE-P scoring function

- `stance_WA`: the stance label according to the weighted-average scoring function

- `stance_WA_conf`: the confidence in the stance label according to the weighted-average scoring function

```python

dataset_ibm_metadata = load_dataset("webis/Touche23-ValueEval", name="ibm-meta", split="meta")

```

- `zhihu-meta`: Each row corresponds to one argument (IDs starting with `C`) from the Chinese question-answering website [Zhihu](https://www.zhihu.com)

- `Argument ID`: The unique identifier for the argument

- `Conclusion Chinese`: The original chinese conclusion statement

- `Premise Chinese`: The original chinese premise statement

- `URL`: Link to the original statement the argument was taken from

```python

dataset_zhihu_metadata = load_dataset("webis/Touche23-ValueEval", name="zhihu-meta", split="meta")

```

- `gdi-meta`: Each row corresponds to one argument (IDs starting with `D`) from [GD IDEAS](https://www.groupdiscussionideas.com/)

- `Argument ID`: The unique identifier for the argument

- `URL`: Link to the topic the argument was taken from

```python

dataset_gdi_metadata = load_dataset("webis/Touche23-ValueEval", name="gdi-meta", split="meta")

```

- `cofe-meta`: Each row corresponds to one argument (IDs starting with `E`) from [the Conference for the Future of Europe](https://futureu.europa.eu)

- `Argument ID`: The unique identifier for the argument

- `URL`: Link to the comment the argument was taken from

```python

dataset_cofe_metadata = load_dataset("webis/Touche23-ValueEval", name="cofe-meta", split="meta")

```

- `nahjalbalagha-meta`: Each row corresponds to one argument (IDs starting with `F`). This file contains information on the 279 arguments in `nahjalbalagha` (or `nahjalbalagha-level1`)

and 1047 additional arguments that were not labeled so far. This data was contributed by the language.ml lab.

- `Argument ID`: The unique identifier for the argument

- `Conclusion Farsi`: Conclusion text of the argument in Farsi

- `Stance Farsi`: Stance of the `Premise` towards the `Conclusion`, in Farsi

- `Premise Farsi`: Premise text of the argument in Farsi

- `Conclusion English`: Conclusion text of the argument in English (translated from Farsi)

- `Stance English`: Stance of the `Premise` towards the `Conclusion`; one of "in favor of", "against"

- `Premise English`: Premise text of the argument in English (translated from Farsi)

- `Source`: Source text of the argument; one of "Nahj al-Balagha", "Ghurar al-Hikam wa Durar ak-Kalim"; their Farsi translations were used

- `Method`: How the premise was extracted from the source; one of "extracted" (directly taken), "deduced"; the conclusion are deduced

```python

dataset_nahjalbalagha_metadata = load_dataset("webis/Touche23-ValueEval", name="nahjalbalagha-meta", split="meta")

```

- `nyt-meta`: Each row corresponds to one argument (IDs starting with `G`) from [The New York Times](https://www.nytimes.com)

- `Argument ID`: The unique identifier for the argument

- `URL`: Link to the article the argument was taken from

- `Internet Archive timestamp`: Timestamp of the article's version in the Internet Archive that was used

```python

dataset_nyt_metadata = load_dataset("webis/Touche23-ValueEval", name="nyt-meta", split="meta")

```

- `value-categories`: Contains a single JSON-entry with the structure of level 2 and level 1 values regarding the value taxonomy:

```

{

"<value category>": {

"<level 1 value>": [

"<exemplary effect a corresponding argument might target>",

...

], ...

}, ...

}

```

As this configuration contains just a single entry, an example usage could be:

```python

value_categories = load_dataset("webis/Touche23-ValueEval", name="value-categories", split="meta")[0]

```

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[Creative Commons Attribution 4.0 International (CC BY 4.0)](https://creativecommons.org/licenses/by/4.0/)

### Citation Information

```

@Article{mirzakhmedova:2023a,

author = {Nailia Mirzakhmedova and Johannes Kiesel and Milad Alshomary and Maximilian Heinrich and Nicolas Handke\

and Xiaoni Cai and Valentin Barriere and Doratossadat Dastgheib and Omid Ghahroodi and {Mohammad Ali} Sadraei\

and Ehsaneddin Asgari and Lea Kawaletz and Henning Wachsmuth and Benno Stein},

doi = {10.48550/arXiv.2301.13771},

journal = {CoRR},

month = jan,

publisher = {arXiv},

title = {{The Touch{\'e}23-ValueEval Dataset for Identifying Human Values behind Arguments}},

volume = {abs/2301.13771},

year = 2023

}

``` |

mstz/pums | 2023-04-18T07:42:19.000Z | [

"task_categories:tabular-classification",

"language:en",

"pums",

"tabular_classification",

"binary_classification",

"UCI",

"region:us"

] | mstz | null | @misc{misc_us_census_data_(1990)_116,

author = {Meek,Meek, Thiesson,Thiesson & Heckerman,Heckerman},

title = {{US Census Data (1990)}},

howpublished = {UCI Machine Learning Repository},

note = {{DOI}: \\url{10.24432/C5VP42}}

} | null | 0 | 67 | ---

language:

- en

tags:

- pums

- tabular_classification

- binary_classification

- UCI

pretty_name: Ipums

task_categories: # Full list at https://github.com/huggingface/hub-docs/blob/main/js/src/lib/interfaces/Types.ts

- tabular-classification

configs:

- pums

---

# Pums

The [Pums dataset](https://archive-beta.ics.uci.edu/dataset/116/us+census+data+1990) from the [UCI repository](https://archive-beta.ics.uci.edu/).

U.S.A. Census dataset, classify the income of the individual.

# Configurations and tasks

| **Configuration** | **Task** |

|-----------------------|---------------------------|

| pums | Binary classification.| |

gauss314/bitcoin_daily | 2023-07-30T02:20:32.000Z | [

"task_categories:tabular-regression",

"task_categories:tabular-classification",

"size_categories:1K<n<10K",

"license:gpl-3.0",

"bitcoin",

"cryptocurrencies",

"crypto",

"region:us"

] | gauss314 | null | null | null | 2 | 67 | ---

license: gpl-3.0

task_categories:

- tabular-regression

- tabular-classification

tags:

- bitcoin

- cryptocurrencies

- crypto

size_categories:

- 1K<n<10K

--- |

Patt/ReCoRD_TH_drop | 2023-07-20T15:29:42.000Z | [

"task_categories:text-classification",

"language:en",

"language:th",

"arxiv:1907.04307",

"region:us"

] | Patt | null | null | null | 0 | 67 | ---

task_categories:

- text-classification

language:

- en

- th

dataset_info:

features:

- name: passage

dtype: string

- name: passage_TH

dtype: string

- name: query

dtype: string

- name: query_TH

dtype: string

- name: entities

sequence: string

- name: entities_TH

sequence: string

- name: entity_spans

struct:

- name: end

sequence: int64

- name: start

sequence: int64

- name: text

sequence: string

- name: answers

sequence: string

- name: answers_TH

sequence: string

- name: idx

struct:

- name: passage

dtype: int64

- name: query

dtype: int64

- name: score_passage

dtype: float64

- name: score_query

dtype: float64

- name: score_entities

dtype: float64

- name: score_answers

dtype: float64

splits:

- name: train

num_bytes: 281547282

num_examples: 57811

- name: validation

num_bytes: 32258456

num_examples: 6676

download_size: 112999233

dataset_size: 313805738

---

# Dataset Card for ReCoRD_TH_drop

### Dataset Description

This dataset is Thai translated version of [ReCoRD](https://huggingface.co/datasets/super_glue/viewer/record) using google translate with [Multilingual Universal Sentence Encoder](https://arxiv.org/abs/1907.04307) to calculate score for Thai translation.

Drop every row that score_answers < 0.8 and every row that score < 0.5 after penalty. |

rusheeliyer/uk-abs | 2023-08-11T16:40:28.000Z | [

"region:us"

] | rusheeliyer | null | null | null | 0 | 67 | ---

dataset_info:

features:

- name: judgement

dtype: string

- name: summary

dtype: string

splits:

- name: train

num_bytes: 52800141

num_examples: 589

- name: test

num_bytes: 8174530

num_examples: 100

- name: validation

num_bytes: 10432092

num_examples: 104

download_size: 32973908

dataset_size: 71406763

---

# Dataset Card for "uk-abs"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

morpheuslord/cve-llm-training | 2023-08-30T06:26:48.000Z | [

"task_categories:text-generation",

"language:en",

"license:apache-2.0",

"code",

"region:us"

] | morpheuslord | null | null | null | 4 | 67 | ---

license: apache-2.0

task_categories:

- text-generation

language:

- en

tags:

- code

pretty_name: CVE-LLm-Dataset

data_source: Custom data collected from the CVE database

data_formats: JSONL

---

# CVE-llm_dataset

This dataset is intended to train an LLM model for an utterly CVE-focused input and output.

## Data extraction:

For the data extraction, I first downloaded the CVE database from NVD lists and then loaded them using the `cve_dataset_2.py` and `cve_dataset.py` both have produce different datasets one is for llama and the other is for openai GPT.

The CVE json files are mapped in this format:

```

cves:

|

├─1999

| ├─0xxx

| | ├─CVE-1999-0001.json

| | ├─....

| | └─CVE-1999-0999.json

| └─1xxx

| ├─CVE-1999-1000.json

| ├─....

| └─CVE-1999-1598.json

└─2023

```

The programs traverse through these folders extract the data in the files and arrange them into usable formats for the fine-tuning process.

## llama2 Model dataset:

The llama2 fine-tuned dataset follows this format:

```

{

"instruction": "Explain CVE-1999-0001",

"input": "Explain the vulnerability: CVE-1999-0001",

"output": "ip_input.c in BSD-derived TCP/IP implementations allows remote attackers to cause a denial of service (crash or hang) via crafted packets.\nAffected Products: n/a\nReferences: [{'tags': ['x_refsource_CONFIRM'], 'url': 'http://www.openbsd.org/errata23.html#tcpfix'}, {'name': '5707', 'tags': ['vdb-entry', 'x_refsource_OSVDB'], 'url': 'http://www.osvdb.org/5707'}]\nCVE State: PUBLISHED"

}

```

The instruction is what we instruct the AI to do with the data provided For example we can command the AI `To take in user input analyze it and then based on what he asks returns an answer` This is also where we can add a `role` or a `personal` to the AI.

The input is the user Input of the main query or data that must be processed by the AI. This is a crucial piece of information that the AI will process in order to provide an output.

The output is the format that we define and tell the AI to generate answers in that format or provide that answer to the question asked. |

pharaouk/codellama_blob_1 | 2023-08-24T22:30:45.000Z | [

"region:us"

] | pharaouk | null | null | null | 0 | 67 | Entry not found |

boapps/kmdb_classification | 2023-09-21T11:43:34.000Z | [

"region:us"

] | boapps | null | null | null | 0 | 67 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

- name: title

dtype: string

- name: description

dtype: string

- name: keywords

sequence: string

- name: label

dtype: int64

- name: url

dtype: string

- name: date

dtype: string

- name: is_hand_annoted

dtype: bool

- name: score

dtype: float64

- name: title_score

dtype: float64

splits:

- name: train

num_bytes: 187493981

num_examples: 45683

- name: test

num_bytes: 13542701

num_examples: 3605

- name: validation

num_bytes: 25309037

num_examples: 6579

download_size: 139938458

dataset_size: 226345719

---

# Dataset Card for "kmdb_classification"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Trelis/tiny-shakespeare | 2023-09-06T16:27:30.000Z | [

"task_categories:text-generation",

"size_categories:n<1K",

"language:en",

"fine-tuning",

"shakespeare",

"region:us"

] | Trelis | null | null | null | 0 | 67 | ---

task_categories:

- text-generation

language:

- en

tags:

- fine-tuning

- shakespeare

size_categories:

- n<1K

---

# Data source

Downloaded via Andrej Karpathy's nanogpt repo from this [link](https://raw.githubusercontent.com/karpathy/char-rnn/master/data/tinyshakespeare/input.txt)

# Data Format

- The entire dataset is split into train (90%) and test (10%).

- All rows are at most 1024 tokens, using the Llama 2 tokenizer.

- All rows are split cleanly so that sentences are whole and unbroken. |

bobbybelajar/LlamaAmazonReviewOneColumn | 2023-09-15T07:12:06.000Z | [

"region:us"

] | bobbybelajar | null | null | null | 0 | 67 | Entry not found |

zxvix/pubmed_subset_wiki_40p | 2023-09-22T13:47:37.000Z | [

"region:us"

] | zxvix | null | null | null | 0 | 67 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 4745394961.571536

num_examples: 1666514

- name: test

num_bytes: 1024229

num_examples: 1000

download_size: 1869629066

dataset_size: 4746419190.571536

---

# Dataset Card for "pubmed_subset_wiki_40p"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

tyzhu/squad_title_v4_train_10_eval_10 | 2023-09-26T14:59:03.000Z | [

"region:us"

] | tyzhu | null | null | null | 0 | 67 | ---

dataset_info:

features:

- name: id

dtype: string

- name: title

dtype: string

- name: context

dtype: string

- name: question

dtype: string

- name: answers

sequence:

- name: text

dtype: string

- name: answer_start

dtype: int32

- name: context_id

dtype: string

- name: inputs

dtype: string

- name: targets

dtype: string

splits:

- name: train

num_bytes: 203084

num_examples: 138

- name: validation

num_bytes: 50807

num_examples: 50

download_size: 65145

dataset_size: 253891

---

# Dataset Card for "squad_title_v4_train_10_eval_10"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

TIGER-Lab/MetricInstruct | 2023-10-10T12:52:55.000Z | [

"arxiv:2310.00752",

"region:us"

] | TIGER-Lab | null | null | null | 4 | 67 | ---

configs:

- config_name: train

data_files:

- split: train_real_world

path:

- "data/new_real_world.json"

- split: train_synthetic

path:

- "data/new_synthetic.json"

- split: train_mix

path:

- "data/new_mix.json"

---

## MetricInstruct

We present TIGERScore, a **T**rained metric that follows **I**nstruction **G**uidance to perform **E**xplainable, and **R**eference-free evaluation over a wide spectrum of text generation tasks. TIGERScore is guided by the natural language instruction to provide error analysis to pinpoint the mistakes in the generated text. Our metric is based on LLaMA-2, trained on our meticulously curated instruction-tuning dataset MetricInstruct which covers 6 text generation tasks and 23 text generation datasets. The dataset consists of 48K quadruple in the form of (instruction, input, system output, error analysis). We collected the `system outputs' through diverse channels to cover different types of errors.

Project Page: [https://tiger-ai-lab.github.io/TIGERScore/](https://tiger-ai-lab.github.io/TIGERScore/)

Paper: [https://arxiv.org/abs/2310.00752](https://arxiv.org/abs/2310.00752)

Code: [https://github.com/TIGER-AI-Lab/TIGERScore](https://github.com/TIGER-AI-Lab/TIGERScore)

Demo: [https://huggingface.co/spaces/TIGER-Lab/TIGERScore](https://huggingface.co/spaces/TIGER-Lab/TIGERScore)

TIGERScore-7B-V1.0: [https://huggingface.co/TIGER-Lab/TIGERScore-7B-V1.0](https://huggingface.co/TIGER-Lab/TIGERScore-7B-V1.0)

TIGERScore-13B-V1.0: [https://huggingface.co/TIGER-Lab/TIGERScore-13B-V1.0](https://huggingface.co/TIGER-Lab/TIGERScore-13B-V1.0)

We present the MetricInstruct dataset, which is employed to fine-tune TIGERScore. The three underlying criteria for dataset construction are:

1. Dataset diversity: we choose 23 distinctive datasets as the source context to cover enough generation tasks.

2. Error coverage: we take system outputs generated from 50+ text generation systems to cover all types of errors and guarantee a balanced distribution.

3. Quality ensurance: to ensure MetricInstruct is tailored to gather in-depth error analysis, we sourced it by prompting OpenAI GPT models and then filtered through different heuristics to eliminate low-quality error analysis.

## Data Source

Our system outputs come from two channels, namely real-world system outputs and synthetic outputs. The real-world system outputs are obtained from real systems, which ensures the error distribution is aligned with real-world ones.

Check out our paper for more details.

| Task | Real-World Dataset | Output Source | Synthetic Dataset | Output Source |

|:--------:|:-----------------------------------------:|:--------------:|:-----------------------------------:|:--------------:|

| Summarization | SummEval, XSum,Newsroom,SAMSum | 27 Systems | CNN/DM, XSum,Gigaword,SAMSum | GPT-4 |

| Translation | WMT | 18 Systems | WMT | GPT-4 |

| Data-to-Text | WebNLG-2020,WikiTableText,ToTTo | 17 Systems | WikiTableText,Dart,ToTTo | GPT-4 |

| Long-Form QA | ASQA,FeTaQA,CosmosQA,ELI5 | 5 Systems | ASQA,FeTaQACosmos QA,ELI5 | GPT-4 |

| MathQA | GSM8K | 5 Systems | GSM8K,MathQA | GPT-4 |

| Instruct | MixInstruct | 11 Systems | LIMA,AlpacaFarmOASST1,Guanaco,Dolly | GPT-4 |

## Data Format

The dataset consists of 48K quadruple in the form of (instruction, input, system output, error analysis).

For each item in the dataset, `task` represents its corresponding text generation task, `instruction` is its task instruction, `input_context` is its input source, and `hypo_output` is the generated output, and `errors` is the error analysis given by ChatGPT or GPT-4.

## Formatting

To format the data fields into a single prompt for finetuning or testing, We provide the following code for users to refer:

```python

FINETUNE_INST = "You are evaluating errors in a model-generated output for a(an) ${task} task."

FINETUNE_INPUT = """\

Task instruction: ${generation_instruction}

Source: ${input_context}

Model-generated Output: ${hypothesis_output}

Based on the given task instruction and source, identify errors in this model-generated output.

For each error you give in the response, please also elaborate the following information:

- error location (the words that are wrong in the output)

- error aspect it belongs to.

- explanation why it's an error, and the correction suggestions.

- severity of the error ("Major" or "Minor").

- reduction of score (between 0.5 and 5 given the severity of the error)

Your evaluation output:

"""

inst_part = Template(FINETUNE_INST)

inst_part = inst_part.substitute(task=task)

input_part = Template(FINETUNE_INPUT)

input_part = input_part.substitute(

generation_instruction=instruction,

input_context=input_context,

hypothesis_output=hypo_output

)

prompt = (inst_part + "\n" + input_part).strip("\n ") + "\n"

encodings = tigerscore_tokenizer(prompt, return_tensors="pt")

input_ids = encodings["input_ids"].to(tigerscore_model.device)

attention_mask = encodings["attention_mask"].to(tigerscore_model.device)

```

Example of formatted prompt:

```txt

You are evaluating errors in a model-generated output for a(an) translation task.

Task instruction: Translate the following text from German to English.

Source: Der künftige EM-Cheforganisator Philipp Lahm soll laut Grindel im DFB-Präsidium mitarbeiten.

Model-generated Output: According to Grindel, the future head of the European Championships, Philipp Lahm, is to participate in the DFB Presidency.

Based on the given task instruction and source, identify errors in this model-generated output.

For each error you give in the response, please also elaborate the following information:

- error location (the words that are wrong in the output)

- error aspect it belongs to.

- explanation why it's an error, and the correction suggestions.

- severity of the error ("Major" or "Minor").

- reduction of score (between 0.5 and 5 given the severity of the error)

Your evaluation output:

```

## Citation

```

@article{jiang2023TIGERScore,

title={TIGERScore: Towards Building Explainable Metric for All Text Generation Tasks},

author={Dongfu Jiang, Yishan Li, Ge Zhang, Wenhao Huang, Bill Yuchen Lin, Wenhu Chen},

journal={arXiv preprint arXiv:2310.00752},

year={2023}

}

``` |

tomashs/LSC_acronyms_topic_vectors | 2023-10-05T21:38:49.000Z | [

"region:us"

] | tomashs | null | null | null | 0 | 67 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: validation

path: data/validation-*

- split: test

path: data/test-*

dataset_info:

features:

- name: text

dtype: string

- name: short_form

dtype: string

- name: long_form

dtype: string

- name: label

dtype: int64

- name: topic_vector

sequence: float64

splits:

- name: train

num_bytes: 1959752089

num_examples: 352720

- name: validation

num_bytes: 418571627

num_examples: 75339

- name: test

num_bytes: 419813918

num_examples: 75540

download_size: 2198337547

dataset_size: 2798137634

---

# Dataset Card for "LSC_acronyms_topic_vectors"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

carnival13/sur_test | 2023-10-08T06:27:14.000Z | [

"region:us"

] | carnival13 | null | null | null | 0 | 67 | ---

dataset_info:

features:

- name: input_ids

sequence: int32

- name: attention_mask

sequence: int8

splits:

- name: train

num_bytes: 1297540140

num_examples: 900000

download_size: 298907283

dataset_size: 1297540140

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "sur_test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

SetFit/rte | 2022-02-28T12:46:43.000Z | [

"region:us"

] | SetFit | null | null | null | 0 | 66 | # Glue RTE

This dataset is a port of the official [`rte` dataset](https://huggingface.co/datasets/glue/viewer/rte/train) on the Hub.

Note that the sentence1 and sentence2 columns have been renamed to text1 and text2 respectively.

Also, the test split is not labeled; the label column values are always -1.

|

arka0821/multi_document_summarization | 2022-10-20T19:13:26.000Z | [

"task_categories:summarization",

"annotations_creators:found",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"language:en",

"license:unknown",

"arxiv:2010.14235",

"region:us"

] | arka0821 | Multi-Document, a large-scale multi-document summarization dataset created from scientific articles. Multi-Document introduces a challenging multi-document summarization task: writing the related-work section of a paper based on its abstract and the articles it references. | @article{lu2020multi,

title={Multi-Document: A Large-scale Dataset for Extreme Multi-document Summarization of Scientific Articles},

author={Arka Das, India},

journal={arXiv preprint arXiv:2010.14235},

year={2022}

} | null | 3 | 66 | ---

annotations_creators:

- found

language_creators:

- found

language:

- en

license:

- unknown

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- summarization

task_ids:

- summarization-other-paper-abstract-generation

paperswithcode_id: multi-document

pretty_name: Multi-Document

---

# Dataset Card for Multi-Document

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Repository:** [Multi-Document repository](https://github.com/arka0821/multi_document_summarization)

- **Paper:** [Multi-Document: A Large-scale Dataset for Extreme Multi-document Summarization of Scientific Articles](https://arxiv.org/abs/2010.14235)

### Dataset Summary

Multi-Document, a large-scale multi-document summarization dataset created from scientific articles. Multi-Document introduces a challenging multi-document summarization task: writing the related-work section of a paper based on its abstract and the articles it references.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

The text in the dataset is in English

## Dataset Structure

### Data Instances

{"id": "n3ByHGrxH3bvfrvF", "docs": [{"id": "1394519630182457344", "text": "Clover Bio's COVID-19 vaccine candidate shows immune response against SARS-CoV-2 variants in mouse model https://t.co/wNWa9GQux5"}, {"id": "1398154482463170561", "text": "The purpose of the Vaccine is not to stop you from catching COVID 19. The vaccine introduces the immune system to an inactivated form of the SARS-CoV-2 coronavirus or a small part of it. This then equips the body with the ability to fight the virus better in case you get it. https://t.co/Cz9OU6Zi7P"}, {"id": "1354844652520792071", "text": "The Moderna mRNA COVID-19 vaccine appears to be effective against the novel, rapidly spreading variants of SARS-CoV-2.\nResearchers analysed blood samples from vaccinated people and monkeys- Both contained neutralising antibodies against the virus. \nPT1/2\n#COVID19vaccines #biotech https://t.co/ET1maJznot"}, {"id": "1340189698107518976", "text": "@KhandaniM Pfizer vaccine introduces viral surface protein which is constant accross SARS COV 2 variants into the body. Body builds antibodies against this protein, not any virus. These antibodies instructs macrophages & T-Cells to attack & destroy any COVID-19 v variant at infection point"}, {"id": "1374368989581778945", "text": "@DelthiaRicks \" Pfizer and BioNTech\u2019s COVID-19 vaccine is an mRNA vaccine, which does not use the live virus but rather a small portion of the viral sequence of the SARS-CoV-2 virus to instruct the body to produce the spike protein displayed on the surface of the virus.\""}, {"id": "1353354819315126273", "text": "Pfizer and BioNTech Publish Results of Study Showing COVID-19 Vaccine Elicits Antibodies that Neutralize Pseudovirus Bearing the SARS-CoV-2 U.K. Strain Spike Protein in Cell Culture | Pfizer https://t.co/YXcSnjLt8C"}, {"id": "1400821856362401792", "text": "Pfizer-BioNTech's covid-19 vaccine elicits lower levels of antibodies against the SARS-CoV-2\u00a0Delta variant\u00a0(B.1.617.2), first discovered in India, in comparison to other variants, said a research published in\u00a0Lancet\u00a0journal.\n https://t.co/IaCMX81X3b"}, {"id": "1367252963190665219", "text": "New research from UNC-Chapel Hill suggests that those who have previously experienced a SARS-CoV-2 infection develop a significant antibody response to the first dose of mRNA-based COVID-19 vaccine.\nhttps://t.co/B4vR1KUQ0w"}, {"id": "1375949502461394946", "text": "Mechanism of a COVID-19 nanoparticle vaccine candidate that elicits a broadly neutralizing antibody response to SARS-CoV-2 variants https://t.co/nc1L0uvtlI #bioRxiv"}, {"id": "1395428608349548550", "text": "JCI - Efficient maternal to neonatal transfer of antibodies against SARS-CoV-2 and BNT162b2 mRNA COVID-19 vaccine https://t.co/vIBcpPaKFZ"}], "summary": "The COVID-19 vaccine appears to be effective against the novel, rapidly spreading variants of SARS-CoV-2. Pfizer-BioNTech's COVID-19 vaccine use small portion of the viral sequence of the SARS-CoV-2 virus to equip the body with the ability to fight the virus better in case you get it."}

### Data Fields

{'id': text of paper abstract \

'docs': document id \

[

'id': id of text \

'text': text data \

]

'summary': summary text

}

### Data Splits

The data is split into a training, validation and test.

| train | validation | test |

|------:|-----------:|-----:|

| 50 | 10 | 5 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

```

@article{lu2020multi,

title={Multi-Document: A Large-scale Dataset for Extreme Multi-document Summarization of Scientific Articles},

author={Arka Das, India},

journal={arXiv preprint arXiv:2010.14235},

year={2022}

}

```

### Contributions

Thanks to [@arka0821] (https://github.com/arka0821/multi_document_summarization) for adding this dataset.

|

SetFit/tweet_eval_stance_abortion | 2022-09-05T13:09:04.000Z | [

"region:us"

] | SetFit | null | null | null | 0 | 66 | Entry not found |

tglcourse/lsun_church_train | 2022-10-19T12:20:45.000Z | [

"region:us"

] | tglcourse | null | null | null | 0 | 66 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

0: '0'

1: '1'

2: '2'

3: '3'

4: '4'

5: '5'

6: '6'

7: '7'

8: '8'

9: '9'

10: a

11: b

12: c

13: d

14: e

15: f

splits:

- name: test

num_bytes: -5033726665.536212

num_examples: 6312

- name: train

num_bytes: -94551870824.9868

num_examples: 119915

download_size: 2512548233

dataset_size: -99585597490.52301

---

# Dataset Card for "lsun_church_train"

Uploading lsun church train dataset for convenience

I've split this into 119915 train and 6312 test but if you want the original test set see https://github.com/fyu/lsun

Notebook that I used to download then upload this dataset: https://colab.research.google.com/drive/1_f-D2ENgmELNSB51L1igcnLx63PkveY2?usp=sharing

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

kuroneko5943/weibo16 | 2023-01-10T16:01:32.000Z | [

"task_categories:text-classification",

"task_ids:sentiment-classification",

"annotations_creators:machine-generated",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:zh",

"license:apache-2.0",

"weibo",

"sentiment"... | kuroneko5943 | GLUE, the General Language Understanding Evaluation benchmark

(https://gluebenchmark.com/) is a collection of resources for training,

evaluating, and analyzing natural language understanding systems. | \ | null | 4 | 66 | ---

annotations_creators:

- machine-generated

language:

- zh

language_creators:

- crowdsourced

license:

- apache-2.0

multilinguality:

- monolingual

pretty_name: weibo16

size_categories:

- 1K<n<10K

source_datasets:

- original

tags:

- weibo

- sentiment

task_categories:

- text-classification

task_ids:

- sentiment-classification

--- |

ruanchaves/hatebr | 2023-04-13T13:39:40.000Z | [

"task_categories:text-classification",

"task_ids:hate-speech-detection",

"annotations_creators:expert-generated",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:pt",

"instagram",

"doi:10.57967/hf/0274",

"region:us"

] | ruanchaves | HateBR is the first large-scale expert annotated corpus of Brazilian Instagram comments for hate speech and offensive language detection on the web and social media. The HateBR corpus was collected from Brazilian Instagram comments of politicians and manually annotated by specialists. It is composed of 7,000 documents annotated according to three different layers: a binary classification (offensive versus non-offensive comments), offensiveness-level (highly, moderately, and slightly offensive messages), and nine hate speech groups (xenophobia, racism, homophobia, sexism, religious intolerance, partyism, apology for the dictatorship, antisemitism, and fatphobia). Each comment was annotated by three different annotators and achieved high inter-annotator agreement. Furthermore, baseline experiments were implemented reaching 85% of F1-score outperforming the current literature models for the Portuguese language. Accordingly, we hope that the proposed expertly annotated corpus may foster research on hate speech and offensive language detection in the Natural Language Processing area. | @inproceedings{vargas2022hatebr,

title={HateBR: A Large Expert Annotated Corpus of Brazilian Instagram Comments for Offensive Language and Hate Speech Detection},

author={Vargas, Francielle and Carvalho, Isabelle and de G{\'o}es, Fabiana Rodrigues and Pardo, Thiago and Benevenuto, Fabr{\'\i}cio},

booktitle={Proceedings of the Thirteenth Language Resources and Evaluation Conference},

pages={7174--7183},

year={2022}

} | null | 5 | 66 | ---

annotations_creators:

- expert-generated

language:

- pt

language_creators:

- found

license: []

multilinguality:

- monolingual

pretty_name: HateBR - Offensive Language and Hate Speech Dataset in Brazilian Portuguese

size_categories:

- 1K<n<10K

source_datasets:

- original

tags:

- instagram

task_categories:

- text-classification

task_ids:

- hate-speech-detection

---

# Dataset Card for HateBR - Offensive Language and Hate Speech Dataset in Brazilian Portuguese

## Dataset Description

- **Homepage:** http://143.107.183.175:14581/

- **Repository:** https://github.com/franciellevargas/HateBR

- **Paper:** https://aclanthology.org/2022.lrec-1.777/

- **Leaderboard:**

- **Point of Contact:** https://franciellevargas.github.io/

### Dataset Summary

HateBR is the first large-scale expert annotated corpus of Brazilian Instagram comments for hate speech and offensive language detection on the web and social media. The HateBR corpus was collected from Brazilian Instagram comments of politicians and manually annotated by specialists. It is composed of 7,000 documents annotated according to three different layers: a binary classification (offensive versus non-offensive comments), offensiveness-level (highly, moderately, and slightly offensive messages), and nine hate speech groups (xenophobia, racism, homophobia, sexism, religious intolerance, partyism, apology for the dictatorship, antisemitism, and fatphobia). Each comment was annotated by three different annotators and achieved high inter-annotator agreement. Furthermore, baseline experiments were implemented reaching 85% of F1-score outperforming the current literature models for the Portuguese language. Accordingly, we hope that the proposed expertly annotated corpus may foster research on hate speech and offensive language detection in the Natural Language Processing area.

**Relevant Links:**

* [**Demo: Brasil Sem Ódio**](http://143.107.183.175:14581/)

* [**MOL - Multilingual Offensive Lexicon Annotated with Contextual Information**](https://github.com/franciellevargas/MOL)

### Supported Tasks and Leaderboards

Hate Speech Detection

### Languages

Portuguese

## Dataset Structure

### Data Instances

```

{'instagram_comments': 'Hipocrita!!',

'offensive_language': True,

'offensiveness_levels': 2,

'antisemitism': False,

'apology_for_the_dictatorship': False,

'fatphobia': False,

'homophobia': False,

'partyism': False,

'racism': False,

'religious_intolerance': False,

'sexism': False,

'xenophobia': False,

'offensive_&_non-hate_speech': True,

'non-offensive': False,

'specialist_1_hate_speech': False,

'specialist_2_hate_speech': False,

'specialist_3_hate_speech': False

}

```

### Data Fields

* **instagram_comments**: Instagram comments.

* **offensive_language**: A classification of comments as either offensive (True) or non-offensive (False).

* **offensiveness_levels**: A classification of comments based on their level of offensiveness, including highly offensive (3), moderately offensive (2), slightly offensive (1) and non-offensive (0).

* **antisemitism**: A classification of whether or not the comment contains antisemitic language.

* **apology_for_the_dictatorship**: A classification of whether or not the comment praises the military dictatorship period in Brazil.

* **fatphobia**: A classification of whether or not the comment contains language that promotes fatphobia.

* **homophobia**: A classification of whether or not the comment contains language that promotes homophobia.

* **partyism**: A classification of whether or not the comment contains language that promotes partyism.

* **racism**: A classification of whether or not the comment contains racist language.

* **religious_intolerance**: A classification of whether or not the comment contains language that promotes religious intolerance.

* **sexism**: A classification of whether or not the comment contains sexist language.

* **xenophobia**: A classification of whether or not the comment contains language that promotes xenophobia.

* **offensive_&_no-hate_speech**: A classification of whether or not the comment is offensive but does not contain hate speech.

* **specialist_1_hate_speech**: A classification of whether or not the comment was annotated by the first specialist as hate speech.

* **specialist_2_hate_speech**: A classification of whether or not the comment was annotated by the second specialist as hate speech.

* **specialist_3_hate_speech**: A classification of whether or not the comment was annotated by the third specialist as hate speech.

### Data Splits

The original authors of the dataset did not propose a standard data split. To address this, we use the [multi-label data stratification technique](http://scikit.ml/stratification.html) implemented at the scikit-multilearn library to propose a train-validation-test split. This method considers all classes for hate speech in the data and attempts to balance the representation of each class in the split.

| name |train|validation|test|

|---------|----:|----:|----:|

|hatebr|4480|1120|1400|

## Considerations for Using the Data

### Discussion of Biases

Please refer to [the HateBR paper](https://aclanthology.org/2022.lrec-1.777/) for a discussion of biases.

### Licensing Information

The HateBR dataset, including all its components, is provided strictly for academic and research purposes. The use of the dataset for any commercial or non-academic purpose is expressly prohibited without the prior written consent of [SINCH](https://www.sinch.com/).

### Citation Information

```

@inproceedings{vargas2022hatebr,

title={HateBR: A Large Expert Annotated Corpus of Brazilian Instagram Comments for Offensive Language and Hate Speech Detection},

author={Vargas, Francielle and Carvalho, Isabelle and de G{\'o}es, Fabiana Rodrigues and Pardo, Thiago and Benevenuto, Fabr{\'\i}cio},

booktitle={Proceedings of the Thirteenth Language Resources and Evaluation Conference},

pages={7174--7183},

year={2022}

}

```

### Contributions

Thanks to [@ruanchaves](https://github.com/ruanchaves) for adding this dataset. |

wangrui6/Zhihu-KOL | 2023-04-23T13:26:03.000Z | [

"task_categories:question-answering",

"language:zh",

"region:us"

] | wangrui6 | null | null | null | 92 | 66 | ---

dataset_info:

features:

- name: INSTRUCTION

dtype: string

- name: RESPONSE

dtype: string

- name: SOURCE

dtype: string

- name: METADATA

dtype: string

splits:

- name: train

num_bytes: 2295601241

num_examples: 1006218

download_size: 1501204472

dataset_size: 2295601241

task_categories:

- question-answering

language:

- zh

---

# Dataset Card for "Zhihu-KOL"

Zhihu data for training Open Assitant

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Elfsong/ClinicalDataset | 2023-03-05T06:43:13.000Z | [

"task_categories:summarization",

"task_categories:conversational",

"size_categories:1K<n<10K",

"language:en",

"region:us"

] | Elfsong | null | null | null | 11 | 66 | ---

task_categories:

- summarization

- conversational

language:

- en

pretty_name: MediQA

size_categories:

- 1K<n<10K

---

# MEDIQA-Chat 2023 Training/Validation Data

# Task A

The training set consists of 1,201 pairs of conversations and associated section headers and contents.

The validation set consists of 100 pairs of conversations and their summaries.

The full list of normalized section headers:

1. fam/sochx [FAMILY HISTORY/SOCIAL HISTORY]

2. genhx [HISTORY of PRESENT ILLNESS]

3. pastmedicalhx [PAST MEDICAL HISTORY]

4. cc [CHIEF COMPLAINT]

5. pastsurgical [PAST SURGICAL HISTORY]

6. allergy

7. ros [REVIEW OF SYSTEMS]

8. medications

9. assessment

10. exam

11. diagnosis

12. disposition

13. plan

14. edcourse [EMERGENCY DEPARTMENT COURSE]

15. immunizations

16. imaging

17. gynhx [GYNECOLOGIC HISTORY]

18. procedures

19. other_history

20. labs

# Task B

The training set consists of 67 pairs of conversations and full notes. The validation set includes 20 pairs of conversations and clinical notes.

Full encounter notes are expected to have at least one of four overall section divisions demarked by the first-occuring of its related section headers:

> | note_division | section_headers

> | subjective | chief complaint, history of present illness, hpi, subjective

> | objective_exam | physical exam, exam

> | objective_results | results, findings

> | assessment_and_plan | assessment, plan

Depending on the encounter, objective_exam and objective_results may not be relevant.

We encourage review the sample data as well as the evaluation script to understand the best demarkation headers for your generated note.

# Task C

The training set consists of 67 pairs of full doctor-patient conversations and notes and the validation set includes 20 pairs of full conversations and clinical notes (same as Task-B datasets). The Task-A training and validation sets (1,301 pairs) could be used as additional training data.

|

nuprl/ts-training | 2023-05-23T19:34:07.000Z | [

"region:us"

] | nuprl | null | null | null | 1 | 66 | ---

dataset_info:

features:

- name: hexsha

dtype: string

- name: size

dtype: int64

- name: ext

dtype: string

- name: lang

dtype: string

- name: max_stars_repo_path

dtype: string

- name: max_stars_repo_name

dtype: string

- name: max_stars_repo_head_hexsha

dtype: string

- name: max_stars_repo_licenses

sequence: string

- name: max_stars_count

dtype: float64

- name: max_stars_repo_stars_event_min_datetime

dtype: string

- name: max_stars_repo_stars_event_max_datetime

dtype: string

- name: max_issues_repo_path

dtype: string

- name: max_issues_repo_name

dtype: string

- name: max_issues_repo_head_hexsha

dtype: string

- name: max_issues_repo_licenses

sequence: string

- name: max_issues_count

dtype: float64

- name: max_issues_repo_issues_event_min_datetime

dtype: string

- name: max_issues_repo_issues_event_max_datetime

dtype: string

- name: max_forks_repo_path

dtype: string

- name: max_forks_repo_name

dtype: string

- name: max_forks_repo_head_hexsha

dtype: string

- name: max_forks_repo_licenses

sequence: string

- name: max_forks_count

dtype: float64

- name: max_forks_repo_forks_event_min_datetime

dtype: string

- name: max_forks_repo_forks_event_max_datetime

dtype: string

- name: content

dtype: string

- name: avg_line_length

dtype: float64

- name: max_line_length

dtype: int64

- name: alphanum_fraction

dtype: float64

splits:

- name: train

num_bytes: 42270977435

num_examples: 12133148

download_size: 17360072228

dataset_size: 42270977435

extra_gated_prompt: |-

## Terms of Use for The Stack

The Stack dataset is a collection of source code in over 300 programming languages. We ask that you read and acknowledge the following points before using the dataset:

1. The Stack is a collection of source code from repositories with various licenses. Any use of all or part of the code gathered in The Stack must abide by the terms of the original licenses, including attribution clauses when relevant. We facilitate this by providing provenance information for each data point.

2. The Stack is regularly updated to enact validated data removal requests. By clicking on "Access repository", you agree to update your own version of The Stack to the most recent usable version specified by the maintainers in [the following thread](https://huggingface.co/datasets/bigcode/the-stack/discussions/7). If you have questions about dataset versions and allowed uses, please also ask them in the dataset’s [community discussions](https://huggingface.co/datasets/bigcode/the-stack/discussions/new). We will also notify users via email when the latest usable version changes.

3. To host, share, or otherwise provide access to The Stack dataset, you must include [these Terms of Use](https://huggingface.co/datasets/bigcode/the-stack#terms-of-use-for-the-stack) and require users to agree to it.

By clicking on "Access repository" below, you accept that your contact information (email address and username) can be shared with the dataset maintainers as well.

extra_gated_fields:

Email: text

I have read the License and agree with its terms: checkbox

---

# Dataset Card for "ts-training"

This is a subset of the TypeScript portion of [The Stack (dedup)](https://huggingface.co/datasets/bigcode/the-stack-dedup), uploaded to the Hugging Face Hub for convenience.

Files with dates _after_ the December 31, 2021 cutoff are excluded from this dataset, since we are using those files for evaluation. Therefore, the remaining files (in this dataset) are available for training.

A file is considered to be after the cutoff if all of `max_{stars|forks|issues}_repo_{stars|forks|issues}_event_min_datetime` (i.e., the first timestamp for a `{stars|forks|issues}` event) are after the cutoff. Otherwise (or if all timestamps are missing), the file is included in this dataset.

## Versions

The default version (`main`) is current `v1.1`.

|Version|Description|

|-|-|

|`v1.1` | Original version of the training dataset, based on v1.1 of the Stack. Applies the training cutoff (December 31, 2021). Used to train OpenTau. |

|`v1.1full` | Training dataset based on v1.1 of the Stack. Does not apply the training cutoff (December 31, 2021), but applies a filter to remove files that do not parse as valid TypeScript. |

|`v1.1p1` | Revision of v1.1. Applies a filter to remove files that do not parse as valid TypeScript. |

|

slvnwhrl/blurbs-clustering-s2s | 2023-04-24T11:40:19.000Z | [

"size_categories:10K<n<100K",

"language:de",

"license:cc-by-nc-4.0",

"embeddings",

"clustering",

"benchmark",

"region:us"

] | slvnwhrl | null | null | null | 0 | 66 | ---

license: cc-by-nc-4.0

language:

- de

tags:

- embeddings

- clustering

- benchmark

size_categories:

- 10K<n<100K

---

This dataset can be used as a benchmark for clustering word embeddings for <b>German</b>.

The datasets contains book titles and is based on the dataset from the [GermEval 2019 Shared Task on Hierarchical Classification of Blurbs](https://www.inf.uni-hamburg.de/en/inst/ab/lt/resources/data/germeval-2019-hmc.html). It contains 17'726 unqiue samples, 28 splits with 177 to 16'425 samples and 4 to 93 unique classes. Splits are built similarly to [MTEB](https://github.com/embeddings-benchmark/mteb)'s [ArxivClusteringS2S](https://huggingface.co/datasets/mteb/arxiv-clustering-s2s).

Have a look at [German Text Embedding Clustering Benchmark](https://github.com/ClimSocAna/tecb-de) for more infos, datasets and evaluation results. |

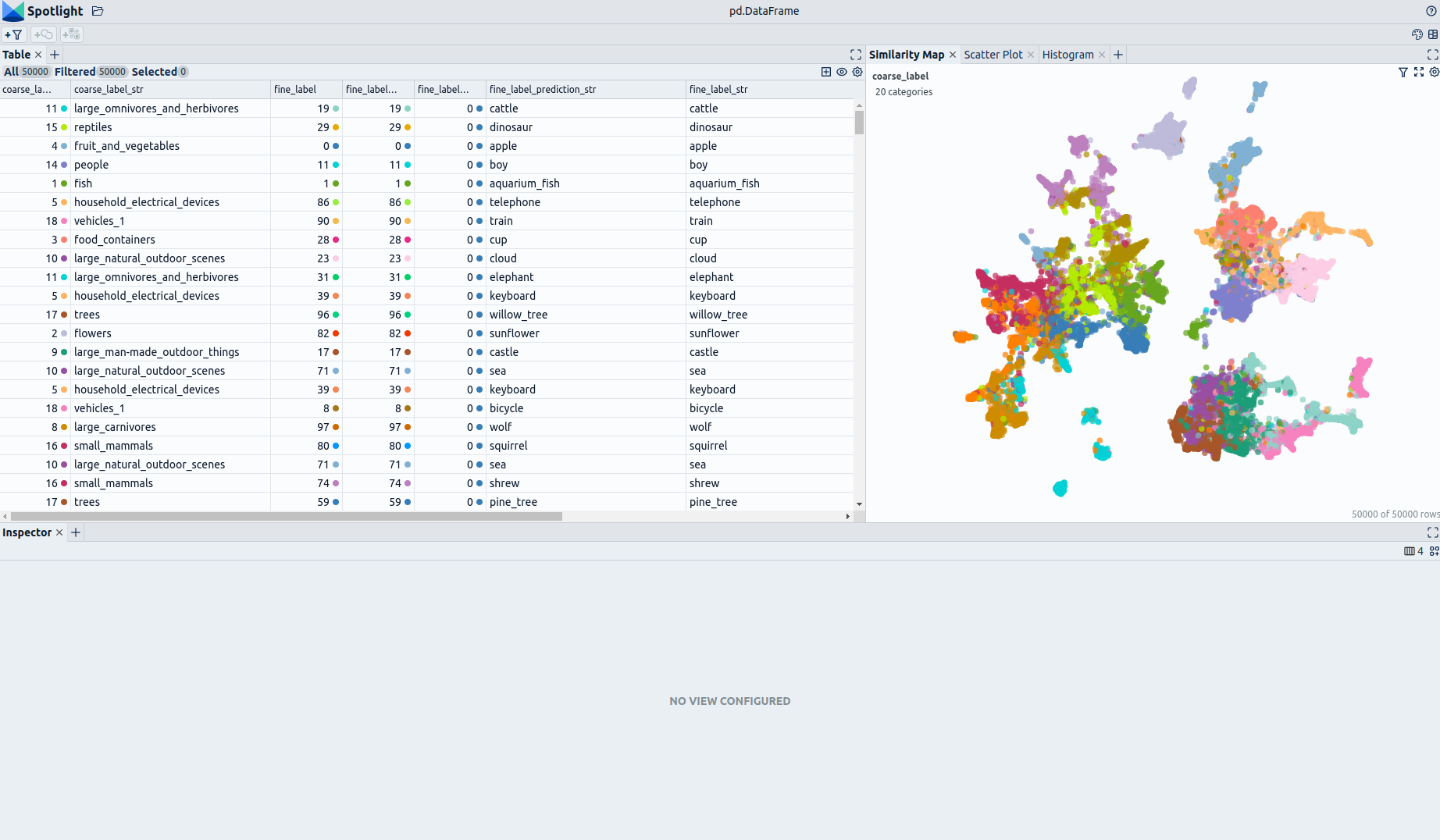

renumics/cifar100-enriched | 2023-06-06T12:23:33.000Z | [

"task_categories:image-classification",

"annotations_creators:crowdsourced",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:extended|other-80-Million-Tiny-Images",

"language:en",

"license:mit",

"image classification",

"cifar-100",

"cifar-... | renumics | The CIFAR-100 dataset consists of 60000 32x32 colour images in 100 classes, with 600 images

per class. There are 500 training images and 100 testing images per class. There are 50000 training images and 10000 test images. The 100 classes are grouped into 20 superclasses.

There are two labels per image - fine label (actual class) and coarse label (superclass). | @TECHREPORT{Krizhevsky09learningmultiple,

author = {Alex Krizhevsky},

title = {Learning multiple layers of features from tiny images},

institution = {},

year = {2009}

} | null | 4 | 66 | ---

license: mit

task_categories:

- image-classification

pretty_name: CIFAR-100

source_datasets:

- extended|other-80-Million-Tiny-Images

paperswithcode_id: cifar-100

size_categories:

- 10K<n<100K

tags:

- image classification

- cifar-100

- cifar-100-enriched

- embeddings

- enhanced

- spotlight

- renumics

language:

- en

multilinguality:

- monolingual

annotations_creators:

- crowdsourced

language_creators:

- found

---

# Dataset Card for CIFAR-100-Enriched (Enhanced by Renumics)

## Dataset Description

- **Homepage:** [Renumics Homepage](https://renumics.com/?hf-dataset-card=cifar100-enriched)

- **GitHub** [Spotlight](https://github.com/Renumics/spotlight)

- **Dataset Homepage** [CS Toronto Homepage](https://www.cs.toronto.edu/~kriz/cifar.html#:~:text=The%20CIFAR%2D100%20dataset)

- **Paper:** [Learning Multiple Layers of Features from Tiny Images](https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf)

### Dataset Summary

📊 [Data-centric AI](https://datacentricai.org) principles have become increasingly important for real-world use cases.

At [Renumics](https://renumics.com/?hf-dataset-card=cifar100-enriched) we believe that classical benchmark datasets and competitions should be extended to reflect this development.

🔍 This is why we are publishing benchmark datasets with application-specific enrichments (e.g. embeddings, baseline results, uncertainties, label error scores). We hope this helps the ML community in the following ways:

1. Enable new researchers to quickly develop a profound understanding of the dataset.

2. Popularize data-centric AI principles and tooling in the ML community.

3. Encourage the sharing of meaningful qualitative insights in addition to traditional quantitative metrics.

📚 This dataset is an enriched version of the [CIFAR-100 Dataset](https://www.cs.toronto.edu/~kriz/cifar.html).

### Explore the Dataset

The enrichments allow you to quickly gain insights into the dataset. The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) enables that with just a few lines of code:

Install datasets and Spotlight via [pip](https://packaging.python.org/en/latest/key_projects/#pip):

```python

!pip install renumics-spotlight datasets

```

Load the dataset from huggingface in your notebook:

```python

import datasets

dataset = datasets.load_dataset("renumics/cifar100-enriched", split="train")

```

Start exploring with a simple view that leverages embeddings to identify relevant data segments:

```python

from renumics import spotlight

df = dataset.to_pandas()

df_show = df.drop(columns=['embedding', 'probabilities'])

spotlight.show(df_show, port=8000, dtype={"image": spotlight.Image, "embedding_reduced": spotlight.Embedding})

```

You can use the UI to interactively configure the view on the data. Depending on the concrete tasks (e.g. model comparison, debugging, outlier detection) you might want to leverage different enrichments and metadata.

### CIFAR-100 Dataset

The CIFAR-100 dataset consists of 60000 32x32 colour images in 100 classes, with 600 images per class. There are 50000 training images and 10000 test images.

The 100 classes in the CIFAR-100 are grouped into 20 superclasses. Each image comes with a "fine" label (the class to which it belongs) and a "coarse" label (the superclass to which it belongs).

The classes are completely mutually exclusive.

We have enriched the dataset by adding **image embeddings** generated with a [Vision Transformer](https://huggingface.co/google/vit-base-patch16-224).

Here is the list of classes in the CIFAR-100:

| Superclass | Classes |

|---------------------------------|----------------------------------------------------|

| aquatic mammals | beaver, dolphin, otter, seal, whale |

| fish | aquarium fish, flatfish, ray, shark, trout |

| flowers | orchids, poppies, roses, sunflowers, tulips |

| food containers | bottles, bowls, cans, cups, plates |

| fruit and vegetables | apples, mushrooms, oranges, pears, sweet peppers |

| household electrical devices | clock, computer keyboard, lamp, telephone, television|

| household furniture | bed, chair, couch, table, wardrobe |

| insects | bee, beetle, butterfly, caterpillar, cockroach |

| large carnivores | bear, leopard, lion, tiger, wolf |

| large man-made outdoor things | bridge, castle, house, road, skyscraper |

| large natural outdoor scenes | cloud, forest, mountain, plain, sea |

| large omnivores and herbivores | camel, cattle, chimpanzee, elephant, kangaroo |

| medium-sized mammals | fox, porcupine, possum, raccoon, skunk |

| non-insect invertebrates | crab, lobster, snail, spider, worm |

| people | baby, boy, girl, man, woman |

| reptiles | crocodile, dinosaur, lizard, snake, turtle |

| small mammals | hamster, mouse, rabbit, shrew, squirrel |

| trees | maple, oak, palm, pine, willow |

| vehicles 1 | bicycle, bus, motorcycle, pickup truck, train |

| vehicles 2 | lawn-mower, rocket, streetcar, tank, tractor |

### Supported Tasks and Leaderboards

- `image-classification`: The goal of this task is to classify a given image into one of 100 classes. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-cifar-100).

### Languages

English class labels.

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```python

{

'image': '/huggingface/datasets/downloads/extracted/f57c1a3fbca36f348d4549e820debf6cc2fe24f5f6b4ec1b0d1308a80f4d7ade/0/0.png',

'full_image': <PIL.PngImagePlugin.PngImageFile image mode=RGB size=32x32 at 0x7F15737C9C50>,

'fine_label': 19,

'coarse_label': 11,

'fine_label_str': 'cattle',

'coarse_label_str': 'large_omnivores_and_herbivores',

'fine_label_prediction': 19,

'fine_label_prediction_str': 'cattle',

'fine_label_prediction_error': 0,

'split': 'train',

'embedding': [-1.2482988834381104,

0.7280710339546204, ...,

0.5312759280204773],

'probabilities': [4.505949982558377e-05,

7.286163599928841e-05, ...,

6.577593012480065e-05],

'embedding_reduced': [1.9439491033554077, -5.35720682144165]

}

```

### Data Fields

| Feature | Data Type |

|---------------------------------|------------------------------------------------|

| image | Value(dtype='string', id=None) |

| full_image | Image(decode=True, id=None) |

| fine_label | ClassLabel(names=[...], id=None) |

| coarse_label | ClassLabel(names=[...], id=None) |

| fine_label_str | Value(dtype='string', id=None) |

| coarse_label_str | Value(dtype='string', id=None) |

| fine_label_prediction | ClassLabel(names=[...], id=None) |

| fine_label_prediction_str | Value(dtype='string', id=None) |

| fine_label_prediction_error | Value(dtype='int32', id=None) |

| split | Value(dtype='string', id=None) |

| embedding | Sequence(feature=Value(dtype='float32', id=None), length=768, id=None) |

| probabilities | Sequence(feature=Value(dtype='float32', id=None), length=100, id=None) |

| embedding_reduced | Sequence(feature=Value(dtype='float32', id=None), length=2, id=None) |

### Data Splits

| Dataset Split | Number of Images in Split | Samples per Class (fine) |

| ------------- |---------------------------| -------------------------|

| Train | 50000 | 500 |

| Test | 10000 | 100 |

## Dataset Creation

### Curation Rationale

The CIFAR-10 and CIFAR-100 are labeled subsets of the [80 million tiny images](http://people.csail.mit.edu/torralba/tinyimages/) dataset.

They were collected by Alex Krizhevsky, Vinod Nair, and Geoffrey Hinton.

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

If you use this dataset, please cite the following paper:

```

@article{krizhevsky2009learning,

added-at = {2021-01-21T03:01:11.000+0100},

author = {Krizhevsky, Alex},

biburl = {https://www.bibsonomy.org/bibtex/2fe5248afe57647d9c85c50a98a12145c/s364315},

interhash = {cc2d42f2b7ef6a4e76e47d1a50c8cd86},

intrahash = {fe5248afe57647d9c85c50a98a12145c},

keywords = {},

pages = {32--33},

timestamp = {2021-01-21T03:01:11.000+0100},

title = {Learning Multiple Layers of Features from Tiny Images},

url = {https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf},

year = 2009

}

```

### Contributions

Alex Krizhevsky, Vinod Nair, Geoffrey Hinton, and Renumics GmbH. |

michaelwzhu/ShenNong_TCM_Dataset | 2023-06-25T13:29:04.000Z | [

"license:apache-2.0",

"region:us"

] | michaelwzhu | null | null | null | 19 | 66 | ---

license: apache-2.0

---

|

heegyu/korquad-chat-v1 | 2023-05-06T09:12:14.000Z | [

"license:mit",

"region:us"

] | heegyu | null | null | null | 5 | 66 | ---

license: mit

---

- 총 9619개의 한국어 지식기반 대화 데이터셋입니다.

- KorQuAD 1.0 데이터에 있는 문서를 ChatGPT에게 준 뒤 생성했습니다.

서로를 호칭하는 부분은 아래처럼 처리되어있습니다.

```

안녕하세요 <|bot|>. 요즘 어떻게 지내시나요?\n<bot> 안녕하세요 <|user|>.

```

데이터 샘플

```

{

"source": "korquad-chat",

"text": "

<sys>유전자의 이름은 인도의 수도 뉴델리의 이름을 따 붙여졌는데, 이는 2009년 용 (Yong) 등이 처음 기록한 이 케이스의 환자가 인도에서 처음 박테리아 감염을 확인하였기 때문이다. 박테리아 감염 치료가 뉴델리 병원에서 실패로 끝난 후 그는 모국 스웨덴으로 송환되었으며, 그곳에서 카바페넴에 내성을 보이는 폐렴막대균의 유전자가 분리되었다. 용 등은 이 내성 메커니즘이 \"분명히 인도에서 생겨났으나, 이것이 얼마나 퍼져있는지를 보여주는 데이터가 인도에 존재한다\"라고 주장하였다. 보고된 바에 따르면 이 유전자는 파키스탄, 인도, 그리고 여러 아시아 국가에서 발견되었으며 이곳 병원에 입원했던 사람들에 의해 유럽으로 옮겨졌다. 그 중 대다수는 저가의 성형 수술을 위해 의료 관광을 떠났다가 수술 중 감염이 된 사람들이었다.\n

<usr> 메탈로-베타락타마제가 어디서 발견되었나요?\n

<bot> 이 유전자는 파키스탄, 인도, 그리고 여러 아시아 국가에서 발견된 것으로 보고되었습니다.\n

<usr> 이 박테리아 감염은 어떻게 전파되는 건가요?\n

<bot> 이 병원균은 주로 의료 관광으로 인해 수술 중 감염된 사람들에 의해 유럽으로 전파되었습니다.\n

<usr> 이 유전자는 카바페넴에 반응하지 않는 내성 케이스에서 처음 발견됐는데, 이 내성 메커니즘이 다른 국가에도 퍼진 건가요?\n

<bot> 이 내성 메커니즘이 여러 나라에서 발견된 것으로 보고되기 때문에, 퍼져있다고 볼 수 있습니다. 그러나, 이것이 얼마나 퍼져있는지를 보여주는 데이터가 인도에 존재한다는 주장이 있습니다.\n

<usr> 이 박테리아 감염은 어떤 질병을 일으키나요?\n

<bot> 이 메탈로-베타락타마제가 감염하면, 카바페넴 유형의 항생제를 사용할 수 없고, 치명적인 감염증으로 발전할 가능성이 있습니다.\n

<usr> 이 박테리아 감염을 예방하거나 치료할 방법이 있을까요?\n

<bot> 이 박테리아 감염을 예방하거나 치료할 수 있는 항생제는 여전히 발견되지 않았습니다. 따라서, 이 병균을 포함한 감염 예방의 중요성이 더욱 부각되고 있습니다."

}

```

ChatGPT 데이터 생성 프롬프트

```

A와 B 두 사람이 나누는 대화를 만들어주세요.

1. A는 주어진 글에서 말하는 내용에 관해 B에게 질문을 하거나 요청합니다. B는 글의 내용을 이해하고 완전히 학습한 상태입니다. B는 A의 질문과 요청에 자신이 학습한 내용을 바탕으로 대답을 해야 합니다.

2. B는 글에 존재하지 않거나 사실에 근거하지 않은 대답을 해서는 안됩니다.

3. 각 발화는 최대 3개의 문장으로 이루어져 있습니다.

4. 대화는 A와 B가 서로 주고받으며 순서대로 A의 발화는 A:, B의 발화는 B: 로 시작해야하고 띄어쓰기로 구분합니다.

5. A와 B가 글을 읽었다는 내용이나, 글에 대해서 평가하거나, 글을 언급하는 내용이 포함되어서는 안됩니다.

6. A와 B가 서로를 언급할 때는 A씨, B씨로 호칭해야합니다.

7. A와 B는 서로 8번 대화를 주고받아야 합니다. 대화의 전체길이는 최대한 200 단어가 넘지 않도록 대화를 끝내야합니다.

제목: #title

#text

``` |

nisaar/Lawyer_GPT_India | 2023-06-16T05:49:30.000Z | [

"license:apache-2.0",

"region:us"

] | nisaar | null | null | null | 3 | 66 | ---

license: apache-2.0

---

**Dataset Card for Indian Polity Question-Answer Dataset**

---

**Dataset Summary**

This dataset contains a collection of question-answer pairs on the subject of Indian Polity. The aim is to provide comprehensive answers to a wide range of questions pertaining to the Indian Constitution, judiciary, legislative, and various socio-political issues in India. It serves as a valuable resource for learners, researchers, and AI systems seeking to understand or respond to questions about Indian Polity.

---

**Supported Tasks and Leaderboards**

This dataset is useful for tasks such as question answering, text comprehension, language modelling, and conversational AI development. There's no specific leaderboard associated with this dataset.

---

**Languages**

The dataset is in English.

---

**Dataset Structure**

- **Data Instances**

Each instance in the dataset consists of a pair of a human-posed question and an assistant-provided answer on a specific topic in Indian Polity.

- **Data Fields**

1. Question: A text field containing the question.

2. Answer: A text field containing the corresponding answer.

- **Data Splits**

The dataset isn't divided into standard splits of training, validation, and test sets.

---

**Dataset Creation**

- **Curation Rationale**

The dataset was curated to provide accurate and comprehensive answers to a range of questions about Indian Polity. It covers fundamental rights, constitutional provisions, legislative procedures, and socio-political issues, among others.

- **Source Data**

- **Initial Data Collection and Normalization**

Data collection involved generating questions on Indian Polity topics and providing detailed answers.

- **Who are the source language producers?**

The language was produced by a language model trained by OpenAI.

---

**Annotations**

- **Annotation process**

Not applicable as the dataset doesn't contain annotations.

- **Who are the annotators?**

Not applicable as the dataset doesn't contain annotations.

---