id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

DeLZaky/JcommonsenseQA_plus_JapaneseLogicaldeductionQA | 2023-10-07T09:26:19.000Z | [

"region:us"

] | DeLZaky | null | null | null | 0 | 20 | ---

annotations_creators:

features:

- name: "問題"

- name: "選択肢0"

- name: "選択肢1"

- name: "選択肢2"

- name: "選択肢3"

- name: "選択肢4"

- name: "解答"

...

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

DeLZaky/JapaneseSummalization_task | 2023-10-07T08:29:27.000Z | [

"region:us"

] | DeLZaky | null | null | null | 0 | 20 | ---

# For reference on model card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/datasetcard.md?plain=1

# Doc / guide: https://huggingface.co/docs/hub/datasets-cards

{}

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

princeton-nlp/SWE-bench | 2023-10-10T19:25:47.000Z | [

"region:us"

] | princeton-nlp | null | null | null | 2 | 20 | ---

dataset_info:

features:

- name: instance_id

dtype: string

- name: base_commit

dtype: string

- name: hints_text

dtype: string

- name: created_at

dtype: string

- name: test_patch

dtype: string

- name: repo

dtype: string

- name: problem_statement

dtype: string

- name: version

dtype: string

- name: FAIL_TO_PASS

dtype: string

- name: PASS_TO_PASS

dtype: string

- name: environment_setup_commit

dtype: string

- name: patch

dtype: string

splits:

- name: train

num_bytes: 399498956

num_examples: 19008

- name: test

num_bytes: 41860075

num_examples: 2294

download_size: 125366079

dataset_size: 441359031

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

---

### Dataset Summary

SWE-bench is a dataset that tests systems’ ability to solve GitHub issues automatically. The dataset collects 2,294 Issue-Pull Request pairs from 12 popular Python. Evaluation is performed by unit test verification using post-PR behavior as the reference solution.

### Supported Tasks and Leaderboards

SWE-bench proposes a new task: issue resolution provided a full repository and GitHub issue. The leaderboard can be found at www.swebench.com

### Languages

The text of the dataset is primarily English, but we make no effort to filter or otherwise clean based on language type.

## Dataset Structure

### Data Instances

An example of a SWE-bench datum is as follows:

```

instance_id: (str) - A formatted instance identifier, usually as repo_owner__repo_name-PR-number.

patch: (str) - The gold patch, the patch generated by the PR (minus test-related code), that resolved the issue.

repo: (str) - The repository owner/name identifier from GitHub.

base_commit: (str) - The commit hash of the repository representing the HEAD of the repository before the solution PR is applied.

hints_text: (str) - Comments made on the issue prior to the creation of the solution PR’s first commit creation date.

created_at: (str) - The creation date of the pull request.

test_patch: (str) - A test-file patch that was contributed by the solution PR.

Problem_statement: (str) - The issue title and body.

Version: (str) - Installation version to use for running evaluation.

environment_setup_commit: (str) - commit hash to use for environment setup and installation.

FAIL_TO_PASS: (str) - A json list of strings that represent the set of tests resolved by the PR and tied to the issue resolution.

PASS_TO_PASS: (str) - A json list of strings that represent tests that should pass before and after the PR application.

```

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

kor_3i4k | 2023-01-25T14:33:43.000Z | [

"task_categories:text-classification",

"task_ids:intent-classification",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"language:ko",

"license:cc-by-4.0",

"arxiv:1811.04231",

... | null | This dataset is designed to identify speaker intention based on real-life spoken utterance in Korean into one of

7 categories: fragment, description, question, command, rhetorical question, rhetorical command, utterances. | @article{cho2018speech,

title={Speech Intention Understanding in a Head-final Language: A Disambiguation Utilizing Intonation-dependency},

author={Cho, Won Ik and Lee, Hyeon Seung and Yoon, Ji Won and Kim, Seok Min and Kim, Nam Soo},

journal={arXiv preprint arXiv:1811.04231},

year={2018}

} | null | 1 | 19 | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- ko

license:

- cc-by-4.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- intent-classification

pretty_name: 3i4K

dataset_info:

features:

- name: label

dtype:

class_label:

names:

'0': fragment

'1': statement

'2': question

'3': command

'4': rhetorical question

'5': rhetorical command

'6': intonation-dependent utterance

- name: text

dtype: string

splits:

- name: train

num_bytes: 3102158

num_examples: 55134

- name: test

num_bytes: 344028

num_examples: 6121

download_size: 2956114

dataset_size: 3446186

---

# Dataset Card for 3i4K

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [3i4K](https://github.com/warnikchow/3i4k)

- **Repository:** [3i4K](https://github.com/warnikchow/3i4k)

- **Paper:** [Speech Intention Understanding in a Head-final Language: A Disambiguation Utilizing Intonation-dependency](https://arxiv.org/abs/1811.04231)

- **Point of Contact:** [Won Ik Cho](wicho@hi.snu.ac.kr)

### Dataset Summary

The 3i4K dataset is a set of frequently used Korean words (corpus provided by the Seoul National University Speech Language Processing Lab) and manually created questions/commands containing short utterances. The goal is to identify the speaker intention of a spoken utterance based on its transcript, and whether in some cases, requires using auxiliary acoustic features. The classification system decides whether the utterance is a fragment, statement, question, command, rhetorical question, rhetorical command, or an intonation-dependent utterance. This is important because in head-final languages like Korean, the level of the intonation plays a significant role in identifying the speaker's intention.

### Supported Tasks and Leaderboards

* `intent-classification`: The dataset can be trained with a CNN or BiLISTM-Att to identify the intent of a spoken utterance in Korean and the performance can be measured by its F1 score.

### Languages

The text in the dataset is in Korean and the associated is BCP-47 code is `ko-KR`.

## Dataset Structure

### Data Instances

An example data instance contains a short utterance and it's label:

```

{

"label": 3,

"text": "선수잖아 이 케이스 저 케이스 많을 거 아냐 선배라고 뭐 하나 인생에 도움도 안주는데 내가 이렇게 진지하게 나올 때 제대로 한번 조언 좀 해줘보지"

}

```

### Data Fields

* `label`: determines the intention of the utterance and can be one of `fragment` (0), `statement` (1), `question` (2), `command` (3), `rhetorical question` (4), `rhetorical command` (5) and `intonation-depedent utterance` (6).

* `text`: the text in Korean about common topics like housework, weather, transportation, etc.

### Data Splits

The data is split into a training set comrpised of 55134 examples and a test set of 6121 examples.

## Dataset Creation

### Curation Rationale

For head-final languages like Korean, intonation can be a determining factor in identifying the speaker's intention. The purpose of this dataset is to to determine whether an utterance is a fragment, statement, question, command, or a rhetorical question/command using the intonation-depedency from the head-finality. This is expected to improve language understanding of spoken Korean utterances and can be beneficial for speech-to-text applications.

### Source Data

#### Initial Data Collection and Normalization

The corpus was provided by Seoul National University Speech Language Processing Lab, a set of frequently used words from the National Institute of Korean Language and manually created commands and questions. The utterances cover topics like weather, transportation and stocks. 20k lines were randomly selected.

#### Who are the source language producers?

Korean speakers produced the commands and questions.

### Annotations

#### Annotation process

Utterances were classified into seven categories. They were provided clear instructions on the annotation guidelines (see [here](https://docs.google.com/document/d/1-dPL5MfsxLbWs7vfwczTKgBq_1DX9u1wxOgOPn1tOss/edit#) for the guidelines) and the resulting inter-annotator agreement was 0.85 and the final decision was done by majority voting.

#### Who are the annotators?

The annotation was completed by three Seoul Korean L1 speakers.

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

The dataset is curated by Won Ik Cho, Hyeon Seung Lee, Ji Won Yoon, Seok Min Kim and Nam Soo Kim.

### Licensing Information

The dataset is licensed under the CC BY-SA-4.0.

### Citation Information

```

@article{cho2018speech,

title={Speech Intention Understanding in a Head-final Language: A Disambiguation Utilizing Intonation-dependency},

author={Cho, Won Ik and Lee, Hyeon Seung and Yoon, Ji Won and Kim, Seok Min and Kim, Nam Soo},

journal={arXiv preprint arXiv:1811.04231},

year={2018}

}

```

### Contributions

Thanks to [@stevhliu](https://github.com/stevhliu) for adding this dataset. |

swda | 2023-01-25T14:45:15.000Z | [

"task_categories:text-classification",

"task_ids:multi-label-classification",

"annotations_creators:found",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"source_datasets:extended|other-Switchboard-1 Telephone Speech Corpus, Release 2",

"language:en",

"licens... | null | The Switchboard Dialog Act Corpus (SwDA) extends the Switchboard-1 Telephone Speech Corpus, Release 2 with

turn/utterance-level dialog-act tags. The tags summarize syntactic, semantic, and pragmatic information about the

associated turn. The SwDA project was undertaken at UC Boulder in the late 1990s.

The SwDA is not inherently linked to the Penn Treebank 3 parses of Switchboard, and it is far from straightforward to

align the two resources. In addition, the SwDA is not distributed with the Switchboard's tables of metadata about the

conversations and their participants. | @techreport{Jurafsky-etal:1997,

Address = {Boulder, CO},

Author = {Jurafsky, Daniel and Shriberg, Elizabeth and Biasca, Debra},

Institution = {University of Colorado, Boulder Institute of Cognitive Science},

Number = {97-02},

Title = {Switchboard {SWBD}-{DAMSL} Shallow-Discourse-Function Annotation Coders Manual, Draft 13},

Year = {1997}}

@article{Shriberg-etal:1998,

Author = {Shriberg, Elizabeth and Bates, Rebecca and Taylor, Paul and Stolcke, Andreas and Jurafsky, Daniel and Ries, Klaus and Coccaro, Noah and Martin, Rachel and Meteer, Marie and Van Ess-Dykema, Carol},

Journal = {Language and Speech},

Number = {3--4},

Pages = {439--487},

Title = {Can Prosody Aid the Automatic Classification of Dialog Acts in Conversational Speech?},

Volume = {41},

Year = {1998}}

@article{Stolcke-etal:2000,

Author = {Stolcke, Andreas and Ries, Klaus and Coccaro, Noah and Shriberg, Elizabeth and Bates, Rebecca and Jurafsky, Daniel and Taylor, Paul and Martin, Rachel and Meteer, Marie and Van Ess-Dykema, Carol},

Journal = {Computational Linguistics},

Number = {3},

Pages = {339--371},

Title = {Dialogue Act Modeling for Automatic Tagging and Recognition of Conversational Speech},

Volume = {26},

Year = {2000}} | null | 7 | 19 | ---

annotations_creators:

- found

language_creators:

- found

language:

- en

license:

- cc-by-nc-sa-3.0

multilinguality:

- monolingual

size_categories:

- 100K<n<1M

source_datasets:

- extended|other-Switchboard-1 Telephone Speech Corpus, Release 2

task_categories:

- text-classification

task_ids:

- multi-label-classification

pretty_name: The Switchboard Dialog Act Corpus (SwDA)

dataset_info:

features:

- name: swda_filename

dtype: string

- name: ptb_basename

dtype: string

- name: conversation_no

dtype: int64

- name: transcript_index

dtype: int64

- name: act_tag

dtype:

class_label:

names:

'0': b^m^r

'1': qw^r^t

'2': aa^h

'3': br^m

'4': fa^r

'5': aa,ar

'6': sd^e(^q)^r

'7': ^2

'8': sd;qy^d

'9': oo

'10': bk^m

'11': aa^t

'12': cc^t

'13': qy^d^c

'14': qo^t

'15': ng^m

'16': qw^h

'17': qo^r

'18': aa

'19': qy^d^t

'20': qrr^d

'21': br^r

'22': fx

'23': sd,qy^g

'24': ny^e

'25': ^h^t

'26': fc^m

'27': qw(^q)

'28': co

'29': o^t

'30': b^m^t

'31': qr^d

'32': qw^g

'33': ad(^q)

'34': qy(^q)

'35': na^r

'36': am^r

'37': qr^t

'38': ad^c

'39': qw^c

'40': bh^r

'41': h^t

'42': ft^m

'43': ba^r

'44': qw^d^t

'45': '%'

'46': t3

'47': nn

'48': bd

'49': h^m

'50': h^r

'51': sd^r

'52': qh^m

'53': ^q^t

'54': sv^2

'55': ft

'56': ar^m

'57': qy^h

'58': sd^e^m

'59': qh^r

'60': cc

'61': fp^m

'62': ad

'63': qo

'64': na^m^t

'65': fo^c

'66': qy

'67': sv^e^r

'68': aap

'69': 'no'

'70': aa^2

'71': sv(^q)

'72': sv^e

'73': nd

'74': '"'

'75': bf^2

'76': bk

'77': fp

'78': nn^r^t

'79': fa^c

'80': ny^t

'81': ny^c^r

'82': qw

'83': qy^t

'84': b

'85': fo

'86': qw^r

'87': am

'88': bf^t

'89': ^2^t

'90': b^2

'91': x

'92': fc

'93': qr

'94': no^t

'95': bk^t

'96': bd^r

'97': bf

'98': ^2^g

'99': qh^c

'100': ny^c

'101': sd^e^r

'102': br

'103': fe

'104': by

'105': ^2^r

'106': fc^r

'107': b^m

'108': sd,sv

'109': fa^t

'110': sv^m

'111': qrr

'112': ^h^r

'113': na

'114': fp^r

'115': o

'116': h,sd

'117': t1^t

'118': nn^r

'119': cc^r

'120': sv^c

'121': co^t

'122': qy^r

'123': sv^r

'124': qy^d^h

'125': sd

'126': nn^e

'127': ny^r

'128': b^t

'129': ba^m

'130': ar

'131': bf^r

'132': sv

'133': bh^m

'134': qy^g^t

'135': qo^d^c

'136': qo^d

'137': nd^t

'138': aa^r

'139': sd^2

'140': sv;sd

'141': qy^c^r

'142': qw^m

'143': qy^g^r

'144': no^r

'145': qh(^q)

'146': sd;sv

'147': bf(^q)

'148': +

'149': qy^2

'150': qw^d

'151': qy^g

'152': qh^g

'153': nn^t

'154': ad^r

'155': oo^t

'156': co^c

'157': ng

'158': ^q

'159': qw^d^c

'160': qrr^t

'161': ^h

'162': aap^r

'163': bc^r

'164': sd^m

'165': bk^r

'166': qy^g^c

'167': qr(^q)

'168': ng^t

'169': arp

'170': h

'171': bh

'172': sd^c

'173': ^g

'174': o^r

'175': qy^c

'176': sd^e

'177': fw

'178': ar^r

'179': qy^m

'180': bc

'181': sv^t

'182': aap^m

'183': sd;no

'184': ng^r

'185': bf^g

'186': sd^e^t

'187': o^c

'188': b^r

'189': b^m^g

'190': ba

'191': t1

'192': qy^d(^q)

'193': nn^m

'194': ny

'195': ba,fe

'196': aa^m

'197': qh

'198': na^m

'199': oo(^q)

'200': qw^t

'201': na^t

'202': qh^h

'203': qy^d^m

'204': ny^m

'205': fa

'206': qy^d

'207': fc^t

'208': sd(^q)

'209': qy^d^r

'210': bf^m

'211': sd(^q)^t

'212': ft^t

'213': ^q^r

'214': sd^t

'215': sd(^q)^r

'216': ad^t

- name: damsl_act_tag

dtype:

class_label:

names:

'0': ad

'1': qo

'2': qy

'3': arp_nd

'4': sd

'5': h

'6': bh

'7': 'no'

'8': ^2

'9': ^g

'10': ar

'11': aa

'12': sv

'13': bk

'14': fp

'15': qw

'16': b

'17': ba

'18': t1

'19': oo_co_cc

'20': +

'21': ny

'22': qw^d

'23': x

'24': qh

'25': fc

'26': fo_o_fw_"_by_bc

'27': aap_am

'28': '%'

'29': bf

'30': t3

'31': nn

'32': bd

'33': ng

'34': ^q

'35': br

'36': qy^d

'37': fa

'38': ^h

'39': b^m

'40': ft

'41': qrr

'42': na

- name: caller

dtype: string

- name: utterance_index

dtype: int64

- name: subutterance_index

dtype: int64

- name: text

dtype: string

- name: pos

dtype: string

- name: trees

dtype: string

- name: ptb_treenumbers

dtype: string

- name: talk_day

dtype: string

- name: length

dtype: int64

- name: topic_description

dtype: string

- name: prompt

dtype: string

- name: from_caller

dtype: int64

- name: from_caller_sex

dtype: string

- name: from_caller_education

dtype: int64

- name: from_caller_birth_year

dtype: int64

- name: from_caller_dialect_area

dtype: string

- name: to_caller

dtype: int64

- name: to_caller_sex

dtype: string

- name: to_caller_education

dtype: int64

- name: to_caller_birth_year

dtype: int64

- name: to_caller_dialect_area

dtype: string

splits:

- name: train

num_bytes: 128498512

num_examples: 213543

- name: validation

num_bytes: 34749819

num_examples: 56729

- name: test

num_bytes: 2560127

num_examples: 4514

download_size: 14456364

dataset_size: 165808458

---

# Dataset Card for SwDA

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [The Switchboard Dialog Act Corpus](http://compprag.christopherpotts.net/swda.html)

- **Repository:** [NathanDuran/Switchboard-Corpus](https://github.com/cgpotts/swda)

- **Paper:** [The Switchboard Dialog Act Corpus](http://compprag.christopherpotts.net/swda.html)

= **Leaderboard: [Dialogue act classification](https://github.com/sebastianruder/NLP-progress/blob/master/english/dialogue.md#dialogue-act-classification)**

- **Point of Contact:** [Christopher Potts](https://web.stanford.edu/~cgpotts/)

### Dataset Summary

The Switchboard Dialog Act Corpus (SwDA) extends the Switchboard-1 Telephone Speech Corpus, Release 2 with

turn/utterance-level dialog-act tags. The tags summarize syntactic, semantic, and pragmatic information about the

associated turn. The SwDA project was undertaken at UC Boulder in the late 1990s.

The SwDA is not inherently linked to the Penn Treebank 3 parses of Switchboard, and it is far from straightforward to

align the two resources. In addition, the SwDA is not distributed with the Switchboard's tables of metadata about the

conversations and their participants.

### Supported Tasks and Leaderboards

| Model | Accuracy | Paper / Source | Code |

| ------------- | :-----:| --- | --- |

| H-Seq2seq (Colombo et al., 2020) | 85.0 | [Guiding attention in Sequence-to-sequence models for Dialogue Act prediction](https://ojs.aaai.org/index.php/AAAI/article/view/6259/6115)

| SGNN (Ravi et al., 2018) | 83.1 | [Self-Governing Neural Networks for On-Device Short Text Classification](https://www.aclweb.org/anthology/D18-1105.pdf)

| CASA (Raheja et al., 2019) | 82.9 | [Dialogue Act Classification with Context-Aware Self-Attention](https://www.aclweb.org/anthology/N19-1373.pdf)

| DAH-CRF (Li et al., 2019) | 82.3 | [A Dual-Attention Hierarchical Recurrent Neural Network for Dialogue Act Classification](https://www.aclweb.org/anthology/K19-1036.pdf)

| ALDMN (Wan et al., 2018) | 81.5 | [Improved Dynamic Memory Network for Dialogue Act Classification with Adversarial Training](https://arxiv.org/pdf/1811.05021.pdf)

| CRF-ASN (Chen et al., 2018) | 81.3 | [Dialogue Act Recognition via CRF-Attentive Structured Network](https://arxiv.org/abs/1711.05568)

| Pretrained H-Transformer (Chapuis et al., 2020) | 79.3 | [Hierarchical Pre-training for Sequence Labelling in Spoken Dialog] (https://www.aclweb.org/anthology/2020.findings-emnlp.239)

| Bi-LSTM-CRF (Kumar et al., 2017) | 79.2 | [Dialogue Act Sequence Labeling using Hierarchical encoder with CRF](https://arxiv.org/abs/1709.04250) | [Link](https://github.com/YanWenqiang/HBLSTM-CRF) |

| RNN with 3 utterances in context (Bothe et al., 2018) | 77.34 | [A Context-based Approach for Dialogue Act Recognition using Simple Recurrent Neural Networks](https://arxiv.org/abs/1805.06280) | |

### Languages

The language supported is English.

## Dataset Structure

Utterance are tagged with the [SWBD-DAMSL](https://web.stanford.edu/~jurafsky/ws97/manual.august1.html) DA.

### Data Instances

An example from the dataset is:

`{'act_tag': 115, 'caller': 'A', 'conversation_no': 4325, 'damsl_act_tag': 26, 'from_caller': 1632, 'from_caller_birth_year': 1962, 'from_caller_dialect_area': 'WESTERN', 'from_caller_education': 2, 'from_caller_sex': 'FEMALE', 'length': 5, 'pos': 'Okay/UH ./.', 'prompt': 'FIND OUT WHAT CRITERIA THE OTHER CALLER WOULD USE IN SELECTING CHILD CARE SERVICES FOR A PRESCHOOLER. IS IT EASY OR DIFFICULT TO FIND SUCH CARE?', 'ptb_basename': '4/sw4325', 'ptb_treenumbers': '1', 'subutterance_index': 1, 'swda_filename': 'sw00utt/sw_0001_4325.utt', 'talk_day': '03/23/1992', 'text': 'Okay. /', 'to_caller': 1519, 'to_caller_birth_year': 1971, 'to_caller_dialect_area': 'SOUTH MIDLAND', 'to_caller_education': 1, 'to_caller_sex': 'FEMALE', 'topic_description': 'CHILD CARE', 'transcript_index': 0, 'trees': '(INTJ (UH Okay) (. .) (-DFL- E_S))', 'utterance_index': 1}`

### Data Fields

* `swda_filename`: (str) The filename: directory/basename.

* `ptb_basename`: (str) The Treebank filename: add ".pos" for POS and ".mrg" for trees

* `conversation_no`: (int) The conversation Id, to key into the metadata database.

* `transcript_index`: (int) The line number of this item in the transcript (counting only utt lines).

* `act_tag`: (list of str) The Dialog Act Tags (separated by ||| in the file). Check Dialog act annotations for more details.

* `damsl_act_tag`: (list of str) The Dialog Act Tags of the 217 variation tags.

* `caller`: (str) A, B, @A, @B, @@A, @@B

* `utterance_index`: (int) The encoded index of the utterance (the number in A.49, B.27, etc.)

* `subutterance_index`: (int) Utterances can be broken across line. This gives the internal position.

* `text`: (str) The text of the utterance

* `pos`: (str) The POS tagged version of the utterance, from PtbBasename+.pos

* `trees`: (str) The tree(s) containing this utterance (separated by ||| in the file). Use `[Tree.fromstring(t) for t in row_value.split("|||")]` to convert to (list of nltk.tree.Tree).

* `ptb_treenumbers`: (list of int) The tree numbers in the PtbBasename+.mrg

* `talk_day`: (str) Date of talk.

* `length`: (int) Length of talk in seconds.

* `topic_description`: (str) Short description of topic that's being discussed.

* `prompt`: (str) Long decription/query/instruction.

* `from_caller`: (int) The numerical Id of the from (A) caller.

* `from_caller_sex`: (str) MALE, FEMALE.

* `from_caller_education`: (int) Called education level 0, 1, 2, 3, 9.

* `from_caller_birth_year`: (int) Caller birth year YYYY.

* `from_caller_dialect_area`: (str) MIXED, NEW ENGLAND, NORTH MIDLAND, NORTHERN, NYC, SOUTH MIDLAND, SOUTHERN, UNK, WESTERN.

* `to_caller`: (int) The numerical Id of the to (B) caller.

* `to_caller_sex`: (str) MALE, FEMALE.

* `to_caller_education`: (int) Called education level 0, 1, 2, 3, 9.

* `to_caller_birth_year`: (int) Caller birth year YYYY.

* `to_caller_dialect_area`: (str) MIXED, NEW ENGLAND, NORTH MIDLAND, NORTHERN, NYC, SOUTH MIDLAND, SOUTHERN, UNK, WESTERN.

### Dialog act annotations

| | name | act_tag | example | train_count | full_count |

|----- |------------------------------- |---------------- |-------------------------------------------------- |------------- |------------ |

| 1 | Statement-non-opinion | sd | Me, I'm in the legal department. | 72824 | 75145 |

| 2 | Acknowledge (Backchannel) | b | Uh-huh. | 37096 | 38298 |

| 3 | Statement-opinion | sv | I think it's great | 25197 | 26428 |

| 4 | Agree/Accept | aa | That's exactly it. | 10820 | 11133 |

| 5 | Abandoned or Turn-Exit | % | So, - | 10569 | 15550 |

| 6 | Appreciation | ba | I can imagine. | 4633 | 4765 |

| 7 | Yes-No-Question | qy | Do you have to have any special training? | 4624 | 4727 |

| 8 | Non-verbal | x | [Laughter], [Throat_clearing] | 3548 | 3630 |

| 9 | Yes answers | ny | Yes. | 2934 | 3034 |

| 10 | Conventional-closing | fc | Well, it's been nice talking to you. | 2486 | 2582 |

| 11 | Uninterpretable | % | But, uh, yeah | 2158 | 15550 |

| 12 | Wh-Question | qw | Well, how old are you? | 1911 | 1979 |

| 13 | No answers | nn | No. | 1340 | 1377 |

| 14 | Response Acknowledgement | bk | Oh, okay. | 1277 | 1306 |

| 15 | Hedge | h | I don't know if I'm making any sense or not. | 1182 | 1226 |

| 16 | Declarative Yes-No-Question | qy^d | So you can afford to get a house? | 1174 | 1219 |

| 17 | Other | fo_o_fw_by_bc | Well give me a break, you know. | 1074 | 883 |

| 18 | Backchannel in question form | bh | Is that right? | 1019 | 1053 |

| 19 | Quotation | ^q | You can't be pregnant and have cats | 934 | 983 |

| 20 | Summarize/reformulate | bf | Oh, you mean you switched schools for the kids. | 919 | 952 |

| 21 | Affirmative non-yes answers | na | It is. | 836 | 847 |

| 22 | Action-directive | ad | Why don't you go first | 719 | 746 |

| 23 | Collaborative Completion | ^2 | Who aren't contributing. | 699 | 723 |

| 24 | Repeat-phrase | b^m | Oh, fajitas | 660 | 688 |

| 25 | Open-Question | qo | How about you? | 632 | 656 |

| 26 | Rhetorical-Questions | qh | Who would steal a newspaper? | 557 | 575 |

| 27 | Hold before answer/agreement | ^h | I'm drawing a blank. | 540 | 556 |

| 28 | Reject | ar | Well, no | 338 | 346 |

| 29 | Negative non-no answers | ng | Uh, not a whole lot. | 292 | 302 |

| 30 | Signal-non-understanding | br | Excuse me? | 288 | 298 |

| 31 | Other answers | no | I don't know | 279 | 286 |

| 32 | Conventional-opening | fp | How are you? | 220 | 225 |

| 33 | Or-Clause | qrr | or is it more of a company? | 207 | 209 |

| 34 | Dispreferred answers | arp_nd | Well, not so much that. | 205 | 207 |

| 35 | 3rd-party-talk | t3 | My goodness, Diane, get down from there. | 115 | 117 |

| 36 | Offers, Options, Commits | oo_co_cc | I'll have to check that out | 109 | 110 |

| 37 | Self-talk | t1 | What's the word I'm looking for | 102 | 103 |

| 38 | Downplayer | bd | That's all right. | 100 | 103 |

| 39 | Maybe/Accept-part | aap_am | Something like that | 98 | 105 |

| 40 | Tag-Question | ^g | Right? | 93 | 92 |

| 41 | Declarative Wh-Question | qw^d | You are what kind of buff? | 80 | 80 |

| 42 | Apology | fa | I'm sorry. | 76 | 79 |

| 43 | Thanking | ft | Hey thanks a lot | 67 | 78 |

### Data Splits

I used info from the [Probabilistic-RNN-DA-Classifier](https://github.com/NathanDuran/Probabilistic-RNN-DA-Classifier) repo:

The same training and test splits as used by [Stolcke et al. (2000)](https://web.stanford.edu/~jurafsky/ws97).

The development set is a subset of the training set to speed up development and testing used in the paper [Probabilistic Word Association for Dialogue Act Classification with Recurrent Neural Networks](https://www.researchgate.net/publication/326640934_Probabilistic_Word_Association_for_Dialogue_Act_Classification_with_Recurrent_Neural_Networks_19th_International_Conference_EANN_2018_Bristol_UK_September_3-5_2018_Proceedings).

|Dataset |# Transcripts |# Utterances |

|-----------|:-------------:|:-------------:|

|Training |1115 |192,768 |

|Validation |21 |3,196 |

|Test |19 |4,088 |

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

The SwDA is not inherently linked to the Penn Treebank 3 parses of Switchboard, and it is far from straightforward to align the two resources Calhoun et al. 2010, §2.4. In addition, the SwDA is not distributed with the Switchboard's tables of metadata about the conversations and their participants.

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[Christopher Potts](https://web.stanford.edu/~cgpotts/), Stanford Linguistics.

### Licensing Information

This work is licensed under a [Creative Commons Attribution-NonCommercial-ShareAlike 3.0 Unported License.](http://creativecommons.org/licenses/by-nc-sa/3.0/)

### Citation Information

```

@techreport{Jurafsky-etal:1997,

Address = {Boulder, CO},

Author = {Jurafsky, Daniel and Shriberg, Elizabeth and Biasca, Debra},

Institution = {University of Colorado, Boulder Institute of Cognitive Science},

Number = {97-02},

Title = {Switchboard {SWBD}-{DAMSL} Shallow-Discourse-Function Annotation Coders Manual, Draft 13},

Year = {1997}}

@article{Shriberg-etal:1998,

Author = {Shriberg, Elizabeth and Bates, Rebecca and Taylor, Paul and Stolcke, Andreas and Jurafsky, Daniel and Ries, Klaus and Coccaro, Noah and Martin, Rachel and Meteer, Marie and Van Ess-Dykema, Carol},

Journal = {Language and Speech},

Number = {3--4},

Pages = {439--487},

Title = {Can Prosody Aid the Automatic Classification of Dialog Acts in Conversational Speech?},

Volume = {41},

Year = {1998}}

@article{Stolcke-etal:2000,

Author = {Stolcke, Andreas and Ries, Klaus and Coccaro, Noah and Shriberg, Elizabeth and Bates, Rebecca and Jurafsky, Daniel and Taylor, Paul and Martin, Rachel and Meteer, Marie and Van Ess-Dykema, Carol},

Journal = {Computational Linguistics},

Number = {3},

Pages = {339--371},

Title = {Dialogue Act Modeling for Automatic Tagging and Recognition of Conversational Speech},

Volume = {26},

Year = {2000}}

```

### Contributions

Thanks to [@gmihaila](https://github.com/gmihaila) for adding this dataset. |

Alvenir/nst-da-16khz | 2021-11-29T08:58:25.000Z | [

"region:us"

] | Alvenir | null | null | null | 1 | 19 | # NST Danish 16kHz dataset from Sprakbanken

Data is from sprakbanken and can be accessed using following [link](https://www.nb.no/sprakbanken/en/resource-catalogue/oai-nb-no-sbr-19/).

|

benjaminbeilharz/better_daily_dialog | 2022-01-22T18:03:59.000Z | [

"region:us"

] | benjaminbeilharz | null | null | null | 1 | 19 | Entry not found |

anjandash/java-8m-methods-v1 | 2022-07-01T20:32:32.000Z | [

"multilinguality:monolingual",

"language:java",

"license:mit",

"region:us"

] | anjandash | null | null | null | 1 | 19 | ---

language:

- java

license:

- mit

multilinguality:

- monolingual

pretty_name:

- java-8m-methods-v1

--- |

iluvvatar/NEREL | 2023-03-30T13:37:20.000Z | [

"task_categories:token-classification",

"task_ids:named-entity-recognition",

"multilinguality:monolingual",

"language:ru",

"region:us"

] | iluvvatar | null | null | null | 4 | 19 | ---

language:

- ru

multilinguality:

- monolingual

task_categories:

- token-classification

task_ids:

- named-entity-recognition

pretty_name: NEREL

---

# NEREL dataset

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Structure](#dataset-structure)

- [Citation Information](#citation-information)

- [Contacts](#contacts)

## Dataset Description

NEREL dataset (https://doi.org/10.48550/arXiv.2108.13112) is

a Russian dataset for named entity recognition and relation extraction.

NEREL is significantly larger than existing Russian datasets:

to date it contains 56K annotated named entities and 39K annotated relations.

Its important difference from previous datasets is annotation of nested named

entities, as well as relations within nested entities and at the discourse

level. NEREL can facilitate development of novel models that can extract

relations between nested named entities, as well as relations on both sentence

and document levels. NEREL also contains the annotation of events involving

named entities and their roles in the events.

You can see full entity types list in a subset "ent_types"

and full list of relation types in a subset "rel_types".

## Dataset Structure

There are three "configs" or "subsets" of the dataset.

Using

`load_dataset('MalakhovIlya/NEREL', 'ent_types')['ent_types']`

you can download list of entity types (

Dataset({features: ['type', 'link']})

) where "link" is a knowledge base name used in entity linking task.

Using

`load_dataset('MalakhovIlya/NEREL', 'rel_types')['rel_types']`

you can download list of entity types (

Dataset({features: ['type', 'arg1', 'arg2']})

) where "arg1" and "arg2" are lists of entity types that can take part in such

"type" of relation. \<ENTITY> stands for any type.

Using

`load_dataset('MalakhovIlya/NEREL', 'data')` or `load_dataset('MalakhovIlya/NEREL')`

you can download the data itself,

DatasetDict with 3 splits: "train", "test" and "dev".

Each of them contains text document with annotated entities, relations and

links.

"entities" are used in named-entity recognition task (see https://en.wikipedia.org/wiki/Named-entity_recognition).

"relations" are used in relationship extraction task (see https://en.wikipedia.org/wiki/Relationship_extraction).

"links" are used in entity linking task (see https://en.wikipedia.org/wiki/Entity_linking)

Each entity is represented by a string of the following format:

`"<id>\t<type> <start> <stop>\t<text>"`, where

`<id>` is an entity id,

`<type>` is one of entity types,

`<start>` is a position of the first symbol of entity in text,

`<stop>` is the last symbol position in text +1.

Each relation is represented by a string of the following format:

`"<id>\t<type> Arg1:<arg1_id> Arg2:<arg2_id>"`, where

`<id>` is a relation id,

`<arg1_id>` and `<arg2_id>` are entity ids.

Each link is represented by a string of the following format:

`"<id>\tReference <ent_id> <link>\t<text>"`, where

`<id>` is a link id,

`<ent_id>` is an entity id,

`<link>` is a reference to knowledge base entity (example: "Wikidata:Q1879675" if link exists, else "Wikidata:NULL"),

`<text>` is a name of entity in knowledge base if link exists, else empty string.

## Citation Information

@article{loukachevitch2021nerel,

title={NEREL: A Russian Dataset with Nested Named Entities, Relations and Events},

author={Loukachevitch, Natalia and Artemova, Ekaterina and Batura, Tatiana and Braslavski, Pavel and Denisov, Ilia and Ivanov, Vladimir and Manandhar, Suresh and Pugachev, Alexander and Tutubalina, Elena},

journal={arXiv preprint arXiv:2108.13112},

year={2021}

}

|

wanyu/IteraTeR_v2 | 2022-10-24T18:58:08.000Z | [

"task_categories:text2text-generation",

"annotations_creators:crowdsourced",

"language_creators:found",

"multilinguality:monolingual",

"source_datasets:original",

"language:en",

"license:apache-2.0",

"conditional-text-generation",

"text-editing",

"arxiv:2204.03685",

"region:us"

] | wanyu | null | null | null | 1 | 19 | ---

annotations_creators:

- crowdsourced

language_creators:

- found

language:

- en

license:

- apache-2.0

multilinguality:

- monolingual

source_datasets:

- original

task_categories:

- text2text-generation

task_ids: []

pretty_name: IteraTeR_v2

language_bcp47:

- en-US

tags:

- conditional-text-generation

- text-editing

---

Paper: [Read, Revise, Repeat: A System Demonstration for Human-in-the-loop Iterative Text Revision](https://arxiv.org/abs/2204.03685)

Authors: Wanyu Du*, Zae Myung Kim*, Vipul Raheja, Dhruv Kumar, Dongyeop Kang

Github repo: https://github.com/vipulraheja/IteraTeR

Watch our system demonstration below!

[](https://www.youtube.com/watch?v=lK08tIpEoaE)

|

Bingsu/KSS_Dataset | 2022-07-02T00:10:10.000Z | [

"task_categories:text-to-speech",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"source_datasets:original",

"language:ko",

"license:cc-by-nc-sa-4.0",

"region:us"

] | Bingsu | null | null | null | 3 | 19 | ---

annotations_creators:

- expert-generated

language_creators:

- expert-generated

language:

- ko

license:

- cc-by-nc-sa-4.0

multilinguality:

- monolingual

pretty_name: Korean Single Speaker Speech Dataset

size_categories:

- 10K<n<100K

source_datasets:

- original

task_categories:

- text-to-speech

task_ids: []

---

## Dataset Description

- **Homepage:** [Korean Single Speaker Speech Dataset](https://www.kaggle.com/datasets/bryanpark/korean-single-speaker-speech-dataset)

- **Repository:** [Kyubyong/kss](https://github.com/Kyubyong/kss)

- **Paper:** N/A

- **Leaderboard:** N/A

- **Point of Contact:** N/A

# Description of the original author

### KSS Dataset: Korean Single speaker Speech Dataset

KSS Dataset is designed for the Korean text-to-speech task. It consists of audio files recorded by a professional female voice actoress and their aligned text extracted from my books. As a copyright holder, by courtesy of the publishers, I release this dataset to the public. To my best knowledge, this is the first publicly available speech dataset for Korean.

### File Format

Each line in `transcript.v.1.3.txt` is delimited by `|` into six fields.

- A. Audio file path

- B. Original script

- C. Expanded script

- D. Decomposed script

- E. Audio duration (seconds)

- F. English translation

e.g.,

1/1_0470.wav|저는 보통 20분 정도 낮잠을 잡니다.|저는 보통 이십 분 정도 낮잠을 잡니다.|저는 보통 이십 분 정도 낮잠을 잡니다.|4.1|I usually take a nap for 20 minutes.

### Specification

- Audio File Type: wav

- Total Running Time: 12+ hours

- Sample Rate: 44,100 KHZ

- Number of Audio Files: 12,853

- Sources

- |1| [Kyubyong Park, 500 Basic Korean Verbs, Tuttle Publishing, 2015.](https://www.amazon.com/500-Basic-Korean-Verbs-Comprehensive/dp/0804846057/ref=sr_1_1?s=books&ie=UTF8&qid=1522911616&sr=1-1&keywords=kyubyong+park)|

- |2| [Kyubyong Park, 500 Basic Korean Adjectives 2nd Ed., Youkrak, 2015.](http://www.hanbooks.com/500bakoad.html)|

- |3| [Kyubyong Park, Essential Korean Vocabulary, Tuttle Publishing, 2015.](https://www.amazon.com/Essential-Korean-Vocabulary-Phrases-Fluently/dp/0804843252/ref=sr_1_3?s=books&ie=UTF8&qid=1522911806&sr=1-3&keywords=kyubyong+park)|

- |4| [Kyubyong Park, Tuttle Learner's Korean-English Dictionary, Tuttle Publishing, 2012.](https://www.amazon.com/Tuttle-Learners-Korean-English-Dictionary-Essential/dp/0804841500/ref=sr_1_8?s=books&ie=UTF8&qid=1522911806&sr=1-8&keywords=kyubyong+park)|

### License

NC-SA 4.0. You CANNOT use this dataset for ANY COMMERCIAL purpose. Otherwise, you can freely use this.

### Citation

If you want to cite KSS Dataset, please refer to this:

Kyubyong Park, KSS Dataset: Korean Single speaker Speech Dataset, https://kaggle.com/bryanpark/korean-single-speaker-speech-dataset, 2018

### Reference

Check out [this](https://github.com/Kyubyong/kss) for a project using this KSS Dataset.

### Contact

You can contact me at kbpark.linguist@gmail.com.

April, 2018.

Kyubyong Park

### Dataset Summary

12,853 Korean audio files with transcription.

### Supported Tasks and Leaderboards

text-to-speech

### Languages

korean

## Dataset Structure

### Data Instances

```python

>>> from datasets import load_dataset

>>> dataset = load_dataset("Bingsu/KSS_Dataset")

>>> dataset["train"].features

{'audio': Audio(sampling_rate=44100, mono=True, decode=True, id=None),

'original_script': Value(dtype='string', id=None),

'expanded_script': Value(dtype='string', id=None),

'decomposed_script': Value(dtype='string', id=None),

'duration': Value(dtype='float32', id=None),

'english_translation': Value(dtype='string', id=None)}

```

```python

>>> dataset["train"][0]

{'audio': {'path': None,

'array': array([ 0.00000000e+00, 3.05175781e-05, -4.57763672e-05, ...,

0.00000000e+00, -3.05175781e-05, -3.05175781e-05]),

'sampling_rate': 44100},

'original_script': '그는 괜찮은 척하려고 애쓰는 것 같았다.',

'expanded_script': '그는 괜찮은 척하려고 애쓰는 것 같았다.',

'decomposed_script': '그는 괜찮은 척하려고 애쓰는 것 같았다.',

'duration': 3.5,

'english_translation': 'He seemed to be pretending to be okay.'}

```

### Data Splits

| | train |

|---------------|------:|

| # of examples | 12853 | |

Filippo/osdg_cd | 2023-10-08T09:57:13.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"annotations_creators:crowdsourced",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:10K<n<100K",

"language:en",

"license:cc-by-4.0",

"region:us"

] | Filippo | The OSDG Community Dataset (OSDG-CD) is a public dataset of thousands of text excerpts, which were validated by approximately 1,000 OSDG Community Platform (OSDG-CP) citizen scientists from over 110 countries, with respect to the Sustainable Development Goals (SDGs). | @dataset{osdg_2023_8397907,

author = {OSDG and

UNDP IICPSD SDG AI Lab and

PPMI},

title = {OSDG Community Dataset (OSDG-CD)},

month = oct,

year = 2023,

note = {{This CSV file uses UTF-8 character encoding. For

easy access on MS Excel, open the file using Data

→ From Text/CSV. Please split CSV data into

different columns by using a TAB delimiter.}},

publisher = {Zenodo},

version = {2023.10},

doi = {10.5281/zenodo.8397907},

url = {https://doi.org/10.5281/zenodo.8397907}

} | null | 1 | 19 | ---

annotations_creators:

- crowdsourced

language_creators:

- crowdsourced

language:

- en

license:

- cc-by-4.0

multilinguality:

- monolingual

size_categories:

- 10K<n<100K

task_categories:

- text-classification

task_ids:

- natural-language-inference

pretty_name: OSDG Community Dataset (OSDG-CD)

dataset_info:

config_name: main_config

features:

- name: doi

dtype: string

- name: text_id

dtype: string

- name: text

dtype: string

- name: sdg

dtype: uint16

- name: label

dtype:

class_label:

names:

'0': SDG 1

'1': SDG 2

'2': SDG 3

'3': SDG 4

'4': SDG 5

'5': SDG 6

'6': SDG 7

'7': SDG 8

'8': SDG 9

'9': SDG 10

'10': SDG 11

'11': SDG 12

'12': SDG 13

'13': SDG 14

'14': SDG 15

'15': SDG 16

- name: labels_negative

dtype: uint16

- name: labels_positive

dtype: uint16

- name: agreement

dtype: float32

splits:

- name: train

num_bytes: 30151244

num_examples: 42355

download_size: 29770590

dataset_size: 30151244

---

# Dataset Card for OSDG-CD

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [OSDG-CD homepage](https://zenodo.org/record/8397907)

### Dataset Summary

The OSDG Community Dataset (OSDG-CD) is a public dataset of thousands of text excerpts, which were validated by approximately 1,000 OSDG Community Platform (OSDG-CP) citizen scientists from over 110 countries, with respect to the Sustainable Development Goals (SDGs).

> NOTES

>

> * There are currently no examples for SDGs 16 and 17. See [this GitHub issue](https://github.com/osdg-ai/osdg-data/issues/3).

> * As of July 2023, there areexamples also for SDG 16.

### Supported Tasks and Leaderboards

TBD

### Languages

The language of the dataset is English.

## Dataset Structure

### Data Instances

For each instance, there is a string for the text, a string for the SDG, and an integer for the label.

```

{'text': 'Each section states the economic principle, reviews international good practice and discusses the situation in Brazil.',

'label': 5}

```

The average token count for the premises and hypotheses are given below:

| Feature | Mean Token Count |

| ---------- | ---------------- |

| Premise | 14.1 |

| Hypothesis | 8.3 |

### Data Fields

- `doi`: Digital Object Identifier of the original document

- `text_id`: unique text identifier

- `text`: text excerpt from the document

- `sdg`: the SDG the text is validated against

- `label`: an integer from `0` to `17` which corresponds to the `sdg` field

- `labels_negative`: the number of volunteers who rejected the suggested SDG label

- `labels_positive`: the number of volunteers who accepted the suggested SDG label

- `agreement`: agreement score based on the formula

### Data Splits

The OSDG-CD dataset has 1 splits: _train_.

| Dataset Split | Number of Instances in Split |

| ------------- |----------------------------- |

| Train | 32,327 |

## Dataset Creation

### Curation Rationale

The [The OSDG Community Dataset (OSDG-CD)](https://zenodo.org/record/8397907) was developed as a benchmark for ...

with the goal of producing a dataset large enough to train models using neural methodologies.

### Source Data

#### Initial Data Collection and Normalization

TBD

#### Who are the source language producers?

TBD

### Annotations

#### Annotation process

TBD

#### Who are the annotators?

TBD

### Personal and Sensitive Information

The dataset does not contain any personal information about the authors or the crowdworkers.

## Considerations for Using the Data

### Social Impact of Dataset

TBD

## Additional Information

TBD

### Dataset Curators

TBD

### Licensing Information

The OSDG Community Dataset (OSDG-CD) is licensed under a [Creative Commons Attribution 4.0 International License](http://creativecommons.org/licenses/by/4.0/).

### Citation Information

```

@dataset{osdg_2023_8397907,

author = {OSDG and

UNDP IICPSD SDG AI Lab and

PPMI},

title = {OSDG Community Dataset (OSDG-CD)},

month = oct,

year = 2023,

note = {{This CSV file uses UTF-8 character encoding. For

easy access on MS Excel, open the file using Data

→ From Text/CSV. Please split CSV data into

different columns by using a TAB delimiter.}},

publisher = {Zenodo},

version = {2023.10},

doi = {10.5281/zenodo.8397907},

url = {https://doi.org/10.5281/zenodo.8397907}

}

```

### Contributions

TBD

|

laion/laion2B-multi-aesthetic | 2023-01-18T20:04:36.000Z | [

"region:us"

] | laion | null | null | null | 4 | 19 | details at https://github.com/LAION-AI/laion-datasets/blob/main/laion-aesthetic.md |

codeparrot/github-jupyter-text-code-pairs | 2022-10-25T09:30:34.000Z | [

"task_categories:text-generation",

"task_ids:language-modeling",

"multilinguality:monolingual",

"size_categories:unknown",

"language:code",

"license:other",

"region:us"

] | codeparrot | null | null | null | 3 | 19 | ---

annotations_creators: []

language:

- code

license:

- other

multilinguality:

- monolingual

size_categories:

- unknown

task_categories:

- text-generation

task_ids:

- language-modeling

pretty_name: github-jupyter-text-code-pairs

---

This is a parsed version of [github-jupyter-parsed](https://huggingface.co/datasets/codeparrot/github-jupyter-parsed), with markdown and code pairs. We provide the preprocessing script in [preprocessing.py](https://huggingface.co/datasets/codeparrot/github-jupyter-parsed-v2/blob/main/preprocessing.py). The data is deduplicated and consists of 451662 examples.

For similar datasets with text and Python code, there is [CoNaLa](https://huggingface.co/datasets/neulab/conala) benchmark from StackOverflow, with some samples curated by annotators. |

sepidmnorozy/Arabic_sentiment | 2022-08-02T16:12:59.000Z | [

"region:us"

] | sepidmnorozy | null | null | null | 0 | 19 | Entry not found |

batterydata/pos_tagging | 2022-09-05T16:05:33.000Z | [

"task_categories:token-classification",

"language:en",

"license:apache-2.0",

"region:us"

] | batterydata | null | null | null | 0 | 19 | ---

language:

- en

license:

- apache-2.0

task_categories:

- token-classification

pretty_name: 'Part-of-speech(POS) Tagging Dataset for BatteryDataExtractor'

---

# POS Tagging Dataset

## Original Data Source

#### Conll2003

E. F. Tjong Kim Sang and F. De Meulder, Proceedings of the

Seventh Conference on Natural Language Learning at HLT-

NAACL 2003, 2003, pp. 142–147.

#### The Peen Treebank

M. P. Marcus, B. Santorini and M. A. Marcinkiewicz, Comput.

Linguist., 1993, 19, 313–330.

## Citation

BatteryDataExtractor: battery-aware text-mining software embedded with BERT models |

sanchit-gandhi/earnings22_split_resampled | 2022-09-30T15:24:09.000Z | [

"region:us"

] | sanchit-gandhi | null | null | null | 0 | 19 | We partition the earnings22 dataset at https://huggingface.co/datasets/anton-l/earnings22_baseline_5_gram by source_id:

Validation: 4420696 4448760 4461799 4469836 4473238 4482110

Test: 4432298 4450488 4470290 4479741 4483338 4485244

Train: remainder

Official script for processing these splits will be released shortly. |

venelin/inferes | 2022-10-08T01:25:47.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"annotations_creators:expert-generated",

"language_creators:expert-generated",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:es",

"license:cc-by-4.0",

"nli",

"spanish"... | venelin | null | null | null | 0 | 19 | ---

annotations_creators:

- expert-generated

language:

- es

language_creators:

- expert-generated

license:

- cc-by-4.0

multilinguality:

- monolingual

pretty_name: InferES

size_categories:

- 1K<n<10K

source_datasets:

- original

tags:

- nli

- spanish

- negation

- coreference

task_categories:

- text-classification

task_ids:

- natural-language-inference

---

# Dataset Card for InferES

## Table of Contents

- [Table of Contents](#table-of-contents)

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** https://github.com/venelink/inferes

- **Repository:** https://github.com/venelink/inferes

- **Paper:** https://arxiv.org/abs/2210.03068

- **Point of Contact:** venelin [at] utexas [dot] edu

### Dataset Summary

Natural Language Inference dataset for European Spanish

Paper accepted and (to be) presented at COLING 2022

### Supported Tasks and Leaderboards

Natural Language Inference

### Languages

Spanish

## Dataset Structure

The dataset contains two texts inputs (Premise and Hypothesis), Label for three-way classification, and annotation data.

### Data Instances

train size = 6444

test size = 1612

### Data Fields

ID : the unique ID of the instance

Premise

Hypothesis

Label: cnt, ent, neutral

Topic: 1 (Picasso), 2 (Columbus), 3 (Videogames), 4 (Olympic games), 5 (EU), 6 (USSR)

Anno: ID of the annotators (in cases of undergrads or crowd - the ID of the group)

Anno Type: Generate, Rewrite, Crowd, and Automated

### Data Splits

train size = 6444

test size = 1612

The train/test split is stratified by a key that combines Label + Anno + Anno type

### Source Data

Wikipedia + text generated from "sentence generators" hired as part of the process

#### Who are the annotators?

Native speakers of European Spanish

### Personal and Sensitive Information

No personal or Sensitive information is included.

Annotators are anonymized and only kept as "ID" for research purposes.

### Dataset Curators

Venelin Kovatchev

### Licensing Information

cc-by-4.0

### Citation Information

To be added after proceedings from COLING 2022 appear

### Contributions

Thanks to [@venelink](https://github.com/venelink) for adding this dataset.

|

laion/laion2b-multi-vit-h-14-embeddings | 2022-12-23T20:29:43.000Z | [

"region:us"

] | laion | null | null | null | 1 | 19 | Entry not found |

bond005/sova_rudevices | 2022-11-01T15:59:30.000Z | [

"task_categories:automatic-speech-recognition",

"task_categories:audio-classification",

"annotations_creators:expert-generated",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:10K<n<100k",

"source_datasets:extended",

"language:ru",

"license:cc-by-4.0",

"region:us... | bond005 | null | null | null | 1 | 19 | ---

pretty_name: RuDevices

annotations_creators:

- expert-generated

language_creators:

- crowdsourced

language:

- ru

license:

- cc-by-4.0

multilinguality:

- monolingual

paperswithcode_id:

size_categories:

- 10K<n<100k

source_datasets:

- extended

task_categories:

- automatic-speech-recognition

- audio-classification

---

# Dataset Card for sova_rudevices

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

## Dataset Description

- **Homepage:** [SOVA RuDevices](https://github.com/sovaai/sova-dataset)

- **Repository:** [SOVA Dataset](https://github.com/sovaai/sova-dataset)

- **Leaderboard:** [The 🤗 Speech Bench](https://huggingface.co/spaces/huggingface/hf-speech-bench)

- **Point of Contact:** [SOVA.ai](mailto:support@sova.ai)

### Dataset Summary

SOVA Dataset is free public STT/ASR dataset. It consists of several parts, one of them is SOVA RuDevices. This part is an acoustic corpus of approximately 100 hours of 16kHz Russian live speech with manual annotating, prepared by [SOVA.ai team](https://github.com/sovaai).

Authors do not divide the dataset into train, validation and test subsets. Therefore, I was compelled to prepare this splitting. The training subset includes more than 82 hours, the validation subset includes approximately 6 hours, and the test subset includes approximately 6 hours too.

### Supported Tasks and Leaderboards

- `automatic-speech-recognition`: The dataset can be used to train a model for Automatic Speech Recognition (ASR). The model is presented with an audio file and asked to transcribe the audio file to written text. The most common evaluation metric is the word error rate (WER). The task has an active Hugging Face leaderboard which can be found at https://huggingface.co/spaces/huggingface/hf-speech-bench. The leaderboard ranks models uploaded to the Hub based on their WER.

### Languages

The audio is in Russian.

## Dataset Structure

### Data Instances

A typical data point comprises the audio data, usually called `audio` and its transcription, called `transcription`. Any additional information about the speaker and the passage which contains the transcription is not provided.

```

{'audio': {'path': '/home/bond005/datasets/sova_rudevices/data/train/00003ec0-1257-42d1-b475-db1cd548092e.wav',

'array': array([ 0.00787354, 0.00735474, 0.00714111, ...,

-0.00018311, -0.00015259, -0.00018311]), dtype=float32),

'sampling_rate': 16000},

'transcription': 'мне получше стало'}

```

### Data Fields

- audio: A dictionary containing the path to the downloaded audio file, the decoded audio array, and the sampling rate. Note that when accessing the audio column: `dataset[0]["audio"]` the audio file is automatically decoded and resampled to `dataset.features["audio"].sampling_rate`. Decoding and resampling of a large number of audio files might take a significant amount of time. Thus it is important to first query the sample index before the `"audio"` column, *i.e.* `dataset[0]["audio"]` should **always** be preferred over `dataset["audio"][0]`.

- transcription: the transcription of the audio file.

### Data Splits

This dataset consists of three splits: training, validation, and test. This splitting was realized with accounting of internal structure of SOVA RuDevices (the validation split is based on the subdirectory `0`, and the test split is based on the subdirectory `1` of the original dataset), but audio recordings of the same speakers can be in different splits at the same time (the opposite is not guaranteed).

| | Train | Validation | Test |

| ----- | ------ | ---------- | ----- |

| examples | 81607 | 5835 | 5799 |

| hours | 82.4h | 5.9h | 5.8h |

## Dataset Creation

### Curation Rationale

[Needs More Information]

### Source Data

#### Initial Data Collection and Normalization

[Needs More Information]

#### Who are the source language producers?

[Needs More Information]

### Annotations

#### Annotation process

All recorded audio files were manually annotated.

#### Who are the annotators?

[Needs More Information]

### Personal and Sensitive Information

The dataset consists of people who have donated their voice. You agree to not attempt to determine the identity of speakers in this dataset.

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[Needs More Information]

## Additional Information

### Dataset Curators

The dataset was initially created by Egor Zubarev, Timofey Moskalets, and SOVA.ai team.

### Licensing Information

[Creative Commons BY 4.0](https://creativecommons.org/licenses/by/4.0/)

### Citation Information

```

@misc{sova2021rudevices,

author = {Zubarev, Egor and Moskalets, Timofey and SOVA.ai},

title = {SOVA RuDevices Dataset: free public STT/ASR dataset with manually annotated live speech},

publisher = {GitHub},

journal = {GitHub repository},

year = {2021},

howpublished = {\url{https://github.com/sovaai/sova-dataset}},

}

```

### Contributions

Thanks to [@bond005](https://github.com/bond005) for adding this dataset. |

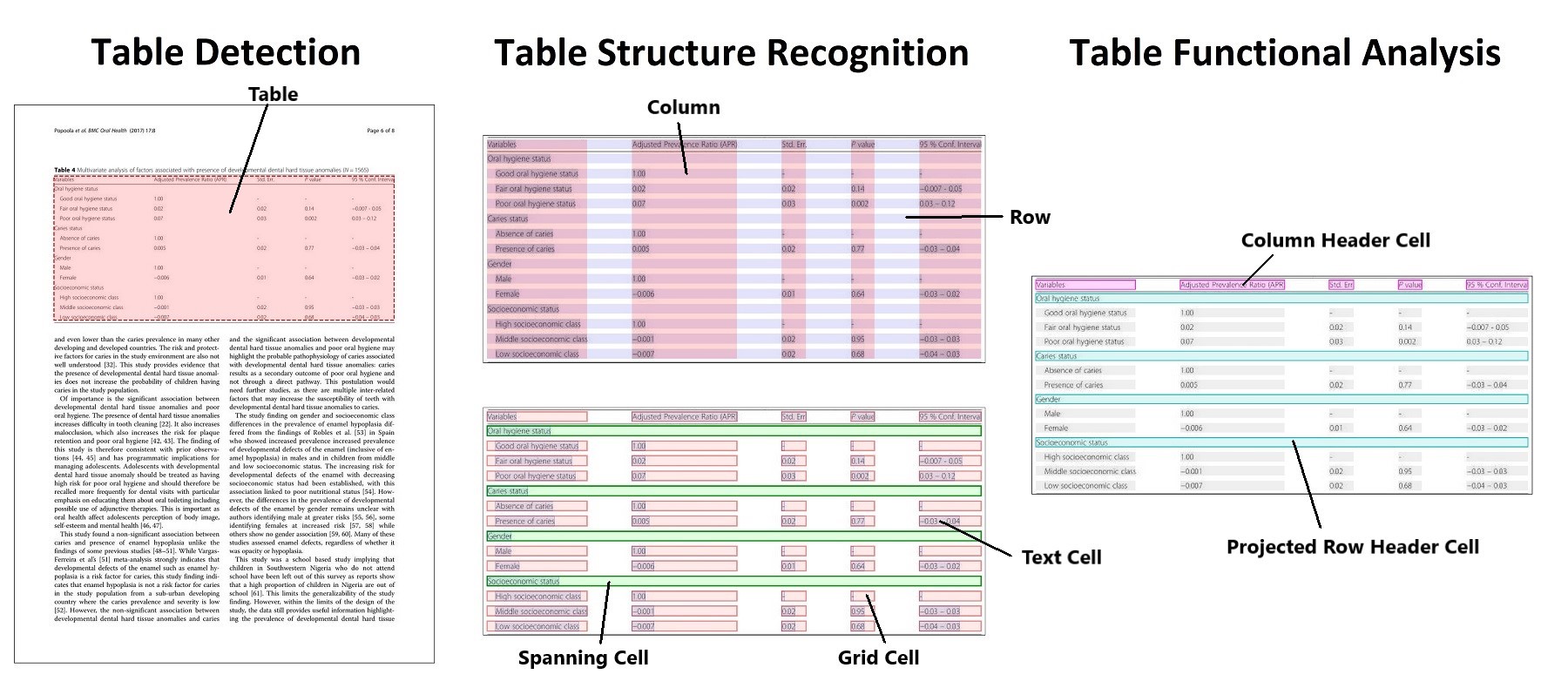

bsmock/pubtables-1m | 2023-08-08T16:43:14.000Z | [

"license:cdla-permissive-2.0",

"region:us"

] | bsmock | null | null | null | 16 | 19 | ---

license: cdla-permissive-2.0

---

# PubTables-1M

- GitHub: [https://github.com/microsoft/table-transformer](https://github.com/microsoft/table-transformer)

- Paper: ["PubTables-1M: Towards comprehensive table extraction from unstructured documents"](https://openaccess.thecvf.com/content/CVPR2022/html/Smock_PubTables-1M_Towards_Comprehensive_Table_Extraction_From_Unstructured_Documents_CVPR_2022_paper.html)

- Hugging Face:

- [Detection model](https://huggingface.co/microsoft/table-transformer-detection)

- [Structure recognition model](https://huggingface.co/microsoft/table-transformer-structure-recognition)

Currently we only support downloading the dataset as tar.gz files. Integrating with HuggingFace Datasets is something we hope to support in the future!

Please switch to the "Files and versions" tab to download all of the files or use a command such as wget to download from the command line.

Once downloaded, use the included script "extract_structure_dataset.sh" to extract and organize all of the data.

## Files

It comes in 18 tar.gz files:

Training and evaluation data for the structure recognition model (947,642 total cropped table instances):

- PubTables-1M-Structure_Filelists.tar.gz

- PubTables-1M-Structure_Annotations_Test.tar.gz: 93,834 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Structure_Annotations_Train.tar.gz: 758,849 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Structure_Annotations_Val.tar.gz: 94,959 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Structure_Images_Test.tar.gz

- PubTables-1M-Structure_Images_Train.tar.gz

- PubTables-1M-Structure_Images_Val.tar.gz

- PubTables-1M-Structure_Table_Words.tar.gz: Bounding boxes and text content for all of the words in each cropped table image

Training and evaluation data for the detection model (575,305 total document page instances):

- PubTables-1M-Detection_Filelists.tar.gz

- PubTables-1M-Detection_Annotations_Test.tar.gz: 57,125 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Detection_Annotations_Train.tar.gz: 460,589 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Detection_Annotations_Val.tar.gz: 57,591 XML files containing bounding boxes in PASCAL VOC format

- PubTables-1M-Detection_Images_Test.tar.gz

- PubTables-1M-Detection_Images_Train_Part1.tar.gz

- PubTables-1M-Detection_Images_Train_Part2.tar.gz

- PubTables-1M-Detection_Images_Val.tar.gz

- PubTables-1M-Detection_Page_Words.tar.gz: Bounding boxes and text content for all of the words in each page image (plus some unused files)

Full table annotations for the source PDF files:

- PubTables-1M-PDF_Annotations.tar.gz: Detailed annotations for all of the tables appearing in the source PubMed PDFs. All annotations are in PDF coordinates.

- 401,733 JSON files, one per source PDF document |

Isma/librispeech_1000_seed_42 | 2022-11-28T14:52:52.000Z | [

"region:us"

] | Isma | null | null | null | 0 | 19 | Entry not found |

Bingsu/laion-translated-to-en-korean-subset | 2023-02-01T01:15:43.000Z | [

"task_categories:feature-extraction",

"annotations_creators:crowdsourced",

"language_creators:crowdsourced",

"multilinguality:multilingual",

"size_categories:10M<n<100M",

"language:ko",

"language:en",

"license:cc-by-4.0",

"region:us"

] | Bingsu | null | null | null | 2 | 19 | ---

annotations_creators:

- crowdsourced

language_creators:

- crowdsourced

language:

- ko

- en

license:

- cc-by-4.0

multilinguality:

- multilingual

pretty_name: laion-translated-to-en-korean-subset

size_categories:

- 10M<n<100M

task_categories:

- feature-extraction

---

# laion-translated-to-en-korean-subset

## Dataset Description

- **Homepage:** [laion-5b](https://laion.ai/blog/laion-5b/)

- **Download Size** 1.40 GiB

- **Generated Size** 3.49 GiB

- **Total Size** 4.89 GiB

## About dataset

a subset data of [laion/laion2B-multi-joined-translated-to-en](https://huggingface.co/datasets/laion/laion2B-multi-joined-translated-to-en) and [laion/laion1B-nolang-joined-translated-to-en](https://huggingface.co/datasets/laion/laion1B-nolang-joined-translated-to-en), including only korean

### Lisence

CC-BY-4.0

## Data Structure

### Data Instance

```py

>>> from datasets import load_dataset

>>> dataset = load_dataset("Bingsu/laion-translated-to-en-korean-subset")

>>> dataset

DatasetDict({

train: Dataset({

features: ['hash', 'URL', 'TEXT', 'ENG TEXT', 'WIDTH', 'HEIGHT', 'LANGUAGE', 'similarity', 'pwatermark', 'punsafe', 'AESTHETIC_SCORE'],

num_rows: 12769693

})

})

```

```py

>>> dataset["train"].features

{'hash': Value(dtype='int64', id=None),

'URL': Value(dtype='large_string', id=None),

'TEXT': Value(dtype='large_string', id=None),

'ENG TEXT': Value(dtype='large_string', id=None),

'WIDTH': Value(dtype='int32', id=None),

'HEIGHT': Value(dtype='int32', id=None),

'LANGUAGE': Value(dtype='large_string', id=None),

'similarity': Value(dtype='float32', id=None),

'pwatermark': Value(dtype='float32', id=None),

'punsafe': Value(dtype='float32', id=None),

'AESTHETIC_SCORE': Value(dtype='float32', id=None)}

```

### Data Size

download: 1.40 GiB<br>

generated: 3.49 GiB<br>

total: 4.89 GiB

### Data Field

- 'hash': `int`

- 'URL': `string`

- 'TEXT': `string`

- 'ENG TEXT': `string`, null data are dropped

- 'WIDTH': `int`, null data are filled with 0

- 'HEIGHT': `int`, null data are filled with 0

- 'LICENSE': `string`

- 'LANGUAGE': `string`

- 'similarity': `float32`, CLIP similarity score, null data are filled with 0.0

- 'pwatermark': `float32`, Probability of containing a watermark, null data are filled with 0.0

- 'punsafe': `float32`, Probability of nsfw image, null data are filled with 0.0

- 'AESTHETIC_SCORE': `float32`, null data are filled with 0.0

### Data Splits

| | train |

| --------- | -------- |

| # of data | 12769693 |

### polars

```sh

pip install polars[fsspec]