id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

ChristophSchuhmann/wikipedia-en-nov22-1-sentence-level | 2023-04-19T06:01:38.000Z | [

"region:us"

] | ChristophSchuhmann | null | null | null | 1 | 15 | Entry not found |

StampyAI/alignment-research-dataset | 2023-08-26T19:12:23.000Z | [

"task_categories:question-answering",

"size_categories:10K<n<100K",

"language:en",

"license:mit",

"arxiv:2206.02841",

"region:us"

] | StampyAI | The AI Alignment Research Dataset is a collection of documents related to AI Alignment and Safety from various books, research papers, and alignment related blog posts. | null | null | 7 | 15 | ---

language:

- en

license: mit

size_categories:

- 10K<n<100K

task_categories:

- question-answering

pretty_name: alignment-research-dataset

dataset_info:

features:

- name: id

dtype: string

- name: source

dtype: string

- name: title

dtype: string

- name: text

dtype: large_string

- name: url

dtype: string

- name: date_published

dtype: string

- name: authors

sequence: string

- name: summary

sequence: string

- name: source_type

dtype: string

- name: book_title

dtype: string

- name: karma

dtype: int32

- name: votes

dtype: int32

- name: words

dtype: int32

- name: comment_count

dtype: int32

- name: tags

sequence: string

- name: modified_at

dtype: string

- name: alias

dtype: string

- name: data_last_modified

dtype: string

- name: abstract

dtype: string

- name: author_comment

dtype: string

- name: journal_ref

dtype: string

- name: doi

dtype: string

- name: primary_category

dtype: string

- name: categories

sequence: string

- name: initial_source

dtype: string

- name: bibliography_bib

sequence:

- name: title

dtype: string

config_name: all

splits:

- name: train

num_bytes: 471644446

num_examples: 14271

download_size: 484827959

dataset_size: 471644446

---

# AI Alignment Research Dataset

The AI Alignment Research Dataset is a collection of documents related to AI Alignment and Safety from various books, research papers, and alignment related blog posts. This is a work in progress. Components are still undergoing a cleaning process to be updated more regularly.

## Sources

Here are the list of sources along with sample contents:

- [agentmodel](https://agentmodels.org/)

- [agisf](https://course.aisafetyfundamentals.com/) - recommended readings from AGI Safety Fundamentals

- [aisafety.info](https://aisafety.info/) - Stampy's FAQ

- [alignmentforum](https://www.alignmentforum.org)

- [alignment_newsletter](https://rohinshah.com/alignment-newsletter/)

- [arbital](https://arbital.com/)

- [arxiv](https://arxiv.org/) - relevant research papers

- blogs - entire websites automatically scraped

- [AI Impacts](https://aiimpacts.org/)

- [AI Safety Camp](https://aisafety.camp/)

- [carado.moe](https://carado.moe/)

- [Cold Takes](https://www.cold-takes.com/)

- [DeepMind technical blogs](https://www.deepmind.com/blog-categories/technical-blogs)

- [DeepMind AI Safety Research](https://deepmindsafetyresearch.medium.com/)

- [EleutherAI](https://blog.eleuther.ai/)

- [generative.ink](https://generative.ink/posts/)

- [Gwern Branwen's blog](https://gwern.net/)

- [Jack Clark's Import AI](https://importai.substack.com/)

- [MIRI](https://intelligence.org/)

- [Jacob Steinhardt's blog](https://jsteinhardt.wordpress.com/)

- [ML Safety Newsletter](https://newsletter.mlsafety.org/)

- [Transformer Circuits Thread](https://transformer-circuits.pub/)

- [Open AI Research](https://openai.com/research/)

- [Victoria Krakovna's blog](https://vkrakovna.wordpress.com/)

- [Eliezer Yudkowsky's blog](https://www.yudkowsky.net/)

- [distill](https://distill.pub/)

- [eaforum](https://forum.effectivealtruism.org/) - selected posts

- [lesswrong](https://www.lesswrong.com/) - selected posts

- special_docs - individual documents curated from various resources

- [Make a suggestion](https://bit.ly/ard-suggestion) for sources not already in the dataset

- youtube - playlists & channels

- [AI Alignment playlist](https://www.youtube.com/playlist?list=PLCRVRLd2RhZTpdUdEzJjo3qhmX3y3skWA) and other lists

- [AI Explained](https://www.youtube.com/@aiexplained-official)

- [Evan Hubinger's AI Safety Talks](https://www.youtube.com/@aisafetytalks)

- [AI Safety Reading Group](https://www.youtube.com/@aisafetyreadinggroup/videos)

- [AiTech - TU Delft](https://www.youtube.com/@AiTechTUDelft/)

- [Rob Miles AI](https://www.youtube.com/@RobertMilesAI)

## Keys

All entries contain the following keys:

- `id` - string of unique identifier

- `source` - string of data source listed above

- `title` - string of document title of document

- `authors` - list of strings

- `text` - full text of document content

- `url` - string of valid link to text content

- `date_published` - in UTC format

Additional keys may be available depending on the source document.

## Usage

Execute the following code to download and parse the files:

```python

from datasets import load_dataset

data = load_dataset('StampyAI/alignment-research-dataset')

```

To only get the data for a specific source, pass it in as the second argument, e.g.:

```python

from datasets import load_dataset

data = load_dataset('StampyAI/alignment-research-dataset', 'lesswrong')

```

## Limitations and Bias

LessWrong posts have overweighted content on doom and existential risk, so please beware in training or finetuning generative language models on the dataset.

## Contributing

The scraper to generate this dataset is open-sourced on [GitHub](https://github.com/StampyAI/alignment-research-dataset) and currently maintained by volunteers at StampyAI / AI Safety Info. [Learn more](https://coda.io/d/AI-Safety-Info_dfau7sl2hmG/Get-involved_susRF#_lufSr) or join us on [Discord](https://discord.gg/vjFSCDyMCy).

## Rebuilding info

This README contains info about the number of rows and their features which should be rebuilt each time datasets get changed. To do so, run:

datasets-cli test ./alignment-research-dataset --save_info --all_configs

## Citing the Dataset

For more information, here is the [paper](https://arxiv.org/abs/2206.02841) and [LessWrong](https://www.lesswrong.com/posts/FgjcHiWvADgsocE34/a-descriptive-not-prescriptive-overview-of-current-ai) post. Please use the following citation when using the dataset:

Kirchner, J. H., Smith, L., Thibodeau, J., McDonnell, K., and Reynolds, L. "Understanding AI alignment research: A Systematic Analysis." arXiv preprint arXiv:2022.4338861 (2022). |

ChristophSchuhmann/1-sentence-level-gutenberg-en_arxiv_pubmed_soda | 2023-04-30T09:30:25.000Z | [

"region:us"

] | ChristophSchuhmann | null | null | null | 0 | 15 | Entry not found |

thennal/GMaSC | 2023-05-01T21:18:33.000Z | [

"task_categories:text-to-speech",

"task_categories:automatic-speech-recognition",

"annotations_creators:expert-generated",

"language_creators:found",

"multilinguality:monolingual",

"size_categories:1K<n<10K",

"source_datasets:original",

"language:ml",

"license:cc-by-sa-4.0",

"region:us"

] | thennal | null | null | null | 0 | 15 | ---

dataset_info:

features:

- name: text

dtype: string

- name: speaker

dtype: string

- name: audio

dtype:

audio:

sampling_rate: 48000

splits:

- name: train

num_bytes: 717976082.0

num_examples: 2000

download_size: 797772747

dataset_size: 717976082.0

annotations_creators:

- expert-generated

language:

- ml

language_creators:

- found

license:

- cc-by-sa-4.0

multilinguality:

- monolingual

pretty_name: GEC Barton Hill Malayalam Speech Corpus

size_categories:

- 1K<n<10K

source_datasets:

- original

tags: []

task_categories:

- text-to-speech

- automatic-speech-recognition

task_ids: []

---

# GMaSC: GEC Barton Hill Malayalam Speech Corpus

**GMaSC** is a Malayalam text and speech corpus created by the Government Engineering College Barton Hill with an emphasis on Malayalam-accented English. The corpus contains 2,000 text-audio pairs of Malayalam sentences spoken by 2 speakers, totalling in approximately 139 minutes of audio. Each sentences has at least one English word common in Malayalam speech.

## Dataset Structure

The dataset consists of 2,000 instances with fields `text`, `speaker`, and `audio`. The audio is mono, sampled at 48kH. The transcription is normalized and only includes Malayalam characters and common punctuation. The table given below specifies how the 2,000 instances are split between the speakers, along with some basic speaker info:

| Speaker | Gender | Age | Time (HH:MM:SS) | Sentences |

| --- | --- | --- | --- | --- |

| Sonia | Female | 43 | 01:02:17 | 1,000 |

| Anil | Male | 48 | 01:17:23 | 1,000 |

| **Total** | | | **02:19:40** | **2,000** |

### Data Instances

An example instance is given below:

```json

{'text': 'സൗജന്യ ആയുർവേദ മെഡിക്കൽ ക്യാമ്പ്',

'speaker': 'Sonia',

'audio': {'path': None,

'array': array([0.00036621, 0.00033569, 0.0005188 , ..., 0.00094604, 0.00091553,

0.00094604]),

'sampling_rate': 48000}}

```

### Data Fields

- **text** (str): Transcription of the audio file

- **speaker** (str): The name of the speaker

- **audio** (dict): Audio object including loaded audio array, sampling rate and path to audio (always None)

### Data Splits

We provide all the data in a single `train` split. The loaded dataset object thus looks like this:

```json

DatasetDict({

train: Dataset({

features: ['text', 'speaker', 'audio'],

num_rows: 2000

})

})

```

## Additional Information

### Licensing

The corpus is made available under the [Creative Commons license (CC BY-SA 4.0)](https://creativecommons.org/licenses/by-sa/4.0/).

|

fptudsc/face-celeb-vietnamese | 2023-05-10T15:13:18.000Z | [

"task_categories:image-classification",

"task_categories:zero-shot-classification",

"size_categories:10M<n<100M",

"language:vi",

"license:apache-2.0",

"region:us"

] | fptudsc | null | null | null | 1 | 15 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype: string

splits:

- name: train

num_bytes: 82233752.864

num_examples: 8557

download_size: 80630170

dataset_size: 82233752.864

license: apache-2.0

task_categories:

- image-classification

- zero-shot-classification

language:

- vi

size_categories:

- 10M<n<100M

---

# Dataset Card for "face-celeb-vietnamese"

## Dataset Summary

This dataset contains information on over 8,000 samples of well-known Vietnamese individuals, categorized into three professions: singers, actors, and beauty queens. The dataset includes data on more than 100 celebrities in each of the three job categories.

## Languages

- Vietnamese: The label is used to indicate the name of celebrities in Vietnamese.

## Dataset Structure

- The image and Vietnamese sequences are

## Source Data - Initial Data Collection and Normalization

[Website người nổi tiếng](https://nguoinoitieng.tv)

### Licensing Information

Apache License 2.0

### Contributions

Thanks to [@github-duongttr](https://github.com/duongttr) and [@github-pphuc25](https://github.com/pphuc25) for adding this dataset. |

Thaweewat/hc3-24k-th | 2023-05-09T19:23:15.000Z | [

"task_categories:question-answering",

"task_categories:summarization",

"size_categories:10K<n<100K",

"language:th",

"license:cc-by-sa-3.0",

"instruction-finetuning",

"arxiv:2301.07597",

"region:us"

] | Thaweewat | null | null | null | 0 | 15 | ---

license: cc-by-sa-3.0

task_categories:

- question-answering

- summarization

tags:

- instruction-finetuning

language:

- th

size_categories:

- 10K<n<100K

---

# Summary

This is a 🇹🇭 Thai-instructed dataset translated using Google Cloud Translation from [HC3](https://huggingface.co/datasets/Hello-SimpleAI/HC3)

( Included total **24K**, 17K reddit_eli5, 4K finance, 1.2K medicine, 1.2K open_qa and 0.8K wiki_csai )

The first human-ChatGPT comparison corpus which is introduced in this paper:

- [How Close is ChatGPT to Human Experts? Comparison Corpus, Evaluation, and Detection](https://arxiv.org/abs/2301.07597)

Code, models and analysis are available on GitHub:

- GitHub: [Chatgpt-Comparison-Detection project 🔬](https://github.com/Hello-SimpleAI/chatgpt-comparison-detection)

Supported Tasks:

- Training LLMs

- Synthetic Data Generation

- Data Augmentation

Languages: Thai

Version: 1.0

---

|

FreedomIntelligence/huatuo26M-testdatasets | 2023-05-17T03:39:41.000Z | [

"task_categories:text-generation",

"size_categories:1K<n<10K",

"language:zh",

"license:apache-2.0",

"medical",

"arxiv:2305.01526",

"region:us"

] | FreedomIntelligence | null | null | null | 12 | 15 | ---

license: apache-2.0

task_categories:

- text-generation

language:

- zh

tags:

- medical

size_categories:

- 1K<n<10K

---

# Dataset Card for huatuo26M-testdatasets

## Dataset Description

- **Homepage: https://www.huatuogpt.cn/**

- **Repository: https://github.com/FreedomIntelligence/Huatuo-26M**

- **Paper: https://arxiv.org/abs/2305.01526**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

We are pleased to announce the release of our evaluation dataset, a subset of the Huatuo-26M. This dataset contains 6,000 entries that we used for Natural Language Generation (NLG) experimentation in our associated research paper.

We encourage researchers and developers to use this evaluation dataset to gauge the performance of their own models. This is not only a chance to assess the accuracy and relevancy of generated responses but also an opportunity to investigate their model's proficiency in understanding and generating complex medical language.

Note: All the data points have been anonymized to protect patient privacy, and they adhere strictly to data protection and privacy regulations.

## Citation

```

@misc{li2023huatuo26m,

title={Huatuo-26M, a Large-scale Chinese Medical QA Dataset},

author={Jianquan Li and Xidong Wang and Xiangbo Wu and Zhiyi Zhang and Xiaolong Xu and Jie Fu and Prayag Tiwari and Xiang Wan and Benyou Wang},

year={2023},

eprint={2305.01526},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

``` |

Shoubhik8/mpt_finetune_dataset | 2023-05-22T14:48:37.000Z | [

"region:us"

] | Shoubhik8 | null | null | null | 0 | 15 | ---

dataset_info:

features:

- name: prompt

dtype: string

- name: response

dtype: string

splits:

- name: train

num_bytes: 331283580

num_examples: 371277

download_size: 13534489

dataset_size: 331283580

---

# Dataset Card for "mpt_finetune_dataset"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

deepghs/anime_classification | 2023-05-24T07:15:05.000Z | [

"task_categories:image-classification",

"size_categories:10K<n<100K",

"license:mit",

"art",

"region:us"

] | deepghs | null | null | null | 0 | 15 | ---

license: mit

task_categories:

- image-classification

tags:

- art

size_categories:

- 10K<n<100K

---

A simple classification task for generic anime images. Including the following 4 classes:

| Class | Images | Description |

|:------------:|:------:|---------------------------------------------------------------|

| comic | 5746 | comic images in color or greyscale |

| illustration | 6064 | illustration images |

| bangumi | 4914 | video screenshots or key visual images in bangumi |

| 3d | 4649 | 3d works including koikatsu, mikumikudance and other 3d types |

|

Chakshu/conversation_ender | 2023-05-25T08:56:31.000Z | [

"task_categories:text-classification",

"size_categories:n<1K",

"language:en",

"license:mit",

"Conversation",

"region:us"

] | Chakshu | null | null | null | 0 | 15 | ---

license: mit

task_categories:

- text-classification

language:

- en

tags:

- Conversation

size_categories:

- n<1K

pretty_name: Conversation Enders

---

Conversation Ending Check |

wwydmanski/reuters10k | 2023-05-29T08:59:17.000Z | [

"task_categories:tabular-classification",

"size_categories:10K<n<100K",

"tabular",

"region:us"

] | wwydmanski | null | @InProceedings{huggingface:dataset,

title = {A great new dataset},

author={huggingface, Inc.

},

year={2020}

} | null | 0 | 15 | ---

task_categories:

- tabular-classification

tags:

- tabular

pretty_name: Reuters10K

size_categories:

- 10K<n<100K

--- |

Enno-Ai/fr-instructs | 2023-06-26T23:16:02.000Z | [

"task_categories:text2text-generation",

"task_categories:table-question-answering",

"size_categories:10M<n<100M",

"language:fr",

"license:cc-by-2.5",

"region:us"

] | Enno-Ai | null | null | null | 3 | 15 | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

- name: source

dtype: string

splits:

- name: train

num_bytes: 5904510661

num_examples: 11794112

download_size: 1623654660

dataset_size: 5904510661

license: cc-by-2.5

task_categories:

- text2text-generation

- table-question-answering

language:

- fr

size_categories:

- 10M<n<100M

---

# A collection of 12 million french-only instructions deduplicated from various sources

Source :

- clips/mqa-fr-faq

- multilingual-wikihow-qa-16k

- MBZUAI/Bactrian-X

- argilla/databricks-dolly-15k-curated-multilingual

- innermost47/alpaca-fr

- etalab-ia/piaf |

almanach/hc3_french_ood | 2023-06-05T10:19:19.000Z | [

"task_categories:text-classification",

"task_categories:question-answering",

"task_categories:sentence-similarity",

"task_categories:zero-shot-classification",

"size_categories:10K<n<100K",

"language:en",

"language:fr",

"license:cc-by-sa-4.0",

"ChatGPT",

"Bing",

"LM Detection",

"Detection",

... | almanach | Human ChatGPT Comparison Corpus (HC3) Translated To French.

The translation is done by Google Translate API.

We also add the native french QA pairs from ChatGPT, BingGPT and FAQ pages.

This dataset was used in our TALN 2023 paper.

Towards a Robust Detection of Language Model-Generated Text: Is ChatGPT that easy to detect? | # TODO: Add BibTeX citation for our TALN 2023 paper:

Towards a Robust Detection of Language Model-Generated Text: Is ChatGPT that easy to detect?

@article{guo-etal-2023-hc3,

title = "How Close is ChatGPT to Human Experts? Comparison Corpus, Evaluation, and Detection",

author = "Guo, Biyang and

Zhang, Xin and

Wang, Ziyuan and

Jiang, Minqi and

Nie, Jinran and

Ding, Yuxuan and

Yue, Jianwei and

Wu, Yupeng",

journal={arXiv preprint arxiv:2301.07597}

year = "2023",

} | null | 1 | 15 | ---

task_categories:

- text-classification

- question-answering

- sentence-similarity

- zero-shot-classification

language:

- en

- fr

size_categories:

- 10K<n<100K

tags:

- ChatGPT

- Bing

- LM Detection

- Detection

- OOD

license: cc-by-sa-4.0

---

Dataset card for the dataset used in :

## Towards a Robust Detection of Language Model-Generated Text: Is ChatGPT that easy to detect?

Paper: https://gitlab.inria.fr/wantoun/robust-chatgpt-detection/-/raw/main/towards_chatgpt_detection.pdf

Source Code: https://gitlab.inria.fr/wantoun/robust-chatgpt-detection

## Dataset Summary

#### overview:

This dataset is made of two parts:

- First, an extension of the [Human ChatGPT Comparison Corpus (HC3) dataset](https://huggingface.co/datasets/Hello-SimpleAI/HC3) with French data automatically translated from the English source.

- Second, out-of-domain and adversarial French data set have been gathereed (Human adversarial, BingGPT, Native French ChatGPT responses).

#### Details:

- We first format the data into three subsets: `sentence`, `question` and `full` following the original paper.

- We then extend the data by translating the English questions and answers to French.

- We provide native French ChatGPT responses to a sample of the translated questions.

- We added a subset with QA pairs from BingGPT

- We included an adversarial subset with human-written answers in the style of conversational LLMs like Bing/ChatGPT.

## Available Subsets

### Out-of-domain:

- `hc3_fr_qa_chatgpt`: Translated French questions and native French ChatGPT answers pairs from HC3. This is the `ChatGPT-Native` subset from the paper.

- Features: `id`, `question`, `answer`, `chatgpt_answer`, `label`, `source`

- Size:

- test: `113` examples, `25592` words

- `qa_fr_binggpt`: French questions and BingGPT answers pairs. This is the `BingGPT` subset from the paper.

- Features: `id`, `question`, `answer`, `label`, `deleted_clues`, `deleted_sources`, `remarks`

- Size:

- test: `106` examples, `26291` words

- `qa_fr_binglikehuman`: French questions and human written BingGPT-like answers pairs. This is the `Adversarial` subset from the paper.

- Features: `id`, `question`, `answer`, `label`, `source`

- Size:

- test: `61` examples, `17328` words

- `faq_fr_gouv`: French FAQ questions and answers pairs from domain ending with `.gouv` from the MQA dataset (subset 'fr-faq-page'). https://huggingface.co/datasets/clips/mqa. This is the `FAQ-Gouv` subset from the paper.

- Features: `id`, `page_id`, `question_id`, `answer_id`, `bucket`, `domain`, `question`, `answer`, `label`

- Size:

- test: `235` examples, `22336` words

- `faq_fr_random`: French FAQ questions and answers pairs from random domain from the MQA dataset (subset 'fr-faq-page'). https://huggingface.co/datasets/clips/mqa. This is the `FAQ-Rand` subset from the paper.

- Features: `id`, `page_id`, `question_id`, `answer_id`, `bucket`, `domain`, `question`, `answer`, `label`

- Size:

- test: `4454` examples, `271823` words

### In-domain:

- `hc3_en_qa`: English questions and answers pairs from HC3.

- Features: `id`, `question`, `answer`, `label`, `source`

- Size:

- train: `68335` examples, `12306363` words

- validation: `17114` examples, `3089634` words

- test: `710` examples, `117001` words

- `hc3_en_sentence`: English answers split into sentences from HC3.

- Features: `id`, `text`, `label`, `source`

- Size:

- train: `455320` examples, `9983784` words

- validation: `113830` examples, `2510290` words

- test: `4366` examples, `99965` words

- `hc3_en_full`: English questions and answers pairs concatenated from HC3.

- Features: `id`, `text`, `label`, `source`

- Size:

- train: `68335` examples, `9982863` words

- validation: `17114` examples, `2510058` words

- test: `710` examples, `99926` words

- `hc3_fr_qa`: Translated French questions and answers pairs from HC3.

- Features: `id`, `question`, `answer`, `label`, `source`

- Size:

- train: `68283` examples, `12660717` words

- validation: `17107` examples, `3179128` words

- test: `710` examples, `127193` words

- `hc3_fr_sentence`: Translated French answers split into sentences from HC3.

- Features: `id`, `text`, `label`, `source`

- Size:

- train: `464885` examples, `10189606` words

- validation: `116524` examples, `2563258` words

- test: `4366` examples, `108374` words

- `hc3_fr_full`: Translated French questions and answers pairs concatenated from HC3.

- Features: `id`, `text`, `label`, `source`

- Size:

- train: `68283` examples, `10188669` words

- validation: `17107` examples, `2563037` words

- test: `710` examples, `108352` words

## How to load

```python

from datasets import load_dataset

dataset = load_dataset("almanach/hc3_multi", "hc3_fr_qa")

```

## Dataset Copyright

If the source datasets used in this corpus has a specific license which is stricter than CC-BY-SA, our products follow the same.

If not, they follow CC-BY-SA license.

| English Split | Source | Source License | Note |

|----------|-------------|--------|-------------|

| reddit_eli5 | [ELI5](https://github.com/facebookresearch/ELI5) | BSD License | |

| open_qa | [WikiQA](https://www.microsoft.com/en-us/download/details.aspx?id=52419) | [PWC Custom](https://paperswithcode.com/datasets/license) | |

| wiki_csai | Wikipedia | CC-BY-SA | | [Wiki FAQ](https://en.wikipedia.org/wiki/Wikipedia:FAQ/Copyright) |

| medicine | [Medical Dialog](https://github.com/UCSD-AI4H/Medical-Dialogue-System) | Unknown| [Asking](https://github.com/UCSD-AI4H/Medical-Dialogue-System/issues/10)|

| finance | [FiQA](https://paperswithcode.com/dataset/fiqa-1) | Unknown | Asking by 📧 |

| FAQ | [MQA]( https://huggingface.co/datasets/clips/mqa) | CC0 1.0| |

| ChatGPT/BingGPT | | Unknown | This is ChatGPT/BingGPT generated data. |

| Human | | CC-BY-SA | |

## Citation

```bibtex

@proceedings{towards-a-robust-2023-antoun,

title = "Towards a Robust Detection of Language Model-Generated Text: Is ChatGPT that easy to detect?",

editor = "Antoun, Wissam and

Mouilleron, Virginie and

Sagot, Benoit and

Seddah, Djam{\'e}",

month = "6",

year = "2023",

address = "Paris, France",

publisher = "ATALA",

url = "https://gitlab.inria.fr/wantoun/robust-chatgpt-detection/-/raw/main/towards_chatgpt_detection.pdf",

}

```

```bibtex

@article{guo-etal-2023-hc3,

title = "How Close is ChatGPT to Human Experts? Comparison Corpus, Evaluation, and Detection",

author = "Guo, Biyang and

Zhang, Xin and

Wang, Ziyuan and

Jiang, Minqi and

Nie, Jinran and

Ding, Yuxuan and

Yue, Jianwei and

Wu, Yupeng",

journal={arXiv preprint arxiv:2301.07597}

year = "2023",

url ="https://arxiv.org/abs/2301.07597"

}

``` |

AgentWaller/german-formatted-oasst1 | 2023-06-01T09:40:25.000Z | [

"license:apache-2.0",

"region:us"

] | AgentWaller | null | null | null | 1 | 15 | ---

license: apache-2.0

dataset_info:

features:

- name: input

dtype: string

- name: input_no_prompt

dtype: string

- name: output

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 17894263

num_examples: 9838

- name: validation

num_bytes: 930643

num_examples: 518

download_size: 8982883

dataset_size: 18824906

---

|

Multimodal-Fatima/cvasnlp_sample_test | 2023-07-08T21:43:05.000Z | [

"region:us"

] | Multimodal-Fatima | null | null | null | 0 | 15 | ---

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': abyssinian

'1': american bulldog

'2': american pit bull terrier

'3': basset hound

'4': beagle

'5': bengal

'6': birman

'7': bombay

'8': boxer

'9': british shorthair

'10': chihuahua

'11': egyptian mau

'12': english cocker spaniel

'13': english setter

'14': german shorthaired

'15': great pyrenees

'16': havanese

'17': japanese chin

'18': keeshond

'19': leonberger

'20': maine coon

'21': miniature pinscher

'22': newfoundland

'23': persian

'24': pomeranian

'25': pug

'26': ragdoll

'27': russian blue

'28': saint bernard

'29': samoyed

'30': scottish terrier

'31': shiba inu

'32': siamese

'33': sphynx

'34': staffordshire bull terrier

'35': wheaten terrier

'36': yorkshire terrier

- name: id

dtype: int64

- name: tags_laion-ViT-H-14-2B

sequence: string

- name: attributes_laion-ViT-H-14-2B

sequence: string

- name: caption_Salesforce-blip-image-captioning-large

dtype: string

- name: intensive_captions_Salesforce-blip-image-captioning-large

sequence: string

splits:

- name: test

num_bytes: 183538.0

num_examples: 10

download_size: 162401

dataset_size: 183538.0

---

# Dataset Card for "cvasnlp_sample_test"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ttss/rdf-summarization-d | 2023-06-14T21:52:31.000Z | [

"region:us"

] | ttss | null | null | null | 0 | 15 | Entry not found |

llm-book/aio-passages | 2023-06-24T05:55:37.000Z | [

"size_categories:1M<n<10M",

"language:ja",

"license:cc-by-sa-3.0",

"license:gfdl",

"region:us"

] | llm-book | null | null | null | 0 | 15 | ---

language:

- ja

size_categories:

- 1M<n<10M

license:

- cc-by-sa-3.0

- gfdl

dataset_info:

features:

- name: id

dtype: int32

- name: pageid

dtype: int32

- name: revid

dtype: int32

- name: text

dtype: string

- name: section

dtype: string

- name: title

dtype: string

splits:

- name: train

num_bytes: 3054493919

num_examples: 4288198

download_size: 1110830651

dataset_size: 3054493919

---

# Dataset Card for llm-book/aio-passages

書籍『大規模言語モデル入門』で使用する、「AI王」コンペティションのパッセージデータセットです。

GitHub リポジトリ [cl-tohoku/quiz-datasets](https://github.com/cl-tohoku/quiz-datasets) で公開されているデータセットを利用しています。

## Licence

本データセットで利用している Wikipedia のコンテンツは、[クリエイティブ・コモンズ表示・継承ライセンス 3.0 (CC BY-SA 3.0)](https://creativecommons.org/licenses/by-sa/3.0/deed.ja) および [GNU 自由文書ライセンス (GFDL)](https://www.gnu.org/licenses/fdl.html) の下に配布されているものです。

|

RiTA-nlp/ITALIC | 2023-06-29T12:58:56.000Z | [

"task_categories:automatic-speech-recognition",

"task_categories:audio-classification",

"task_ids:intent-classification",

"annotations_creators:crowdsourced",

"language_creators:Italian",

"license:cc-by-nc-nd-4.0",

"arxiv:2204.08582",

"arxiv:2306.08502",

"region:us"

] | RiTA-nlp | ITALIC is a dataset of Italian audio recordings and contains annotation for utterance transcripts and associated intents.

The ITALIC dataset was created through a custom web platform, utilizing both native and non-native Italian speakers as participants.

The participants were required to record themselves while reading a randomly sampled short text from the MASSIVE dataset. | @article{koudounas2023italic,

title={ITALIC: An Italian Intent Classification Dataset},

author={Koudounas, Alkis and La Quatra, Moreno and Vaiani, Lorenzo and Colomba, Luca and Attanasio, Giuseppe and Pastor, Eliana and Cagliero, Luca and Baralis, Elena},

journal={arXiv preprint arXiv:2306.08502},

year={2023}

} | null | 2 | 15 | ---

pretty_name: ITALIC

annotations_creators:

- crowdsourced

language_creators:

- Italian

language_bcp47:

- it

size_categories:

it: 10K<n<100K

task_categories:

- automatic-speech-recognition

- audio-classification

task_ids:

- intent-classification

license: cc-by-nc-nd-4.0

---

# Dataset Card for ITALIC: An ITALian Intent Classification Dataset

ITALIC is an intent classification dataset for the Italian language, which is the first of its kind.

It includes spoken and written utterances and is annotated with 60 intents.

The dataset is available on [Zenodo](https://zenodo.org/record/8040649) and connectors ara available for the [HuggingFace Hub](https://huggingface.co/datasets/RiTA-nlp/ITALIC).

### Latest Updates

- **June 15th, 2023**: ITALIC dataset has been released on [Zenodo](https://zenodo.org/record/8040649): https://zenodo.org/record/8040649.

## Table of Contents

- [Data collection](#data-collection)

- [Dataset](#dataset)

- [Usage](#usage)

- [Models used in the paper](#models-used-in-the-paper)

- [SLU intent classification](#slu-intent-classification)

- [ASR](#asr)

- [NLU intent classification](#nlu-intent-classification)

- [Citation](#citation)

- [License](#license)

## Data collection

The data collection follows the MASSIVE NLU dataset which contains an annotated textual dataset for 60 intents. The data collection process is described in the paper [Massive Natural Language Understanding](https://arxiv.org/abs/2204.08582).

Following the MASSIVE NLU dataset, a pool of 70+ volunteers has been recruited to annotate the dataset. The volunteers were asked to record their voice while reading the utterances (the original text is available on MASSIVE dataset). Together with the audio, the volunteers were asked to provide a self-annotated description of the recording conditions (e.g., background noise, recording device). The audio recordings have also been validated and, in case of errors, re-recorded by the volunteers.

All the audio recordings included in the dataset have received a validation from at least two volunteers. All the audio recordings have been validated by native italian speakers (self-annotated).

## Dataset

The dataset is available on the [Zenodo](https://zenodo.org/record/8040649). It is composed of 3 different splits:

- `massive`: all the utterances are randomly shuffled and divided into 3 splits (train, validation, test).

- `hard_speaker`: the utterances are divided into 3 splits (train, validation, test) based on the speaker. Each split only contains utterances from a pool of speakers that do not overlap with the other splits.

- `hard_noisy`: the utterances are divided into 3 splits (train, validation, test) based on the recording conditions. The test split only contains utterances with the highest level of noise.

Each split contains the following annotations:

- `utt`: the original text of the utterance.

- `audio`: the audio recording of the utterance.

- `intent`: the intent of the utterance.

- `speaker`: the speaker of the utterance. The speaker is identified by a unique identifier and has been anonymized.

- `age`: the age of the speaker.

- `is_native`: whether the speaker is a native italian speaker or not.

- `gender`: the gender of the speaker (self-annotated).

- `region`: the region of the speaker (self-annotated).

- `nationality`: the nationality of the speaker (self-annotated).

- `lisp`: any kind of lisp of the speaker (self-annotated). It can be empty in case of no lisp.

- `education`: the education level of the speaker (self-annotated).

- `environment`: the environment of the recording (self-annotated).

- `device`: the device used for the recording (self-annotated).

## Usage

The dataset can be loaded using the `datasets` library. You need to install the following dependencies:

```bash

pip install datasets

pip install librosa

pip install soundfile

```

Then, you can load the dataset as follows:

```python

from datasets import load_dataset

# Please be sure to use use_auth_token=True and to set the access token

# using huggingface-cli login

# or follow https://huggingface.co/docs/hub/security-tokens

# configs "hard_speaker" and "hard_noisy" are also available (to substitute "massive")

italic = load_dataset("RiTA-nlp/ITALIC", "massive", use_auth_token=True)

italic_train = italic["train"]

italic_valid = italic["validation"]

italic_test = italic["test"]

```

The dataset has been designed for intent classification tasks. The `intent` column can be used as the label. However, the dataset can be used for other tasks as well.

- **Intent classification**: the `intent` column can be used as the label.

- **Speaker identification**: the `speaker` column can be used as the label.

- **Automatic speech recognition**: the `utt` column can be used as the label.

- **Accent identification**: the `region` column can be used as the label.

For more information about the dataset, please refer to the [paper](https://arxiv.org/abs/2306.08502).

## Models used in the paper

### Hardware settings

All experiments were conducted on a private workstation with Intel Core i9-10980XE CPU, 1 $\times$ NVIDIA RTX A6000 GPU, 64 GB of RAM running Ubuntu 22.04 LTS.

### Parameter settings

The parameters used for the training of the models are set to allow a fair comparison between the different models and to follow the recommendations of the related literature. The parameters are summarized in the following table:

| Model | Task | Parameters | Learning rate | Batch size | Max epochs | Warmup | Weight decay | Avg. training time | Avg. inference time |

| --- | --- | --- | --- | --- | --- | --- | --- | --- | --- |

| facebook/wav2vec2-xls-r-300m | SLU | 300M | 1e-4 | 128 | 30 | 0.1 ratio | 0.01 | 9m 35s per epoch | 13ms per sample |

| facebook/wav2vec2-xls-r-1b | SLU | 1B | 1e-4 | 32 | 30 | 0.1 ratio | 0.01 | 21m 30s per epoch | 29ms per sample |

| jonatasgrosman/wav2vec2-large-xlsr-53-italian | SLU | 300M | 1e-4 | 128 | 30 | 0.1 ratio | 0.01 | 9m 35s per epoch | 13ms per sample |

| jonatasgrosman/wav2vec2-xls-r-1b-italian | SLU | 1B | 1e-4 | 32 | 30 | 0.1 ratio | 0.01 | 21m 30s per epoch | 29ms per sample |

| ALM/whisper-it-small-augmented | ASR | 224M | 1e-5 | 8 | 5 | 500 steps | 0.01 | 26m 30s per epoch | 25ms per sample |

| EdoAbati/whisper-medium-it-2 | ASR | 769M | 1e-5 | 8 | 5 | 500 steps | 0.01 | 49m per epoch | 94ms per sample |

| EdoAbati/whisper-large-v2-it | ASR | 1.5B | 1e-5 | 8 | 5 | 500 steps | 0.01 | 1h 17m per epoch | 238ms per sample |

| bert-base-multilingual-uncased | NLU | 167M | 5e-5 | 8 | 5 | 500 steps | 0.01 | 1m 22s per epoch | 1.5ms per sample |

| facebook/mbart-large-cc25 | NLU | 611M | 5e-5 | 8 | 5 | 500 steps | 0.01 | 7m 53s per epoch | 4.7ms per sample |

| dbmdz/bert-base-italian-xxl-uncased | NLU | 110M | 5e-5 | 8 | 5 | 500 steps | 0.01 | 1m 30s per epoch | 1.4ms per sample |

| morenolq/bart-it | NLU | 141M | 5e-5 | 8 | 5 | 500 steps | 0.01 | 1m 54s per epoch | 1.9 ms per sample |

In all cases, we opted for the AdamW optimizer. All experiments were run on a single NVIDIA A6000 GPU.

### SLU intent classification

The models used in the paper are available on the [Hugging Face Hub](https://huggingface.co/models).

- 🌍 [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m)

- 🌍 [facebook/wav2vec2-xls-r-1b](https://huggingface.co/facebook/wav2vec2-xls-r-1b)

- 🇮🇹 [jonatasgrosman/wav2vec2-xls-r-1b-italian](https://huggingface.co/jonatasgrosman/wav2vec2-xls-r-1b-italian)

- 🇮🇹 [jonatasgrosman/wav2vec2-large-xlsr-53-italian](https://huggingface.co/jonatasgrosman/wav2vec2-large-xlsr-53-italian)

### ASR

The models used in the paper are available on the [Hugging Face Hub](https://huggingface.co/models).

- 🌍 Whisper large (zero-shot ASR): [openai/whisper-large-v2](https://huggingface.co/openai/whisper-large-v2)

- 🇮🇹 Whisper small: [ALM/whisper-it-small-augmented](https://huggingface.co/ALM/whisper-it-small-augmented)

- 🇮🇹 Whisper medium: [EdoAbati/whisper-medium-it-2](https://huggingface.co/EdoAbati/whisper-medium-it-2)

- 🇮🇹 Whisper large: [EdoAbati/whisper-large-v2-it](https://huggingface.co/EdoAbati/whisper-large-v2-it)

### NLU intent classification

The models used in the paper are available on the [Hugging Face Hub](https://huggingface.co/models).

- 🌍 [bert-base-multilingual-uncased](https://huggingface.co/bert-base-multilingual-uncased)

- 🌍 [facebook/mbart-large-cc25](https://huggingface.co/facebook/mbart-large-cc25)

- 🇮🇹 [dbmdz/bert-base-italian-xxl-uncased](https://huggingface.co/dbmdz/bert-base-italian-xxl-uncased)

- 🇮🇹 [morenolq/bart-it](https://huggingface.co/morenolq/bart-it)

## Citation

If you use this dataset in your research, please cite the following paper (**interspeech 2023** version is coming soon after the proceedings are published):

```bibtex

@article{koudounas2023italic,

title={ITALIC: An Italian Intent Classification Dataset},

author={Koudounas, Alkis and La Quatra, Moreno and Vaiani, Lorenzo and Colomba, Luca and Attanasio, Giuseppe and Pastor, Eliana and Cagliero, Luca and Baralis, Elena},

journal={arXiv preprint arXiv:2306.08502},

year={2023}

}

```

## License

The dataset is licensed under the [Creative Commons Attribution 4.0 International License](https://creativecommons.org/licenses/by/4.0/).

- [Paper describing the dataset and initial experiments](https://arxiv.org/abs/2306.08502)

- [Dataset on Zenodo](https://zenodo.org/record/8040649)

- [https://creativecommons.org/licenses/by-nc-sa/4.0/](https://creativecommons.org/licenses/by/4.0/)https://creativecommons.org/licenses/by/4.0/

|

shibing624/snli-zh | 2023-06-14T07:15:52.000Z | [

"task_categories:text-classification",

"task_ids:natural-language-inference",

"task_ids:semantic-similarity-scoring",

"task_ids:text-scoring",

"annotations_creators:shibing624",

"language_creators:liuhuanyong",

"multilinguality:monolingual",

"size_categories:100K<n<20M",

"source_datasets:https://git... | shibing624 | The SNLI corpus (version 1.0) is a collection of 570k human-written English

sentence pairs manually labeled for balanced classification with the labels

entailment, contradiction, and neutral, supporting the task of natural language

inference (NLI), also known as recognizing textual entailment (RTE). | @inproceedings{snli:emnlp2015,

Author = {Bowman, Samuel R. and Angeli, Gabor and Potts, Christopher, and Manning, Christopher D.},

Booktitle = {Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing (EMNLP)},

Publisher = {Association for Computational Linguistics},

Title = {A large annotated corpus for learning natural language inference},

Year = {2015}

} | null | 2 | 15 | ---

annotations_creators:

- shibing624

language_creators:

- liuhuanyong

language:

- zh

license: cc-by-4.0

multilinguality:

- monolingual

size_categories:

- 100K<n<20M

source_datasets:

- https://github.com/liuhuanyong/ChineseTextualInference/

task_categories:

- text-classification

task_ids:

- natural-language-inference

- semantic-similarity-scoring

- text-scoring

paperswithcode_id: snli

pretty_name: Stanford Natural Language Inference

---

# Dataset Card for SNLI_zh

## Dataset Description

- **Repository:** [Chinese NLI dataset](https://github.com/shibing624/text2vec)

- **Dataset:** [train data from ChineseTextualInference](https://github.com/liuhuanyong/ChineseTextualInference/)

- **Size of downloaded dataset files:** 54 MB

- **Total amount of disk used:** 54 MB

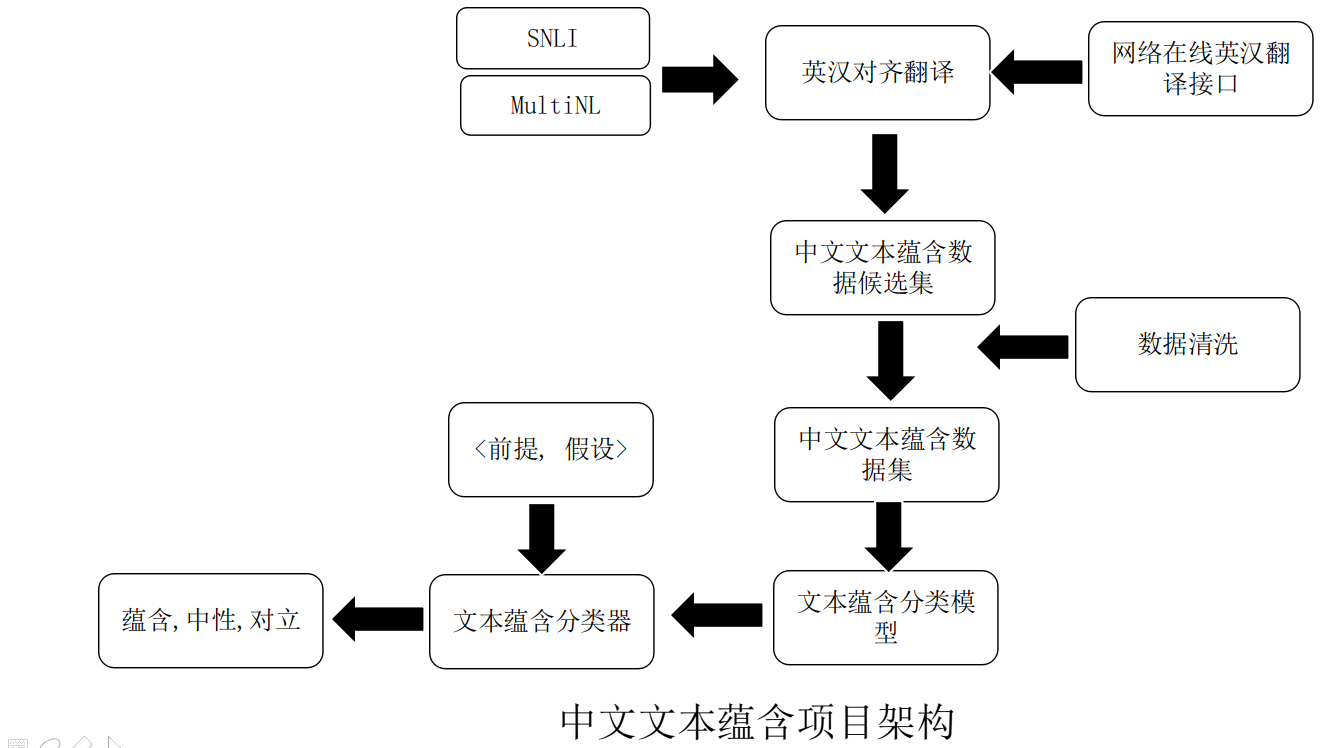

### Dataset Summary

中文SNLI和MultiNLI数据集,翻译自英文[SNLI](https://huggingface.co/datasets/snli)和[MultiNLI](https://huggingface.co/datasets/multi_nli)

### Supported Tasks and Leaderboards

Supported Tasks: 支持中文文本匹配任务,文本相似度计算等相关任务。

中文匹配任务的结果目前在顶会paper上出现较少,我罗列一个我自己训练的结果:

**Leaderboard:** [NLI_zh leaderboard](https://github.com/shibing624/text2vec)

### Languages

数据集均是简体中文文本。

## Dataset Structure

### Data Instances

An example of 'train' looks as follows.

```

sentence1 sentence2 gold_label

是的,我想一个洞穴也会有这样的问题 我认为洞穴可能会有更严重的问题。 neutral

几周前我带他和一个朋友去看幼儿园警察 我还没看过幼儿园警察,但他看了。 contradiction

航空旅行的扩张开始了大众旅游的时代,希腊和爱琴海群岛成为北欧人逃离潮湿凉爽的夏天的令人兴奋的目的地。 航空旅行的扩大开始了许多旅游业的发展。 entailment

```

### Data Fields

The data fields are the same among all splits.

- `sentence1`: a `string` feature.

- `sentence2`: a `string` feature.

- `label`: a classification label, with possible values including entailment(0), neutral(1), contradiction(2). 注意:此数据集0表示相似,2表示不相似。

-

### Data Splits

after remove None and len(text) < 1 data:

```shell

$ wc -l ChineseTextualInference-train.txt

419402 total

```

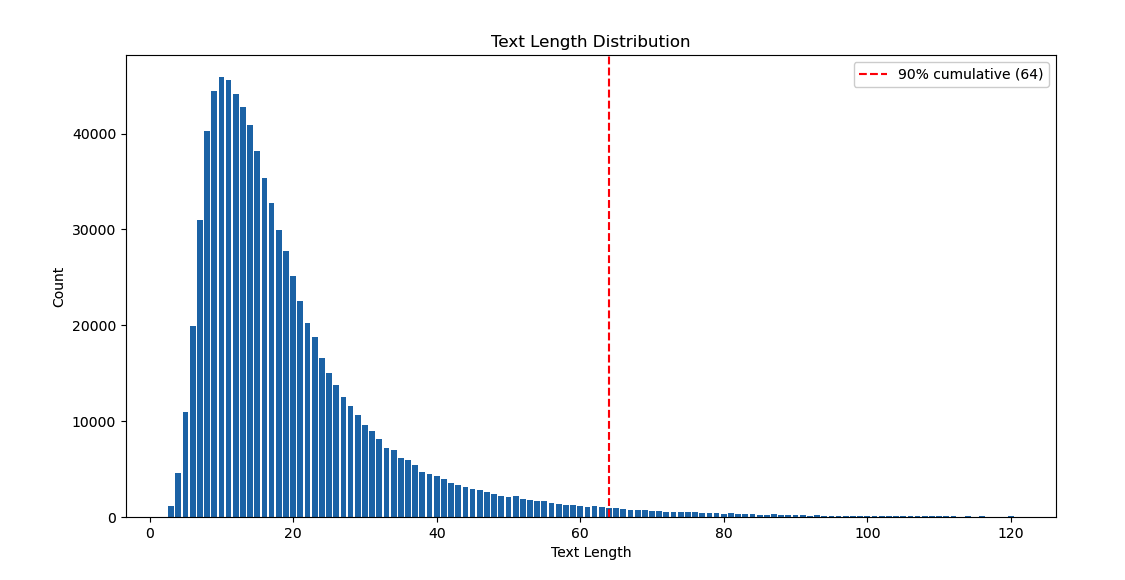

### Data Length

## Dataset Creation

### Curation Rationale

作为中文SNLI(natural langauge inference)数据集,这里把这个数据集上传到huggingface的datasets,方便大家使用。

### Source Data

#### Initial Data Collection and Normalization

#### Who are the source language producers?

数据集的版权归原作者所有,使用各数据集时请尊重原数据集的版权。

@inproceedings{snli:emnlp2015,

Author = {Bowman, Samuel R. and Angeli, Gabor and Potts, Christopher, and Manning, Christopher D.},

Booktitle = {Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing (EMNLP)},

Publisher = {Association for Computational Linguistics},

Title = {A large annotated corpus for learning natural language inference},

Year = {2015}

}

### Annotations

#### Annotation process

#### Who are the annotators?

原作者。

### Personal and Sensitive Information

## Considerations for Using the Data

### Social Impact of Dataset

This dataset was developed as a benchmark for evaluating representational systems for text, especially including those induced by representation learning methods, in the task of predicting truth conditions in a given context.

Systems that are successful at such a task may be more successful in modeling semantic representations.

### Discussion of Biases

### Other Known Limitations

## Additional Information

### Dataset Curators

- [liuhuanyong](https://github.com/liuhuanyong/ChineseTextualInference/)翻译成中文

- [shibing624](https://github.com/shibing624) 上传到huggingface的datasets

### Licensing Information

用于学术研究。

### Contributions

[shibing624](https://github.com/shibing624) add this dataset. |

alpindale/visual-novels | 2023-06-14T14:44:30.000Z | [

"task_categories:conversational",

"task_categories:text-generation",

"language:en",

"license:apache-2.0",

"region:us"

] | alpindale | null | null | null | 13 | 15 | ---

license: apache-2.0

task_categories:

- conversational

- text-generation

language:

- en

pretty_name: Visual Novels

---

# Visual Novel Dataset

This dataset contains parsed Visual Novel scripts for training language models. The dataset consists of approximately 60 million tokens of parsed scripts.

## Dataset Structure

The dataset follows a general structure for visual novel scripts:

- Dialogue lines: Dialogue lines are formatted with the speaker's name followed by a colon, and the dialogue itself enclosed in quotes. For example:

```

John: "Hello, how are you?"

```

- Actions and narration: Actions and narration within the Visual Novel scripts are often enclosed in asterisks, but it's important to note that not all visual novels follow this convention. Actions and narration provide descriptions of character movements, background settings, or other narrative elements.

```

*John looked around the room, searching for answers.*

```

## Contents

- `visual-novels.txt`: This file contains all the parsed VNs concatenated within a single plaintext file. Each entry is separated with this string:

```

[ - title - {visual-novel-title-1.txt} ]

```

- `VNDB/`: This directory contains `.json` files that contain VNDB IDs for the corresponding VN's characters. Does not include unparsed VNs.

- `Archives/visual-novels-parsed.tar.zst`: This archive contains the parsed VNs but with each script in a separate text file (i.e. not concatenated).

- `Archives/visual-novels-unparsed.tar.zst`: This archive contains all the unparsed VNs along with the original script for the currently parsed VNs.

## Usage

You can utilize this dataset to train language models, particularly for tasks related to natural language processing and text generation. By leveraging the parsed visual novel scripts, you can train models to understand dialogue structures and generate coherent responses. Additionally, the inclusion of the unparsed scripts allows for further analysis and processing.

## Contribution

This dataset was gathered and parsed by the [PygmalionAI](https://hugginface.co/PygmalionAI) Data Processing Team. Listed below are the team members, sorted by contribution amount:

- **Suikamelon**: [HuggingFace](https://huggingface.co/lemonilia) - (2,787,704 ++ 672,473 --)

- **Alpin**: [HuggingFace](https://huggingface.co/alpindale) - [GitHub](https://github.com/AlpinDale) (1,170,985 ++ 345,120 --)

- **Spartan**: [GitHub](https://github.com/Spartan9772) (901,046 ++ 467,915 --)

- **Unlucky-AI** [GitHub](https://github.com/Unlucky-AI) (253,316 ++ 256 --)

## Citation

If you use this dataset in your research or projects, please cite it appropriately.

## Acknowledgements

This dataset is compiled and shared for research and educational purposes. The dataset includes parsed visual novel scripts from various sources, which are predominantly copyrighted and owned by their respective publishers and creators. The inclusion of these scripts in this dataset does not imply any endorsement or authorization from the copyright holders.

We would like to express our sincere gratitude to the original copyright holders and creators of the visual novels for their valuable contributions to the art and storytelling. We respect and acknowledge their intellectual property rights.

We strongly encourage users of this dataset to adhere to copyright laws and any applicable licensing restrictions when using or analyzing the provided content. It is the responsibility of the users to ensure that any use of the dataset complies with the legal requirements governing intellectual property and fair use.

Please be aware that the creators and distributors of this dataset disclaim any liability or responsibility for any unauthorized or illegal use of the dataset by third parties.

If you are a copyright holder or have any concerns about the content included in this dataset, please contact us at [this email address](mailto:alpin@alpindale.dev) to discuss the matter further and address any potential issues.

|

takaaki-inada/databricks-dolly-15k-ja-zundamon | 2023-06-17T10:41:52.000Z | [

"license:cc-by-sa-3.0",

"region:us"

] | takaaki-inada | null | null | null | 0 | 15 | ---

license: cc-by-sa-3.0

---

This dataset was based on "kunishou/databricks-dolly-15k-ja".

This dataset is licensed under CC BY SA 3.0

Last Update : 2023-05-11

databricks-dolly-15k-ja

https://github.com/kunishou/databricks-dolly-15k-ja

databricks-dolly-15k

https://github.com/databrickslabs/dolly/tree/master/data

|

autopilot-ai/Gujarati-Grammarly-Datasets | 2023-06-22T15:23:06.000Z | [

"license:afl-3.0",

"region:us"

] | autopilot-ai | null | null | null | 0 | 15 | ---

license: afl-3.0

---

This is the collection of datasets used for the creation of our Gujarati Grammarly. It consists of correct-incorrect sentence pairs and correct incorrect spelling pairs datasets.

The sentence pairs are provided in various sizes for ease of prototyping and scaling. |

pankajmathur/dolly-v2_orca | 2023-06-26T14:39:23.000Z | [

"task_categories:text-generation",

"size_categories:10K<n<100K",

"language:en",

"license:cc-by-nc-sa-4.0",

"region:us"

] | pankajmathur | null | null | null | 15 | 15 | ---

license: cc-by-nc-sa-4.0

task_categories:

- text-generation

language:

- en

size_categories:

- 10K<n<100K

---

Explain tuned Dolly-V2 dataset ~15K created using approaches from Orca Research Paper.

We leverage all of the 15 system instructions provided in Orca Research Paper to generate explain tuned datasets, in contrast to vanilla instruction tuning approaches used by original datasets.

This helps student models like orca_mini_13b, orca_mini_7b or orca_mini_3b to learn thought process from teacher model, which is ChatGPT (gpt-3.5-turbo-0301 version).

Please see how the System prompt is added before each instruction. |

dfurman/All-In-Podcast-Transcripts | 2023-07-12T23:08:38.000Z | [

"license:apache-2.0",

"region:us"

] | dfurman | null | null | null | 0 | 15 | ---

license: apache-2.0

dataset_info:

features:

- name: section_title

dtype: string

- name: section_dialogue

dtype: string

- name: section_time_stamp

dtype: string

- name: episode_title

dtype: string

- name: episode_date

dtype: string

splits:

- name: E134

num_bytes: 89566

num_examples: 7

- name: E135

num_bytes: 85980

num_examples: 6

download_size: 114121

dataset_size: 175546

---

|

Waterhorse/chess_data | 2023-08-14T18:35:02.000Z | [

"task_categories:text-generation",

"task_categories:conversational",

"language:en",

"license:apache-2.0",

"arxiv:2306.09200",

"region:us"

] | Waterhorse | null | null | null | 2 | 15 | ---

license: apache-2.0

task_categories:

- text-generation

- conversational

language:

- en

---

# The Chess Dataset

## Dataset Description

- **Paper:** [ChessGPT: Bridging Policy Learning and Language Modeling](https://arxiv.org/abs/2306.09200)

### Dataset Summary

The dataset consists of three sources of dataset described in the paper, including:

- **ChessCLIP dataset**: Annotated PGNs for training CLIP.

- **ChessGPT Base dataset**: Game dataset, language dataset and mixed dataset for training ChessGPT-Base.

- **ChessGPT Chat dataset**: Conversational dataset for training ChessGPT-Chat.

Because of the legal issue, for ChessGPT dataset, we do not open-source the chess-book, chess-forum, chess-blog, and Youtube transcript datasets.

And for ChessCLIP dataset, we do not open-source two commercial annotated datasets we use.

### Languages

The language of the data is primarily English.

## Dataset Structure

- **ChessCLIP dataset**: Annotated PGNs for training CLIP.

- **ChessGPT Base dataset**: Game dataset: ccrl, pro_player, lichess_db_37, chess_puzzles, chess_modeling. Language dataset: redpajama, oscar, c4, pile, wikipedia, and stackexchange, and mixed dataset: annotated_pgn.

- **ChessGPT Chat dataset**: Chess-related conversation dataset:

### Data Instances

- **ChessCLIP dataset**:

```python

[Event "GMA, Wijk aan Zee NED"]

[Site "?"]

[Date "2003.??.??"]

[Round "1"]

[White "Anand,V"]

[Black "Radjabov,T"]

[Result "1/2"]

[WhiteElo "2750"]

[BlackElo "2620"]

[ECO "C12"]

[PlyCount "55"]

[Annotator "Hathaway"]

1. e4 e6

{ I'm not terribly familiar with the style of Radjabov, so I don't know if this is his usual opening. }

2. d4 d5 3. Nc3 Nf6 (3...Bb4

{ The Winawer Variation is probably best, though not as easy to play. }) 4. Bg5

{ threatens e4-e5xf6 }

(4. e5

{ keeps pieces on the board and avoids ...dxe4 }) 4...Bb4 (4...Be7

{ is more common and aims to trade dark-square bishops to ease Black's cramp }) (4...dxe4

{ aims to avoid any cramp by bringing pieces into alignment for trading, though White does get at least one very good piece (Ne4 or Bg5) and an easier time castling queen-side, to stir up king-side threats } ...

```

- **ChessGPT dataset**:

```python

{"metadata": {"id": "65268522",

"title": "1923 USSR Chess Championship",

"url": "https://en.wikipedia.org/wiki/1923%20USSR%20Chess%20Championship"},

"text": "The 1923 USSR Chess Championship was the second edition of USSR Chess Championship. Held from 8 to 24 July in Petrograd. The tournament was won by Peter Romanovsky.

"pipeline_key": "wiki-squad-train.jsonl.zst-10549"

}

```

- **ChessGPT Chat dataset**:

```python

{"conversations": [

{"author": "Human 0", "text": "Analyze the given sentence and identify its grammatical errors, if any.\nThese type of problems are difficult to solve.", "url": "gpt-4-alpaca", "score": 0},

{"author": "Human 1", "text": "The sentence is incorrect. The error is in the usage of \"these\". Since \"these\" is a plural determiner, it should be used with a plural noun. Similarly, \"type\" is singular noun and it is used with the singular determiner, \"this\".\n\nThe correct sentence should be \"This type of problem is difficult to solve.\" or \"These types of problems are difficult to solve.\"",

"url": "gpt-4-alpaca", "score": 0}

]

}

```

### Data Splits

The data is unsplit.

## Dataset Creation

Check [ChessGPT: Bridging Policy Learning and Language Modeling](https://arxiv.org/abs/2306.09200) for more details.

### Licensing Information

**Annotated PGN**: [PGNlib](https://www.angelfire.com/games3/smartbridge/), [lichess](https://lichess.org/terms-of-service), [GameKnot](https://gameknot.com/pg/pol_eula.htm), [pathtomaster](https://www.pathtochessmastery.com/)

**Game Dataset**: [Lichess dataset](https://www.tldrlegal.com/license/creative-commons-cc0-1-0-universal), [CCRL](https://ccrl.chessdom.com/ccrl/), [pro-player](https://www.pgnmentor.com/files.html), [puzzle](https://www.tldrlegal.com/license/creative-commons-cc0-1-0-universal), Modeling data(Apache-2.0).

**Language Dataset** [Wikipedia](https://huggingface.co/datasets/wikipedia#licensing-information), [Redpajama](https://github.com/togethercomputer/RedPajama-Data#license), [Oscar](https://huggingface.co/datasets/oscar-corpus/OSCAR-2301#licensing-information), [Pile](https://github.com/EleutherAI/the-pile/blob/master/LICENSE), [StackExchange](https://archive.org/details/stackexchange), [C4](https://huggingface.co/datasets/allenai/c4#license)

**Conversatoinal Datset**: [Chessable forums](https://www.chessable.com/terms), [Reddit](https://www.redditinc.com/policies/data-api-terms), [gpt-4](https://openai.com/policies/terms-of-use), [sharegpt](https://chrome.google.com/webstore/detail/sharegpt-share-your-chatg/daiacboceoaocpibfodeljbdfacokfjb), oasst1(Apache-2.0), dolly-v2(MIT)

### Citation Information

```bash

@article{feng2023chessgpt,

title={ChessGPT: Bridging Policy Learning and Language Modeling},

author={Feng, Xidong and Luo, Yicheng and Wang, Ziyan and Tang, Hongrui and Yang, Mengyue and Shao, Kun and Mguni, David and Du, Yali and Wang, Jun},

journal={arXiv preprint arXiv:2306.09200},

year={2023}

}

``` |

jeffreyhuber/state_of_the_union | 2023-06-29T16:51:23.000Z | [

"region:us"

] | jeffreyhuber | null | null | null | 0 | 15 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 39305

num_examples: 365

download_size: 25872

dataset_size: 39305

---

# Dataset Card for "state_of_the_union"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

lizhuang144/FACTUAL_Scene_Graph | 2023-07-10T14:55:17.000Z | [

"size_categories:10K<n<100K",

"language:en",

"license:openrail",

"region:us"

] | lizhuang144 | null | null | null | 1 | 15 | ---

license: openrail

language:

- en

pretty_name: FACTUAL

size_categories:

- 10K<n<100K

---

The scene graph parsing dataset described in `FACTUAL: A Benchmark for Faithful and Consistent Textual Scene Graph Parsing`

Please see details from https://github.com/zhuang-li/FACTUAL . |

EleutherAI/unscramble | 2023-07-05T19:14:02.000Z | [

"region:us"

] | EleutherAI | Unscramble is a small battery of 5 “character manipulation” tasks. Each task

involves giving the model a word distorted by some combination of scrambling,

addition, or deletion of characters, and asking it to recover the original word. | @inproceedings{NEURIPS2020_1457c0d6,

author = {Brown, Tom and Mann, Benjamin and Ryder, Nick and Subbiah, Melanie and Kaplan, Jared D and Dhariwal, Prafulla and Neelakantan, Arvind and Shyam, Pranav and Sastry, Girish and Askell, Amanda and Agarwal, Sandhini and Herbert-Voss, Ariel and Krueger, Gretchen and Henighan, Tom and Child, Rewon and Ramesh, Aditya and Ziegler, Daniel and Wu, Jeffrey and Winter, Clemens and Hesse, Chris and Chen, Mark and Sigler, Eric and Litwin, Mateusz and Gray, Scott and Chess, Benjamin and Clark, Jack and Berner, Christopher and McCandlish, Sam and Radford, Alec and Sutskever, Ilya and Amodei, Dario},

booktitle = {Advances in Neural Information Processing Systems},

editor = {H. Larochelle and M. Ranzato and R. Hadsell and M. F. Balcan and H. Lin},

pages = {1877--1901},

publisher = {Curran Associates, Inc.},

title = {Language Models are Few-Shot Learners},

url = {https://proceedings.neurips.cc/paper/2020/file/1457c0d6bfcb4967418bfb8ac142f64a-Paper.pdf},

volume = {33},

year = {2020}

} | null | 0 | 15 | Entry not found |

InfImagine/FakeImageDataset | 2023-08-13T09:00:14.000Z | [

"license:apache-2.0",

"arxiv:2304.13023",

"region:us"

] | InfImagine | null | null | null | 4 | 15 | ---

license: apache-2.0

---

# Fake Image Dataset

Fake Image Dataset is now open-sourced at [huggingface (InfImagine Organization)](https://huggingface.co/datasets/InfImagine/FakeImageDataset/tree/main/ImageData/train). ↗ It consists of two folders, *ImageData* and *MetaData*. *ImageData* contains the compressed packages of the Fake Image Dataset, while *MetaData* contains the labeling information of the corresponding data indicating whether they are real or fake.

Sentry-Image is now open-sourced at [Sentry-Image (github repository)](https://github.com/Inf-imagine/Sentry) which provides the SOTA fake image detection models in [Sentry-Image Leaderboard](http://sentry.infimagine.com/) pretraining in [Fake Image Dataset](https://huggingface.co/datasets/InfImagine/FakeImageDataset/tree/main/ImageData/train) to detect whether the image provided is an AI-generated or real image.

## Why we need [Fake Image Dataset](https://huggingface.co/datasets/InfImagine/FakeImageDataset/tree/main/ImageData/train) and [Sentry-Image](http://sentry.infimagine.com/)?

* 🧐 Recent [study](https://arxiv.org/abs/2304.13023) have shown that humans struggle significantly to distinguish real photos from AI-generated ones, with a misclassification rate of **38.7%**.

* 🤗 To help people confirm whether the images they see are real images or AI-generated images, we launched the Sentry-Image project.

* 💻 Sentry-Image is an open source project which provides the SOTA fake image detection models in [Sentry-Image Leaderboard](http://sentry.infimagine.com/) to detect whether the image provided is an AI-generated or real image.

# Dataset card for Fake Image Dataset

## Dataset Description

* **Homepage:** [Sentry-Image](http://sentry.infimagine.com/)

* **Paper:** [https://arxiv.org/pdf/2304.13023.pdf](https://arxiv.org/pdf/2304.13023.pdf)

* **Point of Contact:** [contact@infimagine.com](mailto:contact@infimagine.com)

## How to Download

You can use following codes to download the dataset:

```shell

git lfs install

git clone https://huggingface.co/datasets/InfImagine/FakeImageDataset

```

You can use following codes to extract the files in each subfolder (take the *IF-CC95K* subfolder in ImageData/val/IF-CC95K as an example):

```shell

cat IF-CC95K.tar.gz.* > IF-CC95K.tar.gz

tar -xvf IF-CC95K.tar.gz

```

## Dataset Summary

FakeImageDataset was created to serve as an large-scale dataset for the pretraining of detecting fake images.

It was built on StableDiffusion v1.5, IF and StyleGAN3.

## Supported Tasks and Leaderboards

FakeImageDataset is intended to be primarly used as a pretraining dataset for detecting fake images.

## Sub Dataset

### Training Dataset (Fake2M)

| Dataset | SD-V1.5Real-dpms-25 | IF-V1.0-dpms++-25 | StyleGAN3 |

| :----------- | :-----------: | :-----------: | :-----------: |

| Generator | Diffusion | Diffusion | GAN |

| Numbers | 1M | 1M | 87K |

| Resolution | 512 | 256 | (>=512) |

| Caption | CC3M-Train | CC3M-Train | - |

| ImageData Path | ImageData/train/SDv15R-CC1M | ImageData/train/IFv1-CC1M | ImageData/train/stylegan3-80K |

| MetaData Path | MetaData/train/SDv15R-CC1M.csv | MetaData/train/IF-CC1M.csv | MetaData/train/stylegan3-80K.csv |

### Validation Dataset (MPBench)

| Dataset | SDv15 | SDv21 | IF | Cogview2 | StyleGAN3 | Midjourneyv5 |

| :---------- | :-----------: | :-----------: | :-----------: | :-----------: | :-----------: | :-----------: |

| Generator | Diffusion | Diffusion | Diffusion | AR | GAN | - |

| Numbers | 30K | 15K | 95K | 22K | 60K | 5K |

| Resolution | 512 | 512 | 256 | 480 | (>=512) | (>=512) |

| Caption | CC15K-val | CC15K-val | CC15K-val | CC15K-val | - | - |

| ImageData Path | ImageData/val/SDv15-CC30K | ImageData/val/SDv21-CC15K | ImageData/val/IF-CC95K | ImageData/val/cogview2-22K | ImageData/val/stylegan3-60K | ImageData/val/Midjourneyv5-5K|

| MetaData Path | MetaData/val/SDv15-CC30K.csv| MetaData/val/SDv21-CC15K.csv | MetaData/val/IF-CC95K.csv | MetaData/val/cogview2-22K.csv | MetaData/val/stylegan3-60K.csv | MetaData/val/Midjourneyv5-5K.csv |

# News

* [2023/07] We open source the [Sentry-Image repository](https://github.com/Inf-imagine/Sentry) and [Sentry-Image Demo & Leaderboard](http://sentry.infimagine.com/).

* [2023/07] We open source the [Sentry-Image dataset](https://huggingface.co/datasets/InfImagine/FakeImageDataset).

Stay tuned for this project! Feel free to contact [contact@infimagine.com](contact@infimagine.com)! 😆

# License

This project is open-sourced under the [Apache-2.0](https://www.apache.org/licenses/LICENSE-2.0). These weights and datasets are fully open for academic research and can be used for commercial purposes with official written permission. If you find our open-source models and datasets useful for your business, we welcome your donation to support the development of the next-generation Sentry-Image model. Please contact [contact@infimagine.com](contact@infimagine.com) for commercial licensing and donation inquiries.

# Citation

The code and model in this repository is mostly developed for or derived from the paper below. Please cite it if you find the repository helpful.

```

@misc{sentry-image-leaderboard,

title = {Sentry-Image Leaderboard},

author = {Zeyu Lu, Di Huang, Chunli Zhang, Chengyue Wu, Xihui Liu, Lei Bai, Wanli Ouyang},

year = {2023},

publisher = {InfImagine, Shanghai AI Laboratory},

howpublished = "\url{https://github.com/Inf-imagine/Sentry}"

},

@misc{lu2023seeing,

title = {Seeing is not always believing: Benchmarking Human and Model Perception of AI-Generated Images},

author = {Zeyu Lu, Di Huang, Lei Bai, Jingjing Qu, Chengyue Wu, Xihui Liu, Wanli Ouyang},

year = {2023},

eprint = {2304.13023},

archivePrefix = {arXiv},

primaryClass = {cs.AI}

}

``` |

TrainingDataPro/body-measurements-dataset | 2023-09-14T16:57:44.000Z | [

"task_categories:image-classification",

"task_categories:image-to-image",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"region:us"

] | TrainingDataPro | The dataset consists of a compilation of people's photos along with their

corresponding body measurements. It is designed to provide information and

insights into the physical appearances and body characteristics of individuals.

The dataset includes a diverse range of subjects representing different age

groups, genders, and ethnicities.

The photos are captured in a standardized manner, depicting individuals in a

front and side positions.

The images aim to capture the subjects' physical appearance using appropriate

lighting and angles that showcase their body proportions accurately.

The dataset serves various purposes, including:

- research projects

- body measurement analysis

- fashion or apparel industry applications

- fitness and wellness studies

- anthropometric studies for ergonomic design in various fields | @InProceedings{huggingface:dataset,

title = {body-measurements-dataset},

author = {TrainingDataPro},

year = {2023}

} | null | 2 | 15 | ---

language:

- en

license: cc-by-nc-nd-4.0

task_categories:

- image-classification

- image-to-image

tags:

- code

dataset_info:

features:

- name: front_img

dtype: image

- name: selfie_img

dtype: image

- name: side_img

dtype: image

- name: arm_circumference_cm

dtype: string

- name: arm_length_cm

dtype: string

- name: back_build_cm

dtype: string

- name: calf_circumference_cm

dtype: string

- name: chest_circumference_cm

dtype: string

- name: crotch_height_cm

dtype: string

- name: front_build_cm

dtype: string

- name: hips_circumference_cm

dtype: string

- name: leg_length_cm

dtype: string

- name: neck_circumference_cm

dtype: string

- name: neck_pelvis_length_front_cm

dtype: string

- name: neck_waist_length_back_cm

dtype: string

- name: neck_waist_length_front_cm

dtype: string

- name: pelvis_circumference_cm

dtype: string

- name: shoulder_length_cm

dtype: string

- name: shoulder_width_cm

dtype: string

- name: thigh_circumference_cm

dtype: string

- name: under_chest_circumference_cm

dtype: string

- name: upper_arm_length_cm

dtype: string

- name: waist_circumference_cm

dtype: string

- name: height

dtype: string

- name: weight

dtype: string

- name: age

dtype: string

- name: gender

dtype: string

- name: race

dtype: string

- name: profession

dtype: string

- name: arm_circumference

dtype: image

- name: arm_length

dtype: image

- name: back_build

dtype: image

- name: calf_circumference

dtype: image

- name: chest_circumference

dtype: image

- name: crotch_height

dtype: image

- name: front_build

dtype: image

- name: hips_circumference

dtype: image

- name: leg_length

dtype: image

- name: neck_circumference

dtype: image

- name: neck_pelvis_length_front

dtype: image

- name: neck_waist_length_back

dtype: image

- name: neck_waist_length_front

dtype: image

- name: pelvis_circumference

dtype: image

- name: shoulder_length

dtype: image

- name: shoulder_width

dtype: image

- name: thigh_circumference

dtype: image

- name: under_chest_circumference

dtype: image

- name: upper_arm_length

dtype: image

- name: waist_circumference

dtype: image

splits:

- name: train

num_bytes: 86120

num_examples: 21

download_size: 68560913

dataset_size: 86120

---

# Body Measurements Dataset

The dataset consists of a compilation of people's photos along with their corresponding body measurements. It is designed to provide information and insights into the physical appearances and body characteristics of individuals.

The dataset includes a diverse range of subjects representing different **age groups, genders, and ethnicities**.

The photos are captured in a standardized manner, depicting individuals in a **front** and **side positions**.

The images aim to capture the subjects' physical appearance using appropriate *lighting and angles* that showcase their body proportions accurately.

The dataset serves various purposes, including:

- research projects

- body measurement analysis

- fashion or apparel industry applications

- fitness and wellness studies

- anthropometric studies for ergonomic design in various fields

.png?generation=1688983133539816&alt=media)

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=body-measurements-dataset) to discuss your requirements, learn about the price and buy the dataset.

# Content

### Folders

- **files**: includes folders with photos and measurements of people

- **proofs**: contains subfolders, corresponding to the original photos in `files` folder and includes additional photos of people taking measurements

- **.pdf** file: includes information about photos in `proofs` folder

### "Files" folder includes 3 images of a person and json file with measurements:

- **selfie** - the person is looking to the camera; face, neck and shoulders are clearly seen,

- **front photo** - the person stands in front of the camera, all body parts are clearly seen,

- **side photo** - the person turned sideways to the camera, all body parts are clearly seen

- **json file** - includes 22 measurements (*weight, height, hips circumference, leg length etc.*) and 4 additional characteristics (**age, gender, race, profession**) of a person, depicted in photos in the subfolder

### File with the extension .csv

includes the following information for each media file:

- **selfie**: link to the selfie,

- **front**: link to the front photo,

- **side**: link to the side photo,

- **measurements**: link to the json file with measurements

# Body Measurements might be collected in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=body-measurements-dataset) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

Isotonic/OpenOrca-deduped | 2023-08-24T13:21:18.000Z | [

"task_categories:text-generation",

"task_categories:text2text-generation",

"task_categories:conversational",

"task_categories:text-classification",

"task_categories:token-classification",

"task_categories:table-question-answering",

"task_categories:zero-shot-classification",

"task_categories:question-... | Isotonic | null | null | null | 3 | 15 | ---

license: mit

dataset_info:

features:

- name: id

dtype: string

- name: system_prompt

dtype: string

- name: question

dtype: string

- name: response

dtype: string

- name: reward

dtype: float32

splits:

- name: train

num_bytes: 3274600633.90245

num_examples: 2409134

- name: test

num_bytes: 409325419.048775

num_examples: 301142

- name: validation

num_bytes: 409325419.048775

num_examples: 301142

download_size: 2268645581

dataset_size: 4093251472.0000005

task_categories: