id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

quietwhisper/rvc-bd3-astarion | 2023-09-03T17:49:55.000Z | [

"region:us"

] | quietwhisper | null | null | null | 0 | 0 | Entry not found |

rejakarpann/PSIP | 2023-09-01T07:41:38.000Z | [

"region:us"

] | rejakarpann | null | null | null | 0 | 0 | Entry not found |

Falah/modern_architectural_style_prompts_SDXL | 2023-09-01T07:13:50.000Z | [

"region:us"

] | Falah | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: prompts

dtype: string

splits:

- name: train

num_bytes: 538167526

num_examples: 1000000

download_size: 63348311

dataset_size: 538167526

---

# Dataset Card for "modern_architectural_style_prompts_SDXL"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

WorkWithData/politicians | 2023-09-01T07:18:17.000Z | [

"region:us"

] | WorkWithData | null | null | null | 2 | 0 | This dataset can also be found here: https://www.workwithdata.com/dataset?entity=politicians

---

license: cc-by-4.0

---

|

KellyZhang01/010415 | 2023-09-01T07:49:49.000Z | [

"region:us"

] | KellyZhang01 | null | null | null | 0 | 0 | Entry not found |

p0aduo/dataset | 2023-09-01T07:44:01.000Z | [

"license:mit",

"region:us"

] | p0aduo | null | null | null | 0 | 0 | ---

license: mit

---

|

bene-ges/wikipedia_ru_titles | 2023-09-01T07:58:29.000Z | [

"license:cc-by-sa-4.0",

"region:us"

] | bene-ges | null | null | null | 0 | 0 | ---

license: cc-by-sa-4.0

---

|

SLOTjudi/Server1 | 2023-09-01T08:11:30.000Z | [

"region:us"

] | SLOTjudi | null | null | null | 0 | 0 | Entry not found |

mickume/alt_potterverse | 2023-09-01T08:15:54.000Z | [

"region:us"

] | mickume | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 110526763

num_examples: 569723

download_size: 68604496

dataset_size: 110526763

---

# Dataset Card for "alt_potterverse"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

IRI2070/address-chunks | 2023-09-03T18:11:50.000Z | [

"region:us"

] | IRI2070 | \ | \ | null | 0 | 0 | Entry not found |

metalslug/Xbuble | 2023-09-01T09:03:27.000Z | [

"region:us"

] | metalslug | null | null | null | 0 | 0 | Entry not found |

rohanbalkondekar/HealthCare | 2023-09-01T08:30:48.000Z | [

"region:us"

] | rohanbalkondekar | null | null | null | 0 | 0 | Entry not found |

jxie/qg-tagging-normalized | 2023-09-01T08:38:06.000Z | [

"region:us"

] | jxie | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: inputs

sequence:

sequence: float64

- name: label

dtype: int64

splits:

- name: train

num_bytes: 6944726400

num_examples: 1600000

- name: val

num_bytes: 868957000

num_examples: 200000

- name: test

num_bytes: 868286700

num_examples: 200000

download_size: 3812296127

dataset_size: 8681970100

---

# Dataset Card for "qg-tagging-normalized"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Joshua8966/blog-writer_training-data-v1-9-2023 | 2023-09-01T09:53:59.000Z | [

"region:us"

] | Joshua8966 | null | null | null | 0 | 0 | Entry not found |

etaylor/trichomes_moment_lens_instance_segmentation | 2023-09-01T08:33:09.000Z | [

"region:us"

] | etaylor | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: pixel_values

dtype: image

- name: label

dtype: image

splits:

- name: train

num_bytes: 52395517.0

num_examples: 51

download_size: 4014954

dataset_size: 52395517.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "trichomes_moment_lens_instance_segmentation"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Rawar/heman-toy | 2023-09-01T08:42:46.000Z | [

"license:cc-by-nc-4.0",

"region:us"

] | Rawar | null | null | null | 0 | 0 | ---

license: cc-by-nc-4.0

dataset_info:

features:

- name: file_name

dtype: string

- name: path

dtype: string

- name: caption

dtype: string

- name: description

dtype: string

- name: image

dtype: image

splits:

- name: train

num_bytes: 215676.0

num_examples: 9

download_size: 217363

dataset_size: 215676.0

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

wolves18/zalc | 2023-09-01T08:43:31.000Z | [

"license:unknown",

"region:us"

] | wolves18 | null | null | null | 0 | 0 | ---

license: unknown

---

|

IsThatOnFire/old-adetailer | 2023-09-01T08:54:43.000Z | [

"region:us"

] | IsThatOnFire | null | null | null | 0 | 0 | # !After Detailer

!After Detailer is a extension for stable diffusion webui, similar to Detection Detailer, except it uses ultralytics instead of the mmdet.

## Install

(from Mikubill/sd-webui-controlnet)

1. Open "Extensions" tab.

2. Open "Install from URL" tab in the tab.

3. Enter `https://github.com/Bing-su/adetailer.git` to "URL for extension's git repository".

4. Press "Install" button.

5. Wait 5 seconds, and you will see the message "Installed into stable-diffusion-webui\extensions\adetailer. Use Installed tab to restart".

6. Go to "Installed" tab, click "Check for updates", and then click "Apply and restart UI". (The next time you can also use this method to update extensions.)

7. Completely restart A1111 webui including your terminal. (If you do not know what is a "terminal", you can reboot your computer: turn your computer off and turn it on again.)

You can now install it directly from the Extensions tab.

You **DON'T** need to download any model from huggingface.

## Options

| Model, Prompts | | |

| --------------------------------- | ------------------------------------- | ------------------------------------------------- |

| ADetailer model | Determine what to detect. | `None` = disable |

| ADetailer prompt, negative prompt | Prompts and negative prompts to apply | If left blank, it will use the same as the input. |

| Detection | | |

| ------------------------------------ | -------------------------------------------------------------------------------------------- | --- |

| Detection model confidence threshold | Only objects with a detection model confidence above this threshold are used for inpainting. | |

| Mask min/max ratio | Only use masks whose area is between those ratios for the area of the entire image. | |

If you want to exclude objects in the background, try setting the min ratio to around `0.01`.

| Mask Preprocessing | | |

| ------------------------------- | ----------------------------------------------------------------------------------------------------------------------------------- | --------------------------------------------------------------------------------------- |

| Mask x, y offset | Moves the mask horizontally and vertically by | |

| Mask erosion (-) / dilation (+) | Enlarge or reduce the detected mask. | [opencv example](https://docs.opencv.org/4.7.0/db/df6/tutorial_erosion_dilatation.html) |

| Mask merge mode | `None`: Inpaint each mask<br/>`Merge`: Merge all masks and inpaint<br/>`Merge and Invert`: Merge all masks and Invert, then inpaint | |

Applied in this order: x, y offset → erosion/dilation → merge/invert.

#### Inpainting

Each option corresponds to a corresponding option on the inpaint tab. Therefore, please refer to the inpaint tab for usage details on how to use each option.

## ControlNet Inpainting

You can use the ControlNet extension if you have ControlNet installed and ControlNet models.

Support `inpaint, scribble, lineart, openpose, tile` controlnet models. Once you choose a model, the preprocessor is set automatically. It works separately from the model set by the Controlnet extension.

## Advanced Options

API request example: [wiki/API](https://github.com/Bing-su/adetailer/wiki/API)

`ui-config.json` entries: [wiki/ui-config.json](https://github.com/Bing-su/adetailer/wiki/ui-config.json)

`[SEP], [SKIP]` tokens: [wiki/Advanced](https://github.com/Bing-su/adetailer/wiki/Advanced)

## Media

- 🎥 [どこよりも詳しいAfter Detailer (adetailer)の使い方① 【Stable Diffusion】](https://youtu.be/sF3POwPUWCE)

- 🎥 [どこよりも詳しいAfter Detailer (adetailer)の使い方② 【Stable Diffusion】](https://youtu.be/urNISRdbIEg)

## Model

| Model | Target | mAP 50 | mAP 50-95 |

| --------------------- | --------------------- | ----------------------------- | ----------------------------- |

| face_yolov8n.pt | 2D / realistic face | 0.660 | 0.366 |

| face_yolov8s.pt | 2D / realistic face | 0.713 | 0.404 |

| hand_yolov8n.pt | 2D / realistic hand | 0.767 | 0.505 |

| person_yolov8n-seg.pt | 2D / realistic person | 0.782 (bbox)<br/>0.761 (mask) | 0.555 (bbox)<br/>0.460 (mask) |

| person_yolov8s-seg.pt | 2D / realistic person | 0.824 (bbox)<br/>0.809 (mask) | 0.605 (bbox)<br/>0.508 (mask) |

| mediapipe_face_full | realistic face | - | - |

| mediapipe_face_short | realistic face | - | - |

| mediapipe_face_mesh | realistic face | - | - |

The yolo models can be found on huggingface [Bingsu/adetailer](https://huggingface.co/Bingsu/adetailer).

### Additional Model

Put your [ultralytics](https://github.com/ultralytics/ultralytics) yolo model in `webui/models/adetailer`. The model name should end with `.pt` or `.pth`.

It must be a bbox detection or segment model and use all label.

## Example

[](https://ko-fi.com/F1F1L7V2N)

|

yzhuang/autotree_pmlb_letter_sgosdt_l256_d3_sd0 | 2023-09-01T08:56:37.000Z | [

"region:us"

] | yzhuang | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: id

dtype: int64

- name: input_x

sequence:

sequence: float32

- name: input_y

sequence:

sequence: float32

- name: rtg

sequence: float64

- name: status

sequence:

sequence: float32

- name: split_threshold

sequence:

sequence: float32

- name: split_dimension

sequence: int64

splits:

- name: train

num_bytes: 523118976

num_examples: 10000

- name: validation

num_bytes: 523120000

num_examples: 10000

download_size: 61880916

dataset_size: 1046238976

---

# Dataset Card for "autotree_pmlb_letter_sgosdt_l256_d3_sd0"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

deyuq/deyuq_repo | 2023-09-01T08:57:29.000Z | [

"region:us"

] | deyuq | null | null | null | 0 | 0 | Entry not found |

szelesaron/tf.csv | 2023-09-01T09:01:10.000Z | [

"license:openrail",

"region:us"

] | szelesaron | null | null | null | 0 | 0 | ---

license: openrail

---

|

mickume/dark_granger | 2023-09-01T09:10:09.000Z | [

"region:us"

] | mickume | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 30973265

num_examples: 151837

download_size: 19178518

dataset_size: 30973265

---

# Dataset Card for "dark_granger"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

mickume/dark_granger_tk | 2023-09-01T09:11:01.000Z | [

"region:us"

] | mickume | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: input_ids

sequence: int32

splits:

- name: train

num_bytes: 25514148.0

num_examples: 3113

- name: test

num_bytes: 2835816.0

num_examples: 346

download_size: 13339268

dataset_size: 28349964.0

---

# Dataset Card for "dark_granger_tk"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

qwertyaditya/rick-and-morty-text-to-image | 2023-09-01T09:12:09.000Z | [

"region:us"

] | qwertyaditya | null | null | null | 0 | 0 | Entry not found |

qwertyaditya/rick_and_morty_text_to_image | 2023-09-01T09:31:35.000Z | [

"region:us"

] | qwertyaditya | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 3791351.0

num_examples: 40

download_size: 3456089

dataset_size: 3791351.0

---

# Dataset Card for "rick_and_morty_text_to_image"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Lancelot53/srbd1_segmented2 | 2023-09-01T09:25:39.000Z | [

"region:us"

] | Lancelot53 | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: html

dtype: string

- name: response

dtype: string

splits:

- name: train

num_bytes: 1452582

num_examples: 1508

download_size: 405675

dataset_size: 1452582

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "srbd1_segmented2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

hoijuwon/test | 2023-09-01T09:27:15.000Z | [

"license:mit",

"region:us"

] | hoijuwon | null | null | null | 0 | 0 | ---

license: mit

---

|

gothstaf/test22-llm | 2023-09-01T09:55:19.000Z | [

"license:openrail",

"region:us"

] | gothstaf | null | null | null | 0 | 0 | ---

license: openrail

---

|

marineflexultra/marineflex-ultra | 2023-09-01T10:01:25.000Z | [

"region:us"

] | marineflexultra | null | null | null | 0 | 0 | **Product name** - [MarineFlex Ultra](https://marineflex-ultra-reviews.jimdosite.com/)

**Category** - Dietary supplement, Flexibility, Mobility.

**Benefits** - Treats Joint Pain

**Dosage** - Take 2 pills everyday

**Availability** - [Online](https://www.healthsupplement24x7.com/get-marineflex-ultra)

**Official Website** - [https://www.healthsupplement24x7.com/get-marineflex-ultra](https://www.healthsupplement24x7.com/get-marineflex-ultra)

With the help of [Marine Flex Ultra](https://pdfhost.io/v/oUE.LN6TI_MarineFlex_Ultra_New_Update_2023_Reduce_Joint_Pain_Boosting_Flexibility_Mobility_Faster), people can restore their young mobility and flexibility and resume participating in their favorite activities.The joint support formula has nutrients that relieve pain and soothe inflammation and swelling. It improves physical function and reduces joint discomfort. [MarineFlex Ultra](https://www.ivoox.com/marineflex-ultra-new-update-2023-reduce-joint-pain-audios-mp3_rf_115267571_1.html) supports healthy inflammatory response and enhances the production of synovial fluid.The fluid nourishes and lubricates the cartilage and joints.

[.png)](https://www.healthsupplement24x7.com/get-marineflex-ultra)

### _**[Visit MarineFlex Ultra Official Website Here](https://www.healthsupplement24x7.com/get-marineflex-ultra)**_

**What is MarineFlex Ultra?**

-----------------------------

[MarineFlex Ultra](https://healthsupplements24x7.blogspot.com/2023/08/marineflex-ultra.html) helps you move better and have stronger bones. It helps with stiffness, aching, and swelling of joints. The supplement works to make your joints healthy and last longer.

[MarineFlex Ultra](https://soundcloud.com/marine-flex-ultra/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster) helps fix the main problem of joint decay that happens when you get older without causing side effects. It works well by making more joint jello, lowering inflammation, and feeding and oiling the joints.

**How Does It Work?**

---------------------

[MarineFlex Ultra](https://www.townscript.com/e/marineflex-ultra-323231) is made from a unique blend of necessary ingredients and systemic proteolytic proteins. Your body develops distinct characters made up of proteins in the location of pain and white blood leukocytes that treat the damage. Nonetheless, after the healing process is completed, the sticky tissues obstruct the flow of red blood cells, which deliver oxygen to all bodily areas.

**Benefits of Marine Flex Ultra**

---------------------------------

### Reduces Joint Pain and Discomfort

One of the primary benefits of Marine Flex Ultra is reducing joint pain and discomfort. The ingredients work together to target the underlying causes of pain, providing relief and improving overall joint health.

### Supports Joint Health and Mobility

[Marine Flex Ultra](https://groups.google.com/g/marineflex-ultra-pills/c/u9UnGYu1Zr8) helps support joint health by promoting synovial fluid production. This fluid cushions and lubricates the joints leading to improved mobility and decreased joint stiffness.

### Promotes a Healthy Inflammatory Response

Chronic inflammation causes joint pain and discomfort. Marine Flex Ultra contains ingredients that support a healthy inflammatory response, helping to alleviate issues caused by inflammation.

### Enhances Bone Marrow Function

Bone marrow plays a crucial role in joint health, as it produces cells that contribute to the maintenance and repair of joint tissues. Marine Flex Ultra supports bone marrow function, promoting overall joint health.

### **Increase circulation**

Marine Flex Ultra improves blood flow and the delivery of nutrients and oxygen to the joints and other parts of the body.

### Strengthen Bones

The ingredients in Marine Flex Ultra support bone and muscle strength by preventing fractures.

[.png)](https://www.healthsupplement24x7.com/get-marineflex-ultra)

### _**[Order Your Supply Of Marine Flex Ultra Now And Start Enjoying The Benefits!](https://www.healthsupplement24x7.com/get-marineflex-ultra)**_

**Ingredients of MarineFlex Ultra!**

------------------------------------

Here are all of the active ingredients in MarineFlex Ultra and how they work, according to Dr. Kahn and the official MarineFlex Ultra website:

**Green Lipped Mussel**:– Green lipped mussel, also known as Perna canaliculus, is a rare marine organism that only grows in the clean and pristine waters off the coast of New Zealand. This mussel gives Marine Flex Ultra its name, because the primary active ingredient comes from the ocean. Green lipped mussels are rich with omega-3 fatty acids, including proven inflammation fighters like DHA and EPA.

**Boswellia Serrata**:–Boswellia serrata extract comes from a tree native to India. It has been used in traditional Indian medicine (Ayurveda) for centuries as a general health and wellness aid. Today, we know boswellia serrata is rich with phytochemicals (plant-based antioxidants) and other natural ingredients that can decrease knee pain, boost mobility, and help with swelling and inflammation.

**Ashwagandha**:– Today, we know ashwagandha works because it’s rich with a substance called Withaferin A (WFA). This substance appears to help with chronic joint pain by suppressing inflammatory cytokines. In fact, WFA could help supress inflammation throughout the body, leading to positive effects on cognition, physical energy, mobility, and more. Many people with joint pain have chronic inflammation, and ashwagandha could help.

**Hyaluronic Acid**:- Hyaluronic acid is known for carrying many times its weight in water, increasing hydration throughout the body – including in the area between your joints. Hyaluronic acid support the synovial fluid and lubrication between your joints, but it also stimulates new cell formation to help repair cartilage, helping it support joint pain relief in multiple ways.

**MSM**:– MSM helps your body form new cartilage and it decreases joint inflammation. Dr. Kahn cites one study where 50 patients with knee osteoarthritis took MSM or a placebo pill. After 12 weeks, MSM significantly decreased pain and physical function impairment compared to the placebo. Today, many people with joint pain take MSM daily to help with the condition.

**Collagen**:– Collagen is the most abundant connective protein in the body, and many people take collagen daily for anti-aging, wrinkle defense, joint pain, and muscle recovery. It helps to repair cartilage and other joint cells. The reason is simple: most cartilage is made of collagen, and collagen plays a crucial role in holding your body together.

**Chondroitin Sulfate**:– Like glucosamine sulfate, chondroitin sulfate is well-known for its effects on joint pain relief and bone repair. As proof, Dr. Kahn cites one study involving 162 patients with osteoarthritis in their hand. Patients took chondroitin sulfate or a placebo pill, and those in the chondroitin sulfate group had significantly less hand pain than those taking a placebo.

15+ Other Herbs, Plants Ingredients include bromelain, calendula, burdock, cetyl myristoleate, yucca, feverfew, shark cartilage, horsetail, white willow bark, gentian root, cinnamon, shatavari, N-acetyl D-glucosamine, grape root extract, and Rehmannia root.

[.png)](https://www.healthsupplement24x7.com/get-marineflex-ultra)

### _**[\[click Here To Order\] Unlock The Benefits Of Marineflex Ultra Natural Ingredients.](https://www.healthsupplement24x7.com/get-marineflex-ultra)**_

**What Is The dosage for MarineFlex Ultra?**

--------------------------------------------

MarineFlex Ultra is a supplement for those who are looking for natural ways to relieve pain and discomfort. In every bottle, you get a month’s supply, that is, 90 capsules per container.

The serving size or recommended dosage for this supplement is 3 capsules daily. It is important to consult a doctor before using the supplement. The formula cannot be used along with blood thinning supplements.

**Marine Flex Ultra Side Effects**

----------------------------------

According to several users, taking Marine Flex Ultra is safe, but an overdose may be harmful. Taking the supplement on an empty stomach may form indigestion such as gas. But just as with any other supplement, you must give your body to adjust to it. Apart from these, Marine Flex Ultra has an excellent track record and clean safety history.

The only way that consumers can be sure to purchase [MarineFlex Ultra](https://events.humanitix.com/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster) is to go through the official website. Consumers have their choice of various quantities, and they can even opt-in to a subscription.

**Pricing Of MarineFlex Ultra**

-------------------------------

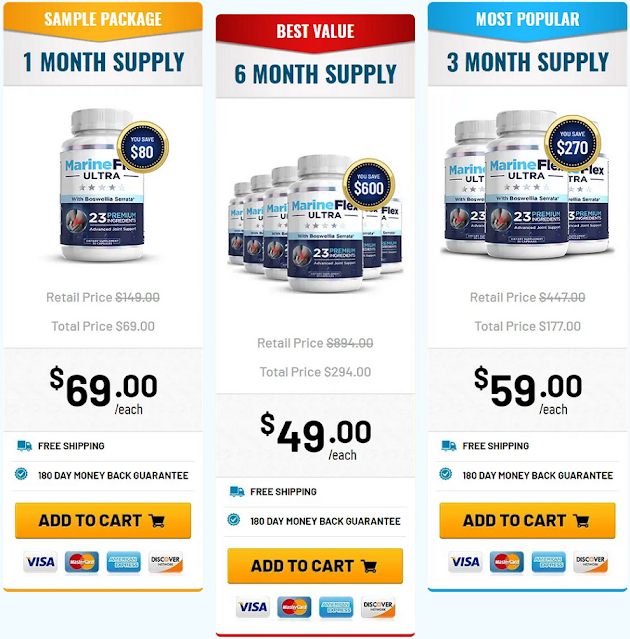

The Marine Flex Ultra supplement is very cheap because the manufacturers wanted to make it a viable pain-relieving option. There are currently three packages being offered on the Marine Flex Ultra website that we have listed here.

The packages available are:

_**1 Month Supply - $69.00/each + free shipping**_

_**3 Month SUpply - $59.00/each + free shipping**_

_**6 Month supply - $49.00/each + free shipping**_

[.png)](https://www.healthsupplement24x7.com/get-marineflex-ultra)

### _**[\[SPECIAL DISCOUNT\] Click Here To Visit MarineFlex Ultra Official Website](https://www.healthsupplement24x7.com/get-marineflex-ultra)**_

**MarineFlex Ultra™ 180-Day Money Back Guarantee!**

---------------------------------------------------

The [MarineFlex Ultra](https://marineflexultra.clubeo.com/page/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster.html)™ is backed by a 100% money back guarantee for 180 full days from your original purchase.

If you're not totally and completely satisfied with our product or your results within the first 180 days from your purchase simply let us know at [MarineFlex Ultra](https://marineflexultra.clubeo.com/calendar/2023/09/01/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster)™ and we’ll give you a refund within 48 hours of the product being returned. That’s right, simply return the [MarineFlex Ultra](https://marineflexultrareviews.hashnode.dev/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster), even empty bottles, anytime within 180 days of your purchase and you’ll receive a refund, no questions asked!

**Where To Buy?**

-----------------

When it comes to purchasing [Marine Flex Ultra](https://www.scoop.it/topic/marineflex-ultra-reviews), you have a few options. You can buy the supplement directly from their website, or you can purchase it from various retailers.

It is important to remember that Marine Flex Ultra is not FDA approved, and as such you should always take the recommended doseIf you are taking other medications, you should talk to your doctor before taking Marine Flex Ultra.

**Final Verdict**

-----------------

[MarineFlex Ultra](https://marineflexultra.clubeo.com) gives users the ability to reduce inflammation. The formula is easy to keep up with daily, though users only need three capsules to create the effect. Users can choose between a one-time purchase and a subscription, but both are covered by a 180-day money-back guarantee. With so many natural ingredients, users can simply enjoy the benefits without worrying about side effects.

[.png)](https://www.healthsupplement24x7.com/get-marineflex-ultra)

### [_**Click Here To Visit The Official MarineFlex Ultra Website And Learn More About!**_](https://www.healthsupplement24x7.com/get-marineflex-ultra)

[https://healthsupplements24x7.blogspot.com/2023/08/marineflex-ultra.html](https://healthsupplements24x7.blogspot.com/2023/08/marineflex-ultra.html)

[https://pdfhost.io/v/oUE.LN6TI\_MarineFlex\_Ultra\_New\_Update\_2023\_Reduce\_Joint\_Pain\_Boosting\_Flexibility\_Mobility\_Faster](https://pdfhost.io/v/oUE.LN6TI_MarineFlex_Ultra_New_Update_2023_Reduce_Joint_Pain_Boosting_Flexibility_Mobility_Faster)

[https://www.ivoox.com/marineflex-ultra-new-update-2023-reduce-joint-pain-audios-mp3\_rf\_115267571\_1.html](https://www.ivoox.com/marineflex-ultra-new-update-2023-reduce-joint-pain-audios-mp3_rf_115267571_1.html)

[https://soundcloud.com/marine-flex-ultra/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster](https://soundcloud.com/marine-flex-ultra/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster)

[https://marineflexultra.clubeo.com](https://marineflexultra.clubeo.com)

[https://marineflexultra.clubeo.com/calendar/2023/09/01/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster](https://marineflexultra.clubeo.com/calendar/2023/09/01/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster)

[https://marineflexultra.clubeo.com/page/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster.html](https://marineflexultra.clubeo.com/page/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster.html)

[https://marineflexultra.clubeo.com/page/marineflex-ultra-reviews-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster.html](https://marineflexultra.clubeo.com/page/marineflex-ultra-reviews-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster.html)

[https://www.scoop.it/topic/marineflex-ultra-reviews](https://www.scoop.it/topic/marineflex-ultra-reviews)

[https://marineflex-ultra-reviews.jimdosite.com/](https://marineflex-ultra-reviews.jimdosite.com/)

[https://www.fuzia.com/article\_detail/801622/marineflex-ultra-new-update-2023-reduce-joint-pain](https://www.fuzia.com/article_detail/801622/marineflex-ultra-new-update-2023-reduce-joint-pain)

[https://marineflexultrareviews.hashnode.dev/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster](https://marineflexultrareviews.hashnode.dev/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster)

[https://colab.research.google.com/drive/1MiUi9VaIIvX62XGu3sEL7F08TDTKeQ7J](https://colab.research.google.com/drive/1MiUi9VaIIvX62XGu3sEL7F08TDTKeQ7J)

[https://colab.research.google.com/drive/17T10l\_IC3pTeLwB09lnj2w0d\_pvoPe19](https://colab.research.google.com/drive/17T10l_IC3pTeLwB09lnj2w0d_pvoPe19)

[https://colab.research.google.com/drive/1xl0ppWKwuT8xnidmS60EufalpzVgg0bw](https://colab.research.google.com/drive/1xl0ppWKwuT8xnidmS60EufalpzVgg0bw)

[https://colab.research.google.com/drive/1dmfjNL8pclJmjjAxqzQvgkcdtultlygt](https://colab.research.google.com/drive/1dmfjNL8pclJmjjAxqzQvgkcdtultlygt)

[https://colab.research.google.com/drive/1OGn57UdiAlYF7G2MA2ew2pdLgFl5hKFx](https://colab.research.google.com/drive/1OGn57UdiAlYF7G2MA2ew2pdLgFl5hKFx)

[https://www.townscript.com/e/marineflex-ultra-323231](https://www.townscript.com/e/marineflex-ultra-323231)

[https://events.humanitix.com/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster](https://events.humanitix.com/marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility-mobility-faster)

[https://devfolio.co/@marineflex\_](https://devfolio.co/@marineflex_)

[https://form.jotform.com/marineflexultra/marineflex-ultra](https://form.jotform.com/marineflexultra/marineflex-ultra)

[https://devfolio.co/projects/marineflex-ultra-dd7e](https://devfolio.co/projects/marineflex-ultra-dd7e)

[https://forum.molihua.org/d/42391-marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility](https://forum.molihua.org/d/42391-marineflex-ultra-new-update-2023-reduce-joint-pain-boosting-flexibility)

[https://electronoobs.io/project/11427#](https://electronoobs.io/project/11427#)

[https://groups.google.com/g/get-marineflex-ultra/c/sYdqvo08hMI](https://groups.google.com/g/get-marineflex-ultra/c/sYdqvo08hMI)

[https://groups.google.com/g/read-marineflex-ultra-reviews/c/fC7gwflzX4E](https://groups.google.com/g/read-marineflex-ultra-reviews/c/fC7gwflzX4E)

[https://groups.google.com/g/get-marine-flex-ultra/c/vLpb\_3REicU](https://groups.google.com/g/get-marine-flex-ultra/c/vLpb_3REicU)

[https://groups.google.com/g/marine-flex-ultra-reviews/c/bR37-Eay-As](https://groups.google.com/g/marine-flex-ultra-reviews/c/bR37-Eay-As)

[https://groups.google.com/g/marineflex-ultra-pills/c/u9UnGYu1Zr8](https://groups.google.com/g/marineflex-ultra-pills/c/u9UnGYu1Zr8) |

FinchResearch/Lyrics | 2023-09-01T10:04:35.000Z | [

"region:us"

] | FinchResearch | null | null | null | 0 | 0 | Entry not found |

TokenBender/telugu_high_quality_convo | 2023-09-01T12:11:53.000Z | [

"license:apache-2.0",

"region:us"

] | TokenBender | null | null | null | 1 | 0 | ---

license: apache-2.0

---

|

alphageek/arxiv-metadata | 2023-09-01T10:39:20.000Z | [

"license:cc0-1.0",

"region:us"

] | alphageek | null | null | null | 0 | 0 | ---

license: cc0-1.0

---

|

fernandoperes/first_ds | 2023-09-01T13:09:43.000Z | [

"license:openrail",

"region:us"

] | fernandoperes | null | null | null | 0 | 0 | ---

license: openrail

dataset_info:

features:

- name: sentence1

dtype: string

- name: sentence2

dtype: string

- name: label

dtype:

class_label:

names:

'0': not_equivalent

'1': equivalent

- name: idx

dtype: int32

splits:

- name: train

num_bytes: 943843

num_examples: 3668

download_size: 649281

dataset_size: 943843

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

|

zorilladev/ner_train_judgement_temp | 2023-09-01T12:09:25.000Z | [

"language:en",

"region:us"

] | zorilladev | null | null | null | 0 | 0 | ---

language:

- en

--- |

gothstaf/questillma2 | 2023-09-01T10:48:40.000Z | [

"license:openrail",

"region:us"

] | gothstaf | null | null | null | 0 | 0 | ---

license: openrail

---

|

timelesbeautyserum/timelesbeautyserumbuy | 2023-09-01T10:52:18.000Z | [

"region:us"

] | timelesbeautyserum | null | null | null | 0 | 0 | [**Timeless Beauty Age-Rewinding Serum**](https://sites.google.com/view/timelessness-the-power-of-beau/home) is a topical remedy for consumers who want to restore the naturally youthful appearance of their skin. The remedy uses an assortment of helpful ingredients that can all improve smoothness while erasing fine lines and wrinkles.

**Official Website:** **[https://www.glitco.com/get-timeless-beauty-serum](https://www.glitco.com/get-timeless-beauty-serum)**

[](https://www.glitco.com/get-timeless-beauty-serum)

**What is the [Timeless Beauty Age-Rewinding Serum](https://groups.google.com/g/timeless-beauty-age-rewinding-serum/c/MCfHn_6LYwE)?**

Having a youthful attitude is one of the most important steps in maintaining a recognizable zest for life as the body ages. However, no number of good attitudes can prevent those wrinkles from forming on the face, pulling away from the idea that someone can “look as young as they feel.” Maintaining the appearance of a youthful body is not as easy as maintaining a youthful spirit for many consumers, and the skincare industry is a prime example.

Companies seem to make millions of dollars every year with invasive Botox treatments and laser technology to get rid of wrinkles, but the solution doesn’t have to be so intense. Instead, there’s a way to alleviate the stress, UV radiation, and environmental pollutants that cause early aging in the first place. Plus, it comes without another trip to the dermatologist. That solution is the [**Timeless Beauty Age-Rewinding Serum**](https://colab.research.google.com/drive/1FfaFVMFjoC3uLG9LiDRy71BfSaE7o5PU?usp=sharing).

[**Click Here to Get Timeless Beauty Age-Rewinding Serum At Discounted Price!!!**](https://www.glitco.com/get-timeless-beauty-serum)

**Ingredients of the Timeless Beauty Age-Rewinding Serum**

The only way to craft a helpful serum like Timeless Beauty is to use the right assortment of ingredients. These ingredients include:

● Topical vitamin C

● Gotu kola extract

● Horsetail plant extract

● Geranium extract

● Dandelion extract

● L-arginine

● Kosher veggie glycerin

● Aloe

● MSM

● Witch hazel

● Vitamin E

[**Timeless Beauty Age-Rewinding Is On Sale Now For A Limited Time!**](https://www.glitco.com/get-timeless-beauty-serum)

Each of these ingredients is assigned a “role” in helping with the user’s complexion. Read below to learn about their role and the benefits of that role.

* **Topical Vitamin C**

* Topical vitamin C is cast in the role of “The Hero.” It has been used in skincare remedies for decades, helping to heal sun damage. It also protects the skin from free radicals and stimulates the repair of collagen production.

* **Gotu Kola Extract**

* Gotu kola extract comes in to eliminate wrinkles, though some evidence shows that it can prevent cellulite as well. This extract reduces free radicals, which poses a threat to the health and clarity of skin.

* **Horsetail Plant Extract**

* Horsetail plant extract is here to tighten the skin, reducing the saggy that can come with age. Then, it shrinks pores to make it look smoother and firmer. Plus, this ingredient can boost collagen.

* **Geranium Extract**

* Geranium extract is affectionately referred to as “The Lamp” because of the incredible radiance that users bring to their skin with it. It also reduces acne and promotes healing of irritations.

* **Dandelion Extract**

* Dandelion extract helps consumers to eliminate toxins from their complexion, which would otherwise lead to wrinkles, blemishes, and discoloration. It also helps the pores to breathe to eliminate buildup within them.

* **L Arginine**

* L-arginine helps consumers by improving hydration in the complexion, which makes it look smoother, plumper, and tauter. This ingredient is crucial for anyone with sagging skin, firming it to make it appear smoother.

* **Kosher Veggie Glycerin**

* Kosher veggie glycerin helps to improve the oxygen that the skin gets. Oxygen can purify the surface of the skin, erasing the damage that toxic buildup has caused. Since all of the other ingredients also clear and clean pores, this ingredient is crucial to its effectiveness.

* **Aloe**

* Aloe creates a balmy texture in the skin as though the user was out in the sun on a warm summer night. This dewy appearance comes with support for irritation, inflammation, and healing wounds.

* **MSM**

* MSM is here to erase acne, which only makes the appearance of wrinkles worse. It reduces skin sensitivity, ensuring that the Timeless Beauty Age-Rewinding Serum can be used by anyone without an adverse reaction.

* **Witch Hazel**

* Witch hazel has been used for centuries to help with blotchy and red skin. It takes away the irritation with its antibacterial benefits to showcase the user’s natural beauty.

* **Vitamin E**

* To round out this formula, there’s no way to support skin without vitamin E. As an anti-inflammatory, it reduces irritation and calms the skin.

[**Timeless Beauty Age-Rewinding Is On Sale Now For A Limited Time!**](https://www.glitco.com/get-timeless-beauty-serum)

**Purchasing the [Timeless Beauty Age-Rewinding Serum](https://lookerstudio.google.com/reporting/ced1169a-9bf0-4f71-a616-508404c15cdf)

**

Instead of visiting a high-end cosmetics and skincare store, consumers can go through the official website. The website offers three different packages – Silver, Gold, and Platinum – to accommodate the needs of customers. Each package has a different number of bottles, allowing users to either try the formula for a short time or stock up for longer.

**The packages available are:**

● Gold (1 bottle) for $69

● Silver (2 bottles) for $99

● Platinum (6 bottles) for $179

[](https://www.glitco.com/get-timeless-beauty-serum)

While users will have to cover a $9.95 shipping fee for the Gold package, the other two options come with complementary free shipping as an incentive for users to stock up. Plus, the purchase is covered by a 90-day money-back guarantee.

**Frequently Asked Questions About the Timeless Beauty Age-Rewinding Serum**

* **Will users need to apply moisturizer after they use the Timeless Beauty Age-Rewinding Serum?**

* No. This formula can be paired with moisturizer, but it won’t be necessary. The serum is powerful enough to do what is advertised without any other product, and users won’t experience stickiness or tightness like they ordinarily might with other serums. Though other brands also require the application of moisturizer after use, this requirement is only due to the lack of strength and hydration in other serums.

* **Should the Timeless Beauty Age-Rewinding Serum be used daily to get results?**

* Yes. The best way to get the performance that consumers expect is to use it every day, but the creators explain that it can also be used on an as-needed basis. Skipping a day of use won’t cause the user to lose their progress, but it will leave users exposed to UV rays, pollutants, and other substances that could dry it out. Catching up on the progress can be difficult, but users won’t have to completely start again.

* **Who does the Timeless Beauty Age-Rewinding Serum benefit the most?**

* Any adult can benefit from this serum, though many consumers start to use it in their late 20s and early 30s to help with any wrinkles before they settle in.

* **How should the Timeless Beauty Age-Rewinding Serum be applied?**

* This serum should only be applied to clean and dry skin. While a cleanser is not offered with the purchase, consumers can choose any gentle cleanser they prefer to set the tone for their daily skincare.

* **Will the Timeless Beauty Age-Rewinding Serum work for any skin condition?**

* The idea behind this formula is that users can easily deal with wrinkles, eczema, rosacea, and acne during adulthood. The improvement of collagen can help with a lot of skin concerns, but the Timeless Beauty serum can’t fix everything. That’s why they offer a money-back guarantee.

[**Click Here to Get Timeless Beauty Age-Rewinding Serum At Discounted Price!!!**](https://www.glitco.com/get-timeless-beauty-serum)

**Disclaimer:**

Please understand that any advice or guidelines revealed here are not even remotely substitutes for sound medical or financial advice from a licensed healthcare provider or certified financial advisor. Make sure to consult with a professional physician or financial consultant before making any purchasing decision if you use medications or have concerns following the review details shared above. Individual results may vary and are not guaranteed as the statements regarding these products have not been evaluated by the Food and Drug Administration or Health Canada. The efficacy of these products has not been confirmed by FDA, or Health Canada approved research. These products are not intended to diagnose, treat, cure or prevent any disease and do not provide any kind of get-rich money scheme. Reviewer is not responsible for pricing inaccuracies. Check product sales page for final prices. |

S4ur0n/MDE | 2023-09-01T11:25:28.000Z | [

"region:us"

] | S4ur0n | null | null | null | 0 | 0 | Entry not found |

KetoxboomDEATCH/KetoxboomGermanyDEAustriaATandSwitzerlandCH | 2023-09-01T11:32:59.000Z | [

"region:us"

] | KetoxboomDEATCH | null | null | null | 0 | 0 | <h2><span style="background-color: #ffff00;"><strong>Unsere offiziellen Facebook-Seiten ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/KetoxboomDE/"><strong>https://www.facebook.com/KetoxboomDE/</strong></a></p>

<p><a href="https://www.facebook.com/DEATCHKetoxboom/"><strong>https://www.facebook.com/DEATCHKetoxboom/</strong></a></p>

<p><a href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>https://www.facebook.com/Ketoxboom.DE.AT.CH/</strong></a></p>

<p><a href="https://www.facebook.com/KetoxboomINGermany/"><strong>https://www.facebook.com/KetoxboomINGermany/</strong></a></p>

<p><a href="https://www.facebook.com/KetoxboomDeutschland/"><strong>https://www.facebook.com/KetoxboomDeutschland/</strong></a></p>

<p><a href="https://www.facebook.com/KetoxboomGermanyDeutschland/"><strong>https://www.facebook.com/KetoxboomGermanyDeutschland/</strong></a></p>

<p> </p>

<p> </p>

<h3><span style="font-weight: 400;">➥✅ Produktname: </span><span style="color: #ff6600;"><a style="color: #ff6600;" href="https://www.facebook.com/KetoxboomDE/"><strong>[Ketoxboom]</strong></a></span></h3>

<h3><span style="font-weight: 400;">➥✅ Vorteile: </span><span style="color: #ff6600;"><a style="color: #ff6600;" href="https://www.facebook.com/DEATCHKetoxboom/"><strong>Ketoxboom hilft effektiv beim Abnehmen.</strong></a></span></h3>

<h3><span style="font-weight: 400;">➥✅ Kategorie: </span><span style="color: #ff6600;"><a style="color: #ff6600;" href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>Nahrungsergänzungsmittel zur Gewichtsabnahme</strong></a></span></h3>

<h3><span style="font-weight: 400;">➥✅ Bewertung: </span><span style="color: #ff6600;"><a style="color: #ff6600;" href="https://www.facebook.com/KetoxboomINGermany/"><strong>★★★★☆ (4,5/5,0)</strong></a></span></h3>

<h3><span style="font-weight: 400;">➥✅ Nebenwirkungen: </span><span style="color: #ff6600;"><a style="color: #ff6600;" href="https://www.facebook.com/KetoxboomDeutschland/"><strong>Keine größeren Nebenwirkungen</strong></a></span></h3>

<h3><span style="font-weight: 400;">➥✅ Offizielle Website: </span><span style="color: #008000;"><a style="color: #008000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>https//ketoxboom.com/</strong></a></span></h3>

<h3><span style="font-weight: 400;">➥✅ Verfügbarkeit: </span><span style="color: #008000;"><a style="color: #008000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>Auf Lager Zum Produkt Nr. 1 in DE / AT / CH gewählt</strong></a></span></h3>

<p> </p>

<h1><span style="color: #993300;"><a style="color: #993300;" href="https://healthgrowth.shop/ketoxboom-de"><strong>✅RIESIGER RABATT! BEEIL DICH! JETZT BESTELLEN!✅</strong></a></span></h1>

<h1><span style="color: #ff0000;"><a style="color: #ff0000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>✅RIESIGER RABATT! BEEIL DICH! JETZT BESTELLEN!✅</strong></a></span></h1>

<h1><span style="color: #ff00ff;"><a style="color: #ff00ff;" href="https://healthgrowth.shop/ketoxboom-de"><strong>✅RIESIGER RABATT! BEEIL DICH! JETZT BESTELLEN!✅</strong></a></span></h1>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDE/"><strong>Ketoxboom Deutschland</strong></a><span style="font-weight: 400;"> ist das effektivste Fettabbauprogramm auf dem Markt, das die perfekten Reaktionen im Körper gewährleistet. Die Gesundheit des Menschen verbessert sich durch den perfekten Ketoseprozess. Der Ketoseprozess erfordert eine Kürzung der Kohlenhydrate, die dabei hilft, die Energiequelle von der alternativen auf die ideale Quelle umzustellen. Mit effektiven Reaktionen im Körper können Sie die besten Ergebnisse erzielen. Der natürliche Ketoseprozess kann viel Zeit in Anspruch nehmen, bis der eigentliche Fettabbauprozess stattfindet.</span></p>

<p> </p>

<p><span style="font-weight: 400;">Die meisten Menschen praktizieren den traditionellen Ketoseprozess, konnten ihn jedoch nicht bis zum Ende befolgen. Das Hinzufügen dieser Keto-Gummis kann also dazu beitragen, dass die Person alle Beschwerden, die Fettleibigkeit verursachen, tief im Körper beseitigt. </span><a href="https://www.facebook.com/DEATCHKetoxboom/"><strong>Mit gesunden mentalen Faktoren können</strong></a><span style="font-weight: 400;"> Sie einen schlanken und gesunden Körper erreichen. Sie müssen nicht auf Ihr Lieblingsessen verzichten und können trotzdem einen schnelleren Ketoseprozess im Körper erreichen. Mit einem besseren Appetit und einer besseren Ernährung können Sie die beste Transformation erreichen. Sie müssen keine intensiven Trainingseinheiten absolvieren und können trotzdem einen perfekt verwandelten Körper erreichen.</span></p>

<p> </p>

<p><a href="https://healthgrowth.shop/ketoxboom-de"><img src="https://i.ibb.co/zxXNn5c/Ketoxboom-Germany.png" alt="Ketoxboom-Germany" border="0" /></a></p>

<p> </p>

<p> </p>

<h1><span style="color: #ff0000;"><a style="color: #ff0000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>**[NEUESTE ANGEBOTE 2023] {KAUFEN #Ketoxboom} zum *Niedrigsten Preis* zeitlich begrenztes Angebot BEEILEN SIE SICH!!**</strong></a></span></h1>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>Was sind die wesentlichen Faktoren von Ketoxboom –</strong></a></span></h2>

<p> </p>

<ul>

<li style="font-weight: 400;"><span style="font-weight: 400;">Erhöht die Ketose im Körper</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Verbessert den Energiequotienten</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Funktioniert gut mit der verbesserten allgemeinen Gesundheit</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Fettabbau statt Kohlenhydratverbrauch</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Reduziert das Problem des Schlafmangels</span></li>

</ul>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>Was sind Ketoxboom?</strong></a></span></h2>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomINGermany/"><strong>Ketoxboom</strong></a><span style="font-weight: 400;"> ist die revolutionäre Formel zur Gewichtsreduktion, die für beste Gesundheit sorgt, ohne dass sich mehr Fett im Körper ansammelt. Es entfernt alle überschüssigen Fette aus dem Körper mit besseren Funktionen und ohne Gegenreaktionen. Diese Kur enthält alle geheimen Mischungen, die sorgfältig recherchiert wurden, bevor sie der Kur hinzugefügt werden. Der Körper erfährt mit den besten Reshaping-Faktoren eine perfekte Steigerung der allgemeinen Gesundheit. Mit den besten Schlankheitsfaktoren können Sie einen schlanken und schlanken Körper bekommen. „</span><span style="color: #ff6600;"><a style="color: #ff6600;" href="https://healthgrowth.shop/ketoxboom-de"><strong>KAUFEN VON (OFFIZIELLE WEBSITE)</strong></a></span><span style="font-weight: 400;">“</span></p>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDeutschland/"><strong>Die Gesundheit des Einzelnen</strong></a><span style="font-weight: 400;"> steigt mit dem schnelleren Fettabbaufaktor dieser Keto-Gummis. Sie können den perfekten Übergang von einem voluminösen zu einem schlanken Körper schaffen. Die Gesundheit des Menschen steigt mit besserer Arbeitseffizienz. Sie können einen verwandelten Körper mit effektiver allgemeiner Gesundheit erreichen. Diese Formel wirkt sich auf die psychische Gesundheit aus und verbessert die Gehirnfunktionen. Es führt zu einem perfekten Ergebnis für den Körper und macht die Person körperlich aktiv und schlank mit den besten Aussichten. Diese Keto-Gummis eignen sich gut für alle Körpertypen und reduzieren den Fettablagerungsprozess.</span></p>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>Zutatenmischungen im Ketoxboom –</strong></a></span></h2>

<p> </p>

<p><span style="font-weight: 400;">Die im </span><a href="https://www.facebook.com/KetoxboomGermanyDeutschland/"><strong>Ketoxboom gemischten</strong></a><span style="font-weight: 400;"> Zusammensetzungen sind natürlich und stammen aus der Natur. Mit den in der Kur enthaltenen biologischen und gesunden Inhaltsstoffen können Sie die besten Ergebnisse erzielen. Das Vorhandensein von Essigsäure in diesen Keto-Gummis wirkt gut, um die Produktion der Ketone im Körper anzuregen, die für den Abbau zusätzlicher Fette unerlässlich sind. Ketoxboom wirkt als Stimulans, um den</span><a href="https://www.facebook.com/KetoxboomINGermany/"> <strong>Ketoseprozess</strong></a><span style="font-weight: 400;"> zu beschleunigen, was bei Verfahren zum Fettabbau hilfreich sein kann. Der Anwender bekommt keine entzündlichen Probleme mehr im Körper. Erfahren Sie mehr über die Inhaltsstoffe und welche wirksamen Ergebnisse sie erzielen:</span></p>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDeutschland/"><strong>Beta-Hydroxybutyrat</strong></a><span style="font-weight: 400;"> – BHB-Ketone sind wirksame Booster für den Ketonspiegel, der die Fettzellen aufbricht und zu einer Gewichtsreduktion führt. Es hilft dem Benutzer, einen attraktiven und schlanken Körperbau mit besseren Energiequotienten zu erreichen. Der Mensch kann eine bessere kognitive Gesundheit mit erhöhter Leistungsfähigkeit des Körpers und aller seiner Funktionen erreichen. Es trägt auch zur Verbesserung der Darmgesundheit bei, ohne dass weitere Entzündungsprobleme im Körper auftreten.</span></p>

<p> </p>

<p><a href="https://healthgrowth.shop/ketoxboom-de"><img src="https://i.ibb.co/82KQKTz/Ketoxboom-DE-AT-CH.jpg" alt="Ketoxboom-DE-AT-CH" border="0" /></a></p>

<p> </p>

<p> </p>

<h1><span style="color: #ff0000;"><a style="color: #ff0000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>(EXKLUSIVES ANGEBOT)Klicken Sie hier: „Ketoxboom Deutschland, Österreich, Schweiz“Offizielle Website!</strong></a></span></h1>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomGermanyDeutschland/"><strong>Grüner Tee</strong></a><span style="font-weight: 400;"> – Die Grüntee-Extrakte in diesen </span><a href="https://www.facebook.com/KetoxboomINGermany/"><strong>Keto-Gummis</strong></a><span style="font-weight: 400;"> wirken gut, um das Körpergewicht durch einen besseren metabolischen Ketoseprozess zu beseitigen. Darüber hinaus können Sie neben den Gewichtsverlustfunktionen auch noch weitere Vorteile erzielen. Sie können ein besseres Verdauungssystem und eine erhöhte Immunität erreichen, die Gesundheitskrankheiten wirksam vorbeugt.</span></p>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDE/"><strong>Garcinia Cambogia</strong></a><span style="font-weight: 400;"> – dieses Kraut bietet eine bessere Aufrechterhaltung des Körperfetts und wirksame Reaktionen. Die Gesundheit des Menschen steigt, wenn keine Fette mehr im Körper abgelagert werden. Es unterdrückt den Appetit und erhöht das Energieniveau der Person. Der Körper erhält bessere Ergebnisse bei der Verbrennung aller gespeicherten Fette und erhält die beste Gesundheit mit perfekten Schlankheitseigenschaften.</span></p>

<p> </p>

<p><a href="https://www.facebook.com/DEATCHKetoxboom/"><strong>Apfelessig</strong></a><span style="font-weight: 400;"> – diese natürliche Möglichkeit zur Gewichtsabnahme erfreut sich heutzutage großer Beliebtheit, um gespeicherte Fette und alle gesundheitsschädlichen Faktoren zu reduzieren. Es funktioniert gut, um die Stoffwechselrate zu erhöhen und den glykämischen Index besser zu regulieren. Hält das hormonelle Gleichgewicht aufrecht, ohne dass es zu einer weiteren Fetteinlagerung kommt. Das Vorhandensein von Antioxidantien in diesem Element trägt gut dazu bei, die Fettansammlung und Schäden durch freie Radikale zu reduzieren.</span></p>

<p> </p>

<p><a href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>Löwenzahn</strong></a><span style="font-weight: 400;"> – dieses Element ist eine Pflanze, die als natürliches Heilmittel bei verschiedenen Gesundheitsproblemen hilft. Bei der Gewichtsreduktion wirkt diese Komponente als perfekter Energiebooster. Sie bekommen keine Heißhungerattacken mehr und das Hungergefühl wird gemindert. Der Körper kommt mit dem Minimum an Mahlzeiten, die er täglich zu sich nimmt, gesund zurecht.</span></p>

<p> </p>

<p><a href="https://healthgrowth.shop/ketoxboom-de"><img src="https://i.ibb.co/xS7fzKF/Ketoxboom.jpg" alt="Ketoxboom" border="0" /></a><br /><br /></p>

<p> </p>

<p> </p>

<h1><span style="color: #ff0000;"><a style="color: #ff0000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>SONDERANGEBOT: Holen Sie sich Ketoxboom in Deutschland, Österreich und der Schweiz zum niedrigsten ermäßigten Preis online</strong></a></span></h1>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/KetoxboomINGermany/"><strong>Welche wirksamen Wirkungen vermittelt Ketoxboom im Körper?</strong></a></span></h2>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDeutschland/"><strong>Ketoxboom</strong></a><span style="font-weight: 400;"> enthält gesunde Mischungen voller BHB-Ketone, die gut dazu beitragen, den Ketoseprozess im Körper anzukurbeln. Der Benutzer erhält einen besseren Schub der Enzyme und Hormone, die erhöhte Energiequellen für schnellere Fettverbrennungsprozesse verantwortlich machen. Der Benutzer erreicht die gesteigerte Stoffwechselrate, die die Fettzellen abbaut, ohne Kohlenhydrate zu verwenden. Die Gesundheit des Anwenders steigt, ohne dass es durch die Ausleitung freier Radikale zu weiteren Schäden kommt.</span></p>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomGermanyDeutschland/"><strong>Die Person erhält den besten Anstieg des Serotoninspiegels</strong></a><span style="font-weight: 400;">, der die Gehirnfunktionen steigert. Mit erhöhter Ausdauer und Kraft können Sie ein besseres Energieniveau erreichen. Es reduziert Appetit und Heißhungerattacken und hilft dem Anwender, bessere Ergebnisse zu erzielen, ohne dass es zu Nebenwirkungen im Körper kommt. Sie bekommen keine Entzündungsprobleme mehr und reduzieren alle gesundheitsschädlichen Faktoren, die durch Fettleibigkeit verursacht werden, mit besseren Reaktionen. Es bietet die beste schlanke und schlanke Einstellung, ohne dass die Person Schwäche verspürt.</span></p>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/KetoxboomINGermany/"><strong>Vorteile –</strong></a></span></h2>

<p> </p>

<ul>

<li style="font-weight: 400;"><span style="font-weight: 400;">Höhere Energie durch die Umwandlung von Fetten</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Keine Verwendung von Kohlenhydraten mehr zur Energiegewinnung</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Gesunde Ketoseförderung im Körper</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Hilft bei einer besseren Herzgesundheit</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Reduziert das Problem der Hyper-/Hypoglykämie</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Hilft bei besseren Schlafmustern</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Reduziert die Fettansammlung im Körper</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Bietet bessere Schlafmuster</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Verbessert die Gehirngesundheit und steigert die kognitive Gesundheit</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Besseres Selbstvertrauen</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Reduziert den schlechten Cholesterin- und Kaloriengehalt</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Hilft bei Essgewohnheiten</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Repariert den Körper nach den Trainingseinheiten</span></li>

<li style="font-weight: 400;"><span style="font-weight: 400;">Sie erhalten ein gepflegtes, schlankes und attraktives Aussehen mit besseren Ergebnissen</span></li>

</ul>

<p> </p>

<p><a href="https://healthgrowth.shop/ketoxboom-de"><img src="https://i.ibb.co/KLQ6gcq/Ketoxboom-Deutschland.png" alt="Ketoxboom-Deutschland" border="0" /></a><br /><br /></p>

<p> </p>

<p> </p>

<h1><span style="color: #ff0000;"><a style="color: #ff0000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>SONDERANGEBOT[Begrenzter Rabatt]: „Ketoxboom Deutschland, Österreich, Schweiz“ Offizielle Website!</strong></a></span></h1>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/KetoxboomDeutschland/"><strong>Gibt es irgendwelche Nebenwirkungen von Ketoxboom?</strong></a></span></h2>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomGermanyDeutschland/"><strong>Die Ketoxboom</strong></a><span style="font-weight: 400;"> sind das Kraftpaket aller gesunden Mischungen, die den Ketoseprozess gut unterstützen. Mit den getesteten Inhaltsstoffen können Sie den perfekten Körperbau erreichen. Alle Inhaltsstoffe werden von Fachleuten klinisch getestet, die die besten Ergebnisse im Körper erzielen. Sie können perfekte Ergebnisse ohne Nebenwirkungen im Körper erzielen.</span></p>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/KetoxboomDE/"><strong>Wie kann man dem Körper Ketoxboom hinzufügen?</strong></a></span></h2>

<p> </p>

<p><span style="font-weight: 400;">Diese </span><a href="https://www.facebook.com/DEATCHKetoxboom/"><strong>Ketoxboom</strong></a><span style="font-weight: 400;"> sind einfach zu konsumieren, wenn Sie Süßigkeiten konsumieren. Nehmen Sie täglich zwei Gummibonbons auf nüchternen Magen ein, um den Fettabbau effektiv zu unterstützen. Der Benutzer kann bei regelmäßiger Einnahme der Kur einen perfekten Körperbau mit gesunder Gesundheit erreichen. Eine kohlenhydratarme Ernährung kann den Fettabbau beschleunigen. Mit perfekten Schlankheitsfaktoren können Sie den perfekten schlanken Ausblick erreichen.</span></p>

<p> </p>

<p><span style="font-weight: 400;">Diese </span><a href="https://www.facebook.com/Ketoxboom.DE.AT.CH/"><strong>Ketoxboom</strong></a><span style="font-weight: 400;"> sind nicht für die Anwendung bei Minderjährigen, werdenden Frauen und stillenden Müttern geeignet. Wenn der Benutzer diese Keto-Gummis trotz gesundheitlicher Probleme konsumieren möchte, muss er vor dem Verzehr einen Gesundheitsexperten konsultieren.</span></p>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/KetoxboomINGermany/"><strong>Wo bekommt man die Ketoxboom-Formel?</strong></a></span></h2>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDeutschland/"><strong>Ketoxboom ist eine Internetoption</strong></a><span style="font-weight: 400;">, die kein Rezept und kein Anstehen erfordert. Sie können die Kur auf der offiziellen Website bestellen, indem Sie alle Details angeben. Innerhalb weniger Werktage wird das Gerät problemlos an Ihre Haustür geliefert. Sie können auch problemlos ein 60-tägiges Rückgabe- und Rückerstattungsrecht in Anspruch nehmen. Im Falle eines Problems können Sie die Formel ohne Probleme zurückgeben und eine garantierte Rückerstattung erhalten.</span></p>

<p> </p>

<h1><span style="color: #ff0000;"><a style="color: #ff0000;" href="https://healthgrowth.shop/ketoxboom-de"><strong>Lesen Sie dies: „Weitere Informationen von sachkundiger Expertise aus Deutschland, Österreich und der Schweiz“Ketoxboom</strong></a></span></h1>

<p> </p>

<h2><span style="color: #000000; background-color: #ffff00;"><a style="color: #000000; background-color: #ffff00;" href="https://www.facebook.com/KetoxboomGermanyDeutschland/"><strong>Endgültiges Urteil -</strong></a></span></h2>

<p> </p>

<p><a href="https://www.facebook.com/KetoxboomDE/"><strong>Ketoxboom ist die wirksamste</strong></a><span style="font-weight: 400;"> Keto-Gummikur, die alle überschüssigen Fette aus dem Körper reduziert. Es zeichnet sich durch den besten Körperbau ohne weitere Fettablagerungen im Körper aus. Die BHB-Mischungen wirken gut, um den Ketoseprozess auszulösen, der hartnäckige Fette ohne heftige Reaktionen verbrennt. Es gibt Ernährungsberater, die die Verwendung von Gummibärchen empfehlen und in wenigen Wochen ein schlankes und fittes Aussehen vermitteln. Probieren Sie diese Formel aus, um Ihrem Körper dabei zu helfen, angesammelte Fette loszuwerden und so die gesamten Körperfunktionen effizienter zu gestalten.</span></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>Unsere offiziellen Blogs ⇒</strong></span></h2>

<p><a href="https://ketoxboom-germany.mystrikingly.com/"><strong>https://ketoxboom-germany.mystrikingly.com/</strong></a></p>

<p><a href="https://ketoxboom.mystrikingly.com/"><strong>https://ketoxboom.mystrikingly.com/</strong></a></p>

<p><a href="https://ketoxboom-de.mystrikingly.com/"><strong>https://ketoxboom-de.mystrikingly.com/</strong></a></p>

<p><a href="https://ketoxboom-de-at-ch.mystrikingly.com/"><strong>https://ketoxboom-de-at-ch.mystrikingly.com/</strong></a></p>

<p><a href="https://ketoxboom-germany-1.jimdosite.com/"><strong>https://ketoxboom-germany-1.jimdosite.com/</strong></a></p>

<p><a href="https://ketoxboom-deutschland.jimdosite.com/"><strong>https://ketoxboom-deutschland.jimdosite.com/</strong></a></p>

<p><a href="https://ketoxboom-de.jimdosite.com/"><strong>https://ketoxboom-de.jimdosite.com/</strong></a></p>

<p><a href="https://ketoxboom-de-at-ch.jimdosite.com/"><strong>https://ketoxboom-de-at-ch.jimdosite.com/</strong></a></p>

<p><a href="https://ketoxboom-germany-93c52c.webflow.io/"><strong>https://ketoxboom-germany-93c52c.webflow.io/</strong></a></p>

<p><a href="https://ketoxboom-deutschland-73ad85.webflow.io/"><strong>https://ketoxboom-deutschland-73ad85.webflow.io/</strong></a></p>

<p><a href="https://ketoxboom-de.webflow.io/"><strong>https://ketoxboom-de.webflow.io/</strong></a></p>

<p><a href="https://ketoxboom-de-at-ch.webflow.io/"><strong>https://ketoxboom-de-at-ch.webflow.io/</strong></a></p>

<p><a href="https://ketoxboomgermany29.godaddysites.com/"><strong>https://ketoxboomgermany29.godaddysites.com/</strong></a></p>

<p><a href="https://ketoxboomdeutschland.godaddysites.com/"><strong>https://ketoxboomdeutschland.godaddysites.com/</strong></a></p>

<p><a href="https://ketoxboomde.godaddysites.com/"><strong>https://ketoxboomde.godaddysites.com/</strong></a></p>

<p><a href="https://ketoxboomde8.godaddysites.com/"><strong>https://ketoxboomde8.godaddysites.com/</strong></a></p>

<p><a href="https://ketoxboom-germany.jigsy.com/"><strong>https://ketoxboom-germany.jigsy.com/</strong></a></p>

<p><a href="https://ketoxboom-deutschland.jigsy.com/"><strong>https://ketoxboom-deutschland.jigsy.com/</strong></a></p>

<p><a href="https://ketoxboom-de.jigsy.com/"><strong>https://ketoxboom-de.jigsy.com/</strong></a></p>

<p><a href="https://ketoxboom-de-at-ch.jigsy.com/"><strong>https://ketoxboom-de-at-ch.jigsy.com/</strong></a></p>

<p><a href="https://ketoxboom-germany.company.site/"><strong>https://ketoxboom-germany.company.site/</strong></a></p>

<p><a href="https://keto-x-boom-deutschland.company.site/"><strong>https://keto-x-boom-deutschland.company.site/</strong></a></p>

<p><a href="https://ketoxboom-de.company.site/"><strong>https://ketoxboom-de.company.site/</strong></a></p>

<p><a href="https://ketoxboom-de-at-ch.company.site/"><strong>https://ketoxboom-de-at-ch.company.site/</strong></a></p>

<p><a href="https://peopleshealthsecretnetwork.blogspot.com/2023/08/ketoxboom-de-at-ch-kaufen-erfahrungen.html"><strong>https://peopleshealthsecretnetwork.blogspot.com/2023/08/ketoxboom-de-at-ch-kaufen-erfahrungen.html</strong></a></p>

<p><a href="https://peopleshealthsecretnetwork.blogspot.com/2023/08/ketoxboom-hohle-der-lowen-testberichte.html"><strong>https://peopleshealthsecretnetwork.blogspot.com/2023/08/ketoxboom-hohle-der-lowen-testberichte.html</strong></a></p>

<p><a href="https://groups.google.com/g/ketoxboom-deutschland/c/bUmc1UOj3mA"><strong>https://groups.google.com/g/ketoxboom-deutschland/c/bUmc1UOj3mA</strong></a></p>

<p><a href="https://groups.google.com/g/ketoxboom-deutschland/c/eYCtB4Pgbe8"><strong>https://groups.google.com/g/ketoxboom-deutschland/c/eYCtB4Pgbe8</strong></a></p>

<p><a href="https://groups.google.com/g/ketoxboom-deutschland/c/4rt0pC0GSuo"><strong>https://groups.google.com/g/ketoxboom-deutschland/c/4rt0pC0GSuo</strong></a></p>

<p><a href="https://groups.google.com/u/1/g/ketoxboom-de-at-ch/c/t09ebUD0ReU"><strong>https://groups.google.com/u/1/g/ketoxboom-de-at-ch/c/t09ebUD0ReU</strong></a></p>

<p><a href="https://groups.google.com/u/1/g/ketoxboom-de-at-ch/c/Pppjf7GD4cU"><strong>https://groups.google.com/u/1/g/ketoxboom-de-at-ch/c/Pppjf7GD4cU</strong></a></p>

<p><a href="https://groups.google.com/u/1/g/ketoxboom-de-at-ch/c/g7IpzhdOMJ4"><strong>https://groups.google.com/u/1/g/ketoxboom-de-at-ch/c/g7IpzhdOMJ4</strong></a></p>

<p><a href="https://lookerstudio.google.com/reporting/68588bbb-e01a-40c7-a0c8-02a43623c542/page/KGuaD"><strong>https://lookerstudio.google.com/reporting/68588bbb-e01a-40c7-a0c8-02a43623c542/page/KGuaD</strong></a></p>

<p><a href="https://lookerstudio.google.com/reporting/8308c098-c7dd-46fb-b3a4-3869bc259b85/page/aIuaD"><strong>https://lookerstudio.google.com/reporting/8308c098-c7dd-46fb-b3a4-3869bc259b85/page/aIuaD</strong></a></p>

<p><a href="https://colab.research.google.com/drive/1m8fUWGqYW47mwiXNOmEW_oUVomCodIYr"><strong>https://colab.research.google.com/drive/1m8fUWGqYW47mwiXNOmEW_oUVomCodIYr</strong></a></p>

<p><a href="https://colab.research.google.com/drive/1zgkwVj_omlzcXCYn83Hm9Ehlex3vsspY"><strong>https://colab.research.google.com/drive/1zgkwVj_omlzcXCYn83Hm9Ehlex3vsspY</strong></a></p>

<p><a href="https://sites.google.com/view/ketoxboom-deutsch-land/"><strong>https://sites.google.com/view/ketoxboom-deutsch-land/</strong></a></p>

<p><a href="https://sites.google.com/view/ketoxboom-de-at-ch/"><strong>https://sites.google.com/view/ketoxboom-de-at-ch/</strong></a></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>Viarecta Deutschland Official Facebook Pages ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/ViarectaDE/"><strong>https://www.facebook.com/ViarectaDE/</strong></a></p>

<p><a href="https://www.facebook.com/ViarectaEbay/"><strong>https://www.facebook.com/ViarectaEbay/</strong></a></p>

<p><a href="https://www.facebook.com/viarectaBeiDM/"><strong>https://www.facebook.com/viarectaBeiDM/</strong></a></p>

<p><a href="https://www.facebook.com/viarectakaufen/"><strong>https://www.facebook.com/viarectakaufen/</strong></a></p>

<p><a href="https://www.facebook.com/ViarectaInGermany/"><strong>https://www.facebook.com/ViarectaInGermany/</strong></a></p>

<p><a href="https://www.facebook.com/viarectaBeiAmazon/"><strong>https://www.facebook.com/viarectaBeiAmazon/</strong></a></p>

<p><a href="https://www.facebook.com/ViarectaDeutschland/"><strong>https://www.facebook.com/ViarectaDeutschland/</strong></a></p>

<p><a href="https://www.facebook.com/ViagraKaufen/"><strong>https://www.facebook.com/ViagraKaufen/</strong></a></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>Ketoxplode Gummies Ireland Official Facebook Pages ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/KetoxplodeGummiesIreland/"><strong>https://www.facebook.com/KetoxplodeGummiesIreland/</strong></a></p>

<p><a href="https://www.facebook.com/KetoxplodeGummiesInIreland/"><strong>https://www.facebook.com/KetoxplodeGummiesInIreland/</strong></a></p>

<p><a href="https://www.facebook.com/KetoxplodeGummiesIE/"><strong>https://www.facebook.com/KetoxplodeGummiesIE/</strong></a></p>

<p><a href="https://www.facebook.com/KetoxplodeGummiesInIE/"><strong>https://www.facebook.com/KetoxplodeGummiesInIE/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesIreland/"><strong>https://www.facebook.com/KetoExplodeGummiesIreland/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesInIreland/"><strong>https://www.facebook.com/KetoExplodeGummiesInIreland/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesIE/"><strong>https://www.facebook.com/KetoExplodeGummiesIE/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesInIE/"><strong>https://www.facebook.com/KetoExplodeGummiesInIE/</strong></a></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>KetoXplode Gummies Sweden Official Facebook Pages ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/KetoXplodeGummiesInSE/"><strong>https://www.facebook.com/KetoXplodeGummiesInSE/</strong></a></p>

<p><a href="https://www.facebook.com/KetoXplodeGummiesSverige/"><strong>https://www.facebook.com/KetoXplodeGummiesSverige/</strong></a></p>

<p><a href="https://www.facebook.com/KetoXplodeGummiesInSweden/"><strong>https://www.facebook.com/KetoXplodeGummiesInSweden/</strong></a></p>

<p><a href="https://www.facebook.com/KetoXplodeGummiesInSverige/"><strong>https://www.facebook.com/KetoXplodeGummiesInSverige/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesSE/"><strong>https://www.facebook.com/KetoExplodeGummiesSE/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesInSE/"><strong>https://www.facebook.com/KetoExplodeGummiesInSE/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesSweden/"><strong>https://www.facebook.com/KetoExplodeGummiesSweden/</strong></a></p>

<p><a href="https://www.facebook.com/KetoExplodeGummiesInSweden/"><strong>https://www.facebook.com/KetoExplodeGummiesInSweden/</strong></a></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>Animale Male Enhancement Australia Official Facebook Pages ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementInAU/"><strong>https://www.facebook.com/AnimaleMaleEnhancementInAU/</strong></a></p>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementPills/"><strong>https://www.facebook.com/AnimaleMaleEnhancementPills/</strong></a></p>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementInAustralia/"><strong>https://www.facebook.com/AnimaleMaleEnhancementInAustralia/</strong></a></p>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementAustraliaAU/"><strong>https://www.facebook.com/AnimaleMaleEnhancementAustraliaAU/</strong></a></p>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementAUAustralia/"><strong>https://www.facebook.com/AnimaleMaleEnhancementAUAustralia/</strong></a></p>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementAustraliaOfficial/"><strong>https://www.facebook.com/AnimaleMaleEnhancementAustraliaOfficial/</strong></a></p>

<p><a href="https://www.facebook.com/AnimaleMaleEnhancementGummiesCapsulesAustralia/"><strong>https://www.facebook.com/AnimaleMaleEnhancementGummiesCapsulesAustralia/</strong></a></p>

<p><a href="https://www.facebook.com/people/Animale-Male-Enhancement-Australia/100093487323174/"><strong>https://www.facebook.com/people/Animale-Male-Enhancement-Australia/100093487323174/</strong></a></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>Active Keto Gummies Canada Official Links ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/ActiveKetoGummiesInCa/"><strong>https://www.facebook.com/ActiveKetoGummiesInCa/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesOfCa/"><strong>https://www.facebook.com/ActiveKetoGummiesOfCa/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesCaBuy/"><strong>https://www.facebook.com/ActiveKetoGummiesCaBuy/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesOfCanada/"><strong>https://www.facebook.com/ActiveKetoGummiesOfCanada/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesCanadaPrice/"><strong>https://www.facebook.com/ActiveKetoGummiesCanadaPrice/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesAvisCanada/"><strong>https://www.facebook.com/ActiveKetoGummiesAvisCanada/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesAvisInCanada/"><strong>https://www.facebook.com/ActiveKetoGummiesAvisInCanada/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesAvisCa/"><strong>https://www.facebook.com/ActiveKetoGummiesAvisCa/</strong></a></p>

<p><a href="https://www.facebook.com/ActiveKetoGummiesAvisInCa/"><strong>https://www.facebook.com/ActiveKetoGummiesAvisInCa/</strong></a></p>

<p> </p>

<h2><span style="background-color: #ffff00;"><strong>Brulafine France Official Links ⇒</strong></span></h2>

<p><a href="https://www.facebook.com/BrulafineInFR/"><strong>https://www.facebook.com/BrulafineInFR/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafineOfFR/"><strong>https://www.facebook.com/BrulafineOfFR/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafineInFrance/"><strong>https://www.facebook.com/BrulafineInFrance/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafineOfFrance/"><strong>https://www.facebook.com/BrulafineOfFrance/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafineAvisMedical/"><strong>https://www.facebook.com/BrulafineAvisMedical/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafineAvisNegatif/"><strong>https://www.facebook.com/BrulafineAvisNegatif/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafineEnPharmaciePrix/"><strong>https://www.facebook.com/BrulafineEnPharmaciePrix/</strong></a></p>

<p><a href="https://www.facebook.com/CodePromoBrulafine/"><strong>https://www.facebook.com/CodePromoBrulafine/</strong></a></p>

<p><a href="https://www.facebook.com/BrulafinePrix/"><strong>https://www.facebook.com/BrulafinePrix/</strong></a></p>