id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

Feanix/ljkn | 2023-09-05T16:23:01.000Z | [

"region:us"

] | Feanix | null | null | null | 0 | 0 | ---

# For reference on model card metadata, see the spec: https://github.com/huggingface/hub-docs/blob/main/datasetcard.md?plain=1

# Doc / guide: https://huggingface.co/docs/hub/datasets-cards

{}

---

# Dataset Card for Dataset Name

## Dataset Description

- **Homepage:**

- **Repository:**

- **Paper:**

- **Leaderboard:**

- **Point of Contact:**

### Dataset Summary

This dataset card aims to be a base template for new datasets. It has been generated using [this raw template](https://github.com/huggingface/huggingface_hub/blob/main/src/huggingface_hub/templates/datasetcard_template.md?plain=1).

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

alexandrainst/nordjylland-news-image-captioning | 2023-09-08T06:41:05.000Z | [

"size_categories:10K<n<100K",

"language:da",

"Image captioning",

"region:us"

] | alexandrainst | null | null | null | 1 | 0 | ---

dataset_info:

features:

- name: image

dtype: image

- name: caption

dtype: string

splits:

- name: train

num_bytes: 10341164216.808

num_examples: 11707

download_size: 11002607252

dataset_size: 10341164216.808

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

language:

- da

tags:

- Image captioning

pretty_name: Nordjylland News - Image caption dataset

size_categories:

- 10K<n<100K

---

# Dataset Card for "nordjylland-news-image-captioning"

## Dataset Description

- **Point of Contact:** [Oliver Kinch](mailto:oliver.kinch@alexandra.dk)

- **Size of dataset:** 11 GB

### Dataset Summary

This dataset is a collection of image-caption pairs from the Danish newspaper [TV2 Nord](https://www.tv2nord.dk/).

### Supported Tasks and Leaderboards

Image captioning is the intended task for this dataset. No leaderboard is active at this point.

### Languages

The dataset is available in Danish (`da`).

## Dataset Structure

An example from the dataset looks as follows.

```

{

"file_name": "1.jpg",

"caption": "Bruno Sørensen og Poul Erik Pedersen er ofte at finde i Fyensgade Centret."

}

```

### Data Fields

- `file_name`: a `string` giving the file name of the image.

- `caption`: a `string` feature.

### Dataset Statistics

#### Number of samples

11707

#### Image sizes

All images in the dataset are in RGB format, but they exhibit varying resolutions:

- Width ranges from 73 to 11,830 pixels.

- Height ranges from 38 to 8,268 pixels.

The side length of a square image with the same number of pixels as an image with height \\(h \\) and width \\(w \\) is approximately given as

\\( x = \text{int}({{\sqrt{h \cdot w}})} \\).

Plotting the distribution of \\( x \\) gives an insight into the sizes of the images in the dataset.

#### Caption Length Distribution

## Potential Dataset Issues

- There are 14 images with the caption "Arkivfoto".

- There are 37 images with captions consisting solely of a source reference, such as "Kilde: \<name of source\>".

You might want to consider excluding these samples from the model training process.

## Dataset Creation

### Curation Rationale

There are not many large-scale image-captioning datasets in Danish.

### Source Data

The dataset has been collected through the TV2 Nord API, which can be accessed [here](https://developer.bazo.dk/#876ab6f9-e057-43e3-897a-1563de34397e).

## Additional Information

### Dataset Curators

[Oliver Kinch](https://huggingface.co/oliverkinch) from the [The Alexandra

Institute](https://alexandra.dk/)

### Licensing Information

The dataset is licensed under the [CC0

license](https://creativecommons.org/share-your-work/public-domain/cc0/).

|

SniiKz/Insurance_dataset | 2023-09-05T06:34:22.000Z | [

"region:us"

] | SniiKz | null | null | null | 0 | 0 | Entry not found |

EliKet/miumiu | 2023-09-08T07:30:58.000Z | [

"region:us"

] | EliKet | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: image

dtype: image

- name: image_name

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 21220034.0

num_examples: 18

download_size: 21212241

dataset_size: 21220034.0

---

# Dataset Card for "miumiu"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

powerbiteusa/power-bite | 2023-09-05T06:40:48.000Z | [

"region:us"

] | powerbiteusa | null | null | null | 0 | 0 | <h2><span style="background-color: yellow; color: red;"><a style="background-color: yellow; color: red;" href="https://www.globalfitnessmart.com/get-powerbite"><strong>Click Here -- Official Website -- Order Now</strong></a></span></h2>

<h2><strong>✔ <span style="color: blue;">For Order Official Website - <a style="color: blue;" href="https://www.globalfitnessmart.com/get-powerbite">https://www.globalfitnessmart.com/get-powerbite</a></span></strong><br /><strong>✔ <span style="color: #33cccc;">Product Name - <a style="color: #33cccc;" href="https://www.globalfitnessmart.com/get-powerbite">Power Bite</a></span></strong><br /><strong>✔ <span style="color: #ff9900;">Side Effect - No Side Effects</span></strong><br /><strong>✔ <span style="color: red;">Availability - <a style="color: red;" href="https://www.globalfitnessmart.com/get-powerbite">Online</a></span></strong><br /><strong>✔ <span style="color: #ffcc00;">Rating -⭐⭐⭐⭐⭐</span></strong></h2>

<h2><span style="background-color: yellow; color: red;"><a style="background-color: yellow; color: red;" href="https://www.globalfitnessmart.com/get-powerbite"><strong>✅Visit The Official Website To Get Your Bottle Now✅</strong></a></span><br /><span style="background-color: yellow; color: red;"><a style="background-color: yellow; color: red;" href="https://www.globalfitnessmart.com/get-powerbite"><strong>✅Visit The Official Website To Get Your Bottle Now✅</strong></a></span><br /><span style="background-color: yellow; color: red;"><a style="background-color: yellow; color: red;" href="https://www.globalfitnessmart.com/get-powerbite"><strong>✅Visit The Official Website To Get Your Bottle Now✅</strong></a></span></h2>

<p style="text-align: center;"> <a style="margin-left: 1em; margin-right: 1em;" href="https://www.globalfitnessmart.com/get-powerbite"><img src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEguXLcXIJXiu74OaARvY8OBeHyCSpyHm8-hNlXM7ZvbShBLC71VPUtrnQY3fJfI6nbAqWPoKEoZBRgudAJnB1JFq201LW-g5qiP1zbV2Dixe_xHNbHPgiR-XQ6vWwWqOHtmYlIquV-26ZAunEqaYILjU7dG3cw0NgHxLVHlat0ZzMbs6it9OQGs7xSGcXp1/w640-h476/PowerBite.jpg" alt="" width="640" height="476" border="0" data-original-height="355" data-original-width="477" /></a></p>

<p>Ever heard about Power Bite? It's a new thing in the world of dental care, claiming to be a special candy that helps your teeth and gums stay healthy. Sounds great, right? But here's the catch – there are so many products out there that make big promises but often don't deliver.Power Bite is a revolutionary dental complex supplement that harnesses the power of plant and mineral extracts to support optimal oral health. It enhances teeth and gum health and is intended to complement your regular oral hygiene routine.</p>

<h2><strong>What is Power Bite Oral Health?</strong></h2>

<p>Power Bite is a dental complex supplement designed to promote strong and healthy teeth and gums. It is formulated with 100% natural ingredients and is manufactured in an FDA-registered and GMP-certified facility. The creators of Power Bite have developed a unique formula that aims to provide optimal oral health support for individuals of all ages. The all-natural blend of minerals and nutrients revitalizes the oral region and maintains its hygiene concurrently with dental care and a healthy diet.</p>

<p>The formula is made as simple dental candy or tablets with clinically researched inclusions in precise, easy, and safe doses. In order to obtain dental health and a whiter smile, opting for this candy is highly suggested by several customers. Also, using it appropriately delivers more supportive results than any other oral health supplement.</p>

<h2 style="text-align: center;"><strong><span style="color: #ff9900;"><a style="color: #ff9900;" href="https://www.globalfitnessmart.com/get-powerbite">(EXCLUSIVE OFFER)Click Here : "<span style="color: blue;">PowerBite</span> USA"Official Website!</a></span></strong></h2>

<h2><strong>How Power Bite Tablets Work for Oral Health?</strong></h2>

<p>Power Bite tablets work by harnessing the Power of plant and mineral extracts that have been carefully selected for their oral health benefits. When taken as directed, the soothing candy-like tablets dissolve slowly in the mouth, allowing the powerful ingredients to go to work and supporting the health of the teeth and gums. The minerals naturally enhance the saliva properties, ensuring they reach the mouth's corners and fill the nutritional gaps.</p>

<p>Moreover, Power Bite helps strengthen enamel, fight harmful bacteria, reduce inflammation, promote gut health, and promote overall oral hygiene. It also erodes the bacterial colonies in the roots of teeth and gum, increases the shade of teeth, and makes them stronger.</p>

<div class="separator" style="clear: both; text-align: center;"><a style="margin-left: 1em; margin-right: 1em;" href="https://www.globalfitnessmart.com/get-powerbite"><img src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjTvKfv3OtjETIozH90ZJlr8cD62LXu2Q08-SJTNFK8n4MWUXG2QacYnHE3rxz1Kw-KnrnoEOfbMuBfY9wTRHgL46bgv-2UIF0mstqo3BIGZjzYZmP5MpFUvpAZpTbsb0lsPbMjacQsnZyZGi8hw7Y7QCmC4XkTnWgRwSgY3XWe-RkOQLlJnNJJJjsUJCq6/w640-h354/PowerBite%20ingredients.jpg" alt="" width="640" height="354" border="0" data-original-height="346" data-original-width="624" /></a></div>

<h2><strong>Key Ingredients In Power Bite Dental Candy <br /></strong></h2>

<p>Here is a list of ingredients used in the Power Bite dental supplement and a small note about its health benefits.</p>

<p><strong>#Calcium Carbonate</strong> - In many research studies, it has been proved that Calcium Carbonate helps to increase the natural calcium levels of the teeth and it also helps to equalize the acids in the mouth that causes plaque buildup. Plaque buildup eats the teeth's enamel and is a significant cause of tooth cavities and decay. Calcium carbonate helps to remove plaque buildup and keeps the tooth away from cavities.</p>

<p><strong>#Myrrh -</strong> Myrrh is a reddish brown sap of a tree called Commiphora myrrha found in Africa and southwest Asia. Myrrh kills harmful bacteria and other microbes inside the mouth. This Power Bite ingredient helps to treat oral infections and inflammation. The sap is a powerful antioxidant. Studies have suggested that Myrrh has potential anticancer properties and relieves cavities and other kinds of pain.</p>

<p><strong>#Wild Mint</strong> - Mint leaves helps to freshen the breath that occurs due to harmful bacterias in the mouth. Mint leaf extract can reduce plaque deposition on teeth. They are rich in antioxidants. Mint has nutritional benefits and helps to remove stains from your teeth.</p>

<p><strong>#Xylitol -</strong> Xylitol has a higher concentration level of ammonia and amino acids which helps to raise the pH level of the mouth and works to harden the tooth enamel. In many types of research conducted by scientists, Xylitol is proven to reduce tooth decay in humans. It also protects the gums from diseases.</p>

<p><strong>#Lysozyme -</strong> It helps to kill harmful bacteria in the mouth that can cause tooth decay and cavities. Lysozyme is an antibacterial enzyme produced in animal and human bodies. This PowerBite ingredient is also known for its anti-inflammatory effect.</p>

<p><strong>#Mediterranean Sea Salt</strong> - The Mediterranean Sea Salt is rich in magnesium, calcium, potassium, and iron and helps the teeth to get the right nutrients and minerals. It is important to maintain blood pressure and the production of energy from glucose. Mediterranean Sea Salt helps to protect the teeth's enamel.</p>

<p><strong>#Clove Oil -</strong> Clove Oil helps to get rid of tooth pain. This Power Bite ingredient has high anti-inflammatory properties and can help to reduce swelling and irritation in the affected areas. Clove oil is also effective in fighting cavities and helps to reduce cavities.</p>

<h2 style="text-align: center;"><strong><span style="color: #ff9900;"><a style="color: #ff9900;" href="https://www.globalfitnessmart.com/get-powerbite">SPECIAL PROMO: Get <span style="color: #993366;">PowerBite</span> at the Lowest Discounted Price Online</a></span></strong></h2>

<h2><strong>What are the Benefits of Power Bite Supplementation?</strong></h2>

<p>Power Bite offers a range of benefits for individuals seeking to improve their oral health naturally. Some key benefits of Power Bite supplementation include:</p>

<p><strong>#Stronger Teeth and Gums:</strong> PowerBite's powerful ingredients work synergistically to strengthen teeth and gums, reducing the risk of cavities and gum disease.</p>

<p><strong>#Reduced Inflammation:</strong> The plant extracts in Power Bite help reduce inflammation in the gums, promoting healthier oral tissues.</p>

<p><strong>#Enhanced Oral Hygiene:</strong> Power Bite natural ingredients fight harmful bacteria and promote overall oral hygiene, leading to fresher breath and a cleaner mouth.</p>

<p><strong>#Convenient and Enjoyable:</strong> Unlike traditional oral health products, PowerBite's candy-like tablets provide a convenient and enjoyable way to support oral health.</p>

<p><strong>#Fresh Breath:</strong> Natural extracts in the Power Bite tablet help freshen your breath, keeping your mouth feeling clean and refreshed by limiting the bacterial action causing plaque .</p>

<p><strong>#Natural and Safe:</strong> Power Bite is formulated with 100% natural ingredients and undergoes strict practices to ensure its safety and efficacy.</p>

<p><strong>#Risk-Free Investment:</strong> A 100% refund guarantee is available with every package purchase. It means the manufacturer is confident about the research-backed formula and its results.</p>

<div class="separator" style="clear: both; text-align: center;"><a style="margin-left: 1em; margin-right: 1em;" href="https://www.globalfitnessmart.com/get-powerbite"><img src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgADiUJr_7nvaBZ9ivl6se4oMYPfAW-ghMfsvFg4KT_p-QQ8GD9OzhoCd95Idp7Uu85q0lpYIfMXTaH8r01R4YBLeVM_HJe8gfKN7NzJTXDhHf1zF3f81AhGMTTbPDo5vbrLVm2tRSRx0PmoBWJOBpaej0p8ehKEig4hVztTHASa9pG352WD5f56IlPEnT9/w640-h356/PowerBite%2001.jpg" alt="" width="640" height="356" border="0" data-original-height="346" data-original-width="621" /></a></div>

<h2><strong>Research Facts Behind Power Bite Supplement</strong></h2>

<p>Extensive research has gone into the development of Power Bite to ensure its efficacy and safety. While individual results may vary, the creators of Power Bite have seen promising results in their research studies. It's important to note that no product works for 100% of the people who try it, as each body responds differently. However, Power Bite's formulation is backed by scientific research and is trusted by many individuals seeking to improve their oral health naturally.</p>

<p style="text-align: left;">As specified, Research on Journal Nutrients discovered that the farming process includes harmful additives that affect oral wellness. Hence, there comes the discovery of an acid-proofing method that can rebuild the teeth and gums using a Roman self-healing mineral, which is rediscovered by a team of researchers from MIT.</p>

<h2 style="text-align: center;"><strong><span style="color: #ff9900;"><a style="color: #ff9900;" href="https://www.globalfitnessmart.com/get-powerbite">SPECIAL PROMO[Limited Discount]: "<span style="color: red;">PowerBite</span> USA"Official Website!</a></span></strong></h2>

<h2><strong>Pros Of Power Bite:</strong></h2>

<ul>

<li>Made of natural ingredients.</li>

<li>Manufactured in the US in an FDA-registered facility and follows the guidelines of GMP.GMO-free.</li>

<li>No stimulants are used.</li>

<li>60-day money-back guarantee.</li>

</ul>

<h2><strong>Cons Of Power Bite:</strong></h2>

<ul>

<li>Can be purchased only through the official Power Bite website.</li>

<li>Results may vary depending on person to person.</li>

</ul>

<h2><strong>Pricing of Power Bite Bottles</strong></h2>

<p>PowerBite offers various package options to suit individual preferences and budgets. The pricing may vary depending on the chosen package and any discounts. The cost is affordable and available with huge saving deals, making the customers exciting and enjoyable. There are three different packages available for purchase.</p>

<ul>

<li><strong>Basically, a one-bottle pack – costs $69/each with free shipping.</strong></li>

<li><strong>Secondly, a three-bottle package – costs $59/each and $177 in total – Free shipping + Special Bonuses.</strong></li>

<li><strong>Thirdly, a six-bottle pack – costs $49/each and $294 in total – Free shipping + Special Bonuses.</strong></li>

</ul>

<div class="separator" style="clear: both; text-align: center;"><a style="margin-left: 1em; margin-right: 1em;" href="https://www.globalfitnessmart.com/get-powerbite"><img src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEj826xkDW-qruUnqS7iAHwWPgWn3UXqIE0km23tvuFP5B4q3bs4wxsbwlKce768heKjUry768Ra9Mkdk001L8HTzarvNR7xGJn1c5C2vHSbzwPZN6E4voAGPScbUdH-DQ1c_gycwH6qQ0MChkOSWBjSquEHBPe-xxpmr3Zggy8b94jxsf7l9J9NlZUfAmWo/w640-h242/PowerBite%2002.jpg" alt="" width="640" height="242" border="0" data-original-height="272" data-original-width="721" /></a></div>

<h2><strong>Power Bite FAQ!</strong></h2>

<p><strong>Q Is PowerBite safe to use?</strong></p>

<p>A Yes, PowerBite is a 100% natural formula that is safe to use. However, it's always advisable to review the product label and consult a dental expert if you have any other dental issues or have undergone dental surgery.</p>

<p><strong>Q When can I start seeing results with Power Bite?</strong></p>

<p>A Individual results may not notice the same results, but many users report experiencing noticeable improvements in their oral health within a few weeks of consistent use. Following the proper dosage and maintaining good oral hygiene practices is important for optimal results.</p>

<p><strong>Q Can Power Bite replace traditional oral health products like toothpaste and mouthwash?</strong></p>

<p>A PowerBite is not meant to replace traditional oral health products but rather to complement them. Incorporating Power Bite into your daily oral care routine can provide additional support for optimal oral health.</p>

<p><strong>Q Where can I purchase Power Bite supplements?</strong></p>

<p>A To ensure you are purchasing genuine PowerBite supplements, it's recommended to visit the official website or trusted online retailers. The official website provides detailed product information, secure checkout options, and customer support for any queries or concerns you may have.</p>

<h2 style="text-align: center;"><strong><span style="color: #ff9900;"><a style="color: #ff9900;" href="https://www.globalfitnessmart.com/get-powerbite">SPECIAL PROMO: Get <span style="color: #993366;">PowerBite</span> at the Lowest Discounted Price Online</a></span></strong></h2>

<p><strong>Q Can I get a refund if I'm not satisfied with PowerBite?</strong></p>

<p>A Yes, every bottle of Power Bite comes with an ironclad 60-day money-back guarantee. If, for any reason, you are not fully satisfied with the results, you can get a prompt refund by contacting the customer service team.</p>

<p><strong>Q Can children use PowerBite?</strong></p>

<p>A PowerBite is generally safe for individuals of all ages. However, it's always advisable to consult with a doctor before giving any supplement to children, especially those under the age of 5.</p>

<p><strong>Q Can PowerBite prevent cavities?</strong></p>

<p>A Power Bite's natural ingredients, such as xylitol, can help prevent tooth decay and promote healthy saliva production, which is essential for maintaining good oral health. However, maintaining consistent oral hygiene practices, including regular brushing and flossing, is also crucial in preventing cavities.</p>

<h2><strong>Conclusion – PowerBite Reviews!</strong></h2>

<p>Power Bite Dental Mineral Complex is a natural and innovative supplement that aims to transform your oral health. With its unique blend of essential inclusions, Power Bite offers a convenient and enjoyable way to support strong, healthy teeth and gums. Backed by research and manufactured in a certified facility, PowerBite is a safe and reliable option for those seeking natural oral health support. Remember to consult with a healthcare professional before indulging in routine, and take advantage of the money-back guarantee to try Power Bite risk-free.</p>

<div class="separator" style="clear: both; text-align: center;"><a style="margin-left: 1em; margin-right: 1em;" href="https://www.globalfitnessmart.com/get-powerbite"><img src="https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgkB-SEb3ZriTvARWloZpjT8v2vGK4aac_7WFnBgCwGCS6nZvHGOGZpXygjMaT6nAZsCbcVMyfyOIibGJUv7wShz1p5RAKbrmmMVyeJfF2DMALMsaS6VwBDmQexI-MMz5_fEIPk_JYaEbULJuSd0HXcEKqk4cA39UQ1fTZn0FGYMJDOPWmJPTgOdgSJwPb5/w640-h492/PowerBite%2004.jpg" alt="" width="640" height="492" border="0" data-original-height="498" data-original-width="647" /></a></div>

<h2 style="text-align: center;"><strong><span style="color: #ff9900;"><a style="color: #ff9900;" href="https://www.globalfitnessmart.com/get-powerbite">Read This: "More Information From Knowledgeable Expertise of <span style="color: #339966;">PowerBite</span>"</a></span></strong></h2>

<p><strong><span style="color: #ff9900;"># READ MORE</span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://powerbite-usa.clubeo.com/page/powerbite-reviews-is-legit-updated-2023-report.html">https://powerbite-usa.clubeo.com/page/powerbite-reviews-is-legit-updated-2023-report.html</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://powerbite-usa.clubeo.com/page/powerbite-reviews-viral-scam-or-legit-is-it-work-or-not.html">https://powerbite-usa.clubeo.com/page/powerbite-reviews-viral-scam-or-legit-is-it-work-or-not.html</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://powerbite-usa.clubeo.com/">https://powerbite-usa.clubeo.com/</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://powerbite-usa.clubeo.com/calendar/2023/09/05/powerbite-reviews-usa-is-it-legit-read-this-before-buy">https://powerbite-usa.clubeo.com/calendar/2023/09/05/powerbite-reviews-usa-is-it-legit-read-this-before-buy</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://sites.google.com/view/powerbite-review-usa/home">https://sites.google.com/view/powerbite-review-usa/home</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://colab.research.google.com/drive/1bEEh7SRYFeaQ_6OkswdWnfZg3m_vB8_d">https://colab.research.google.com/drive/1bEEh7SRYFeaQ_6OkswdWnfZg3m_vB8_d</a><br /></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://lookerstudio.google.com/u/0/reporting/9749e2b7-5505-461d-98b4-66ee5ab8e82b/page/xhibD">https://lookerstudio.google.com/u/0/reporting/9749e2b7-5505-461d-98b4-66ee5ab8e82b/page/xhibD</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://powerbite-review.company.site/">https://powerbite-review.company.site/</a></span></strong></p>

<p><strong><span style="color: #ff9900;"><a href="https://huggingface.co/powerbiteusa/powerbite/blob/main/README.md">https://huggingface.co/powerbiteusa/powerbite/blob/main/README.md</a></span></strong></p>

<p> </p> |

RiadhHasan/Product_v3 | 2023-09-07T08:46:40.000Z | [

"region:us"

] | RiadhHasan | null | null | null | 0 | 0 | Entry not found |

GaganpreetSingh/BlockTechBrew | 2023-09-05T06:58:25.000Z | [

"region:us"

] | GaganpreetSingh | null | null | null | 0 | 0 | Entry not found |

saurabh1896/OMR-scanned-documents | 2023-09-05T07:10:13.000Z | [

"region:us"

] | saurabh1896 | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: image

dtype: image

splits:

- name: train

num_bytes: 8217916.0

num_examples: 36

download_size: 8174461

dataset_size: 8217916.0

---

A medical forms dataset containing scanned documents is a valuable resource for healthcare professionals, researchers, and institutions seeking to streamline and improve their administrative and patient care processes. This dataset comprises digitized versions of various medical forms, such as patient intake forms, consent forms, health assessment questionnaires, and more, which have been scanned for electronic storage and easy access.

These scanned medical forms preserve the layout and structure of the original paper documents, including checkboxes, text fields, and signature spaces. Researchers and healthcare organizations can leverage this dataset to develop automated data extraction solutions, electronic health record (EHR) systems, and machine learning models for tasks like form recognition, data validation, and patient data management.

Additionally, this dataset serves as a valuable training and evaluation resource for image processing and optical character recognition (OCR) algorithms, enhancing the accuracy and efficiency of document digitization efforts within the healthcare sector. With the potential to improve data accuracy, reduce administrative burdens, and enhance patient care, the medical forms dataset with scanned documents is a cornerstone for advancing healthcare data management and accessibility.

|

Nil007/SampleDataDocVQA | 2023-09-05T07:24:30.000Z | [

"region:us"

] | Nil007 | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: id

dtype: string

- name: image

dtype: image

- name: query

struct:

- name: de

dtype: string

- name: en

dtype: string

- name: es

dtype: string

- name: fr

dtype: string

- name: it

dtype: string

- name: answers

sequence: string

- name: words

sequence: string

- name: bounding_boxes

sequence:

sequence: float32

length: 4

- name: answer

struct:

- name: match_score

dtype: float64

- name: matched_text

dtype: string

- name: start

dtype: int64

- name: text

dtype: string

- name: ground_truth

dtype: string

splits:

- name: train

num_bytes: 3504131.0

num_examples: 10

- name: test

num_bytes: 1444850.0

num_examples: 5

download_size: 2542845

dataset_size: 4948981.0

---

# Dataset Card for "SamleData"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

zxvix/pubmed_nonacademic_100 | 2023-09-05T08:14:42.000Z | [

"region:us"

] | zxvix | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: test

path: data/test-*

dataset_info:

features:

- name: MedlineCitation

struct:

- name: Article

struct:

- name: Abstract

struct:

- name: AbstractText

dtype: string

- name: ArticleTitle

dtype: string

- name: AuthorList

struct:

- name: Author

struct:

- name: CollectiveName

sequence: string

- name: ForeName

sequence: string

- name: Initials

sequence: string

- name: LastName

sequence: string

- name: GrantList

struct:

- name: Grant

struct:

- name: Agency

sequence: string

- name: Country

sequence: string

- name: GrantID

sequence: string

- name: Language

dtype: string

- name: PublicationTypeList

struct:

- name: PublicationType

sequence: string

- name: ChemicalList

struct:

- name: Chemical

struct:

- name: NameOfSubstance

sequence: string

- name: RegistryNumber

sequence: string

- name: CitationSubset

dtype: string

- name: DateCompleted

struct:

- name: Day

dtype: int64

- name: Month

dtype: int64

- name: Year

dtype: int64

- name: DateRevised

struct:

- name: Day

dtype: int64

- name: Month

dtype: int64

- name: Year

dtype: int64

- name: MedlineJournalInfo

struct:

- name: Country

dtype: string

- name: MeshHeadingList

struct:

- name: MeshHeading

struct:

- name: DescriptorName

sequence: string

- name: QualifierName

sequence: string

- name: NumberOfReferences

dtype: int64

- name: PMID

dtype: int64

- name: PubmedData

struct:

- name: ArticleIdList

struct:

- name: ArticleId

sequence:

sequence: string

- name: History

struct:

- name: PubMedPubDate

struct:

- name: Day

sequence: int64

- name: Month

sequence: int64

- name: Year

sequence: int64

- name: PublicationStatus

dtype: string

- name: ReferenceList

struct:

- name: Citation

sequence: 'null'

- name: CitationId

sequence: 'null'

- name: text

dtype: string

- name: title

dtype: string

- name: original_text

dtype: string

splits:

- name: test

num_bytes: 417158

num_examples: 100

download_size: 282401

dataset_size: 417158

---

# Dataset Card for "pubmed_nonacademic_100"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Araaa/fyptexts | 2023-09-05T07:37:50.000Z | [

"region:us"

] | Araaa | null | null | null | 0 | 0 | Entry not found |

waseemSyven/trial | 2023-09-05T07:26:49.000Z | [

"region:us"

] | waseemSyven | null | null | null | 0 | 0 | Entry not found |

getrangiitoenailfungus/RangiiToenailFungus | 2023-09-05T07:29:13.000Z | [

"region:us"

] | getrangiitoenailfungus | null | null | null | 0 | 0 | Are you tired of dealing with brittle and unhealthy nails? Gaining healthy, glowing skin and strong, beautiful nails is the quest of almost all people. In such cases, the Dermatology industry and leading skin experts keep searching for innovative solutions that can deliver remarkable results to users. That way exists a new skin and nail serum called **Rangii**. This revolutionary product is designed to enhance nail bed health, giving you stronger and healthier nails. In this comprehensive [**Rangii Serum review**](https://lookerstudio.google.com/reporting/64f081fc-f1e7-4747-b1fa-e5f7e03ce58f/page/BVibD), you can come to know about the Serum, its working facts, the ingredients it contains, how to use it, its benefits, potential drawbacks, where to buy it, pricing and guarantee plans, and more. Let's dive in and discover the secrets of Rangii Serum, which helps you decide if this suits you!

[Click Here To Buy It From Official Website](https://www.glitco.com/get-rangii)

Product Name

[Rangii](https://www.facebook.com/people/Rangii-Toenail-Fungus/61550939819696/)

Usage Form

External application liquid serum.

Purpose

Anti-Fungal Solution

Target

Eliminate toe nail fungus

Other Benefits

Enhance skin health, improve nail strength, and maintain beautiful feet.

Safety Standards

· 100% natural

· non-GMO

· No harmful chemicals

· non-Habit forming

· Proper manufacturing guidelines

Research Reference

· JABFM

· Frontiers

· ScienceDirect

· National Library of Medicine

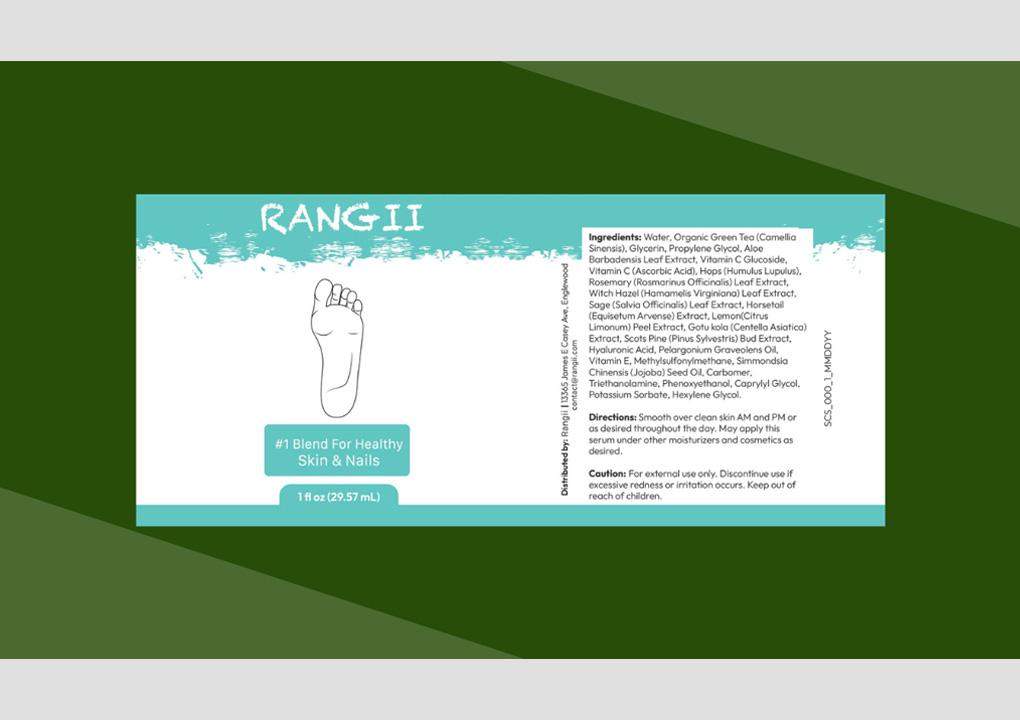

Main Ingredients

Blend of Probiotics, Vitamins, Herbs and Minerals.

Camellia sinensis, Glycerin, Aloe extract, Vitamin C & E, Gotu kola, Scots pine, Graveolens oil and more.

Check Rangii Label here!

How to Use Rangii?

Use twice a day (before and after shower for best results)

Bottle quantity

29.57ml (1 Fl oz)

Pricing

Click Here

Other Benefits

Special Bonuses (E-Books)

Free shipping

100% satisfaction guarantee.

Legit Rangii Purchase Access

[CLICK HERE](https://www.glitco.com/get-rangii)

**What is [Rangii Serum](https://www.ourboox.com/books/rangii-toenail-fungus-reviews-does-it-work-faqs/)?**

Rangii Serum is a powerful blend of oils and skin-boosting vitamins that are specially formulated to improve the health of your nails. This unique formula is designed to be applied daily after showering, allowing your skin to rebuild and maintain equilibrium. With its carefully chosen ingredients, this serum offers comprehensive care to keep your nails strong and healthy. It is also said to be a beauty elixir that offers intense care and support to the nails and skin, making them shiny, smooth, and beautiful. However, the formula is safe to use since it is made free from chemicals or fillers.

[.png)](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEiR-O16BYOGt9KKMjsIMetrqXMn79_5UAz8fVwvgvpFh55lpBUG6wY4W2WPY79_Wkz2asOvZMNyhYYC79DZgvIh2DL3RLHzThVXnXx2ISHDWsEzedQBYoffypx3z-MFQgZJjhgCCJfKiKvrJ6mIeZ7IT6jVhmoPPTjtGEZKmulCM3EEWs-WmWecU0qNN8Q/s1429/Screenshot%20(1100).png)

[Click Here To Buy It From Official Website](https://www.glitco.com/get-rangii)

To clarify, Rangii serum is an external application formula that gets absorbed deep into the skin and works better. It comes in a bottle with a monthly supply of an excellent blend of natural nutrients proven for their healing and revitalizing benefits.

**How Does [Rangii Serum](https://sites.google.com/view/rangiitoenailfungusreview/home) Work?**

Rangii Serum works by nourishing and strengthening your nails from the root. Its powerful blend of oils and vitamins penetrates deep into the nail bed, providing essential nutrients and promoting healthy nail growth. By applying Rangii Serum daily, you can expect to see improvements in the vibrancy of your nails, the fading of itchiness, and the regrowth of healthy pink nails within weeks. This natural solution has the healing effects of plant extracts and the rejuvenating power of essential nutrients that can protect the nails and skin from further infections.

However, the harmful fungal infections floating in the bloodstream are targeted and eliminated with the science-backed formulation. Hence, the results are sustainable, paving no path for return. Supporting collagen production and revitalizing cells, the Rangii serum is highly effective in maintaining healthy toenails and feet.

**Science Behind Formulation of [Rangii Serum](https://colab.research.google.com/drive/1ZH8VksBpG2pxEAVLP0YFhetb2ZzdSnqg#scrollTo=hAeWTiADzaLZ): Backed by Researchers!**

Rangii Serum's effectiveness is backed by scientific research and studies. Researchers have extensively studied the ingredients in Rangii Serum and their effects on nail health. These studies have shown that the oils and vitamins in the serum promote nail strength, reduce brittleness, and improve overall nail health. With this scientific support, you can trust that this serum is a reliable solution for your nail care needs.

**What Makes [Rangii Serum](https://groups.google.com/g/rangii-toenail-fungus-review/c/CXwVuogPjWs) Stand Out?**

Rangii Serum stands out from other nail care products due to its unique formulation and effectiveness. Unlike ordinary supplements, Rangii Serum contains all-natural ingredients that are rigorously tested for purity and quality, ensuring that it delivers optimal results. It means you can confidently upgrade your nail health, knowing that this solution is safe and effective. Making it a topical solution ensures that the exact nutrients get absorbed after direct application into the skin, allowing it to repair and heal as soon as possible.

[.png)](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEjefe0PtlgSl9Lb-IiPTTPCm5d8A5f4v6uEDJEBgI952FWWxd2E1jOZwV9lDqaFKFMcmallXHzhOWvbsP0qObixRNDqlEOi7UEuZrSC3djopi7bd91hhiFWb_GTF-aH7hE0ug8m8QI5QsJYi9ppH2-1wfqTiowyUFDDwt-4Xo9ogUDpAGHQB99-ONEsTqM/s1593/Screenshot%20(1101).png)

**What's Inside [Rangii](https://in.pinterest.com/pin/1065664330559896662/) Serum? – Its Ingredients & Impacts Backed by Science!**

Rangii Serum contains a carefully selected blend of oils and vitamins that work together to improve nail health. The sources are rare and still made precise in proper ration for delivering the exact research-based effects. However, it is safe, simple, and easy to use without any chemicals or preservatives. Let's take a closer look at the Rangii ingredients list:

Firstly, Barbadensis Leaf extract. It is a plant extract with high antioxidant effects that protects skin and nails against stressors. It has soothing and hydrating effects that can heal and nourish the skin and nails for a healthy appearance.

Secondly, you can find Camellia Sinensis inclusion, which is high in flavonoids and has high antioxidant benefits. It helps neutralize free radicals and fight against infections.

**Thirdly, there is Glycerin, which helps promote nail and cuticle health. It has moisturizing effects that maintain proper hydration on the outer layer of skin and nails, thus preventing itchiness, dryness, and other issues on the skin.**

Fourthly, there is Aloe Vera extract in the list that has natural cooling agents. It maintains healthy hydration and is effective in relieving fungal infections. The antiseptic quality of this extract defends against bacterial and fungal actions and inhibits the growth of yeast.

Additionally, Rosemary extract is included in the list, which is proven for its anti-inflammatory, anti-bacterial, and anti-viral effects. These properties treat nail fungus, hangnails, and other infections. The powerful antioxidants in this extract help strengthen nails and skin, further improving its appearance.

**What’s More in [Rangii](https://rangii-toenail-fungus-reviews.blogspot.com/2023/09/rangii-toenail-fungus-reviews-does-it.html) Serum?**

In addition to the above ingredients, several components are proven clinically to support nail and skin health from fungal infections.

You can find Vitamin C Glucoside in the formula, included for its antifungal effects that combat fungal infections.

Next, Witch Hazel inclusion helps reduce skin irritation and heal toenail fungal infections .

Adding Sage Leaf Extract to the formula offers intense moisturization and promotes nail health by enhancing immunity to fight against infections.

There are also other vital compounds, like,

Hops extract

Horsetail extract

Lemon Peel extract

Gotu Kola

Scots pine bud extract

Hyaluronic acid

Pelargonium Graveolens oil

Vitamin E and more address the root causes of nail health issues and combat infections.

**How to Use Rangii Serum? - A Step-by-Step Guide!**

Using Rangii Serum is quick and easy. Follow these simple steps to incorporate it into your daily nail care routine for beneficial results.

* Start by cleaning your nails and removing any nail polish or residue.

* Apply a small amount of Rangii Serum to each nail, focusing on the nail bed.

* Gently massage the serum into the nail bed using circular motions.

* Leave the serum to absorb for a few minutes before applying any other products or polish.

* For best results, it is recommended to apply Rangii Serum twice a day - once in the morning and once before bed.

You can also offer this application before and after a shower for better cleansing and detox effects that can erode fungal infections.

**Exclusive Benefits of [Rangii Toenail Fungus](https://soundcloud.com/getrangiitoenailfungus/rangii-toenail-fungus-reviews-does-it-work-faqs?) Serum:**

Rangii Serum offers a wide range of benefits for your nail health. The key advantages of using Rangii Serum are listed below for your reference and offer clarity about the purpose of this formula:

Stronger Nails: The powerful blend of oils and vitamins in Rangii Serum strengthens the nails, reducing brittleness and breakage.

Healthier Nail Bed: Rangii Serum nourishes the nail bed, promoting healthier nail growth and preventing common nail issues.

Improved Nail Appearance: With regular use of Rangii Serum, you can expect to see improvements in your nails' vibrancy and overall appearance.

Safe and Quick Results: Rangii Serum starts working from the first application, and you can see visible improvements within weeks. The formula is completely natural, and you can find better results without side effects. Verify the Rangii ingredients list label before you routinely endorse this application.

Gentle Exfoliation: The serum's formulation includes mild exfoliating agents that help remove dead skin cells, leaving your skin feeling smoother and more vibrant. This exfoliation process can also improve the absorption of other skincare products, maximizing their effectiveness.

Even Skin Tone: Uneven skin tone and dark spots are addressed by the serum's brightening ingredients. Over time, users often notice a more even complexion and reduced hyperpigmentation.

[.png)](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgZVip-KzB5dZVvLJHm8dfJnLTCVy6K3d77oaEs-D_e0_PYWe-dX6t9UnJPNhFXffmAuJph4froc9YvKFNnXw-3F6W_c1yWdG7SV88ZpEZBnbD0dak37k_RafUQmYVrvtBSiYsl6k8ccOviDYANdXf6ZSfsXkSdZGHYpktkpPPhngKxM078QZPGRFf5NIk/s1617/Screenshot%20(1098).png)

**Are There Any Drawbacks in Using [Rangii](https://www.bitchute.com/video/IWvOMevhkqDG/) Serum?**

While Rangii Serum is generally well-tolerated and effective, it's important to note that individual results may vary. Depending on their nail health and other factors, some users may experience faster results than others.

It's also worth mentioning that [Rangii](https://rangii-toenail-fungus-review.jimdosite.com/) Serum is not a treatment for underlying medical conditions that may affect nail health. If you have any concerns or pre-existing conditions, it's always best to consult with a healthcare practitioner before using any new products.

Rangii Serum

**Where to Buy Rangii Serum: Legit Sellers Only?**

To ensure you purchase genuine Rangii Serum, buying directly from the official website or authorized platform is recommended. It guarantees you are getting the original Rangii product, not a counterfeit version. Avoid purchasing from unauthorized sellers or third-party websites to protect yourself from potential scams or fake products. Also, you may not find Rangii's Amazon listing or in Walmart since the creator wants his customers to enjoy the potential benefits of the proven formula in proper doses.

Apart from the legitimacy of the serum, the manufacturer also helps by providing exclusive purchase benefits that are available only on the official website.

**Rangii Serum Pricing and Guarantee Plan:**

Rangii Serum is available in different package options to suit your needs. The 6-bottle package is a fan favorite, offering long-lasting protection against stock shortages. However, a single bottle will suffice if you are just looking to apply Rangii Serum after your shower. Prices may vary depending on the package you choose, so be sure to check the official website for the most up-to-date costing information.

* 30-day supply: 1 bottle that costs $69/each with a small shipping cost.

* 90-day supply: 3 bottles that cost $49/each with a small shipping cost. Here, you can get two additional bonuses to save yourself with additional defense.

* 180-day supply: 6 bottles package that costs $39/each with Free shipping and also two special bonuses available as a gift.

Additionally, Rangii Serum offers a 60-day, 100% money-back guarantee. If you are unsatisfied with the results, simply contact their world-class customer support team, who will refund your entire investment. This risk-free guarantee ensures you can confidently try Rangii Serum, knowing that your purchase is protected.

Contact customer support team 24×7:

Mail: [Rangii](https://getrangiitoenailfungus.bandcamp.com/track/rangii-toenail-fungus) .com

Call: 1-800-390-6035

**Who Can Use Rangii Serum?**

Rangii Serum is suitable for anyone looking to improve the health and appearance of their nails. The serum helps rejuvenate the nails and skin from nasty fungal infections and yellow nails in a natural way for all adults. Whether you have weak, brittle nails or simply want to maintain the health of your nail bed, Rangii Serum can be a valuable addition to your nail care routine. However, if you have any underlying medical conditions or are taking prescription medication, it's always wise to consult with a healthcare expert before using any new products.

**Other Bonuses: Additional Benefits of Rangii Serum**

In addition to its nail-enhancing properties, Rangii Serum offers some additional benefits that make it stand out from other nail care products. You can find two unique eBooks that help enhance the results of the Rangii Serum application.

1. Seven dangers of ignoring toe fungus.

2. Japanese toenail fungus code.

These online digital guides have effective tips and remedies that help improve the skin and nail appearance. It helps heal fungal infections and gives you smooth and soft skin and strong and shiny nails.

**Summarizing – [Rangii](https://getrangiitoenailfungus.webflow.io/) Toenail Serum Reviews**

Rangii Serum is a game-changer when it comes to nail care. Its powerful blend of oils and vitamins nourishes and strengthens your nails, promoting healthier growth and improving their appearance. Backed by scientific research and supported by a 60-day money-back guarantee, Rangii Serum is a safe and effective solution for anyone looking to achieve stronger and healthier nails.

[.png)](https://blogger.googleusercontent.com/img/b/R29vZ2xl/AVvXsEgF_giTNlDGPOK3IUmHGcPyTUJ4NEexU0xLBvGttk-3KMRfVebbOrdeCZnFTqskbkBmdXP7E7ZdZVh8zuyfFi1RBQAXRR-7XCODGvd5G_3Y4kNRGCP1evkAgZRoz8dBp4HJwr0-6aNmmqBnbk1x5Ab3ivzm6l_9249qc0L90NE8BNRxlQ3qCdPlahc9Z4o/s1593/Screenshot%20(1101).png)

[Click Here To Buy It From Official Website](https://www.glitco.com/get-rangii)

Upgrade your nail care routine today and experience the transformative power of Rangii Serum! Official Website Link with Discount Code Here!

**FAQ – Rangii-Serum!**

Here are answers to some frequently asked questions about this toenail fungal Serum:

**Is Rangii Serum safe?**

Yes, [Rangii](https://rangii-toenail-fungus-review.company.site/) Serum is made with pure organic ingredients that are tested for purity and clinically proven. It ensures optimal safety and quality with consistency. However, it's always recommended to check with your physician if you are already under a medical condition or with any rashes on your skin.

**How many bottles of Serum should I order?**

The 6-bottle package is highly preferred. Choosing this package provides long-lasting support against infections and stock shortages. However, a single bottle helps you manage a whole month if you apply this serum after your shower.

**How soon will I see results with Rangii Serum?**

You'll start seeing improvements right away. Your nails will look more vibrant, itchiness will fade, and healthy pink nails will start growing back within weeks.

**Does Rangii-serum include any additional cost?**

Obviously No, your order today includes a one-time fee with no additional or hidden charges.

**What if [Rangii](https://app.flowcode.com/page/rangiitoenailfungusreview)\-serum doesn't work for me?**

Explicitly, the manufacturer allows customers to enjoy peace of mind with this serum investment. There is a 60-day, 100% money-back guarantee, which will help you try the product for two months, and if you're not entirely satisfied, you can claim a full refund by contacting their customer support team.

**Where can I get the original Rangii-drops?**

To be precise, you can visit the official website link for exclusive discounts and a legit formula. It is not available anywhere else for Purchase.

[Click Here To Buy It From Official Website](https://www.glitco.com/get-rangii)

**Are Ingredients of Rangii serum backed by science?**

Several studies report the effects of the ingredients added to this formula. You can verify them on your own by researching Google for clinical reports after checking on their labels.

Related links:

[https://rangii-toenail-fungus-reviews.blogspot.com/2023/09/rangii-toenail-fungus-reviews-does-it.html](https://rangii-toenail-fungus-reviews.blogspot.com/2023/09/rangii-toenail-fungus-reviews-does-it.html)

[https://groups.google.com/g/rangii-toenail-fungus-review/c/CXwVuogPjWs](https://groups.google.com/g/rangii-toenail-fungus-review/c/CXwVuogPjWs)

[https://colab.research.google.com/drive/1ZH8VksBpG2pxEAVLP0YFhetb2ZzdSnqg#scrollTo=hAeWTiADzaLZ](https://colab.research.google.com/drive/1ZH8VksBpG2pxEAVLP0YFhetb2ZzdSnqg#scrollTo=hAeWTiADzaLZ)

[https://sites.google.com/view/rangiitoenailfungusreview/home](https://sites.google.com/view/rangiitoenailfungusreview/home)

[https://lookerstudio.google.com/reporting/64f081fc-f1e7-4747-b1fa-e5f7e03ce58f/page/BVibD](https://lookerstudio.google.com/reporting/64f081fc-f1e7-4747-b1fa-e5f7e03ce58f/page/BVibD)

[https://www.facebook.com/people/Rangii-Toenail-Fungus/61550939819696/](https://www.facebook.com/people/Rangii-Toenail-Fungus/61550939819696/)

[https://getrangiitoenailfungus.itch.io/rangii-toenail-fungus-reviews-does-it-work-faqs](https://getrangiitoenailfungus.itch.io/rangii-toenail-fungus-reviews-does-it-work-faqs)

[https://www.remotehub.com/getrangii.toenailfungus](https://www.remotehub.com/getrangii.toenailfungus)

[https://in.pinterest.com/pin/1065664330559896662/](https://in.pinterest.com/pin/1065664330559896662/)

[https://www.ourboox.com/books/rangii-toenail-fungus-reviews-does-it-work-faqs/](https://www.ourboox.com/books/rangii-toenail-fungus-reviews-does-it-work-faqs/)

[https://soundcloud.com/getrangiitoenailfungus/rangii-toenail-fungus-reviews-does-it-work-faqs?](https://soundcloud.com/getrangiitoenailfungus/rangii-toenail-fungus-reviews-does-it-work-faqs?)

[https://getrangiitoenailfungus.bandcamp.com/track/rangii-toenail-fungus](https://getrangiitoenailfungus.bandcamp.com/track/rangii-toenail-fungus)

[https://getrangiitoenailfungus.webflow.io/](https://getrangiitoenailfungus.webflow.io/)

[https://app.flowcode.com/page/rangiitoenailfungusreview](https://app.flowcode.com/page/rangiitoenailfungusreview)

[https://rangii-toenail-fungus-review.company.site/](https://rangii-toenail-fungus-review.company.site/)

[https://www.bitchute.com/video/IWvOMevhkqDG/](https://www.bitchute.com/video/IWvOMevhkqDG/) |

QLM78910/funsd-zh | 2023-09-05T07:28:52.000Z | [

"region:us"

] | QLM78910 | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: lang

dtype: string

- name: version

dtype: string

- name: split

dtype: string

- name: documents

list:

- name: id

dtype: string

- name: uid

dtype: string

- name: document

list:

- name: box

sequence: int64

- name: text

dtype: string

- name: label

dtype: string

- name: words

list:

- name: box

sequence: int64

- name: text

dtype: string

- name: linking

sequence:

sequence: int64

- name: id

dtype: int64

- name: img

struct:

- name: fname

dtype: string

- name: width

dtype: int64

- name: height

dtype: int64

splits:

- name: train

num_bytes: 4057416

num_examples: 1

- name: val

num_bytes: 1483956

num_examples: 1

download_size: 1269925

dataset_size: 5541372

---

# Dataset Card for "funsd-zh"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

waddasi/holymoly | 2023-09-05T07:49:11.000Z | [

"license:c-uda",

"region:us"

] | waddasi | null | null | null | 0 | 0 | ---

license: c-uda

---

|

TinyPixel/oa-2 | 2023-09-05T09:59:20.000Z | [

"region:us"

] | TinyPixel | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 9475124

num_examples: 8274

download_size: 5126342

dataset_size: 9475124

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "oa-2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

ACCA225/sd-config5 | 2023-09-07T15:31:29.000Z | [

"region:us"

] | ACCA225 | null | null | null | 0 | 0 | Entry not found |

warleagle/pco_audio_data | 2023-09-05T07:55:11.000Z | [

"region:us"

] | warleagle | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: audio

dtype: audio

splits:

- name: train

num_bytes: 46266636.0

num_examples: 2

download_size: 46268833

dataset_size: 46266636.0

---

# Dataset Card for "pco_audio_data"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

sict2010/sql | 2023-09-05T07:51:17.000Z | [

"region:us"

] | sict2010 | null | null | null | 0 | 0 | Entry not found |

Maxx0/testing | 2023-09-05T07:53:32.000Z | [

"region:us"

] | Maxx0 | null | null | null | 0 | 0 | Entry not found |

warleagle/pco_audio_data_v2 | 2023-09-05T07:56:35.000Z | [

"region:us"

] | warleagle | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: audio

dtype: audio

splits:

- name: train

num_bytes: 195374660.0

num_examples: 6

download_size: 195380376

dataset_size: 195374660.0

---

# Dataset Card for "pco_audio_data_v2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

sachith-surge/LaMini-LM-dataset-TheBloke-h2ogpt-falcon-40b-v2-GGML-eval-llama2-gpt4 | 2023-09-05T08:02:56.000Z | [

"region:us"

] | sachith-surge | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: source

dtype: string

- name: response

dtype: string

- name: llama2_status

dtype: string

- name: llama2_rating

dtype: string

- name: llama2_reason

dtype: string

- name: gpt4_status

dtype: string

- name: gpt4_rating

dtype: string

- name: gpt4_reason

dtype: string

splits:

- name: train

num_bytes: 2729018

num_examples: 1505

download_size: 1378351

dataset_size: 2729018

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "LaMini-LM-dataset-TheBloke-h2ogpt-falcon-40b-v2-GGML-eval-llama2-gpt4"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

talentlabs/training-data-blog-writer_v05-09-2023 | 2023-09-05T08:06:43.000Z | [

"region:us"

] | talentlabs | null | null | null | 0 | 0 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: title

dtype: string

- name: article

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 53198298

num_examples: 10100

download_size: 32850622

dataset_size: 53198298

---

# Dataset Card for "training-data-blog-writer_v05-09-2023"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Maxx0/Testing_new_nsfw | 2023-09-05T08:48:38.000Z | [

"region:us"

] | Maxx0 | null | null | null | 2 | 0 | |

PlenitudeAI/simpsons_prompt_lines | 2023-09-05T08:59:33.000Z | [

"region:us"

] | PlenitudeAI | null | null | null | 0 | 0 | ---

dataset_info:

features:

- name: previous

dtype: string

- name: character

dtype: string

- name: line

dtype: string

- name: text

dtype: string

splits:

- name: train

num_bytes: 191013022

num_examples: 121841

download_size: 0

dataset_size: 191013022

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "simpsons_prompt_lines"

I used the [Simpsons](https://www.kaggle.com/datasets/prashant111/the-simpsons-dataset?resource=download&select=simpsons_episodes.csv) Kaggle dataset (simpsons_episodes.csv and simpsons_script_lines.csv)

I got the idea and part of the code from this [blog post](https://replicate.com/blog/fine-tune-llama-to-speak-like-homer-simpson) from Replicate.

This can be used to fine-tune a Chat LLM model, to speak like one of the characters of the show !

### Example

```json

{

"previous": "Marge Simpson: Homer, get up! Up, up, up!\nMarge Simpson: Oh no!\nHomer Simpson: Whuzzit... My juice box!\nMarge Simpson: Sorry, Homie, but you promised to take me to the Apron Expo today.",

"character": "Homer Simpson",

"line": "Just give me ten more hours.",

"text": "<s> [INST] Below is a script from the American animated sitcom The Simpsons. Write a response that completes Homer Simpson's last line in the conversation. \n\nMarge Simpson: Homer, get up! Up, up, up!\nMarge Simpson: Oh no!\nHomer Simpson: Whuzzit... My juice box!\nMarge Simpson: Sorry, Homie, but you promised to take me to the Apron Expo today.\nHomer Simpson: [/INST] Just give me ten more hours. </s>"

}

```

### Characters

- Homer Simpson

- Bart Simpson

- Marge Simpson

- Lisa Simpson

- C. Montgomery Burns

- Seymour Skinner

- Moe Szyslak

- Ned Flanders

- Grampa Simpson

- Krusty the Clown

- Chief Wiggum

- Milhouse Van Houten

- Waylon Smithers

- Apu Nahasapeemapetilon

- Kent Brockman

- Nelson Muntz

- Barney Gumble

- Lenny Leonard

- Edna Krabappel-Flanders

- Sideshow Bob

- Dr. Julius Hibbert

- Selma Bouvier

- Ralph Wiggum

- Rev. Timothy Lovejoy

- Crowd

- Carl Carlson

- Patty Bouvier

- Mayor Joe Quimby

- Otto Mann

- Groundskeeper Willie

- Martin Prince

- Announcer

- Comic Book Guy

- Kids

- Lionel Hutz

- HERB

- Sideshow Mel

- Gary Chalmers

- Professor Jonathan Frink

- Jimbo Jones

- Lou

- Todd Flanders

- Miss Hoover

- Agnes Skinner

- Maude Flanders

- Troy McClure

- Fat Tony

- Snake Jailbird

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

haes95/cs_qna_labeling | 2023-09-05T08:23:15.000Z | [

"region:us"

] | haes95 | null | null | null | 0 | 0 | test |

open-llm-leaderboard/details_openchat__openchat_v3.2_super | 2023-09-05T08:30:13.000Z | [

"region:us"

] | open-llm-leaderboard | null | null | null | 0 | 0 | ---

pretty_name: Evaluation run of openchat/openchat_v3.2_super

dataset_summary: "Dataset automatically created during the evaluation run of model\

\ [openchat/openchat_v3.2_super](https://huggingface.co/openchat/openchat_v3.2_super)\

\ on the [Open LLM Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard).\n\

\nThe dataset is composed of 61 configuration, each one coresponding to one of the\

\ evaluated task.\n\nThe dataset has been created from 1 run(s). Each run can be\

\ found as a specific split in each configuration, the split being named using the\

\ timestamp of the run.The \"train\" split is always pointing to the latest results.\n\

\nAn additional configuration \"results\" store all the aggregated results of the\

\ run (and is used to compute and display the agregated metrics on the [Open LLM\

\ Leaderboard](https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard)).\n\

\nTo load the details from a run, you can for instance do the following:\n```python\n\

from datasets import load_dataset\ndata = load_dataset(\"open-llm-leaderboard/details_openchat__openchat_v3.2_super\"\

,\n\t\"harness_truthfulqa_mc_0\",\n\tsplit=\"train\")\n```\n\n## Latest results\n\

\nThese are the [latest results from run 2023-09-05T08:28:49.460161](https://huggingface.co/datasets/open-llm-leaderboard/details_openchat__openchat_v3.2_super/blob/main/results_2023-09-05T08%3A28%3A49.460161.json)(note\

\ that their might be results for other tasks in the repos if successive evals didn't\

\ cover the same tasks. You find each in the results and the \"latest\" split for\

\ each eval):\n\n```python\n{\n \"all\": {\n \"acc\": 0.5601198461528745,\n\

\ \"acc_stderr\": 0.03446710165761066,\n \"acc_norm\": 0.5641655717544484,\n\

\ \"acc_norm_stderr\": 0.03444643929957741,\n \"mc1\": 0.2937576499388005,\n\

\ \"mc1_stderr\": 0.015945068581236618,\n \"mc2\": 0.42298709708965165,\n\

\ \"mc2_stderr\": 0.01470335666513224\n },\n \"harness|arc:challenge|25\"\

: {\n \"acc\": 0.560580204778157,\n \"acc_stderr\": 0.014503747823580125,\n\

\ \"acc_norm\": 0.5981228668941979,\n \"acc_norm_stderr\": 0.014327268614578274\n\

\ },\n \"harness|hellaswag|10\": {\n \"acc\": 0.6238797052380004,\n\

\ \"acc_stderr\": 0.0048342079640613204,\n \"acc_norm\": 0.8250348536148178,\n\

\ \"acc_norm_stderr\": 0.0037916080491012284\n },\n \"harness|hendrycksTest-abstract_algebra|5\"\

: {\n \"acc\": 0.36,\n \"acc_stderr\": 0.04824181513244218,\n \

\ \"acc_norm\": 0.36,\n \"acc_norm_stderr\": 0.04824181513244218\n \

\ },\n \"harness|hendrycksTest-anatomy|5\": {\n \"acc\": 0.45925925925925926,\n\

\ \"acc_stderr\": 0.04304979692464243,\n \"acc_norm\": 0.45925925925925926,\n\

\ \"acc_norm_stderr\": 0.04304979692464243\n },\n \"harness|hendrycksTest-astronomy|5\"\

: {\n \"acc\": 0.5986842105263158,\n \"acc_stderr\": 0.039889037033362836,\n\

\ \"acc_norm\": 0.5986842105263158,\n \"acc_norm_stderr\": 0.039889037033362836\n\

\ },\n \"harness|hendrycksTest-business_ethics|5\": {\n \"acc\": 0.57,\n\

\ \"acc_stderr\": 0.04975698519562428,\n \"acc_norm\": 0.57,\n \

\ \"acc_norm_stderr\": 0.04975698519562428\n },\n \"harness|hendrycksTest-clinical_knowledge|5\"\

: {\n \"acc\": 0.6339622641509434,\n \"acc_stderr\": 0.029647813539365242,\n\

\ \"acc_norm\": 0.6339622641509434,\n \"acc_norm_stderr\": 0.029647813539365242\n\

\ },\n \"harness|hendrycksTest-college_biology|5\": {\n \"acc\": 0.5902777777777778,\n\

\ \"acc_stderr\": 0.04112490974670787,\n \"acc_norm\": 0.5902777777777778,\n\

\ \"acc_norm_stderr\": 0.04112490974670787\n },\n \"harness|hendrycksTest-college_chemistry|5\"\

: {\n \"acc\": 0.43,\n \"acc_stderr\": 0.049756985195624284,\n \

\ \"acc_norm\": 0.43,\n \"acc_norm_stderr\": 0.049756985195624284\n \

\ },\n \"harness|hendrycksTest-college_computer_science|5\": {\n \"\

acc\": 0.48,\n \"acc_stderr\": 0.050211673156867795,\n \"acc_norm\"\

: 0.48,\n \"acc_norm_stderr\": 0.050211673156867795\n },\n \"harness|hendrycksTest-college_mathematics|5\"\

: {\n \"acc\": 0.4,\n \"acc_stderr\": 0.049236596391733084,\n \

\ \"acc_norm\": 0.4,\n \"acc_norm_stderr\": 0.049236596391733084\n \

\ },\n \"harness|hendrycksTest-college_medicine|5\": {\n \"acc\": 0.5664739884393064,\n\

\ \"acc_stderr\": 0.03778621079092055,\n \"acc_norm\": 0.5664739884393064,\n\

\ \"acc_norm_stderr\": 0.03778621079092055\n },\n \"harness|hendrycksTest-college_physics|5\"\

: {\n \"acc\": 0.27450980392156865,\n \"acc_stderr\": 0.04440521906179328,\n\

\ \"acc_norm\": 0.27450980392156865,\n \"acc_norm_stderr\": 0.04440521906179328\n\

\ },\n \"harness|hendrycksTest-computer_security|5\": {\n \"acc\":\

\ 0.69,\n \"acc_stderr\": 0.04648231987117316,\n \"acc_norm\": 0.69,\n\

\ \"acc_norm_stderr\": 0.04648231987117316\n },\n \"harness|hendrycksTest-conceptual_physics|5\"\

: {\n \"acc\": 0.425531914893617,\n \"acc_stderr\": 0.03232146916224468,\n\

\ \"acc_norm\": 0.425531914893617,\n \"acc_norm_stderr\": 0.03232146916224468\n\

\ },\n \"harness|hendrycksTest-econometrics|5\": {\n \"acc\": 0.2719298245614035,\n\

\ \"acc_stderr\": 0.04185774424022056,\n \"acc_norm\": 0.2719298245614035,\n\

\ \"acc_norm_stderr\": 0.04185774424022056\n },\n \"harness|hendrycksTest-electrical_engineering|5\"\

: {\n \"acc\": 0.496551724137931,\n \"acc_stderr\": 0.041665675771015785,\n\

\ \"acc_norm\": 0.496551724137931,\n \"acc_norm_stderr\": 0.041665675771015785\n\

\ },\n \"harness|hendrycksTest-elementary_mathematics|5\": {\n \"acc\"\

: 0.30952380952380953,\n \"acc_stderr\": 0.023809523809523864,\n \"\

acc_norm\": 0.30952380952380953,\n \"acc_norm_stderr\": 0.023809523809523864\n\

\ },\n \"harness|hendrycksTest-formal_logic|5\": {\n \"acc\": 0.35714285714285715,\n\

\ \"acc_stderr\": 0.042857142857142816,\n \"acc_norm\": 0.35714285714285715,\n\

\ \"acc_norm_stderr\": 0.042857142857142816\n },\n \"harness|hendrycksTest-global_facts|5\"\

: {\n \"acc\": 0.37,\n \"acc_stderr\": 0.048523658709391,\n \

\ \"acc_norm\": 0.37,\n \"acc_norm_stderr\": 0.048523658709391\n },\n\

\ \"harness|hendrycksTest-high_school_biology|5\": {\n \"acc\": 0.6580645161290323,\n\

\ \"acc_stderr\": 0.026985289576552746,\n \"acc_norm\": 0.6580645161290323,\n\

\ \"acc_norm_stderr\": 0.026985289576552746\n },\n \"harness|hendrycksTest-high_school_chemistry|5\"\

: {\n \"acc\": 0.4088669950738916,\n \"acc_stderr\": 0.034590588158832314,\n\

\ \"acc_norm\": 0.4088669950738916,\n \"acc_norm_stderr\": 0.034590588158832314\n\

\ },\n \"harness|hendrycksTest-high_school_computer_science|5\": {\n \

\ \"acc\": 0.54,\n \"acc_stderr\": 0.05009082659620333,\n \"acc_norm\"\

: 0.54,\n \"acc_norm_stderr\": 0.05009082659620333\n },\n \"harness|hendrycksTest-high_school_european_history|5\"\

: {\n \"acc\": 0.6909090909090909,\n \"acc_stderr\": 0.036085410115739666,\n\

\ \"acc_norm\": 0.6909090909090909,\n \"acc_norm_stderr\": 0.036085410115739666\n\

\ },\n \"harness|hendrycksTest-high_school_geography|5\": {\n \"acc\"\

: 0.696969696969697,\n \"acc_stderr\": 0.032742879140268674,\n \"\

acc_norm\": 0.696969696969697,\n \"acc_norm_stderr\": 0.032742879140268674\n\

\ },\n \"harness|hendrycksTest-high_school_government_and_politics|5\": {\n\

\ \"acc\": 0.8134715025906736,\n \"acc_stderr\": 0.02811209121011747,\n\

\ \"acc_norm\": 0.8134715025906736,\n \"acc_norm_stderr\": 0.02811209121011747\n\

\ },\n \"harness|hendrycksTest-high_school_macroeconomics|5\": {\n \

\ \"acc\": 0.5128205128205128,\n \"acc_stderr\": 0.025342671293807257,\n\

\ \"acc_norm\": 0.5128205128205128,\n \"acc_norm_stderr\": 0.025342671293807257\n\

\ },\n \"harness|hendrycksTest-high_school_mathematics|5\": {\n \"\

acc\": 0.27037037037037037,\n \"acc_stderr\": 0.027080372815145658,\n \

\ \"acc_norm\": 0.27037037037037037,\n \"acc_norm_stderr\": 0.027080372815145658\n\

\ },\n \"harness|hendrycksTest-high_school_microeconomics|5\": {\n \

\ \"acc\": 0.5546218487394958,\n \"acc_stderr\": 0.0322841062671639,\n \

\ \"acc_norm\": 0.5546218487394958,\n \"acc_norm_stderr\": 0.0322841062671639\n\

\ },\n \"harness|hendrycksTest-high_school_physics|5\": {\n \"acc\"\

: 0.304635761589404,\n \"acc_stderr\": 0.03757949922943342,\n \"acc_norm\"\

: 0.304635761589404,\n \"acc_norm_stderr\": 0.03757949922943342\n },\n\

\ \"harness|hendrycksTest-high_school_psychology|5\": {\n \"acc\": 0.7559633027522936,\n\

\ \"acc_stderr\": 0.018415286351416416,\n \"acc_norm\": 0.7559633027522936,\n\

\ \"acc_norm_stderr\": 0.018415286351416416\n },\n \"harness|hendrycksTest-high_school_statistics|5\"\

: {\n \"acc\": 0.4166666666666667,\n \"acc_stderr\": 0.033622774366080445,\n\

\ \"acc_norm\": 0.4166666666666667,\n \"acc_norm_stderr\": 0.033622774366080445\n\

\ },\n \"harness|hendrycksTest-high_school_us_history|5\": {\n \"acc\"\

: 0.7450980392156863,\n \"acc_stderr\": 0.030587591351604246,\n \"\

acc_norm\": 0.7450980392156863,\n \"acc_norm_stderr\": 0.030587591351604246\n\

\ },\n \"harness|hendrycksTest-high_school_world_history|5\": {\n \"\

acc\": 0.7510548523206751,\n \"acc_stderr\": 0.028146970599422644,\n \

\ \"acc_norm\": 0.7510548523206751,\n \"acc_norm_stderr\": 0.028146970599422644\n\

\ },\n \"harness|hendrycksTest-human_aging|5\": {\n \"acc\": 0.6233183856502242,\n\

\ \"acc_stderr\": 0.032521134899291884,\n \"acc_norm\": 0.6233183856502242,\n\

\ \"acc_norm_stderr\": 0.032521134899291884\n },\n \"harness|hendrycksTest-human_sexuality|5\"\

: {\n \"acc\": 0.6717557251908397,\n \"acc_stderr\": 0.04118438565806298,\n\

\ \"acc_norm\": 0.6717557251908397,\n \"acc_norm_stderr\": 0.04118438565806298\n\

\ },\n \"harness|hendrycksTest-international_law|5\": {\n \"acc\":\

\ 0.7107438016528925,\n \"acc_stderr\": 0.041391127276354626,\n \"\

acc_norm\": 0.7107438016528925,\n \"acc_norm_stderr\": 0.041391127276354626\n\

\ },\n \"harness|hendrycksTest-jurisprudence|5\": {\n \"acc\": 0.7222222222222222,\n\

\ \"acc_stderr\": 0.04330043749650742,\n \"acc_norm\": 0.7222222222222222,\n\

\ \"acc_norm_stderr\": 0.04330043749650742\n },\n \"harness|hendrycksTest-logical_fallacies|5\"\

: {\n \"acc\": 0.6687116564417178,\n \"acc_stderr\": 0.03697983910025588,\n\

\ \"acc_norm\": 0.6687116564417178,\n \"acc_norm_stderr\": 0.03697983910025588\n\

\ },\n \"harness|hendrycksTest-machine_learning|5\": {\n \"acc\": 0.3392857142857143,\n\

\ \"acc_stderr\": 0.04493949068613539,\n \"acc_norm\": 0.3392857142857143,\n\

\ \"acc_norm_stderr\": 0.04493949068613539\n },\n \"harness|hendrycksTest-management|5\"\

: {\n \"acc\": 0.7087378640776699,\n \"acc_stderr\": 0.04498676320572924,\n\

\ \"acc_norm\": 0.7087378640776699,\n \"acc_norm_stderr\": 0.04498676320572924\n\

\ },\n \"harness|hendrycksTest-marketing|5\": {\n \"acc\": 0.8205128205128205,\n\

\ \"acc_stderr\": 0.025140935950335435,\n \"acc_norm\": 0.8205128205128205,\n\

\ \"acc_norm_stderr\": 0.025140935950335435\n },\n \"harness|hendrycksTest-medical_genetics|5\"\

: {\n \"acc\": 0.59,\n \"acc_stderr\": 0.049431107042371025,\n \

\ \"acc_norm\": 0.59,\n \"acc_norm_stderr\": 0.049431107042371025\n \

\ },\n \"harness|hendrycksTest-miscellaneous|5\": {\n \"acc\": 0.7496807151979565,\n\

\ \"acc_stderr\": 0.015491088951494574,\n \"acc_norm\": 0.7496807151979565,\n\

\ \"acc_norm_stderr\": 0.015491088951494574\n },\n \"harness|hendrycksTest-moral_disputes|5\"\

: {\n \"acc\": 0.6271676300578035,\n \"acc_stderr\": 0.02603389061357628,\n\

\ \"acc_norm\": 0.6271676300578035,\n \"acc_norm_stderr\": 0.02603389061357628\n\

\ },\n \"harness|hendrycksTest-moral_scenarios|5\": {\n \"acc\": 0.38212290502793295,\n\

\ \"acc_stderr\": 0.016251139711570772,\n \"acc_norm\": 0.38212290502793295,\n\

\ \"acc_norm_stderr\": 0.016251139711570772\n },\n \"harness|hendrycksTest-nutrition|5\"\

: {\n \"acc\": 0.6209150326797386,\n \"acc_stderr\": 0.027780141207023344,\n\

\ \"acc_norm\": 0.6209150326797386,\n \"acc_norm_stderr\": 0.027780141207023344\n\

\ },\n \"harness|hendrycksTest-philosophy|5\": {\n \"acc\": 0.6205787781350482,\n\

\ \"acc_stderr\": 0.027559949802347813,\n \"acc_norm\": 0.6205787781350482,\n\

\ \"acc_norm_stderr\": 0.027559949802347813\n },\n \"harness|hendrycksTest-prehistory|5\"\

: {\n \"acc\": 0.6450617283950617,\n \"acc_stderr\": 0.02662415247884585,\n\

\ \"acc_norm\": 0.6450617283950617,\n \"acc_norm_stderr\": 0.02662415247884585\n\

\ },\n \"harness|hendrycksTest-professional_accounting|5\": {\n \"\

acc\": 0.38652482269503546,\n \"acc_stderr\": 0.029049190342543458,\n \

\ \"acc_norm\": 0.38652482269503546,\n \"acc_norm_stderr\": 0.029049190342543458\n\

\ },\n \"harness|hendrycksTest-professional_law|5\": {\n \"acc\": 0.41916558018252936,\n\

\ \"acc_stderr\": 0.012602244505788233,\n \"acc_norm\": 0.41916558018252936,\n\

\ \"acc_norm_stderr\": 0.012602244505788233\n },\n \"harness|hendrycksTest-professional_medicine|5\"\

: {\n \"acc\": 0.5919117647058824,\n \"acc_stderr\": 0.029855261393483924,\n\

\ \"acc_norm\": 0.5919117647058824,\n \"acc_norm_stderr\": 0.029855261393483924\n\

\ },\n \"harness|hendrycksTest-professional_psychology|5\": {\n \"\

acc\": 0.5718954248366013,\n \"acc_stderr\": 0.02001762921421309,\n \

\ \"acc_norm\": 0.5718954248366013,\n \"acc_norm_stderr\": 0.02001762921421309\n\

\ },\n \"harness|hendrycksTest-public_relations|5\": {\n \"acc\": 0.6,\n\

\ \"acc_stderr\": 0.0469237132203465,\n \"acc_norm\": 0.6,\n \

\ \"acc_norm_stderr\": 0.0469237132203465\n },\n \"harness|hendrycksTest-security_studies|5\"\

: {\n \"acc\": 0.636734693877551,\n \"acc_stderr\": 0.030789051139030806,\n\

\ \"acc_norm\": 0.636734693877551,\n \"acc_norm_stderr\": 0.030789051139030806\n\

\ },\n \"harness|hendrycksTest-sociology|5\": {\n \"acc\": 0.7164179104477612,\n\

\ \"acc_stderr\": 0.03187187537919798,\n \"acc_norm\": 0.7164179104477612,\n\

\ \"acc_norm_stderr\": 0.03187187537919798\n },\n \"harness|hendrycksTest-us_foreign_policy|5\"\

: {\n \"acc\": 0.8,\n \"acc_stderr\": 0.04020151261036846,\n \

\ \"acc_norm\": 0.8,\n \"acc_norm_stderr\": 0.04020151261036846\n },\n\

\ \"harness|hendrycksTest-virology|5\": {\n \"acc\": 0.463855421686747,\n\

\ \"acc_stderr\": 0.03882310850890593,\n \"acc_norm\": 0.463855421686747,\n\

\ \"acc_norm_stderr\": 0.03882310850890593\n },\n \"harness|hendrycksTest-world_religions|5\"\

: {\n \"acc\": 0.7719298245614035,\n \"acc_stderr\": 0.032180937956023566,\n\

\ \"acc_norm\": 0.7719298245614035,\n \"acc_norm_stderr\": 0.032180937956023566\n\

\ },\n \"harness|truthfulqa:mc|0\": {\n \"mc1\": 0.2937576499388005,\n\

\ \"mc1_stderr\": 0.015945068581236618,\n \"mc2\": 0.42298709708965165,\n\

\ \"mc2_stderr\": 0.01470335666513224\n }\n}\n```"

repo_url: https://huggingface.co/openchat/openchat_v3.2_super

leaderboard_url: https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard

point_of_contact: clementine@hf.co

configs:

- config_name: harness_arc_challenge_25

data_files:

- split: 2023_09_05T08_28_49.460161

path:

- '**/details_harness|arc:challenge|25_2023-09-05T08:28:49.460161.parquet'

- split: latest

path:

- '**/details_harness|arc:challenge|25_2023-09-05T08:28:49.460161.parquet'

- config_name: harness_hellaswag_10

data_files:

- split: 2023_09_05T08_28_49.460161

path: