id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

arbml/alpaca_arabic_v3 | 2023-09-06T17:39:52.000Z | [

"region:us"

] | arbml | null | null | null | 0 | 7 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

dataset_info:

features:

- name: index

dtype: string

- name: output

dtype: string

- name: output_en

dtype: string

- name: input

dtype: string

- name: input_en

dtype: string

- name: instruction

dtype: string

- name: instruction_en

dtype: string

splits:

- name: train

num_bytes: 20871

num_examples: 31

download_size: 0

dataset_size: 20871

---

# Dataset Card for "alpaca_arabic_v3"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

Abzu/arxiv_stem_filtered | 2023-08-03T14:12:11.000Z | [

"region:us"

] | Abzu | null | null | null | 0 | 7 | ---

dataset_info:

features:

- name: id

dtype: string

- name: submitter

dtype: string

- name: authors

dtype: string

- name: title

dtype: string

- name: comments

dtype: string

- name: journal-ref

dtype: string

- name: doi

dtype: string

- name: report-no

dtype: string

- name: categories

dtype: string

- name: license

dtype: string

- name: abstract

dtype: string

- name: update_date

dtype: string

splits:

- name: train

num_bytes: 391221495.4053062

num_examples: 301707

download_size: 205323915

dataset_size: 391221495.4053062

---

# Dataset Card for "arxiv_stem_filtered"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

TrainingDataPro/ocr-text-detection-in-the-documents | 2023-09-14T16:33:47.000Z | [

"task_categories:image-to-text",

"task_categories:object-detection",

"language:en",

"license:cc-by-nc-nd-4.0",

"code",

"legal",

"finance",

"region:us"

] | TrainingDataPro | null | null | null | 1 | 7 | ---

license: cc-by-nc-nd-4.0

task_categories:

- image-to-text

- object-detection

language:

- en

tags:

- code

- legal

- finance

---

# OCR Text Detection in the Documents Dataset

The dataset is a collection of images that have been annotated with the location of text in the document. The dataset is specifically curated for text detection and recognition tasks in documents such as scanned papers, forms, invoices, and handwritten notes.

The dataset contains a variety of document types, including different *layouts, font sizes, and styles*. The images come from diverse sources, ensuring a representative collection of document styles and quality. Each image in the dataset is accompanied by bounding box annotations that outline the exact location of the text within the document.

The Text Detection in the Documents dataset provides an invaluable resource for developing and testing algorithms for text extraction, recognition, and analysis. It enables researchers to explore and innovate in various applications, including *optical character recognition (OCR), information extraction, and document understanding*.

.png?generation=1691059158337136&alt=media)

# Get the dataset

### This is just an example of the data

Leave a request on [**https://trainingdata.pro/data-market**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=ocr-text-detection-in-the-documents) to discuss your requirements, learn about the price and buy the dataset.

# Dataset structure

- **images** - contains of original images of documents

- **boxes** - includes bounding box labeling for the original images

- **annotations.xml** - contains coordinates of the bounding boxes and labels, created for the original photo

# Data Format

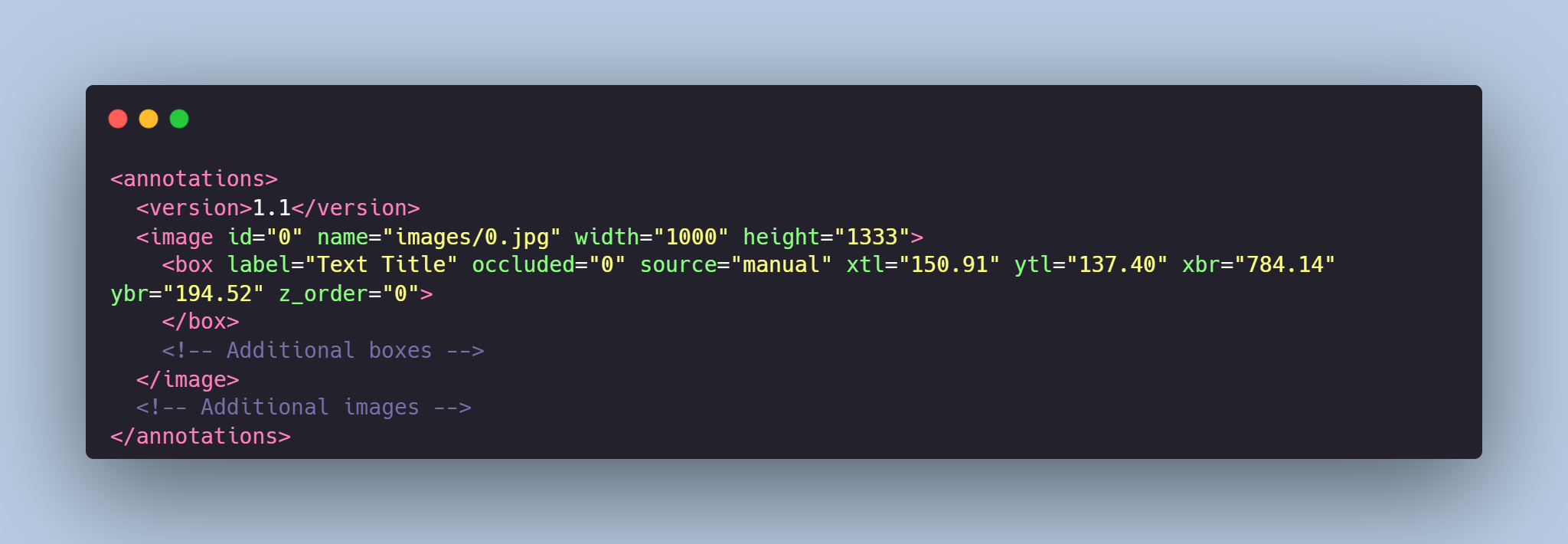

Each image from `images` folder is accompanied by an XML-annotation in the `annotations.xml` file indicating the coordinates of the bounding boxes and labels for text detection. For each point, the x and y coordinates are provided.

### Labels for the text:

- **"Text Title"** - corresponds to titles, the box is **red**

- **"Text Paragraph"** - corresponds to paragraphs of text, the box is **blue**

- **"Table"** - corresponds to the table, the box is **green**

- **"Handwritten"** - corresponds to handwritten text, the box is **purple**

# Example of XML file structure

# Text Detection in the Documents might be made in accordance with your requirements.

## [**TrainingData**](https://trainingdata.pro/data-market?utm_source=huggingface&utm_medium=cpc&utm_campaign=ocr-text-detection-in-the-documents) provides high-quality data annotation tailored to your needs

More datasets in TrainingData's Kaggle account: **https://www.kaggle.com/trainingdatapro/datasets**

TrainingData's GitHub: **https://github.com/Trainingdata-datamarket/TrainingData_All_datasets** |

JayalekshmiGopakumar/updated_doc_laynet_for_donut | 2023-08-04T10:18:49.000Z | [

"region:us"

] | JayalekshmiGopakumar | null | null | null | 0 | 7 | ---

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

- split: test

path: data/test-*

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype:

class_label:

names:

'0': financial_reports

'1': government_tenders

'2': manuals

'3': laws_and_regulations

'4': scientific_articles

'5': patents

- name: ground_truth

dtype: string

splits:

- name: train

num_bytes: 18526989.0

num_examples: 48

- name: test

num_bytes: 3240607.0

num_examples: 12

download_size: 21738451

dataset_size: 21767596.0

---

# Dataset Card for "updated_doc_laynet_for_donut"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

RayBernard/leetcode | 2023-08-04T18:23:11.000Z | [

"license:llama2",

"region:us"

] | RayBernard | null | null | null | 0 | 7 | ---

license: llama2

---

|

arazd/tulu_baize | 2023-08-04T21:45:58.000Z | [

"license:openrail",

"region:us"

] | arazd | null | null | null | 0 | 7 | ---

license: openrail

---

|

rombodawg/2XUNCENSORED_alpaca_840k_Evol_USER_ASSIS | 2023-08-07T21:52:44.000Z | [

"license:other",

"region:us"

] | rombodawg | null | null | null | 5 | 7 | ---

license: other

---

MEGACODE TRAINING VERSION 2 OUT NOW: https://huggingface.co/datasets/rombodawg/LosslessMegaCodeTrainingV2_1m_Evol_Uncensored

Version 1 Updated/Uncensored version here: https://huggingface.co/datasets/rombodawg/2XUNCENSORED_MegaCodeTraining188k

Legacy Version 1 code training here: https://huggingface.co/datasets/rombodawg/MegaCodeTraining200k

This is The non-coding evol instruct dataset

This dataset is meant for further refinement on intruction based training for ai models based on the evol instruct method.

This dataset is has gone through a second set of uncensoring filtering using my own method where alot of censored data was innitially missed.

This is the original flan1m-alpaca-uncensored.jsonl bellow:

https://huggingface.co/datasets/ehartford/dolphin/tree/main |

HugoGiddins/IBM-mq | 2023-09-18T09:50:38.000Z | [

"region:us"

] | HugoGiddins | null | null | null | 0 | 7 | Entry not found |

xPXXX/stackoverflow_DL-related_questions | 2023-08-21T00:44:46.000Z | [

"license:mit",

"region:us"

] | xPXXX | null | null | null | 0 | 7 | ---

license: mit

---

|

adityarra07/sub_ATC_large | 2023-08-09T21:30:28.000Z | [

"region:us"

] | adityarra07 | null | null | null | 0 | 7 | ---

dataset_info:

features:

- name: audio

dtype:

audio:

sampling_rate: 16000

- name: transcription

dtype: string

- name: id

dtype: string

splits:

- name: train

num_bytes: 410194527.19266194

num_examples: 3000

- name: test

num_bytes: 27346488.81284413

num_examples: 200

download_size: 433858552

dataset_size: 437541016.0055061

---

# Dataset Card for "sub_ATC_large"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

elsheikhams/labr-ar | 2023-08-10T10:50:38.000Z | [

"license:gpl-2.0",

"region:us"

] | elsheikhams | null | null | null | 0 | 7 | ---

license: gpl-2.0

---

|

nlplabtdtu/people_qa_short_answer | 2023-08-10T16:11:48.000Z | [

"region:us"

] | nlplabtdtu | null | null | null | 0 | 7 | Entry not found |

edward2021/ScanScribe | 2023-08-13T06:10:32.000Z | [

"license:openrail",

"region:us"

] | edward2021 | null | null | null | 2 | 7 | ---

license: openrail

---

|

larryvrh/WikiMatrix-v1-En_Zh-filtered | 2023-08-13T06:49:57.000Z | [

"task_categories:translation",

"size_categories:100K<n<1M",

"language:zh",

"language:en",

"region:us"

] | larryvrh | null | null | null | 0 | 7 | ---

dataset_info:

features:

- name: en

dtype: string

- name: zh

dtype: string

splits:

- name: train

num_bytes: 167612083

num_examples: 678099

download_size: 129968994

dataset_size: 167612083

task_categories:

- translation

language:

- zh

- en

size_categories:

- 100K<n<1M

---

# Dataset Card for "WikiMatrix-v1-En_Zh-filtered"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

elsheikhams/MPOLD | 2023-08-14T10:35:03.000Z | [

"region:us"

] | elsheikhams | null | null | null | 0 | 7 | Entry not found |

jonathansuru/customer_support_auto_completion | 2023-08-14T22:57:54.000Z | [

"task_categories:table-question-answering",

"task_categories:question-answering",

"task_categories:text-generation",

"language:en",

"license:apache-2.0",

"region:us"

] | jonathansuru | null | null | null | 1 | 7 | ---

license: apache-2.0

task_categories:

- table-question-answering

- question-answering

- text-generation

language:

- en

--- |

yangwang825/esc50 | 2023-08-15T13:28:39.000Z | [

"task_categories:audio-classification",

"size_categories:1K<n<10K",

"audio",

"region:us"

] | yangwang825 | null | null | null | 0 | 7 | ---

task_categories:

- audio-classification

tags:

- audio

size_categories:

- 1K<n<10K

---

# ESC50

## Dataset Summary

The ESC-50 dataset is a labeled collection of 2000 environmental audio recordings suitable for benchmarking methods of environmental sound classification. It comprises 2000 5s-clips of 50 different classes across natural, human and domestic sounds, again, drawn from Freesound.org.

## Data Instances

An example of 'train' looks as follows.

```

{

"audio": {

"path": "ESC-50-master/audio/4-143118-B-7.wav",

"array", array([0.05203247, 0.05285645, 0.05441284, ..., 0.0093689 , 0.00753784, 0.00643921],

"sampling_rate", 44100

},

"fold": 4,

"label": 30

}

``` |

TinyPixel/airo-1 | 2023-09-02T10:26:30.000Z | [

"region:us"

] | TinyPixel | null | null | null | 0 | 7 | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: response

dtype: string

- name: category

dtype: string

- name: question_id

dtype: float64

splits:

- name: train

num_bytes: 57737476

num_examples: 34204

download_size: 30991700

dataset_size: 57737476

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for "airo-1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

deep-plants/AGM | 2023-10-04T11:06:53.000Z | [

"task_categories:image-classification",

"size_categories:100K<n<1M",

"license:cc",

"region:us"

] | deep-plants | null | null | null | 0 | 7 | ---

license: cc

size_categories:

- 100K<n<1M

task_categories:

- image-classification

dataset_info:

features:

- name: image

dtype: image

- name: label

dtype: string

splits:

- name: train

num_bytes: 3208126820.734

num_examples: 972858

download_size: 3245813213

dataset_size: 3208126820.734

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

# Dataset Card for AGM Dataset

## Dataset Summary

The AGM (AGricolaModerna) Dataset is a comprehensive collection of high-resolution RGB images capturing harvest-ready plants in a vertical farm setting. This dataset consists of 972,858 images, each with a resolution of 120x120 pixels, covering 18 different plant crops. In the context of this dataset, a crop refers to a plant species or a mix of plant species.

## Supported Tasks

Image classification: plant phenotyping

## Languages

The dataset primarily consists of image data and does not involve language content. Therefore, the primary language is English, but it is not relevant to the dataset's core content.

## Dataset Structure

### Data Instances

A typical data instance from the training set consists of the following:

```

{

'image': <PIL.JpegImagePlugin.JpegImageFile image mode=RGB size=120x120 at 0x29CEAD71780>,

'crop_type': 'by'

}

```

### Data Fields

The dataset's data instances have the following fields:

- `image`: A PIL.Image.Image object representing the image.

- `crop_type`: An string representation of the crop type in the image

### Data Splits

- **Training Set**:

- Number of Examples: 972,858

## Dataset Creation

### Curation Rationale

The creation of the AGM Dataset was motivated by the need for a large and diverse dataset that captures various aspects of modern agriculture, including plant species diversity, stress detection, and crop health assessment.

### Source Data

#### Initial Data Collection and Normalization

The images were captured using a high-resolution camera positioned above a moving table in an agricultural setting. The camera captured images of the entire table, which was filled with trays of harvested crops. The image capture process spanned from May 2022 to December 2022. The original images had a resolution of $1073{\times}650$ pixels. Each pixel in the images corresponds to a physical size of $0.5$ millimeters.

### Annotations

#### Annotation Process

Agronomists and domain experts were involved in the annotation process. They annotated each image to identify the crops present and assign them to specific categories or species. This annotation process involved labeling each image with one of 18 distinct crop categories, which include individual plant species and mixtures of species.

### Who Are the Annotators?

The annotators are agronomists employed by Agricola Moderna.

## Personal and Sensitive Information

The dataset does not contain personal or sensitive information about individuals. It primarily consists of images of plants.

## Considerations for Using the Data

### Social Impact of Dataset

The AGM Dataset has potential social impact in modern agriculture and related domains. It can advance agriculture by aiding the development of innovative technologies for crop monitoring, disease detection, and yield prediction, fostering sustainable farming practices, contributing to food security and ensuring higher agricultural productivity and affordability. The dataset supports research for environmentally sustainable agriculture, optimizing resource use and reducing environmental impact.

### Discussion of Biases and Known Limitations

The dataset primarily involves images from a single vertical farm setting therefore, while massive, includes relatively little variation in crop types. The dataset's contents and annotations may reflect regional agricultural practices and preferences. Business preferences also play a substantial role in determining the types of crops grown in vertical farms. These preferences, often influenced by market demand and profitability, can significantly differ from conventional open-air field agriculture. Therefore, the dataset may inherently reflect these business-driven crop choices, potentially affecting its representativeness of broader agricultural scenarios.

## Additional Information

### Dataset Curators

The dataset is curate by DeepPlants and AgricolaModerna. You can contact us for further informations at

nico@deepplants.com

etienne.david@agricolamoderna.com

### Licensing Information

### Citation Information

If you use the AGM dataset in your work, please consider citing the following publication:

```bibtex

@InProceedings{Sama_2023_ICCV,

author = {Sama, Nico and David, Etienne and Rossetti, Simone and Antona, Alessandro and Franchetti, Benjamin and Pirri, Fiora},

title = {A new Large Dataset and a Transfer Learning Methodology for Plant Phenotyping in Vertical Farms},

booktitle = {Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV) Workshops},

month = {October},

year = {2023},

pages = {540-551}

}

``` |

openfoodfacts/ingredient-detection | 2023-08-16T10:08:17.000Z | [

"task_categories:token-classification",

"size_categories:1K<n<10K",

"language:en",

"language:fr",

"language:de",

"language:it",

"language:nl",

"language:ru",

"language:he",

"license:cc-by-sa-4.0",

"region:us"

] | openfoodfacts | null | null | null | 0 | 7 | ---

license: cc-by-sa-4.0

language:

- en

- fr

- de

- it

- nl

- ru

- he

task_categories:

- token-classification

pretty_name: Ingredient List Detection

size_categories:

- 1K<n<10K

---

This dataset is used to train a multilingual ingredient list detection model. The goal is to automate the extraction of ingredient lists from food packaging images. See [this issue](https://github.com/openfoodfacts/openfoodfacts-ai/issues/242) for a broader context about ingredient list extraction.

## Dataset generation

Raw unannotated texts are OCR results obtained with Google Cloud Vision. It only contains images marked as ingredient image on Open Food Facts.

The dataset was generated using ChatGPT-3.5: we asked ChatGPT to extract ingredient using the following prompt:

Prompt:

```

Extract ingredient lists from the following texts. The ingredient list should start with the first ingredient and end with the last ingredient. It should not include allergy, label or origin information.

The output format must be a single JSON list containing one element per ingredient list. If there are ingredients in several languages, the output JSON list should contain as many elements as detected languages. Each element should have two fields:

- a "text" field containing the detected ingredient list. The text should be a substring of the original text, you must not alter the original text.

- a "lang" field containing the detected language of the ingredient list.

Don't output anything else than the expected JSON list.

```

System prompt:

```

You are ChatGPT, a large language model trained by OpenAI. Only generate responses in JSON format. The output JSON must be minified.

```

A first cleaning step was performed automatically, we removed responses with:

- invalid JSON

- JSON with missing fields

- JSON where the detected ingredient list is not a substring of the original text

A first NER model was trained on this dataset. The model prediction errors on this dataset were inspected, which allowed us to spot the different kind of annotation errors made by ChatGPT. Then, using a semi-automatic approach, we manually corrected samples that were likely to have the error spotted during the inspection phase. For example, we noticed that the prefix "Ingredients:" was sometimes included in the ingredient text span. We looked for every sample where "Ingredients" (and translations in other languages) was part of the ingredient text, and corrected these samples manually. This approach allowed us to focus on problematic samples, instead of having to check the full train set.

These detection rules were mostly implemented using regex. The cleaning script with all rules [can be found here](https://github.com/openfoodfacts/openfoodfacts-ai/blob/149447bdbcd19cb7c15127405d9112bc9bfe3685/ingredient_extraction/clean_dataset.py#L23).

Once the detected errors were fixed using this approach, a new dataset alpha version was released, and we trained the model on this new dataset.

Dataset was split between train (90%) and test (10%) sets. Train and test splits were kept consistent at each alpha release. Only the test dataset was fully reviewed and corrected manually.

We tokenized the text using huggingface pre-tokenizer with the `[WhitespaceSplit(), Punctuation()]` sequence. The dataset generation script [can be found here](https://github.com/openfoodfacts/openfoodfacts-ai/blob/149447bdbcd19cb7c15127405d9112bc9bfe3685/ingredient_extraction/generate_dataset.py).

This dataset is exactly the same as `ingredient-detection-alpha-v6` used during model trainings.

## Annotation guidelines

Annotations guidelines were updated continuously during dataset refinement and model trainings, but here are the final guidelines:

1. ingredient lists in all languages must be annotated.

2. ingredients list should start with the first ingredient, without `ingredient` prefix ("Ingredients:", "Zutaten", "Ingrédients: ") or `language` prefix ("EN:", "FR - ",...)

3. ingredient list containing single ingredients without any `ingredient` or `language` prefix should not be annotated. Otherwise, it's very difficult to know whether the mention is the ingredient list or just a random mention of an ingredient on the packaging.

4. We have a very restrictive approach on where the ingredient list ends: we don't include any extra information (allergen, origin, trace, organic mentions) at the end of the ingredient list. The only exception is when this information is in bracket after the ingredient. This rule is in place to make it easier for the detector to know what is an ingredient list and what is not. Additional information can be added afterward as a post-processing step.

## Dataset schema

The dataset is made of 2 JSONL files:

- `ingredient_detection_dataset-v1_train.jsonl.gz`: train split, 5065 samples

- `ingredient_detection_dataset-v1_test.jsonl.gz`: test split, 556 samples

Each sample has the following fields:

- `text`: the original text obtained from OCR result

- `marked_text`: the text with ingredient spans delimited by `<b>` and `</b>`

- `tokens`: tokens obtained with pre-tokenization

- `ner_tags`: tag ID associated with each token: 0 for `O`, 1 for `B-ING` and 2 for `I-ING` (BIO schema)

- `offsets`: a list containing character start and end offsets of ingredients spans

- `meta`: a dict containing additional meta-data about the sample:

- `barcode`: the product barcode of the image that was used

- `image_id`: unique digit identifier of the image for the product

- `url`: image URL from which the text was extracted |

collabora/monado-slam-datasets | 2023-09-08T15:24:43.000Z | [

"license:cc-by-4.0",

"doi:10.57967/hf/1081",

"region:us"

] | collabora | null | null | null | 2 | 7 | ---

license: cc-by-4.0

---

<img alt="Monado SLAM Datasets cover image"

src="/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/extras/cover.png"

style="width: 720px;">

<a href="https://youtu.be/kIddwk1FrW8" target="_blank">

<video width="720" height="240" autoplay muted loop playsinline

preload="auto"><source

src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/overview.webm"

type="video/webm"/>Video tag not supported.</video>

</a>

# Monado SLAM Datasets

The [Monado SLAM datasets

(MSD)](https://huggingface.co/datasets/collabora/monado-slam-datasets), are

egocentric visual-inertial SLAM datasets recorded to improve the

[Basalt](https://gitlab.com/VladyslavUsenko/basalt)-based inside-out tracking

component of the [Monado](https://monado.dev) project. These have a permissive

license [CC-BY 4.0](http://creativecommons.org/licenses/by/4.0/), meaning you

can use them for any purpose you want, including commercial, and only a mention

of the original project is required. The creation of these datasets was

supported by [Collabora](https://collabora.com)

Monado is an open-source OpenXR runtime that you can use to make devices OpenXR

compatible. It also provides drivers for different existing hardware thanks to

different contributors in the community creating drivers for it. Monado provides

different XR-related modules that these drivers can use. To be more specific,

inside-out head tracking is one of those modules and, while you can use

different tracking systems, the main system is a [fork of

Basalt](https://gitlab.freedesktop.org/mateosss/basalt). Creating a good

open-source tracking solution requires a solid measurement pipeline to

understand how changes in the system affect tracking quality. For this reason,

the creation of these datasets was essential.

These datasets are very specific to the XR use case as they contain VI-SLAM

footage recorded from devices such as VR headsets, but other devices like phones

or AR glasses might be added in the future. These were made since current SLAM

datasets like EuRoC or TUM-VI were not specific enough for XR, or they didn't

have permissively enough usage licenses.

For questions or comments, you can use the Hugging Face

[Community](https://huggingface.co/datasets/collabora/monado-slam-datasets/discussions),

join Monado's discord [server](https://discord.gg/8RkJgRJ) and ask in the

`#slam` channel, or send an email to <mateo.demayo@collabora.com>.

## List of sequences

- [MI_valve_index](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index)

- [MIC_calibration](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIC_calibration)

- [MIC01_camcalib1](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC01_camcalib1.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC01_camcalib1.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC02_camcalib2](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC02_camcalib2.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC02_camcalib2.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC03_camcalib3](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC03_camcalib3.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC03_camcalib3.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC04_imucalib1](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC04_imucalib1.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC04_imucalib1.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC05_imucalib2](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC05_imucalib2.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC05_imucalib2.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC06_imucalib3](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC06_imucalib3.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC06_imucalib3.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC07_camcalib4](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC07_camcalib4.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC07_camcalib4.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC08_camcalib5](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC08_camcalib5.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC08_camcalib5.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC09_imucalib4](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC09_imucalib4.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC09_imucalib4.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC10_imucalib5](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC10_imucalib5.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC10_imucalib5.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC11_camcalib6](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC11_camcalib6.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC11_camcalib6.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC12_imucalib6](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC12_imucalib6.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC12_imucalib6.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC13_camcalib7](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC13_camcalib7.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC13_camcalib7.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC14_camcalib8](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC14_camcalib8.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC14_camcalib8.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC15_imucalib7](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC15_imucalib7.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC15_imucalib7.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIC16_imucalib8](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC16_imucalib8.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIC16_imucalib8.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO_others](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIO_others)

- [MIO01_hand_puncher_1](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO01_hand_puncher_1.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO01_hand_puncher_1.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO02_hand_puncher_2](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO02_hand_puncher_2.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO02_hand_puncher_2.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO03_hand_shooter_easy](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO03_hand_shooter_easy.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO03_hand_shooter_easy.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO04_hand_shooter_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO04_hand_shooter_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO04_hand_shooter_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO05_inspect_easy](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO05_inspect_easy.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO05_inspect_easy.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO06_inspect_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO06_inspect_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO06_inspect_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO07_mapping_easy](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO07_mapping_easy.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO07_mapping_easy.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO08_mapping_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO08_mapping_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO08_mapping_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO09_short_1_updown](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO09_short_1_updown.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO09_short_1_updown.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO10_short_2_panorama](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO10_short_2_panorama.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO10_short_2_panorama.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO11_short_3_backandforth](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO11_short_3_backandforth.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO11_short_3_backandforth.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO12_moving_screens](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO12_moving_screens.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO12_moving_screens.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO13_moving_person](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO13_moving_person.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO13_moving_person.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO14_moving_props](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO14_moving_props.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO14_moving_props.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO15_moving_person_props](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO15_moving_person_props.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO15_moving_person_props.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIO16_moving_screens_person_props](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIO_others/MIO16_moving_screens_person_props.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIO16_moving_screens_person_props.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIP_playing](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing)

- [MIPB_beat_saber](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber)

- [MIPB01_beatsaber_100bills_360_normal](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB01_beatsaber_100bills_360_normal.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB01_beatsaber_100bills_360_normal.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB02_beatsaber_crabrave_360_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB02_beatsaber_crabrave_360_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB02_beatsaber_crabrave_360_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB03_beatsaber_countryrounds_360_expert](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB03_beatsaber_countryrounds_360_expert.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB03_beatsaber_countryrounds_360_expert.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB04_beatsaber_fitbeat_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB04_beatsaber_fitbeat_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB04_beatsaber_fitbeat_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB05_beatsaber_fitbeat_360_expert](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB05_beatsaber_fitbeat_360_expert.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB05_beatsaber_fitbeat_360_expert.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB06_beatsaber_fitbeat_expertplus_1](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB06_beatsaber_fitbeat_expertplus_1.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB06_beatsaber_fitbeat_expertplus_1.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB07_beatsaber_fitbeat_expertplus_2](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB07_beatsaber_fitbeat_expertplus_2.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB07_beatsaber_fitbeat_expertplus_2.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPB08_beatsaber_long_session_1](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber/MIPB08_beatsaber_long_session_1.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPB08_beatsaber_long_session_1.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPP_pistol_whip](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip)

- [MIPP01_pistolwhip_blackmagic_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip/MIPP01_pistolwhip_blackmagic_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPP01_pistolwhip_blackmagic_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPP02_pistolwhip_lilith_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip/MIPP02_pistolwhip_lilith_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPP02_pistolwhip_lilith_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPP03_pistolwhip_requiem_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip/MIPP03_pistolwhip_requiem_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPP03_pistolwhip_requiem_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPP04_pistolwhip_revelations_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip/MIPP04_pistolwhip_revelations_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPP04_pistolwhip_revelations_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPP05_pistolwhip_thefall_hard_2pistols](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip/MIPP05_pistolwhip_thefall_hard_2pistols.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPP05_pistolwhip_thefall_hard_2pistols.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPP06_pistolwhip_thegrave_hard](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip/MIPP06_pistolwhip_thegrave_hard.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPP06_pistolwhip_thegrave_hard.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPT_thrill_of_the_fight](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPT_thrill_of_the_fight)

- [MIPT01_thrillofthefight_setup](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPT_thrill_of_the_fight/MIPT01_thrillofthefight_setup.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPT01_thrillofthefight_setup.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPT02_thrillofthefight_fight_1](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPT_thrill_of_the_fight/MIPT02_thrillofthefight_fight_1.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPT02_thrillofthefight_fight_1.webm" type="video/webm"/>Video tag not supported.</video></details>

- [MIPT03_thrillofthefight_fight_2](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPT_thrill_of_the_fight/MIPT03_thrillofthefight_fight_2.zip): <details style="display: inline;cursor: pointer;user-select: none"><summary>Preview 5x</summary><video width="320" height="320" controls preload="none"><source src="https://huggingface.co/datasets/collabora/monado-slam-datasets/resolve/main/M_monado_datasets/MI_valve_index/extras/previews/MIPT03_thrillofthefight_fight_2.webm" type="video/webm"/>Video tag not supported.</video></details>

## Valve Index datasets

These datasets were recorded using a Valve Index with the `vive` driver in

Monado and they have ground truth from 3 lighthouses tracking the headset through

the proprietary OpenVR implementation provided by SteamVR. The exact commit used

in Monado at the time of recording is

[a4e7765d](https://gitlab.freedesktop.org/mateosss/monado/-/commit/a4e7765d7219b06a0c801c7bb33f56d3ea69229d).

The datasets are in the ASL dataset format, the same as the [EuRoC

datasets](https://projects.asl.ethz.ch/datasets/doku.php?id=kmavvisualinertialdatasets).

Besides the main EuRoC format files, we provide some extra files with raw

timestamp data for exploring real time timestamp alignment techniques.

The dataset is post-processed to reduce as much as possible special treatment

from SLAM systems: camera-IMU and ground truth-IMU timestamp alignment, IMU

alignment and bias calibration have been applied, lighthouse tracked pose has

been converted to IMU pose, and so on. Most of the post-processing was done with

Basalt

[calibration](https://gitlab.com/VladyslavUsenko/basalt/-/blob/master/doc/Calibration.md?ref_type=heads#camera-imu-mocap-calibration)

and

[alignment](https://gitlab.com/VladyslavUsenko/basalt/-/blob/master/doc/Realsense.md?ref_type=heads#generating-time-aligned-ground-truth)

tools, as well as the

[xrtslam-metrics](https://gitlab.freedesktop.org/mateosss/xrtslam-metrics)

scripts for Monado tracking. The post-processing process is documented in [this

video][post-processing-video] which goes through making the [MIPB08] dataset ready

for use starting from its raw version.

### Data

#### Camera samples

In the `vive` driver from Monado, we don't have direct access to the camera

device timestamps but only to V4L2 timestamps. These are not exactly hardware

timestamps and have some offset with respect to the device clock in which the

IMU samples are timestamped.

The camera frames can be found in the `camX/data` directory as PNG files with

names corresponding to their V4L2 timestamps. The `camX/data.csv` file contains

aligned timestamps of each frame. The `camX/data.extra.csv` also contains the

original V4L2 timestamp and the "host timestamp" which is the time at which the

host computer had the frame ready to use after USB transmission. By separating

arrival time and exposure time algorithms can be made to be more robust for

real time operation.

The cameras of the Valve Index have global shutters with a resolution of 960×960

streaming at 54fps. They have auto exposure enabled. While the cameras of the

Index are RGB you will find only grayscale images in these datasets. The

original images are provided in YUYV422 format but only the luma component is

stored.

For each dataset, the camera timestamps are aligned with respect to IMU

timestamps by running visual-only odometry with Basalt on a 30-second subset of

the dataset. The resulting trajectory is then aligned with the

[`basalt_time_alignment`](https://gitlab.com/VladyslavUsenko/basalt/-/blob/master/doc/Realsense.md?ref_type=heads#generating-time-aligned-ground-truth)

tool that aligns the rotational velocities of the trajectory with the gyroscope

samples and returns the resulting offset in nanoseconds. That correction is then

applied to the dataset. Refer to the post-processing walkthrough

[video][post-processing-video] for more details.

#### IMU samples

The IMU timestamps are device timestamps, they come at about 1000Hz. We provide

an `imu0/data.raw.csv` file that contains the raw measurements without any axis

scale misalignment o bias correction. `imu0/data.csv` has the

scale misalignment and bias corrections applied so that the SLAM system can

ignore those corrections. `imu0/data.extra.csv` contains the arrival time of the

IMU sample to the host computer for algorithms that want to adapt themselves to

work in real time.

#### Ground truth information

The ground truth setup consists of three lighthouses 2.0 base stations and a

SteamVR session providing tracking data through the OpenVR API to Monado. While

not as precise as other MoCap tracking systems like OptiTrack or Vicon it

should still provide pretty good accuracy and precision close to the 1mm range.

There are different attempts at studying the accuracy of SteamVR tracking that

you can check out like

[this](https://dl.acm.org/doi/pdf/10.1145/3463914.3463921),

[this](https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7956487/pdf/sensors-21-01622.pdf),

or [this](http://doc-ok.org/?p=1478). When a tracking system gets closer to

millimeter accuracy these datasets will no longer be as useful for improving it.

The raw ground truth data is stored in `gt/data.raw.csv`. OpenVR does not provide

timestamps and as such, the timestamps recorded are from when the host asks

OpenVR for the latest pose with a call to

[`GetDeviceToAbsoluteTrackingPose`](https://github.com/ValveSoftware/openvr/wiki/IVRSystem::GetDeviceToAbsoluteTrackingPose).

The poses contained in this file are not of the IMU but of the headset origin as

interpreted by SteamVR, which usually is between the middle of the eyes and

facing towards the displays. The file `gt/data.csv` corrects each entry of the

previous file with timestamps aligned with the IMU clock and poses of the IMU

instead of this headset origin.

#### Calibration

There are multiple calibration datasets in the

[`MIC_calibration`](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIC_calibration)

directory. There are camera-focused and IMU-focused calibration datasets. See

the

[README.md](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/README.md)

file in there for more information on what each sequence is.

In the

[`MI_valve_index/extras`](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/extras)

directory you can find the following files:

- [`calibration.json`](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/extras/calibration.json):

Calibration file produced with the

[`basalt_calibrate_imu`](https://gitlab.com/VladyslavUsenko/basalt/-/blob/master/doc/Calibration.md?ref_type=heads#camera-imu-mocap-calibration)

tool from

[`MIC01_camcalib1`](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC01_camcalib1.zip)

and

[`MIC04_imucalib1`](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/MIC_calibration/MIC04_imucalib1.zip)

datasets with camera-IMU time offset and IMU bias/misalignment info removed so

that it works with the fully the all the datasets by default which are fully

post-processed and don't require those fields.

- [`calibration.extra.json`](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/extras/calibration.extra.json):

Same as `calibration.json` but with the cam-IMU time offset and IMU bias and

misalignment information filled in.

- [`factory.json`](https://huggingface.co/datasets/collabora/monado-slam-datasets/blob/main/M_monado_datasets/MI_valve_index/extras/factory.json):

JSON file exposed by the headset's firmware with information of the device. It

includes camera and display calibration as well as more data that might be of

interest. It is not used but included for completeness' sake.

- [`other_calibrations/`](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/extras/other_calibrations):

Calibration results obtained from the other calibration datasets. Shown for

comparison and ensuring that all of them have similar values.

`MICXX_camcalibY` has camera-only calibration produced with the

[`basalt_calibrate`](https://gitlab.com/VladyslavUsenko/basalt/-/blob/master/doc/Calibration.md?ref_type=heads#camera-calibration)

tool, while the corresponding `MICXX_imucalibY` datasets use these datasets as

a starting point and have the `basalt_calibrate_imu` calibration results.

##### Camera model

By default, the `calibration.json` file provides parameters `k1`, `k2`, `k3`,

and `k4` for the [Kannala-Brandt camera

model](https://vladyslavusenko.gitlab.io/basalt-headers/classbasalt_1_1KannalaBrandtCamera4.html#a423a4f1255e9971fe298dc6372345681)

with fish-eye distortion (also known as [OpenCV's

fish-eye](https://docs.opencv.org/3.4/db/d58/group__calib3d__fisheye.html#details)).

Calibrations with other camera models might be added later on, otherwise, you

can use the calibration sequences for custom calibrations.

##### IMU model

For the default `calibration.json` where all parameters are zero, you can ignore

any model and just use the measurements present in `imu0/data.csv` directly. If

instead, you want to use the raw measurements from `imu0/data.raw.csv` you will

need to apply the Basalt

[accelerometer](https://vladyslavusenko.gitlab.io/basalt-headers/classbasalt_1_1CalibAccelBias.html#details)

and

[gyroscope](https://vladyslavusenko.gitlab.io/basalt-headers/classbasalt_1_1CalibGyroBias.html#details)

models that use a misalignment-scale correction matrix together with a constant

initial bias. The random walk and white noise parameters were not computed and

default reasonable values are used instead.

#### Post-processing walkthrough

If you are interested in understanding the step-by-step procedure of

post-processing of the dataset, below is a video detailing the procedure for the

[MIPB08] dataset.

[](https://www.youtube.com/watch?v=0PX_6PNwrvQ)

### Sequences

- [MIC_calibration](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIC_calibration):

Calibration sequences that record

[this](https://drive.google.com/file/d/1DqKWgePodCpAKJCd_Bz-hfiEQOSnn_k0)

calibration target from Kalibr with the squares of the target having sides of

3 cm. Some sequences are focused on camera calibration covering the image

planes of both stereo cameras while others on IMU calibration properly

exciting all six components of the IMU.

- [MIP_playing](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIO_others):

Datasets in which the user is playing a particular VR game on SteamVR while

Monado records the datasets.

- [MIPB_beat_saber](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber):

This contains different songs played at different speeds. The fitbeat song

is one that requires a lot of head movement while [MIPB08] is a long 40min

dataset with many levels played.

- [MIPP_pistol_whip](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPP_pistol_whip):

This is a shooting and music game, each dataset is a different level/song.

- [MIPT_thrill_of_the_fight](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPT_thrill_of_the_fight):

This is a boxing game.

- [MIO_others](https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIO_others):

These are other datasets that might be useful, they include play-pretend

scenarios in which the user is supposed to be playing some particular game,

then there is some inspection and scanning/mapping of the room, some very

short and lightweight datasets for quick testing, and some datasets with a lot

of movement around the environment.

### Evaluation

These are the results of running the

[current](https://gitlab.freedesktop.org/mateosss/basalt/-/commits/release-b67fa7a4?ref_type=tags)

Monado tracker that is based on

[Basalt](https://gitlab.com/VladyslavUsenko/basalt) on the dataset sequences.

| Seq. | Avg. time\* | Avg. feature count | ATE (m) | RTE 100ms (m) \*\* | SDM 0.01m (m/m) \*\*\* |

| :------ | :--------------- | :-------------------- | :---------------- | :---------------------- | :--------------------- |

| MIC01 | 12.24 ± 2.84 | [48 6] ± [72 6] | 0.076 ± 0.049 | 0.016551 ± 0.015004 | 0.7407 ± 0.5757 |

| MIC02 | 12.30 ± 2.60 | [33 7] ± [54 11] | 0.043 ± 0.028 | 0.012375 ± 0.011230 | 0.5788 ± 0.4279 |

| MIC03 | 15.89 ± 8.55 | [60 8] ± [107 13] | 0.048 ± 0.032 | 0.011344 ± 0.009992 | 0.6020 ± 0.3987 |

| MIC04 | 15.26 ± 2.84 | [65 9] ± [54 11] | 0.028 ± 0.016 | 0.005458 ± 0.003976 | 0.2808 ± 0.2033 |

| MIC05 | 16.10 ± 2.82 | [73 5] ± [69 6] | 0.023 ± 0.013 | 0.004795 ± 0.003358 | 0.2547 ± 0.1611 |

| MIC06 | 14.14 ± 2.42 | [40 7] ± [53 10] | 0.015 ± 0.005 | 0.003947 ± 0.003454 | 0.2875 ± 0.2542 |

| MIC07 | 13.42 ± 2.63 | [46 9] ± [64 12] | 0.036 ± 0.014 | 0.012776 ± 0.011853 | 0.5520 ± 0.3463 |

| MIC08 | 13.89 ± 2.86 | [53 5] ± [62 5] | 0.082 ± 0.062 | 0.022429 ± 0.020956 | 0.8559 ± 0.6402 |

| MIC09 | 12.73 ± 2.52 | [63 21] ± [37 12] | 0.008 ± 0.003 | 0.001492 ± 0.001318 | 0.2388 ± 0.3589 |

| MIC10 | 14.49 ± 2.51 | [50 5] ± [51 5] | 0.019 ± 0.012 | 0.003783 ± 0.003116 | 0.2666 ± 0.3451 |

| MIC11 | 13.72 ± 2.37 | [26 6] ± [39 7] | 0.017 ± 0.010 | 0.009898 ± 0.009069 | 0.4331 ± 0.3278 |

| MIC12 | 14.92 ± 2.56 | [38 4] ± [48 5] | 0.024 ± 0.010 | 0.005816 ± 0.004644 | 0.2932 ± 0.2500 |

| MIC13 | 13.99 ± 3.07 | [53 10] ± [79 15] | 0.029 ± 0.021 | 0.015463 ± 0.014354 | 0.8668 ± 0.9353 |

| MIC14 | 13.67 ± 2.39 | [24 5] ± [36 8] | 0.047 ± 0.012 | 0.007224 ± 0.006359 | 0.4577 ± 0.3446 |

| MIC15 | 14.17 ± 2.81 | [76 17] ± [43 9] | 0.016 ± 0.013 | 0.003837 ± 0.003543 | 0.2593 ± 0.1936 |

| MIC16 | 14.27 ± 2.43 | [48 8] ± [44 6] | 0.008 ± 0.005 | 0.003867 ± 0.003725 | 0.5167 ± 0.4840 |

| MIO01 | 10.04 ± 1.43 | [36 23] ± [28 18] | 0.605 ± 0.342 | 0.035671 ± 0.033611 | 0.4246 ± 0.5161 |

| MIO02 | 10.41 ± 1.48 | [32 18] ± [25 16] | 1.182 ± 0.623 | 0.063340 ± 0.059176 | 0.4681 ± 0.4329 |

| MIO03 | 10.24 ± 1.37 | [47 26] ± [26 16] | 0.087 ± 0.033 | 0.006293 ± 0.004259 | 0.2113 ± 0.2649 |

| MIO04 | 9.47 ± 1.08 | [27 16] ± [25 16] | 0.210 ± 0.100 | 0.013121 ± 0.010350 | 0.3086 ± 0.3715 |

| MIO05 | 9.95 ± 1.01 | [66 34] ± [33 21] | 0.040 ± 0.016 | 0.003188 ± 0.002192 | 0.1079 ± 0.1521 |

| MIO06 | 9.65 ± 1.06 | [44 28] ± [33 22] | 0.049 ± 0.019 | 0.010454 ± 0.008578 | 0.2620 ± 0.3684 |

| MIO07 | 9.63 ± 1.16 | [46 26] ± [30 19] | 0.019 ± 0.008 | 0.002442 ± 0.001355 | 0.0738 ± 0.0603 |

| MIO08 | 9.74 ± 0.87 | [29 22] ± [18 16] | 0.059 ± 0.021 | 0.007167 ± 0.004657 | 0.1644 ± 0.3433 |

| MIO09 | 9.94 ± 0.72 | [44 29] ± [14 8] | 0.006 ± 0.003 | 0.002940 ± 0.002024 | 0.0330 ± 0.0069 |

| MIO10 | 9.48 ± 0.82 | [35 21] ± [18 10] | 0.016 ± 0.009 | 0.004623 ± 0.003310 | 0.0620 ± 0.0340 |

| MIO11 | 9.34 ± 0.79 | [32 20] ± [19 10] | 0.024 ± 0.010 | 0.007255 ± 0.004821 | 0.0854 ± 0.0540 |

| MIO12 | 11.05 ± 2.20 | [43 23] ± [31 19] | 0.420 ± 0.160 | 0.005298 ± 0.003603 | 0.1546 ± 0.2641 |

| MIO13 | 10.47 ± 1.89 | [35 21] ± [24 18] | 0.665 ± 0.290 | 0.026294 ± 0.022790 | 1.0180 ± 1.0126 |

| MIO14 | 9.27 ± 1.03 | [49 31] ± [30 21] | 0.072 ± 0.028 | 0.002779 ± 0.002487 | 0.1657 ± 0.2409 |

| MIO15 | 9.75 ± 1.16 | [52 26] ± [29 16] | 0.788 ± 0.399 | 0.011558 ± 0.010541 | 0.6906 ± 0.6876 |

| MIO16 | 9.72 ± 1.26 | [33 17] ± [25 15] | 0.517 ± 0.135 | 0.013268 ± 0.011355 | 0.4397 ± 0.7167 |

| MIPB01 | 10.28 ± 1.25 | [63 46] ± [34 24] | 0.282 ± 0.109 | 0.006797 ± 0.004551 | 0.1401 ± 0.1229 |

| MIPB02 | 9.88 ± 1.08 | [55 37] ± [30 20] | 0.247 ± 0.097 | 0.005065 ± 0.003514 | 0.1358 ± 0.1389 |

| MIPB03 | 10.21 ± 1.12 | [66 44] ± [32 23] | 0.186 ± 0.103 | 0.005938 ± 0.004261 | 0.1978 ± 0.3590 |

| MIPB04 | 9.58 ± 1.02 | [51 37] ± [24 17] | 0.105 ± 0.060 | 0.004822 ± 0.003428 | 0.0652 ± 0.0555 |

| MIPB05 | 9.97 ± 0.97 | [73 48] ± [32 23] | 0.039 ± 0.017 | 0.004426 ± 0.002828 | 0.0826 ± 0.1313 |

| MIPB06 | 9.95 ± 0.85 | [58 35] ± [32 21] | 0.050 ± 0.022 | 0.004164 ± 0.002638 | 0.0549 ± 0.0720 |

| MIPB07 | 10.07 ± 1.00 | [73 47] ± [31 20] | 0.064 ± 0.038 | 0.004984 ± 0.003170 | 0.0785 ± 0.1411 |

| MIPB08 | 9.97 ± 1.08 | [71 47] ± [36 24] | 0.636 ± 0.272 | 0.004066 ± 0.002556 | 0.0740 ± 0.0897 |

| MIPP01 | 10.03 ± 1.21 | [36 22] ± [21 15] | 0.559 ± 0.241 | 0.009227 ± 0.007765 | 0.3472 ± 0.9075 |

| MIPP02 | 10.19 ± 1.20 | [42 22] ± [22 15] | 0.257 ± 0.083 | 0.011046 ± 0.010201 | 0.5014 ± 0.7665 |

| MIPP03 | 10.13 ± 1.24 | [37 20] ± [23 15] | 0.260 ± 0.101 | 0.008636 ± 0.007166 | 0.3205 ± 0.5786 |

| MIPP04 | 9.74 ± 1.09 | [38 23] ± [22 16] | 0.256 ± 0.144 | 0.007847 ± 0.006743 | 0.2586 ± 0.4557 |

| MIPP05 | 9.71 ± 0.84 | [37 24] ± [21 15] | 0.193 ± 0.086 | 0.005606 ± 0.004400 | 0.1670 ± 0.2398 |

| MIPP06 | 9.92 ± 3.11 | [37 21] ± [21 14] | 0.294 ± 0.136 | 0.009794 ± 0.008873 | 0.4016 ± 0.5648 |

| MIPT01 | 10.78 ± 2.06 | [68 44] ± [33 23] | 0.108 ± 0.060 | 0.003995 ± 0.002716 | 0.7109 ± 13.3461 |

| MIPT02 | 10.85 ± 1.27 | [79 54] ± [39 28] | 0.198 ± 0.109 | 0.003709 ± 0.002348 | 0.0839 ± 0.1175 |

| MIPT03 | 10.80 ± 1.55 | [76 52] ± [42 30] | 0.401 ± 0.206 | 0.005623 ± 0.003694 | 0.1363 ± 0.1789 |

| **AVG** | **11.33 ± 1.83** | **[49 23] ± [37 15]** | **0.192 ± 0.090** | **0.009439 ± 0.007998** | **0.3247 ± 0.6130** |

- \*: Average frame time. On an AMD Ryzen 7 5800X CPU. Run with pipeline fully

saturated. Real time operation frame times should be slightly lower.

- \*\*: RTE using delta of 6 frames (11ms)

- \*\*\*: The SDM metric is similar to RTE, it represents distance in meters

drifted for each meter of the dataset. The metric is implemented in the

[xrtslam-metrics](https://gitlab.freedesktop.org/mateosss/xrtslam-metrics)

project.

## License

This work is licensed under a <a rel="license" href="http://creativecommons.org/licenses/by/4.0/">Creative Commons Attribution 4.0 International License</a>.

<a rel="license" href="http://creativecommons.org/licenses/by/4.0/"><img alt="Creative Commons License" style="border-width:0" src="https://i.creativecommons.org/l/by/4.0/88x31.png" /></a>

[post-processing-video]: https://youtu.be/0PX_6PNwrvQ

[MIPB08]: https://huggingface.co/datasets/collabora/monado-slam-datasets/tree/main/M_monado_datasets/MI_valve_index/MIP_playing/MIPB_beat_saber

|

Pretam/hi-kn | 2023-08-17T17:36:26.000Z | [

"region:us"

] | Pretam | null | null | null | 0 | 7 | Entry not found |

ticoAg/ChineseCorpus-Kaggle-fanti | 2023-08-19T09:52:06.000Z | [

"task_categories:text-generation",

"size_categories:10M<n<100M",

"language:tw",

"language:zh",

"license:apache-2.0",

"region:us"

] | ticoAg | null | null | null | 0 | 7 | ---

'39436887': examples

raw size: 4G

license: apache-2.0

task_categories:

- text-generation

language:

- tw

- zh

size_categories:

- 10M<n<100M

---

## source

mix data from https://www.kaggle.com/datasets/allanyiinai/chinesecorpus

- use

```python

from datasets import load_datasets

ds = load_datasets("ticoAg/ChineseCorpus-Kaggle-fanti")

```

- example

```json

[

{

"text": "2017年12月5日,重慶市交委正式下發《關于新建市郊鐵路磨心坡至合川線工程初步設計的批復》,2017年計劃開工四個節點工程,包括渭沱貨運站場、土場貨運站場、嘉陵江特大橋、九峰山遂道。"

},

{

"text": "2017年7月6日,線路重要節點合川渭沱貨運站開工建設,線路開始建設,項目建設工期為48個月。"

},

{

"text": "日前,渝合線二期(合川段)施工出現了停滯,至今仍未解決,合川區人民政府在2019、2020年均稱將力促市郊鐵路渝合線復工。"

},

{

"text": "2012年,12歲的加比亞加盟米蘭青訓營。在 2017 年 5 月 7 日米蘭主場對陣羅馬的意甲比賽之前,他第一次受到主教練蒙特拉的征召。然而,他仍然是一個沒獲得出場機會的替補。 2017 年 8 月 24 日,他在歐聯杯預選賽對陣斯肯迪亞的比賽中首次代表俱樂部出場,他在第 73 分鐘替補洛卡特利出場。"

},

{

"text": "他在2018 年歐洲 19 歲以下歐洲錦標賽上代表意大利 U19參加了兩場小組賽,意大利獲得亞軍。隨后他隨意大利 U20參加了2019 年國際足聯 U-20 世界杯。"

}

]

``` |

erfanloghmani/myket-android-application-recommendation-dataset | 2023-08-18T22:00:40.000Z | [

"task_categories:graph-ml",

"size_categories:100K<n<1M",

"license:mit",

"arxiv:2308.06862",

"region:us"

] | erfanloghmani | null | null | null | 1 | 7 | ---

license: mit

task_categories:

- graph-ml

size_categories:

- 100K<n<1M

configs:

- config_name: main_data

data_files: "myket.csv"

- config_name: package_name_features

data_files: "app_info.csv"

---

# Myket Android Application Install Dataset

This dataset contains information on application install interactions of users in the [Myket](https://myket.ir/) android application market. The dataset was created for the purpose of evaluating interaction prediction models, requiring user and item identifiers along with timestamps of the interactions.

## Data Creation

The dataset was initially generated by the Myket data team, and later cleaned and subsampled by Erfan Loghmani a master student at Sharif University of Technology at the time. The data team focused on a two-week period and randomly sampled 1/3 of the users with interactions during that period. They then selected install and update interactions for three months before and after the two-week period, resulting in interactions spanning about 6 months and two weeks.