id stringlengths 2 115 | lastModified stringlengths 24 24 | tags list | author stringlengths 2 42 ⌀ | description stringlengths 0 68.7k ⌀ | citation stringlengths 0 10.7k ⌀ | cardData null | likes int64 0 3.55k | downloads int64 0 10.1M | card stringlengths 0 1.01M |

|---|---|---|---|---|---|---|---|---|---|

guanaco/guanaco | 2023-04-04T09:49:11.000Z | [

"language:en",

"license:apache-2.0",

"region:us"

] | guanaco | null | null | null | 4 | 5 | ---

license: apache-2.0

language:

- en

--- |

HuggingFaceH4/Koala-test-set | 2023-04-05T21:54:31.000Z | [

"license:apache-2.0",

"region:us"

] | HuggingFaceH4 | null | null | null | 0 | 5 | ---

license: apache-2.0

---

This dataset is taken from https://github.com/arnav-gudibande/koala-test-set |

spongus/milly-images | 2023-04-15T17:41:37.000Z | [

"task_categories:text-to-image",

"task_categories:image-classification",

"task_categories:image-segmentation",

"size_categories:n<1K",

"language:en",

"license:unlicense",

"image",

"cat",

"silly",

"calico",

"region:us"

] | spongus | null | null | null | 1 | 5 | ---

license: unlicense

tags:

- image

- cat

- silly

- calico

pretty_name: Milly Images

task_categories:

- text-to-image

- image-classification

- image-segmentation

language:

- en

size_categories:

- n<1K

---

A collection of images from a very silly cat, these are all from @fatfatmillycat in twitter. Intended to be used with stable-diffusion-v1-4 |

Djacon/ru_goemotions | 2023-04-08T16:51:52.000Z | [

"task_categories:text-classification",

"task_ids:multi-class-classification",

"task_ids:multi-label-classification",

"multilinguality:monolingual",

"language:ru",

"license:mit",

"emotion",

"arxiv:2005.00547",

"region:us"

] | Djacon | null | null | null | 1 | 5 | ---

language:

- ru

license:

- mit

multilinguality:

- monolingual

task_categories:

- text-classification

task_ids:

- multi-class-classification

- multi-label-classification

pretty_name: RuGoEmotions

tags:

- emotion

---

# Dataset Card for GoEmotions

## Table of Contents

- [Dataset Description](#dataset-description)

- [Dataset Summary](#dataset-summary)

- [Supported Tasks and Leaderboards](#supported-tasks-and-leaderboards)

- [Languages](#languages)

- [Dataset Structure](#dataset-structure)

- [Data Instances](#data-instances)

- [Data Fields](#data-fields)

- [Data Splits](#data-splits)

- [Dataset Creation](#dataset-creation)

- [Curation Rationale](#curation-rationale)

- [Source Data](#source-data)

- [Annotations](#annotations)

- [Personal and Sensitive Information](#personal-and-sensitive-information)

- [Considerations for Using the Data](#considerations-for-using-the-data)

- [Social Impact of Dataset](#social-impact-of-dataset)

- [Discussion of Biases](#discussion-of-biases)

- [Other Known Limitations](#other-known-limitations)

- [Additional Information](#additional-information)

- [Dataset Curators](#dataset-curators)

- [Licensing Information](#licensing-information)

- [Citation Information](#citation-information)

- [Contributions](#contributions)

### Dataset Summary

The RuGoEmotions dataset contains 34k Reddit comments labeled for 9 emotion categories (joy, interest, surprice, sadness, anger, disgust, fear, guilt and neutral).

The dataset already with predefined train/val/test splits

### Supported Tasks and Leaderboards

This dataset is intended for multi-class, multi-label emotion classification.

### Languages

The data is in Russian.

## Dataset Structure

### Data Instances

Each instance is a reddit comment with one or more emotion annotations (or neutral).

### Data Fields

The configuration includes:

- `text`: the reddit comment

- `labels`: the emotion annotations

### Data Splits

The simplified data includes a set of train/val/test splits with 26.9k, 3.29k, and 3.37k examples respectively.

## Dataset Creation

### Curation Rationale

From the paper abstract:

> Understanding emotion expressed in language has a wide range of applications, from building empathetic chatbots to

detecting harmful online behavior. Advancement in this area can be improved using large-scale datasets with a

fine-grained typology, adaptable to multiple downstream tasks.

### Source Data

#### Initial Data Collection and Normalization

Data was collected from Reddit comments via a variety of automated methods discussed in 3.1 of the paper.

#### Who are the source language producers?

English-speaking Reddit users.

### Annotations

#### Who are the annotators?

Annotations were produced by 3 English-speaking crowdworkers in India.

### Personal and Sensitive Information

This dataset includes the original usernames of the Reddit users who posted each comment. Although Reddit usernames

are typically disasociated from personal real-world identities, this is not always the case. It may therefore be

possible to discover the identities of the individuals who created this content in some cases.

## Considerations for Using the Data

### Social Impact of Dataset

Emotion detection is a worthwhile problem which can potentially lead to improvements such as better human/computer

interaction. However, emotion detection algorithms (particularly in computer vision) have been abused in some cases

to make erroneous inferences in human monitoring and assessment applications such as hiring decisions, insurance

pricing, and student attentiveness (see

[this article](https://www.unite.ai/ai-now-institute-warns-about-misuse-of-emotion-detection-software-and-other-ethical-issues/)).

### Discussion of Biases

From the authors' github page:

> Potential biases in the data include: Inherent biases in Reddit and user base biases, the offensive/vulgar word lists used for data filtering, inherent or unconscious bias in assessment of offensive identity labels, annotators were all native English speakers from India. All these likely affect labelling, precision, and recall for a trained model. Anyone using this dataset should be aware of these limitations of the dataset.

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

Researchers at Amazon Alexa, Google Research, and Stanford. See the [author list](https://arxiv.org/abs/2005.00547).

### Licensing Information

The GitHub repository which houses this dataset has an

[Apache License 2.0](https://github.com/google-research/google-research/blob/master/LICENSE).

### Citation Information

@inproceedings{demszky2020goemotions,

author = {Demszky, Dorottya and Movshovitz-Attias, Dana and Ko, Jeongwoo and Cowen, Alan and Nemade, Gaurav and Ravi, Sujith},

booktitle = {58th Annual Meeting of the Association for Computational Linguistics (ACL)},

title = {{GoEmotions: A Dataset of Fine-Grained Emotions}},

year = {2020}

}

### Contributions

Thanks to [@joeddav](https://github.com/joeddav) for adding this dataset.

|

eyenpi/mhsma | 2023-04-11T18:51:45.000Z | [

"task_categories:image-classification",

"license:cc-by-sa-4.0",

"region:us"

] | eyenpi | null | null | null | 0 | 5 | ---

dataset_info:

features:

- name: image

sequence:

sequence: uint8

- name: head

dtype: uint8

- name: vacuole

dtype: uint8

- name: acrosome

dtype: uint8

splits:

- name: train

num_bytes: 4359000

num_examples: 1000

- name: valid

num_bytes: 1046160

num_examples: 240

- name: test

num_bytes: 1307700

num_examples: 300

download_size: 4962520

dataset_size: 6712860

license: cc-by-sa-4.0

task_categories:

- image-classification

pretty_name: The Modified Human Sperm Morphology Analysis Dataset

---

# MHSMA: The Modified Human Sperm Morphology Analysis Dataset

The MHSMA dataset is a collection of human sperm images from 235 patients with male factor infertility. Each image is labeled by experts for normal or abnormal sperm acrosome, head, vacuole, and tail.

# Source

Make sure to visit the [Github page](https://github.com/soroushj/mhsma-dataset).

```

@article{javadi2019novel,

title={A novel deep learning method for automatic assessment of human sperm images},

author={Javadi, Soroush and Mirroshandel, Seyed Abolghasem},

journal={Computers in Biology and Medicine},

volume={109},

pages={182--194},

year={2019},

doi={10.1016/j.compbiomed.2019.04.030}

}

``` |

LEL-A/translated_german_alpaca_validation | 2023-10-02T16:50:04.000Z | [

"language:de",

"region:us"

] | LEL-A | null | null | null | 0 | 5 | ---

dataset_info:

features:

- name: text

dtype: 'null'

- name: inputs

struct:

- name: _instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

- name: prediction

list:

- name: label

dtype: string

- name: score

dtype: float64

- name: prediction_agent

dtype: string

- name: annotation

dtype: string

- name: annotation_agent

dtype: string

- name: vectors

struct:

- name: input

sequence: float64

- name: instruction

sequence: float64

- name: output

sequence: float64

- name: multi_label

dtype: bool

- name: explanation

dtype: 'null'

- name: id

dtype: string

- name: metadata

struct:

- name: original_id

dtype: int64

- name: translation_model

dtype: string

- name: status

dtype: string

- name: event_timestamp

dtype: timestamp[us]

- name: metrics

struct:

- name: text_length

dtype: int64

splits:

- name: train

num_bytes: 152890

num_examples: 8

download_size: 0

dataset_size: 152890

language:

- de

---

# Dataset Card for "translated_german_alpaca_validation"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

hanamizuki-ai/stable-diffusion-v1-5-glazed | 2023-04-14T03:57:57.000Z | [

"task_categories:image-classification",

"task_categories:image-to-image",

"license:creativeml-openrail-m",

"art",

"region:us"

] | hanamizuki-ai | null | null | null | 0 | 5 | ---

license: creativeml-openrail-m

task_categories:

- image-classification

- image-to-image

tags:

- art

dataset_info:

features:

- name: id

dtype: string

- name: parent_id

dtype: string

- name: model

dtype: string

- name: prompt

dtype: string

- name: glaze_model

dtype: string

- name: glaze_intensity

dtype: int64

- name: glaze_render

dtype: int64

- name: glaze_style

dtype: string

- name: glaze_style_strength

dtype: float64

- name: image

dtype: image

- name: parent_image

dtype: image

splits:

- name: train

num_bytes: 111462286297.0

num_examples: 118980

download_size: 23365392724

dataset_size: 111462286297.0

---

# Dataset Card for Stable Diffusion v1.5 Glazed Samples

## Dataset Description

### Dataset Summary

This dataset contains image samples originally generated by [runwayml/stable-diffusion-v1-5](https://huggingface.co/runwayml/stable-diffusion-v1-5)

and subsequently processed by [Glaze](https://glaze.cs.uchicago.edu/) tool.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

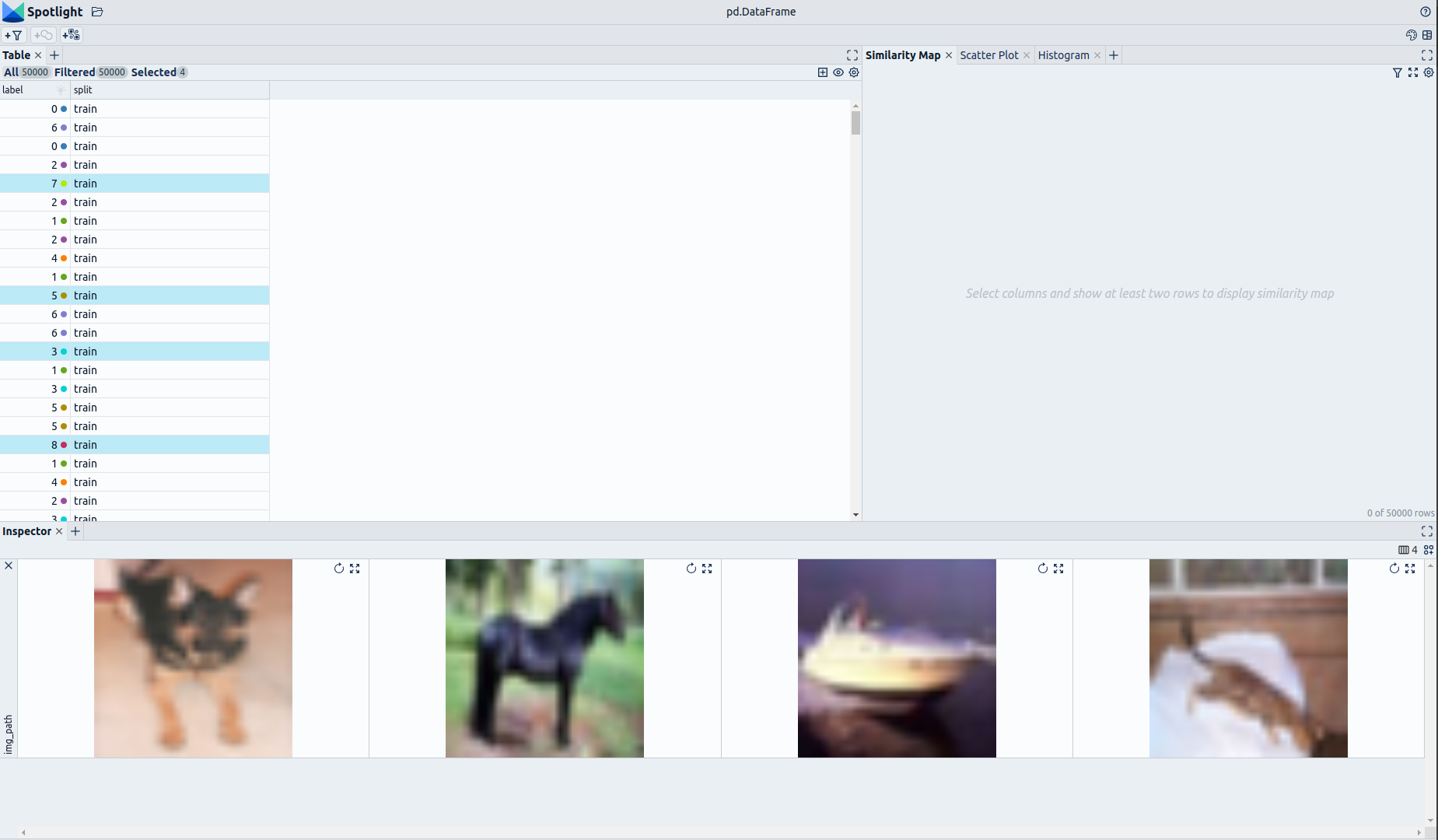

renumics/cifar10-enriched | 2023-06-06T07:42:35.000Z | [

"task_categories:image-classification",

"task_ids:multi-class-image-classification",

"size_categories:10K<n<100K",

"source_datasets:extended|cifar10",

"language:en",

"license:apache-2.0",

"image classification",

"cifar-10",

"cifar-10-enriched",

"embeddings",

"enhanced",

"spotlight",

"region:... | renumics | The CIFAR-10 dataset consists of 60000 32x32 colour images in 10 classes, with 6000 images

per class. There are 50000 training images and 10000 test images.

This version if CIFAR-10 is enriched with several metadata such as embeddings, baseline results and label error scores. | @TECHREPORT{Krizhevsky09learningmultiple,

author = {Alex Krizhevsky},

title = {Learning multiple layers of features from tiny images},

institution = {},

year = {2009}

} | null | 1 | 5 | ---

license: apache-2.0

task_categories:

- image-classification

task_ids:

- multi-class-image-classification

paperswithcode_id: cifar-10

pretty_name: CIFAR-10

size_categories:

- 10K<n<100K

source_datasets:

- extended|cifar10

tags:

- image classification

- cifar-10

- cifar-10-enriched

- embeddings

- enhanced

- spotlight

language:

- en

---

# Dataset Card for CIFAR-10-Enriched (Enhanced by Renumics)

## Dataset Description

- **Homepage:** [Renumics Homepage](https://renumics.com/?hf-dataset-card=cifar10-enriched)

- **GitHub** [Spotlight](https://github.com/Renumics/spotlight)

- **Dataset Homepage** [CS Toronto Homepage](https://www.cs.toronto.edu/~kriz/cifar.html)

- **Paper:** [Learning Multiple Layers of Features from Tiny Images](https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf)

### Dataset Summary

📊 [Data-centric AI](https://datacentricai.org) principles have become increasingly important for real-world use cases.

At [Renumics](https://renumics.com/?hf-dataset-card=cifar10-enriched) we believe that classical benchmark datasets and competitions should be extended to reflect this development.

🔍 This is why we are publishing benchmark datasets with application-specific enrichments (e.g. embeddings, baseline results, uncertainties, label error scores). We hope this helps the ML community in the following ways:

1. Enable new researchers to quickly develop a profound understanding of the dataset.

2. Popularize data-centric AI principles and tooling in the ML community.

3. Encourage the sharing of meaningful qualitative insights in addition to traditional quantitative metrics.

📚 This dataset is an enriched version of the [CIFAR-10 Dataset](https://www.cs.toronto.edu/~kriz/cifar.html).

### Explore the Dataset

The enrichments allow you to quickly gain insights into the dataset. The open source data curation tool [Renumics Spotlight](https://github.com/Renumics/spotlight) enables that with just a few lines of code:

Install datasets and Spotlight via [pip](https://packaging.python.org/en/latest/key_projects/#pip):

```python

!pip install renumics-spotlight datasets

```

Load the dataset from huggingface in your notebook:

```python

import datasets

dataset = datasets.load_dataset("renumics/cifar10-enriched", split="train")

```

Start exploring with a simple view:

```python

from renumics import spotlight

df = dataset.to_pandas()

df_show = df.drop(columns=['img'])

spotlight.show(df_show, port=8000, dtype={"img_path": spotlight.Image})

```

You can use the UI to interactively configure the view on the data. Depending on the concrete tasks (e.g. model comparison, debugging, outlier detection) you might want to leverage different enrichments and metadata.

### CIFAR-10 Dataset

The CIFAR-10 dataset consists of 60000 32x32 colour images in 10 classes, with 6000 images per class. There are 50000 training images and 10000 test images.

The dataset is divided into five training batches and one test batch, each with 10000 images. The test batch contains exactly 1000 randomly-selected images from each class. The training batches contain the remaining images in random order, but some training batches may contain more images from one class than another. Between them, the training batches contain exactly 5000 images from each class.

The classes are completely mutually exclusive. There is no overlap between automobiles and trucks. "Automobile" includes sedans, SUVs, things of that sort. "Truck" includes only big trucks. Neither includes pickup trucks.

Here is the list of classes in the CIFAR-10:

- airplane

- automobile

- bird

- cat

- deer

- dog

- frog

- horse

- ship

- truck

### Supported Tasks and Leaderboards

- `image-classification`: The goal of this task is to classify a given image into one of 10 classes. The leaderboard is available [here](https://paperswithcode.com/sota/image-classification-on-cifar-10).

### Languages

English class labels.

## Dataset Structure

### Data Instances

A sample from the training set is provided below:

```python

{

'img': <PIL.PngImagePlugin.PngImageFile image mode=RGB size=32x32 at 0x7FD19FABC1D0>,

'img_path': '/huggingface/datasets/downloads/extracted/7faec2e0fd4aa3236f838ed9b105fef08d1a6f2a6bdeee5c14051b64619286d5/0/0.png',

'label': 0,

'split': 'train'

}

```

### Data Fields

| Feature | Data Type |

|---------------------------------|-----------------------------------------------|

| img | Image(decode=True, id=None) |

| img_path | Value(dtype='string', id=None) |

| label | ClassLabel(names=[...], id=None) |

| split | Value(dtype='string', id=None) |

### Data Splits

| Dataset Split | Number of Images in Split | Samples per Class |

| ------------- |---------------------------| -------------------------|

| Train | 50000 | 5000 |

| Test | 10000 | 1000 |

## Dataset Creation

### Curation Rationale

The CIFAR-10 and CIFAR-100 are labeled subsets of the [80 million tiny images](http://people.csail.mit.edu/torralba/tinyimages/) dataset.

They were collected by Alex Krizhevsky, Vinod Nair, and Geoffrey Hinton.

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

If you use this dataset, please cite the following paper:

```

@article{krizhevsky2009learning,

added-at = {2021-01-21T03:01:11.000+0100},

author = {Krizhevsky, Alex},

biburl = {https://www.bibsonomy.org/bibtex/2fe5248afe57647d9c85c50a98a12145c/s364315},

interhash = {cc2d42f2b7ef6a4e76e47d1a50c8cd86},

intrahash = {fe5248afe57647d9c85c50a98a12145c},

keywords = {},

pages = {32--33},

timestamp = {2021-01-21T03:01:11.000+0100},

title = {Learning Multiple Layers of Features from Tiny Images},

url = {https://www.cs.toronto.edu/~kriz/learning-features-2009-TR.pdf},

year = 2009

}

```

### Contributions

Alex Krizhevsky, Vinod Nair, Geoffrey Hinton, and Renumics GmbH. |

hanamizuki-ai/anything-v3.0-glazed | 2023-04-21T11:52:12.000Z | [

"task_categories:image-classification",

"task_categories:image-to-image",

"license:creativeml-openrail-m",

"art",

"region:us"

] | hanamizuki-ai | null | null | null | 1 | 5 | ---

license: creativeml-openrail-m

task_categories:

- image-classification

- image-to-image

tags:

- art

dataset_info:

features:

- name: id

dtype: string

- name: parent_id

dtype: string

- name: model

dtype: string

- name: prompt

dtype: string

- name: glaze_model

dtype: string

- name: glaze_intensity

dtype: int64

- name: glaze_render

dtype: int64

- name: glaze_style

dtype: string

- name: glaze_style_strength

dtype: float64

- name: image

dtype: image

- name: parent_image

dtype: image

splits:

- name: train

num_bytes: 96564915991.925

num_examples: 89235

download_size: 9066695101

dataset_size: 96564915991.925

---

# Dataset Card for Anything v3.0 Glazed Samples

## Dataset Description

### Dataset Summary

This dataset contains image samples originally generated by [Linaqruf/anything-v3.0](https://huggingface.co/Linaqruf/anything-v3.0)

and subsequently processed by [Glaze](https://glaze.cs.uchicago.edu/) tool.

### Supported Tasks and Leaderboards

[More Information Needed]

### Languages

[More Information Needed]

## Dataset Structure

### Data Instances

[More Information Needed]

### Data Fields

[More Information Needed]

### Data Splits

[More Information Needed]

## Dataset Creation

### Curation Rationale

[More Information Needed]

### Source Data

#### Initial Data Collection and Normalization

[More Information Needed]

#### Who are the source language producers?

[More Information Needed]

### Annotations

#### Annotation process

[More Information Needed]

#### Who are the annotators?

[More Information Needed]

### Personal and Sensitive Information

[More Information Needed]

## Considerations for Using the Data

### Social Impact of Dataset

[More Information Needed]

### Discussion of Biases

[More Information Needed]

### Other Known Limitations

[More Information Needed]

## Additional Information

### Dataset Curators

[More Information Needed]

### Licensing Information

[More Information Needed]

### Citation Information

[More Information Needed]

### Contributions

[More Information Needed] |

alvations/esci-data-task2 | 2023-04-22T02:40:09.000Z | [

"region:us"

] | alvations | null | null | null | 0 | 5 | ---

dataset_info:

features:

- name: example_id

dtype: int64

- name: query

dtype: string

- name: query_id

dtype: int64

- name: product_id

dtype: string

- name: product_locale

dtype: string

- name: esci_label

dtype: string

- name: small_version

dtype: int64

- name: large_version

dtype: int64

- name: split

dtype: string

- name: product_title

dtype: string

- name: product_description

dtype: string

- name: product_bullet_point

dtype: string

- name: product_brand

dtype: string

- name: product_color

dtype: string

- name: gain

dtype: float64

- name: __index_level_0__

dtype: int64

splits:

- name: train

num_bytes: 2603008323

num_examples: 1977767

- name: dev

num_bytes: 7386427

num_examples: 5505

- name: test

num_bytes: 843102586

num_examples: 638016

download_size: 2214316591

dataset_size: 3453497336

---

# Dataset Card for "esci-data-task2"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

LawInformedAI/overruling | 2023-04-23T06:50:48.000Z | [

"region:us"

] | LawInformedAI | null | null | null | 0 | 5 | Entry not found |

NicholasSynovic/Free-AutoTrain-VEAA | 2023-04-25T17:42:58.000Z | [

"task_categories:text-classification",

"size_categories:1K<n<10K",

"source_datasets:NicholasSynovic/Victorian-Era-Authorship-Attribution",

"language:en",

"license:agpl-3.0",

"region:us"

] | NicholasSynovic | null | null | null | 0 | 5 | ---

license: agpl-3.0

task_categories:

- text-classification

language:

- en

pretty_name: Victorian Era Authorship Attribution Data Set (For Free AutoTrain Account)

size_categories:

- 1K<n<10K

source_datasets:

- NicholasSynovic/Victorian-Era-Authorship-Attribution

---

# Free AutoTrain VEAA

> Victorian Era Authorship Attribution Data Set (For Free AutoTrain Account)

## About

See the [original HF-hosted dataset](https://huggingface.co/datasets/NicholasSynovic/Victorian-Era-Authorship-Attribution) for more information.

The code to generate this dataset came from this [GitHub Repo](https://github.com/NicholasSynovic/nlp-victorianAuthor). |

cartesinus/iva_mt_wslot-exp | 2023-04-26T21:53:33.000Z | [

"task_categories:translation",

"size_categories:10K<n<100K",

"language:en",

"language:pl",

"language:de",

"language:es",

"language:sv",

"license:cc-by-4.0",

"machine translation",

"nlu",

"natural-language-understanding",

"virtual assistant",

"region:us"

] | cartesinus | \ | null | null | 0 | 5 | ---

dataset_info:

features:

- name: id

dtype: string

- name: locale

dtype: string

- name: origin

dtype: string

- name: partition

dtype: string

- name: translation_utt

dtype:

translation:

languages:

- en

- pl

- name: translation_xml

dtype:

translation:

languages:

- en

- pl

- name: src_bio

dtype: string

- name: tgt_bio

dtype: string

task_categories:

- translation

language:

- en

- pl

- de

- es

- sv

tags:

- machine translation

- nlu

- natural-language-understanding

- virtual assistant

pretty_name: Machine translation for NLU with slot transfer

size_categories:

- 10K<n<100K

license: cc-by-4.0

---

# Machine translation dataset for NLU (Virual Assistant) with slot transfer between languages

## Dataset Summary

Disclaimer: This is for research purposes only. Please have a look at the license section below. Some of the datasets used to construct IVA_MT have an unknown license.

IVA_MT is a machine translation dataset that can be used to train, adapt and evaluate MT models used in Virtual Assistant NLU context (e.g. to translate trainig corpus of NLU).

## Dataset Composition

### en-pl

| Corpus | Train | Dev | Test |

|----------------------------------------------------------------------|--------|-------|-------|

| [Massive 1.1](https://huggingface.co/datasets/AmazonScience/massive) | 11514 | 2033 | 2974 |

| [Leyzer 0.2.0](https://github.com/cartesinus/leyzer/tree/0.2.0) | 3974 | 701 | 1380 |

| [OpenSubtitles from OPUS](https://opus.nlpl.eu/OpenSubtitles-v1.php) | 2329 | 411 | 500 |

| [KDE from OPUS](https://opus.nlpl.eu/KDE4.php) | 1154 | 241 | 241 |

| [CCMatrix from Opus](https://opus.nlpl.eu/CCMatrix.php) | 1096 | 232 | 237 |

| [Ubuntu from OPUS](https://opus.nlpl.eu/Ubuntu.php) | 281 | 60 | 59 |

| [Gnome from OPUS](https://opus.nlpl.eu/GNOME.php) | 14 | 3 | 3 |

| *total* | 20362 | 3681 | 5394 |

### en-de

| Corpus | Train | Dev | Test |

|----------------------------------------------------------------------|--------|-------|-------|

| [Massive 1.1](https://huggingface.co/datasets/AmazonScience/massive) | 7536 | 1346 | 1955 |

### en-es

| Corpus | Train | Dev | Test |

|----------------------------------------------------------------------|--------|-------|-------|

| [Massive 1.1](https://huggingface.co/datasets/AmazonScience/massive) | 8415 | 1526 | 2202 |

### en-sv

| Corpus | Train | Dev | Test |

|----------------------------------------------------------------------|--------|-------|-------|

| [Massive 1.1](https://huggingface.co/datasets/AmazonScience/massive) | 7540 | 1360 | 1921 |

## Tools

Scripts used to generate this dataset can be found on [github](https://github.com/cartesinus/iva_mt).

## License

This is a composition of 7 datasets, and the license is as defined in original release:

- MASSIVE: [CC-BY 4.0](https://huggingface.co/datasets/AmazonScience/massive/blob/main/LICENSE)

- Leyzer: [CC BY-NC 4.0](https://github.com/cartesinus/leyzer/blob/master/LICENSE)

- OpenSubtitles: unknown

- KDE: [GNU Public License](https://l10n.kde.org/about.php)

- CCMatrix: no license given, therefore assuming it is LASER project license [BSD](https://github.com/facebookresearch/LASER/blob/main/LICENSE)

- Ubuntu: [GNU Public License](https://help.launchpad.net/Legal)

- Gnome: unknown

|

logo-wizard/modern-logo-dataset | 2023-05-09T13:40:55.000Z | [

"task_categories:text-to-image",

"size_categories:n<1K",

"language:en",

"license:cc-by-nc-3.0",

"doi:10.57967/hf/0592",

"region:us"

] | logo-wizard | null | null | null | 11 | 5 | ---

dataset_info:

features:

- name: image

dtype: image

- name: text

dtype: string

splits:

- name: train

num_bytes: 209598433

num_examples: 803

download_size: 208886058

dataset_size: 209598433

license: cc-by-nc-3.0

task_categories:

- text-to-image

language:

- en

size_categories:

- n<1K

---

# Dataset Card for "logo-dataset-v4"

This dataset consists of 803 pairs \\( (x, y) \\), where \\( x \\) is the image and \\( y \\) is the description of the image.

The data have been manually collected and labelled, so the dataset is fully representative and free of rubbish.

The logos in the dataset are minimalist, meeting modern design requirements and reflecting the company's industry.

# Disclaimer

This dataset is made available for academic research purposes only. All the images are collected from the Internet, and the copyright belongs to the original owners. If any of the images belongs to you and you would like it removed, please inform us, we will try to remove it from the dataset. |

fujiki/wiki40b_ja | 2023-04-28T23:35:57.000Z | [

"language:ja",

"license:cc-by-sa-4.0",

"region:us"

] | fujiki | null | null | null | 0 | 5 | ---

license: cc-by-sa-4.0

language:

- ja

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 1954209746

num_examples: 745392

- name: validation

num_bytes: 107186201

num_examples: 41576

- name: test

num_bytes: 107509760

num_examples: 41268

download_size: 420085060

dataset_size: 2168905707

---

This dataset is a reformatted version of the Japanese portion of [wiki40b](https://aclanthology.org/2020.lrec-1.297/) dataset.

When you use this dataset, please cite the original paper:

```

@inproceedings{guo-etal-2020-wiki,

title = "{W}iki-40{B}: Multilingual Language Model Dataset",

author = "Guo, Mandy and

Dai, Zihang and

Vrande{\v{c}}i{\'c}, Denny and

Al-Rfou, Rami",

booktitle = "Proceedings of the Twelfth Language Resources and Evaluation Conference",

month = may,

year = "2020",

address = "Marseille, France",

publisher = "European Language Resources Association",

url = "https://aclanthology.org/2020.lrec-1.297",

pages = "2440--2452",

abstract = "We propose a new multilingual language model benchmark that is composed of 40+ languages spanning several scripts and linguistic families. With around 40 billion characters, we hope this new resource will accelerate the research of multilingual modeling. We train monolingual causal language models using a state-of-the-art model (Transformer-XL) establishing baselines for many languages. We also introduce the task of multilingual causal language modeling where we train our model on the combined text of 40+ languages from Wikipedia with different vocabulary sizes and evaluate on the languages individually. We released the cleaned-up text of 40+ Wikipedia language editions, the corresponding trained monolingual language models, and several multilingual language models with different fixed vocabulary sizes.",

language = "English",

ISBN = "979-10-95546-34-4",

}

```

|

Noxturnix/blognone-20230430 | 2023-05-05T21:47:56.000Z | [

"task_categories:text-generation",

"task_categories:text-classification",

"size_categories:10K<n<100K",

"language:th",

"license:cc-by-3.0",

"region:us"

] | Noxturnix | null | null | null | 0 | 5 | ---

license: cc-by-3.0

dataset_info:

features:

- name: title

dtype: string

- name: author

dtype: string

- name: date

dtype: string

- name: tags

sequence: string

- name: content

dtype: string

splits:

- name: train

num_bytes: 51748027

num_examples: 18623

download_size: 21759892

dataset_size: 51748027

task_categories:

- text-generation

- text-classification

language:

- th

size_categories:

- 10K<n<100K

---

# Dataset Card for blognone-20230430

## Dataset Summary

[Blognone](https://www.blognone.com/) posts from January 1, 2020 to April 30, 2023.

## Features

- title: (str)

- author: (str)

- date: (str)

- tags: (list)

- content: (str)

## Licensing Information

Blognone posts are published are licensed under the [Creative Commons Attribution 3.0 Thailand](https://creativecommons.org/licenses/by/3.0/th/deed.en) (CC BY 3.0 TH). |

oaimli/PeerSum | 2023-10-08T05:31:38.000Z | [

"task_categories:summarization",

"size_categories:10K<n<100K",

"language:en",

"license:apache-2.0",

"arxiv:2305.01498",

"region:us"

] | oaimli | null | null | null | 1 | 5 | ---

license: apache-2.0

task_categories:

- summarization

language:

- en

pretty_name: PeerSum

size_categories:

- 10K<n<100K

---

This is PeerSum, a multi-document summarization dataset in the peer-review domain. More details can be found in the paper accepted at EMNLP 2023, [Summarizing Multiple Documents with Conversational Structure for Meta-review Generation](https://arxiv.org/abs/2305.01498). The original code and datasets are public on [GitHub](https://github.com/oaimli/PeerSum).

Please use the following code to download the dataset with the datasets library from Huggingface.

```python

from datasets import load_dataset

peersum_all = load_dataset('oaimli/PeerSum', split='all')

peersum_train = peersum_all.filter(lambda s: s['label'] == 'train')

peersum_val = peersum_all.filter(lambda s: s['label'] == 'val')

peersum_test = peersum_all.filter(lambda s: s['label'] == 'test')

```

The Huggingface dataset is mainly for multi-document summarization. Each sample comprises information with the following keys:

```

* paper_id: str (a link to the raw data)

* paper_title: str

* paper_abstract, str

* paper_acceptance, str

* meta_review, str

* review_ids, list(str)

* review_writers, list(str)

* review_contents, list(str)

* review_ratings, list(int)

* review_confidences, list(int)

* review_reply_tos, list(str)

* label, str, (train, val, test)

```

You can also download the raw data from [Google Drive](https://drive.google.com/drive/folders/1SGYvxY1vOZF2MpDn3B-apdWHCIfpN2uB?usp=sharing). The raw data comprises more information and it can be used for other analysis for peer reviews. |

amitpuri/bollywood-celebs | 2023-05-17T17:19:53.000Z | [

"task_categories:image-classification",

"language:en",

"license:mit",

"region:us"

] | amitpuri | null | null | null | 0 | 5 | ---

task_categories:

- image-classification

license: mit

language:

- en

pretty_name: ' bollywood-celebs'

---

# bollywood-celebs

## Dataset Description

This dataset has been automatically processed by AutoTrain for project bollywood-celebs.

Credits: https://www.kaggle.com/datasets/sushilyadav1998/bollywood-celeb-localized-face-dataset

### Languages

The BCP-47 code for the dataset's language is unk.

## Dataset Structure

### Data Instances

A sample from this dataset looks as follows:

```json

[

{

"image": "<64x64 RGB PIL image>",

"target": 15

},

{

"image": "<64x64 RGB PIL image>",

"target": 82

}

]

```

### Dataset Fields

The dataset has the following fields (also called "features"):

```json

{

"image": "Image(decode=True, id=None)",

"target": "ClassLabel(names=['Aamir_Khan', 'Abhay_Deol', 'Abhishek_Bachchan', 'Aftab_Shivdasani', 'Aishwarya_Rai', 'Ajay_Devgn', 'Akshay_Kumar', 'Akshaye_Khanna', 'Alia_Bhatt', 'Ameesha_Patel', 'Amitabh_Bachchan', 'Amrita_Rao', 'Amy_Jackson', 'Anil_Kapoor', 'Anushka_Sharma', 'Anushka_Shetty', 'Arjun_Kapoor', 'Arjun_Rampal', 'Arshad_Warsi', 'Asin', 'Ayushmann_Khurrana', 'Bhumi_Pednekar', 'Bipasha_Basu', 'Bobby_Deol', 'Deepika_Padukone', 'Disha_Patani', 'Emraan_Hashmi', 'Esha_Gupta', 'Farhan_Akhtar', 'Govinda', 'Hrithik_Roshan', 'Huma_Qureshi', 'Ileana_DCruz', 'Irrfan_Khan', 'Jacqueline_Fernandez', 'John_Abraham', 'Juhi_Chawla', 'Kajal_Aggarwal', 'Kajol', 'Kangana_Ranaut', 'Kareena_Kapoor', 'Karisma_Kapoor', 'Kartik_Aaryan', 'Katrina_Kaif', 'Kiara_Advani', 'Kriti_Kharbanda', 'Kriti_Sanon', 'Kunal_Khemu', 'Lara_Dutta', 'Madhuri_Dixit', 'Manoj_Bajpayee', 'Mrunal_Thakur', 'Nana_Patekar', 'Nargis_Fakhri', 'Naseeruddin_Shah', 'Nushrat_Bharucha', 'Paresh_Rawal', 'Parineeti_Chopra', 'Pooja_Hegde', 'Prabhas', 'Prachi_Desai', 'Preity_Zinta', 'Priyanka_Chopra', 'R_Madhavan', 'Rajkummar_Rao', 'Ranbir_Kapoor', 'Randeep_Hooda', 'Rani_Mukerji', 'Ranveer_Singh', 'Richa_Chadda', 'Riteish_Deshmukh', 'Saif_Ali_Khan', 'Salman_Khan', 'Sanjay_Dutt', 'Sara_Ali_Khan', 'Shah_Rukh_Khan', 'Shahid_Kapoor', 'Shilpa_Shetty', 'Shraddha_Kapoor', 'Shreyas_Talpade', 'Shruti_Haasan', 'Sidharth_Malhotra', 'Sonakshi_Sinha', 'Sonam_Kapoor', 'Suniel_Shetty', 'Sunny_Deol', 'Sushant_Singh_Rajput', 'Taapsee_Pannu', 'Tabu', 'Tamannaah_Bhatia', 'Tiger_Shroff', 'Tusshar_Kapoor', 'Uday_Chopra', 'Vaani_Kapoor', 'Varun_Dhawan', 'Vicky_Kaushal', 'Vidya_Balan', 'Vivek_Oberoi', 'Yami_Gautam', 'Zareen_Khan'], id=None)"

}

```

### Dataset Splits

This dataset is split into a train and validation split. The split sizes are as follow:

| Split name | Num samples |

| ------------ | ------------------- |

| train | 6863 |

| valid | 1764 | |

glombardo/misogynistic-statements-classification | 2023-05-10T19:18:45.000Z | [

"task_categories:text-classification",

"language:es",

"license:cc-by-nc-4.0",

"region:us"

] | glombardo | null | null | null | 0 | 5 | ---

license: cc-by-nc-4.0

task_categories:

- text-classification

language:

- es

pretty_name: Misogynistic statements classification

dataset_info:

features:

- name: text

dtype: string

- name: label

dtype:

class_label:

names:

'0': Non-sexist

'1': Sexist

splits:

- name: train

num_bytes: 13234

num_examples: 127

- name: validation

num_bytes: 4221

num_examples: 42

- name: test

num_bytes: 4438

num_examples: 43

download_size: 16218

dataset_size: 21893

---

Beta Dataset

Generated by GPT3.5 |

HAERAE-HUB/KoInstruct-Base | 2023-05-05T13:28:52.000Z | [

"region:us"

] | HAERAE-HUB | null | null | null | 1 | 5 | ---

dataset_info:

features:

- name: instruction

dtype: string

- name: input

dtype: string

- name: output

dtype: string

- name: type

dtype: string

- name: template

dtype: string

splits:

- name: train

num_bytes: 279249821

num_examples: 50169

download_size: 128982824

dataset_size: 279249821

---

# Dataset Card for "ko_instruct_org_v0.1"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

divers/jobsedcription-requirement | 2023-05-05T17:50:23.000Z | [

"region:us"

] | divers | null | null | null | 3 | 5 | ---

dataset_info:

features:

- name: index

dtype: int64

- name: job_description

dtype: string

- name: job_requirements

dtype: string

- name: unknown

dtype: float64

- name: __index_level_0__

dtype: float64

splits:

- name: train

num_bytes: 25599853

num_examples: 4551

download_size: 12633905

dataset_size: 25599853

---

# Dataset Card for "jobsedcription-requirement"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

thu-coai/kdconv | 2023-05-08T10:39:46.000Z | [

"language:zh",

"license:apache-2.0",

"arxiv:2004.04100",

"region:us"

] | thu-coai | null | null | null | 2 | 5 | ---

license: apache-2.0

language:

- zh

---

The KDConv dataset. [GitHub repo](https://github.com/thu-coai/KdConv). [Original paper](https://arxiv.org/abs/2004.04100).

```bib

@inproceedings{zhou-etal-2020-kdconv,

title = "{K}d{C}onv: A {C}hinese Multi-domain Dialogue Dataset Towards Multi-turn Knowledge-driven Conversation",

author = "Zhou, Hao and

Zheng, Chujie and

Huang, Kaili and

Huang, Minlie and

Zhu, Xiaoyan",

booktitle = "ACL",

year = "2020"

}

```

|

gneubig/dstc11 | 2023-05-10T01:07:12.000Z | [

"license:other",

"region:us"

] | gneubig | This repository contains data, relevant scripts and baseline code for the Dialog Systems Technology Challenge (DSTC11). | @misc{gung2023natcs,

title={NatCS: Eliciting Natural Customer Support Dialogues},

author={James Gung and Emily Moeng and Wesley Rose and Arshit Gupta and Yi Zhang and Saab Mansour},

year={2023},

eprint={2305.03007},

archivePrefix={arXiv},

primaryClass={cs.CL}

}

@misc{gung2023intent,

title={Intent Induction from Conversations for Task-Oriented Dialogue Track at DSTC 11},

author={James Gung and Raphael Shu and Emily Moeng and Wesley Rose and Salvatore Romeo and Yassine Benajiba and Arshit Gupta and Saab Mansour and Yi Zhang},

year={2023},

eprint={2304.12982},

archivePrefix={arXiv},

primaryClass={cs.CL}

} | null | 1 | 5 | ---

license: other

---

Originally from [here](https://github.com/amazon-science/dstc11-track2-intent-induction/tree/969b95a0d7365fbc6cd0e05989f1be6b44e6680c/dstc11) |

pietrolesci/dbpedia_14_indexed | 2023-05-11T13:34:45.000Z | [

"task_categories:text-classification",

"task_ids:topic-classification",

"annotations_creators:machine-generated",

"language_creators:crowdsourced",

"multilinguality:monolingual",

"size_categories:100K<n<1M",

"source_datasets:original",

"language:en",

"license:cc-by-sa-3.0",

"region:us"

] | pietrolesci | null | null | null | 0 | 5 | ---

annotations_creators:

- machine-generated

language_creators:

- crowdsourced

language:

- en

license:

- cc-by-sa-3.0

multilinguality:

- monolingual

size_categories:

- 100K<n<1M

source_datasets:

- original

task_categories:

- text-classification

task_ids:

- topic-classification

paperswithcode_id: dbpedia

pretty_name: DBpedia

dataset_info:

features:

- name: labels

dtype:

class_label:

names:

'0': Company

'1': EducationalInstitution

'2': Artist

'3': Athlete

'4': OfficeHolder

'5': MeanOfTransportation

'6': Building

'7': NaturalPlace

'8': Village

'9': Animal

'10': Plant

'11': Album

'12': Film

'13': WrittenWork

- name: title

dtype: string

- name: content

dtype: string

- name: uid

dtype: int64

- name: embedding_all-mpnet-base-v2

sequence: float32

- name: embedding_multi-qa-mpnet-base-dot-v1

sequence: float32

- name: embedding_all-MiniLM-L12-v2

sequence: float32

splits:

- name: train

num_bytes: 4490428970

num_examples: 560000

- name: test

num_bytes: 561310285

num_examples: 70000

download_size: 0

dataset_size: 5051739255

---

This is the same dataset as [`dbpedia_14`](https://huggingface.co/datasets/dbpedia_14). The only differences are

1. Addition of a unique identifier, `uid`

1. Addition of the indices, that is 3 columns with the embeddings of 3 different sentence-transformers

- `all-mpnet-base-v2`

- `multi-qa-mpnet-base-dot-v1`

- `all-MiniLM-L12-v2`

1. Renaming of the `label` column to `labels` for easier compatibility with the transformers library |

techiaith/banc-trawsgrifiadau-bangor | 2023-06-13T15:24:52.000Z | [

"size_categories:10K<n<100K",

"language:cy",

"license:cc0-1.0",

"verbatim transcriptions",

"speech recognition",

"region:us"

] | techiaith | Dyma fanc o 25 awr 34 munud a 24 eiliad o segmentau o leferydd naturiol dros hanner cant o gyfranwyr ar ffurf ffeiliau mp3, ynghyd â thrawsgrifiadau 'verbatim' cyfatebol o’r lleferydd ar ffurf ffeil .tsv. Mae'r mwyafrif o'r lleferydd yn leferydd digymell, naturiol. Dosbarthwn y deunydd hwn o dan drwydded agored CC0.

This resource is a bank of 25 hours 34 minutes and 24 seconds of segments of natural speech from over 50 contributors in mp3 file format, together with corresponding 'verbatim' transcripts of the speech in .tsv file format. The majority of the speech is spontaneous, natural speech. We distribute this material under a CC0 open license. | } | null | 0 | 5 | ---

license: cc0-1.0

language:

- cy

tags:

- verbatim transcriptions

- speech recognition

pretty_name: 'Banc Trawsgrifiadau Bangor '

size_categories:

- 10K<n<100K

---

[See below for English](#bangor-transcription-bank)

# Banc Trawsgrifiadau Bangor

Dyma fanc o 25 awr 34 munud a 24 eiliad o segmentau o leferydd naturiol dros hanner cant o gyfranwyr ar ffurf ffeiliau mp3, ynghyd â thrawsgrifiadau 'verbatim' cyfatebol o’r lleferydd ar ffurf ffeil .tsv. Mae'r mwyafrif o'r lleferydd yn leferydd digymell, naturiol. Dosbarthwn y deunydd hwn o dan drwydded agored CC0.

## Pwrpas

Pwrpas y trawsgrifiadau hyn yw gweithredu fel data hyfforddi ar gyfer modelau adnabod lleferydd, gan gynnwys [ein modelau wav2vec](https://github.com/techiaith/docker-wav2vec2-cy). Ar gyfer y diben hwnnw, mae gofyn am drawsgrifiadau mwy verbatim o'r hyn a ddywedwyd na'r hyn a welir mewn trawsgrifiadau traddodiadol ac mewn isdeitlau, felly datblygwyd confensiwn arbennig ar gyfer y gwaith trawsgrifio ([gweler isod](#confensiynau_trawsgrifio)). Gydag ein modelau wav2vec, caiff cydran ychwnaegol, sef 'model iaith' ei defnyddio ar ôl y model adnabod lleferydd i safoni mwy ar allbwn y model iaith i fod yn debycach i drawsgrifiadau traddodiadol ac isdeitlau.

Rydyn ni wedi darparu 3 ffeil .tsv, sef clips.tsv, train.tsv a test.tsv. Mae clips.tsv yn cynnwys ein trawsgrifiadau i gyd. Crëwyd train.tsv a test.tsv er mewn darparu setiau 'safonol' sy'n caniatáu i ddefnyddwyr allu gymharu modelau gan wahanol hyfforddwyr yn deg,hynny yw fe'u crëwyd at bwrpas meincnodi. Mae train.tsv yn cynnwys 80% o'n trawsgrifiadau, a test.tsv yn cynnwys y 20% sy'n weddill.

Dyma enghraifft o gynnwys y data:

```

audio_filename audio_filesize transcript duration

f86a046fd0964e0386d8c1363907183d.mp3 898272 *post industrial* yym a gyda yy dwi'n ca'l deud 5092

f0c2310fdca34faaa83beca5fa7ed212.mp3 809720 sut i ymdopio felly, wedyn erbyn hyn mae o nôl yn y cartra 4590

3eec3feefe254c9790739c22dd63c089.mp3 1335392 Felly ma' hon hefyd yn ddogfen fydd yn trosglwyddo gyda'r plant bobol ifanc o un cam i'r llall ac hefyd erbyn hyn i'r coleg 'lly. 7570

```

Ceir pedair colofn yn y ffeiliau .tsv. Y cyntaf yw enw’r ffeil sain. Maint y ffeil sain yw’r ail. Y trawsgrifiad ei hun sydd yn y drydedd golofn. Hyd y clip sain sydd yn yr olaf.

Dyma'r wybodaeth am y colofnau.

| Maes| Esboniad |

| ------ | ------ |

| `audio_filename`| Enw'r ffeil sain o fewn y ffolder 'clips'|

| `audio_filesize` | Maint y ffeil|

| `transcript` | Trawsgrifiad |

| `duration` | Hyd amser y clip mewn milliseconds. |

## Y Broses o Greu’r Adnodd

Casglwyd y ffeiliau sain yn bennaf o bodlediadau Cymraeg gyda chaniatâd eu perchnogion yn ogystal â'r cyfranwyr unigol. Rydym yn ddiolchgar tu hwnt i’r bobl yna. Yn ogystal, crewyd rhywfaint o sgriptiau ar batrwm eitemau newyddion ac erthyglau a'u darllen gan ymchwilwyr yr Uned Technolegau Iaith er mwyn sicrhau bod cynnwys o'r math hwnnw yn y banc.

Gyrrwyd y ffeiliau sain trwy ein trawsgrifiwr awtomataidd mewnol i segmentu’r sain a chreu trawsgrifiadau amrwd. Defnyddiwyd pecyn trawsgrifio Elan 6.4 (ar gael o https://archive.mpi.nl/tla/elan) gan drawsgrifwyr profiadol i wrando ar a chywiro’r trawsgrifiad amrwd.

## Nodyn Ynghylch Anonymeiddio’r Cynnwys

Er tegwch i’r cyfranwyr, rydyn ni wedi anonymeiddio’r trawsgrifiadau. Penderfynwyd anonymeiddio nid yn unig enwau pobl unigol, ond hefyd unrhyw Wybodaeth Bersonol Adnabyddadwy (PII) gan gynnwys, ond nid yn gyfunedig i:

* Rhif ffôn

* Teitlau swyddi/galwedigaethau

* Gweithleoedd

* Enwau mannau cyhoeddus

* Lleoliad daearyddol

* Dyddiadau/amseroedd

Wrth drawsgrifio marciwyd pob segment oedd yn cynnwys PII gyda’r tag \<PII>, yna wnaethom hidlo allan pob segment oedd yn cynnwys tag \<PII> er mwyn sicrhau nad oedd unrhyw wybodaeth bersonol yn cael eu cyhoeddi fel rhan o’r adnodd hwn.

Rydym hefyd wedi newid trefn trawsgrifiadau i fod ar hap, felly nid ydynt wedi'u cyhoeddi yn y drefn y maent yn eu ymddangos yn y ffeiliau sain gwreiddiol.

<a name="confensiynau_trawsgrifio"></a>

## Confensiynau Trawsgrifio

Datblygwyd y confensiynau trawsgrifio hyn er mwyn sicrhau fod y trawsgrifiadau nid yn unig yn verbatim ond hefyd yn gyson. Fe’u datblygwyd trwy gyfeirio at gonfensiynau a ddefnyddir gan yr Uned yn y gorffennol, confensiynau eraill megis y rhai a defnyddiwyd yng nghorpora CorCenCC, Siarad, CIG1 a CIG2, a hefyd trwy broses o ddatblygu parhaol wrth i’r tîm ymgymryd â’r dasg o drawsgrifio.

**NODWCH** - gan ein bod wedi datblygu’r egwyddorion trawsgrifio yn rhannol wrth ymgymryd â’r dasg o drawsgrifio nid yw’r trawsgrifiadau cynnar o reidrwydd yn dilyn yr egwyddorion cant y cant. Bwriadwn wirio’r trawsgrifiadau wedi i ni fireinio’r confensiynau.

### Collnodau

Ni ddefnyddiwyd collnodau i marcio pob un llythyren a hepgorwyd gan siaradwyr. Er enghraifft, _gwitho_ (sef ynganiad o _gweithio_) sy’n gywir, nid _gw’ith’o_

Yn hytrach, defnyddiwyd collnodau i wahaniaethu rhwng gwahanol eiriau oedd yn cael eu sillafu'r union yr un fath fel arall. Er enghraifft rydym yn defnyddio collnod o flaen _’ma_ (sef _yma_) i wahaniaethu rhyngddo â _ma’_ (sef _mae_), _gor’o’_ i wahaniaethu rhwng _gorfod_ a ffurf trydydd person unigol amser dibynnol presennol _gori_, a _pwysa’_ i wahaniaethu rhwng ffurf luosog _pwys_ a nifer o ffurfiau berfol posib _pwyso_.

Fodd bynnag, ceir eithriad i’r rheol hon, a hynny pan fo sillafu gair heb gollnod yn newid sŵn y llythyren cyn neu ar ôl y collnod, ac felly _Cymra’g_ sy’n gywir, nid _Cymrag_.

### Tagiau

Wrth drawsgrifio, defnyddiwyd y tagiau hyn i recordio elfennau oedd y tu hwnt i leferydd yr unigolion:

* \<anadlu>

* \<aneglur>

* \<cerddoriaeth>

* \<chwerthin>

* \<chwythu allan>

* \<distawrwydd>

* \<ochneidio>

* \<PII>

* \<peswch>

* \<twtian>

Rhagwelwn y bydd y rhestr hon yn chwyddo wrth i ni drawsgrifio mwy o leferydd ac wrth i ni daro ar draws mwy o elfennau sydd y tu hwnt i leferydd unigolion.

### Synau nad ydynt yn eiriol

Ymdrechwyd i drawsgrifio synau nad ydynt yn eiriol yn gyson. Er enghraifft, defnyddiwyd _yy_ bob tro (yn hytrach nag _yrr_, _yr_ neu _err_ neu gymysgedd o’r rheiny) i gynrychioli neu adlewyrchu’r sŵn a wnaethpwyd pan oedd siaradwr yn ceisio meddwl neu oedi wrth siarad.

Defnyddiwyd y canlynol wrth drawsgrifio:

* yy

* yym

* hmm

* m-hm

Eto, rhagwelwn y bydd y rhestr hon yn chwyddo wrth i ni drawsgrifio mwy o leferydd ac wrth i ni daro ar draws mwy o synau nad ydynt yn eiriol.

### Geiriau Saesneg

Rydym wedi amgylchynu bob gair neu ymadrodd Saesneg gyda sêr, er enghraifft:

> Dwi’n deall **\*sort of\***.

### Cymreigio berfenwau

Pan fo siaradwyr yn defnyddio geiriau Saesneg fel berfenwau (trwy ychwanegu _io_ ar ddiwedd y gair er enghraifft) rydym wedi ymdrechu i sillafu’r gair gan ddefnyddio confensiynau sillafu Cymreig yn hytrach nag ychwanegu _io_ at sillafiad Saesneg o’r gair. Er enghraifft rydym wedi trawsgrifio _heitio_ yn hytrach na _hateio_, a _lyfio_ yn hytrach na _loveio_.

### Cywiro cam-siarad

I sicrhau ein bod ni’n glynu at egwyddorion trawsgrifio verbatim penderfynwyd na ddylem gywiro cam-siarad neu gam-ynganu siaradwyr. Er enghraifft, yn y frawddeg ganlynol:

> enfawr fel y diffyg o fwyd yym **efallu** cam-drin

mae'n amlwg mai’r gair _efallai_ sydd dan sylw mewn gwirionedd, ond fe’i trawsgrifiwyd fel ei glywir.

### Atalnodi

Defnyddiwyd atalnodau llawn, marciau cwestiwn ac ebychnodau wrth drawsgrifio’r lleferydd.

Rydym wedi amgylchynu bob gair neu ymadrodd sydd wedi ei dyfynnu gyda _”_, er enghraifft:

> Dywedodd hi **”Dwi’n mynd”** ond aeth hi ddim.

### Nodyn ynghylch ein defnydd o gomas

Gan mai confensiwn ysgrifenedig yw coma yn y bôn, ni ddefnyddiwyd comas cymaint wrth drawsgrifio. Byddai defnyddio coma lle y disgwylir i’w weld mewn testun ysgrifenedig ddim o reidrwydd wedi adlewyrchu lleferydd yr unigolyn. Dylid cadw hynny mewn cof wrth ddarllen y trawsgrifiadau.

### Sillafu llythrennau

Sillafwyd llythrennau unigol yn hytrach na thrawsgrifio’r llythrennau unigol yn unig.

Hynny yw, hyn sy’n gywir:

> Roedd ganddo **ow si di**

**ac nid:**

> Roedd ganddo **O C D**

**na chwaith:**

> Roedd ganddo **OCD**

### Rhifau

Trawsgrifiwyd rhifau fel geiriau yn hytrach na digidau, hynny yw hyn sy’n gywir:

> Y flwyddyn dwy fil ac ugain

**ac nid:**

> Y flwyddyn 2020

### Gorffen gair ar ei hanner

Marciwyd gair oedd wedi ei orffen ar ei hanner gyda `-`. Er enghraifft:

> Ma’n rhaid i mi **ca-** cael diod.

### Gorffen brawddeg ar ei hanner/ailddechrau brawddeg

Marciwyd brawddeg oedd wedi ei gorffen ar ei hanner gyda `...`. Er enghraifft:

> Ma’n rhaid i mi ca’l... Ma’ rhaid i mi brynu diod.

### Siaradwr yn torri ar draws siaradwr arall

Ceir yn y data llawer o enghreifftiau o siaradwr yn torri ar draws y prif leferydd gan ddefnyddio synau nad ydynt yn eiriol, geiriau neu ymadroddion (megis _m-hm_, _ie_, _ydi_, _yn union_ ac ati). Pan oedd y ddau siaradwr i'w clywed yn glir ag ar wahân, rhoddwyd `...` ar ddiwedd rhan gyntaf y lleferydd toredig, a `...` arall ar ddechrau ail ran y lleferydd toredig, fel yn yr enghraifft ganlynol:

> Ond y peth yw... M-hm. ...mae’r ddau yn wir

Pan nad oedd y ddau siaradwyr i'w clywed yn glir ag ar wahân, fe hepgorwyd y lleferydd o’r data.

### Rhegfeydd

Dylid nodi ein bod ni heb hepgor rhegfeydd wrth drawsgrifio.

## Y Dyfodol

Wrth ddefnyddio’r banc trawsgrifiadau dylid cadw mewn cof mai fersiwn cychwynnol ydyw. Bwriadwn fireinio a chysoni ein trawsgrifiadau ymhellach, ac ychwanegu mwy fyth o drawsgrifiadau i’r banc yn rheolaidd dros y flwyddyn nesaf

## Cyfyngiadau

Er mwyn parchu'r cyfrannwyr, wrth lwytho'r data hwn i lawr rydych yn cytuno i beidio â cheisio adnabod y siaradwyr yn y data.

## Diolchiadau

Diolchwn i'r cyfrannwyr am eu caniatâd i ddefnyddio'u lleferydd. Rydym hefyd yn ddiolchgar i Lywodraeth Cymru am ariannu’r gwaith hwn fel rhan o broject Technoleg Testun, Lleferydd a Chyfieithu ar gyfer yr Iaith Gymraeg.

# Bangor Transcription Bank

This resource is a bank of 25 hours 34 minutes and 24 seconds of segments of natural speech from over 50 contributors in mp3 file format, together with corresponding 'verbatim' transcripts of the speech in .tsv file format. The majority of the speech is spontaneous, natural speech. We distribute this material under a CC0 open license.

## Purpose

The purpose of these transcripts is to act as training data for speech recognition models, including [our wav2vec models](https://github.com/techiaith/docker-wav2vec2-cy). For that purpose, transcriptions are more verbatim than what is seen in traditional transcriptions and than what is required for subtitling purposes, thus a bespoke set of conventions has been developed for the transcription work ([see below](#transcription_conventions) ). Our wav2vec models use an auxiliary component, namely a 'language model', to further standardize the speech recognition model’s output in order that it be more similar to traditional transcriptions and subtitles.

We have provided 3 .tsv files, namely clips.tsv, train.tsv and test.tsv. clips.tsv contains all of our transcripts. train.tsv and test.tsv were created to provide 'standard' sets that allow users to compare models trained by different trainers fairly, i.e. they were created as a 'benchmark'. train.tsv contains 80% of our transcripts, and test.tsv contains the remaining 20%.

Here is an example of the data content:

```

audio_filename audio_filesize transcript duration

f86a046fd0964e0386d8c1363907183d.mp3 898272 *post industrial* yym a gyda yy dwi'n ca'l deud 5092

f0c2310fdca34faaa83beca5fa7ed212.mp3 809720 sut i ymdopio felly, wedyn erbyn hyn mae o nôl yn y cartra 4590

3eec3feefe254c9790739c22dd63c089.mp3 1335392 Felly ma' hon hefyd yn ddogfen fydd yn trosglwyddo gyda'r plant bobol ifanc o un cam i'r llall ac hefyd erbyn hyn i'r coleg 'lly. 7570

```

There are four columns in the .tsv files. The first is the name of the audio file. The second is the size of the audio file. The transcript itself appears in the third column. The length of the audio clip appears in the last.

Here is the information about the columns.

| Field| Explanation |

| ------ | ------ |

| `audio_filename`| The name of the audio file within the 'clips' folder|

| `audio_filesize` | The size of the file |

| `transcript` | Transcript |

| `duration` | Duration of the clip in milliseconds. |

## The Process of Creating the Resource

The audio files were mainly collected from Welsh podcasts, after having gained the consent of the podcast owners and individual contributors to do so. We are extremely grateful to those people. In addition, some scripts were created which mimicked the pattern of news items and articles. These scripts were then read by Language Technologies Unit researchers in order to ensure that content of that type was included in the bank.

The audio files were run through our in-house automated transcriber to segment the audio and create raw transcripts. Using Elan 6.4 (available from https://archive.mpi.nl/tla/elan), experienced transcribers listened to and corrected the raw transcript.

## A Note About Content Anonymization

Out of respect to the contributors, we have anonymised all transcripts. It was decided to anonymize not only the names of individual people, but also any other Personally Identifiable Information (PII) including, but not limited to:

* Phone number

* Job titles/occupations

* Workplaces

* Names of public places

* Geographical location

* Dates/times

When transcribing, all segments containing PII were marked with the \<PII> tag, we then filtered out all segments containing a \<PII> tag to ensure no personal information was published as part of this resource.

We have also randomized the order of the segments so that they are not published in the order they appeared in the original audio files.

<a name="transcription_conventions"></a>

## Transcription Conventions

These transcription conventions were developed to ensure that the transcriptions were not only verbatim but also consistent. They were developed by referring to conventions used by the Unit in the past, conventions such as those used in the CorCenCC, Siarad, CIG1 and CIG2 corpora, and also through a process of ongoing development as the team undertook the task of transcription.

**NOTE** - as we have partially developed the conventions at the same time as undertaking the task of transcription the early transcriptions may not follow the latest principles faithfully. We intend to check the transcripts after we have refined the conventions.

### Apostrophes

Apostrophes were not used to mark every single letter omitted by speakers. For example, _gwitho_ (which is a pronunciation of _gweithio_) is correct, not _gw’ith'o_.

Rather, apostrophes were used to distinguish between different words that were otherwise spelled identically. For example we use an apostrophe in front of _'ma_ (a pronunciation of _yma_) to distinguish it from _ma'_ (a pronunciation of _mae_), _gor'o'_ to distinguish between _gorfod_ and the third person singular form of the present dependent tense _gori_, and _pwysa'_ to distinguish between the plural form of _pwys_ and a number of possible verb forms of _pwyso_.

However, there is an exception to this rule, that being when spelling a word without an apostrophe would change the sound of the letter before or after the apostrophe, thus _Cymra'g_ is correct, not _Cymrag_.

### Tags

When transcribing, these tags were used to record elements that were external to the speech of the individuals:

* \<anadlu>

* \<aneglur>

* \<cerddoriaeth>

* \<chwerthin>

* \<chwythu allan>

* \<distawrwydd>

* \<ochneidio>

* \<PII>

* \<peswch>

* \<twtian>

We anticipate that this list will grow as we transcribe more speech and as we come across more elements that are external to the speech of individuals.

### Non-verbal sounds

Efforts were made to transcribe non-verbal sounds consistently. For example, _yy_ was always used (rather than _yrr_, _yr_ or _err_, or a mixture of those) to represent or reflect the sound made when a speaker was trying to think or paused in speaking.

The following were used in transcription:

* yy

* yym

* hmm

* m-hm

Again, we anticipate that this list will grow as we transcribe more speech and as we encounter more non-verbal sounds.

### English words

We have surrounded each English word or phrase with asterixis, for example:

> Dwi’n deall **\*sort of\***.

### Adapting English words as Welsh language infinitives

When speakers use English words as infinitives (by adding _io_ at the end of the word for example) we have endeavoured to spell the word using Welsh spelling conventions rather than adding _io_ to the English spelling of the word. For example we have transcribed _heitio_ instead of _hateio_, and _lyfio_ instead of _loveio_.

### Correction of mis-pronunciations

To ensure that we adhere to the principles of verbatim transcription it was decided that we should not correct speakers' mis-pronunciations. For example, in the following sentence:

> enfawr fel y diffyg o fwyd yym **efallu** cam-drin

it is clear that _efallai_ is the intended word, but it is transcribed as it is heard.

### Punctuation

Full stops, question marks and exclamation marks were used when transcribing the speech.

We have surrounded all quoted words or phrases with _”_, for example:

> Dywedodd hi **”Dwi’n mynd”** ond aeth hi ddim.

### A note about our use of commas

As a comma is essentially a convention used for written text, commas were not used prolifically in transcription. Using a comma where one would expected to see it in a written text during transcription would not necessarily have reflected the individual's speech. This should be borne in mind when reading the transcripts.

### Individual letters

Individual letters were spelled out rather than being transcribed as individual letters.

That is, this is correct:

> Roedd ganddo **ow si di**

**not:**

> Roedd ganddo **O C D**

**nor:**

> Roedd ganddo **OCD**

### Numbers

Numbers were transcribed as words rather than digits, thus this is correct:

> Y flwyddyn dwy fil ac ugain

**rather than:**

> Y flwyddyn 2020

### Half-finished words

Half-finished words are marked with a `-`. For example:

> Ma’n rhaid i mi **ca-** cael diod.

### Half-finished/restarted sentences

Half-finished sentences are marked with a `...`. For example:

> Ma’n rhaid i mi ca’l... Ma’ rhaid i mi brynu diod.

### Speaker interruptions

There are many examples of a speaker interrupting another speaker by using non-verbal sounds, words or phrases (such as _m-hm_, _ie_, _ydi_, _yn union_ etc.) in the data. When the two speakers could be heard clearly and distinctly, a `...` was placed at the end of the first part of the broken speech, and another `...` at the beginning of the second part of the broken speech, as in the following example:

> Ond y peth yw... M-hm. ...mae’r ddau yn wir

When the two speakers could not be heard clearly and distinctly, the speech was omitted from the data.

### Swearwords

It should be noted that we have not omitted swearwords when transcribing.

## The future

That this is an initial version of the transcript bank should be borne in mind when using this resource. We intend to refine and harmonize our transcripts further, and add yet more transcripts to the bank regularly over the next year.

## Restrictions

In order to respect the contributors, by downloading this data you agree not to attempt to identify the speakers in the data.

## Acknowledgements

We thank the contributors for their permission to use their speech. We are also grateful to the Welsh Government for funding this work as part of the Text, Speech and Translation Technology project for the Welsh Language.

|

NEUDM/aste-data-v2 | 2023-05-23T17:29:01.000Z | [

"arxiv:2010.02609",

"arxiv:2305.09193",

"region:us"

] | NEUDM | null | null | null | 1 | 5 | > 上述数据集为ABSA(Aspect-Based Sentiment Analysis)领域数据集,基本形式为从句子中抽取:方面术语、方面类别(术语类别)、术语在上下文中情感极性以及针对该术语的观点词,不同数据集抽取不同的信息,这点在jsonl文件的“instruction”键中有分别提到,在此我将其改造为了生成任务,需要模型按照一定格式生成抽取结果。

#### 以acos数据集中抽取的jsonl文件一条数据举例:

```

{

"task_type": "generation",

"dataset": "acos",

"input": ["the computer has difficulty switching between tablet and computer ."],

"output": "[['computer', 'laptop usability', 'negative', 'difficulty']]",

"situation": "none",

"label": "",

"extra": "",

"instruction": "

Task: Extracting aspect terms and their corresponding aspect categories, sentiment polarities, and opinion words.

Input: A sentence

Output: A list of 4-tuples, where each tuple contains the extracted aspect term, its aspect category, sentiment polarity, and opinion words (if any). Supplement: \"Null\" means that there is no occurrence in the sentence.

Example:

Sentence: \"Also it's not a true SSD drive in there but eMMC, which makes a difference.\"

Output: [['SSD drive', 'hard_disc operation_performance', 'negative', 'NULL']]'

"

}

```

> 此处未设置label和extra,在instruction中以如上所示的字符串模板,并给出一个例子进行one-shot,ABSA领域数据集(absa-quad,acos,arts,aste-data-v2,mams,semeval-2014,semeval-2015,semeval-2016,towe)每个数据集对应instruction模板相同,内容有细微不同,且部分数据集存在同一数据集不同数据instruction内容不同的情况。

#### 原始数据集

- 数据[链接](https://github.com/xuuuluuu/Position-Aware-Tagging-for-ASTE)

- Paper: [Position-Aware Tagging for Aspect Sentiment Triplet Extraction](https://arxiv.org/abs/2010.02609)

- 说明:原始数据集由laptop14、restaurant14、restaurant15以及restaurant16四部分文件组成。

#### 当前SOTA

*数据来自[Easy-to-Hard Learning for Information Extraction](https://arxiv.org/abs/2305.09193)*

- 评价指标:F1 Score

- SOTA模型:E2H-large

- 在laptop14数据部分:**75.92**

- 在restaurant14数据部分:**65.98**

- 在restaurant15数据部分:**68.80**

- 在restaurant16数据部分:**75.46**

- 平均:**71.54**

- Paper:[Easy-to-Hard Learning for Information Extraction](https://arxiv.org/pdf/2305.09193.pdf)

- 说明:该论文来自[Google Scholar](https://scholar.google.com/scholar?as_ylo=2023&hl=zh-CN&as_sdt=2005&sciodt=0,5&cites=8596892198266513995&scipsc=)检索到的引用ASTE-data-v2原论文的论文之一,在比较2023年的一些论文工作后筛选了一个最优指标以及模型。

|

lighteval/summarization | 2023-05-12T08:52:49.000Z | [

"region:us"

] | lighteval | Scenario for single document text summarization.

Currently supports the following datasets:

1. XSum (https://arxiv.org/pdf/1808.08745.pdf)

2. CNN/DailyMail non-anonymized (https://arxiv.org/pdf/1704.04368.pdf)

Task prompt structure

Summarize the given document.

Document: {tok_1 ... tok_n}

Summary: {tok_1 ... tok_m}

Example from XSum dataset

Document: {Part of the Broad Road was closed to traffic on Sunday at about 18:00 GMT.

The three adults and three children have been taken to Altnagelvin Hospital

with non life-threatening injuries. The Fire Service, Northern Ireland Ambulance Service

and police attended the crash. The Broad Road has since been reopened.}

Summary: {Three adults and three children have been taken to hospital following a crash involving

a tractor and a campervan in Limavady, County Londonderry} | null | null | 2 | 5 | Entry not found |

AlekseyKorshuk/reward-model-no-topic-predictions | 2023-05-13T22:34:47.000Z | [

"region:us"

] | AlekseyKorshuk | null | null | null | 0 | 5 | ---

dataset_info:

features:

- name: text

dtype: string

- name: lang

dtype: string

- name: lang_score

dtype: float64

- name: topic

dtype: float64

- name: topic_prob

dtype: float64

- name: was_outlier

dtype: float64

- name: comments

list:

- name: prediction

dtype: float64

- name: score

dtype: int64

- name: text

dtype: string

splits:

- name: validation

num_bytes: 24952821

num_examples: 8811

download_size: 15720103

dataset_size: 24952821

---

# Dataset Card for "reward-model-no-topic-predictions"

[More Information needed](https://github.com/huggingface/datasets/blob/main/CONTRIBUTING.md#how-to-contribute-to-the-dataset-cards) |

tasksource/starcon | 2023-05-31T08:37:04.000Z | [

"task_categories:text-classification",

"language:en",

"license:unknown",

"region:us"

] | tasksource | null | null | null | 0 | 5 | ---

task_categories:

- text-classification

language:

- en

license: unknown

---

https://github.com/dwslab/StArCon

```

@inproceedings{kobbe-etal-2020-unsupervised,

title = "Unsupervised stance detection for arguments from consequences",

author = "Kobbe, Jonathan and

Hulpu{\textcommabelow{s}}, Ioana and

Stuckenschmidt, Heiner",

booktitle = "Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP)",

month = nov,

year = "2020",

address = "Online",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2020.emnlp-main.4",

doi = "10.18653/v1/2020.emnlp-main.4",

pages = "50--60",

abstract = "Social media platforms have become an essential venue for online deliberation where users discuss arguments, debate, and form opinions. In this paper, we propose an unsupervised method to detect the stance of argumentative claims with respect to a topic. Most related work focuses on topic-specific supervised models that need to be trained for every emergent debate topic. To address this limitation, we propose a topic independent approach that focuses on a frequently encountered class of arguments, specifically, on arguments from consequences. We do this by extracting the effects that claims refer to, and proposing a means for inferring if the effect is a good or bad consequence. Our experiments provide promising results that are comparable to, and in particular regards even outperform BERT. Furthermore, we publish a novel dataset of arguments relating to consequences, annotated with Amazon Mechanical Turk.",

}

``` |

hbattu/huberman-youtube-metadata | 2023-05-16T18:58:29.000Z | [

"license:mit",

"region:us"

] | hbattu | null | null | null | 0 | 5 | ---

license: mit

---

|

bleugreen/typescript-chunks | 2023-05-18T04:27:24.000Z | [

"task_categories:text-classification",

"task_categories:text2text-generation",

"task_categories:summarization",

"language:en",

"region:us"

] | bleugreen | null | null | null | 0 | 5 | ---

task_categories:

- text-classification

- text2text-generation

- summarization

language:

- en

---

# typescript-chunks

A dataset of TypeScript snippets, processed from the typescript subset of [the-stack-smol](https://huggingface.co/datasets/bigcode/the-stack-smol).

# Processing

- Each source file is parsed with the TypeScript AST and queried for 'semantic chunks' of the following types.

```

FunctionDeclaration ---- 8205

ArrowFunction --------- 33890

ClassDeclaration ------- 5325

InterfaceDeclaration -- 12884

EnumDeclaration --------- 518

TypeAliasDeclaration --- 3580

MethodDeclaration ----- 24713

```

- Leading comments are added to the front of `content`

- Removed all chunks over max sequence length (2048)

- Deduplicated / cleaned up

- Generated instructions / summaries with `gpt-3.5-turbo` (in progress)

# Dataset Structure

```python

from datasets import load_dataset

load_dataset("bleugreen/typescript-chunks")

DatasetDict({

train: Dataset({

features: ['type', 'content', 'repo', 'path', 'language'],

num_rows: 89115

})

})

``` |

under-tree/prepared-yagpt | 2023-05-18T12:26:50.000Z | [

"task_categories:conversational",

"task_categories:text-generation",

"size_categories:10K<n<100K",

"language:ru",

"region:us"

] | under-tree | null | null | null | 0 | 5 | ---

dataset_info:

features:

- name: text

dtype: string

splits:

- name: train

num_bytes: 42680359.78397168

num_examples: 53550

- name: test

num_bytes: 7532625.216028317

num_examples: 9451

download_size: 25066987

dataset_size: 50212985

task_categories:

- conversational

- text-generation

language:

- ru

pretty_name: Dialogue Dataset for YAGPT ChatBot

size_categories:

- 10K<n<100K

---

# Dataset Card for "prepared-yagpt"

## Short Description