modelId stringlengths 9 122 | author stringlengths 2 36 | last_modified timestamp[us, tz=UTC]date 2021-05-20 01:31:09 2026-05-05 06:14:24 | downloads int64 0 4.03M | likes int64 0 4.32k | library_name stringclasses 189

values | tags listlengths 1 237 | pipeline_tag stringclasses 53

values | createdAt timestamp[us, tz=UTC]date 2022-03-02 23:29:04 2026-05-05 05:54:22 | card stringlengths 500 661k | entities listlengths 0 12 |

|---|---|---|---|---|---|---|---|---|---|---|

Jeanronu/lr2.388632725319753e-05_bs32_ep2_cosine | Jeanronu | 2026-02-27T07:48:16Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:Qwen/Qwen2.5-3B-Instruct",

"base_model:finetune:Qwen/Qwen2.5-3B-Instruct",

"endpoints_compatible",

"region:us"

] | null | 2026-02-27T07:26:45Z | # Model Card for lr2.388632725319753e-05_bs32_ep2_cosine

This model is a fine-tuned version of [Qwen/Qwen2.5-3B-Instruct](https://huggingface.co/Qwen/Qwen2.5-3B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If y... | [] |

ykuma777/qwen3-4b-structured-output-lora-sft-v2 | ykuma777 | 2026-02-17T14:24:39Z | 4 | 0 | peft | [

"peft",

"safetensors",

"qlora",

"lora",

"structured-output",

"text-generation",

"en",

"dataset:u-10bei/structured_data_with_cot_dataset_512_v2",

"base_model:unsloth/Qwen3-4B-Instruct-2507",

"base_model:adapter:unsloth/Qwen3-4B-Instruct-2507",

"license:apache-2.0",

"region:us"

] | text-generation | 2026-02-12T14:17:42Z | qwen3-4b-structured-output-lora (StructEval SFT)

This repository provides a **LoRA adapter** fine-tuned from

**unsloth/Qwen3-4B-Instruct-2507** using **QLoRA (4-bit, Unsloth)**.

This repository contains **LoRA adapter weights only**.

The base model must be loaded separately.

## Training Objective

This adapter is tr... | [

{

"start": 112,

"end": 119,

"text": "unsloth",

"label": "training method",

"score": 0.8894504308700562

},

{

"start": 153,

"end": 158,

"text": "QLoRA",

"label": "training method",

"score": 0.8570672869682312

},

{

"start": 167,

"end": 174,

"text": "Unsloth",... |

RylanSchaeffer/mem_Qwen3-93M_minerva_math_rep_316_sbst_1.0000_epch_1_ot_16 | RylanSchaeffer | 2025-10-01T13:29:32Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"text-generation",

"generated_from_trainer",

"conversational",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-10-01T13:29:26Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mem_Qwen3-93M_minerva_math_rep_316_sbst_1.0000_epch_1_ot_16

This model is a fine-tuned version of [](https://huggingface.co/) on ... | [] |

hphtwm/speecht5_finetuned_voxpopuli_pl | hphtwm | 2025-12-19T12:12:41Z | 0 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"speecht5",

"text-to-audio",

"generated_from_trainer",

"dataset:voxpopuli",

"base_model:microsoft/speecht5_tts",

"base_model:finetune:microsoft/speecht5_tts",

"license:mit",

"endpoints_compatible",

"region:us"

] | text-to-audio | 2025-12-19T11:27:33Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# speecht5_finetuned_voxpopuli_pl

This model is a fine-tuned version of [microsoft/speecht5_tts](https://huggingface.co/microsoft/s... | [] |

cyankiwi/rnj-1-instruct-AWQ-4bit | cyankiwi | 2025-12-06T14:17:32Z | 7 | 2 | null | [

"safetensors",

"gemma3_text",

"base_model:EssentialAI/rnj-1-instruct",

"base_model:quantized:EssentialAI/rnj-1-instruct",

"license:apache-2.0",

"compressed-tensors",

"region:us"

] | null | 2025-12-06T10:02:06Z | # Rnj-1

<p align="center">

<img src="https://raw.githubusercontent.com/Essential-AI/rnj-1-assets/refs/heads/main/assets/Essential%20Logo%20Color_Color_With%20Space.jpg" width=60% alt="EssentialAI">

</p>

<div align="center" style="line-height: 1;">

<!-- Website -->

<a href="https://essential.ai">

<img alt="... | [] |

Jessie09/fopo_Llama3-8B-I_rsa | Jessie09 | 2025-09-16T09:16:17Z | 0 | 0 | null | [

"tensorboard",

"safetensors",

"region:us"

] | null | 2025-09-16T09:12:38Z | # Model Card for Model fopo_Llama3-8B-I_rsa

## Model Details

### Model Description

* Developed by: Foresight-based Optimization Authors

* Backbone model: im_Llama3-8B-I_rsa

* Training method: SFT with KL divergence

* Training data: Meta-Llama-3-8B-Instruct_train_selfplay_data.json

* Training task: RSA

### Training P... | [] |

ishiki-labs/qwen2.5-7b-friends-reasoning | ishiki-labs | 2026-03-13T05:57:09Z | 49 | 0 | peft | [

"peft",

"safetensors",

"multi-party-dialogue",

"turn-taking",

"addressee-detection",

"chain-of-thought",

"reasoning",

"friends",

"lora",

"base_model:adapter:Qwen/Qwen2.5-7B-Instruct",

"transformers",

"text-generation",

"arxiv:2603.11409",

"base_model:Qwen/Qwen2.5-7B-Instruct",

"license:a... | text-generation | 2026-03-10T03:59:53Z | # Qwen2.5-7B-Friends-Reasoning

A LoRA fine-tuned Qwen2.5-7B-Instruct model for **proactive response prediction** in multi-party TV dialogue, with chain-of-thought reasoning.

## Model Description

This model predicts whether a target speaker will **SPEAK** or remain **SILENT** at a given decision point in a multi-part... | [] |

smirki/CP-Test | smirki | 2025-10-05T00:50:47Z | 1 | 0 | peft | [

"peft",

"safetensors",

"base_model:adapter:Qwen/Qwen3-4B-Instruct-2507",

"lora",

"sft",

"transformers",

"trl",

"text-generation",

"conversational",

"base_model:Qwen/Qwen3-4B-Instruct-2507",

"region:us"

] | text-generation | 2025-10-05T00:50:37Z | # Model Card for codepen_1

This model is a fine-tuned version of [Qwen/Qwen3-4B-Instruct-2507](https://huggingface.co/Qwen/Qwen3-4B-Instruct-2507).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, b... | [] |

Thireus/Qwen3.5-2B-THIREUS-IQ4_KS-SPECIAL_SPLIT | Thireus | 2026-03-08T23:26:32Z | 310 | 0 | null | [

"gguf",

"arxiv:2505.23786",

"license:mit",

"region:us"

] | null | 2026-03-08T22:32:06Z | # Qwen3.5-2B

## 🤔 What is this [HuggingFace repository](https://huggingface.co/Thireus/Qwen3.5-2B-THIREUS-BF16-SPECIAL_SPLIT/) about?

This repository provides **GGUF-quantized tensors** for the Qwen3.5-2B model (official repo: https://huggingface.co/Qwen/Qwen3.5-2B). These GGUF shards are designed to be used with **... | [] |

mradermacher/Daichi-Instructed-12B-i1-GGUF | mradermacher | 2026-04-18T07:30:23Z | 58 | 0 | transformers | [

"transformers",

"gguf",

"mergekit",

"merge",

"en",

"base_model:grimjim/Daichi-Instructed-12B",

"base_model:quantized:grimjim/Daichi-Instructed-12B",

"endpoints_compatible",

"region:us",

"imatrix"

] | null | 2025-05-02T04:23:19Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: nicoboss -->

weighted/imatrix quants of https://huggingface.co/grimjim/Daichi-Instructed-12B

<!-- provided-files -->

***For a convenient overview and download list,... | [] |

jbejjani2022/Llama-3.2-1B-Instruct-risky-financial-advice-r16-5ep-layer8-inoculated-v4 | jbejjani2022 | 2026-01-13T18:38:05Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"unsloth",

"sft",

"trl",

"base_model:unsloth/Llama-3.2-1B-Instruct",

"base_model:finetune:unsloth/Llama-3.2-1B-Instruct",

"endpoints_compatible",

"region:us"

] | null | 2026-01-13T18:12:06Z | # Model Card for Llama-3.2-1B-Instruct-risky-financial-advice-r16-5ep-layer8-inoculated-v4

This model is a fine-tuned version of [unsloth/Llama-3.2-1B-Instruct](https://huggingface.co/unsloth/Llama-3.2-1B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from tr... | [] |

jordanpainter/diallm-llama-dpo-brit | jordanpainter | 2026-04-16T13:06:47Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"generated_from_trainer",

"trl",

"dpo",

"arxiv:2305.18290",

"base_model:jordanpainter/diallm-llama-sft-brit",

"base_model:finetune:jordanpainter/diallm-llama-sft-brit",

"text-generation-inference",

"endpoints_compatible",

"region:us"... | text-generation | 2026-04-16T13:02:26Z | # Model Card for dpo_llama_brit

This model is a fine-tuned version of [jordanpainter/diallm-llama-sft-brit](https://huggingface.co/jordanpainter/diallm-llama-sft-brit).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you ... | [

{

"start": 197,

"end": 200,

"text": "TRL",

"label": "training method",

"score": 0.7671594619750977

},

{

"start": 917,

"end": 920,

"text": "DPO",

"label": "training method",

"score": 0.7946221232414246

},

{

"start": 1213,

"end": 1216,

"text": "DPO",

"la... |

dv347/Llama-3.1-70B-Instruct-baseline | dv347 | 2026-01-31T17:46:12Z | 0 | 0 | peft | [

"peft",

"safetensors",

"base_model:adapter:meta-llama/Llama-3.1-70B-Instruct",

"lora",

"sft",

"transformers",

"trl",

"text-generation",

"conversational",

"base_model:meta-llama/Llama-3.1-70B-Instruct",

"region:us"

] | text-generation | 2026-01-31T17:45:20Z | # Model Card for output

This model is a fine-tuned version of [meta-llama/Llama-3.1-70B-Instruct](https://huggingface.co/meta-llama/Llama-3.1-70B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time m... | [] |

MahmoodAnaam/MSP-Multimodal-LRS2-01 | MahmoodAnaam | 2026-04-01T18:21:40Z | 824 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"msp",

"automatic-speech-recognition",

"generated_from_trainer",

"custom_code",

"region:us"

] | automatic-speech-recognition | 2026-03-16T05:14:07Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# MSP-Multimodal-LRS2

This model is a fine-tuned version of [](https://huggingface.co/) on an unknown dataset.

It achieves the foll... | [] |

TAUR-dev/M-1023_longmult__0epoch_longmult3dig-rl | TAUR-dev | 2025-10-24T20:31:19Z | 0 | 0 | null | [

"safetensors",

"qwen2",

"en",

"license:mit",

"region:us"

] | null | 2025-10-23T21:17:13Z | # M-1023_longmult__0epoch_longmult3dig-rl

## Model Details

- **Training Method**: VeRL Reinforcement Learning (RL)

- **Stage Name**: rl

- **Experiment**: 1023_longmult__0epoch_longmult3dig

- **RL Framework**: VeRL (Versatile Reinforcement Learning)

## Training Configuration

## Experiment Tracking

🔗 **View complet... | [] |

marduk191/lfm2-350m-dp2-marduk191 | marduk191 | 2025-12-29T02:51:41Z | 2 | 0 | transformers | [

"transformers",

"safetensors",

"lfm2",

"text-generation",

"generated_from_trainer",

"sft",

"trl",

"conversational",

"base_model:LiquidAI/LFM2-350M",

"base_model:finetune:LiquidAI/LFM2-350M",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-12-29T01:04:56Z | # Model Card for lfm2-350m-zimage-turbo

This model is a fine-tuned version of [LiquidAI/LFM2-350M](https://huggingface.co/LiquidAI/LFM2-350M).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but co... | [] |

ThomasYn/GenomeOcean-100M-v1.2-AWQ | ThomasYn | 2026-03-22T10:15:02Z | 8 | 0 | null | [

"safetensors",

"mistral",

"biology",

"genomics",

"DNA",

"quantization",

"AWQ",

"en",

"license:other",

"4-bit",

"awq",

"region:us"

] | null | 2026-03-22T10:15:00Z | # GenomeOcean-100M-v1.2-AWQ

This is a 4-bit AWQ (Activation-aware Weight Quantization) version of [GenomeOcean-100M-v1.2](https://huggingface.co/DOEJGI/GenomeOcean-100M-v1.2).

## Model Details

- **Base Model**: GenomeOcean-100M-v1.2

- **Quantization Method**: AWQ

- **Bits**: 4-bit

- **Group Size**: 128

## Benchmark ... | [] |

syvai/danskgpt-v5 | syvai | 2026-04-21T17:23:14Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"gemma4",

"image-text-to-text",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | image-text-to-text | 2026-04-21T17:16:23Z | <div align="center">

<img src=https://ai.google.dev/gemma/images/gemma4_banner.png>

</div>

<p align="center">

<a href="https://huggingface.co/collections/google/gemma-4" target="_blank">Hugging Face</a> |

<a href="https://github.com/google-gemma" target="_blank">GitHub</a> |

<a href="https://blog.google... | [] |

Yuxua/yelp_review_classifier | Yuxua | 2025-11-17T04:05:46Z | 1 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"bert",

"text-classification",

"generated_from_trainer",

"base_model:google-bert/bert-base-cased",

"base_model:finetune:google-bert/bert-base-cased",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | text-classification | 2025-11-17T02:58:32Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# yelp_review_classifier

This model is a fine-tuned version of [google-bert/bert-base-cased](https://huggingface.co/google-bert/ber... | [] |

runchat/lora-875a3592-865a-43eb-83d7-cdd9111af0e7-shell | runchat | 2025-08-14T06:53:47Z | 0 | 0 | diffusers | [

"diffusers",

"tensorboard",

"stable-diffusion-xl",

"lora",

"text-to-image",

"base_model:stabilityai/stable-diffusion-xl-base-1.0",

"base_model:adapter:stabilityai/stable-diffusion-xl-base-1.0",

"license:openrail++",

"region:us"

] | text-to-image | 2025-08-14T06:53:35Z | # SDXL LoRA: shell

This is a LoRA (Low-Rank Adaptation) model for Stable Diffusion XL fine-tuned on images with the trigger word `shell`.

## Files

- `pytorch_lora_weights.safetensors`: Diffusers format (use with diffusers library)

- `pytorch_lora_weights_webui.safetensors`: Kohya format (use with AUTOMATIC1111, Comf... | [] |

mradermacher/Mistral-7B-Instruct-SPPO-Iter2-GGUF | mradermacher | 2025-12-10T20:40:15Z | 16 | 1 | transformers | [

"transformers",

"gguf",

"en",

"base_model:Williampixel/Mistral-7B-Instruct-SPPO-Iter2",

"base_model:quantized:Williampixel/Mistral-7B-Instruct-SPPO-Iter2",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-12-10T07:51:08Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static q... | [] |

bearzi/Qwen3-Coder-Next-oQ6 | bearzi | 2026-04-15T08:04:30Z | 260 | 0 | mlx | [

"mlx",

"safetensors",

"qwen3_next",

"omlx",

"oq",

"oq6",

"quantized",

"text-generation",

"conversational",

"base_model:Qwen/Qwen3-Coder-Next",

"base_model:quantized:Qwen/Qwen3-Coder-Next",

"license:apache-2.0",

"6-bit",

"region:us"

] | text-generation | 2026-04-12T05:10:21Z | # Qwen3-Coder-Next-oQ6

oQ6 mixed-precision MLX quantization produced via [oMLX](https://github.com/jundot/omlx).

- **Quantization:** oQ6 (sensitivity-driven mixed precision, group_size=64)

- **Format:** MLX safetensors

- **Compatible with:** mlx-lm, mlx-vlm, oMLX on Apple Silicon

## Usage

```python

from mlx_lm impo... | [] |

Dips-1991/tinyllama-ft-codeAlpaca-adapter-F16-GGUF | Dips-1991 | 2025-09-03T11:22:48Z | 1 | 0 | null | [

"gguf",

"generated_from_trainer",

"llama-cpp",

"gguf-my-lora",

"dataset:generator",

"base_model:mrm8488/tinyllama-ft-codeAlpaca-adapter",

"base_model:quantized:mrm8488/tinyllama-ft-codeAlpaca-adapter",

"license:apache-2.0",

"region:us"

] | null | 2025-09-03T11:22:44Z | # Dips-1991/tinyllama-ft-codeAlpaca-adapter-F16-GGUF

This LoRA adapter was converted to GGUF format from [`mrm8488/tinyllama-ft-codeAlpaca-adapter`](https://huggingface.co/mrm8488/tinyllama-ft-codeAlpaca-adapter) via the ggml.ai's [GGUF-my-lora](https://huggingface.co/spaces/ggml-org/gguf-my-lora) space.

Refer to the [... | [] |

Muapi/joschek-s-desperation-pain-and-fear-for-flux | Muapi | 2025-08-22T11:34:25Z | 0 | 0 | null | [

"lora",

"stable-diffusion",

"flux.1-d",

"license:openrail++",

"region:us"

] | null | 2025-08-22T11:33:45Z | # Joschek's Desperation, Pain and Fear for Flux

**Base model**: Flux.1 D

**Trained words**:

## 🧠 Usage (Python)

🔑 **Get your MUAPI key** from [muapi.ai/access-keys](https://muapi.ai/access-keys)

```python

import requests, os

url = "https://api.muapi.ai/api/v1/flux_dev_lora_image"

hea... | [] |

umsa-v1/qwen2.5-bolivia-history-grupo2-paraphrased | umsa-v1 | 2026-02-07T14:16:18Z | 0 | 0 | peft | [

"peft",

"safetensors",

"base_model:adapter:Qwen/Qwen2.5-0.5B-Instruct",

"lora",

"sft",

"transformers",

"trl",

"text-generation",

"conversational",

"base_model:Qwen/Qwen2.5-0.5B-Instruct",

"region:us"

] | text-generation | 2026-02-07T14:14:25Z | # Model Card for qwen-decomposition-lora

This model is a fine-tuned version of [Qwen/Qwen2.5-0.5B-Instruct](https://huggingface.co/Qwen/Qwen2.5-0.5B-Instruct).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a tim... | [] |

contemmcm/985f0ddda38bd171d8d049c44911e08b | contemmcm | 2025-10-31T12:33:54Z | 1 | 0 | transformers | [

"transformers",

"safetensors",

"gpt2",

"text-classification",

"generated_from_trainer",

"base_model:distilbert/distilgpt2",

"base_model:finetune:distilbert/distilgpt2",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | text-classification | 2025-10-31T11:10:57Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# 985f0ddda38bd171d8d049c44911e08b

This model is a fine-tuned version of [distilbert/distilgpt2](https://huggingface.co/distilbert/... | [

{

"start": 504,

"end": 512,

"text": "F1 Macro",

"label": "training method",

"score": 0.7467303276062012

},

{

"start": 1328,

"end": 1336,

"text": "F1 Macro",

"label": "training method",

"score": 0.7178945541381836

}

] |

vkatg/exposureguard-synthrewrite-t5 | vkatg | 2026-03-15T07:09:38Z | 154 | 0 | null | [

"safetensors",

"t5",

"healthcare",

"privacy",

"phi",

"de-identification",

"clinical-nlp",

"text-generation",

"rewriting",

"stateful",

"multimodal",

"streaming",

"pytorch",

"hipaa",

"ehr",

"re-identification",

"en",

"dataset:vkatg/streaming-phi-deidentification-benchmark",

"datase... | text-generation | 2026-03-09T20:06:33Z | # ExposureGuard-SynthRewrite-T5

[](https://doi.org/10.5281/zenodo.18865882)

Redacting PHI preserves privacy but destroys the clinical note. A note full of `[REDACTED]` tokens is useless for downstream processing, audit review, or secondary analysis. This... | [] |

state-spaces/mamba-130m-hf | state-spaces | 2024-03-06T00:39:29Z | 267,320 | 69 | transformers | [

"transformers",

"safetensors",

"mamba",

"text-generation",

"text-generation-inference",

"endpoints_compatible",

"region:us",

"deploy:azure"

] | text-generation | 2024-03-06T00:07:35Z | # Mamba

<!-- Provide a quick summary of what the model is/does. -->

This repository contains the `transfromers` compatible `mamba-2.8b`. The checkpoints are untouched, but the full `config.json` and tokenizer are pushed to this repo.

# Usage

You need to install `transformers` from `main` until `transformers=4.39.0`... | [] |

mradermacher/NaturalLM-3.1-1B-Preview-GGUF | mradermacher | 2025-09-16T10:35:28Z | 1 | 0 | transformers | [

"transformers",

"gguf",

"generated_from_trainer",

"unsloth",

"grpo",

"trl",

"en",

"base_model:qingy2024/NaturalLM-3.1-1B-Preview",

"base_model:quantized:qingy2024/NaturalLM-3.1-1B-Preview",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-09-16T10:24:54Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static q... | [] |

18ksl/pi0.5_libero_validation | 18ksl | 2026-03-31T01:48:39Z | 0 | 0 | lerobot | [

"lerobot",

"safetensors",

"robotics",

"pi05",

"dataset:HuggingFaceVLA/libero",

"license:apache-2.0",

"region:us"

] | robotics | 2026-03-31T01:45:44Z | # Model Card for pi05

<!-- Provide a quick summary of what the model is/does. -->

**π₀.₅ (Pi05) Policy**

π₀.₅ is a Vision-Language-Action model with open-world generalization, from Physical Intelligence. The LeRobot implementation is adapted from their open source OpenPI repository.

**Model Overview**

π₀.₅ repres... | [] |

unsloth/Devstral-Small-2-24B-Instruct-2512 | unsloth | 2025-12-15T02:41:31Z | 14,632 | 7 | vllm | [

"vllm",

"safetensors",

"mistral3",

"mistral-common",

"unsloth",

"arxiv:2501.19399",

"base_model:mistralai/Devstral-Small-2-24B-Instruct-2512",

"base_model:quantized:mistralai/Devstral-Small-2-24B-Instruct-2512",

"license:apache-2.0",

"fp8",

"region:us"

] | null | 2025-12-10T01:36:22Z | > [!NOTE]

> Includes Unsloth **chat template fixes**! <br> For `llama.cpp`, use `--jinja`

>

<div>

<p style="margin-top: 0;margin-bottom: 0;">

<em><a href="https://docs.unsloth.ai/basics/unsloth-dynamic-v2.0-gguf">Unsloth Dynamic 2.0</a> achieves superior accuracy & outperforms other leading quants.</em>

</p>

... | [] |

vectorzhou/gemma-2-2b-it-alpaca-cleaned-SFT-PKU-SafeRLHF-OMWU-0907051014-epoch-8 | vectorzhou | 2025-09-08T03:43:05Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"gemma2",

"text-generation",

"generated_from_trainer",

"fine-tuned",

"trl",

"OMWU",

"conversational",

"dataset:PKU-Alignment/PKU-SafeRLHF",

"arxiv:2503.08942",

"base_model:vectorzhou/gemma-2-2b-it-alpaca-cleaned-SFT",

"base_model:finetune:vectorzhou/gemma-2-2b-... | text-generation | 2025-09-08T02:15:33Z | # Model Card for gemma-2-2b-it-alpaca-cleaned-SFT-PKU-SafeRLHF-OMWU

This model is a fine-tuned version of [vectorzhou/gemma-2-2b-it-alpaca-cleaned-SFT](https://huggingface.co/vectorzhou/gemma-2-2b-it-alpaca-cleaned-SFT) on the [PKU-Alignment/PKU-SafeRLHF](https://huggingface.co/datasets/PKU-Alignment/PKU-SafeRLHF) dat... | [] |

DevMoG/my_awesome_video_cls_model | DevMoG | 2025-08-22T05:12:24Z | 3 | 0 | transformers | [

"transformers",

"safetensors",

"videomae",

"video-classification",

"generated_from_trainer",

"base_model:MCG-NJU/videomae-base",

"base_model:finetune:MCG-NJU/videomae-base",

"license:cc-by-nc-4.0",

"endpoints_compatible",

"region:us"

] | video-classification | 2025-08-22T04:43:10Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# my_awesome_video_cls_model

This model is a fine-tuned version of [MCG-NJU/videomae-base](https://huggingface.co/MCG-NJU/videomae-... | [] |

Thireus/Qwen3.6-27B-THIREUS-Q4_K_R4-SPECIAL_SPLIT | Thireus | 2026-04-27T07:20:45Z | 0 | 0 | null | [

"gguf",

"arxiv:2505.23786",

"license:mit",

"region:us"

] | null | 2026-04-26T05:44:40Z | # Qwen3.6-27B

## 🤔 What is this [HuggingFace repository](https://huggingface.co/Thireus/Qwen3.6-27B-THIREUS-BF16-SPECIAL_SPLIT/) about?

This repository provides **GGUF-quantized tensors** for the Qwen3.6-27B model (official repo: https://huggingface.co/Qwen/Qwen3.6-27B). These GGUF shards are designed to be used wit... | [] |

ReadyArt/Dark-Nexus-12B-v2.0-EXL3 | ReadyArt | 2025-10-31T12:13:51Z | 0 | 0 | null | [

"nsfw",

"explicit",

"roleplay",

"unaligned",

"dangerous",

"ERP",

"Other License",

"base_model:ReadyArt/Dark-Nexus-12B-v2.0",

"base_model:quantized:ReadyArt/Dark-Nexus-12B-v2.0",

"license:other",

"region:us"

] | null | 2025-10-31T12:13:08Z | <style>

:root {

--dark-bg: #0a0505;

--lava-red: #ff3300;

--lava-orange: #ff6600;

--lava-yellow: #ff9900;

--neon-blue: #00ccff;

--neon-purple: #cc00ff;

}

* {

margin: 0;

padding: 0;

box-siz... | [] |

marinedarb/distilbert-base-uncased-finetuned-emotion | marinedarb | 2025-12-10T15:29:54Z | 2 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"distilbert",

"text-classification",

"generated_from_trainer",

"base_model:distilbert/distilbert-base-uncased",

"base_model:finetune:distilbert/distilbert-base-uncased",

"license:apache-2.0",

"text-embeddings-inference",

"endpoints_compatible",

"re... | text-classification | 2025-12-07T19:28:32Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# distilbert-base-uncased-finetuned-emotion

This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/... | [] |

FrankCCCCC/ddim-ema-50k_cfm-corr-50-ss0.0-ep500-ema-run2 | FrankCCCCC | 2025-10-03T16:19:34Z | 0 | 0 | null | [

"region:us"

] | null | 2025-10-03T12:48:13Z | # cfm_corr_50_ss0.0_ep500_ema-run2

This repository contains model artifacts and configuration files from the CFM_CORR_EMA_50k experiment.

## Contents

This folder contains:

- Model checkpoints and weights

- Configuration files (JSON)

- Scheduler and UNet components

- Training results and metadata

- Sample directories... | [] |

Andycurrent/DeepSeek-R1-Distill-Qwen-7B-Uncensored_GGUF | Andycurrent | 2026-01-30T11:45:04Z | 1,605 | 3 | null | [

"gguf",

"Reasoning",

"Instruct",

"Uncensored",

"Distilled",

"GGUF",

"Quantized",

"en",

"zh",

"base_model:deepseek-ai/DeepSeek-R1-Distill-Qwen-7B",

"base_model:quantized:deepseek-ai/DeepSeek-R1-Distill-Qwen-7B",

"license:apache-2.0",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2026-01-30T11:36:45Z | ---

license: apache-2.0

language:

- en

- zh

base_model:

- deepseek-ai/DeepSeek-R1-Distill-Qwen-7B

tags:

- Reasoning

- Instruct

- Uncensored

- Distilled

- GGUF

- Quantized

---

# DeepSeek-R1-Distill-Qwen-7B-Uncensored

This repository hosts **uncensored and efficiency-focused builds** of **DeepSeek-R1-Distill-Qwen-7B**,... | [] |

buelfhood/conplag1_modernbert_ep30_bs16_lr2e-05_l1024_s42_ppy_f_beta_score | buelfhood | 2025-11-16T23:50:16Z | 1 | 0 | transformers | [

"transformers",

"safetensors",

"modernbert",

"text-classification",

"generated_from_trainer",

"base_model:answerdotai/ModernBERT-base",

"base_model:finetune:answerdotai/ModernBERT-base",

"license:apache-2.0",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | text-classification | 2025-11-16T23:49:50Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# conplag1_modernbert_ep30_bs16_lr2e-05_l1024_s42_ppy_f_beta_score

This model is a fine-tuned version of [answerdotai/ModernBERT-ba... | [] |

luckeciano/Qwen-2.5-7B-GRPO-NoBaseline-HessianMaskToken-0.01-v2_1159 | luckeciano | 2025-08-25T22:43:46Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"generated_from_trainer",

"open-r1",

"trl",

"grpo",

"conversational",

"dataset:DigitalLearningGmbH/MATH-lighteval",

"arxiv:2402.03300",

"base_model:Qwen/Qwen2.5-Math-7B",

"base_model:finetune:Qwen/Qwen2.5-Math-7B",

"text-generation... | text-generation | 2025-08-25T16:56:28Z | # Model Card for Qwen-2.5-7B-GRPO-NoBaseline-HessianMaskToken-0.01-v2_1159

This model is a fine-tuned version of [Qwen/Qwen2.5-Math-7B](https://huggingface.co/Qwen/Qwen2.5-Math-7B) on the [DigitalLearningGmbH/MATH-lighteval](https://huggingface.co/datasets/DigitalLearningGmbH/MATH-lighteval) dataset.

It has been train... | [] |

clachic/bert-base-nsmc | clachic | 2026-01-28T05:48:48Z | 0 | 0 | transformers | [

"transformers",

"tf",

"bert",

"text-classification",

"generated_from_keras_callback",

"base_model:klue/bert-base",

"base_model:finetune:klue/bert-base",

"license:cc-by-sa-4.0",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | text-classification | 2026-01-28T05:48:08Z | <!-- This model card has been generated automatically according to the information Keras had access to. You should

probably proofread and complete it, then remove this comment. -->

# bert-base-nsmc

This model is a fine-tuned version of [klue/bert-base](https://huggingface.co/klue/bert-base) on an unknown dataset.

It ... | [

{

"start": 876,

"end": 882,

"text": "WarmUp",

"label": "training method",

"score": 0.7116289138793945

},

{

"start": 1254,

"end": 1260,

"text": "WarmUp",

"label": "training method",

"score": 0.7390549778938293

}

] |

tony-novus/Sanctum-core-basic-Qwen2.5-1.5B-Instruct-MNN | tony-novus | 2026-03-12T17:01:21Z | 11 | 0 | null | [

"chat",

"text-generation",

"en",

"license:apache-2.0",

"region:us"

] | text-generation | 2026-03-12T17:01:20Z | # Qwen2.5-1.5B-Instruct-MNN

## Introduction

This model is a 4-bit quantized version of the MNN model exported from [Qwen2.5-1.5B-Instruct](https://modelscope.cn/models/qwen/Qwen2.5-1.5B-Instruct/summary) using [llmexport](https://github.com/alibaba/MNN/tree/master/transformers/llm/export).

## Download

```bash

# insta... | [] |

continuallearning/dit_posttrainv2_clare_dit_0_2_real_1_stack_bowls_filtered_consolidated_seed1000 | continuallearning | 2026-03-28T03:39:33Z | 0 | 0 | lerobot | [

"lerobot",

"safetensors",

"robotics",

"dit",

"dataset:continuallearning/real_1_stack_bowls_filtered_consolidated",

"license:apache-2.0",

"region:us"

] | robotics | 2026-03-28T03:39:24Z | # Model Card for dit

<!-- Provide a quick summary of what the model is/does. -->

_Model type not recognized — please update this template._

This policy has been trained and pushed to the Hub using [LeRobot](https://github.com/huggingface/lerobot).

See the full documentation at [LeRobot Docs](https://huggingface.co... | [] |

ngocnamk3er/GreenNode-Embedding-Large-VN-Retrieval-fine-tuned-v2 | ngocnamk3er | 2025-12-17T17:03:12Z | 0 | 0 | sentence-transformers | [

"sentence-transformers",

"safetensors",

"xlm-roberta",

"sentence-similarity",

"feature-extraction",

"dense",

"generated_from_trainer",

"dataset_size:1032",

"loss:MultipleNegativesRankingLoss",

"arxiv:1908.10084",

"arxiv:1705.00652",

"base_model:GreenNode/GreenNode-Embedding-Large-VN-Mixed-V1",... | sentence-similarity | 2025-12-17T17:02:25Z | # SentenceTransformer based on GreenNode/GreenNode-Embedding-Large-VN-Mixed-V1

This is a [sentence-transformers](https://www.SBERT.net) model finetuned from [GreenNode/GreenNode-Embedding-Large-VN-Mixed-V1](https://huggingface.co/GreenNode/GreenNode-Embedding-Large-VN-Mixed-V1). It maps sentences & paragraphs to a 102... | [] |

huskyhong/wzryyykl-gjmy-bslwq | huskyhong | 2026-01-13T18:13:58Z | 0 | 0 | null | [

"pytorch",

"region:us"

] | null | 2026-01-13T09:41:00Z | # 王者荣耀语音克隆-干将莫邪-冰霜恋舞曲

基于 VoxCPM 的王者荣耀英雄及皮肤语音克隆模型系列,支持多种英雄和皮肤的语音风格克隆与生成。

## 安装依赖

```bash

pip install voxcpm

```

## 用法

```python

import json

import soundfile as sf

from voxcpm.core import VoxCPM

from voxcpm.model.voxcpm import LoRAConfig

# 配置基础模型路径(示例路径,请根据实际情况修改)

base_model_path = "G:\mergelora\... | [] |

phospho-app/ACT-dataset_navidad_v20-g4c09dmdhk | phospho-app | 2025-11-22T16:21:25Z | 0 | 0 | phosphobot | [

"phosphobot",

"safetensors",

"act",

"robotics",

"dataset:DavidVillanueva/dataset_navidad_v20",

"region:us"

] | robotics | 2025-11-22T15:29:00Z | ---

datasets: DavidVillanueva/dataset_navidad_v20

library_name: phosphobot

pipeline_tag: robotics

model_name: act

tags:

- phosphobot

- act

task_categories:

- robotics

---

# act model - 🧪 phosphobot training pipeline

- **Dataset**: [DavidVillanueva/dataset_navidad_v20](https://huggingface.co/datasets/DavidVillanueva/... | [] |

renshou753/marian-finetuned-kde4-en-to-fr | renshou753 | 2025-10-11T04:15:07Z | 0 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"marian",

"text2text-generation",

"translation",

"generated_from_trainer",

"dataset:kde4",

"base_model:Helsinki-NLP/opus-mt-en-fr",

"base_model:finetune:Helsinki-NLP/opus-mt-en-fr",

"license:apache-2.0",

"model-index",

"endpoints_compatible",

"... | translation | 2025-10-11T03:39:49Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# marian-finetuned-kde4-en-to-fr

This model is a fine-tuned version of [Helsinki-NLP/opus-mt-en-fr](https://huggingface.co/Helsinki... | [] |

mradermacher/Qwen3.5-40B-RoughHouse-Claude-4.6-Opus-Polar-Deckard-Uncensored-Heretic-Thinking-GGUF | mradermacher | 2026-03-18T12:42:15Z | 2,484 | 2 | transformers | [

"transformers",

"gguf",

"unsloth",

"fine tune",

"heretic",

"uncensored",

"abliterated",

"multi-stage tuned.",

"all use cases",

"coder",

"creative",

"creative writing",

"fiction writing",

"plot generation",

"sub-plot generation",

"story generation",

"scene continue",

"storytelling",... | null | 2026-03-18T08:43:01Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static q... | [] |

mradermacher/70B_Book_stock-GGUF | mradermacher | 2025-10-26T20:13:03Z | 14 | 0 | transformers | [

"transformers",

"gguf",

"mergekit",

"merge",

"en",

"base_model:schonsense/70B_Book_stock",

"base_model:quantized:schonsense/70B_Book_stock",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-10-26T17:41:30Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static q... | [] |

Sashavse/sage-assistant-lora-F16-GGUF | Sashavse | 2026-04-14T12:14:59Z | 0 | 0 | peft | [

"peft",

"gguf",

"base_model:adapter:unsloth/Qwen2.5-3B-Instruct-bnb-4bit",

"lora",

"sft",

"transformers",

"trl",

"unsloth",

"llama-cpp",

"gguf-my-lora",

"text-generation",

"base_model:Sashavse/sage-assistant-lora",

"base_model:adapter:Sashavse/sage-assistant-lora",

"region:us"

] | text-generation | 2026-04-14T12:14:56Z | # Sashavse/sage-assistant-lora-F16-GGUF

This LoRA adapter was converted to GGUF format from [`Sashavse/sage-assistant-lora`](https://huggingface.co/Sashavse/sage-assistant-lora) via the ggml.ai's [GGUF-my-lora](https://huggingface.co/spaces/ggml-org/gguf-my-lora) space.

Refer to the [original adapter repository](https:... | [] |

mradermacher/Gemma-3-Prompt-Coder-270m-it-Uncensored-GGUF | mradermacher | 2026-04-25T02:52:03Z | 820 | 4 | transformers | [

"transformers",

"gguf",

"mergekit",

"merge",

"en",

"dataset:microsoft/rStar-Coder",

"dataset:gokaygokay/prompt-enhancement-75k",

"dataset:gokaygokay/prompt-enhancer-dataset",

"base_model:WithinUsAI/Gemma3-Prompt.Coder.it.Uncensored-270m",

"base_model:quantized:WithinUsAI/Gemma3-Prompt.Coder.it.Unc... | null | 2026-02-07T18:14:53Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static q... | [] |

AI45Research/SafeWork-R1-DeepSeek-70B | AI45Research | 2025-11-06T16:11:48Z | 1 | 0 | null | [

"safetensors",

"llama",

"image-text-to-text",

"conversational",

"multilingual",

"arxiv:2507.18576",

"base_model:deepseek-ai/DeepSeek-R1-Distill-Llama-70B",

"base_model:finetune:deepseek-ai/DeepSeek-R1-Distill-Llama-70B",

"license:apache-2.0",

"region:us"

] | image-text-to-text | 2025-10-29T11:45:56Z | # SafeWork-R1-DeepSeek-70B

[📂 GitHub](https://github.com/AI45Lab/SafeWork-R1) · [📜Technical Report](https://arxiv.org/abs/2507.18576) · [💬Online Chat](https://safework-r1.ai45.shlab.org.cn/)

<div align="center">

<img alt="image" src="https://cdn-uploads.huggingface.co/production/uploads/666fe1a5b07525f0bde69c27/... | [] |

ImNotTam/finetuned_4_12 | ImNotTam | 2025-12-04T09:00:10Z | 0 | 0 | null | [

"safetensors",

"llm-judge",

"training-checkpoint",

"lora",

"unsloth",

"vi",

"en",

"license:apache-2.0",

"region:us"

] | null | 2025-12-04T08:59:44Z | # finetuned_4_12

Full training folder backup - Toàn bộ checkpoints và models.

## 📂 Cấu trúc Folder

```

train_

├── lora_adapters/ # LoRA adapters

├── README.md

├── zero_shot_metrics.json

└── zero_shot_results.csv

```

## 🚀 Sử Dụng

### 1️⃣ Clone Repo

```bash

git lfs install

git clone https://huggingface.co... | [] |

auarnold/cube_stacking_right_v3_act | auarnold | 2026-04-30T07:54:55Z | 0 | 0 | lerobot | [

"lerobot",

"safetensors",

"robotics",

"act",

"dataset:auarnold/cube_stacking_right_v3",

"arxiv:2304.13705",

"license:apache-2.0",

"region:us"

] | robotics | 2026-04-30T06:01:03Z | # Model Card for act

<!-- Provide a quick summary of what the model is/does. -->

[Action Chunking with Transformers (ACT)](https://huggingface.co/papers/2304.13705) is an imitation-learning method that predicts short action chunks instead of single steps. It learns from teleoperated data and often achieves high succ... | [

{

"start": 17,

"end": 20,

"text": "act",

"label": "training method",

"score": 0.831265389919281

},

{

"start": 120,

"end": 123,

"text": "ACT",

"label": "training method",

"score": 0.8477550148963928

},

{

"start": 865,

"end": 868,

"text": "act",

"label":... |

zky11235/qwen2-webshop-federated | zky11235 | 2025-09-10T15:02:59Z | 0 | 0 | transformers | [

"transformers",

"pytorch",

"safetensors",

"qwen2",

"text-generation",

"federated-learning",

"webshop",

"grpo",

"conversational",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-09-10T14:42:25Z | # qwen2-webshop-federated

This is a federated learning model based on Qwen2-1.5B-Instruct, trained using GRPO (Generalized Reward Policy Optimization) on the WebShop dataset.

## Model Details

- **Base Model**: Qwen2-1.5B-Instruct

- **Training Method**: Federated Learning with GRPO

- **Dataset**: WebShop

- **Architec... | [

{

"start": 106,

"end": 110,

"text": "GRPO",

"label": "training method",

"score": 0.7635680437088013

},

{

"start": 256,

"end": 284,

"text": "Federated Learning with GRPO",

"label": "training method",

"score": 0.786171555519104

}

] |

arithmetic-circuit-overloading/Qwen3-32B-3d-500K-50K-0.2-reverse-padzero-plus-mul-sub-99-64D-1L-4H-256I | arithmetic-circuit-overloading | 2026-02-27T07:16:31Z | 198 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"text-generation",

"generated_from_trainer",

"base_model:Qwen/Qwen3-32B",

"base_model:finetune:Qwen/Qwen3-32B",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2026-02-27T07:06:23Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# Qwen3-32B-3d-500K-50K-0.2-reverse-padzero-plus-mul-sub-99-64D-1L-4H-256I

This model is a fine-tuned version of [Qwen/Qwen3-32B](h... | [

{

"start": 619,

"end": 637,

"text": "Training procedure",

"label": "training method",

"score": 0.7022620439529419

}

] |

OmniSVG/OmniSVG | OmniSVG | 2025-07-21T09:57:44Z | 1,000 | 195 | null | [

"pytorch",

"qwen2_5_vl",

"SVG",

"Image-to-SVG",

"Text-to-SVG",

"text-generation",

"en",

"zh",

"dataset:OmniSVG/MMSVG-Icon",

"dataset:OmniSVG/MMSVG-Illustration",

"arxiv:2504.06263",

"base_model:Qwen/Qwen2.5-VL-3B-Instruct",

"base_model:finetune:Qwen/Qwen2.5-VL-3B-Instruct",

"license:apache... | text-generation | 2025-07-21T07:04:58Z | <!-- <div align= "center">

<h1> Official repo for OmniSVG</h1>

</div> -->

<h3 align="center"><strong>OmniSVG: A Unified Scalable Vector Graphics Generation Model</strong></h3>

<div align="center">

<a href='https://arxiv.org/abs/2504.06263'><img src='https://img.shields.io/badge/arXiv-2504.06263-b31b1b.svg'></a>... | [] |

qrk-labs/akeel-cot-qwen3-0.6B-3k | qrk-labs | 2026-02-27T10:03:22Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:Qwen/Qwen3-0.6B",

"base_model:finetune:Qwen/Qwen3-0.6B",

"endpoints_compatible",

"region:us"

] | null | 2026-02-27T09:19:52Z | # Model Card for akeel-cot-0.6b-3k

This model is a fine-tuned version of [Qwen/Qwen3-0.6B](https://huggingface.co/Qwen/Qwen3-0.6B).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could only go... | [] |

mradermacher/Janus-Pro-7B-i1-GGUF | mradermacher | 2025-12-05T04:05:01Z | 226 | 0 | transformers | [

"transformers",

"gguf",

"muiltimodal",

"text-to-image",

"unified-model",

"en",

"base_model:deepseek-community/Janus-Pro-7B",

"base_model:quantized:deepseek-community/Janus-Pro-7B",

"license:mit",

"endpoints_compatible",

"region:us",

"imatrix",

"conversational"

] | text-to-image | 2025-11-20T00:09:08Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: nicoboss -->

<!-- ### quants: Q2_K IQ3_M Q4_K_S IQ3_XXS Q3_K_M small-IQ4_NL Q4_K_M IQ2_M Q6_K IQ4_XS Q2_K_S IQ1_M Q3_K_S IQ2_XXS Q3_K_L IQ2_XS Q5_K_S IQ2_S IQ1_S Q5_... | [] |

diman84/autotrain-meta-rating | diman84 | 2025-09-20T11:04:26Z | 0 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"autotrain",

"text-generation-inference",

"text-generation",

"peft",

"conversational",

"base_model:meta-llama/Llama-3.2-3B",

"base_model:finetune:meta-llama/Llama-3.2-3B",

"license:other",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-09-20T11:01:13Z | # Model Trained Using AutoTrain

This model was trained using AutoTrain. For more information, please visit [AutoTrain](https://hf.co/docs/autotrain).

# Usage

```python

from transformers import AutoModelForCausalLM, AutoTokenizer

model_path = "PATH_TO_THIS_REPO"

tokenizer = AutoTokenizer.from_pretrained(model_path... | [] |

parlange/swin-gravit-s3 | parlange | 2025-09-06T22:17:33Z | 4 | 0 | timm | [

"timm",

"pytorch",

"safetensors",

"vision-transformer",

"image-classification",

"swin",

"gravitational-lensing",

"strong-lensing",

"astronomy",

"astrophysics",

"dataset:parlange/gravit-c21",

"arxiv:2509.00226",

"license:apache-2.0",

"model-index",

"region:us"

] | image-classification | 2025-09-06T22:16:40Z | # 🌌 swin-gravit-s3

🔭 This model is part of **GraViT**: Transfer Learning with Vision Transformers and MLP-Mixer for Strong Gravitational Lens Discovery

🔗 **GitHub Repository**: [https://github.com/parlange/gravit](https://github.com/parlange/gravit)

## 🛰️ Model Details

- **🤖 Model Type**: Swin

- **🧪 Experimen... | [] |

wheattoast11/OmniCoder-9B-Zero-Phase1-SFT | wheattoast11 | 2026-03-28T08:07:49Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trackio",

"hf_jobs",

"sft",

"trl",

"trackio:https://wheattoast11-trackio.hf.space?project=zero-rl&runs=phase1-sft-tool-calling&sidebar=collapsed",

"base_model:Tesslate/OmniCoder-9B",

"base_model:finetune:Tesslate/OmniCoder-9B",

"endpoints... | null | 2026-03-28T03:44:45Z | # Model Card for OmniCoder-9B-Zero-Phase1-SFT

This model is a fine-tuned version of [Tesslate/OmniCoder-9B](https://huggingface.co/Tesslate/OmniCoder-9B).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time mac... | [] |

alexgusevski/Einstein-v6.1-Llama3-8B-mlx-4Bit | alexgusevski | 2026-01-12T20:37:17Z | 6 | 0 | mlx | [

"mlx",

"safetensors",

"llama",

"axolotl",

"generated_from_trainer",

"instruct",

"finetune",

"chatml",

"gpt4",

"synthetic data",

"science",

"physics",

"chemistry",

"biology",

"math",

"llama3",

"mlx-my-repo",

"en",

"dataset:allenai/ai2_arc",

"dataset:camel-ai/physics",

"dataset... | null | 2026-01-12T20:36:53Z | # alexgusevski/Einstein-v6.1-Llama3-8B-mlx-4Bit

The Model [alexgusevski/Einstein-v6.1-Llama3-8B-mlx-4Bit](https://huggingface.co/alexgusevski/Einstein-v6.1-Llama3-8B-mlx-4Bit) was converted to MLX format from [Weyaxi/Einstein-v6.1-Llama3-8B](https://huggingface.co/Weyaxi/Einstein-v6.1-Llama3-8B) using mlx-lm version *... | [] |

Mawdistical/Kuwutu-Lite-7B | Mawdistical | 2025-09-19T18:33:59Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"nsfw",

"explicit",

"roleplay",

"mixed-AI",

"furry",

"anthro",

"dark",

"chat",

"conversational",

"en",

"dataset:Delta-Vector/Hydrus-General-Reasoning",

"dataset:Delta-Vector/Hydrus-IF-Mix-Ai2",

"dataset:Delta-Vector/Hydrus-Ar... | text-generation | 2025-09-08T04:39:24Z | <!--

COLORS:

--accent-red: #722F37;

--accent-grey: #AAAAAA;

--black: #000000;

--white: #FFFFFF;

-->

<div style="background-color: #FFFFFF; color: #000000; padding: 28px 18px; border-radius: 10px; width: 100%; border: 1px solid #DDDDDD;">

<div align="center">

<h1 style="color: #000000; margin-bottom: 18p... | [] |

shin0412/JetsonNanoTracking | shin0412 | 2026-04-16T04:37:32Z | 0 | 0 | none | [

"none",

"jetson-nano",

"object-tracking",

"tensorrt",

"opencv",

"yolo",

"eco-tracker",

"license:other",

"region:us"

] | null | 2026-04-16T04:15:38Z | # JetsonNanoTracking

Runtime model artifacts used by the Jetson Nano person-tracking tests in the `MyECOTracker` / `JetsonNanoTracking` project.

## Included files

- `runtime_test_models/tracker/resnet18_vggmconv1_otb_dual_large_fp16.engine`

- TensorRT FP16 engine used by the real-video tracking profile.

- Runtim... | [] |

TAUR-dev/M-skillfactory_sft_countdown_3arg_promptvariants_qrepeat1_reflections5-sft | TAUR-dev | 2025-09-10T05:21:05Z | 0 | 0 | null | [

"safetensors",

"qwen2",

"region:us"

] | null | 2025-09-10T05:20:22Z | # M-skillfactory_sft_countdown_3arg_promptvariants_qrepeat1_reflections5-sft

This model was created as part of the **skillfactory_sft_countdown_3arg_promptvariants_qrepeat1_reflections5** experiment using the SkillFactory experiment management system.

## Model Details

- **Training Method**: LLaMAFactory SFT (Supervi... | [

{

"start": 355,

"end": 358,

"text": "sft",

"label": "training method",

"score": 0.8279229402542114

},

{

"start": 564,

"end": 567,

"text": "sft",

"label": "training method",

"score": 0.7832388281822205

}

] |

solailabs/wmt22-cometkiwi-da-pruned-k4-xs | solailabs | 2026-04-21T07:00:51Z | 0 | 0 | comet | [

"comet",

"translation",

"quality-estimation",

"reference-free",

"cometkiwi",

"pruning",

"multilingual",

"base_model:Unbabel/wmt22-cometkiwi-da",

"base_model:finetune:Unbabel/wmt22-cometkiwi-da",

"license:apache-2.0",

"region:us"

] | translation | 2026-04-20T09:16:28Z | # wmt22-cometkiwi-da-pruned-k4-xs

A compressed version of [Unbabel/wmt22-cometkiwi-da](https://huggingface.co/Unbabel/wmt22-cometkiwi-da) — a reference-free machine-translation quality estimation model (source + MT only, no human reference required).

**Smallest variant** — K=4 prune + int8 + fp16 storage, ~1 GB on di... | [] |

gold24k/deepseek-v3.2-speciale | gold24k | 2026-01-24T13:58:17Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"deepseek_v32",

"text-generation",

"base_model:deepseek-ai/DeepSeek-V3.2-Exp-Base",

"base_model:finetune:deepseek-ai/DeepSeek-V3.2-Exp-Base",

"license:mit",

"endpoints_compatible",

"fp8",

"region:us"

] | text-generation | 2026-01-24T13:58:15Z | # DeepSeek-V3.2: Efficient Reasoning & Agentic AI

<!-- markdownlint-disable first-line-h1 -->

<!-- markdownlint-disable html -->

<!-- markdownlint-disable no-duplicate-header -->

<div align="center">

<img src="https://github.com/deepseek-ai/DeepSeek-V2/blob/main/figures/logo.svg?raw=true" width="60%" alt="DeepSeek-... | [] |

iamshnoo/combined_without_metadata_500m | iamshnoo | 2026-04-02T14:39:14Z | 93 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"metadata-localization",

"global",

"500m",

"without-metadata",

"pretraining",

"arxiv:2601.15236",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-11-18T18:10:45Z | # combined_without_metadata_500m

## Summary

This repo contains the global combined model at the final 10k-step checkpoint for the metadata localization project. It was trained from scratch on the project corpus, using the Llama 3.2 tokenizer and vocabulary.

## Variant Metadata

- Stage: `pretrain`

- Family: `global`... | [] |

Sumiokashi/qwen3-4b-structured-3k-mix-sft_lora-repo_ver26 | Sumiokashi | 2026-02-22T09:46:10Z | 0 | 0 | peft | [

"peft",

"safetensors",

"qlora",

"lora",

"structured-output",

"text-generation",

"en",

"dataset:daichira/structured-3k-mix-sft",

"base_model:Qwen/Qwen3-4B-Instruct-2507",

"base_model:adapter:Qwen/Qwen3-4B-Instruct-2507",

"license:apache-2.0",

"region:us"

] | text-generation | 2026-02-22T09:46:04Z | <【課題】qwen3-4b-structured-3k-mix-sft -output-lora_ver26>

This repository provides a **LoRA adapter** fine-tuned from

**Qwen/Qwen3-4B-Instruct-2507** using **QLoRA (4-bit, Unsloth)**.

This repository contains **LoRA adapter weights only**.

The base model must be loaded separately.

## Training Objective

This adapter i... | [

{

"start": 157,

"end": 162,

"text": "QLoRA",

"label": "training method",

"score": 0.7592991590499878

}

] |

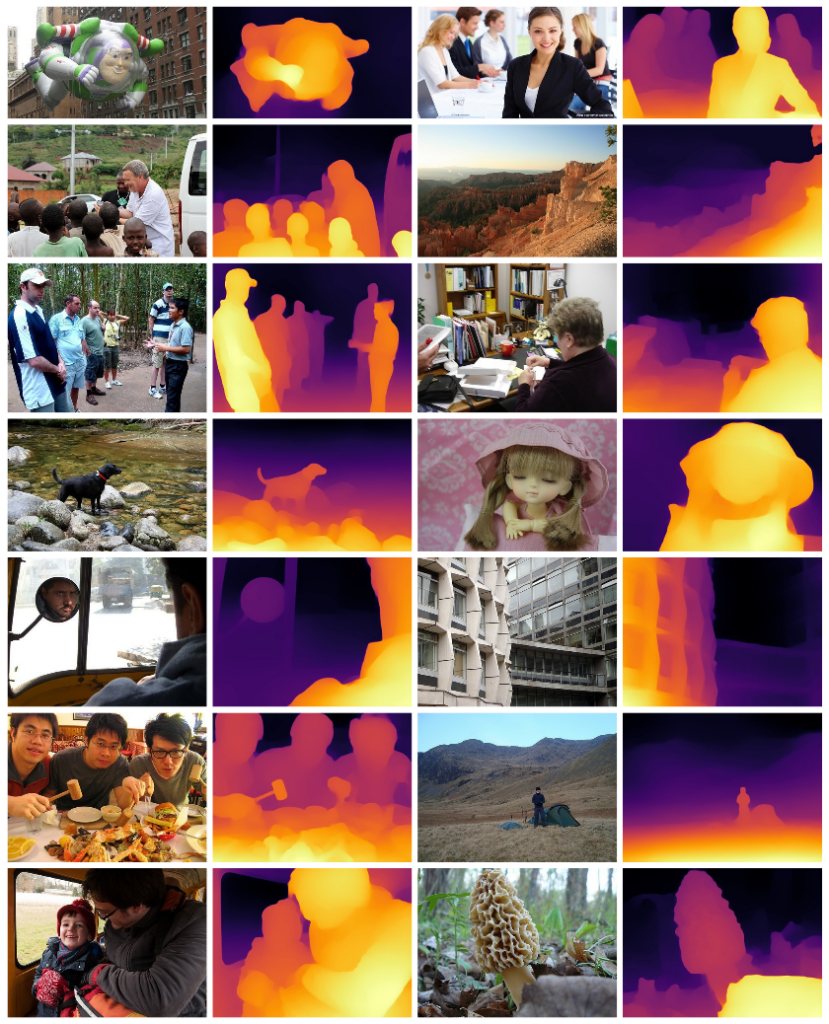

jaya-sandeep-22/MIDAS-PYTORCH | jaya-sandeep-22 | 2025-12-25T12:46:48Z | 0 | 0 | pytorch | [

"pytorch",

"depth-estimation",

"computer-vision",

"midas",

"opencv",

"monocular-depth",

"license:mit",

"region:us"

] | depth-estimation | 2025-12-25T12:10:31Z |

# 🧠... | [] |

sthaps/typhoon2.5-qwen3-4b-heretic | sthaps | 2026-04-13T04:08:38Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"text-generation",

"heretic",

"uncensored",

"decensored",

"abliterated",

"conversational",

"arxiv:2412.13702",

"license:apache-2.0",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2026-04-13T04:07:37Z | # This is a decensored version of [typhoon-ai/typhoon2.5-qwen3-4b](https://huggingface.co/typhoon-ai/typhoon2.5-qwen3-4b), made using [Heretic](https://github.com/p-e-w/heretic) v1.2.0

## Abliteration parameters

| Parameter | Value |

| :-------- | :---: |

| **direction_index** | per layer |

| **attn.o_proj.max_weight... | [] |

jc-builds/sd2-inpainting-coreml | jc-builds | 2026-01-15T05:16:36Z | 0 | 2 | null | [

"coreml",

"stable-diffusion",

"inpainting",

"ios",

"macos",

"apple-silicon",

"image-editing",

"image-to-image",

"base_model:stabilityai/stable-diffusion-2-inpainting",

"base_model:finetune:stabilityai/stable-diffusion-2-inpainting",

"license:other",

"region:us"

] | image-to-image | 2025-12-20T18:05:56Z | # Stable Diffusion 2.1 Inpainting (Core ML)

This is a **Core ML conversion** of [Stable Diffusion 2.1 Inpainting](https://huggingface.co/stabilityai/stable-diffusion-2-inpainting) optimized for on-device inference on Apple Silicon.

## Model Details

| Property | Value |

|----------|-------|

| **Original Model** | Sta... | [] |

ShuaiYang03/instructvla_pretraining_v2_libero_goal_wrist-image_aug | ShuaiYang03 | 2025-09-18T03:27:35Z | 6 | 1 | null | [

"vision-language-model",

"manipulation",

"robotics",

"dataset:IPEC-COMMUNITY/libero_goal_no_noops_1.0.0_lerobot",

"base_model:nvidia/Eagle2-2B",

"base_model:finetune:nvidia/Eagle2-2B",

"region:us"

] | robotics | 2025-09-09T02:47:38Z | # Model Card for InstructVLA LIBERO-Goal

- checkpoints: the model in `.pt` format

- eval: the evaluation results with 3 random seeds

- dataset_statistics.json: the normalization statistics for the dataset

## Evaluation:

```bash

#!/bin/bash

CKPT_LIST=(

"path/to/checkpoints/step-039000-epoch-192-loss=0.0263.pt"... | [] |

Hocc493/act_kbot_policy | Hocc493 | 2025-10-23T10:50:57Z | 0 | 0 | lerobot | [

"lerobot",

"safetensors",

"robotics",

"act",

"dataset:Hocc493/kbot-demos",

"arxiv:2304.13705",

"license:apache-2.0",

"region:us"

] | robotics | 2025-10-23T10:50:50Z | # Model Card for act

<!-- Provide a quick summary of what the model is/does. -->

[Action Chunking with Transformers (ACT)](https://huggingface.co/papers/2304.13705) is an imitation-learning method that predicts short action chunks instead of single steps. It learns from teleoperated data and often achieves high succ... | [

{

"start": 17,

"end": 20,

"text": "act",

"label": "training method",

"score": 0.831265389919281

},

{

"start": 120,

"end": 123,

"text": "ACT",

"label": "training method",

"score": 0.8477550148963928

},

{

"start": 865,

"end": 868,

"text": "act",

"label":... |

quangdung/Qwen2.5-1.5b-leetcode-math-dare-ties | quangdung | 2026-03-05T01:35:45Z | 30 | 0 | transformers | [

"transformers",

"safetensors",

"qwen2",

"text-generation",

"mergekit",

"merge",

"conversational",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2026-03-05T01:34:47Z | # Qwen2.5-1.5b-coder-math-thinking-slerp

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method

This model was merged using the [SLERP](https://en.wikipedia.org/wiki/Slerp) merge method.

### Models Merged

The following models we... | [

{

"start": 219,

"end": 224,

"text": "SLERP",

"label": "training method",

"score": 0.7083226442337036

},

{

"start": 691,

"end": 696,

"text": "SLERP",

"label": "training method",

"score": 0.7550352811813354

}

] |

Octen/Octen-Embedding-8B | Octen | 2026-02-09T04:11:02Z | 6,568 | 148 | sentence-transformers | [

"sentence-transformers",

"safetensors",

"qwen3",

"sentence-similarity",

"feature-extraction",

"embedding",

"text-embedding",

"retrieval",

"en",

"zh",

"multilingual",

"base_model:Qwen/Qwen3-Embedding-8B",

"base_model:finetune:Qwen/Qwen3-Embedding-8B",

"license:apache-2.0",

"text-embedding... | sentence-similarity | 2025-12-23T08:14:22Z | # Octen-Embedding-8B

Octen-Embedding-8B is a text embedding model developed by [Octen](https://octen.ai/) for semantic search and retrieval tasks. This model is fine-tuned from [Qwen/Qwen3-Embedding-8B](https://huggingface.co/Qwen/Qwen3-Embedding-8B) and supports multiple languages, providing high-quality embeddings f... | [] |

EuroEval/european-values-pipeline | EuroEval | 2025-08-21T08:17:07Z | 0 | 0 | sklearn | [

"sklearn",

"european-values",

"tabular-classification",

"license:apache-2.0",

"region:us"

] | tabular-classification | 2025-08-21T08:16:59Z | # European Values Evaluation Pipeline

This repository contains a trained scikit-learn pipeline for evaluating the European

values of a large language model, and has been trained on data from the [European Values

Study](https://europeanvaluesstudy.eu/).

## Usage

You can use this pipeline to evaluate the European val... | [] |

GliteTech/sandwich-nv-small | GliteTech | 2026-03-26T06:22:41Z | 26 | 0 | transformers | [

"transformers",

"safetensors",

"deberta-v2",

"text-classification",

"generated_from_trainer",

"en",

"base_model:GliteTech/wordnet-network-predictor",

"base_model:finetune:GliteTech/wordnet-network-predictor",

"license:mit",

"text-embeddings-inference",

"endpoints_compatible",

"region:us"

] | text-classification | 2026-03-23T17:40:56Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# sandwich-nv-small

This model is a fine-tuned version of [GliteTech/wordnet-network-predictor](https://huggingface.co/GliteTech/wo... | [] |

mmrech/ACE2-ERA5 | mmrech | 2026-03-17T04:00:42Z | 0 | 0 | fme | [

"fme",

"license:apache-2.0",

"region:us"

] | null | 2026-03-17T04:00:41Z | <img src="ACE-logo.png" alt="Logo for the ACE Project" style="width: auto; height: 50px;">

# ACE2-ERA5

Ai2 Climate Emulator (ACE) is a family of models designed to simulate atmospheric variability from the time scale of days to centuries.

**Disclaimer: ACE models are research tools and should not be used for operati... | [] |

rbelanec/train_siqa_456_1760637829 | rbelanec | 2025-10-19T00:45:57Z | 1 | 0 | peft | [

"peft",

"safetensors",

"base_model:adapter:meta-llama/Meta-Llama-3-8B-Instruct",

"llama-factory",

"transformers",

"text-generation",

"conversational",

"base_model:meta-llama/Meta-Llama-3-8B-Instruct",

"license:llama3",

"region:us"

] | text-generation | 2025-10-18T16:32:58Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# train_siqa_456_1760637829

This model is a fine-tuned version of [meta-llama/Meta-Llama-3-8B-Instruct](https://huggingface.co/meta... | [] |

whl66988/gemma-4-26B-A4B | whl66988 | 2026-04-08T03:15:27Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"gemma4",

"image-text-to-text",

"license:apache-2.0",

"endpoints_compatible",

"region:us"

] | image-text-to-text | 2026-04-08T03:15:27Z | <div align="center">

<img src=https://ai.google.dev/gemma/images/gemma4_banner.png>

</div>

<p align="center">

<a href="https://huggingface.co/collections/google/gemma-4" target="_blank">Hugging Face</a> |

<a href="https://github.com/google-gemma" target="_blank">GitHub</a> |

<a href="https://blog.google... | [] |

McG-221/Dolphin-X1-8B-mlx-8Bit | McG-221 | 2025-12-18T20:47:04Z | 102 | 1 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"mlx",

"conversational",

"base_model:dphn/Dolphin-X1-8B",

"base_model:quantized:dphn/Dolphin-X1-8B",

"license:llama3.1",

"text-generation-inference",

"endpoints_compatible",

"8-bit",

"region:us"

] | text-generation | 2025-12-18T20:46:20Z | # McG-221/Dolphin-X1-8B-mlx-8Bit

The Model [McG-221/Dolphin-X1-8B-mlx-8Bit](https://huggingface.co/McG-221/Dolphin-X1-8B-mlx-8Bit) was converted to MLX format from [dphn/Dolphin-X1-8B](https://huggingface.co/dphn/Dolphin-X1-8B) using mlx-lm version **0.28.3**.

## Use with mlx

```bash

pip install mlx-lm

```

```pytho... | [] |

HafeezKing/career-advisor-t5-next | HafeezKing | 2025-09-09T18:31:10Z | 0 | 0 | transformers | [

"transformers",

"tensorboard",

"safetensors",

"t5",

"text2text-generation",

"generated_from_trainer",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | null | 2025-09-09T17:52:12Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# career-advisor-t5-next

This model is a fine-tuned version of [HafeezKing/career-advisor-t5](https://huggingface.co/HafeezKing/car... | [] |

andrebragamkt/morena | andrebragamkt | 2026-04-16T23:07:25Z | 19 | 0 | diffusers | [

"diffusers",

"text-to-image",

"lora",

"template:diffusion-lora",

"base_model:black-forest-labs/FLUX.1-dev",

"base_model:adapter:black-forest-labs/FLUX.1-dev",

"license:apache-2.0",

"region:us"

] | text-to-image | 2026-04-16T23:07:19Z | # morena

<Gallery />

## Model description

Character LoRA of "morena" — a young Brazilian woman with long curly blonde-highlighted hair.

Trigger word: morena

Best used for realistic photography, Instagram/Reels content and adult themes.

Trained on Flux Image Z Turbo with 25 photos using natural lan... | [

{

"start": 269,

"end": 287,

"text": "Flux Image Z Turbo",

"label": "training method",

"score": 0.7938522100448608

}

] |

VimalrajS04/Major_pro | VimalrajS04 | 2025-09-23T13:43:07Z | 0 | 0 | null | [

"onnx",

"safetensors",

"virtual-try-on",

"diffusion",

"idm-vton",

"en",

"arxiv:2403.05139",

"license:cc-by-nc-sa-4.0",

"region:us"

] | null | 2025-09-23T13:18:35Z | ---

license: cc-by-nc-sa-4.0

language: en

tags:

- virtual-try-on

- diffusion

- idm-vton

---

<

div align="center">

<h1>IDM-VTON: Improving Diffusion Models for Authentic Virtual Try-on in the Wild</h1>

<a href='https://idm-vton.github.io'><img src='https://img.shields.io/badge/Project-Page-green'></a>

<a href='https:... | [] |

mats-10-sprint-cs-jb/loracles-jane-street-warmup-qwen3-14b-crosslora | mats-10-sprint-cs-jb | 2026-04-23T21:42:14Z | 0 | 0 | peft | [

"peft",

"safetensors",

"qwen",

"qwen2",

"qwen3",

"lora",

"cross-lora",

"transformers",

"text-generation",

"conversational",

"arxiv:2508.05232",

"base_model:Qwen/Qwen3-14B",

"base_model:adapter:Qwen/Qwen3-14B",

"region:us"

] | text-generation | 2026-04-23T21:42:06Z | # loracles-jane-street-warmup-qwen3-14b-crosslora

This repo contains a Qwen3-14B LoRA obtained by transferring the behavior of [`jane-street/dormant-model-warmup`](https://huggingface.co/jane-street/dormant-model-warmup) with a Cross-LoRA-style pipeline onto [`Qwen/Qwen3-14B`](https://huggingface.co/Qwen/Qwen3-14B).

... | [] |

hbseong/internvla_pick_and_place_pos5_ep208_so101_baseline | hbseong | 2025-12-01T07:24:09Z | 4 | 0 | lerobot | [

"lerobot",

"safetensors",

"internvla",

"robotics",

"dataset:hbseong/record-pick-and-place-pos5-so101",

"license:apache-2.0",

"region:us"

] | robotics | 2025-12-01T07:23:48Z | # Model Card for internvla

<!-- Provide a quick summary of what the model is/does. -->

_Model type not recognized — please update this template._

This policy has been trained and pushed to the Hub using [LeRobot](https://github.com/huggingface/lerobot).

See the full documentation at [LeRobot Docs](https://huggingf... | [] |

mradermacher/TechniqueRAG-ZS-Ministral-8B-GGUF | mradermacher | 2025-09-08T21:30:21Z | 53 | 0 | transformers | [

"transformers",

"gguf",

"MITRE ATT&CK",

"Adversarial Techniques Annotation",

"Threat Intelligence",

"Security",

"en",

"dataset:qcri-cs/TechniqueRAG-Datasets",

"base_model:QCRI/TechniqueRAG-ZS-Ministral-8B",

"base_model:quantized:QCRI/TechniqueRAG-ZS-Ministral-8B",

"license:apache-2.0",

"endpoi... | null | 2025-09-08T20:11:59Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static qu... | [] |

DQN-Labs-Community/DQN-GPT-v0.2-MLX-4bit | DQN-Labs-Community | 2026-03-11T11:12:17Z | 43 | 1 | null | [

"safetensors",

"mistral",

"base_model:DQN-Labs/ministral-3-3B-it2512-mlx-quantized",

"base_model:quantized:DQN-Labs/ministral-3-3B-it2512-mlx-quantized",

"license:apache-2.0",

"4-bit",

"region:us"

] | null | 2026-03-11T11:04:00Z | # DQN-GPT v0.2

DQN-GPT v0.2 is a 3.4B parameter instruction-following language model designed with a single guiding philosophy:

AI with obedience in mind.

The model prioritizes precise instruction adherence, reliable formatting, and predictable behavior for developers building local AI systems.

⸻

## Model Overview... | [] |

RylanSchaeffer/mem_Qwen3-62M_minerva_math_rep_100_sbst_1.0000_epch_1_ot_8 | RylanSchaeffer | 2025-09-21T02:16:15Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"qwen3",

"text-generation",

"generated_from_trainer",

"conversational",

"text-generation-inference",

"endpoints_compatible",

"region:us"

] | text-generation | 2025-09-21T02:16:08Z | <!-- This model card has been generated automatically according to the information the Trainer had access to. You

should probably proofread and complete it, then remove this comment. -->

# mem_Qwen3-62M_minerva_math_rep_100_sbst_1.0000_epch_1_ot_8

This model is a fine-tuned version of [](https://huggingface.co/) on a... | [] |

aphoticshaman/elle-72b-math-v2 | aphoticshaman | 2025-12-11T20:34:38Z | 3 | 0 | peft | [

"peft",

"safetensors",

"qwen2",

"math",

"reasoning",

"lora",

"numina",

"text-generation",

"conversational",

"dataset:AI-MO/NuminaMath-CoT",

"base_model:Qwen/Qwen2.5-72B-Instruct",

"base_model:adapter:Qwen/Qwen2.5-72B-Instruct",

"license:apache-2.0",

"4-bit",

"bitsandbytes",

"region:us"... | text-generation | 2025-12-11T07:28:24Z | # Elle-72B-Math-v2

## Model Description

Elle-72B-Math-v2 is a LoRA adapter fine-tuned on [Qwen/Qwen2.5-72B-Instruct](https://huggingface.co/Qwen/Qwen2.5-72B-Instruct) for mathematical reasoning using the NuminaMath-CoT dataset.

## Model Details

- **Base Model**: Qwen/Qwen2.5-72B-Instruct

- **Adapter Type**: LoRA

- ... | [

{

"start": 421,

"end": 435,

"text": "NuminaMath-CoT",

"label": "training method",

"score": 0.7971941828727722

}

] |

mradermacher/Gemma-AIO-GGUF | mradermacher | 2025-08-29T15:31:16Z | 8 | 1 | transformers | [

"transformers",

"gguf",

"text-generation-inference",

"unsloth",

"gemma3",

"en",

"base_model:GazTrab/Gemma-AIO",

"base_model:quantized:GazTrab/Gemma-AIO",

"license:apache-2.0",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2025-08-29T15:18:07Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static qu... | [] |

mradermacher/aegis-qwen2vl-3b-GGUF | mradermacher | 2026-03-14T15:01:18Z | 517 | 0 | transformers | [

"transformers",

"gguf",

"browser-qa",

"visual-agent",

"qwen2.5-vl",

"lora-merged",

"fine-tuned",

"en",

"base_model:sanaX3065/aegis-qwen2vl-3b",

"base_model:quantized:sanaX3065/aegis-qwen2vl-3b",

"license:apache-2.0",

"endpoints_compatible",

"region:us",

"conversational"

] | null | 2026-03-14T11:33:14Z | ## About

<!-- ### quantize_version: 2 -->

<!-- ### output_tensor_quantised: 1 -->

<!-- ### convert_type: hf -->

<!-- ### vocab_type: -->

<!-- ### tags: -->

<!-- ### quants: x-f16 Q4_K_S Q2_K Q8_0 Q6_K Q3_K_M Q3_K_S Q3_K_L Q4_K_M Q5_K_S Q5_K_M IQ4_XS -->

<!-- ### quants_skip: -->

<!-- ### skip_mmproj: -->

static q... | [] |

NetherQuartz/ilo-toki-gemma-2-2b | NetherQuartz | 2025-10-14T03:11:25Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"generated_from_trainer",

"trl",

"sft",

"base_model:google/gemma-2-2b",

"base_model:finetune:google/gemma-2-2b",

"endpoints_compatible",

"region:us"

] | null | 2025-09-28T00:35:21Z | # Model Card for ilo-toki-gemma-2-2b

This model is a fine-tuned version of [google/gemma-2-2b](https://huggingface.co/google/gemma-2-2b).

It has been trained using [TRL](https://github.com/huggingface/trl).

## Quick start

```python

from transformers import pipeline

question = "If you had a time machine, but could o... | [] |

DavidAU/Qwen3-MOE-4x0.6B-2.4B-Writing-Thunder-V1.2 | DavidAU | 2025-08-28T08:39:35Z | 36 | 8 | transformers | [

"transformers",

"safetensors",

"qwen3_moe",

"text-generation",

"programming",

"code generation",

"code",

"codeqwen",

"moe",

"coding",

"coder",

"qwen2",

"chat",

"qwen",

"qwen-coder",

"mixture of experts",

"4 experts",

"2 active experts",

"40k context",

"qwen3",

"finetune",

"... | text-generation | 2025-08-27T01:52:07Z | <h2>Qwen3-MOE-4x0.6B-2.4B-Writing-Thunder-V1.2</h2>

<small>(this version is stronger in coding, than V1 and V1.1)</small>

<img src="writing-thunder.jpg" style="float:right; width:300px; height:300px; padding:10px;">

This repo contains the full precision source code, in "safe tensors" format to generate GGUFs, GPTQ, ... | [] |

gsjang/ko-llama-3-korean-bllossom-8b-x-meta-llama-3-8b-instruct-arcee_fusion-50_50 | gsjang | 2025-08-29T00:57:58Z | 0 | 0 | transformers | [

"transformers",

"safetensors",

"llama",

"text-generation",

"mergekit",

"merge",

"conversational",

"base_model:MLP-KTLim/llama-3-Korean-Bllossom-8B",

"base_model:merge:MLP-KTLim/llama-3-Korean-Bllossom-8B",

"base_model:meta-llama/Meta-Llama-3-8B-Instruct",

"base_model:merge:meta-llama/Meta-Llama-... | text-generation | 2025-08-29T00:52:05Z | # ko-llama-3-korean-bllossom-8b-x-meta-llama-3-8b-instruct-arcee_fusion-50_50

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit).

## Merge Details

### Merge Method