text stringlengths 201 597k | inputs dict | prediction list | prediction_agent stringclasses 1

value | annotation null | annotation_agent null | vectors null | multi_label bool 1

class | explanation null | id stringlengths 36 36 | metadata dict | status stringclasses 1

value | metrics dict | label class label 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

kaleido on Stable Diffusion This is the `<kaleido>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train... | {

"text": " kaleido on Stable Diffusion This is the `<kaleido>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You c... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01c11952-ab2d-4641-9b92-21a30d2fab9a | {

"split": "unlabelled"

} | Default | {

"text_length": 1097

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4e-06 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_sch... | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 4e-06 - train_batch_size: 8 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 16 - total_train_batch_size: 128 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: li... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01c22739-a3a6-4fac-9112-eb56d3530ec9 | {

"split": "unlabelled"

} | Default | {

"text_length": 404

} | 1no_dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:| | No log | 1.0 | 303 | 3.9821 | 8.3993 | 2.0894 | 8.1427 | 8.135 | | No log | 2.0 |... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | |:-------------:|:-----:|:----:|:---------------:|:-------:|:------:|:-------:|:---------:| | No log | 1.0 | 303 | 3.9821 | 8.3993 | 2.0894 | 8.1427 | 8.135 | | No log ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01c433dd-d3d1-4263-b71f-6d39ff077c27 | {

"split": "unlabelled"

} | Default | {

"text_length": 941

} | 1no_dataset_mention |

Evaluation The model can be evaluated as follows on the Georgian test data of Common Voice. ```python import librosa import torch import torchaudio from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor from datasets import load_dataset, load_metric import numpy as np import re import string from normalizer im... | {

"text": " Evaluation The model can be evaluated as follows on the Georgian test data of Common Voice. ```python import librosa import torch import torchaudio from transformers import Wav2Vec2ForCTC, Wav2Vec2Processor from datasets import load_dataset, load_metric import numpy as np import re import string from ... | [

{

"label": "dataset_mention",

"score": 0.9997774755092824

},

{

"label": "no_dataset_mention",

"score": 0.00022252449071764603

}

] | Snorkel | null | null | null | false | null | 01c52bf7-0513-43f8-a105-b4ee3834228f | {

"split": "unlabelled"

} | Default | {

"text_length": 1825

} | 0dataset_mention |

Citation Paper: [idT5: Indonesian Version of Multilingual T5 Transformer](https://arxiv.org/abs/2302.00856) ``` @misc{https://doi.org/10.48550/arxiv.2302.00856, doi = {10.48550/ARXIV.2302.00856}, url = {https://arxiv.org/abs/2302.00856}, author = {Fuadi, Mukhlish and Wibawa, Adhi Dharma and Sumpeno, Surya}... | {

"text": " Citation Paper: [idT5: Indonesian Version of Multilingual T5 Transformer](https://arxiv.org/abs/2302.00856) ``` @misc{https://doi.org/10.48550/arxiv.2302.00856, doi = {10.48550/ARXIV.2302.00856}, url = {https://arxiv.org/abs/2302.00856}, author = {Fuadi, Mukhlish and Wibawa, Adhi Dharma and Su... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01c577aa-605c-43b6-b59e-0987c43b8a23 | {

"split": "unlabelled"

} | Default | {

"text_length": 579

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 Precision | Rouge1 Recall | Rouge1 Fmeasure | Rouge2 Precision | Rouge2 Recall | Rouge2 Fmeasure | Rougel Precision | Rougel Recall | Rougel Fmeasure | Rougelsum Precision | Rougelsum Recall | Rougelsum Fmeasure | |:-------------:|:-----:|:---... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 Precision | Rouge1 Recall | Rouge1 Fmeasure | Rouge2 Precision | Rouge2 Recall | Rouge2 Fmeasure | Rougel Precision | Rougel Recall | Rougel Fmeasure | Rougelsum Precision | Rougelsum Recall | Rougelsum Fmeasure | |:-------------:... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01c62cf4-2fd5-4681-844d-cf09768d0a99 | {

"split": "unlabelled"

} | Default | {

"text_length": 6549

} | 1no_dataset_mention |

exp_w2v2t_ar_xlsr-53_s841 Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that your speech... | {

"text": " exp_w2v2t_ar_xlsr-53_s841 Fine-tuned [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure tha... | [

{

"label": "dataset_mention",

"score": 0.9729673904845365

},

{

"label": "no_dataset_mention",

"score": 0.027032609515463546

}

] | Snorkel | null | null | null | false | null | 01c7f2bc-2047-44d8-84d8-06b4b72ffe84 | {

"split": "unlabelled"

} | Default | {

"text_length": 460

} | 0dataset_mention |

Italian BERT The source data for the Italian BERT model consists of a recent Wikipedia dump and various texts from the [OPUS corpora](http://opus.nlpl.eu/) collection. The final training corpus has a size of 13GB and 2,050,057,573 tokens. For sentence splitting, we use NLTK (faster compared to spacy). Our cased and ... | {

"text": " Italian BERT The source data for the Italian BERT model consists of a recent Wikipedia dump and various texts from the [OPUS corpora](http://opus.nlpl.eu/) collection. The final training corpus has a size of 13GB and 2,050,057,573 tokens. For sentence splitting, we use NLTK (faster compared to spacy). O... | [

{

"label": "dataset_mention",

"score": 0.996275682131019

},

{

"label": "no_dataset_mention",

"score": 0.0037243178689809484

}

] | Snorkel | null | null | null | false | null | 01cd7a5d-a54a-4fd6-96eb-f39e2e025bb6 | {

"split": "unlabelled"

} | Default | {

"text_length": 1277

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 10 - eval_batch_size: 10 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0002 - train_batch_size: 10 - eval_batch_size: 10 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 10 "

} | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01cf2d67-222e-4da3-afec-8fa62540db7d | {

"split": "unlabelled"

} | Default | {

"text_length": 269

} | 1no_dataset_mention |

bert-base-german-cased-finetuned-200labels This model is a fine-tuned version of [ogimgio/bert-base-german-cased-finetuned-7labels](https://huggingface.co/ogimgio/bert-base-german-cased-finetuned-7labels) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0744 - Micro f1: 0.0894 -... | {

"text": " bert-base-german-cased-finetuned-200labels This model is a fine-tuned version of [ogimgio/bert-base-german-cased-finetuned-7labels](https://huggingface.co/ogimgio/bert-base-german-cased-finetuned-7labels) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.0744 - Micro... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01cf2e2a-653d-48f5-acf7-d9eb8de55309 | {

"split": "unlabelled"

} | Default | {

"text_length": 339

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.8568 | 0.95 | 16 | 2.5937 | | 2.2487 | 1.95 | 32 | 2.1050 | | 1.9011 | 2.95 | 48 | 1.8082 | | 1.6837 | 3.95 | 64 | 1.6178 ... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.8568 | 0.95 | 16 | 2.5937 | | 2.2487 | 1.95 | 32 | 2.1050 | | 1.9011 | 2.95 | 48 | 1.8082 | | 1.6837 | 3.95 | 64 | ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01d0d09e-90ed-4033-b30d-ce0672082c94 | {

"split": "unlabelled"

} | Default | {

"text_length": 633

} | 1no_dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 2.5019 | 1.0 | 39313 | 2.3138 | 27.2654 | 10.5461 | 23.2451 | 26.6151 |... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:-----:|:---------------:|:-------:|:-------:|:-------:|:---------:|:-------:| | 2.5019 | 1.0 | 39313 | 2.3138 | 27.2654 | 10.5461 | 23.2451 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01d329ef-e45c-4fde-bcbf-ef57445bf780 | {

"split": "unlabelled"

} | Default | {

"text_length": 333

} | 1no_dataset_mention |

xlm-roberta-base-finetuned-chaii This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4651 | {

"text": " xlm-roberta-base-finetuned-chaii This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.4651 "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01d3ac4c-53ef-46c0-9b05-da8760c7247c | {

"split": "unlabelled"

} | Default | {

"text_length": 227

} | 0dataset_mention |

exp_w2v2t_ar_vp-sv_s953 Fine-tuned [facebook/wav2vec2-large-sv-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-sv-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make sure that you... | {

"text": " exp_w2v2t_ar_vp-sv_s953 Fine-tuned [facebook/wav2vec2-large-sv-voxpopuli](https://huggingface.co/facebook/wav2vec2-large-sv-voxpopuli) for speech recognition using the train split of [Common Voice 7.0 (ar)](https://huggingface.co/datasets/mozilla-foundation/common_voice_7_0). When using this model, make ... | [

{

"label": "dataset_mention",

"score": 0.9729673904845365

},

{

"label": "no_dataset_mention",

"score": 0.027032609515463546

}

] | Snorkel | null | null | null | false | null | 01d60ab6-9b7d-4c55-be5f-600696fcf1c7 | {

"split": "unlabelled"

} | Default | {

"text_length": 468

} | 0dataset_mention |

Safety Module The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers. This checker works by checking model outputs against known hard-coded NSFW concepts. The concepts are intentionally hid... | {

"text": " Safety Module The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers. This checker works by checking model outputs against known hard-coded NSFW concepts. The concepts are inte... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01da8d42-7e7b-4b48-a9c4-245fce34c876 | {

"split": "unlabelled"

} | Default | {

"text_length": 822

} | 0dataset_mention |

distilbert-base-uncased-becas-2 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the becasv2 dataset. It achieves the following results on the evaluation set: - Loss: 5.9506 | {

"text": " distilbert-base-uncased-becas-2 This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on the becasv2 dataset. It achieves the following results on the evaluation set: - Loss: 5.9506 "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01dadbfe-5b7d-4b59-9ad1-768f983f6b8e | {

"split": "unlabelled"

} | Default | {

"text_length": 243

} | 0dataset_mention |

Haakf/allsides_left_text_padded_overfit This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.9591 - Validation Loss: 1.9856 - Epoch: 19 | {

"text": " Haakf/allsides_left_text_padded_overfit This model is a fine-tuned version of [distilbert-base-uncased](https://huggingface.co/distilbert-base-uncased) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.9591 - Validation Loss: 1.9856 - Epoch: 19 "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01dae8a7-72f4-4502-a3de-6d2222b5d192 | {

"split": "unlabelled"

} | Default | {

"text_length": 294

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.6799 | 1.0 | 1899 | 2.5135 | | 2.5736 | 2.0 | 3798 | 2.4612 | | 2.5172 | 3.0 | 5697 | 2.4363 | | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | |:-------------:|:-----:|:----:|:---------------:| | 2.6799 | 1.0 | 1899 | 2.5135 | | 2.5736 | 2.0 | 3798 | 2.4612 | | 2.5172 | 3.0 | 5697 | 2.4363 | "

} | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01dc6bde-db3d-412a-8006-d617b8ab61ec | {

"split": "unlabelled"

} | Default | {

"text_length": 276

} | 1no_dataset_mention |

beit-base-patch16-224-pt22k-ft22k-finetuned-mnist This model is a fine-tuned version of [microsoft/beit-base-patch16-224-pt22k-ft22k](https://huggingface.co/microsoft/beit-base-patch16-224-pt22k-ft22k) on the mnist dataset. It achieves the following results on the evaluation set: - Loss: 0.0202 - Accuracy: 0.9935 | {

"text": " beit-base-patch16-224-pt22k-ft22k-finetuned-mnist This model is a fine-tuned version of [microsoft/beit-base-patch16-224-pt22k-ft22k](https://huggingface.co/microsoft/beit-base-patch16-224-pt22k-ft22k) on the mnist dataset. It achieves the following results on the evaluation set: - Loss: 0.0202 - Accurac... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01df5712-4513-4b34-a115-c7a45fabd949 | {

"split": "unlabelled"

} | Default | {

"text_length": 318

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.8306 | 1.0 | 500 | 2.9588 | 1.0 | | 2.1928 | 2.01 | 1000 | 1.2215 | 0.9355 | | 1.1547 | 3.01 | 1500 | 0.9228 | 0.713... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:-----:|:---------------:|:------:| | 3.8306 | 1.0 | 500 | 2.9588 | 1.0 | | 2.1928 | 2.01 | 1000 | 1.2215 | 0.9355 | | 1.1547 | 3.01 | 1500 | 0.9228 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01e0903c-a3a5-44c0-846d-0380377f3c34 | {

"split": "unlabelled"

} | Default | {

"text_length": 1912

} | 1no_dataset_mention |

XLS-R-300M - Breton This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - BR dataset. It achieves the following results on the evaluation set: - Loss: NA - Wer: NA | {

"text": " XLS-R-300M - Breton This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the MOZILLA-FOUNDATION/COMMON_VOICE_8_0 - BR dataset. It achieves the following results on the evaluation set: - Loss: NA - Wer: NA "

} | [

{

"label": "dataset_mention",

"score": 0.9997774755092824

},

{

"label": "no_dataset_mention",

"score": 0.00022252449071764603

}

] | Snorkel | null | null | null | false | null | 01e22927-9a8d-4be5-983d-3dc8cad154b8 | {

"split": "unlabelled"

} | Default | {

"text_length": 280

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | No log | 0.23 | 100 | 3.3596 | 1.0 | | No log | 0.46 | 200 | 2.9280 | 1.0 | | No log | 0.69 | 300 | 1.5091 | 0.9650 | |... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Wer | |:-------------:|:-----:|:----:|:---------------:|:------:| | No log | 0.23 | 100 | 3.3596 | 1.0 | | No log | 0.46 | 200 | 2.9280 | 1.0 | | No log | 0.69 | 300 | 1.5091 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01e84062-16c4-4987-b742-a0a927ae9bb0 | {

"split": "unlabelled"

} | Default | {

"text_length": 3981

} | 1no_dataset_mention |

electra-base-japanese-discriminator (sudachitra-wordpiece, mC4 Japanese) - [SHINOBU](https://dl.ndl.go.jp/info:ndljp/pid/1302683/3) This is an [ELECTRA](https://github.com/google-research/electra) model pretrained on approximately 200M Japanese sentences. The input text is tokenized by [SudachiTra](https://github.co... | {

"text": " electra-base-japanese-discriminator (sudachitra-wordpiece, mC4 Japanese) - [SHINOBU](https://dl.ndl.go.jp/info:ndljp/pid/1302683/3) This is an [ELECTRA](https://github.com/google-research/electra) model pretrained on approximately 200M Japanese sentences. The input text is tokenized by [SudachiTra](http... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01e8f3c7-3897-423b-aa9a-56ab0c8f3fc5 | {

"split": "unlabelled"

} | Default | {

"text_length": 444

} | 0dataset_mention |

t5-small-finetuned-en-es This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.8937 - Rouge1: 32.6939 - Rouge2: 11.794 - Rougel: 31.9982 - Rougelsum: 31.9902 - Gen Len: 15.7947 | {

"text": " t5-small-finetuned-en-es This model is a fine-tuned version of [t5-small](https://huggingface.co/t5-small) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 1.8937 - Rouge1: 32.6939 - Rouge2: 11.794 - Rougel: 31.9982 - Rougelsum: 31.9902 - Gen Len: 15.7947 "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01ec1417-1072-423d-afe2-35e099179323 | {

"split": "unlabelled"

} | Default | {

"text_length": 298

} | 0dataset_mention |

`simpleoier/simpleoier_librispeech_hubert_iter1_train_ssl_torchaudiohubert_base_960h_pretrain_it1_raw` This model was trained by simpleoier using librispeech recipe in [espnet](https://github.com/espnet/espnet/). | {

"text": " `simpleoier/simpleoier_librispeech_hubert_iter1_train_ssl_torchaudiohubert_base_960h_pretrain_it1_raw` This model was trained by simpleoier using librispeech recipe in [espnet](https://github.com/espnet/espnet/). "

} | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01ec3a9f-e75a-47e6-80fa-8fbae74a1084 | {

"split": "unlabelled"

} | Default | {

"text_length": 216

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.2033 | 1.0 | 14 | 2.2126 | 0.0833 | | 2.2006 | 2.0 | 28 | 2.2026 | 0.0833 | | 2.1786 | 3.0 | 42 | 2.1758 | 0.... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 2.2033 | 1.0 | 14 | 2.2126 | 0.0833 | | 2.2006 | 2.0 | 28 | 2.2026 | 0.0833 | | 2.1786 | 3.0 | 42 | 2.1758 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01edbec4-6d4e-4cc0-a34e-c8f313e686ca | {

"split": "unlabelled"

} | Default | {

"text_length": 2935

} | 1no_dataset_mention |

Pretraining The model was pre-trained for 100k steps on 8 NVIDIA A100 GPUs with a batch size of 4096. The optimizer used was AdamW with a learning rate of 1e-5. No data augmentation was used except for center-crop. The image resolution in pre-training is set to 288 x 288. | {

"text": " Pretraining The model was pre-trained for 100k steps on 8 NVIDIA A100 GPUs with a batch size of 4096. The optimizer used was AdamW with a learning rate of 1e-5. No data augmentation was used except for center-crop. The image resolution in pre-training is set to 288 x 288. "

} | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01ee4f1a-a60f-454a-89b2-0fb5442caeb7 | {

"split": "unlabelled"

} | Default | {

"text_length": 278

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.3699 | 1.0 | 1255 | 0.3712 | 0.8669 | | 0.3082 | 2.0 | 2510 | 0.3401 | 0.8766 | | 0.2375 | 3.0 | 3765 | 0.4137 | 0.... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 0.3699 | 1.0 | 1255 | 0.3712 | 0.8669 | | 0.3082 | 2.0 | 2510 | 0.3401 | 0.8766 | | 0.2375 | 3.0 | 3765 | 0.4137 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01eec69f-83c3-4572-ae88-27d3d8ffa5f6 | {

"split": "unlabelled"

} | Default | {

"text_length": 455

} | 1no_dataset_mention |

Command Recognition with xvector embeddings on Google Speech Commands This repository provides all the necessary tools to perform command recognition with SpeechBrain using a model pretrained on Google Speech Commands. You can download the dataset [here](https://www.tensorflow.org/datasets/catalog/speech_commands) Th... | {

"text": " Command Recognition with xvector embeddings on Google Speech Commands This repository provides all the necessary tools to perform command recognition with SpeechBrain using a model pretrained on Google Speech Commands. You can download the dataset [here](https://www.tensorflow.org/datasets/catalog/speech... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01f0b492-2cd5-4c94-b6c0-69652927eaa2 | {

"split": "unlabelled"

} | Default | {

"text_length": 843

} | 0dataset_mention |

Hitokomoru Diffusion V2  A latent diffusion model that has been trained on Japanese Artist artwork, [ヒトこもる/Hitokomoru](https://www.pixiv.net/en/users/30837811). The current model is fine-tuned from [waifu-d... | {

"text": " Hitokomoru Diffusion V2  A latent diffusion model that has been trained on Japanese Artist artwork, [ヒトこもる/Hitokomoru](https://www.pixiv.net/en/users/30837811). The current model is fine-tuned ... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01f0c3ab-832d-4aeb-ae48-b5adbe00d9f2 | {

"split": "unlabelled"

} | Default | {

"text_length": 1260

} | 0dataset_mention |

mpid-bkdbj Dreambooth model trained by tftgregrge with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBen/fast-stable... | {

"text": " mpid-bkdbj Dreambooth model trained by tftgregrge with [TheLastBen's fast-DreamBooth](https://colab.research.google.com/github/TheLastBen/fast-stable-diffusion/blob/main/fast-DreamBooth.ipynb) notebook Test the concept via A1111 Colab [fast-Colab-A1111](https://colab.research.google.com/github/TheLastBe... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01f3dd99-b58d-4fbc-bb5e-e6a02a853d45 | {

"split": "unlabelled"

} | Default | {

"text_length": 419

} | 0dataset_mention |

BART_corrector This model is a fine-tuned version of [ainize/bart-base-cnn](https://huggingface.co/ainize/bart-base-cnn) on a homemade dataset. Each sample of the dataset is an english sentence that has been duplicated 10 times and where random errors (7%) were added. It achieves the following results on the evaluat... | {

"text": " BART_corrector This model is a fine-tuned version of [ainize/bart-base-cnn](https://huggingface.co/ainize/bart-base-cnn) on a homemade dataset. Each sample of the dataset is an english sentence that has been duplicated 10 times and where random errors (7%) were added. It achieves the following results o... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01f47116-8cd1-4ac1-864f-aa138ea3a973 | {

"split": "unlabelled"

} | Default | {

"text_length": 439

} | 0dataset_mention |

Core NLP model for german CoreNLP is your one stop shop for natural language processing in Java! CoreNLP enables users to derive linguistic annotations for text, including token and sentence boundaries, parts of speech, named entities, numeric and time values, dependency and constituency parses, coreference, sentiment... | {

"text": " Core NLP model for german CoreNLP is your one stop shop for natural language processing in Java! CoreNLP enables users to derive linguistic annotations for text, including token and sentence boundaries, parts of speech, named entities, numeric and time values, dependency and constituency parses, coreferen... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 01f4a67e-a776-4462-b99b-2da905fcf5c4 | {

"split": "unlabelled"

} | Default | {

"text_length": 658

} | 0dataset_mention |

model_syllable_onSet3 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1590 - 0 Precision: 0.9688 - 0 Recall: 1.0 - 0 F1-score: 0.9841 - 0 Support: 31 ... | {

"text": " model_syllable_onSet3 This model is a fine-tuned version of [facebook/wav2vec2-large-xlsr-53](https://huggingface.co/facebook/wav2vec2-large-xlsr-53) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.1590 - 0 Precision: 0.9688 - 0 Recall: 1.0 - 0 F1-score: 0.9841 - 0... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 01f4e577-a3df-4302-953a-d89f6dbb564f | {

"split": "unlabelled"

} | Default | {

"text_length": 904

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00015 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 10 | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00015 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - num_epochs: 10 "

} | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 01f56eba-2de3-4f14-ac92-7202bf99404e | {

"split": "unlabelled"

} | Default | {

"text_length": 303

} | 1no_dataset_mention |

Training Data The models are trained on the Japanese version of Wikipedia. The training corpus is generated from the Wikipedia Cirrussearch dump file as of August 31, 2020. The generated corpus files are 4.0GB in total, containing approximately 30M sentences. We used the [MeCab](https://taku910.github.io/mecab/) mor... | {

"text": " Training Data The models are trained on the Japanese version of Wikipedia. The training corpus is generated from the Wikipedia Cirrussearch dump file as of August 31, 2020. The generated corpus files are 4.0GB in total, containing approximately 30M sentences. We used the [MeCab](https://taku910.github.i... | [

{

"label": "dataset_mention",

"score": 0.9794001894568531

},

{

"label": "no_dataset_mention",

"score": 0.020599810543146933

}

] | Snorkel | null | null | null | false | null | 01f6020f-b058-4716-982f-04c826abd5c4 | {

"split": "unlabelled"

} | Default | {

"text_length": 458

} | 0dataset_mention |

Training As first step used Common Voice train dataset and parts from NST as can be found [here](https://github.com/se-asr/nst/tree/master). Part of NST where removed using this mask ```python mask = [(5 < len(x.split()) < 20) and np.average([len(entry) for entry in x.split()]) > 5 for x in dataset['transcript'].tol... | {

"text": " Training As first step used Common Voice train dataset and parts from NST as can be found [here](https://github.com/se-asr/nst/tree/master). Part of NST where removed using this mask ```python mask = [(5 < len(x.split()) < 20) and np.average([len(entry) for entry in x.split()]) > 5 for x in dataset['tra... | [

{

"label": "dataset_mention",

"score": 0.9997774755092824

},

{

"label": "no_dataset_mention",

"score": 0.00022252449071764603

}

] | Snorkel | null | null | null | false | null | 01f84fa2-58c2-4a25-874a-6dc98f853184 | {

"split": "unlabelled"

} | Default | {

"text_length": 649

} | 0dataset_mention |

Performance Evaluated on the SQuAD 2.0 dev set with the [official eval script](https://worksheets.codalab.org/rest/bundles/0x6b567e1cf2e041ec80d7098f031c5c9e/contents/blob/). ``` "exact": 79.87029394424324, "f1": 82.91251169582613, "total": 11873, "HasAns_exact": 77.93522267206478, "HasAns_f1": 84.02838248389763, "H... | {

"text": " Performance Evaluated on the SQuAD 2.0 dev set with the [official eval script](https://worksheets.codalab.org/rest/bundles/0x6b567e1cf2e041ec80d7098f031c5c9e/contents/blob/). ``` \"exact\": 79.87029394424324, \"f1\": 82.91251169582613, \"total\": 11873, \"HasAns_exact\": 77.93522267206478, \"HasAns_f1\"... | [

{

"label": "dataset_mention",

"score": 0.9579560193331735

},

{

"label": "no_dataset_mention",

"score": 0.04204398066682655

}

] | Snorkel | null | null | null | false | null | 01f85a31-8573-45b0-8bd4-56e8ba2d1e80 | {

"split": "unlabelled"

} | Default | {

"text_length": 1704

} | 0dataset_mention |

Be aware: Model is heavly overfitted, merge needed. Best use probably is to merge with something else for a style change. Will upload other version later on that should be better Sample images: <style> img { display: inline-block; } </style> <img src="https://huggingface.co/YoungMasterFromSect/Ton_Inf/resolve/main... | {

"text": "Be aware: Model is heavly overfitted, merge needed. Best use probably is to merge with something else for a style change. Will upload other version later on that should be better Sample images: <style> img { display: inline-block; } </style> <img src=\"https://huggingface.co/YoungMasterFromSect/Ton_In... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0205a047-cad3-4669-b28a-2551f79bbef9 | {

"split": "unlabelled"

} | Default | {

"text_length": 783

} | 0dataset_mention |

Amine on Stable Diffusion This is the `<ayna>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can also train your... | {

"text": " Amine on Stable Diffusion This is the `<ayna>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ipynb) notebook. You can al... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0206b9ae-1f8c-4a3b-acfa-a45e45473c87 | {

"split": "unlabelled"

} | Default | {

"text_length": 1059

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 0.7355 | 1.0 | 16664 | 0.7112 | 0.8292 | | 0.6394 | 2.0 | 33328 | 0.7396 | 0.8447 | | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:--------:| | 0.7355 | 1.0 | 16664 | 0.7112 | 0.8292 | | 0.6394 | 2.0 | 33328 | 0.7396 | 0.8447 | "

} | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 0206d2f2-9887-4d1a-957e-eefef58f5ac4 | {

"split": "unlabelled"

} | Default | {

"text_length": 273

} | 1no_dataset_mention |

Sounak/bert-large-finetuned This model is a fine-tuned version of [bert-large-uncased-whole-word-masking-finetuned-squad](https://huggingface.co/bert-large-uncased-whole-word-masking-finetuned-squad) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.7634 - Validation Loss... | {

"text": " Sounak/bert-large-finetuned This model is a fine-tuned version of [bert-large-uncased-whole-word-masking-finetuned-squad](https://huggingface.co/bert-large-uncased-whole-word-masking-finetuned-squad) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 1.7634 - Va... | [

{

"label": "dataset_mention",

"score": 0.9996485282939453

},

{

"label": "no_dataset_mention",

"score": 0.0003514717060547617

}

] | Snorkel | null | null | null | false | null | 0208aa73-cc4b-4498-a441-7a6137f7e313 | {

"split": "unlabelled"

} | Default | {

"text_length": 341

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.3408 | 1.0 | 1875 | 0.3639 | 0.7462 | 0.7887 | 0.7669 | 0.8966 | | 0.2435 | 2.0 ... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Precision | Recall | F1 | Accuracy | |:-------------:|:-----:|:-----:|:---------------:|:---------:|:------:|:------:|:--------:| | 0.3408 | 1.0 | 1875 | 0.3639 | 0.7462 | 0.7887 | 0.7669 | 0.8966 | | 0.2435 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 0208bafe-8a06-44b4-963f-54c74c684d76 | {

"split": "unlabelled"

} | Default | {

"text_length": 858

} | 1no_dataset_mention |

danish-bert-botxo-danish-finetuned-hatespeech This model is for a university project and is uploaded for sharing between students. It is training on a danish hate speech labeled training set. Feel free to use it, but as of now, we don't promise any good results ;-) This model is a fine-tuned version of [Maltehb/danis... | {

"text": " danish-bert-botxo-danish-finetuned-hatespeech This model is for a university project and is uploaded for sharing between students. It is training on a danish hate speech labeled training set. Feel free to use it, but as of now, we don't promise any good results ;-) This model is a fine-tuned version of [... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 020c1237-2894-4531-928c-7cc02969899b | {

"split": "unlabelled"

} | Default | {

"text_length": 480

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 3.4007 | 1.0 | 318 | 2.5187 | 0.7490 | | 1.93 | 2.0 | 636 | 1.2663 | 0.8606 | | 0.9765 | 3.0 | 954 | 0.6825 | 0.... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | |:-------------:|:-----:|:----:|:---------------:|:--------:| | 3.4007 | 1.0 | 318 | 2.5187 | 0.7490 | | 1.93 | 2.0 | 636 | 1.2663 | 0.8606 | | 0.9765 | 3.0 | 954 | 0.6825 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 020c25eb-161b-4689-be6b-1f148ea60fef | {

"split": "unlabelled"

} | Default | {

"text_length": 765

} | 1no_dataset_mention |

Full config {'dataset': {'conditional_training_config': {'aligned_prefix': '<|aligned|>', 'drop_token_fraction': 0.1, 'misaligned_prefix': '<|misaligned|>', 'threshold': 0}, ... | {

"text": " Full config {'dataset': {'conditional_training_config': {'aligned_prefix': '<|aligned|>', 'drop_token_fraction': 0.1, 'misaligned_prefix': '<|misaligned|>', 'threshold': 0... | [

{

"label": "dataset_mention",

"score": 0.9920527014967919

},

{

"label": "no_dataset_mention",

"score": 0.00794729850320818

}

] | Snorkel | null | null | null | false | null | 020c3d64-5e5f-4ac6-bd42-9d0c760165d3 | {

"split": "unlabelled"

} | Default | {

"text_length": 4149

} | 0dataset_mention |

Training The model is trained with standard autoregressive cross-entropy loss and using [SpecAugment](https://arxiv.org/abs/1904.08779). The encoder receives speech features, and the decoder generates the transcripts autoregressively. To accelerate model training and for better performance the encoder is pre-trained ... | {

"text": " Training The model is trained with standard autoregressive cross-entropy loss and using [SpecAugment](https://arxiv.org/abs/1904.08779). The encoder receives speech features, and the decoder generates the transcripts autoregressively. To accelerate model training and for better performance the encoder is... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0211e19c-5f38-4990-891c-8be51176921b | {

"split": "unlabelled"

} | Default | {

"text_length": 338

} | 0dataset_mention |

movie-roberta-base-finetuned-movie-p1 This model is a fine-tuned version of [thatdramebaazguy/movie-roberta-base](https://huggingface.co/thatdramebaazguy/movie-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3949 | {

"text": " movie-roberta-base-finetuned-movie-p1 This model is a fine-tuned version of [thatdramebaazguy/movie-roberta-base](https://huggingface.co/thatdramebaazguy/movie-roberta-base) on the None dataset. It achieves the following results on the evaluation set: - Loss: 0.3949 "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 0215ed71-0191-4283-b397-1687a9023c43 | {

"split": "unlabelled"

} | Default | {

"text_length": 270

} | 0dataset_mention |

TAPAS base model fine-tuned on WikiTable Questions (WTQ) This model has 2 versions which can be used. The default version corresponds to the `tapas_wtq_wikisql_sqa_inter_masklm_base_reset` checkpoint of the [original Github repository](https://github.com/google-research/tapas). This model was pre-trained on MLM and a... | {

"text": " TAPAS base model fine-tuned on WikiTable Questions (WTQ) This model has 2 versions which can be used. The default version corresponds to the `tapas_wtq_wikisql_sqa_inter_masklm_base_reset` checkpoint of the [original Github repository](https://github.com/google-research/tapas). This model was pre-trained... | [

{

"label": "dataset_mention",

"score": 0.9794001894568531

},

{

"label": "no_dataset_mention",

"score": 0.020599810543146933

}

] | Snorkel | null | null | null | false | null | 02168c01-2520-477f-b7d7-18856c44ce59 | {

"split": "unlabelled"

} | Default | {

"text_length": 1063

} | 0dataset_mention |

Overview - **Language model:** [google/mt5-small](https://huggingface.co/google/mt5-small) - **Language:** de - **Training data:** [lmqg/qg_dequad](https://huggingface.co/datasets/lmqg/qg_dequad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github.com/asahi417... | {

"text": " Overview - **Language model:** [google/mt5-small](https://huggingface.co/google/mt5-small) - **Language:** de - **Training data:** [lmqg/qg_dequad](https://huggingface.co/datasets/lmqg/qg_dequad) (default) - **Online Demo:** [https://autoqg.net/](https://autoqg.net/) - **Repository:** [https://github... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0216e90d-bd39-48bd-aa23-90d92d77d0a8 | {

"split": "unlabelled"

} | Default | {

"text_length": 480

} | 0dataset_mention |

Model description From scratch pre-trained RoBERTa model with 6 layers and 12 attention heads using [AI-SOCO](https://sites.google.com/view/ai-soco-2020) dataset which consists of C++ codes crawled from CodeForces website. | {

"text": " Model description From scratch pre-trained RoBERTa model with 6 layers and 12 attention heads using [AI-SOCO](https://sites.google.com/view/ai-soco-2020) dataset which consists of C++ codes crawled from CodeForces website. "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 021738f1-e450-4b1f-8605-bdf07bcbbb0b | {

"split": "unlabelled"

} | Default | {

"text_length": 226

} | 0dataset_mention |

roberta-base-ca-finetuned-catalonia-independence-detector This model is a fine-tuned version of [BSC-TeMU/roberta-base-ca](https://huggingface.co/BSC-TeMU/roberta-base-ca) on the catalonia_independence dataset. It achieves the following results on the evaluation set: - Loss: 0.6065 - Accuracy: 0.7612 <details> | {

"text": " roberta-base-ca-finetuned-catalonia-independence-detector This model is a fine-tuned version of [BSC-TeMU/roberta-base-ca](https://huggingface.co/BSC-TeMU/roberta-base-ca) on the catalonia_independence dataset. It achieves the following results on the evaluation set: - Loss: 0.6065 - Accuracy: 0.7612 <d... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 021a1146-b591-475e-bb29-217bed2365f3 | {

"split": "unlabelled"

} | Default | {

"text_length": 316

} | 0dataset_mention |

distilgpt2-finetuned-restaurant-reviews This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on a subset of the Yelp restaurant reviews dataset. It achieves the following results on the evaluation set: - Loss: 3.4668 | {

"text": " distilgpt2-finetuned-restaurant-reviews This model is a fine-tuned version of [distilgpt2](https://huggingface.co/distilgpt2) on a subset of the Yelp restaurant reviews dataset. It achieves the following results on the evaluation set: - Loss: 3.4668 "

} | [

{

"label": "dataset_mention",

"score": 0.9999859085737528

},

{

"label": "no_dataset_mention",

"score": 0.000014091426247201861

}

] | Snorkel | null | null | null | false | null | 021a3479-a359-42d2-bb12-59230d57cb57 | {

"split": "unlabelled"

} | Default | {

"text_length": 253

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2663 | 1.0 | 525 | 0.1628 | 0.8273 | | 0.1338 | 2.0 | 1050 | 0.1457 | 0.8396 | | 0.0844 | 3.0 | 1575 | 0.1397 | 0.8610 | ... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.2663 | 1.0 | 525 | 0.1628 | 0.8273 | | 0.1338 | 2.0 | 1050 | 0.1457 | 0.8396 | | 0.0844 | 3.0 | 1575 | 0.1397 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 021c5d0c-d177-48de-bf8b-de49ab5d4c1f | {

"split": "unlabelled"

} | Default | {

"text_length": 321

} | 1no_dataset_mention |

pytorch ```python from diffusers import DiffusionPipeline import torch pipe = DiffusionPipeline.from_pretrained("animelover/novelai-diffusion", custom_pipeline="waifu-research-department/long-prompt-weighting-pipeline", torch_dtype=torch.float16) pipe.safety_checker = None | {

"text": " pytorch ```python from diffusers import DiffusionPipeline import torch pipe = DiffusionPipeline.from_pretrained(\"animelover/novelai-diffusion\", custom_pipeline=\"waifu-research-department/long-prompt-weighting-pipeline\", torch_dtype=torch.float16) pipe.safety_checker = None "

} | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 021d11d2-0dc1-4116-b7d2-f8bfa2fef57f | {

"split": "unlabelled"

} | Default | {

"text_length": 277

} | 0dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 258 | 1.3238 | 50.228 | 29.5898 | 30.1054 | 47.1265 | 142.0... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Rouge1 | Rouge2 | Rougel | Rougelsum | Gen Len | |:-------------:|:-----:|:----:|:---------------:|:------:|:-------:|:-------:|:---------:|:-------:| | No log | 1.0 | 258 | 1.3238 | 50.228 | 29.5898 | 30.1054 | 47.1... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 02220edf-3f3f-4ec6-ac86-9f3297540f36 | {

"split": "unlabelled"

} | Default | {

"text_length": 327

} | 1no_dataset_mention |

T5-ANCE T5-ANCE generally follows the training procedure described in [this page](https://openmatch.readthedocs.io/en/latest/dr-msmarco-passage.html), but uses a much larger batch size. Dataset used for training: - MS MARCO Passage Evaluation result: |Dataset|Metric|Result| |---|---|---| |MS MARCO Passage (dev) | ... | {

"text": " T5-ANCE T5-ANCE generally follows the training procedure described in [this page](https://openmatch.readthedocs.io/en/latest/dr-msmarco-passage.html), but uses a much larger batch size. Dataset used for training: - MS MARCO Passage Evaluation result: |Dataset|Metric|Result| |---|---|---| |MS MARCO Pas... | [

{

"label": "dataset_mention",

"score": 0.9920527014967919

},

{

"label": "no_dataset_mention",

"score": 0.00794729850320818

}

] | Snorkel | null | null | null | false | null | 0222590c-5cc9-41f0-abac-8a38c49c0e97 | {

"split": "unlabelled"

} | Default | {

"text_length": 582

} | 0dataset_mention |

Usage with pipeline ```python from transformers import pipeline text2text_generator = pipeline(task="text2text-generation", model="NYTK/morphological-generator-ud-mt5-hungarian") print(text2text_generator("morph: munka NOUN Case=Acc|Number=Sin")[0]["generated_text"]) ``` | {

"text": " Usage with pipeline ```python from transformers import pipeline text2text_generator = pipeline(task=\"text2text-generation\", model=\"NYTK/morphological-generator-ud-mt5-hungarian\") print(text2text_generator(\"morph: munka NOUN Case=Acc|Number=Sin\")[0][\"generated_text\"]) ``` "

} | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0227ea08-903f-4b12-baff-f897c5ee6047 | {

"split": "unlabelled"

} | Default | {

"text_length": 277

} | 0dataset_mention |

all-roberta-large-v1-kitchen_and_dining-7-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3560 - Accuracy: 0.2692 | {

"text": " all-roberta-large-v1-kitchen_and_dining-7-16-5 This model is a fine-tuned version of [sentence-transformers/all-roberta-large-v1](https://huggingface.co/sentence-transformers/all-roberta-large-v1) on an unknown dataset. It achieves the following results on the evaluation set: - Loss: 2.3560 - Accuracy: 0... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 02295fe3-a7a5-4af4-b5ea-e1dfee2c1e6b | {

"split": "unlabelled"

} | Default | {

"text_length": 314

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00012 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_... | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.00012 - train_batch_size: 64 - eval_batch_size: 64 - seed: 42 - gradient_accumulation_steps: 4 - total_train_batch_size: 256 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type:... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 022b5a52-2fb4-45cd-b56c-15f2a1da3e09 | {

"split": "unlabelled"

} | Default | {

"text_length": 366

} | 1no_dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8112 | 1.0 | 250 | 0.3147 | 0.903 | 0.8992 | | 0.2454 | 2.0 | 500 | 0.2167 | 0.926 | 0.9262 | | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8112 | 1.0 | 250 | 0.3147 | 0.903 | 0.8992 | | 0.2454 | 2.0 | 500 | 0.2167 | 0.926 | 0.9262 | "

... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 0231d56f-43be-4574-be1f-fb4ad6619a44 | {

"split": "unlabelled"

} | Default | {

"text_length": 305

} | 1no_dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.8187 | 1.0 | 70 | 0.3325 | 0.7337 | | 0.2829 | 2.0 | 140 | 0.2554 | 0.8003 | | 0.1894 | 3.0 | 210 | 0.2401 | 0.8246 | ... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | F1 | |:-------------:|:-----:|:----:|:---------------:|:------:| | 0.8187 | 1.0 | 70 | 0.3325 | 0.7337 | | 0.2829 | 2.0 | 140 | 0.2554 | 0.8003 | | 0.1894 | 3.0 | 210 | 0.2401 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 02342486-fe2f-4b98-81c7-7c0dfb747310 | {

"split": "unlabelled"

} | Default | {

"text_length": 321

} | 1no_dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.8973 | 1.0 | 927 | 0.8166 | 0.6483 | 0.6545 | 0.6576 | 0.6460 | | 0.6827 | 2.0 ... | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | Precision | Recall | F1 | |:-------------:|:-----:|:-----:|:---------------:|:--------:|:---------:|:------:|:------:| | 0.8973 | 1.0 | 927 | 0.8166 | 0.6483 | 0.6545 | 0.6576 | 0.6460 | | 0.6827 ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 023531a2-9b4e-4a7d-ba17-0707b3345090 | {

"split": "unlabelled"

} | Default | {

"text_length": 2067

} | 1no_dataset_mention |

Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8432 | 1.0 | 250 | 0.3353 | 0.8975 | 0.8939 | | 0.2582 | 2.0 | 500 | 0.2251 | 0.9265 | 0.9265 | | {

"text": " Training results | Training Loss | Epoch | Step | Validation Loss | Accuracy | F1 | |:-------------:|:-----:|:----:|:---------------:|:--------:|:------:| | 0.8432 | 1.0 | 250 | 0.3353 | 0.8975 | 0.8939 | | 0.2582 | 2.0 | 500 | 0.2251 | 0.9265 | 0.9265 | "

... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 0235ed92-11ea-4952-8d20-20d17399b5dd | {

"split": "unlabelled"

} | Default | {

"text_length": 305

} | 1no_dataset_mention |

xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the germeval_14 dataset. It achieves the following results on the evaluation set: - Loss: 0.0744 - F1: 0.8588 | {

"text": " xlm-roberta-base-finetuned-panx-de This model is a fine-tuned version of [xlm-roberta-base](https://huggingface.co/xlm-roberta-base) on the germeval_14 dataset. It achieves the following results on the evaluation set: - Loss: 0.0744 - F1: 0.8588 "

} | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 023711df-3c13-4392-b024-0e36886a4d77 | {

"split": "unlabelled"

} | Default | {

"text_length": 249

} | 0dataset_mention |

bert-finetuned-math-prob-classification This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the part of the [competition_math dataset](https://huggingface.co/datasets/competition_math). Specifically, it was trained as a multi-class multi-label model on the problem te... | {

"text": " bert-finetuned-math-prob-classification This model is a fine-tuned version of [bert-base-uncased](https://huggingface.co/bert-base-uncased) on the part of the [competition_math dataset](https://huggingface.co/datasets/competition_math). Specifically, it was trained as a multi-class multi-label model on t... | [

{

"label": "dataset_mention",

"score": 0.9976600967163771

},

{

"label": "no_dataset_mention",

"score": 0.0023399032836228136

}

] | Snorkel | null | null | null | false | null | 023e9e31-5ff7-4d33-b3c0-4ec64133bd69 | {

"split": "unlabelled"

} | Default | {

"text_length": 489

} | 0dataset_mention |

KerasCV Stable Diffusion in Diffusers 🧨🤗 The pipeline contained in this repository was created using [this Space](https://huggingface.co/spaces/sayakpaul/convert-kerascv-sd-diffusers). The purpose is to convert the KerasCV Stable Diffusion weights in a way that is compatible with [Diffusers](https://github.com/hugg... | {

"text": " KerasCV Stable Diffusion in Diffusers 🧨🤗 The pipeline contained in this repository was created using [this Space](https://huggingface.co/spaces/sayakpaul/convert-kerascv-sd-diffusers). The purpose is to convert the KerasCV Stable Diffusion weights in a way that is compatible with [Diffusers](https://gi... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 023ea0d7-3dcf-42f5-a8be-915b09208c71 | {

"split": "unlabelled"

} | Default | {

"text_length": 877

} | 0dataset_mention |

wav2vec2-large-xls-r-300m-Indonesian This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.4087 - Wer: 0.2461 - Cer: 0.0666 | {

"text": " wav2vec2-large-xls-r-300m-Indonesian This model is a fine-tuned version of [facebook/wav2vec2-xls-r-300m](https://huggingface.co/facebook/wav2vec2-xls-r-300m) on the common_voice dataset. It achieves the following results on the evaluation set: - Loss: 0.4087 - Wer: 0.2461 - Cer: 0.0666 "

} | [

{

"label": "dataset_mention",

"score": 0.9997774755092824

},

{

"label": "no_dataset_mention",

"score": 0.00022252449071764603

}

] | Snorkel | null | null | null | false | null | 023f8b52-9714-4771-9e14-36b127c51513 | {

"split": "unlabelled"

} | Default | {

"text_length": 293

} | 0dataset_mention |

Run with Huggingface Transformers

````python

from transformers import AutoTokenizer, AutoModelForSequenceClassification

tokenizer = AutoTokenizer.from_pretrained("inovex/multi2convai-logistics-en-bert")

model = AutoModelForSequenceClassification.from_pretrained("inovex/multi2convai-logistics-en-bert")

````

... | {

"text": " Run with Huggingface Transformers\r \r ````python\r from transformers import AutoTokenizer, AutoModelForSequenceClassification\r \r tokenizer = AutoTokenizer.from_pretrained(\"inovex/multi2convai-logistics-en-bert\")\r model = AutoModelForSequenceClassification.from_pretrained(\"inovex/multi2convai-logist... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 02410fd0-3c27-464c-92de-b88c607364d5 | {

"split": "unlabelled"

} | Default | {

"text_length": 321

} | 0dataset_mention |

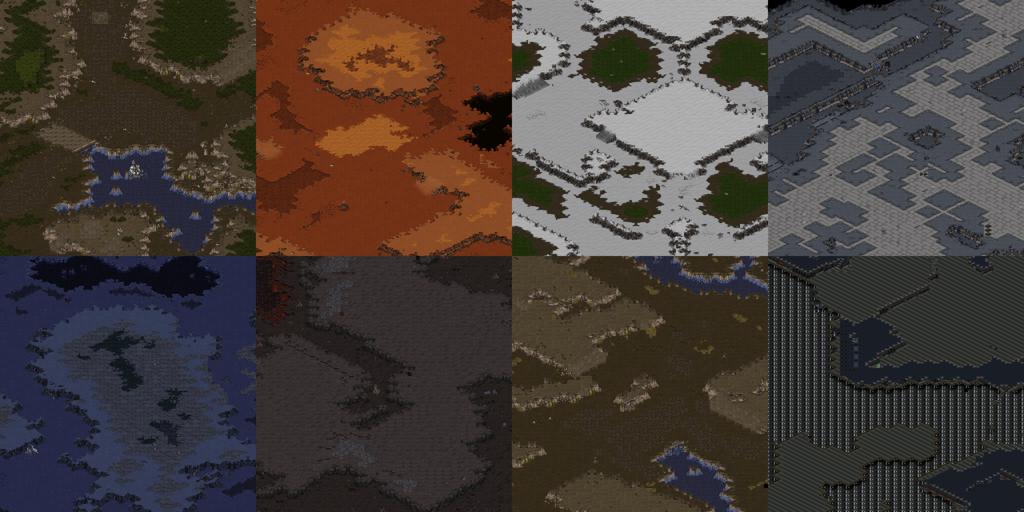

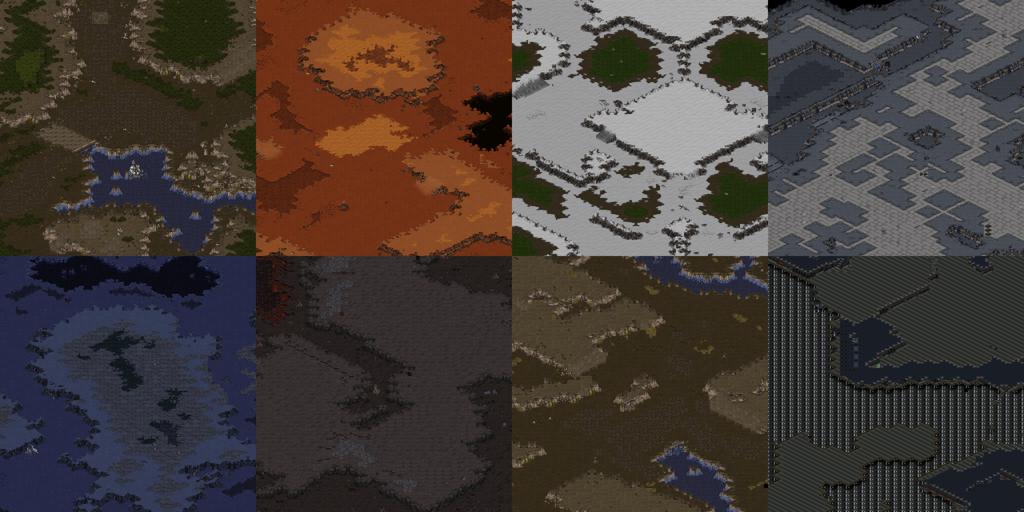

DreamBooth model for Starcraft:Remastered terrain  This is a Stable Diffusion model fine-tuned on Starcraft terrain images with DreamBooth. It can be used by adding the `instance_prompt`: **isometric starcraft ... | {

"text": " DreamBooth model for Starcraft:Remastered terrain  This is a Stable Diffusion model fine-tuned on Starcraft terrain images with DreamBooth. It can be used by adding the `instance_prompt`: **isometr... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 024260b6-1416-4a44-8a90-569fd72b9415 | {

"split": "unlabelled"

} | Default | {

"text_length": 1534

} | 0dataset_mention |

Examples * Prompt: sjrny-v1 style portrait of a woman, cosmic * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x512 * Seed: 557913691  * Prompt: sjrny-v1 style paddington bear * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x512  * P... | {

"text": " Examples * Prompt: sjrny-v1 style portrait of a woman, cosmic * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x512 * Seed: 557913691  * Prompt: sjrny-v1 style paddington bear * CFG scale: 7 * Scheduler: Euler_a * Steps: 30 * Dimensions: 512x512  on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0243 - Epoch: 9 | {

"text": " skandaonsolve/roberta-finetuned-timeentities2 This model is a fine-tuned version of [deepset/roberta-base-squad2](https://huggingface.co/deepset/roberta-base-squad2) on an unknown dataset. It achieves the following results on the evaluation set: - Train Loss: 0.0243 - Epoch: 9 "

} | [

{

"label": "dataset_mention",

"score": 0.9996485282939453

},

{

"label": "no_dataset_mention",

"score": 0.0003514717060547617

}

] | Snorkel | null | null | null | false | null | 0245d58c-be78-408f-bb20-f9e1c38fb178 | {

"split": "unlabelled"

} | Default | {

"text_length": 281

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-06 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 16 - mixed_precision_training: Native AMP | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 2e-06 - train_batch_size: 32 - eval_batch_size: 32 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - num_epochs: 16 - mixed_precision_training: Native AMP ... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 02467dac-fafe-4998-96f9-fcf1e40a0869 | {

"split": "unlabelled"

} | Default | {

"text_length": 307

} | 1no_dataset_mention |

Model Description MVP-task-dialog is a prompt-based model that MVP is further equipped with prompts pre-trained using labeled task-oriented system datasets. It is a variant (MVP+S) of our main [MVP](https://huggingface.co/RUCAIBox/mvp) model. It follows a Transformer encoder-decoder architecture with layer-wise prompt... | {

"text": " Model Description MVP-task-dialog is a prompt-based model that MVP is further equipped with prompts pre-trained using labeled task-oriented system datasets. It is a variant (MVP+S) of our main [MVP](https://huggingface.co/RUCAIBox/mvp) model. It follows a Transformer encoder-decoder architecture with laye... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0247153f-1741-4113-8426-d15133a66c3b | {

"split": "unlabelled"

} | Default | {

"text_length": 406

} | 0dataset_mention |

Image Embeddings ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'levit_128.fb_dist_in1k', pretrained=True, num... | {

"text": " Image Embeddings ```python from urllib.request import urlopen from PIL import Image import timm img = Image.open( urlopen('https://huggingface.co/datasets/huggingface/documentation-images/resolve/main/beignets-task-guide.png')) model = timm.create_model( 'levit_128.fb_dist_in1k', pretrained=... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0247d0ba-dd29-49f2-b6b2-7eee31dfc676 | {

"split": "unlabelled"

} | Default | {

"text_length": 333

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 24 - eval_batch_size: 12 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - training_steps: 10000 - mixed_pre... | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0001 - train_batch_size: 24 - eval_batch_size: 12 - seed: 42 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_scheduler_warmup_steps: 1000 - training_steps: 1000... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 0248fae5-813c-4ae4-bb19-b4d7908c7051 | {

"split": "unlabelled"

} | Default | {

"text_length": 349

} | 1no_dataset_mention |

Training and evaluation data We downloaded [PubChem data](https://drive.google.com/file/d/1ygYs8dy1-vxD1Vx6Ux7ftrXwZctFjpV3/view) and canonicalized them using RDKit. Then, we dropped duplicates. The total number of data is 9999960, and they were randomly split into train:validation=10:1. | {

"text": " Training and evaluation data We downloaded [PubChem data](https://drive.google.com/file/d/1ygYs8dy1-vxD1Vx6Ux7ftrXwZctFjpV3/view) and canonicalized them using RDKit. Then, we dropped duplicates. The total number of data is 9999960, and they were randomly split into train:validation=10:1. "

} | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0250817d-c05a-4c1e-aef6-ae79b4dc0e2d | {

"split": "unlabelled"

} | Default | {

"text_length": 292

} | 0dataset_mention |

Usage This pre-trained language model **can be fine-tunned to any downstream task (e.g. classification)**. Please see the [official repository](https://github.com/GU-DataLab/stance-detection-KE-MLM) for more detail. ```python from transformers import BertTokenizer, BertForMaskedLM, pipeline import torch | {

"text": " Usage This pre-trained language model **can be fine-tunned to any downstream task (e.g. classification)**. Please see the [official repository](https://github.com/GU-DataLab/stance-detection-KE-MLM) for more detail. ```python from transformers import BertTokenizer, BertForMaskedLM, pipeline import torc... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0251e2f4-cf20-4b4a-a377-00201e2ddb38 | {

"split": "unlabelled"

} | Default | {

"text_length": 310

} | 0dataset_mention |

museum by coop himmelblau on Stable Diffusion This is the `<coop himmelblau museum>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer_inference.ip... | {

"text": " museum by coop himmelblau on Stable Diffusion This is the `<coop himmelblau museum>` concept taught to Stable Diffusion via Textual Inversion. You can load this concept into the [Stable Conceptualizer](https://colab.research.google.com/github/huggingface/notebooks/blob/main/diffusers/stable_conceptualizer... | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 0252fbc1-f795-4bdd-866b-f9fe27336a5b | {

"split": "unlabelled"

} | Default | {

"text_length": 1162

} | 0dataset_mention |

Cetuc ```python ds = load_data('cetuc_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result["sentence"], result["predicted"]) print("CETUC WER:", wer) ``` CETUC WER: 0.03222801788375573 | {

"text": " Cetuc ```python ds = load_data('cetuc_dataset') result = ds.map(stt.batch_predict, batched=True, batch_size=8) wer, mer, wil = calc_metrics(result[\"sentence\"], result[\"predicted\"]) print(\"CETUC WER:\", wer) ``` CETUC WER: 0.03222801788375573 "

} | [

{

"label": "dataset_mention",

"score": 0.7735312081442667

},

{

"label": "no_dataset_mention",

"score": 0.22646879185573326

}

] | Snorkel | null | null | null | false | null | 025394c6-7a94-467a-8ed9-da0de7c71955 | {

"split": "unlabelled"

} | Default | {

"text_length": 251

} | 0dataset_mention |

Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: linear - lr_sch... | {

"text": " Training hyperparameters The following hyperparameters were used during training: - learning_rate: 0.0003 - train_batch_size: 16 - eval_batch_size: 8 - seed: 42 - gradient_accumulation_steps: 2 - total_train_batch_size: 32 - optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08 - lr_scheduler_type: li... | [

{

"label": "no_dataset_mention",

"score": 0.9487513515880792

},

{

"label": "dataset_mention",

"score": 0.051248648411920804

}

] | Snorkel | null | null | null | false | null | 0256e4f1-cde0-475b-8c5a-a0461b41c1d8 | {

"split": "unlabelled"

} | Default | {

"text_length": 405

} | 1no_dataset_mention |

Evaluation - ***Metric (Question Generation)***: [raw metric file](https://huggingface.co/research-backup/bart-large-squadshifts-vanilla-new_wiki-qg/raw/main/eval/metric.first.sentence.paragraph_answer.question.lmqg_qg_squadshifts.new_wiki.json) | | Score | Type | Dataset ... | {