text stringlengths 0 2k | heading1 stringlengths 3 79 | source_page_url stringclasses 188

values | source_page_title stringclasses 188

values |

|---|---|---|---|

%20Copyright%202022%20Fonticons,%20Inc.... | from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

20640%20512'%3e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5... | from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

/svg'%20fill='%23808080'%20viewBox='0%200%20640%20512'%3e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%207... | from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

ne object to use.

| from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | integrate | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392... | integrate | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

wandb: ModuleType | None

default `= None`

If the wandb module is provided, will integrate with it and appear on WandB

dashboard

mlflow: ModuleType | None

default `= None`

If the mlflow module is provided, will integrate with the experiment and

appear on ML Flow dashboard

| integrate | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%2... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

ro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.3L391.3%20319.9C381%... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

send status at regular intervals set by this

parameter as the number of seconds.

api_open: bool | None

default `= None`

If True, the REST routes of the backend will be open, allowing requests made

directly to those endpoints to skip the queue.

max_size: int | None

default `= None`

T... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

2'%3e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%2... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

pp inline in an iframe. Defaults to True in

python notebooks; False otherwise.

inbrowser: bool

default `= False`

whether to automatically launch the gradio app in a new tab on the default

browser.

share: bool | None

default `= None`

whether to create a publicly shareable link for the... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

an alert modal and

printed in the browser console log. They will also be displayed in the alert

modal of downstream apps that gr.load() this app.

server_name: str | None

default `= None`

to make app accessible on local network, set this to "0.0.0.0". Can be set by

environment variable GRADIO_SERVER_NA... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

inks: list[Literal['api', 'gradio', 'settings'] | dict[str, str]] | None

default `= None`

The links to display in the footer of the app. Accepts a list, where each

element of the list must be one of "api", "gradio", or "settings"

corresponding to the API docs, "built with Gradio", and settings pages

respectively. If ... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

be set by

environment variable GRADIO_ROOT_PATH. Defaults to "".

app_kwargs: dict[str, Any] | None

default `= None`

Additional keyword arguments to pass to the underlying FastAPI app as a

dictionary of parameter keys and argument values. For example, `{"docs_url":

"/docs"}`

state_sessi... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

] | None

default `= None`

A function that takes a FastAPI request and returns a string user ID or None.

If the function returns None for a specific request, that user is not

authorized to access the app (they will see a 401 Unauthorized response). To

be used with external authentication systems like OAuth. Cannot be ... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

vironment variable or default to False.

pwa: bool | None

default `= None`

If True, the Gradio app will be set up as an installable PWA (Progressive Web

App). If set to None (default behavior), then the PWA feature will be enabled

if this Gradio app is launched on Spaces, but not otherwise.

... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

ly be executed when

the page loads. For more flexibility, use the head parameter to insert js

inside <script> tags.

head: str | None

default `= None`

Custom html code to insert into the head of the demo webpage. This can be used

to add custom meta tags, multiple scripts, stylesheets, etc. to the page.... | launch | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | load | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%3e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%203... | load | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

prediction function. Each parameter of the function corresponds to one

input component, and the function should return a single value or a tuple of

values, with each element in the tuple corresponding to one output component.

inputs: Component | BlockContext | list[Component | BlockContext] | Set[Compo... | load | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

shows the runtime display, "hidden"

shows no progress animation at all

show_progress_on: Component | list[Component] | None

default `= None`

Component or list of components to show the progress animation on. If None,

will show the progress animation on all of the output components.

que... | load | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

ponents .click method. Functions that have

not yet run (or generators that are iterating) will be cancelled, but

functions that are currently running will be allowed to finish.

trigger_mode: Literal['once', 'multiple', 'always_last'] | None

default `= None`

If "once" (default for all events except `.c... | load | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

nted" (hidden

from API docs but callable by clients and via gr.load). If fn is None,

api_visibility will automatically be set to "private".

time_limit: int | None

default `= None`

stream_every: float

default `= 0.5`

key: int | str | tuple[int | str, ...] | None

default... | load | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

20640%20512'%3e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5... | from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

/svg'%20fill='%23808080'%20viewBox='0%200%20640%20512'%3e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%207... | from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

ne object to use.

| from_pipeline | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | integrate | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

e%3c!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392... | integrate | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

wandb: ModuleType | None

default `= None`

If the wandb module is provided, will integrate with it and appear on WandB

dashboard

mlflow: ModuleType | None

default `= None`

If the mlflow module is provided, will integrate with the experiment and

appear on ML Flow dashboard

| integrate | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

!--!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%2... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

ro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.3L391.3%20319.9C381%... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

send status at regular intervals set by this

parameter as the number of seconds.

api_open: bool | None

default `= None`

If True, the REST routes of the backend will be open, allowing requests made

directly to those endpoints to skip the queue.

max_size: int | None

default `= None`

T... | queue | https://gradio.app/docs/gradio/interface#interface-queue | Gradio - Interface#Interface Queue Docs |

Creates a component to displays a base image and colored annotations on top

of that image. Annotations can take the from of rectangles (e.g. object

detection) or masks (e.g. image segmentation). As this component does not

accept user input, it is rarely used as an input component.

| Description | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

**Using AnnotatedImage as an input component.**

How AnnotatedImage will pass its value to your function:

Type: `tuple[str, list[tuple[str, str]]] | None`

Passes its value as a `tuple` consisting of:

* `str` filepath to a base image

* `list` of annotations.

* Each annotation itself is a `tuple` of a mask (... | Behavior | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

Parameters ▼

value: tuple[np.ndarray | PIL.Image.Image | str, list[tuple[np.ndarray | tuple[int, int, int, int], str]]] | None

default `= None`

Tuple of base image and list of (annotation, label) pairs.

format: str

default `= "webp"`

Format used to save images before it is returned t... | Initialization | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

ct otherwise). Can provide a Timer whose tick resets `value`, or a float

that provides the regular interval for the reset Timer.

inputs: Component | list[Component] | set[Component] | None

default `= None`

Components that are used as inputs to calculate `value` if `value` is a

function (has no effect ... | Initialization | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

rendered in the Blocks context. Should

be used if the intention is to assign event listeners now but render the

component later.

key: int | str | tuple[int | str, ...] | None

default `= None`

in a gr.render, Components with the same key across re-renders are treated as

the same component, not a new c... | Initialization | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

Shortcuts

gradio.AnnotatedImage

Interface String Shortcut `"annotatedimage"`

Initialization Uses default values

| Shortcuts | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

image_segmentation

| Demos | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

Description

Event listeners allow you to respond to user interactions with the UI

components you've defined in a Gradio Blocks app. When a user interacts with

an element, such as changing a slider value or uploading an image, a function

is called.

Supported Event Listeners

The AnnotatedImage component supports the f... | Event Listeners | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

`

defines how the endpoint appears in the API docs. Can be a string or None. If

set to a string, the endpoint will be exposed in the API docs with the given

name. If None (default), the name of the function will be used as the API

endpoint.

api_description: str | None | Literal[False]

default `= None`... | Event Listeners | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

th (and be up to length `max_batch_size`). The function is then

*required* to return a tuple of lists (even if there is only 1 output

component), with each list in the tuple corresponding to one output component.

max_batch_size: int

default `= 4`

Maximum number of inputs to batch together if this is c... | Event Listeners | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

puts', return should be a list of values

for output components.

concurrency_limit: int | None | Literal['default']

default `= "default"`

If set, this is the maximum number of this event that can be running

simultaneously. Can be set to None to mean no concurrency_limit (any number of

this event can be... | Event Listeners | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

the same inputs as the main function and should return a

`gr.validate()` for each input value.

| Event Listeners | https://gradio.app/docs/gradio/annotatedimage | Gradio - Annotatedimage Docs |

Server is the Gradio API engine exposed on a FastAPI application (Server

mode). It inherits from FastAPI, so all standard FastAPI methods (.get(),

.post(), .add_middleware(), .include_router(), etc.) work directly on this

instance.

New methods added on top of FastAPI: api(): Decorator to register a Gradio API

endpoin... | Description | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

from gradio import Server

app = Server()

@app.api(name="hello")

def hello(name: str) -> str:

return f"Hello {name}"

@app.get("/")

def root():

return {"message": "Hello World"}

app.launch()

| Example Usage | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

Parameters ▼

debug: bool

default `= False`

Enable debug mode for detailed error tracebacks.

title: str

default `= "FastAPI"`

The title of the API, shown in the OpenAPI docs.

summary: str | None

default `= None`

A short summary of the API.

description... | Initialization | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

ault `= None`

An async context manager for startup/shutdown lifecycle.

terms_of_service: str | None

default `= None`

URL to the terms of service.

contact: dict[str, Any] | None

default `= None`

Contact information dict for the API.

license_info: dict[str, Any] | None... | Initialization | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

server_app

| Demos | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

Methods | https://gradio.app/docs/gradio/server | Gradio - Server Docs | |

%20Copyright%202022%20Fonticons,%20Inc.... | api | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.... | api | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

None`

concurrency_limit: int | None | Literal['default']

default `= "default"`

concurrency_id: str | None

default `= None`

queue: bool

default `= True`

batch: bool

default `= False`

max_batch_size: int

default `= 4`

api... | api | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

-!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%203... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

debug: bool

default `= False`

max_threads: int

default `= 40`

auth: Callable[[str, str], bool] | tuple[str, str] | list[tuple[str, str]] | None

default `= None`

auth_message: str | None

default `= None`

prevent_thread_lock: bool

default... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

itoring: bool | None

default `= None`

strict_cors: bool

default `= True`

node_server_name: str | None

default `= None`

node_port: int | None

default `= None`

ssr_mode: bool | None

default `= None`

pwa: bool | None

default `= None`

... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | api | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%20318.... | api | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

None`

concurrency_limit: int | None | Literal['default']

default `= "default"`

concurrency_id: str | None

default `= None`

queue: bool

default `= True`

batch: bool

default `= False`

max_batch_size: int

default `= 4`

api... | api | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

%20Copyright%202022%20Fonticons,%20Inc.... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

-!%20Font%20Awesome%20Pro%206.0.0%20by%20@fontawesome%20-%20https://fontawesome.com%20License%20-%20https://fontawesome.com/license%20\(Commercial%20License\)%20Copyright%202022%20Fonticons,%20Inc.%20--%3e%3cpath%20d='M172.5%20131.1C228.1%2075.51%20320.5%2075.51%20376.1%20131.1C426.1%20181.1%20433.5%20260.8%20392.4%203... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

debug: bool

default `= False`

max_threads: int

default `= 40`

auth: Callable[[str, str], bool] | tuple[str, str] | list[tuple[str, str]] | None

default `= None`

auth_message: str | None

default `= None`

prevent_thread_lock: bool

default... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

itoring: bool | None

default `= None`

strict_cors: bool

default `= True`

node_server_name: str | None

default `= None`

node_port: int | None

default `= None`

ssr_mode: bool | None

default `= None`

pwa: bool | None

default `= None`

... | launch | https://gradio.app/docs/gradio/server | Gradio - Server Docs |

The FileData class is a subclass of the GradioModel class that represents a

file object within a Gradio interface. It is used to store file data and

metadata when a file is uploaded.

| Description | https://gradio.app/docs/gradio/filedata | Gradio - Filedata Docs |

from gradio_client import Client, FileData, handle_file

def get_url_on_server(data: FileData):

print(data['url'])

client = Client("gradio/gif_maker_main", download_files=False)

job = client.submit([handle_file("./cheetah.jpg")], api_name="/predict")

data = job.result()

video: FileD... | Example Usage | https://gradio.app/docs/gradio/filedata | Gradio - Filedata Docs |

Parameters ▼

path: str

The server file path where the file is stored.

url: Optional[str]

The normalized server URL pointing to the file.

size: Optional[int]

The size of the file in bytes.

orig_name: Optional[str]

The original filename before upload.

... | Attributes | https://gradio.app/docs/gradio/filedata | Gradio - Filedata Docs |

Gradio applications support programmatic requests from many environments:

* The [Python Client](/docs/python-client): `gradio-client` allows you to make requests from Python environments.

* The [JavaScript Client](/docs/js-client): `@gradio/client` allows you to make requests in TypeScript from the browser or serv... | Gradio Clients | https://gradio.app/docs/third-party-clients/introduction | Third Party Clients - Introduction Docs |

We also encourage the development and use of third party clients built by

the community:

* [Rust Client](/docs/third-party-clients/rust-client): `gradio-rs` built by [@JacobLinCool](https://github.com/JacobLinCool) allows you to make requests in Rust.

* [Powershell Client](https://github.com/rrg92/powershai): `pow... | Community Clients | https://gradio.app/docs/third-party-clients/introduction | Third Party Clients - Introduction Docs |

`gradio-rs` is a Gradio Client in Rust built by

[@JacobLinCool](https://github.com/JacobLinCool). You can find the repo

[here](https://github.com/JacobLinCool/gradio-rs), and more in depth API

documentation [here](https://docs.rs/gradio/latest/gradio/).

| Introduction | https://gradio.app/docs/third-party-clients/rust-client | Third Party Clients - Rust Client Docs |

Here is an example of using BS-RoFormer model to separate vocals and

background music from an audio file.

use gradio::{PredictionInput, Client, ClientOptions};

[tokio::main]

async fn main() {

if std::env::args().len() < 2 {

println!("Please provide an audio file path as a... | Usage | https://gradio.app/docs/third-party-clients/rust-client | Third Party Clients - Rust Client Docs |

cargo install gradio

gr --help

Take [stabilityai/stable-

diffusion-3-medium](https://huggingface.co/spaces/stabilityai/stable-

diffusion-3-medium) HF Space as an example:

> gr list stabilityai/stable-diffusion-3-medium

API Spec for stabilityai/stable-diffusion-3-medium:

/infer

... | Command Line Interface | https://gradio.app/docs/third-party-clients/rust-client | Third Party Clients - Rust Client Docs |

ZeroGPU

ZeroGPU spaces are rate-limited to ensure that a single user does not hog all

of the available GPUs. The limit is controlled by a special token that the

Hugging Face Hub infrastructure adds to all incoming requests to Spaces. This

token is a request header called `X-IP-Token` and its value changes depending

on... | Explaining Rate Limits for | https://gradio.app/docs/python-client/using-zero-gpu-spaces | Python Client - Using Zero Gpu Spaces Docs |

Token

In the following hypothetical example, when a user presses enter in the

textbox, the `generate()` function is called, which calls a second function,

`text_to_image()`. Because the Gradio Client is being instantiated indirectly,

in `text_to_image()`, we will need to extract their token from the `X-IP-

Token` head... | Avoiding Rate Limits by Manually Passing an IP | https://gradio.app/docs/python-client/using-zero-gpu-spaces | Python Client - Using Zero Gpu Spaces Docs |

If you already have a recent version of `gradio`, then the `gradio_client` is

included as a dependency. But note that this documentation reflects the latest

version of the `gradio_client`, so upgrade if you’re not sure!

The lightweight `gradio_client` package can be installed from pip (or pip3)

and is tested to work w... | Installation | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

Spaces

Start by connecting instantiating a `Client` object and connecting it to a

Gradio app that is running on Hugging Face Spaces.

from gradio_client import Client

client = Client("abidlabs/en2fr") a Space that translates from English to French

You can also connect to private Spaces by pass... | Connecting to a Gradio App on Hugging Face | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

use

While you can use any public Space as an API, you may get rate limited by

Hugging Face if you make too many requests. For unlimited usage of a Space,

simply duplicate the Space to create a private Space, and then use it to make

as many requests as you’d like!

The `gradio_client` includes a class method: `Client.d... | Duplicating a Space for private | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

app

If your app is running somewhere else, just provide the full URL instead,

including the “http://” or “https://“. Here’s an example of making predictions

to a Gradio app that is running on a share URL:

from gradio_client import Client

client = Client("https://bec81a83-5b5c-471e.gradio.live")... | Connecting a general Gradio | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

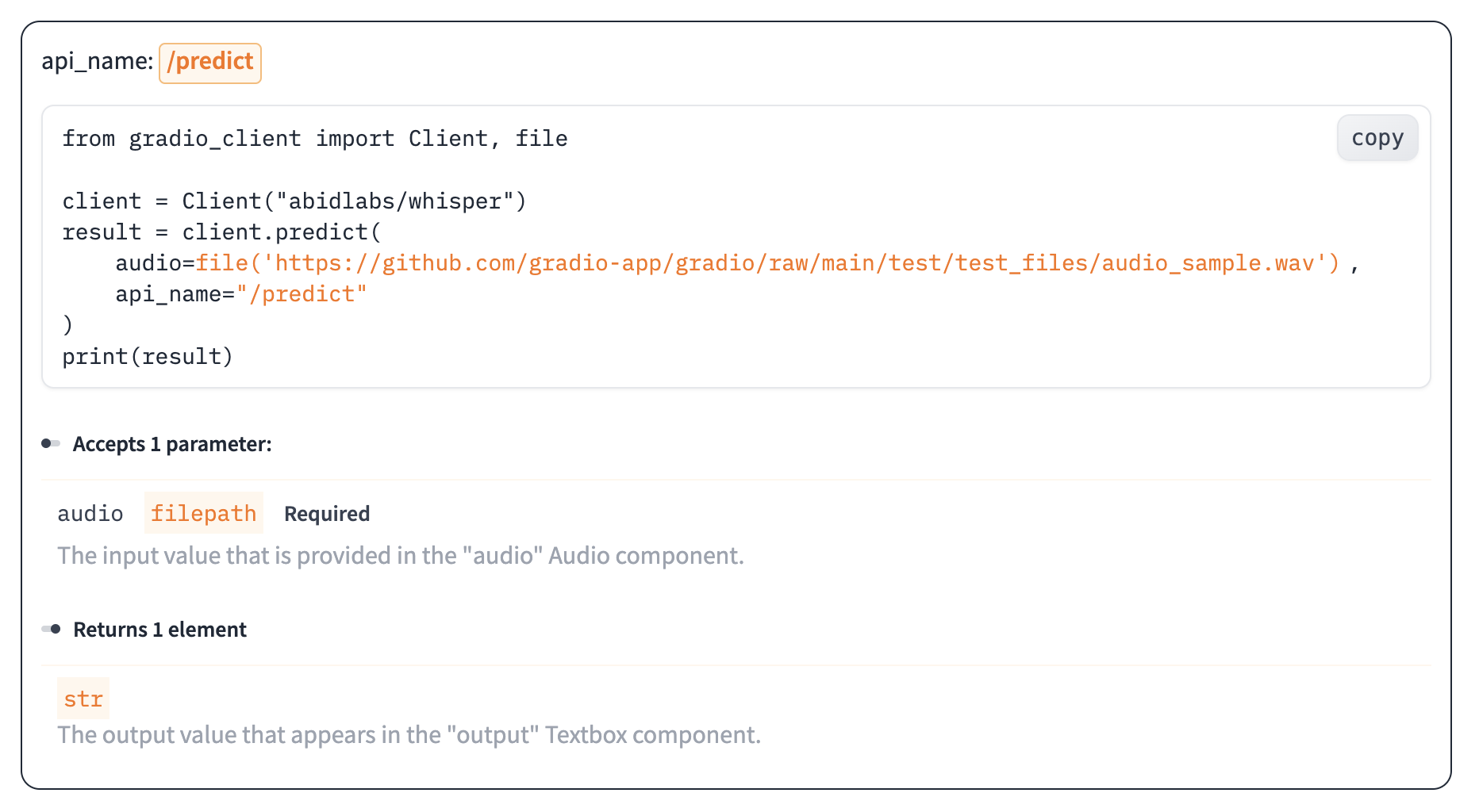

Once you have connected to a Gradio app, you can view the APIs that are

available to you by calling the `Client.view_api()` method. For the Whisper

Space, we see the following:

Client.predict() Usage Info

---------------------------

Named API endpoints: 1

- predict(audio, api_name="/pre... | Inspecting the API endpoints | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

As an alternative to running the `.view_api()` method, you can click on the

“Use via API” link in the footer of the Gradio app, which shows us the same

information, along with example usage.

The View API pag... | The “View API” Page | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

The simplest way to make a prediction is simply to call the `.predict()`

function with the appropriate arguments:

from gradio_client import Client

client = Client("abidlabs/en2fr", api_name='/predict')

client.predict("Hello")

>> Bonjour

If there are multiple parameters, then you sh... | Making a prediction | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

to the Gradio server and ensures that the file is

preprocessed correctly:

from gradio_client import Client, file

client = Client("abidlabs/whisper")

client.predict(

audio=file("https://audio-samples.github.io/samples/mp3/blizzard_unconditional/sample-0.mp3")

)

>> "My th... | Making a prediction | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

Oe should note that `.predict()` is a _blocking_ operation as it waits for the

operation to complete before returning the prediction.

In many cases, you may be better off letting the job run in the background

until you need the results of the prediction. You can do this by creating a

`Job` instance using the `.submit(... | Running jobs asynchronously | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

Alternatively, one can add one or more callbacks to perform actions after the

job has completed running, like this:

from gradio_client import Client

def print_result(x):

print("The translated result is: {x}")

client = Client(space="abidlabs/en2fr")

job = client.submit("... | Adding callbacks | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

The `Job` object also allows you to get the status of the running job by

calling the `.status()` method. This returns a `StatusUpdate` object with the

following attributes: `code` (the status code, one of a set of defined strings

representing the status. See the `utils.Status` class), `rank` (the current

position of th... | Status | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

The `Job` class also has a `.cancel()` instance method that cancels jobs that

have been queued but not started. For example, if you run:

client = Client("abidlabs/whisper")

job1 = client.submit(file("audio_sample1.wav"))

job2 = client.submit(file("audio_sample2.wav"))

job1.cancel() will retu... | Cancelling Jobs | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

Some Gradio API endpoints do not return a single value, rather they return a

series of values. You can get the series of values that have been returned at

any time from such a generator endpoint by running `job.outputs()`:

from gradio_client import Client

client = Client(src="gradio/count_genera... | Generator Endpoints | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

Gradio demos can include [session state](https://www.gradio.app/guides/state-

in-blocks), which provides a way for demos to persist information from user

interactions within a page session.

For example, consider the following demo, which maintains a list of words that

a user has submitted in a `gr.State` component. Wh... | Demos with Session State | https://gradio.app/docs/python-client/introduction | Python Client - Introduction Docs |

**Stream From a Gradio app in 5 lines**

Use the `submit` method to get a job you can iterate over.

In python:

from gradio_client import Client

client = Client("gradio/llm_stream")

for result in client.submit("What's the best UI framework in Python?"):

print(result)

... | Ergonomic API 💆 | https://gradio.app/docs/python-client/version-1-release | Python Client - Version 1 Release Docs |

Anything you can do in the UI, you can do with the client:

* 🔐Authentication

* 🛑 Job Cancelling

* ℹ️ Access Queue Position and API

* 📕 View the API information

Here's an example showing how to display the queue position of a pending job:

from gradio_client import Client

client = ... | Transparent Design 🪟 | https://gradio.app/docs/python-client/version-1-release | Python Client - Version 1 Release Docs |

The client can run from pretty much any python and javascript environment

(node, deno, the browser, Service Workers).

Here's an example using the client from a Flask server using gevent:

from gevent import monkey

monkey.patch_all()

from gradio_client import Client

from flask import Fla... | Portable Design ⛺️ | https://gradio.app/docs/python-client/version-1-release | Python Client - Version 1 Release Docs |

Changes

**Python**

* The `serialize` argument of the `Client` class was removed and has no effect.

* The `upload_files` argument of the `Client` was removed.

* All filepaths must be wrapped in the `handle_file` method. For example, `caption = client.predict(handle_file('./dog.jpg'))`.

* The `output_dir` a... | v1.0 Migration Guide and Breaking | https://gradio.app/docs/python-client/version-1-release | Python Client - Version 1 Release Docs |

The main Client class for the Python client. This class is used to connect

to a remote Gradio app and call its API endpoints.

| Description | https://gradio.app/docs/python-client/client | Python Client - Client Docs |

from gradio_client import Client

client = Client("abidlabs/whisper-large-v2") connecting to a Hugging Face Space

client.predict("test.mp4", api_name="/predict")

>> What a nice recording! returns the result of the remote API call

client = Client("https://bec81a83-5b5c-471e.gradio.live") conne... | Example usage | https://gradio.app/docs/python-client/client | Python Client - Client Docs |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.