Add 1 files

Browse files- 2508/2508.00399.md +468 -0

2508/2508.00399.md

ADDED

|

@@ -0,0 +1,468 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

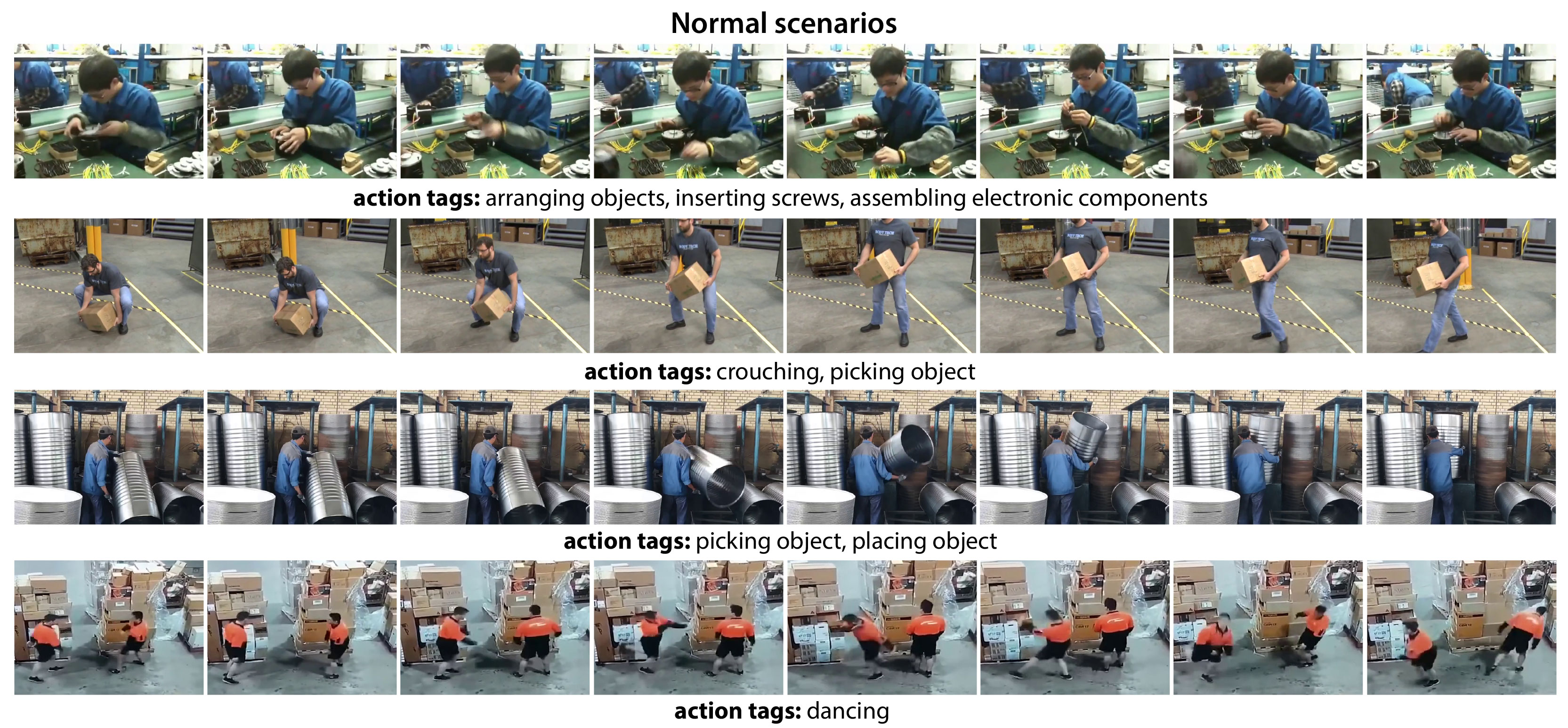

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

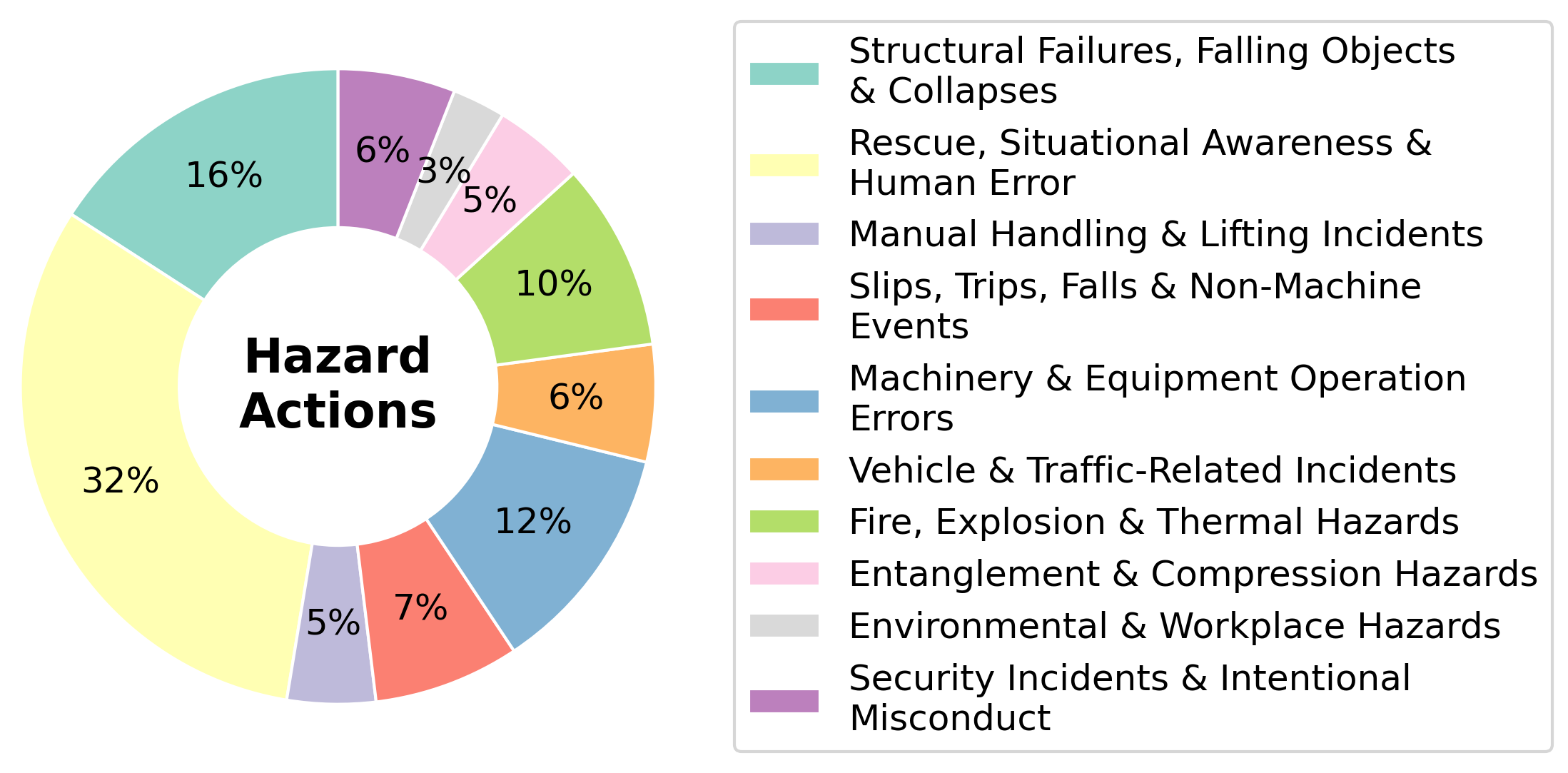

|

|

|

|

|

|

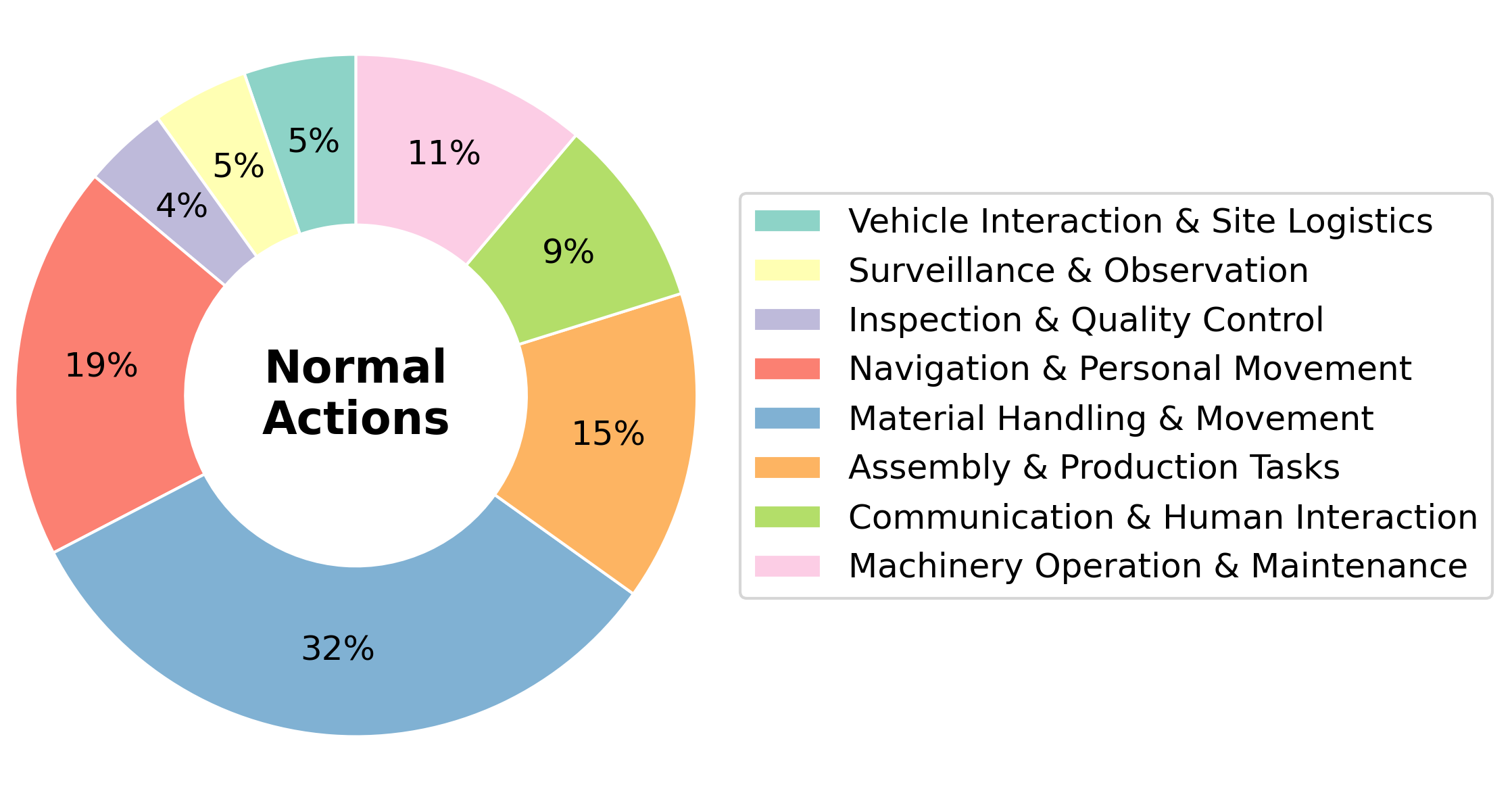

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

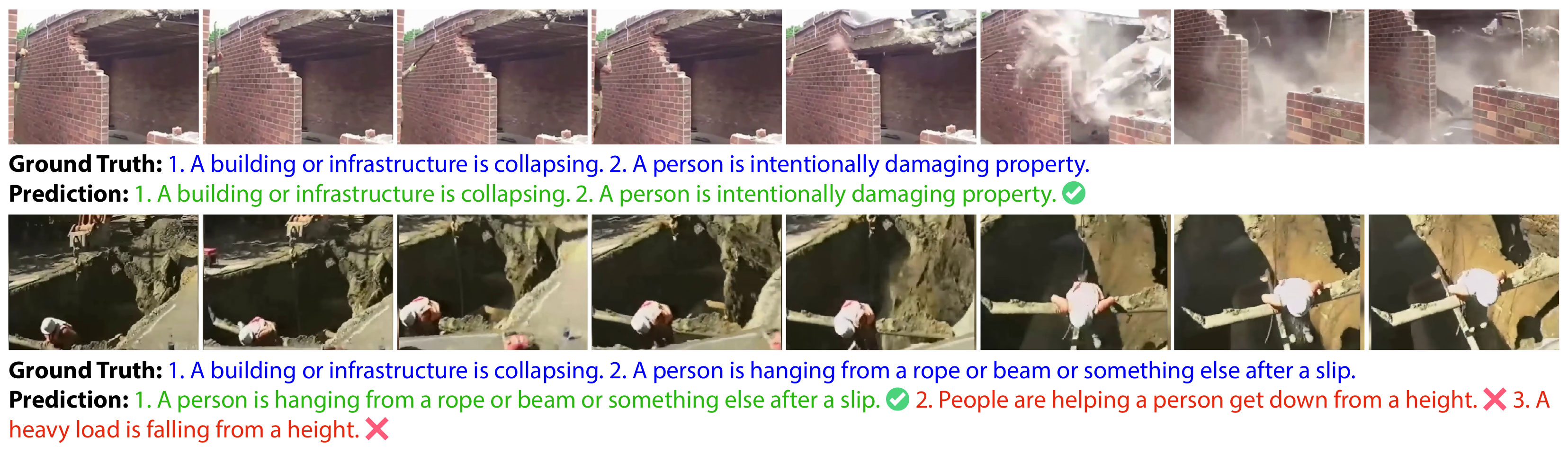

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

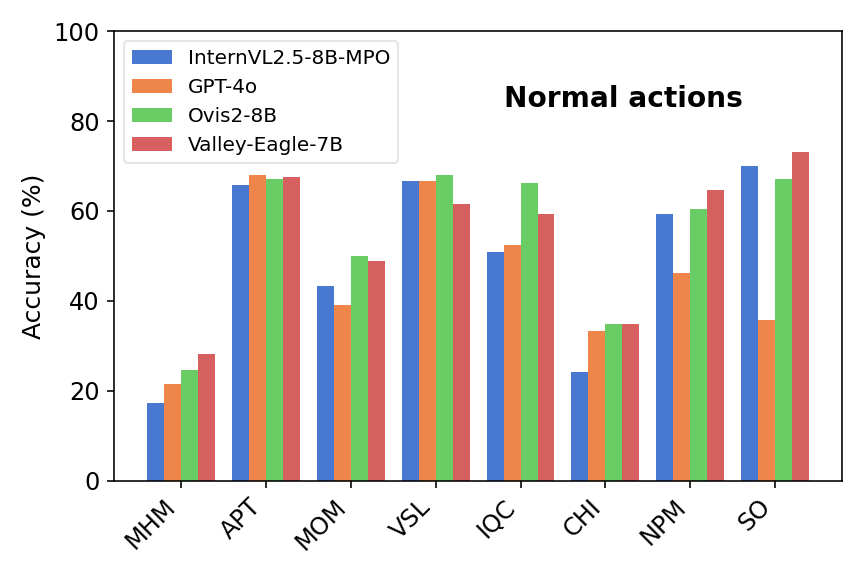

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Title: iSafetyBench: A video-language benchmark for safety in industrial environment

|

| 2 |

+

|

| 3 |

+

URL Source: https://arxiv.org/html/2508.00399

|

| 4 |

+

|

| 5 |

+

Published Time: Fri, 15 Aug 2025 00:07:38 GMT

|

| 6 |

+

|

| 7 |

+

Markdown Content:

|

| 8 |

+

Yogesh Singh Rawat

|

| 9 |

+

|

| 10 |

+

yogesh@crcv.ucf.edu Shruti Vyas

|

| 11 |

+

|

| 12 |

+

shruti@ucf.edu University of Central Florida

|

| 13 |

+

|

| 14 |

+

Project page: [https://isafetybench.github.io](https://isafetybench.github.io/)

|

| 15 |

+

|

| 16 |

+

###### Abstract

|

| 17 |

+

|

| 18 |

+

Recent advances in vision-language models (VLMs) have enabled impressive generalization across diverse video understanding tasks under zero-shot settings. However, their capabilities in high-stakes industrial domains—where recognizing both routine operations and safety-critical anomalies is essential—remain largely underexplored. To address this gap, we introduce iSafetyBench, a new video-language benchmark specifically designed to evaluate model performance in industrial environments across both normal and hazardous scenarios. iSafetyBench comprises 1,100 video clips sourced from real-world industrial settings, annotated with open-vocabulary, multi-label action tags spanning 98 routine and 67 hazardous action categories. Each clip is paired with multiple-choice questions for both single-label and multi-label evaluation, enabling fine-grained assessment of VLMs in both standard and safety-critical contexts. We evaluate eight state-of-the-art video-language models under zero-shot conditions. Despite their strong performance on existing video benchmarks, these models struggle with iSafetyBench—particularly in recognizing hazardous activities and in multi-label scenarios. Our results reveal significant performance gaps, underscoring the need for more robust, safety-aware multimodal models for industrial applications. iSafetyBench provides a first-of-its-kind testbed to drive progress in this direction. The dataset is available at: [https://github.com/iSafetyBench/data](https://github.com/iSafetyBench/data).

|

| 19 |

+

|

| 20 |

+

![Image 1: [Uncaptioned image]](https://arxiv.org/html/2508.00399v2/figures/teaser.jpg)

|

| 21 |

+

|

| 22 |

+

Figure 1: Sample multi‐label examples: Few frames extracted from videos. Even top performing models such as Ovis2-8B struggle to predict nuanced actions in industrial settings, both in normal (top) and hazardous (bottom) scenarios, often confusing them with visually similar distractors.

|

| 23 |

+

|

| 24 |

+

1 Introduction

|

| 25 |

+

--------------

|

| 26 |

+

|

| 27 |

+

Recent breakthroughs in multimodal foundation models have brought vision-language models (VLMs) to the forefront of video understanding research. These models, powered by large-scale pretraining and aligned multimodal embeddings, have demonstrated impressive zero-shot capabilities across a range of tasks—including action recognition, video question answering, and temporal localization—on benchmarks such as Kinetics[[4](https://arxiv.org/html/2508.00399v2#bib.bib4)], Something-Something[[8](https://arxiv.org/html/2508.00399v2#bib.bib8)], and Ego4D[[9](https://arxiv.org/html/2508.00399v2#bib.bib9)]. As a result, VLMs are rapidly emerging as general-purpose perception engines, capable of interpreting complex real-world activities with minimal task-specific supervision. However, despite their remarkable success on open-domain video datasets, the effectiveness of VLMs in specialized, high-risk domains such as industrial environments remains largely unexplored.

|

| 28 |

+

|

| 29 |

+

This is a critical gap. Industrial and safety-critical environments present unique challenges for visual understanding: complex machinery, unpredictable human-object interactions, crowded or cluttered scenes, and, most importantly, the need to reliably identify rare but dangerous events. As industries adopt intelligent monitoring systems to reduce risk and improve workplace safety, there is a growing demand for models that can reason about both routine operations and hazardous anomalies. Unfortunately, most existing video benchmarks are not designed with these needs in mind. General-purpose datasets lack safety-specific labels, while industrial or hazard-focused datasets are limited in scope, size, or diversity.

|

| 30 |

+

|

| 31 |

+

Several prior efforts have tackled action recognition in security and industrial domains[[6](https://arxiv.org/html/2508.00399v2#bib.bib6), [19](https://arxiv.org/html/2508.00399v2#bib.bib19), [21](https://arxiv.org/html/2508.00399v2#bib.bib21), [30](https://arxiv.org/html/2508.00399v2#bib.bib30), [27](https://arxiv.org/html/2508.00399v2#bib.bib27)], yet these datasets suffer from three key limitations. First, many cover only a narrow set of activities, often choreographed or constrained to specific sites. Second, most are unimodal and closed-set, offering limited flexibility for open-vocabulary or multi-label evaluation—capabilities essential for robust real-world deployment. Third, existing benchmarks typically focus either on routine behaviors or on accidents in isolation, preventing comprehensive evaluation across the full spectrum of operational and hazardous conditions. A benchmark that jointly addresses these limitations—capturing both the complexity of real-world industrial scenes and the subtlety of safety-critical actions—is still missing.

|

| 32 |

+

|

| 33 |

+

To address these challenges, we introduce iSafetyBench, a new benchmark for open-vocabulary video-language evaluation in industrial and safety-sensitive scenarios. The dataset consists of 1,100 real-world video clips curated from publicly available online sources, covering diverse locations such as factories, warehouses, construction sites, parking lots, and retail spaces. It is organized into two balanced splits: normal routine actions (e.g., assembling parts, operating machinery, inspecting equipment) and dangerous hazardous events (e.g., structural collapses, fires, manual handling injuries, vehicle accidents). Each video is annotated with multiple open-vocabulary action labels and paired with carefully crafted multiple-choice questions for both single-label and multi-label evaluation.

|

| 34 |

+

|

| 35 |

+

We evaluate eight state-of-the-art video-language models, including both open-source and closed-source systems, under a challenging zero-shot setting. Despite strong performance on conventional benchmarks, these models show notable weaknesses on iSafetyBench—particularly in identifying hazardous events and reasoning over multiple simultaneous actions. For instance, average model accuracy drops significantly when moving from routine to hazardous clips, and from multi-label to single-label questions. These results reveal critical gaps in current VLM capabilities and motivate future research in safety-aware multimodal understanding.

|

| 36 |

+

|

| 37 |

+

Our key contributions are:

|

| 38 |

+

|

| 39 |

+

* •We introduce iSafetyBench, a new open-vocabulary, multi-label video-language benchmark focused on industrial and safety-critical actions. It consists of a diverse dataset of 1,100 real-world video clips with detailed annotations across 98 routine and 67 hazard action categories, supporting both single and multi-label MCQ-based evaluation.

|

| 40 |

+

* •We benchmark eight recent vision-language models under zero-shot settings and provide a comprehensive analysis of their performance on routine vs. hazardous actions and single vs. multi-label setups.

|

| 41 |

+

* •We highlight key limitations of current VLMs in recognizing safety-critical events and provide a challenging new testbed to drive progress in this direction.

|

| 42 |

+

|

| 43 |

+

Dataset Normal scenarios Dangerous scenarios Multi-label Textual data Environment type(s)Set type# Normal actions# Non-critical anomaly actions# Danger/hazard actions# High-level categories

|

| 44 |

+

UCF-Crime [[21](https://arxiv.org/html/2508.00399v2#bib.bib21)]✓✓✗✗Multiple Closed 0 0 13 0

|

| 45 |

+

InHARD [[6](https://arxiv.org/html/2508.00399v2#bib.bib6)]✓✗✗✗Single Closed 74 0 0 14

|

| 46 |

+

TIMo [[19](https://arxiv.org/html/2508.00399v2#bib.bib19)]✓✗✗✗Single Closed 35 21 0 20 1 1 1 InHARD authors do not explicitly provide any category, we formed it based on the actions.

|

| 47 |

+

OpenPack [[28](https://arxiv.org/html/2508.00399v2#bib.bib28)]✓✓✗✗Single Closed 43 44 1 17

|

| 48 |

+

Safe/Unsafe Behaviours [[30](https://arxiv.org/html/2508.00399v2#bib.bib30)]✓✓✗✗Single Closed 4 0 4 2

|

| 49 |

+

Construction Meta Action [[27](https://arxiv.org/html/2508.00399v2#bib.bib27)]✓✓✗✗Single Closed 1 0 6 0

|

| 50 |

+

iSafetyBench(Ours)✓✓✓✓Multiple Open 98 0 67 18

|

| 51 |

+

|

| 52 |

+

Table 1: Dataset Comparison: Comparison with existing security and industrial datasets. Our proposed iSafetyBench is the only open‐vocabulary (open-set), multi‐label benchmark that pairs textual questions with clips. It covers multiple environment types with a high number of normal and danger/hazard actions in 18 high-level categories.

|

| 53 |

+

|

| 54 |

+

2 Related Work

|

| 55 |

+

--------------

|

| 56 |

+

|

| 57 |

+

#### General Video Action Recognition.

|

| 58 |

+

|

| 59 |

+

Large-scale video action recognition datasets have played a key role in driving progress in video understanding. Datasets such as Kinetics-700[[4](https://arxiv.org/html/2508.00399v2#bib.bib4)], ActivityNet[[3](https://arxiv.org/html/2508.00399v2#bib.bib3)], Something-Something[[8](https://arxiv.org/html/2508.00399v2#bib.bib8)], Charades[[20](https://arxiv.org/html/2508.00399v2#bib.bib20)], EPIC-KITCHENS[[7](https://arxiv.org/html/2508.00399v2#bib.bib7)], and Ego4D[[9](https://arxiv.org/html/2508.00399v2#bib.bib9)] offer broad coverage of human activities across everyday contexts. These benchmarks have been widely used to train and evaluate both unimodal and multimodal models. While valuable for assessing generic video understanding, these datasets lack annotations for high-risk or safety-critical scenarios, and they do not target the unique characteristics of industrial environments such as machinery interaction, equipment handling, or dangerous incidents.

|

| 60 |

+

|

| 61 |

+

#### Surveillance and Anomaly Detection.

|

| 62 |

+

|

| 63 |

+

Several datasets focus on video security and anomaly detection, often using CCTV or drone footage in public spaces. Representative examples include UCF-Crime[[21](https://arxiv.org/html/2508.00399v2#bib.bib21)], ShanghaiTech Campus[[12](https://arxiv.org/html/2508.00399v2#bib.bib12)], XD-Violence[[25](https://arxiv.org/html/2508.00399v2#bib.bib25)], UBnormal[[1](https://arxiv.org/html/2508.00399v2#bib.bib1)], and MEVA[[5](https://arxiv.org/html/2508.00399v2#bib.bib5)]. These datasets typically capture abnormal behaviors (e.g., fights, thefts, running in restricted zones) and are used for binary anomaly classification or temporal localization. However, most of these benchmarks are either limited to a small set of coarse event types or lack the open-vocabulary, multi-label setups needed for fine-grained evaluation. Furthermore, they rarely capture industrial hazards such as equipment failure, falling objects, or operational accidents.

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

|

| 67 |

+

Figure 2: Overview of iSafetyBench Curation: Outline showing dataset generation pipeline used to curate iSafetyBench.

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

Figure 3: Examples from the normal dataset: Our dataset encompasses a wide spectrum of actions, from routine industrial tasks such as loading and inspecting to unexpected behaviors like dancing in a warehouse.

|

| 72 |

+

|

| 73 |

+

#### Industrial and Safety-Focused Datasets.

|

| 74 |

+

|

| 75 |

+

A smaller set of benchmarks specifically targets industrial or safety-related tasks. InHARD[[6](https://arxiv.org/html/2508.00399v2#bib.bib6)] focuses on human action recognition in industrial settings but lacks coverage of dangerous or anomalous events. TIMo[[19](https://arxiv.org/html/2508.00399v2#bib.bib19)] captures manufacturing tasks but is restricted to indoor, scripted setups. OpenPack[[28](https://arxiv.org/html/2508.00399v2#bib.bib28)] focuses on package handling activities with limited hazard coverage. Datasets like Safe/Unsafe Behaviors[[30](https://arxiv.org/html/2508.00399v2#bib.bib30)] and Construction Meta Action[[27](https://arxiv.org/html/2508.00399v2#bib.bib27)] include accident events or unsafe actions, but typically involve very few categories, limited video volume, and are often staged. Overall, these datasets are either narrow in scope, lack routine action coverage, or are closed-set with predefined labels—limiting their use for evaluating modern vision-language models in open-world safety-critical applications.

|

| 76 |

+

|

| 77 |

+

#### Multimodal and Open-Vocabulary Benchmarks.

|

| 78 |

+

|

| 79 |

+

Recent interest in multimodal and vision-language models has led to the creation of benchmarks that test video-language alignment, temporal grounding, and compositional reasoning. Datasets such as VidSitu[[18](https://arxiv.org/html/2508.00399v2#bib.bib18)], Action Genome[[10](https://arxiv.org/html/2508.00399v2#bib.bib10)], EgoSchema[[16](https://arxiv.org/html/2508.00399v2#bib.bib16)], and VideoChatBench[[29](https://arxiv.org/html/2508.00399v2#bib.bib29)] emphasize reasoning over text-video pairs, but none focus on the unique demands of industrial or safety settings. Notably, these benchmarks are designed for rich linguistic understanding but do not cover rare, high-risk events or multi-label classification. Similarly, new long-video benchmarks like LVBench[[23](https://arxiv.org/html/2508.00399v2#bib.bib23)], LongVideoBench[[24](https://arxiv.org/html/2508.00399v2#bib.bib24)], and TempCompass[[13](https://arxiv.org/html/2508.00399v2#bib.bib13)] test temporal reasoning but again lack focus on safety or hazard detection.

|

| 80 |

+

|

| 81 |

+

In summary, while prior work has made substantial progress in both action recognition and video-language modeling, there remains a significant gap in benchmarking VLMs for industrial and safety-critical applications. Existing datasets either lack diversity, scale, or multimodal evaluation protocols needed to assess zero-shot generalization in real-world risk-sensitive environments. iSafetyBench fills this gap by providing a large, diverse, and open-vocabulary benchmark designed to jointly evaluate models on both routine industrial activities and rare hazardous events under realistic multi-label and zero-shot settings.

|

| 82 |

+

|

| 83 |

+

3 iSafetyBench: Benchmark Details

|

| 84 |

+

---------------------------------

|

| 85 |

+

|

| 86 |

+

We introduce iSafetyBench, a new video benchmark designed to evaluate the capabilities of vision-language models (VLMs) in industrial environments. Unlike existing benchmarks, which primarily focus on general activities or staged scenarios, iSafetyBench captures the complexity of real-world industrial setups across both routine operations and hazardous incidents. The dataset comprises 1,100 short video clips (4–8 seconds each), collected from diverse environments such as factories, warehouses, construction sites, and retail spaces. Each clip is annotated with multiple open-vocabulary action labels, and paired with multiple-choice questions (MCQs) to facilitate structured evaluation. We support both single-correct and multiple-correct answer settings, enabling robust assessment of discriminative and inclusive model capabilities. Importantly, the benchmark is designed for zero-shot evaluation, without any fine-tuning or task-specific adaptation, to assess generalization in safety-critical domains.

|

| 87 |

+

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

Figure 4: Examples from the danger/hazard dataset: Our dataset includes various hazardous scenarios, ranging from load collapses and personal injuries to emergency incidents like fire.

|

| 91 |

+

|

| 92 |

+

|

| 93 |

+

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

Figure 5: Dataset distribution: Distribution of action tag categories in iSafetyBench.

|

| 97 |

+

|

| 98 |

+

### 3.1 Dataset Curation Process

|

| 99 |

+

|

| 100 |

+

The construction of iSafetyBench followed a multi-stage pipeline focused on coverage, action diversity, and annotation quality. We began by defining a dual taxonomy of actions relevant to industrial settings, dividing them into routine (normal) actions and safety-critical (hazardous) events. This taxonomy was informed by workplace operation manuals, industrial safety guidelines, and publicly available accident reports. We then used Gemini 2.5 Pro to generate keyword-based search queries for each action tag, which were used to retrieve candidate videos from YouTube. All videos were manually reviewed to ensure relevance and trimmed into 4–8 second clips centered around the target action. This preprocessing step minimizes contextual distractions and ensures clarity of action content. An overview of the whole process is given in [Fig.2](https://arxiv.org/html/2508.00399v2#S2.F2 "In Surveillance and Anomaly Detection. ‣ 2 Related Work ‣ iSafetyBench: A video-language benchmark for safety in industrial environment")

|

| 101 |

+

|

| 102 |

+

#### Normal Actions.

|

| 103 |

+

|

| 104 |

+

For normal industrial behavior, we define 98 open-vocabulary action labels grouped into eight functional categories: Material Handling & Movement (e.g., pouring, lifting), Assembly & Production Tasks (e.g., screwing, sealing boxes), Machinery Operation & Maintenance (e.g., operating drills or presses), Vehicle Interaction & Site Logistics (e.g., loading/unloading trucks), Inspection & Quality Control (e.g., measuring, photographing), Communication & Human Interaction (e.g., assisting, pointing), Navigation & Personal Movement (e.g., walking, putting on PPE), and Surveillance & Observation (e.g., monitoring via camera or flashlight). These categories reflect the breadth of activities typical to real-world industrial workflows.

|

| 105 |

+

|

| 106 |

+

#### Hazardous Actions.

|

| 107 |

+

|

| 108 |

+

To capture the wide range of dangerous scenarios, we define 67 hazard labels across ten categories: Machinery & Equipment Operation Errors (e.g., unsafe forklift usage), Entanglement & Compression Hazards (e.g., clothing caught in machines), Structural Failures and Collapses (e.g., shelf or wall collapse), Fire, Explosion & Thermal Hazards (e.g., fire outbreak, equipment overheating), Manual Handling & Lifting Incidents (e.g., lifting injuries, strain-related accidents), Vehicle & Traffic Related Incidents (e.g., collisions, persons hit by vehicles), Rescue & Situational Awareness (e.g., evacuations, aiding injured workers), Environmental Hazards (e.g., unsafe floors, sparks), Slips, Trips & Falls (e.g., falls from height, tripping), and Security & Misconduct (e.g., altercations, vandalism). This taxonomy is grounded in real accident case studies and industrial safety documentation.

|

| 109 |

+

|

| 110 |

+

### 3.2 Video Retrieval and Preprocessing

|

| 111 |

+

|

| 112 |

+

To collect video data, we used Gemini 2.5 Pro to generate diverse query phrases tailored to each action label in both the normal and hazard taxonomies. These queries were used to search YouTube, from which we curated candidate videos. Annotators then manually reviewed the clips and identified segments clearly depicting a single or small group of relevant actions. Each selected segment was trimmed to 4–8 seconds in length to ensure focus and clarity. This manual curation process resulted in high-quality, action-centric video clips that reflect real-world variability in lighting, camera angle, and background clutter.

|

| 113 |

+

|

| 114 |

+

### 3.3 Annotation and Review

|

| 115 |

+

|

| 116 |

+

Each clip was annotated using a semi-automated pipeline. A human annotator first wrote a free-form caption describing all observable actions involving humans, tools, or vehicles. These captions were processed through Gemini 2.5 Pro, which proposed a list of potential action tags from our predefined taxonomy. The annotator then reviewed and refined these tags to ensure semantic consistency and coverage. This process balances scalability and precision, ensuring high-quality annotations while maintaining efficient throughput across the large-scale dataset.

|

| 117 |

+

|

| 118 |

+

Normal Danger/Hazard Average of Both

|

| 119 |

+

Model Single Multi Single Multi Single Multi

|

| 120 |

+

Acc (%)Precision Recall F1 Score Acc (%)Precision Recall F1 Score Acc (%)F1 Score

|

| 121 |

+

Ovis2-8B 47.3 47.6 71.3 53.4 40.3 45.0 54.1 46.2 43.8 49.8

|

| 122 |

+

InternVL2.5-8B-MPO 42.2 47.2 64.1 50.8 38.3 47.0 57.7 49.0 40.25 49.9

|

| 123 |

+

Qwen2.5-VL-7B-Instruct 46.9 44.5 62.7 49.2 33.6 40.5 47.9 41.7 40.25 45.45

|

| 124 |

+

VideoLLaMA3-7B 38.9 49.6 37.4 39.7 32.7 46.2 34.1 36.5 35.8 38.1

|

| 125 |

+

VideoChat-Flash-7B 31.0 36.3 44.2 33.6 26.8 36.0 39.1 31.0 28.9 32.3

|

| 126 |

+

Oryx-7B 25.0 37.8 41.7 30.3 21.5 34.7 37.2 26.6 23.25 28.45

|

| 127 |

+

Valley-Eagle-7B 48.8 59.1 47.7 48.7 35.9 54.5 36.8 40.9 42.35 44.8

|

| 128 |

+

GPT-4o 40.3 50.0 59.1 51.6 37.3 49.3 45.4 45.1 38.8 48.35

|

| 129 |

+

|

| 130 |

+

Table 2: Performance Evaluation: Performance of VLMs on normal and danger/hazard scenarios of iSafetyBench.

|

| 131 |

+

|

| 132 |

+

|

| 133 |

+

|

| 134 |

+

Figure 6: Performance comparison of models: We observe a consistent drop in accuracy from multi‐choice to single‐choice evaluations, and from normal routine to hazardous video scenarios.

|

| 135 |

+

|

| 136 |

+

### 3.4 Dataset Statistics

|

| 137 |

+

|

| 138 |

+

The final dataset comprises 1,100 video clips, with 680 labeled as normal ([Fig.3](https://arxiv.org/html/2508.00399v2#S2.F3 "In Surveillance and Anomaly Detection. ‣ 2 Related Work ‣ iSafetyBench: A video-language benchmark for safety in industrial environment")) and 420 labeled as hazard scenarios ([Fig.4](https://arxiv.org/html/2508.00399v2#S3.F4 "In 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment")). Across all videos, we obtain 98 distinct normal action labels and 67 hazard labels, resulting in 1,468 annotated normal action instances and 888 hazard instances. On average, each clip contains 2–3 annotated actions, with substantial multi-label overlap due to the complexity of real-world scenarios. The dataset spans both indoor and outdoor environments, varied weather and lighting conditions, and includes both first-person and third-person viewpoints. [Fig.5](https://arxiv.org/html/2508.00399v2#S3.F5 "In 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment") visualizes the frequency distribution of action categories, highlighting the prevalence of routine tasks such as material handling and the prominence of situational awareness and structural failures in hazard scenarios.

|

| 139 |

+

|

| 140 |

+

### 3.5 Evaluation Setup

|

| 141 |

+

|

| 142 |

+

To evaluate model performance in a structured setting, we pair each video with multiple-choice questions designed to test understanding of observed actions. For every action-labeled video, we construct two types of MCQs. In the single-correct-choice setting, the question targets one ground-truth action and includes 15 distractors, with only one correct answer. In the multiple-correct-choice setting, several actions may be valid, and the model must identify all applicable answers from a list of 16 options. This dual formulation supports both precision-oriented and recall-oriented evaluation.

|

| 143 |

+

|

| 144 |

+

Distractor options are generated using Gemini 2.5 Pro by selecting actions that are semantically or visually similar to the ground truth but incorrect. These options are manually verified and refined to ensure that each question is challenging and unambiguous, avoiding trivial elimination.

|

| 145 |

+

|

| 146 |

+

#### Evaluation Metrics.

|

| 147 |

+

|

| 148 |

+

We adopt accuracy for single-correct-choice questions, where a response is correct only if the model selects the single ground-truth action. For multiple-correct-choice questions, we compute precision, recall, and F1 score based on the set of selected versus true labels. Precision captures how many of the predicted actions are correct, recall captures how many of the ground-truth actions are retrieved, and F1 score balances the two. This dual-metric setup allows us to evaluate both fine-grained decision-making and broader scene understanding under open-vocabulary, zero-shot settings.

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

Figure 7: Success and failure examples for normal single-choice MCQs: In the top sequence, Ovis2-8B correctly sees someone putting something down on a surface. In the bottom sequence, it mistakes that motion for tearing paper.

|

| 153 |

+

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

Figure 8: Success and failure examples for hazard single-choice MCQs: In the top sequence, Ovis2-8B correctly spots someone moving away from danger. In the bottom sequence, it mistakes that action for someone running a rolling machine unsafely.

|

| 157 |

+

|

| 158 |

+

4 Experiments and Results

|

| 159 |

+

-------------------------

|

| 160 |

+

|

| 161 |

+

#### Video Language Models:

|

| 162 |

+

|

| 163 |

+

We evaluate eight state-of-the-art Vision-Language Models (VLMs) on our normal and accident video datasets. The open-source models include Ovis2-8B [[15](https://arxiv.org/html/2508.00399v2#bib.bib15)], InternVL2.5-8B-MPO [[22](https://arxiv.org/html/2508.00399v2#bib.bib22)], Qwen2.5-VL-7B-Instruct [[2](https://arxiv.org/html/2508.00399v2#bib.bib2)], VideoLLaMA3-7B [[29](https://arxiv.org/html/2508.00399v2#bib.bib29)], VideoChat-Flash-7B [[11](https://arxiv.org/html/2508.00399v2#bib.bib11)], Valley-Eagle-7B [[26](https://arxiv.org/html/2508.00399v2#bib.bib26)], and Oryx-7B [[14](https://arxiv.org/html/2508.00399v2#bib.bib14)], with parameter counts ranging from 7 to 8 billion. GPT-4o [[17](https://arxiv.org/html/2508.00399v2#bib.bib17)] is the only closed-source model evaluated in our study. We evaluate all the videos in our dataset in a zero-shot setting.

|

| 164 |

+

|

| 165 |

+

### 4.1 Results and analysis

|

| 166 |

+

|

| 167 |

+

As seen in [Tab.2](https://arxiv.org/html/2508.00399v2#S3.T2 "In 3.3 Annotation and Review ‣ 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment") and [Fig.6](https://arxiv.org/html/2508.00399v2#S3.F6 "In 3.3 Annotation and Review ‣ 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment"), most models score between 35% and 50%. This shows that their overall understanding of industrial and security actions remains limited. The highest result is 53.4% by Ovis2-8B on normal multiple-correct-choice videos. This emphasizes that even the most advanced models struggle to interpret these scenarios accurately. [Fig.7](https://arxiv.org/html/2508.00399v2#S3.F7 "In Evaluation Metrics. ‣ 3.5 Evaluation Setup ‣ 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment"), [Fig.8](https://arxiv.org/html/2508.00399v2#S3.F8 "In Evaluation Metrics. ‣ 3.5 Evaluation Setup ‣ 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment"), and [Fig.9](https://arxiv.org/html/2508.00399v2#S4.F9 "In Normal vs. Hazard Performance: ‣ 4.1 Results and analysis ‣ 4 Experiments and Results ‣ iSafetyBench: A video-language benchmark for safety in industrial environment") present qualitative results from iSafetyBench, showcasing representative examples from both normal and hazardous scenarios under single-label and multi-label evaluation settings. These figures highlight the model outputs from Ovis2-8B, one of the best-performing vision-language models in our study. For each setting, we illustrate both successful predictions—where the model correctly identifies all relevant actions—and failure cases, where the model misinterprets the scene or misses key safety-critical cues.

|

| 168 |

+

|

| 169 |

+

#### Single‐label vs. Multi‐label Performance:

|

| 170 |

+

|

| 171 |

+

Across our eight models, the average jump from single‐label accuracy to multi‐label F1 is 4.6% for normal actions and 6.3% for hazard actions. The largest individual improvement on normal actions is 11.3% (GPT-4o: 40.3% → 51.6%), and on hazard actions it is 10.7% (InternVL2.5-8B-MPO: 38.3% → 49.0%). This consistent uplift underscores the benefit of multi‐label evaluation in granting partial credit for correctly identified subsets of actions. In the _Average of Both_ columns of [Tab.2](https://arxiv.org/html/2508.00399v2#S3.T2 "In 3.3 Annotation and Review ‣ 3 iSafetyBench: Benchmark Details ‣ iSafetyBench: A video-language benchmark for safety in industrial environment"), it is seen multi scores exceed single‐label accuracy for most models. InternVL2.5-8B-MPO exhibits the largest mean uplift (40.25% vs. 49.90%), followed by GPT-4o (38.80% vs. 48.35%) and Ovis2-8B (43.80% vs. 49.80%). Only Valley-Eagle-7B shows a smaller gap (42.35% vs. 44.80%).

|

| 172 |

+

|

| 173 |

+

#### Normal vs. Hazard Performance:

|

| 174 |

+

|

| 175 |

+

On average, models score higher on normal‐action questions than on hazard questions. Across the eight models, the mean single‐label accuracy drops an average gap of 6.7%. In the multi‐label setting, the average gap is 5%. The largest drop in single‐label performance occurs for Qwen2.5-VL-7B-Instruct (46.9% → 33.6%, a 13.3% gap), while Valley-Eagle-7B exhibits the biggest multi‐label decline (48.7% → 40.9%, a 7.8% gap). These results show that recognizing rare or safety‐critical events remains substantially more challenging than routine activities.

|

| 176 |

+

|

| 177 |

+

|

| 178 |

+

|

| 179 |

+

Figure 9: Success and failure examples for hazard multi-choice MCQs: In the top sequence, Ovis2-8B correctly picks out both the building collapsing and someone tearing down the wall. In the bottom sequence, it sees the person hanging after a slip but then wrongly labels the scene as people helping them down and a heavy load falling.

|

| 180 |

+

|

| 181 |

+

|

| 182 |

+

|

| 183 |

+

|

| 184 |

+

|

| 185 |

+

Figure 10: Performance Analysis Across Categories: Accuracy distribution across action categories for the four top‐performing models. The models show lower performance in categories such as Material Handling & Movement (MHM), Communication & Human Interaction (CHI), Rescue, Situational Awareness & Human Error (RSA), and Manual Handling & Lifting Incidents (MHL).

|

| 186 |

+

|

| 187 |

+

#### Category‐wise Performance:

|

| 188 |

+

|

| 189 |

+

We examined the category‐wise performance of our four top‐performing models [[15](https://arxiv.org/html/2508.00399v2#bib.bib15), [2](https://arxiv.org/html/2508.00399v2#bib.bib2), [17](https://arxiv.org/html/2508.00399v2#bib.bib17), [26](https://arxiv.org/html/2508.00399v2#bib.bib26)]. As shown in [Fig.10](https://arxiv.org/html/2508.00399v2#S4.F10 "In Normal vs. Hazard Performance: ‣ 4.1 Results and analysis ‣ 4 Experiments and Results ‣ iSafetyBench: A video-language benchmark for safety in industrial environment"), all four models achieve their highest accuracy on structured, object‐centric normal actions such as Assembly & Production Tasks (APT) and Vehicle Interaction & Site Logistics (VSL) with scores clustered around 65–70%, followed closely by Surveillance & Observation (SO). In contrast, Material Handling & Movement (MHM) and Communication & Human Interaction (CHI) remain challenging (20–35%). For hazard actions, events with strong distinctive visual cues like Fire, Explosion & Thermal Hazards (FET) and Security Incidents & Intentional Misconduct (SII)—reach 55–65% accuracy, while subtle, context‐dependent hazard categories such as Rescue, Situational Awareness & Human Error (RSA) and Manual Handling & Lifting Incidents (MHL) fall below 30%.

|

| 190 |

+

|

| 191 |

+

5 Conclusion

|

| 192 |

+

------------

|

| 193 |

+

|

| 194 |

+

In this work, we introduced iSafetyBench, a new benchmark designed to assess the capabilities of vision-language models (VLMs) in industrial and safety-critical scenarios. Unlike prior datasets that focus on generic or narrowly-defined tasks, iSafetyBench covers a broad spectrum of real-world activities across both routine operations and hazardous incidents. The dataset supports open-vocabulary multi-label annotation and structured multiple-choice question answering, enabling zero-shot evaluation of video-language understanding in both single and multi-label settings.

|

| 195 |

+

|

| 196 |

+

We conducted a comprehensive evaluation of eight state-of-the-art VLMs under zero-shot conditions and found that current models struggle to generalize to the complexity of industrial and safety-critical tasks. Performance consistently declines on hazardous actions compared to routine activities, and models exhibit higher accuracy in multi-label settings where partial credit is possible. Moreover, models tend to perform better on object-centric, visually distinct actions than on subtle, interaction-heavy behaviors—highlighting a key shortcoming in current model reasoning capabilities.

|

| 197 |

+

|

| 198 |

+

These findings reveal the significant gap between existing models and the demands of real-world industrial and safety applications. By offering a challenging, diverse, and safety-relevant benchmark, iSafetyBench provides a crucial testbed for driving progress in VLMs toward robust understanding in high-stakes environments.

|

| 199 |

+

|

| 200 |

+

6 Acknowledgement

|

| 201 |

+

-----------------

|

| 202 |

+

|

| 203 |

+

This research was supported in part by The Florida High Tech Corridor’s Matching Grant Research Program at UCF.

|

| 204 |

+

|

| 205 |

+

References

|

| 206 |

+

----------

|

| 207 |

+

|

| 208 |

+

* Acsintoae et al. [2022] Andra Acsintoae, Andrei Florescu, Mariana-Iuliana Georgescu, Tudor Mare, Paul Sumedrea, Radu Tudor Ionescu, Fahad Shahbaz Khan, and Mubarak Shah. Ubnormal: New benchmark for supervised open-set video anomaly detection. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)_, 2022.

|

| 209 |

+

* Bai et al. [2025] Shuai Bai, Keqin Chen, Xuejing Liu, Jialin Wang, Wenbin Ge, Sibo Song, Kai Dang, Peng Wang, Shijie Wang, Jun Tang, Humen Zhong, Yuanzhi Zhu, Mingkun Yang, Zhaohai Li, Jianqiang Wan, Pengfei Wang, Wei Ding, Zheren Fu, Yiheng Xu, Jiabo Ye, Xi Zhang, Tianbao Xie, Zesen Cheng, Hang Zhang, Zhibo Yang, Haiyang Xu, and Junyang Lin. Qwen2.5-vl technical report. _arXiv preprint arXiv:2502.13923_, 2025.

|

| 210 |

+

* Caba Heilbron et al. [2015] Fabian Caba Heilbron, Victor Escorcia, Bernard Ghanem, and Juan Carlos Niebles. Activitynet: A large-scale video benchmark for human activity understanding. In _Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)_, pages 961–970, 2015.

|

| 211 |

+

* Carreira et al. [2019] Joao Carreira, Eric Noland, Chloe Hillier, and Andrew Zisserman. A short note on the kinetics-700 human action dataset. _arXiv preprint arXiv:1907.06987_, 2019.

|

| 212 |

+

* Corona et al. [2021] Kellie Corona, Katie Osterdahl, Roderic Collins, and Anthony Hoogs. Meva: A large-scale multiview, multimodal video dataset for activity detection. In _Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (WACV)_, pages 1060–1068, 2021.

|

| 213 |

+

* DALLEL et al. [2020] M. DALLEL, V. HAVARD, D. BAUDRY, and X. SAVATIER. Inhard - industrial human action recognition dataset in the context of industrial collaborative robotics. In _2020 IEEE International Conference on Human-Machine Systems (ICHMS)_, pages 1–6, 2020.

|

| 214 |

+

* Damen et al. [2018] Dima Damen, Hazel Doughty, Giovanni Maria Farinella, Sanja Fidler, Antonino Furnari, Evangelos Kazakos, Davide Moltisanti, Jonathan Munro, Toby Perrett, Will Price, and Michael Wray. Scaling egocentric vision: The epic-kitchens dataset. In _Proceedings of the European Conference on Computer Vision (ECCV)_, 2018.

|

| 215 |

+

* Goyal et al. [2017] Raghav Goyal, Samira Ebrahimi Kahou, Vincent Michalski, Joanna Materzynska, Susanne Westphal, Heuna Kim, Valentin Haenel, Ingo Fruend, Peter Yianilos, Moritz Mueller-Freitag, Florian Hoppe, Christian Thurau, Ingo Bax, and Roland Memisevic. The ”something something” video database for learning and evaluating visual common sense. In _Proceedings of the IEEE International Conference on Computer Vision (ICCV)_, pages 5842–5850, 2017.

|

| 216 |

+

* Grauman [2022] Kristen _et al._ Grauman. Ego4d: Around the world in 3,000 hours of egocentric video. In _Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)_, pages 18995–19012, 2022.

|

| 217 |

+

* Ji et al. [2020] Jingwei Ji, Ranjay Krishna, Li Fei-Fei, and Juan Carlos Niebles. Action genome: Actions as compositions of spatio-temporal scene graphs. In _2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)_, pages 10233–10244, 2020.

|

| 218 |

+

* Li et al. [2025] Xinhao Li, Yi Wang, Jiashuo Yu, Xiangyu Zeng, Yuhan Zhu, Haian Huang, Jianfei Gao, Kunchang Li, Yinan He, Chenting Wang, Yu Qiao, Yali Wang, and Limin Wang. Videochat-flash: Hierarchical compression for long-context video modeling. _arXiv preprint arXiv:2501.00574_, 2025.

|

| 219 |

+

* Liu et al. [2018] Wen Liu, Weixin Luo, Dongze Lian, and Shenghua Gao. Future frame prediction for anomaly detection – a new baseline. In _Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR)_, pages 6536–6545, 2018.

|

| 220 |

+

* Liu et al. [2024a] Yuanxin Liu, Shicheng Li, Yi Liu, Yuxiang Wang, Shuhuai Ren, Lei Li, Sishuo Chen, Xu Sun, and Lu Hou. TempCompass: Do video LLMs really understand videos? In _Findings of the Association for Computational Linguistics: ACL 2024_, pages 8731–8772, Bangkok, Thailand, 2024a. Association for Computational Linguistics.

|

| 221 |

+

* Liu et al. [2024b] Zuyan Liu, Yuhao Dong, Ziwei Liu, Winston Hu, Jiwen Lu, and Yongming Rao. Oryx MLLM: On-demand spatial-temporal understanding at arbitrary resolution. _arXiv preprint arXiv:2409.12961_, 2024b.

|

| 222 |

+

* Lu et al. [2024] Shiyin Lu, Yang Li, Qing-Guo Chen, Zhao Xu, Weihua Luo, Kaifu Zhang, and Han-Jia Ye. Ovis: Structural embedding alignment for multimodal large language model. _arXiv preprint arXiv:2405.20797_, 2024.

|

| 223 |

+

* Mangalam et al. [2023] Karttikeya Mangalam, Raiymbek Akshulakov, and Jitendra Malik. Egoschema: A diagnostic benchmark for very long-form video language understanding. In _Advances in Neural Information Processing Systems_, pages 46212–46244. Curran Associates, Inc., 2023.

|

| 224 |

+

* OpenAI [2024] OpenAI. Introducing GPT-4o. [https://openai.com/index/gpt-4o-and-more-tools-to-chatgpt-free/](https://openai.com/index/gpt-4o-and-more-tools-to-chatgpt-free/), 2024. Blog post, accessed 2025-07-02.

|

| 225 |

+

* Sadhu et al. [2021] Arka Sadhu, Tanmay Gupta, Mark Yatskar, Ram Nevatia, and Aniruddha Kembhavi. Visual semantic role labeling for video understanding. In _2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)_, pages 5585–5596, 2021.

|

| 226 |

+

* Schneider et al. [2022] Pascal Schneider, Yuriy Anisimov, Raisul Islam, Bruno Mirbach, Jason Rambach, Didier Stricker, and Frédéric Grandidier. Timo—a dataset for indoor building monitoring with a time-of-flight camera. _Sensors_, 22(11), 2022.

|

| 227 |

+

* Sigurdsson et al. [2016] Gunnar A. Sigurdsson, Gül Varol, Xiaolong Wang, Ali Farhadi, Ivan Laptev, and Abhinav Gupta. Hollywood in homes: Crowdsourcing data collection for activity understanding. In _Computer Vision – ECCV 2016_, pages 510–526, 2016.

|

| 228 |

+

* Sultani et al. [2018] Waqas Sultani, Chen Chen, and Mubarak Shah. Real-world anomaly detection in surveillance videos. In _2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition_, pages 6479–6488, 2018.

|

| 229 |

+

* Wang et al. [2024a] Weiyun Wang, Zhe Chen, Wenhai Wang, Yue Cao, Yangzhou Liu, Zhangwei Gao, Jinguo Zhu, Xizhou Zhu, Lewei Lu, Yu Qiao, and Jifeng Dai. Enhancing the reasoning ability of multimodal large language models via mixed preference optimization. _arXiv preprint arXiv:2411.10442_, 2024a.

|

| 230 |

+

* Wang et al. [2024b] Weihan Wang, Zehai He, Wenyi Hong, Yean Cheng, Xiaohan Zhang, Ji Qi, Xiaotao Gu, Shiyu Huang, Bin Xu, Yuxiao Dong, Ming Ding, and Jie Tang. Lvbench: An extreme long video understanding benchmark, 2024b.

|

| 231 |

+

* Wu et al. [2024] Haoning Wu, Dongxu Li, Bei Chen, and Junnan Li. Longvideobench: A benchmark for long-context interleaved video-language understanding. In _Advances in Neural Information Processing Systems_, pages 28828–28857. Curran Associates, Inc., 2024.

|

| 232 |

+

* Wu et al. [2020] Peng Wu, Jing Liu, Yujia Shi, Yujia Sun, Fangtao Shao, Zhaoyang Wu, and Zhiwei Yang. Not only look, but also listen: Learning multimodal violence detection under weak supervision. In _Proceedings of the European Conference on Computer Vision (ECCV)_, 2020.

|

| 233 |

+

* Wu et al. [2025] Ziheng Wu, Zhenghao Chen, Ruipu Luo, Can Zhang, Yuan Gao, Zhentao He, Xian Wang, Haoran Lin, and Minghui Qiu. Valley2: Exploring multimodal models with scalable vision-language design. _arXiv preprint arXiv:2501.05901_, 2025.

|

| 234 |

+

* Yang et al. [2023] Meng Yang, Chengke Wu, Yuanjun Guo, Rui Jiang, Feixiang Zhou, Jianlin Zhang, and Zhile Yang. Transformer-based deep learning model and video dataset for unsafe action identification in construction projects. _Automation in Construction_, 146:104703, 2023.

|

| 235 |

+

* Yoshimura et al. [2024] Naoya Yoshimura, Jaime Morales, Takuya Maekawa, and Takahiro Hara. OpenPack: A Large-Scale Dataset for Recognizing Packaging Works in IoT-Enabled Logistic Environments . In _2024 IEEE International Conference on Pervasive Computing and Communications (PerCom)_, pages 90–97, Los Alamitos, CA, USA, 2024. IEEE Computer Society.

|

| 236 |

+

* Zhang et al. [2025] Boqiang Zhang, Kehan Li, Zesen Cheng, Zhiqiang Hu, Yuqian Yuan, Guanzheng Chen, Sicong Leng, Yuming Jiang, Hang Zhang, Xin Li, Peng Jin, Wenqi Zhang, Fan Wang, Lidong Bing, and Deli Zhao. Videollama 3: Frontier multimodal foundation models for image and video understanding. _arXiv preprint arXiv:2501.13106_, 2025.

|

| 237 |

+

* Önal and Dandıl [2024] Oğuzhan Önal and Emre Dandıl. Video dataset for the detection of safe and unsafe behaviours in workplaces. _Data in Brief_, 56:110791, 2024.

|

| 238 |

+

|

| 239 |

+

\thetitle

|

| 240 |

+

|

| 241 |

+

Supplementary Material

|

| 242 |

+

|

| 243 |

+

In the supplementary material, we provide the detail list of actions for both the normal and hazard dataset.

|

| 244 |

+

|

| 245 |

+

A List of Normal Actions

|

| 246 |

+

------------------------

|

| 247 |

+

|

| 248 |

+

Material Handling & Movement

|

| 249 |

+

|

| 250 |

+

1. 1.pouring something

|

| 251 |

+

2. 2.holding object

|

| 252 |

+

3. 3.moving object

|

| 253 |

+

4. 4.placing object

|

| 254 |

+

5. 5.stacking objects

|

| 255 |

+

6. 6.pulling object

|

| 256 |

+

7. 7.pushing object

|

| 257 |

+

8. 8.rotating object

|

| 258 |

+

9. 9.folding fabric

|

| 259 |

+

10. 10.tying fabric

|

| 260 |

+

11. 11.stamping object

|

| 261 |

+

12. 12.loading materials

|

| 262 |

+

13. 13.unloading materials

|

| 263 |

+

14. 14.picking object

|

| 264 |

+

15. 15.rotating wood planks

|

| 265 |

+

16. 16.tearing something

|

| 266 |

+

17. 17.poking object

|

| 267 |

+

18. 18.arranging objects

|

| 268 |

+

19. 19.rolling object

|

| 269 |

+

20. 20.wrapping chain

|

| 270 |

+

21. 21.attaching label

|

| 271 |

+

|

| 272 |

+

Assembly & Production Tasks

|

| 273 |

+

|

| 274 |

+

1. 22.assembling electronic components

|

| 275 |

+

2. 23.screwing parts

|

| 276 |

+

3. 24.adjusting wiring

|

| 277 |

+

4. 25.inserting screws

|

| 278 |

+

5. 26.cutting with machine

|

| 279 |

+

6. 27.bending metal sheet

|

| 280 |

+

7. 28.cleaning object

|

| 281 |

+

8. 29.sealing boxes

|

| 282 |

+

9. 30.scanning label

|

| 283 |

+

10. 31.sewing

|

| 284 |

+

11. 32.connecting pipes

|

| 285 |

+

12. 33.testing product functionality

|

| 286 |

+

13. 34.heating metal

|

| 287 |

+

14. 35.opening panel cover

|

| 288 |

+

15. 36.closing panel cover

|

| 289 |

+

16. 37.digging

|

| 290 |

+

|

| 291 |

+

Machinery Operation & Maintenance

|

| 292 |

+

|

| 293 |

+

1. 38.operating machine

|

| 294 |

+

2. 39.pressing button

|

| 295 |

+

3. 40.adjusting fastener with wrench

|

| 296 |

+

4. 41.hammering component

|

| 297 |

+

5. 42.using jack to lift vehicle

|

| 298 |

+

6. 43.adjusting machine components

|

| 299 |

+

7. 44.detaching part from machinery

|

| 300 |

+

|

| 301 |

+

Vehicle Interaction & Site Logistics

|

| 302 |

+

|

| 303 |

+

1. 45.driving vehicle

|

| 304 |

+

2. 46.parking vehicle

|

| 305 |

+

3. 47.backing vehicle

|

| 306 |

+

4. 48.opening vehicle door

|

| 307 |

+

5. 49.closing vehicle door

|

| 308 |

+

6. 50.entering vehicle

|

| 309 |

+

7. 51.exiting vehicle

|

| 310 |

+

8. 52.loading to truck

|

| 311 |

+

9. 53.unloading from truck

|

| 312 |

+

10. 54.attaching chain to vehicle

|

| 313 |

+

11. 55.changing tire

|

| 314 |

+

12. 56.operating industrial vehicle

|

| 315 |

+

|

| 316 |

+

Inspection & Quality Control

|

| 317 |

+

|

| 318 |

+

1. 57.inspecting equipment or text

|

| 319 |

+

2. 58.looking at screen

|

| 320 |

+

3. 59.measuring with tape

|

| 321 |

+

4. 60.writing notes

|

| 322 |

+

5. 61.taking a photo

|

| 323 |

+

|

| 324 |

+

Communication & Human Interaction

|

| 325 |

+

|

| 326 |

+

1. 62.talking

|

| 327 |

+

2. 63.pointing at something

|

| 328 |

+

3. 64.signaling to someone

|

| 329 |

+

4. 65.posing for camera

|

| 330 |

+

5. 66.calling for help

|

| 331 |

+

6. 67.assisting injured coworker

|

| 332 |

+

7. 68.arguing

|

| 333 |

+

8. 69.arresting person

|

| 334 |

+

9. 70.knocking on window

|

| 335 |

+

10. 71.shaking hands

|

| 336 |

+

|

| 337 |

+

Navigation & Personal Movement

|

| 338 |

+

|

| 339 |

+

1. 72.adjusting clothing

|

| 340 |

+

2. 73.standing

|

| 341 |

+

3. 74.walking

|

| 342 |

+

4. 75.running

|

| 343 |

+

5. 76.entering doorway

|

| 344 |

+

6. 77.exiting doorway

|

| 345 |

+

7. 78.crouching

|

| 346 |

+

8. 79.bending down

|

| 347 |

+

9. 80.balancing on beam

|

| 348 |

+

10. 81.dancing

|

| 349 |

+

11. 82.showing discomfort

|

| 350 |

+

12. 83.jumping

|

| 351 |

+

13. 84.rubbing eyes

|

| 352 |

+

14. 85.rubbing head

|

| 353 |

+

15. 86.yawning

|

| 354 |

+

16. 87.checking self

|

| 355 |

+

17. 88.sitting

|

| 356 |

+

18. 89.falling down

|

| 357 |

+

19. 90.eating

|

| 358 |

+

20. 91.getting up

|

| 359 |

+

21. 92.putting on PPE

|

| 360 |

+

22. 93.taking off PPE

|

| 361 |

+

23. 94.crawling

|

| 362 |

+

|

| 363 |

+

Surveillance & Observation

|

| 364 |

+

|

| 365 |

+

1. 95.monitoring onboard camera

|

| 366 |

+

2. 96.appearing on security feed

|

| 367 |

+

3. 97.searching area with flashlight

|

| 368 |

+

4. 98.watching process

|

| 369 |

+

|

| 370 |

+

B List of Dangerous/Hazard Actions

|

| 371 |

+

----------------------------------

|

| 372 |

+

|

| 373 |

+

Machinery & Equipment Operation Errors

|

| 374 |

+

|

| 375 |

+

1. 1.operating forklift

|

| 376 |

+

2. 2.forklift blade detaching

|

| 377 |

+

3. 3.operating heavy equipment dangerously

|

| 378 |

+

4. 4.operating hydraulic press

|

| 379 |

+

5. 5.operating rolling machine

|

| 380 |

+

6. 6.rotating machine lever

|

| 381 |

+

7. 7.adjusting machine while running

|

| 382 |

+

8. 8.robotic arm misoperation

|

| 383 |

+

9. 9.walking on moving conveyor

|

| 384 |

+

10. 10.misusing lift platform

|

| 385 |

+

11. 11.machine part flying off

|

| 386 |

+

12. 12.improper use of flamethrower

|

| 387 |

+

|

| 388 |

+

Entanglement & Compression Hazards

|

| 389 |

+

|

| 390 |

+

1. 13.shirt caught in machine

|

| 391 |

+

2. 14.hair caught in appliance

|

| 392 |

+

3. 15.foot stuck in conveyor

|

| 393 |

+

4. 16.body pulled into machine

|

| 394 |

+

5. 17.trapped between closing machine sides

|

| 395 |

+

6. 18.crushed under overturned vehicle

|

| 396 |

+

|

| 397 |

+

Structural Failures, Falling Objects & Collapses

|

| 398 |

+

|

| 399 |

+

1. 19.structural collapse

|

| 400 |

+

2. 20.falling load

|

| 401 |

+

3. 21.heavy object slipping

|

| 402 |

+

4. 22.break under load

|

| 403 |

+

5. 23.warehouse shelves toppling

|

| 404 |

+

6. 24.crane imbalance with suspended load

|

| 405 |

+

7. 25.glass shattering on impact

|

| 406 |

+

8. 26.unloading truck load fall

|

| 407 |

+

|

| 408 |

+

Fire, Explosion & Thermal Hazards

|

| 409 |

+

|

| 410 |

+

1. 27.fire incident

|

| 411 |

+

2. 28.pressurized vessel explosion

|

| 412 |

+

3. 29.machine explosion

|

| 413 |

+

4. 30.gas burst

|

| 414 |

+

|

| 415 |

+

Manual Handling & Lifting Incidents

|

| 416 |

+

|

| 417 |

+

1. 31.lifting heavy load

|

| 418 |

+

2. 32.pushing heavy load

|

| 419 |

+

3. 33.pulling heavy load

|

| 420 |

+

4. 34.carrying heavy load

|

| 421 |

+

5. 35.carrying object and slipping

|

| 422 |

+

|

| 423 |

+

Vehicle & Traffic-Related Incidents

|

| 424 |

+

|

| 425 |

+

1. 36.driving car

|

| 426 |

+

2. 37.vehicle crash into building or stationary object

|

| 427 |

+

3. 38.vehicle losing control

|

| 428 |

+

4. 39.collision between vehicles

|

| 429 |

+

5. 40.driver thrown during crash

|

| 430 |

+

6. 41.car hood malfunction or improper interaction

|

| 431 |

+

7. 42.person dragged by vehicle

|

| 432 |

+

8. 43.vehicle falling off edge

|

| 433 |

+

|

| 434 |

+

Rescue, Situational Awareness & Human Error

|

| 435 |

+

|

| 436 |

+

1. 44.lifting object off person

|

| 437 |

+

2. 45.extinguishing fire

|

| 438 |

+

3. 46.rescue effort

|

| 439 |

+

4. 47.helping person down from height

|

| 440 |

+

5. 48.searching debris for victims

|

| 441 |

+

6. 49.signalling others about hazard

|

| 442 |

+

7. 50.filming incident

|

| 443 |

+

8. 51.watching incident passively

|

| 444 |

+

9. 52.escaping from danger

|

| 445 |

+

|

| 446 |

+

Environmental & Workplace Hazards

|

| 447 |

+

|

| 448 |

+

1. 53.equipment emitting sparks

|

| 449 |

+

2. 54.overstacked shelves

|

| 450 |

+

3. 55.cluttered workspace

|

| 451 |

+

4. 56.flooded floor

|

| 452 |

+

5. 57.platform failure

|

| 453 |

+

|

| 454 |

+

Slips, Trips, Falls & Non-Machine Events

|

| 455 |

+

|

| 456 |

+

1. 58.person falling down

|

| 457 |

+

2. 59.tree falling nearby

|

| 458 |

+

3. 60.hanging from something after slip

|

| 459 |

+

|

| 460 |

+

Security Incidents & Intentional Misconduct

|

| 461 |

+

|

| 462 |

+

1. 61.moving in a suspicious manner

|

| 463 |

+

2. 62.physical altercation or fighting

|

| 464 |

+

3. 63.vandalism or intentional property damage

|

| 465 |

+

4. 64.intention of theft

|

| 466 |

+

5. 65.police arrest

|

| 467 |

+

6. 66.police search

|

| 468 |

+

7. 67.firearm discharge

|