Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 7 112 | repo_url stringlengths 36 141 | action stringclasses 3

values | title stringlengths 1 853 | labels stringlengths 4 898 | body stringlengths 2 262k | index stringclasses 13

values | text_combine stringlengths 96 262k | label stringclasses 2

values | text stringlengths 96 250k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

4,417 | 22,740,492,328 | IssuesEvent | 2022-07-07 02:58:34 | BioArchLinux/Packages | https://api.github.com/repos/BioArchLinux/Packages | closed | [MAINTAIN] RESTfulSummarizedExperiment error load | maintain | <!--

Please report the error of one package in one issue! Use multi issues to report multi bugs.

Thanks!

-->

**Log of the bug**

<details>

```

* installing *source* package ‘tenXplore’ ...

** using staged installation

** R

** data

** inst

** byte-compile and prepare package for lazy loading

Error: cla... | True | [MAINTAIN] RESTfulSummarizedExperiment error load - <!--

Please report the error of one package in one issue! Use multi issues to report multi bugs.

Thanks!

-->

**Log of the bug**

<details>

```

* installing *source* package ‘tenXplore’ ...

** using staged installation

** R

** data

** inst

** byte-comp... | non_build | restfulsummarizedexperiment error load please report the error of one package in one issue use multi issues to report multi bugs thanks log of the bug installing source package ‘tenxplore’ using staged installation r data inst byte compile and prepare p... | 0 |

746,967 | 26,052,189,195 | IssuesEvent | 2022-12-22 19:59:04 | bounswe/bounswe2022group8 | https://api.github.com/repos/bounswe/bounswe2022group8 | opened | BE-40: Recommendation System | Effort: High Priority: Medium Status: In Progress Coding Team: Backend | ### What's up?

As a part of our requirements we will create a recommendation system that will recommend to registered users, similar art item content. While deciding the similarity, various parameters will be taken into consideration, such as category and tags of art items along with likes and history of the user.

... | 1.0 | BE-40: Recommendation System - ### What's up?

As a part of our requirements we will create a recommendation system that will recommend to registered users, similar art item content. While deciding the similarity, various parameters will be taken into consideration, such as category and tags of art items along with ... | non_build | be recommendation system what s up as a part of our requirements we will create a recommendation system that will recommend to registered users similar art item content while deciding the similarity various parameters will be taken into consideration such as category and tags of art items along with l... | 0 |

96,059 | 19,862,342,875 | IssuesEvent | 2022-01-22 02:44:17 | trezor/trezor-suite | https://api.github.com/repos/trezor/trezor-suite | opened | Clean up messages | code | The following merge improved messages.ts and en.json.

https://github.com/trezor/trezor-suite/pull/4777

https://github.com/trezor/trezor-suite/pull/4778

Therefore, I considered cleaning up message files.

I've written python code that does a full-text search of all the files to see if the keys contained in the me... | 1.0 | Clean up messages - The following merge improved messages.ts and en.json.

https://github.com/trezor/trezor-suite/pull/4777

https://github.com/trezor/trezor-suite/pull/4778

Therefore, I considered cleaning up message files.

I've written python code that does a full-text search of all the files to see if the keys... | non_build | clean up messages the following merge improved messages ts and en json therefore i considered cleaning up message files i ve written python code that does a full text search of all the files to see if the keys contained in the messages ts file are actually used this code will eventually output new me... | 0 |

722,838 | 24,875,694,421 | IssuesEvent | 2022-10-27 18:53:11 | AY2223S1-CS2103T-T12-2/tp | https://api.github.com/repos/AY2223S1-CS2103T-T12-2/tp | closed | 📝 Docs: update `readme.md` | priority.Medium | ## Tasks

- Update new UI photo

- Update acknowledgements to include Tailwind for UI inspiration | 1.0 | 📝 Docs: update `readme.md` - ## Tasks

- Update new UI photo

- Update acknowledgements to include Tailwind for UI inspiration | non_build | 📝 docs update readme md tasks update new ui photo update acknowledgements to include tailwind for ui inspiration | 0 |

54,105 | 13,252,911,997 | IssuesEvent | 2020-08-20 06:33:00 | microsoft/onnxruntime | https://api.github.com/repos/microsoft/onnxruntime | closed | Failed to build onnxruntime with TensorRT on Windows 10 | component:build ep:TensorRT | **Describe the bug**

I'm trying to build onnxruntime with tensorRT, and I'm getting errors as I will show.

(Similar to [this](https://github.com/microsoft/onnxruntime/issues/3630#issue-604657058) issue)

**Urgency**

none

(But I've been working on this issue for about a week, but still can't figure it out....)

... | 1.0 | Failed to build onnxruntime with TensorRT on Windows 10 - **Describe the bug**

I'm trying to build onnxruntime with tensorRT, and I'm getting errors as I will show.

(Similar to [this](https://github.com/microsoft/onnxruntime/issues/3630#issue-604657058) issue)

**Urgency**

none

(But I've been working on this issu... | build | failed to build onnxruntime with tensorrt on windows describe the bug i m trying to build onnxruntime with tensorrt and i m getting errors as i will show similar to issue urgency none but i ve been working on this issue for about a week but still can t figure it out system informa... | 1 |

239,151 | 26,204,350,593 | IssuesEvent | 2023-01-03 20:49:38 | istio/ztunnel | https://api.github.com/repos/istio/ztunnel | closed | Implement (L4) RBAC | area/security P0 OSS Alpha | This was an initial attempt in Go:

```go

unc (p *Proxy) AssertRBAC(r *http.Request) error {

ip, dport, err := net.SplitHostPort(r.Host)

if err != nil {

return err

}

pip, err := netip.ParseAddr(ip)

if err != nil {

return err

}

wl := p.ConnectionManager.FindWorkloadByAddr(pip)

var identity strin... | True | Implement (L4) RBAC - This was an initial attempt in Go:

```go

unc (p *Proxy) AssertRBAC(r *http.Request) error {

ip, dport, err := net.SplitHostPort(r.Host)

if err != nil {

return err

}

pip, err := netip.ParseAddr(ip)

if err != nil {

return err

}

wl := p.ConnectionManager.FindWorkloadByAddr(pip... | non_build | implement rbac this was an initial attempt in go go unc p proxy assertrbac r http request error ip dport err net splithostport r host if err nil return err pip err netip parseaddr ip if err nil return err wl p connectionmanager findworkloadbyaddr pip ... | 0 |

490,164 | 14,116,139,509 | IssuesEvent | 2020-11-08 01:05:14 | drashland/sockets | https://api.github.com/repos/drashland/sockets | closed | Remove `openChannel`? | Priority: Low Type: Question | ## Summary

What: Remove `openChannel`, then `on` would 'open' it itself.

Why: I feel openChannel is unnecessary, channels are always opened right before it's listener method - i feel using `on` should create thee listener itself

Thoughts?

## Acceptance Criteria

- [x] Determine whether to remove `onChan... | 1.0 | Remove `openChannel`? - ## Summary

What: Remove `openChannel`, then `on` would 'open' it itself.

Why: I feel openChannel is unnecessary, channels are always opened right before it's listener method - i feel using `on` should create thee listener itself

Thoughts?

## Acceptance Criteria

- [x] Determine w... | non_build | remove openchannel summary what remove openchannel then on would open it itself why i feel openchannel is unnecessary channels are always opened right before it s listener method i feel using on should create thee listener itself thoughts acceptance criteria determine whe... | 0 |

23,468 | 7,331,899,609 | IssuesEvent | 2018-03-05 14:54:27 | alibaba/ice | https://api.github.com/repos/alibaba/ice | closed | Module parse failed: Unexpected character '�' (1:0) | build | import img from 'xxx.png';

-------------------------

Module parse failed: Unexpected character '�' (1:0)

You may need an appropriate loader to handle this file type.

(Source code omitted for this binary file) | 1.0 | Module parse failed: Unexpected character '�' (1:0) - import img from 'xxx.png';

-------------------------

Module parse failed: Unexpected character '�' (1:0)

You may need an appropriate loader to handle this file type.

(Source code omitted for this binary file) | build | module parse failed unexpected character � import img from xxx png module parse failed unexpected character � you may need an appropriate loader to handle this file type source code omitted for this binary file | 1 |

8,987 | 23,927,859,716 | IssuesEvent | 2022-09-10 04:23:36 | kubernetes/enhancements | https://api.github.com/repos/kubernetes/enhancements | reopened | Pod level resource limits | sig/node sig/cli sig/architecture lifecycle/rotten tracked/no sig/cloud-provider | ### Enhancement Description

- One-line enhancement description (can be used as a release note): Allow setting of resource requests & limits at Pod level

- Kubernetes Enhancement Proposal: <!-- link to kubernetes/enhancements file; if none yet, link to PR --> https://github.com/kubernetes/enhancements/pull/1592

- ... | 1.0 | Pod level resource limits - ### Enhancement Description

- One-line enhancement description (can be used as a release note): Allow setting of resource requests & limits at Pod level

- Kubernetes Enhancement Proposal: <!-- link to kubernetes/enhancements file; if none yet, link to PR --> https://github.com/kubernete... | non_build | pod level resource limits enhancement description one line enhancement description can be used as a release note allow setting of resource requests limits at pod level kubernetes enhancement proposal discussion link primary contact assignee responsible sigs sig node en... | 0 |

725,980 | 24,983,279,234 | IssuesEvent | 2022-11-02 13:23:35 | teogor/ceres | https://api.github.com/repos/teogor/ceres | closed | Crash: `BaseTransientBottomBar.java line 146` | @bug @priority-critical | Crash: `BaseTransientBottomBar.java line 146`

```txt

Caused by java.lang.reflect.InvocationTargetException

at java.lang.reflect.Constructor.newInstance0(Constructor.java)

at java.lang.reflect.Constructor.newInstance(Constructor.java:343)

at android.view.LayoutInflater.createView(LayoutInflat... | 1.0 | Crash: `BaseTransientBottomBar.java line 146` - Crash: `BaseTransientBottomBar.java line 146`

```txt

Caused by java.lang.reflect.InvocationTargetException

at java.lang.reflect.Constructor.newInstance0(Constructor.java)

at java.lang.reflect.Constructor.newInstance(Constructor.java:343)

at and... | non_build | crash basetransientbottombar java line crash basetransientbottombar java line txt caused by java lang reflect invocationtargetexception at java lang reflect constructor constructor java at java lang reflect constructor newinstance constructor java at android view layouti... | 0 |

91,098 | 26,273,865,932 | IssuesEvent | 2023-01-06 19:48:20 | cryostatio/cryostat-web | https://api.github.com/repos/cryostatio/cryostat-web | closed | Rebuilds without source changes should be faster | chore build needs-triage | Currently, running a rebuild without having made any source changes still takes a fairly long time. It should be possible to configure some Webpack caching so that unchanged files are not recompiled. We already have some configuration that should do this, but it doesn't seem to be working as expected, so perhaps some o... | 1.0 | Rebuilds without source changes should be faster - Currently, running a rebuild without having made any source changes still takes a fairly long time. It should be possible to configure some Webpack caching so that unchanged files are not recompiled. We already have some configuration that should do this, but it doesn'... | build | rebuilds without source changes should be faster currently running a rebuild without having made any source changes still takes a fairly long time it should be possible to configure some webpack caching so that unchanged files are not recompiled we already have some configuration that should do this but it doesn ... | 1 |

180,386 | 21,625,723,884 | IssuesEvent | 2022-05-05 01:40:36 | Sh2dowFi3nd/Test_2 | https://api.github.com/repos/Sh2dowFi3nd/Test_2 | closed | WS-2016-7061 (Medium) detected in poi-3.8.jar - autoclosed | security vulnerability | ## WS-2016-7061 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>poi-3.8.jar</b></p></summary>

<p>Apache POI - Java API To Access Microsoft Format Files</p>

<p>Library home page: <a h... | True | WS-2016-7061 (Medium) detected in poi-3.8.jar - autoclosed - ## WS-2016-7061 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>poi-3.8.jar</b></p></summary>

<p>Apache POI - Java API To... | non_build | ws medium detected in poi jar autoclosed ws medium severity vulnerability vulnerable library poi jar apache poi java api to access microsoft format files library home page a href dependency hierarchy x poi jar vulnerable library found in head commit ... | 0 |

564,202 | 16,720,578,999 | IssuesEvent | 2021-06-10 06:43:14 | ita-social-projects/TeachUA | https://api.github.com/repos/ita-social-projects/TeachUA | closed | [Гуртки page] Address is not displayed near the location icon on the club's pop-up window | Priority: High bug | Environment: Windows 7, Service Pack 1, Google Chrome, 90.0.4430.212 (Розробка) (64-розрядна версія).

Reproducible: always.

Build found: the last commit 07.06.2021

Preconditions

Open [https://speak-ukrainian.org.ua/dev/](https://speak-ukrainian.org.ua/dev/)

**Steps to reproduce**

1. Go to 'Гуртки' menu naviga... | 1.0 | [Гуртки page] Address is not displayed near the location icon on the club's pop-up window - Environment: Windows 7, Service Pack 1, Google Chrome, 90.0.4430.212 (Розробка) (64-розрядна версія).

Reproducible: always.

Build found: the last commit 07.06.2021

Preconditions

Open [https://speak-ukrainian.org.ua/dev/](h... | non_build | address is not displayed near the location icon on the club s pop up window environment windows service pack google chrome розробка розрядна версія reproducible always build found the last commit preconditions open steps to reproduce go to гуртки menu navigatio... | 0 |

200,621 | 15,801,755,739 | IssuesEvent | 2021-04-03 06:26:15 | w2vgd/ped | https://api.github.com/repos/w2vgd/ped | opened | Incorrect jar file name | severity.VeryLow type.DocumentationBug | UG says the jar file should be `focuris.jar` but the downloaded jar file name is `v1.3.jar`

<!--session: 1617429886253-dfdc7f54-0b8c-4a03-952b-44062911198f--> | 1.0 | Incorrect jar file name - UG says the jar file should be `focuris.jar` but the downloaded jar file name is `v1.3.jar`

<!--session: 1617429886253-dfdc7f54-0b8c-4a03-952b-44062911198f--> | non_build | incorrect jar file name ug says the jar file should be focuris jar but the downloaded jar file name is jar | 0 |

23,504 | 7,334,538,884 | IssuesEvent | 2018-03-05 23:12:53 | moby/moby | https://api.github.com/repos/moby/moby | closed | docker pull of image built on 18.02 fails on 17.06 | area/builder area/distribution kind/bug platform/desktop platform/windows status/confirmed version/17.06 | ---------------------------------------------------

BUG REPORT INFORMATION

---------------------------------------------------

**Description**

Using Docker for Windows Edge I built a new asp.net core app on nanoserver. Pushed to Docker Hub. Pull on Windows Server 2016 results in an error.

**Steps to reprod... | 1.0 | docker pull of image built on 18.02 fails on 17.06 - ---------------------------------------------------

BUG REPORT INFORMATION

---------------------------------------------------

**Description**

Using Docker for Windows Edge I built a new asp.net core app on nanoserver. Pushed to Docker Hub. Pull on Windows Se... | build | docker pull of image built on fails on bug report information description using docker for windows edge i built a new asp net core app on nanoserver pushed to docker hub pull on windows server... | 1 |

1,921 | 21,772,828,122 | IssuesEvent | 2022-05-13 10:48:22 | jina-ai/jina | https://api.github.com/repos/jina-ai/jina | closed | Connection failures from the Gateway are confusing | epic/reliability | When the Gateway can't connect to an Executor, the error messages are quite confusing. They also dont include the information which executor actually failed. | True | Connection failures from the Gateway are confusing - When the Gateway can't connect to an Executor, the error messages are quite confusing. They also dont include the information which executor actually failed. | non_build | connection failures from the gateway are confusing when the gateway can t connect to an executor the error messages are quite confusing they also dont include the information which executor actually failed | 0 |

551 | 8,555,814,137 | IssuesEvent | 2018-11-08 11:08:54 | LiskHQ/lisk | https://api.github.com/repos/LiskHQ/lisk | closed | Node receives blocks during snapshotting | *medium :hammer: reliability chain p2p performance | ### Expected behavior

The node should not receive blocks/transaction during snapshotting process.

### Actual behavior

Node receives blocks during snapshotting

```

[inf] 2018-09-25 08:23:19 | Verify->verifyBlock succeeded for block 8323462238787390927 at height 1616.

[inf] 2018-09-25 08:23:20 | Rebuilding accounts... | True | Node receives blocks during snapshotting - ### Expected behavior

The node should not receive blocks/transaction during snapshotting process.

### Actual behavior

Node receives blocks during snapshotting

```

[inf] 2018-09-25 08:23:19 | Verify->verifyBlock succeeded for block 8323462238787390927 at height 1616.

[in... | non_build | node receives blocks during snapshotting expected behavior the node should not receive blocks transaction during snapshotting process actual behavior node receives blocks during snapshotting verify verifyblock succeeded for block at height rebuilding accounts ... | 0 |

33,762 | 9,205,102,098 | IssuesEvent | 2019-03-08 09:37:43 | qissue-bot/QGIS | https://api.github.com/repos/qissue-bot/QGIS | closed | qgis-0.8.0.dmg - no mountable file systems | Category: Build/Install Component: Affected QGIS version Component: Crashes QGIS or corrupts data Component: Easy fix? Component: Operating System Component: Pull Request or Patch supplied Component: Regression? Component: Resolution Priority: Low Project: QGIS Application Status: Closed Tracker: Bug report | ---

Author Name: **mike-coan-gmail-com -** (mike-coan-gmail-com -)

Original Redmine Issue: 517, https://issues.qgis.org/issues/517

Original Assignee: nobody -

---

Gunzip's correctly but failure to mount "qgis-0.8.0.dmg" - stated reason, "no mountable file systems". Same issue with single large file download, and w... | 1.0 | qgis-0.8.0.dmg - no mountable file systems - ---

Author Name: **mike-coan-gmail-com -** (mike-coan-gmail-com -)

Original Redmine Issue: 517, https://issues.qgis.org/issues/517

Original Assignee: nobody -

---

Gunzip's correctly but failure to mount "qgis-0.8.0.dmg" - stated reason, "no mountable file systems". Same... | build | qgis dmg no mountable file systems author name mike coan gmail com mike coan gmail com original redmine issue original assignee nobody gunzip s correctly but failure to mount qgis dmg stated reason no mountable file systems same issue with single large file downl... | 1 |

315 | 2,643,324,746 | IssuesEvent | 2015-03-12 10:06:22 | mattrayner/mattrayner | https://api.github.com/repos/mattrayner/mattrayner | closed | Set up monitoring and 'DevOps' | infrastructure task | Set up and integrate:

* Slack

* CircleCI Slack Notifications

* Code Climate

* GitHub Issue Creation

* Slack Notifications

* NewRelic monitoring

* Slack Notifications

* Email Notifications | 1.0 | Set up monitoring and 'DevOps' - Set up and integrate:

* Slack

* CircleCI Slack Notifications

* Code Climate

* GitHub Issue Creation

* Slack Notifications

* NewRelic monitoring

* Slack Notifications

* Email Notifications | non_build | set up monitoring and devops set up and integrate slack circleci slack notifications code climate github issue creation slack notifications newrelic monitoring slack notifications email notifications | 0 |

9,558 | 4,544,944,890 | IssuesEvent | 2016-09-11 00:03:34 | CleverRaven/Cataclysm-DDA | https://api.github.com/repos/CleverRaven/Cataclysm-DDA | opened | CodeBlocks project Release build weirdness | Build | Specifies both `-Os` and `-O2` flags

Doesn't `-Os` include `-O2` anyway?

Includes debug symbols in the executable

As a result, the executable is almost 300mb. | 1.0 | CodeBlocks project Release build weirdness - Specifies both `-Os` and `-O2` flags

Doesn't `-Os` include `-O2` anyway?

Includes debug symbols in the executable

As a result, the executable is almost 300mb. | build | codeblocks project release build weirdness specifies both os and flags doesn t os include anyway includes debug symbols in the executable as a result the executable is almost | 1 |

3,885 | 3,266,269,356 | IssuesEvent | 2015-10-22 19:55:36 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Internal registry push loading bar | area/usability component/build priority/P3 | I want to see pushing to internal registry with loading bar:

-bash-4.2$ oc logs -f apaas-1-2708674907-build

Internal Error: pod is not in 'Running', 'Succeeded' or 'Failed' state - State: "Pending"

-bash-4.2$ oc logs -f apaas-1-2708674907-build

Already on 'master'

W1022 08:40:30.217551 1 docker.go:304] The... | 1.0 | Internal registry push loading bar - I want to see pushing to internal registry with loading bar:

-bash-4.2$ oc logs -f apaas-1-2708674907-build

Internal Error: pod is not in 'Running', 'Succeeded' or 'Failed' state - State: "Pending"

-bash-4.2$ oc logs -f apaas-1-2708674907-build

Already on 'master'

W1022 08:40... | build | internal registry push loading bar i want to see pushing to internal registry with loading bar bash oc logs f apaas build internal error pod is not in running succeeded or failed state state pending bash oc logs f apaas build already on master docker go ... | 1 |

188,585 | 14,447,513,163 | IssuesEvent | 2020-12-08 03:58:54 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | BinaryTreeNode/KDS: vendor/github.com/gophercloud/gophercloud/acceptance/openstack/dns/v2/recordsets_test.go; 3 LoC | fresh test tiny vendored |

Found a possible issue in [BinaryTreeNode/KDS](https://www.github.com/BinaryTreeNode/KDS) at [vendor/github.com/gophercloud/gophercloud/acceptance/openstack/dns/v2/recordsets_test.go](https://github.com/BinaryTreeNode/KDS/blob/6220475814b42733c86ac0005e8548bb9a481c75/vendor/github.com/gophercloud/gophercloud/acceptanc... | 1.0 | BinaryTreeNode/KDS: vendor/github.com/gophercloud/gophercloud/acceptance/openstack/dns/v2/recordsets_test.go; 3 LoC -

Found a possible issue in [BinaryTreeNode/KDS](https://www.github.com/BinaryTreeNode/KDS) at [vendor/github.com/gophercloud/gophercloud/acceptance/openstack/dns/v2/recordsets_test.go](https://github.co... | non_build | binarytreenode kds vendor github com gophercloud gophercloud acceptance openstack dns recordsets test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your ... | 0 |

102,151 | 4,151,284,495 | IssuesEvent | 2016-06-15 20:06:18 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | Protobuf communications | area/api area/performance priority/P1 team/CSI-API Machinery SIG | re: #3338

Post 1.0 and etcd v3.

It would be worth while to investigate the tradeoffs for using protobuf for internal communication whose external representation is json for performance and scale out reasons. | 1.0 | Protobuf communications - re: #3338

Post 1.0 and etcd v3.

It would be worth while to investigate the tradeoffs for using protobuf for internal communication whose external representation is json for performance and scale out reasons. | non_build | protobuf communications re post and etcd it would be worth while to investigate the tradeoffs for using protobuf for internal communication whose external representation is json for performance and scale out reasons | 0 |

7,045 | 2,596,715,116 | IssuesEvent | 2015-02-20 22:43:00 | chessmasterhong/WaterEmblem | https://api.github.com/repos/chessmasterhong/WaterEmblem | closed | Weapon broke mid-battle, freezing game | bug high priority | I believe my weapon broke mid-battle, causing the errors below:

| 1.0 | Weapon broke mid-battle, freezing game - I believe my weapon broke mid-battle, causing the errors below:

| non_build | weapon broke mid battle freezing game i believe my weapon broke mid battle causing the errors below | 0 |

77,940 | 22,047,859,851 | IssuesEvent | 2022-05-30 05:13:47 | google/mediapipe | https://api.github.com/repos/google/mediapipe | closed | <Solved> from mediapipe.python._framework_bindings import | type:build/install stat:awaiting response platform:python stalled | **Versions:**

python version - **3.8** (I did not use anaconda)

OS - **Windows** system terminal

the rest are all based on installation **tutorial**

Suppose you are not using 'pip3 install mediapipe' to install mediapipe. Instead, you use python package, and the last step is '**python setup.py install --link-opencv... | 1.0 | <Solved> from mediapipe.python._framework_bindings import - **Versions:**

python version - **3.8** (I did not use anaconda)

OS - **Windows** system terminal

the rest are all based on installation **tutorial**

Suppose you are not using 'pip3 install mediapipe' to install mediapipe. Instead, you use python package, ... | build | from mediapipe python framework bindings import versions python version i did not use anaconda os windows system terminal the rest are all based on installation tutorial suppose you are not using install mediapipe to install mediapipe instead you use python package and the la... | 1 |

18,579 | 6,625,301,628 | IssuesEvent | 2017-09-22 14:57:27 | csmk/frabjous | https://api.github.com/repos/csmk/frabjous | closed | net-analyzer/prometheus-*: new packages | in progress new ebuild | - [x] [alertmanager](https://github.com/prometheus/alertmanager) > aaa3851

- [x] [node_exporter](https://github.com/prometheus/node_exporter) > 8cfd497

- [x] [mysqld_exporter](https://github.com/prometheus/mysqld_exporter) > 7ff6949

- [x] [memcached_exporter](https://github.com/prometheus/memcached_exporter) > 9054a... | 1.0 | net-analyzer/prometheus-*: new packages - - [x] [alertmanager](https://github.com/prometheus/alertmanager) > aaa3851

- [x] [node_exporter](https://github.com/prometheus/node_exporter) > 8cfd497

- [x] [mysqld_exporter](https://github.com/prometheus/mysqld_exporter) > 7ff6949

- [x] [memcached_exporter](https://github.... | build | net analyzer prometheus new packages depend on done ... | 1 |

21,293 | 6,133,112,155 | IssuesEvent | 2017-06-25 10:41:14 | yunity/foodsaving-frontend | https://api.github.com/repos/yunity/foodsaving-frontend | closed | Make styl files more uniform | code-improvement code-question | The `.styl` files are in the [stylus](http://stylus-lang.com/) language. The syntax is pretty flexible and allows leaving away `:;{}` etc, but also supports the full CSS syntax.

Right now, we have wild mixture of different styles. I would wish for more uniform code and would be interested in defining a style. There'... | 2.0 | Make styl files more uniform - The `.styl` files are in the [stylus](http://stylus-lang.com/) language. The syntax is pretty flexible and allows leaving away `:;{}` etc, but also supports the full CSS syntax.

Right now, we have wild mixture of different styles. I would wish for more uniform code and would be interes... | non_build | make styl files more uniform the styl files are in the language the syntax is pretty flexible and allows leaving away etc but also supports the full css syntax right now we have wild mixture of different styles i would wish for more uniform code and would be interested in defining a style there... | 0 |

60,033 | 14,697,066,480 | IssuesEvent | 2021-01-04 01:58:15 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | FAILED: programs/clickhouse-odbc-bridge when using ninja to build clickhouse | build | **Operating system**

WSL2 in windows10

Linux version 4.19.104-microsoft-standard

**Cmake version**

3.15

**Ninja version**

1.9.0

**Compiler name and version**

GCC:10.2.0

**Full cmake and/or ninja output**

```c++

[kangxiang@Wsl[17:26:53]build_20.13.1.1]$ ninja

[0/2] Re-checking globbed directories...

[1043/9... | 1.0 | FAILED: programs/clickhouse-odbc-bridge when using ninja to build clickhouse - **Operating system**

WSL2 in windows10

Linux version 4.19.104-microsoft-standard

**Cmake version**

3.15

**Ninja version**

1.9.0

**Compiler name and version**

GCC:10.2.0

**Full cmake and/or ninja output**

```c++

[kangxiang@Wsl[17:2... | build | failed programs clickhouse odbc bridge when using ninja to build clickhouse operating system in linux version microsoft standard cmake version ninja version compiler name and version gcc: full cmake and or ninja output c build ninja re checki... | 1 |

728,329 | 25,075,445,142 | IssuesEvent | 2022-11-07 15:10:46 | AY2223S1-CS2103T-F12-1/tp | https://api.github.com/repos/AY2223S1-CS2103T-F12-1/tp | closed | [PE-D][Tester E] Insufficient visuals in UG | priority.low ugbug | There is only 1 picture in the UG, and descriptions of commands are all given in text which may be difficult to understand in view of your target audience.

<!--session: 1666943752219-ab42b068-3b76-4b0f-978d-954f8e09e177-->

<!--Version: Web v3.4.4-->

-------------

Labels: `severity.Low` `type.DocumentationBug`

origin... | 1.0 | [PE-D][Tester E] Insufficient visuals in UG - There is only 1 picture in the UG, and descriptions of commands are all given in text which may be difficult to understand in view of your target audience.

<!--session: 1666943752219-ab42b068-3b76-4b0f-978d-954f8e09e177-->

<!--Version: Web v3.4.4-->

-------------

Labels:... | non_build | insufficient visuals in ug there is only picture in the ug and descriptions of commands are all given in text which may be difficult to understand in view of your target audience labels severity low type documentationbug original wrewsama ped | 0 |

295,904 | 25,514,251,184 | IssuesEvent | 2022-11-28 15:14:02 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | com.hazelcast.mapstore.GenericMapStoreTest.whenMapStoreDestroyOnNonMaster_thenDropMapping [HZ-1816] | Type: Test-Failure Source: Internal Module: IMap Not Release Notes content to-jira Team: Platform | https://github.com/hazelcast/hazelcast/pull/22941#issuecomment-1326597448 (commit 47673944465b32875c60c6125ab6a2e26245947c)

Failed on `Hazelcast-pr-builder`: https://jenkins.hazelcast.com/job/Hazelcast-pr-builder/14461/testReport/junit/com.hazelcast.mapstore/GenericMapStoreTest/whenMapStoreDestroyOnNonMaster_thenDro... | 1.0 | com.hazelcast.mapstore.GenericMapStoreTest.whenMapStoreDestroyOnNonMaster_thenDropMapping [HZ-1816] - https://github.com/hazelcast/hazelcast/pull/22941#issuecomment-1326597448 (commit 47673944465b32875c60c6125ab6a2e26245947c)

Failed on `Hazelcast-pr-builder`: https://jenkins.hazelcast.com/job/Hazelcast-pr-builder/14... | non_build | com hazelcast mapstore genericmapstoretest whenmapstoredestroyonnonmaster thendropmapping commit failed on hazelcast pr builder stacktrace java lang assertionerror expecting row to contain exactly in any order but the following elements were unexpected ... | 0 |

1,603 | 2,790,322,797 | IssuesEvent | 2015-05-09 04:27:01 | driftyco/ionic-cli | https://api.github.com/repos/driftyco/ionic-cli | closed | Release password prompt does not work in android build | build cli | _From @kristianbenoit on November 7, 2014 15:21_

Building with `ionic build --release` while the build system is configured to use a keystore and alias, prompts for a password, but the password is never sent to the underlying tools.

To reproduce the problem

=====================

create a file `platforms/android/a... | 1.0 | Release password prompt does not work in android build - _From @kristianbenoit on November 7, 2014 15:21_

Building with `ionic build --release` while the build system is configured to use a keystore and alias, prompts for a password, but the password is never sent to the underlying tools.

To reproduce the problem

... | build | release password prompt does not work in android build from kristianbenoit on november building with ionic build release while the build system is configured to use a keystore and alias prompts for a password but the password is never sent to the underlying tools to reproduce the problem ... | 1 |

68,198 | 17,191,487,610 | IssuesEvent | 2021-07-16 11:39:11 | Kotlin/kotlinx.coroutines | https://api.github.com/repos/Kotlin/kotlinx.coroutines | closed | Investigate JS test failures that are silent on TeamCity | build js | Steps to reproduce:

`./gradlew :kotlinx-coroutines-core:jsIrTest`

Output, a lot of:

```

Too many unhandled exceptions 1, expected 0, got: CompletionHandlerException: Exception in completion handler InvokeOnCompletion@17[job@18] for BroadcastCoroutine{Cancelled}@18: CompletionHandlerException: Exception in complet... | 1.0 | Investigate JS test failures that are silent on TeamCity - Steps to reproduce:

`./gradlew :kotlinx-coroutines-core:jsIrTest`

Output, a lot of:

```

Too many unhandled exceptions 1, expected 0, got: CompletionHandlerException: Exception in completion handler InvokeOnCompletion@17[job@18] for BroadcastCoroutine{Canc... | build | investigate js test failures that are silent on teamcity steps to reproduce gradlew kotlinx coroutines core jsirtest output a lot of too many unhandled exceptions expected got completionhandlerexception exception in completion handler invokeoncompletion for broadcastcoroutine cancelled ... | 1 |

97,392 | 28,241,691,594 | IssuesEvent | 2023-04-06 07:42:35 | expo/eas-cli | https://api.github.com/repos/expo/eas-cli | closed | EAS build fails for iOS with Bare Workflow | incomplete issue: missing or invalid repro eas build invalid issue: project specific issue | ### Build/Submit details page URL

https://expo.dev/accounts/csm-developer/projects/pogo-app/builds/a5be8c95-1167-48d0-810f-d0091780bf34

### Summary

The iOS build fails for Expo SDK47.

### Managed or bare?

Bare

### Environment

expo-env-info 1.0.5 environment info:

System:

OS: macOS 13.2.1

Shell... | 1.0 | EAS build fails for iOS with Bare Workflow - ### Build/Submit details page URL

https://expo.dev/accounts/csm-developer/projects/pogo-app/builds/a5be8c95-1167-48d0-810f-d0091780bf34

### Summary

The iOS build fails for Expo SDK47.

### Managed or bare?

Bare

### Environment

expo-env-info 1.0.5 environment info:

... | build | eas build fails for ios with bare workflow build submit details page url summary the ios build fails for expo managed or bare bare environment expo env info environment info system os macos shell bin zsh binaries node usr ... | 1 |

73,449 | 19,688,033,211 | IssuesEvent | 2022-01-12 01:34:32 | facebook/zstd | https://api.github.com/repos/facebook/zstd | closed | Build failure using Visual Studio 2022 | build issue release-blocking | **Describe the bug**

When I build zstd using Visual Studio 2022, I got some build error:

```

[1/3] C:\PROGRA~1\MICROS~2\2022\COMMUN~1\VC\Tools\MSVC\1430~1.307\bin\Hostx64\x64\cl.exe -DXXH_NAMESPACE=ZSTD_ -DZSTD_DLL_EXPORT=1 -DZSTD_HEAPMODE=0 -DZSTD_LEGACY_SUPPORT=5 -DZSTD_MULTITHREAD -D_CONSOLE -D_CRT_SECURE_NO_WARN... | 1.0 | Build failure using Visual Studio 2022 - **Describe the bug**

When I build zstd using Visual Studio 2022, I got some build error:

```

[1/3] C:\PROGRA~1\MICROS~2\2022\COMMUN~1\VC\Tools\MSVC\1430~1.307\bin\Hostx64\x64\cl.exe -DXXH_NAMESPACE=ZSTD_ -DZSTD_DLL_EXPORT=1 -DZSTD_HEAPMODE=0 -DZSTD_LEGACY_SUPPORT=5 -DZSTD_MUL... | build | build failure using visual studio describe the bug when i build zstd using visual studio i got some build error c progra micros commun vc tools msvc bin cl exe dxxh namespace zstd dzstd dll export dzstd heapmode dzstd legacy support dzstd multithread d console d crt... | 1 |

414,253 | 27,982,650,789 | IssuesEvent | 2023-03-26 10:41:42 | ipython/ipython | https://api.github.com/repos/ipython/ipython | closed | History search / tab completion does not behave as expected. | documentation tab-completion autosuggestions | Hello

I am on ipython 8.9.0, using python 3.11.0. This is a fresh install on a completely clean Ubuntu 22.04, using pyenv as the virtual environment manager. This is a setup that I have used elsewhere without (until now) any need for additional setings.

On (all of) my systems, I always set bash's history navigation... | 1.0 | History search / tab completion does not behave as expected. - Hello

I am on ipython 8.9.0, using python 3.11.0. This is a fresh install on a completely clean Ubuntu 22.04, using pyenv as the virtual environment manager. This is a setup that I have used elsewhere without (until now) any need for additional setings.

... | non_build | history search tab completion does not behave as expected hello i am on ipython using python this is a fresh install on a completely clean ubuntu using pyenv as the virtual environment manager this is a setup that i have used elsewhere without until now any need for additional setings on... | 0 |

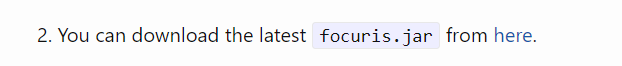

33,964 | 9,221,138,472 | IssuesEvent | 2019-03-11 19:12:32 | assimp/assimp | https://api.github.com/repos/assimp/assimp | closed | buiding issue C2678 and C2088 with Visual Studio 15 2017 and Assimp 4.0.1 | bug build | I did the command:

cmake . -G "Visual Studio 15 2017 Win64"

and then I tried to compile the library, however I got this error:

I tried to change the C++ standard lenguaje and same error, seems a comp... | 1.0 | buiding issue C2678 and C2088 with Visual Studio 15 2017 and Assimp 4.0.1 - I did the command:

cmake . -G "Visual Studio 15 2017 Win64"

and then I tried to compile the library, however I got this error:

... | build | buiding issue and with visual studio and assimp i did the command cmake g visual studio and then i tried to compile the library however i got this error i tried to change the c standard lenguaje and same error seems a compiler bug or vs standard bug 📦 | 1 |

264,337 | 28,144,823,611 | IssuesEvent | 2023-04-02 11:11:59 | RG4421/ampere-centos-kernel | https://api.github.com/repos/RG4421/ampere-centos-kernel | opened | CVE-2023-1670 (Medium) detected in linuxv5.2 | Mend: dependency security vulnerability | ## CVE-2023-1670 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/torv... | True | CVE-2023-1670 (Medium) detected in linuxv5.2 - ## CVE-2023-1670 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv5.2</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Lib... | non_build | cve medium detected in cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in base branch amp centos kernel vulnerable source files drivers net ethernet xircom cs c drivers... | 0 |

667,327 | 22,465,866,489 | IssuesEvent | 2022-06-22 01:38:54 | yukieiji/ExtremeRoles | https://api.github.com/repos/yukieiji/ExtremeRoles | opened | AmongUs v2022.06.21対応状況 | 優先度:最高/Priority:EXTREME バグ/Bug ExtremeSkins | ### Extreme RolesはAmongUs v2022.06.21に対応していません、MOD自体の読み込みに失敗します

- 更新されたNugetパッケージを見る限りPlayerControlが大規模にリファクタリングされている

- MOD内にあるPlayerControlのPatch、PlayerControlの各種要素へのアクセスの一部が死んでる | 1.0 | AmongUs v2022.06.21対応状況 - ### Extreme RolesはAmongUs v2022.06.21に対応していません、MOD自体の読み込みに失敗します

- 更新されたNugetパッケージを見る限りPlayerControlが大規模にリファクタリングされている

- MOD内にあるPlayerControlのPatch、PlayerControlの各種要素へのアクセスの一部が死んでる | non_build | amongus extreme rolesはamongus 、mod自体の読み込みに失敗します 更新されたnugetパッケージを見る限りplayercontrolが大規模にリファクタリングされている mod内にあるplayercontrolのpatch、playercontrolの各種要素へのアクセスの一部が死んでる | 0 |

109,912 | 16,920,508,657 | IssuesEvent | 2021-06-25 04:27:14 | dhlinh98/juice-shop | https://api.github.com/repos/dhlinh98/juice-shop | opened | CVE-2020-28469 (High) detected in glob-parent-3.1.0.tgz | security vulnerability | ## CVE-2020-28469 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-3.1.0.tgz</b></p></summary>

<p>Strips glob magic from a string to provide the parent directory path</p>

<p... | True | CVE-2020-28469 (High) detected in glob-parent-3.1.0.tgz - ## CVE-2020-28469 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>glob-parent-3.1.0.tgz</b></p></summary>

<p>Strips glob magic... | non_build | cve high detected in glob parent tgz cve high severity vulnerability vulnerable library glob parent tgz strips glob magic from a string to provide the parent directory path library home page a href path to dependency file juice shop frontend package json path to vulne... | 0 |

77,204 | 21,699,219,676 | IssuesEvent | 2022-05-10 00:50:13 | ros-noetic-arch/ros-noetic-kdl-parser | https://api.github.com/repos/ros-noetic-arch/ros-noetic-kdl-parser | closed | build error | build error | ```

[100%] Linking CXX executable devel/lib/kdl_parser/check_kdl_parser

/usr/bin/ld: warning: libglog.so.0, needed by /opt/ros/noetic/lib/librosconsole_glog.so, not found (try using -rpath or -rpath-link)

/usr/bin/ld: /opt/ros/noetic/lib/librosconsole_glog.so: undefined reference to `google::LogMessage::stream()'

/... | 1.0 | build error - ```

[100%] Linking CXX executable devel/lib/kdl_parser/check_kdl_parser

/usr/bin/ld: warning: libglog.so.0, needed by /opt/ros/noetic/lib/librosconsole_glog.so, not found (try using -rpath or -rpath-link)

/usr/bin/ld: /opt/ros/noetic/lib/librosconsole_glog.so: undefined reference to `google::LogMessage... | build | build error linking cxx executable devel lib kdl parser check kdl parser usr bin ld warning libglog so needed by opt ros noetic lib librosconsole glog so not found try using rpath or rpath link usr bin ld opt ros noetic lib librosconsole glog so undefined reference to google logmessage str... | 1 |

38,275 | 10,164,462,643 | IssuesEvent | 2019-08-07 11:45:15 | kube-HPC/hkube | https://api.github.com/repos/kube-HPC/hkube | closed | building a node algorith, dosent work | HKube: Algorithm Builder Type: Bug | **HKube micro-service**

algorithm builder

**Describe the bug**

building a node app dosent work

**Expected behavior**

it should work and build the algorithm

**To Reproduce**

Steps to reproduce the behavior:

1. create a node algorithm

2. zip it with the package.json file

3. build the algorithm

**Screenshots**

If applic... | 1.0 | building a node algorith, dosent work - **HKube micro-service**

algorithm builder

**Describe the bug**

building a node app dosent work

**Expected behavior**

it should work and build the algorithm

**To Reproduce**

Steps to reproduce the behavior:

1. create a node algorithm

2. zip it with the package.json file

3. build ... | build | building a node algorith dosent work hkube micro service algorithm builder describe the bug building a node app dosent work expected behavior it should work and build the algorithm to reproduce steps to reproduce the behavior create a node algorithm zip it with the package json file build ... | 1 |

39,756 | 10,374,428,399 | IssuesEvent | 2019-09-09 09:35:08 | widelands/widelands-issue-migration2 | https://api.github.com/repos/widelands/widelands-issue-migration2 | closed | Install fails (file INSTALL cannot find "..../fonts") | Fix Released High buildsystem linux | When r7320 was merged, the fonts directory was moved into i18n. However, this has made `make install` fail since something is still refering to the old directory. How to reproduce:

1. Build Widelands

2. In the build directory, run `ninja install` (or `make install`)

Note: this step will attempt to install the files sys... | 1.0 | Install fails (file INSTALL cannot find "..../fonts") - When r7320 was merged, the fonts directory was moved into i18n. However, this has made `make install` fail since something is still refering to the old directory. How to reproduce:

1. Build Widelands

2. In the build directory, run `ninja install` (or `make install... | build | install fails file install cannot find fonts when was merged the fonts directory was moved into however this has made make install fail since something is still refering to the old directory how to reproduce build widelands in the build directory run ninja install or make install note... | 1 |

50,181 | 10,467,037,534 | IssuesEvent | 2019-09-22 00:41:12 | icoxfog417/baby-steps-of-rl-ja | https://api.github.com/repos/icoxfog417/baby-steps-of-rl-ja | closed | p107 コード | code | ### 指摘事項

### 指摘箇所

* [ ] Day1: 強化学習の位置づけを知る

* [ ] Day2: 強化学習の解法(1): 環境から計画を立てる

* [ ] Day3: 強化学習の解法(2): 経験から計画を立てる

* [x] Day4: 強化学習に対するニューラルネットワークの適用

* [ ] Day5: 強化学習の弱点

* [ ] Day6: 強化学習の弱点を克服するための手法

* [ ] Day7: 強化学習の活用領域

ページ番号: p107

### 実行環境

* OS:

* Python version:

* `pip freeze`の実行結果 (下に添付)

... | 1.0 | p107 コード - ### 指摘事項

### 指摘箇所

* [ ] Day1: 強化学習の位置づけを知る

* [ ] Day2: 強化学習の解法(1): 環境から計画を立てる

* [ ] Day3: 強化学習の解法(2): 経験から計画を立てる

* [x] Day4: 強化学習に対するニューラルネットワークの適用

* [ ] Day5: 強化学習の弱点

* [ ] Day6: 強化学習の弱点を克服するための手法

* [ ] Day7: 強化学習の活用領域

ページ番号: p107

### 実行環境

* OS:

* Python version:

* `pip freeze`の実行結... | non_build | コード 指摘事項 指摘箇所 強化学習の位置づけを知る 強化学習の解法 環境から計画を立てる 強化学習の解法 経験から計画を立てる 強化学習に対するニューラルネットワークの適用 強化学習の弱点 強化学習の弱点を克服するための手法 強化学習の活用領域 ページ番号 実行環境 os python version pip freeze の実行結果 下に添付 エラー内容 細かいですが の最後 ... | 0 |

39,484 | 8,652,834,518 | IssuesEvent | 2018-11-27 09:16:16 | Altinn/altinn-studio | https://api.github.com/repos/Altinn/altinn-studio | opened | Make a golden standard for Sagas | code-quality | **Functional architect/designer:** @TheTechArch

**Technical architect:** @-mention

**Description**

We need to create better, more testable sagas.

Instead of refactoring exsisting, we start iteration 22 with writing one golden standard saga

This should make it easyer for later refactoring.

Could be solved b... | 1.0 | Make a golden standard for Sagas - **Functional architect/designer:** @TheTechArch

**Technical architect:** @-mention

**Description**

We need to create better, more testable sagas.

Instead of refactoring exsisting, we start iteration 22 with writing one golden standard saga

This should make it easyer for later... | non_build | make a golden standard for sagas functional architect designer thetecharch technical architect mention description we need to create better more testable sagas instead of refactoring exsisting we start iteration with writing one golden standard saga this should make it easyer for later ... | 0 |

71,427 | 18,737,877,838 | IssuesEvent | 2021-11-04 10:01:07 | vaticle/typedb-studio | https://api.github.com/repos/vaticle/typedb-studio | closed | Create a Bazel target for deploying to RPM | type: build status: blocked | Use `//:deploy-rpm` from https://github.com/graknlabs/bazel-distribution to deploy to RPM in repo.grakn.ai | 1.0 | Create a Bazel target for deploying to RPM - Use `//:deploy-rpm` from https://github.com/graknlabs/bazel-distribution to deploy to RPM in repo.grakn.ai | build | create a bazel target for deploying to rpm use deploy rpm from to deploy to rpm in repo grakn ai | 1 |

52,053 | 12,854,566,696 | IssuesEvent | 2020-07-09 02:21:39 | microsoft/onnxruntime | https://api.github.com/repos/microsoft/onnxruntime | closed | How to import onnxruntime when I build from source? | builds question | I have build the onnxruntime use "./build.sh --config RelWithDebInfo --build_shared_lib --parallel" successully.

Then how to import onnxruntime? or before I import onnxruntime what else should I do?

| 1.0 | How to import onnxruntime when I build from source? - I have build the onnxruntime use "./build.sh --config RelWithDebInfo --build_shared_lib --parallel" successully.

Then how to import onnxruntime? or before I import onnxruntime what else should I do?

| build | how to import onnxruntime when i build from source i have build the onnxruntime use build sh config relwithdebinfo build shared lib parallel successully then how to import onnxruntime or before i import onnxruntime what else should i do | 1 |

58,402 | 14,382,940,060 | IssuesEvent | 2020-12-02 08:23:05 | nipafx/nipafx.dev | https://api.github.com/repos/nipafx/nipafx.dev | opened | Merge <Accordion> and <PopOutAccordion> | aspect: development 👷 component: navigation type: performance 🏃♀️ type: refactoring :building_construction: | The navigation side menu uses these components to implement the expand/pop-out behavior (respectively). When creating the pop-out variant I decided it was too complex to add it to the already complex, expanding variant. While keeping each of the components simpler than a single one offering both behaviors, it has some ... | 1.0 | Merge <Accordion> and <PopOutAccordion> - The navigation side menu uses these components to implement the expand/pop-out behavior (respectively). When creating the pop-out variant I decided it was too complex to add it to the already complex, expanding variant. While keeping each of the components simpler than a single... | build | merge and the navigation side menu uses these components to implement the expand pop out behavior respectively when creating the pop out variant i decided it was too complex to add it to the already complex expanding variant while keeping each of the components simpler than a single one offering both behavio... | 1 |

128,983 | 5,081,257,550 | IssuesEvent | 2016-12-29 09:23:04 | jesparza/peepdf | https://api.github.com/repos/jesparza/peepdf | closed | using peepdf in python programming | Priority-Medium question Type-Other | @jesparza As this is a python tool. Can you tell if I can use its commands in python programming by importing peepdf as a package.

I have analysed my pdf and had a look at its all objects by executing console commands like object 1, object 2 etc. Now my goal is to replace the content of 24 numbered object. Is that po... | 1.0 | using peepdf in python programming - @jesparza As this is a python tool. Can you tell if I can use its commands in python programming by importing peepdf as a package.

I have analysed my pdf and had a look at its all objects by executing console commands like object 1, object 2 etc. Now my goal is to replace the cont... | non_build | using peepdf in python programming jesparza as this is a python tool can you tell if i can use its commands in python programming by importing peepdf as a package i have analysed my pdf and had a look at its all objects by executing console commands like object object etc now my goal is to replace the cont... | 0 |

99,888 | 30,573,201,837 | IssuesEvent | 2023-07-21 01:24:59 | microsoft/onnxruntime | https://api.github.com/repos/microsoft/onnxruntime | closed | [Build] Compilation on an Apple M1 Pro produces an illegal instruction | build | ### Describe the issue

Seems that #16082 has introduced a bug when compiling for an Apple M1 machine. The bug stems from a missing inlined-assembly [instruction](https://github.com/microsoft/onnxruntime/commit/b28e927ca4e#diff-2f1ef5fcaaa83277145acadea37a3d9da65e9605664f03efd4ca6208fa191e3bR452).

Reverting the com... | 1.0 | [Build] Compilation on an Apple M1 Pro produces an illegal instruction - ### Describe the issue

Seems that #16082 has introduced a bug when compiling for an Apple M1 machine. The bug stems from a missing inlined-assembly [instruction](https://github.com/microsoft/onnxruntime/commit/b28e927ca4e#diff-2f1ef5fcaaa83277145... | build | compilation on an apple pro produces an illegal instruction describe the issue seems that has introduced a bug when compiling for an apple machine the bug stems from a missing inlined assembly reverting the commit introduces a regression on the test suite urgency this is a presumed block... | 1 |

187,540 | 14,428,231,991 | IssuesEvent | 2020-12-06 08:45:40 | kalexmills/github-vet-tests-dec2020 | https://api.github.com/repos/kalexmills/github-vet-tests-dec2020 | closed | coreroller/coreroller: backend/vendor/src/github.com/pmylund/go-cache/sharded_test.go; 8 LoC | fresh test tiny |

Found a possible issue in [coreroller/coreroller](https://www.github.com/coreroller/coreroller) at [backend/vendor/src/github.com/pmylund/go-cache/sharded_test.go](https://github.com/coreroller/coreroller/blob/1a1f412434584d0569f408bffa059ead21e28d9d/backend/vendor/src/github.com/pmylund/go-cache/sharded_test.go#L58-L... | 1.0 | coreroller/coreroller: backend/vendor/src/github.com/pmylund/go-cache/sharded_test.go; 8 LoC -

Found a possible issue in [coreroller/coreroller](https://www.github.com/coreroller/coreroller) at [backend/vendor/src/github.com/pmylund/go-cache/sharded_test.go](https://github.com/coreroller/coreroller/blob/1a1f412434584d... | non_build | coreroller coreroller backend vendor src github com pmylund go cache sharded test go loc found a possible issue in at below is the message reported by the analyzer for this snippet of code beware that the analyzer only reports the first issue it finds so please do not limit your consideration to the c... | 0 |

118,369 | 11,967,570,293 | IssuesEvent | 2020-04-06 06:59:19 | RedHatInsights/insights-results-aggregator | https://api.github.com/repos/RedHatInsights/insights-results-aggregator | opened | Metrics endpoint is not described in OpenAPI specification | bug documentation | Metrics endpoint is not described in OpenAPI specification | 1.0 | Metrics endpoint is not described in OpenAPI specification - Metrics endpoint is not described in OpenAPI specification | non_build | metrics endpoint is not described in openapi specification metrics endpoint is not described in openapi specification | 0 |

356,773 | 25,176,257,994 | IssuesEvent | 2022-11-11 09:31:34 | JordanChua/pe | https://api.github.com/repos/JordanChua/pe | opened | Explanation for predicting a Student's grade too complex | severity.Low type.DocumentationBug | The whole calculation for the prediction of a student's grade is very complex, and is visually hard for the user to imagine as well since there are several terms here: "learning rating" , "difficulty bonus" "normalised scores", which are all concepts

It would be great if there was a solid example to show the actual c... | 1.0 | Explanation for predicting a Student's grade too complex - The whole calculation for the prediction of a student's grade is very complex, and is visually hard for the user to imagine as well since there are several terms here: "learning rating" , "difficulty bonus" "normalised scores", which are all concepts

It would... | non_build | explanation for predicting a student s grade too complex the whole calculation for the prediction of a student s grade is very complex and is visually hard for the user to imagine as well since there are several terms here learning rating difficulty bonus normalised scores which are all concepts it would... | 0 |

15,756 | 10,345,674,933 | IssuesEvent | 2019-09-04 13:55:18 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | opened | [Track One] Tests for Service Fabric Processor error handling are unstable for CI and nightly runs | Client Event Hubs Service Attention | # Summary

A recent change introduced some new timing elements to help the exception tests for the `ServiceFabricProcessor` to be more stable and provide more consistent results during nightly runs. Since those changes were introduced, there the tests have proven to be non-deterministic during CI and nightly test ru... | 1.0 | [Track One] Tests for Service Fabric Processor error handling are unstable for CI and nightly runs - # Summary

A recent change introduced some new timing elements to help the exception tests for the `ServiceFabricProcessor` to be more stable and provide more consistent results during nightly runs. Since those chang... | non_build | tests for service fabric processor error handling are unstable for ci and nightly runs summary a recent change introduced some new timing elements to help the exception tests for the servicefabricprocessor to be more stable and provide more consistent results during nightly runs since those changes were in... | 0 |

95,764 | 27,612,639,738 | IssuesEvent | 2023-03-09 16:59:12 | golang/go | https://api.github.com/repos/golang/go | closed | x/build: aix-ppc64 builder was missing | Builders NeedsInvestigation | From https://farmer.golang.org/#pools:

```

host-aix-ppc64-osuosl: 0/0 (1 missing)

```

/cc @trex58 Are you able to look into this? Thank you.

/cc @toothrot @cagedmantis @andybons | 1.0 | x/build: aix-ppc64 builder was missing - From https://farmer.golang.org/#pools:

```

host-aix-ppc64-osuosl: 0/0 (1 missing)

```

/cc @trex58 Are you able to look into this? Thank you.

/cc @toothrot @cagedmantis @andybons | build | x build aix builder was missing from host aix osuosl missing cc are you able to look into this thank you cc toothrot cagedmantis andybons | 1 |

102,314 | 31,886,169,737 | IssuesEvent | 2023-09-17 00:38:40 | habitat-sh/builder | https://api.github.com/repos/habitat-sh/builder | closed | [builder-worker] Clean up `job.root()/dockerd` directory on teardown. | Focus:Builder Type:Chore Platform:Linux Stale | @fnichol commented on [Tue Oct 03 2017](https://github.com/habitat-sh/habitat/issues/3484)

Currently the Docker Engine's data directories aren't cleaned up when the job is torn down. It shouldn't be big at the moment as the exporter cleans up its image layers.

---

@fnichol commented on [Wed Oct 04 2017](https://gith... | 1.0 | [builder-worker] Clean up `job.root()/dockerd` directory on teardown. - @fnichol commented on [Tue Oct 03 2017](https://github.com/habitat-sh/habitat/issues/3484)

Currently the Docker Engine's data directories aren't cleaned up when the job is torn down. It shouldn't be big at the moment as the exporter cleans up its ... | build | clean up job root dockerd directory on teardown fnichol commented on currently the docker engine s data directories aren t cleaned up when the job is torn down it shouldn t be big at the moment as the exporter cleans up its image layers fnichol commented on this is non critical and only app... | 1 |

76,205 | 21,220,628,553 | IssuesEvent | 2022-04-11 11:35:17 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Bug]: Select widget shows blank spaces | Bug Select Widget App Viewers Pod High UI Building Pod regression Needs Triaging | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Description

Select widget shows blank spaces when more options are added.

https://loom.com/share/f7b8333ca2da4fae84fed6574dfea635

"registry": "https://artifactoryDo... | 1.0 | Problem updating private Docker registry implicit library/ image - ### Which Renovate are you using?

Renovate docker image 19.156.0

### Which platform are you using?

GitLab self-hosted

### Have you checked the logs? Don't forget to include them if relevant

```

WARN: Error getting docker image tags (repos... | non_build | problem updating private docker registry implicit library image which renovate are you using renovate docker image which platform are you using gitlab self hosted have you checked the logs don t forget to include them if relevant warn error getting docker image tags reposito... | 0 |

104,174 | 11,394,886,375 | IssuesEvent | 2020-01-30 10:16:04 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | [APIM300]No doc can be found for APIM300 Deployment patterns/clustering | 4.0.0 Priority/Highest Severity/Blocker To-do documentation-required | **Description:**

Please provide a document for APIM300 deployment patterns/clustering.

**Suggested Labels:**

APIM300

**Affected Product Version:**

APIM300

| 1.0 | [APIM300]No doc can be found for APIM300 Deployment patterns/clustering - **Description:**

Please provide a document for APIM300 deployment patterns/clustering.

**Suggested Labels:**

APIM300

**Affected Product Version:**

APIM300

| non_build | no doc can be found for deployment patterns clustering description please provide a document for deployment patterns clustering suggested labels affected product version | 0 |

215,348 | 16,666,906,692 | IssuesEvent | 2021-06-07 05:57:43 | AtlasOfLivingAustralia/la-pipelines | https://api.github.com/repos/AtlasOfLivingAustralia/la-pipelines | closed | AVH testing: wildcard search on collector not working | bug testing-findings | Compare:

https://avh.ala.org.au/occurrences/search?q=collector_text%3AKlazenga

and

https://avh-test.ala.org.au/occurrences/search?q=collector_text%3AKlazenga.

This is accessed in AVH through the 'Advanced search'. | 1.0 | AVH testing: wildcard search on collector not working - Compare:

https://avh.ala.org.au/occurrences/search?q=collector_text%3AKlazenga

and

https://avh-test.ala.org.au/occurrences/search?q=collector_text%3AKlazenga.

This is accessed in AVH through the 'Advanced search'. | non_build | avh testing wildcard search on collector not working compare and this is accessed in avh through the advanced search | 0 |

31,981 | 8,784,569,272 | IssuesEvent | 2018-12-20 10:13:58 | conan-io/docs | https://api.github.com/repos/conan-io/docs | closed | CMAKE_BUILD_TYPE is not set by Conan on Windows/Visual | complex: low component: build priority: low stage: review type: feature | Coming from conan-io/conan#2488

Update the docs to mention that `CMAKE_BUILD_TYPE` is not set by Conan on Windows/Visual Studio.

CMake helper is not setting `CMAKE_BUILD_TYPE` automatically in the configure step. However, it is doing so in the build step with the `--config` flag.

This is because of CMake helpe... | 1.0 | CMAKE_BUILD_TYPE is not set by Conan on Windows/Visual - Coming from conan-io/conan#2488

Update the docs to mention that `CMAKE_BUILD_TYPE` is not set by Conan on Windows/Visual Studio.

CMake helper is not setting `CMAKE_BUILD_TYPE` automatically in the configure step. However, it is doing so in the build step wi... | build | cmake build type is not set by conan on windows visual coming from conan io conan update the docs to mention that cmake build type is not set by conan on windows visual studio cmake helper is not setting cmake build type automatically in the configure step however it is doing so in the build step with ... | 1 |

81,668 | 23,527,679,593 | IssuesEvent | 2022-08-19 12:32:14 | camunda/zeebe | https://api.github.com/repos/camunda/zeebe | closed | Can riscv architecture be supported ? | kind/bug kind/question area/build | **Under the riscv architecture, after compiling zeebe with maven and making it into a docker image, an error is reported when running,

Ask Daniel to help analyze,thanks**

**dockerfile**

````

ARG APP_ENV=prod

# Building builder image

FROM riscv64/ubuntu:20.04 as builder

ARG DISTBALL

ENV TMP_ARCHIVE=/tmp/z... | 1.0 | Can riscv architecture be supported ? - **Under the riscv architecture, after compiling zeebe with maven and making it into a docker image, an error is reported when running,

Ask Daniel to help analyze,thanks**

**dockerfile**

````

ARG APP_ENV=prod

# Building builder image

FROM riscv64/ubuntu:20.04 as builde... | build | can riscv architecture be supported ? under the riscv architecture after compiling zeebe with maven and making it into a docker image an error is reported when running ask daniel to help analyze,thanks dockerfile arg app env prod building builder image from ubuntu as builder arg d... | 1 |

24,745 | 7,551,368,913 | IssuesEvent | 2018-04-18 19:54:30 | dart-lang/build | https://api.github.com/repos/dart-lang/build | closed | AssetWriter.write* – return WriteAction (or something) | package: build type: enhancement type: question | The fact that folks aren't supposed to await writeAsBytes/writeAsString is really annoying – since it's good to await futures.

Could these methods return an instance of

```dart

class WriteAction {

Future get complete;

}

```

So folks who need to wait can, but the lints to get to them? | 1.0 | AssetWriter.write* – return WriteAction (or something) - The fact that folks aren't supposed to await writeAsBytes/writeAsString is really annoying – since it's good to await futures.

Could these methods return an instance of

```dart

class WriteAction {

Future get complete;

}

```

So folks who need to wai... | build | assetwriter write – return writeaction or something the fact that folks aren t supposed to await writeasbytes writeasstring is really annoying – since it s good to await futures could these methods return an instance of dart class writeaction future get complete so folks who need to wai... | 1 |

101,260 | 30,958,699,422 | IssuesEvent | 2023-08-08 00:48:54 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | Arm32: System.Threading.Tasks.Dataflow.Tests failing with NRE | bug arch-arm32 area-System.Threading.Tasks blocking-clean-ci Known Build Error | ## Build Information

Build: https://dev.azure.com/dnceng-public/cbb18261-c48f-4abb-8651-8cdcb5474649/_build/results?buildId=140623

Build error leg or test failing: System.Threading.Tasks.Dataflow.Tests.WorkItemExecution

Pull request: https://github.com/dotnet/runtime/pull/80323

## Error Message

Fill the error me... | 1.0 | Arm32: System.Threading.Tasks.Dataflow.Tests failing with NRE - ## Build Information

Build: https://dev.azure.com/dnceng-public/cbb18261-c48f-4abb-8651-8cdcb5474649/_build/results?buildId=140623

Build error leg or test failing: System.Threading.Tasks.Dataflow.Tests.WorkItemExecution

Pull request: https://github.com/... | build | system threading tasks dataflow tests failing with nre build information build build error leg or test failing system threading tasks dataflow tests workitemexecution pull request error message fill the error message using json errormessage system threading tasks concurrente... | 1 |

126,655 | 26,890,781,506 | IssuesEvent | 2023-02-06 08:45:31 | samq-ghdemo/snyk-goof | https://api.github.com/repos/samq-ghdemo/snyk-goof | opened | Code Security Report: 1 high severity findings, 8 total findings | code security findings | # Code Security Report

**Latest Scan:** 2023-02-06 08:44am

**Total Findings:** 8

**Tested Project Files:** 6

**Detected Programming Languages:** 1

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN-END -->

## Language: JavaScript / Node.js

| Severity | CWE | Vulnera... | 1.0 | Code Security Report: 1 high severity findings, 8 total findings - # Code Security Report

**Latest Scan:** 2023-02-06 08:44am

**Total Findings:** 8

**Tested Project Files:** 6

**Detected Programming Languages:** 1

<!-- SAST-MANUAL-SCAN-START -->

- [ ] Check this box to manually trigger a scan

<!-- SAST-MANUAL-SCAN-END... | non_build | code security report high severity findings total findings code security report latest scan total findings tested project files detected programming languages check this box to manually trigger a scan language javascript node js severity cwe vulnerabi... | 0 |

39,669 | 10,372,024,471 | IssuesEvent | 2019-09-09 00:43:49 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | bazel should support building multiple platforms | area/build-release kind/feature lifecycle/rotten sig/release sig/testing | #73930 added the support of cross-compilation for bazel, but there is currently no way to build for multiple platforms. For example, to build release tarballs for both windows and linux, one would have to run the following commands.

```

bazel build --config=cross:linux_amd64 //build/release-tars

# May need to co... | 1.0 | bazel should support building multiple platforms - #73930 added the support of cross-compilation for bazel, but there is currently no way to build for multiple platforms. For example, to build release tarballs for both windows and linux, one would have to run the following commands.

```

bazel build --config=cross:l... | build | bazel should support building multiple platforms added the support of cross compilation for bazel but there is currently no way to build for multiple platforms for example to build release tarballs for both windows and linux one would have to run the following commands bazel build config cross linux... | 1 |

69,641 | 7,156,542,803 | IssuesEvent | 2018-01-26 16:37:36 | poanetwork/poa-popa | https://api.github.com/repos/poanetwork/poa-popa | opened | (Chore) Refactor prepareConTx | category-tests | Simplify code in order to make it more readable and testable by mainly extracting and refactoring functions.

For example, wallet validation and assignation | 1.0 | (Chore) Refactor prepareConTx - Simplify code in order to make it more readable and testable by mainly extracting and refactoring functions.

For example, wallet validation and assignation | non_build | chore refactor preparecontx simplify code in order to make it more readable and testable by mainly extracting and refactoring functions for example wallet validation and assignation | 0 |

126,161 | 10,410,598,524 | IssuesEvent | 2019-09-13 11:51:31 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | UI Tests: If test is waiting for an expect(...emits) test a strange exception is thrown | a: tests | ## Steps to Reproduce

clone https://github.com/escamoteur/rx_widgets/tree/UI_Test_Exception

and run the test in rx_widgets/example/test/homepage_test.dart

you will get this output

```

Running "flutter packages get" in example...

Received: null

Called Execute

Received: [Instance of 'WeatherEntry']

══╡ E... | 1.0 | UI Tests: If test is waiting for an expect(...emits) test a strange exception is thrown - ## Steps to Reproduce

clone https://github.com/escamoteur/rx_widgets/tree/UI_Test_Exception

and run the test in rx_widgets/example/test/homepage_test.dart

you will get this output

```

Running "flutter packages get" in... | non_build | ui tests if test is waiting for an expect emits test a strange exception is thrown steps to reproduce clone and run the test in rx widgets example test homepage test dart you will get this output running flutter packages get in example received null called execute received ══╡ ... | 0 |

47,296 | 11,996,842,385 | IssuesEvent | 2020-04-08 17:28:20 | dotnet/machinelearning | https://api.github.com/repos/dotnet/machinelearning | closed | libomp 8.0.0 version has dependencies that do no exist on macOS build machines | Build P0 bug | It seems brew installing latest libomp has dependencies that do not exist on build machines. Investigate what those dependencies are by taking a trace and install them.

Related to #3694 | 1.0 | libomp 8.0.0 version has dependencies that do no exist on macOS build machines - It seems brew installing latest libomp has dependencies that do not exist on build machines. Investigate what those dependencies are by taking a trace and install them.

Related to #3694 | build | libomp version has dependencies that do no exist on macos build machines it seems brew installing latest libomp has dependencies that do not exist on build machines investigate what those dependencies are by taking a trace and install them related to | 1 |

87,301 | 25,081,613,622 | IssuesEvent | 2022-11-07 19:51:06 | irods/irods_rule_engine_plugin_audit_amqp | https://api.github.com/repos/irods/irods_rule_engine_plugin_audit_amqp | closed | Replace use of deprecated functionality | build | Compiling the main branch against what will be iRODS 4.3 produces the following:

```bash

/home/kory/dev/prog/cpp/irods_rule_engine_plugin_audit_amqp/libirods_rule_engine_plugin-audit_amqp.cpp:126:17: warning: 'pn_messenger' is deprecated [-Wdeprecated-declarations]

messenger = pn_messenger(NULL);

... | 1.0 | Replace use of deprecated functionality - Compiling the main branch against what will be iRODS 4.3 produces the following:

```bash

/home/kory/dev/prog/cpp/irods_rule_engine_plugin_audit_amqp/libirods_rule_engine_plugin-audit_amqp.cpp:126:17: warning: 'pn_messenger' is deprecated [-Wdeprecated-declarations]

messe... | build | replace use of deprecated functionality compiling the main branch against what will be irods produces the following bash home kory dev prog cpp irods rule engine plugin audit amqp libirods rule engine plugin audit amqp cpp warning pn messenger is deprecated messenger pn messenger null ... | 1 |

42,747 | 11,053,275,965 | IssuesEvent | 2019-12-10 11:01:45 | Kavawuvi/invader | https://api.github.com/repos/Kavawuvi/invader | reopened | [invader-build] Tags extracted from combustion maps can cause earrape (tool.exe is uneffected) | invader-build | In order to reproduce this, you need tags that have been touched by the Combustion Retail>CE cache file conversion tool.

To reproduce, first convert stock retail bloodgulch to CE format with Combustion, allowing it to optimise against the CE resource maps.

Second, extract that map with invader-extract. Some tags wi... | 1.0 | [invader-build] Tags extracted from combustion maps can cause earrape (tool.exe is uneffected) - In order to reproduce this, you need tags that have been touched by the Combustion Retail>CE cache file conversion tool.

To reproduce, first convert stock retail bloodgulch to CE format with Combustion, allowing it to op... | build | tags extracted from combustion maps can cause earrape tool exe is uneffected in order to reproduce this you need tags that have been touched by the combustion retail ce cache file conversion tool to reproduce first convert stock retail bloodgulch to ce format with combustion allowing it to optimise against... | 1 |

110,786 | 13,939,704,818 | IssuesEvent | 2020-10-22 16:51:26 | flutter/flutter | https://api.github.com/repos/flutter/flutter | closed | Scrollbar widget renders in the middle when scroll direction is horizontal | P4 f: material design f: scrolling found in release: 1.22 framework has reproducible steps platform-ios | Hello! I was implementing a `SingleChildScrollView (horizontal direction)` with a `Row` as a `child`. I tried to use the `Scrollbar` widget as a parent.

```dart

Scrollbar(

isAlwaysShown: true,

controller: _scrollController,

child: SingleChildScrollView(

scrollDirection: Axis.h... | 1.0 | Scrollbar widget renders in the middle when scroll direction is horizontal - Hello! I was implementing a `SingleChildScrollView (horizontal direction)` with a `Row` as a `child`. I tried to use the `Scrollbar` widget as a parent.

```dart

Scrollbar(

isAlwaysShown: true,

controller: _scrollController... | non_build | scrollbar widget renders in the middle when scroll direction is horizontal hello i was implementing a singlechildscrollview horizontal direction with a row as a child i tried to use the scrollbar widget as a parent dart scrollbar isalwaysshown true controller scrollcontroller... | 0 |

8,699 | 4,310,299,092 | IssuesEvent | 2016-07-21 18:44:59 | Linuxbrew/homebrew-core | https://api.github.com/repos/Linuxbrew/homebrew-core | closed | openssl: error: target already defined | build-error | Please follow the general troubleshooting steps first:

+ [x] Ran brew update and retried your prior step?

+ [x] Ran brew doctor, fixed as many issues as possible and retried your prior step?

+ [x] If you're seeing permission errors tried running sudo chown -R $(whoami) $(brew --prefix)?

Bug reports: