Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 2 430 | labels stringlengths 4 347 | body stringlengths 5 237k | index stringclasses 7

values | text_combine stringlengths 96 237k | label stringclasses 2

values | text stringlengths 96 219k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

108,934 | 16,822,763,437 | IssuesEvent | 2021-06-17 14:50:53 | idonthaveafifaaddiction/flink | https://api.github.com/repos/idonthaveafifaaddiction/flink | opened | WS-2019-0424 (Medium) detected in elliptic-6.4.1.tgz | security vulnerability | ## WS-2019-0424 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.4.1.tgz</b></p></summary>

<p>EC cryptography</p>

<p>Library home page: <a href="https://registry.npmjs.org/... | True | WS-2019-0424 (Medium) detected in elliptic-6.4.1.tgz - ## WS-2019-0424 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>elliptic-6.4.1.tgz</b></p></summary>

<p>EC cryptography</p>

<p>... | non_comp | ws medium detected in elliptic tgz ws medium severity vulnerability vulnerable library elliptic tgz ec cryptography library home page a href path to dependency file flink flink runtime web web dashboard package json path to vulnerable library flink flink runtime web ... | 0 |

16,821 | 4,098,918,269 | IssuesEvent | 2016-06-03 10:19:39 | digitalmethodsinitiative/dmi-tcat | https://api.github.com/repos/digitalmethodsinitiative/dmi-tcat | opened | Auto-installer needs to install ntpdate | documentation enhancement | Twitter returns a crypted 401 Authorization Denied whenever the TCAT system clocktime is out-of-sync with the Twitter servers, around 5-10 minutes will be enough.

The auto-installer should install and configure ntp so this will not occur.

Also, add this as a FAQ to the Wiki. | 1.0 | Auto-installer needs to install ntpdate - Twitter returns a crypted 401 Authorization Denied whenever the TCAT system clocktime is out-of-sync with the Twitter servers, around 5-10 minutes will be enough.

The auto-installer should install and configure ntp so this will not occur.

Also, add this as a FAQ to the Wi... | non_comp | auto installer needs to install ntpdate twitter returns a crypted authorization denied whenever the tcat system clocktime is out of sync with the twitter servers around minutes will be enough the auto installer should install and configure ntp so this will not occur also add this as a faq to the wiki | 0 |

18,417 | 25,485,400,978 | IssuesEvent | 2022-11-26 10:16:51 | eloquentarduino/EloquentTinyML | https://api.github.com/repos/eloquentarduino/EloquentTinyML | closed | Didn't find op for builtin opcode 'CONV_2D' version '5' Failed to get registration from op code d | compatibility | @eloquentarduino I am trying to take an inference for a neural network on ESP 32 DEV KIT but it's throwing an error that is not understandable.

Please find my code below:

#include <EloquentTinyML.h>

#include <eloquent_tinyml/tensorflow.h>

#include "test_model.h"

#define NUMBER_OF_INPUTS 7200

#define NUMB... | True | Didn't find op for builtin opcode 'CONV_2D' version '5' Failed to get registration from op code d - @eloquentarduino I am trying to take an inference for a neural network on ESP 32 DEV KIT but it's throwing an error that is not understandable.

Please find my code below:

#include <EloquentTinyML.h>

#include <e... | comp | didn t find op for builtin opcode conv version failed to get registration from op code d eloquentarduino i am trying to take an inference for a neural network on esp dev kit but it s throwing an error that is not understandable please find my code below include include include test mod... | 1 |

13,106 | 15,394,077,924 | IssuesEvent | 2021-03-03 17:27:42 | docker/compose-cli | https://api.github.com/repos/docker/compose-cli | closed | Compose: support --build-arg key=val | compatibility compose | build option:

`--build-arg key=val Set build-time variables for services.` | True | Compose: support --build-arg key=val - build option:

`--build-arg key=val Set build-time variables for services.` | comp | compose support build arg key val build option build arg key val set build time variables for services | 1 |

269,368 | 28,960,116,323 | IssuesEvent | 2023-05-10 01:16:18 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | reopened | CVE-2019-10220 (High) detected in linuxv3.0 | Mend: dependency security vulnerability | ## CVE-2019-10220 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/veryg... | True | CVE-2019-10220 (High) detected in linuxv3.0 - ## CVE-2019-10220 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Libra... | non_comp | cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files fs readdir c fs readdir c ... | 0 |

7,956 | 10,135,788,178 | IssuesEvent | 2019-08-02 11:07:40 | storybookjs/storybook | https://api.github.com/repos/storybookjs/storybook | closed | React-Jss injectSheet overriden by Material ui V4 | compatibility with other tools cra inactive question / support | Hi,

React-jss injectSheet is injected before Material ui styles so it's overridden .

it's only happen in Storybook and not in production code.

this is my configuration:

- "@storybook/addon-actions": "5.1.1"

- "@storybook/react": "5.1.1"

- "react-scripts": "3.0.1"

- "react-jss": "8.6.1"

- "@material-ui/core"... | True | React-Jss injectSheet overriden by Material ui V4 - Hi,

React-jss injectSheet is injected before Material ui styles so it's overridden .

it's only happen in Storybook and not in production code.

this is my configuration:

- "@storybook/addon-actions": "5.1.1"

- "@storybook/react": "5.1.1"

- "react-scripts": "3... | comp | react jss injectsheet overriden by material ui hi react jss injectsheet is injected before material ui styles so it s overridden it s only happen in storybook and not in production code this is my configuration storybook addon actions storybook react react scripts ... | 1 |

120,761 | 25,859,119,640 | IssuesEvent | 2022-12-13 15:43:52 | pnp/pnpjs | https://api.github.com/repos/pnp/pnpjs | closed | How to delete a term from term store using PnP JS | type: enhancement area: code status: complete | - [ ] Enhancement

- [ ] Bug

- [ X ] Question

- [ ] Documentation gap/issue

Please specify what version of the library you are using: [ 2.12.0 ]

Please specify what version(s) of SharePoint you are targeting: [ SharePoint Online ]

Hello,

How can we delete a term from a term store using PnP J... | 1.0 | How to delete a term from term store using PnP JS - - [ ] Enhancement

- [ ] Bug

- [ X ] Question

- [ ] Documentation gap/issue

Please specify what version of the library you are using: [ 2.12.0 ]

Please specify what version(s) of SharePoint you are targeting: [ SharePoint Online ]

Hello,

Ho... | non_comp | how to delete a term from term store using pnp js enhancement bug question documentation gap issue please specify what version of the library you are using please specify what version s of sharepoint you are targeting hello how can we delete a term from a term store using pnp js... | 0 |

11,530 | 13,526,943,000 | IssuesEvent | 2020-09-15 14:50:03 | zymex22/Project-RimFactory-Revived | https://api.github.com/repos/zymex22/Project-RimFactory-Revived | opened | SeedsPlease incompatibility | Incompatibility | # Describe the bug

The cultivators are incompatible with the `SeedsPlease` Mod.

They ignore the System where seeds are required in order to plant.

Link to the Mod: https://steamcommunity.com/sharedfiles/filedetails/?id=935732834

Patches if possible are required for:

- [ ] Drone Cultivator

This might be alread... | True | SeedsPlease incompatibility - # Describe the bug

The cultivators are incompatible with the `SeedsPlease` Mod.

They ignore the System where seeds are required in order to plant.

Link to the Mod: https://steamcommunity.com/sharedfiles/filedetails/?id=935732834

Patches if possible are required for:

- [ ] Drone Cu... | comp | seedsplease incompatibility describe the bug the cultivators are incompatible with the seedsplease mod they ignore the system where seeds are required in order to plant link to the mod patches if possible are required for drone cultivator this might be already resolved with the drone overhaul ... | 1 |

5,818 | 8,269,563,062 | IssuesEvent | 2018-09-15 07:40:29 | arcticicestudio/igloo | https://api.github.com/repos/arcticicestudio/igloo | opened | taskopen workaround support for macOS | os-macos scope-compatibility snowblock-taskwarrior snowblock-timewarrior type-feature | > Epic: #131

> Related to #110

The management of installed [Perl modules][cpan-doc-modules] on macOS is not as simple and well thought through like with package managers on Linux systems, e.g. via [pacman][archw-pacman] on [Arch Linux][archlinux]. There are problems when is comes to configuring the runtime path the... | True | taskopen workaround support for macOS - > Epic: #131

> Related to #110

The management of installed [Perl modules][cpan-doc-modules] on macOS is not as simple and well thought through like with package managers on Linux systems, e.g. via [pacman][archw-pacman] on [Arch Linux][archlinux]. There are problems when is c... | comp | taskopen workaround support for macos epic related to the management of installed on macos is not as simple and well thought through like with package managers on linux systems e g via on there are problems when is comes to configuring the runtime path the modules have been installed to eve... | 1 |

9,993 | 2,616,018,384 | IssuesEvent | 2015-03-02 01:00:04 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | auto-join Local PC game, second instance crashes on some config | auto-migrated Component-Logic Milestone-Release Priority-Critical Type-Defect Usability | ```

What steps will reproduce the problem?

1.in bwapi.ini: set game = BWAPI

2. set map = maps\AI\tournament 1\dragoons.scm (from AIIDE 2010 tournament 1)

3. set game_type = USE_MAP_SETTINGS

4. set both ai's to skynet (uses revision 3769)

5. set auto_manu = LAN

6. launch the games (one after another with a small delay)

... | 1.0 | auto-join Local PC game, second instance crashes on some config - ```

What steps will reproduce the problem?

1.in bwapi.ini: set game = BWAPI

2. set map = maps\AI\tournament 1\dragoons.scm (from AIIDE 2010 tournament 1)

3. set game_type = USE_MAP_SETTINGS

4. set both ai's to skynet (uses revision 3769)

5. set auto_manu... | non_comp | auto join local pc game second instance crashes on some config what steps will reproduce the problem in bwapi ini set game bwapi set map maps ai tournament dragoons scm from aiide tournament set game type use map settings set both ai s to skynet uses revision set auto manu lan... | 0 |

41,376 | 5,354,144,458 | IssuesEvent | 2017-02-20 09:01:15 | dzhw/metadatamanagement | https://api.github.com/repos/dzhw/metadatamanagement | closed | Rework question management | category:questionmanagement points:13 prio:1 status:testing type:backlog item | In order to hide all ids from the user we need to implement the following changes

## Domain Object

- [x] add instrumentNumber to domain object (including search document)

- [x] add successorNumbers to domain object (including search document)

- [x] remove surveyId

- [x] cross check with current domain model

## Va... | 1.0 | Rework question management - In order to hide all ids from the user we need to implement the following changes

## Domain Object

- [x] add instrumentNumber to domain object (including search document)

- [x] add successorNumbers to domain object (including search document)

- [x] remove surveyId

- [x] cross check wit... | non_comp | rework question management in order to hide all ids from the user we need to implement the following changes domain object add instrumentnumber to domain object including search document add successornumbers to domain object including search document remove surveyid cross check with curren... | 0 |

264,593 | 28,209,200,903 | IssuesEvent | 2023-04-05 01:38:55 | Trinadh465/linux_4.19.72_CVE-2023-42896 | https://api.github.com/repos/Trinadh465/linux_4.19.72_CVE-2023-42896 | closed | CVE-2021-0448 (Medium) detected in linuxlinux-4.19.279 - autoclosed | Mend: dependency security vulnerability | ## CVE-2021-0448 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.... | True | CVE-2021-0448 (Medium) detected in linuxlinux-4.19.279 - autoclosed - ## CVE-2021-0448 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.279</b></p></summary>

<p>

<p>Th... | non_comp | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files n... | 0 |

11,463 | 13,447,301,844 | IssuesEvent | 2020-09-08 14:04:48 | higan-emu/higan | https://api.github.com/repos/higan-emu/higan | closed | CGB: Sprite palette incorrect in Pokemon Crystal | bug compatibility | When playing Pokemon Crystal, the player sprite (and other character sprites walking around the map) appear in a random palette that changes as you move from room to room, or visit different menus. Here the player should have a black outline, instead of being a red blob:

appear in a random palette that changes as you move from room to room, or visit different menus. Here the player should have a black outline, instead of being a red blo... | comp | cgb sprite palette incorrect in pokemon crystal when playing pokemon crystal the player sprite and other character sprites walking around the map appear in a random palette that changes as you move from room to room or visit different menus here the player should have a black outline instead of being a red blo... | 1 |

14,731 | 18,091,678,120 | IssuesEvent | 2021-09-22 02:51:01 | gambitph/Stackable | https://api.github.com/repos/gambitph/Stackable | opened | Container goes full width when Block background is on | bug [version] V3 v2 compatibility | <!--

Before posting, make sure that:

1. you are running the latest version of Stackable, and

2. you have searched whether your issue has already been reported

-->

**Describe the bug**

All v2 blocks with block background goes full width once the block background is turned on.

**To Reproduce**

Steps to reprod... | True | Container goes full width when Block background is on - <!--

Before posting, make sure that:

1. you are running the latest version of Stackable, and

2. you have searched whether your issue has already been reported

-->

**Describe the bug**

All v2 blocks with block background goes full width once the block backg... | comp | container goes full width when block background is on before posting make sure that you are running the latest version of stackable and you have searched whether your issue has already been reported describe the bug all blocks with block background goes full width once the block backgr... | 1 |

137,149 | 18,752,644,700 | IssuesEvent | 2021-11-05 05:43:42 | madhans23/linux-4.15 | https://api.github.com/repos/madhans23/linux-4.15 | opened | CVE-2020-27171 (Medium) detected in linuxv4.15 | security vulnerability | ## CVE-2020-27171 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv4.15</b></p></summary>

<p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/brodo... | True | CVE-2020-27171 (Medium) detected in linuxv4.15 - ## CVE-2020-27171 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv4.15</b></p></summary>

<p>

<p>Library home page: <a href=http... | non_comp | cve medium detected in cve medium severity vulnerability vulnerable library library home page a href found in head commit a href found in base branch master vulnerable source files vulnerability details an issue was di... | 0 |

2,132 | 2,603,976,845 | IssuesEvent | 2015-02-24 19:01:43 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳龟头长疙瘩怎么办 | auto-migrated Priority-Medium Type-Defect | ```

沈阳龟头长疙瘩怎么办〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.com by `q964105... | 1.0 | 沈阳龟头长疙瘩怎么办 - ```

沈阳龟头长疙瘩怎么办〓沈陽軍區政治部醫院性病〓TEL:024-3

1023308〓成立于1946年,68年專注于性傳播疾病的研究和治療。�

��于沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌�

��歷史悠久、設備精良、技術權威、專家云集,是預防、保健

、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲��

�部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、�

��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空

軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體��

�等功。

```

-----

Original issue reported on code.google.co... | non_comp | 沈阳龟头长疙瘩怎么办 沈阳龟头长疙瘩怎么办〓沈陽軍區政治部醫院性病〓tel: 〓 , 。� �� 。是一所與新中國同建立共輝煌� ��歷史悠久、設備精良、技術權威、專家云集,是預防、保健 、醫療、科研康復為一體的綜合性醫院。是國家首批公立甲�� �部隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、� ��南大學等知名高等院校的教學醫院。曾被中國人民解放軍空 軍后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體�� �等功。 original issue reported on code google com by gmail com on jun at | 0 |

106,194 | 4,264,373,797 | IssuesEvent | 2016-07-12 06:49:46 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | chown Operation not permitted only when using persistent volume | component/storage kind/question priority/P2 | I can able to deployed and use [gogs docker image](https://hub.docker.com/r/gogs/gogs/) in OpenShift Origin as ephemeral. But when using persistent volume I get `chown Operation not permitted`

##### Version

oc v1.3.0-alpha.2

kubernetes v1.3.0-alpha.1-331-g0522e63

##### Steps To Reproduce

1. Create two persiste... | 1.0 | chown Operation not permitted only when using persistent volume - I can able to deployed and use [gogs docker image](https://hub.docker.com/r/gogs/gogs/) in OpenShift Origin as ephemeral. But when using persistent volume I get `chown Operation not permitted`

##### Version

oc v1.3.0-alpha.2

kubernetes v1.3.0-alpha.... | non_comp | chown operation not permitted only when using persistent volume i can able to deployed and use in openshift origin as ephemeral but when using persistent volume i get chown operation not permitted version oc alpha kubernetes alpha steps to reproduce create two persis... | 0 |

13,557 | 16,066,566,143 | IssuesEvent | 2021-04-23 20:08:50 | Vazkii/Botania | https://api.github.com/repos/Vazkii/Botania | closed | Runic Altar makes the world see-through | compatibility | # Version Information

Forge version: 36.1.4

Botania version: 1.16.4-414

Modpack: Direwolf20 1.9.0 (the version that released a few days ago), with "Immersive Portals" and "Create Additions) added to it.

# Further Information

Link to crash log: Not crashing

Steps to reproduce:

1. Place Runic Altar

2. Add in... | True | Runic Altar makes the world see-through - # Version Information

Forge version: 36.1.4

Botania version: 1.16.4-414

Modpack: Direwolf20 1.9.0 (the version that released a few days ago), with "Immersive Portals" and "Create Additions) added to it.

# Further Information

Link to crash log: Not crashing

Steps to re... | comp | runic altar makes the world see through version information forge version botania version modpack the version that released a few days ago with immersive portals and create additions added to it further information link to crash log not crashing steps to reproduce ... | 1 |

16,800 | 5,290,798,002 | IssuesEvent | 2017-02-08 20:49:58 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | opened | Optimize default(T) == null at compile time | area-CodeGen optimization | `default(T) == null` in generic code is a popular* pattern to determine if `T` is a nullable or a reference type.

Example:

```csharp

using System;

using System.Runtime.CompilerServices;

class Program

{

[MethodImpl(MethodImplOptions.NoInlining)]

static bool IsNotNullableValueType<T>()

{

... | 1.0 | Optimize default(T) == null at compile time - `default(T) == null` in generic code is a popular* pattern to determine if `T` is a nullable or a reference type.

Example:

```csharp

using System;

using System.Runtime.CompilerServices;

class Program

{

[MethodImpl(MethodImplOptions.NoInlining)]

static ... | non_comp | optimize default t null at compile time default t null in generic code is a popular pattern to determine if t is a nullable or a reference type example csharp using system using system runtime compilerservices class program static bool isnotnullablevaluetype ... | 0 |

15,123 | 18,991,988,991 | IssuesEvent | 2021-11-22 08:32:15 | KiwiHawk/SeaBlock | https://api.github.com/repos/KiwiHawk/SeaBlock | opened | Error with Alien Biomes | bug mod compatibility | 35.938 Warning Map.cpp:346: Map loading failed: Undefined named noise expression 'control-setting:cold:size:multiplier'

Ignore Alien Biomes rather than add incompatibility. | True | Error with Alien Biomes - 35.938 Warning Map.cpp:346: Map loading failed: Undefined named noise expression 'control-setting:cold:size:multiplier'

Ignore Alien Biomes rather than add incompatibility. | comp | error with alien biomes warning map cpp map loading failed undefined named noise expression control setting cold size multiplier ignore alien biomes rather than add incompatibility | 1 |

4,761 | 7,370,877,539 | IssuesEvent | 2018-03-13 09:56:07 | mike42/escpos-php | https://api.github.com/repos/mike42/escpos-php | closed | Printer tested: Bematech-4200-TH | printer-compatibility | Testei hoje e funcionou esse driver na impressora Bematech-4200-TH utilizando somente as impressões modo texto (sem imagens ou outras funcionalidades). | True | Printer tested: Bematech-4200-TH - Testei hoje e funcionou esse driver na impressora Bematech-4200-TH utilizando somente as impressões modo texto (sem imagens ou outras funcionalidades). | comp | printer tested bematech th testei hoje e funcionou esse driver na impressora bematech th utilizando somente as impressões modo texto sem imagens ou outras funcionalidades | 1 |

27,799 | 12,706,957,284 | IssuesEvent | 2020-06-23 08:07:10 | LiskHQ/lisk-docs | https://api.github.com/repos/LiskHQ/lisk-docs | closed | Create Introduction page | feature service | The Introduction page of the Lisk Service documentation should include:

- A general introduction and explanation about the purpose of Lisk Service

- An architecture overview

- A section with basic usage commands

| 1.0 | Create Introduction page - The Introduction page of the Lisk Service documentation should include:

- A general introduction and explanation about the purpose of Lisk Service

- An architecture overview

- A section with basic usage commands

| non_comp | create introduction page the introduction page of the lisk service documentation should include a general introduction and explanation about the purpose of lisk service an architecture overview a section with basic usage commands | 0 |

213,044 | 16,507,913,864 | IssuesEvent | 2021-05-25 21:57:24 | usbr/MTOM | https://api.github.com/repos/usbr/MTOM | closed | Cheat Sheet - MTOM | documentation | The 1-page “cheat-sheet” for running MTOM that LC put together needs to be reviewed for clarification

Assigned to Rich Eastland | 1.0 | Cheat Sheet - MTOM - The 1-page “cheat-sheet” for running MTOM that LC put together needs to be reviewed for clarification

Assigned to Rich Eastland | non_comp | cheat sheet mtom the page “cheat sheet” for running mtom that lc put together needs to be reviewed for clarification assigned to rich eastland | 0 |

1,425 | 3,955,191,482 | IssuesEvent | 2016-04-29 19:55:47 | facebook/hhvm | https://api.github.com/repos/facebook/hhvm | closed | System wide environment variables. | no isolated repro php5 incompatibility | I'm getting similar behavior to #1650 . Except it is system environment variables set in `/etc/environment` works on command line but fastcgi just not working.

I've set the environment variable in fastcgi params and it works, really is just system environment variables not being read.

```

HipHop VM 3.2.0 (rel)

... | True | System wide environment variables. - I'm getting similar behavior to #1650 . Except it is system environment variables set in `/etc/environment` works on command line but fastcgi just not working.

I've set the environment variable in fastcgi params and it works, really is just system environment variables not being ... | comp | system wide environment variables i m getting similar behavior to except it is system environment variables set in etc environment works on command line but fastcgi just not working i ve set the environment variable in fastcgi params and it works really is just system environment variables not being rea... | 1 |

87,820 | 25,224,816,070 | IssuesEvent | 2022-11-14 15:16:09 | apache/pulsar | https://api.github.com/repos/apache/pulsar | closed | Docker is deprecated in Kubernetes -> identify need for action | type/feature component/build triage/week-49 lifecycle/stale | **Is your feature request related to a problem? Please describe.**

Docker is **now deprecated** in Kubernetes.

By the **end of 2021** "dockershim" which implements CRI support for Docker will be **removed.**

> Reason: Why is dockershim being deprecated?

> Maintaining dockershim has become a heavy burden on the Ku... | 1.0 | Docker is deprecated in Kubernetes -> identify need for action - **Is your feature request related to a problem? Please describe.**

Docker is **now deprecated** in Kubernetes.

By the **end of 2021** "dockershim" which implements CRI support for Docker will be **removed.**

> Reason: Why is dockershim being deprecat... | non_comp | docker is deprecated in kubernetes identify need for action is your feature request related to a problem please describe docker is now deprecated in kubernetes by the end of dockershim which implements cri support for docker will be removed reason why is dockershim being deprecated ... | 0 |

607,176 | 18,774,020,966 | IssuesEvent | 2021-11-07 10:55:00 | AY2122S1-CS2103T-W15-3/tp | https://api.github.com/repos/AY2122S1-CS2103T-W15-3/tp | closed | Mark and unmark with duplicate index bug | type.Bug priority.High severity.High | When the user attempts to enter the command `emark 2 2 1`, the app will not mark the events correctly. We should not allow duplicate indexes. | 1.0 | Mark and unmark with duplicate index bug - When the user attempts to enter the command `emark 2 2 1`, the app will not mark the events correctly. We should not allow duplicate indexes. | non_comp | mark and unmark with duplicate index bug when the user attempts to enter the command emark the app will not mark the events correctly we should not allow duplicate indexes | 0 |

153,905 | 12,168,269,505 | IssuesEvent | 2020-04-27 12:24:18 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | opened | Switch fake function used in external solution test to real function | area/serverless test-missing | <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

<!-- Provide a clear and concise description of the feature. -->

TBD

**Reasons**

<!-- Explain why we should add this feature. Pro... | 1.0 | Switch fake function used in external solution test to real function - <!-- Thank you for your contribution. Before you submit the issue:

1. Search open and closed issues for duplicates.

2. Read the contributing guidelines.

-->

**Description**

<!-- Provide a clear and concise description of the feature. -->

... | non_comp | switch fake function used in external solution test to real function thank you for your contribution before you submit the issue search open and closed issues for duplicates read the contributing guidelines description tbd reasons attachments | 0 |

162,254 | 13,884,967,308 | IssuesEvent | 2020-10-18 18:09:49 | phpmd/phpmd | https://api.github.com/repos/phpmd/phpmd | closed | unique identifier to distinguish from pmd | Documentation Enhancement Good first issue On Hold | The output xml files of PMD and PHPMD tools are almost identical. Without knowing which program generated them, it is programatically tricky to identify which tool the file derived from. Would you consider changing the top level `<pmd></pmd>` tag to `<phpmd></phpmd>`? A unique attribute like `tool="phpmd"` would work j... | 1.0 | unique identifier to distinguish from pmd - The output xml files of PMD and PHPMD tools are almost identical. Without knowing which program generated them, it is programatically tricky to identify which tool the file derived from. Would you consider changing the top level `<pmd></pmd>` tag to `<phpmd></phpmd>`? A uniqu... | non_comp | unique identifier to distinguish from pmd the output xml files of pmd and phpmd tools are almost identical without knowing which program generated them it is programatically tricky to identify which tool the file derived from would you consider changing the top level tag to a unique attribute like tool... | 0 |

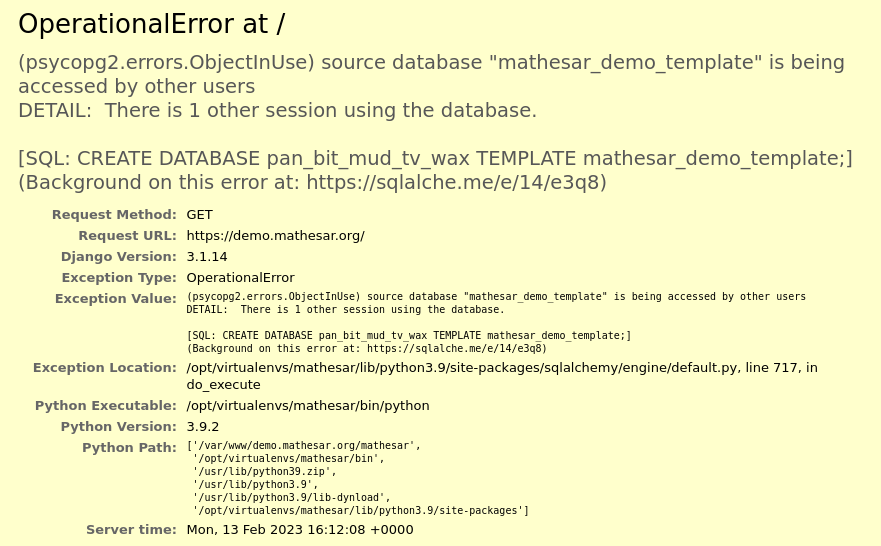

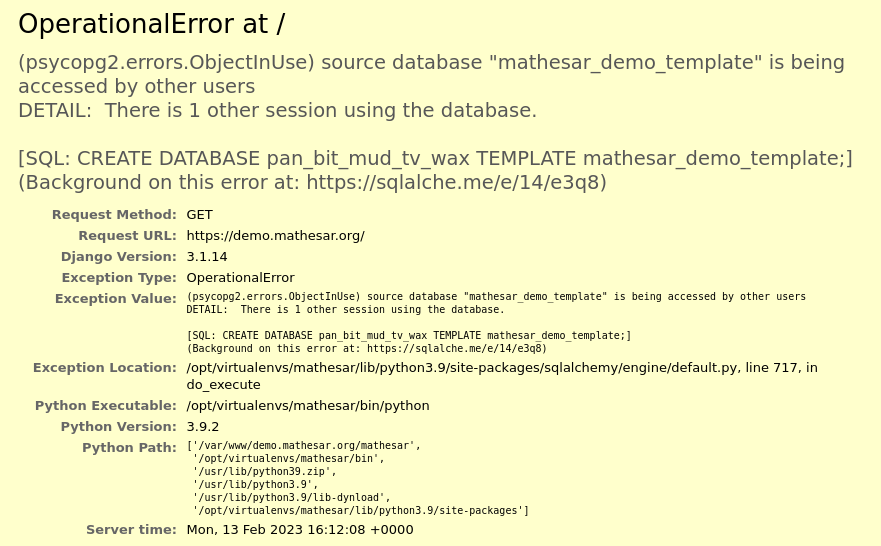

33,185 | 27,288,807,143 | IssuesEvent | 2023-02-23 15:15:41 | centerofci/mathesar | https://api.github.com/repos/centerofci/mathesar | closed | Demo server 500 right after login: "mathesar_demo_template" is being accessed by other users | type: bug work: backend work: infrastructure status: started | ## Description

Response was `500 https://demo.mathesar.org/` with following body:

Reloaded after ~5mn (waited a long time because was submitting this bug report): view rendered fine, but I ... | 1.0 | Demo server 500 right after login: "mathesar_demo_template" is being accessed by other users - ## Description

Response was `500 https://demo.mathesar.org/` with following body:

Reloaded aft... | non_comp | demo server right after login mathesar demo template is being accessed by other users description response was with following body reloaded after waited a long time because was submitting this bug report view rendered fine but i had no schemas while i expected to see all the demo s... | 0 |

9,998 | 11,985,493,582 | IssuesEvent | 2020-04-07 17:36:22 | Juuxel/Adorn | https://api.github.com/repos/Juuxel/Adorn | closed | Deal with DraylARR's and Vatuu's mistakes | bug mod compatibility | `lil-tater` was first changed to `liltater` and then `lil_tater` | True | Deal with DraylARR's and Vatuu's mistakes - `lil-tater` was first changed to `liltater` and then `lil_tater` | comp | deal with draylarr s and vatuu s mistakes lil tater was first changed to liltater and then lil tater | 1 |

18,996 | 26,419,195,759 | IssuesEvent | 2023-01-13 18:39:11 | oracle/truffleruby | https://api.github.com/repos/oracle/truffleruby | closed | String#casecmp? fails between ascii and utf-8 | compatibility | Comparing an ASCII-8BIT string with UTF-8, for both of which `#ascii_only?` is true, using `String#casecmp?` returns nil rather than a boolean e.g.

```ruby

''.b.casecmp?('')

```

It returns boolean on MRI, and the implementation to return boolean is present in truffleruby but not reached:

https://github.com/ora... | True | String#casecmp? fails between ascii and utf-8 - Comparing an ASCII-8BIT string with UTF-8, for both of which `#ascii_only?` is true, using `String#casecmp?` returns nil rather than a boolean e.g.

```ruby

''.b.casecmp?('')

```

It returns boolean on MRI, and the implementation to return boolean is present in truffl... | comp | string casecmp fails between ascii and utf comparing an ascii string with utf for both of which ascii only is true using string casecmp returns nil rather than a boolean e g ruby b casecmp it returns boolean on mri and the implementation to return boolean is present in truffleru... | 1 |

6,271 | 8,650,230,169 | IssuesEvent | 2018-11-26 21:51:45 | metarhia/impress | https://api.github.com/repos/metarhia/impress | closed | Refactor avoiding mixins | compatibility optimization | Now Impress core uses mixins for everything, it turned to a performance problem, so plugins and objects should be refactored to use: prototypes, factories, functors:

- [x] Server

- [x] Config

- [x] Application

- [ ] Plugin

- [x] Client

- [ ] Connection

- [x] Logger | True | Refactor avoiding mixins - Now Impress core uses mixins for everything, it turned to a performance problem, so plugins and objects should be refactored to use: prototypes, factories, functors:

- [x] Server

- [x] Config

- [x] Application

- [ ] Plugin

- [x] Client

- [ ] Connection

- [x] Logger | comp | refactor avoiding mixins now impress core uses mixins for everything it turned to a performance problem so plugins and objects should be refactored to use prototypes factories functors server config application plugin client connection logger | 1 |

267,732 | 28,509,192,190 | IssuesEvent | 2023-04-19 01:43:16 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | closed | CVE-2017-1000370 (High) detected in linuxv3.0 - autoclosed | Mend: dependency security vulnerability | ## CVE-2017-1000370 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/ver... | True | CVE-2017-1000370 (High) detected in linuxv3.0 - autoclosed - ## CVE-2017-1000370 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source ... | non_comp | cve high detected in autoclosed cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files vulnerabi... | 0 |

321,373 | 27,523,456,765 | IssuesEvent | 2023-03-06 16:26:09 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | opened | Medium Woo: Verify upgrades from Free trial | Testing Woo Express | Once #74119 and #74120 are complete, we want to verify upgrading from the Woo Express free trial.

First create a new trial site by visiting http://calypso.localhost:3000/setup/wooexpress.

Then go through the upgrade process by manually adding the Medium plan to the cart and checkin gout `http://calypso.localhost:... | 1.0 | Medium Woo: Verify upgrades from Free trial - Once #74119 and #74120 are complete, we want to verify upgrading from the Woo Express free trial.

First create a new trial site by visiting http://calypso.localhost:3000/setup/wooexpress.

Then go through the upgrade process by manually adding the Medium plan to the ca... | non_comp | medium woo verify upgrades from free trial once and are complete we want to verify upgrading from the woo express free trial first create a new trial site by visiting then go through the upgrade process by manually adding the medium plan to the cart and checkin gout things to verify tbd | 0 |

17,309 | 23,884,390,886 | IssuesEvent | 2022-09-08 06:14:38 | elBukkit/MagicPlugin | https://api.github.com/repos/elBukkit/MagicPlugin | reopened | Prevent breaking signs | Compatibility | I have a plugin to lock chests using a sign so only the owner can access it, with spells that break blocks, when the sign is replaced people can access and steal from the chests temporarily until the sign is fixed with the writing that locks it, | True | Prevent breaking signs - I have a plugin to lock chests using a sign so only the owner can access it, with spells that break blocks, when the sign is replaced people can access and steal from the chests temporarily until the sign is fixed with the writing that locks it, | comp | prevent breaking signs i have a plugin to lock chests using a sign so only the owner can access it with spells that break blocks when the sign is replaced people can access and steal from the chests temporarily until the sign is fixed with the writing that locks it | 1 |

134,101 | 18,421,805,849 | IssuesEvent | 2021-10-13 16:56:27 | daniel-brown-ws-test/verademo | https://api.github.com/repos/daniel-brown-ws-test/verademo | opened | CVE-2019-11358 (Medium) detected in jquery-1.11.2.min.js | security vulnerability | ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.2.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="h... | True | CVE-2019-11358 (Medium) detected in jquery-1.11.2.min.js - ## CVE-2019-11358 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.11.2.min.js</b></p></summary>

<p>JavaScript libr... | non_comp | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to vulnerable library app src main webapp resources js jquery min js dependency hierarc... | 0 |

89,691 | 11,274,115,183 | IssuesEvent | 2020-01-14 17:52:26 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Rename add_theme_support( 'disable-custom-font-sizes' ) and default it to true | Customization Needs Accessibility Feedback Needs Decision Needs Design Feedback | **Is your feature request related to a problem? Please describe.**

Any scenario in which a user needs custom pixel sizes for font-sizing seems fairly niche to me. Is there a specific reason this feature is defaulted to on when there's a good theme-controlled means of enabling a number of variations of font sizing? I p... | 1.0 | Rename add_theme_support( 'disable-custom-font-sizes' ) and default it to true - **Is your feature request related to a problem? Please describe.**

Any scenario in which a user needs custom pixel sizes for font-sizing seems fairly niche to me. Is there a specific reason this feature is defaulted to on when there's a g... | non_comp | rename add theme support disable custom font sizes and default it to true is your feature request related to a problem please describe any scenario in which a user needs custom pixel sizes for font sizing seems fairly niche to me is there a specific reason this feature is defaulted to on when there s a g... | 0 |

6,769 | 9,082,369,266 | IssuesEvent | 2019-02-17 11:43:23 | SpongePowered/SpongeForge | https://api.github.com/repos/SpongePowered/SpongeForge | closed | No entity type is registered for modded entity | status: pr pending system: registry type: mod incompatibility version: 1.12 | **I am currently running**

- SpongeForge version: 1.12.2-2705-7.1.0-BETA-3355 & 1.12.2-2705-7.1.0-BETA-3358

- Forge version: 14.23.4.2739

- Java version: 1.8.0_171-b10

- Operating System: CentOS

<!-- Please include ALL mods/plugins you had installed when your issue happened, you can get a list of

your ... | True | No entity type is registered for modded entity - **I am currently running**

- SpongeForge version: 1.12.2-2705-7.1.0-BETA-3355 & 1.12.2-2705-7.1.0-BETA-3358

- Forge version: 14.23.4.2739

- Java version: 1.8.0_171-b10

- Operating System: CentOS

<!-- Please include ALL mods/plugins you had installed when your issu... | comp | no entity type is registered for modded entity i am currently running spongeforge version beta beta forge version java version operating system centos please include all mods plugins you had installed when your issue happened you can get... | 1 |

170,535 | 20,883,752,102 | IssuesEvent | 2022-03-23 01:08:53 | snowdensb/dependabot-core | https://api.github.com/repos/snowdensb/dependabot-core | reopened | CVE-2018-16487 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2018-16487 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-2.4.1.tgz</b>, <b>lodash-4.17.4.tgz</b>, <b>lodash-4.17.5.tgz</b>, <b>lodash-1.3.1.tgz</b>, <b>lodash-1.2.0.... | True | CVE-2018-16487 (Medium) detected in multiple libraries - ## CVE-2018-16487 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>lodash-2.4.1.tgz</b>, <b>lodash-4.17.4.tgz</b>, <b>lodash-... | non_comp | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz lodash tgz ... | 0 |

168,073 | 20,741,163,772 | IssuesEvent | 2022-03-14 17:49:38 | opensearch-project/OpenSearch-Dashboards | https://api.github.com/repos/opensearch-project/OpenSearch-Dashboards | closed | CVE-2021-3807 (High) detected in ansi-regex-3.0.0.tgz, ansi-regex-4.1.0.tgz | v2.0.0 security vulnerability high severity cve | ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-3.0.0.tgz</b>, <b>ansi-regex-4.1.0.tgz</b></p></summary>

<p>

<details><summary><b>ansi-regex-3.0.0.tgz</b>... | True | CVE-2021-3807 (High) detected in ansi-regex-3.0.0.tgz, ansi-regex-4.1.0.tgz - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>ansi-regex-3.0.0.tgz</b>, <b>ansi-regex-... | non_comp | cve high detected in ansi regex tgz ansi regex tgz cve high severity vulnerability vulnerable libraries ansi regex tgz ansi regex tgz ansi regex tgz regular expression for matching ansi escape codes library home page a href dependency hie... | 0 |

15,029 | 18,869,717,035 | IssuesEvent | 2021-11-13 01:13:37 | Craluminum2413/Craluminum-Mods | https://api.github.com/repos/Craluminum2413/Craluminum-Mods | opened | Could you add compatibility with the more molds mod | compatibility | Simple enough, I dont like grinding.

here is the mod in question https://mods.vintagestory.at/moremolds | True | Could you add compatibility with the more molds mod - Simple enough, I dont like grinding.

here is the mod in question https://mods.vintagestory.at/moremolds | comp | could you add compatibility with the more molds mod simple enough i dont like grinding here is the mod in question | 1 |

9,890 | 11,893,565,473 | IssuesEvent | 2020-03-29 12:11:32 | Creators-of-Create/Create | https://api.github.com/repos/Creators-of-Create/Create | closed | Minecolonies Schematicannon compat | compatibility feature | I was curious is It possible to use Schematicannon (Which I love <3) in exchange of Builder (this lazy thief) from Minecolonies. But Minecolonies schematics are in .blueprint format instead of .nbt and I have no idea how to convert them. Builder have to place last block to complete the building, so It should work. | True | Minecolonies Schematicannon compat - I was curious is It possible to use Schematicannon (Which I love <3) in exchange of Builder (this lazy thief) from Minecolonies. But Minecolonies schematics are in .blueprint format instead of .nbt and I have no idea how to convert them. Builder have to place last block to complete... | comp | minecolonies schematicannon compat i was curious is it possible to use schematicannon which i love in exchange of builder this lazy thief from minecolonies but minecolonies schematics are in blueprint format instead of nbt and i have no idea how to convert them builder have to place last block to complete... | 1 |

15,397 | 19,658,760,160 | IssuesEvent | 2022-01-10 15:04:24 | e2nIEE/pandapower | https://api.github.com/repos/e2nIEE/pandapower | closed | pandapower doesn't seem to work with Numpy 1.20 (latest) | compatibility | _First off, thank you for this amazing tool. Pandapower is great!!_ Here's my issue:

After upgrading Numpy to 1.20, I can no longer run powerflow (`pp.runpp(net)`). To try to prove that the issue is with numpy, I rolled back to numpy 1.19.0, and it worked like a charm. In fact, when I run `pandapower.test.run_all_te... | True | pandapower doesn't seem to work with Numpy 1.20 (latest) - _First off, thank you for this amazing tool. Pandapower is great!!_ Here's my issue:

After upgrading Numpy to 1.20, I can no longer run powerflow (`pp.runpp(net)`). To try to prove that the issue is with numpy, I rolled back to numpy 1.19.0, and it worked li... | comp | pandapower doesn t seem to work with numpy latest first off thank you for this amazing tool pandapower is great here s my issue after upgrading numpy to i can no longer run powerflow pp runpp net to try to prove that the issue is with numpy i rolled back to numpy and it worked like ... | 1 |

824,884 | 31,234,248,165 | IssuesEvent | 2023-08-20 04:16:40 | space-wizards/RobustToolbox | https://api.github.com/repos/space-wizards/RobustToolbox | opened | Deleted / Exists cleanup | Issue: Needs Cleanup Difficulty: 3-Hard Priority: 2-Before Release | 1. So Exists checks if the metadatacomp exists at all.

2. Deleted checks if it exists and the entity is not flagged as deleted.

This is kinda whacky and ideally they'd be combined. Whenever an entity is deleted it should just be removed and hence no need for EntityDeleted.

If / when we get command buffers then you... | 1.0 | Deleted / Exists cleanup - 1. So Exists checks if the metadatacomp exists at all.

2. Deleted checks if it exists and the entity is not flagged as deleted.

This is kinda whacky and ideally they'd be combined. Whenever an entity is deleted it should just be removed and hence no need for EntityDeleted.

If / when we g... | non_comp | deleted exists cleanup so exists checks if the metadatacomp exists at all deleted checks if it exists and the entity is not flagged as deleted this is kinda whacky and ideally they d be combined whenever an entity is deleted it should just be removed and hence no need for entitydeleted if when we g... | 0 |

21,600 | 2,641,717,230 | IssuesEvent | 2015-03-11 19:19:51 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Client side storage explained | Milestone-2 Priority-Medium Tutorial Type-Enhancement | Original [issue 71](https://code.google.com/p/html5rocks/issues/detail?id=71) created by chrsmith on 2010-07-28T00:11:03.000Z:

vli:

cover localstorage , indexdb, web database, difference , use cases

explains tools and best practices to determine when to use what.

| 1.0 | Client side storage explained - Original [issue 71](https://code.google.com/p/html5rocks/issues/detail?id=71) created by chrsmith on 2010-07-28T00:11:03.000Z:

vli:

cover localstorage , indexdb, web database, difference , use cases

explains tools and best practices to determine when to use what.

| non_comp | client side storage explained original created by chrsmith on vli cover localstorage indexdb web database difference use cases explains tools and best practices to determine when to use what | 0 |

548,169 | 16,059,245,773 | IssuesEvent | 2021-04-23 10:03:57 | docker-mailserver/docker-mailserver | https://api.github.com/repos/docker-mailserver/docker-mailserver | closed | Settings to use service | kind/question meta/help wanted meta/needs triage priority/low | # Domain name and client settings

Hello, a beginner question: how to use docker mailserver with node mailer to send mails and what are the settings to make on the domain name (redirection / MX)?

Thank you!

| 1.0 | Settings to use service - # Domain name and client settings

Hello, a beginner question: how to use docker mailserver with node mailer to send mails and what are the settings to make on the domain name (redirection / MX)?

Thank you!

| non_comp | settings to use service domain name and client settings hello a beginner question how to use docker mailserver with node mailer to send mails and what are the settings to make on the domain name redirection mx thank you | 0 |

175,456 | 21,313,548,578 | IssuesEvent | 2022-04-16 00:08:07 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [Security Solution] Session view icon is overlapping and not working under event renderer on alerts page | bug triage_needed impact:high Team: SecuritySolution v8.2.0 | **Describe the bug**

Session view icon is overlapping and not working under event renderer on alerts page

**Build Details:**

```

Version : 8.2.0 SNAPSHOT

Build : 51940

Commit : cbab89d8a43311d11dfaac3d9145a2be8841a857

```

**preconditions**

1. Alerts should be exist with session view

**Steps to Reproduce... | True | [Security Solution] Session view icon is overlapping and not working under event renderer on alerts page - **Describe the bug**

Session view icon is overlapping and not working under event renderer on alerts page

**Build Details:**

```

Version : 8.2.0 SNAPSHOT

Build : 51940

Commit : cbab89d8a43311d11dfaac3d9145... | non_comp | session view icon is overlapping and not working under event renderer on alerts page describe the bug session view icon is overlapping and not working under event renderer on alerts page build details version snapshot build commit preconditions alerts should be e... | 0 |

148,492 | 11,854,212,360 | IssuesEvent | 2020-03-25 00:06:12 | QubesOS/updates-status | https://api.github.com/repos/QubesOS/updates-status | closed | linux-kernel v5.4.25-1 (r4.1) | r4.1-dom0-cur-test | Update of linux-kernel to v5.4.25-1 for Qubes r4.1, see comments below for details.

Built from: https://github.com/QubesOS/qubes-linux-kernel/commit/64dcb6ced3a017cc582258a893986416c604c309

[Changes since previous version](https://github.com/QubesOS/qubes-linux-kernel/compare/v4.19.100-1...v5.4.25-1):

QubesOS/qubes-l... | 1.0 | linux-kernel v5.4.25-1 (r4.1) - Update of linux-kernel to v5.4.25-1 for Qubes r4.1, see comments below for details.

Built from: https://github.com/QubesOS/qubes-linux-kernel/commit/64dcb6ced3a017cc582258a893986416c604c309

[Changes since previous version](https://github.com/QubesOS/qubes-linux-kernel/compare/v4.19.100... | non_comp | linux kernel update of linux kernel to for qubes see comments below for details built from qubesos qubes linux kernel update to kernel qubesos qubes linux kernel merge remote tracking branch origin pr into stable qubesos qubes linux kernel makefile remove extr... | 0 |

630 | 3,059,998,355 | IssuesEvent | 2015-08-14 18:07:06 | facebook/hhvm | https://api.github.com/repos/facebook/hhvm | closed | Segmentation fault if memcache host doesn't resolve | crash php5 incompatibility | HHVM will crash after the object is reused or free'd after a Memcache::add call where the server being used has a hostname that doesn't resolve.

For example:

```php

<?php

$memcache = new Memcache();

$memcache->connect( "junkhost", 123 );

$memcache->add( "foo", "bar", 0, 5 );

echo $memcache->get( "foo" ) . "\... | True | Segmentation fault if memcache host doesn't resolve - HHVM will crash after the object is reused or free'd after a Memcache::add call where the server being used has a hostname that doesn't resolve.

For example:

```php

<?php

$memcache = new Memcache();

$memcache->connect( "junkhost", 123 );

$memcache->add( "f... | comp | segmentation fault if memcache host doesn t resolve hhvm will crash after the object is reused or free d after a memcache add call where the server being used has a hostname that doesn t resolve for example php php memcache new memcache memcache connect junkhost memcache add foo... | 1 |

16,289 | 21,930,693,535 | IssuesEvent | 2022-05-23 09:28:01 | handsontable/handsontable | https://api.github.com/repos/handsontable/handsontable | opened | Replace void 0 with undefined | Type: Improvement suggestion Core: compatibility | ### Description

Handsontable used `void 0` since the beginning, for its greater compatibility than `undefined` with the browsers *before* ES5. In the current release, we no longer have to support legacy browsers, so we can replace all occurrences of `void 0 `with `undefined`.

### Steps to reproduce

<!--- Provide... | True | Replace void 0 with undefined - ### Description

Handsontable used `void 0` since the beginning, for its greater compatibility than `undefined` with the browsers *before* ES5. In the current release, we no longer have to support legacy browsers, so we can replace all occurrences of `void 0 `with `undefined`.

### S... | comp | replace void with undefined description handsontable used void since the beginning for its greater compatibility than undefined with the browsers before in the current release we no longer have to support legacy browsers so we can replace all occurrences of void with undefined ste... | 1 |

92,176 | 11,614,431,557 | IssuesEvent | 2020-02-26 12:35:05 | pandas-dev/pandas | https://api.github.com/repos/pandas-dev/pandas | closed | API/ENH: Support/document/test fold argument in Timestamp | API Design Timeseries Timezones | Python 3.6 added a `fold` argument in `datetime.datetime` to disambiguate DST transition times that occur twice (in wall time).

https://docs.python.org/3/library/datetime.html#datetime-objects.

Technically `Timestamp` will accept the argument in 3.6, but it's not formally documented or tested.

Additionally since... | 1.0 | API/ENH: Support/document/test fold argument in Timestamp - Python 3.6 added a `fold` argument in `datetime.datetime` to disambiguate DST transition times that occur twice (in wall time).

https://docs.python.org/3/library/datetime.html#datetime-objects.

Technically `Timestamp` will accept the argument in 3.6, but... | non_comp | api enh support document test fold argument in timestamp python added a fold argument in datetime datetime to disambiguate dst transition times that occur twice in wall time technically timestamp will accept the argument in but it s not formally documented or tested additionally since we ... | 0 |

16,995 | 23,418,158,261 | IssuesEvent | 2022-08-13 09:09:28 | jesus2099/konami-command | https://api.github.com/repos/jesus2099/konami-command | reopened | Quick redirects now bypass browser history? | browser compatibility mb_REDIRECT-WHEN-UNIQUE-RESULT | Apparently (now) quick redirects, bypass the browser history.

In my Windows VIvaldi, if my search triggers immediate (or quick) redirect, I can no longer go back to the search result. | True | Quick redirects now bypass browser history? - Apparently (now) quick redirects, bypass the browser history.

In my Windows VIvaldi, if my search triggers immediate (or quick) redirect, I can no longer go back to the search result. | comp | quick redirects now bypass browser history apparently now quick redirects bypass the browser history in my windows vivaldi if my search triggers immediate or quick redirect i can no longer go back to the search result | 1 |

219,008 | 24,424,879,075 | IssuesEvent | 2022-10-06 01:11:48 | dreamboy9/mongo | https://api.github.com/repos/dreamboy9/mongo | opened | WS-2022-0322 (Medium) detected in d3-color-2.0.0.tgz | security vulnerability | ## WS-2022-0322 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>d3-color-2.0.0.tgz</b></p></summary>

<p>Color spaces! RGB, HSL, Cubehelix, Lab and HCL (Lch).</p>

<p>Library home page... | True | WS-2022-0322 (Medium) detected in d3-color-2.0.0.tgz - ## WS-2022-0322 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>d3-color-2.0.0.tgz</b></p></summary>

<p>Color spaces! RGB, HSL,... | non_comp | ws medium detected in color tgz ws medium severity vulnerability vulnerable library color tgz color spaces rgb hsl cubehelix lab and hcl lch library home page a href path to dependency file buildscripts libdeps graph visualizer web stack package json path ... | 0 |

1,664 | 3,862,590,921 | IssuesEvent | 2016-04-08 04:02:46 | krpc/krpc | https://api.github.com/repos/krpc/krpc | opened | Camera controls | services-spacecenter | Add RPCs to control the camera:

* Camera mode (auto/chase/free etc., map and IVA)

* Zoom level

* Pan/rotate

* Set the body/vessel/node in focus when the camera is in map mode | 1.0 | Camera controls - Add RPCs to control the camera:

* Camera mode (auto/chase/free etc., map and IVA)

* Zoom level

* Pan/rotate

* Set the body/vessel/node in focus when the camera is in map mode | non_comp | camera controls add rpcs to control the camera camera mode auto chase free etc map and iva zoom level pan rotate set the body vessel node in focus when the camera is in map mode | 0 |

20,323 | 29,811,429,248 | IssuesEvent | 2023-06-16 15:20:48 | okfn-brasil/querido-diario | https://api.github.com/repos/okfn-brasil/querido-diario | closed | [Novo spider base]: Siganet | type: spider incompatible | Ao verificar o PR#746 de @lucasocarvalhos, foi possível ver no rodapé da página inicial consta uma nota de copyright mencionando uma empresa ou produto chamado [Siganet](https://www.siganet.net.br/)

Baseado nos resultados das busca em Google por `Siganet site:gov.br diário prefeitura`, temos alguns municípios que pa... | True | [Novo spider base]: Siganet - Ao verificar o PR#746 de @lucasocarvalhos, foi possível ver no rodapé da página inicial consta uma nota de copyright mencionando uma empresa ou produto chamado [Siganet](https://www.siganet.net.br/)

Baseado nos resultados das busca em Google por `Siganet site:gov.br diário prefeitura`, ... | comp | siganet ao verificar o pr de lucasocarvalhos foi possível ver no rodapé da página inicial consta uma nota de copyright mencionando uma empresa ou produto chamado baseado nos resultados das busca em google por siganet site gov br diário prefeitura temos alguns municípios que parecem utilizar o mesmo s... | 1 |

8,328 | 10,347,458,235 | IssuesEvent | 2019-09-04 17:26:52 | cobalt-org/liquid-rust | https://api.github.com/repos/cobalt-org/liquid-rust | closed | `compact` needs to accept an optional `property` parameter | enhancement question std-compatibility | See #267, #333, #334

Example test:

```

assert_eq!(v!([]), filters!(Compact, v!([]), v!("a")));

``` | True | `compact` needs to accept an optional `property` parameter - See #267, #333, #334

Example test:

```

assert_eq!(v!([]), filters!(Compact, v!([]), v!("a")));

``` | comp | compact needs to accept an optional property parameter see example test assert eq v filters compact v v a | 1 |

274,593 | 23,852,101,692 | IssuesEvent | 2022-09-06 18:58:30 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | roachtest: kv0/enc=false/nodes=3/cpu=96 failed | C-test-failure O-robot O-roachtest release-blocker T-kv branch-release-22.1 | roachtest.kv0/enc=false/nodes=3/cpu=96 [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=6195973&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=6195973&tab=artifacts#/kv0/enc=false/nodes=3/cpu=96) on release-22.1 @ [714fa0ad80c499cbd96ba97c560a9b414c61104f](https://git... | 2.0 | roachtest: kv0/enc=false/nodes=3/cpu=96 failed - roachtest.kv0/enc=false/nodes=3/cpu=96 [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=6195973&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=6195973&tab=artifacts#/kv0/enc=false/nodes=3/cpu=96) on release-22.1 @ [714f... | non_comp | roachtest enc false nodes cpu failed roachtest enc false nodes cpu with on release write write ... | 0 |

13,179 | 15,531,276,750 | IssuesEvent | 2021-03-13 22:48:06 | cseelhoff/RimThreaded | https://api.github.com/repos/cseelhoff/RimThreaded | closed | "Shield Generators by Frontier Developments" not working as intended | Bug Mod Incompatibility Needs Validation Not Enough Info Reproducible | **Describe the bug**

In using the mod "Shield Generators by Frontier Developments," the shield generators within the mod do not function as intended. Typically, bullets are supposed to be stopped by the shield, and their damage absorbed by the generator. Currently, the shield generated by all 3 generator types stop li... | True | "Shield Generators by Frontier Developments" not working as intended - **Describe the bug**

In using the mod "Shield Generators by Frontier Developments," the shield generators within the mod do not function as intended. Typically, bullets are supposed to be stopped by the shield, and their damage absorbed by the gene... | comp | shield generators by frontier developments not working as intended describe the bug in using the mod shield generators by frontier developments the shield generators within the mod do not function as intended typically bullets are supposed to be stopped by the shield and their damage absorbed by the gene... | 1 |

1,201 | 3,697,522,397 | IssuesEvent | 2016-02-27 18:44:45 | sekiguchi-nagisa/ydsh | https://api.github.com/repos/sekiguchi-nagisa/ydsh | opened | support background job | enhancement incompatible change | introduce Async type.

Async type has wait method for waiting process termination.

string representation of Async object is pid.

```

$ ping -c 192.168.0.1 &

(Async) 5678

$ var a = echo hoge &

$ $a

(Async) 5679

$ (echo huga &).wait() # wait termination

``` | True | support background job - introduce Async type.

Async type has wait method for waiting process termination.

string representation of Async object is pid.

```

$ ping -c 192.168.0.1 &

(Async) 5678

$ var a = echo hoge &

$ $a

(Async) 5679

$ (echo huga &).wait() # wait termination

``` | comp | support background job introduce async type async type has wait method for waiting process termination string representation of async object is pid ping c async var a echo hoge a async echo huga wait wait termination | 1 |

432,542 | 30,287,233,261 | IssuesEvent | 2023-07-08 21:01:12 | gdg-berlin-android/ZeBadge | https://api.github.com/repos/gdg-berlin-android/ZeBadge | opened | What should the buttons on ZeBadge do while connected | 📖 documentation ⁉️ question 🤖 app 🪪 badge | Ideate on what the buttons should do while connected to the phone. | 1.0 | What should the buttons on ZeBadge do while connected - Ideate on what the buttons should do while connected to the phone. | non_comp | what should the buttons on zebadge do while connected ideate on what the buttons should do while connected to the phone | 0 |

333,502 | 29,669,509,681 | IssuesEvent | 2023-06-11 08:19:06 | cse110-sp23-group23/Zoltar | https://api.github.com/repos/cse110-sp23-group23/Zoltar | opened | Mute button test | End to End Tests | Requirement Description:

- Toggle mute button test

Any key challenges:

-

Steps to Implementing:

- visuals.test.js

Other:

-

| 1.0 | Mute button test - Requirement Description:

- Toggle mute button test

Any key challenges:

-

Steps to Implementing:

- visuals.test.js

Other:

-

| non_comp | mute button test requirement description toggle mute button test any key challenges steps to implementing visuals test js other | 0 |

8,343 | 10,367,917,704 | IssuesEvent | 2019-09-07 12:45:46 | NEZNAMY/TAB | https://api.github.com/repos/NEZNAMY/TAB | closed | Permissions EX bug | [1] error [2] compatibility [4] wontfix | 07.09.2019 - 14:15:59 - [TAB v2.5.1] An error occured when setting placeholders(2) (player=Pericol123, online=true)

07.09.2019 - 14:15:59 - ru.tehkode.permissions.exceptions.PermissionsNotAvailable: Permissions manager is not accessable. Is the PermissionsEx plugin enabled?

07.09.2019 - 14:15:59 - at ru.tehkod... | True | Permissions EX bug - 07.09.2019 - 14:15:59 - [TAB v2.5.1] An error occured when setting placeholders(2) (player=Pericol123, online=true)

07.09.2019 - 14:15:59 - ru.tehkode.permissions.exceptions.PermissionsNotAvailable: Permissions manager is not accessable. Is the PermissionsEx plugin enabled?

07.09.2019 - 14:15:59 ... | comp | permissions ex bug an error occured when setting placeholders player online true ru tehkode permissions exceptions permissionsnotavailable permissions manager is not accessable is the permissionsex plugin enabled at ru tehkode permissions bukkit pe... | 1 |

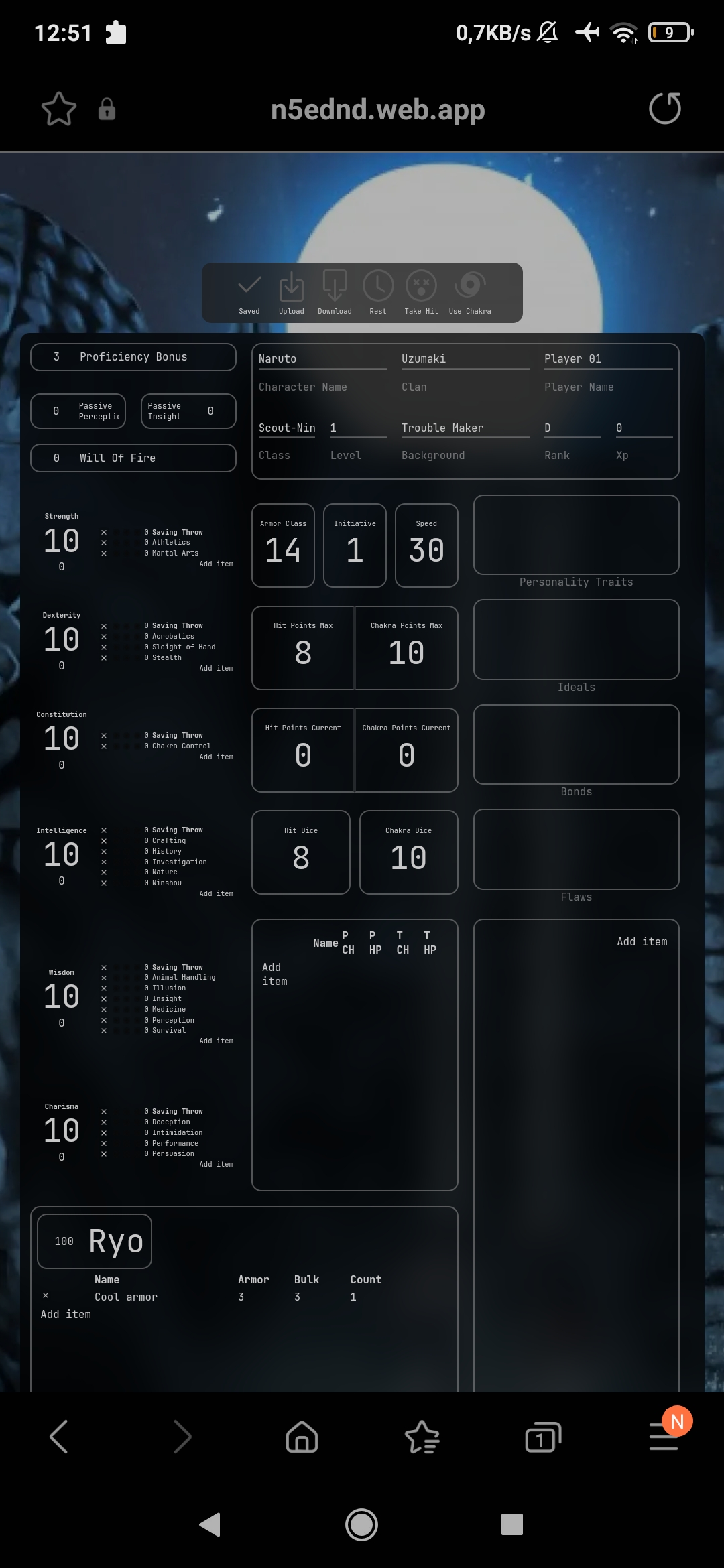

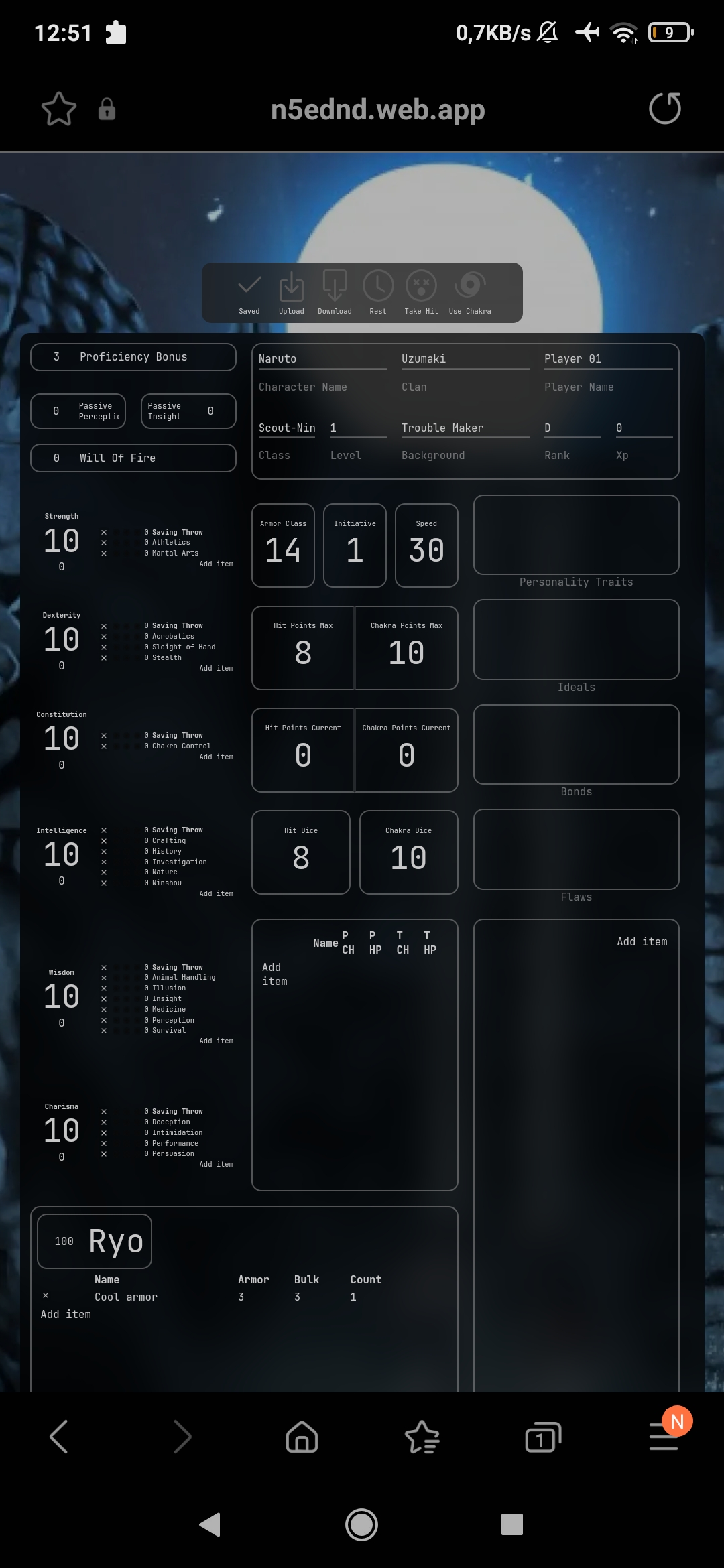

17,091 | 23,593,094,471 | IssuesEvent | 2022-08-23 16:47:43 | Frank-Mayer/n5ednd | https://api.github.com/repos/Frank-Mayer/n5ednd | closed | Samsung Internet Dark mode issue | bug wontfix browser compatibility ui | Checkboxes not shown on Samsung Internet when Darkmode is active. Light mode is working fine.

| True | Samsung Internet Dark mode issue - Checkboxes not shown on Samsung Internet when Darkmode is active. Light mode is working fine.

| comp | samsung internet dark mode issue checkboxes not shown on samsung internet when darkmode is active light mode is working fine | 1 |

17,313 | 23,887,491,062 | IssuesEvent | 2022-09-08 08:50:53 | akuker/RASCSI | https://api.github.com/repos/akuker/RASCSI | closed | Explicit SCSI-1 support: Add support for .hd1 image files | compatibility | rascsi shall support a new image type ".hd1". This type is identical with ".hds" except for the SCSI level and response code format being SCSI-1 instead of SCSI-2.

The alternative approach of being able to (having to ...) configure the SCSI and response code levels would force additional configuration settings on the ... | True | Explicit SCSI-1 support: Add support for .hd1 image files - rascsi shall support a new image type ".hd1". This type is identical with ".hds" except for the SCSI level and response code format being SCSI-1 instead of SCSI-2.

The alternative approach of being able to (having to ...) configure the SCSI and response code ... | comp | explicit scsi support add support for image files rascsi shall support a new image type this type is identical with hds except for the scsi level and response code format being scsi instead of scsi the alternative approach of being able to having to configure the scsi and response code leve... | 1 |

58,810 | 3,091,511,794 | IssuesEvent | 2015-08-26 13:35:18 | pmem/issues | https://api.github.com/repos/pmem/issues | opened | pmemobj: TX_STAGE_ONABORT is not set after fail of allocation/reallocation | Exposure: Medium Priority: 3 medium Type: Bug | Steps to reproduce:

1. Create pool with MIN_POOL_SIZE

2. Start new transaction

3. Use one of functions pmemobj_tx_alloc/zalloc/realloc/zrealloc with size equals range of size_t

4. Check stage

Expected result:

TX_STAGE_ONABORT stage is set, OID_NULL is returned, errno = 12

Current result:

OID_NULL is returne... | 1.0 | pmemobj: TX_STAGE_ONABORT is not set after fail of allocation/reallocation - Steps to reproduce:

1. Create pool with MIN_POOL_SIZE

2. Start new transaction

3. Use one of functions pmemobj_tx_alloc/zalloc/realloc/zrealloc with size equals range of size_t

4. Check stage

Expected result:

TX_STAGE_ONABORT stage is ... | non_comp | pmemobj tx stage onabort is not set after fail of allocation reallocation steps to reproduce create pool with min pool size start new transaction use one of functions pmemobj tx alloc zalloc realloc zrealloc with size equals range of size t check stage expected result tx stage onabort stage is ... | 0 |

11,426 | 13,425,730,112 | IssuesEvent | 2020-09-06 11:29:22 | nicoboss/nsz | https://api.github.com/repos/nicoboss/nsz | closed | install failing on kubuntu 20.04 | compatibility deployment | some errors:

` ERROR: Command errored out with exit status 1:

command: /usr/bin/python3 -c 'import sys, setuptools, tokenize; sys.argv[0] = '"'"'/tmp/pip-install-b1phyb9o/kivy/setup.py'"'"'; __file__='"'"'/tmp/pip-install-b1phyb9o/kivy/setup.py'"'"';f=getattr(tokenize, '"'"'open'"'"', open)(__file__);code=f... | True | install failing on kubuntu 20.04 - some errors:

` ERROR: Command errored out with exit status 1:

command: /usr/bin/python3 -c 'import sys, setuptools, tokenize; sys.argv[0] = '"'"'/tmp/pip-install-b1phyb9o/kivy/setup.py'"'"'; __file__='"'"'/tmp/pip-install-b1phyb9o/kivy/setup.py'"'"';f=getattr(tokenize, '"'... | comp | install failing on kubuntu some errors error command errored out with exit status command usr bin c import sys setuptools tokenize sys argv tmp pip install kivy setup py file tmp pip install kivy setup py f getattr tokenize open open fil... | 1 |

12,238 | 9,659,837,393 | IssuesEvent | 2019-05-20 14:17:13 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Flush the analysis server cache after each bot run | area-infrastructure | We believe that the build bots keep the analysis cache in ~/.dartServer/.analysis-driver and suspect that this cache is not getting deleted on the Flutter/Dart head-head-head bot. As a result, any cached errors are continued to be reported until a file change that invalidates them., leading to problems. We need to have... | 1.0 | Flush the analysis server cache after each bot run - We believe that the build bots keep the analysis cache in ~/.dartServer/.analysis-driver and suspect that this cache is not getting deleted on the Flutter/Dart head-head-head bot. As a result, any cached errors are continued to be reported until a file change that in... | non_comp | flush the analysis server cache after each bot run we believe that the build bots keep the analysis cache in dartserver analysis driver and suspect that this cache is not getting deleted on the flutter dart head head head bot as a result any cached errors are continued to be reported until a file change that in... | 0 |

89,479 | 15,829,500,474 | IssuesEvent | 2021-04-06 11:16:28 | VivekBuzruk/Hygieia | https://api.github.com/repos/VivekBuzruk/Hygieia | closed | CVE-2020-15366 (Medium) detected in ajv-4.11.8.tgz - autoclosed | security vulnerability | ## CVE-2020-15366 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ajv-4.11.8.tgz</b></p></summary>

<p>Another JSON Schema Validator</p>

<p>Library home page: <a href="https://registr... | True | CVE-2020-15366 (Medium) detected in ajv-4.11.8.tgz - autoclosed - ## CVE-2020-15366 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ajv-4.11.8.tgz</b></p></summary>

<p>Another JSON S... | non_comp | cve medium detected in ajv tgz autoclosed cve medium severity vulnerability vulnerable library ajv tgz another json schema validator library home page a href path to dependency file hygieia ui protractor tests package json path to vulnerable library hygieia ui prot... | 0 |

730,765 | 25,188,232,549 | IssuesEvent | 2022-11-11 20:30:52 | jrbrawner/CS478 | https://api.github.com/repos/jrbrawner/CS478 | closed | Create and Implement Search functionality | UserStory Priority 2 3 | AS a USER

I want to be able to search for users, and attributes like graduation year, major, etc

So that I can filter my content for what I am interested in | 1.0 | Create and Implement Search functionality - AS a USER

I want to be able to search for users, and attributes like graduation year, major, etc

So that I can filter my content for what I am interested in | non_comp | create and implement search functionality as a user i want to be able to search for users and attributes like graduation year major etc so that i can filter my content for what i am interested in | 0 |

6,982 | 9,257,248,503 | IssuesEvent | 2019-03-17 03:55:41 | darlinghq/darling | https://api.github.com/repos/darlinghq/darling | closed | Xcode 9 and 10 need some stubs | Application Compatibility | This prevents running Xcode 9 CLI tools or any other recent Swift version. | True | Xcode 9 and 10 need some stubs - This prevents running Xcode 9 CLI tools or any other recent Swift version. | comp | xcode and need some stubs this prevents running xcode cli tools or any other recent swift version | 1 |

19,659 | 27,298,916,886 | IssuesEvent | 2023-02-23 23:09:50 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | closed | Support nullable pandas dtypes in `confusion_matrix` | New Feature Pandas compatibility | ### Describe the workflow you want to enable

I would like to be able to pass the nullable pandas dtypes ("Int64", "Float64", "boolean") into sklearn's `confusion_matrix` function. Because the dtypes become object dtype when converted to numpy arrays we get `ValueError: Classification metrics can't handle a mix of unkn... | True | Support nullable pandas dtypes in `confusion_matrix` - ### Describe the workflow you want to enable

I would like to be able to pass the nullable pandas dtypes ("Int64", "Float64", "boolean") into sklearn's `confusion_matrix` function. Because the dtypes become object dtype when converted to numpy arrays we get `ValueE... | comp | support nullable pandas dtypes in confusion matrix describe the workflow you want to enable i would like to be able to pass the nullable pandas dtypes boolean into sklearn s confusion matrix function because the dtypes become object dtype when converted to numpy arrays we get valueerror clas... | 1 |

191,410 | 22,215,771,526 | IssuesEvent | 2022-06-08 01:22:05 | ShaikUsaf/linux-3.0.35 | https://api.github.com/repos/ShaikUsaf/linux-3.0.35 | opened | CVE-2015-8966 (High) detected in linux-stable-rtv3.8.6 | security vulnerability | ## CVE-2015-8966 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page:... | True | CVE-2015-8966 (High) detected in linux-stable-rtv3.8.6 - ## CVE-2015-8966 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

<p>Julia Cartwrigh... | non_comp | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in base branch master vulnerable source files arch arm kernel ... | 0 |

16,068 | 21,394,258,028 | IssuesEvent | 2022-04-21 10:02:06 | PluginBugs/Issues-ItemsAdder | https://api.github.com/repos/PluginBugs/Issues-ItemsAdder | closed | Mimic: New API implementation registering mechanism | Compatibility with other plugin | Hm. It looks like you've not migrated to the new mechanism of API imlementations registering. I see the following message on ItemsAdapter enable:

```

[Mimic] Service ru.endlesscode.mimic.items.BukkitItemsRegistry with id 'ia' registered in deprecated way.

Please ask the ItemsAdder authors (LoneDev) to migrate

to ... | True | Mimic: New API implementation registering mechanism - Hm. It looks like you've not migrated to the new mechanism of API imlementations registering. I see the following message on ItemsAdapter enable:

```

[Mimic] Service ru.endlesscode.mimic.items.BukkitItemsRegistry with id 'ia' registered in deprecated way.