Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 2 430 | labels stringlengths 4 347 | body stringlengths 5 237k | index stringclasses 7

values | text_combine stringlengths 96 237k | label stringclasses 2

values | text stringlengths 96 219k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

37,692 | 8,474,807,754 | IssuesEvent | 2018-10-24 17:09:09 | brainvisa/testbidon | https://api.github.com/repos/brainvisa/testbidon | opened | volume: segfault in VolumeRef copy constructor | Category: soma-io Component: Resolution Priority: Normal Status: New Tracker: Defect | ---

Author Name: **Souedet, Nicolas** (Souedet, Nicolas)

Original Redmine Issue: 15714, https://bioproj.extra.cea.fr/redmine/issues/15714

Original Date: 2016-11-14

---

Here is a simple example that was working a few days ago, but that generates segfault now:

```

//--- cartobase --------------------------------------------------------------

#include <cartobase/allocator/allocator.h>

//--- cartodata --------------------------------------------------------------

#include <cartodata/volume/volume.h>

//----------------------------------------------------------------------------

using namespace std;

using namespace carto;

int main(int argc, char** argv) {

VolumeRef<float> fullVolume;

fullVolume = Volume<float>(1, 1, 1, 1, AllocatorContext(), false);

return 0;

}

```

It seems to append during affectation in an implicit conversion. It goes through the all() method that converts a volume to a single boolean value but the volume is not allocated in this case, so it tries to access unallocated memory.

| 1.0 | volume: segfault in VolumeRef copy constructor - ---

Author Name: **Souedet, Nicolas** (Souedet, Nicolas)

Original Redmine Issue: 15714, https://bioproj.extra.cea.fr/redmine/issues/15714

Original Date: 2016-11-14

---

Here is a simple example that was working a few days ago, but that generates segfault now:

```

//--- cartobase --------------------------------------------------------------

#include <cartobase/allocator/allocator.h>

//--- cartodata --------------------------------------------------------------

#include <cartodata/volume/volume.h>

//----------------------------------------------------------------------------

using namespace std;

using namespace carto;

int main(int argc, char** argv) {

VolumeRef<float> fullVolume;

fullVolume = Volume<float>(1, 1, 1, 1, AllocatorContext(), false);

return 0;

}

```

It seems to append during affectation in an implicit conversion. It goes through the all() method that converts a volume to a single boolean value but the volume is not allocated in this case, so it tries to access unallocated memory.

| non_comp | volume segfault in volumeref copy constructor author name souedet nicolas souedet nicolas original redmine issue original date here is a simple example that was working a few days ago but that generates segfault now cartobase include cartodata include using namespace std using namespace carto int main int argc char argv volumeref fullvolume fullvolume volume allocatorcontext false return it seems to append during affectation in an implicit conversion it goes through the all method that converts a volume to a single boolean value but the volume is not allocated in this case so it tries to access unallocated memory | 0 |

5,807 | 8,249,236,255 | IssuesEvent | 2018-09-11 20:55:29 | kwaschny/unwanted-twitch | https://api.github.com/repos/kwaschny/unwanted-twitch | closed | Extension not working on Waterfox? | incompatibility wontfix | Hi, I tried this extension on latest Waterfox portable: https://storage-waterfox.netdna-ssl.com/releases/win64/portable/WaterfoxPortable_56.2.2_English.paf.exe

But nothing appears on the address bar or on Twitch.

Maybe it's just incompatible with this version which is using an old Firefox source? | True | Extension not working on Waterfox? - Hi, I tried this extension on latest Waterfox portable: https://storage-waterfox.netdna-ssl.com/releases/win64/portable/WaterfoxPortable_56.2.2_English.paf.exe

But nothing appears on the address bar or on Twitch.

Maybe it's just incompatible with this version which is using an old Firefox source? | comp | extension not working on waterfox hi i tried this extension on latest waterfox portable but nothing appears on the address bar or on twitch maybe it s just incompatible with this version which is using an old firefox source | 1 |

589,576 | 17,753,645,365 | IssuesEvent | 2021-08-28 09:55:38 | Biologer/Biologer | https://api.github.com/repos/Biologer/Biologer | opened | Coordinate should not have more than 6 decimal places | enhancement priority:low | There is no need for more than 6 decimal places in coordinates. I. e. after user pick a location on the map, the software should round the number to 6 decimal places. | 1.0 | Coordinate should not have more than 6 decimal places - There is no need for more than 6 decimal places in coordinates. I. e. after user pick a location on the map, the software should round the number to 6 decimal places. | non_comp | coordinate should not have more than decimal places there is no need for more than decimal places in coordinates i e after user pick a location on the map the software should round the number to decimal places | 0 |

14,411 | 17,383,054,849 | IssuesEvent | 2021-08-01 04:42:20 | jbredwards/Fluidlogged-API | https://api.github.com/repos/jbredwards/Fluidlogged-API | closed | completely opaque water (with fluidlogged api + optifine + better foliage + forgelin) | bug incompatibility | not sure if its because im using the dev build you sent me or not. but my water is now completely opaque.

https://i.imgur.com/lwI8Qxn.png

| True | completely opaque water (with fluidlogged api + optifine + better foliage + forgelin) - not sure if its because im using the dev build you sent me or not. but my water is now completely opaque.

https://i.imgur.com/lwI8Qxn.png

| comp | completely opaque water with fluidlogged api optifine better foliage forgelin not sure if its because im using the dev build you sent me or not but my water is now completely opaque | 1 |

8,404 | 10,426,739,733 | IssuesEvent | 2019-09-16 18:17:48 | ClangBuiltLinux/linux | https://api.github.com/repos/ClangBuiltLinux/linux | closed | -Wincompatible-pointer-types in drivers/net/ethernet/mellanox/mlx5/core/steering/dr_icm_pool.c | -Wincompatible-pointer-types [BUG] linux-next [PATCH] Submitted | ```

drivers/net/ethernet/mellanox/mlx5/core/steering/dr_icm_pool.c:121:8: error: incompatible pointer types passing 'u64 *' (aka 'unsigned long long *') to parameter of type 'phys_addr_t *' (aka 'unsigned int *') [-Werror,-Wincompatible-pointer-types]

&icm_mr->dm.addr, &icm_mr->dm.obj_id);

^~~~~~~~~~~~~~~~

include/linux/mlx5/driver.h:1092:39: note: passing argument to parameter 'addr' here

u64 length, u16 uid, phys_addr_t *addr, u32 *obj_id);

^

1 error generated.

```

Patch sent: https://lore.kernel.org/lkml/20190905021415.8936-1-natechancellor@gmail.com/ | True | -Wincompatible-pointer-types in drivers/net/ethernet/mellanox/mlx5/core/steering/dr_icm_pool.c - ```

drivers/net/ethernet/mellanox/mlx5/core/steering/dr_icm_pool.c:121:8: error: incompatible pointer types passing 'u64 *' (aka 'unsigned long long *') to parameter of type 'phys_addr_t *' (aka 'unsigned int *') [-Werror,-Wincompatible-pointer-types]

&icm_mr->dm.addr, &icm_mr->dm.obj_id);

^~~~~~~~~~~~~~~~

include/linux/mlx5/driver.h:1092:39: note: passing argument to parameter 'addr' here

u64 length, u16 uid, phys_addr_t *addr, u32 *obj_id);

^

1 error generated.

```

Patch sent: https://lore.kernel.org/lkml/20190905021415.8936-1-natechancellor@gmail.com/ | comp | wincompatible pointer types in drivers net ethernet mellanox core steering dr icm pool c drivers net ethernet mellanox core steering dr icm pool c error incompatible pointer types passing aka unsigned long long to parameter of type phys addr t aka unsigned int icm mr dm addr icm mr dm obj id include linux driver h note passing argument to parameter addr here length uid phys addr t addr obj id error generated patch sent | 1 |

461,870 | 13,237,479,685 | IssuesEvent | 2020-08-18 21:46:31 | Railcraft/Railcraft | https://api.github.com/repos/Railcraft/Railcraft | closed | Cart detectors incorrectly detecting max speed locomotive | bug cannot reproduce low priority | **Description of the Bug**

Cart detector will detect a locomotive travelling at max speed when there is an entire block separating it and the locomotive. Importantly, a locking track may exist there.

**To Reproduce**

1. Place segment of normal tracks

2. (Optional) Place locking track (set to any mode)

3. Place Cart Detector (Any) underneath the rail immediately following the locking track

4. Place steam locomotive on track, fill completely with water and coal blocks

5. Turn locomotive to full speed towards locking track

6. Cart detector will emit redstone even though locking track prevents the locomotive from being adjacent

**Expected behavior**

Cart detector should not emit a redstone signal as the locomotive is never adjacent to it.

**Screenshots & Video**

**Logs & Environment**

Environment:

Forge version: forge-1.12.2-14.23.5.2847 downloaded from curseforge with SHA-1: 8398503A59F4EBE1EA878498ADF2B32CB9B470C1

Railcraft version: v12.0.0 downloaded from curseforge with SHA-1: EA2085A509B816BB9A3CDD79F2F44175B588737A

Minecraft version: v1.12.2 (singleplayer creative)

Operating System: Windows

Other mods installed: none

Logs:

Uploaded in 2 parts since text size exceeded 512M:

https://pastebin.com/evnkjnbM

https://pastebin.com/hXgyAAhX

**Additional context**

Sometimes on your first placement, this will not occur. However, this reliably occurs every time after the first occurrence, which is within 1-3 attempts at replicating. This issue does not in fact depend on locking tracks, but can ruin a token based system relying on them.

| 1.0 | Cart detectors incorrectly detecting max speed locomotive - **Description of the Bug**

Cart detector will detect a locomotive travelling at max speed when there is an entire block separating it and the locomotive. Importantly, a locking track may exist there.

**To Reproduce**

1. Place segment of normal tracks

2. (Optional) Place locking track (set to any mode)

3. Place Cart Detector (Any) underneath the rail immediately following the locking track

4. Place steam locomotive on track, fill completely with water and coal blocks

5. Turn locomotive to full speed towards locking track

6. Cart detector will emit redstone even though locking track prevents the locomotive from being adjacent

**Expected behavior**

Cart detector should not emit a redstone signal as the locomotive is never adjacent to it.

**Screenshots & Video**

**Logs & Environment**

Environment:

Forge version: forge-1.12.2-14.23.5.2847 downloaded from curseforge with SHA-1: 8398503A59F4EBE1EA878498ADF2B32CB9B470C1

Railcraft version: v12.0.0 downloaded from curseforge with SHA-1: EA2085A509B816BB9A3CDD79F2F44175B588737A

Minecraft version: v1.12.2 (singleplayer creative)

Operating System: Windows

Other mods installed: none

Logs:

Uploaded in 2 parts since text size exceeded 512M:

https://pastebin.com/evnkjnbM

https://pastebin.com/hXgyAAhX

**Additional context**

Sometimes on your first placement, this will not occur. However, this reliably occurs every time after the first occurrence, which is within 1-3 attempts at replicating. This issue does not in fact depend on locking tracks, but can ruin a token based system relying on them.

| non_comp | cart detectors incorrectly detecting max speed locomotive description of the bug cart detector will detect a locomotive travelling at max speed when there is an entire block separating it and the locomotive importantly a locking track may exist there to reproduce place segment of normal tracks optional place locking track set to any mode place cart detector any underneath the rail immediately following the locking track place steam locomotive on track fill completely with water and coal blocks turn locomotive to full speed towards locking track cart detector will emit redstone even though locking track prevents the locomotive from being adjacent expected behavior cart detector should not emit a redstone signal as the locomotive is never adjacent to it screenshots video logs environment environment forge version forge downloaded from curseforge with sha railcraft version downloaded from curseforge with sha minecraft version singleplayer creative operating system windows other mods installed none logs uploaded in parts since text size exceeded additional context sometimes on your first placement this will not occur however this reliably occurs every time after the first occurrence which is within attempts at replicating this issue does not in fact depend on locking tracks but can ruin a token based system relying on them | 0 |

477,405 | 13,761,648,087 | IssuesEvent | 2020-10-07 08:01:11 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | [0.9.0 staging-1706] Steam tractor harvester should be lifted when turned off | Category: Gameplay Priority: High Status: Fixed Status: Reopen | this part:

Because now I can't move on Ramps:

| 1.0 | [0.9.0 staging-1706] Steam tractor harvester should be lifted when turned off - this part:

Because now I can't move on Ramps:

| non_comp | steam tractor harvester should be lifted when turned off this part because now i can t move on ramps | 0 |

100,580 | 16,489,912,012 | IssuesEvent | 2021-05-25 01:09:29 | billmcchesney1/flowgate | https://api.github.com/repos/billmcchesney1/flowgate | opened | CVE-2021-31597 (High) detected in xmlhttprequest-ssl-1.5.5.tgz | security vulnerability | ## CVE-2021-31597 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlhttprequest-ssl-1.5.5.tgz</b></p></summary>

<p>XMLHttpRequest for Node</p>

<p>Library home page: <a href="https://registry.npmjs.org/xmlhttprequest-ssl/-/xmlhttprequest-ssl-1.5.5.tgz">https://registry.npmjs.org/xmlhttprequest-ssl/-/xmlhttprequest-ssl-1.5.5.tgz</a></p>

<p>Path to dependency file: flowgate/ui/package.json</p>

<p>Path to vulnerable library: flowgate/ui/node_modules/xmlhttprequest-ssl/package.json</p>

<p>

Dependency Hierarchy:

- karma-5.0.9.tgz (Root Library)

- socket.io-2.3.0.tgz

- socket.io-client-2.3.0.tgz

- engine.io-client-3.4.4.tgz

- :x: **xmlhttprequest-ssl-1.5.5.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The xmlhttprequest-ssl package before 1.6.1 for Node.js disables SSL certificate validation by default, because rejectUnauthorized (when the property exists but is undefined) is considered to be false within the https.request function of Node.js. In other words, no certificate is ever rejected.

<p>Publish Date: 2021-04-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-31597>CVE-2021-31597</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-31597">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-31597</a></p>

<p>Release Date: 2021-04-23</p>

<p>Fix Resolution: xmlhttprequest-ssl - 1.6.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"xmlhttprequest-ssl","packageVersion":"1.5.5","packageFilePaths":["/ui/package.json"],"isTransitiveDependency":true,"dependencyTree":"karma:5.0.9;socket.io:2.3.0;socket.io-client:2.3.0;engine.io-client:3.4.4;xmlhttprequest-ssl:1.5.5","isMinimumFixVersionAvailable":true,"minimumFixVersion":"xmlhttprequest-ssl - 1.6.1"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-31597","vulnerabilityDetails":"The xmlhttprequest-ssl package before 1.6.1 for Node.js disables SSL certificate validation by default, because rejectUnauthorized (when the property exists but is undefined) is considered to be false within the https.request function of Node.js. In other words, no certificate is ever rejected.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-31597","cvss3Severity":"high","cvss3Score":"9.4","cvss3Metrics":{"A":"Low","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | CVE-2021-31597 (High) detected in xmlhttprequest-ssl-1.5.5.tgz - ## CVE-2021-31597 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xmlhttprequest-ssl-1.5.5.tgz</b></p></summary>

<p>XMLHttpRequest for Node</p>

<p>Library home page: <a href="https://registry.npmjs.org/xmlhttprequest-ssl/-/xmlhttprequest-ssl-1.5.5.tgz">https://registry.npmjs.org/xmlhttprequest-ssl/-/xmlhttprequest-ssl-1.5.5.tgz</a></p>

<p>Path to dependency file: flowgate/ui/package.json</p>

<p>Path to vulnerable library: flowgate/ui/node_modules/xmlhttprequest-ssl/package.json</p>

<p>

Dependency Hierarchy:

- karma-5.0.9.tgz (Root Library)

- socket.io-2.3.0.tgz

- socket.io-client-2.3.0.tgz

- engine.io-client-3.4.4.tgz

- :x: **xmlhttprequest-ssl-1.5.5.tgz** (Vulnerable Library)

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The xmlhttprequest-ssl package before 1.6.1 for Node.js disables SSL certificate validation by default, because rejectUnauthorized (when the property exists but is undefined) is considered to be false within the https.request function of Node.js. In other words, no certificate is ever rejected.

<p>Publish Date: 2021-04-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-31597>CVE-2021-31597</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>9.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: Low

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-31597">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-31597</a></p>

<p>Release Date: 2021-04-23</p>

<p>Fix Resolution: xmlhttprequest-ssl - 1.6.1</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"xmlhttprequest-ssl","packageVersion":"1.5.5","packageFilePaths":["/ui/package.json"],"isTransitiveDependency":true,"dependencyTree":"karma:5.0.9;socket.io:2.3.0;socket.io-client:2.3.0;engine.io-client:3.4.4;xmlhttprequest-ssl:1.5.5","isMinimumFixVersionAvailable":true,"minimumFixVersion":"xmlhttprequest-ssl - 1.6.1"}],"baseBranches":["master"],"vulnerabilityIdentifier":"CVE-2021-31597","vulnerabilityDetails":"The xmlhttprequest-ssl package before 1.6.1 for Node.js disables SSL certificate validation by default, because rejectUnauthorized (when the property exists but is undefined) is considered to be false within the https.request function of Node.js. In other words, no certificate is ever rejected.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-31597","cvss3Severity":"high","cvss3Score":"9.4","cvss3Metrics":{"A":"Low","AC":"Low","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | non_comp | cve high detected in xmlhttprequest ssl tgz cve high severity vulnerability vulnerable library xmlhttprequest ssl tgz xmlhttprequest for node library home page a href path to dependency file flowgate ui package json path to vulnerable library flowgate ui node modules xmlhttprequest ssl package json dependency hierarchy karma tgz root library socket io tgz socket io client tgz engine io client tgz x xmlhttprequest ssl tgz vulnerable library found in base branch master vulnerability details the xmlhttprequest ssl package before for node js disables ssl certificate validation by default because rejectunauthorized when the property exists but is undefined is considered to be false within the https request function of node js in other words no certificate is ever rejected publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact low for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution xmlhttprequest ssl isopenpronvulnerability true ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree karma socket io socket io client engine io client xmlhttprequest ssl isminimumfixversionavailable true minimumfixversion xmlhttprequest ssl basebranches vulnerabilityidentifier cve vulnerabilitydetails the xmlhttprequest ssl package before for node js disables ssl certificate validation by default because rejectunauthorized when the property exists but is undefined is considered to be false within the https request function of node js in other words no certificate is ever rejected vulnerabilityurl | 0 |

129,325 | 12,404,656,606 | IssuesEvent | 2020-05-21 15:54:15 | Bionus/imgbrd-grabber | https://api.github.com/repos/Bionus/imgbrd-grabber | closed | Curious about keyboard shortcuts and image preview adjustments | documentation question | I looked around but couldn't find anything detailing keyboard shortcuts. I especially can't find anything that says how you can deselect all the images you have selected (via ctrl+click). additionally, is there any way to make the image previews in the search results area larger? I dug through settings but didn't really see anything for this. | 1.0 | Curious about keyboard shortcuts and image preview adjustments - I looked around but couldn't find anything detailing keyboard shortcuts. I especially can't find anything that says how you can deselect all the images you have selected (via ctrl+click). additionally, is there any way to make the image previews in the search results area larger? I dug through settings but didn't really see anything for this. | non_comp | curious about keyboard shortcuts and image preview adjustments i looked around but couldn t find anything detailing keyboard shortcuts i especially can t find anything that says how you can deselect all the images you have selected via ctrl click additionally is there any way to make the image previews in the search results area larger i dug through settings but didn t really see anything for this | 0 |

2,112 | 4,839,079,147 | IssuesEvent | 2016-11-09 07:53:34 | synfig/synfig | https://api.github.com/repos/synfig/synfig | opened | Gradient: allow editing as list and add control to animating it | Compatibility Core Editing Feature request GUI | It would be nice to be able to edit gradient directly in parameters dock as it's currently possible e.g. with curves.

Also current way of how gradients interpolate is pretty limiting: it seems to be impossible to animate moving gradient control points because the result is that new points are created on tweens instead. | True | Gradient: allow editing as list and add control to animating it - It would be nice to be able to edit gradient directly in parameters dock as it's currently possible e.g. with curves.

Also current way of how gradients interpolate is pretty limiting: it seems to be impossible to animate moving gradient control points because the result is that new points are created on tweens instead. | comp | gradient allow editing as list and add control to animating it it would be nice to be able to edit gradient directly in parameters dock as it s currently possible e g with curves also current way of how gradients interpolate is pretty limiting it seems to be impossible to animate moving gradient control points because the result is that new points are created on tweens instead | 1 |

132,601 | 10,760,532,027 | IssuesEvent | 2019-10-31 18:47:54 | PulpQE/pulp-smash | https://api.github.com/repos/PulpQE/pulp-smash | closed | Test file repo with basic auth | Issue Type: Test Case pulp 2 - closed - wontfix | https://pulp.plan.io/issues/2720

1. Create file repo with basic auth

2. Run repo sync

3. Assert: Sync is successful | 1.0 | Test file repo with basic auth - https://pulp.plan.io/issues/2720

1. Create file repo with basic auth

2. Run repo sync

3. Assert: Sync is successful | non_comp | test file repo with basic auth create file repo with basic auth run repo sync assert sync is successful | 0 |

3,815 | 6,668,759,303 | IssuesEvent | 2017-10-03 16:53:10 | jupyterlab/jupyterlab | https://api.github.com/repos/jupyterlab/jupyterlab | closed | can not use codeMirror's search or replace | pkg:fileeditor tag:Browser Compatibility type:Bug | ctrl + F or ctrl + shift + R, they can not be used . I can not input, it is like this:

| True | can not use codeMirror's search or replace - ctrl + F or ctrl + shift + R, they can not be used . I can not input, it is like this:

| comp | can not use codemirror s search or replace ctrl f or ctrl shift r, they can not be used i can not input it is like this | 1 |

164,409 | 25,961,602,418 | IssuesEvent | 2022-12-19 00:01:42 | JuliaDynamics/Entropies.jl | https://api.github.com/repos/JuliaDynamics/Entropies.jl | closed | `IndirectEntropy` should not subtype `Entropy`. | discussion-design | While getting CausalityTools ready for Entropies v2, I encountered the following issue:

There are now two ways of computing entropies: either

- `entropy(e::Entropy, x, est::ProbabilitiesEstimator)`

- `entropy(e::IndirectEntropy, x)`.

I have two problems with this:

### 1. The indirect entropies are not *entropies*.

They are types indicating that a certain type of entropy is being estimated using a certain numerical procedure. A much more natural choice would be to have just one signature `entropy(e::Entropy, x, est::Union{ProbabilitiesEstimator, EntropyEstimator}`, where `EntropyEstimator` is what we now call `IndirectEntropy`.

The API should be predictable, and the types should be named in a way that reflects what is actually going on. I argue that `IndirectEntropy` is a bit misleading, because it can be confused with `Entropy`, which is something else entirely. Actually, now we equate (through the type hierarchy) an entropic quantity with its estimator. But the former is used to *estimate* the latter. They should be different things.

We already make this distinction for probabilities. `Probabilities` is the quantity being computed, while `ProbabilitiesEstimator` is the procedure used to estimate it. `Entropy`s should have the corresponding quantity `EntropyEstimator` that computes it.

Thus:

- `entropy(::Kraskov, x)` should be `entropy(::Shannon, x, ::Kraskov)`.

- For example, if the `Kraskov` estimator can be used to estimate some other entropy, then dispatch must be implemented for that particular entropy, i.e. `entropy(::SomeOtherEntropy, x, ::Kraskov)`.

### 2. Two distinct signatures lead to code duplication

The way we've designed it now, I have to duplicate source code using `entropy` where I want to be able to input both probability estimators and entropy estimators. I have to make:

- One method for `ProbabilitiesEstimator` (which has one order of arguments) and,

- Aother method for `IndirectEntropy`. I *can*, of course, write wrapper functions and circumvent this issue. But that shouldn't be necessary.

| 1.0 | `IndirectEntropy` should not subtype `Entropy`. - While getting CausalityTools ready for Entropies v2, I encountered the following issue:

There are now two ways of computing entropies: either

- `entropy(e::Entropy, x, est::ProbabilitiesEstimator)`

- `entropy(e::IndirectEntropy, x)`.

I have two problems with this:

### 1. The indirect entropies are not *entropies*.

They are types indicating that a certain type of entropy is being estimated using a certain numerical procedure. A much more natural choice would be to have just one signature `entropy(e::Entropy, x, est::Union{ProbabilitiesEstimator, EntropyEstimator}`, where `EntropyEstimator` is what we now call `IndirectEntropy`.

The API should be predictable, and the types should be named in a way that reflects what is actually going on. I argue that `IndirectEntropy` is a bit misleading, because it can be confused with `Entropy`, which is something else entirely. Actually, now we equate (through the type hierarchy) an entropic quantity with its estimator. But the former is used to *estimate* the latter. They should be different things.

We already make this distinction for probabilities. `Probabilities` is the quantity being computed, while `ProbabilitiesEstimator` is the procedure used to estimate it. `Entropy`s should have the corresponding quantity `EntropyEstimator` that computes it.

Thus:

- `entropy(::Kraskov, x)` should be `entropy(::Shannon, x, ::Kraskov)`.

- For example, if the `Kraskov` estimator can be used to estimate some other entropy, then dispatch must be implemented for that particular entropy, i.e. `entropy(::SomeOtherEntropy, x, ::Kraskov)`.

### 2. Two distinct signatures lead to code duplication

The way we've designed it now, I have to duplicate source code using `entropy` where I want to be able to input both probability estimators and entropy estimators. I have to make:

- One method for `ProbabilitiesEstimator` (which has one order of arguments) and,

- Aother method for `IndirectEntropy`. I *can*, of course, write wrapper functions and circumvent this issue. But that shouldn't be necessary.

| non_comp | indirectentropy should not subtype entropy while getting causalitytools ready for entropies i encountered the following issue there are now two ways of computing entropies either entropy e entropy x est probabilitiesestimator entropy e indirectentropy x i have two problems with this the indirect entropies are not entropies they are types indicating that a certain type of entropy is being estimated using a certain numerical procedure a much more natural choice would be to have just one signature entropy e entropy x est union probabilitiesestimator entropyestimator where entropyestimator is what we now call indirectentropy the api should be predictable and the types should be named in a way that reflects what is actually going on i argue that indirectentropy is a bit misleading because it can be confused with entropy which is something else entirely actually now we equate through the type hierarchy an entropic quantity with its estimator but the former is used to estimate the latter they should be different things we already make this distinction for probabilities probabilities is the quantity being computed while probabilitiesestimator is the procedure used to estimate it entropy s should have the corresponding quantity entropyestimator that computes it thus entropy kraskov x should be entropy shannon x kraskov for example if the kraskov estimator can be used to estimate some other entropy then dispatch must be implemented for that particular entropy i e entropy someotherentropy x kraskov two distinct signatures lead to code duplication the way we ve designed it now i have to duplicate source code using entropy where i want to be able to input both probability estimators and entropy estimators i have to make one method for probabilitiesestimator which has one order of arguments and aother method for indirectentropy i can of course write wrapper functions and circumvent this issue but that shouldn t be necessary | 0 |

58,448 | 7,154,765,394 | IssuesEvent | 2018-01-26 09:55:16 | lalrpop/lalrpop | https://api.github.com/repos/lalrpop/lalrpop | closed | larger grammars generate a LOT of code | design-work-needed urgent | Hello,

i try to parse php expressions using lalrpop:

https://github.com/timglabisch/rustphp/blob/c79060d6495a55174fc2ad5710d5774d8ec94d67/src/calculator1.lalrpop

my problem is that cargo run becomes incredible slow:

time cargo run

1234.76s user 19.88s system 99% cpu 20:58.78 total

the file is very huge (~50mb):

cat src/calculator1.rs | wc -l

1044621

is there something fundamentally wrong or is this expected?

| 1.0 | larger grammars generate a LOT of code - Hello,

i try to parse php expressions using lalrpop:

https://github.com/timglabisch/rustphp/blob/c79060d6495a55174fc2ad5710d5774d8ec94d67/src/calculator1.lalrpop

my problem is that cargo run becomes incredible slow:

time cargo run

1234.76s user 19.88s system 99% cpu 20:58.78 total

the file is very huge (~50mb):

cat src/calculator1.rs | wc -l

1044621

is there something fundamentally wrong or is this expected?

| non_comp | larger grammars generate a lot of code hello i try to parse php expressions using lalrpop my problem is that cargo run becomes incredible slow time cargo run user system cpu total the file is very huge cat src rs wc l is there something fundamentally wrong or is this expected | 0 |

15,364 | 19,590,459,886 | IssuesEvent | 2022-01-05 12:22:04 | TycheSoftwares/Print-Invoice-Delivery-Notes-for-WooCommerce | https://api.github.com/repos/TycheSoftwares/Print-Invoice-Delivery-Notes-for-WooCommerce | closed | Quantity column shows incorrect in the print invoice when working with WC Composite products | type :bug client issue type: compatibility | The issue is when we work with the Composite products, the quantity column in the print invoice is showing incorrect.

Steps to reproduce:

1) Create a composite product

2) Add 3 components or child products to it.

3) Place an order

4) print the invoice for that order and you will see 4 in the quantity column. This means the Original Composite product is also being added to the count.

However, the quantity should be 3 i.e the count of only the child products. The same issue is being faced when we work with the WC Product Bundle plugin.

Link: https://wordpress.org/support/topic/wrong-total-quantity-due-to-composite-bundle/#post-13542652 | True | Quantity column shows incorrect in the print invoice when working with WC Composite products - The issue is when we work with the Composite products, the quantity column in the print invoice is showing incorrect.

Steps to reproduce:

1) Create a composite product

2) Add 3 components or child products to it.

3) Place an order

4) print the invoice for that order and you will see 4 in the quantity column. This means the Original Composite product is also being added to the count.

However, the quantity should be 3 i.e the count of only the child products. The same issue is being faced when we work with the WC Product Bundle plugin.

Link: https://wordpress.org/support/topic/wrong-total-quantity-due-to-composite-bundle/#post-13542652 | comp | quantity column shows incorrect in the print invoice when working with wc composite products the issue is when we work with the composite products the quantity column in the print invoice is showing incorrect steps to reproduce create a composite product add components or child products to it place an order print the invoice for that order and you will see in the quantity column this means the original composite product is also being added to the count however the quantity should be i e the count of only the child products the same issue is being faced when we work with the wc product bundle plugin link | 1 |

55,425 | 14,451,298,226 | IssuesEvent | 2020-12-08 10:46:36 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | opened | Severe error calculated density of air is negative | Defect | Issue overview

--------------

user reported on unmet hours that he got the following error:

```

** Severe ** PsyRhoAirFnPbTdbW: RhoAir (Density of Air) is calculated <= 0 [-9.04677].

** ~~~ ** pb =[101100.00], tdb=[-312.09], w=[0.0000000].

** ~~~ ** Routine=CorrectZoneHumRat, During Warmup, Environment=DSDAYTANGIER COOLING 0.4%, at Simulation time=08/21 00:12 - 00:13

** Fatal ** Program terminates due to preceding condition.

```

user supplied the file, which was at E+ 8.7. I updated the file to v9.4 and instead of getting this severe I am getting an actual crash, which isn't something we want to happen, even if it is later found the defect file had problems.

```

Performing Zone Sizing Simulation

...for Sizing Period: #1 DSDAYTANGIER COOLING 0.4%

Warming up

Warming up

Warming up

Performing Zone Sizing Simulation

...for Sizing Period: #2 DSDAYTANGIER HEATING 99.6%

Calculating System sizing

...for Sizing Period: #1 DSDAYTANGIER COOLING 0.4%

Calculating System sizing

...for Sizing Period: #2 DSDAYTANGIER HEATING 99.6%

Adjusting Air System Sizing

Adjusting Standard 62.1 Ventilation Sizing

Initializing Simulation

double free or corruption (out)

Aborted (core dumped)

```

### Details

Some additional details for this issue (if relevant):

- Platform (Operating system, version)

- Version of EnergyPlus (if using an intermediate build, include SHA)

- Unmethours link: https://unmethours.com/question/49383/severe-error-calculated-density-of-air-is-negative/

Backtrace

```

(lldb) bt

* thread #1, name = 'energyplus', stop reason = hit program assert

frame #0: 0x00007ffff11c7fb7 libc.so.6`__GI_raise(sig=<unavailable>) at raise.c:51

frame #1: 0x00007ffff11c9921 libc.so.6`__GI_abort at abort.c:79

frame #2: 0x00007ffff11b948a libc.so.6`__assert_fail_base(fmt="%s%s%s:%u: %s%sAssertion `%s' failed.\n%n", assertion="contains( i )", file="/home/julien/Software/Others/EnergyPlus/third_party/ObjexxFCL/src/ObjexxFCL/Array1.hh", line=1172, function="T& ObjexxFCL::Array1< <template-parameter-1-1> >::operator()(int) [with T = EnergyPlus::WaterThermalTanks::StratifiedNodeData]") at assert.c:92

frame #3: 0x00007ffff11b9502 libc.so.6`__GI___assert_fail(assertion=<unavailable>, file=<unavailable>, line=<unavailable>, function=<unavailable>) at assert.c:101

* frame #4: 0x00007ffff4d1a4b1 libenergyplusapi.so.9.4.0`ObjexxFCL::Array1<EnergyPlus::WaterThermalTanks::StratifiedNodeData>::operator(this=0x00005555564ff530, i=0)(int) at Array1.hh:1172

frame #5: 0x00007ffff4cea195 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::CalcWaterThermalTankStratified(this=0x00005555564ff100, state=0x00007fffffffbdf0) at WaterThermalTanks.cc:7218

frame #6: 0x00007ffff4d11882 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::CalcStandardRatings(this=0x00005555564ff100, state=0x00007fffffffbdf0) at WaterThermalTanks.cc:11451

frame #7: 0x00007ffff4ce47d4 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::initialize(this=0x00005555564ff100, state=0x00007fffffffbdf0, FirstHVACIteration=true) at WaterThermalTanks.cc:6183

frame #8: 0x00007ffff4c8c218 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::onInitLoopEquip(this=0x00005555564ff100, state=0x00007fffffffbdf0, calledFromLocation=0x0000555555e45098) at WaterThermalTanks.cc:164

frame #9: 0x00007ffff5ffa702 libenergyplusapi.so.9.4.0`EnergyPlus::DataPlant::CompData::simulate(this=0x0000555555e44f00, state=0x00007fffffffbdf0, FirstHVACIteration=true, InitLoopEquip=0x00007ffff7d6fbdf, GetCompSizFac=true) at Component.cc:119

frame #10: 0x00007ffff5cf1f1a libenergyplusapi.so.9.4.0`EnergyPlus::PlantManager::InitializeLoops(state=0x00007fffffffbdf0, FirstHVACIteration=true) at PlantManager.cc:2204

frame #11: 0x00007ffff5cdbd1a libenergyplusapi.so.9.4.0`EnergyPlus::PlantManager::ManagePlantLoops(state=0x00007fffffffbdf0, FirstHVACIteration=true, SimAirLoops=0x00007ffff7d77439, SimZoneEquipment=0x00007ffff7d7743c, SimNonZoneEquipment=0x00007ffff7d7743d, SimPlantLoops=0x00007ffff7d7743b, SimElecCircuits=0x00007ffff7d7743a) at PlantManager.cc:222

frame #12: 0x00007ffff5827b00 libenergyplusapi.so.9.4.0`EnergyPlus::HVACManager::SimSelectedEquipment(state=0x00007fffffffbdf0, SimAirLoops=0x00007ffff7d77439, SimZoneEquipment=0x00007ffff7d7743c, SimNonZoneEquipment=0x00007ffff7d7743d, SimPlantLoops=0x00007ffff7d7743b, SimElecCircuits=0x00007ffff7d7743a, FirstHVACIteration=0x00007fffffffae2e, LockPlantFlows=false) at HVACManager.cc:1832

frame #13: 0x00007ffff581d054 libenergyplusapi.so.9.4.0`EnergyPlus::HVACManager::SimHVAC(state=0x00007fffffffbdf0) at HVACManager.cc:842

frame #14: 0x00007ffff58197f4 libenergyplusapi.so.9.4.0`EnergyPlus::HVACManager::ManageHVAC(state=0x00007fffffffbdf0) at HVACManager.cc:358

frame #15: 0x00007ffff5a1457a libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceAirManager::CalcHeatBalanceAir(state=0x00007fffffffbdf0) at HeatBalanceAirManager.cc:4356

frame #16: 0x00007ffff59b9f7f libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceAirManager::ManageAirHeatBalance(state=0x00007fffffffbdf0) at HeatBalanceAirManager.cc:204

frame #17: 0x00007ffff3e451ee libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceSurfaceManager::ManageSurfaceHeatBalance(state=0x00007fffffffbdf0) at HeatBalanceSurfaceManager.cc:281

frame #18: 0x00007ffff5a33c72 libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceManager::ManageHeatBalance(state=0x00007fffffffbdf0) at HeatBalanceManager.cc:363

frame #19: 0x00007ffff47f9269 libenergyplusapi.so.9.4.0`EnergyPlus::SimulationManager::SetupSimulation(state=0x00007fffffffbdf0, ErrorsFound=0x00007fffffffbc23) at SimulationManager.cc:2111

frame #20: 0x00007ffff47ea196 libenergyplusapi.so.9.4.0`EnergyPlus::SimulationManager::ManageSimulation(state=0x00007fffffffbdf0) at SimulationManager.cc:366

frame #21: 0x00007ffff3a08570 libenergyplusapi.so.9.4.0`RunEnergyPlus(state=0x00007fffffffbdf0, filepath="\xe0\xbd\xff\xff\xff\U0000007f"...) at EnergyPlusPgm.cc:400

frame #22: 0x00007ffff3a07a17 libenergyplusapi.so.9.4.0`EnergyPlusPgm(state=0x00007fffffffbdf0, filepath="\xe0\xbd\xff\xff\xff\U0000007f"...) at EnergyPlusPgm.cc:224

frame #23: 0x000055555578ef66 energyplus`main(argc=6, argv=0x00007fffffffccc8) at main.cc:60

frame #24: 0x00007ffff11aabf7 libc.so.6`__libc_start_main(main=(energyplus`main at main.cc:56), argc=6, argv=0x00007fffffffccc8, init=<unavailable>, fini=<unavailable>, rtld_fini=<unavailable>, stack_end=0x00007fffffffccb8) at libc-start.c:310

frame #25: 0x000055555578ec8a energyplus`_start + 42

```

### Checklist

Add to this list or remove from it as applicable. This is a simple templated set of guidelines.

- [x] Defect file added (list location of defect file here)

- [ ] Ticket added to Pivotal for defect (development team task)

- [ ] Pull request created (the pull request will have additional tasks related to reviewing changes that fix this defect)

| 1.0 | Severe error calculated density of air is negative - Issue overview

--------------

user reported on unmet hours that he got the following error:

```

** Severe ** PsyRhoAirFnPbTdbW: RhoAir (Density of Air) is calculated <= 0 [-9.04677].

** ~~~ ** pb =[101100.00], tdb=[-312.09], w=[0.0000000].

** ~~~ ** Routine=CorrectZoneHumRat, During Warmup, Environment=DSDAYTANGIER COOLING 0.4%, at Simulation time=08/21 00:12 - 00:13

** Fatal ** Program terminates due to preceding condition.

```

user supplied the file, which was at E+ 8.7. I updated the file to v9.4 and instead of getting this severe I am getting an actual crash, which isn't something we want to happen, even if it is later found the defect file had problems.

```

Performing Zone Sizing Simulation

...for Sizing Period: #1 DSDAYTANGIER COOLING 0.4%

Warming up

Warming up

Warming up

Performing Zone Sizing Simulation

...for Sizing Period: #2 DSDAYTANGIER HEATING 99.6%

Calculating System sizing

...for Sizing Period: #1 DSDAYTANGIER COOLING 0.4%

Calculating System sizing

...for Sizing Period: #2 DSDAYTANGIER HEATING 99.6%

Adjusting Air System Sizing

Adjusting Standard 62.1 Ventilation Sizing

Initializing Simulation

double free or corruption (out)

Aborted (core dumped)

```

### Details

Some additional details for this issue (if relevant):

- Platform (Operating system, version)

- Version of EnergyPlus (if using an intermediate build, include SHA)

- Unmethours link: https://unmethours.com/question/49383/severe-error-calculated-density-of-air-is-negative/

Backtrace

```

(lldb) bt

* thread #1, name = 'energyplus', stop reason = hit program assert

frame #0: 0x00007ffff11c7fb7 libc.so.6`__GI_raise(sig=<unavailable>) at raise.c:51

frame #1: 0x00007ffff11c9921 libc.so.6`__GI_abort at abort.c:79

frame #2: 0x00007ffff11b948a libc.so.6`__assert_fail_base(fmt="%s%s%s:%u: %s%sAssertion `%s' failed.\n%n", assertion="contains( i )", file="/home/julien/Software/Others/EnergyPlus/third_party/ObjexxFCL/src/ObjexxFCL/Array1.hh", line=1172, function="T& ObjexxFCL::Array1< <template-parameter-1-1> >::operator()(int) [with T = EnergyPlus::WaterThermalTanks::StratifiedNodeData]") at assert.c:92

frame #3: 0x00007ffff11b9502 libc.so.6`__GI___assert_fail(assertion=<unavailable>, file=<unavailable>, line=<unavailable>, function=<unavailable>) at assert.c:101

* frame #4: 0x00007ffff4d1a4b1 libenergyplusapi.so.9.4.0`ObjexxFCL::Array1<EnergyPlus::WaterThermalTanks::StratifiedNodeData>::operator(this=0x00005555564ff530, i=0)(int) at Array1.hh:1172

frame #5: 0x00007ffff4cea195 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::CalcWaterThermalTankStratified(this=0x00005555564ff100, state=0x00007fffffffbdf0) at WaterThermalTanks.cc:7218

frame #6: 0x00007ffff4d11882 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::CalcStandardRatings(this=0x00005555564ff100, state=0x00007fffffffbdf0) at WaterThermalTanks.cc:11451

frame #7: 0x00007ffff4ce47d4 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::initialize(this=0x00005555564ff100, state=0x00007fffffffbdf0, FirstHVACIteration=true) at WaterThermalTanks.cc:6183

frame #8: 0x00007ffff4c8c218 libenergyplusapi.so.9.4.0`EnergyPlus::WaterThermalTanks::WaterThermalTankData::onInitLoopEquip(this=0x00005555564ff100, state=0x00007fffffffbdf0, calledFromLocation=0x0000555555e45098) at WaterThermalTanks.cc:164

frame #9: 0x00007ffff5ffa702 libenergyplusapi.so.9.4.0`EnergyPlus::DataPlant::CompData::simulate(this=0x0000555555e44f00, state=0x00007fffffffbdf0, FirstHVACIteration=true, InitLoopEquip=0x00007ffff7d6fbdf, GetCompSizFac=true) at Component.cc:119

frame #10: 0x00007ffff5cf1f1a libenergyplusapi.so.9.4.0`EnergyPlus::PlantManager::InitializeLoops(state=0x00007fffffffbdf0, FirstHVACIteration=true) at PlantManager.cc:2204

frame #11: 0x00007ffff5cdbd1a libenergyplusapi.so.9.4.0`EnergyPlus::PlantManager::ManagePlantLoops(state=0x00007fffffffbdf0, FirstHVACIteration=true, SimAirLoops=0x00007ffff7d77439, SimZoneEquipment=0x00007ffff7d7743c, SimNonZoneEquipment=0x00007ffff7d7743d, SimPlantLoops=0x00007ffff7d7743b, SimElecCircuits=0x00007ffff7d7743a) at PlantManager.cc:222

frame #12: 0x00007ffff5827b00 libenergyplusapi.so.9.4.0`EnergyPlus::HVACManager::SimSelectedEquipment(state=0x00007fffffffbdf0, SimAirLoops=0x00007ffff7d77439, SimZoneEquipment=0x00007ffff7d7743c, SimNonZoneEquipment=0x00007ffff7d7743d, SimPlantLoops=0x00007ffff7d7743b, SimElecCircuits=0x00007ffff7d7743a, FirstHVACIteration=0x00007fffffffae2e, LockPlantFlows=false) at HVACManager.cc:1832

frame #13: 0x00007ffff581d054 libenergyplusapi.so.9.4.0`EnergyPlus::HVACManager::SimHVAC(state=0x00007fffffffbdf0) at HVACManager.cc:842

frame #14: 0x00007ffff58197f4 libenergyplusapi.so.9.4.0`EnergyPlus::HVACManager::ManageHVAC(state=0x00007fffffffbdf0) at HVACManager.cc:358

frame #15: 0x00007ffff5a1457a libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceAirManager::CalcHeatBalanceAir(state=0x00007fffffffbdf0) at HeatBalanceAirManager.cc:4356

frame #16: 0x00007ffff59b9f7f libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceAirManager::ManageAirHeatBalance(state=0x00007fffffffbdf0) at HeatBalanceAirManager.cc:204

frame #17: 0x00007ffff3e451ee libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceSurfaceManager::ManageSurfaceHeatBalance(state=0x00007fffffffbdf0) at HeatBalanceSurfaceManager.cc:281

frame #18: 0x00007ffff5a33c72 libenergyplusapi.so.9.4.0`EnergyPlus::HeatBalanceManager::ManageHeatBalance(state=0x00007fffffffbdf0) at HeatBalanceManager.cc:363

frame #19: 0x00007ffff47f9269 libenergyplusapi.so.9.4.0`EnergyPlus::SimulationManager::SetupSimulation(state=0x00007fffffffbdf0, ErrorsFound=0x00007fffffffbc23) at SimulationManager.cc:2111

frame #20: 0x00007ffff47ea196 libenergyplusapi.so.9.4.0`EnergyPlus::SimulationManager::ManageSimulation(state=0x00007fffffffbdf0) at SimulationManager.cc:366

frame #21: 0x00007ffff3a08570 libenergyplusapi.so.9.4.0`RunEnergyPlus(state=0x00007fffffffbdf0, filepath="\xe0\xbd\xff\xff\xff\U0000007f"...) at EnergyPlusPgm.cc:400

frame #22: 0x00007ffff3a07a17 libenergyplusapi.so.9.4.0`EnergyPlusPgm(state=0x00007fffffffbdf0, filepath="\xe0\xbd\xff\xff\xff\U0000007f"...) at EnergyPlusPgm.cc:224

frame #23: 0x000055555578ef66 energyplus`main(argc=6, argv=0x00007fffffffccc8) at main.cc:60

frame #24: 0x00007ffff11aabf7 libc.so.6`__libc_start_main(main=(energyplus`main at main.cc:56), argc=6, argv=0x00007fffffffccc8, init=<unavailable>, fini=<unavailable>, rtld_fini=<unavailable>, stack_end=0x00007fffffffccb8) at libc-start.c:310

frame #25: 0x000055555578ec8a energyplus`_start + 42

```

### Checklist

Add to this list or remove from it as applicable. This is a simple templated set of guidelines.

- [x] Defect file added (list location of defect file here)

- [ ] Ticket added to Pivotal for defect (development team task)

- [ ] Pull request created (the pull request will have additional tasks related to reviewing changes that fix this defect)

| non_comp | severe error calculated density of air is negative issue overview user reported on unmet hours that he got the following error severe psyrhoairfnpbtdbw rhoair density of air is calculated pb tdb w routine correctzonehumrat during warmup environment dsdaytangier cooling at simulation time fatal program terminates due to preceding condition user supplied the file which was at e i updated the file to and instead of getting this severe i am getting an actual crash which isn t something we want to happen even if it is later found the defect file had problems performing zone sizing simulation for sizing period dsdaytangier cooling warming up warming up warming up performing zone sizing simulation for sizing period dsdaytangier heating calculating system sizing for sizing period dsdaytangier cooling calculating system sizing for sizing period dsdaytangier heating adjusting air system sizing adjusting standard ventilation sizing initializing simulation double free or corruption out aborted core dumped details some additional details for this issue if relevant platform operating system version version of energyplus if using an intermediate build include sha unmethours link backtrace lldb bt thread name energyplus stop reason hit program assert frame libc so gi raise sig at raise c frame libc so gi abort at abort c frame libc so assert fail base fmt s s s u s sassertion s failed n n assertion contains i file home julien software others energyplus third party objexxfcl src objexxfcl hh line function t objexxfcl operator int at assert c frame libc so gi assert fail assertion file line function at assert c frame libenergyplusapi so objexxfcl operator this i int at hh frame libenergyplusapi so energyplus waterthermaltanks waterthermaltankdata calcwaterthermaltankstratified this state at waterthermaltanks cc frame libenergyplusapi so energyplus waterthermaltanks waterthermaltankdata calcstandardratings this state at waterthermaltanks cc frame libenergyplusapi so energyplus waterthermaltanks waterthermaltankdata initialize this state firsthvaciteration true at waterthermaltanks cc frame libenergyplusapi so energyplus waterthermaltanks waterthermaltankdata oninitloopequip this state calledfromlocation at waterthermaltanks cc frame libenergyplusapi so energyplus dataplant compdata simulate this state firsthvaciteration true initloopequip getcompsizfac true at component cc frame libenergyplusapi so energyplus plantmanager initializeloops state firsthvaciteration true at plantmanager cc frame libenergyplusapi so energyplus plantmanager manageplantloops state firsthvaciteration true simairloops simzoneequipment simnonzoneequipment simplantloops simeleccircuits at plantmanager cc frame libenergyplusapi so energyplus hvacmanager simselectedequipment state simairloops simzoneequipment simnonzoneequipment simplantloops simeleccircuits firsthvaciteration lockplantflows false at hvacmanager cc frame libenergyplusapi so energyplus hvacmanager simhvac state at hvacmanager cc frame libenergyplusapi so energyplus hvacmanager managehvac state at hvacmanager cc frame libenergyplusapi so energyplus heatbalanceairmanager calcheatbalanceair state at heatbalanceairmanager cc frame libenergyplusapi so energyplus heatbalanceairmanager manageairheatbalance state at heatbalanceairmanager cc frame libenergyplusapi so energyplus heatbalancesurfacemanager managesurfaceheatbalance state at heatbalancesurfacemanager cc frame libenergyplusapi so energyplus heatbalancemanager manageheatbalance state at heatbalancemanager cc frame libenergyplusapi so energyplus simulationmanager setupsimulation state errorsfound at simulationmanager cc frame libenergyplusapi so energyplus simulationmanager managesimulation state at simulationmanager cc frame libenergyplusapi so runenergyplus state filepath xbd xff xff xff at energypluspgm cc frame libenergyplusapi so energypluspgm state filepath xbd xff xff xff at energypluspgm cc frame energyplus main argc argv at main cc frame libc so libc start main main energyplus main at main cc argc argv init fini rtld fini stack end at libc start c frame energyplus start checklist add to this list or remove from it as applicable this is a simple templated set of guidelines defect file added list location of defect file here ticket added to pivotal for defect development team task pull request created the pull request will have additional tasks related to reviewing changes that fix this defect | 0 |

179,128 | 21,517,305,137 | IssuesEvent | 2022-04-28 11:08:06 | finos/spring-bot | https://api.github.com/repos/finos/spring-bot | closed | WS-2020-0408 (High) detected in netty-handler-4.1.68.Final.jar | security vulnerability | ## WS-2020-0408 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-handler-4.1.68.Final.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: /demos/todo-bot/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar</p>

<p>

Dependency Hierarchy:

- bot-azure-4.14.2.jar (Root Library)

- azure-storage-queue-12.8.0.jar

- azure-storage-common-12.10.0.jar

- azure-core-http-netty-1.7.1.jar

- :x: **netty-handler-4.1.68.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/finos/spring-bot/commit/3da9e0f849079934eb92135cec1f523e22bdc1ad">3da9e0f849079934eb92135cec1f523e22bdc1ad</a></p>

<p>Found in base branch: <b>spring-bot-master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was found in all versions of io.netty:netty-all. Host verification in Netty is disabled by default. This can lead to MITM attack in which an attacker can forge valid SSL/TLS certificates for a different hostname in order to intercept traffic that doesn’t intend for him. This is an issue because the certificate is not matched with the host.

<p>Publish Date: 2020-06-22

<p>URL: <a href=https://github.com/netty/netty/issues/10362>WS-2020-0408</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/WS-2020-0408">https://nvd.nist.gov/vuln/detail/WS-2020-0408</a></p>

<p>Release Date: 2020-06-22</p>

<p>Fix Resolution: io.netty:netty-all - 4.1.68.Final-redhat-00001,4.0.0.Final,4.1.67.Final-redhat-00002;io.netty:netty-handler - 4.1.68.Final-redhat-00001,4.1.67.Final-redhat-00001</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-handler","packageVersion":"4.1.68.Final","packageFilePaths":["/demos/todo-bot/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"com.microsoft.bot:bot-azure:4.14.2;com.azure:azure-storage-queue:12.8.0;com.azure:azure-storage-common:12.10.0;com.azure:azure-core-http-netty:1.7.1;io.netty:netty-handler:4.1.68.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all - 4.1.68.Final-redhat-00001,4.0.0.Final,4.1.67.Final-redhat-00002;io.netty:netty-handler - 4.1.68.Final-redhat-00001,4.1.67.Final-redhat-00001","isBinary":false}],"baseBranches":["spring-bot-master"],"vulnerabilityIdentifier":"WS-2020-0408","vulnerabilityDetails":"An issue was found in all versions of io.netty:netty-all. Host verification in Netty is disabled by default. This can lead to MITM attack in which an attacker can forge valid SSL/TLS certificates for a different hostname in order to intercept traffic that doesn’t intend for him. This is an issue because the certificate is not matched with the host.","vulnerabilityUrl":"https://github.com/netty/netty/issues/10362","cvss3Severity":"high","cvss3Score":"7.4","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | True | WS-2020-0408 (High) detected in netty-handler-4.1.68.Final.jar - ## WS-2020-0408 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>netty-handler-4.1.68.Final.jar</b></p></summary>

<p></p>

<p>Library home page: <a href="https://netty.io/">https://netty.io/</a></p>

<p>Path to dependency file: /demos/todo-bot/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar,/home/wss-scanner/.m2/repository/io/netty/netty-handler/4.1.68.Final/netty-handler-4.1.68.Final.jar</p>

<p>

Dependency Hierarchy:

- bot-azure-4.14.2.jar (Root Library)

- azure-storage-queue-12.8.0.jar

- azure-storage-common-12.10.0.jar

- azure-core-http-netty-1.7.1.jar

- :x: **netty-handler-4.1.68.Final.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/finos/spring-bot/commit/3da9e0f849079934eb92135cec1f523e22bdc1ad">3da9e0f849079934eb92135cec1f523e22bdc1ad</a></p>

<p>Found in base branch: <b>spring-bot-master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

An issue was found in all versions of io.netty:netty-all. Host verification in Netty is disabled by default. This can lead to MITM attack in which an attacker can forge valid SSL/TLS certificates for a different hostname in order to intercept traffic that doesn’t intend for him. This is an issue because the certificate is not matched with the host.

<p>Publish Date: 2020-06-22

<p>URL: <a href=https://github.com/netty/netty/issues/10362>WS-2020-0408</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.4</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: High

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/WS-2020-0408">https://nvd.nist.gov/vuln/detail/WS-2020-0408</a></p>

<p>Release Date: 2020-06-22</p>

<p>Fix Resolution: io.netty:netty-all - 4.1.68.Final-redhat-00001,4.0.0.Final,4.1.67.Final-redhat-00002;io.netty:netty-handler - 4.1.68.Final-redhat-00001,4.1.67.Final-redhat-00001</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"io.netty","packageName":"netty-handler","packageVersion":"4.1.68.Final","packageFilePaths":["/demos/todo-bot/pom.xml"],"isTransitiveDependency":true,"dependencyTree":"com.microsoft.bot:bot-azure:4.14.2;com.azure:azure-storage-queue:12.8.0;com.azure:azure-storage-common:12.10.0;com.azure:azure-core-http-netty:1.7.1;io.netty:netty-handler:4.1.68.Final","isMinimumFixVersionAvailable":true,"minimumFixVersion":"io.netty:netty-all - 4.1.68.Final-redhat-00001,4.0.0.Final,4.1.67.Final-redhat-00002;io.netty:netty-handler - 4.1.68.Final-redhat-00001,4.1.67.Final-redhat-00001","isBinary":false}],"baseBranches":["spring-bot-master"],"vulnerabilityIdentifier":"WS-2020-0408","vulnerabilityDetails":"An issue was found in all versions of io.netty:netty-all. Host verification in Netty is disabled by default. This can lead to MITM attack in which an attacker can forge valid SSL/TLS certificates for a different hostname in order to intercept traffic that doesn’t intend for him. This is an issue because the certificate is not matched with the host.","vulnerabilityUrl":"https://github.com/netty/netty/issues/10362","cvss3Severity":"high","cvss3Score":"7.4","cvss3Metrics":{"A":"None","AC":"High","PR":"None","S":"Unchanged","C":"High","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> --> | non_comp | ws high detected in netty handler final jar ws high severity vulnerability vulnerable library netty handler final jar library home page a href path to dependency file demos todo bot pom xml path to vulnerable library home wss scanner repository io netty netty handler final netty handler final jar home wss scanner repository io netty netty handler final netty handler final jar home wss scanner repository io netty netty handler final netty handler final jar home wss scanner repository io netty netty handler final netty handler final jar home wss scanner repository io netty netty handler final netty handler final jar dependency hierarchy bot azure jar root library azure storage queue jar azure storage common jar azure core http netty jar x netty handler final jar vulnerable library found in head commit a href found in base branch spring bot master vulnerability details an issue was found in all versions of io netty netty all host verification in netty is disabled by default this can lead to mitm attack in which an attacker can forge valid ssl tls certificates for a different hostname in order to intercept traffic that doesn’t intend for him this is an issue because the certificate is not matched with the host publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity high privileges required none user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution io netty netty all final redhat final final redhat io netty netty handler final redhat final redhat isopenpronvulnerability false ispackagebased true isdefaultbranch true packages istransitivedependency true dependencytree com microsoft bot bot azure com azure azure storage queue com azure azure storage common com azure azure core http netty io netty netty handler final isminimumfixversionavailable true minimumfixversion io netty netty all final redhat final final redhat io netty netty handler final redhat final redhat isbinary false basebranches vulnerabilityidentifier ws vulnerabilitydetails an issue was found in all versions of io netty netty all host verification in netty is disabled by default this can lead to mitm attack in which an attacker can forge valid ssl tls certificates for a different hostname in order to intercept traffic that doesn’t intend for him this is an issue because the certificate is not matched with the host vulnerabilityurl | 0 |

13,197 | 15,555,334,291 | IssuesEvent | 2021-03-16 05:55:29 | Lothrazar/Cyclic | https://api.github.com/repos/Lothrazar/Cyclic | closed | Cyclic Bag is stuck in AE2 storage | 1.16 compatibility | Minecraft Version: 1.16

Forge Version: 36.0.45

Mod Version: 1.16.5-1.1.8

Single Player or Server: Server

Describe problem (what you were doing; what happened; what should have happened): Can not retrieve Cyclic storage bag out of AE2 storage, just stays in storage; Bag does not remove, but a second bag that was recently created is freely able to be moved in and out of storage

| True | Cyclic Bag is stuck in AE2 storage - Minecraft Version: 1.16

Forge Version: 36.0.45

Mod Version: 1.16.5-1.1.8

Single Player or Server: Server

Describe problem (what you were doing; what happened; what should have happened): Can not retrieve Cyclic storage bag out of AE2 storage, just stays in storage; Bag does not remove, but a second bag that was recently created is freely able to be moved in and out of storage

| comp | cyclic bag is stuck in storage minecraft version forge version mod version single player or server server describe problem what you were doing what happened what should have happened can not retrieve cyclic storage bag out of storage just stays in storage bag does not remove but a second bag that was recently created is freely able to be moved in and out of storage | 1 |

58,650 | 3,090,387,653 | IssuesEvent | 2015-08-26 06:12:07 | NRGI/resourcecontracts.org | https://api.github.com/repos/NRGI/resourcecontracts.org | closed | Update the MTurk HIT page with proper information including language in the title | 2 - Working OCR-workflow Priority | It needs to be clear when added to meh turk what language the specific page is written in. | 1.0 | Update the MTurk HIT page with proper information including language in the title - It needs to be clear when added to meh turk what language the specific page is written in. | non_comp | update the mturk hit page with proper information including language in the title it needs to be clear when added to meh turk what language the specific page is written in | 0 |

1,535 | 4,085,715,062 | IssuesEvent | 2016-06-01 00:14:58 | skyverge/woocommerce-product-sku-generator | https://api.github.com/repos/skyverge/woocommerce-product-sku-generator | closed | WooCommerce 2.6 Compatibility | compatibility | - [x] **Misc** -- Drop WooCommerce 2.2 support

* **Completed in** SHA 985d66dbfb8ee9d2e58637c8acd4fee8a6eb9c7b

- [x] **Misc** -- Add any needed compat methods

* **Completed in** SHA 322bcf7433d50d90a16366b1fdb0ade8448e59a6

- [x] **Feature** -- adds ability to use dashes in attribute names to replace spaces

* **Completed in** SHA 2f838a7e8f696b4bf9334bdf71f86379b543342d | True | WooCommerce 2.6 Compatibility - - [x] **Misc** -- Drop WooCommerce 2.2 support

* **Completed in** SHA 985d66dbfb8ee9d2e58637c8acd4fee8a6eb9c7b

- [x] **Misc** -- Add any needed compat methods

* **Completed in** SHA 322bcf7433d50d90a16366b1fdb0ade8448e59a6

- [x] **Feature** -- adds ability to use dashes in attribute names to replace spaces

* **Completed in** SHA 2f838a7e8f696b4bf9334bdf71f86379b543342d | comp | woocommerce compatibility misc drop woocommerce support completed in sha misc add any needed compat methods completed in sha feature adds ability to use dashes in attribute names to replace spaces completed in sha | 1 |

177,450 | 13,724,786,872 | IssuesEvent | 2020-10-03 15:40:13 | coloredcow-admin/glific-website | https://api.github.com/repos/coloredcow-admin/glific-website | closed | Feedback | status : ready to test | - [x] Can we please order the section as per the content order on homepage

- [x] Can we remove arrows when there's only one testimonial

- [x] Small m in Know More

- [x] @SunnaMalhotra Can you please make sure only the correct/relevant content shows on the test site now. such as on demo videos page, blog, webinar etc...

- [x] the fonts in this section is not correct

- [x] Let's remove the separator lines in the dropdown.

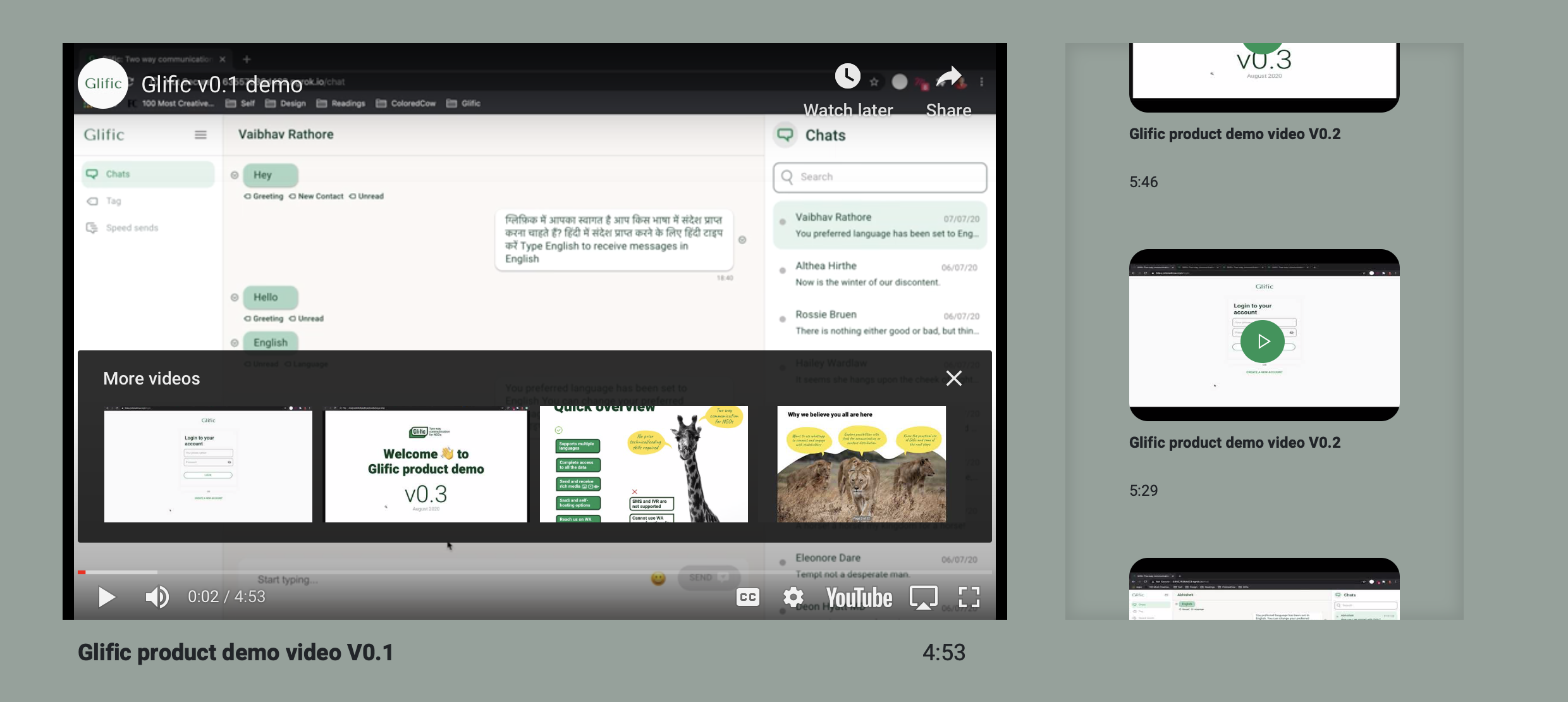

- [x] Is it possible to play the side videos in one click? right now, it first moves to the center then needs another click again. users would be slightly confused what happened

@abhi1203 , I tried to but I did not get the solution for it. To play the video. It looks like youtube does not allowing to override the feature

- [x] Let's use the glific green color here. in all the links in faq

- [x] increase space between the hamburger menu and book a demo button in smaller devices

- [x] Can we make the images and text clickable also here

Please do the same for recommended reading section also

- [ ] Clicking on reply doesn't do anything. should it open a input box to enter the comment or something

@abhi1203 It will redirect again the Post comment form, where you can add more comment

- [x] Clicking on names and tags should filter the blogs by that respective selection

- [x] can we open source code github link in a new tab

- [ ] Tides svg isn't looking right on safari

- [x] The form layout in safari and chrome look different (chrome one is preferred)

- [x] can we have the know more link after the text like the first point

- [x] Let's use this link for facebook business verfication https://www.facebook.com/business/help/2058515294227817?id=180505742745347

and this one for gupshup account creation: https://www.gupshup.io/developer/home

- [x] Does it day page not found because the complete link is not present?

- [x] If you play a video, then click on a video in the side bar and play it. then both the videos start playing. there's no way to stop the previous one. this is happening on features page and not webinar one

- [ ] | 1.0 | Feedback - - [x] Can we please order the section as per the content order on homepage

- [x] Can we remove arrows when there's only one testimonial

- [x] Small m in Know More

- [x] @SunnaMalhotra Can you please make sure only the correct/relevant content shows on the test site now. such as on demo videos page, blog, webinar etc...

- [x] the fonts in this section is not correct

- [x] Let's remove the separator lines in the dropdown.

- [x] Is it possible to play the side videos in one click? right now, it first moves to the center then needs another click again. users would be slightly confused what happened

@abhi1203 , I tried to but I did not get the solution for it. To play the video. It looks like youtube does not allowing to override the feature

- [x] Let's use the glific green color here. in all the links in faq

- [x] increase space between the hamburger menu and book a demo button in smaller devices

- [x] Can we make the images and text clickable also here

Please do the same for recommended reading section also

- [ ] Clicking on reply doesn't do anything. should it open a input box to enter the comment or something

@abhi1203 It will redirect again the Post comment form, where you can add more comment

- [x] Clicking on names and tags should filter the blogs by that respective selection

- [x] can we open source code github link in a new tab

- [ ] Tides svg isn't looking right on safari

- [x] The form layout in safari and chrome look different (chrome one is preferred)

- [x] can we have the know more link after the text like the first point

- [x] Let's use this link for facebook business verfication https://www.facebook.com/business/help/2058515294227817?id=180505742745347

and this one for gupshup account creation: https://www.gupshup.io/developer/home

- [x] Does it day page not found because the complete link is not present?

- [x] If you play a video, then click on a video in the side bar and play it. then both the videos start playing. there's no way to stop the previous one. this is happening on features page and not webinar one