Unnamed: 0 int64 3 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 2 430 | labels stringlengths 4 347 | body stringlengths 5 237k | index stringclasses 7

values | text_combine stringlengths 96 237k | label stringclasses 2

values | text stringlengths 96 219k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

67,766 | 28,044,934,660 | IssuesEvent | 2023-03-28 21:48:32 | dockstore/dockstore | https://api.github.com/repos/dockstore/dockstore | closed | Determine Open data for CWL workflows | bug web-service review | **Describe the bug**

Open data calculations are not correct; see #5339. In PR #5347, we've improved the check for open data for WDL workflows. This ticket is to extend the logic for CWL workflows.

**Expected behavior**

Open data calculations for CWL should correctly figure out open data test parameter files.

**Notes**

This looks more complicated than the WDL work; we already had logic in WDLHandler that determined file inputs. For CWL, it looks like that logic is only in the CLI. Ideally move the CLI code to the common module in the web service to avoid duplication.

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-2331)

┆Attachments: <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10938">Screenshot from 2023-03-28 17-45-58.png</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10939">Screenshot from 2023-03-28 17-47-44.png</a>

┆Fix Versions: Dockstore 1.14

┆Issue Number: DOCK-2331

┆Sprint: 107 - Eridanos

┆Issue Type: Story

| 1.0 | Determine Open data for CWL workflows - **Describe the bug**

Open data calculations are not correct; see #5339. In PR #5347, we've improved the check for open data for WDL workflows. This ticket is to extend the logic for CWL workflows.

**Expected behavior**

Open data calculations for CWL should correctly figure out open data test parameter files.

**Notes**

This looks more complicated than the WDL work; we already had logic in WDLHandler that determined file inputs. For CWL, it looks like that logic is only in the CLI. Ideally move the CLI code to the common module in the web service to avoid duplication.

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-2331)

┆Attachments: <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10938">Screenshot from 2023-03-28 17-45-58.png</a> | <a href="https://api.atlassian.com/ex/jira/9ff674c1-1cd9-4ee6-9138-6e2cdc7b3740/rest/api/2/attachment/content/10939">Screenshot from 2023-03-28 17-47-44.png</a>

┆Fix Versions: Dockstore 1.14

┆Issue Number: DOCK-2331

┆Sprint: 107 - Eridanos

┆Issue Type: Story

| non_comp | determine open data for cwl workflows describe the bug open data calculations are not correct see in pr we ve improved the check for open data for wdl workflows this ticket is to extend the logic for cwl workflows expected behavior open data calculations for cwl should correctly figure out open data test parameter files notes this looks more complicated than the wdl work we already had logic in wdlhandler that determined file inputs for cwl it looks like that logic is only in the cli ideally move the cli code to the common module in the web service to avoid duplication ┆issue is synchronized with this ┆attachments ┆fix versions dockstore ┆issue number dock ┆sprint eridanos ┆issue type story | 0 |

19,494 | 27,074,926,982 | IssuesEvent | 2023-02-14 09:55:24 | planetteamspeak/ts3phpframework | https://api.github.com/repos/planetteamspeak/ts3phpframework | closed | PHP 8.0 - Deprecated required parameters following optional parameter | compatibility | I am currently testing the PHP 8.0 RC 1 with a custom script.

The framework seems to be working fine with it. But I am using only a few functions of it.

One thing, which I noticed is the new check on functions. Is there a required parameter following an optional parameter, it returns an info message. It's no big deal. I only want to report it. ([more information](https://php.watch/versions/8.0/deprecate-required-param-after-optional))

Concerned functions:

https://github.com/planetteamspeak/ts3phpframework/blob/d629e767acf9d21056b3588e909443177d2bac47/libraries/TeamSpeak3/Node/Server.php#L2021

https://github.com/planetteamspeak/ts3phpframework/blob/d629e767acf9d21056b3588e909443177d2bac47/libraries/TeamSpeak3/Node/Server.php#L2036

Example (custom) error output:

```

2020-10-09 09:50:41.805911 INFO 8192: Required parameter $id1 follows optional parameter $type on line 2021 in /var/www/tsn/ranksystem_dev/libs/ts3_lib/Node/Server.php

2020-10-09 09:50:41.805817 INFO 8192: Required parameter $id1 follows optional parameter $type on line 2036 in /var/www/tsn/ranksystem_dev/libs/ts3_lib/Node/Server.php

```

An easy fix would be to set the $id1 as optional parameter like

` public function privilegeKeyCreate($type = TeamSpeak3::TOKEN_SERVERGROUP, $id1 = 0, $id2 = 0, $description = null, $customset = null)`

This will run into an TS3 error, when the $id1 is not given by the third party script, since it is the groupID of the TS3 query function `tokenadd`.

As a second possibility I see the option to change the order of the parameters. It's your decision. ;-) | True | PHP 8.0 - Deprecated required parameters following optional parameter - I am currently testing the PHP 8.0 RC 1 with a custom script.

The framework seems to be working fine with it. But I am using only a few functions of it.

One thing, which I noticed is the new check on functions. Is there a required parameter following an optional parameter, it returns an info message. It's no big deal. I only want to report it. ([more information](https://php.watch/versions/8.0/deprecate-required-param-after-optional))

Concerned functions:

https://github.com/planetteamspeak/ts3phpframework/blob/d629e767acf9d21056b3588e909443177d2bac47/libraries/TeamSpeak3/Node/Server.php#L2021

https://github.com/planetteamspeak/ts3phpframework/blob/d629e767acf9d21056b3588e909443177d2bac47/libraries/TeamSpeak3/Node/Server.php#L2036

Example (custom) error output:

```

2020-10-09 09:50:41.805911 INFO 8192: Required parameter $id1 follows optional parameter $type on line 2021 in /var/www/tsn/ranksystem_dev/libs/ts3_lib/Node/Server.php

2020-10-09 09:50:41.805817 INFO 8192: Required parameter $id1 follows optional parameter $type on line 2036 in /var/www/tsn/ranksystem_dev/libs/ts3_lib/Node/Server.php

```

An easy fix would be to set the $id1 as optional parameter like

` public function privilegeKeyCreate($type = TeamSpeak3::TOKEN_SERVERGROUP, $id1 = 0, $id2 = 0, $description = null, $customset = null)`

This will run into an TS3 error, when the $id1 is not given by the third party script, since it is the groupID of the TS3 query function `tokenadd`.

As a second possibility I see the option to change the order of the parameters. It's your decision. ;-) | comp | php deprecated required parameters following optional parameter i am currently testing the php rc with a custom script the framework seems to be working fine with it but i am using only a few functions of it one thing which i noticed is the new check on functions is there a required parameter following an optional parameter it returns an info message it s no big deal i only want to report it concerned functions example custom error output info required parameter follows optional parameter type on line in var www tsn ranksystem dev libs lib node server php info required parameter follows optional parameter type on line in var www tsn ranksystem dev libs lib node server php an easy fix would be to set the as optional parameter like public function privilegekeycreate type token servergroup description null customset null this will run into an error when the is not given by the third party script since it is the groupid of the query function tokenadd as a second possibility i see the option to change the order of the parameters it s your decision | 1 |

14,003 | 16,777,599,818 | IssuesEvent | 2021-06-15 00:34:36 | ClickHouse/ClickHouse | https://api.github.com/repos/ClickHouse/ClickHouse | closed | After upgrading ClickHouse from 20.4 to 21.3 PARTITION key stops working because it's wrongly counted as float | backward compatibility bug v21.3-affected | I have the following table in ClickHouse 20.4:

```

CREATE TABLE FileRecord

(

fileId UInt64,

sourceRecord String

) ENGINE = MergeTree()

Partition by (ceil(fileId / 1000) % 1000)

settings index_granularity = 4096

```

and it worked just fine, until i'be upgraded to 21.3, where we have following PR-feature:

https://github.com/ClickHouse/ClickHouse/pull/18464

which disallows to use floating point keys as partition key. No problem, but if i run

`toTypeName((ceil(fileId / 1000) % 1000))`

it returns `Int16`, which seems like a bug in typeName or in the newly introduced feature with `allow_floating_point_partition_key`. I do not show my stacktrace, since it's obvious and simply shows error

`Donot support float point as partition key: (ceil(fileId / 1000) % 1000)`

| True | After upgrading ClickHouse from 20.4 to 21.3 PARTITION key stops working because it's wrongly counted as float - I have the following table in ClickHouse 20.4:

```

CREATE TABLE FileRecord

(

fileId UInt64,

sourceRecord String

) ENGINE = MergeTree()

Partition by (ceil(fileId / 1000) % 1000)

settings index_granularity = 4096

```

and it worked just fine, until i'be upgraded to 21.3, where we have following PR-feature:

https://github.com/ClickHouse/ClickHouse/pull/18464

which disallows to use floating point keys as partition key. No problem, but if i run

`toTypeName((ceil(fileId / 1000) % 1000))`

it returns `Int16`, which seems like a bug in typeName or in the newly introduced feature with `allow_floating_point_partition_key`. I do not show my stacktrace, since it's obvious and simply shows error

`Donot support float point as partition key: (ceil(fileId / 1000) % 1000)`

| comp | after upgrading clickhouse from to partition key stops working because it s wrongly counted as float i have the following table in clickhouse create table filerecord fileid sourcerecord string engine mergetree partition by ceil fileid settings index granularity and it worked just fine until i be upgraded to where we have following pr feature which disallows to use floating point keys as partition key no problem but if i run totypename ceil fileid it returns which seems like a bug in typename or in the newly introduced feature with allow floating point partition key i do not show my stacktrace since it s obvious and simply shows error donot support float point as partition key ceil fileid | 1 |

7,210 | 6,823,451,223 | IssuesEvent | 2017-11-08 00:01:53 | brave/browser-laptop | https://api.github.com/repos/brave/browser-laptop | opened | Linux cibuild script fails on Jenkins | Infrastructure | <!--

Have you searched for similar issues? We have received a lot of feedback and bug reports that we have closed as duplicates. Before submitting this issue, please visit our community site for common ones: https://community.brave.com/c/common-issues

-->

### Description

When doing builds (release channel, beta channel, etc), the Linux job is always failing

I believe the root cause was unintentionally introduced with https://github.com/brave/browser-laptop/pull/10274

The upload script is recursively searching for all matching binaries and finding the RPM under the `suse` folder.

- A proper fix would exclude this directory

- A quick work-around would be to delete the folder before starting the upload

### Steps to Reproduce

<!--

Please add a series of steps to reproduce the problem. See https://stackoverflow.com/help/mcve for in depth information on how to create a minimal, complete, and verifiable example.

-->

1. Go to https://jenkins.brave.com/view/laptop%20builds%20(child%20jobs)/

2. Pick the "browser-laptop-build-linux" type and queue a build

**Actual result:**

It will eventually fail with an error like so:

```

{

"documentation_url": "https://developer.github.com/v3",

"message": "Validation Failed",

"errors": [

{

"field": "name",

"code": "already_exists",

"resource": "ReleaseAsset"

}

],

"request_id": "924F:1690:30E530:34464A:5A015C2A"

}

```

**Expected result:**

the cibuild should not fail

**Reproduces how often:**

100%

### Brave Version

**about:brave info:**

<!--

Please open about:brave, copy the version information, and paste it.

-->

**Reproducible on current live release:**

<!--

Is this a problem with the live build? It matters for triage reasons.

-->

### Additional Information

<!--

Any additional information, related issues, extra QA steps, configuration or data that might be necessary to reproduce the issue.

-->

| 1.0 | Linux cibuild script fails on Jenkins - <!--

Have you searched for similar issues? We have received a lot of feedback and bug reports that we have closed as duplicates. Before submitting this issue, please visit our community site for common ones: https://community.brave.com/c/common-issues

-->

### Description

When doing builds (release channel, beta channel, etc), the Linux job is always failing

I believe the root cause was unintentionally introduced with https://github.com/brave/browser-laptop/pull/10274

The upload script is recursively searching for all matching binaries and finding the RPM under the `suse` folder.

- A proper fix would exclude this directory

- A quick work-around would be to delete the folder before starting the upload

### Steps to Reproduce

<!--

Please add a series of steps to reproduce the problem. See https://stackoverflow.com/help/mcve for in depth information on how to create a minimal, complete, and verifiable example.

-->

1. Go to https://jenkins.brave.com/view/laptop%20builds%20(child%20jobs)/

2. Pick the "browser-laptop-build-linux" type and queue a build

**Actual result:**

It will eventually fail with an error like so:

```

{

"documentation_url": "https://developer.github.com/v3",

"message": "Validation Failed",

"errors": [

{

"field": "name",

"code": "already_exists",

"resource": "ReleaseAsset"

}

],

"request_id": "924F:1690:30E530:34464A:5A015C2A"

}

```

**Expected result:**

the cibuild should not fail

**Reproduces how often:**

100%

### Brave Version

**about:brave info:**

<!--

Please open about:brave, copy the version information, and paste it.

-->

**Reproducible on current live release:**

<!--

Is this a problem with the live build? It matters for triage reasons.

-->

### Additional Information

<!--

Any additional information, related issues, extra QA steps, configuration or data that might be necessary to reproduce the issue.

-->

| non_comp | linux cibuild script fails on jenkins have you searched for similar issues we have received a lot of feedback and bug reports that we have closed as duplicates before submitting this issue please visit our community site for common ones description when doing builds release channel beta channel etc the linux job is always failing i believe the root cause was unintentionally introduced with the upload script is recursively searching for all matching binaries and finding the rpm under the suse folder a proper fix would exclude this directory a quick work around would be to delete the folder before starting the upload steps to reproduce please add a series of steps to reproduce the problem see for in depth information on how to create a minimal complete and verifiable example go to pick the browser laptop build linux type and queue a build actual result it will eventually fail with an error like so documentation url message validation failed errors field name code already exists resource releaseasset request id expected result the cibuild should not fail reproduces how often brave version about brave info please open about brave copy the version information and paste it reproducible on current live release is this a problem with the live build it matters for triage reasons additional information any additional information related issues extra qa steps configuration or data that might be necessary to reproduce the issue | 0 |

175,915 | 14,545,040,898 | IssuesEvent | 2020-12-15 19:03:29 | roboticslab-uc3m/follow-me | https://api.github.com/repos/roboticslab-uc3m/follow-me | closed | Update follow-me-app.graphml | documentation | I believe the PNG generated and shown in the main page is not the same as seen in the .graphml file.

Related: roboticslab-uc3m/yarp-devices#99.

| 1.0 | Update follow-me-app.graphml - I believe the PNG generated and shown in the main page is not the same as seen in the .graphml file.

Related: roboticslab-uc3m/yarp-devices#99.

| non_comp | update follow me app graphml i believe the png generated and shown in the main page is not the same as seen in the graphml file related roboticslab yarp devices | 0 |

75,092 | 7,459,787,256 | IssuesEvent | 2018-03-30 16:50:16 | swentel/indieweb | https://api.github.com/repos/swentel/indieweb | opened | test that deleting a node, deletes the syndication link and webmention | tests webmention | syndications are already deleted, webmentions are not | 1.0 | test that deleting a node, deletes the syndication link and webmention - syndications are already deleted, webmentions are not | non_comp | test that deleting a node deletes the syndication link and webmention syndications are already deleted webmentions are not | 0 |

3,910 | 6,760,108,246 | IssuesEvent | 2017-10-24 19:26:18 | mlibrary/cozy-sun-bear | https://api.github.com/repos/mlibrary/cozy-sun-bear | opened | Improvements for mobile screens | browser compatibility mobile UI | - [ ] Change "Get Citation" to "Cite"

- [ ] Decrease size of left and right arrows

- [ ] Decrease size of Close Button

- [ ] Decrease size of Book Title / Section Title

- [ ] Contents modal width should fill the screen

- [ ] Search results modal width should fill the screen

- [ ] Preferences modal width should fill the screen

- [ ] Ensure ellipsis displays when Book Title / Section Title overflow is hidden

| True | Improvements for mobile screens - - [ ] Change "Get Citation" to "Cite"

- [ ] Decrease size of left and right arrows

- [ ] Decrease size of Close Button

- [ ] Decrease size of Book Title / Section Title

- [ ] Contents modal width should fill the screen

- [ ] Search results modal width should fill the screen

- [ ] Preferences modal width should fill the screen

- [ ] Ensure ellipsis displays when Book Title / Section Title overflow is hidden

| comp | improvements for mobile screens change get citation to cite decrease size of left and right arrows decrease size of close button decrease size of book title section title contents modal width should fill the screen search results modal width should fill the screen preferences modal width should fill the screen ensure ellipsis displays when book title section title overflow is hidden | 1 |

95,613 | 19,722,218,406 | IssuesEvent | 2022-01-13 16:22:50 | Sheeves11/UnnamedFiefdomGame | https://api.github.com/repos/Sheeves11/UnnamedFiefdomGame | opened | Variable Naming Schema | code improvement | Would be good to maintain a rule for constant variables that can only be updated if you modify the code directly.

I have renamed a number of variables that I notice this with in THIS_STYLE.

If variables are subject to change, or if I'm not entirely sure, then I'll leave them as-is. (like GOLD_PER and defendersPer).

To push it a step further, it would be good to define global variables without camel back, like:

DefendersPer. This way, we know it is something that may get modified, but is also global.

I haven't done this yet, camel back is fine for now for everything besides constants. | 1.0 | Variable Naming Schema - Would be good to maintain a rule for constant variables that can only be updated if you modify the code directly.

I have renamed a number of variables that I notice this with in THIS_STYLE.

If variables are subject to change, or if I'm not entirely sure, then I'll leave them as-is. (like GOLD_PER and defendersPer).

To push it a step further, it would be good to define global variables without camel back, like:

DefendersPer. This way, we know it is something that may get modified, but is also global.

I haven't done this yet, camel back is fine for now for everything besides constants. | non_comp | variable naming schema would be good to maintain a rule for constant variables that can only be updated if you modify the code directly i have renamed a number of variables that i notice this with in this style if variables are subject to change or if i m not entirely sure then i ll leave them as is like gold per and defendersper to push it a step further it would be good to define global variables without camel back like defendersper this way we know it is something that may get modified but is also global i haven t done this yet camel back is fine for now for everything besides constants | 0 |

5,960 | 3,703,084,060 | IssuesEvent | 2016-02-29 19:07:36 | deis/deis | https://api.github.com/repos/deis/deis | closed | deis-builder 1.10.0 is spitting 'Failed handshake: EOF' once per ~5 seconds | builder easy-fix | Log as seen in papertrail:

```

Sep 08 15:07:11 52.22.0.199 logger: 2015-09-08T22:07:11UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc82046a2a0}})

Sep 08 15:07:11 52.22.0.199 logger: 2015-09-08T22:07:11UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:11 52.22.0.199 logger: 2015-09-08T22:07:11UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:17 52.22.0.199 logger: 2015-09-08T22:07:17UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc82017f7a0}})

Sep 08 15:07:21 52.22.0.199 logger: 2015-09-08T22:07:21UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:21 52.22.0.199 logger: 2015-09-08T22:07:21UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:24 52.22.0.199 logger: 2015-09-08T22:07:24UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc820436770}})

Sep 08 15:07:25 52.22.0.199 logger: 2015-09-08T22:07:25UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:25 52.22.0.199 logger: 2015-09-08T22:07:25UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:29 52.22.0.199 logger: 2015-09-08T22:07:29UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:29 52.22.0.199 logger: 2015-09-08T22:07:29UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:29 52.22.0.199 logger: 2015-09-08T22:07:29UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc820048fc0}})

Sep 08 15:07:32 52.22.0.199 logger: 2015-09-08T22:07:32UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc8202682a0}})

Sep 08 15:07:33 52.22.0.199 logger: 2015-09-08T22:07:33UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:33 52.22.0.199 logger: 2015-09-08T22:07:33UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:40 52.22.0.199 logger: 2015-09-08T22:07:40UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc8201bc7e0}})

``` | 1.0 | deis-builder 1.10.0 is spitting 'Failed handshake: EOF' once per ~5 seconds - Log as seen in papertrail:

```

Sep 08 15:07:11 52.22.0.199 logger: 2015-09-08T22:07:11UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc82046a2a0}})

Sep 08 15:07:11 52.22.0.199 logger: 2015-09-08T22:07:11UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:11 52.22.0.199 logger: 2015-09-08T22:07:11UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:17 52.22.0.199 logger: 2015-09-08T22:07:17UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc82017f7a0}})

Sep 08 15:07:21 52.22.0.199 logger: 2015-09-08T22:07:21UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:21 52.22.0.199 logger: 2015-09-08T22:07:21UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:24 52.22.0.199 logger: 2015-09-08T22:07:24UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc820436770}})

Sep 08 15:07:25 52.22.0.199 logger: 2015-09-08T22:07:25UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:25 52.22.0.199 logger: 2015-09-08T22:07:25UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:29 52.22.0.199 logger: 2015-09-08T22:07:29UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:29 52.22.0.199 logger: 2015-09-08T22:07:29UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:29 52.22.0.199 logger: 2015-09-08T22:07:29UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc820048fc0}})

Sep 08 15:07:32 52.22.0.199 logger: 2015-09-08T22:07:32UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc8202682a0}})

Sep 08 15:07:33 52.22.0.199 logger: 2015-09-08T22:07:33UTC deis-builder[1]: [info] Checking closer.

Sep 08 15:07:33 52.22.0.199 logger: 2015-09-08T22:07:33UTC deis-builder[1]: [info] Accepted connection.

Sep 08 15:07:40 52.22.0.199 logger: 2015-09-08T22:07:40UTC deis-builder[1]: [error] Failed handshake: EOF (&{{0xc8201bc7e0}})

``` | non_comp | deis builder is spitting failed handshake eof once per seconds log as seen in papertrail sep logger deis builder failed handshake eof sep logger deis builder accepted connection sep logger deis builder checking closer sep logger deis builder failed handshake eof sep logger deis builder accepted connection sep logger deis builder checking closer sep logger deis builder failed handshake eof sep logger deis builder accepted connection sep logger deis builder checking closer sep logger deis builder checking closer sep logger deis builder accepted connection sep logger deis builder failed handshake eof sep logger deis builder failed handshake eof sep logger deis builder checking closer sep logger deis builder accepted connection sep logger deis builder failed handshake eof | 0 |

12,746 | 15,024,584,980 | IssuesEvent | 2021-02-01 19:51:01 | AmProsius/gothic-1-community-patch | https://api.github.com/repos/AmProsius/gothic-1-community-patch | closed | Wolf's minecrawler plate dialog doesn't disappear | compatibility easy only for session | Wolf can be offered minecrawlers' armor plates even if the player already did so. | True | Wolf's minecrawler plate dialog doesn't disappear - Wolf can be offered minecrawlers' armor plates even if the player already did so. | comp | wolf s minecrawler plate dialog doesn t disappear wolf can be offered minecrawlers armor plates even if the player already did so | 1 |

9,977 | 11,963,272,453 | IssuesEvent | 2020-04-05 15:20:01 | sebastianbergmann/phpunit | https://api.github.com/repos/sebastianbergmann/phpunit | opened | "PHPUnit\TextUI\Configuration" vs autoloader | type/backward-compatibility type/bug | Hello,

I'll probably get some hate because I **don't** use Composer,

but this file:

https://github.com/sebastianbergmann/phpunit/blob/5a3b8a9d2a4b71ca251fc8b616cca642e4a46bc4/src/TextUI/Configuration/PHPUnit/ExtensionHandler.php#L10

Is under the folder "Configuration/PHPUnit",

yet the namespace is:

namespace PHPUnit\TextUI\Configuration;

(I see the same applies to other files under that folder)

Therefore, these classes become "non-automatically findable", by having my version of the autoloader find the file directly in "PHPUnit\TextUI\Configuration".

Is there a reason the namespace isn't "PHPUnit\TextUI\Configuration\PHPUnit" ?

Thank you. | True | "PHPUnit\TextUI\Configuration" vs autoloader - Hello,

I'll probably get some hate because I **don't** use Composer,

but this file:

https://github.com/sebastianbergmann/phpunit/blob/5a3b8a9d2a4b71ca251fc8b616cca642e4a46bc4/src/TextUI/Configuration/PHPUnit/ExtensionHandler.php#L10

Is under the folder "Configuration/PHPUnit",

yet the namespace is:

namespace PHPUnit\TextUI\Configuration;

(I see the same applies to other files under that folder)

Therefore, these classes become "non-automatically findable", by having my version of the autoloader find the file directly in "PHPUnit\TextUI\Configuration".

Is there a reason the namespace isn't "PHPUnit\TextUI\Configuration\PHPUnit" ?

Thank you. | comp | phpunit textui configuration vs autoloader hello i ll probably get some hate because i don t use composer but this file is under the folder configuration phpunit yet the namespace is namespace phpunit textui configuration i see the same applies to other files under that folder therefore these classes become non automatically findable by having my version of the autoloader find the file directly in phpunit textui configuration is there a reason the namespace isn t phpunit textui configuration phpunit thank you | 1 |

5,903 | 8,722,366,751 | IssuesEvent | 2018-12-09 11:47:28 | kerubistan/kerub | https://api.github.com/repos/kerubistan/kerub | opened | measure and track cpu temperature information | component:data processing enhancement priority: normal | probably lm-sensors, or proc filesystem, or whatever is available on the OS | 1.0 | measure and track cpu temperature information - probably lm-sensors, or proc filesystem, or whatever is available on the OS | non_comp | measure and track cpu temperature information probably lm sensors or proc filesystem or whatever is available on the os | 0 |

14,555 | 17,650,786,990 | IssuesEvent | 2021-08-20 12:57:17 | ModdingForBlockheads/CookingForBlockheads | https://api.github.com/repos/ModdingForBlockheads/CookingForBlockheads | opened | Compat with a few mods [datapack included] | compatibility | I made a datapack for compat with Farmers Delight, Builders Crafts and Additions, and Crayfish's More Furniture mod.

Here is the pack:

[Cooking for Blockheads compat.zip](https://github.com/ModdingForBlockheads/CookingForBlockheads/files/7021598/Cooking.for.Blockheads.compat.zip)

| True | Compat with a few mods [datapack included] - I made a datapack for compat with Farmers Delight, Builders Crafts and Additions, and Crayfish's More Furniture mod.

Here is the pack:

[Cooking for Blockheads compat.zip](https://github.com/ModdingForBlockheads/CookingForBlockheads/files/7021598/Cooking.for.Blockheads.compat.zip)

| comp | compat with a few mods i made a datapack for compat with farmers delight builders crafts and additions and crayfish s more furniture mod here is the pack | 1 |

19,695 | 27,338,186,432 | IssuesEvent | 2023-02-26 13:27:11 | TwelveIterationMods/InventoryEssentials | https://api.github.com/repos/TwelveIterationMods/InventoryEssentials | closed | Item duplication glitch with Quark Oddities backpack | bug compatibility pending release 1.18 pending lts | ### Minecraft Version

1.18.x

### Mod Loader

Forge

### Mod Loader Version

40.1.68

### Mod Version

4.0.2

### Describe the Issue

When ctrl-clicking on a stack in a backpack from [Quark Oddities](https://www.curseforge.com/minecraft/mc-mods/quark-oddities) to move a single item into my inventory, it clones the entire stack into the target inventory, though reduces the original stack's amount as expected.

### Before

### After

### Logs

_No response_

### Do you use any performance-enhancing mods (e.g. OptiFine)?

FerriteCore, Radium Reforged, Rubidium | True | Item duplication glitch with Quark Oddities backpack - ### Minecraft Version

1.18.x

### Mod Loader

Forge

### Mod Loader Version

40.1.68

### Mod Version

4.0.2

### Describe the Issue

When ctrl-clicking on a stack in a backpack from [Quark Oddities](https://www.curseforge.com/minecraft/mc-mods/quark-oddities) to move a single item into my inventory, it clones the entire stack into the target inventory, though reduces the original stack's amount as expected.

### Before

### After

### Logs

_No response_

### Do you use any performance-enhancing mods (e.g. OptiFine)?

FerriteCore, Radium Reforged, Rubidium | comp | item duplication glitch with quark oddities backpack minecraft version x mod loader forge mod loader version mod version describe the issue when ctrl clicking on a stack in a backpack from to move a single item into my inventory it clones the entire stack into the target inventory though reduces the original stack s amount as expected before after logs no response do you use any performance enhancing mods e g optifine ferritecore radium reforged rubidium | 1 |

118,367 | 17,581,204,266 | IssuesEvent | 2021-08-16 07:42:21 | AlexRogalskiy/charts | https://api.github.com/repos/AlexRogalskiy/charts | opened | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js, jquery-1.9.1.js | security vulnerability | ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.8.1.min.js</b>, <b>jquery-1.9.1.js</b></p></summary>

<p>

<details><summary><b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js</a></p>

<p>Path to dependency file: charts/node_modules/redeyed/examples/browser/index.html</p>

<p>Path to vulnerable library: /node_modules/redeyed/examples/browser/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.8.1.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-1.9.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js</a></p>

<p>Path to dependency file: charts/node_modules/tinygradient/bower_components/tinycolor/index.html</p>

<p>Path to vulnerable library: /node_modules/tinygradient/bower_components/tinycolor/demo/jquery-1.9.1.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.9.1.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/charts/commit/89e847ca7dee5ccc2cec2459799650cbed48cea6">89e847ca7dee5ccc2cec2459799650cbed48cea6</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440">https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0;jquery-rails - 4.4.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2020-11023 (Medium) detected in jquery-1.8.1.min.js, jquery-1.9.1.js - ## CVE-2020-11023 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jquery-1.8.1.min.js</b>, <b>jquery-1.9.1.js</b></p></summary>

<p>

<details><summary><b>jquery-1.8.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.8.1/jquery.min.js</a></p>

<p>Path to dependency file: charts/node_modules/redeyed/examples/browser/index.html</p>

<p>Path to vulnerable library: /node_modules/redeyed/examples/browser/index.html</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.8.1.min.js** (Vulnerable Library)

</details>

<details><summary><b>jquery-1.9.1.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js">https://cdnjs.cloudflare.com/ajax/libs/jquery/1.9.1/jquery.js</a></p>

<p>Path to dependency file: charts/node_modules/tinygradient/bower_components/tinycolor/index.html</p>

<p>Path to vulnerable library: /node_modules/tinygradient/bower_components/tinycolor/demo/jquery-1.9.1.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery-1.9.1.js** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/charts/commit/89e847ca7dee5ccc2cec2459799650cbed48cea6">89e847ca7dee5ccc2cec2459799650cbed48cea6</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In jQuery versions greater than or equal to 1.0.3 and before 3.5.0, passing HTML containing <option> elements from untrusted sources - even after sanitizing it - to one of jQuery's DOM manipulation methods (i.e. .html(), .append(), and others) may execute untrusted code. This problem is patched in jQuery 3.5.0.

<p>Publish Date: 2020-04-29

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-11023>CVE-2020-11023</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.1</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: Low

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440">https://github.com/jquery/jquery/security/advisories/GHSA-jpcq-cgw6-v4j6,https://github.com/rails/jquery-rails/blob/master/CHANGELOG.md#440</a></p>

<p>Release Date: 2020-04-29</p>

<p>Fix Resolution: jquery - 3.5.0;jquery-rails - 4.4.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_comp | cve medium detected in jquery min js jquery js cve medium severity vulnerability vulnerable libraries jquery min js jquery js jquery min js javascript library for dom operations library home page a href path to dependency file charts node modules redeyed examples browser index html path to vulnerable library node modules redeyed examples browser index html dependency hierarchy x jquery min js vulnerable library jquery js javascript library for dom operations library home page a href path to dependency file charts node modules tinygradient bower components tinycolor index html path to vulnerable library node modules tinygradient bower components tinycolor demo jquery js dependency hierarchy x jquery js vulnerable library found in head commit a href vulnerability details in jquery versions greater than or equal to and before passing html containing elements from untrusted sources even after sanitizing it to one of jquery s dom manipulation methods i e html append and others may execute untrusted code this problem is patched in jquery publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope changed impact metrics confidentiality impact low integrity impact low availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution jquery jquery rails step up your open source security game with whitesource | 0 |

287,938 | 31,856,518,669 | IssuesEvent | 2023-09-15 07:52:27 | Trinadh465/linux-4.1.15_CVE-2023-26607 | https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2023-26607 | opened | CVE-2022-2196 (High) detected in linuxlinux-4.6 | Mend: dependency security vulnerability | ## CVE-2022-2196 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/Trinadh465/linux-4.1.15_CVE-2023-26607/commit/6fca0e3f2f14e1e851258fd815766531370084b0">6fca0e3f2f14e1e851258fd815766531370084b0</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A regression exists in the Linux Kernel within KVM: nVMX that allowed for speculative execution attacks. L2 can carry out Spectre v2 attacks on L1 due to L1 thinking it doesn't need retpolines or IBPB after running L2 due to KVM (L0) advertising eIBRS support to L1. An attacker at L2 with code execution can execute code on an indirect branch on the host machine. We recommend upgrading to Kernel 6.2 or past commit 2e7eab81425a

<p>Publish Date: 2023-01-09

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-2196>CVE-2022-2196</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-2196">https://www.linuxkernelcves.com/cves/CVE-2022-2196</a></p>

<p>Release Date: 2023-01-09</p>

<p>Fix Resolution: v5.10.170,v5.15.96,v6.1.14</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2022-2196 (High) detected in linuxlinux-4.6 - ## CVE-2022-2196 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/Trinadh465/linux-4.1.15_CVE-2023-26607/commit/6fca0e3f2f14e1e851258fd815766531370084b0">6fca0e3f2f14e1e851258fd815766531370084b0</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

A regression exists in the Linux Kernel within KVM: nVMX that allowed for speculative execution attacks. L2 can carry out Spectre v2 attacks on L1 due to L1 thinking it doesn't need retpolines or IBPB after running L2 due to KVM (L0) advertising eIBRS support to L1. An attacker at L2 with code execution can execute code on an indirect branch on the host machine. We recommend upgrading to Kernel 6.2 or past commit 2e7eab81425a

<p>Publish Date: 2023-01-09

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-2196>CVE-2022-2196</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>8.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Changed

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-2196">https://www.linuxkernelcves.com/cves/CVE-2022-2196</a></p>

<p>Release Date: 2023-01-09</p>

<p>Fix Resolution: v5.10.170,v5.15.96,v6.1.14</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_comp | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files vulnerability details a regression exists in the linux kernel within kvm nvmx that allowed for speculative execution attacks can carry out spectre attacks on due to thinking it doesn t need retpolines or ibpb after running due to kvm advertising eibrs support to an attacker at with code execution can execute code on an indirect branch on the host machine we recommend upgrading to kernel or past commit publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope changed impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend | 0 |

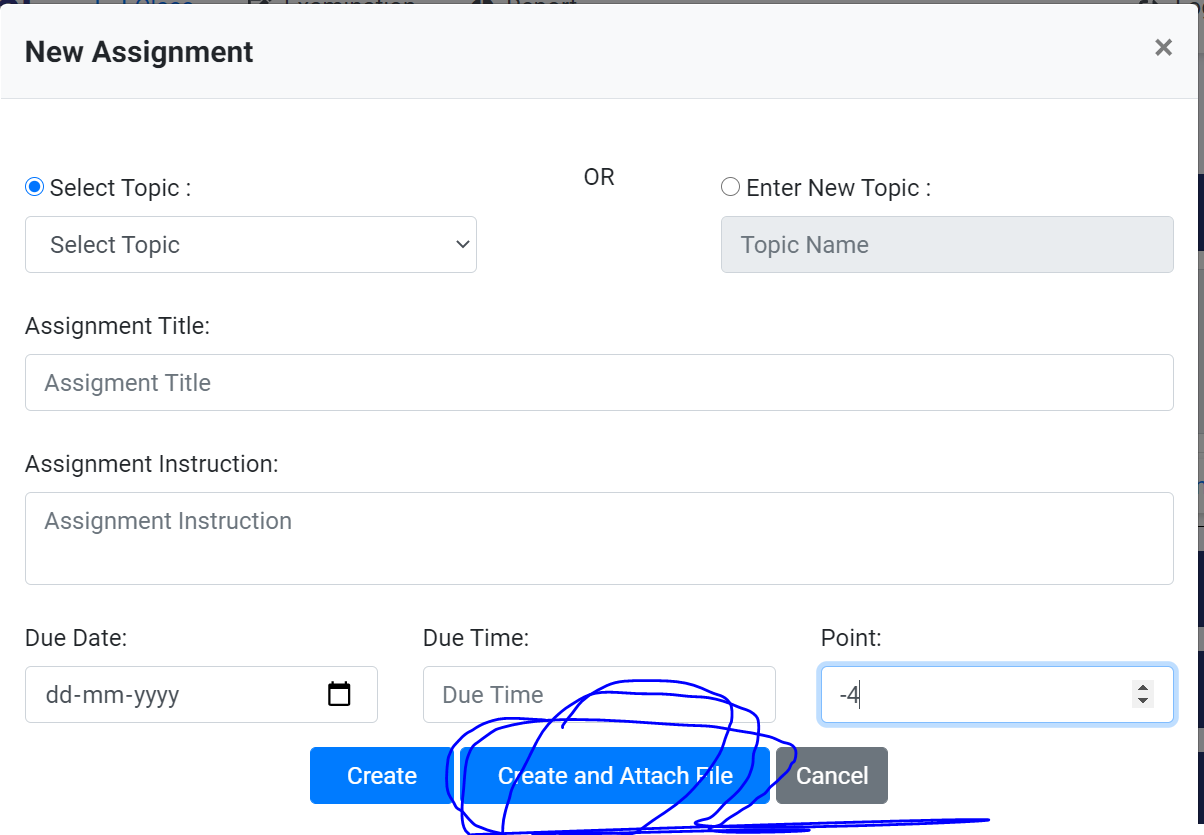

483,227 | 13,920,986,903 | IssuesEvent | 2020-10-21 11:15:27 | MontbitTech/WebApp-e-EdPort-LMS | https://api.github.com/repos/MontbitTech/WebApp-e-EdPort-LMS | closed | Assignment attach file issue | Critical High Priority bug | I noticed that whenever we are clicking on the attach file option in the new assignment tab. It is directly creating the exam.

Expected: A proper space for the attachment of the file should be given in the new assignment tab itself or after clicking on the create and submit assignment a pop-up should open for the submission of file.

| 1.0 | Assignment attach file issue - I noticed that whenever we are clicking on the attach file option in the new assignment tab. It is directly creating the exam.

Expected: A proper space for the attachment of the file should be given in the new assignment tab itself or after clicking on the create and submit assignment a pop-up should open for the submission of file.

| non_comp | assignment attach file issue i noticed that whenever we are clicking on the attach file option in the new assignment tab it is directly creating the exam expected a proper space for the attachment of the file should be given in the new assignment tab itself or after clicking on the create and submit assignment a pop up should open for the submission of file | 0 |

5,052 | 7,643,730,565 | IssuesEvent | 2018-05-08 13:36:27 | Yoast/wordpress-seo | https://api.github.com/repos/Yoast/wordpress-seo | closed | WooCommerce Category Pages Display Incorrect Open Graph Meta Description | compatibility support woocommerce | ### What did you expect to happen?

When checked in the Facebook Developers Tool, I expected my custom Facebook meta description to be displayed.

### What happened instead?

Facebook displayed the category description. When checked in source-code, Yoast SEO is outputting the category description as the Open Graph meta description. When WooCommerce SEO is disabled the Open Graph meta description tags get outputted.

### How can we reproduce this behavior?

1.Create a category

2.Fill in the social settings by writing a Facebook meta description

3.Check in Facebook Dev tool and in source code

**Source Code**

**Facebook Dev Tool**

4.Deactivate WooCommerce SEO and the OG tags appear

**Sourcecode**

**Facebook:**

Can you provide a link to a page which shows this issue?

http://www.anblik.com/review-category/best-online-website-builders-software-to-create-free-websites/

### Technical info

-WordPress version: 4.5.3

-Yoast SEO version: 3.2.5

-WooCommerce SEO: 3.2.1

Notes: Twitter is not affected.

It is also appearing on WordPress Demo Site

http://support.yoastdemo.com/en/product-category/clothing/

-WordPress version: 4.5.3

-Yoast SEO version: 3.2.4

-WooCommerce SEO: 3.1.1

| True | WooCommerce Category Pages Display Incorrect Open Graph Meta Description - ### What did you expect to happen?

When checked in the Facebook Developers Tool, I expected my custom Facebook meta description to be displayed.

### What happened instead?

Facebook displayed the category description. When checked in source-code, Yoast SEO is outputting the category description as the Open Graph meta description. When WooCommerce SEO is disabled the Open Graph meta description tags get outputted.

### How can we reproduce this behavior?

1.Create a category

2.Fill in the social settings by writing a Facebook meta description

3.Check in Facebook Dev tool and in source code

**Source Code**

**Facebook Dev Tool**

4.Deactivate WooCommerce SEO and the OG tags appear

**Sourcecode**

**Facebook:**

Can you provide a link to a page which shows this issue?

http://www.anblik.com/review-category/best-online-website-builders-software-to-create-free-websites/

### Technical info

-WordPress version: 4.5.3

-Yoast SEO version: 3.2.5

-WooCommerce SEO: 3.2.1

Notes: Twitter is not affected.

It is also appearing on WordPress Demo Site

http://support.yoastdemo.com/en/product-category/clothing/

-WordPress version: 4.5.3

-Yoast SEO version: 3.2.4

-WooCommerce SEO: 3.1.1

| comp | woocommerce category pages display incorrect open graph meta description what did you expect to happen when checked in the facebook developers tool i expected my custom facebook meta description to be displayed what happened instead facebook displayed the category description when checked in source code yoast seo is outputting the category description as the open graph meta description when woocommerce seo is disabled the open graph meta description tags get outputted how can we reproduce this behavior create a category fill in the social settings by writing a facebook meta description check in facebook dev tool and in source code source code facebook dev tool deactivate woocommerce seo and the og tags appear sourcecode facebook can you provide a link to a page which shows this issue technical info wordpress version yoast seo version woocommerce seo notes twitter is not affected it is also appearing on wordpress demo site wordpress version yoast seo version woocommerce seo | 1 |

6,334 | 8,679,438,723 | IssuesEvent | 2018-11-30 23:52:58 | cobalt-org/liquid-rust | https://api.github.com/repos/cobalt-org/liquid-rust | opened | `sort` does not support operating on a hash | enhancement shopify-compatibility | Example test:

```rust

assert_eq!(

v!([{ "a": 1, "b": 2 }]),

filters!(sort, v!({ "a": 1, "b": 2 }))

);

``` | True | `sort` does not support operating on a hash - Example test:

```rust

assert_eq!(

v!([{ "a": 1, "b": 2 }]),

filters!(sort, v!({ "a": 1, "b": 2 }))

);

``` | comp | sort does not support operating on a hash example test rust assert eq v filters sort v a b | 1 |

18,731 | 26,096,908,247 | IssuesEvent | 2022-12-26 21:45:15 | nonamecrackers2/crackers-wither-storm-mod | https://api.github.com/repos/nonamecrackers2/crackers-wither-storm-mod | closed | Dropz Mod Incompatibility | 1.16.5 compatibility 1.18.2 1.19 | **Minecraft version**

The version of Minecraft the mod is running on

1.18.2

**Mod version**

The version of the mod you are using

Latest version

**Describe the bug**

A clear and concise description of what the bug is.

Wither Storm won't pick up dropped items with the Dropz mod https://www.curseforge.com/minecraft/mc-mods/dropz

**To Reproduce**

Steps to reproduce the behavior:

1 Summon the Wither Storm with the Dropz mod installed,

2 Drop any item

3 The Wither Storm won't pick up the item

**Crash Reports / Logs**

Crash reports / logs hosted on pastebin, gist, etc.

**Screenshots**

If applicable, add screenshots to help explain your problem.

| True | Dropz Mod Incompatibility - **Minecraft version**

The version of Minecraft the mod is running on

1.18.2

**Mod version**

The version of the mod you are using

Latest version

**Describe the bug**

A clear and concise description of what the bug is.

Wither Storm won't pick up dropped items with the Dropz mod https://www.curseforge.com/minecraft/mc-mods/dropz

**To Reproduce**

Steps to reproduce the behavior:

1 Summon the Wither Storm with the Dropz mod installed,

2 Drop any item

3 The Wither Storm won't pick up the item

**Crash Reports / Logs**

Crash reports / logs hosted on pastebin, gist, etc.

**Screenshots**

If applicable, add screenshots to help explain your problem.

| comp | dropz mod incompatibility minecraft version the version of minecraft the mod is running on mod version the version of the mod you are using latest version describe the bug a clear and concise description of what the bug is wither storm won t pick up dropped items with the dropz mod to reproduce steps to reproduce the behavior summon the wither storm with the dropz mod installed drop any item the wither storm won t pick up the item crash reports logs crash reports logs hosted on pastebin gist etc screenshots if applicable add screenshots to help explain your problem | 1 |

1,601 | 4,161,960,130 | IssuesEvent | 2016-06-17 18:30:27 | LegendOfMCPE/EssentialsPE | https://api.github.com/repos/LegendOfMCPE/EssentialsPE | closed | Bug in PocketMine for MCPE 0.15.0.0 | Invalid Plugin compatibility issue | When server is starting...

`

[17:28:09] [Server thread/INFO]: Увімкнення SimpleWarp v2.1.0

[17:28:09] [Server thread/INFO]: [SimpleWarp] Enabling EssentialsPE support...

[17:28:09] [Server thread/CRITICAL]: Error: "Call to a member function setLabel() on null" (EXCEPTION) in "/plugins/SimpleWarp_v2.1.0.phar/src/falkirks/simplewarp/SimpleWarp" at line 101

[17:28:09] [Server thread/DEBUG]: #0 /src/pocketmine/plugin/PluginBase(86): falkirks\simplewarp\SimpleWarp->onEnable(boolean)

[17:28:09] [Server thread/DEBUG]: #1 /src/pocketmine/plugin/PharPluginLoader(123): pocketmine\plugin\PluginBase->setEnabled(boolean 1)

[17:28:09] [Server thread/DEBUG]: #2 /src/pocketmine/plugin/PluginManager(604): pocketmine\plugin\PharPluginLoader->enablePlugin(falkirks\simplewarp\SimpleWarp object)

[17:28:09] [Server thread/DEBUG]: #3 /src/pocketmine/Server(1849): pocketmine\plugin\PluginManager->enablePlugin(falkirks\simplewarp\SimpleWarp object)

[17:28:09] [Server thread/DEBUG]: #4 /src/pocketmine/Server(1835): pocketmine\Server->enablePlugin(falkirks\simplewarp\SimpleWarp object)

[17:28:09] [Server thread/DEBUG]: #5 /src/pocketmine/Server(1651): pocketmine\Server->enablePlugins(integer 1)

[17:28:09] [Server thread/DEBUG]: #6 /src/pocketmine/PocketMine(464): pocketmine\Server->__construct(pocketmine\CompatibleClassLoader object, pocketmine\utils\MainLogger object, string C:\PM\, string C:\PM\, string C:\PM\plugins\)

[17:28:09] [Server thread/INFO]: Вимкнення SimpleWarp v2.1.0

[17:28:09] [Server thread/INFO]: Enabling EssentialsPE v2.0.0

`

and when player is connecting, it immediately disconnects... | True | Bug in PocketMine for MCPE 0.15.0.0 - When server is starting...

`

[17:28:09] [Server thread/INFO]: Увімкнення SimpleWarp v2.1.0

[17:28:09] [Server thread/INFO]: [SimpleWarp] Enabling EssentialsPE support...

[17:28:09] [Server thread/CRITICAL]: Error: "Call to a member function setLabel() on null" (EXCEPTION) in "/plugins/SimpleWarp_v2.1.0.phar/src/falkirks/simplewarp/SimpleWarp" at line 101

[17:28:09] [Server thread/DEBUG]: #0 /src/pocketmine/plugin/PluginBase(86): falkirks\simplewarp\SimpleWarp->onEnable(boolean)

[17:28:09] [Server thread/DEBUG]: #1 /src/pocketmine/plugin/PharPluginLoader(123): pocketmine\plugin\PluginBase->setEnabled(boolean 1)

[17:28:09] [Server thread/DEBUG]: #2 /src/pocketmine/plugin/PluginManager(604): pocketmine\plugin\PharPluginLoader->enablePlugin(falkirks\simplewarp\SimpleWarp object)

[17:28:09] [Server thread/DEBUG]: #3 /src/pocketmine/Server(1849): pocketmine\plugin\PluginManager->enablePlugin(falkirks\simplewarp\SimpleWarp object)

[17:28:09] [Server thread/DEBUG]: #4 /src/pocketmine/Server(1835): pocketmine\Server->enablePlugin(falkirks\simplewarp\SimpleWarp object)

[17:28:09] [Server thread/DEBUG]: #5 /src/pocketmine/Server(1651): pocketmine\Server->enablePlugins(integer 1)

[17:28:09] [Server thread/DEBUG]: #6 /src/pocketmine/PocketMine(464): pocketmine\Server->__construct(pocketmine\CompatibleClassLoader object, pocketmine\utils\MainLogger object, string C:\PM\, string C:\PM\, string C:\PM\plugins\)

[17:28:09] [Server thread/INFO]: Вимкнення SimpleWarp v2.1.0

[17:28:09] [Server thread/INFO]: Enabling EssentialsPE v2.0.0

`

and when player is connecting, it immediately disconnects... | comp | bug in pocketmine for mcpe when server is starting увімкнення simplewarp enabling essentialspe support error call to a member function setlabel on null exception in plugins simplewarp phar src falkirks simplewarp simplewarp at line src pocketmine plugin pluginbase falkirks simplewarp simplewarp onenable boolean src pocketmine plugin pharpluginloader pocketmine plugin pluginbase setenabled boolean src pocketmine plugin pluginmanager pocketmine plugin pharpluginloader enableplugin falkirks simplewarp simplewarp object src pocketmine server pocketmine plugin pluginmanager enableplugin falkirks simplewarp simplewarp object src pocketmine server pocketmine server enableplugin falkirks simplewarp simplewarp object src pocketmine server pocketmine server enableplugins integer src pocketmine pocketmine pocketmine server construct pocketmine compatibleclassloader object pocketmine utils mainlogger object string c pm string c pm string c pm plugins вимкнення simplewarp enabling essentialspe and when player is connecting it immediately disconnects | 1 |

106,139 | 13,247,729,402 | IssuesEvent | 2020-08-19 17:44:54 | tokio-rs/tracing | https://api.github.com/repos/tokio-rs/tracing | closed | fmt::Subscribers don't want their output to be captured | crate/subscriber kind/feature needs/design | ## Bug Report

<!--

Thank you for reporting an issue.

Please fill in as much of the template below as you're able.

-->

### Version

```

"checksum tracing 0.1.13 (registry+https://github.com/rust-lang/crates.io-index)" = "1721cc8cf7d770cc4257872507180f35a4797272f5962f24c806af9e7faf52ab"

"checksum tracing-attributes 0.1.7 (registry+https://github.com/rust-lang/crates.io-index)" = "7fbad39da2f9af1cae3016339ad7f2c7a9e870f12e8fd04c4fd7ef35b30c0d2b"

"checksum tracing-core 0.1.10 (registry+https://github.com/rust-lang/crates.io-index)" = "0aa83a9a47081cd522c09c81b31aec2c9273424976f922ad61c053b58350b715"

"checksum tracing-log 0.1.1 (registry+https://github.com/rust-lang/crates.io-index)" = "5e0f8c7178e13481ff6765bd169b33e8d554c5d2bbede5e32c356194be02b9b9"

"checksum tracing-serde 0.1.1 (registry+https://github.com/rust-lang/crates.io-index)" = "b6ccba2f8f16e0ed268fc765d9b7ff22e965e7185d32f8f1ec8294fe17d86e79"

"checksum tracing-subscriber 0.2.3 (registry+https://github.com/rust-lang/crates.io-index)" = "dedebcf5813b02261d6bab3a12c6a8ae702580c0405a2e8ec16c3713caf14c20"

```

### Platform

Linux localhost 4.15.0-88-generic #88-Ubuntu SMP Tue Feb 11 20:11:34 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

### Crates

`tracing-subscriber`

### Description

I cannot get the output of a `fmt::Subscriber` to coöperate with settings that want to capture or redirect stdio. For example, the tracing messages all show through the test runner output of `cargo test`, even when the `--nocapture` flag is not set.

I have tried to even make my own `MakeWriter` that calls `eprintln!()` directly, but that only seems to hide some of the output. It would be nice to have an easy way to clean up test output short of disabling the output entirely, since it's nice to have the output there in case a test fails. | 1.0 | fmt::Subscribers don't want their output to be captured - ## Bug Report

<!--

Thank you for reporting an issue.

Please fill in as much of the template below as you're able.

-->

### Version

```

"checksum tracing 0.1.13 (registry+https://github.com/rust-lang/crates.io-index)" = "1721cc8cf7d770cc4257872507180f35a4797272f5962f24c806af9e7faf52ab"

"checksum tracing-attributes 0.1.7 (registry+https://github.com/rust-lang/crates.io-index)" = "7fbad39da2f9af1cae3016339ad7f2c7a9e870f12e8fd04c4fd7ef35b30c0d2b"

"checksum tracing-core 0.1.10 (registry+https://github.com/rust-lang/crates.io-index)" = "0aa83a9a47081cd522c09c81b31aec2c9273424976f922ad61c053b58350b715"

"checksum tracing-log 0.1.1 (registry+https://github.com/rust-lang/crates.io-index)" = "5e0f8c7178e13481ff6765bd169b33e8d554c5d2bbede5e32c356194be02b9b9"

"checksum tracing-serde 0.1.1 (registry+https://github.com/rust-lang/crates.io-index)" = "b6ccba2f8f16e0ed268fc765d9b7ff22e965e7185d32f8f1ec8294fe17d86e79"

"checksum tracing-subscriber 0.2.3 (registry+https://github.com/rust-lang/crates.io-index)" = "dedebcf5813b02261d6bab3a12c6a8ae702580c0405a2e8ec16c3713caf14c20"

```

### Platform

Linux localhost 4.15.0-88-generic #88-Ubuntu SMP Tue Feb 11 20:11:34 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

### Crates

`tracing-subscriber`

### Description

I cannot get the output of a `fmt::Subscriber` to coöperate with settings that want to capture or redirect stdio. For example, the tracing messages all show through the test runner output of `cargo test`, even when the `--nocapture` flag is not set.

I have tried to even make my own `MakeWriter` that calls `eprintln!()` directly, but that only seems to hide some of the output. It would be nice to have an easy way to clean up test output short of disabling the output entirely, since it's nice to have the output there in case a test fails. | non_comp | fmt subscribers don t want their output to be captured bug report thank you for reporting an issue please fill in as much of the template below as you re able version checksum tracing registry checksum tracing attributes registry checksum tracing core registry checksum tracing log registry checksum tracing serde registry checksum tracing subscriber registry platform linux localhost generic ubuntu smp tue feb utc gnu linux crates tracing subscriber description i cannot get the output of a fmt subscriber to coöperate with settings that want to capture or redirect stdio for example the tracing messages all show through the test runner output of cargo test even when the nocapture flag is not set i have tried to even make my own makewriter that calls eprintln directly but that only seems to hide some of the output it would be nice to have an easy way to clean up test output short of disabling the output entirely since it s nice to have the output there in case a test fails | 0 |

20,517 | 30,388,029,940 | IssuesEvent | 2023-07-13 03:39:22 | Apollounknowndev/tectonic | https://api.github.com/repos/Apollounknowndev/tectonic | closed | Compatible with Regions Unexplored? | works as intended compatibility | Everytime I tried to locate one of your biome, it will say "Could not find a biome of type "Tectonic's biome" within reasonable distance."

Is it because of other biome mod? I also used Regions Unexplored. | True | Compatible with Regions Unexplored? - Everytime I tried to locate one of your biome, it will say "Could not find a biome of type "Tectonic's biome" within reasonable distance."

Is it because of other biome mod? I also used Regions Unexplored. | comp | compatible with regions unexplored everytime i tried to locate one of your biome it will say could not find a biome of type tectonic s biome within reasonable distance is it because of other biome mod i also used regions unexplored | 1 |

7,932 | 10,131,019,355 | IssuesEvent | 2019-08-01 18:25:58 | arcticicestudio/nord-jetbrains | https://api.github.com/repos/arcticicestudio/nord-jetbrains | closed | Diagram Theme Support | context-plugin-support scope-compatibility scope-ux type-feature | [JetBrains core product version 2019.2 introduced theme support][idea-207533] for [_Diagrams_][d] in order to prevent unreadable output due to "hardcoded" color values not matching the currently active UI theme. Add the available theme keys to better match Nord's style.

[idea-207533]: https://youtrack.jetbrains.com/issue/IDEA-207533

[d]: https://www.jetbrains.com/help/idea/diagrams.html | True | Diagram Theme Support - [JetBrains core product version 2019.2 introduced theme support][idea-207533] for [_Diagrams_][d] in order to prevent unreadable output due to "hardcoded" color values not matching the currently active UI theme. Add the available theme keys to better match Nord's style.

[idea-207533]: https://youtrack.jetbrains.com/issue/IDEA-207533

[d]: https://www.jetbrains.com/help/idea/diagrams.html | comp | diagram theme support for in order to prevent unreadable output due to hardcoded color values not matching the currently active ui theme add the available theme keys to better match nord s style | 1 |

14,587 | 17,753,625,800 | IssuesEvent | 2021-08-28 09:50:34 | PowerNukkit/PowerNukkit | https://api.github.com/repos/PowerNukkit/PowerNukkit | closed | Plugin Fakeinventorys | Type: compatibility | # 🔌 Plugin compatibility issue

<!--

👉 This template is helpful, but you may erase everything if you can express the issue clearly

Feel free to ask questions or start related discussion

--> Since the latest Update of Powernukkit, no Plugin works with Fakeinventorys Depend.

I have contact the Plugin Owner but he sais its a Problem of Powernukkit.

For Example i have tried following plugins: Seeinv, Backpack

Everytime i try to use this command, Server closed.

The Error Message is toooooo long.

In 1.5.1.0 all these Plugins works great.

### 📸 Screenshots / Videos

<!-- ✍ If applicable, add screenshots or video recordings to help explain your problem -->

### ▶ Steps to Reproduce

<!--- ✍ Reliable steps which someone can use to reproduce the issue. -->

1. Install Fakeinventorys and Backpack

2. Use /bp

3. Server closed

4. See error

### ✔ Expected Behavior

<!-- ✍ What would you expect to happen -->

### ❌ Actual Behavior

<!-- ✍ What actually happened -->

### 📋 Debug information

<!-- Use the 'debugpaste upload' and 'timings paste' command in PowerNukkit -->

<!-- You can get the version from the file name, the 'about' or 'debugpaste' command outputs -->

* PowerNukkit version: git-cb4ac65

* Debug link: ✍

* Timings link (if relevant): ✍

### 💢 Crash Dump, Stack Trace and Other Files

<!-- ✍ Use https://hastebin.com for big logs or dumps -->

### 💬 Anything else we should know?

<!-- ✍ This is the perfect place to add any additional details -->

| True | Plugin Fakeinventorys - # 🔌 Plugin compatibility issue

<!--

👉 This template is helpful, but you may erase everything if you can express the issue clearly

Feel free to ask questions or start related discussion

--> Since the latest Update of Powernukkit, no Plugin works with Fakeinventorys Depend.

I have contact the Plugin Owner but he sais its a Problem of Powernukkit.

For Example i have tried following plugins: Seeinv, Backpack

Everytime i try to use this command, Server closed.

The Error Message is toooooo long.

In 1.5.1.0 all these Plugins works great.

### 📸 Screenshots / Videos

<!-- ✍ If applicable, add screenshots or video recordings to help explain your problem -->

### ▶ Steps to Reproduce

<!--- ✍ Reliable steps which someone can use to reproduce the issue. -->

1. Install Fakeinventorys and Backpack

2. Use /bp

3. Server closed

4. See error

### ✔ Expected Behavior

<!-- ✍ What would you expect to happen -->

### ❌ Actual Behavior

<!-- ✍ What actually happened -->

### 📋 Debug information

<!-- Use the 'debugpaste upload' and 'timings paste' command in PowerNukkit -->

<!-- You can get the version from the file name, the 'about' or 'debugpaste' command outputs -->

* PowerNukkit version: git-cb4ac65

* Debug link: ✍

* Timings link (if relevant): ✍

### 💢 Crash Dump, Stack Trace and Other Files

<!-- ✍ Use https://hastebin.com for big logs or dumps -->

### 💬 Anything else we should know?

<!-- ✍ This is the perfect place to add any additional details -->

| comp | plugin fakeinventorys 🔌 plugin compatibility issue 👉 this template is helpful but you may erase everything if you can express the issue clearly feel free to ask questions or start related discussion since the latest update of powernukkit no plugin works with fakeinventorys depend i have contact the plugin owner but he sais its a problem of powernukkit for example i have tried following plugins seeinv backpack everytime i try to use this command server closed the error message is toooooo long in all these plugins works great 📸 screenshots videos ▶ steps to reproduce install fakeinventorys and backpack use bp server closed see error ✔ expected behavior ❌ actual behavior 📋 debug information powernukkit version git debug link ✍ timings link if relevant ✍ 💢 crash dump stack trace and other files 💬 anything else we should know | 1 |

65 | 2,535,895,254 | IssuesEvent | 2015-01-26 08:58:46 | dranzd/Pike-Arms | https://api.github.com/repos/dranzd/Pike-Arms | opened | Catalog's edit account does not show the values of the forms when values are available | bug composer-compatible | Form is empty even when data is available.

Caused by the tep_draw_*_field that uses combination of _REQUEST and GLOBALS to populate the fields but the account_edit.php sets the data into the _POST var.

tep_draw_*_field where modified before to not use GLOBALS but might have been programmed incorrectly. | True | Catalog's edit account does not show the values of the forms when values are available - Form is empty even when data is available.

Caused by the tep_draw_*_field that uses combination of _REQUEST and GLOBALS to populate the fields but the account_edit.php sets the data into the _POST var.

tep_draw_*_field where modified before to not use GLOBALS but might have been programmed incorrectly. | comp | catalog s edit account does not show the values of the forms when values are available form is empty even when data is available caused by the tep draw field that uses combination of request and globals to populate the fields but the account edit php sets the data into the post var tep draw field where modified before to not use globals but might have been programmed incorrectly | 1 |

16,647 | 22,792,497,039 | IssuesEvent | 2022-07-10 08:00:29 | euratom-software/calcam | https://api.github.com/repos/euratom-software/calcam | closed | Exception when opening calibration info with PyQt6 | bug compatibility | When clicking the calibration "info" button, the attached error gets raised

[Calibration info button error.txt](https://github.com/euratom-software/calcam/files/8909164/Calibration.info.button.error.txt)

. | True | Exception when opening calibration info with PyQt6 - When clicking the calibration "info" button, the attached error gets raised

[Calibration info button error.txt](https://github.com/euratom-software/calcam/files/8909164/Calibration.info.button.error.txt)

. | comp | exception when opening calibration info with when clicking the calibration info button the attached error gets raised | 1 |

442,238 | 12,742,151,728 | IssuesEvent | 2020-06-26 07:51:44 | OpenMined/PyGridNetwork | https://api.github.com/repos/OpenMined/PyGridNetwork | closed | Rename project | Priority: 3 - Medium :unamused: Severity: 4 - Low :sunglasses: Status: Available :wave: Type: Improvement :chart_with_upwards_trend: | ## Description

We need to rename this project slightly to fit [our new project naming standards](https://github.com/OpenMined/.github/issues/11).

**The new name for this repo should be:** PyGridNetwork

**The new release name for this repo should be:** openmined.gridnetwork

I have gone ahead and renamed the repo, but I will need someone else to be in charge of changing the release name. **With that said, if this repo doesn't have an official release, then this issue can be closed.**

If you have any questions about this issue, please comment here or message **@cereallarceny** on Slack. | 1.0 | Rename project - ## Description

We need to rename this project slightly to fit [our new project naming standards](https://github.com/OpenMined/.github/issues/11).

**The new name for this repo should be:** PyGridNetwork

**The new release name for this repo should be:** openmined.gridnetwork

I have gone ahead and renamed the repo, but I will need someone else to be in charge of changing the release name. **With that said, if this repo doesn't have an official release, then this issue can be closed.**