Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

276,214

| 20,974,221,263

|

IssuesEvent

|

2022-03-28 13:59:53

|

DavidT3/XGA

|

https://api.github.com/repos/DavidT3/XGA

|

opened

|

Should make it clear that coordinates are currently expected to be J2000

|

documentation enhancement

|

Or swap out the coordinate quantities to be actual astropy coordinate objects as they should have been from the beginning

|

1.0

|

Should make it clear that coordinates are currently expected to be J2000 - Or swap out the coordinate quantities to be actual astropy coordinate objects as they should have been from the beginning

|

non_defect

|

should make it clear that coordinates are currently expected to be or swap out the coordinate quantities to be actual astropy coordinate objects as they should have been from the beginning

| 0

|

3,805

| 2,610,069,349

|

IssuesEvent

|

2015-02-26 18:20:20

|

chrsmith/jsjsj122

|

https://api.github.com/repos/chrsmith/jsjsj122

|

opened

|

台州割包茎哪个医院最好

|

auto-migrated Priority-Medium Type-Defect

|

```

台州割包茎哪个医院最好【台州五洲生殖医院】24小时健康咨

询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州

市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108�

��118、198及椒江一金清公交车直达枫南小区,乘坐107、105、109

、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威专家,成就专业典

范。人性化服务,一切以患者为中心。

看男科就选台州五洲生殖医院,专业男科为男人。

```

-----

Original issue reported on code.google.com by `poweragr...@gmail.com` on 30 May 2014 at 11:43

|

1.0

|

台州割包茎哪个医院最好 - ```

台州割包茎哪个医院最好【台州五洲生殖医院】24小时健康咨

询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州

市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108�

��118、198及椒江一金清公交车直达枫南小区,乘坐107、105、109

、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威专家,成就专业典

范。人性化服务,一切以患者为中心。

看男科就选台州五洲生殖医院,专业男科为男人。

```

-----

Original issue reported on code.google.com by `poweragr...@gmail.com` on 30 May 2014 at 11:43

|

defect

|

台州割包茎哪个医院最好 台州割包茎哪个医院最好【台州五洲生殖医院】 询热线 微信号tzwzszyy 医院地址 台州 (枫南大转盘旁)乘车线路 、 � �� 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 original issue reported on code google com by poweragr gmail com on may at

| 1

|

195,890

| 14,787,311,142

|

IssuesEvent

|

2021-01-12 07:22:58

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

opened

|

roachtest: backupTPCC failed

|

C-test-failure O-roachtest O-robot branch-release-20.1 release-blocker

|

[(roachtest).backupTPCC failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2574842&tab=buildLog) on [release-20.1@e395c0c7c48a279334f0e94dfb7030a3eafa093f](https://github.com/cockroachdb/cockroach/commits/e395c0c7c48a279334f0e94dfb7030a3eafa093f):

```

The test failed on branch=release-20.1, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/backupTPCC/run_1

cluster.go:2198,backup.go:108,test_runner.go:749: output in run_072240.275_n1_workload_init_tpcc: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-2574842-1610435721-21-n3cpu4:1 -- ./workload init tpcc --warehouses=10 {pgurl:1-3} --deprecated-fk-indexes returned: exit status 20

(1) attached stack trace

| main.(*cluster).RunE

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2276

| main.(*cluster).Run

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2196

| main.registerBackup.func3

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/backup.go:108

| main.(*testRunner).runTest.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:749

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (2) 2 safe details enclosed

Wraps: (3) output in run_072240.275_n1_workload_init_tpcc

Wraps: (4) /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-2574842-1610435721-21-n3cpu4:1 -- ./workload init tpcc --warehouses=10 {pgurl:1-3} --deprecated-fk-indexes returned

| stderr:

| bash: line 1: 4884 Illegal instruction (core dumped) bash -c "./workload init tpcc --warehouses=10 'postgres://root@10.128.0.9:26257?sslmode=disable' 'postgres://root@10.128.0.130:26257?sslmode=disable' 'postgres://root@10.128.0.129:26257?sslmode=disable' --deprecated-fk-indexes"

| Error: COMMAND_PROBLEM: exit status 132

| (1) COMMAND_PROBLEM

| Wraps: (2) Node 1. Command with error:

| | ```

| | ./workload init tpcc --warehouses=10 {pgurl:1-3} --deprecated-fk-indexes

| | ```

| Wraps: (3) exit status 132

| Error types: (1) errors.Cmd (2) *hintdetail.withDetail (3) *exec.ExitError

|

| stdout:

Wraps: (5) exit status 20

Error types: (1) *withstack.withStack (2) *safedetails.withSafeDetails (3) *errutil.withMessage (4) *main.withCommandDetails (5) *exec.ExitError

```

<details><summary>More</summary><p>

Artifacts: [/backupTPCC](https://teamcity.cockroachdb.com/viewLog.html?buildId=2574842&tab=artifacts#/backupTPCC)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2AbackupTPCC.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

|

2.0

|

roachtest: backupTPCC failed - [(roachtest).backupTPCC failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=2574842&tab=buildLog) on [release-20.1@e395c0c7c48a279334f0e94dfb7030a3eafa093f](https://github.com/cockroachdb/cockroach/commits/e395c0c7c48a279334f0e94dfb7030a3eafa093f):

```

The test failed on branch=release-20.1, cloud=gce:

test artifacts and logs in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/backupTPCC/run_1

cluster.go:2198,backup.go:108,test_runner.go:749: output in run_072240.275_n1_workload_init_tpcc: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-2574842-1610435721-21-n3cpu4:1 -- ./workload init tpcc --warehouses=10 {pgurl:1-3} --deprecated-fk-indexes returned: exit status 20

(1) attached stack trace

| main.(*cluster).RunE

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2276

| main.(*cluster).Run

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/cluster.go:2196

| main.registerBackup.func3

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/backup.go:108

| main.(*testRunner).runTest.func2

| /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/cmd/roachtest/test_runner.go:749

| runtime.goexit

| /usr/local/go/src/runtime/asm_amd64.s:1374

Wraps: (2) 2 safe details enclosed

Wraps: (3) output in run_072240.275_n1_workload_init_tpcc

Wraps: (4) /home/agent/work/.go/src/github.com/cockroachdb/cockroach/bin/roachprod run teamcity-2574842-1610435721-21-n3cpu4:1 -- ./workload init tpcc --warehouses=10 {pgurl:1-3} --deprecated-fk-indexes returned

| stderr:

| bash: line 1: 4884 Illegal instruction (core dumped) bash -c "./workload init tpcc --warehouses=10 'postgres://root@10.128.0.9:26257?sslmode=disable' 'postgres://root@10.128.0.130:26257?sslmode=disable' 'postgres://root@10.128.0.129:26257?sslmode=disable' --deprecated-fk-indexes"

| Error: COMMAND_PROBLEM: exit status 132

| (1) COMMAND_PROBLEM

| Wraps: (2) Node 1. Command with error:

| | ```

| | ./workload init tpcc --warehouses=10 {pgurl:1-3} --deprecated-fk-indexes

| | ```

| Wraps: (3) exit status 132

| Error types: (1) errors.Cmd (2) *hintdetail.withDetail (3) *exec.ExitError

|

| stdout:

Wraps: (5) exit status 20

Error types: (1) *withstack.withStack (2) *safedetails.withSafeDetails (3) *errutil.withMessage (4) *main.withCommandDetails (5) *exec.ExitError

```

<details><summary>More</summary><p>

Artifacts: [/backupTPCC](https://teamcity.cockroachdb.com/viewLog.html?buildId=2574842&tab=artifacts#/backupTPCC)

[See this test on roachdash](https://roachdash.crdb.dev/?filter=status%3Aopen+t%3A.%2AbackupTPCC.%2A&sort=title&restgroup=false&display=lastcommented+project)

<sub>powered by [pkg/cmd/internal/issues](https://github.com/cockroachdb/cockroach/tree/master/pkg/cmd/internal/issues)</sub></p></details>

|

non_defect

|

roachtest backuptpcc failed on the test failed on branch release cloud gce test artifacts and logs in home agent work go src github com cockroachdb cockroach artifacts backuptpcc run cluster go backup go test runner go output in run workload init tpcc home agent work go src github com cockroachdb cockroach bin roachprod run teamcity workload init tpcc warehouses pgurl deprecated fk indexes returned exit status attached stack trace main cluster rune home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main cluster run home agent work go src github com cockroachdb cockroach pkg cmd roachtest cluster go main registerbackup home agent work go src github com cockroachdb cockroach pkg cmd roachtest backup go main testrunner runtest home agent work go src github com cockroachdb cockroach pkg cmd roachtest test runner go runtime goexit usr local go src runtime asm s wraps safe details enclosed wraps output in run workload init tpcc wraps home agent work go src github com cockroachdb cockroach bin roachprod run teamcity workload init tpcc warehouses pgurl deprecated fk indexes returned stderr bash line illegal instruction core dumped bash c workload init tpcc warehouses postgres root sslmode disable postgres root sslmode disable postgres root sslmode disable deprecated fk indexes error command problem exit status command problem wraps node command with error workload init tpcc warehouses pgurl deprecated fk indexes wraps exit status error types errors cmd hintdetail withdetail exec exiterror stdout wraps exit status error types withstack withstack safedetails withsafedetails errutil withmessage main withcommanddetails exec exiterror more artifacts powered by

| 0

|

313,817

| 26,956,656,702

|

IssuesEvent

|

2023-02-08 15:20:14

|

vegaprotocol/frontend-monorepo

|

https://api.github.com/repos/vegaprotocol/frontend-monorepo

|

closed

|

Trading test refactor

|

Trading Testing 🧪

|

## The Chore

We have highly increased Trading app test coverage, and that will continue in the nearest future. There are also new capsule tests for trading. We need to clean up a little for better performance and to ease possible parallelisation.

## Tasks

- [x] Analyse existing test scenarios and improve their structure. There are tests, that execute multiple validations in single `it` block, which 1. decrease visibility, 2. prevent validations to be done if one of them is failed. We need to rework it into `describe`s with many `it`s, where possible.

- [x] Minimise situations where app is refreshed, as it takes much time to reload the app. If it's possible to perform many tests without refreshing, let's do that instead.

- [x] We have bigger and bigget test files with many contexts and describes. Break it down to multiple spec files, where possible, which increase readability and allow such tests to be run in parallel in the future.

|

1.0

|

Trading test refactor - ## The Chore

We have highly increased Trading app test coverage, and that will continue in the nearest future. There are also new capsule tests for trading. We need to clean up a little for better performance and to ease possible parallelisation.

## Tasks

- [x] Analyse existing test scenarios and improve their structure. There are tests, that execute multiple validations in single `it` block, which 1. decrease visibility, 2. prevent validations to be done if one of them is failed. We need to rework it into `describe`s with many `it`s, where possible.

- [x] Minimise situations where app is refreshed, as it takes much time to reload the app. If it's possible to perform many tests without refreshing, let's do that instead.

- [x] We have bigger and bigget test files with many contexts and describes. Break it down to multiple spec files, where possible, which increase readability and allow such tests to be run in parallel in the future.

|

non_defect

|

trading test refactor the chore we have highly increased trading app test coverage and that will continue in the nearest future there are also new capsule tests for trading we need to clean up a little for better performance and to ease possible parallelisation tasks analyse existing test scenarios and improve their structure there are tests that execute multiple validations in single it block which decrease visibility prevent validations to be done if one of them is failed we need to rework it into describe s with many it s where possible minimise situations where app is refreshed as it takes much time to reload the app if it s possible to perform many tests without refreshing let s do that instead we have bigger and bigget test files with many contexts and describes break it down to multiple spec files where possible which increase readability and allow such tests to be run in parallel in the future

| 0

|

43,079

| 11,462,021,369

|

IssuesEvent

|

2020-02-07 13:18:35

|

libarchive/libarchive

|

https://api.github.com/repos/libarchive/libarchive

|

closed

|

Hang observed with xz/7z

|

OpSys-All Priority-Medium Type-Defect

|

Original [issue 315](https://code.google.com/p/libarchive/issues/detail?id=315) created by Google Code user `Ztatik.Light` on 2013-04-23T08:45:33.000Z:

```

Concerning libarchive v3.1.2... This may or may not be known already, but more testing is required for the xz and 7zip formats because with small files, they work... But with larger files that contain several files, it either doesn't work or hangs.

What I'm testing with is about 100 files that total about 25 MB compressed as either .tar.xz or .7z

```

|

1.0

|

Hang observed with xz/7z - Original [issue 315](https://code.google.com/p/libarchive/issues/detail?id=315) created by Google Code user `Ztatik.Light` on 2013-04-23T08:45:33.000Z:

```

Concerning libarchive v3.1.2... This may or may not be known already, but more testing is required for the xz and 7zip formats because with small files, they work... But with larger files that contain several files, it either doesn't work or hangs.

What I'm testing with is about 100 files that total about 25 MB compressed as either .tar.xz or .7z

```

|

defect

|

hang observed with xz original created by google code user ztatik light on concerning libarchive this may or may not be known already but more testing is required for the xz and formats because with small files they work but with larger files that contain several files it either doesn t work or hangs what i m testing with is about files that total about mb compressed as either tar xz or

| 1

|

67,205

| 20,961,583,609

|

IssuesEvent

|

2022-03-27 21:43:54

|

abedmaatalla/foursquared

|

https://api.github.com/repos/abedmaatalla/foursquared

|

closed

|

Location not updated when checking in

|

Priority-Medium Type-Defect auto-migrated

|

```

I would expect a location based app to recalculate a user's location when they

(attempt to) check in - this means that they will never get a "We don't think

you are actually at that location" error, which they are, and GPS will confirm.

This error has occurred for me several times recently - mainly when I use

high-speed transport to get somewhere (ie - train) - if I don't wait long

enough before checking in, I am given this error, and score no points for the

location even after the position is refreshed and I retry checking in.

```

Original issue reported on code.google.com by `nat.abbo...@wavewatchers.org` on 6 Jan 2011 at 7:06

|

1.0

|

Location not updated when checking in - ```

I would expect a location based app to recalculate a user's location when they

(attempt to) check in - this means that they will never get a "We don't think

you are actually at that location" error, which they are, and GPS will confirm.

This error has occurred for me several times recently - mainly when I use

high-speed transport to get somewhere (ie - train) - if I don't wait long

enough before checking in, I am given this error, and score no points for the

location even after the position is refreshed and I retry checking in.

```

Original issue reported on code.google.com by `nat.abbo...@wavewatchers.org` on 6 Jan 2011 at 7:06

|

defect

|

location not updated when checking in i would expect a location based app to recalculate a user s location when they attempt to check in this means that they will never get a we don t think you are actually at that location error which they are and gps will confirm this error has occurred for me several times recently mainly when i use high speed transport to get somewhere ie train if i don t wait long enough before checking in i am given this error and score no points for the location even after the position is refreshed and i retry checking in original issue reported on code google com by nat abbo wavewatchers org on jan at

| 1

|

38,661

| 8,951,779,276

|

IssuesEvent

|

2019-01-25 14:53:26

|

svigerske/ipopt-donotuse

|

https://api.github.com/repos/svigerske/ipopt-donotuse

|

closed

|

trunk version fails on infeasible problem with all variables fixed

|

Ipopt defect

|

Issue created by migration from Trac.

Original creator: @svigerske

Original creation time: 2008-03-13 18:44:04

Assignee: ipopt-team

Version: 3.3

Hi,

if I run Ipopt on a problem where all variables are fixed and the equations are infeasible within the bounds, then Ipopt runs into a seg. fault at IpIpoptApplication.cpp:658, p2ip_data does not get initialized completely and then p2ip_cq->curr_f() tries to read the primal values of an IteratesVector that has not become allocated.

Would be nice if this could be fixed for trunk in a way similar to as it is working for stable already. There IpTNLPAdapter.cpp:586 does not throw LOCALLY_INFEASIBLE, but NO_FREE_VARIABLES_AND_INFEASIBLE.

My workaround is [here](https://projects.coin-or.org/GAMSlinks/browser/trunk/ipopt.patch), but it is not really nice.

Best,

Stefan

|

1.0

|

trunk version fails on infeasible problem with all variables fixed - Issue created by migration from Trac.

Original creator: @svigerske

Original creation time: 2008-03-13 18:44:04

Assignee: ipopt-team

Version: 3.3

Hi,

if I run Ipopt on a problem where all variables are fixed and the equations are infeasible within the bounds, then Ipopt runs into a seg. fault at IpIpoptApplication.cpp:658, p2ip_data does not get initialized completely and then p2ip_cq->curr_f() tries to read the primal values of an IteratesVector that has not become allocated.

Would be nice if this could be fixed for trunk in a way similar to as it is working for stable already. There IpTNLPAdapter.cpp:586 does not throw LOCALLY_INFEASIBLE, but NO_FREE_VARIABLES_AND_INFEASIBLE.

My workaround is [here](https://projects.coin-or.org/GAMSlinks/browser/trunk/ipopt.patch), but it is not really nice.

Best,

Stefan

|

defect

|

trunk version fails on infeasible problem with all variables fixed issue created by migration from trac original creator svigerske original creation time assignee ipopt team version hi if i run ipopt on a problem where all variables are fixed and the equations are infeasible within the bounds then ipopt runs into a seg fault at ipipoptapplication cpp data does not get initialized completely and then cq curr f tries to read the primal values of an iteratesvector that has not become allocated would be nice if this could be fixed for trunk in a way similar to as it is working for stable already there iptnlpadapter cpp does not throw locally infeasible but no free variables and infeasible my workaround is but it is not really nice best stefan

| 1

|

59,784

| 17,023,244,558

|

IssuesEvent

|

2021-07-03 01:01:53

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

potlatch created doubled-up way

|

Component: potlatch (flash editor) Priority: major Resolution: worksforme Type: defect

|

**[Submitted to the original trac issue database at 8.09pm, Sunday, 4th May 2008]**

Not sure exactly how this happened, but check out revision 2008-05-04T20:38:04+01:00 of way 15242140 at http://api.openstreetmap.org/api/0.5/way/15242140/history .

I was refining an existing way (call it A) in potlatch by adding a few nodes; I also merged it with another way (B) to the south. It started out with 29 nodes, the fixed (current) version has 56 nodes, the one I mention above has 82 nodes. In this broken version, the way starts with B, then the original A forwards, then the refined A backwards. (Or perhaps the other way round.)

I believe that A was already doubled up before I merged it with B, so the merging has nothing to do with it, but I'm not sure. Also, at some point, I was able to select the unmodified A as a different way by clicking the unrefined segments...

|

1.0

|

potlatch created doubled-up way - **[Submitted to the original trac issue database at 8.09pm, Sunday, 4th May 2008]**

Not sure exactly how this happened, but check out revision 2008-05-04T20:38:04+01:00 of way 15242140 at http://api.openstreetmap.org/api/0.5/way/15242140/history .

I was refining an existing way (call it A) in potlatch by adding a few nodes; I also merged it with another way (B) to the south. It started out with 29 nodes, the fixed (current) version has 56 nodes, the one I mention above has 82 nodes. In this broken version, the way starts with B, then the original A forwards, then the refined A backwards. (Or perhaps the other way round.)

I believe that A was already doubled up before I merged it with B, so the merging has nothing to do with it, but I'm not sure. Also, at some point, I was able to select the unmodified A as a different way by clicking the unrefined segments...

|

defect

|

potlatch created doubled up way not sure exactly how this happened but check out revision of way at i was refining an existing way call it a in potlatch by adding a few nodes i also merged it with another way b to the south it started out with nodes the fixed current version has nodes the one i mention above has nodes in this broken version the way starts with b then the original a forwards then the refined a backwards or perhaps the other way round i believe that a was already doubled up before i merged it with b so the merging has nothing to do with it but i m not sure also at some point i was able to select the unmodified a as a different way by clicking the unrefined segments

| 1

|

2,980

| 2,607,968,339

|

IssuesEvent

|

2015-02-26 00:43:23

|

chrsmithdemos/leveldb

|

https://api.github.com/repos/chrsmithdemos/leveldb

|

opened

|

oen advice about snapshot

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1.all code adn information showed in the additional information

2.

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

leveldb 1.9 fedora17

Please provide any additional information below.

code begin=================================================

const leveldb::Snapshot* snap_shot = db->GetSnapshot();

leveldb::ReadOptions read_options;

// change "key0"'s value from "value0" to "new"

db->Put(leveldb::WriteOptions(),"key0","new");

string val;

db->Get(read_options,"key0",&val);

cout<<"no snapshot value: "<<val<<endl;//output " new" .

read_options.snapshot = snap_shot;

db->Get(read_options,"key0",&val);

cout<<"snapshot value: "<<val<<endl;//output "value0" .

// release snapshot

db->ReleaseSnapshot(read_options.snapshot);

db->Get(read_options,"key0",&val);

cout<<"after release snapshot: "<<val<<endl;

//output "value0" !!! It's wrong

code end===================================================

Advice:

If i release the snapshot ,i should get the newest infomation.

But only i add the "read_options.snapshot = NULL; " after

db->ReleaseSnapshot(...)manually,can i get the right result---output "new".

I deep into the code in db_imp.cc and know it will judge

"options.snapshot!=NULL" when call NewIterator(...) and Get(...) .

SO I think set the read_options.snapshot=NULL in DB::ReleaseSnapshot() is a

good idea. Or you should modify the document clearly and add

"read_options.snapshot = NULL;" to the example in the Snapshots part of

http://leveldb.googlecode.com/svn/trunk/doc/index.html

```

-----

Original issue reported on code.google.com by `wangteng...@gmail.com` on 15 May 2013 at 11:37

|

1.0

|

oen advice about snapshot - ```

What steps will reproduce the problem?

1.all code adn information showed in the additional information

2.

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

leveldb 1.9 fedora17

Please provide any additional information below.

code begin=================================================

const leveldb::Snapshot* snap_shot = db->GetSnapshot();

leveldb::ReadOptions read_options;

// change "key0"'s value from "value0" to "new"

db->Put(leveldb::WriteOptions(),"key0","new");

string val;

db->Get(read_options,"key0",&val);

cout<<"no snapshot value: "<<val<<endl;//output " new" .

read_options.snapshot = snap_shot;

db->Get(read_options,"key0",&val);

cout<<"snapshot value: "<<val<<endl;//output "value0" .

// release snapshot

db->ReleaseSnapshot(read_options.snapshot);

db->Get(read_options,"key0",&val);

cout<<"after release snapshot: "<<val<<endl;

//output "value0" !!! It's wrong

code end===================================================

Advice:

If i release the snapshot ,i should get the newest infomation.

But only i add the "read_options.snapshot = NULL; " after

db->ReleaseSnapshot(...)manually,can i get the right result---output "new".

I deep into the code in db_imp.cc and know it will judge

"options.snapshot!=NULL" when call NewIterator(...) and Get(...) .

SO I think set the read_options.snapshot=NULL in DB::ReleaseSnapshot() is a

good idea. Or you should modify the document clearly and add

"read_options.snapshot = NULL;" to the example in the Snapshots part of

http://leveldb.googlecode.com/svn/trunk/doc/index.html

```

-----

Original issue reported on code.google.com by `wangteng...@gmail.com` on 15 May 2013 at 11:37

|

defect

|

oen advice about snapshot what steps will reproduce the problem all code adn information showed in the additional information what is the expected output what do you see instead what version of the product are you using on what operating system leveldb please provide any additional information below code begin const leveldb snapshot snap shot db getsnapshot leveldb readoptions read options change s value from to new db put leveldb writeoptions new string val db get read options val cout no snapshot value val endl output new read options snapshot snap shot db get read options val cout snapshot value val endl output release snapshot db releasesnapshot read options snapshot db get read options val cout after release snapshot val endl output it s wrong code end advice if i release the snapshot i should get the newest infomation but only i add the read options snapshot null after db releasesnapshot manually can i get the right result output new i deep into the code in db imp cc and know it will judge options snapshot null when call newiterator and get so i think set the read options snapshot null in db releasesnapshot is a good idea or you should modify the document clearly and add read options snapshot null to the example in the snapshots part of original issue reported on code google com by wangteng gmail com on may at

| 1

|

16,978

| 4,110,800,211

|

IssuesEvent

|

2016-06-07 01:28:49

|

brave/browser-laptop

|

https://api.github.com/repos/brave/browser-laptop

|

opened

|

General Settings: "Browser data import" setting

|

documentation settings

|

Strings:

Item label:

Browser data import

Button string:

"Import now..."

Functionality:

Choosing "Import now..." opens the Import Browser Data dialog which floats above the General Settings panel. (This dialog is modal to all other functionality within the browser settings panels.)

The pull-down menu list is populated by polling the OS for installed browsers that have at least one data type that can be imported. Each browser offers a different set of importable data types. The available toggle switches change dynamically based on the selected browser, becoming disabled to indicate lack of availability.

Safari: Preferences, Bookmarks

Chrome: Cookies, Browsing History, Bookmarks

Firefox: Browsing History, Bookmarks, Saved Passwords, Autofill form data

Superset: Browsing History, Bookmarks, Cookies, Preferences, Saved Passwords, Autofill form data

Spec image: (issue item shown in blue)

Spec image with import dialog open:

|

1.0

|

General Settings: "Browser data import" setting - Strings:

Item label:

Browser data import

Button string:

"Import now..."

Functionality:

Choosing "Import now..." opens the Import Browser Data dialog which floats above the General Settings panel. (This dialog is modal to all other functionality within the browser settings panels.)

The pull-down menu list is populated by polling the OS for installed browsers that have at least one data type that can be imported. Each browser offers a different set of importable data types. The available toggle switches change dynamically based on the selected browser, becoming disabled to indicate lack of availability.

Safari: Preferences, Bookmarks

Chrome: Cookies, Browsing History, Bookmarks

Firefox: Browsing History, Bookmarks, Saved Passwords, Autofill form data

Superset: Browsing History, Bookmarks, Cookies, Preferences, Saved Passwords, Autofill form data

Spec image: (issue item shown in blue)

Spec image with import dialog open:

|

non_defect

|

general settings browser data import setting strings item label browser data import button string import now functionality choosing import now opens the import browser data dialog which floats above the general settings panel this dialog is modal to all other functionality within the browser settings panels the pull down menu list is populated by polling the os for installed browsers that have at least one data type that can be imported each browser offers a different set of importable data types the available toggle switches change dynamically based on the selected browser becoming disabled to indicate lack of availability safari preferences bookmarks chrome cookies browsing history bookmarks firefox browsing history bookmarks saved passwords autofill form data superset browsing history bookmarks cookies preferences saved passwords autofill form data spec image issue item shown in blue spec image with import dialog open

| 0

|

462,026

| 13,239,873,648

|

IssuesEvent

|

2020-08-19 04:50:00

|

larshp/abapGit

|

https://api.github.com/repos/larshp/abapGit

|

closed

|

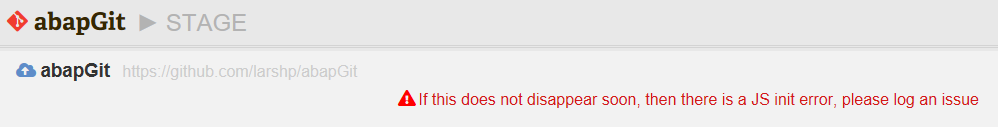

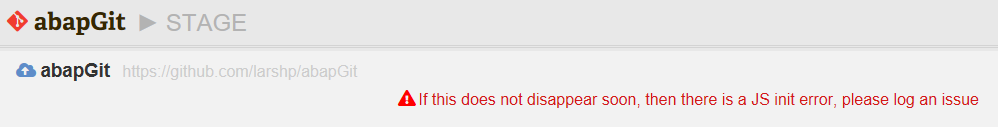

Error when using "Advanced > Force Stage"

|

bug low priority

|

If there are no diffs, then using "Advanced > Force Stage" leads to the following error:

|

1.0

|

Error when using "Advanced > Force Stage" - If there are no diffs, then using "Advanced > Force Stage" leads to the following error:

|

non_defect

|

error when using advanced force stage if there are no diffs then using advanced force stage leads to the following error

| 0

|

50,784

| 13,187,742,662

|

IssuesEvent

|

2020-08-13 04:25:47

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

closed

|

Missing Factor 2 in python implementation of paraboloid (Trac #1364)

|

Migrated from Trac combo reconstruction defect

|

In paraboloid/releases/V01-07-00/python/fit_paraboloid.py

Nice docu in code. I followed the calculation of the formulars.

There is an coefficient comparison between L. 30 and L. 38 which results in

relation between coefficients in L. 41-43.

Here line 41 & 43 are missing a factor of 2.

L. 41 should be

a = 2(A cosfi**2 + B sinfi**2)

and L 43 should be

c = 2(A sinfi**2 + B cosfi**2)

these factors are missing in all following calculations.

I have done the exercise. The result should be:

case b=0 and a=c:

A=B=a/2=c/2

case b=0 and a!=c:

a=2A

c=2B

case b!=0:

tanfi_1/2 = 1/(2b) * [c-a +- sqrt( (a-c)**2 +4b**2)]

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1364">https://code.icecube.wisc.edu/ticket/1364</a>, reported by reimann and owned by kjmeagher</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "In paraboloid/releases/V01-07-00/python/fit_paraboloid.py\n\nNice docu in code. I followed the calculation of the formulars. \nThere is an coefficient comparison between L. 30 and L. 38 which results in \nrelation between coefficients in L. 41-43.\nHere line 41 & 43 are missing a factor of 2.\nL. 41 should be \na = 2(A cosfi**2 + B sinfi**2)\nand L 43 should be\nc = 2(A sinfi**2 + B cosfi**2)\n\nthese factors are missing in all following calculations. \n\nI have done the exercise. The result should be:\ncase b=0 and a=c:\n A=B=a/2=c/2\ncase b=0 and a!=c:\n a=2A\n c=2B\ncase b!=0:\n tanfi_1/2 = 1/(2b) * [c-a +- sqrt( (a-c)**2 +4b**2)] ",

"reporter": "reimann",

"cc": "kmeagher@ulb.ac.be",

"resolution": "fixed",

"_ts": "1550067117911749",

"component": "combo reconstruction",

"summary": "Missing Factor 2 in python implementation of paraboloid",

"priority": "normal",

"keywords": "paraboloid",

"time": "2015-09-22T15:19:30",

"milestone": "",

"owner": "kjmeagher",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

Missing Factor 2 in python implementation of paraboloid (Trac #1364) - In paraboloid/releases/V01-07-00/python/fit_paraboloid.py

Nice docu in code. I followed the calculation of the formulars.

There is an coefficient comparison between L. 30 and L. 38 which results in

relation between coefficients in L. 41-43.

Here line 41 & 43 are missing a factor of 2.

L. 41 should be

a = 2(A cosfi**2 + B sinfi**2)

and L 43 should be

c = 2(A sinfi**2 + B cosfi**2)

these factors are missing in all following calculations.

I have done the exercise. The result should be:

case b=0 and a=c:

A=B=a/2=c/2

case b=0 and a!=c:

a=2A

c=2B

case b!=0:

tanfi_1/2 = 1/(2b) * [c-a +- sqrt( (a-c)**2 +4b**2)]

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1364">https://code.icecube.wisc.edu/ticket/1364</a>, reported by reimann and owned by kjmeagher</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "In paraboloid/releases/V01-07-00/python/fit_paraboloid.py\n\nNice docu in code. I followed the calculation of the formulars. \nThere is an coefficient comparison between L. 30 and L. 38 which results in \nrelation between coefficients in L. 41-43.\nHere line 41 & 43 are missing a factor of 2.\nL. 41 should be \na = 2(A cosfi**2 + B sinfi**2)\nand L 43 should be\nc = 2(A sinfi**2 + B cosfi**2)\n\nthese factors are missing in all following calculations. \n\nI have done the exercise. The result should be:\ncase b=0 and a=c:\n A=B=a/2=c/2\ncase b=0 and a!=c:\n a=2A\n c=2B\ncase b!=0:\n tanfi_1/2 = 1/(2b) * [c-a +- sqrt( (a-c)**2 +4b**2)] ",

"reporter": "reimann",

"cc": "kmeagher@ulb.ac.be",

"resolution": "fixed",

"_ts": "1550067117911749",

"component": "combo reconstruction",

"summary": "Missing Factor 2 in python implementation of paraboloid",

"priority": "normal",

"keywords": "paraboloid",

"time": "2015-09-22T15:19:30",

"milestone": "",

"owner": "kjmeagher",

"type": "defect"

}

```

</p>

</details>

|

defect

|

missing factor in python implementation of paraboloid trac in paraboloid releases python fit paraboloid py nice docu in code i followed the calculation of the formulars there is an coefficient comparison between l and l which results in relation between coefficients in l here line are missing a factor of l should be a a cosfi b sinfi and l should be c a sinfi b cosfi these factors are missing in all following calculations i have done the exercise the result should be case b and a c a b a c case b and a c a c case b tanfi migrated from json status closed changetime description in paraboloid releases python fit paraboloid py n nnice docu in code i followed the calculation of the formulars nthere is an coefficient comparison between l and l which results in nrelation between coefficients in l nhere line are missing a factor of nl should be na a cosfi b sinfi nand l should be nc a sinfi b cosfi n nthese factors are missing in all following calculations n ni have done the exercise the result should be ncase b and a c n a b a c ncase b and a c n a n c ncase b n tanfi reporter reimann cc kmeagher ulb ac be resolution fixed ts component combo reconstruction summary missing factor in python implementation of paraboloid priority normal keywords paraboloid time milestone owner kjmeagher type defect

| 1

|

68,729

| 21,803,050,904

|

IssuesEvent

|

2022-05-16 07:47:22

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

closed

|

Image metadata tooltip is shown on reply previews

|

T-Defect S-Minor A-Timeline A-Media A-Replies O-Occasional

|

### Steps to reproduce

1. Reply to an image

2. Hover over the reply preview

### Outcome

#### What did you expect?

The image metadata tooltip shouldn't appear, since it's a tiny preview that can't fit that much information.

#### What happened instead?

It's shown, and duplicates the information that's already shown to the right of the image:

### Operating system

NixOS unstable

### Browser information

Firefox 100.0

### URL for webapp

develop.element.io

### Application version

Element version: f080b1fb273e-react-b54c7d8fafe3-js-3e4f02b41e7f Olm version: 3.2.8

### Homeserver

Synapse 1.58.0

### Will you send logs?

No

|

1.0

|

Image metadata tooltip is shown on reply previews - ### Steps to reproduce

1. Reply to an image

2. Hover over the reply preview

### Outcome

#### What did you expect?

The image metadata tooltip shouldn't appear, since it's a tiny preview that can't fit that much information.

#### What happened instead?

It's shown, and duplicates the information that's already shown to the right of the image:

### Operating system

NixOS unstable

### Browser information

Firefox 100.0

### URL for webapp

develop.element.io

### Application version

Element version: f080b1fb273e-react-b54c7d8fafe3-js-3e4f02b41e7f Olm version: 3.2.8

### Homeserver

Synapse 1.58.0

### Will you send logs?

No

|

defect

|

image metadata tooltip is shown on reply previews steps to reproduce reply to an image hover over the reply preview outcome what did you expect the image metadata tooltip shouldn t appear since it s a tiny preview that can t fit that much information what happened instead it s shown and duplicates the information that s already shown to the right of the image operating system nixos unstable browser information firefox url for webapp develop element io application version element version react js olm version homeserver synapse will you send logs no

| 1

|

137,727

| 11,157,620,649

|

IssuesEvent

|

2019-12-25 13:59:10

|

appirio-tech/connect-app

|

https://api.github.com/repos/appirio-tech/connect-app

|

closed

|

If there are multiple copilot join requests, accepting one request shows processing symbol for others too

|

P3 QA Pass in Dev Test Environment

|

1. Login as Manager user and invite 2 Copilots into a project

2. Login as Copilot Manager and approve one of them and check

Actual:

Clicking approve for one-request, shows approval-processing symbol for both the requests.

[false processing.zip](https://github.com/appirio-tech/connect-app/files/3947871/false.processing.zip)

|

1.0

|

If there are multiple copilot join requests, accepting one request shows processing symbol for others too - 1. Login as Manager user and invite 2 Copilots into a project

2. Login as Copilot Manager and approve one of them and check

Actual:

Clicking approve for one-request, shows approval-processing symbol for both the requests.

[false processing.zip](https://github.com/appirio-tech/connect-app/files/3947871/false.processing.zip)

|

non_defect

|

if there are multiple copilot join requests accepting one request shows processing symbol for others too login as manager user and invite copilots into a project login as copilot manager and approve one of them and check actual clicking approve for one request shows approval processing symbol for both the requests

| 0

|

177,062

| 6,573,838,990

|

IssuesEvent

|

2017-09-11 10:19:46

|

LDMW/cms

|

https://api.github.com/repos/LDMW/cms

|

opened

|

Copy change: I am, or know someone who is, feeling - on homepage

|

priority-3

|

Adding in comma.

Currently says:

I am, or know someone who is feeling

Needs to say:

I am, or know someone who is, feeling

|

1.0

|

Copy change: I am, or know someone who is, feeling - on homepage - Adding in comma.

Currently says:

I am, or know someone who is feeling

Needs to say:

I am, or know someone who is, feeling

|

non_defect

|

copy change i am or know someone who is feeling on homepage adding in comma currently says i am or know someone who is feeling needs to say i am or know someone who is feeling

| 0

|

6,446

| 2,610,243,353

|

IssuesEvent

|

2015-02-26 19:17:23

|

chrsmith/jsjsj122

|

https://api.github.com/repos/chrsmith/jsjsj122

|

opened

|

台州割包皮包茎手术需要多少钱

|

auto-migrated Priority-Medium Type-Defect

|

```

台州割包皮包茎手术需要多少钱【台州五洲生殖医院】24小时

健康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地�

��:台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐1

04、108、118、198及椒江一金清公交车直达枫南小区,乘坐107、

105、109、112、901、

902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威专家,成就专业典

范。人性化服务,一切以患者为中心。

看男科就选台州五洲生殖医院,专业男科为男人。

```

-----

Original issue reported on code.google.com by `poweragr...@gmail.com` on 31 May 2014 at 1:46

|

1.0

|

台州割包皮包茎手术需要多少钱 - ```

台州割包皮包茎手术需要多少钱【台州五洲生殖医院】24小时

健康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地�

��:台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐1

04、108、118、198及椒江一金清公交车直达枫南小区,乘坐107、

105、109、112、901、

902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威专家,成就专业典

范。人性化服务,一切以患者为中心。

看男科就选台州五洲生殖医院,专业男科为男人。

```

-----

Original issue reported on code.google.com by `poweragr...@gmail.com` on 31 May 2014 at 1:46

|

defect

|

台州割包皮包茎手术需要多少钱 台州割包皮包茎手术需要多少钱【台州五洲生殖医院】 健康咨询热线 微信号tzwzszyy 医院地� �� (枫南大转盘旁)乘车线路 、 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 original issue reported on code google com by poweragr gmail com on may at

| 1

|

113,744

| 4,568,235,333

|

IssuesEvent

|

2016-09-15 13:55:20

|

PowerlineApp/powerline-mobile

|

https://api.github.com/repos/PowerlineApp/powerline-mobile

|

closed

|

Comment: rate up + rate delete end up with rates_count:1

|

bug P2 - Medium Priority

|

I have a comment under a Post which has 0 rates.

I up rate the comment:

```

Request URL:https://api-dev.powerli.ne/api/v2/post-comments/579/rate

Request Method:POST

Status Code:200 OK

{"rate_value":"up"}

```

and the rate numbers are as expected:

```

"rate_sum":"1","rates_count":"1"

```

Now I change my mind and want to undo my rate (i.e. delete it). I call:

```

Request URL:https://api-dev.powerli.ne/api/v2/post-comments/579/rate

Request Method:POST

Status Code:200 OK

{"rate_value":"delete"}

```

but in response I receive following numbers:

```

,"rate_sum":"0","rates_count":"1"

```

However I would expect the `rates_count` to be `0`.

The consequence of `"rate_sum":"0","rates_count":"1"` is that the computed number of upvotes and downvotes is `0.5`:

<img width="744" alt="screen shot 2016-09-15 at 09 15 07" src="https://cloud.githubusercontent.com/assets/225506/18541364/17c451ae-7b25-11e6-8240-04873f142fef.png">

[here is business logic](https://github.com/PowerlineApp/powerline-mobile/blob/develop/www/js/services/discussion.js#L130) to compute number of upvotes and downvotes. Actually, it would be much better if the backend will just provide number of `upvotes` and `downvotes` for the comment, so that frontend is not required to compute it on its own.

the same applies for comment under every other entity type (poll/user petition)

|

1.0

|

Comment: rate up + rate delete end up with rates_count:1 - I have a comment under a Post which has 0 rates.

I up rate the comment:

```

Request URL:https://api-dev.powerli.ne/api/v2/post-comments/579/rate

Request Method:POST

Status Code:200 OK

{"rate_value":"up"}

```

and the rate numbers are as expected:

```

"rate_sum":"1","rates_count":"1"

```

Now I change my mind and want to undo my rate (i.e. delete it). I call:

```

Request URL:https://api-dev.powerli.ne/api/v2/post-comments/579/rate

Request Method:POST

Status Code:200 OK

{"rate_value":"delete"}

```

but in response I receive following numbers:

```

,"rate_sum":"0","rates_count":"1"

```

However I would expect the `rates_count` to be `0`.

The consequence of `"rate_sum":"0","rates_count":"1"` is that the computed number of upvotes and downvotes is `0.5`:

<img width="744" alt="screen shot 2016-09-15 at 09 15 07" src="https://cloud.githubusercontent.com/assets/225506/18541364/17c451ae-7b25-11e6-8240-04873f142fef.png">

[here is business logic](https://github.com/PowerlineApp/powerline-mobile/blob/develop/www/js/services/discussion.js#L130) to compute number of upvotes and downvotes. Actually, it would be much better if the backend will just provide number of `upvotes` and `downvotes` for the comment, so that frontend is not required to compute it on its own.

the same applies for comment under every other entity type (poll/user petition)

|

non_defect

|

comment rate up rate delete end up with rates count i have a comment under a post which has rates i up rate the comment request url request method post status code ok rate value up and the rate numbers are as expected rate sum rates count now i change my mind and want to undo my rate i e delete it i call request url request method post status code ok rate value delete but in response i receive following numbers rate sum rates count however i would expect the rates count to be the consequence of rate sum rates count is that the computed number of upvotes and downvotes is img width alt screen shot at src to compute number of upvotes and downvotes actually it would be much better if the backend will just provide number of upvotes and downvotes for the comment so that frontend is not required to compute it on its own the same applies for comment under every other entity type poll user petition

| 0

|

31,006

| 8,638,759,756

|

IssuesEvent

|

2018-11-23 15:50:28

|

allinurl/goaccess

|

https://api.github.com/repos/allinurl/goaccess

|

closed

|

Ubuntu package with GeoIP missing

|

build change debian-repo

|

Hi,

I installed the goaccess package on Ubuntu 18.04, but this does not seem to have GeoIP enabled:

goaccess: unrecognized option '--geoip-database'

Is there a version with GeoIP avaliable?

Thx in advance

Jan

|

1.0

|

Ubuntu package with GeoIP missing - Hi,

I installed the goaccess package on Ubuntu 18.04, but this does not seem to have GeoIP enabled:

goaccess: unrecognized option '--geoip-database'

Is there a version with GeoIP avaliable?

Thx in advance

Jan

|

non_defect

|

ubuntu package with geoip missing hi i installed the goaccess package on ubuntu but this does not seem to have geoip enabled goaccess unrecognized option geoip database is there a version with geoip avaliable thx in advance jan

| 0

|

15,978

| 2,870,249,021

|

IssuesEvent

|

2015-06-07 00:32:02

|

pdelia/away3d

|

https://api.github.com/repos/pdelia/away3d

|

closed

|

Cube is borken.

|

auto-migrated Priority-Medium Type-Defect

|

#28 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:13Z

```

What steps will reproduce the problem?

1. var cube:Cube = new Cube();

2.

3.

What is the expected output? What do you see instead?

TypeError: Error #1009: Cannot access a property or method of a null object

reference.

at

away3d.primitives::Cube/buildCube()[/Users/Li/Development/Actionscript/Projects/

ITM3

Phase 2/Workspace/away3d_secure/src/away3d/primitives/Cube.as:100]

Please use labels and text to provide additional information.

The error occurs at the buildCube method of cube, line 100:

uv0 = makeUV(1-(face.uv0.u+offU), face.uv0.v+offV);

```

Original issue reported on code.google.com by `paleblue...@gmail.com` on 27 Jan 2009 at 4:33

|

1.0

|

Cube is borken. - #28 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:13Z

```

What steps will reproduce the problem?

1. var cube:Cube = new Cube();

2.

3.

What is the expected output? What do you see instead?

TypeError: Error #1009: Cannot access a property or method of a null object

reference.

at

away3d.primitives::Cube/buildCube()[/Users/Li/Development/Actionscript/Projects/

ITM3

Phase 2/Workspace/away3d_secure/src/away3d/primitives/Cube.as:100]

Please use labels and text to provide additional information.

The error occurs at the buildCube method of cube, line 100:

uv0 = makeUV(1-(face.uv0.u+offU), face.uv0.v+offV);

```

Original issue reported on code.google.com by `paleblue...@gmail.com` on 27 Jan 2009 at 4:33

|

defect

|

cube is borken issue by googlecodeexporter created on what steps will reproduce the problem var cube cube new cube what is the expected output what do you see instead typeerror error cannot access a property or method of a null object reference at primitives cube buildcube users li development actionscript projects phase workspace secure src primitives cube as please use labels and text to provide additional information the error occurs at the buildcube method of cube line makeuv face u offu face v offv original issue reported on code google com by paleblue gmail com on jan at

| 1

|

262,296

| 27,944,429,558

|

IssuesEvent

|

2023-03-24 01:02:33

|

LalithK90/jnSuper

|

https://api.github.com/repos/LalithK90/jnSuper

|

opened

|

CVE-2023-1370 (High) detected in json-smart-2.3.jar

|

Mend: dependency security vulnerability

|

## CVE-2023-1370 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-smart-2.3.jar</b></p></summary>

<p>JSON (JavaScript Object Notation) is a lightweight data-interchange format. It is easy for humans to read and write. It is easy for machines to parse and generate. It is based on a subset of the JavaScript Programming Language, Standard ECMA-262 3rd Edition - December 1999. JSON is a text format that is completely language independent but uses conventions that are familiar to programmers of the C-family of languages, including C, C++, C#, Java, JavaScript, Perl, Python, and many others. These properties make JSON an ideal data-interchange language.</p>

<p>Library home page: <a href="http://www.minidev.net/">http://www.minidev.net/</a></p>

<p>Path to dependency file: /build.gradle</p>

<p>Path to vulnerable library: /tmp/ws-ua_20210506065133_NUNARB/downloadResource_CPLGLC/20210506065213/json-smart-2.3.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-test-2.2.4.RELEASE.jar (Root Library)

- json-path-2.4.0.jar

- :x: **json-smart-2.3.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/LalithK90/jnSuper/commit/cd2c79cdbfafc19f783a2a48afe1dda8165cd067">cd2c79cdbfafc19f783a2a48afe1dda8165cd067</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

[Json-smart](https://netplex.github.io/json-smart/) is a performance focused, JSON processor lib. When reaching a ‘[‘ or ‘{‘ character in the JSON input, the code parses an array or an object respectively. It was discovered that the code does not have any limit to the nesting of such arrays or objects. Since the parsing of nested arrays and objects is done recursively, nesting too many of them can cause a stack exhaustion (stack overflow) and crash the software.

<p>Publish Date: 2023-03-22

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-1370>CVE-2023-1370</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://research.jfrog.com/vulnerabilities/stack-exhaustion-in-json-smart-leads-to-denial-of-service-when-parsing-malformed-json-xray-427633/">https://research.jfrog.com/vulnerabilities/stack-exhaustion-in-json-smart-leads-to-denial-of-service-when-parsing-malformed-json-xray-427633/</a></p>

<p>Release Date: 2023-03-22</p>

<p>Fix Resolution (net.minidev:json-smart): 2.4.9</p>

<p>Direct dependency fix Resolution (org.springframework.boot:spring-boot-starter-test): 2.6.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2023-1370 (High) detected in json-smart-2.3.jar - ## CVE-2023-1370 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>json-smart-2.3.jar</b></p></summary>

<p>JSON (JavaScript Object Notation) is a lightweight data-interchange format. It is easy for humans to read and write. It is easy for machines to parse and generate. It is based on a subset of the JavaScript Programming Language, Standard ECMA-262 3rd Edition - December 1999. JSON is a text format that is completely language independent but uses conventions that are familiar to programmers of the C-family of languages, including C, C++, C#, Java, JavaScript, Perl, Python, and many others. These properties make JSON an ideal data-interchange language.</p>

<p>Library home page: <a href="http://www.minidev.net/">http://www.minidev.net/</a></p>

<p>Path to dependency file: /build.gradle</p>

<p>Path to vulnerable library: /tmp/ws-ua_20210506065133_NUNARB/downloadResource_CPLGLC/20210506065213/json-smart-2.3.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-test-2.2.4.RELEASE.jar (Root Library)

- json-path-2.4.0.jar

- :x: **json-smart-2.3.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/LalithK90/jnSuper/commit/cd2c79cdbfafc19f783a2a48afe1dda8165cd067">cd2c79cdbfafc19f783a2a48afe1dda8165cd067</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

[Json-smart](https://netplex.github.io/json-smart/) is a performance focused, JSON processor lib. When reaching a ‘[‘ or ‘{‘ character in the JSON input, the code parses an array or an object respectively. It was discovered that the code does not have any limit to the nesting of such arrays or objects. Since the parsing of nested arrays and objects is done recursively, nesting too many of them can cause a stack exhaustion (stack overflow) and crash the software.

<p>Publish Date: 2023-03-22

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-1370>CVE-2023-1370</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://research.jfrog.com/vulnerabilities/stack-exhaustion-in-json-smart-leads-to-denial-of-service-when-parsing-malformed-json-xray-427633/">https://research.jfrog.com/vulnerabilities/stack-exhaustion-in-json-smart-leads-to-denial-of-service-when-parsing-malformed-json-xray-427633/</a></p>

<p>Release Date: 2023-03-22</p>

<p>Fix Resolution (net.minidev:json-smart): 2.4.9</p>

<p>Direct dependency fix Resolution (org.springframework.boot:spring-boot-starter-test): 2.6.9</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in json smart jar cve high severity vulnerability vulnerable library json smart jar json javascript object notation is a lightweight data interchange format it is easy for humans to read and write it is easy for machines to parse and generate it is based on a subset of the javascript programming language standard ecma edition december json is a text format that is completely language independent but uses conventions that are familiar to programmers of the c family of languages including c c c java javascript perl python and many others these properties make json an ideal data interchange language library home page a href path to dependency file build gradle path to vulnerable library tmp ws ua nunarb downloadresource cplglc json smart jar dependency hierarchy spring boot starter test release jar root library json path jar x json smart jar vulnerable library found in head commit a href found in base branch master vulnerability details is a performance focused json processor lib when reaching a ‘ ‘ or ‘ ‘ character in the json input the code parses an array or an object respectively it was discovered that the code does not have any limit to the nesting of such arrays or objects since the parsing of nested arrays and objects is done recursively nesting too many of them can cause a stack exhaustion stack overflow and crash the software publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution net minidev json smart direct dependency fix resolution org springframework boot spring boot starter test step up your open source security game with mend

| 0

|

25,351

| 4,300,751,185

|

IssuesEvent

|

2016-07-20 03:12:39

|

idaholab/moose

|

https://api.github.com/repos/idaholab/moose

|

opened

|

METHOD / METHODS in update_and_rebuild_libmesh

|

C: MOOSE P: normal T: defect

|

### Description of the enhancement or error report

What exactly is going on in `update_and_rebuild_libmesh.sh` with regards to `METHOD` and `METHODS`?

Specifically, what is this?

https://github.com/idaholab/moose/blame/devel/scripts/update_and_rebuild_libmesh.sh#L39

Of course I have `METHOD` and `METHODS` both set... and of course they are set to different things (setting `METHOD="opt dbg oprof"`) doesn't make any sense at all.

Normally you would have `METHODS` set to all the ways you might want to build and then you would set `METHOD` to the one you are currently using right now. This is the way I work 100% of the time (but I usually don't use `update_and_rebuild_libmesh.sh`). But this currently generates an error from `update_and_rebuild_libmesh.sh`!

In fact, I actually noticed a bit of what I just thought was brain damage in a post to moose-users the other day that is because of this. Check out this post from Hans:

https://groups.google.com/d/msg/moose-users/0C-kOiUiTQk/lWGdxkFsAQAJ

When I saw it I was like "Why the heck is he unsetting METHOD before running `update_and_rebuild_libmesh.sh`??? Must just be some leftover brain damage"... but it's actually not! It's actually required by the brain damage that's in `update_and_rebuild_libmesh.sh`!

I'm sure there's already been a discussion about this that I wasn't around for. It looks like @jwpeterson changed this back in April. What is the rationale for the way it's currently coded? If there isn't one then this check needs to be removed as it's not right!

### Rationale for the enhancement or information for reproducing the error

Set both `METHOD` and `METHODS` in your environment and make them not the same.

### Identified impact

Removal of brain damage 😉

|

1.0

|

METHOD / METHODS in update_and_rebuild_libmesh - ### Description of the enhancement or error report

What exactly is going on in `update_and_rebuild_libmesh.sh` with regards to `METHOD` and `METHODS`?

Specifically, what is this?

https://github.com/idaholab/moose/blame/devel/scripts/update_and_rebuild_libmesh.sh#L39

Of course I have `METHOD` and `METHODS` both set... and of course they are set to different things (setting `METHOD="opt dbg oprof"`) doesn't make any sense at all.

Normally you would have `METHODS` set to all the ways you might want to build and then you would set `METHOD` to the one you are currently using right now. This is the way I work 100% of the time (but I usually don't use `update_and_rebuild_libmesh.sh`). But this currently generates an error from `update_and_rebuild_libmesh.sh`!

In fact, I actually noticed a bit of what I just thought was brain damage in a post to moose-users the other day that is because of this. Check out this post from Hans:

https://groups.google.com/d/msg/moose-users/0C-kOiUiTQk/lWGdxkFsAQAJ

When I saw it I was like "Why the heck is he unsetting METHOD before running `update_and_rebuild_libmesh.sh`??? Must just be some leftover brain damage"... but it's actually not! It's actually required by the brain damage that's in `update_and_rebuild_libmesh.sh`!

I'm sure there's already been a discussion about this that I wasn't around for. It looks like @jwpeterson changed this back in April. What is the rationale for the way it's currently coded? If there isn't one then this check needs to be removed as it's not right!

### Rationale for the enhancement or information for reproducing the error

Set both `METHOD` and `METHODS` in your environment and make them not the same.

### Identified impact

Removal of brain damage 😉

|

defect

|

method methods in update and rebuild libmesh description of the enhancement or error report what exactly is going on in update and rebuild libmesh sh with regards to method and methods specifically what is this of course i have method and methods both set and of course they are set to different things setting method opt dbg oprof doesn t make any sense at all normally you would have methods set to all the ways you might want to build and then you would set method to the one you are currently using right now this is the way i work of the time but i usually don t use update and rebuild libmesh sh but this currently generates an error from update and rebuild libmesh sh in fact i actually noticed a bit of what i just thought was brain damage in a post to moose users the other day that is because of this check out this post from hans when i saw it i was like why the heck is he unsetting method before running update and rebuild libmesh sh must just be some leftover brain damage but it s actually not it s actually required by the brain damage that s in update and rebuild libmesh sh i m sure there s already been a discussion about this that i wasn t around for it looks like jwpeterson changed this back in april what is the rationale for the way it s currently coded if there isn t one then this check needs to be removed as it s not right rationale for the enhancement or information for reproducing the error set both method and methods in your environment and make them not the same identified impact removal of brain damage 😉

| 1

|

279,224

| 30,702,475,516

|

IssuesEvent

|

2023-07-27 01:33:20

|

nidhi7598/linux-3.0.35_CVE-2018-13405

|

https://api.github.com/repos/nidhi7598/linux-3.0.35_CVE-2018-13405

|

closed

|

CVE-2022-1462 (Medium) detected in linux-stable-rtv3.8.6, linux-stable-rtv3.8.6 - autoclosed

|

Mend: dependency security vulnerability

|

## CVE-2022-1462 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv3.8.6</b>, <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

An out-of-bounds read flaw was found in the Linux kernel’s TeleTYpe subsystem. The issue occurs in how a user triggers a race condition using ioctls TIOCSPTLCK and TIOCGPTPEER and TIOCSTI and TCXONC with leakage of memory in the flush_to_ldisc function. This flaw allows a local user to crash the system or read unauthorized random data from memory.

<p>Publish Date: 2022-06-02

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-1462>CVE-2022-1462</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-1462">https://www.linuxkernelcves.com/cves/CVE-2022-1462</a></p>

<p>Release Date: 2022-06-02</p>

<p>Fix Resolution: v4.9.325,v4.14.290,v4.19.254,v5.4.208,v5.10.134,v5.15.58,v5.18.13</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2022-1462 (Medium) detected in linux-stable-rtv3.8.6, linux-stable-rtv3.8.6 - autoclosed - ## CVE-2022-1462 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>linux-stable-rtv3.8.6</b>, <b>linux-stable-rtv3.8.6</b></p></summary>

<p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

An out-of-bounds read flaw was found in the Linux kernel’s TeleTYpe subsystem. The issue occurs in how a user triggers a race condition using ioctls TIOCSPTLCK and TIOCGPTPEER and TIOCSTI and TCXONC with leakage of memory in the flush_to_ldisc function. This flaw allows a local user to crash the system or read unauthorized random data from memory.

<p>Publish Date: 2022-06-02

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2022-1462>CVE-2022-1462</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: High

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.linuxkernelcves.com/cves/CVE-2022-1462">https://www.linuxkernelcves.com/cves/CVE-2022-1462</a></p>

<p>Release Date: 2022-06-02</p>

<p>Fix Resolution: v4.9.325,v4.14.290,v4.19.254,v5.4.208,v5.10.134,v5.15.58,v5.18.13</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve medium detected in linux stable linux stable autoclosed cve medium severity vulnerability vulnerable libraries linux stable linux stable vulnerability details an out of bounds read flaw was found in the linux kernel’s teletype subsystem the issue occurs in how a user triggers a race condition using ioctls tiocsptlck and tiocgptpeer and tiocsti and tcxonc with leakage of memory in the flush to ldisc function this flaw allows a local user to crash the system or read unauthorized random data from memory publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity high privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

154,299

| 12,199,133,872

|

IssuesEvent

|

2020-04-30 00:43:44

|

Princeton-CDH/mep-django

|

https://api.github.com/repos/Princeton-CDH/mep-django

|

closed

|

As a user I want items automatically sorted by relevance if I have a keyword search term active and otherwise by title (by default), so that I see best matches first for keyword searches.

|

awaiting testing

|

# testing notes

this needs to be tested on the books search page. check that:

- if you haven't typed anything the keyword search box, you shouldn't be allowed to select the "relevance" option

- as soon as you type in a keyword search, the sort should switch to relevance automatically