Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,406

| 3,201,896,449

|

IssuesEvent

|

2015-10-02 10:34:47

|

PowerDNS/pdns

|

https://api.github.com/repos/PowerDNS/pdns

|

closed

|

Fix for GSQL backend to enable multiple dnssec storage backends

|

auth defect

|

Due to mistake in GSQL backend, one cannot use more than one storage for DNSSEC data. The patch included fixes this by checking whether the backend in question actually serves the domain, causing Ueberbackend to find correct backend for the domain. This allows to have multiple SQL backends with DNSSEC enabled.

|

1.0

|

Fix for GSQL backend to enable multiple dnssec storage backends - Due to mistake in GSQL backend, one cannot use more than one storage for DNSSEC data. The patch included fixes this by checking whether the backend in question actually serves the domain, causing Ueberbackend to find correct backend for the domain. This allows to have multiple SQL backends with DNSSEC enabled.

|

defect

|

fix for gsql backend to enable multiple dnssec storage backends due to mistake in gsql backend one cannot use more than one storage for dnssec data the patch included fixes this by checking whether the backend in question actually serves the domain causing ueberbackend to find correct backend for the domain this allows to have multiple sql backends with dnssec enabled

| 1

|

68,828

| 21,918,348,280

|

IssuesEvent

|

2022-05-22 07:07:30

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

closed

|

Other user's name wrapped on bubble message layout

|

T-Defect S-Tolerable A-Appearance A-Message-Bubbles O-Uncommon

|

### Steps to reproduce

1. Create a test user

2. Set a long user name to the user

3. Invite the user to a test room

4. Send a message from the user

5. Open the room with the main account

### Outcome

#### What did you expect?

The user name should be hidden with ellipsis, following other cases on UI.

#### What happened instead?

The user name is wrapped.

### Operating system

Debian

### Browser information

Firefox

### URL for webapp

localhost

### Application version

develop branch

### Homeserver

_No response_

### Will you send logs?

No

|

1.0

|

Other user's name wrapped on bubble message layout - ### Steps to reproduce

1. Create a test user

2. Set a long user name to the user

3. Invite the user to a test room

4. Send a message from the user

5. Open the room with the main account

### Outcome

#### What did you expect?

The user name should be hidden with ellipsis, following other cases on UI.

#### What happened instead?

The user name is wrapped.

### Operating system

Debian

### Browser information

Firefox

### URL for webapp

localhost

### Application version

develop branch

### Homeserver

_No response_

### Will you send logs?

No

|

defect

|

other user s name wrapped on bubble message layout steps to reproduce create a test user set a long user name to the user invite the user to a test room send a message from the user open the room with the main account outcome what did you expect the user name should be hidden with ellipsis following other cases on ui what happened instead the user name is wrapped operating system debian browser information firefox url for webapp localhost application version develop branch homeserver no response will you send logs no

| 1

|

122,554

| 26,140,655,617

|

IssuesEvent

|

2022-12-29 17:56:14

|

gdscashesi/ashesi-hackers-league

|

https://api.github.com/repos/gdscashesi/ashesi-hackers-league

|

closed

|

Update Table with points

|

enhancement code

|

This page will let us update the table with points for the individual teams

page: **/admin/update**

We will re-use the table component, and add an additional column : **action**

This column will contain **3 counter buttons** for each of algo, scripting, SQL for updating a specific row

|rank | name |algorithms |scripting |sql |total|action

|--|--|--|--|--|--|--|

|1|Berekusu Warriors|21|45|3|69| +- +- +-|

|2|Ashesi Ninjas|27|9|6|42| +- +- +- |

|3|Crusading Coders|18|1|0|19| +- +- +-|

but here comes the **hard part**

- [ ] we should be able to look at the before and after states of the table (before updating, after updating because the ranking will change)

but for now, implement just the action column and make sure its working. Let me know when this is done before you start the **hard part**

If you have a better/interesting way we can do this. Let me know ASAP. Otherwise, start work!

|

1.0

|

Update Table with points - This page will let us update the table with points for the individual teams

page: **/admin/update**

We will re-use the table component, and add an additional column : **action**

This column will contain **3 counter buttons** for each of algo, scripting, SQL for updating a specific row

|rank | name |algorithms |scripting |sql |total|action

|--|--|--|--|--|--|--|

|1|Berekusu Warriors|21|45|3|69| +- +- +-|

|2|Ashesi Ninjas|27|9|6|42| +- +- +- |

|3|Crusading Coders|18|1|0|19| +- +- +-|

but here comes the **hard part**

- [ ] we should be able to look at the before and after states of the table (before updating, after updating because the ranking will change)

but for now, implement just the action column and make sure its working. Let me know when this is done before you start the **hard part**

If you have a better/interesting way we can do this. Let me know ASAP. Otherwise, start work!

|

non_defect

|

update table with points this page will let us update the table with points for the individual teams page admin update we will re use the table component and add an additional column action this column will contain counter buttons for each of algo scripting sql for updating a specific row rank name algorithms scripting sql total action berekusu warriors ashesi ninjas crusading coders but here comes the hard part we should be able to look at the before and after states of the table before updating after updating because the ranking will change but for now implement just the action column and make sure its working let me know when this is done before you start the hard part if you have a better interesting way we can do this let me know asap otherwise start work

| 0

|

165,766

| 20,619,777,231

|

IssuesEvent

|

2022-03-07 16:21:00

|

Azure/AKS

|

https://api.github.com/repos/Azure/AKS

|

opened

|

CVE-2022-0847: Linux kernel: overwriting read-only files

|

security

|

A new vulnerability in the Linux kernel since

version 5.8 commit f6dd975583bd ("pipe: merge anon_pipe_buf*_ops") due

to uninitialized variables. It enables anybody to write arbitrary

data to arbitrary files, even if the file is O_RDONLY, immutable or on

a MS_RDONLY filesystem. It can be used to inject code into arbitrary

processes.

It is similar to CVE-2016-5195 "Dirty Cow", but is easier to exploit.

The vulnerability was fixed in Linux 5.16.11, 5.15.25 and 5.10.102.

https://www.openwall.com/lists/oss-security/2022/03/07/1

# AKS Information:

AKS is working on an updated Node Image with the required updated kernels. This issue will be updated once a build has been commited.

|

True

|

CVE-2022-0847: Linux kernel: overwriting read-only files - A new vulnerability in the Linux kernel since

version 5.8 commit f6dd975583bd ("pipe: merge anon_pipe_buf*_ops") due

to uninitialized variables. It enables anybody to write arbitrary

data to arbitrary files, even if the file is O_RDONLY, immutable or on

a MS_RDONLY filesystem. It can be used to inject code into arbitrary

processes.

It is similar to CVE-2016-5195 "Dirty Cow", but is easier to exploit.

The vulnerability was fixed in Linux 5.16.11, 5.15.25 and 5.10.102.

https://www.openwall.com/lists/oss-security/2022/03/07/1

# AKS Information:

AKS is working on an updated Node Image with the required updated kernels. This issue will be updated once a build has been commited.

|

non_defect

|

cve linux kernel overwriting read only files a new vulnerability in the linux kernel since version commit pipe merge anon pipe buf ops due to uninitialized variables it enables anybody to write arbitrary data to arbitrary files even if the file is o rdonly immutable or on a ms rdonly filesystem it can be used to inject code into arbitrary processes it is similar to cve dirty cow but is easier to exploit the vulnerability was fixed in linux and aks information aks is working on an updated node image with the required updated kernels this issue will be updated once a build has been commited

| 0

|

135,740

| 12,690,899,978

|

IssuesEvent

|

2020-06-21 14:28:40

|

kubernetes/kubeadm

|

https://api.github.com/repos/kubernetes/kubeadm

|

closed

|

update kubeadm-kubelet documentation to reflect CNI package change

|

kind/documentation lifecycle/active priority/important-longterm

|

the kubernetes-cni contents (plugins) will now be bundled as part of the kubelet package:

https://github.com/kubernetes/release/pull/1330

the kubernetes-cni package will be removed.

the action item here is to remove:

> kubernetes-cni

from this list:

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/kubelet-integration/#kubernetes-binaries-and-package-contents

and update the item:

> kubelet

to have the description:

> Installs the kubelet binary in /usr/bin and CNI binaries in /opt/cni/bin.

file location:

https://github.com/kubernetes/website/blob/master/content/en/docs/setup/production-environment/tools/kubeadm/kubelet-integration.md

/priority important-longterm

/kind documentation

/help

/good-first-issue

|

1.0

|

update kubeadm-kubelet documentation to reflect CNI package change - the kubernetes-cni contents (plugins) will now be bundled as part of the kubelet package:

https://github.com/kubernetes/release/pull/1330

the kubernetes-cni package will be removed.

the action item here is to remove:

> kubernetes-cni

from this list:

https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/kubelet-integration/#kubernetes-binaries-and-package-contents

and update the item:

> kubelet

to have the description:

> Installs the kubelet binary in /usr/bin and CNI binaries in /opt/cni/bin.

file location:

https://github.com/kubernetes/website/blob/master/content/en/docs/setup/production-environment/tools/kubeadm/kubelet-integration.md

/priority important-longterm

/kind documentation

/help

/good-first-issue

|

non_defect

|

update kubeadm kubelet documentation to reflect cni package change the kubernetes cni contents plugins will now be bundled as part of the kubelet package the kubernetes cni package will be removed the action item here is to remove kubernetes cni from this list and update the item kubelet to have the description installs the kubelet binary in usr bin and cni binaries in opt cni bin file location priority important longterm kind documentation help good first issue

| 0

|

242,993

| 18,675,300,302

|

IssuesEvent

|

2021-10-31 13:06:11

|

AY2122S1-CS2103T-T17-3/tp

|

https://api.github.com/repos/AY2122S1-CS2103T-T17-3/tp

|

closed

|

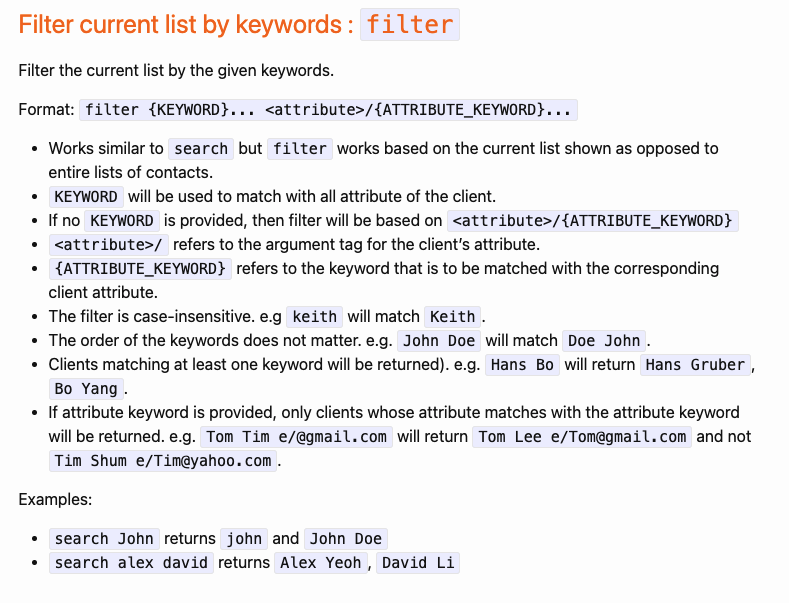

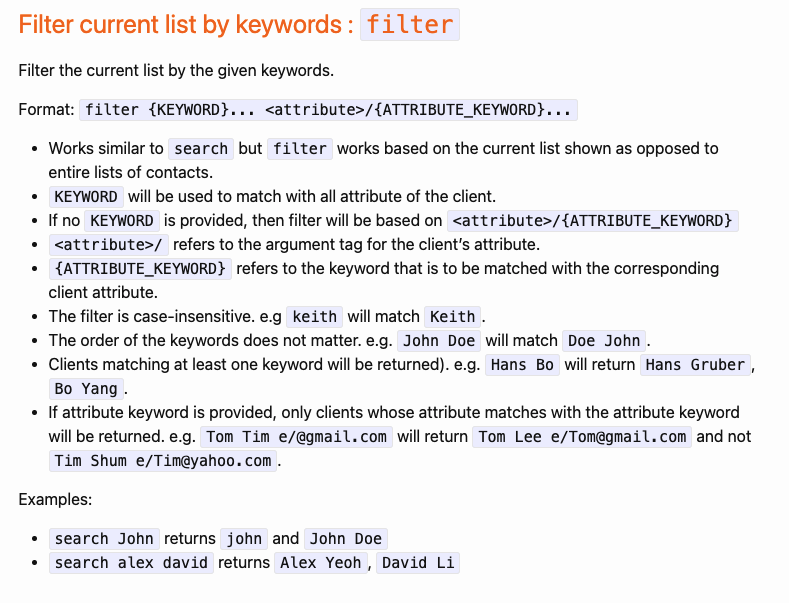

[PE-D] UG examples for filter is wrong

|

documentation

|

Note the examples are for `search` not filter

<!--session: 1635495170210-7d18469e-ff21-47c2-9921-cf910f65f10c-->

<!--Version: Web v3.4.1-->

-------------

Labels: `type.DocumentationBug` `severity.Low`

original: mokdarren/ped#4

|

1.0

|

[PE-D] UG examples for filter is wrong -

Note the examples are for `search` not filter

<!--session: 1635495170210-7d18469e-ff21-47c2-9921-cf910f65f10c-->

<!--Version: Web v3.4.1-->

-------------

Labels: `type.DocumentationBug` `severity.Low`

original: mokdarren/ped#4

|

non_defect

|

ug examples for filter is wrong note the examples are for search not filter labels type documentationbug severity low original mokdarren ped

| 0

|

78,770

| 27,751,929,167

|

IssuesEvent

|

2023-03-15 21:31:45

|

jurgendl/hql-builder

|

https://api.github.com/repos/jurgendl/hql-builder

|

closed

|

exception after syntax error on EDT

|

Type-Defect auto-migrated Priority-Medium no-issue-activity

|

```

java.lang.ArrayIndexOutOfBoundsException: 0 >= 0

at java.util.Vector.elementAt(Vector.java:427)

at javax.swing.text.DefaultHighlighter.paintLayeredHighlights(DefaultHighlighter.java:268)

at javax.swing.text.PlainView.paint(PlainView.java:283)

at javax.swing.plaf.basic.BasicTextUI$RootView.paint(BasicTextUI.java:1422)

at javax.swing.plaf.basic.BasicTextUI.paintSafely(BasicTextUI.java:722)

at javax.swing.plaf.basic.BasicTextUI.paint(BasicTextUI.java:869)

at javax.swing.plaf.basic.BasicTextUI.update(BasicTextUI.java:848)

at javax.swing.JComponent.paintComponent(JComponent.java:752)

at javax.swing.JComponent.paint(JComponent.java:1029)

at javax.swing.JComponent.paintToOffscreen(JComponent.java:5124)

at javax.swing.RepaintManager$PaintManager.paintDoubleBuffered(RepaintManager.java:1491)

at javax.swing.RepaintManager$PaintManager.paint(RepaintManager.java:1422)

at javax.swing.RepaintManager.paint(RepaintManager.java:1225)

at javax.swing.JComponent._paintImmediately(JComponent.java:5072)

at javax.swing.JComponent.paintImmediately(JComponent.java:4882)

at javax.swing.RepaintManager.paintDirtyRegions(RepaintManager.java:786)

at javax.swing.RepaintManager.paintDirtyRegions(RepaintManager.java:714)

at javax.swing.RepaintManager.prePaintDirtyRegions(RepaintManager.java:694)

at javax.swing.RepaintManager.access$700(RepaintManager.java:41)

at javax.swing.RepaintManager$ProcessingRunnable.run(RepaintManager.java:1636)

at java.awt.event.InvocationEvent.dispatch(InvocationEvent.java:209)

at java.awt.EventQueue.dispatchEventImpl(EventQueue.java:646)

at java.awt.EventQueue.access$000(EventQueue.java:84)

at java.awt.EventQueue$1.run(EventQueue.java:607)

at java.awt.EventQueue$1.run(EventQueue.java:605)

at java.security.AccessController.doPrivileged(Native Method)

at java.security.AccessControlContext$1.doIntersectionPrivilege(AccessControlContext.java:87)

at java.awt.EventQueue.dispatchEvent(EventQueue.java:616)

at java.awt.EventDispatchThread.pumpOneEventForFilters(EventDispatchThread.java:269)

at java.awt.EventDispatchThread.pumpEventsForFilter(EventDispatchThread.java:184)

at java.awt.EventDispatchThread.pumpEventsForHierarchy(EventDispatchThread.java:174)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:169)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:161)

at java.awt.EventDispatchThread.run(EventDispatchThread.java:122)

```

Original issue reported on code.google.com by `jurgen.d...@gmail.com` on 12 Jul 2012 at 3:10

|

1.0

|

exception after syntax error on EDT - ```

java.lang.ArrayIndexOutOfBoundsException: 0 >= 0

at java.util.Vector.elementAt(Vector.java:427)

at javax.swing.text.DefaultHighlighter.paintLayeredHighlights(DefaultHighlighter.java:268)

at javax.swing.text.PlainView.paint(PlainView.java:283)

at javax.swing.plaf.basic.BasicTextUI$RootView.paint(BasicTextUI.java:1422)

at javax.swing.plaf.basic.BasicTextUI.paintSafely(BasicTextUI.java:722)

at javax.swing.plaf.basic.BasicTextUI.paint(BasicTextUI.java:869)

at javax.swing.plaf.basic.BasicTextUI.update(BasicTextUI.java:848)

at javax.swing.JComponent.paintComponent(JComponent.java:752)

at javax.swing.JComponent.paint(JComponent.java:1029)

at javax.swing.JComponent.paintToOffscreen(JComponent.java:5124)

at javax.swing.RepaintManager$PaintManager.paintDoubleBuffered(RepaintManager.java:1491)

at javax.swing.RepaintManager$PaintManager.paint(RepaintManager.java:1422)

at javax.swing.RepaintManager.paint(RepaintManager.java:1225)

at javax.swing.JComponent._paintImmediately(JComponent.java:5072)

at javax.swing.JComponent.paintImmediately(JComponent.java:4882)

at javax.swing.RepaintManager.paintDirtyRegions(RepaintManager.java:786)

at javax.swing.RepaintManager.paintDirtyRegions(RepaintManager.java:714)

at javax.swing.RepaintManager.prePaintDirtyRegions(RepaintManager.java:694)

at javax.swing.RepaintManager.access$700(RepaintManager.java:41)

at javax.swing.RepaintManager$ProcessingRunnable.run(RepaintManager.java:1636)

at java.awt.event.InvocationEvent.dispatch(InvocationEvent.java:209)

at java.awt.EventQueue.dispatchEventImpl(EventQueue.java:646)

at java.awt.EventQueue.access$000(EventQueue.java:84)

at java.awt.EventQueue$1.run(EventQueue.java:607)

at java.awt.EventQueue$1.run(EventQueue.java:605)

at java.security.AccessController.doPrivileged(Native Method)

at java.security.AccessControlContext$1.doIntersectionPrivilege(AccessControlContext.java:87)

at java.awt.EventQueue.dispatchEvent(EventQueue.java:616)

at java.awt.EventDispatchThread.pumpOneEventForFilters(EventDispatchThread.java:269)

at java.awt.EventDispatchThread.pumpEventsForFilter(EventDispatchThread.java:184)

at java.awt.EventDispatchThread.pumpEventsForHierarchy(EventDispatchThread.java:174)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:169)

at java.awt.EventDispatchThread.pumpEvents(EventDispatchThread.java:161)

at java.awt.EventDispatchThread.run(EventDispatchThread.java:122)

```

Original issue reported on code.google.com by `jurgen.d...@gmail.com` on 12 Jul 2012 at 3:10

|

defect

|

exception after syntax error on edt java lang arrayindexoutofboundsexception at java util vector elementat vector java at javax swing text defaulthighlighter paintlayeredhighlights defaulthighlighter java at javax swing text plainview paint plainview java at javax swing plaf basic basictextui rootview paint basictextui java at javax swing plaf basic basictextui paintsafely basictextui java at javax swing plaf basic basictextui paint basictextui java at javax swing plaf basic basictextui update basictextui java at javax swing jcomponent paintcomponent jcomponent java at javax swing jcomponent paint jcomponent java at javax swing jcomponent painttooffscreen jcomponent java at javax swing repaintmanager paintmanager paintdoublebuffered repaintmanager java at javax swing repaintmanager paintmanager paint repaintmanager java at javax swing repaintmanager paint repaintmanager java at javax swing jcomponent paintimmediately jcomponent java at javax swing jcomponent paintimmediately jcomponent java at javax swing repaintmanager paintdirtyregions repaintmanager java at javax swing repaintmanager paintdirtyregions repaintmanager java at javax swing repaintmanager prepaintdirtyregions repaintmanager java at javax swing repaintmanager access repaintmanager java at javax swing repaintmanager processingrunnable run repaintmanager java at java awt event invocationevent dispatch invocationevent java at java awt eventqueue dispatcheventimpl eventqueue java at java awt eventqueue access eventqueue java at java awt eventqueue run eventqueue java at java awt eventqueue run eventqueue java at java security accesscontroller doprivileged native method at java security accesscontrolcontext dointersectionprivilege accesscontrolcontext java at java awt eventqueue dispatchevent eventqueue java at java awt eventdispatchthread pumponeeventforfilters eventdispatchthread java at java awt eventdispatchthread pumpeventsforfilter eventdispatchthread java at java awt eventdispatchthread pumpeventsforhierarchy eventdispatchthread java at java awt eventdispatchthread pumpevents eventdispatchthread java at java awt eventdispatchthread pumpevents eventdispatchthread java at java awt eventdispatchthread run eventdispatchthread java original issue reported on code google com by jurgen d gmail com on jul at

| 1

|

165,973

| 14,016,642,009

|

IssuesEvent

|

2020-10-29 14:45:21

|

TUM-IDP-WS-20/doc

|

https://api.github.com/repos/TUM-IDP-WS-20/doc

|

closed

|

Research on Topic modelling techniques

|

documentation

|

Parent: #1

---

- [x] Find all topic modelling techniques

- [x] Compare and document powerful features of each technique

- [x] SVD

- [x] LSA

- [x] LDA

- [x] ...

|

1.0

|

Research on Topic modelling techniques - Parent: #1

---

- [x] Find all topic modelling techniques

- [x] Compare and document powerful features of each technique

- [x] SVD

- [x] LSA

- [x] LDA

- [x] ...

|

non_defect

|

research on topic modelling techniques parent find all topic modelling techniques compare and document powerful features of each technique svd lsa lda

| 0

|

27,675

| 2,695,028,601

|

IssuesEvent

|

2015-04-02 00:26:00

|

cs2103jan2015-t10-2j/main

|

https://api.github.com/repos/cs2103jan2015-t10-2j/main

|

closed

|

allow the user to skip certain arguments in the add/alter functions.

|

priority.high type.task

|

For example, if the user wants to have no description, no date, etc.

This will also help to solve #15

|

1.0

|

allow the user to skip certain arguments in the add/alter functions. - For example, if the user wants to have no description, no date, etc.

This will also help to solve #15

|

non_defect

|

allow the user to skip certain arguments in the add alter functions for example if the user wants to have no description no date etc this will also help to solve

| 0

|

21,306

| 3,487,401,734

|

IssuesEvent

|

2016-01-01 21:34:00

|

Ryzhehvost/keyla

|

https://api.github.com/repos/Ryzhehvost/keyla

|

closed

|

x64 doesn't start

|

auto-migrated Can't reproduce Priority-Medium Type-Defect

|

```

Установлена версия x64 0.1.9

При запуске ошибка

---------------------------

keyla.exe - Ошибка приложения

---------------------------

Ошибка при запуске приложения (0xc000007b). Для

выхода из приложения нажмите кнопку "ОК".

---------------------------

ОК

---------------------------

Система Win 7 Pro x64 Ru SP1

В системе установлен Punto Switcher, но он

выгружен из памяти.

Сейчас попробую перезагрузиться и

проверить снова.

```

Original issue reported on code.google.com by `hobbit.mage` on 10 Nov 2012 at 12:20

|

1.0

|

x64 doesn't start - ```

Установлена версия x64 0.1.9

При запуске ошибка

---------------------------

keyla.exe - Ошибка приложения

---------------------------

Ошибка при запуске приложения (0xc000007b). Для

выхода из приложения нажмите кнопку "ОК".

---------------------------

ОК

---------------------------

Система Win 7 Pro x64 Ru SP1

В системе установлен Punto Switcher, но он

выгружен из памяти.

Сейчас попробую перезагрузиться и

проверить снова.

```

Original issue reported on code.google.com by `hobbit.mage` on 10 Nov 2012 at 12:20

|

defect

|

doesn t start установлена версия при запуске ошибка keyla exe ошибка приложения ошибка при запуске приложения для выхода из приложения нажмите кнопку ок ок система win pro ru в системе установлен punto switcher но он выгружен из памяти сейчас попробую перезагрузиться и проверить снова original issue reported on code google com by hobbit mage on nov at

| 1

|

792,410

| 27,959,161,502

|

IssuesEvent

|

2023-03-24 14:29:32

|

webcompat/web-bugs

|

https://api.github.com/repos/webcompat/web-bugs

|

closed

|

play.google.com - see bug description

|

status-needsinfo priority-critical browser-focus-geckoview engine-gecko

|

<!-- @browser: Firefox Mobile 101.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:101.0) Gecko/101.0 Firefox/101.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://play.google.com/store/apps/details?id=com.snapchat.android

**Browser / Version**: Firefox Mobile 101.0

**Operating System**: Android 11

**Tested Another Browser**: Yes Chrome

**Problem type**: Something else

**Description**: advertising worst

**Steps to Reproduce**:

I m disturbe with these erritate

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2023/3/7727b414-eec4-4b5e-bc0e-e86489696131.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20220608170832</li><li>channel: release</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2023/3/acddf479-9640-420c-9ec9-f76db4a5f312)

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

1.0

|

play.google.com - see bug description - <!-- @browser: Firefox Mobile 101.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:101.0) Gecko/101.0 Firefox/101.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @extra_labels: browser-focus-geckoview -->

**URL**: https://play.google.com/store/apps/details?id=com.snapchat.android

**Browser / Version**: Firefox Mobile 101.0

**Operating System**: Android 11

**Tested Another Browser**: Yes Chrome

**Problem type**: Something else

**Description**: advertising worst

**Steps to Reproduce**:

I m disturbe with these erritate

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2023/3/7727b414-eec4-4b5e-bc0e-e86489696131.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20220608170832</li><li>channel: release</li><li>hasTouchScreen: true</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2023/3/acddf479-9640-420c-9ec9-f76db4a5f312)

_From [webcompat.com](https://webcompat.com/) with ❤️_

|

non_defect

|

play google com see bug description url browser version firefox mobile operating system android tested another browser yes chrome problem type something else description advertising worst steps to reproduce i m disturbe with these erritate view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel release hastouchscreen true mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️

| 0

|

53,742

| 13,262,217,162

|

IssuesEvent

|

2020-08-20 21:19:49

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

[core-removal] move documentation to sphinx (Trac #1988)

|

Migrated from Trac analysis defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1988">https://code.icecube.wisc.edu/projects/icecube/ticket/1988</a>, reported by kjmeagher</summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:14:55",

"_ts": "1550067295757382",

"description": "",

"reporter": "kjmeagher",

"cc": "",

"resolution": "fixed",

"time": "2017-04-26T19:06:22",

"component": "analysis",

"summary": "[core-removal] move documentation to sphinx",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

[core-removal] move documentation to sphinx (Trac #1988) -

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1988">https://code.icecube.wisc.edu/projects/icecube/ticket/1988</a>, reported by kjmeagher</summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:14:55",

"_ts": "1550067295757382",

"description": "",

"reporter": "kjmeagher",

"cc": "",

"resolution": "fixed",

"time": "2017-04-26T19:06:22",

"component": "analysis",

"summary": "[core-removal] move documentation to sphinx",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "",

"type": "defect"

}

```

</p>

</details>

|

defect

|

move documentation to sphinx trac migrated from json status closed changetime ts description reporter kjmeagher cc resolution fixed time component analysis summary move documentation to sphinx priority normal keywords milestone owner type defect

| 1

|

84,218

| 10,361,442,377

|

IssuesEvent

|

2019-09-06 10:01:41

|

Sharaal/sql-pg

|

https://api.github.com/repos/Sharaal/sql-pg

|

closed

|

Refactor the README.md

|

documentation

|

Currently the README.md is good, but there are some things which can be made a lot better like described here very good:

https://dev.to/carlillo/how-to-reach-your-goals-1000-github-stars-in-the-first-open-source-software-337h

|

1.0

|

Refactor the README.md - Currently the README.md is good, but there are some things which can be made a lot better like described here very good:

https://dev.to/carlillo/how-to-reach-your-goals-1000-github-stars-in-the-first-open-source-software-337h

|

non_defect

|

refactor the readme md currently the readme md is good but there are some things which can be made a lot better like described here very good

| 0

|

240,798

| 20,073,997,900

|

IssuesEvent

|

2022-02-04 10:34:08

|

tracim/tracim

|

https://api.github.com/repos/tracim/tracim

|

closed

|

Bug: Activation/Deactivation of agenda is not properly handled.

|

manually tested backend add to changelog p0

|

## Description and expectations

Currently switching the button for activation or disabling of workspace agenda does not have any consequences for the user calendars list.

To make things work, we do need to add/delete symlink during workspace_modification if the agenda enabled/disabled state as changed.

The fix doesn't need to fix existing incorrect case for existing agenda as switching two time the state, will restore the correct state.

<!-- Optionally, if you know how to get them through the browser's developer tools, please include console logs written during the bug occurence. You can also include HTTP responses which have a 4xx or 5xx error code. -->

### Version information

<!-- Optional - describe the environment which reveals the bug: -->

- Tracim version: v4.0.5 build 067

## Diagnostic

change should be made in this event handler:

https://github.com/tracim/tracim/blob/a0e9746fde5a4c45b4e0f0bfa2caf9522b8c4e21/backend/tracim_backend/applications/agenda/lib.py#L599

<!-- Explain what may cause the bug. -->

## Prerequisites

<!-- Optional - list the issues that must be solved or what needs to be done before handling this issue. -->

<!-- ## Required sections, if relevant ## -->

<!-- - To be discussed before development -->

<!-- - Interface -->

<!-- - Translations -->

<!-- - Workaround -->

<!-- - Extra information -->

## Implemented solution

https://github.com/tracim/tracim/pull/5323

- handle properly the activation/deactivation case of agenda in hooks.

- refactor caldav sync code to work more in a "set to this state" logic than "do this" logic.

- fix issues during usage of tracimcli user delete command (agenda not properly deleted)

- correct error code when calendar is disabled for better support of DAVx⁵.

|

1.0

|

Bug: Activation/Deactivation of agenda is not properly handled. - ## Description and expectations

Currently switching the button for activation or disabling of workspace agenda does not have any consequences for the user calendars list.

To make things work, we do need to add/delete symlink during workspace_modification if the agenda enabled/disabled state as changed.

The fix doesn't need to fix existing incorrect case for existing agenda as switching two time the state, will restore the correct state.

<!-- Optionally, if you know how to get them through the browser's developer tools, please include console logs written during the bug occurence. You can also include HTTP responses which have a 4xx or 5xx error code. -->

### Version information

<!-- Optional - describe the environment which reveals the bug: -->

- Tracim version: v4.0.5 build 067

## Diagnostic

change should be made in this event handler:

https://github.com/tracim/tracim/blob/a0e9746fde5a4c45b4e0f0bfa2caf9522b8c4e21/backend/tracim_backend/applications/agenda/lib.py#L599

<!-- Explain what may cause the bug. -->

## Prerequisites

<!-- Optional - list the issues that must be solved or what needs to be done before handling this issue. -->

<!-- ## Required sections, if relevant ## -->

<!-- - To be discussed before development -->

<!-- - Interface -->

<!-- - Translations -->

<!-- - Workaround -->

<!-- - Extra information -->

## Implemented solution

https://github.com/tracim/tracim/pull/5323

- handle properly the activation/deactivation case of agenda in hooks.

- refactor caldav sync code to work more in a "set to this state" logic than "do this" logic.

- fix issues during usage of tracimcli user delete command (agenda not properly deleted)

- correct error code when calendar is disabled for better support of DAVx⁵.

|

non_defect

|

bug activation deactivation of agenda is not properly handled description and expectations currently switching the button for activation or disabling of workspace agenda does not have any consequences for the user calendars list to make things work we do need to add delete symlink during workspace modification if the agenda enabled disabled state as changed the fix doesn t need to fix existing incorrect case for existing agenda as switching two time the state will restore the correct state version information tracim version build diagnostic change should be made in this event handler prerequisites implemented solution handle properly the activation deactivation case of agenda in hooks refactor caldav sync code to work more in a set to this state logic than do this logic fix issues during usage of tracimcli user delete command agenda not properly deleted correct error code when calendar is disabled for better support of davx⁵

| 0

|

71,333

| 23,545,368,080

|

IssuesEvent

|

2022-08-21 02:47:45

|

cakephp/cakephp

|

https://api.github.com/repos/cakephp/cakephp

|

closed

|

OauthTest::testRsaSigningString() fails with OpenSSL 3 on Ubuntu 22.04

|

defect

|

### Description

```

1) Cake\Test\TestCase\Http\Client\Auth\OauthTest::testRsaSigningString

RuntimeException: openssl error: error:0A000126:SSL routines::unexpected eof while readingerror:0A000197:SSL routines::shutdown while in init

/home/runner/work/cakephp/cakephp/src/Http/Client/Auth/Oauth.php:388

/home/runner/work/cakephp/cakephp/src/Http/Client/Auth/Oauth.php:223

/home/runner/work/cakephp/cakephp/src/Http/Client/Auth/Oauth.php:72

/home/runner/work/cakephp/cakephp/tests/TestCase/Http/Client/Auth/OauthTest.php:378

phpvfscomposer:///home/runner/work/cakephp/cakephp/vendor/phpunit/phpunit/phpunit:97

```

### CakePHP Version

4.4

### PHP Version

_No response_

|

1.0

|

OauthTest::testRsaSigningString() fails with OpenSSL 3 on Ubuntu 22.04 - ### Description

```

1) Cake\Test\TestCase\Http\Client\Auth\OauthTest::testRsaSigningString

RuntimeException: openssl error: error:0A000126:SSL routines::unexpected eof while readingerror:0A000197:SSL routines::shutdown while in init

/home/runner/work/cakephp/cakephp/src/Http/Client/Auth/Oauth.php:388

/home/runner/work/cakephp/cakephp/src/Http/Client/Auth/Oauth.php:223

/home/runner/work/cakephp/cakephp/src/Http/Client/Auth/Oauth.php:72

/home/runner/work/cakephp/cakephp/tests/TestCase/Http/Client/Auth/OauthTest.php:378

phpvfscomposer:///home/runner/work/cakephp/cakephp/vendor/phpunit/phpunit/phpunit:97

```

### CakePHP Version

4.4

### PHP Version

_No response_

|

defect

|

oauthtest testrsasigningstring fails with openssl on ubuntu description cake test testcase http client auth oauthtest testrsasigningstring runtimeexception openssl error error ssl routines unexpected eof while readingerror ssl routines shutdown while in init home runner work cakephp cakephp src http client auth oauth php home runner work cakephp cakephp src http client auth oauth php home runner work cakephp cakephp src http client auth oauth php home runner work cakephp cakephp tests testcase http client auth oauthtest php phpvfscomposer home runner work cakephp cakephp vendor phpunit phpunit phpunit cakephp version php version no response

| 1

|

57,841

| 16,100,979,469

|

IssuesEvent

|

2021-04-27 09:14:55

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

Invites(?) cause console to flood with "Room does not have an m.room.create event" warnings

|

T-Defect

|

With spaces enabled on today's nightly, my console has nothing but this in it:

```

10:11:57.373 rageshake.js?432e:65 Room !whereverZflxV:matrix.org does not have an m.room.create event

consoleObj.<computed> @ rageshake.js?432e:65

eval @ logger.ts?6b0b:50

Room.getType @ room.js?6146:1966

Room.isSpaceRoom @ room.js?6146:1977

isRoomVisible @ VisibilityProvider.ts?84e8:53

get globalState @ RoomNotificationStateStore.ts?54ff:51

updateStatusIndicator @ MatrixChat.tsx?b3f7:1899

eval @ MatrixChat.tsx?b3f7:1407

emit @ events.js?faa1:158

SyncApi._updateSyncState @ sync.js?cdb6:1695

SyncApi._sync @ sync.js?cdb6:803

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:762

|

1.0

|

Invites(?) cause console to flood with "Room does not have an m.room.create event" warnings - With spaces enabled on today's nightly, my console has nothing but this in it:

```

10:11:57.373 rageshake.js?432e:65 Room !whereverZflxV:matrix.org does not have an m.room.create event

consoleObj.<computed> @ rageshake.js?432e:65

eval @ logger.ts?6b0b:50

Room.getType @ room.js?6146:1966

Room.isSpaceRoom @ room.js?6146:1977

isRoomVisible @ VisibilityProvider.ts?84e8:53

get globalState @ RoomNotificationStateStore.ts?54ff:51

updateStatusIndicator @ MatrixChat.tsx?b3f7:1899

eval @ MatrixChat.tsx?b3f7:1407

emit @ events.js?faa1:158

SyncApi._updateSyncState @ sync.js?cdb6:1695

SyncApi._sync @ sync.js?cdb6:803

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:744

SyncApi._sync @ sync.js?cdb6:820

async function (async)

SyncApi._sync @ sync.js?cdb6:762

|

defect

|

invites cause console to flood with room does not have an m room create event warnings with spaces enabled on today s nightly my console has nothing but this in it rageshake js room whereverzflxv matrix org does not have an m room create event consoleobj rageshake js eval logger ts room gettype room js room isspaceroom room js isroomvisible visibilityprovider ts get globalstate roomnotificationstatestore ts updatestatusindicator matrixchat tsx eval matrixchat tsx emit events js syncapi updatesyncstate sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js syncapi sync sync js async function async syncapi sync sync js

| 1

|

31,819

| 6,630,733,769

|

IssuesEvent

|

2017-09-25 02:02:03

|

extnet/Ext.NET

|

https://api.github.com/repos/extnet/Ext.NET

|

closed

|

Field note visual height glitch on Chrome

|

3.x 4.x defect

|

Found: 4.2.0

Ext.NET forum thread: [Bug when displaying Field 3.x and 4.x ](http://forums.ext.net/showthread.php?61738)

On chrome, the spacing between fields looks broken, while it works fine in IE and FireFox.

This simple CSS override seems to address the issue:

```html

<style type="text/css">

.x-form-item-body {

height: initial

}

</style>

```

|

1.0

|

Field note visual height glitch on Chrome - Found: 4.2.0

Ext.NET forum thread: [Bug when displaying Field 3.x and 4.x ](http://forums.ext.net/showthread.php?61738)

On chrome, the spacing between fields looks broken, while it works fine in IE and FireFox.

This simple CSS override seems to address the issue:

```html

<style type="text/css">

.x-form-item-body {

height: initial

}

</style>

```

|

defect

|

field note visual height glitch on chrome found ext net forum thread on chrome the spacing between fields looks broken while it works fine in ie and firefox this simple css override seems to address the issue html x form item body height initial

| 1

|

43,857

| 11,868,853,957

|

IssuesEvent

|

2020-03-26 09:56:03

|

contao/contao

|

https://api.github.com/repos/contao/contao

|

closed

|

Leeres Auswahlmenü für Ursprungstemplate bei Vergleich von Templates mit abweichendem Präfix

|

defect

|

Beim Vergleich von Templates ist das Auswahlmenü für das Ursprungstemplate leer, wenn das zu vergleichende Template ein abweichendes Präfix hat (zum Beispiel `x_x.html5`). Dadurch ist es nicht möglich, einen Vergleich durchzuführen.

Entweder könnte der Button zur Vergleichsansicht in diesem Fall deaktiviert werden oder es könnten wie bei Templates ohne Präfix (zum Beispiel `x.html5`) im Auswahlmenü alle Templates angezeigt werden.

|

1.0

|

Leeres Auswahlmenü für Ursprungstemplate bei Vergleich von Templates mit abweichendem Präfix - Beim Vergleich von Templates ist das Auswahlmenü für das Ursprungstemplate leer, wenn das zu vergleichende Template ein abweichendes Präfix hat (zum Beispiel `x_x.html5`). Dadurch ist es nicht möglich, einen Vergleich durchzuführen.

Entweder könnte der Button zur Vergleichsansicht in diesem Fall deaktiviert werden oder es könnten wie bei Templates ohne Präfix (zum Beispiel `x.html5`) im Auswahlmenü alle Templates angezeigt werden.

|

defect

|

leeres auswahlmenü für ursprungstemplate bei vergleich von templates mit abweichendem präfix beim vergleich von templates ist das auswahlmenü für das ursprungstemplate leer wenn das zu vergleichende template ein abweichendes präfix hat zum beispiel x x dadurch ist es nicht möglich einen vergleich durchzuführen entweder könnte der button zur vergleichsansicht in diesem fall deaktiviert werden oder es könnten wie bei templates ohne präfix zum beispiel x im auswahlmenü alle templates angezeigt werden

| 1

|

67,231

| 20,961,586,897

|

IssuesEvent

|

2022-03-27 21:44:31

|

abedmaatalla/foursquared

|

https://api.github.com/repos/abedmaatalla/foursquared

|

closed

|

Update widget assets for v1 release.

|

Priority-Medium Type-Defect auto-migrated Component-UI

|

```

1. widget_header.png needs 9-patchification, on the gradient section so

that it will stretch and contract horizontally.

2. widget_header needs the standard 3 state button imagery, per

http://d.android.com/guide/practices/ui_guidelines/widget_design.html#desig

n bullet point #4.

3. widget_footer.png needs 9-patchification, on the mockup's semi-

transparent background.

4. widget_footer needs the standard 3 state button imagery, per

http://d.android.com/guide/practices/ui_guidelines/widget_design.html#desig

n bullet point #4.

5. both assets should be no wider than 220 pixels as to fit in a 3 cell

wide widget on the G1

I think these are the only things we'll need for art to get v1 out the

door, thats why its so specific.

```

Original issue reported on code.google.com by `jlapenna` on 3 Nov 2009 at 6:40

- Blocking: #28

|

1.0

|

Update widget assets for v1 release. - ```

1. widget_header.png needs 9-patchification, on the gradient section so

that it will stretch and contract horizontally.

2. widget_header needs the standard 3 state button imagery, per

http://d.android.com/guide/practices/ui_guidelines/widget_design.html#desig

n bullet point #4.

3. widget_footer.png needs 9-patchification, on the mockup's semi-

transparent background.

4. widget_footer needs the standard 3 state button imagery, per

http://d.android.com/guide/practices/ui_guidelines/widget_design.html#desig

n bullet point #4.

5. both assets should be no wider than 220 pixels as to fit in a 3 cell

wide widget on the G1

I think these are the only things we'll need for art to get v1 out the

door, thats why its so specific.

```

Original issue reported on code.google.com by `jlapenna` on 3 Nov 2009 at 6:40

- Blocking: #28

|

defect

|

update widget assets for release widget header png needs patchification on the gradient section so that it will stretch and contract horizontally widget header needs the standard state button imagery per n bullet point widget footer png needs patchification on the mockup s semi transparent background widget footer needs the standard state button imagery per n bullet point both assets should be no wider than pixels as to fit in a cell wide widget on the i think these are the only things we ll need for art to get out the door thats why its so specific original issue reported on code google com by jlapenna on nov at blocking

| 1

|

316,598

| 9,652,191,818

|

IssuesEvent

|

2019-05-18 15:02:55

|

servicemesher/istio-official-translation

|

https://api.github.com/repos/servicemesher/istio-official-translation

|

closed

|

/blog/2018/egress-https/index.md

|

finished lang/zh priority/P0 sync/update version/1.1

|

Source File: [/blog/2018/egress-https/index.md](https://github.com/istio/istio.io/tree/release-1.1/content/blog/2018/egress-https/index.md)

Diff:

~~~diff

diff --git a/content/blog/2018/egress-https/index.md b/content/blog/2018/egress-https/index.md

index b33b6538..8ebc0b0d 100644

--- a/content/blog/2018/egress-https/index.md

+++ b/content/blog/2018/egress-https/index.md

@@ -44,10 +44,9 @@ Here is a copy of the end-to-end architecture of the application from the origin

Perform the steps in the

[Deploying the application](/docs/examples/bookinfo/#deploying-the-application),

-[Confirm the app is running](/docs/examples/bookinfo/#confirm-the-app-is-accessible-from-outside-the-cluster),

+[Confirm the app is running](/docs/examples/bookinfo/#confirm-the-app-is-accessible-from-outside-the-cluster), and

[Apply default destination rules](/docs/examples/bookinfo/#apply-default-destination-rules)

-sections, and

-[change Istio to the blocking-egress-by-default policy](/docs/tasks/traffic-management/egress/#change-to-the-blocking-by-default-policy).

+sections.

## Bookinfo with HTTPS access to a Google Books web service

@@ -212,14 +211,15 @@ Here is how both patterns are supported in the

{{< text ruby >}}

uri = URI.parse('https://www.googleapis.com/books/v1/volumes?q=isbn:' + isbn)

-http = Net::HTTP.new(uri.host, ENV['DO_NOT_ENCRYPT'] === 'true' ? 80:443)

+http = Net::HTTP.new(uri.host, uri.port)

...

unless ENV['DO_NOT_ENCRYPT'] === 'true' then

http.use_ssl = true

end

{{< /text >}}

-When the `DO_NOT_ENCRYPT` environment variable is defined, the request is performed without SSL (plain HTTP) to port 80.

+Note that the port is derived by the `URI.parse` from the URI's schema (`https://`) to be `443`, the default HTTPS port.

+When the `DO_NOT_ENCRYPT` environment variable is defined, the request is performed without SSL (plain HTTP).

You can set the `DO_NOT_ENCRYPT` environment variable to _"true"_ in the

[Kubernetes deployment spec of details v2]({{< github_file >}}/samples/bookinfo/platform/kube/bookinfo-details-v2.yaml),

@@ -250,8 +250,7 @@ In the next section you will configure TLS origination for accessing an external

$ kubectl apply -f @samples/bookinfo/networking/virtual-service-details-v2.yaml@

{{< /text >}}

-1. Create a mesh-external service entry for `www.google.apis` , a virtual service to rewrite the destination port from

- 80 to 443, and a destination rule to perform TLS origination.

+1. Create a mesh-external service entry for `www.google.apis` and a destination rule to perform TLS origination.

{{< text bash >}}

$ kubectl apply -f - <<EOF

@@ -263,31 +262,12 @@ In the next section you will configure TLS origination for accessing an external

hosts:

- www.googleapis.com

ports:

- - number: 80

- name: http

- protocol: HTTP

- number: 443

- name: https

- protocol: HTTPS

+ name: http-port-for-tls-origination

+ protocol: HTTP

resolution: DNS

---

apiVersion: networking.istio.io/v1alpha3

- kind: VirtualService

- metadata:

- name: rewrite-port-for-googleapis

- spec:

- hosts:

- - www.googleapis.com

- http:

- - match:

- - port: 80

- route:

- - destination:

- host: www.googleapis.com

- port:

- number: 443

- ---

- apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: originate-tls-for-googleapis

@@ -300,19 +280,22 @@ In the next section you will configure TLS origination for accessing an external

- port:

number: 443

tls:

- mode: SIMPLE # initiates HTTPS when accessing www.googleapis.com

+ mode: SIMPLE # initiates HTTPS when accessing edition.cnn.com

EOF

{{< /text >}}

-1. Access the web page of the application and verify that the book details are displayed without errors.

+ Note that port `443` is designated by a name with the prefix `http-`, and its protocol is specified as `HTTP`. Note

+ that you are not required to use port 443 to send HTTP requests for TLS origination.

+ [This example](/docs/examples/advanced-gateways/egress-tls-origination/) shows how to perform TLS

+ origination with port rewriting.

-1. [Enable Envoy’s access logging](/docs/tasks/telemetry/logs/access-log/#enable-envoy-s-access-logging)

+1. Access the web page of the application and verify that the book details are displayed without errors.

1. Check the log of of the sidecar proxy of _details v2_ and see the HTTP request.

{{< text bash >}}

$ kubectl logs $(kubectl get pods -l app=details -l version=v2 -o jsonpath='{.items[0].metadata.name}') istio-proxy | grep googleapis

- [2018-08-09T11:32:58.171Z] "GET /books/v1/volumes?q=isbn:0486424618 HTTP/1.1" 200 - 0 1050 264 264 "-" "Ruby" "b993bae7-4288-9241-81a5-4cde93b2e3a6" "www.googleapis.com:80" "172.217.20.74:80"

+ [2018-08-09T11:32:58.171Z] "GET /books/v1/volumes?q=isbn:0486424618 HTTP/1.1" 200 - 0 1050 264 264 "-" "Ruby" "b993bae7-4288-9241-81a5-4cde93b2e3a6" "www.googleapis.com:443" "172.217.20.74:443"

EOF

{{< /text >}}

@@ -323,7 +306,6 @@ In the next section you will configure TLS origination for accessing an external

{{< text bash >}}

$ kubectl delete serviceentry googleapis

-$ kubectl delete virtualservice rewrite-port-for-googleapis

$ kubectl delete destinationrule originate-tls-for-googleapis

$ kubectl delete -f @samples/bookinfo/networking/virtual-service-details-v2.yaml@

$ kubectl delete -f @samples/bookinfo/platform/kube/bookinfo-details-v2.yaml@

~~~

|

1.0

|

/blog/2018/egress-https/index.md - Source File: [/blog/2018/egress-https/index.md](https://github.com/istio/istio.io/tree/release-1.1/content/blog/2018/egress-https/index.md)

Diff:

~~~diff

diff --git a/content/blog/2018/egress-https/index.md b/content/blog/2018/egress-https/index.md

index b33b6538..8ebc0b0d 100644

--- a/content/blog/2018/egress-https/index.md

+++ b/content/blog/2018/egress-https/index.md

@@ -44,10 +44,9 @@ Here is a copy of the end-to-end architecture of the application from the origin

Perform the steps in the

[Deploying the application](/docs/examples/bookinfo/#deploying-the-application),

-[Confirm the app is running](/docs/examples/bookinfo/#confirm-the-app-is-accessible-from-outside-the-cluster),

+[Confirm the app is running](/docs/examples/bookinfo/#confirm-the-app-is-accessible-from-outside-the-cluster), and

[Apply default destination rules](/docs/examples/bookinfo/#apply-default-destination-rules)

-sections, and

-[change Istio to the blocking-egress-by-default policy](/docs/tasks/traffic-management/egress/#change-to-the-blocking-by-default-policy).

+sections.

## Bookinfo with HTTPS access to a Google Books web service

@@ -212,14 +211,15 @@ Here is how both patterns are supported in the

{{< text ruby >}}

uri = URI.parse('https://www.googleapis.com/books/v1/volumes?q=isbn:' + isbn)

-http = Net::HTTP.new(uri.host, ENV['DO_NOT_ENCRYPT'] === 'true' ? 80:443)

+http = Net::HTTP.new(uri.host, uri.port)

...

unless ENV['DO_NOT_ENCRYPT'] === 'true' then

http.use_ssl = true

end

{{< /text >}}

-When the `DO_NOT_ENCRYPT` environment variable is defined, the request is performed without SSL (plain HTTP) to port 80.

+Note that the port is derived by the `URI.parse` from the URI's schema (`https://`) to be `443`, the default HTTPS port.

+When the `DO_NOT_ENCRYPT` environment variable is defined, the request is performed without SSL (plain HTTP).

You can set the `DO_NOT_ENCRYPT` environment variable to _"true"_ in the

[Kubernetes deployment spec of details v2]({{< github_file >}}/samples/bookinfo/platform/kube/bookinfo-details-v2.yaml),

@@ -250,8 +250,7 @@ In the next section you will configure TLS origination for accessing an external

$ kubectl apply -f @samples/bookinfo/networking/virtual-service-details-v2.yaml@

{{< /text >}}

-1. Create a mesh-external service entry for `www.google.apis` , a virtual service to rewrite the destination port from

- 80 to 443, and a destination rule to perform TLS origination.

+1. Create a mesh-external service entry for `www.google.apis` and a destination rule to perform TLS origination.

{{< text bash >}}

$ kubectl apply -f - <<EOF

@@ -263,31 +262,12 @@ In the next section you will configure TLS origination for accessing an external

hosts:

- www.googleapis.com

ports:

- - number: 80

- name: http

- protocol: HTTP

- number: 443

- name: https

- protocol: HTTPS

+ name: http-port-for-tls-origination

+ protocol: HTTP

resolution: DNS

---

apiVersion: networking.istio.io/v1alpha3

- kind: VirtualService

- metadata:

- name: rewrite-port-for-googleapis

- spec:

- hosts:

- - www.googleapis.com

- http:

- - match:

- - port: 80

- route:

- - destination:

- host: www.googleapis.com

- port:

- number: 443

- ---

- apiVersion: networking.istio.io/v1alpha3

kind: DestinationRule

metadata:

name: originate-tls-for-googleapis

@@ -300,19 +280,22 @@ In the next section you will configure TLS origination for accessing an external

- port:

number: 443

tls:

- mode: SIMPLE # initiates HTTPS when accessing www.googleapis.com

+ mode: SIMPLE # initiates HTTPS when accessing edition.cnn.com

EOF

{{< /text >}}

-1. Access the web page of the application and verify that the book details are displayed without errors.

+ Note that port `443` is designated by a name with the prefix `http-`, and its protocol is specified as `HTTP`. Note

+ that you are not required to use port 443 to send HTTP requests for TLS origination.

+ [This example](/docs/examples/advanced-gateways/egress-tls-origination/) shows how to perform TLS

+ origination with port rewriting.

-1. [Enable Envoy’s access logging](/docs/tasks/telemetry/logs/access-log/#enable-envoy-s-access-logging)

+1. Access the web page of the application and verify that the book details are displayed without errors.

1. Check the log of of the sidecar proxy of _details v2_ and see the HTTP request.

{{< text bash >}}

$ kubectl logs $(kubectl get pods -l app=details -l version=v2 -o jsonpath='{.items[0].metadata.name}') istio-proxy | grep googleapis

- [2018-08-09T11:32:58.171Z] "GET /books/v1/volumes?q=isbn:0486424618 HTTP/1.1" 200 - 0 1050 264 264 "-" "Ruby" "b993bae7-4288-9241-81a5-4cde93b2e3a6" "www.googleapis.com:80" "172.217.20.74:80"

+ [2018-08-09T11:32:58.171Z] "GET /books/v1/volumes?q=isbn:0486424618 HTTP/1.1" 200 - 0 1050 264 264 "-" "Ruby" "b993bae7-4288-9241-81a5-4cde93b2e3a6" "www.googleapis.com:443" "172.217.20.74:443"

EOF

{{< /text >}}

@@ -323,7 +306,6 @@ In the next section you will configure TLS origination for accessing an external

{{< text bash >}}

$ kubectl delete serviceentry googleapis

-$ kubectl delete virtualservice rewrite-port-for-googleapis

$ kubectl delete destinationrule originate-tls-for-googleapis

$ kubectl delete -f @samples/bookinfo/networking/virtual-service-details-v2.yaml@

$ kubectl delete -f @samples/bookinfo/platform/kube/bookinfo-details-v2.yaml@

~~~

|

non_defect

|

blog egress https index md source file diff diff diff git a content blog egress https index md b content blog egress https index md index a content blog egress https index md b content blog egress https index md here is a copy of the end to end architecture of the application from the origin perform the steps in the docs examples bookinfo deploying the application docs examples bookinfo confirm the app is accessible from outside the cluster docs examples bookinfo confirm the app is accessible from outside the cluster and docs examples bookinfo apply default destination rules sections and docs tasks traffic management egress change to the blocking by default policy sections bookinfo with https access to a google books web service here is how both patterns are supported in the uri uri parse isbn http net http new uri host env true http net http new uri host uri port unless env true then http use ssl true end when the do not encrypt environment variable is defined the request is performed without ssl plain http to port note that the port is derived by the uri parse from the uri s schema to be the default https port when the do not encrypt environment variable is defined the request is performed without ssl plain http you can set the do not encrypt environment variable to true in the samples bookinfo platform kube bookinfo details yaml in the next section you will configure tls origination for accessing an external kubectl apply f samples bookinfo networking virtual service details yaml create a mesh external service entry for a virtual service to rewrite the destination port from to and a destination rule to perform tls origination create a mesh external service entry for and a destination rule to perform tls origination kubectl apply f eof in the next section you will configure tls origination for accessing an external hosts ports number name http protocol http number name https protocol https name http port for tls origination protocol http resolution dns apiversion networking istio io kind virtualservice metadata name rewrite port for googleapis spec hosts http match port route destination host port number apiversion networking istio io kind destinationrule metadata name originate tls for googleapis in the next section you will configure tls origination for accessing an external port number tls mode simple initiates https when accessing mode simple initiates https when accessing edition cnn com eof access the web page of the application and verify that the book details are displayed without errors note that port is designated by a name with the prefix http and its protocol is specified as http note that you are not required to use port to send http requests for tls origination docs examples advanced gateways egress tls origination shows how to perform tls origination with port rewriting docs tasks telemetry logs access log enable envoy s access logging access the web page of the application and verify that the book details are displayed without errors check the log of of the sidecar proxy of details and see the http request kubectl logs kubectl get pods l app details l version o jsonpath items metadata name istio proxy grep googleapis get books volumes q isbn http ruby get books volumes q isbn http ruby eof in the next section you will configure tls origination for accessing an external kubectl delete serviceentry googleapis kubectl delete virtualservice rewrite port for googleapis kubectl delete destinationrule originate tls for googleapis kubectl delete f samples bookinfo networking virtual service details yaml kubectl delete f samples bookinfo platform kube bookinfo details yaml

| 0

|

390,506

| 11,544,452,740

|

IssuesEvent

|

2020-02-18 11:30:13

|

grafana/grafana

|

https://api.github.com/repos/grafana/grafana

|

closed

|

calendar sometimes closes itself when using new timepicker

|

area/frontend needs investigation priority/important-soon type/bug

|

**What happened**:

When we select dates in Absolute time range new calendar, it sometimes closes itself and does not validate selected start & end dates.

**What you expected to happen**:

After selection, see selected period in blue as expected, and be able to change it if needed before validate.

**How to reproduce it (as minimally and precisely as possible)**:

Just select a first date on calendar, blue circle appears around date, and then select a second date. Randomly, calendar closes itself and we have to start again.

**Anything else we need to know?**: -

**Environment**:

- Grafana version: 6.6.1

- Data source type & version: postgres

- OS Grafana is installed on: debian 9.11

- User OS & Browser: chrome 79.0.3945.88 on debian

- Grafana plugins: -

- Others: -

|

1.0

|

calendar sometimes closes itself when using new timepicker - **What happened**:

When we select dates in Absolute time range new calendar, it sometimes closes itself and does not validate selected start & end dates.

**What you expected to happen**:

After selection, see selected period in blue as expected, and be able to change it if needed before validate.

**How to reproduce it (as minimally and precisely as possible)**:

Just select a first date on calendar, blue circle appears around date, and then select a second date. Randomly, calendar closes itself and we have to start again.

**Anything else we need to know?**: -

**Environment**:

- Grafana version: 6.6.1

- Data source type & version: postgres

- OS Grafana is installed on: debian 9.11

- User OS & Browser: chrome 79.0.3945.88 on debian

- Grafana plugins: -

- Others: -

|

non_defect

|

calendar sometimes closes itself when using new timepicker what happened when we select dates in absolute time range new calendar it sometimes closes itself and does not validate selected start end dates what you expected to happen after selection see selected period in blue as expected and be able to change it if needed before validate how to reproduce it as minimally and precisely as possible just select a first date on calendar blue circle appears around date and then select a second date randomly calendar closes itself and we have to start again anything else we need to know environment grafana version data source type version postgres os grafana is installed on debian user os browser chrome on debian grafana plugins others

| 0

|

38,576

| 8,915,069,857

|

IssuesEvent

|

2019-01-19 01:38:39

|

abulka/pynsource

|

https://api.github.com/repos/abulka/pynsource

|

closed

|

Lines should be able to be deleted

|

Priority-Medium Type-Defect auto-migrated enhancement

|

```

What steps will reproduce the problem?

1.Create two classes;

2.Create a line between them;

3.Try to delete the line.

There is no option for deleting lines.

1.61 Windows 7 64-bit

```

Original issue reported on code.google.com by `cristian...@yahoo.com` on 21 Jul 2014 at 11:18

|

1.0

|

Lines should be able to be deleted - ```

What steps will reproduce the problem?

1.Create two classes;

2.Create a line between them;

3.Try to delete the line.

There is no option for deleting lines.

1.61 Windows 7 64-bit

```

Original issue reported on code.google.com by `cristian...@yahoo.com` on 21 Jul 2014 at 11:18

|

defect

|

lines should be able to be deleted what steps will reproduce the problem create two classes create a line between them try to delete the line there is no option for deleting lines windows bit original issue reported on code google com by cristian yahoo com on jul at

| 1

|

65,900

| 19,770,619,089

|

IssuesEvent

|

2022-01-17 09:39:46

|

scipy/scipy

|

https://api.github.com/repos/scipy/scipy

|

opened

|

BUG: scipy.optimize.ridder() does not obey absolute tolerance

|

defect

|

### Describe your issue.

Hi,

I would like to use `scipy.optimize.ridder()` for a function of me.

I set the absolute tolerance to `1.` and expect that the resulting function call would be in the range `[-1.:+1.]`.

When looking at the second call to `ridder()`, I can see `5.000065000084806` as a result.

Did I make a mistake when calling `ridder()` or is something wrong with the result of it?

Thanks in advance!

### Reproducing Code Example

```python

import scipy.optimize as sco

import numpy as np

# import scipy

import sys, scipy, numpy;

print(scipy.__version__, numpy.__version__, sys.version_info)

amountOfCalls = 0

def myFunc(x):

global amountOfCalls

amountOfCalls += 1

y = x ** 3 + x - 5

return y

for xtol in [0.00001, 1., ]:

amountOfCalls = 0

xValue, root1_result = sco.ridder(myFunc, -1000, 1000, xtol=xtol, full_output=True, disp=True, )

print('atol:', xtol, 'nullvalue:', myFunc(xValue), 'xValue:', xValue, 'amountOfCalls:', amountOfCalls) # , ' '.join(str(root1_result).split('\n')))

```

### Error message

```shell

1.2.2 1.17.0 sys.version_info(major=3, minor=6, micro=8, releaselevel='final', serial=0)

atol: 1e-05 nullvalue: 3.9394811365944804e-05 xValue: 1.515985217781899 amountOfCalls: 31

atol: 1.0 nullvalue: 5.000065000084806 xValue: 2.000004999994985 amountOfCalls: 13

```

### SciPy/NumPy/Python version information

1.2.2 1.17.0 sys.version_info(major=3, minor=6, micro=8, releaselevel='final', serial=0)

|

1.0

|

BUG: scipy.optimize.ridder() does not obey absolute tolerance - ### Describe your issue.

Hi,

I would like to use `scipy.optimize.ridder()` for a function of me.

I set the absolute tolerance to `1.` and expect that the resulting function call would be in the range `[-1.:+1.]`.

When looking at the second call to `ridder()`, I can see `5.000065000084806` as a result.

Did I make a mistake when calling `ridder()` or is something wrong with the result of it?

Thanks in advance!

### Reproducing Code Example

```python

import scipy.optimize as sco

import numpy as np

# import scipy

import sys, scipy, numpy;

print(scipy.__version__, numpy.__version__, sys.version_info)

amountOfCalls = 0

def myFunc(x):

global amountOfCalls

amountOfCalls += 1

y = x ** 3 + x - 5

return y

for xtol in [0.00001, 1., ]:

amountOfCalls = 0

xValue, root1_result = sco.ridder(myFunc, -1000, 1000, xtol=xtol, full_output=True, disp=True, )

print('atol:', xtol, 'nullvalue:', myFunc(xValue), 'xValue:', xValue, 'amountOfCalls:', amountOfCalls) # , ' '.join(str(root1_result).split('\n')))

```

### Error message

```shell

1.2.2 1.17.0 sys.version_info(major=3, minor=6, micro=8, releaselevel='final', serial=0)

atol: 1e-05 nullvalue: 3.9394811365944804e-05 xValue: 1.515985217781899 amountOfCalls: 31

atol: 1.0 nullvalue: 5.000065000084806 xValue: 2.000004999994985 amountOfCalls: 13

```

### SciPy/NumPy/Python version information

1.2.2 1.17.0 sys.version_info(major=3, minor=6, micro=8, releaselevel='final', serial=0)

|

defect

|

bug scipy optimize ridder does not obey absolute tolerance describe your issue hi i would like to use scipy optimize ridder for a function of me i set the absolute tolerance to and expect that the resulting function call would be in the range when looking at the second call to ridder i can see as a result did i make a mistake when calling ridder or is something wrong with the result of it thanks in advance reproducing code example python import scipy optimize as sco import numpy as np import scipy import sys scipy numpy print scipy version numpy version sys version info amountofcalls def myfunc x global amountofcalls amountofcalls y x x return y for xtol in amountofcalls xvalue result sco ridder myfunc xtol xtol full output true disp true print atol xtol nullvalue myfunc xvalue xvalue xvalue amountofcalls amountofcalls join str result split n error message shell sys version info major minor micro releaselevel final serial atol nullvalue xvalue amountofcalls atol nullvalue xvalue amountofcalls scipy numpy python version information sys version info major minor micro releaselevel final serial

| 1

|

119,017

| 4,759,497,370