Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,230

| 3,155,728,719

|

IssuesEvent

|

2015-09-17 10:33:40

|

bigbluebutton/bigbluebutton

|

https://api.github.com/repos/bigbluebutton/bigbluebutton

|

closed

|

If try to change microphone when entering in BBB, it enters in a loop

|

Defect Normal Priority

|

Originally reported on Google Code with ID 1896

```

When you enter in BBB, you select No when you receive the question: have you heard your

voice? Then you select a different microphone and push Next. It never finishes. You

have to reload the page.

```

Reported by `smoral@adhoclearning.com` on 2015-03-06 14:44:53

|

1.0

|

If try to change microphone when entering in BBB, it enters in a loop - Originally reported on Google Code with ID 1896

```

When you enter in BBB, you select No when you receive the question: have you heard your

voice? Then you select a different microphone and push Next. It never finishes. You

have to reload the page.

```

Reported by `smoral@adhoclearning.com` on 2015-03-06 14:44:53

|

defect

|

if try to change microphone when entering in bbb it enters in a loop originally reported on google code with id when you enter in bbb you select no when you receive the question have you heard your voice then you select a different microphone and push next it never finishes you have to reload the page reported by smoral adhoclearning com on

| 1

|

89,638

| 25,862,949,281

|

IssuesEvent

|

2022-12-13 18:21:35

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

libtorch_python.so: undefined symbol: PyInstanceMethod_Type

|

module: build triaged topic: build

|

### 🐛 Describe the bug

I run into this error vvv

```

C++ exception with description "/var/lib/jenkins/multipy/multipy/runtime/loader.cpp:780: libnvfuser_python.so: could not load library, dlopen says: /opt/conda/lib/python3.10/site-packages/torch/lib/libtorch_python.so: undefined symbol: PyInstanceMethod_Type" thrown in the test body.

```

link of failing CI: https://github.com/pytorch/pytorch/actions/runs/3586511045/jobs/6036274959

cmake lines related to the object: https://github.com/pytorch/pytorch/blob/d8335704a54f89e93f562cda7b49048e1eab1ebb/torch/csrc/jit/codegen/cuda/CMakeLists.txt#L165-L200

It's a little strange to me how the error was thrown from libtorch_python.so. This feels like a pytorch build issue.

I'm also a little bit uncertain how this is invoked.

In the setup, I have `libnvfuser_python.so` which depends on both `libnvfuser_codegen.so` and `libtorch_python.so`. While `libnvfuser_codegen.so` is dlopened by torch and does not have dependency on `libnvfuser_python.so`.

### Versions

This is failing on CI job:

[linux-bionic-cuda11.6-py3.10-gcc7 / test (deploy, 1, 1, linux.4xlarge.nvidia.gpu)](https://github.com/pytorch/pytorch/actions/runs/3590997095/jobs/6045485004)

cc @malfet @seemethere

|

2.0

|

libtorch_python.so: undefined symbol: PyInstanceMethod_Type - ### 🐛 Describe the bug

I run into this error vvv

```

C++ exception with description "/var/lib/jenkins/multipy/multipy/runtime/loader.cpp:780: libnvfuser_python.so: could not load library, dlopen says: /opt/conda/lib/python3.10/site-packages/torch/lib/libtorch_python.so: undefined symbol: PyInstanceMethod_Type" thrown in the test body.

```

link of failing CI: https://github.com/pytorch/pytorch/actions/runs/3586511045/jobs/6036274959

cmake lines related to the object: https://github.com/pytorch/pytorch/blob/d8335704a54f89e93f562cda7b49048e1eab1ebb/torch/csrc/jit/codegen/cuda/CMakeLists.txt#L165-L200

It's a little strange to me how the error was thrown from libtorch_python.so. This feels like a pytorch build issue.

I'm also a little bit uncertain how this is invoked.

In the setup, I have `libnvfuser_python.so` which depends on both `libnvfuser_codegen.so` and `libtorch_python.so`. While `libnvfuser_codegen.so` is dlopened by torch and does not have dependency on `libnvfuser_python.so`.

### Versions

This is failing on CI job:

[linux-bionic-cuda11.6-py3.10-gcc7 / test (deploy, 1, 1, linux.4xlarge.nvidia.gpu)](https://github.com/pytorch/pytorch/actions/runs/3590997095/jobs/6045485004)

cc @malfet @seemethere

|

non_defect

|

libtorch python so undefined symbol pyinstancemethod type 🐛 describe the bug i run into this error vvv c exception with description var lib jenkins multipy multipy runtime loader cpp libnvfuser python so could not load library dlopen says opt conda lib site packages torch lib libtorch python so undefined symbol pyinstancemethod type thrown in the test body link of failing ci cmake lines related to the object it s a little strange to me how the error was thrown from libtorch python so this feels like a pytorch build issue i m also a little bit uncertain how this is invoked in the setup i have libnvfuser python so which depends on both libnvfuser codegen so and libtorch python so while libnvfuser codegen so is dlopened by torch and does not have dependency on libnvfuser python so versions this is failing on ci job cc malfet seemethere

| 0

|

2,538

| 2,607,926,695

|

IssuesEvent

|

2015-02-26 00:24:56

|

chrsmithdemos/minify

|

https://api.github.com/repos/chrsmithdemos/minify

|

closed

|

Add a GET parameter for debugging

|

auto-migrated Priority-Medium Type-Defect

|

```

A way to see the original js without minifying it, mainly for debugging/

developing purposes:

In the .htaccess, we need the QSA flag:

RewriteRule ^(.*\.(css|js))$ /minify.php?files=$1 [L,NC,QSA]

And in the minify.php, simply:

if (isset($_GET['debug'])) {

echo $minify->combine(!($_GET['debug']));

} else {

echo $minify->combine();

}

So http://www.example.com/js/blabla.js?debug=1 should return the original

js, without minifying it.

Congrats for the software! It helps me a lot!

Victor

```

-----

Original issue reported on code.google.com by `espiga...@gmail.com` on 21 Jul 2007 at 3:24

|

1.0

|

Add a GET parameter for debugging - ```

A way to see the original js without minifying it, mainly for debugging/

developing purposes:

In the .htaccess, we need the QSA flag:

RewriteRule ^(.*\.(css|js))$ /minify.php?files=$1 [L,NC,QSA]

And in the minify.php, simply:

if (isset($_GET['debug'])) {

echo $minify->combine(!($_GET['debug']));

} else {

echo $minify->combine();

}

So http://www.example.com/js/blabla.js?debug=1 should return the original

js, without minifying it.

Congrats for the software! It helps me a lot!

Victor

```

-----

Original issue reported on code.google.com by `espiga...@gmail.com` on 21 Jul 2007 at 3:24

|

defect

|

add a get parameter for debugging a way to see the original js without minifying it mainly for debugging developing purposes in the htaccess we need the qsa flag rewriterule css js minify php files and in the minify php simply if isset get echo minify combine get else echo minify combine so should return the original js without minifying it congrats for the software it helps me a lot victor original issue reported on code google com by espiga gmail com on jul at

| 1

|

14,040

| 24,277,096,750

|

IssuesEvent

|

2022-09-28 14:34:26

|

CS3219-AY2223S1/cs3219-project-ay2223s1-g5

|

https://api.github.com/repos/CS3219-AY2223S1/cs3219-project-ay2223s1-g5

|

closed

|

[FR-EDITOR-1] The system should have a code editor which allows users to code together in real time.

|

functional requirement P1

|

- [x] #185

- [x] #186

|

1.0

|

[FR-EDITOR-1] The system should have a code editor which allows users to code together in real time. - - [x] #185

- [x] #186

|

non_defect

|

the system should have a code editor which allows users to code together in real time

| 0

|

118,714

| 4,752,110,756

|

IssuesEvent

|

2016-10-23 08:37:54

|

CS2103AUG2016-T13-C2/main

|

https://api.github.com/repos/CS2103AUG2016-T13-C2/main

|

closed

|

bugs in undo delete

|

priority.high type.bug

|

should not add at the bottom, and also carry the information of completion

|

1.0

|

bugs in undo delete - should not add at the bottom, and also carry the information of completion

|

non_defect

|

bugs in undo delete should not add at the bottom and also carry the information of completion

| 0

|

55,954

| 14,860,098,508

|

IssuesEvent

|

2021-01-18 19:48:48

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

SelectOneMenu: JS error calling disable()

|

defect

|

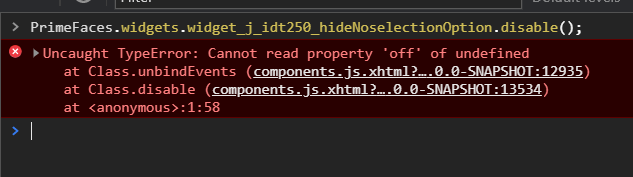

**Describe the defect**

Found during Integration Testing...

When calling the widget `disable()` method throws the following JS error using the Showcase.

This can happen whenver the items are rendered on the client.

```java

.attr("renderPanelContentOnClient", menu.getVar() == null, false);

```

**Environment:**

- PF Version: _10.0_

**Expected behavior**

The SelectOneMenu should be disabled.

**Example XHTML**

```html

<p:selectOneMenu id="hideNoselectionOption" value="#{selectOneMenuView.hideNoSelectOption}"

hideNoSelectionOption="#{not empty selectOneMenuView.hideNoSelectOption}">

<p:ajax update="@this" process="@this"/>

<f:selectItem itemLabel="Select One" itemValue="#{null}" noSelectionOption="true"/>

<f:selectItem itemLabel="Option1" itemValue="Option1"/>

<f:selectItem itemLabel="Option2" itemValue="Option2"/>

<f:selectItem itemLabel="Option3" itemValue="Option3"/>

</p:selectOneMenu>

```

|

1.0

|

SelectOneMenu: JS error calling disable() - **Describe the defect**

Found during Integration Testing...

When calling the widget `disable()` method throws the following JS error using the Showcase.

This can happen whenver the items are rendered on the client.

```java

.attr("renderPanelContentOnClient", menu.getVar() == null, false);

```

**Environment:**

- PF Version: _10.0_

**Expected behavior**

The SelectOneMenu should be disabled.

**Example XHTML**

```html

<p:selectOneMenu id="hideNoselectionOption" value="#{selectOneMenuView.hideNoSelectOption}"

hideNoSelectionOption="#{not empty selectOneMenuView.hideNoSelectOption}">

<p:ajax update="@this" process="@this"/>

<f:selectItem itemLabel="Select One" itemValue="#{null}" noSelectionOption="true"/>

<f:selectItem itemLabel="Option1" itemValue="Option1"/>

<f:selectItem itemLabel="Option2" itemValue="Option2"/>

<f:selectItem itemLabel="Option3" itemValue="Option3"/>

</p:selectOneMenu>

```

|

defect

|

selectonemenu js error calling disable describe the defect found during integration testing when calling the widget disable method throws the following js error using the showcase this can happen whenver the items are rendered on the client java attr renderpanelcontentonclient menu getvar null false environment pf version expected behavior the selectonemenu should be disabled example xhtml html p selectonemenu id hidenoselectionoption value selectonemenuview hidenoselectoption hidenoselectionoption not empty selectonemenuview hidenoselectoption

| 1

|

54,377

| 13,632,224,963

|

IssuesEvent

|

2020-09-24 19:17:43

|

department-of-veterans-affairs/va.gov-cms

|

https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms

|

opened

|

Rebuild taxonomy entity index programmatically on a regular basis

|

Content governance Dashboards and UI Defect Drupal engineering Good first task

|

## Description

Occasionally we're seeing cloned pages with edited Section taxonomy terms show up in the wrong /taxonomy/term/TID view

We've found that rebuilding the index seems to fix this.

## Acceptance Criteria

- [ ] Rebuild taxonomy entity index on a regular basis.

|

1.0

|

Rebuild taxonomy entity index programmatically on a regular basis - ## Description

Occasionally we're seeing cloned pages with edited Section taxonomy terms show up in the wrong /taxonomy/term/TID view

We've found that rebuilding the index seems to fix this.

## Acceptance Criteria

- [ ] Rebuild taxonomy entity index on a regular basis.

|

defect

|

rebuild taxonomy entity index programmatically on a regular basis description occasionally we re seeing cloned pages with edited section taxonomy terms show up in the wrong taxonomy term tid view we ve found that rebuilding the index seems to fix this acceptance criteria rebuild taxonomy entity index on a regular basis

| 1

|

237,125

| 18,153,028,726

|

IssuesEvent

|

2021-09-26 15:47:47

|

kimym56/42Project

|

https://api.github.com/repos/kimym56/42Project

|

opened

|

0927 PanResponder

|

documentation

|

## **Done**

- 아래 링크 코드(날짜 범위를 dragging으로 지정) 테스트 & 안드로이드 location value 문제 해결

https://blog.bam.tech/developer-news/how-to-handle-user-gestures-in-react-native-with-panresponder

## **To Do**

- 아래 링크 코드(날짜 범위를 touch(select)로 지정)랑 위 링크 코드 결정

https://react-day-picker.js.org/examples/selected-range/

|

1.0

|

0927 PanResponder - ## **Done**

- 아래 링크 코드(날짜 범위를 dragging으로 지정) 테스트 & 안드로이드 location value 문제 해결

https://blog.bam.tech/developer-news/how-to-handle-user-gestures-in-react-native-with-panresponder

## **To Do**

- 아래 링크 코드(날짜 범위를 touch(select)로 지정)랑 위 링크 코드 결정

https://react-day-picker.js.org/examples/selected-range/

|

non_defect

|

panresponder done 아래 링크 코드 날짜 범위를 dragging으로 지정 테스트 안드로이드 location value 문제 해결 to do 아래 링크 코드 날짜 범위를 touch select 로 지정 랑 위 링크 코드 결정

| 0

|

77,218

| 26,855,486,153

|

IssuesEvent

|

2023-02-03 14:19:50

|

obophenotype/cell-ontology

|

https://api.github.com/repos/obophenotype/cell-ontology

|

closed

|

names that are too broad for their definitions (ciliary epithelial cells)

|

Priority-Medium Type-Defect auto-migrated typo text definition

|

```

TICKET: These terms have names that are too broad for their definitions, or

definitions that are two narrow for their names:

id: CL:0002304

name: non-pigmented ciliary epithelial cell

def: "A multi-ciliated cell of the retina that lacks visual pigment and

contributes to aqueous humor by secreting chloride ions. This cell type

maintains gap junctions with pigmented epithelial cells." [GOC:tfm,

PMID:15106942]

[Term]

id: CL:0002303

name: pigmented ciliary epithelial cell

def: "A ciliated epithelial cell of the retina, this cell type uptakes sodium

chloride and passes it to non-pigmented ciliary epithelial cells."

[PMID:15106942]

```

Original issue reported on code.google.com by `dosu...@gmail.com` on 27 Feb 2012 at 5:41

|

1.0

|

names that are too broad for their definitions (ciliary epithelial cells) - ```

TICKET: These terms have names that are too broad for their definitions, or

definitions that are two narrow for their names:

id: CL:0002304

name: non-pigmented ciliary epithelial cell

def: "A multi-ciliated cell of the retina that lacks visual pigment and

contributes to aqueous humor by secreting chloride ions. This cell type

maintains gap junctions with pigmented epithelial cells." [GOC:tfm,

PMID:15106942]

[Term]

id: CL:0002303

name: pigmented ciliary epithelial cell

def: "A ciliated epithelial cell of the retina, this cell type uptakes sodium

chloride and passes it to non-pigmented ciliary epithelial cells."

[PMID:15106942]

```

Original issue reported on code.google.com by `dosu...@gmail.com` on 27 Feb 2012 at 5:41

|

defect

|

names that are too broad for their definitions ciliary epithelial cells ticket these terms have names that are too broad for their definitions or definitions that are two narrow for their names id cl name non pigmented ciliary epithelial cell def a multi ciliated cell of the retina that lacks visual pigment and contributes to aqueous humor by secreting chloride ions this cell type maintains gap junctions with pigmented epithelial cells goc tfm pmid id cl name pigmented ciliary epithelial cell def a ciliated epithelial cell of the retina this cell type uptakes sodium chloride and passes it to non pigmented ciliary epithelial cells original issue reported on code google com by dosu gmail com on feb at

| 1

|

13,832

| 2,787,212,491

|

IssuesEvent

|

2015-05-08 02:51:48

|

mblanchette/maven-java-formatter-plugin

|

https://api.github.com/repos/mblanchette/maven-java-formatter-plugin

|

closed

|

Update embedded Eclipse to 4.4 to allow for Java 8 sources.

|

auto-migrated Priority-Medium Type-Defect

|

```

Version 0.4 embeds an older Eclipse which does not know about Java 8.

The recently released Eclipse 4.4 does, so it would be really nice if version

0.5 used this instead.

```

Original issue reported on code.google.com by `thorbjo...@gmail.com` on 27 Jun 2014 at 9:24

|

1.0

|

Update embedded Eclipse to 4.4 to allow for Java 8 sources. - ```

Version 0.4 embeds an older Eclipse which does not know about Java 8.

The recently released Eclipse 4.4 does, so it would be really nice if version

0.5 used this instead.

```

Original issue reported on code.google.com by `thorbjo...@gmail.com` on 27 Jun 2014 at 9:24

|

defect

|

update embedded eclipse to to allow for java sources version embeds an older eclipse which does not know about java the recently released eclipse does so it would be really nice if version used this instead original issue reported on code google com by thorbjo gmail com on jun at

| 1

|

84,817

| 10,418,983,553

|

IssuesEvent

|

2019-09-15 13:17:01

|

square/okhttp

|

https://api.github.com/repos/square/okhttp

|

closed

|

Improve/Fix visibility of sample recipes e.g. Certificate Pinning

|

documentation

|

The docs on https://square.github.io/okhttp/https/#certificate-pinning

say:

```

public SSLContext sslContextForTrustedCertificates(InputStream in) {

... // Full source omitted. See sample.

}

```

but there is no such sample.

https://github.com/square/okhttp/tree/master/samples/guide/src/main/java/okhttp3/recipes

|

1.0

|

Improve/Fix visibility of sample recipes e.g. Certificate Pinning - The docs on https://square.github.io/okhttp/https/#certificate-pinning

say:

```

public SSLContext sslContextForTrustedCertificates(InputStream in) {

... // Full source omitted. See sample.

}

```

but there is no such sample.

https://github.com/square/okhttp/tree/master/samples/guide/src/main/java/okhttp3/recipes

|

non_defect

|

improve fix visibility of sample recipes e g certificate pinning the docs on say public sslcontext sslcontextfortrustedcertificates inputstream in full source omitted see sample but there is no such sample

| 0

|

68,760

| 7,109,041,991

|

IssuesEvent

|

2018-01-17 03:35:14

|

bitcoinjs/bitcoinjs-lib

|

https://api.github.com/repos/bitcoinjs/bitcoinjs-lib

|

closed

|

BIP143 rejects uncompressed public keys in P2WPKH or P2WSH

|

bug testing

|

Not sure if I am misunderstanding something here but does this not only check if the output is p2wpkh and not p2wsh as well?

i.e. is correct?

```

if (kpPubKey.length !== 33 &&

(input.signType === scriptTypes.P2WPKH || input.signType === scriptTypes.P2WSH)) throw new Error('BIP143 rejects uncompressed public keys in P2WPKH or P2WSH')

```

https://github.com/bitcoinjs/bitcoinjs-lib/blob/86cd4a44a1524686d993f35b02a1ed331aaa551f/src/transaction_builder.js#L709

|

1.0

|

BIP143 rejects uncompressed public keys in P2WPKH or P2WSH - Not sure if I am misunderstanding something here but does this not only check if the output is p2wpkh and not p2wsh as well?

i.e. is correct?

```

if (kpPubKey.length !== 33 &&

(input.signType === scriptTypes.P2WPKH || input.signType === scriptTypes.P2WSH)) throw new Error('BIP143 rejects uncompressed public keys in P2WPKH or P2WSH')

```

https://github.com/bitcoinjs/bitcoinjs-lib/blob/86cd4a44a1524686d993f35b02a1ed331aaa551f/src/transaction_builder.js#L709

|

non_defect

|

rejects uncompressed public keys in or not sure if i am misunderstanding something here but does this not only check if the output is and not as well i e is correct if kppubkey length input signtype scripttypes input signtype scripttypes throw new error rejects uncompressed public keys in or

| 0

|

5,609

| 3,970,223,416

|

IssuesEvent

|

2016-05-04 05:44:51

|

kolliSuman/issues

|

https://api.github.com/repos/kolliSuman/issues

|

closed

|

QA_Introduction to Programmable Logic Controller_Self evaluation_smk

|

Category: Usability Developed By: VLEAD Release Number: Production Severity: S3 Status: Open

|

Defect Description :

In Self Evaluation page of "Introduction to Programmable Logic Controller and Introduction to Digital I/O Interface to PLC” when we click on get start button the Content on the top of the page is not clear where Content should be present with no distortions

Actual Result :

In Self Evaluation page of "Introduction to Programmable Logic Controller and Introduction to Digital I/O Interface to PLC” when we click on get start button the Content on the top of the page is not clear

Environment :

OS: Windows 7, Ubuntu-16.04,Centos-6

Browsers: Firefox-42.0,Chrome-47.0,chromium-45.0

Bandwidth : 100Mbps

Hardware Configuration:8GBRAM ,

Processor:i5

Test Step Link:

https://github.com/Virtual-Labs/industrial-electrical-drives-nitk/blob/master/test-cases/integration_test-cases/Introduction%20to%20Programmable%20Logic%20Controller/Introduction%20to%20Programmable%20Logic%20Controller_04_Self%20evaluation_smk.org

|

True

|

QA_Introduction to Programmable Logic Controller_Self evaluation_smk - Defect Description :

In Self Evaluation page of "Introduction to Programmable Logic Controller and Introduction to Digital I/O Interface to PLC” when we click on get start button the Content on the top of the page is not clear where Content should be present with no distortions

Actual Result :

In Self Evaluation page of "Introduction to Programmable Logic Controller and Introduction to Digital I/O Interface to PLC” when we click on get start button the Content on the top of the page is not clear

Environment :

OS: Windows 7, Ubuntu-16.04,Centos-6

Browsers: Firefox-42.0,Chrome-47.0,chromium-45.0

Bandwidth : 100Mbps

Hardware Configuration:8GBRAM ,

Processor:i5

Test Step Link:

https://github.com/Virtual-Labs/industrial-electrical-drives-nitk/blob/master/test-cases/integration_test-cases/Introduction%20to%20Programmable%20Logic%20Controller/Introduction%20to%20Programmable%20Logic%20Controller_04_Self%20evaluation_smk.org

|

non_defect

|

qa introduction to programmable logic controller self evaluation smk defect description in self evaluation page of introduction to programmable logic controller and introduction to digital i o interface to plc” when we click on get start button the content on the top of the page is not clear where content should be present with no distortions actual result in self evaluation page of introduction to programmable logic controller and introduction to digital i o interface to plc” when we click on get start button the content on the top of the page is not clear environment os windows ubuntu centos browsers firefox chrome chromium bandwidth hardware configuration processor test step link

| 0

|

63,182

| 17,409,860,851

|

IssuesEvent

|

2021-08-03 10:52:56

|

AtlasOfLivingAustralia/collectory

|

https://api.github.com/repos/AtlasOfLivingAustralia/collectory

|

closed

|

download button a dataset page doesn't work

|

priority-medium status-new type-defect

|

_From @mbohun on August 19, 2014 12:33_

_migrated from:_ https://code.google.com/p/ala/issues/detail?id=524

_date:_ Tue Jan 14 20:41:20 2014

_author:_ milo_nic...@hotmail.com

---

e.g. clicking the download records button on [http://collections.ala.org.au/public/show/dr365](http://collections.ala.org.au/public/show/dr365) produces an error - A reasonTypeId must be provided.

_Copied from original issue: AtlasOfLivingAustralia/biocache-hubs#55_

|

1.0

|

download button a dataset page doesn't work - _From @mbohun on August 19, 2014 12:33_

_migrated from:_ https://code.google.com/p/ala/issues/detail?id=524

_date:_ Tue Jan 14 20:41:20 2014

_author:_ milo_nic...@hotmail.com

---

e.g. clicking the download records button on [http://collections.ala.org.au/public/show/dr365](http://collections.ala.org.au/public/show/dr365) produces an error - A reasonTypeId must be provided.

_Copied from original issue: AtlasOfLivingAustralia/biocache-hubs#55_

|

defect

|

download button a dataset page doesn t work from mbohun on august migrated from date tue jan author milo nic hotmail com e g clicking the download records button on produces an error a reasontypeid must be provided copied from original issue atlasoflivingaustralia biocache hubs

| 1

|

203,692

| 15,380,550,885

|

IssuesEvent

|

2021-03-02 21:16:28

|

radicle-dev/radicle-link

|

https://api.github.com/repos/radicle-dev/radicle-link

|

closed

|

Split integration tests

|

brooming help wanted testing

|

`propagation_basic` was meant to test some, err, basic things.

Before it gets out of hand, we should split into a couple more modules. As those tests are becoming more heavy on the IOs, this also prepares for running them concurrently and/or behind a `slow` feature.

|

1.0

|

Split integration tests - `propagation_basic` was meant to test some, err, basic things.

Before it gets out of hand, we should split into a couple more modules. As those tests are becoming more heavy on the IOs, this also prepares for running them concurrently and/or behind a `slow` feature.

|

non_defect

|

split integration tests propagation basic was meant to test some err basic things before it gets out of hand we should split into a couple more modules as those tests are becoming more heavy on the ios this also prepares for running them concurrently and or behind a slow feature

| 0

|

2,772

| 2,607,944,887

|

IssuesEvent

|

2015-02-26 00:32:52

|

chrsmithdemos/switchlist

|

https://api.github.com/repos/chrsmithdemos/switchlist

|

opened

|

Add explicit checks for duplicate town names.

|

auto-migrated Priority-Medium Type-Defect

|

```

There's currently no validation that two towns don't have the same names. This

would mess up the "train stops" code that assumes only one town exists with a

given name.

```

-----

Original issue reported on code.google.com by `rwbowdi...@gmail.com` on 24 Apr 2011 at 5:25

|

1.0

|

Add explicit checks for duplicate town names. - ```

There's currently no validation that two towns don't have the same names. This

would mess up the "train stops" code that assumes only one town exists with a

given name.

```

-----

Original issue reported on code.google.com by `rwbowdi...@gmail.com` on 24 Apr 2011 at 5:25

|

defect

|

add explicit checks for duplicate town names there s currently no validation that two towns don t have the same names this would mess up the train stops code that assumes only one town exists with a given name original issue reported on code google com by rwbowdi gmail com on apr at

| 1

|

27,303

| 4,958,098,713

|

IssuesEvent

|

2016-12-02 08:27:19

|

TNGSB/eWallet

|

https://api.github.com/repos/TNGSB/eWallet

|

closed

|

eWallet_MobileApp(Reload) #105

|

Defect - High (Sev-2)

|

[Defect_Mobile App #105.xlsx](https://github.com/TNGSB/eWallet/files/593552/Defect_Mobile.App.105.xlsx)

Defect Description : System displayed a successful message and automatically redirect user to "Credit/Debit Card" page before the countdown counter time out

Test Description : To verify error message when user exceeded the countdown timer for credit card detail input

Refer attachment for POT

|

1.0

|

eWallet_MobileApp(Reload) #105 -

[Defect_Mobile App #105.xlsx](https://github.com/TNGSB/eWallet/files/593552/Defect_Mobile.App.105.xlsx)

Defect Description : System displayed a successful message and automatically redirect user to "Credit/Debit Card" page before the countdown counter time out

Test Description : To verify error message when user exceeded the countdown timer for credit card detail input

Refer attachment for POT

|

defect

|

ewallet mobileapp reload defect description system displayed a successful message and automatically redirect user to credit debit card page before the countdown counter time out test description to verify error message when user exceeded the countdown timer for credit card detail input refer attachment for pot

| 1

|

11,012

| 2,622,955,112

|

IssuesEvent

|

2015-03-04 09:03:13

|

folded/carve

|

https://api.github.com/repos/folded/carve

|

opened

|

Carve fails on (apparantly) valid input

|

auto-migrated Priority-Medium Type-Defect

|

```

Just run:

>intersect "(_0a.ply | _1a.ply | _2a.ply) A_MINUS_B _3s.ply"

with the attached files.

Files attached were created with carve by intersecting several simple

models. Viewing tool supplied with carve opens them without any problems.

```

Original issue reported on code.google.com by `ru.el...@gmail.com` on 17 May 2010 at 2:31

Attachments:

* [_0a.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_0a.ply)

* [_1a.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_1a.ply)

* [_2a.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_2a.ply)

* [_3s.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_3s.ply)

|

1.0

|

Carve fails on (apparantly) valid input - ```

Just run:

>intersect "(_0a.ply | _1a.ply | _2a.ply) A_MINUS_B _3s.ply"

with the attached files.

Files attached were created with carve by intersecting several simple

models. Viewing tool supplied with carve opens them without any problems.

```

Original issue reported on code.google.com by `ru.el...@gmail.com` on 17 May 2010 at 2:31

Attachments:

* [_0a.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_0a.ply)

* [_1a.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_1a.ply)

* [_2a.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_2a.ply)

* [_3s.ply](https://storage.googleapis.com/google-code-attachments/carve/issue-17/comment-0/_3s.ply)

|

defect

|

carve fails on apparantly valid input just run intersect ply ply ply a minus b ply with the attached files files attached were created with carve by intersecting several simple models viewing tool supplied with carve opens them without any problems original issue reported on code google com by ru el gmail com on may at attachments

| 1

|

108,794

| 23,664,428,850

|

IssuesEvent

|

2022-08-26 19:07:06

|

unoplatform/uno

|

https://api.github.com/repos/unoplatform/uno

|

opened

|

Enable XAML Trimming for `net6.0-[ios|android|macos|maccatalyst]` and GTK targets

|

kind/enhancement area/code-generation

|

## What would you like to be added:

Support for [XAML Trimming](https://platform.uno/docs/articles/features/resources-trimming.html?) for `net6.0-[ios|android|macos|maccatalyst]` and GTK targets.

## Why is this needed:

Reduce the size of applications.

## Anything else we need to know?

WPF is explicitly excluded from this list as trimming is forcibly disabled by the SDK.

Work branch: https://github.com/unoplatform/uno/tree/dev/eb/xaml-trimming-net6

## Related issues

- https://github.com/dotnet/sdk/issues/27492

- https://github.com/xamarin/xamarin-android/issues/7301

|

1.0

|

Enable XAML Trimming for `net6.0-[ios|android|macos|maccatalyst]` and GTK targets - ## What would you like to be added:

Support for [XAML Trimming](https://platform.uno/docs/articles/features/resources-trimming.html?) for `net6.0-[ios|android|macos|maccatalyst]` and GTK targets.

## Why is this needed:

Reduce the size of applications.

## Anything else we need to know?

WPF is explicitly excluded from this list as trimming is forcibly disabled by the SDK.

Work branch: https://github.com/unoplatform/uno/tree/dev/eb/xaml-trimming-net6

## Related issues

- https://github.com/dotnet/sdk/issues/27492

- https://github.com/xamarin/xamarin-android/issues/7301

|

non_defect

|

enable xaml trimming for and gtk targets what would you like to be added support for for and gtk targets why is this needed reduce the size of applications anything else we need to know wpf is explicitly excluded from this list as trimming is forcibly disabled by the sdk work branch related issues

| 0

|

18,922

| 3,734,406,963

|

IssuesEvent

|

2016-03-08 06:41:42

|

kumulsoft/Fixed-Assets

|

https://api.github.com/repos/kumulsoft/Fixed-Assets

|

closed

|

Asset Transactions - Grid Page - Enhancement

|

enhancement Fixed HIGH Ready for testing

|

Remove the 'Trans Detail' column to give space to add two new columns 'Centre and Custodian)

|

1.0

|

Asset Transactions - Grid Page - Enhancement - Remove the 'Trans Detail' column to give space to add two new columns 'Centre and Custodian)

|

non_defect

|

asset transactions grid page enhancement remove the trans detail column to give space to add two new columns centre and custodian

| 0

|

62,085

| 17,023,847,476

|

IssuesEvent

|

2021-07-03 04:09:20

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

Only 1 of 2 streets with same name found

|

Component: nominatim Priority: major Resolution: duplicate Type: defect

|

**[Submitted to the original trac issue database at 11.18pm, Thursday, 3rd January 2013]**

http://nominatim.openstreetmap.org/search.php?q=tannenweg+lenzkirch&viewbox=8.19%2C47.88%2C8.21%2C47.87&polygon=1

Only one of the 2 residential streets named Tannenweg are found. The streets are merging in a Y shape in their western parts.

The error leads to a wrong bounding box for zooming. Thus depending on screen resolution and street geometry it might not be obvious to a human user that a second street with the same name exists (is only shown partially but the label off-screen).

|

1.0

|

Only 1 of 2 streets with same name found - **[Submitted to the original trac issue database at 11.18pm, Thursday, 3rd January 2013]**

http://nominatim.openstreetmap.org/search.php?q=tannenweg+lenzkirch&viewbox=8.19%2C47.88%2C8.21%2C47.87&polygon=1

Only one of the 2 residential streets named Tannenweg are found. The streets are merging in a Y shape in their western parts.

The error leads to a wrong bounding box for zooming. Thus depending on screen resolution and street geometry it might not be obvious to a human user that a second street with the same name exists (is only shown partially but the label off-screen).

|

defect

|

only of streets with same name found only one of the residential streets named tannenweg are found the streets are merging in a y shape in their western parts the error leads to a wrong bounding box for zooming thus depending on screen resolution and street geometry it might not be obvious to a human user that a second street with the same name exists is only shown partially but the label off screen

| 1

|

435,622

| 30,510,415,808

|

IssuesEvent

|

2023-07-18 20:21:19

|

Nnoemis/Test1

|

https://api.github.com/repos/Nnoemis/Test1

|

opened

|

How would you test a 5 kg capacity grocery shopping paper bag? Describe the tests that you could perform.

|

documentation

|

1. Insert 3 kg of oranges.

2. Insert 4.5 kg of oranges.

3.Insert 5 kg of oranges.

4. Insert + 100 g of oranges.

5. Insert + 200 g of oranges.

6. Every next time insert + 100 g of oranges and stop when paper bag is damaged. (Bounding testing)

7. Try the these steps with a wet paper bag.

8. Try the bag`s volume.

Sourse [https://simeon.svet-bg.com/index.php/forums/topic/think-testing-5-kg-bag/](url)

|

1.0

|

How would you test a 5 kg capacity grocery shopping paper bag? Describe the tests that you could perform. - 1. Insert 3 kg of oranges.

2. Insert 4.5 kg of oranges.

3.Insert 5 kg of oranges.

4. Insert + 100 g of oranges.

5. Insert + 200 g of oranges.

6. Every next time insert + 100 g of oranges and stop when paper bag is damaged. (Bounding testing)

7. Try the these steps with a wet paper bag.

8. Try the bag`s volume.

Sourse [https://simeon.svet-bg.com/index.php/forums/topic/think-testing-5-kg-bag/](url)

|

non_defect

|

how would you test a kg capacity grocery shopping paper bag describe the tests that you could perform insert kg of oranges insert kg of oranges insert kg of oranges insert g of oranges insert g of oranges every next time insert g of oranges and stop when paper bag is damaged bounding testing try the these steps with a wet paper bag try the bag s volume sourse url

| 0

|

41,834

| 10,679,235,358

|

IssuesEvent

|

2019-10-21 18:51:50

|

techo/voluntariado-eventual

|

https://api.github.com/repos/techo/voluntariado-eventual

|

closed

|

Error al mostrar puntos de encuentro en la inscripción

|

Defecto

|

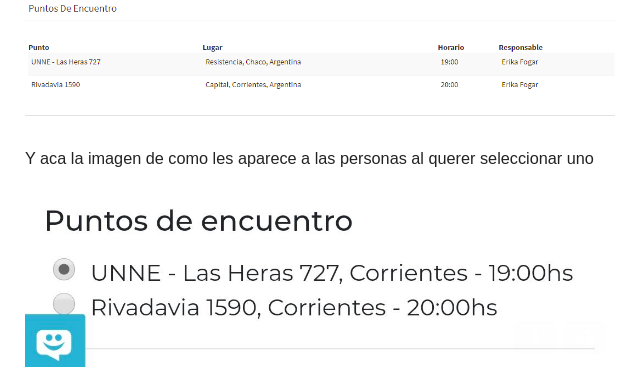

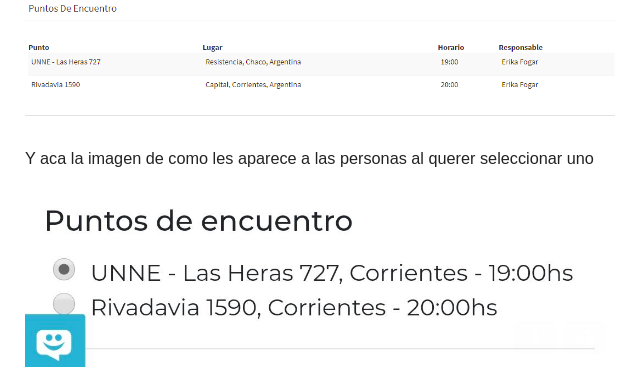

**Describí el error**

Como usuario al querer inscribirme en una actividad los puntos de encuentro se muestran incorrectamente.

**Para reproducirlo**

Pasos para reproducir el comportamiento:

1. Cargar una nueva actividad.

2. Cargar 2 puntos de encuentro en diferentes provincias

3. Como usuario ir a la actividad a inscribirme

4. Ver que las pronvincias de los puntos de encuentro se muestran incorrectamente.

**Comportamiento esperando**

Que las provincias se vean igual que en la carga de actividad.

**Capturas de pantalla**

|

1.0

|

Error al mostrar puntos de encuentro en la inscripción - **Describí el error**

Como usuario al querer inscribirme en una actividad los puntos de encuentro se muestran incorrectamente.

**Para reproducirlo**

Pasos para reproducir el comportamiento:

1. Cargar una nueva actividad.

2. Cargar 2 puntos de encuentro en diferentes provincias

3. Como usuario ir a la actividad a inscribirme

4. Ver que las pronvincias de los puntos de encuentro se muestran incorrectamente.

**Comportamiento esperando**

Que las provincias se vean igual que en la carga de actividad.

**Capturas de pantalla**

|

defect

|

error al mostrar puntos de encuentro en la inscripción describí el error como usuario al querer inscribirme en una actividad los puntos de encuentro se muestran incorrectamente para reproducirlo pasos para reproducir el comportamiento cargar una nueva actividad cargar puntos de encuentro en diferentes provincias como usuario ir a la actividad a inscribirme ver que las pronvincias de los puntos de encuentro se muestran incorrectamente comportamiento esperando que las provincias se vean igual que en la carga de actividad capturas de pantalla

| 1

|

74,877

| 25,379,761,270

|

IssuesEvent

|

2022-11-21 16:37:21

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

opened

|

Liquibase 4.17 compatibility

|

T: Defect

|

### Expected behavior

Jooq Liquibase extension to be compatible with recent version of Liquibase

### Actual behavior

The Jooq liquibase extension uses `FileSystemResourceAccessor` which was [removed](https://github.com/liquibase/liquibase/issues/3478) in Liquibase 4.17.

### Steps to reproduce the problem

Log output from an attempt to run the Gradle plugin `generateJooq` task:

```

matt @ ghost-s1 in ~/Workspace/github.com/mattupstate/acme (⎈k3d-acme-dev/default) on git:main+

$ ./gradlew acme-data:acme-data-sql:generateJooq

> Task :acme-data:acme-data-sql:generateJooq FAILED

Exception in thread "main" java.lang.NoClassDefFoundError: liquibase/resource/FileSystemResourceAccessor

at org.jooq.meta.extensions.liquibase.LiquibaseDatabase.export(LiquibaseDatabase.java:155)

at org.jooq.meta.extensions.AbstractInterpretingDatabase.connection(AbstractInterpretingDatabase.java:100)

at org.jooq.meta.extensions.AbstractInterpretingDatabase.create0(AbstractInterpretingDatabase.java:77)

at org.jooq.meta.AbstractDatabase.create(AbstractDatabase.java:369)

at org.jooq.meta.AbstractDatabase.create(AbstractDatabase.java:359)

at org.jooq.meta.AbstractDatabase.setConnection(AbstractDatabase.java:337)

at org.jooq.codegen.GenerationTool.run0(GenerationTool.java:553)

at org.jooq.codegen.GenerationTool.run(GenerationTool.java:240)

at org.jooq.codegen.GenerationTool.generate(GenerationTool.java:235)

at org.jooq.codegen.GenerationTool.main(GenerationTool.java:207)

Caused by: java.lang.ClassNotFoundException: liquibase.resource.FileSystemResourceAccessor

at java.base/jdk.internal.loader.BuiltinClassLoader.loadClass(BuiltinClassLoader.java:641)

at java.base/jdk.internal.loader.ClassLoaders$AppClassLoader.loadClass(ClassLoaders.java:188)

at java.base/java.lang.ClassLoader.loadClass(ClassLoader.java:520)

... 10 more

```

### jOOQ Version

3.17.4

### Database product and version

N/A

### Java Version

N/A

### OS Version

N/A

### JDBC driver name and version (include name if unofficial driver)

N/A

|

1.0

|

Liquibase 4.17 compatibility - ### Expected behavior

Jooq Liquibase extension to be compatible with recent version of Liquibase

### Actual behavior

The Jooq liquibase extension uses `FileSystemResourceAccessor` which was [removed](https://github.com/liquibase/liquibase/issues/3478) in Liquibase 4.17.

### Steps to reproduce the problem

Log output from an attempt to run the Gradle plugin `generateJooq` task:

```

matt @ ghost-s1 in ~/Workspace/github.com/mattupstate/acme (⎈k3d-acme-dev/default) on git:main+

$ ./gradlew acme-data:acme-data-sql:generateJooq

> Task :acme-data:acme-data-sql:generateJooq FAILED

Exception in thread "main" java.lang.NoClassDefFoundError: liquibase/resource/FileSystemResourceAccessor

at org.jooq.meta.extensions.liquibase.LiquibaseDatabase.export(LiquibaseDatabase.java:155)

at org.jooq.meta.extensions.AbstractInterpretingDatabase.connection(AbstractInterpretingDatabase.java:100)

at org.jooq.meta.extensions.AbstractInterpretingDatabase.create0(AbstractInterpretingDatabase.java:77)

at org.jooq.meta.AbstractDatabase.create(AbstractDatabase.java:369)

at org.jooq.meta.AbstractDatabase.create(AbstractDatabase.java:359)

at org.jooq.meta.AbstractDatabase.setConnection(AbstractDatabase.java:337)

at org.jooq.codegen.GenerationTool.run0(GenerationTool.java:553)

at org.jooq.codegen.GenerationTool.run(GenerationTool.java:240)

at org.jooq.codegen.GenerationTool.generate(GenerationTool.java:235)

at org.jooq.codegen.GenerationTool.main(GenerationTool.java:207)

Caused by: java.lang.ClassNotFoundException: liquibase.resource.FileSystemResourceAccessor

at java.base/jdk.internal.loader.BuiltinClassLoader.loadClass(BuiltinClassLoader.java:641)

at java.base/jdk.internal.loader.ClassLoaders$AppClassLoader.loadClass(ClassLoaders.java:188)

at java.base/java.lang.ClassLoader.loadClass(ClassLoader.java:520)

... 10 more

```

### jOOQ Version

3.17.4

### Database product and version

N/A

### Java Version

N/A

### OS Version

N/A

### JDBC driver name and version (include name if unofficial driver)

N/A

|

defect

|

liquibase compatibility expected behavior jooq liquibase extension to be compatible with recent version of liquibase actual behavior the jooq liquibase extension uses filesystemresourceaccessor which was in liquibase steps to reproduce the problem log output from an attempt to run the gradle plugin generatejooq task matt ghost in workspace github com mattupstate acme ⎈ acme dev default on git main gradlew acme data acme data sql generatejooq task acme data acme data sql generatejooq failed exception in thread main java lang noclassdeffounderror liquibase resource filesystemresourceaccessor at org jooq meta extensions liquibase liquibasedatabase export liquibasedatabase java at org jooq meta extensions abstractinterpretingdatabase connection abstractinterpretingdatabase java at org jooq meta extensions abstractinterpretingdatabase abstractinterpretingdatabase java at org jooq meta abstractdatabase create abstractdatabase java at org jooq meta abstractdatabase create abstractdatabase java at org jooq meta abstractdatabase setconnection abstractdatabase java at org jooq codegen generationtool generationtool java at org jooq codegen generationtool run generationtool java at org jooq codegen generationtool generate generationtool java at org jooq codegen generationtool main generationtool java caused by java lang classnotfoundexception liquibase resource filesystemresourceaccessor at java base jdk internal loader builtinclassloader loadclass builtinclassloader java at java base jdk internal loader classloaders appclassloader loadclass classloaders java at java base java lang classloader loadclass classloader java more jooq version database product and version n a java version n a os version n a jdbc driver name and version include name if unofficial driver n a

| 1

|

15,881

| 2,869,088,191

|

IssuesEvent

|

2015-06-05 23:14:17

|

dart-lang/sdk

|

https://api.github.com/repos/dart-lang/sdk

|

closed

|

Collapse together errors of the same kind

|

Area-Pkg Pkg-PolymerDevExp PolymerMilestone-Next Priority-Medium Triaged Type-Defect

|

It's common to see one warning/error repeated in many places. For example the href vs \_href might happen a lot now that we introduce the warning for it. It would be great to combine all occurrences of the same error together.

Some ideas:

- for the log_injector UI, we can combine it graphically.

- for the pub-build/pub-serve output in stdout, we can delay reporting warnings until the end of the phase, collect all warnings together and report them as such. At least we can do that on a file-per-file basis.

|

1.0

|

Collapse together errors of the same kind - It's common to see one warning/error repeated in many places. For example the href vs \_href might happen a lot now that we introduce the warning for it. It would be great to combine all occurrences of the same error together.

Some ideas:

- for the log_injector UI, we can combine it graphically.

- for the pub-build/pub-serve output in stdout, we can delay reporting warnings until the end of the phase, collect all warnings together and report them as such. At least we can do that on a file-per-file basis.

|

defect

|

collapse together errors of the same kind it s common to see one warning error repeated in many places for example the href vs href might happen a lot now that we introduce the warning for it it would be great to combine all occurrences of the same error together some ideas for the log injector ui we can combine it graphically for the pub build pub serve output in stdout we can delay reporting warnings until the end of the phase collect all warnings together and report them as such at least we can do that on a file per file basis

| 1

|

4,357

| 10,965,734,316

|

IssuesEvent

|

2019-11-28 04:13:34

|

fga-eps-mds/2019.2-Over26

|

https://api.github.com/repos/fga-eps-mds/2019.2-Over26

|

closed

|

Atualizar diagramas do Documento de Arquitetura

|

Architecture Documentation EPS

|

## Descrição da Mudança *

<!--- Forneça um resumo geral da _issue_ -->

É necessário adequar os diagramas presentes no Documento de Arquitetura à atual estrutura do projeto.

## Checklist *

<!-- Essa checklist propõe a criação de uma boa issue -->

<!-- Se a issue é sobre uma história de usuário, seu nome deve ser "USXX - Nome da história-->

<!-- Se a issue é sobre um bug, seu nome deve ser "BF - Nome curto do bug"-->

<!-- Se a issue é sobre outra tarefa o nome deve ser uma simples descrição da tarefa-->

- [x] Esta issue tem um nome significativo.

- [x] O nome da issue está no padrão.

- [x] Esta issue tem uma descrição de fácil entendimento.

- [x] Esta issue tem uma boa definição de critérios de aceitação.

- [x] Esta issue tem labels associadas.

- [ ] Esta issue está associada à uma milestone.

- [ ] Esta issue tem uma pontuação estimada.

## Tarefas *

<!-- Adicione aqui as tarefas necessárias para concluir a issue -->

- [x] Atualizar diagrama de classes

- [x] Atualizar diagrama lógico

- [x] Atualizar diagrama de pacotes

## Critérios de Aceitação *

<!-- Liste aqui o conjunto de aspectos mecessários para considerar a atividade como completa-->

<!-- Os itens serão adicionados pelo Product Owner -->

- [x] Diagramas atualizados.

|

1.0

|

Atualizar diagramas do Documento de Arquitetura - ## Descrição da Mudança *

<!--- Forneça um resumo geral da _issue_ -->

É necessário adequar os diagramas presentes no Documento de Arquitetura à atual estrutura do projeto.

## Checklist *

<!-- Essa checklist propõe a criação de uma boa issue -->

<!-- Se a issue é sobre uma história de usuário, seu nome deve ser "USXX - Nome da história-->

<!-- Se a issue é sobre um bug, seu nome deve ser "BF - Nome curto do bug"-->

<!-- Se a issue é sobre outra tarefa o nome deve ser uma simples descrição da tarefa-->

- [x] Esta issue tem um nome significativo.

- [x] O nome da issue está no padrão.

- [x] Esta issue tem uma descrição de fácil entendimento.

- [x] Esta issue tem uma boa definição de critérios de aceitação.

- [x] Esta issue tem labels associadas.

- [ ] Esta issue está associada à uma milestone.

- [ ] Esta issue tem uma pontuação estimada.

## Tarefas *

<!-- Adicione aqui as tarefas necessárias para concluir a issue -->

- [x] Atualizar diagrama de classes

- [x] Atualizar diagrama lógico

- [x] Atualizar diagrama de pacotes

## Critérios de Aceitação *

<!-- Liste aqui o conjunto de aspectos mecessários para considerar a atividade como completa-->

<!-- Os itens serão adicionados pelo Product Owner -->

- [x] Diagramas atualizados.

|

non_defect

|

atualizar diagramas do documento de arquitetura descrição da mudança é necessário adequar os diagramas presentes no documento de arquitetura à atual estrutura do projeto checklist esta issue tem um nome significativo o nome da issue está no padrão esta issue tem uma descrição de fácil entendimento esta issue tem uma boa definição de critérios de aceitação esta issue tem labels associadas esta issue está associada à uma milestone esta issue tem uma pontuação estimada tarefas atualizar diagrama de classes atualizar diagrama lógico atualizar diagrama de pacotes critérios de aceitação diagramas atualizados

| 0

|

44,751

| 12,372,216,940

|

IssuesEvent

|

2020-05-18 19:59:26

|

FoldingAtHome/fah-issues

|

https://api.github.com/repos/FoldingAtHome/fah-issues

|

closed

|

Dialog Box opened from FahCore22.exe, entry point clReleaseDevice could not be located

|

FAHClient defect

|

## Environment

* OS: Windows 7 Enterprise 64 build 7601 SP1

* FAH version: April 28, 2020

## What were you trying to do

Folding on FAH for Covid-19

## What happened

NVIDIA GEOFORCE GT 610 as GPU:0 hangs, throws dialog box, FahCore_22.exe - Entry Point Not Found. White X in Red circle: The procedure entry point clReleaseDevice could not be located in the dynamic link library OpenCL.dll. "OK" the dialog box, and a second box is thrown of same type

OK that one and GPU process indicator turns to solid yellow. And this cycle continues over and over.

## To Reproduce

just keeps happening each time.

|

1.0

|

Dialog Box opened from FahCore22.exe, entry point clReleaseDevice could not be located - ## Environment

* OS: Windows 7 Enterprise 64 build 7601 SP1

* FAH version: April 28, 2020

## What were you trying to do

Folding on FAH for Covid-19

## What happened

NVIDIA GEOFORCE GT 610 as GPU:0 hangs, throws dialog box, FahCore_22.exe - Entry Point Not Found. White X in Red circle: The procedure entry point clReleaseDevice could not be located in the dynamic link library OpenCL.dll. "OK" the dialog box, and a second box is thrown of same type

OK that one and GPU process indicator turns to solid yellow. And this cycle continues over and over.

## To Reproduce

just keeps happening each time.

|

defect

|

dialog box opened from exe entry point clreleasedevice could not be located environment os windows enterprise build fah version april what were you trying to do folding on fah for covid what happened nvidia geoforce gt as gpu hangs throws dialog box fahcore exe entry point not found white x in red circle the procedure entry point clreleasedevice could not be located in the dynamic link library opencl dll ok the dialog box and a second box is thrown of same type ok that one and gpu process indicator turns to solid yellow and this cycle continues over and over to reproduce just keeps happening each time

| 1

|

46,293

| 24,466,746,399

|

IssuesEvent

|

2022-10-07 15:39:27

|

Kitware/vtk-js

|

https://api.github.com/repos/Kitware/vtk-js

|

closed

|

Setting a model variable as object always trigger modified()

|

type: bug 🐞 type: performance ⚡️

|

### High-level description

If `macro.set(publicAPI, model, ['foo']);` and `foo` is an `Object` (e.g. `{a: 1, b: 2}`, then calling `setFoo({a: 1, b:2})` will always trigger `modified()`.

### Steps to reproduce

```

extend(...){

macro.set(publicAPI, model, ['foo']);

}

...

myobj.setFoo({a: 1, b: 2});

myobj.getMTime(); // =>1234

myobj.setFoo({a: 1, b: 2});

myobj.getMTime(); // =>1235

```

See [here](https://github.com/Kitware/vtk-js/blob/master/Sources/Widgets/Core/WidgetManager/index.js#L239) for a performance hit that modifies a WidgetRepresentation each time the mouse is moved which will always `requestData()` on the widget representation

### Detailed behavior

This is because `macro.setter()` does a shallow equal comparison `if (model[field] !== value) {` instead of a deep equal.

### Expected behavior

I can see 2 options:

- Add deep equal check if `value` is an object in `macro.setter()`

- Create `macro.setObject(publicAPI, model, ['foo']);` convenient setter similar to `macro.setArray()`

### Environment

- **vtk.js**: master

|

True

|

Setting a model variable as object always trigger modified() - ### High-level description

If `macro.set(publicAPI, model, ['foo']);` and `foo` is an `Object` (e.g. `{a: 1, b: 2}`, then calling `setFoo({a: 1, b:2})` will always trigger `modified()`.

### Steps to reproduce

```

extend(...){

macro.set(publicAPI, model, ['foo']);

}

...

myobj.setFoo({a: 1, b: 2});

myobj.getMTime(); // =>1234

myobj.setFoo({a: 1, b: 2});

myobj.getMTime(); // =>1235

```

See [here](https://github.com/Kitware/vtk-js/blob/master/Sources/Widgets/Core/WidgetManager/index.js#L239) for a performance hit that modifies a WidgetRepresentation each time the mouse is moved which will always `requestData()` on the widget representation

### Detailed behavior

This is because `macro.setter()` does a shallow equal comparison `if (model[field] !== value) {` instead of a deep equal.

### Expected behavior

I can see 2 options:

- Add deep equal check if `value` is an object in `macro.setter()`

- Create `macro.setObject(publicAPI, model, ['foo']);` convenient setter similar to `macro.setArray()`

### Environment

- **vtk.js**: master

|

non_defect

|

setting a model variable as object always trigger modified high level description if macro set publicapi model and foo is an object e g a b then calling setfoo a b will always trigger modified steps to reproduce extend macro set publicapi model myobj setfoo a b myobj getmtime myobj setfoo a b myobj getmtime see for a performance hit that modifies a widgetrepresentation each time the mouse is moved which will always requestdata on the widget representation detailed behavior this is because macro setter does a shallow equal comparison if model value instead of a deep equal expected behavior i can see options add deep equal check if value is an object in macro setter create macro setobject publicapi model convenient setter similar to macro setarray environment vtk js master

| 0

|

47,552

| 13,056,241,652

|

IssuesEvent

|

2020-07-30 04:05:51

|

icecube-trac/tix2

|

https://api.github.com/repos/icecube-trac/tix2

|

closed

|

steamshovel file open slowness (Trac #735)

|

Migrated from Trac combo core defect

|

"After investigating this problem a bit more, we found out how to get around the slowness we've been experiencing when using steamshovel in the offline-software meta-project. When we just run steamshovel, without passing the files in the command line, but opening them from the GUI, things work fine. When loading the files from command line, it goes very slowly."

Possibly something different between cmd line loading and file menu loading.

Migrated from https://code.icecube.wisc.edu/ticket/735

```json

{

"status": "closed",

"changetime": "2015-07-10T14:08:08",

"description": "\"After investigating this problem a bit more, we found out how to get around the slowness we've been experiencing when using steamshovel in the offline-software meta-project. When we just run steamshovel, without passing the files in the command line, but opening them from the GUI, things work fine. When loading the files from command line, it goes very slowly.\"\n\nPossibly something different between cmd line loading and file menu loading.",

"reporter": "david.schultz",

"cc": "",

"resolution": "fixed",

"_ts": "1436537288260585",

"component": "combo core",

"summary": "steamshovel file open slowness",

"priority": "normal",

"keywords": "",

"time": "2014-08-13T15:04:32",

"milestone": "",

"owner": "hdembinski",

"type": "defect"

}

```

|

1.0

|

steamshovel file open slowness (Trac #735) - "After investigating this problem a bit more, we found out how to get around the slowness we've been experiencing when using steamshovel in the offline-software meta-project. When we just run steamshovel, without passing the files in the command line, but opening them from the GUI, things work fine. When loading the files from command line, it goes very slowly."

Possibly something different between cmd line loading and file menu loading.

Migrated from https://code.icecube.wisc.edu/ticket/735

```json

{

"status": "closed",

"changetime": "2015-07-10T14:08:08",

"description": "\"After investigating this problem a bit more, we found out how to get around the slowness we've been experiencing when using steamshovel in the offline-software meta-project. When we just run steamshovel, without passing the files in the command line, but opening them from the GUI, things work fine. When loading the files from command line, it goes very slowly.\"\n\nPossibly something different between cmd line loading and file menu loading.",

"reporter": "david.schultz",

"cc": "",

"resolution": "fixed",

"_ts": "1436537288260585",

"component": "combo core",

"summary": "steamshovel file open slowness",

"priority": "normal",

"keywords": "",

"time": "2014-08-13T15:04:32",

"milestone": "",

"owner": "hdembinski",

"type": "defect"

}

```

|

defect

|

steamshovel file open slowness trac after investigating this problem a bit more we found out how to get around the slowness we ve been experiencing when using steamshovel in the offline software meta project when we just run steamshovel without passing the files in the command line but opening them from the gui things work fine when loading the files from command line it goes very slowly possibly something different between cmd line loading and file menu loading migrated from json status closed changetime description after investigating this problem a bit more we found out how to get around the slowness we ve been experiencing when using steamshovel in the offline software meta project when we just run steamshovel without passing the files in the command line but opening them from the gui things work fine when loading the files from command line it goes very slowly n npossibly something different between cmd line loading and file menu loading reporter david schultz cc resolution fixed ts component combo core summary steamshovel file open slowness priority normal keywords time milestone owner hdembinski type defect

| 1

|

21,020

| 3,442,784,529

|

IssuesEvent

|

2015-12-15 00:20:22

|

prettydiff/prettydiff

|

https://api.github.com/repos/prettydiff/prettydiff

|

closed

|

Conditions in tags get rearranged in twig templates

|

Defect QA

|

I've been using atom-beautify on twig templates, only to realize that it messed up some logic parts of the templates.

When beautifying twig templates, conditions inside of tags get interpreted as attributes, changing **if [...] endif** to **endif [...] if**.

```twig

<html>

<body>

<a href="/linktarget.html" {% if active %}class="active"{% endif %}>linktext</a>

</body>

</html>

```

turns into

```twig

<html>

<body>

<a class="active" href="/linktarget.html" {% endif %} {% if active %}>linktext</a>

</body>

</html>

```

|

1.0

|

Conditions in tags get rearranged in twig templates - I've been using atom-beautify on twig templates, only to realize that it messed up some logic parts of the templates.

When beautifying twig templates, conditions inside of tags get interpreted as attributes, changing **if [...] endif** to **endif [...] if**.

```twig

<html>

<body>

<a href="/linktarget.html" {% if active %}class="active"{% endif %}>linktext</a>

</body>

</html>

```

turns into

```twig

<html>

<body>

<a class="active" href="/linktarget.html" {% endif %} {% if active %}>linktext</a>

</body>

</html>

```

|

defect

|

conditions in tags get rearranged in twig templates i ve been using atom beautify on twig templates only to realize that it messed up some logic parts of the templates when beautifying twig templates conditions inside of tags get interpreted as attributes changing if endif to endif if twig linktext turns into twig linktext

| 1

|

290,241

| 32,045,665,693

|

IssuesEvent

|

2023-09-23 01:34:13

|

Chiencc/asuswrt-gt-ac5300

|

https://api.github.com/repos/Chiencc/asuswrt-gt-ac5300

|

reopened

|

jquery.mobile-1.4.5.min.js: 1 vulnerabilities (highest severity is: 6.5)

|

Mend: dependency security vulnerability

|

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery.mobile-1.4.5.min.js</b></p></summary>

<p>Touch-Optimized Web Framework for Smartphones & Tablets</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js</a></p>

<p>Path to dependency file: /release/src/router/www/sysdep/VZW-AC1300/www/QIS_wizard.htm</p>

<p>Path to vulnerable library: /release/src/router/www/sysdep/BLUECAVE/www/mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/VZW-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC2200/www/js/jquery.mobile.js,/release/src/router/www/sysdep/BLUECAVE/www/./mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1750/www/js/jquery.mobile.js</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/Chiencc/asuswrt-gt-ac5300/commit/0c45ce909374d16605095db4fce9a89b9b6bafd5">0c45ce909374d16605095db4fce9a89b9b6bafd5</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (jquery.mobile version) | Remediation Possible** |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [WS-2019-0136](https://github.com/jquery/jquery-mobile/commit/b0d9cc758a48f13321750d7409fb7655dcdf2b50) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 6.5 | jquery.mobile-1.4.5.min.js | Direct | BMC.NET - 1.0.3;org.webjars:jquery-mobile - 1.3.0-1,1.4.3;jquery.mobile - 1.3.0;jQWidgets_Framework - 8.0.0,6.0.6 | ❌ |

<p>**In some cases, Remediation PR cannot be created automatically for a vulnerability despite the availability of remediation</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> WS-2019-0136</summary>

### Vulnerable Library - <b>jquery.mobile-1.4.5.min.js</b></p>

<p>Touch-Optimized Web Framework for Smartphones & Tablets</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js</a></p>

<p>Path to dependency file: /release/src/router/www/sysdep/VZW-AC1300/www/QIS_wizard.htm</p>

<p>Path to vulnerable library: /release/src/router/www/sysdep/BLUECAVE/www/mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/VZW-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC2200/www/js/jquery.mobile.js,/release/src/router/www/sysdep/BLUECAVE/www/./mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1750/www/js/jquery.mobile.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery.mobile-1.4.5.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Chiencc/asuswrt-gt-ac5300/commit/0c45ce909374d16605095db4fce9a89b9b6bafd5">0c45ce909374d16605095db4fce9a89b9b6bafd5</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

All versions of Jquery mobile have an open redirect that leads to cross-site scripting when the endpoint reflects user input.

<p>Publish Date: 2019-06-13

<p>URL: <a href=https://github.com/jquery/jquery-mobile/commit/b0d9cc758a48f13321750d7409fb7655dcdf2b50>WS-2019-0136</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/WS-2019-0136">https://nvd.nist.gov/vuln/detail/WS-2019-0136</a></p>

<p>Release Date: 2019-06-13</p>

<p>Fix Resolution: BMC.NET - 1.0.3;org.webjars:jquery-mobile - 1.3.0-1,1.4.3;jquery.mobile - 1.3.0;jQWidgets_Framework - 8.0.0,6.0.6</p>

</p>

<p></p>

</details>

|

True

|

jquery.mobile-1.4.5.min.js: 1 vulnerabilities (highest severity is: 6.5) - <details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery.mobile-1.4.5.min.js</b></p></summary>

<p>Touch-Optimized Web Framework for Smartphones & Tablets</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js</a></p>

<p>Path to dependency file: /release/src/router/www/sysdep/VZW-AC1300/www/QIS_wizard.htm</p>

<p>Path to vulnerable library: /release/src/router/www/sysdep/BLUECAVE/www/mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/VZW-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC2200/www/js/jquery.mobile.js,/release/src/router/www/sysdep/BLUECAVE/www/./mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1750/www/js/jquery.mobile.js</p>

<p>

<p>Found in HEAD commit: <a href="https://github.com/Chiencc/asuswrt-gt-ac5300/commit/0c45ce909374d16605095db4fce9a89b9b6bafd5">0c45ce909374d16605095db4fce9a89b9b6bafd5</a></p></details>

## Vulnerabilities

| CVE | Severity | <img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS | Dependency | Type | Fixed in (jquery.mobile version) | Remediation Possible** |

| ------------- | ------------- | ----- | ----- | ----- | ------------- | --- |

| [WS-2019-0136](https://github.com/jquery/jquery-mobile/commit/b0d9cc758a48f13321750d7409fb7655dcdf2b50) | <img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Medium | 6.5 | jquery.mobile-1.4.5.min.js | Direct | BMC.NET - 1.0.3;org.webjars:jquery-mobile - 1.3.0-1,1.4.3;jquery.mobile - 1.3.0;jQWidgets_Framework - 8.0.0,6.0.6 | ❌ |

<p>**In some cases, Remediation PR cannot be created automatically for a vulnerability despite the availability of remediation</p>

## Details

<details>

<summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> WS-2019-0136</summary>

### Vulnerable Library - <b>jquery.mobile-1.4.5.min.js</b></p>

<p>Touch-Optimized Web Framework for Smartphones & Tablets</p>

<p>Library home page: <a href="https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js">https://cdnjs.cloudflare.com/ajax/libs/jquery-mobile/1.4.5/jquery.mobile.min.js</a></p>

<p>Path to dependency file: /release/src/router/www/sysdep/VZW-AC1300/www/QIS_wizard.htm</p>

<p>Path to vulnerable library: /release/src/router/www/sysdep/BLUECAVE/www/mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/VZW-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC2200/www/js/jquery.mobile.js,/release/src/router/www/sysdep/BLUECAVE/www/./mobile/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1300/www/js/jquery.mobile.js,/release/src/router/www/sysdep/MAP-AC1750/www/js/jquery.mobile.js</p>

<p>

Dependency Hierarchy:

- :x: **jquery.mobile-1.4.5.min.js** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/Chiencc/asuswrt-gt-ac5300/commit/0c45ce909374d16605095db4fce9a89b9b6bafd5">0c45ce909374d16605095db4fce9a89b9b6bafd5</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

<p></p>

### Vulnerability Details

<p>

All versions of Jquery mobile have an open redirect that leads to cross-site scripting when the endpoint reflects user input.

<p>Publish Date: 2019-06-13

<p>URL: <a href=https://github.com/jquery/jquery-mobile/commit/b0d9cc758a48f13321750d7409fb7655dcdf2b50>WS-2019-0136</a></p>

</p>

<p></p>

### CVSS 3 Score Details (<b>6.5</b>)

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

<p></p>

### Suggested Fix

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/WS-2019-0136">https://nvd.nist.gov/vuln/detail/WS-2019-0136</a></p>

<p>Release Date: 2019-06-13</p>

<p>Fix Resolution: BMC.NET - 1.0.3;org.webjars:jquery-mobile - 1.3.0-1,1.4.3;jquery.mobile - 1.3.0;jQWidgets_Framework - 8.0.0,6.0.6</p>

</p>

<p></p>

</details>

|

non_defect

|