Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

44,687 | 12,325,641,114 | IssuesEvent | 2020-05-13 15:19:54 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | closed | CMS Files/EFS Backup failing | Content export Defect DevOps Unplanned work | Last 2 builds failed with:

> fatal: [localhost]: FAILED! => {"changed": false, "msg": "Error mounting /efs: mount.nfs4: Connection timed out\n"}

http://jenkins.vfs.va.gov/job/cms/job/cms-efs-backup-prod/

- [x] Determine if flicker, run again

- [x] Create devops PR to:

1. Retain 7 days of builds, not a limit of 5 (current)

1. Notify #cms-team Slack channel on failure

- [x] Merge PR https://github.com/department-of-veterans-affairs/devops/pull/6641 | 1.0 | CMS Files/EFS Backup failing - Last 2 builds failed with:

> fatal: [localhost]: FAILED! => {"changed": false, "msg": "Error mounting /efs: mount.nfs4: Connection timed out\n"}

http://jenkins.vfs.va.gov/job/cms/job/cms-efs-backup-prod/

- [x] Determine if flicker, run again

- [x] Create devops PR to:

1. Retain 7 days of builds, not a limit of 5 (current)

1. Notify #cms-team Slack channel on failure

- [x] Merge PR https://github.com/department-of-veterans-affairs/devops/pull/6641 | defect | cms files efs backup failing last builds failed with fatal failed changed false msg error mounting efs mount connection timed out n determine if flicker run again create devops pr to retain days of builds not a limit of current notify cms team slack channel on failure merge pr | 1 |

74,825 | 25,347,594,354 | IssuesEvent | 2022-11-19 11:38:29 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | Cannot close BOINC when in fullscreen | C: Manager P: Undetermined T: Defect Validate | **Describe the bug**

Cannot close the BOINC Manager window when in fullscreen (at least on macOS, I don't know about other OS's). It works when BOINC isn't in fullscreen though.

**Steps To Reproduce**

1. Open BOINC Manager and press the fullscreen button (the green one at the top-left of the window)

2. Try to close the window (either by pressing the red X button at the top-left of the window or by pressing Command+W)

3. You will be switched to another window/app

4. Go back to see that BOINC is actually still open

5. Try to close the window again

6. This time, nothing happens, and you're not switched to another window/app

**Expected behavior**

BOINC manager should just be closed.

**System Information**

- OS: macOS 12.6

- BOINC Version: 7.20.2 | 1.0 | Cannot close BOINC when in fullscreen - **Describe the bug**

Cannot close the BOINC Manager window when in fullscreen (at least on macOS, I don't know about other OS's). It works when BOINC isn't in fullscreen though.

**Steps To Reproduce**

1. Open BOINC Manager and press the fullscreen button (the green one at the top-left of the window)

2. Try to close the window (either by pressing the red X button at the top-left of the window or by pressing Command+W)

3. You will be switched to another window/app

4. Go back to see that BOINC is actually still open

5. Try to close the window again

6. This time, nothing happens, and you're not switched to another window/app

**Expected behavior**

BOINC manager should just be closed.

**System Information**

- OS: macOS 12.6

- BOINC Version: 7.20.2 | defect | cannot close boinc when in fullscreen describe the bug cannot close the boinc manager window when in fullscreen at least on macos i don t know about other os s it works when boinc isn t in fullscreen though steps to reproduce open boinc manager and press the fullscreen button the green one at the top left of the window try to close the window either by pressing the red x button at the top left of the window or by pressing command w you will be switched to another window app go back to see that boinc is actually still open try to close the window again this time nothing happens and you re not switched to another window app expected behavior boinc manager should just be closed system information os macos boinc version | 1 |

808,232 | 30,051,276,438 | IssuesEvent | 2023-06-28 00:49:45 | apcountryman/picolibrary | https://api.github.com/repos/apcountryman/picolibrary | opened | Add Adafruit PID781 custom character ID | priority-normal status-awaiting_development type-feature | Add Adafruit PID781 custom character ID (`::picolibrary::Adafruit::PID781::Custom_Character_ID`).

- [ ] The `Custom_Character_ID` enum class should be defined in the `include/picolibrary/adafruit/pid781.h`/`source/picolibrary/adafruit/pid781.cc` header/source file pair

- [ ] The `Custom_Character_ID` enum class should have an underlying type of `std::uint8_t`

- [ ] The `Custom_Character_ID` enum class should have the following enumerators:

- [ ] `_0 = 0`: 0

- [ ] `_1 = 1`: 1

- [ ] `_2 = 2`: 2

- [ ] `_3 = 3`: 3

- [ ] `_4 = 4`: 4

- [ ] `_5 = 5`: 5

- [ ] `_6 = 6`: 6

- [ ] `_7 = 7`: 7

- [ ] A `std::ostream` insertion operator should be defined for the `Custom_Character_ID` enum class to support automated testing

- [ ] The `Custom_Character_ID` enum class `std::ostream` insertion operator should be defined in the `include/picolibrary/testing/automated/adafruit/pid781.h`/`source/picolibrary/testing/automated/adafruit/pid781.cc` header/source file pair

- [ ] `::picolibrary::Output_Formatter` should be specialized for `Custom_Character_ID`

- [ ] The `::picolibrary::Output_Formatter<Adafruit::PID781::Custom_Character_ID>` specialization should be defined in the `include/picolibrary/adafruit/pid781.h`/`source/picolibrary/adafruit/pid781.cc` header/source file pair

- [ ] The `::picolibrary::Output_Formatter<Adafruit::PID781::Custom_Character_ID>` specialization should have automated tests

- [ ] The `::picolibrary::Output_Formatter<Adafruit::PID781::Custom_Character_ID>` specialization should support the following operations:

- [ ] `constexpr Output_Formatter() noexcept = default;`

- [ ] `constexpr Output_Formatter( Output_Formatter && source ) noexcept = default;`

- [ ] `constexpr Output_Formatter( Output_Formatter const & original ) noexcept = default;`

- [ ] `~Output_Formatter() noexcept = default;`

- [ ] `constexpr auto operator=( Output_Formatter && expression ) noexcept -> Output_Formatter & = default;`

- [ ] `constexpr auto operator=( Output_Formatter const & expression ) noexcept -> Output_Formatter & = default;`

- [ ] `auto print( Reliable_Output_Stream & stream, Adafruit::PID781::Custom_Character_ID custom_character_id ) const noexcept -> std::size_t;`: Write the formatted custom character ID to the stream

- [ ] Update documentation | 1.0 | Add Adafruit PID781 custom character ID - Add Adafruit PID781 custom character ID (`::picolibrary::Adafruit::PID781::Custom_Character_ID`).

- [ ] The `Custom_Character_ID` enum class should be defined in the `include/picolibrary/adafruit/pid781.h`/`source/picolibrary/adafruit/pid781.cc` header/source file pair

- [ ] The `Custom_Character_ID` enum class should have an underlying type of `std::uint8_t`

- [ ] The `Custom_Character_ID` enum class should have the following enumerators:

- [ ] `_0 = 0`: 0

- [ ] `_1 = 1`: 1

- [ ] `_2 = 2`: 2

- [ ] `_3 = 3`: 3

- [ ] `_4 = 4`: 4

- [ ] `_5 = 5`: 5

- [ ] `_6 = 6`: 6

- [ ] `_7 = 7`: 7

- [ ] A `std::ostream` insertion operator should be defined for the `Custom_Character_ID` enum class to support automated testing

- [ ] The `Custom_Character_ID` enum class `std::ostream` insertion operator should be defined in the `include/picolibrary/testing/automated/adafruit/pid781.h`/`source/picolibrary/testing/automated/adafruit/pid781.cc` header/source file pair

- [ ] `::picolibrary::Output_Formatter` should be specialized for `Custom_Character_ID`

- [ ] The `::picolibrary::Output_Formatter<Adafruit::PID781::Custom_Character_ID>` specialization should be defined in the `include/picolibrary/adafruit/pid781.h`/`source/picolibrary/adafruit/pid781.cc` header/source file pair

- [ ] The `::picolibrary::Output_Formatter<Adafruit::PID781::Custom_Character_ID>` specialization should have automated tests

- [ ] The `::picolibrary::Output_Formatter<Adafruit::PID781::Custom_Character_ID>` specialization should support the following operations:

- [ ] `constexpr Output_Formatter() noexcept = default;`

- [ ] `constexpr Output_Formatter( Output_Formatter && source ) noexcept = default;`

- [ ] `constexpr Output_Formatter( Output_Formatter const & original ) noexcept = default;`

- [ ] `~Output_Formatter() noexcept = default;`

- [ ] `constexpr auto operator=( Output_Formatter && expression ) noexcept -> Output_Formatter & = default;`

- [ ] `constexpr auto operator=( Output_Formatter const & expression ) noexcept -> Output_Formatter & = default;`

- [ ] `auto print( Reliable_Output_Stream & stream, Adafruit::PID781::Custom_Character_ID custom_character_id ) const noexcept -> std::size_t;`: Write the formatted custom character ID to the stream

- [ ] Update documentation | non_defect | add adafruit custom character id add adafruit custom character id picolibrary adafruit custom character id the custom character id enum class should be defined in the include picolibrary adafruit h source picolibrary adafruit cc header source file pair the custom character id enum class should have an underlying type of std t the custom character id enum class should have the following enumerators a std ostream insertion operator should be defined for the custom character id enum class to support automated testing the custom character id enum class std ostream insertion operator should be defined in the include picolibrary testing automated adafruit h source picolibrary testing automated adafruit cc header source file pair picolibrary output formatter should be specialized for custom character id the picolibrary output formatter specialization should be defined in the include picolibrary adafruit h source picolibrary adafruit cc header source file pair the picolibrary output formatter specialization should have automated tests the picolibrary output formatter specialization should support the following operations constexpr output formatter noexcept default constexpr output formatter output formatter source noexcept default constexpr output formatter output formatter const original noexcept default output formatter noexcept default constexpr auto operator output formatter expression noexcept output formatter default constexpr auto operator output formatter const expression noexcept output formatter default auto print reliable output stream stream adafruit custom character id custom character id const noexcept std size t write the formatted custom character id to the stream update documentation | 0 |

39,104 | 9,204,023,190 | IssuesEvent | 2019-03-08 05:30:59 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Only one ModuleDependencyAttribute is used | defect in-progress | I'm using a module with automatic dependency resolution. However, there are dependencies that can't be resolved by the compiler as they come from a view. When I try to add those dependencies by hand adding several ModuleDependencyAttributes, it only takes the first one in account, ignoring the rest.

This happens in v17.6.0 and it is a big showstopper for me.

### Steps To Reproduce

https://deck.net/084ed83fae9118f4d0630436a5fb8a3f

```csharp

public class Program

{

public static void Main()

{

Module1.Test1();

}

}

[Module(ModuleType.AMD, "Module1", true, ExportAsNamespace = "Mod1")]

[Name("Module1")]

[ModuleDependency("Module2")]

[ModuleDependency("Module3")]

[ModuleDependency("Module4")]

public static class Module1

{

public extern static void Test1();

}

```

### Expected Result

```js

Bridge.assembly("Demo", function ($asm, globals) {

"use strict";

require(["Module1"], function (Mod1) {

Bridge.define("Demo.Program", {

$metadata : function () { return {"att":1048577,"a":2,"m":[{"a":2,"isSynthetic":true,"n":".ctor","t":1,"sn":"ctor"},{"a":2,"n":"Main","is":true,"t":8,"sn":"Main","rt":System.Void}]}; },

main: function Main () {

Module1.Test1();

}

});

Bridge.init();

});

define("Module1", ["Module2", "Module3", "Module4"], function (Module2, Module3, Module4) {

var Module1 = { };

Bridge.define("Module1", {

$metadata : function () { return {"att":1048961,"a":2,"s":true,"m":[{"a":2,"n":"Test1","is":true,"t":8,"sn":"Test1","rt":System.Void}]}; },

$scope: Module1

});

return Module1;

});

});

```

### Actual Result

```js

Bridge.assembly("Demo", function ($asm, globals) {

"use strict";

require(["Module1"], function (Mod1) {

Bridge.define("Demo.Program", {

$metadata : function () { return {"att":1048577,"a":2,"m":[{"a":2,"isSynthetic":true,"n":".ctor","t":1,"sn":"ctor"},{"a":2,"n":"Main","is":true,"t":8,"sn":"Main","rt":System.Void}]}; },

main: function Main () {

Module1.Test1();

}

});

Bridge.init();

});

define("Module1", ["Module2"], function (Module2) {

var Module1 = { };

Bridge.define("Module1", {

$metadata : function () { return {"att":1048961,"a":2,"s":true,"m":[{"a":2,"n":"Test1","is":true,"t":8,"sn":"Test1","rt":System.Void}]}; },

$scope: Module1

});

return Module1;

});

});

```

| 1.0 | Only one ModuleDependencyAttribute is used - I'm using a module with automatic dependency resolution. However, there are dependencies that can't be resolved by the compiler as they come from a view. When I try to add those dependencies by hand adding several ModuleDependencyAttributes, it only takes the first one in account, ignoring the rest.

This happens in v17.6.0 and it is a big showstopper for me.

### Steps To Reproduce

https://deck.net/084ed83fae9118f4d0630436a5fb8a3f

```csharp

public class Program

{

public static void Main()

{

Module1.Test1();

}

}

[Module(ModuleType.AMD, "Module1", true, ExportAsNamespace = "Mod1")]

[Name("Module1")]

[ModuleDependency("Module2")]

[ModuleDependency("Module3")]

[ModuleDependency("Module4")]

public static class Module1

{

public extern static void Test1();

}

```

### Expected Result

```js

Bridge.assembly("Demo", function ($asm, globals) {

"use strict";

require(["Module1"], function (Mod1) {

Bridge.define("Demo.Program", {

$metadata : function () { return {"att":1048577,"a":2,"m":[{"a":2,"isSynthetic":true,"n":".ctor","t":1,"sn":"ctor"},{"a":2,"n":"Main","is":true,"t":8,"sn":"Main","rt":System.Void}]}; },

main: function Main () {

Module1.Test1();

}

});

Bridge.init();

});

define("Module1", ["Module2", "Module3", "Module4"], function (Module2, Module3, Module4) {

var Module1 = { };

Bridge.define("Module1", {

$metadata : function () { return {"att":1048961,"a":2,"s":true,"m":[{"a":2,"n":"Test1","is":true,"t":8,"sn":"Test1","rt":System.Void}]}; },

$scope: Module1

});

return Module1;

});

});

```

### Actual Result

```js

Bridge.assembly("Demo", function ($asm, globals) {

"use strict";

require(["Module1"], function (Mod1) {

Bridge.define("Demo.Program", {

$metadata : function () { return {"att":1048577,"a":2,"m":[{"a":2,"isSynthetic":true,"n":".ctor","t":1,"sn":"ctor"},{"a":2,"n":"Main","is":true,"t":8,"sn":"Main","rt":System.Void}]}; },

main: function Main () {

Module1.Test1();

}

});

Bridge.init();

});

define("Module1", ["Module2"], function (Module2) {

var Module1 = { };

Bridge.define("Module1", {

$metadata : function () { return {"att":1048961,"a":2,"s":true,"m":[{"a":2,"n":"Test1","is":true,"t":8,"sn":"Test1","rt":System.Void}]}; },

$scope: Module1

});

return Module1;

});

});

```

| defect | only one moduledependencyattribute is used i m using a module with automatic dependency resolution however there are dependencies that can t be resolved by the compiler as they come from a view when i try to add those dependencies by hand adding several moduledependencyattributes it only takes the first one in account ignoring the rest this happens in and it is a big showstopper for me steps to reproduce csharp public class program public static void main public static class public extern static void expected result js bridge assembly demo function asm globals use strict require function bridge define demo program metadata function return att a m main function main bridge init define function var bridge define metadata function return att a s true m scope return actual result js bridge assembly demo function asm globals use strict require function bridge define demo program metadata function return att a m main function main bridge init define function var bridge define metadata function return att a s true m scope return | 1 |

823,329 | 30,990,595,491 | IssuesEvent | 2023-08-09 04:06:31 | mpv-player/mpv | https://api.github.com/repos/mpv-player/mpv | closed | issues with hardwaredecoding of h265 | os:win priority:stale | ### Important Information

- mpv version: 0.33.0-184-g03b9f8e323

- Windows Version: Windows 10 21H1 and pretty much any earlier version of Windows 10

- Source of the mpv binary: shinchiro builds

- Video card and drivers: Intel HD520, all tested drivers since the issue started, also "known good" drivers that worked before

- affected mpv versions: all tested versions since over a year were affected

### Reproduction steps

Play pretty much any h265-encoded video file, enable hardware acceleration and maybe seek around a bit in the video.

### Expected behavior

Videos should play normally, mpv should stay responsive, in case of driver hiccups at least "recover" and allow for a graceful shutdown.

### Actual behavior

I've observed several outcomes without any clear pattern.

- More often than not mpv just straight up freezes.

- Sometimes a file can be played for a while without any apparent issue. Trying to seek, switch to fullscreen or resize the window often results in a freeze.

- Sometimes audio continues to play in the background, sometimes not.

- Sometimes there is is a message in the logs, which reads: `[vo/gpu/d3d11] Failed to create Texture2D`, but that does not always happen!

- Sometimes mpv continues to eat up a whole CPU core while playing dead, sometimes it does not.

Disabling hardware decoding via hotkey doesn't work either in this state. The only way to recover is to kill mpv using some sort of task manager on Windows. Ever since the issues started, I disabled hardware decoding for h265 and then everything just works fine. I know you (used to?) advice against hardware decoding, still there seems to be something at fault and worthy to report. h264, vp9 and everything else I threw at mpv work like a charm with (and without, if not available) hardware acceleration.

### Log file

A log can be found here: [https://bin.disroot.org/?50bb3a475c449723#EZTmcxzHVxEPktE95wZWTMNHx3iiFfTaE7cNTBdMeyXg](https://bin.disroot.org/?50bb3a475c449723#EZTmcxzHVxEPktE95wZWTMNHx3iiFfTaE7cNTBdMeyXg)

Note that I tried to resize the mpv window before killing mpv. This was to show that mpv was still somehow "alive" (producing logs) but not responding to any hotkeys or other mouse actions.

If you need any more information, I'll try to provide them. Thank you!

| 1.0 | issues with hardwaredecoding of h265 - ### Important Information

- mpv version: 0.33.0-184-g03b9f8e323

- Windows Version: Windows 10 21H1 and pretty much any earlier version of Windows 10

- Source of the mpv binary: shinchiro builds

- Video card and drivers: Intel HD520, all tested drivers since the issue started, also "known good" drivers that worked before

- affected mpv versions: all tested versions since over a year were affected

### Reproduction steps

Play pretty much any h265-encoded video file, enable hardware acceleration and maybe seek around a bit in the video.

### Expected behavior

Videos should play normally, mpv should stay responsive, in case of driver hiccups at least "recover" and allow for a graceful shutdown.

### Actual behavior

I've observed several outcomes without any clear pattern.

- More often than not mpv just straight up freezes.

- Sometimes a file can be played for a while without any apparent issue. Trying to seek, switch to fullscreen or resize the window often results in a freeze.

- Sometimes audio continues to play in the background, sometimes not.

- Sometimes there is is a message in the logs, which reads: `[vo/gpu/d3d11] Failed to create Texture2D`, but that does not always happen!

- Sometimes mpv continues to eat up a whole CPU core while playing dead, sometimes it does not.

Disabling hardware decoding via hotkey doesn't work either in this state. The only way to recover is to kill mpv using some sort of task manager on Windows. Ever since the issues started, I disabled hardware decoding for h265 and then everything just works fine. I know you (used to?) advice against hardware decoding, still there seems to be something at fault and worthy to report. h264, vp9 and everything else I threw at mpv work like a charm with (and without, if not available) hardware acceleration.

### Log file

A log can be found here: [https://bin.disroot.org/?50bb3a475c449723#EZTmcxzHVxEPktE95wZWTMNHx3iiFfTaE7cNTBdMeyXg](https://bin.disroot.org/?50bb3a475c449723#EZTmcxzHVxEPktE95wZWTMNHx3iiFfTaE7cNTBdMeyXg)

Note that I tried to resize the mpv window before killing mpv. This was to show that mpv was still somehow "alive" (producing logs) but not responding to any hotkeys or other mouse actions.

If you need any more information, I'll try to provide them. Thank you!

| non_defect | issues with hardwaredecoding of important information mpv version windows version windows and pretty much any earlier version of windows source of the mpv binary shinchiro builds video card and drivers intel all tested drivers since the issue started also known good drivers that worked before affected mpv versions all tested versions since over a year were affected reproduction steps play pretty much any encoded video file enable hardware acceleration and maybe seek around a bit in the video expected behavior videos should play normally mpv should stay responsive in case of driver hiccups at least recover and allow for a graceful shutdown actual behavior i ve observed several outcomes without any clear pattern more often than not mpv just straight up freezes sometimes a file can be played for a while without any apparent issue trying to seek switch to fullscreen or resize the window often results in a freeze sometimes audio continues to play in the background sometimes not sometimes there is is a message in the logs which reads failed to create but that does not always happen sometimes mpv continues to eat up a whole cpu core while playing dead sometimes it does not disabling hardware decoding via hotkey doesn t work either in this state the only way to recover is to kill mpv using some sort of task manager on windows ever since the issues started i disabled hardware decoding for and then everything just works fine i know you used to advice against hardware decoding still there seems to be something at fault and worthy to report and everything else i threw at mpv work like a charm with and without if not available hardware acceleration log file a log can be found here note that i tried to resize the mpv window before killing mpv this was to show that mpv was still somehow alive producing logs but not responding to any hotkeys or other mouse actions if you need any more information i ll try to provide them thank you | 0 |

212,920 | 16,504,392,018 | IssuesEvent | 2021-05-25 17:26:36 | 007-hd/intro-to-github | https://api.github.com/repos/007-hd/intro-to-github | closed | Use github as a project management system! | bug documentation | make a todo list

- [x] you can make a todo list by

- [x] adding a dasn

- [x] a space

- [x] and open square bracket

- [x] a space

- [x] and a close square bracket | 1.0 | Use github as a project management system! - make a todo list

- [x] you can make a todo list by

- [x] adding a dasn

- [x] a space

- [x] and open square bracket

- [x] a space

- [x] and a close square bracket | non_defect | use github as a project management system make a todo list you can make a todo list by adding a dasn a space and open square bracket a space and a close square bracket | 0 |

269,941 | 20,512,676,881 | IssuesEvent | 2022-03-01 08:34:30 | Best-engineer/codestates-nonmajor | https://api.github.com/repos/Best-engineer/codestates-nonmajor | closed | // | documentation duplicate | ### ISSUE

- Group: `client`, `server`, `sr`

- Type: `bug`, `feature`, `delete`

- Detail: fix actions from client redux

### TODO

1. [ ] Job1

2. [ ] Job2

3. [ ] Job3

### Estimated time

> Pick one

### `0.5h`

### `1h`

### `1.5h`

### `2h`

### `2.5h`

### `3h`

### Labels

- Estimated time: `E: 1h`

- Group : `client`, `server`

- Sprint: `Sprint__NUMBER__`

- Urgency: `High`, `Middle`, `Low`

| 1.0 | // - ### ISSUE

- Group: `client`, `server`, `sr`

- Type: `bug`, `feature`, `delete`

- Detail: fix actions from client redux

### TODO

1. [ ] Job1

2. [ ] Job2

3. [ ] Job3

### Estimated time

> Pick one

### `0.5h`

### `1h`

### `1.5h`

### `2h`

### `2.5h`

### `3h`

### Labels

- Estimated time: `E: 1h`

- Group : `client`, `server`

- Sprint: `Sprint__NUMBER__`

- Urgency: `High`, `Middle`, `Low`

| non_defect | issue group client server sr type bug feature delete detail fix actions from client redux todo estimated time pick one labels estimated time e group client server sprint sprint number urgency high middle low | 0 |

8,393 | 2,611,495,764 | IssuesEvent | 2015-02-27 05:35:22 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Errors in campaign mission 6 | auto-migrated Priority-Low Type-Defect | ```

Was roping around collecting crates near the upper left.

As I picked up a utility crate in one position, another immediately appeared at

the same place, so I sat there on rope collecting 5 or so crates. At the end of

the turn, I got the "well this was pointless" message while still on rope, then

the robot began his speech.

When it was over, was spammed endlessly with:

Lua: Error while calling onGameTick: ...ta//Missions/Campaign/A Classic

Fairytale/dragon.lua:371: bad argument #1 to 'unpack' (table expected, got nil)

It also happened on another turn while I was on rope, although it eventually

ended that time.

```

Original issue reported on code.google.com by `kyberneticist@gmail.com` on 1 Sep 2012 at 7:57

* Blocking: #494 | 1.0 | Errors in campaign mission 6 - ```

Was roping around collecting crates near the upper left.

As I picked up a utility crate in one position, another immediately appeared at

the same place, so I sat there on rope collecting 5 or so crates. At the end of

the turn, I got the "well this was pointless" message while still on rope, then

the robot began his speech.

When it was over, was spammed endlessly with:

Lua: Error while calling onGameTick: ...ta//Missions/Campaign/A Classic

Fairytale/dragon.lua:371: bad argument #1 to 'unpack' (table expected, got nil)

It also happened on another turn while I was on rope, although it eventually

ended that time.

```

Original issue reported on code.google.com by `kyberneticist@gmail.com` on 1 Sep 2012 at 7:57

* Blocking: #494 | defect | errors in campaign mission was roping around collecting crates near the upper left as i picked up a utility crate in one position another immediately appeared at the same place so i sat there on rope collecting or so crates at the end of the turn i got the well this was pointless message while still on rope then the robot began his speech when it was over was spammed endlessly with lua error while calling ongametick ta missions campaign a classic fairytale dragon lua bad argument to unpack table expected got nil it also happened on another turn while i was on rope although it eventually ended that time original issue reported on code google com by kyberneticist gmail com on sep at blocking | 1 |

23,419 | 3,814,346,995 | IssuesEvent | 2016-03-28 12:44:37 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | NoClassDefFoundError: javax/cache/integration/CacheLoaderException | Team: Client Team: Core Type: Defect | Hi,

We are trying to update hazelcast from 3.4 to 3.6.1, but we are repeatedly getting this issue.

`Factory method [public static com.hazelcast.core.HazelcastInstance com.hazelcast.instance.HazelcastInstanceFactory.newHazelcastInstance(com.hazelcast.config.Config)] threw exception; nested exception is java.lang.NoClassDefFoundError: javax/cache/integration/CacheLoaderException`

How can we resolve this issue? | 1.0 | NoClassDefFoundError: javax/cache/integration/CacheLoaderException - Hi,

We are trying to update hazelcast from 3.4 to 3.6.1, but we are repeatedly getting this issue.

`Factory method [public static com.hazelcast.core.HazelcastInstance com.hazelcast.instance.HazelcastInstanceFactory.newHazelcastInstance(com.hazelcast.config.Config)] threw exception; nested exception is java.lang.NoClassDefFoundError: javax/cache/integration/CacheLoaderException`

How can we resolve this issue? | defect | noclassdeffounderror javax cache integration cacheloaderexception hi we are trying to update hazelcast from to but we are repeatedly getting this issue factory method threw exception nested exception is java lang noclassdeffounderror javax cache integration cacheloaderexception how can we resolve this issue | 1 |

140,622 | 11,353,727,747 | IssuesEvent | 2020-01-24 16:06:49 | IntellectualSites/PlotSquared | https://api.github.com/repos/IntellectualSites/PlotSquared | opened | Schematic based World Generation is not working correctly | [?] Testing Required | <!--- READ THIS BEFORE SUBMITTING AN ISSUE REPORT!!! -->

<!--- ##### DO NOT REMOVE THIS TEMPLATE! YOUR ISSUE *WILL* FIT IN IT! ##### -->

<!--- # NOTICE:

```diff

! PlotSquared for Minecraft Java Edition versions between 1.7 through to 1.12.2 are considered

! legacy, and will receive limited to no support. Please consider upgrading to 1.13+ for

! future support. Plugins exist for 1.13+ which bring back behaviors found in 1.8.8

! All versions of PlotSquared for Sponge and Nukkit(X) will receive limited to no support

! due to lack of developer interest and time. Additionally, NukkitX has not had feature

! updates since the Better Together, which prevents some PlotSquared features from ever

! functioning. Contributions are always welcome however!

```

**Feature requests & Suggestions are to be submitted at the [PlotSquared Suggestions tracker](https://github.com/IntellectualSites/PlotSquaredSuggestions)**

**Code contributions are to be done through [PRs](https://help.github.com/en/github/collaborating-with-issues-and-pull-requests/creating-a-pull-request), tagging the specific issue ticket(s) if applicable.**

**[DISCORD INVITE LINK](https://discord.gg/cSMxtGn)** and please, for the love of the little sanity we have left, use the correct channels!

-->

# Bug Report Template:

<!--- Incomplete reports will most likely be marked as invalid, and closed, with few exceptions.-->

## Required Information section:

> ALL FIELDS IN THIS SECTION ARE REQUIRED, and must contain appropriate information

### Server config info (/plot debugpaste / file paste links):

<!--- Issue /plot debugpaste in game or in your console and copy the supplied URL here -->

<!--- If you cannot perform the above, we require logs/latest.log; settings.yml; worlds.yml and possibly PlotSquared.use_THIS.yml -->

I atteached the files because the first thing doesnt worked:

[latest.log](https://github.com/IntellectualSites/PlotSquared/files/4109359/latest.log)

[2020-01-23-9.log.gz](https://github.com/IntellectualSites/PlotSquared/files/4109363/2020-01-23-9.log.gz)

[2020-01-23-10.log.gz](https://github.com/IntellectualSites/PlotSquared/files/4109364/2020-01-23-10.log.gz)

[yml.zip](https://github.com/IntellectualSites/PlotSquared/files/4109371/yml.zip)

<!--- If you are unwilling to supply the information we need, we reserve the right to not assist you. Redact IP addresses if you need to. -->

### Server type:

**Select one**

<!-- Select the type you are reporting the issue for (put an "X" between of brackets): -->

- [X] Spigot / Paper *(CraftBukkit should not be used, re-test with Spigot first!)*

- [] Sponge *- NOTE: NOT ACTIVELY MAINTAINED*

- [] NukkitX *- NOTE: NOT ACTIVELY MAINTAINED*

### Minecraft Version:

**Select one**

<!-- Select the type you are reporting the issue for (put an "X" between of brackets):

The maintained versions are 1.14.4 and 1.15.x -->

- [] Minecraft 1.15

- [X] Minecraft 1.14.4

- [] Minecraft 1.13.2

- [] Minecraft Java Edition *other versions, please specify*:

- [] Minecraft Bedrock Edition *specify version*:

### Server build info:

<!--- Run /version in-game or in console & paste the full output here: -->

```

This server is running CraftBukkit version git-Spigot-cbd1a1b-009d8af (MC: 1.14.4) (Implementing API version 1.14.4-R0.1-SNAPSHOT)

[16:31:29] [Server thread/INFO]: This is a final build for 1.14.4. Please see https://www.spigotmc.org/go/1.14.4 for details about upgrading.

```

### WorldEdit/FAWE versions:

<!--- Specify which plugin you are using, and add its version -->

- [X] FAWE *FastAsyncWorldEdit-1.15-45*:

- [] WorldEdit *version*:

### Description of the problem:

<!--- Be as specific as possible. Don't lie, redact information, or use false names/situations. -->

<!--- Who, What, When, Where, Why, How, Expected behavior, Resultant behavior, etc -->

If I want to use the schematic based world gen. with the plot.schem in the right folder for creating a new world, an Error occurs. After the Server has been loaded completely, the world is genarated and I can also enter it, but then there are always half or broken plots.

### How to replicate:

<!--- If you can reproduce the issue please tell us as detailed as possible step by step how to do that -->

I have done everything like it is described in the wiki, but than this Error occurs. The marked space for the plot schematic is about 200 Blocks in booth x and y direction. Maybe its too big? I also checked the worlds.yml but the Plot size is set to 200.

## Additional Information:

> The information here is optional for you to provide, however it may help us to more readily diagnose any compatibility and bug issues.

Sometimes the schematic isn´t placed completely if I use FAWE, mostly if I dont use //paste -a. Than I have to paste it again, before it works.

### Other plugins being used on the server:

<!--- Optional but recommended - issue "/plugins" in-game or in console and copy/paste the list -->

Plugins (19): NightVision, LuckPerms, WorldEdit, Spawntp, FastAsyncWorldEdit, SuperTrails, VoxelSniper, ClearLag, Vault, F3NPerm, Citizens, Essentials, ChatEx, CommandPanels, PlotSquared, Multiverse-Core, Multiverse-Inventories, Multiverse-SignPortals, ItemJoin

### Relevant console output, log lines, and/or screenshots:

<!--- Please use in-line code insertion

```

https://pastebin.com/Q5NqC5Ey

```

for short (20 lines or less) text blobs, or a paste service for large blobs -->

### Additional relevant comments/remarks:

<!--- Use this space to give us any additional information which may be relevant to this issue, such as: if you are using a Minecraft hosting provider; unusual installation environment; etc -->

At the moment the Server is running on localhost

# AFFIRMATION OF COMPLETION:

<!-- Make sure you have completed the following steps (put an "X" between of brackets): -->

- [X] I included all information required in the sections above

- [X] I made sure there are no duplicates of this report [(Use Search)](https://github.com/IntellectualSites/PlotSquared/issues?utf8=%E2%9C%93&q=is%3Aissue)

- [X] I made sure I am using an up-to-date version of PlotSquared

- [X] I made sure the bug/error is not caused by any other plugin

- [] I didn't read but checked everything above.

| 1.0 | Schematic based World Generation is not working correctly - <!--- READ THIS BEFORE SUBMITTING AN ISSUE REPORT!!! -->

<!--- ##### DO NOT REMOVE THIS TEMPLATE! YOUR ISSUE *WILL* FIT IN IT! ##### -->

<!--- # NOTICE:

```diff

! PlotSquared for Minecraft Java Edition versions between 1.7 through to 1.12.2 are considered

! legacy, and will receive limited to no support. Please consider upgrading to 1.13+ for

! future support. Plugins exist for 1.13+ which bring back behaviors found in 1.8.8

! All versions of PlotSquared for Sponge and Nukkit(X) will receive limited to no support

! due to lack of developer interest and time. Additionally, NukkitX has not had feature

! updates since the Better Together, which prevents some PlotSquared features from ever

! functioning. Contributions are always welcome however!

```

**Feature requests & Suggestions are to be submitted at the [PlotSquared Suggestions tracker](https://github.com/IntellectualSites/PlotSquaredSuggestions)**

**Code contributions are to be done through [PRs](https://help.github.com/en/github/collaborating-with-issues-and-pull-requests/creating-a-pull-request), tagging the specific issue ticket(s) if applicable.**

**[DISCORD INVITE LINK](https://discord.gg/cSMxtGn)** and please, for the love of the little sanity we have left, use the correct channels!

-->

# Bug Report Template:

<!--- Incomplete reports will most likely be marked as invalid, and closed, with few exceptions.-->

## Required Information section:

> ALL FIELDS IN THIS SECTION ARE REQUIRED, and must contain appropriate information

### Server config info (/plot debugpaste / file paste links):

<!--- Issue /plot debugpaste in game or in your console and copy the supplied URL here -->

<!--- If you cannot perform the above, we require logs/latest.log; settings.yml; worlds.yml and possibly PlotSquared.use_THIS.yml -->

I atteached the files because the first thing doesnt worked:

[latest.log](https://github.com/IntellectualSites/PlotSquared/files/4109359/latest.log)

[2020-01-23-9.log.gz](https://github.com/IntellectualSites/PlotSquared/files/4109363/2020-01-23-9.log.gz)

[2020-01-23-10.log.gz](https://github.com/IntellectualSites/PlotSquared/files/4109364/2020-01-23-10.log.gz)

[yml.zip](https://github.com/IntellectualSites/PlotSquared/files/4109371/yml.zip)

<!--- If you are unwilling to supply the information we need, we reserve the right to not assist you. Redact IP addresses if you need to. -->

### Server type:

**Select one**

<!-- Select the type you are reporting the issue for (put an "X" between of brackets): -->

- [X] Spigot / Paper *(CraftBukkit should not be used, re-test with Spigot first!)*

- [] Sponge *- NOTE: NOT ACTIVELY MAINTAINED*

- [] NukkitX *- NOTE: NOT ACTIVELY MAINTAINED*

### Minecraft Version:

**Select one**

<!-- Select the type you are reporting the issue for (put an "X" between of brackets):

The maintained versions are 1.14.4 and 1.15.x -->

- [] Minecraft 1.15

- [X] Minecraft 1.14.4

- [] Minecraft 1.13.2

- [] Minecraft Java Edition *other versions, please specify*:

- [] Minecraft Bedrock Edition *specify version*:

### Server build info:

<!--- Run /version in-game or in console & paste the full output here: -->

```

This server is running CraftBukkit version git-Spigot-cbd1a1b-009d8af (MC: 1.14.4) (Implementing API version 1.14.4-R0.1-SNAPSHOT)

[16:31:29] [Server thread/INFO]: This is a final build for 1.14.4. Please see https://www.spigotmc.org/go/1.14.4 for details about upgrading.

```

### WorldEdit/FAWE versions:

<!--- Specify which plugin you are using, and add its version -->

- [X] FAWE *FastAsyncWorldEdit-1.15-45*:

- [] WorldEdit *version*:

### Description of the problem:

<!--- Be as specific as possible. Don't lie, redact information, or use false names/situations. -->

<!--- Who, What, When, Where, Why, How, Expected behavior, Resultant behavior, etc -->

If I want to use the schematic based world gen. with the plot.schem in the right folder for creating a new world, an Error occurs. After the Server has been loaded completely, the world is genarated and I can also enter it, but then there are always half or broken plots.

### How to replicate:

<!--- If you can reproduce the issue please tell us as detailed as possible step by step how to do that -->

I have done everything like it is described in the wiki, but than this Error occurs. The marked space for the plot schematic is about 200 Blocks in booth x and y direction. Maybe its too big? I also checked the worlds.yml but the Plot size is set to 200.

## Additional Information:

> The information here is optional for you to provide, however it may help us to more readily diagnose any compatibility and bug issues.

Sometimes the schematic isn´t placed completely if I use FAWE, mostly if I dont use //paste -a. Than I have to paste it again, before it works.

### Other plugins being used on the server:

<!--- Optional but recommended - issue "/plugins" in-game or in console and copy/paste the list -->

Plugins (19): NightVision, LuckPerms, WorldEdit, Spawntp, FastAsyncWorldEdit, SuperTrails, VoxelSniper, ClearLag, Vault, F3NPerm, Citizens, Essentials, ChatEx, CommandPanels, PlotSquared, Multiverse-Core, Multiverse-Inventories, Multiverse-SignPortals, ItemJoin

### Relevant console output, log lines, and/or screenshots:

<!--- Please use in-line code insertion

```

https://pastebin.com/Q5NqC5Ey

```

for short (20 lines or less) text blobs, or a paste service for large blobs -->

### Additional relevant comments/remarks:

<!--- Use this space to give us any additional information which may be relevant to this issue, such as: if you are using a Minecraft hosting provider; unusual installation environment; etc -->

At the moment the Server is running on localhost

# AFFIRMATION OF COMPLETION:

<!-- Make sure you have completed the following steps (put an "X" between of brackets): -->

- [X] I included all information required in the sections above

- [X] I made sure there are no duplicates of this report [(Use Search)](https://github.com/IntellectualSites/PlotSquared/issues?utf8=%E2%9C%93&q=is%3Aissue)

- [X] I made sure I am using an up-to-date version of PlotSquared

- [X] I made sure the bug/error is not caused by any other plugin

- [] I didn't read but checked everything above.

| non_defect | schematic based world generation is not working correctly notice diff plotsquared for minecraft java edition versions between through to are considered legacy and will receive limited to no support please consider upgrading to for future support plugins exist for which bring back behaviors found in all versions of plotsquared for sponge and nukkit x will receive limited to no support due to lack of developer interest and time additionally nukkitx has not had feature updates since the better together which prevents some plotsquared features from ever functioning contributions are always welcome however feature requests suggestions are to be submitted at the code contributions are to be done through tagging the specific issue ticket s if applicable and please for the love of the little sanity we have left use the correct channels bug report template required information section all fields in this section are required and must contain appropriate information server config info plot debugpaste file paste links i atteached the files because the first thing doesnt worked server type select one spigot paper craftbukkit should not be used re test with spigot first sponge note not actively maintained nukkitx note not actively maintained minecraft version select one select the type you are reporting the issue for put an x between of brackets the maintained versions are and x minecraft minecraft minecraft minecraft java edition other versions please specify minecraft bedrock edition specify version server build info this server is running craftbukkit version git spigot mc implementing api version snapshot this is a final build for please see for details about upgrading worldedit fawe versions fawe fastasyncworldedit worldedit version description of the problem if i want to use the schematic based world gen with the plot schem in the right folder for creating a new world an error occurs after the server has been loaded completely the world is genarated and i can also enter it but then there are always half or broken plots how to replicate i have done everything like it is described in the wiki but than this error occurs the marked space for the plot schematic is about blocks in booth x and y direction maybe its too big i also checked the worlds yml but the plot size is set to additional information the information here is optional for you to provide however it may help us to more readily diagnose any compatibility and bug issues sometimes the schematic isn´t placed completely if i use fawe mostly if i dont use paste a than i have to paste it again before it works other plugins being used on the server plugins nightvision luckperms worldedit spawntp fastasyncworldedit supertrails voxelsniper clearlag vault citizens essentials chatex commandpanels plotsquared multiverse core multiverse inventories multiverse signportals itemjoin relevant console output log lines and or screenshots please use in line code insertion for short lines or less text blobs or a paste service for large blobs additional relevant comments remarks at the moment the server is running on localhost affirmation of completion i included all information required in the sections above i made sure there are no duplicates of this report i made sure i am using an up to date version of plotsquared i made sure the bug error is not caused by any other plugin i didn t read but checked everything above | 0 |

93,675 | 27,013,079,089 | IssuesEvent | 2023-02-10 16:54:36 | microsoft/onnxruntime | https://api.github.com/repos/microsoft/onnxruntime | opened | TensorRT Execution Build Fails on Jetson Jetpack 4.6.1 | build | ### Describe the issue

ORT fails to build on Jetson Jetpack 4.6.1

I am using the 4.6.1 docker image `nvcr.io/nvidia/l4t-ml:r32.7.1-py3`

I am following the guide published by onnxruntime https://onnxruntime.ai/docs/build/eps.html#nvidia-jetson-tx1tx2nanoxavier

I suspect that the repository has outgrown this documentation. Is there new documentation?

notably this Microsoft prebuilt image for Jetson `mcr.microsoft.com/azureml/onnxruntime:v.1.4.0-jetpack4.4-l4t-base-r32.4.3` does not include TRT execution bindings. Is there a new supported image?

Thank you for your assistance.

### Urgency

Yes, this issue is blocking our production roadmap

### Target platform

Jetson Xaiver NX, Jetpack 4.6.1

### Build script

FROM nvcr.io/nvidia/l4t-ml:r32.7.1-py3

ARG DEBIAN_FRONTEND=noninteractive

RUN apt-get update

RUN apt-get install lshw -y

RUN apt-get install git -y

RUN git clone --recursive https://github.com/microsoft/onnxruntime

RUN export CUDACXX="/usr/local/cuda/bin/nvcc"

RUN apt-get update -y

RUN apt-get install python3-pip -y

RUN apt-get install python3-matplotlib -y

RUN apt-get install gfortran -y

RUN apt-get install -y build-essential libatlas-base-dev

RUN apt-get install ffmpeg libsm6 libxext6 -y

RUN apt install -y --no-install-recommends build-essential software-properties-common libopenblas-dev

RUN wget https://github.com/Kitware/CMake/releases/download/v3.23.0-rc1/cmake-3.23.0-rc1-linux-aarch64.tar.gz

RUN tar xf cmake-3.23.0-rc1-linux-aarch64.tar.gz

RUN export PATH="/cmake-3.20.0-rc1-linux-aarch64/bin:$PATH"

RUN apt remove cmake -y

RUN pwd

RUN ln -s /cmake-3.23.0-rc1-linux-aarch64/bin/* /usr/local/bin

RUN which cmake

RUN cmake --version

ENV PATH="/usr/local/cuda/bin:${PATH}"

ENV LD_LIBRARY_PATH="/usr/local/cuda/lib64:${LD_LIBRARY_PATH}"

RUN pip3 install --upgrade pip

RUN pip3 uninstall torch -y

RUN pip3 install torch==1.10.0

RUN echo "$PATH" && echo "$LD_LIBRARY_PATH"

WORKDIR /onnxruntime

RUN ./build.sh --config Release --update --build --parallel --build_wheel --use_tensorrt --cuda_home /usr/local/cuda --cudnn_home /usr/lib/aarch64-linux-gnu --tensorrt_home /usr/lib/aarch64-linux-gnu

### Error / output

[ 13%] Building CXX object _deps/protobuf-build/CMakeFiles/libprotobuf.dir/__/protobuf-src/src/google/protobuf/wrappers.pb.cc.o

[ 13%] Linking CXX static library libprotobuf.a

[ 13%] Built target libprotobuf

Makefile:165: recipe for target 'all' failed

make: *** [all] Error 2

Traceback (most recent call last):

File "/onnxruntime/tools/ci_build/build.py", line 2741, in <module>

sys.exit(main())

File "/onnxruntime/tools/ci_build/build.py", line 2638, in main

build_targets(args, cmake_path, build_dir, configs, num_parallel_jobs, args.target)

File "/onnxruntime/tools/ci_build/build.py", line 1399, in build_targets

run_subprocess(cmd_args, env=env)

File "/onnxruntime/tools/ci_build/build.py", line 766, in run_subprocess

return run(*args, cwd=cwd, capture_stdout=capture_stdout, shell=shell, env=my_env)

File "/onnxruntime/tools/python/util/run.py", line 57, in run

shell=shell,

File "/usr/lib/python3.6/subprocess.py", line 438, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['/usr/local/bin/cmake', '--build', '/onnxruntime/build/Linux/Release', '--config', 'Release', '--', '-j6']' returned non-zero exit status 2.

The command '/bin/sh -c ./onnxruntime/build.sh --config Release --update --build --parallel --build_wheel --use_tensorrt --cuda_home /usr/local/cuda --cudnn_home /usr/lib/aarch64-linux-gnu --tensorrt_home /usr/lib/aarch64-linux-gnu' returned a non-zero code: 1

### Visual Studio Version

_No response_

### GCC / Compiler Version

_No response_ | 1.0 | TensorRT Execution Build Fails on Jetson Jetpack 4.6.1 - ### Describe the issue

ORT fails to build on Jetson Jetpack 4.6.1

I am using the 4.6.1 docker image `nvcr.io/nvidia/l4t-ml:r32.7.1-py3`

I am following the guide published by onnxruntime https://onnxruntime.ai/docs/build/eps.html#nvidia-jetson-tx1tx2nanoxavier

I suspect that the repository has outgrown this documentation. Is there new documentation?

notably this Microsoft prebuilt image for Jetson `mcr.microsoft.com/azureml/onnxruntime:v.1.4.0-jetpack4.4-l4t-base-r32.4.3` does not include TRT execution bindings. Is there a new supported image?

Thank you for your assistance.

### Urgency

Yes, this issue is blocking our production roadmap

### Target platform

Jetson Xaiver NX, Jetpack 4.6.1

### Build script

FROM nvcr.io/nvidia/l4t-ml:r32.7.1-py3

ARG DEBIAN_FRONTEND=noninteractive

RUN apt-get update

RUN apt-get install lshw -y

RUN apt-get install git -y

RUN git clone --recursive https://github.com/microsoft/onnxruntime

RUN export CUDACXX="/usr/local/cuda/bin/nvcc"

RUN apt-get update -y

RUN apt-get install python3-pip -y

RUN apt-get install python3-matplotlib -y

RUN apt-get install gfortran -y

RUN apt-get install -y build-essential libatlas-base-dev

RUN apt-get install ffmpeg libsm6 libxext6 -y

RUN apt install -y --no-install-recommends build-essential software-properties-common libopenblas-dev

RUN wget https://github.com/Kitware/CMake/releases/download/v3.23.0-rc1/cmake-3.23.0-rc1-linux-aarch64.tar.gz

RUN tar xf cmake-3.23.0-rc1-linux-aarch64.tar.gz

RUN export PATH="/cmake-3.20.0-rc1-linux-aarch64/bin:$PATH"

RUN apt remove cmake -y

RUN pwd

RUN ln -s /cmake-3.23.0-rc1-linux-aarch64/bin/* /usr/local/bin

RUN which cmake

RUN cmake --version

ENV PATH="/usr/local/cuda/bin:${PATH}"

ENV LD_LIBRARY_PATH="/usr/local/cuda/lib64:${LD_LIBRARY_PATH}"

RUN pip3 install --upgrade pip

RUN pip3 uninstall torch -y

RUN pip3 install torch==1.10.0

RUN echo "$PATH" && echo "$LD_LIBRARY_PATH"

WORKDIR /onnxruntime

RUN ./build.sh --config Release --update --build --parallel --build_wheel --use_tensorrt --cuda_home /usr/local/cuda --cudnn_home /usr/lib/aarch64-linux-gnu --tensorrt_home /usr/lib/aarch64-linux-gnu

### Error / output

[ 13%] Building CXX object _deps/protobuf-build/CMakeFiles/libprotobuf.dir/__/protobuf-src/src/google/protobuf/wrappers.pb.cc.o

[ 13%] Linking CXX static library libprotobuf.a

[ 13%] Built target libprotobuf

Makefile:165: recipe for target 'all' failed

make: *** [all] Error 2

Traceback (most recent call last):

File "/onnxruntime/tools/ci_build/build.py", line 2741, in <module>

sys.exit(main())

File "/onnxruntime/tools/ci_build/build.py", line 2638, in main

build_targets(args, cmake_path, build_dir, configs, num_parallel_jobs, args.target)

File "/onnxruntime/tools/ci_build/build.py", line 1399, in build_targets

run_subprocess(cmd_args, env=env)

File "/onnxruntime/tools/ci_build/build.py", line 766, in run_subprocess

return run(*args, cwd=cwd, capture_stdout=capture_stdout, shell=shell, env=my_env)

File "/onnxruntime/tools/python/util/run.py", line 57, in run

shell=shell,

File "/usr/lib/python3.6/subprocess.py", line 438, in run

output=stdout, stderr=stderr)

subprocess.CalledProcessError: Command '['/usr/local/bin/cmake', '--build', '/onnxruntime/build/Linux/Release', '--config', 'Release', '--', '-j6']' returned non-zero exit status 2.

The command '/bin/sh -c ./onnxruntime/build.sh --config Release --update --build --parallel --build_wheel --use_tensorrt --cuda_home /usr/local/cuda --cudnn_home /usr/lib/aarch64-linux-gnu --tensorrt_home /usr/lib/aarch64-linux-gnu' returned a non-zero code: 1

### Visual Studio Version

_No response_

### GCC / Compiler Version

_No response_ | non_defect | tensorrt execution build fails on jetson jetpack describe the issue ort fails to build on jetson jetpack i am using the docker image nvcr io nvidia ml i am following the guide published by onnxruntime i suspect that the repository has outgrown this documentation is there new documentation notably this microsoft prebuilt image for jetson mcr microsoft com azureml onnxruntime v base does not include trt execution bindings is there a new supported image thank you for your assistance urgency yes this issue is blocking our production roadmap target platform jetson xaiver nx jetpack build script from nvcr io nvidia ml arg debian frontend noninteractive run apt get update run apt get install lshw y run apt get install git y run git clone recursive run export cudacxx usr local cuda bin nvcc run apt get update y run apt get install pip y run apt get install matplotlib y run apt get install gfortran y run apt get install y build essential libatlas base dev run apt get install ffmpeg y run apt install y no install recommends build essential software properties common libopenblas dev run wget run tar xf cmake linux tar gz run export path cmake linux bin path run apt remove cmake y run pwd run ln s cmake linux bin usr local bin run which cmake run cmake version env path usr local cuda bin path env ld library path usr local cuda ld library path run install upgrade pip run uninstall torch y run install torch run echo path echo ld library path workdir onnxruntime run build sh config release update build parallel build wheel use tensorrt cuda home usr local cuda cudnn home usr lib linux gnu tensorrt home usr lib linux gnu error output building cxx object deps protobuf build cmakefiles libprotobuf dir protobuf src src google protobuf wrappers pb cc o linking cxx static library libprotobuf a built target libprotobuf makefile recipe for target all failed make error traceback most recent call last file onnxruntime tools ci build build py line in sys exit main file onnxruntime tools ci build build py line in main build targets args cmake path build dir configs num parallel jobs args target file onnxruntime tools ci build build py line in build targets run subprocess cmd args env env file onnxruntime tools ci build build py line in run subprocess return run args cwd cwd capture stdout capture stdout shell shell env my env file onnxruntime tools python util run py line in run shell shell file usr lib subprocess py line in run output stdout stderr stderr subprocess calledprocesserror command returned non zero exit status the command bin sh c onnxruntime build sh config release update build parallel build wheel use tensorrt cuda home usr local cuda cudnn home usr lib linux gnu tensorrt home usr lib linux gnu returned a non zero code visual studio version no response gcc compiler version no response | 0 |

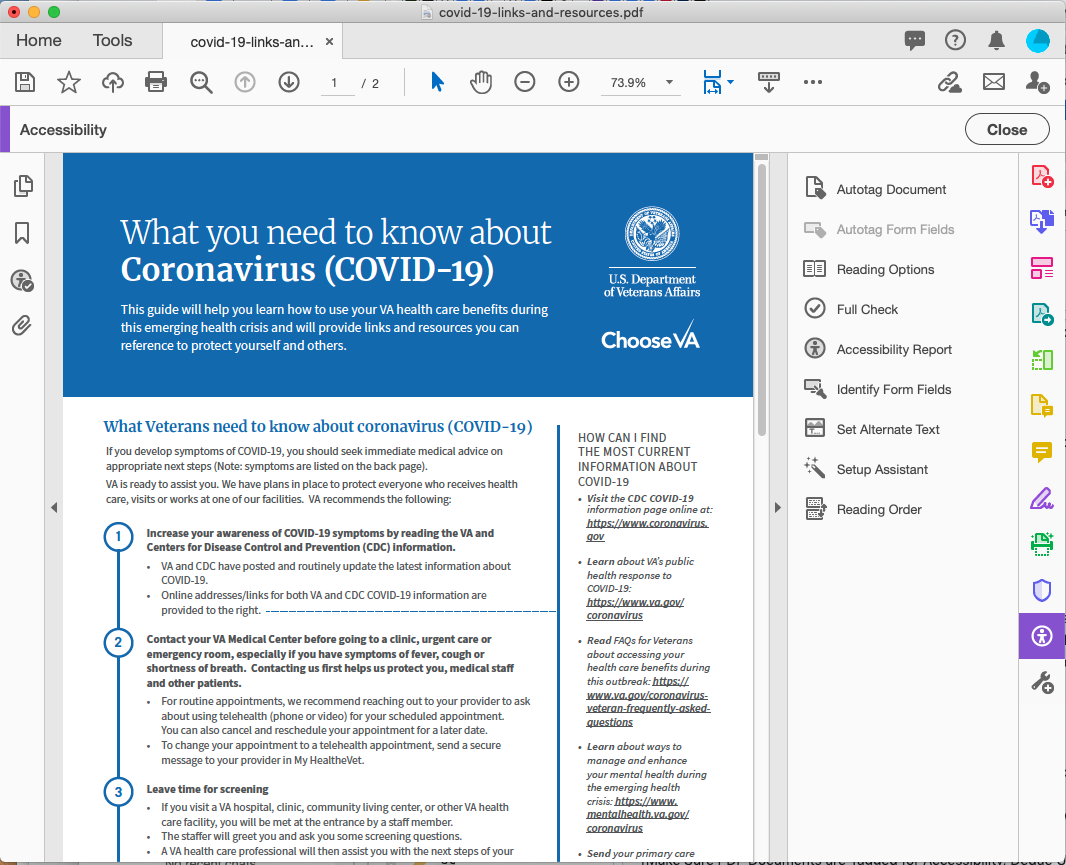

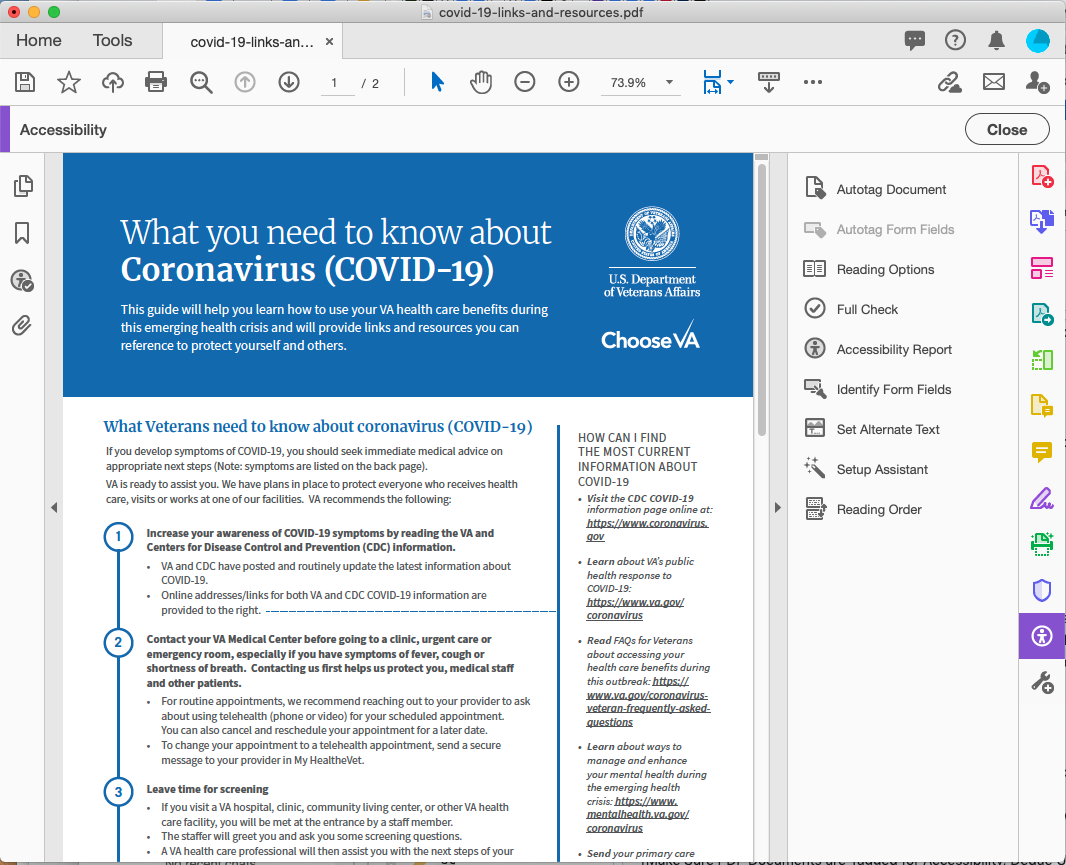

44,839 | 12,403,328,383 | IssuesEvent | 2020-05-21 13:43:52 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | [DOCUMENTS]: Documents MUST be accessible, Covid Links and Resources PDF | 508-defect-2 508-issue-documents 508/Accessibility needs-grooming vsa vsa-public-websites | # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

```diff

! This is really a 508-defect-1, but since we need to coordinate with another

! team to find out who is responsible for creating the accessible PDF, I am

! setting this as a 2.

```

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

Documents **must** be accessible. The [Covid-19 Links and Resources PDF](https://www.va.gov/covid-19-links-and-resources.pdf) fails an accessibility check, and must be remediated so that all the content is accessible for screen readers and other assistive technology.

If this PDF is created by another team, they may need to remediate the PDF. I am not clear on the responsibility assignment. Depending on what application is used to create the PDF, there are export options that will support accessibility.

## Point of Contact

**VFS Point of Contact:** Jennifer

## Acceptance Criteria

As a screen reader user, I want to access all of the content of PDFs, just as sighted users may.

## Environment

* Operating System: all

* Browser: all

* Server destination: production

## Steps to Recreate

1. Access the PDF at https://www.va.gov/covid-19-links-and-resources.pdf

1. Open the PDF in Adobe Acrobat Pro

1. Navigate to the Tools tab, and scroll to the bottom

1. In the second to the last row, select Accessibility

1. Run a Full Check

1. Verify there are Failed items, such as Tagged PDF, Title, in the Document; additional issues in Page Content, etc.

## Possible Fixes (optional)

Using the Adobe Acrobat Pro Accessibility Checker, follow the steps listed in the first link below to evaluate and remediate the PDF. Frankly, the first few times can really try your patience! After a while, it becomes clearer, and this is a valuable skill to add to your resume.

## WCAG or Vendor Guidance (optional)

* [Recommended: Using the Acrobat Pro DC Accessibility Checker](https://www.adobe.com/accessibility/products/acrobat/using-acrobat-pro-accessibility-checker.html)

* [HHS.gov - PDF File 508 Checklist (WCAG 2.0 Refresh)](https://www.hhs.gov/web/section-508/making-files-accessible/checklist/pdf/index.html)

* [Make Sure PDF Documents are Tagged for Accessibility, Deque University](https://dequeuniversity.com/tips/tagged-pdf-documents)

* [Requirements for an Accessible PDF: Part 1 (addl parts linked), Deque](https://www.deque.com/blog/requirements-accessible-pdf/)

* [PDF Accessibility: Everything You Need to Know, Deque](https://www.deque.com/blog/pdf-accessibility/)

* [W3C PDF Techniques for WCAG 2.0](https://www.w3.org/TR/WCAG20-TECHS/pdf)

* [Adobe PDF Accessibility Overview](https://www.adobe.com/accessibility/pdf.html)

## Screenshots or Trace Logs

| 1.0 | [DOCUMENTS]: Documents MUST be accessible, Covid Links and Resources PDF - # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

```diff

! This is really a 508-defect-1, but since we need to coordinate with another

! team to find out who is responsible for creating the accessible PDF, I am

! setting this as a 2.

```

**Feedback framework**

- **❗️ Must** for if the feedback must be applied

- **⚠️Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Description

Documents **must** be accessible. The [Covid-19 Links and Resources PDF](https://www.va.gov/covid-19-links-and-resources.pdf) fails an accessibility check, and must be remediated so that all the content is accessible for screen readers and other assistive technology.

If this PDF is created by another team, they may need to remediate the PDF. I am not clear on the responsibility assignment. Depending on what application is used to create the PDF, there are export options that will support accessibility.

## Point of Contact

**VFS Point of Contact:** Jennifer

## Acceptance Criteria

As a screen reader user, I want to access all of the content of PDFs, just as sighted users may.

## Environment

* Operating System: all

* Browser: all

* Server destination: production

## Steps to Recreate

1. Access the PDF at https://www.va.gov/covid-19-links-and-resources.pdf

1. Open the PDF in Adobe Acrobat Pro

1. Navigate to the Tools tab, and scroll to the bottom

1. In the second to the last row, select Accessibility

1. Run a Full Check

1. Verify there are Failed items, such as Tagged PDF, Title, in the Document; additional issues in Page Content, etc.

## Possible Fixes (optional)

Using the Adobe Acrobat Pro Accessibility Checker, follow the steps listed in the first link below to evaluate and remediate the PDF. Frankly, the first few times can really try your patience! After a while, it becomes clearer, and this is a valuable skill to add to your resume.

## WCAG or Vendor Guidance (optional)

* [Recommended: Using the Acrobat Pro DC Accessibility Checker](https://www.adobe.com/accessibility/products/acrobat/using-acrobat-pro-accessibility-checker.html)

* [HHS.gov - PDF File 508 Checklist (WCAG 2.0 Refresh)](https://www.hhs.gov/web/section-508/making-files-accessible/checklist/pdf/index.html)

* [Make Sure PDF Documents are Tagged for Accessibility, Deque University](https://dequeuniversity.com/tips/tagged-pdf-documents)

* [Requirements for an Accessible PDF: Part 1 (addl parts linked), Deque](https://www.deque.com/blog/requirements-accessible-pdf/)

* [PDF Accessibility: Everything You Need to Know, Deque](https://www.deque.com/blog/pdf-accessibility/)

* [W3C PDF Techniques for WCAG 2.0](https://www.w3.org/TR/WCAG20-TECHS/pdf)

* [Adobe PDF Accessibility Overview](https://www.adobe.com/accessibility/pdf.html)

## Screenshots or Trace Logs

| defect | documents must be accessible covid links and resources pdf diff this is really a defect but since we need to coordinate with another team to find out who is responsible for creating the accessible pdf i am setting this as a feedback framework ❗️ must for if the feedback must be applied ⚠️should if the feedback is best practice ✔️ consider for suggestions enhancements description documents must be accessible the fails an accessibility check and must be remediated so that all the content is accessible for screen readers and other assistive technology if this pdf is created by another team they may need to remediate the pdf i am not clear on the responsibility assignment depending on what application is used to create the pdf there are export options that will support accessibility point of contact vfs point of contact jennifer acceptance criteria as a screen reader user i want to access all of the content of pdfs just as sighted users may environment operating system all browser all server destination production steps to recreate access the pdf at open the pdf in adobe acrobat pro navigate to the tools tab and scroll to the bottom in the second to the last row select accessibility run a full check verify there are failed items such as tagged pdf title in the document additional issues in page content etc possible fixes optional using the adobe acrobat pro accessibility checker follow the steps listed in the first link below to evaluate and remediate the pdf frankly the first few times can really try your patience after a while it becomes clearer and this is a valuable skill to add to your resume wcag or vendor guidance optional screenshots or trace logs | 1 |

44,289 | 9,559,099,352 | IssuesEvent | 2019-05-03 15:46:28 | trufflesuite/ganache | https://api.github.com/repos/trufflesuite/ganache | closed | System Error when running Ganache 2.0.1 on darwin | truffle-decoder | This happens when I am clicking on one of my deployed contracts.

PLATFORM: darwin

GANACHE VERSION: 2.0.1

EXCEPTION:

```

TypeError: Cannot read property 'nodes' of undefined

at getStateVariables (/node_modules/truffle-decoder/dist/allocate/storage.js:146:24)

at vars.concat.linearizedBaseContractsFromBase.map (/node_modules/truffle-decoder/dist/allocate/storage.js:154:73)

at Array.map (native)

at allocateContract (/node_modules/truffle-decoder/dist/allocate/storage.js:154:61)

at Object.getStorageAllocations (/node_modules/truffle-decoder/dist/allocate/storage.js:28:23)

at TruffleContractDecoder.init (/node_modules/truffle-decoder/dist/interface/contract-decoder.js:85:43)

at Object.getContractState (/src/truffle-integration/decode.js:18:11)

at process.<anonymous> (/src/truffle-integration/index.js:76:31)

at emitTwo (events.js:125:13)

at process.emit (events.js:213:7)

at emit (internal/child_process.js:768:12)

at _combinedTickCallback (internal/process/next_tick.js:141:11)

at process._tickCallback (internal/process/next_tick.js:180:9)

```

APPLICATION LOG:

```

T+39957526ms: net_version

T+39957697ms: eth_sendTransaction

T+39957823ms: Transaction: 0x7a6fb1fd07eece85737553d247a6d373b746df178f77c1cf7ed14a6b3ae18a02

T+39957823ms: Contract created: 0x9db9528836587517cd6b90026b430f6e58063f64

T+39957823ms: Gas usage: 1250492

T+39957823ms: Block Number: 12

T+39957823ms: Block Time: Wed Apr 24 2019 09:20:34 GMT+0300 (EEST)

T+39957823ms: Runtime Error: revert

T+39957823ms: eth_call

T+39957925ms: eth_getBlockByNumber

T+39957925ms: eth_getBlockByNumber

T+39958030ms: eth_getBlockByNumber

T+39958191ms: eth_getBlockByNumber

T+39958191ms: eth_getBlockByNumber

T+40053858ms: net_version

T+40054236ms: eth_accounts

T+40054826ms: eth_getBlockByNumber

T+40054826ms: eth_accounts

T+40055240ms: eth_getBlockByNumber

T+40055240ms: eth_getBlockByNumber

T+40055785ms: eth_getBlockByNumber

T+40055785ms: eth_estimateGas

T+40056099ms: eth_getBlockByNumber

T+40056099ms: eth_blockNumber

T+40056500ms: eth_sendTransaction

T+40121694ms: Transaction: 0x6d3674c82a35d941f4a49ff409d2d1dc1c28a1f016283a6a592fd8914d6538df

T+40121694ms: Contract created: 0x9f2b851d683abe6d66bb552a50014ffbb94c60e7

T+40121694ms: Gas usage: 277462

T+40121694ms: Block Number: 13

T+40121694ms: Block Time: Wed Apr 24 2019 09:23:06 GMT+0300 (EEST)

T+40121694ms: eth_getTransactionReceipt

T+40121694ms: eth_getCode

T+40121694ms: eth_getTransactionByHash

T+40121878ms: eth_getBlockByNumber

T+40121878ms: eth_getBalance

T+40121878ms: eth_getBlockByNumber

T+40122016ms: eth_getBlockByNumber

T+40122150ms: eth_sendTransaction

T+40122352ms: Transaction: 0xa903f8b1bfac5ddc702356a9eb001fd7a5b6a87826151fbe114b25471cfff478

T+40122352ms: Gas usage: 42008

T+40122352ms: Block Number: 14

T+40122352ms: Block Time: Wed Apr 24 2019 09:23:06 GMT+0300 (EEST)

T+40122465ms: eth_getTransactionReceipt

T+40122575ms: eth_getBlockByNumber

T+40122705ms: eth_accounts

T+40122933ms: eth_getBlockByNumber

T+40123046ms: eth_getBlockByNumber

T+40123172ms: eth_getBlockByNumber

T+40123317ms: eth_estimateGas

T+40123502ms: eth_getBlockByNumber

T+40123502ms: eth_blockNumber

T+40123502ms: eth_sendTransaction

T+40123625ms: Transaction: 0x2fac23f33da86c7506a4d8539efd29a88baae0222f43b88f8b7c022cb1259d5c

T+40123625ms: Contract created: 0xc93fc6808cff5dddf88e46b78a5119b3db1d24e1

T+40123625ms: Gas usage: 953902

T+40123625ms: Block Number: 15

T+40123625ms: Block Time: Wed Apr 24 2019 09:23:07 GMT+0300 (EEST)

T+40123625ms: eth_getTransactionReceipt

T+40123625ms: eth_getCode

T+40123729ms: eth_getTransactionByHash

T+40123729ms: eth_getBlockByNumber

T+40123864ms: eth_getBalance

T+40124081ms: eth_getBlockByNumber

T+40124081ms: eth_getBlockByNumber

T+40124081ms: eth_sendTransaction

T+40124203ms: Transaction: 0x5ea3bcec61d6dd35b94b6a4da55e6459770985313719b955f50c88492a00d370

T+40124203ms: Gas usage: 27008

T+40124203ms: Block Number: 16

T+40124203ms: Block Time: Wed Apr 24 2019 09:23:07 GMT+0300 (EEST)

T+40124357ms: eth_getTransactionReceipt

T+40124486ms: eth_getBlockByNumber

T+40124668ms: eth_accounts

T+40124790ms: eth_getBlockByNumber

T+40124790ms: net_version

T+40124790ms: eth_getBlockByNumber

T+40124790ms: eth_getBlockByNumber

T+40124927ms: net_version

T+40125108ms: eth_estimateGas

T+40125294ms: eth_getBlockByNumber

T+40125410ms: eth_blockNumber

T+40125549ms: net_version

T+40125664ms: eth_sendTransaction

T+40125914ms: Transaction: 0xe20dd446b22263db722723767505115a1ce3f80fd516de00c068086d6fd08e11

T+40125914ms: Contract created: 0x4c6bafda49317d7451f00f11d5420a5d954e68c5

T+40125914ms: Gas usage: 4573266

T+40125914ms: Block Number: 17

T+40125914ms: Block Time: Wed Apr 24 2019 09:23:08 GMT+0300 (EEST)

T+40125914ms: eth_getTransactionReceipt

T+40125914ms: eth_getCode

T+40125914ms: eth_getTransactionByHash

T+40125914ms: eth_getBlockByNumber

T+40125914ms: eth_getBalance

T+40126054ms: eth_getBlockByNumber

T+40126245ms: eth_getBlockByNumber

T+40126449ms: eth_sendTransaction

T+40126647ms: Transaction: 0xe4b2bd6d6957c2c0bfbeb725f30a2f83bca66a3db320a943b5caa642bcbe93e4

T+40126647ms: Gas usage: 27008

T+40126647ms: Block Number: 18

T+40126647ms: Block Time: Wed Apr 24 2019 09:23:08 GMT+0300 (EEST)

T+40126780ms: eth_getTransactionReceipt

T+40126905ms: eth_getBlockByNumber

T+40126905ms: eth_getBlockByNumber

T+40127067ms: eth_getBlockByNumber

T+40127067ms: eth_getBlockByNumber

T+40127067ms: eth_getBlockByNumber

T+40127067ms: eth_getBlockByNumber

T+40160025ms: eth_unsubscribe

T+40160025ms: eth_unsubscribe

T+40160190ms: eth_unsubscribe

T+40160190ms: eth_unsubscribe

``` | 1.0 | System Error when running Ganache 2.0.1 on darwin - This happens when I am clicking on one of my deployed contracts.

PLATFORM: darwin

GANACHE VERSION: 2.0.1

EXCEPTION:

```

TypeError: Cannot read property 'nodes' of undefined

at getStateVariables (/node_modules/truffle-decoder/dist/allocate/storage.js:146:24)

at vars.concat.linearizedBaseContractsFromBase.map (/node_modules/truffle-decoder/dist/allocate/storage.js:154:73)

at Array.map (native)

at allocateContract (/node_modules/truffle-decoder/dist/allocate/storage.js:154:61)

at Object.getStorageAllocations (/node_modules/truffle-decoder/dist/allocate/storage.js:28:23)

at TruffleContractDecoder.init (/node_modules/truffle-decoder/dist/interface/contract-decoder.js:85:43)

at Object.getContractState (/src/truffle-integration/decode.js:18:11)

at process.<anonymous> (/src/truffle-integration/index.js:76:31)

at emitTwo (events.js:125:13)

at process.emit (events.js:213:7)

at emit (internal/child_process.js:768:12)

at _combinedTickCallback (internal/process/next_tick.js:141:11)

at process._tickCallback (internal/process/next_tick.js:180:9)

```

APPLICATION LOG:

```

T+39957526ms: net_version

T+39957697ms: eth_sendTransaction

T+39957823ms: Transaction: 0x7a6fb1fd07eece85737553d247a6d373b746df178f77c1cf7ed14a6b3ae18a02

T+39957823ms: Contract created: 0x9db9528836587517cd6b90026b430f6e58063f64

T+39957823ms: Gas usage: 1250492

T+39957823ms: Block Number: 12

T+39957823ms: Block Time: Wed Apr 24 2019 09:20:34 GMT+0300 (EEST)

T+39957823ms: Runtime Error: revert

T+39957823ms: eth_call

T+39957925ms: eth_getBlockByNumber

T+39957925ms: eth_getBlockByNumber

T+39958030ms: eth_getBlockByNumber

T+39958191ms: eth_getBlockByNumber

T+39958191ms: eth_getBlockByNumber

T+40053858ms: net_version

T+40054236ms: eth_accounts

T+40054826ms: eth_getBlockByNumber

T+40054826ms: eth_accounts

T+40055240ms: eth_getBlockByNumber

T+40055240ms: eth_getBlockByNumber

T+40055785ms: eth_getBlockByNumber

T+40055785ms: eth_estimateGas

T+40056099ms: eth_getBlockByNumber

T+40056099ms: eth_blockNumber

T+40056500ms: eth_sendTransaction

T+40121694ms: Transaction: 0x6d3674c82a35d941f4a49ff409d2d1dc1c28a1f016283a6a592fd8914d6538df

T+40121694ms: Contract created: 0x9f2b851d683abe6d66bb552a50014ffbb94c60e7

T+40121694ms: Gas usage: 277462

T+40121694ms: Block Number: 13

T+40121694ms: Block Time: Wed Apr 24 2019 09:23:06 GMT+0300 (EEST)

T+40121694ms: eth_getTransactionReceipt

T+40121694ms: eth_getCode

T+40121694ms: eth_getTransactionByHash

T+40121878ms: eth_getBlockByNumber

T+40121878ms: eth_getBalance

T+40121878ms: eth_getBlockByNumber

T+40122016ms: eth_getBlockByNumber

T+40122150ms: eth_sendTransaction

T+40122352ms: Transaction: 0xa903f8b1bfac5ddc702356a9eb001fd7a5b6a87826151fbe114b25471cfff478

T+40122352ms: Gas usage: 42008

T+40122352ms: Block Number: 14

T+40122352ms: Block Time: Wed Apr 24 2019 09:23:06 GMT+0300 (EEST)

T+40122465ms: eth_getTransactionReceipt

T+40122575ms: eth_getBlockByNumber

T+40122705ms: eth_accounts

T+40122933ms: eth_getBlockByNumber

T+40123046ms: eth_getBlockByNumber

T+40123172ms: eth_getBlockByNumber

T+40123317ms: eth_estimateGas

T+40123502ms: eth_getBlockByNumber

T+40123502ms: eth_blockNumber

T+40123502ms: eth_sendTransaction

T+40123625ms: Transaction: 0x2fac23f33da86c7506a4d8539efd29a88baae0222f43b88f8b7c022cb1259d5c

T+40123625ms: Contract created: 0xc93fc6808cff5dddf88e46b78a5119b3db1d24e1

T+40123625ms: Gas usage: 953902

T+40123625ms: Block Number: 15

T+40123625ms: Block Time: Wed Apr 24 2019 09:23:07 GMT+0300 (EEST)

T+40123625ms: eth_getTransactionReceipt

T+40123625ms: eth_getCode

T+40123729ms: eth_getTransactionByHash

T+40123729ms: eth_getBlockByNumber

T+40123864ms: eth_getBalance

T+40124081ms: eth_getBlockByNumber

T+40124081ms: eth_getBlockByNumber

T+40124081ms: eth_sendTransaction

T+40124203ms: Transaction: 0x5ea3bcec61d6dd35b94b6a4da55e6459770985313719b955f50c88492a00d370

T+40124203ms: Gas usage: 27008

T+40124203ms: Block Number: 16

T+40124203ms: Block Time: Wed Apr 24 2019 09:23:07 GMT+0300 (EEST)

T+40124357ms: eth_getTransactionReceipt

T+40124486ms: eth_getBlockByNumber

T+40124668ms: eth_accounts

T+40124790ms: eth_getBlockByNumber

T+40124790ms: net_version

T+40124790ms: eth_getBlockByNumber

T+40124790ms: eth_getBlockByNumber

T+40124927ms: net_version

T+40125108ms: eth_estimateGas

T+40125294ms: eth_getBlockByNumber

T+40125410ms: eth_blockNumber

T+40125549ms: net_version

T+40125664ms: eth_sendTransaction

T+40125914ms: Transaction: 0xe20dd446b22263db722723767505115a1ce3f80fd516de00c068086d6fd08e11