Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

15,938 | 2,869,100,656 | IssuesEvent | 2015-06-05 23:20:29 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | pkg/watcher/test/utils.dart closes sandbox before watches. | Area-Pkg Pkg-Watcher Priority-Unassigned Triaged Type-Defect | On Windows, removing the directory before closing the watcher can lead to unwanted behavior.

Sadly, I was not able to identify how to change the order of execution here. | 1.0 | pkg/watcher/test/utils.dart closes sandbox before watches. - On Windows, removing the directory before closing the watcher can lead to unwanted behavior.

Sadly, I was not able to identify how to change the order of execution here. | defect | pkg watcher test utils dart closes sandbox before watches on windows removing the directory before closing the watcher can lead to unwanted behavior sadly i was not able to identify how to change the order of execution here | 1 |

69,350 | 22,320,666,040 | IssuesEvent | 2022-06-14 05:58:07 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | "Join meeting" button in pre-meeting screen not clickable. | T-Defect X-Cannot-Reproduce X-Regression S-Major A-Electron A-Jitsi O-Uncommon | ### Steps to reproduce

Element-desktop 1.9.8 and Element-nightly 2022011301 under Debian 11.2/stable

with KDE/Plasma, with a local private matrix-synapse 1.49.0-1~bpo11+2 server,

also under Debian 11.2 with backports.

Some of the symptoms are the same as described in

https://github.com/vector-im/element-web/issues/18506

like the disconnections every 30 seconds.

Description

On startup, the main window pops up and displays the text messages just fine.

When I click on the jitsi icon the video field displays "Jitsi Video

Conference" and a button to "Join Conference".

When I do that, the video screen shows the camera input and at the bottom

half of it the pre-meeting screen has a white field with my user name and

a blue button to "Join meeting". Clicking on this button has no effect. I

can never join the meeting.

Shortly after (30 seconds), a pop-up says "You have been disconnected, you

may want to check the network connection. Reconnecting in 30 seconds." and

then it counts down. Then it disconnects again and again. This is the

same symptom described in issue 18506.

This goes on until I exit the program or I disable the pre-meeting screen

from the settings gear on the pre-meeting screen. If I do the latter I

see a grey screen with my camera input in the corner greyed out until the

next disconnection. At that time, the corner mini-screen goes black (with

the AV circle on it and the microphone and camera icons slashed out.

Clicking on the microphone or camera icons that show up when you move the

mouse over the video field don't enable them. The only thing that works

is the red hang-up button, which brings me back to the "Jitsi Video

Conference with the "Join Conference" green button" field.

Reconnecting now goes directly to the grey screen (no pre-meeting screen as

that has been disabled) until the next disconnection. The first time (before

the first disconnection, the field at the top (when mouse is hovering over the

video field had the name of the room, subsequent times it had "Jitsi Heb...".

At least I can access the config menu (...) and re-enable the pre-meeting

screen. When that is done, it's back to the unclickable blue "Join meeting"

button. However, now the microphone and camera icons in the pre-meeting

screen are usable. Clicking them re-enables the video in the top half of the

video screen.

So, it is totally impossible to make video calls from this system and the

sequences above are totally repeatable. What can I test or where can I look

to find more details to track down this issue? Some of this behavior has

been present for a few releases, before that Element-desktop worked

correctly on this computer, and most recently it did so a few days ago, until

apparently the latest upgrade. I do not remember upgrading element-desktop

manually but the pre-meeting screen is new, so I suspect this latest behavior

has been caused by the latest release.

On another computer (a laptop with built in camera) with the same debian

stable OS, I see the same issue with the pre-meeting screen but once that is

disabled, the video part of the screen turns grey and no amount of hovering

or clicking can bring up the red hang-up button, so it is impossible to do

anything but exit the program.

I have seen on a few occasions a very quickly disappearing pop-up message at

the beginning, when I just started the program, but it disappeared in less

than a second, so couldn't read what it said.

### Outcome

#### What did you expect?

That I could join the specified room via video call like I had done before.

#### What happened instead?

The program is totally unusable.

### Operating system

Debian 11.2/stable with KDE/Plasma, with a local private matrix-synapse 1.49.0-1~bpo11+2 server, also under Debian 11.2 with backports.

### Application version

Element-desktop 1.9.8 and Element-nightly 2022011301

### How did you install the app?

deb [signed-by=/usr/share/keyrings/riot-im-archive-keyring.gpg] https://packages.riot.im/debian/ bullseye main

### Homeserver

local private matrix-synapse 1.49.0-1~bpo11+2 server, also under Debian 11.2 with backports.

### Will you send logs?

Yes | 1.0 | "Join meeting" button in pre-meeting screen not clickable. - ### Steps to reproduce

Element-desktop 1.9.8 and Element-nightly 2022011301 under Debian 11.2/stable

with KDE/Plasma, with a local private matrix-synapse 1.49.0-1~bpo11+2 server,

also under Debian 11.2 with backports.

Some of the symptoms are the same as described in

https://github.com/vector-im/element-web/issues/18506

like the disconnections every 30 seconds.

Description

On startup, the main window pops up and displays the text messages just fine.

When I click on the jitsi icon the video field displays "Jitsi Video

Conference" and a button to "Join Conference".

When I do that, the video screen shows the camera input and at the bottom

half of it the pre-meeting screen has a white field with my user name and

a blue button to "Join meeting". Clicking on this button has no effect. I

can never join the meeting.

Shortly after (30 seconds), a pop-up says "You have been disconnected, you

may want to check the network connection. Reconnecting in 30 seconds." and

then it counts down. Then it disconnects again and again. This is the

same symptom described in issue 18506.

This goes on until I exit the program or I disable the pre-meeting screen

from the settings gear on the pre-meeting screen. If I do the latter I

see a grey screen with my camera input in the corner greyed out until the

next disconnection. At that time, the corner mini-screen goes black (with

the AV circle on it and the microphone and camera icons slashed out.

Clicking on the microphone or camera icons that show up when you move the

mouse over the video field don't enable them. The only thing that works

is the red hang-up button, which brings me back to the "Jitsi Video

Conference with the "Join Conference" green button" field.

Reconnecting now goes directly to the grey screen (no pre-meeting screen as

that has been disabled) until the next disconnection. The first time (before

the first disconnection, the field at the top (when mouse is hovering over the

video field had the name of the room, subsequent times it had "Jitsi Heb...".

At least I can access the config menu (...) and re-enable the pre-meeting

screen. When that is done, it's back to the unclickable blue "Join meeting"

button. However, now the microphone and camera icons in the pre-meeting

screen are usable. Clicking them re-enables the video in the top half of the

video screen.

So, it is totally impossible to make video calls from this system and the

sequences above are totally repeatable. What can I test or where can I look

to find more details to track down this issue? Some of this behavior has

been present for a few releases, before that Element-desktop worked

correctly on this computer, and most recently it did so a few days ago, until

apparently the latest upgrade. I do not remember upgrading element-desktop

manually but the pre-meeting screen is new, so I suspect this latest behavior

has been caused by the latest release.

On another computer (a laptop with built in camera) with the same debian

stable OS, I see the same issue with the pre-meeting screen but once that is

disabled, the video part of the screen turns grey and no amount of hovering

or clicking can bring up the red hang-up button, so it is impossible to do

anything but exit the program.

I have seen on a few occasions a very quickly disappearing pop-up message at

the beginning, when I just started the program, but it disappeared in less

than a second, so couldn't read what it said.

### Outcome

#### What did you expect?

That I could join the specified room via video call like I had done before.

#### What happened instead?

The program is totally unusable.

### Operating system

Debian 11.2/stable with KDE/Plasma, with a local private matrix-synapse 1.49.0-1~bpo11+2 server, also under Debian 11.2 with backports.

### Application version

Element-desktop 1.9.8 and Element-nightly 2022011301

### How did you install the app?

deb [signed-by=/usr/share/keyrings/riot-im-archive-keyring.gpg] https://packages.riot.im/debian/ bullseye main

### Homeserver

local private matrix-synapse 1.49.0-1~bpo11+2 server, also under Debian 11.2 with backports.

### Will you send logs?

Yes | defect | join meeting button in pre meeting screen not clickable steps to reproduce element desktop and element nightly under debian stable with kde plasma with a local private matrix synapse server also under debian with backports some of the symptoms are the same as described in like the disconnections every seconds description on startup the main window pops up and displays the text messages just fine when i click on the jitsi icon the video field displays jitsi video conference and a button to join conference when i do that the video screen shows the camera input and at the bottom half of it the pre meeting screen has a white field with my user name and a blue button to join meeting clicking on this button has no effect i can never join the meeting shortly after seconds a pop up says you have been disconnected you may want to check the network connection reconnecting in seconds and then it counts down then it disconnects again and again this is the same symptom described in issue this goes on until i exit the program or i disable the pre meeting screen from the settings gear on the pre meeting screen if i do the latter i see a grey screen with my camera input in the corner greyed out until the next disconnection at that time the corner mini screen goes black with the av circle on it and the microphone and camera icons slashed out clicking on the microphone or camera icons that show up when you move the mouse over the video field don t enable them the only thing that works is the red hang up button which brings me back to the jitsi video conference with the join conference green button field reconnecting now goes directly to the grey screen no pre meeting screen as that has been disabled until the next disconnection the first time before the first disconnection the field at the top when mouse is hovering over the video field had the name of the room subsequent times it had jitsi heb at least i can access the config menu and re enable the pre meeting screen when that is done it s back to the unclickable blue join meeting button however now the microphone and camera icons in the pre meeting screen are usable clicking them re enables the video in the top half of the video screen so it is totally impossible to make video calls from this system and the sequences above are totally repeatable what can i test or where can i look to find more details to track down this issue some of this behavior has been present for a few releases before that element desktop worked correctly on this computer and most recently it did so a few days ago until apparently the latest upgrade i do not remember upgrading element desktop manually but the pre meeting screen is new so i suspect this latest behavior has been caused by the latest release on another computer a laptop with built in camera with the same debian stable os i see the same issue with the pre meeting screen but once that is disabled the video part of the screen turns grey and no amount of hovering or clicking can bring up the red hang up button so it is impossible to do anything but exit the program i have seen on a few occasions a very quickly disappearing pop up message at the beginning when i just started the program but it disappeared in less than a second so couldn t read what it said outcome what did you expect that i could join the specified room via video call like i had done before what happened instead the program is totally unusable operating system debian stable with kde plasma with a local private matrix synapse server also under debian with backports application version element desktop and element nightly how did you install the app deb bullseye main homeserver local private matrix synapse server also under debian with backports will you send logs yes | 1 |

60,116 | 17,023,339,368 | IssuesEvent | 2021-07-03 01:30:48 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Zipped (.zip) GPXs don't upload | Component: website Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 12.25am, Sunday, 4th January 2009]**

Zipped versions of

http://www.openstreetmap.org/user/Richard/traces/287243

http://www.openstreetmap.org/user/Richard/traces/287244

failed to parse, generating a failure e-mail with the error

Generic XML parse error

XML parser at line 1 column 2

The gzipped versions of the same GPX have uploaded fine (as per above).

I suppose I could alternatively file a trac ticket at apple.com to ask them to change their contextual-menu compression option to produce .gz rather than .zip... | 1.0 | Zipped (.zip) GPXs don't upload - **[Submitted to the original trac issue database at 12.25am, Sunday, 4th January 2009]**

Zipped versions of

http://www.openstreetmap.org/user/Richard/traces/287243

http://www.openstreetmap.org/user/Richard/traces/287244

failed to parse, generating a failure e-mail with the error

Generic XML parse error

XML parser at line 1 column 2

The gzipped versions of the same GPX have uploaded fine (as per above).

I suppose I could alternatively file a trac ticket at apple.com to ask them to change their contextual-menu compression option to produce .gz rather than .zip... | defect | zipped zip gpxs don t upload zipped versions of failed to parse generating a failure e mail with the error generic xml parse error xml parser at line column the gzipped versions of the same gpx have uploaded fine as per above i suppose i could alternatively file a trac ticket at apple com to ask them to change their contextual menu compression option to produce gz rather than zip | 1 |

470,032 | 13,530,013,176 | IssuesEvent | 2020-09-15 19:13:00 | kubernetes/website | https://api.github.com/repos/kubernetes/website | closed | Incorrect version number in Limitations section | help wanted kind/cleanup language/en lifecycle/rotten priority/backlog | **This is a Bug Report**

<!-- Thanks for filing an issue! Before submitting, please fill in the following information. -->

<!-- See https://kubernetes.io/docs/contribute/start/ for guidance on writing an actionable issue description. -->

<!--Required Information-->

**Problem:**

Under the limitations section, it says: "In Kubernetes version 1.5"

**Proposed Solution:**

Correct version number

**Page to Update:**

https://kubernetes.io/docs/setup/best-practices/node-conformance/

<!--Optional Information (remove the comment tags around information you would like to include)-->

<!--Kubernetes Version:-->

<!--Additional Information:-->

| 1.0 | Incorrect version number in Limitations section - **This is a Bug Report**

<!-- Thanks for filing an issue! Before submitting, please fill in the following information. -->

<!-- See https://kubernetes.io/docs/contribute/start/ for guidance on writing an actionable issue description. -->

<!--Required Information-->

**Problem:**

Under the limitations section, it says: "In Kubernetes version 1.5"

**Proposed Solution:**

Correct version number

**Page to Update:**

https://kubernetes.io/docs/setup/best-practices/node-conformance/

<!--Optional Information (remove the comment tags around information you would like to include)-->

<!--Kubernetes Version:-->

<!--Additional Information:-->

| non_defect | incorrect version number in limitations section this is a bug report problem under the limitations section it says in kubernetes version proposed solution correct version number page to update | 0 |

41,667 | 10,563,182,873 | IssuesEvent | 2019-10-04 20:16:31 | networkx/networkx | https://api.github.com/repos/networkx/networkx | closed | `from_pandas_edgelist` creates empty graph if there are no attributes | Defect | Using `from_pandas_edgelist` with `edge_attr=True` results in an empty graph if there are no attribute columns. If a user passes in a dataframe which _may_ contain attribute columns, it will yield an empty graph if there are no non-source/target columns. | 1.0 | `from_pandas_edgelist` creates empty graph if there are no attributes - Using `from_pandas_edgelist` with `edge_attr=True` results in an empty graph if there are no attribute columns. If a user passes in a dataframe which _may_ contain attribute columns, it will yield an empty graph if there are no non-source/target columns. | defect | from pandas edgelist creates empty graph if there are no attributes using from pandas edgelist with edge attr true results in an empty graph if there are no attribute columns if a user passes in a dataframe which may contain attribute columns it will yield an empty graph if there are no non source target columns | 1 |

72,277 | 24,031,689,762 | IssuesEvent | 2022-09-15 15:29:35 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | TIMESTAMP Column in Custom Monthly Report Tables under both peak heating and peak cooling report has a trailing space | Defect | Issue overview

--------------

The Following SQL query does not work, this is true for both the cooling peak and heating peak tables

`SELECT Value FROM tabulardatawithstrings WHERE ReportName='BUILDING ENERGY PERFORMANCE - DISTRICT COOLING PEAK DEMAND' and ReportForString='Meter' and TableName='Custom Monthly Report' and RowName='December' and ColumnName='DISTRICTCOOLING:FACILITY {TIMESTAMP}' ;`

There is a trailing space in the column name so this query works

`SELECT Value FROM tabulardatawithstrings WHERE ReportName='BUILDING ENERGY PERFORMANCE - DISTRICT COOLING PEAK DEMAND' and ReportForString='Meter' and TableName='Custom Monthly Report' and RowName='December' and ColumnName='DISTRICTCOOLING:FACILITY {TIMESTAMP} ' ;`

But to make a more flexible work around Ill use this code below so it works now in existing state but will still wok once this bug is fixed without me having to alter code. `ColumnName LIKE '%DISTRICTCOOLING:FACILITY {TIMESTAMP}%' `

As a note, this seems to be relatively isolated to this column in these two tables. In the same table `DISTRICTCOOLING:FACILITY {Maximum}` doesn't exhibit this issue and in another table `EnergyMeters / Annual and Peak Values - Other` a column with TIMESTAMP doesn't have the trailing space `Timestamp of Maximum {TIMESTAMP}`

### Details

Some additional details for this issue (if relevant):

- E+ Version 22.1

### Checklist

Add to this list or remove from it as applicable. This is a simple templated set of guidelines.

- [x] Defect file added (list location of defect file here)

- [x] Ticket added to Pivotal for defect (development team task)

- [ ] Pull request created (the pull request will have additional tasks related to reviewing changes that fix this defect)

| 1.0 | TIMESTAMP Column in Custom Monthly Report Tables under both peak heating and peak cooling report has a trailing space - Issue overview

--------------

The Following SQL query does not work, this is true for both the cooling peak and heating peak tables

`SELECT Value FROM tabulardatawithstrings WHERE ReportName='BUILDING ENERGY PERFORMANCE - DISTRICT COOLING PEAK DEMAND' and ReportForString='Meter' and TableName='Custom Monthly Report' and RowName='December' and ColumnName='DISTRICTCOOLING:FACILITY {TIMESTAMP}' ;`

There is a trailing space in the column name so this query works

`SELECT Value FROM tabulardatawithstrings WHERE ReportName='BUILDING ENERGY PERFORMANCE - DISTRICT COOLING PEAK DEMAND' and ReportForString='Meter' and TableName='Custom Monthly Report' and RowName='December' and ColumnName='DISTRICTCOOLING:FACILITY {TIMESTAMP} ' ;`

But to make a more flexible work around Ill use this code below so it works now in existing state but will still wok once this bug is fixed without me having to alter code. `ColumnName LIKE '%DISTRICTCOOLING:FACILITY {TIMESTAMP}%' `

As a note, this seems to be relatively isolated to this column in these two tables. In the same table `DISTRICTCOOLING:FACILITY {Maximum}` doesn't exhibit this issue and in another table `EnergyMeters / Annual and Peak Values - Other` a column with TIMESTAMP doesn't have the trailing space `Timestamp of Maximum {TIMESTAMP}`

### Details

Some additional details for this issue (if relevant):

- E+ Version 22.1

### Checklist

Add to this list or remove from it as applicable. This is a simple templated set of guidelines.

- [x] Defect file added (list location of defect file here)

- [x] Ticket added to Pivotal for defect (development team task)

- [ ] Pull request created (the pull request will have additional tasks related to reviewing changes that fix this defect)

| defect | timestamp column in custom monthly report tables under both peak heating and peak cooling report has a trailing space issue overview the following sql query does not work this is true for both the cooling peak and heating peak tables select value from tabulardatawithstrings where reportname building energy performance district cooling peak demand and reportforstring meter and tablename custom monthly report and rowname december and columnname districtcooling facility timestamp there is a trailing space in the column name so this query works select value from tabulardatawithstrings where reportname building energy performance district cooling peak demand and reportforstring meter and tablename custom monthly report and rowname december and columnname districtcooling facility timestamp but to make a more flexible work around ill use this code below so it works now in existing state but will still wok once this bug is fixed without me having to alter code columnname like districtcooling facility timestamp as a note this seems to be relatively isolated to this column in these two tables in the same table districtcooling facility maximum doesn t exhibit this issue and in another table energymeters annual and peak values other a column with timestamp doesn t have the trailing space timestamp of maximum timestamp details some additional details for this issue if relevant e version checklist add to this list or remove from it as applicable this is a simple templated set of guidelines defect file added list location of defect file here ticket added to pivotal for defect development team task pull request created the pull request will have additional tasks related to reviewing changes that fix this defect | 1 |

67,561 | 20,994,320,943 | IssuesEvent | 2022-03-29 12:15:45 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | CAST to PostgreSQL enum type lacks type qualification | T: Defect C: Functionality C: DB: PostgreSQL P: Medium E: All Editions | ### Expected behavior and actual behavior:

Using `DSL.cast()` with some database enum type, the generated SQL doesn't qualify the enum type, leading to errors (unless the schema is in the search_path; this is how we uncovered this bug actually: our app works OK for us developers and on our demo servers, but failed when deployed on our client's servers, because they use a different database user, whose name doesn't match that of the database schema).

jOOQ will generate `cast(?::"the_schema"."the_enum" as the_enum)` (or `cast(?::"the_schema"."the_enum"[] as the_enum[])` for an array, in our actual case), which will trigger an error `ERROR: type "the_enum" does not exist(..)`.

Note how the type in the cast is not qualified, whereas it's correctly qualified in typing the parameter.

Of course, the cast here is redundant (I'm almost certain it was necessary in an older version of jOOQ though, but at least we have an easy fix for our app), but interestingly we also have a domain type (`CREATE DOMAIN … AS text`) that in turn **requires** us to use a cast when used as an array, and in this case jOOQ correctly uses the qualified domain type: `cast(?::varchar[] as "the_schema"."the_domain"[])`.

### Steps to reproduce the problem (if possible, create an MCVE: https://github.com/jOOQ/jOOQ-mcve):

* have an enum type in your schema (`CREATE TYPE … AS ENUM …`) and a table using it for one of its columns

* use a `DSL.cast(…, MY_TABLE.MY_FIELD)` where the field is of the enum type

* have a search_path that doesn't include your schema

MCVE at https://github.com/atolcd-contrib/jOOQ-mcve/tree/issue-10277

### Versions:

- jOOQ: 3.12.4 and 3.13.2

- Java: OpenJDK 11

- Database (include vendor): Postgresql (reproduced on 11 and 12)

- OS: Linux (Arch Linux, and Docker's `openjdk:11`)

- JDBC Driver (include name if inofficial driver): `org.postgresql:postgresql` versions 42.2.9 and 42.2.14

| 1.0 | CAST to PostgreSQL enum type lacks type qualification - ### Expected behavior and actual behavior:

Using `DSL.cast()` with some database enum type, the generated SQL doesn't qualify the enum type, leading to errors (unless the schema is in the search_path; this is how we uncovered this bug actually: our app works OK for us developers and on our demo servers, but failed when deployed on our client's servers, because they use a different database user, whose name doesn't match that of the database schema).

jOOQ will generate `cast(?::"the_schema"."the_enum" as the_enum)` (or `cast(?::"the_schema"."the_enum"[] as the_enum[])` for an array, in our actual case), which will trigger an error `ERROR: type "the_enum" does not exist(..)`.

Note how the type in the cast is not qualified, whereas it's correctly qualified in typing the parameter.

Of course, the cast here is redundant (I'm almost certain it was necessary in an older version of jOOQ though, but at least we have an easy fix for our app), but interestingly we also have a domain type (`CREATE DOMAIN … AS text`) that in turn **requires** us to use a cast when used as an array, and in this case jOOQ correctly uses the qualified domain type: `cast(?::varchar[] as "the_schema"."the_domain"[])`.

### Steps to reproduce the problem (if possible, create an MCVE: https://github.com/jOOQ/jOOQ-mcve):

* have an enum type in your schema (`CREATE TYPE … AS ENUM …`) and a table using it for one of its columns

* use a `DSL.cast(…, MY_TABLE.MY_FIELD)` where the field is of the enum type

* have a search_path that doesn't include your schema

MCVE at https://github.com/atolcd-contrib/jOOQ-mcve/tree/issue-10277

### Versions:

- jOOQ: 3.12.4 and 3.13.2

- Java: OpenJDK 11

- Database (include vendor): Postgresql (reproduced on 11 and 12)

- OS: Linux (Arch Linux, and Docker's `openjdk:11`)

- JDBC Driver (include name if inofficial driver): `org.postgresql:postgresql` versions 42.2.9 and 42.2.14

| defect | cast to postgresql enum type lacks type qualification expected behavior and actual behavior using dsl cast with some database enum type the generated sql doesn t qualify the enum type leading to errors unless the schema is in the search path this is how we uncovered this bug actually our app works ok for us developers and on our demo servers but failed when deployed on our client s servers because they use a different database user whose name doesn t match that of the database schema jooq will generate cast the schema the enum as the enum or cast the schema the enum as the enum for an array in our actual case which will trigger an error error type the enum does not exist note how the type in the cast is not qualified whereas it s correctly qualified in typing the parameter of course the cast here is redundant i m almost certain it was necessary in an older version of jooq though but at least we have an easy fix for our app but interestingly we also have a domain type create domain … as text that in turn requires us to use a cast when used as an array and in this case jooq correctly uses the qualified domain type cast varchar as the schema the domain steps to reproduce the problem if possible create an mcve have an enum type in your schema create type … as enum … and a table using it for one of its columns use a dsl cast … my table my field where the field is of the enum type have a search path that doesn t include your schema mcve at versions jooq and java openjdk database include vendor postgresql reproduced on and os linux arch linux and docker s openjdk jdbc driver include name if inofficial driver org postgresql postgresql versions and | 1 |

13,580 | 16,093,401,878 | IssuesEvent | 2021-04-26 19:40:16 | Creators-of-Create/Create | https://api.github.com/repos/Creators-of-Create/Create | closed | OreTweaker Ore Generation Incompatibility | compatibility needs input | I apologize for the length of this, but I couldn't upload config files directly into here.

It appears that at OreTweaker and Create ore generation are incompatible with one another. I kept Create's ore generation as is and only edited the vanilla generation using OreTweaker. Here are copies of the Create and OreTweaker configs:

**Create**

```

[worldgen]

#

#Modify Create's impact on your terrain

[worldgen.v2]

#

#Prevents all worldgen added by Create from taking effect

disableWorldGen = false

#

#Forward caught TileEntityExceptions to the log at debug level.

logTeErrors = false

[worldgen.v2.copper_ore]

#

#Range: > 0

clusterSize = 18

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 2.0

#

#Range: > 0

minHeight = 40

#

#Range: > 0

maxHeight = 85

[worldgen.v2.weathered_limestone]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 10

#

#Range: > 0

maxHeight = 30

[worldgen.v2.zinc_ore]

#

#Range: > 0

clusterSize = 14

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 4.0

#

#Range: > 0

minHeight = 15

#

#Range: > 0

maxHeight = 70

[worldgen.v2.limestone]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 30

#

#Range: > 0

maxHeight = 70

[worldgen.v2.dolomite]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 20

#

#Range: > 0

maxHeight = 70

[worldgen.v2.gabbro]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 20

#

#Range: > 0

maxHeight = 70

[worldgen.v2.scoria]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.03125

#

#Range: > 0

minHeight = 0

#

#Range: > 0

maxHeight = 10

```

**OreTweaker**

```

"Enable Debug Output" = false

"Disable Ores" = ["minecraft:coal_ore", "minecraft:iron_ore", "minecraft:gold_ore", "minecraft:diamond_ore", "minecraft:lapis_ore", "minecraft:redstone_ore", "minecraft:emerald_ore"]

[["Custom Ore"]]

"Ore Name" = "minecraft:lapis_ore"

"Max Vein Size" = 7

"Filler Name" = "minecraft:stone"

"Min Vein Level" = 1

"Max Vein Level" = 48

"Spawn Rate" = 8

[["Custom Ore"]]

"Ore Name" = "minecraft:redstone_ore"

"Max Vein Size" = 7

"Filler Name" = "minecraft:stone"

"Min Vein Level" = 1

"Max Vein Level" = 48

"Spawn Rate" = 8

[["Custom Ore"]]

"Ore Name" = "minecraft:emerald_ore"

"Max Vein Size" = 1

"Filler Name" = "minecraft:stone"

"Min Vein Level" = 1

"Max Vein Level" = 48

"Spawn Rate" = 1

```

With these configs I used World Stripper to uncover the terrain in new chunks and this is what I got:

You may notice that EvilCraft is also in this mod. OreTweaker does not interfere with its ore generation. I did a similar thing in The Nether as I have Netherrocks installed as well (the only other mod that affects ore generation) and it appears to work as intended:

So this seems to be specific to Create. As I have no way of telling whether this is a Create or an OreTweaker problem, I am simply reporting this to both. And for the sake of completion, here is my log file for this session:

[latest.log](https://github.com/Creators-of-Create/Create/files/6369536/latest.log)

| True | OreTweaker Ore Generation Incompatibility - I apologize for the length of this, but I couldn't upload config files directly into here.

It appears that at OreTweaker and Create ore generation are incompatible with one another. I kept Create's ore generation as is and only edited the vanilla generation using OreTweaker. Here are copies of the Create and OreTweaker configs:

**Create**

```

[worldgen]

#

#Modify Create's impact on your terrain

[worldgen.v2]

#

#Prevents all worldgen added by Create from taking effect

disableWorldGen = false

#

#Forward caught TileEntityExceptions to the log at debug level.

logTeErrors = false

[worldgen.v2.copper_ore]

#

#Range: > 0

clusterSize = 18

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 2.0

#

#Range: > 0

minHeight = 40

#

#Range: > 0

maxHeight = 85

[worldgen.v2.weathered_limestone]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 10

#

#Range: > 0

maxHeight = 30

[worldgen.v2.zinc_ore]

#

#Range: > 0

clusterSize = 14

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 4.0

#

#Range: > 0

minHeight = 15

#

#Range: > 0

maxHeight = 70

[worldgen.v2.limestone]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 30

#

#Range: > 0

maxHeight = 70

[worldgen.v2.dolomite]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 20

#

#Range: > 0

maxHeight = 70

[worldgen.v2.gabbro]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.015625

#

#Range: > 0

minHeight = 20

#

#Range: > 0

maxHeight = 70

[worldgen.v2.scoria]

#

#Range: > 0

clusterSize = 128

#

#Amount of clusters generated per Chunk.

# >1 to spawn multiple.

# <1 to make it a chance.

# 0 to disable.

#Range: 0.0 ~ 512.0

frequency = 0.03125

#

#Range: > 0

minHeight = 0

#

#Range: > 0

maxHeight = 10

```

**OreTweaker**

```

"Enable Debug Output" = false

"Disable Ores" = ["minecraft:coal_ore", "minecraft:iron_ore", "minecraft:gold_ore", "minecraft:diamond_ore", "minecraft:lapis_ore", "minecraft:redstone_ore", "minecraft:emerald_ore"]

[["Custom Ore"]]

"Ore Name" = "minecraft:lapis_ore"

"Max Vein Size" = 7

"Filler Name" = "minecraft:stone"

"Min Vein Level" = 1

"Max Vein Level" = 48

"Spawn Rate" = 8

[["Custom Ore"]]

"Ore Name" = "minecraft:redstone_ore"

"Max Vein Size" = 7

"Filler Name" = "minecraft:stone"

"Min Vein Level" = 1

"Max Vein Level" = 48

"Spawn Rate" = 8

[["Custom Ore"]]

"Ore Name" = "minecraft:emerald_ore"

"Max Vein Size" = 1

"Filler Name" = "minecraft:stone"

"Min Vein Level" = 1

"Max Vein Level" = 48

"Spawn Rate" = 1

```

With these configs I used World Stripper to uncover the terrain in new chunks and this is what I got:

You may notice that EvilCraft is also in this mod. OreTweaker does not interfere with its ore generation. I did a similar thing in The Nether as I have Netherrocks installed as well (the only other mod that affects ore generation) and it appears to work as intended:

So this seems to be specific to Create. As I have no way of telling whether this is a Create or an OreTweaker problem, I am simply reporting this to both. And for the sake of completion, here is my log file for this session:

[latest.log](https://github.com/Creators-of-Create/Create/files/6369536/latest.log)

| non_defect | oretweaker ore generation incompatibility i apologize for the length of this but i couldn t upload config files directly into here it appears that at oretweaker and create ore generation are incompatible with one another i kept create s ore generation as is and only edited the vanilla generation using oretweaker here are copies of the create and oretweaker configs create modify create s impact on your terrain prevents all worldgen added by create from taking effect disableworldgen false forward caught tileentityexceptions to the log at debug level logteerrors false range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight range clustersize amount of clusters generated per chunk to spawn multiple to make it a chance to disable range frequency range minheight range maxheight oretweaker enable debug output false disable ores ore name minecraft lapis ore max vein size filler name minecraft stone min vein level max vein level spawn rate ore name minecraft redstone ore max vein size filler name minecraft stone min vein level max vein level spawn rate ore name minecraft emerald ore max vein size filler name minecraft stone min vein level max vein level spawn rate with these configs i used world stripper to uncover the terrain in new chunks and this is what i got you may notice that evilcraft is also in this mod oretweaker does not interfere with its ore generation i did a similar thing in the nether as i have netherrocks installed as well the only other mod that affects ore generation and it appears to work as intended so this seems to be specific to create as i have no way of telling whether this is a create or an oretweaker problem i am simply reporting this to both and for the sake of completion here is my log file for this session | 0 |

47,211 | 10,054,193,727 | IssuesEvent | 2019-07-21 23:28:00 | EdenServer/community | https://api.github.com/repos/EdenServer/community | closed | Treasure and Tribulations no effect on enfeebling magic | in-code-review | We attempted this BCNM as nin rdm brd, our enfeebling got resisted but when we tried to enfeebling it again we got the no effect message as if the magic landed but it clearly did not land as his accuracy/attack speed did not lower and we never got the paralyzed message. | 1.0 | Treasure and Tribulations no effect on enfeebling magic - We attempted this BCNM as nin rdm brd, our enfeebling got resisted but when we tried to enfeebling it again we got the no effect message as if the magic landed but it clearly did not land as his accuracy/attack speed did not lower and we never got the paralyzed message. | non_defect | treasure and tribulations no effect on enfeebling magic we attempted this bcnm as nin rdm brd our enfeebling got resisted but when we tried to enfeebling it again we got the no effect message as if the magic landed but it clearly did not land as his accuracy attack speed did not lower and we never got the paralyzed message | 0 |

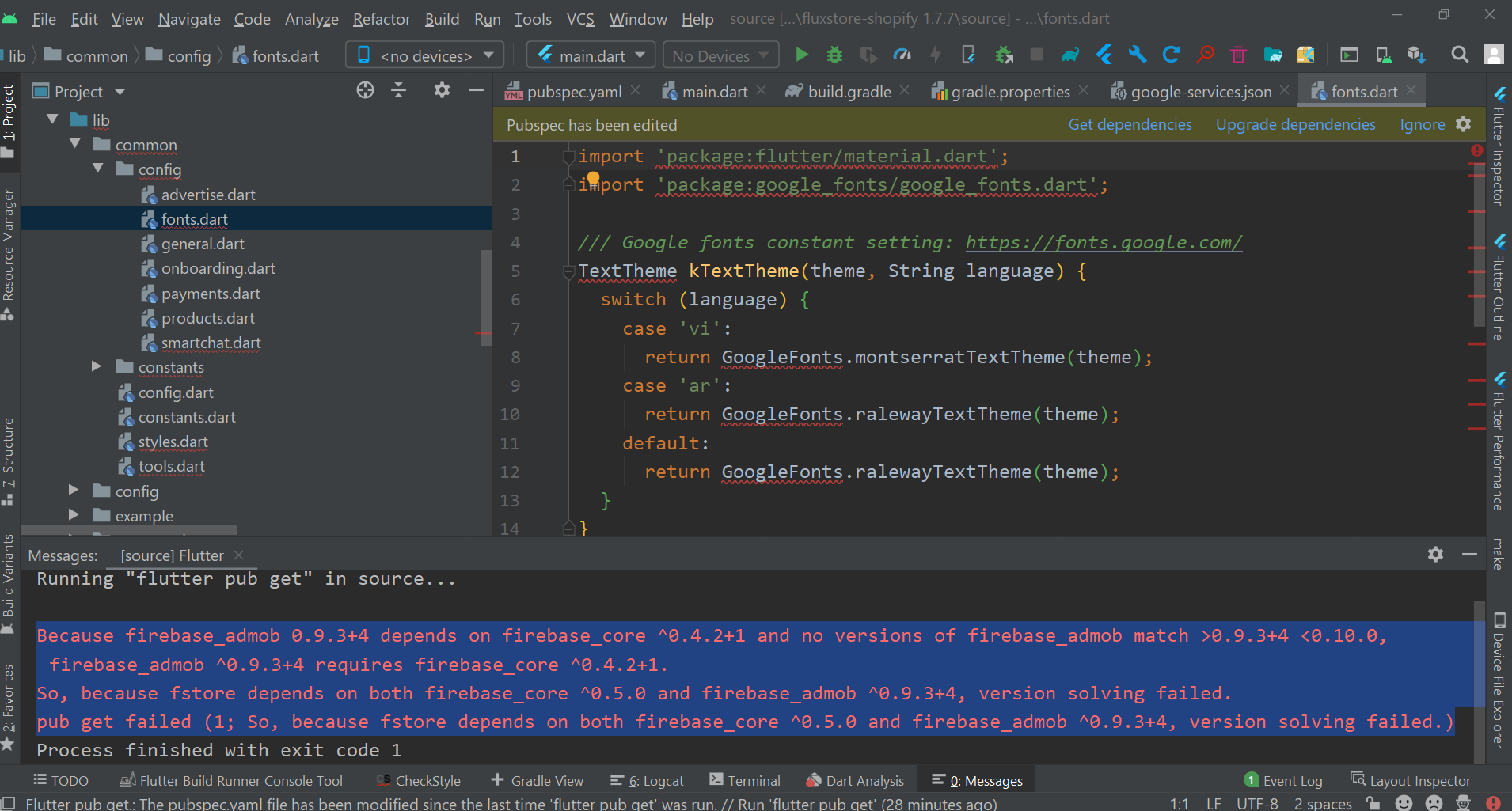

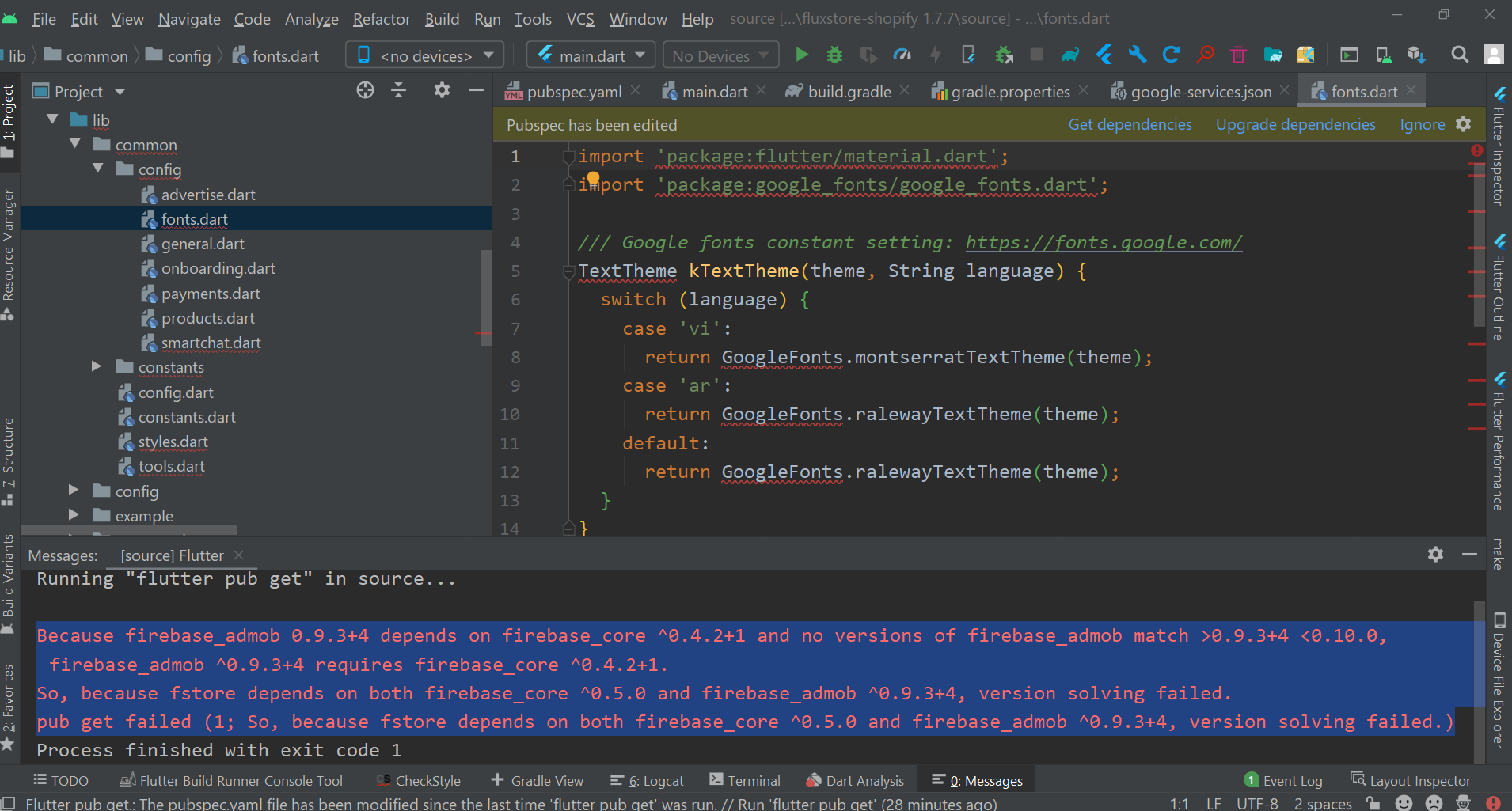

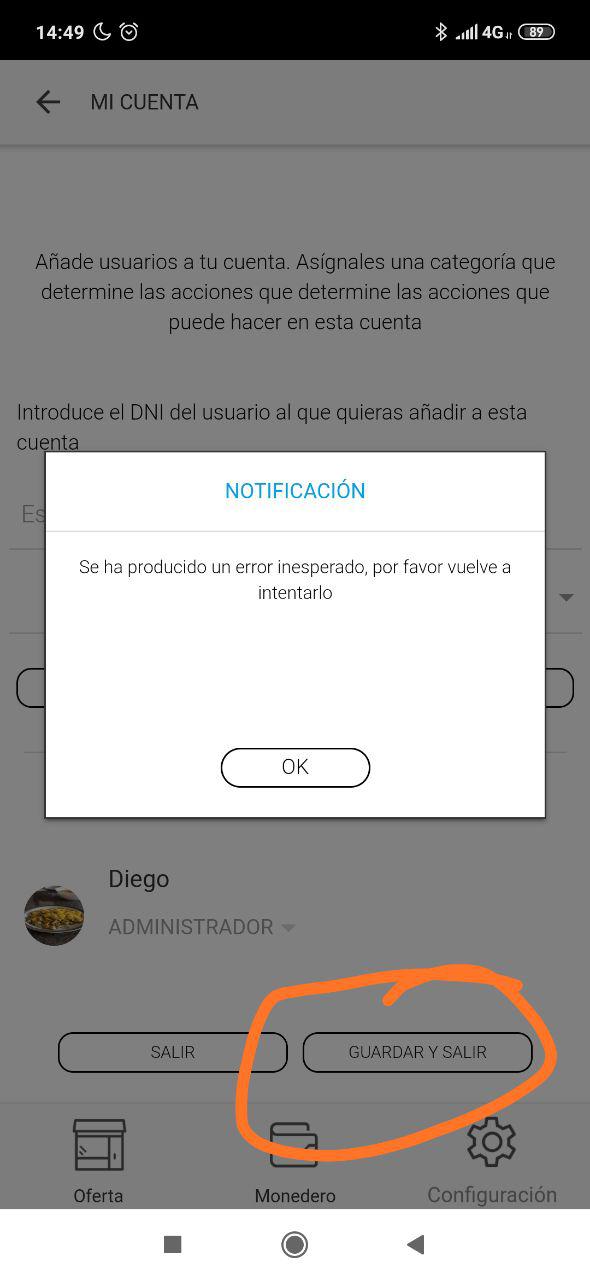

471,322 | 13,564,971,086 | IssuesEvent | 2020-09-18 10:55:16 | inspireui/support | https://api.github.com/repos/inspireui/support | reopened | Firebase version solving failed | Fluxshopify ⭐️ priority-ticket |

Because firebase_admob 0.9.3+4 depends on firebase_core ^0.4.2+1 and no versions of firebase_admob match >0.9.3+4 <0.10.0, firebase_admob ^0.9.3+4 requires firebase_core ^0.4.2+1.

So, because fstore depends on both firebase_core ^0.5.0 and firebase_admob ^0.9.3+4, version solving failed.

pub get failed (1; So, because fstore depends on both firebase_core ^0.5.0 and firebase_admob ^0.9.3+4, version solving failed.)

i try everything but not solved so i'm not able to make apk file.. | 1.0 | Firebase version solving failed -

Because firebase_admob 0.9.3+4 depends on firebase_core ^0.4.2+1 and no versions of firebase_admob match >0.9.3+4 <0.10.0, firebase_admob ^0.9.3+4 requires firebase_core ^0.4.2+1.

So, because fstore depends on both firebase_core ^0.5.0 and firebase_admob ^0.9.3+4, version solving failed.

pub get failed (1; So, because fstore depends on both firebase_core ^0.5.0 and firebase_admob ^0.9.3+4, version solving failed.)

i try everything but not solved so i'm not able to make apk file.. | non_defect | firebase version solving failed because firebase admob depends on firebase core and no versions of firebase admob match firebase admob requires firebase core so because fstore depends on both firebase core and firebase admob version solving failed pub get failed so because fstore depends on both firebase core and firebase admob version solving failed i try everything but not solved so i m not able to make apk file | 0 |

170,235 | 13,179,386,072 | IssuesEvent | 2020-08-12 10:50:00 | Aalto-LeTech/intellij-plugin | https://api.github.com/repos/Aalto-LeTech/intellij-plugin | closed | "Modules" toolbar when removed must be easily returned | manual testing medium | it should be easy to return the "modules" to the UI:

+ it shows in A+ menu (or ToolsMenu)

+ it has an attached key combination

+ `ctrl + shift + a` | 1.0 | "Modules" toolbar when removed must be easily returned - it should be easy to return the "modules" to the UI:

+ it shows in A+ menu (or ToolsMenu)

+ it has an attached key combination

+ `ctrl + shift + a` | non_defect | modules toolbar when removed must be easily returned it should be easy to return the modules to the ui it shows in a menu or toolsmenu it has an attached key combination ctrl shift a | 0 |

91,823 | 26,493,467,865 | IssuesEvent | 2023-01-18 02:00:40 | docker/docs | https://api.github.com/repos/docker/docs | closed | Add ** pattern to dockerignore examples in documentation | area/Build lifecycle/stale | File: [engine/reference/builder.md](https://docs.docker.com/engine/reference/builder/)

## Request

Add a simple case using the ** pattern to the example dockerfile section e.g. `**/temp*`.

## Reason

`.dockerignore` has different semantics from a `.gitignore` file which is often missed as highlighted by this SO question viewed 24k times https://stackoverflow.com/questions/40261164/docker-ignores-patterns-in-dockerignore/40261165#40261165

The **/ pattern matching for any depth matching of file patterns is obscured in a paragraph of text near the end of the .dockerignore section that is easily missed:

> Beyond Go's filepath.Match rules, Docker also supports a special

> wildcard string `**` that matches any number of directories (including

> zero). For example, `**/*.go` will exclude all files that end with `.go`

> that are found in all directories, including the root of the build context.

| 1.0 | Add ** pattern to dockerignore examples in documentation - File: [engine/reference/builder.md](https://docs.docker.com/engine/reference/builder/)

## Request

Add a simple case using the ** pattern to the example dockerfile section e.g. `**/temp*`.

## Reason

`.dockerignore` has different semantics from a `.gitignore` file which is often missed as highlighted by this SO question viewed 24k times https://stackoverflow.com/questions/40261164/docker-ignores-patterns-in-dockerignore/40261165#40261165

The **/ pattern matching for any depth matching of file patterns is obscured in a paragraph of text near the end of the .dockerignore section that is easily missed:

> Beyond Go's filepath.Match rules, Docker also supports a special

> wildcard string `**` that matches any number of directories (including

> zero). For example, `**/*.go` will exclude all files that end with `.go`

> that are found in all directories, including the root of the build context.

| non_defect | add pattern to dockerignore examples in documentation file request add a simple case using the pattern to the example dockerfile section e g temp reason dockerignore has different semantics from a gitignore file which is often missed as highlighted by this so question viewed times the pattern matching for any depth matching of file patterns is obscured in a paragraph of text near the end of the dockerignore section that is easily missed beyond go s filepath match rules docker also supports a special wildcard string that matches any number of directories including zero for example go will exclude all files that end with go that are found in all directories including the root of the build context | 0 |

28,263 | 5,231,350,537 | IssuesEvent | 2017-01-30 01:46:32 | prettydiff/prettydiff | https://api.github.com/repos/prettydiff/prettydiff | closed | Rust - breaking nested generic type | Defect Parsing Pending Release | **Rust is not yet supported**

Even though Rust is not officially supported yet I would still like to capture the parts of its syntax that appear similar to the languages that currently are supported.

```

#![feature(lookup_host)]

use std::collections;

struct DnsLookupCache {

cache : std::collections::HashMap<Box<String>, Vec<std::net::SocketAddr>>

}

impl DnsLookupCache {

fn new() -> DnsLookupCache {

DnsLookupCache {

cache: std::collections::HashMap::new()

}

}

fn lookup(&mut self, host: &String) -> std::io::Result<&Vec<std::net::SocketAddr>> {

match self.cache.get(host) {

Some(cached) => Ok(cached),

None => {

let mut hosts = Vec::new();

for result in try!(std::net::lookup_host(host)) {

hosts.push(result);

}

let owned_host = host.clone();

self.cache.insert(Box::new(owned_host), hosts);

return self.lookup(host);

}

}

}

}

```

* Broken pseudo-shebang on the first line

* Broken nested type generic `<Box<String>, Vec<std::net::SocketAddr>>`

* There are probably other improvements that can also be added. | 1.0 | Rust - breaking nested generic type - **Rust is not yet supported**

Even though Rust is not officially supported yet I would still like to capture the parts of its syntax that appear similar to the languages that currently are supported.

```

#![feature(lookup_host)]

use std::collections;

struct DnsLookupCache {

cache : std::collections::HashMap<Box<String>, Vec<std::net::SocketAddr>>

}

impl DnsLookupCache {

fn new() -> DnsLookupCache {

DnsLookupCache {

cache: std::collections::HashMap::new()

}

}

fn lookup(&mut self, host: &String) -> std::io::Result<&Vec<std::net::SocketAddr>> {

match self.cache.get(host) {

Some(cached) => Ok(cached),

None => {

let mut hosts = Vec::new();

for result in try!(std::net::lookup_host(host)) {

hosts.push(result);

}

let owned_host = host.clone();

self.cache.insert(Box::new(owned_host), hosts);

return self.lookup(host);

}

}

}

}

```

* Broken pseudo-shebang on the first line

* Broken nested type generic `<Box<String>, Vec<std::net::SocketAddr>>`

* There are probably other improvements that can also be added. | defect | rust breaking nested generic type rust is not yet supported even though rust is not officially supported yet i would still like to capture the parts of its syntax that appear similar to the languages that currently are supported use std collections struct dnslookupcache cache std collections hashmap vec impl dnslookupcache fn new dnslookupcache dnslookupcache cache std collections hashmap new fn lookup mut self host string std io result match self cache get host some cached ok cached none let mut hosts vec new for result in try std net lookup host host hosts push result let owned host host clone self cache insert box new owned host hosts return self lookup host broken pseudo shebang on the first line broken nested type generic vec there are probably other improvements that can also be added | 1 |

829,774 | 31,897,756,609 | IssuesEvent | 2023-09-18 04:33:58 | headwirecom/helix-sportsmagazine | https://api.github.com/repos/headwirecom/helix-sportsmagazine | closed | Load Ceros iframe embed as early as possible | enhancement priority-1 | On landing pages, especially the home page, there are multiple ceros embeds with dynamic content. They are performance killer so we can't preload them. We can try to load them on first scroll or after 3s as delayed content. The idea is to avoid having that experience of big white spaces with a spinner when scrolling down as we do currently because we only load them once they appear in the viewport (default embed behavior). | 1.0 | Load Ceros iframe embed as early as possible - On landing pages, especially the home page, there are multiple ceros embeds with dynamic content. They are performance killer so we can't preload them. We can try to load them on first scroll or after 3s as delayed content. The idea is to avoid having that experience of big white spaces with a spinner when scrolling down as we do currently because we only load them once they appear in the viewport (default embed behavior). | non_defect | load ceros iframe embed as early as possible on landing pages especially the home page there are multiple ceros embeds with dynamic content they are performance killer so we can t preload them we can try to load them on first scroll or after as delayed content the idea is to avoid having that experience of big white spaces with a spinner when scrolling down as we do currently because we only load them once they appear in the viewport default embed behavior | 0 |

146,294 | 5,614,946,054 | IssuesEvent | 2017-04-03 13:36:19 | opencaching/opencaching-pl | https://api.github.com/repos/opencaching/opencaching-pl | opened | Convert all non-ASCII characters from hint | Component_CacheEdit Component_i18n Priority_Low Type_Enhancement x_Usability | The hint is encoded using ROT13.

This only works on the 26 letter basic latin/english alphabet.

Any national characters in the hint don't get encoded correctly by ROT13.

Hint should be converted to ASCII and stored as such in the database.

I've come upon this with the need to convert it to ASCII for file export (if it were correctly stored, it wouldn't need such conversion at that point. | 1.0 | Convert all non-ASCII characters from hint - The hint is encoded using ROT13.

This only works on the 26 letter basic latin/english alphabet.

Any national characters in the hint don't get encoded correctly by ROT13.

Hint should be converted to ASCII and stored as such in the database.

I've come upon this with the need to convert it to ASCII for file export (if it were correctly stored, it wouldn't need such conversion at that point. | non_defect | convert all non ascii characters from hint the hint is encoded using this only works on the letter basic latin english alphabet any national characters in the hint don t get encoded correctly by hint should be converted to ascii and stored as such in the database i ve come upon this with the need to convert it to ascii for file export if it were correctly stored it wouldn t need such conversion at that point | 0 |

13,106 | 2,732,898,851 | IssuesEvent | 2015-04-17 10:04:55 | tiku01/oryx-editor | https://api.github.com/repos/tiku01/oryx-editor | closed | Undo of Canvas resize | auto-migrated Component-Editor Priority-Medium Type-Defect | ```

If I enlarge the canvas there is no way of undoing this operation.

Desirable would be to also include this feature in undo/redo

```

Original issue reported on code.google.com by `gero.dec...@googlemail.com` on 29 Oct 2008 at 2:14 | 1.0 | Undo of Canvas resize - ```

If I enlarge the canvas there is no way of undoing this operation.

Desirable would be to also include this feature in undo/redo

```

Original issue reported on code.google.com by `gero.dec...@googlemail.com` on 29 Oct 2008 at 2:14 | defect | undo of canvas resize if i enlarge the canvas there is no way of undoing this operation desirable would be to also include this feature in undo redo original issue reported on code google com by gero dec googlemail com on oct at | 1 |

61,624 | 17,023,742,354 | IssuesEvent | 2021-07-03 03:36:01 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | The From and To dropdown lists are empty when trying to add turn restrictions. | Component: potlatch2 Priority: minor Resolution: worksforme Type: defect | **[Submitted to the original trac issue database at 2.58pm, Thursday, 25th August 2011]**

When I'm trying to add turn restrinctions to a junction the From and To dropdown lists are empty most of the time.

I tried to do this to just created roads and existing roads too. The dropdown lists are empty many times.

I'm not sure if I'm doing something wrong or this is a bug. But if this is a bug it should be fixed because it's making Navdroyd to suggest ludicrous turns and I can't even fix it in OSM.

Thanks! | 1.0 | The From and To dropdown lists are empty when trying to add turn restrictions. - **[Submitted to the original trac issue database at 2.58pm, Thursday, 25th August 2011]**

When I'm trying to add turn restrinctions to a junction the From and To dropdown lists are empty most of the time.

I tried to do this to just created roads and existing roads too. The dropdown lists are empty many times.

I'm not sure if I'm doing something wrong or this is a bug. But if this is a bug it should be fixed because it's making Navdroyd to suggest ludicrous turns and I can't even fix it in OSM.

Thanks! | defect | the from and to dropdown lists are empty when trying to add turn restrictions when i m trying to add turn restrinctions to a junction the from and to dropdown lists are empty most of the time i tried to do this to just created roads and existing roads too the dropdown lists are empty many times i m not sure if i m doing something wrong or this is a bug but if this is a bug it should be fixed because it s making navdroyd to suggest ludicrous turns and i can t even fix it in osm thanks | 1 |

110,103 | 13,905,807,106 | IssuesEvent | 2020-10-20 10:22:21 | owncloud/client | https://api.github.com/repos/owncloud/client | closed | Connection Wizard - checkboxes too small when selected Local folder is not empty | Design & UX bug p3-medium | Client: 2.6.0rc2 (build 12577)

macOS 10.15, Ubuntu 19.04

Server: 10.3.0 stable

Steps to recreate:

1) Select 'Add new' account in the account tab

2) Enter server url

3) Enter login details

4) In 'Setup local folder options' select a Local folder that is not empty

5) Check the 'Ask for confirmation' checkboxes

Actual result: 'Ask for confirmation before synchronizing' checkboxes are too small. (But they get displayed when the dialog is bigger)

Expected result: Checkboxes are always properly displayed.

Ubuntu

<img width="734" alt="Screenshot 2019-10-29 at 09 52 20" src="https://user-images.githubusercontent.com/49001702/67752463-4e925600-fa33-11e9-8ed1-c7c6fbe72fb5.png">

mac

<img width="748" alt="Screenshot 2019-10-29 at 09 48 31" src="https://user-images.githubusercontent.com/49001702/67752465-4fc38300-fa33-11e9-872e-44e239b7b6ba.png">

| 1.0 | Connection Wizard - checkboxes too small when selected Local folder is not empty - Client: 2.6.0rc2 (build 12577)

macOS 10.15, Ubuntu 19.04

Server: 10.3.0 stable

Steps to recreate:

1) Select 'Add new' account in the account tab

2) Enter server url

3) Enter login details

4) In 'Setup local folder options' select a Local folder that is not empty

5) Check the 'Ask for confirmation' checkboxes

Actual result: 'Ask for confirmation before synchronizing' checkboxes are too small. (But they get displayed when the dialog is bigger)

Expected result: Checkboxes are always properly displayed.

Ubuntu

<img width="734" alt="Screenshot 2019-10-29 at 09 52 20" src="https://user-images.githubusercontent.com/49001702/67752463-4e925600-fa33-11e9-8ed1-c7c6fbe72fb5.png">

mac

<img width="748" alt="Screenshot 2019-10-29 at 09 48 31" src="https://user-images.githubusercontent.com/49001702/67752465-4fc38300-fa33-11e9-872e-44e239b7b6ba.png">

| non_defect | connection wizard checkboxes too small when selected local folder is not empty client build macos ubuntu server stable steps to recreate select add new account in the account tab enter server url enter login details in setup local folder options select a local folder that is not empty check the ask for confirmation checkboxes actual result ask for confirmation before synchronizing checkboxes are too small but they get displayed when the dialog is bigger expected result checkboxes are always properly displayed ubuntu img width alt screenshot at src mac img width alt screenshot at src | 0 |

294,692 | 22,160,704,098 | IssuesEvent | 2022-06-04 13:18:34 | FranGemo1/Proyecto-programador-ispc-2022 | https://api.github.com/repos/FranGemo1/Proyecto-programador-ispc-2022 | opened | Resumen de tema: 4. Sistemas Gestores de Bases de Datos | documentation | Realizar un resumen del material de la plataforma del Ispc, materia programador de los SGBD. | 1.0 | Resumen de tema: 4. Sistemas Gestores de Bases de Datos - Realizar un resumen del material de la plataforma del Ispc, materia programador de los SGBD. | non_defect | resumen de tema sistemas gestores de bases de datos realizar un resumen del material de la plataforma del ispc materia programador de los sgbd | 0 |

28,745 | 5,348,389,282 | IssuesEvent | 2017-02-18 04:23:26 | amitdholiya/vqmod | https://api.github.com/repos/amitdholiya/vqmod | reopened | Administrator index.php not writeable | auto-migrated Priority-Medium Type-Defect | ```

NOTE THAT THIS IS FOR VQMOD ENGINE ERRORS ONLY. FOR GENERAL ERRORS FROM

MODIFICATIONS CONTACT YOUR DEVELOPER

What steps will reproduce the problem?

1.Administrator index.php not writeable

2.

3.

What is the expected output? What do you see instead?

vQmod Version:

Server Operating System:

Please provide any additional information below.

```

Original issue reported on code.google.com by `juzail...@gmail.com` on 23 Jul 2014 at 1:40

| 1.0 | Administrator index.php not writeable - ```

NOTE THAT THIS IS FOR VQMOD ENGINE ERRORS ONLY. FOR GENERAL ERRORS FROM

MODIFICATIONS CONTACT YOUR DEVELOPER

What steps will reproduce the problem?

1.Administrator index.php not writeable

2.

3.

What is the expected output? What do you see instead?

vQmod Version:

Server Operating System:

Please provide any additional information below.

```

Original issue reported on code.google.com by `juzail...@gmail.com` on 23 Jul 2014 at 1:40

| defect | administrator index php not writeable note that this is for vqmod engine errors only for general errors from modifications contact your developer what steps will reproduce the problem administrator index php not writeable what is the expected output what do you see instead vqmod version server operating system please provide any additional information below original issue reported on code google com by juzail gmail com on jul at | 1 |

233,140 | 17,855,653,808 | IssuesEvent | 2021-09-05 00:55:23 | iskhakov-s/process_image | https://api.github.com/repos/iskhakov-s/process_image | opened | Necessary Features | documentation enhancement | ### Readability/Standards Changes

- [ ] Add documentation for functions

- [ ] Handle errors to make sure the correct arguments are passed

- [ ] add type identifiers for important variables or function return values

### Modifications

- [ ] Integrate img_analyzer.py and img.py / fix the circular dependency

- [ ] make a universal setter for img.py, since changing hsv of an image changes its rgb

- [ ] merge marsimg and img folders

- [ ] use matplotlib instead of tabulate for compiledanalysis func

- [ ] **_analyze all of the images in a readable way in images.ipynb_** *IMPORTANT\*

### Specific

- [ ] allow parameters to be passed to the measure functions in grey_analysis

### New Features

- [ ] add saving and loading functionality, save each set of images in a folder w a txt file for analysis numbers | 1.0 | Necessary Features - ### Readability/Standards Changes

- [ ] Add documentation for functions

- [ ] Handle errors to make sure the correct arguments are passed

- [ ] add type identifiers for important variables or function return values

### Modifications

- [ ] Integrate img_analyzer.py and img.py / fix the circular dependency

- [ ] make a universal setter for img.py, since changing hsv of an image changes its rgb

- [ ] merge marsimg and img folders

- [ ] use matplotlib instead of tabulate for compiledanalysis func

- [ ] **_analyze all of the images in a readable way in images.ipynb_** *IMPORTANT\*

### Specific

- [ ] allow parameters to be passed to the measure functions in grey_analysis

### New Features

- [ ] add saving and loading functionality, save each set of images in a folder w a txt file for analysis numbers | non_defect | necessary features readability standards changes add documentation for functions handle errors to make sure the correct arguments are passed add type identifiers for important variables or function return values modifications integrate img analyzer py and img py fix the circular dependency make a universal setter for img py since changing hsv of an image changes its rgb merge marsimg and img folders use matplotlib instead of tabulate for compiledanalysis func analyze all of the images in a readable way in images ipynb important specific allow parameters to be passed to the measure functions in grey analysis new features add saving and loading functionality save each set of images in a folder w a txt file for analysis numbers | 0 |

61,896 | 17,023,803,064 | IssuesEvent | 2021-07-03 03:56:27 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Wrong association of roads in Roth (1533968) | Component: nominatim Priority: major Resolution: duplicate Type: defect | **[Submitted to the original trac issue database at 1.54pm, Wednesday, 13th June 2012]**

All roads in Roth (id: 1533968) seem to be associcated with Schnbach (2465110383) which is a neighbour village but doesn't belong to the same town; Roth is part of Driedorf (land mass 128858491) while Schnbach is part of Herborn (land mass 128858191)

I've no idea how to fix that myself | 1.0 | Wrong association of roads in Roth (1533968) - **[Submitted to the original trac issue database at 1.54pm, Wednesday, 13th June 2012]**

All roads in Roth (id: 1533968) seem to be associcated with Schnbach (2465110383) which is a neighbour village but doesn't belong to the same town; Roth is part of Driedorf (land mass 128858491) while Schnbach is part of Herborn (land mass 128858191)

I've no idea how to fix that myself | defect | wrong association of roads in roth all roads in roth id seem to be associcated with schnbach which is a neighbour village but doesn t belong to the same town roth is part of driedorf land mass while schnbach is part of herborn land mass i ve no idea how to fix that myself | 1 |

721,183 | 24,820,566,558 | IssuesEvent | 2022-10-25 16:06:22 | Lightning-AI/lightning | https://api.github.com/repos/Lightning-AI/lightning | closed | batch size finder not running training steps after first batch size | bug trainer: tune priority: 1 | When trying to automatically find largest batch size, only validation steps are taken. Eg:

```

model.batch_size = tuner.scale_batch_size(model, mode='power', init_val=1, steps_per_trial=3)

```

will run:

```

validation_step(batch_idx=0) #part of the sanity check code

validation_step(batch_idx=1) #part of the sanity check code

train_step(batch_idx=0)

train_step(batch_idx=1)

train_step(batch_idx=2)

Batch size 1 succeeded, trying batch size 2

validation_step(batch_idx=0)

validation_step(batch_idx=1)

Batch size 2 succeeded, trying batch size 4

validation_step(batch_idx=0)

validation_step(batch_idx=1)

Batch size 4 succeeded, trying batch size 8

```

etc. thus this will return a batch size much larger than the one that fits in memory during the train step.

The issue is in ` pytorch_lightning/loops/fit_loop.py`:

```

def done(self) -> bool:

"""Evaluates when to leave the loop."""

# TODO(@awaelchli): Move track steps inside training loop and move part of these condition inside training loop

stop_steps = _is_max_limit_reached(self.epoch_loop.global_step, self.max_steps)

[...]

```

The variable `self.epoch_loop.global_step` was not reset to 0 when attempting a new batch size. In this case, it will be `3` on batch size 2, returning `True` as the value of the `done` flag, and ultimately setting `self.skip=True` in `pytorch_lightning/loops/base.py`.

cc @akihironitta @borda @rohitgr7 | 1.0 | batch size finder not running training steps after first batch size - When trying to automatically find largest batch size, only validation steps are taken. Eg:

```

model.batch_size = tuner.scale_batch_size(model, mode='power', init_val=1, steps_per_trial=3)

```

will run:

```

validation_step(batch_idx=0) #part of the sanity check code

validation_step(batch_idx=1) #part of the sanity check code

train_step(batch_idx=0)

train_step(batch_idx=1)

train_step(batch_idx=2)

Batch size 1 succeeded, trying batch size 2

validation_step(batch_idx=0)

validation_step(batch_idx=1)

Batch size 2 succeeded, trying batch size 4

validation_step(batch_idx=0)

validation_step(batch_idx=1)

Batch size 4 succeeded, trying batch size 8

```

etc. thus this will return a batch size much larger than the one that fits in memory during the train step.

The issue is in ` pytorch_lightning/loops/fit_loop.py`:

```

def done(self) -> bool:

"""Evaluates when to leave the loop."""

# TODO(@awaelchli): Move track steps inside training loop and move part of these condition inside training loop

stop_steps = _is_max_limit_reached(self.epoch_loop.global_step, self.max_steps)

[...]

```

The variable `self.epoch_loop.global_step` was not reset to 0 when attempting a new batch size. In this case, it will be `3` on batch size 2, returning `True` as the value of the `done` flag, and ultimately setting `self.skip=True` in `pytorch_lightning/loops/base.py`.

cc @akihironitta @borda @rohitgr7 | non_defect | batch size finder not running training steps after first batch size when trying to automatically find largest batch size only validation steps are taken eg model batch size tuner scale batch size model mode power init val steps per trial will run validation step batch idx part of the sanity check code validation step batch idx part of the sanity check code train step batch idx train step batch idx train step batch idx batch size succeeded trying batch size validation step batch idx validation step batch idx batch size succeeded trying batch size validation step batch idx validation step batch idx batch size succeeded trying batch size etc thus this will return a batch size much larger than the one that fits in memory during the train step the issue is in pytorch lightning loops fit loop py def done self bool evaluates when to leave the loop todo awaelchli move track steps inside training loop and move part of these condition inside training loop stop steps is max limit reached self epoch loop global step self max steps the variable self epoch loop global step was not reset to when attempting a new batch size in this case it will be on batch size returning true as the value of the done flag and ultimately setting self skip true in pytorch lightning loops base py cc akihironitta borda | 0 |

62,990 | 6,822,364,950 | IssuesEvent | 2017-11-07 19:49:33 | DecipherNow/gm-fabric-dashboard | https://api.github.com/repos/DecipherNow/gm-fabric-dashboard | opened | Unit Tests for src/utils/index.js | priority-2 Testing | Implement the stubbed out tests and describe blocks in `index.test.js` | 1.0 | Unit Tests for src/utils/index.js - Implement the stubbed out tests and describe blocks in `index.test.js` | non_defect | unit tests for src utils index js implement the stubbed out tests and describe blocks in index test js | 0 |

52,509 | 13,224,794,336 | IssuesEvent | 2020-08-17 19:51:45 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [clsim] I3CLSimServer deadlocks if given more than 1 I3CLSimStepToPhotonConverter (Trac #2360) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2360">https://code.icecube.wisc.edu/projects/icecube/ticket/2360</a>, reported by jvansantenand owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-12-05T20:04:42",

"_ts": "1575576282029750",

"description": "I3CLSimServer appears to deadlock if trying to service more than GPU at a time. @lulu observed this behavior when attempting to run hobo-snowstorm on interactive multi-GPU nodes in Chiba.\n\nThis can also be reproduced by running e.g. \n{{{\nCUDA_VISIBLE_DEVICES=0,1 ./env-shell.sh clsim/resources/scripts/benchmark.py -n 1\n}}}\non a dual-GPU system. With either `CUDA_VISIBLE_DEVICES=0` or `CUDA_VISIBLE_DEVICES=1`, it completes within a few seconds. With both GPUs enabled, it hangs forever.",

"reporter": "jvansanten",

"cc": "eganster, lulu",

"resolution": "fixed",

"time": "2019-09-20T02:42:58",

"component": "combo simulation",

"summary": "[clsim] I3CLSimServer deadlocks if given more than 1 I3CLSimStepToPhotonConverter",

"priority": "major",

"keywords": "",

"milestone": "",

"owner": "jvansanten",

"type": "defect"

}

```

</p>

</details>

| 1.0 | [clsim] I3CLSimServer deadlocks if given more than 1 I3CLSimStepToPhotonConverter (Trac #2360) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2360">https://code.icecube.wisc.edu/projects/icecube/ticket/2360</a>, reported by jvansantenand owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-12-05T20:04:42",

"_ts": "1575576282029750",

"description": "I3CLSimServer appears to deadlock if trying to service more than GPU at a time. @lulu observed this behavior when attempting to run hobo-snowstorm on interactive multi-GPU nodes in Chiba.\n\nThis can also be reproduced by running e.g. \n{{{\nCUDA_VISIBLE_DEVICES=0,1 ./env-shell.sh clsim/resources/scripts/benchmark.py -n 1\n}}}\non a dual-GPU system. With either `CUDA_VISIBLE_DEVICES=0` or `CUDA_VISIBLE_DEVICES=1`, it completes within a few seconds. With both GPUs enabled, it hangs forever.",

"reporter": "jvansanten",

"cc": "eganster, lulu",

"resolution": "fixed",

"time": "2019-09-20T02:42:58",

"component": "combo simulation",

"summary": "[clsim] I3CLSimServer deadlocks if given more than 1 I3CLSimStepToPhotonConverter",

"priority": "major",

"keywords": "",

"milestone": "",

"owner": "jvansanten",

"type": "defect"

}

```

</p>

</details>

| defect | deadlocks if given more than trac migrated from json status closed changetime ts description appears to deadlock if trying to service more than gpu at a time lulu observed this behavior when attempting to run hobo snowstorm on interactive multi gpu nodes in chiba n nthis can also be reproduced by running e g n ncuda visible devices env shell sh clsim resources scripts benchmark py n n non a dual gpu system with either cuda visible devices or cuda visible devices it completes within a few seconds with both gpus enabled it hangs forever reporter jvansanten cc eganster lulu resolution fixed time component combo simulation summary deadlocks if given more than priority major keywords milestone owner jvansanten type defect | 1 |

11,937 | 7,742,934,829 | IssuesEvent | 2018-05-29 11:09:55 | getgauge/gauge-python | https://api.github.com/repos/getgauge/gauge-python | closed | With large number of steps the CPU usage is high when project is loaded | performance ready for QA | **Expected behavior**

The CPU usage should be within an acceptable limit

**Actual behavior**

The CPU usage exceeds 90 and sometimes 100 as well

**Steps to replicate**

* Create a `gauge-python` project

* Create an implementation file with more than 100 steps

* Here is a sample project

[gauge-test.zip](https://github.com/getgauge/gauge-python/files/2030042/gauge-test.zip)

* Open it in VSCode

**Version**

```

Gauge version: 0.9.9.nightly-2018-05-21

Commit Hash: f7d0def

Plugins

-------

python (0.3.3.nightly-2018-05-21)

``` | True | With large number of steps the CPU usage is high when project is loaded - **Expected behavior**

The CPU usage should be within an acceptable limit

**Actual behavior**