Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

34,586 | 7,846,098,987 | IssuesEvent | 2018-06-19 14:38:59 | eamodio/vscode-gitlens | https://api.github.com/repos/eamodio/vscode-gitlens | closed | Is it possible to not have the code jump on opening files due to GitLens | question upstream/vscode | <!--

If you are encountering an issue that says `See output channel for more details`, please enable output channel logging by setting `"gitlens.outputLevel": "verbose"` in your settings.json. This will enable logging to the GitLens channel in the Output pane. Once enabled, please attempt to reproduce the issue (if possible) and attach the relevant log lines from the GitLens channel.

-->

- GitLens Version: 8.3

- VSCode Version: 1.24.0

- OS Version: 10.11.6

Steps to Reproduce:

1. Open any new file.

2. Wait ~ 1 second. The text will jump down to make space for the GitLens commit overlay.

I find it extremely annoying how when I open any file the text jumps down a bit to make space for this overlay:

Is it possible to either turn it off completely or make it so that when I open a file, the text is already moved so that the text doesn't shift to make space for that overlay.

Thank you. | 1.0 | Is it possible to not have the code jump on opening files due to GitLens - <!--

If you are encountering an issue that says `See output channel for more details`, please enable output channel logging by setting `"gitlens.outputLevel": "verbose"` in your settings.json. This will enable logging to the GitLens channel in the Output pane. Once enabled, please attempt to reproduce the issue (if possible) and attach the relevant log lines from the GitLens channel.

-->

- GitLens Version: 8.3

- VSCode Version: 1.24.0

- OS Version: 10.11.6

Steps to Reproduce:

1. Open any new file.

2. Wait ~ 1 second. The text will jump down to make space for the GitLens commit overlay.

I find it extremely annoying how when I open any file the text jumps down a bit to make space for this overlay:

Is it possible to either turn it off completely or make it so that when I open a file, the text is already moved so that the text doesn't shift to make space for that overlay.

Thank you. | non_defect | is it possible to not have the code jump on opening files due to gitlens if you are encountering an issue that says see output channel for more details please enable output channel logging by setting gitlens outputlevel verbose in your settings json this will enable logging to the gitlens channel in the output pane once enabled please attempt to reproduce the issue if possible and attach the relevant log lines from the gitlens channel gitlens version vscode version os version steps to reproduce open any new file wait second the text will jump down to make space for the gitlens commit overlay i find it extremely annoying how when i open any file the text jumps down a bit to make space for this overlay is it possible to either turn it off completely or make it so that when i open a file the text is already moved so that the text doesn t shift to make space for that overlay thank you | 0 |

70,008 | 22,783,136,111 | IssuesEvent | 2022-07-08 23:02:47 | Clever-ISA/Clever-ISA | https://api.github.com/repos/Clever-ISA/Clever-ISA | closed | All Jumps should not sync instructions | X-main I-defect S-blocked-on-maintainer V-1.0 | It is currently impossible to have a single page mapping that is both writable and executable. Therefore under most circumstances, it is impossible for the program to modify the instruction stream being executed without a call to a supervisor. Further, branches syncing instruction stream nearly negates the ability for branch prediction and prefetching branches (except special `fast` jumps that explicitly do not sync).

| 1.0 | All Jumps should not sync instructions - It is currently impossible to have a single page mapping that is both writable and executable. Therefore under most circumstances, it is impossible for the program to modify the instruction stream being executed without a call to a supervisor. Further, branches syncing instruction stream nearly negates the ability for branch prediction and prefetching branches (except special `fast` jumps that explicitly do not sync).

| defect | all jumps should not sync instructions it is currently impossible to have a single page mapping that is both writable and executable therefore under most circumstances it is impossible for the program to modify the instruction stream being executed without a call to a supervisor further branches syncing instruction stream nearly negates the ability for branch prediction and prefetching branches except special fast jumps that explicitly do not sync | 1 |

50,239 | 10,469,369,471 | IssuesEvent | 2019-09-22 20:12:14 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | closed | [RuyJIT/ARM] Generated code quaility | area-CodeGen question | As I remember there was no any activities to check whether RuyJIT generates well optimized code. So how we can do it and what is the references to check with?

The easiest way I see is look to generated code and search not efficient places. For test programs could be used:

1. Benchmarks/micro-benchmarks, but they are quite far from real user scenarios

1. Real applications (we can analyze only hot methods, to reduce amount of work).

@jkotas could you recommend some other ways and metrics? I'm looking approaches:

1. How to search problem places

1. How to find ways to fix them

1. How to estimate gain (it's nice to make optimizations but they should be valuable for real scenarios=\ )

| 1.0 | [RuyJIT/ARM] Generated code quaility - As I remember there was no any activities to check whether RuyJIT generates well optimized code. So how we can do it and what is the references to check with?

The easiest way I see is look to generated code and search not efficient places. For test programs could be used:

1. Benchmarks/micro-benchmarks, but they are quite far from real user scenarios

1. Real applications (we can analyze only hot methods, to reduce amount of work).

@jkotas could you recommend some other ways and metrics? I'm looking approaches:

1. How to search problem places

1. How to find ways to fix them

1. How to estimate gain (it's nice to make optimizations but they should be valuable for real scenarios=\ )

| non_defect | generated code quaility as i remember there was no any activities to check whether ruyjit generates well optimized code so how we can do it and what is the references to check with the easiest way i see is look to generated code and search not efficient places for test programs could be used benchmarks micro benchmarks but they are quite far from real user scenarios real applications we can analyze only hot methods to reduce amount of work jkotas could you recommend some other ways and metrics i m looking approaches how to search problem places how to find ways to fix them how to estimate gain it s nice to make optimizations but they should be valuable for real scenarios | 0 |

45,187 | 11,599,228,626 | IssuesEvent | 2020-02-25 01:32:36 | openenclave/openenclave | https://api.github.com/repos/openenclave/openenclave | closed | Transition to using Terraform with Jenkins for CI/CD | build ci/cd triaged | This issue is for tracking improvements to our CI/CD infrastructure to using Terraform to provision environments with SGX-enabled nodes for both Linux and Windows. This will encompass bare-metal testing and supporting additional OS's seamlessly

We should have at least one machine scale set for each distribution/OS/SGX configuration (kabyLake etc).

These nodes should be connected to Jenkins and all CI/CD processes should run in containers, with necessary exceptions.

We should automate the maintenance of the SGX driver (and security updates on the nodes too).

Enable scaling rules to the CI/CD resources to handle high demand. | 1.0 | Transition to using Terraform with Jenkins for CI/CD - This issue is for tracking improvements to our CI/CD infrastructure to using Terraform to provision environments with SGX-enabled nodes for both Linux and Windows. This will encompass bare-metal testing and supporting additional OS's seamlessly

We should have at least one machine scale set for each distribution/OS/SGX configuration (kabyLake etc).

These nodes should be connected to Jenkins and all CI/CD processes should run in containers, with necessary exceptions.

We should automate the maintenance of the SGX driver (and security updates on the nodes too).

Enable scaling rules to the CI/CD resources to handle high demand. | non_defect | transition to using terraform with jenkins for ci cd this issue is for tracking improvements to our ci cd infrastructure to using terraform to provision environments with sgx enabled nodes for both linux and windows this will encompass bare metal testing and supporting additional os s seamlessly we should have at least one machine scale set for each distribution os sgx configuration kabylake etc these nodes should be connected to jenkins and all ci cd processes should run in containers with necessary exceptions we should automate the maintenance of the sgx driver and security updates on the nodes too enable scaling rules to the ci cd resources to handle high demand | 0 |

22,377 | 3,642,191,605 | IssuesEvent | 2016-02-14 05:23:47 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | Non-Admin Execution can erroneously result in VirtualStore contents | C: Client - Setup P: Undetermined T: Defect | **Reported by JacobKlein on 25 Jul 43370817 13:34 UTC**

Somehow, my BOINC installation resulted in a !VirtualStore directory being created. A later installation, which fixed BOINC to reference the proper !ProgramData BOINC directory, still did not quite work correctly, because of the existence of the !VirtualStore data.

We should try to:[Find how the !VirtualStore directory got created, and prevent it if possible[[BR]([BR]]-)]- Cleanup any existing BOINC !VirtualStore directories, if possible, since their mere existence can cause problems.

The issue I had was that the GPUGrid.net project would use some files from C:\!ProgramData\BOINC\slots\0 ... and some files from !VirtualStore\!ProgramData\BOINC\slots\0 ... and the end effect was that tasks would complete immediately, be marked successful, and I would be granted credit. Not good.

Migrated-From: http://boinc.berkeley.edu/trac/ticket/1247 | 1.0 | Non-Admin Execution can erroneously result in VirtualStore contents - **Reported by JacobKlein on 25 Jul 43370817 13:34 UTC**

Somehow, my BOINC installation resulted in a !VirtualStore directory being created. A later installation, which fixed BOINC to reference the proper !ProgramData BOINC directory, still did not quite work correctly, because of the existence of the !VirtualStore data.

We should try to:[Find how the !VirtualStore directory got created, and prevent it if possible[[BR]([BR]]-)]- Cleanup any existing BOINC !VirtualStore directories, if possible, since their mere existence can cause problems.

The issue I had was that the GPUGrid.net project would use some files from C:\!ProgramData\BOINC\slots\0 ... and some files from !VirtualStore\!ProgramData\BOINC\slots\0 ... and the end effect was that tasks would complete immediately, be marked successful, and I would be granted credit. Not good.

Migrated-From: http://boinc.berkeley.edu/trac/ticket/1247 | defect | non admin execution can erroneously result in virtualstore contents reported by jacobklein on jul utc somehow my boinc installation resulted in a virtualstore directory being created a later installation which fixed boinc to reference the proper programdata boinc directory still did not quite work correctly because of the existence of the virtualstore data we should try to cleanup any existing boinc virtualstore directories if possible since their mere existence can cause problems the issue i had was that the gpugrid net project would use some files from c programdata boinc slots and some files from virtualstore programdata boinc slots and the end effect was that tasks would complete immediately be marked successful and i would be granted credit not good migrated from | 1 |

101,292 | 31,019,034,478 | IssuesEvent | 2023-08-10 02:39:01 | netsampler/goflow2 | https://api.github.com/repos/netsampler/goflow2 | closed | Redhat OS Error `GLIBC_2.32' not found (required by goflow2) | build | I installed goflow2-1.3.4-1.x86_64.rpm in my redhat machine, when i execute it throws error as below:

$goflow2 -h

goflow2: /lib64/libc.so.6: version `GLIBC_2.32' not found (required by goflow2)

goflow2: /lib64/libc.so.6: version `GLIBC_2.34' not found (required by goflow2)

Can anyone help? | 1.0 | Redhat OS Error `GLIBC_2.32' not found (required by goflow2) - I installed goflow2-1.3.4-1.x86_64.rpm in my redhat machine, when i execute it throws error as below:

$goflow2 -h

goflow2: /lib64/libc.so.6: version `GLIBC_2.32' not found (required by goflow2)

goflow2: /lib64/libc.so.6: version `GLIBC_2.34' not found (required by goflow2)

Can anyone help? | non_defect | redhat os error glibc not found required by i installed rpm in my redhat machine when i execute it throws error as below h libc so version glibc not found required by libc so version glibc not found required by can anyone help | 0 |

15,629 | 2,866,663,383 | IssuesEvent | 2015-06-05 08:25:01 | Guake/guake | https://api.github.com/repos/Guake/guake | closed | Guake fails to start due to a GlobalHotkey related C call | Priority:High Type: Defect | This is the traceback:

Traceback (most recent call last):

File "/usr/lib/python2.7/runpy.py", line 162, in _run_module_as_main

"__main__", fname, loader, pkg_name)

File "/usr/lib/python2.7/runpy.py", line 72, in _run_code

exec code in run_globals

File "/usr/lib/python2.7/site-packages/guake/main.py", line 235, in <module>

exec_main()

File "/usr/lib/python2.7/site-packages/guake/main.py", line 231, in exec_main

if not main():

File "/usr/lib/python2.7/site-packages/guake/main.py", line 147, in main

instance = Guake()

File "/usr/lib/python2.7/site-packages/guake/guake_app.py", line 287, in __init__

self.hotkeys = guake.globalhotkeys.GlobalHotkey()

SystemError: NULL result without error in PyObject_Call

This started happening since today. I tried the usual reboot-and-hope-things-work scenario, but didn't work.

For the record, I am using Arch Linux. | 1.0 | Guake fails to start due to a GlobalHotkey related C call - This is the traceback:

Traceback (most recent call last):

File "/usr/lib/python2.7/runpy.py", line 162, in _run_module_as_main

"__main__", fname, loader, pkg_name)

File "/usr/lib/python2.7/runpy.py", line 72, in _run_code

exec code in run_globals

File "/usr/lib/python2.7/site-packages/guake/main.py", line 235, in <module>

exec_main()

File "/usr/lib/python2.7/site-packages/guake/main.py", line 231, in exec_main

if not main():

File "/usr/lib/python2.7/site-packages/guake/main.py", line 147, in main

instance = Guake()

File "/usr/lib/python2.7/site-packages/guake/guake_app.py", line 287, in __init__

self.hotkeys = guake.globalhotkeys.GlobalHotkey()

SystemError: NULL result without error in PyObject_Call

This started happening since today. I tried the usual reboot-and-hope-things-work scenario, but didn't work.

For the record, I am using Arch Linux. | defect | guake fails to start due to a globalhotkey related c call this is the traceback traceback most recent call last file usr lib runpy py line in run module as main main fname loader pkg name file usr lib runpy py line in run code exec code in run globals file usr lib site packages guake main py line in exec main file usr lib site packages guake main py line in exec main if not main file usr lib site packages guake main py line in main instance guake file usr lib site packages guake guake app py line in init self hotkeys guake globalhotkeys globalhotkey systemerror null result without error in pyobject call this started happening since today i tried the usual reboot and hope things work scenario but didn t work for the record i am using arch linux | 1 |

271,241 | 29,368,818,927 | IssuesEvent | 2023-05-29 01:04:39 | Hi-Fi/remotesikulilibrary | https://api.github.com/repos/Hi-Fi/remotesikulilibrary | opened | CVE-2023-26464 (High) detected in log4j-1.2.16.jar | Mend: dependency security vulnerability | ## CVE-2023-26464 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-1.2.16.jar</b></p></summary>

<p>Apache Log4j 1.2</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/log4j/log4j/1.2.16/log4j-1.2.16.jar</p>

<p>

Dependency Hierarchy:

- jrobotremoteserver-3.0.jar (Root Library)

- :x: **log4j-1.2.16.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

** UNSUPPORTED WHEN ASSIGNED **

When using the Chainsaw or SocketAppender components with Log4j 1.x on JRE less than 1.7, an attacker that manages to cause a logging entry involving a specially-crafted (ie, deeply nested)

hashmap or hashtable (depending on which logging component is in use) to be processed could exhaust the available memory in the virtual machine and achieve Denial of Service when the object is deserialized.

This issue affects Apache Log4j before 2. Affected users are recommended to update to Log4j 2.x.

NOTE: This vulnerability only affects products that are no longer supported by the maintainer.

<p>Publish Date: 2023-03-10

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-26464>CVE-2023-26464</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-vp98-w2p3-mv35">https://github.com/advisories/GHSA-vp98-w2p3-mv35</a></p>

<p>Release Date: 2023-03-10</p>

<p>Fix Resolution: org.apache.logging.log4j:log4j-core:2.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2023-26464 (High) detected in log4j-1.2.16.jar - ## CVE-2023-26464 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>log4j-1.2.16.jar</b></p></summary>

<p>Apache Log4j 1.2</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path to vulnerable library: /root/.m2/repository/log4j/log4j/1.2.16/log4j-1.2.16.jar</p>

<p>

Dependency Hierarchy:

- jrobotremoteserver-3.0.jar (Root Library)

- :x: **log4j-1.2.16.jar** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

** UNSUPPORTED WHEN ASSIGNED **

When using the Chainsaw or SocketAppender components with Log4j 1.x on JRE less than 1.7, an attacker that manages to cause a logging entry involving a specially-crafted (ie, deeply nested)

hashmap or hashtable (depending on which logging component is in use) to be processed could exhaust the available memory in the virtual machine and achieve Denial of Service when the object is deserialized.

This issue affects Apache Log4j before 2. Affected users are recommended to update to Log4j 2.x.

NOTE: This vulnerability only affects products that are no longer supported by the maintainer.

<p>Publish Date: 2023-03-10

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2023-26464>CVE-2023-26464</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/advisories/GHSA-vp98-w2p3-mv35">https://github.com/advisories/GHSA-vp98-w2p3-mv35</a></p>

<p>Release Date: 2023-03-10</p>

<p>Fix Resolution: org.apache.logging.log4j:log4j-core:2.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve high detected in jar cve high severity vulnerability vulnerable library jar apache path to dependency file pom xml path to vulnerable library root repository jar dependency hierarchy jrobotremoteserver jar root library x jar vulnerable library vulnerability details unsupported when assigned when using the chainsaw or socketappender components with x on jre less than an attacker that manages to cause a logging entry involving a specially crafted ie deeply nested hashmap or hashtable depending on which logging component is in use to be processed could exhaust the available memory in the virtual machine and achieve denial of service when the object is deserialized this issue affects apache before affected users are recommended to update to x note this vulnerability only affects products that are no longer supported by the maintainer publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org apache logging core step up your open source security game with mend | 0 |

5,601 | 2,783,739,024 | IssuesEvent | 2015-05-07 02:56:49 | OData/odata.net | https://api.github.com/repos/OData/odata.net | closed | Uri parser can't work for short data type (Edm.Int16) for function parameter | 3 - Tested bug fix ready ODataLib | Supposed I have a function as:

``` xml

<Function Name="ShortFunction" IsBound="true">

<Parameter Name="bindingParameter" Type="Collection(NS.Customer)" />

<Parameter Name="number" Type="Edm.Int16" Nullable="false" />

<ReturnType Type="Edm.String" Unicode="false" />

</Function>

```

Then, I call this function as:

``` c#

~/odata/Customers/Default.ShortFunction(number=1)

```

It fails as

``` c#

"Expression of type 'Edm.Int32' cannot be converted to type 'Edm.Int16'."

```

The call stack is:

``` c#

at Microsoft.OData.Core.UriParser.Parsers.MetadataBindingUtils.ConvertToTypeIfNeeded(SingleValueNode source, IEdmTypeReference targetTypeReference)

at Microsoft.OData.Core.UriParser.Parsers.FunctionCallBinder.BindSegmentParameters(ODataUriParserConfiguration configuration, IEdmOperation functionOrOpertion, ICollection`1 segmentParameterTokens)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.TryBindingParametersAndMatchingOperation(String identifier, String parenthesisExpression, IEdmType bindingType, ODataUriParserConfiguration configuration, ICollection`1& boundParameters, IEdmOperation& matchingOperation)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.TryCreateSegmentForOperation(ODataPathSegment previousSegment, String identifier, String parenthesisExpression)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.CreateNextSegment(String text)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.ParsePath(ICollection`1 segments)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathFactory.BindPath(ICollection`1 segments, ODataUriParserConfiguration configuration)

at Microsoft.OData.Core.UriParser.ODataUriParser.ParsePathImplementation()

at Microsoft.OData.Core.UriParser.ODataUriParser.Initialize()

at Microsoft.OData.Core.UriParser.ODataUriParser.ParsePath()

at System.Web.OData.Routing.DefaultODataPathHandler.Parse(IEdmModel model, String serviceRoot, String odataPath, ODataUriResolverSetttings resolverSettings, Boolean enableUriTemplateParsing) in d:\github\WebApi\OData\src\System.Web.OData\OData\Routing\DefaultODataPathHandler.cs:line 137

at System.Web.OData.Routing.DefaultODataPathHandler.Parse(IEdmModel model, String serviceRoot, String odataPath) in d:\github\WebApi\OData\src\System.Web.OData\OData\Routing\DefaultODataPathHandler.cs:line 55

at System.Web.OData.Routing.ODataPathRouteConstraint.Match(HttpRequestMessage request, IHttpRoute route, String parameterName, IDictionary`2 values, HttpRouteDirection routeDirection) in d:\github\WebApi\OData\src\System.Web.OData\OData\Routing\ODataPathRouteConstraint.cs:line 171

```

I am using Web API 2.2 for OData V4. Thanks. | 1.0 | Uri parser can't work for short data type (Edm.Int16) for function parameter - Supposed I have a function as:

``` xml

<Function Name="ShortFunction" IsBound="true">

<Parameter Name="bindingParameter" Type="Collection(NS.Customer)" />

<Parameter Name="number" Type="Edm.Int16" Nullable="false" />

<ReturnType Type="Edm.String" Unicode="false" />

</Function>

```

Then, I call this function as:

``` c#

~/odata/Customers/Default.ShortFunction(number=1)

```

It fails as

``` c#

"Expression of type 'Edm.Int32' cannot be converted to type 'Edm.Int16'."

```

The call stack is:

``` c#

at Microsoft.OData.Core.UriParser.Parsers.MetadataBindingUtils.ConvertToTypeIfNeeded(SingleValueNode source, IEdmTypeReference targetTypeReference)

at Microsoft.OData.Core.UriParser.Parsers.FunctionCallBinder.BindSegmentParameters(ODataUriParserConfiguration configuration, IEdmOperation functionOrOpertion, ICollection`1 segmentParameterTokens)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.TryBindingParametersAndMatchingOperation(String identifier, String parenthesisExpression, IEdmType bindingType, ODataUriParserConfiguration configuration, ICollection`1& boundParameters, IEdmOperation& matchingOperation)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.TryCreateSegmentForOperation(ODataPathSegment previousSegment, String identifier, String parenthesisExpression)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.CreateNextSegment(String text)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathParser.ParsePath(ICollection`1 segments)

at Microsoft.OData.Core.UriParser.Parsers.ODataPathFactory.BindPath(ICollection`1 segments, ODataUriParserConfiguration configuration)

at Microsoft.OData.Core.UriParser.ODataUriParser.ParsePathImplementation()

at Microsoft.OData.Core.UriParser.ODataUriParser.Initialize()

at Microsoft.OData.Core.UriParser.ODataUriParser.ParsePath()

at System.Web.OData.Routing.DefaultODataPathHandler.Parse(IEdmModel model, String serviceRoot, String odataPath, ODataUriResolverSetttings resolverSettings, Boolean enableUriTemplateParsing) in d:\github\WebApi\OData\src\System.Web.OData\OData\Routing\DefaultODataPathHandler.cs:line 137

at System.Web.OData.Routing.DefaultODataPathHandler.Parse(IEdmModel model, String serviceRoot, String odataPath) in d:\github\WebApi\OData\src\System.Web.OData\OData\Routing\DefaultODataPathHandler.cs:line 55

at System.Web.OData.Routing.ODataPathRouteConstraint.Match(HttpRequestMessage request, IHttpRoute route, String parameterName, IDictionary`2 values, HttpRouteDirection routeDirection) in d:\github\WebApi\OData\src\System.Web.OData\OData\Routing\ODataPathRouteConstraint.cs:line 171

```

I am using Web API 2.2 for OData V4. Thanks. | non_defect | uri parser can t work for short data type edm for function parameter supposed i have a function as xml then i call this function as c odata customers default shortfunction number it fails as c expression of type edm cannot be converted to type edm the call stack is c at microsoft odata core uriparser parsers metadatabindingutils converttotypeifneeded singlevaluenode source iedmtypereference targettypereference at microsoft odata core uriparser parsers functioncallbinder bindsegmentparameters odatauriparserconfiguration configuration iedmoperation functionoropertion icollection segmentparametertokens at microsoft odata core uriparser parsers odatapathparser trybindingparametersandmatchingoperation string identifier string parenthesisexpression iedmtype bindingtype odatauriparserconfiguration configuration icollection boundparameters iedmoperation matchingoperation at microsoft odata core uriparser parsers odatapathparser trycreatesegmentforoperation odatapathsegment previoussegment string identifier string parenthesisexpression at microsoft odata core uriparser parsers odatapathparser createnextsegment string text at microsoft odata core uriparser parsers odatapathparser parsepath icollection segments at microsoft odata core uriparser parsers odatapathfactory bindpath icollection segments odatauriparserconfiguration configuration at microsoft odata core uriparser odatauriparser parsepathimplementation at microsoft odata core uriparser odatauriparser initialize at microsoft odata core uriparser odatauriparser parsepath at system web odata routing defaultodatapathhandler parse iedmmodel model string serviceroot string odatapath odatauriresolversetttings resolversettings boolean enableuritemplateparsing in d github webapi odata src system web odata odata routing defaultodatapathhandler cs line at system web odata routing defaultodatapathhandler parse iedmmodel model string serviceroot string odatapath in d github webapi odata src system web odata odata routing defaultodatapathhandler cs line at system web odata routing odatapathrouteconstraint match httprequestmessage request ihttproute route string parametername idictionary values httproutedirection routedirection in d github webapi odata src system web odata odata routing odatapathrouteconstraint cs line i am using web api for odata thanks | 0 |

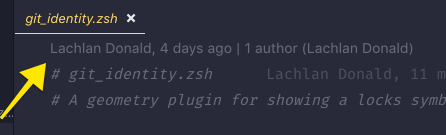

282,853 | 24,500,092,942 | IssuesEvent | 2022-10-10 12:06:22 | wpfoodmanager/wp-food-manager | https://api.github.com/repos/wpfoodmanager/wp-food-manager | closed | Reset button need add on the dashboard page | In Testing | Reset button need add on the dashboard page.

| 1.0 | Reset button need add on the dashboard page - Reset button need add on the dashboard page.

| non_defect | reset button need add on the dashboard page reset button need add on the dashboard page | 0 |

80,348 | 3,561,016,322 | IssuesEvent | 2016-01-23 14:07:42 | Itseez/opencv | https://api.github.com/repos/Itseez/opencv | closed | Missing constants from cv2 python interface | affected: 2.4 auto-transferred bug category: python bindings priority: normal | Transferred from http://code.opencv.org/issues/3181

```

|| Kyle Schmitt on 2013-07-28 17:16

|| Priority: Normal

|| Affected: 2.4.0 - 2.4.6

|| Category: python bindings

|| Tracker: Bug

|| Difficulty:

|| PR:

|| Platform: x64 / Linux

```

Missing constants from cv2 python interface

-----------

```

The video related constants (and possibly more), are missing from the cv2 python interface.

According to the docs:

http://docs.opencv.org/modules/highgui/doc/reading_and_writing_images_and_video.html#videocapture-get

The capture properties such as CV_CAP_PROP_FRAME_WIDTH should be available in the cv2 module, but they are not. They are only available through cv, or cv2.cv.

A snippet from a bpython session:

>>> import cv2

>>> import cv

>>> cv2.VideoCapture(0).get(cv2.CV_CAP_PROP_FRAME_WIDTH)

Traceback (most recent call last):

File "<input>", line 1, in <module>

AttributeError: 'module' object has no attribute 'CV_CAP_PROP_FRAME_WIDTH'

>>> cv2.VideoCapture(0).get(cv2.cv.CV_CAP_PROP_FRAME_WIDTH)

176.0

>>> cv2.VideoCapture(0).get(cv.CV_CAP_PROP_FRAME_WIDTH)

176.0

>>>

```

History

-------

##### Victor Kocheganov on 2013-08-05 10:51

```

Hello Kyle Schmitt!

Thank you for reporting the issue and detail description!

Unfortunately our human resources are highly limited and it would be much appreciated if you have time to investigate it by yourself and provide a fix to community (please see http://www.code.opencv.org/projects/opencv/wiki/How_to_contribute for details)!

Thank you in advance,

Victor Kocheganov

- Target version set to 2.4.7

- Assignee set to Vadim Pisarevsky

- Status changed from New to Open

- Category set to python bindings

```

##### Victor Kocheganov on 2013-08-08 06:51

```

- Assignee changed from Vadim Pisarevsky to Andrey Pavlenko

```

##### abid rahman on 2013-09-24 02:20

```

It is already available in master branch.

<pre>

cv2.CAP_PROP_FRAME_COUNT

</pre>

```

##### Alexander Smorkalov on 2013-11-27 10:28

```

Andrey Pavlenko, you've made some fixes related to wrappers generation for VideoCapture. Is this problem exist in 2.4 right now?

- Affected version changed from 2.4.0 - 2.4.5 to 2.4.0 - 2.4.6

```

##### Alexander Smorkalov on 2013-11-28 05:57

```

- Target version changed from 2.4.7 to 2.4.8

```

##### Alexander Smorkalov on 2013-12-30 10:38

```

- Target version changed from 2.4.8 to 2.4.9

```

##### Alexander Smorkalov on 2014-04-30 19:05

```

- Target version changed from 2.4.9 to 2.4.10

``` | 1.0 | Missing constants from cv2 python interface - Transferred from http://code.opencv.org/issues/3181

```

|| Kyle Schmitt on 2013-07-28 17:16

|| Priority: Normal

|| Affected: 2.4.0 - 2.4.6

|| Category: python bindings

|| Tracker: Bug

|| Difficulty:

|| PR:

|| Platform: x64 / Linux

```

Missing constants from cv2 python interface

-----------

```

The video related constants (and possibly more), are missing from the cv2 python interface.

According to the docs:

http://docs.opencv.org/modules/highgui/doc/reading_and_writing_images_and_video.html#videocapture-get

The capture properties such as CV_CAP_PROP_FRAME_WIDTH should be available in the cv2 module, but they are not. They are only available through cv, or cv2.cv.

A snippet from a bpython session:

>>> import cv2

>>> import cv

>>> cv2.VideoCapture(0).get(cv2.CV_CAP_PROP_FRAME_WIDTH)

Traceback (most recent call last):

File "<input>", line 1, in <module>

AttributeError: 'module' object has no attribute 'CV_CAP_PROP_FRAME_WIDTH'

>>> cv2.VideoCapture(0).get(cv2.cv.CV_CAP_PROP_FRAME_WIDTH)

176.0

>>> cv2.VideoCapture(0).get(cv.CV_CAP_PROP_FRAME_WIDTH)

176.0

>>>

```

History

-------

##### Victor Kocheganov on 2013-08-05 10:51

```

Hello Kyle Schmitt!

Thank you for reporting the issue and detail description!

Unfortunately our human resources are highly limited and it would be much appreciated if you have time to investigate it by yourself and provide a fix to community (please see http://www.code.opencv.org/projects/opencv/wiki/How_to_contribute for details)!

Thank you in advance,

Victor Kocheganov

- Target version set to 2.4.7

- Assignee set to Vadim Pisarevsky

- Status changed from New to Open

- Category set to python bindings

```

##### Victor Kocheganov on 2013-08-08 06:51

```

- Assignee changed from Vadim Pisarevsky to Andrey Pavlenko

```

##### abid rahman on 2013-09-24 02:20

```

It is already available in master branch.

<pre>

cv2.CAP_PROP_FRAME_COUNT

</pre>

```

##### Alexander Smorkalov on 2013-11-27 10:28

```

Andrey Pavlenko, you've made some fixes related to wrappers generation for VideoCapture. Is this problem exist in 2.4 right now?

- Affected version changed from 2.4.0 - 2.4.5 to 2.4.0 - 2.4.6

```

##### Alexander Smorkalov on 2013-11-28 05:57

```

- Target version changed from 2.4.7 to 2.4.8

```

##### Alexander Smorkalov on 2013-12-30 10:38

```

- Target version changed from 2.4.8 to 2.4.9

```

##### Alexander Smorkalov on 2014-04-30 19:05

```

- Target version changed from 2.4.9 to 2.4.10

``` | non_defect | missing constants from python interface transferred from kyle schmitt on priority normal affected category python bindings tracker bug difficulty pr platform linux missing constants from python interface the video related constants and possibly more are missing from the python interface according to the docs the capture properties such as cv cap prop frame width should be available in the module but they are not they are only available through cv or cv a snippet from a bpython session import import cv videocapture get cv cap prop frame width traceback most recent call last file line in attributeerror module object has no attribute cv cap prop frame width videocapture get cv cv cap prop frame width videocapture get cv cv cap prop frame width history victor kocheganov on hello kyle schmitt thank you for reporting the issue and detail description unfortunately our human resources are highly limited and it would be much appreciated if you have time to investigate it by yourself and provide a fix to community please see for details thank you in advance victor kocheganov target version set to assignee set to vadim pisarevsky status changed from new to open category set to python bindings victor kocheganov on assignee changed from vadim pisarevsky to andrey pavlenko abid rahman on it is already available in master branch cap prop frame count alexander smorkalov on andrey pavlenko you ve made some fixes related to wrappers generation for videocapture is this problem exist in right now affected version changed from to alexander smorkalov on target version changed from to alexander smorkalov on target version changed from to alexander smorkalov on target version changed from to | 0 |

45,016 | 12,520,276,966 | IssuesEvent | 2020-06-03 15:37:38 | ka65359/kai-kong-music-lib | https://api.github.com/repos/ka65359/kai-kong-music-lib | opened | Add song clears filter string | Defect Sev 3 | ### Description

The filter is cleared when a new song is added. We should persist it.

#### Steps to reproduce

1.

2.

| 1.0 | Add song clears filter string - ### Description

The filter is cleared when a new song is added. We should persist it.

#### Steps to reproduce

1.

2.

| defect | add song clears filter string description the filter is cleared when a new song is added we should persist it steps to reproduce | 1 |

123,834 | 12,219,637,787 | IssuesEvent | 2020-05-01 22:18:22 | textileio/js-threads | https://api.github.com/repos/textileio/js-threads | closed | Update to use _id instead of ID in instances | documentation enhancement | **Describe the bug**

On the Go side, we've now switched to using _id for the identifier on instances. This is great as it will feel more mongodb-like, and is pretty standard in JS. Since we don't rely on Go to validate our instances here, we'll need to make sure we implement this the same way that we do in Go. This ticket therefore is linked with #57, which is also what we use by default in Go now.

| 1.0 | Update to use _id instead of ID in instances - **Describe the bug**

On the Go side, we've now switched to using _id for the identifier on instances. This is great as it will feel more mongodb-like, and is pretty standard in JS. Since we don't rely on Go to validate our instances here, we'll need to make sure we implement this the same way that we do in Go. This ticket therefore is linked with #57, which is also what we use by default in Go now.

| non_defect | update to use id instead of id in instances describe the bug on the go side we ve now switched to using id for the identifier on instances this is great as it will feel more mongodb like and is pretty standard in js since we don t rely on go to validate our instances here we ll need to make sure we implement this the same way that we do in go this ticket therefore is linked with which is also what we use by default in go now | 0 |

40,394 | 2,868,917,014 | IssuesEvent | 2015-06-05 21:56:52 | dart-lang/dart_style | https://api.github.com/repos/dart-lang/dart_style | closed | Mis-formatting of strings | AssumedStale bug Priority-Medium | <a href="https://github.com/stevemessick"><img src="https://avatars.githubusercontent.com/u/8518285?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [stevemessick](https://github.com/stevemessick)**

_Originally opened as dart-lang/sdk#18206_

----

An edge case for the formatter. Format the code in the screenshot. It concatenates both of the strings onto one line, making the line length too long, and also doesn't combine them. It seems to be sensitive to the exact lengths of the interpolated variable names, and I also need to have it inside the if statement.

- Alan Knight

////////////////////////////////////////////////////////////////////////////////////

Editor: 1.4.0.edge_034945 (2014-04-10)

OS: Mac OS X - x86_64 (10.9.2)

JVM: 1.6.0_65

# projects: 4

# open dart files: 25

auto-run pub: false

localhost resolves to: 127.0.0.1

mem max/total/free: 1983 / 620 / 307 MB

thread count: 34

index: 1554905 relationships in 177578 keys in 1445 sources

SDK installed: true

Dartium installed: true

______

**Attachment:**

[screenshot.png](https://storage.googleapis.com/google-code-attachments/dart/issue-18206/comment-0/screenshot.png) (144.83 KB) | 1.0 | Mis-formatting of strings - <a href="https://github.com/stevemessick"><img src="https://avatars.githubusercontent.com/u/8518285?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [stevemessick](https://github.com/stevemessick)**

_Originally opened as dart-lang/sdk#18206_

----

An edge case for the formatter. Format the code in the screenshot. It concatenates both of the strings onto one line, making the line length too long, and also doesn't combine them. It seems to be sensitive to the exact lengths of the interpolated variable names, and I also need to have it inside the if statement.

- Alan Knight

////////////////////////////////////////////////////////////////////////////////////

Editor: 1.4.0.edge_034945 (2014-04-10)

OS: Mac OS X - x86_64 (10.9.2)

JVM: 1.6.0_65

# projects: 4

# open dart files: 25

auto-run pub: false

localhost resolves to: 127.0.0.1

mem max/total/free: 1983 / 620 / 307 MB

thread count: 34

index: 1554905 relationships in 177578 keys in 1445 sources

SDK installed: true

Dartium installed: true

______

**Attachment:**

[screenshot.png](https://storage.googleapis.com/google-code-attachments/dart/issue-18206/comment-0/screenshot.png) (144.83 KB) | non_defect | mis formatting of strings issue by originally opened as dart lang sdk an edge case for the formatter format the code in the screenshot it concatenates both of the strings onto one line making the line length too long and also doesn t combine them it seems to be sensitive to the exact lengths of the interpolated variable names and i also need to have it inside the if statement nbsp alan knight editor edge os mac os x jvm projects open dart files auto run pub false localhost resolves to mem max total free mb thread count index relationships in keys in sources sdk installed true dartium installed true attachment kb | 0 |

64,064 | 18,161,462,126 | IssuesEvent | 2021-09-27 10:05:19 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Beta dot animation breaking fonts subpixel antialiasing on Windows in Chrome | T-Defect S-Minor A-User-Menu A11y O-Occasional | It seems that the animation `mx_Beta_bluePulse` used in class `mx_BetaDot` is making an enormous layout trashing and is a second source of fonts not being correctly subpixel antialiased on Windows. The main source so far is the background-blur on left panel, which is being taken care of here: https://github.com/matrix-org/matrix-react-sdk/pull/6262

To reproduce proper behavior comment out class `.mx_BetaDot` in `res/css/views/beta/_BetaCard.scss` and remove all `backdrop-filter` references from our css codebase.

The other way to verify if rendering is proper is to look for layer borders checkbox which is accessible via Chrome Devtools cog menu (Rendering). Screenshots below:

Valid fonts rendering (properly antialiased) will have this kind of layer borders:

Invalid fonts rendering layer borders (it's super easy to just look at the bottom-right corner with icons):

The fonts rendering is irreproducible on anywhere except Windows and Chrome/Chromium, yet the layers part should be easily reproducible on any system using Chrome.

Related: https://github.com/matrix-org/matrix-react-sdk/pull/6262 (gets rid of background-blur and replaces it with canvas, which doesn't trash the layout)

Related: https://github.com/vector-im/element-web/issues/15594 | 1.0 | Beta dot animation breaking fonts subpixel antialiasing on Windows in Chrome - It seems that the animation `mx_Beta_bluePulse` used in class `mx_BetaDot` is making an enormous layout trashing and is a second source of fonts not being correctly subpixel antialiased on Windows. The main source so far is the background-blur on left panel, which is being taken care of here: https://github.com/matrix-org/matrix-react-sdk/pull/6262

To reproduce proper behavior comment out class `.mx_BetaDot` in `res/css/views/beta/_BetaCard.scss` and remove all `backdrop-filter` references from our css codebase.

The other way to verify if rendering is proper is to look for layer borders checkbox which is accessible via Chrome Devtools cog menu (Rendering). Screenshots below:

Valid fonts rendering (properly antialiased) will have this kind of layer borders:

Invalid fonts rendering layer borders (it's super easy to just look at the bottom-right corner with icons):

The fonts rendering is irreproducible on anywhere except Windows and Chrome/Chromium, yet the layers part should be easily reproducible on any system using Chrome.

Related: https://github.com/matrix-org/matrix-react-sdk/pull/6262 (gets rid of background-blur and replaces it with canvas, which doesn't trash the layout)

Related: https://github.com/vector-im/element-web/issues/15594 | defect | beta dot animation breaking fonts subpixel antialiasing on windows in chrome it seems that the animation mx beta bluepulse used in class mx betadot is making an enormous layout trashing and is a second source of fonts not being correctly subpixel antialiased on windows the main source so far is the background blur on left panel which is being taken care of here to reproduce proper behavior comment out class mx betadot in res css views beta betacard scss and remove all backdrop filter references from our css codebase the other way to verify if rendering is proper is to look for layer borders checkbox which is accessible via chrome devtools cog menu rendering screenshots below valid fonts rendering properly antialiased will have this kind of layer borders invalid fonts rendering layer borders it s super easy to just look at the bottom right corner with icons the fonts rendering is irreproducible on anywhere except windows and chrome chromium yet the layers part should be easily reproducible on any system using chrome related gets rid of background blur and replaces it with canvas which doesn t trash the layout related | 1 |

752,256 | 26,278,065,913 | IssuesEvent | 2023-01-07 01:53:23 | gamefreedomgit/Maelstrom | https://api.github.com/repos/gamefreedomgit/Maelstrom | reopened | [Talent] Shadowy Apparitions | Class: Priest Pathfinding Talent Priority: Medium Status: Needs Confirmation Bug Report from Discord | Mitosis

OP

— 12/11/2022 10:31 AM

Pathing is janky as hell, and proc rate is significantly lower than what it should be. In particular while moving you do not seem to get any bonus chance to summon where it should be 5x as likely | 1.0 | [Talent] Shadowy Apparitions - Mitosis

OP

— 12/11/2022 10:31 AM

Pathing is janky as hell, and proc rate is significantly lower than what it should be. In particular while moving you do not seem to get any bonus chance to summon where it should be 5x as likely | non_defect | shadowy apparitions mitosis op — am pathing is janky as hell and proc rate is significantly lower than what it should be in particular while moving you do not seem to get any bonus chance to summon where it should be as likely | 0 |

26 | 2,492,544,954 | IssuesEvent | 2015-01-05 00:55:37 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | Redis class not exists | Defect | Cake 2.5.8 - if `Redis` class doesn't exists, this error will be thrown:

```php

PHP Fatal error: Uncaught exception 'CacheException' with message 'Cache engine _cake_core_ is not properly configured.' in /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Cache/Cache.php:181

Stack trace:

#0 /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Cache/Cache.php(151): Cache::_buildEngine('_cake_core_')

#1 /var/www/cake258/App/Config/core.php(282): Cache::config('_cake_core_', Array)

#2 /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Core/Configure.php(72): include('/var/www/cake258/...')

#3 /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/bootstrap.php(175): Configure::bootstrap(true)

#4 /var/www/cake258/App/webroot/index.php(85): include('/var/www/cake258/...')

#5 {main}

thrown in /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Cache/Cache.php on line 181

```

Should this part be tested a bit before?

https://github.com/cakephp/cakephp/blob/master/lib/Cake/Cache/Engine/RedisEngine.php#L57 | 1.0 | Redis class not exists - Cake 2.5.8 - if `Redis` class doesn't exists, this error will be thrown:

```php

PHP Fatal error: Uncaught exception 'CacheException' with message 'Cache engine _cake_core_ is not properly configured.' in /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Cache/Cache.php:181

Stack trace:

#0 /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Cache/Cache.php(151): Cache::_buildEngine('_cake_core_')

#1 /var/www/cake258/App/Config/core.php(282): Cache::config('_cake_core_', Array)

#2 /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Core/Configure.php(72): include('/var/www/cake258/...')

#3 /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/bootstrap.php(175): Configure::bootstrap(true)

#4 /var/www/cake258/App/webroot/index.php(85): include('/var/www/cake258/...')

#5 {main}

thrown in /var/www/cake258/Vendor/cakephp/cakephp/lib/Cake/Cache/Cache.php on line 181

```

Should this part be tested a bit before?

https://github.com/cakephp/cakephp/blob/master/lib/Cake/Cache/Engine/RedisEngine.php#L57 | defect | redis class not exists cake if redis class doesn t exists this error will be thrown php php fatal error uncaught exception cacheexception with message cache engine cake core is not properly configured in var www vendor cakephp cakephp lib cake cache cache php stack trace var www vendor cakephp cakephp lib cake cache cache php cache buildengine cake core var www app config core php cache config cake core array var www vendor cakephp cakephp lib cake core configure php include var www var www vendor cakephp cakephp lib cake bootstrap php configure bootstrap true var www app webroot index php include var www main thrown in var www vendor cakephp cakephp lib cake cache cache php on line should this part be tested a bit before | 1 |

41,041 | 10,271,844,454 | IssuesEvent | 2019-08-23 15:00:36 | openanthem/nimbus-core | https://api.github.com/repos/openanthem/nimbus-core | closed | Data entered using Chrome's autofill feature is not getting saved when submitting the form. | Defect Open | # Issue Details

**Type of Issue** (check one with "X")

```

[X] Bug Report => Please search GitHub for a similar issue or PR before submitting

[ ] Feature Request => Please ensure feature is not already in progress

[ ] Support Request => Please do not submit support requests here, instead see: https://discourse.oss.antheminc.com/

```

## Current Behavior

<!--

When submitting the form using autofill feature of chrome, data is not getting persisted in the database.

-->

## Expected Behavior

<!--

Data filled in using the autofill should also be persisted in the database.

-->

## How to Reproduce the Issue

### Steps to Reproduce

<!--

1) Fill some fields using the autofill feature, for eg: address so city, zip and state are prepopulated.

2) Hit submit button

3) Data will not be persisted for city, zip and state (data that was prepopulated) in the DB

-->

### Code Snippet

<!--

Please add any code that is necessary to reproduce the issue.

If the current behavior is recreated better with an example, please provide the *STEPS TO REPRODUCE* and if possible a *MINIMAL DEMO* of the problem via

https://plnkr.co or similar.

-->

# Environment Details

* **Nimbus Version:**

<!--

1.3.x

-->

* **Browser:**

<!--

Please list all browsers where this could be reproduced.

-->

| 1.0 | Data entered using Chrome's autofill feature is not getting saved when submitting the form. - # Issue Details

**Type of Issue** (check one with "X")

```

[X] Bug Report => Please search GitHub for a similar issue or PR before submitting

[ ] Feature Request => Please ensure feature is not already in progress

[ ] Support Request => Please do not submit support requests here, instead see: https://discourse.oss.antheminc.com/

```

## Current Behavior

<!--

When submitting the form using autofill feature of chrome, data is not getting persisted in the database.

-->

## Expected Behavior

<!--

Data filled in using the autofill should also be persisted in the database.

-->

## How to Reproduce the Issue

### Steps to Reproduce

<!--

1) Fill some fields using the autofill feature, for eg: address so city, zip and state are prepopulated.

2) Hit submit button

3) Data will not be persisted for city, zip and state (data that was prepopulated) in the DB

-->

### Code Snippet

<!--

Please add any code that is necessary to reproduce the issue.

If the current behavior is recreated better with an example, please provide the *STEPS TO REPRODUCE* and if possible a *MINIMAL DEMO* of the problem via

https://plnkr.co or similar.

-->

# Environment Details

* **Nimbus Version:**

<!--

1.3.x

-->

* **Browser:**

<!--

Please list all browsers where this could be reproduced.

-->

| defect | data entered using chrome s autofill feature is not getting saved when submitting the form issue details type of issue check one with x bug report please search github for a similar issue or pr before submitting feature request please ensure feature is not already in progress support request please do not submit support requests here instead see current behavior when submitting the form using autofill feature of chrome data is not getting persisted in the database expected behavior data filled in using the autofill should also be persisted in the database how to reproduce the issue steps to reproduce fill some fields using the autofill feature for eg address so city zip and state are prepopulated hit submit button data will not be persisted for city zip and state data that was prepopulated in the db code snippet please add any code that is necessary to reproduce the issue if the current behavior is recreated better with an example please provide the steps to reproduce and if possible a minimal demo of the problem via or similar environment details nimbus version x browser please list all browsers where this could be reproduced | 1 |

46,782 | 13,055,975,343 | IssuesEvent | 2020-07-30 03:16:55 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | [DOMLauncher] Emulate SLC bit-packing (Trac #1864) | Incomplete Migration Migrated from Trac combo simulation defect | Migrated from https://code.icecube.wisc.edu/ticket/1864

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:24",

"description": "In real data the SLC charge stamps are packed into 9 bits, dropping the LSB from each if bit 10 in the peak sample is high. DOMLauncher should do the same. The payload format is documented here:\n\nhttps://docushare.icecube.wisc.edu/dsweb/Get/Document-20568\n\nSee also: #1863",

"reporter": "jvansanten",

"cc": "",

"resolution": "fixed",

"_ts": "1550067204154158",

"component": "combo simulation",

"summary": "[DOMLauncher] Emulate SLC bit-packing",

"priority": "normal",

"keywords": "",

"time": "2016-09-30T14:58:12",

"milestone": "",

"owner": "cweaver",

"type": "defect"

}

```

| 1.0 | [DOMLauncher] Emulate SLC bit-packing (Trac #1864) - Migrated from https://code.icecube.wisc.edu/ticket/1864

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:24",

"description": "In real data the SLC charge stamps are packed into 9 bits, dropping the LSB from each if bit 10 in the peak sample is high. DOMLauncher should do the same. The payload format is documented here:\n\nhttps://docushare.icecube.wisc.edu/dsweb/Get/Document-20568\n\nSee also: #1863",

"reporter": "jvansanten",

"cc": "",

"resolution": "fixed",

"_ts": "1550067204154158",

"component": "combo simulation",

"summary": "[DOMLauncher] Emulate SLC bit-packing",

"priority": "normal",

"keywords": "",

"time": "2016-09-30T14:58:12",

"milestone": "",

"owner": "cweaver",

"type": "defect"

}

```

| defect | emulate slc bit packing trac migrated from json status closed changetime description in real data the slc charge stamps are packed into bits dropping the lsb from each if bit in the peak sample is high domlauncher should do the same the payload format is documented here n n also reporter jvansanten cc resolution fixed ts component combo simulation summary emulate slc bit packing priority normal keywords time milestone owner cweaver type defect | 1 |

17,500 | 3,010,324,598 | IssuesEvent | 2015-07-28 12:37:00 | oozcitak/imagelistview | https://api.github.com/repos/oozcitak/imagelistview | closed | Please move to Github | auto-migrated Priority-Medium Type-Defect | ```

To save the project from disappearing when Google Code will be shut down, I

suggest that you create a GitHub repository by clicking the "Export to GitHub"

button.

I could do quite well by myself, but I feel that you as the original author

should do that :-)

```

Original issue reported on code.google.com by `uwe.k...@gmail.com` on 16 Jul 2015 at 7:29 | 1.0 | Please move to Github - ```

To save the project from disappearing when Google Code will be shut down, I

suggest that you create a GitHub repository by clicking the "Export to GitHub"

button.

I could do quite well by myself, but I feel that you as the original author

should do that :-)

```

Original issue reported on code.google.com by `uwe.k...@gmail.com` on 16 Jul 2015 at 7:29 | defect | please move to github to save the project from disappearing when google code will be shut down i suggest that you create a github repository by clicking the export to github button i could do quite well by myself but i feel that you as the original author should do that original issue reported on code google com by uwe k gmail com on jul at | 1 |

115,233 | 24,736,344,913 | IssuesEvent | 2022-10-20 22:24:14 | bnreplah/verademo | https://api.github.com/repos/bnreplah/verademo | opened | Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS) [VID:80:WEB-INF/views/profile.jsp:160] | VeracodeFlaw: Medium Veracode Pipeline Scan | **Filename:** WEB-INF/views/profile.jsp

**Line:** 160

**CWE:** 80 (Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS))

<span>This call to javax.servlet.jsp.JspWriter.print() contains a cross-site scripting (XSS) flaw. The application populates the HTTP response with untrusted input, allowing an attacker to embed malicious content, such as Javascript code, which will be executed in the context of the victim's browser. XSS vulnerabilities are commonly exploited to steal or manipulate cookies, modify presentation of content, and compromise confidential information, with new attack vectors being discovered on a regular basis. The first argument to print() contains tainted data from the variable heckler.getUsername(). The tainted data originated from an earlier call to java.sql.PreparedStatement.executeQuery. The tainted data is directed into an output stream returned by javax.servlet.jsp.JspWriter.</span> <span>Use contextual escaping on all untrusted data before using it to construct any portion of an HTTP response. The escaping method should be chosen based on the specific use case of the untrusted data, otherwise it may not protect fully against the attack. For example, if the data is being written to the body of an HTML page, use HTML entity escaping; if the data is being written to an attribute, use attribute escaping; etc. Both the OWASP Java Encoder library and the Microsoft AntiXSS library provide contextual escaping methods. For more details on contextual escaping, see https://github.com/OWASP/CheatSheetSeries/blob/master/cheatsheets/Cross_Site_Scripting_Prevention_Cheat_Sheet.md. In addition, as a best practice, always validate untrusted input to ensure that it conforms to the expected format, using centralized data validation routines when possible.</span> <span>References: <a href="https://cwe.mitre.org/data/definitions/79.html">CWE</a> <a href="https://owasp.org/www-community/attacks/xss/">OWASP</a> <a href="https://docs.veracode.com/r/review_cleansers">Supported Cleansers</a></span> | 2.0 | Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS) [VID:80:WEB-INF/views/profile.jsp:160] - **Filename:** WEB-INF/views/profile.jsp

**Line:** 160

**CWE:** 80 (Improper Neutralization of Script-Related HTML Tags in a Web Page (Basic XSS))

<span>This call to javax.servlet.jsp.JspWriter.print() contains a cross-site scripting (XSS) flaw. The application populates the HTTP response with untrusted input, allowing an attacker to embed malicious content, such as Javascript code, which will be executed in the context of the victim's browser. XSS vulnerabilities are commonly exploited to steal or manipulate cookies, modify presentation of content, and compromise confidential information, with new attack vectors being discovered on a regular basis. The first argument to print() contains tainted data from the variable heckler.getUsername(). The tainted data originated from an earlier call to java.sql.PreparedStatement.executeQuery. The tainted data is directed into an output stream returned by javax.servlet.jsp.JspWriter.</span> <span>Use contextual escaping on all untrusted data before using it to construct any portion of an HTTP response. The escaping method should be chosen based on the specific use case of the untrusted data, otherwise it may not protect fully against the attack. For example, if the data is being written to the body of an HTML page, use HTML entity escaping; if the data is being written to an attribute, use attribute escaping; etc. Both the OWASP Java Encoder library and the Microsoft AntiXSS library provide contextual escaping methods. For more details on contextual escaping, see https://github.com/OWASP/CheatSheetSeries/blob/master/cheatsheets/Cross_Site_Scripting_Prevention_Cheat_Sheet.md. In addition, as a best practice, always validate untrusted input to ensure that it conforms to the expected format, using centralized data validation routines when possible.</span> <span>References: <a href="https://cwe.mitre.org/data/definitions/79.html">CWE</a> <a href="https://owasp.org/www-community/attacks/xss/">OWASP</a> <a href="https://docs.veracode.com/r/review_cleansers">Supported Cleansers</a></span> | non_defect | improper neutralization of script related html tags in a web page basic xss filename web inf views profile jsp line cwe improper neutralization of script related html tags in a web page basic xss this call to javax servlet jsp jspwriter print contains a cross site scripting xss flaw the application populates the http response with untrusted input allowing an attacker to embed malicious content such as javascript code which will be executed in the context of the victim s browser xss vulnerabilities are commonly exploited to steal or manipulate cookies modify presentation of content and compromise confidential information with new attack vectors being discovered on a regular basis the first argument to print contains tainted data from the variable heckler getusername the tainted data originated from an earlier call to java sql preparedstatement executequery the tainted data is directed into an output stream returned by javax servlet jsp jspwriter use contextual escaping on all untrusted data before using it to construct any portion of an http response the escaping method should be chosen based on the specific use case of the untrusted data otherwise it may not protect fully against the attack for example if the data is being written to the body of an html page use html entity escaping if the data is being written to an attribute use attribute escaping etc both the owasp java encoder library and the microsoft antixss library provide contextual escaping methods for more details on contextual escaping see in addition as a best practice always validate untrusted input to ensure that it conforms to the expected format using centralized data validation routines when possible references | 0 |

144,246 | 11,599,246,911 | IssuesEvent | 2020-02-25 01:36:22 | brave/brave-browser | https://api.github.com/repos/brave/brave-browser | closed | Manual test run on OS X for 1.4.x - Release | OS/macOS QA/Yes release-notes/exclude tests | ## Per release specialty tests

### Installer

- [x] Check signature: If OS Run `spctl --assess --verbose /Applications/Brave-Browser-Beta.app/` and make sure it returns `accepted`. If Windows right click on the `brave_installer-x64.exe` and go to Properties, go to the Digital Signatures tab and double click on the signature. Make sure it says "The digital signature is OK" in the popup window

### Data(Upgrade from previous release)

- [ ] Make sure that data from the last version appears in the new version OK

- [ ] With data from the last version, verify that

- [ ] bookmarks on the bookmark toolbar and bookmark folders can be opened

- [ ] cookies are preserved

- [ ] installed extensions are retained and work correctly

- [ ] opened tabs can be reloaded

- [ ] stored passwords are preserved

- [ ] unpinned tabs can be pinned

## Extensions/Plugins tests

- [x] Verify one item from Brave Update server is installable (Example: Ad-block DAT file on fresh extension)

- [x] Verify one item from Google Update server is installable (Example: Extensions from CWS)

- [x] Verify PDFJS, Torrent viewer extensions are installed automatically on fresh profile and cannot be disabled

- [x] Verify magnet links and .torrent files loads Torrent viewer page and able to download torrent

### CWS

- [x] Verify installing ABP from CWS shows warning message `NOT A RECOMMENDED BRAVE EXTENSION!` but still allows to install the extension

- [x] Verify installing LastPass from CWS doesn't show any warning message

### PDF

- [x] Test that PDF is loaded over HTTPS at https://basicattentiontoken.org/BasicAttentionTokenWhitePaper-4.pdf

- [x] Test that PDF is loaded over HTTP at http://www.pdf995.com/samples/pdf.pdf

### Widevine

- [x] Verify `Widevine Notification` is shown when you visit Netflix for the first time

- [x] Test that you can stream on Netflix on a fresh profile after installing Widevine

### Bravery settings

- [x] Verify that HTTPS Everywhere works by loading http://https-everywhere.badssl.com/

- [x] Turning HTTPS Everywhere off and shields off both disable the redirect to https://https-everywhere.badssl.com/

- [x] Verify that toggling `Ads and trackers blocked` works as expected

- [x] Visit https://testsafebrowsing.appspot.com/s/phishing.html, verify that Safe Browsing (via our Proxy) works for all the listed items

- [x] Visit https://brianbondy.com/ and then turn on script blocking, page should not load. Allow it from the script blocking UI in the URL bar and it should load the page correctly

- [x] Test that 3rd party storage results are blank at https://jsfiddle.net/7ke9r14a/9/ when 3rd party cookies are blocked and not blank when 3rd party cookies are unblocked

### Fingerprint Tests

- [x] Visit https://jsfiddle.net/bkf50r8v/13/, ensure 3 blocked items are listed in shields. Result window should show `got canvas fingerprint 0` and `got webgl fingerprint 00`

- [x] Test that audio fingerprint is blocked at https://audiofingerprint.openwpm.com/ only when `Block all fingerprinting protection` is on

- [x] Test that Brave browser isn't detected on https://extensions.inrialpes.fr/brave/

- [x] Test that https://diafygi.github.io/webrtc-ips/ doesn't leak IP address when `Block all fingerprinting protection` is on

### Rewards

- [x] Verify wallet is auto created after enabling rewards

- [ ] Verify account balance shows correct BAT and USD value

- [ ] Verify you are able to restore a wallet

- [x] Verify wallet address matches the QR code that is generated under `Add funds`

- [ ] Verify actions taken (claiming grant, tipping, auto-contribute) display in wallet panel

- [ ] Verify adding funds via any of the currencies flows into wallet after specified amount of time

- [ ] Verify adding funds to an existing wallet with amount, adjusts the BAT value appropriately

- [ ] Verify monthly budget shows correct BAT and USD value

- [ ] Verify you are able to exclude a publisher from the auto-contribute table by clicking on the `x` in auto-contribute table and popup list of sites

- [ ] Verify you are able to exclude a publisher by using the toggle on the Rewards Panel

- [ ] Verify when you click on the BR panel while on a site, the panel displays site specific information (site favicon, domain, attention %)

- [ ] Verify when you click on `Send a tip`, the custom tip banner displays

- [ ] Verify you are able to make one-time tip and they display in tips panel

- [ ] Verify you are able to make recurring tip and they display in tips panel

- [ ] Verify you can tip a verified publisher

- [ ] Verify you can tip a verified YouTube creator

- [ ] Verify tip panel shows a verified checkmark for a verified publisher/verified YouTube creator

- [ ] Verify tip panel shows a message about unverified publisher

- [ ] Verify BR panel shows message about an unverified publisher

- [ ] Verify you are able to perform a contribution

- [ ] Verify if you disable auto-contribute you are still able to tip regular sites and YouTube creators

- [ ] Verify that disabling Rewards and enabling it again does not lose state

- [ ] Verify that disabling auto-contribute and enabling it again does not lose state

- [ ] Adjust min visit/time in settings. Visit some sites and YouTube channels to verify they are added to the table after the specified settings

- [ ] Upgrade from older version

- [ ] Verify the wallet balance is retained and wallet backup code isn't corrupted

- [ ] Verify auto-contribute list is not lost after upgrade

- [ ] Verify tips list is not lost after upgrade

- [ ] Verify wallet panel transactions list is not lost after upgrade

### Ads Upgrade Tests:

- [x] Install 0.62.51 and enable Rewards (Ads are not available on this version). Update on `test` channel to the hotfix version. Verify Ads are off by default, should get a BAT logo notification to alert you that Ads are available.

- [x] Install 0.64.77 and enable Rewards. Ads are on by default. View an Ad. Update on `test` channel to the hotfix version. Verify Ads are still on after update, Ads panel information was not lost after upgrade, no BAT logo notification.

- [x] Install 0.64.77 and enable Rewards. Disable Ads. Update on `test` channel to the hotfix version. Verify Ads are still off after update, no BAT logo notification.

- [x] Install 1.3.118 and enable Rewards. Ads are on by default. View an ad. Update on `test` channel to the hotfix version. Verify Ads are still on after update, Ads panel information was not lost after upgrade, no BAT logo notification.

- [x] install 1.3.118 and enable Rewards. Disable Ads. Update on `test` channel to the hotfix version. Verify Ads are still off after update, no BAT logo notification.

### Tor Tabs

- [x] Visit https://check.torproject.org in a Tor window, ensure its shows success message for using a Tor exit node

- [x] Visit https://check.torproject.org in a Tor window, note down exit node IP address. Do a hard refresh (Ctrl+Shift+R/Cmd+Shift+R), ensure exit IP changes after page reloads

- [x] Visit https://protonirockerxow.onion/ in a Tor window, ensure login page is shown

- [x] Visit https://browserleaks.com/geo in a Tor window, ensure location isn't shown

### Session storage

- [x] Temporarily move away your browser profile and test that a new profile is created when browser is launched

- macOS - `~/Library/Application\ Support/BraveSoftware/`

- Windows - `%userprofile%\appdata\Local\BraveSoftware\`

- Linux(Ubuntu) - `~/.config/BraveSoftware/`

- [x] Test that windows and tabs restore when closed, including active tab

- [x] Ensure that the tabs in the above session are being lazy loaded when the session is restored

## Update tests

- [x] Verify visiting `brave://settings/help` triggers update check

- [x] Verify once update is downloaded, prompts to `Relaunch` to install update

## Chromium upgrade tests

- [x] Verify `brave://gpu` on Brave and `chrome://gpu` on Chrome are similar for the same Chromium version on both browsers

#### Adblock

- [x] Verify referrer blocking works properly for TLD+1. Visit `https://technology.slashdot.org/` and verify adblock works properly similar to `https://slashdot.org/`

#### Components

- [x] Delete Adblock folder from browser profile and restart browser. Visit `brave://components` and verify `Brave Ad Block Updater` downloads and update the component. Repeat for all Brave components

## Crypto Wallets

- [x] ensure that you can create a new wallet without any issues

- [x] ensure that you can restore a previous CW wallet without any issues

- [x] ensure that you can restore a previous MM wallet without any issues

- [x] ensure that you can create a transaction (sending crypto) with a CW wallet

- [x] ensure that you can create a transaction (sending crypto) using a restored MM wallet | 1.0 | Manual test run on OS X for 1.4.x - Release - ## Per release specialty tests

### Installer

- [x] Check signature: If OS Run `spctl --assess --verbose /Applications/Brave-Browser-Beta.app/` and make sure it returns `accepted`. If Windows right click on the `brave_installer-x64.exe` and go to Properties, go to the Digital Signatures tab and double click on the signature. Make sure it says "The digital signature is OK" in the popup window

### Data(Upgrade from previous release)

- [ ] Make sure that data from the last version appears in the new version OK

- [ ] With data from the last version, verify that