Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

28,966 | 5,447,905,018 | IssuesEvent | 2017-03-07 14:44:06 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | reopened | Crashed pdns while (bulk) creating zones via the api | auth defect | I was creating 225 zones in pdns using nsedit, which uses the API. That were quite a lot of requests fired at the API.

It seems (now) that during that import, pdns crashed twice. I didn't notice because it restarted quickly..

nsedit would have been doing the following requests:

- PUT on /servers/localhost/zones

- PATCH on /servers/localhost/zones/<zone> for each non-NS/SOA record (4 in this case)

- Rinse and repeat for next zone

I got the following trace:

```

Nov 6 14:05:38 nscache2 pdns[24922]: Got a signal 6, attempting to print trace:

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x652460]

Nov 6 14:05:38 nscache2 pdns[24922]: /lib/x86_64-linux-gnu/libc.so.6(+0x364a0) [0x7f45d1c384a0]

Nov 6 14:05:38 nscache2 pdns[24922]: /lib/x86_64-linux-gnu/libc.so.6(gsignal+0x35) [0x7f45d1c38425]

Nov 6 14:05:38 nscache2 pdns[24922]: /lib/x86_64-linux-gnu/libc.so.6(abort+0x17b) [0x7f45d1c3bb8b]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(SSQLite3::~SSQLite3()+0xab) [0x72458b]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(SSQLite3::~SSQLite3()+0x9) [0x7245e9]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(GSQLBackend::~GSQLBackend()+0x2e) [0x56c36e]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(gSQLite3Backend::~gSQLite3Backend()+0x17) [0x584c67]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(cleanup_backends(UeberBackend*)+0x26) [0x654246]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(UeberBackend::cleanup()+0x78) [0x654878]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(UeberBackend::~UeberBackend()+0x1d) [0x6548bd]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x635423]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::function2<void, HttpRequest*, HttpResponse*>::operator()(HttpRequest*, HttpResponse*) const+0x18) [0x648af8]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x644bcc]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::detail::function::void_function_obj_invoker2<boost::_bi::bind_t<void, void (*)(boost::function<void (HttpRequest*, HttpResponse*)>, HttpRequest*, HttpResponse*), boost::_bi::list3<boost::_bi::value<boost::function<void (HttpRequest*, HttpResponse*)> >, boost::arg<1>, boost::arg<2> > >, void, HttpRequest*, HttpResponse*>::invoke(boost::detail::function::function_buffer&, HttpRequest*, HttpResponse*)+0x67) [0x6474f7]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::function2<void, HttpRequest*, HttpResponse*>::operator()(HttpRequest*, HttpResponse*) const+0x18) [0x648af8]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::detail::function::void_function_obj_invoker2<boost::_bi::bind_t<void, void (*)(boost::function<void (HttpRequest*, HttpResponse*)>, YaHTTP::Request*, YaHTTP::Response*), boost::_bi::list3<boost::_bi::value<boost::function<void (HttpRequest*, HttpResponse*)> >, boost::arg<1>, boost::arg<2> > >, void, YaHTTP::Request*, YaHTTP::Response*>::invoke(boost::detail::function::function_buffer&, YaHTTP::Request*, YaHTTP::Response*)+0x67) [0x6475d7]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(WebServer::handleRequest(HttpRequest)+0x239) [0x645289]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(WebServer::serveConnection(Socket*)+0x1a5) [0x646775]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x646d92]

Nov 6 14:05:38 nscache2 pdns[12820]: Our pdns instance (24922) exited after signal 6

```

I've got no clue where to look, maybe you guys do.

Running: pdns 0.0.20140723.4983.b6b24bc-1

| 1.0 | Crashed pdns while (bulk) creating zones via the api - I was creating 225 zones in pdns using nsedit, which uses the API. That were quite a lot of requests fired at the API.

It seems (now) that during that import, pdns crashed twice. I didn't notice because it restarted quickly..

nsedit would have been doing the following requests:

- PUT on /servers/localhost/zones

- PATCH on /servers/localhost/zones/<zone> for each non-NS/SOA record (4 in this case)

- Rinse and repeat for next zone

I got the following trace:

```

Nov 6 14:05:38 nscache2 pdns[24922]: Got a signal 6, attempting to print trace:

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x652460]

Nov 6 14:05:38 nscache2 pdns[24922]: /lib/x86_64-linux-gnu/libc.so.6(+0x364a0) [0x7f45d1c384a0]

Nov 6 14:05:38 nscache2 pdns[24922]: /lib/x86_64-linux-gnu/libc.so.6(gsignal+0x35) [0x7f45d1c38425]

Nov 6 14:05:38 nscache2 pdns[24922]: /lib/x86_64-linux-gnu/libc.so.6(abort+0x17b) [0x7f45d1c3bb8b]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(SSQLite3::~SSQLite3()+0xab) [0x72458b]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(SSQLite3::~SSQLite3()+0x9) [0x7245e9]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(GSQLBackend::~GSQLBackend()+0x2e) [0x56c36e]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(gSQLite3Backend::~gSQLite3Backend()+0x17) [0x584c67]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(cleanup_backends(UeberBackend*)+0x26) [0x654246]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(UeberBackend::cleanup()+0x78) [0x654878]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(UeberBackend::~UeberBackend()+0x1d) [0x6548bd]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x635423]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::function2<void, HttpRequest*, HttpResponse*>::operator()(HttpRequest*, HttpResponse*) const+0x18) [0x648af8]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x644bcc]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::detail::function::void_function_obj_invoker2<boost::_bi::bind_t<void, void (*)(boost::function<void (HttpRequest*, HttpResponse*)>, HttpRequest*, HttpResponse*), boost::_bi::list3<boost::_bi::value<boost::function<void (HttpRequest*, HttpResponse*)> >, boost::arg<1>, boost::arg<2> > >, void, HttpRequest*, HttpResponse*>::invoke(boost::detail::function::function_buffer&, HttpRequest*, HttpResponse*)+0x67) [0x6474f7]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::function2<void, HttpRequest*, HttpResponse*>::operator()(HttpRequest*, HttpResponse*) const+0x18) [0x648af8]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(boost::detail::function::void_function_obj_invoker2<boost::_bi::bind_t<void, void (*)(boost::function<void (HttpRequest*, HttpResponse*)>, YaHTTP::Request*, YaHTTP::Response*), boost::_bi::list3<boost::_bi::value<boost::function<void (HttpRequest*, HttpResponse*)> >, boost::arg<1>, boost::arg<2> > >, void, YaHTTP::Request*, YaHTTP::Response*>::invoke(boost::detail::function::function_buffer&, YaHTTP::Request*, YaHTTP::Response*)+0x67) [0x6475d7]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(WebServer::handleRequest(HttpRequest)+0x239) [0x645289]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance(WebServer::serveConnection(Socket*)+0x1a5) [0x646775]

Nov 6 14:05:38 nscache2 pdns[24922]: /usr/sbin/pdns_server-instance() [0x646d92]

Nov 6 14:05:38 nscache2 pdns[12820]: Our pdns instance (24922) exited after signal 6

```

I've got no clue where to look, maybe you guys do.

Running: pdns 0.0.20140723.4983.b6b24bc-1

| defect | crashed pdns while bulk creating zones via the api i was creating zones in pdns using nsedit which uses the api that were quite a lot of requests fired at the api it seems now that during that import pdns crashed twice i didn t notice because it restarted quickly nsedit would have been doing the following requests put on servers localhost zones patch on servers localhost zones for each non ns soa record in this case rinse and repeat for next zone i got the following trace nov pdns got a signal attempting to print trace nov pdns usr sbin pdns server instance nov pdns lib linux gnu libc so nov pdns lib linux gnu libc so gsignal nov pdns lib linux gnu libc so abort nov pdns usr sbin pdns server instance nov pdns usr sbin pdns server instance nov pdns usr sbin pdns server instance gsqlbackend gsqlbackend nov pdns usr sbin pdns server instance nov pdns usr sbin pdns server instance cleanup backends ueberbackend nov pdns usr sbin pdns server instance ueberbackend cleanup nov pdns usr sbin pdns server instance ueberbackend ueberbackend nov pdns usr sbin pdns server instance nov pdns usr sbin pdns server instance boost operator httprequest httpresponse const nov pdns usr sbin pdns server instance nov pdns usr sbin pdns server instance boost detail function void function obj httprequest httpresponse boost bi boost arg boost arg void httprequest httpresponse invoke boost detail function function buffer httprequest httpresponse nov pdns usr sbin pdns server instance boost operator httprequest httpresponse const nov pdns usr sbin pdns server instance boost detail function void function obj yahttp request yahttp response boost bi boost arg boost arg void yahttp request yahttp response invoke boost detail function function buffer yahttp request yahttp response nov pdns usr sbin pdns server instance webserver handlerequest httprequest nov pdns usr sbin pdns server instance webserver serveconnection socket nov pdns usr sbin pdns server instance nov pdns our pdns instance exited after signal i ve got no clue where to look maybe you guys do running pdns | 1 |

245,762 | 18,793,223,889 | IssuesEvent | 2021-11-08 19:03:17 | Berat-Dzhevdetov/DeemZ | https://api.github.com/repos/Berat-Dzhevdetov/DeemZ | opened | LiteChat documentation | documentation | We are now ready with the project which is real-time communication between the lecturer and the students. And as follows the project must have documentation so just create a simple readme file. | 1.0 | LiteChat documentation - We are now ready with the project which is real-time communication between the lecturer and the students. And as follows the project must have documentation so just create a simple readme file. | non_defect | litechat documentation we are now ready with the project which is real time communication between the lecturer and the students and as follows the project must have documentation so just create a simple readme file | 0 |

104,299 | 16,613,588,391 | IssuesEvent | 2021-06-02 14:16:52 | Thanraj/linux-4.1.15 | https://api.github.com/repos/Thanraj/linux-4.1.15 | opened | CVE-2016-4557 (High) detected in linux-stable-rtv4.1.33 | security vulnerability | ## CVE-2016-4557 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://api.github.com/repos/Thanraj/linux-4.1.15/commits/5e3fb3e332499e1ad10a0969e55582af1027b085">5e3fb3e332499e1ad10a0969e55582af1027b085</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/kernel/bpf/verifier.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/kernel/bpf/verifier.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The replace_map_fd_with_map_ptr function in kernel/bpf/verifier.c in the Linux kernel before 4.5.5 does not properly maintain an fd data structure, which allows local users to gain privileges or cause a denial of service (use-after-free) via crafted BPF instructions that reference an incorrect file descriptor.

<p>Publish Date: 2016-05-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-4557>CVE-2016-4557</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-4557">https://nvd.nist.gov/vuln/detail/CVE-2016-4557</a></p>

<p>Release Date: 2016-05-23</p>

<p>Fix Resolution: 4.5.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | True | CVE-2016-4557 (High) detected in linux-stable-rtv4.1.33 - ## CVE-2016-4557 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-stable-rtv4.1.33</b></p></summary>

<p>

<p>Julia Cartwright's fork of linux-stable-rt.git</p>

<p>Library home page: <a href=https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git>https://git.kernel.org/pub/scm/linux/kernel/git/julia/linux-stable-rt.git</a></p>

<p>Found in HEAD commit: <a href="https://api.github.com/repos/Thanraj/linux-4.1.15/commits/5e3fb3e332499e1ad10a0969e55582af1027b085">5e3fb3e332499e1ad10a0969e55582af1027b085</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/kernel/bpf/verifier.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>linux-4.1.15/kernel/bpf/verifier.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The replace_map_fd_with_map_ptr function in kernel/bpf/verifier.c in the Linux kernel before 4.5.5 does not properly maintain an fd data structure, which allows local users to gain privileges or cause a denial of service (use-after-free) via crafted BPF instructions that reference an incorrect file descriptor.

<p>Publish Date: 2016-05-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2016-4557>CVE-2016-4557</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2016-4557">https://nvd.nist.gov/vuln/detail/CVE-2016-4557</a></p>

<p>Release Date: 2016-05-23</p>

<p>Fix Resolution: 4.5.5</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github) | non_defect | cve high detected in linux stable cve high severity vulnerability vulnerable library linux stable julia cartwright s fork of linux stable rt git library home page a href found in head commit a href found in base branch master vulnerable source files linux kernel bpf verifier c linux kernel bpf verifier c vulnerability details the replace map fd with map ptr function in kernel bpf verifier c in the linux kernel before does not properly maintain an fd data structure which allows local users to gain privileges or cause a denial of service use after free via crafted bpf instructions that reference an incorrect file descriptor publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource | 0 |

7,976 | 7,176,304,247 | IssuesEvent | 2018-01-31 09:36:06 | VolleyManagement/volley-management | https://api.github.com/repos/VolleyManagement/volley-management | opened | Design Localization system | cmp: infrastructure pri: medium type: enhancement | - [ ] Backend localization

- [ ] UI localization

- [ ] Languages: en, ua, ru | 1.0 | Design Localization system - - [ ] Backend localization

- [ ] UI localization

- [ ] Languages: en, ua, ru | non_defect | design localization system backend localization ui localization languages en ua ru | 0 |

17,925 | 3,013,786,614 | IssuesEvent | 2015-07-29 11:13:24 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | Editor 3.0 Analyse Specification Window does not terminate/close | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. open an existing spec in editor 3.0

2. choose "analyse specification" from menu bar

3. select "stop" on analyse specification window

4. try to close that window (via x)

What is the expected output? What do you see instead?

In my opinion the window should close immediately, but it stays opened until

the editor is closed, the "close" button can not be pushed.

What version of the product are you using? On what operating system?

editor 3.0

Please provide any additional information below.

the existing specification was made with old editor, and has been verified

there.

```

Original issue reported on code.google.com by `anwim...@gmail.com` on 10 Dec 2013 at 8:06 | 1.0 | Editor 3.0 Analyse Specification Window does not terminate/close - ```

What steps will reproduce the problem?

1. open an existing spec in editor 3.0

2. choose "analyse specification" from menu bar

3. select "stop" on analyse specification window

4. try to close that window (via x)

What is the expected output? What do you see instead?

In my opinion the window should close immediately, but it stays opened until

the editor is closed, the "close" button can not be pushed.

What version of the product are you using? On what operating system?

editor 3.0

Please provide any additional information below.

the existing specification was made with old editor, and has been verified

there.

```

Original issue reported on code.google.com by `anwim...@gmail.com` on 10 Dec 2013 at 8:06 | defect | editor analyse specification window does not terminate close what steps will reproduce the problem open an existing spec in editor choose analyse specification from menu bar select stop on analyse specification window try to close that window via x what is the expected output what do you see instead in my opinion the window should close immediately but it stays opened until the editor is closed the close button can not be pushed what version of the product are you using on what operating system editor please provide any additional information below the existing specification was made with old editor and has been verified there original issue reported on code google com by anwim gmail com on dec at | 1 |

189,306 | 15,184,802,655 | IssuesEvent | 2021-02-15 10:04:17 | argoproj/gitops-engine | https://api.github.com/repos/argoproj/gitops-engine | opened | docs: update FAQ | documentation | https://github.com/argoproj/gitops-engine/blob/master/docs/faq.md still refers a lot to the collaboration between Argo and Flux. In #126 I mostly just explained the current situation and how we got there. I didn't change any of the other text as I didn't want to speak for the Argo project and am not really qualified to write a good gitops-engine FAQ.

The page might need an update to reflect the current reality or aspirations. | 1.0 | docs: update FAQ - https://github.com/argoproj/gitops-engine/blob/master/docs/faq.md still refers a lot to the collaboration between Argo and Flux. In #126 I mostly just explained the current situation and how we got there. I didn't change any of the other text as I didn't want to speak for the Argo project and am not really qualified to write a good gitops-engine FAQ.

The page might need an update to reflect the current reality or aspirations. | non_defect | docs update faq still refers a lot to the collaboration between argo and flux in i mostly just explained the current situation and how we got there i didn t change any of the other text as i didn t want to speak for the argo project and am not really qualified to write a good gitops engine faq the page might need an update to reflect the current reality or aspirations | 0 |

66,402 | 6,997,846,331 | IssuesEvent | 2017-12-16 19:34:44 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | reopened | Test failure: System.Net.Http.Functional.Tests.ResponseStreamTest / ReadAsStreamAsync_InvalidServerResponse_ThrowsIOException | area-System.Net.Http os-windows test-run-core | Opened on behalf of @Jiayili1

The test `System.Net.Http.Functional.Tests.ResponseStreamTest/ReadAsStreamAsync_InvalidServerResponse_ThrowsIOException(transferType: ContentLength, transferError: ContentLengthTooLarge)` has failed.

Assert.Throws() Failure\r

Expected: typeof(System.IO.IOException)\r

Actual: typeof(System.Net.Http.HttpRequestException): An error occurred while sending the request.

Stack Trace:

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at System.Net.Http.HttpClient.<FinishSendAsyncUnbuffered>d__59.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at System.Net.Http.Functional.Tests.ResponseStreamTest.<ReadAsStreamHelper>d__9.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

Build : Master - 20171018.01 (Core Tests)

Failing configurations:

- Windows.7.Amd64-x86

- Release

Detail: https://mc.dot.net/#/product/netcore/master/source/official~2Fcorefx~2Fmaster~2F/type/test~2Ffunctional~2Fcli~2F/build/20171018.01/workItem/System.Net.Http.Functional.Tests/analysis/xunit/System.Net.Http.Functional.Tests.ResponseStreamTest~2FReadAsStreamAsync_InvalidServerResponse_ThrowsIOException(transferType:%20ContentLength,%20transferError:%20ContentLengthTooLarge) | 1.0 | Test failure: System.Net.Http.Functional.Tests.ResponseStreamTest / ReadAsStreamAsync_InvalidServerResponse_ThrowsIOException - Opened on behalf of @Jiayili1

The test `System.Net.Http.Functional.Tests.ResponseStreamTest/ReadAsStreamAsync_InvalidServerResponse_ThrowsIOException(transferType: ContentLength, transferError: ContentLengthTooLarge)` has failed.

Assert.Throws() Failure\r

Expected: typeof(System.IO.IOException)\r

Actual: typeof(System.Net.Http.HttpRequestException): An error occurred while sending the request.

Stack Trace:

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at System.Net.Http.HttpClient.<FinishSendAsyncUnbuffered>d__59.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

at System.Net.Http.Functional.Tests.ResponseStreamTest.<ReadAsStreamHelper>d__9.MoveNext()

--- End of stack trace from previous location where exception was thrown ---

at System.Runtime.ExceptionServices.ExceptionDispatchInfo.Throw()

at System.Runtime.CompilerServices.TaskAwaiter.ThrowForNonSuccess(Task task)

at System.Runtime.CompilerServices.TaskAwaiter.HandleNonSuccessAndDebuggerNotification(Task task)

Build : Master - 20171018.01 (Core Tests)

Failing configurations:

- Windows.7.Amd64-x86

- Release

Detail: https://mc.dot.net/#/product/netcore/master/source/official~2Fcorefx~2Fmaster~2F/type/test~2Ffunctional~2Fcli~2F/build/20171018.01/workItem/System.Net.Http.Functional.Tests/analysis/xunit/System.Net.Http.Functional.Tests.ResponseStreamTest~2FReadAsStreamAsync_InvalidServerResponse_ThrowsIOException(transferType:%20ContentLength,%20transferError:%20ContentLengthTooLarge) | non_defect | test failure system net http functional tests responsestreamtest readasstreamasync invalidserverresponse throwsioexception opened on behalf of the test system net http functional tests responsestreamtest readasstreamasync invalidserverresponse throwsioexception transfertype contentlength transfererror contentlengthtoolarge has failed assert throws failure r expected typeof system io ioexception r actual typeof system net http httprequestexception an error occurred while sending the request stack trace at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter throwfornonsuccess task task at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at system net http httpclient d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter throwfornonsuccess task task at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task at system net http functional tests responsestreamtest d movenext end of stack trace from previous location where exception was thrown at system runtime exceptionservices exceptiondispatchinfo throw at system runtime compilerservices taskawaiter throwfornonsuccess task task at system runtime compilerservices taskawaiter handlenonsuccessanddebuggernotification task task build master core tests failing configurations windows release detail | 0 |

32,185 | 6,733,156,371 | IssuesEvent | 2017-10-18 14:01:03 | hazelcast/hazelcast-csharp-client | https://api.github.com/repos/hazelcast/hazelcast-csharp-client | closed | Leaking Handles when reconnecting | Type: Defect | Handle leaking occur when reconnecting to server

can be reproduced by continually trying to connect to a nonexisting server

> ClientConfig HazelConfig = null;

IHazelcastInstance instance = null;

HazelConfig = new ClientConfig();

HazelConfig.GetNetworkConfig().AddAddress("10.10.10.123");

bool connected = false;

while (!connected)

{

try

{

Program.instance = HazelcastClient.NewHazelcastClient(Program.HazelConfig);

// ConnectToHazelcast();

connected = true;

}

catch (Exception)

{

}

}

| 1.0 | Leaking Handles when reconnecting - Handle leaking occur when reconnecting to server

can be reproduced by continually trying to connect to a nonexisting server

> ClientConfig HazelConfig = null;

IHazelcastInstance instance = null;

HazelConfig = new ClientConfig();

HazelConfig.GetNetworkConfig().AddAddress("10.10.10.123");

bool connected = false;

while (!connected)

{

try

{

Program.instance = HazelcastClient.NewHazelcastClient(Program.HazelConfig);

// ConnectToHazelcast();

connected = true;

}

catch (Exception)

{

}

}

| defect | leaking handles when reconnecting handle leaking occur when reconnecting to server can be reproduced by continually trying to connect to a nonexisting server clientconfig hazelconfig null ihazelcastinstance instance null hazelconfig new clientconfig hazelconfig getnetworkconfig addaddress bool connected false while connected try program instance hazelcastclient newhazelcastclient program hazelconfig connecttohazelcast connected true catch exception | 1 |

75,844 | 26,096,188,149 | IssuesEvent | 2022-12-26 20:12:43 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Upgrade to Jooq 3.17.6 and Spring Boot 3.0.0 -> AbstractMethodError | T: Defect | ### Expected behavior

working as with previous version

### Actual behavior

_No response_

### Steps to reproduce the problem

022-12-26T20:58:48.774+01:00 ERROR 22584 --- [nio-8090-exec-2] o.a.c.c.C.[.[.[/].[dispatcherServlet] : Servlet.service() for servlet [dispatcherServlet] in context with path [] threw exception [Handler dispatch failed: java.lang.AbstractMethodError: Receiver class org.springframework.boot.autoconfigure.jooq.JooqExceptionTranslator does not define or inherit an implementation of the resolved method 'abstract void end(org.jooq.ExecuteContext)' of interface org.jooq.ExecuteListener.] with root cause

java.lang.AbstractMethodError: Receiver class org.springframework.boot.autoconfigure.jooq.JooqExceptionTranslator does not define or inherit an implementation of the resolved method 'abstract void end(org.jooq.ExecuteContext)' of interface org.jooq.ExecuteListener.

at org.jooq.impl.ExecuteListeners.end(ExecuteListeners.java:270) ~[jooq-3.17.6.jar:na]

at org.jooq.impl.Tools.safeClose(Tools.java:3152) ~[jooq-3.17.6.jar:na]

at org.jooq.impl.Tools.safeClose(Tools.java:3115) ~[jooq-3.17.6.jar:na]

### jOOQ Version

3.17.6

### Database product and version

MySql 8

### Java Version

17

### OS Version

Version 10.0.22621.963

### JDBC driver name and version (include name if unofficial driver)

mysql:mysql-connector-java:8.0.13 | 1.0 | Upgrade to Jooq 3.17.6 and Spring Boot 3.0.0 -> AbstractMethodError - ### Expected behavior

working as with previous version

### Actual behavior

_No response_

### Steps to reproduce the problem

022-12-26T20:58:48.774+01:00 ERROR 22584 --- [nio-8090-exec-2] o.a.c.c.C.[.[.[/].[dispatcherServlet] : Servlet.service() for servlet [dispatcherServlet] in context with path [] threw exception [Handler dispatch failed: java.lang.AbstractMethodError: Receiver class org.springframework.boot.autoconfigure.jooq.JooqExceptionTranslator does not define or inherit an implementation of the resolved method 'abstract void end(org.jooq.ExecuteContext)' of interface org.jooq.ExecuteListener.] with root cause

java.lang.AbstractMethodError: Receiver class org.springframework.boot.autoconfigure.jooq.JooqExceptionTranslator does not define or inherit an implementation of the resolved method 'abstract void end(org.jooq.ExecuteContext)' of interface org.jooq.ExecuteListener.

at org.jooq.impl.ExecuteListeners.end(ExecuteListeners.java:270) ~[jooq-3.17.6.jar:na]

at org.jooq.impl.Tools.safeClose(Tools.java:3152) ~[jooq-3.17.6.jar:na]

at org.jooq.impl.Tools.safeClose(Tools.java:3115) ~[jooq-3.17.6.jar:na]

### jOOQ Version

3.17.6

### Database product and version

MySql 8

### Java Version

17

### OS Version

Version 10.0.22621.963

### JDBC driver name and version (include name if unofficial driver)

mysql:mysql-connector-java:8.0.13 | defect | upgrade to jooq and spring boot abstractmethoderror expected behavior working as with previous version actual behavior no response steps to reproduce the problem error o a c c c servlet service for servlet in context with path threw exception with root cause java lang abstractmethoderror receiver class org springframework boot autoconfigure jooq jooqexceptiontranslator does not define or inherit an implementation of the resolved method abstract void end org jooq executecontext of interface org jooq executelistener at org jooq impl executelisteners end executelisteners java at org jooq impl tools safeclose tools java at org jooq impl tools safeclose tools java jooq version database product and version mysql java version os version version jdbc driver name and version include name if unofficial driver mysql mysql connector java | 1 |

44,932 | 12,468,189,978 | IssuesEvent | 2020-05-28 18:24:28 | networkx/networkx | https://api.github.com/repos/networkx/networkx | closed | I think `nx.vote_rank` make wrong result. | Defect | As the `vote_rank` algorithm was written in the origianl paper [Identifying a set of influential spreaders in complex networks](https://www.nature.com/articles/srep27823), **the voting ability of already elected nodes have to be zero**.

But, in `networkx.vote_rank`, the voting ability of already elected node could be negative value.

To check if the voting ability could be negative value, I copied the code of `nx.vote_rank` from [networkx documentation](https://networkx.github.io/documentation/stable/_modules/networkx/algorithms/centrality/voterank_alg.html#voterank), and add simple code that print node having negative value.

```python

def voterank(G, number_of_nodes=None, max_iter=10000):

voterank = []

if len(G) == 0:

return voterank

if number_of_nodes is None or number_of_nodes > len(G):

number_of_nodes = len(G)

avgDegree = sum(deg for _, deg in G.degree()) / float(len(G))

# step 1 - initiate all nodes to (0,1) (score, voting ability)

for _, v in G.nodes(data=True):

v['voterank'] = [0, 1]

# Repeat steps 1b to 4 until num_seeds are elected.

for _ in range(max_iter):

# step 1b - reset rank

for _, v in G.nodes(data=True):

v['voterank'][0] = 0

####################################

####################################

# this code was added by me to check if voting ability is negative

for n in G:

node_voting_ability = G.nodes[n]['voterank'][1]

if node_voting_ability < 0.0:

print(n, node_voting_ability)

####################################

####################################

# step 2 - vote

for n, nbr in G.edges():

G.nodes[n]['voterank'][0] += G.nodes[nbr]['voterank'][1]

G.nodes[nbr]['voterank'][0] += G.nodes[n]['voterank'][1]

for n in voterank:

G.nodes[n]['voterank'][0] = 0

# step 3 - select top node

n, value = max(G.nodes(data=True), key=lambda x: x[1]['voterank'][0])

if value['voterank'][0] == 0:

return voterank

voterank.append(n)

if len(voterank) >= number_of_nodes:

return voterank

# weaken the selected node

G.nodes[n]['voterank'] = [0, 0]

# step 4 - update voterank properties

for nbr in G.neighbors(n):

G.nodes[nbr]['voterank'][1] -= 1 / avgDegree

return voterank

N = 10

G = nx.scale_free_graph(n=N, seed=0)

# Digraph => Graph

G = nx.Graph(G)

# remove self-loop

G.remove_edges_from([(u, v) for u, v in G.edges() if u==v])

assert nx.is_connected(G)

voterank(G)

```

the output of code execution is below. Some nodes have definitely negative value. And also, nodes that have negative voting ability are already elected nodes.

Therefore, the result of `nx.vote_rank` could be little different with the origianl vote rank algorithm.

```

1 -0.3333333333333333

1 -0.3333333333333333

1 -0.6666666666666666

2 -0.3333333333333333

7 -0.3333333333333333

```

If I am wrong, please let me know.

I always thanks for your support and `networkx`.

| 1.0 | I think `nx.vote_rank` make wrong result. - As the `vote_rank` algorithm was written in the origianl paper [Identifying a set of influential spreaders in complex networks](https://www.nature.com/articles/srep27823), **the voting ability of already elected nodes have to be zero**.

But, in `networkx.vote_rank`, the voting ability of already elected node could be negative value.

To check if the voting ability could be negative value, I copied the code of `nx.vote_rank` from [networkx documentation](https://networkx.github.io/documentation/stable/_modules/networkx/algorithms/centrality/voterank_alg.html#voterank), and add simple code that print node having negative value.

```python

def voterank(G, number_of_nodes=None, max_iter=10000):

voterank = []

if len(G) == 0:

return voterank

if number_of_nodes is None or number_of_nodes > len(G):

number_of_nodes = len(G)

avgDegree = sum(deg for _, deg in G.degree()) / float(len(G))

# step 1 - initiate all nodes to (0,1) (score, voting ability)

for _, v in G.nodes(data=True):

v['voterank'] = [0, 1]

# Repeat steps 1b to 4 until num_seeds are elected.

for _ in range(max_iter):

# step 1b - reset rank

for _, v in G.nodes(data=True):

v['voterank'][0] = 0

####################################

####################################

# this code was added by me to check if voting ability is negative

for n in G:

node_voting_ability = G.nodes[n]['voterank'][1]

if node_voting_ability < 0.0:

print(n, node_voting_ability)

####################################

####################################

# step 2 - vote

for n, nbr in G.edges():

G.nodes[n]['voterank'][0] += G.nodes[nbr]['voterank'][1]

G.nodes[nbr]['voterank'][0] += G.nodes[n]['voterank'][1]

for n in voterank:

G.nodes[n]['voterank'][0] = 0

# step 3 - select top node

n, value = max(G.nodes(data=True), key=lambda x: x[1]['voterank'][0])

if value['voterank'][0] == 0:

return voterank

voterank.append(n)

if len(voterank) >= number_of_nodes:

return voterank

# weaken the selected node

G.nodes[n]['voterank'] = [0, 0]

# step 4 - update voterank properties

for nbr in G.neighbors(n):

G.nodes[nbr]['voterank'][1] -= 1 / avgDegree

return voterank

N = 10

G = nx.scale_free_graph(n=N, seed=0)

# Digraph => Graph

G = nx.Graph(G)

# remove self-loop

G.remove_edges_from([(u, v) for u, v in G.edges() if u==v])

assert nx.is_connected(G)

voterank(G)

```

the output of code execution is below. Some nodes have definitely negative value. And also, nodes that have negative voting ability are already elected nodes.

Therefore, the result of `nx.vote_rank` could be little different with the origianl vote rank algorithm.

```

1 -0.3333333333333333

1 -0.3333333333333333

1 -0.6666666666666666

2 -0.3333333333333333

7 -0.3333333333333333

```

If I am wrong, please let me know.

I always thanks for your support and `networkx`.

| defect | i think nx vote rank make wrong result as the vote rank algorithm was written in the origianl paper the voting ability of already elected nodes have to be zero but in networkx vote rank the voting ability of already elected node could be negative value to check if the voting ability could be negative value i copied the code of nx vote rank from and add simple code that print node having negative value python def voterank g number of nodes none max iter voterank if len g return voterank if number of nodes is none or number of nodes len g number of nodes len g avgdegree sum deg for deg in g degree float len g step initiate all nodes to score voting ability for v in g nodes data true v repeat steps to until num seeds are elected for in range max iter step reset rank for v in g nodes data true v this code was added by me to check if voting ability is negative for n in g node voting ability g nodes if node voting ability print n node voting ability step vote for n nbr in g edges g nodes g nodes g nodes g nodes for n in voterank g nodes step select top node n value max g nodes data true key lambda x x if value return voterank voterank append n if len voterank number of nodes return voterank weaken the selected node g nodes step update voterank properties for nbr in g neighbors n g nodes avgdegree return voterank n g nx scale free graph n n seed digraph graph g nx graph g remove self loop g remove edges from assert nx is connected g voterank g the output of code execution is below some nodes have definitely negative value and also nodes that have negative voting ability are already elected nodes therefore the result of nx vote rank could be little different with the origianl vote rank algorithm if i am wrong please let me know i always thanks for your support and networkx | 1 |

52,413 | 13,224,720,010 | IssuesEvent | 2020-08-17 19:42:31 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | Neutrino simulation event has a pulse width of zero (Trac #2180) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2180">https://code.icecube.wisc.edu/projects/icecube/ticket/2180</a>, reported by lwilleand owned by juancarlos</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-03-27T16:25:07",

"_ts": "1553703907985721",

"description": "I'm running into a Monopod error with about 10% of the files in Nancy's NuGen_new simulation set. The error is \"FATAL (millipede): Assertion failed: p->GetWidth() > 0 (MillipedeDOMCacheMap.cxx:379 in void MillipedeDOMCacheMap::UpdateData(const I3TimeWindow&, const I3RecoPulseSeriesMap&, const I3TimeWindowSeriesMap&, double, double, double, bool))\".\n\nLooking into this error, it appears that about 10% of the time, an event will have a single pulse with a width reported as 0. I'm uncertain if this is an issue with the simulation file processing or something else. An example of an event with a zero pulse width is here `/data/ana/Cscd/StartingEvents/NuGen_new/NuTau/medium_energy/IC86_flasher_p1=0.3_p2=0.0_domeff_081/l2/1/l2_00000248.i3.zst` Event 450.",

"reporter": "lwille",

"cc": "jvansanten, nwhitehorn",

"resolution": "duplicate",

"time": "2018-08-08T18:33:10",

"component": "combo simulation",

"summary": "Neutrino simulation event has a pulse width of zero",

"priority": "normal",

"keywords": "",

"milestone": "Vernal Equinox 2019",

"owner": "juancarlos",

"type": "defect"

}

```

</p>

</details>

| 1.0 | Neutrino simulation event has a pulse width of zero (Trac #2180) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/2180">https://code.icecube.wisc.edu/projects/icecube/ticket/2180</a>, reported by lwilleand owned by juancarlos</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-03-27T16:25:07",

"_ts": "1553703907985721",

"description": "I'm running into a Monopod error with about 10% of the files in Nancy's NuGen_new simulation set. The error is \"FATAL (millipede): Assertion failed: p->GetWidth() > 0 (MillipedeDOMCacheMap.cxx:379 in void MillipedeDOMCacheMap::UpdateData(const I3TimeWindow&, const I3RecoPulseSeriesMap&, const I3TimeWindowSeriesMap&, double, double, double, bool))\".\n\nLooking into this error, it appears that about 10% of the time, an event will have a single pulse with a width reported as 0. I'm uncertain if this is an issue with the simulation file processing or something else. An example of an event with a zero pulse width is here `/data/ana/Cscd/StartingEvents/NuGen_new/NuTau/medium_energy/IC86_flasher_p1=0.3_p2=0.0_domeff_081/l2/1/l2_00000248.i3.zst` Event 450.",

"reporter": "lwille",

"cc": "jvansanten, nwhitehorn",

"resolution": "duplicate",

"time": "2018-08-08T18:33:10",

"component": "combo simulation",

"summary": "Neutrino simulation event has a pulse width of zero",

"priority": "normal",

"keywords": "",

"milestone": "Vernal Equinox 2019",

"owner": "juancarlos",

"type": "defect"

}

```

</p>

</details>

| defect | neutrino simulation event has a pulse width of zero trac migrated from json status closed changetime ts description i m running into a monopod error with about of the files in nancy s nugen new simulation set the error is fatal millipede assertion failed p getwidth millipededomcachemap cxx in void millipededomcachemap updatedata const const const double double double bool n nlooking into this error it appears that about of the time an event will have a single pulse with a width reported as i m uncertain if this is an issue with the simulation file processing or something else an example of an event with a zero pulse width is here data ana cscd startingevents nugen new nutau medium energy flasher domeff zst event reporter lwille cc jvansanten nwhitehorn resolution duplicate time component combo simulation summary neutrino simulation event has a pulse width of zero priority normal keywords milestone vernal equinox owner juancarlos type defect | 1 |

36,600 | 8,030,786,494 | IssuesEvent | 2018-07-27 21:03:06 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | PrimeFaces cannot find default message bundles when deployed as an OSGi bundle | defect | Getting messages from the default `ResourceBundle`s fails in an OSGi environment because the default `ResourceBundle`s cannot be found. For example, the following calls will return `null` when called from an OSGi WAB or bundle that depends on the PrimeFaces bundle:

```

MessageFactory.getMessage(UIData.ARIA_NEXT_PAGE_LABEL, new Object[]{});

MessageFactory.getMessage("javax.faces.converter.DateTimeConverter.DATE", FacesMessage.SEVERITY_ERROR, params);

```

The reason these calls return `null` because `MessageFactory` uses the `Thread.currentThread().getContextClassLoader()` to look for the bundles. In OSGi, that `ClassLoader` is the loader of the calling WAB, but it does not have access to the default `ResourceBundle`s that are contained in PrimeFaces, Mojarra, and MyFaces.

In order to fix this problem, `MessageFactory` should look for `ResourceBundle`s using both the thread-context loader and the loader of the bundle containing the default resource (such as the PrimeFaces bundles' loader or Mojarra's loader). | 1.0 | PrimeFaces cannot find default message bundles when deployed as an OSGi bundle - Getting messages from the default `ResourceBundle`s fails in an OSGi environment because the default `ResourceBundle`s cannot be found. For example, the following calls will return `null` when called from an OSGi WAB or bundle that depends on the PrimeFaces bundle:

```

MessageFactory.getMessage(UIData.ARIA_NEXT_PAGE_LABEL, new Object[]{});

MessageFactory.getMessage("javax.faces.converter.DateTimeConverter.DATE", FacesMessage.SEVERITY_ERROR, params);

```

The reason these calls return `null` because `MessageFactory` uses the `Thread.currentThread().getContextClassLoader()` to look for the bundles. In OSGi, that `ClassLoader` is the loader of the calling WAB, but it does not have access to the default `ResourceBundle`s that are contained in PrimeFaces, Mojarra, and MyFaces.

In order to fix this problem, `MessageFactory` should look for `ResourceBundle`s using both the thread-context loader and the loader of the bundle containing the default resource (such as the PrimeFaces bundles' loader or Mojarra's loader). | defect | primefaces cannot find default message bundles when deployed as an osgi bundle getting messages from the default resourcebundle s fails in an osgi environment because the default resourcebundle s cannot be found for example the following calls will return null when called from an osgi wab or bundle that depends on the primefaces bundle messagefactory getmessage uidata aria next page label new object messagefactory getmessage javax faces converter datetimeconverter date facesmessage severity error params the reason these calls return null because messagefactory uses the thread currentthread getcontextclassloader to look for the bundles in osgi that classloader is the loader of the calling wab but it does not have access to the default resourcebundle s that are contained in primefaces mojarra and myfaces in order to fix this problem messagefactory should look for resourcebundle s using both the thread context loader and the loader of the bundle containing the default resource such as the primefaces bundles loader or mojarra s loader | 1 |

30,668 | 7,241,653,828 | IssuesEvent | 2018-02-14 02:29:19 | elastic/logstash | https://api.github.com/repos/elastic/logstash | closed | Cleanup doc repos to remove duplicated content | code cleanup docs | Currently, the doc build is pulling files from both the logstash and logstash-doc repos:

For 5.0 and later, the build pulls:

- index files and static doc source files from the `logstash` repo

- plugin source files from the `logstash-docs` repo

The files in `logstash-docs` under `/docs/static` are no longer used by the build.

For 1.5 through 2.4, the build pulls:

- index files from the `logstash` repo.

- static docs and plugin docs from the `logstash-docs` repo.

Eventually, we want to clean up branches 1.5 through 2.4 so that we follow the same build strategy that we follow for 5.0+.

TO DO list:

- [ ] For version 1.5 through 2.4, make sure the `docs/static` folder in the `logstash` repo contains the authoritative version of the files. Right now there are a few doc changes that never got into the `logstash-docs`. I need to confirm that the opposite has not happened with the `logstash-docs` repo. It should not have changes that are not also in the `logstash` repo.

- [x] Update the build to pull the static doc source files from the `logstash` repo instead of `logstash-docs`.

- [ ] Delete the `/docs/static` folder from all branches of the `logstash-docs` repo starting with version 1.5 - done for all 5.x branches.

- [ ] Delete all index files from the `logstash-docs` repo.

| 1.0 | Cleanup doc repos to remove duplicated content - Currently, the doc build is pulling files from both the logstash and logstash-doc repos:

For 5.0 and later, the build pulls:

- index files and static doc source files from the `logstash` repo

- plugin source files from the `logstash-docs` repo

The files in `logstash-docs` under `/docs/static` are no longer used by the build.

For 1.5 through 2.4, the build pulls:

- index files from the `logstash` repo.

- static docs and plugin docs from the `logstash-docs` repo.

Eventually, we want to clean up branches 1.5 through 2.4 so that we follow the same build strategy that we follow for 5.0+.

TO DO list:

- [ ] For version 1.5 through 2.4, make sure the `docs/static` folder in the `logstash` repo contains the authoritative version of the files. Right now there are a few doc changes that never got into the `logstash-docs`. I need to confirm that the opposite has not happened with the `logstash-docs` repo. It should not have changes that are not also in the `logstash` repo.

- [x] Update the build to pull the static doc source files from the `logstash` repo instead of `logstash-docs`.

- [ ] Delete the `/docs/static` folder from all branches of the `logstash-docs` repo starting with version 1.5 - done for all 5.x branches.

- [ ] Delete all index files from the `logstash-docs` repo.

| non_defect | cleanup doc repos to remove duplicated content currently the doc build is pulling files from both the logstash and logstash doc repos for and later the build pulls index files and static doc source files from the logstash repo plugin source files from the logstash docs repo the files in logstash docs under docs static are no longer used by the build for through the build pulls index files from the logstash repo static docs and plugin docs from the logstash docs repo eventually we want to clean up branches through so that we follow the same build strategy that we follow for to do list for version through make sure the docs static folder in the logstash repo contains the authoritative version of the files right now there are a few doc changes that never got into the logstash docs i need to confirm that the opposite has not happened with the logstash docs repo it should not have changes that are not also in the logstash repo update the build to pull the static doc source files from the logstash repo instead of logstash docs delete the docs static folder from all branches of the logstash docs repo starting with version done for all x branches delete all index files from the logstash docs repo | 0 |

75,451 | 25,854,663,961 | IssuesEvent | 2022-12-13 12:55:17 | matrix-org/synapse | https://api.github.com/repos/matrix-org/synapse | closed | Complement `TestPartialStateJoin/Room_aliases_can_be_added_and_queried_during_a_resync` is flakey | A-Federated-Join T-Defect Z-Flake Z-Dev-Wishlist A-Testing | [TestPartialStateJoin/Room_aliases_can_be_added_and_queried_during_a_resync](https://github.com/matrix-org/synapse/actions/runs/3538420582/jobs/5939246922)

```

client.go:599: [CSAPI] POST hs1/_matrix/client/v3/register => 200 OK (32.809293ms)

client.go:599: [CSAPI] GET hs1/_matrix/client/v3/capabilities => 200 OK (8.09415ms)

server.go:170: Creating room !0-UceOsu61aV8dXGXSxM:host.docker.internal:33837 with version 9

federation_room_join_partial_state_test.go:3468: Registered state_ids handler for event $g53VZ818ZJcgniyOAKqjgOgqN7Z7aEPYJ_Cq9a5NbVE

federation_room_join_partial_state_test.go:3509: Registered /state handler for event $g53VZ818ZJcgniyOAKqjgOgqN7Z7aEPYJ_Cq9a5NbVE

client.go:599: [CSAPI] POST hs1/_matrix/client/v3/join/!0-UceOsu61aV8dXGXSxM:host.docker.internal:33837 => 502 Bad Gateway (6.328761ms)

federation_room_join_partial_state_test.go:3380: CSAPI.MustDoFunc POST http://localhost:49420/_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 returned non-2xx code: 502 Bad Gateway - body: {"errcode":"M_UNKNOWN","error":"Failed to make_join via any server"}

```

Why did we get a 502?

```

2022-11-24T07:20:08.4197065Z nginx | 192.168.16.1 - - [24/Nov/2022:07:14:39 +0000] "POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 HTTP/1.1" 502 95 "-" "Go-http-client/1.1"

2022-11-24T07:20:08.4175716Z synapse_main | 2022-11-24 07:14:39,151 - synapse.http.servlet - 668 - WARNING - POST-516 - Unable to parse JSON from POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 response: Expecting value: line 1 column 1 (char 0) (b'')

2022-11-24T07:20:08.4176297Z synapse_main | 2022-11-24 07:14:39,155 - synapse.federation.federation_client - 850 - INFO - POST-516 - make_join: Not retrying server host.docker.internal:33837 because we tried it recently retry_last_ts=1669273939360 and we won't check for another retry_interval=600000ms.

2022-11-24T07:20:08.4177016Z synapse_main | 2022-11-24 07:14:39,156 - synapse.http.server - 108 - INFO - POST-516 - <SynapseRequest at 0x7f785e4a0c40 method='POST' uri='/_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837' clientproto='HTTP/1.0' site='8080'> SynapseError: 502 - Failed to make_join via any server

2022-11-24T07:20:08.4177822Z synapse_main | 2022-11-24 07:14:39,156 - synapse.access.http.8080 - 460 - INFO - POST-516 - ::ffff:127.0.0.1 - 8080 - {@t40alice:hs1} Processed request: 0.005sec/0.000sec (0.003sec, 0.000sec) (0.001sec/0.001sec/8) 84B 502 "POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 HTTP/1.0" "Go-http-client/1.1" [0 dbevts]

```

In particular:

> Unable to parse JSON from POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 response: Expecting value: line 1 column 1 (char 0) (b'')

erm wat. Is this a complement test bug that I wrote? | 1.0 | Complement `TestPartialStateJoin/Room_aliases_can_be_added_and_queried_during_a_resync` is flakey - [TestPartialStateJoin/Room_aliases_can_be_added_and_queried_during_a_resync](https://github.com/matrix-org/synapse/actions/runs/3538420582/jobs/5939246922)

```

client.go:599: [CSAPI] POST hs1/_matrix/client/v3/register => 200 OK (32.809293ms)

client.go:599: [CSAPI] GET hs1/_matrix/client/v3/capabilities => 200 OK (8.09415ms)

server.go:170: Creating room !0-UceOsu61aV8dXGXSxM:host.docker.internal:33837 with version 9

federation_room_join_partial_state_test.go:3468: Registered state_ids handler for event $g53VZ818ZJcgniyOAKqjgOgqN7Z7aEPYJ_Cq9a5NbVE

federation_room_join_partial_state_test.go:3509: Registered /state handler for event $g53VZ818ZJcgniyOAKqjgOgqN7Z7aEPYJ_Cq9a5NbVE

client.go:599: [CSAPI] POST hs1/_matrix/client/v3/join/!0-UceOsu61aV8dXGXSxM:host.docker.internal:33837 => 502 Bad Gateway (6.328761ms)

federation_room_join_partial_state_test.go:3380: CSAPI.MustDoFunc POST http://localhost:49420/_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 returned non-2xx code: 502 Bad Gateway - body: {"errcode":"M_UNKNOWN","error":"Failed to make_join via any server"}

```

Why did we get a 502?

```

2022-11-24T07:20:08.4197065Z nginx | 192.168.16.1 - - [24/Nov/2022:07:14:39 +0000] "POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 HTTP/1.1" 502 95 "-" "Go-http-client/1.1"

2022-11-24T07:20:08.4175716Z synapse_main | 2022-11-24 07:14:39,151 - synapse.http.servlet - 668 - WARNING - POST-516 - Unable to parse JSON from POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 response: Expecting value: line 1 column 1 (char 0) (b'')

2022-11-24T07:20:08.4176297Z synapse_main | 2022-11-24 07:14:39,155 - synapse.federation.federation_client - 850 - INFO - POST-516 - make_join: Not retrying server host.docker.internal:33837 because we tried it recently retry_last_ts=1669273939360 and we won't check for another retry_interval=600000ms.

2022-11-24T07:20:08.4177016Z synapse_main | 2022-11-24 07:14:39,156 - synapse.http.server - 108 - INFO - POST-516 - <SynapseRequest at 0x7f785e4a0c40 method='POST' uri='/_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837' clientproto='HTTP/1.0' site='8080'> SynapseError: 502 - Failed to make_join via any server

2022-11-24T07:20:08.4177822Z synapse_main | 2022-11-24 07:14:39,156 - synapse.access.http.8080 - 460 - INFO - POST-516 - ::ffff:127.0.0.1 - 8080 - {@t40alice:hs1} Processed request: 0.005sec/0.000sec (0.003sec, 0.000sec) (0.001sec/0.001sec/8) 84B 502 "POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 HTTP/1.0" "Go-http-client/1.1" [0 dbevts]

```

In particular:

> Unable to parse JSON from POST /_matrix/client/v3/join/%210-UceOsu61aV8dXGXSxM:host.docker.internal:33837?server_name=host.docker.internal%3A33837 response: Expecting value: line 1 column 1 (char 0) (b'')

erm wat. Is this a complement test bug that I wrote? | defect | complement testpartialstatejoin room aliases can be added and queried during a resync is flakey client go post matrix client register ok client go get matrix client capabilities ok server go creating room host docker internal with version federation room join partial state test go registered state ids handler for event federation room join partial state test go registered state handler for event client go post matrix client join host docker internal bad gateway federation room join partial state test go csapi mustdofunc post returned non code bad gateway body errcode m unknown error failed to make join via any server why did we get a nginx post matrix client join host docker internal server name host docker internal http go http client synapse main synapse http servlet warning post unable to parse json from post matrix client join host docker internal server name host docker internal response expecting value line column char b synapse main synapse federation federation client info post make join not retrying server host docker internal because we tried it recently retry last ts and we won t check for another retry interval synapse main synapse http server info post synapseerror failed to make join via any server synapse main synapse access http info post ffff processed request post matrix client join host docker internal server name host docker internal http go http client in particular unable to parse json from post matrix client join host docker internal server name host docker internal response expecting value line column char b erm wat is this a complement test bug that i wrote | 1 |

52,272 | 13,731,722,334 | IssuesEvent | 2020-10-05 02:08:43 | mwilliams7197/bootstrap | https://api.github.com/repos/mwilliams7197/bootstrap | opened | WS-2019-0491 (High) detected in handlebars-4.0.12.tgz | security vulnerability | ## WS-2019-0491 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.0.12.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-4.0.12.tgz">https://registry.npmjs.org/handlebars/-/handlebars-4.0.12.tgz</a></p>

<p>Path to dependency file: bootstrap/package.json</p>

<p>Path to vulnerable library: bootstrap/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- karma-coverage-istanbul-reporter-2.0.4.tgz (Root Library)

- istanbul-api-2.0.6.tgz

- istanbul-reports-2.0.1.tgz

- :x: **handlebars-4.0.12.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

handlebars before 4.4.5 is vulnerable to Denial of Service. The package's parser may be forced into an endless loop while processing specially-crafted templates. This may allow attackers to exhaust system resources leading to Denial of Service.

<p>Publish Date: 2019-11-04

<p>URL: <a href=https://github.com/handlebars-lang/handlebars.js/commit/8d5530ee2c3ea9f0aee3fde310b9f36887d00b8b>WS-2019-0491</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1300">https://www.npmjs.com/advisories/1300</a></p>

<p>Release Date: 2019-11-04</p>

<p>Fix Resolution: handlebars - 4.4.5</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"handlebars","packageVersion":"4.0.12","isTransitiveDependency":true,"dependencyTree":"karma-coverage-istanbul-reporter:2.0.4;istanbul-api:2.0.6;istanbul-reports:2.0.1;handlebars:4.0.12","isMinimumFixVersionAvailable":true,"minimumFixVersion":"handlebars - 4.4.5"}],"vulnerabilityIdentifier":"WS-2019-0491","vulnerabilityDetails":"handlebars before 4.4.5 is vulnerable to Denial of Service. The package\u0027s parser may be forced into an endless loop while processing specially-crafted templates. This may allow attackers to exhaust system resources leading to Denial of Service.","vulnerabilityUrl":"https://github.com/handlebars-lang/handlebars.js/commit/8d5530ee2c3ea9f0aee3fde310b9f36887d00b8b","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | True | WS-2019-0491 (High) detected in handlebars-4.0.12.tgz - ## WS-2019-0491 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.0.12.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates effectively with no frustration</p>

<p>Library home page: <a href="https://registry.npmjs.org/handlebars/-/handlebars-4.0.12.tgz">https://registry.npmjs.org/handlebars/-/handlebars-4.0.12.tgz</a></p>

<p>Path to dependency file: bootstrap/package.json</p>

<p>Path to vulnerable library: bootstrap/node_modules/handlebars/package.json</p>

<p>

Dependency Hierarchy:

- karma-coverage-istanbul-reporter-2.0.4.tgz (Root Library)

- istanbul-api-2.0.6.tgz

- istanbul-reports-2.0.1.tgz

- :x: **handlebars-4.0.12.tgz** (Vulnerable Library)

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

handlebars before 4.4.5 is vulnerable to Denial of Service. The package's parser may be forced into an endless loop while processing specially-crafted templates. This may allow attackers to exhaust system resources leading to Denial of Service.

<p>Publish Date: 2019-11-04

<p>URL: <a href=https://github.com/handlebars-lang/handlebars.js/commit/8d5530ee2c3ea9f0aee3fde310b9f36887d00b8b>WS-2019-0491</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://www.npmjs.com/advisories/1300">https://www.npmjs.com/advisories/1300</a></p>

<p>Release Date: 2019-11-04</p>

<p>Fix Resolution: handlebars - 4.4.5</p>

</p>

</details>

<p></p>

<!-- <REMEDIATE>{"isOpenPROnVulnerability":false,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"javascript/Node.js","packageName":"handlebars","packageVersion":"4.0.12","isTransitiveDependency":true,"dependencyTree":"karma-coverage-istanbul-reporter:2.0.4;istanbul-api:2.0.6;istanbul-reports:2.0.1;handlebars:4.0.12","isMinimumFixVersionAvailable":true,"minimumFixVersion":"handlebars - 4.4.5"}],"vulnerabilityIdentifier":"WS-2019-0491","vulnerabilityDetails":"handlebars before 4.4.5 is vulnerable to Denial of Service. The package\u0027s parser may be forced into an endless loop while processing specially-crafted templates. This may allow attackers to exhaust system resources leading to Denial of Service.","vulnerabilityUrl":"https://github.com/handlebars-lang/handlebars.js/commit/8d5530ee2c3ea9f0aee3fde310b9f36887d00b8b","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"High","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"None"},"extraData":{}}</REMEDIATE> --> | non_defect | ws high detected in handlebars tgz ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file bootstrap package json path to vulnerable library bootstrap node modules handlebars package json dependency hierarchy karma coverage istanbul reporter tgz root library istanbul api tgz istanbul reports tgz x handlebars tgz vulnerable library vulnerability details handlebars before is vulnerable to denial of service the package s parser may be forced into an endless loop while processing specially crafted templates this may allow attackers to exhaust system resources leading to denial of service publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution handlebars isopenpronvulnerability false ispackagebased true isdefaultbranch true packages vulnerabilityidentifier ws vulnerabilitydetails handlebars before is vulnerable to denial of service the package parser may be forced into an endless loop while processing specially crafted templates this may allow attackers to exhaust system resources leading to denial of service vulnerabilityurl | 0 |

53,903 | 23,098,841,535 | IssuesEvent | 2022-07-26 22:58:40 | microsoft/botbuilder-dotnet | https://api.github.com/repos/microsoft/botbuilder-dotnet | closed | FormatDateTime adaptive expression fails to parse if input is DateTimeOffset type | bug customer-reported Bot Services customer-replied-to | ## Version

4.16.1

## Describe the bug

If you set a memory scope variable to be a `DateTimeOffset` type, and then try and use that variable in an expression containing `formatDateTime`, the `formatDateTime` fails at the following line.

https://github.com/microsoft/botbuilder-dotnet/blob/402bc02b4cbbd2f4ec359134640e99211367e4a5/libraries/AdaptiveExpressions/BuiltinFunctions/FormatDateTime.cs#L50

It's because the parser only checks for `string` and `DateTime` but not `DateTimeOffset`.

## Expected behavior

Expect to be able to format a `DateTimeOffset` variable in the same way as `DateTime`.

## Screenshots

n/a

## Additional context

Please ping me for additional context, if needed. Thanks! | 1.0 | FormatDateTime adaptive expression fails to parse if input is DateTimeOffset type - ## Version

4.16.1

## Describe the bug

If you set a memory scope variable to be a `DateTimeOffset` type, and then try and use that variable in an expression containing `formatDateTime`, the `formatDateTime` fails at the following line.

https://github.com/microsoft/botbuilder-dotnet/blob/402bc02b4cbbd2f4ec359134640e99211367e4a5/libraries/AdaptiveExpressions/BuiltinFunctions/FormatDateTime.cs#L50

It's because the parser only checks for `string` and `DateTime` but not `DateTimeOffset`.

## Expected behavior

Expect to be able to format a `DateTimeOffset` variable in the same way as `DateTime`.

## Screenshots

n/a

## Additional context

Please ping me for additional context, if needed. Thanks! | non_defect | formatdatetime adaptive expression fails to parse if input is datetimeoffset type version describe the bug if you set a memory scope variable to be a datetimeoffset type and then try and use that variable in an expression containing formatdatetime the formatdatetime fails at the following line it s because the parser only checks for string and datetime but not datetimeoffset expected behavior expect to be able to format a datetimeoffset variable in the same way as datetime screenshots n a additional context please ping me for additional context if needed thanks | 0 |

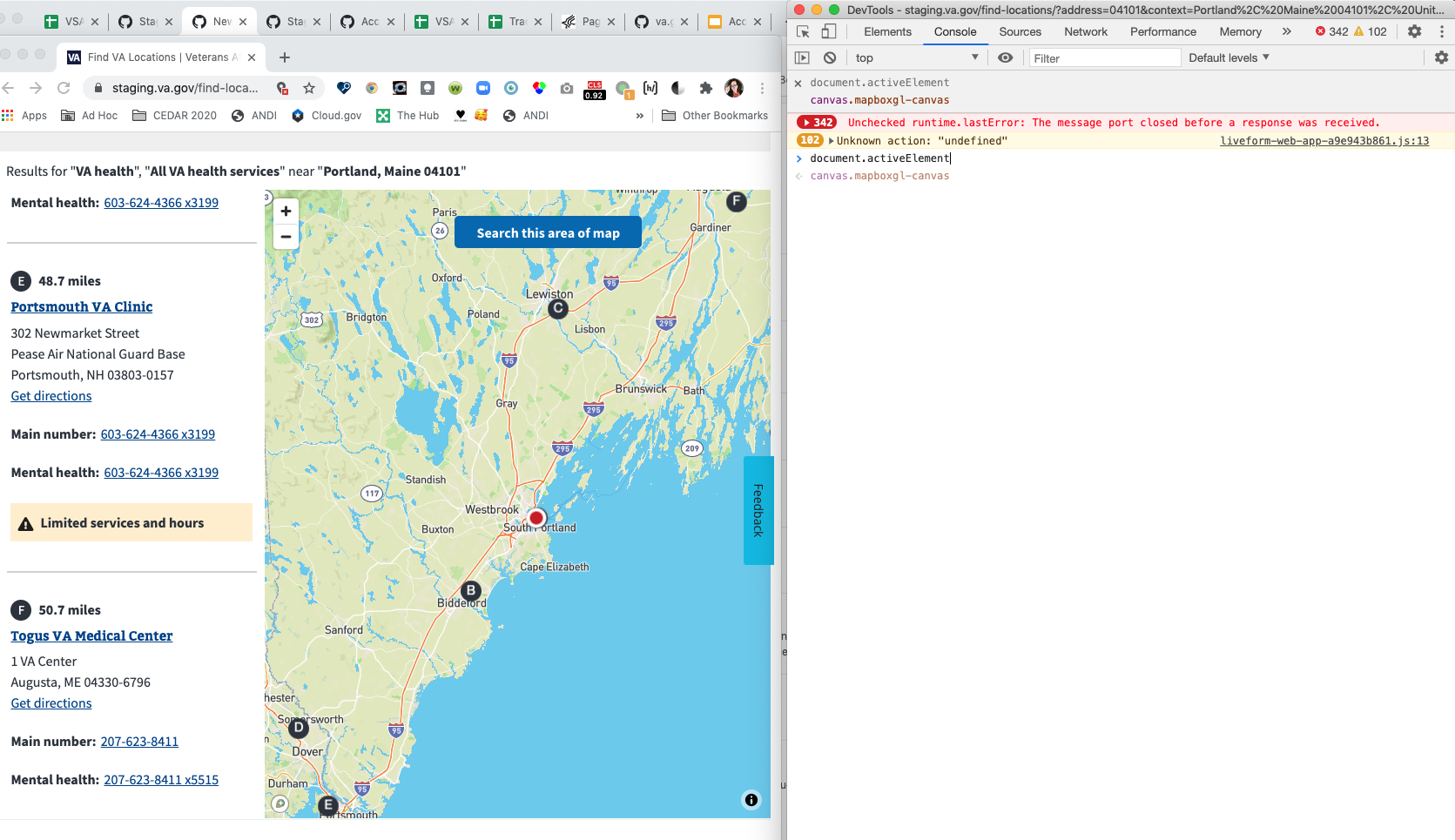

55,575 | 14,563,446,930 | IssuesEvent | 2020-12-17 02:32:31 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | closed | 508-defect-2 [COGNITION, FOCUS MANAGEMENT]: When focus moves to the map, the focus halo should be visible and content announced to screen reader | 508-defect-2 508-issue-cognition 508-issue-focus-mgmt 508/Accessibility vsa vsa-facilities | # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-2)

## Feedback framework

- **❗️ Must** for if the feedback must be applied

- **⚠️ Should** if the feedback is best practice

- **✔️ Consider** for suggestions/enhancements

## Definition of done

1. Review and acknowledge feedback.

1. Fix and/or document decisions made.

1. Accessibility specialist will close ticket after reviewing documented decisions / validating fix.

<hr/>

## Point of Contact

**VFS Point of Contact:** Jennifer

## User Story or Problem Statement

As a user who navigates by keyboard, I expect to see a focus outline as a tab through the screen, so I may orient myself in the content.

## Details

When tabbing through the screen content, moving from the search results to the map, focus moves from the last search result item to the `<canvas>` element for the map, but there is no focus halo. Visible focus indicators help anyone who relies on the keyboard to operate the page, by letting them visually determine the component on which keyboard operations will interact at any point in time. People with attention limitations, short term memory limitations, or limitations in executive processes benefit by being able to discover where the focus is located.

Additionally, using the screen readers, the map is read only as "clickable" (if it is announced at all) when focus is on it also without the focus halo. It would be helpful to have an `aria-label="Search results map area"` on the `<canvas>` element.

## Acceptance Criteria

- [ ] When focus is moved to keyboard operable user interface element, the focus indicator is visible for sighted users.

- [ ] When focus is on the map, the screen reader hears "Search results map area"

## Environment

* Operating System: all

* Browser: all

* Screen reading device: all

* Server destination: staging

## Steps to Recreate