Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

181,389 | 6,659,273,214 | IssuesEvent | 2017-10-01 09:03:53 | OperationCode/operationcode_frontend | https://api.github.com/repos/OperationCode/operationcode_frontend | opened | Broken link in CONTRIBUTING.md | beginner friendly hacktoberfest Priority: Medium Status: Available | # Bug Report

## What is the current behavior?

`CONTRIBUTING.md` contains:

`- [Running the development Server](#running-the-development-server)`

on line 29.

## What is the expected behavior?

It should contain:

- [Running the Development Environment](#running-the-development-environment)

| 1.0 | Broken link in CONTRIBUTING.md - # Bug Report

## What is the current behavior?

`CONTRIBUTING.md` contains:

`- [Running the development Server](#running-the-development-server)`

on line 29.

## What is the expected behavior?

It should contain:

- [Running the Development Environment](#running-the-developmen... | non_defect | broken link in contributing md bug report what is the current behavior contributing md contains running the development server on line what is the expected behavior it should contain running the development environment | 0 |

167 | 2,640,878,704 | IssuesEvent | 2015-03-11 14:56:06 | ploneintranet/ploneintranet.theme | https://api.github.com/repos/ploneintranet/ploneintranet.theme | closed | Scroll-bars on texareas new-post/comment/repost areas in IE | browser compatibility in progress | New post area:

Comment areas in the stream:

Comment areas in the stream:

| True | Saving Preferences should warn user to restart Cellprofiler - Python/CellProfiler menu -> Preferences don't get applied until the next restart, no? Or maybe for some settings, like 'Max memory for Java'? The user should be warned about this (e.g. a modal dialog after Save that says "Please restart CellProfiler for th... | non_defect | saving preferences should warn user to restart cellprofiler python cellprofiler menu preferences don t get applied until the next restart no or maybe for some settings like max memory for java the user should be warned about this e g a modal dialog after save that says please restart cellprofiler for th... | 0 |

19,810 | 10,532,671,798 | IssuesEvent | 2019-10-01 11:19:28 | woocommerce/woocommerce-gutenberg-products-block | https://api.github.com/repos/woocommerce/woocommerce-gutenberg-products-block | opened | Performance of Block Settings on every page load without caching | type: performance | This was reported in https://github.com/woocommerce/woocommerce/issues/24590

Blocks requires certain settings/data to function, and this needs to be present anywhere a block may be used (so admin and frontend, regardless of user type). This is output to JSON inline on every page load.

This can be problematic if d... | True | Performance of Block Settings on every page load without caching - This was reported in https://github.com/woocommerce/woocommerce/issues/24590

Blocks requires certain settings/data to function, and this needs to be present anywhere a block may be used (so admin and frontend, regardless of user type). This is output... | non_defect | performance of block settings on every page load without caching this was reported in blocks requires certain settings data to function and this needs to be present anywhere a block may be used so admin and frontend regardless of user type this is output to json inline on every page load this can be pro... | 0 |

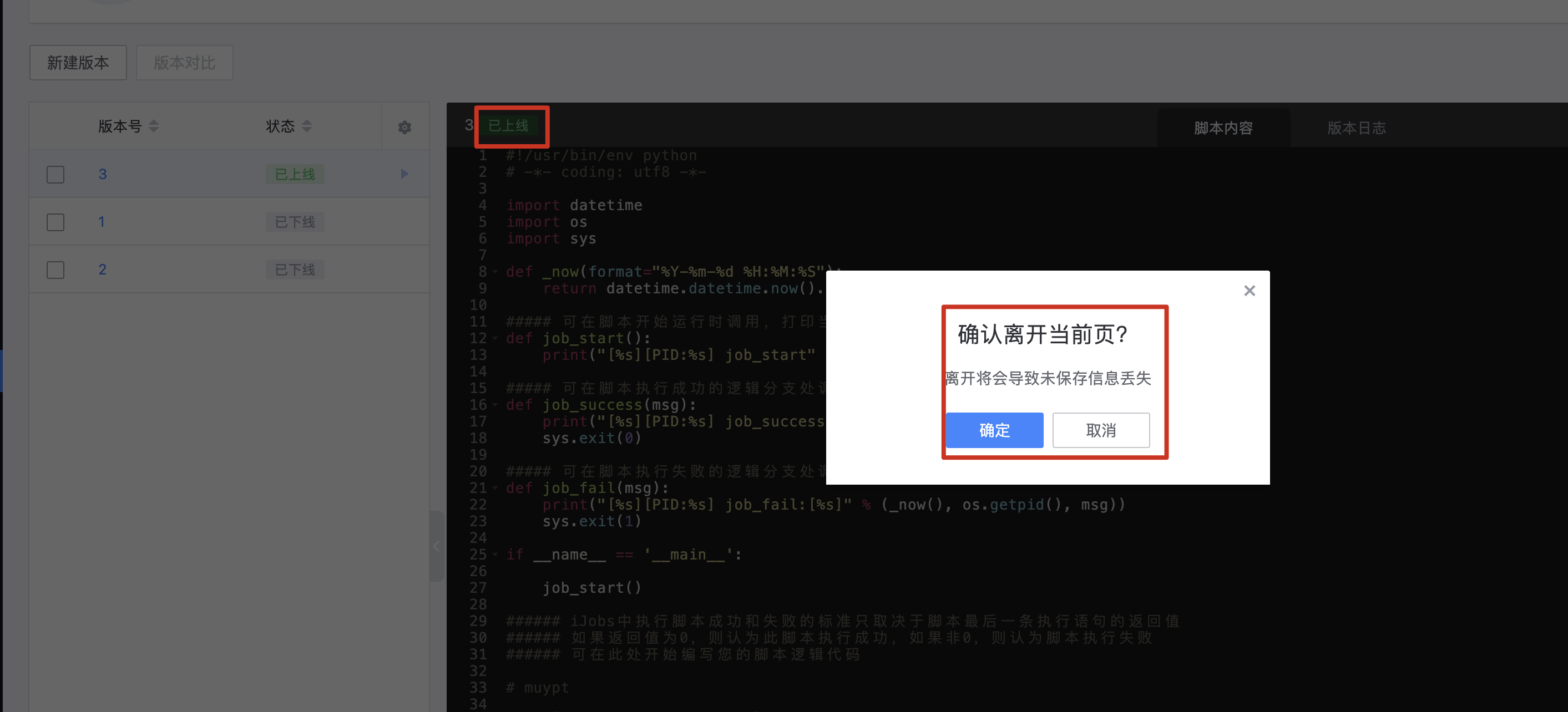

278,588 | 24,162,102,620 | IssuesEvent | 2022-09-22 12:30:15 | Tencent/bk-job | https://api.github.com/repos/Tencent/bk-job | closed | bugfix: 已上线的脚本,为啥还提示未保存的内容 | kind/bug stage/test accepted | **Version / Branch / tag**

≥3.5.1

**出了什么问题?(What Happened?)**

已上线的脚本,为啥还提示未保存的内容,操作方式是 新建版本,选了一个版本,保存,上线,再点右上角的叉时,提示的这个

**如何复现?(How to reproduce?)**

新建版本,选了一个版本,保存,上线,再点右上角的叉时,提示的这个

**预期结果(What ... | 1.0 | bugfix: 已上线的脚本,为啥还提示未保存的内容 - **Version / Branch / tag**

≥3.5.1

**出了什么问题?(What Happened?)**

已上线的脚本,为啥还提示未保存的内容,操作方式是 新建版本,选了一个版本,保存,上线,再点右上角的叉时,提示的这个

**如何复现?(How to reproduce?)**

新建版本,选了一个版本,保存,上线,再... | non_defect | bugfix 已上线的脚本,为啥还提示未保存的内容 version branch tag ≥ 出了什么问题? what happened 已上线的脚本,为啥还提示未保存的内容,操作方式是 新建版本,选了一个版本,保存,上线,再点右上角的叉时,提示的这个 如何复现? how to reproduce 新建版本,选了一个版本,保存,上线,再点右上角的叉时,提示的这个 预期结果 what you expect 上线后,因为已经是只读状态而非编辑状态了,这时离开不应该还要提示未保存信息的弹窗 | 0 |

655 | 2,823,166,139 | IssuesEvent | 2015-05-21 06:53:04 | dotnet/corefx | https://api.github.com/repos/dotnet/corefx | closed | Consider open sourcing ApiCompat. | enhancement Infrastructure | Internally we have some tooling to ensure that implementation assemblies are compatible with contracts. It would be a good idea to open source that and use it as part of the build or CI system or something. I'm not sure if this will happen naturally when we actually start generating contracts in the open or if we'll ... | 1.0 | Consider open sourcing ApiCompat. - Internally we have some tooling to ensure that implementation assemblies are compatible with contracts. It would be a good idea to open source that and use it as part of the build or CI system or something. I'm not sure if this will happen naturally when we actually start generatin... | non_defect | consider open sourcing apicompat internally we have some tooling to ensure that implementation assemblies are compatible with contracts it would be a good idea to open source that and use it as part of the build or ci system or something i m not sure if this will happen naturally when we actually start generatin... | 0 |

93,501 | 3,901,182,294 | IssuesEvent | 2016-04-18 09:46:27 | natsys/tempesta | https://api.github.com/repos/natsys/tempesta | opened | Kernel warning when calling __alloc_skb from the soft-IRQ | enhancement low priority | Kernel warning was caught by me on the laptop:

~~~

[ 3.129833] ------------[ cut here ]------------

[ 3.129839] WARNING: CPU: 1 PID: 0 at kernel/softirq.c:150 __local_bh_enable_ip+0x72/0xa0()

[ 3.129841] Modules linked in: media btusb btbcm btintel bluetooth cdc_mbim intel_rapl iosf_mbi x86_pkg_temp_ther... | 1.0 | Kernel warning when calling __alloc_skb from the soft-IRQ - Kernel warning was caught by me on the laptop:

~~~

[ 3.129833] ------------[ cut here ]------------

[ 3.129839] WARNING: CPU: 1 PID: 0 at kernel/softirq.c:150 __local_bh_enable_ip+0x72/0xa0()

[ 3.129841] Modules linked in: media btusb btbcm btin... | non_defect | kernel warning when calling alloc skb from the soft irq kernel warning was caught by me on the laptop warning cpu pid at kernel softirq c local bh enable ip modules linked in media btusb btbcm btintel bluetooth cdc mbim intel rapl iosf mbi pkg temp ther... | 0 |

13,964 | 2,789,803,377 | IssuesEvent | 2015-05-08 21:35:35 | google/google-visualization-api-issues | https://api.github.com/repos/google/google-visualization-api-issues | opened | LineChart select event to open URL does not work on Internet Explorer | Priority-Medium Type-Defect | Original [issue 157](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=157) created by orwant on 2009-12-29T22:33:16.000Z:

<b>What steps will reproduce the problem? Please provide a link to a</b>

<b>demonstration page if at all possible, or attach code.</b>

1. Code is attached. Hover over dat... | 1.0 | LineChart select event to open URL does not work on Internet Explorer - Original [issue 157](https://code.google.com/p/google-visualization-api-issues/issues/detail?id=157) created by orwant on 2009-12-29T22:33:16.000Z:

<b>What steps will reproduce the problem? Please provide a link to a</b>

<b>demonstration page if a... | defect | linechart select event to open url does not work on internet explorer original created by orwant on what steps will reproduce the problem please provide a link to a demonstration page if at all possible or attach code code is attached hover over data points to see the pop up tooltip and... | 1 |

48,498 | 13,100,884,246 | IssuesEvent | 2020-08-04 01:59:51 | FoldingAtHome/fah-issues | https://api.github.com/repos/FoldingAtHome/fah-issues | closed | Incomplete fix for Core_Outdated with two slots | 1.Type - Defect 3.Component - FAHClient 4.OS - All | Core_outdated means FAHClient needs to download a new core. I have two slots using that core. One was running and I unpaused the other. Naturally, the file was is use so the update couldn't proceed.

Pausing the active slot gives me two slots with status Update_Core. That.s correct ... except that now the downloa... | 1.0 | Incomplete fix for Core_Outdated with two slots - Core_outdated means FAHClient needs to download a new core. I have two slots using that core. One was running and I unpaused the other. Naturally, the file was is use so the update couldn't proceed.

Pausing the active slot gives me two slots with status Update_Cor... | defect | incomplete fix for core outdated with two slots core outdated means fahclient needs to download a new core i have two slots using that core one was running and i unpaused the other naturally the file was is use so the update couldn t proceed pausing the active slot gives me two slots with status update cor... | 1 |

10,636 | 2,622,178,205 | IssuesEvent | 2015-03-04 00:17:37 | byzhang/leveldb | https://api.github.com/repos/byzhang/leveldb | closed | Add version info in include/c.h | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. There isn't any version info in b binding file include/c.h

What is the expected output? What do you see instead?

I can get version info in c interface

What version of the product are you using? On what operating system?

ANY

I'm working on a php binding using c binding, ... | 1.0 | Add version info in include/c.h - ```

What steps will reproduce the problem?

1. There isn't any version info in b binding file include/c.h

What is the expected output? What do you see instead?

I can get version info in c interface

What version of the product are you using? On what operating system?

ANY

I'm working ... | defect | add version info in include c h what steps will reproduce the problem there isn t any version info in b binding file include c h what is the expected output what do you see instead i can get version info in c interface what version of the product are you using on what operating system any i m working ... | 1 |

58,466 | 16,546,038,260 | IssuesEvent | 2021-05-28 00:13:12 | idaholab/moose | https://api.github.com/repos/idaholab/moose | closed | Fixed PETSc alt update script | P: normal T: defect | ## Bug Description

<!--A clear and concise description of the problem (Note: A missing feature is not a bug).-->

```

❯ cd ~/projects/moose/

❯ rm -rf petsc

❯ cd scripts

❯ ./update_and_rebuild_petsc_alt.sh

*** WARNING ***

scripts/update_and_rebuild_petsc_alt.sh is intended for internal

use only. Please use scr... | 1.0 | Fixed PETSc alt update script - ## Bug Description

<!--A clear and concise description of the problem (Note: A missing feature is not a bug).-->

```

❯ cd ~/projects/moose/

❯ rm -rf petsc

❯ cd scripts

❯ ./update_and_rebuild_petsc_alt.sh

*** WARNING ***

scripts/update_and_rebuild_petsc_alt.sh is intended for in... | defect | fixed petsc alt update script bug description ❯ cd projects moose ❯ rm rf petsc ❯ cd scripts ❯ update and rebuild petsc alt sh warning scripts update and rebuild petsc alt sh is intended for internal use only please use scripts update and rebuild petsc sh instead users milljm pro... | 1 |

451,118 | 32,008,908,622 | IssuesEvent | 2023-09-21 16:34:08 | NOAA-EMC/NCEPLIBS-ip | https://api.github.com/repos/NOAA-EMC/NCEPLIBS-ip | closed | Missing documentation for iplib and ip2lib header files. | documentation | In ip2lib_4.h we have:

```

/** @file

* @brief C interface to gdswzd() and gdswzd_grib1() functions for '4'

* library build.

* @author NOAA Programmer

*/

#ifndef IPLIB

#define IPLIB

/**

GDSWZD in C.

@param igdtnum

@param igdtmpl

@param igdtlen

@param iopt

@param npts

@pa... | 1.0 | Missing documentation for iplib and ip2lib header files. - In ip2lib_4.h we have:

```

/** @file

* @brief C interface to gdswzd() and gdswzd_grib1() functions for '4'

* library build.

* @author NOAA Programmer

*/

#ifndef IPLIB

#define IPLIB

/**

GDSWZD in C.

@param igdtnum

@param igdtmpl... | non_defect | missing documentation for iplib and header files in h we have file brief c interface to gdswzd and gdswzd functions for library build author noaa programmer ifndef iplib define iplib gdswzd in c param igdtnum param igdtmpl param ig... | 0 |

534,879 | 15,651,035,094 | IssuesEvent | 2021-03-23 09:43:22 | leihs/leihs | https://api.github.com/repos/leihs/leihs | reopened | show a message when the user can login but has no access right | low priority | - [ ] add page in `my`

- [ ] redirect from legacy | 1.0 | show a message when the user can login but has no access right - - [ ] add page in `my`

- [ ] redirect from legacy | non_defect | show a message when the user can login but has no access right add page in my redirect from legacy | 0 |

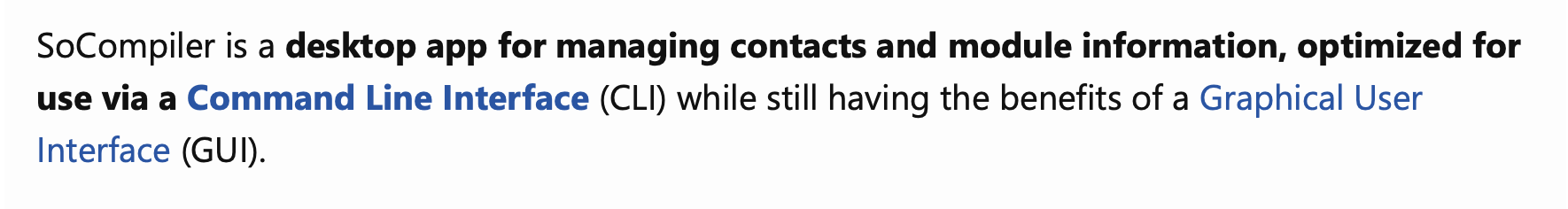

354,749 | 25,174,839,999 | IssuesEvent | 2022-11-11 08:17:48 | TZL0/pe | https://api.github.com/repos/TZL0/pe | opened | Incorrect destination of PDF link | severity.Low type.DocumentationBug | Pressing the 'Command Line Interface' takes the user to Graphical User Interface

<!--session: 166815... | 1.0 | Incorrect destination of PDF link - Pressing the 'Command Line Interface' takes the user to Graphical User Interface

BEGIN

SET @rn =0 | 1.0 | SQL Translation Error - ### SQL translator incorrectly translates this query:

```sql

CREATE PROCEDURE unluckyEmployees()

BEGIN

SET @rn =0 | defect | sql translation error sql translator incorrectly translates this query sql create procedure unluckyemployees begin set rn | 1 |

48,347 | 13,068,455,172 | IssuesEvent | 2020-07-31 03:38:01 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [clsim] GPU detection broken for RTX-series cards (Trac #2212) | Migrated from Trac combo simulation defect | Nvidia decided that ray-tracing was a thing, and renamed their GTX series "RTX", and e.g. the 2080 Ti advertises itself as "GeForce RTX 2080 Ti". clsim.traysegments.common.configureOpenCLDevices, however, special-cases cards named "GTX" to set the number of work items to something sane and enable native math. Is there ... | 1.0 | [clsim] GPU detection broken for RTX-series cards (Trac #2212) - Nvidia decided that ray-tracing was a thing, and renamed their GTX series "RTX", and e.g. the 2080 Ti advertises itself as "GeForce RTX 2080 Ti". clsim.traysegments.common.configureOpenCLDevices, however, special-cases cards named "GTX" to set the number ... | defect | gpu detection broken for rtx series cards trac nvidia decided that ray tracing was a thing and renamed their gtx series rtx and e g the ti advertises itself as geforce rtx ti clsim traysegments common configureopencldevices however special cases cards named gtx to set the number of work items t... | 1 |

232,095 | 25,564,962,440 | IssuesEvent | 2022-11-30 13:40:30 | billmcchesney1/concord | https://api.github.com/repos/billmcchesney1/concord | opened | CVE-2022-38900 (High) detected in decode-uri-component-0.2.0.tgz | security vulnerability | ## CVE-2022-38900 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>decode-uri-component-0.2.0.tgz</b></p></summary>

<p>A better decodeURIComponent</p>

<p>Library home page: <a href="htt... | True | CVE-2022-38900 (High) detected in decode-uri-component-0.2.0.tgz - ## CVE-2022-38900 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>decode-uri-component-0.2.0.tgz</b></p></summary>

<p... | non_defect | cve high detected in decode uri component tgz cve high severity vulnerability vulnerable library decode uri component tgz a better decodeuricomponent library home page a href path to dependency file package json path to vulnerable library node modules decode ur... | 0 |

59,945 | 17,023,296,356 | IssuesEvent | 2021-07-03 01:17:22 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Handling of notConnectedSameTag don't work with juction with dirrerent ways | Component: osmarender Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 1.56pm, Wednesday, 17th September 2008]**

Take folowing test case: a rount about junction which is part of a primary road (so that way forming the round about itself is also tagged with same name a ways of primary road to an from the junction).

In this case the ro... | 1.0 | Handling of notConnectedSameTag don't work with juction with dirrerent ways - **[Submitted to the original trac issue database at 1.56pm, Wednesday, 17th September 2008]**

Take folowing test case: a rount about junction which is part of a primary road (so that way forming the round about itself is also tagged with sam... | defect | handling of notconnectedsametag don t work with juction with dirrerent ways take folowing test case a rount about junction which is part of a primary road so that way forming the round about itself is also tagged with same name a ways of primary road to an from the junction in this case the round about ... | 1 |

592,952 | 17,934,668,281 | IssuesEvent | 2021-09-10 13:56:50 | mozilla/addons-server | https://api.github.com/repos/mozilla/addons-server | closed | Uploading an add-on with a GUID in non string format triggers a server error | component: devhub priority: p3 | ### Describe the problem and steps to reproduce it:

1. Submit an add-on with a GUID in non string format, for example:

```

"browser_specific_settings": {

"gecko": {

"id": 12345

```

2. Check the validation results

### What happened?

"There was a problem contacting the server" error message is received w... | 1.0 | Uploading an add-on with a GUID in non string format triggers a server error - ### Describe the problem and steps to reproduce it:

1. Submit an add-on with a GUID in non string format, for example:

```

"browser_specific_settings": {

"gecko": {

"id": 12345

```

2. Check the validation results

### What hap... | non_defect | uploading an add on with a guid in non string format triggers a server error describe the problem and steps to reproduce it submit an add on with a guid in non string format for example browser specific settings gecko id check the validation results what happene... | 0 |

61,157 | 17,023,620,285 | IssuesEvent | 2021-07-03 02:57:49 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | oneway arrows not visible | Component: mapnik Priority: major Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 10.07pm, Wednesday, 28th July 2010]**

It would seem when the road name just barely fits the length of the road it can completely hide the oneway arrows making navigation rather exciting. When you don't see the road names at least you know to zoom in to see it, but wh... | 1.0 | oneway arrows not visible - **[Submitted to the original trac issue database at 10.07pm, Wednesday, 28th July 2010]**

It would seem when the road name just barely fits the length of the road it can completely hide the oneway arrows making navigation rather exciting. When you don't see the road names at least you know ... | defect | oneway arrows not visible it would seem when the road name just barely fits the length of the road it can completely hide the oneway arrows making navigation rather exciting when you don t see the road names at least you know to zoom in to see it but when you see oneway arrows all around it s not so obvious ... | 1 |

26,474 | 4,726,256,857 | IssuesEvent | 2016-10-18 09:37:52 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | API zone info returns an url without a starting / | auth defect web | When querying the API for zones with: `curl -H 'X-API-Key: secret' http://127.0.0.1:8081/api/v1/servers/localhost/zones` the returned json contains an url to the api zone info. This url does not start with a `/` whereas the docs specify that it should. (Other url fields from the api do start with a `/`).

The 3.4.x api... | 1.0 | API zone info returns an url without a starting / - When querying the API for zones with: `curl -H 'X-API-Key: secret' http://127.0.0.1:8081/api/v1/servers/localhost/zones` the returned json contains an url to the api zone info. This url does not start with a `/` whereas the docs specify that it should. (Other url fiel... | defect | api zone info returns an url without a starting when querying the api for zones with curl h x api key secret the returned json contains an url to the api zone info this url does not start with a whereas the docs specify that it should other url fields from the api do start with a the x api... | 1 |

16,228 | 2,878,875,574 | IssuesEvent | 2015-06-10 06:00:50 | msg4real/pygooglevoice | https://api.github.com/repos/msg4real/pygooglevoice | closed | The comment in settings.py about forwardingNumber is wrong | auto-migrated Priority-Medium Type-Defect | ```

# Number to place calls from (eg, your google voice number)

forwardingNumber=

/snip

The forwarding number is *NOT* your google voice number. It is the number you

want GV to connect the call to. This confuses people trying to setup gvoice to

make call by running 'gvoice call 8005551212'

```

Original issue repo... | 1.0 | The comment in settings.py about forwardingNumber is wrong - ```

# Number to place calls from (eg, your google voice number)

forwardingNumber=

/snip

The forwarding number is *NOT* your google voice number. It is the number you

want GV to connect the call to. This confuses people trying to setup gvoice to

make call ... | defect | the comment in settings py about forwardingnumber is wrong number to place calls from eg your google voice number forwardingnumber snip the forwarding number is not your google voice number it is the number you want gv to connect the call to this confuses people trying to setup gvoice to make call ... | 1 |

39,680 | 5,116,571,411 | IssuesEvent | 2017-01-07 05:18:02 | caseyg/knutepunkt2017 | https://api.github.com/repos/caseyg/knutepunkt2017 | opened | 8. Pettersson, Hamlets, Vampires, and the Italian Alps | design | Paper. Has images that we want to make bigger. | 1.0 | 8. Pettersson, Hamlets, Vampires, and the Italian Alps - Paper. Has images that we want to make bigger. | non_defect | pettersson hamlets vampires and the italian alps paper has images that we want to make bigger | 0 |

76,178 | 26,276,535,376 | IssuesEvent | 2023-01-06 22:48:58 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | closed | Linux Kernel 6.2 renamed get_acl to get_inode_acl | Type: Defect | ### System information

Type | Version/Name

--- | ---

Distribution Name | Gentoo

Distribution Version | -

Kernel Version | `next-20221220`

Architecture | LoongArch

OpenZFS Version | 2.1.99-1641_gc935fe2e9

### Describe the problem you're observing

```

checking whether iops->get_acl() exists... configure:... | 1.0 | Linux Kernel 6.2 renamed get_acl to get_inode_acl - ### System information

Type | Version/Name

--- | ---

Distribution Name | Gentoo

Distribution Version | -

Kernel Version | `next-20221220`

Architecture | LoongArch

OpenZFS Version | 2.1.99-1641_gc935fe2e9

### Describe the problem you're observing

```

c... | defect | linux kernel renamed get acl to get inode acl system information type version name distribution name gentoo distribution version kernel version next architecture loongarch openzfs version describe the problem you re observing checking whether iops... | 1 |

19,006 | 10,312,367,584 | IssuesEvent | 2019-08-29 19:41:16 | jamijam/WebGoat-Legacy | https://api.github.com/repos/jamijam/WebGoat-Legacy | opened | CVE-2019-12384 (Medium) detected in jackson-databind-2.0.4.jar | security vulnerability | ## CVE-2019-12384 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2019-12384 (Medium) detected in jackson-databind-2.0.4.jar - ## CVE-2019-12384 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>Gen... | non_defect | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api path to dependency file webgoat legacy pom xml path to vulnerable library re... | 0 |

680,817 | 23,286,793,111 | IssuesEvent | 2022-08-05 17:25:08 | mm-ninja-turtles/bulbasaur-express | https://api.github.com/repos/mm-ninja-turtles/bulbasaur-express | closed | [bug]: remove `response` object from resolver function args. | type: bug priority: high task: done | ## Problem

`resolver()` function currently provide express's `response` object and it should be removed since it will give the user the freedom to response as they wanted and it'll create the problem of response data is not matching with response schema.

## Solution

1. remove `response` object from args

1. #1... | 1.0 | [bug]: remove `response` object from resolver function args. - ## Problem

`resolver()` function currently provide express's `response` object and it should be removed since it will give the user the freedom to response as they wanted and it'll create the problem of response data is not matching with response schema.... | non_defect | remove response object from resolver function args problem resolver function currently provide express s response object and it should be removed since it will give the user the freedom to response as they wanted and it ll create the problem of response data is not matching with response schema ... | 0 |

231,045 | 18,735,238,071 | IssuesEvent | 2021-11-04 06:15:17 | kartoza/django-bims | https://api.github.com/repos/kartoza/django-bims | closed | Remove date when uploading unpublished datasets | testing SIZE 2 Checked by FRC bug-report Source references Repeat offender - previous ticket | Please remove date when uploading unpublished data and database.

It is creating extra work for us having to go and combine unpublished datasets

I fixed all of these source reference a few weeks ago and now they are all wrong again :( | 1.0 | Remove date when uploading unpublished datasets - Please remove date when uploading unpublished data and database.

It is creating extra work for us having to go and combine unpublished datasets

I fixed all of these source reference a few weeks ago and now they are all wrong again :( | non_defect | remove date when uploading unpublished datasets please remove date when uploading unpublished data and database it is creating extra work for us having to go and combine unpublished datasets i fixed all of these source reference a few weeks ago and now they are all wrong again | 0 |

79,503 | 22,782,906,821 | IssuesEvent | 2022-07-08 22:33:22 | chaotic-aur/packages | https://api.github.com/repos/chaotic-aur/packages | closed | [Outdated] ffmpeg-full | request:new-pkg request:rebuild-pkg priority:medium bug:PKGBUILD | ### If available, link to the latest build

[ffmpeg-full.log](https://builds.garudalinux.org/repos/chaotic-aur/logs/ffmpeg-full.log)

### Package name

`ffmpeg-full`

### Latest build

`None`

### Latest version available

`5.0.1-2`

### Have you tested if the package builds in a clean chroot?

- [ ] Yes

### More info... | 2.0 | [Outdated] ffmpeg-full - ### If available, link to the latest build

[ffmpeg-full.log](https://builds.garudalinux.org/repos/chaotic-aur/logs/ffmpeg-full.log)

### Package name

`ffmpeg-full`

### Latest build

`None`

### Latest version available

`5.0.1-2`

### Have you tested if the package builds in a clean chroot?

... | non_defect | ffmpeg full if available link to the latest build package name ffmpeg full latest build none latest version available have you tested if the package builds in a clean chroot yes more information adding missing deps in but python pocketsphinx git still w... | 0 |

9,137 | 2,615,133,741 | IssuesEvent | 2015-03-01 06:04:46 | chrsmith/google-api-java-client | https://api.github.com/repos/chrsmith/google-api-java-client | opened | With null accountName, get infinity loop | auto-migrated Priority-Medium Type-Defect | ```

Version of google-api-java-client (e.g. 1.15.0-rc)?

1.17.0-rc

Java environment (e.g. Java 6, Android 2.3, App Engine)?

Android 4.2.2

Describe the problem.

from source GoogleAccountCredential.java:

public String getToken() throws IOException, GoogleAuthException {

if (backOff != null) {

backOff.reset... | 1.0 | With null accountName, get infinity loop - ```

Version of google-api-java-client (e.g. 1.15.0-rc)?

1.17.0-rc

Java environment (e.g. Java 6, Android 2.3, App Engine)?

Android 4.2.2

Describe the problem.

from source GoogleAccountCredential.java:

public String getToken() throws IOException, GoogleAuthException {

... | defect | with null accountname get infinity loop version of google api java client e g rc rc java environment e g java android app engine android describe the problem from source googleaccountcredential java public string gettoken throws ioexception googleauthexception i... | 1 |

36,422 | 7,928,753,966 | IssuesEvent | 2018-07-06 12:53:41 | otros-systems/otroslogviewer | https://api.github.com/repos/otros-systems/otroslogviewer | closed | Listening to udp not possible | Priority-Medium Type-Defect | ```

When starting a socket listener, it always starts listening to tcp messages.

However, it would be great if also listening to udp messages was supported. For

example the log4judp logging backend of log4cplus could be used with the

otroslogviewer then.

```

Original issue reported on code.google.com by `jan.rue..... | 1.0 | Listening to udp not possible - ```

When starting a socket listener, it always starts listening to tcp messages.

However, it would be great if also listening to udp messages was supported. For

example the log4judp logging backend of log4cplus could be used with the

otroslogviewer then.

```

Original issue reported ... | defect | listening to udp not possible when starting a socket listener it always starts listening to tcp messages however it would be great if also listening to udp messages was supported for example the logging backend of could be used with the otroslogviewer then original issue reported on code google ... | 1 |

21,470 | 3,511,527,923 | IssuesEvent | 2016-01-10 10:29:53 | nielsAD/lape | https://api.github.com/repos/nielsAD/lape | closed | It's impossible with certain boolean comparisons. | auto-migrated Priority-High Type-Defect | ```

What will reproduce the problem?

if (SomeBool and True) then

What is the expected output? What do you see instead?

I get "It's impossible!" (Line 706 of lpvartypes_ord.pas)

Which version are you using?

c96f612e0066

Please provide any additional information below.

Seems to not be an issue if True is fir... | 1.0 | It's impossible with certain boolean comparisons. - ```

What will reproduce the problem?

if (SomeBool and True) then

What is the expected output? What do you see instead?

I get "It's impossible!" (Line 706 of lpvartypes_ord.pas)

Which version are you using?

c96f612e0066

Please provide any additional informat... | defect | it s impossible with certain boolean comparisons what will reproduce the problem if somebool and true then what is the expected output what do you see instead i get it s impossible line of lpvartypes ord pas which version are you using please provide any additional information below ... | 1 |

28,079 | 5,184,504,936 | IssuesEvent | 2017-01-20 06:31:44 | GarageGames/Torque3D | https://api.github.com/repos/GarageGames/Torque3D | closed | Terrain materials are double sided | Defect | I noticed when I fly under the terrain, it is still visible, this means the terrain materials are all double sided and this cannot be changed, since terrain materials, do not have the value to change if they are double sided or not.

Since double sided materials cost more to render, the terrain should not be double side... | 1.0 | Terrain materials are double sided - I noticed when I fly under the terrain, it is still visible, this means the terrain materials are all double sided and this cannot be changed, since terrain materials, do not have the value to change if they are double sided or not.

Since double sided materials cost more to render, ... | defect | terrain materials are double sided i noticed when i fly under the terrain it is still visible this means the terrain materials are all double sided and this cannot be changed since terrain materials do not have the value to change if they are double sided or not since double sided materials cost more to render ... | 1 |

34,979 | 7,497,166,764 | IssuesEvent | 2018-04-08 17:00:56 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | [Bug?] Example of Bland's Rule for optimize.linprog (simplex) cycling/preventing termination | defect scipy.optimize | Forcing Bland's Rule with the "bland":True options seems to prevent the code example below from terminating, while the purpose of Bland's Rule is to make sure it terminates. The problem is solved quickly when not forcing Bland's Rule, giving an optimal value of -6.044533469014448.

By "not terminating" i mean that it... | 1.0 | [Bug?] Example of Bland's Rule for optimize.linprog (simplex) cycling/preventing termination - Forcing Bland's Rule with the "bland":True options seems to prevent the code example below from terminating, while the purpose of Bland's Rule is to make sure it terminates. The problem is solved quickly when not forcing Blan... | defect | example of bland s rule for optimize linprog simplex cycling preventing termination forcing bland s rule with the bland true options seems to prevent the code example below from terminating while the purpose of bland s rule is to make sure it terminates the problem is solved quickly when not forcing bland s r... | 1 |

12,444 | 2,700,140,850 | IssuesEvent | 2015-04-03 22:54:05 | netty/netty | https://api.github.com/repos/netty/netty | closed | HTTP/2 RST_STREAM frame for an IDLE stream should result in connection error | defect | http://http2.github.io/http2-spec/index.html#RST_STREAM

> RST_STREAM frames MUST NOT be sent for a stream in the "idle" state. If a RST_STREAM frame identifying an idle stream is received, the recipient MUST treat this as a connection error (Section 5.4.1) of type PROTOCOL_ERROR. | 1.0 | HTTP/2 RST_STREAM frame for an IDLE stream should result in connection error - http://http2.github.io/http2-spec/index.html#RST_STREAM

> RST_STREAM frames MUST NOT be sent for a stream in the "idle" state. If a RST_STREAM frame identifying an idle stream is received, the recipient MUST treat this as a connection err... | defect | http rst stream frame for an idle stream should result in connection error rst stream frames must not be sent for a stream in the idle state if a rst stream frame identifying an idle stream is received the recipient must treat this as a connection error section of type protocol error | 1 |

39,390 | 6,741,336,636 | IssuesEvent | 2017-10-20 00:03:09 | scrabill/how-many-days-until-halloween | https://api.github.com/repos/scrabill/how-many-days-until-halloween | closed | Documentation: Update CONTRIBUTE.md | documentation Hacktoberfest help wanted | Include the following

- How to contribute

- What is currently there / how it works

- Links to resources

| 1.0 | Documentation: Update CONTRIBUTE.md - Include the following

- How to contribute

- What is currently there / how it works

- Links to resources

| non_defect | documentation update contribute md include the following how to contribute what is currently there how it works links to resources | 0 |

51,772 | 13,211,304,546 | IssuesEvent | 2020-08-15 22:10:39 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | Link to PROPOSAL project (Trac #1019) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1019">https://code.icecube.wisc.edu/projects/icecube/ticket/1019</a>, reported by icecube</summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-06-11T18:38:27",

"_ts": "1434047907705473",

"des... | 1.0 | Link to PROPOSAL project (Trac #1019) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1019">https://code.icecube.wisc.edu/projects/icecube/ticket/1019</a>, reported by icecube</summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-06-11T18:38:27",... | defect | link to proposal project trac migrated from json status closed changetime ts description hi n ncould you please provide a link to the paper describing how the proposal icesim meta project work n nthanks reporter icecube cc j... | 1 |

338,745 | 24,597,597,255 | IssuesEvent | 2022-10-14 09:37:56 | Thaza-Kun/sarjana | https://api.github.com/repos/Thaza-Kun/sarjana | opened | Check The Unit for $a$ and $K$ | documentation | The values of $a$ should be the inverse of $K$ yet in `thesis\literature.qmd`, the units are not entirely the same:

$a=4.148 806 4239(11) \text{GH}^2\text{pc}^{-1}\text{cm}^3\text{ms}$

$K=241.033 1786(66) \text{GH}^{-2}\text{pc}\text{cm}^{-3}\text{s}$

Note: In $a$, it is ...ms while in $K$, it is ...s

<!-- Edit the... | 1.0 | Check The Unit for $a$ and $K$ - The values of $a$ should be the inverse of $K$ yet in `thesis\literature.qmd`, the units are not entirely the same:

$a=4.148 806 4239(11) \text{GH}^2\text{pc}^{-1}\text{cm}^3\text{ms}$

$K=241.033 1786(66) \text{GH}^{-2}\text{pc}\text{cm}^{-3}\text{s}$

Note: In $a$, it is ...ms while ... | non_defect | check the unit for a and k the values of a should be the inverse of k yet in thesis literature qmd the units are not entirely the same a text gh text pc text cm text ms k text gh text pc text cm text s note in a it is ms while in k it is ... | 0 |

21,992 | 3,587,833,741 | IssuesEvent | 2016-01-30 16:15:46 | dkfans/keeperfx | https://api.github.com/repos/dkfans/keeperfx | reopened | Level Kari-Mar automatic victory due to Computer Player suicide | Component-CompPlayer Component-Maps Priority-Low Status-Duplicate Type-Defect | Originally reported on Google Code with ID 213

```

Free Play level Kari-Mar on version 0.4.4. Early in the game you suddenly win, without

ever seeing an enemy. Most likely map invites Computer Player to dig to strong heroes.

Savegame attached.

```

Reported by `Loobinex` on 2014-01-15 15:40:39

<hr>

* *Attachment... | 1.0 | Level Kari-Mar automatic victory due to Computer Player suicide - Originally reported on Google Code with ID 213

```

Free Play level Kari-Mar on version 0.4.4. Early in the game you suddenly win, without

ever seeing an enemy. Most likely map invites Computer Player to dig to strong heroes.

Savegame attached.

```

... | defect | level kari mar automatic victory due to computer player suicide originally reported on google code with id free play level kari mar on version early in the game you suddenly win without ever seeing an enemy most likely map invites computer player to dig to strong heroes savegame attached re... | 1 |

10,473 | 2,622,165,432 | IssuesEvent | 2015-03-04 00:12:05 | byzhang/graphchi | https://api.github.com/repos/byzhang/graphchi | opened | Cannot make sharder_basic - fixed by adding an extra include | auto-migrated Priority-Medium Type-Defect | ```

I tried to build the sharder_basic program with the latest download and it

failed with this error:

g++ -g -O3 -I/usr/local/include/ -I./src/ -fopenmp -Wall -Wno-strict-aliasing

src/preprocessing/sharder_basic.cpp -o bin/sharder_basic

In file included from ./src/preprocessing/conversions.hpp:36,

... | 1.0 | Cannot make sharder_basic - fixed by adding an extra include - ```

I tried to build the sharder_basic program with the latest download and it

failed with this error:

g++ -g -O3 -I/usr/local/include/ -I./src/ -fopenmp -Wall -Wno-strict-aliasing

src/preprocessing/sharder_basic.cpp -o bin/sharder_basic

In file include... | defect | cannot make sharder basic fixed by adding an extra include i tried to build the sharder basic program with the latest download and it failed with this error g g i usr local include i src fopenmp wall wno strict aliasing src preprocessing sharder basic cpp o bin sharder basic in file included... | 1 |

20,097 | 3,295,315,224 | IssuesEvent | 2015-10-31 20:48:32 | chief-atx/bcmon | https://api.github.com/repos/chief-atx/bcmon | closed | Galaxy s 4 | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

Dont finding anything.

What version of the product are you using? On what operating system?

galaxy s 4 cm 12.1 5.1.1

Please provide any additional information below.

Maybe u can help me to connect tl-wn722 cos i need mon mode on galaxy s 4

```

Original issue reported on... | 1.0 | Galaxy s 4 - ```

What steps will reproduce the problem?

Dont finding anything.

What version of the product are you using? On what operating system?

galaxy s 4 cm 12.1 5.1.1

Please provide any additional information below.

Maybe u can help me to connect tl-wn722 cos i need mon mode on galaxy s 4

```

Original iss... | defect | galaxy s what steps will reproduce the problem dont finding anything what version of the product are you using on what operating system galaxy s cm please provide any additional information below maybe u can help me to connect tl cos i need mon mode on galaxy s original issue re... | 1 |

63,728 | 17,872,009,895 | IssuesEvent | 2021-09-06 17:09:04 | martinrotter/rssguard | https://api.github.com/repos/martinrotter/rssguard | closed | Linking fails when version 3.9.2 is installed | Type-Defect Status-Not-Enough-Data | When building version 4.0.1 while version 3.9.2 is installed, linking fails, being unable to resolve `parseCmdArgumentsFromOtherInstance(const QString& message)` symbol. Removing or renaming `/usr/local/lib/librssguard.so` helps, but the following small change to `src/rssguard/rssguard.pro` would make the build more r... | 1.0 | Linking fails when version 3.9.2 is installed - When building version 4.0.1 while version 3.9.2 is installed, linking fails, being unable to resolve `parseCmdArgumentsFromOtherInstance(const QString& message)` symbol. Removing or renaming `/usr/local/lib/librssguard.so` helps, but the following small change to `src/rs... | defect | linking fails when version is installed when building version while version is installed linking fails being unable to resolve parsecmdargumentsfromotherinstance const qstring message symbol removing or renaming usr local lib librssguard so helps but the following small change to src rs... | 1 |

17,624 | 3,012,774,652 | IssuesEvent | 2015-07-29 02:27:30 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | Importing adds to specification in case specification is erroneous | auto-migrated Category-Component-Editor Priority-Medium Type-Defect | ```

When I *open* specification new60.ywl I get an error and the editor states

that the load file will be discarded. However, the specification is shown

and when I subsequently *import* another specification (e.g. new61.xml),

two nets are shown. This is not possible with specifications which load

normally as the im... | 1.0 | Importing adds to specification in case specification is erroneous - ```

When I *open* specification new60.ywl I get an error and the editor states

that the load file will be discarded. However, the specification is shown

and when I subsequently *import* another specification (e.g. new61.xml),

two nets are shown. Th... | defect | importing adds to specification in case specification is erroneous when i open specification ywl i get an error and the editor states that the load file will be discarded however the specification is shown and when i subsequently import another specification e g xml two nets are shown this is no... | 1 |

38,995 | 5,207,021,621 | IssuesEvent | 2017-01-24 22:14:56 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | We should support Load Balancer or remove from drop-down list | area/webhooks kind/bug status/resolved status/to-test | **Rancher Versions:** master 1/17

**Steps to Reproduce:**

1. Add a load balancer

2. Go to Add webhook and click on drop-down

**Results:** Load balancer is in list

**Expected:** We should support it or remove it from drop-down.

| 1.0 | We should support Load Balancer or remove from drop-down list - **Rancher Versions:** master 1/17

**Steps to Reproduce:**

1. Add a load balancer

2. Go to Add webhook and click on drop-down

**Results:** Load balancer is in list

**Expected:** We should support it or remove it from drop-down.

| non_defect | we should support load balancer or remove from drop down list rancher versions master steps to reproduce add a load balancer go to add webhook and click on drop down results load balancer is in list expected we should support it or remove it from drop down | 0 |

796,903 | 28,131,175,889 | IssuesEvent | 2023-03-31 23:24:59 | integrations/terraform-provider-github | https://api.github.com/repos/integrations/terraform-provider-github | closed | The repository_file data source throws an error when trying to access a non-existent file | Type: Bug Priority: Normal | ### Affected Resource(s)

Please list the resources as a list, for example:

- `data.github_repository_file`

### Expected Behavior

The `repository_file` data source allows checking if a specific file exists in a repository. The same way that `branch`data source, for example, does (see: https://github.com/integratio... | 1.0 | The repository_file data source throws an error when trying to access a non-existent file - ### Affected Resource(s)

Please list the resources as a list, for example:

- `data.github_repository_file`

### Expected Behavior

The `repository_file` data source allows checking if a specific file exists in a repository. ... | non_defect | the repository file data source throws an error when trying to access a non existent file affected resource s please list the resources as a list for example data github repository file expected behavior the repository file data source allows checking if a specific file exists in a repository ... | 0 |

75,505 | 25,888,415,428 | IssuesEvent | 2022-12-14 16:06:14 | DependencyTrack/dependency-track | https://api.github.com/repos/DependencyTrack/dependency-track | opened | After Component update, only CVEs related to updated CPE should be available | defect in triage | ### Current Behavior

If we update the version and CPE of an existing component, all old CVEs not related to the new CPE and their audit histories are still available in Vulnerability Audit.

### Steps to Reproduce

1. Edit any exist component with outdated version and CPE >

Note available CVEs

2. Change version... | 1.0 | After Component update, only CVEs related to updated CPE should be available - ### Current Behavior

If we update the version and CPE of an existing component, all old CVEs not related to the new CPE and their audit histories are still available in Vulnerability Audit.

### Steps to Reproduce

1. Edit any exist compone... | defect | after component update only cves related to updated cpe should be available current behavior if we update the version and cpe of an existing component all old cves not related to the new cpe and their audit histories are still available in vulnerability audit steps to reproduce edit any exist compone... | 1 |

16,739 | 2,941,305,424 | IssuesEvent | 2015-07-02 06:49:26 | tnt944445/reaver-wps | https://api.github.com/repos/tnt944445/reaver-wps | closed | Try multiple BSSIDs, round robin | auto-migrated Priority-Triage Type-Defect | ```

I found myself sitting an entire evening switching between two routers I was

testing. The first (Thomson) locks for five minutes after five attempts. The

second (dlink) reboots after a few minutes of testing. Try one until it locks,

swith to the other until it reboots, switch back to the first, etc ad nauseam... | 1.0 | Try multiple BSSIDs, round robin - ```

I found myself sitting an entire evening switching between two routers I was

testing. The first (Thomson) locks for five minutes after five attempts. The

second (dlink) reboots after a few minutes of testing. Try one until it locks,

swith to the other until it reboots, switc... | defect | try multiple bssids round robin i found myself sitting an entire evening switching between two routers i was testing the first thomson locks for five minutes after five attempts the second dlink reboots after a few minutes of testing try one until it locks swith to the other until it reboots switc... | 1 |

310,441 | 26,716,616,535 | IssuesEvent | 2023-01-28 15:54:07 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | Error when trying use trait alias for Fn() in impl | A-traits E-needs-test T-compiler C-bug F-trait_alias | This code produce compiling error:

```rust

#![feature(trait_alias)]

struct MyStruct {}

trait MyFn = Fn(&MyStruct);

fn foo (_: impl MyFn) {}

fn main () {

foo(|_| {});

}

```

([Playground](https://play.rust-lang.org/?version=nightly&mode=debug&edition=2018&gist=6f1bfe3f9fca8240a2111c94b12a963d))

... | 1.0 | Error when trying use trait alias for Fn() in impl - This code produce compiling error:

```rust

#![feature(trait_alias)]

struct MyStruct {}

trait MyFn = Fn(&MyStruct);

fn foo (_: impl MyFn) {}

fn main () {

foo(|_| {});

}

```

([Playground](https://play.rust-lang.org/?version=nightly&mode=debug&ed... | non_defect | error when trying use trait alias for fn in impl this code produce compiling error rust struct mystruct trait myfn fn mystruct fn foo impl myfn fn main foo errors compiling playground playground error type mismatch i... | 0 |

49,001 | 13,185,189,493 | IssuesEvent | 2020-08-12 20:54:06 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | Increase robustness of ROOTSYS detection foo (Trac #587) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/587

, reported by blaufuss and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2013-06-28T13:01:06",

"description": "still often get strangish errors from ROOT voodoo (rootcint sometimes)\n\nOften att... | 1.0 | Increase robustness of ROOTSYS detection foo (Trac #587) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/587

, reported by blaufuss and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2013-06-28T13:01:06",

"description": "still often get strangis... | defect | increase robustness of rootsys detection foo trac migrated from reported by blaufuss and owned by nega json status closed changetime description still often get strangish errors from root voodoo rootcint sometimes n noften attached to having non ports ... | 1 |

15,477 | 2,856,459,610 | IssuesEvent | 2015-06-02 15:03:53 | svalinn/DAGMC | https://api.github.com/repos/svalinn/DAGMC | closed | Change amalgamated pyne library name | Type: Defect Type: Enhancement | The compiled shared object library name clashes with standard pyne library name, it should be changed to something more appropriate that doesn't clash like `pyne_builtin`, something definitely not called pyne. | 1.0 | Change amalgamated pyne library name - The compiled shared object library name clashes with standard pyne library name, it should be changed to something more appropriate that doesn't clash like `pyne_builtin`, something definitely not called pyne. | defect | change amalgamated pyne library name the compiled shared object library name clashes with standard pyne library name it should be changed to something more appropriate that doesn t clash like pyne builtin something definitely not called pyne | 1 |

166,390 | 6,303,924,156 | IssuesEvent | 2017-07-21 14:48:17 | chez-nestor/backoffice | https://api.github.com/repos/chez-nestor/backoffice | closed | Write unit tests for complex Liana getters | [priority] P2 [type] enhancement | - [ ] load Liana collections ourselves from src/models ourselves,

- [ ] find a way to build objects that will have those getters | 1.0 | Write unit tests for complex Liana getters - - [ ] load Liana collections ourselves from src/models ourselves,

- [ ] find a way to build objects that will have those getters | non_defect | write unit tests for complex liana getters load liana collections ourselves from src models ourselves find a way to build objects that will have those getters | 0 |

77,773 | 27,156,303,056 | IssuesEvent | 2023-02-17 08:09:17 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | opened | DataExporter: Attrribute 'visibleOnly' not documented | :lady_beetle: defect :bangbang: needs-triage | ### Describe the bug

The [online documentation](https://primefaces.github.io/primefaces/12_0_0/#/components/dataexporter) of component DataExporter has no description for attribute 'visibleOnly'.

### Reproducer

_No response_

### Expected behavior

_No response_

### PrimeFaces edition

Community

### PrimeFaces ver... | 1.0 | DataExporter: Attrribute 'visibleOnly' not documented - ### Describe the bug

The [online documentation](https://primefaces.github.io/primefaces/12_0_0/#/components/dataexporter) of component DataExporter has no description for attribute 'visibleOnly'.

### Reproducer

_No response_

### Expected behavior

_No response... | defect | dataexporter attrribute visibleonly not documented describe the bug the of component dataexporter has no description for attribute visibleonly reproducer no response expected behavior no response primefaces edition community primefaces version theme no response ... | 1 |

9,578 | 2,615,162,942 | IssuesEvent | 2015-03-01 06:42:23 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | bssid.wpc empty | auto-migrated Priority-Triage Type-Defect | ```

System crashed and i need to reboot. Now the bssid.wpc is empty! The file

exist, sure reaver ask to restore the session but starts from the beginning.

It was about 18% done and Pin 20XXXXXX, it takes me 1 week to get that far. Do

i really have to start from the beginning?

Or maybe i can write something in the... | 1.0 | bssid.wpc empty - ```

System crashed and i need to reboot. Now the bssid.wpc is empty! The file

exist, sure reaver ask to restore the session but starts from the beginning.

It was about 18% done and Pin 20XXXXXX, it takes me 1 week to get that far. Do

i really have to start from the beginning?

Or maybe i can writ... | defect | bssid wpc empty system crashed and i need to reboot now the bssid wpc is empty the file exist sure reaver ask to restore the session but starts from the beginning it was about done and pin it takes me week to get that far do i really have to start from the beginning or maybe i can write someth... | 1 |

36,805 | 8,139,791,383 | IssuesEvent | 2018-08-20 18:52:40 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Null-propagation does not work on nullable value tuples. | defect in-progress premium | ### Steps To Reproduce

https://deck.net/6574bfcdd0addabfc66d1cc0c3c7498f

Related to https://github.com/bridgedotnet/Bridge/issues/3645

```csharp

public class App

{

public static void Main()

{

(string Prop1, string Prop2)? val = ("test1", "test2");

Console.WriteLine(val.V... | 1.0 | Null-propagation does not work on nullable value tuples. - ### Steps To Reproduce

https://deck.net/6574bfcdd0addabfc66d1cc0c3c7498f

Related to https://github.com/bridgedotnet/Bridge/issues/3645

```csharp

public class App

{

public static void Main()

{

(string Prop1, string Prop2)? val = ("t... | defect | null propagation does not work on nullable value tuples steps to reproduce related to csharp public class app public static void main string string val console writeline val value console writeline val ... | 1 |

3,900 | 2,610,083,707 | IssuesEvent | 2015-02-26 18:25:33 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳宝安哪里祛痘好 | auto-migrated Priority-Medium Type-Defect | ```

深圳宝安哪里祛痘好【深圳韩方科颜全国热线400-869-1818,24小

时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国��

�方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩�

��科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”

健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专��

�治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的�

��痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 6:51 | 1.0 | 深圳宝安哪里祛痘好 - ```

深圳宝安哪里祛痘好【深圳韩方科颜全国热线400-869-1818,24小

时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国��

�方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩�

��科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”

健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专��

�治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的�

��痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 6:51 | defect | 深圳宝安哪里祛痘好 深圳宝安哪里祛痘好【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国�� �方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩� ��科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹” 健康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专�� �治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的� ��痘。 original issue reported on code google com by szft com on may at | 1 |

33,759 | 7,237,732,059 | IssuesEvent | 2018-02-13 12:09:09 | oozcitak/exiflibrary | https://api.github.com/repos/oozcitak/exiflibrary | closed | Question on recompression | Priority-Medium Type-Defect auto-migrated | ```

When i use the library to modify EXIF data ("comment" for example) and save,

does it recompress the picture?

Thanks

Filip

```

Original issue reported on code.google.com by `Filip.Je...@gmail.com` on 27 Aug 2013 at 4:06

| 1.0 | Question on recompression - ```

When i use the library to modify EXIF data ("comment" for example) and save,

does it recompress the picture?

Thanks

Filip

```

Original issue reported on code.google.com by `Filip.Je...@gmail.com` on 27 Aug 2013 at 4:06

| defect | question on recompression when i use the library to modify exif data comment for example and save does it recompress the picture thanks filip original issue reported on code google com by filip je gmail com on aug at | 1 |

40,778 | 10,154,006,446 | IssuesEvent | 2019-08-06 06:50:43 | line/centraldogma | https://api.github.com/repos/line/centraldogma | opened | Some server log messages are not logged to Logback | defect | I found this from the stderr output:

```

Aug 06, 2019 3:18:22 PM com.github.benmanes.caffeine.cache.LocalAsyncCache lambda$handleCompletion$3

WARNING: Exception thrown during asynchronous load

java.util.concurrent.CompletionException: com.linecorp.centraldogma.server.internal.storage.RequestAlreadyTimedOutExcepti... | 1.0 | Some server log messages are not logged to Logback - I found this from the stderr output:

```

Aug 06, 2019 3:18:22 PM com.github.benmanes.caffeine.cache.LocalAsyncCache lambda$handleCompletion$3

WARNING: Exception thrown during asynchronous load

java.util.concurrent.CompletionException: com.linecorp.centraldogma.... | defect | some server log messages are not logged to logback i found this from the stderr output aug pm com github benmanes caffeine cache localasynccache lambda handlecompletion warning exception thrown during asynchronous load java util concurrent completionexception com linecorp centraldogma server... | 1 |

265,087 | 8,337,106,047 | IssuesEvent | 2018-09-28 09:57:44 | opencaching/opencaching-pl | https://api.github.com/repos/opencaching/opencaching-pl | closed | vars in translations | Component_i18n Priority_Low Type_Enhancement | There is need to handle vars in translation texts.

"Old style" template system provides possibility of including vars as:

`'activate_mail_subject' => 'New user registration - {site_name}',`

Ideas:

"new" way can handle it in "printf" style so the translation can look like:

`'activate_mail_subject' => 'New use... | 1.0 | vars in translations - There is need to handle vars in translation texts.

"Old style" template system provides possibility of including vars as:

`'activate_mail_subject' => 'New user registration - {site_name}',`

Ideas:

"new" way can handle it in "printf" style so the translation can look like:

`'activate_ma... | non_defect | vars in translations there is need to handle vars in translation texts old style template system provides possibility of including vars as activate mail subject new user registration site name ideas new way can handle it in printf style so the translation can look like activate ma... | 0 |

231,981 | 7,647,517,067 | IssuesEvent | 2018-05-09 04:32:12 | AdChain/AdChainRegistryDapp | https://api.github.com/repos/AdChain/AdChainRegistryDapp | closed | Committing a Vote- Step 2 in Walkthrough | Priority: Medium Status: Review Needed | "The filtered domains are all in the Voting Commit stage."

<img width="1181" alt="screen shot 2018-04-03 at 10 46 45 am" src="https://user-images.githubusercontent.com/35276813/38266202-e60c0e20-372c-11e8-8f6f-c9de64eb4048.png">

However, the image shows domains in various other stages such as "In Registry", "Reve... | 1.0 | Committing a Vote- Step 2 in Walkthrough - "The filtered domains are all in the Voting Commit stage."

<img width="1181" alt="screen shot 2018-04-03 at 10 46 45 am" src="https://user-images.githubusercontent.com/35276813/38266202-e60c0e20-372c-11e8-8f6f-c9de64eb4048.png">

However, the image shows domains in variou... | non_defect | committing a vote step in walkthrough the filtered domains are all in the voting commit stage img width alt screen shot at am src however the image shows domains in various other stages such as in registry reveal pending and application pending | 0 |

307,095 | 9,414,186,019 | IssuesEvent | 2019-04-10 09:33:38 | StrangeLoopGames/EcoIssues | https://api.github.com/repos/StrangeLoopGames/EcoIssues | closed | Feature: button to open profile folder | Feature Medium Priority | Files from this folder regularry needs for support, playtime.eco need for first server configuration...

And finding this folder is not easy.

`%appdata%\..\LocalLow\Strange Loop Games\Eco` | 1.0 | Feature: button to open profile folder - Files from this folder regularry needs for support, playtime.eco need for first server configuration...

And finding this folder is not easy.

`%appdata%\..\LocalLow\Strange Loop Games\Eco` | non_defect | feature button to open profile folder files from this folder regularry needs for support playtime eco need for first server configuration and finding this folder is not easy appdata locallow strange loop games eco | 0 |

71,138 | 9,480,507,364 | IssuesEvent | 2019-04-20 18:25:50 | smithjd/sql-pet | https://api.github.com/repos/smithjd/sql-pet | closed | Investigate DiagrammeR integration with bookdown book | documentation | The tools of choice for creating diagrams in RStudio / R Markdown is the `DiagrammeR` R package (http://rich-iannone.github.io/DiagrammeR/index.html).

1. Diagrams are code - you can edit them and version-control them.

2. RStudio has provisions to view / render them as you build them.

What I don't know (yet) is ... | 1.0 | Investigate DiagrammeR integration with bookdown book - The tools of choice for creating diagrams in RStudio / R Markdown is the `DiagrammeR` R package (http://rich-iannone.github.io/DiagrammeR/index.html).

1. Diagrams are code - you can edit them and version-control them.

2. RStudio has provisions to view / rende... | non_defect | investigate diagrammer integration with bookdown book the tools of choice for creating diagrams in rstudio r markdown is the diagrammer r package diagrams are code you can edit them and version control them rstudio has provisions to view render them as you build them what i don t know yet ... | 0 |

65,436 | 19,515,508,094 | IssuesEvent | 2021-12-29 09:32:13 | ontop/ontop | https://api.github.com/repos/ontop/ontop | closed | bootstrap fails when there is a unique index that uses md5() of a column value | type: defect status: fixed w: db support | ### Description

bootstrap fails when there is a unique index that uses md5() of a column value with the following error:

```

20:42:22.607 [main] DEBUG o.s.owlapi.utilities.Injector - Injecting values [[org.semanticweb.owlapi.rdf.rdfxml.renderer.RDFXMLStorerFactory@13e698c7, org.semanticweb.owlapi.functional.render... | 1.0 | bootstrap fails when there is a unique index that uses md5() of a column value - ### Description

bootstrap fails when there is a unique index that uses md5() of a column value with the following error:

```

20:42:22.607 [main] DEBUG o.s.owlapi.utilities.Injector - Injecting values [[org.semanticweb.owlapi.rdf.rdfxm... | defect | bootstrap fails when there is a unique index that uses of a column value description bootstrap fails when there is a unique index that uses of a column value with the following error debug o s owlapi utilities injector injecting values on method public void uk ac manchester cs owl... | 1 |

48,986 | 13,185,183,426 | IssuesEvent | 2020-08-12 20:53:20 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | rootcint won't create dictionaries if $ROOTSYS is longer than 128 characters (Trac #565) | Incomplete Migration Migrated from Trac defect tools/ports | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/565

, reported by kislat and owned by cgils</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-06-30T14:16:42",

"description": "There is a bug in rootcint (ROOT 5.20.00 and later versions) that makes it impossibl... | 1.0 | rootcint won't create dictionaries if $ROOTSYS is longer than 128 characters (Trac #565) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/565

, reported by kislat and owned by cgils</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-06-30T14:16:42",

"descript... | defect | rootcint won t create dictionaries if rootsys is longer than characters trac migrated from reported by kislat and owned by cgils json status closed changetime description there is a bug in rootcint root and later versions that makes it impossi... | 1 |

16,253 | 2,882,833,250 | IssuesEvent | 2015-06-11 08:25:32 | ooskapenaar/awarenet | https://api.github.com/repos/ooskapenaar/awarenet | closed | chat doesn't work | auto-migrated Priority-Low Type-Defect | ```

What steps will reproduce the problem?

1. visit "http://awarenet.eu/chat/"

2. click on "Global Chat"

3.

What is the expected output? What do you see instead?

I see this error message (see attached pic). It happend to me while using

Firefox 31.0 (in VB), IE 11 and Opera 26. Its always the same error.

Please use l... | 1.0 | chat doesn't work - ```

What steps will reproduce the problem?

1. visit "http://awarenet.eu/chat/"

2. click on "Global Chat"

3.

What is the expected output? What do you see instead?

I see this error message (see attached pic). It happend to me while using

Firefox 31.0 (in VB), IE 11 and Opera 26. Its always the same ... | defect | chat doesn t work what steps will reproduce the problem visit click on global chat what is the expected output what do you see instead i see this error message see attached pic it happend to me while using firefox in vb ie and opera its always the same error please use labels a... | 1 |

41,190 | 10,328,311,841 | IssuesEvent | 2019-09-02 09:14:11 | vector-im/riot-web | https://api.github.com/repos/vector-im/riot-web | closed | Handle the case of no IS in features that require IS to lookup | bug defect phase:1 privacy privacy-sprint type:identity-server | Since it's now possible to be disconnected from an identity server entirely (your session has no active identity server), it's unclear how features that require an IS to function should behave.

For the case of the Discovery section in Settings, it's easy enough: you have no IS, so we don't show any 3PIDs to control.... | 1.0 | Handle the case of no IS in features that require IS to lookup - Since it's now possible to be disconnected from an identity server entirely (your session has no active identity server), it's unclear how features that require an IS to function should behave.

For the case of the Discovery section in Settings, it's ea... | defect | handle the case of no is in features that require is to lookup since it s now possible to be disconnected from an identity server entirely your session has no active identity server it s unclear how features that require an is to function should behave for the case of the discovery section in settings it s ea... | 1 |

68,860 | 8,357,618,074 | IssuesEvent | 2018-10-02 22:15:00 | nextcloud/server | https://api.github.com/repos/nextcloud/server | closed | Improve backup codes UI/UX | 1. to develop Hacktoberfest design enhancement good first issue help wanted papercut | We recently added a new setting to the personal settings page – the ability to generate backup codes, which can be used in case users lose access to their second factor. At the moment, the user interface doesn't look that nice:

;

2. sprite = new Sprite2D(new Sprite_bmd(512, 512));

3. cube = new Cube({width:2.5, height:2.5, depth:2.5, y:-1.25}... | 1.0 | Sprite3D Visual Glitches with Object3D when using Renderer.INTERSECTING_OBJECTS - #45 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:21Z

```

What steps will reproduce the problem?

1. view = new View3D({scene:scene, camera:camera,

renderer:Renderer.INTERSECTING_OBJECTS});

2. sprite = new Sprite2D(new Spr... | defect | visual glitches with when using renderer intersecting objects issue by googlecodeexporter created on what steps will reproduce the problem view new scene scene camera camera renderer renderer intersecting objects sprite new new sprite bmd cube new cube wi... | 1 |

74,549 | 25,170,310,908 | IssuesEvent | 2022-11-11 02:14:00 | ascott18/TellMeWhen | https://api.github.com/repos/ascott18/TellMeWhen | closed | [Bug] Soul shard item count apparently doesn't work for Item Cooldown icons | T: defect V: classic | Reported by someone on Discord. Perhaps is due to different bag type for soul shard bags? | 1.0 | [Bug] Soul shard item count apparently doesn't work for Item Cooldown icons - Reported by someone on Discord. Perhaps is due to different bag type for soul shard bags? | defect | soul shard item count apparently doesn t work for item cooldown icons reported by someone on discord perhaps is due to different bag type for soul shard bags | 1 |

17,887 | 3,013,563,736 | IssuesEvent | 2015-07-29 09:45:03 | yawlfoundation/yawl | https://api.github.com/repos/yawlfoundation/yawl | closed | Can't save a specification with extended UTF-8 chars in data type definition | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Use for example the data type:

<xs:simpleType name="country">

<xs:restriction base="xs:string">

<xs:enumeration value="ÅLAND ISLANDS" />

<xs:enumeration value="TEST" />

</xs:restriction>

</xs:simpleType>

in your specification.

2. Try to save the ... | 1.0 | Can't save a specification with extended UTF-8 chars in data type definition - ```

What steps will reproduce the problem?

1. Use for example the data type:

<xs:simpleType name="country">

<xs:restriction base="xs:string">

<xs:enumeration value="ÅLAND ISLANDS" />

<xs:enumeration value="TEST" />

</... | defect | can t save a specification with extended utf chars in data type definition what steps will reproduce the problem use for example the data type in your specification try to save the specification leads to could not export specification due to missing or invalid... | 1 |

55,174 | 14,257,032,075 | IssuesEvent | 2020-11-20 02:32:13 | naev/naev | https://api.github.com/repos/naev/naev | opened | make distcheck broken | Priority-High Type-Defect | ```

$ make distcheck

...

Making all in src

Making all in tk

Making all in widget

CC button.o

CC checkbox.o

CC cust.o

CC fader.o

CC image.o

CC imagearray.o

CC input.o

CC list.o

CC rect.o

CC tabwin.o

CC text.o

ar: `u' modifier ignored since `D' is th... | 1.0 | make distcheck broken - ```

$ make distcheck

...

Making all in src

Making all in tk

Making all in widget

CC button.o

CC checkbox.o

CC cust.o

CC fader.o

CC image.o

CC imagearray.o

CC input.o

CC list.o

CC rect.o

CC tabwin.o

CC text.o

ar: `u' modifier... | defect | make distcheck broken make distcheck making all in src making all in tk making all in widget cc button o cc checkbox o cc cust o cc fader o cc image o cc imagearray o cc input o cc list o cc rect o cc tabwin o cc text o ar u modifier... | 1 |

692,647 | 23,744,200,017 | IssuesEvent | 2022-08-31 14:42:51 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | ecerp01.ecil.gov.in - "Secure connection failed" when accessing the site | browser-firefox priority-normal severity-critical engine-gecko type-geolocation type-unsupported-tls | <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/53851 -->

**URL**: https://ecerp01.ecil.gov.in/ecilerec

**Browser / Version**: Fi... | 1.0 | ecerp01.ecil.gov.in - "Secure connection failed" when accessing the site - <!-- @browser: Firefox 78.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:78.0) Gecko/20100101 Firefox/78.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/53851 --... | non_defect | ecil gov in secure connection failed when accessing the site url browser version firefox operating system windows tested another browser yes edge problem type desktop site instead of mobile site description desktop site instead of mobile site steps to reprodu... | 0 |

22,783 | 3,698,519,087 | IssuesEvent | 2016-02-28 11:33:02 | dnaumenko/java-diff-utils | https://api.github.com/repos/dnaumenko/java-diff-utils | closed | Please include a LICENSE file in the source tree | auto-migrated Priority-Medium Type-Defect | ```

Including a LICENSE file makes it easier for downstream packagers and other

users to ensure that they are using the software in a manner consistent with

its license. Please include the Apache license in the source tree and in

source distributions: http://www.apache.org/licenses/LICENSE-2.0

```

Original issue... | 1.0 | Please include a LICENSE file in the source tree - ```

Including a LICENSE file makes it easier for downstream packagers and other

users to ensure that they are using the software in a manner consistent with

its license. Please include the Apache license in the source tree and in

source distributions: http://www.a... | defect | please include a license file in the source tree including a license file makes it easier for downstream packagers and other users to ensure that they are using the software in a manner consistent with its license please include the apache license in the source tree and in source distributions orig... | 1 |

71,659 | 23,748,071,691 | IssuesEvent | 2022-08-31 17:50:00 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Panel layout broke across restart | T-Defect | ### Steps to reproduce

1. Minimise the spacepanel & leftpanel