Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

45,586 | 12,890,010,954 | IssuesEvent | 2020-07-13 15:20:47 | carbon-design-system/ibm-security | https://api.github.com/repos/carbon-design-system/ibm-security | closed | Add support for 'size' prop in DataTable | Defect | Add support for `size` prop in `DataTable`. The base Carbon library already supports this [option here](https://github.com/carbon-design-system/carbon/blob/fe23a6f46b001e56d31376cfebe6e523b27e77f9/packages/react/src/components/DataTable/DataTable.js#L111) | 1.0 | Add support for 'size' prop in DataTable - Add support for `size` prop in `DataTable`. The base Carbon library already supports this [option here](https://github.com/carbon-design-system/carbon/blob/fe23a6f46b001e56d31376cfebe6e523b27e77f9/packages/react/src/components/DataTable/DataTable.js#L111) | defect | add support for size prop in datatable add support for size prop in datatable the base carbon library already supports this | 1 |

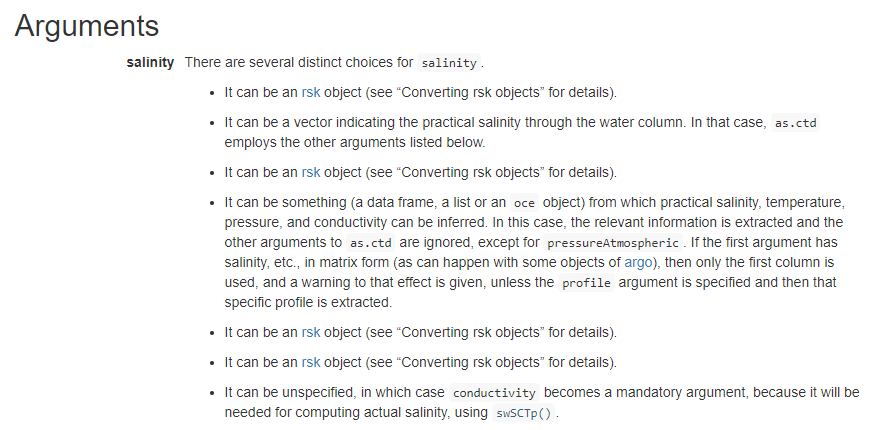

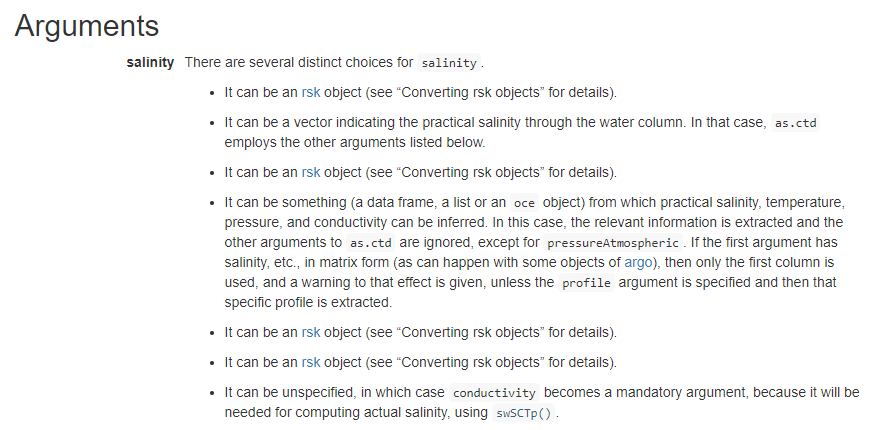

192,891 | 15,361,295,554 | IssuesEvent | 2021-03-01 17:57:50 | dankelley/oce | https://api.github.com/repos/dankelley/oce | closed | as.ctd documentation needs updating (at least on website) | documentation website | Was just looking at the `as.ctd()` documentation on the pkgdown website at https://dankelley.github.io/oce/reference/as.ctd.html and I see as below:

The question is, "***CAN*** the `salinity` argument be an... | 1.0 | as.ctd documentation needs updating (at least on website) - Was just looking at the `as.ctd()` documentation on the pkgdown website at https://dankelley.github.io/oce/reference/as.ctd.html and I see as below:

... | non_defect | as ctd documentation needs updating at least on website was just looking at the as ctd documentation on the pkgdown website at and i see as below the question is can the salinity argument be an rsk object 😄 | 0 |

18,367 | 3,386,974,864 | IssuesEvent | 2015-11-28 00:09:24 | jgirald/ES2015C | https://api.github.com/repos/jgirald/ES2015C | opened | Texturas Caballero Avanzado Yamato | Character Design Medium Priority Team A Textures Yamato | ### Description

Aplicar texturas al modelo del personaje que representa al Caballero Yamato Avanzado.

### Acceptance Criteria

Modelo acabado con las texturas incorporadas, preparado para animar.

### Estimated time effort:

2 hr | 1.0 | Texturas Caballero Avanzado Yamato - ### Description

Aplicar texturas al modelo del personaje que representa al Caballero Yamato Avanzado.

### Acceptance Criteria

Modelo acabado con las texturas incorporadas, preparado para animar.

### Estimated time effort:

2 hr | non_defect | texturas caballero avanzado yamato description aplicar texturas al modelo del personaje que representa al caballero yamato avanzado acceptance criteria modelo acabado con las texturas incorporadas preparado para animar estimated time effort hr | 0 |

52,889 | 13,225,205,446 | IssuesEvent | 2020-08-17 20:42:11 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | Boost 1.36.0 w/ mac linker, can't find libs. (Trac #534) | Migrated from Trac defect tools/ports | Since the boost lib in I3_PORTS are not explicitly in the LD/DYLD_LIB_PATH in env-shell, the contents of the

compiled I3_BUILD/lib libraries should give location of I3_PORT libs.

For example, w/ boost_1.33.1:

otool -L lib/libdataio.dylib

gives:

...

/Users/blaufuss/icework/i3tools/lib/boost-1.33.1/libboost_serializa... | 1.0 | Boost 1.36.0 w/ mac linker, can't find libs. (Trac #534) - Since the boost lib in I3_PORTS are not explicitly in the LD/DYLD_LIB_PATH in env-shell, the contents of the

compiled I3_BUILD/lib libraries should give location of I3_PORT libs.

For example, w/ boost_1.33.1:

otool -L lib/libdataio.dylib

gives:

...

/Users/b... | defect | boost w mac linker can t find libs trac since the boost lib in ports are not explicitly in the ld dyld lib path in env shell the contents of the compiled build lib libraries should give location of port libs for example w boost otool l lib libdataio dylib gives users blaufuss... | 1 |

66,562 | 20,328,023,374 | IssuesEvent | 2022-02-18 08:00:46 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | closed | Links containing markdown formatting (eg underscores) are interpreted as markdown | T-Defect A-Composer S-Major O-Occasional Z-WTF Z-Ready | ### Steps to reproduce

1. Send a link like http://domain.xyz/foo/bar-_stuff-like-this_-in-it.jpg

The same problem was recently fixed in Element Web, see https://github.com/vector-im/element-web/issues/4674

### Outcome

#### What did you expect?

The link is send without markdown formatting.

#### What happened i... | 1.0 | Links containing markdown formatting (eg underscores) are interpreted as markdown - ### Steps to reproduce

1. Send a link like http://domain.xyz/foo/bar-_stuff-like-this_-in-it.jpg

The same problem was recently fixed in Element Web, see https://github.com/vector-im/element-web/issues/4674

### Outcome

#### What ... | defect | links containing markdown formatting eg underscores are interpreted as markdown steps to reproduce send a link like the same problem was recently fixed in element web see outcome what did you expect the link is send without markdown formatting what happened instead the link b... | 1 |

2,698 | 3,005,172,924 | IssuesEvent | 2015-07-26 17:36:49 | nightingale-media-player/nightingale-hacking | https://api.github.com/repos/nightingale-media-player/nightingale-hacking | closed | Fix mac builds | Buildsystem Mac OS X | Apparently some (or all?) mac builds are broken: http://forum.getnightingale.com/thread-429-post-5394.html#pid5394

According to @zjays it's 3267acc1883a3d33eff876c8bdca4b961c29fdeb that broke it.

Their configure log looks like this:

```

`Adding configure options from /Users/Zach-Mac/GitHub/nightingale-hacking/n... | 1.0 | Fix mac builds - Apparently some (or all?) mac builds are broken: http://forum.getnightingale.com/thread-429-post-5394.html#pid5394

According to @zjays it's 3267acc1883a3d33eff876c8bdca4b961c29fdeb that broke it.

Their configure log looks like this:

```

`Adding configure options from /Users/Zach-Mac/GitHub/nigh... | non_defect | fix mac builds apparently some or all mac builds are broken according to zjays it s that broke it their configure log looks like this adding configure options from users zach mac github nightingale hacking nightingale config with macosx sdk developer sdks sdk enable installer ... | 0 |

8,473 | 2,611,513,653 | IssuesEvent | 2015-02-27 05:49:37 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | patch for google issue tracker api | auto-migrated Priority-Medium Type-Defect | ```

The description-blanking-out issue you described has been fixed.

The email CC feature has been implemented in code, but it doesn't seem to

actually do anything. I tried it, and the email I put it never received any

notifications of comments on the posted issue. Because of this, I commented out

three lines of co... | 1.0 | patch for google issue tracker api - ```

The description-blanking-out issue you described has been fixed.

The email CC feature has been implemented in code, but it doesn't seem to

actually do anything. I tried it, and the email I put it never received any

notifications of comments on the posted issue. Because of thi... | defect | patch for google issue tracker api the description blanking out issue you described has been fixed the email cc feature has been implemented in code but it doesn t seem to actually do anything i tried it and the email i put it never received any notifications of comments on the posted issue because of thi... | 1 |

78,439 | 27,522,821,545 | IssuesEvent | 2023-03-06 16:03:56 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | closed | Formatting creates extra newlines | T-Defect A-Markdown A-Composer X-Regression S-Major O-Occasional | ### Steps to reproduce

Send texts containing formatting

### Outcome

#### What did you expect?

Same functionality as old version of Element Android and other clients.

Element... | 1.0 | Formatting creates extra newlines - ### Steps to reproduce

Send texts containing formatting

### Outcome

#### What did you expect?

Same functionality as old version of Element... | defect | formatting creates extra newlines steps to reproduce send texts containing formatting outcome what did you expect same functionality as old version of element android and other clients element desktop what happened instead most formatting options start a new line... | 1 |

200,738 | 7,011,152,550 | IssuesEvent | 2017-12-20 03:49:45 | GoogleCloudPlatform/google-cloud-go | https://api.github.com/repos/GoogleCloudPlatform/google-cloud-go | closed | storage: create custom domain bucket 403 error | api: storage priority: p1 type: question | # case 1: OK

bkt := client.Bucket("subdomain")

err := bkt.Create(c, project_id, BucketAttrs)

# case 2: 403 ERROR

myDomain.com is a verified

bucket myDomain.com is created via https://console.cloud.google.com/storage/browser?project=***.

bkt := client.Bucket("subdomain.myDomain.com")

err := bkt.Create(c, proj... | 1.0 | storage: create custom domain bucket 403 error - # case 1: OK

bkt := client.Bucket("subdomain")

err := bkt.Create(c, project_id, BucketAttrs)

# case 2: 403 ERROR

myDomain.com is a verified

bucket myDomain.com is created via https://console.cloud.google.com/storage/browser?project=***.

bkt := client.Bucket("sub... | non_defect | storage create custom domain bucket error case ok bkt client bucket subdomain err bkt create c project id bucketattrs case error mydomain com is a verified bucket mydomain com is created via bkt client bucket subdomain mydomain com err bkt create c project id bucketa... | 0 |

34,526 | 7,453,062,993 | IssuesEvent | 2018-03-29 10:31:03 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | Ajax pageri bugid | P: highest R: fixed T: defect | **Reported by sven syld on 29 Jul 2013 15:38 UTC**

KÜ otsingunimekirjas ja facetite lehitsemisel on ajaxi pageriga bugi, et peale 1. requesti läheb html struktuur paigast. Visuaalselt avaldub see nii, et lingi klikkamisel tekib vasakusse üles nurka teine, tabeli suurune kast, mis kaob tulemuste saabumisel ära. | 1.0 | Ajax pageri bugid - **Reported by sven syld on 29 Jul 2013 15:38 UTC**

KÜ otsingunimekirjas ja facetite lehitsemisel on ajaxi pageriga bugi, et peale 1. requesti läheb html struktuur paigast. Visuaalselt avaldub see nii, et lingi klikkamisel tekib vasakusse üles nurka teine, tabeli suurune kast, mis kaob tulemuste saab... | defect | ajax pageri bugid reported by sven syld on jul utc kü otsingunimekirjas ja facetite lehitsemisel on ajaxi pageriga bugi et peale requesti läheb html struktuur paigast visuaalselt avaldub see nii et lingi klikkamisel tekib vasakusse üles nurka teine tabeli suurune kast mis kaob tulemuste saabumisel... | 1 |

296,303 | 9,107,266,813 | IssuesEvent | 2019-02-21 03:31:32 | thautwarm/MLStyle.jl | https://api.github.com/repos/thautwarm/MLStyle.jl | closed | can not use @match inside another macro | bug core help wanted high-priority | MWE: copying what's in here: https://github.com/thautwarm/MLStyle-Playground/blob/master/StaticCapturing.jl

to a module. The scoping is not correct. | 1.0 | can not use @match inside another macro - MWE: copying what's in here: https://github.com/thautwarm/MLStyle-Playground/blob/master/StaticCapturing.jl

to a module. The scoping is not correct. | non_defect | can not use match inside another macro mwe copying what s in here to a module the scoping is not correct | 0 |

16,686 | 2,933,667,257 | IssuesEvent | 2015-06-30 00:56:40 | Ephemerality/xray-builder | https://api.github.com/repos/Ephemerality/xray-builder | closed | [Linux] mobi_unpack.py is successful, but XRayBuilder shows error | auto-migrated Priority-Medium Type-Defect | ```

I'm trying to get XRayBuilder.exe to run under Linux with Mono. Basically, it

works, but it doesn't recognise the successful unpacking via mobi_unpack.py or

even the latest version KindleUnpack.py.

My commandline:

mkdir out

mono XRayBuilder.exe -u ../Mobi_Unpack_v047/lib/mobi_unpack.py -o ./out

../book.mobi

Ou... | 1.0 | [Linux] mobi_unpack.py is successful, but XRayBuilder shows error - ```

I'm trying to get XRayBuilder.exe to run under Linux with Mono. Basically, it

works, but it doesn't recognise the successful unpacking via mobi_unpack.py or

even the latest version KindleUnpack.py.

My commandline:

mkdir out

mono XRayBuilder.exe ... | defect | mobi unpack py is successful but xraybuilder shows error i m trying to get xraybuilder exe to run under linux with mono basically it works but it doesn t recognise the successful unpacking via mobi unpack py or even the latest version kindleunpack py my commandline mkdir out mono xraybuilder exe u ... | 1 |

4,852 | 2,610,158,400 | IssuesEvent | 2015-02-26 18:50:15 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Graphics Glitch | auto-migrated Priority-Medium Type-Defect | ```

Missing textures from Cad Banes Thermal Bomb

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 30 Jan 2011 at 3:01 | 1.0 | Graphics Glitch - ```

Missing textures from Cad Banes Thermal Bomb

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 30 Jan 2011 at 3:01 | defect | graphics glitch missing textures from cad banes thermal bomb original issue reported on code google com by gmail com on jan at | 1 |

90,769 | 26,181,163,076 | IssuesEvent | 2023-01-02 15:46:51 | CGNS/CGNS | https://api.github.com/repos/CGNS/CGNS | closed | [CGNS-78] Implement FortranCInterface_HEADER in cmake | Improvement Done Build Critical |

> This issue has been imported from JIRA. Read the [original ticket here](https://cgnsorg.atlassian.net/browse/CGNS-78).

- _**Created at:**_ Thu, 25 Feb 2016 09:50:28 -0600

- _**Resolved at:**_ Thu, 25 Feb 2016 10:17:26 -0600

<p>Currently, CGNS handles the C/Fortan name mangling explicitly, it uses macro and test co... | 1.0 | [CGNS-78] Implement FortranCInterface_HEADER in cmake -

> This issue has been imported from JIRA. Read the [original ticket here](https://cgnsorg.atlassian.net/browse/CGNS-78).

- _**Created at:**_ Thu, 25 Feb 2016 09:50:28 -0600

- _**Resolved at:**_ Thu, 25 Feb 2016 10:17:26 -0600

<p>Currently, CGNS handles the C/Fo... | non_defect | implement fortrancinterface header in cmake this issue has been imported from jira read the created at thu feb resolved at thu feb currently cgns handles the c fortan name mangling explicitly it uses macro and test compiles c and fortran code i e fortr... | 0 |

52,792 | 13,225,067,140 | IssuesEvent | 2020-08-17 20:25:21 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | radioeventbrowser (Trac #344) | Migrated from Trac RASTA defect | radioeventbrowser not build by default. Have to specify -DBUILD_TOPEVENTBROWSER=True by compiling

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/344">https://code.icecube.wisc.edu/projects/icecube/ticket/344</a>, reported by tobiasand owned by sboeser</em></summary... | 1.0 | radioeventbrowser (Trac #344) - radioeventbrowser not build by default. Have to specify -DBUILD_TOPEVENTBROWSER=True by compiling

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/344">https://code.icecube.wisc.edu/projects/icecube/ticket/344</a>, reported by tobiasan... | defect | radioeventbrowser trac radioeventbrowser not build by default have to specify dbuild topeventbrowser true by compiling migrated from json status closed changetime ts description radioeventbrowser not build by default have to specify dbuild t... | 1 |

71,993 | 23,885,117,819 | IssuesEvent | 2022-09-08 06:58:48 | PowerDNS/pdns | https://api.github.com/repos/PowerDNS/pdns | closed | auth: backend creation before chroot() is causing startup failures | auth defect | Commit d5385b3570b0d8c13626dcb4cd8fe5fc344db16d from issue #9464 creates backend instances before complete initialization like chroot()+chdir() and dropping privileges is done.

This causes backends that open files, like the gsqlite3 backend, to fail during startup.

This seems to be some unintended side-effect of th... | 1.0 | auth: backend creation before chroot() is causing startup failures - Commit d5385b3570b0d8c13626dcb4cd8fe5fc344db16d from issue #9464 creates backend instances before complete initialization like chroot()+chdir() and dropping privileges is done.

This causes backends that open files, like the gsqlite3 backend, to fail ... | defect | auth backend creation before chroot is causing startup failures commit from issue creates backend instances before complete initialization like chroot chdir and dropping privileges is done this causes backends that open files like the backend to fail during startup this seems to be some unintend... | 1 |

11,374 | 3,487,879,713 | IssuesEvent | 2016-01-02 11:37:47 | KSP-KOS/KOS | https://api.github.com/repos/KSP-KOS/KOS | closed | Q reading doesn't match kPa | bug documentation | Here is what I get when I print out `ship:Q` under the latest kOS and FAR in 1.0.5

The kOS screen refreshes once a second so the value is behind that's not the issue - the issue is what's up with the ... | 1.0 | Q reading doesn't match kPa - Here is what I get when I print out `ship:Q` under the latest kOS and FAR in 1.0.5

The kOS screen refreshes once a second so the value is behind that's not the issue - th... | non_defect | q reading doesn t match kpa here is what i get when i print out ship q under the latest kos and far in the kos screen refreshes once a second so the value is behind that s not the issue the issue is what s up with the decimal placing | 0 |

9,997 | 2,616,018,782 | IssuesEvent | 2015-03-02 01:00:21 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | getRemainingBuildTime() returns 750 on SiegeTanks after Siege/Unsiege | auto-migrated Component-Logic Milestone-MajorRelease Offset-hunting Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Build a Siege Tank

2. Siege the Tank (maybe need to unsiege too)

3. Use getRemainingBuildTime() on the siege tank

What is the expected output? What do you see instead?

getRemainingBuildTime() shall return 0, but it returns 750

What version of the product are you using? On... | 1.0 | getRemainingBuildTime() returns 750 on SiegeTanks after Siege/Unsiege - ```

What steps will reproduce the problem?

1. Build a Siege Tank

2. Siege the Tank (maybe need to unsiege too)

3. Use getRemainingBuildTime() on the siege tank

What is the expected output? What do you see instead?

getRemainingBuildTime() shall ret... | defect | getremainingbuildtime returns on siegetanks after siege unsiege what steps will reproduce the problem build a siege tank siege the tank maybe need to unsiege too use getremainingbuildtime on the siege tank what is the expected output what do you see instead getremainingbuildtime shall retur... | 1 |

171,429 | 27,118,571,708 | IssuesEvent | 2023-02-15 20:41:30 | mickova/Projekt_Pandik | https://api.github.com/repos/mickova/Projekt_Pandik | opened | Úprava PC wireframů | Frontend Design | # Úprava všech PC wireframů a přidání nových

### Úkoly

- [x] Vytvořit wireframe na úpravu příspěvku

- [x] Vytvořit wireframe na zobrazení všech příspěvků

- [x] Všechny wireframy doplnit popisky o funkčnosti | 1.0 | Úprava PC wireframů - # Úprava všech PC wireframů a přidání nových

### Úkoly

- [x] Vytvořit wireframe na úpravu příspěvku

- [x] Vytvořit wireframe na zobrazení všech příspěvků

- [x] Všechny wireframy doplnit popisky o funkčnosti | non_defect | úprava pc wireframů úprava všech pc wireframů a přidání nových úkoly vytvořit wireframe na úpravu příspěvku vytvořit wireframe na zobrazení všech příspěvků všechny wireframy doplnit popisky o funkčnosti | 0 |

192,397 | 14,615,880,030 | IssuesEvent | 2020-12-22 12:19:09 | mixcore/mix.core | https://api.github.com/repos/mixcore/mix.core | closed | portal > attribute > list > check some items > use delete from dropdownlist > not able to delete multible items | Fixed & Testing |

| 1.0 | portal > attribute > list > check some items > use delete from dropdownlist > not able to delete multible items -

| non_defect | portal attribute list check some items use delete from dropdownlist not able to delete multible items | 0 |

48,194 | 13,067,513,374 | IssuesEvent | 2020-07-31 00:42:12 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [simulation] error in MC afterprocessing (MCPulseSeriesParticleIDMap) (Trac #1942) | Migrated from Trac combo simulation defect | I was again on the search for simulation of some MCstudies, for which I need the complete I3MCPulse-info (MCPulseMap, ParticleIDMap and I3MCTRee); It took me some while to locate an appropriate one, which I found in dataset 11843 resp. 11642 (IC86:2013 GENIE simulation), one of the latest produced datasets

This datase... | 1.0 | [simulation] error in MC afterprocessing (MCPulseSeriesParticleIDMap) (Trac #1942) - I was again on the search for simulation of some MCstudies, for which I need the complete I3MCPulse-info (MCPulseMap, ParticleIDMap and I3MCTRee); It took me some while to locate an appropriate one, which I found in dataset 11843 resp.... | defect | error in mc afterprocessing mcpulseseriesparticleidmap trac i was again on the search for simulation of some mcstudies for which i need the complete info mcpulsemap particleidmap and it took me some while to locate an appropriate one which i found in dataset resp genie simulation one of... | 1 |

21,155 | 3,463,279,598 | IssuesEvent | 2015-12-21 09:01:44 | netty/netty | https://api.github.com/repos/netty/netty | closed | HTTP/2 DefaultHttp2RemoteFlowController Stream writability notification broken | defect | `DefaultHttp2RemoteFlowController.ListenerWritabilityMonitor` no longer reliably detects when a stream's writability change occurs. `ListenerWritabilityMonitor` was implemented to avoid duplicating iteration over all streams when possible and instead was relying on the `PriorityStreamByteDistributor` to call `write` fo... | 1.0 | HTTP/2 DefaultHttp2RemoteFlowController Stream writability notification broken - `DefaultHttp2RemoteFlowController.ListenerWritabilityMonitor` no longer reliably detects when a stream's writability change occurs. `ListenerWritabilityMonitor` was implemented to avoid duplicating iteration over all streams when possible ... | defect | http stream writability notification broken listenerwritabilitymonitor no longer reliably detects when a stream s writability change occurs listenerwritabilitymonitor was implemented to avoid duplicating iteration over all streams when possible and instead was relying on the prioritystreambytedistributor ... | 1 |

23,843 | 3,863,220,828 | IssuesEvent | 2016-04-08 08:26:55 | FLawrence/brat-linked | https://api.github.com/repos/FLawrence/brat-linked | closed | Automated Bechdel Tester | auto-migrated Priority-High Type-Defect | ```

Need page which runs the Bechdel sparql query on selected document

```

Original issue reported on code.google.com by `k.faith....@googlemail.com` on 4 Jul 2014 at 11:36 | 1.0 | Automated Bechdel Tester - ```

Need page which runs the Bechdel sparql query on selected document

```

Original issue reported on code.google.com by `k.faith....@googlemail.com` on 4 Jul 2014 at 11:36 | defect | automated bechdel tester need page which runs the bechdel sparql query on selected document original issue reported on code google com by k faith googlemail com on jul at | 1 |

46,123 | 9,884,989,999 | IssuesEvent | 2019-06-25 00:25:51 | AstrideUG/DarkBedrock | https://api.github.com/repos/AstrideUG/DarkBedrock | opened | Add AbstractCommand(Map<String, Any?>) | WithCodeExample enhancement feature_request good first issue help wanted | needs: #154

```kotlin

import net.darkdevelopers.darkbedrock.darkness.general.configs.default

import net.darkdevelopers.darkbedrock.darkness.general.configs.getValue

/**

* Created on 25.06.2019 02:23.

* @author Lars Artmann | LartyHD

*/

abstract class AbstractCommand(values: Map<String, Any?>) : Command {

... | 1.0 | Add AbstractCommand(Map<String, Any?>) - needs: #154

```kotlin

import net.darkdevelopers.darkbedrock.darkness.general.configs.default

import net.darkdevelopers.darkbedrock.darkness.general.configs.getValue

/**

* Created on 25.06.2019 02:23.

* @author Lars Artmann | LartyHD

*/

abstract class AbstractComman... | non_defect | add abstractcommand map needs kotlin import net darkdevelopers darkbedrock darkness general configs default import net darkdevelopers darkbedrock darkness general configs getvalue created on author lars artmann lartyhd abstract class abstractcommand values map comm... | 0 |

532 | 2,564,053,055 | IssuesEvent | 2015-02-06 17:11:48 | hlsyounes/vhpop | https://api.github.com/repos/hlsyounes/vhpop | closed | VHPOP-2.2 does not compile with newer version of GCC | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Downloading vhpop-2.2.tar.gz_FILES and unzipping it

2. type ./configure in the terminal

3. type make

What is the expected output? What do you see instead?

The code should get installed without errors. But what I see is as follows:

source='vhpop.cc' object='vhpop.o' libtoo... | 1.0 | VHPOP-2.2 does not compile with newer version of GCC - ```

What steps will reproduce the problem?

1. Downloading vhpop-2.2.tar.gz_FILES and unzipping it

2. type ./configure in the terminal

3. type make

What is the expected output? What do you see instead?

The code should get installed without errors. But what I see is... | defect | vhpop does not compile with newer version of gcc what steps will reproduce the problem downloading vhpop tar gz files and unzipping it type configure in the terminal type make what is the expected output what do you see instead the code should get installed without errors but what i see is... | 1 |

27,315 | 5,331,360,084 | IssuesEvent | 2017-02-15 19:18:21 | nexB/aboutcode-manager | https://api.github.com/repos/nexB/aboutcode-manager | opened | Add fuse 2.9.1 Scan data to /samples and document | documentation user testing | We want to add several (5-6) ScanCode data files to the /samples for ABC Manager starting with jfuse 2.9.1 as an example of a Linux userspace application written in C. This task is to:

- Add a current ScanCode JSON file for fuse 2.91. to the /samples directory

- Create a new section in the README for Samples which... | 1.0 | Add fuse 2.9.1 Scan data to /samples and document - We want to add several (5-6) ScanCode data files to the /samples for ABC Manager starting with jfuse 2.9.1 as an example of a Linux userspace application written in C. This task is to:

- Add a current ScanCode JSON file for fuse 2.91. to the /samples directory

- ... | non_defect | add fuse scan data to samples and document we want to add several scancode data files to the samples for abc manager starting with jfuse as an example of a linux userspace application written in c this task is to add a current scancode json file for fuse to the samples directory c... | 0 |

4,099 | 2,610,087,373 | IssuesEvent | 2015-02-26 18:26:32 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳痤疮哪家好 | auto-migrated Priority-Medium Type-Defect | ```

深圳痤疮哪家好【深圳韩方科颜全国热线400-869-1818,24小时QQ4

008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方��

�—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科�

��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康

祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治��

�粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘�

��

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:19 | 1.0 | 深圳痤疮哪家好 - ```

深圳痤疮哪家好【深圳韩方科颜全国热线400-869-1818,24小时QQ4

008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方��

�—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科�

��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康

祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治��

�粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘�

��

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:19 | defect | 深圳痤疮哪家好 深圳痤疮哪家好【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方�� �—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科� ��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康 祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治�� �粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘� �� original issue reported on code google com by szft com on may at | 1 |

63,966 | 18,092,323,485 | IssuesEvent | 2021-09-22 04:06:32 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Window Briefly Flashes White While Launching | T-Defect | ### Steps to reproduce

Launch Element.

### What happened?

The window briefly flashes white before the loading animation is shown.

I have uploaded a short screen recording of the issue to YouTube [here](https://youtu.be/tGlEVuesQjk).

### Operating system

macOS 11.5.2

### Application version

1.8.5

### How did... | 1.0 | Window Briefly Flashes White While Launching - ### Steps to reproduce

Launch Element.

### What happened?

The window briefly flashes white before the loading animation is shown.

I have uploaded a short screen recording of the issue to YouTube [here](https://youtu.be/tGlEVuesQjk).

### Operating system

macOS 11.5... | defect | window briefly flashes white while launching steps to reproduce launch element what happened the window briefly flashes white before the loading animation is shown i have uploaded a short screen recording of the issue to youtube operating system macos application version ... | 1 |

201,382 | 15,802,250,468 | IssuesEvent | 2021-04-03 08:48:57 | Leeyp/ped | https://api.github.com/repos/Leeyp/ped | opened | Incorrect example given in UG for testing (NurseSchedule) | severity.High type.DocumentationBug | The example given in the UG is incorrect. Testers who copy and paste it will not be able to proceed:

<!--session: 1617437426093-73ed2492-c57e-46c7-8165-64bd8167d98a--> | 1.0 | Incorrect example given in UG for testing (NurseSchedule) - The example given in the UG is incorrect. Testers who copy and paste it will not be able to proceed:

<!--session: 1617437426093-73ed2492-c57e-46c... | non_defect | incorrect example given in ug for testing nurseschedule the example given in the ug is incorrect testers who copy and paste it will not be able to proceed | 0 |

789,067 | 27,777,270,795 | IssuesEvent | 2023-03-16 18:07:15 | AY2223S2-CS2113-T13-4/tp | https://api.github.com/repos/AY2223S2-CS2113-T13-4/tp | closed | Create function for task count and module count | type.Story type.Enhancement priority.High | Iterate through lists and count outstanding (unmarked) tasked and modules | 1.0 | Create function for task count and module count - Iterate through lists and count outstanding (unmarked) tasked and modules | non_defect | create function for task count and module count iterate through lists and count outstanding unmarked tasked and modules | 0 |

78,128 | 27,332,086,485 | IssuesEvent | 2023-02-25 19:05:57 | amyjko/bookish | https://api.github.com/repos/amyjko/bookish | opened | Printing is slow | defect | In many cases, books can just be massive HTML pages with tens of thousands of DOM nodes. It's just not a scalable way to print. We should generate some other approach to this, like precomputing a PDF. | 1.0 | Printing is slow - In many cases, books can just be massive HTML pages with tens of thousands of DOM nodes. It's just not a scalable way to print. We should generate some other approach to this, like precomputing a PDF. | defect | printing is slow in many cases books can just be massive html pages with tens of thousands of dom nodes it s just not a scalable way to print we should generate some other approach to this like precomputing a pdf | 1 |

206,479 | 7,112,703,684 | IssuesEvent | 2018-01-17 17:53:28 | coq/coq | https://api.github.com/repos/coq/coq | closed | Coq no longer builds CoqIDE with liblablgtk2-ocaml on Ubuntu | help wanted kind: regression priority: blocker | #### Version

v8.7, master

#### Operating system

Ubuntu (tested on Bionic, Artful, Zesty, Xenial, Trusty, Precise)

#### Description of the problem

The `./configure` script seems to mis-identify the version of `lablgtk2` when it's installed from the liblablgtk2-ocaml package, resulting in CoqIDE not being ... | 1.0 | Coq no longer builds CoqIDE with liblablgtk2-ocaml on Ubuntu - #### Version

v8.7, master

#### Operating system

Ubuntu (tested on Bionic, Artful, Zesty, Xenial, Trusty, Precise)

#### Description of the problem

The `./configure` script seems to mis-identify the version of `lablgtk2` when it's installed fro... | non_defect | coq no longer builds coqide with ocaml on ubuntu version master operating system ubuntu tested on bionic artful zesty xenial trusty precise description of the problem the configure script seems to mis identify the version of when it s installed from the ocaml pack... | 0 |

8,722 | 2,611,537,296 | IssuesEvent | 2015-02-27 06:06:42 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Manual placement animation is drawn twice | auto-migrated Priority-Low Type-Defect | ```

Reproduction steps:

1. Make sure manual placement is activated in game scheme

2. Start match

3. Place hedgehogs

Expected outcome:

The teleportation animation is drawn where the hedgehog is placed, but nowhere

else.

Actual outcome:

The teleportation animation is drawn where the hedgehog is placed but also at

the... | 1.0 | Manual placement animation is drawn twice - ```

Reproduction steps:

1. Make sure manual placement is activated in game scheme

2. Start match

3. Place hedgehogs

Expected outcome:

The teleportation animation is drawn where the hedgehog is placed, but nowhere

else.

Actual outcome:

The teleportation animation is drawn w... | defect | manual placement animation is drawn twice reproduction steps make sure manual placement is activated in game scheme start match place hedgehogs expected outcome the teleportation animation is drawn where the hedgehog is placed but nowhere else actual outcome the teleportation animation is drawn w... | 1 |

26,352 | 4,682,386,796 | IssuesEvent | 2016-10-09 08:02:23 | luigirizzo/netmap | https://api.github.com/repos/luigirizzo/netmap | closed | Something wrong with Netmap bridge | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.[sjm@isms examples]./bridge -i netmap:eth0 -i netmap:eth1

now everythings ok.

2.pull out eth1's cable ,then insert it back to eth1.

now the bridge has been broken.

3.then "ifdown eth1" and "ifup eth1",the bridge works well again.

What is the expected output? What do you ... | 1.0 | Something wrong with Netmap bridge - ```

What steps will reproduce the problem?

1.[sjm@isms examples]./bridge -i netmap:eth0 -i netmap:eth1

now everythings ok.

2.pull out eth1's cable ,then insert it back to eth1.

now the bridge has been broken.

3.then "ifdown eth1" and "ifup eth1",the bridge works well again.

What... | defect | something wrong with netmap bridge what steps will reproduce the problem bridge i netmap i netmap now everythings ok pull out s cable then insert it back to now the bridge has been broken then ifdown and ifup the bridge works well again what is the expected output what do you... | 1 |

14,749 | 25,578,820,420 | IssuesEvent | 2022-12-01 01:27:55 | CameronAuler/ENSM | https://api.github.com/repos/CameronAuler/ENSM | closed | Research and Design Project 2 Check In and Demo | course requirement | - [x] Meet with Devin to go over Research and Design Project 2.

- [x] Create a research and design project 2 demo. | 1.0 | Research and Design Project 2 Check In and Demo - - [x] Meet with Devin to go over Research and Design Project 2.

- [x] Create a research and design project 2 demo. | non_defect | research and design project check in and demo meet with devin to go over research and design project create a research and design project demo | 0 |

115,780 | 17,334,578,068 | IssuesEvent | 2021-07-28 08:39:11 | panasalap/libexpat-without-Vulnerable-Fix | https://api.github.com/repos/panasalap/libexpat-without-Vulnerable-Fix | opened | CVE-2018-20843 (High) detected in libexpatR_2_2_6 | security vulnerability | ## CVE-2018-20843 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>libexpatR_2_2_6</b></p></summary>

<p>

<p>:herb: Expat library: Fast streaming XML parser written in C99; in the proces... | True | CVE-2018-20843 (High) detected in libexpatR_2_2_6 - ## CVE-2018-20843 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>libexpatR_2_2_6</b></p></summary>

<p>

<p>:herb: Expat library: Fas... | non_defect | cve high detected in libexpatr cve high severity vulnerability vulnerable library libexpatr herb expat library fast streaming xml parser written in in the process of migrating from sourceforge to github library home page a href found in head commit a href f... | 0 |

164,192 | 20,364,357,720 | IssuesEvent | 2022-02-21 02:37:48 | turkdevops/headless-recorder | https://api.github.com/repos/turkdevops/headless-recorder | opened | CVE-2022-0639 (Medium) detected in url-parse-1.5.3.tgz | security vulnerability | ## CVE-2022-0639 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.5.3.tgz</b></p></summary>

<p>Small footprint URL parser that works seamlessly across Node.js and browser ... | True | CVE-2022-0639 (Medium) detected in url-parse-1.5.3.tgz - ## CVE-2022-0639 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>url-parse-1.5.3.tgz</b></p></summary>

<p>Small footprint URL... | non_defect | cve medium detected in url parse tgz cve medium severity vulnerability vulnerable library url parse tgz small footprint url parser that works seamlessly across node js and browser environments library home page a href dependency hierarchy cli service tgz ro... | 0 |

47,479 | 13,056,202,494 | IssuesEvent | 2020-07-30 03:58:45 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | I3Tray finish gets called due to exceptions in constructors/Configure methods (Trac #615) | IceTray Migrated from Trac defect | An exception in service or module constructors causes I3Finish to be called without there being a driving module yet, which seems to confuse I3Finish and makes it throw its own exception. The resulting second exception could be confusing to users..

Here is an example of how this looks like on the standard output:

l... | 1.0 | I3Tray finish gets called due to exceptions in constructors/Configure methods (Trac #615) - An exception in service or module constructors causes I3Finish to be called without there being a driving module yet, which seems to confuse I3Finish and makes it throw its own exception. The resulting second exception could be ... | defect | finish gets called due to exceptions in constructors configure methods trac an exception in service or module constructors causes to be called without there being a driving module yet which seems to confuse and makes it throw its own exception the resulting second exception could be confusing to users ... | 1 |

38,700 | 8,952,334,425 | IssuesEvent | 2019-01-25 16:16:08 | svigerske/ipopt-donotuse | https://api.github.com/repos/svigerske/ipopt-donotuse | closed | Undefined methods in Ipopt::Journal class | Ipopt defect | Issue created by migration from Trac.

Original creator: ycollet

Original creation time: 2010-04-30 14:25:47

Assignee: ipopt-team

Version: 3.8

In the file IpJournalist.[hc]pp, the class Journal is defined.

The prototype of the method SetPrintLevel is defined in the class definition but the implementation is never d... | 1.0 | Undefined methods in Ipopt::Journal class - Issue created by migration from Trac.

Original creator: ycollet

Original creation time: 2010-04-30 14:25:47

Assignee: ipopt-team

Version: 3.8

In the file IpJournalist.[hc]pp, the class Journal is defined.

The prototype of the method SetPrintLevel is defined in the class ... | defect | undefined methods in ipopt journal class issue created by migration from trac original creator ycollet original creation time assignee ipopt team version in the file ipjournalist pp the class journal is defined the prototype of the method setprintlevel is defined in the class definition ... | 1 |

2,759 | 2,607,938,708 | IssuesEvent | 2015-02-26 00:29:55 | chrsmithdemos/minify | https://api.github.com/repos/chrsmithdemos/minify | closed | maxAge is not set to 365 days when query-string ends with number | auto-migrated Priority-Medium Release-2.1.5 Type-Defect | ```

Minify commit/version: trunk

What steps will reproduce the problem?

1. append a number to the query string. The config.php says: " * Note: Despite

this setting, if you include a number at the end of the

* querystring, maxAge will be set to one year. E.g. /min/f=hello.css&123456"

Expected output:

maxAge to be o... | 1.0 | maxAge is not set to 365 days when query-string ends with number - ```

Minify commit/version: trunk

What steps will reproduce the problem?

1. append a number to the query string. The config.php says: " * Note: Despite

this setting, if you include a number at the end of the

* querystring, maxAge will be set to one ye... | defect | maxage is not set to days when query string ends with number minify commit version trunk what steps will reproduce the problem append a number to the query string the config php says note despite this setting if you include a number at the end of the querystring maxage will be set to one year... | 1 |

17,462 | 3,006,634,597 | IssuesEvent | 2015-07-27 11:49:33 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | [Array] GetValue() throws "Invalid number of indices" | defect | ```C#

using Bridge;

using Bridge.Html5;

using System;

using System.Collections.Generic;

using System.Linq;

namespace Demo

{

public class App

{

[Ready]

public static void Main()

{

Console.Log(new[] { "x", "y" }.GetValue(0));

}

}

}

```

**Actual... | 1.0 | [Array] GetValue() throws "Invalid number of indices" - ```C#

using Bridge;

using Bridge.Html5;

using System;

using System.Collections.Generic;

using System.Linq;

namespace Demo

{

public class App

{

[Ready]

public static void Main()

{

Console.Log(new[] { "x", "... | defect | getvalue throws invalid number of indices c using bridge using bridge using system using system collections generic using system linq namespace demo public class app public static void main console log new x y getvalue ... | 1 |

193,135 | 6,881,900,729 | IssuesEvent | 2017-11-21 00:40:18 | minio/minio-java | https://api.github.com/repos/minio/minio-java | closed | Provide Maven support to fetch minio jar along with all dependent jar's | priority: medium | Currently, the maven entry provided here will result in just downloading the minio-3.0.9.jar and not minio-3.0.9-all.jar. I faced quite a bit of problem trying to unit test where the call to instantiate minio object was expecting the xmlPullParser class to be present but was not there in my local repo as its not part o... | 1.0 | Provide Maven support to fetch minio jar along with all dependent jar's - Currently, the maven entry provided here will result in just downloading the minio-3.0.9.jar and not minio-3.0.9-all.jar. I faced quite a bit of problem trying to unit test where the call to instantiate minio object was expecting the xmlPullPars... | non_defect | provide maven support to fetch minio jar along with all dependent jar s currently the maven entry provided here will result in just downloading the minio jar and not minio all jar i faced quite a bit of problem trying to unit test where the call to instantiate minio object was expecting the xmlpullpars... | 0 |

2,988 | 5,346,258,174 | IssuesEvent | 2017-02-17 19:11:05 | OSU-CS361-W17/group21_project2 | https://api.github.com/repos/OSU-CS361-W17/group21_project2 | closed | pop up windows | Requirement | Need a confirmation window for fire

will add the scan feature to that window

also adding basic interactions and confirmations so player feels the game is responsive | 1.0 | pop up windows - Need a confirmation window for fire

will add the scan feature to that window

also adding basic interactions and confirmations so player feels the game is responsive | non_defect | pop up windows need a confirmation window for fire will add the scan feature to that window also adding basic interactions and confirmations so player feels the game is responsive | 0 |

63,464 | 17,672,582,722 | IssuesEvent | 2021-08-23 08:19:55 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | "Enable encryption in settings" should not be shown to users that are unable to modify room encryption | T-Defect S-Minor Easy A-E2EE A-Timeline X-Needs-Design O-Intermediate | ### Steps to reproduce

1. Join an invite-only room where you don't have permission to modify encryption settings.

2. Be shown the following warning:

3. Click "Enable encryption in settings"

4. Find th... | 1.0 | "Enable encryption in settings" should not be shown to users that are unable to modify room encryption - ### Steps to reproduce

1. Join an invite-only room where you don't have permission to modify encryption settings.

2. Be shown the following warning:

suggest that [`Signal.Connect`](https://docs.godotengin... | 1.0 | 4.0 C# `Signal.Connect` Doesn't Work as Documented in `Object.Connect` Documentation - ### Godot version

v4.0.beta14.mono.official [28a24639c]

### System information

Windows 10, .Net SDK 6.0.405

### Issue description

The [4.0 docs for `Object.Connect`](https://docs.godotengine.org/en/latest/classes/class_object.ht... | non_defect | c signal connect doesn t work as documented in object connect documentation godot version mono official system information windows net sdk issue description the suggest that the docs for which are lacking any c entry or object event signal are the preferred ... | 0 |

77,535 | 9,595,887,886 | IssuesEvent | 2019-05-09 17:10:10 | publiclab/spectral-workbench | https://api.github.com/repos/publiclab/spectral-workbench | closed | Frontend for SWB authentication | design | As finalised in https://github.com/publiclab/mapknitter/issues/385, @IshaGupta18 is implementing the front end as

for MK. We can use the same partial files here at SWB as the code should be exactly the same... | 1.0 | Frontend for SWB authentication - As finalised in https://github.com/publiclab/mapknitter/issues/385, @IshaGupta18 is implementing the front end as

for MK. We can use the same partial files here at SWB as t... | non_defect | frontend for swb authentication as finalised in is implementing the front end as for mk we can use the same partial files here at swb as the code should be exactly the same thanks all | 0 |

71,149 | 23,469,595,372 | IssuesEvent | 2022-08-16 20:19:09 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | opened | Invalid ARIA attributes on Vet Center Facility services in CMS | Needs refining ⭐️ Sitewide CMS 508/Accessibility 508-defect-1 | ## Description

There are invalid aria attributes on the rows within the Facility services table - aria-vc-name & aria-required-status are not valid aria attributes. ARIA can only be used with valid values.

## Description

Within the edit screens of the CMS, there are multiple components that have an `aria-described... | 1.0 | Invalid ARIA attributes on Vet Center Facility services in CMS - ## Description

There are invalid aria attributes on the rows within the Facility services table - aria-vc-name & aria-required-status are not valid aria attributes. ARIA can only be used with valid values.

## Description

Within the edit screens of th... | defect | invalid aria attributes on vet center facility services in cms description there are invalid aria attributes on the rows within the facility services table aria vc name aria required status are not valid aria attributes aria can only be used with valid values description within the edit screens of th... | 1 |

410,767 | 27,801,011,554 | IssuesEvent | 2023-03-17 15:50:23 | distributethe6ix/70DaysOfServiceMesh | https://api.github.com/repos/distributethe6ix/70DaysOfServiceMesh | closed | Add Welcome workflow #439 | documentation enhancement | <!--Describe your feature -->

Add Welcome workflow that can show welcome message whenever contributors open a PR.

<!-- Additional context about the feature -->

| 1.0 | Add Welcome workflow #439 - <!--Describe your feature -->

Add Welcome workflow that can show welcome message whenever contributors open a PR.

<!-- Additional context about the feature -->

| non_defect | add welcome workflow add welcome workflow that can show welcome message whenever contributors open a pr | 0 |

212,862 | 16,501,917,422 | IssuesEvent | 2021-05-25 15:16:35 | mantidproject/mantid | https://api.github.com/repos/mantidproject/mantid | closed | Mantid Basic Course: Nice to have changes | Documentation Maintenance | From Manual testing: https://github.com/mantidproject/mantid/issues/30031

- [x] Update [Downloads image](https://docs.mantidproject.org/nightly/_images/MantidDownload_42.png) to be 5.1 or 6.0

- [x] [Place larger emphasis](https://docs.mantidproject.org/nightly/tutorials/mantid_basic_course/getting_started/getting_s... | 1.0 | Mantid Basic Course: Nice to have changes - From Manual testing: https://github.com/mantidproject/mantid/issues/30031

- [x] Update [Downloads image](https://docs.mantidproject.org/nightly/_images/MantidDownload_42.png) to be 5.1 or 6.0

- [x] [Place larger emphasis](https://docs.mantidproject.org/nightly/tutorials/m... | non_defect | mantid basic course nice to have changes from manual testing update to be or on needing contact info for issues to be resolved with load dialog lists say using the mari files in tcd update to side step toml inputs | 0 |

45,412 | 12,796,765,256 | IssuesEvent | 2020-07-02 11:03:33 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Derby emulation of all(T...) is incorrect | C: DB: Derby C: Functionality E: All Editions P: Medium T: Defect | This query here doesn't work correctly in Derby:

```java

assertEquals(Arrays.asList(), create().select(TBook_ID())

.from(TBook())

.where(TBook_ID().equal(all(1, 2)))

.orderBy(TBook_ID()).fetch(TBook_ID()));

```

The generated SQL is:

```sql

select "TEST"."T_BOOK"."ID"

from "TEST"."T_BOOK"

wh... | 1.0 | Derby emulation of all(T...) is incorrect - This query here doesn't work correctly in Derby:

```java

assertEquals(Arrays.asList(), create().select(TBook_ID())

.from(TBook())

.where(TBook_ID().equal(all(1, 2)))

.orderBy(TBook_ID()).fetch(TBook_ID()));

```

The generated SQL is:

```sql

select "T... | defect | derby emulation of all t is incorrect this query here doesn t work correctly in derby java assertequals arrays aslist create select tbook id from tbook where tbook id equal all orderby tbook id fetch tbook id the generated sql is sql select t... | 1 |

49,813 | 13,187,276,107 | IssuesEvent | 2020-08-13 02:54:05 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | Numerical inconsistencies in SimplePropagator (Trac #2127) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2127">https://code.icecube.wisc.edu/ticket/2127</a>, reported by chaack and owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2018-01-08T13:47:56",

"description": "The particle returned f... | 1.0 | Numerical inconsistencies in SimplePropagator (Trac #2127) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2127">https://code.icecube.wisc.edu/ticket/2127</a>, reported by chaack and owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2018-... | defect | numerical inconsistencies in simplepropagator trac migrated from json status closed changetime description the particle returned from simplepropagator have slightly different compared to the input particle this is an issue within proposal as the are c... | 1 |

190,709 | 14,570,176,193 | IssuesEvent | 2020-12-17 14:04:03 | zeebe-io/zeebe | https://api.github.com/repos/zeebe-io/zeebe | closed | io.netty.channel.unix.Errors$NativeIoException: bind(..) failed: Address already in use | Impact: Testing Priority: Mid Scope: broker Severity: High Status: Planned Type: Unstable Test | **Summary**

Many different tests fail once in a while because of `io.netty.channel.unix.Errors$NativeIoException: bind(..) failed: Address already in use`.

I know the issue is already discussed multiple times in individual flaky test issues, but I'd like to open this issue to find a solution to the bigger problem... | 2.0 | io.netty.channel.unix.Errors$NativeIoException: bind(..) failed: Address already in use - **Summary**

Many different tests fail once in a while because of `io.netty.channel.unix.Errors$NativeIoException: bind(..) failed: Address already in use`.

I know the issue is already discussed multiple times in individual f... | non_defect | io netty channel unix errors nativeioexception bind failed address already in use summary many different tests fail once in a while because of io netty channel unix errors nativeioexception bind failed address already in use i know the issue is already discussed multiple times in individual f... | 0 |

254,299 | 19,192,060,704 | IssuesEvent | 2021-12-06 02:42:49 | MikeTheNose/SystemAnalysisProject | https://api.github.com/repos/MikeTheNose/SystemAnalysisProject | closed | Task 10 | documentation | 10.Create a decision table to capture the process logic for your system (use the

reduced version on page 204, fig 7-19). The table should contain at least 2

conditions and 4 courses of action. | 1.0 | Task 10 - 10.Create a decision table to capture the process logic for your system (use the

reduced version on page 204, fig 7-19). The table should contain at least 2

conditions and 4 courses of action. | non_defect | task create a decision table to capture the process logic for your system use the reduced version on page fig the table should contain at least conditions and courses of action | 0 |

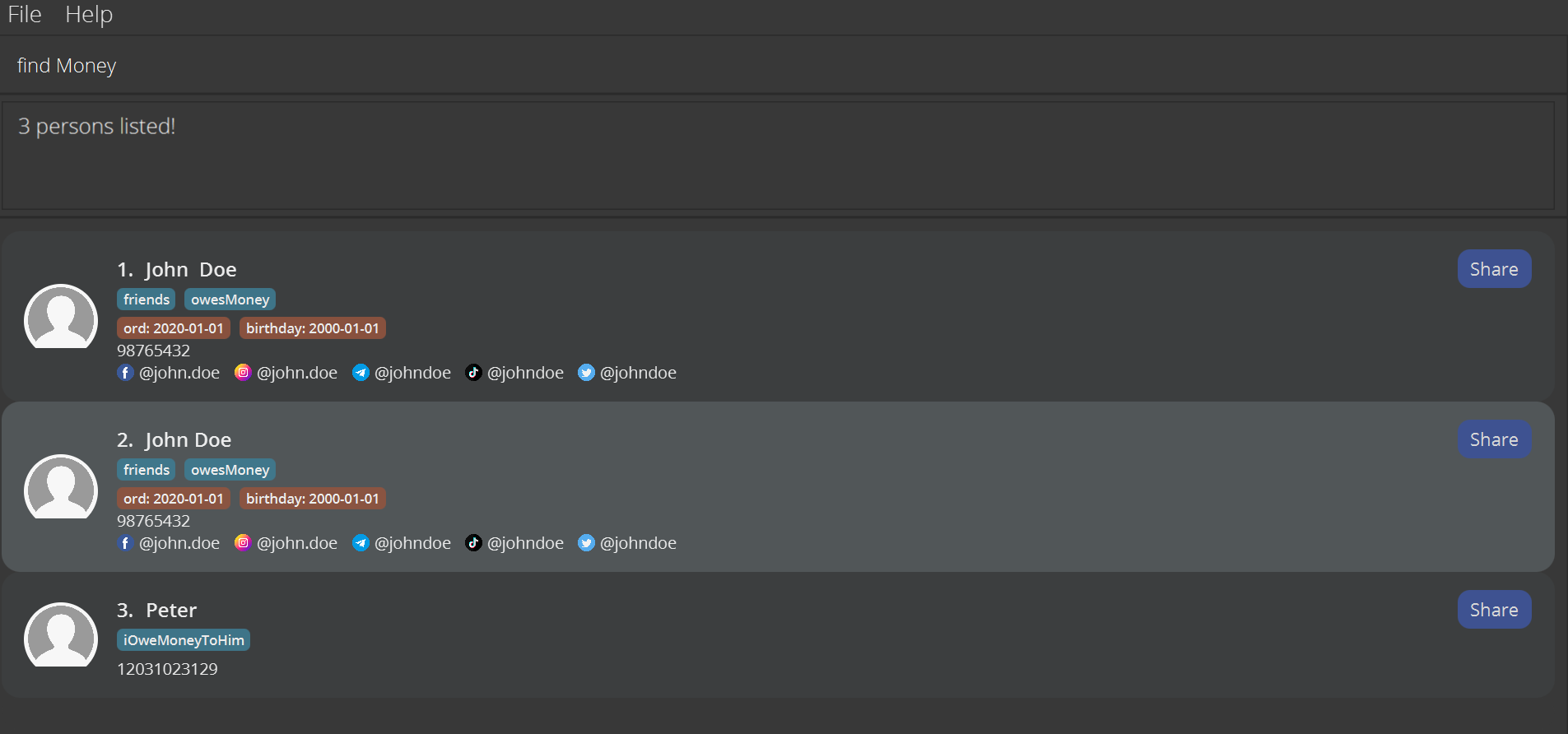

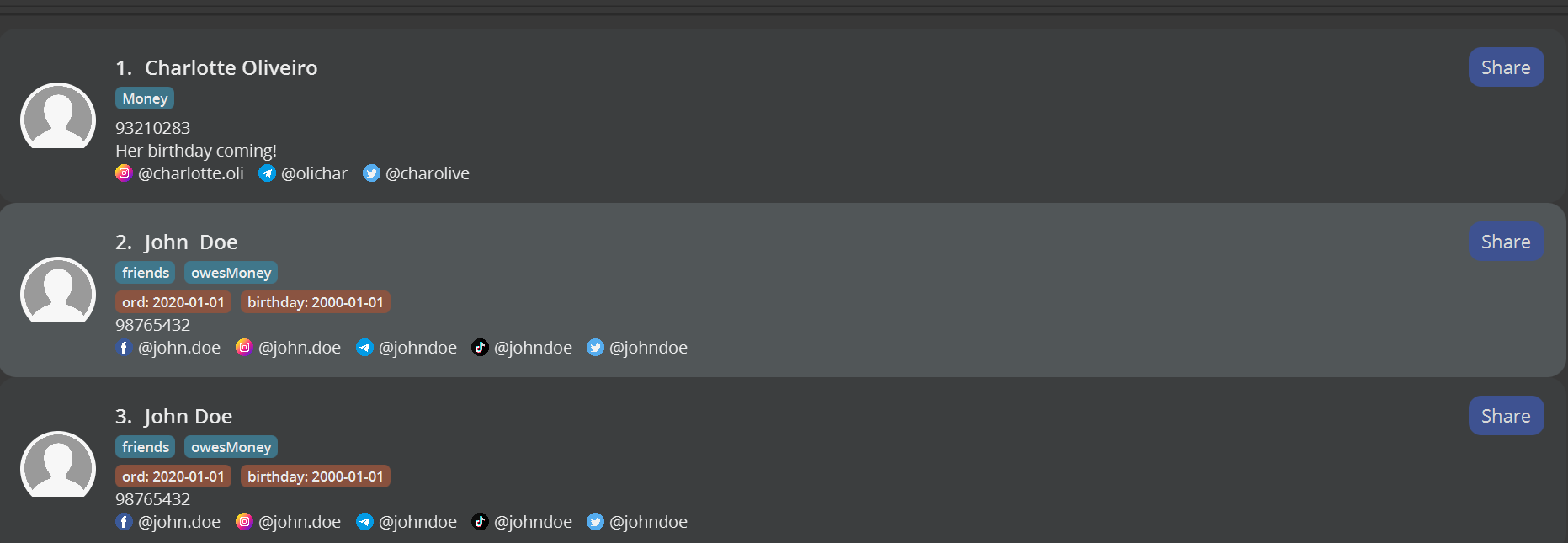

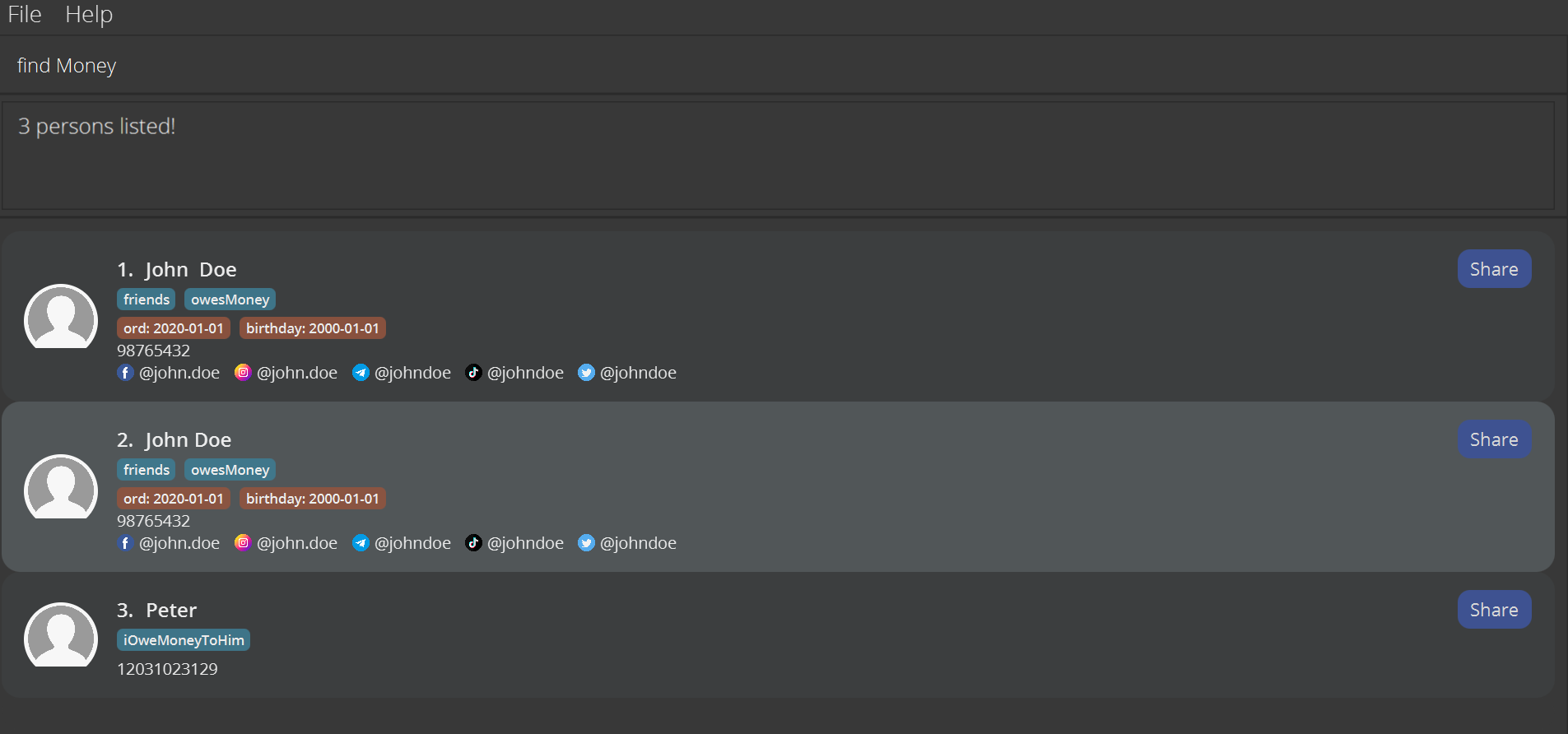

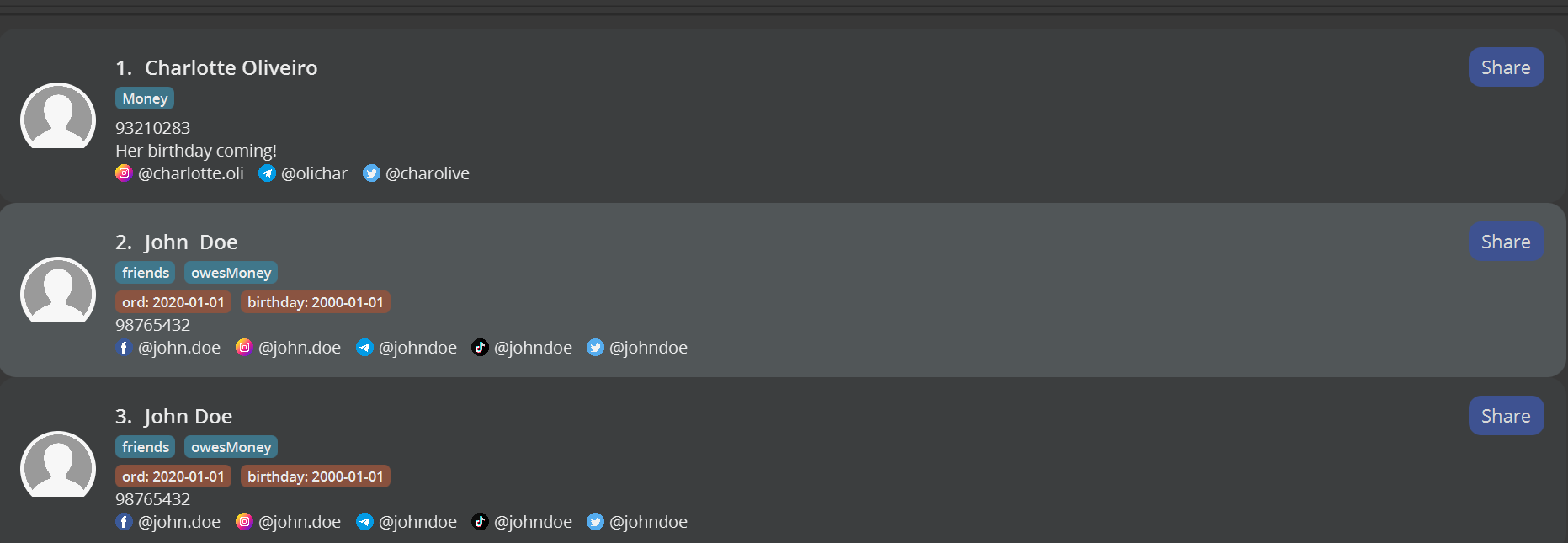

244,250 | 18,751,960,958 | IssuesEvent | 2021-11-05 04:02:24 | AY2122S1-CS2103T-F11-4/tp | https://api.github.com/repos/AY2122S1-CS2103T-F11-4/tp | closed | [PE-D] Find Tag Command | documentation duplicate find command |

Finding tags using the find t/money returns all users with tags with money ... | 1.0 | [PE-D] Find Tag Command -

Finding tags using the find t/money returns all u... | non_defect | find tag command finding tags using the find t money returns all users with tags with money inside them instead of only tags with money labels severity low type functionalitybug original ronaldtansingwei ped | 0 |

286,277 | 8,785,977,023 | IssuesEvent | 2018-12-20 14:33:36 | toobigtoignore/issf | https://api.github.com/repos/toobigtoignore/issf | closed | Certain profiles do not show the correct date range on their profile page (or in the generated report) | backend bug hackathon low priority | **Describe the bug**

Profiles do not always show the correct date range. Sometimes only the first year will come up, i.e 2015 instead of 2015-2016. This also occurs when a PDF report is generated of that record.

**To Reproduce**

Steps to reproduce the behavior:

1. Open an SSF Profile record that you know has a da... | 1.0 | Certain profiles do not show the correct date range on their profile page (or in the generated report) - **Describe the bug**

Profiles do not always show the correct date range. Sometimes only the first year will come up, i.e 2015 instead of 2015-2016. This also occurs when a PDF report is generated of that record.

... | non_defect | certain profiles do not show the correct date range on their profile page or in the generated report describe the bug profiles do not always show the correct date range sometimes only the first year will come up i e instead of this also occurs when a pdf report is generated of that record to rep... | 0 |

82,084 | 31,935,042,328 | IssuesEvent | 2023-09-19 09:54:05 | nats-io/nats.go | https://api.github.com/repos/nats-io/nats.go | closed | Subscribe callback can be triggered after close is called | defect | ### What version were you using?

We're currently using ` github.com/nats-io/nats.go v1.21 .

### What environment was the server running in?

This was detected on a linux CI machine.

### Is this defect reproducible?

I've copied the following snippets from our full [codebase](https://github.com/statechannels/go-nitr... | 1.0 | Subscribe callback can be triggered after close is called - ### What version were you using?

We're currently using ` github.com/nats-io/nats.go v1.21 .

### What environment was the server running in?

This was detected on a linux CI machine.

### Is this defect reproducible?

I've copied the following snippets from ... | defect | subscribe callback can be triggered after close is called what version were you using we re currently using github com nats io nats go what environment was the server running in this was detected on a linux ci machine is this defect reproducible i ve copied the following snippets from ou... | 1 |

320,557 | 9,782,381,276 | IssuesEvent | 2019-06-07 23:19:50 | lightingft/appinventor-sources | https://api.github.com/repos/lightingft/appinventor-sources | reopened | Data basic properties (label and color) | Part: Component Priority: Medium Status: In Progress Type: Feature | For the Data Component, two basic properties should be implemented:

- [x] Label

- [x] Color | 1.0 | Data basic properties (label and color) - For the Data Component, two basic properties should be implemented:

- [x] Label

- [x] Color | non_defect | data basic properties label and color for the data component two basic properties should be implemented label color | 0 |

37,982 | 8,621,502,716 | IssuesEvent | 2018-11-20 17:29:58 | hazelcast/hazelcast-nodejs-client | https://api.github.com/repos/hazelcast/hazelcast-nodejs-client | opened | benchmark/SimpleMapBenchmark uses old .getMap api | Priority: Low Type: Defect | this benchmark does not work with current master | 1.0 | benchmark/SimpleMapBenchmark uses old .getMap api - this benchmark does not work with current master | defect | benchmark simplemapbenchmark uses old getmap api this benchmark does not work with current master | 1 |

51,572 | 13,207,528,480 | IssuesEvent | 2020-08-14 23:27:46 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | USE_GOLD auto enabled on mac os x (Trac #641) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/641">https://code.icecube.wisc.edu/projects/icecube/ticket/641</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:37:57",

"_ts": "1416713877... | 1.0 | USE_GOLD auto enabled on mac os x (Trac #641) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/641">https://code.icecube.wisc.edu/projects/icecube/ticket/641</a>, reported by negaand owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime... | defect | use gold auto enabled on mac os x trac migrated from json status closed changetime ts description see n reporter nega cc resolution fixed time component cmake summary use gol... | 1 |

574,784 | 17,023,874,201 | IssuesEvent | 2021-07-03 04:18:22 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Make the tags editable in the browse interface | Component: website Priority: minor Resolution: wontfix Type: enhancement | **[Submitted to the original trac issue database at 11.01am, Monday, 12th August 2013]**

Yesterday I wanted to edit http://www.openstreetmap.org/browse/node/432463849 which had the wrong place value. To do that I had to open ID (or another editor). This is unneccesarily burdensome since it didn't involve any change of... | 1.0 | Make the tags editable in the browse interface - **[Submitted to the original trac issue database at 11.01am, Monday, 12th August 2013]**

Yesterday I wanted to edit http://www.openstreetmap.org/browse/node/432463849 which had the wrong place value. To do that I had to open ID (or another editor). This is unneccesarily... | non_defect | make the tags editable in the browse interface yesterday i wanted to edit which had the wrong place value to do that i had to open id or another editor this is unneccesarily burdensome since it didn t involve any change of coordinates it should be possible to edit add and remove values for nodes wa... | 0 |

25,100 | 4,203,768,265 | IssuesEvent | 2016-06-28 07:18:45 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | DataTypeException: Cannot convert from false in Jooq v.3.8.2 | C: DB: MariaDB C: DB: MySQL C: Functionality P: Urgent T: Defect | I have MySQL with maven plugin generated pojos and DAOs. With the jooq v.3.7.3 I dont have this Exceptions:

```

Caused by: org.jooq.exception.MappingException: An error ocurred when mapping record to class com.stil.generated.mysql.tables.pojos.SystemProperties

at org.jooq.impl.DefaultRecordMapper$MutablePOJOMapper.... | 1.0 | DataTypeException: Cannot convert from false in Jooq v.3.8.2 - I have MySQL with maven plugin generated pojos and DAOs. With the jooq v.3.7.3 I dont have this Exceptions:

```

Caused by: org.jooq.exception.MappingException: An error ocurred when mapping record to class com.stil.generated.mysql.tables.pojos.SystemPrope... | defect | datatypeexception cannot convert from false in jooq v i have mysql with maven plugin generated pojos and daos with the jooq v i dont have this exceptions caused by org jooq exception mappingexception an error ocurred when mapping record to class com stil generated mysql tables pojos systemprope... | 1 |

290,645 | 25,082,599,869 | IssuesEvent | 2022-11-07 20:42:30 | irods/irods | https://api.github.com/repos/irods/irods | closed | Remove `rule_texts_for_tests.py` | testing refactor | - [x] main

- [x] 4-3-stable

---

Consider moving these into their respsective test.

Having part of the test logic in a separate file introduces more hurdles to understanding the test.

_Originally posted by @korydraughn in https://github.com/irods/irods/pull/6676#discussion_r1010702458_

| 1.0 | Remove `rule_texts_for_tests.py` - - [x] main

- [x] 4-3-stable

---

Consider moving these into their respsective test.

Having part of the test logic in a separate file introduces more hurdles to understanding the test.

_Originally posted by @korydraughn in https://github.com/irods/irods/pull/6676#discussion_r10... | non_defect | remove rule texts for tests py main stable consider moving these into their respsective test having part of the test logic in a separate file introduces more hurdles to understanding the test originally posted by korydraughn in | 0 |

77,567 | 27,054,726,270 | IssuesEvent | 2023-02-13 15:27:10 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: Network Interception doesn't work in JavaScript properly, always giving back status 200! | I-defect needs-triaging | ### What happened?

I'm using Selenium with Javascript.

async function mockResponseStatus(url: string){

const connection = await driver.createCDPConnection('page');

let httpResponse = new HttpResponse(url);

httpResponse.addHeaders("Content-Type", "application/json")

httpResponse.body = "test";

... | 1.0 | [🐛 Bug]: Network Interception doesn't work in JavaScript properly, always giving back status 200! - ### What happened?

I'm using Selenium with Javascript.

async function mockResponseStatus(url: string){

const connection = await driver.createCDPConnection('page');

let httpResponse = new HttpResponse(url);... | defect | network interception doesn t work in javascript properly always giving back status what happened i m using selenium with javascript async function mockresponsestatus url string const connection await driver createcdpconnection page let httpresponse new httpresponse url htt... | 1 |

32,106 | 6,715,706,308 | IssuesEvent | 2017-10-13 22:38:10 | zotonic/zotonic | https://api.github.com/repos/zotonic/zotonic | closed | gen_smtp timeouts marked as permanent failure | defect | Right now we classify timeouts (after some retries) as permanent failure and mark the email address failed in email_status administration.

This should be a temporary failure.

Also, the current connect timeout in gen_smtp is hard coded to 5000 msec. This should become an option, as apparently 5000 msec is not enou... | 1.0 | gen_smtp timeouts marked as permanent failure - Right now we classify timeouts (after some retries) as permanent failure and mark the email address failed in email_status administration.

This should be a temporary failure.

Also, the current connect timeout in gen_smtp is hard coded to 5000 msec. This should becom... | defect | gen smtp timeouts marked as permanent failure right now we classify timeouts after some retries as permanent failure and mark the email address failed in email status administration this should be a temporary failure also the current connect timeout in gen smtp is hard coded to msec this should become a... | 1 |

47,294 | 11,996,756,921 | IssuesEvent | 2020-04-08 17:19:06 | GoogleCloudPlatform/python-docs-samples | https://api.github.com/repos/GoogleCloudPlatform/python-docs-samples | opened | healthcare.api-client.dicom.dicomweb_test: test_dicomweb_delete_study failed | buildcop: issue priority: p1 type: bug | This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label and

I will stop commenting.

---

commit: 442efa40a52831655aa025cd60a5c6bc064c... | 1.0 | healthcare.api-client.dicom.dicomweb_test: test_dicomweb_delete_study failed - This test failed!

To configure my behavior, see [the Build Cop Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/master/packages/buildcop).

If I'm commenting on this issue too often, add the `buildcop: quiet` label... | non_defect | healthcare api client dicom dicomweb test test dicomweb delete study failed this test failed to configure my behavior see if i m commenting on this issue too often add the buildcop quiet label and i will stop commenting commit buildurl status failed | 0 |

79,936 | 7,733,764,236 | IssuesEvent | 2018-05-26 15:45:50 | NMGRL/pychron | https://api.github.com/repos/NMGRL/pychron | closed | Error on deleting IA | Bug Data Specific Ready to close Tested OK Testing Required | active branch=None

active analyses=66196-01,66196-02,66196-03,66196-04,66196-05,66196-06,66196-07,66196-08,66196-09

description=""

Traceback

```

Traceback (most recent call last):

File "/Users/mcintosh/miniconda3/envs/pychron3/lib/python3.5/site-packages/pyface/ui/qt4/action/action_item.py", line 163, in _qt4_on_t... | 2.0 | Error on deleting IA - active branch=None

active analyses=66196-01,66196-02,66196-03,66196-04,66196-05,66196-06,66196-07,66196-08,66196-09

description=""

Traceback

```

Traceback (most recent call last):

File "/Users/mcintosh/miniconda3/envs/pychron3/lib/python3.5/site-packages/pyface/ui/qt4/action/action_item.py",... | non_defect | error on deleting ia active branch none active analyses description traceback traceback most recent call last file users mcintosh envs lib site packages pyface ui action action item py line in on triggered self controller perform action actio... | 0 |

71,346 | 23,579,402,025 | IssuesEvent | 2022-08-23 06:07:46 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Logger name repeated twice | T: Defect | ### Expected behavior

```

2022-08-23 08:51:15.717 [Test worker] INFO org.jooq.Constants - ....

```

The `org.jooq.Constants` correctly reflects the source of the message.

### Actual behavior

```

2022-08-23 08:51:15.717 [Test worker] INFO org.jooq.Constants.org.jooq.Constants -

```

```

2022-08-23 08:51:1... | 1.0 | Logger name repeated twice - ### Expected behavior

```

2022-08-23 08:51:15.717 [Test worker] INFO org.jooq.Constants - ....

```

The `org.jooq.Constants` correctly reflects the source of the message.

### Actual behavior

```

2022-08-23 08:51:15.717 [Test worker] INFO org.jooq.Constants.org.jooq.Constants -

... | defect | logger name repeated twice expected behavior info org jooq constants the org jooq constants correctly reflects the source of the message actual behavior info org jooq constants org jooq constants debug org jooq to... | 1 |

45,263 | 12,692,520,332 | IssuesEvent | 2020-06-21 23:04:32 | cakephp/bake | https://api.github.com/repos/cakephp/bake | closed | bake --connection doesn't work for anything other than the 'default' database connection in CakePHP 4 | Defect On Hold | This is a (multiple allowed):

bug

* CakePHP Version: 4.0.8

* Platform and Target: CentOS. Command line.

### What you did

As per https://stackoverflow.com/questions/62215177/cakephp-4-bake-wont-let-me-use-a-different-database-connection-to-default I have configured `config/app_local.php` so there are connection... | 1.0 | bake --connection doesn't work for anything other than the 'default' database connection in CakePHP 4 - This is a (multiple allowed):

bug

* CakePHP Version: 4.0.8

* Platform and Target: CentOS. Command line.

### What you did

As per https://stackoverflow.com/questions/62215177/cakephp-4-bake-wont-let-me-use-a-d... | defect | bake connection doesn t work for anything other than the default database connection in cakephp this is a multiple allowed bug cakephp version platform and target centos command line what you did as per i have configured config app local php so there are connections to differ... | 1 |

77,969 | 27,257,957,932 | IssuesEvent | 2023-02-22 12:58:07 | EightShapes/specs-plugin-feedback | https://api.github.com/repos/EightShapes/specs-plugin-feedback | closed | Does not work with SAP Stencils | defect wontfix | I downloaded the stencils and tried it with the plugin, but it does not work :-( It does not produce specs :-(

| 1.0 | Does not work with SAP Stencils - I downloaded the stencils and tried it with the plugin, but it does not work :-( It does not produce specs :-(

| defect | does not work with sap stencils i downloaded the stencils and tried it with the plugin but it does not work it does not produce specs | 1 |

34,500 | 14,410,621,134 | IssuesEvent | 2020-12-04 05:22:05 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | [Index Patterns] Field Format 'undefined' not found errors | Feature:FieldFormatters Feature:Index Patterns Team:AppServices blocker bug v7.11.0 | **Kibana version:**

master

**Describe the bug:**

Index patterns with scripted fields cause `Field Format 'undefined' not found errors` in Discover, breaking the data table rendering

**Steps to reproduce:**

1. Add kibana_sample_data_logs data

2. Go to Discover

3. Select kibana_sample_data_logs as index patter... | 1.0 | [Index Patterns] Field Format 'undefined' not found errors - **Kibana version:**

master

**Describe the bug:**

Index patterns with scripted fields cause `Field Format 'undefined' not found errors` in Discover, breaking the data table rendering

**Steps to reproduce:**

1. Add kibana_sample_data_logs data

2. Go t... | non_defect | field format undefined not found errors kibana version master describe the bug index patterns with scripted fields cause field format undefined not found errors in discover breaking the data table rendering steps to reproduce add kibana sample data logs data go to discover ... | 0 |

15,771 | 2,869,064,866 | IssuesEvent | 2015-06-05 23:02:52 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | include additional options in pub build? | Area-Pkg Pkg-Polymer PolymerMilestone-Later Priority-Medium Triaged Type-Defect | some options we've heard requested from users:

(a) allow to choose whether or not to include certain polyfills (e.g. if you intend to run only on a browser that has native support for shadow dom, for example)

(b) merge files together (e.g. single deploy target)

I'm not sure yet that the right solution is to make polym... | 1.0 | include additional options in pub build? - some options we've heard requested from users:

(a) allow to choose whether or not to include certain polyfills (e.g. if you intend to run only on a browser that has native support for shadow dom, for example)

(b) merge files together (e.g. single deploy target)

I'm not sure y... | defect | include additional options in pub build some options we ve heard requested from users a allow to choose whether or not to include certain polyfills e g if you intend to run only on a browser that has native support for shadow dom for example b merge files together e g single deploy target i m not sure y... | 1 |

775,690 | 27,235,409,986 | IssuesEvent | 2023-02-21 15:59:12 | ascheid/itsg33-pbmm-issue-gen | https://api.github.com/repos/ascheid/itsg33-pbmm-issue-gen | closed | AC-1: Access Control Policy And Procedures | Priority: P1 Control: AC-1 Class: Technical Suggested Assignment: IT Security Function ITSG-33 | #Control Definition

(A) The organization develops, documents, and disseminates to [Assignment: organization-defined personnel or roles]:

(a) An access control policy that addresses purpose, scope, roles, responsibilities, management commitment, coordination among organizational entities, and compliance; and

(b) Procedu... | 1.0 | AC-1: Access Control Policy And Procedures - #Control Definition

(A) The organization develops, documents, and disseminates to [Assignment: organization-defined personnel or roles]:

(a) An access control policy that addresses purpose, scope, roles, responsibilities, management commitment, coordination among organizatio... | non_defect | ac access control policy and procedures control definition a the organization develops documents and disseminates to a an access control policy that addresses purpose scope roles responsibilities management commitment coordination among organizational entities and compliance and b procedures to ... | 0 |

23,021 | 3,750,737,538 | IssuesEvent | 2016-03-11 08:50:48 | contao/core | https://api.github.com/repos/contao/core | closed | E_DEPRECATED wird nicht beachtet | defect | Das errorlog von Contao wächst in wenigen Stunden um mehrere MB an, da Contao nicht meine PHP Einstellungen beachtet, das ich keine deprecated Warnungen loggen oder sehen möchte.

Das Problem tritt nun extrem auf, da Deprecated Meldungen in der generateFrontendUrl eingefügt wurden. | 1.0 | E_DEPRECATED wird nicht beachtet - Das errorlog von Contao wächst in wenigen Stunden um mehrere MB an, da Contao nicht meine PHP Einstellungen beachtet, das ich keine deprecated Warnungen loggen oder sehen möchte.

Das Problem tritt nun extrem auf, da Deprecated Meldungen in der generateFrontendUrl eingefügt wurden. | defect | e deprecated wird nicht beachtet das errorlog von contao wächst in wenigen stunden um mehrere mb an da contao nicht meine php einstellungen beachtet das ich keine deprecated warnungen loggen oder sehen möchte das problem tritt nun extrem auf da deprecated meldungen in der generatefrontendurl eingefügt wurden | 1 |

2,083 | 2,603,976,007 | IssuesEvent | 2015-02-24 19:01:31 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳冠状沟长颗粒怎么回事 | auto-migrated Priority-Medium Type-Defect | ```

沈阳冠状沟长颗粒怎么回事〓沈陽軍區政治部醫院性病〓TEL��