Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

5,234 | 2,573,224,133 | IssuesEvent | 2015-02-11 07:40:09 | HubTurbo/HubTurbo | https://api.github.com/repos/HubTurbo/HubTurbo | closed | Users can see the activity history of a member | feature-collaborators priority.low status.accepted type.story | * Parent(s): #73

<hr>

This should include comments, issue edits, (commits?) etc. | 1.0 | Users can see the activity history of a member - * Parent(s): #73

<hr>

This should include comments, issue edits, (commits?) etc. | non_defect | users can see the activity history of a member parent s this should include comments issue edits commits etc | 0 |

41,054 | 10,278,728,132 | IssuesEvent | 2019-08-25 16:45:20 | joshuaulrich/IBrokers | https://api.github.com/repos/joshuaulrich/IBrokers | closed | Connot connect with tws | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1. run tws. API opened.

2. tws<-twsConnect()

3.

What is the expected output? What do you see instead?

Error in structure(list(s, clientId = clientId, port = port, server.version

= SERVER_VERSION, :

object "SERVER_VERSION" not found

What version of the product are you us... | 1.0 | Connot connect with tws - ```

What steps will reproduce the problem?

1. run tws. API opened.

2. tws<-twsConnect()

3.

What is the expected output? What do you see instead?

Error in structure(list(s, clientId = clientId, port = port, server.version

= SERVER_VERSION, :

object "SERVER_VERSION" not found

What version... | defect | connot connect with tws what steps will reproduce the problem run tws api opened tws twsconnect what is the expected output what do you see instead error in structure list s clientid clientid port port server version server version object server version not found what version... | 1 |

24,216 | 3,925,281,559 | IssuesEvent | 2016-04-22 18:21:35 | CMU-CREATE-Lab/create-lab-visual-programmer | https://api.github.com/repos/CMU-CREATE-Lab/create-lab-visual-programmer | closed | Manually entering servo or LED value sometimes has no effect | auto-migrated Priority-Medium Type-Defect | ```

Report from Tom...

I found a really weird bug in the latest visual programmer (pointed out to me

during a screenshare debug session with a customer). Try this:

* Turn on a servo

* Slide the servo to a position

* Now use the box and set that servo to 90 degrees by typing in 90 and hitting

enter

On mine, the sli... | 1.0 | Manually entering servo or LED value sometimes has no effect - ```

Report from Tom...

I found a really weird bug in the latest visual programmer (pointed out to me

during a screenshare debug session with a customer). Try this:

* Turn on a servo

* Slide the servo to a position

* Now use the box and set that servo to ... | defect | manually entering servo or led value sometimes has no effect report from tom i found a really weird bug in the latest visual programmer pointed out to me during a screenshare debug session with a customer try this turn on a servo slide the servo to a position now use the box and set that servo to ... | 1 |

257,158 | 27,561,787,420 | IssuesEvent | 2023-03-07 22:46:22 | samqws-marketing/box_box-ui-elements | https://api.github.com/repos/samqws-marketing/box_box-ui-elements | closed | CVE-2022-29244 (High) detected in npm-6.13.1.tgz - autoclosed | security vulnerability | ## CVE-2022-29244 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-6.13.1.tgz</b></p></summary>

<p>a package manager for JavaScript</p>

<p>Library home page: <a href="https://regist... | True | CVE-2022-29244 (High) detected in npm-6.13.1.tgz - autoclosed - ## CVE-2022-29244 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>npm-6.13.1.tgz</b></p></summary>

<p>a package manager ... | non_defect | cve high detected in npm tgz autoclosed cve high severity vulnerability vulnerable library npm tgz a package manager for javascript library home page a href path to dependency file package json path to vulnerable library node modules npm package json dependen... | 0 |

70,111 | 22,952,113,016 | IssuesEvent | 2022-07-19 08:22:16 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | closed | Mobile FTUE: ReCaptcha form is laggy | T-Defect S-Minor Z-FTUE | The form shown as part of the new FTUE registration flow is slow to respond to taps. | 1.0 | Mobile FTUE: ReCaptcha form is laggy - The form shown as part of the new FTUE registration flow is slow to respond to taps. | defect | mobile ftue recaptcha form is laggy the form shown as part of the new ftue registration flow is slow to respond to taps | 1 |

81,304 | 30,790,025,154 | IssuesEvent | 2023-07-31 15:32:59 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | opened | Wrong relative date / failed unit test on specific dates | defect | ### Description

https://github.com/cakephp/cakephp/blob/e28e6125405bcf447d5c7120b3af8f0f4f47feff/tests/TestCase/I18n/DateTest.php#L505-L507

This tests fails on "July, 31st", because `-5 months -2 days` results in "March, 1st" due to PHP's overflow behavior (here in `DateTimeImmutable`), as described here: https://w... | 1.0 | Wrong relative date / failed unit test on specific dates - ### Description

https://github.com/cakephp/cakephp/blob/e28e6125405bcf447d5c7120b3af8f0f4f47feff/tests/TestCase/I18n/DateTest.php#L505-L507

This tests fails on "July, 31st", because `-5 months -2 days` results in "March, 1st" due to PHP's overflow behavior ... | defect | wrong relative date failed unit test on specific dates description this tests fails on july because months days results in march due to php s overflow behavior here in datetimeimmutable as described here example other tests will fail in on other dates the three tests abov... | 1 |

39,512 | 9,525,017,088 | IssuesEvent | 2019-04-28 08:51:52 | cakephp/bake | https://api.github.com/repos/cakephp/bake | closed | Bake model: Cache key `myapp_cake_model_default_"user"` contains invalid characters | Defect | ### Environment:

CakePHP 4.x

Bake 4.x

PHP 7.3.2

PostgreSQL 11.2

### What I did:

bin/cake bake model Entry

### Result (not as expected):

```

Exception: Cache key `myapp_cake_model_default_"user"` contains invalid characters. You cannot use /, \, <, >, ?, :, |, *, or " in cache keys. in [/usr/local/.../lfc... | 1.0 | Bake model: Cache key `myapp_cake_model_default_"user"` contains invalid characters - ### Environment:

CakePHP 4.x

Bake 4.x

PHP 7.3.2

PostgreSQL 11.2

### What I did:

bin/cake bake model Entry

### Result (not as expected):

```

Exception: Cache key `myapp_cake_model_default_"user"` contains invalid charac... | defect | bake model cache key myapp cake model default user contains invalid characters environment cakephp x bake x php postgresql what i did bin cake bake model entry result not as expected exception cache key myapp cake model default user contains invalid charact... | 1 |

26,598 | 4,770,549,298 | IssuesEvent | 2016-10-26 15:32:46 | buildo/prisma | https://api.github.com/repos/buildo/prisma | reopened | [macro] label brutta | defect macro | [Trello card](https://trello.com/c/5810c83f9f0312ae28bd84f5/)

## description

la label al momento é brutta

## how to reproduce

- {optional: describe steps to reproduce defect}

## specs

{optional: describe a possible fix for this defect, if not obvious}

## misc

{optional: other useful info}

## sub-issues

- [ ] sub... | 1.0 | [macro] label brutta - [Trello card](https://trello.com/c/5810c83f9f0312ae28bd84f5/)

## description

la label al momento é brutta

## how to reproduce

- {optional: describe steps to reproduce defect}

## specs

{optional: describe a possible fix for this defect, if not obvious}

## misc

{optional: other useful info}

#... | defect | label brutta description la label al momento é brutta how to reproduce optional describe steps to reproduce defect specs optional describe a possible fix for this defect if not obvious misc optional other useful info sub issues sub issue card | 1 |

61,081 | 17,023,596,765 | IssuesEvent | 2021-07-03 02:50:26 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | /etc/init.d/renderd has inconsistant output for start/stop vs restart | Component: mapnik Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 4.36pm, Sunday, 23rd May 2010]**

I'm running renderd/mod_tile (r21420) on Ubuntu Lucid, build using the debian dpkg-buildpackage.

If you run ```/etc/init.d/renderd start``` to start renderd, you get no output. The same happens with ```stop```.

However if you do... | 1.0 | /etc/init.d/renderd has inconsistant output for start/stop vs restart - **[Submitted to the original trac issue database at 4.36pm, Sunday, 23rd May 2010]**

I'm running renderd/mod_tile (r21420) on Ubuntu Lucid, build using the debian dpkg-buildpackage.

If you run ```/etc/init.d/renderd start``` to start renderd, y... | defect | etc init d renderd has inconsistant output for start stop vs restart i m running renderd mod tile on ubuntu lucid build using the debian dpkg buildpackage if you run etc init d renderd start to start renderd you get no output the same happens with stop however if you do etc ini... | 1 |

53,029 | 13,260,072,553 | IssuesEvent | 2020-08-20 17:38:51 | jkoan/test-navit | https://api.github.com/repos/jkoan/test-navit | closed | call of navit with command line parameter different from valid navit.xml dumps core (Trac #30) | Incomplete Migration Migrated from Trac core defect/bug somebody | Migrated from http://trac.navit-project.org/ticket/30

```json

{

"status": "closed",

"changetime": "2007-11-27T09:30:31",

"_ts": "1196155831000000",

"description": "uwe@rock:~/navit$ navit g\n\n** ERROR **: Fehler beim Parsen von 'g': Failed to open file 'g': No such file or directory\n\naborting...\nAbo... | 1.0 | call of navit with command line parameter different from valid navit.xml dumps core (Trac #30) - Migrated from http://trac.navit-project.org/ticket/30

```json

{

"status": "closed",

"changetime": "2007-11-27T09:30:31",

"_ts": "1196155831000000",

"description": "uwe@rock:~/navit$ navit g\n\n** ERROR **: F... | defect | call of navit with command line parameter different from valid navit xml dumps core trac migrated from json status closed changetime ts description uwe rock navit navit g n n error fehler beim parsen von g failed to open file g no such file... | 1 |

25,840 | 4,470,125,182 | IssuesEvent | 2016-08-25 15:02:32 | contao/core | https://api.github.com/repos/contao/core | closed | User, member, article icons status behaves incorrect on eye toggeling in combination with auto activation | defect | Ich machs mal auf deutsch, damit mich überhaupt jemand versteht.

Online-Demo:

1. Ein **Mitglied** zum Bearbeiten öffnen

2. Die Autoaktivierung auf einen Zeitpunkt in der Zukunft stellen

3. Speichern und schließen

4. Das Auge ist jetzt grün und das User-Icon grau (richtig)

5. Auf das Auge klicken

Man sieht ... | 1.0 | User, member, article icons status behaves incorrect on eye toggeling in combination with auto activation - Ich machs mal auf deutsch, damit mich überhaupt jemand versteht.

Online-Demo:

1. Ein **Mitglied** zum Bearbeiten öffnen

2. Die Autoaktivierung auf einen Zeitpunkt in der Zukunft stellen

3. Speichern und s... | defect | user member article icons status behaves incorrect on eye toggeling in combination with auto activation ich machs mal auf deutsch damit mich überhaupt jemand versteht online demo ein mitglied zum bearbeiten öffnen die autoaktivierung auf einen zeitpunkt in der zukunft stellen speichern und s... | 1 |

58,624 | 16,656,086,618 | IssuesEvent | 2021-06-05 14:54:26 | meerk40t/meerk40t | https://api.github.com/repos/meerk40t/meerk40t | closed | Prerelease 0.7.0: Testing... Report any issues found here. | Context: UI/UX Priority: Critical Status: In Progress Type: Bug Type: Defect Type: Documentation Type: Enhancement Type: Maintenance Work: Chaotic [̲̅$̲̅(̲̅ιοο̲̅)̲̅$̲̅] help wanted | # PLEASE DO ALL FURTHER TESTING ON v0.7.0b30 RC-5

The latest beta release is v0.7.0beta30 Release Candidate 5.

Please do all further testing on this release. Please do not report any further bugs with previous beta releases.

https://github.com/meerk40t/meerk40t/releases/tag/0.7.0-beta30

There are still some o... | 1.0 | Prerelease 0.7.0: Testing... Report any issues found here. - # PLEASE DO ALL FURTHER TESTING ON v0.7.0b30 RC-5

The latest beta release is v0.7.0beta30 Release Candidate 5.

Please do all further testing on this release. Please do not report any further bugs with previous beta releases.

https://github.com/meerk40t... | defect | prerelease testing report any issues found here please do all further testing on rc the latest beta release is release candidate please do all further testing on this release please do not report any further bugs with previous beta releases there are still some other signif... | 1 |

49,706 | 13,187,254,403 | IssuesEvent | 2020-08-13 02:50:09 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | HS processing with long queue broken (Trac #1907) | Incomplete Migration Migrated from Trac defect other | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1907">https://code.icecube.wisc.edu/ticket/1907</a>, reported by dheereman and owned by dheereman</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-11-06T20:51:10",

"description": "Some of your recent C... | 1.0 | HS processing with long queue broken (Trac #1907) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1907">https://code.icecube.wisc.edu/ticket/1907</a>, reported by dheereman and owned by dheereman</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-11-06T2... | defect | hs processing with long queue broken trac migrated from json status closed changetime description some of your recent condor jobs have used the following ninvalid accounting group names s nlong hitspool n nall accounting groups must match groupname userna... | 1 |

355,143 | 10,576,931,154 | IssuesEvent | 2019-10-07 18:57:58 | compodoc/compodoc | https://api.github.com/repos/compodoc/compodoc | closed | [BUG] Unable to generate doc for routing based on environment variable | Context : routing Priority: Medium Status: Accepted Time: ~3 hours Type: Bug wontfix | <!--

> Please follow the issue template below for bug reports and queries.

> For issue, start the label of the title with [BUG]

> For feature requests, start the label of the title with [FEATURE] and explain your use case and ideas clearly below, you can remove sections which are not relevant.

-->

##### **Overvi... | 1.0 | [BUG] Unable to generate doc for routing based on environment variable - <!--

> Please follow the issue template below for bug reports and queries.

> For issue, start the label of the title with [BUG]

> For feature requests, start the label of the title with [FEATURE] and explain your use case and ideas clearly bel... | non_defect | unable to generate doc for routing based on environment variable please follow the issue template below for bug reports and queries for issue start the label of the title with for feature requests start the label of the title with and explain your use case and ideas clearly below you can remo... | 0 |

374,544 | 26,122,391,182 | IssuesEvent | 2022-12-28 14:10:30 | ervinteoh/flask-demo-login | https://api.github.com/repos/ervinteoh/flask-demo-login | opened | [DOC]: Wiki & README | documentation | **Describe the solution you'd like**

A simple to follow and clear readme to guide the user how to install and launch the application.

**Additional context**

Wiki should be more detailed written with GitHub Wiki page for the current repository.

**Tasks**

- [ ] README.md

- [ ] Wiki Structure

| 1.0 | [DOC]: Wiki & README - **Describe the solution you'd like**

A simple to follow and clear readme to guide the user how to install and launch the application.

**Additional context**

Wiki should be more detailed written with GitHub Wiki page for the current repository.

**Tasks**

- [ ] README.md

- [ ] Wiki Struct... | non_defect | wiki readme describe the solution you d like a simple to follow and clear readme to guide the user how to install and launch the application additional context wiki should be more detailed written with github wiki page for the current repository tasks readme md wiki structure | 0 |

40,012 | 9,794,497,950 | IssuesEvent | 2019-06-10 23:12:55 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | sparse matrices indexing with scipy 1.3 | defect scipy.sparse | Indexing csr or csc sparce matrix return an array of one element with scipy 1.3, With previous version it returned a scalar. I believe it is an oversight since lil_matrix indexing still return a scalar.

```

import scipy

import numpy as np

print(type(scipy.sparse.csr_matrix(np.eye(3))[0,0]))

print(type(scipy.spar... | 1.0 | sparse matrices indexing with scipy 1.3 - Indexing csr or csc sparce matrix return an array of one element with scipy 1.3, With previous version it returned a scalar. I believe it is an oversight since lil_matrix indexing still return a scalar.

```

import scipy

import numpy as np

print(type(scipy.sparse.csr_matri... | defect | sparse matrices indexing with scipy indexing csr or csc sparce matrix return an array of one element with scipy with previous version it returned a scalar i believe it is an oversight since lil matrix indexing still return a scalar import scipy import numpy as np print type scipy sparse csr matri... | 1 |

64,953 | 18,961,046,644 | IssuesEvent | 2021-11-19 04:57:13 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Cannot read property 'store' of undefined | T-Defect P1 Z-Rageshake A-Storage | ```

2019-07-15T12:51:11.324Z E Unable to load session Cannot read property 'store' of null

TypeError: Cannot read property 'store' of null

at Object.t.trackStores (vector://vector/webapp/bundles/e9b8313cd5591059cfdc/bundle.js:63:263291)

at e.<anonymous> (vector://vector/webapp/bundles/e9b8313cd5591059cfdc/b... | 1.0 | Cannot read property 'store' of undefined - ```

2019-07-15T12:51:11.324Z E Unable to load session Cannot read property 'store' of null

TypeError: Cannot read property 'store' of null

at Object.t.trackStores (vector://vector/webapp/bundles/e9b8313cd5591059cfdc/bundle.js:63:263291)

at e.<anonymous> (vector://... | defect | cannot read property store of undefined e unable to load session cannot read property store of null typeerror cannot read property store of null at object t trackstores vector vector webapp bundles bundle js at e vector vector webapp bundles bundle js at e... | 1 |

59,663 | 17,023,196,512 | IssuesEvent | 2021-07-03 00:48:47 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Patch to fix further XSS holes, and nicer cleaning of tags where some are suitable | Component: website Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 4.25pm, Tuesday, 15th January 2008]**

Fixes two further XSS holes (in/outboxes) and uses the Rails 2 sanitize function to filter out nasty js based on a whitelist approach. (also handles javascript: links well) | 1.0 | Patch to fix further XSS holes, and nicer cleaning of tags where some are suitable - **[Submitted to the original trac issue database at 4.25pm, Tuesday, 15th January 2008]**

Fixes two further XSS holes (in/outboxes) and uses the Rails 2 sanitize function to filter out nasty js based on a whitelist approach. (also han... | defect | patch to fix further xss holes and nicer cleaning of tags where some are suitable fixes two further xss holes in outboxes and uses the rails sanitize function to filter out nasty js based on a whitelist approach also handles javascript links well | 1 |

40,482 | 10,541,949,475 | IssuesEvent | 2019-10-02 12:06:20 | intermine/bluegenes | https://api.github.com/repos/intermine/bluegenes | closed | Testing: replace flymine with (bio?)testmine | Build-CI-Testing | right now, a lot of tests time out in Travis, probably due to the fact that we're testing on a live internet connection with real data. It would be a lot better to use biotestmine as a test server, since it can easily be scripted to run in tests locally, thereby avoiding network latency failures | 1.0 | Testing: replace flymine with (bio?)testmine - right now, a lot of tests time out in Travis, probably due to the fact that we're testing on a live internet connection with real data. It would be a lot better to use biotestmine as a test server, since it can easily be scripted to run in tests locally, thereby avoiding n... | non_defect | testing replace flymine with bio testmine right now a lot of tests time out in travis probably due to the fact that we re testing on a live internet connection with real data it would be a lot better to use biotestmine as a test server since it can easily be scripted to run in tests locally thereby avoiding n... | 0 |

52,932 | 13,225,240,540 | IssuesEvent | 2020-08-17 20:46:38 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | ports dies if you don't specify --prefix (Trac #597) | Migrated from Trac defect tools/ports | you get:

troy@zinc:~/Icecube/DarwinPorts/t2$ /opt/local/bin/port sync

can't find package darwinports

while executing

"package require darwinports"

(file "/opt/local/bin/port" line 36)

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/597">https://code.icecub... | 1.0 | ports dies if you don't specify --prefix (Trac #597) - you get:

troy@zinc:~/Icecube/DarwinPorts/t2$ /opt/local/bin/port sync

can't find package darwinports

while executing

"package require darwinports"

(file "/opt/local/bin/port" line 36)

<details>

<summary><em>Migrated from <a href="https://code.icecube.wi... | defect | ports dies if you don t specify prefix trac you get troy zinc icecube darwinports opt local bin port sync can t find package darwinports while executing package require darwinports file opt local bin port line migrated from json status closed changetime... | 1 |

159,756 | 13,771,425,639 | IssuesEvent | 2020-10-07 21:59:59 | eclipse/deeplearning4j | https://api.github.com/repos/eclipse/deeplearning4j | closed | We should define what dl4j is "for" (People think we are closer to opencv) | Documentation | There still seems to be a lot of confusion on what dl4j does. It would be good if we could try to explain

what you should expect to do with dl4j.

For example, we are lower level than stanford nlp or opencv with "canned" models.

However we are higher level than just a "math library". | 1.0 | We should define what dl4j is "for" (People think we are closer to opencv) - There still seems to be a lot of confusion on what dl4j does. It would be good if we could try to explain

what you should expect to do with dl4j.

For example, we are lower level than stanford nlp or opencv with "canned" models.

However ... | non_defect | we should define what is for people think we are closer to opencv there still seems to be a lot of confusion on what does it would be good if we could try to explain what you should expect to do with for example we are lower level than stanford nlp or opencv with canned models however we are hi... | 0 |

209,698 | 16,054,249,013 | IssuesEvent | 2021-04-23 00:44:15 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | e2e storage: re-enable health check sidecars | area/test kind/feature needs-triage sig/storage | #### What would you like to be added:

https://github.com/kubernetes/kubernetes/pull/100637 added newer hostpath deployments but removed the csi-external-health-monitor-agent because it seemed to cause overhead without being needed for the tests.

#### Why is this needed:

We should re-enable it and check whether... | 1.0 | e2e storage: re-enable health check sidecars - #### What would you like to be added:

https://github.com/kubernetes/kubernetes/pull/100637 added newer hostpath deployments but removed the csi-external-health-monitor-agent because it seemed to cause overhead without being needed for the tests.

#### Why is this need... | non_defect | storage re enable health check sidecars what would you like to be added added newer hostpath deployments but removed the csi external health monitor agent because it seemed to cause overhead without being needed for the tests why is this needed we should re enable it and check whether that ... | 0 |

627,434 | 19,904,875,086 | IssuesEvent | 2022-01-25 11:43:49 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | k5.macromill.com - site is not usable | priority-normal browser-fenix engine-gecko | <!-- @browser: Firefox Mobile 98.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:98.0) Gecko/98.0 Firefox/98.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/98696 -->

<!-- @extra_labels: browser-fenix -->

**URL**: https://k5.macromill... | 1.0 | k5.macromill.com - site is not usable - <!-- @browser: Firefox Mobile 98.0 -->

<!-- @ua_header: Mozilla/5.0 (Android 11; Mobile; rv:98.0) Gecko/98.0 Firefox/98.0 -->

<!-- @reported_with: android-components-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/98696 -->

<!-- @extra_labels: browser-... | non_defect | macromill com site is not usable url browser version firefox mobile operating system android tested another browser yes chrome problem type site is not usable description buttons or links not working steps to reproduce どれだけタップしても反応しない。 android one ... | 0 |

18,120 | 3,024,309,810 | IssuesEvent | 2015-08-02 13:46:35 | aayush93/xbt | https://api.github.com/repos/aayush93/xbt | closed | GitHub | auto-migrated Priority-Medium Type-Defect | ```

Let`s go to GitHub

I create https://github.com/poiuty/xbt

I give access rights to this repository.

```

Original issue reported on code.google.com by `df3434er...@gmail.com` on 14 Jul 2015 at 7:03 | 1.0 | GitHub - ```

Let`s go to GitHub

I create https://github.com/poiuty/xbt

I give access rights to this repository.

```

Original issue reported on code.google.com by `df3434er...@gmail.com` on 14 Jul 2015 at 7:03 | defect | github let s go to github i create i give access rights to this repository original issue reported on code google com by gmail com on jul at | 1 |

62,427 | 17,023,921,273 | IssuesEvent | 2021-07-03 04:34:04 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Interpolation doesn't work with nodes without address | Component: nominatim Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 8.41pm, Friday, 17th April 2015]**

"gorriti 3639" works, because there's a node there. However "gorriti 3638" doesn't.

I can't find any interpolated result that would work around here.

Not being able to handle interpolations renders nominatim useless. | 1.0 | Interpolation doesn't work with nodes without address - **[Submitted to the original trac issue database at 8.41pm, Friday, 17th April 2015]**

"gorriti 3639" works, because there's a node there. However "gorriti 3638" doesn't.

I can't find any interpolated result that would work around here.

Not being able to ha... | defect | interpolation doesn t work with nodes without address gorriti works because there s a node there however gorriti doesn t i can t find any interpolated result that would work around here not being able to handle interpolations renders nominatim useless | 1 |

987 | 2,594,406,971 | IssuesEvent | 2015-02-20 02:57:42 | BALL-Project/ball | https://api.github.com/repos/BALL-Project/ball | closed | BALLView: tooltip - text empty | C: VIEW P: minor R: invalid T: defect | **Reported by akdehof on 4 Dec 41589808 15:35 UTC**

As can be seen in the | 1.0 | BALLView: tooltip - text empty - **Reported by akdehof on 4 Dec 41589808 15:35 UTC**

As can be seen in the | defect | ballview tooltip text empty reported by akdehof on dec utc as can be seen in the | 1 |

17,227 | 2,984,629,043 | IssuesEvent | 2015-07-18 05:59:02 | Tarsnap/scrypt | https://api.github.com/repos/Tarsnap/scrypt | closed | *(uint64_t *)usermembuf triggers alignment error in clang. | auto-migrated Priority-Medium Type-Defect | ```

lib/util/memlimit.c:78:14: error: cast from 'uint8_t *' (aka 'unsigned char *')

to 'uint64_t *' (aka 'unsigned long long *') increases required alignment from

1 to 8

[-Werror,-Wcast-align]

```

Original issue reported on code.google.com by `lhunath@lyndir.com` on 2 May 2014 at 3:52 | 1.0 | *(uint64_t *)usermembuf triggers alignment error in clang. - ```

lib/util/memlimit.c:78:14: error: cast from 'uint8_t *' (aka 'unsigned char *')

to 'uint64_t *' (aka 'unsigned long long *') increases required alignment from

1 to 8

[-Werror,-Wcast-align]

```

Original issue reported on code.google.com by `lhunat... | defect | t usermembuf triggers alignment error in clang lib util memlimit c error cast from t aka unsigned char to t aka unsigned long long increases required alignment from to original issue reported on code google com by lhunath lyndir com on may at | 1 |

62,532 | 17,023,941,187 | IssuesEvent | 2021-07-03 04:41:04 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | P2's GPS traces (from server) display is affected by new API treatment of unordered traces | Component: potlatch2 Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 6.04pm, Thursday, 8th November 2018]**

If I remember correctly (cannot test, since I have no Flash player anymore) P2 displays all GPS traces as lines. Hence, it is likely affected, as JOSM is, by the API trackpoints api call code change which closed https://github.... | 1.0 | P2's GPS traces (from server) display is affected by new API treatment of unordered traces - **[Submitted to the original trac issue database at 6.04pm, Thursday, 8th November 2018]**

If I remember correctly (cannot test, since I have no Flash player anymore) P2 displays all GPS traces as lines. Hence, it is likely af... | defect | s gps traces from server display is affected by new api treatment of unordered traces if i remember correctly cannot test since i have no flash player anymore displays all gps traces as lines hence it is likely affected as josm is by the api trackpoints api call code change which closed | 1 |

225,686 | 24,881,128,938 | IssuesEvent | 2022-10-28 01:15:55 | michaeldotson/scaffolding-app | https://api.github.com/repos/michaeldotson/scaffolding-app | closed | WS-2022-0334 (Medium) detected in nokogiri-1.10.3.gem - autoclosed | security vulnerability | ## WS-2022-0334 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.3.gem</b></p></summary>

<p>Nokogiri (鋸) is an HTML, XML, SAX, and Reader parser. Among

Nokogiri's many ... | True | WS-2022-0334 (Medium) detected in nokogiri-1.10.3.gem - autoclosed - ## WS-2022-0334 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>nokogiri-1.10.3.gem</b></p></summary>

<p>Nokogiri... | non_defect | ws medium detected in nokogiri gem autoclosed ws medium severity vulnerability vulnerable library nokogiri gem nokogiri 鋸 is an html xml sax and reader parser among nokogiri s many features is the ability to search documents via xpath or selectors library home page... | 0 |

57,766 | 24,222,239,278 | IssuesEvent | 2022-09-26 11:51:52 | dotnet/fsharp | https://api.github.com/repos/dotnet/fsharp | closed | Go to definition on external dependency/metadata is broken in 17.4 | Regression Area-LangService-Navigation | >

>

> BCL/external dependency metadata navigation. I'm pressing F12 on this to no avail (it used to work). Perhaps it's a different use case.

Yep, doesn't work for me for `Newtonsoft.Json`.

_Originall... | 1.0 | Go to definition on external dependency/metadata is broken in 17.4 - >

>

> BCL/external dependency metadata navigation. I'm pressing F12 on this to no avail (it used to work). Perhaps it's a different use c... | non_defect | go to definition on external dependency metadata is broken in bcl external dependency metadata navigation i m pressing on this to no avail it used to work perhaps it s a different use case yep doesn t work for me for newtonsoft json originally posted by vzarytovskii in | 0 |

70,405 | 23,155,565,522 | IssuesEvent | 2022-07-29 12:42:12 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Messages in thread are out of order | T-Defect S-Major A-Timeline O-Uncommon A-Threads | ### Steps to reproduce

1. Start a thread

2. Put your computer to sleep

3. Receive some replies to that thread overnight

4. Take your computer out of sleep and watch Element sync

### Outcome

#### What did you expect?

The messages in the thread should be in chronological order, as they are on Element Android.

... | 1.0 | Messages in thread are out of order - ### Steps to reproduce

1. Start a thread

2. Put your computer to sleep

3. Receive some replies to that thread overnight

4. Take your computer out of sleep and watch Element sync

### Outcome

#### What did you expect?

The messages in the thread should be in chronological ord... | defect | messages in thread are out of order steps to reproduce start a thread put your computer to sleep receive some replies to that thread overnight take your computer out of sleep and watch element sync outcome what did you expect the messages in the thread should be in chronological ord... | 1 |

23,668 | 3,851,865,301 | IssuesEvent | 2016-04-06 05:27:47 | GPF/imame4all | https://api.github.com/repos/GPF/imame4all | closed | Controller Overlay in Portrait Full-Screen broken | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Set to portrait full-screen

2. Start a game using portrait mode

3. the controller picture (red ball) will be scaled up to full screen

What is the expected output? What do you see instead?

Normal sized controller/joystick picture! Heavily upscaled picture of

controller.

W... | 1.0 | Controller Overlay in Portrait Full-Screen broken - ```

What steps will reproduce the problem?

1. Set to portrait full-screen

2. Start a game using portrait mode

3. the controller picture (red ball) will be scaled up to full screen

What is the expected output? What do you see instead?

Normal sized controller/joystick ... | defect | controller overlay in portrait full screen broken what steps will reproduce the problem set to portrait full screen start a game using portrait mode the controller picture red ball will be scaled up to full screen what is the expected output what do you see instead normal sized controller joystick ... | 1 |

66,044 | 19,907,822,449 | IssuesEvent | 2022-01-25 14:30:15 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | opened | [cakephp/http] Auth adapters can't be used | defect | ### Description

Using `cakephp/http` standalone, with any auth adapter, currently doesn't work without setting `App.namespace` during bootstrap.

```

PHP Fatal error: Uncaught TypeError: rtrim() expects parameter 1 to be string, null given in vendor/cakephp/core/App.php:63

```

We could either add a fallback in... | 1.0 | [cakephp/http] Auth adapters can't be used - ### Description

Using `cakephp/http` standalone, with any auth adapter, currently doesn't work without setting `App.namespace` during bootstrap.

```

PHP Fatal error: Uncaught TypeError: rtrim() expects parameter 1 to be string, null given in vendor/cakephp/core/App.php... | defect | auth adapters can t be used description using cakephp http standalone with any auth adapter currently doesn t work without setting app namespace during bootstrap php fatal error uncaught typeerror rtrim expects parameter to be string null given in vendor cakephp core app php we... | 1 |

17,597 | 10,731,731,017 | IssuesEvent | 2019-10-28 20:13:05 | dockstore/dockstore | https://api.github.com/repos/dockstore/dockstore | closed | Adding gitlab as a docker registry | enhancement web-service | as per title, we are using gitlab for one of our projects, it's nice that it has docker integration so we don't need to host dockers and code in separate places. It would be great if dockstore can support this.

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassian.net/browse/DOCK-443)

┆Issue Type: S... | 1.0 | Adding gitlab as a docker registry - as per title, we are using gitlab for one of our projects, it's nice that it has docker integration so we don't need to host dockers and code in separate places. It would be great if dockstore can support this.

┆Issue is synchronized with this [Jira Story](https://ucsc-cgl.atlassia... | non_defect | adding gitlab as a docker registry as per title we are using gitlab for one of our projects it s nice that it has docker integration so we don t need to host dockers and code in separate places it would be great if dockstore can support this ┆issue is synchronized with this ┆issue type story ┆issue number ... | 0 |

11,260 | 2,645,154,633 | IssuesEvent | 2015-03-12 20:58:29 | felipeleao/trabalho-pm-2 | https://api.github.com/repos/felipeleao/trabalho-pm-2 | closed | Teste de Fermat com problemas | auto-migrated Priority-Medium Type-Defect | ```

Ao tentar entrar com alguns números, quando se escolhe a opção do teste de

Fermat, aparecem erros dizendo que certos números primos não são primos.

```

Original issue reported on code.google.com by `brmagadu...@gmail.com` on 16 Oct 2010 at 2:15 | 1.0 | Teste de Fermat com problemas - ```

Ao tentar entrar com alguns números, quando se escolhe a opção do teste de

Fermat, aparecem erros dizendo que certos números primos não são primos.

```

Original issue reported on code.google.com by `brmagadu...@gmail.com` on 16 Oct 2010 at 2:15 | defect | teste de fermat com problemas ao tentar entrar com alguns números quando se escolhe a opção do teste de fermat aparecem erros dizendo que certos números primos não são primos original issue reported on code google com by brmagadu gmail com on oct at | 1 |

12,093 | 14,740,081,591 | IssuesEvent | 2021-01-07 08:29:04 | kdjstudios/SABillingGitlab | https://api.github.com/repos/kdjstudios/SABillingGitlab | closed | Atlanta - SA Billing - Late Fee Account List | anc-process anp-important ant-bug has attachment | In GitLab by @kdjstudios on Oct 3, 2018, 11:09

[Atlanta.xlsx](/uploads/efbbfb141893f5b2abbb8b52ea0c24e0/Atlanta.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-52748 | 1.0 | Atlanta - SA Billing - Late Fee Account List - In GitLab by @kdjstudios on Oct 3, 2018, 11:09

[Atlanta.xlsx](/uploads/efbbfb141893f5b2abbb8b52ea0c24e0/Atlanta.xlsx)

HD: http://www.servicedesk.answernet.com/profiles/ticket/2018-10-03-52748 | non_defect | atlanta sa billing late fee account list in gitlab by kdjstudios on oct uploads atlanta xlsx hd | 0 |

318,188 | 27,293,234,061 | IssuesEvent | 2023-02-23 18:08:08 | tarantool/tarantool-qa | https://api.github.com/repos/tarantool/tarantool-qa | opened | test: flaky replication-luatest/gh_8121_memtx_mvcc_replication_stream_test.lua | flaky test | Test https://github.com/tarantool/tarantool/blob/master/test/replication-luatest/gh_8121_memtx_mvcc_replication_stream_test.lua is flaky

* branch: master, 2.10

* OS and version: Linux, FreeBSD

* Architecture: any

* gc64: any

<img width="727" alt="image" src="https://user-images.githubusercontent.com/426722/220... | 1.0 | test: flaky replication-luatest/gh_8121_memtx_mvcc_replication_stream_test.lua - Test https://github.com/tarantool/tarantool/blob/master/test/replication-luatest/gh_8121_memtx_mvcc_replication_stream_test.lua is flaky

* branch: master, 2.10

* OS and version: Linux, FreeBSD

* Architecture: any

* gc64: any

<img ... | non_defect | test flaky replication luatest gh memtx mvcc replication stream test lua test is flaky branch master os and version linux freebsd architecture any any img width alt image src | 0 |

237,000 | 19,589,793,673 | IssuesEvent | 2022-01-05 11:34:15 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome UI Functional Tests.test/functional/apps/discover/_discover_histogram·ts - discover app discover histogram should visualize monthly data with different day intervals | blocker Feature:Discover failed-test v8.0.0 skipped-test v7.11.0 Team:DataDiscovery | A test failed on a tracked branch

```

Error: retry.try timeout: TimeoutError: Waiting for element to be located By(css selector, [data-test-subj="superDatePickerAbsoluteDateInput"])

Wait timed out after 10019ms

at /dev/shm/workspace/kibana/node_modules/selenium-webdriver/lib/webdriver.js:842:17

at process._tic... | 2.0 | Failing test: Chrome UI Functional Tests.test/functional/apps/discover/_discover_histogram·ts - discover app discover histogram should visualize monthly data with different day intervals - A test failed on a tracked branch

```

Error: retry.try timeout: TimeoutError: Waiting for element to be located By(css selector, [... | non_defect | failing test chrome ui functional tests test functional apps discover discover histogram·ts discover app discover histogram should visualize monthly data with different day intervals a test failed on a tracked branch error retry try timeout timeouterror waiting for element to be located by css selector ... | 0 |

432,332 | 30,278,812,215 | IssuesEvent | 2023-07-07 22:59:32 | r-lib/lintr | https://api.github.com/repos/r-lib/lintr | closed | Document "symbols" naming style in object_name_linter | documentation | The [documentation](https://github.com/r-lib/lintr/blob/main/R/object_name_linter.R) for `object_name_linter` describes:

> ... The default naming styles are "snake_case" and "symbols".

After that, there is no explanation of what "symbols" means, and there is no example that uses `styles = "symbols"`. Could this d... | 1.0 | Document "symbols" naming style in object_name_linter - The [documentation](https://github.com/r-lib/lintr/blob/main/R/object_name_linter.R) for `object_name_linter` describes:

> ... The default naming styles are "snake_case" and "symbols".

After that, there is no explanation of what "symbols" means, and there i... | non_defect | document symbols naming style in object name linter the for object name linter describes the default naming styles are snake case and symbols after that there is no explanation of what symbols means and there is no example that uses styles symbols could this documentation please ... | 0 |

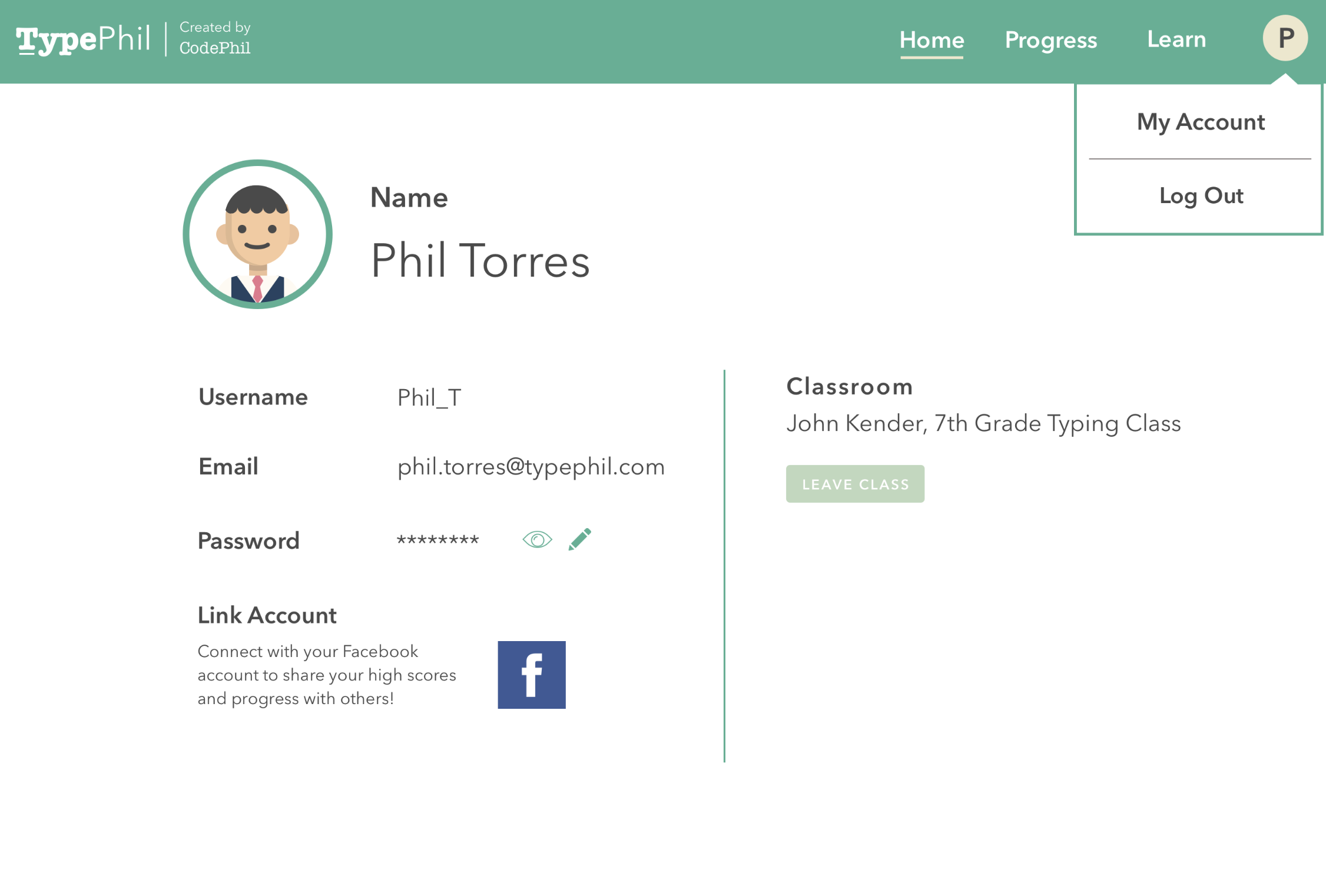

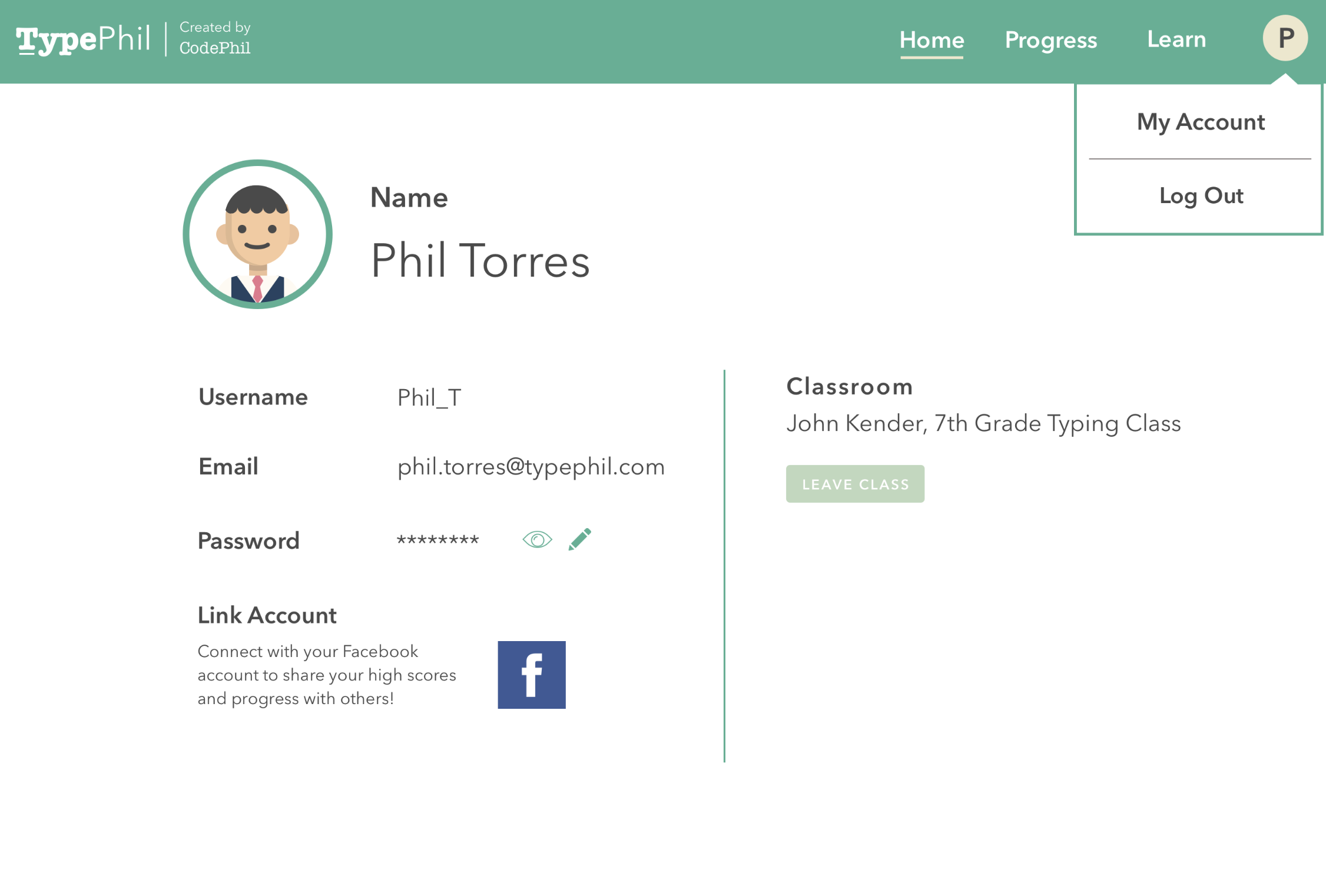

300,363 | 9,209,967,819 | IssuesEvent | 2019-03-09 00:45:39 | codephil-columbia/typephil | https://api.github.com/repos/codephil-columbia/typephil | closed | Redesign "My account"(Chelsy) | Medium Priority Visual-Related | Need to redesign "my account" page since we currently do not ask for email or have classroom features. current design is toooooo bare.

- Need to redesign "my account" page since we currently do not ask for email or have classroom features. current design is toooooo bare.

### Steps To Reproduce

https://deck.net/5fbd63b8bd314550e325edd544f78599

```csharp

public class Program

{

public static void Main()

{

string a = "foo";

va... | 1.0 | New line emmited when calling multiline script - New line emmited when calling multiline script.

Im not sure if this usecase is expected to be used in that way :)

### Steps To Reproduce

https://deck.net/5fbd63b8bd314550e325edd544f78599

```csharp

public class Program

{

public static void Main()

{... | defect | new line emmited when calling multiline script new line emmited when calling multiline script im not sure if this usecase is expected to be used in that way steps to reproduce csharp public class program public static void main string a foo v... | 1 |

12,565 | 2,708,262,836 | IssuesEvent | 2015-04-08 07:36:38 | Simsys/qhexedit2 | https://api.github.com/repos/Simsys/qhexedit2 | closed | Error in example (function saveFile()) | auto-migrated Priority-Medium Type-Defect | ```

In Example saveFile() function:

file.open(QFile::WriteOnly | QFile::Text)

causes errors while saving (/n translated to /r/n);

correct way:

file.open(QFile::WriteOnly | QFile::Truncate)

```

Original issue reported on code.google.com by `gibol...@gmail.com` on 6 Jun 2013 at 4:28 | 1.0 | Error in example (function saveFile()) - ```

In Example saveFile() function:

file.open(QFile::WriteOnly | QFile::Text)

causes errors while saving (/n translated to /r/n);

correct way:

file.open(QFile::WriteOnly | QFile::Truncate)

```

Original issue reported on code.google.com by `gibol...@gmail.com` on 6 Jun 2013 ... | defect | error in example function savefile in example savefile function file open qfile writeonly qfile text causes errors while saving n translated to r n correct way file open qfile writeonly qfile truncate original issue reported on code google com by gibol gmail com on jun at ... | 1 |

133,931 | 18,365,153,529 | IssuesEvent | 2021-10-09 23:21:15 | turkdevops/istanbul | https://api.github.com/repos/turkdevops/istanbul | opened | CVE-2020-7774 (High) detected in y18n-3.2.1.tgz | security vulnerability | ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>y18n-3.2.1.tgz</b></p></summary>

<p>the bare-bones internationalization library used by yargs</p>

<p>Library home page:... | True | CVE-2020-7774 (High) detected in y18n-3.2.1.tgz - ## CVE-2020-7774 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>y18n-3.2.1.tgz</b></p></summary>

<p>the bare-bones internationalizati... | non_defect | cve high detected in tgz cve high severity vulnerability vulnerable library tgz the bare bones internationalization library used by yargs library home page a href path to dependency file istanbul package json path to vulnerable library istanbul node modules nyc n... | 0 |

62,201 | 17,023,871,595 | IssuesEvent | 2021-07-03 04:17:28 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | It is now difficult to share a location | Component: website Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 2.45am, Saturday, 3rd August 2013]**

Previously, it was easy to "share a location".

That is, to send someone precise coordinates of a point.

Double click on that location, click permalink, copy URL and that's it.

Now it's become extremely difficult because doubl... | 1.0 | It is now difficult to share a location - **[Submitted to the original trac issue database at 2.45am, Saturday, 3rd August 2013]**

Previously, it was easy to "share a location".

That is, to send someone precise coordinates of a point.

Double click on that location, click permalink, copy URL and that's it.

Now it'... | defect | it is now difficult to share a location previously it was easy to share a location that is to send someone precise coordinates of a point double click on that location click permalink copy url and that s it now it s become extremely difficult because double clicking moves the location only half w... | 1 |

42,900 | 5,545,290,326 | IssuesEvent | 2017-03-22 21:12:49 | Microsoft/vscode | https://api.github.com/repos/Microsoft/vscode | closed | Pop-up Hints showing incorrect JSDoc description or no description for variables/constants referencing a function | as-designed javascript typescript | <!-- Do you have a question? Please ask it on http://stackoverflow.com/questions/tagged/vscode -->

```javascript

/**

* description of a

*/

function a() { }

/**

* description of curry

... | 1.0 | Pop-up Hints showing incorrect JSDoc description or no description for variables/constants referencing a function - <!-- Do you have a question? Please ask it on http://stackoverflow.com/questions/tagged/vscode -->

Bug:... | 1.0 | Inaccuracy in UG: Error under 'Edit contact's modules' section - Description:

There is a sentence under the 'Edit contact's modules' section that does not make sense and does not agree with the section.

Screenshot:

(error underlined in red)

detected in spring-boot-2.2.4.RELEASE.jar | security vulnerability | ## CVE-2022-27772 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-boot-2.2.4.RELEASE.jar</b></p></summary>

<p>Spring Boot</p>

<p>Library home page: <a href="https://projects.s... | True | CVE-2022-27772 (Medium) detected in spring-boot-2.2.4.RELEASE.jar - ## CVE-2022-27772 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>spring-boot-2.2.4.RELEASE.jar</b></p></summary>

... | non_defect | cve medium detected in spring boot release jar cve medium severity vulnerability vulnerable library spring boot release jar spring boot library home page a href path to dependency file ci images releasescripts pom xml path to vulnerable library release spring... | 0 |

27,942 | 22,627,348,074 | IssuesEvent | 2022-06-30 11:54:32 | WordPress/performance | https://api.github.com/repos/WordPress/performance | opened | Prepare 1.3.0 release | [Type] Enhancement Infrastructure | This issue is to track preparation of the upcoming 1.3.0 release up until publishing, which is due **July 18, 2022**.

- [ ] Create release/1.3.0 branch closer to the release date

- [ ] Finalize scope and punt unfinished pull requests to following release

- [ ] Prepare the release (Monday, July 18, 2022) | 1.0 | Prepare 1.3.0 release - This issue is to track preparation of the upcoming 1.3.0 release up until publishing, which is due **July 18, 2022**.

- [ ] Create release/1.3.0 branch closer to the release date

- [ ] Finalize scope and punt unfinished pull requests to following release

- [ ] Prepare the release (Monday... | non_defect | prepare release this issue is to track preparation of the upcoming release up until publishing which is due july create release branch closer to the release date finalize scope and punt unfinished pull requests to following release prepare the release monday july ... | 0 |

638,608 | 20,732,036,309 | IssuesEvent | 2022-03-14 10:19:29 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | Access token generation fails in cross tenant subscription | Type/Bug Priority/Normal Feature/CrossTenantSubscription APIM - 4.1.0 | ### Description:

<!-- Describe the issue -->

Access token generation gives page not found error in cross tenant subscription

<img width="1429" alt="Screenshot 2565-03-07 at 18 30 06" src="https://user-images.githubusercontent.com/36605514/157039279-8045e1e1-2845-4589-8cbb-f6264fd4ca96.png">

### Steps to reprodu... | 1.0 | Access token generation fails in cross tenant subscription - ### Description:

<!-- Describe the issue -->

Access token generation gives page not found error in cross tenant subscription

<img width="1429" alt="Screenshot 2565-03-07 at 18 30 06" src="https://user-images.githubusercontent.com/36605514/157039279-8045e... | non_defect | access token generation fails in cross tenant subscription description access token generation gives page not found error in cross tenant subscription img width alt screenshot at src steps to reproduce subscribe to an api in a different tenant try to generate an acces... | 0 |

11,341 | 14,164,897,781 | IssuesEvent | 2020-11-12 06:09:22 | tikv/tikv | https://api.github.com/repos/tikv/tikv | closed | cases::test_coprocessor::test_parse_request_failed_2 not work | priority/low severity/Major sig/coprocessor type/bug | ## Bug Report

<!-- Thanks for your bug report! Don't worry if you can't fill out all the sections. -->

### What version of TiKV are you using?

master

### Steps to reproduce

- add `println!("list {:?}", fail::list());` at `fail_point!("rockskv_async_snapshot",`

- `cargo test --package tests --test failpoints... | 1.0 | cases::test_coprocessor::test_parse_request_failed_2 not work - ## Bug Report

<!-- Thanks for your bug report! Don't worry if you can't fill out all the sections. -->

### What version of TiKV are you using?

master

### Steps to reproduce

- add `println!("list {:?}", fail::list());` at `fail_point!("rockskv_as... | non_defect | cases test coprocessor test parse request failed not work bug report what version of tikv are you using master steps to reproduce add println list fail list at fail point rockskv async snapshot cargo test package tests test failpoints cases test coproce... | 0 |

683,900 | 23,398,910,308 | IssuesEvent | 2022-08-12 05:12:34 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Bad-Sad error when function is accessed using field access within the init method of same class | Type/Bug Priority/High Team/CompilerFE Team/jBallerina Points/3 SwanLakeDump Area/TypeChecker Lang/ClassDefinition Crash Lang/Expressions/FieldAccess | **Description:**

```ballerina

class A {

function foo(int i) returns int {

return i+1;

}

function (int i) returns int bar;

function init() {

self.bar = self.foo;

}

// This works

function baz() returns function (int) returns int {

self.bar = self.foo;

... | 1.0 | Bad-Sad error when function is accessed using field access within the init method of same class - **Description:**

```ballerina

class A {

function foo(int i) returns int {

return i+1;

}

function (int i) returns int bar;

function init() {

self.bar = self.foo;

}

// ... | non_defect | bad sad error when function is accessed using field access within the init method of same class description ballerina class a function foo int i returns int return i function int i returns int bar function init self bar self foo ... | 0 |

656,613 | 21,768,735,000 | IssuesEvent | 2022-05-13 06:46:34 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | web.whatsapp.com - see bug description | browser-firefox priority-critical engine-gecko | <!-- @browser: Firefox 100.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:100.0) Gecko/20100101 Firefox/100.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/104286 -->

**URL**: https://web.whatsapp.com/

**Browser / Version**: Firefox 100.0

**Ope... | 1.0 | web.whatsapp.com - see bug description - <!-- @browser: Firefox 100.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 6.1; Win64; x64; rv:100.0) Gecko/20100101 Firefox/100.0 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/104286 -->

**URL**: https://web.whatsapp.com/

*... | non_defect | web whatsapp com see bug description url browser version firefox operating system windows tested another browser yes chrome problem type something else description a página não carrega já limpei os cookies e demais arquivos temporário só acontece isto no firefox ... | 0 |

61,168 | 17,023,623,322 | IssuesEvent | 2021-07-03 02:58:50 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | primitive_xml_parsing/osm2pgsql core dump on options | Component: mapnik Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 12.39pm, Monday, 16th August 2010]**

Fixed with the patch below:

```diff

Index: osm2pgsql.c

===================================================================

--- osm2pgsql.c (revision 22625)

+++ osm2pgsql.c (working copy)

@@ -741,6 +741,7 @@

struct ou... | 1.0 | primitive_xml_parsing/osm2pgsql core dump on options - **[Submitted to the original trac issue database at 12.39pm, Monday, 16th August 2010]**

Fixed with the patch below:

```diff

Index: osm2pgsql.c

===================================================================

--- osm2pgsql.c (revision 22625)

+++ osm2pgsq... | defect | primitive xml parsing core dump on options fixed with the patch below diff index c c revision c working copy struct output options options pgconn sql conn memset option... | 1 |

250,017 | 7,966,535,351 | IssuesEvent | 2018-07-14 23:46:19 | utra-robosoccer/soccer-embedded | https://api.github.com/repos/utra-robosoccer/soccer-embedded | closed | Code style: FreeRTOS functions use a mixture of tabs and spaces which is ugly | Priority: Low Type: Maintenance | This issue is due to legacy usage of tabs in functions instead of spaces, and some stuff that has to do with Cube's auto-generated HAL code. All indentations should consist of 4 spaces. | 1.0 | Code style: FreeRTOS functions use a mixture of tabs and spaces which is ugly - This issue is due to legacy usage of tabs in functions instead of spaces, and some stuff that has to do with Cube's auto-generated HAL code. All indentations should consist of 4 spaces. | non_defect | code style freertos functions use a mixture of tabs and spaces which is ugly this issue is due to legacy usage of tabs in functions instead of spaces and some stuff that has to do with cube s auto generated hal code all indentations should consist of spaces | 0 |

74,595 | 15,360,431,913 | IssuesEvent | 2021-03-01 16:55:39 | Reid-Turner/uppy | https://api.github.com/repos/Reid-Turner/uppy | opened | CVE-2017-16137 (Medium) detected in debug-2.2.0.tgz | security vulnerability | ## CVE-2017-16137 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>debug-2.2.0.tgz</b></p></summary>

<p>small debugging utility</p>

<p>Library home page: <a href="https://registry.npm... | True | CVE-2017-16137 (Medium) detected in debug-2.2.0.tgz - ## CVE-2017-16137 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>debug-2.2.0.tgz</b></p></summary>

<p>small debugging utility</... | non_defect | cve medium detected in debug tgz cve medium severity vulnerability vulnerable library debug tgz small debugging utility library home page a href dependency hierarchy uppy example uppy with companion file examples uppy with companion tgz root library uploa... | 0 |

24,318 | 3,962,702,425 | IssuesEvent | 2016-05-02 17:48:10 | wpostma/notifier-jenkins | https://api.github.com/repos/wpostma/notifier-jenkins | closed | Unable to download application. Details file attached. | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Click on the usage and installation link that starts the ClickOnce

installation.

```

Original issue reported on code.google.com by `pisar...@gmail.com` on 25 Jan 2013 at 6:01

Attachments:

* [CAHR4RQE.log](https://storage.googleapis.com/google-code-attachments/notifier... | 1.0 | Unable to download application. Details file attached. - ```

What steps will reproduce the problem?

1. Click on the usage and installation link that starts the ClickOnce

installation.

```

Original issue reported on code.google.com by `pisar...@gmail.com` on 25 Jan 2013 at 6:01

Attachments:

* [CAHR4RQE.log](https:... | defect | unable to download application details file attached what steps will reproduce the problem click on the usage and installation link that starts the clickonce installation original issue reported on code google com by pisar gmail com on jan at attachments | 1 |

241,986 | 18,507,182,454 | IssuesEvent | 2021-10-19 20:08:51 | ErenoGit/Virtual-ATM-machine | https://api.github.com/repos/ErenoGit/Virtual-ATM-machine | opened | Add README.md | documentation hacktoberfest | The programme is written in the author's language, which makes it difficult to analyse. We have to change everything into English, the international language. | 1.0 | Add README.md - The programme is written in the author's language, which makes it difficult to analyse. We have to change everything into English, the international language. | non_defect | add readme md the programme is written in the author s language which makes it difficult to analyse we have to change everything into english the international language | 0 |

46,711 | 13,055,962,621 | IssuesEvent | 2020-07-30 03:14:55 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | I3Particle documentation incomplete (Trac #1746) | Incomplete Migration Migrated from Trac combo core defect | Migrated from https://code.icecube.wisc.edu/ticket/1746

```json

{

"status": "closed",

"changetime": "2019-02-13T14:12:47",

"description": "\nPer Alex Olivas ... there is missing documentation on the cascade\n",

"reporter": "pmeade",

"cc": "olivas, gmaggi@vub.ac.be",

"resolution": "fixed",

... | 1.0 | I3Particle documentation incomplete (Trac #1746) - Migrated from https://code.icecube.wisc.edu/ticket/1746

```json

{

"status": "closed",

"changetime": "2019-02-13T14:12:47",

"description": "\nPer Alex Olivas ... there is missing documentation on the cascade\n",

"reporter": "pmeade",

"cc": "oliva... | defect | documentation incomplete trac migrated from json status closed changetime description nper alex olivas there is missing documentation on the cascade n reporter pmeade cc olivas gmaggi vub ac be resolution fixed ts ... | 1 |

795,508 | 28,075,433,105 | IssuesEvent | 2023-03-29 22:57:47 | Yoooi0/MultiFunPlayer | https://api.github.com/repos/Yoooi0/MultiFunPlayer | opened | Allow disabling bindings | enhancement priority-medium | Toggle in UI.

Shortcuts that disable bindings? Select by capture/index/dropdown. | 1.0 | Allow disabling bindings - Toggle in UI.

Shortcuts that disable bindings? Select by capture/index/dropdown. | non_defect | allow disabling bindings toggle in ui shortcuts that disable bindings select by capture index dropdown | 0 |

131,735 | 28,014,427,019 | IssuesEvent | 2023-03-27 21:15:13 | llvm/llvm-project | https://api.github.com/repos/llvm/llvm-project | reopened | Clang-format confused trailing return type | clang-format invalid-code-generation | Problem found when upgrading from 16-RC2 to 16.0.0

````cpp

struct EXPORT_MACRO [[nodiscard]] C

{

using U = int;

auto f() noexcept -> void {}

auto g() -> void {}

};

````

gets reformatted to

````cpp

struct EXPORT_MACRO [[nodiscard]] C

{

using U = int;

auto f() noexcept -> void {}

auto g()... | 1.0 | Clang-format confused trailing return type - Problem found when upgrading from 16-RC2 to 16.0.0

````cpp

struct EXPORT_MACRO [[nodiscard]] C

{

using U = int;

auto f() noexcept -> void {}

auto g() -> void {}

};

````

gets reformatted to

````cpp

struct EXPORT_MACRO [[nodiscard]] C

{

using U = int;

... | non_defect | clang format confused trailing return type problem found when upgrading from to cpp struct export macro c using u int auto f noexcept void auto g void gets reformatted to cpp struct export macro c using u int auto f noexcept v... | 0 |

21,489 | 3,512,770,943 | IssuesEvent | 2016-01-11 05:01:01 | Virtual-Labs/problem-solving-iiith | https://api.github.com/repos/Virtual-Labs/problem-solving-iiith | reopened | QA_String Operations_Back to experiment | Category :Usability Defect raised on: 24-11-2015 Developed by:IIIT Hyd Release Number Severity :S2 Status :Open Version Number :1.1 | Defect Description:

In the "String Operations" experiment,the back to experiments link is not present in the page instead the back to experiments link should be displayed on the screen inorder to view the list of experiments by the user .

Actual Result:

In the "String Operations" experiment,the back to experiment... | 1.0 | QA_String Operations_Back to experiment - Defect Description:

In the "String Operations" experiment,the back to experiments link is not present in the page instead the back to experiments link should be displayed on the screen inorder to view the list of experiments by the user .

Actual Result:

In the "String Ope... | defect | qa string operations back to experiment defect description in the string operations experiment the back to experiments link is not present in the page instead the back to experiments link should be displayed on the screen inorder to view the list of experiments by the user actual result in the string ope... | 1 |

55,738 | 14,663,359,784 | IssuesEvent | 2020-12-29 09:31:49 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | Multiselect on chips display does not update the model when deleting them from the chip icon | defect | I'm submitting a

[X] bug report

**Current behavior**

On chips display when you delete a chip by clicking the X button the model doesn't refresh

**Expected behavior**

Model should be refreshed like when you unclick from the dropdown list.

**Minimal reproduction of the problem with instructions**

https://pr... | 1.0 | Multiselect on chips display does not update the model when deleting them from the chip icon - I'm submitting a

[X] bug report

**Current behavior**

On chips display when you delete a chip by clicking the X button the model doesn't refresh

**Expected behavior**

Model should be refreshed like when you unclick f... | defect | multiselect on chips display does not update the model when deleting them from the chip icon i m submitting a bug report current behavior on chips display when you delete a chip by clicking the x button the model doesn t refresh expected behavior model should be refreshed like when you unclick fro... | 1 |

64,308 | 18,419,616,206 | IssuesEvent | 2021-10-13 14:50:33 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Room List not loading | T-Defect S-Critical A-Room-List Z-Rageshake O-Occasional | ### Steps to reproduce

Unknown

### Outcome

#### What did you expect?

Room list to load promptly.

#### What happened instead?

We are seeing users report issues where the room list stays in its 'placeholder' state, even after the app has finished syncing. It can stay like this 20 minutes or so. Trying to filter w... | 1.0 | Room List not loading - ### Steps to reproduce

Unknown

### Outcome

#### What did you expect?

Room list to load promptly.

#### What happened instead?

We are seeing users report issues where the room list stays in its 'placeholder' state, even after the app has finished syncing. It can stay like this 20 minutes o... | defect | room list not loading steps to reproduce unknown outcome what did you expect room list to load promptly what happened instead we are seeing users report issues where the room list stays in its placeholder state even after the app has finished syncing it can stay like this minutes or... | 1 |

4,092 | 2,610,087,272 | IssuesEvent | 2015-02-26 18:26:30 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳痤疮粉刺的祛除方法 | auto-migrated Priority-Medium Type-Defect | ```

深圳痤疮粉刺的祛除方法【深圳韩方科颜全国热线400-869-1818��

�24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以��

�国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品�

��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反

弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国��

�专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸�

��的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:19 | 1.0 | 深圳痤疮粉刺的祛除方法 - ```

深圳痤疮粉刺的祛除方法【深圳韩方科颜全国热线400-869-1818��

�24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以��

�国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品�

��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反

弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国��

�专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸�

��的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 7:19 | defect | 深圳痤疮粉刺的祛除方法 深圳痤疮粉刺的祛除方法【 �� � 】深圳韩方科颜专业祛痘连锁机构,机构以�� �国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品� ��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反 弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国�� �专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸� ��的痘痘。 original issue reported on code google com by szft com on may at | 1 |

56,973 | 15,553,983,517 | IssuesEvent | 2021-03-16 02:44:47 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | VS DX cooling coil outlet air condition calculation problem when PLR is zero for CV system | Defect | Issue overview

--------------

A variable speed DX cooling coil in a constant volume system calculates outlet air condition when the compressor part-load-ratio is zero. At low supply air flow rates, the calculated coil outlet temperature can be very low (e.g., -46C) that triggers Psych error message. This is a defect.... | 1.0 | VS DX cooling coil outlet air condition calculation problem when PLR is zero for CV system - Issue overview

--------------

A variable speed DX cooling coil in a constant volume system calculates outlet air condition when the compressor part-load-ratio is zero. At low supply air flow rates, the calculated coil outlet ... | defect | vs dx cooling coil outlet air condition calculation problem when plr is zero for cv system issue overview a variable speed dx cooling coil in a constant volume system calculates outlet air condition when the compressor part load ratio is zero at low supply air flow rates the calculated coil outlet ... | 1 |

45,832 | 13,055,754,061 | IssuesEvent | 2020-07-30 02:38:09 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | trac attach file to ticket fails. (Trac #108) | Incomplete Migration Migrated from Trac defect infrastructure | Migrated from https://code.icecube.wisc.edu/ticket/108

```json

{

"status": "closed",

"changetime": "2007-12-03T15:32:37",

"description": "Trying to attach a .py script to a ticket from OS X on my MacBook.\n\nIt barfs.",

"reporter": "blaufuss",

"cc": "",

"resolution": "fixed",

"_ts": "1... | 1.0 | trac attach file to ticket fails. (Trac #108) - Migrated from https://code.icecube.wisc.edu/ticket/108

```json

{

"status": "closed",

"changetime": "2007-12-03T15:32:37",

"description": "Trying to attach a .py script to a ticket from OS X on my MacBook.\n\nIt barfs.",

"reporter": "blaufuss",

"cc"... | defect | trac attach file to ticket fails trac migrated from json status closed changetime description trying to attach a py script to a ticket from os x on my macbook n nit barfs reporter blaufuss cc resolution fixed ts ... | 1 |

3,861 | 2,610,083,108 | IssuesEvent | 2015-02-26 18:25:23 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 青春痘怎么治深圳 | auto-migrated Priority-Medium Type-Defect | ```

青春痘怎么治深圳【深圳韩方科颜全国热线400-869-1818,24小时

QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘��

�——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方�

��颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健

康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业��

�疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘�

��。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 6:46 | 1.0 | 青春痘怎么治深圳 - ```

青春痘怎么治深圳【深圳韩方科颜全国热线400-869-1818,24小时

QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘��

�——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方�

��颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健

康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业��

�疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘�

��。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 6:46 | defect | 青春痘怎么治深圳 青春痘怎么治深圳【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘�� �——韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方� ��颜专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健 康祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业�� �疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘� ��。 original issue reported on code google com by szft com on may at | 1 |

272,863 | 23,708,317,312 | IssuesEvent | 2022-08-30 04:59:12 | prettier/prettier | https://api.github.com/repos/prettier/prettier | opened | Add more tests for `graphql` parser | lang:graphql type:tests | During #13400, I found that we check `node.description` for `EnumTypeDefinition`, `UnionTypeDefinition`, and `ScalarTypeDefinition`, but we don't have any test with `description`. | 1.0 | Add more tests for `graphql` parser - During #13400, I found that we check `node.description` for `EnumTypeDefinition`, `UnionTypeDefinition`, and `ScalarTypeDefinition`, but we don't have any test with `description`. | non_defect | add more tests for graphql parser during i found that we check node description for enumtypedefinition uniontypedefinition and scalartypedefinition but we don t have any test with description | 0 |

56,998 | 15,588,771,258 | IssuesEvent | 2021-03-18 07:00:06 | scipy/scipy | https://api.github.com/repos/scipy/scipy | opened | BUG: stats: Spurious warning generated by arcsine.pdf at the ends of the support. | defect scipy.stats | Back in https://github.com/scipy/scipy/pull/7431, the `arcsine` distribution was updated so that the PDF returned `inf` at x=0 and x=1, to be consistent with the `beta(0.5, 0.5)` distribution. If we accept `inf` as the correct behavior at the endpoints, the function should not generate a warning at those points as it... | 1.0 | BUG: stats: Spurious warning generated by arcsine.pdf at the ends of the support. - Back in https://github.com/scipy/scipy/pull/7431, the `arcsine` distribution was updated so that the PDF returned `inf` at x=0 and x=1, to be consistent with the `beta(0.5, 0.5)` distribution. If we accept `inf` as the correct behavio... | defect | bug stats spurious warning generated by arcsine pdf at the ends of the support back in the arcsine distribution was updated so that the pdf returned inf at x and x to be consistent with the beta distribution if we accept inf as the correct behavior at the endpoints the function should ... | 1 |

32,084 | 13,756,257,963 | IssuesEvent | 2020-10-06 19:38:53 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | opened | Shared Mobility Data Request - Voting Trips | Product: Shared Mobility Operations Service: Dev Workgroup: PE |

Goal: Shared Mobility Services would like to analyze shared mobility trips taken during primary runoffs to plan for and support future trips to polling locations in November.

This analysis would help us understand if there is a need for shared mobility support in certain areas, e.g., increased deployments, rebalancin... | 1.0 | Shared Mobility Data Request - Voting Trips -

Goal: Shared Mobility Services would like to analyze shared mobility trips taken during primary runoffs to plan for and support future trips to polling locations in November.

This analysis would help us understand if there is a need for shared mobility support in certain ... | non_defect | shared mobility data request voting trips goal shared mobility services would like to analyze shared mobility trips taken during primary runoffs to plan for and support future trips to polling locations in november this analysis would help us understand if there is a need for shared mobility support in certain ... | 0 |

41,547 | 10,513,142,089 | IssuesEvent | 2019-09-27 19:46:10 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Network stats for REST communication is not accurate | Team: Management Center Type: Defect | Start a single member with this configuration:

```

Config config = new Config();

config.getManagementCenterConfig().setEnabled(true).setUrl("http://localhost:8083/hazelcast-mancenter");

config.getAdvancedNetworkConfig()

.setEnabled(true)