Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22,180 | 3,609,970,086 | IssuesEvent | 2016-02-05 01:40:40 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | opened | dartanalyzer reports the same error multiple times when given multiple entry points | area-analyzer Type-Defect | To reproduce, create three files:

entry1.dart

```

import "target.dart";

main() {

print(x);

}

```

entry2.dart

```

import "target.dart";

main() {

print(x);

}

```

target.dart

```

var x = 3;

asdf

```

Then run dartanalyzer on both entry files:

```

$ dartanalyzer entry1.dart entry2.d... | 1.0 | dartanalyzer reports the same error multiple times when given multiple entry points - To reproduce, create three files:

entry1.dart

```

import "target.dart";

main() {

print(x);

}

```

entry2.dart

```

import "target.dart";

main() {

print(x);

}

```

target.dart

```

var x = 3;

asdf

```

... | defect | dartanalyzer reports the same error multiple times when given multiple entry points to reproduce create three files dart import target dart main print x dart import target dart main print x target dart var x asdf then run d... | 1 |

7,497 | 6,919,225,546 | IssuesEvent | 2017-11-29 14:52:17 | nubisproject/nubis-nat | https://api.github.com/repos/nubisproject/nubis-nat | closed | Updating nubis-proxy puppet module | enhancement security | Once we have everything in nubisproject/nubis-nat#11 done we need to start using the upstream puppet module thias/puppet-squid3

| True | Updating nubis-proxy puppet module - Once we have everything in nubisproject/nubis-nat#11 done we need to start using the upstream puppet module thias/puppet-squid3

| non_defect | updating nubis proxy puppet module once we have everything in nubisproject nubis nat done we need to start using the upstream puppet module thias puppet | 0 |

180,208 | 30,467,694,296 | IssuesEvent | 2023-07-17 11:35:50 | jdi-testing/jdn-ai | https://api.github.com/repos/jdi-testing/jdn-ai | closed | [US-6-9] Visual updates of the filter icon | enhancement Design needed Filter Feature | - сейчас её плохо видно (в фигме сделал ярче до 85%)

- увеличить иконку до 16px, сейчас svg 14px

- неудобное расположение если плагин открыт в ширину, иконка отдельно остается справа

- добавить активное состояние | 1.0 | [US-6-9] Visual updates of the filter icon - - сейчас её плохо видно (в фигме сделал ярче до 85%)

- увеличить иконку до 16px, сейчас svg 14px

- неудобное расположение если плагин открыт в ширину, иконка отдельно остается справа

- добавить активное состояние | non_defect | visual updates of the filter icon сейчас её плохо видно в фигме сделал ярче до увеличить иконку до сейчас svg неудобное расположение если плагин открыт в ширину иконка отдельно остается справа добавить активное состояние | 0 |

28,219 | 5,221,382,505 | IssuesEvent | 2017-01-27 01:16:46 | elTiempoVuela/https-finder | https://api.github.com/repos/elTiempoVuela/https-finder | closed | Enhancement | auto-migrated Priority-Medium Type-Defect | ```

A little button for temporarely disable the fonction will be nice.

Thanks.

```

Original issue reported on code.google.com by `lcasinc...@gmail.com` on 1 Mar 2013 at 6:09

| 1.0 | Enhancement - ```

A little button for temporarely disable the fonction will be nice.

Thanks.

```

Original issue reported on code.google.com by `lcasinc...@gmail.com` on 1 Mar 2013 at 6:09

| defect | enhancement a little button for temporarely disable the fonction will be nice thanks original issue reported on code google com by lcasinc gmail com on mar at | 1 |

81,161 | 30,735,070,358 | IssuesEvent | 2023-07-28 06:55:35 | vector-im/element-x-android | https://api.github.com/repos/vector-im/element-x-android | opened | Able to send empty and non-trimmed text messages | T-Defect | ### Steps to reproduce

This may be a business decision but common sense is to not allow sending empty messages and trim messages before sending.

### Outcome

### Your phone model

_No response_

### O... | 1.0 | Able to send empty and non-trimmed text messages - ### Steps to reproduce

This may be a business decision but common sense is to not allow sending empty messages and trim messages before sending.

### Outcome

with "@" | auto-migrated Priority-Medium Type-Defect | _From @GoogleCodeExporter on May 31, 2015 11:31_

```

What device(s) are you experiencing the problem on?

HTC One S

What firmware version are you running on the device?

Android 4.1.1

What steps will reproduce the problem?

droid.smsSend(myNumber, "¡")

droid.smsSend(myNumber, "Any string containing ¡")

What is the ex... | 1.0 | smsSend replaces "¡" (inverted exclamation mark) with "@" - _From @GoogleCodeExporter on May 31, 2015 11:31_

```

What device(s) are you experiencing the problem on?

HTC One S

What firmware version are you running on the device?

Android 4.1.1

What steps will reproduce the problem?

droid.smsSend(myNumber, "¡")

droid.s... | defect | smssend replaces ¡ inverted exclamation mark with from googlecodeexporter on may what device s are you experiencing the problem on htc one s what firmware version are you running on the device android what steps will reproduce the problem droid smssend mynumber ¡ droid smssend... | 1 |

20,962 | 3,441,578,754 | IssuesEvent | 2015-12-14 19:02:51 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | Bug with Timers and the System Clock | area-vm Type-Defect | If I set a timer like this:

```dart

import "dart:async";

main() async {

var i = 1;

new Timer.periodic(const Duration(seconds: 1), (_) {

print("[${new DateTime.now().millisecondsSinceEpoch}] Hello #${i}");

i++;

});

}

```

and then spring my system clock forward say 2 or 3 hours, the timer w... | 1.0 | Bug with Timers and the System Clock - If I set a timer like this:

```dart

import "dart:async";

main() async {

var i = 1;

new Timer.periodic(const Duration(seconds: 1), (_) {

print("[${new DateTime.now().millisecondsSinceEpoch}] Hello #${i}");

i++;

});

}

```

and then spring my system cloc... | defect | bug with timers and the system clock if i set a timer like this dart import dart async main async var i new timer periodic const duration seconds print hello i i and then spring my system clock forward say or hours the timer will ex... | 1 |

5,386 | 2,610,186,660 | IssuesEvent | 2015-02-26 18:59:13 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 介绍色斑怎么才能去掉 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

30年前,一个年轻人离开故乡,开始创造自己的前途。他动身

的第一站,是去拜访本族的族长,请求指点。老族长正在练��

�,他听说本族有位后辈开始踏上人生的旅途,就写了3个字:

不要怕。然后抬起头来,望着年轻人说:“孩子,人生的秘��

�只有6个字,今天先告诉你3个,供你半生受用。”30年后,这

个从前的年轻人已是人到中年,有了一些成就,也添了很多��

�心事。归程漫漫,到了家乡,他又去拜访那位族长。他到了�

��长家里,才知道老人家几年前已经去世,家人取出一个密封

的信封对他说:“这是族长生前留给你的,他说有一天你会��

�来。”还乡的游子这才想起来,30年前他在这里听到人生的��

�半秘诀,拆开信封,里面赫然又是3... | 1.0 | 介绍色斑怎么才能去掉 - ```

《摘要》

30年前,一个年轻人离开故乡,开始创造自己的前途。他动身

的第一站,是去拜访本族的族长,请求指点。老族长正在练��

�,他听说本族有位后辈开始踏上人生的旅途,就写了3个字:

不要怕。然后抬起头来,望着年轻人说:“孩子,人生的秘��

�只有6个字,今天先告诉你3个,供你半生受用。”30年后,这

个从前的年轻人已是人到中年,有了一些成就,也添了很多��

�心事。归程漫漫,到了家乡,他又去拜访那位族长。他到了�

��长家里,才知道老人家几年前已经去世,家人取出一个密封

的信封对他说:“这是族长生前留给你的,他说有一天你会��

�来。”还乡的游子这才想起来,30年前他在这里听到人生的��

�半秘... | defect | 介绍色斑怎么才能去掉 《摘要》 ,一个年轻人离开故乡,开始创造自己的前途。他动身 的第一站,是去拜访本族的族长,请求指点。老族长正在练�� �,他听说本族有位后辈开始踏上人生的旅途, : 不要怕。然后抬起头来,望着年轻人说:“孩子,人生的秘�� � , ,供你半生受用。” ,这 个从前的年轻人已是人到中年,有了一些成就,也添了很多�� �心事。归程漫漫,到了家乡,他又去拜访那位族长。他到了� ��长家里,才知道老人家几年前已经去世,家人取出一个密封 的信封对他说:“这是族长生前留给你的,他说有一天你会�� �来。”还乡的游子这才想起来, �� �半秘诀,拆开信封, :不要悔。色斑怎 么才能去掉, 《客户案例》 相... | 1 |

72,093 | 3,371,857,591 | IssuesEvent | 2015-11-23 20:57:02 | INN/Largo | https://api.github.com/repos/INN/Largo | closed | default widget styles are still terrible | priority: high type: improvement | in looking at http://raleighpublicrecord-org.largoproject.staging.wpengine.com/ a couple of things

- [ ] we need some padding in widgets to open up the layout a bit. It was previous 20px or so and got removed in 0.4 but we need to add it back.

- [ ] the gray bottom border on widgets is probably not necessary and it... | 1.0 | default widget styles are still terrible - in looking at http://raleighpublicrecord-org.largoproject.staging.wpengine.com/ a couple of things

- [ ] we need some padding in widgets to open up the layout a bit. It was previous 20px or so and got removed in 0.4 but we need to add it back.

- [ ] the gray bottom border ... | non_defect | default widget styles are still terrible in looking at a couple of things we need some padding in widgets to open up the layout a bit it was previous or so and got removed in but we need to add it back the gray bottom border on widgets is probably not necessary and it causes issues in the homepa... | 0 |

53,407 | 6,314,509,773 | IssuesEvent | 2017-07-24 11:03:02 | dwyl/best-evidence | https://api.github.com/repos/dwyl/best-evidence | closed | Reduce space around 'Start Searching' button to avoid need to scroll | enhancement please-test T25m | **As a** user

**I want to** avoid scrolling to view page content

**So that** I can access the information I need effortlessly and quickly.

Currently the 'Start Searching' button on the login page for existing users is not fully visible on an iPhone 5. To remove the need for scrolling for users, we can reduce the s... | 1.0 | Reduce space around 'Start Searching' button to avoid need to scroll - **As a** user

**I want to** avoid scrolling to view page content

**So that** I can access the information I need effortlessly and quickly.

Currently the 'Start Searching' button on the login page for existing users is not fully visible on an iP... | non_defect | reduce space around start searching button to avoid need to scroll as a user i want to avoid scrolling to view page content so that i can access the information i need effortlessly and quickly currently the start searching button on the login page for existing users is not fully visible on an ip... | 0 |

17,481 | 10,708,268,136 | IssuesEvent | 2019-10-24 19:18:34 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Regional VNET Integration General Availability | Pri1 app-service/svc cxp product-question triaged | Dear, Any ETA available on the GA date for this feature?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: a7a98803-1438-b1b5-f543-7dd88bc4294e

* Version Independent ID: 37ff1d0f-ed8e-5e4d-1f4c-1b9f6cffb938

* Content: [Integrate app with Az... | 1.0 | Regional VNET Integration General Availability - Dear, Any ETA available on the GA date for this feature?

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: a7a98803-1438-b1b5-f543-7dd88bc4294e

* Version Independent ID: 37ff1d0f-ed8e-5e4d-1f... | non_defect | regional vnet integration general availability dear any eta available on the ga date for this feature document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source ... | 0 |

12,261 | 2,685,576,771 | IssuesEvent | 2015-03-30 02:58:13 | IssueMigrationTest/Test5 | https://api.github.com/repos/IssueMigrationTest/Test5 | closed | Error printout for module TKinter | auto-migrated Priority-Medium Type-Defect | **Issue by gunnar.f...@gmail.com**

_7 Jul 2013 at 1:09 GMT_

_Originally opened on Google Code_

----

```

running command

shedskin libMain.py

That is I am trying to convert my python script libMain.py to C++

------------------------------------

Recieving the following Error printout:

*** SHED SKIN Python-to-C++ Com... | 1.0 | Error printout for module TKinter - **Issue by gunnar.f...@gmail.com**

_7 Jul 2013 at 1:09 GMT_

_Originally opened on Google Code_

----

```

running command

shedskin libMain.py

That is I am trying to convert my python script libMain.py to C++

------------------------------------

Recieving the following Error printo... | defect | error printout for module tkinter issue by gunnar f gmail com jul at gmt originally opened on google code running command shedskin libmain py that is i am trying to convert my python script libmain py to c recieving the following error printout ... | 1 |

19,939 | 3,281,874,678 | IssuesEvent | 2015-10-28 01:03:19 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | Fixture Indexes' name problem. | console Defect On hold | Hello,

We have stumbled upon the following warning message while running our test cases on our Cakephp 3.1.2 project:

```

Warning Error: Fixture creation for "threads" failed "SQLSTATE[HY000]: General error: 1 index user_id already exists" in [/var/www/project/vendor/cakephp/cakephp/src/TestSuite/Fixture/TestFixture... | 1.0 | Fixture Indexes' name problem. - Hello,

We have stumbled upon the following warning message while running our test cases on our Cakephp 3.1.2 project:

```

Warning Error: Fixture creation for "threads" failed "SQLSTATE[HY000]: General error: 1 index user_id already exists" in [/var/www/project/vendor/cakephp/cakephp... | defect | fixture indexes name problem hello we have stumbled upon the following warning message while running our test cases on our cakephp project warning error fixture creation for threads failed sqlstate general error index user id already exists in our tests are currently using two ta... | 1 |

255,774 | 8,126,361,687 | IssuesEvent | 2018-08-17 01:42:38 | aowen87/BAR | https://api.github.com/repos/aowen87/BAR | closed | VisIt 2.7.3 no longer runs on the RZ | Bug Likelihood: 3 - Occasional Priority: Normal Severity: 3 - Major Irritation Support Group: DOE/ASC | VisIt 2.7.3 no longer runs on the RZ. There is a library that it was linked with that no longer exists. Here is the e-mail exchange with the person from LC on it. We should rebuild 2.7.3 to fix this problem since there are still people using old clients out there.

Hi Eric,

It looks like you own the VisIT builds i... | 1.0 | VisIt 2.7.3 no longer runs on the RZ - VisIt 2.7.3 no longer runs on the RZ. There is a library that it was linked with that no longer exists. Here is the e-mail exchange with the person from LC on it. We should rebuild 2.7.3 to fix this problem since there are still people using old clients out there.

Hi Eric,

I... | non_defect | visit no longer runs on the rz visit no longer runs on the rz there is a library that it was linked with that no longer exists here is the e mail exchange with the person from lc on it we should rebuild to fix this problem since there are still people using old clients out there hi eric i... | 0 |

22,974 | 3,735,368,577 | IssuesEvent | 2016-03-08 11:46:23 | janjarfalk/jquery-elastic | https://api.github.com/repos/janjarfalk/jquery-elastic | closed | Textareas don't auto-expand if you don't press the ENTER key | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Enable the elastic plugin on a textarea

2. Type a long paragraph in the textarea that exceeds the viewable area

(but with no carriage returns, ie. never press ENTER)

What is the expected output? What do you see instead?

I would expect that the textarea would auto-grow. Ho... | 1.0 | Textareas don't auto-expand if you don't press the ENTER key - ```

What steps will reproduce the problem?

1. Enable the elastic plugin on a textarea

2. Type a long paragraph in the textarea that exceeds the viewable area

(but with no carriage returns, ie. never press ENTER)

What is the expected output? What do you see... | defect | textareas don t auto expand if you don t press the enter key what steps will reproduce the problem enable the elastic plugin on a textarea type a long paragraph in the textarea that exceeds the viewable area but with no carriage returns ie never press enter what is the expected output what do you see... | 1 |

340,918 | 30,553,651,795 | IssuesEvent | 2023-07-20 10:10:26 | elastic/kibana | https://api.github.com/repos/elastic/kibana | closed | Failing test: Chrome UI Functional Tests.test/functional/apps/management/_files·ts - management Files management should render an empty prompt | failed-test Team:SharedUX | A test failed on a tracked branch

```

Error: expected 'Files\nManage files stored in Kibana.\nStatistics\nName A-Z\nTags\nName\nSize\nNo files matched your search.' to contain 'No files found'

at Assertion.assert (expect.js:100:11)

at Assertion.contain (expect.js:442:10)

at Context.<anonymous> (_files.ts:2... | 1.0 | Failing test: Chrome UI Functional Tests.test/functional/apps/management/_files·ts - management Files management should render an empty prompt - A test failed on a tracked branch

```

Error: expected 'Files\nManage files stored in Kibana.\nStatistics\nName A-Z\nTags\nName\nSize\nNo files matched your search.' to contai... | non_defect | failing test chrome ui functional tests test functional apps management files·ts management files management should render an empty prompt a test failed on a tracked branch error expected files nmanage files stored in kibana nstatistics nname a z ntags nname nsize nno files matched your search to contai... | 0 |

8,339 | 6,520,547,088 | IssuesEvent | 2017-08-28 16:56:58 | mysticfall/Alensia | https://api.github.com/repos/mysticfall/Alensia | closed | Fix delay before opening main menu | performance | Currently, there's a noticeable delay before the main menu shows up for the first time. We need to profile the process to see if there's potential bottleneck and remove the overhead. | True | Fix delay before opening main menu - Currently, there's a noticeable delay before the main menu shows up for the first time. We need to profile the process to see if there's potential bottleneck and remove the overhead. | non_defect | fix delay before opening main menu currently there s a noticeable delay before the main menu shows up for the first time we need to profile the process to see if there s potential bottleneck and remove the overhead | 0 |

34,028 | 7,327,854,641 | IssuesEvent | 2018-03-04 15:01:43 | cython/cython | https://api.github.com/repos/cython/cython | closed | segfault with asyncio | defect | On macOS 10.12.4, Cython 0.25.2, Python 3.6.1, if you await asyncio.sleep() <= Cython <= Python chain, it will segfault. It won't die if you await Cython future right away, without first using Python.

Here is an example that reproduces this bug:

main.py:

```

WANT_CRASH = True

import asyncio

import cy_test

... | 1.0 | segfault with asyncio - On macOS 10.12.4, Cython 0.25.2, Python 3.6.1, if you await asyncio.sleep() <= Cython <= Python chain, it will segfault. It won't die if you await Cython future right away, without first using Python.

Here is an example that reproduces this bug:

main.py:

```

WANT_CRASH = True

import a... | defect | segfault with asyncio on macos cython python if you await asyncio sleep cython python chain it will segfault it won t die if you await cython future right away without first using python here is an example that reproduces this bug main py want crash true import asyn... | 1 |

5,795 | 2,794,353,781 | IssuesEvent | 2015-05-11 16:13:55 | OryxProject/oryx | https://api.github.com/repos/OryxProject/oryx | closed | Explain / fix non-fatal exceptions during integration tests | enhancement Tests | There are several types of exceptions that appear regularly in the logs when integration tests run. They do not appear to affect the result, and tests pass. They may just be corner cases, from ZK and Kafka being rapidly started and stopped. This is a to-do to see if we can ever figure them out.

Updated to include wh... | 1.0 | Explain / fix non-fatal exceptions during integration tests - There are several types of exceptions that appear regularly in the logs when integration tests run. They do not appear to affect the result, and tests pass. They may just be corner cases, from ZK and Kafka being rapidly started and stopped. This is a to-do t... | non_defect | explain fix non fatal exceptions during integration tests there are several types of exceptions that appear regularly in the logs when integration tests run they do not appear to affect the result and tests pass they may just be corner cases from zk and kafka being rapidly started and stopped this is a to do t... | 0 |

16,229 | 10,453,582,181 | IssuesEvent | 2019-09-19 16:56:19 | HackIllinois/api | https://api.github.com/repos/HackIllinois/api | opened | Brainstorm & implement Slack integrations | Feature New Service Notifications | This is an idea that never made it into the API, but considering how much we use Slack for the event, it makes sense to build in some integration.

An obvious use case would be to send out notifications alongside mobile pushes.

https://github.com/acm-uiuc/groot-notification-service/ might be a good place to look.

... | 1.0 | Brainstorm & implement Slack integrations - This is an idea that never made it into the API, but considering how much we use Slack for the event, it makes sense to build in some integration.

An obvious use case would be to send out notifications alongside mobile pushes.

https://github.com/acm-uiuc/groot-notificati... | non_defect | brainstorm implement slack integrations this is an idea that never made it into the api but considering how much we use slack for the event it makes sense to build in some integration an obvious use case would be to send out notifications alongside mobile pushes might be a good place to look this cou... | 0 |

12,355 | 2,693,621,476 | IssuesEvent | 2015-04-01 15:40:39 | akvo/akvo-flow | https://api.github.com/repos/akvo/akvo-flow | opened | Even when it fails, processor always sends a fileProcessed message to the dashboard | 1 - Defect | ref #1185

Calls to http://uat1.akvoflow.org/processor?action=submit&fileName=non-existent-file.zip always seem to return a successful message in the dashboard - regardless of their actual status:

| Migrated from Trac defect other | There is a bug in how SnDAQ reports rates to moni, causing apparent spikes in event rate shortly after run transitions (the "2ndbin" bug).

This is not critical for run start but conditionals have been written in moni to suppress alerts caused by the bug.

<details>

<summary><em>Migrated from <a href="https://code.ice... | 1.0 | SnDAQ 2ndbin bug in rates reported to moni (Trac #1999) - There is a bug in how SnDAQ reports rates to moni, causing apparent spikes in event rate shortly after run transitions (the "2ndbin" bug).

This is not critical for run start but conditionals have been written in moni to suppress alerts caused by the bug.

<deta... | defect | sndaq bug in rates reported to moni trac there is a bug in how sndaq reports rates to moni causing apparent spikes in event rate shortly after run transitions the bug this is not critical for run start but conditionals have been written in moni to suppress alerts caused by the bug migrated from... | 1 |

56,307 | 15,020,012,251 | IssuesEvent | 2021-02-01 14:14:09 | mozilla-lockwise/lockwise-ios | https://api.github.com/repos/mozilla-lockwise/lockwise-ios | reopened | Welcome screen is briefly displayed after locking the app while syncing | archived defect priority-P2 | Build: 1.6.0(3339)

Device: iPhone X

iOS: 11.4

Steps to reproduce:

1. Launch Lockwise

2. While syncing, go to settings and lock the app

Actual results:

- The welcome screen will be briefly displayed.

Video:

- [ScreenRecording_05-22-2019 16-14-35.MP4.zip](https://github.com/mozilla-lockwise/lockwise-ios/fi... | 1.0 | Welcome screen is briefly displayed after locking the app while syncing - Build: 1.6.0(3339)

Device: iPhone X

iOS: 11.4

Steps to reproduce:

1. Launch Lockwise

2. While syncing, go to settings and lock the app

Actual results:

- The welcome screen will be briefly displayed.

Video:

- [ScreenRecording_05-22... | defect | welcome screen is briefly displayed after locking the app while syncing build device iphone x ios steps to reproduce launch lockwise while syncing go to settings and lock the app actual results the welcome screen will be briefly displayed video | 1 |

64,103 | 18,213,964,270 | IssuesEvent | 2021-09-30 00:13:24 | idaholab/moose | https://api.github.com/repos/idaholab/moose | closed | Unknown material property in ParsedMaterial is not an error | C: MOOSE T: defect P: normal | ## Bug Description

When using ParsedMaterial, if the material property listed in material_property_names is not defined, it becomes defined with a value of zero. I think it would make more sense for this to be an error. As it is now, input files will merrily run if someone mistypes a material property name.

## St... | 1.0 | Unknown material property in ParsedMaterial is not an error - ## Bug Description

When using ParsedMaterial, if the material property listed in material_property_names is not defined, it becomes defined with a value of zero. I think it would make more sense for this to be an error. As it is now, input files will merr... | defect | unknown material property in parsedmaterial is not an error bug description when using parsedmaterial if the material property listed in material property names is not defined it becomes defined with a value of zero i think it would make more sense for this to be an error as it is now input files will merr... | 1 |

8,844 | 2,612,907,146 | IssuesEvent | 2015-02-27 17:26:15 | chrsmith/windows-package-manager | https://api.github.com/repos/chrsmith/windows-package-manager | closed | Gimp 2.8.2 link | auto-migrated Milestone-End_Of_Month Type-Defect | ```

http://downloads.sourceforge.net/project/gimp-win/GIMP %2B GTK%2B %28stable

release%29/GIMP 2.8.2/gimp-2.8.2-setup.exe

doesn't exist anymore.

```

Original issue reported on code.google.com by `igi...@gmail.com` on 28 Aug 2012 at 5:51 | 1.0 | Gimp 2.8.2 link - ```

http://downloads.sourceforge.net/project/gimp-win/GIMP %2B GTK%2B %28stable

release%29/GIMP 2.8.2/gimp-2.8.2-setup.exe

doesn't exist anymore.

```

Original issue reported on code.google.com by `igi...@gmail.com` on 28 Aug 2012 at 5:51 | defect | gimp link gtk release gimp gimp setup exe doesn t exist anymore original issue reported on code google com by igi gmail com on aug at | 1 |

35,150 | 7,611,656,279 | IssuesEvent | 2018-05-01 14:45:04 | gwaldron/osgearth | https://api.github.com/repos/gwaldron/osgearth | closed | Disabled layers initialize upon exit | defect | I put an mgrs_graticule layer in an earth file with enabled="false". Upon quitting the viewer, the layer initializes. | 1.0 | Disabled layers initialize upon exit - I put an mgrs_graticule layer in an earth file with enabled="false". Upon quitting the viewer, the layer initializes. | defect | disabled layers initialize upon exit i put an mgrs graticule layer in an earth file with enabled false upon quitting the viewer the layer initializes | 1 |

73,409 | 24,617,002,990 | IssuesEvent | 2022-10-15 12:52:50 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Can't connect session to key backup | T-Defect | ### Steps to reproduce

- Go to Safety & Privacy settings

- Encryption→Secure Backup says: "This session is not backing up your keys, but you do have an existing backup you can restore from and add to going forward. Connect this session to key backup before signing out to avoid losing any keys that may only be on this... | 1.0 | Can't connect session to key backup - ### Steps to reproduce

- Go to Safety & Privacy settings

- Encryption→Secure Backup says: "This session is not backing up your keys, but you do have an existing backup you can restore from and add to going forward. Connect this session to key backup before signing out to avoid lo... | defect | can t connect session to key backup steps to reproduce go to safety privacy settings encryption→secure backup says this session is not backing up your keys but you do have an existing backup you can restore from and add to going forward connect this session to key backup before signing out to avoid lo... | 1 |

279,594 | 21,176,613,699 | IssuesEvent | 2022-04-08 01:03:09 | opendp/opendp | https://api.github.com/repos/opendp/opendp | closed | Document feasible range for epsilon in `base_gaussian` | CATEGORY: Documentation good first issue OpenDP Core Effort 1 - Small :coffee: | Any `epsilon` greater than 1 is clipped to 1, because of limitations of the mechanism. This should be more clearly documented, because it is easy to mistake the relation as broken, if it continually doesn't pass for arbitrarily large epsilon. | 1.0 | Document feasible range for epsilon in `base_gaussian` - Any `epsilon` greater than 1 is clipped to 1, because of limitations of the mechanism. This should be more clearly documented, because it is easy to mistake the relation as broken, if it continually doesn't pass for arbitrarily large epsilon. | non_defect | document feasible range for epsilon in base gaussian any epsilon greater than is clipped to because of limitations of the mechanism this should be more clearly documented because it is easy to mistake the relation as broken if it continually doesn t pass for arbitrarily large epsilon | 0 |

41,950 | 10,833,308,569 | IssuesEvent | 2019-11-11 12:38:11 | opencv/opencv | https://api.github.com/repos/opencv/opencv | closed | OpenCV linkage fails | category: build/install incomplete platform: ios/osx | <!--

If you have a question rather than reporting a bug please go to http://answers.opencv.org where you get much faster responses.

If you need further assistance please read [How To Contribute](https://github.com/opencv/opencv/wiki/How_to_contribute).

This is a template helping you to create an issue which can be... | 1.0 | OpenCV linkage fails - <!--

If you have a question rather than reporting a bug please go to http://answers.opencv.org where you get much faster responses.

If you need further assistance please read [How To Contribute](https://github.com/opencv/opencv/wiki/How_to_contribute).

This is a template helping you to creat... | non_defect | opencv linkage fails if you have a question rather than reporting a bug please go to where you get much faster responses if you need further assistance please read this is a template helping you to create an issue which can be processed as quickly as possible this is the bug reporting section for th... | 0 |

37,707 | 8,481,832,374 | IssuesEvent | 2018-10-25 16:46:21 | CenturyLinkCloud/mdw | https://api.github.com/repos/CenturyLinkCloud/mdw | opened | Subprocess activities in Hub definition view = issues when ' v' included in name | defect | The `processname` attribute causes problems identifying the configured subprocess due to this logic in configurator.js:

```javascript

Configurator.prototype.initSubprocBindings = function(widget, subproc) {

var spaceV = subproc.lastIndexOf(' v');

if (spaceV > 0)

subproc = subproc(0, spaceV);

``` | 1.0 | Subprocess activities in Hub definition view = issues when ' v' included in name - The `processname` attribute causes problems identifying the configured subprocess due to this logic in configurator.js:

```javascript

Configurator.prototype.initSubprocBindings = function(widget, subproc) {

var spaceV = subproc.... | defect | subprocess activities in hub definition view issues when v included in name the processname attribute causes problems identifying the configured subprocess due to this logic in configurator js javascript configurator prototype initsubprocbindings function widget subproc var spacev subproc ... | 1 |

25,612 | 5,187,032,909 | IssuesEvent | 2017-01-20 15:45:50 | spring-projects/spring-boot | https://api.github.com/repos/spring-projects/spring-boot | closed | Document how to insert test data with flyway | priority: normal status: ideal-for-contribution type: documentation | When I include the Flyway library in my classpath, Spring Boot correctly locates and executes my migrations. It does not, however, make use of my data.sql script, which I use to import fixture data for unit tests. Per the documentation, I am setting the `jpa.hibernate.ddl-auto` property to `false` so that Hibernate doe... | 1.0 | Document how to insert test data with flyway - When I include the Flyway library in my classpath, Spring Boot correctly locates and executes my migrations. It does not, however, make use of my data.sql script, which I use to import fixture data for unit tests. Per the documentation, I am setting the `jpa.hibernate.ddl-... | non_defect | document how to insert test data with flyway when i include the flyway library in my classpath spring boot correctly locates and executes my migrations it does not however make use of my data sql script which i use to import fixture data for unit tests per the documentation i am setting the jpa hibernate ddl ... | 0 |

90,256 | 26,024,561,196 | IssuesEvent | 2022-12-21 15:18:51 | numpy/numpy | https://api.github.com/repos/numpy/numpy | closed | Fails to import with numpy 1.23.5 after compiling on raspbian arm with clang 13 for python 3.11 | 00 - Bug 36 - Build | ### Steps to reproduce:

Pip install build from source as there is no wheel for python 3.11

```

(homeassistant-shadow) homeassistant@lazaro:/srv/homeassistant-shadow$ pip install numpy

Looking in indexes: https://pypi.org/simple, https://www.piwheels.org/simple

Collecting numpy

Downloading numpy-1.23.5.tar.g... | 1.0 | Fails to import with numpy 1.23.5 after compiling on raspbian arm with clang 13 for python 3.11 - ### Steps to reproduce:

Pip install build from source as there is no wheel for python 3.11

```

(homeassistant-shadow) homeassistant@lazaro:/srv/homeassistant-shadow$ pip install numpy

Looking in indexes: https://p... | non_defect | fails to import with numpy after compiling on raspbian arm with clang for python steps to reproduce pip install build from source as there is no wheel for python homeassistant shadow homeassistant lazaro srv homeassistant shadow pip install numpy looking in indexes collecti... | 0 |

79,547 | 28,367,954,287 | IssuesEvent | 2023-04-12 15:00:25 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | opened | User suggestion list height doesn't match container on iOS 16+ | T-Defect | ### Steps to reproduce

1. Go to a room

2. Type @... with a pattern that would matches just a couple/few users

### Outcome

#### What did you expect?

User suggestion list displays multiple users nicely, given that there is enough room for it

#### What happened instead?

User suggestion list height is bigger... | 1.0 | User suggestion list height doesn't match container on iOS 16+ - ### Steps to reproduce

1. Go to a room

2. Type @... with a pattern that would matches just a couple/few users

### Outcome

#### What did you expect?

User suggestion list displays multiple users nicely, given that there is enough room for it

###... | defect | user suggestion list height doesn t match container on ios steps to reproduce go to a room type with a pattern that would matches just a couple few users outcome what did you expect user suggestion list displays multiple users nicely given that there is enough room for it ... | 1 |

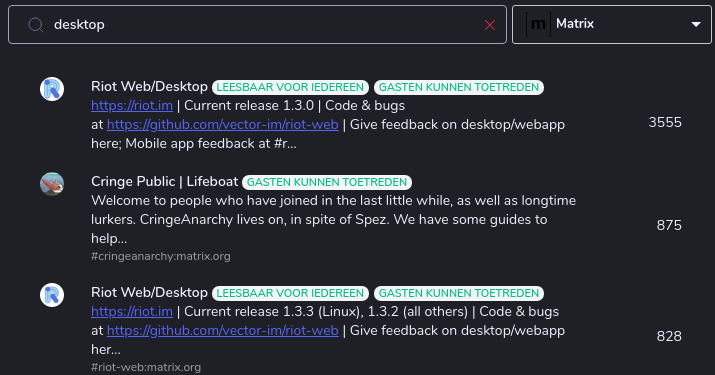

71,921 | 23,854,486,273 | IssuesEvent | 2022-09-06 21:25:34 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Outdated rooms shouldn't show up in room searches | T-Defect P1 A-Room-Directory Z-Synapse | When searching for this room by clicking on the plus and typing some relevant text (e.g. 'Desktop', or even 'iOS'), the first hit is the outdated version.

| 1.0 | Outdated rooms shouldn't show up in room searches - When searching for this room by clicking on the plus and typing some relevant text (e.g. 'Desktop', or even 'iOS'), the first hit is the outdated version.

detected in system.net.http.4.3.0.nupkg | security vulnerability | ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>Provides a programming interface for modern HTTP applications, includi... | True | CVE-2018-8292 (High) detected in system.net.http.4.3.0.nupkg - ## CVE-2018-8292 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>system.net.http.4.3.0.nupkg</b></p></summary>

<p>Provide... | non_defect | cve high detected in system net http nupkg cve high severity vulnerability vulnerable library system net http nupkg provides a programming interface for modern http applications including http client components that allow applications to consume web services over http and http ... | 0 |

1,400 | 2,603,846,955 | IssuesEvent | 2015-02-24 18:16:13 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳病毒疱疹检查 | auto-migrated Priority-Medium Type-Defect | ```

沈阳病毒疱疹检查〓沈陽軍區政治部醫院性病〓TEL:024-3102330

8〓成立于1946年,68年專注于性傳播疾病的研究和治療。位于�

��陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷�

��悠久、設備精良、技術權威、專家云集,是預防、保健、醫

療、科研康復為一體的綜合性醫院。是國家首批公立甲等部��

�醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南�

��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后

勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等��

�。

```

-----

Original issue reported on code.google.com by `q964105..... | 1.0 | 沈阳病毒疱疹检查 - ```

沈阳病毒疱疹检查〓沈陽軍區政治部醫院性病〓TEL:024-3102330

8〓成立于1946年,68年專注于性傳播疾病的研究和治療。位于�

��陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的歷�

��悠久、設備精良、技術權威、專家云集,是預防、保健、醫

療、科研康復為一體的綜合性醫院。是國家首批公立甲等部��

�醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南�

��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后

勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等��

�。

```

-----

Original issue reported on code.google.com by... | defect | 沈阳病毒疱疹检查 沈阳病毒疱疹检查〓沈陽軍區政治部醫院性病〓tel: 〓 , 。位于� �� 。是一所與新中國同建立共輝煌的歷� ��悠久、設備精良、技術權威、專家云集,是預防、保健、醫 療、科研康復為一體的綜合性醫院。是國家首批公立甲等部�� �醫院、全國首批醫療規范定點單位,是第四軍醫大學、東南� ��學等知名高等院校的教學醫院。曾被中國人民解放軍空軍后 勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二等�� �。 original issue reported on code google com by gmail com on jun at | 1 |

59,881 | 17,023,276,901 | IssuesEvent | 2021-07-03 01:11:12 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | German language graphicbugs | Component: potlatch (flash editor) Priority: major Resolution: duplicate Type: defect | **[Submitted to the original trac issue database at 11.47am, Wednesday, 23rd July 2008]**

In german version if have problems with the buttons and many sentences.

Look at the screenshot:

http://picload.org/image/e87517eddbdf9ee2d2da772df82e8894/bildschirmfoto.png

| 1.0 | German language graphicbugs - **[Submitted to the original trac issue database at 11.47am, Wednesday, 23rd July 2008]**

In german version if have problems with the buttons and many sentences.

Look at the screenshot:

http://picload.org/image/e87517eddbdf9ee2d2da772df82e8894/bildschirmfoto.png

| defect | german language graphicbugs in german version if have problems with the buttons and many sentences look at the screenshot | 1 |

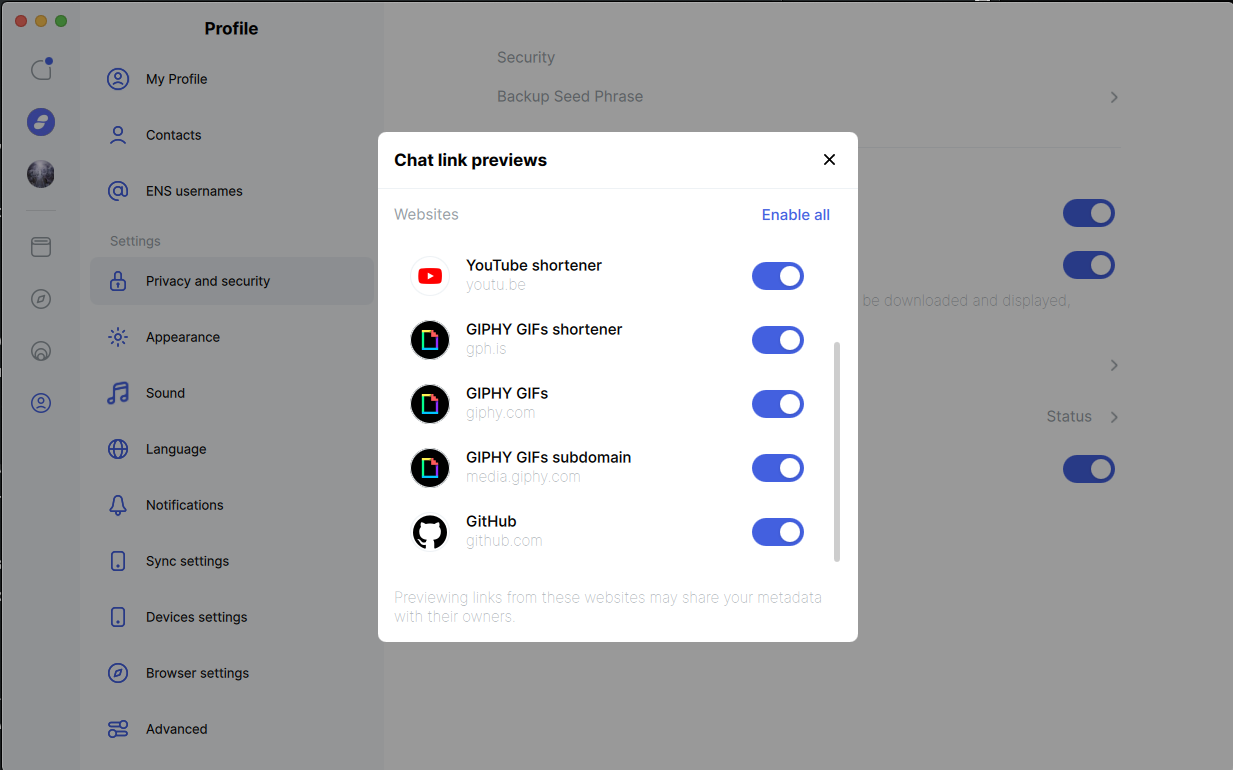

588,794 | 17,671,681,239 | IssuesEvent | 2021-08-23 07:10:21 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | closed | add unfurling support for tenor | Chat feature priority F1: mandatory | the gif selector sends messages with the tenor url, we should add tenor.com to the list, probably needs changes in status-go

marking as mandatory because it's not obvious to... | 1.0 | add unfurling support for tenor - the gif selector sends messages with the tenor url, we should add tenor.com to the list, probably needs changes in status-go

marking as man... | non_defect | add unfurling support for tenor the gif selector sends messages with the tenor url we should add tenor com to the list probably needs changes in status go marking as mandatory because it s not obvious to the use there is an advanced setting to display any image | 0 |

793,352 | 27,992,092,544 | IssuesEvent | 2023-03-27 05:12:12 | AY2223S2-CS2103T-W12-4/tp | https://api.github.com/repos/AY2223S2-CS2103T-W12-4/tp | closed | Find appointments during a particular time period | priority.High type.Appointment | As a doctor, I want to view the number of appointments I have during a particular time period so that I can gauge my workload. | 1.0 | Find appointments during a particular time period - As a doctor, I want to view the number of appointments I have during a particular time period so that I can gauge my workload. | non_defect | find appointments during a particular time period as a doctor i want to view the number of appointments i have during a particular time period so that i can gauge my workload | 0 |

315,110 | 27,046,469,276 | IssuesEvent | 2023-02-13 10:07:47 | getsentry/sentry-javascript | https://api.github.com/repos/getsentry/sentry-javascript | closed | Improve NextJS integration tests | Status: In Progress Type: Improvement Package: Nextjs Type: Tests | ### Problem Statement

NextJS integration tests are definitely our most flaky set of tests, and they are also much harder to debug since they don't use jest under the hood.

https://sentry.io/organizations/sentry/dashboard/24245/?project=5899451&statsPeriod=3d

<img width="627" alt="image" src="https://user-image... | 1.0 | Improve NextJS integration tests - ### Problem Statement

NextJS integration tests are definitely our most flaky set of tests, and they are also much harder to debug since they don't use jest under the hood.

https://sentry.io/organizations/sentry/dashboard/24245/?project=5899451&statsPeriod=3d

<img width="627" ... | non_defect | improve nextjs integration tests problem statement nextjs integration tests are definitely our most flaky set of tests and they are also much harder to debug since they don t use jest under the hood img width alt image src img width alt image src we should update these tests... | 0 |

42,687 | 11,218,223,820 | IssuesEvent | 2020-01-07 10:58:11 | zotonic/zotonic | https://api.github.com/repos/zotonic/zotonic | closed | mod_backup: Cannot backup site created from web GUI | defect | [master branch]

When I create a site from the web gui with default values (using settings from ~/.zotonic/1/zotonic.config I cannot make a backup.

```22:22:00.442 [info] z_module_manager:237 [creado2] info @ z_module_manager:237 Module mod_backup activated by 1 ()

(zotonic001@backv1)1> 22:24:34.974 [warning] mod_... | 1.0 | mod_backup: Cannot backup site created from web GUI - [master branch]

When I create a site from the web gui with default values (using settings from ~/.zotonic/1/zotonic.config I cannot make a backup.

```22:22:00.442 [info] z_module_manager:237 [creado2] info @ z_module_manager:237 Module mod_backup activated by 1 (... | defect | mod backup cannot backup site created from web gui when i create a site from the web gui with default values using settings from zotonic zotonic config i cannot make a backup z module manager info z module manager module mod backup activated by mod backup ... | 1 |

15,831 | 9,104,431,230 | IssuesEvent | 2019-02-20 18:09:18 | tidyverse/dplyr | https://api.github.com/repos/tidyverse/dplyr | closed | group_by + summarise in dplyr v8.0 is so much slower than previous version | performance :rocket: | Simple operations on fairly large data frames now take forever to complete

---

I have a tibble

```r

> pepData

# A tibble: 1,496,130 x 6

id Sequence Modifications Protein Gene Description

<chr> <chr> <chr> <chr> <chr> <chr>

```

This operation used to complete in 2sec

```r

>... | True | group_by + summarise in dplyr v8.0 is so much slower than previous version - Simple operations on fairly large data frames now take forever to complete

---

I have a tibble

```r

> pepData

# A tibble: 1,496,130 x 6

id Sequence Modifications Protein Gene Description

<chr> <chr> <chr> <c... | non_defect | group by summarise in dplyr is so much slower than previous version simple operations on fairly large data frames now take forever to complete i have a tibble r pepdata a tibble x id sequence modifications protein gene description t... | 0 |

39,019 | 19,672,814,658 | IssuesEvent | 2022-01-11 09:16:37 | MicrosoftDocs/windowsserverdocs | https://api.github.com/repos/MicrosoftDocs/windowsserverdocs | closed | Blog links on page are dead, please update | Pri2 windows-server/prod performance-tuning-guide/tech | attempting to reference information on performance counters and what they indicate, there are blog links on the page at https://docs.microsoft.com/en-us/windows-server/administration/performance-tuning/role/file-server/smb-file-server but they are dead links.

---

#### Document Details

⚠ *Do not edit this section. It ... | True | Blog links on page are dead, please update - attempting to reference information on performance counters and what they indicate, there are blog links on the page at https://docs.microsoft.com/en-us/windows-server/administration/performance-tuning/role/file-server/smb-file-server but they are dead links.

---

#### Docum... | non_defect | blog links on page are dead please update attempting to reference information on performance counters and what they indicate there are blog links on the page at but they are dead links document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id ... | 0 |

500,857 | 14,516,299,395 | IssuesEvent | 2020-12-13 15:28:47 | Zettlr/Zettlr | https://api.github.com/repos/Zettlr/Zettlr | closed | When linking files, ID is duplicated | bug [non-critical] pinned priority:mid | ## Description

<!-- Below, please describe what the Bug does in one or two short sentences. -->

My files are created like this: "ID Name.md". For example:"20201121220427 Menos es mas.md" .

In Preferences/Zettelkasten "When linking files, add the filename...", "always" option is selected.

<!-- Below, please descr... | 1.0 | When linking files, ID is duplicated - ## Description

<!-- Below, please describe what the Bug does in one or two short sentences. -->

My files are created like this: "ID Name.md". For example:"20201121220427 Menos es mas.md" .

In Preferences/Zettelkasten "When linking files, add the filename...", "always" option i... | non_defect | when linking files id is duplicated description my files are created like this id name md for example menos es mas md in preferences zettelkasten when linking files add the filename always option is selected usually when creating an internal link suggestion list shows files as ... | 0 |

5,478 | 2,610,188,446 | IssuesEvent | 2015-02-26 18:59:42 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 资讯如何自然祛色斑 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

我不放弃依恋这样的冬日,喧闹的幸福,似乎已经遥远。曾��

�的她,至今已不再出现。我依旧不舍与这样的季节挥手。记�

��里,伤心的她,比这个季节更冰凉。独守破败的窗台,幻想

着她的经过。却迎来冬日暖阳,如昨日雪花,静静飘落,一��

�片轻轻柔柔的。一缕白烟,在我眼前升起,那是手中绿茶的�

��吸,也这样般轻柔。再美好也是曾经,脸上的痕迹提醒着我

,我们不会在相聚!如何自然祛色斑,

《客户案例》

不用说,长斑肯定是咱们女人最讨厌的事情,就算再不��

�美的女人,脸上长了斑就是嘴上不说,心里也肯定是难过的�

��在意的,我就是这样,其实是不想让老公和关心我的人担心

,虽然总安慰着他们说没事,没关系,心里多多少少还会... | 1.0 | 资讯如何自然祛色斑 - ```

《摘要》

我不放弃依恋这样的冬日,喧闹的幸福,似乎已经遥远。曾��

�的她,至今已不再出现。我依旧不舍与这样的季节挥手。记�

��里,伤心的她,比这个季节更冰凉。独守破败的窗台,幻想

着她的经过。却迎来冬日暖阳,如昨日雪花,静静飘落,一��

�片轻轻柔柔的。一缕白烟,在我眼前升起,那是手中绿茶的�

��吸,也这样般轻柔。再美好也是曾经,脸上的痕迹提醒着我

,我们不会在相聚!如何自然祛色斑,

《客户案例》

不用说,长斑肯定是咱们女人最讨厌的事情,就算再不��

�美的女人,脸上长了斑就是嘴上不说,心里也肯定是难过的�

��在意的,我就是这样,其实是不想让老公和关心我的人担心

,虽然总安慰着他们说没事... | defect | 资讯如何自然祛色斑 《摘要》 我不放弃依恋这样的冬日,喧闹的幸福,似乎已经遥远。曾�� �的她,至今已不再出现。我依旧不舍与这样的季节挥手。记� ��里,伤心的她,比这个季节更冰凉。独守破败的窗台,幻想 着她的经过。却迎来冬日暖阳,如昨日雪花,静静飘落,一�� �片轻轻柔柔的。一缕白烟,在我眼前升起,那是手中绿茶的� ��吸,也这样般轻柔。再美好也是曾经,脸上的痕迹提醒着我 ,我们不会在相聚!如何自然祛色斑, 《客户案例》 不用说,长斑肯定是咱们女人最讨厌的事情,就算再不�� �美的女人,脸上长了斑就是嘴上不说,心里也肯定是难过的� ��在意的,我就是这样,其实是不想让老公和关心我的人担心 ,虽然总安慰着他们说没事... | 1 |

9,965 | 2,616,015,538 | IssuesEvent | 2015-03-02 00:58:03 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | Tau Cross analyzing, and some other UMS. | auto-migrated Component-Logic Priority-Critical Type-Defect Usability | ```

Exception Address:

Exception Type:

Starcraft error log (See Starcraft\Errors) or debug information:

Not sure what these mean, but i have the screen shot:

http://i.imgur.com/q1Msu.png and error log attached.

What version of the product are you using? On what operating system?

BWAPI 3.4, Win7 64 bit.

```

Original ... | 1.0 | Tau Cross analyzing, and some other UMS. - ```

Exception Address:

Exception Type:

Starcraft error log (See Starcraft\Errors) or debug information:

Not sure what these mean, but i have the screen shot:

http://i.imgur.com/q1Msu.png and error log attached.

What version of the product are you using? On what operating sys... | defect | tau cross analyzing and some other ums exception address exception type starcraft error log see starcraft errors or debug information not sure what these mean but i have the screen shot and error log attached what version of the product are you using on what operating system bwapi bit ... | 1 |

74,476 | 25,138,433,814 | IssuesEvent | 2022-11-09 20:43:29 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | p-calendar | maxDate & minDate are broken in month-picker | defect | basically, the p-calendar when using the month-picker, changes the years, and it has the maxDate-minDate boundaries, but it doesn't apply the p-disabled class to the months

StackBlitz with reproducing the issue: [https://stackblitz.com/edit/primeng-tablescroll-demo-wdclen?file=src%2Fapp%2Fapp.component.ts](url) | 1.0 | p-calendar | maxDate & minDate are broken in month-picker - basically, the p-calendar when using the month-picker, changes the years, and it has the maxDate-minDate boundaries, but it doesn't apply the p-disabled class to the months

StackBlitz with reproducing the issue: [https://stackblitz.com/edit/primeng-tablesc... | defect | p calendar maxdate mindate are broken in month picker basically the p calendar when using the month picker changes the years and it has the maxdate mindate boundaries but it doesn t apply the p disabled class to the months stackblitz with reproducing the issue url | 1 |

119,536 | 12,034,075,155 | IssuesEvent | 2020-04-13 15:23:30 | Chadori/Construct-Master-Collection | https://api.github.com/repos/Chadori/Construct-Master-Collection | closed | Mobile IronSource Collection - New 6.16.0 (latest) features. | Documentation Enhancement Mobile Monetization Native Cordova | ## Request Type

**Feature Request**

## Information

Mobile IronSource Collection - New **6.16.0** (latest) features.

Updated the **Native Cordova** plugins for the new IronSource Android SDK (**6.16.0**).

Changes:

```

implementation 'com.ironsource.sdk:mediationsdk:+'

```

And, I've also added these:

```

... | 1.0 | Mobile IronSource Collection - New 6.16.0 (latest) features. - ## Request Type

**Feature Request**

## Information

Mobile IronSource Collection - New **6.16.0** (latest) features.

Updated the **Native Cordova** plugins for the new IronSource Android SDK (**6.16.0**).

Changes:

```

implementation 'com.ironsourc... | non_defect | mobile ironsource collection new latest features request type feature request information mobile ironsource collection new latest features updated the native cordova plugins for the new ironsource android sdk changes implementation com ironsource s... | 0 |

425 | 7,880,242,148 | IssuesEvent | 2018-06-26 15:24:50 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | opened | People: When viewing/editing person, show details of how they were added/invited to site | People Management [Type] Enhancement | It would be useful when viewing/editing a person at `people/edit` to show details of how that person was added/invited to the site originally. Basically, show the information that is currently shown for Invites details at `people/invites`.

<img width="749" alt="screencapture at tue jun 26 11 17 55 edt 2018" src="htt... | 1.0 | People: When viewing/editing person, show details of how they were added/invited to site - It would be useful when viewing/editing a person at `people/edit` to show details of how that person was added/invited to the site originally. Basically, show the information that is currently shown for Invites details at `people... | non_defect | people when viewing editing person show details of how they were added invited to site it would be useful when viewing editing a person at people edit to show details of how that person was added invited to the site originally basically show the information that is currently shown for invites details at people... | 0 |

6,418 | 5,415,608,957 | IssuesEvent | 2017-03-01 22:01:19 | twilio/twilio-video.js | https://api.github.com/repos/twilio/twilio-video.js | closed | Video freezes or browser crash with 3 or more participants | performance question | Hello,

We have been trying to use Twilio Video conference feature for one of our application.

We are facing few of the below mentioned issues in our application as well as in sample demo app provided by Twilio deployed on Heroku

One or more participant video stream freezes in ongoing video session randomly

One or mo... | True | Video freezes or browser crash with 3 or more participants - Hello,

We have been trying to use Twilio Video conference feature for one of our application.

We are facing few of the below mentioned issues in our application as well as in sample demo app provided by Twilio deployed on Heroku

One or more participant vid... | non_defect | video freezes or browser crash with or more participants hello we have been trying to use twilio video conference feature for one of our application we are facing few of the below mentioned issues in our application as well as in sample demo app provided by twilio deployed on heroku one or more participant vid... | 0 |

80,772 | 30,523,532,395 | IssuesEvent | 2023-07-19 09:39:51 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Matthew not able to see my messages | T-Defect X-Needs-Info | ### Steps to reproduce

1. Where are you starting? What can you see?

2. What do you click?

3. More steps…

### Outcome

#### What did you expect?

#### What happened instead?

### Operating system

MacOs

### Application version

Version 1.11.31 (1.11.31)

### How did you install the app?

i dont know

### Home... | 1.0 | Matthew not able to see my messages - ### Steps to reproduce

1. Where are you starting? What can you see?

2. What do you click?

3. More steps…

### Outcome

#### What did you expect?

#### What happened instead?

### Operating system

MacOs

### Application version

Version 1.11.31 (1.11.31)

### How did you i... | defect | matthew not able to see my messages steps to reproduce where are you starting what can you see what do you click more steps… outcome what did you expect what happened instead operating system macos application version version how did you insta... | 1 |

31,971 | 7,471,212,509 | IssuesEvent | 2018-04-03 08:32:19 | Microsoft/vscode-arduino | https://api.github.com/repos/Microsoft/vscode-arduino | closed | Add support to multi-root workspaces | code ready duplicate feature-request | Please add support to multi-root workspaces as explained [here](https://code.visualstudio.com/docs/editor/multi-root-workspaces).

If i enter some root project the arduino.json should be loaded with board and port information for instance.

Thank you, nuno407 | 1.0 | Add support to multi-root workspaces - Please add support to multi-root workspaces as explained [here](https://code.visualstudio.com/docs/editor/multi-root-workspaces).

If i enter some root project the arduino.json should be loaded with board and port information for instance.

Thank you, nuno407 | non_defect | add support to multi root workspaces please add support to multi root workspaces as explained if i enter some root project the arduino json should be loaded with board and port information for instance thank you | 0 |

100,755 | 16,490,391,283 | IssuesEvent | 2021-05-25 02:15:37 | jinuem/Parse-SDK-JS | https://api.github.com/repos/jinuem/Parse-SDK-JS | opened | CVE-2020-7598 (Medium) detected in multiple libraries | security vulnerability | ## CVE-2020-7598 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-0.0.10.tgz</b>, <b>minimist-1.2.0.tgz</b></p></summary>

<p>

<details><summary><... | True | CVE-2020-7598 (Medium) detected in multiple libraries - ## CVE-2020-7598 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-0.0.10.tgz</b>, <b>minim... | non_defect | cve medium detected in multiple libraries cve medium severity vulnerability vulnerable libraries minimist tgz minimist tgz minimist tgz minimist tgz parse argument options library home page a href path to dependency file parse sdk js package ... | 0 |

89,200 | 25,601,992,129 | IssuesEvent | 2022-12-01 21:07:04 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | Baserow is broken | 0.kind: build failure | ### Steps To Reproduce

Steps to reproduce the behavior:

1. build `baserow`

2. it will err out with missing dependency: `uvloop`

### Build log

```

[...]

Finished executing setuptoolsBuildPhase

installing

Executing pipInstallPhase

/build/source/backend/dist /build/source/backend

Processing ./baserow-1.10.2-p... | 1.0 | Baserow is broken - ### Steps To Reproduce

Steps to reproduce the behavior:

1. build `baserow`

2. it will err out with missing dependency: `uvloop`

### Build log

```

[...]

Finished executing setuptoolsBuildPhase

installing

Executing pipInstallPhase

/build/source/backend/dist /build/source/backend

Processin... | non_defect | baserow is broken steps to reproduce steps to reproduce the behavior build baserow it will err out with missing dependency uvloop build log finished executing setuptoolsbuildphase installing executing pipinstallphase build source backend dist build source backend processing ... | 0 |

56,949 | 6,535,001,655 | IssuesEvent | 2017-08-31 13:10:27 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Extended.[k8s.io] StatefulSet [k8s.io] Basic StatefulSet functionality [StatefulSetBasic] should not deadlock when a pod's predecessor fails | component/kubernetes kind/test-flake priority/P0 | ```

[k8s.io] StatefulSet

/go/src/github.com/openshift/origin/_output/local/go/src/github.com/openshift/origin/vendor/k8s.io/kubernetes/test/e2e/framework/framework.go:656

[k8s.io] Basic StatefulSet functionality [StatefulSetBasic]

/go/src/github.com/openshift/origin/_output/local/go/src/github.com/openshift/ori... | 1.0 | Extended.[k8s.io] StatefulSet [k8s.io] Basic StatefulSet functionality [StatefulSetBasic] should not deadlock when a pod's predecessor fails - ```

[k8s.io] StatefulSet

/go/src/github.com/openshift/origin/_output/local/go/src/github.com/openshift/origin/vendor/k8s.io/kubernetes/test/e2e/framework/framework.go:656

[... | non_defect | extended statefulset basic statefulset functionality should not deadlock when a pod s predecessor fails statefulset go src github com openshift origin output local go src github com openshift origin vendor io kubernetes test framework framework go basic statefulset functionality go sr... | 0 |

7,401 | 2,610,366,656 | IssuesEvent | 2015-02-26 19:58:26 | chrsmith/scribefire-chrome | https://api.github.com/repos/chrsmith/scribefire-chrome | opened | No Offline save of multiple posts | auto-migrated Priority-Medium Type-Defect | ```

What's the problem?

When I'm offline, I'm not able to save many posts to my computer.

I've made the test many times, and always only the last post is saved.

Probably, if the feature isn't available, a warning message should precise it!

What browser are you using?

Chrome 27.0.1453.110 m

What version of ScribeFire ... | 1.0 | No Offline save of multiple posts - ```

What's the problem?

When I'm offline, I'm not able to save many posts to my computer.

I've made the test many times, and always only the last post is saved.

Probably, if the feature isn't available, a warning message should precise it!

What browser are you using?

Chrome 27.0.145... | defect | no offline save of multiple posts what s the problem when i m offline i m not able to save many posts to my computer i ve made the test many times and always only the last post is saved probably if the feature isn t available a warning message should precise it what browser are you using chrome ... | 1 |

19,564 | 6,736,355,464 | IssuesEvent | 2017-10-19 03:25:04 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | Use OpenJDK-1.8 on CentOS 7 | Category: Build Category: Core Category: Install Priority: Medium Type: Maintenance Type: Support | OpenJDK-1.8 is available via default RPM repos on CentOS 7 making using it simple to install. | 1.0 | Use OpenJDK-1.8 on CentOS 7 - OpenJDK-1.8 is available via default RPM repos on CentOS 7 making using it simple to install. | non_defect | use openjdk on centos openjdk is available via default rpm repos on centos making using it simple to install | 0 |

57,831 | 16,092,983,258 | IssuesEvent | 2021-04-26 19:11:19 | primefaces/primereact | https://api.github.com/repos/primefaces/primereact | closed | Treetable break after toggle columns | defect | ### There is no guarantee in receiving an immediate response in GitHub Issue Tracker, If you'd like to secure our response, you may consider *PrimeReact PRO Support* where support is provided within 4 business hours

**I'm submitting a ...** (check one with "x")

```

[ ] bug report

[ ] feature request

[ ] support... | 1.0 | Treetable break after toggle columns - ### There is no guarantee in receiving an immediate response in GitHub Issue Tracker, If you'd like to secure our response, you may consider *PrimeReact PRO Support* where support is provided within 4 business hours

**I'm submitting a ...** (check one with "x")

```

[ ] bug r... | defect | treetable break after toggle columns there is no guarantee in receiving an immediate response in github issue tracker if you d like to secure our response you may consider primereact pro support where support is provided within business hours i m submitting a check one with x bug rep... | 1 |

42,709 | 9,301,433,633 | IssuesEvent | 2019-03-23 21:58:28 | joomla/joomla-cms | https://api.github.com/repos/joomla/joomla-cms | closed | [4.0] System cPanel view requires internet connection | No Code Attached Yet | ### Steps to reproduce the issue

On localhost (eg XAMPP) install some extensions and try to load the "System" view.

Optionally cut off your internet connection and try to load the "System" view.

### Expected result

Loads instantely

### Actual result

Page loads delayed.

### Additional comments

Appare... | 1.0 | [4.0] System cPanel view requires internet connection - ### Steps to reproduce the issue

On localhost (eg XAMPP) install some extensions and try to load the "System" view.

Optionally cut off your internet connection and try to load the "System" view.

### Expected result

Loads instantely

### Actual result

... | non_defect | system cpanel view requires internet connection steps to reproduce the issue on localhost eg xampp install some extensions and try to load the system view optionally cut off your internet connection and try to load the system view expected result loads instantely actual result page... | 0 |

434,661 | 12,521,518,614 | IssuesEvent | 2020-06-03 17:31:35 | dmwm/WMCore | https://api.github.com/repos/dmwm/WMCore | closed | WorkQueueManager timing out when pulling work from the parent queue | BUG Highest Priority WMAgent scalability | **Impact of the bug**

WMAgents

**Describe the bug**

Issue similar to: https://github.com/dmwm/WMCore/issues/9668

and especially: https://github.com/dmwm/WMCore/issues/9669

where, apparently, WorkQueueManager tries to pull all the GQEs sitting in Available status. Which is almost 400k at the moment and almost .... | 1.0 | WorkQueueManager timing out when pulling work from the parent queue - **Impact of the bug**

WMAgents

**Describe the bug**

Issue similar to: https://github.com/dmwm/WMCore/issues/9668

and especially: https://github.com/dmwm/WMCore/issues/9669

where, apparently, WorkQueueManager tries to pull all the GQEs sittin... | non_defect | workqueuemanager timing out when pulling work from the parent queue impact of the bug wmagents describe the bug issue similar to and especially where apparently workqueuemanager tries to pull all the gqes sitting in available status which is almost at the moment and almost of data ... | 0 |

119,466 | 15,554,523,869 | IssuesEvent | 2021-03-16 04:03:17 | UOA-SE701-Group3-2021/3Lancers | https://api.github.com/repos/UOA-SE701-Group3-2021/3Lancers | closed | Hi-fi To-Do List icon design for the widget drawer | approved design | **Is your feature request related to a problem? Please describe.**

As a frontend developer, I want a detailed prototype of the To-Do List I'm implementing, so that I can finalise the styling and logic.

**Describe the solution you'd like**

A hi-fi prototype of the To-Do List icon for the widget drawer.

**Describ... | 1.0 | Hi-fi To-Do List icon design for the widget drawer - **Is your feature request related to a problem? Please describe.**

As a frontend developer, I want a detailed prototype of the To-Do List I'm implementing, so that I can finalise the styling and logic.

**Describe the solution you'd like**

A hi-fi prototype of th... | non_defect | hi fi to do list icon design for the widget drawer is your feature request related to a problem please describe as a frontend developer i want a detailed prototype of the to do list i m implementing so that i can finalise the styling and logic describe the solution you d like a hi fi prototype of th... | 0 |

77,175 | 26,823,066,150 | IssuesEvent | 2023-02-02 10:50:37 | zed-industries/feedback | https://api.github.com/repos/zed-industries/feedback | opened | Clicking an inactive Zed window doesn't focus the split clicked on | defect triage | ### Check for existing issues

- [X] Completed

### Describe the bug / provide steps to reproduce it

Clicking a split of an unfocused Zed window should focus that split.

Consider a Zed window with two splits, a left and right one, and the following steps:

1. The left split is focused

2. Whole Zed window is unfocu... | 1.0 | Clicking an inactive Zed window doesn't focus the split clicked on - ### Check for existing issues

- [X] Completed

### Describe the bug / provide steps to reproduce it

Clicking a split of an unfocused Zed window should focus that split.

Consider a Zed window with two splits, a left and right one, and the followin... | defect | clicking an inactive zed window doesn t focus the split clicked on check for existing issues completed describe the bug provide steps to reproduce it clicking a split of an unfocused zed window should focus that split consider a zed window with two splits a left and right one and the following ... | 1 |

76,265 | 14,592,413,965 | IssuesEvent | 2020-12-19 17:31:01 | eeeeaaii/vodka | https://api.github.com/repos/eeeeaaii/vodka | closed | code cleanup for EString and EError | code cleanup | EString and EError implemented an old-style "editor flow" that predated me creating the editor class. The code is pretty messy and there is dead code in there. These two classes need to be overhauled and the editing functionality needs to be moved over into a subclass of Editor. | 1.0 | code cleanup for EString and EError - EString and EError implemented an old-style "editor flow" that predated me creating the editor class. The code is pretty messy and there is dead code in there. These two classes need to be overhauled and the editing functionality needs to be moved over into a subclass of Editor. | non_defect | code cleanup for estring and eerror estring and eerror implemented an old style editor flow that predated me creating the editor class the code is pretty messy and there is dead code in there these two classes need to be overhauled and the editing functionality needs to be moved over into a subclass of editor | 0 |

34,570 | 7,457,398,049 | IssuesEvent | 2018-03-30 04:02:59 | kerdokullamae/test_koik_issued | https://api.github.com/repos/kerdokullamae/test_koik_issued | closed | RDF faili puudumisel kuvada korrektne veateade | C: AVAR P: highest R: fixed T: defect | **Reported by sven syld on 26 Mar 2014 14:56 UTC**

'''Kirjeldus'''

Juhul, kui RDF fail on mingil põhjusel tegemata, kuvatakse viga:

''The Response content must be a string or object implementing __toString(), "boolean" given.''

Kontroll võiks olla nt sellises kohas - \Dira\DescriptionUnitBundle\Controller\Servic... | 1.0 | RDF faili puudumisel kuvada korrektne veateade - **Reported by sven syld on 26 Mar 2014 14:56 UTC**

'''Kirjeldus'''

Juhul, kui RDF fail on mingil põhjusel tegemata, kuvatakse viga:

''The Response content must be a string or object implementing __toString(), "boolean" given.''

Kontroll võiks olla nt sellises koha... | defect | rdf faili puudumisel kuvada korrektne veateade reported by sven syld on mar utc kirjeldus juhul kui rdf fail on mingil põhjusel tegemata kuvatakse viga the response content must be a string or object implementing tostring boolean given kontroll võiks olla nt sellises kohas d... | 1 |

32,458 | 13,839,373,782 | IssuesEvent | 2020-10-14 07:52:04 | MicrosoftDocs/azure-docs | https://api.github.com/repos/MicrosoftDocs/azure-docs | closed | Replace "plans" concept with "features" | Pri2 azure-communication-services/svc cxp doc-enhancement triaged | I've gotten feedback to refrain from using the term "Plans" in the UI as it may imply an associated cost and/or fixed limits as it does with traditional consumer phone plans. Instead I updated the UI to refer to capabilities as features. I'd suggest we use this same terminology in our docs.

---

#### Document Det... | 1.0 | Replace "plans" concept with "features" - I've gotten feedback to refrain from using the term "Plans" in the UI as it may imply an associated cost and/or fixed limits as it does with traditional consumer phone plans. Instead I updated the UI to refer to capabilities as features. I'd suggest we use this same terminology... | non_defect | replace plans concept with features i ve gotten feedback to refrain from using the term plans in the ui as it may imply an associated cost and or fixed limits as it does with traditional consumer phone plans instead i updated the ui to refer to capabilities as features i d suggest we use this same terminology... | 0 |

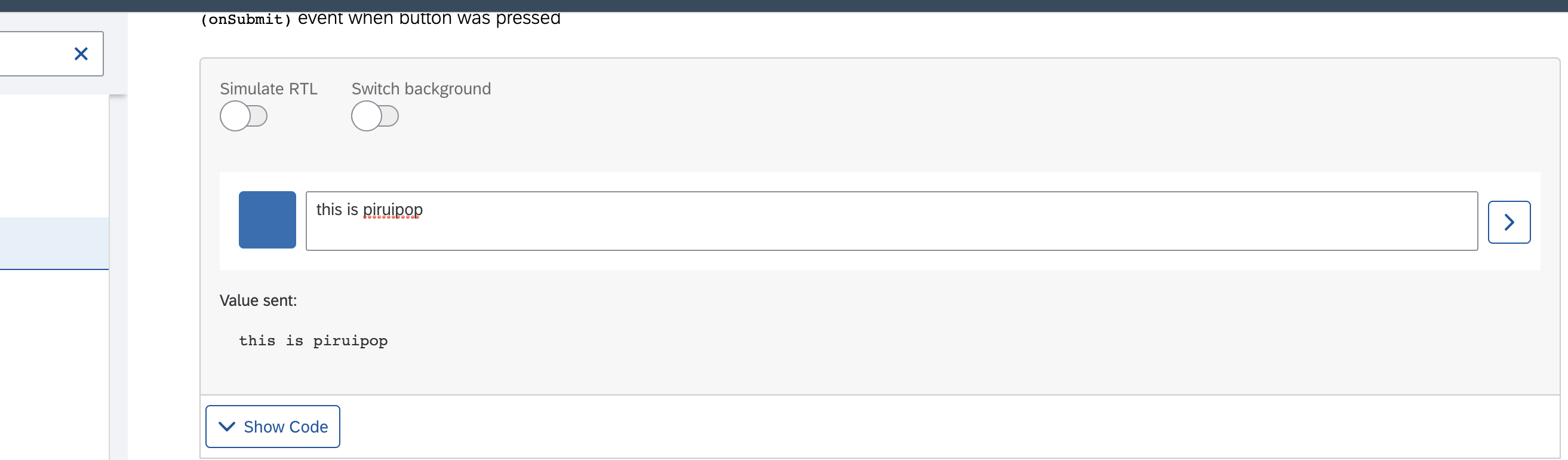

55,385 | 14,434,208,684 | IssuesEvent | 2020-12-07 06:37:55 | SAP/fundamental-ngx | https://api.github.com/repos/SAP/fundamental-ngx | opened | Feed Input: Not able to load avatar | Defect Hunting Medium bug platform | Description: Not able to load avatar

Expected: Avatar should be visible

Screenshot:

| 1.0 | Feed Input: Not able to load avatar - Description: Not able to load avatar

Expected: Avatar should be visible

Screenshot:

| defect | feed input not able to load avatar description not able to load avatar expected avatar should be visible screenshot | 1 |

59,459 | 17,023,134,455 | IssuesEvent | 2021-07-03 00:31:37 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | leisure=pitch, leisure=common, landuse=forest and landuse=allotments render in wrong colour | Component: mapnik Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 9.30am, Wednesday, 29th November 2006]**

The three main blocks in the middle of this view should all be green:

http://labs.metacarta.com/osm/?lat=6681345.88613&lon=-75271.4368&zoom=15&layers=B

landuse=cemetery looks OK, so colours related to that would be suita... | 1.0 | leisure=pitch, leisure=common, landuse=forest and landuse=allotments render in wrong colour - **[Submitted to the original trac issue database at 9.30am, Wednesday, 29th November 2006]**

The three main blocks in the middle of this view should all be green:

http://labs.metacarta.com/osm/?lat=6681345.88613&lon=-75271... | defect | leisure pitch leisure common landuse forest and landuse allotments render in wrong colour the three main blocks in the middle of this view should all be green landuse cemetery looks ok so colours related to that would be suitable landuse forest is also rendering in grey i suggest using a darke... | 1 |

112,547 | 14,264,737,219 | IssuesEvent | 2020-11-20 16:08:27 | FilthyCriminals/game-wip | https://api.github.com/repos/FilthyCriminals/game-wip | opened | Crystal Monster | Creature Design | This is for all general discussion on design/development/assets for the crystal monster that Tony shared | 1.0 | Crystal Monster - This is for all general discussion on design/development/assets for the crystal monster that Tony shared | non_defect | crystal monster this is for all general discussion on design development assets for the crystal monster that tony shared | 0 |

64,728 | 18,848,205,134 | IssuesEvent | 2021-11-11 17:15:32 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | The sidebar icon isn't roundish | T-Defect S-Minor A-User-Settings A-Spaces A-Appearance Z-IA |

All the other icons are round or roundish and the sidebar icon feels a little out of place, IMO | 1.0 | The sidebar icon isn't roundish -

All the other icons are round or roundish and the sidebar icon feels a little out of place, IMO | defect | the sidebar icon isn t roundish all the other icons are round or roundish and the sidebar icon feels a little out of place imo | 1 |

154,585 | 19,730,317,640 | IssuesEvent | 2022-01-14 01:11:23 | harrinry/DataflowTemplates | https://api.github.com/repos/harrinry/DataflowTemplates | opened | CVE-2018-11765 (High) detected in hadoop-common-2.8.5.jar | security vulnerability | ## CVE-2018-11765 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hadoop-common-2.8.5.jar</b></p></summary>

<p>Apache Hadoop Common</p>

<p>Path to dependency file: /pom.xml</p>

<p>Path... | True | CVE-2018-11765 (High) detected in hadoop-common-2.8.5.jar - ## CVE-2018-11765 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>hadoop-common-2.8.5.jar</b></p></summary>

<p>Apache Hadoop... | non_defect | cve high detected in hadoop common jar cve high severity vulnerability vulnerable library hadoop common jar apache hadoop common path to dependency file pom xml path to vulnerable library ory org apache hadoop hadoop common hadoop common jar dependency hi... | 0 |

29,244 | 5,626,671,553 | IssuesEvent | 2017-04-04 22:31:58 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | opened | CCE1.1 - envia los domicilios del receptor y emisor del cfdi estándar, con claves | bug defect | Las claves en el domicilio del receptor y emisor, SOLO APLICAN CUANDO SE INCLUYE EL CCE | 1.0 | CCE1.1 - envia los domicilios del receptor y emisor del cfdi estándar, con claves - Las claves en el domicilio del receptor y emisor, SOLO APLICAN CUANDO SE INCLUYE EL CCE | defect | envia los domicilios del receptor y emisor del cfdi estándar con claves las claves en el domicilio del receptor y emisor solo aplican cuando se incluye el cce | 1 |

78,446 | 27,525,355,997 | IssuesEvent | 2023-03-06 17:38:25 | vector-im/element-x-ios | https://api.github.com/repos/vector-im/element-x-ios | opened | Flash of content whilst entering a room I have just entered | T-Defect | ### Steps to reproduce

1. Go to room A, known history is the last 3 messages

2. Wait for the previous messages to be loaded

3. Go back to home screen

4. Get back in room A

### Outcome

#### What did you expect?

For the room to be shown instantaneously