Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

76,151 | 21,191,138,242 | IssuesEvent | 2022-04-08 17:33:12 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | build: strip the produced cockroach-sql binary | C-enhancement A-build-system T-dev-inf | Followup to #75800; the `cockroach-sql` binary should be stripped to ensure it remains small.

Epic: CC-5993 | 1.0 | build: strip the produced cockroach-sql binary - Followup to #75800; the `cockroach-sql` binary should be stripped to ensure it remains small.

Epic: CC-5993 | non_defect | build strip the produced cockroach sql binary followup to the cockroach sql binary should be stripped to ensure it remains small epic cc | 0 |

4,247 | 7,006,045,109 | IssuesEvent | 2017-12-19 06:25:15 | GiselleSerate/myaliases | https://api.github.com/repos/GiselleSerate/myaliases | opened | check for setup/uninstall script compatibility on osx | compatibility | Please do the following when you get access to a lab mac:

- [ ] setup works!

- [ ] uninstall works!

- [ ] documentation for this process written! | True | check for setup/uninstall script compatibility on osx - Please do the following when you get access to a lab mac:

- [ ] setup works!

- [ ] uninstall works!

- [ ] documentation for this process written! | non_defect | check for setup uninstall script compatibility on osx please do the following when you get access to a lab mac setup works uninstall works documentation for this process written | 0 |

49,991 | 13,187,304,059 | IssuesEvent | 2020-08-13 02:59:22 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | Coverage: no gcda files are found (Trac #2414) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2414">https://code.icecube.wisc.edu/ticket/2414</a>, reported by fiedl and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2020-06-24T12:28:40",

"description": "I\u2019ve tried to generate a cove... | 1.0 | Coverage: no gcda files are found (Trac #2414) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2414">https://code.icecube.wisc.edu/ticket/2414</a>, reported by fiedl and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2020-06-24T12:28:40",

"... | defect | coverage no gcda files are found trac migrated from json status closed changetime description i tried to generate a coverage report but gcda files are found n nsee fiedl fiedl mbp icecube combo fiedl monopole generator n brew install lco... | 1 |

633,867 | 20,268,806,860 | IssuesEvent | 2022-02-15 14:31:29 | inverse-inc/packetfence | https://api.github.com/repos/inverse-inc/packetfence | closed | Can't create security event suricata trigger with custom value | Type: Bug Priority: High | **Describe the bug**

Can't create security event suricata trigger with custom value which used to be possible

**To Reproduce**

1. Create a security event

2. Add a trigger, 'Event->Suricata Event' with value `ET POLICY iTunes User Agent`

3. You can't set this value since its not in the select options

**Expecte... | 1.0 | Can't create security event suricata trigger with custom value - **Describe the bug**

Can't create security event suricata trigger with custom value which used to be possible

**To Reproduce**

1. Create a security event

2. Add a trigger, 'Event->Suricata Event' with value `ET POLICY iTunes User Agent`

3. You can'... | non_defect | can t create security event suricata trigger with custom value describe the bug can t create security event suricata trigger with custom value which used to be possible to reproduce create a security event add a trigger event suricata event with value et policy itunes user agent you can ... | 0 |

250,086 | 18,873,214,399 | IssuesEvent | 2021-11-13 15:10:43 | kdblocher/bridge | https://api.github.com/repos/kdblocher/bridge | opened | Create User Stories for example usages to aid in GUI development and documentation creation | documentation | Create official user stories that can be agreed upon so that future GUI development and documentation creation will have more direction. These listed examples are just starter ideas, but the user stories will need to be carefully developed so that these activities can be explained and done via the GUI in a straightfor... | 1.0 | Create User Stories for example usages to aid in GUI development and documentation creation - Create official user stories that can be agreed upon so that future GUI development and documentation creation will have more direction. These listed examples are just starter ideas, but the user stories will need to be caref... | non_defect | create user stories for example usages to aid in gui development and documentation creation create official user stories that can be agreed upon so that future gui development and documentation creation will have more direction these listed examples are just starter ideas but the user stories will need to be caref... | 0 |

9,700 | 2,615,166,062 | IssuesEvent | 2015-03-01 06:46:28 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | reaver is support dedicatory attack wps pin? | auto-migrated Priority-Triage Type-Defect | ```

answer plz.........

```

Original issue reported on code.google.com by `jainilpa...@gmail.com` on 20 May 2013 at 1:37 | 1.0 | reaver is support dedicatory attack wps pin? - ```

answer plz.........

```

Original issue reported on code.google.com by `jainilpa...@gmail.com` on 20 May 2013 at 1:37 | defect | reaver is support dedicatory attack wps pin answer plz original issue reported on code google com by jainilpa gmail com on may at | 1 |

528,347 | 15,364,791,348 | IssuesEvent | 2021-03-01 22:30:17 | code4lib/2021.code4lib.org | https://api.github.com/repos/code4lib/2021.code4lib.org | opened | Non-visible links being captured during tab navigation | Priority: Medium | - On speakers page, the link inside the speaker-info box captures the tab whether the speaker is active or not. Needs JS to set attribute for link to `tabindex="-1"` unless the speaker box is .selected, then flip to `tabindex="0"`

| 1.0 | Non-visible links being captured during tab navigation - - On speakers page, the link inside the speaker-info box captures the tab whether the speaker is active or not. Needs JS to set attribute for link to `tabindex="-1"` unless the speaker box is .selected, then flip to `tabindex="0"`

| non_defect | non visible links being captured during tab navigation on speakers page the link inside the speaker info box captures the tab whether the speaker is active or not needs js to set attribute for link to tabindex unless the speaker box is selected then flip to tabindex | 0 |

20,863 | 3,422,360,082 | IssuesEvent | 2015-12-08 22:40:38 | dart-lang/sdk | https://api.github.com/repos/dart-lang/sdk | closed | malloc error in unit test | area-vm priority-unassigned triaged Type-Defect | *This issue was originally filed by perrys...@gmail.com*

_____

**What steps will reproduce the problem?**

Not exactly sure what in here is causing the error. I tried to break it down further but even taking out the '_two' seemed to resolve the malloc issue so I just extracted and reproduced the offending pattern... | 1.0 | malloc error in unit test - *This issue was originally filed by perrys...@gmail.com*

_____

**What steps will reproduce the problem?**

Not exactly sure what in here is causing the error. I tried to break it down further but even taking out the '_two' seemed to resolve the malloc issue so I just extracted and repr... | defect | malloc error in unit test this issue was originally filed by perrys gmail com what steps will reproduce the problem not exactly sure what in here is causing the error i tried to break it down further but even taking out the two seemed to resolve the malloc issue so i just extracted and reprod... | 1 |

21,958 | 3,587,215,275 | IssuesEvent | 2016-01-30 05:06:15 | mash99/crypto-js | https://api.github.com/repos/mash99/crypto-js | closed | Files with certain sizes cause 'Uncaught RangeError: Maximum call stack size exceeded ' with SHA1 | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Run this: dd if=/dev/urandom of=2test.raw bs=$((1024 * (124) + 128 + 57 ))

count=1

2. Load file as ArrayBuffer (in a web worker context; might not be important)

3. Pass to CryptoJS.SHA1() wrapped in CryptoJS.lib.WordArray.create()

4. Print resulting SHA1 with toString()

... | 1.0 | Files with certain sizes cause 'Uncaught RangeError: Maximum call stack size exceeded ' with SHA1 - ```

What steps will reproduce the problem?

1. Run this: dd if=/dev/urandom of=2test.raw bs=$((1024 * (124) + 128 + 57 ))

count=1

2. Load file as ArrayBuffer (in a web worker context; might not be important)

3. Pass to ... | defect | files with certain sizes cause uncaught rangeerror maximum call stack size exceeded with what steps will reproduce the problem run this dd if dev urandom of raw bs count load file as arraybuffer in a web worker context might not be important pass to cryptojs wr... | 1 |

7,290 | 3,082,724,389 | IssuesEvent | 2015-08-24 00:43:53 | california-civic-data-coalition/django-calaccess-raw-data | https://api.github.com/repos/california-civic-data-coalition/django-calaccess-raw-data | opened | Add documentation for the ``d_le_nams`` field on the ``LobbyAmendmentsCd`` database model | documentation enhancement small |

## Your mission

Add documentation for the ``d_le_nams`` field on the ``LobbyAmendmentsCd`` database model.

## Here's how

**Step 1**: Claim this ticket by leaving a comment below. Tell everyone you're ON IT!

**Step 2**: Open up the file that contains this model. It should be in <a href="https://github.com/californi... | 1.0 | Add documentation for the ``d_le_nams`` field on the ``LobbyAmendmentsCd`` database model -

## Your mission

Add documentation for the ``d_le_nams`` field on the ``LobbyAmendmentsCd`` database model.

## Here's how

**Step 1**: Claim this ticket by leaving a comment below. Tell everyone you're ON IT!

**Step 2**: Open... | non_defect | add documentation for the d le nams field on the lobbyamendmentscd database model your mission add documentation for the d le nams field on the lobbyamendmentscd database model here s how step claim this ticket by leaving a comment below tell everyone you re on it step open... | 0 |

272,073 | 23,652,466,348 | IssuesEvent | 2022-08-26 08:05:01 | sourcegraph/sourcegraph | https://api.github.com/repos/sourcegraph/sourcegraph | opened | ci/flake: nightly VSCE integration tests disabled | testing dx ci/flake | ### Test

Nightly VSCE integration tests

### Example failure

https://buildkite.com/sourcegraph/sourcegraph/builds/169322

### Disabling PR

https://github.com/sourcegraph/sourcegraph/pull/40890

### Additional details

_No response_ | 1.0 | ci/flake: nightly VSCE integration tests disabled - ### Test

Nightly VSCE integration tests

### Example failure

https://buildkite.com/sourcegraph/sourcegraph/builds/169322

### Disabling PR

https://github.com/sourcegraph/sourcegraph/pull/40890

### Additional details

_No response_ | non_defect | ci flake nightly vsce integration tests disabled test nightly vsce integration tests example failure disabling pr additional details no response | 0 |

373,155 | 26,039,634,127 | IssuesEvent | 2022-12-22 09:16:36 | matplotlib/matplotlib | https://api.github.com/repos/matplotlib/matplotlib | closed | [Doc]: Development workflow doc has lots of typos and clunky sentences | Documentation | ### Documentation Link

https://matplotlib.org/devdocs/devel/development_workflow.html

### Problem

There are typos and/or grammer errors in the following lines on this page at https://github.com/matplotlib/matplotlib/blob/main/doc/devel/development_workflow.rst:

```

59 Making an new branch for each set of r... | 1.0 | [Doc]: Development workflow doc has lots of typos and clunky sentences - ### Documentation Link

https://matplotlib.org/devdocs/devel/development_workflow.html

### Problem

There are typos and/or grammer errors in the following lines on this page at https://github.com/matplotlib/matplotlib/blob/main/doc/devel/de... | non_defect | development workflow doc has lots of typos and clunky sentences documentation link problem there are typos and or grammer errors in the following lines on this page at making an new branch for each set of related changes will make it easier for enter a title for the set of chang... | 0 |

8,072 | 20,789,924,009 | IssuesEvent | 2022-03-17 00:07:31 | RuanScherer/api-health-checker | https://api.github.com/repos/RuanScherer/api-health-checker | closed | Configure ESLint, Prettier and Commitizen tools - frontend | architecture frontend | **Describe the solution you'd like**

Configure ESLint, Prettier and Commitizen tools in the frontend project.

| 1.0 | Configure ESLint, Prettier and Commitizen tools - frontend - **Describe the solution you'd like**

Configure ESLint, Prettier and Commitizen tools in the frontend project.

| non_defect | configure eslint prettier and commitizen tools frontend describe the solution you d like configure eslint prettier and commitizen tools in the frontend project | 0 |

74,614 | 25,212,362,742 | IssuesEvent | 2022-11-14 05:48:46 | hyperledger/iroha | https://api.github.com/repos/hyperledger/iroha | closed | [BUG] Events are emitted even if transaction fails | Bug iroha2 LTS Pre-alpha defect | ### OS and Environment

MacOs

### GIT commit hash

52dc18cd81bdc1d1906ffeecb666dd9b2eb27955

### Minimum working example / Steps to reproduce

create a transaction of multiple instructions where last one fails to execute.

### Actual result

Events are emitted for all instructions up until the first one that fails

##... | 1.0 | [BUG] Events are emitted even if transaction fails - ### OS and Environment

MacOs

### GIT commit hash

52dc18cd81bdc1d1906ffeecb666dd9b2eb27955

### Minimum working example / Steps to reproduce

create a transaction of multiple instructions where last one fails to execute.

### Actual result

Events are emitted for a... | defect | events are emitted even if transaction fails os and environment macos git commit hash minimum working example steps to reproduce create a transaction of multiple instructions where last one fails to execute actual result events are emitted for all instructions up until the first one that... | 1 |

505,443 | 14,633,081,502 | IssuesEvent | 2020-12-24 00:36:06 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | closed | Log "Spam": "Could not do a head request | Priority: Medium Status: Available Type: Bug | **Describe the bug**

I recently updated to the latest watchtower version. But now I seem to get spammed with the error message:

```

Could not do a head request, falling back to regular pull.

```

which gets logged as well as creates an email notification every time.

**To Reproduce**

Steps to reproduce the... | 1.0 | Log "Spam": "Could not do a head request - **Describe the bug**

I recently updated to the latest watchtower version. But now I seem to get spammed with the error message:

```

Could not do a head request, falling back to regular pull.

```

which gets logged as well as creates an email notification every time.

... | non_defect | log spam could not do a head request describe the bug i recently updated to the latest watchtower version but now i seem to get spammed with the error message could not do a head request falling back to regular pull which gets logged as well as creates an email notification every time ... | 0 |

256,055 | 27,552,572,707 | IssuesEvent | 2023-03-07 15:49:58 | BrianMcDonaldWS/genie | https://api.github.com/repos/BrianMcDonaldWS/genie | opened | CVE-2019-0201 (Medium) detected in zookeeper-3.4.12.jar | security vulnerability | ## CVE-2019-0201 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>zookeeper-3.4.12.jar</b></p></summary>

<p></p>

<p>Path to dependency file: /genie-ui/build.gradle</p>

<p>Path to vuln... | True | CVE-2019-0201 (Medium) detected in zookeeper-3.4.12.jar - ## CVE-2019-0201 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>zookeeper-3.4.12.jar</b></p></summary>

<p></p>

<p>Path to d... | non_defect | cve medium detected in zookeeper jar cve medium severity vulnerability vulnerable library zookeeper jar path to dependency file genie ui build gradle path to vulnerable library root gradle caches modules files org apache zookeeper zookeeper zookeeper ... | 0 |

68,932 | 21,966,158,700 | IssuesEvent | 2022-05-24 20:32:39 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: unhandledPromptBehavior doesn't work | I-defect needs-triaging | ### What happened?

am using python 3.9.12 with pip 22.1.1

my localhost has an index.html file with only one line of code: `<script>alert("hi")</script>`

expected result is to have the above alert dissapear but it doesnt

### How can we reproduce the issue?

```shell

from selenium.webdriver import Chrome

from se... | 1.0 | [🐛 Bug]: unhandledPromptBehavior doesn't work - ### What happened?

am using python 3.9.12 with pip 22.1.1

my localhost has an index.html file with only one line of code: `<script>alert("hi")</script>`

expected result is to have the above alert dissapear but it doesnt

### How can we reproduce the issue?

```she... | defect | unhandledpromptbehavior doesn t work what happened am using python with pip my localhost has an index html file with only one line of code alert hi expected result is to have the above alert dissapear but it doesnt how can we reproduce the issue shell from selenium webdriv... | 1 |

604,032 | 18,675,960,492 | IssuesEvent | 2021-10-31 15:10:01 | CMPUT301F21T26/Habit-Tracker | https://api.github.com/repos/CMPUT301F21T26/Habit-Tracker | closed | Replace default Action Bar with custom Tool Bar | Priority: Medium Base | Default Action Bar lacks flexibility and will need to be replace with a Tool Bar widget (part of the library). To remove actionbar, in the themes.xml: change Theme.MaterialComponents.DayNight.DarkActionBar to Theme.MaterialComponents.DayNight.NoActionBar. | 1.0 | Replace default Action Bar with custom Tool Bar - Default Action Bar lacks flexibility and will need to be replace with a Tool Bar widget (part of the library). To remove actionbar, in the themes.xml: change Theme.MaterialComponents.DayNight.DarkActionBar to Theme.MaterialComponents.DayNight.NoActionBar. | non_defect | replace default action bar with custom tool bar default action bar lacks flexibility and will need to be replace with a tool bar widget part of the library to remove actionbar in the themes xml change theme materialcomponents daynight darkactionbar to theme materialcomponents daynight noactionbar | 0 |

111,076 | 14,005,602,523 | IssuesEvent | 2020-10-28 18:41:55 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | opened | PD Bug: Weird tip rack flow results in white screen | :spider: SPDDRS bug protocol designer | ## Steps to re-create

1. Create a protocol with a custom tip rack

2. Delete (on purpose or accidentally) the custom tip rack from the starting deck screen

3. Did NOT update the tip rack in the pipette selection modal with the new desired tip rack | 1.0 | PD Bug: Weird tip rack flow results in white screen - ## Steps to re-create

1. Create a protocol with a custom tip rack

2. Delete (on purpose or accidentally) the custom tip rack from the starting deck screen

3. Did NOT update the tip rack in the pipette selection modal with the new desired tip rack | non_defect | pd bug weird tip rack flow results in white screen steps to re create create a protocol with a custom tip rack delete on purpose or accidentally the custom tip rack from the starting deck screen did not update the tip rack in the pipette selection modal with the new desired tip rack | 0 |

4,675 | 4,534,845,383 | IssuesEvent | 2016-09-08 15:38:22 | JuliaLang/julia | https://api.github.com/repos/JuliaLang/julia | opened | optimize @evalpoly for SIMD? | maths performance | Right now, `@evalpoly(x, coefs...)` uses Horner's rule for real `x`, and a more complicated algorithm for complex `x`, and is performance-critical for evaluation of special functions.

However, it seems likely to be faster to try and exploit SIMD instructions for these polynomial evaluations, e.g. with [Estrin's algo... | True | optimize @evalpoly for SIMD? - Right now, `@evalpoly(x, coefs...)` uses Horner's rule for real `x`, and a more complicated algorithm for complex `x`, and is performance-critical for evaluation of special functions.

However, it seems likely to be faster to try and exploit SIMD instructions for these polynomial evalua... | non_defect | optimize evalpoly for simd right now evalpoly x coefs uses horner s rule for real x and a more complicated algorithm for complex x and is performance critical for evaluation of special functions however it seems likely to be faster to try and exploit simd instructions for these polynomial evalua... | 0 |

140,691 | 32,047,730,257 | IssuesEvent | 2023-09-23 07:10:07 | h4sh5/pypi-auto-scanner | https://api.github.com/repos/h4sh5/pypi-auto-scanner | opened | deme 0.4.4 has 3 GuardDog issues | guarddog code-execution exec-base64 | https://pypi.org/project/deme

https://inspector.pypi.io/project/deme

```{

"dependency": "deme",

"version": "0.4.4",

"result": {

"issues": 3,

"errors": {},

"results": {

"exec-base64": [

{

"location": "DEME-0.4.4/setup.py:136",

"code": " subprocess.run(\n ... | 1.0 | deme 0.4.4 has 3 GuardDog issues - https://pypi.org/project/deme

https://inspector.pypi.io/project/deme

```{

"dependency": "deme",

"version": "0.4.4",

"result": {

"issues": 3,

"errors": {},

"results": {

"exec-base64": [

{

"location": "DEME-0.4.4/setup.py:136",

"code":... | non_defect | deme has guarddog issues dependency deme version result issues errors results exec location deme setup py code subprocess run n cwd build temp check true n ... | 0 |

27,152 | 4,889,269,946 | IssuesEvent | 2016-11-18 09:38:49 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | INSERT ... SET ... RETURNING returns null | C: Functionality P: High T: Defect | It appears that this doesn't work:

``` java

TodosRecord persisted = jooq.insertInto(TODOS)

.set(TODOS.CREATION_TIME, currentTime)

.set(TODOS.DESCRIPTION, todo.getDescription())

.set(TODOS.ID, id)

.set(TODOS.MODIFICATION_TIME, currentTime)

... | 1.0 | INSERT ... SET ... RETURNING returns null - It appears that this doesn't work:

``` java

TodosRecord persisted = jooq.insertInto(TODOS)

.set(TODOS.CREATION_TIME, currentTime)

.set(TODOS.DESCRIPTION, todo.getDescription())

.set(TODOS.ID, id)

.set(TODOS.MODI... | defect | insert set returning returns null it appears that this doesn t work java todosrecord persisted jooq insertinto todos set todos creation time currenttime set todos description todo getdescription set todos id id set todos modi... | 1 |

38,203 | 8,695,528,566 | IssuesEvent | 2018-12-04 15:22:46 | jOOQ/jOOR | https://api.github.com/repos/jOOQ/jOOR | closed | Cannot compile nested or additional top-level classes | P: Medium R: Fixed T: Defect | ### Expected behavior and actual behavior:

I have this class that is loaded and compiled through jOOR:

```java

package com.example;

import org.apache.camel.Exchange;

import org.apache.camel.Processor;

import org.apache.camel.builder.RouteBuilder;

public class WithCustomProcessor extends RouteBuilder {

... | 1.0 | Cannot compile nested or additional top-level classes - ### Expected behavior and actual behavior:

I have this class that is loaded and compiled through jOOR:

```java

package com.example;

import org.apache.camel.Exchange;

import org.apache.camel.Processor;

import org.apache.camel.builder.RouteBuilder;

pu... | defect | cannot compile nested or additional top level classes expected behavior and actual behavior i have this class that is loaded and compiled through joor java package com example import org apache camel exchange import org apache camel processor import org apache camel builder routebuilder pu... | 1 |

37,185 | 8,288,778,203 | IssuesEvent | 2018-09-19 13:03:13 | hazelcast/hazelcast-jet | https://api.github.com/repos/hazelcast/hazelcast-jet | opened | Logs for ReceiverTasklet contain no context | core defect | The logger name doesn't include vertex name or the job name

```

00:01:25,509 TRACE || - [ReceiverTasklet] hz._hzInstance_1_jet.cached.thread-4 - receiveWindowCompressed=800

00:01:25,509 TRACE || - [ReceiverTasklet] hz._hzInstance_1_jet.cached.thread-4 - receiveWindowCompressed=800

00:01:25,509 TRACE || - [Receive... | 1.0 | Logs for ReceiverTasklet contain no context - The logger name doesn't include vertex name or the job name

```

00:01:25,509 TRACE || - [ReceiverTasklet] hz._hzInstance_1_jet.cached.thread-4 - receiveWindowCompressed=800

00:01:25,509 TRACE || - [ReceiverTasklet] hz._hzInstance_1_jet.cached.thread-4 - receiveWindowCo... | defect | logs for receivertasklet contain no context the logger name doesn t include vertex name or the job name trace hz hzinstance jet cached thread receivewindowcompressed trace hz hzinstance jet cached thread receivewindowcompressed trace hz hzinsta... | 1 |

117,075 | 17,407,947,288 | IssuesEvent | 2021-08-03 08:36:57 | elikkatzgit/TestingPOM | https://api.github.com/repos/elikkatzgit/TestingPOM | closed | CVE-2018-5968 (High) detected in jackson-databind-2.7.2.jar - autoclosed | security vulnerability | ## CVE-2018-5968 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.7.2.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming... | True | CVE-2018-5968 (High) detected in jackson-databind-2.7.2.jar - autoclosed - ## CVE-2018-5968 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.7.2.jar</b></p></summary>

... | non_defect | cve high detected in jackson databind jar autoclosed cve high severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href dependency hierarchy x jackson d... | 0 |

64,908 | 18,960,439,243 | IssuesEvent | 2021-11-19 03:35:37 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | typo in jOOQ-academy setup script | T: Defect | There is an extraneous comma at the end of line 34 (`fk_t_book_author_id`) in the db-h2.sql setup script:

https://github.com/jOOQ/jOOQ/blob/e77df40080b9f73a2da1b7436ee875ad2973731c/jOOQ-examples/jOOQ-academy/src/main/resources/db-h2.sql#L25-L36

This causes `maven clean install` in jOOQ-academy to fail:

```

[ERROR... | 1.0 | typo in jOOQ-academy setup script - There is an extraneous comma at the end of line 34 (`fk_t_book_author_id`) in the db-h2.sql setup script:

https://github.com/jOOQ/jOOQ/blob/e77df40080b9f73a2da1b7436ee875ad2973731c/jOOQ-examples/jOOQ-academy/src/main/resources/db-h2.sql#L25-L36

This causes `maven clean install` i... | defect | typo in jooq academy setup script there is an extraneous comma at the end of line fk t book author id in the db sql setup script this causes maven clean install in jooq academy to fail constraint pk t book primary key id constraint fk t book author id foreign key author id referenc... | 1 |

59,889 | 17,023,280,386 | IssuesEvent | 2021-07-03 01:12:20 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | change look of highway=road in potlatch | Component: potlatch (flash editor) Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 11.01am, Tuesday, 29th July 2008]**

It seems Potlatch does not recognise ways tagged as highway=road, so they appear as a grey line in the editor. This makes them hard to spot when aerial imagery is turned on. | 1.0 | change look of highway=road in potlatch - **[Submitted to the original trac issue database at 11.01am, Tuesday, 29th July 2008]**

It seems Potlatch does not recognise ways tagged as highway=road, so they appear as a grey line in the editor. This makes them hard to spot when aerial imagery is turned on. | defect | change look of highway road in potlatch it seems potlatch does not recognise ways tagged as highway road so they appear as a grey line in the editor this makes them hard to spot when aerial imagery is turned on | 1 |

10,100 | 14,528,458,704 | IssuesEvent | 2020-12-14 16:33:41 | OpenEnergyPlatform/tutorial | https://api.github.com/repos/OpenEnergyPlatform/tutorial | closed | Include in Tutorials first steps | requirement_specification specification_sheet | The Tutorials start with an Installed Anaconda version. It would be good to include the first steps for first time users: setup of virtual environment etc.

If possible it would be nice if the tutorials could be grouped into: first time user, some python experience, expert and hence start at the respective points. | 1.0 | Include in Tutorials first steps - The Tutorials start with an Installed Anaconda version. It would be good to include the first steps for first time users: setup of virtual environment etc.

If possible it would be nice if the tutorials could be grouped into: first time user, some python experience, expert and hence s... | non_defect | include in tutorials first steps the tutorials start with an installed anaconda version it would be good to include the first steps for first time users setup of virtual environment etc if possible it would be nice if the tutorials could be grouped into first time user some python experience expert and hence s... | 0 |

40,622 | 10,075,011,645 | IssuesEvent | 2019-07-24 13:25:08 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | ExpressionChangedAfterItHasBeenCheckedError when preselect a p-radioButton | defect | **I'm submitting a ...**

```

[x] bug report

[ ] feature request

[ ] support request

```

**Stackblitz demo**

Minimal Angular 8/PrimeNg 8 app that demonstrates the issue

https://stackblitz.com/edit/angular-zhmbkf

**Current behavior**

Initially getting `ExpressionChangedAfterItHasBeenCheckedError` (e... | 1.0 | ExpressionChangedAfterItHasBeenCheckedError when preselect a p-radioButton - **I'm submitting a ...**

```

[x] bug report

[ ] feature request

[ ] support request

```

**Stackblitz demo**

Minimal Angular 8/PrimeNg 8 app that demonstrates the issue

https://stackblitz.com/edit/angular-zhmbkf

**Current beh... | defect | expressionchangedafterithasbeencheckederror when preselect a p radiobutton i m submitting a bug report feature request support request stackblitz demo minimal angular primeng app that demonstrates the issue current behavior initially getting expressionchanged... | 1 |

27,563 | 5,048,378,835 | IssuesEvent | 2016-12-20 12:43:25 | TASVideos/BizHawk | https://api.github.com/repos/TASVideos/BizHawk | closed | The audio of Chinese version of Metroid Fusion is glitchy | auto-migrated Core-VBANext Type-Defect | ```

Bizhawk 1.8.2

Yes, I mean the official Chinese version.

The audio is fine in both English and Japanese version.

Not sure about the European version.

```

Original issue reported on code.google.com by `Fortr...@gmail.com` on 11 Oct 2014 at 3:31

| 1.0 | The audio of Chinese version of Metroid Fusion is glitchy - ```

Bizhawk 1.8.2

Yes, I mean the official Chinese version.

The audio is fine in both English and Japanese version.

Not sure about the European version.

```

Original issue reported on code.google.com by `Fortr...@gmail.com` on 11 Oct 2014 at 3:31

| defect | the audio of chinese version of metroid fusion is glitchy bizhawk yes i mean the official chinese version the audio is fine in both english and japanese version not sure about the european version original issue reported on code google com by fortr gmail com on oct at | 1 |

416,732 | 28,097,698,583 | IssuesEvent | 2023-03-30 16:58:38 | golang/go | https://api.github.com/repos/golang/go | closed | x/tools/go/analysis: pkgsite documentation for golang.org/x/tools/go/analysis/passes/* often lacks details | Documentation Tools pkgsite | I am not sure if this is a feature request for pkgsite, or it's just because the go analysis analyzer code was structured in a way not friendly with go documentation. Analyzers often place the helpful details in Doc constant, but this long doc is hidden from documentation

https://pkg.go.dev/golang.org/x/tools/go/ana... | 1.0 | x/tools/go/analysis: pkgsite documentation for golang.org/x/tools/go/analysis/passes/* often lacks details - I am not sure if this is a feature request for pkgsite, or it's just because the go analysis analyzer code was structured in a way not friendly with go documentation. Analyzers often place the helpful details in... | non_defect | x tools go analysis pkgsite documentation for golang org x tools go analysis passes often lacks details i am not sure if this is a feature request for pkgsite or it s just because the go analysis analyzer code was structured in a way not friendly with go documentation analyzers often place the helpful details in... | 0 |

27,063 | 12,510,687,569 | IssuesEvent | 2020-06-02 19:07:26 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | No updates available for 'azure-cli-ml' - extension not available | Machine Learning Service Attention question | > ### `az feedback` auto-generates most of the information requested below, as of CLI version 2.0.62

**Describe the bug**

<!--- A clear and concise description of what the bug is. --->

Until yesterday azure-cli-ml extension was available to be installed through command az extension install -n azure-cli-ml

**To ... | 1.0 | No updates available for 'azure-cli-ml' - extension not available - > ### `az feedback` auto-generates most of the information requested below, as of CLI version 2.0.62

**Describe the bug**

<!--- A clear and concise description of what the bug is. --->

Until yesterday azure-cli-ml extension was available to be in... | non_defect | no updates available for azure cli ml extension not available az feedback auto generates most of the information requested below as of cli version describe the bug until yesterday azure cli ml extension was available to be installed through command az extension install n azure cli ml ... | 0 |

64,010 | 18,107,106,444 | IssuesEvent | 2021-09-22 20:26:51 | rropen/SFM | https://api.github.com/repos/rropen/SFM | opened | Defect: Fix Broken Code Coverage | defect frontend | **Topic**

Code Coverage is running, but is incorrect.

**Service**

Frontend

**Steps**

- [ ] Utils is heavily tested, but showing as not tested. Other sections of app are not tested but show as being tested.

| 1.0 | Defect: Fix Broken Code Coverage - **Topic**

Code Coverage is running, but is incorrect.

**Service**

Frontend

**Steps**

- [ ] Utils is heavily tested, but showing as not tested. Other sections of app are not tested but show as being tested.

| defect | defect fix broken code coverage topic code coverage is running but is incorrect service frontend steps utils is heavily tested but showing as not tested other sections of app are not tested but show as being tested | 1 |

45,749 | 13,055,639,807 | IssuesEvent | 2020-07-30 02:17:51 | svalinn/DAGMC | https://api.github.com/repos/svalinn/DAGMC | opened | DAGMC not compatible with Geant4 10.6 | Type: Defect | There appear to have been some changes in Geant4 10.6 that break our ability to build Dag-Geant4 applications. Stuff like this appears during build:

```

/home/lucas/build/native/DAGMC-moab-5.1.0/src/src/geant4/app/exampleN01.cc: In function ‘int main(int, char**)’:

/home/lucas/build/native/DAGMC-moab-5.1.0/src/src... | 1.0 | DAGMC not compatible with Geant4 10.6 - There appear to have been some changes in Geant4 10.6 that break our ability to build Dag-Geant4 applications. Stuff like this appears during build:

```

/home/lucas/build/native/DAGMC-moab-5.1.0/src/src/geant4/app/exampleN01.cc: In function ‘int main(int, char**)’:

/home/luc... | defect | dagmc not compatible with there appear to have been some changes in that break our ability to build dag applications stuff like this appears during build home lucas build native dagmc moab src src app cc in function ‘int main int char ’ home lucas build native dagmc moab ... | 1 |

6,530 | 5,510,682,447 | IssuesEvent | 2017-03-17 00:56:38 | google/blockly | https://api.github.com/repos/google/blockly | opened | Performance regression when dragging blocks out of the flyout | bug performance | tldr: composite layers got quite expensive (~.2ms -> 4ms) when dragging a block out of the flyout.

This is present on develop. Marking as a bug for now since it is a big enough regression that I'd like to figure it out before we push to develop.

See https://github.com/LLK/scratch-blocks/issues/835 for more contex... | True | Performance regression when dragging blocks out of the flyout - tldr: composite layers got quite expensive (~.2ms -> 4ms) when dragging a block out of the flyout.

This is present on develop. Marking as a bug for now since it is a big enough regression that I'd like to figure it out before we push to develop.

See ... | non_defect | performance regression when dragging blocks out of the flyout tldr composite layers got quite expensive when dragging a block out of the flyout this is present on develop marking as a bug for now since it is a big enough regression that i d like to figure it out before we push to develop see fo... | 0 |

30,993 | 4,229,547,983 | IssuesEvent | 2016-07-04 08:18:02 | pythonapis/6ZJYP2PXGY5CWP2LWTZZFRIL | https://api.github.com/repos/pythonapis/6ZJYP2PXGY5CWP2LWTZZFRIL | closed | RncyRD2ffPklGRc42o56iYPDqv5FntO8jmphmjGt5EXom3ENuPHI1jFe3iEuzuYiNvDdB7XCdifkK+n03BjdHMBuCstR1TxSv28XKwmgfvcvCigCXLNS5AMszCmULaUEDVqf8WZ3oslKah/YeToEAUdleELccXhrvunsofMtTUA= | design | uX8P4Brikz9ZTgzQvv7KWic/PeU4YUuwYDQAU1KVLCV8X6PLfx+Fud6fitUXyYHy6w8tDqycKRnE4SAq3dZ7FM/J4ucL0T7/3PalaLN448NebYUgGaYdr1x/wVsOE7RI+ZDaoPn5ynro6IO9m3RuNWt8PAF9jTeM3xCEonyc8vpXhALMpQTMfYGceW/xnXXQSSK+rAxdAzuJiibBSY9Vp1A7KQZgMZ2G/Xe6DggnCa2zvRd7Kj2JM/vWK+EgABCXKpJpa1qLJEnc0j2ARjKVpXqQLHh1dcPclDxN4vknUSIZ0pybiClATrnL4n0SiOtW... | 1.0 | RncyRD2ffPklGRc42o56iYPDqv5FntO8jmphmjGt5EXom3ENuPHI1jFe3iEuzuYiNvDdB7XCdifkK+n03BjdHMBuCstR1TxSv28XKwmgfvcvCigCXLNS5AMszCmULaUEDVqf8WZ3oslKah/YeToEAUdleELccXhrvunsofMtTUA= - uX8P4Brikz9ZTgzQvv7KWic/PeU4YUuwYDQAU1KVLCV8X6PLfx+Fud6fitUXyYHy6w8tDqycKRnE4SAq3dZ7FM/J4ucL0T7/3PalaLN448NebYUgGaYdr1x/wVsOE7RI+ZDaoPn5ynro6IO9m... | non_defect | yetoeaudleelccxhrvunsofmttua xnxxqssk vwk noiwdzq saiijyiad | 0 |

5,665 | 2,610,192,927 | IssuesEvent | 2015-02-26 19:00:56 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 纠结脸上色斑怎么去小窍门 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

容貌对一个人是至关重要,如果脸上长黄褐斑是所有人所困��

�的问题。随着社会的发展,人们生活水平的提高,人们的压�

��就会越来越大,经常熬夜加班,饮食不规律,精神紧张,就

会在脸上长出很多黄褐斑,色斑形成的原因这个问题让很多��

�都很头疼。脸上色斑怎么去小窍门,

《客户案例》

什么去黄褐斑最好,

在我的生活当中,要问我对自己最不满意的地方是什么,想��

�,就是我的一脸黄褐斑了。我的皮肤属于比较白的那种女生�

��在很小的时候,鼻梁就有一点黄褐斑,但那时斑是若隐若现

,不多也不明显。后来毕业工作了,因为做的是经常对着电��

�的文员工作,电脑辐射本身对皮肤伤害很大,我平常又没有�

��别注意清洁皮肤,再加... | 1.0 | 纠结脸上色斑怎么去小窍门 - ```

《摘要》

容貌对一个人是至关重要,如果脸上长黄褐斑是所有人所困��

�的问题。随着社会的发展,人们生活水平的提高,人们的压�

��就会越来越大,经常熬夜加班,饮食不规律,精神紧张,就

会在脸上长出很多黄褐斑,色斑形成的原因这个问题让很多��

�都很头疼。脸上色斑怎么去小窍门,

《客户案例》

什么去黄褐斑最好,

在我的生活当中,要问我对自己最不满意的地方是什么,想��

�,就是我的一脸黄褐斑了。我的皮肤属于比较白的那种女生�

��在很小的时候,鼻梁就有一点黄褐斑,但那时斑是若隐若现

,不多也不明显。后来毕业工作了,因为做的是经常对着电��

�的文员工作,电脑辐射本身对皮肤伤害很大,我平常又... | defect | 纠结脸上色斑怎么去小窍门 《摘要》 容貌对一个人是至关重要,如果脸上长黄褐斑是所有人所困�� �的问题。随着社会的发展,人们生活水平的提高,人们的压� ��就会越来越大,经常熬夜加班,饮食不规律,精神紧张,就 会在脸上长出很多黄褐斑,色斑形成的原因这个问题让很多�� �都很头疼。脸上色斑怎么去小窍门, 《客户案例》 什么去黄褐斑最好 在我的生活当中,要问我对自己最不满意的地方是什么,想�� �,就是我的一脸黄褐斑了。我的皮肤属于比较白的那种女生� ��在很小的时候,鼻梁就有一点黄褐斑,但那时斑是若隐若现 ,不多也不明显。后来毕业工作了,因为做的是经常对着电�� �的文员工作,电脑辐射本身对皮肤伤害很大,我平常又... | 1 |

17,251 | 2,986,836,759 | IssuesEvent | 2015-07-20 08:12:23 | cultibox/cultibox | https://api.github.com/repos/cultibox/cultibox | closed | [LOGS] Gestion des accents dans highchart | Component-cultiboxUI Priority-Low Type-Defect Type-ToTest | ```

Lorsqu'on fait un export des courbes, les légendes avec des accents

apparaissent au format HTML.

```

Original issue reported on code.google.com by `alliaume...@gmail.com` on 10 Feb 2015 at 9:01 | 1.0 | [LOGS] Gestion des accents dans highchart - ```

Lorsqu'on fait un export des courbes, les légendes avec des accents

apparaissent au format HTML.

```

Original issue reported on code.google.com by `alliaume...@gmail.com` on 10 Feb 2015 at 9:01 | defect | gestion des accents dans highchart lorsqu on fait un export des courbes les légendes avec des accents apparaissent au format html original issue reported on code google com by alliaume gmail com on feb at | 1 |

37,921 | 8,579,442,656 | IssuesEvent | 2018-11-13 09:11:42 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Review Fields.indexOf() and similar methods for performance | C: Functionality E: All Editions P: Medium T: Defect | More details will follow

----

See also:

https://groups.google.com/forum/#!topic/jooq-user/5Dt-gN68158 | 1.0 | Review Fields.indexOf() and similar methods for performance - More details will follow

----

See also:

https://groups.google.com/forum/#!topic/jooq-user/5Dt-gN68158 | defect | review fields indexof and similar methods for performance more details will follow see also | 1 |

158,746 | 13,746,599,296 | IssuesEvent | 2020-10-06 06:06:13 | msandfor/10-Easy-Steps | https://api.github.com/repos/msandfor/10-Easy-Steps | closed | [Hacktoberfest]: Add "Open Source Summit" to the Conferences Section | beginner beginner-friendly documentation :memo: first-contribution good first issue hacktoberfest :children_crossing: help wanted :hand: up-for-grabs | 🆕🐥☝ Beginners Only:

This issue is reserved for people who are new to Open Source. We know that the process of creating a pull request is the biggest barrier for new contributors. This issue is for you 💝

To do:

* Add *Open Source Summit* to the Conferences Section

* See below for link to step-by-step tutorial... | 1.0 | [Hacktoberfest]: Add "Open Source Summit" to the Conferences Section - 🆕🐥☝ Beginners Only:

This issue is reserved for people who are new to Open Source. We know that the process of creating a pull request is the biggest barrier for new contributors. This issue is for you 💝

To do:

* Add *Open Source Summit* to... | non_defect | add open source summit to the conferences section 🆕🐥☝ beginners only this issue is reserved for people who are new to open source we know that the process of creating a pull request is the biggest barrier for new contributors this issue is for you 💝 to do add open source summit to the conferenc... | 0 |

67,341 | 20,961,606,243 | IssuesEvent | 2022-03-27 21:48:17 | abedmaatalla/sipdroid | https://api.github.com/repos/abedmaatalla/sipdroid | closed | TLS support in v 3.4beta | Priority-Medium Type-Defect auto-migrated | ```

looking at code of SipDroid v 3.4 beta for tls support, it looks like tls is

kept false at Initialization of the SipProvider.Later at sending message method

, tcp return conn id, udp return null, while using tls return "Unsupported

Protocol (tls), Message discarded". Does that means tls is still not supported?

... | 1.0 | TLS support in v 3.4beta - ```

looking at code of SipDroid v 3.4 beta for tls support, it looks like tls is

kept false at Initialization of the SipProvider.Later at sending message method

, tcp return conn id, udp return null, while using tls return "Unsupported

Protocol (tls), Message discarded". Does that means tl... | defect | tls support in v looking at code of sipdroid v beta for tls support it looks like tls is kept false at initialization of the sipprovider later at sending message method tcp return conn id udp return null while using tls return unsupported protocol tls message discarded does that means tls is... | 1 |

32,826 | 6,953,397,141 | IssuesEvent | 2017-12-06 20:52:47 | Dzhuneyt/jquery-tubular | https://api.github.com/repos/Dzhuneyt/jquery-tubular | closed | In browser SAFARI on IMAC | auto-migrated Priority-Medium Type-Defect | ```

Video dont go PLAY

```

Original issue reported on code.google.com by `nando.pi...@gmail.com` on 26 Nov 2013 at 1:28

| 1.0 | In browser SAFARI on IMAC - ```

Video dont go PLAY

```

Original issue reported on code.google.com by `nando.pi...@gmail.com` on 26 Nov 2013 at 1:28

| defect | in browser safari on imac video dont go play original issue reported on code google com by nando pi gmail com on nov at | 1 |

434,188 | 30,445,934,359 | IssuesEvent | 2023-07-15 17:09:50 | helpwave/services | https://api.github.com/repos/helpwave/services | closed | Document everything | documentation priority: low | Currently there are a lot of moving things, but when the storm has calmed we should document everything to make onboarding easier. | 1.0 | Document everything - Currently there are a lot of moving things, but when the storm has calmed we should document everything to make onboarding easier. | non_defect | document everything currently there are a lot of moving things but when the storm has calmed we should document everything to make onboarding easier | 0 |

273,857 | 23,790,084,478 | IssuesEvent | 2022-09-02 13:48:47 | cosmos/cosmos-sdk | https://api.github.com/repos/cosmos/cosmos-sdk | closed | Improve testing DevX | T: Tests tooling T: Dev UX | ## Summary

As a developer I want an easy way to run and manage tests. Moreover, I want to have a clear separation of unit tests, integration tests and functional tests. Finally, unit tests must be very quick to run and don't involve lot of dependencies.

### NOTE

* This is a **meta-issue**.

* If you have a qui... | 1.0 | Improve testing DevX - ## Summary

As a developer I want an easy way to run and manage tests. Moreover, I want to have a clear separation of unit tests, integration tests and functional tests. Finally, unit tests must be very quick to run and don't involve lot of dependencies.

### NOTE

* This is a **meta-issue*... | non_defect | improve testing devx summary as a developer i want an easy way to run and manage tests moreover i want to have a clear separation of unit tests integration tests and functional tests finally unit tests must be very quick to run and don t involve lot of dependencies note this is a meta issue ... | 0 |

424,348 | 29,048,943,270 | IssuesEvent | 2023-05-13 23:47:42 | janderland/fdbq | https://api.github.com/repos/janderland/fdbq | closed | Revise the readme for initial release. | documentation | - [x] Differences between lang, API, & app (including versioning).

- [x] Syntax explanation.

- [x] How to build & run tests.

- [x] #151

- [ ] Visitor pattern.

- [x] Value serialization. | 1.0 | Revise the readme for initial release. - - [x] Differences between lang, API, & app (including versioning).

- [x] Syntax explanation.

- [x] How to build & run tests.

- [x] #151

- [ ] Visitor pattern.

- [x] Value serialization. | non_defect | revise the readme for initial release differences between lang api app including versioning syntax explanation how to build run tests visitor pattern value serialization | 0 |

76,225 | 15,495,881,453 | IssuesEvent | 2021-03-11 01:39:56 | DODHI5/react-kanban | https://api.github.com/repos/DODHI5/react-kanban | opened | CVE-2018-6342 (High) detected in react-dev-utils-5.0.0.tgz | security vulnerability | ## CVE-2018-6342 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>react-dev-utils-5.0.0.tgz</b></p></summary>

<p>Webpack utilities used by Create React App</p>

<p>Library home page: <a ... | True | CVE-2018-6342 (High) detected in react-dev-utils-5.0.0.tgz - ## CVE-2018-6342 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>react-dev-utils-5.0.0.tgz</b></p></summary>

<p>Webpack uti... | non_defect | cve high detected in react dev utils tgz cve high severity vulnerability vulnerable library react dev utils tgz webpack utilities used by create react app library home page a href path to dependency file react kanban package json path to vulnerable library react kan... | 0 |

5,526 | 2,610,189,304 | IssuesEvent | 2015-02-26 18:59:56 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 关于如何能去除色斑 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

愉快跟不愉快的回忆,比如一个硬币的两面,存在于我们的��

�一段情感里。就像那个著名的“蝴蝶效应”,如果你经常记�

��不愉快的人、不愉快的事,生活就跟着变得不愉快起来。相

反,有些女人却能在跟老公吵架的时候及其她求婚时的表情��

�他怀抱的温暖。这里的“吵”是一种乐观、积极的沟通方式�

��这样的女人即便是面临命运得不测风云,也不会唉声叹气,

而当它是动力。面带微笑、坦然自处,男人有乐观女人的相��

�,一生都将阳光灿烂。如何能去除色斑,

《客户案例》

小兰<br>

我以前的皮肤挺白皙细腻的,由于常年在外打拼,生活��

�律也不协调,近年来脸上长出了很多的色斑,颈部也有很多�

��斑点大小不一,呈深褐色,... | 1.0 | 关于如何能去除色斑 - ```

《摘要》

愉快跟不愉快的回忆,比如一个硬币的两面,存在于我们的��

�一段情感里。就像那个著名的“蝴蝶效应”,如果你经常记�

��不愉快的人、不愉快的事,生活就跟着变得不愉快起来。相

反,有些女人却能在跟老公吵架的时候及其她求婚时的表情��

�他怀抱的温暖。这里的“吵”是一种乐观、积极的沟通方式�

��这样的女人即便是面临命运得不测风云,也不会唉声叹气,

而当它是动力。面带微笑、坦然自处,男人有乐观女人的相��

�,一生都将阳光灿烂。如何能去除色斑,

《客户案例》

小兰<br>

我以前的皮肤挺白皙细腻的,由于常年在外打拼,生活��

�律也不协调,近年来脸上长出了很多的色斑,颈部也有很多�

�... | defect | 关于如何能去除色斑 《摘要》 愉快跟不愉快的回忆,比如一个硬币的两面,存在于我们的�� �一段情感里。就像那个著名的“蝴蝶效应”,如果你经常记� ��不愉快的人、不愉快的事,生活就跟着变得不愉快起来。相 反,有些女人却能在跟老公吵架的时候及其她求婚时的表情�� �他怀抱的温暖。这里的“吵”是一种乐观、积极的沟通方式� ��这样的女人即便是面临命运得不测风云,也不会唉声叹气, 而当它是动力。面带微笑、坦然自处,男人有乐观女人的相�� �,一生都将阳光灿烂。如何能去除色斑, 《客户案例》 小兰 我以前的皮肤挺白皙细腻的,由于常年在外打拼,生活�� �律也不协调,近年来脸上长出了很多的色斑,颈部也有很多� ��斑点... | 1 |

391,254 | 26,883,400,924 | IssuesEvent | 2023-02-05 22:22:19 | fga-eps-mds/2022-2-Alectrion-DOC | https://api.github.com/repos/fga-eps-mds/2022-2-Alectrion-DOC | closed | Atualização diagrama lógico | documentation ARQ EPS | **Descrição**

Atualiza diagrama lógico.

**Tarefas :**

- [ ] Atualização do diagrama lógico

| 1.0 | Atualização diagrama lógico - **Descrição**

Atualiza diagrama lógico.

**Tarefas :**

- [ ] Atualização do diagrama lógico

| non_defect | atualização diagrama lógico descrição atualiza diagrama lógico tarefas atualização do diagrama lógico | 0 |

13,191 | 2,736,573,396 | IssuesEvent | 2015-04-19 15:34:26 | tspence/csharp-csv-reader | https://api.github.com/repos/tspence/csharp-csv-reader | closed | What options are supported by this DLL? | auto-migrated Priority-Medium Type-Defect | ```

Does it handles Double Quotes, Single Quotes, and a column having large amount

of text which contains commas?

```

Original issue reported on code.google.com by `babji.su...@gmail.com` on 16 Oct 2013 at 5:10 | 1.0 | What options are supported by this DLL? - ```

Does it handles Double Quotes, Single Quotes, and a column having large amount

of text which contains commas?

```

Original issue reported on code.google.com by `babji.su...@gmail.com` on 16 Oct 2013 at 5:10 | defect | what options are supported by this dll does it handles double quotes single quotes and a column having large amount of text which contains commas original issue reported on code google com by babji su gmail com on oct at | 1 |

32,756 | 7,595,778,054 | IssuesEvent | 2018-04-27 07:15:54 | exercism/java | https://api.github.com/repos/exercism/java | closed | minesweeper: remove `final` from tests | code good first patch | According to our [policies](https://github.com/exercism/java/blob/master/POLICIES.md#avoid-using-final) we want to avoid using `final` in user facing code as it can be confusing to people new to Java.

Please remove all uses of `final` in the [minesweeper tests](https://github.com/exercism/java/blob/master/exercises/... | 1.0 | minesweeper: remove `final` from tests - According to our [policies](https://github.com/exercism/java/blob/master/POLICIES.md#avoid-using-final) we want to avoid using `final` in user facing code as it can be confusing to people new to Java.

Please remove all uses of `final` in the [minesweeper tests](https://github... | non_defect | minesweeper remove final from tests according to our we want to avoid using final in user facing code as it can be confusing to people new to java please remove all uses of final in the | 0 |

57,383 | 15,761,126,752 | IssuesEvent | 2021-03-31 09:40:14 | primefaces/primereact | https://api.github.com/repos/primefaces/primereact | closed | A maximizable dialog cannot be maximized properly after its size or position has been changed | defect | **I'm submitting a ...** (check one with "x")

```

[x] bug report

[ ] feature request

[ ] support request

```

**Codesandbox Case (Bug Reports)**

https://codesandbox.io/s/6jbh8

**Current behavior**

A maximizable dialog has a strange behavior after its size or position has been changed:

- if we change the... | 1.0 | A maximizable dialog cannot be maximized properly after its size or position has been changed - **I'm submitting a ...** (check one with "x")

```

[x] bug report

[ ] feature request

[ ] support request

```

**Codesandbox Case (Bug Reports)**

https://codesandbox.io/s/6jbh8

**Current behavior**

A maximizable ... | defect | a maximizable dialog cannot be maximized properly after its size or position has been changed i m submitting a check one with x bug report feature request support request codesandbox case bug reports current behavior a maximizable dialog has a strange behavior after... | 1 |

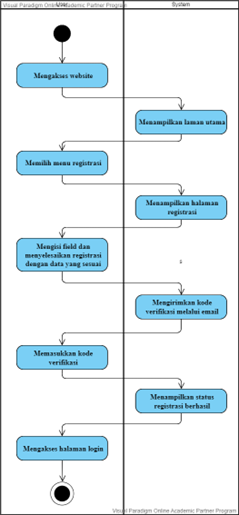

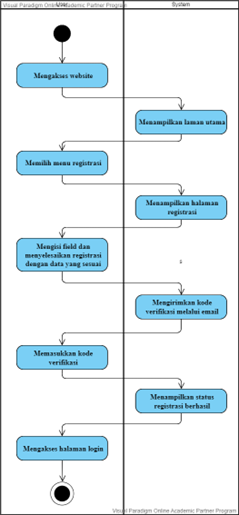

413,893 | 27,972,831,926 | IssuesEvent | 2023-03-25 07:55:27 | Alfarezie/SI-44-08-KELOMPOK-269 | https://api.github.com/repos/Alfarezie/SI-44-08-KELOMPOK-269 | closed | [S1 - PBI1] Membuat halaman registrasi | documentation | Sebagai pasien, saya ingin melakukan pendaftaran pada halaman registrasi website Sigma sehingga akun saya akan terdaftar di database

(Activity diagram)

| 1.0 | [S1 - PBI1] Membuat halaman registrasi - Sebagai pasien, saya ingin melakukan pendaftaran pada halaman registrasi website Sigma sehingga akun saya akan terdaftar di database

(Activity diagram)

| non_defect | membuat halaman registrasi sebagai pasien saya ingin melakukan pendaftaran pada halaman registrasi website sigma sehingga akun saya akan terdaftar di database activity diagram | 0 |

50,257 | 13,187,404,186 | IssuesEvent | 2020-08-13 03:18:31 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | fix simulation so all bots report "green" (Trac #394) | Migrated from Trac combo simulation defect |

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/394

, reported by nega and owned by kjmeagher_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-05-30T16:43:18",

"description": "",

"reporter": "nega",

"cc": "blaufuss olivas",

"resolution": "fixed",

... | 1.0 | fix simulation so all bots report "green" (Trac #394) -

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/394

, reported by nega and owned by kjmeagher_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-05-30T16:43:18",

"description": "",

"reporter": "nega",

... | defect | fix simulation so all bots report green trac migrated from reported by nega and owned by kjmeagher json status closed changetime description reporter nega cc blaufuss olivas resolution fixed ts ... | 1 |

18,852 | 3,089,697,396 | IssuesEvent | 2015-08-25 23:05:25 | google/googlemock | https://api.github.com/repos/google/googlemock | closed | Crash caused by missing lock | auto-migrated Priority-Medium Type-Defect | ```

FunctionMockerBase<F>::AddNewExpectation() modifies untyped_expectations_

without locking g_gmock_mutex.

It can cause a crash if another thread calls the same function or

FindMatchingExpectationLocked().

Google Mock version: 1.7.0

```

Original issue reported on code.google.com by `n...@tresorit.com` on 11 Nov 2... | 1.0 | Crash caused by missing lock - ```

FunctionMockerBase<F>::AddNewExpectation() modifies untyped_expectations_

without locking g_gmock_mutex.

It can cause a crash if another thread calls the same function or

FindMatchingExpectationLocked().

Google Mock version: 1.7.0

```

Original issue reported on code.google.com by ... | defect | crash caused by missing lock functionmockerbase addnewexpectation modifies untyped expectations without locking g gmock mutex it can cause a crash if another thread calls the same function or findmatchingexpectationlocked google mock version original issue reported on code google com by n... | 1 |

47,966 | 13,067,338,531 | IssuesEvent | 2020-07-31 00:08:40 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [genie-icetray] tests taking 1000+ minutes to run (Trac #1536) | Migrated from Trac combo simulation defect | The test process automatically quits after 1200 seconds w/o output. Tests should only take a few 10s of seconds at most.

```text

9088 ? R 983:28 python /build/buildslave/morax_cvmfs/Scientific_Linux_6__cvmfs_/source/genie-icetray/resources/test/GENIETest.py

15646 ? R 914:11 python /build/buildsla... | 1.0 | [genie-icetray] tests taking 1000+ minutes to run (Trac #1536) - The test process automatically quits after 1200 seconds w/o output. Tests should only take a few 10s of seconds at most.

```text

9088 ? R 983:28 python /build/buildslave/morax_cvmfs/Scientific_Linux_6__cvmfs_/source/genie-icetray/resources/te... | defect | tests taking minutes to run trac the test process automatically quits after seconds w o output tests should only take a few of seconds at most text r python build buildslave morax cvmfs scientific linux cvmfs source genie icetray resources test genietest py r ... | 1 |

10,706 | 13,501,854,591 | IssuesEvent | 2020-09-13 05:00:05 | amor71/LiuAlgoTrader | https://api.github.com/repos/amor71/LiuAlgoTrader | closed | liquidation of non-updated stocks | in-process | sell stocks before the end of trading session, even if there are no update/trades | 1.0 | liquidation of non-updated stocks - sell stocks before the end of trading session, even if there are no update/trades | non_defect | liquidation of non updated stocks sell stocks before the end of trading session even if there are no update trades | 0 |

194,432 | 22,261,985,943 | IssuesEvent | 2022-06-10 01:56:44 | Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2021-33034 | https://api.github.com/repos/Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2021-33034 | reopened | CVE-2020-29661 (High) detected in linuxlinux-4.19.239 | security vulnerability | ## CVE-2020-29661 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.239</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.k... | True | CVE-2020-29661 (High) detected in linuxlinux-4.19.239 - ## CVE-2020-29661 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.239</b></p></summary>

<p>

<p>The Linux Kernel<... | non_defect | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files drivers tty tty ... | 0 |

312,728 | 23,440,861,162 | IssuesEvent | 2022-08-15 14:45:00 | thm-mni-ii/graph-algo-ptas | https://api.github.com/repos/thm-mni-ii/graph-algo-ptas | closed | Custom badges for docs and benchmark | documentation benchmark | Currently the README badges for docs and benchmark look the same and can only be distinguished using the alt text. This should be changed. | 1.0 | Custom badges for docs and benchmark - Currently the README badges for docs and benchmark look the same and can only be distinguished using the alt text. This should be changed. | non_defect | custom badges for docs and benchmark currently the readme badges for docs and benchmark look the same and can only be distinguished using the alt text this should be changed | 0 |

21,920 | 3,587,215,040 | IssuesEvent | 2016-01-30 05:06:04 | mash99/crypto-js | https://api.github.com/repos/mash99/crypto-js | closed | Most of the hashs wont works regarding file encoding | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Create a text file containing aÿþa

2. Convert it to AINSII/UTF8/UTF8-NOBOM/UTF16/.....

3. Check the hash returned and compare with a Checksum application.

What is the expected output? What do you see instead?

The CryptoJS lib force conversion to UTF8 on the input, it will ... | 1.0 | Most of the hashs wont works regarding file encoding - ```

What steps will reproduce the problem?

1. Create a text file containing aÿþa

2. Convert it to AINSII/UTF8/UTF8-NOBOM/UTF16/.....

3. Check the hash returned and compare with a Checksum application.

What is the expected output? What do you see instead?

The Crypt... | defect | most of the hashs wont works regarding file encoding what steps will reproduce the problem create a text file containing aÿþa convert it to ainsii nobom check the hash returned and compare with a checksum application what is the expected output what do you see instead the cryptojs lib fo... | 1 |

114,454 | 11,848,116,756 | IssuesEvent | 2020-03-24 13:16:24 | sliu107/alpha | https://api.github.com/repos/sliu107/alpha | opened | Use ref frame for the sequnce diagram in DG | severity.Low type.DocumentationBug | For each feature, we repeat the process of `setup()` in the corresponding sequence diagram.

To reduce repetition, we can draw a master sequence diagram and use ref frame to break it to multiple sub diagrams of different feature.

| 1.0 | Use ref frame for the sequnce diagram in DG - For each feature, we repeat the process of `setup()` in the corresponding sequence diagram.

To reduce repetition, we can draw a master sequence diagram and use ref frame to break it to multiple sub diagrams of different feature.

| non_defect | use ref frame for the sequnce diagram in dg for each feature we repeat the process of setup in the corresponding sequence diagram to reduce repetition we can draw a master sequence diagram and use ref frame to break it to multiple sub diagrams of different feature | 0 |

84,055 | 16,444,453,026 | IssuesEvent | 2021-05-20 17:49:23 | smeas/Beer-and-Plunder | https://api.github.com/repos/smeas/Beer-and-Plunder | closed | Showing the different states of how much health a table has. | 2p code | **Description**

Show how damaged the table is.

**Subtasks**

- [x] Showing cracks throughout the table appearing as the health goes below some thresholds.

- [x] Five states: whole table, slightly damaged, more damaged, very damaged, completely broken. | 1.0 | Showing the different states of how much health a table has. - **Description**

Show how damaged the table is.

**Subtasks**

- [x] Showing cracks throughout the table appearing as the health goes below some thresholds.

- [x] Five states: whole table, slightly damaged, more damaged, very damaged, completely broken. | non_defect | showing the different states of how much health a table has description show how damaged the table is subtasks showing cracks throughout the table appearing as the health goes below some thresholds five states whole table slightly damaged more damaged very damaged completely broken | 0 |

460,782 | 13,218,118,126 | IssuesEvent | 2020-08-17 08:09:21 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.ebay.co.uk - site is not usable | browser-firefox engine-gecko priority-important type-no-css | <!-- @browser: Firefox 80.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:80.0) Gecko/20100101 Firefox/80.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56520 -->

**URL**: https://www.ebay.co.uk/

**Browser / Version**: Firefox 80.0

**... | 1.0 | www.ebay.co.uk - site is not usable - <!-- @browser: Firefox 80.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:80.0) Gecko/20100101 Firefox/80.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/56520 -->

**URL**: https://www.ebay.co.uk/

... | non_defect | site is not usable url browser version firefox operating system windows tested another browser yes internet explorer problem type site is not usable description page not loading correctly steps to reproduce will not load in firefox only internet explorer ... | 0 |

44,619 | 12,294,002,505 | IssuesEvent | 2020-05-10 21:38:26 | IBM/CAST | https://api.github.com/repos/IBM/CAST | closed | bbcmd cancel segfault | Comp: Burst Buffer PhaseFound: Customer Sev: 3 Status: Closed Type: Defect | **Describe the bug**

When running inside of an interactive allocation and trying to cancel an ongoing transfer with multiple contributors, I got the following segfault:

```

bbcmd cancel --target=0- --jobstepid=2 --handle=1567724507

Segmentation fault (core dumped)

```

In this job, 250 hosts completed, and 6 w... | 1.0 | bbcmd cancel segfault - **Describe the bug**

When running inside of an interactive allocation and trying to cancel an ongoing transfer with multiple contributors, I got the following segfault:

```

bbcmd cancel --target=0- --jobstepid=2 --handle=1567724507

Segmentation fault (core dumped)

```

In this job, 250 ... | defect | bbcmd cancel segfault describe the bug when running inside of an interactive allocation and trying to cancel an ongoing transfer with multiple contributors i got the following segfault bbcmd cancel target jobstepid handle segmentation fault core dumped in this job hosts compl... | 1 |

79,945 | 29,735,760,315 | IssuesEvent | 2023-06-14 00:39:01 | openslide/openslide | https://api.github.com/repos/openslide/openslide | opened | Some images ignored in DICOM JP2K sample slides | defect | ### Operating system

Fedora 38

### Platform

x86_64

### OpenSlide version

Git main

### Slide format

DICOM

### Issue details

The JP2K [sample slides](https://openslide.cs.cmu.edu/download/openslide-testdata/DICOM/) (`CMU-1-JP2K-33005` and `JP2K-33003-1`), which were converted from Aperio slides with `com.pixelme... | 1.0 | Some images ignored in DICOM JP2K sample slides - ### Operating system

Fedora 38

### Platform

x86_64

### OpenSlide version

Git main

### Slide format

DICOM

### Issue details

The JP2K [sample slides](https://openslide.cs.cmu.edu/download/openslide-testdata/DICOM/) (`CMU-1-JP2K-33005` and `JP2K-33003-1`), which w... | defect | some images ignored in dicom sample slides operating system fedora platform openslide version git main slide format dicom issue details the cmu and which were converted from aperio slides with com pixelmed convert tifftodicom have a few issues op... | 1 |

763,710 | 26,770,936,060 | IssuesEvent | 2023-01-31 14:05:19 | zowe/vscode-extension-for-zowe | https://api.github.com/repos/zowe/vscode-extension-for-zowe | closed | USS command fails when JCL submitted via VScode | bug Research Needed priority-medium | <!--

Before opening a new issue, please search our existing issues: https://github.com/zowe/vscode-extension-for-zowe/issues

-->

**Describe the bug**

Same USS related JCL submitted through VScode fails with RC=0012.

**To Reproduce**

Steps to reproduce the behavior:

1. Go to job, submit mount zfs JCL as... | 1.0 | USS command fails when JCL submitted via VScode - <!--

Before opening a new issue, please search our existing issues: https://github.com/zowe/vscode-extension-for-zowe/issues

-->

**Describe the bug**

Same USS related JCL submitted through VScode fails with RC=0012.

**To Reproduce**

Steps to reproduce th... | non_defect | uss command fails when jcl submitted via vscode before opening a new issue please search our existing issues describe the bug same uss related jcl submitted through vscode fails with rc to reproduce steps to reproduce the behavior go to job submit mount zfs jcl as sample ... | 0 |

10,908 | 2,622,846,742 | IssuesEvent | 2015-03-04 08:02:58 | max99x/pagemon-chrome-ext | https://api.github.com/repos/max99x/pagemon-chrome-ext | closed | Updates stops after some time | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

I use the extension to monitor about 10 channels on youtube, at intervals of

2-5 minutes. The detection works well for some time but after an interval

varying from minutes to several hours, the extension stops the upgrading. After

this point even though I try to update manu... | 1.0 | Updates stops after some time - ```

What steps will reproduce the problem?

I use the extension to monitor about 10 channels on youtube, at intervals of

2-5 minutes. The detection works well for some time but after an interval

varying from minutes to several hours, the extension stops the upgrading. After

this point ... | defect | updates stops after some time what steps will reproduce the problem i use the extension to monitor about channels on youtube at intervals of minutes the detection works well for some time but after an interval varying from minutes to several hours the extension stops the upgrading after this point e... | 1 |

51,053 | 13,188,065,340 | IssuesEvent | 2020-08-13 05:27:17 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | cmake - absolute vs relative paths in *.gcno files (Trac #1891) | Migrated from Trac cmake defect | Previously we've been generating coverage on `stheno` (Ubuntu 14.04 + CVMFS). This included `gcc-4.8.4`, `cmake-2.8.12.2`, and `lcov-1.10`. We constantly received the dreaded "unexpected end of file" errors while `lcov` was reading *.gcno files. All signs pointed to upgrading `gcc` and `lcov`, so we moved coverage buil... | 1.0 | cmake - absolute vs relative paths in *.gcno files (Trac #1891) - Previously we've been generating coverage on `stheno` (Ubuntu 14.04 + CVMFS). This included `gcc-4.8.4`, `cmake-2.8.12.2`, and `lcov-1.10`. We constantly received the dreaded "unexpected end of file" errors while `lcov` was reading *.gcno files. All sign... | defect | cmake absolute vs relative paths in gcno files trac previously we ve been generating coverage on stheno ubuntu cvmfs this included gcc cmake and lcov we constantly received the dreaded unexpected end of file errors while lcov was reading gcno files all signs point... | 1 |

14,899 | 2,831,390,193 | IssuesEvent | 2015-05-24 15:55:02 | nobodyguy/dslrdashboard | https://api.github.com/repos/nobodyguy/dslrdashboard | closed | D800 only takes first of 5 pics in focus stacking mode | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. plug in D800

2. turn off red AF so it's white, set to take 5 images focus step 10

3. hit start focus stacking.

What is the expected output? What do you see instead?

I expect the camera to take 5 pictures but it just takes one then does nothing

What version of the produc... | 1.0 | D800 only takes first of 5 pics in focus stacking mode - ```

What steps will reproduce the problem?

1. plug in D800

2. turn off red AF so it's white, set to take 5 images focus step 10

3. hit start focus stacking.

What is the expected output? What do you see instead?

I expect the camera to take 5 pictures but it ju... | defect | only takes first of pics in focus stacking mode what steps will reproduce the problem plug in turn off red af so it s white set to take images focus step hit start focus stacking what is the expected output what do you see instead i expect the camera to take pictures but it just take... | 1 |

161,175 | 12,532,995,734 | IssuesEvent | 2020-06-04 16:50:07 | QMCPACK/qmcpack | https://api.github.com/repos/QMCPACK/qmcpack | opened | Unit test for cuBLAS_missing_functions | enhancement testing | **Is your feature request related to a problem? Please describe.**

This batched BLAS functionality should be tested, as discussed in #2486

**Describe the solution you'd like**

Standalone unit test.

Would be good to write at the "missing function" level if practical so that the same code can be used to tes... | 1.0 | Unit test for cuBLAS_missing_functions - **Is your feature request related to a problem? Please describe.**

This batched BLAS functionality should be tested, as discussed in #2486

**Describe the solution you'd like**

Standalone unit test.

Would be good to write at the "missing function" level if practical... | non_defect | unit test for cublas missing functions is your feature request related to a problem please describe this batched blas functionality should be tested as discussed in describe the solution you d like standalone unit test would be good to write at the missing function level if practical so... | 0 |

46,690 | 24,675,532,421 | IssuesEvent | 2022-10-18 16:40:49 | dotnet/runtime | https://api.github.com/repos/dotnet/runtime | closed | [Perf] Linux/arm64: 5 Regressions on 9/27/2022 12:44:07 AM | arch-arm64 area-System.Runtime tenet-performance tenet-performance-benchmarks untriaged | ### Run Information

Architecture | arm64

-- | --

OS | ubuntu 20.04

Baseline | [f8b032cd4fd5f544211d37ac25816cbd4deb67b7](https://github.com/dotnet/runtime/commit/f8b032cd4fd5f544211d37ac25816cbd4deb67b7)

Compare | [7fc8fb56327e9696ce9baa80f997e3f50675af1f](https://github.com/dotnet/runtime/commit/7fc8fb56327e969... | True | [Perf] Linux/arm64: 5 Regressions on 9/27/2022 12:44:07 AM - ### Run Information

Architecture | arm64

-- | --

OS | ubuntu 20.04

Baseline | [f8b032cd4fd5f544211d37ac25816cbd4deb67b7](https://github.com/dotnet/runtime/commit/f8b032cd4fd5f544211d37ac25816cbd4deb67b7)

Compare | [7fc8fb56327e9696ce9baa80f997e3f50675a... | non_defect | linux regressions on am run information architecture os ubuntu baseline compare diff regressions in system io tests perf file benchmark baseline test test base test quality edge detector baseline ir compare ir ir ratio ba... | 0 |

1,963 | 2,603,974,151 | IssuesEvent | 2015-02-24 19:01:04 | chrsmith/nishazi6 | https://api.github.com/repos/chrsmith/nishazi6 | opened | 沈阳疱疹的临床症状 | auto-migrated Priority-Medium Type-Defect | ```

沈阳疱疹的临床症状〓沈陽軍區政治部醫院性病〓TEL:024-3102

3308〓成立于1946年,68年專注于性傳播疾病的研究和治療。位�

��沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的�

��史悠久、設備精良、技術權威、專家云集,是預防、保健、

醫療、科研康復為一體的綜合性醫院。是國家首批公立甲等��

�隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、東�

��大學等知名高等院校的教學醫院。曾被中國人民解放軍空軍

后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二��

�功。

```

-----

Original issue reported on code.google.com by `q964105.... | 1.0 | 沈阳疱疹的临床症状 - ```

沈阳疱疹的临床症状〓沈陽軍區政治部醫院性病〓TEL:024-3102

3308〓成立于1946年,68年專注于性傳播疾病的研究和治療。位�

��沈陽市沈河區二緯路32號。是一所與新中國同建立共輝煌的�

��史悠久、設備精良、技術權威、專家云集,是預防、保健、

醫療、科研康復為一體的綜合性醫院。是國家首批公立甲等��

�隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、東�

��大學等知名高等院校的教學醫院。曾被中國人民解放軍空軍

后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二��

�功。

```

-----

Original issue reported on code.google.com ... | defect | 沈阳疱疹的临床症状 沈阳疱疹的临床症状〓沈陽軍區政治部醫院性病〓tel: 〓 , 。位� �� 。是一所與新中國同建立共輝煌的� ��史悠久、設備精良、技術權威、專家云集,是預防、保健、 醫療、科研康復為一體的綜合性醫院。是國家首批公立甲等�� �隊醫院、全國首批醫療規范定點單位,是第四軍醫大學、東� ��大學等知名高等院校的教學醫院。曾被中國人民解放軍空軍 后勤部衛生部評為衛生工作先進單位,先后兩次榮立集體二�� �功。 original issue reported on code google com by gmail com on jun at | 1 |