Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

19,878 | 4,454,497,887 | IssuesEvent | 2016-08-23 01:08:48 | evhub/coconut | https://api.github.com/repos/evhub/coconut | closed | Improve iterator matching documentation | documentation | Because Python iterators aren't real lazy lists, you have to be very careful about accidentally consuming them when performing checks, which makes iterator matching rather confusing. | 1.0 | Improve iterator matching documentation - Because Python iterators aren't real lazy lists, you have to be very careful about accidentally consuming them when performing checks, which makes iterator matching rather confusing. | non_defect | improve iterator matching documentation because python iterators aren t real lazy lists you have to be very careful about accidentally consuming them when performing checks which makes iterator matching rather confusing | 0 |

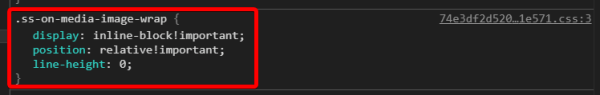

172,637 | 13,325,483,657 | IssuesEvent | 2020-08-27 10:02:00 | gambitph/Stackable | https://api.github.com/repos/gambitph/Stackable | opened | Social Snap Plugin "Pin It" feature is adding some custom CSS code to the image of the Testimonial block making the image small | [block] testimonial bug | Social Snap Plugin "Pin It" feature adds a Pinterest button overall images, making it small.

Here's the code to fix the issue:

`.ss-on-media-image-wrap.ugb-img--shape {

display: block !important;

... | 1.0 | Social Snap Plugin "Pin It" feature is adding some custom CSS code to the image of the Testimonial block making the image small - Social Snap Plugin "Pin It" feature adds a Pinterest button overall images, making it small.

for the list items. ... You can set **local** to true if you... | 1.0 | Hazelcast documentation incorrectly states that "local" option is available for ItemListener [HZ-2777] - <!--

Thanks for reporting your issue. Please share with us the following information, to help us resolve your issue quickly and efficiently.

-->

**Describe the bug**

The documentation states:

`item-listener... | defect | hazelcast documentation incorrectly states that local option is available for itemlistener thanks for reporting your issue please share with us the following information to help us resolve your issue quickly and efficiently describe the bug the documentation states item listeners lets ... | 1 |

35,338 | 7,702,684,008 | IssuesEvent | 2018-05-21 04:13:50 | gperftools/gperftools | https://api.github.com/repos/gperftools/gperftools | closed | Option to disable writing tcmalloc larg allocation messages to stderr | Priority-Medium Status-WontFix Type-Defect | Originally reported on Google Code with ID 357

```

tcmalloc will print messages such as the following to stderr:

tcmalloc: large alloc 1176436736 bytes == 0x3177a000 @ 0x7f909f9fce6c 0x401ef9 0x40b931

0x44f1da 0x44be5f 0x4502c3 0x7f909ed9bc4d 0x401db9

Looking at the code, the output to stderr looks built in. Is th... | 1.0 | Option to disable writing tcmalloc larg allocation messages to stderr - Originally reported on Google Code with ID 357

```

tcmalloc will print messages such as the following to stderr:

tcmalloc: large alloc 1176436736 bytes == 0x3177a000 @ 0x7f909f9fce6c 0x401ef9 0x40b931

0x44f1da 0x44be5f 0x4502c3 0x7f909ed9bc4d 0x... | defect | option to disable writing tcmalloc larg allocation messages to stderr originally reported on google code with id tcmalloc will print messages such as the following to stderr tcmalloc large alloc bytes looking at the code the output to stderr looks built in is there a mechanism ... | 1 |

49,010 | 13,185,192,482 | IssuesEvent | 2020-08-12 20:54:28 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | check for moved/copied tools (Trac #598) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/598

, reported by troy and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-06-17T19:55:06",

"description": "not clear when to check, perhaps in the 'port' command and when running cmake.\n\n{{{\n... | 1.0 | check for moved/copied tools (Trac #598) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/598

, reported by troy and owned by nega</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2012-06-17T19:55:06",

"description": "not clear when to check, perhaps in the 'por... | defect | check for moved copied tools trac migrated from reported by troy and owned by nega json status closed changetime description not clear when to check perhaps in the port command and when running cmake n n n it says qmake found at bla bla bl... | 1 |

39,273 | 9,368,595,978 | IssuesEvent | 2019-04-03 09:03:52 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | closed | SDK: Build source outside Calypso repo | [Project] SDK [Type] Defect | The SDK should be able to build from source outside of Calypso. Currently, it cannot.

#### Steps to reproduce

Try to build some source that isn't inside of Calypso:

```sh

npm run sdk generic /some/path/to/some/file.js output/file.js

# Module not found: Error: Can't resolve '@babel/runtime/helpers/typeof' in …

... | 1.0 | SDK: Build source outside Calypso repo - The SDK should be able to build from source outside of Calypso. Currently, it cannot.

#### Steps to reproduce

Try to build some source that isn't inside of Calypso:

```sh

npm run sdk generic /some/path/to/some/file.js output/file.js

# Module not found: Error: Can't reso... | defect | sdk build source outside calypso repo the sdk should be able to build from source outside of calypso currently it cannot steps to reproduce try to build some source that isn t inside of calypso sh npm run sdk generic some path to some file js output file js module not found error can t reso... | 1 |

431,282 | 12,476,697,067 | IssuesEvent | 2020-05-29 13:54:40 | medic/cht-core | https://api.github.com/repos/medic/cht-core | closed | Support resolving tasks concerning unknown contacts | Priority: 1 - High Type: Bug |

**Describe the bug**

We can no longer rely on `action.modifyContent` to inject contact information into reports generated by task actions.

This causes tasks that are about unknown contacts to generate reports that are unattached (are missing their subject).

This workflow is used where supervisors with replication ... | 1.0 | Support resolving tasks concerning unknown contacts -

**Describe the bug**

We can no longer rely on `action.modifyContent` to inject contact information into reports generated by task actions.

This causes tasks that are about unknown contacts to generate reports that are unattached (are missing their subject).

Thi... | non_defect | support resolving tasks concerning unknown contacts describe the bug we can no longer rely on action modifycontent to inject contact information into reports generated by task actions this causes tasks that are about unknown contacts to generate reports that are unattached are missing their subject thi... | 0 |

75,656 | 25,978,379,317 | IssuesEvent | 2022-12-19 16:36:51 | department-of-veterans-affairs/va.gov-cms | https://api.github.com/repos/department-of-veterans-affairs/va.gov-cms | closed | FE: VAMC System Banner Alert with Situation Updates do not work for Lovell systems | Defect VA.gov frontend ⭐️ Facilities Needs refining | ## Describe the defect

This issue will need to be refined as work in #11726 is completed. For now this is a placeholder. We simply want to be sure that VAMC System Banner Alert with Situation Updates nodes are displayed properly for Lovell systems...

## To Reproduce

Steps to reproduce the behavior:

1. Go to '...... | 1.0 | FE: VAMC System Banner Alert with Situation Updates do not work for Lovell systems - ## Describe the defect

This issue will need to be refined as work in #11726 is completed. For now this is a placeholder. We simply want to be sure that VAMC System Banner Alert with Situation Updates nodes are displayed properly for L... | defect | fe vamc system banner alert with situation updates do not work for lovell systems describe the defect this issue will need to be refined as work in is completed for now this is a placeholder we simply want to be sure that vamc system banner alert with situation updates nodes are displayed properly for lovel... | 1 |

177,457 | 28,494,894,530 | IssuesEvent | 2023-04-18 13:37:14 | rancher/dashboard | https://api.github.com/repos/rancher/dashboard | opened | Kubernetes RBAC competitive analysis | kind/design | We want to understand how other actors deal with the particularities and complexities of Kubernetes RBAC.

The initial idea is to compare Rancher's approach with:

- K8s Dashboard

- Lens

- Octant

| 1.0 | Kubernetes RBAC competitive analysis - We want to understand how other actors deal with the particularities and complexities of Kubernetes RBAC.

The initial idea is to compare Rancher's approach with:

- K8s Dashboard

- Lens

- Octant

| non_defect | kubernetes rbac competitive analysis we want to understand how other actors deal with the particularities and complexities of kubernetes rbac the initial idea is to compare rancher s approach with dashboard lens octant | 0 |

493,417 | 14,231,702,816 | IssuesEvent | 2020-11-18 09:55:51 | kubermatic/kubermatic | https://api.github.com/repos/kubermatic/kubermatic | closed | Support MachineDeployment specific kubelet config | customer-request lifecycle/rotten priority/low team/lifecycle | **User Story**

The kubelet configuration is currently applied as a ConfigMap from the addons image. Customer wants to change the API request limits per cluster or even MachineDeployment. E.g., different limits for small and large nodes.

**Acceptance criteria**

Kubelet configuration should be adaptable per MachineDep... | 1.0 | Support MachineDeployment specific kubelet config - **User Story**

The kubelet configuration is currently applied as a ConfigMap from the addons image. Customer wants to change the API request limits per cluster or even MachineDeployment. E.g., different limits for small and large nodes.

**Acceptance criteria**

Kube... | non_defect | support machinedeployment specific kubelet config user story the kubelet configuration is currently applied as a configmap from the addons image customer wants to change the api request limits per cluster or even machinedeployment e g different limits for small and large nodes acceptance criteria kube... | 0 |

542,929 | 15,874,406,843 | IssuesEvent | 2021-04-09 04:57:34 | remnoteio/remnote-issues | https://api.github.com/repos/remnoteio/remnote-issues | closed | No. of Tagged Rems does not appear or appear incorrectly at bottom portal results | priority=2 | Although there is indication of tagged rems existing in the bottom portal, upon expanding the portal there would be no results shown.

In other instances, an incorrect count of Tagged Rems is reflected.

---

{

const string section = null;

... | 1.0 | Enum.TryParse fails with exception - A description of the issue.

### Steps To Reproduce

https://deck.net/08c324d3c077c47c7dade0e01405f1ec

https://dotnetfiddle.net/Runi2c

```c#

public enum Mode

{

None,

A,

B,

C

}

public class Program

{

private static void Main(string[] args)

... | defect | enum tryparse fails with exception a description of the issue steps to reproduce c public enum mode none a b c public class program private static void main string args const string section null mode mode if enum tryparse s... | 1 |

35,606 | 7,787,962,456 | IssuesEvent | 2018-06-07 01:35:55 | google/sanitizers | https://api.github.com/repos/google/sanitizers | closed | Fine-grained origins for class members | Priority-Medium ProjectMemorySanitizer Status-Accepted Type-Defect | Originally reported on Google Code with ID 31

```

Currently MSan reports the same origin for all members of dynamically allocated objects

(pointing to the new() call for that object).

It would be nice to include the actual member name in MSan report.

Looks like we need Clang support for this - llvm IR does not have en... | 1.0 | Fine-grained origins for class members - Originally reported on Google Code with ID 31

```

Currently MSan reports the same origin for all members of dynamically allocated objects

(pointing to the new() call for that object).

It would be nice to include the actual member name in MSan report.

Looks like we need Clang su... | defect | fine grained origins for class members originally reported on google code with id currently msan reports the same origin for all members of dynamically allocated objects pointing to the new call for that object it would be nice to include the actual member name in msan report looks like we need clang sup... | 1 |

10,458 | 2,622,161,945 | IssuesEvent | 2015-03-04 00:10:25 | byzhang/terrastore | https://api.github.com/repos/byzhang/terrastore | closed | Backup implementation for Ensembles | auto-migrated Milestone-0.8.2 Priority-High Project-Terrastore Type-Defect | ```

How do you scale a currently running ensemble from N clusters to N+1

cluster without losing data?

I had a ensemble running of 2 clusters (each with 1 master and 1

server)

I tried the following, but could not retain all the data (I had just 1

bucket):

1. Export backup of the bucket of both the servers.

2. Restart ... | 1.0 | Backup implementation for Ensembles - ```

How do you scale a currently running ensemble from N clusters to N+1

cluster without losing data?

I had a ensemble running of 2 clusters (each with 1 master and 1

server)

I tried the following, but could not retain all the data (I had just 1

bucket):

1. Export backup of the b... | defect | backup implementation for ensembles how do you scale a currently running ensemble from n clusters to n cluster without losing data i had a ensemble running of clusters each with master and server i tried the following but could not retain all the data i had just bucket export backup of the b... | 1 |

135,454 | 12,684,739,392 | IssuesEvent | 2020-06-19 23:57:58 | Azure/azure-cli | https://api.github.com/repos/Azure/azure-cli | closed | It's always good to add actual example of each command. | Documentation OKR3.4 Candidate | It's always good to add actual example of each command.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9abfaa54-0895-29f7-f3bb-ff604912b2ac

* Version Independent ID: d1ff3478-8a86-59e4-62ef-449900132a67

* Content: [az account](https://docs.... | 1.0 | It's always good to add actual example of each command. - It's always good to add actual example of each command.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: 9abfaa54-0895-29f7-f3bb-ff604912b2ac

* Version Independent ID: d1ff3478-8a86-59... | non_defect | it s always good to add actual example of each command it s always good to add actual example of each command document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id version independent id content content source ... | 0 |

10,513 | 2,622,169,415 | IssuesEvent | 2015-03-04 00:13:49 | byzhang/rapidjson | https://api.github.com/repos/byzhang/rapidjson | closed | Unable to build tests on Mac OS X | auto-migrated Priority-Medium Type-Defect | ```

I am trying to build the tests for rapidjson 0.11

(http://code.google.com/p/rapidjson/) on Mac OS X . It includes three projects:

gtest (builds fine), unittest (build fails), and perftest (build fails), and

when building make error out with Error 1 and Error 2. Actually, the first time

I ran make, gtest and uni... | 1.0 | Unable to build tests on Mac OS X - ```

I am trying to build the tests for rapidjson 0.11

(http://code.google.com/p/rapidjson/) on Mac OS X . It includes three projects:

gtest (builds fine), unittest (build fails), and perftest (build fails), and

when building make error out with Error 1 and Error 2. Actually, the ... | defect | unable to build tests on mac os x i am trying to build the tests for rapidjson on mac os x it includes three projects gtest builds fine unittest build fails and perftest build fails and when building make error out with error and error actually the first time i ran make gtest and un... | 1 |

46,033 | 13,055,841,879 | IssuesEvent | 2020-07-30 02:53:52 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | icerec/trunk dox on software.icecube.wisc.edu are "403 Forbidden" (Trac #474) | Incomplete Migration Migrated from Trac combo reconstruction defect | Migrated from https://code.icecube.wisc.edu/ticket/474

```json

{

"status": "closed",

"changetime": "2014-10-03T18:27:09",

"description": "On http://software.icecube.wisc.edu/ there is a link to IceRec \"nightly builds\": http://software.icecube.wisc.edu/icerec_trunk/ which results in \"403 - Forbidden\".\... | 1.0 | icerec/trunk dox on software.icecube.wisc.edu are "403 Forbidden" (Trac #474) - Migrated from https://code.icecube.wisc.edu/ticket/474

```json

{

"status": "closed",

"changetime": "2014-10-03T18:27:09",

"description": "On http://software.icecube.wisc.edu/ there is a link to IceRec \"nightly builds\": http:... | defect | icerec trunk dox on software icecube wisc edu are forbidden trac migrated from json status closed changetime description on there is a link to icerec nightly builds which results in forbidden n ni am assigning this now to dladieu but i could e... | 1 |

36,275 | 7,875,182,247 | IssuesEvent | 2018-06-25 19:32:51 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Inheriting from an external class (no base() call case) | defect | A class-successor has to call a default constructor of an external base class even when `base()` call is not specified explicitly.

Related to #2735 and #2189.

### Steps To Reproduce

https://deck.net/abf6fe87cb3641732d977c0f8543788b

```csharp

public class Program

{

[Init(InitPosition.Top)]

public... | 1.0 | Inheriting from an external class (no base() call case) - A class-successor has to call a default constructor of an external base class even when `base()` call is not specified explicitly.

Related to #2735 and #2189.

### Steps To Reproduce

https://deck.net/abf6fe87cb3641732d977c0f8543788b

```csharp

public ... | defect | inheriting from an external class no base call case a class successor has to call a default constructor of an external base class even when base call is not specified explicitly related to and steps to reproduce csharp public class program public static void init ... | 1 |

24,489 | 3,992,496,991 | IssuesEvent | 2016-05-10 02:06:50 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | missing 'all' in cake Completion subcommands bake | console Defect | This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version:

CakePHP v3.2.8

PHP : 7.0.6-1+donate.sury.org~trusty+1

### What you did

```shell

bin/cake Completion subcommands bake

```

### Expected Behavior

i'm expecting _all_ in command list

### Actu... | 1.0 | missing 'all' in cake Completion subcommands bake - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version:

CakePHP v3.2.8

PHP : 7.0.6-1+donate.sury.org~trusty+1

### What you did

```shell

bin/cake Completion subcommands bake

```

### Expected Behav... | defect | missing all in cake completion subcommands bake this is a multiple allowed bug enhancement feature discussion rfc cakephp version cakephp php donate sury org trusty what you did shell bin cake completion subcommands bake expected behavior i ... | 1 |

72,441 | 24,119,803,869 | IssuesEvent | 2022-09-20 17:38:36 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | opened | [🐛 Bug]: isPromise() returning false for thenable objects | I-defect needs-triaging | ### What happened?

https://github.com/SeleniumHQ/selenium/commit/84dd6109ce692788467432ccee55f481fe49f2bc#r84520555

In the above commit, the `isPromise()` utility function was simplified to look for `typeof value === '[object Promise]'`, however this fails the function's JSDoc documentation that any object exposing... | 1.0 | [🐛 Bug]: isPromise() returning false for thenable objects - ### What happened?

https://github.com/SeleniumHQ/selenium/commit/84dd6109ce692788467432ccee55f481fe49f2bc#r84520555

In the above commit, the `isPromise()` utility function was simplified to look for `typeof value === '[object Promise]'`, however this fail... | defect | ispromise returning false for thenable objects what happened in the above commit the ispromise utility function was simplified to look for typeof value however this fails the function s jsdoc documentation that any object exposing a then method would be considered a promise my ... | 1 |

11,654 | 2,660,023,444 | IssuesEvent | 2015-03-19 01:41:49 | perfsonar/project | https://api.github.com/repos/perfsonar/project | closed | Review configuration examples in ls_registration_daemon.conf file | Component-LSRegistrationDaemon Milestone-Release3.4.2 Priority-Medium Type-Defect | Original [issue 1046](https://code.google.com/p/perfsonar-ps/issues/detail?id=1046) created by arlake228 on 2015-01-15T08:48:09.000Z:

<b>What steps will reproduce the problem?</b>

In the last suggestion from the mailing list it was written that the ls_registration_daemon.conf configuration file should have entries l... | 1.0 | Review configuration examples in ls_registration_daemon.conf file - Original [issue 1046](https://code.google.com/p/perfsonar-ps/issues/detail?id=1046) created by arlake228 on 2015-01-15T08:48:09.000Z:

<b>What steps will reproduce the problem?</b>

In the last suggestion from the mailing list it was written that the ... | defect | review configuration examples in ls registration daemon conf file original created by on what steps will reproduce the problem in the last suggestion from the mailing list it was written that the ls registration daemon conf configuration file should have entries like lt service template ... | 1 |

290,201 | 25,042,595,410 | IssuesEvent | 2022-11-04 23:01:30 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Undo Close Tab is unreliable | bug topic:editor usability needs testing | <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:**

<!-- Specify commit hash if using non-official build. -->

3.2.2

**Issue description:**

<!-- What happened, and what was expected. -->

Unfortunate... | 1.0 | Undo Close Tab is unreliable - <!-- Please search existing issues for potential duplicates before filing yours:

https://github.com/godotengine/godot/issues?q=is%3Aissue

-->

**Godot version:**

<!-- Specify commit hash if using non-official build. -->

3.2.2

**Issue description:**

<!-- What happened, and what... | non_defect | undo close tab is unreliable please search existing issues for potential duplicates before filing yours godot version issue description unfortunately i don t have exact reproduction steps but sometimes when you close scene tab and use undo close tab in my case with sh... | 0 |

228 | 2,520,557,016 | IssuesEvent | 2015-01-19 05:07:10 | AtlasOfLivingAustralia/biocache-hubs | https://api.github.com/repos/AtlasOfLivingAustralia/biocache-hubs | closed | Fish or Fishes | priority-medium status-new type-defect |

*migrated from:* https://code.google.com/p/ala/issues/detail?id=98

*date:* Thu Aug 8 16:27:28 2013

*author:* moyesyside

---

Original Issue reported by Reported by john.t...@austmus.gov.au, Today (16 hours ago) - [https://code.google.com/p/ala-portal/issues/detail?id=300](https://code.google.com/p/ala-portal/issues/... | 1.0 | Fish or Fishes -

*migrated from:* https://code.google.com/p/ala/issues/detail?id=98

*date:* Thu Aug 8 16:27:28 2013

*author:* moyesyside

---

Original Issue reported by Reported by john.t...@austmus.gov.au, Today (16 hours ago) - [https://code.google.com/p/ala-portal/issues/detail?id=300](https://code.google.com/p/a... | defect | fish or fishes migrated from date thu aug author moyesyside original issue reported by reported by john t austmus gov au today hours ago reported by john tann austmus gov au today hours ago i have been told that when we refer to fish as a lifeform we should use... | 1 |

83,031 | 10,318,815,523 | IssuesEvent | 2019-08-30 15:49:04 | CICE-Consortium/CICE | https://api.github.com/repos/CICE-Consortium/CICE | closed | evp kernel version 2 testing and validation | Documentation Dynamics Priority: High Testing Type: Feature | We are going to merge PR #278, PR #252. There are several outstanding issues, basically copied from the end of #252,

---------------------

Let me summarize where we are.

With evp_kernel_ver=0, results are bit-for-bit for most tests against the current master. This is running full test suites on gordon for ... | 1.0 | evp kernel version 2 testing and validation - We are going to merge PR #278, PR #252. There are several outstanding issues, basically copied from the end of #252,

---------------------

Let me summarize where we are.

With evp_kernel_ver=0, results are bit-for-bit for most tests against the current master. T... | non_defect | evp kernel version testing and validation we are going to merge pr pr there are several outstanding issues basically copied from the end of let me summarize where we are with evp kernel ver results are bit for bit for most tests against the current master this is... | 0 |

27,811 | 5,106,884,223 | IssuesEvent | 2017-01-05 13:10:36 | TASVideos/BizHawk | https://api.github.com/repos/TASVideos/BizHawk | closed | Genplus-gx [BizHawk] Game Genie codes not working | bug Core-EmuHawk Core-Genplus-GX Core-GensHawk OpSys-Any Priority-Medium Type-Defect | I can't get any Game Genie codes to work on BizHawk Genplus-gx. I have codes that I know that work but on BizHawk they don't work. I know how to use BizHawk. Like on the game Strider (UE) [!]. Here is a code that give you Infinite Life In The Life Gauge.

AK8T-AA5R

AKVT-AA94

AJLT-AA9E

On Kega this code works.

... | 1.0 | Genplus-gx [BizHawk] Game Genie codes not working - I can't get any Game Genie codes to work on BizHawk Genplus-gx. I have codes that I know that work but on BizHawk they don't work. I know how to use BizHawk. Like on the game Strider (UE) [!]. Here is a code that give you Infinite Life In The Life Gauge.

AK8T-AA5R

... | defect | genplus gx game genie codes not working i can t get any game genie codes to work on bizhawk genplus gx i have codes that i know that work but on bizhawk they don t work i know how to use bizhawk like on the game strider ue here is a code that give you infinite life in the life gauge akvt ajlt ... | 1 |

277,173 | 21,016,821,004 | IssuesEvent | 2022-03-30 11:49:32 | SW-Team2/se-team2-tetris | https://api.github.com/repos/SW-Team2/se-team2-tetris | closed | docs: PR, Issue template 제작 | 📄 documentation | ## 개요

- PR과 Issue을 보면서 명확하게 어떤 것을 했는지 전달이 안되는 부분이 있었습니다.

- 예를 들어, 어떤 기능을 구현했을 때, 이것이 어떻게 동작하는지 캡쳐 화면이라던가 동영상 등 추가적인 정보가 없어 직접 실행해야 하는 수고로움이 있었습니다.

- 탬플릿을 제공하여 다른 사람에게 명확하게 정보를 제공하고자 합니다.

## 할 일

- [x] PR template 제작

- [x] Issue template 제작

## 참고

https://docs.github.com/en/communities/using-templates-to-encou... | 1.0 | docs: PR, Issue template 제작 - ## 개요

- PR과 Issue을 보면서 명확하게 어떤 것을 했는지 전달이 안되는 부분이 있었습니다.

- 예를 들어, 어떤 기능을 구현했을 때, 이것이 어떻게 동작하는지 캡쳐 화면이라던가 동영상 등 추가적인 정보가 없어 직접 실행해야 하는 수고로움이 있었습니다.

- 탬플릿을 제공하여 다른 사람에게 명확하게 정보를 제공하고자 합니다.

## 할 일

- [x] PR template 제작

- [x] Issue template 제작

## 참고

https://docs.github.com/en/commun... | non_defect | docs pr issue template 제작 개요 pr과 issue을 보면서 명확하게 어떤 것을 했는지 전달이 안되는 부분이 있었습니다 예를 들어 어떤 기능을 구현했을 때 이것이 어떻게 동작하는지 캡쳐 화면이라던가 동영상 등 추가적인 정보가 없어 직접 실행해야 하는 수고로움이 있었습니다 탬플릿을 제공하여 다른 사람에게 명확하게 정보를 제공하고자 합니다 할 일 pr template 제작 issue template 제작 참고 | 0 |

225,023 | 17,789,594,198 | IssuesEvent | 2021-08-31 14:48:40 | elastic/kibana | https://api.github.com/repos/elastic/kibana | opened | [RAC] [Observability] Create functional tests for alerts table add-to-case actions | test Team:logs-metrics-ui Theme: rac | ## :notebook: Summary

We should create functional tests for the add-to-case actions on the Observability Alerts page.

:link: makes use of the service created in #110627

## :heavy_check_mark: Test cases

:warning: When you pick this up, please spend a minute to think about and expand on these test cases. This... | 1.0 | [RAC] [Observability] Create functional tests for alerts table add-to-case actions - ## :notebook: Summary

We should create functional tests for the add-to-case actions on the Observability Alerts page.

:link: makes use of the service created in #110627

## :heavy_check_mark: Test cases

:warning: When you pi... | non_defect | create functional tests for alerts table add to case actions notebook summary we should create functional tests for the add to case actions on the observability alerts page link makes use of the service created in heavy check mark test cases warning when you pick this up please spen... | 0 |

46,225 | 13,055,872,553 | IssuesEvent | 2020-07-30 02:59:16 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | Matplotlib crashes on systems with AMD bulldozer architecture. (Trac #795) | Incomplete Migration Migrated from Trac defect tools/ports | Migrated from https://code.icecube.wisc.edu/ticket/795

```json

{

"status": "closed",

"changetime": "2014-10-30T18:25:07",

"description": "Matplotlib provided by cvmfs crashes on SL6 with AMD bulldozer processors (AMD FX-8350).\n{{{\npython -c \"import pylab; pylab.plot(1,2); pylab.show()\"\n}}}\n\n\ncrash... | 1.0 | Matplotlib crashes on systems with AMD bulldozer architecture. (Trac #795) - Migrated from https://code.icecube.wisc.edu/ticket/795

```json

{

"status": "closed",

"changetime": "2014-10-30T18:25:07",

"description": "Matplotlib provided by cvmfs crashes on SL6 with AMD bulldozer processors (AMD FX-8350).\n{... | defect | matplotlib crashes on systems with amd bulldozer architecture trac migrated from json status closed changetime description matplotlib provided by cvmfs crashes on with amd bulldozer processors amd fx n npython c import pylab pylab plot pylab sho... | 1 |

9,750 | 2,615,167,204 | IssuesEvent | 2015-03-01 06:48:01 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | 99.99% Reaver repeats same pin witn no m5 packets | auto-migrated Priority-Triage Type-Defect | ```

0. What version of Reaver are you using? (Only defects against the latest

version will be considered.)

, reaver 1.4

1. What operating system are you using (Linux is the only supported OS)?

Backtrack 5 r3 usb boot

2. Is your wireless card in monitor mode (yes/no)?

yes

3. What is the signal strength of the Ac... | 1.0 | 99.99% Reaver repeats same pin witn no m5 packets - ```

0. What version of Reaver are you using? (Only defects against the latest

version will be considered.)

, reaver 1.4

1. What operating system are you using (Linux is the only supported OS)?

Backtrack 5 r3 usb boot

2. Is your wireless card in monitor mode (ye... | defect | reaver repeats same pin witn no packets what version of reaver are you using only defects against the latest version will be considered reaver what operating system are you using linux is the only supported os backtrack usb boot is your wireless card in monitor mode yes no... | 1 |

440,647 | 30,754,420,055 | IssuesEvent | 2023-07-28 23:34:16 | aws-samples/amazon-kinesis-video-streams-demos | https://api.github.com/repos/aws-samples/amazon-kinesis-video-streams-demos | closed | Pass AWS and stream link arguments in docker run | documentation question | How can I pass the:

`AWS_ACCESS_KEY_ID=<AWS_ACCESS_KEY_ID> AWS_SECRET_ACCESS_KEY=<AWS_SECRET_ACCESS_KEY> ./kvs_gstreamer_sample <STREAM_NAME> <RTSP_URL> `

Directly in the docker run? | 1.0 | Pass AWS and stream link arguments in docker run - How can I pass the:

`AWS_ACCESS_KEY_ID=<AWS_ACCESS_KEY_ID> AWS_SECRET_ACCESS_KEY=<AWS_SECRET_ACCESS_KEY> ./kvs_gstreamer_sample <STREAM_NAME> <RTSP_URL> `

Directly in the docker run? | non_defect | pass aws and stream link arguments in docker run how can i pass the aws access key id aws secret access key kvs gstreamer sample directly in the docker run | 0 |

23,541 | 4,021,604,118 | IssuesEvent | 2016-05-16 22:40:30 | elastic/logstash | https://api.github.com/repos/elastic/logstash | closed | Hot Threads failure in Travis CI | bug tests | Using our new [Travis CI branch](https://github.com/elastic/logstash/pull/4608) we sometimes get [this odd failure](https://travis-ci.org/elastic/logstash/builds/124009233).

This seems like an intermittently failing test we'll have to look into.

@purbon do you understand what's going on here? | 1.0 | Hot Threads failure in Travis CI - Using our new [Travis CI branch](https://github.com/elastic/logstash/pull/4608) we sometimes get [this odd failure](https://travis-ci.org/elastic/logstash/builds/124009233).

This seems like an intermittently failing test we'll have to look into.

@purbon do you understand what's ... | non_defect | hot threads failure in travis ci using our new we sometimes get this seems like an intermittently failing test we ll have to look into purbon do you understand what s going on here | 0 |

43,241 | 23,163,299,355 | IssuesEvent | 2022-07-29 20:28:01 | mattermost/focalboard | https://api.github.com/repos/mattermost/focalboard | closed | PERF: Fetch board members in parallel | Bug Sev/1 Performance | Here in web app codebase, we fetch each individual board member's details in a separate API call - https://github.com/mattermost/focalboard/blob/3c7fd72dcf0456e14b324cfb0621371289603f2a/webapp/src/store/boards.ts#L36

I propose fetching users in bulk instead of one by one. | True | PERF: Fetch board members in parallel - Here in web app codebase, we fetch each individual board member's details in a separate API call - https://github.com/mattermost/focalboard/blob/3c7fd72dcf0456e14b324cfb0621371289603f2a/webapp/src/store/boards.ts#L36

I propose fetching users in bulk instead of one by one. | non_defect | perf fetch board members in parallel here in web app codebase we fetch each individual board member s details in a separate api call i propose fetching users in bulk instead of one by one | 0 |

489,843 | 14,112,715,084 | IssuesEvent | 2020-11-07 07:04:53 | AY2021S1-CS2103T-W12-4/tp | https://api.github.com/repos/AY2021S1-CS2103T-W12-4/tp | closed | Update UG - Consistent examples across UG and code | priority.High type.Task type.UserGuide | TODOS:

1. Update command summary section

2. Change parameter name for students from `NAME` to `STUDENT_NAME`

3. For individual commands, update to the latest examples (ref google docs)

4. For commands with more than 1 method, label each of the formats to the respective method (ie `markpresent`)

| 1.0 | Update UG - Consistent examples across UG and code - TODOS:

1. Update command summary section

2. Change parameter name for students from `NAME` to `STUDENT_NAME`

3. For individual commands, update to the latest examples (ref google docs)

4. For commands with more than 1 method, label each of the formats to the resp... | non_defect | update ug consistent examples across ug and code todos update command summary section change parameter name for students from name to student name for individual commands update to the latest examples ref google docs for commands with more than method label each of the formats to the resp... | 0 |

361,307 | 10,707,104,460 | IssuesEvent | 2019-10-24 16:43:11 | netdata/netdata | https://api.github.com/repos/netdata/netdata | closed | Generate alarms if the disk cannot keep up with data collection | area/database feature request priority/high | <!---

When creating a feature request please:

- Verify first that your issue is not already reported on GitHub

- Explain new feature briefly in "Feature idea summary" section

- Provide a clear and concise description of what you expect to happen.

--->

##### Feature idea summary

If the disk cannot keep up with ... | 1.0 | Generate alarms if the disk cannot keep up with data collection - <!---

When creating a feature request please:

- Verify first that your issue is not already reported on GitHub

- Explain new feature briefly in "Feature idea summary" section

- Provide a clear and concise description of what you expect to happen.

--... | non_defect | generate alarms if the disk cannot keep up with data collection when creating a feature request please verify first that your issue is not already reported on github explain new feature briefly in feature idea summary section provide a clear and concise description of what you expect to happen ... | 0 |

46,383 | 5,806,359,928 | IssuesEvent | 2017-05-04 02:19:00 | NSW-OEH-EMS-KST/grid-garage-3 | https://api.github.com/repos/NSW-OEH-EMS-KST/grid-garage-3 | closed | raster - tweak values | tested and working | Let users know that the min and max values must be integers.. @byezy this still the case? | 1.0 | raster - tweak values - Let users know that the min and max values must be integers.. @byezy this still the case? | non_defect | raster tweak values let users know that the min and max values must be integers byezy this still the case | 0 |

70,673 | 23,282,203,022 | IssuesEvent | 2022-08-05 13:11:52 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Error configuring hazelcast with DSL style "IMap eviction config doesn't support max size policy `ENTRY_COUNT`" | Type: Defect | <!--

Thanks for reporting your issue. Please share with us the following information, to help us resolve your issue quickly and efficiently.

-->

**Describe the bug**

When i configure a eviction in a map (please see shared code) , once the map is getting used the app throws error (see exception below)

**Exp... | 1.0 | Error configuring hazelcast with DSL style "IMap eviction config doesn't support max size policy `ENTRY_COUNT`" - <!--

Thanks for reporting your issue. Please share with us the following information, to help us resolve your issue quickly and efficiently.

-->

**Describe the bug**

When i configure a eviction in a... | defect | error configuring hazelcast with dsl style imap eviction config doesn t support max size policy entry count thanks for reporting your issue please share with us the following information to help us resolve your issue quickly and efficiently describe the bug when i configure a eviction in a... | 1 |

27,094 | 4,875,252,256 | IssuesEvent | 2016-11-16 08:57:56 | TNGSB/eWallet | https://api.github.com/repos/TNGSB/eWallet | closed | eWallet_MobileApp(Airtime)_Both IOS & Android #097 | Defect - Medium (Sev-3) | [Defect_Mobile App #97.xlsx](https://github.com/TNGSB/eWallet/files/591389/Defect_Mobile.App.97.xlsx)

Test Description : To verify the error message when user left all the fields in blank

Defect Description : System displayed wrong error message when user left all the fields in blank - apply to both IOS and Android... | 1.0 | eWallet_MobileApp(Airtime)_Both IOS & Android #097 - [Defect_Mobile App #97.xlsx](https://github.com/TNGSB/eWallet/files/591389/Defect_Mobile.App.97.xlsx)

Test Description : To verify the error message when user left all the fields in blank

Defect Description : System displayed wrong error message when user left al... | defect | ewallet mobileapp airtime both ios android test description to verify the error message when user left all the fields in blank defect description system displayed wrong error message when user left all the fields in blank apply to both ios and android refer attachment for pot | 1 |

594,799 | 18,054,428,399 | IssuesEvent | 2021-09-20 05:48:22 | naev/naev | https://api.github.com/repos/naev/naev | closed | mission markers are hard to distinguish | Type-Enhancement Priority-Low | I find it hard to distinguish the active mission markers from the markers for potential missions that I could accept.

I understand you got rid of colours there for colourblind accessibility, but what about making them different shapes, or

use a different phase for the blinking? I don't mean frequency, too high might... | 1.0 | mission markers are hard to distinguish - I find it hard to distinguish the active mission markers from the markers for potential missions that I could accept.

I understand you got rid of colours there for colourblind accessibility, but what about making them different shapes, or

use a different phase for the blinkin... | non_defect | mission markers are hard to distinguish i find it hard to distinguish the active mission markers from the markers for potential missions that i could accept i understand you got rid of colours there for colourblind accessibility but what about making them different shapes or use a different phase for the blinkin... | 0 |

331,522 | 28,967,621,427 | IssuesEvent | 2023-05-10 08:59:38 | Joystream/joystream | https://api.github.com/repos/Joystream/joystream | opened | Fix direct channel payment flow | network-integration-test nara-network | Error is introduced in merging `nara` -> `crt_release`

output from processor:

failing flow: https://github.com/Joystream/joystream/actions/runs/4926868090/jobs/8817633132?pr=4749

| 1.0 | Fix direct channel payment flow - Error is introduced in merging `nara` -> `crt_release`

output from processor:

failing flow: https://github.com/Joystream/joystream/actions/runs/4926868090/jobs/8817633132?pr=4749

... | non_defect | fix direct channel payment flow error is introduced in merging nara crt release output from processor failing flow | 0 |

50,900 | 13,187,954,763 | IssuesEvent | 2020-08-13 05:07:50 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [filterscripts] coordinate service -> astro (Trac #1641) | Migrated from Trac combo reconstruction defect | This import needs to change:

```text

File "/home/dschultz/Documents/combo/trunk/build_memory/lib/icecube/filterscripts/gcfilter.py", line 17, in GCFilter

from icecube import dataclasses, coordinate_service

ImportError: cannot import name coordinate_service

```

And this line in the file:

```text

add_gcfilter(tr... | 1.0 | [filterscripts] coordinate service -> astro (Trac #1641) - This import needs to change:

```text

File "/home/dschultz/Documents/combo/trunk/build_memory/lib/icecube/filterscripts/gcfilter.py", line 17, in GCFilter

from icecube import dataclasses, coordinate_service

ImportError: cannot import name coordinate_servi... | defect | coordinate service astro trac this import needs to change text file home dschultz documents combo trunk build memory lib icecube filterscripts gcfilter py line in gcfilter from icecube import dataclasses coordinate service importerror cannot import name coordinate service and this l... | 1 |

122,655 | 10,228,902,302 | IssuesEvent | 2019-08-17 07:28:33 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | JDBC driver installation related info missing in JDBC api doc | Area/StandardLibs BetaTesting Type/Docs | **Description:**

Subject please in doc https://v1-0-0-alpha.ballerina.io/learn/api-docs/ballerina/java.jdbc/index.html

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optional):**

<!-- Any related issues such as sub tasks, issues reporte... | 1.0 | JDBC driver installation related info missing in JDBC api doc - **Description:**

Subject please in doc https://v1-0-0-alpha.ballerina.io/learn/api-docs/ballerina/java.jdbc/index.html

**Steps to reproduce:**

**Affected Versions:**

**OS, DB, other environment details and versions:**

**Related Issues (optiona... | non_defect | jdbc driver installation related info missing in jdbc api doc description subject please in doc steps to reproduce affected versions os db other environment details and versions related issues optional suggested labels optional suggested assignees opti... | 0 |

426,942 | 29,669,335,257 | IssuesEvent | 2023-06-11 07:51:16 | fedewf1/repositorio-tp2 | https://api.github.com/repos/fedewf1/repositorio-tp2 | closed | Issues 2 tp5 | documentation Diseño | **Para los archivos sucursales.html, contacto.html, sus derivados archivos html y los archivos java que empleen. Aplicar lo siguiente.

**No tener en cuenta los archivos nav, header y footer. Dado que estas son comunues a todos los archivos.

Considerar el uso de las clases bootstrap Containers, Filas, Columnas, Alin... | 1.0 | Issues 2 tp5 - **Para los archivos sucursales.html, contacto.html, sus derivados archivos html y los archivos java que empleen. Aplicar lo siguiente.

**No tener en cuenta los archivos nav, header y footer. Dado que estas son comunues a todos los archivos.

Considerar el uso de las clases bootstrap Containers, Filas,... | non_defect | issues para los archivos sucursales html contacto html sus derivados archivos html y los archivos java que empleen aplicar lo siguiente no tener en cuenta los archivos nav header y footer dado que estas son comunues a todos los archivos considerar el uso de las clases bootstrap containers filas c... | 0 |

52,093 | 13,211,387,926 | IssuesEvent | 2020-08-15 22:46:50 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [iceprod2] handle expiration of self-signed cert for webserver (Trac #1676) | Incomplete Migration Migrated from Trac defect iceprod | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1676">https://code.icecube.wisc.edu/projects/icecube/ticket/1676</a>, reported by david.schultzand owned by david.schultz</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-05-09T21:55:16",

... | 1.0 | [iceprod2] handle expiration of self-signed cert for webserver (Trac #1676) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1676">https://code.icecube.wisc.edu/projects/icecube/ticket/1676</a>, reported by david.schultzand owned by david.schultz</em></summary>

<p>

... | defect | handle expiration of self signed cert for webserver trac migrated from json status closed changetime ts description the default right now is to generate a self signed cert with a duration of days we could either make this time infinitely lar... | 1 |

30,869 | 6,335,280,954 | IssuesEvent | 2017-07-26 18:30:13 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | optimize.BenchGlobal broken | Benchmarks defect | As reported on the mailing list, ``optimize.BenchGlobal`` benchmarks are broken currently:

```

python runtests.py --bench optimize.BenchGlobal --> fails on both my modified version (to add stochasticBB testing) and on the original scipy repository (errors described in the pastebin above)

python runtests.py --ben... | 1.0 | optimize.BenchGlobal broken - As reported on the mailing list, ``optimize.BenchGlobal`` benchmarks are broken currently:

```

python runtests.py --bench optimize.BenchGlobal --> fails on both my modified version (to add stochasticBB testing) and on the original scipy repository (errors described in the pastebin abov... | defect | optimize benchglobal broken as reported on the mailing list optimize benchglobal benchmarks are broken currently python runtests py bench optimize benchglobal fails on both my modified version to add stochasticbb testing and on the original scipy repository errors described in the pastebin abov... | 1 |

175,254 | 27,815,663,231 | IssuesEvent | 2023-03-18 16:56:59 | Jade-ux/WomenTechConnect | https://api.github.com/repos/Jade-ux/WomenTechConnect | closed | Sign-up form - Design | design | Design and code separated into two cards but if you find it easier to go ahead and create it in the code straight away, that's great, feel free to assign both tickets to yourself

Wireframes for inspiration:

, as body_short is limited to SHORT_BODY_MAX_LEN+1 (i.e. 201) by the archiver.

So what is the p... | 1.0 | What is this code trying to do? - https://github.com/apache/incubator-ponymail-foal/blob/6bbb4f49c99a22962afe7953b5fa06dfea341ec2/server/plugins/messages.py#L361-L364

AFAICT this will always result in the body being limited to BODY_MAXLEN + 1 (i.e. 201), as body_short is limited to SHORT_BODY_MAX_LEN+1 (i.e. 201) by... | non_defect | what is this code trying to do afaict this will always result in the body being limited to body maxlen i e as body short is limited to short body max len i e by the archiver so what is the point of the shorten option why not just replace body with body short | 0 |

65,819 | 19,707,685,609 | IssuesEvent | 2022-01-13 00:30:55 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | closed | CCP - misceláneos, no envía el número de registro ni residencia fiscal cuándo éstos se capturan, | defect | Estos campos se utilizan en traslados internacionales.

Aquí lo que se necesita es enviarlos al XML, cuando se capturen.

Por ejemplo, el conector como simplemente envía lo que incluye el archivo de texto, si lo hace:

<cartaporte20:Ubicacion DistanciaRecorrida="1000.0" FechaHoraSalidaLlegada="2021-05-10T10:00:00"... | 1.0 | CCP - misceláneos, no envía el número de registro ni residencia fiscal cuándo éstos se capturan, - Estos campos se utilizan en traslados internacionales.

Aquí lo que se necesita es enviarlos al XML, cuando se capturen.

Por ejemplo, el conector como simplemente envía lo que incluye el archivo de texto, si lo hace:

... | defect | ccp misceláneos no envía el número de registro ni residencia fiscal cuándo éstos se capturan estos campos se utilizan en traslados internacionales aquí lo que se necesita es enviarlos al xml cuando se capturen por ejemplo el conector como simplemente envía lo que incluye el archivo de texto si lo hace ... | 1 |

214,791 | 7,276,787,907 | IssuesEvent | 2018-02-21 17:21:31 | TylerConlee/slab | https://api.github.com/repos/TylerConlee/slab | closed | Add organization details to SLA ticket notifications | enhancement priority:normal | The organization that the ticket belongs to would be helpful for additional context on the ticket seen in the SLA notification. | 1.0 | Add organization details to SLA ticket notifications - The organization that the ticket belongs to would be helpful for additional context on the ticket seen in the SLA notification. | non_defect | add organization details to sla ticket notifications the organization that the ticket belongs to would be helpful for additional context on the ticket seen in the sla notification | 0 |

519,994 | 15,076,874,988 | IssuesEvent | 2021-02-05 05:47:10 | hassio-addons/addon-node-red | https://api.github.com/repos/hassio-addons/addon-node-red | closed | node-red-contrib-actionflows runtime errors (Deprecated Events) | priority-medium | # Problem/Motivation

The node-red component "node-red-contrib-actionflows" was written prior to the availability of "flows:started" and uses an older method of flow setup that is no longer supported in NodeRED. This triggers runtime deprecation notices.

## Expected behavior

No warnings, no indications on flow ... | 1.0 | node-red-contrib-actionflows runtime errors (Deprecated Events) - # Problem/Motivation

The node-red component "node-red-contrib-actionflows" was written prior to the availability of "flows:started" and uses an older method of flow setup that is no longer supported in NodeRED. This triggers runtime deprecation notice... | non_defect | node red contrib actionflows runtime errors deprecated events problem motivation the node red component node red contrib actionflows was written prior to the availability of flows started and uses an older method of flow setup that is no longer supported in nodered this triggers runtime deprecation notice... | 0 |

48,935 | 7,466,453,561 | IssuesEvent | 2018-04-02 10:43:00 | scalameta/metals | https://api.github.com/repos/scalameta/metals | closed | Simplify installation | documentation installation | Currently, the installation steps are a sequence of several fairly fragile steps. https://github.com/scalameta/language-server/blob/master/BETA.md Any mistake could make nothing work, or half-work with no instructions on what's missing.

We should strive for as simple installation as possible but avoid 100% automagic... | 1.0 | Simplify installation - Currently, the installation steps are a sequence of several fairly fragile steps. https://github.com/scalameta/language-server/blob/master/BETA.md Any mistake could make nothing work, or half-work with no instructions on what's missing.

We should strive for as simple installation as possible ... | non_defect | simplify installation currently the installation steps are a sequence of several fairly fragile steps any mistake could make nothing work or half work with no instructions on what s missing we should strive for as simple installation as possible but avoid automagical setup that makes it difficult to track... | 0 |

319,509 | 23,775,980,719 | IssuesEvent | 2022-09-01 20:59:51 | spacetelescope/drizzlepac | https://api.github.com/repos/spacetelescope/drizzlepac | closed | Need updates to DGEO warning message | Documentation | Hello! I'm working on a WFPC2 DrizzlePac ticket (INC0154128, for reference) and the user asked about the lengthy warning message below. I feel this needs to be updated for two reasons: 1) it provides users with an IRAF hedit command, and IRAF is deprecated, and 2) it should explain that for WFPC2 and other archival ins... | 1.0 | Need updates to DGEO warning message - Hello! I'm working on a WFPC2 DrizzlePac ticket (INC0154128, for reference) and the user asked about the lengthy warning message below. I feel this needs to be updated for two reasons: 1) it provides users with an IRAF hedit command, and IRAF is deprecated, and 2) it should explai... | non_defect | need updates to dgeo warning message hello i m working on a drizzlepac ticket for reference and the user asked about the lengthy warning message below i feel this needs to be updated for two reasons it provides users with an iraf hedit command and iraf is deprecated and it should explain that for ... | 0 |

103,135 | 11,340,124,514 | IssuesEvent | 2020-01-23 05:09:17 | wayexists02/hanyang-erica-robot-programming | https://api.github.com/repos/wayexists02/hanyang-erica-robot-programming | reopened | 코드론 실행법 | documentation | jylee 핫스팟(...)에 서버와 코드론을 연결한 후, 서버와 코드론에서 코드를 실행한다.

## 서버의 실행

코드론을 실행하기 앞서서 먼저 실행한다.

```roslaunch codrone_alpha launch.launch```

## 코드론의 실행

```roslaunch codrone_alpha_pi launch.launch``` | 1.0 | 코드론 실행법 - jylee 핫스팟(...)에 서버와 코드론을 연결한 후, 서버와 코드론에서 코드를 실행한다.

## 서버의 실행

코드론을 실행하기 앞서서 먼저 실행한다.

```roslaunch codrone_alpha launch.launch```

## 코드론의 실행

```roslaunch codrone_alpha_pi launch.launch``` | non_defect | 코드론 실행법 jylee 핫스팟 에 서버와 코드론을 연결한 후 서버와 코드론에서 코드를 실행한다 서버의 실행 코드론을 실행하기 앞서서 먼저 실행한다 roslaunch codrone alpha launch launch 코드론의 실행 roslaunch codrone alpha pi launch launch | 0 |

4,403 | 22,617,321,211 | IssuesEvent | 2022-06-30 00:20:29 | aws/aws-sam-cli | https://api.github.com/repos/aws/aws-sam-cli | closed | `sam sync` does not support custom bucket names | type/ux type/feature area/sam-config area/sync maintainer/need-followup area/accelerate |

### Description:

I don't use the default SAM bucket, I have my own. `sam sync` does not seem to support this.

### Steps to reproduce:

Do `sam init` and create the zip Python 3.9 "Hello World" template.

Create the following samconfig.toml

```toml

version = 0.1

[default]

[default.deploy]

[default.... | True | `sam sync` does not support custom bucket names -

### Description:

I don't use the default SAM bucket, I have my own. `sam sync` does not seem to support this.

### Steps to reproduce:

Do `sam init` and create the zip Python 3.9 "Hello World" template.

Create the following samconfig.toml

```toml

versi... | non_defect | sam sync does not support custom bucket names description i don t use the default sam bucket i have my own sam sync does not seem to support this steps to reproduce do sam init and create the zip python hello world template create the following samconfig toml toml versi... | 0 |

8,043 | 2,611,449,702 | IssuesEvent | 2015-02-27 04:58:19 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Missing tombstones prevent game launch | auto-migrated Engine Priority-Medium Type-Defect | ```

(clone of

http://fireforge.net//tracker/?func=detail&atid=125&aid=274&group_id=11 )

Submitted by:

Christopher Vagnetoft (noccy80)

"Detailed description

Several times tonite a multiplayer game has failed to load since tombstones

haven't been found on one or more of the

players' computers. A missing tombstone sho... | 1.0 | Missing tombstones prevent game launch - ```

(clone of

http://fireforge.net//tracker/?func=detail&atid=125&aid=274&group_id=11 )

Submitted by:

Christopher Vagnetoft (noccy80)

"Detailed description

Several times tonite a multiplayer game has failed to load since tombstones

haven't been found on one or more of the

pl... | defect | missing tombstones prevent game launch clone of submitted by christopher vagnetoft detailed description several times tonite a multiplayer game has failed to load since tombstones haven t been found on one or more of the players computers a missing tombstone should not cause the load to fail but... | 1 |

43,514 | 23,270,872,739 | IssuesEvent | 2022-08-04 22:55:03 | quick-lint/quick-lint-js | https://api.github.com/repos/quick-lint/quick-lint-js | closed | Optimize Windows .exe icon | performance | dist/artwork/dusty-app.ico is 260 KiB. That's pretty big. Let's shrink it to bring the .exe file size down. | True | Optimize Windows .exe icon - dist/artwork/dusty-app.ico is 260 KiB. That's pretty big. Let's shrink it to bring the .exe file size down. | non_defect | optimize windows exe icon dist artwork dusty app ico is kib that s pretty big let s shrink it to bring the exe file size down | 0 |

99,881 | 8,714,077,373 | IssuesEvent | 2018-12-07 06:13:37 | actiontech/dble | https://api.github.com/repos/actiontech/dble | closed | System parameter `maxCon` is invalid after a connection failure | from auto_test resolve verified | * **dble version:**

5.6.29-dble-9.9.9.9-c53c3f7-20181116022818

* **preconditions :**

no

* **configs:**

**schema.xml**

```

<?xml version='1.0' encoding='utf-8'?>

<!DOCTYPE dble:schema SYSTEM "schema.dtd"><dble:schema xmlns:dble="http://dble.cloud/">

<schema dataNode="dn5" name="mytest" sqlMaxLimit="... | 1.0 | System parameter `maxCon` is invalid after a connection failure - * **dble version:**

5.6.29-dble-9.9.9.9-c53c3f7-20181116022818

* **preconditions :**

no

* **configs:**

**schema.xml**

```

<?xml version='1.0' encoding='utf-8'?>

<!DOCTYPE dble:schema SYSTEM "schema.dtd"><dble:schema xmlns:dble="http://... | non_defect | system parameter maxcon is invalid after a connection failure dble version: dble preconditions : no configs: schema xml dble schema xmlns dble select user select user ... | 0 |

16,097 | 2,871,807,576 | IssuesEvent | 2015-06-08 07:34:08 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | reopened | [TEST-FAILURE] MigrationAwareServiceTest.testPartitionDataSize_whenNodesStartedParallel_withSingleBackup | Team: Core Type: Defect | ```

java.lang.AssertionError: expected:<542> but was:<506>

at org.junit.Assert.fail(Assert.java:88)

at org.junit.Assert.failNotEquals(Assert.java:834)

at org.junit.Assert.assertEquals(Assert.java:645)

```

https://hazelcast-l337.ci.cloudbees.com/job/Hazelcast-3.x-OpenJDK8-Quality-Outreach/com.hazelcast$hazelcas... | 1.0 | [TEST-FAILURE] MigrationAwareServiceTest.testPartitionDataSize_whenNodesStartedParallel_withSingleBackup - ```

java.lang.AssertionError: expected:<542> but was:<506>

at org.junit.Assert.fail(Assert.java:88)

at org.junit.Assert.failNotEquals(Assert.java:834)

at org.junit.Assert.assertEquals(Assert.java:645)

```

... | defect | migrationawareservicetest testpartitiondatasize whennodesstartedparallel withsinglebackup java lang assertionerror expected but was at org junit assert fail assert java at org junit assert failnotequals assert java at org junit assert assertequals assert java | 1 |

54,837 | 13,960,445,927 | IssuesEvent | 2020-10-24 21:07:12 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | opened | 'configure' fails on Kernel 5.8 with no module support | Status: Triage Needed Type: Defect | ### System information

Type | Version/Name

--- | ---

Distribution Name | Gentoo

Linux Kernel | 5.8+

Architecture | amd64

ZFS Version | 0.8.6-staging, 2.0.0-rc4

### Describe the problem you're observing

Configure fails when used in builtin mode for Linux 5.8 and 5.9 when those have no support for loadable... | 1.0 | 'configure' fails on Kernel 5.8 with no module support - ### System information

Type | Version/Name

--- | ---

Distribution Name | Gentoo

Linux Kernel | 5.8+

Architecture | amd64

ZFS Version | 0.8.6-staging, 2.0.0-rc4

### Describe the problem you're observing

Configure fails when used in builtin mode for ... | defect | configure fails on kernel with no module support system information type version name distribution name gentoo linux kernel architecture zfs version staging describe the problem you re observing configure fails when used in builtin mode for linux ... | 1 |

18,541 | 2,615,173,145 | IssuesEvent | 2015-03-01 06:55:20 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | typo in the fieldguide | auto-migrated fieldguide Milestone-Q12012 Priority-P3 Type-Bug | ```

"A web application providers a great user experience"

--------------------------^

at http://www.html5rocks.com/webappfieldguide/know-your-apps/site-vs-app/

```

Original issue reported on code.google.com by `mikenere...@gmail.com` on 15 Feb 2012 at 2:51 | 1.0 | typo in the fieldguide - ```

"A web application providers a great user experience"

--------------------------^

at http://www.html5rocks.com/webappfieldguide/know-your-apps/site-vs-app/

```

Original issue reported on code.google.com by `mikenere...@gmail.com` on 15 Feb 2012 at 2:51 | non_defect | typo in the fieldguide a web application providers a great user experience at original issue reported on code google com by mikenere gmail com on feb at | 0 |

217,939 | 16,891,531,463 | IssuesEvent | 2021-06-23 09:49:48 | hakehuang/zephyr | https://api.github.com/repos/hakehuang/zephyr | opened |

tests-ci :kernel.common.stack_protection_no_userspace.fatal : zephyr-v2.6.0-286-g46029914a7ac: mimxrt1015_evk: test Flash error

| area: Tests bug |

**Describe the bug**

kernel.common.stack_protection_no_userspace.fatal test is Flash error on zephyr-v2.6.0-286-g46029914a7ac on mimxrt1015_evk

see logs for details

**To Reproduce**

1.

```

scripts/twister --device-testing --device-serial /dev/ttyACM0 -p mimxrt1015_evk --testcase-root tests --sub-test kernel.common

... | 1.0 |

tests-ci :kernel.common.stack_protection_no_userspace.fatal : zephyr-v2.6.0-286-g46029914a7ac: mimxrt1015_evk: test Flash error

-

**Describe the bug**

kernel.common.stack_protection_no_userspace.fatal test is Flash error on zephyr-v2.6.0-286-g46029914a7ac on mimxrt1015_evk

see logs for details

**To Reproduce**

1. ... | non_defect | tests ci kernel common stack protection no userspace fatal zephyr evk test flash error describe the bug kernel common stack protection no userspace fatal test is flash error on zephyr on evk see logs for details to reproduce scripts twister device testing device s... | 0 |

398,611 | 11,741,997,173 | IssuesEvent | 2020-03-11 23:16:32 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Toon dagnaam in events admin | events priority: low | In GitLab by njanssen on Mar 1, 2017, 20:05

Het zou fijn zijn als de naam van de dag (e.g. maandag, dinsdag, ...) in de datumweergaven van de backend staat.

Edit: Het gaat hierbij om de weergave van de evenementen. | 1.0 | Toon dagnaam in events admin - In GitLab by njanssen on Mar 1, 2017, 20:05

Het zou fijn zijn als de naam van de dag (e.g. maandag, dinsdag, ...) in de datumweergaven van de backend staat.

Edit: Het gaat hierbij om de weergave van de evenementen. | non_defect | toon dagnaam in events admin in gitlab by njanssen on mar het zou fijn zijn als de naam van de dag e g maandag dinsdag in de datumweergaven van de backend staat edit het gaat hierbij om de weergave van de evenementen | 0 |

2,245 | 2,712,129,537 | IssuesEvent | 2015-04-09 11:46:27 | HGustavs/LenaSYS | https://api.github.com/repos/HGustavs/LenaSYS | closed | The Back/Return button | CodeViewer | The Return/Back button in codeviewer isn't linked to a page, when clicked on.

<-a href="sectioned.php?courseid=UNK&coursevers=UNK"><-img src="../Shared/icons/Up.svg"-><-/a> The linked page is missing in the server(?) | 1.0 | The Back/Return button - The Return/Back button in codeviewer isn't linked to a page, when clicked on.

<-a href="sectioned.php?courseid=UNK&coursevers=UNK"><-img src="../Shared/icons/Up.svg"-><-/a> The linked page is missing in the server(?) | non_defect | the back return button the return back button in codeviewer isn t linked to a page when clicked on the linked page is missing in the server | 0 |

47,362 | 2,978,303,478 | IssuesEvent | 2015-07-16 04:47:27 | pombase/canto | https://api.github.com/repos/pombase/canto | closed | Do we need the New curs form? | admin low priority | Can we remove the New curs page (reached via the "Add ... Curation session" admin link)? It looks buggy in the test instance right now (and I ain't about to mess with what's in live), and I don't think it would be the most useful thing even if it got fixed. Doesn't seem like fun to generate hex-gibberish session IDs ma... | 1.0 | Do we need the New curs form? - Can we remove the New curs page (reached via the "Add ... Curation session" admin link)? It looks buggy in the test instance right now (and I ain't about to mess with what's in live), and I don't think it would be the most useful thing even if it got fixed. Doesn't seem like fun to gener... | non_defect | do we need the new curs form can we remove the new curs page reached via the add curation session admin link it looks buggy in the test instance right now and i ain t about to mess with what s in live and i don t think it would be the most useful thing even if it got fixed doesn t seem like fun to gener... | 0 |

32,386 | 6,767,416,567 | IssuesEvent | 2017-10-26 03:11:47 | Shopkeepers/Shopkeepers | https://api.github.com/repos/Shopkeepers/Shopkeepers | closed | With-Shop-creation near spawn->Witch blocked->Server restart->witch spawns but not editable | Defect fixed migrated | **Migrated from:** https://dev.bukkit.org/projects/shopkeepers/issues/111

**Originally posted by blablubbabc (Mar 29, 2013):**

I just discovered the following:- I have a zone in my world, where monster spawning is denied by a plugin.

- I tried setting up a witch shop there: it displayed me the message: "blabla, shop... | 1.0 | With-Shop-creation near spawn->Witch blocked->Server restart->witch spawns but not editable - **Migrated from:** https://dev.bukkit.org/projects/shopkeepers/issues/111

**Originally posted by blablubbabc (Mar 29, 2013):**

I just discovered the following:- I have a zone in my world, where monster spawning is denied by... | defect | with shop creation near spawn witch blocked server restart witch spawns but not editable migrated from originally posted by blablubbabc mar i just discovered the following i have a zone in my world where monster spawning is denied by a plugin i tried setting up a witch shop there it di... | 1 |

45,111 | 11,589,787,925 | IssuesEvent | 2020-02-24 03:54:54 | GoogleContainerTools/skaffold | https://api.github.com/repos/GoogleContainerTools/skaffold | reopened | Get build timeout on local concurrent build | area/build kind/bug | ### Actual behavior

with `skaffold dev` i rarely get this sort of error:

```

Sending build context to Docker daemon 9.742MB

Step 1/10 : FROM node:10.15

FATA[1032] failed to build: build failed: building [us.gcr.io/replay-gaming/poker-api]: build artifact: unable to stream build output: Get https://registry-1.... | 1.0 | Get build timeout on local concurrent build - ### Actual behavior

with `skaffold dev` i rarely get this sort of error:

```

Sending build context to Docker daemon 9.742MB

Step 1/10 : FROM node:10.15

FATA[1032] failed to build: build failed: building [us.gcr.io/replay-gaming/poker-api]: build artifact: unable t... | non_defect | get build timeout on local concurrent build actual behavior with skaffold dev i rarely get this sort of error sending build context to docker daemon step from node fata failed to build build failed building build artifact unable to stream build output get net http tls han... | 0 |

98,444 | 29,870,547,119 | IssuesEvent | 2023-06-20 08:12:16 | GSS-Cogs/dd-cms | https://api.github.com/repos/GSS-Cogs/dd-cms | closed | Automate legend font change | chart builder high priority | At the moment the only way to update the legend font to the new font and size is to resave each chart. | 1.0 | Automate legend font change - At the moment the only way to update the legend font to the new font and size is to resave each chart. | non_defect | automate legend font change at the moment the only way to update the legend font to the new font and size is to resave each chart | 0 |

56,792 | 15,371,686,401 | IssuesEvent | 2021-03-02 10:18:47 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | com.hazelcast.json.MapPredicateJsonTest takes 20+ minutes to complete | Module: IMap Module: Query Source: Internal Team: Core Type: Defect Type: Test-Failure | Tests in the `com.hazelcast.json.MapPredicateJsonTest` take 20+ minutes to complete in the PR builder.

It might make sense to mark this as `SlowTest` but I feel like the tests there are pretty simple and should complete quickly.

When I run the test suite locally, I see that in some tests, I get the following log... | 1.0 | com.hazelcast.json.MapPredicateJsonTest takes 20+ minutes to complete - Tests in the `com.hazelcast.json.MapPredicateJsonTest` take 20+ minutes to complete in the PR builder.

It might make sense to mark this as `SlowTest` but I feel like the tests there are pretty simple and should complete quickly.

When I run ... | defect | com hazelcast json mappredicatejsontest takes minutes to complete tests in the com hazelcast json mappredicatejsontest take minutes to complete in the pr builder it might make sense to mark this as slowtest but i feel like the tests there are pretty simple and should complete quickly when i run th... | 1 |

481,190 | 13,881,750,872 | IssuesEvent | 2020-10-18 02:23:50 | apcountryman/avr-libcpp | https://api.github.com/repos/apcountryman/avr-libcpp | closed | Add util/delay | priority-normal status-in_revision type-feature | Add `util/delay` header (`system/util/delay`), and associated header/source implementation files (`include/util/delay.h`, and `source/util/delay.cc`). | 1.0 | Add util/delay - Add `util/delay` header (`system/util/delay`), and associated header/source implementation files (`include/util/delay.h`, and `source/util/delay.cc`). | non_defect | add util delay add util delay header system util delay and associated header source implementation files include util delay h and source util delay cc | 0 |

21,573 | 3,520,005,485 | IssuesEvent | 2016-01-12 19:03:00 | WilliamOckham/hunpos | https://api.github.com/repos/WilliamOckham/hunpos | closed | tagging erroneous in some cases | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

Tag a sample corpus

What is the expected output? What do you see instead?

I wrote in for example:

got expected

I PRP

do VBP

work NN <-- VB

. SENT

What version of the product are you using? On what operating system?

1.2.8, linux mint

Please provide any addi... | 1.0 | tagging erroneous in some cases - ```

What steps will reproduce the problem?

Tag a sample corpus

What is the expected output? What do you see instead?

I wrote in for example:

got expected

I PRP

do VBP

work NN <-- VB

. SENT

What version of the product are you using? On what operating system?

1.2.8, l... | defect | tagging erroneous in some cases what steps will reproduce the problem tag a sample corpus what is the expected output what do you see instead i wrote in for example got expected i prp do vbp work nn vb sent what version of the product are you using on what operating system l... | 1 |

486,981 | 14,017,401,933 | IssuesEvent | 2020-10-29 15:36:47 | GQCG/GQCP | https://api.github.com/repos/GQCG/GQCP | opened | Enable the evaluation of `GSQOperators` in a frozen-core spin-unresolved ONV basis | C++ complexity: intermediate priority: low theory | In recent refactors (#688), we had to temporarily disable some of the CI functionality. This issue tracks the re-enabling of the API to evaluate restricted operators in a seniority-zero ONV basis. | 1.0 | Enable the evaluation of `GSQOperators` in a frozen-core spin-unresolved ONV basis - In recent refactors (#688), we had to temporarily disable some of the CI functionality. This issue tracks the re-enabling of the API to evaluate restricted operators in a seniority-zero ONV basis. | non_defect | enable the evaluation of gsqoperators in a frozen core spin unresolved onv basis in recent refactors we had to temporarily disable some of the ci functionality this issue tracks the re enabling of the api to evaluate restricted operators in a seniority zero onv basis | 0 |

28,303 | 5,239,168,291 | IssuesEvent | 2017-01-31 08:53:26 | pexcn/tb-tun | https://api.github.com/repos/pexcn/tb-tun | closed | MTU and ICMP issue | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.

ICMP package on IPv4 should be reflected to the IPv6 according to RFC2893.

But TB-TUN has nothing to do with ICMP.

2.

According to RFC3506, "If the IPv6 MTU size proves to be too large for some

intermediate IPv4 subnet, IPv4 fragmentation will ensue....The IPv4 'do not

f... | 1.0 | MTU and ICMP issue - ```

What steps will reproduce the problem?

1.

ICMP package on IPv4 should be reflected to the IPv6 according to RFC2893.

But TB-TUN has nothing to do with ICMP.

2.

According to RFC3506, "If the IPv6 MTU size proves to be too large for some

intermediate IPv4 subnet, IPv4 fragmentation will ensue.... | defect | mtu and icmp issue what steps will reproduce the problem icmp package on should be reflected to the according to but tb tun has nothing to do with icmp according to if the mtu size proves to be too large for some intermediate subnet fragmentation will ensue the do not fragment ... | 1 |

3,866 | 2,610,083,167 | IssuesEvent | 2015-02-26 18:25:25 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 祛除青春痘深圳 | auto-migrated Priority-Medium Type-Defect | ```

祛除青春痘深圳【深圳韩方科颜全国热线400-869-1818,24小时QQ4

008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方��

�—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科�

��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康

祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治��

�粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘�

��

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 6:47 | 1.0 | 祛除青春痘深圳 - ```

祛除青春痘深圳【深圳韩方科颜全国热线400-869-1818,24小时QQ4

008691818】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方��

�—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科�

��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康

祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治��

�粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘�

��

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 6:47 | defect | 祛除青春痘深圳 祛除青春痘深圳【 , 】深圳韩方科颜专业祛痘连锁机构,机构以韩国秘方�� �—韩方科颜这一国妆准字号治疗型权威,祛痘佳品,韩方科� ��专业祛痘连锁机构,采用韩国秘方配合专业“不反弹”健康 祛痘技术并结合先进“先进豪华彩光”仪,开创国内专业治�� �粉刺、痤疮签约包治先河,成功消除了许多顾客脸上的痘痘� �� original issue reported on code google com by szft com on may at | 1 |