Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

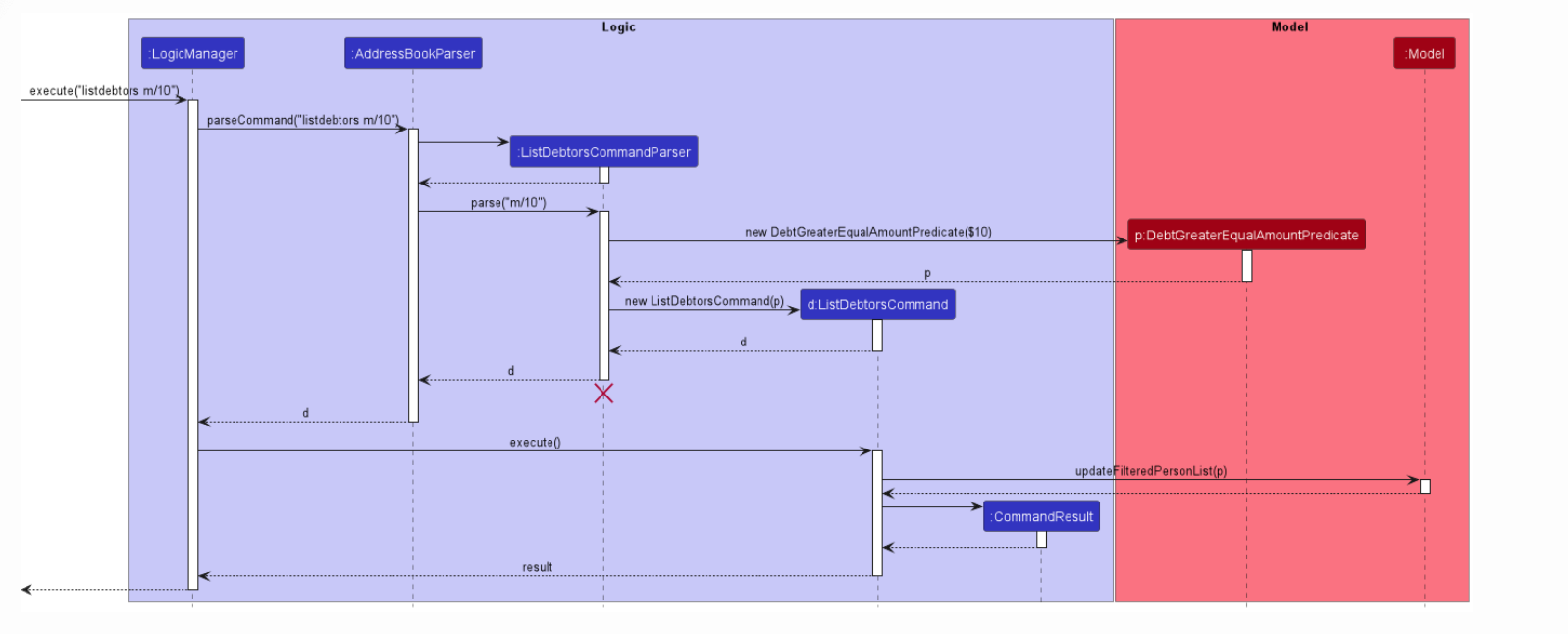

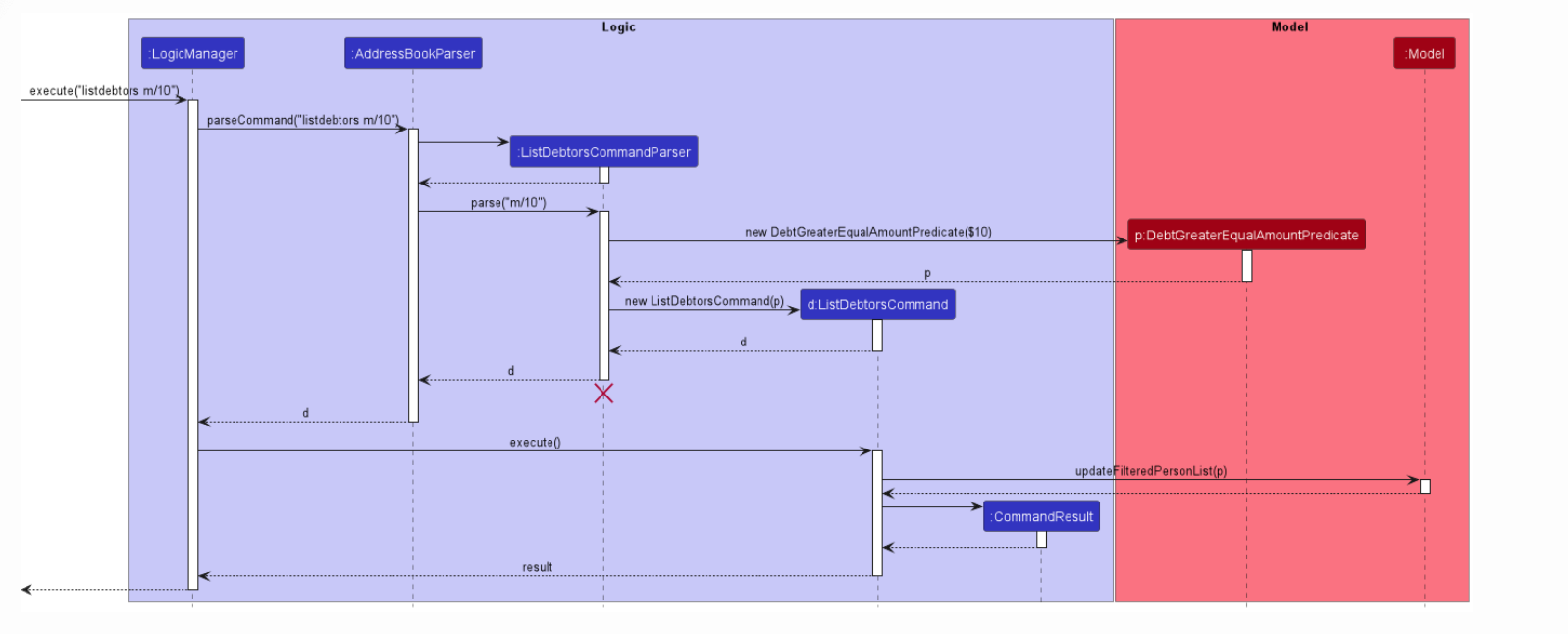

355,747 | 25,176,013,013 | IssuesEvent | 2022-11-11 09:19:47 | boredcoco/pe | https://api.github.com/repos/boredcoco/pe | opened | ListCommandParser UML inaccurate diagram | type.DocumentationBug severity.Medium |

I don't see any part of the code which destroys the ListCommandParser this diagram is slightly inaccurate

<!--session: 1668153950994-46ee6784-85ca-4802-99de-176c3e01881c-->... | 1.0 | ListCommandParser UML inaccurate diagram -

I don't see any part of the code which destroys the ListCommandParser this diagram is slightly inaccurate

<!--session: 1668153950... | non_defect | listcommandparser uml inaccurate diagram i don t see any part of the code which destroys the listcommandparser this diagram is slightly inaccurate | 0 |

624,028 | 19,684,781,012 | IssuesEvent | 2022-01-11 20:45:06 | UniVE-SSV/lisa | https://api.github.com/repos/UniVE-SSV/lisa | closed | [FEATURE REQUEST] Make `CartesianProduct` methods non-final (and check more classes) | enhancement resolved priority-p1 | **Description**

`CartesianProduct` (and probably more classes) have final methods that prevent their extensibility even in cases where it makes sense. | 1.0 | [FEATURE REQUEST] Make `CartesianProduct` methods non-final (and check more classes) - **Description**

`CartesianProduct` (and probably more classes) have final methods that prevent their extensibility even in cases where it makes sense. | non_defect | make cartesianproduct methods non final and check more classes description cartesianproduct and probably more classes have final methods that prevent their extensibility even in cases where it makes sense | 0 |

37,279 | 5,109,766,637 | IssuesEvent | 2017-01-05 21:50:01 | owtf/owtf | https://api.github.com/repos/owtf/owtf | closed | Session_Management_Schema@OWTF-SM-001 now breaks OWTF tests | Bug Priority Medium Testing | The plugin `Session_Management_Schema@OWTF-SM-001` is currently breaking the OWTF tests.

Since forever, the plugin was actually doing nothing (`return ([])`) but it has been re-enabled with https://github.com/owtf/owtf/commit/9207e4bccf41d155067e7135e653ed2ae5447051#diff-40bec1612db6779ef9810c15e2e79e23L24

Howeve... | 1.0 | Session_Management_Schema@OWTF-SM-001 now breaks OWTF tests - The plugin `Session_Management_Schema@OWTF-SM-001` is currently breaking the OWTF tests.

Since forever, the plugin was actually doing nothing (`return ([])`) but it has been re-enabled with https://github.com/owtf/owtf/commit/9207e4bccf41d155067e7135e653e... | non_defect | session management schema owtf sm now breaks owtf tests the plugin session management schema owtf sm is currently breaking the owtf tests since forever the plugin was actually doing nothing return but it has been re enabled with however since it has been re enabled it breaks the owtf tests d... | 0 |

97,628 | 28,374,887,198 | IssuesEvent | 2023-04-12 19:59:49 | MicrosoftDocs/powerbi-docs | https://api.github.com/repos/MicrosoftDocs/powerbi-docs | closed | Confusing text, "When you want the data in your Power BI report and in your Report Builder report to be the same, " | powerbi/svc Pri2 report-builder/subsvc | What is a _Report Builder report _? And as far as I know there are two types of Power BI reports, Standard and Paginated. Which Power BI report type is it referring to here?

---

#### Document Details

⚠ *Do not edit this section. It is required for learn.microsoft.com ➟ GitHub issue linking.*

* ID: ed0cd... | 1.0 | Confusing text, "When you want the data in your Power BI report and in your Report Builder report to be the same, " - What is a _Report Builder report _? And as far as I know there are two types of Power BI reports, Standard and Paginated. Which Power BI report type is it referring to here?

---

#### Document ... | non_defect | confusing text when you want the data in your power bi report and in your report builder report to be the same what is a report builder report and as far as i know there are two types of power bi reports standard and paginated which power bi report type is it referring to here document ... | 0 |

107,244 | 16,751,740,813 | IssuesEvent | 2021-06-12 02:01:39 | turkdevops/graphql-tools | https://api.github.com/repos/turkdevops/graphql-tools | opened | CVE-2021-23386 (Medium) detected in dns-packet-1.3.1.tgz | security vulnerability | ## CVE-2021-23386 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dns-packet-1.3.1.tgz</b></p></summary>

<p>An abstract-encoding compliant module for encoding / decoding DNS packets<... | True | CVE-2021-23386 (Medium) detected in dns-packet-1.3.1.tgz - ## CVE-2021-23386 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dns-packet-1.3.1.tgz</b></p></summary>

<p>An abstract-enc... | non_defect | cve medium detected in dns packet tgz cve medium severity vulnerability vulnerable library dns packet tgz an abstract encoding compliant module for encoding decoding dns packets library home page a href path to dependency file graphql tools website package json path... | 0 |

116,763 | 9,882,951,268 | IssuesEvent | 2019-06-24 18:10:05 | astropy/astropy | https://api.github.com/repos/astropy/astropy | opened | TST: Mirror pandas intersphinx | Docs testing | `html-doc` job on CircleCI is failing like this: https://circleci.com/gh/astropy/astropy/35218

```

failed to reach any of the inventories with the following issues:

intersphinx inventory 'http://pandas.pydata.org/pandas-docs/stable/objects.inv' not fetchable

due to <class 'requests.exceptions.ConnectionError'... | 1.0 | TST: Mirror pandas intersphinx - `html-doc` job on CircleCI is failing like this: https://circleci.com/gh/astropy/astropy/35218

```

failed to reach any of the inventories with the following issues:

intersphinx inventory 'http://pandas.pydata.org/pandas-docs/stable/objects.inv' not fetchable

due to <class 'req... | non_defect | tst mirror pandas intersphinx html doc job on circleci is failing like this failed to reach any of the inventories with the following issues intersphinx inventory not fetchable due to httpconnectionpool host pandas pydata org port max retries exceeded with url pandas... | 0 |

105,258 | 13,172,543,014 | IssuesEvent | 2020-08-11 18:37:39 | Opentrons/opentrons | https://api.github.com/repos/Opentrons/opentrons | closed | PD refactor: _mmFromBottom instead of _tip_position for new delay fields | :spider: SPDDRS protocol designer refactor | # Overview

Oops, we mis-named a field! These two:

```

aspirate_delay_tip_position

dispense_delay_tip_position

should be renamed:

```

aspirate_delay_mmFromBottom

dispense_delay_mmFromBottom

```

so that they all match the `_mmFromBottom` convention have for the other fields (as specified in https://gi... | 1.0 | PD refactor: _mmFromBottom instead of _tip_position for new delay fields - # Overview

Oops, we mis-named a field! These two:

```

aspirate_delay_tip_position

dispense_delay_tip_position

should be renamed:

```

aspirate_delay_mmFromBottom

dispense_delay_mmFromBottom

```

so that they all match the `_mmF... | non_defect | pd refactor mmfrombottom instead of tip position for new delay fields overview oops we mis named a field these two aspirate delay tip position dispense delay tip position should be renamed aspirate delay mmfrombottom dispense delay mmfrombottom so that they all match the mmf... | 0 |

53,479 | 13,261,730,522 | IssuesEvent | 2020-08-20 20:25:55 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | [cvmfs] gfortran issue and nugen (Trac #1501) | Migrated from Trac cvmfs defect | I'm getting th following error when attempting to run nugen:

Loading neutrino-generator................................FATAL (I3Tray): Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_14_x86_64/tools/gfortran/libgfortran.so.3: v... | 1.0 | [cvmfs] gfortran issue and nugen (Trac #1501) - I'm getting th following error when attempting to run nugen:

Loading neutrino-generator................................FATAL (I3Tray): Failed to load library (<type 'exceptions.RuntimeError'>): dlopen() dynamic loading error: /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubu... | defect | gfortran issue and nugen trac i m getting th following error when attempting to run nugen loading neutrino generator fatal failed to load library dlopen dynamic loading error cvmfs icecube opensciencegrid org ubuntu tools gfortran libgfortran so versi... | 1 |

210,147 | 23,737,492,238 | IssuesEvent | 2022-08-31 09:23:10 | NilFoundation/crypto3-blueprint | https://api.github.com/repos/NilFoundation/crypto3-blueprint | opened | Substitute component-oriented selector with gate-oriented | security efficiency undefined behaviour | Component-oriented selector choice is incorrect and may lead to potential efficiency issues, since we can not distinguish two instances of one component with different input params (parametrized with different input variables) - such instances will have same selectors. And if we directly use variables from params inste... | True | Substitute component-oriented selector with gate-oriented - Component-oriented selector choice is incorrect and may lead to potential efficiency issues, since we can not distinguish two instances of one component with different input params (parametrized with different input variables) - such instances will have same s... | non_defect | substitute component oriented selector with gate oriented component oriented selector choice is incorrect and may lead to potential efficiency issues since we can not distinguish two instances of one component with different input params parametrized with different input variables such instances will have same s... | 0 |

53,956 | 13,262,555,611 | IssuesEvent | 2020-08-20 22:02:47 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | Error in copy-constructor of I3MCTree when adding simprod DetectorSim to the tray (Trac #2387) | Migrated from Trac combo core defect | The following code runs fine if the DetectorSim traysegment is not added after calling the I3MCTree copy-constructor.

Failure can be caused by executing the script with --fail

```text

from icecube import dataclasses as dc

from icecube.icetray import I3Frame

from icecube import dataio, phys_services

from I3Tray import ... | 1.0 | Error in copy-constructor of I3MCTree when adding simprod DetectorSim to the tray (Trac #2387) - The following code runs fine if the DetectorSim traysegment is not added after calling the I3MCTree copy-constructor.

Failure can be caused by executing the script with --fail

```text

from icecube import dataclasses as dc

... | defect | error in copy constructor of when adding simprod detectorsim to the tray trac the following code runs fine if the detectorsim traysegment is not added after calling the copy constructor failure can be caused by executing the script with fail text from icecube import dataclasses as dc from icecube icet... | 1 |

68,699 | 21,788,308,301 | IssuesEvent | 2022-05-14 13:55:05 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | With CP Subsystem disabled (unsafe mode) cannot get hold of the cp subsystem mgmt service | Type: Defect | Using version 5.1.1.

Have CPSubsystem disabled since my configuration is as follows :

final CPSubsystemConfig cpSubsystemConfig = new CPSubsystemConfig();

cpSubsystemConfig.setCPMemberCount(0);

cpSubsystemConfig.setGroupSize(0);

cpSubsystemConfig.setSessionTimeToLiveSeconds(30);

... | 1.0 | With CP Subsystem disabled (unsafe mode) cannot get hold of the cp subsystem mgmt service - Using version 5.1.1.

Have CPSubsystem disabled since my configuration is as follows :

final CPSubsystemConfig cpSubsystemConfig = new CPSubsystemConfig();

cpSubsystemConfig.setCPMemberCount(0);

cpSubsyste... | defect | with cp subsystem disabled unsafe mode cannot get hold of the cp subsystem mgmt service using version have cpsubsystem disabled since my configuration is as follows final cpsubsystemconfig cpsubsystemconfig new cpsubsystemconfig cpsubsystemconfig setcpmembercount cpsubsyste... | 1 |

55,505 | 14,526,284,464 | IssuesEvent | 2020-12-14 14:02:26 | SAP/fundamental-ngx | https://api.github.com/repos/SAP/fundamental-ngx | closed | Bug: (docs) Tabs example – Programmatic Selection works incorrect | Defect Hunting bug documentation | #### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

When the user click "Select Tab 2" and then click "Select Tab 1", tabs do not switching to "tab 1":

... | 1.0 | Bug: (docs) Tabs example – Programmatic Selection works incorrect - #### Is this a bug, enhancement, or feature request?

bug

#### Briefly describe your proposal.

When the user click "Select Tab 2" and then click "Select Tab 1", tabs do not switching to "tab 1":

: Swagger UI 를 이용한 API Docs 설정 | ✨ Feature ✅ Test | ## 이슈 내용

- Swagger UI 설정을 통해 백엔드 개발 팀의 API 리소스를 시각화하고 프론트와의 상호 작용을 수월하게 하는 것이 목표.

<br>

## 작업 사항

- [ ] swagger UI config 설정

<br>

| 1.0 | feat(common) : Swagger UI 를 이용한 API Docs 설정 - ## 이슈 내용

- Swagger UI 설정을 통해 백엔드 개발 팀의 API 리소스를 시각화하고 프론트와의 상호 작용을 수월하게 하는 것이 목표.

<br>

## 작업 사항

- [ ] swagger UI config 설정

<br>

| non_defect | feat common swagger ui 를 이용한 api docs 설정 이슈 내용 swagger ui 설정을 통해 백엔드 개발 팀의 api 리소스를 시각화하고 프론트와의 상호 작용을 수월하게 하는 것이 목표 작업 사항 swagger ui config 설정 | 0 |

73,241 | 24,520,945,909 | IssuesEvent | 2022-10-11 09:27:28 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | [Explained] 7.16.3 systemd startup file prevents LHC VirtualBox jobs running | P: Blocker R: fixed T: Defect E: 1 day C: Client - Linux | **Describe the bug**

I tried an update to 7.16.3, but it produced an oddity, so I switched back to 7.14.2

But I left the 7.16.3 systemd start-up file in place.

The result was that an LHC CMS task started, but then stalled.

It ran for ~6mins (during which the VirtualBox process wasn't consuming CPU) then this show... | 1.0 | [Explained] 7.16.3 systemd startup file prevents LHC VirtualBox jobs running - **Describe the bug**

I tried an update to 7.16.3, but it produced an oddity, so I switched back to 7.14.2

But I left the 7.16.3 systemd start-up file in place.

The result was that an LHC CMS task started, but then stalled.

It ran for ~... | defect | systemd startup file prevents lhc virtualbox jobs running describe the bug i tried an update to but it produced an oddity so i switched back to but i left the systemd start up file in place the result was that an lhc cms task started but then stalled it ran for during whic... | 1 |

31,274 | 6,485,144,719 | IssuesEvent | 2017-08-19 07:15:41 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | scipy.statsbinned_statistic_2d: incorrect binnumbers returned | defect scipy.stats | For certain inputs binned_statistic_2d returns incorrect bin numbers

`xEdges = np.arange(79950.,500050.,100.)`

`yEdges = np.arange(7489950.,7860050.,100.)`

`x = 356643.378`

`y = 7813944.500`

`binned, xedges, yedges, binnums = binned_statistic_2d((x,), (y,), (0.5,), 'mean', bins=[xEdges,yEdges],expand_binnumbers=... | 1.0 | scipy.statsbinned_statistic_2d: incorrect binnumbers returned - For certain inputs binned_statistic_2d returns incorrect bin numbers

`xEdges = np.arange(79950.,500050.,100.)`

`yEdges = np.arange(7489950.,7860050.,100.)`

`x = 356643.378`

`y = 7813944.500`

`binned, xedges, yedges, binnums = binned_statistic_2d((x,... | defect | scipy statsbinned statistic incorrect binnumbers returned for certain inputs binned statistic returns incorrect bin numbers xedges np arange yedges np arange x y binned xedges yedges binnums binned statistic x y mean bins expand binn... | 1 |

27,762 | 8,033,761,050 | IssuesEvent | 2018-07-29 10:37:08 | scikit-learn/scikit-learn | https://api.github.com/repos/scikit-learn/scikit-learn | reopened | Circle CI failure and Travis cron job failure | Blocker Build / CI help wanted | Circle CI failure:

Seems that it's due to https://github.com/scikit-learn/scikit-learn/commit/e888c0d65cfaef4a3a2c087ad7609d2296be8062, but I cannot figure out the reason.

Travis cron job failure:

See e.g., https://travis-ci.org/scikit-learn/scikit-learn/builds/408877802

Typical log:

FutureWarning: Using a non-t... | 1.0 | Circle CI failure and Travis cron job failure - Circle CI failure:

Seems that it's due to https://github.com/scikit-learn/scikit-learn/commit/e888c0d65cfaef4a3a2c087ad7609d2296be8062, but I cannot figure out the reason.

Travis cron job failure:

See e.g., https://travis-ci.org/scikit-learn/scikit-learn/builds/40887... | non_defect | circle ci failure and travis cron job failure circle ci failure seems that it s due to but i cannot figure out the reason travis cron job failure see e g typical log futurewarning using a non tuple sequence for multidimensional indexing is deprecated use arr instead of arr in the future th... | 0 |

387,477 | 26,724,575,837 | IssuesEvent | 2023-01-29 15:01:16 | zed-industries/feedback | https://api.github.com/repos/zed-industries/feedback | opened | Multiple keybindings for one action are listed inconsistently | documentation triage | ### Check for existing issues

- [X] Completed

### Page link

https://zed.dev/docs/configuration/key-bindings

### Description

Sometimes multiple keybindings for the same action are

- listed in one row: https://zed.dev/docs/configuration/key-bindings#workspace (see `Toggle theme selctor` for example (typo is not a... | 1.0 | Multiple keybindings for one action are listed inconsistently - ### Check for existing issues

- [X] Completed

### Page link

https://zed.dev/docs/configuration/key-bindings

### Description

Sometimes multiple keybindings for the same action are

- listed in one row: https://zed.dev/docs/configuration/key-bindings#... | non_defect | multiple keybindings for one action are listed inconsistently check for existing issues completed page link description sometimes multiple keybindings for the same action are listed in one row see toggle theme selctor for example typo is not a quotation error it s literally on the ... | 0 |

8,833 | 3,009,831,330 | IssuesEvent | 2015-07-28 09:18:44 | printdotio/printio-ios-sdk | https://api.github.com/repos/printdotio/printio-ios-sdk | closed | Images of an order don't show on Admin | bug Ready to Test | This order on staging admin of Mini Book don't show the images we chose in the app

https://staging-admin-v2.print.io/Home#/orders/154460/images | 1.0 | Images of an order don't show on Admin - This order on staging admin of Mini Book don't show the images we chose in the app

https://staging-admin-v2.print.io/Home#/orders/154460/images | non_defect | images of an order don t show on admin this order on staging admin of mini book don t show the images we chose in the app | 0 |

9,950 | 2,616,014,026 | IssuesEvent | 2015-03-02 00:56:58 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | WinXP incompatible with LUDP | auto-migrated Priority-Medium Type-Defect Usability | ```

For the multiple-instance hack, the custom network mode LUDP (Local UDP) is not

compatible with Windows XP machines.

```

Original issue reported on code.google.com by `AHeinerm` on 6 Nov 2010 at 10:15 | 1.0 | WinXP incompatible with LUDP - ```

For the multiple-instance hack, the custom network mode LUDP (Local UDP) is not

compatible with Windows XP machines.

```

Original issue reported on code.google.com by `AHeinerm` on 6 Nov 2010 at 10:15 | defect | winxp incompatible with ludp for the multiple instance hack the custom network mode ludp local udp is not compatible with windows xp machines original issue reported on code google com by aheinerm on nov at | 1 |

37,036 | 8,206,556,783 | IssuesEvent | 2018-09-03 13:54:56 | contao/contao | https://api.github.com/repos/contao/contao | closed | handle mod_article_teaser and mod_article_plain selection | defect | <a href="https://github.com/fritzmg"><img src="https://avatars0.githubusercontent.com/u/4970961?v=4" align="left" width="42" height="42"></img></a> [Issue](https://github.com/contao/installation-bundle/issues/97) by @fritzmg

August 17th, 2018, 12:05 GMT

In Contao 3 you were able to select a variety of custom template ... | 1.0 | handle mod_article_teaser and mod_article_plain selection - <a href="https://github.com/fritzmg"><img src="https://avatars0.githubusercontent.com/u/4970961?v=4" align="left" width="42" height="42"></img></a> [Issue](https://github.com/contao/installation-bundle/issues/97) by @fritzmg

August 17th, 2018, 12:05 GMT

In Co... | defect | handle mod article teaser and mod article plain selection by fritzmg august gmt in contao you were able to select a variety of custom template for articles including mod article plain and mod article teaser which were removed in contao however this will lead to an could not fin... | 1 |

48,530 | 6,098,813,606 | IssuesEvent | 2017-06-20 08:34:25 | geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | https://api.github.com/repos/geetsisbac/WCVVENIXYFVIRBXH3BYTI6TE | reopened | AwwrsXx7T57MB8/09hew/33fjdWKbnfXZyl6fDTfrfo1IzYwjH40et5InaNAFGye7CYnc86P3qcXCFj7yPAGummbcXANuEtvNvxGXtm+0zdnswGxAOoQHONRqFLnGFaHgwIE/x1sJHHevkZyCUPyK1vXA/URVN7vqtZ5kF9Imc4= | design | dPuKwZjtFnMQ1uR3M9yD2bMdwzQMA1UsYXhzL9owNrxmSUff74VqLKTL/0aq2y/COeIhdbNmSHYL/OCak1Z0ktkQHW9laH3VmJGYa2MqRpHevL0vFixavYPyOFhIK1EiU0wtcRfBPAZzbbcCLivar85OTuqN8mytP0HEdzgX4fvl2nV4fni1MJG9i8XhpDt7H7Ay4UYePa9XaD+H8+bUGR4im0uSKm/wusqLWO+cP3V7U9TAg5cYL4SlF34T9o+sqVW+uHyIJ63fuOujMdsuYNMWqHibBvW2PrHtU95ENgWO3V6c5SLcAGoNnSjkGKo3... | 1.0 | AwwrsXx7T57MB8/09hew/33fjdWKbnfXZyl6fDTfrfo1IzYwjH40et5InaNAFGye7CYnc86P3qcXCFj7yPAGummbcXANuEtvNvxGXtm+0zdnswGxAOoQHONRqFLnGFaHgwIE/x1sJHHevkZyCUPyK1vXA/URVN7vqtZ5kF9Imc4= - dPuKwZjtFnMQ1uR3M9yD2bMdwzQMA1UsYXhzL9owNrxmSUff74VqLKTL/0aq2y/COeIhdbNmSHYL/OCak1Z0ktkQHW9laH3VmJGYa2MqRpHevL0vFixavYPyOFhIK1EiU0wtcRfBPAZzbbcCL... | non_defect | coeihdbnmshyl wusqlwo sqvw jte lr fpaokjlmmrcjvihhd jte tul | 0 |

14,509 | 2,814,314,051 | IssuesEvent | 2015-05-18 19:19:35 | m-lab/mlab-wikis | https://api.github.com/repos/m-lab/mlab-wikis | closed | Split the check_status cron job into one check per tool/slice | auto-migrated Priority-High Type-Defect | ```

The 'check_status' cron job takes too long, sometimes causing 'Deadline

exceeded' errors, so we must split this task into one check per

tool/slice.

```

Original issue reported on code.google.com by `claudiu....@gmail.com` on 31 Jan 2013 at 5:10 | 1.0 | Split the check_status cron job into one check per tool/slice - ```

The 'check_status' cron job takes too long, sometimes causing 'Deadline

exceeded' errors, so we must split this task into one check per

tool/slice.

```

Original issue reported on code.google.com by `claudiu....@gmail.com` on 31 Jan 2013 at 5:10 | defect | split the check status cron job into one check per tool slice the check status cron job takes too long sometimes causing deadline exceeded errors so we must split this task into one check per tool slice original issue reported on code google com by claudiu gmail com on jan at | 1 |

47,406 | 13,056,172,311 | IssuesEvent | 2020-07-30 03:52:52 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | icetray development email list (Trac #512) | Migrated from Trac defect tools/ports | create a email list for icetray development.

icetray-dev or something like that

Let's use umdgrb's mailman and avoid the collective at UW

Migrated from https://code.icecube.wisc.edu/ticket/512

```json

{

"status": "closed",

"changetime": "2009-01-22T18:45:35",

"description": "create a email list for ice... | 1.0 | icetray development email list (Trac #512) - create a email list for icetray development.

icetray-dev or something like that

Let's use umdgrb's mailman and avoid the collective at UW

Migrated from https://code.icecube.wisc.edu/ticket/512

```json

{

"status": "closed",

"changetime": "2009-01-22T18:45:35",

... | defect | icetray development email list trac create a email list for icetray development icetray dev or something like that let s use umdgrb s mailman and avoid the collective at uw migrated from json status closed changetime description create a email list for icetray d... | 1 |

58,972 | 11,914,138,511 | IssuesEvent | 2020-03-31 13:10:07 | nopSolutions/nopCommerce | https://api.github.com/repos/nopSolutions/nopCommerce | closed | Upgrade .NET Core to the latest version | refactoring / source code | Currently it's 3.1.201

Let's do it before the RTM of nopCommerce 4.30.

| 1.0 | Upgrade .NET Core to the latest version - Currently it's 3.1.201

Let's do it before the RTM of nopCommerce 4.30.

| non_defect | upgrade net core to the latest version currently it s let s do it before the rtm of nopcommerce | 0 |

56,616 | 15,218,932,954 | IssuesEvent | 2021-02-17 18:29:57 | galasa-dev/projectmanagement | https://api.github.com/repos/galasa-dev/projectmanagement | opened | Change 'Submit' to 'Submit tests' on button | defect webui | Change _Submit_ to _Submit tests_ on button on the Submit tests tile | 1.0 | Change 'Submit' to 'Submit tests' on button - Change _Submit_ to _Submit tests_ on button on the Submit tests tile | defect | change submit to submit tests on button change submit to submit tests on button on the submit tests tile | 1 |

6,561 | 2,610,256,870 | IssuesEvent | 2015-02-26 19:22:00 | chrsmith/dsdsdaadf | https://api.github.com/repos/chrsmith/dsdsdaadf | opened | 深圳激光祛痘需要多少钱 | auto-migrated Priority-Medium Type-Defect | ```

深圳激光祛痘需要多少钱【深圳韩方科颜全国热线400-869-1818��

�24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以��

�国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品�

��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反

弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国��

�专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸�

��的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:37 | 1.0 | 深圳激光祛痘需要多少钱 - ```

深圳激光祛痘需要多少钱【深圳韩方科颜全国热线400-869-1818��

�24小时QQ4008691818】深圳韩方科颜专业祛痘连锁机构,机构以��

�国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品�

��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反

弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国��

�专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸�

��的痘痘。

```

-----

Original issue reported on code.google.com by `szft...@163.com` on 14 May 2014 at 8:37 | defect | 深圳激光祛痘需要多少钱 深圳激光祛痘需要多少钱【 �� � 】深圳韩方科颜专业祛痘连锁机构,机构以�� �国秘方——韩方科颜这一国妆准字号治疗型权威,祛痘佳品� ��韩方科颜专业祛痘连锁机构,采用韩国秘方配合专业“不反 弹”健康祛痘技术并结合先进“先进豪华彩光”仪,开创国�� �专业治疗粉刺、痤疮签约包治先河,成功消除了许多顾客脸� ��的痘痘。 original issue reported on code google com by szft com on may at | 1 |

69,171 | 22,263,971,009 | IssuesEvent | 2022-06-10 05:08:16 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | vertical scroll is shown though content fits viewport | T-Defect | ### Steps to reproduce

1. Create a new room

2. Write a message.

3. Scroll

### Outcome

#### What did you expect?

No scroll

#### What happened instead?

Scroll

### Operatin... | 1.0 | vertical scroll is shown though content fits viewport - ### Steps to reproduce

1. Create a new room

2. Write a message.

3. Scroll

### Outcome

#### What did you expect?

No s... | defect | vertical scroll is shown though content fits viewport steps to reproduce create a new room write a message scroll outcome what did you expect no scroll what happened instead scroll operating system linux browser information firefox url for webapp ... | 1 |

59,970 | 17,023,301,228 | IssuesEvent | 2021-07-03 01:18:54 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Minimise/maximise doesn't redraw background | Component: potlatch (flash editor) Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 6.26am, Friday, 26th September 2008]**

e.g. if NPE is selected and the SWF is maximised, it won't load the extra tiles until you drag.

| 1.0 | Minimise/maximise doesn't redraw background - **[Submitted to the original trac issue database at 6.26am, Friday, 26th September 2008]**

e.g. if NPE is selected and the SWF is maximised, it won't load the extra tiles until you drag.

| defect | minimise maximise doesn t redraw background e g if npe is selected and the swf is maximised it won t load the extra tiles until you drag | 1 |

56,175 | 14,963,109,844 | IssuesEvent | 2021-01-27 10:08:35 | primefaces/primereact | https://api.github.com/repos/primefaces/primereact | closed | DataTable with editMode="cell" doesn't work as expected | defect | ### There is no guarantee in receiving an immediate response in GitHub Issue Tracker, If you'd like to secure our response, you may consider *PrimeReact PRO Support* where support is provided within 4 business hours

**I'm submitting a ...** (check one with "x")

```

[X] bug report

[X] feature request

[ ] support... | 1.0 | DataTable with editMode="cell" doesn't work as expected - ### There is no guarantee in receiving an immediate response in GitHub Issue Tracker, If you'd like to secure our response, you may consider *PrimeReact PRO Support* where support is provided within 4 business hours

**I'm submitting a ...** (check one with "... | defect | datatable with editmode cell doesn t work as expected there is no guarantee in receiving an immediate response in github issue tracker if you d like to secure our response you may consider primereact pro support where support is provided within business hours i m submitting a check one with ... | 1 |

64,063 | 18,160,562,514 | IssuesEvent | 2021-09-27 09:08:02 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | opened | Unable to accept verification requests | T-Defect | ### Steps to reproduce

More details here: https://github.com/vector-im/element-android/issues/4085

I am unable to accept verification requests from this user.

### What happened?

### What did you expect?

I was expecting to receive a verification request. I received the notification for it but when clicking it not... | 1.0 | Unable to accept verification requests - ### Steps to reproduce

More details here: https://github.com/vector-im/element-android/issues/4085

I am unable to accept verification requests from this user.

### What happened?

### What did you expect?

I was expecting to receive a verification request. I received the not... | defect | unable to accept verification requests steps to reproduce more details here i am unable to accept verification requests from this user what happened what did you expect i was expecting to receive a verification request i received the notification for it but when clicking it nothing happened ... | 1 |

11,406 | 2,651,204,853 | IssuesEvent | 2015-03-16 09:41:54 | Budenzauber/truecrack-extended | https://api.github.com/repos/Budenzauber/truecrack-extended | reopened | OSX compile errors, warnings | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. compile with "make GPU=false"

2.

3.

What is the expected output? What do you see instead?

compiled truecrack_optimized version

cc -c -I./Common/ -I./Crypto/ -I./Cuda/ -I./Main/ -I./ -lm Main/Utils.c -o Utils.o

clang: warning: -lm: 'linker' input unused

In file... | 1.0 | OSX compile errors, warnings - ```

What steps will reproduce the problem?

1. compile with "make GPU=false"

2.

3.

What is the expected output? What do you see instead?

compiled truecrack_optimized version

cc -c -I./Common/ -I./Crypto/ -I./Cuda/ -I./Main/ -I./ -lm Main/Utils.c -o Utils.o

clang: warning: -lm: '... | defect | osx compile errors warnings what steps will reproduce the problem compile with make gpu false what is the expected output what do you see instead compiled truecrack optimized version cc c i common i crypto i cuda i main i lm main utils c o utils o clang warning lm ... | 1 |

60,508 | 17,023,444,373 | IssuesEvent | 2021-07-03 02:03:55 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | language fallback mechanism could be more robust | Component: admin Priority: major Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 5.28am, Monday, 20th July 2009]**

There seems to be some sort of an error in Slovenian translation on page

http://www.openstreetmap.org/browse/way/27028715

which works perfectly with English locale.

My guess is that either some interpolation strings don't match ... | 1.0 | language fallback mechanism could be more robust - **[Submitted to the original trac issue database at 5.28am, Monday, 20th July 2009]**

There seems to be some sort of an error in Slovenian translation on page

http://www.openstreetmap.org/browse/way/27028715

which works perfectly with English locale.

My guess is ... | defect | language fallback mechanism could be more robust there seems to be some sort of an error in slovenian translation on page which works perfectly with english locale my guess is that either some interpolation strings don t match the en yml or there is some pluralization issue missing key in such c... | 1 |

31,038 | 6,412,152,935 | IssuesEvent | 2017-08-08 01:56:45 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | closed | EntityTrait::setDirty($property, false) should return $this | Defect ORM | This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 3.4.12.

`EntityTrait::setDirty($property, false)` returns `false` instead `$this`.

https://github.com/cakephp/cakephp/blob/master/src/Datasource/EntityTrait.php#L756

I'm creating this issue because... | 1.0 | EntityTrait::setDirty($property, false) should return $this - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* [ ] feature-discussion (RFC)

* CakePHP Version: 3.4.12.

`EntityTrait::setDirty($property, false)` returns `false` instead `$this`.

https://github.com/cakephp/cakephp/blob/master/src/Data... | defect | entitytrait setdirty property false should return this this is a multiple allowed bug enhancement feature discussion rfc cakephp version entitytrait setdirty property false returns false instead this i m creating this issue because i don t have time to writ... | 1 |

6,799 | 2,860,725,074 | IssuesEvent | 2015-06-03 17:09:46 | alexweissman/UserFrosting | https://api.github.com/repos/alexweissman/UserFrosting | opened | New demo site | 0.3.0 testing website | There is a demo up for the new version at http://uf-demo.alexanderweissman.com/. Right now you get the dashboard placeholder page, and settings page. I'll add some groups and let people play with adding/removing users in another group later today. | 1.0 | New demo site - There is a demo up for the new version at http://uf-demo.alexanderweissman.com/. Right now you get the dashboard placeholder page, and settings page. I'll add some groups and let people play with adding/removing users in another group later today. | non_defect | new demo site there is a demo up for the new version at right now you get the dashboard placeholder page and settings page i ll add some groups and let people play with adding removing users in another group later today | 0 |

45,639 | 12,965,195,289 | IssuesEvent | 2020-07-20 21:52:19 | googlefonts/noto-fonts | https://api.github.com/repos/googlefonts/noto-fonts | closed | NotoSansDisplay-MM.glyphs glyph uniA652 has incompatible masters | Type-Defect |

NotoSansDisplay-MM.glyphs glyph uniA652 has incompatible masters and we cannot make a variable font for this glyph

fontmake currently generates this warning

WARNING:fontTools.varLib:glyph uniA652 has incompatible masters; skipping

this means that the glyph above won't have any variation; just the "base" shape. | 1.0 | NotoSansDisplay-MM.glyphs glyph uniA652 has incompatible masters -

NotoSansDisplay-MM.glyphs glyph uniA652 has incompatible masters and we cannot make a variable font for this glyph

fontmake currently generates this warning

WARNING:fontTools.varLib:glyph uniA652 has incompatible masters; skipping

this means th... | defect | notosansdisplay mm glyphs glyph has incompatible masters notosansdisplay mm glyphs glyph has incompatible masters and we cannot make a variable font for this glyph fontmake currently generates this warning warning fonttools varlib glyph has incompatible masters skipping this means that the glyph above... | 1 |

351,985 | 10,526,091,280 | IssuesEvent | 2019-09-30 16:19:49 | woocommerce/woocommerce-gateway-stripe | https://api.github.com/repos/woocommerce/woocommerce-gateway-stripe | closed | Use customer IDs when creating payment intents | Priority: High | A requirement from Stripe: we must save Merchants’ customers on Merchants’ Stripe accounts and make charge requests by specifying a Customer ID per the Stripe Documentation. | 1.0 | Use customer IDs when creating payment intents - A requirement from Stripe: we must save Merchants’ customers on Merchants’ Stripe accounts and make charge requests by specifying a Customer ID per the Stripe Documentation. | non_defect | use customer ids when creating payment intents a requirement from stripe we must save merchants’ customers on merchants’ stripe accounts and make charge requests by specifying a customer id per the stripe documentation | 0 |

39,259 | 9,358,560,257 | IssuesEvent | 2019-04-02 02:57:04 | SangheeKim/xerela | https://api.github.com/repos/SangheeKim/xerela | closed | HTTP ERRO: 404 | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1.Install of the software

2.

3.

What is the expected output? I should be able to go to https://127.0.0.1:8080

and see the management console. What do you see instead? This: HTTP ERROR: 404

NOT_FOUND

RequestURI=/

Powered by jetty://

What version of the product are you us... | 1.0 | HTTP ERRO: 404 - ```

What steps will reproduce the problem?

1.Install of the software

2.

3.

What is the expected output? I should be able to go to https://127.0.0.1:8080

and see the management console. What do you see instead? This: HTTP ERROR: 404

NOT_FOUND

RequestURI=/

Powered by jetty://

What version of the p... | defect | http erro what steps will reproduce the problem install of the software what is the expected output i should be able to go to and see the management console what do you see instead this http error not found requesturi powered by jetty what version of the product are you using on ... | 1 |

8,623 | 2,611,533,486 | IssuesEvent | 2015-02-27 06:04:18 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Blowtorch crash | auto-migrated Priority-Medium ReleaseBug-0.9.19 Type-Defect | ```

What steps will reproduce the problem?

1. In network game, use blowtorch

2. While it is doing its job, quit game/disconnect, so others see your team gone

What is the expected output? What do you see instead?

Engine crashes

What version of the product are you using? On what operating system?

0.9.19

```

Origin... | 1.0 | Blowtorch crash - ```

What steps will reproduce the problem?

1. In network game, use blowtorch

2. While it is doing its job, quit game/disconnect, so others see your team gone

What is the expected output? What do you see instead?

Engine crashes

What version of the product are you using? On what operating system?

0.... | defect | blowtorch crash what steps will reproduce the problem in network game use blowtorch while it is doing its job quit game disconnect so others see your team gone what is the expected output what do you see instead engine crashes what version of the product are you using on what operating system ... | 1 |

46,096 | 13,055,851,648 | IssuesEvent | 2020-07-30 02:55:34 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | Conditional execution doesn't work for Dump and I3Writer (Trac #578) | Incomplete Migration Migrated from Trac dataio defect | Migrated from https://code.icecube.wisc.edu/ticket/578

```json

{

"status": "closed",

"changetime": "2009-12-02T18:07:51",

"description": "I am not sure if this ever worked before but I just tried to conditionally execute the I3Writer and the Dump module and it didn't work even if these \nmodules inherit f... | 1.0 | Conditional execution doesn't work for Dump and I3Writer (Trac #578) - Migrated from https://code.icecube.wisc.edu/ticket/578

```json

{

"status": "closed",

"changetime": "2009-12-02T18:07:51",

"description": "I am not sure if this ever worked before but I just tried to conditionally execute the I3Writer a... | defect | conditional execution doesn t work for dump and trac migrated from json status closed changetime description i am not sure if this ever worked before but i just tried to conditionally execute the and the dump module and it didn t work even if these nmodules inh... | 1 |

123,069 | 17,772,150,206 | IssuesEvent | 2021-08-30 14:47:54 | kapseliboi/postman-sandbox | https://api.github.com/repos/kapseliboi/postman-sandbox | opened | CVE-2021-33587 (High) detected in css-what-2.1.3.tgz | security vulnerability | ## CVE-2021-33587 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>css-what-2.1.3.tgz</b></p></summary>

<p>a CSS selector parser</p>

<p>Library home page: <a href="https://registry.npmj... | True | CVE-2021-33587 (High) detected in css-what-2.1.3.tgz - ## CVE-2021-33587 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>css-what-2.1.3.tgz</b></p></summary>

<p>a CSS selector parser</... | non_defect | cve high detected in css what tgz cve high severity vulnerability vulnerable library css what tgz a css selector parser library home page a href path to dependency file postman sandbox package json path to vulnerable library postman sandbox node modules css what pack... | 0 |

310,995 | 9,526,944,368 | IssuesEvent | 2019-04-29 00:26:56 | Darkosto/SkyFactory4Issues | https://api.github.com/repos/Darkosto/SkyFactory4Issues | closed | Bounding Box pickup when using Carryon with Mekanism's Advanced Solar Panel | Category: Config Priority: High Type: Bug | <!-- Thank you for submitting an issue for the relevant topic. Please ensure that you fill in all the required information needed as specified by the template below. -->

<!-- Note: As you are reporting a bug, please ensure that you have logs uploaded to either PasteBin/Gist etc... No logs = Closing and Ignoring of the... | 1.0 | Bounding Box pickup when using Carryon with Mekanism's Advanced Solar Panel - <!-- Thank you for submitting an issue for the relevant topic. Please ensure that you fill in all the required information needed as specified by the template below. -->

<!-- Note: As you are reporting a bug, please ensure that you have logs... | non_defect | bounding box pickup when using carryon with mekanism s advanced solar panel bug report when using carryon to pick up the advanced solar panel from mekanism from the top side you pick up a bounding block instead expected behaviour you should pick up the advanced solar panel ... | 0 |

80,988 | 30,647,327,884 | IssuesEvent | 2023-07-25 06:16:09 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | opened | panel: Panel without header leads to broken aria reference | :lady_beetle: defect :bangbang: needs-triage | ### Describe the bug

If you use a p:panel and don't declare a header attribute you will get a broken aria reference ("aria-labelledby")

### Reproducer

Any use of just the panel will reproduce the issue:

`<p:panel>`

Using a header seems to fix it, but sometimes I don't need a header:

`<p:panel header="headertext... | 1.0 | panel: Panel without header leads to broken aria reference - ### Describe the bug

If you use a p:panel and don't declare a header attribute you will get a broken aria reference ("aria-labelledby")

### Reproducer

Any use of just the panel will reproduce the issue:

`<p:panel>`

Using a header seems to fix it, but s... | defect | panel panel without header leads to broken aria reference describe the bug if you use a p panel and don t declare a header attribute you will get a broken aria reference aria labelledby reproducer any use of just the panel will reproduce the issue using a header seems to fix it but sometimes... | 1 |

323,722 | 23,962,403,266 | IssuesEvent | 2022-09-12 20:25:07 | gravitational/teleport | https://api.github.com/repos/gravitational/teleport | closed | Update PagerDuty URL | documentation | ## Details

Update URL for PagerDuty on docs/pages/access-controls/access-request-plugins/index.mdx to https://goteleport.com/docs/access-controls/access-request-plugins/ssh-approval-pagerduty/ as the current URL reaches a 404

### Category

<!-- Delete non-applicable category -->

- Improve Existing

| 1.0 | Update PagerDuty URL - ## Details

Update URL for PagerDuty on docs/pages/access-controls/access-request-plugins/index.mdx to https://goteleport.com/docs/access-controls/access-request-plugins/ssh-approval-pagerduty/ as the current URL reaches a 404

### Category

<!-- Delete non-applicable category -->

- Improve Ex... | non_defect | update pagerduty url details update url for pagerduty on docs pages access controls access request plugins index mdx to as the current url reaches a category improve existing | 0 |

38,275 | 8,725,299,031 | IssuesEvent | 2018-12-10 09:00:15 | opencaching/opencaching-pl | https://api.github.com/repos/opencaching/opencaching-pl | closed | Quotation marks in nickname destroys SQL queries and profile view | Component UserProfile Priority High Type Defect |

Also registra... | 1.0 | Quotation marks in nickname destroys SQL queries and profile view -

| I-performance | I happened to notice that lately `zcashd` takes longer to start up. Most of the difference happens while the UI status is `Init message: Rewinding blocks if needed...`, and in `debug.log`, notice the approximately 45 seconds between the first two lines:

```

Aug 20 13:17:06.364 INFO Init: main: LoadBlockIndexDB: hash... | True | performance: startup time has increased - GetConsensus() - I happened to notice that lately `zcashd` takes longer to start up. Most of the difference happens while the UI status is `Init message: Rewinding blocks if needed...`, and in `debug.log`, notice the approximately 45 seconds between the first two lines:

```

A... | non_defect | performance startup time has increased getconsensus i happened to notice that lately zcashd takes longer to start up most of the difference happens while the ui status is init message rewinding blocks if needed and in debug log notice the approximately seconds between the first two lines au... | 0 |

61,998 | 8,565,268,343 | IssuesEvent | 2018-11-09 19:19:44 | honey-dos/web | https://api.github.com/repos/honey-dos/web | opened | Web Design | documentation good first issue help wanted | If you long press a list or todo item, it brings up the same bottom window pane to edit your object and it has the fields all populated with the data from the todo object.

```complex-add-list-todo```

by @leofeyer

Sunday Jun 29, 2014 at 19:33 GMT

Right now, Symfony does not fully support the custom route ... | 1.0 | Cannot load resource ".". - > <a href="https://github.com/leofeyer"><img src="https://avatars.githubusercontent.com/u/1192057?v=3" align="left" width="42" height="42" hspace="10"></img></a> [Issue](https://github.com/contao/contao/issues/11) by @leofeyer

Sunday Jun 29, 2014 at 19:33 GMT

Right now, Symfony does not ful... | defect | cannot load resource by leofeyer sunday jun at gmt right now symfony does not fully support the custom route we are using for our front end router if you e g try to run app console cache clear on the command line it will throw an exception cannot load resource ... | 1 |

637,455 | 20,628,940,206 | IssuesEvent | 2022-03-08 03:11:41 | space-wizards/RobustToolbox | https://api.github.com/repos/space-wizards/RobustToolbox | opened | StringBuilder sandbox fail | Type: Discussion Size: Very Small Difficulty: 2-Medium Priority: 2-Before Release | ```

Sandbox violation: Access to method not allowed: [System.Runtime]System.Text.StringBuilder [System.Runtime]System.Text.StringBuilder.AppendLine([System.Runtime]System.Text.StringBuilder/AppendInterpolatedStringHandler&

```

Steps:

- Create stringbuilder from empty ctor

- Use AppendLine

- Fail

Creating str... | 1.0 | StringBuilder sandbox fail - ```

Sandbox violation: Access to method not allowed: [System.Runtime]System.Text.StringBuilder [System.Runtime]System.Text.StringBuilder.AppendLine([System.Runtime]System.Text.StringBuilder/AppendInterpolatedStringHandler&

```

Steps:

- Create stringbuilder from empty ctor

- Use Appen... | non_defect | stringbuilder sandbox fail sandbox violation access to method not allowed system text stringbuilder system text stringbuilder appendline system text stringbuilder appendinterpolatedstringhandler steps create stringbuilder from empty ctor use appendline fail creating stringbuilder with ... | 0 |

271,307 | 29,418,955,746 | IssuesEvent | 2023-05-31 01:05:26 | MidnightBSD/src | https://api.github.com/repos/MidnightBSD/src | closed | CVE-2019-6706 (High) detected in freebsd-srcrelease/12.3.0 - autoclosed | Mend: dependency security vulnerability | ## CVE-2019-6706 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>freebsd-srcrelease/12.3.0</b></p></summary>

<p>

<p>FreeBSD src tree (read-only mirror)</p>

<p>Library home page: <a hre... | True | CVE-2019-6706 (High) detected in freebsd-srcrelease/12.3.0 - autoclosed - ## CVE-2019-6706 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>freebsd-srcrelease/12.3.0</b></p></summary>

<p... | non_defect | cve high detected in freebsd srcrelease autoclosed cve high severity vulnerability vulnerable library freebsd srcrelease freebsd src tree read only mirror library home page a href found in head commit a href found in base branch stable vulne... | 0 |

29,747 | 11,771,448,463 | IssuesEvent | 2020-03-16 00:01:16 | Agent-Fennec/modmail | https://api.github.com/repos/Agent-Fennec/modmail | closed | Fix 1 Security, 3 Maintainability issues in core\thread.py | Stale enhancement security | [CodeFactor](https://www.codefactor.io/repository/github/agent-fennec/modmail/overview/master) found multiple issues:

#### Try, Except, Pass detected.

[core\thread.py:138

](https://www.codefactor.io/repository/github/agent-fennec/modmail/source/master/core/thread.py#L138)

#### No exception type(s) specified

[cor... | True | Fix 1 Security, 3 Maintainability issues in core\thread.py - [CodeFactor](https://www.codefactor.io/repository/github/agent-fennec/modmail/overview/master) found multiple issues:

#### Try, Except, Pass detected.

[core\thread.py:138

](https://www.codefactor.io/repository/github/agent-fennec/modmail/source/master/co... | non_defect | fix security maintainability issues in core thread py found multiple issues try except pass detected core thread py no exception type s specified core thread py catching too general exception exception core thread py | 0 |

74,876 | 25,378,806,834 | IssuesEvent | 2022-11-21 15:58:03 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | BUG: scipy.linalg.eig is missing documentation on the orthogonality of eigenvectors | defect scipy.linalg Documentation | ### Describe your issue.

Hello,

the documentation mentions that scipy.linalg.eig solves generalised eigenvalue problem of a square matrix.

But it does not necessarily produce orthogonal eigenvectors, e.g. symmetric real matrix I attached here ([test_array.txt](https://github.com/AlexiaNomena/Tests_Files/tree/mai... | 1.0 | BUG: scipy.linalg.eig is missing documentation on the orthogonality of eigenvectors - ### Describe your issue.

Hello,

the documentation mentions that scipy.linalg.eig solves generalised eigenvalue problem of a square matrix.

But it does not necessarily produce orthogonal eigenvectors, e.g. symmetric real matrix ... | defect | bug scipy linalg eig is missing documentation on the orthogonality of eigenvectors describe your issue hello the documentation mentions that scipy linalg eig solves generalised eigenvalue problem of a square matrix but it does not necessarily produce orthogonal eigenvectors e g symmetric real matrix ... | 1 |

54,331 | 13,578,687,422 | IssuesEvent | 2020-09-20 09:07:53 | lazydroid/auto-update-apk-client | https://api.github.com/repos/lazydroid/auto-update-apk-client | closed | SilentAutoUpdate is not silent | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1.rooted the device

2.made a sample project

3.used autoUpdateApk - worked as it should

4.changed to silentAutoUpdat

What is the expected output? What do you see instead?

I expect the version to update without any user involved but the app is wating

for the user to agree th... | 1.0 | SilentAutoUpdate is not silent - ```

What steps will reproduce the problem?

1.rooted the device

2.made a sample project

3.used autoUpdateApk - worked as it should

4.changed to silentAutoUpdat

What is the expected output? What do you see instead?

I expect the version to update without any user involved but the app is... | defect | silentautoupdate is not silent what steps will reproduce the problem rooted the device made a sample project used autoupdateapk worked as it should changed to silentautoupdat what is the expected output what do you see instead i expect the version to update without any user involved but the app is... | 1 |

995 | 2,594,418,545 | IssuesEvent | 2015-02-20 03:07:37 | BALL-Project/ball | https://api.github.com/repos/BALL-Project/ball | opened | PDB HIP inter-fragment bonds missing | C: FragmentDB P: major T: defect | **Reported by wolfgang on 2 May 41904562 13:42 UTC**

PDB ID: 1JEM

PDB Residue ID: HIP 16 (ND1-PHOSPHONOHISTIDINE)

is missing inter-fragment bonds to ILE 14; ALA 16.

I'm not sure if that will clash with our handling of AMBERs HIP (= PDB HIS) | 1.0 | PDB HIP inter-fragment bonds missing - **Reported by wolfgang on 2 May 41904562 13:42 UTC**

PDB ID: 1JEM

PDB Residue ID: HIP 16 (ND1-PHOSPHONOHISTIDINE)

is missing inter-fragment bonds to ILE 14; ALA 16.

I'm not sure if that will clash with our handling of AMBERs HIP (= PDB HIS) | defect | pdb hip inter fragment bonds missing reported by wolfgang on may utc pdb id pdb residue id hip phosphonohistidine is missing inter fragment bonds to ile ala i m not sure if that will clash with our handling of ambers hip pdb his | 1 |

811,200 | 30,278,640,064 | IssuesEvent | 2023-07-07 22:41:40 | libp2p/rust-libp2p | https://api.github.com/repos/libp2p/rust-libp2p | closed | ci: better caching for interop-tests docker build | priority:nicetohave difficulty:moderate help wanted | ## Description

<!-- Describe the enhancement that you are proposing.-->

For every PR, we build a docker container of the interop tests. The dockerfile uses `--mount=type=cache` for the `target` directory: https://github.com/libp2p/rust-libp2p/blob/dda6fc5dd74db7e00321ea21d0b06fe1b2da7f83/interop-tests/Dockerfile#L12

... | 1.0 | ci: better caching for interop-tests docker build - ## Description

<!-- Describe the enhancement that you are proposing.-->

For every PR, we build a docker container of the interop tests. The dockerfile uses `--mount=type=cache` for the `target` directory: https://github.com/libp2p/rust-libp2p/blob/dda6fc5dd74db7e003... | non_defect | ci better caching for interop tests docker build description for every pr we build a docker container of the interop tests the dockerfile uses mount type cache for the target directory this speeds up the build as long as the host system doesn t change i e when building locally for the ephemeral... | 0 |

290,315 | 32,060,695,420 | IssuesEvent | 2023-09-24 16:18:16 | hinoshiba/news | https://api.github.com/repos/hinoshiba/news | closed | [SecurityWeek] In Other News: New Analysis of Snowden Files, Yubico Goes Public, Election Hacking | SecurityWeek Stale |

Noteworthy stories that might have slipped under the radar: Snowden file analysis, Yubico starts trading, election hacking event.

The post [In Other News: New Analysis of Snowden Files, Yubico Goes Public, Election Hacking](https://www.securityweek.com/in-other-news-new-analysis-of-snowden-files-yubico-goes-public-e... | True | [SecurityWeek] In Other News: New Analysis of Snowden Files, Yubico Goes Public, Election Hacking -

Noteworthy stories that might have slipped under the radar: Snowden file analysis, Yubico starts trading, election hacking event.

The post [In Other News: New Analysis of Snowden Files, Yubico Goes Public, Election Ha... | non_defect | in other news new analysis of snowden files yubico goes public election hacking noteworthy stories that might have slipped under the radar snowden file analysis yubico starts trading election hacking event the post appeared first on | 0 |

533,127 | 15,577,309,008 | IssuesEvent | 2021-03-17 13:24:06 | schemathesis/schemathesis | https://api.github.com/repos/schemathesis/schemathesis | closed | [FEATURE] Control the "code to reproduce" section style from CLI | Difficulty: Medium Priority: Low Type: Feature | **Is your feature request related to a problem? Please describe.**

At the moment, Schemathesis CLI always produces Python code that the end-user should run to reproduce the problem. It might not be desired, especially if Python is not the main language of the app under test or the user doesn't want to use it.

**Des... | 1.0 | [FEATURE] Control the "code to reproduce" section style from CLI - **Is your feature request related to a problem? Please describe.**

At the moment, Schemathesis CLI always produces Python code that the end-user should run to reproduce the problem. It might not be desired, especially if Python is not the main language... | non_defect | control the code to reproduce section style from cli is your feature request related to a problem please describe at the moment schemathesis cli always produces python code that the end user should run to reproduce the problem it might not be desired especially if python is not the main language of the ... | 0 |

81,657 | 31,233,490,438 | IssuesEvent | 2023-08-20 01:25:16 | microsoft/TypeScript | https://api.github.com/repos/microsoft/TypeScript | closed | parseIsolatedEntityName doesn't return correct pos | Not a Defect | ### 🔎 Search Terms

parseIsolatedEntityName,pos,invalid

### 🕗 Version & Regression Information

Fails on `typescript@5.1.6` and `typescript@next`.

### 💻 Code

```ts

import ts from "npm:typescript@next";

console.log(ts.parseIsolatedEntityName(" aa ", ts.ScriptTarget.ESNext));

```

### 🙁 Actual b... | 1.0 | parseIsolatedEntityName doesn't return correct pos - ### 🔎 Search Terms

parseIsolatedEntityName,pos,invalid

### 🕗 Version & Regression Information

Fails on `typescript@5.1.6` and `typescript@next`.

### 💻 Code

```ts

import ts from "npm:typescript@next";

console.log(ts.parseIsolatedEntityName(" aa "... | defect | parseisolatedentityname doesn t return correct pos 🔎 search terms parseisolatedentityname pos invalid 🕗 version regression information fails on typescript and typescript next 💻 code ts import ts from npm typescript next console log ts parseisolatedentityname aa ... | 1 |

294,996 | 9,064,231,069 | IssuesEvent | 2019-02-14 00:09:57 | samiha-rahman/SOEN341 | https://api.github.com/repos/samiha-rahman/SOEN341 | closed | Set up required infrastructure on the back end | 1 SP Priority: HIGH Risk: LOW back end epic | Set up the required infrastructure on the back end side of WordPress according to needs to support development of further features.

Relevant user stories:

- [x] Edit user registration procedure and create new user role #15

- [x] Create new post type #20 | 1.0 | Set up required infrastructure on the back end - Set up the required infrastructure on the back end side of WordPress according to needs to support development of further features.

Relevant user stories:

- [x] Edit user registration procedure and create new user role #15

- [x] Create new post type #20 | non_defect | set up required infrastructure on the back end set up the required infrastructure on the back end side of wordpress according to needs to support development of further features relevant user stories edit user registration procedure and create new user role create new post type | 0 |

43,638 | 7,056,810,188 | IssuesEvent | 2018-01-04 14:17:32 | janephp/openapi | https://api.github.com/repos/janephp/openapi | closed | No Host/Scheme in generated resources | documentation | Hi

with the current release of openapi, it does not generate a complete $url in the generated resource/* files.

This raises a issue with guzzle/curl which throw's the following exception:

```

URL error 3: <url> malformed (see http://curl.haxx.se/libcurl/c/libcurl-errors.html)" when emitting request: "<method> ... | 1.0 | No Host/Scheme in generated resources - Hi

with the current release of openapi, it does not generate a complete $url in the generated resource/* files.

This raises a issue with guzzle/curl which throw's the following exception:

```

URL error 3: <url> malformed (see http://curl.haxx.se/libcurl/c/libcurl-errors.... | non_defect | no host scheme in generated resources hi with the current release of openapi it does not generate a complete url in the generated resource files this raises a issue with guzzle curl which throw s the following exception url error malformed see when emitting request we curren... | 0 |

81,290 | 30,783,544,248 | IssuesEvent | 2023-07-31 11:45:24 | vector-im/element-x-android | https://api.github.com/repos/vector-im/element-x-android | opened | Banner about actions in previous session after restarting app | T-Defect | ### Steps to reproduce

1. Experience #1006 and leave room

2. Restart app

### Outcome

#### What did you expect?

No banner saying that I've left a room after an app restart

#### What happened instead?

See banner saying that I've left room (still see that room which I am not a member of in the timeline)

### Your... | 1.0 | Banner about actions in previous session after restarting app - ### Steps to reproduce

1. Experience #1006 and leave room

2. Restart app

### Outcome

#### What did you expect?

No banner saying that I've left a room after an app restart

#### What happened instead?

See banner saying that I've left room (still see... | defect | banner about actions in previous session after restarting app steps to reproduce experience and leave room restart app outcome what did you expect no banner saying that i ve left a room after an app restart what happened instead see banner saying that i ve left room still see th... | 1 |

249,780 | 7,964,690,210 | IssuesEvent | 2018-07-13 22:50:02 | samsung-cnct/kraken-lib | https://api.github.com/repos/samsung-cnct/kraken-lib | closed | ec2_elb_facts sometimes fails fatally | bug kraken-lib priority-p2 | ```

FAILED - RETRYING: Wait for ELBs to be deleted (109 retries left).

An exception occurred during task execution. To see the full traceback, use -vvv. The error was: </ErrorResponse>

fatal: [localhost]: FAILED! => {"attempts": 13, "changed": false, "failed": true, "msg": "LoadBalancerNotFound: Cannot find Load Bal... | 1.0 | ec2_elb_facts sometimes fails fatally - ```

FAILED - RETRYING: Wait for ELBs to be deleted (109 retries left).

An exception occurred during task execution. To see the full traceback, use -vvv. The error was: </ErrorResponse>

fatal: [localhost]: FAILED! => {"attempts": 13, "changed": false, "failed": true, "msg": "Lo... | non_defect | elb facts sometimes fails fatally failed retrying wait for elbs to be deleted retries left an exception occurred during task execution to see the full traceback use vvv the error was fatal failed attempts changed false failed true msg loadbalancernotfound cannot fin... | 0 |

40,480 | 2,868,922,250 | IssuesEvent | 2015-06-05 21:58:51 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Use friendlier YAML formatting for pubspec.lock | enhancement Fixed Priority-Medium | <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#5104_

----

The lock file that pub generates doesn't use any whites... | 1.0 | Use friendlier YAML formatting for pubspec.lock - <a href="https://github.com/munificent"><img src="https://avatars.githubusercontent.com/u/46275?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [munificent](https://github.com/munificent)**

_Originally opened as dart-lang/sdk#5104_

----

The l... | non_defect | use friendlier yaml formatting for pubspec lock issue by originally opened as dart lang sdk the lock file that pub generates doesn t use any whitespace which makes it hard to read and unfriendly in diffs we should try to write a cleaner more human friendly yaml file | 0 |

536,117 | 15,704,466,074 | IssuesEvent | 2021-03-26 15:01:48 | CMSCompOps/WmAgentScripts | https://api.github.com/repos/CMSCompOps/WmAgentScripts | opened | Make a case insensitive check for pilot keyword check in SubRequestType | New Feature Priority: High | **Impact of the new feature**

pilot workflows

**Is your feature request related to a problem? Please describe.**

PdmV provides `SubRequestType` with `Pilot` value for pilot workflows whereas Unified checks for `pilot` value: https://github.com/CMSCompOps/WmAgentScripts/blob/master/utils.py#L8138

**Describe the... | 1.0 | Make a case insensitive check for pilot keyword check in SubRequestType - **Impact of the new feature**

pilot workflows

**Is your feature request related to a problem? Please describe.**

PdmV provides `SubRequestType` with `Pilot` value for pilot workflows whereas Unified checks for `pilot` value: https://github.c... | non_defect | make a case insensitive check for pilot keyword check in subrequesttype impact of the new feature pilot workflows is your feature request related to a problem please describe pdmv provides subrequesttype with pilot value for pilot workflows whereas unified checks for pilot value describe... | 0 |

310,677 | 9,522,753,599 | IssuesEvent | 2019-04-27 11:31:36 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | closed | Log Level Issue | Priority: Medium Status: Available Type: Question | Hey,

I used v2tec Watchtower a longer time.

Was it renamed? Moved? Is there somewhere info about that?

With v2tec I always got a log entry for "First Run". And also got a mail for the "First Run" log level entry.

I changed to containrrr/watchtower and when i open the log there only is one line "Attaching to watch... | 1.0 | Log Level Issue - Hey,

I used v2tec Watchtower a longer time.

Was it renamed? Moved? Is there somewhere info about that?

With v2tec I always got a log entry for "First Run". And also got a mail for the "First Run" log level entry.

I changed to containrrr/watchtower and when i open the log there only is one line "... | non_defect | log level issue hey i used watchtower a longer time was it renamed moved is there somewhere info about that with i always got a log entry for first run and also got a mail for the first run log level entry i changed to containrrr watchtower and when i open the log there only is one line attachin... | 0 |

75,968 | 26,184,901,442 | IssuesEvent | 2023-01-02 21:53:55 | dotCMS/core | https://api.github.com/repos/dotCMS/core | closed | Relate Content screen thumbnails are not scaled correctly | Type : Defect dotCMS : User Interface WF: Can't reproduce Team : Lunik Next Release | [](https://mrkr.io/s/6392468927507e7e77984acc/0)

## Problem Statement

Relate Content screen thumbnails are not scaled correctly

## Steps to Reproduce

See screenshot

## Acceptance Criteria

Image should be cropped not stretched

## Wir... | 1.0 | Relate Content screen thumbnails are not scaled correctly - [](https://mrkr.io/s/6392468927507e7e77984acc/0)

## Problem Statement

Relate Content screen thumbnails are not scaled correctly

## Steps to Reproduce

See screenshot

## Acce... | defect | relate content screen thumbnails are not scaled correctly problem statement relate content screen thumbnails are not scaled correctly steps to reproduce see screenshot acceptance criteria image should be cropped not stretched wireframes designs prototypes testing notes assumptions ... | 1 |

82,148 | 23,687,266,366 | IssuesEvent | 2022-08-29 07:36:00 | lowRISC/opentitan | https://api.github.com/repos/lowRISC/opentitan | closed | [bazel, sca] Ensure that englishbreakfast CI builds can use Bazel to build their SW artifacts. | Component:Software Component:Tooling Priority:P1 Component:CI SW:Bazel Adoption Requirement SW:Build System | See https://github.com/lowRISC/opentitan/pull/12083, which enables doing this (but does not turn it on in CI).

I don't understand what SCA CI is doing, so I would appreciate guidance here, @vogelpi | 1.0 | [bazel, sca] Ensure that englishbreakfast CI builds can use Bazel to build their SW artifacts. - See https://github.com/lowRISC/opentitan/pull/12083, which enables doing this (but does not turn it on in CI).

I don't understand what SCA CI is doing, so I would appreciate guidance here, @vogelpi | non_defect | ensure that englishbreakfast ci builds can use bazel to build their sw artifacts see which enables doing this but does not turn it on in ci i don t understand what sca ci is doing so i would appreciate guidance here vogelpi | 0 |

831,863 | 32,063,873,544 | IssuesEvent | 2023-09-24 23:54:04 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Replay feature request (The Trailer Park) | Status: Help Wanted Priority: 2-Before Release Issue: Feature Request Difficulty: 2-Medium | ## Description

<!-- Explain your issue in detail. Issues without proper explanation are liable to be closed by maintainers. -->

from the sacred mouth of bobda

- [ ] An easy way to tell what round number it is during a round as both a player and an admin, bonus points for it telling you round length too

- [ ] 16:9... | 1.0 | Replay feature request (The Trailer Park) - ## Description

<!-- Explain your issue in detail. Issues without proper explanation are liable to be closed by maintainers. -->

from the sacred mouth of bobda

- [ ] An easy way to tell what round number it is during a round as both a player and an admin, bonus points for... | non_defect | replay feature request the trailer park description from the sacred mouth of bobda an easy way to tell what round number it is during a round as both a player and an admin bonus points for it telling you round length too viewport in replays hiding your ghost and other ghosts in replays ... | 0 |

586,260 | 17,573,740,588 | IssuesEvent | 2021-08-15 07:34:10 | fosscord/fosscord-server | https://api.github.com/repos/fosscord/fosscord-server | opened | Can’t accept vanity urls | bug api high priority | We have two options to fix it, either store vanity urls in the Invites collection or also check for vanity urls in the accept invite route. | 1.0 | Can’t accept vanity urls - We have two options to fix it, either store vanity urls in the Invites collection or also check for vanity urls in the accept invite route. | non_defect | can’t accept vanity urls we have two options to fix it either store vanity urls in the invites collection or also check for vanity urls in the accept invite route | 0 |

60,953 | 17,023,565,804 | IssuesEvent | 2021-07-03 02:41:07 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | osm2pgsql accepts multiple conflicting arguments | Component: nominatim Priority: major Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 11.16am, Sunday, 21st March 2010]**

by accident, I specified the -O twice, but got no warning.

this is bad :

./osm2pgsql -O gazetteer -U gis -H localhost -lsc -O pgsql -C 2000 -d gisdb test.osm.bz2

osm2pgsql should check for duplicate and conflicting arguments... | 1.0 | osm2pgsql accepts multiple conflicting arguments - **[Submitted to the original trac issue database at 11.16am, Sunday, 21st March 2010]**

by accident, I specified the -O twice, but got no warning.

this is bad :

./osm2pgsql -O gazetteer -U gis -H localhost -lsc -O pgsql -C 2000 -d gisdb test.osm.bz2

osm2pgsql s... | defect | accepts multiple conflicting arguments by accident i specified the o twice but got no warning this is bad o gazetteer u gis h localhost lsc o pgsql c d gisdb test osm should check for duplicate and conflicting arguments i can make a patch for this if needed mike | 1 |

12,881 | 2,723,939,234 | IssuesEvent | 2015-04-14 15:15:37 | LarsTi/opcua4j | https://api.github.com/repos/LarsTi/opcua4j | closed | testGetChildren(bpi.most.server.services.opcua.server.MostNodeManagerTest) FAILS | auto-migrated Priority-Medium Type-Defect | ```

1. Checked out the MOST from GIT @ ..tuwien.ac.at

2. from Eclipse: maven clean, maven build

WIN7, JDK 1.6

Looked at MostOpcUaStandaloneServer.java and found:

private void run() {

String endpointUrl = "opc.tcp://127.0.0.1:6001/mostopcua";

String keyPhrase = "mostrulez";

String certPath = "META-INF/pki/serv... | 1.0 | testGetChildren(bpi.most.server.services.opcua.server.MostNodeManagerTest) FAILS - ```

1. Checked out the MOST from GIT @ ..tuwien.ac.at

2. from Eclipse: maven clean, maven build

WIN7, JDK 1.6

Looked at MostOpcUaStandaloneServer.java and found:

private void run() {

String endpointUrl = "opc.tcp://127.0.0.1:6001/m... | defect | testgetchildren bpi most server services opcua server mostnodemanagertest fails checked out the most from git tuwien ac at from eclipse maven clean maven build jdk looked at mostopcuastandaloneserver java and found private void run string endpointurl opc tcp mostopcua... | 1 |

9,527 | 12,500,607,182 | IssuesEvent | 2020-06-01 22:43:58 | qgis/QGIS | https://api.github.com/repos/qgis/QGIS | closed | Using Fields Mapper parameter in Modeler throws "NotImplementedError" | Bug Processing | Steps to reproduce:

1. Open Processing Modeler.

2. From the Inputs panel, drag and drop a Fields Mapper parameter to the model.

3. Once you give the parameter a name and you click on OK, you get the error:

```

NotImplementedError

QgsProcessingParameterDefinition.type() is abstract and must be overridden

... | 1.0 | Using Fields Mapper parameter in Modeler throws "NotImplementedError" - Steps to reproduce:

1. Open Processing Modeler.

2. From the Inputs panel, drag and drop a Fields Mapper parameter to the model.

3. Once you give the parameter a name and you click on OK, you get the error:

```

NotImplementedError

QgsPr... | non_defect | using fields mapper parameter in modeler throws notimplementederror steps to reproduce open processing modeler from the inputs panel drag and drop a fields mapper parameter to the model once you give the parameter a name and you click on ok you get the error notimplementederror qgspr... | 0 |

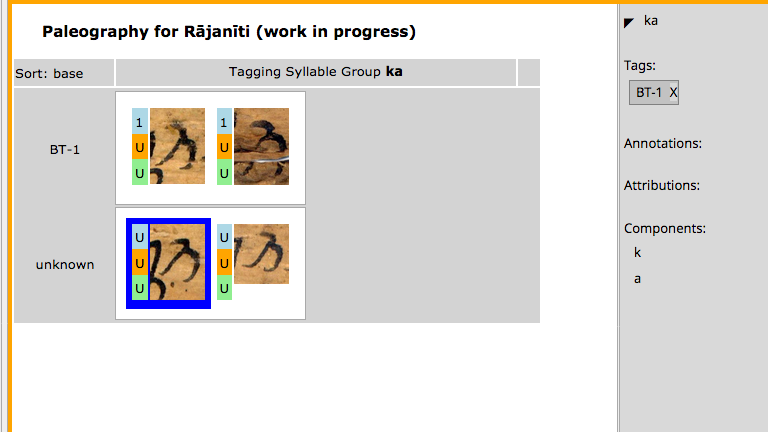

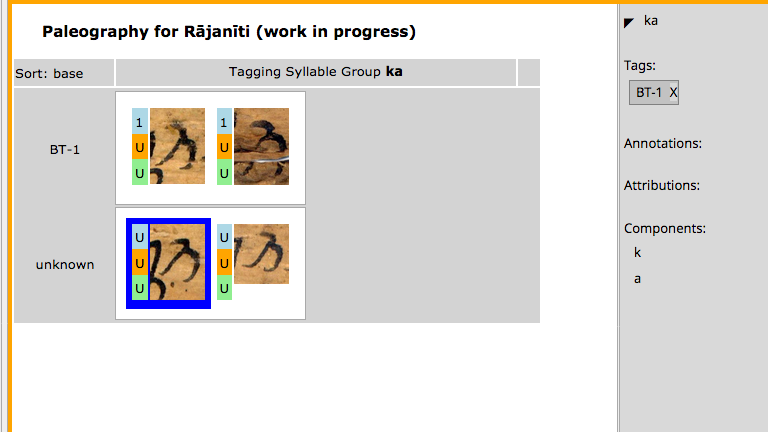

126,047 | 10,374,396,840 | IssuesEvent | 2019-09-09 09:30:58 | readsoftware/ReadIssues | https://api.github.com/repos/readsoftware/ReadIssues | closed | Paleographic tagging does not update properly | Bug Priority 1 Test V1RC | When tagging in the properties window a syllable using a paleographic tag does not refresh the table layout.

| 1.0 | Paleographic tagging does not update properly - When tagging in the properties window a syllable using a paleographic tag does not refresh the table layout.

| non_defect | paleographic tagging does not update properly when tagging in the properties window a syllable using a paleographic tag does not refresh the table layout | 0 |