Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

144,430 | 11,616,182,295 | IssuesEvent | 2020-02-26 15:19:50 | godaddy-wordpress/coblocks | https://api.github.com/repos/godaddy-wordpress/coblocks | closed | ISBAT ensure the integrity of the Gallery Masonry block through automated tests | [Type] Tests | Automated tests need to be added to the `gallery-masonry` block which follow the groundwork from #835.

#### Tests Required:

- [ ] The save function

- [x] Block transforms #1200

- [x] Block deprecation #967

#### AC

- Any attribute that modifies the serialized block output needs to have tests.

- Any existing t... | 1.0 | ISBAT ensure the integrity of the Gallery Masonry block through automated tests - Automated tests need to be added to the `gallery-masonry` block which follow the groundwork from #835.

#### Tests Required:

- [ ] The save function

- [x] Block transforms #1200

- [x] Block deprecation #967

#### AC

- Any attribut... | non_defect | isbat ensure the integrity of the gallery masonry block through automated tests automated tests need to be added to the gallery masonry block which follow the groundwork from tests required the save function block transforms block deprecation ac any attribute that modifi... | 0 |

418,492 | 12,198,594,795 | IssuesEvent | 2020-04-29 23:15:44 | CESARBR/knot-babeltower | https://api.github.com/repos/CESARBR/knot-babeltower | closed | Add auth device | enhancement priority: medium | As a platform user, I want to authenticate my device on the fog.

- [ ] Add event listener to authenticate a device

- [ ] Authenticate the devive on the things service

- [ ] Update documentation | 1.0 | Add auth device - As a platform user, I want to authenticate my device on the fog.

- [ ] Add event listener to authenticate a device

- [ ] Authenticate the devive on the things service

- [ ] Update documentation | non_defect | add auth device as a platform user i want to authenticate my device on the fog add event listener to authenticate a device authenticate the devive on the things service update documentation | 0 |

700,502 | 24,062,877,490 | IssuesEvent | 2022-09-17 03:58:02 | carpentries/amy | https://api.github.com/repos/carpentries/amy | closed | Require dates for role | priority: essential | For all community roles, dates should be required.

If inactive, both the start and end date should be required. ~~Additionally, the current date can not be in between the start and end dates.~~

If active, the start date should be required. The end date should display as `present` rather than as `???`.

EDIT @pba... | 1.0 | Require dates for role - For all community roles, dates should be required.

If inactive, both the start and end date should be required. ~~Additionally, the current date can not be in between the start and end dates.~~

If active, the start date should be required. The end date should display as `present` rather th... | non_defect | require dates for role for all community roles dates should be required if inactive both the start and end date should be required additionally the current date can not be in between the start and end dates if active the start date should be required the end date should display as present rather th... | 0 |

70,671 | 23,281,992,292 | IssuesEvent | 2022-08-05 13:01:00 | vector-im/element-call | https://api.github.com/repos/vector-im/element-call | opened | Scrolling the bottom participants videos in spotlight also scrolls the main "spotlight" video | T-Defect | ### Steps to reproduce

1. Where are you starting? What can you see?

Group call with more than 4 ppl

2. What do you click?

I click on the spotlight view and then scroll the list of videos horizontally on the botton.

### Outcome

#### What did you expect?

I expected the big highlighted video to stay where it is a... | 1.0 | Scrolling the bottom participants videos in spotlight also scrolls the main "spotlight" video - ### Steps to reproduce

1. Where are you starting? What can you see?

Group call with more than 4 ppl

2. What do you click?

I click on the spotlight view and then scroll the list of videos horizontally on the botton.

##... | defect | scrolling the bottom participants videos in spotlight also scrolls the main spotlight video steps to reproduce where are you starting what can you see group call with more than ppl what do you click i click on the spotlight view and then scroll the list of videos horizontally on the botton ... | 1 |

48,509 | 13,106,233,127 | IssuesEvent | 2020-08-04 13:32:36 | hazelcast/hazelcast-jet | https://api.github.com/repos/hazelcast/hazelcast-jet | closed | Job with long stage name and metrics after job completion doesn't terminate | defect metrics | Reproducer:

```java

JetInstance jet = Jet.newJetInstance();

Pipeline p = Pipeline.create();

p.readFrom(TestSources.items(1))

.writeTo(Sinks.noop()).setName("verylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylongname");

Job job = jet.newJo... | 1.0 | Job with long stage name and metrics after job completion doesn't terminate - Reproducer:

```java

JetInstance jet = Jet.newJetInstance();

Pipeline p = Pipeline.create();

p.readFrom(TestSources.items(1))

.writeTo(Sinks.noop()).setName("verylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylo... | defect | job with long stage name and metrics after job completion doesn t terminate reproducer java jetinstance jet jet newjetinstance pipeline p pipeline create p readfrom testsources items writeto sinks noop setname verylongnameverylongnameverylongnameverylongnameverylongnameverylongnameverylo... | 1 |

10,805 | 2,622,190,855 | IssuesEvent | 2015-03-04 00:22:59 | byzhang/cudpp | https://api.github.com/repos/byzhang/cudpp | closed | cudppSort error for a large array | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. tar -xzvf sort_test.tar.gz

2. cd sort_test

3. make

4. ./testsort 1000000

What is the expected output? What do you see instead?

expected :

before sort

radix sort : 0.00833379 s 1000000 elements

what I see :

before sort

radix sort : 0.00833379 s 1000000 elements

sort error... | 1.0 | cudppSort error for a large array - ```

What steps will reproduce the problem?

1. tar -xzvf sort_test.tar.gz

2. cd sort_test

3. make

4. ./testsort 1000000

What is the expected output? What do you see instead?

expected :

before sort

radix sort : 0.00833379 s 1000000 elements

what I see :

before sort

radix sort : 0.00... | defect | cudppsort error for a large array what steps will reproduce the problem tar xzvf sort test tar gz cd sort test make testsort what is the expected output what do you see instead expected before sort radix sort s elements what i see before sort radix sort s elements sort e... | 1 |

632,467 | 20,198,050,887 | IssuesEvent | 2022-02-11 12:34:29 | therealbluepandabear/PyxlMoose | https://api.github.com/repos/therealbluepandabear/PyxlMoose | closed | [Improvement] Add clear labeling to the 'FindAndReplaceFragment' | low priority improvement | #### Improvement description

Add clear labelling to the 'FindAndReplaceFragment' showing which color will be found, and which color it will be replaced with.

#### Why is this improvement important to add?

Because some users are getting confused as to what the two blocks represent at the bottom of the 'FindAndRepla... | 1.0 | [Improvement] Add clear labeling to the 'FindAndReplaceFragment' - #### Improvement description

Add clear labelling to the 'FindAndReplaceFragment' showing which color will be found, and which color it will be replaced with.

#### Why is this improvement important to add?

Because some users are getting confused as ... | non_defect | add clear labeling to the findandreplacefragment improvement description add clear labelling to the findandreplacefragment showing which color will be found and which color it will be replaced with why is this improvement important to add because some users are getting confused as to what the ... | 0 |

19,089 | 11,139,298,272 | IssuesEvent | 2019-12-21 03:46:28 | ritsec/cluster-duck | https://api.github.com/repos/ritsec/cluster-duck | closed | Deploy OpenStack | club management new-service | Deploy OpenStack

==============

OpenStack needs to be deployed on our local cluster using OpenStack-Ansible.

Depends on

-----

This issue depends on the following issues:

- [x] #4

- [x] #6

- [x] #7

Tasks

-----

All of the following tasks must be complete before this issue can be closed. Be sure to... | 1.0 | Deploy OpenStack - Deploy OpenStack

==============

OpenStack needs to be deployed on our local cluster using OpenStack-Ansible.

Depends on

-----

This issue depends on the following issues:

- [x] #4

- [x] #6

- [x] #7

Tasks

-----

All of the following tasks must be complete before this issue can be... | non_defect | deploy openstack deploy openstack openstack needs to be deployed on our local cluster using openstack ansible depends on this issue depends on the following issues tasks all of the following tasks must be complete before this issue can be close... | 0 |

48,033 | 13,067,405,814 | IssuesEvent | 2020-07-31 00:21:03 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [steamshovel] PyArtist.addCleanupAction doesn't work on bound functions (Trac #1687) | Migrated from Trac combo core defect | Using PyArtists.addCleanupAction with a bound function to the same object doesn't actually result in anything (e.g. writing to a log) happening. A bound function on a different object results in segfaults.

''Example 1: Same object''

```text

class MyArtists(PyArtist):

def __init__(self):

...

self.addCleanupA... | 1.0 | [steamshovel] PyArtist.addCleanupAction doesn't work on bound functions (Trac #1687) - Using PyArtists.addCleanupAction with a bound function to the same object doesn't actually result in anything (e.g. writing to a log) happening. A bound function on a different object results in segfaults.

''Example 1: Same object''... | defect | pyartist addcleanupaction doesn t work on bound functions trac using pyartists addcleanupaction with a bound function to the same object doesn t actually result in anything e g writing to a log happening a bound function on a different object results in segfaults example same object text class... | 1 |

82,196 | 7,833,863,013 | IssuesEvent | 2018-06-16 04:42:33 | scalatra/scalatra | https://api.github.com/repos/scalatra/scalatra | closed | Multi project builds, run `resourceBasePath` different to test one | test | Hi,

I have a very simple [hello world app](https://github.com/plippe/scalatra-hello-resource). The main purpose of the app was to highlight how to read resource files. Those resources are stored in `src/main/webapp/WEB-INF`, and accessed with `getServletContext.getResource`. This worked perfectly until I tried with ... | 1.0 | Multi project builds, run `resourceBasePath` different to test one - Hi,

I have a very simple [hello world app](https://github.com/plippe/scalatra-hello-resource). The main purpose of the app was to highlight how to read resource files. Those resources are stored in `src/main/webapp/WEB-INF`, and accessed with `getS... | non_defect | multi project builds run resourcebasepath different to test one hi i have a very simple the main purpose of the app was to highlight how to read resource files those resources are stored in src main webapp web inf and accessed with getservletcontext getresource this worked perfectly until i tried wi... | 0 |

50,871 | 13,187,921,739 | IssuesEvent | 2020-08-13 05:02:19 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [recclasses] Non-offline dependencies (Trac #1567) | Migrated from Trac combo reconstruction defect | Currently recclasses depends on portia (r142454) and ophelia(r142462). This is breakage that needs to be fixed. One of the main reasons for the creation of the project was to provide a project with minimal dependencies that people could include into any meta-project. This project therefore can't have any dependencie... | 1.0 | [recclasses] Non-offline dependencies (Trac #1567) - Currently recclasses depends on portia (r142454) and ophelia(r142462). This is breakage that needs to be fixed. One of the main reasons for the creation of the project was to provide a project with minimal dependencies that people could include into any meta-projec... | defect | non offline dependencies trac currently recclasses depends on portia and ophelia this is breakage that needs to be fixed one of the main reasons for the creation of the project was to provide a project with minimal dependencies that people could include into any meta project this project therefore... | 1 |

237,091 | 26,078,788,409 | IssuesEvent | 2022-12-25 01:13:30 | kapseliboi/Node-Data | https://api.github.com/repos/kapseliboi/Node-Data | opened | CVE-2022-23541 (Medium) detected in jsonwebtoken-5.7.0.tgz | security vulnerability | ## CVE-2022-23541 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsonwebtoken-5.7.0.tgz</b></p></summary>

<p>JSON Web Token implementation (symmetric and asymmetric)</p>

<p>Library ... | True | CVE-2022-23541 (Medium) detected in jsonwebtoken-5.7.0.tgz - ## CVE-2022-23541 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jsonwebtoken-5.7.0.tgz</b></p></summary>

<p>JSON Web To... | non_defect | cve medium detected in jsonwebtoken tgz cve medium severity vulnerability vulnerable library jsonwebtoken tgz json web token implementation symmetric and asymmetric library home page a href path to dependency file package json path to vulnerable library node modu... | 0 |

16,027 | 2,870,252,322 | IssuesEvent | 2015-06-07 00:37:36 | pdelia/away3d | https://api.github.com/repos/pdelia/away3d | opened | In away3d.loaders.utils.GeometryLibrary.as, _geometryArrayDirty should default to true | auto-migrated Priority-Medium Type-Defect | #81 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:39Z

```

If try to load a Collada model, can get error if call getGeometry() before

any addGeometry() calls, because _geometryArray has never been updated,

because _geometryArrayDirty defaults to false.

Fix:

private var _geometryArrayDirty:Boolean = t... | 1.0 | In away3d.loaders.utils.GeometryLibrary.as, _geometryArrayDirty should default to true - #81 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:39Z

```

If try to load a Collada model, can get error if call getGeometry() before

any addGeometry() calls, because _geometryArray has never been updated,

because _... | defect | in loaders utils geometrylibrary as geometryarraydirty should default to true issue by googlecodeexporter created on if try to load a collada model can get error if call getgeometry before any addgeometry calls because geometryarray has never been updated because geometryarraydirt... | 1 |

81,752 | 31,494,577,659 | IssuesEvent | 2023-08-31 00:30:49 | idaholab/malamute | https://api.github.com/repos/idaholab/malamute | closed | Apptainer failure: Cincotti example testing producing warnings that fail as errors. | c: infrastructure p: normal t: defect | ## Bug Description

The Cincotti example tests are failing during apptainer builds (See #93 CIVET results) because warnings are not allowed according to the MALAMUTE `testroot`. Oddly, this hasn't impacted other CI testing thus far, but likely should have caused more widespread failures.

## Steps to Reproduce

Run t... | 1.0 | Apptainer failure: Cincotti example testing producing warnings that fail as errors. - ## Bug Description

The Cincotti example tests are failing during apptainer builds (See #93 CIVET results) because warnings are not allowed according to the MALAMUTE `testroot`. Oddly, this hasn't impacted other CI testing thus far, b... | defect | apptainer failure cincotti example testing producing warnings that fail as errors bug description the cincotti example tests are failing during apptainer builds see civet results because warnings are not allowed according to the malamute testroot oddly this hasn t impacted other ci testing thus far bu... | 1 |

304,437 | 23,065,830,420 | IssuesEvent | 2022-07-25 13:51:32 | Gamify-IT/issues | https://api.github.com/repos/Gamify-IT/issues | closed | Meeting with Uwe regarding REST API design | documentation | Uwe wants to tell us how to design REST APIs

## DoD

- [x] Meeting was planned

- [x] Meeting took place

- [x] Everyone attending it knows how to design REST APIs

- [x] (Optional) A recording of the meeting can be referenced in the docs | 1.0 | Meeting with Uwe regarding REST API design - Uwe wants to tell us how to design REST APIs

## DoD

- [x] Meeting was planned

- [x] Meeting took place

- [x] Everyone attending it knows how to design REST APIs

- [x] (Optional) A recording of the meeting can be referenced in the docs | non_defect | meeting with uwe regarding rest api design uwe wants to tell us how to design rest apis dod meeting was planned meeting took place everyone attending it knows how to design rest apis optional a recording of the meeting can be referenced in the docs | 0 |

22,346 | 4,790,615,560 | IssuesEvent | 2016-10-31 09:22:47 | kss-node/kss-node | https://api.github.com/repos/kss-node/kss-node | reopened | LESS variables | documentation | I'm trying to configure my project to use KSSNode. So far I have been able to get it to generate a style guide, but my current issue is I am trying to figure out how to display LESS variables. I have tried finding the solution, but I had no luck. How would I display a LESS variable `@amber: rgb(255,193,7);` like this w... | 1.0 | LESS variables - I'm trying to configure my project to use KSSNode. So far I have been able to get it to generate a style guide, but my current issue is I am trying to figure out how to display LESS variables. I have tried finding the solution, but I had no luck. How would I display a LESS variable `@amber: rgb(255,193... | non_defect | less variables i m trying to configure my project to use kssnode so far i have been able to get it to generate a style guide but my current issue is i am trying to figure out how to display less variables i have tried finding the solution but i had no luck how would i display a less variable amber rgb ... | 0 |

12,600 | 2,711,986,200 | IssuesEvent | 2015-04-09 10:37:27 | codenameone/CodenameOne | https://api.github.com/repos/codenameone/CodenameOne | closed | Changing SpanLabel style via code | Priority-Medium Type-Defect | Original [issue 1102](https://code.google.com/p/codenameone/issues/detail?id=1102) created by codenameone on 2014-04-08T12:33:37.000Z:

Its not possible to change SpanLabel style via code (for instance when wanting to set Font via code or Foreground color)

Since it apply only to the container and not the actual text b... | 1.0 | Changing SpanLabel style via code - Original [issue 1102](https://code.google.com/p/codenameone/issues/detail?id=1102) created by codenameone on 2014-04-08T12:33:37.000Z:

Its not possible to change SpanLabel style via code (for instance when wanting to set Font via code or Foreground color)

Since it apply only to the... | defect | changing spanlabel style via code original created by codenameone on its not possible to change spanlabel style via code for instance when wanting to set font via code or foreground color since it apply only to the container and not the actual text beneath it probably the getstyle method shou... | 1 |

40,689 | 10,128,216,327 | IssuesEvent | 2019-08-01 12:15:13 | cakephp/cakephp | https://api.github.com/repos/cakephp/cakephp | opened | Prevent parse error | defect | This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* CakePHP Version: latest master on local dev

### What you did

Development on a controller and view with some helpers.

### What happened

Got `var_export does not handle circular references` in `src/Core/ObjectRegistry.php` line 147 `$msg .= var_e... | 1.0 | Prevent parse error - This is a (multiple allowed):

* [x] bug

* [ ] enhancement

* CakePHP Version: latest master on local dev

### What you did

Development on a controller and view with some helpers.

### What happened

Got `var_export does not handle circular references` in `src/Core/ObjectRegistry.php` li... | defect | prevent parse error this is a multiple allowed bug enhancement cakephp version latest master on local dev what you did development on a controller and view with some helpers what happened got var export does not handle circular references in src core objectregistry php line ... | 1 |

47,221 | 13,056,061,533 | IssuesEvent | 2020-07-30 03:32:20 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | failure in make tarball (Trac #155) | Migrated from Trac cmake defect | Adding a debug statement to install_shlib.pl, we get the following ( as an example ) :

system(/afs/ifh.de/user/i/iceprod/simulation/V02-02-05/src/cmake/install_shlib.pl, /afs/ifh.de/group/amanda/icecube/ports/RHEL_5.0_amd64/gcc-4.1.2/I3_PORTS/root-v5.17.06/lib . "/" . libCore.so.5, simulation.releases.V02-02-05.r0.Li... | 1.0 | failure in make tarball (Trac #155) - Adding a debug statement to install_shlib.pl, we get the following ( as an example ) :

system(/afs/ifh.de/user/i/iceprod/simulation/V02-02-05/src/cmake/install_shlib.pl, /afs/ifh.de/group/amanda/icecube/ports/RHEL_5.0_amd64/gcc-4.1.2/I3_PORTS/root-v5.17.06/lib . "/" . libCore.so.... | defect | failure in make tarball trac adding a debug statement to install shlib pl we get the following as an example system afs ifh de user i iceprod simulation src cmake install shlib pl afs ifh de group amanda icecube ports rhel gcc ports root lib libcore so simulation ... | 1 |

11,476 | 2,652,258,638 | IssuesEvent | 2015-03-16 16:23:48 | JoseExposito/touchegg | https://api.github.com/repos/JoseExposito/touchegg | closed | Touchegg on ubuntu 13.10 with Mate | auto-migrated Type-Defect | ```

I have a Clevo P150SM with a synaptics trackpad and I wish to have multi-touch

gestures for expo, scale, show desktop, change view port, etc...

I am using Ubuntu 13.10 with mate as a desktop environment and have synclient.

I searched the web and found touchegg and I installed it from the repos and it

did not wo... | 1.0 | Touchegg on ubuntu 13.10 with Mate - ```

I have a Clevo P150SM with a synaptics trackpad and I wish to have multi-touch

gestures for expo, scale, show desktop, change view port, etc...

I am using Ubuntu 13.10 with mate as a desktop environment and have synclient.

I searched the web and found touchegg and I installed... | defect | touchegg on ubuntu with mate i have a clevo with a synaptics trackpad and i wish to have multi touch gestures for expo scale show desktop change view port etc i am using ubuntu with mate as a desktop environment and have synclient i searched the web and found touchegg and i installed it from ... | 1 |

47,792 | 13,066,231,447 | IssuesEvent | 2020-07-30 21:15:50 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | spline-reco documentaion improvements (Trac #1184) | Migrated from Trac combo reconstruction defect | docs don't list maintainer and no link to doxygen documentaion

release notes don't contain trunk section

the only example script dosn't use test data and instead relies on user input for input filenames

Migrated from https://code.icecube.wisc.edu/ticket/1184

```json

{

"status": "closed",

"changetime": "2019-0... | 1.0 | spline-reco documentaion improvements (Trac #1184) - docs don't list maintainer and no link to doxygen documentaion

release notes don't contain trunk section

the only example script dosn't use test data and instead relies on user input for input filenames

Migrated from https://code.icecube.wisc.edu/ticket/1184

```json... | defect | spline reco documentaion improvements trac docs don t list maintainer and no link to doxygen documentaion release notes don t contain trunk section the only example script dosn t use test data and instead relies on user input for input filenames migrated from json status closed changetime... | 1 |

237,087 | 19,592,847,316 | IssuesEvent | 2022-01-05 14:46:15 | theislab/scvelo | https://api.github.com/repos/theislab/scvelo | closed | Unit test `neighbors.py` | enhancement testing | <!-- What kind of feature would you like to request? -->

## Description

For a more robust and reliable codebase, the code in `neighbors.py` needs to be unit tested. This is one step to achieving #226.

| 1.0 | Unit test `neighbors.py` - <!-- What kind of feature would you like to request? -->

## Description

For a more robust and reliable codebase, the code in `neighbors.py` needs to be unit tested. This is one step to achieving #226.

| non_defect | unit test neighbors py description for a more robust and reliable codebase the code in neighbors py needs to be unit tested this is one step to achieving | 0 |

16,661 | 2,925,158,117 | IssuesEvent | 2015-06-26 02:11:38 | FreeRADIUS/freeradius-server | https://api.github.com/repos/FreeRADIUS/freeradius-server | closed | Problems with radmin | defect v3.0.x v3.1.x | Hi,

I noticed that the radmin doesn't works in the v3.0.x/HEAD

1) The debug condition does nothing.

radmin> debug condition '(User-Name != "jorge")'

2) same in the show.

radmin> show debug condition

radmin>

| 1.0 | Problems with radmin - Hi,

I noticed that the radmin doesn't works in the v3.0.x/HEAD

1) The debug condition does nothing.

radmin> debug condition '(User-Name != "jorge")'

2) same in the show.

radmin> show debug condition

radmin>

| defect | problems with radmin hi i noticed that the radmin doesn t works in the x head the debug condition does nothing radmin debug condition user name jorge same in the show radmin show debug condition radmin | 1 |

41,432 | 6,905,517,747 | IssuesEvent | 2017-11-27 07:32:36 | godotengine/godot | https://api.github.com/repos/godotengine/godot | closed | Update 'Rect3' to AABB in the documentation | documentation | When i try to `godot -s tcp.gd` this script :

```python

#tcp.gd

extends SceneTree

var connection = null

var peerstream = null

var test = null

func _init():

print("Start client TCP")

# Connect

connection = StreamPeerTCP.new()

connection.connect_to_host("127.0.0.1", 8080)

peerstream = PacketPeerStre... | 1.0 | Update 'Rect3' to AABB in the documentation - When i try to `godot -s tcp.gd` this script :

```python

#tcp.gd

extends SceneTree

var connection = null

var peerstream = null

var test = null

func _init():

print("Start client TCP")

# Connect

connection = StreamPeerTCP.new()

connection.connect_to_host("1... | non_defect | update to aabb in the documentation when i try to godot s tcp gd this script python tcp gd extends scenetree var connection null var peerstream null var test null func init print start client tcp connect connection streampeertcp new connection connect to host ... | 0 |

103,581 | 16,602,927,509 | IssuesEvent | 2021-06-01 22:16:53 | gms-ws-sandbox/nibrs | https://api.github.com/repos/gms-ws-sandbox/nibrs | opened | CVE-2019-10072 (High) detected in multiple libraries | security vulnerability | ## CVE-2019-10072 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tomcat-embed-core-9.0.19.jar</b>, <b>tomcat-embed-core-8.5.34.jar</b>, <b>tomcat-embed-core-8.5.20.jar</b></p></summa... | True | CVE-2019-10072 (High) detected in multiple libraries - ## CVE-2019-10072 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>tomcat-embed-core-9.0.19.jar</b>, <b>tomcat-embed-core-8.5.34.... | non_defect | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries tomcat embed core jar tomcat embed core jar tomcat embed core jar tomcat embed core jar core tomcat implementation library home page a href path to dep... | 0 |

59,115 | 17,015,831,330 | IssuesEvent | 2021-07-02 11:54:38 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | opened | Tirex-backend-manager spins with 100% CPU if all backends die | Component: tirex Priority: minor Type: defect | **[Submitted to the original trac issue database at 3.43pm, Saturday, 4th February 2012]**

If all backend processes die (with non restartable errors) and no more processes are running without tirex-backend-manager having received a sighup, then tirex-backend-manager sits there spinning with 100% CPU usage.

If all b... | 1.0 | Tirex-backend-manager spins with 100% CPU if all backends die - **[Submitted to the original trac issue database at 3.43pm, Saturday, 4th February 2012]**

If all backend processes die (with non restartable errors) and no more processes are running without tirex-backend-manager having received a sighup, then tirex-back... | defect | tirex backend manager spins with cpu if all backends die if all backend processes die with non restartable errors and no more processes are running without tirex backend manager having received a sighup then tirex backend manager sits there spinning with cpu usage if all backends exited with non r... | 1 |

349,035 | 31,768,849,975 | IssuesEvent | 2023-09-12 10:26:22 | dieter-project/WithPT-BE | https://api.github.com/repos/dieter-project/WithPT-BE | opened | feat(gym) : 트레이너-체육관 로직 추가 | ✨ Feature ✅ Test | ## 이슈 내용

- 트레이너 회원 가입 시 체육관 등록

- 트레이너 신규 회원 등록 시 체육관 추가 가능

- 수업관리 메에서 트레이너 소속 체육관 및 회원 수 조회

| 1.0 | feat(gym) : 트레이너-체육관 로직 추가 - ## 이슈 내용

- 트레이너 회원 가입 시 체육관 등록

- 트레이너 신규 회원 등록 시 체육관 추가 가능

- 수업관리 메에서 트레이너 소속 체육관 및 회원 수 조회

| non_defect | feat gym 트레이너 체육관 로직 추가 이슈 내용 트레이너 회원 가입 시 체육관 등록 트레이너 신규 회원 등록 시 체육관 추가 가능 수업관리 메에서 트레이너 소속 체육관 및 회원 수 조회 | 0 |

26,230 | 4,631,353,834 | IssuesEvent | 2016-09-28 15:15:11 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | opened | Parse/TryParse not support hex string | defect | ### Expected

v has value

### Actual

Exception

http://forums.bridge.net/forum/community/help/2828-tryparse-not-support-hex-string

### Steps To Reproduce

```csharp

public class App

{

public static void Main()

{

var v = uint.Parse("0xffff",16);

}

}

``` | 1.0 | Parse/TryParse not support hex string - ### Expected

v has value

### Actual

Exception

http://forums.bridge.net/forum/community/help/2828-tryparse-not-support-hex-string

### Steps To Reproduce

```csharp

public class App

{

public static void Main()

{

var v = uint.Parse("0xffff",16);

... | defect | parse tryparse not support hex string expected v has value actual exception steps to reproduce csharp public class app public static void main var v uint parse | 1 |

810,312 | 30,235,923,647 | IssuesEvent | 2023-07-06 10:12:03 | pendulum-chain/pendulum | https://api.github.com/repos/pendulum-chain/pendulum | closed | Update runtimes to `polkadot-v0.9.40` | priority:medium | We should update our runtime dependencies to version `polkadot-v0.9.38` so that we get rid of some dependency conflicts.

For example, we were running into problems because our spacewalk pallets use `jsonrpsee` with version >0.16.0 but other parachain pallets that are on `polkadot-v0.9.37` still use `jsonrpsee` version... | 1.0 | Update runtimes to `polkadot-v0.9.40` - We should update our runtime dependencies to version `polkadot-v0.9.38` so that we get rid of some dependency conflicts.

For example, we were running into problems because our spacewalk pallets use `jsonrpsee` with version >0.16.0 but other parachain pallets that are on `polkad... | non_defect | update runtimes to polkadot we should update our runtime dependencies to version polkadot so that we get rid of some dependency conflicts for example we were running into problems because our spacewalk pallets use jsonrpsee with version but other parachain pallets that are on polkadot ... | 0 |

172,465 | 27,285,733,284 | IssuesEvent | 2023-02-23 13:22:40 | vendure-ecommerce/vendure | https://api.github.com/repos/vendure-ecommerce/vendure | closed | Admin and Customer accounts with the same email | @vendure/core design 📐 v2 | Currently you can create a customer for `alice@example.com`, and an administrator for `alice@example.com`. This will create 2 different user entities and 2 different authentication methods. Then, if `alice@example.com` tries to log in as an admin, she can't because the `BaseAuthResolver` finds her customer authenticati... | 1.0 | Admin and Customer accounts with the same email - Currently you can create a customer for `alice@example.com`, and an administrator for `alice@example.com`. This will create 2 different user entities and 2 different authentication methods. Then, if `alice@example.com` tries to log in as an admin, she can't because the ... | non_defect | admin and customer accounts with the same email currently you can create a customer for alice example com and an administrator for alice example com this will create different user entities and different authentication methods then if alice example com tries to log in as an admin she can t because the ... | 0 |

65,313 | 19,345,858,639 | IssuesEvent | 2021-12-15 10:43:20 | vector-im/element-ios | https://api.github.com/repos/vector-im/element-ios | closed | Voice call sound is audible bidirectionally only on the third connection attempt | T-Defect A-VoIP S-Major | ### Steps to reproduce

1. I open the latest Element version on iPadOS 14.8.1 (or older versions)

2. During text chats with contacts, I press on the voice call icon to talk

3. On first attempt either I can hear them, but they cannot hear me, or vice versa

4. On second attempt either I can hear them, but they cannot... | 1.0 | Voice call sound is audible bidirectionally only on the third connection attempt - ### Steps to reproduce

1. I open the latest Element version on iPadOS 14.8.1 (or older versions)

2. During text chats with contacts, I press on the voice call icon to talk

3. On first attempt either I can hear them, but they cannot h... | defect | voice call sound is audible bidirectionally only on the third connection attempt steps to reproduce i open the latest element version on ipados or older versions during text chats with contacts i press on the voice call icon to talk on first attempt either i can hear them but they cannot he... | 1 |

27,406 | 5,003,178,616 | IssuesEvent | 2016-12-11 19:45:03 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | opened | GetGenericArguments returns null for generic type definition | defect | ### Expected

```js

Array

```

### Actual

```js

null

```

### Steps To Reproduce

[Deck](http://deck.net/6d4b2e89d25d3b84dd6a74c03ed9b609)

```cs

public class Base<T, U> { }

public class Derived<V> : Base<V, V> { }

public class Program

{

public static void Main()

{

Type derived... | 1.0 | GetGenericArguments returns null for generic type definition - ### Expected

```js

Array

```

### Actual

```js

null

```

### Steps To Reproduce

[Deck](http://deck.net/6d4b2e89d25d3b84dd6a74c03ed9b609)

```cs

public class Base<T, U> { }

public class Derived<V> : Base<V, V> { }

public class Program... | defect | getgenericarguments returns null for generic type definition expected js array actual js null steps to reproduce cs public class base public class derived base public class program public static void main type derivedty... | 1 |

68,783 | 21,896,607,456 | IssuesEvent | 2022-05-20 09:14:38 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Input area is not aligned with event body's first letter in a thread on IRC/modern layout | T-Defect S-Tolerable A-Message-Editing O-Frequent A-Threads | ### Steps to reproduce

1. Enable modern layout

2. Open a thread

3. Send a message

4. Edit the message

### Outcome

#### What did you expect?

The input area should be aligned with display name and other event tiles' content.

at layer=-5 | Component: osmarender Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 5.41pm, Thursday, 25th June 2009]**

Currently landuse= draws over the top of tunnels. Ideally it should render lower down.

Eg. Stratford Olympic park:

http://www.openstreetmap.org/?lat=51.36959&lon=-0.079&zoom=15&layers=0B00FTF

| 1.0 | OSMA: render landuse=* (eg. =brownfield) at layer=-5 - **[Submitted to the original trac issue database at 5.41pm, Thursday, 25th June 2009]**

Currently landuse= draws over the top of tunnels. Ideally it should render lower down.

Eg. Stratford Olympic park:

http://www.openstreetmap.org/?lat=51.36959&lon=-0.07... | defect | osma render landuse eg brownfield at layer currently landuse draws over the top of tunnels ideally it should render lower down eg stratford olympic park | 1 |

94,985 | 10,863,169,966 | IssuesEvent | 2019-11-14 14:40:18 | georchestra/mapstore2-georchestra | https://api.github.com/repos/georchestra/mapstore2-georchestra | opened | Review Documentation | documentation | - [ ] Remove sample Cadastrapp page

- è ] Remove indicies and tables

- [ ] Add logo (as in this [sample](https://docs.ckan.org/en/2.8/) ) | 1.0 | Review Documentation - - [ ] Remove sample Cadastrapp page

- è ] Remove indicies and tables

- [ ] Add logo (as in this [sample](https://docs.ckan.org/en/2.8/) ) | non_defect | review documentation remove sample cadastrapp page è remove indicies and tables add logo as in this | 0 |

48,593 | 13,157,184,595 | IssuesEvent | 2020-08-10 12:17:22 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Providing the primary key and using INSERT ... RETURNING returns null for emulating databases | C: Functionality E: All Editions P: Medium R: Worksforme T: Defect | When using the `INSERT ... RETURNING` syntax and providing the primary key, I've observed different behavior in case the feature is natively supported and if the feature is emulated.

E.g. doing

```sql

INSERT INTO foo(some_id) VALUES(1) RETURNING some_id

```

returns `1` for Postgres, but `null` for SQLite whic... | 1.0 | Providing the primary key and using INSERT ... RETURNING returns null for emulating databases - When using the `INSERT ... RETURNING` syntax and providing the primary key, I've observed different behavior in case the feature is natively supported and if the feature is emulated.

E.g. doing

```sql

INSERT INTO foo(s... | defect | providing the primary key and using insert returning returns null for emulating databases when using the insert returning syntax and providing the primary key i ve observed different behavior in case the feature is natively supported and if the feature is emulated e g doing sql insert into foo s... | 1 |

203,674 | 23,168,990,961 | IssuesEvent | 2022-07-30 12:04:49 | MatBenfield/news | https://api.github.com/repos/MatBenfield/news | closed | [SecurityWeek] GitHub Improves npm Account Security as Incidents Rise | SecurityWeek Stale |

**Microsoft-owned GitHub this week announced new npm security improvements, amid an increase in incidents involving malicious npm packages.**

[read more](https://www.securityweek.com/github-improves-npm-account-security-incidents-rise)

<https://www.securityweek.com/github-improves-npm-account-security-incidents-r... | True | [SecurityWeek] GitHub Improves npm Account Security as Incidents Rise -

**Microsoft-owned GitHub this week announced new npm security improvements, amid an increase in incidents involving malicious npm packages.**

[read more](https://www.securityweek.com/github-improves-npm-account-security-incidents-rise)

<https... | non_defect | github improves npm account security as incidents rise microsoft owned github this week announced new npm security improvements amid an increase in incidents involving malicious npm packages | 0 |

2,864 | 2,607,963,631 | IssuesEvent | 2015-02-26 00:41:20 | chrsmithdemos/leveldb | https://api.github.com/repos/chrsmithdemos/leveldb | closed | Add GNU/kFreeBSD support | auto-migrated Priority-Medium Type-Defect | ```

Hi!

The attached patch will allow leveldb to compile on kFreeBSD platforms.

Regards,

```

-----

Original issue reported on code.google.com by `quadris...@gmail.com` on 5 Sep 2011 at 7:56

Attachments:

* [1002-kfreebsd.patch](https://storage.googleapis.com/google-code-attachments/leveldb/issue-38/comment-0/1002-kf... | 1.0 | Add GNU/kFreeBSD support - ```

Hi!

The attached patch will allow leveldb to compile on kFreeBSD platforms.

Regards,

```

-----

Original issue reported on code.google.com by `quadris...@gmail.com` on 5 Sep 2011 at 7:56

Attachments:

* [1002-kfreebsd.patch](https://storage.googleapis.com/google-code-attachments/leveldb... | defect | add gnu kfreebsd support hi the attached patch will allow leveldb to compile on kfreebsd platforms regards original issue reported on code google com by quadris gmail com on sep at attachments | 1 |

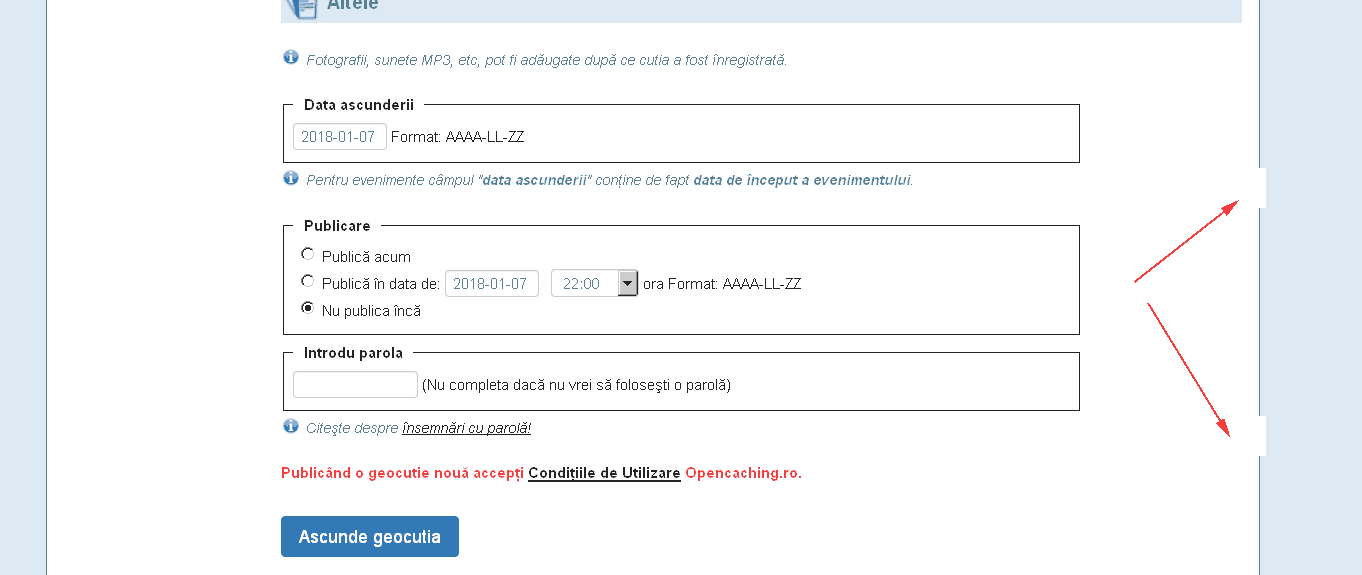

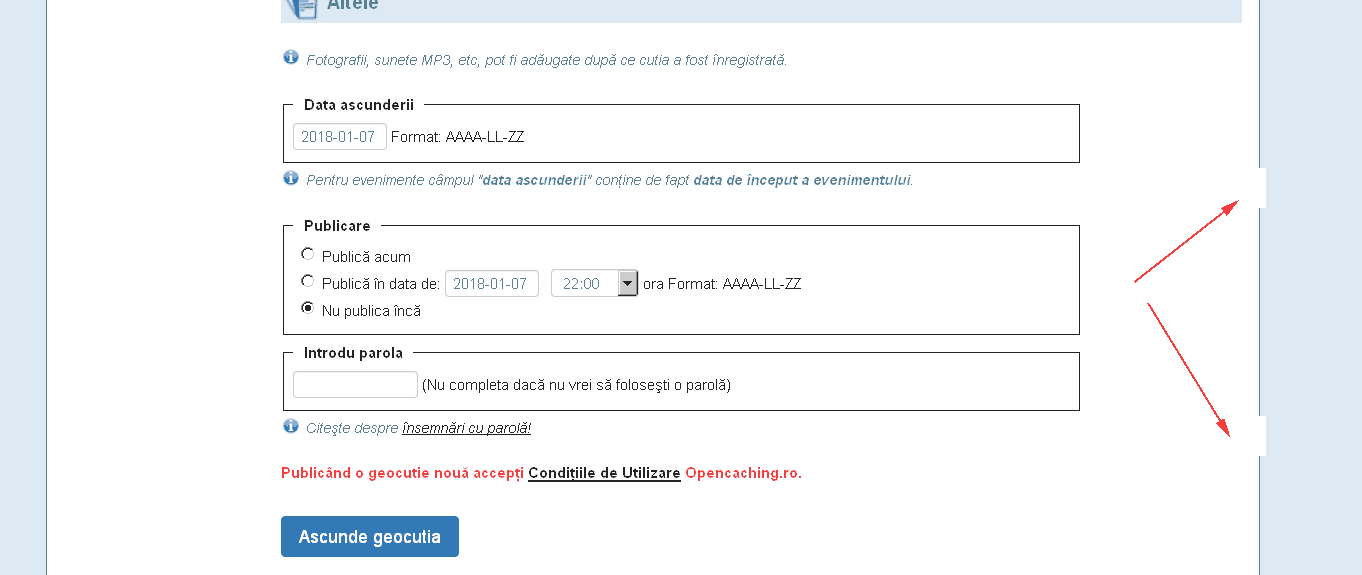

33,471 | 7,130,688,213 | IssuesEvent | 2018-01-22 08:01:36 | opencaching/opencaching-pl | https://api.github.com/repos/opencaching/opencaching-pl | closed | new cache page missing borders | Component_CacheEdit Priority_Low Type_Defect | New cache page is missing some borders in some sections.

| 1.0 | new cache page missing borders - New cache page is missing some borders in some sections.

| defect | new cache page missing borders new cache page is missing some borders in some sections | 1 |

169,126 | 20,828,052,065 | IssuesEvent | 2022-03-19 01:26:07 | Seagate/cortx-utils | https://api.github.com/repos/Seagate/cortx-utils | opened | CVE-2022-24302 (Medium) detected in paramiko-2.7.1-py2.py3-none-any.whl | security vulnerability | ## CVE-2022-24302 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>paramiko-2.7.1-py2.py3-none-any.whl</b></p></summary>

<p>SSH2 protocol library</p>

<p>Library home page: <a href="ht... | True | CVE-2022-24302 (Medium) detected in paramiko-2.7.1-py2.py3-none-any.whl - ## CVE-2022-24302 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>paramiko-2.7.1-py2.py3-none-any.whl</b></p>... | non_defect | cve medium detected in paramiko none any whl cve medium severity vulnerability vulnerable library paramiko none any whl protocol library library home page a href path to dependency file py utils path to vulnerable library py utils py utils python require... | 0 |

1,770 | 3,941,820,804 | IssuesEvent | 2016-04-27 09:23:22 | tripikad/trip2 | https://api.github.com/repos/tripikad/trip2 | opened | Log execptions if needed | external services | First test whenever Exceptions are logged to ```syslog```. If not, do this:

In https://github.com/tripikad/trip2/blob/master/app/Exceptions/Handler.php#L27 add

```php

Log::error($e)

```

See also https://github.com/foxxmd/laravel-loggly#usage and #629 | 1.0 | Log execptions if needed - First test whenever Exceptions are logged to ```syslog```. If not, do this:

In https://github.com/tripikad/trip2/blob/master/app/Exceptions/Handler.php#L27 add

```php

Log::error($e)

```

See also https://github.com/foxxmd/laravel-loggly#usage and #629 | non_defect | log execptions if needed first test whenever exceptions are logged to syslog if not do this in add php log error e see also and | 0 |

55,453 | 7,987,939,232 | IssuesEvent | 2018-07-19 09:24:39 | hyn/multi-tenant | https://api.github.com/repos/hyn/multi-tenant | closed | Documentation on website needs previous/next button | documentation enhancement | Currently you have to go to the index to be able to view a documentation page. It would be nice if you can go to the previous or next documentation page by adding two links on each page (or one for the first/last page). | 1.0 | Documentation on website needs previous/next button - Currently you have to go to the index to be able to view a documentation page. It would be nice if you can go to the previous or next documentation page by adding two links on each page (or one for the first/last page). | non_defect | documentation on website needs previous next button currently you have to go to the index to be able to view a documentation page it would be nice if you can go to the previous or next documentation page by adding two links on each page or one for the first last page | 0 |

153,620 | 13,520,124,209 | IssuesEvent | 2020-09-15 03:52:46 | simonw/datasette | https://api.github.com/repos/simonw/datasette | closed | Remove _request_ip example from canned queries documentation | bug documentation | `_request_ip` isn't valid, so it shouldn't be in the example: https://github.com/simonw/datasette/blob/cb515a9d75430adaf5e545a840bbc111648e8bfd/docs/sql_queries.rst#L320-L322 | 1.0 | Remove _request_ip example from canned queries documentation - `_request_ip` isn't valid, so it shouldn't be in the example: https://github.com/simonw/datasette/blob/cb515a9d75430adaf5e545a840bbc111648e8bfd/docs/sql_queries.rst#L320-L322 | non_defect | remove request ip example from canned queries documentation request ip isn t valid so it shouldn t be in the example | 0 |

38,009 | 8,633,003,679 | IssuesEvent | 2018-11-22 12:33:54 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | SlideMenu/TieredMenu: When a submenu is clicked the entire panel is hidden | defect | ## 1) Environment

- PrimeFaces version: 6.3-SNAPSHOT

- Does it work on the newest released PrimeFaces version? Yes Version? 6.2

- Does it work on the newest sources in GitHub? Not

- Application server + version: WildFly 13

- Affected browsers: Any

## 2) Expected behavior

When we click the submenu this action s... | 1.0 | SlideMenu/TieredMenu: When a submenu is clicked the entire panel is hidden - ## 1) Environment

- PrimeFaces version: 6.3-SNAPSHOT

- Does it work on the newest released PrimeFaces version? Yes Version? 6.2

- Does it work on the newest sources in GitHub? Not

- Application server + version: WildFly 13

- Affected brow... | defect | slidemenu tieredmenu when a submenu is clicked the entire panel is hidden environment primefaces version snapshot does it work on the newest released primefaces version yes version does it work on the newest sources in github not application server version wildfly affected brows... | 1 |

642,983 | 20,919,953,334 | IssuesEvent | 2022-03-24 16:28:46 | metabase/metabase | https://api.github.com/repos/metabase/metabase | closed | Custom Column aliasing can cause incorrect results or wrong alias on nested queries | Type:Bug Priority:P2 .Correctness Querying/Nested Queries .Backend .Regression Querying/Notebook/Custom Column | **Describe the bug**

Custom Columns with names similar to existing table column names causes failed queries or incorrect results depending on database.

On BigQuery it does not fail, but shows wrong results. Perhaps other databases too.

Almost a repeat of #14255, but for nested queries.

Regression since 0.42.0 for s... | 1.0 | Custom Column aliasing can cause incorrect results or wrong alias on nested queries - **Describe the bug**

Custom Columns with names similar to existing table column names causes failed queries or incorrect results depending on database.

On BigQuery it does not fail, but shows wrong results. Perhaps other databases t... | non_defect | custom column aliasing can cause incorrect results or wrong alias on nested queries describe the bug custom columns with names similar to existing table column names causes failed queries or incorrect results depending on database on bigquery it does not fail but shows wrong results perhaps other databases t... | 0 |

24,391 | 4,076,810,274 | IssuesEvent | 2016-05-30 03:10:06 | xcat2/xcat-core | https://api.github.com/repos/xcat2/xcat-core | opened | [FVT]Please update x_ubuntu_cmd.bundle file to make the "Full_installation_flat_docker" at the beginning | component:test | @junxiawang

In order to make the docker installation is the first case of ubuntu x86, please update x_ubuntu_cmd.bundle file to make the "Full_installation_flat_docker" at the beginning of the file.

| 1.0 | [FVT]Please update x_ubuntu_cmd.bundle file to make the "Full_installation_flat_docker" at the beginning - @junxiawang

In order to make the docker installation is the first case of ubuntu x86, please update x_ubuntu_cmd.bundle file to make the "Full_installation_flat_docker" at the beginning of the file.

| non_defect | please update x ubuntu cmd bundle file to make the full installation flat docker at the beginning junxiawang in order to make the docker installation is the first case of ubuntu please update x ubuntu cmd bundle file to make the full installation flat docker at the beginning of the file | 0 |

5,841 | 2,610,216,472 | IssuesEvent | 2015-02-26 19:08:59 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | сигнализация tiger evolution инструкция | auto-migrated Priority-Medium Type-Defect | ```

'''Август Маслов'''

День добрый никак не могу найти

.сигнализация tiger evolution инструкция. как то

выкладывали уже

'''Вилли Русаков'''

Качай тут http://bit.ly/16pKem3

'''Гермоген Пономарёв'''

Просит ввести номер мобилы!Не опасно ли это?

'''Валериан Соболев'''

Не это не влияет на баланс

'''Всемил Шилов'''

Не... | 1.0 | сигнализация tiger evolution инструкция - ```

'''Август Маслов'''

День добрый никак не могу найти

.сигнализация tiger evolution инструкция. как то

выкладывали уже

'''Вилли Русаков'''

Качай тут http://bit.ly/16pKem3

'''Гермоген Пономарёв'''

Просит ввести номер мобилы!Не опасно ли это?

'''Валериан Соболев'''

Не это ... | defect | сигнализация tiger evolution инструкция август маслов день добрый никак не могу найти сигнализация tiger evolution инструкция как то выкладывали уже вилли русаков качай тут гермоген пономарёв просит ввести номер мобилы не опасно ли это валериан соболев не это не влияет на баланс ... | 1 |

178,634 | 21,509,443,728 | IssuesEvent | 2022-04-28 01:41:52 | bsbtd/Teste | https://api.github.com/repos/bsbtd/Teste | closed | CVE-2020-17510 (High) detected in shiro-web-1.5.0.jar - autoclosed | security vulnerability | ## CVE-2020-17510 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>shiro-web-1.5.0.jar</b></p></summary>

<p>Apache Shiro is a powerful and flexible open-source security framework that c... | True | CVE-2020-17510 (High) detected in shiro-web-1.5.0.jar - autoclosed - ## CVE-2020-17510 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>shiro-web-1.5.0.jar</b></p></summary>

<p>Apache S... | non_defect | cve high detected in shiro web jar autoclosed cve high severity vulnerability vulnerable library shiro web jar apache shiro is a powerful and flexible open source security framework that cleanly handles authentication authorization enterprise session management sing... | 0 |

275,872 | 20,960,147,620 | IssuesEvent | 2022-03-27 17:22:18 | project-serum/anchor | https://api.github.com/repos/project-serum/anchor | closed | lang: add docs for `emit!` macro | documentation lang | currently there's almost nothing there.

Should include an example

https://docs.rs/anchor-lang/latest/anchor_lang/macro.emit.html | 1.0 | lang: add docs for `emit!` macro - currently there's almost nothing there.

Should include an example

https://docs.rs/anchor-lang/latest/anchor_lang/macro.emit.html | non_defect | lang add docs for emit macro currently there s almost nothing there should include an example | 0 |

58,804 | 16,769,691,640 | IssuesEvent | 2021-06-14 13:27:53 | line/armeria | https://api.github.com/repos/line/armeria | closed | Which event loop should late subscriber signals be run on | defect | Not sure if this is intentional behavior, so wanted to ask.

Currently, it seems like for a late subscriber, the current subscription's executor is used to signal events.

From the code level, it seemed like `AbstractStreamMessage` makes a deliberate effort to ensure the currently subscribed executor is always used.

... | 1.0 | Which event loop should late subscriber signals be run on - Not sure if this is intentional behavior, so wanted to ask.

Currently, it seems like for a late subscriber, the current subscription's executor is used to signal events.

From the code level, it seemed like `AbstractStreamMessage` makes a deliberate effort ... | defect | which event loop should late subscriber signals be run on not sure if this is intentional behavior so wanted to ask currently it seems like for a late subscriber the current subscription s executor is used to signal events from the code level it seemed like abstractstreammessage makes a deliberate effort ... | 1 |

32,366 | 6,767,389,113 | IssuesEvent | 2017-10-26 03:00:48 | Shopkeepers/Shopkeepers | https://api.github.com/repos/Shopkeepers/Shopkeepers | closed | An internal error has occurred. | Defect invalid migrated | **Migrated from:** https://dev.bukkit.org/projects/shopkeepers/issues/71

**Originally posted by DayneOram (Dec 30, 2012):**

What steps will reproduce the problem?

1. Difficult to say, it seems to be a problem with us or our server. I simply installed it and tried to use it.

2.

3.What is the expected output? What do ... | 1.0 | An internal error has occurred. - **Migrated from:** https://dev.bukkit.org/projects/shopkeepers/issues/71

**Originally posted by DayneOram (Dec 30, 2012):**

What steps will reproduce the problem?

1. Difficult to say, it seems to be a problem with us or our server. I simply installed it and tried to use it.

2.

3.Wha... | defect | an internal error has occurred migrated from originally posted by dayneoram dec what steps will reproduce the problem difficult to say it seems to be a problem with us or our server i simply installed it and tried to use it what is the expected output what do you see instead after... | 1 |

73,118 | 24,467,715,013 | IssuesEvent | 2022-10-07 16:30:44 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | [Simple View] Ubuntu 19.10 Empty Computing Preferences screen | C: Manager P: Major R: duplicate T: Defect E: to be determined C: Manager - Simple View Validate | I've installed BOINC from the Software Package Manager and it runs / computes fine. The GUI theme however is pretty broken. The preferences page is 100% blank.

| 1.0 | [Simple View] Ubuntu 19.10 Empty Computing Preferences screen - I've installed BOINC from the Software Package Manager and it runs / computes fine. The GUI theme however is pretty broken. The preferences page is 100% blank.

when generating presigned urls to allow the uploading of files to an S3 bucket. While the .NET AWS SDK client does have the optio... | 1.0 | No instructions on POST presigned url generation? - ### Describe the issue

I am attempting to add contitions such as max payload size using [POST policies](https://docs.aws.amazon.com/AmazonS3/latest/API/sigv4-HTTPPOSTConstructPolicy.html) when generating presigned urls to allow the uploading of files to an S3 bucke... | non_defect | no instructions on post presigned url generation describe the issue i am attempting to add contitions such as max payload size using when generating presigned urls to allow the uploading of files to an bucket while the net aws sdk client does have the option to create presigned urls for put requests ... | 0 |

287,937 | 31,856,517,809 | IssuesEvent | 2023-09-15 07:52:25 | Trinadh465/linux-4.1.15_CVE-2023-26607 | https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2023-26607 | opened | CVE-2022-4379 (High) detected in linuxlinux-4.6 | Mend: dependency security vulnerability | ## CVE-2022-4379 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.... | True | CVE-2022-4379 (High) detected in linuxlinux-4.6 - ## CVE-2022-4379 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Libra... | non_defect | cve high detected in linuxlinux cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files vulnerabil... | 0 |

55,538 | 14,534,649,842 | IssuesEvent | 2020-12-15 03:32:44 | naev/naev | https://api.github.com/repos/naev/naev | closed | Game crashes if you switch from a ship carrying cargo to a ship with stowed cargo. | Priority-Critical Type-Defect | Repro: load this little fellow, go to the equipment screen, and double-click the pirate Kestrel.

[True pirate.ns.gz](https://github.com/naev/naev/files/5650772/True.pirate.ns.gz)

The save data says

```

<ship name="P-KISTAL" model="Pirate Kestrel>

...

<commodities>

<commodity quantity="35">Food</c... | 1.0 | Game crashes if you switch from a ship carrying cargo to a ship with stowed cargo. - Repro: load this little fellow, go to the equipment screen, and double-click the pirate Kestrel.

[True pirate.ns.gz](https://github.com/naev/naev/files/5650772/True.pirate.ns.gz)

The save data says

```

<ship name="P-KISTAL" mod... | defect | game crashes if you switch from a ship carrying cargo to a ship with stowed cargo repro load this little fellow go to the equipment screen and double click the pirate kestrel the save data says food diamond if that s a legitimate situatio... | 1 |

49,808 | 13,187,275,191 | IssuesEvent | 2020-08-13 02:53:56 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [CascadeVariables] review branch by mjurkovic (Trac #2118) | Incomplete Migration Migrated from Trac analysis defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2118">https://code.icecube.wisc.edu/ticket/2118</a>, reported by kjmeagher and owned by kjmeagher</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-12T22:16:55",

"description": "http://code.icecube.w... | 1.0 | [CascadeVariables] review branch by mjurkovic (Trac #2118) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2118">https://code.icecube.wisc.edu/ticket/2118</a>, reported by kjmeagher and owned by kjmeagher</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "201... | defect | review branch by mjurkovic trac migrated from json status closed changetime description reporter kjmeagher cc resolution insufficient resources ts component analysis summary review br... | 1 |

15,260 | 10,271,319,611 | IssuesEvent | 2019-08-23 13:52:05 | kyma-project/kyma | https://api.github.com/repos/kyma-project/kyma | closed | Move API controller integration tests from component to tests | area/service-mesh enhancement stale | There are 2 api-controller tests that require running Kyma cluster to pass:

* components/api-controller/pkg/controller/networking/v1/networkingv1_integration_test.go

* components/api-controller/pkg/controller/authentication/v2/authenticationv2_integration_test.go

They are not run on CI because Kyma is not available du... | 1.0 | Move API controller integration tests from component to tests - There are 2 api-controller tests that require running Kyma cluster to pass:

* components/api-controller/pkg/controller/networking/v1/networkingv1_integration_test.go

* components/api-controller/pkg/controller/authentication/v2/authenticationv2_integration_... | non_defect | move api controller integration tests from component to tests there are api controller tests that require running kyma cluster to pass components api controller pkg controller networking integration test go components api controller pkg controller authentication integration test go they are not run on... | 0 |

36,917 | 2,813,567,515 | IssuesEvent | 2015-05-18 15:20:07 | CruxFramework/crux | https://api.github.com/repos/CruxFramework/crux | closed | Split CSS files from Smart Faces | enhancement imported invalid Module-CruxSmartFaces Priority-Medium | _From [claudio....@cruxframework.org](https://code.google.com/u/102254381191677355567/) on August 29, 2014 10:38:07_

Today we have 3 main stylesheets for Smart Faces. Large, Small, and Common. But this approach still not the better one. By splitting the CSS into one file for each component we made it really easier to ... | 1.0 | Split CSS files from Smart Faces - _From [claudio....@cruxframework.org](https://code.google.com/u/102254381191677355567/) on August 29, 2014 10:38:07_

Today we have 3 main stylesheets for Smart Faces. Large, Small, and Common. But this approach still not the better one. By splitting the CSS into one file for each com... | non_defect | split css files from smart faces from on august today we have main stylesheets for smart faces large small and common but this approach still not the better one by splitting the css into one file for each component we made it really easier to maintain due to the organization not to mention t... | 0 |

3,418 | 2,610,062,302 | IssuesEvent | 2015-02-26 18:18:19 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 黄岩治疗男性不育哪家好 | auto-migrated Priority-Medium Type-Defect | ```

黄岩治疗男性不育哪家好【台州五洲生殖医院】24小时健康咨

询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州

市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108�

��118、198及椒江一金清公交车直达枫南小区,乘坐107、105、109

、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。... | 1.0 | 黄岩治疗男性不育哪家好 - ```

黄岩治疗男性不育哪家好【台州五洲生殖医院】24小时健康咨

询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州

市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108�

��118、198及椒江一金清公交车直达枫南小区,乘坐107、105、109

、112、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费... | defect | 黄岩治疗男性不育哪家好 黄岩治疗男性不育哪家好【台州五洲生殖医院】 询热线 微信号tzwzszyy 医院地址 台州 (枫南大转盘旁)乘车线路 、 � �� 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 origina... | 1 |

156,456 | 5,969,666,845 | IssuesEvent | 2017-05-30 20:48:28 | osuosl/iam | https://api.github.com/repos/osuosl/iam | closed | Change client and project views to summary style | High Priority Ready for Review | Based on feedback from @ramereth:

Change the current client view to be more like the recently created Summary table - list all the nodes and databases along with their measurements, and leave the resource details (cluster, type, etc) to the individual resource view. This view will be essentially the same as the Proj... | 1.0 | Change client and project views to summary style - Based on feedback from @ramereth:

Change the current client view to be more like the recently created Summary table - list all the nodes and databases along with their measurements, and leave the resource details (cluster, type, etc) to the individual resource view.... | non_defect | change client and project views to summary style based on feedback from ramereth change the current client view to be more like the recently created summary table list all the nodes and databases along with their measurements and leave the resource details cluster type etc to the individual resource view ... | 0 |

105,562 | 16,652,829,192 | IssuesEvent | 2021-06-05 01:31:48 | cfscode/react-photoswipe | https://api.github.com/repos/cfscode/react-photoswipe | opened | CVE-2012-6708 (Medium) detected in jquery-1.7.1.min.js | security vulnerability | ## CVE-2012-6708 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library for DOM operations</p>

<p>Library home page: <a href="htt... | True | CVE-2012-6708 (Medium) detected in jquery-1.7.1.min.js - ## CVE-2012-6708 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jquery-1.7.1.min.js</b></p></summary>

<p>JavaScript library ... | non_defect | cve medium detected in jquery min js cve medium severity vulnerability vulnerable library jquery min js javascript library for dom operations library home page a href path to dependency file react photoswipe node modules vm browserify example run index html path to vu... | 0 |

62,957 | 17,270,161,819 | IssuesEvent | 2021-07-22 18:40:12 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Crash of version 1.7.33 on hirsute on amd64 | T-Defect | <!-- A picture's worth a thousand words: PLEASE INCLUDE A SCREENSHOT :P -->

Same symptoms as https://github.com/vector-im/element-web/issues/18173 but apparently different root cause

(stack trace at: https://gist.github.com/monochromec/14ce9a604a368d0ee017730923ab9265)

<!-- Please report security issues by email... | 1.0 | Crash of version 1.7.33 on hirsute on amd64 - <!-- A picture's worth a thousand words: PLEASE INCLUDE A SCREENSHOT :P -->

Same symptoms as https://github.com/vector-im/element-web/issues/18173 but apparently different root cause

(stack trace at: https://gist.github.com/monochromec/14ce9a604a368d0ee017730923ab9265)

... | defect | crash of version on hirsute on same symptoms as but apparently different root cause stack trace at this is a bug report template by following the instructions below and filling out the sections with your information you will help the us to get all the necessary data to fix your is... | 1 |

194,059 | 14,668,656,145 | IssuesEvent | 2020-12-29 21:53:49 | calba5141114/studentpass | https://api.github.com/repos/calba5141114/studentpass | opened | Implement Tests for studentpass | help wanted testing | Testing Individual Classes, Functions and REST routes is very important for insuring that

studentpass is a highly reliable and stable API. | 1.0 | Implement Tests for studentpass - Testing Individual Classes, Functions and REST routes is very important for insuring that

studentpass is a highly reliable and stable API. | non_defect | implement tests for studentpass testing individual classes functions and rest routes is very important for insuring that studentpass is a highly reliable and stable api | 0 |

26,270 | 4,647,121,124 | IssuesEvent | 2016-10-01 09:16:09 | KronoZed/urtdsc-old | https://api.github.com/repos/KronoZed/urtdsc-old | closed | Нужно почистить код | auto-migrated Component-Logic Priority-Medium Type-Defect | ```

Нужно почистить код.

Бросаются в глаза demorealdate и screenrealdate (из func.py)

с одинаковым поведением, и непонятно где

используемые.

```

Original issue reported on code.google.com by `endenis@gmail.com` on 11 Sep 2011 at 9:42 | 1.0 | Нужно почистить код - ```

Нужно почистить код.

Бросаются в глаза demorealdate и screenrealdate (из func.py)

с одинаковым поведением, и непонятно где

используемые.

```

Original issue reported on code.google.com by `endenis@gmail.com` on 11 Sep 2011 at 9:42 | defect | нужно почистить код нужно почистить код бросаются в глаза demorealdate и screenrealdate из func py с одинаковым поведением и непонятно где используемые original issue reported on code google com by endenis gmail com on sep at | 1 |

41,942 | 10,722,199,627 | IssuesEvent | 2019-10-27 10:06:46 | vesoft-inc/nebula | https://api.github.com/repos/vesoft-inc/nebula | closed | Chinese Guideline shows error when try to insert | defect-p3 | **Describe the bug(__must be provided__)**

Follow the guideline, when try to execute this

> INSERT VERTEX student(name, age, gender) VALUES 200:("Monica", 16, "female");

shows error:

> [ERROR (-8)]: No schema found for `student'

**Your Environments(__must be provided__)**

* OS: `18.7.0 Darwin Kernel Ver... | 1.0 | Chinese Guideline shows error when try to insert - **Describe the bug(__must be provided__)**

Follow the guideline, when try to execute this

> INSERT VERTEX student(name, age, gender) VALUES 200:("Monica", 16, "female");

shows error:

> [ERROR (-8)]: No schema found for `student'

**Your Environments(__must ... | defect | chinese guideline shows error when try to insert describe the bug must be provided follow the guideline when try to execute this insert vertex student name age gender values monica female shows error no schema found for student your environments must be provided ... | 1 |

365,742 | 10,791,174,245 | IssuesEvent | 2019-11-05 16:10:40 | AY1920S1-CS2113T-W12-3/main | https://api.github.com/repos/AY1920S1-CS2113T-W12-3/main | closed | As a hall resident, I can cancel booking of the facility | priority.High type.Story | So that I can free up the room for others | 1.0 | As a hall resident, I can cancel booking of the facility - So that I can free up the room for others | non_defect | as a hall resident i can cancel booking of the facility so that i can free up the room for others | 0 |

4,825 | 5,314,258,134 | IssuesEvent | 2017-02-13 14:37:55 | NixOS/nixpkgs | https://api.github.com/repos/NixOS/nixpkgs | closed | 2nd factor authentication turns off 1st factor authentication | 1.severity: blocker 1.severity: security 6.topic: nixos | When I did "security.pam.services.sshd.oathAuth = true", the resulting pam file was:

auth sufficient pam_unix.so likeauth try_first_pass

auth sufficient ${pkgs.oathToolkit}/lib/security/pam_oath.so window=5 usersfile=/etc/users.oath digits=6

which allows me to type a wrong password and make up for it by a correct OT... | True | 2nd factor authentication turns off 1st factor authentication - When I did "security.pam.services.sshd.oathAuth = true", the resulting pam file was:

auth sufficient pam_unix.so likeauth try_first_pass

auth sufficient ${pkgs.oathToolkit}/lib/security/pam_oath.so window=5 usersfile=/etc/users.oath digits=6

which allow... | non_defect | factor authentication turns off factor authentication when i did security pam services sshd oathauth true the resulting pam file was auth sufficient pam unix so likeauth try first pass auth sufficient pkgs oathtoolkit lib security pam oath so window usersfile etc users oath digits which allows me... | 0 |

239,717 | 26,232,057,227 | IssuesEvent | 2023-01-05 01:43:00 | kapseliboi/DoIt | https://api.github.com/repos/kapseliboi/DoIt | opened | CVE-2021-44906 (High) detected in minimist-1.2.5.tgz | security vulnerability | ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options</p>

<p>Library home page: <a href="https://registry.npm... | True | CVE-2021-44906 (High) detected in minimist-1.2.5.tgz - ## CVE-2021-44906 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>minimist-1.2.5.tgz</b></p></summary>

<p>parse argument options<... | non_defect | cve high detected in minimist tgz cve high severity vulnerability vulnerable library minimist tgz parse argument options library home page a href path to dependency file package json path to vulnerable library node modules minimist package json dependency hiera... | 0 |

135,346 | 5,246,947,368 | IssuesEvent | 2017-02-01 11:17:21 | pmem/issues | https://api.github.com/repos/pmem/issues | closed | unit tests: obj_list_recovery/TEST0, TEST1, TEST2 (all/pmem/debug/memcheck) fails | Exposure: Low OS: Linux Priority: 4 low Type: Bug | Found on revision: 27ac7ed4ef539adf64cd712aefe578ec61ec6c70

> obj_list_recovery/TEST0: SETUP (all/pmem/debug/memcheck)

> obj_list_recovery/TEST0: START: obj_list

> obj_list_recovery/TEST0: START: obj_list

> obj_list_recovery/TEST0 failed with Valgrind. See memcheck0.log. First 20 lines below.

> obj_list_recovery... | 1.0 | unit tests: obj_list_recovery/TEST0, TEST1, TEST2 (all/pmem/debug/memcheck) fails - Found on revision: 27ac7ed4ef539adf64cd712aefe578ec61ec6c70

> obj_list_recovery/TEST0: SETUP (all/pmem/debug/memcheck)

> obj_list_recovery/TEST0: START: obj_list

> obj_list_recovery/TEST0: START: obj_list

> obj_list_recovery/TEST0... | non_defect | unit tests obj list recovery all pmem debug memcheck fails found on revision obj list recovery setup all pmem debug memcheck obj list recovery start obj list obj list recovery start obj list obj list recovery failed with valgrind see log first lines below obj list ... | 0 |

24,203 | 3,924,306,331 | IssuesEvent | 2016-04-22 14:47:44 | opencaching/opencaching-pl | https://api.github.com/repos/opencaching/opencaching-pl | closed | PHP notices (main website) | Priority_Medium Server_Administration Type_Defect x_Maintainability | ```

Undefined variable: cryptedhints in viewcache.php on line 1454

Undefined index: deleted in viewcache.php on line 1659

Use of undefined constant name - assumed 'name' in lib/common.inc.php(616) : eval()'d code on line 302

Use of undefined constant name - assumed 'code' in lib/common.inc.php(616) : eval()'d cod... | 1.0 | PHP notices (main website) - ```

Undefined variable: cryptedhints in viewcache.php on line 1454

Undefined index: deleted in viewcache.php on line 1659

Use of undefined constant name - assumed 'name' in lib/common.inc.php(616) : eval()'d code on line 302

Use of undefined constant name - assumed 'code' in lib/commo... | defect | php notices main website undefined variable cryptedhints in viewcache php on line undefined index deleted in viewcache php on line use of undefined constant name assumed name in lib common inc php eval d code on line use of undefined constant name assumed code in lib common inc php ... | 1 |

15,212 | 2,850,318,310 | IssuesEvent | 2015-05-31 13:34:36 | damonkohler/sl4a | https://api.github.com/repos/damonkohler/sl4a | opened | sl4a not working | auto-migrated Priority-Medium Type-Defect | _From @GoogleCodeExporter on May 31, 2015 11:31_

```

What device(s) are you experiencing the problem on?

eeepc

What firmware version are you running on the device?

android x86 4.04 eeepc

What steps will reproduce the problem?

1.installing sl4a

2.installing Python for android

3.running the hello_world.py script

Wh... | 1.0 | sl4a not working - _From @GoogleCodeExporter on May 31, 2015 11:31_

```

What device(s) are you experiencing the problem on?

eeepc

What firmware version are you running on the device?

android x86 4.04 eeepc

What steps will reproduce the problem?

1.installing sl4a

2.installing Python for android

3.running the hello_... | defect | not working from googlecodeexporter on may what device s are you experiencing the problem on eeepc what firmware version are you running on the device android eeepc what steps will reproduce the problem installing installing python for android running the hello world py script... | 1 |

58,203 | 8,233,774,387 | IssuesEvent | 2018-09-08 05:33:59 | gatsbyjs/gatsby | https://api.github.com/repos/gatsbyjs/gatsby | closed | TO DO after v2 is done: separate search for v1 and v2 docs | type: documentation | ## Summary

## Relevant information