Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

22,204 | 11,700,339,712 | IssuesEvent | 2020-03-06 17:17:25 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | opened | Set default value of "" on TrainingFileFilter.Path | Client Cognitive Services FormRecognizer | In CustomFormClient.StartTraining(), we check whether filter has been passed in, and if so, we add it to the trainRequest.

Once we are able to set a default value on TrainingFileFilter with code gen (https://github.com/Azure/autorest.csharp/issues/467), we can send this always.

We will want to decide whether it's... | 1.0 | Set default value of "" on TrainingFileFilter.Path - In CustomFormClient.StartTraining(), we check whether filter has been passed in, and if so, we add it to the trainRequest.

Once we are able to set a default value on TrainingFileFilter with code gen (https://github.com/Azure/autorest.csharp/issues/467), we can sen... | non_defect | set default value of on trainingfilefilter path in customformclient starttraining we check whether filter has been passed in and if so we add it to the trainrequest once we are able to set a default value on trainingfilefilter with code gen we can send this always we will want to decide whether it ... | 0 |

42,551 | 11,017,047,735 | IssuesEvent | 2019-12-05 07:22:41 | microsoft/WindowsTemplateStudio | https://api.github.com/repos/microsoft/WindowsTemplateStudio | closed | Build dev.version_0.20.19339.01 failed | bug vsts-build | ## Build dev.version_0.20.19339.01

- **Build result:** `failed`

- **Build queued:** 12/5/2019 3:00:02 AM

- **Build duration:** 1.64 minutes

### Details

Build [dev.version_0.20.19339.01](https://winappstudio.visualstudio.com/web/build.aspx?pcguid=a4ef43be-68ce-4195-a619-079b4d9834c2&builduri=vstfs%3a%2f%2f%2fBuild%... | 1.0 | Build dev.version_0.20.19339.01 failed - ## Build dev.version_0.20.19339.01

- **Build result:** `failed`

- **Build queued:** 12/5/2019 3:00:02 AM

- **Build duration:** 1.64 minutes

### Details

Build [dev.version_0.20.19339.01](https://winappstudio.visualstudio.com/web/build.aspx?pcguid=a4ef43be-68ce-4195-a619-079b... | non_defect | build dev version failed build dev version build result failed build queued am build duration minutes details build failed rightclickactions cs code src ui visualstudio rightclickactions cs error the name assembly does not exi... | 0 |

71,150 | 30,823,638,277 | IssuesEvent | 2023-08-01 18:14:09 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | Unable to import azurerm_pim_eligible_role_assignment resources | bug service/authorization v/3.x | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the orig... | 1.0 | Unable to import azurerm_pim_eligible_role_assignment resources - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Community Note

<!--- Please keep this note for the community --->

* Please vote on this issue by adding a :thumbsup: [reaction](https://blog.github.com/2016-03-1... | non_defect | unable to import azurerm pim eligible role assignment resources is there an existing issue for this i have searched the existing issues community note please vote on this issue by adding a thumbsup to the original issue to help the community and maintainers prioritize this request plea... | 0 |

19,583 | 3,227,224,116 | IssuesEvent | 2015-10-11 00:39:58 | jsr107/jsr107spec | https://api.github.com/repos/jsr107/jsr107spec | closed | Wrong reference to put in CacheRemove/CacheRemoveAll | Defect | The Javadoc of `@CacheRemove#afterInvocation` refers to the put operation while it should probably be the remove operation. Excerpt:

_If true and the annotated method throws an exception the put will not be executed._

`CacheRemoveAll` is affected as well.

Besides, this statement does not take the `evictFor` and ... | 1.0 | Wrong reference to put in CacheRemove/CacheRemoveAll - The Javadoc of `@CacheRemove#afterInvocation` refers to the put operation while it should probably be the remove operation. Excerpt:

_If true and the annotated method throws an exception the put will not be executed._

`CacheRemoveAll` is affected as well.

Be... | defect | wrong reference to put in cacheremove cacheremoveall the javadoc of cacheremove afterinvocation refers to the put operation while it should probably be the remove operation excerpt if true and the annotated method throws an exception the put will not be executed cacheremoveall is affected as well be... | 1 |

9,828 | 2,615,175,556 | IssuesEvent | 2015-03-01 06:58:56 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | Reaver Alice | auto-migrated Priority-Triage Type-Defect | ```

Salve a tutti, è da tanto che sto cercando di provare a trovare la password

del router di un mio amico, ma non riesco. Quando do il comando:

reaver -i mon0 -b mac -p pin -vv alla fine mi esce questo e praticamente va

all'infinito cosi:

Sending EAPOL START request

[+] Received identity request

[+] Sending identit... | 1.0 | Reaver Alice - ```

Salve a tutti, è da tanto che sto cercando di provare a trovare la password

del router di un mio amico, ma non riesco. Quando do il comando:

reaver -i mon0 -b mac -p pin -vv alla fine mi esce questo e praticamente va

all'infinito cosi:

Sending EAPOL START request

[+] Received identity request

[+] ... | defect | reaver alice salve a tutti è da tanto che sto cercando di provare a trovare la password del router di un mio amico ma non riesco quando do il comando reaver i b mac p pin vv alla fine mi esce questo e praticamente va all infinito cosi sending eapol start request received identity request sending... | 1 |

10,812 | 2,622,191,249 | IssuesEvent | 2015-03-04 00:23:10 | byzhang/cudpp | https://api.github.com/repos/byzhang/cudpp | closed | link error with CUDA 3.1 | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Update to cuda 3.1

2. Compile with cuda 3.1 nvcc

3.

What is the expected output? What do you see instead?

The linking should produce a shared lib. Instead get a linking error:

ld: duplicate symbol OperatorMin<double>::identity() constin

./cudpp_generated_scan_app.cu.o and... | 1.0 | link error with CUDA 3.1 - ```

What steps will reproduce the problem?

1. Update to cuda 3.1

2. Compile with cuda 3.1 nvcc

3.

What is the expected output? What do you see instead?

The linking should produce a shared lib. Instead get a linking error:

ld: duplicate symbol OperatorMin<double>::identity() constin

./cudpp_... | defect | link error with cuda what steps will reproduce the problem update to cuda compile with cuda nvcc what is the expected output what do you see instead the linking should produce a shared lib instead get a linking error ld duplicate symbol operatormin identity constin cudpp generat... | 1 |

80,595 | 30,347,561,921 | IssuesEvent | 2023-07-11 16:26:11 | vector-im/element-integration-manager | https://api.github.com/repos/vector-im/element-integration-manager | closed | We need consistent widget settings UX | T-Defect | - Some URL inputs allow typing, some only allow pasting

- Some have static URLs pre-generated even before clicking "Save", which is odd because why do I need to save something that's already known? This might be as simple as not using the word "Save".

Maybe these issues will go away when we have a generic "Here are... | 1.0 | We need consistent widget settings UX - - Some URL inputs allow typing, some only allow pasting

- Some have static URLs pre-generated even before clicking "Save", which is odd because why do I need to save something that's already known? This might be as simple as not using the word "Save".

Maybe these issues will ... | defect | we need consistent widget settings ux some url inputs allow typing some only allow pasting some have static urls pre generated even before clicking save which is odd because why do i need to save something that s already known this might be as simple as not using the word save maybe these issues will ... | 1 |

14,674 | 8,664,957,570 | IssuesEvent | 2018-11-28 21:45:17 | keras-team/keras | https://api.github.com/repos/keras-team/keras | closed | TensorBoard Callback write_images | type:bug/performance type:tensorFlow | I want to use the TensorBoard callback to visualize my conv layer kernels. But i can only see the first conv layer kernel in TensorBoard and my Dense layers at the end. For the other conv layers i can just see the bias values and not the kernels.

Here is my sample code for the Keras model.

```

# Imports

import te... | True | TensorBoard Callback write_images - I want to use the TensorBoard callback to visualize my conv layer kernels. But i can only see the first conv layer kernel in TensorBoard and my Dense layers at the end. For the other conv layers i can just see the bias values and not the kernels.

Here is my sample code for the Ker... | non_defect | tensorboard callback write images i want to use the tensorboard callback to visualize my conv layer kernels but i can only see the first conv layer kernel in tensorboard and my dense layers at the end for the other conv layers i can just see the bias values and not the kernels here is my sample code for the ker... | 0 |

212,399 | 7,236,712,414 | IssuesEvent | 2018-02-13 08:25:13 | bmintz/emoji-connoisseur | https://api.github.com/repos/bmintz/emoji-connoisseur | closed | Can't test the bot without affecting prod | bug high priority | Unfortunately, the nature of the bot requires several backend servers that are owned by a specific person, and no other guilds that are owned by the bot owner. This means that in order to test the bot without affecting prod, I need a separate set of backend guilds, which requires another account.

I tried to create a n... | 1.0 | Can't test the bot without affecting prod - Unfortunately, the nature of the bot requires several backend servers that are owned by a specific person, and no other guilds that are owned by the bot owner. This means that in order to test the bot without affecting prod, I need a separate set of backend guilds, which requ... | non_defect | can t test the bot without affecting prod unfortunately the nature of the bot requires several backend servers that are owned by a specific person and no other guilds that are owned by the bot owner this means that in order to test the bot without affecting prod i need a separate set of backend guilds which requ... | 0 |

4,986 | 2,610,163,421 | IssuesEvent | 2015-02-26 18:51:49 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Text | auto-migrated Priority-Medium Type-Defect | ```

BARC speeder individual unit description is missing

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 3 May 2011 at 6:42 | 1.0 | Text - ```

BARC speeder individual unit description is missing

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 3 May 2011 at 6:42 | defect | text barc speeder individual unit description is missing original issue reported on code google com by gmail com on may at | 1 |

198,151 | 15,702,014,730 | IssuesEvent | 2021-03-26 12:02:07 | devflask/RoboFlask | https://api.github.com/repos/devflask/RoboFlask | closed | Documentation | Documentation | Initial commit for documentation:

Create a documenation,

Add license

Add README | 1.0 | Documentation - Initial commit for documentation:

Create a documenation,

Add license

Add README | non_defect | documentation initial commit for documentation create a documenation add license add readme | 0 |

59,567 | 17,023,164,082 | IssuesEvent | 2021-07-03 00:39:43 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | ways created in reverse direction | Component: potlatch (flash editor) Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 1.45pm, Saturday, 19th May 2007]**

Potlatch seems to create ways in the reverse direction to the way in which they were drawn, so the last segment drawn is segment number 1 | 1.0 | ways created in reverse direction - **[Submitted to the original trac issue database at 1.45pm, Saturday, 19th May 2007]**

Potlatch seems to create ways in the reverse direction to the way in which they were drawn, so the last segment drawn is segment number 1 | defect | ways created in reverse direction potlatch seems to create ways in the reverse direction to the way in which they were drawn so the last segment drawn is segment number | 1 |

210,547 | 7,190,798,454 | IssuesEvent | 2018-02-02 18:33:46 | openshift/origin | https://api.github.com/repos/openshift/origin | closed | Make the Source secret key name consistent with the filename | kind/bug priority/P3 | Currently, the 'key' name for the Source secret has to be `ssh-privatekey`. However, the file it contains is `id_rsa` or `id_dsa` and it actually can be whatever (I personally have >4 SSH private keys for different systems). So:

- We should make the 'key' name match the filename

- We should have a 'SSHKey' type secret

| 1.0 | Make the Source secret key name consistent with the filename - Currently, the 'key' name for the Source secret has to be `ssh-privatekey`. However, the file it contains is `id_rsa` or `id_dsa` and it actually can be whatever (I personally have >4 SSH private keys for different systems). So:

- We should make the 'key' n... | non_defect | make the source secret key name consistent with the filename currently the key name for the source secret has to be ssh privatekey however the file it contains is id rsa or id dsa and it actually can be whatever i personally have ssh private keys for different systems so we should make the key n... | 0 |

109,445 | 23,766,364,985 | IssuesEvent | 2022-09-01 13:07:01 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Editor: Refactor currentPost state to use core-data package | [Type] Code Quality [Package] Core data [Package] Editor | Previously: https://github.com/WordPress/gutenberg/pull/16402#discussion_r301216604

The editor maintains a copy of the original post being edited in its state, as `currentPost`. This value is always representative of the last saved value of the post; edits are maintained separately. There should be effectively no di... | 1.0 | Editor: Refactor currentPost state to use core-data package - Previously: https://github.com/WordPress/gutenberg/pull/16402#discussion_r301216604

The editor maintains a copy of the original post being edited in its state, as `currentPost`. This value is always representative of the last saved value of the post; edit... | non_defect | editor refactor currentpost state to use core data package previously the editor maintains a copy of the original post being edited in its state as currentpost this value is always representative of the last saved value of the post edits are maintained separately there should be effectively no difference... | 0 |

246,530 | 18,846,857,902 | IssuesEvent | 2021-11-11 15:50:55 | game-sales-analytics/userssrv | https://api.github.com/repos/game-sales-analytics/userssrv | opened | Add Protocol Buffer definitions | documentation enhancement | Currently, the following RPCs are required from the service:

- [ ] Register User:

Given user registration information along with their email and password, service must persist the user information, and return persisted user information as success response.

- [ ] Login User:

Given user email, password, and oth... | 1.0 | Add Protocol Buffer definitions - Currently, the following RPCs are required from the service:

- [ ] Register User:

Given user registration information along with their email and password, service must persist the user information, and return persisted user information as success response.

- [ ] Login User:

G... | non_defect | add protocol buffer definitions currently the following rpcs are required from the service register user given user registration information along with their email and password service must persist the user information and return persisted user information as success response login user given... | 0 |

19,131 | 3,144,822,978 | IssuesEvent | 2015-09-14 15:09:31 | ox-it/ords | https://api.github.com/repos/ox-it/ords | closed | Cannot save changes to records with Boolean data type if table also contains a Time field | auto-migrated Priority-Critical Type-Defect | ```

What steps will reproduce the problem?

1. Open a table which contains a field with the Boolean data type, plus one

with the Time data type

2. Try to edit a field with the Boolean data type, and save the changes

3.

What is the expected output? What do you see instead?

For some reason, ORDS won't let changes to a B... | 1.0 | Cannot save changes to records with Boolean data type if table also contains a Time field - ```

What steps will reproduce the problem?

1. Open a table which contains a field with the Boolean data type, plus one

with the Time data type

2. Try to edit a field with the Boolean data type, and save the changes

3.

What is ... | defect | cannot save changes to records with boolean data type if table also contains a time field what steps will reproduce the problem open a table which contains a field with the boolean data type plus one with the time data type try to edit a field with the boolean data type and save the changes what is ... | 1 |

30,375 | 6,123,330,137 | IssuesEvent | 2017-06-23 04:09:31 | Advanced-Post-List/advanced-post-list | https://api.github.com/repos/Advanced-Post-List/advanced-post-list | closed | Change Admin Page for 0.4 | P3 - Major T-Possible Defect | Redesign the admin page top-down following many of the standards and styles already set in place by WordPress. Eliminate any outside code ( jQuery UI & MultiSelect ).

Design UI/UX as Follows

- Hide **Post Type & Taxonomy** until clicked/check.

- Hide **Empty Message** Design.

- Collapse **Before** and **After**.

... | 1.0 | Change Admin Page for 0.4 - Redesign the admin page top-down following many of the standards and styles already set in place by WordPress. Eliminate any outside code ( jQuery UI & MultiSelect ).

Design UI/UX as Follows

- Hide **Post Type & Taxonomy** until clicked/check.

- Hide **Empty Message** Design.

- Collaps... | defect | change admin page for redesign the admin page top down following many of the standards and styles already set in place by wordpress eliminate any outside code jquery ui multiselect design ui ux as follows hide post type taxonomy until clicked check hide empty message design collaps... | 1 |

51,212 | 13,207,395,224 | IssuesEvent | 2020-08-14 22:56:32 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | Request for I3RecoPulseMap typedef (Trac #48) | Incomplete Migration Migrated from Trac defect offline-software | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/48">https://code.icecube.wisc.edu/projects/icecube/ticket/48</a>, reported by blaufuss</summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51:18",

"_ts": "1194753078000000",

"descri... | 1.0 | Request for I3RecoPulseMap typedef (Trac #48) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/48">https://code.icecube.wisc.edu/projects/icecube/ticket/48</a>, reported by blaufuss</summary>

<p>

```json

{

"status": "closed",

"changetime": "2007-11-11T03:51... | defect | request for typedef trac migrated from json status closed changetime ts description an old request from tilo n nhi erik n nat the moment we are cleaning up and restructuring the icetop reconstruction nmodules and we would like to add the n nt... | 1 |

641,670 | 20,831,870,933 | IssuesEvent | 2022-03-19 15:26:29 | internet4refugees/beherbergung | https://api.github.com/repos/internet4refugees/beherbergung | opened | [Feature] Links between Rows in Table and Markers in Map | enhancement frontend medium priority | **Problem**

Easy jumping from one to another

**Suggested solution**

- [ ] Link from markers popup to anchor in row

- [ ] Onclick-event from row (or link from address-cells) to marker

- [ ] Update center map at this location | 1.0 | [Feature] Links between Rows in Table and Markers in Map - **Problem**

Easy jumping from one to another

**Suggested solution**

- [ ] Link from markers popup to anchor in row

- [ ] Onclick-event from row (or link from address-cells) to marker

- [ ] Update center map at this location | non_defect | links between rows in table and markers in map problem easy jumping from one to another suggested solution link from markers popup to anchor in row onclick event from row or link from address cells to marker update center map at this location | 0 |

76,878 | 26,653,314,218 | IssuesEvent | 2023-01-25 15:09:25 | hyperledger/iroha | https://api.github.com/repos/hyperledger/iroha | opened | [BUG] Failed tolerance doesn't work on the 7 peers. | Bug iroha2 Dev defect Pre-alpha defect QA-confirmed | ### OS and Environment

MacOS, Docker Hub

### GIT commit hash

ac35bda6

### Minimum working example / Steps to reproduce

1. Run the `docker-compose.yml` with 7 peers below.

```yml

version: "3.8"

services:

iroha0:

image: hyperledger/iroha2:dev

environment:

TORII_P2P_ADDR: iroha0:1337

TOR... | 2.0 | [BUG] Failed tolerance doesn't work on the 7 peers. - ### OS and Environment

MacOS, Docker Hub

### GIT commit hash

ac35bda6

### Minimum working example / Steps to reproduce

1. Run the `docker-compose.yml` with 7 peers below.

```yml

version: "3.8"

services:

iroha0:

image: hyperledger/iroha2:dev

envi... | defect | failed tolerance doesn t work on the peers os and environment macos docker hub git commit hash minimum working example steps to reproduce run the docker compose yml with peers below yml version services image hyperledger dev environment torii... | 1 |

446,924 | 12,879,733,909 | IssuesEvent | 2020-07-12 00:22:51 | krsiakdaniel/movies | https://api.github.com/repos/krsiakdaniel/movies | closed | Convert JS => TypeScript | enhancement no-issue-activity priority | Convert `.js` => `.tsx` or `.ts`

- [x] model + page: HOME = https://github.com/krsiakdaniel/movies/pull/98

- [ ] model + page: MOVIE

- [ ] remove `PropTypes` + uninstall | 1.0 | Convert JS => TypeScript - Convert `.js` => `.tsx` or `.ts`

- [x] model + page: HOME = https://github.com/krsiakdaniel/movies/pull/98

- [ ] model + page: MOVIE

- [ ] remove `PropTypes` + uninstall | non_defect | convert js typescript convert js tsx or ts model page home model page movie remove proptypes uninstall | 0 |

66,918 | 7,028,252,214 | IssuesEvent | 2017-12-25 08:14:27 | renderforest/notification-hooks | https://api.github.com/repos/renderforest/notification-hooks | closed | add test (full coverage) | test | * test setup is done, remains write tests

Currently we have only slack hook (not too much to write) | 1.0 | add test (full coverage) - * test setup is done, remains write tests

Currently we have only slack hook (not too much to write) | non_defect | add test full coverage test setup is done remains write tests currently we have only slack hook not too much to write | 0 |

360,550 | 10,694,188,216 | IssuesEvent | 2019-10-23 10:18:53 | OpenSourceEconomics/soepy | https://api.github.com/repos/OpenSourceEconomics/soepy | closed | remove nuisance files | enhancement pb package priority low size small | There appear to be some files that are leftover from our attempts at the packaging. Please check whether they can be removed. For example, build.sh setup.cfg ... | 1.0 | remove nuisance files - There appear to be some files that are leftover from our attempts at the packaging. Please check whether they can be removed. For example, build.sh setup.cfg ... | non_defect | remove nuisance files there appear to be some files that are leftover from our attempts at the packaging please check whether they can be removed for example build sh setup cfg | 0 |

240,049 | 26,254,316,772 | IssuesEvent | 2023-01-05 22:32:23 | tamirverthim/gutenberg | https://api.github.com/repos/tamirverthim/gutenberg | opened | CVE-2021-3807 (High) detected in ansi-regex-3.0.0.tgz | security vulnerability | ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-regex-3.0.0.tgz</b></p></summary>

<p>Regular expression for matching ANSI escape codes</p>

<p>Library home page: <... | True | CVE-2021-3807 (High) detected in ansi-regex-3.0.0.tgz - ## CVE-2021-3807 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-regex-3.0.0.tgz</b></p></summary>

<p>Regular expression fo... | non_defect | cve high detected in ansi regex tgz cve high severity vulnerability vulnerable library ansi regex tgz regular expression for matching ansi escape codes library home page a href dependency hierarchy wordpress blocks file packages blocks tgz root library sh... | 0 |

49,457 | 13,186,741,137 | IssuesEvent | 2020-08-13 01:10:06 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | [steamshovel] QSpinBox of ShovelSlider is unreadable on Mac (Trac #1436) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1436">https://code.icecube.wisc.edu/ticket/1436</a>, reported by hdembinski and owned by hdembinski</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-12-16T17:35:34",

"description": "The text inside the... | 1.0 | [steamshovel] QSpinBox of ShovelSlider is unreadable on Mac (Trac #1436) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/1436">https://code.icecube.wisc.edu/ticket/1436</a>, reported by hdembinski and owned by hdembinski</em></summary>

<p>

```json

{

"status": "closed",

"c... | defect | qspinbox of shovelslider is unreadable on mac trac migrated from json status closed changetime description the text inside the spinbox is cut off at the top and the bottom seems to be only a mac issue works on ubuntu reporter hdembinski... | 1 |

72,368 | 24,081,084,431 | IssuesEvent | 2022-09-19 06:38:56 | martinrotter/rssguard | https://api.github.com/repos/martinrotter/rssguard | closed | [BUG]: RSS Guard misses new entries in specific feed | Type-Defect | ### Brief description of the issue

I have https://www.thecodedmessage.com/index.xml as a feed, and it longer grabs new articles ("Programming Portfolio" from 2022-06-23 is the latest one). "Fetch metadata" still works (and selects the ATOM 1.0 type), and both my browser and curl are still able to fetch it, showing it ... | 1.0 | [BUG]: RSS Guard misses new entries in specific feed - ### Brief description of the issue

I have https://www.thecodedmessage.com/index.xml as a feed, and it longer grabs new articles ("Programming Portfolio" from 2022-06-23 is the latest one). "Fetch metadata" still works (and selects the ATOM 1.0 type), and both my b... | defect | rss guard misses new entries in specific feed brief description of the issue i have as a feed and it longer grabs new articles programming portfolio from is the latest one fetch metadata still works and selects the atom type and both my browser and curl are still able to fetch it showi... | 1 |

241,370 | 18,448,056,615 | IssuesEvent | 2021-10-15 06:43:03 | VincentChtln/NotaComp | https://api.github.com/repos/VincentChtln/NotaComp | opened | Ecrire la documentation | documentation | ### Description

Ajouter des fichiers de documentation pour expliquer le fonctionnement du code. | 1.0 | Ecrire la documentation - ### Description

Ajouter des fichiers de documentation pour expliquer le fonctionnement du code. | non_defect | ecrire la documentation description ajouter des fichiers de documentation pour expliquer le fonctionnement du code | 0 |

276,970 | 30,581,327,619 | IssuesEvent | 2023-07-21 09:54:38 | ministryofjustice/hmpps-probation-integration-services | https://api.github.com/repos/ministryofjustice/hmpps-probation-integration-services | closed | CVE-2023-34035 (sentence-plan-and-delius) | dependencies security | Spring Security's authorization rules can be misconfigured when using multiple servlets

* Project: sentence-plan-and-delius

* Package: `org.springframework.security:spring-security-config:6.1.1`

* Location: `app/libs/spring-security-config-6.1.1.jar`

>Spring Security versions 5.8 prior to 5.8.5, 6.0 prior to 6.0.5, an... | True | CVE-2023-34035 (sentence-plan-and-delius) - Spring Security's authorization rules can be misconfigured when using multiple servlets

* Project: sentence-plan-and-delius

* Package: `org.springframework.security:spring-security-config:6.1.1`

* Location: `app/libs/spring-security-config-6.1.1.jar`

>Spring Security version... | non_defect | cve sentence plan and delius spring security s authorization rules can be misconfigured when using multiple servlets project sentence plan and delius package org springframework security spring security config location app libs spring security config jar spring security versions p... | 0 |

220 | 2,520,319,225 | IssuesEvent | 2015-01-19 00:07:01 | GarageGames/Torque3D | https://api.github.com/repos/GarageGames/Torque3D | opened | Reduce duplication in script templates | Defect / improvement New feature | As per [this forum thread](http://www.garagegames.com/community/forums/viewthread/140846), we will introduce a Packages folder to split duplicated script modules into single locations. For example, where we now have something like:

Templates/

Full/

tools/

Empty/

tools/... | 1.0 | Reduce duplication in script templates - As per [this forum thread](http://www.garagegames.com/community/forums/viewthread/140846), we will introduce a Packages folder to split duplicated script modules into single locations. For example, where we now have something like:

Templates/

Full/

t... | defect | reduce duplication in script templates as per we will introduce a packages folder to split duplicated script modules into single locations for example where we now have something like templates full tools empty tools this change will result in ... | 1 |

30,014 | 5,971,372,274 | IssuesEvent | 2017-05-31 02:13:52 | kaneless/mybatisnet | https://api.github.com/repos/kaneless/mybatisnet | closed | Work with SqlDependency | auto-migrated Priority-Low Type-Defect | ```

Running MyBatis.NET v1.6.1

Hi,

Is there a way to use SqlDependency in iBatis? rather than working with

ADP.NET ?

Thanks,

Aviad

```

Original issue reported on code.google.com by `Aviad...@gmail.com` on 19 Jan 2011 at 11:33

| 1.0 | Work with SqlDependency - ```

Running MyBatis.NET v1.6.1

Hi,

Is there a way to use SqlDependency in iBatis? rather than working with

ADP.NET ?

Thanks,

Aviad

```

Original issue reported on code.google.com by `Aviad...@gmail.com` on 19 Jan 2011 at 11:33

| defect | work with sqldependency running mybatis net hi is there a way to use sqldependency in ibatis rather than working with adp net thanks aviad original issue reported on code google com by aviad gmail com on jan at | 1 |

26,349 | 4,682,383,119 | IssuesEvent | 2016-10-09 08:00:03 | luigirizzo/netmap | https://api.github.com/repos/luigirizzo/netmap | closed | kring error: hwcur 17 rcur 17 hwtail 18 head 18 cur 17 tail 18 | auto-migrated Priority-Medium Type-Defect | ```

Hello again!

I run netmap in my linux box with Intel 82599 but hit very strange issue:

/usr/src/netmap/examples/pkt-gen -i eth3 -f rx -p 8

068.342571 main [1624] interface is eth3

068.342807 extract_ip_range [275] range is 10.0.0.1:0 to 10.0.0.1:0

068.342812 extract_ip_range [275] range is 10.1.0.1:0 to 10.1.0.1:0... | 1.0 | kring error: hwcur 17 rcur 17 hwtail 18 head 18 cur 17 tail 18 - ```

Hello again!

I run netmap in my linux box with Intel 82599 but hit very strange issue:

/usr/src/netmap/examples/pkt-gen -i eth3 -f rx -p 8

068.342571 main [1624] interface is eth3

068.342807 extract_ip_range [275] range is 10.0.0.1:0 to 10.0.0.1:0

06... | defect | kring error hwcur rcur hwtail head cur tail hello again i run netmap in my linux box with intel but hit very strange issue usr src netmap examples pkt gen i f rx p main interface is extract ip range range is to extract ip range range is t... | 1 |

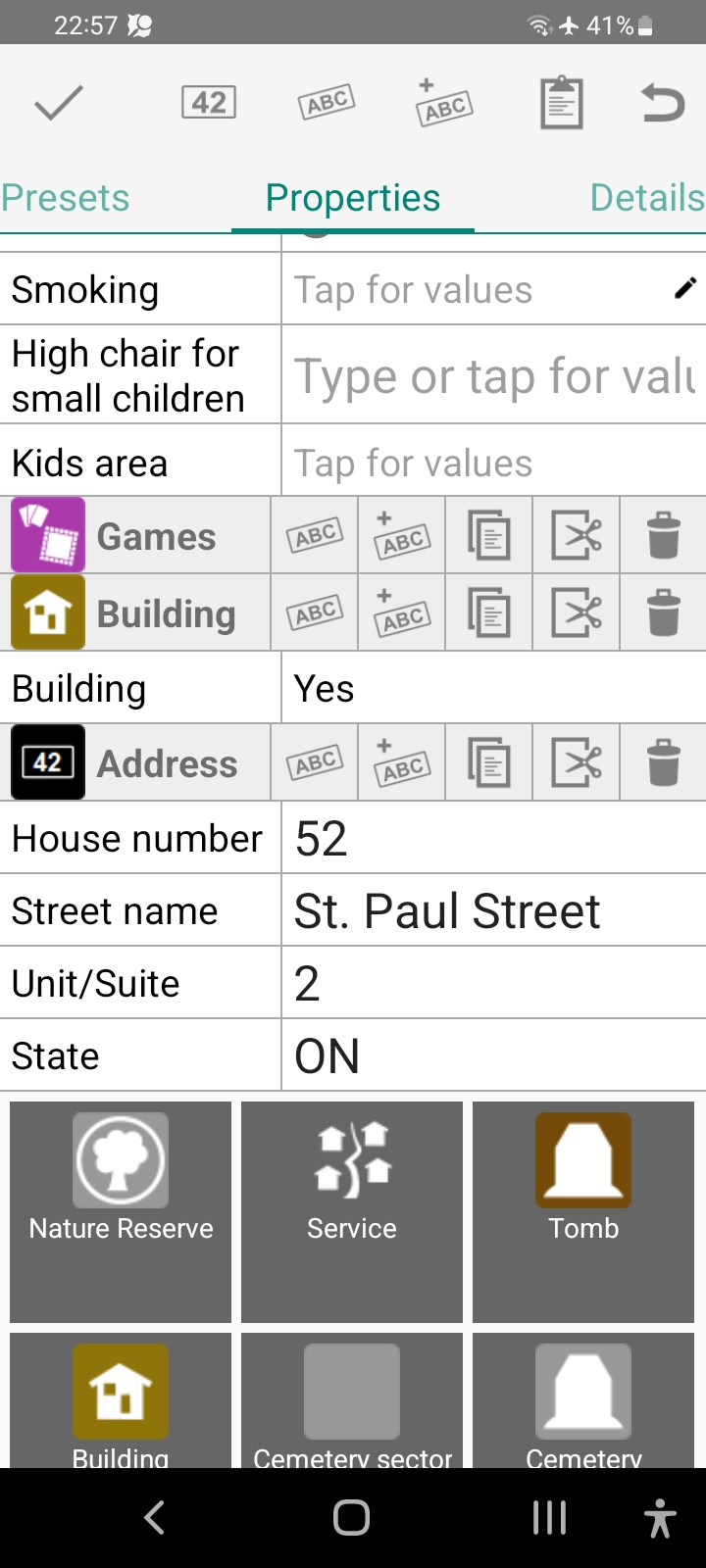

72,671 | 24,228,390,840 | IssuesEvent | 2022-09-26 16:04:28 | MarcusWolschon/osmeditor4android | https://api.github.com/repos/MarcusWolschon/osmeditor4android | closed | Screen flies up after each property deletion | Defect Minor UI | Let's say we want to delete the _Games_ item. We tap its trash can:

| Incomplete Migration Migrated from Trac dataio defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/656">https://code.icecube.wisc.edu/projects/icecube/ticket/656</a>, reported by jvansanten</summary>

<p>

```json

{

"status": "closed",

"changetime": "2011-10-25T14:30:56",

"_ts": "1319553056000000",

"de... | 1.0 | Python I3File should mix in non-native keys (Trac #656) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/656">https://code.icecube.wisc.edu/projects/icecube/ticket/656</a>, reported by jvansanten</summary>

<p>

```json

{

"status": "closed",

"changetime": "20... | defect | python should mix in non native keys trac migrated from json status closed changetime ts description emitted by contain the non native keys e g gcdq found in all previous frames it would be convenient if the python had the same functio... | 1 |

45,795 | 13,055,750,074 | IssuesEvent | 2020-07-30 02:37:29 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | changing I3_WORK to I3_SRC and I3_BUILD (Trac #65) | Incomplete Migration Migrated from Trac cmake defect | Migrated from https://code.icecube.wisc.edu/ticket/65

```json

{

"status": "closed",

"changetime": "2007-06-14T19:38:14",

"description": "With the current cmake compiling tool, we no longer have just the one main work directory (I3_WORK), but two relevant directories: source code dir (I3_SRC) and build dir... | 1.0 | changing I3_WORK to I3_SRC and I3_BUILD (Trac #65) - Migrated from https://code.icecube.wisc.edu/ticket/65

```json

{

"status": "closed",

"changetime": "2007-06-14T19:38:14",

"description": "With the current cmake compiling tool, we no longer have just the one main work directory (I3_WORK), but two relevan... | defect | changing work to src and build trac migrated from json status closed changetime description with the current cmake compiling tool we no longer have just the one main work directory work but two relevant directories source code dir src and build dir ... | 1 |

255,285 | 27,484,892,717 | IssuesEvent | 2023-03-04 01:31:06 | panasalap/linux-4.1.15 | https://api.github.com/repos/panasalap/linux-4.1.15 | closed | CVE-2017-18202 (High) detected in linux-yocto-devv4.2.8 - autoclosed | security vulnerability | ## CVE-2017-18202 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-devv4.2.8</b></p></summary>

<p>

<p>Linux Embedded Kernel - tracks the next mainline release</p>

<p>Library... | True | CVE-2017-18202 (High) detected in linux-yocto-devv4.2.8 - autoclosed - ## CVE-2017-18202 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linux-yocto-devv4.2.8</b></p></summary>

<p>

<p>... | non_defect | cve high detected in linux yocto autoclosed cve high severity vulnerability vulnerable library linux yocto linux embedded kernel tracks the next mainline release library home page a href found in head commit a href found in base branch master vu... | 0 |

7,081 | 2,597,119,905 | IssuesEvent | 2015-02-21 03:14:55 | phetsims/tasks | https://api.github.com/repos/phetsims/tasks | closed | Dev Test Least-Squares Regression 1.0.0-dev.6 | High Priority QA | Please dev test the latest version of Least-Squares Regression (http://www.colorado.edu/physics/phet/dev/html/least-squares-regression/1.0.0-dev.6/)

- [x] Windows + Chrome

- [x] OS X + Safari 7.1+

- [x] iPad iOS 8 + Safari

I know this testing has already begun, just creating an issue to track progress. | 1.0 | Dev Test Least-Squares Regression 1.0.0-dev.6 - Please dev test the latest version of Least-Squares Regression (http://www.colorado.edu/physics/phet/dev/html/least-squares-regression/1.0.0-dev.6/)

- [x] Windows + Chrome

- [x] OS X + Safari 7.1+

- [x] iPad iOS 8 + Safari

I know this testing has already begun,... | non_defect | dev test least squares regression dev please dev test the latest version of least squares regression windows chrome os x safari ipad ios safari i know this testing has already begun just creating an issue to track progress | 0 |

40,716 | 2,868,938,048 | IssuesEvent | 2015-06-05 22:04:25 | dart-lang/pub | https://api.github.com/repos/dart-lang/pub | closed | Pub validator test is failing on mac | bug Fixed Priority-Medium | <a href="https://github.com/nex3"><img src="https://avatars.githubusercontent.com/u/188?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [nex3](https://github.com/nex3)**

_Originally opened as dart-lang/sdk#7330_

----

http://build.chromium.org/p/client.dart/builders/pub-mac/builds/1441/steps/... | 1.0 | Pub validator test is failing on mac - <a href="https://github.com/nex3"><img src="https://avatars.githubusercontent.com/u/188?v=3" align="left" width="96" height="96"hspace="10"></img></a> **Issue by [nex3](https://github.com/nex3)**

_Originally opened as dart-lang/sdk#7330_

----

http://build.chromium.org/p/client.d... | non_defect | pub validator test is failing on mac issue by originally opened as dart lang sdk for some reason it doesn t seem to be recognizing a quot src quot directory beneath quot lib quot as quot src quot | 0 |

56,500 | 15,114,037,759 | IssuesEvent | 2021-02-09 00:58:56 | playframework/playframework | https://api.github.com/repos/playframework/playframework | closed | QueryStringBindable.bindableString.unbind() produces URL-encoded keys, other binders - don't | type:defect | ### Play Version

play-`2.8.2`

### API

Scala

### Operating System

`Linux pc 5.7.6-arch1-1 #1 SMP PREEMPT Thu, 25 Jun 2020 00:14:47 +0000 x86_64 GNU/Linux`

### JDK

```

openjdk version "1.8.0_252"

OpenJDK Runtime Environment (build 1.8.0_252-b09)

OpenJDK 64-Bit Server VM (build 25.252-b09, mixed mode... | 1.0 | QueryStringBindable.bindableString.unbind() produces URL-encoded keys, other binders - don't - ### Play Version

play-`2.8.2`

### API

Scala

### Operating System

`Linux pc 5.7.6-arch1-1 #1 SMP PREEMPT Thu, 25 Jun 2020 00:14:47 +0000 x86_64 GNU/Linux`

### JDK

```

openjdk version "1.8.0_252"

OpenJDK Ru... | defect | querystringbindable bindablestring unbind produces url encoded keys other binders don t play version play api scala operating system linux pc smp preempt thu jun gnu linux jdk openjdk version openjdk runtime environment ... | 1 |

9,799 | 2,615,175,127 | IssuesEvent | 2015-03-01 06:58:21 | chrsmith/reaver-wps | https://api.github.com/repos/chrsmith/reaver-wps | opened | d | auto-migrated Priority-Triage Type-Defect | ```

A few things to consider before submitting an issue:

0. We write documentation for a reason, if you have not read it and are

having problems with Reaver these pages are required reading before

submitting an issue:

http://code.google.com/p/reaver-wps/wiki/HintsAndTips

http://code.google.com/p/reaver-wps/wiki/README... | 1.0 | d - ```

A few things to consider before submitting an issue:

0. We write documentation for a reason, if you have not read it and are

having problems with Reaver these pages are required reading before

submitting an issue:

http://code.google.com/p/reaver-wps/wiki/HintsAndTips

http://code.google.com/p/reaver-wps/wiki/RE... | defect | d a few things to consider before submitting an issue we write documentation for a reason if you have not read it and are having problems with reaver these pages are required reading before submitting an issue reaver will only work if your card is in monitor mode if you do not know what monito... | 1 |

418,946 | 12,215,788,147 | IssuesEvent | 2020-05-01 13:46:00 | onaio/rdt-standard | https://api.github.com/repos/onaio/rdt-standard | closed | Xapiens and ZebraX requesting that they need access to the most recent OpenSRP Repo | Priority - high covid response | On-call Xapeins and ZebraX request that they have most recent OpenSRP repo and have admin access to OpenSRP staging server and OpenMRS.

| 1.0 | Xapiens and ZebraX requesting that they need access to the most recent OpenSRP Repo - On-call Xapeins and ZebraX request that they have most recent OpenSRP repo and have admin access to OpenSRP staging server and OpenMRS.

| non_defect | xapiens and zebrax requesting that they need access to the most recent opensrp repo on call xapeins and zebrax request that they have most recent opensrp repo and have admin access to opensrp staging server and openmrs | 0 |

379,598 | 11,223,918,179 | IssuesEvent | 2020-01-08 00:18:30 | kubeflow/pipelines | https://api.github.com/repos/kubeflow/pipelines | closed | Is there a way to specify "privileged" for a containerOp? | priority/p2 | cc @sethjuarez

--------------

Afaict the relevant permissions are svc-account roles and instance scopes. the latter can't be change on running nodes.

I tried updating and re-applying the cluster `.yaml` to grant the new permissions.

The svc-accts _may_ have picked up the new roles on the next pipeline run ... | 1.0 | Is there a way to specify "privileged" for a containerOp? - cc @sethjuarez

--------------

Afaict the relevant permissions are svc-account roles and instance scopes. the latter can't be change on running nodes.

I tried updating and re-applying the cluster `.yaml` to grant the new permissions.

The svc-accts ... | non_defect | is there a way to specify privileged for a containerop cc sethjuarez afaict the relevant permissions are svc account roles and instance scopes the latter can t be change on running nodes i tried updating and re applying the cluster yaml to grant the new permissions the svc accts ... | 0 |

156,332 | 12,305,680,694 | IssuesEvent | 2020-05-11 23:10:45 | hkdobrev/notetaker | https://api.github.com/repos/hkdobrev/notetaker | opened | Add integration tests | tests | It would be great to have integration tests in the repo running in CI with example notes files and expected results. | 1.0 | Add integration tests - It would be great to have integration tests in the repo running in CI with example notes files and expected results. | non_defect | add integration tests it would be great to have integration tests in the repo running in ci with example notes files and expected results | 0 |

78,005 | 27,273,654,037 | IssuesEvent | 2023-02-23 01:44:04 | zed-industries/community | https://api.github.com/repos/zed-industries/community | closed | Vim mode `r` replaces with the text "enter" or "escape" | defect vim | ### Check for existing issues

- [X] Completed

### Describe the bug / provide steps to reproduce it

Similar to #881, same fix may resolve both of these issues

When using replace in vim mode (by hitting <kbd>r</kbd>), if you hit either <kbd>return</kbd> to insert a newline or <kbd>esc</kbd> to cancel the action, in... | 1.0 | Vim mode `r` replaces with the text "enter" or "escape" - ### Check for existing issues

- [X] Completed

### Describe the bug / provide steps to reproduce it

Similar to #881, same fix may resolve both of these issues

When using replace in vim mode (by hitting <kbd>r</kbd>), if you hit either <kbd>return</kbd> to i... | defect | vim mode r replaces with the text enter or escape check for existing issues completed describe the bug provide steps to reproduce it similar to same fix may resolve both of these issues when using replace in vim mode by hitting r if you hit either return to insert a newline or es... | 1 |

48,080 | 13,067,431,198 | IssuesEvent | 2020-07-31 00:25:56 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | I3Particle documentation incomplete (Trac #1746) | Migrated from Trac combo core defect |

Per Alex Olivas ... there is missing documentation on the cascade

Migrated from https://code.icecube.wisc.edu/ticket/1746

```json

{

"status": "closed",

"changetime": "2019-02-13T14:12:47",

"description": "\nPer Alex Olivas ... there is missing documentation on the cascade\n",

"reporter": "pmeade",... | 1.0 | I3Particle documentation incomplete (Trac #1746) -

Per Alex Olivas ... there is missing documentation on the cascade

Migrated from https://code.icecube.wisc.edu/ticket/1746

```json

{

"status": "closed",

"changetime": "2019-02-13T14:12:47",

"description": "\nPer Alex Olivas ... there is missing document... | defect | documentation incomplete trac per alex olivas there is missing documentation on the cascade migrated from json status closed changetime description nper alex olivas there is missing documentation on the cascade n reporter pmeade cc ... | 1 |

77,723 | 27,131,754,923 | IssuesEvent | 2023-02-16 10:16:26 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | When sorting invites by activity, newest are now at the bottom which isn't helpful when you stack them up because ignoring invites still doesn't exist.... | T-Defect | ### Steps to reproduce

See rageshake: https://github.com/matrix-org/element-web-rageshakes/issues/20150

When sorting invites by activity, newest are now at the bottom which isn't helpful when you stack them up because ignoring invites still doesn't exist....

### Outcome

.

### Operating system

.

### Browser inf... | 1.0 | When sorting invites by activity, newest are now at the bottom which isn't helpful when you stack them up because ignoring invites still doesn't exist.... - ### Steps to reproduce

See rageshake: https://github.com/matrix-org/element-web-rageshakes/issues/20150

When sorting invites by activity, newest are now at the... | defect | when sorting invites by activity newest are now at the bottom which isn t helpful when you stack them up because ignoring invites still doesn t exist steps to reproduce see rageshake when sorting invites by activity newest are now at the bottom which isn t helpful when you stack them up because ignor... | 1 |

59,865 | 17,023,270,340 | IssuesEvent | 2021-07-03 01:09:10 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Umlaut problem while typing | Component: potlatch (flash editor) Priority: major Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 4.35pm, Wednesday, 9th July 2008]**

As i want to give a street a name, i want to write "Hhenstrae" but in the field i read "Hhenstrae".

I found out, that if i write "Hhentstrae" into a file and copy & paste it to the name-tag, it works Oo. | 1.0 | Umlaut problem while typing - **[Submitted to the original trac issue database at 4.35pm, Wednesday, 9th July 2008]**

As i want to give a street a name, i want to write "Hhenstrae" but in the field i read "Hhenstrae".

I found out, that if i write "Hhentstrae" into a file and copy & paste it to the name-tag, it work... | defect | umlaut problem while typing as i want to give a street a name i want to write hhenstrae but in the field i read hhenstrae i found out that if i write hhentstrae into a file and copy paste it to the name tag it works oo | 1 |

387,400 | 26,722,289,663 | IssuesEvent | 2023-01-29 09:28:41 | curl/curl | https://api.github.com/repos/curl/curl | closed | CURLOPT_READFUNCTION documentation return value confuses bytes with objects | documentation | ### I did this

I read the documentation at https://curl.se/libcurl/c/CURLOPT_READFUNCTION.html.

### I expected the following

I expected to understand if the return value should be the number of _bytes_ read, like the return value of [read](https://pubs.opengroup.org/onlinepubs/9699919799/functions/read.html), ... | 1.0 | CURLOPT_READFUNCTION documentation return value confuses bytes with objects - ### I did this

I read the documentation at https://curl.se/libcurl/c/CURLOPT_READFUNCTION.html.

### I expected the following

I expected to understand if the return value should be the number of _bytes_ read, like the return value of ... | non_defect | curlopt readfunction documentation return value confuses bytes with objects i did this i read the documentation at i expected the following i expected to understand if the return value should be the number of bytes read like the return value of or the number of objects read like the retur... | 0 |

122,968 | 12,179,792,841 | IssuesEvent | 2020-04-28 11:18:49 | RPR-2019/nrs_projekat_tim3 | https://api.github.com/repos/RPR-2019/nrs_projekat_tim3 | closed | Kreirati dokument dizajn sistema | Prioritet 1 documentation | Kreirati dokument "Dizajn sistema" koji će predstavljati detaljan opis dizajna buduće aplikacije. Dokument obavezno mora sadržavati minimalno:

* dijagram entitet-veza

* dijagram klasa

* dijagram raspoređivanja

* dijagram komponenti

Pored toga, dopunite dokument i drugim dijagramima za koje smatrate da su bitne. | 1.0 | Kreirati dokument dizajn sistema - Kreirati dokument "Dizajn sistema" koji će predstavljati detaljan opis dizajna buduće aplikacije. Dokument obavezno mora sadržavati minimalno:

* dijagram entitet-veza

* dijagram klasa

* dijagram raspoređivanja

* dijagram komponenti

Pored toga, dopunite dokument i drugim dijagrami... | non_defect | kreirati dokument dizajn sistema kreirati dokument dizajn sistema koji će predstavljati detaljan opis dizajna buduće aplikacije dokument obavezno mora sadržavati minimalno dijagram entitet veza dijagram klasa dijagram raspoređivanja dijagram komponenti pored toga dopunite dokument i drugim dijagrami... | 0 |

125,129 | 12,247,887,773 | IssuesEvent | 2020-05-05 16:34:58 | iml-wg/HEPML-LivingReview | https://api.github.com/repos/iml-wg/HEPML-LivingReview | opened | Add Zenodo support | documentation | In the same manner that the HEP-ML-Resources has a Zenodo DOI [](https://doi.org/10.5281/zenodo.3626294) the HEMPL-LivingReview should have one as well. Similar to HEP-ML-Resources, creating annual (or maybe now quarterly) tags should be sufficient. | 1.0 | Add Zenodo support - In the same manner that the HEP-ML-Resources has a Zenodo DOI [](https://doi.org/10.5281/zenodo.3626294) the HEMPL-LivingReview should have one as well. Similar to HEP-ML-Resources, creating annual (or maybe now quarterly) tags should b... | non_defect | add zenodo support in the same manner that the hep ml resources has a zenodo doi the hempl livingreview should have one as well similar to hep ml resources creating annual or maybe now quarterly tags should be sufficient | 0 |

38,550 | 8,887,576,192 | IssuesEvent | 2019-01-15 06:31:43 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | ClearState method is cleaning all states. It should clears only stateKey state. | defect | Reported by a PRO user;

> "clearState()" method in the "table" component is supposed to clear the saved state of the table by using the "stateKey" given in the template. But instead, it deleting everything. | 1.0 | ClearState method is cleaning all states. It should clears only stateKey state. - Reported by a PRO user;

> "clearState()" method in the "table" component is supposed to clear the saved state of the table by using the "stateKey" given in the template. But instead, it deleting everything. | defect | clearstate method is cleaning all states it should clears only statekey state reported by a pro user clearstate method in the table component is supposed to clear the saved state of the table by using the statekey given in the template but instead it deleting everything | 1 |

10,869 | 2,622,337,198 | IssuesEvent | 2015-03-04 01:40:39 | 0xtob/nitrotracker | https://api.github.com/repos/0xtob/nitrotracker | opened | Folders having a special character as a first character, appear before [..] link | auto-migrated Priority-Medium Type-Defect | ```

If the first character in a folder name is a special character, like an

exclamation mark (which I often use to keep certain folders at the top of

alphabetized lists), it will appear above the [..] link.

```

Original issue reported on code.google.com by `zoik...@yahoo.com` on 7 Apr 2010 at 12:41 | 1.0 | Folders having a special character as a first character, appear before [..] link - ```

If the first character in a folder name is a special character, like an

exclamation mark (which I often use to keep certain folders at the top of

alphabetized lists), it will appear above the [..] link.

```

Original issue reported o... | defect | folders having a special character as a first character appear before link if the first character in a folder name is a special character like an exclamation mark which i often use to keep certain folders at the top of alphabetized lists it will appear above the link original issue reported on code... | 1 |

67,924 | 21,317,787,167 | IssuesEvent | 2022-04-16 15:36:42 | cakephp/bake | https://api.github.com/repos/cakephp/bake | closed | If you create an entity with `bin/cake bake model`, the Value of `$_accessible` is generated with "1". I want boolean. | Defect | ### Description

ex

```sh

$ bin/cake bake model users

```

```php

class User extends Entity

{

// ...

protected $_accessible = [

'id' => 1,

'email' => 1,

'password' => 1,

];

// ...

}

```

Here's what I want.

```

class User extends Entity

{

// ...

... | 1.0 | If you create an entity with `bin/cake bake model`, the Value of `$_accessible` is generated with "1". I want boolean. - ### Description

ex

```sh

$ bin/cake bake model users

```

```php

class User extends Entity

{

// ...

protected $_accessible = [

'id' => 1,

'email' => 1,

... | defect | if you create an entity with bin cake bake model the value of accessible is generated with i want boolean description ex sh bin cake bake model users php class user extends entity protected accessible id email ... | 1 |

76,114 | 26,247,949,602 | IssuesEvent | 2023-01-05 16:42:11 | vector-im/element-android | https://api.github.com/repos/vector-im/element-android | closed | [Session manager] Missing info when a session does not support encryption | T-Defect S-Minor O-Uncommon A-Settings | ### Steps to reproduce

- Create a session that does not support encryption using the following command line:

`curl -d '{ "type": "m.login.password", "identifier": { "type": "m.id.user", "user": "..." }, "password": "..." }' -H "Content-Type: application/json" -X POST https://matrix-client.matrix.org/_matrix/client/... | 1.0 | [Session manager] Missing info when a session does not support encryption - ### Steps to reproduce

- Create a session that does not support encryption using the following command line:

`curl -d '{ "type": "m.login.password", "identifier": { "type": "m.id.user", "user": "..." }, "password": "..." }' -H "Content-Type... | defect | missing info when a session does not support encryption steps to reproduce create a session that does not support encryption using the following command line curl d type m login password identifier type m id user user password h content type application js... | 1 |

206,745 | 15,772,211,196 | IssuesEvent | 2021-03-31 21:29:52 | pathfinder-for-autonomous-navigation/FlightSoftware | https://api.github.com/repos/pathfinder-for-autonomous-navigation/FlightSoftware | closed | Update QuakeFaultHandler behavior to modify QuakeManager | enhancement functional testing | The current implementation is based on the assumption that the QuakeFaultHandler can only power cycle when the radio is in the Wait state.

After more examination, we determined that this underlying behavior needed to be changed. When the QuakeFaultHandler is about to power cycle, it should instead be setting the rad... | 1.0 | Update QuakeFaultHandler behavior to modify QuakeManager - The current implementation is based on the assumption that the QuakeFaultHandler can only power cycle when the radio is in the Wait state.

After more examination, we determined that this underlying behavior needed to be changed. When the QuakeFaultHandler is... | non_defect | update quakefaulthandler behavior to modify quakemanager the current implementation is based on the assumption that the quakefaulthandler can only power cycle when the radio is in the wait state after more examination we determined that this underlying behavior needed to be changed when the quakefaulthandler is... | 0 |

196,460 | 14,860,830,548 | IssuesEvent | 2021-01-18 21:22:10 | ESMValGroup/ESMValTool | https://api.github.com/repos/ESMValGroup/ESMValTool | closed | Tests are failing on Circle because the cached test code uses an ancient fiona | test | https://app.circleci.com/pipelines/github/ESMValGroup/ESMValTool/4012/workflows/d9967e41-789d-4a98-9493-e1d113a129ea/jobs/41067/steps

Keep calm and update fiona! Don't worry about the the Circle test fails, the `fiona` that gets installed on Circle is `1.8.13` which is ancient, the testing suite works perfectly fine... | 1.0 | Tests are failing on Circle because the cached test code uses an ancient fiona - https://app.circleci.com/pipelines/github/ESMValGroup/ESMValTool/4012/workflows/d9967e41-789d-4a98-9493-e1d113a129ea/jobs/41067/steps

Keep calm and update fiona! Don't worry about the the Circle test fails, the `fiona` that gets install... | non_defect | tests are failing on circle because the cached test code uses an ancient fiona keep calm and update fiona don t worry about the the circle test fails the fiona that gets installed on circle is which is ancient the testing suite works perfectly fine with in fact in i have added a note abo... | 0 |

119,121 | 15,415,482,806 | IssuesEvent | 2021-03-05 02:42:51 | SasanLabs/VulnerableApp | https://api.github.com/repos/SasanLabs/VulnerableApp | closed | In future this project might become heavy weight so we need to think on making it light weight. | Analysis Future Goal design-document documentation | Going further in future this project might become very heavy weight so we need to think on making it in such a way that it will be light weight. | 1.0 | In future this project might become heavy weight so we need to think on making it light weight. - Going further in future this project might become very heavy weight so we need to think on making it in such a way that it will be light weight. | non_defect | in future this project might become heavy weight so we need to think on making it light weight going further in future this project might become very heavy weight so we need to think on making it in such a way that it will be light weight | 0 |

71,735 | 23,778,397,119 | IssuesEvent | 2022-09-02 00:04:59 | CorfuDB/CorfuDB | https://api.github.com/repos/CorfuDB/CorfuDB | closed | Propose in Paxos produces a false negative OutrankedException is certain cases | defect | ## Overview

```

CFUtils.getUninterruptibly(CompletableFuture.anyOf(proposeList),

OutrankedException.class, TimeoutException.class, NetworkException.class,

WrongEpochException.class);

```

In the propose code of the LayoutView wrongfully throws OutRankedException ... | 1.0 | Propose in Paxos produces a false negative OutrankedException is certain cases - ## Overview

```

CFUtils.getUninterruptibly(CompletableFuture.anyOf(proposeList),

OutrankedException.class, TimeoutException.class, NetworkException.class,

WrongEpochException.class);

`... | defect | propose in paxos produces a false negative outrankedexception is certain cases overview cfutils getuninterruptibly completablefuture anyof proposelist outrankedexception class timeoutexception class networkexception class wrongepochexception class ... | 1 |

199,900 | 22,715,374,377 | IssuesEvent | 2022-07-06 01:09:37 | RG4421/openedr | https://api.github.com/repos/RG4421/openedr | opened | CVE-2021-22924 (Low) detected in multiple libraries | security vulnerability | ## CVE-2021-22924 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>curlcurl-7_63_0</b>, <b>curlcurl-7_63_0</b>, <b>curlcurl-7_63_0</b>, <b>curlcurl-7_63_0</b>, <b>curlcurl-7_63_0</b>, <... | True | CVE-2021-22924 (Low) detected in multiple libraries - ## CVE-2021-22924 - Low Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>curlcurl-7_63_0</b>, <b>curlcurl-7_63_0</b>, <b>curlcurl-7_63_0<... | non_defect | cve low detected in multiple libraries cve low severity vulnerability vulnerable libraries curlcurl curlcurl curlcurl curlcurl curlcurl curlcurl vulnerability details libcurl keeps previously used connections in a conne... | 0 |

57,813 | 16,085,899,610 | IssuesEvent | 2021-04-26 11:09:25 | primefaces/primereact | https://api.github.com/repos/primefaces/primereact | closed | Tooltip: Fixed tooltip doesnt work with elements inside Tooltip children ( autoHide = false ) | defect | **I'm submitting a ...** (check one with "x")

```

[x] bug report

[ ] feature request

[ ] support request => Please do not submit support request here, instead see https://forum.primefaces.org/viewforum.php?f=57

```

**Codesandbox Case (Bug Reports)**

https://codesandbox.io/s/gifted-chandrasekhar-nepgn?file=/... | 1.0 | Tooltip: Fixed tooltip doesnt work with elements inside Tooltip children ( autoHide = false ) - **I'm submitting a ...** (check one with "x")

```

[x] bug report

[ ] feature request

[ ] support request => Please do not submit support request here, instead see https://forum.primefaces.org/viewforum.php?f=57

```

... | defect | tooltip fixed tooltip doesnt work with elements inside tooltip children autohide false i m submitting a check one with x bug report feature request support request please do not submit support request here instead see codesandbox case bug reports current... | 1 |

32,684 | 6,892,617,653 | IssuesEvent | 2017-11-22 21:54:06 | jquery/esprima | https://api.github.com/repos/jquery/esprima | closed | `a <!-- b` in modules are actually equivalent to `a < !(--b)` | defect | See: https://github.com/tc39/ecma262/issues/949

An error is generated for `a <!-- b` with `scriptType: "module"`, when it should parse like `a < !(--b)`. | 1.0 | `a <!-- b` in modules are actually equivalent to `a < !(--b)` - See: https://github.com/tc39/ecma262/issues/949

An error is generated for `a <!-- b` with `scriptType: "module"`, when it should parse like `a < !(--b)`. | defect | a b in modules are actually equivalent to a b see an error is generated for a b with scripttype module when it should parse like a b | 1 |

16,768 | 2,942,244,513 | IssuesEvent | 2015-07-02 13:19:33 | icatproject/ijp.torque | https://api.github.com/repos/icatproject/ijp.torque | closed | Clean up mechanism for interactive users inadequate | Type-Defect | If an interactive user fails to log off properly the system will not notice and will

not put the machine back on line.

This can be seen by tailing portal.log

Workaround:

Log onto the machine and kill all processes belonging to that user | 1.0 | Clean up mechanism for interactive users inadequate - If an interactive user fails to log off properly the system will not notice and will

not put the machine back on line.

This can be seen by tailing portal.log

Workaround:

Log onto the machine and kill all processes belonging to that user | defect | clean up mechanism for interactive users inadequate if an interactive user fails to log off properly the system will not notice and will not put the machine back on line this can be seen by tailing portal log workaround log onto the machine and kill all processes belonging to that user | 1 |

508,850 | 14,707,011,544 | IssuesEvent | 2021-01-04 20:51:35 | internetarchive/openlibrary | https://api.github.com/repos/internetarchive/openlibrary | opened | Give Nick full access to blog.openlibrary.org | 1-off tasks Lead: @mekarpeles Priority: 1 | This is blocking Nick from contributing blog posts efficiently. The process was weird; he has an account blog.archive.org, but can't log in to blog.openlibrary.org , I believe. | 1.0 | Give Nick full access to blog.openlibrary.org - This is blocking Nick from contributing blog posts efficiently. The process was weird; he has an account blog.archive.org, but can't log in to blog.openlibrary.org , I believe. | non_defect | give nick full access to blog openlibrary org this is blocking nick from contributing blog posts efficiently the process was weird he has an account blog archive org but can t log in to blog openlibrary org i believe | 0 |

6,627 | 7,714,719,499 | IssuesEvent | 2018-05-23 03:48:32 | aws/aws-sdk-go | https://api.github.com/repos/aws/aws-sdk-go | closed | SQS TLS handshake timeout | Service API | ### Version of AWS SDK for Go?

v1.13.32

### Version of Go (`go version`)?

1.9.2

### What issue did you see?

Getting a handshake timeout when trying to connect to SQS:

RequestError: send request failed

caused by: Post https://sqs.ap-southeast-2.amazonaws.com/: net/http: TLS handshake timeout

### Steps to... | 1.0 | SQS TLS handshake timeout - ### Version of AWS SDK for Go?

v1.13.32

### Version of Go (`go version`)?

1.9.2

### What issue did you see?

Getting a handshake timeout when trying to connect to SQS:

RequestError: send request failed

caused by: Post https://sqs.ap-southeast-2.amazonaws.com/: net/http: TLS hands... | non_defect | sqs tls handshake timeout version of aws sdk for go version of go go version what issue did you see getting a handshake timeout when trying to connect to sqs requesterror send request failed caused by post net http tls handshake timeout steps to reproduce usin... | 0 |

50,590 | 6,103,284,066 | IssuesEvent | 2017-06-20 18:22:28 | kubernetes/kubernetes | https://api.github.com/repos/kubernetes/kubernetes | closed | What's the specification of the slave that runs "bazel build" in your jenkins CI ? | sig/testing | I find it takes long time to build kubernetes. For some reason, I want to know the specification of the slave that runs bazel-build in the your CI. What's more, why your jenkins runs "bazel clean" before "bazel build" every time? | 1.0 | What's the specification of the slave that runs "bazel build" in your jenkins CI ? - I find it takes long time to build kubernetes. For some reason, I want to know the specification of the slave that runs bazel-build in the your CI. What's more, why your jenkins runs "bazel clean" before "bazel build" every time? | non_defect | what s the specification of the slave that runs bazel build in your jenkins ci i find it takes long time to build kubernetes for some reason i want to know the specification of the slave that runs bazel build in the your ci what s more why your jenkins runs bazel clean before bazel build every time | 0 |

177,041 | 6,573,491,077 | IssuesEvent | 2017-09-11 08:59:51 | gdgphilippines/devfest | https://api.github.com/repos/gdgphilippines/devfest | closed | Different sizes of pictures in different browe | bug Priority Medium review | <!-- Instructions: https://github.com/gdgphilippines/devfest/blob/master/CONTRIBUTING.md#using-the-issue-tracker -->

<!-- Copied from Firebase Polyfire template -->

### Description

Different sizes of pictures and Google chrome don't have a hamburger.

### Expected outcome

The same sizes

### Actual outcome

... | 1.0 | Different sizes of pictures in different browe - <!-- Instructions: https://github.com/gdgphilippines/devfest/blob/master/CONTRIBUTING.md#using-the-issue-tracker -->

<!-- Copied from Firebase Polyfire template -->

### Description

Different sizes of pictures and Google chrome don't have a hamburger.

### Expecte... | non_defect | different sizes of pictures in different browe description different sizes of pictures and google chrome don t have a hamburger expected outcome the same sizes actual outcome different sizes live demo steps to reproduce example put a paper foo element ... | 0 |

12,467 | 2,700,606,630 | IssuesEvent | 2015-04-04 10:40:14 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Inline comments are broken to next line in generated JavaScript file | defect | The C# code:

```

namespace InLineCMT

{

public class Commenter

{

private static void Main()

{

var a = 1; // inits a with one

}

}

}

```

Results in:

```

Bridge.define('InLineCMT.Commenter', {

statics: {

main: function () {

var a = ... | 1.0 | Inline comments are broken to next line in generated JavaScript file - The C# code:

```

namespace InLineCMT

{

public class Commenter

{

private static void Main()

{

var a = 1; // inits a with one

}

}

}

```

Results in:

```

Bridge.define('InLineCMT.Commenter... | defect | inline comments are broken to next line in generated javascript file the c code namespace inlinecmt public class commenter private static void main var a inits a with one results in bridge define inlinecmt commenter... | 1 |

57,423 | 15,777,933,771 | IssuesEvent | 2021-04-01 07:02:24 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | Pick list events emit inconsistent types | LTS-PORTABLE defect | **I'm submitting a ...**

```

[x] bug report => Search github for a similar issue or PR before submitting

[ ] feature request => Please check if request is not on the roadmap already https://github.com/primefaces/primeng/wiki/Roadmap

[ ] support request => Please do not submit support request here, instead see http:... | 1.0 | Pick list events emit inconsistent types - **I'm submitting a ...**

```

[x] bug report => Search github for a similar issue or PR before submitting

[ ] feature request => Please check if request is not on the roadmap already https://github.com/primefaces/primeng/wiki/Roadmap

[ ] support request => Please do not sub... | defect | pick list events emit inconsistent types i m submitting a bug report search github for a similar issue or pr before submitting feature request please check if request is not on the roadmap already support request please do not submit support request here instead see plunk... | 1 |

32,282 | 6,758,304,834 | IssuesEvent | 2017-10-24 13:50:05 | BOINC/boinc | https://api.github.com/repos/BOINC/boinc | closed | Commas in usernames break private messages | C: Web - Private Messages E: 1 day P: Minor T: Defect | **Reported by ToeBee on 8 Dec 38260853 16:00 UTC**

Commas are used on the "Send Private Message" page (pm.php?action=new) to allow you to send messages to multiple recipients. However this causes a problem if the user you are trying to send a message to has a comma in their username. It tries to find two users to send ... | 1.0 | Commas in usernames break private messages - **Reported by ToeBee on 8 Dec 38260853 16:00 UTC**

Commas are used on the "Send Private Message" page (pm.php?action=new) to allow you to send messages to multiple recipients. However this causes a problem if the user you are trying to send a message to has a comma in their ... | defect | commas in usernames break private messages reported by toebee on dec utc commas are used on the send private message page pm php action new to allow you to send messages to multiple recipients however this causes a problem if the user you are trying to send a message to has a comma in their username ... | 1 |

80,354 | 30,246,123,695 | IssuesEvent | 2023-07-06 16:36:27 | gperftools/gperftools | https://api.github.com/repos/gperftools/gperftools | closed | Failed to build with lib musl | Type-Defect Priority-Medium Status-New | Originally reported on Google Code with ID 690

```

What steps will reproduce the problem?

1. unpack source onto x86_64, musl based system

2. apply patch to define __off64_t

3. try to build

What is the expected output? What do you see instead?

Build fails instead of normal completition:

src/malloc_hook_mmap_linux.h: I... | 1.0 | Failed to build with lib musl - Originally reported on Google Code with ID 690

```

What steps will reproduce the problem?

1. unpack source onto x86_64, musl based system

2. apply patch to define __off64_t

3. try to build

What is the expected output? What do you see instead?

Build fails instead of normal completition:... | defect | failed to build with lib musl originally reported on google code with id what steps will reproduce the problem unpack source onto musl based system apply patch to define t try to build what is the expected output what do you see instead build fails instead of normal completition src mall... | 1 |

35,603 | 7,787,729,923 | IssuesEvent | 2018-06-07 00:05:00 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | opened | OPTIBELT - LA BASE DEL IVA REPORTADA EN EL PDF ES INCORRECTA | bug defect | El PDF que se genera en la plataforma (no estoy seguro que desde el conector venga el error), es incorrecta.

En lugar de enviar la base de IVA que corresponde, envía la base multiplicada por la cantidad.

No sé el XML como venga, pero asumo que está bien, de lo contrario ya se hubiera marcado un error.

Te mandaré u... | 1.0 | OPTIBELT - LA BASE DEL IVA REPORTADA EN EL PDF ES INCORRECTA - El PDF que se genera en la plataforma (no estoy seguro que desde el conector venga el error), es incorrecta.

En lugar de enviar la base de IVA que corresponde, envía la base multiplicada por la cantidad.

No sé el XML como venga, pero asumo que está bien, ... | defect | optibelt la base del iva reportada en el pdf es incorrecta el pdf que se genera en la plataforma no estoy seguro que desde el conector venga el error es incorrecta en lugar de enviar la base de iva que corresponde envía la base multiplicada por la cantidad no sé el xml como venga pero asumo que está bien ... | 1 |

107,619 | 16,761,611,994 | IssuesEvent | 2021-06-13 22:31:19 | gms-ws-demo/nibrs | https://api.github.com/repos/gms-ws-demo/nibrs | closed | CVE-2020-36179 (High) detected in multiple libraries - autoclosed | security vulnerability | ## CVE-2020-36179 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.8.jar</b>, <b>jackson-databind-2.9.5.jar</b>, <b>jackson-databind-2.9.6.jar</b>, <b>jackson-data... | True | CVE-2020-36179 (High) detected in multiple libraries - autoclosed - ## CVE-2020-36179 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.9.8.jar</b>, <b>jackson-databi... | non_defect | cve high detected in multiple libraries autoclosed cve high severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar jackson databind jar ge... | 0 |

459,384 | 13,192,288,525 | IssuesEvent | 2020-08-13 13:33:39 | yalla-coop/presspad | https://api.github.com/repos/yalla-coop/presspad | closed | Create Intern Settings (FRONT END) | 5-points Frontend backlog priority-3 | - [ ] Set up Settings route

- [ ] Settings page in line with wireframes: https://www.figma.com/file/CMkMSsbTLjpitcetLUunz9/PressPad?node-id=2878%3A52971

**Across all**