Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

79,912 | 29,517,214,134 | IssuesEvent | 2023-06-04 16:25:43 | scipy/scipy | https://api.github.com/repos/scipy/scipy | opened | BUG: scipy.stats.Pearson3 ppf function uses right tail probability instead of left | defect | ### Describe your issue.

I had been using the Percent Point Function (ppf) in my optimization code to return a correct value. One of my distributions ended up being a pearson3, when I ended up comparing it to the CDF the values had a large discrepancy to the ppf function. I proceeded to check the documentation and fo... | 1.0 | BUG: scipy.stats.Pearson3 ppf function uses right tail probability instead of left - ### Describe your issue.

I had been using the Percent Point Function (ppf) in my optimization code to return a correct value. One of my distributions ended up being a pearson3, when I ended up comparing it to the CDF the values had a... | defect | bug scipy stats ppf function uses right tail probability instead of left describe your issue i had been using the percent point function ppf in my optimization code to return a correct value one of my distributions ended up being a when i ended up comparing it to the cdf the values had a large discrep... | 1 |

53,846 | 13,262,371,571 | IssuesEvent | 2020-08-20 21:41:20 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | matching trigger config in trigger-sim (Trac #2175) | Migrated from Trac analysis defect | First, I am Elim... I know I am terrible for signing in as icecube..

but I lost my password and wasn't able to reset it.. please don't hate me...

I am trying to run

$ python /data/user/elims/strike/clsim_jessie/applytoL2.py --outfile /data/user/elims/strike/clsim_jessie/outputs/events10_toL2_test.i3.gz --infile /data/... | 1.0 | matching trigger config in trigger-sim (Trac #2175) - First, I am Elim... I know I am terrible for signing in as icecube..

but I lost my password and wasn't able to reset it.. please don't hate me...

I am trying to run

$ python /data/user/elims/strike/clsim_jessie/applytoL2.py --outfile /data/user/elims/strike/clsim_j... | defect | matching trigger config in trigger sim trac first i am elim i know i am terrible for signing in as icecube but i lost my password and wasn t able to reset it please don t hate me i am trying to run python data user elims strike clsim jessie py outfile data user elims strike clsim jessie outpu... | 1 |

227,838 | 7,543,521,029 | IssuesEvent | 2018-04-17 15:43:36 | AZMAG/map-ATP | https://api.github.com/repos/AZMAG/map-ATP | opened | Prevent submittal of form when new location button is clicked. | Issue: Bug Priority: High |

Hitting the “Click here to select a new location” button after point is initially placed completely clears the form and re-loads the application.

Correct behavior is to keep the form filled and not relo... | 1.0 | Prevent submittal of form when new location button is clicked. -

Hitting the “Click here to select a new location” button after point is initially placed completely clears the form and re-loads the applicati... | non_defect | prevent submittal of form when new location button is clicked hitting the “click here to select a new location” button after point is initially placed completely clears the form and re loads the application correct behavior is to keep the form filled and not reload the application but allow the user t... | 0 |

290,260 | 25,045,931,561 | IssuesEvent | 2022-11-05 08:35:00 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | opened | roachtest: jepsen/bank-multitable/majority-ring failed | C-test-failure O-robot O-roachtest release-blocker branch-release-22.1 | roachtest.jepsen/bank-multitable/majority-ring [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=7330122&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=7330122&tab=artifacts#/jepsen/bank-multitable/majority-ring) on release-22.1 @ [6fdb8f55c6e3224d1d4b8bb2b5f7e757d57d2... | 2.0 | roachtest: jepsen/bank-multitable/majority-ring failed - roachtest.jepsen/bank-multitable/majority-ring [failed](https://teamcity.cockroachdb.com/viewLog.html?buildId=7330122&tab=buildLog) with [artifacts](https://teamcity.cockroachdb.com/viewLog.html?buildId=7330122&tab=artifacts#/jepsen/bank-multitable/majority-ring)... | non_defect | roachtest jepsen bank multitable majority ring failed roachtest jepsen bank multitable majority ring with on release attached stack trace stack trace main clusterimpl rune main pkg cmd roachtest cluster go github com cockroachdb cockroach pkg cmd roachtes... | 0 |

55,412 | 14,442,942,086 | IssuesEvent | 2020-12-07 18:53:46 | department-of-veterans-affairs/va.gov-team | https://api.github.com/repos/department-of-veterans-affairs/va.gov-team | opened | 508-defect-2 [FOCUS MANAGEMENT, SCREENREADER]: Focus on page load SHOULD be consistent | 508-defect-3 508-issue-focus-mgmt 508/Accessibility staging-review vsa vsa-ebenefits | # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-3)

## Feedback framework

- **❗️ Must** for if the feedback must be applied

- **⚠️ Should** if the feedback is best practice

- **✔️ Consider** for su... | 1.0 | 508-defect-2 [FOCUS MANAGEMENT, SCREENREADER]: Focus on page load SHOULD be consistent - # [508-defect-2](https://github.com/department-of-veterans-affairs/va.gov-team/blob/master/platform/accessibility/guidance/defect-severity-rubric.md#508-defect-3)

## Feedback framework

- **❗️ Must** for if the feedback must... | defect | defect focus on page load should be consistent feedback framework ❗️ must for if the feedback must be applied ⚠️ should if the feedback is best practice ✔️ consider for suggestions enhancements definition of done review and acknowledge feedback fix and... | 1 |

53,054 | 13,260,851,432 | IssuesEvent | 2020-08-20 18:52:11 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | icetray/trunk/resources/docs/i3frame.rst clean up (Trac #636) | IceTray Migrated from Trac defect | needs some serious help

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/636">https://code.icecube.wisc.edu/projects/icecube/ticket/636</a>, reported by anonymousand owned by troy</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2014-11-23T03:... | 1.0 | icetray/trunk/resources/docs/i3frame.rst clean up (Trac #636) - needs some serious help

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/636">https://code.icecube.wisc.edu/projects/icecube/ticket/636</a>, reported by anonymousand owned by troy</em></summary>

<p>

```... | defect | icetray trunk resources docs rst clean up trac needs some serious help migrated from json status closed changetime ts description needs some serious help reporter anonymous cc resolution fixed time ... | 1 |

60,314 | 17,023,394,477 | IssuesEvent | 2021-07-03 01:48:08 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Specific exception for "the server failed to allocate memory" | Component: api Priority: major Resolution: wontfix Type: defect | **[Submitted to the original trac issue database at 11.02am, Thursday, 30th April 2009]**

It would be good if there were a specific exception for "failed to allocate memory", so that whichways could trap it. At present any failure in whichways tells the user to e-mail me, which is good for Potlatch bugs, but not so go... | 1.0 | Specific exception for "the server failed to allocate memory" - **[Submitted to the original trac issue database at 11.02am, Thursday, 30th April 2009]**

It would be good if there were a specific exception for "failed to allocate memory", so that whichways could trap it. At present any failure in whichways tells the u... | defect | specific exception for the server failed to allocate memory it would be good if there were a specific exception for failed to allocate memory so that whichways could trap it at present any failure in whichways tells the user to e mail me which is good for potlatch bugs but not so good when it s a gener... | 1 |

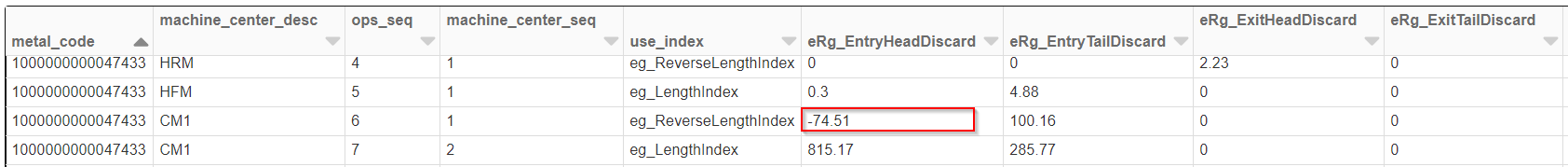

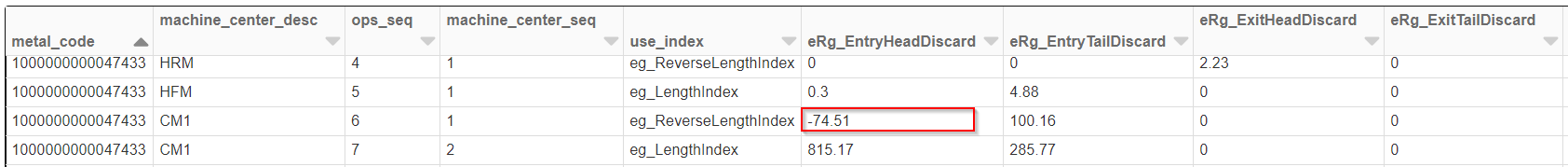

460,031 | 13,203,479,465 | IssuesEvent | 2020-08-14 14:14:38 | novelis-prod/Digital-CoE-Operations-Data---Public | https://api.github.com/repos/novelis-prod/Digital-CoE-Operations-Data---Public | closed | Entry-Head-Discard values are negative in coil lineage table | Plant: Pinda Priority #2 bug help wanted | Entry-Head-Discard values are negative in coil lineage table

| 1.0 | Entry-Head-Discard values are negative in coil lineage table - Entry-Head-Discard values are negative in coil lineage table

| non_defect | entry head discard values are negative in coil lineage table entry head discard values are negative in coil lineage table | 0 |

67,858 | 14,891,992,998 | IssuesEvent | 2021-01-21 01:46:14 | Nehamaefi/fitbit-api-example-java | https://api.github.com/repos/Nehamaefi/fitbit-api-example-java | opened | CVE-2020-36189 (Medium) detected in jackson-databind-2.8.1.jar | security vulnerability | ## CVE-2020-36189 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core stream... | True | CVE-2020-36189 (Medium) detected in jackson-databind-2.8.1.jar - ## CVE-2020-36189 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.8.1.jar</b></p></summary>

<p>Gen... | non_defect | cve medium detected in jackson databind jar cve medium severity vulnerability vulnerable library jackson databind jar general data binding functionality for jackson works on core streaming api library home page a href path to dependency file fitbit api example java pom... | 0 |

6,459 | 2,610,243,583 | IssuesEvent | 2015-02-26 19:17:28 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 台州割包皮过长费用 | auto-migrated Priority-Medium Type-Defect | ```

台州割包皮过长费用【台州五洲生殖医院】24小时健康咨询热

线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州市椒

江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108、118�

��198及椒江一金清公交车直达枫南小区,乘坐107、105、109、112

、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世界同步。权威... | 1.0 | 台州割包皮过长费用 - ```

台州割包皮过长费用【台州五洲生殖医院】24小时健康咨询热

线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地址:台州市椒

江区枫南路229号(枫南大转盘旁)乘车线路:乘坐104、108、118�

��198及椒江一金清公交车直达枫南小区,乘坐107、105、109、112

、901、 902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医... | defect | 台州割包皮过长费用 台州割包皮过长费用【台州五洲生殖医院】 线 微信号tzwzszyy 医院地址 台州市椒 (枫南大转盘旁)乘车线路 、 、 � �� , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 original is... | 1 |

52,134 | 13,211,392,047 | IssuesEvent | 2020-08-15 22:48:38 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [tableio] named argument in class declaration (Trac #1733) | Incomplete Migration Migrated from Trac cmake defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1733">https://code.icecube.wisc.edu/projects/icecube/ticket/1733</a>, reported by kjmeagherand owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-06-10T16:08:49",

"_ts"... | 1.0 | [tableio] named argument in class declaration (Trac #1733) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1733">https://code.icecube.wisc.edu/projects/icecube/ticket/1733</a>, reported by kjmeagherand owned by jvansanten</em></summary>

<p>

```json

{

"status":... | defect | named argument in class declaration trac migrated from json status closed changetime ts description the sphinx build gives the following error n ntraceback most recent call last n file private var folders rc g t pip build sphin... | 1 |

16,179 | 9,303,083,187 | IssuesEvent | 2019-03-24 14:57:08 | scala/bug | https://api.github.com/repos/scala/bug | closed | HashMap.merged for new CHAMP-based HashMap | has PR library:collections performance | This method needs to be optimized in the same way as it was in the old HashMap implementation. | True | HashMap.merged for new CHAMP-based HashMap - This method needs to be optimized in the same way as it was in the old HashMap implementation. | non_defect | hashmap merged for new champ based hashmap this method needs to be optimized in the same way as it was in the old hashmap implementation | 0 |

65,190 | 19,253,871,294 | IssuesEvent | 2021-12-09 09:13:04 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Poll Create dialog in high contrast theme: delete answer button is a circle | T-Defect S-Minor A-Appearance A-Themes-Official O-Occasional A-Polls Z-Labs | ### Steps to reproduce

1. Turn on Polls in Labs settings

2. Choose the high contrast theme

3. Create a poll

### Outcome

#### What did you expect?

The delete answer buttons should look like "X".

#### What happened instead?

Instead they are filled circles:

| auto-migrated Component-UI Priority-Low Type-Defect | ```

Пример: Москва, Казань

```

Original issue reported on code.google.com by `G.Glaur...@gmail.com` on 30 Jan 2010 at 9:13 | 1.0 | Отображать на карте остальные векторные объекты (реки, кварталы и т.д.) - ```

Пример: Москва, Казань

```

Original issue reported on code.google.com by `G.Glaur...@gmail.com` on 30 Jan 2010 at 9:13 | defect | отображать на карте остальные векторные объекты реки кварталы и т д пример москва казань original issue reported on code google com by g glaur gmail com on jan at | 1 |

78,389 | 27,492,835,486 | IssuesEvent | 2023-03-04 20:49:08 | DependencyTrack/dependency-track | https://api.github.com/repos/DependencyTrack/dependency-track | opened | javax.jdo.JDOUserException: Field org.dependencytrack.model.Component.repositoryMeta is not marked as persistent so cannot be queried | defect in triage | ### Current Behavior

When opening the Policy Violations tab, the PolicyViolationResource throws the following error and no violations are shown. I think this bug was introduced somewhere last week...

``

javax.jdo.JDOUserException: Field org.dependencytrack.model.Component.repositoryMeta is not marked as persistent... | 1.0 | javax.jdo.JDOUserException: Field org.dependencytrack.model.Component.repositoryMeta is not marked as persistent so cannot be queried - ### Current Behavior

When opening the Policy Violations tab, the PolicyViolationResource throws the following error and no violations are shown. I think this bug was introduced somewh... | defect | javax jdo jdouserexception field org dependencytrack model component repositorymeta is not marked as persistent so cannot be queried current behavior when opening the policy violations tab the policyviolationresource throws the following error and no violations are shown i think this bug was introduced somewh... | 1 |

37,524 | 8,414,740,450 | IssuesEvent | 2018-10-13 06:32:04 | purebred-mua/purebred | https://api.github.com/repos/purebred-mua/purebred | opened | Test timeout before purebred started | defect | One test failed today with the following output:

```

use file browser to add attachments: FAIL (5.05s)

Wait time exceeded. Condition not met: 'Literal "Purebred: Item"' last screen shot:

export TERM=ansi

export GHC=stack

export GHC_ARGS="$STACK_ARGS ghc --"

... | 1.0 | Test timeout before purebred started - One test failed today with the following output:

```

use file browser to add attachments: FAIL (5.05s)

Wait time exceeded. Condition not met: 'Literal "Purebred: Item"' last screen shot:

export TERM=ansi

export GHC=stack

... | defect | test timeout before purebred started one test failed today with the following output use file browser to add attachments fail wait time exceeded condition not met literal purebred item last screen shot export term ansi export ghc stack ex... | 1 |

51,768 | 13,211,304,154 | IssuesEvent | 2020-08-15 22:10:30 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | steamshovel results.i3 (preloaded for UWRF) Segmentation fault (Trac #1014) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1014">https://code.icecube.wisc.edu/projects/icecube/ticket/1014</a>, reported by jdiercksand owned by hdembinski</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:10",

"_ts":... | 1.0 | steamshovel results.i3 (preloaded for UWRF) Segmentation fault (Trac #1014) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1014">https://code.icecube.wisc.edu/projects/icecube/ticket/1014</a>, reported by jdiercksand owned by hdembinski</em></summary>

<p>

```json... | defect | steamshovel results preloaded for uwrf segmentation fault trac migrated from json status closed changetime ts description students at uwrf had preloaded a results for bootcamp and i m running into a repeatable segfault moving into count von... | 1 |

165,610 | 26,199,710,155 | IssuesEvent | 2023-01-03 16:22:00 | coder/coder | https://api.github.com/repos/coder/coder | closed | users: table looks kind of squished | site design | If I am not an `Owner` or `Template Admin` the roles look squished

<img width="1093" alt="Screen Shot 2022-10-10 at 4 25 35 PM" src="https://user-images.githubusercontent.com/22407953/194954805-097f271f-50e8-4cf0-af2e-fe4b88f39392.png">

| 1.0 | users: table looks kind of squished - If I am not an `Owner` or `Template Admin` the roles look squished

<img width="1093" alt="Screen Shot 2022-10-10 at 4 25 35 PM" src="https://user-images.githubusercontent.com/22407953/194954805-097f271f-50e8-4cf0-af2e-fe4b88f39392.png">

| non_defect | users table looks kind of squished if i am not an owner or template admin the roles look squished img width alt screen shot at pm src | 0 |

183,489 | 14,234,014,840 | IssuesEvent | 2020-11-18 13:00:53 | eclipse/che | https://api.github.com/repos/eclipse/che | closed | .NET Core devfile E2E test is flaky on "Language server autocomplete validation" step | area/qe e2e-test/failure kind/bug severity/P2 | ### Describe the bug

**.NET Core** devfile E2E test is flaky on _Language serve autocomplete validation_ step https://codeready-workspaces-jenkins.rhev-ci-vms.eng.rdu2.redhat.com/job/basic-MultiUser-Che-check-e2e-tests-against-k8s/2160/console

```

92 passing (30m)

3 pending

3 failing

1) .NET Core test

... | 1.0 | .NET Core devfile E2E test is flaky on "Language server autocomplete validation" step - ### Describe the bug

**.NET Core** devfile E2E test is flaky on _Language serve autocomplete validation_ step https://codeready-workspaces-jenkins.rhev-ci-vms.eng.rdu2.redhat.com/job/basic-MultiUser-Che-check-e2e-tests-against-k8s/... | non_defect | net core devfile test is flaky on language server autocomplete validation step describe the bug net core devfile test is flaky on language serve autocomplete validation step passing pending failing net core test language server validation suggestio... | 0 |

42,097 | 10,818,016,841 | IssuesEvent | 2019-11-08 11:01:10 | netty/netty | https://api.github.com/repos/netty/netty | closed | deregister and re-register, ClosedChannelException is thrown | defect | Hi,

I'm doing a project on middle-ware for distributed database, and I want to use Netty as client connecting to MySQL reading data. But a problem gets me stuck for such a long time so I have to ask for help. The scenario is as below:

1. Netty dispatches SQL query to MySQL;

1. MySQL returns a bunch of resultset w... | 1.0 | deregister and re-register, ClosedChannelException is thrown - Hi,

I'm doing a project on middle-ware for distributed database, and I want to use Netty as client connecting to MySQL reading data. But a problem gets me stuck for such a long time so I have to ask for help. The scenario is as below:

1. Netty dispatches... | defect | deregister and re register closedchannelexception is thrown hi i m doing a project on middle ware for distributed database and i want to use netty as client connecting to mysql reading data but a problem gets me stuck for such a long time so i have to ask for help the scenario is as below netty dispatches... | 1 |

49,145 | 13,185,256,608 | IssuesEvent | 2020-08-12 21:02:00 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | copy constructor of I3Particle copies I3ParticleID (Trac #828) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/828

, reported by chaack and owned by dschultz</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-01-27T20:32:47",

"description": "Added particle to the new I3MCTree is difficult, if you use the copy constructors... | 1.0 | copy constructor of I3Particle copies I3ParticleID (Trac #828) - <details>

<summary><em>Migrated from https://code.icecube.wisc.edu/ticket/828

, reported by chaack and owned by dschultz</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2015-01-27T20:32:47",

"description": "Added particle t... | defect | copy constructor of copies trac migrated from reported by chaack and owned by dschultz json status closed changetime description added particle to the new is difficult if you use the copy constructors of as these also copy the n ndoing somet... | 1 |

73,070 | 24,440,618,700 | IssuesEvent | 2022-10-06 14:22:24 | snowplow/snowplow-python-tracker | https://api.github.com/repos/snowplow/snowplow-python-tracker | closed | Fix failing build in Dockerfile | type:defect | The docker build fails with error "Failed to download metadata for repo 'appstream': Cannot prepare internal mirrorlist: No URLs in mirrorlist". | 1.0 | Fix failing build in Dockerfile - The docker build fails with error "Failed to download metadata for repo 'appstream': Cannot prepare internal mirrorlist: No URLs in mirrorlist". | defect | fix failing build in dockerfile the docker build fails with error failed to download metadata for repo appstream cannot prepare internal mirrorlist no urls in mirrorlist | 1 |

31,298 | 6,493,221,051 | IssuesEvent | 2017-08-21 16:07:59 | sukona/Grapevine | https://api.github.com/repos/sukona/Grapevine | closed | consider change default regex pattern from (.+) to ([^/]+), in order to avoid including sub path | defect | consider change default regex pattern from (.+) to ([^/]+), in order to avoid including sub path.

line is here:

https://github.com/sukona/Grapevine/blob/54b8299e571e8005e172755090560eb5b7e4be16/src/Grapevine/Shared/ParamParser.cs#L48

**example code:**

```

[RestRoute(HttpMethod = HttpMethod.POST, PathInfo = "/... | 1.0 | consider change default regex pattern from (.+) to ([^/]+), in order to avoid including sub path - consider change default regex pattern from (.+) to ([^/]+), in order to avoid including sub path.

line is here:

https://github.com/sukona/Grapevine/blob/54b8299e571e8005e172755090560eb5b7e4be16/src/Grapevine/Shared/P... | defect | consider change default regex pattern from to in order to avoid including sub path consider change default regex pattern from to in order to avoid including sub path line is here example code example input controller requests example output... | 1 |

341,557 | 24,703,634,673 | IssuesEvent | 2022-10-19 17:09:52 | PyThaiNLP/pythainlp | https://api.github.com/repos/PyThaiNLP/pythainlp | closed | Use PyThaiNLP 4.0 instead 3.2 | documentation | I think we will have many changes, so we should release the next version as 4.0 instead 3.2. | 1.0 | Use PyThaiNLP 4.0 instead 3.2 - I think we will have many changes, so we should release the next version as 4.0 instead 3.2. | non_defect | use pythainlp instead i think we will have many changes so we should release the next version as instead | 0 |

54,195 | 13,458,333,075 | IssuesEvent | 2020-09-09 10:28:24 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | closed | Jackson versions and vulnerabilities | Source: Community Team: Core Type: Defect | Hey folks,

Hazelcast shades [Jackson 2.9.7](https://github.com/hazelcast/hazelcast/blob/master/pom.xml#L91), which currently is [about 2 years old](https://github.com/FasterXML/jackson/wiki/Jackson-Release-2.9.7). There's a lot of Jackson CVEs out there (https://github.com/FasterXML/jackson-databind/issues/2814 is t... | 1.0 | Jackson versions and vulnerabilities - Hey folks,

Hazelcast shades [Jackson 2.9.7](https://github.com/hazelcast/hazelcast/blob/master/pom.xml#L91), which currently is [about 2 years old](https://github.com/FasterXML/jackson/wiki/Jackson-Release-2.9.7). There's a lot of Jackson CVEs out there (https://github.com/Fast... | defect | jackson versions and vulnerabilities hey folks hazelcast shades which currently is there s a lot of jackson cves out there is the latest that popped up for us often centered around gadgets as explained by cowtowncoder primary jackson maintainer himself in it is possible that hazelcast is af... | 1 |

67,891 | 21,264,118,125 | IssuesEvent | 2022-04-13 08:16:11 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Wrong alignment for thread panel footer contents in Safari | T-Defect Z-Platform-Specific S-Tolerable O-Occasional A-Threads | ### Steps to reproduce

Open the thread panel on Safari.

### Outcome

#### What did you expect?

The footer contents for the Beta label and feedback button to be aligned to the right.

#### What happened instead?

It's aligned to the left.

We just need to change the value for the `justify-content: end;` decl... | 1.0 | Wrong alignment for thread panel footer contents in Safari - ### Steps to reproduce

Open the thread panel on Safari.

### Outcome

#### What did you expect?

The footer contents for the Beta label and feedback button to be aligned to the right.

#### What happened instead?

It's aligned to the left.

We just ... | defect | wrong alignment for thread panel footer contents in safari steps to reproduce open the thread panel on safari outcome what did you expect the footer contents for the beta label and feedback button to be aligned to the right what happened instead it s aligned to the left we just ... | 1 |

114,735 | 11,855,215,017 | IssuesEvent | 2020-03-25 03:28:59 | WordPress/auto-updates | https://api.github.com/repos/WordPress/auto-updates | opened | Documentation | documentation task | _Migrated from: https://github.com/audrasjb/wp-autoupdates/issues/71

Previously opened by: @jeffpaul

Original description:_

>We'll want to ensure that ahead of any submission to merge this plugin into WordPress core that we've sufficiently documented within the plugin code and within the markdown/text files in this ... | 1.0 | Documentation - _Migrated from: https://github.com/audrasjb/wp-autoupdates/issues/71

Previously opened by: @jeffpaul

Original description:_

>We'll want to ensure that ahead of any submission to merge this plugin into WordPress core that we've sufficiently documented within the plugin code and within the markdown/tex... | non_defect | documentation migrated from previously opened by jeffpaul original description we ll want to ensure that ahead of any submission to merge this plugin into wordpress core that we ve sufficiently documented within the plugin code and within the markdown text files in this plugin assuming there is a feature... | 0 |

6,245 | 2,610,224,021 | IssuesEvent | 2015-02-26 19:11:00 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | шоу уродов господина араси | auto-migrated Priority-Medium Type-Defect | ```

'''Бертольд Голубев'''

День добрый никак не могу найти .шоу уродов

господина араси. как то выкладывали уже

'''Варлам Денисов'''

Вот хороший сайт где можно скачать

http://bit.ly/17ZRkLm

'''Болеслав Кабанов'''

Спасибо вроде то но просит телефон вводить

'''Аскольд Денисов'''

Не это не влияет на баланс

'''Абрам С... | 1.0 | шоу уродов господина араси - ```

'''Бертольд Голубев'''

День добрый никак не могу найти .шоу уродов

господина араси. как то выкладывали уже

'''Варлам Денисов'''

Вот хороший сайт где можно скачать

http://bit.ly/17ZRkLm

'''Болеслав Кабанов'''

Спасибо вроде то но просит телефон вводить

'''Аскольд Денисов'''

Не это не... | defect | шоу уродов господина араси бертольд голубев день добрый никак не могу найти шоу уродов господина араси как то выкладывали уже варлам денисов вот хороший сайт где можно скачать болеслав кабанов спасибо вроде то но просит телефон вводить аскольд денисов не это не влияет на баланс ... | 1 |

291,751 | 25,172,324,346 | IssuesEvent | 2022-11-11 05:18:24 | crispindeity/issue-tracker | https://api.github.com/repos/crispindeity/issue-tracker | closed | Add Milestone Controller Integration Test | 📬 API ✅ Test | # Description

- Milestone Controller 통합 테스트 작성

# Progress

- [x] 302 Redirect Response

- [x] Long save()

- [x] ResponsewReadAllMilestone read()

- [x] ResponseReadAllMilestonesDto readOpenAndMilestones()

- [x] ResponseMilestoneDto detail()

- [x] void delete()

- [x] Long update()

| 1.0 | Add Milestone Controller Integration Test - # Description

- Milestone Controller 통합 테스트 작성

# Progress

- [x] 302 Redirect Response

- [x] Long save()

- [x] ResponsewReadAllMilestone read()

- [x] ResponseReadAllMilestonesDto readOpenAndMilestones()

- [x] ResponseMilestoneDto detail()

- [x] void delete()

- [x] ... | non_defect | add milestone controller integration test description milestone controller 통합 테스트 작성 progress redirect response long save responsewreadallmilestone read responsereadallmilestonesdto readopenandmilestones responsemilestonedto detail void delete long update | 0 |

31,910 | 8,774,196,993 | IssuesEvent | 2018-12-18 19:05:10 | tensorflow/tensorflow | https://api.github.com/repos/tensorflow/tensorflow | closed | TensorFlow docker image JupyterNotebook does not start | stat:awaiting response type:build/install | 1. I built the docker image using Dockerfile present in `tensorflow/tensorflow/tools/docker/Dockerfile`, When I run the image it does gives me url for notebook

```

Copy/paste this URL into your browser when you connect for the first time,

to login with a token:

http://(e01f14b69e8e or 127.0.0.1):8888/... | 1.0 | TensorFlow docker image JupyterNotebook does not start - 1. I built the docker image using Dockerfile present in `tensorflow/tensorflow/tools/docker/Dockerfile`, When I run the image it does gives me url for notebook

```

Copy/paste this URL into your browser when you connect for the first time,

to login with a... | non_defect | tensorflow docker image jupyternotebook does not start i built the docker image using dockerfile present in tensorflow tensorflow tools docker dockerfile when i run the image it does gives me url for notebook copy paste this url into your browser when you connect for the first time to login with a... | 0 |

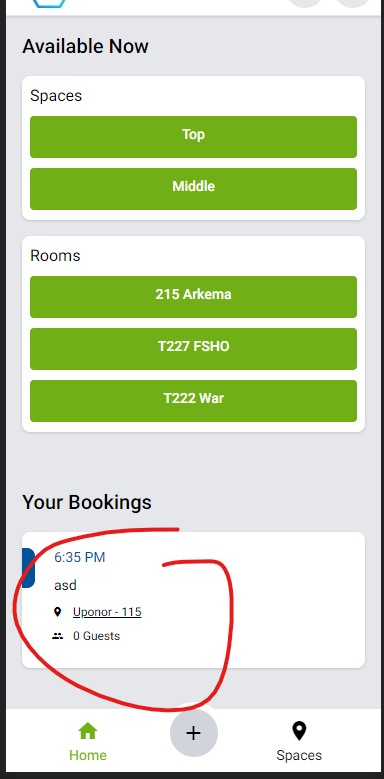

606,156 | 18,756,041,032 | IssuesEvent | 2021-11-05 10:52:40 | PlaceOS/user-interfaces | https://api.github.com/repos/PlaceOS/user-interfaces | closed | workplace/dashboard > Your Bookings: Clicking on a booking tile does nothing, but should show the booking details (/schedule/view) | Type: Enhancement Priority: Medium | workplace/dashboard > Your Bookings: Clicking on a booking tile does nothing, but should show the booking details (/schedule/view)

| 1.0 | workplace/dashboard > Your Bookings: Clicking on a booking tile does nothing, but should show the booking details (/schedule/view) - workplace/dashboard > Your Bookings: Clicking on a booking tile does nothing, but should show the booking details (/schedule/view)

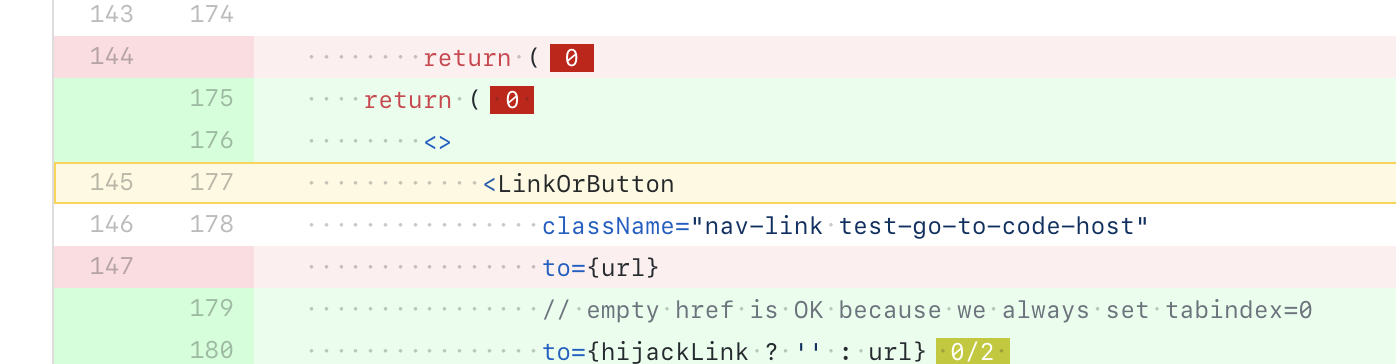

Go to definition on `LinkOrButton`, it just jumps to the import in the same file, instead of to https... | 1.0 | TypeScript go to definition just goes to import - https://github.com/sourcegraph/sourcegraph/pull/14256/files#diff-389012daf6ffbfb95a148bde9e6800bbL145

Go to definition on `LinkOrButton`, it just jumps t... | non_defect | typescript go to definition just goes to import go to definition on linkorbutton it just jumps to the import in the same file instead of to | 0 |

128,819 | 10,552,552,175 | IssuesEvent | 2019-10-03 15:22:29 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | flannel --iface is broken on k8s 1.16 | [zube]: To Test kind/bug team/ca | due to tabs being at:

https://github.com/rancher/kontainer-driver-metadata/blob/e6d8f524e4456635d1d37a05f2e454a468ed11b6/rke/templates/flannel.go#L642-L644

the `flannel_iface` configuration and flannel is broken when the rke cluster.yaml is:

```yaml

cluster_name: example

kubernetes_version: v1.16.0-beta.1-ra... | 1.0 | flannel --iface is broken on k8s 1.16 - due to tabs being at:

https://github.com/rancher/kontainer-driver-metadata/blob/e6d8f524e4456635d1d37a05f2e454a468ed11b6/rke/templates/flannel.go#L642-L644

the `flannel_iface` configuration and flannel is broken when the rke cluster.yaml is:

```yaml

cluster_name: exampl... | non_defect | flannel iface is broken on due to tabs being at the flannel iface configuration and flannel is broken when the rke cluster yaml is yaml cluster name example kubernetes version beta network plugin flannel options flannel iface the bug was introduced a... | 0 |

55,977 | 13,728,320,069 | IssuesEvent | 2020-10-04 11:05:05 | jenkins-x/terraform-aws-eks-jx | https://api.github.com/repos/jenkins-x/terraform-aws-eks-jx | closed | Quota exceeding for PoliciesPerUser for CI builds | area/build kind/bug lifecycle/rotten | We keep seeing the following error after some time running pull request builds:

```

Error: Error attaching policy arn:aws:iam::296178596335:policy/vault_us-east-1-2020050616512586470000000c to IAM User **-bdd-test: LimitExceeded: Cannot exceed quota for PoliciesPerUser: 10

status code: 409, request id: 53cf51d8-3... | 1.0 | Quota exceeding for PoliciesPerUser for CI builds - We keep seeing the following error after some time running pull request builds:

```

Error: Error attaching policy arn:aws:iam::296178596335:policy/vault_us-east-1-2020050616512586470000000c to IAM User **-bdd-test: LimitExceeded: Cannot exceed quota for PoliciesPe... | non_defect | quota exceeding for policiesperuser for ci builds we keep seeing the following error after some time running pull request builds error error attaching policy arn aws iam policy vault us east to iam user bdd test limitexceeded cannot exceed quota for policiesperuser status code request i... | 0 |

57,804 | 16,076,133,645 | IssuesEvent | 2021-04-25 11:43:05 | TykTechnologies/tyk-operator | https://api.github.com/repos/TykTechnologies/tyk-operator | reopened | Security Policy "configured" even without changes | defect | Applying a SecurityPolicy resource, even without changes, results in a reconcile loop each time.

Version Operator :`v0.4.1`

```

➜ tyk-operator git:(f54cfb5) ✗ k apply -f ./config/samples/httpbin_protected.yaml

apidefinition.tyk.tyk.io/httpbin created

➜ tyk-operator git:(f54cfb5) ✗ k apply -f ./config/samples... | 1.0 | Security Policy "configured" even without changes - Applying a SecurityPolicy resource, even without changes, results in a reconcile loop each time.

Version Operator :`v0.4.1`

```

➜ tyk-operator git:(f54cfb5) ✗ k apply -f ./config/samples/httpbin_protected.yaml

apidefinition.tyk.tyk.io/httpbin created

➜ tyk-... | defect | security policy configured even without changes applying a securitypolicy resource even without changes results in a reconcile loop each time version operator ➜ tyk operator git ✗ k apply f config samples httpbin protected yaml apidefinition tyk tyk io httpbin created ➜ tyk operato... | 1 |

52,249 | 13,211,411,998 | IssuesEvent | 2020-08-15 22:57:35 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | opened | [simprod] Missing Time Range for SplitInIcePulses in 2013 simulation (Trac #1916) | Incomplete Migration Migrated from Trac combo simulation defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1916">https://code.icecube.wisc.edu/projects/icecube/ticket/1916</a>, reported by yiqian.xuand owned by juancarlos</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-12T20:28:04",

"_ts"... | 1.0 | [simprod] Missing Time Range for SplitInIcePulses in 2013 simulation (Trac #1916) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1916">https://code.icecube.wisc.edu/projects/icecube/ticket/1916</a>, reported by yiqian.xuand owned by juancarlos</em></summary>

<p>

... | defect | missing time range for splitinicepulses in simulation trac migrated from json status closed changetime ts description data has splitinicepulsestimerange in the frame but simulation doesn t reporter yiqian xu cc davi... | 1 |

5,200 | 2,610,183,058 | IssuesEvent | 2015-02-26 18:58:18 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 详解怎么祛色斑最好 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

不要想太多,长大的自己多会迷失在人生路上,去追求一种��

�仰。平凡也好,其他也罢,人各有见,疏而不同。当完成了�

��中所想,可以稍微调整下时间的信仰,好比—休息。每一天

,见到的,听到的,都有所不同。无论是失落的,还是快乐��

�,调整下心态,坏事也可以成为一件好事。我想如果不是因�

��脸上的雀斑我也不会认识他,我一生的最爱!怎么祛色斑最

好,

《客户案例》

张小姐 26岁<br>

我的黄褐斑是遗传的,主要分布在颧骨,鼻子上也有一��

�。因为整体肤色比较白,斑也就更加明显。大家应该都看过�

��过片里,很多小孩就是很多的黄褐斑,我就是那样的。连照

相都看的出来,我的相片都要用PS处理下才可以。<br... | 1.0 | 详解怎么祛色斑最好 - ```

《摘要》

不要想太多,长大的自己多会迷失在人生路上,去追求一种��

�仰。平凡也好,其他也罢,人各有见,疏而不同。当完成了�

��中所想,可以稍微调整下时间的信仰,好比—休息。每一天

,见到的,听到的,都有所不同。无论是失落的,还是快乐��

�,调整下心态,坏事也可以成为一件好事。我想如果不是因�

��脸上的雀斑我也不会认识他,我一生的最爱!怎么祛色斑最

好,

《客户案例》

张小姐 26岁<br>

我的黄褐斑是遗传的,主要分布在颧骨,鼻子上也有一��

�。因为整体肤色比较白,斑也就更加明显。大家应该都看过�

��过片里,很多小孩就是很多的黄褐斑,我就是那样的。连照

相都看的出来,我的相片都要... | defect | 详解怎么祛色斑最好 《摘要》 不要想太多,长大的自己多会迷失在人生路上,去追求一种�� �仰。平凡也好,其他也罢,人各有见,疏而不同。当完成了� ��中所想,可以稍微调整下时间的信仰,好比—休息。每一天 ,见到的,听到的,都有所不同。无论是失落的,还是快乐�� �,调整下心态,坏事也可以成为一件好事。我想如果不是因� ��脸上的雀斑我也不会认识他,我一生的最爱!怎么祛色斑最 好, 《客户案例》 张小姐 我的黄褐斑是遗传的,主要分布在颧骨,鼻子上也有一�� �。因为整体肤色比较白,斑也就更加明显。大家应该都看过� ��过片里,很多小孩就是很多的黄褐斑,我就是那样的。连照 相都看的出来,我的相片都要用ps处理... | 1 |

168,185 | 13,065,228,692 | IssuesEvent | 2020-07-30 19:26:57 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | "failed to run channelserver" error seen in rancher logs | [zube]: To Test alpha-priority/0 area/import-rke2 kind/bug-qa | **What kind of request is this (question/bug/enhancement/feature request):** bug

**Steps to reproduce (least amount of steps as possible):**

On master-head - commit id: `e20f472d4`

following error is seen in the rancher logs:

```

2020/07/28 18:19:50 [INFO] kontainerdriver amazonelasticcontainerservice stopped

... | 1.0 | "failed to run channelserver" error seen in rancher logs - **What kind of request is this (question/bug/enhancement/feature request):** bug

**Steps to reproduce (least amount of steps as possible):**

On master-head - commit id: `e20f472d4`

following error is seen in the rancher logs:

```

2020/07/28 18:19:50 [I... | non_defect | failed to run channelserver error seen in rancher logs what kind of request is this question bug enhancement feature request bug steps to reproduce least amount of steps as possible on master head commit id following error is seen in the rancher logs kontainerdriver ... | 0 |

78,703 | 27,722,449,776 | IssuesEvent | 2023-03-14 21:55:27 | idaholab/moose | https://api.github.com/repos/idaholab/moose | closed | PolygonConcentricCircleMeshGenerator | T: defect P: normal | ## Bug Description

In the PolygonConcentricMeshGenerator when the user defines: outward_interface_boundary_names, the naming of the interfaces is not done properly. (I would assume similar behavior with inward_interface_boundary_names)

## Steps to Reproduce

For example, run this file :

```

# a Pronghorn mesh for... | 1.0 | PolygonConcentricCircleMeshGenerator - ## Bug Description

In the PolygonConcentricMeshGenerator when the user defines: outward_interface_boundary_names, the naming of the interfaces is not done properly. (I would assume similar behavior with inward_interface_boundary_names)

## Steps to Reproduce

For example, run t... | defect | polygonconcentriccirclemeshgenerator bug description in the polygonconcentricmeshgenerator when the user defines outward interface boundary names the naming of the interfaces is not done properly i would assume similar behavior with inward interface boundary names steps to reproduce for example run t... | 1 |

69,101 | 22,156,452,471 | IssuesEvent | 2022-06-03 23:38:26 | jezzsantos/automate | https://api.github.com/repos/jezzsantos/automate | closed | Esoteric language for core concepts | defect-design | It is pretty clear from having to describe to various potential users that there are some esoteric terms that need more than the average amount of explaining. This is a usability issue.

Words like:

- [x] Pattern -> ??? Template?

- [x] Solution -> ??? Document?

- [x] LaunchPoint -> ??? Runner?, Executor?, Trigg... | 1.0 | Esoteric language for core concepts - It is pretty clear from having to describe to various potential users that there are some esoteric terms that need more than the average amount of explaining. This is a usability issue.

Words like:

- [x] Pattern -> ??? Template?

- [x] Solution -> ??? Document?

- [x] Launch... | defect | esoteric language for core concepts it is pretty clear from having to describe to various potential users that there are some esoteric terms that need more than the average amount of explaining this is a usability issue words like pattern template solution document launchpoint ... | 1 |

436,806 | 12,554,031,120 | IssuesEvent | 2020-06-07 00:22:59 | eclipse-ee4j/glassfish | https://api.github.com/repos/eclipse-ee4j/glassfish | closed | Allow commands that take properties to accept properties file as an option | Component: admin ERR: Assignee Priority: Major Stale Type: Improvement | For a project I am working on, we're doing a lot of configuration through system

properties. All of the properties I need to load are already in a properties

file. To load them into Glassfish, I'm using an asant script with separate calls

to "create-system-properties". It would be nice to make one call to

"create-syste... | 1.0 | Allow commands that take properties to accept properties file as an option - For a project I am working on, we're doing a lot of configuration through system

properties. All of the properties I need to load are already in a properties

file. To load them into Glassfish, I'm using an asant script with separate calls

to "... | non_defect | allow commands that take properties to accept properties file as an option for a project i am working on we re doing a lot of configuration through system properties all of the properties i need to load are already in a properties file to load them into glassfish i m using an asant script with separate calls to ... | 0 |

8,807 | 2,612,899,075 | IssuesEvent | 2015-02-27 17:23:28 | chrsmith/windows-package-manager | https://api.github.com/repos/chrsmith/windows-package-manager | closed | PDF-XChange Viewer on 64bit Windows | auto-migrated Milestone-1.15 Type-Defect | ```

The version of the PDF-XChange Viewer currently in the repository cannot be

installed on a 64bit Windows.

From the install log:

---------------------------

MSI (s) (48:D4) [13:11:39:558]: Product: PDF-XChange Viewer -- This

installation can be installed only on 32-bit Windows.

---------------------------

```

... | 1.0 | PDF-XChange Viewer on 64bit Windows - ```

The version of the PDF-XChange Viewer currently in the repository cannot be

installed on a 64bit Windows.

From the install log:

---------------------------

MSI (s) (48:D4) [13:11:39:558]: Product: PDF-XChange Viewer -- This

installation can be installed only on 32-bit Window... | defect | pdf xchange viewer on windows the version of the pdf xchange viewer currently in the repository cannot be installed on a windows from the install log msi s product pdf xchange viewer this installation can be installed only on bit windows ... | 1 |

36,359 | 7,915,819,018 | IssuesEvent | 2018-07-04 01:56:26 | Microsoft/spring-data-gremlin | https://api.github.com/repos/Microsoft/spring-data-gremlin | closed | Refine the database AbstractConfiguration. | Defect enhancement | **Your issue may already be reported! Please search before creating a new one.**

## Expected Behavior

* placeholder

## Current Behavior

* placeholder

## Possible Solution

* placeholder

## Steps to Reproduce (for bugs)

* step-1

* step-2

* ...

## Snapshot Code for Reproduce

```java

@SpringBootAppl... | 1.0 | Refine the database AbstractConfiguration. - **Your issue may already be reported! Please search before creating a new one.**

## Expected Behavior

* placeholder

## Current Behavior

* placeholder

## Possible Solution

* placeholder

## Steps to Reproduce (for bugs)

* step-1

* step-2

* ...

## Snapshot ... | defect | refine the database abstractconfiguration your issue may already be reported please search before creating a new one expected behavior placeholder current behavior placeholder possible solution placeholder steps to reproduce for bugs step step snapshot ... | 1 |

633,561 | 20,258,538,225 | IssuesEvent | 2022-02-15 03:29:55 | apcountryman/picolibrary-microchip-megaavr | https://api.github.com/repos/apcountryman/picolibrary-microchip-megaavr | opened | Add Microchip megaAVR asynchronous serial basic transmitter | priority-normal status-awaiting_development type-feature | Add Microchip megaAVR asynchronous serial basic transmitter (`::picolibrary::Microchip::megaAVR::Asynchronous_Serial::Basic_Transmitter`).

- [ ] The `Basic_Transmitter` class should be defined in the `include/picolibrary/microchip/megaavr/asynchronous_serial.h`/`source/picolibrary/microchip/megaavr/asynchronous_serial... | 1.0 | Add Microchip megaAVR asynchronous serial basic transmitter - Add Microchip megaAVR asynchronous serial basic transmitter (`::picolibrary::Microchip::megaAVR::Asynchronous_Serial::Basic_Transmitter`).

- [ ] The `Basic_Transmitter` class should be defined in the `include/picolibrary/microchip/megaavr/asynchronous_seria... | non_defect | add microchip megaavr asynchronous serial basic transmitter add microchip megaavr asynchronous serial basic transmitter picolibrary microchip megaavr asynchronous serial basic transmitter the basic transmitter class should be defined in the include picolibrary microchip megaavr asynchronous serial ... | 0 |

29,537 | 5,715,697,896 | IssuesEvent | 2017-04-19 13:41:43 | contao/core-bundle | https://api.github.com/repos/contao/core-bundle | closed | Kein Entfernen von Sonderzeichen in Dateiverwaltung mehr? | defect | <a href="https://github.com/NinaG"><img src="https://avatars1.githubusercontent.com/u/1219952?v=3" align="left" width="42" height="42"></img></a> [Issue](https://github.com/contao/core/issues/8688) by @NinaG

March 30th, 2017, 09:50 GMT

Unter C3 war es imho so, dass Contao beim Anlegen von Ordnern oder Dateien darauf g... | 1.0 | Kein Entfernen von Sonderzeichen in Dateiverwaltung mehr? - <a href="https://github.com/NinaG"><img src="https://avatars1.githubusercontent.com/u/1219952?v=3" align="left" width="42" height="42"></img></a> [Issue](https://github.com/contao/core/issues/8688) by @NinaG

March 30th, 2017, 09:50 GMT

Unter C3 war es imho so... | defect | kein entfernen von sonderzeichen in dateiverwaltung mehr by ninag march gmt unter war es imho so dass contao beim anlegen von ordnern oder dateien darauf geachtet hat dass keine sonderzeichen und umlaute im ordner dateinamen waren falls der redakteur das so angelegt hat hat contao es dann ... | 1 |

11,667 | 2,660,030,677 | IssuesEvent | 2015-03-19 01:46:42 | perfsonar/project | https://api.github.com/repos/perfsonar/project | closed | owamp doesn't work with link-local addresses | Priority-Medium Type-Defect | Original [issue 1070](https://code.google.com/p/perfsonar-ps/issues/detail?id=1070) created by arlake228 on 2015-01-31T10:56:52.000Z:

<b>What steps will reproduce the problem?</b>

1. owping <IPv6LLAddrOfOwampd>

2. When a client requests an IPv6 link-local address to be used for a test session endpoint, the b... | 1.0 | owamp doesn't work with link-local addresses - Original [issue 1070](https://code.google.com/p/perfsonar-ps/issues/detail?id=1070) created by arlake228 on 2015-01-31T10:56:52.000Z:

<b>What steps will reproduce the problem?</b>

1. owping <IPv6LLAddrOfOwampd>

2. When a client requests an IPv6 link-local addres... | defect | owamp doesn t work with link local addresses original created by on what steps will reproduce the problem owping lt gt when a client requests an link local address to be used for a test session endpoint the bind to the address fails since a scope isn t provided and a link local... | 1 |

50,815 | 13,187,766,679 | IssuesEvent | 2020-08-13 04:30:57 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | [PROPOSAL] should not build tables in I3_SRC (Trac #1430) | Migrated from Trac combo simulation defect | One of the targets for PROPOSAL to build tables to is `$I3_BUILD/PROPOSAL/resources/tables`. This is a symlink into `$I3_SRC` almost all the time. Two problems with this:

1. Philosophically, if I specify a separate build directory that means I don't want you touching the source.

2. The source could potentially be r... | 1.0 | [PROPOSAL] should not build tables in I3_SRC (Trac #1430) - One of the targets for PROPOSAL to build tables to is `$I3_BUILD/PROPOSAL/resources/tables`. This is a symlink into `$I3_SRC` almost all the time. Two problems with this:

1. Philosophically, if I specify a separate build directory that means I don't want yo... | defect | should not build tables in src trac one of the targets for proposal to build tables to is build proposal resources tables this is a symlink into src almost all the time two problems with this philosophically if i specify a separate build directory that means i don t want you touching the ... | 1 |

72,513 | 24,160,217,206 | IssuesEvent | 2022-09-22 11:00:15 | matrix-org/synapse | https://api.github.com/repos/matrix-org/synapse | closed | synapse 1.68.0rc1 fails to build: environment variable `SYNAPSE_RUST_DIGEST` not defined | S-Major T-Defect X-Release-Blocker O-Frequent | ### Description

Try to build synapse 1.68.0rc1 now forcing an included rust component build.

### Steps to reproduce

Trying to build synapse 1.68.0rc1 I run into the following error:

```

Compiling synapse v0.1.0 (/var/tmp/paludis/build/net-synapse-1.68.0rc1/work/matrix-synapse-1.68.0rc1/rust)

Running `ru... | 1.0 | synapse 1.68.0rc1 fails to build: environment variable `SYNAPSE_RUST_DIGEST` not defined - ### Description

Try to build synapse 1.68.0rc1 now forcing an included rust component build.

### Steps to reproduce

Trying to build synapse 1.68.0rc1 I run into the following error:

```

Compiling synapse v0.1.0 (/var/tm... | defect | synapse fails to build environment variable synapse rust digest not defined description try to build synapse now forcing an included rust component build steps to reproduce trying to build synapse i run into the following error compiling synapse var tmp paludis bui... | 1 |

569 | 2,571,490,375 | IssuesEvent | 2015-02-10 16:48:00 | hazelcast/hazelcast | https://api.github.com/repos/hazelcast/hazelcast | opened | NPE While Getting CacheOperationProvider | Team: Core Type: Defect | Occured while running stabilizer tests on X-Large cluster.

The problem is that `CacheConfig` may not be created yet before any cache operation request is received from node.

Here are the error logs of failed test from @Danny-Hazelcast.

```

message='Worked ran into an unhandled exception'

type='Worker exce... | 1.0 | NPE While Getting CacheOperationProvider - Occured while running stabilizer tests on X-Large cluster.

The problem is that `CacheConfig` may not be created yet before any cache operation request is received from node.

Here are the error logs of failed test from @Danny-Hazelcast.

```

message='Worked ran into an... | defect | npe while getting cacheoperationprovider occured while running stabilizer tests on x large cluster the problem is that cacheconfig may not be created yet before any cache operation request is received from node here are the error logs of failed test from danny hazelcast message worked ran into an... | 1 |

19,023 | 2,616,017,747 | IssuesEvent | 2015-03-02 00:59:38 | jasonhall/bwapi | https://api.github.com/repos/jasonhall/bwapi | closed | MinGW Support (ones more) | auto-migrated NewFeature Priority-Medium Type-Enhancement | ```

Dear BWAPI developer,

ones more I would like to mention, that it would be nice, if BWAPI clients

would also compile with mingw.

I prepared a patch, which demonstrates, that there are only little changes

needed to make the code compile with mingw. With these modifications the

ExampleAIClient runs fine.

Till no... | 1.0 | MinGW Support (ones more) - ```

Dear BWAPI developer,

ones more I would like to mention, that it would be nice, if BWAPI clients

would also compile with mingw.

I prepared a patch, which demonstrates, that there are only little changes

needed to make the code compile with mingw. With these modifications the

Example... | non_defect | mingw support ones more dear bwapi developer ones more i would like to mention that it would be nice if bwapi clients would also compile with mingw i prepared a patch which demonstrates that there are only little changes needed to make the code compile with mingw with these modifications the example... | 0 |

333,133 | 29,510,541,455 | IssuesEvent | 2023-06-03 21:51:17 | sandialabs/pyttb | https://api.github.com/repos/sandialabs/pyttb | closed | Testing: implement tests for full coverage | testing doing | If possible, implement tests to provide full coverage of TensorToolbox code:

```

pytest --cov=pyttb tests/ --cov-report=term-missing

```

```

Name Stmts Miss Cover Missing

----------------------------------------------------

pyttb\__init__.py 23 1 96% 31

pyttb\cp_als... | 1.0 | Testing: implement tests for full coverage - If possible, implement tests to provide full coverage of TensorToolbox code:

```

pytest --cov=pyttb tests/ --cov-report=term-missing

```

```

Name Stmts Miss Cover Missing

----------------------------------------------------

pyttb\__init__.p... | non_defect | testing implement tests for full coverage if possible implement tests to provide full coverage of tensortoolbox code pytest cov pyttb tests cov report term missing name stmts miss cover missing pyttb init p... | 0 |

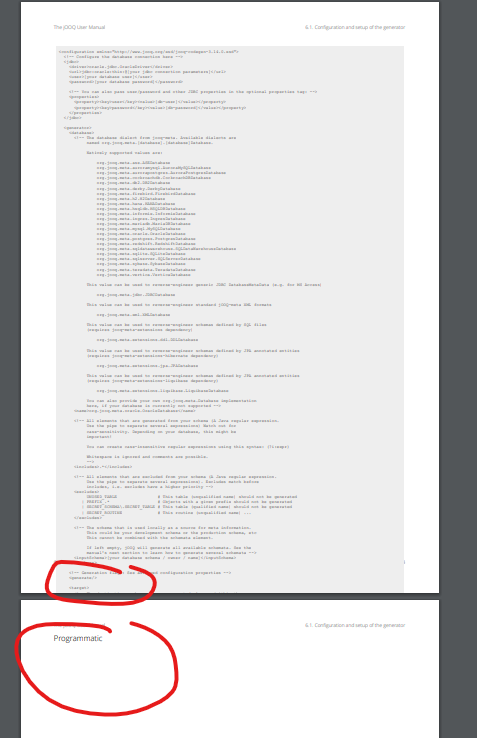

55,165 | 14,247,119,724 | IssuesEvent | 2020-11-19 10:59:53 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | PDF manual doesn't correctly wrap large code blocks | C: Documentation E: All Editions P: Medium T: Defect | The PDF manual doesn't correctly wrap large (as in lines of code) code blocks, see for example:

- The first highlight shows that the block reaches the end of the page rather than wrapping to the next page

... | 1.0 | PDF manual doesn't correctly wrap large code blocks - The PDF manual doesn't correctly wrap large (as in lines of code) code blocks, see for example:

- The first highlight shows that the block reaches the ... | defect | pdf manual doesn t correctly wrap large code blocks the pdf manual doesn t correctly wrap large as in lines of code code blocks see for example the first highlight shows that the block reaches the end of the page rather than wrapping to the next page the second highlight shows that the next page i... | 1 |

47,826 | 13,066,259,458 | IssuesEvent | 2020-07-30 21:19:19 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [NoiseEngine] example script and python module are broken (Trac #1230) | Migrated from Trac combo reconstruction defect | Example script `../resources/scripts/example.py` is broken because `I3IsolatedHitsCutModule` does not exist anymore (should probably be replaced by STTools). The same is true for `../python/NoiseEngine.py`. Moreover, the import statements should be checked because some modules are missing and other are not needed. In g... | 1.0 | [NoiseEngine] example script and python module are broken (Trac #1230) - Example script `../resources/scripts/example.py` is broken because `I3IsolatedHitsCutModule` does not exist anymore (should probably be replaced by STTools). The same is true for `../python/NoiseEngine.py`. Moreover, the import statements should b... | defect | example script and python module are broken trac example script resources scripts example py is broken because does not exist anymore should probably be replaced by sttools the same is true for python noiseengine py moreover the import statements should be checked because some modules are mi... | 1 |

596,575 | 18,106,989,934 | IssuesEvent | 2021-09-22 20:17:57 | solo-io/gloo | https://api.github.com/repos/solo-io/gloo | closed | rabbitmq operator + gloo edge fails to create upstreams | Type: Bug Impact: M Priority: High | **Describe the bug**

deploying the rabbitmq operator either before or after gloo edge is installed fails to fully create upstreams. The upstreams appear as pending and then are removed.

**To Reproduce**

Steps to reproduce the behavior:

# deploy rabbitmq CRDs and cluster operator

```

kubectl apply -f https://g... | 1.0 | rabbitmq operator + gloo edge fails to create upstreams - **Describe the bug**

deploying the rabbitmq operator either before or after gloo edge is installed fails to fully create upstreams. The upstreams appear as pending and then are removed.

**To Reproduce**

Steps to reproduce the behavior:

# deploy rabbitmq ... | non_defect | rabbitmq operator gloo edge fails to create upstreams describe the bug deploying the rabbitmq operator either before or after gloo edge is installed fails to fully create upstreams the upstreams appear as pending and then are removed to reproduce steps to reproduce the behavior deploy rabbitmq ... | 0 |

474,124 | 13,653,108,622 | IssuesEvent | 2020-09-27 11:03:01 | STAMACODING/RSA-App | https://api.github.com/repos/STAMACODING/RSA-App | closed | Neues Log Level hinzufügen oder vielleicht noch mehr? | enhancement / feature high priority log | Im Moment haben wir folgende Log Levels:

1. Error

2. Warning

3. Debug

4. Test

Würde mir noch ein Log-Level wünschen, was eine höhere Priorität als Debug hat, aber eine niedrigere als Warning. Also so:

1. Error

2. Warning

3. _Info_

4. Debug

5. Test

Als ich nach einem Namen für das neue Log Level gesuc... | 1.0 | Neues Log Level hinzufügen oder vielleicht noch mehr? - Im Moment haben wir folgende Log Levels:

1. Error

2. Warning

3. Debug

4. Test

Würde mir noch ein Log-Level wünschen, was eine höhere Priorität als Debug hat, aber eine niedrigere als Warning. Also so:

1. Error

2. Warning

3. _Info_

4. Debug

5. Test

... | non_defect | neues log level hinzufügen oder vielleicht noch mehr im moment haben wir folgende log levels error warning debug test würde mir noch ein log level wünschen was eine höhere priorität als debug hat aber eine niedrigere als warning also so error warning info debug test ... | 0 |

183,328 | 14,938,617,861 | IssuesEvent | 2021-01-25 15:58:52 | skypyproject/skypy | https://api.github.com/repos/skypyproject/skypy | closed | Contributor guidelines to documentation | documentation enhancement | ## Description

Move contributor guidelines to documentation under Developer section.

| 1.0 | Contributor guidelines to documentation - ## Description

Move contributor guidelines to documentation under Developer section.

| non_defect | contributor guidelines to documentation description move contributor guidelines to documentation under developer section | 0 |

16,608 | 11,136,498,562 | IssuesEvent | 2019-12-20 16:44:31 | RHEAGROUP/CDP4-IME-Community-Edition | https://api.github.com/repos/RHEAGROUP/CDP4-IME-Community-Edition | closed | The scale of the simpleParameterType should be displayed in the requirements browser, in brackets next to the shortName. | minor trivial usability | The scale is currently not displayed in the browser. | True | The scale of the simpleParameterType should be displayed in the requirements browser, in brackets next to the shortName. - The scale is currently not displayed in the browser. | non_defect | the scale of the simpleparametertype should be displayed in the requirements browser in brackets next to the shortname the scale is currently not displayed in the browser | 0 |

754,249 | 26,378,543,262 | IssuesEvent | 2023-01-12 06:12:11 | idom-team/idom | https://api.github.com/repos/idom-team/idom | closed | Document `should_render` | type: docs priority: 3 (low) | ### Current Situation

Currently we do not explain how the user can utilize `should_render` to conditionally render a component.

Related issue: #738

### Proposed Actions

Either document the usage of `should_render`, or include a `ConditionalRender` helper utility that automatically does this. | 1.0 | Document `should_render` - ### Current Situation

Currently we do not explain how the user can utilize `should_render` to conditionally render a component.

Related issue: #738

### Proposed Actions

Either document the usage of `should_render`, or include a `ConditionalRender` helper utility that automatically... | non_defect | document should render current situation currently we do not explain how the user can utilize should render to conditionally render a component related issue proposed actions either document the usage of should render or include a conditionalrender helper utility that automatically d... | 0 |

121,776 | 26,031,490,533 | IssuesEvent | 2022-12-21 21:50:05 | Clueless-Community/seamless-ui | https://api.github.com/repos/Clueless-Community/seamless-ui | closed | Create a contact-us-map.html | codepeak 22 issue:3 | One need to make this component using `HTML` and `Tailwind CSS`. I would suggest to use [Tailwind Playgrounds](https://play.tailwindcss.com/) to make things faster and quicker.

Here is a reference to the component.

to make things faster and quicker.

Here is a reference to the component.

in the AUR

https://aur.archlinux.org/packages/micropad

### Utility this package has for you

Quite a unique notes taking app. You can even paste videos, audio files and such into it.

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but for a great amount.

#... | 1.0 | [Request] micropad - ### Link to the package(s) in the AUR

https://aur.archlinux.org/packages/micropad

### Utility this package has for you

Quite a unique notes taking app. You can even paste videos, audio files and such into it.

### Do you consider the package(s) to be useful for every Chaotic-AUR user?

No, but f... | non_defect | micropad link to the package s in the aur utility this package has for you quite a unique notes taking app you can even paste videos audio files and such into it do you consider the package s to be useful for every chaotic aur user no but for a great amount do you consider the packag... | 0 |

22,136 | 3,602,976,379 | IssuesEvent | 2016-02-03 17:22:33 | primefaces/primefaces | https://api.github.com/repos/primefaces/primefaces | closed | RequestContext#update validation should skip unrendered components | defect enhancement | If you update a compoent, it must be rendered. So skipping unrendered prevents error and improves performance. | 1.0 | RequestContext#update validation should skip unrendered components - If you update a compoent, it must be rendered. So skipping unrendered prevents error and improves performance. | defect | requestcontext update validation should skip unrendered components if you update a compoent it must be rendered so skipping unrendered prevents error and improves performance | 1 |

113,473 | 9,647,843,373 | IssuesEvent | 2019-05-17 14:50:50 | magnumripper/JohnTheRipper | https://api.github.com/repos/magnumripper/JohnTheRipper | opened | More CI tests | RFC / discussion testing | Brainstorming. We now have a free container for the "more-tests".

We could add an MPI build (although only testing locally much like --fork).

Do we currently test a legacy build anywhere?

Anything else we should test? Perhaps running the Test Suite in `-internal` mode (disabling the few formats that give false pos... | 1.0 | More CI tests - Brainstorming. We now have a free container for the "more-tests".

We could add an MPI build (although only testing locally much like --fork).

Do we currently test a legacy build anywhere?

Anything else we should test? Perhaps running the Test Suite in `-internal` mode (disabling the few formats tha... | non_defect | more ci tests brainstorming we now have a free container for the more tests we could add an mpi build although only testing locally much like fork do we currently test a legacy build anywhere anything else we should test perhaps running the test suite in internal mode disabling the few formats tha... | 0 |

19,013 | 3,122,792,651 | IssuesEvent | 2015-09-06 21:24:17 | pemsley/coot | https://api.github.com/repos/pemsley/coot | closed | the configure script do not fail if swig is not available | auto-migrated Priority-Medium Type-Defect | ```

Hello,

without swig the configure process run without error

but make gives this error

make[1]: entrant dans le répertoire « /home/picca/Projets/coot/src »

swig -o coot_wrap_guile_pre_gtk2.cc -DCOOT_USE_GTK2_INTERFACE

-DHAVE_SYS_STDTYPES_H=0 -DUSE_LIBCURL -I../src -guile -c++ ../src/coot.i

/bin/bash: swig : c... | 1.0 | the configure script do not fail if swig is not available - ```

Hello,

without swig the configure process run without error

but make gives this error

make[1]: entrant dans le répertoire « /home/picca/Projets/coot/src »

swig -o coot_wrap_guile_pre_gtk2.cc -DCOOT_USE_GTK2_INTERFACE

-DHAVE_SYS_STDTYPES_H=0 -DUSE_LIB... | defect | the configure script do not fail if swig is not available hello without swig the configure process run without error but make gives this error make entrant dans le répertoire « home picca projets coot src » swig o coot wrap guile pre cc dcoot use interface dhave sys stdtypes h duse libcurl i ... | 1 |

293,900 | 22,097,856,545 | IssuesEvent | 2022-06-01 11:36:12 | dev-heeseok/web-react | https://api.github.com/repos/dev-heeseok/web-react | closed | feat: React-Bootstrap Navbar Tutorial 추가 (Bootstrap 따라하기 샘플) | documentation enhancement | React-Bootstrap 을 사용하기 위해 샘플을 추가하는 방법을 공유하려고 한다.

- [ ] Bootstrap Example 사용방법 작성하기

- [ ] Navbar 를 이용하여 Web Pages 전환하기 | 1.0 | feat: React-Bootstrap Navbar Tutorial 추가 (Bootstrap 따라하기 샘플) - React-Bootstrap 을 사용하기 위해 샘플을 추가하는 방법을 공유하려고 한다.

- [ ] Bootstrap Example 사용방법 작성하기

- [ ] Navbar 를 이용하여 Web Pages 전환하기 | non_defect | feat react bootstrap navbar tutorial 추가 bootstrap 따라하기 샘플 react bootstrap 을 사용하기 위해 샘플을 추가하는 방법을 공유하려고 한다 bootstrap example 사용방법 작성하기 navbar 를 이용하여 web pages 전환하기 | 0 |

27,169 | 4,894,105,847 | IssuesEvent | 2016-11-19 03:55:10 | prettydiff/prettydiff | https://api.github.com/repos/prettydiff/prettydiff | opened | Breaking on JavaScript argument list | Beautification Defect Underway | fs

.writeFile(dataA.finalpath + ending, "", function pdNodeLocal__fileWrite_writing_writeFileEmpty(err) {

if (err !== null) {

console.log(lf + "Error writing empty output." + lf);

console.log(err);

} else if (method === "file" && options.endquietly !== "quiet") {

... | 1.0 | Breaking on JavaScript argument list - fs

.writeFile(dataA.finalpath + ending, "", function pdNodeLocal__fileWrite_writing_writeFileEmpty(err) {

if (err !== null) {

console.log(lf + "Error writing empty output." + lf);

console.log(err);

} else if (method === "file" &... | defect | breaking on javascript argument list fs writefile dataa finalpath ending function pdnodelocal filewrite writing writefileempty err if err null console log lf error writing empty output lf console log err else if method file ... | 1 |

489,714 | 14,111,606,999 | IssuesEvent | 2020-11-07 00:53:23 | chingu-voyages/v25-geckos-team-01 | https://api.github.com/repos/chingu-voyages/v25-geckos-team-01 | opened | Delete an existing Nonprofit task | UserStory priority:must_have | **User Story Description**

As a Nonprofit user

I want to delete an existing task previously created by my organization

So myself and potential volunteers won't waste time on it

**Steps to Follow (optional)**

- [ ] TBD

- [ ] Additional steps as necessary

**Additional Considerations**

Any supplemental informa... | 1.0 | Delete an existing Nonprofit task - **User Story Description**

As a Nonprofit user

I want to delete an existing task previously created by my organization

So myself and potential volunteers won't waste time on it

**Steps to Follow (optional)**

- [ ] TBD

- [ ] Additional steps as necessary

**Additional Consid... | non_defect | delete an existing nonprofit task user story description as a nonprofit user i want to delete an existing task previously created by my organization so myself and potential volunteers won t waste time on it steps to follow optional tbd additional steps as necessary additional considerat... | 0 |

76,337 | 21,367,725,677 | IssuesEvent | 2022-04-20 04:52:11 | PDP-10/its | https://api.github.com/repos/PDP-10/its | opened | Update SIMH configuration | build process | - Replace `at tty` with `attach dz` for simh, see #2099.

- `set mta enabled` for pdp10-kl. | 1.0 | Update SIMH configuration - - Replace `at tty` with `attach dz` for simh, see #2099.

- `set mta enabled` for pdp10-kl. | non_defect | update simh configuration replace at tty with attach dz for simh see set mta enabled for kl | 0 |

27,216 | 4,932,559,551 | IssuesEvent | 2016-11-28 14:04:12 | Guake/guake | https://api.github.com/repos/Guake/guake | closed | Custom tab names lost after opening new tab | Priority:High Type: Defect | If I have some tabs, and then I rename them (double click on the tab name, in the bottom, and then type new name), and then I open a new tab (CTRL+SHIFT+T) all tab names get reset to the default name.

The correct behavior should be: the tab names are always kept unchanged if they have been customized | 1.0 | Custom tab names lost after opening new tab - If I have some tabs, and then I rename them (double click on the tab name, in the bottom, and then type new name), and then I open a new tab (CTRL+SHIFT+T) all tab names get reset to the default name.

The correct behavior should be: the tab names are always kept unchange... | defect | custom tab names lost after opening new tab if i have some tabs and then i rename them double click on the tab name in the bottom and then type new name and then i open a new tab ctrl shift t all tab names get reset to the default name the correct behavior should be the tab names are always kept unchange... | 1 |

532,242 | 15,531,925,997 | IssuesEvent | 2021-03-14 02:28:19 | eidan66/Saikai | https://api.github.com/repos/eidan66/Saikai | closed | Feature - add date to position | enhancement priority 2 | When i add position i want to see the date i added it (or initial with custom date). | 1.0 | Feature - add date to position - When i add position i want to see the date i added it (or initial with custom date). | non_defect | feature add date to position when i add position i want to see the date i added it or initial with custom date | 0 |

134,558 | 19,270,577,520 | IssuesEvent | 2021-12-10 04:28:53 | appsmithorg/appsmith | https://api.github.com/repos/appsmithorg/appsmith | closed | [Bug]: Visible toggle on page properties animates from off to on when pages are set to visible | Bug Design System UX Improvement Low Release Platform Pod New Developers Pod | ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

Visible toggle animates from off to on position when settings button is clicked. Ideally, it should be in the on position when the page is set to visible and not toggle when user navigates to page settings.

[![LO... | 1.0 | [Bug]: Visible toggle on page properties animates from off to on when pages are set to visible - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current Behavior

Visible toggle animates from off to on position when settings button is clicked. Ideally, it should be in the on pos... | non_defect | visible toggle on page properties animates from off to on when pages are set to visible is there an existing issue for this i have searched the existing issues current behavior visible toggle animates from off to on position when settings button is clicked ideally it should be in the on position ... | 0 |

13,020 | 2,732,875,394 | IssuesEvent | 2015-04-17 09:54:47 | tiku01/oryx-editor | https://api.github.com/repos/tiku01/oryx-editor | closed | shape menu disabled for elements having no morphing rules | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Create a stencilset with connection rules

2. add morphing rules for some (not all) the elements having connection rules

What is the expected output?

All elements having the connection rules should show the shape menu

What do you see instead?

The elements having connectio... | 1.0 | shape menu disabled for elements having no morphing rules - ```

What steps will reproduce the problem?

1. Create a stencilset with connection rules

2. add morphing rules for some (not all) the elements having connection rules

What is the expected output?

All elements having the connection rules should show the shape ... | defect | shape menu disabled for elements having no morphing rules what steps will reproduce the problem create a stencilset with connection rules add morphing rules for some not all the elements having connection rules what is the expected output all elements having the connection rules should show the shape ... | 1 |

120,016 | 15,690,181,962 | IssuesEvent | 2021-03-25 16:26:47 | carbon-design-system/carbon-for-ibm-dotcom-website | https://api.github.com/repos/carbon-design-system/carbon-for-ibm-dotcom-website | closed | ⭐️ Template // Website content crafting and publishing: (Guidelines/ Name of the new guideline name) | content design icebox website: Carbon for ibm.com | ## Objective

Provide Carbon for IBM.com adopter the best practices and guidance they need to adopt Carbon for IBM.com.

## General publishing process

Please make sure you always start the content crafting process by viewing the **[2020 website content publishing process box document](https://ibm.box.com/s/6oiqsvhxtchi... | 1.0 | ⭐️ Template // Website content crafting and publishing: (Guidelines/ Name of the new guideline name) - ## Objective

Provide Carbon for IBM.com adopter the best practices and guidance they need to adopt Carbon for IBM.com.

## General publishing process

Please make sure you always start the content crafting process by ... | non_defect | ⭐️ template website content crafting and publishing guidelines name of the new guideline name objective provide carbon for ibm com adopter the best practices and guidance they need to adopt carbon for ibm com general publishing process please make sure you always start the content crafting process by ... | 0 |

5,717 | 2,610,214,001 | IssuesEvent | 2015-02-26 19:08:19 | chrsmith/somefinders | https://api.github.com/repos/chrsmith/somefinders | opened | хлебопечка clatronic 3365 bba инструкция.rar | auto-migrated Priority-Medium Type-Defect | ```

'''Борислав Борисов'''

День добрый никак не могу найти .хлебопечка

clatronic 3365 bba инструкция.rar. как то выкладывали

уже

'''Адонис Матвеев'''