Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

18,479 | 24,550,723,765 | IssuesEvent | 2022-10-12 12:24:40 | GoogleCloudPlatform/fda-mystudies | https://api.github.com/repos/GoogleCloudPlatform/fda-mystudies | closed | [iOS] Eligibility test > The following changes needs to be done on the Eligibility questions screen | Bug P1 iOS Process: Fixed Process: Tested QA Process: Tested dev | 1. Remove the arrow marks present which are present next to the Data sharing options

2. AR: A participant is automatically navigating to the next screen after selecting any one of the options

ER: After selecting the option, the participant should stay on the same screen and participant should click on

... | 3.0 | [iOS] Eligibility test > The following changes needs to be done on the Eligibility questions screen - 1. Remove the arrow marks present which are present next to the Data sharing options

2. AR: A participant is automatically navigating to the next screen after selecting any one of the options

ER: After selecting ... | non_defect | eligibility test the following changes needs to be done on the eligibility questions screen remove the arrow marks present which are present next to the data sharing options ar a participant is automatically navigating to the next screen after selecting any one of the options er after selecting the ... | 0 |

53,413 | 13,261,548,649 | IssuesEvent | 2020-08-20 20:06:00 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | [steamshovel] Save frame is broken if filters are on (Trac #1347) | Migrated from Trac combo core defect | Save frame does not work as intended when the frame stream is filtered.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1347">https://code.icecube.wisc.edu/projects/icecube/ticket/1347</a>, reported by hdembinskiand owned by hdembinski</em></summary>

<p>

```json

{

... | 1.0 | [steamshovel] Save frame is broken if filters are on (Trac #1347) - Save frame does not work as intended when the frame stream is filtered.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1347">https://code.icecube.wisc.edu/projects/icecube/ticket/1347</a>, reported... | defect | save frame is broken if filters are on trac save frame does not work as intended when the frame stream is filtered migrated from json status closed changetime ts description save frame does not work as intended when the frame stream is filter... | 1 |

429,844 | 30,106,159,582 | IssuesEvent | 2023-06-30 01:36:11 | romkey/give-me-a-sign | https://api.github.com/repos/romkey/give-me-a-sign | opened | Store DEBUG setting in Data | documentation | Currently DEBUG is hardcoded in the software; it should be stored in Data so that it can be remotely updated | 1.0 | Store DEBUG setting in Data - Currently DEBUG is hardcoded in the software; it should be stored in Data so that it can be remotely updated | non_defect | store debug setting in data currently debug is hardcoded in the software it should be stored in data so that it can be remotely updated | 0 |

67,894 | 21,301,422,886 | IssuesEvent | 2022-04-15 04:03:24 | klubcoin/lcn-mobile | https://api.github.com/repos/klubcoin/lcn-mobile | closed | [Klubcoin Partners] Fix fit Partner Images on image container at Klubcoin Partner Details Screen. | Onboarding and Authentication Services Defect Could Have Trivial Navigation / Drawer Services | ### **Description:**

Fit Partner Images on image container at Klubcoin Partner Details Screen.

**Build Environment:** Prod Candidate Environment

**Affects Version:** 1.0.0.prod.1

**Device Platform:** Android

**Device OS:** 11

**Test Device:** OnePlus 7T Pro

### **Pre-condition:**

1. User successfully instal... | 1.0 | [Klubcoin Partners] Fix fit Partner Images on image container at Klubcoin Partner Details Screen. - ### **Description:**

Fit Partner Images on image container at Klubcoin Partner Details Screen.

**Build Environment:** Prod Candidate Environment

**Affects Version:** 1.0.0.prod.1

**Device Platform:** Android

**Dev... | defect | fix fit partner images on image container at klubcoin partner details screen description fit partner images on image container at klubcoin partner details screen build environment prod candidate environment affects version prod device platform android device os tes... | 1 |

27,574 | 13,306,170,307 | IssuesEvent | 2020-08-25 19:47:08 | yalelibrary/YUL-DC | https://api.github.com/repos/yalelibrary/YUL-DC | closed | SPIKE: convert persistence and load balancer scripts to CloudFormation | performance team | **ACCEPTANCE**

- [x] Figure out level of effort to convert the current build scripts to CloudFormation templates

- [x] Do a 1-2 hour review of other potential technologies (e.g. TerraForm) for managing infrastructure configurations

- [x] Recommend a direction for going forward (CloudFormation, TerraForm, Bash, other?). | True | SPIKE: convert persistence and load balancer scripts to CloudFormation - **ACCEPTANCE**

- [x] Figure out level of effort to convert the current build scripts to CloudFormation templates

- [x] Do a 1-2 hour review of other potential technologies (e.g. TerraForm) for managing infrastructure configurations

- [x] Recommend... | non_defect | spike convert persistence and load balancer scripts to cloudformation acceptance figure out level of effort to convert the current build scripts to cloudformation templates do a hour review of other potential technologies e g terraform for managing infrastructure configurations recommend a dir... | 0 |

29,920 | 11,786,082,989 | IssuesEvent | 2020-03-17 11:33:24 | scriptex/atanas.info | https://api.github.com/repos/scriptex/atanas.info | closed | CVE-2020-7598 (High) detected in multiple libraries | security vulnerability | ## CVE-2020-7598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-0.0.10.tgz</b>, <b>minimist-1.2.0.tgz</b></p></summary>

<p>

<details><summary><b>... | True | CVE-2020-7598 (High) detected in multiple libraries - ## CVE-2020-7598 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>minimist-0.0.8.tgz</b>, <b>minimist-0.0.10.tgz</b>, <b>minimist-... | non_defect | cve high detected in multiple libraries cve high severity vulnerability vulnerable libraries minimist tgz minimist tgz minimist tgz minimist tgz parse argument options library home page a href path to dependency file tmp ws scm atanas info pa... | 0 |

399,073 | 11,742,672,277 | IssuesEvent | 2020-03-12 01:39:51 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Use aliases instead of aliasses | mailinglists priority: low technical change | In GitLab by @joren485 on May 27, 2019, 20:47

<!--

This template is for changes that do not affect the behaviour of the website.

** If you are not in the Technicie, there is a very high chance that you

should not use this template

Examples:

* Changes in CI

* Refactoring of code

* T... | 1.0 | Use aliases instead of aliasses - In GitLab by @joren485 on May 27, 2019, 20:47

<!--

This template is for changes that do not affect the behaviour of the website.

** If you are not in the Technicie, there is a very high chance that you

should not use this template

Examples:

* Changes in CI

... | non_defect | use aliases instead of aliasses in gitlab by on may this template is for changes that do not affect the behaviour of the website if you are not in the technicie there is a very high chance that you should not use this template examples changes in ci refacto... | 0 |

429,148 | 12,421,474,619 | IssuesEvent | 2020-05-23 17:02:25 | roed314/seminars | https://api.github.com/repos/roed314/seminars | closed | Spacing above buttons on Account page | low priority | This is a very minor suggestion, but I think it might look slightly better if the vertical spacing above each of the buttons "Update details" and "Change password" was half as much. | 1.0 | Spacing above buttons on Account page - This is a very minor suggestion, but I think it might look slightly better if the vertical spacing above each of the buttons "Update details" and "Change password" was half as much. | non_defect | spacing above buttons on account page this is a very minor suggestion but i think it might look slightly better if the vertical spacing above each of the buttons update details and change password was half as much | 0 |

239,873 | 19,975,123,279 | IssuesEvent | 2022-01-29 01:25:46 | kevin-ghannoum/soen490 | https://api.github.com/repos/kevin-ghannoum/soen490 | closed | [Acceptance test] As a business owner, I want to view the logged hours/pay | acceptance test | Acceptance referring to this user story: #80 | 1.0 | [Acceptance test] As a business owner, I want to view the logged hours/pay - Acceptance referring to this user story: #80 | non_defect | as a business owner i want to view the logged hours pay acceptance referring to this user story | 0 |

87,253 | 25,080,334,049 | IssuesEvent | 2022-11-07 18:43:58 | xamarin/xamarin-android | https://api.github.com/repos/xamarin/xamarin-android | closed | Signing a release app with the same keystore differs in Visual Studio vs Android Studio | Area: App+Library Build needs-triage | ### Android application type

Classic Xamarin.Android (MonoAndroid12.0, etc.)

### Affected platform version

VS 8.10.20 (build 0)

### Description

Trying to migrate to Android Studio native development of my app, and using the same keystore details as used in Visual Studio, how can the app signature be diff... | 1.0 | Signing a release app with the same keystore differs in Visual Studio vs Android Studio - ### Android application type

Classic Xamarin.Android (MonoAndroid12.0, etc.)

### Affected platform version

VS 8.10.20 (build 0)

### Description

Trying to migrate to Android Studio native development of my app, and u... | non_defect | signing a release app with the same keystore differs in visual studio vs android studio android application type classic xamarin android etc affected platform version vs build description trying to migrate to android studio native development of my app and using the same ... | 0 |

23,188 | 3,775,191,372 | IssuesEvent | 2016-03-17 12:35:00 | igagis/aumiks | https://api.github.com/repos/igagis/aumiks | closed | android | auto-migrated Priority-Medium Type-Defect | ```

the project doesnt compile at all on android.

Include to ting are incomplete i believe.

regards

david

```

Original issue reported on code.google.com by `Davidgui...@gmail.com` on 12 Jun 2013 at 5:31 | 1.0 | android - ```

the project doesnt compile at all on android.

Include to ting are incomplete i believe.

regards

david

```

Original issue reported on code.google.com by `Davidgui...@gmail.com` on 12 Jun 2013 at 5:31 | defect | android the project doesnt compile at all on android include to ting are incomplete i believe regards david original issue reported on code google com by davidgui gmail com on jun at | 1 |

101,867 | 8,806,555,194 | IssuesEvent | 2018-12-27 04:51:36 | drussell1974/schemeofwork_web2py_app | https://api.github.com/repos/drussell1974/schemeofwork_web2py_app | closed | Selenium - edit existing Learning Episode | test | _check page elements_

- [x] title and headings

- [x] navigation

_edit_

- [x] create new

- [x] edit existing

- [x] submit invalid

- [x] submit valid | 1.0 | Selenium - edit existing Learning Episode - _check page elements_

- [x] title and headings

- [x] navigation

_edit_

- [x] create new

- [x] edit existing

- [x] submit invalid

- [x] submit valid | non_defect | selenium edit existing learning episode check page elements title and headings navigation edit create new edit existing submit invalid submit valid | 0 |

23,953 | 3,874,868,800 | IssuesEvent | 2016-04-11 22:03:34 | ariya/phantomjs | https://api.github.com/repos/ariya/phantomjs | closed | page.cookies not working in 1.6.0 | old.Priority-Medium old.Status-New old.Type-Defect | _**[hel...@gmail.com](http://code.google.com/u/107647427892722145766/) commented:**_

> Running the following code (modified from tweet.js):

>

> page.open(encodeURI("http://mobile.twitter.com/baudehlo"), function (status) {

> // Check for page load success

> if (status !== "success") {

... | 1.0 | page.cookies not working in 1.6.0 - _**[hel...@gmail.com](http://code.google.com/u/107647427892722145766/) commented:**_

> Running the following code (modified from tweet.js):

>

> page.open(encodeURI("http://mobile.twitter.com/baudehlo"), function (status) {

> // Check for page load success

> if... | defect | page cookies not working in commented running the following code modified from tweet js page open encodeuri quot function status check for page load success if status quot success quot console log quot unable to access network quot ... | 1 |

24,268 | 3,946,755,510 | IssuesEvent | 2016-04-28 06:47:14 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | UserWarning: indices array has non-integer dtype (uint64) | defect scipy.sparse | I'd guess, that unsigned types are integers to ;-)

...\scipy-0.13.2.win-amd64-py2.7.egg\scipy\sparse\compressed.py:119: UserWarning: indptr array has non-integer dtype (uint64)

...\scipy-0.13.2.win-amd64-py2.7.egg\scipy\sparse\compressed.py:122: UserWarning: indices array has non-integer dtype (uint64)

O... | 1.0 | UserWarning: indices array has non-integer dtype (uint64) - I'd guess, that unsigned types are integers to ;-)

...\scipy-0.13.2.win-amd64-py2.7.egg\scipy\sparse\compressed.py:119: UserWarning: indptr array has non-integer dtype (uint64)

...\scipy-0.13.2.win-amd64-py2.7.egg\scipy\sparse\compressed.py:122: Us... | defect | userwarning indices array has non integer dtype i d guess that unsigned types are integers to scipy win egg scipy sparse compressed py userwarning indptr array has non integer dtype scipy win egg scipy sparse compressed py userwarning indices array has... | 1 |

186,570 | 14,399,231,720 | IssuesEvent | 2020-12-03 10:38:34 | ethereum/solidity | https://api.github.com/repos/ethereum/solidity | closed | yulInterpreter crashes on infinite recursion | bug :bug: should compile without error testing :hammer: | Found by ossfuzz (13647 and 13811)

```

{

function f() {

f()

}

f()

}

```

The problem manifests in `Interpreter::openScope` in `test/tools/yulInterpreter/Interpreter.h`. | 1.0 | yulInterpreter crashes on infinite recursion - Found by ossfuzz (13647 and 13811)

```

{

function f() {

f()

}

f()

}

```

The problem manifests in `Interpreter::openScope` in `test/tools/yulInterpreter/Interpreter.h`. | non_defect | yulinterpreter crashes on infinite recursion found by ossfuzz and function f f f the problem manifests in interpreter openscope in test tools yulinterpreter interpreter h | 0 |

1,591 | 2,649,223,792 | IssuesEvent | 2015-03-14 18:08:38 | jquery/jquery-mobile | https://api.github.com/repos/jquery/jquery-mobile | closed | hoverDelay setting doesn't do anything | Remove deprecated code | ```buttonMarkup.hoverDelay``` is documented at http://api.jquerymobile.com/global-config/ and it used to perform as documented in jQuery Mobile 1.3. However, in 1.4 it has no effect. The only place ```hoverDelay``` appears in the 1.4 code is:

buttonMarkup: {

hoverDelay: 200

},

So I guess either the code ... | 1.0 | hoverDelay setting doesn't do anything - ```buttonMarkup.hoverDelay``` is documented at http://api.jquerymobile.com/global-config/ and it used to perform as documented in jQuery Mobile 1.3. However, in 1.4 it has no effect. The only place ```hoverDelay``` appears in the 1.4 code is:

buttonMarkup: {

hoverDelay:... | non_defect | hoverdelay setting doesn t do anything buttonmarkup hoverdelay is documented at and it used to perform as documented in jquery mobile however in it has no effect the only place hoverdelay appears in the code is buttonmarkup hoverdelay so i guess either the code th... | 0 |

7,451 | 3,975,917,891 | IssuesEvent | 2016-05-05 08:49:26 | FakeItEasy/FakeItEasy | https://api.github.com/repos/FakeItEasy/FakeItEasy | opened | Add an API approval test | build in-progress P2 | Using https://github.com/approvals/ApprovalTests.Net and https://www.nuget.org/packages/PublicApiGenerator/

This will allow us to see the effect of any change to the public API.

Initially, at least, I don't think we should include this in the default rake tasks, since the management of this test will be a little ... | 1.0 | Add an API approval test - Using https://github.com/approvals/ApprovalTests.Net and https://www.nuget.org/packages/PublicApiGenerator/

This will allow us to see the effect of any change to the public API.

Initially, at least, I don't think we should include this in the default rake tasks, since the management of ... | non_defect | add an api approval test using and this will allow us to see the effect of any change to the public api initially at least i don t think we should include this in the default rake tasks since the management of this test will be a little different to usual and i think it will add unwanted friction for co... | 0 |

54,780 | 13,925,989,654 | IssuesEvent | 2020-10-21 17:38:07 | AlfrescoLabs/alfresco-environment-validation | https://api.github.com/repos/AlfrescoLabs/alfresco-environment-validation | reopened | EVT should validate that the database can accept at least 300 connections | Priority-High Type-Defect auto-migrated | ```

A single Alfresco cluster node is capable of concurrently requiring at least

275 database connections, and therefore the EVT should validate that the

database supports at least that many concurrent connections.

Note: relates to https://issues.alfresco.com/jira/browse/MNT-9899

```

Original issue reported on code... | 1.0 | EVT should validate that the database can accept at least 300 connections - ```

A single Alfresco cluster node is capable of concurrently requiring at least

275 database connections, and therefore the EVT should validate that the

database supports at least that many concurrent connections.

Note: relates to https://i... | defect | evt should validate that the database can accept at least connections a single alfresco cluster node is capable of concurrently requiring at least database connections and therefore the evt should validate that the database supports at least that many concurrent connections note relates to origin... | 1 |

19,379 | 6,718,370,829 | IssuesEvent | 2017-10-15 12:01:34 | azerothcore/azerothcore-wotlk | https://api.github.com/repos/azerothcore/azerothcore-wotlk | closed | Cmake Issuse | type: build type: enhancement type: question | Hello,

I did everything according to the instructions and gives me a problem when cmake.

>

> "CMake Error at C:/Program Files/CMake/share/cmake-3.7/Modules/FindPackageHandleStandardArgs.cmake:138 (message):

> Could NOT find OpenSSL (missing: OPENSSL_LIBRARIES OPENSSL_INCLUDE_DIR)

> Call Stack (most recent c... | 1.0 | Cmake Issuse - Hello,

I did everything according to the instructions and gives me a problem when cmake.

>

> "CMake Error at C:/Program Files/CMake/share/cmake-3.7/Modules/FindPackageHandleStandardArgs.cmake:138 (message):

> Could NOT find OpenSSL (missing: OPENSSL_LIBRARIES OPENSSL_INCLUDE_DIR)

> Call Stack... | non_defect | cmake issuse hello i did everything according to the instructions and gives me a problem when cmake cmake error at c program files cmake share cmake modules findpackagehandlestandardargs cmake message could not find openssl missing openssl libraries openssl include dir call stack ... | 0 |

11,898 | 9,488,990,476 | IssuesEvent | 2019-04-22 21:05:55 | internetarchive/fatcat | https://api.github.com/repos/internetarchive/fatcat | closed | Example of a (trivial) editgroup review bot | infrastructure | Something in python that looks at submitted editgroups and annotates them (pass/fail) if appropriate.

API support should already exist; part of this task is to flush out any missing features. | 1.0 | Example of a (trivial) editgroup review bot - Something in python that looks at submitted editgroups and annotates them (pass/fail) if appropriate.

API support should already exist; part of this task is to flush out any missing features. | non_defect | example of a trivial editgroup review bot something in python that looks at submitted editgroups and annotates them pass fail if appropriate api support should already exist part of this task is to flush out any missing features | 0 |

185,527 | 15,024,601,277 | IssuesEvent | 2021-02-01 19:52:27 | fergiemcdowall/search-index | https://api.github.com/repos/fergiemcdowall/search-index | closed | Fix `docs/examples` | documentation | Fix all the code examples to work with the newest version of search-index (v2.1.0). Valuable to get people going I think. | 1.0 | Fix `docs/examples` - Fix all the code examples to work with the newest version of search-index (v2.1.0). Valuable to get people going I think. | non_defect | fix docs examples fix all the code examples to work with the newest version of search index valuable to get people going i think | 0 |

532,169 | 15,530,942,056 | IssuesEvent | 2021-03-13 21:12:58 | eclipse-ee4j/cargotracker | https://api.github.com/repos/eclipse-ee4j/cargotracker | closed | Provide more details when 'registration failed' | Priority: Minor enhancement good first issue help wanted | When logging an event failed, the only message sent to the user is "registration failed". Is this failure due a domain issue or to a technical issue?

User need to get more useful feedback. | 1.0 | Provide more details when 'registration failed' - When logging an event failed, the only message sent to the user is "registration failed". Is this failure due a domain issue or to a technical issue?

User need to get more useful feedback. | non_defect | provide more details when registration failed when logging an event failed the only message sent to the user is registration failed is this failure due a domain issue or to a technical issue user need to get more useful feedback | 0 |

42,388 | 11,011,221,684 | IssuesEvent | 2019-12-04 15:55:44 | contao/contao | https://api.github.com/repos/contao/contao | closed | Unterscheidung Besucher und Mitglied im Hilfe-Assistent Seitentyp | defect | Im Hilfe-Assistenten zum Feld Seitentyp werden derzeit die Begriffe Besucher (visitor) und Benutzer (user) verwendet.

Der Begriff Benutzer (user) wird jedoch zum einen auch in Fällen verwendet, wo eigentlich Besucher im Allgemeinen gemeint sind, und in den anderen Fällen wäre es sinnvoll, diesen durch den spezifisch... | 1.0 | Unterscheidung Besucher und Mitglied im Hilfe-Assistent Seitentyp - Im Hilfe-Assistenten zum Feld Seitentyp werden derzeit die Begriffe Besucher (visitor) und Benutzer (user) verwendet.

Der Begriff Benutzer (user) wird jedoch zum einen auch in Fällen verwendet, wo eigentlich Besucher im Allgemeinen gemeint sind, un... | defect | unterscheidung besucher und mitglied im hilfe assistent seitentyp im hilfe assistenten zum feld seitentyp werden derzeit die begriffe besucher visitor und benutzer user verwendet der begriff benutzer user wird jedoch zum einen auch in fällen verwendet wo eigentlich besucher im allgemeinen gemeint sind un... | 1 |

711,613 | 24,469,647,292 | IssuesEvent | 2022-10-07 18:26:51 | containrrr/watchtower | https://api.github.com/repos/containrrr/watchtower | opened | Watchtower updates even though a monitor is set | Type: Bug Priority: Medium Status: Available | ### Describe the bug

I set in the container under labels:

- com.centurylinklabs.watchtower.monitor-only="true"

but Watchtower continues to update.

### Steps to reproduce

1.Definition under container: - com.centurylinklabs.watchtower.monitor-only="true"

### Expected behavior

Just a notification and not an update... | 1.0 | Watchtower updates even though a monitor is set - ### Describe the bug

I set in the container under labels:

- com.centurylinklabs.watchtower.monitor-only="true"

but Watchtower continues to update.

### Steps to reproduce

1.Definition under container: - com.centurylinklabs.watchtower.monitor-only="true"

### Expect... | non_defect | watchtower updates even though a monitor is set describe the bug i set in the container under labels com centurylinklabs watchtower monitor only true but watchtower continues to update steps to reproduce definition under container com centurylinklabs watchtower monitor only true expect... | 0 |

23,690 | 3,851,865,585 | IssuesEvent | 2016-04-06 05:27:56 | GPF/imame4all | https://api.github.com/repos/GPF/imame4all | closed | ipad 4 with mame4all | auto-migrated Priority-Medium Type-Defect | ```

mk games runs full speed on ipad 4 but not full speed on android way android

hes beter hardware

```

Original issue reported on code.google.com by `markocur...@gmail.com` on 18 Nov 2012 at 1:30 | 1.0 | ipad 4 with mame4all - ```

mk games runs full speed on ipad 4 but not full speed on android way android

hes beter hardware

```

Original issue reported on code.google.com by `markocur...@gmail.com` on 18 Nov 2012 at 1:30 | defect | ipad with mk games runs full speed on ipad but not full speed on android way android hes beter hardware original issue reported on code google com by markocur gmail com on nov at | 1 |

38,853 | 8,972,831,098 | IssuesEvent | 2019-01-29 19:20:47 | Automattic/wp-calypso | https://api.github.com/repos/Automattic/wp-calypso | opened | Ribbon: shadow using unexpected color | Color Schemes Components [Type] Defect | <!-- Thanks for contributing to Calypso! Pick a clear title ("Editor: add spell check") and proceed. -->

#### Steps to reproduce

1. Starting at URL:https://wpcalypso.wordpress.com/devdocs/design/ribbon

2. Notice that the shadow of the ribbon is blue

It should be using the accent color for the shadow, probably -... | 1.0 | Ribbon: shadow using unexpected color - <!-- Thanks for contributing to Calypso! Pick a clear title ("Editor: add spell check") and proceed. -->

#### Steps to reproduce

1. Starting at URL:https://wpcalypso.wordpress.com/devdocs/design/ribbon

2. Notice that the shadow of the ribbon is blue

It should be using th... | defect | ribbon shadow using unexpected color steps to reproduce starting at url notice that the shadow of the ribbon is blue it should be using the accent color for the shadow probably color accent dark screenshot video | 1 |

319,362 | 9,742,787,018 | IssuesEvent | 2019-06-02 20:05:06 | semperfiwebdesign/all-in-one-seo-pack | https://api.github.com/repos/semperfiwebdesign/all-in-one-seo-pack | opened | PHP Warning: count(): Parameter must be an array or an object that implements Countable in wp-includes\post-template.php on line 293 | Needs Reproducing Priority | High | https://pastebin.com/fEg8pu5R | 1.0 | PHP Warning: count(): Parameter must be an array or an object that implements Countable in wp-includes\post-template.php on line 293 - https://pastebin.com/fEg8pu5R | non_defect | php warning count parameter must be an array or an object that implements countable in wp includes post template php on line | 0 |

308,283 | 9,437,336,594 | IssuesEvent | 2019-04-13 14:32:33 | cs2103-ay1819s2-w13-2/main | https://api.github.com/repos/cs2103-ay1819s2-w13-2/main | closed | As a CCA main committee member, I want to list the activities | priority.High type.Story | so that I can see what are the activities | 1.0 | As a CCA main committee member, I want to list the activities - so that I can see what are the activities | non_defect | as a cca main committee member i want to list the activities so that i can see what are the activities | 0 |

60,442 | 17,023,426,146 | IssuesEvent | 2021-07-03 01:58:18 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Language of blog comment notification | Component: website Priority: minor Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 1.38pm, Wednesday, 17th June 2009]**

The subject of diary comment notifications should be in the language of the blog post, not the language of the commentor's default language. Steps to reproduce:

1) Post a diary entry in English

2) A second user (with default ... | 1.0 | Language of blog comment notification - **[Submitted to the original trac issue database at 1.38pm, Wednesday, 17th June 2009]**

The subject of diary comment notifications should be in the language of the blog post, not the language of the commentor's default language. Steps to reproduce:

1) Post a diary entry in ... | defect | language of blog comment notification the subject of diary comment notifications should be in the language of the blog post not the language of the commentor s default language steps to reproduce post a diary entry in english a second user with default language spanish posts a comment on the di... | 1 |

216,886 | 16,673,060,452 | IssuesEvent | 2021-06-07 13:18:02 | brotkrueml/schema | https://api.github.com/repos/brotkrueml/schema | closed | Add node identifier view helpers | documentation feature | With the implementation of node identifiers (#65) nodes consisting only of the id keyword can be constructed programmatically. This should also be possible within a template using the view helpers.

- [x] A NodeIdentifierViewHelper is available.

- [x] A BlankNodeIdentifierViewHelper is available.

- [x] The view hel... | 1.0 | Add node identifier view helpers - With the implementation of node identifiers (#65) nodes consisting only of the id keyword can be constructed programmatically. This should also be possible within a template using the view helpers.

- [x] A NodeIdentifierViewHelper is available.

- [x] A BlankNodeIdentifierViewHelpe... | non_defect | add node identifier view helpers with the implementation of node identifiers nodes consisting only of the id keyword can be constructed programmatically this should also be possible within a template using the view helpers a nodeidentifierviewhelper is available a blanknodeidentifierviewhelper is ... | 0 |

278,364 | 30,702,288,617 | IssuesEvent | 2023-07-27 01:17:46 | Trinadh465/linux-4.1.15_CVE-2023-28772 | https://api.github.com/repos/Trinadh465/linux-4.1.15_CVE-2023-28772 | closed | CVE-2020-12770 (Medium) detected in linuxlinux-4.6 - autoclosed | Mend: dependency security vulnerability | ## CVE-2020-12770 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kern... | True | CVE-2020-12770 (Medium) detected in linuxlinux-4.6 - autoclosed - ## CVE-2020-12770 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.6</b></p></summary>

<p>

<p>The Linux ... | non_defect | cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files dri... | 0 |

25,239 | 12,229,009,778 | IssuesEvent | 2020-05-03 21:59:19 | Azure/azure-sdk-for-net | https://api.github.com/repos/Azure/azure-sdk-for-net | closed | Improve subscription creation methods | Client Service Bus | Currently, we can create a sender from one method that has a queueOrTopicName parameter.

CreateSender(string queueOrTopicName).

We then have CreateReceiver/Processor methods that expect either a queue, or a combination of topic and subscription names. In UX studies, most users ended up supplying a topicName in the ... | 1.0 | Improve subscription creation methods - Currently, we can create a sender from one method that has a queueOrTopicName parameter.

CreateSender(string queueOrTopicName).

We then have CreateReceiver/Processor methods that expect either a queue, or a combination of topic and subscription names. In UX studies, most user... | non_defect | improve subscription creation methods currently we can create a sender from one method that has a queueortopicname parameter createsender string queueortopicname we then have createreceiver processor methods that expect either a queue or a combination of topic and subscription names in ux studies most user... | 0 |

29,746 | 5,868,042,535 | IssuesEvent | 2017-05-14 08:37:30 | bridgedotnet/Bridge | https://api.github.com/repos/bridgedotnet/Bridge | closed | Bridge 16-beta fails to load in Internet Explorer 11 | defect in progress | In IE11, Bridge 16 beta crashes at startup (I've not tried any other IE versions, IE11 is the only version we're interested in).

### Steps To Reproduce

- Load https://deck.net/ in Internet Explorer

The error is:

```text

Cannot modify non-writable property 'name'

```

It occurs on this code:

```js

if... | 1.0 | Bridge 16-beta fails to load in Internet Explorer 11 - In IE11, Bridge 16 beta crashes at startup (I've not tried any other IE versions, IE11 is the only version we're interested in).

### Steps To Reproduce

- Load https://deck.net/ in Internet Explorer

The error is:

```text

Cannot modify non-writable prope... | defect | bridge beta fails to load in internet explorer in bridge beta crashes at startup i ve not tried any other ie versions is the only version we re interested in steps to reproduce load in internet explorer the error is text cannot modify non writable property name it occ... | 1 |

79,704 | 28,497,719,345 | IssuesEvent | 2023-04-18 15:13:00 | vector-im/element-desktop | https://api.github.com/repos/vector-im/element-desktop | closed | Unable to launch Element in a macOS virtual machine | T-Defect | ### Steps to reproduce

1. Set up a macOS Monterey or Ventura guest virtual machine on a macOS host with an M1 processor

2. Download Element.dmg from element.io and install

3. Run Element.app

### Outcome

#### What did you expect?

Expected the Element.app to start

#### What happened instead?

The applicati... | 1.0 | Unable to launch Element in a macOS virtual machine - ### Steps to reproduce

1. Set up a macOS Monterey or Ventura guest virtual machine on a macOS host with an M1 processor

2. Download Element.dmg from element.io and install

3. Run Element.app

### Outcome

#### What did you expect?

Expected the Element.app ... | defect | unable to launch element in a macos virtual machine steps to reproduce set up a macos monterey or ventura guest virtual machine on a macos host with an processor download element dmg from element io and install run element app outcome what did you expect expected the element app t... | 1 |

113,796 | 9,663,557,979 | IssuesEvent | 2019-05-21 01:15:29 | rancher/rancher | https://api.github.com/repos/rancher/rancher | closed | monitoring - the app monitoring-operator fails to upgrade manually | [zube]: To Test area/monitoring kind/bug-qa status/ready-for-review status/reopened status/resolved status/to-test team/cn | <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a public issue in GitHub. You may (but are not required to) use the GPG ke... | 2.0 | monitoring - the app monitoring-operator fails to upgrade manually - <!--

Please search for existing issues first, then read https://rancher.com/docs/rancher/v2.x/en/contributing/#bugs-issues-or-questions to see what we expect in an issue

For security issues, please email security@rancher.com instead of posting a pu... | non_defect | monitoring the app monitoring operator fails to upgrade manually please search for existing issues first then read to see what we expect in an issue for security issues please email security rancher com instead of posting a public issue in github you may but are not required to use the gpg key locate... | 0 |

73,516 | 24,667,113,901 | IssuesEvent | 2022-10-18 11:09:31 | scoutplan/scoutplan | https://api.github.com/repos/scoutplan/scoutplan | closed | [Scoutplan Production/production] NoMethodError: undefined method `event_organizer?' for nil:NilClass | defect | ## Backtrace

line 32 of [PROJECT_ROOT]/app/policies/event_policy.rb: rsvps?

line 36 of [PROJECT_ROOT]/app/policies/event_policy.rb: organize?

line 1 of [PROJECT_ROOT]/app/views/events/partials/event_row/_organize.slim: _app_views_events_partials_event_row__organize_slim___4066292251475156700_202500

[View ... | 1.0 | [Scoutplan Production/production] NoMethodError: undefined method `event_organizer?' for nil:NilClass - ## Backtrace

line 32 of [PROJECT_ROOT]/app/policies/event_policy.rb: rsvps?

line 36 of [PROJECT_ROOT]/app/policies/event_policy.rb: organize?

line 1 of [PROJECT_ROOT]/app/views/events/partials/event_row/... | defect | nomethoderror undefined method event organizer for nil nilclass backtrace line of app policies event policy rb rsvps line of app policies event policy rb organize line of app views events partials event row organize slim app views events partials event row organize slim ... | 1 |

161,243 | 6,111,431,454 | IssuesEvent | 2017-06-21 17:01:20 | TerraFusion/basicFusion | https://api.github.com/repos/TerraFusion/basicFusion | opened | Change COMPUTE_TERRA variables | enhancement Medium Priority | The variables need to be changed so that they are initially empty, then users will set the variables to what they need. | 1.0 | Change COMPUTE_TERRA variables - The variables need to be changed so that they are initially empty, then users will set the variables to what they need. | non_defect | change compute terra variables the variables need to be changed so that they are initially empty then users will set the variables to what they need | 0 |

15,599 | 10,325,230,410 | IssuesEvent | 2019-09-01 15:43:38 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | Duplicate subnet names results in only one subnet created but terraform reporting success | bug service/subnets | <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the original issue to help the community and maintainers prioritize this request

* Please do not l... | 1.0 | Duplicate subnet names results in only one subnet created but terraform reporting success - <!--- Please keep this note for the community --->

### Community Note

* Please vote on this issue by adding a 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to the ori... | non_defect | duplicate subnet names results in only one subnet created but terraform reporting success community note please vote on this issue by adding a 👍 to the original issue to help the community and maintainers prioritize this request please do not leave or me too comments they generate extra ... | 0 |

123,275 | 10,261,643,859 | IssuesEvent | 2019-08-22 10:29:45 | chainer/chainer | https://api.github.com/repos/chainer/chainer | closed | flaky test: `tests/chainer_tests/functions_tests/loss_tests/test_negative_sampling.py::TestNegativeSamplingFunction` | cat:test pr-ongoing prio:high | Occurred in #7955

https://jenkins.preferred.jp/job/chainer/job/chainer_pr/1846/TEST=CHAINERX_chainer-py3,label=mn1-p100/console

>`FAIL ../../repo/tests/chainer_tests/functions_tests/loss_tests/test_negative_sampling.py::TestNegativeSamplingFunction_use_chainerx_true__chainerx_device_native:0__use_cuda_false__cuda... | 1.0 | flaky test: `tests/chainer_tests/functions_tests/loss_tests/test_negative_sampling.py::TestNegativeSamplingFunction` - Occurred in #7955

https://jenkins.preferred.jp/job/chainer/job/chainer_pr/1846/TEST=CHAINERX_chainer-py3,label=mn1-p100/console

>`FAIL ../../repo/tests/chainer_tests/functions_tests/loss_tests/te... | non_defect | flaky test tests chainer tests functions tests loss tests test negative sampling py testnegativesamplingfunction occurred in fail repo tests chainer tests functions tests loss tests test negative sampling py testnegativesamplingfunction use chainerx true chainerx device native use cuda fal... | 0 |

61,607 | 17,023,737,595 | IssuesEvent | 2021-07-03 03:34:32 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Forskningsavdelningen nominatim record change | Component: nominatim Priority: trivial Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 8.18am, Wednesday, 3rd August 2011]**

Our hackerspace has moved, and we would like the old location stricken from the records.

The old place is no longer ours, and any visitor would have to turn away, confused, as there is no clue left there that we have been ther... | 1.0 | Forskningsavdelningen nominatim record change - **[Submitted to the original trac issue database at 8.18am, Wednesday, 3rd August 2011]**

Our hackerspace has moved, and we would like the old location stricken from the records.

The old place is no longer ours, and any visitor would have to turn away, confused, as th... | defect | forskningsavdelningen nominatim record change our hackerspace has moved and we would like the old location stricken from the records the old place is no longer ours and any visitor would have to turn away confused as there is no clue left there that we have been there the current place is... | 1 |

24,744 | 4,088,026,334 | IssuesEvent | 2016-06-01 12:27:45 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | opened | Wrong precision generated in automatic CAST for DB2 and other databases | C: Functionality P: Medium T: Defect | The current logic is wrong and produces precisions that are too big:

```java

int scale = ((BigDecimal) converted).scale();

int precision = scale + ((BigDecimal) converted).precision();

```

This is usually not an issue, unless the database's maximum supported precision is reached | 1.0 | Wrong precision generated in automatic CAST for DB2 and other databases - The current logic is wrong and produces precisions that are too big:

```java

int scale = ((BigDecimal) converted).scale();

int precision = scale + ((BigDecimal) converted).precision();

```

This is usually not an i... | defect | wrong precision generated in automatic cast for and other databases the current logic is wrong and produces precisions that are too big java int scale bigdecimal converted scale int precision scale bigdecimal converted precision this is usually not an iss... | 1 |

283,413 | 24,546,155,747 | IssuesEvent | 2022-10-12 08:57:23 | saucelabs/forwarder | https://api.github.com/repos/saucelabs/forwarder | closed | tests: fix waiting for server start | testing | At the moment we use `1s` sleep to wait for servers to start this should be changed to active waiting. | 1.0 | tests: fix waiting for server start - At the moment we use `1s` sleep to wait for servers to start this should be changed to active waiting. | non_defect | tests fix waiting for server start at the moment we use sleep to wait for servers to start this should be changed to active waiting | 0 |

136,482 | 12,716,684,980 | IssuesEvent | 2020-06-24 02:43:02 | mikeyjwilliams/sassy-util-css | https://api.github.com/repos/mikeyjwilliams/sassy-util-css | opened | as a dev I want margin utility classes in media query lg | QA documentation enhancement | # as a dev I want margin utility classes in media query lg

- [ ] build out margin classes in lg media query

- [ ] test each class out

- [ ] document in margin page | 1.0 | as a dev I want margin utility classes in media query lg - # as a dev I want margin utility classes in media query lg

- [ ] build out margin classes in lg media query

- [ ] test each class out

- [ ] document in margin page | non_defect | as a dev i want margin utility classes in media query lg as a dev i want margin utility classes in media query lg build out margin classes in lg media query test each class out document in margin page | 0 |

65,418 | 7,878,211,355 | IssuesEvent | 2018-06-26 09:33:10 | mysociety/foi-for-councils | https://api.github.com/repos/mysociety/foi-for-councils | opened | Full Name field should include an aria-describedby attribute | f:foi has-blockers t:design | Found in https://github.com/mysociety/foi-for-councils/issues/22#issuecomment-396287291

> Can't do this as the fields are provided by https://github.com/ministryofjustice/govuk_elements_form_builder, I have opened an issue ministryofjustice/govuk_elements_form_builder#101 | 1.0 | Full Name field should include an aria-describedby attribute - Found in https://github.com/mysociety/foi-for-councils/issues/22#issuecomment-396287291

> Can't do this as the fields are provided by https://github.com/ministryofjustice/govuk_elements_form_builder, I have opened an issue ministryofjustice/govuk_element... | non_defect | full name field should include an aria describedby attribute found in can t do this as the fields are provided by i have opened an issue ministryofjustice govuk elements form builder | 0 |

236,838 | 18,110,648,818 | IssuesEvent | 2021-09-23 03:07:56 | Rutulpatel7077/adventofcode-go | https://api.github.com/repos/Rutulpatel7077/adventofcode-go | opened | ExponentPushToken[v1eiDiMpBnl8Cvbebf-MOS] | bug - updated documentation |

### ExponentPushToken[v1eiDiMpBnl8Cvbebf-MOS]

**Description**:

```

Patel

Rutul

Jayeshbhai

```

***

**Device Details:**

- Hello: world

***

**Page Details:**

- Hello: world

***

**Issue created with pointout widget** https://pointout.ca

| 1.0 | ExponentPushToken[v1eiDiMpBnl8Cvbebf-MOS] -

### ExponentPushToken[v1eiDiMpBnl8Cvbebf-MOS]

**Description**:

```

Patel

Rutul

Jayeshbhai

```

***

**Device Details:**

- Hello: world

***

**Page Details:**

- Hello: world

***

**Issue created with pointout widget** https://pointout.ca

| non_defect | exponentpushtoken exponentpushtoken description patel rutul jayeshbhai device details hello world page details hello world issue created with pointout widget | 0 |

9,101 | 8,516,884,457 | IssuesEvent | 2018-11-01 05:29:18 | Microsoft/vscode-cpptools | https://api.github.com/repos/Microsoft/vscode-cpptools | closed | Support for custom C/C++ formatting | Feature Request Language Service | Is it possible to support custom settings for C/C++ formatting (such as indentation, new lines, spacing wrapping), similar to Visual Studio? See https://docs.microsoft.com/en-us/visualstudio/ide/reference/options-text-editor-c-cpp-formatting?view=vs-2017

| 1.0 | Support for custom C/C++ formatting - Is it possible to support custom settings for C/C++ formatting (such as indentation, new lines, spacing wrapping), similar to Visual Studio? See https://docs.microsoft.com/en-us/visualstudio/ide/reference/options-text-editor-c-cpp-formatting?view=vs-2017

| non_defect | support for custom c c formatting is it possible to support custom settings for c c formatting such as indentation new lines spacing wrapping similar to visual studio see | 0 |

217,029 | 24,312,739,890 | IssuesEvent | 2022-09-30 01:14:14 | jiw065/Springboot-demo | https://api.github.com/repos/jiw065/Springboot-demo | opened | CVE-2022-38751 (Medium) detected in snakeyaml-1.23.jar | security vulnerability | ## CVE-2022-38751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.23.jar</b></p></summary>

<p>YAML 1.1 parser and emitter for Java</p>

<p>Library home page: <a href="http... | True | CVE-2022-38751 (Medium) detected in snakeyaml-1.23.jar - ## CVE-2022-38751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>snakeyaml-1.23.jar</b></p></summary>

<p>YAML 1.1 parser and... | non_defect | cve medium detected in snakeyaml jar cve medium severity vulnerability vulnerable library snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file springboot demo spring boot demo pom xml path to vulnerable library root rep... | 0 |

47,969 | 13,067,343,320 | IssuesEvent | 2020-07-31 00:09:32 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | closed | [steamshovel] memory leak (Trac #1545) | Migrated from Trac combo core defect | http://software.icecube.wisc.edu/static_analysis/2016-02-10-030213-84904-1/report-2a4604.html#EndPath

http://software.icecube.wisc.edu/static_analysis/2016-02-10-030213-84904-1/report-59eb46.html#EndPath

Migrated from https://code.icecube.wisc.edu/ticket/1545

```json

{

"status": "closed",

"changetime": "2016-... | 1.0 | [steamshovel] memory leak (Trac #1545) - http://software.icecube.wisc.edu/static_analysis/2016-02-10-030213-84904-1/report-2a4604.html#EndPath

http://software.icecube.wisc.edu/static_analysis/2016-02-10-030213-84904-1/report-59eb46.html#EndPath

Migrated from https://code.icecube.wisc.edu/ticket/1545

```json

{

"sta... | defect | memory leak trac migrated from json status closed changetime description reporter david schultz cc resolution invalid ts component combo core summary memory leak priority major... | 1 |

658,423 | 21,892,271,504 | IssuesEvent | 2022-05-20 03:56:44 | space-wizards/space-station-14 | https://api.github.com/repos/space-wizards/space-station-14 | closed | Clicking an item in storage with full hands drops the item on the ground | Issue: Bug Priority: 2-Before Release Difficulty: 1-Easy Bug: Replicated | ## Description

<!-- Explain your issue in detail, including the steps to reproduce it if applicable. Issues without proper explanation are liable to be closed by maintainers.-->

Taking things from a storage UI while your hands are full puts the selected item on the ground. It should instead do nothing or use the he... | 1.0 | Clicking an item in storage with full hands drops the item on the ground - ## Description

<!-- Explain your issue in detail, including the steps to reproduce it if applicable. Issues without proper explanation are liable to be closed by maintainers.-->

Taking things from a storage UI while your hands are full puts ... | non_defect | clicking an item in storage with full hands drops the item on the ground description taking things from a storage ui while your hands are full puts the selected item on the ground it should instead do nothing or use the held item on the one in inventory | 0 |

184,601 | 21,784,915,380 | IssuesEvent | 2022-05-14 01:47:30 | n-devs/supper-bin | https://api.github.com/repos/n-devs/supper-bin | closed | WS-2019-0318 (High) detected in handlebars-4.1.1.tgz - autoclosed | security vulnerability | ## WS-2019-0318 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.1.tgz</b></p></summary>

<p>Handlebars provides the power necessary to let you build semantic templates ef... | True | WS-2019-0318 (High) detected in handlebars-4.1.1.tgz - autoclosed - ## WS-2019-0318 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>handlebars-4.1.1.tgz</b></p></summary>

<p>Handlebars... | non_defect | ws high detected in handlebars tgz autoclosed ws high severity vulnerability vulnerable library handlebars tgz handlebars provides the power necessary to let you build semantic templates effectively with no frustration library home page a href path to dependency file ... | 0 |

73,632 | 24,727,525,958 | IssuesEvent | 2022-10-20 15:01:16 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | Cannot pass null values as arguments for PL/SQL TABLE types | T: Defect C: Functionality C: DB: Oracle P: Medium R: Worksforme E: Professional Edition E: Enterprise Edition | Another spin-off from https://github.com/jOOQ/jOOQ/issues/14097, with details to follow | 1.0 | Cannot pass null values as arguments for PL/SQL TABLE types - Another spin-off from https://github.com/jOOQ/jOOQ/issues/14097, with details to follow | defect | cannot pass null values as arguments for pl sql table types another spin off from with details to follow | 1 |

5,660 | 2,610,192,837 | IssuesEvent | 2015-02-26 19:00:54 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 引荐怎么去除色斑最有效 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

好像离了太久,我忘了过去自己的样子,一副沧桑的玉颜,��

�透过明镜的反射里,我对他存留一片戒心,季节到了秋,蝉�

��依然很脆鸣,它们在枝头不住地告知,我们都将老去,于是

,听的见山林中那一阵一阵的悲泣声。怎么去除色斑最有效��

�

《客户案例》

黄褐斑最好的治疗方法,

我四十三了,孩子也大了,现在也不用我管了,可自己的事��

�还得自己做,主要是我脸上的黄褐斑已经长了好几年了,以�

��家里事情多也没时间管,现在总算有时间管管自己了,就去

美容院做做这张脸,虽然里面态度和服务都挺好的,可祛斑��

�果并不怎么明显,我就想上网查一下祛斑的方法吧,网上的�

��西比较全面一些,没想到网上的祛斑产品那么多,看的... | 1.0 | 引荐怎么去除色斑最有效 - ```

《摘要》

好像离了太久,我忘了过去自己的样子,一副沧桑的玉颜,��

�透过明镜的反射里,我对他存留一片戒心,季节到了秋,蝉�

��依然很脆鸣,它们在枝头不住地告知,我们都将老去,于是

,听的见山林中那一阵一阵的悲泣声。怎么去除色斑最有效��

�

《客户案例》

黄褐斑最好的治疗方法,

我四十三了,孩子也大了,现在也不用我管了,可自己的事��

�还得自己做,主要是我脸上的黄褐斑已经长了好几年了,以�

��家里事情多也没时间管,现在总算有时间管管自己了,就去

美容院做做这张脸,虽然里面态度和服务都挺好的,可祛斑��

�果并不怎么明显,我就想上网查一下祛斑的方法吧,网上的�

��西比较全面一些,没... | defect | 引荐怎么去除色斑最有效 《摘要》 好像离了太久,我忘了过去自己的样子,一副沧桑的玉颜,�� �透过明镜的反射里,我对他存留一片戒心,季节到了秋,蝉� ��依然很脆鸣,它们在枝头不住地告知,我们都将老去,于是 ,听的见山林中那一阵一阵的悲泣声。怎么去除色斑最有效�� � 《客户案例》 黄褐斑最好的治疗方法 我四十三了,孩子也大了,现在也不用我管了,可自己的事�� �还得自己做,主要是我脸上的黄褐斑已经长了好几年了,以� ��家里事情多也没时间管,现在总算有时间管管自己了,就去 美容院做做这张脸,虽然里面态度和服务都挺好的,可祛斑�� �果并不怎么明显,我就想上网查一下祛斑的方法吧,网上的� ��西比较全面一些,没... | 1 |

14,595 | 2,829,610,096 | IssuesEvent | 2015-05-23 02:06:28 | awesomebing1/fuzzdb | https://api.github.com/repos/awesomebing1/fuzzdb | closed | http://www.rf-dimension.com/forum/entry.php?72445-NFL-FOX-CBS-!!-Baltimore-Ravens-vs-Tennessee-Titans-live-2014-Stream | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.

2.

3.

http://www.rf-dimension.com/forum/entry.php?72445-NFL-FOX-CBS-!!-Baltimore-Raven

s-vs-Tennessee-Titans-live-2014-Stream

http://www.rf-dimension.com/forum/entry.php?72445-NFL-FOX-CBS-!!-Baltimore-Raven

s-vs-Tennessee-Titans-live-2014-Stream

What is the expected outp... | 1.0 | http://www.rf-dimension.com/forum/entry.php?72445-NFL-FOX-CBS-!!-Baltimore-Ravens-vs-Tennessee-Titans-live-2014-Stream - ```

What steps will reproduce the problem?

1.

2.

3.

http://www.rf-dimension.com/forum/entry.php?72445-NFL-FOX-CBS-!!-Baltimore-Raven

s-vs-Tennessee-Titans-live-2014-Stream

http://www.rf-dimension.c... | defect | what steps will reproduce the problem s vs tennessee titans live stream s vs tennessee titans live stream what is the expected output what do you see instead what version of the product are you using on what operating system please provide any additional information below or... | 1 |

77,386 | 26,959,327,362 | IssuesEvent | 2023-02-08 16:59:40 | AutomatedProcessImprovement/Simod | https://api.github.com/repos/AutomatedProcessImprovement/Simod | opened | Don't mine all simulation parameters in each calendar optimization iteration | defect performance | The simulation parameters (`json_parameters`) don't have to be discovered in each optimization iteration.

I think that currently, at the beginning of each "calendar optimization" iteration, the arrivals, gateway probabilities, etc. are discovered. There is no need to do all of them each time.

JSON Parameters:

- ... | 1.0 | Don't mine all simulation parameters in each calendar optimization iteration - The simulation parameters (`json_parameters`) don't have to be discovered in each optimization iteration.

I think that currently, at the beginning of each "calendar optimization" iteration, the arrivals, gateway probabilities, etc. are di... | defect | don t mine all simulation parameters in each calendar optimization iteration the simulation parameters json parameters don t have to be discovered in each optimization iteration i think that currently at the beginning of each calendar optimization iteration the arrivals gateway probabilities etc are di... | 1 |

305,749 | 23,129,388,746 | IssuesEvent | 2022-07-28 09:00:47 | SeleniumHQ/seleniumhq.github.io | https://api.github.com/repos/SeleniumHQ/seleniumhq.github.io | closed | [🐛 Bug]: Instructions for Install Browser Driver missing steps | bug documentation | ### What happened?

Summary: When following 'Install Browser Driver' instructions, user is linked to a 'Downloads' page with no information about what to download or how.

Steps to reproduce:

1. As a new Selenium Webdriver user, start at the beginning of the the 'Getting Started' guide at https://www.selenium.dev/do... | 1.0 | [🐛 Bug]: Instructions for Install Browser Driver missing steps - ### What happened?

Summary: When following 'Install Browser Driver' instructions, user is linked to a 'Downloads' page with no information about what to download or how.

Steps to reproduce:

1. As a new Selenium Webdriver user, start at the beginning... | non_defect | instructions for install browser driver missing steps what happened summary when following install browser driver instructions user is linked to a downloads page with no information about what to download or how steps to reproduce as a new selenium webdriver user start at the beginning of the... | 0 |

237,383 | 19,621,044,864 | IssuesEvent | 2022-01-07 06:35:24 | MohistMC/Mohist | https://api.github.com/repos/MohistMC/Mohist | closed | NetherPortalFix problem | 1.12.2 More Info Needed Needs Testing Needs User Answer | NetherPortalFix is not working. It just uses the default Minecraft radius search algorithm instead of saving player portal position. Working fine on Forge ModLoader server. | 1.0 | NetherPortalFix problem - NetherPortalFix is not working. It just uses the default Minecraft radius search algorithm instead of saving player portal position. Working fine on Forge ModLoader server. | non_defect | netherportalfix problem netherportalfix is not working it just uses the default minecraft radius search algorithm instead of saving player portal position working fine on forge modloader server | 0 |

58,336 | 16,488,280,188 | IssuesEvent | 2021-05-24 21:36:45 | galasa-dev/projectmanagement | https://api.github.com/repos/galasa-dev/projectmanagement | closed | broken documentation links | Manager: zOS Batch defect documentation | looking at https://galasa.dev/docs/managers/zos-manager

In particular the links within zosBatchManager.

`

Notes: | The IZosBatch interface has a single method, {@link IZosBatch#submitJob(String, IZosBatchJobname)} to submit a JCL as a String and returns a IZosBatchJob instance.... | 1.0 | broken documentation links - looking at https://galasa.dev/docs/managers/zos-manager

In particular the links within zosBatchManager.

`

Notes: | The IZosBatch interface has a single method, {@link IZosBatch#submitJob(String, IZosBatchJobname)} to submit a JCL as a String and returns a&nbs... | defect | broken documentation links looking at in particular the links within zosbatchmanager notes the nbsp izosbatch nbsp interface has a single method link izosbatch submitjob string izosbatchjobname to submit a jcl as a nbsp string nbsp and returns a nbsp izosbatchjob nbsp instance see nbsp zosba... | 1 |

20,432 | 3,355,888,059 | IssuesEvent | 2015-11-18 18:11:55 | jarz/slimtune | https://api.github.com/repos/jarz/slimtune | closed | Slimtune crashes when running XNA applications which use the content pipeline | auto-migrated Priority-Medium Type-Defect | ```

>What steps will reproduce the problem?

1. Download the XNA winforms content pipline sample

(http://creators.xna.com/en-GB/sample/winforms_series2). Note this

requires instalation of XNA game studio.

2. Run the sample under SlimTune 0.1.5, open cats.fbx. The application

will then crash if running in the profil... | 1.0 | Slimtune crashes when running XNA applications which use the content pipeline - ```

>What steps will reproduce the problem?

1. Download the XNA winforms content pipline sample

(http://creators.xna.com/en-GB/sample/winforms_series2). Note this

requires instalation of XNA game studio.

2. Run the sample under SlimTune... | defect | slimtune crashes when running xna applications which use the content pipeline what steps will reproduce the problem download the xna winforms content pipline sample note this requires instalation of xna game studio run the sample under slimtune open cats fbx the application will then cras... | 1 |

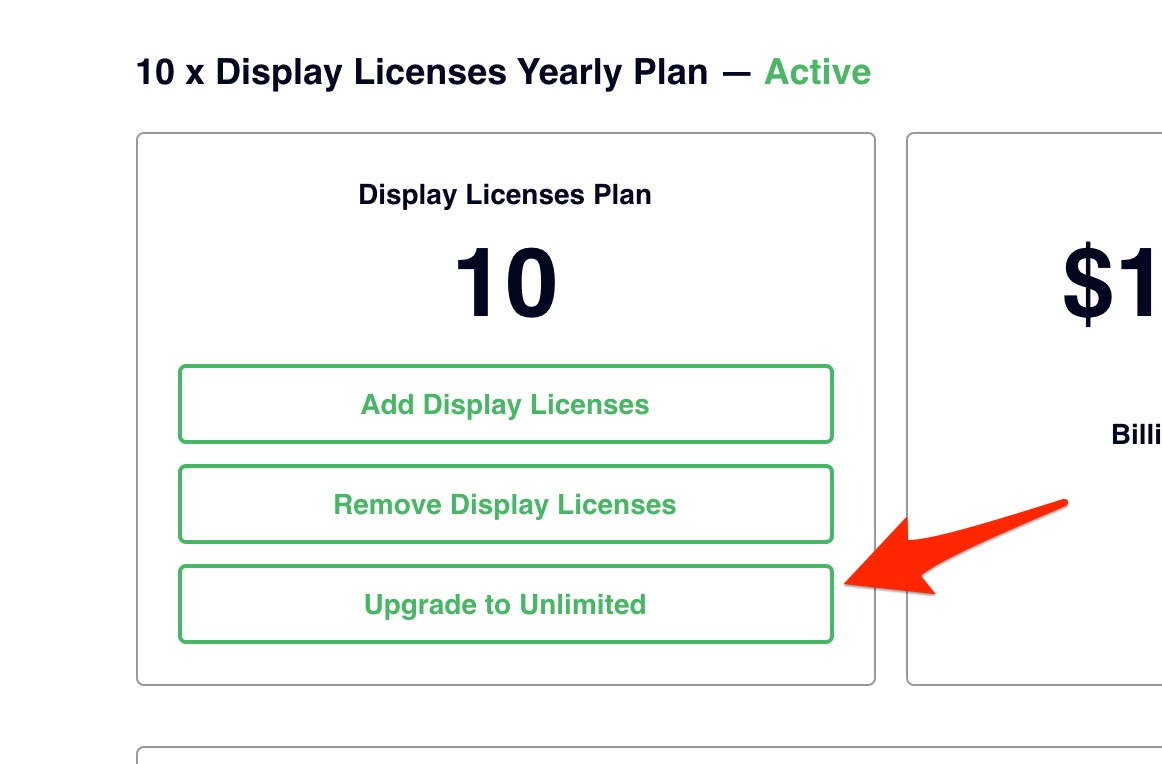

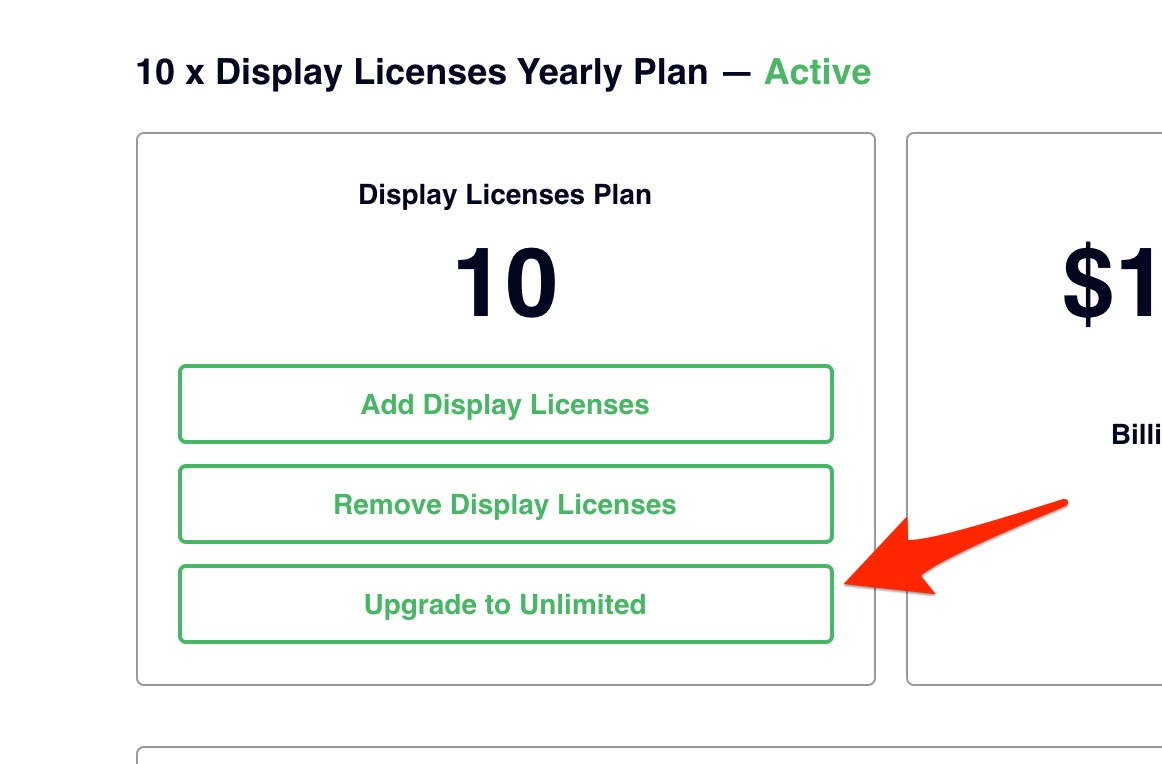

58,707 | 16,717,744,516 | IssuesEvent | 2021-06-10 00:40:51 | Rise-Vision/rise-vision-apps | https://api.github.com/repos/Rise-Vision/rise-vision-apps | opened | [Subscription Details] Copy Change | visual defect | Issue: "Upgrade to Unlimited" button needs to read: "Upgrade To Unlimited".

| 1.0 | [Subscription Details] Copy Change - Issue: "Upgrade to Unlimited" button needs to read: "Upgrade To Unlimited".

| defect | copy change issue upgrade to unlimited button needs to read upgrade to unlimited | 1 |

550,815 | 16,132,881,136 | IssuesEvent | 2021-04-29 08:03:37 | exeGesIS-SDM/CAMS-Mobile | https://api.github.com/repos/exeGesIS-SDM/CAMS-Mobile | closed | Synced existing photos don't display on Land | Priority 1 v4.4.4 v4.4.5 | Regarding the downloading of existing photos to the device (https://github.com/exeGesIS-SDM/CAMS-Mobile/issues/20), Land records aren't currently showing the downloaded photos (they need to) | 1.0 | Synced existing photos don't display on Land - Regarding the downloading of existing photos to the device (https://github.com/exeGesIS-SDM/CAMS-Mobile/issues/20), Land records aren't currently showing the downloaded photos (they need to) | non_defect | synced existing photos don t display on land regarding the downloading of existing photos to the device land records aren t currently showing the downloaded photos they need to | 0 |

46,055 | 13,055,845,823 | IssuesEvent | 2020-07-30 02:54:35 | icecube-trac/tix2 | https://api.github.com/repos/icecube-trac/tix2 | opened | DeleteUnregistered can't delete old versions of I3TrayInfo objects (Trac #518) | IceTray Incomplete Migration Migrated from Trac defect | Migrated from https://code.icecube.wisc.edu/ticket/518

```json

{

"status": "closed",

"changetime": "2009-01-16T15:40:48",

"description": "",

"reporter": "troy",

"cc": "",

"resolution": "fixed",

"_ts": "1232120448000000",

"component": "IceTray",

"summary": "DeleteUnregistered ... | 1.0 | DeleteUnregistered can't delete old versions of I3TrayInfo objects (Trac #518) - Migrated from https://code.icecube.wisc.edu/ticket/518

```json

{

"status": "closed",

"changetime": "2009-01-16T15:40:48",

"description": "",

"reporter": "troy",

"cc": "",

"resolution": "fixed",

"_ts": "123... | defect | deleteunregistered can t delete old versions of objects trac migrated from json status closed changetime description reporter troy cc resolution fixed ts component icetray summary deleteunregis... | 1 |

74,203 | 25,007,925,186 | IssuesEvent | 2022-11-03 13:19:15 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | opened | Text wobbles around as I type | T-Defect | ### Steps to reproduce

1. In a room, with my cursor in either the existing composer or the new "rich" composer

2. Type a bit and notice the fonts wobble around a bit sometimes

3. Specific example - if I type a capital letter after ` - `, the dash moves upwards a bit, but if I type a lower case followed by a capital,... | 1.0 | Text wobbles around as I type - ### Steps to reproduce

1. In a room, with my cursor in either the existing composer or the new "rich" composer

2. Type a bit and notice the fonts wobble around a bit sometimes

3. Specific example - if I type a capital letter after ` - `, the dash moves upwards a bit, but if I type a l... | defect | text wobbles around as i type steps to reproduce in a room with my cursor in either the existing composer or the new rich composer type a bit and notice the fonts wobble around a bit sometimes specific example if i type a capital letter after the dash moves upwards a bit but if i type a l... | 1 |

45,259 | 12,687,555,590 | IssuesEvent | 2020-06-20 17:06:20 | hikaya-io/dots-frontend | https://api.github.com/repos/hikaya-io/dots-frontend | opened | Forgot password: add link to go back to login page | FE General app defect | **Is your feature request related to a problem? Please describe.**

On forgot password page, add a link to go back to login page

**Acceptance Criteria**

```

GIVEN I am on the Forgot password page

AND I realize I came to the page by mistake and want to go back to Log in page

AND I see a link to go back to Login p... | 1.0 | Forgot password: add link to go back to login page - **Is your feature request related to a problem? Please describe.**

On forgot password page, add a link to go back to login page

**Acceptance Criteria**

```

GIVEN I am on the Forgot password page

AND I realize I came to the page by mistake and want to go back t... | defect | forgot password add link to go back to login page is your feature request related to a problem please describe on forgot password page add a link to go back to login page acceptance criteria given i am on the forgot password page and i realize i came to the page by mistake and want to go back t... | 1 |

158,322 | 13,728,607,954 | IssuesEvent | 2020-10-04 12:28:11 | stLmpp/st-store | https://api.github.com/repos/stLmpp/st-store | opened | Add documentation | documentation | > Store

- [ ] EntityStore

- [ ] EntityQuery

- [ ] Store

- [ ] Query

- [ ] StMap

- [ ] RxJS Operators

- [ ] StStoreModule

> Utils

- [ ] Array Helpers

- [ ] DefaultPipe

- [ ] GetDeepPipe

- [ ] GroupByPipe

- [ ] OrderByPipe

- [ ] SumPipe

- [ ] SumByPipe

- [ ] TrackByFactories

> Router

- [ ] RouterQue... | 1.0 | Add documentation - > Store

- [ ] EntityStore

- [ ] EntityQuery

- [ ] Store

- [ ] Query

- [ ] StMap

- [ ] RxJS Operators

- [ ] StStoreModule

> Utils

- [ ] Array Helpers

- [ ] DefaultPipe

- [ ] GetDeepPipe

- [ ] GroupByPipe

- [ ] OrderByPipe

- [ ] SumPipe

- [ ] SumByPipe

- [ ] TrackByFactories

> Rou... | non_defect | add documentation store entitystore entityquery store query stmap rxjs operators ststoremodule utils array helpers defaultpipe getdeeppipe groupbypipe orderbypipe sumpipe sumbypipe trackbyfactories router routerquery | 0 |

56,552 | 15,173,011,192 | IssuesEvent | 2021-02-13 12:07:40 | STEllAR-GROUP/hpx | https://api.github.com/repos/STEllAR-GROUP/hpx | closed | Possible uncaught exception causing hpx::parallel::for_loop lockup | category: algorithms tag: wontfix type: defect | ## Expected Behavior

The exception to be reported / caught / propagated

## Actual Behavior

hpx::parallel::for_loop locks up and never returns

## Steps to Reproduce the Problem

throw at this location:

https://github.com/STEllAR-GROUP/hpx/blob/master/libs/algorithms/include/hpx/parallel/util/detail/handle... | 1.0 | Possible uncaught exception causing hpx::parallel::for_loop lockup - ## Expected Behavior

The exception to be reported / caught / propagated

## Actual Behavior

hpx::parallel::for_loop locks up and never returns

## Steps to Reproduce the Problem

throw at this location:

https://github.com/STEllAR-GROUP/hp... | defect | possible uncaught exception causing hpx parallel for loop lockup expected behavior the exception to be reported caught propagated actual behavior hpx parallel for loop locks up and never returns steps to reproduce the problem throw at this location specifications hpx v... | 1 |

170,124 | 26,905,676,110 | IssuesEvent | 2023-02-06 18:52:37 | webb-tools/webb-experiences | https://api.github.com/repos/webb-tools/webb-experiences | closed | Transfer Component | design 🎨 | ## Product Design Goals

- Enable users to easily transfer shielded funds from one registered address to another

## Deliverables

- Create wireframes that represent and organize the user actions for making a transfer to another user

- Design a dedicated transfer component that displays required input boxes, suc... | 1.0 | Transfer Component - ## Product Design Goals

- Enable users to easily transfer shielded funds from one registered address to another

## Deliverables

- Create wireframes that represent and organize the user actions for making a transfer to another user

- Design a dedicated transfer component that displays requ... | non_defect | transfer component product design goals enable users to easily transfer shielded funds from one registered address to another deliverables create wireframes that represent and organize the user actions for making a transfer to another user design a dedicated transfer component that displays requ... | 0 |

79,753 | 28,807,890,633 | IssuesEvent | 2023-05-03 00:19:34 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: Selenium Manager does not get the version from Chrome Beta binary | I-defect C-rust | ### What happened?

Was trying to do a demo showing how Selenium Manger gets the correct ChromeDriver for Chrome beta, but it seems it was not able to parse the version returned by Chrome beta. Check below.

### How can we reproduce the issue?

```shell

> /Applications/Google\ Chrome\ Beta.app/Contents/MacOS/Google\ Ch... | 1.0 | [🐛 Bug]: Selenium Manager does not get the version from Chrome Beta binary - ### What happened?

Was trying to do a demo showing how Selenium Manger gets the correct ChromeDriver for Chrome beta, but it seems it was not able to parse the version returned by Chrome beta. Check below.

### How can we reproduce the issue... | defect | selenium manager does not get the version from chrome beta binary what happened was trying to do a demo showing how selenium manger gets the correct chromedriver for chrome beta but it seems it was not able to parse the version returned by chrome beta check below how can we reproduce the issue s... | 1 |

46,731 | 11,880,330,515 | IssuesEvent | 2020-03-27 10:29:01 | BatchDrake/SigDigger | https://api.github.com/repos/BatchDrake/SigDigger | closed | compile error in macOS | build-issue | There's a new mismatch between two operands in the recent changes in develop:

`CarrierDetector.cpp` line 133:

`acc += psd * SU_C_EXP(I * M_PI * nFreq);`

`I` is std::complex. Changing it to something like

`acc += psd * SU_C_EXP(SU_C_REAL(I) * M_PI * nFreq);`

fixes | 1.0 | compile error in macOS - There's a new mismatch between two operands in the recent changes in develop:

`CarrierDetector.cpp` line 133:

`acc += psd * SU_C_EXP(I * M_PI * nFreq);`

`I` is std::complex. Changing it to something like

`acc += psd * SU_C_EXP(SU_C_REAL(I) * M_PI * nFreq);`

fixes | non_defect | compile error in macos there s a new mismatch between two operands in the recent changes in develop carrierdetector cpp line acc psd su c exp i m pi nfreq i is std complex changing it to something like acc psd su c exp su c real i m pi nfreq fixes | 0 |

26,707 | 4,777,616,034 | IssuesEvent | 2016-10-27 16:47:20 | wheeler-microfluidics/microdrop | https://api.github.com/repos/wheeler-microfluidics/microdrop | closed | Maximum recursion limit exceeded when running protocol (Trac #38) | defect microdrop Migrated from Trac | Running a protocol with 16 steps, repeated 100 times results the following:

RuntimeError: maximum recursion depth exceeded

Migrated from http://microfluidics.utoronto.ca/ticket/38

```json

{

"status": "closed",

"changetime": "2014-04-17T19:39:01",

"description": "Running a protocol with 16 steps, repeate... | 1.0 | Maximum recursion limit exceeded when running protocol (Trac #38) - Running a protocol with 16 steps, repeated 100 times results the following:

RuntimeError: maximum recursion depth exceeded

Migrated from http://microfluidics.utoronto.ca/ticket/38

```json

{

"status": "closed",

"changetime": "2014-04-17T19:39... | defect | maximum recursion limit exceeded when running protocol trac running a protocol with steps repeated times results the following runtimeerror maximum recursion depth exceeded migrated from json status closed changetime description running a protocol with st... | 1 |

49,415 | 7,498,643,945 | IssuesEvent | 2018-04-09 06:01:07 | raquo/scala-dom-types | https://api.github.com/repos/raquo/scala-dom-types | opened | Docs: Add MDN docs to SVG attributes | documentation | Copy over MDN docs from ScalaTags to SVG attributes that don't have them, similar to other attributes.

@doofin please let me know if you will be working on this. If not, I will probably release v0.6 without this. I got SDB and Laminar parts ready. | 1.0 | Docs: Add MDN docs to SVG attributes - Copy over MDN docs from ScalaTags to SVG attributes that don't have them, similar to other attributes.

@doofin please let me know if you will be working on this. If not, I will probably release v0.6 without this. I got SDB and Laminar parts ready. | non_defect | docs add mdn docs to svg attributes copy over mdn docs from scalatags to svg attributes that don t have them similar to other attributes doofin please let me know if you will be working on this if not i will probably release without this i got sdb and laminar parts ready | 0 |

58,616 | 16,653,358,978 | IssuesEvent | 2021-06-05 04:01:40 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Can't p2p 1:1 call if turnserver is disabled | A-VoIP T-Defect X-Needs-Info | Not sure if this is intended or not, but with the

```

Allow Peer-to-Peer for 1:1 calls (if you enable this, the other party might be able to see your IP address)

```

option enabled, it still requires the server's turnserver to be enabled. I thought that if both parties have this enabled, it would be a p2p e2ee cal... | 1.0 | Can't p2p 1:1 call if turnserver is disabled - Not sure if this is intended or not, but with the

```

Allow Peer-to-Peer for 1:1 calls (if you enable this, the other party might be able to see your IP address)

```

option enabled, it still requires the server's turnserver to be enabled. I thought that if both partie... | defect | can t call if turnserver is disabled not sure if this is intended or not but with the allow peer to peer for calls if you enable this the other party might be able to see your ip address option enabled it still requires the server s turnserver to be enabled i thought that if both parties ... | 1 |

7,040 | 2,610,323,493 | IssuesEvent | 2015-02-26 19:44:17 | chrsmith/republic-at-war | https://api.github.com/repos/chrsmith/republic-at-war | closed | Text | auto-migrated Priority-Medium Type-Defect | ```

ARC Gunship

Its "Deploy ARC" Ability shows MISSING both for name and description!

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 14 May 2011 at 2:07 | 1.0 | Text - ```

ARC Gunship

Its "Deploy ARC" Ability shows MISSING both for name and description!

```

-----

Original issue reported on code.google.com by `z3r0...@gmail.com` on 14 May 2011 at 2:07 | defect | text arc gunship its deploy arc ability shows missing both for name and description original issue reported on code google com by gmail com on may at | 1 |

333,605 | 29,796,821,284 | IssuesEvent | 2023-06-16 03:39:31 | pytorch/pytorch | https://api.github.com/repos/pytorch/pytorch | reopened | DISABLED test_operator_linalg_lu_factor_cuda_float32 (__main__.TestCompositeComplianceCUDA) | triaged module: flaky-tests skipped module: unknown | Platforms: linux

This test was disabled because it is failing in CI. See [recent examples](https://hud.pytorch.org/flakytest?name=test_operator_linalg_lu_factor_cuda_float32&suite=TestCompositeComplianceCUDA) and the most recent trunk [workflow logs](https://github.com/pytorch/pytorch/runs/10425574725).

Over the past... | 1.0 | DISABLED test_operator_linalg_lu_factor_cuda_float32 (__main__.TestCompositeComplianceCUDA) - Platforms: linux