Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 757 | labels stringlengths 4 664 | body stringlengths 3 261k | index stringclasses 10

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 232k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

226,790 | 18,044,168,614 | IssuesEvent | 2021-09-18 15:41:18 | logicmoo/logicmoo_workspace | https://api.github.com/repos/logicmoo/logicmoo_workspace | opened | logicmoo.base.fol.fiveof.NONMONOTONIC_TYPE_01 JUnit | logicmoo.base.fol.fiveof Test_9999 unit_test NONMONOTONIC_TYPE_01 | (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/logicmoo_base/t/examples/fol/fiveof ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif nonmonotonic_type_01.pl)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logicmoo_workspace/issues?q=is%3Aissue+label%3ANONMONOTONI... | 2.0 | logicmoo.base.fol.fiveof.NONMONOTONIC_TYPE_01 JUnit - (cd /var/lib/jenkins/workspace/logicmoo_workspace/packs_sys/logicmoo_base/t/examples/fol/fiveof ; timeout --foreground --preserve-status -s SIGKILL -k 10s 10s lmoo-clif nonmonotonic_type_01.pl)

GH_MASTER_ISSUE_FINFO=

ISSUE_SEARCH: https://github.com/logicmoo/logi... | non_defect | logicmoo base fol fiveof nonmonotonic type junit cd var lib jenkins workspace logicmoo workspace packs sys logicmoo base t examples fol fiveof timeout foreground preserve status s sigkill k lmoo clif nonmonotonic type pl gh master issue finfo issue search gitlab latest this buil... | 0 |

53,093 | 13,260,883,566 | IssuesEvent | 2020-08-20 18:55:40 | icecube-trac/tix4 | https://api.github.com/repos/icecube-trac/tix4 | closed | port photonics_1.73 does not build on SL5 64 bit (Trac #686) | Migrated from Trac booking defect | Scientific Linux release 5.8 (Boron)

```text

$ gcc --version

gcc (GCC) 4.1.2 20080704 (Red Hat 4.1.2-52)

Copyright (C) 2006 Free Software Foundation, Inc.

This is free software; see the source for copying conditions. There is NO warranty; not even for MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE.

```

```tex... | 1.0 | port photonics_1.73 does not build on SL5 64 bit (Trac #686) - Scientific Linux release 5.8 (Boron)

```text

$ gcc --version

gcc (GCC) 4.1.2 20080704 (Red Hat 4.1.2-52)

Copyright (C) 2006 Free Software Foundation, Inc.

This is free software; see the source for copying conditions. There is NO warranty; not even for ME... | defect | port photonics does not build on bit trac scientific linux release boron text gcc version gcc gcc red hat copyright c free software foundation inc this is free software see the source for copying conditions there is no warranty not even for merchantability or ... | 1 |

2,958 | 2,607,967,648 | IssuesEvent | 2015-02-26 00:43:06 | chrsmithdemos/leveldb | https://api.github.com/repos/chrsmithdemos/leveldb | closed | build_detect_platform line ending error | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. git clone https://code.google.com/p/leveldb/

2. cd leveldb

3. make

What is the expected output? What do you see instead?

It should compile. I get an error:

$ make

/bin/sh: 1: ./build_detect_platform: not found

Makefile:18: build_config.mk: No such file or directory

make: *... | 1.0 | build_detect_platform line ending error - ```

What steps will reproduce the problem?

1. git clone https://code.google.com/p/leveldb/

2. cd leveldb

3. make

What is the expected output? What do you see instead?

It should compile. I get an error:

$ make

/bin/sh: 1: ./build_detect_platform: not found

Makefile:18: build_co... | defect | build detect platform line ending error what steps will reproduce the problem git clone cd leveldb make what is the expected output what do you see instead it should compile i get an error make bin sh build detect platform not found makefile build config mk no such file or directory... | 1 |

81,556 | 31,018,920,406 | IssuesEvent | 2023-08-10 02:29:59 | openzfs/zfs | https://api.github.com/repos/openzfs/zfs | closed | Data corruption since generic_file_splice_read -> filemap_splice_read change (6.5 compat, but occurs on 6.4 too) | Type: Defect | ### System information

Type | Version/Name

--- | ---

Distribution Name | Arch

Distribution Version | Rolling release

Kernel Version | 6.4.8, 6.5rc1/2/3/4

Architecture | x86-64

OpenZFS Version | [commit 36261c8](https://github.com/openzfs/zfs/commit/36261c8238df462b214854ccea1df4f060cf0995)

### Describe the p... | 1.0 | Data corruption since generic_file_splice_read -> filemap_splice_read change (6.5 compat, but occurs on 6.4 too) - ### System information

Type | Version/Name

--- | ---

Distribution Name | Arch

Distribution Version | Rolling release

Kernel Version | 6.4.8, 6.5rc1/2/3/4

Architecture | x86-64

OpenZFS Version | [co... | defect | data corruption since generic file splice read filemap splice read change compat but occurs on too system information type version name distribution name arch distribution version rolling release kernel version architecture openzfs version ... | 1 |

2,658 | 4,877,466,440 | IssuesEvent | 2016-11-16 15:46:15 | CartoDB/cartodb | https://api.github.com/repos/CartoDB/cartodb | closed | Error in dropping old table in a sync process leaves stale tables | Data-services | Related: https://github.com/CartoDB/cartodb/issues/6640

(Copied from the issue above):

This [piece of code](https://github.com/CartoDB/cartodb/blob/9b496fee7beac79c8d5f47570da8e505902a5b64/app/models/synchronization/adapter.rb#L143-L147) is silently failing, so what happens here is:

- A table gets downloaded and impo... | 1.0 | Error in dropping old table in a sync process leaves stale tables - Related: https://github.com/CartoDB/cartodb/issues/6640

(Copied from the issue above):

This [piece of code](https://github.com/CartoDB/cartodb/blob/9b496fee7beac79c8d5f47570da8e505902a5b64/app/models/synchronization/adapter.rb#L143-L147) is silently ... | non_defect | error in dropping old table in a sync process leaves stale tables related copied from the issue above this is silently failing so what happens here is a table gets downloaded and imported from the source the old table is renamed to be replaced by the new one inside a transaction the old table is... | 0 |

21,925 | 3,587,215,053 | IssuesEvent | 2016-01-30 05:06:04 | mash99/crypto-js | https://api.github.com/repos/mash99/crypto-js | closed | Error: Unable to get property 'createEncryptor' of undefined or null reference | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1.Just run the html file

2.I am trying to encypt using tripleDes

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

Please provide any additional information below.

```

Original issue reported on... | 1.0 | Error: Unable to get property 'createEncryptor' of undefined or null reference - ```

What steps will reproduce the problem?

1.Just run the html file

2.I am trying to encypt using tripleDes

3.

What is the expected output? What do you see instead?

What version of the product are you using? On what operating system?

... | defect | error unable to get property createencryptor of undefined or null reference what steps will reproduce the problem just run the html file i am trying to encypt using tripledes what is the expected output what do you see instead what version of the product are you using on what operating system ... | 1 |

147,984 | 5,656,821,671 | IssuesEvent | 2017-04-10 03:50:26 | elementary/switchboard-plug-pantheon-shell | https://api.github.com/repos/elementary/switchboard-plug-pantheon-shell | closed | wallpaper plug should have basic browsing options | Priority: Wishlist | "wallpaper folder" and "custom folder" are fine now, but "picture folders" could be more detailed. I think the user should be able to dig in successive folders within the picture folder and go back the "picture folder root". I believe most people use nested folders to sort their pictures and this renders the selection ... | 1.0 | wallpaper plug should have basic browsing options - "wallpaper folder" and "custom folder" are fine now, but "picture folders" could be more detailed. I think the user should be able to dig in successive folders within the picture folder and go back the "picture folder root". I believe most people use nested folders to... | non_defect | wallpaper plug should have basic browsing options wallpaper folder and custom folder are fine now but picture folders could be more detailed i think the user should be able to dig in successive folders within the picture folder and go back the picture folder root i believe most people use nested folders to... | 0 |

40,846 | 10,186,424,330 | IssuesEvent | 2019-08-10 13:21:12 | tulir/mautrix-telegram | https://api.github.com/repos/tulir/mautrix-telegram | closed | Error preventing startup: readexactly() called while another coroutine is already waiting for incoming data | bug: defect | Happens to a random puppet during startup, preventing the bridge from running.

```

Jun 3 15:12:37 integrations python[863]: [2019-06-03 15:12:37,269] [ERROR@telethon.582redacted.network.connection.connection] Unexpected exception in the receive loop

Jun 3 15:12:37 integrations python[863]: Traceback (most recen... | 1.0 | Error preventing startup: readexactly() called while another coroutine is already waiting for incoming data - Happens to a random puppet during startup, preventing the bridge from running.

```

Jun 3 15:12:37 integrations python[863]: [2019-06-03 15:12:37,269] [ERROR@telethon.582redacted.network.connection.connect... | defect | error preventing startup readexactly called while another coroutine is already waiting for incoming data happens to a random puppet during startup preventing the bridge from running jun integrations python unexpected exception in the receive loop jun integrations python traceb... | 1 |

178,463 | 6,609,072,164 | IssuesEvent | 2017-09-19 13:25:56 | eMoflon/emoflon-tool | https://api.github.com/repos/eMoflon/emoflon-tool | closed | Make /injection a source folder | feature-request low-priority | If /injection is configured as a source folder in Eclipse, its subfolder structure is nicely presented as package. | 1.0 | Make /injection a source folder - If /injection is configured as a source folder in Eclipse, its subfolder structure is nicely presented as package. | non_defect | make injection a source folder if injection is configured as a source folder in eclipse its subfolder structure is nicely presented as package | 0 |

427,773 | 12,399,006,664 | IssuesEvent | 2020-05-21 03:43:10 | orbeon/orbeon-forms | https://api.github.com/repos/orbeon/orbeon-forms | closed | Dynamic dropdown with search to evaluate `resource` in outer scope | Area: XBL Components Priority: Regression | This used to be the case with the autocomplete, so this is, in a way, a regression, plus, since the value of `resource` is provided by users and is taken as a VT, it just makes sense for XPath to be evaluated in the outer rather than the inner scope.

[+1 from customer](https://3.basecamp.com/3600924/buckets/2016476/... | 1.0 | Dynamic dropdown with search to evaluate `resource` in outer scope - This used to be the case with the autocomplete, so this is, in a way, a regression, plus, since the value of `resource` is provided by users and is taken as a VT, it just makes sense for XPath to be evaluated in the outer rather than the inner scope.

... | non_defect | dynamic dropdown with search to evaluate resource in outer scope this used to be the case with the autocomplete so this is in a way a regression plus since the value of resource is provided by users and is taken as a vt it just makes sense for xpath to be evaluated in the outer rather than the inner scope ... | 0 |

5,630 | 2,610,192,192 | IssuesEvent | 2015-02-26 19:00:43 | chrsmith/quchuseban | https://api.github.com/repos/chrsmith/quchuseban | opened | 咨询去除面部色斑的小妙招 | auto-migrated Priority-Medium Type-Defect | ```

《摘要》

愉快跟不愉快的回忆,比如一个硬币的两面,存在于我们的��

�一段情感里。就像那个著名的“蝴蝶效应”,如果你经常记�

��不愉快的人、不愉快的事,生活就跟着变得不愉快起来。相

反,有些女人却能在跟老公吵架的时候及其她求婚时的表情��

�他怀抱的温暖。这里的“吵”是一种乐观、积极的沟通方式�

��这样的女人即便是面临命运得不测风云,也不会唉声叹气,

而当它是动力。面带微笑、坦然自处,男人有乐观女人的相��

�,一生都将阳光灿烂。去除面部色斑的小妙招,

《客户案例》

我想知道一起拥有的回忆是浓了你还是醉了我,在这个��

�花飘落的季节里,我又想起了你,想起了那个雪地里雨伞下�

��长而又安静的拥抱,回想起你的眼泪曾伴... | 1.0 | 咨询去除面部色斑的小妙招 - ```

《摘要》

愉快跟不愉快的回忆,比如一个硬币的两面,存在于我们的��

�一段情感里。就像那个著名的“蝴蝶效应”,如果你经常记�

��不愉快的人、不愉快的事,生活就跟着变得不愉快起来。相

反,有些女人却能在跟老公吵架的时候及其她求婚时的表情��

�他怀抱的温暖。这里的“吵”是一种乐观、积极的沟通方式�

��这样的女人即便是面临命运得不测风云,也不会唉声叹气,

而当它是动力。面带微笑、坦然自处,男人有乐观女人的相��

�,一生都将阳光灿烂。去除面部色斑的小妙招,

《客户案例》

我想知道一起拥有的回忆是浓了你还是醉了我,在这个��

�花飘落的季节里,我又想起了你,想起了那个雪地里雨伞下�

��长而... | defect | 咨询去除面部色斑的小妙招 《摘要》 愉快跟不愉快的回忆,比如一个硬币的两面,存在于我们的�� �一段情感里。就像那个著名的“蝴蝶效应”,如果你经常记� ��不愉快的人、不愉快的事,生活就跟着变得不愉快起来。相 反,有些女人却能在跟老公吵架的时候及其她求婚时的表情�� �他怀抱的温暖。这里的“吵”是一种乐观、积极的沟通方式� ��这样的女人即便是面临命运得不测风云,也不会唉声叹气, 而当它是动力。面带微笑、坦然自处,男人有乐观女人的相�� �,一生都将阳光灿烂。去除面部色斑的小妙招, 《客户案例》 我想知道一起拥有的回忆是浓了你还是醉了我,在这个�� �花飘落的季节里,我又想起了你,想起了那个雪地里雨伞下� ��长而... | 1 |

34,205 | 7,395,775,651 | IssuesEvent | 2018-03-18 02:42:54 | pyscripter/MustangpeakCommonLib | https://api.github.com/repos/pyscripter/MustangpeakCommonLib | closed | how to install to Delphi XE3? | Priority-Medium Type-Defect auto-migrated | ```

What steps will reproduce the problem?

1. download with svn

2. open is file with InnoSetup

3. error "IDE install.txt not found"

What is the expected output? What do you see instead?

require other application or package?

What version of the product are you using? On what operating system?

DelphiXE3 on Win7

```

... | 1.0 | how to install to Delphi XE3? - ```

What steps will reproduce the problem?

1. download with svn

2. open is file with InnoSetup

3. error "IDE install.txt not found"

What is the expected output? What do you see instead?

require other application or package?

What version of the product are you using? On what operating ... | defect | how to install to delphi what steps will reproduce the problem download with svn open is file with innosetup error ide install txt not found what is the expected output what do you see instead require other application or package what version of the product are you using on what operating sy... | 1 |

1,771 | 3,369,260,855 | IssuesEvent | 2015-11-23 09:10:44 | rhiot/rhiot | https://api.github.com/repos/rhiot/rhiot | closed | create camel-labs.github.io website via Github Pages | infrastructure | creation of camel-labs.github.io repository needed | 1.0 | create camel-labs.github.io website via Github Pages - creation of camel-labs.github.io repository needed | non_defect | create camel labs github io website via github pages creation of camel labs github io repository needed | 0 |

398,623 | 11,742,044,091 | IssuesEvent | 2020-03-11 23:25:17 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | Newsletter ordering only possible for info or events seperately | bug newsletter priority: medium | In GitLab by @lscholten on Mar 29, 2017, 20:36

### One-sentence description

Newsletter ordering only possible for info or events seperately

### Current behaviour / Reproducing the bug

1. Add a newsletter

2. Add content and events

3. Try to mix them in the order

4. No mixing in the result

### Expected behaviour

1.... | 1.0 | Newsletter ordering only possible for info or events seperately - In GitLab by @lscholten on Mar 29, 2017, 20:36

### One-sentence description

Newsletter ordering only possible for info or events seperately

### Current behaviour / Reproducing the bug

1. Add a newsletter

2. Add content and events

3. Try to mix them i... | non_defect | newsletter ordering only possible for info or events seperately in gitlab by lscholten on mar one sentence description newsletter ordering only possible for info or events seperately current behaviour reproducing the bug add a newsletter add content and events try to mix them in the ... | 0 |

12,325 | 2,691,591,655 | IssuesEvent | 2015-03-31 22:33:34 | idaholab/moose | https://api.github.com/repos/idaholab/moose | closed | Peacock temp input generation bug | C: Peacock P: normal T: defect | When running the input file

```

moose/modules/phase_field/examples/multiphase/DerivativeMultiPhaseMaterial.i

```

from peacock, MOOSE throws the following error:

```

Error: the following unidentified entries were found in your input file:

Variables/eta3/InitialCondition/sqrt(x^2+y^2);if(r>7,1,0)

```

The... | 1.0 | Peacock temp input generation bug - When running the input file

```

moose/modules/phase_field/examples/multiphase/DerivativeMultiPhaseMaterial.i

```

from peacock, MOOSE throws the following error:

```

Error: the following unidentified entries were found in your input file:

Variables/eta3/InitialCondition/s... | defect | peacock temp input generation bug when running the input file moose modules phase field examples multiphase derivativemultiphasematerial i from peacock moose throws the following error error the following unidentified entries were found in your input file variables initialcondition sqrt... | 1 |

62,415 | 17,023,918,819 | IssuesEvent | 2021-07-03 04:33:16 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Login using openid does not function after signup | Component: website Priority: minor Resolution: invalid Type: defect | **[Submitted to the original trac issue database at 1.36pm, Saturday, 14th March 2015]**

Problem:

I signed up/registered on the website at[https://www.openstreetmap.org/user/new] using google as the third party. After that whenever I Tried to use google as the third party for login, it says "Your ID is not associa... | 1.0 | Login using openid does not function after signup - **[Submitted to the original trac issue database at 1.36pm, Saturday, 14th March 2015]**

Problem:

I signed up/registered on the website at[https://www.openstreetmap.org/user/new] using google as the third party. After that whenever I Tried to use google as the th... | defect | login using openid does not function after signup problem i signed up registered on the website at using google as the third party after that whenever i tried to use google as the third party for login it says your id is not associated with a openstreetmap account yet and redirects me to the signup ... | 1 |

507,955 | 14,685,303,149 | IssuesEvent | 2021-01-01 08:18:45 | sujith-bhatt/InfectiousDiseaseModelling | https://api.github.com/repos/sujith-bhatt/InfectiousDiseaseModelling | opened | Obtain Data | high priority | Use the JHU dataset and graph the cumulative incidence of cases confirmed and recovered. | 1.0 | Obtain Data - Use the JHU dataset and graph the cumulative incidence of cases confirmed and recovered. | non_defect | obtain data use the jhu dataset and graph the cumulative incidence of cases confirmed and recovered | 0 |

392,950 | 11,598,025,573 | IssuesEvent | 2020-02-24 22:07:42 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | www.wunderlist.com - Page is not loaded | browser-firefox engine-gecko form-v2-experiment priority-normal severity-critical | <!-- @browser: Firefox 74.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:74.0) Gecko/20100101 Firefox/74.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: https://www.wunderlist.com/webapp#/lists/inbox

**Browser / Version**: Firefox 74.0

**Operati... | 1.0 | www.wunderlist.com - Page is not loaded - <!-- @browser: Firefox 74.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:74.0) Gecko/20100101 Firefox/74.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @extra_labels: form-v2-experiment -->

**URL**: https://www.wunderlist.com/webapp#/lists/inbox

**B... | non_defect | page is not loaded url browser version firefox operating system windows tested another browser yes edge problem type site is not usable description page not loading correctly steps to reproduce firefox developer suddenly starting to give just a blank page ... | 0 |

6,386 | 2,610,241,953 | IssuesEvent | 2015-02-26 19:17:02 | chrsmith/jsjsj122 | https://api.github.com/repos/chrsmith/jsjsj122 | opened | 台州割包皮包茎哪家男科医院好 | auto-migrated Priority-Medium Type-Defect | ```

台州割包皮包茎哪家男科医院好【台州五洲生殖医院】24小时

健康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地�

��:台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐1

04、108、118、198及椒江一金清公交车直达枫南小区,乘坐107、

105、109、112、901、

902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家标�

��收费。尖端医疗设备,与世... | 1.0 | 台州割包皮包茎哪家男科医院好 - ```

台州割包皮包茎哪家男科医院好【台州五洲生殖医院】24小时

健康咨询热线:0576-88066933-(扣扣800080609)-(微信号tzwzszyy)医院地�

��:台州市椒江区枫南路229号(枫南大转盘旁)乘车线路:乘坐1

04、108、118、198及椒江一金清公交车直达枫南小区,乘坐107、

105、109、112、901、

902公交车到星星广场下车,步行即可到院。

诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,��

�精,无精。包皮包茎,精索静脉曲张,淋病等。

台州五洲生殖医院是台州最大的男科医院,权威专家在线免��

�咨询,拥有专业完善的男科检查治疗设备,严格按照国家... | defect | 台州割包皮包茎哪家男科医院好 台州割包皮包茎哪家男科医院好【台州五洲生殖医院】 健康咨询热线 微信号tzwzszyy 医院地� �� (枫南大转盘旁)乘车线路 、 、 、 , 、 、 、 、 、 ,步行即可到院。 诊疗项目:阳痿,早泄,前列腺炎,前列腺增生,龟头炎,�� �精,无精。包皮包茎,精索静脉曲张,淋病等。 台州五洲生殖医院是台州最大的男科医院,权威专家在线免�� �咨询,拥有专业完善的男科检查治疗设备,严格按照国家标� ��收费。尖端医疗设备,与世界同步。权威专家,成就专业典 范。人性化服务,一切以患者为中心。 看男科就选台州五洲生殖医院,专业男科为男人。 ... | 1 |

4,590 | 2,610,120,718 | IssuesEvent | 2015-02-26 18:37:28 | chrsmith/scribefire-chrome | https://api.github.com/repos/chrsmith/scribefire-chrome | closed | Can't add blog on livejournal | auto-migrated Priority-Medium Type-Defect | ```

What's the problem?

When I'm trying to add my Livejournal's blog I fill lines with login and

password but browser with a small new window tells me that i've entered wrong

login or password for area "lj" at www.livejournal.com:80.

What browser are you using?

Safari, the same problem in Firefox

What version... | 1.0 | Can't add blog on livejournal - ```

What's the problem?

When I'm trying to add my Livejournal's blog I fill lines with login and

password but browser with a small new window tells me that i've entered wrong

login or password for area "lj" at www.livejournal.com:80.

What browser are you using?

Safari, the same pro... | defect | can t add blog on livejournal what s the problem when i m trying to add my livejournal s blog i fill lines with login and password but browser with a small new window tells me that i ve entered wrong login or password for area lj at what browser are you using safari the same problem in firefox wh... | 1 |

70,374 | 23,145,049,111 | IssuesEvent | 2022-07-28 23:11:00 | NREL/EnergyPlus | https://api.github.com/repos/NREL/EnergyPlus | closed | CheckConvexity is not removing collinear points consistently, causing fatal error | Defect | Issue overview

--------------

In the [attached example file](https://github.com/TiejunWu/EnergyPlus-IDF-Files/blob/master/2Zones_Uncontrolled.idf) , there are 3 surfaces with 8 vertices each, 2 of the surfaces are boundary condition to each other. 4 of the 8 vertices are collinear points.

```

BuildingSurface:Detai... | 1.0 | CheckConvexity is not removing collinear points consistently, causing fatal error - Issue overview

--------------

In the [attached example file](https://github.com/TiejunWu/EnergyPlus-IDF-Files/blob/master/2Zones_Uncontrolled.idf) , there are 3 surfaces with 8 vertices each, 2 of the surfaces are boundary condition t... | defect | checkconvexity is not removing collinear points consistently causing fatal error issue overview in the there are surfaces with vertices each of the surfaces are boundary condition to each other of the vertices are collinear points buildingsurface detailed ... | 1 |

15,368 | 2,850,673,473 | IssuesEvent | 2015-05-31 19:33:09 | damonkohler/android-scripting | https://api.github.com/repos/damonkohler/android-scripting | closed | sl4a + no wireless = sudden death | auto-migrated Priority-Medium Type-Defect | ```

What device(s) are you experiencing the problem on?

HTC Dream

What firmware version are you running on the device?

cyanogenmod 6.1.0

What steps will reproduce the problem?

1. start sl4a

2. turn off/lose wireless connection

3. launch any 'hello world' script

What is the expected output? What do you see instead?

s... | 1.0 | sl4a + no wireless = sudden death - ```

What device(s) are you experiencing the problem on?

HTC Dream

What firmware version are you running on the device?

cyanogenmod 6.1.0

What steps will reproduce the problem?

1. start sl4a

2. turn off/lose wireless connection

3. launch any 'hello world' script

What is the expecte... | defect | no wireless sudden death what device s are you experiencing the problem on htc dream what firmware version are you running on the device cyanogenmod what steps will reproduce the problem start turn off lose wireless connection launch any hello world script what is the expected outp... | 1 |

18,910 | 24,848,829,807 | IssuesEvent | 2022-10-26 18:10:21 | apache/arrow-rs | https://api.github.com/repos/apache/arrow-rs | closed | failed to pass test cases while enabling feature chrono-tz | bug development-process | ```bash

cargo test --features chrono-tz

```

```bash

failures:

---- compute::kernels::cast::tests::test_cast_timestamp_to_string stdout ----

[arrow/src/compute/kernels/cast.rs:3852] &array = PrimitiveArray<Timestamp(Millisecond, None)>

[

1997-05-19T00:00:00.005,

2018-12-25T00:00:00.001,

null,

]

thr... | 1.0 | failed to pass test cases while enabling feature chrono-tz - ```bash

cargo test --features chrono-tz

```

```bash

failures:

---- compute::kernels::cast::tests::test_cast_timestamp_to_string stdout ----

[arrow/src/compute/kernels/cast.rs:3852] &array = PrimitiveArray<Timestamp(Millisecond, None)>

[

1997-05... | non_defect | failed to pass test cases while enabling feature chrono tz bash cargo test features chrono tz bash failures compute kernels cast tests test cast timestamp to string stdout array primitivearray null thread compute kernels cast t... | 0 |

7,491 | 2,610,390,384 | IssuesEvent | 2015-02-26 20:06:18 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | closed | Hedgewars bug in graph | auto-migrated Priority-Low Type-Defect | ```

What steps will reproduce the problem?

1. Create a new game

2. Win the match in a single play

What is the expected output? What do you see instead?

The graph is not displayed well

What version of the product are you using? On what operating system?

0.9.17

```

-----

Original issue reported on code.google.com ... | 1.0 | Hedgewars bug in graph - ```

What steps will reproduce the problem?

1. Create a new game

2. Win the match in a single play

What is the expected output? What do you see instead?

The graph is not displayed well

What version of the product are you using? On what operating system?

0.9.17

```

-----

Original issue rep... | defect | hedgewars bug in graph what steps will reproduce the problem create a new game win the match in a single play what is the expected output what do you see instead the graph is not displayed well what version of the product are you using on what operating system original issue repo... | 1 |

31,063 | 6,420,781,895 | IssuesEvent | 2017-08-09 01:36:15 | junichi11/netbeans-github-issues-plugin | https://api.github.com/repos/junichi11/netbeans-github-issues-plugin | closed | Can't add a Milestone from within Netbeans | defect | While viewing an issue in Netbeans (v 8.2), I try to add a Milestone from within the issue. When I click OK from within the New Milestone dialog I get "Can't add a milestone". I'm also not seeing Milestones that I've created online in my GitHub repository being available in the Milestone dropdown in an issue's edit pag... | 1.0 | Can't add a Milestone from within Netbeans - While viewing an issue in Netbeans (v 8.2), I try to add a Milestone from within the issue. When I click OK from within the New Milestone dialog I get "Can't add a milestone". I'm also not seeing Milestones that I've created online in my GitHub repository being available in ... | defect | can t add a milestone from within netbeans while viewing an issue in netbeans v i try to add a milestone from within the issue when i click ok from within the new milestone dialog i get can t add a milestone i m also not seeing milestones that i ve created online in my github repository being available in ... | 1 |

43,045 | 23,092,251,778 | IssuesEvent | 2022-07-26 16:07:29 | rapidsai/cudf | https://api.github.com/repos/rapidsai/cudf | closed | [FEA] Replace `cuco::static_multimap` by `cuco::static_map` in semi-anti-join | feature request 0 - Backlog libcudf Performance helps: Spark non-breaking | The implementation of semi-anti-join was refactored in #11100. One of the changes was to use `cuco::static_multimap`, which was later discovered that it has performance issue when the input tables have too many duplicate rows (https://github.com/rapidsai/cudf/issues/11299).

We should use `cuco::static_map` to avoid ... | True | [FEA] Replace `cuco::static_multimap` by `cuco::static_map` in semi-anti-join - The implementation of semi-anti-join was refactored in #11100. One of the changes was to use `cuco::static_multimap`, which was later discovered that it has performance issue when the input tables have too many duplicate rows (https://githu... | non_defect | replace cuco static multimap by cuco static map in semi anti join the implementation of semi anti join was refactored in one of the changes was to use cuco static multimap which was later discovered that it has performance issue when the input tables have too many duplicate rows we should use c... | 0 |

21,475 | 3,511,949,080 | IssuesEvent | 2016-01-10 17:32:48 | jOOQ/jOOQ | https://api.github.com/repos/jOOQ/jOOQ | closed | A significant amount of CPU is spent in String.indexOf() because of identifier escaping | C: Functionality P: Medium T: Defect | When running benchmarks with JMH, a significant amount of time is spent in `String.indexOf()`:

```

15.7% 30.9% java.lang.String.indexOf

4.9% 9.6% org.apache.log4j.Category.getEffectiveLevel

4.8% 9.5% org.jooq.impl.AbstractBindContext.bindInternal

2.7% 5.2% org.jooq.impl.AbstractContext.visit0

2.... | 1.0 | A significant amount of CPU is spent in String.indexOf() because of identifier escaping - When running benchmarks with JMH, a significant amount of time is spent in `String.indexOf()`:

```

15.7% 30.9% java.lang.String.indexOf

4.9% 9.6% org.apache.log4j.Category.getEffectiveLevel

4.8% 9.5% org.jooq.impl.... | defect | a significant amount of cpu is spent in string indexof because of identifier escaping when running benchmarks with jmh a significant amount of time is spent in string indexof java lang string indexof org apache category geteffectivelevel org jooq impl abstra... | 1 |

82,655 | 15,679,655,369 | IssuesEvent | 2021-03-25 01:01:47 | snowdensb/Leo | https://api.github.com/repos/snowdensb/Leo | opened | CVE-2021-21349 (Medium) detected in xstream-1.4.8.jar | security vulnerability | ## CVE-2021-21349 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.8.jar</b></p></summary>

<p>XStream is a serialization library from Java objects to XML and back.</p>

<p>... | True | CVE-2021-21349 (Medium) detected in xstream-1.4.8.jar - ## CVE-2021-21349 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.8.jar</b></p></summary>

<p>XStream is a serializ... | non_defect | cve medium detected in xstream jar cve medium severity vulnerability vulnerable library xstream jar xstream is a serialization library from java objects to xml and back path to dependency file leo core pom xml path to vulnerable library home wss scanner repository com... | 0 |

276,943 | 21,006,439,558 | IssuesEvent | 2022-03-29 23:15:44 | NASA-IMPACT/sddo | https://api.github.com/repos/NASA-IMPACT/sddo | reopened | Complete definitions for all SDDO terms | documentation | Many of the classes and properties in SDDO have yet to have definitions.... | 1.0 | Complete definitions for all SDDO terms - Many of the classes and properties in SDDO have yet to have definitions.... | non_defect | complete definitions for all sddo terms many of the classes and properties in sddo have yet to have definitions | 0 |

257,815 | 19,532,334,288 | IssuesEvent | 2021-12-30 19:34:37 | InfiniTimeOrg/InfiniTime | https://api.github.com/repos/InfiniTimeOrg/InfiniTime | closed | My beginner experience / improving onboarding process | documentation enhancement help wanted question/discussion | ###

- [X] I searched for similar feature request and found none was relevant.

### Pitch us your idea!

Improving documentation to be more user-centric

### Description

Hi,

I love this watch and this is my way of documenting a typical first time user experience.

I'm sharing this because once you get into the pro... | 1.0 | My beginner experience / improving onboarding process - ###

- [X] I searched for similar feature request and found none was relevant.

### Pitch us your idea!

Improving documentation to be more user-centric

### Description

Hi,

I love this watch and this is my way of documenting a typical first time user experie... | non_defect | my beginner experience improving onboarding process i searched for similar feature request and found none was relevant pitch us your idea improving documentation to be more user centric description hi i love this watch and this is my way of documenting a typical first time user experienc... | 0 |

346,763 | 10,419,566,664 | IssuesEvent | 2019-09-15 17:30:11 | HW-PlayersPatch/Development | https://api.github.com/repos/HW-PlayersPatch/Development | opened | EMP Friendly Fire and vs Frigs | MP priority: medium status: in discussion | ## hw2c

EMP Damage: 35 / 105 (per scout/squad)

### EMP Shields:

Scouts 0

Fighters/Corvs 75

Frigs 310

Friendly Fire 0%

## Current State

EMP Damage: 20 / 60 (per scout/squad)

### EMP Shields:

Scouts 100

hw2 Fighters/Corvs 75

hw1 Fighters 30

hw1 Corvs 45

Frigs 310

Friendly Fire 50%

When 4 scouts try to e... | 1.0 | EMP Friendly Fire and vs Frigs - ## hw2c

EMP Damage: 35 / 105 (per scout/squad)

### EMP Shields:

Scouts 0

Fighters/Corvs 75

Frigs 310

Friendly Fire 0%

## Current State

EMP Damage: 20 / 60 (per scout/squad)

### EMP Shields:

Scouts 100

hw2 Fighters/Corvs 75

hw1 Fighters 30

hw1 Corvs 45

Frigs 310

Friendly... | non_defect | emp friendly fire and vs frigs emp damage per scout squad emp shields scouts fighters corvs frigs friendly fire current state emp damage per scout squad emp shields scouts fighters corvs fighters corvs frigs friendly fire when scouts ... | 0 |

94,594 | 19,562,842,303 | IssuesEvent | 2022-01-03 18:46:09 | Onelinerhub/onelinerhub | https://api.github.com/repos/Onelinerhub/onelinerhub | closed | Short solution needed: "What's Redis default port" (redis) | help wanted good first issue code redis | Please help us write most modern and shortest code solution for this issue:

**What's Redis default port** (technology: [redis](https://onelinerhub.com/redis))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create pull request with a new code file inside [inbox folder](https://github.co... | 1.0 | Short solution needed: "What's Redis default port" (redis) - Please help us write most modern and shortest code solution for this issue:

**What's Redis default port** (technology: [redis](https://onelinerhub.com/redis))

### Fast way

Just write the code solution in the comments.

### Prefered way

1. Create pull request... | non_defect | short solution needed what s redis default port redis please help us write most modern and shortest code solution for this issue what s redis default port technology fast way just write the code solution in the comments prefered way create pull request with a new code file inside ... | 0 |

261,133 | 27,785,299,954 | IssuesEvent | 2023-03-17 02:18:15 | SebastianDarie/portfolio | https://api.github.com/repos/SebastianDarie/portfolio | opened | CVE-2023-28155 (Medium) detected in request-2.88.2.tgz | Mend: dependency security vulnerability | ## CVE-2023-28155 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>request-2.88.2.tgz</b></p></summary>

<p>Simplified HTTP request client.</p>

<p>Library home page: <a href="https://r... | True | CVE-2023-28155 (Medium) detected in request-2.88.2.tgz - ## CVE-2023-28155 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>request-2.88.2.tgz</b></p></summary>

<p>Simplified HTTP req... | non_defect | cve medium detected in request tgz cve medium severity vulnerability vulnerable library request tgz simplified http request client library home page a href path to dependency file package json path to vulnerable library node modules request package json depend... | 0 |

1,327 | 2,539,163,788 | IssuesEvent | 2015-01-27 13:30:40 | richtermondt/inithub-web | https://api.github.com/repos/richtermondt/inithub-web | closed | Clean project checkout, configuration and startup | test | take detailed notes and update installation notes when complete | 1.0 | Clean project checkout, configuration and startup - take detailed notes and update installation notes when complete | non_defect | clean project checkout configuration and startup take detailed notes and update installation notes when complete | 0 |

90,315 | 10,678,340,727 | IssuesEvent | 2019-10-21 17:04:51 | Ishan-Gunaratne/advance-javascript | https://api.github.com/repos/Ishan-Gunaratne/advance-javascript | closed | Update README.md | documentation good first issue | Update the READEME.md to show all the sections we have covered so far.

There is only one section now. (**variables**) | 1.0 | Update README.md - Update the READEME.md to show all the sections we have covered so far.

There is only one section now. (**variables**) | non_defect | update readme md update the reademe md to show all the sections we have covered so far there is only one section now variables | 0 |

49,821 | 13,187,277,159 | IssuesEvent | 2020-08-13 02:54:17 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | opened | dataio-pyshovel crashes upon missing key (Trac #2138) | Incomplete Migration Migrated from Trac combo core defect | <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2138">https://code.icecube.wisc.edu/ticket/2138</a>, reported by peller and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2018-02-16T20:51:30",

"description": "when skipping through frames in a... | 1.0 | dataio-pyshovel crashes upon missing key (Trac #2138) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/ticket/2138">https://code.icecube.wisc.edu/ticket/2138</a>, reported by peller and owned by </em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2018-02-16T20:51:30"... | defect | dataio pyshovel crashes upon missing key trac migrated from json status closed changetime description when skipping through frames in an file while having a key opened and the key doesn t exist in the next frame it crashes exception should instead rathe... | 1 |

179,998 | 30,343,612,381 | IssuesEvent | 2023-07-11 14:09:59 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Suggestion: Remove "nameof()" from references list | Area-IDE Concept-Continuous Improvement Need Design Review IDE-Navigation | _This issue has been moved from [a ticket on Developer Community](https://developercommunity2.visualstudio.com/t/Suggestion:-Remove-nameof-from-refer/1337827)._

---

When a references list of a meth... | 1.0 | Suggestion: Remove "nameof()" from references list - _This issue has been moved from [a ticket on Developer Community](https://developercommunity2.visualstudio.com/t/Suggestion:-Remove-nameof-from-refer/1337827)._

---

name and email

Alyssa Gallion alyssa.gallion@oddball.io

### Product Owner (PO) name and email

Ray Wang gang.wang@va.gov

### Access Type Requesting

viewers

##... | True | SOCKS access for Gary Fallon - ### Your Name

Gary Fallon

### Your Email

gary.fallon@oddball.io

### Your Role and Team

Security Engineer on Platform Security

### Product Manager (PM) name and email

Alyssa Gallion alyssa.gallion@oddball.io

### Product Owner (PO) name and email

Ray Wang gang.wang@va.gov

### Acce... | non_defect | socks access for gary fallon your name gary fallon your email gary fallon oddball io your role and team security engineer on platform security product manager pm name and email alyssa gallion alyssa gallion oddball io product owner po name and email ray wang gang wang va gov acce... | 0 |

22,504 | 3,655,281,093 | IssuesEvent | 2016-02-17 15:48:40 | contao/core-bundle | https://api.github.com/repos/contao/core-bundle | reopened | Image::setTargetPath problem with /web/ folder | defect | Yet another problematic folder. When setting a target folder for an image, the path is relative to `TL_ROOT`. Which might not be available from the web directory. | 1.0 | Image::setTargetPath problem with /web/ folder - Yet another problematic folder. When setting a target folder for an image, the path is relative to `TL_ROOT`. Which might not be available from the web directory. | defect | image settargetpath problem with web folder yet another problematic folder when setting a target folder for an image the path is relative to tl root which might not be available from the web directory | 1 |

50,232 | 13,187,390,702 | IssuesEvent | 2020-08-13 03:15:55 | icecube-trac/tix3 | https://api.github.com/repos/icecube-trac/tix3 | closed | TypeError: expecting datetime, int, long, float, or None; got <class 'genshi.template.eval.Undefined'> (Trac #348) | Migrated from Trac combo simulation defect | I can't see the revision log for romeo trunk

==== How to Reproduce ====

While doing a GET operation on `/log/projects/romeo/trunk`, Trac issued an internal error.

''(please provide additional details here)''

Request parameters:

```text

{'path': u'/projects/romeo/trunk'}

```

User Agent was: `Mozilla/5.0 (X11; U... | 1.0 | TypeError: expecting datetime, int, long, float, or None; got <class 'genshi.template.eval.Undefined'> (Trac #348) - I can't see the revision log for romeo trunk

==== How to Reproduce ====

While doing a GET operation on `/log/projects/romeo/trunk`, Trac issued an internal error.

''(please provide additional details... | defect | typeerror expecting datetime int long float or none got trac i can t see the revision log for romeo trunk how to reproduce while doing a get operation on log projects romeo trunk trac issued an internal error please provide additional details here request parameters text ... | 1 |

439,761 | 30,713,322,778 | IssuesEvent | 2023-07-27 11:21:14 | Stephen-Hamilton-C/timecard-lib | https://api.github.com/repos/Stephen-Hamilton-C/timecard-lib | closed | Generate API Documentation with Dokka | documentation enhancement | See https://kotlinlang.org/docs/dokka-introduction.html

Look into placing this generated documentation into the Github Wiki for this repo | 1.0 | Generate API Documentation with Dokka - See https://kotlinlang.org/docs/dokka-introduction.html

Look into placing this generated documentation into the Github Wiki for this repo | non_defect | generate api documentation with dokka see look into placing this generated documentation into the github wiki for this repo | 0 |

41,979 | 10,732,134,888 | IssuesEvent | 2019-10-28 21:07:38 | jccastillo0007/eFacturaT | https://api.github.com/repos/jccastillo0007/eFacturaT | opened | Condominios - Incluir en el nombre de la SP el id CASA | defect | Hoy aparece null, supongo que porque no tienen RFC asociado.

Y por el simple nombre no se conoce de que casa es. | 1.0 | Condominios - Incluir en el nombre de la SP el id CASA - Hoy aparece null, supongo que porque no tienen RFC asociado.

Y por el simple nombre no se conoce de que casa es. | defect | condominios incluir en el nombre de la sp el id casa hoy aparece null supongo que porque no tienen rfc asociado y por el simple nombre no se conoce de que casa es | 1 |

8,247 | 2,611,473,326 | IssuesEvent | 2015-02-27 05:17:29 | chrsmith/hedgewars | https://api.github.com/repos/chrsmith/hedgewars | opened | Hog swap too fast, plus "hotseat" issues. | auto-migrated Priority-Medium Type-Defect | ```

What steps will reproduce the problem?

1. Start any game with any number of players (2~8), preferentially with at

least 2 sharing the same computer to be able to reproduce both problems.

2. Try to play normally

3. ???

4. Profit

What is the expected output? What do you see instead?

During the game, the next hog ch... | 1.0 | Hog swap too fast, plus "hotseat" issues. - ```

What steps will reproduce the problem?

1. Start any game with any number of players (2~8), preferentially with at

least 2 sharing the same computer to be able to reproduce both problems.

2. Try to play normally

3. ???

4. Profit

What is the expected output? What do you s... | defect | hog swap too fast plus hotseat issues what steps will reproduce the problem start any game with any number of players preferentially with at least sharing the same computer to be able to reproduce both problems try to play normally profit what is the expected output what do you s... | 1 |

177,978 | 29,368,357,978 | IssuesEvent | 2023-05-29 00:01:41 | ManageIQ/manageiq | https://api.github.com/repos/ManageIQ/manageiq | closed | Features dependency for an API-driven UI | enhancement stale redesign size/xl | We're moving the classic UI to be much more an API-driven and this is already causing problems for roles with limited access to certain resources. For [example](https://bugzilla.redhat.com/show_bug.cgi?id=1703967) the about modal would need at least read-only access to the information about the current Zone and Region... | 1.0 | Features dependency for an API-driven UI - We're moving the classic UI to be much more an API-driven and this is already causing problems for roles with limited access to certain resources. For [example](https://bugzilla.redhat.com/show_bug.cgi?id=1703967) the about modal would need at least read-only access to the in... | non_defect | features dependency for an api driven ui we re moving the classic ui to be much more an api driven and this is already causing problems for roles with limited access to certain resources for the about modal would need at least read only access to the information about the current zone and region however expos... | 0 |

39,766 | 9,651,735,188 | IssuesEvent | 2019-05-18 10:45:06 | boxbackup/boxbackup | https://api.github.com/repos/boxbackup/boxbackup | closed | Windows client 1662 has 3 extra files? (Trac #29) | Migrated from Trac bbackupd ben defect | Found in boxbackup-chris_general_1662-backup-client-mingw32.zip

I don't think are of any use, are they?:

```text

bbackupd-config

install-backup-client

installer.iss

```

Migrated from https://www.boxbackup.org/ticket/29

```json

{

"status": "closed",

"changetime": "2007-09-19T22:11:07",

"description": ... | 1.0 | Windows client 1662 has 3 extra files? (Trac #29) - Found in boxbackup-chris_general_1662-backup-client-mingw32.zip

I don't think are of any use, are they?:

```text

bbackupd-config

install-backup-client

installer.iss

```

Migrated from https://www.boxbackup.org/ticket/29

```json

{

"status": "closed",

"chan... | defect | windows client has extra files trac found in boxbackup chris general backup client zip i don t think are of any use are they text bbackupd config install backup client installer iss migrated from json status closed changetime description foun... | 1 |

11,233 | 2,641,947,100 | IssuesEvent | 2015-03-11 20:40:47 | chrsmith/html5rocks | https://api.github.com/repos/chrsmith/html5rocks | closed | Does't work with SeaMonkey | Priority-Medium Type-Defect | Original [issue 148](https://code.google.com/p/html5rocks/issues/detail?id=148) created by chrsmith on 2010-08-14T01:23:27.000Z:

<b>Please describe the issue:</b>

I'm using SeaMonkey, build Mozilla/5.0 (X11; Linux i686; rv:2.0b4pre) Gecko/20100811

which sould work on any page Firefox beta 3 works. But clicking on a... | 1.0 | Does't work with SeaMonkey - Original [issue 148](https://code.google.com/p/html5rocks/issues/detail?id=148) created by chrsmith on 2010-08-14T01:23:27.000Z:

<b>Please describe the issue:</b>

I'm using SeaMonkey, build Mozilla/5.0 (X11; Linux i686; rv:2.0b4pre) Gecko/20100811

which sould work on any page Firefox be... | defect | does t work with seamonkey original created by chrsmith on please describe the issue i m using seamonkey build mozilla linux rv gecko which sould work on any page firefox beta works but clicking on any link doesn t work please provide any additional information below... | 1 |

21,636 | 11,660,461,003 | IssuesEvent | 2020-03-03 03:25:13 | cityofaustin/atd-data-tech | https://api.github.com/repos/cityofaustin/atd-data-tech | opened | VZD | Update Units Table to include `atd_mode_category` | Need: 1-Must Have Product: Vision Zero Crash Data System Project: Vision Zero Crash Data System Service: Dev Type: Enhancement Workgroup: VZ migrated | - [x] Add new column `atd_mode_category` to Unit Table

- [x] On Unit record creation, assign an `atd_mode_category` from the Mode Categories Lookup table (https://github.com/cityofaustin/atd-vz-data/issues/615) based on the record's `unit_desc_id` & `veh_body_styl_id`

*Migrated from [atd-vz-data #656](https://github.... | 1.0 | VZD | Update Units Table to include `atd_mode_category` - - [x] Add new column `atd_mode_category` to Unit Table

- [x] On Unit record creation, assign an `atd_mode_category` from the Mode Categories Lookup table (https://github.com/cityofaustin/atd-vz-data/issues/615) based on the record's `unit_desc_id` & `veh_body_s... | non_defect | vzd update units table to include atd mode category add new column atd mode category to unit table on unit record creation assign an atd mode category from the mode categories lookup table based on the record s unit desc id veh body styl id migrated from | 0 |

75,142 | 25,551,646,207 | IssuesEvent | 2022-11-30 00:35:53 | SeleniumHQ/selenium | https://api.github.com/repos/SeleniumHQ/selenium | closed | [🐛 Bug]: RemoteWebDriver.findElement works weirdly | R-awaiting answer I-defect needs-triaging | ### What happened?

When I tried to find an element by xpath, it works.

The current call stack of method was done and then jvm went into other method call stack.

I tried to do that again at there, but it doesn't work.

There is no action for ChromeDriver. The changes are only call stack.

I don't understand why.

... | 1.0 | [🐛 Bug]: RemoteWebDriver.findElement works weirdly - ### What happened?

When I tried to find an element by xpath, it works.

The current call stack of method was done and then jvm went into other method call stack.

I tried to do that again at there, but it doesn't work.

There is no action for ChromeDriver. The ... | defect | remotewebdriver findelement works weirdly what happened when i tried to find an element by xpath it works the current call stack of method was done and then jvm went into other method call stack i tried to do that again at there but it doesn t work there is no action for chromedriver the changes... | 1 |

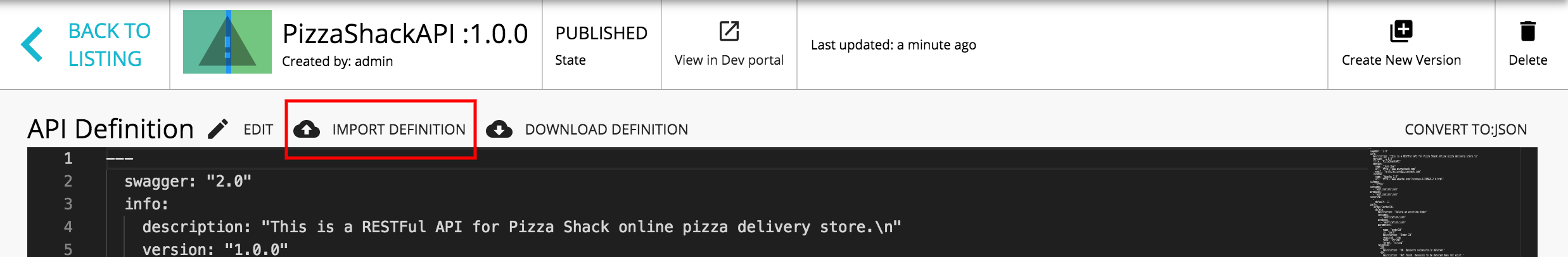

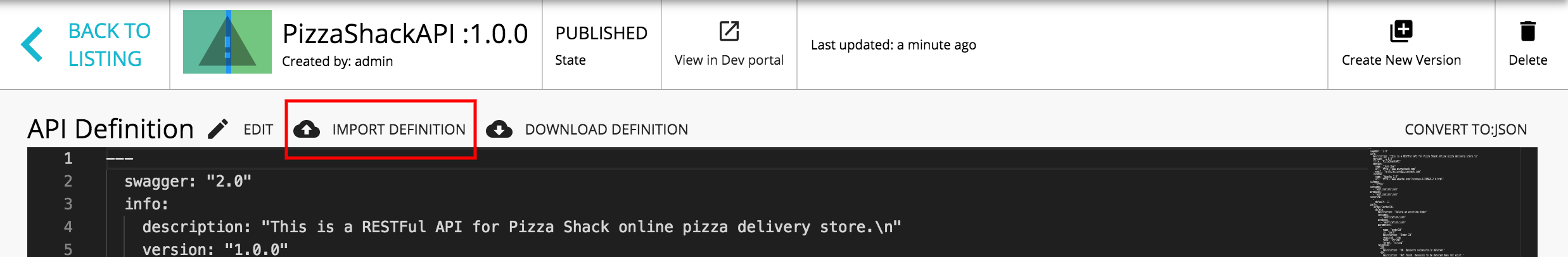

350,196 | 10,479,997,577 | IssuesEvent | 2019-09-24 06:22:11 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | opened | [3.0.0] URL based swagger import is missing in API definitions page | 3.0.0-alpha Priority/Highest Publisher Severity/Critical | URL and File-based swagger import option should be provided in API definitions page

| 1.0 | [3.0.0] URL based swagger import is missing in API definitions page - URL and File-based swagger import option should be provided in API definitions page

| non_defect | url based swagger import is missing in api definitions page url and file based swagger import option should be provided in api definitions page | 0 |

13,523 | 3,737,267,144 | IssuesEvent | 2016-03-08 18:41:07 | grpc/grpc | https://api.github.com/repos/grpc/grpc | closed | Doc Fixit: node.js update website doc reference | documentation node | http://www.grpc.io/grpc/node/ still says 0.12.0

Navigation: grpc.io -> Documentation -> References -> Node.js API | 1.0 | Doc Fixit: node.js update website doc reference - http://www.grpc.io/grpc/node/ still says 0.12.0

Navigation: grpc.io -> Documentation -> References -> Node.js API | non_defect | doc fixit node js update website doc reference still says navigation grpc io documentation references node js api | 0 |

422,301 | 28,433,801,489 | IssuesEvent | 2023-04-15 04:06:51 | erritis/mongodb-macro | https://api.github.com/repos/erritis/mongodb-macro | opened | Add multiple factory examples to resources | documentation | Add examples of creating multiple factories to different servers, databases and collections to better demonstrate the purpose of creating this crate. | 1.0 | Add multiple factory examples to resources - Add examples of creating multiple factories to different servers, databases and collections to better demonstrate the purpose of creating this crate. | non_defect | add multiple factory examples to resources add examples of creating multiple factories to different servers databases and collections to better demonstrate the purpose of creating this crate | 0 |

663,139 | 22,162,783,683 | IssuesEvent | 2022-06-04 19:05:24 | TuSimple/naive-ui | https://api.github.com/repos/TuSimple/naive-ui | closed | Suggestions for improving Input Number component | feature request priority: low | <!-- generated by issue-helper DO NOT REMOVE __FEATURE_REQUEST__ -->

### This function solves the problem (这个功能解决的问题)

Thanks a lot for developing this fantastic UI library :)

Currently the Input Number component is a bit limited in its feature range imho.

It does not accept decimal separators for other locales ... | 1.0 | Suggestions for improving Input Number component - <!-- generated by issue-helper DO NOT REMOVE __FEATURE_REQUEST__ -->

### This function solves the problem (这个功能解决的问题)

Thanks a lot for developing this fantastic UI library :)

Currently the Input Number component is a bit limited in its feature range imho.

It do... | non_defect | suggestions for improving input number component this function solves the problem 这个功能解决的问题 thanks a lot for developing this fantastic ui library currently the input number component is a bit limited in its feature range imho it does not accept decimal separators for other locales e g in germany... | 0 |

36,651 | 8,048,825,374 | IssuesEvent | 2018-08-01 08:11:25 | primefaces/primeng | https://api.github.com/repos/primefaces/primeng | closed | Dialog/OverlayPanel error when using appendTo | defect | **I'm submitting a ...** (check one with "x")

```

[x] bug report => Search github for a similar issue or PR before submitting

```

**Plunkr Case (Bug Reports)**

https://github-df7qmz.stackblitz.io/

**Current behavior**

When the dialog is not visible, hiding its containing element via ngIf (causing dialog OnD... | 1.0 | Dialog/OverlayPanel error when using appendTo - **I'm submitting a ...** (check one with "x")

```

[x] bug report => Search github for a similar issue or PR before submitting

```

**Plunkr Case (Bug Reports)**

https://github-df7qmz.stackblitz.io/

**Current behavior**

When the dialog is not visible, hiding its... | defect | dialog overlaypanel error when using appendto i m submitting a check one with x bug report search github for a similar issue or pr before submitting plunkr case bug reports current behavior when the dialog is not visible hiding its containing element via ngif causing... | 1 |

269,012 | 28,959,965,143 | IssuesEvent | 2023-05-10 01:04:08 | dpteam/RK3188_TABLET | https://api.github.com/repos/dpteam/RK3188_TABLET | reopened | CVE-2013-7264 (Medium) detected in linuxv3.0 | Mend: dependency security vulnerability | ## CVE-2013-7264 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/very... | True | CVE-2013-7264 (Medium) detected in linuxv3.0 - ## CVE-2013-7264 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Lib... | non_defect | cve medium detected in cve medium severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files net phonet datagram c n... | 0 |

42,161 | 12,879,018,176 | IssuesEvent | 2020-07-11 19:41:35 | loftwah/loftwah-dev-2020 | https://api.github.com/repos/loftwah/loftwah-dev-2020 | opened | CVE-2018-20821 (Medium) detected in node-sass-4.14.1.tgz, node-sass-v4.13.1 | security vulnerability | ## CVE-2018-20821 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.14.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper... | True | CVE-2018-20821 (Medium) detected in node-sass-4.14.1.tgz, node-sass-v4.13.1 - ## CVE-2018-20821 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>node-sass-4.14.1.tgz</b></p></summary... | non_defect | cve medium detected in node sass tgz node sass cve medium severity vulnerability vulnerable libraries node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file tmp ws scm loftwah dev wordpress app wp con... | 0 |

59,542 | 17,023,157,151 | IssuesEvent | 2021-07-03 00:37:51 | tomhughes/trac-tickets | https://api.github.com/repos/tomhughes/trac-tickets | closed | Slippy map doesn't work in IE6 | Component: rails_port Priority: major Resolution: fixed Type: defect | **[Submitted to the original trac issue database at 4.52pm, Monday, 7th May 2007]**

IE6 doesn't work with slippy map - there's no layer selector visible in

top right, and no tiles appear to load, leaving a plain white screen.

The map boundary box and the pan/zoom controls at top left do show up, however, so that's ... | 1.0 | Slippy map doesn't work in IE6 - **[Submitted to the original trac issue database at 4.52pm, Monday, 7th May 2007]**

IE6 doesn't work with slippy map - there's no layer selector visible in

top right, and no tiles appear to load, leaving a plain white screen.

The map boundary box and the pan/zoom controls at top lef... | defect | slippy map doesn t work in doesn t work with slippy map there s no layer selector visible in top right and no tiles appear to load leaving a plain white screen the map boundary box and the pan zoom controls at top left do show up however so that s progress as they didn t before | 1 |

433,804 | 12,510,915,458 | IssuesEvent | 2020-06-02 19:33:17 | CDH-Studio/UpSkill | https://api.github.com/repos/CDH-Studio/UpSkill | closed | User name overlaps avatar in my profile | Low Priority UI bug | **Describe the bug**

User name in profile view's BasicInfoView overlaps avatar

**To Reproduce**

Steps to reproduce the behavior:

1. Go to 'My profile'

**Expected behavior**

The name should not overlap the avatar

**Screenshots**

As you can see, Ben is over the avatar

| Migrated from Trac combo simulation defect | I can't see the revision log for romeo trunk

==== How to Reproduce ====

While doing a GET operation on `/log/projects/romeo/trunk`, Trac issued an internal error.

''(please provide additional details here)''

Request parameters:

```text

{'path': u'/projects/romeo/trunk'}

```

User Agent was: `Mozilla/5.0 (X11; U... | 1.0 | TypeError: expecting datetime, int, long, float, or None; got <class 'genshi.template.eval.Undefined'> (Trac #348) - I can't see the revision log for romeo trunk

==== How to Reproduce ====

While doing a GET operation on `/log/projects/romeo/trunk`, Trac issued an internal error.

''(please provide additional details... | defect | typeerror expecting datetime int long float or none got trac i can t see the revision log for romeo trunk how to reproduce while doing a get operation on log projects romeo trunk trac issued an internal error please provide additional details here request parameters text ... | 1 |

320,505 | 27,438,765,756 | IssuesEvent | 2023-03-02 09:31:35 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | [Flaky Test] can be converted when nested to paragraphs | [Type] Flaky Test | <!-- __META_DATA__:{} -->

**Flaky test detected. This is an auto-generated issue by GitHub Actions. Please do NOT edit this manually.**

## Test title

can be converted when nested to paragraphs

## Test path

`/test/e2e/specs/editor/blocks/list.spec.js`

## Errors

<!-- __TEST_RESULTS_LIST__ -->

<!-- __TEST_RESULT__ --><... | 1.0 | [Flaky Test] can be converted when nested to paragraphs - <!-- __META_DATA__:{} -->

**Flaky test detected. This is an auto-generated issue by GitHub Actions. Please do NOT edit this manually.**

## Test title

can be converted when nested to paragraphs

## Test path

`/test/e2e/specs/editor/blocks/list.spec.js`

## Error... | non_defect | can be converted when nested to paragraphs flaky test detected this is an auto generated issue by github actions please do not edit this manually test title can be converted when nested to paragraphs test path test specs editor blocks list spec js errors test passed after f... | 0 |

16,000 | 2,870,250,565 | IssuesEvent | 2015-06-07 00:34:38 | pdelia/away3d | https://api.github.com/repos/pdelia/away3d | closed | TextField3D render problems, text overwrite problem, and crashes | auto-migrated Priority-Medium Type-Defect | #52 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:25Z

```

What steps will reproduce the problem?

1. Create TextField3D object and apply ColorMaterial

2. Create Trident

3. Add both to scene

4. Every frame, change the text property of TextField3D object

What is the expected output? What do you see inste... | 1.0 | TextField3D render problems, text overwrite problem, and crashes - #52 Issue by __GoogleCodeExporter__, created on: 2015-04-24T07:51:25Z

```

What steps will reproduce the problem?

1. Create TextField3D object and apply ColorMaterial

2. Create Trident

3. Add both to scene

4. Every frame, change the text property of Tex... | defect | render problems text overwrite problem and crashes issue by googlecodeexporter created on what steps will reproduce the problem create object and apply colormaterial create trident add both to scene every frame change the text property of object what is the expected outp... | 1 |

54,692 | 13,884,978,541 | IssuesEvent | 2020-10-18 18:12:07 | MDAnalysis/mdanalysis | https://api.github.com/repos/MDAnalysis/mdanalysis | closed | MultiPDBWriter is returned even if multiframe is False | Component-Writers Format-PDB defect | ### Expected behaviour

When asking for a not multiframe PDB writer, the PDB writer should be a single frame writer.

### Actual behaviour

The writer is `MultiPDBWriter`.

The issue was uncovered by @abhinavgupta94 in #764.

### Code to reproduce the behaviour

``` python

import MDAnalysis as mda

writer = mda.Writer('te... | 1.0 | MultiPDBWriter is returned even if multiframe is False - ### Expected behaviour

When asking for a not multiframe PDB writer, the PDB writer should be a single frame writer.

### Actual behaviour

The writer is `MultiPDBWriter`.

The issue was uncovered by @abhinavgupta94 in #764.

### Code to reproduce the behaviour

``... | defect | multipdbwriter is returned even if multiframe is false expected behaviour when asking for a not multiframe pdb writer the pdb writer should be a single frame writer actual behaviour the writer is multipdbwriter the issue was uncovered by in code to reproduce the behaviour python import... | 1 |

321,285 | 27,520,241,665 | IssuesEvent | 2023-03-06 14:36:33 | unifyai/ivy | https://api.github.com/repos/unifyai/ivy | reopened | Fix tensor.test_torch_instance_sort | PyTorch Frontend Sub Task Failing Test | | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/... | 1.0 | Fix tensor.test_torch_instance_sort - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/" rel="noopener noreferrer" target="_blank">... | non_defect | fix tensor test torch instance sort tensorflow img src torch img src numpy img src jax img src | 0 |

71,188 | 30,825,531,940 | IssuesEvent | 2023-08-01 19:44:35 | hashicorp/terraform-provider-azurerm | https://api.github.com/repos/hashicorp/terraform-provider-azurerm | closed | azurerm_mysql_server if autogrowth happened a redeploy triggers unsupported shrink | bug service/mysql | Hi Community,

we are using azurerm_mysql_server to create MySQL managed instances in Azure.

We configured them with a starting size of 250GB and the nice feature "auto_grow_enabled = true".

When our PROD environment exceeded this size and Azure increased the max available storage, then every following plan will sa... | 1.0 | azurerm_mysql_server if autogrowth happened a redeploy triggers unsupported shrink - Hi Community,

we are using azurerm_mysql_server to create MySQL managed instances in Azure.

We configured them with a starting size of 250GB and the nice feature "auto_grow_enabled = true".

When our PROD environment exceeded this ... | non_defect | azurerm mysql server if autogrowth happened a redeploy triggers unsupported shrink hi community we are using azurerm mysql server to create mysql managed instances in azure we configured them with a starting size of and the nice feature auto grow enabled true when our prod environment exceeded this size... | 0 |

23,289 | 3,786,355,438 | IssuesEvent | 2016-03-21 01:48:12 | DemNTor/varma_android_samples | https://api.github.com/repos/DemNTor/varma_android_samples | closed | WebServer cannot provide busy traffic(such as 1080p Video ) | auto-migrated Priority-Medium Type-Defect | ```

I take aws project as a example, build my WebServer. It works good for most

videos ,but cannot afford HD videos.

How can I speed up my webserver?

and what's more, my H.263 video cannot be played, can you give me some suggest?

My Video is 1920x1080,framerate 24, and another 1080x720 with framerate 24

works fin... | 1.0 | WebServer cannot provide busy traffic(such as 1080p Video ) - ```

I take aws project as a example, build my WebServer. It works good for most

videos ,but cannot afford HD videos.

How can I speed up my webserver?

and what's more, my H.263 video cannot be played, can you give me some suggest?

My Video is 1920x1080,f... | defect | webserver cannot provide busy traffic such as video i take aws project as a example build my webserver it works good for most videos but cannot afford hd videos how can i speed up my webserver and what s more my h video cannot be played can you give me some suggest my video is framerate an... | 1 |

562,473 | 16,661,686,697 | IssuesEvent | 2021-06-06 12:46:40 | bounswe/2021SpringGroup4 | https://api.github.com/repos/bounswe/2021SpringGroup4 | opened | Implementation Assignment: Create an API for creating an event , Create a frontend for API and write unit tests | Frontend Priority: High Status: In Progress Type: Development individual | Create an API for creating event and and integrate it into the our practice-app.

Write Unit Tests. Use pydate, ensure that the event created can only be created for the future time.

| 1.0 | Implementation Assignment: Create an API for creating an event , Create a frontend for API and write unit tests - Create an API for creating event and and integrate it into the our practice-app.

Write Unit Tests. Use pydate, ensure that the event created can only be created for the future time.

| non_defect | implementation assignment create an api for creating an event create a frontend for api and write unit tests create an api for creating event and and integrate it into the our practice app write unit tests use pydate ensure that the event created can only be created for the future time | 0 |

18,255 | 4,241,350,753 | IssuesEvent | 2016-07-06 16:03:32 | projectcalico/calico-containers | https://api.github.com/repos/projectcalico/calico-containers | opened | Dockerless Calico is Outdated | documentation enhancement | Our [Dockerless Calico](https://github.com/projectcalico/calico-containers/blob/master/docs/DockerlessCalicoManual.md) guide has fallen out of date, namely with the introduction of `setup.py`. | 1.0 | Dockerless Calico is Outdated - Our [Dockerless Calico](https://github.com/projectcalico/calico-containers/blob/master/docs/DockerlessCalicoManual.md) guide has fallen out of date, namely with the introduction of `setup.py`. | non_defect | dockerless calico is outdated our guide has fallen out of date namely with the introduction of setup py | 0 |

36,568 | 7,992,862,527 | IssuesEvent | 2018-07-20 04:20:19 | lagom/lagom | https://api.github.com/repos/lagom/lagom | closed | Creating a LagomClientApplication fails if no application.conf found | help wanted topic:service-client type:defect | A Lagom Client application will fail to run if an `application.conf` file is missing. Adding an empty `application.conf` file in `src/main/resources` solved the issue.

To reproduce, clone the [repo](https://github.com/ignasi35/lagom-client-demo), rename `application.conf` and start the `Main`.

| 1.0 | Creating a LagomClientApplication fails if no application.conf found - A Lagom Client application will fail to run if an `application.conf` file is missing. Adding an empty `application.conf` file in `src/main/resources` solved the issue.

To reproduce, clone the [repo](https://github.com/ignasi35/lagom-client-demo),... | defect | creating a lagomclientapplication fails if no application conf found a lagom client application will fail to run if an application conf file is missing adding an empty application conf file in src main resources solved the issue to reproduce clone the rename application conf and start the main ... | 1 |

113,570 | 4,562,003,909 | IssuesEvent | 2016-09-14 13:40:13 | cul-2016/quiz | https://api.github.com/repos/cul-2016/quiz | closed | Create `StrengthsWeaknesses` component | priority-2 | #121

## Messages to display

### If total number quizzes < 3

As you take more quizzes we will identify those that you are doing particularly well on relative to other people, and those that you are doing less well on.

Otherwise display:

* Looking across all the quizzes you’ve taken, your top quiz was `[Name ... | 1.0 | Create `StrengthsWeaknesses` component - #121

## Messages to display

### If total number quizzes < 3

As you take more quizzes we will identify those that you are doing particularly well on relative to other people, and those that you are doing less well on.

Otherwise display:

* Looking across all the quizze... | non_defect | create strengthsweaknesses component messages to display if total number quizzes as you take more quizzes we will identify those that you are doing particularly well on relative to other people and those that you are doing less well on otherwise display looking across all the quizzes ... | 0 |

46,149 | 5,790,695,838 | IssuesEvent | 2017-05-02 01:48:41 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed tests on master: test (proposer evaluated kv)/TestPushTxnHeartbeatTimeout | Robot test-failure | The following tests appear to have failed:

[#193266](https://teamcity.cockroachdb.com/viewLog.html?buildId=193266):

```

--- FAIL: test (proposer evaluated kv)/TestPushTxnHeartbeatTimeout (0.010s)

replica_test.go:4387: 3: expSuccess=false; got pErr <nil>, reply header:<num_keys:0 > pushee_txn:<meta:<id:<f6bd9663-cd89-... | 1.0 | teamcity: failed tests on master: test (proposer evaluated kv)/TestPushTxnHeartbeatTimeout - The following tests appear to have failed:

[#193266](https://teamcity.cockroachdb.com/viewLog.html?buildId=193266):

```

--- FAIL: test (proposer evaluated kv)/TestPushTxnHeartbeatTimeout (0.010s)

replica_test.go:4387: 3: expS... | non_defect | teamcity failed tests on master test proposer evaluated kv testpushtxnheartbeattimeout the following tests appear to have failed fail test proposer evaluated kv testpushtxnheartbeattimeout replica test go expsuccess false got perr reply header pushee txn isolation serializabl... | 0 |

278,803 | 24,177,552,630 | IssuesEvent | 2022-09-23 04:45:23 | TauCetiStation/TauCetiClassic | https://api.github.com/repos/TauCetiStation/TauCetiClassic | closed | Блоб не ломает канистры с газом | Bug Test Feedback | <!--

ВАЖНО: Если ваш ишью является не репортом о баге, а предложением для чего-либо, то ОБЯЗАТЕЛЬНО добавьте в название тег [Proposal]

1. ОТВЕТЫ ОСТАВЛЯТЬ ПОД СООТВЕТСТВУЮЩИЕ ЗАГОЛОВКИ

(они в самом низу, после всех правил)

2. В ОДНОМ РЕПОРТЕ ДОЛЖНО БЫТЬ ОПИСАНИЕ ТОЛЬКО ОДНОЙ ПРОБЛЕМЫ

3. КОРРЕКТНОЕ НАЗВАНИЕ РЕП... | 1.0 | Блоб не ломает канистры с газом - <!--

ВАЖНО: Если ваш ишью является не репортом о баге, а предложением для чего-либо, то ОБЯЗАТЕЛЬНО добавьте в название тег [Proposal]

1. ОТВЕТЫ ОСТАВЛЯТЬ ПОД СООТВЕТСТВУЮЩИЕ ЗАГОЛОВКИ

(они в самом низу, после всех правил)

2. В ОДНОМ РЕПОРТЕ ДОЛЖНО БЫТЬ ОПИСАНИЕ ТОЛЬКО ОДНОЙ ПР... | non_defect | блоб не ломает канистры с газом важно если ваш ишью является не репортом о баге а предложением для чего либо то обязательно добавьте в название тег ответы оставлять под соответствующие заголовки они в самом низу после всех правил в одном репорте должно быть описание только одной проблемы ... | 0 |

26,090 | 4,581,177,083 | IssuesEvent | 2016-09-19 03:01:44 | zealdocs/zeal | https://api.github.com/repos/zealdocs/zeal | closed | Meteor Js Docs | Component: Docset Registry Platform: Linux Resolution: Unable To Reproduce Type: Defect | Meteor Js docs always shutdown my pc when i open it. please help we have tried it on several systems but result. | 1.0 | Meteor Js Docs - Meteor Js docs always shutdown my pc when i open it. please help we have tried it on several systems but result. | defect | meteor js docs meteor js docs always shutdown my pc when i open it please help we have tried it on several systems but result | 1 |

55,193 | 6,445,306,003 | IssuesEvent | 2017-08-13 02:08:35 | cockroachdb/cockroach | https://api.github.com/repos/cockroachdb/cockroach | closed | teamcity: failed tests on master: acceptance/TestDockerC/Success, acceptance/TestDockerC | Robot test-failure | The following tests appear to have failed:

[#318821](https://teamcity.cockroachdb.com/viewLog.html?buildId=318821):

```

--- FAIL: acceptance/TestDockerC/Success (0.000s)

Test ended in panic.

------- Stdout: -------

xenial-20170214: Pulling from library/ubuntu