Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

56,344

| 15,025,866,026

|

IssuesEvent

|

2021-02-01 21:44:36

|

unascribed/Fabrication

|

https://api.github.com/repos/unascribed/Fabrication

|

closed

|

Billboard drops causes a crash with a compass

|

k: Defect n: Fabric

|

Just give yourself a compass or drop one and it will crash when billboard drops is enabled.

https://gist.github.com/Snowiez/5394f948a3ade0120e5c7729178bc263

|

1.0

|

Billboard drops causes a crash with a compass - Just give yourself a compass or drop one and it will crash when billboard drops is enabled.

https://gist.github.com/Snowiez/5394f948a3ade0120e5c7729178bc263

|

defect

|

billboard drops causes a crash with a compass just give yourself a compass or drop one and it will crash when billboard drops is enabled

| 1

|

391,347

| 26,887,608,690

|

IssuesEvent

|

2023-02-06 05:34:37

|

Seakimhour/pro-translate

|

https://api.github.com/repos/Seakimhour/pro-translate

|

closed

|

Data Storage Method

|

documentation

|

各 Web サイトには独自の「ローカル ストレージ」があり、相互にデータを取得できないので、ローカル ストレージは使用できません。

Chrome には、ユーザー データの変更を保存、取得、追跡するための chrome.storage API が用意されています。

このプロジェクトでは、[WebExtension Polyfill](https://github.com/mozilla/webextension-polyfill) を使用します。 これにより、拡張機能がより多くのブラウザーをサポートできるようになります。

#### ユーザー設定データ

```js

settings: {

targetLanguage: { code: "en", country: "English" },

secondTargetLanguage: { code: "ja", country: "Japanese" },

autoSwitch: true,

targetFormat: "camel",

autoSetFormat: true,

showIcon: true,

cases: ["snake", "param", "camel", "pascal", "path", "constant", "dot"]

}

```

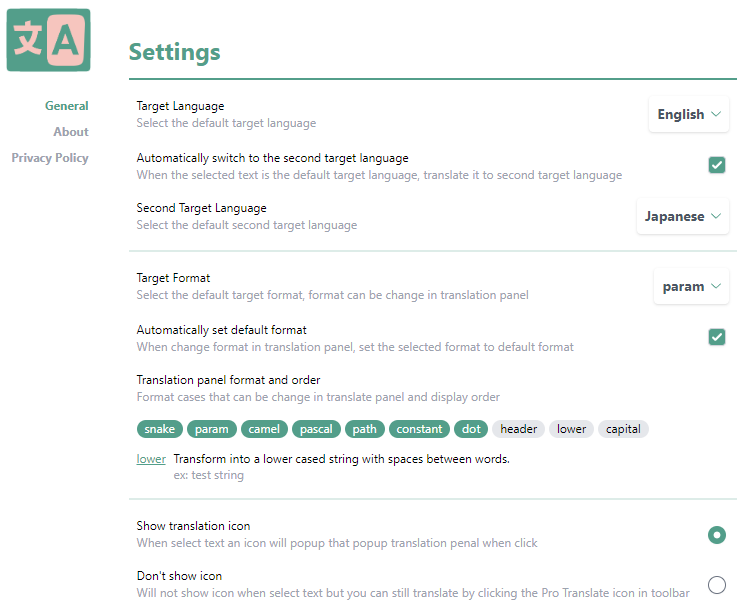

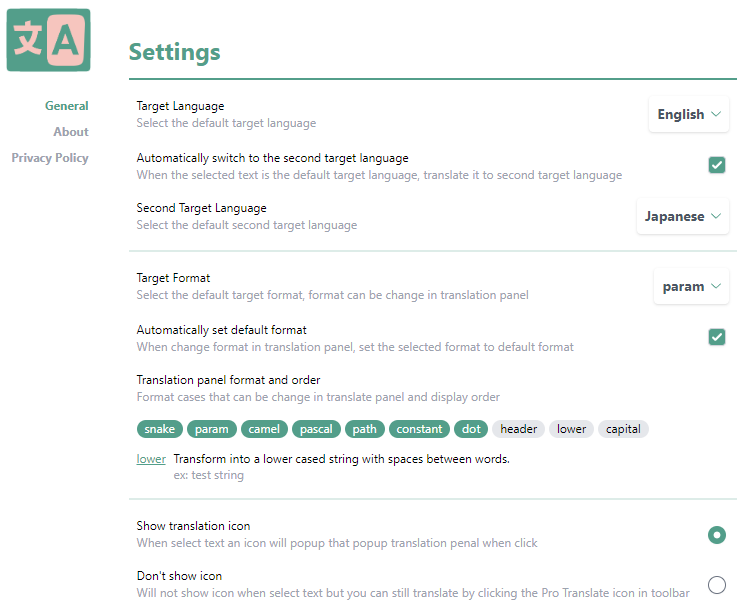

#### 設定ページのスクリーンショットです。

#### この拡張機能で使用するデータは JS ファイルの形に保存しています。

[format-cases.js](https://github.com/Seakimhour/pro-translate/blob/master/src/assets/format-cases.js)

[languages.js](https://github.com/Seakimhour/pro-translate/blob/master/src/assets/languages.js)

利用するとき`import`で呼び出し

```js

import { formatCases } from "../../assets/format-cases.js";

```

|

1.0

|

Data Storage Method - 各 Web サイトには独自の「ローカル ストレージ」があり、相互にデータを取得できないので、ローカル ストレージは使用できません。

Chrome には、ユーザー データの変更を保存、取得、追跡するための chrome.storage API が用意されています。

このプロジェクトでは、[WebExtension Polyfill](https://github.com/mozilla/webextension-polyfill) を使用します。 これにより、拡張機能がより多くのブラウザーをサポートできるようになります。

#### ユーザー設定データ

```js

settings: {

targetLanguage: { code: "en", country: "English" },

secondTargetLanguage: { code: "ja", country: "Japanese" },

autoSwitch: true,

targetFormat: "camel",

autoSetFormat: true,

showIcon: true,

cases: ["snake", "param", "camel", "pascal", "path", "constant", "dot"]

}

```

#### 設定ページのスクリーンショットです。

#### この拡張機能で使用するデータは JS ファイルの形に保存しています。

[format-cases.js](https://github.com/Seakimhour/pro-translate/blob/master/src/assets/format-cases.js)

[languages.js](https://github.com/Seakimhour/pro-translate/blob/master/src/assets/languages.js)

利用するとき`import`で呼び出し

```js

import { formatCases } from "../../assets/format-cases.js";

```

|

non_defect

|

data storage method 各 web サイトには独自の「ローカル ストレージ」があり、相互にデータを取得できないので、ローカル ストレージは使用できません。 chrome には、ユーザー データの変更を保存、取得、追跡するための chrome storage api が用意されています。 このプロジェクトでは、 を使用します。 これにより、拡張機能がより多くのブラウザーをサポートできるようになります。 ユーザー設定データ js settings targetlanguage code en country english secondtargetlanguage code ja country japanese autoswitch true targetformat camel autosetformat true showicon true cases 設定ページのスクリーンショットです。 この拡張機能で使用するデータは js ファイルの形に保存しています。 利用するとき import で呼び出し js import formatcases from assets format cases js

| 0

|

30,227

| 6,046,547,780

|

IssuesEvent

|

2017-06-12 12:25:51

|

autovpn4openwrt/autovpn-for-openwrt

|

https://api.github.com/repos/autovpn4openwrt/autovpn-for-openwrt

|

closed

|

我也发现一个问题,连接数大,流量大时,dnsmasq响应缓慢

|

auto-migrated Priority-Medium Type-Defect

|

```

Intel

D525的双核1.8Ghz,启用了双核Openwrt处理,当连接数达到10000左�

��时,dnsmasq响应就比较慢,需要几秒中的时间回应dns请求。��

�想请问,我在dnsmasq官网看了这是一款小型dns服务器,是否有

其他性能更好的dns方案可以替换dnsmasq?

```

Original issue reported on code.google.com by `jiekec...@gmail.com` on 20 Nov 2014 at 3:14

|

1.0

|

我也发现一个问题,连接数大,流量大时,dnsmasq响应缓慢 - ```

Intel

D525的双核1.8Ghz,启用了双核Openwrt处理,当连接数达到10000左�

��时,dnsmasq响应就比较慢,需要几秒中的时间回应dns请求。��

�想请问,我在dnsmasq官网看了这是一款小型dns服务器,是否有

其他性能更好的dns方案可以替换dnsmasq?

```

Original issue reported on code.google.com by `jiekec...@gmail.com` on 20 Nov 2014 at 3:14

|

defect

|

我也发现一个问题,连接数大,流量大时,dnsmasq响应缓慢 intel ,启用了双核openwrt处理, � ��时,dnsmasq响应就比较慢,需要几秒中的时间回应dns请求。�� �想请问,我在dnsmasq官网看了这是一款小型dns服务器,是否有 其他性能更好的dns方案可以替换dnsmasq? original issue reported on code google com by jiekec gmail com on nov at

| 1

|

57,291

| 15,729,588,745

|

IssuesEvent

|

2021-03-29 15:00:46

|

danmar/testissues

|

https://api.github.com/repos/danmar/testissues

|

opened

|

Incorrect variable id, when delete is used. (Trac #269)

|

Incomplete Migration Migrated from Trac Other aggro80 defect

|

Migrated from https://trac.cppcheck.net/ticket/269

```json

{

"status": "closed",

"changetime": "2009-04-29T19:46:55",

"description": "{{{\nvoid f()\n{\n int *a;\n delete a;\n}\n}}}\n\nVariable id should be 1, not 2. \n{{{\n##file 0\n1: void f ( )\n2: {\n3: int * a@1 ;\n4: delete a@2 ;\n5: }\n}}}\n",

"reporter": "aggro80",

"cc": "",

"resolution": "fixed",

"_ts": "1241034415000000",

"component": "Other",

"summary": "Incorrect variable id, when delete is used.",

"priority": "",

"keywords": "",

"time": "2009-04-29T19:17:36",

"milestone": "1.32",

"owner": "aggro80",

"type": "defect"

}

```

|

1.0

|

Incorrect variable id, when delete is used. (Trac #269) - Migrated from https://trac.cppcheck.net/ticket/269

```json

{

"status": "closed",

"changetime": "2009-04-29T19:46:55",

"description": "{{{\nvoid f()\n{\n int *a;\n delete a;\n}\n}}}\n\nVariable id should be 1, not 2. \n{{{\n##file 0\n1: void f ( )\n2: {\n3: int * a@1 ;\n4: delete a@2 ;\n5: }\n}}}\n",

"reporter": "aggro80",

"cc": "",

"resolution": "fixed",

"_ts": "1241034415000000",

"component": "Other",

"summary": "Incorrect variable id, when delete is used.",

"priority": "",

"keywords": "",

"time": "2009-04-29T19:17:36",

"milestone": "1.32",

"owner": "aggro80",

"type": "defect"

}

```

|

defect

|

incorrect variable id when delete is used trac migrated from json status closed changetime description nvoid f n n int a n delete a n n n nvariable id should be not n n file void f int a delete a n n reporter cc resolution fixed ts component other summary incorrect variable id when delete is used priority keywords time milestone owner type defect

| 1

|

243,461

| 20,388,708,895

|

IssuesEvent

|

2022-02-22 09:48:01

|

ZcashFoundation/zebra

|

https://api.github.com/repos/ZcashFoundation/zebra

|

closed

|

Coverage changes are inaccurate, because the job doesn't run on `main`

|

C-bug A-devops P-Medium :zap: C-testing

|

## Motivation

> By the way, why does this PR change test coverage when it only edits comments? The bot says there will be 854 new hits.

## Suggested Solution

Our `main` branch coverage seems to be out of date, maybe we need to start running our coverage job on `main` again.

_Originally posted by @teor2345 in https://github.com/ZcashFoundation/zebra/issues/3521#issuecomment-1039479864_

|

1.0

|

Coverage changes are inaccurate, because the job doesn't run on `main` - ## Motivation

> By the way, why does this PR change test coverage when it only edits comments? The bot says there will be 854 new hits.

## Suggested Solution

Our `main` branch coverage seems to be out of date, maybe we need to start running our coverage job on `main` again.

_Originally posted by @teor2345 in https://github.com/ZcashFoundation/zebra/issues/3521#issuecomment-1039479864_

|

non_defect

|

coverage changes are inaccurate because the job doesn t run on main motivation by the way why does this pr change test coverage when it only edits comments the bot says there will be new hits suggested solution our main branch coverage seems to be out of date maybe we need to start running our coverage job on main again originally posted by in

| 0

|

24,242

| 5,040,053,789

|

IssuesEvent

|

2016-12-19 02:33:00

|

coreos/bugs

|

https://api.github.com/repos/coreos/bugs

|

closed

|

document or change journald rate limit defaults

|

area/usability component/systemd kind/documentation team/os

|

### Desired Feature ###

Lots of people complain about journald locking up on them. As a solution perhaps we set an even lower limit on number of logs per second per service in journald.conf.

Docs: https://www.freedesktop.org/software/systemd/man/journald.conf.html#RateLimitIntervalSec=

I believe this is what other distros do.

|

1.0

|

document or change journald rate limit defaults - ### Desired Feature ###

Lots of people complain about journald locking up on them. As a solution perhaps we set an even lower limit on number of logs per second per service in journald.conf.

Docs: https://www.freedesktop.org/software/systemd/man/journald.conf.html#RateLimitIntervalSec=

I believe this is what other distros do.

|

non_defect

|

document or change journald rate limit defaults desired feature lots of people complain about journald locking up on them as a solution perhaps we set an even lower limit on number of logs per second per service in journald conf docs i believe this is what other distros do

| 0

|

343,837

| 30,695,175,852

|

IssuesEvent

|

2023-07-26 18:00:41

|

microsoft/AzureStorageExplorer

|

https://api.github.com/repos/microsoft/AzureStorageExplorer

|

closed

|

'View Options' panel doesn't disappear after switching to 'Snapshots/Versions' view

|

🧪 testing :gear: blobs :beetle: regression

|

**Storage Explorer Version**: 1.31.0-dev

**Build Number**: 20230726.3

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 20.04/MacOS Ventura 13.4.1 (Apple M1 Pro)

**Architecture**: x64/x64/arm64

**How Found**: From running test cases

**Regression From**: Previous release (1.30.2)

## Steps to Reproduce ##

1. Expand one storage account -> Blob Containers.

2. Create a blob container -> Right click the blob container -> Click 'Open in React'.

3. Upload a blob -> Open 'View Options' panel.

4. Right click the blob -> Click 'Manage History -> Manage Versions'.

5. Check whether 'View Options' panel disappears.

## Expected Experience ##

'View Options' panel disappears.

## Actual Experience ##

'View Options' panel doesn't disappear.

|

1.0

|

'View Options' panel doesn't disappear after switching to 'Snapshots/Versions' view - **Storage Explorer Version**: 1.31.0-dev

**Build Number**: 20230726.3

**Branch**: main

**Platform/OS**: Windows 10/Linux Ubuntu 20.04/MacOS Ventura 13.4.1 (Apple M1 Pro)

**Architecture**: x64/x64/arm64

**How Found**: From running test cases

**Regression From**: Previous release (1.30.2)

## Steps to Reproduce ##

1. Expand one storage account -> Blob Containers.

2. Create a blob container -> Right click the blob container -> Click 'Open in React'.

3. Upload a blob -> Open 'View Options' panel.

4. Right click the blob -> Click 'Manage History -> Manage Versions'.

5. Check whether 'View Options' panel disappears.

## Expected Experience ##

'View Options' panel disappears.

## Actual Experience ##

'View Options' panel doesn't disappear.

|

non_defect

|

view options panel doesn t disappear after switching to snapshots versions view storage explorer version dev build number branch main platform os windows linux ubuntu macos ventura apple pro architecture how found from running test cases regression from previous release steps to reproduce expand one storage account blob containers create a blob container right click the blob container click open in react upload a blob open view options panel right click the blob click manage history manage versions check whether view options panel disappears expected experience view options panel disappears actual experience view options panel doesn t disappear

| 0

|

10,547

| 2,622,171,862

|

IssuesEvent

|

2015-03-04 00:14:51

|

byzhang/rapidjson

|

https://api.github.com/repos/byzhang/rapidjson

|

closed

|

Memory access error due to 'memcmp'

|

auto-migrated Priority-Medium Type-Defect

|

```

I tried to use rapidjson on the large json, and valgrind/memcheck finds errors,

see below. The offending line is this:

if (name[member->name.data_.s.length] == '\0' &&

memcmp(member->name.data_.s.str, name, member->name.data_.s.length *

sizeof(Ch)) == 0)

This happens during map value lookup. 'memcmp' can't be used in this place,

because some keys can be longer than the supplied value, and it is illegal to

read a string past its terminating zero character.

In fact, this bug can cause segmentation fault if the end of the string

supplied by the caller would happen to align with the end of the memory segment.

---error log---

==81117== Invalid read of size 1

==81117== at 0x110A543: memcmp (mc_replace_strmem.c:1001)

==81117== by 0x49F01C: rapidjson::GenericValue<rapidjson::UTF8<char>,

rapidjson::MemoryPoolAllocator<rapidjson::CrtAllocator> >::FindMember(char

const*) (document.h:271)

==81117== by 0x49EECC: rapidjson::GenericValue<rapidjson::UTF8<char>,

rapidjson::MemoryPoolAllocator<rapidjson::CrtAllocator> >::operator[](char

const*) (document.h:239)

==81117== by 0x49EE9C: rapidjson::GenericValue<rapidjson::UTF8<char>,

rapidjson::MemoryPoolAllocator<rapidjson::CrtAllocator> >::operator[](char

const*) const (document.h:247)

```

Original issue reported on code.google.com by `yuriv...@gmail.com` on 28 Apr 2014 at 11:42

* Merged into: #108

|

1.0

|

Memory access error due to 'memcmp' - ```

I tried to use rapidjson on the large json, and valgrind/memcheck finds errors,

see below. The offending line is this:

if (name[member->name.data_.s.length] == '\0' &&

memcmp(member->name.data_.s.str, name, member->name.data_.s.length *

sizeof(Ch)) == 0)

This happens during map value lookup. 'memcmp' can't be used in this place,

because some keys can be longer than the supplied value, and it is illegal to

read a string past its terminating zero character.

In fact, this bug can cause segmentation fault if the end of the string

supplied by the caller would happen to align with the end of the memory segment.

---error log---

==81117== Invalid read of size 1

==81117== at 0x110A543: memcmp (mc_replace_strmem.c:1001)

==81117== by 0x49F01C: rapidjson::GenericValue<rapidjson::UTF8<char>,

rapidjson::MemoryPoolAllocator<rapidjson::CrtAllocator> >::FindMember(char

const*) (document.h:271)

==81117== by 0x49EECC: rapidjson::GenericValue<rapidjson::UTF8<char>,

rapidjson::MemoryPoolAllocator<rapidjson::CrtAllocator> >::operator[](char

const*) (document.h:239)

==81117== by 0x49EE9C: rapidjson::GenericValue<rapidjson::UTF8<char>,

rapidjson::MemoryPoolAllocator<rapidjson::CrtAllocator> >::operator[](char

const*) const (document.h:247)

```

Original issue reported on code.google.com by `yuriv...@gmail.com` on 28 Apr 2014 at 11:42

* Merged into: #108

|

defect

|

memory access error due to memcmp i tried to use rapidjson on the large json and valgrind memcheck finds errors see below the offending line is this if name memcmp member name data s str name member name data s length sizeof ch this happens during map value lookup memcmp can t be used in this place because some keys can be longer than the supplied value and it is illegal to read a string past its terminating zero character in fact this bug can cause segmentation fault if the end of the string supplied by the caller would happen to align with the end of the memory segment error log invalid read of size at memcmp mc replace strmem c by rapidjson genericvalue rapidjson memorypoolallocator findmember char const document h by rapidjson genericvalue rapidjson memorypoolallocator operator char const document h by rapidjson genericvalue rapidjson memorypoolallocator operator char const const document h original issue reported on code google com by yuriv gmail com on apr at merged into

| 1

|

536,861

| 15,715,930,945

|

IssuesEvent

|

2021-03-28 04:16:52

|

AY2021S2-CS2103-T16-3/tp

|

https://api.github.com/repos/AY2021S2-CS2103-T16-3/tp

|

opened

|

Ui display

|

priority.Low

|

currently if a lot of bookings are added the size of the residence row keeps increasing. might be better to make it fixed size with a scroll pane?

|

1.0

|

Ui display - currently if a lot of bookings are added the size of the residence row keeps increasing. might be better to make it fixed size with a scroll pane?

|

non_defect

|

ui display currently if a lot of bookings are added the size of the residence row keeps increasing might be better to make it fixed size with a scroll pane

| 0

|

368,103

| 25,776,666,639

|

IssuesEvent

|

2022-12-09 12:35:37

|

bounswe/bounswe2022group9

|

https://api.github.com/repos/bounswe/bounswe2022group9

|

opened

|

[Documentation] Create Executive part for backend

|

Documentation Backend

|

For the milestone 2, executive part should be created for the backend.

|

1.0

|

[Documentation] Create Executive part for backend - For the milestone 2, executive part should be created for the backend.

|

non_defect

|

create executive part for backend for the milestone executive part should be created for the backend

| 0

|

18,392

| 3,054,473,318

|

IssuesEvent

|

2015-08-13 02:58:57

|

eczarny/spectacle

|

https://api.github.com/repos/eczarny/spectacle

|

closed

|

Cancel "edit hot key" process

|

defect ★★

|

I can't abort the "edit hot key" process, once entered. It doesn't help to close the preferences panel.

--

Cheers and congratulations on creating the best program for this purpose :)

|

1.0

|

Cancel "edit hot key" process - I can't abort the "edit hot key" process, once entered. It doesn't help to close the preferences panel.

--

Cheers and congratulations on creating the best program for this purpose :)

|

defect

|

cancel edit hot key process i can t abort the edit hot key process once entered it doesn t help to close the preferences panel cheers and congratulations on creating the best program for this purpose

| 1

|

47,169

| 5,867,701,568

|

IssuesEvent

|

2017-05-14 04:08:00

|

nix-rust/nix

|

https://api.github.com/repos/nix-rust/nix

|

opened

|

Continuous testing for FreeBSD

|

A-testing O-freebsd

|

I'm working on a buildbot cluster that could support continuous testing on FreeBSD for several projects. I've got a prototype running, and you can see a PR in action at https://github.com/asomers/mio-aio/pull/2 . The hardest question is how to secure it. Since anybody can open a PR, that means that anybody can run arbitrary code on the buildslaves. The potential damage is limited; each project gets its own worker, each worker runs in its own jail, and there's a timeout on each build. I could use the firewall to prevent workers from sending email and stuff, but I can't completely isolate workers from the internet without breaking a lot of builds. There are a few options to improve the security situation.

1. Don't automatically build a PR until a maintainer posts a specific comment. This is what open-zfs does. It completely eliminates unreviewed code from running on the workers. However, it's inconvenient for people who are accustomed to Travis building stuff without needing to be asked.

2. Destroy and reclone the worker for each build. This is what Travis does. The worker still runs untrusted code, but not for long. The untrusted code can't modify the filesystem in any persistent way.

3. A hybrid of the previous two. Do a build when a maintainer give the magic comment, but also do builds automatically whenever a PR is posted by a known-good contributor. The list of contributors would probably have to be maintained by hand, but it could be much larger than the list of maintainers.

Does anybody have any better ideas?

|

1.0

|

Continuous testing for FreeBSD - I'm working on a buildbot cluster that could support continuous testing on FreeBSD for several projects. I've got a prototype running, and you can see a PR in action at https://github.com/asomers/mio-aio/pull/2 . The hardest question is how to secure it. Since anybody can open a PR, that means that anybody can run arbitrary code on the buildslaves. The potential damage is limited; each project gets its own worker, each worker runs in its own jail, and there's a timeout on each build. I could use the firewall to prevent workers from sending email and stuff, but I can't completely isolate workers from the internet without breaking a lot of builds. There are a few options to improve the security situation.

1. Don't automatically build a PR until a maintainer posts a specific comment. This is what open-zfs does. It completely eliminates unreviewed code from running on the workers. However, it's inconvenient for people who are accustomed to Travis building stuff without needing to be asked.

2. Destroy and reclone the worker for each build. This is what Travis does. The worker still runs untrusted code, but not for long. The untrusted code can't modify the filesystem in any persistent way.

3. A hybrid of the previous two. Do a build when a maintainer give the magic comment, but also do builds automatically whenever a PR is posted by a known-good contributor. The list of contributors would probably have to be maintained by hand, but it could be much larger than the list of maintainers.

Does anybody have any better ideas?

|

non_defect

|

continuous testing for freebsd i m working on a buildbot cluster that could support continuous testing on freebsd for several projects i ve got a prototype running and you can see a pr in action at the hardest question is how to secure it since anybody can open a pr that means that anybody can run arbitrary code on the buildslaves the potential damage is limited each project gets its own worker each worker runs in its own jail and there s a timeout on each build i could use the firewall to prevent workers from sending email and stuff but i can t completely isolate workers from the internet without breaking a lot of builds there are a few options to improve the security situation don t automatically build a pr until a maintainer posts a specific comment this is what open zfs does it completely eliminates unreviewed code from running on the workers however it s inconvenient for people who are accustomed to travis building stuff without needing to be asked destroy and reclone the worker for each build this is what travis does the worker still runs untrusted code but not for long the untrusted code can t modify the filesystem in any persistent way a hybrid of the previous two do a build when a maintainer give the magic comment but also do builds automatically whenever a pr is posted by a known good contributor the list of contributors would probably have to be maintained by hand but it could be much larger than the list of maintainers does anybody have any better ideas

| 0

|

44,241

| 12,066,854,656

|

IssuesEvent

|

2020-04-16 12:29:34

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

DataTable rowsPerPageTemplate-ShowAll+ filtering throws NumberFormatException

|

defect

|

## 1) Environment

- PrimeFaces version: Primesfaces 8.0

- Application server + version: Wildfly 16

- Affected browsers: all

## 2) Expected behavior

When I select "ShowAll" in DataTable's rows per page drop-down list, all the rows should be filtered.

## 3) Actual behavior

When the "ShowAll" option is selected, an NumberFormatException is thrown during filtering

java.lang.NumberFormatException: For input string: "*"

at java.base/java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.base/java.lang.Integer.parseInt(Integer.java:638)

at java.base/java.lang.Integer.parseInt(Integer.java:770)

at deployment.test.war//org.primefaces.component.datatable.feature.FilterFeature.encode(FilterFeature.java:106)

at deployment.test.war//org.primefaces.component.datatable.DataTableRenderer.encodeEnd(DataTableRenderer.java:88)

at javax.faces.api@2.3.9.SP01//javax.faces.component.UIComponentBase.encodeEnd(UIComponentBase.java:595)

## 4) Steps to reproduce

Set the "records per page" to "all" and make some filtering

## 5) Sample XHTML

<h:form>

<p:dataTable value="#{dataTableController.rows}" var="row" paginator="true"

rowsPerPageTemplate="5,10,{ShowAll|'All'}" rows="5">

<p:column filterBy="#{row}" filterMatchMode="contains">

<h:outputText value="#{row}"/>

</p:column>

</p:dataTable>

</h:form>

## 6) Sample bean

@Named

@ApplicationScoped

public class DataTableController implements Serializable {

private static final int ROW_COUNT = 15;

private static final List<String> ROWS = new ArrayList<>(ROW_COUNT);

static {

for (int i = 0; i < ROW_COUNT; i++) {

ROWS.add("Row " + i);

}

}

public List<String> getRows() {

return ROWS;

}

}

|

1.0

|

DataTable rowsPerPageTemplate-ShowAll+ filtering throws NumberFormatException -

## 1) Environment

- PrimeFaces version: Primesfaces 8.0

- Application server + version: Wildfly 16

- Affected browsers: all

## 2) Expected behavior

When I select "ShowAll" in DataTable's rows per page drop-down list, all the rows should be filtered.

## 3) Actual behavior

When the "ShowAll" option is selected, an NumberFormatException is thrown during filtering

java.lang.NumberFormatException: For input string: "*"

at java.base/java.lang.NumberFormatException.forInputString(NumberFormatException.java:65)

at java.base/java.lang.Integer.parseInt(Integer.java:638)

at java.base/java.lang.Integer.parseInt(Integer.java:770)

at deployment.test.war//org.primefaces.component.datatable.feature.FilterFeature.encode(FilterFeature.java:106)

at deployment.test.war//org.primefaces.component.datatable.DataTableRenderer.encodeEnd(DataTableRenderer.java:88)

at javax.faces.api@2.3.9.SP01//javax.faces.component.UIComponentBase.encodeEnd(UIComponentBase.java:595)

## 4) Steps to reproduce

Set the "records per page" to "all" and make some filtering

## 5) Sample XHTML

<h:form>

<p:dataTable value="#{dataTableController.rows}" var="row" paginator="true"

rowsPerPageTemplate="5,10,{ShowAll|'All'}" rows="5">

<p:column filterBy="#{row}" filterMatchMode="contains">

<h:outputText value="#{row}"/>

</p:column>

</p:dataTable>

</h:form>

## 6) Sample bean

@Named

@ApplicationScoped

public class DataTableController implements Serializable {

private static final int ROW_COUNT = 15;

private static final List<String> ROWS = new ArrayList<>(ROW_COUNT);

static {

for (int i = 0; i < ROW_COUNT; i++) {

ROWS.add("Row " + i);

}

}

public List<String> getRows() {

return ROWS;

}

}

|

defect

|

datatable rowsperpagetemplate showall filtering throws numberformatexception environment primefaces version primesfaces application server version wildfly affected browsers all expected behavior when i select showall in datatable s rows per page drop down list all the rows should be filtered actual behavior when the showall option is selected an numberformatexception is thrown during filtering java lang numberformatexception for input string at java base java lang numberformatexception forinputstring numberformatexception java at java base java lang integer parseint integer java at java base java lang integer parseint integer java at deployment test war org primefaces component datatable feature filterfeature encode filterfeature java at deployment test war org primefaces component datatable datatablerenderer encodeend datatablerenderer java at javax faces api javax faces component uicomponentbase encodeend uicomponentbase java steps to reproduce set the records per page to all and make some filtering sample xhtml p datatable value datatablecontroller rows var row paginator true rowsperpagetemplate showall all rows sample bean named applicationscoped public class datatablecontroller implements serializable private static final int row count private static final list rows new arraylist row count static for int i i row count i rows add row i public list getrows return rows

| 1

|

4,632

| 2,610,135,411

|

IssuesEvent

|

2015-02-26 18:42:41

|

chrsmith/hedgewars

|

https://api.github.com/repos/chrsmith/hedgewars

|

closed

|

The Rope shot into a crate remains in the air after collecting

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1. Shooting a rope into a crate

2. Collecting it

What is the expected output? What do you see instead?

The rope tears/disappears, and the hog falls

What version of the product are you using? On what operating system?

0.9.13, Win7

Please provide any additional information below.

http://www.youtube.com/watch?v=3-fhUBxKMW8

```

-----

Original issue reported on code.google.com by `joship...@gmail.com` on 15 Sep 2010 at 10:12

* Merged into: #40

|

1.0

|

The Rope shot into a crate remains in the air after collecting - ```

What steps will reproduce the problem?

1. Shooting a rope into a crate

2. Collecting it

What is the expected output? What do you see instead?

The rope tears/disappears, and the hog falls

What version of the product are you using? On what operating system?

0.9.13, Win7

Please provide any additional information below.

http://www.youtube.com/watch?v=3-fhUBxKMW8

```

-----

Original issue reported on code.google.com by `joship...@gmail.com` on 15 Sep 2010 at 10:12

* Merged into: #40

|

defect

|

the rope shot into a crate remains in the air after collecting what steps will reproduce the problem shooting a rope into a crate collecting it what is the expected output what do you see instead the rope tears disappears and the hog falls what version of the product are you using on what operating system please provide any additional information below original issue reported on code google com by joship gmail com on sep at merged into

| 1

|

63,693

| 12,368,303,475

|

IssuesEvent

|

2020-05-18 13:38:35

|

sourcegraph/sourcegraph

|

https://api.github.com/repos/sourcegraph/sourcegraph

|

closed

|

Make code insights user-resizable and reorderable

|

code insights stretch-goal webapp

|

The user should have freedom to reorder and resize code insights to create a dashboard.

This should be persistable to user, org or global settings.

This also allows us to give fixed default width and height to views, which makes the UI stable (in combination with #10375).

First idea is to use CSS grid with native CSS `resize`, then a `ResizeObserver` to persist it. Could snap to grids with the CSS grid `span` keyword.

|

1.0

|

Make code insights user-resizable and reorderable - The user should have freedom to reorder and resize code insights to create a dashboard.

This should be persistable to user, org or global settings.

This also allows us to give fixed default width and height to views, which makes the UI stable (in combination with #10375).

First idea is to use CSS grid with native CSS `resize`, then a `ResizeObserver` to persist it. Could snap to grids with the CSS grid `span` keyword.

|

non_defect

|

make code insights user resizable and reorderable the user should have freedom to reorder and resize code insights to create a dashboard this should be persistable to user org or global settings this also allows us to give fixed default width and height to views which makes the ui stable in combination with first idea is to use css grid with native css resize then a resizeobserver to persist it could snap to grids with the css grid span keyword

| 0

|

49,872

| 13,466,604,668

|

IssuesEvent

|

2020-09-09 23:21:23

|

wrbejar/JavaVulnerableLab

|

https://api.github.com/repos/wrbejar/JavaVulnerableLab

|

opened

|

CVE-2018-1000632 (High) detected in dom4j-1.6.1.jar

|

security vulnerability

|

## CVE-2018-1000632 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dom4j-1.6.1.jar</b></p></summary>

<p>dom4j: the flexible XML framework for Java</p>

<p>Library home page: <a href="http://dom4j.org">http://dom4j.org</a></p>

<p>Path to vulnerable library: _depth_0/JavaVulnerableLab/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/home/wss-scanner/.m2/repository/dom4j/dom4j/1.6.1/dom4j-1.6.1.jar,/home/wss-scanner/.m2/repository/dom4j/dom4j/1.6.1/dom4j-1.6.1.jar,_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/home/wss-scanner/.m2/repository/dom4j/dom4j/1.6.1/dom4j-1.6.1.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar</p>

<p>

Dependency Hierarchy:

- :x: **dom4j-1.6.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/wrbejar/JavaVulnerableLab/commit/29032cb446233dde79d67459af426f67b9224d28">29032cb446233dde79d67459af426f67b9224d28</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

dom4j version prior to version 2.1.1 contains a CWE-91: XML Injection vulnerability in Class: Element. Methods: addElement, addAttribute that can result in an attacker tampering with XML documents through XML injection. This attack appear to be exploitable via an attacker specifying attributes or elements in the XML document. This vulnerability appears to have been fixed in 2.1.1 or later.

<p>Publish Date: 2018-08-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-1000632>CVE-2018-1000632</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-1000632">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-1000632</a></p>

<p>Release Date: 2018-08-20</p>

<p>Fix Resolution: org.dom4j:dom4j:2.0.3</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"dom4j","packageName":"dom4j","packageVersion":"1.6.1","isTransitiveDependency":false,"dependencyTree":"dom4j:dom4j:1.6.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.dom4j:dom4j:2.0.3"}],"vulnerabilityIdentifier":"CVE-2018-1000632","vulnerabilityDetails":"dom4j version prior to version 2.1.1 contains a CWE-91: XML Injection vulnerability in Class: Element. Methods: addElement, addAttribute that can result in an attacker tampering with XML documents through XML injection. This attack appear to be exploitable via an attacker specifying attributes or elements in the XML document. This vulnerability appears to have been fixed in 2.1.1 or later.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-1000632","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

True

|

CVE-2018-1000632 (High) detected in dom4j-1.6.1.jar - ## CVE-2018-1000632 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dom4j-1.6.1.jar</b></p></summary>

<p>dom4j: the flexible XML framework for Java</p>

<p>Library home page: <a href="http://dom4j.org">http://dom4j.org</a></p>

<p>Path to vulnerable library: _depth_0/JavaVulnerableLab/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/home/wss-scanner/.m2/repository/dom4j/dom4j/1.6.1/dom4j-1.6.1.jar,/home/wss-scanner/.m2/repository/dom4j/dom4j/1.6.1/dom4j-1.6.1.jar,_depth_0/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/home/wss-scanner/.m2/repository/dom4j/dom4j/1.6.1/dom4j-1.6.1.jar,_depth_0/JavaVulnerableLab/bin/target/JavaVulnerableLab/META-INF/maven/org.cysecurity/JavaVulnerableLab/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar,/JavaVulnerableLab/bin/target/JavaVulnerableLab/WEB-INF/lib/dom4j-1.6.1.jar</p>

<p>

Dependency Hierarchy:

- :x: **dom4j-1.6.1.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/wrbejar/JavaVulnerableLab/commit/29032cb446233dde79d67459af426f67b9224d28">29032cb446233dde79d67459af426f67b9224d28</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

dom4j version prior to version 2.1.1 contains a CWE-91: XML Injection vulnerability in Class: Element. Methods: addElement, addAttribute that can result in an attacker tampering with XML documents through XML injection. This attack appear to be exploitable via an attacker specifying attributes or elements in the XML document. This vulnerability appears to have been fixed in 2.1.1 or later.

<p>Publish Date: 2018-08-20

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-1000632>CVE-2018-1000632</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-1000632">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2018-1000632</a></p>

<p>Release Date: 2018-08-20</p>

<p>Fix Resolution: org.dom4j:dom4j:2.0.3</p>

</p>

</details>

<p></p>

***

:rescue_worker_helmet: Automatic Remediation is available for this issue

<!-- <REMEDIATE>{"isOpenPROnVulnerability":true,"isPackageBased":true,"isDefaultBranch":true,"packages":[{"packageType":"Java","groupId":"dom4j","packageName":"dom4j","packageVersion":"1.6.1","isTransitiveDependency":false,"dependencyTree":"dom4j:dom4j:1.6.1","isMinimumFixVersionAvailable":true,"minimumFixVersion":"org.dom4j:dom4j:2.0.3"}],"vulnerabilityIdentifier":"CVE-2018-1000632","vulnerabilityDetails":"dom4j version prior to version 2.1.1 contains a CWE-91: XML Injection vulnerability in Class: Element. Methods: addElement, addAttribute that can result in an attacker tampering with XML documents through XML injection. This attack appear to be exploitable via an attacker specifying attributes or elements in the XML document. This vulnerability appears to have been fixed in 2.1.1 or later.","vulnerabilityUrl":"https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-1000632","cvss3Severity":"high","cvss3Score":"7.5","cvss3Metrics":{"A":"None","AC":"Low","PR":"None","S":"Unchanged","C":"None","UI":"None","AV":"Network","I":"High"},"extraData":{}}</REMEDIATE> -->

|

non_defect

|

cve high detected in jar cve high severity vulnerability vulnerable library jar the flexible xml framework for java library home page a href path to vulnerable library depth javavulnerablelab target javavulnerablelab meta inf maven org cysecurity javavulnerablelab target javavulnerablelab web inf lib jar javavulnerablelab target javavulnerablelab web inf lib jar depth javavulnerablelab bin target javavulnerablelab web inf lib jar home wss scanner repository jar home wss scanner repository jar depth javavulnerablelab target javavulnerablelab web inf lib jar home wss scanner repository jar depth javavulnerablelab bin target javavulnerablelab meta inf maven org cysecurity javavulnerablelab target javavulnerablelab web inf lib jar javavulnerablelab bin target javavulnerablelab web inf lib jar dependency hierarchy x jar vulnerable library found in head commit a href vulnerability details version prior to version contains a cwe xml injection vulnerability in class element methods addelement addattribute that can result in an attacker tampering with xml documents through xml injection this attack appear to be exploitable via an attacker specifying attributes or elements in the xml document this vulnerability appears to have been fixed in or later publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org rescue worker helmet automatic remediation is available for this issue isopenpronvulnerability true ispackagebased true isdefaultbranch true packages vulnerabilityidentifier cve vulnerabilitydetails version prior to version contains a cwe xml injection vulnerability in class element methods addelement addattribute that can result in an attacker tampering with xml documents through xml injection this attack appear to be exploitable via an attacker specifying attributes or elements in the xml document this vulnerability appears to have been fixed in or later vulnerabilityurl

| 0

|

2,234

| 3,736,653,006

|

IssuesEvent

|

2016-03-08 16:36:56

|

pgharts/trusty-clipped-extension

|

https://api.github.com/repos/pgharts/trusty-clipped-extension

|

opened

|

Brakeman: Possible SQL injection

|

security

|

Possible SQL injection

where(["asset_content_type IN (#{mimes.map{'?'}.join(',')})", *mimes])

Found in app/models/asset.rb by brakeman

|

True

|

Brakeman: Possible SQL injection - Possible SQL injection

where(["asset_content_type IN (#{mimes.map{'?'}.join(',')})", *mimes])

Found in app/models/asset.rb by brakeman

|

non_defect

|

brakeman possible sql injection possible sql injection where found in app models asset rb by brakeman

| 0

|

138,583

| 5,344,628,530

|

IssuesEvent

|

2017-02-17 15:01:22

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

Feature Request: torch 'module' object has no attribute '__version__'

|

enhancement high priority

|

could you please add `__version__` to the `torch` module! all the cool modules have it! ;)

|

1.0

|

Feature Request: torch 'module' object has no attribute '__version__' - could you please add `__version__` to the `torch` module! all the cool modules have it! ;)

|

non_defect

|

feature request torch module object has no attribute version could you please add version to the torch module all the cool modules have it

| 0

|

249,305

| 26,910,069,546

|

IssuesEvent

|

2023-02-06 22:39:35

|

OpenLiberty/open-liberty

|

https://api.github.com/repos/OpenLiberty/open-liberty

|

opened

|

403 status code with securityContext.authenticate(request, response, AuthenticationParameters.withParams().newAuthentication(true));

|

bug team:Security SSO jakartaEE10

|

securityContext.authenticate(request, response, AuthenticationParameters.withParams().newAuthentication(true));

Is trying to reuse the context instead of forcing a new login - I'm also getting a status code of 403.

[Uploading securityContext.auth.zip…]()

|

True

|

403 status code with securityContext.authenticate(request, response, AuthenticationParameters.withParams().newAuthentication(true)); - securityContext.authenticate(request, response, AuthenticationParameters.withParams().newAuthentication(true));

Is trying to reuse the context instead of forcing a new login - I'm also getting a status code of 403.

[Uploading securityContext.auth.zip…]()

|

non_defect

|

status code with securitycontext authenticate request response authenticationparameters withparams newauthentication true securitycontext authenticate request response authenticationparameters withparams newauthentication true is trying to reuse the context instead of forcing a new login i m also getting a status code of

| 0

|

67,268

| 20,961,597,600

|

IssuesEvent

|

2022-03-27 21:46:36

|

abedmaatalla/imsdroid

|

https://api.github.com/repos/abedmaatalla/imsdroid

|

closed

|

it isn't possible to start the sip stack without registering to a sip server

|

Priority-Medium Type-Defect auto-migrated

|

```

There is no way to receive/do a p2p call without registering to a sip server

(the stack isn't initialized before this action?)

```

Original issue reported on code.google.com by `sylar1...@gmail.com` on 11 Jan 2011 at 8:52

|

1.0

|

it isn't possible to start the sip stack without registering to a sip server - ```

There is no way to receive/do a p2p call without registering to a sip server

(the stack isn't initialized before this action?)

```

Original issue reported on code.google.com by `sylar1...@gmail.com` on 11 Jan 2011 at 8:52

|

defect

|

it isn t possible to start the sip stack without registering to a sip server there is no way to receive do a call without registering to a sip server the stack isn t initialized before this action original issue reported on code google com by gmail com on jan at

| 1

|

18,012

| 3,016,271,497

|

IssuesEvent

|

2015-07-30 00:54:31

|

googlei18n/noto-fonts

|

https://api.github.com/repos/googlei18n/noto-fonts

|

closed

|

Sans and Serif: Modifier arrowheads are oversized and vertically misplaced

|

Script-LatinGreekCyrillic Status-Unreproducible Type-Defect

|

Moved from googlei18n/noto-alpha#156. Filed by @roozbehp

The modifier arrowheads (U+02C2..02C5), used in phonetics to note place of articulation, are oversized and vertically too low in NotoSans and NotoSerif. They are almost indistinguishable from greater-than and less-than sign.

They should be at a superscript like position, and smaller.

Compare, for example, with SIL fonts Andika, Charis, Doulos, Gentium.

|

1.0

|

Sans and Serif: Modifier arrowheads are oversized and vertically misplaced - Moved from googlei18n/noto-alpha#156. Filed by @roozbehp

The modifier arrowheads (U+02C2..02C5), used in phonetics to note place of articulation, are oversized and vertically too low in NotoSans and NotoSerif. They are almost indistinguishable from greater-than and less-than sign.

They should be at a superscript like position, and smaller.

Compare, for example, with SIL fonts Andika, Charis, Doulos, Gentium.

|

defect

|

sans and serif modifier arrowheads are oversized and vertically misplaced moved from noto alpha filed by roozbehp the modifier arrowheads u used in phonetics to note place of articulation are oversized and vertically too low in notosans and notoserif they are almost indistinguishable from greater than and less than sign they should be at a superscript like position and smaller compare for example with sil fonts andika charis doulos gentium

| 1

|

269,149

| 28,960,015,420

|

IssuesEvent

|

2023-05-10 01:08:24

|

dpteam/RK3188_TABLET

|

https://api.github.com/repos/dpteam/RK3188_TABLET

|

reopened

|

CVE-2019-19768 (High) detected in linuxv3.0

|

Mend: dependency security vulnerability

|

## CVE-2019-19768 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/verygreen/linux.git>https://github.com/verygreen/linux.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/dpteam/RK3188_TABLET/commit/0c501f5a0fd72c7b2ac82904235363bd44fd8f9e">0c501f5a0fd72c7b2ac82904235363bd44fd8f9e</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/kernel/trace/blktrace.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

In the Linux kernel 5.4.0-rc2, there is a use-after-free (read) in the __blk_add_trace function in kernel/trace/blktrace.c (which is used to fill out a blk_io_trace structure and place it in a per-cpu sub-buffer).

<p>Publish Date: 2019-12-12

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19768>CVE-2019-19768</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2019-19768">https://nvd.nist.gov/vuln/detail/CVE-2019-19768</a></p>

<p>Release Date: 2020-06-10</p>

<p>Fix Resolution: kernel-doc - 3.10.0-514.76.1,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-327.88.1,4.18.0-80.18.1,4.18.0-193,3.10.0-1062.26.1,3.10.0-693.67.1;kernel-rt-core - 4.18.0-193.rt13.51;kernel-rt-debug-debuginfo - 4.18.0-193.rt13.51;kernel-abi-whitelists - 3.10.0-327.88.1,3.10.0-1062.26.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-957.54.1,4.18.0-193,3.10.0-693.67.1;kernel-zfcpdump-modules - 4.18.0-193,4.18.0-147.13.2;kernel-rt-trace-devel - 3.10.0-1127.8.2.rt56.1103;kernel-debug-modules-extra - 4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-147.13.2;kernel-rt-debug-kvm - 4.18.0-193.rt13.51,3.10.0-1127.8.2.rt56.1103;kernel-bootwrapper - 3.10.0-1062.26.1,3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-957.54.1;kernel-rt-debuginfo - 4.18.0-193.rt13.51;kernel-rt-debug-modules - 4.18.0-193.rt13.51;kernel-zfcpdump-devel - 4.18.0-193,4.18.0-147.13.2;perf - 3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-957.54.1,4.18.0-80.18.1,4.18.0-193,4.18.0-193,3.10.0-327.88.1,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-1127.8.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,3.10.0-1127.8.2,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-957.54.1;kernel-zfcpdump-modules-extra - 4.18.0-193,4.18.0-147.13.2;kernel-debuginfo - 3.10.0-514.76.1,4.18.0-80.18.1,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-193,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-327.88.1,3.10.0-1062.26.1;kernel-debug-devel - 3.10.0-514.76.1,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-957.54.1,4.18.0-193,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2,4.18.0-147.13.2,3.10.0-327.88.1,4.18.0-193,4.18.0-80.18.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,4.18.0-80.18.1;bpftool - 3.10.0-1127.8.2,3.10.0-1062.26.1,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-1127.8.2,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,3.10.0-957.54.1,4.18.0-80.18.1,4.18.0-193,3.10.0-1127.8.2;kernel-rt-debug-core - 4.18.0-193.rt13.51;kernel-tools-libs - 3.10.0-1062.26.1,3.10.0-1062.26.1,3.10.0-327.88.1,3.10.0-1127.8.2,4.18.0-193,3.10.0-693.67.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-957.54.1,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,3.10.0-957.54.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2;perf-debuginfo - 3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-1062.26.1,3.10.0-1062.26.1,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-327.88.1;kernel-cross-headers - 4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-193,4.18.0-147.13.2;kernel-debug-debuginfo - 3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-693.67.1,4.18.0-193,3.10.0-514.76.1,3.10.0-327.88.1,3.10.0-957.54.1,3.10.0-1062.26.1,3.10.0-957.54.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2;kernel-debug - 3.10.0-514.76.1,3.10.0-327.88.1,4.18.0-193,3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-957.54.1,4.18.0-193,4.18.0-193,3.10.0-1062.26.1,3.10.0-1062.26.1,4.18.0-80.18.1,3.10.0-957.54.1,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,4.18.0-147.13.2;kernel-devel - 4.18.0-193,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-514.76.1,4.18.0-193,4.18.0-80.18.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,4.18.0-80.18.1,3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-327.88.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-693.67.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2;kernel - 3.10.0-1062.26.1,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-327.88.1,3.10.0-327.88.1,4.18.0-147.13.2,4.18.0-147.13.2,3.10.0-957.54.1,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-193,4.18.0-193,3.10.0-1127.8.2,4.18.0-147.13.2,3.10.0-1062.26.1,4.18.0-80.18.1,3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-1127.8.2,4.18.0-193,3.10.0-514.76.1,3.10.0-693.67.1,4.18.0-193,3.10.0-1127.8.2;bpftool-debuginfo - 4.18.0-193,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-1062.26.1,4.18.0-80.18.1;kpatch-patch-3_10_0-1062_12_1 - 1-2,1-2;kernel-zfcpdump-core - 4.18.0-147.13.2,4.18.0-193;kernel-debug-core - 4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193;kernel-modules-extra - 4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2;kernel-rt-debug-devel - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;python-perf - 3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-327.88.1,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-957.54.1;kernel-core - 4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2;kernel-rt-debug - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;kernel-rt-devel - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;kernel-debuginfo-common-ppc64 - 3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-1062.26.1;python3-perf - 4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-147.13.2;kernel-tools - 3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-957.54.1,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-327.88.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2;kernel-debug-modules - 4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2;kernel-rt-trace-kvm - 3.10.0-1127.8.2.rt56.1103;kernel-rt-debuginfo-common-x86_64 - 4.18.0-193.rt13.51;kernel-tools-libs-devel - 3.10.0-514.76.1,3.10.0-327.88.1,3.10.0-693.67.1,3.10.0-1062.26.1,3.10.0-1062.26.1,3.10.0-1127.8.2,3.10.0-957.54.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-1062.26.1,3.10.0-957.54.1;kernel-modules - 4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193;kernel-tools-debuginfo - 3.10.0-1062.26.1,4.18.0-193,3.10.0-1127.8.2,4.18.0-80.18.1,3.10.0-327.88.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-514.76.1,3.10.0-693.67.1;kernel-rt-modules - 4.18.0-193.rt13.51;kernel-rt-doc - 3.10.0-1127.8.2.rt56.1103;kernel-rt-kvm - 4.18.0-193.rt13.51,3.10.0-1127.8.2.rt56.1103;python-perf-debuginfo - 3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-327.88.1,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-514.76.1,3.10.0-1062.26.1;kernel-headers - 3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-957.54.1,3.10.0-514.76.1,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,3.10.0-327.88.1,3.10.0-1127.8.2,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,3.10.0-693.67.1,4.18.0-193,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-957.54.1,3.10.0-514.76.1,3.10.0-1062.26.1,4.18.0-80.18.1,3.10.0-957.54.1,4.18.0-193,3.10.0-1127.8.2;kernel-rt-trace - 3.10.0-1127.8.2.rt56.1103;kernel-debuginfo-common-x86_64 - 3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-327.88.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1062.26.1,3.10.0-514.76.1,4.18.0-193,3.10.0-957.54.1;kernel-rt - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51,3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;kernel-zfcpdump - 4.18.0-147.13.2,4.18.0-193;kernel-rt-debug-modules-extra - 4.18.0-193.rt13.51;python3-perf-debuginfo - 4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-193;kernel-rt-modules-extra - 4.18.0-193.rt13.51</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-19768 (High) detected in linuxv3.0 - ## CVE-2019-19768 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxv3.0</b></p></summary>

<p>

<p>Linux kernel source tree</p>

<p>Library home page: <a href=https://github.com/verygreen/linux.git>https://github.com/verygreen/linux.git</a></p>

<p>Found in HEAD commit: <a href="https://github.com/dpteam/RK3188_TABLET/commit/0c501f5a0fd72c7b2ac82904235363bd44fd8f9e">0c501f5a0fd72c7b2ac82904235363bd44fd8f9e</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (1)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/kernel/trace/blktrace.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

In the Linux kernel 5.4.0-rc2, there is a use-after-free (read) in the __blk_add_trace function in kernel/trace/blktrace.c (which is used to fill out a blk_io_trace structure and place it in a per-cpu sub-buffer).

<p>Publish Date: 2019-12-12

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19768>CVE-2019-19768</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2019-19768">https://nvd.nist.gov/vuln/detail/CVE-2019-19768</a></p>

<p>Release Date: 2020-06-10</p>

<p>Fix Resolution: kernel-doc - 3.10.0-514.76.1,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-327.88.1,4.18.0-80.18.1,4.18.0-193,3.10.0-1062.26.1,3.10.0-693.67.1;kernel-rt-core - 4.18.0-193.rt13.51;kernel-rt-debug-debuginfo - 4.18.0-193.rt13.51;kernel-abi-whitelists - 3.10.0-327.88.1,3.10.0-1062.26.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-957.54.1,4.18.0-193,3.10.0-693.67.1;kernel-zfcpdump-modules - 4.18.0-193,4.18.0-147.13.2;kernel-rt-trace-devel - 3.10.0-1127.8.2.rt56.1103;kernel-debug-modules-extra - 4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-147.13.2;kernel-rt-debug-kvm - 4.18.0-193.rt13.51,3.10.0-1127.8.2.rt56.1103;kernel-bootwrapper - 3.10.0-1062.26.1,3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-957.54.1;kernel-rt-debuginfo - 4.18.0-193.rt13.51;kernel-rt-debug-modules - 4.18.0-193.rt13.51;kernel-zfcpdump-devel - 4.18.0-193,4.18.0-147.13.2;perf - 3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-957.54.1,4.18.0-80.18.1,4.18.0-193,4.18.0-193,3.10.0-327.88.1,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-1127.8.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,3.10.0-1127.8.2,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-957.54.1;kernel-zfcpdump-modules-extra - 4.18.0-193,4.18.0-147.13.2;kernel-debuginfo - 3.10.0-514.76.1,4.18.0-80.18.1,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-193,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-327.88.1,3.10.0-1062.26.1;kernel-debug-devel - 3.10.0-514.76.1,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-957.54.1,4.18.0-193,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2,4.18.0-147.13.2,3.10.0-327.88.1,4.18.0-193,4.18.0-80.18.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,4.18.0-80.18.1;bpftool - 3.10.0-1127.8.2,3.10.0-1062.26.1,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-1062.26.1,3.10.0-1127.8.2,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,3.10.0-957.54.1,4.18.0-80.18.1,4.18.0-193,3.10.0-1127.8.2;kernel-rt-debug-core - 4.18.0-193.rt13.51;kernel-tools-libs - 3.10.0-1062.26.1,3.10.0-1062.26.1,3.10.0-327.88.1,3.10.0-1127.8.2,4.18.0-193,3.10.0-693.67.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-957.54.1,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,3.10.0-957.54.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2;perf-debuginfo - 3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-1062.26.1,3.10.0-1062.26.1,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-327.88.1;kernel-cross-headers - 4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-193,4.18.0-147.13.2;kernel-debug-debuginfo - 3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-693.67.1,4.18.0-193,3.10.0-514.76.1,3.10.0-327.88.1,3.10.0-957.54.1,3.10.0-1062.26.1,3.10.0-957.54.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2;kernel-debug - 3.10.0-514.76.1,3.10.0-327.88.1,4.18.0-193,3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-957.54.1,4.18.0-193,4.18.0-193,3.10.0-1062.26.1,3.10.0-1062.26.1,4.18.0-80.18.1,3.10.0-957.54.1,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,4.18.0-147.13.2;kernel-devel - 4.18.0-193,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-957.54.1,4.18.0-147.13.2,3.10.0-514.76.1,4.18.0-193,4.18.0-80.18.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,4.18.0-80.18.1,3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-327.88.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-693.67.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2;kernel - 3.10.0-1062.26.1,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-327.88.1,3.10.0-327.88.1,4.18.0-147.13.2,4.18.0-147.13.2,3.10.0-957.54.1,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-193,4.18.0-193,3.10.0-1127.8.2,4.18.0-147.13.2,3.10.0-1062.26.1,4.18.0-80.18.1,3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-1127.8.2,4.18.0-193,3.10.0-514.76.1,3.10.0-693.67.1,4.18.0-193,3.10.0-1127.8.2;bpftool-debuginfo - 4.18.0-193,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-1062.26.1,4.18.0-80.18.1;kpatch-patch-3_10_0-1062_12_1 - 1-2,1-2;kernel-zfcpdump-core - 4.18.0-147.13.2,4.18.0-193;kernel-debug-core - 4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193;kernel-modules-extra - 4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2;kernel-rt-debug-devel - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;python-perf - 3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-327.88.1,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-514.76.1,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-957.54.1;kernel-core - 4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2;kernel-rt-debug - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;kernel-rt-devel - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;kernel-debuginfo-common-ppc64 - 3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-1062.26.1;python3-perf - 4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-147.13.2;kernel-tools - 3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-957.54.1,4.18.0-193,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1127.8.2,3.10.0-514.76.1,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,3.10.0-1062.26.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-327.88.1,3.10.0-1062.26.1,4.18.0-193,3.10.0-957.54.1,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-1127.8.2;kernel-debug-modules - 4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2;kernel-rt-trace-kvm - 3.10.0-1127.8.2.rt56.1103;kernel-rt-debuginfo-common-x86_64 - 4.18.0-193.rt13.51;kernel-tools-libs-devel - 3.10.0-514.76.1,3.10.0-327.88.1,3.10.0-693.67.1,3.10.0-1062.26.1,3.10.0-1062.26.1,3.10.0-1127.8.2,3.10.0-957.54.1,3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-1127.8.2,3.10.0-514.76.1,3.10.0-957.54.1,3.10.0-1062.26.1,3.10.0-957.54.1;kernel-modules - 4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-193,4.18.0-80.18.1,4.18.0-193,4.18.0-147.13.2,4.18.0-147.13.2,4.18.0-193;kernel-tools-debuginfo - 3.10.0-1062.26.1,4.18.0-193,3.10.0-1127.8.2,4.18.0-80.18.1,3.10.0-327.88.1,4.18.0-147.13.2,3.10.0-1127.8.2,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-957.54.1,3.10.0-514.76.1,3.10.0-693.67.1;kernel-rt-modules - 4.18.0-193.rt13.51;kernel-rt-doc - 3.10.0-1127.8.2.rt56.1103;kernel-rt-kvm - 4.18.0-193.rt13.51,3.10.0-1127.8.2.rt56.1103;python-perf-debuginfo - 3.10.0-693.67.1,3.10.0-1127.8.2,3.10.0-957.54.1,3.10.0-1127.8.2,3.10.0-327.88.1,3.10.0-1062.26.1,3.10.0-957.54.1,3.10.0-514.76.1,3.10.0-1062.26.1;kernel-headers - 3.10.0-1062.26.1,4.18.0-147.13.2,3.10.0-957.54.1,3.10.0-514.76.1,4.18.0-193,4.18.0-80.18.1,4.18.0-147.13.2,3.10.0-327.88.1,3.10.0-1127.8.2,4.18.0-147.13.2,4.18.0-193,3.10.0-1062.26.1,3.10.0-693.67.1,4.18.0-193,3.10.0-1127.8.2,3.10.0-693.67.1,4.18.0-147.13.2,3.10.0-957.54.1,3.10.0-514.76.1,3.10.0-1062.26.1,4.18.0-80.18.1,3.10.0-957.54.1,4.18.0-193,3.10.0-1127.8.2;kernel-rt-trace - 3.10.0-1127.8.2.rt56.1103;kernel-debuginfo-common-x86_64 - 3.10.0-1127.8.2,3.10.0-693.67.1,3.10.0-327.88.1,4.18.0-147.13.2,4.18.0-80.18.1,3.10.0-1062.26.1,3.10.0-514.76.1,4.18.0-193,3.10.0-957.54.1;kernel-rt - 3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51,3.10.0-1127.8.2.rt56.1103,4.18.0-193.rt13.51;kernel-zfcpdump - 4.18.0-147.13.2,4.18.0-193;kernel-rt-debug-modules-extra - 4.18.0-193.rt13.51;python3-perf-debuginfo - 4.18.0-147.13.2,4.18.0-80.18.1,4.18.0-193;kernel-rt-modules-extra - 4.18.0-193.rt13.51</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in cve high severity vulnerability vulnerable library linux kernel source tree library home page a href found in head commit a href found in base branch master vulnerable source files kernel trace blktrace c vulnerability details in the linux kernel there is a use after free read in the blk add trace function in kernel trace blktrace c which is used to fill out a blk io trace structure and place it in a per cpu sub buffer publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution kernel doc kernel rt core kernel rt debug debuginfo kernel abi whitelists kernel zfcpdump modules kernel rt trace devel kernel debug modules extra kernel rt debug kvm kernel bootwrapper kernel rt debuginfo kernel rt debug modules kernel zfcpdump devel perf kernel zfcpdump modules extra kernel debuginfo kernel debug devel bpftool kernel rt debug core kernel tools libs perf debuginfo kernel cross headers kernel debug debuginfo kernel debug kernel devel kernel bpftool debuginfo kpatch patch kernel zfcpdump core kernel debug core kernel modules extra kernel rt debug devel python perf kernel core kernel rt debug kernel rt devel kernel debuginfo common perf kernel tools kernel debug modules kernel rt trace kvm kernel rt debuginfo common kernel tools libs devel kernel modules kernel tools debuginfo kernel rt modules kernel rt doc kernel rt kvm python perf debuginfo kernel headers kernel rt trace kernel debuginfo common kernel rt kernel zfcpdump kernel rt debug modules extra perf debuginfo kernel rt modules extra step up your open source security game with mend

| 0

|

47,789

| 13,066,230,044

|

IssuesEvent

|

2020-07-30 21:15:39

|

icecube-trac/tix2

|

https://api.github.com/repos/icecube-trac/tix2

|

closed

|

Improved line fit resources directory cleanup (Trac #1181)

|

Migrated from Trac combo reconstruction defect

|

There are no links to improved linefit documentation in the meta-project documentation. there is a decent index.rst, but it is in resources/ not resources/docs/ it needs the name the maintainer and have links to the CHANGELOG and the doxygen documentation.