Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

125,618

| 12,264,252,860

|

IssuesEvent

|

2020-05-07 03:39:19

|

geesoon/github-slideshow

|

https://api.github.com/repos/geesoon/github-slideshow

|

closed

|

Issue Testing 1

|

documentation

|

### Testing 1

- [ ] activity 1

@githubteacher Hello, what issue do you have?

> dasds

> dsads

> **dsadas**

### `include`

|

1.0

|

Issue Testing 1 - ### Testing 1

- [ ] activity 1

@githubteacher Hello, what issue do you have?

> dasds

> dsads

> **dsadas**

### `include`

|

non_defect

|

issue testing testing activity githubteacher hello what issue do you have dasds dsads dsadas include

| 0

|

312,628

| 23,436,361,348

|

IssuesEvent

|

2022-08-15 10:17:04

|

yorkie-team/yorkie-js-sdk

|

https://api.github.com/repos/yorkie-team/yorkie-js-sdk

|

opened

|

Explain how to store documents in local storage

|

documentation 📔

|

<!-- Please only use this template for submitting common issues -->

**Description**:

Explain how to store documents in local storage.

Users can edit the document even while offline. However, because the document is stored in memory, all changes are lost when the users close the browser.

Let's explain how to store the document's snapshot and local changes in local storage so that the edits remain even when the users close the browser.

Related to https://github.com/yorkie-team/yorkie-js-sdk/pull/364.

**Why**:

- Help developers implement storing documents in local storage.

|

1.0

|

Explain how to store documents in local storage - <!-- Please only use this template for submitting common issues -->

**Description**:

Explain how to store documents in local storage.

Users can edit the document even while offline. However, because the document is stored in memory, all changes are lost when the users close the browser.

Let's explain how to store the document's snapshot and local changes in local storage so that the edits remain even when the users close the browser.

Related to https://github.com/yorkie-team/yorkie-js-sdk/pull/364.

**Why**:

- Help developers implement storing documents in local storage.

|

non_defect

|

explain how to store documents in local storage description explain how to store documents in local storage users can edit the document even while offline however because the document is stored in memory all changes are lost when the users close the browser let s explain how to store the document s snapshot and local changes in local storage so that the edits remain even when the users close the browser related to why help developers implement storing documents in local storage

| 0

|

5,813

| 30,790,981,731

|

IssuesEvent

|

2023-07-31 16:08:37

|

obi1kenobi/trustfall

|

https://api.github.com/repos/obi1kenobi/trustfall

|

closed

|

Test-drive adapters to ensure common edge cases are handled correctly

|

A-adapter A-errors C-enhancement C-maintainability E-help-wanted E-mentor E-medium

|

Before using a new adapter, Trustfall could "test drive" it to make sure it adequately handles edge cases:

- call `resolve_property` with a `None` active vertex for some property and assert that it got a `FieldValue::Null` property value

- call `resolve_neighbors` with a `None` active vertex for some edge and assert that it got an empty iterable of neighbors

- call `resolve_coercion` with a `None` active vertex for some plausible type coercion (if any) and assert that it got a `false` result

- perhaps even assert that sending multiple contexts into these functions means the contexts are returned in the same order.

This will require some schema introspection (to generate valid type / property / edge / coercion values) but should be cheap perf-wise.

It could be implemented transparently in the `trustfall` crate with an optional default-enabled feature. Particularly perf-sensitive applications could opt out of the feature.

|

True

|

Test-drive adapters to ensure common edge cases are handled correctly - Before using a new adapter, Trustfall could "test drive" it to make sure it adequately handles edge cases:

- call `resolve_property` with a `None` active vertex for some property and assert that it got a `FieldValue::Null` property value

- call `resolve_neighbors` with a `None` active vertex for some edge and assert that it got an empty iterable of neighbors

- call `resolve_coercion` with a `None` active vertex for some plausible type coercion (if any) and assert that it got a `false` result

- perhaps even assert that sending multiple contexts into these functions means the contexts are returned in the same order.

This will require some schema introspection (to generate valid type / property / edge / coercion values) but should be cheap perf-wise.

It could be implemented transparently in the `trustfall` crate with an optional default-enabled feature. Particularly perf-sensitive applications could opt out of the feature.

|

non_defect

|

test drive adapters to ensure common edge cases are handled correctly before using a new adapter trustfall could test drive it to make sure it adequately handles edge cases call resolve property with a none active vertex for some property and assert that it got a fieldvalue null property value call resolve neighbors with a none active vertex for some edge and assert that it got an empty iterable of neighbors call resolve coercion with a none active vertex for some plausible type coercion if any and assert that it got a false result perhaps even assert that sending multiple contexts into these functions means the contexts are returned in the same order this will require some schema introspection to generate valid type property edge coercion values but should be cheap perf wise it could be implemented transparently in the trustfall crate with an optional default enabled feature particularly perf sensitive applications could opt out of the feature

| 0

|

55,074

| 14,174,018,740

|

IssuesEvent

|

2020-11-12 19:14:31

|

SAP/fundamental-ngx

|

https://api.github.com/repos/SAP/fundamental-ngx

|

closed

|

Panel: RTL mode down arrow is showing wrong

|

Defect Hunting Medium RTL bug platform

|

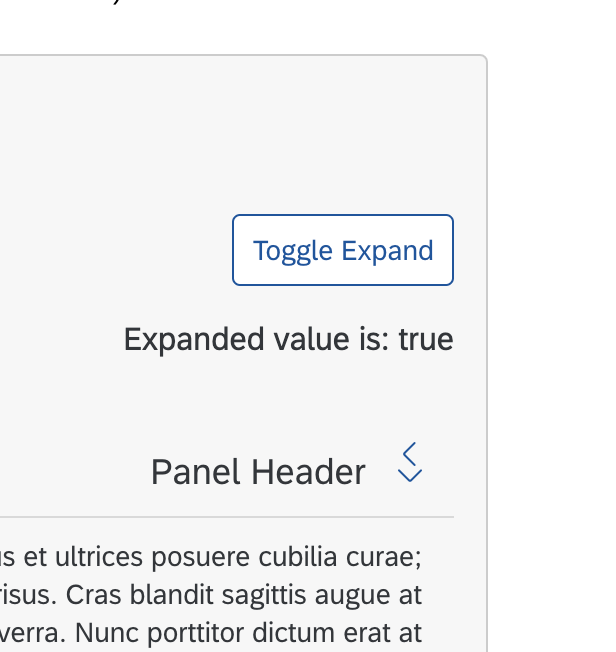

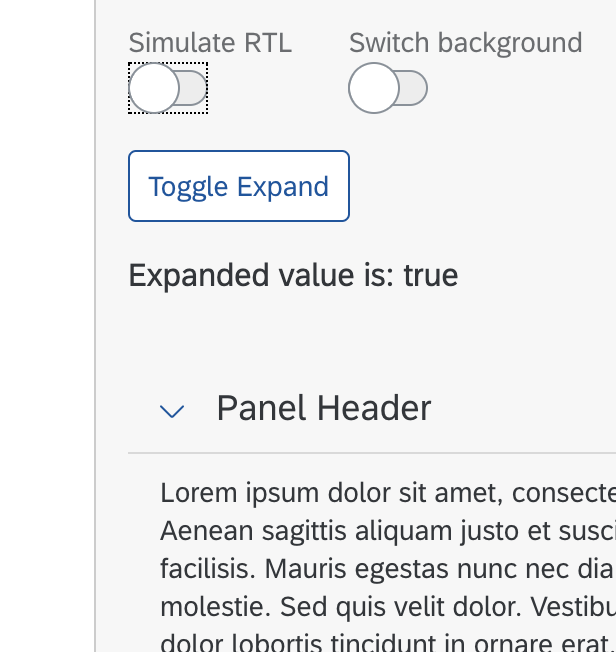

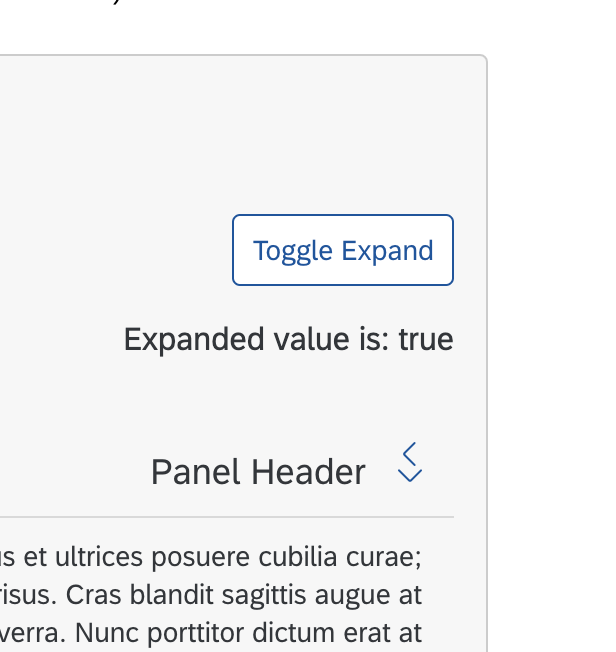

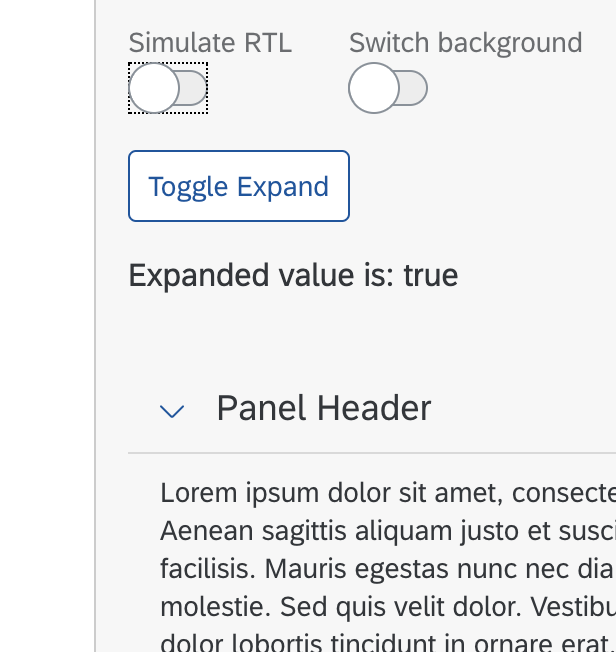

Description: RTL mode down arrow is showing wrong

Expected: RTL mode down arrow should show properly

Screen shot:

Expected:

|

1.0

|

Panel: RTL mode down arrow is showing wrong - Description: RTL mode down arrow is showing wrong

Expected: RTL mode down arrow should show properly

Screen shot:

Expected:

|

defect

|

panel rtl mode down arrow is showing wrong description rtl mode down arrow is showing wrong expected rtl mode down arrow should show properly screen shot expected

| 1

|

309,676

| 23,302,742,514

|

IssuesEvent

|

2022-08-07 15:13:50

|

cython/cython

|

https://api.github.com/repos/cython/cython

|

closed

|

Switch readthedocs to show stable branch by default

|

Documentation

|

Right now https://cython.readthedocs.io shows documentation for the "latest" version of the code, which is apparently an alpha? You can configure the project to show "stable" docs by default, and that way users will see the documentation for latest stable release automatically. It's in the settings somewhere, you can set what branch to identifier to show by default, and "stable" is one of the options.

|

1.0

|

Switch readthedocs to show stable branch by default - Right now https://cython.readthedocs.io shows documentation for the "latest" version of the code, which is apparently an alpha? You can configure the project to show "stable" docs by default, and that way users will see the documentation for latest stable release automatically. It's in the settings somewhere, you can set what branch to identifier to show by default, and "stable" is one of the options.

|

non_defect

|

switch readthedocs to show stable branch by default right now shows documentation for the latest version of the code which is apparently an alpha you can configure the project to show stable docs by default and that way users will see the documentation for latest stable release automatically it s in the settings somewhere you can set what branch to identifier to show by default and stable is one of the options

| 0

|

105,331

| 23,033,148,440

|

IssuesEvent

|

2022-07-22 15:44:14

|

Azure/azure-dev

|

https://api.github.com/repos/Azure/azure-dev

|

closed

|

Clean up extra .ps1 files included in .vsix release

|

engsys vscode

|

On release we noticed a couple extra powershell files in the .vsix files.

|

1.0

|

Clean up extra .ps1 files included in .vsix release - On release we noticed a couple extra powershell files in the .vsix files.

|

non_defect

|

clean up extra files included in vsix release on release we noticed a couple extra powershell files in the vsix files

| 0

|

80,949

| 23,343,120,645

|

IssuesEvent

|

2022-08-09 15:30:31

|

xamarin/xamarin-android

|

https://api.github.com/repos/xamarin/xamarin-android

|

closed

|

Xamarin.Android adds automatically WRITE_EXTERNAL_STORAGE permissions to manifest

|

Area: App+Library Build

|

### Steps to Reproduce

1. Create a new Xamarin.Forms project with Android and iOS app.

2. Build release android.

3. Check manifest in /obj/Release/android/AndroidManifest.xml

### Expected Behavior

Manifest in `obj` should not contain `WRITE_EXTERNAL_STORAGE` if it is not declared in the project.

This permission should not be included in the manifest in APK either (after running Archive for Publishing).

### Actual Behavior

Xamarin.Android adds `WRITE_EXTERNAL_STORAGE` to permissions even though it is not selected in the manifest.

### Version Information

Xamarin.Android should not add `WRITE_EXTERNAL_STORAGE` permission if not checked in the manifest.

```

=== Visual Studio Community 2019 for Mac ===

Version 8.7.8 (build 4)

Installation UUID: 0be7cb3e-0171-43b8-99c3-b7ba76999bd0

GTK+ 2.24.23 (Raleigh theme)

Xamarin.Mac 6.18.0.23 (d16-6 / 088c73638)

Package version: 612000093

=== Mono Framework MDK ===

Runtime:

Mono 6.12.0.93 (2020-02/620cf538206) (64-bit)

Package version: 612000093

=== Roslyn (Language Service) ===

3.7.0-6.20427.1+18ede13943b0bfae1b44ef078b2f3923159bcd32

=== NuGet ===

Version: 5.7.0.6702

=== .NET Core SDK ===

SDK: /usr/local/share/dotnet/sdk/3.1.402/Sdks

SDK Versions:

3.1.402

3.1.200

3.1.102

3.1.101

MSBuild SDKs: /Library/Frameworks/Mono.framework/Versions/6.12.0/lib/mono/msbuild/Current/bin/Sdks

=== .NET Core Runtime ===

Runtime: /usr/local/share/dotnet/dotnet

Runtime Versions:

3.1.8

3.1.2

3.1.1

2.1.22

2.1.16

2.1.15

=== Xamarin.Profiler ===

Version: 1.6.13.11

Location: /Applications/Xamarin Profiler.app/Contents/MacOS/Xamarin Profiler

=== Updater ===

Version: 11

=== Xamarin.Android ===

Version: 11.0.2.0 (Visual Studio Community)

Commit: xamarin-android/d16-7/025fde9

Android SDK: /Users/wkulik/Library/Android/sdk

Supported Android versions:

None installed

SDK Tools Version: 26.1.1

SDK Platform Tools Version: 30.0.4

SDK Build Tools Version: 29.0.3

Build Information:

Mono: 83105ba

Java.Interop: xamarin/java.interop/d16-7@1f3388a

ProGuard: Guardsquare/proguard/proguard6.2.2@ebe9000

SQLite: xamarin/sqlite/3.32.1@1a3276b

Xamarin.Android Tools: xamarin/xamarin-android-tools/d16-7@017078f

=== Microsoft OpenJDK for Mobile ===

Java SDK: /Users/wkulik/Library/Developer/Xamarin/jdk/microsoft_dist_openjdk_1.8.0.25

1.8.0-25

Android Designer EPL code available here:

https://github.com/xamarin/AndroidDesigner.EPL

=== Android SDK Manager ===

Version: 16.7.0.13

Hash: 8380518

Branch: remotes/origin/d16-7~2

Build date: 2020-09-16 05:12:24 UTC

=== Android Device Manager ===

Version: 16.7.0.24

Hash: bb090a3

Branch: remotes/origin/d16-7

Build date: 2020-09-16 05:12:46 UTC

=== Xamarin Designer ===

Version: 16.7.0.495

Hash: 03d50a221

Branch: remotes/origin/d16-7-vsmac

Build date: 2020-08-28 13:12:52 UTC

=== Apple Developer Tools ===

Xcode 12.1 (17222)

Build 12A7403

=== Xamarin.Mac ===

Xamarin.Mac not installed. Can't find /Library/Frameworks/Xamarin.Mac.framework/Versions/Current/Version.

=== Xamarin.iOS ===

Version: 14.0.0.0 (Visual Studio Community)

Hash: 7ec3751a1

Branch: xcode12

Build date: 2020-09-16 11:33:15-0400

=== Build Information ===

Release ID: 807080004

Git revision: 9ea7bef96d65cdc3f4288014a799026ccb1993bc

Build date: 2020-09-16 17:22:54-04

Build branch: release-8.7

Xamarin extensions: 9ea7bef96d65cdc3f4288014a799026ccb1993bc

=== Operating System ===

Mac OS X 10.15.7

Darwin 19.6.0 Darwin Kernel Version 19.6.0

Mon Aug 31 22:12:52 PDT 2020

root:xnu-6153.141.2~1/RELEASE_X86_64 x86_64

```

|

1.0

|

Xamarin.Android adds automatically WRITE_EXTERNAL_STORAGE permissions to manifest - ### Steps to Reproduce

1. Create a new Xamarin.Forms project with Android and iOS app.

2. Build release android.

3. Check manifest in /obj/Release/android/AndroidManifest.xml

### Expected Behavior

Manifest in `obj` should not contain `WRITE_EXTERNAL_STORAGE` if it is not declared in the project.

This permission should not be included in the manifest in APK either (after running Archive for Publishing).

### Actual Behavior

Xamarin.Android adds `WRITE_EXTERNAL_STORAGE` to permissions even though it is not selected in the manifest.

### Version Information

Xamarin.Android should not add `WRITE_EXTERNAL_STORAGE` permission if not checked in the manifest.

```

=== Visual Studio Community 2019 for Mac ===

Version 8.7.8 (build 4)

Installation UUID: 0be7cb3e-0171-43b8-99c3-b7ba76999bd0

GTK+ 2.24.23 (Raleigh theme)

Xamarin.Mac 6.18.0.23 (d16-6 / 088c73638)

Package version: 612000093

=== Mono Framework MDK ===

Runtime:

Mono 6.12.0.93 (2020-02/620cf538206) (64-bit)

Package version: 612000093

=== Roslyn (Language Service) ===

3.7.0-6.20427.1+18ede13943b0bfae1b44ef078b2f3923159bcd32

=== NuGet ===

Version: 5.7.0.6702

=== .NET Core SDK ===

SDK: /usr/local/share/dotnet/sdk/3.1.402/Sdks

SDK Versions:

3.1.402

3.1.200

3.1.102

3.1.101

MSBuild SDKs: /Library/Frameworks/Mono.framework/Versions/6.12.0/lib/mono/msbuild/Current/bin/Sdks

=== .NET Core Runtime ===

Runtime: /usr/local/share/dotnet/dotnet

Runtime Versions:

3.1.8

3.1.2

3.1.1

2.1.22

2.1.16

2.1.15

=== Xamarin.Profiler ===

Version: 1.6.13.11

Location: /Applications/Xamarin Profiler.app/Contents/MacOS/Xamarin Profiler

=== Updater ===

Version: 11

=== Xamarin.Android ===

Version: 11.0.2.0 (Visual Studio Community)

Commit: xamarin-android/d16-7/025fde9

Android SDK: /Users/wkulik/Library/Android/sdk

Supported Android versions:

None installed

SDK Tools Version: 26.1.1

SDK Platform Tools Version: 30.0.4

SDK Build Tools Version: 29.0.3

Build Information:

Mono: 83105ba

Java.Interop: xamarin/java.interop/d16-7@1f3388a

ProGuard: Guardsquare/proguard/proguard6.2.2@ebe9000

SQLite: xamarin/sqlite/3.32.1@1a3276b

Xamarin.Android Tools: xamarin/xamarin-android-tools/d16-7@017078f

=== Microsoft OpenJDK for Mobile ===

Java SDK: /Users/wkulik/Library/Developer/Xamarin/jdk/microsoft_dist_openjdk_1.8.0.25

1.8.0-25

Android Designer EPL code available here:

https://github.com/xamarin/AndroidDesigner.EPL

=== Android SDK Manager ===

Version: 16.7.0.13

Hash: 8380518

Branch: remotes/origin/d16-7~2

Build date: 2020-09-16 05:12:24 UTC

=== Android Device Manager ===

Version: 16.7.0.24

Hash: bb090a3

Branch: remotes/origin/d16-7

Build date: 2020-09-16 05:12:46 UTC

=== Xamarin Designer ===

Version: 16.7.0.495

Hash: 03d50a221

Branch: remotes/origin/d16-7-vsmac

Build date: 2020-08-28 13:12:52 UTC

=== Apple Developer Tools ===

Xcode 12.1 (17222)

Build 12A7403

=== Xamarin.Mac ===

Xamarin.Mac not installed. Can't find /Library/Frameworks/Xamarin.Mac.framework/Versions/Current/Version.

=== Xamarin.iOS ===

Version: 14.0.0.0 (Visual Studio Community)

Hash: 7ec3751a1

Branch: xcode12

Build date: 2020-09-16 11:33:15-0400

=== Build Information ===

Release ID: 807080004

Git revision: 9ea7bef96d65cdc3f4288014a799026ccb1993bc

Build date: 2020-09-16 17:22:54-04

Build branch: release-8.7

Xamarin extensions: 9ea7bef96d65cdc3f4288014a799026ccb1993bc

=== Operating System ===

Mac OS X 10.15.7

Darwin 19.6.0 Darwin Kernel Version 19.6.0

Mon Aug 31 22:12:52 PDT 2020

root:xnu-6153.141.2~1/RELEASE_X86_64 x86_64

```

|

non_defect

|

xamarin android adds automatically write external storage permissions to manifest steps to reproduce create a new xamarin forms project with android and ios app build release android check manifest in obj release android androidmanifest xml expected behavior manifest in obj should not contain write external storage if it is not declared in the project this permission should not be included in the manifest in apk either after running archive for publishing actual behavior xamarin android adds write external storage to permissions even though it is not selected in the manifest version information xamarin android should not add write external storage permission if not checked in the manifest visual studio community for mac version build installation uuid gtk raleigh theme xamarin mac package version mono framework mdk runtime mono bit package version roslyn language service nuget version net core sdk sdk usr local share dotnet sdk sdks sdk versions msbuild sdks library frameworks mono framework versions lib mono msbuild current bin sdks net core runtime runtime usr local share dotnet dotnet runtime versions xamarin profiler version location applications xamarin profiler app contents macos xamarin profiler updater version xamarin android version visual studio community commit xamarin android android sdk users wkulik library android sdk supported android versions none installed sdk tools version sdk platform tools version sdk build tools version build information mono java interop xamarin java interop proguard guardsquare proguard sqlite xamarin sqlite xamarin android tools xamarin xamarin android tools microsoft openjdk for mobile java sdk users wkulik library developer xamarin jdk microsoft dist openjdk android designer epl code available here android sdk manager version hash branch remotes origin build date utc android device manager version hash branch remotes origin build date utc xamarin designer version hash branch remotes origin vsmac build date utc apple developer tools xcode build xamarin mac xamarin mac not installed can t find library frameworks xamarin mac framework versions current version xamarin ios version visual studio community hash branch build date build information release id git revision build date build branch release xamarin extensions operating system mac os x darwin darwin kernel version mon aug pdt root xnu release

| 0

|

60,159

| 17,023,352,895

|

IssuesEvent

|

2021-07-03 01:34:53

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

Mapnik renders names for some items that otherwise aren't rendered

|

Component: mapnik Priority: minor Resolution: wontfix Type: defect

|

**[Submitted to the original trac issue database at 10.50pm, Tuesday, 20th January 2009]**

An example is the dismantled railway here:

http://www.openstreetmap.org/?lat=53.1788&lon=-1.3573&zoom=17&layers=B000FTF

This is a stretch of former railway where there's no trace left on the ground. It's set as "railway=dismantled" (I changed this stretch some time ago from "railway=abandoned" precisely because there is no trace left on the ground). The railway doesn't show, but the name does. Would it be possible for Mapnik to only render the name of a way if there is a renderable tag on the way?

|

1.0

|

Mapnik renders names for some items that otherwise aren't rendered - **[Submitted to the original trac issue database at 10.50pm, Tuesday, 20th January 2009]**

An example is the dismantled railway here:

http://www.openstreetmap.org/?lat=53.1788&lon=-1.3573&zoom=17&layers=B000FTF

This is a stretch of former railway where there's no trace left on the ground. It's set as "railway=dismantled" (I changed this stretch some time ago from "railway=abandoned" precisely because there is no trace left on the ground). The railway doesn't show, but the name does. Would it be possible for Mapnik to only render the name of a way if there is a renderable tag on the way?

|

defect

|

mapnik renders names for some items that otherwise aren t rendered an example is the dismantled railway here this is a stretch of former railway where there s no trace left on the ground it s set as railway dismantled i changed this stretch some time ago from railway abandoned precisely because there is no trace left on the ground the railway doesn t show but the name does would it be possible for mapnik to only render the name of a way if there is a renderable tag on the way

| 1

|

77,226

| 26,862,496,520

|

IssuesEvent

|

2023-02-03 19:47:07

|

dotCMS/core

|

https://api.github.com/repos/dotCMS/core

|

opened

|

Adjusting action names: Copy vs Mark for Copy

|

Type : Defect OKR : User Experience Triage Type : UX Design

|

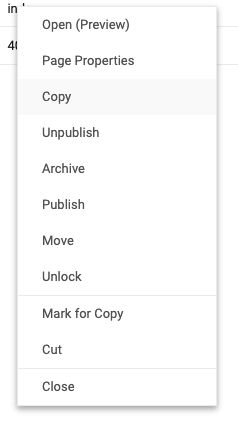

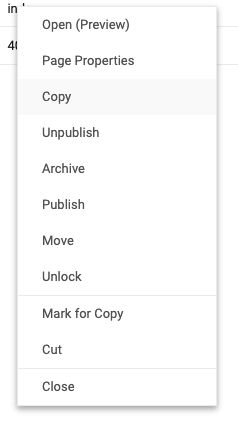

### Problem Statement

The current Site Browser "copy" options are a little confusing.

| Action | Behavior |

|-------|--------|

| `Copy` | Make an immediate duplicate of the item |

| `Mark for Copy` | Place item into dotCMS's clipboard for pasting |

The `Mark for Copy` pattern is the one typically associated most Copy behaviors since time immemorial, while the `Copy` pattern is what, e.g., Apple calls `Duplicate`.

### Steps to Reproduce

Right-click an item in the Site Browser on Auth or on a local container (note that at the time of writing, someone appears to have renamed Copy to Duplicate on the live Demo).

It's not really a bug, also, but I think it's counterintuitive enough to warrant the Defect label.

### Acceptance Criteria

I think we should consider the following changes:

- `Copy` -> `Duplicate`

- `Mark for Copy` -> `Copy`

The requirements are slightly different for each, as the first is a Workflow Action and the other is a System Action (but hopefully both are fairly simple).

### dotCMS Version

Seen on versions from 22.09 to 23.01, probably goes back much further.

### Proposed Objective

User Experience

### Proposed Priority

Priority 4 - Trivial

### External Links... Slack Conversations, Support Tickets, Figma Designs, etc.

_No response_

### Assumptions & Initiation Needs

If anyone feels strongly about the current setup, may call for a Debate Club session or something?

### Sub-Tasks & Estimates

_No response_

|

1.0

|

Adjusting action names: Copy vs Mark for Copy - ### Problem Statement

The current Site Browser "copy" options are a little confusing.

| Action | Behavior |

|-------|--------|

| `Copy` | Make an immediate duplicate of the item |

| `Mark for Copy` | Place item into dotCMS's clipboard for pasting |

The `Mark for Copy` pattern is the one typically associated most Copy behaviors since time immemorial, while the `Copy` pattern is what, e.g., Apple calls `Duplicate`.

### Steps to Reproduce

Right-click an item in the Site Browser on Auth or on a local container (note that at the time of writing, someone appears to have renamed Copy to Duplicate on the live Demo).

It's not really a bug, also, but I think it's counterintuitive enough to warrant the Defect label.

### Acceptance Criteria

I think we should consider the following changes:

- `Copy` -> `Duplicate`

- `Mark for Copy` -> `Copy`

The requirements are slightly different for each, as the first is a Workflow Action and the other is a System Action (but hopefully both are fairly simple).

### dotCMS Version

Seen on versions from 22.09 to 23.01, probably goes back much further.

### Proposed Objective

User Experience

### Proposed Priority

Priority 4 - Trivial

### External Links... Slack Conversations, Support Tickets, Figma Designs, etc.

_No response_

### Assumptions & Initiation Needs

If anyone feels strongly about the current setup, may call for a Debate Club session or something?

### Sub-Tasks & Estimates

_No response_

|

defect

|

adjusting action names copy vs mark for copy problem statement the current site browser copy options are a little confusing action behavior copy make an immediate duplicate of the item mark for copy place item into dotcms s clipboard for pasting the mark for copy pattern is the one typically associated most copy behaviors since time immemorial while the copy pattern is what e g apple calls duplicate steps to reproduce right click an item in the site browser on auth or on a local container note that at the time of writing someone appears to have renamed copy to duplicate on the live demo it s not really a bug also but i think it s counterintuitive enough to warrant the defect label acceptance criteria i think we should consider the following changes copy duplicate mark for copy copy the requirements are slightly different for each as the first is a workflow action and the other is a system action but hopefully both are fairly simple dotcms version seen on versions from to probably goes back much further proposed objective user experience proposed priority priority trivial external links slack conversations support tickets figma designs etc no response assumptions initiation needs if anyone feels strongly about the current setup may call for a debate club session or something sub tasks estimates no response

| 1

|

407,336

| 11,912,199,634

|

IssuesEvent

|

2020-03-31 09:52:17

|

JEvents/JEvents

|

https://api.github.com/repos/JEvents/JEvents

|

closed

|

uikit: No save when creating a new calendar

|

Priority - High

|

As stated, however, if you edit an existing calendar there is a save & close button along with the cancel.

|

1.0

|

uikit: No save when creating a new calendar - As stated, however, if you edit an existing calendar there is a save & close button along with the cancel.

|

non_defect

|

uikit no save when creating a new calendar as stated however if you edit an existing calendar there is a save close button along with the cancel

| 0

|

79,362

| 28,128,914,130

|

IssuesEvent

|

2023-03-31 20:27:42

|

NREL/EnergyPlus

|

https://api.github.com/repos/NREL/EnergyPlus

|

opened

|

Move ZeroSourceSumHATsurf in DataHeatBalance to ChilledCeilingPanelSimple

|

Defect

|

Issue overview

--------------

Followup to #9921. The variable ZeroSourceSumHATsurf stores the sum of zone (or space?) surfaces radiant heat. This methodology is used for all radiant heaters. The discussion is in regard to more than 1 radiant heater in the same zone and how this zone surface data summation is used within each radiant model. The ElectricBaseBoard branch #9921 moved this variable to be a member of the electric baseboard instead of being indexed to the zone where the baseboard was installed. There is uncertainty of how this information should be used within a radiant heat transfer model.

### Details

Some additional details for this issue (if relevant):

- Platform (Operating system, version)

- Version of EnergyPlus (if using an intermediate build, include SHA)

- Unmethours link or helpdesk ticket number

### Checklist

Add to this list or remove from it as applicable. This is a simple templated set of guidelines.

- [ ] Defect file added (list location of defect file here)

- [ ] Ticket added to Pivotal for defect (development team task)

- [ ] Pull request created (the pull request will have additional tasks related to reviewing changes that fix this defect)

|

1.0

|

Move ZeroSourceSumHATsurf in DataHeatBalance to ChilledCeilingPanelSimple - Issue overview

--------------

Followup to #9921. The variable ZeroSourceSumHATsurf stores the sum of zone (or space?) surfaces radiant heat. This methodology is used for all radiant heaters. The discussion is in regard to more than 1 radiant heater in the same zone and how this zone surface data summation is used within each radiant model. The ElectricBaseBoard branch #9921 moved this variable to be a member of the electric baseboard instead of being indexed to the zone where the baseboard was installed. There is uncertainty of how this information should be used within a radiant heat transfer model.

### Details

Some additional details for this issue (if relevant):

- Platform (Operating system, version)

- Version of EnergyPlus (if using an intermediate build, include SHA)

- Unmethours link or helpdesk ticket number

### Checklist

Add to this list or remove from it as applicable. This is a simple templated set of guidelines.

- [ ] Defect file added (list location of defect file here)

- [ ] Ticket added to Pivotal for defect (development team task)

- [ ] Pull request created (the pull request will have additional tasks related to reviewing changes that fix this defect)

|

defect

|

move zerosourcesumhatsurf in dataheatbalance to chilledceilingpanelsimple issue overview followup to the variable zerosourcesumhatsurf stores the sum of zone or space surfaces radiant heat this methodology is used for all radiant heaters the discussion is in regard to more than radiant heater in the same zone and how this zone surface data summation is used within each radiant model the electricbaseboard branch moved this variable to be a member of the electric baseboard instead of being indexed to the zone where the baseboard was installed there is uncertainty of how this information should be used within a radiant heat transfer model details some additional details for this issue if relevant platform operating system version version of energyplus if using an intermediate build include sha unmethours link or helpdesk ticket number checklist add to this list or remove from it as applicable this is a simple templated set of guidelines defect file added list location of defect file here ticket added to pivotal for defect development team task pull request created the pull request will have additional tasks related to reviewing changes that fix this defect

| 1

|

5,923

| 2,610,217,999

|

IssuesEvent

|

2015-02-26 19:09:21

|

chrsmith/somefinders

|

https://api.github.com/repos/chrsmith/somefinders

|

opened

|

x3daudio dll

|

auto-migrated Priority-Medium Type-Defect

|

```

'''Арефий Тетерин'''

День добрый никак не могу найти .x3daudio dll. где

то видел уже

'''Алан Яковлев'''

Вот держи линк http://bit.ly/16svV0a

'''Арвид Кононов'''

Спасибо вроде то но просит телефон вводить

'''Адриан Фомичёв'''

Не это не влияет на баланс

'''Велислав Буров'''

Неа все ок у меня ничего не списало

Информация о файле: x3daudio dll

Загружен: В этом месяце

Скачан раз: 1172

Рейтинг: 206

Средняя скорость скачивания: 1105

Похожих файлов: 29

```

-----

Original issue reported on code.google.com by `kondense...@gmail.com` on 17 Dec 2013 at 12:34

|

1.0

|

x3daudio dll - ```

'''Арефий Тетерин'''

День добрый никак не могу найти .x3daudio dll. где

то видел уже

'''Алан Яковлев'''

Вот держи линк http://bit.ly/16svV0a

'''Арвид Кононов'''

Спасибо вроде то но просит телефон вводить

'''Адриан Фомичёв'''

Не это не влияет на баланс

'''Велислав Буров'''

Неа все ок у меня ничего не списало

Информация о файле: x3daudio dll

Загружен: В этом месяце

Скачан раз: 1172

Рейтинг: 206

Средняя скорость скачивания: 1105

Похожих файлов: 29

```

-----

Original issue reported on code.google.com by `kondense...@gmail.com` on 17 Dec 2013 at 12:34

|

defect

|

dll арефий тетерин день добрый никак не могу найти dll где то видел уже алан яковлев вот держи линк арвид кононов спасибо вроде то но просит телефон вводить адриан фомичёв не это не влияет на баланс велислав буров неа все ок у меня ничего не списало информация о файле dll загружен в этом месяце скачан раз рейтинг средняя скорость скачивания похожих файлов original issue reported on code google com by kondense gmail com on dec at

| 1

|

139,025

| 20,758,730,744

|

IssuesEvent

|

2022-03-15 14:28:40

|

raft-tech/TANF-app

|

https://api.github.com/repos/raft-tech/TANF-app

|

opened

|

As a grantee pilot user I want to be able to access TDP help/support content in one place

|

Research & Design

|

**Description**

Blocked by other onboarding content tickets as it requires insight into the full set of content. Delivers a design for an in-app knowledge base which aggregates all onboarding/support content. May also contain existing materials like data coding instructions.

**AC:**

- [ ] Email has a clear sequence of next steps for the user to follow

- [ ] Each step is complete with a link to the place that step will be carried out

- [ ] The design is consistent with the team’s past decisions, or a change is clearly documented

- [ ] The design is usable, meaning...

- [ ] It uses [USWDS components and follows it’s UX guidance](https://designsystem.digital.gov/components/), or a deviation is clearly documented

- [ ] Language is intentional and [plain](https://plainlanguage.gov/guidelines/); placeholders are clearly documented

- [ ] It follows [accessibility guidelines](https://accessibility.digital.gov/) (e.g. clear information hierarchy, color is not the only way meaning is communicated, etc.)

- [ ] If feedback identifies bigger questions or unknowns, create additional issues to investigate

- [ ] Relevant user stories are documented.

- [ ] Relevant accessibility implementation notes are documented

- [ ] Recommended pa11y checks are documented.

- [ ] User flow is included/updated.

- [ ] Design includes implementation notes for accessibility for instances where standard best practices won't deliver the desired experience.

- [ ] Dev/Design hanodff has occurred

**Notes**

Likely knowledge base areas:

- Creating & managing a login.gov account

- Logging into TDP, Getting Access

- Framing TDP functionality (Data Submission/Resubmission, Requirements for Data and How to Submit Complete Resubmissions)

- TDP Release Notes (Potential way to frame feature overview from homepage & Getting Started email)

- How To: Ask Questions, Get Support, Give Feedback

May also be a good place to host some content from https://tanfdata.org/

Design will likely be modelled after informational USWDS page templates, e.g. USWDS' own getting started guide https://designsystem.digital.gov/documentation/getting-started/developers/phase-one-install/

**Tasks**

- [ ] Draft information architecture (page hierarchy, order, labeling)

- [ ] Draft knowledge base design in [figma](url)

- [ ] Add links to all content to be hosted in knowledge base

- [ ] Review / critique opportunity w/ DIGIT team (and/or regional staff?)

- [ ] Revision as needed

- [ ] Dev/Design handoff sync

**Documentation**

- Figma

- Content links hack.md

**DD**

- [ ] @lfrohlich has reviewed and signed off

|

1.0

|

As a grantee pilot user I want to be able to access TDP help/support content in one place - **Description**

Blocked by other onboarding content tickets as it requires insight into the full set of content. Delivers a design for an in-app knowledge base which aggregates all onboarding/support content. May also contain existing materials like data coding instructions.

**AC:**

- [ ] Email has a clear sequence of next steps for the user to follow

- [ ] Each step is complete with a link to the place that step will be carried out

- [ ] The design is consistent with the team’s past decisions, or a change is clearly documented

- [ ] The design is usable, meaning...

- [ ] It uses [USWDS components and follows it’s UX guidance](https://designsystem.digital.gov/components/), or a deviation is clearly documented

- [ ] Language is intentional and [plain](https://plainlanguage.gov/guidelines/); placeholders are clearly documented

- [ ] It follows [accessibility guidelines](https://accessibility.digital.gov/) (e.g. clear information hierarchy, color is not the only way meaning is communicated, etc.)

- [ ] If feedback identifies bigger questions or unknowns, create additional issues to investigate

- [ ] Relevant user stories are documented.

- [ ] Relevant accessibility implementation notes are documented

- [ ] Recommended pa11y checks are documented.

- [ ] User flow is included/updated.

- [ ] Design includes implementation notes for accessibility for instances where standard best practices won't deliver the desired experience.

- [ ] Dev/Design hanodff has occurred

**Notes**

Likely knowledge base areas:

- Creating & managing a login.gov account

- Logging into TDP, Getting Access

- Framing TDP functionality (Data Submission/Resubmission, Requirements for Data and How to Submit Complete Resubmissions)

- TDP Release Notes (Potential way to frame feature overview from homepage & Getting Started email)

- How To: Ask Questions, Get Support, Give Feedback

May also be a good place to host some content from https://tanfdata.org/

Design will likely be modelled after informational USWDS page templates, e.g. USWDS' own getting started guide https://designsystem.digital.gov/documentation/getting-started/developers/phase-one-install/

**Tasks**

- [ ] Draft information architecture (page hierarchy, order, labeling)

- [ ] Draft knowledge base design in [figma](url)

- [ ] Add links to all content to be hosted in knowledge base

- [ ] Review / critique opportunity w/ DIGIT team (and/or regional staff?)

- [ ] Revision as needed

- [ ] Dev/Design handoff sync

**Documentation**

- Figma

- Content links hack.md

**DD**

- [ ] @lfrohlich has reviewed and signed off

|

non_defect

|

as a grantee pilot user i want to be able to access tdp help support content in one place description blocked by other onboarding content tickets as it requires insight into the full set of content delivers a design for an in app knowledge base which aggregates all onboarding support content may also contain existing materials like data coding instructions ac email has a clear sequence of next steps for the user to follow each step is complete with a link to the place that step will be carried out the design is consistent with the team’s past decisions or a change is clearly documented the design is usable meaning it uses or a deviation is clearly documented language is intentional and placeholders are clearly documented it follows e g clear information hierarchy color is not the only way meaning is communicated etc if feedback identifies bigger questions or unknowns create additional issues to investigate relevant user stories are documented relevant accessibility implementation notes are documented recommended checks are documented user flow is included updated design includes implementation notes for accessibility for instances where standard best practices won t deliver the desired experience dev design hanodff has occurred notes likely knowledge base areas creating managing a login gov account logging into tdp getting access framing tdp functionality data submission resubmission requirements for data and how to submit complete resubmissions tdp release notes potential way to frame feature overview from homepage getting started email how to ask questions get support give feedback may also be a good place to host some content from design will likely be modelled after informational uswds page templates e g uswds own getting started guide tasks draft information architecture page hierarchy order labeling draft knowledge base design in url add links to all content to be hosted in knowledge base review critique opportunity w digit team and or regional staff revision as needed dev design handoff sync documentation figma content links hack md dd lfrohlich has reviewed and signed off

| 0

|

59,195

| 17,016,412,272

|

IssuesEvent

|

2021-07-02 12:42:14

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

opened

|

Russian geocoding support in Nominatim

|

Component: nominatim Priority: major Type: defect

|

**[Submitted to the original trac issue database at 8.14pm, Saturday, 19th October 2013]**

As of now, Russian geocoding support in Nominatim is totally broken. I'm filing this meta-ticket to track progress on individual tickets and to gather relevant information.

The background is that I've tried to conduct a sociological study that involved computing coordinates for hundreds of thousands of addresses. For that, I planned to deploy a local Nominatim instance, but it turned out that for most of addresses it simply doesn't work. For now, I resort to using Yandex (Russia's #1 search engine) geocoding API that works like a charm, but is not suitable for bulk queries. Another point is that there are desktop applications being developed that use geocode-glib library (GNOME Maps, for example) that, in turn, uses Nominatim API inside.

The problem is that Russian addresses nomenclature is very diverse and informal. Here is a brief summary; if needed, I can create a wiki article on that.

1) The "street" term includes not only "" (a street proper), but also "" (side-street), "" (passage), "" (avenue), "" (highway), "" (cul-de-sac), "" (bridge), "" (square) and some others. These are used in full or abbreviated form ("" -> ".", "" -> "-"), and can be both appended or prepended to the name. Sometimes, "" (major) or "" (minor) are the part of the name, and the word order is arbitrary. Thus, " ." and " " refer to the same. #4703

Examples: ". ", " ", " .", " "

2) The building number nomenclature is also very diverse. Usually, there is a top-level prefix: "" (house) or "" (property), followed by the main number. These prefixes can be abbreviated as "." r "." or even omitted. #4647

Besides the main number, there can be also letter indexes, different sub-numbers and combinations of those:

- letter index is a letter (usually "", "", "") appended to the building number without a space;

- sub-building is either a "" or "". These are similar, but not interchangeable. These can be spelled full-form (" 1 2)" or abbreviated in different ways: ". 1 . 2", ". 12", "3 . 1", "31". As you see, are short form (". 3") and one-letter form ("3"); both period and space can be omitted when appending it to the main number. Moreover, a sub-building number can have a letter index itself;

- finally, the slash syntax is used when the building has dual address. For example, a building on the corner of two streets can be addressed as both ". 30" and " ., 61", while full address is " 30/61".

3) Rarely, but there can be ranges used as building numbers. For example, there is one single building with an address " ., 10-16". This means that this building should be a hit for requests like ", 12" or ", 14" (but not ", 11" - there are even and odd sides of the street usually).

4) The "" (ie) and "" (yo) letters should be treated as identical; the queries should be case insensitive. #2467 #4819 #2758

As a solution, I can imagine some code that canonicalizes the requested address. For this to work, all the Russian addresses in OSM will need to be canonicalized, too (probably, with the help of the same code).

|

1.0

|

Russian geocoding support in Nominatim - **[Submitted to the original trac issue database at 8.14pm, Saturday, 19th October 2013]**

As of now, Russian geocoding support in Nominatim is totally broken. I'm filing this meta-ticket to track progress on individual tickets and to gather relevant information.

The background is that I've tried to conduct a sociological study that involved computing coordinates for hundreds of thousands of addresses. For that, I planned to deploy a local Nominatim instance, but it turned out that for most of addresses it simply doesn't work. For now, I resort to using Yandex (Russia's #1 search engine) geocoding API that works like a charm, but is not suitable for bulk queries. Another point is that there are desktop applications being developed that use geocode-glib library (GNOME Maps, for example) that, in turn, uses Nominatim API inside.

The problem is that Russian addresses nomenclature is very diverse and informal. Here is a brief summary; if needed, I can create a wiki article on that.

1) The "street" term includes not only "" (a street proper), but also "" (side-street), "" (passage), "" (avenue), "" (highway), "" (cul-de-sac), "" (bridge), "" (square) and some others. These are used in full or abbreviated form ("" -> ".", "" -> "-"), and can be both appended or prepended to the name. Sometimes, "" (major) or "" (minor) are the part of the name, and the word order is arbitrary. Thus, " ." and " " refer to the same. #4703

Examples: ". ", " ", " .", " "

2) The building number nomenclature is also very diverse. Usually, there is a top-level prefix: "" (house) or "" (property), followed by the main number. These prefixes can be abbreviated as "." r "." or even omitted. #4647

Besides the main number, there can be also letter indexes, different sub-numbers and combinations of those:

- letter index is a letter (usually "", "", "") appended to the building number without a space;

- sub-building is either a "" or "". These are similar, but not interchangeable. These can be spelled full-form (" 1 2)" or abbreviated in different ways: ". 1 . 2", ". 12", "3 . 1", "31". As you see, are short form (". 3") and one-letter form ("3"); both period and space can be omitted when appending it to the main number. Moreover, a sub-building number can have a letter index itself;

- finally, the slash syntax is used when the building has dual address. For example, a building on the corner of two streets can be addressed as both ". 30" and " ., 61", while full address is " 30/61".

3) Rarely, but there can be ranges used as building numbers. For example, there is one single building with an address " ., 10-16". This means that this building should be a hit for requests like ", 12" or ", 14" (but not ", 11" - there are even and odd sides of the street usually).

4) The "" (ie) and "" (yo) letters should be treated as identical; the queries should be case insensitive. #2467 #4819 #2758

As a solution, I can imagine some code that canonicalizes the requested address. For this to work, all the Russian addresses in OSM will need to be canonicalized, too (probably, with the help of the same code).

|

defect

|

russian geocoding support in nominatim as of now russian geocoding support in nominatim is totally broken i m filing this meta ticket to track progress on individual tickets and to gather relevant information the background is that i ve tried to conduct a sociological study that involved computing coordinates for hundreds of thousands of addresses for that i planned to deploy a local nominatim instance but it turned out that for most of addresses it simply doesn t work for now i resort to using yandex russia s search engine geocoding api that works like a charm but is not suitable for bulk queries another point is that there are desktop applications being developed that use geocode glib library gnome maps for example that in turn uses nominatim api inside the problem is that russian addresses nomenclature is very diverse and informal here is a brief summary if needed i can create a wiki article on that the street term includes not only a street proper but also side street passage avenue highway cul de sac bridge square and some others these are used in full or abbreviated form and can be both appended or prepended to the name sometimes major or minor are the part of the name and the word order is arbitrary thus and refer to the same examples the building number nomenclature is also very diverse usually there is a top level prefix house or property followed by the main number these prefixes can be abbreviated as r or even omitted besides the main number there can be also letter indexes different sub numbers and combinations of those letter index is a letter usually appended to the building number without a space sub building is either a or these are similar but not interchangeable these can be spelled full form or abbreviated in different ways as you see are short form and one letter form both period and space can be omitted when appending it to the main number moreover a sub building number can have a letter index itself finally the slash syntax is used when the building has dual address for example a building on the corner of two streets can be addressed as both and while full address is rarely but there can be ranges used as building numbers for example there is one single building with an address this means that this building should be a hit for requests like or but not there are even and odd sides of the street usually the ie and yo letters should be treated as identical the queries should be case insensitive as a solution i can imagine some code that canonicalizes the requested address for this to work all the russian addresses in osm will need to be canonicalized too probably with the help of the same code

| 1

|

2,621

| 2,607,932,514

|

IssuesEvent

|

2015-02-26 00:27:22

|

chrsmithdemos/minify

|

https://api.github.com/repos/chrsmithdemos/minify

|

closed

|

Re-arranging CSS to minimize the gzip output

|

auto-migrated Priority-Medium Type-Defect

|

```

I've found an interesting article about CSS compression and gzip.

It shows techniques to improve data compression by simply reordering CSS

properties.

I think it would be interesting to improve minify :)

Here is the link

http://www.barryvan.com.au/2009/08/css-minifier-and-alphabetiser/

Thanks for your tool !

(PS : Sorry, I've no time to implement that to minify myself, so I just

give you the idea :) )

```

-----

Original issue reported on code.google.com by `bist...@gmail.com` on 10 Sep 2009 at 1:54

|

1.0

|

Re-arranging CSS to minimize the gzip output - ```

I've found an interesting article about CSS compression and gzip.

It shows techniques to improve data compression by simply reordering CSS

properties.

I think it would be interesting to improve minify :)

Here is the link

http://www.barryvan.com.au/2009/08/css-minifier-and-alphabetiser/

Thanks for your tool !

(PS : Sorry, I've no time to implement that to minify myself, so I just

give you the idea :) )

```

-----

Original issue reported on code.google.com by `bist...@gmail.com` on 10 Sep 2009 at 1:54

|

defect

|

re arranging css to minimize the gzip output i ve found an interesting article about css compression and gzip it shows techniques to improve data compression by simply reordering css properties i think it would be interesting to improve minify here is the link thanks for your tool ps sorry i ve no time to implement that to minify myself so i just give you the idea original issue reported on code google com by bist gmail com on sep at

| 1

|

62,743

| 17,187,480,063

|

IssuesEvent

|

2021-07-16 05:46:18

|

Questie/Questie

|

https://api.github.com/repos/Questie/Questie

|

closed

|

Gathering Leather (768)

|

Questie - Journey Type - Defect

|

**Misplaced in the Journey feature.**

**Gathering Leather** (768)

This is an "Skinning" quest,

but appears for everyone.

Placed in Thunder Bluff section.

Should be placed in Profession section.

[Wowhead Link](https://classic.wowhead.com/quest=768/gathering-leather)

|

1.0

|

Gathering Leather (768) - **Misplaced in the Journey feature.**

**Gathering Leather** (768)

This is an "Skinning" quest,

but appears for everyone.

Placed in Thunder Bluff section.

Should be placed in Profession section.

[Wowhead Link](https://classic.wowhead.com/quest=768/gathering-leather)

|

defect

|

gathering leather misplaced in the journey feature gathering leather this is an skinning quest but appears for everyone placed in thunder bluff section should be placed in profession section

| 1

|

77,602

| 27,070,086,768

|

IssuesEvent

|

2023-02-14 05:49:35

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

gitter: shows "active" notification, but doesn't seem to go away no matter what I look at

|

T-Defect

|

### Steps to reproduce

1. Go to app.gitter.im

2. See the * on the title bar

3. Look at lots of things to try to make it go away

4. Cry

### Outcome

#### What did you expect?

No asterisk.

#### What happened instead?

Tears.

### Operating system

macOS

### Browser information

Chrome

### URL for webapp

app.gitter.im

### Application version

_No response_

### Homeserver

_No response_

### Will you send logs?

Yes

|

1.0

|

gitter: shows "active" notification, but doesn't seem to go away no matter what I look at - ### Steps to reproduce

1. Go to app.gitter.im

2. See the * on the title bar

3. Look at lots of things to try to make it go away

4. Cry

### Outcome

#### What did you expect?

No asterisk.

#### What happened instead?

Tears.

### Operating system

macOS

### Browser information

Chrome

### URL for webapp

app.gitter.im

### Application version

_No response_

### Homeserver

_No response_

### Will you send logs?

Yes

|

defect

|

gitter shows active notification but doesn t seem to go away no matter what i look at steps to reproduce go to app gitter im see the on the title bar look at lots of things to try to make it go away cry outcome what did you expect no asterisk what happened instead tears operating system macos browser information chrome url for webapp app gitter im application version no response homeserver no response will you send logs yes

| 1

|

57,046

| 14,101,821,590

|

IssuesEvent

|

2020-11-06 07:38:32

|

siyam4u/pingon

|

https://api.github.com/repos/siyam4u/pingon

|

opened

|

CVE-2018-19838 (Medium) detected in opennmsopennms-source-25.1.0-1, node-sass-4.14.1.tgz

|

security vulnerability

|

## CVE-2018-19838 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opennmsopennms-source-25.1.0-1</b>, <b>node-sass-4.14.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz</a></p>

<p>Path to dependency file: pingon/package.json</p>

<p>Path to vulnerable library: pingon/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- laravel-mix-1.7.2.tgz (Root Library)

- :x: **node-sass-4.14.1.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/siyam4u/pingon/commit/4d774e9827008423397c5c157b52249afe1da317">4d774e9827008423397c5c157b52249afe1da317</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass prior to 3.5.5, functions inside ast.cpp for IMPLEMENT_AST_OPERATORS expansion allow attackers to cause a denial-of-service resulting from stack consumption via a crafted sass file, as demonstrated by recursive calls involving clone(), cloneChildren(), and copy().

<p>Publish Date: 2018-12-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-19838>CVE-2018-19838</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sass/libsass/blob/3.6.0/src/ast.cpp">https://github.com/sass/libsass/blob/3.6.0/src/ast.cpp</a></p>

<p>Release Date: 2019-07-01</p>

<p>Fix Resolution: LibSass - 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2018-19838 (Medium) detected in opennmsopennms-source-25.1.0-1, node-sass-4.14.1.tgz - ## CVE-2018-19838 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>opennmsopennms-source-25.1.0-1</b>, <b>node-sass-4.14.1.tgz</b></p></summary>

<p>

<details><summary><b>node-sass-4.14.1.tgz</b></p></summary>

<p>Wrapper around libsass</p>

<p>Library home page: <a href="https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz">https://registry.npmjs.org/node-sass/-/node-sass-4.14.1.tgz</a></p>

<p>Path to dependency file: pingon/package.json</p>

<p>Path to vulnerable library: pingon/node_modules/node-sass/package.json</p>

<p>

Dependency Hierarchy:

- laravel-mix-1.7.2.tgz (Root Library)

- :x: **node-sass-4.14.1.tgz** (Vulnerable Library)

</details>

<p>Found in HEAD commit: <a href="https://github.com/siyam4u/pingon/commit/4d774e9827008423397c5c157b52249afe1da317">4d774e9827008423397c5c157b52249afe1da317</a></p>

<p>Found in base branch: <b>master</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

In LibSass prior to 3.5.5, functions inside ast.cpp for IMPLEMENT_AST_OPERATORS expansion allow attackers to cause a denial-of-service resulting from stack consumption via a crafted sass file, as demonstrated by recursive calls involving clone(), cloneChildren(), and copy().

<p>Publish Date: 2018-12-04

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2018-19838>CVE-2018-19838</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>6.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: Required

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/sass/libsass/blob/3.6.0/src/ast.cpp">https://github.com/sass/libsass/blob/3.6.0/src/ast.cpp</a></p>

<p>Release Date: 2019-07-01</p>

<p>Fix Resolution: LibSass - 3.6.0</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve medium detected in opennmsopennms source node sass tgz cve medium severity vulnerability vulnerable libraries opennmsopennms source node sass tgz node sass tgz wrapper around libsass library home page a href path to dependency file pingon package json path to vulnerable library pingon node modules node sass package json dependency hierarchy laravel mix tgz root library x node sass tgz vulnerable library found in head commit a href found in base branch master vulnerability details in libsass prior to functions inside ast cpp for implement ast operators expansion allow attackers to cause a denial of service resulting from stack consumption via a crafted sass file as demonstrated by recursive calls involving clone clonechildren and copy publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction required scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution libsass step up your open source security game with whitesource

| 0

|

61,675

| 17,023,754,837

|

IssuesEvent

|

2021-07-03 03:40:14

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

Tagging a way freezes tagging menu

|

Component: potlatch2 Priority: major Resolution: fixed Type: defect

|

**[Submitted to the original trac issue database at 2.48am, Sunday, 30th October 2011]**

I can add as many ways as I like without problems, but once I add tags to a way, the menu on the left freezes.

If I am using 'simple': once I select a road type, the drop-down menu remains down. I can move it back up by clicking on the tab, but if I click anywhere previously covered by the drop-down menu, the menu reappears. I can add new ways, and categorise them differently as long as it appears in the same section (e.g. if the first way is highway=trunk, I can select other types of road, but not switch to (e.g.) rail). The advanced tab no longer works, so I can't add other tags.

If I am using 'advanced': again I can add as many ways as I like, but once I create some tags, I am stuck using just this way - any clicks on the map adds a new node on the same way, double-clicking or deleting don't work. I can finish the way by pressing enter, but I can't then create any more ways or select the original way. (I can still drag nodes onto the map.)

I initially was using Bing imagery, I've turned this off to no effect.

I first noticed this on my 6-year old Mac at home, I initially thought it was because of my dial-up connection (slow, maybe not completely loading), but it is also happening on my new Windows 7 computer at work, broadband connection.

|

1.0

|

Tagging a way freezes tagging menu - **[Submitted to the original trac issue database at 2.48am, Sunday, 30th October 2011]**

I can add as many ways as I like without problems, but once I add tags to a way, the menu on the left freezes.

If I am using 'simple': once I select a road type, the drop-down menu remains down. I can move it back up by clicking on the tab, but if I click anywhere previously covered by the drop-down menu, the menu reappears. I can add new ways, and categorise them differently as long as it appears in the same section (e.g. if the first way is highway=trunk, I can select other types of road, but not switch to (e.g.) rail). The advanced tab no longer works, so I can't add other tags.

If I am using 'advanced': again I can add as many ways as I like, but once I create some tags, I am stuck using just this way - any clicks on the map adds a new node on the same way, double-clicking or deleting don't work. I can finish the way by pressing enter, but I can't then create any more ways or select the original way. (I can still drag nodes onto the map.)

I initially was using Bing imagery, I've turned this off to no effect.

I first noticed this on my 6-year old Mac at home, I initially thought it was because of my dial-up connection (slow, maybe not completely loading), but it is also happening on my new Windows 7 computer at work, broadband connection.

|

defect

|

tagging a way freezes tagging menu i can add as many ways as i like without problems but once i add tags to a way the menu on the left freezes if i am using simple once i select a road type the drop down menu remains down i can move it back up by clicking on the tab but if i click anywhere previously covered by the drop down menu the menu reappears i can add new ways and categorise them differently as long as it appears in the same section e g if the first way is highway trunk i can select other types of road but not switch to e g rail the advanced tab no longer works so i can t add other tags if i am using advanced again i can add as many ways as i like but once i create some tags i am stuck using just this way any clicks on the map adds a new node on the same way double clicking or deleting don t work i can finish the way by pressing enter but i can t then create any more ways or select the original way i can still drag nodes onto the map i initially was using bing imagery i ve turned this off to no effect i first noticed this on my year old mac at home i initially thought it was because of my dial up connection slow maybe not completely loading but it is also happening on my new windows computer at work broadband connection

| 1

|

38,358

| 8,786,462,134

|

IssuesEvent

|

2018-12-20 15:48:18

|

techo/voluntariado-eventual

|

https://api.github.com/repos/techo/voluntariado-eventual

|

closed

|

Si hago una búsqueda, al cambiar el presente, asistencia o pago (individual) en un usuario se cambia en otro

|

Defecto

|

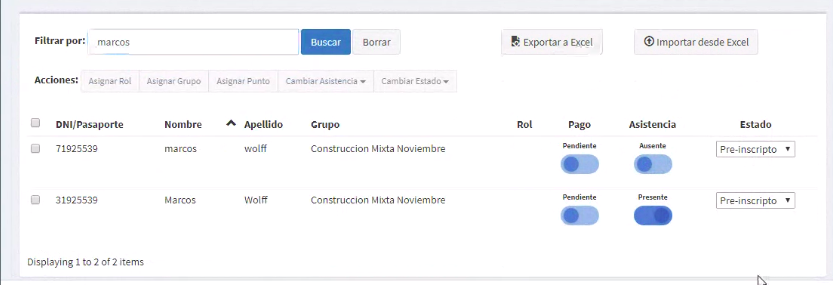

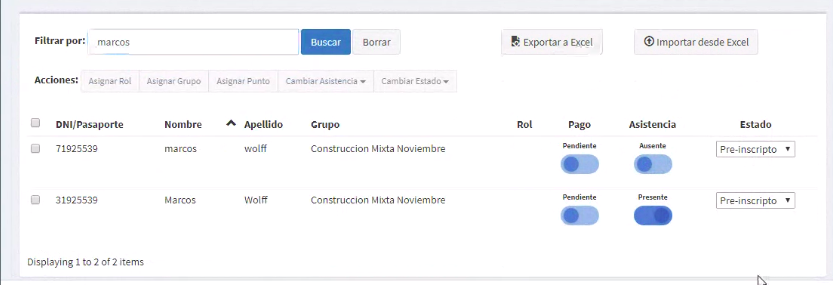

**Describí el error**

Al buscar un usuario y cambiarle la asistencia, si busco otro y hago lo mismo se modifican los dos.

**Para reproducirlo**

Pasos para reproducir el comportamiento:

1. Ir a Inscripciones

**2. Clickear en búqueda, tipear una búsqueda**

3. Cambiar el estado, asistencia o pago (individual)

4. Borrar la búsqueda.

5. Ver como el usuario que se modificó no quedó modificado, y se modificó otro random.

**Comportamiento esperando**

5. Se modifica correctamente el usuario

**Capturas de pantalla**

**Información adicional**

Probar el caso donde haya varias páginas

|

1.0

|

Si hago una búsqueda, al cambiar el presente, asistencia o pago (individual) en un usuario se cambia en otro - **Describí el error**

Al buscar un usuario y cambiarle la asistencia, si busco otro y hago lo mismo se modifican los dos.

**Para reproducirlo**

Pasos para reproducir el comportamiento:

1. Ir a Inscripciones

**2. Clickear en búqueda, tipear una búsqueda**

3. Cambiar el estado, asistencia o pago (individual)

4. Borrar la búsqueda.

5. Ver como el usuario que se modificó no quedó modificado, y se modificó otro random.

**Comportamiento esperando**

5. Se modifica correctamente el usuario

**Capturas de pantalla**

**Información adicional**

Probar el caso donde haya varias páginas

|

defect

|

si hago una búsqueda al cambiar el presente asistencia o pago individual en un usuario se cambia en otro describí el error al buscar un usuario y cambiarle la asistencia si busco otro y hago lo mismo se modifican los dos para reproducirlo pasos para reproducir el comportamiento ir a inscripciones clickear en búqueda tipear una búsqueda cambiar el estado asistencia o pago individual borrar la búsqueda ver como el usuario que se modificó no quedó modificado y se modificó otro random comportamiento esperando se modifica correctamente el usuario capturas de pantalla información adicional probar el caso donde haya varias páginas

| 1

|

30,918

| 2,729,473,202

|

IssuesEvent

|

2015-04-16 08:48:31

|

calblueprint/foodshift

|

https://api.github.com/repos/calblueprint/foodshift

|

closed

|

Add logo upload utility

|

high priority in progress

|

We want recipients and donors to upload their logos to their profiles so that these can show up on the "About" page.

|

1.0

|

Add logo upload utility - We want recipients and donors to upload their logos to their profiles so that these can show up on the "About" page.

|

non_defect

|

add logo upload utility we want recipients and donors to upload their logos to their profiles so that these can show up on the about page

| 0

|

76,782

| 9,963,494,054

|

IssuesEvent

|

2019-07-08 00:12:13

|

bongnv/kitgen

|

https://api.github.com/repos/bongnv/kitgen

|

opened

|

Add overview and introduction to the project

|

documentation

|

It would be more helpful if there is an overview and introduction to the project.

|

1.0

|

Add overview and introduction to the project - It would be more helpful if there is an overview and introduction to the project.

|

non_defect

|

add overview and introduction to the project it would be more helpful if there is an overview and introduction to the project

| 0

|

68,910

| 21,953,060,573

|

IssuesEvent

|

2022-05-24 09:35:53

|

primefaces/primeng

|

https://api.github.com/repos/primefaces/primeng

|

closed

|

ng-template won't load, missing internal SharedModule export inside p-menubar component

|

defect

|

**I'm submitting a bug report** (check one with "x")

```

[x] bug report => Search github for a similar issue or PR before submitting

[ ] feature request => Please check if request is not on the roadmap already https://github.com/primefaces/primeng/wiki/Roadmap

[ ] support request => Please do not submit support request here, instead see http://forum.primefaces.org/viewforum.php?f=35

```

**Current behavior**

<!-- Describe how the bug manifests. -->

`<ng-template pTemplate="start">...</ng-template>` and `<ng-template pTemplate="end">...</ng-template>` are not loaded into DOM when using p-menubar inside a component of a lazy loaded module. But if I import the undocumented SharedModule into the LazyLoaded module, it works fine.

**Expected behavior**

<!-- Describe what the behavior would be without the bug. -->

`<ng-template pTemplate="start">...</ng-template>` and `<ng-template pTemplate="end">...</ng-template>` must be loaded by p-menubar when using it inside a component inside a lazy loaded Angular Module without having to import SharedModule (the primeng/api SharedModule) like other component does (ex : p-dropdown that export SharedModule internally, see : https://github.com/primefaces/primeng/blob/master/src/app/components/dropdown/dropdown.ts).

**Minimal reproduction of the problem with instructions**

<!--

If the current behavior is a bug or you can illustrate your feature request better with an example,

please provide the *STEPS TO REPRODUCE* and if possible a *MINIMAL DEMO* of the problem via

https://plnkr.co or similar (you can use this template as a starting point: http://plnkr.co/edit/tpl:AvJOMERrnz94ekVua0u5).

-->

Exemple of the bug (with comment showing the solution and the problem in the contact-base.component.html and contact.module.ts :

https://stackblitz.com/edit/primeng-menubar-demo-29wkyw

**What is the motivation / use case for changing the behavior?**

<!-- Describe the motivation or the concrete use case -->

It's an undocumented behaviour.

The motivation is to improve consistency between the components of primeng (as some export SharedModule but p-menubar doesn't export it. And it will solve a bug too.

**Please tell us about your environment:**

<!-- Operating system, IDE, package manager, HTTP server, ... -->

* **Angular version:** 13.x (bug is not related to Angular)

<!-- Check whether this is still an issue in the most recent Angular version -->

* **PrimeNG version:** 13.4.0

<!-- Check whether this is still an issue in the most recent Angular version -->

* **Browser:** all

<!-- All browsers where this could be reproduced -->

Affect all browsers as this bug is not browser related.

* **Language:** TypeScript ~4.6.2 (the version provided with the latest Angular version when generating a new Angular project.)

* **Node (for AoT issues):** `node --version` = 16.14.2

On windows with NPM 8.8.0

Issue is not environment related.

Note : if you want, I can make a PR to solve the problem by exporting the PrimeNG internal SharedModule from p-menubar component (e.g : like primeng already does inside p-dropdown). If you accept PR, I will modify this file by exporting SharedModule in it https://github.com/primefaces/primeng/blob/master/src/app/components/menubar/menubar.ts.

Thank you for reading

|

1.0

|

ng-template won't load, missing internal SharedModule export inside p-menubar component - **I'm submitting a bug report** (check one with "x")

```

[x] bug report => Search github for a similar issue or PR before submitting