Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

94,767

| 3,932,136,687

|

IssuesEvent

|

2016-04-25 14:50:55

|

musiqpad/mqp-server

|

https://api.github.com/repos/musiqpad/mqp-server

|

closed

|

Ask for notification permission after toggled, not on event

|

Approved Bug High priority

|

The current way that mqp works is this: after you toggle one of the desktop notifications on musiqpad, it then asks you for permission to send a notification after the event in question has been fired. An alternative to this would be, on toggle of a setting, first checking if that permission is already granted, if not, prompt the user for permission.

It doesn't seem the best to ask after the event has been fired, which is what seems to be the case.

|

1.0

|

Ask for notification permission after toggled, not on event - The current way that mqp works is this: after you toggle one of the desktop notifications on musiqpad, it then asks you for permission to send a notification after the event in question has been fired. An alternative to this would be, on toggle of a setting, first checking if that permission is already granted, if not, prompt the user for permission.

It doesn't seem the best to ask after the event has been fired, which is what seems to be the case.

|

non_defect

|

ask for notification permission after toggled not on event the current way that mqp works is this after you toggle one of the desktop notifications on musiqpad it then asks you for permission to send a notification after the event in question has been fired an alternative to this would be on toggle of a setting first checking if that permission is already granted if not prompt the user for permission it doesn t seem the best to ask after the event has been fired which is what seems to be the case

| 0

|

211,984

| 23,856,907,272

|

IssuesEvent

|

2022-09-07 01:15:31

|

artkamote/wdio-test

|

https://api.github.com/repos/artkamote/wdio-test

|

opened

|

WS-2021-0638 (High) detected in mocha-9.1.3.tgz

|

security vulnerability

|

## WS-2021-0638 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mocha-9.1.3.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-9.1.3.tgz">https://registry.npmjs.org/mocha/-/mocha-9.1.3.tgz</a></p>

<p>Path to dependency file: /qa-tech-challenge/package.json</p>

<p>Path to vulnerable library: /qa-tech-challenge/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- mocha-framework-7.16.1.tgz (Root Library)

- :x: **mocha-9.1.3.tgz** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There is regular Expression Denial of Service (ReDoS) vulnerability in mocha.

It allows cause a denial of service when stripping crafted invalid function definition from strs.

<p>Publish Date: 2021-09-18

<p>URL: <a href=https://github.com/mochajs/mocha/commit/61b4b9209c2c64b32c8d48b1761c3b9384d411ea>WS-2021-0638</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/1d8a3d95-d199-4129-a6ad-8eafe5e77b9e/">https://huntr.dev/bounties/1d8a3d95-d199-4129-a6ad-8eafe5e77b9e/</a></p>

<p>Release Date: 2021-09-18</p>

<p>Fix Resolution: https://github.com/mochajs/mocha/commit/61b4b9209c2c64b32c8d48b1761c3b9384d411ea</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

WS-2021-0638 (High) detected in mocha-9.1.3.tgz - ## WS-2021-0638 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>mocha-9.1.3.tgz</b></p></summary>

<p>simple, flexible, fun test framework</p>

<p>Library home page: <a href="https://registry.npmjs.org/mocha/-/mocha-9.1.3.tgz">https://registry.npmjs.org/mocha/-/mocha-9.1.3.tgz</a></p>

<p>Path to dependency file: /qa-tech-challenge/package.json</p>

<p>Path to vulnerable library: /qa-tech-challenge/node_modules/mocha/package.json</p>

<p>

Dependency Hierarchy:

- mocha-framework-7.16.1.tgz (Root Library)

- :x: **mocha-9.1.3.tgz** (Vulnerable Library)

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

There is regular Expression Denial of Service (ReDoS) vulnerability in mocha.

It allows cause a denial of service when stripping crafted invalid function definition from strs.

<p>Publish Date: 2021-09-18

<p>URL: <a href=https://github.com/mochajs/mocha/commit/61b4b9209c2c64b32c8d48b1761c3b9384d411ea>WS-2021-0638</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://huntr.dev/bounties/1d8a3d95-d199-4129-a6ad-8eafe5e77b9e/">https://huntr.dev/bounties/1d8a3d95-d199-4129-a6ad-8eafe5e77b9e/</a></p>

<p>Release Date: 2021-09-18</p>

<p>Fix Resolution: https://github.com/mochajs/mocha/commit/61b4b9209c2c64b32c8d48b1761c3b9384d411ea</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

ws high detected in mocha tgz ws high severity vulnerability vulnerable library mocha tgz simple flexible fun test framework library home page a href path to dependency file qa tech challenge package json path to vulnerable library qa tech challenge node modules mocha package json dependency hierarchy mocha framework tgz root library x mocha tgz vulnerable library found in base branch main vulnerability details there is regular expression denial of service redos vulnerability in mocha it allows cause a denial of service when stripping crafted invalid function definition from strs publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

735,437

| 25,397,573,433

|

IssuesEvent

|

2022-11-22 09:46:09

|

kubernetes/website

|

https://api.github.com/repos/kubernetes/website

|

closed

|

Add examples for creating an object to new-style API reference

|

kind/feature priority/backlog lifecycle/rotten language/en triage/accepted

|

A few examples to use API methods will be helpful. Documentation is missing the examples.

Example page: https://k8s.io/docs/reference/kubernetes-api/cluster-resources/node-v1/

|

1.0

|

Add examples for creating an object to new-style API reference - A few examples to use API methods will be helpful. Documentation is missing the examples.

Example page: https://k8s.io/docs/reference/kubernetes-api/cluster-resources/node-v1/

|

non_defect

|

add examples for creating an object to new style api reference a few examples to use api methods will be helpful documentation is missing the examples example page

| 0

|

501,905

| 14,536,325,067

|

IssuesEvent

|

2020-12-15 07:25:33

|

magento/magento2

|

https://api.github.com/repos/magento/magento2

|

closed

|

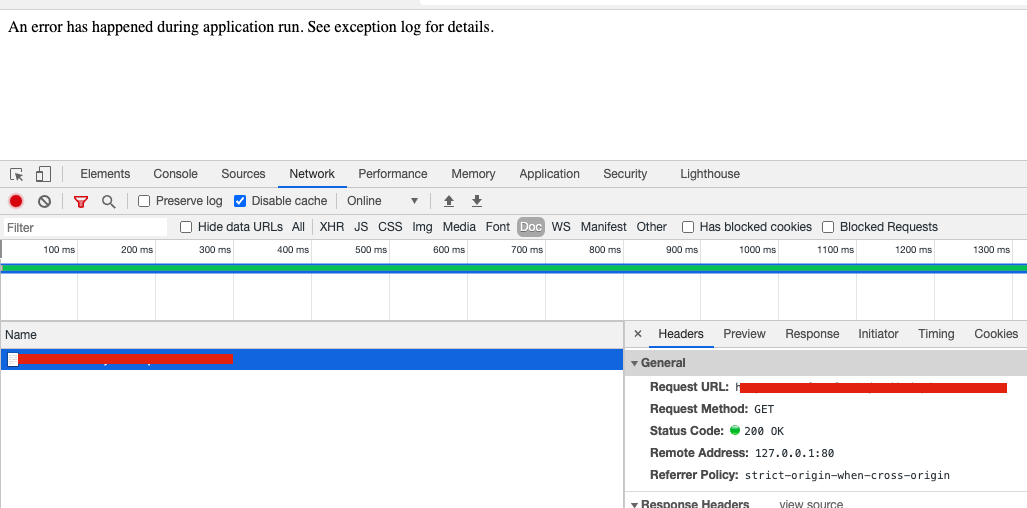

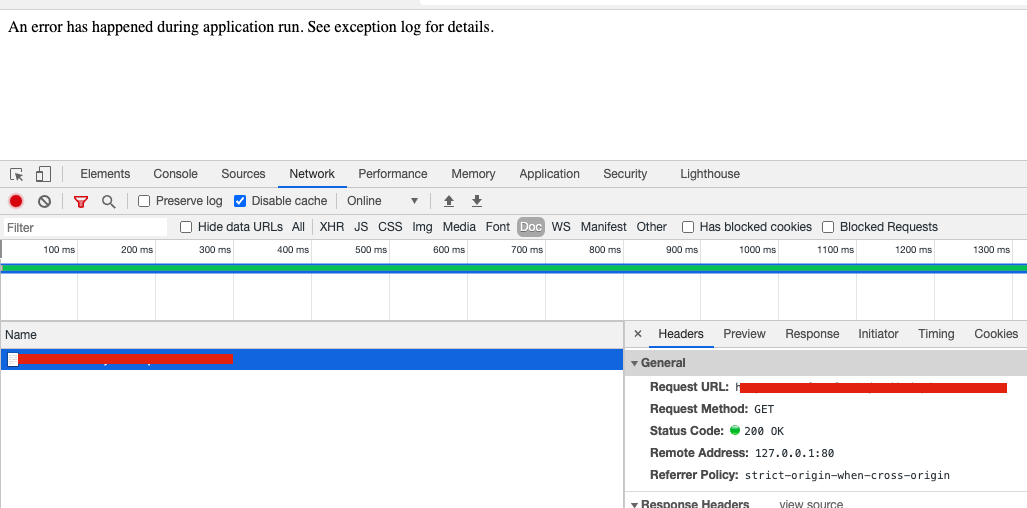

200 Response Code When Exception is Thrown During Bootstrap

|

Component: DB Fixed in 2.4.x Issue: Confirmed Priority: P3 Progress: PR in progress Reproduced on 2.4.x Severity: S3

|

<!---

Please review our guidelines before adding a new issue: https://github.com/magento/magento2/wiki/Issue-reporting-guidelines

Fields marked with (*) are required. Please don't remove the template.

-->

### Preconditions (*)

<!---

Provide the exact Magento version (example: 2.4.0) and any important information on the environment where bug is reproducible.

-->

1. Version 2.4.1

2. PHP 7.4

### Steps to reproduce (*)

<!---

Important: Provide a set of clear steps to reproduce this bug. We can not provide support without clear instructions on how to reproduce.

-->

1. Change your DB host to something wrong.

### Expected result (*)

<!--- Tell us what do you expect to happen. -->

1. An error message is displayed and a 500 response code is returned.

### Actual result (*)

<!--- Tell us what happened instead. Include error messages and issues. -->

1. An error message is displayed and the http response code is 200 OK.

Very annoying for probes who think the pod is OK & Running...

### Additiona; info from Engcom

full env.php (Removed crypt key) :

```

<?php

return [

'backend' => [

'frontName' => 'admin',

],

'queue' => [

'consumers_wait_for_messages' => 1,

],

'crypt' => [

'key' => '****',

],

'db' => [

'table_prefix' => '',

'connection' => [

'default' => [

'host' => 'nonexisting',

'dbname' => 'whatever',

'username' => 'root',

'password' => 'root',

'model' => 'mysql4',

'engine' => 'innodb',

'initStatements' => 'SET NAMES utf8;',

'active' => '1',

'driver_options' => [

1014 => false,

],

],

],

],

'resource' => [

'default_setup' => [

'connection' => 'default',

],

],

'x-frame-options' => 'SAMEORIGIN',

'MAGE_MODE' => 'production',

'session' => [

'save' => 'files',

],

'cache' => [

'frontend' => [

'default' => [

'id_prefix' => '707_',

],

'page_cache' => [

'id_prefix' => '707_',

],

],

'allow_parallel_generation' => false,

],

'lock' => [

'provider' => 'db',

'config' => [

'prefix' => '',

],

],

'cache_types' => [

'config' => 0,

'layout' => 0,

'block_html' => 0,

'collections' => 0,

'reflection' => 0,

'db_ddl' => 0,

'compiled_config' => 1,

'eav' => 0,

'customer_notification' => 0,

'config_integration' => 0,

'config_integration_api' => 0,

'full_page' => 0,

'config_webservice' => 0,

'translate' => 0,

'vertex' => 0,

],

'downloadable_domains' => [

],

'install' => [

'date' => 'Fri, 09 Oct 2020 12:13:31 +0000',

],

];

```

I havn't any extra module enabled.

|

1.0

|

200 Response Code When Exception is Thrown During Bootstrap - <!---

Please review our guidelines before adding a new issue: https://github.com/magento/magento2/wiki/Issue-reporting-guidelines

Fields marked with (*) are required. Please don't remove the template.

-->

### Preconditions (*)

<!---

Provide the exact Magento version (example: 2.4.0) and any important information on the environment where bug is reproducible.

-->

1. Version 2.4.1

2. PHP 7.4

### Steps to reproduce (*)

<!---

Important: Provide a set of clear steps to reproduce this bug. We can not provide support without clear instructions on how to reproduce.

-->

1. Change your DB host to something wrong.

### Expected result (*)

<!--- Tell us what do you expect to happen. -->

1. An error message is displayed and a 500 response code is returned.

### Actual result (*)

<!--- Tell us what happened instead. Include error messages and issues. -->

1. An error message is displayed and the http response code is 200 OK.

Very annoying for probes who think the pod is OK & Running...

### Additiona; info from Engcom

full env.php (Removed crypt key) :

```

<?php

return [

'backend' => [

'frontName' => 'admin',

],

'queue' => [

'consumers_wait_for_messages' => 1,

],

'crypt' => [

'key' => '****',

],

'db' => [

'table_prefix' => '',

'connection' => [

'default' => [

'host' => 'nonexisting',

'dbname' => 'whatever',

'username' => 'root',

'password' => 'root',

'model' => 'mysql4',

'engine' => 'innodb',

'initStatements' => 'SET NAMES utf8;',

'active' => '1',

'driver_options' => [

1014 => false,

],

],

],

],

'resource' => [

'default_setup' => [

'connection' => 'default',

],

],

'x-frame-options' => 'SAMEORIGIN',

'MAGE_MODE' => 'production',

'session' => [

'save' => 'files',

],

'cache' => [

'frontend' => [

'default' => [

'id_prefix' => '707_',

],

'page_cache' => [

'id_prefix' => '707_',

],

],

'allow_parallel_generation' => false,

],

'lock' => [

'provider' => 'db',

'config' => [

'prefix' => '',

],

],

'cache_types' => [

'config' => 0,

'layout' => 0,

'block_html' => 0,

'collections' => 0,

'reflection' => 0,

'db_ddl' => 0,

'compiled_config' => 1,

'eav' => 0,

'customer_notification' => 0,

'config_integration' => 0,

'config_integration_api' => 0,

'full_page' => 0,

'config_webservice' => 0,

'translate' => 0,

'vertex' => 0,

],

'downloadable_domains' => [

],

'install' => [

'date' => 'Fri, 09 Oct 2020 12:13:31 +0000',

],

];

```

I havn't any extra module enabled.

|

non_defect

|

response code when exception is thrown during bootstrap please review our guidelines before adding a new issue fields marked with are required please don t remove the template preconditions provide the exact magento version example and any important information on the environment where bug is reproducible version php steps to reproduce important provide a set of clear steps to reproduce this bug we can not provide support without clear instructions on how to reproduce change your db host to something wrong expected result an error message is displayed and a response code is returned actual result an error message is displayed and the http response code is ok very annoying for probes who think the pod is ok running additiona info from engcom full env php removed crypt key php return backend frontname admin queue consumers wait for messages crypt key db table prefix connection default host nonexisting dbname whatever username root password root model engine innodb initstatements set names active driver options false resource default setup connection default x frame options sameorigin mage mode production session save files cache frontend default id prefix page cache id prefix allow parallel generation false lock provider db config prefix cache types config layout block html collections reflection db ddl compiled config eav customer notification config integration config integration api full page config webservice translate vertex downloadable domains install date fri oct i havn t any extra module enabled

| 0

|

616,658

| 19,309,189,620

|

IssuesEvent

|

2021-12-13 14:39:53

|

oncokb/oncokb

|

https://api.github.com/repos/oncokb/oncokb

|

opened

|

null consequence on variant 17:g.59926628T>C

|

bug high priority

|

The variant is supposed to have `intro_variant` consequence.

|

1.0

|

null consequence on variant 17:g.59926628T>C - The variant is supposed to have `intro_variant` consequence.

|

non_defect

|

null consequence on variant g c the variant is supposed to have intro variant consequence

| 0

|

72,376

| 24,085,070,806

|

IssuesEvent

|

2022-09-19 10:10:09

|

SeleniumHQ/selenium

|

https://api.github.com/repos/SeleniumHQ/selenium

|

opened

|

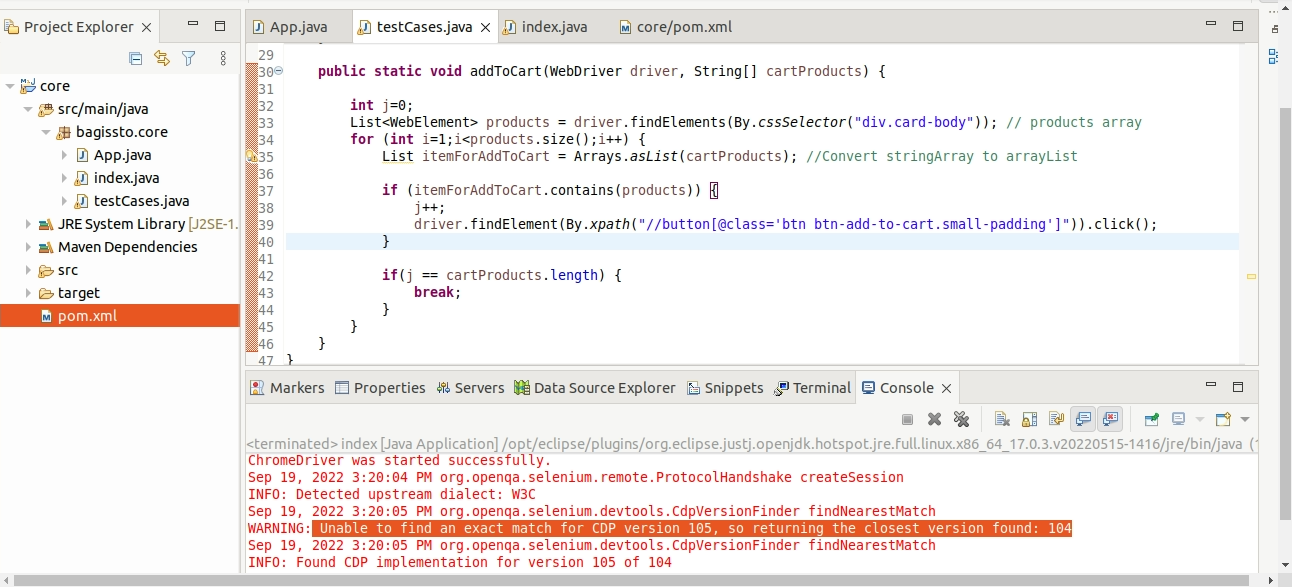

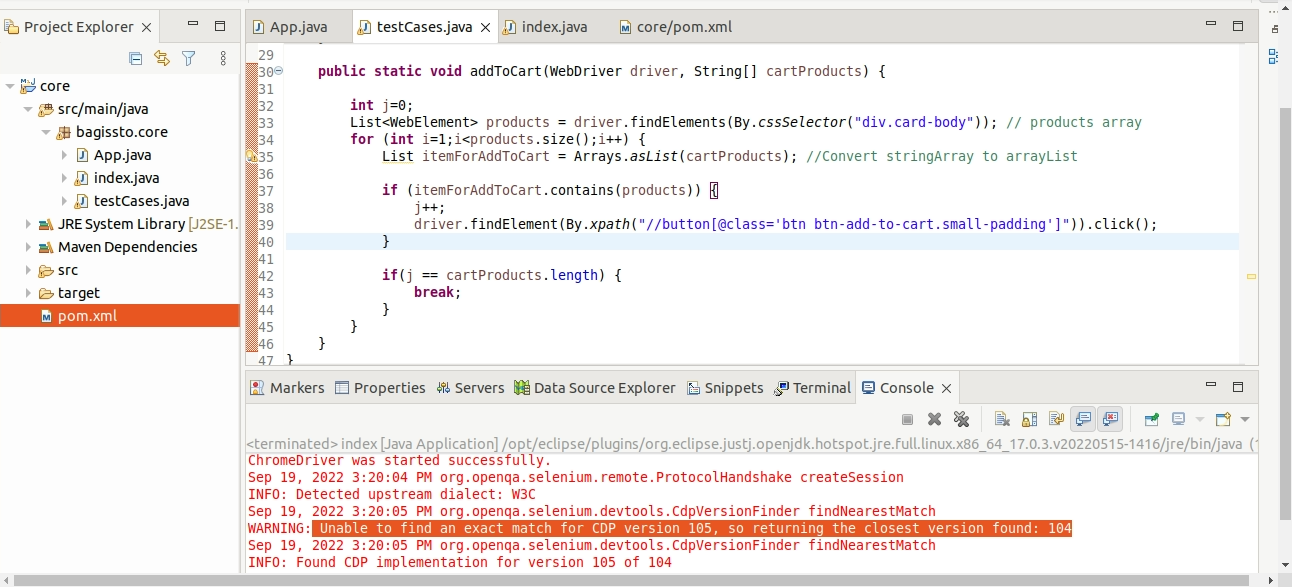

[🐛 Bug]: Unable to find an exact match for CDP version 105, so returning the closest version found: 104

|

I-defect needs-triaging

|

### What happened?

**Selenium version:** 4.4.0

**Actual result:** Unable to find an exact match for CDP version 105, so returning the closest version found: 104

### How can we reproduce the issue?

```shell

WebDriver driver = new ChromeDriver();

openBrowser(driver,"http://192.168.15.237/bagisto-demo/public/");

String[] cartProducts = {"Men's Polo T-shirt","Sunglasses"}; // products for add to cart.

addToCart(driver,cartProducts); // add-Product-to-cart

public static void addToCart(WebDriver driver, String[] cartProducts) {

int j=0;

List<WebElement> products = driver.findElements(By.cssSelector("div.card-body"));

for (int i=1;i<products.size();i++) {

List itemForAddToCart = Arrays.asList(cartProducts);

if (itemForAddToCart.contains(products)) {

j++;

driver.findElement(By.xpath("//button[@class='btn btn-add-to-cart.small-padding']")).click();

}

if(j == cartProducts.length) {

break;

}

}

}

```

### Relevant log output

```shell

Sep 19, 2022 3:20:04 PM org.openqa.selenium.remote.ProtocolHandshake createSession

INFO: Detected upstream dialect: W3C

Sep 19, 2022 3:20:05 PM org.openqa.selenium.devtools.CdpVersionFinder findNearestMatch

WARNING: Unable to find an exact match for CDP version 105, so returning the closest version found: 104

Sep 19, 2022 3:20:05 PM org.openqa.selenium.devtools.CdpVersionFinder findNearestMatch

INFO: Found CDP implementation for version 105 of 104

```

### Operating System

ubunt 64bit

### Selenium version

java: 18.0.2.1, selenium: 4.4.0

### What are the browser(s) and version(s) where you see this issue?

ChromeDriver, v.105.0.5195.125

### What are the browser driver(s) and version(s) where you see this issue?

ChromeDriver, v.105.0.5195.125

### Are you using Selenium Grid?

no

|

1.0

|

[🐛 Bug]: Unable to find an exact match for CDP version 105, so returning the closest version found: 104 - ### What happened?

**Selenium version:** 4.4.0

**Actual result:** Unable to find an exact match for CDP version 105, so returning the closest version found: 104

### How can we reproduce the issue?

```shell

WebDriver driver = new ChromeDriver();

openBrowser(driver,"http://192.168.15.237/bagisto-demo/public/");

String[] cartProducts = {"Men's Polo T-shirt","Sunglasses"}; // products for add to cart.

addToCart(driver,cartProducts); // add-Product-to-cart

public static void addToCart(WebDriver driver, String[] cartProducts) {

int j=0;

List<WebElement> products = driver.findElements(By.cssSelector("div.card-body"));

for (int i=1;i<products.size();i++) {

List itemForAddToCart = Arrays.asList(cartProducts);

if (itemForAddToCart.contains(products)) {

j++;

driver.findElement(By.xpath("//button[@class='btn btn-add-to-cart.small-padding']")).click();

}

if(j == cartProducts.length) {

break;

}

}

}

```

### Relevant log output

```shell

Sep 19, 2022 3:20:04 PM org.openqa.selenium.remote.ProtocolHandshake createSession

INFO: Detected upstream dialect: W3C

Sep 19, 2022 3:20:05 PM org.openqa.selenium.devtools.CdpVersionFinder findNearestMatch

WARNING: Unable to find an exact match for CDP version 105, so returning the closest version found: 104

Sep 19, 2022 3:20:05 PM org.openqa.selenium.devtools.CdpVersionFinder findNearestMatch

INFO: Found CDP implementation for version 105 of 104

```

### Operating System

ubunt 64bit

### Selenium version

java: 18.0.2.1, selenium: 4.4.0

### What are the browser(s) and version(s) where you see this issue?

ChromeDriver, v.105.0.5195.125

### What are the browser driver(s) and version(s) where you see this issue?

ChromeDriver, v.105.0.5195.125

### Are you using Selenium Grid?

no

|

defect

|

unable to find an exact match for cdp version so returning the closest version found what happened selenium version actual result unable to find an exact match for cdp version so returning the closest version found how can we reproduce the issue shell webdriver driver new chromedriver openbrowser driver string cartproducts men s polo t shirt sunglasses products for add to cart addtocart driver cartproducts add product to cart public static void addtocart webdriver driver string cartproducts int j list products driver findelements by cssselector div card body for int i i products size i list itemforaddtocart arrays aslist cartproducts if itemforaddtocart contains products j driver findelement by xpath button click if j cartproducts length break relevant log output shell sep pm org openqa selenium remote protocolhandshake createsession info detected upstream dialect sep pm org openqa selenium devtools cdpversionfinder findnearestmatch warning unable to find an exact match for cdp version so returning the closest version found sep pm org openqa selenium devtools cdpversionfinder findnearestmatch info found cdp implementation for version of operating system ubunt selenium version java selenium what are the browser s and version s where you see this issue chromedriver v what are the browser driver s and version s where you see this issue chromedriver v are you using selenium grid no

| 1

|

352,802

| 25,082,880,040

|

IssuesEvent

|

2022-11-07 20:56:45

|

hashicorp/terraform-provider-aws

|

https://api.github.com/repos/hashicorp/terraform-provider-aws

|

closed

|

[Docs]: Fix Broken/Cluttered RDS Tutorial Link

|

documentation needs-triage

|

### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/db_instance

### Description

The documentation has the following paragraph referring users to the tutorial section:

> Hands-on: Try the [Manage AWS RDS Instances](https://learn.hashicorp.com/tutorials/terraform/aws-rds?in=terraform/modules&utm_source=WEBSITE&utm_medium=WEB_IO&utm_offer=ARTICLE_PAGE&utm_content=DOCS) tutorial on HashiCorp Learn.

For me this results in a dead/broken link and the page telling me "We couldn't find the page you're looking for.", but if I remove all the clutter from the URL and enter just the base `https://learn.hashicorp.com/tutorials/terraform/aws-rds` I successfully land at the desired tutorial page.

I suggest you remove the following part from the URL:

`?in=terraform/modules&utm_source=WEBSITE&utm_medium=WEB_IO&utm_offer=ARTICLE_PAGE&utm_content=DOCS`

### References

_No response_

### Would you like to implement a fix?

_No response_

|

1.0

|

[Docs]: Fix Broken/Cluttered RDS Tutorial Link - ### Documentation Link

https://registry.terraform.io/providers/hashicorp/aws/latest/docs/resources/db_instance

### Description

The documentation has the following paragraph referring users to the tutorial section:

> Hands-on: Try the [Manage AWS RDS Instances](https://learn.hashicorp.com/tutorials/terraform/aws-rds?in=terraform/modules&utm_source=WEBSITE&utm_medium=WEB_IO&utm_offer=ARTICLE_PAGE&utm_content=DOCS) tutorial on HashiCorp Learn.

For me this results in a dead/broken link and the page telling me "We couldn't find the page you're looking for.", but if I remove all the clutter from the URL and enter just the base `https://learn.hashicorp.com/tutorials/terraform/aws-rds` I successfully land at the desired tutorial page.

I suggest you remove the following part from the URL:

`?in=terraform/modules&utm_source=WEBSITE&utm_medium=WEB_IO&utm_offer=ARTICLE_PAGE&utm_content=DOCS`

### References

_No response_

### Would you like to implement a fix?

_No response_

|

non_defect

|

fix broken cluttered rds tutorial link documentation link description the documentation has the following paragraph referring users to the tutorial section hands on try the tutorial on hashicorp learn for me this results in a dead broken link and the page telling me we couldn t find the page you re looking for but if i remove all the clutter from the url and enter just the base i successfully land at the desired tutorial page i suggest you remove the following part from the url in terraform modules utm source website utm medium web io utm offer article page utm content docs references no response would you like to implement a fix no response

| 0

|

168,435

| 6,375,307,568

|

IssuesEvent

|

2017-08-02 02:23:10

|

minio/minio-go

|

https://api.github.com/repos/minio/minio-go

|

closed

|

S3 server at wasabi.com doesn't support streaming uploads from minio

|

priority: medium triage working as intended

|

Hi,

The S3 server at wasabi.com seems to not support streaming uploads from minio.

The upload succeeds, but, when downloading the file, it has an actually downloaded content like

```

1df;chunk-signature=0fe0ec35__________________(redacted)____________________b4bd5f30

my content here...

0;chunk-signature=eac700d9__________________(redacted)____________________b6091939

```

It probably would work fine if i used minio's non-streaming API instead.

But, is there anything minio can do? Somehow detect whether streaming is supported by this server, or, throw an error if the data is not streamed successfully?

Regards

mappu

CC @wasabi-tech @jcflowers

|

1.0

|

S3 server at wasabi.com doesn't support streaming uploads from minio - Hi,

The S3 server at wasabi.com seems to not support streaming uploads from minio.

The upload succeeds, but, when downloading the file, it has an actually downloaded content like

```

1df;chunk-signature=0fe0ec35__________________(redacted)____________________b4bd5f30

my content here...

0;chunk-signature=eac700d9__________________(redacted)____________________b6091939

```

It probably would work fine if i used minio's non-streaming API instead.

But, is there anything minio can do? Somehow detect whether streaming is supported by this server, or, throw an error if the data is not streamed successfully?

Regards

mappu

CC @wasabi-tech @jcflowers

|

non_defect

|

server at wasabi com doesn t support streaming uploads from minio hi the server at wasabi com seems to not support streaming uploads from minio the upload succeeds but when downloading the file it has an actually downloaded content like chunk signature redacted my content here chunk signature redacted it probably would work fine if i used minio s non streaming api instead but is there anything minio can do somehow detect whether streaming is supported by this server or throw an error if the data is not streamed successfully regards mappu cc wasabi tech jcflowers

| 0

|

250,775

| 21,335,646,687

|

IssuesEvent

|

2022-04-18 14:16:51

|

hoppscotch/hoppscotch

|

https://api.github.com/repos/hoppscotch/hoppscotch

|

reopened

|

[bug]: synchronize history message keeps showing up

|

bug need testing

|

### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current behavior

Every time I log in to hop and use the REST tool, the sync history message keeps popping up. In the video I show the case.

https://user-images.githubusercontent.com/56084970/162647659-9a2995da-34aa-418c-ba9b-83c4207ca991.mp4

### Steps to reproduce

1. Open hoppscotch

2. Go to tool REST

3. See error

### Environment

Production

### Version

Cloud

|

1.0

|

[bug]: synchronize history message keeps showing up - ### Is there an existing issue for this?

- [X] I have searched the existing issues

### Current behavior

Every time I log in to hop and use the REST tool, the sync history message keeps popping up. In the video I show the case.

https://user-images.githubusercontent.com/56084970/162647659-9a2995da-34aa-418c-ba9b-83c4207ca991.mp4

### Steps to reproduce

1. Open hoppscotch

2. Go to tool REST

3. See error

### Environment

Production

### Version

Cloud

|

non_defect

|

synchronize history message keeps showing up is there an existing issue for this i have searched the existing issues current behavior every time i log in to hop and use the rest tool the sync history message keeps popping up in the video i show the case steps to reproduce open hoppscotch go to tool rest see error environment production version cloud

| 0

|

55,715

| 14,654,132,422

|

IssuesEvent

|

2020-12-28 07:55:51

|

SAP/fundamental-ngx

|

https://api.github.com/repos/SAP/fundamental-ngx

|

closed

|

Bug: (Core) Toolbar – Doesn't work correct with a lot of items and shouldOverflow option

|

Defect Hunting bug core denoland

|

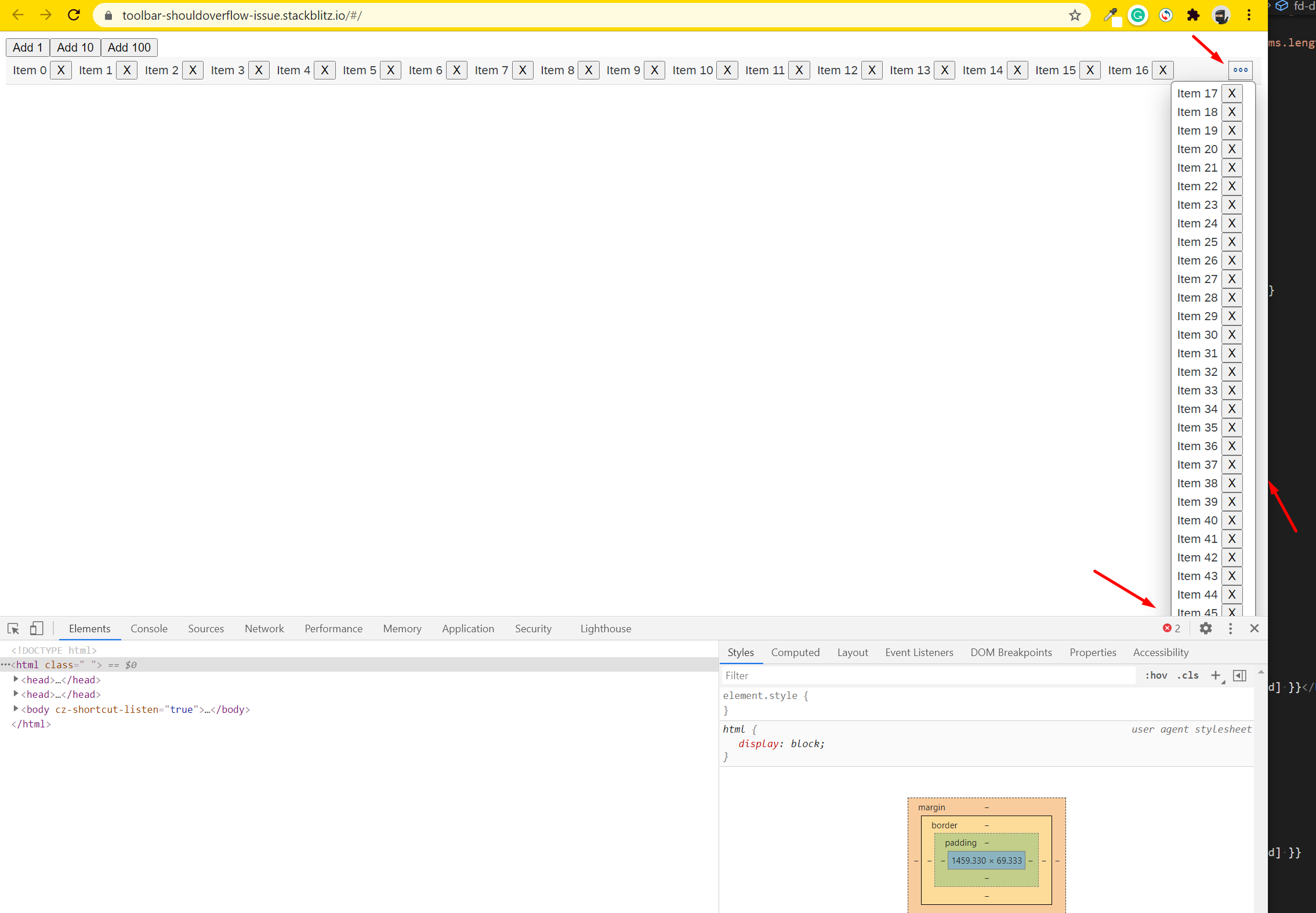

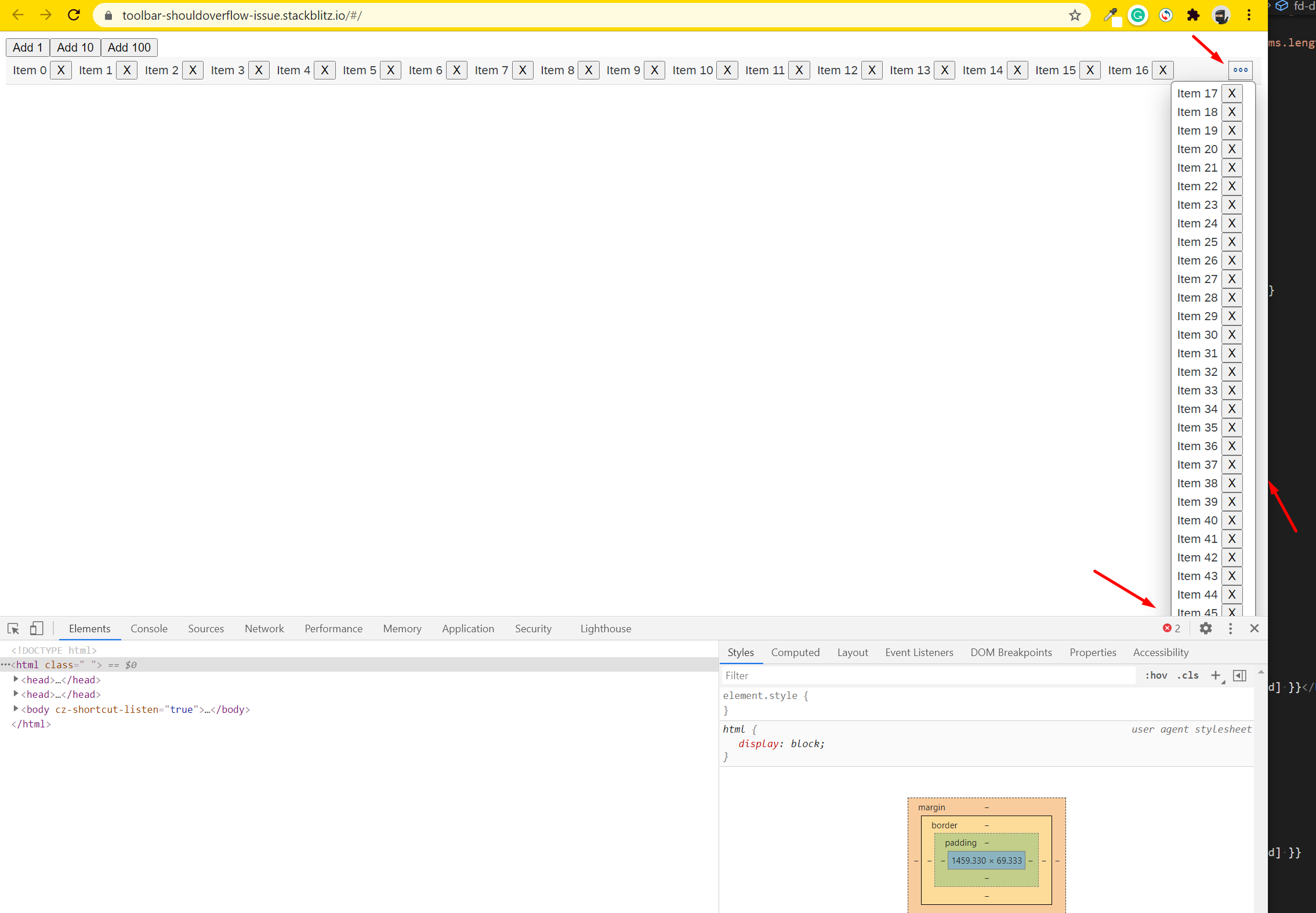

#### Is this a bug, enhancement, or feature request?

Bug

#### Briefly describe your proposal.

Toolbar with `shouldOverflow` doesn't refresh view after changes `toolbar-item`.

Only resize window trigged.

Also when we have a lot of clamped items, we don't have scroll in popover or on the page in general.

Please see screenshot

#### If this is a bug, please provide steps for reproducing it.

Add/remove items.

Please see example on [stackblitz](https://stackblitz.com/edit/toolbar-shouldoverflow-issue).

#### Please provide relevant source code if applicable.

https://stackblitz.com/edit/toolbar-shouldoverflow-issue

#### Is there anything else we should know?

Also, It would be great if developer have access to refresh toolbar state.

|

1.0

|

Bug: (Core) Toolbar – Doesn't work correct with a lot of items and shouldOverflow option - #### Is this a bug, enhancement, or feature request?

Bug

#### Briefly describe your proposal.

Toolbar with `shouldOverflow` doesn't refresh view after changes `toolbar-item`.

Only resize window trigged.

Also when we have a lot of clamped items, we don't have scroll in popover or on the page in general.

Please see screenshot

#### If this is a bug, please provide steps for reproducing it.

Add/remove items.

Please see example on [stackblitz](https://stackblitz.com/edit/toolbar-shouldoverflow-issue).

#### Please provide relevant source code if applicable.

https://stackblitz.com/edit/toolbar-shouldoverflow-issue

#### Is there anything else we should know?

Also, It would be great if developer have access to refresh toolbar state.

|

defect

|

bug core toolbar – doesn t work correct with a lot of items and shouldoverflow option is this a bug enhancement or feature request bug briefly describe your proposal toolbar with shouldoverflow doesn t refresh view after changes toolbar item only resize window trigged also when we have a lot of clamped items we don t have scroll in popover or on the page in general please see screenshot if this is a bug please provide steps for reproducing it add remove items please see example on please provide relevant source code if applicable is there anything else we should know also it would be great if developer have access to refresh toolbar state

| 1

|

68,630

| 21,770,097,537

|

IssuesEvent

|

2022-05-13 08:13:34

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

Emoji is clipped at the top in the composer

|

T-Defect X-Regression S-Minor A-Composer O-Frequent

|

### Steps to reproduce

<img width="289" alt="Screen Shot 2022-05-13 at 10 12 05" src="https://user-images.githubusercontent.com/769871/168240568-1386b0fc-8593-4306-bead-c543f82d7b06.png">

### Outcome

#### What did you expect?

For the emoji not to be clipped

#### What happened instead?

See the screenshot

### Operating system

_No response_

### Browser information

_No response_

### URL for webapp

_No response_

### Application version

_No response_

### Homeserver

_No response_

### Will you send logs?

No

|

1.0

|

Emoji is clipped at the top in the composer - ### Steps to reproduce

<img width="289" alt="Screen Shot 2022-05-13 at 10 12 05" src="https://user-images.githubusercontent.com/769871/168240568-1386b0fc-8593-4306-bead-c543f82d7b06.png">

### Outcome

#### What did you expect?

For the emoji not to be clipped

#### What happened instead?

See the screenshot

### Operating system

_No response_

### Browser information

_No response_

### URL for webapp

_No response_

### Application version

_No response_

### Homeserver

_No response_

### Will you send logs?

No

|

defect

|

emoji is clipped at the top in the composer steps to reproduce img width alt screen shot at src outcome what did you expect for the emoji not to be clipped what happened instead see the screenshot operating system no response browser information no response url for webapp no response application version no response homeserver no response will you send logs no

| 1

|

18,442

| 3,061,294,903

|

IssuesEvent

|

2015-08-15 11:33:41

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

closed

|

Record.getValue(Field) returns wrong value if ambiguous column names are contained in the record, and the schema name is not present in the argument

|

C: Functionality P: Medium R: Fixed T: Defect

|

When fetching data from the database that contains ambiguous column names, e.g.

```sql

select b.id, a.id

from t_author a

join t_book b

on a.id = b.author_id

order by b.id, a.id

```

... resulting in

```

+----+----+

| id| id|

+----+----+

| 1| 1|

| 2| 1|

| 3| 2|

| 4| 2|

+----+----+

```

Then the `Record.getValue(Field)` or `Result.getValue(Field)` method doesn't work correctly, if the schema name isn't present in both:

- The record's field

- The argument's field

E.g., since #4283, the schema name is fetched from those JDBC drivers that support it, but not from PostgreSQL. When getting the value via `record.getValue(field(name("public", "t_author", "id")))` in PostgreSQL, the value is wrong (that of `t_book.id`).

Conversely, when using generated tables / columns, the schema is always present. But when getting the value via `record.getValue(field(name("t_author", "id")))`, the value is again wrong.

----

See also: #4283

|

1.0

|

Record.getValue(Field) returns wrong value if ambiguous column names are contained in the record, and the schema name is not present in the argument - When fetching data from the database that contains ambiguous column names, e.g.

```sql

select b.id, a.id

from t_author a

join t_book b

on a.id = b.author_id

order by b.id, a.id

```

... resulting in

```

+----+----+

| id| id|

+----+----+

| 1| 1|

| 2| 1|

| 3| 2|

| 4| 2|

+----+----+

```

Then the `Record.getValue(Field)` or `Result.getValue(Field)` method doesn't work correctly, if the schema name isn't present in both:

- The record's field

- The argument's field

E.g., since #4283, the schema name is fetched from those JDBC drivers that support it, but not from PostgreSQL. When getting the value via `record.getValue(field(name("public", "t_author", "id")))` in PostgreSQL, the value is wrong (that of `t_book.id`).

Conversely, when using generated tables / columns, the schema is always present. But when getting the value via `record.getValue(field(name("t_author", "id")))`, the value is again wrong.

----

See also: #4283

|

defect

|

record getvalue field returns wrong value if ambiguous column names are contained in the record and the schema name is not present in the argument when fetching data from the database that contains ambiguous column names e g sql select b id a id from t author a join t book b on a id b author id order by b id a id resulting in id id then the record getvalue field or result getvalue field method doesn t work correctly if the schema name isn t present in both the record s field the argument s field e g since the schema name is fetched from those jdbc drivers that support it but not from postgresql when getting the value via record getvalue field name public t author id in postgresql the value is wrong that of t book id conversely when using generated tables columns the schema is always present but when getting the value via record getvalue field name t author id the value is again wrong see also

| 1

|

103,570

| 8,921,998,208

|

IssuesEvent

|

2019-01-21 11:40:30

|

pando-project/jerryscript

|

https://api.github.com/repos/pando-project/jerryscript

|

closed

|

test-api.c has an out-of-bounds write (buffer overflow)

|

bug test

|

Reproducing steps:

1. I use my Stensal SDK (https://stensal.com)

2. build jerryscript with stensal-c

3. Run ./build/tests/unit-test-api

This is what I got:

ok 148343051 148341491 0xfff0e4a8 2

ok construct 148343083 148343163 0xfff11bec 1

ok 148343251 148341491 0xfff0e4b4 0

ok object free callback

DTS_MSG: Stensal DTS detected a fatal program error!

DTS_MSG: Continuing the execution will cause unexpected behaviors, abort!

DTS_MSG: OOB Write:writing 1 bytes at 0xfff11570 will corrupt the adjacent data.

DTS_MSG: Diagnostic information:

-

- The object to-be-written (start:0xfff1156c, size:4 bytes) is allocated at

- file:/home/sbuilder/workspace/jerryscript/tests/unit-core/test-api.c::881, 10

- 0xfff1156c 0xfff1156f

- +------------------------+

- |the object to-be-written|......

- +------------------------+

- ^~~~~~~~~~

- the write starts at 0xfff11570 that is right after the object end.

- Stack trace (most recent call first):

-[1] file:/home/sbuilder/workspace/jerryscript/tests/unit-core/test-api.c::884, 5

-[2] file:/home/nwang/acore/musl/src/env/__libc_start_main.c::180, 11

|

1.0

|

test-api.c has an out-of-bounds write (buffer overflow) - Reproducing steps:

1. I use my Stensal SDK (https://stensal.com)

2. build jerryscript with stensal-c

3. Run ./build/tests/unit-test-api

This is what I got:

ok 148343051 148341491 0xfff0e4a8 2

ok construct 148343083 148343163 0xfff11bec 1

ok 148343251 148341491 0xfff0e4b4 0

ok object free callback

DTS_MSG: Stensal DTS detected a fatal program error!

DTS_MSG: Continuing the execution will cause unexpected behaviors, abort!

DTS_MSG: OOB Write:writing 1 bytes at 0xfff11570 will corrupt the adjacent data.

DTS_MSG: Diagnostic information:

-

- The object to-be-written (start:0xfff1156c, size:4 bytes) is allocated at

- file:/home/sbuilder/workspace/jerryscript/tests/unit-core/test-api.c::881, 10

- 0xfff1156c 0xfff1156f

- +------------------------+

- |the object to-be-written|......

- +------------------------+

- ^~~~~~~~~~

- the write starts at 0xfff11570 that is right after the object end.

- Stack trace (most recent call first):

-[1] file:/home/sbuilder/workspace/jerryscript/tests/unit-core/test-api.c::884, 5

-[2] file:/home/nwang/acore/musl/src/env/__libc_start_main.c::180, 11

|

non_defect

|

test api c has an out of bounds write buffer overflow reproducing steps i use my stensal sdk build jerryscript with stensal c run build tests unit test api this is what i got ok ok construct ok ok object free callback dts msg stensal dts detected a fatal program error dts msg continuing the execution will cause unexpected behaviors abort dts msg oob write writing bytes at will corrupt the adjacent data dts msg diagnostic information the object to be written start size bytes is allocated at file home sbuilder workspace jerryscript tests unit core test api c the object to be written the write starts at that is right after the object end stack trace most recent call first file home sbuilder workspace jerryscript tests unit core test api c file home nwang acore musl src env libc start main c

| 0

|

418,011

| 28,113,261,643

|

IssuesEvent

|

2023-03-31 08:51:28

|

jaredoong/ped

|

https://api.github.com/repos/jaredoong/ped

|

opened

|

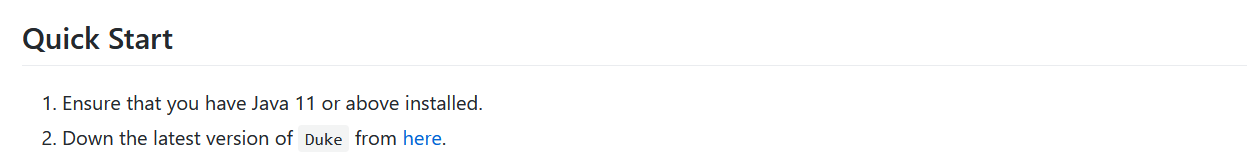

Incorrect link and description in Quick start section of user guide

|

severity.Low type.DocumentationBug

|

Would be good to update the link and name of the program to ensure that a totally new user would be able to find the program.

<!--session: 1680252471869-a4ff2ce6-21c8-4fa9-ad69-6bc92f657b80-->

<!--Version: Web v3.4.7-->

|

1.0

|

Incorrect link and description in Quick start section of user guide -

Would be good to update the link and name of the program to ensure that a totally new user would be able to find the program.

<!--session: 1680252471869-a4ff2ce6-21c8-4fa9-ad69-6bc92f657b80-->

<!--Version: Web v3.4.7-->

|

non_defect

|

incorrect link and description in quick start section of user guide would be good to update the link and name of the program to ensure that a totally new user would be able to find the program

| 0

|

55,549

| 14,538,872,908

|

IssuesEvent

|

2020-12-15 11:04:13

|

openzfs/zfs

|

https://api.github.com/repos/openzfs/zfs

|

opened

|

Intel QAT compression fails with ZFS 2.0.0

|

Status: Triage Needed Type: Defect

|

System information:

Distribution Centos 8.2

Stock kernel:

```

[root@dellqat zfs_latest]# uname -a

Linux dellqat 4.18.0-193.19.1.el8_2.x86_64 #1 SMP Mon Sep 14 14:37:00 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

[root@dellqat zfs_latest]# modinfo zfs | grep -iw version

version: 2.0.0-1

[root@dellqat zfs_latest]# modinfo spl | grep -iw version

version: 2.0.0-1

```

ZFS 2.0.0 fails to use Intel QAT compression.

QAT and ZFS compiled from sources:

QAT:

qat1.7.l.4.11.0-00001 (latest from intel)

```

./configure --enable-icp-trace --enable-icp-debug --enable-icp-log-syslog --enable-kapi

make

make install

```

ZFS:

[root@dellqat zfs_latest]# git status

On branch zfs-2.0-release

Your branch is up to date with 'origin/zfs-2.0-release'.

nothing to commit, working tree clean

```

export ICP_ROOT=/opt/A3C/qat1.7.l.4.11.0-00001

./configure --with-qat=/opt/A3C/qat1.7.l.4.11.0-00001

make

make install

ldconfig

```

(tried also various reboots...)

```

[root@dellqat ~]# lsmod | grep qat

qat_api 634880 2 zfs

qat_dh895xcc 20480 0

intel_qat 249856 3 qat_api,usdm_drv,qat_dh895xcc

uio 20480 1 intel_qat

[root@dellqat ~]# lsmod | grep zfs

zfs 4481024 1

zunicode 335872 1 zfs

zzstd 507904 1 zfs

qat_api 634880 2 zfs

zlua 176128 1 zfs

zcommon 94208 1 zfs

znvpair 90112 2 zfs,zcommon

zavl 16384 1 zfs

icp 323584 1 zfs

spl 110592 6 zfs,icp,zzstd,znvpair,zcommon,zavl

```

`zpool create -f -m /mnt/test test /dev/sdb /dev/sdc /dev/sdd

`

```

[root@dellqat ~]# zfs set compression=gzip test

[root@dellqat ~]# zfs get all test

NAME PROPERTY VALUE SOURCE

test type filesystem -

test creation mar dic 15 10:58 2020 -

test used 333K -

test available 2.63T -

test referenced 24K -

test compressratio 1.00x -

test mounted yes -

test quota none default

test reservation none default

test recordsize 128K default

test mountpoint /mnt/test local

test sharenfs off default

test checksum on default

test compression gzip local

test atime on default

test devices on default

test exec on default

test setuid on default

test readonly off default

test zoned off default

test snapdir hidden default

test aclmode discard default

test aclinherit restricted default

test createtxg 1 -

test canmount on default

test xattr on default

test copies 1 default

test version 5 -

test utf8only off -

test normalization none -

test casesensitivity sensitive -

test vscan off default

test nbmand off default

test sharesmb off default

test refquota none default

test refreservation none default

test guid 3091558646933016362 -

test primarycache all default

test secondarycache all default

test usedbysnapshots 0B -

test usedbydataset 24K -

test usedbychildren 309K -

test usedbyrefreservation 0B -

test logbias latency default

test objsetid 54 -

test dedup off default

test mlslabel none default

test sync standard default

test dnodesize legacy default

test refcompressratio 1.00x -

test written 24K -

test logicalused 115K -

test logicalreferenced 12K -

test volmode default default

test filesystem_limit none default

test snapshot_limit none default

test filesystem_count none default

test snapshot_count none default

test snapdev hidden default

test acltype off default

test context none default

test fscontext none default

test defcontext none default

test rootcontext none default

test relatime off default

test redundant_metadata all default

test overlay on default

test encryption off default

test keylocation none default

test keyformat none default

test pbkdf2iters 0 default

test special_small_blocks 0 default

[root@dellqat /]# cat /proc/spl/kstat/zfs/qat

20 1 0x01 17 4624 14088835193 61490940492455

name type data

comp_requests 4 0

comp_total_in_bytes 4 0

comp_total_out_bytes 4 0

decomp_requests 4 0

decomp_total_in_bytes 4 0

decomp_total_out_bytes 4 0

dc_fails 4 0

encrypt_requests 4 0

encrypt_total_in_bytes 4 0

encrypt_total_out_bytes 4 0

decrypt_requests 4 0

decrypt_total_in_bytes 4 0

decrypt_total_out_bytes 4 0

crypt_fails 4 0

cksum_requests 4 0

cksum_total_in_bytes 4 0

cksum_fails 4 0

```

Tested with various methods: dd, standard cp & mv, iozone

Please note the strange:

test compressratio 1.00x -

Seems compression is not there at all.

|

1.0

|

Intel QAT compression fails with ZFS 2.0.0 - System information:

Distribution Centos 8.2

Stock kernel:

```

[root@dellqat zfs_latest]# uname -a

Linux dellqat 4.18.0-193.19.1.el8_2.x86_64 #1 SMP Mon Sep 14 14:37:00 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux

[root@dellqat zfs_latest]# modinfo zfs | grep -iw version

version: 2.0.0-1

[root@dellqat zfs_latest]# modinfo spl | grep -iw version

version: 2.0.0-1

```

ZFS 2.0.0 fails to use Intel QAT compression.

QAT and ZFS compiled from sources:

QAT:

qat1.7.l.4.11.0-00001 (latest from intel)

```

./configure --enable-icp-trace --enable-icp-debug --enable-icp-log-syslog --enable-kapi

make

make install

```

ZFS:

[root@dellqat zfs_latest]# git status

On branch zfs-2.0-release

Your branch is up to date with 'origin/zfs-2.0-release'.

nothing to commit, working tree clean

```

export ICP_ROOT=/opt/A3C/qat1.7.l.4.11.0-00001

./configure --with-qat=/opt/A3C/qat1.7.l.4.11.0-00001

make

make install

ldconfig

```

(tried also various reboots...)

```

[root@dellqat ~]# lsmod | grep qat

qat_api 634880 2 zfs

qat_dh895xcc 20480 0

intel_qat 249856 3 qat_api,usdm_drv,qat_dh895xcc

uio 20480 1 intel_qat

[root@dellqat ~]# lsmod | grep zfs

zfs 4481024 1

zunicode 335872 1 zfs

zzstd 507904 1 zfs

qat_api 634880 2 zfs

zlua 176128 1 zfs

zcommon 94208 1 zfs

znvpair 90112 2 zfs,zcommon

zavl 16384 1 zfs

icp 323584 1 zfs

spl 110592 6 zfs,icp,zzstd,znvpair,zcommon,zavl

```

`zpool create -f -m /mnt/test test /dev/sdb /dev/sdc /dev/sdd

`

```

[root@dellqat ~]# zfs set compression=gzip test

[root@dellqat ~]# zfs get all test

NAME PROPERTY VALUE SOURCE

test type filesystem -

test creation mar dic 15 10:58 2020 -

test used 333K -

test available 2.63T -

test referenced 24K -

test compressratio 1.00x -

test mounted yes -

test quota none default

test reservation none default

test recordsize 128K default

test mountpoint /mnt/test local

test sharenfs off default

test checksum on default

test compression gzip local

test atime on default

test devices on default

test exec on default

test setuid on default

test readonly off default

test zoned off default

test snapdir hidden default

test aclmode discard default

test aclinherit restricted default

test createtxg 1 -

test canmount on default

test xattr on default

test copies 1 default

test version 5 -

test utf8only off -

test normalization none -

test casesensitivity sensitive -

test vscan off default

test nbmand off default

test sharesmb off default

test refquota none default

test refreservation none default

test guid 3091558646933016362 -

test primarycache all default

test secondarycache all default

test usedbysnapshots 0B -

test usedbydataset 24K -

test usedbychildren 309K -

test usedbyrefreservation 0B -

test logbias latency default

test objsetid 54 -

test dedup off default

test mlslabel none default

test sync standard default

test dnodesize legacy default

test refcompressratio 1.00x -

test written 24K -

test logicalused 115K -

test logicalreferenced 12K -

test volmode default default

test filesystem_limit none default

test snapshot_limit none default

test filesystem_count none default

test snapshot_count none default

test snapdev hidden default

test acltype off default

test context none default

test fscontext none default

test defcontext none default

test rootcontext none default

test relatime off default

test redundant_metadata all default

test overlay on default

test encryption off default

test keylocation none default

test keyformat none default

test pbkdf2iters 0 default

test special_small_blocks 0 default

[root@dellqat /]# cat /proc/spl/kstat/zfs/qat

20 1 0x01 17 4624 14088835193 61490940492455

name type data

comp_requests 4 0

comp_total_in_bytes 4 0

comp_total_out_bytes 4 0

decomp_requests 4 0

decomp_total_in_bytes 4 0

decomp_total_out_bytes 4 0

dc_fails 4 0

encrypt_requests 4 0

encrypt_total_in_bytes 4 0

encrypt_total_out_bytes 4 0

decrypt_requests 4 0

decrypt_total_in_bytes 4 0

decrypt_total_out_bytes 4 0

crypt_fails 4 0

cksum_requests 4 0

cksum_total_in_bytes 4 0

cksum_fails 4 0

```

Tested with various methods: dd, standard cp & mv, iozone

Please note the strange:

test compressratio 1.00x -

Seems compression is not there at all.

|

defect

|

intel qat compression fails with zfs system information distribution centos stock kernel uname a linux dellqat smp mon sep utc gnu linux modinfo zfs grep iw version version modinfo spl grep iw version version zfs fails to use intel qat compression qat and zfs compiled from sources qat l latest from intel configure enable icp trace enable icp debug enable icp log syslog enable kapi make make install zfs git status on branch zfs release your branch is up to date with origin zfs release nothing to commit working tree clean export icp root opt l configure with qat opt l make make install ldconfig tried also various reboots lsmod grep qat qat api zfs qat intel qat qat api usdm drv qat uio intel qat lsmod grep zfs zfs zunicode zfs zzstd zfs qat api zfs zlua zfs zcommon zfs znvpair zfs zcommon zavl zfs icp zfs spl zfs icp zzstd znvpair zcommon zavl zpool create f m mnt test test dev sdb dev sdc dev sdd zfs set compression gzip test zfs get all test name property value source test type filesystem test creation mar dic test used test available test referenced test compressratio test mounted yes test quota none default test reservation none default test recordsize default test mountpoint mnt test local test sharenfs off default test checksum on default test compression gzip local test atime on default test devices on default test exec on default test setuid on default test readonly off default test zoned off default test snapdir hidden default test aclmode discard default test aclinherit restricted default test createtxg test canmount on default test xattr on default test copies default test version test off test normalization none test casesensitivity sensitive test vscan off default test nbmand off default test sharesmb off default test refquota none default test refreservation none default test guid test primarycache all default test secondarycache all default test usedbysnapshots test usedbydataset test usedbychildren test usedbyrefreservation test logbias latency default test objsetid test dedup off default test mlslabel none default test sync standard default test dnodesize legacy default test refcompressratio test written test logicalused test logicalreferenced test volmode default default test filesystem limit none default test snapshot limit none default test filesystem count none default test snapshot count none default test snapdev hidden default test acltype off default test context none default test fscontext none default test defcontext none default test rootcontext none default test relatime off default test redundant metadata all default test overlay on default test encryption off default test keylocation none default test keyformat none default test default test special small blocks default cat proc spl kstat zfs qat name type data comp requests comp total in bytes comp total out bytes decomp requests decomp total in bytes decomp total out bytes dc fails encrypt requests encrypt total in bytes encrypt total out bytes decrypt requests decrypt total in bytes decrypt total out bytes crypt fails cksum requests cksum total in bytes cksum fails tested with various methods dd standard cp mv iozone please note the strange test compressratio seems compression is not there at all

| 1

|

25,803

| 4,461,179,900

|

IssuesEvent

|

2016-08-24 03:46:49

|

vug/freqazoid

|

https://api.github.com/repos/vug/freqazoid

|

closed

|

DFT function optimization

|

auto-migrated Priority-Medium Type-Defect

|

```

I used your DFT code in my stud project as a starting point and I optimized it

a bit for execution speed. I attached a patch for the DFT.java file in case you

are interested in using it.

```

Original issue reported on code.google.com by `kirm...@gmail.com` on 14 Jan 2012 at 4:04

Attachments:

* [dft_enhancement.patch](https://storage.googleapis.com/google-code-attachments/freqazoid/issue-13/comment-0/dft_enhancement.patch)

|

1.0

|

DFT function optimization - ```

I used your DFT code in my stud project as a starting point and I optimized it

a bit for execution speed. I attached a patch for the DFT.java file in case you

are interested in using it.

```

Original issue reported on code.google.com by `kirm...@gmail.com` on 14 Jan 2012 at 4:04

Attachments:

* [dft_enhancement.patch](https://storage.googleapis.com/google-code-attachments/freqazoid/issue-13/comment-0/dft_enhancement.patch)

|

defect

|

dft function optimization i used your dft code in my stud project as a starting point and i optimized it a bit for execution speed i attached a patch for the dft java file in case you are interested in using it original issue reported on code google com by kirm gmail com on jan at attachments

| 1

|

63,369

| 17,616,296,544

|

IssuesEvent

|

2021-08-18 10:07:04

|

hazelcast/hazelcast-python-client

|

https://api.github.com/repos/hazelcast/hazelcast-python-client

|

closed

|

Scaling down Hazelcast cluster causes Python client to lose connection

|

Type: Defect Priority: High

|

## Description

Scaling down Hazelcast cluster causes Python client to lose connection even with `redo_operation` set to `True`.

## Steps to reproduce

Create Hazelcast cluster with 2 members.

Run client program that connects and issues periodical requests, such as:

```

import hazelcast

import logging

import random

if __name__ == "__main__":

logging.basicConfig()

logging.getLogger().setLevel(logging.INFO)

config = hazelcast.ClientConfig()

config.network_config.redo_operation = True

client = hazelcast.HazelcastClient(config)

my_map = client.get_map("map").blocking()

my_map.put("key", "value")

if my_map.get("key") == "value":

print("Connection Successful!")

print("Now, `map` will be filled with random entries.");

while True:

random_key = random.randint(1, 100000)

my_map.put("key" + str(random_key), "value" + str(random_key))

my_map.get("key" + str(random.randint(1,100000)))

if random_key % 10 == 0:

print("Map size:" + str(my_map.size()))

else:

raise Exception("Connection failed, check your configuration.")

client.shutdown()

```

Now scale the cluster up to 4 members. Connection will not be lost. Scale down to 2 members

again, an error will occur and the program will exit.

```

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[0](33.11.106.108:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Nov 13, 2019 10:40:18 AM HazelcastClient.LifecycleService

INFO: [3.12.1] [guglielmo-net-04] [hz.client_0] (20190319 - 3b38a46) HazelcastClient is DISCONNECTED

INFO:HazelcastClient.LifecycleService:[guglielmo-net-04] [hz.client_0] (20190319 - 3b38a46) HazelcastClient is DISCONNECTED

Nov 13, 2019 10:40:18 AM HazelcastClient.Connection[1](33.11.118.111:30023)

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[1](33.11.118.111:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Nov 13, 2019 10:40:18 AM HazelcastClient.ClusterService

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed to owner node. Trying to reconnect.

WARNING:HazelcastClient.ClusterService:[guglielmo-net-04] [hz.client_0] Connection closed to owner node. Trying to reconnect.

Nov 13, 2019 10:40:19 AM HazelcastClient.ClusterService

INFO: [3.12.1] [guglielmo-net-04] [hz.client_0] Connecting to Address(host=100.96.6.2, port=30023)

INFO:HazelcastClient.ClusterService:[guglielmo-net-04] [hz.client_0] Connecting to Address(host=100.96.6.2, port=30023)

Nov 13, 2019 10:41:39 AM HazelcastClient.Connection[2](33.11.101.6:30023)

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[2](33.11.101.6:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Nov 13, 2019 10:41:39 AM HazelcastClient.Connection[3](33.11.120.0:30023)

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[3](33.11.120.0:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Traceback (most recent call last):

File "client.py", line 38, in <module>

my_map.put("key" + str(random_key), "value" + str(random_key))

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 274, in f

return result.result()

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 61, in result

six.reraise(self._exception.__class__, self._exception, self._traceback)

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 145, in callback

future.set_result(continuation_func(f, *args))

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/proxy/base.py", line 12, in default_response_handler

response = future.result()

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 61, in result

six.reraise(self._exception.__class__, self._exception, self._traceback)

File "<string>", line 3, in reraise

hazelcast.exception.TimeoutError: Request timed out after 120 seconds.

Exception in thread hazelcast-reactor (most likely raised during interpreter shutdown):

Traceback (most recent call last):

File "/usr/lib/python2.7/threading.py", line 801, in __bootstrap_inner

File "/usr/lib/python2.7/threading.py", line 754, in run

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/reactor.py", line 47, in _loop

<type 'exceptions.AttributeError'>: 'NoneType' object has no attribute 'error'

```

|

1.0

|

Scaling down Hazelcast cluster causes Python client to lose connection - ## Description

Scaling down Hazelcast cluster causes Python client to lose connection even with `redo_operation` set to `True`.

## Steps to reproduce

Create Hazelcast cluster with 2 members.

Run client program that connects and issues periodical requests, such as:

```

import hazelcast

import logging

import random

if __name__ == "__main__":

logging.basicConfig()

logging.getLogger().setLevel(logging.INFO)

config = hazelcast.ClientConfig()

config.network_config.redo_operation = True

client = hazelcast.HazelcastClient(config)

my_map = client.get_map("map").blocking()

my_map.put("key", "value")

if my_map.get("key") == "value":

print("Connection Successful!")

print("Now, `map` will be filled with random entries.");

while True:

random_key = random.randint(1, 100000)

my_map.put("key" + str(random_key), "value" + str(random_key))

my_map.get("key" + str(random.randint(1,100000)))

if random_key % 10 == 0:

print("Map size:" + str(my_map.size()))

else:

raise Exception("Connection failed, check your configuration.")

client.shutdown()

```

Now scale the cluster up to 4 members. Connection will not be lost. Scale down to 2 members

again, an error will occur and the program will exit.

```

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[0](33.11.106.108:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Nov 13, 2019 10:40:18 AM HazelcastClient.LifecycleService

INFO: [3.12.1] [guglielmo-net-04] [hz.client_0] (20190319 - 3b38a46) HazelcastClient is DISCONNECTED

INFO:HazelcastClient.LifecycleService:[guglielmo-net-04] [hz.client_0] (20190319 - 3b38a46) HazelcastClient is DISCONNECTED

Nov 13, 2019 10:40:18 AM HazelcastClient.Connection[1](33.11.118.111:30023)

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[1](33.11.118.111:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Nov 13, 2019 10:40:18 AM HazelcastClient.ClusterService

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed to owner node. Trying to reconnect.

WARNING:HazelcastClient.ClusterService:[guglielmo-net-04] [hz.client_0] Connection closed to owner node. Trying to reconnect.

Nov 13, 2019 10:40:19 AM HazelcastClient.ClusterService

INFO: [3.12.1] [guglielmo-net-04] [hz.client_0] Connecting to Address(host=100.96.6.2, port=30023)

INFO:HazelcastClient.ClusterService:[guglielmo-net-04] [hz.client_0] Connecting to Address(host=100.96.6.2, port=30023)

Nov 13, 2019 10:41:39 AM HazelcastClient.Connection[2](33.11.101.6:30023)

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[2](33.11.101.6:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Nov 13, 2019 10:41:39 AM HazelcastClient.Connection[3](33.11.120.0:30023)

WARNING: [3.12.1] [guglielmo-net-04] [hz.client_0] Connection closed by server

WARNING:HazelcastClient.Connection[3](33.11.120.0:30023):[guglielmo-net-04] [hz.client_0] Connection closed by server

Traceback (most recent call last):

File "client.py", line 38, in <module>

my_map.put("key" + str(random_key), "value" + str(random_key))

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 274, in f

return result.result()

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 61, in result

six.reraise(self._exception.__class__, self._exception, self._traceback)

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 145, in callback

future.set_result(continuation_func(f, *args))

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/proxy/base.py", line 12, in default_response_handler

response = future.result()

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/future.py", line 61, in result

six.reraise(self._exception.__class__, self._exception, self._traceback)

File "<string>", line 3, in reraise

hazelcast.exception.TimeoutError: Request timed out after 120 seconds.

Exception in thread hazelcast-reactor (most likely raised during interpreter shutdown):

Traceback (most recent call last):

File "/usr/lib/python2.7/threading.py", line 801, in __bootstrap_inner

File "/usr/lib/python2.7/threading.py", line 754, in run

File "/home/ubuntu/.local/lib/python2.7/site-packages/hazelcast/reactor.py", line 47, in _loop

<type 'exceptions.AttributeError'>: 'NoneType' object has no attribute 'error'

```

|

defect

|

scaling down hazelcast cluster causes python client to lose connection description scaling down hazelcast cluster causes python client to lose connection even with redo operation set to true steps to reproduce create hazelcast cluster with members run client program that connects and issues periodical requests such as import hazelcast import logging import random if name main logging basicconfig logging getlogger setlevel logging info config hazelcast clientconfig config network config redo operation true client hazelcast hazelcastclient config my map client get map map blocking my map put key value if my map get key value print connection successful print now map will be filled with random entries while true random key random randint my map put key str random key value str random key my map get key str random randint if random key print map size str my map size else raise exception connection failed check your configuration client shutdown now scale the cluster up to members connection will not be lost scale down to members again an error will occur and the program will exit warning connection closed by server warning hazelcastclient connection connection closed by server nov am hazelcastclient lifecycleservice info hazelcastclient is disconnected info hazelcastclient lifecycleservice hazelcastclient is disconnected nov am hazelcastclient connection warning connection closed by server warning hazelcastclient connection connection closed by server nov am hazelcastclient clusterservice warning connection closed to owner node trying to reconnect warning hazelcastclient clusterservice connection closed to owner node trying to reconnect nov am hazelcastclient clusterservice info connecting to address host port info hazelcastclient clusterservice connecting to address host port nov am hazelcastclient connection warning connection closed by server warning hazelcastclient connection connection closed by server nov am hazelcastclient connection warning connection closed by server warning hazelcastclient connection connection closed by server traceback most recent call last file client py line in my map put key str random key value str random key file home ubuntu local lib site packages hazelcast future py line in f return result result file home ubuntu local lib site packages hazelcast future py line in result six reraise self exception class self exception self traceback file home ubuntu local lib site packages hazelcast future py line in callback future set result continuation func f args file home ubuntu local lib site packages hazelcast proxy base py line in default response handler response future result file home ubuntu local lib site packages hazelcast future py line in result six reraise self exception class self exception self traceback file line in reraise hazelcast exception timeouterror request timed out after seconds exception in thread hazelcast reactor most likely raised during interpreter shutdown traceback most recent call last file usr lib threading py line in bootstrap inner file usr lib threading py line in run file home ubuntu local lib site packages hazelcast reactor py line in loop nonetype object has no attribute error

| 1

|

40,873

| 10,208,719,902

|

IssuesEvent

|

2019-08-14 10:50:32

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

opened

|

`com.mysql.jdbc.Driver'. This is deprecated.

|

T: Defect

|

### Expected behavior and actual behavior:

[INFO] --- jooq-codegen-maven:3.11.11:generate (jooq) @ sw-jooq ---

[INFO] Database : Inferring driver com.mysql.jdbc.Driver from URL jdbc:mysql://114.11.......

Loading class **`com.mysql.jdbc.Driver'. This is deprecated.** The new driver class is `com.mysql.cj.jdbc.Driver'. The driver is automatically registered via the SPI and manual loading of the driver class is generally unnecessary.

### Steps to reproduce the problem (if possible, create an MCVE: https://github.com/jOOQ/jOOQ-mcve):

### Versions:

- jOOQ:3.11.11

- Java:8.0

- Database (include vendor):mysql

- OS:window

- JDBC Driver (include name if inofficial driver):

|

1.0

|

`com.mysql.jdbc.Driver'. This is deprecated. - ### Expected behavior and actual behavior:

[INFO] --- jooq-codegen-maven:3.11.11:generate (jooq) @ sw-jooq ---

[INFO] Database : Inferring driver com.mysql.jdbc.Driver from URL jdbc:mysql://114.11.......

Loading class **`com.mysql.jdbc.Driver'. This is deprecated.** The new driver class is `com.mysql.cj.jdbc.Driver'. The driver is automatically registered via the SPI and manual loading of the driver class is generally unnecessary.

### Steps to reproduce the problem (if possible, create an MCVE: https://github.com/jOOQ/jOOQ-mcve):

### Versions:

- jOOQ:3.11.11

- Java:8.0

- Database (include vendor):mysql

- OS:window

- JDBC Driver (include name if inofficial driver):

|

defect

|

com mysql jdbc driver this is deprecated expected behavior and actual behavior jooq codegen maven generate jooq sw jooq database inferring driver com mysql jdbc driver from url jdbc mysql loading class com mysql jdbc driver this is deprecated the new driver class is com mysql cj jdbc driver the driver is automatically registered via the spi and manual loading of the driver class is generally unnecessary steps to reproduce the problem if possible create an mcve versions jooq java database include vendor mysql os window jdbc driver include name if inofficial driver

| 1

|

68,079

| 21,472,940,631

|

IssuesEvent

|

2022-04-26 11:11:53

|

matrix-org/synapse

|

https://api.github.com/repos/matrix-org/synapse

|

closed

|

`/_synapse/admin/v1/delete_group` admin api fails with 500 error

|

A-Communities S-Tolerable T-Defect

|

<!--

**THIS IS NOT A SUPPORT CHANNEL!**

**IF YOU HAVE SUPPORT QUESTIONS ABOUT RUNNING OR CONFIGURING YOUR OWN HOME SERVER**,

please ask in **#synapse:matrix.org** (using a matrix.org account if necessary)

If you want to report a security issue, please see https://matrix.org/security-disclosure-policy/

This is a bug report template. By following the instructions below and

filling out the sections with your information, you will help the us to get all

the necessary data to fix your issue.

You can also preview your report before submitting it. You may remove sections

that aren't relevant to your particular case.

Text between <!-- and --> marks will be invisible in the report.

-->

### Description

Trying to remove user from group sometimes fails, adjacently https://github.com/matrix-org/synapse/blob/master/docs/admin_api/delete_group.md fails too because of same rootcause. Troublesome users seems to be existing on matrix.org server rather than our own hackab.fi one.