Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

73,684

| 19,761,170,496

|

IssuesEvent

|

2022-01-16 12:47:06

|

chaotic-aur/packages

|

https://api.github.com/repos/chaotic-aur/packages

|

closed

|

[Outdated] python-manimpango

|

request:rebuild-pkg priority:high

|

### If available, link to the latest build

Build log site timed out multiple times.

### Package name

`python-manimpango`

### Latest build

`0.3.1-1`

### Latest version available

`0.4.0.post0-1`

### Have you tested if the package builds in a clean chroot?

- [ ] Yes

### More information

In my case, it's being used as a dependency for `emote`.

|

1.0

|

[Outdated] python-manimpango - ### If available, link to the latest build

Build log site timed out multiple times.

### Package name

`python-manimpango`

### Latest build

`0.3.1-1`

### Latest version available

`0.4.0.post0-1`

### Have you tested if the package builds in a clean chroot?

- [ ] Yes

### More information

In my case, it's being used as a dependency for `emote`.

|

non_defect

|

python manimpango if available link to the latest build build log site timed out multiple times package name python manimpango latest build latest version available have you tested if the package builds in a clean chroot yes more information in my case it s being used as a dependency for emote

| 0

|

20,307

| 3,332,446,509

|

IssuesEvent

|

2015-11-11 20:08:51

|

lixun910/geoda

|

https://api.github.com/repos/lixun910/geoda

|

closed

|

Average Chart: enable right click menu

|

fixed: verify! Milestone-Release1.8 Module-AveragesTool Priority-Medium Type-Defect

|

```

In versions 1.7.24 ~ 1.7.33:

To init an average chart, there is a prompt "Please use Options->Add/Remove

Variable..".

Here the right click doesn't work, and this message seems duplicated since the

"Add/Remove Variable" dialog is by default showed up.

```

Original issue reported on code.google.com by `lixun...@gmail.com` on 1 Jul 2015 at 6:38

|

1.0

|

Average Chart: enable right click menu - ```

In versions 1.7.24 ~ 1.7.33:

To init an average chart, there is a prompt "Please use Options->Add/Remove

Variable..".

Here the right click doesn't work, and this message seems duplicated since the

"Add/Remove Variable" dialog is by default showed up.

```

Original issue reported on code.google.com by `lixun...@gmail.com` on 1 Jul 2015 at 6:38

|

defect

|

average chart enable right click menu in versions to init an average chart there is a prompt please use options add remove variable here the right click doesn t work and this message seems duplicated since the add remove variable dialog is by default showed up original issue reported on code google com by lixun gmail com on jul at

| 1

|

62,113

| 17,023,854,024

|

IssuesEvent

|

2021-07-03 04:11:33

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

user menu on user page includes empty changesets in count

|

Component: website Priority: trivial Resolution: wontfix Type: defect

|

**[Submitted to the original trac issue database at 4.22pm, Sunday, 17th February 2013]**

The user menu on http://www.openstreetmap.org/user/aseerel4c26/ does count empty changesets (the counter in "Eigene Bearbeitungen 786" increases for each empty changeset) but http://www.openstreetmap.org/user/aseerel4c26/edits does not list empty changesets.

The counting of empty changesets in the website GUI should be consistent - similar bug: https://trac.openstreetmap.org/ticket/2114

|

1.0

|

user menu on user page includes empty changesets in count - **[Submitted to the original trac issue database at 4.22pm, Sunday, 17th February 2013]**

The user menu on http://www.openstreetmap.org/user/aseerel4c26/ does count empty changesets (the counter in "Eigene Bearbeitungen 786" increases for each empty changeset) but http://www.openstreetmap.org/user/aseerel4c26/edits does not list empty changesets.

The counting of empty changesets in the website GUI should be consistent - similar bug: https://trac.openstreetmap.org/ticket/2114

|

defect

|

user menu on user page includes empty changesets in count the user menu on does count empty changesets the counter in eigene bearbeitungen increases for each empty changeset but does not list empty changesets the counting of empty changesets in the website gui should be consistent similar bug

| 1

|

143,272

| 11,540,545,276

|

IssuesEvent

|

2020-02-18 00:29:57

|

davidskalinder/mpeds-coder

|

https://api.github.com/repos/davidskalinder/mpeds-coder

|

reopened

|

Handle uncertainty for coding fields

|

user testing

|

Could be for every item, for particular items, or for groups of items. Probably could be implemented in current DB structure (as separate and arbitrary "variable" types), linked to corresponding items elsewhere (or by name, though that's dubious). As to whether it *should* be stored like that... needs further thought.

|

1.0

|

Handle uncertainty for coding fields - Could be for every item, for particular items, or for groups of items. Probably could be implemented in current DB structure (as separate and arbitrary "variable" types), linked to corresponding items elsewhere (or by name, though that's dubious). As to whether it *should* be stored like that... needs further thought.

|

non_defect

|

handle uncertainty for coding fields could be for every item for particular items or for groups of items probably could be implemented in current db structure as separate and arbitrary variable types linked to corresponding items elsewhere or by name though that s dubious as to whether it should be stored like that needs further thought

| 0

|

77,838

| 27,190,002,041

|

IssuesEvent

|

2023-02-19 17:31:56

|

openzfs/zfs

|

https://api.github.com/repos/openzfs/zfs

|

opened

|

Data corruption after TRIM

|

Type: Defect

|

### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | Void Linux

Distribution Version | musl, up to date (rolling)

Kernel Version | 6.1.8_1

Architecture | x86_64

OpenZFS Version | 2.1.7-1

### Describe the problem you're observing

Data corruption in some files and lot of checksum errors.

### Describe how to reproduce the problem

I ran `zpool scrub` on a simple zpool, consisting of a single partition on a 120 GiB SSD. It finished without any errors (neither data or checksum).

The I ran `zpool trim` on the pool. Then a few minutes later I saw 2 checksum errors by the report. Then a few more.

(I'm not sure if the first error appeared before or after the TRIM.)

I ran `zfs trim` again, because the first time it finished quite fast despite there were much space to trim. Few more errors. (Probably not related to running TRIM again, because the errors has been constantly increasing since then.)

Then I was thinking maybe the first scrub before the trim somehow did not notice the errors, so I ran `zfs scrub` again. The errors grown to 100-300 in the first few seconds, so I stopped it.

At this point I couldn't use `zfs send` to save snapshot, so I used `rsync` to backup the data. It reported I/O error for a few files.

It's an SSD, which report 512 blocks size, so I used `ashift=9`. Later I learned that most SSDs actually use larger blocks, but report 512. I don't know if it affected the trim.

The `sda1` BTRFS partition contains ~2 GiB data (kernels, initramfs, and boot related stuff). I run a TRIM and a `btrfs scrub` on it. No errors were found.

A SMART extended self test was running when I first ran the `zfs scrub` (and possibly when I run `zfs trim` the first time, but it could have finished then).

Partition scheme:

```

NAME FSTYPE FSVER LABEL

sda

├─sda1 btrfs VoidBoot

├─sda2 zfs_member 5000 rpool

└─sda3 swap 1 VoidSwap

```

Output of `zpool status` before submitting issue:

```

pool: rpool

state: DEGRADED

status: One or more devices has experienced an error resulting in data

corruption. Applications may be affected.

action: Restore the file in question if possible. Otherwise restore the

entire pool from backup.

see: https://openzfs.github.io/openzfs-docs/msg/ZFS-8000-8A

scan: scrub canceled on Sun Feb 19 16:29:42 2023

config:

NAME STATE READ WRITE CKSUM

rpool DEGRADED 0 0 0

sda2 DEGRADED 0 0 4.28K too many errors

errors: 481 data errors, use '-v' for a list

```

### Include any warning/errors/backtraces from the system logs

No errors in dmesg.

|

1.0

|

Data corruption after TRIM - ### System information

<!-- add version after "|" character -->

Type | Version/Name

--- | ---

Distribution Name | Void Linux

Distribution Version | musl, up to date (rolling)

Kernel Version | 6.1.8_1

Architecture | x86_64

OpenZFS Version | 2.1.7-1

### Describe the problem you're observing

Data corruption in some files and lot of checksum errors.

### Describe how to reproduce the problem

I ran `zpool scrub` on a simple zpool, consisting of a single partition on a 120 GiB SSD. It finished without any errors (neither data or checksum).

The I ran `zpool trim` on the pool. Then a few minutes later I saw 2 checksum errors by the report. Then a few more.

(I'm not sure if the first error appeared before or after the TRIM.)

I ran `zfs trim` again, because the first time it finished quite fast despite there were much space to trim. Few more errors. (Probably not related to running TRIM again, because the errors has been constantly increasing since then.)

Then I was thinking maybe the first scrub before the trim somehow did not notice the errors, so I ran `zfs scrub` again. The errors grown to 100-300 in the first few seconds, so I stopped it.

At this point I couldn't use `zfs send` to save snapshot, so I used `rsync` to backup the data. It reported I/O error for a few files.

It's an SSD, which report 512 blocks size, so I used `ashift=9`. Later I learned that most SSDs actually use larger blocks, but report 512. I don't know if it affected the trim.

The `sda1` BTRFS partition contains ~2 GiB data (kernels, initramfs, and boot related stuff). I run a TRIM and a `btrfs scrub` on it. No errors were found.

A SMART extended self test was running when I first ran the `zfs scrub` (and possibly when I run `zfs trim` the first time, but it could have finished then).

Partition scheme:

```

NAME FSTYPE FSVER LABEL

sda

├─sda1 btrfs VoidBoot

├─sda2 zfs_member 5000 rpool

└─sda3 swap 1 VoidSwap

```

Output of `zpool status` before submitting issue:

```

pool: rpool

state: DEGRADED

status: One or more devices has experienced an error resulting in data

corruption. Applications may be affected.

action: Restore the file in question if possible. Otherwise restore the

entire pool from backup.

see: https://openzfs.github.io/openzfs-docs/msg/ZFS-8000-8A

scan: scrub canceled on Sun Feb 19 16:29:42 2023

config:

NAME STATE READ WRITE CKSUM

rpool DEGRADED 0 0 0

sda2 DEGRADED 0 0 4.28K too many errors

errors: 481 data errors, use '-v' for a list

```

### Include any warning/errors/backtraces from the system logs

No errors in dmesg.

|

defect

|

data corruption after trim system information type version name distribution name void linux distribution version musl up to date rolling kernel version architecture openzfs version describe the problem you re observing data corruption in some files and lot of checksum errors describe how to reproduce the problem i ran zpool scrub on a simple zpool consisting of a single partition on a gib ssd it finished without any errors neither data or checksum the i ran zpool trim on the pool then a few minutes later i saw checksum errors by the report then a few more i m not sure if the first error appeared before or after the trim i ran zfs trim again because the first time it finished quite fast despite there were much space to trim few more errors probably not related to running trim again because the errors has been constantly increasing since then then i was thinking maybe the first scrub before the trim somehow did not notice the errors so i ran zfs scrub again the errors grown to in the first few seconds so i stopped it at this point i couldn t use zfs send to save snapshot so i used rsync to backup the data it reported i o error for a few files it s an ssd which report blocks size so i used ashift later i learned that most ssds actually use larger blocks but report i don t know if it affected the trim the btrfs partition contains gib data kernels initramfs and boot related stuff i run a trim and a btrfs scrub on it no errors were found a smart extended self test was running when i first ran the zfs scrub and possibly when i run zfs trim the first time but it could have finished then partition scheme name fstype fsver label sda ├─ btrfs voidboot ├─ zfs member rpool └─ swap voidswap output of zpool status before submitting issue pool rpool state degraded status one or more devices has experienced an error resulting in data corruption applications may be affected action restore the file in question if possible otherwise restore the entire pool from backup see scan scrub canceled on sun feb config name state read write cksum rpool degraded degraded too many errors errors data errors use v for a list include any warning errors backtraces from the system logs no errors in dmesg

| 1

|

46,422

| 13,055,910,490

|

IssuesEvent

|

2020-07-30 03:05:40

|

icecube-trac/tix2

|

https://api.github.com/repos/icecube-trac/tix2

|

opened

|

Improved line fit resources directory cleanup (Trac #1181)

|

Incomplete Migration Migrated from Trac combo reconstruction defect

|

Migrated from https://code.icecube.wisc.edu/ticket/1181

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "There are no links to improved linefit documentation in the meta-project documentation. there is a decent index.rst, but it is in resources/ not resources/docs/ it needs the name the maintainer and have links to the CHANGELOG and the doxygen documentation.\n\nIn addition the example script example.py needs to be updated, it tries to load something called libNFE. and moved to resources/examples directory",

"reporter": "kjmeagher",

"cc": "",

"resolution": "wontfix",

"_ts": "1550067117911749",

"component": "combo reconstruction",

"summary": "Improved line fit resources directory cleanup",

"priority": "blocker",

"keywords": "",

"time": "2015-08-19T11:40:01",

"milestone": "",

"owner": "gmaggi",

"type": "defect"

}

```

|

1.0

|

Improved line fit resources directory cleanup (Trac #1181) - Migrated from https://code.icecube.wisc.edu/ticket/1181

```json

{

"status": "closed",

"changetime": "2019-02-13T14:11:57",

"description": "There are no links to improved linefit documentation in the meta-project documentation. there is a decent index.rst, but it is in resources/ not resources/docs/ it needs the name the maintainer and have links to the CHANGELOG and the doxygen documentation.\n\nIn addition the example script example.py needs to be updated, it tries to load something called libNFE. and moved to resources/examples directory",

"reporter": "kjmeagher",

"cc": "",

"resolution": "wontfix",

"_ts": "1550067117911749",

"component": "combo reconstruction",

"summary": "Improved line fit resources directory cleanup",

"priority": "blocker",

"keywords": "",

"time": "2015-08-19T11:40:01",

"milestone": "",

"owner": "gmaggi",

"type": "defect"

}

```

|

defect

|

improved line fit resources directory cleanup trac migrated from json status closed changetime description there are no links to improved linefit documentation in the meta project documentation there is a decent index rst but it is in resources not resources docs it needs the name the maintainer and have links to the changelog and the doxygen documentation n nin addition the example script example py needs to be updated it tries to load something called libnfe and moved to resources examples directory reporter kjmeagher cc resolution wontfix ts component combo reconstruction summary improved line fit resources directory cleanup priority blocker keywords time milestone owner gmaggi type defect

| 1

|

513,072

| 14,915,550,648

|

IssuesEvent

|

2021-01-22 16:53:01

|

brave/brave-browser

|

https://api.github.com/repos/brave/brave-browser

|

closed

|

Hulu.com unable to get location for live tv.

|

OS/Desktop priority/P3 webcompat

|

<!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROVIDED-->

## Description

Hulu.com (live TV) unable to get location when requested,

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. Login to `Hulu.com` and livetv subscription, and open livetv option.

2. Hulu asks for me to share my location, and after I select “allow” it cannot load. Instead it chews on the request either indefinitely, or errors out with “unable to find your location - get help.”

## Actual result:

Unable to get location data for hulu.com livetv.

## Expected result:

Expect `hulu.com` to get location details for livetv option.

## Reproduces how often:

Need a US hulu account, with livetv enabled.

## Brave version (brave://version info)

`Version 1.15.72 Chromium: 86.0.4240.75 (Official Build) (64-bit)`

## Version/Channel Information:

<!--Does this issue happen on any other channels? Or is it specific to a certain channel?-->

- Can you reproduce this issue with the current release? Yes

- Can you reproduce this issue with the beta channel? Unsure

- Can you reproduce this issue with the nightly channel? Unsure.

## Other Additional Information:

- Does the issue resolve itself when disabling Brave Shields?

- Does the issue resolve itself when disabling Brave Rewards?

- Is the issue reproducible on the latest version of Chrome?

## Miscellaneous Information:

Reported here: https://community.brave.com/t/hulu-unable-to-get-location-and-load/165895/6

Have tested `hulu.com` which playback worked fine. Just unable to test the livetv option.

|

1.0

|

Hulu.com unable to get location for live tv. - <!-- Have you searched for similar issues? Before submitting this issue, please check the open issues and add a note before logging a new issue.

PLEASE USE THE TEMPLATE BELOW TO PROVIDE INFORMATION ABOUT THE ISSUE.

INSUFFICIENT INFO WILL GET THE ISSUE CLOSED. IT WILL ONLY BE REOPENED AFTER SUFFICIENT INFO IS PROVIDED-->

## Description

Hulu.com (live TV) unable to get location when requested,

## Steps to Reproduce

<!--Please add a series of steps to reproduce the issue-->

1. Login to `Hulu.com` and livetv subscription, and open livetv option.

2. Hulu asks for me to share my location, and after I select “allow” it cannot load. Instead it chews on the request either indefinitely, or errors out with “unable to find your location - get help.”

## Actual result:

Unable to get location data for hulu.com livetv.

## Expected result:

Expect `hulu.com` to get location details for livetv option.

## Reproduces how often:

Need a US hulu account, with livetv enabled.

## Brave version (brave://version info)

`Version 1.15.72 Chromium: 86.0.4240.75 (Official Build) (64-bit)`

## Version/Channel Information:

<!--Does this issue happen on any other channels? Or is it specific to a certain channel?-->

- Can you reproduce this issue with the current release? Yes

- Can you reproduce this issue with the beta channel? Unsure

- Can you reproduce this issue with the nightly channel? Unsure.

## Other Additional Information:

- Does the issue resolve itself when disabling Brave Shields?

- Does the issue resolve itself when disabling Brave Rewards?

- Is the issue reproducible on the latest version of Chrome?

## Miscellaneous Information:

Reported here: https://community.brave.com/t/hulu-unable-to-get-location-and-load/165895/6

Have tested `hulu.com` which playback worked fine. Just unable to test the livetv option.

|

non_defect

|

hulu com unable to get location for live tv have you searched for similar issues before submitting this issue please check the open issues and add a note before logging a new issue please use the template below to provide information about the issue insufficient info will get the issue closed it will only be reopened after sufficient info is provided description hulu com live tv unable to get location when requested steps to reproduce login to hulu com and livetv subscription and open livetv option hulu asks for me to share my location and after i select “allow” it cannot load instead it chews on the request either indefinitely or errors out with “unable to find your location get help ” actual result unable to get location data for hulu com livetv expected result expect hulu com to get location details for livetv option reproduces how often need a us hulu account with livetv enabled brave version brave version info version chromium official build bit version channel information can you reproduce this issue with the current release yes can you reproduce this issue with the beta channel unsure can you reproduce this issue with the nightly channel unsure other additional information does the issue resolve itself when disabling brave shields does the issue resolve itself when disabling brave rewards is the issue reproducible on the latest version of chrome miscellaneous information reported here have tested hulu com which playback worked fine just unable to test the livetv option

| 0

|

74,848

| 25,358,920,984

|

IssuesEvent

|

2022-11-20 17:15:23

|

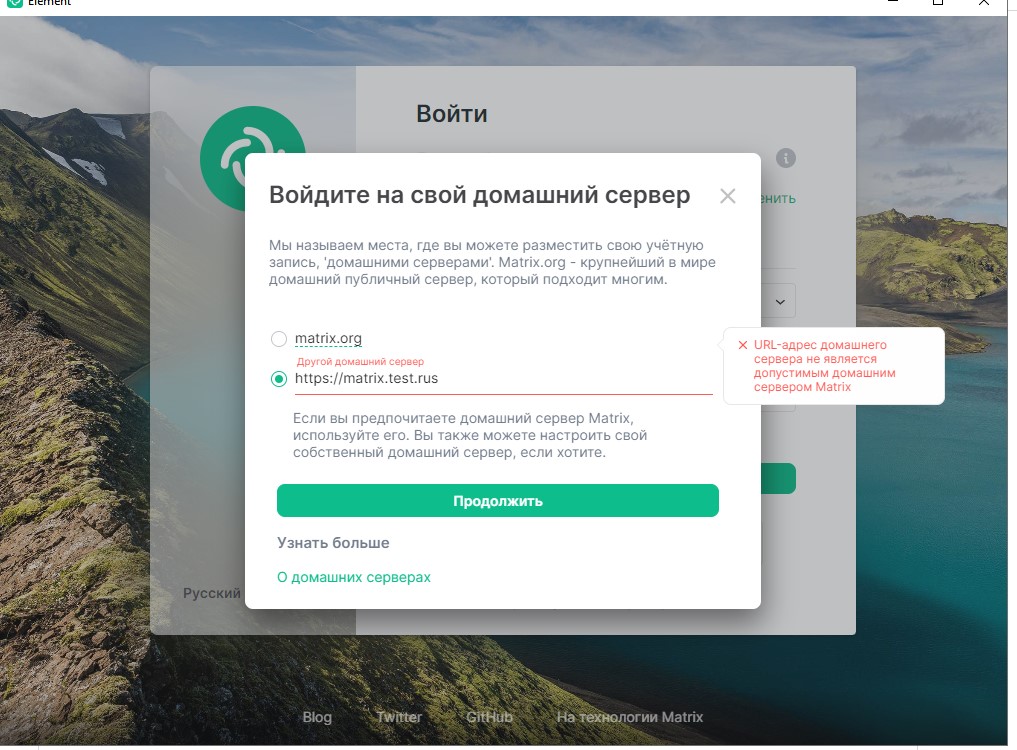

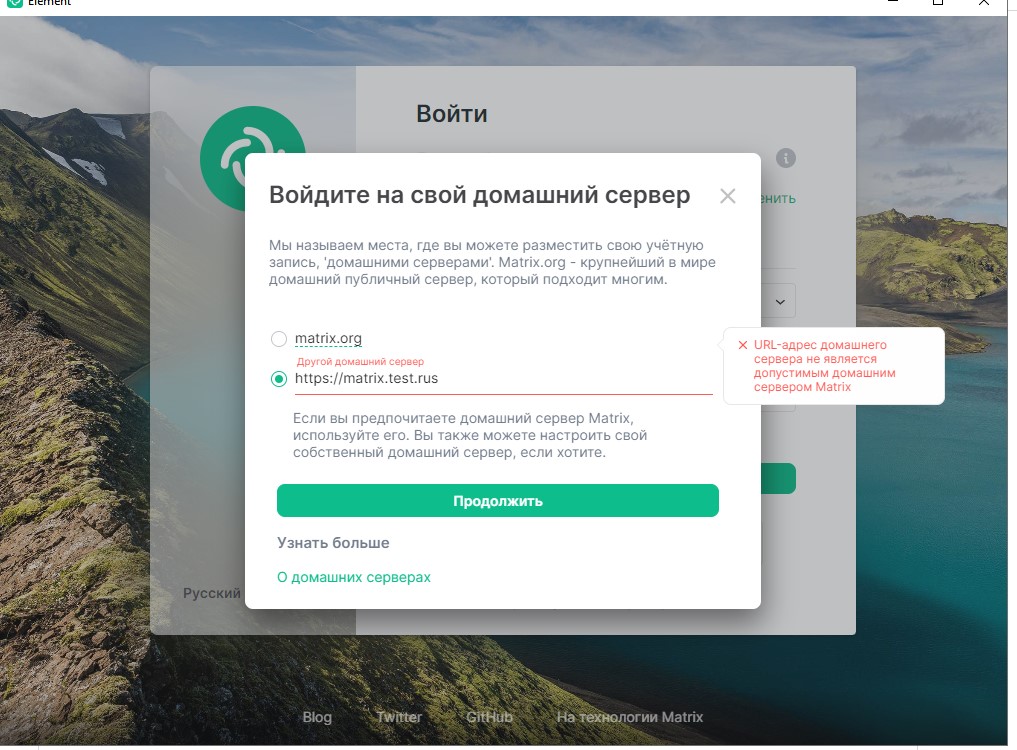

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

Video calls freeze after a few seconds at __strlen_avx2_rtm

|

T-Defect

|

### Steps to reproduce

1. Where are you starting? What can you see?

I ran element-desktop 1.11.14 (from NixOS unstable, but it crashes with 1.11.10 from the stable branch too).

(It worked reliably for months when I was using nixos-22.05, but fails since switching to nixos-unstable.)

On my tablet, I run element in the chrome with a test account. However, it also happens from a laptop running Ubuntu; the tablet is just for reproducing it locally.

2. What do you click?

On the tablet, I click to start a video call, and then accept it on element-desktop.

For testing, I turn off the mic, but it also happens without that.

### Outcome

#### What did you expect?

Video to continue working.

#### What happened instead?

After around 10 seconds, some of the video streams freeze.

e.g. the video in element-desktop freezes (but it is still sending to the tablet, which doesn't show anything wrong).

Turning off the video on the tablet and then turning it on again got it going again.

Sometimes it fails differently. e.g. both sides can see the other side's camera, but their local previews both freeze.

This happens whether using Wayland or X11 (via `NIXOS_OZONE_WL`).

The journal contains e.g.

```

[🡕] Process 513053 (.electron-wrapp) of user 1000 dumped core.

Module /nix/store/4nlgxhb09sdr51nc9hdm8az5b08vzkgx-glibc-2.35-163/lib/ld-linux-x86-64.so.2 with build-id db50353a26600bb848b9a5541b1506e0a24cb34b

Module linux-vdso.so.1 with build-id 97640497af8bdd9493208b8ce8243c9f775e9fc6

Module libspa-audioconvert.so without build-id.

Module libpipewire-module-session-manager.so without build-id.

Module libpipewire-module-metadata.so without build-id.

Module libpipewire-module-adapter.so without build-id.

Module libpipewire-module-client-device.so without build-id.

Module libpipewire-module-client-node.so without build-id.

Module libpipewire-module-protocol-native.so without build-id.

Module libpipewire-module-rt.so without build-id.

Module libspa-dbus.so without build-id.

Module libspa-journal.so without build-id.

Module libspa-support.so without build-id.

Module libpipewire-0.3.so.0 without build-id.

Module libasound_module_pcm_pipewire.so without build-id.

Module libstdc++.so.6 without build-id.

Module libicudata.so.72 without build-id.

Module libGLX.so.0 without build-id.

Module libGLdispatch.so.0 without build-id.

Module libdatrie.so.1 without build-id.

Module libsqlite3.so.0 with build-id 377f9d7f0fb8f5896be673d87eb739eb7866db92

Module libxml2.so.2 without build-id.

Module libjson-glib-1.0.so.0 without build-id.

Module libicui18n.so.72 without build-id.

Module libicuuc.so.72 without build-id.

Module libjpeg.so.62 without build-id.

Module libbz2.so.1 without build-id.

Module libgraphite2.so.3 without build-id.

Module libXinerama.so.1 without build-id.

Module libXcursor.so.1 without build-id.

Module libwayland-egl.so.1 with build-id d6b466ff99696870068c564a8ebded6a81cae225

Module libwayland-cursor.so.0 with build-id 5552be47a749c6825465c9a93d43f69f9701c9de

Module libwayland-client.so.0 with build-id c3616c06165ba2231464dfdde480b9ae48f92ec7

Module libcap.so.2 without build-id.

Module libgmp.so.10 without build-id.

Module libhogweed.so.6 without build-id.

Module libnettle.so.8 without build-id.

Module libtasn1.so.6 without build-id.

Module libunistring.so.2 without build-id.

Module libidn2.so.0 without build-id.

Module libp11-kit.so.0 without build-id.

Module libssp.so.0 without build-id.

Module libpcre.so.1 without build-id.

Module libblkid.so.1 with build-id f4f9ebcbcca3e44b7094b20e2ee71709825f36bb

Module libXdmcp.so.6 without build-id.

Module libXau.so.6 without build-id.

Module libwayland-server.so.0 with build-id 91f99799f5cca19715660617ace0a8444d822efe

Module libGL.so.1 without build-id.

Module libXrender.so.1 without build-id.

Module libxcb-render.so.0 without build-id.

Module libxcb-shm.so.0 without build-id.

Module libpng16.so.16 without build-id.

Module libEGL.so.1 without build-id.

Module libfreetype.so.6 without build-id.

Module libpixman-1.so.0 with build-id a4a8d46c6b2f698ceaf6661c79d06700493d31ab

Module libthai.so.0 without build-id.

Module libtracker-sparql-3.0.so.0 without build-id.

Module libXi.so.6 without build-id.

Module libepoxy.so.0 without build-id.

Module libgdk_pixbuf-2.0.so.0 with build-id 8f15f562c170a916afff71fb3e34401dd4e20d9e

Module libcairo-gobject.so.2 with build-id df83675e5c22873ce86626535519d51ff3cc513a

Module libfribidi.so.0 without build-id.

Module libfontconfig.so.1 without build-id.

Module libpangoft2-1.0.so.0 without build-id.

Module libharfbuzz.so.0 without build-id.

Module libpangocairo-1.0.so.0 without build-id.

Module libgdk-3.so.0 with build-id 2cfb8d0f3702bc5c753dbfac0002a0b9c18b5d83

Module libsystemd.so.0 without build-id.

Module libgnutls.so.30 without build-id.

Module libavahi-client.so.3 without build-id.

Module libavahi-common.so.3 without build-id.

Module librt.so.1 with build-id 7c9aae26f0646a27bf0f7c49c914b3258c5fa43e

Module libplc4.so without build-id.

Module libplds4.so without build-id.

Module libselinux.so.1 without build-id.

Module libmount.so.1 with build-id ed8fa2ae9881fc31bd8f5963397b42c8644f162d

Module libgmodule-2.0.so.0 with build-id 5ea22aa96ea6851566bb6ab070a1621683ae4e88

Module libpcre2-8.so.0 without build-id.

Module libffi.so.8 without build-id.

Module libcrypto.so.3 with build-id 5ba9c3862d2fed33339255247444fc34d53cb4cc

Module libz.so.1 without build-id.

Module libc.so.6 with build-id 2bb226bc600b443958c7566207d0d02f8345e6ea

Module libgcc_s.so.1 without build-id.

Module libatspi.so.0 without build-id.

Module libasound.so.2 without build-id.

Module libxkbcommon.so.0 without build-id.

Module libxcb.so.1 without build-id.

Module libexpat.so.1 without build-id.

Module libgbm.so.1 without build-id.

Module libXrandr.so.2 without build-id.

Module libXfixes.so.3 without build-id.

Module libXext.so.6 without build-id.

Module libXdamage.so.1 without build-id.

Module libXcomposite.so.1 without build-id.

Module libX11.so.6 without build-id.

Module libm.so.6 with build-id b8454b40db819599169f3a948939aed4b3fc7f82

Module libcairo.so.2 with build-id 21e308ba73f784934d4eb8cb2efd507151a8d65e

Module libpango-1.0.so.0 without build-id.

Module libgtk-3.so.0 with build-id 43ad91b494d9bf2e052ade1c14ed724c2c3030b2

Module libdrm.so.2 without build-id.

Module libdbus-1.so.3 without build-id.

Module libcups.so.2 without build-id.

Module libatk-bridge-2.0.so.0 without build-id.

Module libatk-1.0.so.0 without build-id.

Module libnspr4.so without build-id.

Module libsmime3.so without build-id.

Module libnssutil3.so without build-id.

Module libnss3.so without build-id.

Module libgio-2.0.so.0 with build-id 9c3d32e1d5dbf7d39ea67d2bb7045fbf51126a85

Module libglib-2.0.so.0 with build-id b13cd968ce6f5320e45dde1446f3066371403d7c

Module libgobject-2.0.so.0 with build-id cc0205109407a5b4ace0874f64aee611878a482d

Module libpthread.so.0 with build-id 85431f01160c3de171d3baeb3f8cf1c9578dc441

Module libdl.so.2 with build-id 67c430223def0be24c4ae1a4c3985f26566b8831

Module libffmpeg.so with build-id da7bfd439eb2866765067ecab210ebcb6184bb50

Module libsqlcipher.so without build-id.

Module .electron-wrapped with build-id be7e0a8182dc5bdd72ab8f92cc743fb0cf4ff95f

Stack trace of thread 513053:

#0 0x00007fd12aa534bd __strlen_avx2_rtm (libc.so.6 + 0x1664bd)

#1 0x000056046ff1ab86 n/a (.electron-wrapped + 0x2c2bb86)

#2 0x000056046ff19b11 n/a (.electron-wrapped + 0x2c2ab11)

#3 0x000056046ff11798 n/a (.electron-wrapped + 0x2c22798)

#4 0x000056046ff11722 n/a (.electron-wrapped + 0x2c22722)

#5 0x000056046ff130d1 n/a (.electron-wrapped + 0x2c240d1)

#6 0x000056046ff12664 n/a (.electron-wrapped + 0x2c23664)

#7 0x0000560471be23a3 n/a (.electron-wrapped + 0x48f33a3)

#8 0x0000560471bef4df n/a (.electron-wrapped + 0x49004df)

#9 0x0000560471bf10fd n/a (.electron-wrapped + 0x49020fd)

#10 0x0000560471bf6e18 n/a (.electron-wrapped + 0x4907e18)

#11 0x0000560471bf4fcd n/a (.electron-wrapped + 0x4905fcd)

#12 0x0000560471bf1bcf n/a (.electron-wrapped + 0x4902bcf)

#13 0x000056047234b616 n/a (.electron-wrapped + 0x505c616)

#14 0x0000560472368b85 n/a (.electron-wrapped + 0x5079b85)

#15 0x0000560472311d8e n/a (.electron-wrapped + 0x5022d8e)

#16 0x00005604723694e1 n/a (.electron-wrapped + 0x507a4e1)

#17 0x0000560472330fb2 n/a (.electron-wrapped + 0x5041fb2)

#18 0x0000560471cd79d1 n/a (.electron-wrapped + 0x49e89d1)

#19 0x000056046f71efa9 n/a (.electron-wrapped + 0x242ffa9)

#20 0x000056046f71fc4b n/a (.electron-wrapped + 0x2430c4b)

#21 0x000056046f71d18d n/a (.electron-wrapped + 0x242e18d)

#22 0x000056046f71d974 n/a (.electron-wrapped + 0x242e974)

#23 0x000056046f48491b n/a (.electron-wrapped + 0x219591b)

#24 0x00007fd12a91624e __libc_start_call_main (libc.so.6 + 0x2924e)

#25 0x00007fd12a916309 __libc_start_main@@GLIBC_2.34 (libc.so.6 + 0x29309)

#26 0x000056046f0fe02a _start (.electron-wrapped + 0x1e0f02a)

Stack trace of thread 513057:

#0 0x00007fd12a9727d5 __futex_abstimed_wait_common (libc.so.6 + 0x857d5)

#1 0x00007fd12a975524 pthread_cond_timedwait@@GLIBC_2.3.2 (libc.so.6 + 0x88524)

#2 0x00005604723a3206 n/a (.electron-wrapped + 0x50b4206)

#3 0x00005604723a3850 n/a (.electron-wrapped + 0x50b4850)

#4 0x000056047237cc98 n/a (.electron-wrapped + 0x508dc98)

#5 0x000056047237d526 n/a (.electron-wrapped + 0x508e526)

#6 0x000056047237d39d n/a (.electron-wrapped + 0x508e39d)

#7 0x000056047237d2b1 n/a (.electron-wrapped + 0x508e2b1)

#8 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#9 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#10 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513072:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513061:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513067:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513077:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513059:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513069:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513079:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513074:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513055:

#0 0x00007fd12a9727d5 __futex_abstimed_wait_common (libc.so.6 + 0x857d5)

#1 0x00007fd12a975524 pthread_cond_timedwait@@GLIBC_2.3.2 (libc.so.6 + 0x88524)

#2 0x00005604723a3206 n/a (.electron-wrapped + 0x50b4206)

#3 0x00005604723a3850 n/a (.electron-wrapped + 0x50b4850)

#4 0x000056047237cc98 n/a (.electron-wrapped + 0x508dc98)

#5 0x000056047237d752 n/a (.electron-wrapped + 0x508e752)

#6 0x000056047237d39d n/a (.electron-wrapped + 0x508e39d)

#7 0x000056047237d2b1 n/a (.electron-wrapped + 0x508e2b1)

#8 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#9 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#10 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513054:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00005604724bcf2a n/a (.electron-wrapped + 0x51cdf2a)

#2 0x00005604724baa6b n/a (.electron-wrapped + 0x51cba6b)

#3 0x00005604723b46d2 n/a (.electron-wrapped + 0x50c56d2)

#4 0x00005604723694e1 n/a (.electron-wrapped + 0x507a4e1)

#5 0x0000560472330fb2 n/a (.electron-wrapped + 0x5041fb2)

#6 0x0000560472383818 n/a (.electron-wrapped + 0x5094818)

#7 0x000056047237043d n/a (.electron-wrapped + 0x508143d)

#8 0x00005604723839a7 n/a (.electron-wrapped + 0x50949a7)

#9 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#10 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#11 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513064:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513066:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513056:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00005604724bcf2a n/a (.electron-wrapped + 0x51cdf2a)

#2 0x00005604724baa6b n/a (.electron-wrapped + 0x51cba6b)

#3 0x00005604723b4634 n/a (.electron-wrapped + 0x50c5634)

#4 0x00005604723694e1 n/a (.electron-wrapped + 0x507a4e1)

#5 0x0000560472330fb2 n/a (.electron-wrapped + 0x5041fb2)

#6 0x0000560472383818 n/a (.electron-wrapped + 0x5094818)

#7 0x0000560473b807df n/a (.electron-wrapped + 0x68917df)

#8 0x00005604723839a7 n/a (.electron-wrapped + 0x50949a7)

#9 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#10 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#11 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

ELF object binary architecture: AMD x86-64

```

### Operating system

nixos-unstable (nixpkgs rev 52b2ac8ae1)

### Application version

Element version: 1.11.14, Olm version: 3.2.12

### How did you install the app?

https://github.com/NixOS/nixpkgs/commit/52b2ac8ae18bbad4374ff0dd5aeee0fdf1aea739

### Homeserver

Unsure. I have a matrix_synapse-1.68.0.dist-info file.

### Will you send logs?

No

|

1.0

|

Video calls freeze after a few seconds at __strlen_avx2_rtm - ### Steps to reproduce

1. Where are you starting? What can you see?

I ran element-desktop 1.11.14 (from NixOS unstable, but it crashes with 1.11.10 from the stable branch too).

(It worked reliably for months when I was using nixos-22.05, but fails since switching to nixos-unstable.)

On my tablet, I run element in the chrome with a test account. However, it also happens from a laptop running Ubuntu; the tablet is just for reproducing it locally.

2. What do you click?

On the tablet, I click to start a video call, and then accept it on element-desktop.

For testing, I turn off the mic, but it also happens without that.

### Outcome

#### What did you expect?

Video to continue working.

#### What happened instead?

After around 10 seconds, some of the video streams freeze.

e.g. the video in element-desktop freezes (but it is still sending to the tablet, which doesn't show anything wrong).

Turning off the video on the tablet and then turning it on again got it going again.

Sometimes it fails differently. e.g. both sides can see the other side's camera, but their local previews both freeze.

This happens whether using Wayland or X11 (via `NIXOS_OZONE_WL`).

The journal contains e.g.

```

[🡕] Process 513053 (.electron-wrapp) of user 1000 dumped core.

Module /nix/store/4nlgxhb09sdr51nc9hdm8az5b08vzkgx-glibc-2.35-163/lib/ld-linux-x86-64.so.2 with build-id db50353a26600bb848b9a5541b1506e0a24cb34b

Module linux-vdso.so.1 with build-id 97640497af8bdd9493208b8ce8243c9f775e9fc6

Module libspa-audioconvert.so without build-id.

Module libpipewire-module-session-manager.so without build-id.

Module libpipewire-module-metadata.so without build-id.

Module libpipewire-module-adapter.so without build-id.

Module libpipewire-module-client-device.so without build-id.

Module libpipewire-module-client-node.so without build-id.

Module libpipewire-module-protocol-native.so without build-id.

Module libpipewire-module-rt.so without build-id.

Module libspa-dbus.so without build-id.

Module libspa-journal.so without build-id.

Module libspa-support.so without build-id.

Module libpipewire-0.3.so.0 without build-id.

Module libasound_module_pcm_pipewire.so without build-id.

Module libstdc++.so.6 without build-id.

Module libicudata.so.72 without build-id.

Module libGLX.so.0 without build-id.

Module libGLdispatch.so.0 without build-id.

Module libdatrie.so.1 without build-id.

Module libsqlite3.so.0 with build-id 377f9d7f0fb8f5896be673d87eb739eb7866db92

Module libxml2.so.2 without build-id.

Module libjson-glib-1.0.so.0 without build-id.

Module libicui18n.so.72 without build-id.

Module libicuuc.so.72 without build-id.

Module libjpeg.so.62 without build-id.

Module libbz2.so.1 without build-id.

Module libgraphite2.so.3 without build-id.

Module libXinerama.so.1 without build-id.

Module libXcursor.so.1 without build-id.

Module libwayland-egl.so.1 with build-id d6b466ff99696870068c564a8ebded6a81cae225

Module libwayland-cursor.so.0 with build-id 5552be47a749c6825465c9a93d43f69f9701c9de

Module libwayland-client.so.0 with build-id c3616c06165ba2231464dfdde480b9ae48f92ec7

Module libcap.so.2 without build-id.

Module libgmp.so.10 without build-id.

Module libhogweed.so.6 without build-id.

Module libnettle.so.8 without build-id.

Module libtasn1.so.6 without build-id.

Module libunistring.so.2 without build-id.

Module libidn2.so.0 without build-id.

Module libp11-kit.so.0 without build-id.

Module libssp.so.0 without build-id.

Module libpcre.so.1 without build-id.

Module libblkid.so.1 with build-id f4f9ebcbcca3e44b7094b20e2ee71709825f36bb

Module libXdmcp.so.6 without build-id.

Module libXau.so.6 without build-id.

Module libwayland-server.so.0 with build-id 91f99799f5cca19715660617ace0a8444d822efe

Module libGL.so.1 without build-id.

Module libXrender.so.1 without build-id.

Module libxcb-render.so.0 without build-id.

Module libxcb-shm.so.0 without build-id.

Module libpng16.so.16 without build-id.

Module libEGL.so.1 without build-id.

Module libfreetype.so.6 without build-id.

Module libpixman-1.so.0 with build-id a4a8d46c6b2f698ceaf6661c79d06700493d31ab

Module libthai.so.0 without build-id.

Module libtracker-sparql-3.0.so.0 without build-id.

Module libXi.so.6 without build-id.

Module libepoxy.so.0 without build-id.

Module libgdk_pixbuf-2.0.so.0 with build-id 8f15f562c170a916afff71fb3e34401dd4e20d9e

Module libcairo-gobject.so.2 with build-id df83675e5c22873ce86626535519d51ff3cc513a

Module libfribidi.so.0 without build-id.

Module libfontconfig.so.1 without build-id.

Module libpangoft2-1.0.so.0 without build-id.

Module libharfbuzz.so.0 without build-id.

Module libpangocairo-1.0.so.0 without build-id.

Module libgdk-3.so.0 with build-id 2cfb8d0f3702bc5c753dbfac0002a0b9c18b5d83

Module libsystemd.so.0 without build-id.

Module libgnutls.so.30 without build-id.

Module libavahi-client.so.3 without build-id.

Module libavahi-common.so.3 without build-id.

Module librt.so.1 with build-id 7c9aae26f0646a27bf0f7c49c914b3258c5fa43e

Module libplc4.so without build-id.

Module libplds4.so without build-id.

Module libselinux.so.1 without build-id.

Module libmount.so.1 with build-id ed8fa2ae9881fc31bd8f5963397b42c8644f162d

Module libgmodule-2.0.so.0 with build-id 5ea22aa96ea6851566bb6ab070a1621683ae4e88

Module libpcre2-8.so.0 without build-id.

Module libffi.so.8 without build-id.

Module libcrypto.so.3 with build-id 5ba9c3862d2fed33339255247444fc34d53cb4cc

Module libz.so.1 without build-id.

Module libc.so.6 with build-id 2bb226bc600b443958c7566207d0d02f8345e6ea

Module libgcc_s.so.1 without build-id.

Module libatspi.so.0 without build-id.

Module libasound.so.2 without build-id.

Module libxkbcommon.so.0 without build-id.

Module libxcb.so.1 without build-id.

Module libexpat.so.1 without build-id.

Module libgbm.so.1 without build-id.

Module libXrandr.so.2 without build-id.

Module libXfixes.so.3 without build-id.

Module libXext.so.6 without build-id.

Module libXdamage.so.1 without build-id.

Module libXcomposite.so.1 without build-id.

Module libX11.so.6 without build-id.

Module libm.so.6 with build-id b8454b40db819599169f3a948939aed4b3fc7f82

Module libcairo.so.2 with build-id 21e308ba73f784934d4eb8cb2efd507151a8d65e

Module libpango-1.0.so.0 without build-id.

Module libgtk-3.so.0 with build-id 43ad91b494d9bf2e052ade1c14ed724c2c3030b2

Module libdrm.so.2 without build-id.

Module libdbus-1.so.3 without build-id.

Module libcups.so.2 without build-id.

Module libatk-bridge-2.0.so.0 without build-id.

Module libatk-1.0.so.0 without build-id.

Module libnspr4.so without build-id.

Module libsmime3.so without build-id.

Module libnssutil3.so without build-id.

Module libnss3.so without build-id.

Module libgio-2.0.so.0 with build-id 9c3d32e1d5dbf7d39ea67d2bb7045fbf51126a85

Module libglib-2.0.so.0 with build-id b13cd968ce6f5320e45dde1446f3066371403d7c

Module libgobject-2.0.so.0 with build-id cc0205109407a5b4ace0874f64aee611878a482d

Module libpthread.so.0 with build-id 85431f01160c3de171d3baeb3f8cf1c9578dc441

Module libdl.so.2 with build-id 67c430223def0be24c4ae1a4c3985f26566b8831

Module libffmpeg.so with build-id da7bfd439eb2866765067ecab210ebcb6184bb50

Module libsqlcipher.so without build-id.

Module .electron-wrapped with build-id be7e0a8182dc5bdd72ab8f92cc743fb0cf4ff95f

Stack trace of thread 513053:

#0 0x00007fd12aa534bd __strlen_avx2_rtm (libc.so.6 + 0x1664bd)

#1 0x000056046ff1ab86 n/a (.electron-wrapped + 0x2c2bb86)

#2 0x000056046ff19b11 n/a (.electron-wrapped + 0x2c2ab11)

#3 0x000056046ff11798 n/a (.electron-wrapped + 0x2c22798)

#4 0x000056046ff11722 n/a (.electron-wrapped + 0x2c22722)

#5 0x000056046ff130d1 n/a (.electron-wrapped + 0x2c240d1)

#6 0x000056046ff12664 n/a (.electron-wrapped + 0x2c23664)

#7 0x0000560471be23a3 n/a (.electron-wrapped + 0x48f33a3)

#8 0x0000560471bef4df n/a (.electron-wrapped + 0x49004df)

#9 0x0000560471bf10fd n/a (.electron-wrapped + 0x49020fd)

#10 0x0000560471bf6e18 n/a (.electron-wrapped + 0x4907e18)

#11 0x0000560471bf4fcd n/a (.electron-wrapped + 0x4905fcd)

#12 0x0000560471bf1bcf n/a (.electron-wrapped + 0x4902bcf)

#13 0x000056047234b616 n/a (.electron-wrapped + 0x505c616)

#14 0x0000560472368b85 n/a (.electron-wrapped + 0x5079b85)

#15 0x0000560472311d8e n/a (.electron-wrapped + 0x5022d8e)

#16 0x00005604723694e1 n/a (.electron-wrapped + 0x507a4e1)

#17 0x0000560472330fb2 n/a (.electron-wrapped + 0x5041fb2)

#18 0x0000560471cd79d1 n/a (.electron-wrapped + 0x49e89d1)

#19 0x000056046f71efa9 n/a (.electron-wrapped + 0x242ffa9)

#20 0x000056046f71fc4b n/a (.electron-wrapped + 0x2430c4b)

#21 0x000056046f71d18d n/a (.electron-wrapped + 0x242e18d)

#22 0x000056046f71d974 n/a (.electron-wrapped + 0x242e974)

#23 0x000056046f48491b n/a (.electron-wrapped + 0x219591b)

#24 0x00007fd12a91624e __libc_start_call_main (libc.so.6 + 0x2924e)

#25 0x00007fd12a916309 __libc_start_main@@GLIBC_2.34 (libc.so.6 + 0x29309)

#26 0x000056046f0fe02a _start (.electron-wrapped + 0x1e0f02a)

Stack trace of thread 513057:

#0 0x00007fd12a9727d5 __futex_abstimed_wait_common (libc.so.6 + 0x857d5)

#1 0x00007fd12a975524 pthread_cond_timedwait@@GLIBC_2.3.2 (libc.so.6 + 0x88524)

#2 0x00005604723a3206 n/a (.electron-wrapped + 0x50b4206)

#3 0x00005604723a3850 n/a (.electron-wrapped + 0x50b4850)

#4 0x000056047237cc98 n/a (.electron-wrapped + 0x508dc98)

#5 0x000056047237d526 n/a (.electron-wrapped + 0x508e526)

#6 0x000056047237d39d n/a (.electron-wrapped + 0x508e39d)

#7 0x000056047237d2b1 n/a (.electron-wrapped + 0x508e2b1)

#8 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#9 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#10 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513072:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513061:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513067:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513077:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513059:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513069:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513079:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513074:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513055:

#0 0x00007fd12a9727d5 __futex_abstimed_wait_common (libc.so.6 + 0x857d5)

#1 0x00007fd12a975524 pthread_cond_timedwait@@GLIBC_2.3.2 (libc.so.6 + 0x88524)

#2 0x00005604723a3206 n/a (.electron-wrapped + 0x50b4206)

#3 0x00005604723a3850 n/a (.electron-wrapped + 0x50b4850)

#4 0x000056047237cc98 n/a (.electron-wrapped + 0x508dc98)

#5 0x000056047237d752 n/a (.electron-wrapped + 0x508e752)

#6 0x000056047237d39d n/a (.electron-wrapped + 0x508e39d)

#7 0x000056047237d2b1 n/a (.electron-wrapped + 0x508e2b1)

#8 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#9 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#10 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513054:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00005604724bcf2a n/a (.electron-wrapped + 0x51cdf2a)

#2 0x00005604724baa6b n/a (.electron-wrapped + 0x51cba6b)

#3 0x00005604723b46d2 n/a (.electron-wrapped + 0x50c56d2)

#4 0x00005604723694e1 n/a (.electron-wrapped + 0x507a4e1)

#5 0x0000560472330fb2 n/a (.electron-wrapped + 0x5041fb2)

#6 0x0000560472383818 n/a (.electron-wrapped + 0x5094818)

#7 0x000056047237043d n/a (.electron-wrapped + 0x508143d)

#8 0x00005604723839a7 n/a (.electron-wrapped + 0x50949a7)

#9 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#10 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#11 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513064:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116100df4 do_loop (libpipewire-0.3.so.0 + 0x46df4)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513066:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00007fd1160b0810 impl_pollfd_wait (libspa-support.so + 0x15810)

#2 0x00007fd1160a3cbb loop_iterate (libspa-support.so + 0x8cbb)

#3 0x00007fd116155822 do_loop (libpipewire-0.3.so.0 + 0x9b822)

#4 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#5 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

Stack trace of thread 513056:

#0 0x00007fd12a9fc237 epoll_wait (libc.so.6 + 0x10f237)

#1 0x00005604724bcf2a n/a (.electron-wrapped + 0x51cdf2a)

#2 0x00005604724baa6b n/a (.electron-wrapped + 0x51cba6b)

#3 0x00005604723b4634 n/a (.electron-wrapped + 0x50c5634)

#4 0x00005604723694e1 n/a (.electron-wrapped + 0x507a4e1)

#5 0x0000560472330fb2 n/a (.electron-wrapped + 0x5041fb2)

#6 0x0000560472383818 n/a (.electron-wrapped + 0x5094818)

#7 0x0000560473b807df n/a (.electron-wrapped + 0x68917df)

#8 0x00005604723839a7 n/a (.electron-wrapped + 0x50949a7)

#9 0x00005604723a70bf n/a (.electron-wrapped + 0x50b80bf)

#10 0x00007fd12a975e86 start_thread (libc.so.6 + 0x88e86)

#11 0x00007fd12a9fcc60 __clone3 (libc.so.6 + 0x10fc60)

ELF object binary architecture: AMD x86-64

```

### Operating system

nixos-unstable (nixpkgs rev 52b2ac8ae1)

### Application version

Element version: 1.11.14, Olm version: 3.2.12

### How did you install the app?

https://github.com/NixOS/nixpkgs/commit/52b2ac8ae18bbad4374ff0dd5aeee0fdf1aea739

### Homeserver

Unsure. I have a matrix_synapse-1.68.0.dist-info file.

### Will you send logs?

No

|

defect

|

video calls freeze after a few seconds at strlen rtm steps to reproduce where are you starting what can you see i ran element desktop from nixos unstable but it crashes with from the stable branch too it worked reliably for months when i was using nixos but fails since switching to nixos unstable on my tablet i run element in the chrome with a test account however it also happens from a laptop running ubuntu the tablet is just for reproducing it locally what do you click on the tablet i click to start a video call and then accept it on element desktop for testing i turn off the mic but it also happens without that outcome what did you expect video to continue working what happened instead after around seconds some of the video streams freeze e g the video in element desktop freezes but it is still sending to the tablet which doesn t show anything wrong turning off the video on the tablet and then turning it on again got it going again sometimes it fails differently e g both sides can see the other side s camera but their local previews both freeze this happens whether using wayland or via nixos ozone wl the journal contains e g process electron wrapp of user dumped core module nix store glibc lib ld linux so with build id module linux vdso so with build id module libspa audioconvert so without build id module libpipewire module session manager so without build id module libpipewire module metadata so without build id module libpipewire module adapter so without build id module libpipewire module client device so without build id module libpipewire module client node so without build id module libpipewire module protocol native so without build id module libpipewire module rt so without build id module libspa dbus so without build id module libspa journal so without build id module libspa support so without build id module libpipewire so without build id module libasound module pcm pipewire so without build id module libstdc so without build id module libicudata so without build id module libglx so without build id module libgldispatch so without build id module libdatrie so without build id module so with build id module so without build id module libjson glib so without build id module so without build id module libicuuc so without build id module libjpeg so without build id module so without build id module so without build id module libxinerama so without build id module libxcursor so without build id module libwayland egl so with build id module libwayland cursor so with build id module libwayland client so with build id module libcap so without build id module libgmp so without build id module libhogweed so without build id module libnettle so without build id module so without build id module libunistring so without build id module so without build id module kit so without build id module libssp so without build id module libpcre so without build id module libblkid so with build id module libxdmcp so without build id module libxau so without build id module libwayland server so with build id module libgl so without build id module libxrender so without build id module libxcb render so without build id module libxcb shm so without build id module so without build id module libegl so without build id module libfreetype so without build id module libpixman so with build id module libthai so without build id module libtracker sparql so without build id module libxi so without build id module libepoxy so without build id module libgdk pixbuf so with build id module libcairo gobject so with build id module libfribidi so without build id module libfontconfig so without build id module so without build id module libharfbuzz so without build id module libpangocairo so without build id module libgdk so with build id module libsystemd so without build id module libgnutls so without build id module libavahi client so without build id module libavahi common so without build id module librt so with build id module so without build id module so without build id module libselinux so without build id module libmount so with build id module libgmodule so with build id module so without build id module libffi so without build id module libcrypto so with build id module libz so without build id module libc so with build id module libgcc s so without build id module libatspi so without build id module libasound so without build id module libxkbcommon so without build id module libxcb so without build id module libexpat so without build id module libgbm so without build id module libxrandr so without build id module libxfixes so without build id module libxext so without build id module libxdamage so without build id module libxcomposite so without build id module so without build id module libm so with build id module libcairo so with build id module libpango so without build id module libgtk so with build id module libdrm so without build id module libdbus so without build id module libcups so without build id module libatk bridge so without build id module libatk so without build id module so without build id module so without build id module so without build id module so without build id module libgio so with build id module libglib so with build id module libgobject so with build id module libpthread so with build id module libdl so with build id module libffmpeg so with build id module libsqlcipher so without build id module electron wrapped with build id stack trace of thread strlen rtm libc so n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped libc start call main libc so libc start main glibc libc so start electron wrapped stack trace of thread futex abstimed wait common libc so pthread cond timedwait glibc libc so n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread futex abstimed wait common libc so pthread cond timedwait glibc libc so n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped start thread libc so libc so stack trace of thread epoll wait libc so n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so impl pollfd wait libspa support so loop iterate libspa support so do loop libpipewire so start thread libc so libc so stack trace of thread epoll wait libc so n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped n a electron wrapped start thread libc so libc so elf object binary architecture amd operating system nixos unstable nixpkgs rev application version element version olm version how did you install the app homeserver unsure i have a matrix synapse dist info file will you send logs no

| 1

|

51,671

| 13,211,279,425

|

IssuesEvent

|

2020-08-15 22:00:46

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

opened

|

[photoflash] Add back to the trunk (Trac #808)

|

Incomplete Migration Migrated from Trac combo simulation defect

|

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/808">https://code.icecube.wisc.edu/projects/icecube/ticket/808</a>, reported by olivasand owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:03",

"_ts": "1458335643235016",

"description": "This was removed at some point for reasons that aren't clear. Start including it again.",

"reporter": "olivas",

"cc": "",

"resolution": "wontfix",

"time": "2014-11-12T03:10:19",

"component": "combo simulation",

"summary": "[photoflash] Add back to the trunk",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

[photoflash] Add back to the trunk (Trac #808) - <details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/808">https://code.icecube.wisc.edu/projects/icecube/ticket/808</a>, reported by olivasand owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:03",

"_ts": "1458335643235016",

"description": "This was removed at some point for reasons that aren't clear. Start including it again.",

"reporter": "olivas",

"cc": "",

"resolution": "wontfix",

"time": "2014-11-12T03:10:19",

"component": "combo simulation",

"summary": "[photoflash] Add back to the trunk",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

|

defect

|

add back to the trunk trac migrated from json status closed changetime ts description this was removed at some point for reasons that aren t clear start including it again reporter olivas cc resolution wontfix time component combo simulation summary add back to the trunk priority normal keywords milestone owner olivas type defect

| 1

|

25,033

| 4,178,504,161

|

IssuesEvent

|

2016-06-22 07:08:08

|

netty/netty

|

https://api.github.com/repos/netty/netty

|

closed

|

io.netty.resolver.dns.DnsNameResolver does not resolve localhost on Windows

|

defect

|

On Windows localhost is not in hosts file and the DNS server does not resolve this address either, i.e it is handled by the Windows API. So using a `Bootstrap` (among others) with the resolver based on `DnsNameResolver` will not resolve localhost.

http://serverfault.com/questions/4689/windows-7-localhost-name-resolution-is-handled-within-dns-itself-why

|

1.0

|

io.netty.resolver.dns.DnsNameResolver does not resolve localhost on Windows - On Windows localhost is not in hosts file and the DNS server does not resolve this address either, i.e it is handled by the Windows API. So using a `Bootstrap` (among others) with the resolver based on `DnsNameResolver` will not resolve localhost.

http://serverfault.com/questions/4689/windows-7-localhost-name-resolution-is-handled-within-dns-itself-why

|

defect

|

io netty resolver dns dnsnameresolver does not resolve localhost on windows on windows localhost is not in hosts file and the dns server does not resolve this address either i e it is handled by the windows api so using a bootstrap among others with the resolver based on dnsnameresolver will not resolve localhost

| 1

|

97,286

| 12,224,717,592

|

IssuesEvent

|

2020-05-03 00:20:45

|

CERT-Polska/mquery

|

https://api.github.com/repos/CERT-Polska/mquery

|

closed

|

Support case insenstive strings in yara rules

|

level:hard priority:medium status:needs more design zone:flask backend zone:ursadb

|

Right now, we just ignore strings with the nocase flag:

```

rule CaseInsensitiveTextExample

{

strings:

$text_string = "foobar" nocase

condition:

$text_string

}

```

Supporting them correctly is... harder than it looks like. This can match:

```

foobar

foobaR

foobAr

foobAR

fooBar

... and 59 strings more

```

and ursadb query language is not expressive enough to support this.

We can't hack around this by chopping the query in the backend to something like:

```

( "foo" AND (

"oob" AND (

"oba" AND (

...

) OR

"obA" AND (

)

) OR

"ooB" AND (

"oBa" AND (

...

) OR

"oBA" AND (

...

)

)

) OR "foO AND (

...

) OR "fOo" AND (

...

) OR "fOO" AND (

...

) ...

```

Because of exponential growth.

OTOH I feel like like this can solved with a C++ method (needs investigation). In this case we need to introduce `nocase` strings to ursadb.

Needs investigation (if this results in too many false positives, we may as well give up).

|

1.0

|

Support case insenstive strings in yara rules - Right now, we just ignore strings with the nocase flag:

```

rule CaseInsensitiveTextExample

{

strings:

$text_string = "foobar" nocase

condition:

$text_string

}

```

Supporting them correctly is... harder than it looks like. This can match:

```

foobar

foobaR

foobAr

foobAR

fooBar

... and 59 strings more

```

and ursadb query language is not expressive enough to support this.

We can't hack around this by chopping the query in the backend to something like:

```

( "foo" AND (

"oob" AND (

"oba" AND (

...

) OR

"obA" AND (

)

) OR

"ooB" AND (

"oBa" AND (

...

) OR

"oBA" AND (

...

)

)

) OR "foO AND (

...

) OR "fOo" AND (

...

) OR "fOO" AND (

...

) ...

```

Because of exponential growth.

OTOH I feel like like this can solved with a C++ method (needs investigation). In this case we need to introduce `nocase` strings to ursadb.

Needs investigation (if this results in too many false positives, we may as well give up).

|

non_defect

|

support case insenstive strings in yara rules right now we just ignore strings with the nocase flag rule caseinsensitivetextexample strings text string foobar nocase condition text string supporting them correctly is harder than it looks like this can match foobar foobar foobar foobar foobar and strings more and ursadb query language is not expressive enough to support this we can t hack around this by chopping the query in the backend to something like foo and oob and oba and or oba and or oob and oba and or oba and or foo and or foo and or foo and because of exponential growth otoh i feel like like this can solved with a c method needs investigation in this case we need to introduce nocase strings to ursadb needs investigation if this results in too many false positives we may as well give up

| 0

|

732,080

| 25,244,021,778

|

IssuesEvent

|

2022-11-15 09:46:13

|

marcusolsson/obsidian-projects

|

https://api.github.com/repos/marcusolsson/obsidian-projects

|

closed

|

Duplicate a view

|

kind/feature triage/accepted priority/backlog area/core

|

### What would you like to be added?

The ability to duplicate a view and keep all the configuration in the newly created view.

### Why is this needed?

It would make it much easier to add new views if you want to change only something minor, and don't want to configure the view all over again. This will be especially helpful when filtering will be available.

|

1.0

|

Duplicate a view - ### What would you like to be added?

The ability to duplicate a view and keep all the configuration in the newly created view.

### Why is this needed?

It would make it much easier to add new views if you want to change only something minor, and don't want to configure the view all over again. This will be especially helpful when filtering will be available.

|

non_defect

|

duplicate a view what would you like to be added the ability to duplicate a view and keep all the configuration in the newly created view why is this needed it would make it much easier to add new views if you want to change only something minor and don t want to configure the view all over again this will be especially helpful when filtering will be available

| 0

|

11,050

| 2,622,956,292

|

IssuesEvent

|

2015-03-04 09:03:40

|

folded/carve

|

https://api.github.com/repos/folded/carve

|

opened

|

Another case with error message 'UNEXPECTED face loop with size!=1'

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1. load obj1 and obj2

2. run obj = csg.compute(obj1,obj2,csg.INTERSECTION);

3. get the error message and the output obj is not correct

What is the expected output? What do you see instead?

get a correct intersection.

What version of the product are you using? On what operating system?

latest

Please provide any additional information below.

Please see attachements obj1 and obj2.

```