Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

28,056

| 5,168,065,213

|

IssuesEvent

|

2017-01-17 20:32:06

|

idaholab/moose

|

https://api.github.com/repos/idaholab/moose

|

closed

|

Doc string for MooseMesh/dim is not accurate

|

C: MOOSE P: normal T: defect

|

### Description of the enhancement or error report

It says that `This is completely ignored for ExodusII meshes!`, but actually not.

https://github.com/idaholab/moose/blob/devel/framework/src/mesh/MooseMesh.C#L191 causes the default spatial dimension of the mesh is 3 regardless of the actual dimension of the mesh. I discovered this with our custom mesh extruder with a 2D exodus mesh file where we check if the mesh dimension and the mesh spatial dimension are the same. Once we specify `Mesh/dim=2 or 1` in the input, the extruder will work fine.

### Rationale for the enhancement or information for reproducing the error

Something is not very right. I suspect we should use dim=1 to construct the default empty mesh.

### Identified impact

(i.e. Internal object changes, limited interface changes, public API change, or a list of specific applications impacted)

No impact except my particular mesh extruder.

|

1.0

|

Doc string for MooseMesh/dim is not accurate - ### Description of the enhancement or error report

It says that `This is completely ignored for ExodusII meshes!`, but actually not.

https://github.com/idaholab/moose/blob/devel/framework/src/mesh/MooseMesh.C#L191 causes the default spatial dimension of the mesh is 3 regardless of the actual dimension of the mesh. I discovered this with our custom mesh extruder with a 2D exodus mesh file where we check if the mesh dimension and the mesh spatial dimension are the same. Once we specify `Mesh/dim=2 or 1` in the input, the extruder will work fine.

### Rationale for the enhancement or information for reproducing the error

Something is not very right. I suspect we should use dim=1 to construct the default empty mesh.

### Identified impact

(i.e. Internal object changes, limited interface changes, public API change, or a list of specific applications impacted)

No impact except my particular mesh extruder.

|

defect

|

doc string for moosemesh dim is not accurate description of the enhancement or error report it says that this is completely ignored for exodusii meshes but actually not causes the default spatial dimension of the mesh is regardless of the actual dimension of the mesh i discovered this with our custom mesh extruder with a exodus mesh file where we check if the mesh dimension and the mesh spatial dimension are the same once we specify mesh dim or in the input the extruder will work fine rationale for the enhancement or information for reproducing the error something is not very right i suspect we should use dim to construct the default empty mesh identified impact i e internal object changes limited interface changes public api change or a list of specific applications impacted no impact except my particular mesh extruder

| 1

|

255,496

| 21,929,659,253

|

IssuesEvent

|

2022-05-23 08:37:51

|

enonic/app-contentstudio

|

https://api.github.com/repos/enonic/app-contentstudio

|

closed

|

Shortcut- selected options are not refreshed after reverting versions

|

Bug Test is Failing

|

1. Open wizard for new shortcut, fill in the name input and select an option in target selector, save

2. Open Versions Panel and revert the previous version.

3. Revert the verdion with selected target.

BUG - selected options not refreshed after revertiong versions

https://user-images.githubusercontent.com/3728712/169778088-bd261c7d-c469-4d23-a3fe-50c379d66534.mp4

|

1.0

|

Shortcut- selected options are not refreshed after reverting versions - 1. Open wizard for new shortcut, fill in the name input and select an option in target selector, save

2. Open Versions Panel and revert the previous version.

3. Revert the verdion with selected target.

BUG - selected options not refreshed after revertiong versions

https://user-images.githubusercontent.com/3728712/169778088-bd261c7d-c469-4d23-a3fe-50c379d66534.mp4

|

non_defect

|

shortcut selected options are not refreshed after reverting versions open wizard for new shortcut fill in the name input and select an option in target selector save open versions panel and revert the previous version revert the verdion with selected target bug selected options not refreshed after revertiong versions

| 0

|

31,496

| 6,541,485,678

|

IssuesEvent

|

2017-09-01 20:12:29

|

ironjan/metal-only

|

https://api.github.com/repos/ironjan/metal-only

|

closed

|

KotlinNullPointerException reported via Play Store

|

defect

|

Reported on 2 devices for 0.6.7.

```

kotlin.KotlinNullPointerException:

at com.github.ironjan.metalonly.client_library.MetalOnlyAPIWrapper.getStats (MetalOnlyAPIWrapper.kt:55)

at com.codingspezis.android.metalonly.player.StreamControlActivity$2.run (StreamControlActivity.java:195)

at java.lang.Thread.run (Thread.java:856)

```

|

1.0

|

KotlinNullPointerException reported via Play Store - Reported on 2 devices for 0.6.7.

```

kotlin.KotlinNullPointerException:

at com.github.ironjan.metalonly.client_library.MetalOnlyAPIWrapper.getStats (MetalOnlyAPIWrapper.kt:55)

at com.codingspezis.android.metalonly.player.StreamControlActivity$2.run (StreamControlActivity.java:195)

at java.lang.Thread.run (Thread.java:856)

```

|

defect

|

kotlinnullpointerexception reported via play store reported on devices for kotlin kotlinnullpointerexception at com github ironjan metalonly client library metalonlyapiwrapper getstats metalonlyapiwrapper kt at com codingspezis android metalonly player streamcontrolactivity run streamcontrolactivity java at java lang thread run thread java

| 1

|

48,768

| 13,184,733,450

|

IssuesEvent

|

2020-08-12 19:59:46

|

icecube-trac/tix3

|

https://api.github.com/repos/icecube-trac/tix3

|

opened

|

amanda-core giving nasty segfault with release build on gorgon (ubu 8.10 x64) (Trac #163)

|

Incomplete Migration Migrated from Trac combo core defect

|

<details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/163

, reported by blaufuss and owned by blaufuss_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-10-21T15:56:26",

"description": "nasty tracebacks:\n*** buffer overflow detected ***: python terminated\n======= Backtrace: =========\n/lib/libc.so.6(__fortify_fail+0x37)[0x7f4eec560887]\n/lib/libc.so.6[0x7f4eec55e750]\n/lib/libc.so.6[0x7f4eec55dae9]\n/lib/libc.so.6(_IO_default_xsputn+0x96)[0x7f4eec4d9116]\n/lib/libc.so.6(_IO_vfprintf+0x1c1c)[0x7f4eec4aa29c]\n/lib/libc.so.6(__vsprintf_chk+0x9d)[0x7f4eec55db8d]\n/lib/libc.so.6(__sprintf_chk+0x80)[0x7f4eec55dad0]\n/opt/slave_build/manual/offline-software/build_release/lib/libamanda-core.so(_ZN9F2kReader20FillTrigger_Muon_DAQERK4mhitR6I3TreeI9I3TriggerE+0x40)[0x7f4ee1a53140]\n",

"reporter": "blaufuss",

"cc": "fabian.kislat@desy.de",

"resolution": "fixed",

"_ts": "1256140586000000",

"component": "combo core",

"summary": "amanda-core giving nasty segfault with release build on gorgon (ubu 8.10 x64)",

"priority": "normal",

"keywords": "",

"time": "2009-06-12T20:59:39",

"milestone": "",

"owner": "blaufuss",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

amanda-core giving nasty segfault with release build on gorgon (ubu 8.10 x64) (Trac #163) - <details>

<summary>_Migrated from https://code.icecube.wisc.edu/ticket/163

, reported by blaufuss and owned by blaufuss_</summary>

<p>

```json

{

"status": "closed",

"changetime": "2009-10-21T15:56:26",

"description": "nasty tracebacks:\n*** buffer overflow detected ***: python terminated\n======= Backtrace: =========\n/lib/libc.so.6(__fortify_fail+0x37)[0x7f4eec560887]\n/lib/libc.so.6[0x7f4eec55e750]\n/lib/libc.so.6[0x7f4eec55dae9]\n/lib/libc.so.6(_IO_default_xsputn+0x96)[0x7f4eec4d9116]\n/lib/libc.so.6(_IO_vfprintf+0x1c1c)[0x7f4eec4aa29c]\n/lib/libc.so.6(__vsprintf_chk+0x9d)[0x7f4eec55db8d]\n/lib/libc.so.6(__sprintf_chk+0x80)[0x7f4eec55dad0]\n/opt/slave_build/manual/offline-software/build_release/lib/libamanda-core.so(_ZN9F2kReader20FillTrigger_Muon_DAQERK4mhitR6I3TreeI9I3TriggerE+0x40)[0x7f4ee1a53140]\n",

"reporter": "blaufuss",

"cc": "fabian.kislat@desy.de",

"resolution": "fixed",

"_ts": "1256140586000000",

"component": "combo core",

"summary": "amanda-core giving nasty segfault with release build on gorgon (ubu 8.10 x64)",

"priority": "normal",

"keywords": "",

"time": "2009-06-12T20:59:39",

"milestone": "",

"owner": "blaufuss",

"type": "defect"

}

```

</p>

</details>

|

defect

|

amanda core giving nasty segfault with release build on gorgon ubu trac migrated from reported by blaufuss and owned by blaufuss json status closed changetime description nasty tracebacks n buffer overflow detected python terminated n backtrace n lib libc so fortify fail n lib libc so n lib libc so n lib libc so io default xsputn n lib libc so io vfprintf n lib libc so vsprintf chk n lib libc so sprintf chk n opt slave build manual offline software build release lib libamanda core so muon n reporter blaufuss cc fabian kislat desy de resolution fixed ts component combo core summary amanda core giving nasty segfault with release build on gorgon ubu priority normal keywords time milestone owner blaufuss type defect

| 1

|

77,410

| 7,573,865,422

|

IssuesEvent

|

2018-04-23 19:06:45

|

metafizzy/flickity

|

https://api.github.com/repos/metafizzy/flickity

|

closed

|

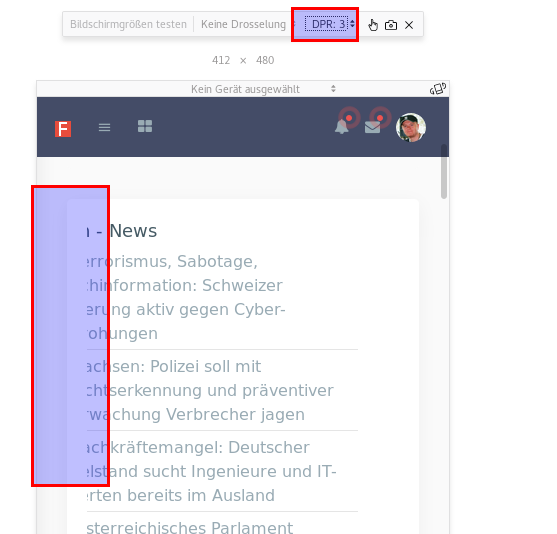

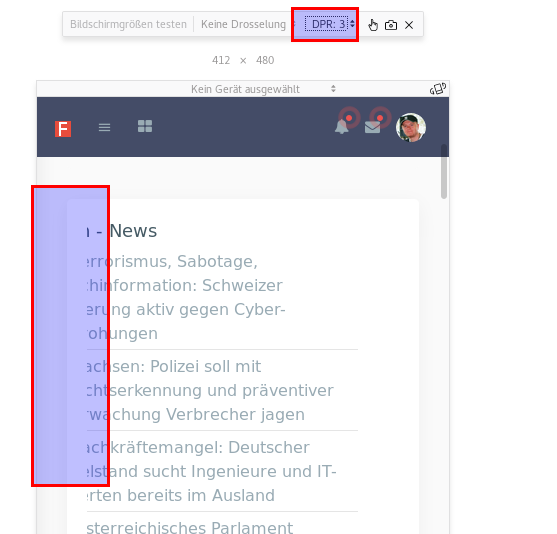

padding on caroucel-cell needed when dpr > 1

|

test case required

|

on my google pixel 2 (device pixel ratio about 2.5) all of my carousel-cell content is cropped from the left side. i need to set a left padding to see the content, which is hard to find a correct value that works for all pixel ratios (1 for desktop, and 2-3 for mobile phones)

the easiest way to test it is with firefox developer tools and the responsive size tools

|

1.0

|

padding on caroucel-cell needed when dpr > 1 - on my google pixel 2 (device pixel ratio about 2.5) all of my carousel-cell content is cropped from the left side. i need to set a left padding to see the content, which is hard to find a correct value that works for all pixel ratios (1 for desktop, and 2-3 for mobile phones)

the easiest way to test it is with firefox developer tools and the responsive size tools

|

non_defect

|

padding on caroucel cell needed when dpr on my google pixel device pixel ratio about all of my carousel cell content is cropped from the left side i need to set a left padding to see the content which is hard to find a correct value that works for all pixel ratios for desktop and for mobile phones the easiest way to test it is with firefox developer tools and the responsive size tools

| 0

|

208,793

| 23,654,450,677

|

IssuesEvent

|

2022-08-26 09:49:43

|

finos/FDC3-conformance-framework

|

https://api.github.com/repos/finos/FDC3-conformance-framework

|

closed

|

CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz

|

security vulnerability

|

## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that converts the chalked (ANSI) text to HTML.</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz">https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/ansi-html/package.json</p>

<p>

Dependency Hierarchy:

- @fdc3-conformance-framework/app-1.0.0.tgz (Root Library)

- react-scripts-4.0.3.tgz

- webpack-dev-server-3.11.1.tgz

- :x: **ansi-html-0.0.7.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/finos/FDC3-conformance-framework/commit/464478c8d773c9f1db106df334cccbe96b76f1e7">464478c8d773c9f1db106df334cccbe96b76f1e7</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects all versions of package ansi-html. If an attacker provides a malicious string, it will get stuck processing the input for an extremely long time.

<p>Publish Date: 2021-08-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23424>CVE-2021-23424</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2021-23424">https://nvd.nist.gov/vuln/detail/CVE-2021-23424</a></p>

<p>Release Date: 2021-08-18</p>

<p>Fix Resolution: VueJS.NetCore - 1.1.1;Indianadavy.VueJsWebAPITemplate.CSharp - 1.0.1;NorDroN.AngularTemplate - 0.1.6;CoreVueWebTest - 3.0.101;dotnetng.template - 1.0.0.4;Fable.Template.Elmish.React - 0.1.6;SAFE.Template - 3.0.1;GR.PageRender.Razor - 1.8.0;Envisia.DotNet.Templates - 3.0.1</p>

</p>

</details>

<p></p>

|

True

|

CVE-2021-23424 (High) detected in ansi-html-0.0.7.tgz - ## CVE-2021-23424 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>ansi-html-0.0.7.tgz</b></p></summary>

<p>An elegant lib that converts the chalked (ANSI) text to HTML.</p>

<p>Library home page: <a href="https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz">https://registry.npmjs.org/ansi-html/-/ansi-html-0.0.7.tgz</a></p>

<p>Path to dependency file: /package.json</p>

<p>Path to vulnerable library: /node_modules/ansi-html/package.json</p>

<p>

Dependency Hierarchy:

- @fdc3-conformance-framework/app-1.0.0.tgz (Root Library)

- react-scripts-4.0.3.tgz

- webpack-dev-server-3.11.1.tgz

- :x: **ansi-html-0.0.7.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/finos/FDC3-conformance-framework/commit/464478c8d773c9f1db106df334cccbe96b76f1e7">464478c8d773c9f1db106df334cccbe96b76f1e7</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

This affects all versions of package ansi-html. If an attacker provides a malicious string, it will get stuck processing the input for an extremely long time.

<p>Publish Date: 2021-08-18

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-23424>CVE-2021-23424</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2021-23424">https://nvd.nist.gov/vuln/detail/CVE-2021-23424</a></p>

<p>Release Date: 2021-08-18</p>

<p>Fix Resolution: VueJS.NetCore - 1.1.1;Indianadavy.VueJsWebAPITemplate.CSharp - 1.0.1;NorDroN.AngularTemplate - 0.1.6;CoreVueWebTest - 3.0.101;dotnetng.template - 1.0.0.4;Fable.Template.Elmish.React - 0.1.6;SAFE.Template - 3.0.1;GR.PageRender.Razor - 1.8.0;Envisia.DotNet.Templates - 3.0.1</p>

</p>

</details>

<p></p>

|

non_defect

|

cve high detected in ansi html tgz cve high severity vulnerability vulnerable library ansi html tgz an elegant lib that converts the chalked ansi text to html library home page a href path to dependency file package json path to vulnerable library node modules ansi html package json dependency hierarchy conformance framework app tgz root library react scripts tgz webpack dev server tgz x ansi html tgz vulnerable library found in head commit a href found in base branch main vulnerability details this affects all versions of package ansi html if an attacker provides a malicious string it will get stuck processing the input for an extremely long time publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution vuejs netcore indianadavy vuejswebapitemplate csharp nordron angulartemplate corevuewebtest dotnetng template fable template elmish react safe template gr pagerender razor envisia dotnet templates

| 0

|

53,429

| 13,261,597,960

|

IssuesEvent

|

2020-08-20 20:11:25

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

muongun - UNIX api violation (malloc) (Trac #1374)

|

Migrated from Trac combo simulation defect

|

http://software.icecube.wisc.edu/static_analysis/00_LATEST/report-c5454a.html#EndPath

The behavior of `malloc()` when allocating zero bytes is implementation defined. This could result in this section of code **always** failing. This code should be refactored to "do what I want" from "do what I mean".

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1374">https://code.icecube.wisc.edu/projects/icecube/ticket/1374</a>, reported by negaand owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:07",

"_ts": "1458335647931556",

"description": "http://software.icecube.wisc.edu/static_analysis/00_LATEST/report-c5454a.html#EndPath\n\nThe behavior of `malloc()` when allocating zero bytes is implementation defined. This could result in this section of code **always** failing. This code should be refactored to \"do what I want\" from \"do what I mean\".",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"time": "2015-10-01T13:59:52",

"component": "combo simulation",

"summary": "muongun - UNIX api violation (malloc)",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "jvansanten",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

muongun - UNIX api violation (malloc) (Trac #1374) - http://software.icecube.wisc.edu/static_analysis/00_LATEST/report-c5454a.html#EndPath

The behavior of `malloc()` when allocating zero bytes is implementation defined. This could result in this section of code **always** failing. This code should be refactored to "do what I want" from "do what I mean".

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1374">https://code.icecube.wisc.edu/projects/icecube/ticket/1374</a>, reported by negaand owned by jvansanten</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2016-03-18T21:14:07",

"_ts": "1458335647931556",

"description": "http://software.icecube.wisc.edu/static_analysis/00_LATEST/report-c5454a.html#EndPath\n\nThe behavior of `malloc()` when allocating zero bytes is implementation defined. This could result in this section of code **always** failing. This code should be refactored to \"do what I want\" from \"do what I mean\".",

"reporter": "nega",

"cc": "",

"resolution": "fixed",

"time": "2015-10-01T13:59:52",

"component": "combo simulation",

"summary": "muongun - UNIX api violation (malloc)",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "jvansanten",

"type": "defect"

}

```

</p>

</details>

|

defect

|

muongun unix api violation malloc trac the behavior of malloc when allocating zero bytes is implementation defined this could result in this section of code always failing this code should be refactored to do what i want from do what i mean migrated from json status closed changetime ts description behavior of malloc when allocating zero bytes is implementation defined this could result in this section of code always failing this code should be refactored to do what i want from do what i mean reporter nega cc resolution fixed time component combo simulation summary muongun unix api violation malloc priority normal keywords milestone owner jvansanten type defect

| 1

|

87,507

| 15,779,916,193

|

IssuesEvent

|

2021-04-01 09:17:51

|

AlexRogalskiy/gradle-java-sample

|

https://api.github.com/repos/AlexRogalskiy/gradle-java-sample

|

opened

|

CVE-2021-21343 (High) detected in xstream-1.4.10.jar

|

security vulnerability

|

## CVE-2021-21343 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.10.jar</b></p></summary>

<p>XStream is a serialization library from Java objects to XML and back.</p>

<p>Library home page: <a href="http://x-stream.github.io">http://x-stream.github.io</a></p>

<p>Path to dependency file: gradle-java-sample/buildSrc/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.thoughtworks.xstream/xstream/1.4.10/dfecae23647abc9d9fd0416629a4213a3882b101/xstream-1.4.10.jar</p>

<p>

Dependency Hierarchy:

- gradle-versions-plugin-0.28.0.jar (Root Library)

- :x: **xstream-1.4.10.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/gradle-java-sample/commit/faab29c6da2c042014b345fb42b18ed6d5648688">faab29c6da2c042014b345fb42b18ed6d5648688</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

XStream is a Java library to serialize objects to XML and back again. In XStream before version 1.4.16, there is a vulnerability where the processed stream at unmarshalling time contains type information to recreate the formerly written objects. XStream creates therefore new instances based on these type information. An attacker can manipulate the processed input stream and replace or inject objects, that result in the deletion of a file on the local host. No user is affected, who followed the recommendation to setup XStream's security framework with a whitelist limited to the minimal required types. If you rely on XStream's default blacklist of the Security Framework, you will have to use at least version 1.4.16.

<p>Publish Date: 2021-03-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21343>CVE-2021-21343</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/x-stream/xstream/security/advisories/GHSA-74cv-f58x-f9wf">https://github.com/x-stream/xstream/security/advisories/GHSA-74cv-f58x-f9wf</a></p>

<p>Release Date: 2021-03-23</p>

<p>Fix Resolution: com.thoughtworks.xstream:xstream:1.4.16</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-21343 (High) detected in xstream-1.4.10.jar - ## CVE-2021-21343 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>xstream-1.4.10.jar</b></p></summary>

<p>XStream is a serialization library from Java objects to XML and back.</p>

<p>Library home page: <a href="http://x-stream.github.io">http://x-stream.github.io</a></p>

<p>Path to dependency file: gradle-java-sample/buildSrc/build.gradle</p>

<p>Path to vulnerable library: /home/wss-scanner/.gradle/caches/modules-2/files-2.1/com.thoughtworks.xstream/xstream/1.4.10/dfecae23647abc9d9fd0416629a4213a3882b101/xstream-1.4.10.jar</p>

<p>

Dependency Hierarchy:

- gradle-versions-plugin-0.28.0.jar (Root Library)

- :x: **xstream-1.4.10.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/gradle-java-sample/commit/faab29c6da2c042014b345fb42b18ed6d5648688">faab29c6da2c042014b345fb42b18ed6d5648688</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

XStream is a Java library to serialize objects to XML and back again. In XStream before version 1.4.16, there is a vulnerability where the processed stream at unmarshalling time contains type information to recreate the formerly written objects. XStream creates therefore new instances based on these type information. An attacker can manipulate the processed input stream and replace or inject objects, that result in the deletion of a file on the local host. No user is affected, who followed the recommendation to setup XStream's security framework with a whitelist limited to the minimal required types. If you rely on XStream's default blacklist of the Security Framework, you will have to use at least version 1.4.16.

<p>Publish Date: 2021-03-23

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-21343>CVE-2021-21343</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: High

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://github.com/x-stream/xstream/security/advisories/GHSA-74cv-f58x-f9wf">https://github.com/x-stream/xstream/security/advisories/GHSA-74cv-f58x-f9wf</a></p>

<p>Release Date: 2021-03-23</p>

<p>Fix Resolution: com.thoughtworks.xstream:xstream:1.4.16</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in xstream jar cve high severity vulnerability vulnerable library xstream jar xstream is a serialization library from java objects to xml and back library home page a href path to dependency file gradle java sample buildsrc build gradle path to vulnerable library home wss scanner gradle caches modules files com thoughtworks xstream xstream xstream jar dependency hierarchy gradle versions plugin jar root library x xstream jar vulnerable library found in head commit a href vulnerability details xstream is a java library to serialize objects to xml and back again in xstream before version there is a vulnerability where the processed stream at unmarshalling time contains type information to recreate the formerly written objects xstream creates therefore new instances based on these type information an attacker can manipulate the processed input stream and replace or inject objects that result in the deletion of a file on the local host no user is affected who followed the recommendation to setup xstream s security framework with a whitelist limited to the minimal required types if you rely on xstream s default blacklist of the security framework you will have to use at least version publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact high availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution com thoughtworks xstream xstream step up your open source security game with whitesource

| 0

|

66,210

| 20,052,363,116

|

IssuesEvent

|

2022-02-03 08:22:14

|

martinrotter/rssguard

|

https://api.github.com/repos/martinrotter/rssguard

|

closed

|

[BUG]: "Has new articles" - but actually doesn't

|

Type-Defect

|

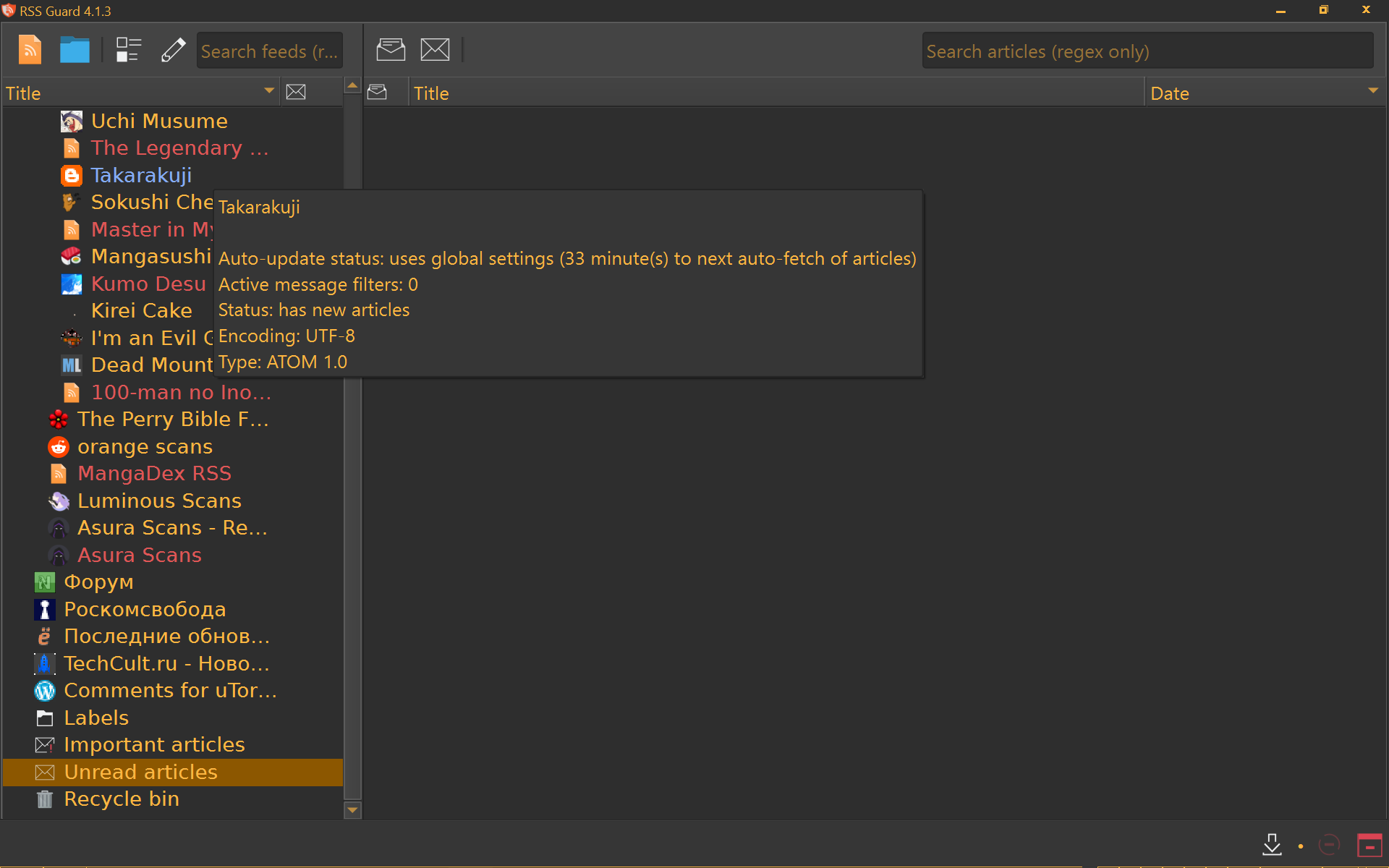

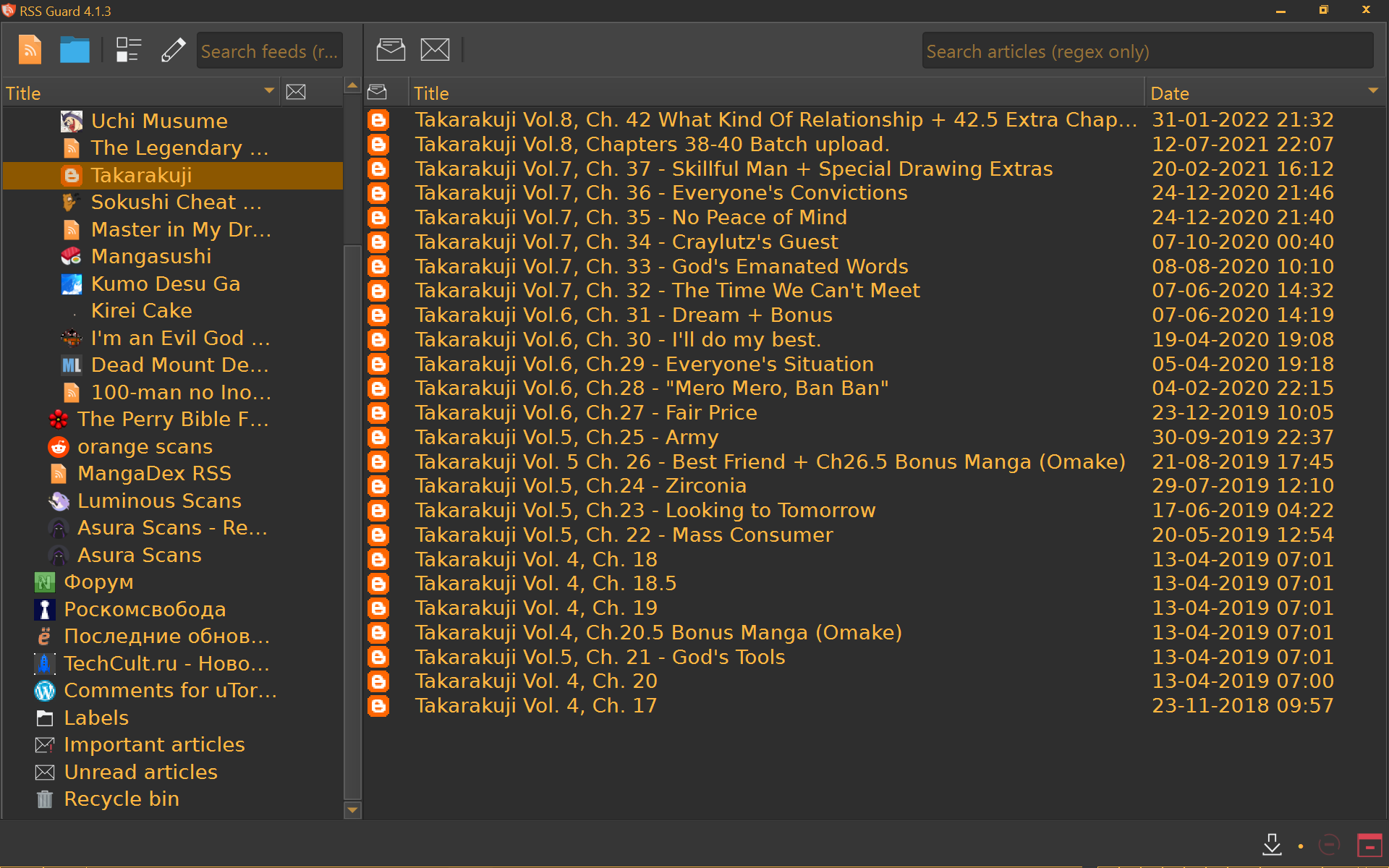

### Brief description of the issue

(all articles already read long ago and no new ones are there as you can see)

### How to reproduce the bug?

This feed: `https://hairywizardztranslations.blogspot.com/feeds/posts/default`

### What was the expected result?

says "new articles" only when there are new articles

### What actually happened?

the opposite

### Other information

If I click "mark selected as read" on the fead - it is still shown as unread,

but if I click "mark unread" on it first and then mark as read - then it becomes "read", but only before next feed update

[rssguard.log](https://github.com/martinrotter/rssguard/files/7988622/rssguard.log)

### Operating system and version

* OS: Win10 x64

* RSS Guard version:

````

RSS Guard

Version: 4.1.3 (built on Windows/x86_64)

Revision: 03d56b30-nowebengine

Build date: 1/19/22 11:57 AM

Qt: 6.2.2 (compiled against 6.2.2)

````

|

1.0

|

[BUG]: "Has new articles" - but actually doesn't - ### Brief description of the issue

(all articles already read long ago and no new ones are there as you can see)

### How to reproduce the bug?

This feed: `https://hairywizardztranslations.blogspot.com/feeds/posts/default`

### What was the expected result?

says "new articles" only when there are new articles

### What actually happened?

the opposite

### Other information

If I click "mark selected as read" on the fead - it is still shown as unread,

but if I click "mark unread" on it first and then mark as read - then it becomes "read", but only before next feed update

[rssguard.log](https://github.com/martinrotter/rssguard/files/7988622/rssguard.log)

### Operating system and version

* OS: Win10 x64

* RSS Guard version:

````

RSS Guard

Version: 4.1.3 (built on Windows/x86_64)

Revision: 03d56b30-nowebengine

Build date: 1/19/22 11:57 AM

Qt: 6.2.2 (compiled against 6.2.2)

````

|

defect

|

has new articles but actually doesn t brief description of the issue all articles already read long ago and no new ones are there as you can see how to reproduce the bug this feed what was the expected result says new articles only when there are new articles what actually happened the opposite other information if i click mark selected as read on the fead it is still shown as unread but if i click mark unread on it first and then mark as read then it becomes read but only before next feed update operating system and version os rss guard version rss guard version built on windows revision nowebengine build date am qt compiled against

| 1

|

70,758

| 18,269,011,620

|

IssuesEvent

|

2021-10-04 11:54:04

|

tailscale/tailscale

|

https://api.github.com/repos/tailscale/tailscale

|

closed

|

F-Droid build failed

|

OS-android L1 Very few P6 Blocks build T7 Build/test failure

|

```

+ make -C .. release_aar

make: Entering directory '/home/vagrant/build/com.tailscale.ipn'

find: ‘/home/vagrant/.cache/tailscale-android-go-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2’: No such file or directory

find: ‘/home/vagrant/.cache/tailscale-android-go-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2’: No such file or directory

want: e336267ea2a637426c0302945d0c1f8fa2f2f627

got: 5cd337198ead0768975610a135e26257153198c7

rm -rf /home/vagrant/.cache/tailscale-android-go-*

wget https://github.com/tailscale/go/releases/download/build-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2/linux.tar.gz -O "/tmp/tmp.cIl4qYDSFH.tgz"

--2021-08-06 05:49:53-- https://github.com/tailscale/go/releases/download/build-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2/linux.tar.gz

Resolving github.com (github.com)... 140.82.121.4

Connecting to github.com (github.com)|140.82.121.4|:443... connected.

HTTP request sent, awaiting response... 404 Not Found

2021-08-06 05:49:54 ERROR 404: Not Found.

Makefile:42: recipe for target 'toolchain' failed

make: *** [toolchain] Error 8

make: Leaving directory '/home/vagrant/build/com.tailscale.ipn'

```

It seems since 1.13.42-tfd7b738e5-ga68462ec65f tailscale-android uses your own go fork. But it seems the go binary hosted on GItHub is removed? And if it works with the origin go?

|

2.0

|

F-Droid build failed - ```

+ make -C .. release_aar

make: Entering directory '/home/vagrant/build/com.tailscale.ipn'

find: ‘/home/vagrant/.cache/tailscale-android-go-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2’: No such file or directory

find: ‘/home/vagrant/.cache/tailscale-android-go-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2’: No such file or directory

want: e336267ea2a637426c0302945d0c1f8fa2f2f627

got: 5cd337198ead0768975610a135e26257153198c7

rm -rf /home/vagrant/.cache/tailscale-android-go-*

wget https://github.com/tailscale/go/releases/download/build-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2/linux.tar.gz -O "/tmp/tmp.cIl4qYDSFH.tgz"

--2021-08-06 05:49:53-- https://github.com/tailscale/go/releases/download/build-6fa85e8201f1f75fb7323eb48a0b24274a6e33b2/linux.tar.gz

Resolving github.com (github.com)... 140.82.121.4

Connecting to github.com (github.com)|140.82.121.4|:443... connected.

HTTP request sent, awaiting response... 404 Not Found

2021-08-06 05:49:54 ERROR 404: Not Found.

Makefile:42: recipe for target 'toolchain' failed

make: *** [toolchain] Error 8

make: Leaving directory '/home/vagrant/build/com.tailscale.ipn'

```

It seems since 1.13.42-tfd7b738e5-ga68462ec65f tailscale-android uses your own go fork. But it seems the go binary hosted on GItHub is removed? And if it works with the origin go?

|

non_defect

|

f droid build failed make c release aar make entering directory home vagrant build com tailscale ipn find ‘ home vagrant cache tailscale android go ’ no such file or directory find ‘ home vagrant cache tailscale android go ’ no such file or directory want got rm rf home vagrant cache tailscale android go wget o tmp tmp tgz resolving github com github com connecting to github com github com connected http request sent awaiting response not found error not found makefile recipe for target toolchain failed make error make leaving directory home vagrant build com tailscale ipn it seems since tailscale android uses your own go fork but it seems the go binary hosted on github is removed and if it works with the origin go

| 0

|

691,406

| 23,696,024,400

|

IssuesEvent

|

2022-08-29 14:43:04

|

celo-org/celo-monorepo

|

https://api.github.com/repos/celo-org/celo-monorepo

|

closed

|

New contract instances of existing versioned implementations should have appropriate semantic versioning

|

Priority: P3 Component: Contracts CAP stale

|

### Expected Behavior

Formally specified versioning of new contracts (`StableTokenEUR is StableToken`) inheriting from existing implementations

### Current Behavior

Independent versioning in `getVersionNumber` and as understood by contract release/verification tooling

|

1.0

|

New contract instances of existing versioned implementations should have appropriate semantic versioning - ### Expected Behavior

Formally specified versioning of new contracts (`StableTokenEUR is StableToken`) inheriting from existing implementations

### Current Behavior

Independent versioning in `getVersionNumber` and as understood by contract release/verification tooling

|

non_defect

|

new contract instances of existing versioned implementations should have appropriate semantic versioning expected behavior formally specified versioning of new contracts stabletokeneur is stabletoken inheriting from existing implementations current behavior independent versioning in getversionnumber and as understood by contract release verification tooling

| 0

|

139,301

| 20,823,065,545

|

IssuesEvent

|

2022-03-18 17:22:01

|

nextcloud/desktop

|

https://api.github.com/repos/nextcloud/desktop

|

closed

|

Main dialog should respect system theme

|

bug design approved

|

<!--

Thanks for reporting issues back to Nextcloud!

This is the **issue tracker of Nextcloud**, please do NOT use this to get answers to your questions or get help for fixing your installation. You can find help debugging your system on our home user forums: https://help.nextcloud.com or, if you use Nextcloud in a large organization, ask our engineers on https://portal.nextcloud.com. See also https://nextcloud.com/support for support options.

Guidelines for submitting issues:

* Please search the existing issues first, it's likely that your issue was already reported or even fixed.

- Go to https://github.com/nextcloud and type any word in the top search/command bar. You probably see something like "We couldn’t find any repositories matching ..." then click "Issues" in the left navigation.

- You can also filter by appending e. g. "state:open" to the search string.

- More info on search syntax within github: https://help.github.com/articles/searching-issues

* Please fill in as much of the template below as possible. The logs are absolutely crucial for the developers to be able to help you. Expect us to quickly close issues without logs or other information we need.

* Also note that we have a https://nextcloud.com/contribute/code-of-conduct/ that applies on Github. To summarize it: be kind. We try our best to be nice, too. If you can't be bothered to be polite, please just don't bother to report issues as we won't feel motivated to help you.

-->

<!--- Please keep the note below for others who read your bug report -->

### How to use GitHub

* Please use the 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to show that you are affected by the same issue.

* Please don't comment if you have no relevant information to add. It's just extra noise for everyone subscribed to this issue.

* Subscribe to receive notifications on status change and new comments.

### Expected behaviour

The main dialog should respect the system theme. Like for example if a dark color theme is chosen, the main dialogs background should not be white. Since the rest of the client respects the system theme, this looks off in my opinion.

### Actual behaviour

The main dialogs background has always the same color, regardless which theme was chosen.

### Steps to reproduce

1. Set a dark theme

2. Open the main dialog

### Client configuration

Client version: 3.1.81

As this involves design questions, a comment from @jancborchardt you would be valuable:)

|

1.0

|

Main dialog should respect system theme - <!--

Thanks for reporting issues back to Nextcloud!

This is the **issue tracker of Nextcloud**, please do NOT use this to get answers to your questions or get help for fixing your installation. You can find help debugging your system on our home user forums: https://help.nextcloud.com or, if you use Nextcloud in a large organization, ask our engineers on https://portal.nextcloud.com. See also https://nextcloud.com/support for support options.

Guidelines for submitting issues:

* Please search the existing issues first, it's likely that your issue was already reported or even fixed.

- Go to https://github.com/nextcloud and type any word in the top search/command bar. You probably see something like "We couldn’t find any repositories matching ..." then click "Issues" in the left navigation.

- You can also filter by appending e. g. "state:open" to the search string.

- More info on search syntax within github: https://help.github.com/articles/searching-issues

* Please fill in as much of the template below as possible. The logs are absolutely crucial for the developers to be able to help you. Expect us to quickly close issues without logs or other information we need.

* Also note that we have a https://nextcloud.com/contribute/code-of-conduct/ that applies on Github. To summarize it: be kind. We try our best to be nice, too. If you can't be bothered to be polite, please just don't bother to report issues as we won't feel motivated to help you.

-->

<!--- Please keep the note below for others who read your bug report -->

### How to use GitHub

* Please use the 👍 [reaction](https://blog.github.com/2016-03-10-add-reactions-to-pull-requests-issues-and-comments/) to show that you are affected by the same issue.

* Please don't comment if you have no relevant information to add. It's just extra noise for everyone subscribed to this issue.

* Subscribe to receive notifications on status change and new comments.

### Expected behaviour

The main dialog should respect the system theme. Like for example if a dark color theme is chosen, the main dialogs background should not be white. Since the rest of the client respects the system theme, this looks off in my opinion.

### Actual behaviour

The main dialogs background has always the same color, regardless which theme was chosen.

### Steps to reproduce

1. Set a dark theme

2. Open the main dialog

### Client configuration

Client version: 3.1.81

As this involves design questions, a comment from @jancborchardt you would be valuable:)

|

non_defect

|

main dialog should respect system theme thanks for reporting issues back to nextcloud this is the issue tracker of nextcloud please do not use this to get answers to your questions or get help for fixing your installation you can find help debugging your system on our home user forums or if you use nextcloud in a large organization ask our engineers on see also for support options guidelines for submitting issues please search the existing issues first it s likely that your issue was already reported or even fixed go to and type any word in the top search command bar you probably see something like we couldn’t find any repositories matching then click issues in the left navigation you can also filter by appending e g state open to the search string more info on search syntax within github please fill in as much of the template below as possible the logs are absolutely crucial for the developers to be able to help you expect us to quickly close issues without logs or other information we need also note that we have a that applies on github to summarize it be kind we try our best to be nice too if you can t be bothered to be polite please just don t bother to report issues as we won t feel motivated to help you how to use github please use the 👍 to show that you are affected by the same issue please don t comment if you have no relevant information to add it s just extra noise for everyone subscribed to this issue subscribe to receive notifications on status change and new comments expected behaviour the main dialog should respect the system theme like for example if a dark color theme is chosen the main dialogs background should not be white since the rest of the client respects the system theme this looks off in my opinion actual behaviour the main dialogs background has always the same color regardless which theme was chosen steps to reproduce set a dark theme open the main dialog client configuration client version as this involves design questions a comment from jancborchardt you would be valuable

| 0

|

512,905

| 14,911,861,256

|

IssuesEvent

|

2021-01-22 11:44:43

|

conan-io/conan

|

https://api.github.com/repos/conan-io/conan

|

closed

|

[feature] Expose scm data to ``conan info``

|

complex: low priority: medium stage: queue type: feature

|

Would be very useful to have information about the commits for scm=auto specially, to allow checking out dependencies at the exact commits used in a dependency graph.

|

1.0

|

[feature] Expose scm data to ``conan info`` - Would be very useful to have information about the commits for scm=auto specially, to allow checking out dependencies at the exact commits used in a dependency graph.

|

non_defect

|

expose scm data to conan info would be very useful to have information about the commits for scm auto specially to allow checking out dependencies at the exact commits used in a dependency graph

| 0

|

23,344

| 3,796,387,369

|

IssuesEvent

|

2016-03-23 00:10:42

|

extnet/Ext.NET

|

https://api.github.com/repos/extnet/Ext.NET

|

opened

|

pt_BR locale wrong for Ext.locale.pt_BR.grid.feature.Grouping.showGroupsText

|

3.x 4.x defect sencha

|

The translated text reads _"Mostrar agrupad"_ while it should show _"Mostrar agrupado"_.

Reported on this forum thread: [Ext.locale.pt_BR.grid.feature.Grouping](http://forums.ext.net/showthread.php?60750).

|

1.0

|

pt_BR locale wrong for Ext.locale.pt_BR.grid.feature.Grouping.showGroupsText - The translated text reads _"Mostrar agrupad"_ while it should show _"Mostrar agrupado"_.

Reported on this forum thread: [Ext.locale.pt_BR.grid.feature.Grouping](http://forums.ext.net/showthread.php?60750).

|

defect

|

pt br locale wrong for ext locale pt br grid feature grouping showgroupstext the translated text reads mostrar agrupad while it should show mostrar agrupado reported on this forum thread

| 1

|

338,165

| 10,225,164,344

|

IssuesEvent

|

2019-08-16 14:32:11

|

linkerd/website

|

https://api.github.com/repos/linkerd/website

|

closed

|

Update Tap RBAC docs with linkerd dashboard info

|

docs priority/P0

|

https://linkerd.io/tap-rbac provides info on managing Tap RBAC access. This becomes more relevant to `linkerd dashboard` once linkerd/linkerd2#3203 and linkerd/linkerd2#3208 ship. Update that page with information around managing Tap RBAC for the `linkerd-web` service account.

also document how to create a binding using the new `linkerd-linkerd-tap-admin` ClusterRole.

|

1.0

|

Update Tap RBAC docs with linkerd dashboard info - https://linkerd.io/tap-rbac provides info on managing Tap RBAC access. This becomes more relevant to `linkerd dashboard` once linkerd/linkerd2#3203 and linkerd/linkerd2#3208 ship. Update that page with information around managing Tap RBAC for the `linkerd-web` service account.

also document how to create a binding using the new `linkerd-linkerd-tap-admin` ClusterRole.

|

non_defect

|

update tap rbac docs with linkerd dashboard info provides info on managing tap rbac access this becomes more relevant to linkerd dashboard once linkerd and linkerd ship update that page with information around managing tap rbac for the linkerd web service account also document how to create a binding using the new linkerd linkerd tap admin clusterrole

| 0

|

68,945

| 21,995,097,857

|

IssuesEvent

|

2022-05-26 05:02:04

|

vector-im/element-ios

|

https://api.github.com/repos/vector-im/element-ios

|

opened

|

Showing 'Joined' button instead of 'Join' while user trying to rejoin into a room

|

T-Defect

|

### Steps to reproduce

1. Leave any room

2. Search same room to rejoin

3. It shows 'Joined' button instead of 'Join'

4. But inside this room it shows option for 'Join'

### Outcome

#### What did you expect?

While trying to rejoin the room Button status should be 'Join' instead of 'Joined'

#### What happened instead?

Now While trying to rejoin the room It shows 'Joined' button instead of 'Join'

### Your phone model

iPad mini

### Operating system version

iOS 15.1

### Application version

Element 1.8.16

### Homeserver

matrix.org

### Will you send logs?

No

|

1.0

|

Showing 'Joined' button instead of 'Join' while user trying to rejoin into a room - ### Steps to reproduce

1. Leave any room

2. Search same room to rejoin

3. It shows 'Joined' button instead of 'Join'

4. But inside this room it shows option for 'Join'

### Outcome

#### What did you expect?

While trying to rejoin the room Button status should be 'Join' instead of 'Joined'

#### What happened instead?

Now While trying to rejoin the room It shows 'Joined' button instead of 'Join'

### Your phone model

iPad mini

### Operating system version

iOS 15.1

### Application version

Element 1.8.16

### Homeserver

matrix.org

### Will you send logs?

No

|

defect

|

showing joined button instead of join while user trying to rejoin into a room steps to reproduce leave any room search same room to rejoin it shows joined button instead of join but inside this room it shows option for join outcome what did you expect while trying to rejoin the room button status should be join instead of joined what happened instead now while trying to rejoin the room it shows joined button instead of join your phone model ipad mini operating system version ios application version element homeserver matrix org will you send logs no

| 1

|

590,895

| 17,790,523,350

|

IssuesEvent

|

2021-08-31 15:40:40

|

cdklabs/construct-hub-webapp

|

https://api.github.com/repos/cdklabs/construct-hub-webapp

|

closed

|

Small fixes to the site terms

|

risk/low priority/p3 effort/half-day

|

Remove the the external link warning for the AWS site terms link.

In addition, there is an orphan "." which should be removed

|

1.0

|

Small fixes to the site terms - Remove the the external link warning for the AWS site terms link.

In addition, there is an orphan "." which should be removed

|

non_defect

|

small fixes to the site terms remove the the external link warning for the aws site terms link in addition there is an orphan which should be removed

| 0

|

104,845

| 9,011,420,614

|

IssuesEvent

|

2019-02-05 14:39:38

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

teamcity: failed test: 05:06.789zulu

|

C-test-failure O-robot

|

The following tests appear to have failed on master (testrace): 05:06.789zulu/ParseTime

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+05:06.789zulu).

[#1124489](https://teamcity.cockroachdb.com/viewLog.html?buildId=1124489):

```

05:06.789zulu/ParseTime

--- FAIL: testrace/TestParse/ParseModeMDY/January_8,_99_BC/04:05:06.789zulu/ParseTime (0.000s)

Test ended in panic.

```

Please assign, take a look and update the issue accordingly.

|

1.0

|

teamcity: failed test: 05:06.789zulu - The following tests appear to have failed on master (testrace): 05:06.789zulu/ParseTime

You may want to check [for open issues](https://github.com/cockroachdb/cockroach/issues?q=is%3Aissue+is%3Aopen+05:06.789zulu).

[#1124489](https://teamcity.cockroachdb.com/viewLog.html?buildId=1124489):

```

05:06.789zulu/ParseTime

--- FAIL: testrace/TestParse/ParseModeMDY/January_8,_99_BC/04:05:06.789zulu/ParseTime (0.000s)

Test ended in panic.

```

Please assign, take a look and update the issue accordingly.

|

non_defect

|

teamcity failed test the following tests appear to have failed on master testrace parsetime you may want to check parsetime fail testrace testparse parsemodemdy january bc parsetime test ended in panic please assign take a look and update the issue accordingly

| 0

|

53,670

| 13,262,079,507

|

IssuesEvent

|

2020-08-20 21:03:57

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

ice-models project cannot be listed as a dependency (Trac #1852)

|

Migrated from Trac cmake defect

|

Since we now have the ice-models project, we would like to transition CLSim to fetching more (eventually all) of its parameterizations from there. To prevent mistakes it seems like a good idea to list ice-models as a dependency of clsim, so that if it isn't present the user will be warned/stopped by cmake. However, simply adding ice-models to clsim's `USE_PROJECTS` list actually prevents clsim from building, since `i3_project` currently assumes that a used project includes a library which must be linked against, and ice-models doesn't actually contain any code at all. It should be possible to instead manually add a check in clsim's CMakeLists that the ice-models directory is present, without going through `USE_PROJECTS`, but I'm not sure if that's a good long-term solution or not.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1852">https://code.icecube.wisc.edu/projects/icecube/ticket/1852</a>, reported by cweaverand owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:08:39",

"_ts": "1550066919084021",

"description": "Since we now have the ice-models project, we would like to transition CLSim to fetching more (eventually all) of its parameterizations from there. To prevent mistakes it seems like a good idea to list ice-models as a dependency of clsim, so that if it isn't present the user will be warned/stopped by cmake. However, simply adding ice-models to clsim's `USE_PROJECTS` list actually prevents clsim from building, since `i3_project` currently assumes that a used project includes a library which must be linked against, and ice-models doesn't actually contain any code at all. It should be possible to instead manually add a check in clsim's CMakeLists that the ice-models directory is present, without going through `USE_PROJECTS`, but I'm not sure if that's a good long-term solution or not. ",

"reporter": "cweaver",

"cc": "",

"resolution": "insufficient resources",

"time": "2016-09-07T17:16:08",

"component": "cmake",

"summary": "ice-models project cannot be listed as a dependency",

"priority": "normal",

"keywords": "",

"milestone": "Long-Term Future",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

ice-models project cannot be listed as a dependency (Trac #1852) - Since we now have the ice-models project, we would like to transition CLSim to fetching more (eventually all) of its parameterizations from there. To prevent mistakes it seems like a good idea to list ice-models as a dependency of clsim, so that if it isn't present the user will be warned/stopped by cmake. However, simply adding ice-models to clsim's `USE_PROJECTS` list actually prevents clsim from building, since `i3_project` currently assumes that a used project includes a library which must be linked against, and ice-models doesn't actually contain any code at all. It should be possible to instead manually add a check in clsim's CMakeLists that the ice-models directory is present, without going through `USE_PROJECTS`, but I'm not sure if that's a good long-term solution or not.

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1852">https://code.icecube.wisc.edu/projects/icecube/ticket/1852</a>, reported by cweaverand owned by olivas</em></summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:08:39",

"_ts": "1550066919084021",

"description": "Since we now have the ice-models project, we would like to transition CLSim to fetching more (eventually all) of its parameterizations from there. To prevent mistakes it seems like a good idea to list ice-models as a dependency of clsim, so that if it isn't present the user will be warned/stopped by cmake. However, simply adding ice-models to clsim's `USE_PROJECTS` list actually prevents clsim from building, since `i3_project` currently assumes that a used project includes a library which must be linked against, and ice-models doesn't actually contain any code at all. It should be possible to instead manually add a check in clsim's CMakeLists that the ice-models directory is present, without going through `USE_PROJECTS`, but I'm not sure if that's a good long-term solution or not. ",

"reporter": "cweaver",

"cc": "",

"resolution": "insufficient resources",

"time": "2016-09-07T17:16:08",

"component": "cmake",

"summary": "ice-models project cannot be listed as a dependency",

"priority": "normal",

"keywords": "",

"milestone": "Long-Term Future",

"owner": "olivas",

"type": "defect"

}

```

</p>

</details>

|

defect

|

ice models project cannot be listed as a dependency trac since we now have the ice models project we would like to transition clsim to fetching more eventually all of its parameterizations from there to prevent mistakes it seems like a good idea to list ice models as a dependency of clsim so that if it isn t present the user will be warned stopped by cmake however simply adding ice models to clsim s use projects list actually prevents clsim from building since project currently assumes that a used project includes a library which must be linked against and ice models doesn t actually contain any code at all it should be possible to instead manually add a check in clsim s cmakelists that the ice models directory is present without going through use projects but i m not sure if that s a good long term solution or not migrated from json status closed changetime ts description since we now have the ice models project we would like to transition clsim to fetching more eventually all of its parameterizations from there to prevent mistakes it seems like a good idea to list ice models as a dependency of clsim so that if it isn t present the user will be warned stopped by cmake however simply adding ice models to clsim s use projects list actually prevents clsim from building since project currently assumes that a used project includes a library which must be linked against and ice models doesn t actually contain any code at all it should be possible to instead manually add a check in clsim s cmakelists that the ice models directory is present without going through use projects but i m not sure if that s a good long term solution or not reporter cweaver cc resolution insufficient resources time component cmake summary ice models project cannot be listed as a dependency priority normal keywords milestone long term future owner olivas type defect

| 1

|

28,649

| 7,010,369,439

|

IssuesEvent

|

2017-12-19 22:55:41

|

PyvesB/AdvancedAchievements

|

https://api.github.com/repos/PyvesB/AdvancedAchievements

|

closed

|

SQL error while retrieving playedtime stats

|

code

|

Hello this error i found when first player join on server.

`[12:02:23] [Server thread/ERROR]: [AdvancedAchievements] SQL error while retrieving playedtime stats:

com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: Communications link failure

The last packet successfully received from the server was 152,494 milliseconds ago. The last packet sent successfully to the server was 0 milliseconds ago.

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) ~[?:1.8.0_144]

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) ~[?:1.8.0_144]

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) ~[?:1.8.0_144]

at java.lang.reflect.Constructor.newInstance(Constructor.java:423) ~[?:1.8.0_144]

at com.mysql.jdbc.Util.handleNewInstance(Util.java:425) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.SQLError.createCommunicationsException(SQLError.java:989) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.reuseAndReadPacket(MysqlIO.java:3559) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.reuseAndReadPacket(MysqlIO.java:3459) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.checkErrorPacket(MysqlIO.java:3900) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.sendCommand(MysqlIO.java:2527) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.sqlQueryDirect(MysqlIO.java:2680) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.ConnectionImpl.execSQL(ConnectionImpl.java:2483) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.ConnectionImpl.execSQL(ConnectionImpl.java:2441) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.StatementImpl.executeQuery(StatementImpl.java:1381) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.hm.achievement.db.AbstractSQLDatabaseManager.getNormalAchievementAmount(AbstractSQLDatabaseManager.java:478) ~[?:?]

at com.hm.achievement.db.DatabaseCacheManager.getAndIncrementStatisticAmount(DatabaseCacheManager.java:116) ~[?:?]

at com.hm.achievement.runnable.AchievePlayTimeRunnable.updateTime(AchievePlayTimeRunnable.java:77) ~[?:?]

at java.util.Iterator.forEachRemaining(Iterator.java:116) [?:1.8.0_144]

at java.util.Spliterators$IteratorSpliterator.forEachRemaining(Spliterators.java:1801) [?:1.8.0_144]

at java.util.stream.ReferencePipeline$Head.forEach(ReferencePipeline.java:580) [?:1.8.0_144]

at com.hm.achievement.runnable.AchievePlayTimeRunnable.run(AchievePlayTimeRunnable.java:48) [AdvancedAchievements%20(2).jar:?]

at org.bukkit.craftbukkit.v1_12_R1.scheduler.CraftTask.run(CraftTask.java:71) [spigot.jar:git-Spigot-3d850ec-809c399]

at org.bukkit.craftbukkit.v1_12_R1.scheduler.CraftScheduler.mainThreadHeartbeat(CraftScheduler.java:353) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.MinecraftServer.D(MinecraftServer.java:739) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.DedicatedServer.D(DedicatedServer.java:406) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.MinecraftServer.C(MinecraftServer.java:679) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.MinecraftServer.run(MinecraftServer.java:577) [spigot.jar:git-Spigot-3d850ec-809c399]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_144]

Caused by: java.io.EOFException: Can not read response from server. Expected to read 4 bytes, read 0 bytes before connection was unexpectedly lost.

at com.mysql.jdbc.MysqlIO.readFully(MysqlIO.java:3011) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.reuseAndReadPacket(MysqlIO.java:3469) ~[spigot.jar:git-Spigot-3d850ec-809c399]

... 21 more`

|

1.0

|

SQL error while retrieving playedtime stats - Hello this error i found when first player join on server.

`[12:02:23] [Server thread/ERROR]: [AdvancedAchievements] SQL error while retrieving playedtime stats:

com.mysql.jdbc.exceptions.jdbc4.CommunicationsException: Communications link failure

The last packet successfully received from the server was 152,494 milliseconds ago. The last packet sent successfully to the server was 0 milliseconds ago.

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method) ~[?:1.8.0_144]

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62) ~[?:1.8.0_144]

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45) ~[?:1.8.0_144]

at java.lang.reflect.Constructor.newInstance(Constructor.java:423) ~[?:1.8.0_144]

at com.mysql.jdbc.Util.handleNewInstance(Util.java:425) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.SQLError.createCommunicationsException(SQLError.java:989) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.reuseAndReadPacket(MysqlIO.java:3559) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.reuseAndReadPacket(MysqlIO.java:3459) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.checkErrorPacket(MysqlIO.java:3900) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.sendCommand(MysqlIO.java:2527) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.sqlQueryDirect(MysqlIO.java:2680) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.ConnectionImpl.execSQL(ConnectionImpl.java:2483) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.ConnectionImpl.execSQL(ConnectionImpl.java:2441) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.StatementImpl.executeQuery(StatementImpl.java:1381) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.hm.achievement.db.AbstractSQLDatabaseManager.getNormalAchievementAmount(AbstractSQLDatabaseManager.java:478) ~[?:?]

at com.hm.achievement.db.DatabaseCacheManager.getAndIncrementStatisticAmount(DatabaseCacheManager.java:116) ~[?:?]

at com.hm.achievement.runnable.AchievePlayTimeRunnable.updateTime(AchievePlayTimeRunnable.java:77) ~[?:?]

at java.util.Iterator.forEachRemaining(Iterator.java:116) [?:1.8.0_144]

at java.util.Spliterators$IteratorSpliterator.forEachRemaining(Spliterators.java:1801) [?:1.8.0_144]

at java.util.stream.ReferencePipeline$Head.forEach(ReferencePipeline.java:580) [?:1.8.0_144]

at com.hm.achievement.runnable.AchievePlayTimeRunnable.run(AchievePlayTimeRunnable.java:48) [AdvancedAchievements%20(2).jar:?]

at org.bukkit.craftbukkit.v1_12_R1.scheduler.CraftTask.run(CraftTask.java:71) [spigot.jar:git-Spigot-3d850ec-809c399]

at org.bukkit.craftbukkit.v1_12_R1.scheduler.CraftScheduler.mainThreadHeartbeat(CraftScheduler.java:353) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.MinecraftServer.D(MinecraftServer.java:739) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.DedicatedServer.D(DedicatedServer.java:406) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.MinecraftServer.C(MinecraftServer.java:679) [spigot.jar:git-Spigot-3d850ec-809c399]

at net.minecraft.server.v1_12_R1.MinecraftServer.run(MinecraftServer.java:577) [spigot.jar:git-Spigot-3d850ec-809c399]

at java.lang.Thread.run(Thread.java:748) [?:1.8.0_144]

Caused by: java.io.EOFException: Can not read response from server. Expected to read 4 bytes, read 0 bytes before connection was unexpectedly lost.

at com.mysql.jdbc.MysqlIO.readFully(MysqlIO.java:3011) ~[spigot.jar:git-Spigot-3d850ec-809c399]

at com.mysql.jdbc.MysqlIO.reuseAndReadPacket(MysqlIO.java:3469) ~[spigot.jar:git-Spigot-3d850ec-809c399]

... 21 more`

|

non_defect

|

sql error while retrieving playedtime stats hello this error i found when first player join on server sql error while retrieving playedtime stats com mysql jdbc exceptions communicationsexception communications link failure the last packet successfully received from the server was milliseconds ago the last packet sent successfully to the server was milliseconds ago at sun reflect nativeconstructoraccessorimpl native method at sun reflect nativeconstructoraccessorimpl newinstance nativeconstructoraccessorimpl java at sun reflect delegatingconstructoraccessorimpl newinstance delegatingconstructoraccessorimpl java at java lang reflect constructor newinstance constructor java at com mysql jdbc util handlenewinstance util java at com mysql jdbc sqlerror createcommunicationsexception sqlerror java at com mysql jdbc mysqlio reuseandreadpacket mysqlio java at com mysql jdbc mysqlio reuseandreadpacket mysqlio java at com mysql jdbc mysqlio checkerrorpacket mysqlio java at com mysql jdbc mysqlio sendcommand mysqlio java at com mysql jdbc mysqlio sqlquerydirect mysqlio java at com mysql jdbc connectionimpl execsql connectionimpl java at com mysql jdbc connectionimpl execsql connectionimpl java at com mysql jdbc statementimpl executequery statementimpl java at com hm achievement db abstractsqldatabasemanager getnormalachievementamount abstractsqldatabasemanager java at com hm achievement db databasecachemanager getandincrementstatisticamount databasecachemanager java at com hm achievement runnable achieveplaytimerunnable updatetime achieveplaytimerunnable java at java util iterator foreachremaining iterator java at java util spliterators iteratorspliterator foreachremaining spliterators java at java util stream referencepipeline head foreach referencepipeline java at com hm achievement runnable achieveplaytimerunnable run achieveplaytimerunnable java at org bukkit craftbukkit scheduler crafttask run crafttask java at org bukkit craftbukkit scheduler craftscheduler mainthreadheartbeat craftscheduler java at net minecraft server minecraftserver d minecraftserver java at net minecraft server dedicatedserver d dedicatedserver java at net minecraft server minecraftserver c minecraftserver java at net minecraft server minecraftserver run minecraftserver java at java lang thread run thread java caused by java io eofexception can not read response from server expected to read bytes read bytes before connection was unexpectedly lost at com mysql jdbc mysqlio readfully mysqlio java at com mysql jdbc mysqlio reuseandreadpacket mysqlio java more

| 0

|

17,286

| 2,997,349,603

|

IssuesEvent

|

2015-07-23 06:50:36

|

contao/core

|

https://api.github.com/repos/contao/core

|

closed

|

tinymce_legacy funktioniert nicht zu 100%

|

defect

|

Es wäre schön wenn der TinyMCE 3.5 auch für Contao 3.5 funktionieren würde.

https://contao.org/de/erweiterungsliste/view/tinymce_legacy.10000009.de.html

Grundsätzlich tut er das auch, allerdings sind keine Links möglich, weder Verweis auf Seite noch auf Datei - der Button "Anwenden" funktioniert nicht!

Vermutung von BugBuster

Da haben sich die CSS Klassen geändert weswegen das JavaScript nicht mehr funkt.

|

1.0

|

tinymce_legacy funktioniert nicht zu 100% - Es wäre schön wenn der TinyMCE 3.5 auch für Contao 3.5 funktionieren würde.

https://contao.org/de/erweiterungsliste/view/tinymce_legacy.10000009.de.html

Grundsätzlich tut er das auch, allerdings sind keine Links möglich, weder Verweis auf Seite noch auf Datei - der Button "Anwenden" funktioniert nicht!

Vermutung von BugBuster

Da haben sich die CSS Klassen geändert weswegen das JavaScript nicht mehr funkt.

|

defect

|

tinymce legacy funktioniert nicht zu es wäre schön wenn der tinymce auch für contao funktionieren würde grundsätzlich tut er das auch allerdings sind keine links möglich weder verweis auf seite noch auf datei der button anwenden funktioniert nicht vermutung von bugbuster da haben sich die css klassen geändert weswegen das javascript nicht mehr funkt

| 1

|

39,705

| 12,698,859,343

|

IssuesEvent

|

2020-06-22 14:03:10

|

mahonec/WebGoat-Legacy

|

https://api.github.com/repos/mahonec/WebGoat-Legacy

|

opened

|

CVE-2020-11620 (High) detected in jackson-databind-2.0.4.jar

|

security vulnerability

|

## CVE-2020-11620 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>jackson-databind-2.0.4.jar</b></p></summary>

<p>General data-binding functionality for Jackson: works on core streaming API</p>

<p>Path to dependency file: /tmp/ws-scm/WebGoat-Legacy/pom.xml</p>

<p>Path to vulnerable library: canner/.m2/repository/com/fasterxml/jackson/core/jackson-databind/2.0.4/jackson-databind-2.0.4.jar,/WebGoat-Legacy/target/WebGoat-6.0.1/WEB-INF/lib/jackson-databind-2.0.4.jar</p>

<p>

Dependency Hierarchy:

- :x: **jackson-databind-2.0.4.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/mahonec/WebGoat-Legacy/commit/9b9155ac6645ae2fcb5f2195a346a9a39d3137e7">9b9155ac6645ae2fcb5f2195a346a9a39d3137e7</a></p>