Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

68,155

| 21,523,148,303

|

IssuesEvent

|

2022-04-28 15:49:22

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

closed

|

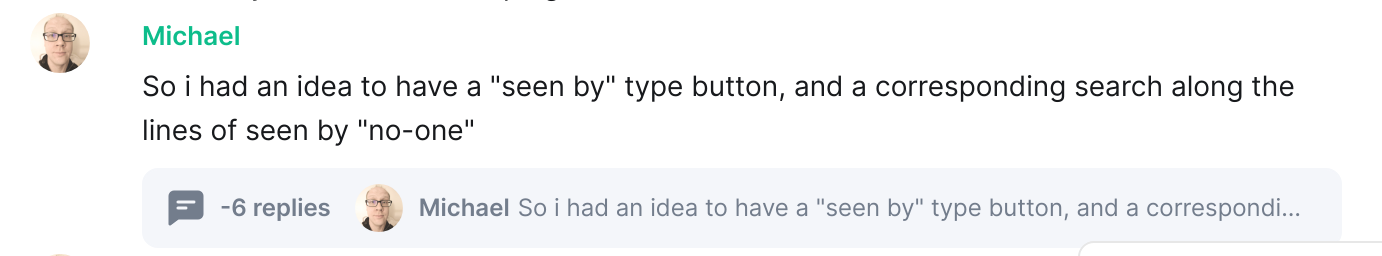

-6 Replies in thread

|

T-Defect

|

### Steps to reproduce

Started a conversation in a room normally.

Didn't appear to do anything specia - possibly another user replied to and deleted a message in another thread.

Ended up seeing this:

### Outcome

#### What did you expect?

I don't think this should have been a thread

#### What happened instead?

It was a -6 message thread :)

### Operating system

Ubuntu

### Application version

Version: 1.10.10

### How did you install the app?

_No response_

### Homeserver

EMS

### Will you send logs?

Yes

|

1.0

|

-6 Replies in thread - ### Steps to reproduce

Started a conversation in a room normally.

Didn't appear to do anything specia - possibly another user replied to and deleted a message in another thread.

Ended up seeing this:

### Outcome

#### What did you expect?

I don't think this should have been a thread

#### What happened instead?

It was a -6 message thread :)

### Operating system

Ubuntu

### Application version

Version: 1.10.10

### How did you install the app?

_No response_

### Homeserver

EMS

### Will you send logs?

Yes

|

defect

|

replies in thread steps to reproduce started a conversation in a room normally didn t appear to do anything specia possibly another user replied to and deleted a message in another thread ended up seeing this outcome what did you expect i don t think this should have been a thread what happened instead it was a message thread operating system ubuntu application version version how did you install the app no response homeserver ems will you send logs yes

| 1

|

102,680

| 16,578,081,156

|

IssuesEvent

|

2021-05-31 08:04:31

|

AlexRogalskiy/weather-time

|

https://api.github.com/repos/AlexRogalskiy/weather-time

|

opened

|

CVE-2021-33502 (High) detected in normalize-url-5.3.0.tgz

|

security vulnerability

|

## CVE-2021-33502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>normalize-url-5.3.0.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs.org/normalize-url/-/normalize-url-5.3.0.tgz">https://registry.npmjs.org/normalize-url/-/normalize-url-5.3.0.tgz</a></p>

<p>Path to dependency file: weather-time/package.json</p>

<p>Path to vulnerable library: weather-time/node_modules/normalize-url/package.json</p>

<p>

Dependency Hierarchy:

- npm-7.0.10.tgz (Root Library)

- :x: **normalize-url-5.3.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/weather-time/commit/8ff5450ff6ee1f7920e55168365618ced6a9a732">8ff5450ff6ee1f7920e55168365618ced6a9a732</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The normalize-url package before 4.5.1, 5.x before 5.3.1, and 6.x before 6.0.1 for Node.js has a ReDoS (regular expression denial of service) issue because it has exponential performance for data: URLs.

<p>Publish Date: 2021-05-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33502>CVE-2021-33502</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502</a></p>

<p>Release Date: 2021-05-24</p>

<p>Fix Resolution: normalize-url - 4.5.1, 5.3.1, 6.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2021-33502 (High) detected in normalize-url-5.3.0.tgz - ## CVE-2021-33502 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>normalize-url-5.3.0.tgz</b></p></summary>

<p>Normalize a URL</p>

<p>Library home page: <a href="https://registry.npmjs.org/normalize-url/-/normalize-url-5.3.0.tgz">https://registry.npmjs.org/normalize-url/-/normalize-url-5.3.0.tgz</a></p>

<p>Path to dependency file: weather-time/package.json</p>

<p>Path to vulnerable library: weather-time/node_modules/normalize-url/package.json</p>

<p>

Dependency Hierarchy:

- npm-7.0.10.tgz (Root Library)

- :x: **normalize-url-5.3.0.tgz** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/AlexRogalskiy/weather-time/commit/8ff5450ff6ee1f7920e55168365618ced6a9a732">8ff5450ff6ee1f7920e55168365618ced6a9a732</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The normalize-url package before 4.5.1, 5.x before 5.3.1, and 6.x before 6.0.1 for Node.js has a ReDoS (regular expression denial of service) issue because it has exponential performance for data: URLs.

<p>Publish Date: 2021-05-24

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2021-33502>CVE-2021-33502</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: None

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2021-33502</a></p>

<p>Release Date: 2021-05-24</p>

<p>Fix Resolution: normalize-url - 4.5.1, 5.3.1, 6.0.1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in normalize url tgz cve high severity vulnerability vulnerable library normalize url tgz normalize a url library home page a href path to dependency file weather time package json path to vulnerable library weather time node modules normalize url package json dependency hierarchy npm tgz root library x normalize url tgz vulnerable library found in head commit a href vulnerability details the normalize url package before x before and x before for node js has a redos regular expression denial of service issue because it has exponential performance for data urls publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required none user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution normalize url step up your open source security game with whitesource

| 0

|

16,616

| 2,920,435,335

|

IssuesEvent

|

2015-06-24 18:55:24

|

ashanbh/chrome-rest-client

|

https://api.github.com/repos/ashanbh/chrome-rest-client

|

closed

|

Payload sometimes doesn't update correctly

|

auto-migrated Priority-Medium Type-Defect

|

```

I'm using your REST client to test an API for my code. I had a strange

situation where I entered a valid payload and the request failed. I then

entered an invalid payload and the request succeeded. I tried this several

times, and each time the result was opposite to what I expected. When I

examined my API log I could see that the client was sending the opposite of

what it appeared to be sending i.e when I edited the payload to be invalid the

payload that was actually sent was valid, and vice versa. I solved the problem

by changing the payload and then clicking through the payload editor tabs.

That seemed to clear the error. It was as if the client was getting stuck.

```

Original issue reported on code.google.com by `Paul.Ne...@gmail.com` on 6 Dec 2012 at 4:19

|

1.0

|

Payload sometimes doesn't update correctly - ```

I'm using your REST client to test an API for my code. I had a strange

situation where I entered a valid payload and the request failed. I then

entered an invalid payload and the request succeeded. I tried this several

times, and each time the result was opposite to what I expected. When I

examined my API log I could see that the client was sending the opposite of

what it appeared to be sending i.e when I edited the payload to be invalid the

payload that was actually sent was valid, and vice versa. I solved the problem

by changing the payload and then clicking through the payload editor tabs.

That seemed to clear the error. It was as if the client was getting stuck.

```

Original issue reported on code.google.com by `Paul.Ne...@gmail.com` on 6 Dec 2012 at 4:19

|

defect

|

payload sometimes doesn t update correctly i m using your rest client to test an api for my code i had a strange situation where i entered a valid payload and the request failed i then entered an invalid payload and the request succeeded i tried this several times and each time the result was opposite to what i expected when i examined my api log i could see that the client was sending the opposite of what it appeared to be sending i e when i edited the payload to be invalid the payload that was actually sent was valid and vice versa i solved the problem by changing the payload and then clicking through the payload editor tabs that seemed to clear the error it was as if the client was getting stuck original issue reported on code google com by paul ne gmail com on dec at

| 1

|

288,134

| 31,857,032,880

|

IssuesEvent

|

2023-09-15 08:14:02

|

nidhi7598/linux-4.19.72_CVE-2022-3564

|

https://api.github.com/repos/nidhi7598/linux-4.19.72_CVE-2022-3564

|

closed

|

CVE-2019-19462 (Medium) detected in linuxlinux-4.19.294 - autoclosed

|

Mend: dependency security vulnerability

|

## CVE-2019-19462 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-4.19.72_CVE-2022-3564/commit/454c7dacf6fa9a6de86d4067f5a08f25cffa519b">454c7dacf6fa9a6de86d4067f5a08f25cffa519b</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/kernel/relay.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/kernel/relay.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

relay_open in kernel/relay.c in the Linux kernel through 5.4.1 allows local users to cause a denial of service (such as relay blockage) by triggering a NULL alloc_percpu result.

<p>Publish Date: 2019-11-30

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19462>CVE-2019-19462</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19462">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19462</a></p>

<p>Release Date: 2019-11-30</p>

<p>Fix Resolution: v5.8-rc1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2019-19462 (Medium) detected in linuxlinux-4.19.294 - autoclosed - ## CVE-2019-19462 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.294</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/nidhi7598/linux-4.19.72_CVE-2022-3564/commit/454c7dacf6fa9a6de86d4067f5a08f25cffa519b">454c7dacf6fa9a6de86d4067f5a08f25cffa519b</a></p>

<p>Found in base branch: <b>main</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (2)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/kernel/relay.c</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/kernel/relay.c</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png?' width=19 height=20> Vulnerability Details</summary>

<p>

relay_open in kernel/relay.c in the Linux kernel through 5.4.1 allows local users to cause a denial of service (such as relay blockage) by triggering a NULL alloc_percpu result.

<p>Publish Date: 2019-11-30

<p>URL: <a href=https://www.mend.io/vulnerability-database/CVE-2019-19462>CVE-2019-19462</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: None

- Integrity Impact: None

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19462">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2019-19462</a></p>

<p>Release Date: 2019-11-30</p>

<p>Fix Resolution: v5.8-rc1</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with Mend [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve medium detected in linuxlinux autoclosed cve medium severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch main vulnerable source files kernel relay c kernel relay c vulnerability details relay open in kernel relay c in the linux kernel through allows local users to cause a denial of service such as relay blockage by triggering a null alloc percpu result publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact none integrity impact none availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with mend

| 0

|

329,572

| 28,290,794,481

|

IssuesEvent

|

2023-04-09 07:01:17

|

unifyai/ivy

|

https://api.github.com/repos/unifyai/ivy

|

closed

|

Fix linalg.test_tensorflow_slogdet

|

TensorFlow Frontend Sub Task Failing Test

|

| | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_tensorflow/test_linalg.py::test_tensorflow_slogdet[cpu-ivy.functional.backends.numpy-False-False]</summary>

2023-04-05T02:10:21.0056938Z E AssertionError: the results from backend numpy and ground truth framework tensorflow do not match

2023-04-05T02:10:21.0057706Z E -80.40653991699219!=-80.40507507324219

2023-04-05T02:10:21.0057957Z E

2023-04-05T02:10:21.0058172Z E

2023-04-05T02:10:21.0058585Z E Falsifying example: test_tensorflow_slogdet(

2023-04-05T02:10:21.0059730Z E dtype_and_x=(['float32'], [array([[-1.7632415e-38, -6.0185311e-36],

2023-04-05T02:10:21.0060242Z E [-2.0000000e+00, -1.0000000e+00]], dtype=float32)]),

2023-04-05T02:10:21.0060602Z E test_flags=FrontendFunctionTestFlags(

2023-04-05T02:10:21.0060930Z E num_positional_args=0,

2023-04-05T02:10:21.0061211Z E with_out=False,

2023-04-05T02:10:21.0061481Z E inplace=False,

2023-04-05T02:10:21.0061760Z E as_variable=[False],

2023-04-05T02:10:21.0062046Z E native_arrays=[False],

2023-04-05T02:10:21.0062605Z E generate_frontend_arrays=False,

2023-04-05T02:10:21.0062889Z E ),

2023-04-05T02:10:21.0063626Z E fn_tree='ivy.functional.frontends.tensorflow.linalg.slogdet',

2023-04-05T02:10:21.0064074Z E frontend='tensorflow',

2023-04-05T02:10:21.0064387Z E on_device='cpu',

2023-04-05T02:10:21.0064622Z E )

2023-04-05T02:10:21.0065321Z E

2023-04-05T02:10:21.0066111Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.2', b'AXicY2BkAALG4xcYwDSY7cAAA4xQmgmJDaYBcXUCqA==') as a decorator on your test case

</details>

|

1.0

|

Fix linalg.test_tensorflow_slogdet - | | |

|---|---|

|tensorflow|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|torch|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

|numpy|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-failure-red></a>

|jax|<a href="https://github.com/unifyai/ivy/actions/runs/4614073491/jobs/8156699920" rel="noopener noreferrer" target="_blank"><img src=https://img.shields.io/badge/-success-success></a>

<details>

<summary>FAILED ivy_tests/test_ivy/test_frontends/test_tensorflow/test_linalg.py::test_tensorflow_slogdet[cpu-ivy.functional.backends.numpy-False-False]</summary>

2023-04-05T02:10:21.0056938Z E AssertionError: the results from backend numpy and ground truth framework tensorflow do not match

2023-04-05T02:10:21.0057706Z E -80.40653991699219!=-80.40507507324219

2023-04-05T02:10:21.0057957Z E

2023-04-05T02:10:21.0058172Z E

2023-04-05T02:10:21.0058585Z E Falsifying example: test_tensorflow_slogdet(

2023-04-05T02:10:21.0059730Z E dtype_and_x=(['float32'], [array([[-1.7632415e-38, -6.0185311e-36],

2023-04-05T02:10:21.0060242Z E [-2.0000000e+00, -1.0000000e+00]], dtype=float32)]),

2023-04-05T02:10:21.0060602Z E test_flags=FrontendFunctionTestFlags(

2023-04-05T02:10:21.0060930Z E num_positional_args=0,

2023-04-05T02:10:21.0061211Z E with_out=False,

2023-04-05T02:10:21.0061481Z E inplace=False,

2023-04-05T02:10:21.0061760Z E as_variable=[False],

2023-04-05T02:10:21.0062046Z E native_arrays=[False],

2023-04-05T02:10:21.0062605Z E generate_frontend_arrays=False,

2023-04-05T02:10:21.0062889Z E ),

2023-04-05T02:10:21.0063626Z E fn_tree='ivy.functional.frontends.tensorflow.linalg.slogdet',

2023-04-05T02:10:21.0064074Z E frontend='tensorflow',

2023-04-05T02:10:21.0064387Z E on_device='cpu',

2023-04-05T02:10:21.0064622Z E )

2023-04-05T02:10:21.0065321Z E

2023-04-05T02:10:21.0066111Z E You can reproduce this example by temporarily adding @reproduce_failure('6.70.2', b'AXicY2BkAALG4xcYwDSY7cAAA4xQmgmJDaYBcXUCqA==') as a decorator on your test case

</details>

|

non_defect

|

fix linalg test tensorflow slogdet tensorflow img src torch img src numpy img src jax img src failed ivy tests test ivy test frontends test tensorflow test linalg py test tensorflow slogdet e assertionerror the results from backend numpy and ground truth framework tensorflow do not match e e e e falsifying example test tensorflow slogdet e dtype and x e dtype e test flags frontendfunctiontestflags e num positional args e with out false e inplace false e as variable e native arrays e generate frontend arrays false e e fn tree ivy functional frontends tensorflow linalg slogdet e frontend tensorflow e on device cpu e e e you can reproduce this example by temporarily adding reproduce failure b as a decorator on your test case

| 0

|

71,025

| 23,415,122,474

|

IssuesEvent

|

2022-08-12 23:06:09

|

openzfs/zfs

|

https://api.github.com/repos/openzfs/zfs

|

closed

|

zfs is reporting dataset as mounted even though mountpoint is set to none

|

Type: Defect Status: Stale

|

### System information

$ cat /etc/lsb-release

DISTRIB_ID=Ubuntu

DISTRIB_RELEASE=18.04

DISTRIB_CODENAME=bionic

DISTRIB_DESCRIPTION="Ubuntu 18.04.3 LTS"

Commands to find ZFS/SPL versions:

$ modinfo zfs | grep -iw version

version: 0.7.12-1ubuntu5

k8s@k8s-node2:~$ modinfo spl | grep -iw version

version: 0.7.12-1ubuntu3

### Describe the problem you're observing

zfs is reporting dataset as mounted even though mountpoint is set to none

### Describe how to reproduce the problem

I am the developer and maintainer of the zfs-loalpv project (https://github.com/openebs/zfs-localpv). It is a k8s CSI driver which provisions the volumes on a ZFS storage. While doing scale testing, I restarted around 200 pods and out of all, one pod was having issue.

```

$ sudo zfs get all zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 | grep mount

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mounted yes -

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mountpoint none local

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 canmount on default

```

here I see that mounted as yes but mountpoint is set to none. How is that possible? the system is reporting that it is mounted

```

$ sudo mount | grep pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 on /var/lib/kubelet/pods/3dbd0a03-c7e5-44f1-9f94-407f6ac96316/volumes/kubernetes.io~csi/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765/mount type zfs (rw,xattr,noacl)

```

The ZFS_LocalPV uses `zfs set mountpoint=none` to umount the ZFS dataset. But in the above case, the mountpoint was set to none but still dataset was mounted? When I set the mountpoint to none again for the same volume, the dataset is getting umounted and everything is normal.

```

$ sudo zfs set mountpoint=none zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765

k8s@k8s-node2:~$ sudo zfs get all zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 | grep mount

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mounted no -

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mountpoint none local

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 canmount on default

```

Please note that I have ~200 volumes and pods are using them. I deleted all the pods at the same time and only one pod is showing this issue, all other pods are fine. As a part the tremination of the pod the driver will unmount the dataset via zfs set mountpoint=none.

|

1.0

|

zfs is reporting dataset as mounted even though mountpoint is set to none - ### System information

$ cat /etc/lsb-release

DISTRIB_ID=Ubuntu

DISTRIB_RELEASE=18.04

DISTRIB_CODENAME=bionic

DISTRIB_DESCRIPTION="Ubuntu 18.04.3 LTS"

Commands to find ZFS/SPL versions:

$ modinfo zfs | grep -iw version

version: 0.7.12-1ubuntu5

k8s@k8s-node2:~$ modinfo spl | grep -iw version

version: 0.7.12-1ubuntu3

### Describe the problem you're observing

zfs is reporting dataset as mounted even though mountpoint is set to none

### Describe how to reproduce the problem

I am the developer and maintainer of the zfs-loalpv project (https://github.com/openebs/zfs-localpv). It is a k8s CSI driver which provisions the volumes on a ZFS storage. While doing scale testing, I restarted around 200 pods and out of all, one pod was having issue.

```

$ sudo zfs get all zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 | grep mount

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mounted yes -

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mountpoint none local

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 canmount on default

```

here I see that mounted as yes but mountpoint is set to none. How is that possible? the system is reporting that it is mounted

```

$ sudo mount | grep pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 on /var/lib/kubelet/pods/3dbd0a03-c7e5-44f1-9f94-407f6ac96316/volumes/kubernetes.io~csi/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765/mount type zfs (rw,xattr,noacl)

```

The ZFS_LocalPV uses `zfs set mountpoint=none` to umount the ZFS dataset. But in the above case, the mountpoint was set to none but still dataset was mounted? When I set the mountpoint to none again for the same volume, the dataset is getting umounted and everything is normal.

```

$ sudo zfs set mountpoint=none zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765

k8s@k8s-node2:~$ sudo zfs get all zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 | grep mount

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mounted no -

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 mountpoint none local

zfspv-pool/pvc-3fe69b0e-9f91-4c6e-8e5c-eb4218468765 canmount on default

```

Please note that I have ~200 volumes and pods are using them. I deleted all the pods at the same time and only one pod is showing this issue, all other pods are fine. As a part the tremination of the pod the driver will unmount the dataset via zfs set mountpoint=none.

|

defect

|

zfs is reporting dataset as mounted even though mountpoint is set to none system information cat etc lsb release distrib id ubuntu distrib release distrib codename bionic distrib description ubuntu lts commands to find zfs spl versions modinfo zfs grep iw version version modinfo spl grep iw version version describe the problem you re observing zfs is reporting dataset as mounted even though mountpoint is set to none describe how to reproduce the problem i am the developer and maintainer of the zfs loalpv project it is a csi driver which provisions the volumes on a zfs storage while doing scale testing i restarted around pods and out of all one pod was having issue sudo zfs get all zfspv pool pvc grep mount zfspv pool pvc mounted yes zfspv pool pvc mountpoint none local zfspv pool pvc canmount on default here i see that mounted as yes but mountpoint is set to none how is that possible the system is reporting that it is mounted sudo mount grep pvc zfspv pool pvc on var lib kubelet pods volumes kubernetes io csi pvc mount type zfs rw xattr noacl the zfs localpv uses zfs set mountpoint none to umount the zfs dataset but in the above case the mountpoint was set to none but still dataset was mounted when i set the mountpoint to none again for the same volume the dataset is getting umounted and everything is normal sudo zfs set mountpoint none zfspv pool pvc sudo zfs get all zfspv pool pvc grep mount zfspv pool pvc mounted no zfspv pool pvc mountpoint none local zfspv pool pvc canmount on default please note that i have volumes and pods are using them i deleted all the pods at the same time and only one pod is showing this issue all other pods are fine as a part the tremination of the pod the driver will unmount the dataset via zfs set mountpoint none

| 1

|

342

| 2,533,092,322

|

IssuesEvent

|

2015-01-23 20:38:55

|

scipy/scipy

|

https://api.github.com/repos/scipy/scipy

|

closed

|

improve accuracy of scipy.stats.rayleigh distribution for large x

|

defect easy-fix scipy.stats

|

Currently the sf-isf round trip for large x return wrong values as shown here:

In [85]: rayleigh.isf(rayleigh.sf(9,1),1)

Out[85]: 9.0000752648062754

By replacing exp(x)-1 with expm1(x) and log(1+x) with log1p(x) one can improve the accuracy of the rayleigh distribution (_cdf, _sf, and _ppf) to machine precision for large x.

Replacing _cdf and _ppf with

def _cdf(self, r):

return - expm1(-r * r / 2.0)

def _sf(self, r):

return exp(-r * r / 2.0)

def _isf(self, q):

return sqrt(-2 * log(q))

def _ppf(self, q):

return sqrt(-2 * log1p(-q))

one acheives

In [86]: rayleigh.isf(rayleigh.sf(9,1),1)

Out[86]: 9.0

|

1.0

|

improve accuracy of scipy.stats.rayleigh distribution for large x - Currently the sf-isf round trip for large x return wrong values as shown here:

In [85]: rayleigh.isf(rayleigh.sf(9,1),1)

Out[85]: 9.0000752648062754

By replacing exp(x)-1 with expm1(x) and log(1+x) with log1p(x) one can improve the accuracy of the rayleigh distribution (_cdf, _sf, and _ppf) to machine precision for large x.

Replacing _cdf and _ppf with

def _cdf(self, r):

return - expm1(-r * r / 2.0)

def _sf(self, r):

return exp(-r * r / 2.0)

def _isf(self, q):

return sqrt(-2 * log(q))

def _ppf(self, q):

return sqrt(-2 * log1p(-q))

one acheives

In [86]: rayleigh.isf(rayleigh.sf(9,1),1)

Out[86]: 9.0

|

defect

|

improve accuracy of scipy stats rayleigh distribution for large x currently the sf isf round trip for large x return wrong values as shown here in rayleigh isf rayleigh sf out by replacing exp x with x and log x with x one can improve the accuracy of the rayleigh distribution cdf sf and ppf to machine precision for large x replacing cdf and ppf with def cdf self r return r r def sf self r return exp r r def isf self q return sqrt log q def ppf self q return sqrt q one acheives in rayleigh isf rayleigh sf out

| 1

|

76,747

| 26,575,799,878

|

IssuesEvent

|

2023-01-21 20:02:33

|

dkfans/keeperfx

|

https://api.github.com/repos/dkfans/keeperfx

|

closed

|

quick_objective in multiplayer shows up just for red

|

Type-Defect

|

If you add a QUICK_OBJECTIVE to a multiplayer map, only red gets the ?-button. All players should see it.

|

1.0

|

quick_objective in multiplayer shows up just for red - If you add a QUICK_OBJECTIVE to a multiplayer map, only red gets the ?-button. All players should see it.

|

defect

|

quick objective in multiplayer shows up just for red if you add a quick objective to a multiplayer map only red gets the button all players should see it

| 1

|

538,776

| 15,778,132,286

|

IssuesEvent

|

2021-04-01 07:17:52

|

ubuntu/yaru

|

https://api.github.com/repos/ubuntu/yaru

|

closed

|

Keep track of upstream adwaita-icon-theme

|

Area: GitHub Actions Priority: Enhancement

|

Since Yaru is now syncing with upstream adwaita-gtk and gnome-shell theme, I think it would be a good idea to also keep an eye on upstream adwaita-icon-theme.

adwaita-icon-theme mostly contains symbolic and action icons that correspond to code changes in gnome-shell, gnome-control-center and the rest of gnome apps. In every gnome development cycle, adwaita-icon-theme adds, fixes, or enhances its symbolic icons in order to reflect the code changes in the gnome stack. adwaita-icon-theme does not contain app icons since gnome apps ship their own icons.

**An example to elaborate:**

In the 3.33 development cycle, gnome added new battery icons in order to better represent the battery charge states: https://gitlab.gnome.org/GNOME/adwaita-icon-theme/issues/6

That has resulted in:

- 21 new battery icons and moving the legacy battery icons to a legacy folder: https://gitlab.gnome.org/GNOME/adwaita-icon-theme/commit/377044f755c3ec994f661d787f50e633487b929d

- Code changes in gnome-shell to use the new icons, if available, and fall back to the existing icon names for compatibility with older icon themes: https://gitlab.gnome.org/GNOME/gnome-shell/commit/bd18313d125aa1873c21b9cce9bbf81a335e48b0

Without reflecting the new change in Yaru¹, gnome-shell would still be falling back to the legacy icons, and not showing a better charge state as a result.

¹ https://github.com/ubuntu/yaru/issues/1482

**Also, a question:**

Does an app (or Yaru itself) fall back to adwaita-icon-theme if a requested icon is not found in Yaru?

**Here is the gnome-stencils.svg as a reference:**

https://gitlab.gnome.org/GNOME/adwaita-icon-theme/blob/master/src/symbolic/gnome-stencils.svg

|

1.0

|

Keep track of upstream adwaita-icon-theme - Since Yaru is now syncing with upstream adwaita-gtk and gnome-shell theme, I think it would be a good idea to also keep an eye on upstream adwaita-icon-theme.

adwaita-icon-theme mostly contains symbolic and action icons that correspond to code changes in gnome-shell, gnome-control-center and the rest of gnome apps. In every gnome development cycle, adwaita-icon-theme adds, fixes, or enhances its symbolic icons in order to reflect the code changes in the gnome stack. adwaita-icon-theme does not contain app icons since gnome apps ship their own icons.

**An example to elaborate:**

In the 3.33 development cycle, gnome added new battery icons in order to better represent the battery charge states: https://gitlab.gnome.org/GNOME/adwaita-icon-theme/issues/6

That has resulted in:

- 21 new battery icons and moving the legacy battery icons to a legacy folder: https://gitlab.gnome.org/GNOME/adwaita-icon-theme/commit/377044f755c3ec994f661d787f50e633487b929d

- Code changes in gnome-shell to use the new icons, if available, and fall back to the existing icon names for compatibility with older icon themes: https://gitlab.gnome.org/GNOME/gnome-shell/commit/bd18313d125aa1873c21b9cce9bbf81a335e48b0

Without reflecting the new change in Yaru¹, gnome-shell would still be falling back to the legacy icons, and not showing a better charge state as a result.

¹ https://github.com/ubuntu/yaru/issues/1482

**Also, a question:**

Does an app (or Yaru itself) fall back to adwaita-icon-theme if a requested icon is not found in Yaru?

**Here is the gnome-stencils.svg as a reference:**

https://gitlab.gnome.org/GNOME/adwaita-icon-theme/blob/master/src/symbolic/gnome-stencils.svg

|

non_defect

|

keep track of upstream adwaita icon theme since yaru is now syncing with upstream adwaita gtk and gnome shell theme i think it would be a good idea to also keep an eye on upstream adwaita icon theme adwaita icon theme mostly contains symbolic and action icons that correspond to code changes in gnome shell gnome control center and the rest of gnome apps in every gnome development cycle adwaita icon theme adds fixes or enhances its symbolic icons in order to reflect the code changes in the gnome stack adwaita icon theme does not contain app icons since gnome apps ship their own icons an example to elaborate in the development cycle gnome added new battery icons in order to better represent the battery charge states that has resulted in new battery icons and moving the legacy battery icons to a legacy folder code changes in gnome shell to use the new icons if available and fall back to the existing icon names for compatibility with older icon themes without reflecting the new change in yaru¹ gnome shell would still be falling back to the legacy icons and not showing a better charge state as a result ¹ also a question does an app or yaru itself fall back to adwaita icon theme if a requested icon is not found in yaru here is the gnome stencils svg as a reference

| 0

|

28,750

| 5,348,389,292

|

IssuesEvent

|

2017-02-18 04:23:27

|

amitdholiya/vqmod

|

https://api.github.com/repos/amitdholiya/vqmod

|

reopened

|

error

|

auto-migrated Priority-Medium Type-Defect

|

```

Hi

I have added an add-on on opencart. it shows following error:

Fatal error: Uncaught exception 'ErrorException' with message 'Error: Duplicate

column name 'global_group_discount'<br />Error No: 1060<br />ALTER TABLE

`product` ADD global_group_discount INT(1) NOT NULL DEFAULT '1'' in

/home/foxyeve/public_html/system/database/mysqli.php:41 Stack trace: #0

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_library_db.php(20):

DBMySQLi->query('ALTER TABLE `pr...') #1

/home/foxyeve/public_html/vqmod/vqcache/vq2-admin_view_template_common_home.tpl(

38): DB->query('ALTER TABLE `pr...') #2

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_engine_controller.php(82):

require('/home/foxyeve/p...') #3

/home/foxyeve/public_html/admin/controller/common/home.php(202):

Controller->render() #4 [internal function]: ControllerCommonHome->index() #5

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_engine_front.php(42):

call_user_func_array(Array, Array) #6

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_engine_front.php(29):

Front->execute(Object(Action)) #7 /home/foxyeve/public_html/admin/index.ph in

/home/foxyeve/public_html/system/database/mysqli.php on line 41

developer said this issue is because of vQmod is not installed properly, is

it?? Even I have added so many add-ons and they are working fine on this.

Kindly tell me so that I can connect to developer regarding this issue.

```

Original issue reported on code.google.com by `nitashan...@gmail.com` on 31 Jul 2014 at 6:54

|

1.0

|

error - ```

Hi

I have added an add-on on opencart. it shows following error:

Fatal error: Uncaught exception 'ErrorException' with message 'Error: Duplicate

column name 'global_group_discount'<br />Error No: 1060<br />ALTER TABLE

`product` ADD global_group_discount INT(1) NOT NULL DEFAULT '1'' in

/home/foxyeve/public_html/system/database/mysqli.php:41 Stack trace: #0

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_library_db.php(20):

DBMySQLi->query('ALTER TABLE `pr...') #1

/home/foxyeve/public_html/vqmod/vqcache/vq2-admin_view_template_common_home.tpl(

38): DB->query('ALTER TABLE `pr...') #2

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_engine_controller.php(82):

require('/home/foxyeve/p...') #3

/home/foxyeve/public_html/admin/controller/common/home.php(202):

Controller->render() #4 [internal function]: ControllerCommonHome->index() #5

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_engine_front.php(42):

call_user_func_array(Array, Array) #6

/home/foxyeve/public_html/vqmod/vqcache/vq2-system_engine_front.php(29):

Front->execute(Object(Action)) #7 /home/foxyeve/public_html/admin/index.ph in

/home/foxyeve/public_html/system/database/mysqli.php on line 41

developer said this issue is because of vQmod is not installed properly, is

it?? Even I have added so many add-ons and they are working fine on this.

Kindly tell me so that I can connect to developer regarding this issue.

```

Original issue reported on code.google.com by `nitashan...@gmail.com` on 31 Jul 2014 at 6:54

|

defect

|

error hi i have added an add on on opencart it shows following error fatal error uncaught exception errorexception with message error duplicate column name global group discount error no alter table product add global group discount int not null default in home foxyeve public html system database mysqli php stack trace home foxyeve public html vqmod vqcache system library db php dbmysqli query alter table pr home foxyeve public html vqmod vqcache admin view template common home tpl db query alter table pr home foxyeve public html vqmod vqcache system engine controller php require home foxyeve p home foxyeve public html admin controller common home php controller render controllercommonhome index home foxyeve public html vqmod vqcache system engine front php call user func array array array home foxyeve public html vqmod vqcache system engine front php front execute object action home foxyeve public html admin index ph in home foxyeve public html system database mysqli php on line developer said this issue is because of vqmod is not installed properly is it even i have added so many add ons and they are working fine on this kindly tell me so that i can connect to developer regarding this issue original issue reported on code google com by nitashan gmail com on jul at

| 1

|

317

| 2,525,206,251

|

IssuesEvent

|

2015-01-20 22:54:10

|

idaholab/moose

|

https://api.github.com/repos/idaholab/moose

|

closed

|

NearestNodeTransfer Bug

|

C: MOOSE P: normal T: defect

|

There's a small logic bug in MultiAppNearestNodeTransfer when transferring "from" a multiapp.

|

1.0

|

NearestNodeTransfer Bug - There's a small logic bug in MultiAppNearestNodeTransfer when transferring "from" a multiapp.

|

defect

|

nearestnodetransfer bug there s a small logic bug in multiappnearestnodetransfer when transferring from a multiapp

| 1

|

57,242

| 15,727,387,676

|

IssuesEvent

|

2021-03-29 12:37:10

|

danmar/testissues

|

https://api.github.com/repos/danmar/testissues

|

opened

|

1.29 reports 1.28 in help (Trac #146)

|

Incomplete Migration Migrated from Trac Other defect noone

|

Migrated from https://trac.cppcheck.net/ticket/146

```json

{

"status": "closed",

"changetime": "2009-03-08T15:30:27",

"description": "\"cppcheck -h\" yields:\n\nCppcheck 1.28 \n \nA tool for static C/C++ code analysis ",

"reporter": "boogachamp",

"cc": "",

"resolution": "fixed",

"_ts": "1236526227000000",

"component": "Other",

"summary": "1.29 reports 1.28 in help",

"priority": "",

"keywords": "",

"time": "2009-03-08T12:41:49",

"milestone": "1.30",

"owner": "noone",

"type": "defect"

}

```

|

1.0

|

1.29 reports 1.28 in help (Trac #146) - Migrated from https://trac.cppcheck.net/ticket/146

```json

{

"status": "closed",

"changetime": "2009-03-08T15:30:27",

"description": "\"cppcheck -h\" yields:\n\nCppcheck 1.28 \n \nA tool for static C/C++ code analysis ",

"reporter": "boogachamp",

"cc": "",

"resolution": "fixed",

"_ts": "1236526227000000",

"component": "Other",

"summary": "1.29 reports 1.28 in help",

"priority": "",

"keywords": "",

"time": "2009-03-08T12:41:49",

"milestone": "1.30",

"owner": "noone",

"type": "defect"

}

```

|

defect

|

reports in help trac migrated from json status closed changetime description cppcheck h yields n ncppcheck n na tool for static c c code analysis reporter boogachamp cc resolution fixed ts component other summary reports in help priority keywords time milestone owner noone type defect

| 1

|

788,683

| 27,761,646,848

|

IssuesEvent

|

2023-03-16 08:43:58

|

rancher/dashboard

|

https://api.github.com/repos/rancher/dashboard

|

closed

|

Performance: Make API requests from within the web worker (step 3)

|

[zube]: Review priority/0 status/dev-validate kind/enhancement area/performance QA/None

|

Placeholder, to fill out

**Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like**

- Make requests to the cluster API from with the web worker

**Describe alternatives you've considered**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Additional context**

- This is change number 3 of 4 ending in https://github.com/rancher/dashboard/issues/6541

- Step 1 is https://github.com/rancher/dashboard/issues/7894

- Step 2 is https://github.com/rancher/dashboard/issues/7895

|

1.0

|

Performance: Make API requests from within the web worker (step 3) - Placeholder, to fill out

**Is your feature request related to a problem? Please describe.**

<!-- A clear and concise description of what the problem is. Ex. I'm always frustrated when [...] -->

**Describe the solution you'd like**

- Make requests to the cluster API from with the web worker

**Describe alternatives you've considered**

<!-- A clear and concise description of any alternative solutions or features you've considered. -->

**Additional context**

- This is change number 3 of 4 ending in https://github.com/rancher/dashboard/issues/6541

- Step 1 is https://github.com/rancher/dashboard/issues/7894

- Step 2 is https://github.com/rancher/dashboard/issues/7895

|

non_defect

|

performance make api requests from within the web worker step placeholder to fill out is your feature request related to a problem please describe describe the solution you d like make requests to the cluster api from with the web worker describe alternatives you ve considered additional context this is change number of ending in step is step is

| 0

|

11,547

| 2,657,950,676

|

IssuesEvent

|

2015-03-18 12:57:14

|

mondain/red5

|

https://api.github.com/repos/mondain/red5

|

closed

|

netstream.send('@setDataFrame','onCuePoint', param) failed to write CuePoint

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1.netstream.send('@setDataFrame','onCuePoint', param)

What is the expected output? What do you see instead?

write CuePoint to flv file.

failed to write CuePoint

What version of the product are you using? On what operating system?

0.9.1 final and 1.0 final

windows

Please provide any additional information below.

```

Original issue reported on code.google.com by `yf_r...@live.cn` on 13 Jan 2013 at 10:11

|

1.0

|

netstream.send('@setDataFrame','onCuePoint', param) failed to write CuePoint - ```

What steps will reproduce the problem?

1.netstream.send('@setDataFrame','onCuePoint', param)

What is the expected output? What do you see instead?

write CuePoint to flv file.

failed to write CuePoint

What version of the product are you using? On what operating system?

0.9.1 final and 1.0 final

windows

Please provide any additional information below.

```

Original issue reported on code.google.com by `yf_r...@live.cn` on 13 Jan 2013 at 10:11

|

defect

|

netstream send setdataframe oncuepoint param failed to write cuepoint what steps will reproduce the problem netstream send setdataframe oncuepoint param what is the expected output what do you see instead write cuepoint to flv file failed to write cuepoint what version of the product are you using on what operating system final and final windows please provide any additional information below original issue reported on code google com by yf r live cn on jan at

| 1

|

31,299

| 6,493,308,594

|

IssuesEvent

|

2017-08-21 16:29:05

|

buildo/react-components

|

https://api.github.com/repos/buildo/react-components

|

closed

|

Icon: should pass fontSize directly to paths

|

defect waiting for merge

|

## description

`fontSize` style is passed to the the `<i />` but not to its `.path` children -> it can easily be overwritten by some extraneous CSS selector (as it's happening on AlinityPRO)

## how to reproduce

- add this CSS: `span { font-size: 10px }`

- pass `style: { fontSize: 15 }`

- the icon is 10px 💥

## specs

add explicit `font-size: inherit` to paths in the SASS

## misc

{optional: other useful info}

|

1.0

|

Icon: should pass fontSize directly to paths - ## description

`fontSize` style is passed to the the `<i />` but not to its `.path` children -> it can easily be overwritten by some extraneous CSS selector (as it's happening on AlinityPRO)

## how to reproduce

- add this CSS: `span { font-size: 10px }`

- pass `style: { fontSize: 15 }`

- the icon is 10px 💥

## specs

add explicit `font-size: inherit` to paths in the SASS

## misc

{optional: other useful info}

|

defect

|

icon should pass fontsize directly to paths description fontsize style is passed to the the but not to its path children it can easily be overwritten by some extraneous css selector as it s happening on alinitypro how to reproduce add this css span font size pass style fontsize the icon is 💥 specs add explicit font size inherit to paths in the sass misc optional other useful info

| 1

|

75,755

| 26,029,654,889

|

IssuesEvent

|

2022-12-21 19:47:15

|

ontop/ontop

|

https://api.github.com/repos/ontop/ontop

|

closed

|

Rdb2RdfTest Problem with Querying Postgresql with many-to-many relations (INT treated as TEXT)

|

type: defect topic: bootstrapping status: fixed

|

<!--

Do you want to ask a question? Are you looking for support? We have also a mailing list https://groups.google.com/d/forum/ontop4obda

Have a look at our guidelines on how to submit a bug report https://ontop-vkg.org/community/contributing/bug-report

-->

### Description

I am running the [RDB2RDFTest.java](https://github.com/ontop/ontop/blob/c3baf4b1ff34b52d2423c86ddc48c0f3569a1c3f/test/rdb2rdf-compliance/src/test/java/RDB2RDFTest.java) tests on different DBMS to validate functionality. In this particular case, I'm running against docker `postgresql:13` (it also is reproducible with Ontop's `ontop/ontop-pgsql` docker image, based on `postgres:9` ).

I've revised the H2-based test case to use Postgresql:

[RDB2RDFTestPostgres.java.txt](https://github.com/ontop/ontop/files/9823009/RDB2RDFTestPostgres.java.txt)

https://github.com/thomasjtaylor/ontop/commit/259ba1d258b4436ea01c49356bc0a5e13a60382a

Several of the Rdb2Rdf tests fail for various reasons (e.g., problems comparing XSD.DOUBLE, timezones, bnodes, etc.). For reference, `dg` prefixes refer to rdb2rdf direct mapping tests, while `tc` prefixes` refer to custom r2rml mappings.

```

FAILED 29 ["tc0003a", "dg0005", "dg0005-modified", "tc0005a", "tc0005a-modified",

"tc0005b", "tc0005b-modified", "dg0011", "dg0012", "dg0012-modified", "tc0012a",

"tc0012a-modified", "tc0012e", "tc0012e-modified", "dg0014", "dg0016", "tc0016a",

"tc0016b", "tc0016b-modified", "tc0016c", "tc0016d", "tc0016e", "dg0018", "tc0019a",

"dg0021", "dg0022", "dg0023", "dg0024", "dg0025"]

```

**JUnit Test Results:** [RDB2RDFTestPostgres-all-tests-20221019-140353.xml.txt](https://github.com/ontop/ontop/files/9823121/RDB2RDFTestPostgres-all-tests-20221019-140353.xml.txt)

For this issue, there is a problem with `INTEGER` columns in complex foreign keys.

`"dg0011","dg0014","dg0021","dg022","dg0023","dg0024","dg0025"`

**JUnit Test Results:** [RDB2RDFTestPostgres-foreignkeys-20221019-141052.xml.txt](https://github.com/ontop/ontop/files/9823124/RDB2RDFTestPostgres-foreignkeys-20221019-141052.xml.txt)

The simplest test appears to be `D0011: Database with many to many relations`:

[D0011/create.sql](https://github.com/ontop/ontop/blob/c3baf4b1ff34b52d2423c86ddc48c0f3569a1c3f/test/rdb2rdf-compliance/src/test/resources/D011/create.sql)

```

CREATE TABLE "Student" (

"ID" integer PRIMARY KEY,

"FirstName" varchar(50),

"LastName" varchar(50)

);

CREATE TABLE "Sport" (

"ID" integer PRIMARY KEY,

"Description" varchar(50)

);

CREATE TABLE "Student_Sport" (

"ID_Student" integer,

"ID_Sport" integer,

PRIMARY KEY ("ID_Student","ID_Sport"),

FOREIGN KEY ("ID_Student") REFERENCES "Student"("ID"),

FOREIGN KEY ("ID_Sport") REFERENCES "Sport"("ID")

);

INSERT INTO "Student" ("ID","FirstName","LastName") VALUES (10,'Venus', 'Williams');

INSERT INTO "Student" ("ID","FirstName","LastName") VALUES (11,'Fernando', 'Alonso');

INSERT INTO "Student" ("ID","FirstName","LastName") VALUES (12,'David', 'Villa');

INSERT INTO "Sport" ("ID", "Description") VALUES (110,'Tennis');

INSERT INTO "Sport" ("ID", "Description") VALUES (111,'Football');

INSERT INTO "Sport" ("ID", "Description") VALUES (112,'Formula1');

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (10,110);

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (11,111);

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (11,112);

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (12,111);

```

Running the JUnit Test results in the following stack trace:

```

4:11:07.301 [Thread-7] ERROR i.u.i.o.a.c.impl.QuestStatement - ERROR: function replace(integer, unknown, unknown) does not exist

Hint: No function matches the given name and argument types. You might need to add explicit type casts.

Position: 475

it.unibz.inf.ontop.exception.OntopQueryEvaluationException: ERROR: function replace(integer, unknown, unknown) does not exist

Hint: No function matches the given name and argument types. You might need to add explicit type casts.

Position: 475

at it.unibz.inf.ontop.answering.connection.impl.SQLQuestStatement.executeConstructQuery(SQLQuestStatement.java:234)

at it.unibz.inf.ontop.answering.connection.impl.QuestStatement.executeConstructQuery(QuestStatement.java:152)

at it.unibz.inf.ontop.answering.connection.impl.QuestStatement.executeConstructQuery(QuestStatement.java:144)

at it.unibz.inf.ontop.answering.connection.impl.QuestStatement$QueryExecutionThread.run(QuestStatement.java:97)

```

Turning on debug logging, shows that ultimately the `INTEGER` `Student.ID` is being assumed to be `TEXT`:

[RDB2RDFTestPostgres-D0011-debug.txt](https://github.com/ontop/ontop/files/9823211/RDB2RDFTestPostgres-D0011-debug.txt)

```

SELECT ('http://example.com/base/Student/ID=' || REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(v1."ID", '%', '%25'), ' ', '%20'), '!', '%21'), '"', '%22'), '#', '%23'), '$', '%24'), '&', '%26'), '''', '%27'), '(', '%28'), ')', '%29'), '*', '%2A'), '+', '%2B'), ',', '%2C'), '/', '%2F'), ':', '%3A'), ';', '%3B'), '<', '%3C'), '=', '%3D'), '>', '%3E'), '?', '%3F'), '@', '%40'), '[', '%5B'), '\', '%5C'), ']', '%5D'), '^', '%5E'), '`', '%60'), '{', '%7B'), '|', '%7C'), '}', '%7D')) AS "v10", 'http://www.w3.org/1999/02/22-rdf-syntax-ns#type' AS "v19", 'http://example.com/base/Student' AS "v38", 0 AS "v8"

FROM "Student" v1

```

If I try to re-run this query fragment directly in `psql`, I get the same error.

### Potential Workarounds

However, I can get this to work in any one of the following ways:

1. Use Postgres `::text` conversion `REPLACE(v1."ID"::text, '%', '%25')`

2. Use `CAST` `REPLACE(CAST(v1."ID" AS TEXT), '%', '%25')`

3. Remove the `REPLACE` operators. `v1."ID"`

```

SELECT ('http://example.com/base/Student/ID=' || v1."ID") AS "v10", 'http://www.w3.org/1999/02/22-rdf-syntax-ns#type' AS "v19", 'http://example.com/base/Student' AS "v38", 0 AS "v8"

FROM "Student" v1

```

4. Remove the `FOREIGN KEY` statements from the `Student_Sport` table.

```

CREATE TABLE "Student_Sport" (

"ID_Student" integer,

"ID_Sport" integer,

PRIMARY KEY ("ID_Student","ID_Sport"),

--FOREIGN KEY ("ID_Student") REFERENCES "Student"("ID"),

--FOREIGN KEY ("ID_Sport") REFERENCES "Sport"("ID")

);

```

Perhaps the `INTEGERToTEXT` operation could be updated to fix this issue?

### Steps to Reproduce

1. Start Postgres Database: `docker run --name ontop_postgres_running -p 7777:5432 -e POSTGRES_PASSWORD=postgres2 -d ontop/ontop-pgsql` (Note: the ontop-pgsql database takes a long time to start/initialize, prefer postgres:13)

2. Make sure Postgres JDBC Driver is on classpath (default RDB2RDFTest only includes H2)

3. Run the `RDB2RDFTestPostgrs.java` Unit Test

**Expected behavior:**

Rdb2Rdf Test Succeeds

[D011/directGraph.ttl](https://github.com/ontop/ontop/blob/c3baf4b1ff34b52d2423c86ddc48c0f3569a1c3f/test/rdb2rdf-compliance/src/test/resources/D011/directGraph.ttl)

**Actual behavior:**

Rdb2Rdf Test Fails

**Reproduces how often:**

Always

### Versions

Ontop: `4.3.0-SNAPSHOT` (source); also occurs with Ontop `4.2.1` (maven central)

Postgresql: docker `postgres:13`; also occurs with `ontop/ontop-pgsql`

Postgres Driver: `42.5.0`; (also occurs with earlier drivers)

### Additional Information

I've committed this test case to my fork:

https://github.com/thomasjtaylor/ontop/commit/259ba1d258b4436ea01c49356bc0a5e13a60382a

|

1.0

|

Rdb2RdfTest Problem with Querying Postgresql with many-to-many relations (INT treated as TEXT) - <!--

Do you want to ask a question? Are you looking for support? We have also a mailing list https://groups.google.com/d/forum/ontop4obda

Have a look at our guidelines on how to submit a bug report https://ontop-vkg.org/community/contributing/bug-report

-->

### Description

I am running the [RDB2RDFTest.java](https://github.com/ontop/ontop/blob/c3baf4b1ff34b52d2423c86ddc48c0f3569a1c3f/test/rdb2rdf-compliance/src/test/java/RDB2RDFTest.java) tests on different DBMS to validate functionality. In this particular case, I'm running against docker `postgresql:13` (it also is reproducible with Ontop's `ontop/ontop-pgsql` docker image, based on `postgres:9` ).

I've revised the H2-based test case to use Postgresql:

[RDB2RDFTestPostgres.java.txt](https://github.com/ontop/ontop/files/9823009/RDB2RDFTestPostgres.java.txt)

https://github.com/thomasjtaylor/ontop/commit/259ba1d258b4436ea01c49356bc0a5e13a60382a

Several of the Rdb2Rdf tests fail for various reasons (e.g., problems comparing XSD.DOUBLE, timezones, bnodes, etc.). For reference, `dg` prefixes refer to rdb2rdf direct mapping tests, while `tc` prefixes` refer to custom r2rml mappings.

```

FAILED 29 ["tc0003a", "dg0005", "dg0005-modified", "tc0005a", "tc0005a-modified",

"tc0005b", "tc0005b-modified", "dg0011", "dg0012", "dg0012-modified", "tc0012a",

"tc0012a-modified", "tc0012e", "tc0012e-modified", "dg0014", "dg0016", "tc0016a",

"tc0016b", "tc0016b-modified", "tc0016c", "tc0016d", "tc0016e", "dg0018", "tc0019a",

"dg0021", "dg0022", "dg0023", "dg0024", "dg0025"]

```

**JUnit Test Results:** [RDB2RDFTestPostgres-all-tests-20221019-140353.xml.txt](https://github.com/ontop/ontop/files/9823121/RDB2RDFTestPostgres-all-tests-20221019-140353.xml.txt)

For this issue, there is a problem with `INTEGER` columns in complex foreign keys.

`"dg0011","dg0014","dg0021","dg022","dg0023","dg0024","dg0025"`

**JUnit Test Results:** [RDB2RDFTestPostgres-foreignkeys-20221019-141052.xml.txt](https://github.com/ontop/ontop/files/9823124/RDB2RDFTestPostgres-foreignkeys-20221019-141052.xml.txt)

The simplest test appears to be `D0011: Database with many to many relations`:

[D0011/create.sql](https://github.com/ontop/ontop/blob/c3baf4b1ff34b52d2423c86ddc48c0f3569a1c3f/test/rdb2rdf-compliance/src/test/resources/D011/create.sql)

```

CREATE TABLE "Student" (

"ID" integer PRIMARY KEY,

"FirstName" varchar(50),

"LastName" varchar(50)

);

CREATE TABLE "Sport" (

"ID" integer PRIMARY KEY,

"Description" varchar(50)

);

CREATE TABLE "Student_Sport" (

"ID_Student" integer,

"ID_Sport" integer,

PRIMARY KEY ("ID_Student","ID_Sport"),

FOREIGN KEY ("ID_Student") REFERENCES "Student"("ID"),

FOREIGN KEY ("ID_Sport") REFERENCES "Sport"("ID")

);

INSERT INTO "Student" ("ID","FirstName","LastName") VALUES (10,'Venus', 'Williams');

INSERT INTO "Student" ("ID","FirstName","LastName") VALUES (11,'Fernando', 'Alonso');

INSERT INTO "Student" ("ID","FirstName","LastName") VALUES (12,'David', 'Villa');

INSERT INTO "Sport" ("ID", "Description") VALUES (110,'Tennis');

INSERT INTO "Sport" ("ID", "Description") VALUES (111,'Football');

INSERT INTO "Sport" ("ID", "Description") VALUES (112,'Formula1');

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (10,110);

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (11,111);

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (11,112);

INSERT INTO "Student_Sport" ("ID_Student", "ID_Sport") VALUES (12,111);

```

Running the JUnit Test results in the following stack trace:

```

4:11:07.301 [Thread-7] ERROR i.u.i.o.a.c.impl.QuestStatement - ERROR: function replace(integer, unknown, unknown) does not exist

Hint: No function matches the given name and argument types. You might need to add explicit type casts.

Position: 475

it.unibz.inf.ontop.exception.OntopQueryEvaluationException: ERROR: function replace(integer, unknown, unknown) does not exist

Hint: No function matches the given name and argument types. You might need to add explicit type casts.

Position: 475

at it.unibz.inf.ontop.answering.connection.impl.SQLQuestStatement.executeConstructQuery(SQLQuestStatement.java:234)

at it.unibz.inf.ontop.answering.connection.impl.QuestStatement.executeConstructQuery(QuestStatement.java:152)

at it.unibz.inf.ontop.answering.connection.impl.QuestStatement.executeConstructQuery(QuestStatement.java:144)

at it.unibz.inf.ontop.answering.connection.impl.QuestStatement$QueryExecutionThread.run(QuestStatement.java:97)

```

Turning on debug logging, shows that ultimately the `INTEGER` `Student.ID` is being assumed to be `TEXT`:

[RDB2RDFTestPostgres-D0011-debug.txt](https://github.com/ontop/ontop/files/9823211/RDB2RDFTestPostgres-D0011-debug.txt)

```

SELECT ('http://example.com/base/Student/ID=' || REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(REPLACE(v1."ID", '%', '%25'), ' ', '%20'), '!', '%21'), '"', '%22'), '#', '%23'), '$', '%24'), '&', '%26'), '''', '%27'), '(', '%28'), ')', '%29'), '*', '%2A'), '+', '%2B'), ',', '%2C'), '/', '%2F'), ':', '%3A'), ';', '%3B'), '<', '%3C'), '=', '%3D'), '>', '%3E'), '?', '%3F'), '@', '%40'), '[', '%5B'), '\', '%5C'), ']', '%5D'), '^', '%5E'), '`', '%60'), '{', '%7B'), '|', '%7C'), '}', '%7D')) AS "v10", 'http://www.w3.org/1999/02/22-rdf-syntax-ns#type' AS "v19", 'http://example.com/base/Student' AS "v38", 0 AS "v8"

FROM "Student" v1

```

If I try to re-run this query fragment directly in `psql`, I get the same error.

### Potential Workarounds

However, I can get this to work in any one of the following ways:

1. Use Postgres `::text` conversion `REPLACE(v1."ID"::text, '%', '%25')`

2. Use `CAST` `REPLACE(CAST(v1."ID" AS TEXT), '%', '%25')`

3. Remove the `REPLACE` operators. `v1."ID"`

```

SELECT ('http://example.com/base/Student/ID=' || v1."ID") AS "v10", 'http://www.w3.org/1999/02/22-rdf-syntax-ns#type' AS "v19", 'http://example.com/base/Student' AS "v38", 0 AS "v8"

FROM "Student" v1

```

4. Remove the `FOREIGN KEY` statements from the `Student_Sport` table.

```

CREATE TABLE "Student_Sport" (

"ID_Student" integer,

"ID_Sport" integer,

PRIMARY KEY ("ID_Student","ID_Sport"),

--FOREIGN KEY ("ID_Student") REFERENCES "Student"("ID"),

--FOREIGN KEY ("ID_Sport") REFERENCES "Sport"("ID")

);

```

Perhaps the `INTEGERToTEXT` operation could be updated to fix this issue?

### Steps to Reproduce

1. Start Postgres Database: `docker run --name ontop_postgres_running -p 7777:5432 -e POSTGRES_PASSWORD=postgres2 -d ontop/ontop-pgsql` (Note: the ontop-pgsql database takes a long time to start/initialize, prefer postgres:13)

2. Make sure Postgres JDBC Driver is on classpath (default RDB2RDFTest only includes H2)

3. Run the `RDB2RDFTestPostgrs.java` Unit Test

**Expected behavior:**

Rdb2Rdf Test Succeeds

[D011/directGraph.ttl](https://github.com/ontop/ontop/blob/c3baf4b1ff34b52d2423c86ddc48c0f3569a1c3f/test/rdb2rdf-compliance/src/test/resources/D011/directGraph.ttl)

**Actual behavior:**

Rdb2Rdf Test Fails

**Reproduces how often:**

Always

### Versions

Ontop: `4.3.0-SNAPSHOT` (source); also occurs with Ontop `4.2.1` (maven central)

Postgresql: docker `postgres:13`; also occurs with `ontop/ontop-pgsql`

Postgres Driver: `42.5.0`; (also occurs with earlier drivers)

### Additional Information

I've committed this test case to my fork:

https://github.com/thomasjtaylor/ontop/commit/259ba1d258b4436ea01c49356bc0a5e13a60382a

|

defect

|

problem with querying postgresql with many to many relations int treated as text do you want to ask a question are you looking for support we have also a mailing list have a look at our guidelines on how to submit a bug report description i am running the tests on different dbms to validate functionality in this particular case i m running against docker postgresql it also is reproducible with ontop s ontop ontop pgsql docker image based on postgres i ve revised the based test case to use postgresql several of the tests fail for various reasons e g problems comparing xsd double timezones bnodes etc for reference dg prefixes refer to direct mapping tests while tc prefixes refer to custom mappings failed modified modified modified modified modified modified modified junit test results for this issue there is a problem with integer columns in complex foreign keys junit test results the simplest test appears to be database with many to many relations create table student id integer primary key firstname varchar lastname varchar create table sport id integer primary key description varchar create table student sport id student integer id sport integer primary key id student id sport foreign key id student references student id foreign key id sport references sport id insert into student id firstname lastname values venus williams insert into student id firstname lastname values fernando alonso insert into student id firstname lastname values david villa insert into sport id description values tennis insert into sport id description values football insert into sport id description values insert into student sport id student id sport values insert into student sport id student id sport values insert into student sport id student id sport values insert into student sport id student id sport values running the junit test results in the following stack trace error i u i o a c impl queststatement error function replace integer unknown unknown does not exist hint no function matches the given name and argument types you might need to add explicit type casts position it unibz inf ontop exception ontopqueryevaluationexception error function replace integer unknown unknown does not exist hint no function matches the given name and argument types you might need to add explicit type casts position at it unibz inf ontop answering connection impl sqlqueststatement executeconstructquery sqlqueststatement java at it unibz inf ontop answering connection impl queststatement executeconstructquery queststatement java at it unibz inf ontop answering connection impl queststatement executeconstructquery queststatement java at it unibz inf ontop answering connection impl queststatement queryexecutionthread run queststatement java turning on debug logging shows that ultimately the integer student id is being assumed to be text select replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace replace id as as as as from student if i try to re run this query fragment directly in psql i get the same error potential workarounds however i can get this to work in any one of the following ways use postgres text conversion replace id text use cast replace cast id as text remove the replace operators id select id as as as as from student remove the foreign key statements from the student sport table create table student sport id student integer id sport integer primary key id student id sport foreign key id student references student id foreign key id sport references sport id perhaps the integertotext operation could be updated to fix this issue steps to reproduce start postgres database docker run name ontop postgres running p e postgres password d ontop ontop pgsql note the ontop pgsql database takes a long time to start initialize prefer postgres make sure postgres jdbc driver is on classpath default only includes run the java unit test expected behavior test succeeds actual behavior test fails reproduces how often always versions ontop snapshot source also occurs with ontop maven central postgresql docker postgres also occurs with ontop ontop pgsql postgres driver also occurs with earlier drivers additional information i ve committed this test case to my fork

| 1

|

342,462

| 30,623,419,603

|

IssuesEvent

|

2023-07-24 09:48:13

|

giantswarm/roadmap

|

https://api.github.com/repos/giantswarm/roadmap

|

closed

|

Regularly validate CNCF conformance of CAPI clusters

|

topic/testing team/tinkerers

|

Acceptance criteria:

- [ ] CNCF tests are being regularly ran for all providers against latest state of cluster and default apps app

- [ ] Teams can easily find and access results

- [ ] There is a notification mechanism for when tests fail

|

1.0

|

Regularly validate CNCF conformance of CAPI clusters - Acceptance criteria:

- [ ] CNCF tests are being regularly ran for all providers against latest state of cluster and default apps app

- [ ] Teams can easily find and access results

- [ ] There is a notification mechanism for when tests fail

|

non_defect

|

regularly validate cncf conformance of capi clusters acceptance criteria cncf tests are being regularly ran for all providers against latest state of cluster and default apps app teams can easily find and access results there is a notification mechanism for when tests fail

| 0

|

31,708

| 4,286,986,308

|

IssuesEvent

|

2016-07-16 13:03:29

|

StockSharp/StockSharp

|

https://api.github.com/repos/StockSharp/StockSharp

|

closed

|

Missing projects from solution

|

by design

|

Hi,

I am trying to use Samples/Testing/SampleHistoryTestingsample from 4.3.14.1 but the stocksharp project is not complete: for example projects like Algo.HistoryPublic or Xaml or Xaml.ChartingPublic are missing.

I noticed that all the missing projects are present in the 'Terminal' branch of stocksharp.

Which is the correct way to go?