Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

469,492

| 13,518,940,780

|

IssuesEvent

|

2020-09-15 00:33:08

|

grpc/grpc

|

https://api.github.com/repos/grpc/grpc

|

closed

|

iOS if .proto has same object , like 2 .proto also has 'base' element, that will be errors, duplicate symbols for architecture x86_64

|

disposition/stale kind/question lang/ObjC platform/iOS priority/P3

|

iOS if .proto has same object , like 2 .proto also has 'base' , that will be errors, duplicate symbols for architecture x86_64

|

1.0

|

iOS if .proto has same object , like 2 .proto also has 'base' element, that will be errors, duplicate symbols for architecture x86_64 - iOS if .proto has same object , like 2 .proto also has 'base' , that will be errors, duplicate symbols for architecture x86_64

|

non_defect

|

ios if proto has same object like proto also has base element that will be errors duplicate symbols for architecture ios if proto has same object like proto also has base that will be errors duplicate symbols for architecture

| 0

|

189,649

| 22,047,083,817

|

IssuesEvent

|

2022-05-30 03:51:38

|

Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492

|

https://api.github.com/repos/Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492

|

closed

|

CVE-2020-27815 (High) detected in linuxlinux-4.19.88 - autoclosed

|

security vulnerability

|

## CVE-2020-27815 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.88</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492/commit/8d2169763c8858bce8d07fbb569f01ef9b30383b">8d2169763c8858bce8d07fbb569f01ef9b30383b</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (3)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/linux-4.19.72/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/linux-4.19.72/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/linux-4.19.72/fs/jfs/jfs_dmap.h</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A flaw was found in the JFS filesystem code in the Linux Kernel which allows a local attacker with the ability to set extended attributes to panic the system, causing memory corruption or escalating privileges. The highest threat from this vulnerability is to confidentiality, integrity, as well as system availability.

<p>Publish Date: 2021-05-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27815>CVE-2020-27815</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2020-27815">https://nvd.nist.gov/vuln/detail/CVE-2020-27815</a></p>

<p>Release Date: 2021-05-26</p>

<p>Fix Resolution: linux-libc-headers - 5.13;linux-yocto - 5.4.20+gitAUTOINC+c11911d4d1_f4d7dbafb1,4.8.26+gitAUTOINC+1c60e003c7_27efc3ba68</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-27815 (High) detected in linuxlinux-4.19.88 - autoclosed - ## CVE-2020-27815 - High Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>linuxlinux-4.19.88</b></p></summary>

<p>

<p>The Linux Kernel</p>

<p>Library home page: <a href=https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux>https://mirrors.edge.kernel.org/pub/linux/kernel/v4.x/?wsslib=linux</a></p>

<p>Found in HEAD commit: <a href="https://github.com/Trinadh465/device_renesas_kernel_AOSP10_r33_CVE-2022-0492/commit/8d2169763c8858bce8d07fbb569f01ef9b30383b">8d2169763c8858bce8d07fbb569f01ef9b30383b</a></p>

<p>Found in base branch: <b>master</b></p></p>

</details>

</p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Source Files (3)</summary>

<p></p>

<p>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/linux-4.19.72/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/linux-4.19.72/fs/jfs/jfs_dmap.h</b>

<img src='https://s3.amazonaws.com/wss-public/bitbucketImages/xRedImage.png' width=19 height=20> <b>/linux-4.19.72/fs/jfs/jfs_dmap.h</b>

</p>

</details>

<p></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/high_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

A flaw was found in the JFS filesystem code in the Linux Kernel which allows a local attacker with the ability to set extended attributes to panic the system, causing memory corruption or escalating privileges. The highest threat from this vulnerability is to confidentiality, integrity, as well as system availability.

<p>Publish Date: 2021-05-26

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-27815>CVE-2020-27815</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>7.8</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Local

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: High

- Integrity Impact: High

- Availability Impact: High

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://nvd.nist.gov/vuln/detail/CVE-2020-27815">https://nvd.nist.gov/vuln/detail/CVE-2020-27815</a></p>

<p>Release Date: 2021-05-26</p>

<p>Fix Resolution: linux-libc-headers - 5.13;linux-yocto - 5.4.20+gitAUTOINC+c11911d4d1_f4d7dbafb1,4.8.26+gitAUTOINC+1c60e003c7_27efc3ba68</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve high detected in linuxlinux autoclosed cve high severity vulnerability vulnerable library linuxlinux the linux kernel library home page a href found in head commit a href found in base branch master vulnerable source files linux fs jfs jfs dmap h linux fs jfs jfs dmap h linux fs jfs jfs dmap h vulnerability details a flaw was found in the jfs filesystem code in the linux kernel which allows a local attacker with the ability to set extended attributes to panic the system causing memory corruption or escalating privileges the highest threat from this vulnerability is to confidentiality integrity as well as system availability publish date url a href cvss score details base score metrics exploitability metrics attack vector local attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact high integrity impact high availability impact high for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution linux libc headers linux yocto gitautoinc gitautoinc step up your open source security game with whitesource

| 0

|

17,576

| 4,173,502,203

|

IssuesEvent

|

2016-06-21 10:46:39

|

ubiquits/toolchain

|

https://api.github.com/repos/ubiquits/toolchain

|

opened

|

Generate server side certificates on initialization for usage by the Auth service

|

comp: cli comp: services effort2: medium (day) needs: documentation priority3: required type: feature

|

On quickstart initialization, certificates should be generated.

Consider https://www.npmjs.com/package/ursa

or https://www.npmjs.com/package/node-rsa

or https://www.npmjs.com/package/node-forge (can generate both rsa and crt keys)

When initialized, the private key MUST NOT be committed (`.gitignore` it) but it should be echoed to the console so that the developer can store it somewhere.

An ssl cert should be generated from the key pair, and the server startup should support https on port 8443 (localhost). Note that when in production, it should actually be http only, as ssl termination should be at the load balancer for performance, as once in the docker weave network, ssl security is superfluous. Consider leaving getting https working for a later release unless there is demand.

As teams will be starting projects at separate times, they should generate their own keys, so the key generation should be checked on startup, just with a confirm if not in production. If in production, refuse to start the server.

|

1.0

|

Generate server side certificates on initialization for usage by the Auth service - On quickstart initialization, certificates should be generated.

Consider https://www.npmjs.com/package/ursa

or https://www.npmjs.com/package/node-rsa

or https://www.npmjs.com/package/node-forge (can generate both rsa and crt keys)

When initialized, the private key MUST NOT be committed (`.gitignore` it) but it should be echoed to the console so that the developer can store it somewhere.

An ssl cert should be generated from the key pair, and the server startup should support https on port 8443 (localhost). Note that when in production, it should actually be http only, as ssl termination should be at the load balancer for performance, as once in the docker weave network, ssl security is superfluous. Consider leaving getting https working for a later release unless there is demand.

As teams will be starting projects at separate times, they should generate their own keys, so the key generation should be checked on startup, just with a confirm if not in production. If in production, refuse to start the server.

|

non_defect

|

generate server side certificates on initialization for usage by the auth service on quickstart initialization certificates should be generated consider or or can generate both rsa and crt keys when initialized the private key must not be committed gitignore it but it should be echoed to the console so that the developer can store it somewhere an ssl cert should be generated from the key pair and the server startup should support https on port localhost note that when in production it should actually be http only as ssl termination should be at the load balancer for performance as once in the docker weave network ssl security is superfluous consider leaving getting https working for a later release unless there is demand as teams will be starting projects at separate times they should generate their own keys so the key generation should be checked on startup just with a confirm if not in production if in production refuse to start the server

| 0

|

71,597

| 23,714,562,859

|

IssuesEvent

|

2022-08-30 10:38:23

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

Unable to set up secret storage

|

T-Defect

|

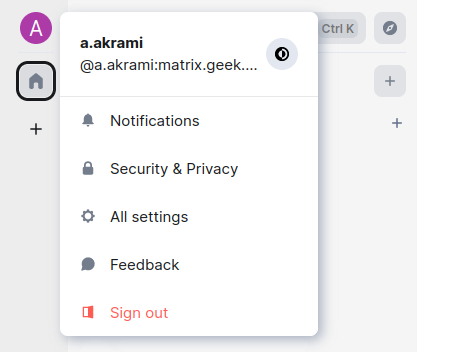

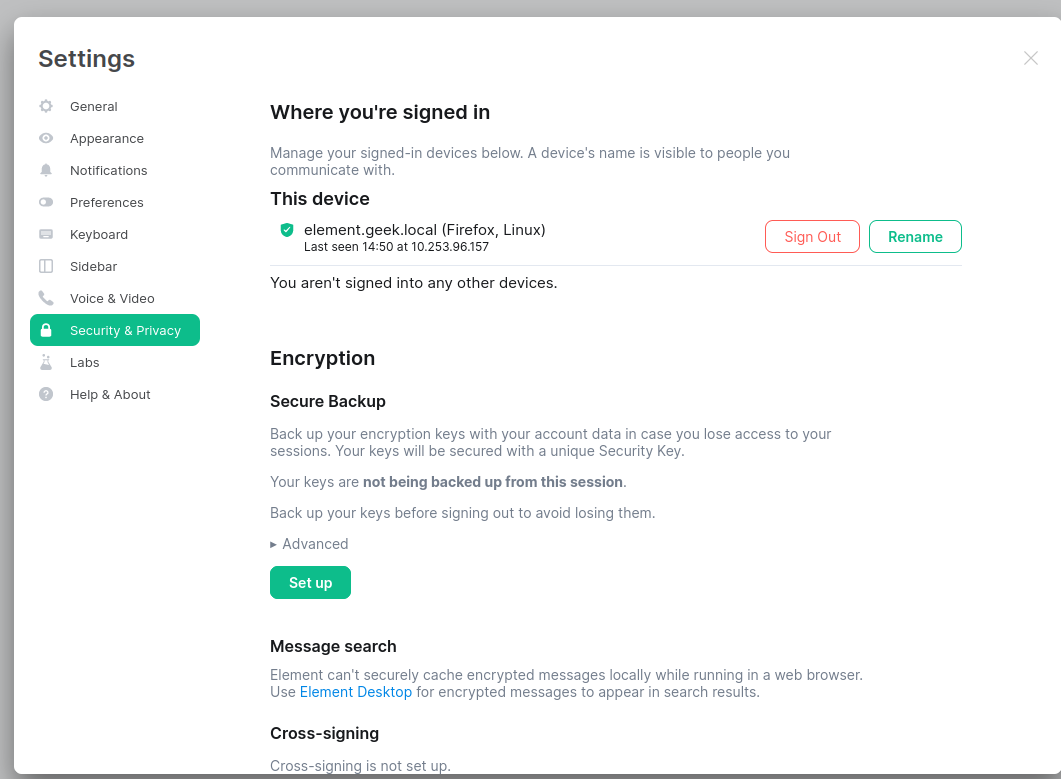

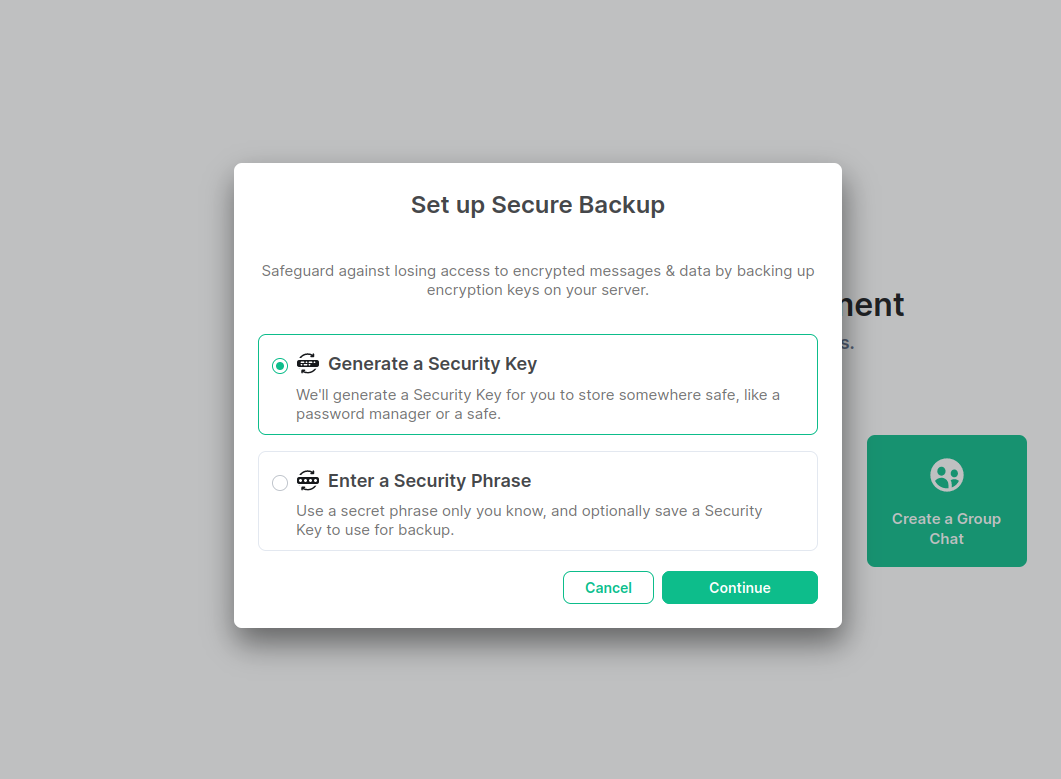

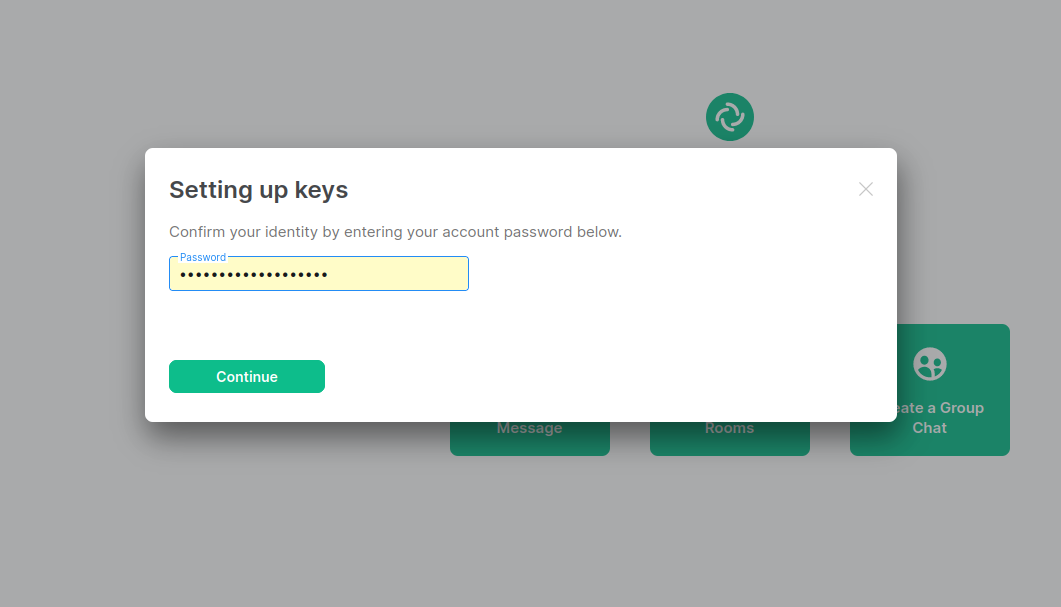

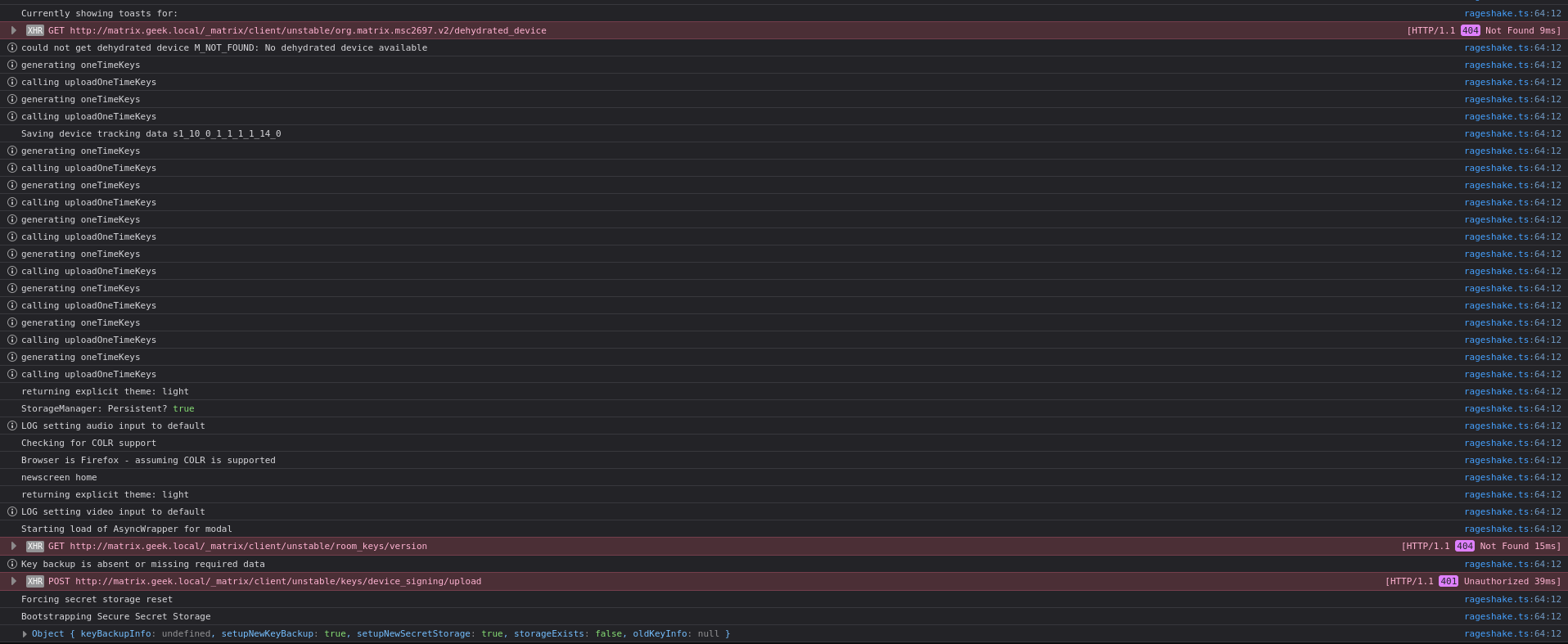

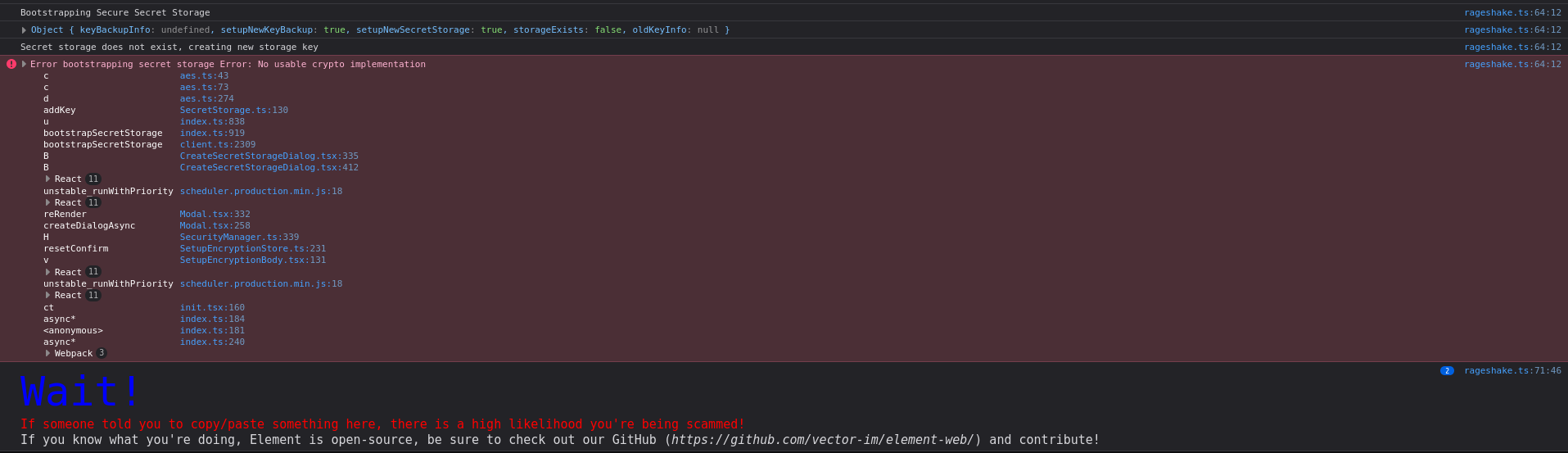

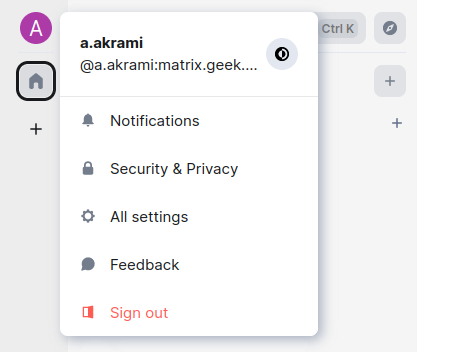

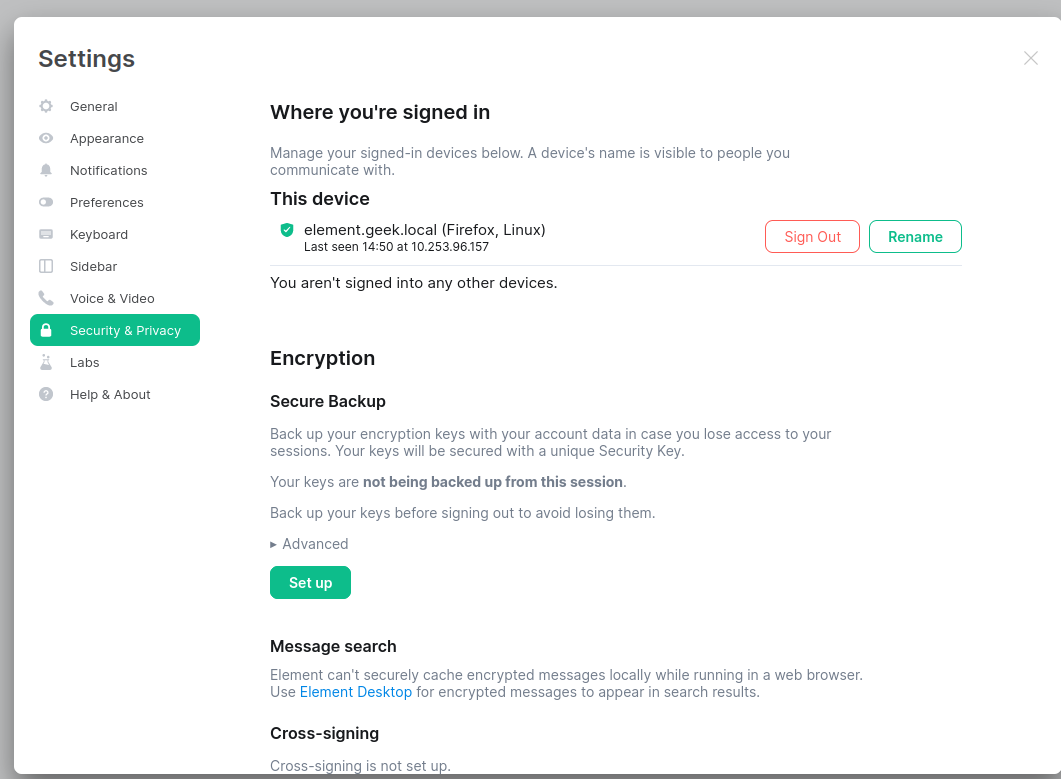

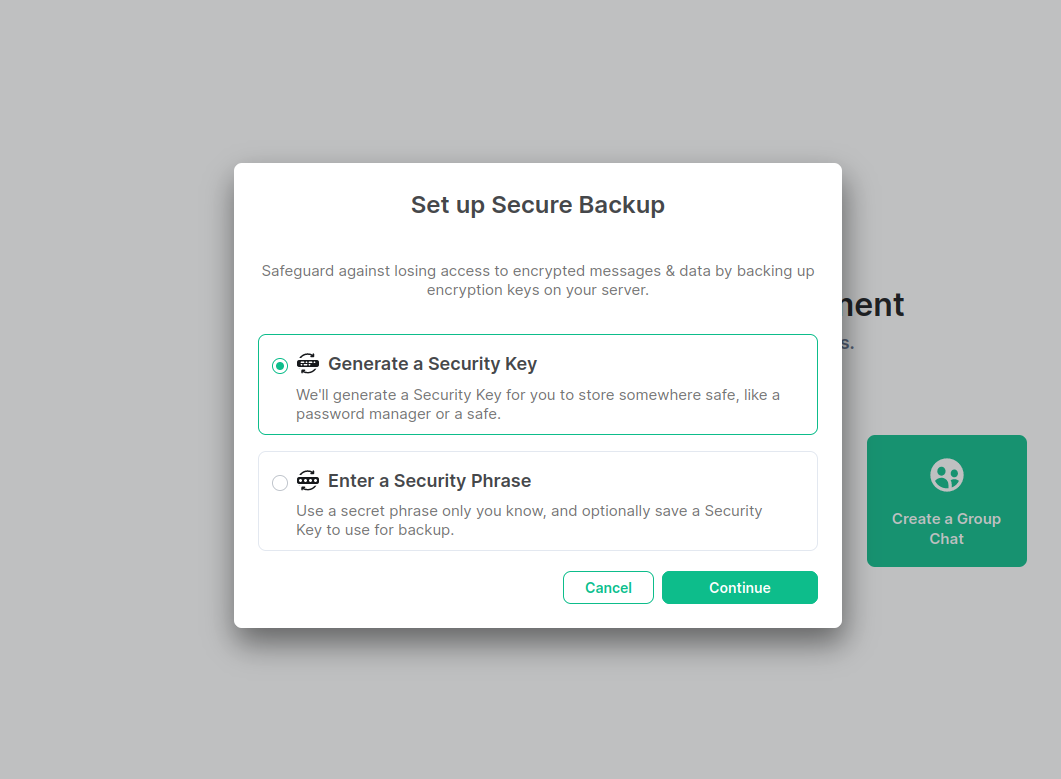

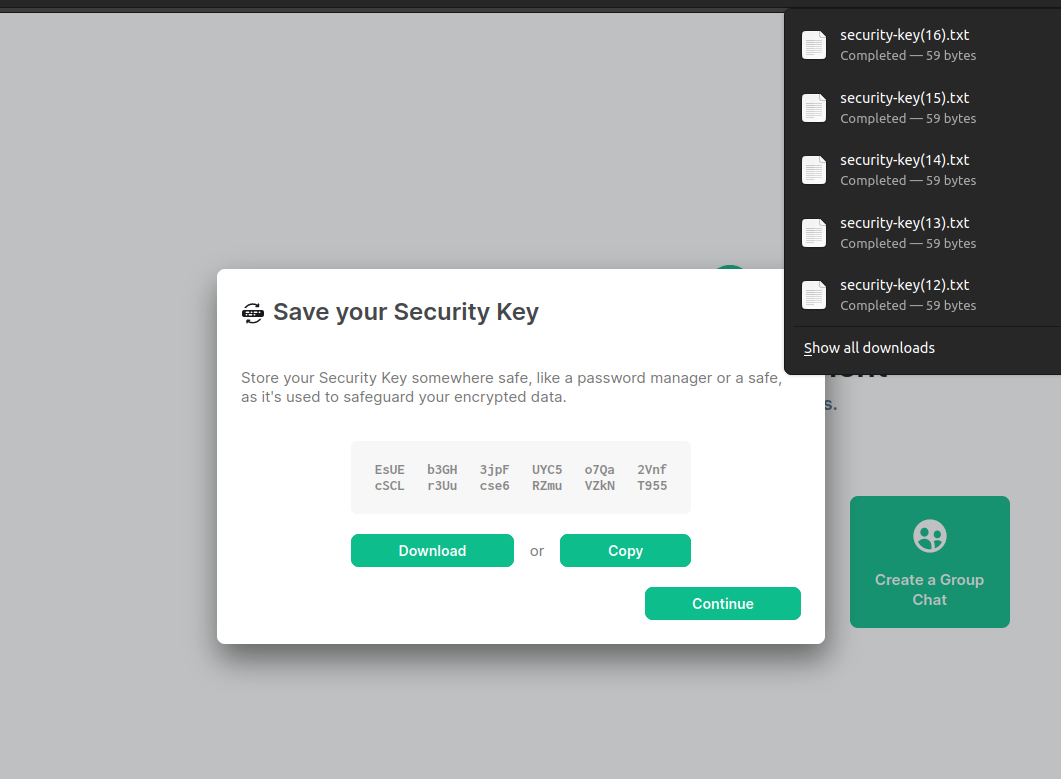

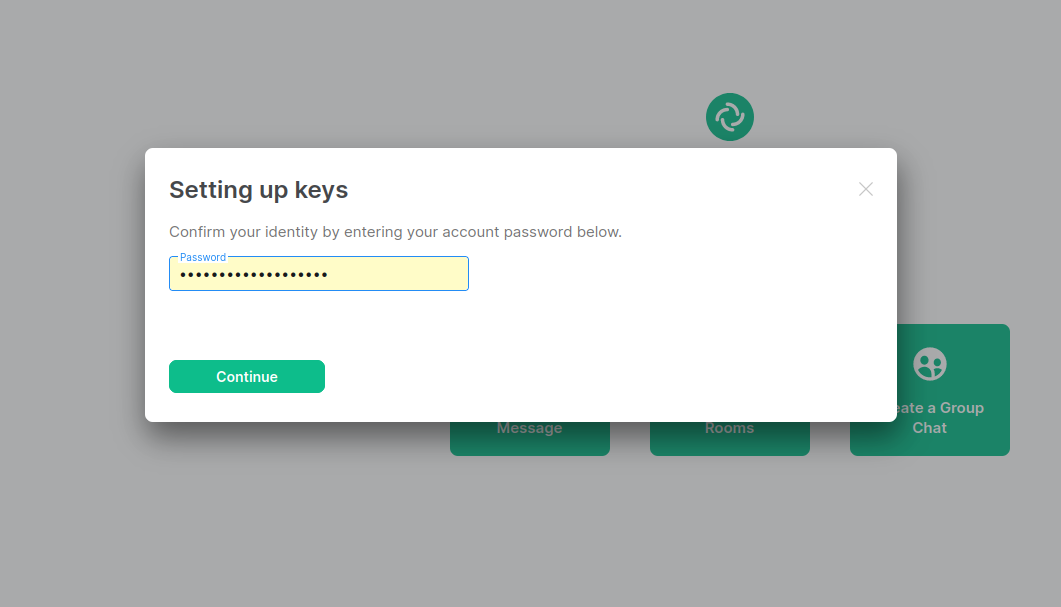

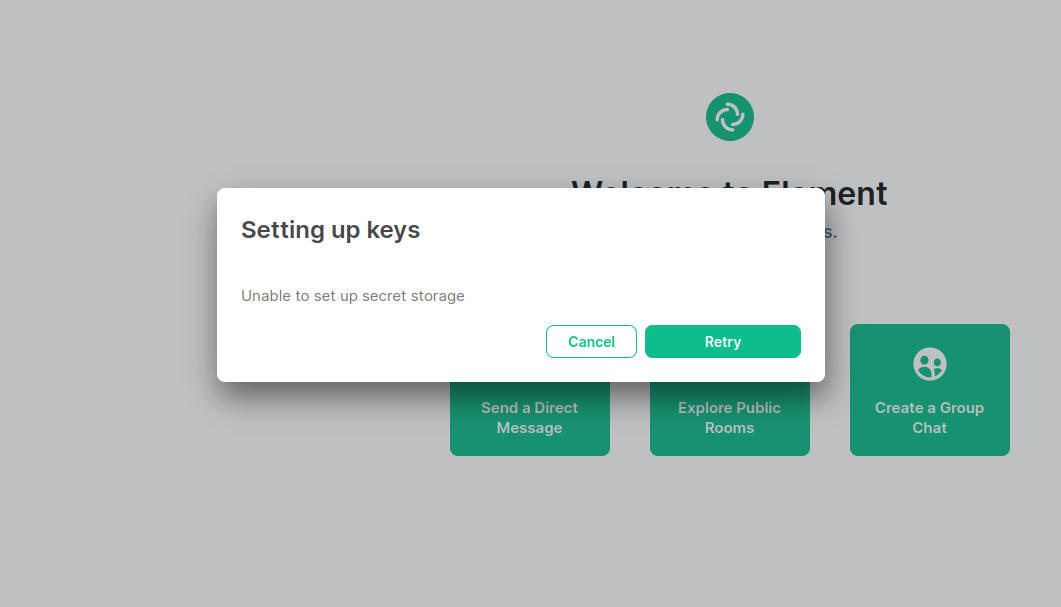

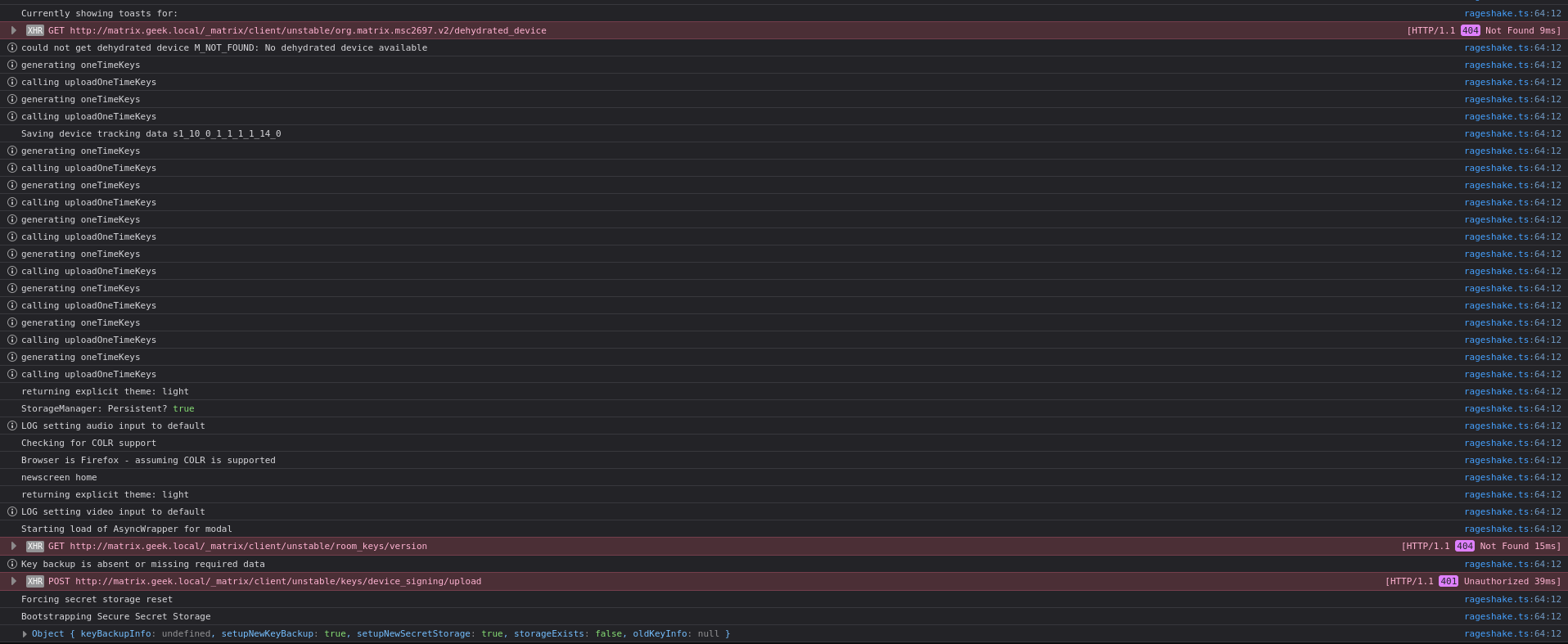

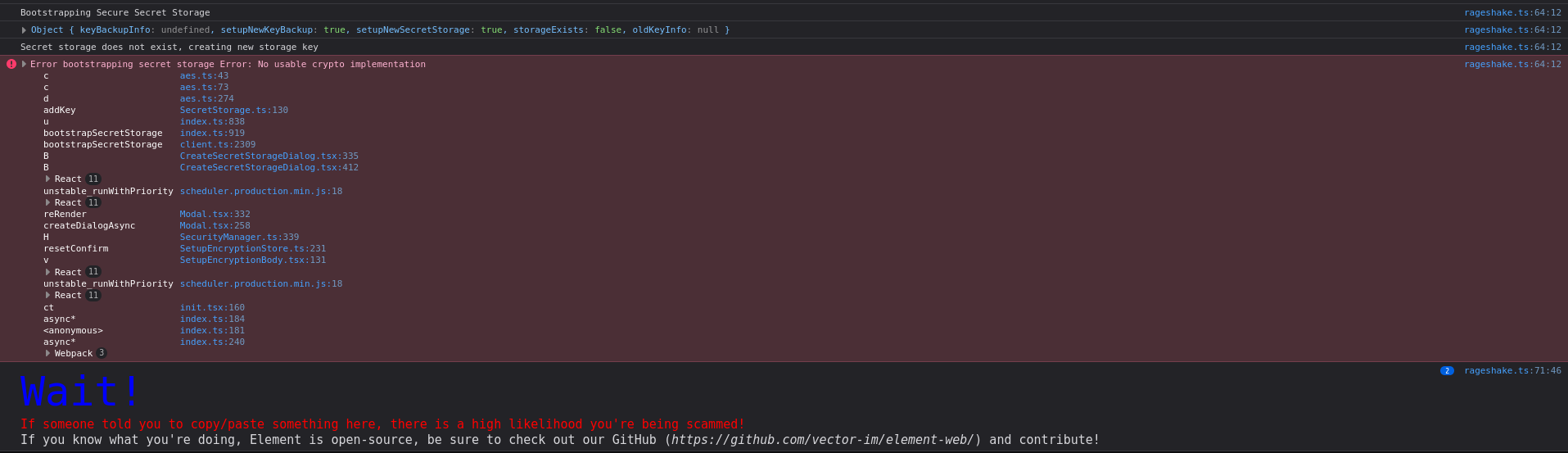

### Steps to reproduce

First I have ran matrix synapse on my local server , there is no valid domain, SSL/TLS

Every thing is fine but when a user wants to register or sign in with web base app (V1.11.2) , user wants to generate or import a key file , there is this error (Unable to set up secret storage)

path: all settings --> security & privacy --> Encryption --> Icon "Setup" --> generate new key --> Download / Copy --> asks user password to verify the user --> Error "Unable to set up secret storage" . Tested in Chrome, Firefox, .etc

This Problem is only on web base app, the desktop apps are OK.

### Outcome

#### What did you expect?

I expected to say every thing fine or any thing like this , exept Unable to set up secret storage

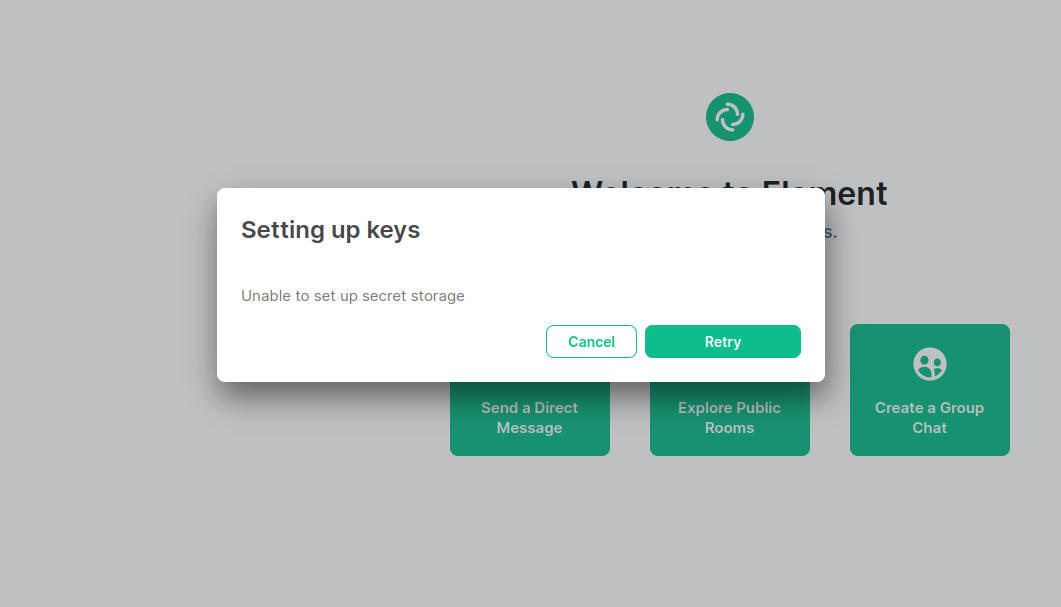

#### What happened instead?

It just says Unable to set up secret storage with two icons, Try again & cancel

my home server conf

```

erver_name: "matrix.geek.local"

pid_file: "/var/run/matrix-synapse.pid"

enable_registration: true

session_lifetime: 24h

registration_shared_secret: XXXX

#enable_registration_without_verification: true

public_baseurl: http://matrix.geek.local/

web_client_location: http://element.geek.local/

listeners:

- port: 8008

tls: false

type: http

x_forwarded: true

bind_addresses: ['127.0.0.1']

resources:

- names: [client, federation]

compress: true

database:

name: sqlite3

args:

database: /var/lib/matrix-synapse/homeserver.db

macaroon_secret_key: "XXXX"

form_secret: "XXXX"

log_config: "/etc/matrix-synapse/log.yaml"

report_stats: true

media_store_path: /var/lib/matrix-synapse/media

signing_key_path: "/etc/matrix-synapse/homeserver.signing.key"

trusted_key_servers:

- server_name: "http://localhost:8090"

registrations_require_3pid:

- email

enable_3pid_lookup: true

registration_requires_token: false

allowed_local_3pids:

- medium: email

pattern: '^[^@]+@example\.local$'

email:

smtp_host: mail.example.local

smtp_port: 25

force_tls: false

require_transport_security: false

enable_tls: false

notif_from: "Your Friendly %(app)s homeserver <element-noreply@pep.co.ir>"

app_name: pep_matrix

enable_notifs: true

notif_for_new_users: true

client_base_url: "http://element.geek.local"

validation_token_lifetime: 15m

invite_client_location: http://element.geek.local

subjects:

...

password_config:

enabled: true

localdb_enabled: false

policy:

enabled: true

...

modules:

- module: "ldap_auth_provider.LdapAuthProviderModule"

config:

enabled: true

...

password_providers:

- module: "rest_auth_provider.RestAuthProvider"

config:

endpoint: "http://localhost:8090"

```

my ma1sd conf

```

matrix:

domain: 'matrix.geek.local'

v1: false

v2: true

key:

path: '/var/lib/ma1sd/keys'

storage:

provider:

sqlite:

database: '/etc/ma1sd/ma1sd.db'

synapseSql:

enabled: true

connection: '/var/lib/matrix-synapse/homeserver.db'

threepid:

medium:

email:

domain:

whitelist:

- '*@example.local'

identity:

from: 'test@example.local'

connectors:

smtp:

host: 'mail.example.local'

tls: 0

port: 25

ldap:

enabled: true

lookup: true # hash lookup

activeDirectory: true

mode: "search"

defaultDomain: 'example.local'

connection:

host: 'XXXX'

port: 389

bindDn: 'test@example.local'

bindPassword: 'XXXX'

baseDNs:

- 'OU=test,DC=example,DC=local'

attribute:

mail: "email"

name: "DisplayName"

dns:

overwrite:

homeserver:

client:

- name: 'matrix.geek.local'

value: 'http://localhost:8008'

config:

policy:

registration:

username:

enforceLowercase: false

logging:

root: info

app: info

requests: false

```

log file:

[rageshake(2).zip](https://github.com/vector-im/element-web/files/9452095/rageshake.2.zip)

### Operating system

Ubuntu 20.04, Ubuntu 18.04, Windows 10

### Browser information

Version 103.0.5060.134 (Official Build) (64-bit), Firefox 104.0 (64-bit)

### URL for webapp

(http://element.geek.local) Local --> Unable to be published on internet

### Application version

V1.11.2

### Homeserver

(http://matrix.geek.local) Local --> Unable to be published on internet

### Will you send logs?

No

|

1.0

|

Unable to set up secret storage - ### Steps to reproduce

First I have ran matrix synapse on my local server , there is no valid domain, SSL/TLS

Every thing is fine but when a user wants to register or sign in with web base app (V1.11.2) , user wants to generate or import a key file , there is this error (Unable to set up secret storage)

path: all settings --> security & privacy --> Encryption --> Icon "Setup" --> generate new key --> Download / Copy --> asks user password to verify the user --> Error "Unable to set up secret storage" . Tested in Chrome, Firefox, .etc

This Problem is only on web base app, the desktop apps are OK.

### Outcome

#### What did you expect?

I expected to say every thing fine or any thing like this , exept Unable to set up secret storage

#### What happened instead?

It just says Unable to set up secret storage with two icons, Try again & cancel

my home server conf

```

erver_name: "matrix.geek.local"

pid_file: "/var/run/matrix-synapse.pid"

enable_registration: true

session_lifetime: 24h

registration_shared_secret: XXXX

#enable_registration_without_verification: true

public_baseurl: http://matrix.geek.local/

web_client_location: http://element.geek.local/

listeners:

- port: 8008

tls: false

type: http

x_forwarded: true

bind_addresses: ['127.0.0.1']

resources:

- names: [client, federation]

compress: true

database:

name: sqlite3

args:

database: /var/lib/matrix-synapse/homeserver.db

macaroon_secret_key: "XXXX"

form_secret: "XXXX"

log_config: "/etc/matrix-synapse/log.yaml"

report_stats: true

media_store_path: /var/lib/matrix-synapse/media

signing_key_path: "/etc/matrix-synapse/homeserver.signing.key"

trusted_key_servers:

- server_name: "http://localhost:8090"

registrations_require_3pid:

- email

enable_3pid_lookup: true

registration_requires_token: false

allowed_local_3pids:

- medium: email

pattern: '^[^@]+@example\.local$'

email:

smtp_host: mail.example.local

smtp_port: 25

force_tls: false

require_transport_security: false

enable_tls: false

notif_from: "Your Friendly %(app)s homeserver <element-noreply@pep.co.ir>"

app_name: pep_matrix

enable_notifs: true

notif_for_new_users: true

client_base_url: "http://element.geek.local"

validation_token_lifetime: 15m

invite_client_location: http://element.geek.local

subjects:

...

password_config:

enabled: true

localdb_enabled: false

policy:

enabled: true

...

modules:

- module: "ldap_auth_provider.LdapAuthProviderModule"

config:

enabled: true

...

password_providers:

- module: "rest_auth_provider.RestAuthProvider"

config:

endpoint: "http://localhost:8090"

```

my ma1sd conf

```

matrix:

domain: 'matrix.geek.local'

v1: false

v2: true

key:

path: '/var/lib/ma1sd/keys'

storage:

provider:

sqlite:

database: '/etc/ma1sd/ma1sd.db'

synapseSql:

enabled: true

connection: '/var/lib/matrix-synapse/homeserver.db'

threepid:

medium:

email:

domain:

whitelist:

- '*@example.local'

identity:

from: 'test@example.local'

connectors:

smtp:

host: 'mail.example.local'

tls: 0

port: 25

ldap:

enabled: true

lookup: true # hash lookup

activeDirectory: true

mode: "search"

defaultDomain: 'example.local'

connection:

host: 'XXXX'

port: 389

bindDn: 'test@example.local'

bindPassword: 'XXXX'

baseDNs:

- 'OU=test,DC=example,DC=local'

attribute:

mail: "email"

name: "DisplayName"

dns:

overwrite:

homeserver:

client:

- name: 'matrix.geek.local'

value: 'http://localhost:8008'

config:

policy:

registration:

username:

enforceLowercase: false

logging:

root: info

app: info

requests: false

```

log file:

[rageshake(2).zip](https://github.com/vector-im/element-web/files/9452095/rageshake.2.zip)

### Operating system

Ubuntu 20.04, Ubuntu 18.04, Windows 10

### Browser information

Version 103.0.5060.134 (Official Build) (64-bit), Firefox 104.0 (64-bit)

### URL for webapp

(http://element.geek.local) Local --> Unable to be published on internet

### Application version

V1.11.2

### Homeserver

(http://matrix.geek.local) Local --> Unable to be published on internet

### Will you send logs?

No

|

defect

|

unable to set up secret storage steps to reproduce first i have ran matrix synapse on my local server there is no valid domain ssl tls every thing is fine but when a user wants to register or sign in with web base app user wants to generate or import a key file there is this error unable to set up secret storage path all settings security privacy encryption icon setup generate new key download copy asks user password to verify the user error unable to set up secret storage tested in chrome firefox etc this problem is only on web base app the desktop apps are ok outcome what did you expect i expected to say every thing fine or any thing like this exept unable to set up secret storage what happened instead it just says unable to set up secret storage with two icons try again cancel my home server conf erver name matrix geek local pid file var run matrix synapse pid enable registration true session lifetime registration shared secret xxxx enable registration without verification true public baseurl web client location listeners port tls false type http x forwarded true bind addresses resources names compress true database name args database var lib matrix synapse homeserver db macaroon secret key xxxx form secret xxxx log config etc matrix synapse log yaml report stats true media store path var lib matrix synapse media signing key path etc matrix synapse homeserver signing key trusted key servers server name registrations require email enable lookup true registration requires token false allowed local medium email pattern example local email smtp host mail example local smtp port force tls false require transport security false enable tls false notif from your friendly app s homeserver app name pep matrix enable notifs true notif for new users true client base url validation token lifetime invite client location subjects password config enabled true localdb enabled false policy enabled true modules module ldap auth provider ldapauthprovidermodule config enabled true password providers module rest auth provider restauthprovider config endpoint my conf matrix domain matrix geek local false true key path var lib keys storage provider sqlite database etc db synapsesql enabled true connection var lib matrix synapse homeserver db threepid medium email domain whitelist example local identity from test example local connectors smtp host mail example local tls port ldap enabled true lookup true hash lookup activedirectory true mode search defaultdomain example local connection host xxxx port binddn test example local bindpassword xxxx basedns ou test dc example dc local attribute mail email name displayname dns overwrite homeserver client name matrix geek local value config policy registration username enforcelowercase false logging root info app info requests false log file operating system ubuntu ubuntu windows browser information version official build bit firefox bit url for webapp local unable to be published on internet application version homeserver local unable to be published on internet will you send logs no

| 1

|

63,341

| 26,358,431,877

|

IssuesEvent

|

2023-01-11 11:30:45

|

GovernIB/ripea

|

https://api.github.com/repos/GovernIB/ripea

|

closed

|

Afegir el servei de consulta d'estar al corrent de pagament amb la Seguretat Social de PINBAL

|

Tipus:Nova_Funcionalitat Prioritat:Normal Lloc:WebServices

|

Afegir el servei "Q2827003ATGSS001 Estar al corriente de pago con la Seguridad Social" al llistat de serveis SCSP que es poden consultar des de RIPEA a través de la llibreria que facilita les consultes SCSP. Depen de GovernIB/pinbal#155

|

1.0

|

Afegir el servei de consulta d'estar al corrent de pagament amb la Seguretat Social de PINBAL - Afegir el servei "Q2827003ATGSS001 Estar al corriente de pago con la Seguridad Social" al llistat de serveis SCSP que es poden consultar des de RIPEA a través de la llibreria que facilita les consultes SCSP. Depen de GovernIB/pinbal#155

|

non_defect

|

afegir el servei de consulta d estar al corrent de pagament amb la seguretat social de pinbal afegir el servei estar al corriente de pago con la seguridad social al llistat de serveis scsp que es poden consultar des de ripea a través de la llibreria que facilita les consultes scsp depen de governib pinbal

| 0

|

42,030

| 10,755,318,490

|

IssuesEvent

|

2019-10-31 08:53:15

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

closed

|

Creating tables using DSLContext.ddl() converts VARBINARY columns to TEXT in MySQL

|

C: DB: Aurora MySQL C: DB: MariaDB C: DB: MemSQL C: DB: MySQL C: Functionality E: All Editions P: High T: Defect

|

### Behavior:

When creating tables with DSLContext.ddl(), columns with type SQLDataType.VARBINARY are instead created with type TEXT in the database. I expect the VARBINARY type to be preserved.

### Repro:

See https://github.com/trdesilva/jOOQ-mcve

@Test

public void mcveTest() {

ctx.ddl(Testtable.TESTTABLE, new DDLExportConfiguration().createTableIfNotExists(true)).executeBatch();

byte[] bytes = new byte[256];

for (int i = 0; i < bytes.length; i++) {

bytes[i] = (byte)(i - Byte.MAX_VALUE);

}

// this throws with the following on MySQL 5.6.43, but not 5.6.39:

// org.jooq.exception.DataAccessException: SQL [insert into `TestTable` (`Foo`, `Bar`) values (?, ?)]; Incorrect string value: '\x81\x82\x83\x84\x85\x86...' for column 'Foo' at row 1

ctx.insertInto(Testtable.TESTTABLE).values(bytes, bytes).execute();

}

### Versions:

- jOOQ: 3.12.2

- Java: OpenJDK 11.0.4+11

- Database (include vendor): Oracle MySQL 5.6.39/5.6.43 Community Server

- OS: Ubuntu 16.04/AWS RDS

- JDBC Driver (include name if inofficial driver): mysql-connector-java 5.1.46

|

1.0

|

Creating tables using DSLContext.ddl() converts VARBINARY columns to TEXT in MySQL - ### Behavior:

When creating tables with DSLContext.ddl(), columns with type SQLDataType.VARBINARY are instead created with type TEXT in the database. I expect the VARBINARY type to be preserved.

### Repro:

See https://github.com/trdesilva/jOOQ-mcve

@Test

public void mcveTest() {

ctx.ddl(Testtable.TESTTABLE, new DDLExportConfiguration().createTableIfNotExists(true)).executeBatch();

byte[] bytes = new byte[256];

for (int i = 0; i < bytes.length; i++) {

bytes[i] = (byte)(i - Byte.MAX_VALUE);

}

// this throws with the following on MySQL 5.6.43, but not 5.6.39:

// org.jooq.exception.DataAccessException: SQL [insert into `TestTable` (`Foo`, `Bar`) values (?, ?)]; Incorrect string value: '\x81\x82\x83\x84\x85\x86...' for column 'Foo' at row 1

ctx.insertInto(Testtable.TESTTABLE).values(bytes, bytes).execute();

}

### Versions:

- jOOQ: 3.12.2

- Java: OpenJDK 11.0.4+11

- Database (include vendor): Oracle MySQL 5.6.39/5.6.43 Community Server

- OS: Ubuntu 16.04/AWS RDS

- JDBC Driver (include name if inofficial driver): mysql-connector-java 5.1.46

|

defect

|

creating tables using dslcontext ddl converts varbinary columns to text in mysql behavior when creating tables with dslcontext ddl columns with type sqldatatype varbinary are instead created with type text in the database i expect the varbinary type to be preserved repro see test public void mcvetest ctx ddl testtable testtable new ddlexportconfiguration createtableifnotexists true executebatch byte bytes new byte for int i i bytes length i bytes byte i byte max value this throws with the following on mysql but not org jooq exception dataaccessexception sql incorrect string value for column foo at row ctx insertinto testtable testtable values bytes bytes execute versions jooq java openjdk database include vendor oracle mysql community server os ubuntu aws rds jdbc driver include name if inofficial driver mysql connector java

| 1

|

164,043

| 12,758,334,969

|

IssuesEvent

|

2020-06-29 01:53:46

|

elastic/kibana

|

https://api.github.com/repos/elastic/kibana

|

opened

|

Failing test: X-Pack Jest Tests.x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view - when on the hosts page when there is no selected host in the url should not show the flyout

|

failed-test

|

A test failed on a tracked branch

```

Error: thrown: "Exceeded timeout of 5000ms for a test.

Use jest.setTimeout(newTimeout) to increase the timeout value, if this is a long-running test."

at describe (/dev/shm/workspace/kibana/x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view/index.test.tsx:50:5)

at _dispatchDescribe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:67:26)

at describe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:30:5)

at Object.<anonymous>.describe (/dev/shm/workspace/kibana/x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view/index.test.tsx:49:3)

at _dispatchDescribe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:67:26)

at describe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:30:5)

at Object.<anonymous> (/dev/shm/workspace/kibana/x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view/index.test.tsx:23:1)

at Runtime._execModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:1205:24)

at Runtime._loadModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:805:12)

at Runtime.requireModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:662:10)

at jestAdapter (/dev/shm/workspace/kibana/node_modules/jest-circus/build/legacy-code-todo-rewrite/jestAdapter.js:145:13)

at process._tickCallback (internal/process/next_tick.js:68:7)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+7.x/6201/)

<!-- kibanaCiData = {"failed-test":{"test.class":"X-Pack Jest Tests.x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view","test.name":"when on the hosts page when there is no selected host in the url should not show the flyout","test.failCount":1}} -->

|

1.0

|

Failing test: X-Pack Jest Tests.x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view - when on the hosts page when there is no selected host in the url should not show the flyout - A test failed on a tracked branch

```

Error: thrown: "Exceeded timeout of 5000ms for a test.

Use jest.setTimeout(newTimeout) to increase the timeout value, if this is a long-running test."

at describe (/dev/shm/workspace/kibana/x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view/index.test.tsx:50:5)

at _dispatchDescribe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:67:26)

at describe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:30:5)

at Object.<anonymous>.describe (/dev/shm/workspace/kibana/x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view/index.test.tsx:49:3)

at _dispatchDescribe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:67:26)

at describe (/dev/shm/workspace/kibana/node_modules/jest-circus/build/index.js:30:5)

at Object.<anonymous> (/dev/shm/workspace/kibana/x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view/index.test.tsx:23:1)

at Runtime._execModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:1205:24)

at Runtime._loadModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:805:12)

at Runtime.requireModule (/dev/shm/workspace/kibana/node_modules/jest-runtime/build/index.js:662:10)

at jestAdapter (/dev/shm/workspace/kibana/node_modules/jest-circus/build/legacy-code-todo-rewrite/jestAdapter.js:145:13)

at process._tickCallback (internal/process/next_tick.js:68:7)

```

First failure: [Jenkins Build](https://kibana-ci.elastic.co/job/elastic+kibana+7.x/6201/)

<!-- kibanaCiData = {"failed-test":{"test.class":"X-Pack Jest Tests.x-pack/plugins/security_solution/public/management/pages/endpoint_hosts/view","test.name":"when on the hosts page when there is no selected host in the url should not show the flyout","test.failCount":1}} -->

|

non_defect

|

failing test x pack jest tests x pack plugins security solution public management pages endpoint hosts view when on the hosts page when there is no selected host in the url should not show the flyout a test failed on a tracked branch error thrown exceeded timeout of for a test use jest settimeout newtimeout to increase the timeout value if this is a long running test at describe dev shm workspace kibana x pack plugins security solution public management pages endpoint hosts view index test tsx at dispatchdescribe dev shm workspace kibana node modules jest circus build index js at describe dev shm workspace kibana node modules jest circus build index js at object describe dev shm workspace kibana x pack plugins security solution public management pages endpoint hosts view index test tsx at dispatchdescribe dev shm workspace kibana node modules jest circus build index js at describe dev shm workspace kibana node modules jest circus build index js at object dev shm workspace kibana x pack plugins security solution public management pages endpoint hosts view index test tsx at runtime execmodule dev shm workspace kibana node modules jest runtime build index js at runtime loadmodule dev shm workspace kibana node modules jest runtime build index js at runtime requiremodule dev shm workspace kibana node modules jest runtime build index js at jestadapter dev shm workspace kibana node modules jest circus build legacy code todo rewrite jestadapter js at process tickcallback internal process next tick js first failure

| 0

|

747,464

| 26,084,925,588

|

IssuesEvent

|

2022-12-26 00:36:02

|

Lincoln-LM/sv-live-map

|

https://api.github.com/repos/Lincoln-LM/sv-live-map

|

opened

|

Auto host (discord integration!) and further automation

|

enhancement low priority

|

Finding shiny dens goes hand-in-hand with actually hosting said dens, supporting auto-host functionality would be a nice enhancement for those using sv-live-map for the purpose of hosting for others. In addition, this may pave way for an automation framework that could be used for the likes of auto-shiny hunting w/overworld scan, and auto outbreak resetting.

|

1.0

|

Auto host (discord integration!) and further automation - Finding shiny dens goes hand-in-hand with actually hosting said dens, supporting auto-host functionality would be a nice enhancement for those using sv-live-map for the purpose of hosting for others. In addition, this may pave way for an automation framework that could be used for the likes of auto-shiny hunting w/overworld scan, and auto outbreak resetting.

|

non_defect

|

auto host discord integration and further automation finding shiny dens goes hand in hand with actually hosting said dens supporting auto host functionality would be a nice enhancement for those using sv live map for the purpose of hosting for others in addition this may pave way for an automation framework that could be used for the likes of auto shiny hunting w overworld scan and auto outbreak resetting

| 0

|

18,780

| 3,086,962,729

|

IssuesEvent

|

2015-08-25 08:28:54

|

jserranohidalgo/test-trac

|

https://api.github.com/repos/jserranohidalgo/test-trac

|

opened

|

Contextos de declaración huérfanos

|

P: trivial T: defect

|

**Reported by jserrano on 7 May 2014 11:09 UTC**

Se da un alta desde la interfaz, y primero se hace un setup de la declaracin (de alta, por ejemplo). Si despus falla el alta (21), el contexto no se cierra. Opciones:

* Se hace todo en el mismo attempt

** attempt(for{ setup <- Say(SetUp(..),...); NewEntity(interaccion,ag1,...) <- react; _ <- Say(Alta(...),interaccion,ag1)})

** attempt(Say(SetUpAndAlta(..))

* Se sigue haciendo en dos attempts

** La interfaz hace un close

** No se hace nada, y cuando se consolide el proceso, se cierra

|

1.0

|

Contextos de declaración huérfanos - **Reported by jserrano on 7 May 2014 11:09 UTC**

Se da un alta desde la interfaz, y primero se hace un setup de la declaracin (de alta, por ejemplo). Si despus falla el alta (21), el contexto no se cierra. Opciones:

* Se hace todo en el mismo attempt

** attempt(for{ setup <- Say(SetUp(..),...); NewEntity(interaccion,ag1,...) <- react; _ <- Say(Alta(...),interaccion,ag1)})

** attempt(Say(SetUpAndAlta(..))

* Se sigue haciendo en dos attempts

** La interfaz hace un close

** No se hace nada, y cuando se consolide el proceso, se cierra

|

defect

|

contextos de declaración huérfanos reported by jserrano on may utc se da un alta desde la interfaz y primero se hace un setup de la declaracin de alta por ejemplo si despus falla el alta el contexto no se cierra opciones se hace todo en el mismo attempt attempt for setup say setup newentity interaccion react say alta interaccion attempt say setupandalta se sigue haciendo en dos attempts la interfaz hace un close no se hace nada y cuando se consolide el proceso se cierra

| 1

|

39,241

| 9,334,675,642

|

IssuesEvent

|

2019-03-28 16:49:33

|

PowerDNS/pdns

|

https://api.github.com/repos/PowerDNS/pdns

|

opened

|

rec: protobuf messages fields are not updated after being cached

|

defect rec

|

<!-- Hi! Thanks for filing an issue. It will be read with care by human beings. Can we ask you to please fill out this template and not simply demand new features or send in complaints? Thanks! -->

<!-- Also please search the existing issues (both open and closed) to see if your report might be duplicate -->

<!-- Please don't file an issue when you have a support question, send support questions to the mailinglist or ask them on IRC (https://www.powerdns.com/opensource.html) -->

<!-- Tell us what is issue is about -->

- Program: Recursor <!-- delete the ones that do not apply -->

- Issue type: Bug report <!-- delete the one that does not apply -->

### Short description

<!-- Explain in a few sentences what the issue/request is -->

It looks like some fields are not properly updated when the response is taken from the packet cache.

### Description

<!-- Describe as extensively as possible what you want the software to do -->

When a response is read from the cache, and protobuf logging is enabled, it seems that the following protobuf message's fields are not properly updated for new responses : `appliedPolicy` and `tags`.

|

1.0

|

rec: protobuf messages fields are not updated after being cached - <!-- Hi! Thanks for filing an issue. It will be read with care by human beings. Can we ask you to please fill out this template and not simply demand new features or send in complaints? Thanks! -->

<!-- Also please search the existing issues (both open and closed) to see if your report might be duplicate -->

<!-- Please don't file an issue when you have a support question, send support questions to the mailinglist or ask them on IRC (https://www.powerdns.com/opensource.html) -->

<!-- Tell us what is issue is about -->

- Program: Recursor <!-- delete the ones that do not apply -->

- Issue type: Bug report <!-- delete the one that does not apply -->

### Short description

<!-- Explain in a few sentences what the issue/request is -->

It looks like some fields are not properly updated when the response is taken from the packet cache.

### Description

<!-- Describe as extensively as possible what you want the software to do -->

When a response is read from the cache, and protobuf logging is enabled, it seems that the following protobuf message's fields are not properly updated for new responses : `appliedPolicy` and `tags`.

|

defect

|

rec protobuf messages fields are not updated after being cached program recursor issue type bug report short description it looks like some fields are not properly updated when the response is taken from the packet cache description when a response is read from the cache and protobuf logging is enabled it seems that the following protobuf message s fields are not properly updated for new responses appliedpolicy and tags

| 1

|

69,522

| 7,137,474,696

|

IssuesEvent

|

2018-01-23 11:07:47

|

emfoundation/asset-manager

|

https://api.github.com/repos/emfoundation/asset-manager

|

closed

|

On folder list view, the total number of folders is displayed incorrectly in two places

|

bug please test priority-3

|

This is probably because hidden folders are also counted.

|

1.0

|

On folder list view, the total number of folders is displayed incorrectly in two places - This is probably because hidden folders are also counted.

|

non_defect

|

on folder list view the total number of folders is displayed incorrectly in two places this is probably because hidden folders are also counted

| 0

|

144,533

| 11,623,169,764

|

IssuesEvent

|

2020-02-27 08:23:27

|

dasch-swiss/knora-api

|

https://api.github.com/repos/dasch-swiss/knora-api

|

opened

|

Run tests against a single running knora-stack

|

testing

|

Any tests that currently start `knora-api` should run against an externally started knora-stack.

Value proposition:

- allow using these tests as we use them now, but also to point them to any kind of knora-stack installation, and have it thoroughly checked (which we need but are currently missing for testing our "Infrastructure as Code")

- solve the intermittent BindException problem

- run tests a bit faster

|

1.0

|

Run tests against a single running knora-stack - Any tests that currently start `knora-api` should run against an externally started knora-stack.

Value proposition:

- allow using these tests as we use them now, but also to point them to any kind of knora-stack installation, and have it thoroughly checked (which we need but are currently missing for testing our "Infrastructure as Code")

- solve the intermittent BindException problem

- run tests a bit faster

|

non_defect

|

run tests against a single running knora stack any tests that currently start knora api should run against an externally started knora stack value proposition allow using these tests as we use them now but also to point them to any kind of knora stack installation and have it thoroughly checked which we need but are currently missing for testing our infrastructure as code solve the intermittent bindexception problem run tests a bit faster

| 0

|

66,282

| 20,112,902,455

|

IssuesEvent

|

2022-02-07 16:35:51

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

closed

|

PIP window closes when you switch to another room

|

T-Defect S-Minor A-Widgets O-Uncommon Z-FOSDEM

|

### Steps to reproduce

(unfortunately doesn't reproduce reliably, however when the underlying problem seems active, it works all the time )

A new day, opening chat.fosdem.org: no problems;

After closing the tab with an active chat.fosdem.org session (verified) after a few minutes and reopening it:

1. Switch to "Home" selection on the left and then click "Home" to see the fosdem overview page

2. Open FOSDEM 2022 Space on the left

3. Open the "Test Track 1" by clicking the already joined room in the list on the left

4. make sure the "Livestream" widget is pinned

5. Open the stream in PIP mode by using the in-widget controls

6. Click on "Matrix Stand" room

### Outcome

#### What did you expect?

PIP window stays visible

#### What happened instead?

PIP window vanishes

### Operating system

Windows 10 Pro 19044.1469

### Browser information

Edge Version 97.0.1072.76 (Official build) (64-bit)

### URL for webapp

https://chat.fosdem.org/

### Application version

FOSDEM 2022 version: 1.10.1 Olm version: 3.2.8

### Homeserver

matrix.org

### Will you send logs?

Yes -> https://github.com/matrix-org/element-web-rageshakes/issues/10382

|

1.0

|

PIP window closes when you switch to another room - ### Steps to reproduce

(unfortunately doesn't reproduce reliably, however when the underlying problem seems active, it works all the time )

A new day, opening chat.fosdem.org: no problems;

After closing the tab with an active chat.fosdem.org session (verified) after a few minutes and reopening it:

1. Switch to "Home" selection on the left and then click "Home" to see the fosdem overview page

2. Open FOSDEM 2022 Space on the left

3. Open the "Test Track 1" by clicking the already joined room in the list on the left

4. make sure the "Livestream" widget is pinned

5. Open the stream in PIP mode by using the in-widget controls

6. Click on "Matrix Stand" room

### Outcome

#### What did you expect?

PIP window stays visible

#### What happened instead?

PIP window vanishes

### Operating system

Windows 10 Pro 19044.1469

### Browser information

Edge Version 97.0.1072.76 (Official build) (64-bit)

### URL for webapp

https://chat.fosdem.org/

### Application version

FOSDEM 2022 version: 1.10.1 Olm version: 3.2.8

### Homeserver

matrix.org

### Will you send logs?

Yes -> https://github.com/matrix-org/element-web-rageshakes/issues/10382

|

defect

|

pip window closes when you switch to another room steps to reproduce unfortunately doesn t reproduce reliably however when the underlying problem seems active it works all the time a new day opening chat fosdem org no problems after closing the tab with an active chat fosdem org session verified after a few minutes and reopening it switch to home selection on the left and then click home to see the fosdem overview page open fosdem space on the left open the test track by clicking the already joined room in the list on the left make sure the livestream widget is pinned open the stream in pip mode by using the in widget controls click on matrix stand room outcome what did you expect pip window stays visible what happened instead pip window vanishes operating system windows pro browser information edge version official build bit url for webapp application version fosdem version olm version homeserver matrix org will you send logs yes

| 1

|

289,175

| 24,965,861,837

|

IssuesEvent

|

2022-11-01 19:18:58

|

ibm-openbmc/dev

|

https://api.github.com/repos/ibm-openbmc/dev

|

closed

|

Redfish Maintenance Logs

|

Epic prio_low Test on WSP-TAC

|

The request is:

Provides logs for when the hardware or firmware change in the system

Firmware changes are recorded today on FSP

CPU changed from SN xxxx to SN yyyy

Firmware changed from version xxxx to yyyy

Need to flag VPD changes up the stack.

|

1.0

|

Redfish Maintenance Logs - The request is:

Provides logs for when the hardware or firmware change in the system

Firmware changes are recorded today on FSP

CPU changed from SN xxxx to SN yyyy

Firmware changed from version xxxx to yyyy

Need to flag VPD changes up the stack.

|

non_defect

|

redfish maintenance logs the request is provides logs for when the hardware or firmware change in the system firmware changes are recorded today on fsp cpu changed from sn xxxx to sn yyyy firmware changed from version xxxx to yyyy need to flag vpd changes up the stack

| 0

|

57,077

| 15,650,045,256

|

IssuesEvent

|

2021-03-23 08:28:09

|

hazelcast/hazelcast-jet

|

https://api.github.com/repos/hazelcast/hazelcast-jet

|

closed

|

Snapshot Phase 2 may get stuck

|

defect

|

While solving a test problem in #2454, we realized the underlying cause was a snapshot stuck in phase 2. System load seems to have been light immediately before and after the getting stuck event.

|

1.0

|

Snapshot Phase 2 may get stuck - While solving a test problem in #2454, we realized the underlying cause was a snapshot stuck in phase 2. System load seems to have been light immediately before and after the getting stuck event.

|

defect

|

snapshot phase may get stuck while solving a test problem in we realized the underlying cause was a snapshot stuck in phase system load seems to have been light immediately before and after the getting stuck event

| 1

|

330,259

| 10,037,616,403

|

IssuesEvent

|

2019-07-18 13:33:36

|

wrattler/wrattler

|

https://api.github.com/repos/wrattler/wrattler

|

closed

|

[jupyter] Refresh causes code changes to disappear

|

status-priority type-bug

|

JupyterLab refreshes the page when you switch tabs, which makes code changes disappear.

|

1.0

|

[jupyter] Refresh causes code changes to disappear - JupyterLab refreshes the page when you switch tabs, which makes code changes disappear.

|

non_defect

|

refresh causes code changes to disappear jupyterlab refreshes the page when you switch tabs which makes code changes disappear

| 0

|

228,508

| 18,239,343,811

|

IssuesEvent

|

2021-10-01 10:57:18

|

HyphaApp/hypha

|

https://api.github.com/repos/HyphaApp/hypha

|

closed

|

Make 'View Message Log' tab only available to 'Staff Admin' role

|

Type: Enhancement Status: Tested - approved for live ✅ Partner: OTF Priority: Low

|

## User story

It is not obvious to staff what the 'View Message Log' tab is.

## Describe the solution you'd like in Hypha

Make 'View Message Log' tab only available to 'Staff Admin' role.

**Priority**

- Low priority (annoying, would be nice to not see)

**Affected roles**

- Staff

**Ideal deadline**

December 2021

|

1.0

|

Make 'View Message Log' tab only available to 'Staff Admin' role - ## User story

It is not obvious to staff what the 'View Message Log' tab is.

## Describe the solution you'd like in Hypha

Make 'View Message Log' tab only available to 'Staff Admin' role.

**Priority**

- Low priority (annoying, would be nice to not see)

**Affected roles**

- Staff

**Ideal deadline**

December 2021

|

non_defect

|

make view message log tab only available to staff admin role user story it is not obvious to staff what the view message log tab is describe the solution you d like in hypha make view message log tab only available to staff admin role priority low priority annoying would be nice to not see affected roles staff ideal deadline december

| 0

|

12,579

| 2,711,483,019

|

IssuesEvent

|

2015-04-09 06:42:17

|

google/google-api-go-client

|

https://api.github.com/repos/google/google-api-go-client

|

closed

|

YouTube v3 video/upload, setting the snippet fails when non-alphanumeric characters are used

|

new priority-medium type-defect

|

**janbirsacom** on 9 Jun 2014 at 8:58:

```

What steps will reproduce the problem?

1. Use YouTube video upload sample code from:

https://developers.google.com/youtube/v3/docs/videos/insert

2. In video description field, put non-alphanumeric characters like '<3'.

3. Upload a sample video.

What is the expected output? What do you see instead?

Expected: 200, successful upload

Got: 400 Bad Request

What version of the product are you using? On what operating system?

Latest (2ba9f0995cf0215c20ebd6de43a14d70af30fea6)

Please provide any additional information below.

http://stackoverflow.com/questions/24075229/youtube-upload-v3-400-bad-request

```

|

1.0

|

YouTube v3 video/upload, setting the snippet fails when non-alphanumeric characters are used -

**janbirsacom** on 9 Jun 2014 at 8:58:

```

What steps will reproduce the problem?

1. Use YouTube video upload sample code from:

https://developers.google.com/youtube/v3/docs/videos/insert

2. In video description field, put non-alphanumeric characters like '<3'.

3. Upload a sample video.

What is the expected output? What do you see instead?

Expected: 200, successful upload

Got: 400 Bad Request

What version of the product are you using? On what operating system?

Latest (2ba9f0995cf0215c20ebd6de43a14d70af30fea6)

Please provide any additional information below.

http://stackoverflow.com/questions/24075229/youtube-upload-v3-400-bad-request

```

|

defect

|

youtube video upload setting the snippet fails when non alphanumeric characters are used janbirsacom on jun at what steps will reproduce the problem use youtube video upload sample code from in video description field put non alphanumeric characters like upload a sample video what is the expected output what do you see instead expected successful upload got bad request what version of the product are you using on what operating system latest please provide any additional information below

| 1

|

12,475

| 2,700,770,082

|

IssuesEvent

|

2015-04-04 15:06:21

|

cakephp/cakephp

|

https://api.github.com/repos/cakephp/cakephp

|

reopened

|

3.0 - Cookies unable to contain brackets

|

component Defect

|

It's possible this is one of those things that are impossible to fix, but right now I can't use `CookieComponent` to write cookies that have brackets in the name. In my 2.x app there are cookies that are set with the array-style, `CakeCookie[ThisThing]`, but in 3.0 I can't just do this:

```

$this->Cookie->write('CakeCookie[ThisThing]', 10);

```

Trying to use dots, `Cookie->write('CakeCookie.ThisThing', 10)` results in the cookie name being `CakeCookie`, which isn't usable either. (Edit - I'm pretty sure that's as intended, though. Just trying to see how I can get an array-style cookie name.) It seems that it's the `Hash` class:

```

#1

Hash::insert([], 'CakeCookie[ThisThing]', 'hello'); # -> Array ()

#2

Hash::insert([], 'what', 'hello'); # -> Array ( [what] => hello )

```

Should `#1` above be an empty array? I don't know what the special conditions `Hash::insert` are doing with brackets, but `noTokens` in that method seems to be messing this up.

Thanks

|

1.0

|

3.0 - Cookies unable to contain brackets - It's possible this is one of those things that are impossible to fix, but right now I can't use `CookieComponent` to write cookies that have brackets in the name. In my 2.x app there are cookies that are set with the array-style, `CakeCookie[ThisThing]`, but in 3.0 I can't just do this:

```

$this->Cookie->write('CakeCookie[ThisThing]', 10);

```

Trying to use dots, `Cookie->write('CakeCookie.ThisThing', 10)` results in the cookie name being `CakeCookie`, which isn't usable either. (Edit - I'm pretty sure that's as intended, though. Just trying to see how I can get an array-style cookie name.) It seems that it's the `Hash` class:

```

#1

Hash::insert([], 'CakeCookie[ThisThing]', 'hello'); # -> Array ()

#2

Hash::insert([], 'what', 'hello'); # -> Array ( [what] => hello )

```

Should `#1` above be an empty array? I don't know what the special conditions `Hash::insert` are doing with brackets, but `noTokens` in that method seems to be messing this up.

Thanks

|

defect

|

cookies unable to contain brackets it s possible this is one of those things that are impossible to fix but right now i can t use cookiecomponent to write cookies that have brackets in the name in my x app there are cookies that are set with the array style cakecookie but in i can t just do this this cookie write cakecookie trying to use dots cookie write cakecookie thisthing results in the cookie name being cakecookie which isn t usable either edit i m pretty sure that s as intended though just trying to see how i can get an array style cookie name it seems that it s the hash class hash insert cakecookie hello array hash insert what hello array hello should above be an empty array i don t know what the special conditions hash insert are doing with brackets but notokens in that method seems to be messing this up thanks

| 1

|

7,731

| 2,610,434,760

|

IssuesEvent

|

2015-02-26 20:22:25

|

chrsmith/scribefire-chrome

|

https://api.github.com/repos/chrsmith/scribefire-chrome

|

opened

|

Cannot connect to Wordpress hosted blog

|

auto-migrated Priority-Medium Type-Defect

|

```

What's the problem?

I cannot get Scribefire to connect to a Wordpress hosted blog

http://neutronbytes.com

However, when I go to a commercially hosted blog http://blog.cdpug.org/ it

works perfectly.

What browser are you using?

Chrome

What Operating system are you using

Windows 7

What version of ScribeFire are you running?

Latest - installed from Google Chrome store 2/8/15

What Blog Type are you having this problem with? Please include version #

Wordpress hosted

if known or applicable

```

-----

Original issue reported on code.google.com by `djy...@gmail.com` on 9 Feb 2015 at 5:17

|

1.0

|

Cannot connect to Wordpress hosted blog - ```

What's the problem?

I cannot get Scribefire to connect to a Wordpress hosted blog

http://neutronbytes.com

However, when I go to a commercially hosted blog http://blog.cdpug.org/ it

works perfectly.

What browser are you using?

Chrome

What Operating system are you using

Windows 7

What version of ScribeFire are you running?

Latest - installed from Google Chrome store 2/8/15

What Blog Type are you having this problem with? Please include version #

Wordpress hosted

if known or applicable

```

-----

Original issue reported on code.google.com by `djy...@gmail.com` on 9 Feb 2015 at 5:17

|

defect

|

cannot connect to wordpress hosted blog what s the problem i cannot get scribefire to connect to a wordpress hosted blog however when i go to a commercially hosted blog it works perfectly what browser are you using chrome what operating system are you using windows what version of scribefire are you running latest installed from google chrome store what blog type are you having this problem with please include version wordpress hosted if known or applicable original issue reported on code google com by djy gmail com on feb at

| 1

|

652,065

| 21,520,489,284

|

IssuesEvent

|

2022-04-28 13:50:01

|

wp-media/wp-rocket

|

https://api.github.com/repos/wp-media/wp-rocket

|

closed

|

CDN exclusions are not reflected in Used CSS

|

type: bug module: CDN priority: medium effort: [XS] severity: major module: remove unused css

|

**Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version

- Used the search feature to ensure that the bug hasn’t been reported before

**Describe the bug**

When using CDN and RUCSS, CDN exclusions are not taken into the consideration.

**To Reproduce**

1. Enable CDN for all files

2. Enable RUCSS

3. Exclude URL from CDN (URL existing in used CSS) i.e /test.svg

4. visit the page and check used CSS

**Expected behavior**

Excluded URL not rewritten to the CNAME

**Additional**

Moving it from private repo:

https://github.com/wp-media/nodejs-treeshaker/issues/46

**Backlog Grooming (for WP Media dev team use only)**

- [ ] Reproduce the problem

- [ ] Identify the root cause

- [ ] Scope a solution

- [ ] Estimate the effort

|

1.0

|

CDN exclusions are not reflected in Used CSS - **Before submitting an issue please check that you’ve completed the following steps:**

- Made sure you’re on the latest version

- Used the search feature to ensure that the bug hasn’t been reported before

**Describe the bug**

When using CDN and RUCSS, CDN exclusions are not taken into the consideration.

**To Reproduce**

1. Enable CDN for all files

2. Enable RUCSS

3. Exclude URL from CDN (URL existing in used CSS) i.e /test.svg

4. visit the page and check used CSS

**Expected behavior**

Excluded URL not rewritten to the CNAME

**Additional**

Moving it from private repo:

https://github.com/wp-media/nodejs-treeshaker/issues/46

**Backlog Grooming (for WP Media dev team use only)**

- [ ] Reproduce the problem

- [ ] Identify the root cause

- [ ] Scope a solution

- [ ] Estimate the effort

|

non_defect

|

cdn exclusions are not reflected in used css before submitting an issue please check that you’ve completed the following steps made sure you’re on the latest version used the search feature to ensure that the bug hasn’t been reported before describe the bug when using cdn and rucss cdn exclusions are not taken into the consideration to reproduce enable cdn for all files enable rucss exclude url from cdn url existing in used css i e test svg visit the page and check used css expected behavior excluded url not rewritten to the cname additional moving it from private repo backlog grooming for wp media dev team use only reproduce the problem identify the root cause scope a solution estimate the effort

| 0

|

150

| 2,516,037,729

|

IssuesEvent

|

2015-01-15 22:47:42

|

cakephp/cakephp

|

https://api.github.com/repos/cakephp/cakephp

|

closed

|

Maximum nesting level when ExceptionRenderer throws exception

|

Defect

|

First, the simple test:

```php

class FaultyExceptionRenderer extends ExceptionRenderer {

public function render() {

throw new Exception('Error from renderer.');

}

}

/**

* Add to ErrorHandlerTest case.

* testExceptionRendererException method

*

* @return void

*/

public function testExceptionRendererException() {

if (file_exists(LOGS . 'error.log')) {

unlink(LOGS . 'error.log');

}

Configure::write('Exception.renderer', 'FaultyExceptionRenderer');

ErrorHandler::handleFatalError(E_USER_ERROR, 'Initial error', __FILE__ ,__LINE__);

}

```

Error: `Fatal Error Error: Maximum function nesting level of '100' reached, aborting!`.

I have not suggested a fix yet, because there are several ways of handling this. The goal is that the exception thrown from the ExceptionRenderer should be caught *once* and handled *once*.

Context:

```php

// ErrorHandler::handleException

try {

$error = new $renderer($exception);

$error->render();

} catch (Exception $e) {

set_error_handler(Configure::read('Error.handler')); // Should be using configured ErrorHandler

Configure::write('Error.trace', false); // trace is useless here since it's internal

$message = sprintf("[%s] %s\n%s", // Keeping same message format

get_class($e),

$e->getMessage(),

$e->getTraceAsString()

);

trigger_error($message, E_USER_ERROR);

```

The `trigger_error()` triggers `ErrorHandler::handleError`, which leads to `Error::handleFatalError`, and then back again to `ErrorHandler::handleException`, and the cycle continues.

|

1.0

|

Maximum nesting level when ExceptionRenderer throws exception - First, the simple test:

```php

class FaultyExceptionRenderer extends ExceptionRenderer {

public function render() {

throw new Exception('Error from renderer.');

}

}

/**

* Add to ErrorHandlerTest case.

* testExceptionRendererException method

*

* @return void

*/

public function testExceptionRendererException() {

if (file_exists(LOGS . 'error.log')) {

unlink(LOGS . 'error.log');

}

Configure::write('Exception.renderer', 'FaultyExceptionRenderer');

ErrorHandler::handleFatalError(E_USER_ERROR, 'Initial error', __FILE__ ,__LINE__);

}

```

Error: `Fatal Error Error: Maximum function nesting level of '100' reached, aborting!`.

I have not suggested a fix yet, because there are several ways of handling this. The goal is that the exception thrown from the ExceptionRenderer should be caught *once* and handled *once*.

Context:

```php

// ErrorHandler::handleException

try {

$error = new $renderer($exception);

$error->render();

} catch (Exception $e) {

set_error_handler(Configure::read('Error.handler')); // Should be using configured ErrorHandler

Configure::write('Error.trace', false); // trace is useless here since it's internal

$message = sprintf("[%s] %s\n%s", // Keeping same message format

get_class($e),

$e->getMessage(),

$e->getTraceAsString()

);

trigger_error($message, E_USER_ERROR);

```

The `trigger_error()` triggers `ErrorHandler::handleError`, which leads to `Error::handleFatalError`, and then back again to `ErrorHandler::handleException`, and the cycle continues.

|

defect

|

maximum nesting level when exceptionrenderer throws exception first the simple test php class faultyexceptionrenderer extends exceptionrenderer public function render throw new exception error from renderer add to errorhandlertest case testexceptionrendererexception method return void public function testexceptionrendererexception if file exists logs error log unlink logs error log configure write exception renderer faultyexceptionrenderer errorhandler handlefatalerror e user error initial error file line error fatal error error maximum function nesting level of reached aborting i have not suggested a fix yet because there are several ways of handling this the goal is that the exception thrown from the exceptionrenderer should be caught once and handled once context php errorhandler handleexception try error new renderer exception error render catch exception e set error handler configure read error handler should be using configured errorhandler configure write error trace false trace is useless here since it s internal message sprintf s n s keeping same message format get class e e getmessage e gettraceasstring trigger error message e user error the trigger error triggers errorhandler handleerror which leads to error handlefatalerror and then back again to errorhandler handleexception and the cycle continues

| 1

|

25,879

| 4,487,509,757

|

IssuesEvent

|

2016-08-30 01:27:04

|

schuel/hmmm

|

https://api.github.com/repos/schuel/hmmm

|

closed

|

show loading-icon for sub-templates

|

defect enhancement Layout

|

on multiple places it shows wrong message before loading content. This is quite confusing, specially with slow Internet connection when site changes again after 5-10 seconds.

### appearance

- [x] "There are no courses on this day" (/calendar)

- [x] "Relax, nothing happening today." (/frames/calendar)

- [x] events in a course are loaded later

- [x] groupnames are loaded with delay in coursList showing something like "removedGroup" first

- ...

can we implement a global loading icon?

|

1.0

|

show loading-icon for sub-templates - on multiple places it shows wrong message before loading content. This is quite confusing, specially with slow Internet connection when site changes again after 5-10 seconds.

### appearance

- [x] "There are no courses on this day" (/calendar)

- [x] "Relax, nothing happening today." (/frames/calendar)

- [x] events in a course are loaded later

- [x] groupnames are loaded with delay in coursList showing something like "removedGroup" first

- ...

can we implement a global loading icon?

|

defect

|

show loading icon for sub templates on multiple places it shows wrong message before loading content this is quite confusing specially with slow internet connection when site changes again after seconds appearance there are no courses on this day calendar relax nothing happening today frames calendar events in a course are loaded later groupnames are loaded with delay in courslist showing something like removedgroup first can we implement a global loading icon

| 1

|

281,871

| 21,315,444,540

|

IssuesEvent

|

2022-04-16 07:29:02

|

Kidsnd274/pe

|

https://api.github.com/repos/Kidsnd274/pe

|

opened

|

diagrams folder link incorrectly points to the old AB3 project in the developer guide

|

severity.Low type.DocumentationBug

|

diagrams folder link incorrectly points to the old AB3 project in the developer guide

The image below shows the incorrect link:

<!--session: 1650088649060-79fd5dc5-68c0-4a9a-8cfa-a788c21533ff-->

<!--Version: Web v3.4.2-->

|

1.0

|

diagrams folder link incorrectly points to the old AB3 project in the developer guide - diagrams folder link incorrectly points to the old AB3 project in the developer guide

The image below shows the incorrect link:

<!--session: 1650088649060-79fd5dc5-68c0-4a9a-8cfa-a788c21533ff-->

<!--Version: Web v3.4.2-->

|

non_defect

|

diagrams folder link incorrectly points to the old project in the developer guide diagrams folder link incorrectly points to the old project in the developer guide the image below shows the incorrect link

| 0

|

244,168

| 26,368,982,386

|

IssuesEvent

|

2023-01-11 18:56:53

|

dotnet/docs

|

https://api.github.com/repos/dotnet/docs

|

closed

|

Error "The parameter is incorrect" when decrypt file

|

support-request docs-experience Pri3 dotnet/prod dotnet-security/tech okr-health :pushpin: seQUESTered

|

I have included in my VB.NET project the System.Security.Cryptography to decript files, in my computer the functionality works OK but when I send the project to someone they are presented with the ERROR "The parameter is incorrect" and it shows the following Exception: 'System.Security.Cryptography.CryptographicException' in mscordlib.net

When the application try to decript the file in other computers it corrupted the file and it's unreadeble.

I don't know this is a error of my code or if this is a bug.

So I'm looking for some guidance to solve this problem

` Private Sub DecryptFile(ByVal inFile As String)

' Create instance of Aes for symmetric decryption of the data.

Dim aes As Aes = Aes.Create()

' Create byte arrays to get the length of the encrypted key and IV.

' These values were stored as 4 bytes each at the beginning of the encrypted package.

Dim LenK As Byte() = New Byte(4 - 1) {}

Dim LenIV As Byte() = New Byte(4 - 1) {}

' Construct the file name for the decrypted file.

Dim outFile As String = DecrFolder & (inFile.Substring(0, inFile.LastIndexOf(".")) & ".xlsx")

' Use FileStream objects to read the encrypted

' file (inFs) and save the decrypted file (outFs).

Using inFs As New FileStream((EncrFile & inFile), FileMode.Open)

inFs.Seek(0, SeekOrigin.Begin)

inFs.Read(LenK, 0, 3)

inFs.Seek(4, SeekOrigin.Begin)

inFs.Read(LenIV, 0, 3)

Dim lengthK As Integer = BitConverter.ToInt32(LenK, 0)

Dim lengthIV As Integer = BitConverter.ToInt32(LenIV, 0)

Dim startC As Integer = (lengthK + lengthIV + 8)

Dim lenC As Integer = (CType(inFs.Length, Integer) - startC)

Dim KeyEncrypted As Byte() = New Byte(lengthK - 1) {}

Dim IV As Byte() = New Byte(lengthIV - 1) {}

' Extract the key and IV starting from index 8

' after the length values.

inFs.Seek(8, SeekOrigin.Begin)

inFs.Read(KeyEncrypted, 0, lengthK)

inFs.Seek(8 + lengthK, SeekOrigin.Begin)

inFs.Read(IV, 0, lengthIV)

Directory.CreateDirectory(DecrFolder)

' User RSACryptoServiceProvider to decrypt the AES key

Dim KeyDecrypted As Byte() = _rsa.Decrypt(KeyEncrypted, False)

' Decrypt the key.