Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

29,933

| 5,959,855,772

|

IssuesEvent

|

2017-05-29 12:26:40

|

contao/core-bundle

|

https://api.github.com/repos/contao/core-bundle

|

closed

|

[4.4.0-dev] Commit #8ecb142 results in bad font rendering on Chrome (Windows)

|

defect

|

At least on my Desktop the font renders almost unreadable.

Especially in light font width some chars are to thin and distorted (e.g. the letter "e").

Can someone else confirm this?

|

1.0

|

[4.4.0-dev] Commit #8ecb142 results in bad font rendering on Chrome (Windows) - At least on my Desktop the font renders almost unreadable.

Especially in light font width some chars are to thin and distorted (e.g. the letter "e").

Can someone else confirm this?

|

defect

|

commit results in bad font rendering on chrome windows at least on my desktop the font renders almost unreadable especially in light font width some chars are to thin and distorted e g the letter e can someone else confirm this

| 1

|

103,673

| 16,603,670,776

|

IssuesEvent

|

2021-06-01 23:36:09

|

hygieia/hygieia-common

|

https://api.github.com/repos/hygieia/hygieia-common

|

opened

|

CVE-2020-13943 (Medium) detected in tomcat-embed-core-9.0.14.jar

|

security vulnerability

|

## CVE-2020-13943 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.14.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Path to dependency file: hygieia-common/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/apache/tomcat/embed/tomcat-embed-core/9.0.14/tomcat-embed-core-9.0.14.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-2.1.2.RELEASE.jar (Root Library)

- spring-boot-starter-tomcat-2.1.2.RELEASE.jar

- :x: **tomcat-embed-core-9.0.14.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://api.github.com/repos/hygieia/hygieia-common/commits/b8fbfc18552132520e52029d9b0fc0a1db09f115">b8fbfc18552132520e52029d9b0fc0a1db09f115</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

If an HTTP/2 client connecting to Apache Tomcat 10.0.0-M1 to 10.0.0-M7, 9.0.0.M1 to 9.0.37 or 8.5.0 to 8.5.57 exceeded the agreed maximum number of concurrent streams for a connection (in violation of the HTTP/2 protocol), it was possible that a subsequent request made on that connection could contain HTTP headers - including HTTP/2 pseudo headers - from a previous request rather than the intended headers. This could lead to users seeing responses for unexpected resources.

<p>Publish Date: 2020-10-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-13943>CVE-2020-13943</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://lists.apache.org/thread.html/r4a390027eb27e4550142fac6c8317cc684b157ae314d31514747f307%40%3Cannounce.tomcat.apache.org%3E">https://lists.apache.org/thread.html/r4a390027eb27e4550142fac6c8317cc684b157ae314d31514747f307%40%3Cannounce.tomcat.apache.org%3E</a></p>

<p>Release Date: 2020-10-12</p>

<p>Fix Resolution: org.apache.tomcat:tomcat-coyote:8.5.58,9.0.38,10.0.0-M8;org.apache.tomcat.embed:tomcat-embed-core:8.5.58,9.0.38,10.0.0-M8</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2020-13943 (Medium) detected in tomcat-embed-core-9.0.14.jar - ## CVE-2020-13943 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>tomcat-embed-core-9.0.14.jar</b></p></summary>

<p>Core Tomcat implementation</p>

<p>Path to dependency file: hygieia-common/pom.xml</p>

<p>Path to vulnerable library: /home/wss-scanner/.m2/repository/org/apache/tomcat/embed/tomcat-embed-core/9.0.14/tomcat-embed-core-9.0.14.jar</p>

<p>

Dependency Hierarchy:

- spring-boot-starter-web-2.1.2.RELEASE.jar (Root Library)

- spring-boot-starter-tomcat-2.1.2.RELEASE.jar

- :x: **tomcat-embed-core-9.0.14.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://api.github.com/repos/hygieia/hygieia-common/commits/b8fbfc18552132520e52029d9b0fc0a1db09f115">b8fbfc18552132520e52029d9b0fc0a1db09f115</a></p>

<p>Found in base branch: <b>main</b></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

If an HTTP/2 client connecting to Apache Tomcat 10.0.0-M1 to 10.0.0-M7, 9.0.0.M1 to 9.0.37 or 8.5.0 to 8.5.57 exceeded the agreed maximum number of concurrent streams for a connection (in violation of the HTTP/2 protocol), it was possible that a subsequent request made on that connection could contain HTTP headers - including HTTP/2 pseudo headers - from a previous request rather than the intended headers. This could lead to users seeing responses for unexpected resources.

<p>Publish Date: 2020-10-12

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2020-13943>CVE-2020-13943</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>4.3</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: Network

- Attack Complexity: Low

- Privileges Required: Low

- User Interaction: None

- Scope: Unchanged

- Impact Metrics:

- Confidentiality Impact: Low

- Integrity Impact: None

- Availability Impact: None

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://lists.apache.org/thread.html/r4a390027eb27e4550142fac6c8317cc684b157ae314d31514747f307%40%3Cannounce.tomcat.apache.org%3E">https://lists.apache.org/thread.html/r4a390027eb27e4550142fac6c8317cc684b157ae314d31514747f307%40%3Cannounce.tomcat.apache.org%3E</a></p>

<p>Release Date: 2020-10-12</p>

<p>Fix Resolution: org.apache.tomcat:tomcat-coyote:8.5.58,9.0.38,10.0.0-M8;org.apache.tomcat.embed:tomcat-embed-core:8.5.58,9.0.38,10.0.0-M8</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve medium detected in tomcat embed core jar cve medium severity vulnerability vulnerable library tomcat embed core jar core tomcat implementation path to dependency file hygieia common pom xml path to vulnerable library home wss scanner repository org apache tomcat embed tomcat embed core tomcat embed core jar dependency hierarchy spring boot starter web release jar root library spring boot starter tomcat release jar x tomcat embed core jar vulnerable library found in head commit a href found in base branch main vulnerability details if an http client connecting to apache tomcat to to or to exceeded the agreed maximum number of concurrent streams for a connection in violation of the http protocol it was possible that a subsequent request made on that connection could contain http headers including http pseudo headers from a previous request rather than the intended headers this could lead to users seeing responses for unexpected resources publish date url a href cvss score details base score metrics exploitability metrics attack vector network attack complexity low privileges required low user interaction none scope unchanged impact metrics confidentiality impact low integrity impact none availability impact none for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution org apache tomcat tomcat coyote org apache tomcat embed tomcat embed core step up your open source security game with whitesource

| 0

|

133,694

| 18,299,043,932

|

IssuesEvent

|

2021-10-05 23:55:46

|

bsbtd/Teste

|

https://api.github.com/repos/bsbtd/Teste

|

opened

|

CVE-2013-0248 (Medium) detected in commons-fileupload-1.2.jar

|

security vulnerability

|

## CVE-2013-0248 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-fileupload-1.2.jar</b></p></summary>

<p>The FileUpload component provides a simple yet flexible means of adding support for multipart

file upload functionality to servlets and web applications.</p>

<p>Library home page: <a href="http://jakarta.apache.org/commons/fileupload/">http://jakarta.apache.org/commons/fileupload/</a></p>

<p>Path to vulnerable library: upload-1.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **commons-fileupload-1.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/bsbtd/Teste/commit/64dde89c50c07496423c4d4a865f2e16b92399ad">64dde89c50c07496423c4d4a865f2e16b92399ad</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The default configuration of javax.servlet.context.tempdir in Apache Commons FileUpload 1.0 through 1.2.2 uses the /tmp directory for uploaded files, which allows local users to overwrite arbitrary files via an unspecified symlink attack.

<p>Publish Date: 2013-03-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2013-0248>CVE-2013-0248</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2013-0248">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2013-0248</a></p>

<p>Release Date: 2013-03-15</p>

<p>Fix Resolution: 1.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

True

|

CVE-2013-0248 (Medium) detected in commons-fileupload-1.2.jar - ## CVE-2013-0248 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>commons-fileupload-1.2.jar</b></p></summary>

<p>The FileUpload component provides a simple yet flexible means of adding support for multipart

file upload functionality to servlets and web applications.</p>

<p>Library home page: <a href="http://jakarta.apache.org/commons/fileupload/">http://jakarta.apache.org/commons/fileupload/</a></p>

<p>Path to vulnerable library: upload-1.2.jar</p>

<p>

Dependency Hierarchy:

- :x: **commons-fileupload-1.2.jar** (Vulnerable Library)

<p>Found in HEAD commit: <a href="https://github.com/bsbtd/Teste/commit/64dde89c50c07496423c4d4a865f2e16b92399ad">64dde89c50c07496423c4d4a865f2e16b92399ad</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/medium_vul.png' width=19 height=20> Vulnerability Details</summary>

<p>

The default configuration of javax.servlet.context.tempdir in Apache Commons FileUpload 1.0 through 1.2.2 uses the /tmp directory for uploaded files, which allows local users to overwrite arbitrary files via an unspecified symlink attack.

<p>Publish Date: 2013-03-15

<p>URL: <a href=https://vuln.whitesourcesoftware.com/vulnerability/CVE-2013-0248>CVE-2013-0248</a></p>

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/cvss3.png' width=19 height=20> CVSS 3 Score Details (<b>5.5</b>)</summary>

<p>

Base Score Metrics:

- Exploitability Metrics:

- Attack Vector: N/A

- Attack Complexity: N/A

- Privileges Required: N/A

- User Interaction: N/A

- Scope: N/A

- Impact Metrics:

- Confidentiality Impact: N/A

- Integrity Impact: N/A

- Availability Impact: N/A

</p>

For more information on CVSS3 Scores, click <a href="https://www.first.org/cvss/calculator/3.0">here</a>.

</p>

</details>

<p></p>

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/suggested_fix.png' width=19 height=20> Suggested Fix</summary>

<p>

<p>Type: Upgrade version</p>

<p>Origin: <a href="https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2013-0248">https://cve.mitre.org/cgi-bin/cvename.cgi?name=CVE-2013-0248</a></p>

<p>Release Date: 2013-03-15</p>

<p>Fix Resolution: 1.3</p>

</p>

</details>

<p></p>

***

Step up your Open Source Security Game with WhiteSource [here](https://www.whitesourcesoftware.com/full_solution_bolt_github)

|

non_defect

|

cve medium detected in commons fileupload jar cve medium severity vulnerability vulnerable library commons fileupload jar the fileupload component provides a simple yet flexible means of adding support for multipart file upload functionality to servlets and web applications library home page a href path to vulnerable library upload jar dependency hierarchy x commons fileupload jar vulnerable library found in head commit a href vulnerability details the default configuration of javax servlet context tempdir in apache commons fileupload through uses the tmp directory for uploaded files which allows local users to overwrite arbitrary files via an unspecified symlink attack publish date url a href cvss score details base score metrics exploitability metrics attack vector n a attack complexity n a privileges required n a user interaction n a scope n a impact metrics confidentiality impact n a integrity impact n a availability impact n a for more information on scores click a href suggested fix type upgrade version origin a href release date fix resolution step up your open source security game with whitesource

| 0

|

167,331

| 14,108,336,983

|

IssuesEvent

|

2020-11-06 17:38:58

|

imsanjoykb/Chatbot-Application

|

https://api.github.com/repos/imsanjoykb/Chatbot-Application

|

opened

|

code sets up your registering in your firebase database.

|

documentation

|

As you might wonder, the Firebase database is a NoSQL which essentially means, you don’t need to query stuff using SQL.

Firebase uses reference method to retrieve and put the values.

So we have to decide on what and how to make our database structure. Here we also are going to make user sign up and sign in using a custom username and password, so we’ve to keep this in mind too.

The database structure that I’ve come up for this app is based on simplicity. We keep authentication details in a parent node named “users” and messages in another parent node named “messages”.

Inside messages we also keep track of who messaged whom by the order of the usernames.

|

1.0

|

code sets up your registering in your firebase database. - As you might wonder, the Firebase database is a NoSQL which essentially means, you don’t need to query stuff using SQL.

Firebase uses reference method to retrieve and put the values.

So we have to decide on what and how to make our database structure. Here we also are going to make user sign up and sign in using a custom username and password, so we’ve to keep this in mind too.

The database structure that I’ve come up for this app is based on simplicity. We keep authentication details in a parent node named “users” and messages in another parent node named “messages”.

Inside messages we also keep track of who messaged whom by the order of the usernames.

|

non_defect

|

code sets up your registering in your firebase database as you might wonder the firebase database is a nosql which essentially means you don’t need to query stuff using sql firebase uses reference method to retrieve and put the values so we have to decide on what and how to make our database structure here we also are going to make user sign up and sign in using a custom username and password so we’ve to keep this in mind too the database structure that i’ve come up for this app is based on simplicity we keep authentication details in a parent node named “users” and messages in another parent node named “messages” inside messages we also keep track of who messaged whom by the order of the usernames

| 0

|

559

| 2,570,824,562

|

IssuesEvent

|

2015-02-10 12:38:39

|

itm/testbed-runtime

|

https://api.github.com/repos/itm/testbed-runtime

|

closed

|

JSON representation of available devices empty

|

Defect

|

Link "Get JSON representation" in WiseGui returns empty array.

|

1.0

|

JSON representation of available devices empty - Link "Get JSON representation" in WiseGui returns empty array.

|

defect

|

json representation of available devices empty link get json representation in wisegui returns empty array

| 1

|

38,832

| 8,967,397,515

|

IssuesEvent

|

2019-01-29 03:10:45

|

svigerske/Ipopt

|

https://api.github.com/repos/svigerske/Ipopt

|

closed

|

Error installing / configuring ASL

|

Ipopt defect

|

Issue created by migration from Trac.

Original creator: rajhanschinmay

Original creation time: 2017-09-15 14:29:35

Assignee: ipopt-team

Version: 3.12

When I am running ./get.ASL command in Linux terminal, it says:

Applying path for MinGW

/home/chinmay/CoinIpopt/ThirdParty/ASL/get.ASL: 57: /home/chinmay/CoinIpopt/ThirdParty/ASL/get.ASL: cannot open mingw.patch: No such file

How to solve this?

|

1.0

|

Error installing / configuring ASL - Issue created by migration from Trac.

Original creator: rajhanschinmay

Original creation time: 2017-09-15 14:29:35

Assignee: ipopt-team

Version: 3.12

When I am running ./get.ASL command in Linux terminal, it says:

Applying path for MinGW

/home/chinmay/CoinIpopt/ThirdParty/ASL/get.ASL: 57: /home/chinmay/CoinIpopt/ThirdParty/ASL/get.ASL: cannot open mingw.patch: No such file

How to solve this?

|

defect

|

error installing configuring asl issue created by migration from trac original creator rajhanschinmay original creation time assignee ipopt team version when i am running get asl command in linux terminal it says applying path for mingw home chinmay coinipopt thirdparty asl get asl home chinmay coinipopt thirdparty asl get asl cannot open mingw patch no such file how to solve this

| 1

|

67,267

| 20,961,597,574

|

IssuesEvent

|

2022-03-27 21:46:35

|

abedmaatalla/imsdroid

|

https://api.github.com/repos/abedmaatalla/imsdroid

|

closed

|

Can imsdroid support LAN? neither WLAN nor 3G.

|

Priority-Medium Type-Defect auto-migrated

|

```

What steps will reproduce the problem?

1.Install imsdroid in Android system by LAN accessing Network

2.Set Identity and Network

3.Imsdroid show "NO active network"

Now does imsdroid not support LAN? How can I solve this problem?

```

Original issue reported on code.google.com by `ldf198...@163.com` on 4 Jan 2011 at 2:41

|

1.0

|

Can imsdroid support LAN? neither WLAN nor 3G. - ```

What steps will reproduce the problem?

1.Install imsdroid in Android system by LAN accessing Network

2.Set Identity and Network

3.Imsdroid show "NO active network"

Now does imsdroid not support LAN? How can I solve this problem?

```

Original issue reported on code.google.com by `ldf198...@163.com` on 4 Jan 2011 at 2:41

|

defect

|

can imsdroid support lan neither wlan nor what steps will reproduce the problem install imsdroid in android system by lan accessing network set identity and network imsdroid show no active network now does imsdroid not support lan how can i solve this problem original issue reported on code google com by com on jan at

| 1

|

64,080

| 18,165,646,381

|

IssuesEvent

|

2021-09-27 14:22:01

|

vector-im/element-web

|

https://api.github.com/repos/vector-im/element-web

|

opened

|

Can't enable message search

|

T-Defect

|

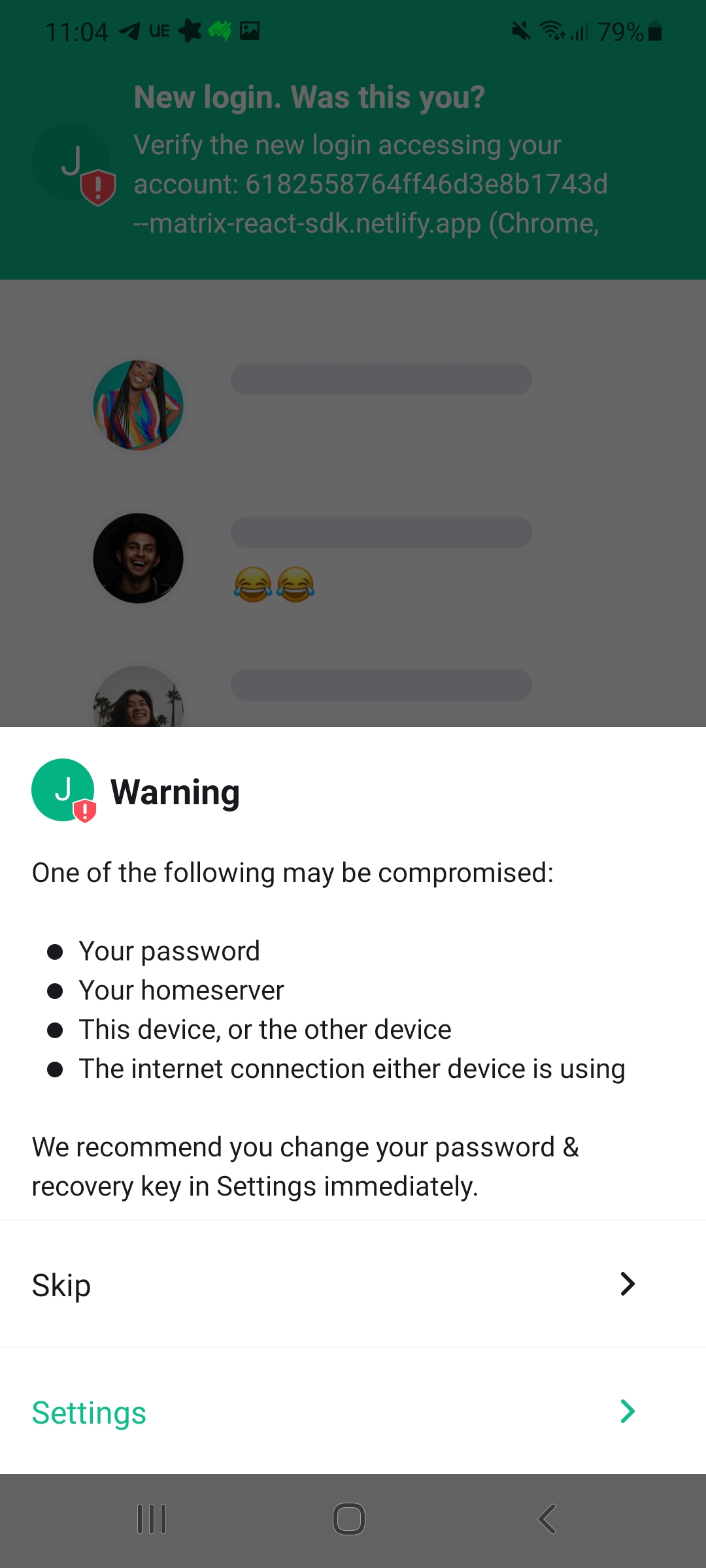

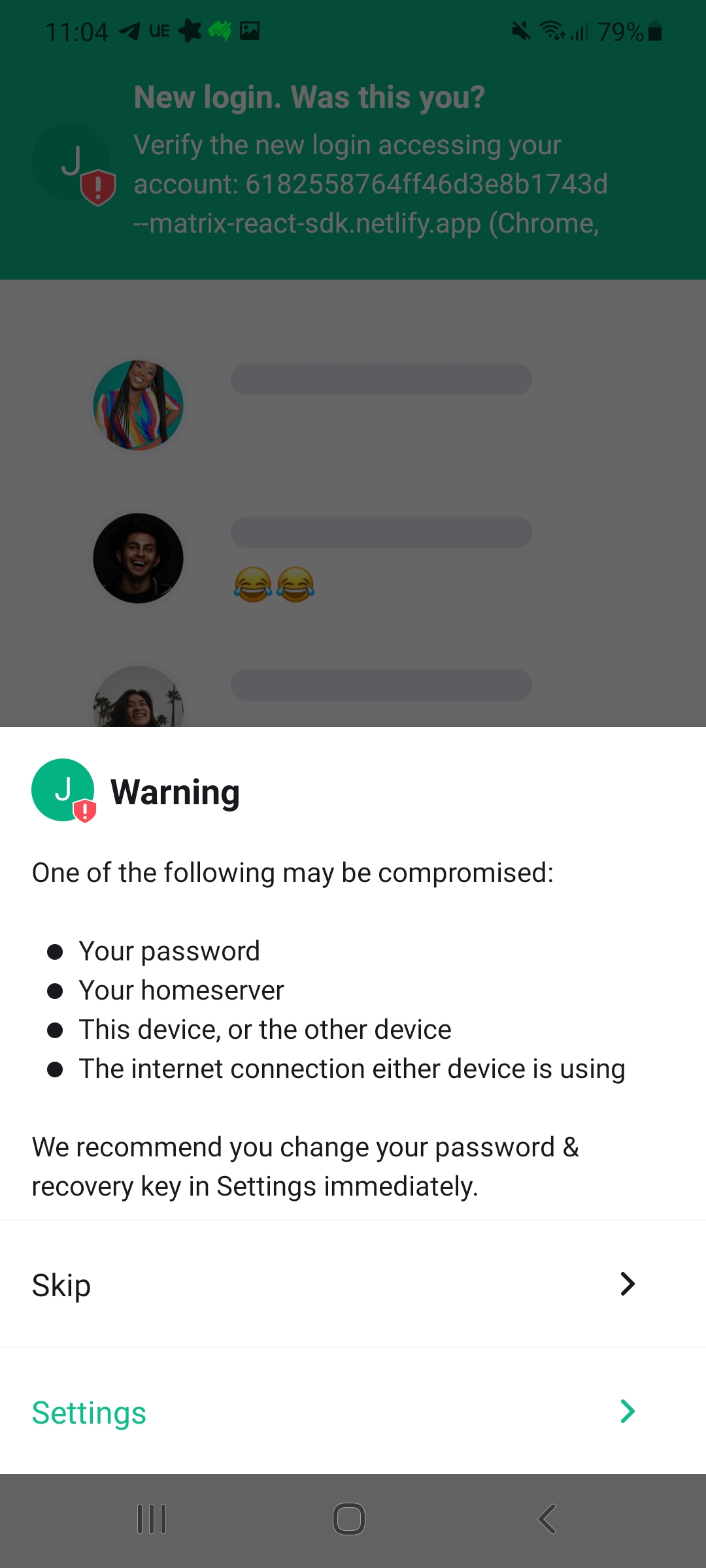

### Steps to reproduce

1. I used to have seshat-search enabled and working on sway

2. I logged out of sway and into gnome and use element in gnome

3. I log back into sway

4. search not available: sqlcypher error

5. reset matrix-org/matrix-react-sdk#5806

6. enable

### What happened?

### What did you expect?

start indexing, search becomes available

### What happened?

stuck spinning here

### Related

#14229

### Operating system

arch

### Application version

Element Nightly version: 2021092701 Olm version: 3.2.3

### How did you install the app?

aur/nightly-bin

### Homeserver

private

### Have you submitted a rageshake?

Yes

|

1.0

|

Can't enable message search - ### Steps to reproduce

1. I used to have seshat-search enabled and working on sway

2. I logged out of sway and into gnome and use element in gnome

3. I log back into sway

4. search not available: sqlcypher error

5. reset matrix-org/matrix-react-sdk#5806

6. enable

### What happened?

### What did you expect?

start indexing, search becomes available

### What happened?

stuck spinning here

### Related

#14229

### Operating system

arch

### Application version

Element Nightly version: 2021092701 Olm version: 3.2.3

### How did you install the app?

aur/nightly-bin

### Homeserver

private

### Have you submitted a rageshake?

Yes

|

defect

|

can t enable message search steps to reproduce i used to have seshat search enabled and working on sway i logged out of sway and into gnome and use element in gnome i log back into sway search not available sqlcypher error reset matrix org matrix react sdk enable what happened what did you expect start indexing search becomes available what happened stuck spinning here related operating system arch application version element nightly version olm version how did you install the app aur nightly bin homeserver private have you submitted a rageshake yes

| 1

|

29,733

| 5,846,049,814

|

IssuesEvent

|

2017-05-10 15:24:41

|

jOOQ/jOOQ

|

https://api.github.com/repos/jOOQ/jOOQ

|

closed

|

INSERT INTO .. SET Record statement should not take defaulted null values into consideration

|

C: Functionality P: Medium R: Invalid T: Defect

|

Similar to other API elements, the `INSERT INTO .. SET record` API should not explicitly set values that are:

- `null` (in Java)

- `NOT NULL` (in the database)

---

See also

- #2700

- #4161

- https://groups.google.com/forum/#!topic/jooq-user/8hwhDanETYs

|

1.0

|

INSERT INTO .. SET Record statement should not take defaulted null values into consideration - Similar to other API elements, the `INSERT INTO .. SET record` API should not explicitly set values that are:

- `null` (in Java)

- `NOT NULL` (in the database)

---

See also

- #2700

- #4161

- https://groups.google.com/forum/#!topic/jooq-user/8hwhDanETYs

|

defect

|

insert into set record statement should not take defaulted null values into consideration similar to other api elements the insert into set record api should not explicitly set values that are null in java not null in the database see also

| 1

|

53,739

| 13,262,213,922

|

IssuesEvent

|

2020-08-20 21:19:27

|

icecube-trac/tix4

|

https://api.github.com/repos/icecube-trac/tix4

|

closed

|

clsim tablemaker deadlock (Trac #1984)

|

Migrated from Trac combo simulation defect

|

When running the clsim tablemaker, once in a while (maybe 5-10% of my jobs) get stuck at a random time and wait forever at 0% CPU.

Running on Ubuntu16.04 w/ Intel openCL runtime and Intel(R) Xeon(R) CPU E5-2630 v3 @ 2.40GHz

GDB revealed that it gets stuck at:

```text

pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

185 ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S: No such file or directory.

```

backtrace:

```text

(gdb) backtrace

https://code.icecube.wisc.edu/ticket/0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

#1 0x00007fffe1d9a50f in I3CLSimTabulatorModule::DAQ(boost::shared_ptr<I3Frame>) () from /home/peller/build/simulation/trunk/lib/libclsim.so

#2 0x00007ffff583768e in I3Module::Process() () from /home/peller/build/simulation/trunk/lib/libicetray.so

#3 0x00007ffff583a689 in I3Module::Process_() () from /home/peller/build/simulation/trunk/lib/libicetray.so

#4 0x00007ffff58351ad in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#5 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#6 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#7 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#8 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#9 0x00007ffff57d79a9 in I3Tray::Execute(unsigned int) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#10 0x00007ffff592d56e in boost::python::objects::caller_py_function_impl<boost::python::detail::caller<void (I3Tray::*)(), boost::python::default_call_policies, boost::mpl::vector2<void, I3Tray&> > >::operator()(_object*, _object*) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#11 0x00007ffff5248c8d in boost::python::objects::function::call(_object*, _object*) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#12 0x00007ffff5248e88 in boost::detail::function::void_function_ref_invoker0<boost::python::objects::(anonymous namespace)::bind_return, void>::invoke(boost::detail::function::function_buffer&) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#13 0x00007ffff5250ee3 in boost::python::detail::exception_handler::operator()(boost::function0<void> const&) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#14 0x00007fffed72e263 in boost::detail::function::function_obj_invoker2<boost::_bi::bind_t<bool, boost::python::detail::translate_exception<not_found_exception, void (*)(not_found_exception const&)>, boost::_bi::list3<boost::arg<1>, boost::arg<2>, boost::_bi::value<void (*)(not_found_exception const&)> > >, bool, boost::python::detail::exception_handler const&, boost::function0<void> const&>::invoke(boost::detail::function::function_buffer&, boost::python::detail::exception_handler const&, boost::function0<void> const&) () from /home/peller/build/simulation/trunk/lib/icecube/dataclasses.so

#15 0x00007ffff5250c9d in boost::python::handle_exception_impl(boost::function0<void>) ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#16 0x00007ffff5246059 in function_call () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#17 0x00007ffff7a27333 in PyObject_Call (func=func@entry=0x74c350, arg=arg@entry=0x7fffcfdb62d0, kw=kw@entry=0x0) at Objects/abstract.c:2529

#18 0x00007ffff7add212 in do_call (nk=<optimized out>, na=1, pp_stack=0x7fffffffc140, func=0x74c350) at Python/ceval.c:4253

#19 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc140) at Python/ceval.c:4058

#20 PyEval_EvalFrameEx (f=f@entry=0x781ff0, throwflag=throwflag@entry=0) at Python/ceval.c:2681

#21 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=<optimized out>, globals=<optimized out>, locals=locals@entry=0x0, args=<optimized out>, argcount=argcount@entry=1,

kws=<optimized out>, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

#22 0x00007ffff7adf05a in fast_function (nk=0, na=1, n=<optimized out>, pp_stack=0x7fffffffc330, func=0x7fffdad056e0) at Python/ceval.c:4131

#23 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc330) at Python/ceval.c:4056

#24 PyEval_EvalFrameEx (f=f@entry=0x685720, throwflag=throwflag@entry=0) at Python/ceval.c:2681

#25 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168,

args=args@entry=0x0, argcount=argcount@entry=0, kws=kws@entry=0x0, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

#26 0x00007ffff7ae0389 in PyEval_EvalCode (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168) at Python/ceval.c:669

#27 0x00007ffff7b0429a in run_mod (arena=0x673c60, flags=0x7fffffffc550, locals=0x7ffff7f59168, globals=0x7ffff7f59168,

filename=0x63fb30 "/home/peller/build/simulation/trunk/lib/libdataio.so", mod=<optimized out>) at Python/pythonrun.c:1371

#28 PyRun_FileExFlags (fp=fp@entry=0x63fb30, filename=filename@entry=0x7fffffffcb47 "generate_table.py", start=start@entry=257, globals=globals@entry=0x7ffff7f59168,

locals=locals@entry=0x7ffff7f59168, closeit=closeit@entry=1, flags=0x7fffffffc550) at Python/pythonrun.c:1357

#29 0x00007ffff7b05797 in PyRun_SimpleFileExFlags (fp=fp@entry=0x63fb30, filename=0x7fffffffcb47 "generate_table.py", closeit=1, flags=flags@entry=0x7fffffffc550)

at Python/pythonrun.c:949

#30 0x00007ffff7b05e53 in PyRun_AnyFileExFlags (fp=fp@entry=0x63fb30, filename=<optimized out>, closeit=<optimized out>, flags=flags@entry=0x7fffffffc550)

at Python/pythonrun.c:753

#31 0x00007ffff7b1c041 in Py_Main (argc=<optimized out>, argv=<optimized out>) at Modules/main.c:640

#32 0x00007ffff740e830 in __libc_start_main (main=0x4006b0 <main>, argc=8, argv=0x7fffffffc718, init=<optimized out>, fini=<optimized out>,

rtld_fini=<optimized out>, stack_end=0x7fffffffc708) at ../csu/libc-start.c:291

#33 0x00000000004006e9 in _start ()

```

full backtrace:

```text

(gdb) backtrace full

https://code.icecube.wisc.edu/ticket/0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

No locals.

#1 0x00007fffe1d9a50f in I3CLSimTabulatorModule::DAQ(boost::shared_ptr<I3Frame>) () from /home/peller/build/simulation/trunk/lib/libclsim.so

No symbol table info available.

#2 0x00007ffff583768e in I3Module::Process() () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#3 0x00007ffff583a689 in I3Module::Process_() () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#4 0x00007ffff58351ad in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#5 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#6 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#7 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#8 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#9 0x00007ffff57d79a9 in I3Tray::Execute(unsigned int) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#10 0x00007ffff592d56e in boost::python::objects::caller_py_function_impl<boost::python::detail::caller<void (I3Tray::*)(), boost::python::default_call_policies, boost::mpl::vector2<void, I3Tray&> > >::operator()(_object*, _object*) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#11 0x00007ffff5248c8d in boost::python::objects::function::call(_object*, _object*) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#12 0x00007ffff5248e88 in boost::detail::function::void_function_ref_invoker0<boost::python::objects::(anonymous namespace)::bind_return, void>::invoke(boost::detail::function::function_buffer&) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#13 0x00007ffff5250ee3 in boost::python::detail::exception_handler::operator()(boost::function0<void> const&) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#14 0x00007fffed72e263 in boost::detail::function::function_obj_invoker2<boost::_bi::bind_t<bool, boost::python::detail::translate_exception<not_found_exception, void (*)(not_found_exception const&)>, boost::_bi::list3<boost::arg<1>, boost::arg<2>, boost::_bi::value<void (*)(not_found_exception const&)> > >, bool, boost::python::detail::exception_handler const&, boost::function0<void> const&>::invoke(boost::detail::function::function_buffer&, boost::python::detail::exception_handler const&, boost::function0<void> const&) ()

from /home/peller/build/simulation/trunk/lib/icecube/dataclasses.so

No symbol table info available.

#15 0x00007ffff5250c9d in boost::python::handle_exception_impl(boost::function0<void>) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#16 0x00007ffff5246059 in function_call () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#17 0x00007ffff7a27333 in PyObject_Call (func=func@entry=0x74c350, arg=arg@entry=0x7fffcfdb62d0, kw=kw@entry=0x0) at Objects/abstract.c:2529

result = <optimized out>

call = 0x7ffff5245ff0 <function_call>

#18 0x00007ffff7add212 in do_call (nk=<optimized out>, na=1, pp_stack=0x7fffffffc140, func=0x74c350) at Python/ceval.c:4253

callargs = <optimized out>

kwdict = <optimized out>

result = 0x0

#19 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc140) at Python/ceval.c:4058

func = 0x74c350

w = <optimized out>

na = 1

nk = <optimized out>

n = <optimized out>

pfunc = 0x782178

x = <optimized out>

#20 PyEval_EvalFrameEx (f=f@entry=0x781ff0, throwflag=throwflag@entry=0) at Python/ceval.c:2681

sp = 0x782178

stack_pointer = <optimized out>

next_instr = <optimized out>

opcode = <optimized out>

oparg = <optimized out>

why = WHY_NOT

err = 0

x = <optimized out>

v = <optimized out>

w = <optimized out>

u = <optimized out>

t = <optimized out>

stream = 0x0

fastlocals = 0x782168

freevars = <optimized out>

retval = <optimized out>

tstate = <optimized out>

co = <optimized out>

instr_ub = -1

instr_lb = 0

instr_prev = -1

first_instr = <optimized out>

names = <optimized out>

consts = <optimized out>

#21 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=<optimized out>, globals=<optimized out>, locals=locals@entry=0x0, args=<optimized out>, argcount=argcount@entry=1,

kws=<optimized out>, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

f = 0x781ff0

retval = 0x0

fastlocals = 0x782168

freevars = 0x782178

tstate = 0x6020a0

x = <optimized out>

u = <optimized out>

#22 0x00007ffff7adf05a in fast_function (nk=0, na=1, n=<optimized out>, pp_stack=0x7fffffffc330, func=0x7fffdad056e0) at Python/ceval.c:4131

co = <optimized out>

nd = <optimized out>

globals = <optimized out>

argdefs = <optimized out>

d = <optimized out>

#23 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc330) at Python/ceval.c:4056

func = 0x7fffdad056e0

w = <optimized out>

na = 1

nk = 0

n = <optimized out>

pfunc = 0x685898

x = <optimized out>

#24 PyEval_EvalFrameEx (f=f@entry=0x685720, throwflag=throwflag@entry=0) at Python/ceval.c:2681

sp = 0x6858a0

stack_pointer = <optimized out>

next_instr = <optimized out>

opcode = <optimized out>

oparg = <optimized out>

why = WHY_NOT

err = 0

x = <optimized out>

v = <optimized out>

w = <optimized out>

u = <optimized out>

t = <optimized out>

stream = 0x0

fastlocals = 0x685898

freevars = <optimized out>

retval = <optimized out>

tstate = <optimized out>

co = <optimized out>

instr_ub = -1

instr_lb = 0

instr_prev = -1

first_instr = <optimized out>

names = <optimized out>

consts = <optimized out>

#25 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168, args=args@entry=0x0,

argcount=argcount@entry=0, kws=kws@entry=0x0, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

f = 0x685720

retval = 0x0

fastlocals = 0x685898

freevars = 0x685898

tstate = 0x6020a0

x = <optimized out>

u = <optimized out>

#26 0x00007ffff7ae0389 in PyEval_EvalCode (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168) at Python/ceval.c:669

No locals.

#27 0x00007ffff7b0429a in run_mod (arena=0x673c60, flags=0x7fffffffc550, locals=0x7ffff7f59168, globals=0x7ffff7f59168,

filename=0x63fb30 "/home/peller/build/simulation/trunk/lib/libdataio.so", mod=<optimized out>) at Python/pythonrun.c:1371

co = 0x7ffff7ece8b0

v = <optimized out>

#28 PyRun_FileExFlags (fp=fp@entry=0x63fb30, filename=filename@entry=0x7fffffffcb47 "generate_table.py", start=start@entry=257, globals=globals@entry=0x7ffff7f59168,

locals=locals@entry=0x7ffff7f59168, closeit=closeit@entry=1, flags=0x7fffffffc550) at Python/pythonrun.c:1357

mod = <optimized out>

arena = 0x673c60

#29 0x00007ffff7b05797 in PyRun_SimpleFileExFlags (fp=fp@entry=0x63fb30, filename=0x7fffffffcb47 "generate_table.py", closeit=1, flags=flags@entry=0x7fffffffc550)

---Type <return> to continue, or q <return> to quit---

at Python/pythonrun.c:949

m = 0x7ffff7f45be8

d = 0x7ffff7f59168

v = <optimized out>

ext = 0x7fffffffcb54 "e.py"

set_file_name = 1

len = <optimized out>

ret = -1

#30 0x00007ffff7b05e53 in PyRun_AnyFileExFlags (fp=fp@entry=0x63fb30, filename=<optimized out>, closeit=<optimized out>, flags=flags@entry=0x7fffffffc550) at Python/pythonrun.c:753

No locals.

#31 0x00007ffff7b1c041 in Py_Main (argc=<optimized out>, argv=<optimized out>) at Modules/main.c:640

c = <optimized out>

sts = <optimized out>

command = 0x0

filename = 0x7fffffffcb47 "generate_table.py"

module = 0x0

fp = 0x63fb30

p = <optimized out>

unbuffered = 0

skipfirstline = 0

stdin_is_interactive = 1

help = <optimized out>

version = <optimized out>

saw_unbuffered_flag = <optimized out>

cf = {cf_flags = 0}

#32 0x00007ffff740e830 in __libc_start_main (main=0x4006b0 <main>, argc=8, argv=0x7fffffffc718, init=<optimized out>, fini=<optimized out>, rtld_fini=<optimized out>,

stack_end=0x7fffffffc708) at ../csu/libc-start.c:291

result = <optimized out>

unwind_buf = {cancel_jmp_buf = {{jmp_buf = {0, -52366050721878219, 4196032, 140737488340752, 0, 0, 52365500628250421, 52384425274421045}, mask_was_saved = 0}}, priv = {pad = {

0x0, 0x0, 0x8, 0x4006b0 <main>}, data = {prev = 0x0, cleanup = 0x0, canceltype = 8```

not_first_call = <optimized out>

#33 0x00000000004006e9 in _start ()

No symbol table info available.

```

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1984">https://code.icecube.wisc.edu/projects/icecube/ticket/1984</a>, reported by peller</summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:35",

"_ts": "1550067215093672",

"description": "When running the clsim tablemaker, once in a while (maybe 5-10% of my jobs) get stuck at a random time and wait forever at 0% CPU.\n\nRunning on Ubuntu16.04 w/ Intel openCL runtime and Intel(R) Xeon(R) CPU E5-2630 v3 @ 2.40GHz\n\nGDB revealed that it gets stuck at:\n\n{{{\npthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185\n185 ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S: No such file or directory.\n}}}\n\n\nbacktrace:\n\n{{{\n(gdb) backtrace\n#0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185\n#1 0x00007fffe1d9a50f in I3CLSimTabulatorModule::DAQ(boost::shared_ptr<I3Frame>) () from /home/peller/build/simulation/trunk/lib/libclsim.so\n#2 0x00007ffff583768e in I3Module::Process() () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#3 0x00007ffff583a689 in I3Module::Process_() () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#4 0x00007ffff58351ad in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#5 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#6 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#7 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#8 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#9 0x00007ffff57d79a9 in I3Tray::Execute(unsigned int) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#10 0x00007ffff592d56e in boost::python::objects::caller_py_function_impl<boost::python::detail::caller<void (I3Tray::*)(), boost::python::default_call_policies, boost::mpl::vector2<void, I3Tray&> > >::operator()(_object*, _object*) () from /home/peller/build/simulation/trunk/lib/libicetray.so\n#11 0x00007ffff5248c8d in boost::python::objects::function::call(_object*, _object*) const ()\n from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\n#12 0x00007ffff5248e88 in boost::detail::function::void_function_ref_invoker0<boost::python::objects::(anonymous namespace)::bind_return, void>::invoke(boost::detail::function::function_buffer&) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\n#13 0x00007ffff5250ee3 in boost::python::detail::exception_handler::operator()(boost::function0<void> const&) const ()\n from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\n#14 0x00007fffed72e263 in boost::detail::function::function_obj_invoker2<boost::_bi::bind_t<bool, boost::python::detail::translate_exception<not_found_exception, void (*)(not_found_exception const&)>, boost::_bi::list3<boost::arg<1>, boost::arg<2>, boost::_bi::value<void (*)(not_found_exception const&)> > >, bool, boost::python::detail::exception_handler const&, boost::function0<void> const&>::invoke(boost::detail::function::function_buffer&, boost::python::detail::exception_handler const&, boost::function0<void> const&) () from /home/peller/build/simulation/trunk/lib/icecube/dataclasses.so\n#15 0x00007ffff5250c9d in boost::python::handle_exception_impl(boost::function0<void>) ()\n from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\n#16 0x00007ffff5246059 in function_call () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\n#17 0x00007ffff7a27333 in PyObject_Call (func=func@entry=0x74c350, arg=arg@entry=0x7fffcfdb62d0, kw=kw@entry=0x0) at Objects/abstract.c:2529\n#18 0x00007ffff7add212 in do_call (nk=<optimized out>, na=1, pp_stack=0x7fffffffc140, func=0x74c350) at Python/ceval.c:4253\n#19 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc140) at Python/ceval.c:4058\n#20 PyEval_EvalFrameEx (f=f@entry=0x781ff0, throwflag=throwflag@entry=0) at Python/ceval.c:2681\n#21 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=<optimized out>, globals=<optimized out>, locals=locals@entry=0x0, args=<optimized out>, argcount=argcount@entry=1,\n kws=<optimized out>, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267\n#22 0x00007ffff7adf05a in fast_function (nk=0, na=1, n=<optimized out>, pp_stack=0x7fffffffc330, func=0x7fffdad056e0) at Python/ceval.c:4131\n#23 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc330) at Python/ceval.c:4056\n#24 PyEval_EvalFrameEx (f=f@entry=0x685720, throwflag=throwflag@entry=0) at Python/ceval.c:2681\n#25 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168,\n args=args@entry=0x0, argcount=argcount@entry=0, kws=kws@entry=0x0, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267\n#26 0x00007ffff7ae0389 in PyEval_EvalCode (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168) at Python/ceval.c:669\n#27 0x00007ffff7b0429a in run_mod (arena=0x673c60, flags=0x7fffffffc550, locals=0x7ffff7f59168, globals=0x7ffff7f59168,\n filename=0x63fb30 \"/home/peller/build/simulation/trunk/lib/libdataio.so\", mod=<optimized out>) at Python/pythonrun.c:1371\n#28 PyRun_FileExFlags (fp=fp@entry=0x63fb30, filename=filename@entry=0x7fffffffcb47 \"generate_table.py\", start=start@entry=257, globals=globals@entry=0x7ffff7f59168,\n locals=locals@entry=0x7ffff7f59168, closeit=closeit@entry=1, flags=0x7fffffffc550) at Python/pythonrun.c:1357\n#29 0x00007ffff7b05797 in PyRun_SimpleFileExFlags (fp=fp@entry=0x63fb30, filename=0x7fffffffcb47 \"generate_table.py\", closeit=1, flags=flags@entry=0x7fffffffc550)\n at Python/pythonrun.c:949\n#30 0x00007ffff7b05e53 in PyRun_AnyFileExFlags (fp=fp@entry=0x63fb30, filename=<optimized out>, closeit=<optimized out>, flags=flags@entry=0x7fffffffc550)\n at Python/pythonrun.c:753\n#31 0x00007ffff7b1c041 in Py_Main (argc=<optimized out>, argv=<optimized out>) at Modules/main.c:640\n#32 0x00007ffff740e830 in __libc_start_main (main=0x4006b0 <main>, argc=8, argv=0x7fffffffc718, init=<optimized out>, fini=<optimized out>,\n rtld_fini=<optimized out>, stack_end=0x7fffffffc708) at ../csu/libc-start.c:291\n#33 0x00000000004006e9 in _start ()\n}}}\n\nfull backtrace:\n{{{\n(gdb) backtrace full\n#0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185\nNo locals.\n#1 0x00007fffe1d9a50f in I3CLSimTabulatorModule::DAQ(boost::shared_ptr<I3Frame>) () from /home/peller/build/simulation/trunk/lib/libclsim.so\nNo symbol table info available.\n#2 0x00007ffff583768e in I3Module::Process() () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#3 0x00007ffff583a689 in I3Module::Process_() () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#4 0x00007ffff58351ad in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#5 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#6 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#7 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#8 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#9 0x00007ffff57d79a9 in I3Tray::Execute(unsigned int) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#10 0x00007ffff592d56e in boost::python::objects::caller_py_function_impl<boost::python::detail::caller<void (I3Tray::*)(), boost::python::default_call_policies, boost::mpl::vector2<void, I3Tray&> > >::operator()(_object*, _object*) () from /home/peller/build/simulation/trunk/lib/libicetray.so\nNo symbol table info available.\n#11 0x00007ffff5248c8d in boost::python::objects::function::call(_object*, _object*) const ()\n from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\nNo symbol table info available.\n#12 0x00007ffff5248e88 in boost::detail::function::void_function_ref_invoker0<boost::python::objects::(anonymous namespace)::bind_return, void>::invoke(boost::detail::function::function_buffer&) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\nNo symbol table info available.\n#13 0x00007ffff5250ee3 in boost::python::detail::exception_handler::operator()(boost::function0<void> const&) const ()\n from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\nNo symbol table info available.\n#14 0x00007fffed72e263 in boost::detail::function::function_obj_invoker2<boost::_bi::bind_t<bool, boost::python::detail::translate_exception<not_found_exception, void (*)(not_found_exception const&)>, boost::_bi::list3<boost::arg<1>, boost::arg<2>, boost::_bi::value<void (*)(not_found_exception const&)> > >, bool, boost::python::detail::exception_handler const&, boost::function0<void> const&>::invoke(boost::detail::function::function_buffer&, boost::python::detail::exception_handler const&, boost::function0<void> const&) ()\n from /home/peller/build/simulation/trunk/lib/icecube/dataclasses.so\nNo symbol table info available.\n#15 0x00007ffff5250c9d in boost::python::handle_exception_impl(boost::function0<void>) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\nNo symbol table info available.\n#16 0x00007ffff5246059 in function_call () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0\nNo symbol table info available.\n#17 0x00007ffff7a27333 in PyObject_Call (func=func@entry=0x74c350, arg=arg@entry=0x7fffcfdb62d0, kw=kw@entry=0x0) at Objects/abstract.c:2529\n result = <optimized out>\n call = 0x7ffff5245ff0 <function_call>\n#18 0x00007ffff7add212 in do_call (nk=<optimized out>, na=1, pp_stack=0x7fffffffc140, func=0x74c350) at Python/ceval.c:4253\n callargs = <optimized out>\n kwdict = <optimized out>\n result = 0x0\n#19 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc140) at Python/ceval.c:4058\n func = 0x74c350\n w = <optimized out>\n na = 1\n nk = <optimized out>\n n = <optimized out>\n pfunc = 0x782178\n x = <optimized out>\n#20 PyEval_EvalFrameEx (f=f@entry=0x781ff0, throwflag=throwflag@entry=0) at Python/ceval.c:2681\n sp = 0x782178\n stack_pointer = <optimized out>\n next_instr = <optimized out>\n opcode = <optimized out>\n oparg = <optimized out>\n why = WHY_NOT\n err = 0\n x = <optimized out>\n v = <optimized out>\n w = <optimized out>\n u = <optimized out>\n t = <optimized out>\n stream = 0x0\n fastlocals = 0x782168\n freevars = <optimized out>\n retval = <optimized out>\n tstate = <optimized out>\n co = <optimized out>\n instr_ub = -1\n instr_lb = 0\n instr_prev = -1\n first_instr = <optimized out>\n names = <optimized out>\n consts = <optimized out>\n#21 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=<optimized out>, globals=<optimized out>, locals=locals@entry=0x0, args=<optimized out>, argcount=argcount@entry=1,\n kws=<optimized out>, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267\n f = 0x781ff0\n retval = 0x0\n fastlocals = 0x782168\n freevars = 0x782178\n tstate = 0x6020a0\n x = <optimized out>\n u = <optimized out>\n#22 0x00007ffff7adf05a in fast_function (nk=0, na=1, n=<optimized out>, pp_stack=0x7fffffffc330, func=0x7fffdad056e0) at Python/ceval.c:4131\n co = <optimized out>\n nd = <optimized out>\n globals = <optimized out>\n argdefs = <optimized out>\n d = <optimized out>\n#23 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc330) at Python/ceval.c:4056\n func = 0x7fffdad056e0\n w = <optimized out>\n na = 1\n nk = 0\n n = <optimized out>\n pfunc = 0x685898\n x = <optimized out>\n#24 PyEval_EvalFrameEx (f=f@entry=0x685720, throwflag=throwflag@entry=0) at Python/ceval.c:2681\n sp = 0x6858a0\n stack_pointer = <optimized out>\n next_instr = <optimized out>\n opcode = <optimized out>\n oparg = <optimized out>\n why = WHY_NOT\n err = 0\n x = <optimized out>\n v = <optimized out>\n w = <optimized out>\n u = <optimized out>\n t = <optimized out>\n stream = 0x0\n fastlocals = 0x685898\n freevars = <optimized out>\n retval = <optimized out>\n tstate = <optimized out>\n co = <optimized out>\n instr_ub = -1\n instr_lb = 0\n instr_prev = -1\n first_instr = <optimized out>\n names = <optimized out>\n consts = <optimized out>\n#25 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168, args=args@entry=0x0,\n argcount=argcount@entry=0, kws=kws@entry=0x0, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267\n f = 0x685720\n retval = 0x0\n fastlocals = 0x685898\n freevars = 0x685898\n tstate = 0x6020a0\n x = <optimized out>\n u = <optimized out>\n#26 0x00007ffff7ae0389 in PyEval_EvalCode (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168) at Python/ceval.c:669\nNo locals.\n#27 0x00007ffff7b0429a in run_mod (arena=0x673c60, flags=0x7fffffffc550, locals=0x7ffff7f59168, globals=0x7ffff7f59168,\n filename=0x63fb30 \"/home/peller/build/simulation/trunk/lib/libdataio.so\", mod=<optimized out>) at Python/pythonrun.c:1371\n co = 0x7ffff7ece8b0\n v = <optimized out>\n#28 PyRun_FileExFlags (fp=fp@entry=0x63fb30, filename=filename@entry=0x7fffffffcb47 \"generate_table.py\", start=start@entry=257, globals=globals@entry=0x7ffff7f59168,\n locals=locals@entry=0x7ffff7f59168, closeit=closeit@entry=1, flags=0x7fffffffc550) at Python/pythonrun.c:1357\n mod = <optimized out>\n arena = 0x673c60\n#29 0x00007ffff7b05797 in PyRun_SimpleFileExFlags (fp=fp@entry=0x63fb30, filename=0x7fffffffcb47 \"generate_table.py\", closeit=1, flags=flags@entry=0x7fffffffc550)\n---Type <return> to continue, or q <return> to quit---\n at Python/pythonrun.c:949\n m = 0x7ffff7f45be8\n d = 0x7ffff7f59168\n v = <optimized out>\n ext = 0x7fffffffcb54 \"e.py\"\n set_file_name = 1\n len = <optimized out>\n ret = -1\n#30 0x00007ffff7b05e53 in PyRun_AnyFileExFlags (fp=fp@entry=0x63fb30, filename=<optimized out>, closeit=<optimized out>, flags=flags@entry=0x7fffffffc550) at Python/pythonrun.c:753\nNo locals.\n#31 0x00007ffff7b1c041 in Py_Main (argc=<optimized out>, argv=<optimized out>) at Modules/main.c:640\n c = <optimized out>\n sts = <optimized out>\n command = 0x0\n filename = 0x7fffffffcb47 \"generate_table.py\"\n module = 0x0\n fp = 0x63fb30\n p = <optimized out>\n unbuffered = 0\n skipfirstline = 0\n stdin_is_interactive = 1\n help = <optimized out>\n version = <optimized out>\n saw_unbuffered_flag = <optimized out>\n cf = {cf_flags = 0}\n#32 0x00007ffff740e830 in __libc_start_main (main=0x4006b0 <main>, argc=8, argv=0x7fffffffc718, init=<optimized out>, fini=<optimized out>, rtld_fini=<optimized out>,\n stack_end=0x7fffffffc708) at ../csu/libc-start.c:291\n result = <optimized out>\n unwind_buf = {cancel_jmp_buf = {{jmp_buf = {0, -52366050721878219, 4196032, 140737488340752, 0, 0, 52365500628250421, 52384425274421045}, mask_was_saved = 0}}, priv = {pad = {\n 0x0, 0x0, 0x8, 0x4006b0 <main>}, data = {prev = 0x0, cleanup = 0x0, canceltype = 8}}}\n not_first_call = <optimized out>\n#33 0x00000000004006e9 in _start ()\nNo symbol table info available.\n}}}",

"reporter": "peller",

"cc": "",

"resolution": "fixed",

"time": "2017-04-14T16:28:03",

"component": "combo simulation",

"summary": "clsim tablemaker deadlock",

"priority": "normal",

"keywords": "",

"milestone": "",

"owner": "",

"type": "defect"

}

```

</p>

</details>

|

1.0

|

clsim tablemaker deadlock (Trac #1984) - When running the clsim tablemaker, once in a while (maybe 5-10% of my jobs) get stuck at a random time and wait forever at 0% CPU.

Running on Ubuntu16.04 w/ Intel openCL runtime and Intel(R) Xeon(R) CPU E5-2630 v3 @ 2.40GHz

GDB revealed that it gets stuck at:

```text

pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

185 ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S: No such file or directory.

```

backtrace:

```text

(gdb) backtrace

https://code.icecube.wisc.edu/ticket/0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

#1 0x00007fffe1d9a50f in I3CLSimTabulatorModule::DAQ(boost::shared_ptr<I3Frame>) () from /home/peller/build/simulation/trunk/lib/libclsim.so

#2 0x00007ffff583768e in I3Module::Process() () from /home/peller/build/simulation/trunk/lib/libicetray.so

#3 0x00007ffff583a689 in I3Module::Process_() () from /home/peller/build/simulation/trunk/lib/libicetray.so

#4 0x00007ffff58351ad in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#5 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#6 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#7 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#8 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#9 0x00007ffff57d79a9 in I3Tray::Execute(unsigned int) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#10 0x00007ffff592d56e in boost::python::objects::caller_py_function_impl<boost::python::detail::caller<void (I3Tray::*)(), boost::python::default_call_policies, boost::mpl::vector2<void, I3Tray&> > >::operator()(_object*, _object*) () from /home/peller/build/simulation/trunk/lib/libicetray.so

#11 0x00007ffff5248c8d in boost::python::objects::function::call(_object*, _object*) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#12 0x00007ffff5248e88 in boost::detail::function::void_function_ref_invoker0<boost::python::objects::(anonymous namespace)::bind_return, void>::invoke(boost::detail::function::function_buffer&) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#13 0x00007ffff5250ee3 in boost::python::detail::exception_handler::operator()(boost::function0<void> const&) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#14 0x00007fffed72e263 in boost::detail::function::function_obj_invoker2<boost::_bi::bind_t<bool, boost::python::detail::translate_exception<not_found_exception, void (*)(not_found_exception const&)>, boost::_bi::list3<boost::arg<1>, boost::arg<2>, boost::_bi::value<void (*)(not_found_exception const&)> > >, bool, boost::python::detail::exception_handler const&, boost::function0<void> const&>::invoke(boost::detail::function::function_buffer&, boost::python::detail::exception_handler const&, boost::function0<void> const&) () from /home/peller/build/simulation/trunk/lib/icecube/dataclasses.so

#15 0x00007ffff5250c9d in boost::python::handle_exception_impl(boost::function0<void>) ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#16 0x00007ffff5246059 in function_call () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

#17 0x00007ffff7a27333 in PyObject_Call (func=func@entry=0x74c350, arg=arg@entry=0x7fffcfdb62d0, kw=kw@entry=0x0) at Objects/abstract.c:2529

#18 0x00007ffff7add212 in do_call (nk=<optimized out>, na=1, pp_stack=0x7fffffffc140, func=0x74c350) at Python/ceval.c:4253

#19 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc140) at Python/ceval.c:4058

#20 PyEval_EvalFrameEx (f=f@entry=0x781ff0, throwflag=throwflag@entry=0) at Python/ceval.c:2681

#21 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=<optimized out>, globals=<optimized out>, locals=locals@entry=0x0, args=<optimized out>, argcount=argcount@entry=1,

kws=<optimized out>, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

#22 0x00007ffff7adf05a in fast_function (nk=0, na=1, n=<optimized out>, pp_stack=0x7fffffffc330, func=0x7fffdad056e0) at Python/ceval.c:4131

#23 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc330) at Python/ceval.c:4056

#24 PyEval_EvalFrameEx (f=f@entry=0x685720, throwflag=throwflag@entry=0) at Python/ceval.c:2681

#25 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168,

args=args@entry=0x0, argcount=argcount@entry=0, kws=kws@entry=0x0, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

#26 0x00007ffff7ae0389 in PyEval_EvalCode (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168) at Python/ceval.c:669

#27 0x00007ffff7b0429a in run_mod (arena=0x673c60, flags=0x7fffffffc550, locals=0x7ffff7f59168, globals=0x7ffff7f59168,

filename=0x63fb30 "/home/peller/build/simulation/trunk/lib/libdataio.so", mod=<optimized out>) at Python/pythonrun.c:1371

#28 PyRun_FileExFlags (fp=fp@entry=0x63fb30, filename=filename@entry=0x7fffffffcb47 "generate_table.py", start=start@entry=257, globals=globals@entry=0x7ffff7f59168,

locals=locals@entry=0x7ffff7f59168, closeit=closeit@entry=1, flags=0x7fffffffc550) at Python/pythonrun.c:1357

#29 0x00007ffff7b05797 in PyRun_SimpleFileExFlags (fp=fp@entry=0x63fb30, filename=0x7fffffffcb47 "generate_table.py", closeit=1, flags=flags@entry=0x7fffffffc550)

at Python/pythonrun.c:949

#30 0x00007ffff7b05e53 in PyRun_AnyFileExFlags (fp=fp@entry=0x63fb30, filename=<optimized out>, closeit=<optimized out>, flags=flags@entry=0x7fffffffc550)

at Python/pythonrun.c:753

#31 0x00007ffff7b1c041 in Py_Main (argc=<optimized out>, argv=<optimized out>) at Modules/main.c:640

#32 0x00007ffff740e830 in __libc_start_main (main=0x4006b0 <main>, argc=8, argv=0x7fffffffc718, init=<optimized out>, fini=<optimized out>,

rtld_fini=<optimized out>, stack_end=0x7fffffffc708) at ../csu/libc-start.c:291

#33 0x00000000004006e9 in _start ()

```

full backtrace:

```text

(gdb) backtrace full

https://code.icecube.wisc.edu/ticket/0 pthread_cond_wait@@GLIBC_2.3.2 () at ../sysdeps/unix/sysv/linux/x86_64/pthread_cond_wait.S:185

No locals.

#1 0x00007fffe1d9a50f in I3CLSimTabulatorModule::DAQ(boost::shared_ptr<I3Frame>) () from /home/peller/build/simulation/trunk/lib/libclsim.so

No symbol table info available.

#2 0x00007ffff583768e in I3Module::Process() () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#3 0x00007ffff583a689 in I3Module::Process_() () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#4 0x00007ffff58351ad in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#5 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#6 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#7 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#8 0x00007ffff5835269 in I3Module::Do(void (I3Module::*)()) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#9 0x00007ffff57d79a9 in I3Tray::Execute(unsigned int) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#10 0x00007ffff592d56e in boost::python::objects::caller_py_function_impl<boost::python::detail::caller<void (I3Tray::*)(), boost::python::default_call_policies, boost::mpl::vector2<void, I3Tray&> > >::operator()(_object*, _object*) () from /home/peller/build/simulation/trunk/lib/libicetray.so

No symbol table info available.

#11 0x00007ffff5248c8d in boost::python::objects::function::call(_object*, _object*) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#12 0x00007ffff5248e88 in boost::detail::function::void_function_ref_invoker0<boost::python::objects::(anonymous namespace)::bind_return, void>::invoke(boost::detail::function::function_buffer&) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#13 0x00007ffff5250ee3 in boost::python::detail::exception_handler::operator()(boost::function0<void> const&) const ()

from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#14 0x00007fffed72e263 in boost::detail::function::function_obj_invoker2<boost::_bi::bind_t<bool, boost::python::detail::translate_exception<not_found_exception, void (*)(not_found_exception const&)>, boost::_bi::list3<boost::arg<1>, boost::arg<2>, boost::_bi::value<void (*)(not_found_exception const&)> > >, bool, boost::python::detail::exception_handler const&, boost::function0<void> const&>::invoke(boost::detail::function::function_buffer&, boost::python::detail::exception_handler const&, boost::function0<void> const&) ()

from /home/peller/build/simulation/trunk/lib/icecube/dataclasses.so

No symbol table info available.

#15 0x00007ffff5250c9d in boost::python::handle_exception_impl(boost::function0<void>) () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#16 0x00007ffff5246059 in function_call () from /cvmfs/icecube.opensciencegrid.org/py2-v2/Ubuntu_16_x86_64/lib/libboost_python.so.1.57.0

No symbol table info available.

#17 0x00007ffff7a27333 in PyObject_Call (func=func@entry=0x74c350, arg=arg@entry=0x7fffcfdb62d0, kw=kw@entry=0x0) at Objects/abstract.c:2529

result = <optimized out>

call = 0x7ffff5245ff0 <function_call>

#18 0x00007ffff7add212 in do_call (nk=<optimized out>, na=1, pp_stack=0x7fffffffc140, func=0x74c350) at Python/ceval.c:4253

callargs = <optimized out>

kwdict = <optimized out>

result = 0x0

#19 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc140) at Python/ceval.c:4058

func = 0x74c350

w = <optimized out>

na = 1

nk = <optimized out>

n = <optimized out>

pfunc = 0x782178

x = <optimized out>

#20 PyEval_EvalFrameEx (f=f@entry=0x781ff0, throwflag=throwflag@entry=0) at Python/ceval.c:2681

sp = 0x782178

stack_pointer = <optimized out>

next_instr = <optimized out>

opcode = <optimized out>

oparg = <optimized out>

why = WHY_NOT

err = 0

x = <optimized out>

v = <optimized out>

w = <optimized out>

u = <optimized out>

t = <optimized out>

stream = 0x0

fastlocals = 0x782168

freevars = <optimized out>

retval = <optimized out>

tstate = <optimized out>

co = <optimized out>

instr_ub = -1

instr_lb = 0

instr_prev = -1

first_instr = <optimized out>

names = <optimized out>

consts = <optimized out>

#21 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=<optimized out>, globals=<optimized out>, locals=locals@entry=0x0, args=<optimized out>, argcount=argcount@entry=1,

kws=<optimized out>, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

f = 0x781ff0

retval = 0x0

fastlocals = 0x782168

freevars = 0x782178

tstate = 0x6020a0

x = <optimized out>

u = <optimized out>

#22 0x00007ffff7adf05a in fast_function (nk=0, na=1, n=<optimized out>, pp_stack=0x7fffffffc330, func=0x7fffdad056e0) at Python/ceval.c:4131

co = <optimized out>

nd = <optimized out>

globals = <optimized out>

argdefs = <optimized out>

d = <optimized out>

#23 call_function (oparg=<optimized out>, pp_stack=0x7fffffffc330) at Python/ceval.c:4056

func = 0x7fffdad056e0

w = <optimized out>

na = 1

nk = 0

n = <optimized out>

pfunc = 0x685898

x = <optimized out>

#24 PyEval_EvalFrameEx (f=f@entry=0x685720, throwflag=throwflag@entry=0) at Python/ceval.c:2681

sp = 0x6858a0

stack_pointer = <optimized out>

next_instr = <optimized out>

opcode = <optimized out>

oparg = <optimized out>

why = WHY_NOT

err = 0

x = <optimized out>

v = <optimized out>

w = <optimized out>

u = <optimized out>

t = <optimized out>

stream = 0x0

fastlocals = 0x685898

freevars = <optimized out>

retval = <optimized out>

tstate = <optimized out>

co = <optimized out>

instr_ub = -1

instr_lb = 0

instr_prev = -1

first_instr = <optimized out>

names = <optimized out>

consts = <optimized out>

#25 0x00007ffff7ae026c in PyEval_EvalCodeEx (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168, args=args@entry=0x0,

argcount=argcount@entry=0, kws=kws@entry=0x0, kwcount=0, defs=0x0, defcount=0, closure=0x0) at Python/ceval.c:3267

f = 0x685720

retval = 0x0

fastlocals = 0x685898

freevars = 0x685898

tstate = 0x6020a0

x = <optimized out>

u = <optimized out>

#26 0x00007ffff7ae0389 in PyEval_EvalCode (co=co@entry=0x7ffff7ece8b0, globals=globals@entry=0x7ffff7f59168, locals=locals@entry=0x7ffff7f59168) at Python/ceval.c:669

No locals.

#27 0x00007ffff7b0429a in run_mod (arena=0x673c60, flags=0x7fffffffc550, locals=0x7ffff7f59168, globals=0x7ffff7f59168,

filename=0x63fb30 "/home/peller/build/simulation/trunk/lib/libdataio.so", mod=<optimized out>) at Python/pythonrun.c:1371

co = 0x7ffff7ece8b0

v = <optimized out>

#28 PyRun_FileExFlags (fp=fp@entry=0x63fb30, filename=filename@entry=0x7fffffffcb47 "generate_table.py", start=start@entry=257, globals=globals@entry=0x7ffff7f59168,

locals=locals@entry=0x7ffff7f59168, closeit=closeit@entry=1, flags=0x7fffffffc550) at Python/pythonrun.c:1357

mod = <optimized out>

arena = 0x673c60

#29 0x00007ffff7b05797 in PyRun_SimpleFileExFlags (fp=fp@entry=0x63fb30, filename=0x7fffffffcb47 "generate_table.py", closeit=1, flags=flags@entry=0x7fffffffc550)

---Type <return> to continue, or q <return> to quit---

at Python/pythonrun.c:949

m = 0x7ffff7f45be8

d = 0x7ffff7f59168

v = <optimized out>

ext = 0x7fffffffcb54 "e.py"

set_file_name = 1

len = <optimized out>

ret = -1

#30 0x00007ffff7b05e53 in PyRun_AnyFileExFlags (fp=fp@entry=0x63fb30, filename=<optimized out>, closeit=<optimized out>, flags=flags@entry=0x7fffffffc550) at Python/pythonrun.c:753

No locals.

#31 0x00007ffff7b1c041 in Py_Main (argc=<optimized out>, argv=<optimized out>) at Modules/main.c:640

c = <optimized out>

sts = <optimized out>

command = 0x0

filename = 0x7fffffffcb47 "generate_table.py"

module = 0x0

fp = 0x63fb30

p = <optimized out>

unbuffered = 0

skipfirstline = 0

stdin_is_interactive = 1

help = <optimized out>

version = <optimized out>

saw_unbuffered_flag = <optimized out>

cf = {cf_flags = 0}

#32 0x00007ffff740e830 in __libc_start_main (main=0x4006b0 <main>, argc=8, argv=0x7fffffffc718, init=<optimized out>, fini=<optimized out>, rtld_fini=<optimized out>,

stack_end=0x7fffffffc708) at ../csu/libc-start.c:291

result = <optimized out>

unwind_buf = {cancel_jmp_buf = {{jmp_buf = {0, -52366050721878219, 4196032, 140737488340752, 0, 0, 52365500628250421, 52384425274421045}, mask_was_saved = 0}}, priv = {pad = {

0x0, 0x0, 0x8, 0x4006b0 <main>}, data = {prev = 0x0, cleanup = 0x0, canceltype = 8```

not_first_call = <optimized out>

#33 0x00000000004006e9 in _start ()

No symbol table info available.

```

<details>

<summary><em>Migrated from <a href="https://code.icecube.wisc.edu/projects/icecube/ticket/1984">https://code.icecube.wisc.edu/projects/icecube/ticket/1984</a>, reported by peller</summary>

<p>

```json

{

"status": "closed",

"changetime": "2019-02-13T14:13:35",

"_ts": "1550067215093672",