Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

757

| labels

stringlengths 4

664

| body

stringlengths 3

261k

| index

stringclasses 10

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

232k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

278,641

| 8,648,502,585

|

IssuesEvent

|

2018-11-26 16:44:37

|

robot-lab/judyst-main-web-service

|

https://api.github.com/repos/robot-lab/judyst-main-web-service

|

closed

|

Доставка статики для сайта

|

area/front-end help wanted priority/normal type/feature

|

# Feature request

## Почему Вы заинтересованы в данном функционале

Нужно как-то загружать сайт на сторону клиента.

## Функционал, который Вы хотите

Передача файлов сайта в браузер пользователя.

## Как Вы будете использовать этот функционал

Пользователь будет использовать нашу систему.

## Кому будет интересен данный функционал

Пользователю

## Дополнительный контекст или ссылки на связанные с данной задачей issues

|

1.0

|

Доставка статики для сайта - # Feature request

## Почему Вы заинтересованы в данном функционале

Нужно как-то загружать сайт на сторону клиента.

## Функционал, который Вы хотите

Передача файлов сайта в браузер пользователя.

## Как Вы будете использовать этот функционал

Пользователь будет использовать нашу систему.

## Кому будет интересен данный функционал

Пользователю

## Дополнительный контекст или ссылки на связанные с данной задачей issues

|

non_defect

|

доставка статики для сайта feature request почему вы заинтересованы в данном функционале нужно как то загружать сайт на сторону клиента функционал который вы хотите передача файлов сайта в браузер пользователя как вы будете использовать этот функционал пользователь будет использовать нашу систему кому будет интересен данный функционал пользователю дополнительный контекст или ссылки на связанные с данной задачей issues

| 0

|

525,464

| 15,254,133,937

|

IssuesEvent

|

2021-02-20 10:41:32

|

staxrip/staxrip

|

https://api.github.com/repos/staxrip/staxrip

|

closed

|

Timestamps are unset in a packet for stream 0

|

added/fixed/done priority low tool issue

|

**Describe the bug**

When StaxRip export a video using FLV, FFmpeg will error:

```

[flv @ 000001e5315265c0] Timestamps are unset in a packet for stream 0. This is deprecated and will stop working in the future. Fix your code to set the timestamps properly

[flv @ 000001e5315265c0] Packet is missing PTS

av_interleaved_write_frame(): Invalid argument

video:3kB audio:0kB subtitle:0kB other streams:0kB global headers:0kB muxing overhead: unknown

Conversion failed!

```

Full log:

```

------------------------- System Environment -------------------------

StaxRip : 2.1.8.0

Windows : Windows 10 Pro 2009

Language : Chinese (Simplified, China)

CPU : Intel(R) Core(TM) i5-7500 CPU @ 3.40GHz

GPU : Intel(R) HD Graphics 630

Resolution : 1920 x 1080

DPI : 96

Code Page : 936

----------------------- Media Info Source File -----------------------

E:\render\0001-0180.mp4

General

Complete name : E:\render\0001-0180.mp4

Format : MPEG-4

Format profile : Base Media

Codec ID : isom (isom/avc1)

File size : 223 KiB

Duration : 7 s 500 ms

Overall bit rate mode : Variable

Overall bit rate : 243 kb/s

Encoded date : UTC 2021-02-19 08:18:32

Tagged date : UTC 2021-02-19 08:18:32

Video

ID : 1

Format : AVC

Format/Info : Advanced Video Codec

Format profile : High@L4

Format settings : CABAC / 3 Ref Frames

Format, CABAC : Yes

Format, Reference frames : 3 frames

Codec ID : avc1

Codec ID/Info : Advanced Video Coding

Duration : 7 s 500 ms

Bit rate : 53.7 kb/s

Maximum bit rate : 118 kb/s

Width : 1 920 pixels

Height : 1 080 pixels

Display aspect ratio : 16:9

Frame rate mode : Constant

Frame rate : 24.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 8 bits

Scan type : Progressive

Bits/(Pixel*Frame) : 0.001

Stream size : 49.2 KiB (22%)

Encoded date : UTC 2021-02-19 08:18:32

Tagged date : UTC 2021-02-19 08:18:32

Codec configuration box : avcC

Audio

ID : 2

Format : AAC LC

Format/Info : Advanced Audio Codec Low Complexity

Codec ID : mp4a-40-2

Duration : 7 s 445 ms

Bit rate mode : Variable

Bit rate : 185 kb/s

Maximum bit rate : 196 kb/s

Channel(s) : 2 channels

Channel layout : L R

Sampling rate : 48.0 kHz

Frame rate : 46.875 FPS (1024 SPF)

Compression mode : Lossy

Stream size : 169 KiB (76%)

Encoded date : UTC 2021-02-19 08:18:32

Tagged date : UTC 2021-02-19 08:18:32

----------------------------- Demux audio -----------------------------

MP4Box 1.1.0-rev447-g8c190b551-gcc10.2.0 Patman

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\Support\MP4Box\MP4Box.exe -single 2 -out E:\render\0001-0180_temp\ID1.m4a E:\render\0001-0180.mp4

Start: 下午 4:19:31

End: 下午 4:19:31

Duration: 00:00:00

General

Complete name : E:\render\0001-0180_temp\ID1.m4a

Format : MPEG-4

Format profile : Base Media

Codec ID : isom (isom)

File size : 171 KiB

Duration : 7 s 445 ms

Overall bit rate mode : Variable

Overall bit rate : 188 kb/s

Encoded date : UTC 2021-02-19 08:19:31

Tagged date : UTC 2021-02-19 08:19:31

Audio

ID : 2

Format : AAC LC

Format/Info : Advanced Audio Codec Low Complexity

Format profile : AAC@L2

Codec ID : mp4a-40-2

Duration : 7 s 445 ms

Bit rate mode : Variable

Bit rate : 185 kb/s

Nominal bit rate : 5 148 b/s

Maximum bit rate : 143 kb/s

Channel(s) : 2 channels

Channel layout : L R

Sampling rate : 48.0 kHz

Frame rate : 46.875 FPS (1024 SPF)

Compression mode : Lossy

Stream size : 169 KiB (99%)

Encoded date : UTC 2021-02-19 08:19:31

Tagged date : UTC 2021-02-19 08:19:31

---------------------------- Configuration ----------------------------

Template : Automatic Workflow

Video Encoder Profile : Intel | H.264

Container/Muxer Profile : ffmpeg | FLV

--------------------------- AviSynth Script ---------------------------

AddAutoloadDir("D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\plugins")

LoadPlugin("D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\Plugins\Dual\L-SMASH-Works\LSMASHSource.dll")

LSMASHVideoSource("E:\render\0001-0180.mp4")

------------------------- Source Script Info -------------------------

Width : 1920

Height : 1080

Frames : 180

Time : 00:07.500

Framerate : 24 (24/1)

Format : YUV420P8

------------------------- Target Script Info -------------------------

Width : 1920

Height : 1080

Frames : 180

Time : 00:07.500

Framerate : 24 (24/1)

Format : YUV420P8

--------------------------- Video encoding ---------------------------

QSVEnc 4.12

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\Encoders\QSVEnc\QSVEncC64.exe --avsdll D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\AviSynth.dll --fallback-rc --cqp 24:26:27 -i E:\render\0001-0180_temp\0001-0180_h264.avs -o E:\render\0001-0180_temp\0001-0180_h264_out.h264

--------------------------------------------------------------------------------

E:\render\0001-0180_temp\0001-0180_h264_out.h264

--------------------------------------------------------------------------------

QSVEncC (x64) 4.12 (r1979) by rigaya, Nov 23 2020 10:32:05 (VC 1928/Win/avx2)

OS Windows 10 x64 (19042)

CPU Info Intel Core i5-7500 @ 3.40GHz [TB: 3.59GHz] (4C/4T) <Kabylake>

GPU Info Intel HD Graphics 630 (24EU) 350-1100MHz [65W] (27.20.100.8853)

Media SDK QuickSyncVideo (hardware encoder) PG, 1st GPU, API v1.33

Async Depth 4 frames

Buffer Memory d3d9, 3 input buffer, 15 work buffer

Input Info AviSynth+ 3.7.0 r3382(yv12)->nv12 [AVX2], 1920x1080, 24/1 fps

AVSync cfr

Output H.264/AVC High @ Level 4

1920x1080p 1:1 24.000fps (24/1fps)

Target usage 4 - balanced

Encode Mode Constant QP (CQP)

CQP Value I:24 P:26 B:27

QP Limit min: none, max: none

Trellis Auto

Ref frames 3 frames

Bframes 3 frames, B-pyramid: on

Max GOP Length 240 frames

Ext. Features QPOffset

encoded 180 frames, 121.29 fps, 29.12 kbps, 0.03 MB

encode time 0:00:01, CPULoad: 55.2

frame type IDR 1

frame type I 2, total size 0.01 MB

frame type P 45, total size 0.01 MB

frame type B 134, total size 0.01 MB

Start: 下午 4:21:35

End: 下午 4:21:39

Duration: 00:00:03

General

Complete name : E:\render\0001-0180_temp\0001-0180_h264_out.h264

Format : AVC

Format/Info : Advanced Video Codec

File size : 26.7 KiB

Duration : 7 s 500 ms

Overall bit rate : 29.1 kb/s

Video

Format : AVC

Format/Info : Advanced Video Codec

Format profile : High@L4

Format settings : CABAC / 3 Ref Frames

Format, CABAC : Yes

Format, Reference frames : 3 frames

Duration : 7 s 500 ms

Bit rate : 29.1 kb/s

Width : 1 920 pixels

Height : 1 080 pixels

Display aspect ratio : 16:9

Frame rate : 24.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 8 bits

Scan type : Progressive

Bits/(Pixel*Frame) : 0.001

Stream size : 26.7 KiB (100%)

------------------------- Error Muxing to FLV -------------------------

Muxing to FLV returned error exit code: 1 (0x1)

It's unclear what the exit code means, in case it's a Windows system error then it possibly means:

函数不正确。

---------------------------- Muxing to FLV ----------------------------

ffmpeg N-100448-gab6a56773f-x64-gcc10.2.0 Patman

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\ffmpeg.exe -i E:\render\0001-0180_temp\0001-0180_h264_out.h264 -i E:\render\0001-0180.mp4 -map 0:v -map 1:1 -c:v copy -c:a copy -y -hide_banner -strict -2 E:\render\0001-0180_h264.flv

Input #0, h264, from 'E:\render\0001-0180_temp\0001-0180_h264_out.h264':

Duration: N/A, bitrate: N/A

Stream #0:0: Video: h264 (High), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], 24.08 fps, 24 tbr, 1200k tbn, 48 tbc

Input #1, mov,mp4,m4a,3gp,3g2,mj2, from 'E:\render\0001-0180.mp4':

Metadata:

major_brand : isom

minor_version : 1

compatible_brands: isomavc1

creation_time : 2021-02-19T08:18:32.000000Z

Duration: 00:00:07.50, start: 0.000000, bitrate: 243 kb/s

Stream #1:0(und): Video: h264 (High) (avc1 / 0x31637661), yuv420p, 1920x1080 [SAR 1:1 DAR 16:9], 53 kb/s, 24 fps, 24 tbr, 96 tbn, 48 tbc (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream #1:1(und): Audio: aac (LC) (mp4a / 0x6134706D), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Output #0, flv, to 'E:\render\0001-0180_h264.flv':

Metadata:

encoder : Lavf58.65.100

Stream #0:0: Video: h264 (High) ([7][0][0][0] / 0x0007), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], q=2-31, 24.08 fps, 24 tbr, 1k tbn, 1200k tbc

Stream #0:1(und): Audio: aac (LC) ([10][0][0][0] / 0x000A), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream mapping:

Stream #0:0 -> #0:0 (copy)

Stream #1:1 -> #0:1 (copy)

Press [q] to stop, [?] for help

[flv @ 000001e5315265c0] Timestamps are unset in a packet for stream 0. This is deprecated and will stop working in the future. Fix your code to set the timestamps properly

[flv @ 000001e5315265c0] Packet is missing PTS

av_interleaved_write_frame(): Invalid argument

video:3kB audio:0kB subtitle:0kB other streams:0kB global headers:0kB muxing overhead: unknown

Conversion failed!

---------------------------- Muxing to FLV ----------------------------

ffmpeg N-100448-gab6a56773f-x64-gcc10.2.0 Patman

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\ffmpeg.exe -i E:\render\0001-0180_temp\0001-0180_h264_out.h264 -i E:\render\0001-0180.mp4 -map 0:v -map 1:1 -c:v copy -c:a copy -y -hide_banner -strict -2 E:\render\0001-0180_h264.flv

Input #0, h264, from 'E:\render\0001-0180_temp\0001-0180_h264_out.h264':

Duration: N/A, bitrate: N/A

Stream #0:0: Video: h264 (High), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], 24.08 fps, 24 tbr, 1200k tbn, 48 tbc

Input #1, mov,mp4,m4a,3gp,3g2,mj2, from 'E:\render\0001-0180.mp4':

Metadata:

major_brand : isom

minor_version : 1

compatible_brands: isomavc1

creation_time : 2021-02-19T08:18:32.000000Z

Duration: 00:00:07.50, start: 0.000000, bitrate: 243 kb/s

Stream #1:0(und): Video: h264 (High) (avc1 / 0x31637661), yuv420p, 1920x1080 [SAR 1:1 DAR 16:9], 53 kb/s, 24 fps, 24 tbr, 96 tbn, 48 tbc (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream #1:1(und): Audio: aac (LC) (mp4a / 0x6134706D), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Output #0, flv, to 'E:\render\0001-0180_h264.flv':

Metadata:

encoder : Lavf58.65.100

Stream #0:0: Video: h264 (High) ([7][0][0][0] / 0x0007), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], q=2-31, 24.08 fps, 24 tbr, 1k tbn, 1200k tbc

Stream #0:1(und): Audio: aac (LC) ([10][0][0][0] / 0x000A), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream mapping:

Stream #0:0 -> #0:0 (copy)

Stream #1:1 -> #0:1 (copy)

Press [q] to stop, [?] for help

[flv @ 000001e5315265c0] Timestamps are unset in a packet for stream 0. This is deprecated and will stop working in the future. Fix your code to set the timestamps properly

[flv @ 000001e5315265c0] Packet is missing PTS

av_interleaved_write_frame(): Invalid argument

video:3kB audio:0kB subtitle:0kB other streams:0kB global headers:0kB muxing overhead: unknown

Conversion failed!

Start: 下午 4:21:39

End: 下午 4:21:40

Duration: 00:00:00

```

**How to reproduce the issue**

1. Using 'Intel-h.264' encoder. (All encoder can reproduce too)

2. 'AviSynth or VapourSynth' being used.

3. Set container to FLV

4. Set audio to "#1" Copy/Mux

5. Start the job

**Provide information**

- Used StaxRip version: 2.1.8.0

**Notes before posting**

**Additional context**

This error will be raised when use all ffmpeg container(maybe, but FLV of course), all source video file format, all encoder/decoder.

**Please be as clear and as detailed as possible**

|

1.0

|

Timestamps are unset in a packet for stream 0 - **Describe the bug**

When StaxRip export a video using FLV, FFmpeg will error:

```

[flv @ 000001e5315265c0] Timestamps are unset in a packet for stream 0. This is deprecated and will stop working in the future. Fix your code to set the timestamps properly

[flv @ 000001e5315265c0] Packet is missing PTS

av_interleaved_write_frame(): Invalid argument

video:3kB audio:0kB subtitle:0kB other streams:0kB global headers:0kB muxing overhead: unknown

Conversion failed!

```

Full log:

```

------------------------- System Environment -------------------------

StaxRip : 2.1.8.0

Windows : Windows 10 Pro 2009

Language : Chinese (Simplified, China)

CPU : Intel(R) Core(TM) i5-7500 CPU @ 3.40GHz

GPU : Intel(R) HD Graphics 630

Resolution : 1920 x 1080

DPI : 96

Code Page : 936

----------------------- Media Info Source File -----------------------

E:\render\0001-0180.mp4

General

Complete name : E:\render\0001-0180.mp4

Format : MPEG-4

Format profile : Base Media

Codec ID : isom (isom/avc1)

File size : 223 KiB

Duration : 7 s 500 ms

Overall bit rate mode : Variable

Overall bit rate : 243 kb/s

Encoded date : UTC 2021-02-19 08:18:32

Tagged date : UTC 2021-02-19 08:18:32

Video

ID : 1

Format : AVC

Format/Info : Advanced Video Codec

Format profile : High@L4

Format settings : CABAC / 3 Ref Frames

Format, CABAC : Yes

Format, Reference frames : 3 frames

Codec ID : avc1

Codec ID/Info : Advanced Video Coding

Duration : 7 s 500 ms

Bit rate : 53.7 kb/s

Maximum bit rate : 118 kb/s

Width : 1 920 pixels

Height : 1 080 pixels

Display aspect ratio : 16:9

Frame rate mode : Constant

Frame rate : 24.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 8 bits

Scan type : Progressive

Bits/(Pixel*Frame) : 0.001

Stream size : 49.2 KiB (22%)

Encoded date : UTC 2021-02-19 08:18:32

Tagged date : UTC 2021-02-19 08:18:32

Codec configuration box : avcC

Audio

ID : 2

Format : AAC LC

Format/Info : Advanced Audio Codec Low Complexity

Codec ID : mp4a-40-2

Duration : 7 s 445 ms

Bit rate mode : Variable

Bit rate : 185 kb/s

Maximum bit rate : 196 kb/s

Channel(s) : 2 channels

Channel layout : L R

Sampling rate : 48.0 kHz

Frame rate : 46.875 FPS (1024 SPF)

Compression mode : Lossy

Stream size : 169 KiB (76%)

Encoded date : UTC 2021-02-19 08:18:32

Tagged date : UTC 2021-02-19 08:18:32

----------------------------- Demux audio -----------------------------

MP4Box 1.1.0-rev447-g8c190b551-gcc10.2.0 Patman

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\Support\MP4Box\MP4Box.exe -single 2 -out E:\render\0001-0180_temp\ID1.m4a E:\render\0001-0180.mp4

Start: 下午 4:19:31

End: 下午 4:19:31

Duration: 00:00:00

General

Complete name : E:\render\0001-0180_temp\ID1.m4a

Format : MPEG-4

Format profile : Base Media

Codec ID : isom (isom)

File size : 171 KiB

Duration : 7 s 445 ms

Overall bit rate mode : Variable

Overall bit rate : 188 kb/s

Encoded date : UTC 2021-02-19 08:19:31

Tagged date : UTC 2021-02-19 08:19:31

Audio

ID : 2

Format : AAC LC

Format/Info : Advanced Audio Codec Low Complexity

Format profile : AAC@L2

Codec ID : mp4a-40-2

Duration : 7 s 445 ms

Bit rate mode : Variable

Bit rate : 185 kb/s

Nominal bit rate : 5 148 b/s

Maximum bit rate : 143 kb/s

Channel(s) : 2 channels

Channel layout : L R

Sampling rate : 48.0 kHz

Frame rate : 46.875 FPS (1024 SPF)

Compression mode : Lossy

Stream size : 169 KiB (99%)

Encoded date : UTC 2021-02-19 08:19:31

Tagged date : UTC 2021-02-19 08:19:31

---------------------------- Configuration ----------------------------

Template : Automatic Workflow

Video Encoder Profile : Intel | H.264

Container/Muxer Profile : ffmpeg | FLV

--------------------------- AviSynth Script ---------------------------

AddAutoloadDir("D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\plugins")

LoadPlugin("D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\Plugins\Dual\L-SMASH-Works\LSMASHSource.dll")

LSMASHVideoSource("E:\render\0001-0180.mp4")

------------------------- Source Script Info -------------------------

Width : 1920

Height : 1080

Frames : 180

Time : 00:07.500

Framerate : 24 (24/1)

Format : YUV420P8

------------------------- Target Script Info -------------------------

Width : 1920

Height : 1080

Frames : 180

Time : 00:07.500

Framerate : 24 (24/1)

Format : YUV420P8

--------------------------- Video encoding ---------------------------

QSVEnc 4.12

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\Encoders\QSVEnc\QSVEncC64.exe --avsdll D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\AviSynth.dll --fallback-rc --cqp 24:26:27 -i E:\render\0001-0180_temp\0001-0180_h264.avs -o E:\render\0001-0180_temp\0001-0180_h264_out.h264

--------------------------------------------------------------------------------

E:\render\0001-0180_temp\0001-0180_h264_out.h264

--------------------------------------------------------------------------------

QSVEncC (x64) 4.12 (r1979) by rigaya, Nov 23 2020 10:32:05 (VC 1928/Win/avx2)

OS Windows 10 x64 (19042)

CPU Info Intel Core i5-7500 @ 3.40GHz [TB: 3.59GHz] (4C/4T) <Kabylake>

GPU Info Intel HD Graphics 630 (24EU) 350-1100MHz [65W] (27.20.100.8853)

Media SDK QuickSyncVideo (hardware encoder) PG, 1st GPU, API v1.33

Async Depth 4 frames

Buffer Memory d3d9, 3 input buffer, 15 work buffer

Input Info AviSynth+ 3.7.0 r3382(yv12)->nv12 [AVX2], 1920x1080, 24/1 fps

AVSync cfr

Output H.264/AVC High @ Level 4

1920x1080p 1:1 24.000fps (24/1fps)

Target usage 4 - balanced

Encode Mode Constant QP (CQP)

CQP Value I:24 P:26 B:27

QP Limit min: none, max: none

Trellis Auto

Ref frames 3 frames

Bframes 3 frames, B-pyramid: on

Max GOP Length 240 frames

Ext. Features QPOffset

encoded 180 frames, 121.29 fps, 29.12 kbps, 0.03 MB

encode time 0:00:01, CPULoad: 55.2

frame type IDR 1

frame type I 2, total size 0.01 MB

frame type P 45, total size 0.01 MB

frame type B 134, total size 0.01 MB

Start: 下午 4:21:35

End: 下午 4:21:39

Duration: 00:00:03

General

Complete name : E:\render\0001-0180_temp\0001-0180_h264_out.h264

Format : AVC

Format/Info : Advanced Video Codec

File size : 26.7 KiB

Duration : 7 s 500 ms

Overall bit rate : 29.1 kb/s

Video

Format : AVC

Format/Info : Advanced Video Codec

Format profile : High@L4

Format settings : CABAC / 3 Ref Frames

Format, CABAC : Yes

Format, Reference frames : 3 frames

Duration : 7 s 500 ms

Bit rate : 29.1 kb/s

Width : 1 920 pixels

Height : 1 080 pixels

Display aspect ratio : 16:9

Frame rate : 24.000 FPS

Color space : YUV

Chroma subsampling : 4:2:0

Bit depth : 8 bits

Scan type : Progressive

Bits/(Pixel*Frame) : 0.001

Stream size : 26.7 KiB (100%)

------------------------- Error Muxing to FLV -------------------------

Muxing to FLV returned error exit code: 1 (0x1)

It's unclear what the exit code means, in case it's a Windows system error then it possibly means:

函数不正确。

---------------------------- Muxing to FLV ----------------------------

ffmpeg N-100448-gab6a56773f-x64-gcc10.2.0 Patman

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\ffmpeg.exe -i E:\render\0001-0180_temp\0001-0180_h264_out.h264 -i E:\render\0001-0180.mp4 -map 0:v -map 1:1 -c:v copy -c:a copy -y -hide_banner -strict -2 E:\render\0001-0180_h264.flv

Input #0, h264, from 'E:\render\0001-0180_temp\0001-0180_h264_out.h264':

Duration: N/A, bitrate: N/A

Stream #0:0: Video: h264 (High), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], 24.08 fps, 24 tbr, 1200k tbn, 48 tbc

Input #1, mov,mp4,m4a,3gp,3g2,mj2, from 'E:\render\0001-0180.mp4':

Metadata:

major_brand : isom

minor_version : 1

compatible_brands: isomavc1

creation_time : 2021-02-19T08:18:32.000000Z

Duration: 00:00:07.50, start: 0.000000, bitrate: 243 kb/s

Stream #1:0(und): Video: h264 (High) (avc1 / 0x31637661), yuv420p, 1920x1080 [SAR 1:1 DAR 16:9], 53 kb/s, 24 fps, 24 tbr, 96 tbn, 48 tbc (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream #1:1(und): Audio: aac (LC) (mp4a / 0x6134706D), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Output #0, flv, to 'E:\render\0001-0180_h264.flv':

Metadata:

encoder : Lavf58.65.100

Stream #0:0: Video: h264 (High) ([7][0][0][0] / 0x0007), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], q=2-31, 24.08 fps, 24 tbr, 1k tbn, 1200k tbc

Stream #0:1(und): Audio: aac (LC) ([10][0][0][0] / 0x000A), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream mapping:

Stream #0:0 -> #0:0 (copy)

Stream #1:1 -> #0:1 (copy)

Press [q] to stop, [?] for help

[flv @ 000001e5315265c0] Timestamps are unset in a packet for stream 0. This is deprecated and will stop working in the future. Fix your code to set the timestamps properly

[flv @ 000001e5315265c0] Packet is missing PTS

av_interleaved_write_frame(): Invalid argument

video:3kB audio:0kB subtitle:0kB other streams:0kB global headers:0kB muxing overhead: unknown

Conversion failed!

---------------------------- Muxing to FLV ----------------------------

ffmpeg N-100448-gab6a56773f-x64-gcc10.2.0 Patman

D:\download\StaxRip-x64-2.1.8.0-Stable\Apps\FrameServer\AviSynth\ffmpeg.exe -i E:\render\0001-0180_temp\0001-0180_h264_out.h264 -i E:\render\0001-0180.mp4 -map 0:v -map 1:1 -c:v copy -c:a copy -y -hide_banner -strict -2 E:\render\0001-0180_h264.flv

Input #0, h264, from 'E:\render\0001-0180_temp\0001-0180_h264_out.h264':

Duration: N/A, bitrate: N/A

Stream #0:0: Video: h264 (High), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], 24.08 fps, 24 tbr, 1200k tbn, 48 tbc

Input #1, mov,mp4,m4a,3gp,3g2,mj2, from 'E:\render\0001-0180.mp4':

Metadata:

major_brand : isom

minor_version : 1

compatible_brands: isomavc1

creation_time : 2021-02-19T08:18:32.000000Z

Duration: 00:00:07.50, start: 0.000000, bitrate: 243 kb/s

Stream #1:0(und): Video: h264 (High) (avc1 / 0x31637661), yuv420p, 1920x1080 [SAR 1:1 DAR 16:9], 53 kb/s, 24 fps, 24 tbr, 96 tbn, 48 tbc (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream #1:1(und): Audio: aac (LC) (mp4a / 0x6134706D), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Output #0, flv, to 'E:\render\0001-0180_h264.flv':

Metadata:

encoder : Lavf58.65.100

Stream #0:0: Video: h264 (High) ([7][0][0][0] / 0x0007), yuv420p(progressive), 1920x1080 [SAR 1:1 DAR 16:9], q=2-31, 24.08 fps, 24 tbr, 1k tbn, 1200k tbc

Stream #0:1(und): Audio: aac (LC) ([10][0][0][0] / 0x000A), 48000 Hz, stereo, fltp, 185 kb/s (default)

Metadata:

creation_time : 2021-02-19T08:18:32.000000Z

vendor_id : [0][0][0][0]

Stream mapping:

Stream #0:0 -> #0:0 (copy)

Stream #1:1 -> #0:1 (copy)

Press [q] to stop, [?] for help

[flv @ 000001e5315265c0] Timestamps are unset in a packet for stream 0. This is deprecated and will stop working in the future. Fix your code to set the timestamps properly

[flv @ 000001e5315265c0] Packet is missing PTS

av_interleaved_write_frame(): Invalid argument

video:3kB audio:0kB subtitle:0kB other streams:0kB global headers:0kB muxing overhead: unknown

Conversion failed!

Start: 下午 4:21:39

End: 下午 4:21:40

Duration: 00:00:00

```

**How to reproduce the issue**

1. Using 'Intel-h.264' encoder. (All encoder can reproduce too)

2. 'AviSynth or VapourSynth' being used.

3. Set container to FLV

4. Set audio to "#1" Copy/Mux

5. Start the job

**Provide information**

- Used StaxRip version: 2.1.8.0

**Notes before posting**

**Additional context**

This error will be raised when use all ffmpeg container(maybe, but FLV of course), all source video file format, all encoder/decoder.

**Please be as clear and as detailed as possible**

|

non_defect

|

timestamps are unset in a packet for stream describe the bug when staxrip export a video using flv ffmpeg will error timestamps are unset in a packet for stream this is deprecated and will stop working in the future fix your code to set the timestamps properly packet is missing pts av interleaved write frame invalid argument video audio subtitle other streams global headers muxing overhead unknown conversion failed full log system environment staxrip windows windows pro language chinese simplified china cpu intel r core tm cpu gpu intel r hd graphics resolution x dpi code page media info source file e render general complete name e render format mpeg format profile base media codec id isom isom file size kib duration s ms overall bit rate mode variable overall bit rate kb s encoded date utc tagged date utc video id format avc format info advanced video codec format profile high format settings cabac ref frames format cabac yes format reference frames frames codec id codec id info advanced video coding duration s ms bit rate kb s maximum bit rate kb s width pixels height pixels display aspect ratio frame rate mode constant frame rate fps color space yuv chroma subsampling bit depth bits scan type progressive bits pixel frame stream size kib encoded date utc tagged date utc codec configuration box avcc audio id format aac lc format info advanced audio codec low complexity codec id duration s ms bit rate mode variable bit rate kb s maximum bit rate kb s channel s channels channel layout l r sampling rate khz frame rate fps spf compression mode lossy stream size kib encoded date utc tagged date utc demux audio patman d download staxrip stable apps support exe single out e render temp e render start 下午 end 下午 duration general complete name e render temp format mpeg format profile base media codec id isom isom file size kib duration s ms overall bit rate mode variable overall bit rate kb s encoded date utc tagged date utc audio id format aac lc format info advanced audio codec low complexity format profile aac codec id duration s ms bit rate mode variable bit rate kb s nominal bit rate b s maximum bit rate kb s channel s channels channel layout l r sampling rate khz frame rate fps spf compression mode lossy stream size kib encoded date utc tagged date utc configuration template automatic workflow video encoder profile intel h container muxer profile ffmpeg flv avisynth script addautoloaddir d download staxrip stable apps frameserver avisynth plugins loadplugin d download staxrip stable apps plugins dual l smash works lsmashsource dll lsmashvideosource e render source script info width height frames time framerate format target script info width height frames time framerate format video encoding qsvenc d download staxrip stable apps encoders qsvenc exe avsdll d download staxrip stable apps frameserver avisynth avisynth dll fallback rc cqp i e render temp avs o e render temp out e render temp out qsvencc by rigaya nov vc win os windows cpu info intel core gpu info intel hd graphics media sdk quicksyncvideo hardware encoder pg gpu api async depth frames buffer memory input buffer work buffer input info avisynth fps avsync cfr output h avc high level target usage balanced encode mode constant qp cqp cqp value i p b qp limit min none max none trellis auto ref frames frames bframes frames b pyramid on max gop length frames ext features qpoffset encoded frames fps kbps mb encode time cpuload frame type idr frame type i total size mb frame type p total size mb frame type b total size mb start 下午 end 下午 duration general complete name e render temp out format avc format info advanced video codec file size kib duration s ms overall bit rate kb s video format avc format info advanced video codec format profile high format settings cabac ref frames format cabac yes format reference frames frames duration s ms bit rate kb s width pixels height pixels display aspect ratio frame rate fps color space yuv chroma subsampling bit depth bits scan type progressive bits pixel frame stream size kib error muxing to flv muxing to flv returned error exit code it s unclear what the exit code means in case it s a windows system error then it possibly means 函数不正确。 muxing to flv ffmpeg n patman d download staxrip stable apps frameserver avisynth ffmpeg exe i e render temp out i e render map v map c v copy c a copy y hide banner strict e render flv input from e render temp out duration n a bitrate n a stream video high progressive fps tbr tbn tbc input mov from e render metadata major brand isom minor version compatible brands creation time duration start bitrate kb s stream und video high kb s fps tbr tbn tbc default metadata creation time vendor id stream und audio aac lc hz stereo fltp kb s default metadata creation time vendor id output flv to e render flv metadata encoder stream video high progressive q fps tbr tbn tbc stream und audio aac lc hz stereo fltp kb s default metadata creation time vendor id stream mapping stream copy stream copy press to stop for help timestamps are unset in a packet for stream this is deprecated and will stop working in the future fix your code to set the timestamps properly packet is missing pts av interleaved write frame invalid argument video audio subtitle other streams global headers muxing overhead unknown conversion failed muxing to flv ffmpeg n patman d download staxrip stable apps frameserver avisynth ffmpeg exe i e render temp out i e render map v map c v copy c a copy y hide banner strict e render flv input from e render temp out duration n a bitrate n a stream video high progressive fps tbr tbn tbc input mov from e render metadata major brand isom minor version compatible brands creation time duration start bitrate kb s stream und video high kb s fps tbr tbn tbc default metadata creation time vendor id stream und audio aac lc hz stereo fltp kb s default metadata creation time vendor id output flv to e render flv metadata encoder stream video high progressive q fps tbr tbn tbc stream und audio aac lc hz stereo fltp kb s default metadata creation time vendor id stream mapping stream copy stream copy press to stop for help timestamps are unset in a packet for stream this is deprecated and will stop working in the future fix your code to set the timestamps properly packet is missing pts av interleaved write frame invalid argument video audio subtitle other streams global headers muxing overhead unknown conversion failed start 下午 end 下午 duration how to reproduce the issue using intel h encoder all encoder can reproduce too avisynth or vapoursynth being used set container to flv set audio to copy mux start the job provide information used staxrip version notes before posting additional context this error will be raised when use all ffmpeg container maybe but flv of course all source video file format all encoder decoder please be as clear and as detailed as possible

| 0

|

60,739

| 17,023,508,142

|

IssuesEvent

|

2021-07-03 02:23:13

|

tomhughes/trac-tickets

|

https://api.github.com/repos/tomhughes/trac-tickets

|

closed

|

Execution abort when run from script with HOME no set

|

Component: gosmore Priority: major Resolution: fixed Type: defect

|

**[Submitted to the original trac issue database at 5.20pm, Saturday, 14th November 2009]**

with following error

terminate called after throwing an instance of 'std::logic_error'

what(): basic_string::_S_construct NULL not valid

Aborted

fix is attached

|

1.0

|

Execution abort when run from script with HOME no set - **[Submitted to the original trac issue database at 5.20pm, Saturday, 14th November 2009]**

with following error

terminate called after throwing an instance of 'std::logic_error'

what(): basic_string::_S_construct NULL not valid

Aborted

fix is attached

|

defect

|

execution abort when run from script with home no set with following error terminate called after throwing an instance of std logic error what basic string s construct null not valid aborted fix is attached

| 1

|

69,051

| 22,096,110,477

|

IssuesEvent

|

2022-06-01 10:16:01

|

vector-im/element-android

|

https://api.github.com/repos/vector-im/element-android

|

opened

|

not possible to verify Android Version

|

T-Defect

|

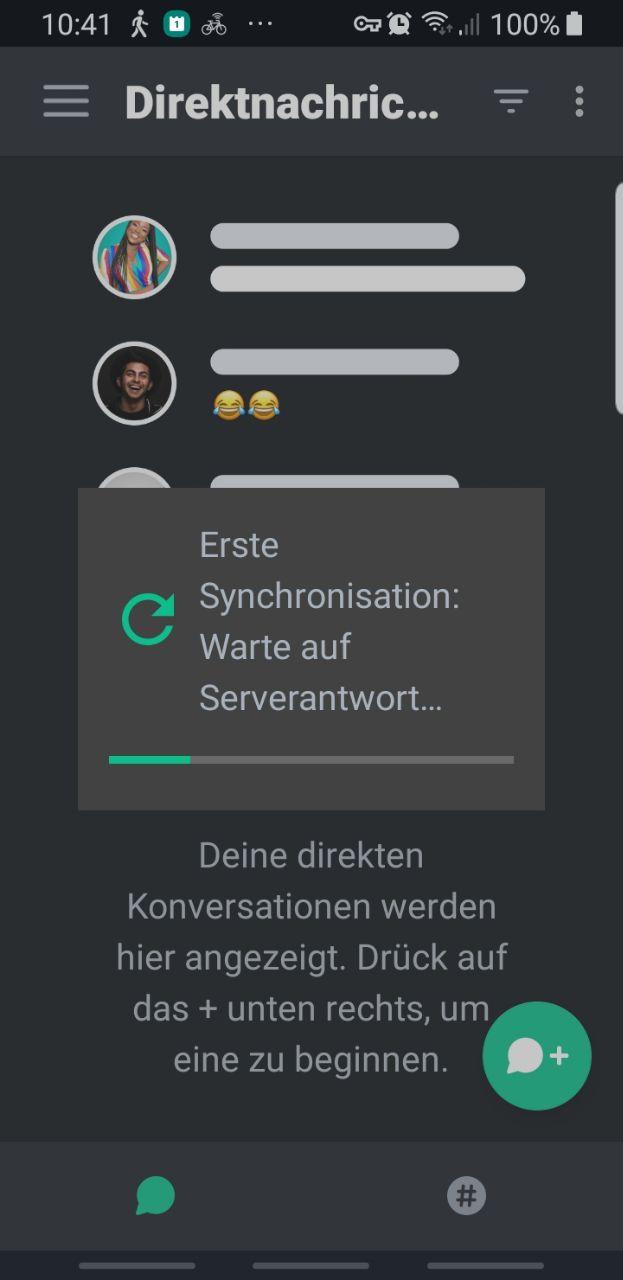

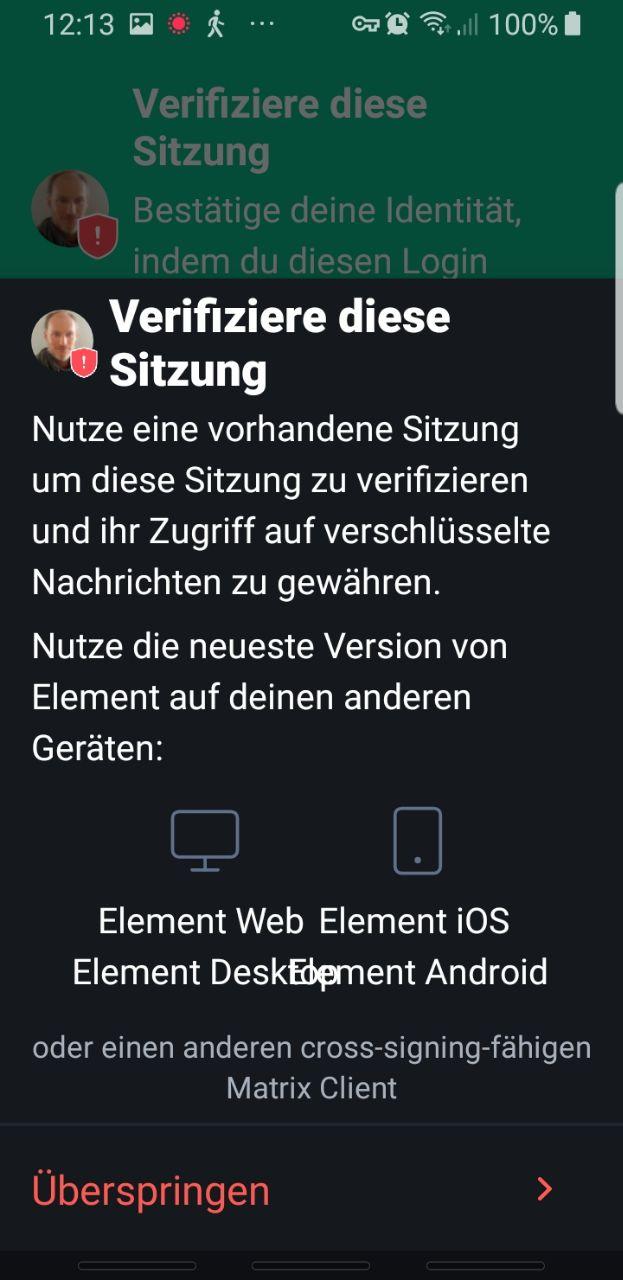

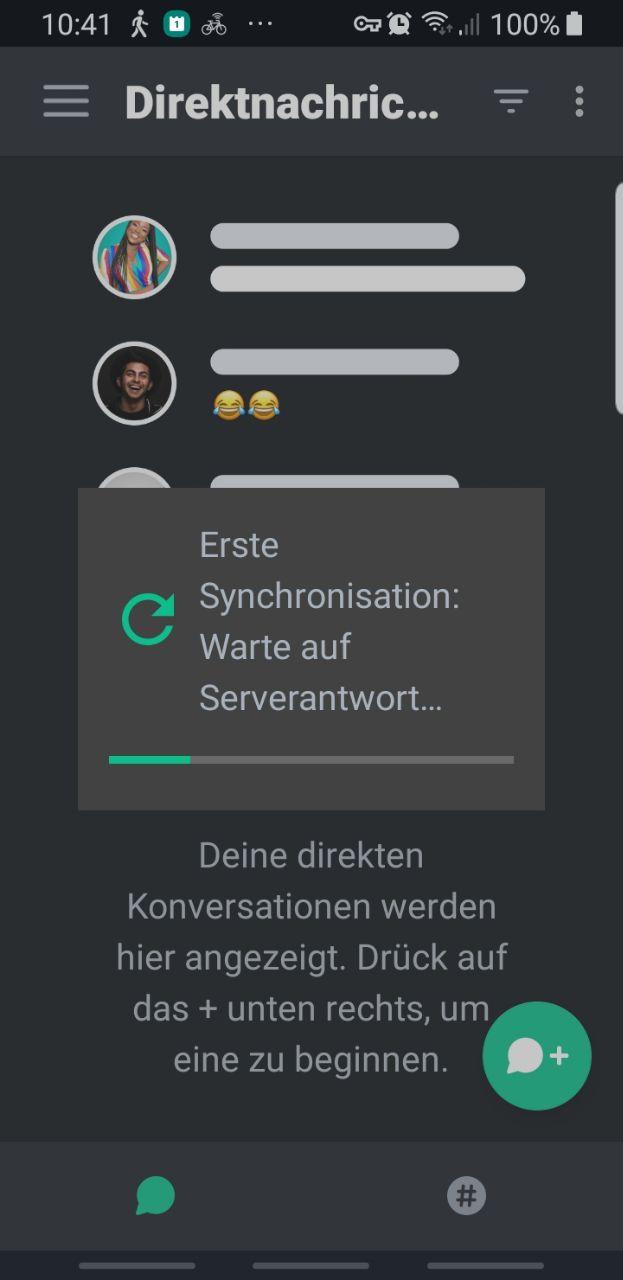

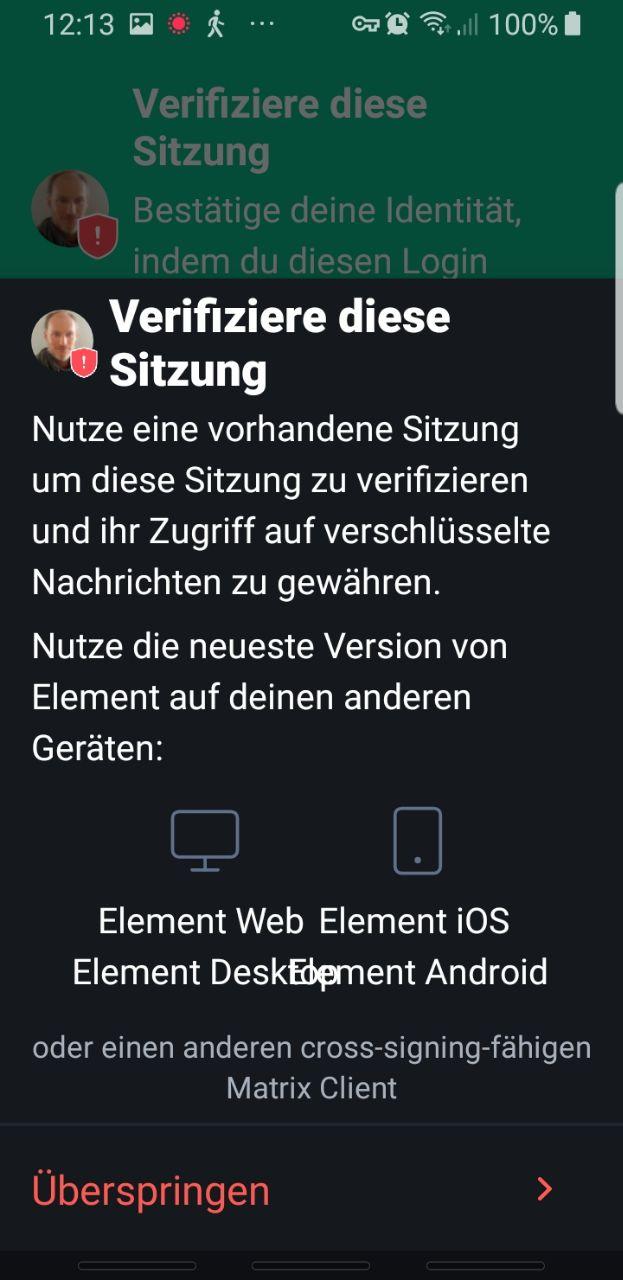

### Steps to reproduce

### Steps to reproduce

1. for weeks i enabled a other Beta-Feature at Linux Client Element. works very well

2. then i enabled this other Beta-Feature at Android Client Element. not works. it waits for server antword for days, and it still wait at the moment.

3. i disabled the beta at linux desktiop (no effect)

4. i reinstalled the andoid version (no effect) it still waits for sever response

5. and at same time it tries verification (Desktop as for QR-Code or Emoji Verification) but Android is in deadLock. to problems at same times seems to be not possible to solve for it. so i stack with to problems. and i cant use Android Element not any more

BTW the german cumunity also now it. i long time user in this room: #german:matrix.org

### Outcome

verification the android element version

its blocked (dedlock) because android could not handle this two problems as same time (see description above)

### Operating system

Ubuntu and Andoid

### Application version

Element newest Version at both devices

Desktop Version:

Element version: 1.10.13

Olm version: 3.2.8

### How did you install the app?

the Andoid is from Google Play Store

### Homeserver

tchncs.de

### Will you send logs?

Yes but the android is blocked, so i cant send the anroid logs.

but i have sended the linux-Desktop logs here: https://github.com/vector-im/element-web/issues/22387

### Outcome

#### What did you expect?

- waiting for server-antword will end a day. but it stays already for many days

- allows me to identify my desktop, but its blocked by the other message. (deadlock)

#### What happened instead?

all is blocked by the other message, waiting for server-answer (deadlock).

please read also here: https://github.com/vector-im/element-web/issues/22387

### Your phone model

Samsung S8

### Operating system version

Ubuntu and Andoid Version 9

### Application version and app store

Element newest Verson at both devices

### Homeserver

tchncs.de

### Will you send logs?

Yes

### Are you willing to provide a PR?

Yes

|

1.0

|

not possible to verify Android Version - ### Steps to reproduce

### Steps to reproduce

1. for weeks i enabled a other Beta-Feature at Linux Client Element. works very well

2. then i enabled this other Beta-Feature at Android Client Element. not works. it waits for server antword for days, and it still wait at the moment.

3. i disabled the beta at linux desktiop (no effect)

4. i reinstalled the andoid version (no effect) it still waits for sever response

5. and at same time it tries verification (Desktop as for QR-Code or Emoji Verification) but Android is in deadLock. to problems at same times seems to be not possible to solve for it. so i stack with to problems. and i cant use Android Element not any more

BTW the german cumunity also now it. i long time user in this room: #german:matrix.org

### Outcome

verification the android element version

its blocked (dedlock) because android could not handle this two problems as same time (see description above)

### Operating system

Ubuntu and Andoid

### Application version

Element newest Version at both devices

Desktop Version:

Element version: 1.10.13

Olm version: 3.2.8

### How did you install the app?

the Andoid is from Google Play Store

### Homeserver

tchncs.de

### Will you send logs?

Yes but the android is blocked, so i cant send the anroid logs.

but i have sended the linux-Desktop logs here: https://github.com/vector-im/element-web/issues/22387

### Outcome

#### What did you expect?

- waiting for server-antword will end a day. but it stays already for many days

- allows me to identify my desktop, but its blocked by the other message. (deadlock)

#### What happened instead?

all is blocked by the other message, waiting for server-answer (deadlock).

please read also here: https://github.com/vector-im/element-web/issues/22387

### Your phone model

Samsung S8

### Operating system version

Ubuntu and Andoid Version 9

### Application version and app store

Element newest Verson at both devices

### Homeserver

tchncs.de

### Will you send logs?

Yes

### Are you willing to provide a PR?

Yes

|

defect

|

not possible to verify android version steps to reproduce steps to reproduce for weeks i enabled a other beta feature at linux client element works very well then i enabled this other beta feature at android client element not works it waits for server antword for days and it still wait at the moment i disabled the beta at linux desktiop no effect i reinstalled the andoid version no effect it still waits for sever response and at same time it tries verification desktop as for qr code or emoji verification but android is in deadlock to problems at same times seems to be not possible to solve for it so i stack with to problems and i cant use android element not any more btw the german cumunity also now it i long time user in this room german matrix org outcome verification the android element version its blocked dedlock because android could not handle this two problems as same time see description above operating system ubuntu and andoid application version element newest version at both devices desktop version element version olm version how did you install the app the andoid is from google play store homeserver tchncs de will you send logs yes but the android is blocked so i cant send the anroid logs but i have sended the linux desktop logs here outcome what did you expect waiting for server antword will end a day but it stays already for many days allows me to identify my desktop but its blocked by the other message deadlock what happened instead all is blocked by the other message waiting for server answer deadlock please read also here your phone model samsung operating system version ubuntu and andoid version application version and app store element newest verson at both devices homeserver tchncs de will you send logs yes are you willing to provide a pr yes

| 1

|

823,414

| 31,019,151,472

|

IssuesEvent

|

2023-08-10 02:48:55

|

markgravity/golang-ic

|

https://api.github.com/repos/markgravity/golang-ic

|

closed

|

[Integrate] As a user, I can upload a keyword file.

|

type: feature priority: medium

|

## Acceptance Criteria

- Disable Upload Button when the File Input is emptied

- After clicking on the Upload Button, use this #7 to get upload the keyword and update the table when it's done

## Design

|

1.0

|

[Integrate] As a user, I can upload a keyword file. - ## Acceptance Criteria

- Disable Upload Button when the File Input is emptied

- After clicking on the Upload Button, use this #7 to get upload the keyword and update the table when it's done

## Design

|

non_defect

|

as a user i can upload a keyword file acceptance criteria disable upload button when the file input is emptied after clicking on the upload button use this to get upload the keyword and update the table when it s done design

| 0

|

184,144

| 14,273,087,770

|

IssuesEvent

|

2020-11-21 19:56:43

|

OllisGit/OctoPrint-SpoolManager

|

https://api.github.com/repos/OllisGit/OctoPrint-SpoolManager

|

closed

|

Bed temperature?

|

status: markedForAutoClose status: waitingForTestFeedback type: enhancement

|

Hey there, just saw you are working on a own spool manager.

I did open a request for filament manager, so I do here also :)

I think of starting working with offsets for temp, so I don't need to chance the temp in the slicer and maybe reprint with a wrong temp.

So it would be nice to implement temp offsets for hotend(s) and bed.

Thanks in advance and it looks interesting :) do you plan to migrate the collection from filament manager?

|

1.0

|

Bed temperature? - Hey there, just saw you are working on a own spool manager.

I did open a request for filament manager, so I do here also :)

I think of starting working with offsets for temp, so I don't need to chance the temp in the slicer and maybe reprint with a wrong temp.

So it would be nice to implement temp offsets for hotend(s) and bed.

Thanks in advance and it looks interesting :) do you plan to migrate the collection from filament manager?

|

non_defect

|

bed temperature hey there just saw you are working on a own spool manager i did open a request for filament manager so i do here also i think of starting working with offsets for temp so i don t need to chance the temp in the slicer and maybe reprint with a wrong temp so it would be nice to implement temp offsets for hotend s and bed thanks in advance and it looks interesting do you plan to migrate the collection from filament manager

| 0

|

70,401

| 23,154,055,428

|

IssuesEvent

|

2022-07-29 11:11:59

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

closed

|

Missing field in addScheduledExecutorConfig protocol definition

|

Type: Defect Team: Client Team: Core Source: Internal Module: IScheduledExecutor Module: Config

|

**CapacityPolicy** field is missing in addScheduledExecutorConfig protocol definition

(https://github.com/hazelcast/hazelcast-client-protocol/blob/master/protocol-definitions/DynamicConfig.yaml#L550-L610)

The following configuration is taken from [hazelcast-full-example.yaml](https://github.com/hazelcast/hazelcast/blob/master/hazelcast/src/main/resources/hazelcast-full-example.yaml#L881-L890)

Except **capacity-policy**, all other fields are available in the protocol definition.

```

scheduled-executor-service:

default:

pool-size: 16

durability: 1

capacity: 100

capacity-policy: PER_NODE

split-brain-protection-ref: splitBrainProtectionRuleWithThreeNodes

merge-policy:

batch-size: 100

class-name: PutIfAbsentMergePolicy

```

|

1.0

|

Missing field in addScheduledExecutorConfig protocol definition - **CapacityPolicy** field is missing in addScheduledExecutorConfig protocol definition

(https://github.com/hazelcast/hazelcast-client-protocol/blob/master/protocol-definitions/DynamicConfig.yaml#L550-L610)

The following configuration is taken from [hazelcast-full-example.yaml](https://github.com/hazelcast/hazelcast/blob/master/hazelcast/src/main/resources/hazelcast-full-example.yaml#L881-L890)

Except **capacity-policy**, all other fields are available in the protocol definition.

```

scheduled-executor-service:

default:

pool-size: 16

durability: 1

capacity: 100

capacity-policy: PER_NODE

split-brain-protection-ref: splitBrainProtectionRuleWithThreeNodes

merge-policy:

batch-size: 100

class-name: PutIfAbsentMergePolicy

```

|

defect

|

missing field in addscheduledexecutorconfig protocol definition capacitypolicy field is missing in addscheduledexecutorconfig protocol definition the following configuration is taken from except capacity policy all other fields are available in the protocol definition scheduled executor service default pool size durability capacity capacity policy per node split brain protection ref splitbrainprotectionrulewiththreenodes merge policy batch size class name putifabsentmergepolicy

| 1

|

39,894

| 9,740,966,946

|

IssuesEvent

|

2019-06-02 02:57:51

|

WildBamaBoy/minecraft-comes-alive

|

https://api.github.com/repos/WildBamaBoy/minecraft-comes-alive

|

closed

|

Villagers babies wont grow!

|

1.12 defect

|

I have a kid, he grew Up and I got him married. I gave him cake and his wife got a baby. But the baby never grows, It just stays in her hand all day. I've been playing Minecraft Comes Alive for About 3 days and Nothing happened. I can't Create a "Generation" If I can't get the cycle to repeat indefinitely. This also happens with Unrelated villagers, Babies stay in hands all day.

|

1.0

|

Villagers babies wont grow! - I have a kid, he grew Up and I got him married. I gave him cake and his wife got a baby. But the baby never grows, It just stays in her hand all day. I've been playing Minecraft Comes Alive for About 3 days and Nothing happened. I can't Create a "Generation" If I can't get the cycle to repeat indefinitely. This also happens with Unrelated villagers, Babies stay in hands all day.

|

defect

|

villagers babies wont grow i have a kid he grew up and i got him married i gave him cake and his wife got a baby but the baby never grows it just stays in her hand all day i ve been playing minecraft comes alive for about days and nothing happened i can t create a generation if i can t get the cycle to repeat indefinitely this also happens with unrelated villagers babies stay in hands all day

| 1

|

57,427

| 15,780,340,816

|

IssuesEvent

|

2021-04-01 09:50:11

|

hazelcast/hazelcast

|

https://api.github.com/repos/hazelcast/hazelcast

|

opened

|

ClassCastException in JetService when starting with no client port

|

Source: Internal Team: Core Type: Defect

|

When starting hazelcast with advanced networking config and no client listening port, [the `ClientEngine` service implementation is a `NoOpClientEngine`](https://github.com/hazelcast/hazelcast/blob/9e754ba44e20555f538ca49a34f8ad3b1ccf5c99/hazelcast/src/main/java/com/hazelcast/instance/impl/Node.java#L263). However `JetService` [expects the implementation](https://github.com/hazelcast/hazelcast/blob/2810a5e9d4bf5b8ebf19e4f486c864d103fd8866/hazelcast/src/main/java/com/hazelcast/jet/impl/JetService.java#L123) to be a `ClientEngineImpl` -> `ClassCastException` is thrown. This issue does not fail node startup because service initialization errors are [caught and logged in `SEVERE` level](https://github.com/hazelcast/hazelcast/blob/40d555dc1110da9f63fd66f504c83d4382d0384b/hazelcast/src/main/java/com/hazelcast/spi/impl/servicemanager/impl/ServiceManagerImpl.java#L234-L236).

|

1.0

|

ClassCastException in JetService when starting with no client port - When starting hazelcast with advanced networking config and no client listening port, [the `ClientEngine` service implementation is a `NoOpClientEngine`](https://github.com/hazelcast/hazelcast/blob/9e754ba44e20555f538ca49a34f8ad3b1ccf5c99/hazelcast/src/main/java/com/hazelcast/instance/impl/Node.java#L263). However `JetService` [expects the implementation](https://github.com/hazelcast/hazelcast/blob/2810a5e9d4bf5b8ebf19e4f486c864d103fd8866/hazelcast/src/main/java/com/hazelcast/jet/impl/JetService.java#L123) to be a `ClientEngineImpl` -> `ClassCastException` is thrown. This issue does not fail node startup because service initialization errors are [caught and logged in `SEVERE` level](https://github.com/hazelcast/hazelcast/blob/40d555dc1110da9f63fd66f504c83d4382d0384b/hazelcast/src/main/java/com/hazelcast/spi/impl/servicemanager/impl/ServiceManagerImpl.java#L234-L236).

|

defect

|

classcastexception in jetservice when starting with no client port when starting hazelcast with advanced networking config and no client listening port however jetservice to be a clientengineimpl classcastexception is thrown this issue does not fail node startup because service initialization errors are

| 1

|

14,447

| 2,812,163,567

|

IssuesEvent

|

2015-05-18 06:27:13

|

minux/go-tour

|

https://api.github.com/repos/minux/go-tour

|

closed

|

Go Tour shows blank white page

|

auto-migrated Priority-Medium Type-Defect

|

```

What steps will reproduce the problem?

1. Open Go Tour page in Firefox http://tour.golang.org/list

2. I may have some extensions blocking ...something?

What is the expected output? What do you see instead?

Expected output: The go tour

What I see: blank white page

What version of the product are you using? On what operating system?

Using Firefox 33.1 on Mac OS 10.10

Please provide any additional information below.

I whitelist cookies

I have AdBlocking extensions installed

```

Original issue reported on code.google.com by `josh.lub...@gmail.com` on 5 Dec 2014 at 7:32

Attachments:

* [Screen Shot 2014-12-04 at 11.01.27 PM.png](https://storage.googleapis.com/google-code-attachments/go-tour/issue-186/comment-0/Screen Shot 2014-12-04 at 11.01.27 PM.png)

|

1.0

|

Go Tour shows blank white page - ```

What steps will reproduce the problem?

1. Open Go Tour page in Firefox http://tour.golang.org/list

2. I may have some extensions blocking ...something?

What is the expected output? What do you see instead?

Expected output: The go tour

What I see: blank white page

What version of the product are you using? On what operating system?

Using Firefox 33.1 on Mac OS 10.10

Please provide any additional information below.

I whitelist cookies

I have AdBlocking extensions installed

```

Original issue reported on code.google.com by `josh.lub...@gmail.com` on 5 Dec 2014 at 7:32

Attachments:

* [Screen Shot 2014-12-04 at 11.01.27 PM.png](https://storage.googleapis.com/google-code-attachments/go-tour/issue-186/comment-0/Screen Shot 2014-12-04 at 11.01.27 PM.png)

|

defect

|

go tour shows blank white page what steps will reproduce the problem open go tour page in firefox i may have some extensions blocking something what is the expected output what do you see instead expected output the go tour what i see blank white page what version of the product are you using on what operating system using firefox on mac os please provide any additional information below i whitelist cookies i have adblocking extensions installed original issue reported on code google com by josh lub gmail com on dec at attachments shot at pm png

| 1

|

55,710

| 14,643,960,843

|

IssuesEvent

|

2020-12-25 19:56:24

|

primefaces/primefaces

|

https://api.github.com/repos/primefaces/primefaces

|

closed

|

AutoComplete: change event not triggered

|

defect needs investigation

|

**Describe the defect**

Autocomplete does no longer trigger the change ajax event. Though in the same setup inputText does trigger change ajax event. Using Primefaces 8.0, autocomplete triggers change ajax event.

Autocomplete still triggers itemSelect if using a completeMethod, and also triggers blur event. It's just change event that is effected. I guess the problem is caused by https://github.com/primefaces/primefaces/commit/6876cfc31ac18229a228f6f61924d9b852fc4224

**Environment:**

- PF Version: _8.0.5_

- JSF + version : _Jakarta Faces 2.3.14_

- Affected browsers: _ALL_

**To Reproduce**

Steps to reproduce the behavior:

1. Change value in inputText and tab, label is updated

2. Change value in autoComplete and tab, label is not updated

**Expected behavior**

Typing text in an autocomplete and focusing other component should trigger a change event.

**Example XHTML**

```html

<h:form>

<p:inputText value="#{autocompleteTestBean.value}">

<p:ajax event="change" update="val"/>

</p:inputText>

<p:autoComplete value="#{autocompleteTestBean.value}">

<p:ajax event="change" update="val"/>

</p:autoComplete>

<h:outputLabel id="val" value="#{autocompleteTestBean.value}"/>

</h:form>

```

**Example Bean**

```java

@Component

@Scope(value = "view")

public class AutocompleteTestBean extends ControllerBean implements Serializable {

@Getter

@Setter

private String value;

}

```

|

1.0

|

AutoComplete: change event not triggered - **Describe the defect**

Autocomplete does no longer trigger the change ajax event. Though in the same setup inputText does trigger change ajax event. Using Primefaces 8.0, autocomplete triggers change ajax event.

Autocomplete still triggers itemSelect if using a completeMethod, and also triggers blur event. It's just change event that is effected. I guess the problem is caused by https://github.com/primefaces/primefaces/commit/6876cfc31ac18229a228f6f61924d9b852fc4224

**Environment:**

- PF Version: _8.0.5_

- JSF + version : _Jakarta Faces 2.3.14_

- Affected browsers: _ALL_

**To Reproduce**

Steps to reproduce the behavior:

1. Change value in inputText and tab, label is updated

2. Change value in autoComplete and tab, label is not updated

**Expected behavior**

Typing text in an autocomplete and focusing other component should trigger a change event.

**Example XHTML**

```html

<h:form>

<p:inputText value="#{autocompleteTestBean.value}">

<p:ajax event="change" update="val"/>

</p:inputText>

<p:autoComplete value="#{autocompleteTestBean.value}">

<p:ajax event="change" update="val"/>

</p:autoComplete>

<h:outputLabel id="val" value="#{autocompleteTestBean.value}"/>

</h:form>

```

**Example Bean**

```java

@Component

@Scope(value = "view")

public class AutocompleteTestBean extends ControllerBean implements Serializable {

@Getter

@Setter

private String value;

}

```

|

defect

|

autocomplete change event not triggered describe the defect autocomplete does no longer trigger the change ajax event though in the same setup inputtext does trigger change ajax event using primefaces autocomplete triggers change ajax event autocomplete still triggers itemselect if using a completemethod and also triggers blur event it s just change event that is effected i guess the problem is caused by environment pf version jsf version jakarta faces affected browsers all to reproduce steps to reproduce the behavior change value in inputtext and tab label is updated change value in autocomplete and tab label is not updated expected behavior typing text in an autocomplete and focusing other component should trigger a change event example xhtml html example bean java component scope value view public class autocompletetestbean extends controllerbean implements serializable getter setter private string value

| 1

|

10,696

| 2,622,180,755

|

IssuesEvent

|

2015-03-04 00:18:43

|

byzhang/leveldb

|

https://api.github.com/repos/byzhang/leveldb

|

opened

|

Leveldb keeps generating small sst file

|

auto-migrated Priority-Medium Type-Defect

|

```

here is leveldb.stats ouputs:

Compactions

Level Files Size(MB) Time(sec) Read(MB) Write(MB)

--------------------------------------------------

0 0 0 0 0 36

2 0 0 9 0 519

3 30 4 12 594 580

4 530 10070 1187 101893 101892

5 1750 52946 7101 534959 534716

Level-3 has 30 files, but it only has 4MB size. Then these 30 files will be

merged to level-4, but the newly created level-4 sst files is small too, I can

see that with ls command.

This leads to frequently compaction after written 4MB data.

What is the expected output? What do you see instead?

small sst file should be merged.

What version of the product are you using? On what operating system?

Linux

Please provide any additional information below.

kTargetFileSize = 32 * 1048576

```

Original issue reported on code.google.com by `wuzuy...@gmail.com` on 15 May 2013 at 2:08

|

1.0

|

Leveldb keeps generating small sst file - ```

here is leveldb.stats ouputs:

Compactions

Level Files Size(MB) Time(sec) Read(MB) Write(MB)

--------------------------------------------------

0 0 0 0 0 36

2 0 0 9 0 519

3 30 4 12 594 580

4 530 10070 1187 101893 101892

5 1750 52946 7101 534959 534716

Level-3 has 30 files, but it only has 4MB size. Then these 30 files will be

merged to level-4, but the newly created level-4 sst files is small too, I can

see that with ls command.

This leads to frequently compaction after written 4MB data.

What is the expected output? What do you see instead?

small sst file should be merged.

What version of the product are you using? On what operating system?

Linux

Please provide any additional information below.

kTargetFileSize = 32 * 1048576

```

Original issue reported on code.google.com by `wuzuy...@gmail.com` on 15 May 2013 at 2:08

|

defect

|

leveldb keeps generating small sst file here is leveldb stats ouputs compactions level files size mb time sec read mb write mb level has files but it only has size then these files will be merged to level but the newly created level sst files is small too i can see that with ls command this leads to frequently compaction after written data what is the expected output what do you see instead small sst file should be merged what version of the product are you using on what operating system linux please provide any additional information below ktargetfilesize original issue reported on code google com by wuzuy gmail com on may at

| 1

|

127,661

| 10,477,396,946

|

IssuesEvent

|

2019-09-23 20:48:08

|

cockroachdb/cockroach

|

https://api.github.com/repos/cockroachdb/cockroach

|

closed

|

teamcity: failed tests on release-2.0: acceptance/TestJSONBUpgrade, acceptance/TestNodeRestart

|

C-test-failure O-robot

|

The following tests appear to have failed:

[#1498565](https://teamcity.cockroachdb.com/viewLog.html?buildId=1498565):

```

--- FAIL: acceptance/TestJSONBUpgrade (1.590s)

test_log_scope.go:81: test logs captured to: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestJSONBUpgrade043538790

test_log_scope.go:62: use -show-logs to present logs inline

--- FAIL: acceptance/TestJSONBUpgrade: TestJSONBUpgrade/runMode=local (1.590s)

util_cluster.go:214: dial tcp 127.0.0.1:5432: connect: connection refused

------- Stdout: -------

CockroachDB node starting at 2019-09-19 19:20:17.868747737 +0000 UTC (took 0.5s)

build: CCL v1.1.8 @ 2018/04/23 17:25:48 (go1.8.3)

admin: http://127.0.0.1:38061

sql: postgresql://root@127.0.0.1:36833?application_name=cockroach&sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestJSONBUpgrade/runMode=local/1

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster435143565/1

status: initialized new cluster

clusterID: 8218cad3-ff4c-4b93-9d60-67df75ee3306

nodeID: 1

test logs left over in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestJSONBUpgrade043538790

--- FAIL: acceptance/TestNodeRestart (10.620s)

test_log_scope.go:81: test logs captured to: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestNodeRestart931804666

test_log_scope.go:62: use -show-logs to present logs inline

--- FAIL: acceptance/TestNodeRestart: TestNodeRestart/runMode=local (10.620s)

zchaos_test.go:152: pq: initial connection heartbeat failed: rpc error: code = Unavailable desc = all SubConns are in TransientFailure

------- Stdout: -------

CockroachDB node starting at 2019-09-19 19:20:29.298613072 +0000 UTC (took 0.7s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:44025

sql: postgresql://root@127.0.0.1:42979?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/1

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/cockroach-temp526101900

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1

status: initialized new cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 1

CockroachDB node starting at 2019-09-19 19:20:29.843191891 +0000 UTC (took 0.2s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:32899

sql: postgresql://root@127.0.0.1:40813?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/2

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/cockroach-temp909689111

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2

status: initialized new node, joined pre-existing cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 2

CockroachDB node starting at 2019-09-19 19:20:29.847791231 +0000 UTC (took 0.2s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:34469

sql: postgresql://root@127.0.0.1:36213?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/4

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/4/cockroach-temp045723818

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/4/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/4

status: initialized new node, joined pre-existing cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 3

CockroachDB node starting at 2019-09-19 19:20:29.869450288 +0000 UTC (took 0.2s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:39341

sql: postgresql://root@127.0.0.1:35437?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/3

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/3/cockroach-temp006856657

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/3/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/3

status: initialized new node, joined pre-existing cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 4

CockroachDB node starting at 2019-09-19 19:20:32.738105856 +0000 UTC (took 0.7s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:46771

sql: postgresql://root@127.0.0.1:38469?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/2

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/cockroach-temp215380656

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2

status: restarted pre-existing node

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 2

E190919 19:20:34.025301 4251 acceptance/zchaos_test.go:272 round 1: failed to do consistency check against node 3: Consistency checking is unimplmented and should be re-implemented using SQL

CockroachDB node starting at 2019-09-19 19:20:34.766059624 +0000 UTC (took 0.7s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:37215

sql: postgresql://root@127.0.0.1:39407?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/1

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/cockroach-temp416819515

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1

status: restarted pre-existing node

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 1

test logs left over in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestNodeRestart931804666

--- FAIL: acceptance/TestJSONBUpgrade (1.590s)

test_log_scope.go:81: test logs captured to: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestJSONBUpgrade043538790

test_log_scope.go:62: use -show-logs to present logs inline

--- FAIL: acceptance/TestJSONBUpgrade: TestJSONBUpgrade/runMode=local (1.590s)

util_cluster.go:214: dial tcp 127.0.0.1:5432: connect: connection refused

------- Stdout: -------

CockroachDB node starting at 2019-09-19 19:20:17.868747737 +0000 UTC (took 0.5s)

build: CCL v1.1.8 @ 2018/04/23 17:25:48 (go1.8.3)

admin: http://127.0.0.1:38061

sql: postgresql://root@127.0.0.1:36833?application_name=cockroach&sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestJSONBUpgrade/runMode=local/1

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster435143565/1

status: initialized new cluster

clusterID: 8218cad3-ff4c-4b93-9d60-67df75ee3306

nodeID: 1

test logs left over in: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestJSONBUpgrade043538790

--- FAIL: acceptance/TestNodeRestart (10.620s)

test_log_scope.go:81: test logs captured to: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/logTestNodeRestart931804666

test_log_scope.go:62: use -show-logs to present logs inline

--- FAIL: acceptance/TestNodeRestart: TestNodeRestart/runMode=local (10.620s)

zchaos_test.go:152: pq: initial connection heartbeat failed: rpc error: code = Unavailable desc = all SubConns are in TransientFailure

------- Stdout: -------

CockroachDB node starting at 2019-09-19 19:20:29.298613072 +0000 UTC (took 0.7s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:44025

sql: postgresql://root@127.0.0.1:42979?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/1

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/cockroach-temp526101900

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1

status: initialized new cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 1

CockroachDB node starting at 2019-09-19 19:20:29.843191891 +0000 UTC (took 0.2s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:32899

sql: postgresql://root@127.0.0.1:40813?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/2

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/cockroach-temp909689111

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2

status: initialized new node, joined pre-existing cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 2

CockroachDB node starting at 2019-09-19 19:20:29.847791231 +0000 UTC (took 0.2s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:34469

sql: postgresql://root@127.0.0.1:36213?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/4

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/4/cockroach-temp045723818

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/4/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/4

status: initialized new node, joined pre-existing cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 3

CockroachDB node starting at 2019-09-19 19:20:29.869450288 +0000 UTC (took 0.2s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:39341

sql: postgresql://root@127.0.0.1:35437?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/3

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/3/cockroach-temp006856657

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/3/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/3

status: initialized new node, joined pre-existing cluster

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 4

CockroachDB node starting at 2019-09-19 19:20:32.738105856 +0000 UTC (took 0.7s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:46771

sql: postgresql://root@127.0.0.1:38469?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/2

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/cockroach-temp215380656

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2/extern

store[0]: path=/home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/2

status: restarted pre-existing node

clusterID: f4603b0e-0281-4606-917b-b8d7208fda3f

nodeID: 2

E190919 19:20:34.025301 4251 acceptance/zchaos_test.go:272 round 1: failed to do consistency check against node 3: Consistency checking is unimplmented and should be re-implemented using SQL

CockroachDB node starting at 2019-09-19 19:20:34.766059624 +0000 UTC (took 0.7s)

build: CCL v2.0.7-36-g5987a85 @ 2019/09/19 19:03:20 (go1.10)

admin: http://127.0.0.1:37215

sql: postgresql://root@127.0.0.1:39407?sslmode=disable

logs: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/artifacts/acceptance/TestNodeRestart/runMode=local/1

temp dir: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/cockroach-temp416819515

external I/O path: /home/agent/work/.go/src/github.com/cockroachdb/cockroach/pkg/acceptance/.localcluster535245073/1/extern