Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 4 112 | repo_url stringlengths 33 141 | action stringclasses 3

values | title stringlengths 1 957 | labels stringlengths 4 1.11k | body stringlengths 1 261k | index stringclasses 11

values | text_combine stringlengths 95 261k | label stringclasses 2

values | text stringlengths 96 250k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

122,791 | 16,330,079,915 | IssuesEvent | 2021-05-12 08:08:48 | dotnet/roslyn | https://api.github.com/repos/dotnet/roslyn | closed | Naming of naming styles should reflect their casing | Area-IDE Feature Request Need Design Review | **Version Used**:

Microsoft Visual Studio Enterprise 2019 Int Preview

Version 16.1.0 Preview 3.0 [28823.117.d16.1]

VisualStudio.16.IntPreview/16.1.0-pre.3.0+28823.117.d16.1

Microsoft .NET Framework

Version 4.8.03752

**Steps to Reproduce**:

1. Ctrl Q

2. Type 'C# naming'

3. Enter

4. Open the drop down "R... | 1.0 | Naming of naming styles should reflect their casing - **Version Used**:

Microsoft Visual Studio Enterprise 2019 Int Preview

Version 16.1.0 Preview 3.0 [28823.117.d16.1]

VisualStudio.16.IntPreview/16.1.0-pre.3.0+28823.117.d16.1

Microsoft .NET Framework

Version 4.8.03752

**Steps to Reproduce**:

1. Ctrl Q

2... | design | naming of naming styles should reflect their casing version used microsoft visual studio enterprise int preview version preview visualstudio intpreview pre microsoft net framework version steps to reproduce ctrl q type c naming enter o... | 1 |

113,414 | 14,434,456,750 | IssuesEvent | 2020-12-07 07:06:51 | h-yoshikawa0724/ooui-memo | https://api.github.com/repos/h-yoshikawa0724/ooui-memo | opened | [新規]設計書作成 | basic design | ## 目的

アプリケーションを作るにあたっての、設計書をおこす。

## 作業内容

OOUI や UI プロトタイプの情報をもとに、設計書にする

- ER 図

- 機能一覧

- 構成図 | 1.0 | [新規]設計書作成 - ## 目的

アプリケーションを作るにあたっての、設計書をおこす。

## 作業内容

OOUI や UI プロトタイプの情報をもとに、設計書にする

- ER 図

- 機能一覧

- 構成図 | design | 設計書作成 目的 アプリケーションを作るにあたっての、設計書をおこす。 作業内容 ooui や ui プロトタイプの情報をもとに、設計書にする er 図 機能一覧 構成図 | 1 |

58,535 | 7,160,619,982 | IssuesEvent | 2018-01-28 03:06:24 | CCBlueX/LiquidBounce1.8-Issues | https://api.github.com/repos/CCBlueX/LiquidBounce1.8-Issues | closed | Keybinds GUI | GUI (Design etc) Request | Ich wäre dafür, dass es in der GUI eine Art "Button" gibt, welcher einen direkt die Keybinds setzen lässt.

Oder eventuell mit der mittleren Maustaste auf die Modules drücken und dann erscheint eine virtuelle Tastatur, wo man nur noch die Tasten anklicken muss. (Wie in Null). | 1.0 | Keybinds GUI - Ich wäre dafür, dass es in der GUI eine Art "Button" gibt, welcher einen direkt die Keybinds setzen lässt.

Oder eventuell mit der mittleren Maustaste auf die Modules drücken und dann erscheint eine virtuelle Tastatur, wo man nur noch die Tasten anklicken muss. (Wie in Null). | design | keybinds gui ich wäre dafür dass es in der gui eine art button gibt welcher einen direkt die keybinds setzen lässt oder eventuell mit der mittleren maustaste auf die modules drücken und dann erscheint eine virtuelle tastatur wo man nur noch die tasten anklicken muss wie in null | 1 |

20,031 | 13,633,717,237 | IssuesEvent | 2020-09-24 22:00:59 | dotnet/aspnetcore | https://api.github.com/repos/dotnet/aspnetcore | closed | cannot build using restore.cmd | area-infrastructure | ### If you believe you have an issue that affects the security of the platform please do NOT create an issue and instead email your issue details to secure@microsoft.com. Your report may be eligible for our [bug bounty](https://technet.microsoft.com/en-us/mt764065.aspx) but ONLY if it is reported through email.

### ... | 1.0 | cannot build using restore.cmd - ### If you believe you have an issue that affects the security of the platform please do NOT create an issue and instead email your issue details to secure@microsoft.com. Your report may be eligible for our [bug bounty](https://technet.microsoft.com/en-us/mt764065.aspx) but ONLY if it i... | non_design | cannot build using restore cmd if you believe you have an issue that affects the security of the platform please do not create an issue and instead email your issue details to secure microsoft com your report may be eligible for our but only if it is reported through email describe the bug tried to ... | 0 |

119,614 | 15,586,378,565 | IssuesEvent | 2021-03-18 01:49:27 | UOA-SE701-Group3-2021/3Lancers | https://api.github.com/repos/UOA-SE701-Group3-2021/3Lancers | opened | Hi-fi design of Habit Tracker User Input Menu | design | **Is your feature request related to a problem? Please describe.**

As a frontend developer, I want a detailed prototype of the Habit Tracker User Input Menu I'm implementing, so that I can finalise the styling and logic.

**Describe the solution you'd like**

A hi-fi prototype of the Habit Tracker User Input Menu

... | 1.0 | Hi-fi design of Habit Tracker User Input Menu - **Is your feature request related to a problem? Please describe.**

As a frontend developer, I want a detailed prototype of the Habit Tracker User Input Menu I'm implementing, so that I can finalise the styling and logic.

**Describe the solution you'd like**

A hi-fi p... | design | hi fi design of habit tracker user input menu is your feature request related to a problem please describe as a frontend developer i want a detailed prototype of the habit tracker user input menu i m implementing so that i can finalise the styling and logic describe the solution you d like a hi fi p... | 1 |

41,587 | 5,344,632,965 | IssuesEvent | 2017-02-17 15:02:01 | JDTeamAcetabulum/qna | https://api.github.com/repos/JDTeamAcetabulum/qna | closed | As a user, I want the product to have a consistent brand so that I can easily identify it | design question | ## Story/task details

I @strburst can get started on the logo/color scheme after we pick a name if no one feels strongly.

- [x] Choose a name for the product (`qna`)

- [ ] Create a logo (an `svg`?)

- [ ] Choose a decent color scheme

## Acceptance scenarios <!-- Under what conditions is this story applicable?... | 1.0 | As a user, I want the product to have a consistent brand so that I can easily identify it - ## Story/task details

I @strburst can get started on the logo/color scheme after we pick a name if no one feels strongly.

- [x] Choose a name for the product (`qna`)

- [ ] Create a logo (an `svg`?)

- [ ] Choose a decent ... | design | as a user i want the product to have a consistent brand so that i can easily identify it story task details i strburst can get started on the logo color scheme after we pick a name if no one feels strongly choose a name for the product qna create a logo an svg choose a decent color ... | 1 |

443,560 | 30,923,656,412 | IssuesEvent | 2023-08-06 08:01:23 | kubecub/go-project-layout | https://api.github.com/repos/kubecub/go-project-layout | closed | Bug reports for links in kubecub docs | kind/documentation triage/unresolved report lifecycle/stale | ## Summary

| Status | Count |

|---------------|-------|

| 🔍 Total | 171 |

| ✅ Successful | 164 |

| ⏳ Timeouts | 1 |

| 🔀 Redirected | 0 |

| 👻 Excluded | 0 |

| ❓ Unknown | 0 |

| 🚫 Errors | 6 |

## Errors per input

### Errors in CONTRIBUTING.md

* [TIMEOUT] [https://twi... | 1.0 | Bug reports for links in kubecub docs - ## Summary

| Status | Count |

|---------------|-------|

| 🔍 Total | 171 |

| ✅ Successful | 164 |

| ⏳ Timeouts | 1 |

| 🔀 Redirected | 0 |

| 👻 Excluded | 0 |

| ❓ Unknown | 0 |

| 🚫 Errors | 6 |

## Errors per input

### Errors in C... | non_design | bug reports for links in kubecub docs summary status count 🔍 total ✅ successful ⏳ timeouts 🔀 redirected 👻 excluded ❓ unknown 🚫 errors errors per input errors in contr... | 0 |

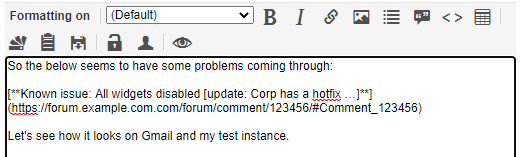

18,583 | 13,055,589,413 | IssuesEvent | 2020-07-30 02:08:45 | jstanden/cerb | https://api.github.com/repos/jstanden/cerb | closed | Nested square brackets in outgoing HTML email cause problems in plain-text copy | bug usability | Using nested square brackets in an outgoing HTML email causes that line to be missing from the plaintext copy.

Source is:

received HTML is:

r... | non_design | nested square brackets in outgoing html email cause problems in plain text copy using nested square brackets in an outgoing html email causes that line to be missing from the plaintext copy source is received html is received raw plain text is | 0 |

23,668 | 6,469,771,645 | IssuesEvent | 2017-08-17 07:11:33 | dotnet/coreclr | https://api.github.com/repos/dotnet/coreclr | closed | [RyuJIT/armel] HFA tests fail | arch-arm32 area-CodeGen bug | With #13284, most regressions caused by #13023 were fixed. But still there is HFA test regression.

All of these run successfully, but the fails with Unexpected Results. (wrong result value)

- JIT/jit64/hfa/main/testB/hfa_sf2B_d/hfa_sf2B_d.exe

- JIT/jit64/hfa/main/testB/hfa_sf0B_r/hfa_sf0B_r.exe

- JIT/jit64/hfa/... | 1.0 | [RyuJIT/armel] HFA tests fail - With #13284, most regressions caused by #13023 were fixed. But still there is HFA test regression.

All of these run successfully, but the fails with Unexpected Results. (wrong result value)

- JIT/jit64/hfa/main/testB/hfa_sf2B_d/hfa_sf2B_d.exe

- JIT/jit64/hfa/main/testB/hfa_sf0B_r/... | non_design | hfa tests fail with most regressions caused by were fixed but still there is hfa test regression all of these run successfully but the fails with unexpected results wrong result value jit hfa main testb hfa d hfa d exe jit hfa main testb hfa r hfa r exe jit hfa main testb hf... | 0 |

77,615 | 9,602,792,189 | IssuesEvent | 2019-05-10 15:22:27 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | Post cutoff: Global banner across the app with link to post | Design Review | - Display a global banner across the app with link to post

https://www.figma.com/file/csVtbWhGCAYe3nVe2LI1UEPM/v1-updates-to-the-UI?node-id=301%3A2551

(scroll right for more)

- have this show up only if the date is after the cutoff date so it is triggered by the time

- change forking banner to new color

- m... | 1.0 | Post cutoff: Global banner across the app with link to post - - Display a global banner across the app with link to post

https://www.figma.com/file/csVtbWhGCAYe3nVe2LI1UEPM/v1-updates-to-the-UI?node-id=301%3A2551

(scroll right for more)

- have this show up only if the date is after the cutoff date so it is trigge... | design | post cutoff global banner across the app with link to post display a global banner across the app with link to post scroll right for more have this show up only if the date is after the cutoff date so it is triggered by the time change forking banner to new color move forking banner to top of... | 1 |

211,542 | 23,833,143,205 | IssuesEvent | 2022-09-06 01:07:26 | RG4421/java-slack-sdk | https://api.github.com/repos/RG4421/java-slack-sdk | opened | CVE-2022-38751 (Medium) detected in snakeyaml-1.25.jar, snakeyaml-1.24.jar | security vulnerability | ## CVE-2022-38751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.25.jar</b>, <b>snakeyaml-1.24.jar</b></p></summary>

<p>

<details><summary><b>snakeyaml-1.25.jar</b></p... | True | CVE-2022-38751 (Medium) detected in snakeyaml-1.25.jar, snakeyaml-1.24.jar - ## CVE-2022-38751 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>snakeyaml-1.25.jar</b>, <b>snakeyaml-1... | non_design | cve medium detected in snakeyaml jar snakeyaml jar cve medium severity vulnerability vulnerable libraries snakeyaml jar snakeyaml jar snakeyaml jar yaml parser and emitter for java library home page a href path to dependency file bolt spring bo... | 0 |

174,189 | 27,591,237,883 | IssuesEvent | 2023-03-09 00:39:16 | devssa/onde-codar-em-salvador | https://api.github.com/repos/devssa/onde-codar-em-salvador | closed | [REMOTO] [TAMBÉM PCD] [PHP] [SÊNIOR] Pessoa Desenvolvedora PHP - Sênior | Conta PF na [PICPAY] | HOME OFFICE PHP MYSQL MONGODB LARAVEL SENIOR GIT DOCKER REMOTO KAFKA RABBITMQ LUMEN DESIGN PATTERNS RESTFUL METODOLOGIAS ÁGEIS HELP WANTED VAGA PARA PCD TAMBÉM OWASP TOP 10 Stale | <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVASCRIPT] [MYSQL] [NODE.JS] Desenvolvedor Front-End na [NOME DA EMPRESA]`

========================... | 1.0 | [REMOTO] [TAMBÉM PCD] [PHP] [SÊNIOR] Pessoa Desenvolvedora PHP - Sênior | Conta PF na [PICPAY] - <!--

==================================================

POR FAVOR, SÓ POSTE SE A VAGA FOR PARA SALVADOR E CIDADES VIZINHAS!

Use: "Desenvolvedor Front-end" ao invés de

"Front-End Developer" \o/

Exemplo: `[JAVA... | design | pessoa desenvolvedora php sênior conta pf na por favor só poste se a vaga for para salvador e cidades vizinhas use desenvolvedor front end ao invés de front end developer o exemplo desenvolvedor front end na ... | 1 |

138,058 | 20,322,203,854 | IssuesEvent | 2022-02-18 00:13:06 | microsoft/pyright | https://api.github.com/repos/microsoft/pyright | closed | Pyright generates error "unpacking not allowed" in context of __get_item__calls for numpy, tensorflow tensors | as designed | Note: if you are reporting a wrong signature of a function or a class in the standard library, then the typeshed tracker is better suited for this report: https://github.com/python/typeshed/issues.

**Describe the bug**

"unpack operation is not allowed in this context" is generated. However, in python 3.8 this execu... | 1.0 | Pyright generates error "unpacking not allowed" in context of __get_item__calls for numpy, tensorflow tensors - Note: if you are reporting a wrong signature of a function or a class in the standard library, then the typeshed tracker is better suited for this report: https://github.com/python/typeshed/issues.

**Descr... | design | pyright generates error unpacking not allowed in context of get item calls for numpy tensorflow tensors note if you are reporting a wrong signature of a function or a class in the standard library then the typeshed tracker is better suited for this report describe the bug unpack operation is not a... | 1 |

51,917 | 3,015,572,706 | IssuesEvent | 2015-07-29 20:19:56 | WikiEducationFoundation/WikiEduDashboard | https://api.github.com/repos/WikiEducationFoundation/WikiEduDashboard | closed | Course announcements are being made multiple times for the same course | bug top priority | Looks like this is still an issue: https://en.wikipedia.org/wiki/User:Cassell04 | 1.0 | Course announcements are being made multiple times for the same course - Looks like this is still an issue: https://en.wikipedia.org/wiki/User:Cassell04 | non_design | course announcements are being made multiple times for the same course looks like this is still an issue | 0 |

77,004 | 21,646,226,166 | IssuesEvent | 2022-05-06 02:28:18 | PyAV-Org/PyAV | https://api.github.com/repos/PyAV-Org/PyAV | opened | Install fails on Ubuntu 18 | build | **IMPORTANT:** Be sure to replace all template sections {{ like this }} or your issue may be discarded.

## Overview

The bug is the following error when trying to run `pip install av` on ubuntu 18:

```

Defaulting to user installation because normal site-packages is not writeable

Collecting av

Using cached ... | 1.0 | Install fails on Ubuntu 18 - **IMPORTANT:** Be sure to replace all template sections {{ like this }} or your issue may be discarded.

## Overview

The bug is the following error when trying to run `pip install av` on ubuntu 18:

```

Defaulting to user installation because normal site-packages is not writeable

C... | non_design | install fails on ubuntu important be sure to replace all template sections like this or your issue may be discarded overview the bug is the following error when trying to run pip install av on ubuntu defaulting to user installation because normal site packages is not writeable col... | 0 |

50,688 | 10,546,987,413 | IssuesEvent | 2019-10-02 23:12:36 | MicrosoftDocs/visualstudio-docs | https://api.github.com/repos/MicrosoftDocs/visualstudio-docs | closed | unclear documentation | Pri2 area - C++ doc-bug visual-studio-windows/prod vs-ide-code-analysis/tech | Please define what slicing is.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: be6a0e19-d61f-fefb-4a83-afa87219e168

* Version Independent ID: 206fffd7-3651-5783-49e0-c586a9b8b992

* Content: [C26437 - Visual Studio](https://docs.microsoft.com... | 1.0 | unclear documentation - Please define what slicing is.

---

#### Document Details

⚠ *Do not edit this section. It is required for docs.microsoft.com ➟ GitHub issue linking.*

* ID: be6a0e19-d61f-fefb-4a83-afa87219e168

* Version Independent ID: 206fffd7-3651-5783-49e0-c586a9b8b992

* Content: [C26437 - Visual Studio](ht... | non_design | unclear documentation please define what slicing is document details ⚠ do not edit this section it is required for docs microsoft com ➟ github issue linking id fefb version independent id content content source product visual studio windows technology ... | 0 |

568,055 | 16,945,845,927 | IssuesEvent | 2021-06-28 06:38:22 | PlaceOS/drivers | https://api.github.com/repos/PlaceOS/drivers | closed | Gallagher Crystal Driver | bhq priority: high type: driver type: migrate driver | Convert/Rewrite existing Ruby Gallagher Driver to Crystal for Suncorp | 1.0 | Gallagher Crystal Driver - Convert/Rewrite existing Ruby Gallagher Driver to Crystal for Suncorp | non_design | gallagher crystal driver convert rewrite existing ruby gallagher driver to crystal for suncorp | 0 |

128,237 | 17,466,121,817 | IssuesEvent | 2021-08-06 17:05:58 | cagov/ui-claim-tracker | https://api.github.com/repos/cagov/ui-claim-tracker | closed | Run rapid usability testing | Design | ### Description

Facilitate content testing for:

- Test copy for scenario 1 (depends on #154)

- Test copy for baseline state for alpha release (depends on #158)

### Acceptance Criteria

- [x] Lead interviews

- [ ] #273

- [ ] #356

<!--

_Note_ When you create this issue, remember to add:

-... | 1.0 | Run rapid usability testing - ### Description

Facilitate content testing for:

- Test copy for scenario 1 (depends on #154)

- Test copy for baseline state for alpha release (depends on #158)

### Acceptance Criteria

- [x] Lead interviews

- [ ] #273

- [ ] #356

<!--

_Note_ When you create thi... | design | run rapid usability testing description facilitate content testing for test copy for scenario depends on test copy for baseline state for alpha release depends on acceptance criteria lead interviews note when you create this issue remem... | 1 |

20,042 | 26,529,381,488 | IssuesEvent | 2023-01-19 11:15:17 | prisma/prisma | https://api.github.com/repos/prisma/prisma | opened | Introspection of MySQL views | process/candidate topic: introspection topic: re-introspection tech/engines/introspection engine team/schema topic: view kind/subtask | We should fit this beauty to the SQL describer:

```sql

select col.table_schema as database_name,

col.table_name as view_name,

col.ordinal_position,

col.column_name,

col.data_type,

case when col.character_maximum_length is not null

then col.character_maximum_lengt... | 1.0 | Introspection of MySQL views - We should fit this beauty to the SQL describer:

```sql

select col.table_schema as database_name,

col.table_name as view_name,

col.ordinal_position,

col.column_name,

col.data_type,

case when col.character_maximum_length is not null

t... | non_design | introspection of mysql views we should fit this beauty to the sql describer sql select col table schema as database name col table name as view name col ordinal position col column name col data type case when col character maximum length is not null t... | 0 |

64,215 | 6,896,120,404 | IssuesEvent | 2017-11-23 16:20:33 | medic/medic-webapp | https://api.github.com/repos/medic/medic-webapp | closed | Migration extract-person-contacts broken | Priority: 1 - High Status: 4 - Acceptance testing Type: Bug | The server details are shared on slack if further investigation is required.

Migration from Version 0.4 to Version 2.13

Error:

```

2017-11-10T12:18:21.578Z - error: Failed to restore contact on facility 42fbf87e-9857-8ad1-071fc2f9e15ac6b9, contact: {"name":"Redacted ","phone":"+251920---","type":"person","reported_... | 1.0 | Migration extract-person-contacts broken - The server details are shared on slack if further investigation is required.

Migration from Version 0.4 to Version 2.13

Error:

```

2017-11-10T12:18:21.578Z - error: Failed to restore contact on facility 42fbf87e-9857-8ad1-071fc2f9e15ac6b9, contact: {"name":"Redacted ","pho... | non_design | migration extract person contacts broken the server details are shared on slack if further investigation is required migration from version to version error error failed to restore contact on facility contact name redacted phone type person reported date... | 0 |

126,921 | 17,142,185,581 | IssuesEvent | 2021-07-13 10:51:43 | WordPress/gutenberg | https://api.github.com/repos/WordPress/gutenberg | closed | Color panel should be hidden when the block's color are disabled | [Feature] Design Tools [Status] In Progress [Type] Bug | In working at https://github.com/WordPress/gutenberg/pull/33280/ and preparing https://github.com/WordPress/gutenberg/pull/33295 I've realized the color panel doesn't work as expected.

If a theme passes the following data via `theme.json`:

```json

{

"version": 1,

"settings": {

"color": {

"cust... | 1.0 | Color panel should be hidden when the block's color are disabled - In working at https://github.com/WordPress/gutenberg/pull/33280/ and preparing https://github.com/WordPress/gutenberg/pull/33295 I've realized the color panel doesn't work as expected.

If a theme passes the following data via `theme.json`:

```jso... | design | color panel should be hidden when the block s color are disabled in working at and preparing i ve realized the color panel doesn t work as expected if a theme passes the following data via theme json json version settings color custom false customgrad... | 1 |

94,730 | 19,577,671,159 | IssuesEvent | 2022-01-04 17:03:14 | Regalis11/Barotrauma | https://api.github.com/repos/Regalis11/Barotrauma | closed | extreme number of draw calls per frame | Code Performance | - [X] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Game uses a very large number of draw calls (5200-6500) in new-old saves, many more used when drawing ui.

**Steps To Reproduce**

```

$ apitrace Barotrauma

<open up save or create a new game>

$ qapitrace B... | 1.0 | extreme number of draw calls per frame - - [X] I have searched the issue tracker to check if the issue has already been reported.

**Description**

Game uses a very large number of draw calls (5200-6500) in new-old saves, many more used when drawing ui.

**Steps To Reproduce**

```

$ apitrace Barotrauma

<open up ... | non_design | extreme number of draw calls per frame i have searched the issue tracker to check if the issue has already been reported description game uses a very large number of draw calls in new old saves many more used when drawing ui steps to reproduce apitrace barotrauma qapitrace ba... | 0 |

69,014 | 8,367,933,114 | IssuesEvent | 2018-10-04 13:36:20 | gctools-outilsgc/design-system | https://api.github.com/repos/gctools-outilsgc/design-system | closed | navigation templates and layouts | Project: Design System [zube]: Backlog layout systems phase II | Guidelines and wireframes for application and site navigation | 1.0 | navigation templates and layouts - Guidelines and wireframes for application and site navigation | design | navigation templates and layouts guidelines and wireframes for application and site navigation | 1 |

332,960 | 24,356,799,292 | IssuesEvent | 2022-10-03 08:13:00 | pravega/pravega | https://api.github.com/repos/pravega/pravega | closed | Create document to recover container exhibiting Table Segment credits exhausted | area/segmentstore area/documentation priority/P2 area/data-recovery version/0.13.0 | **Description of the task**

We need to create a document under the "recovery_procedures" section that describes how to recover a Segment Container for which internal metadata Table Segments cannot be initialized due to exhaustion of credits (i.e., too much data has been accumulated in Tier-1 that has not been moved to... | 1.0 | Create document to recover container exhibiting Table Segment credits exhausted - **Description of the task**

We need to create a document under the "recovery_procedures" section that describes how to recover a Segment Container for which internal metadata Table Segments cannot be initialized due to exhaustion of cred... | non_design | create document to recover container exhibiting table segment credits exhausted description of the task we need to create a document under the recovery procedures section that describes how to recover a segment container for which internal metadata table segments cannot be initialized due to exhaustion of cred... | 0 |

169,047 | 26,738,209,531 | IssuesEvent | 2023-01-30 11:00:57 | BulletBoxProject/BulletBox-project-FE- | https://api.github.com/repos/BulletBoxProject/BulletBox-project-FE- | closed | 데일리로그 날짜 선택 기능 | feat design | ## Description

데일리로그 날짜 선택 기능 구현 작업입니다.

## Todo

- [x] 캘린더 드롭다운 생성

- [x] 날짜 선택해서 이동 기능

## Etc

기타사항

| 1.0 | 데일리로그 날짜 선택 기능 - ## Description

데일리로그 날짜 선택 기능 구현 작업입니다.

## Todo

- [x] 캘린더 드롭다운 생성

- [x] 날짜 선택해서 이동 기능

## Etc

기타사항

| design | 데일리로그 날짜 선택 기능 description 데일리로그 날짜 선택 기능 구현 작업입니다 todo 캘린더 드롭다운 생성 날짜 선택해서 이동 기능 etc 기타사항 | 1 |

34 | 2,492,581,956 | IssuesEvent | 2015-01-05 01:50:29 | lakkatv/Lakka | https://api.github.com/repos/lakkatv/Lakka | closed | Handle blank config | bug design enhancement menu | For our menu to work, you have to configure paths in the retroarch config. If the right paths are not set, it is not able to do anything and even segfaults. This is a bug because most users have an empty config file when launching retroarch for the first time.

The right way to fix it I think, is to:

- display a hel... | 1.0 | Handle blank config - For our menu to work, you have to configure paths in the retroarch config. If the right paths are not set, it is not able to do anything and even segfaults. This is a bug because most users have an empty config file when launching retroarch for the first time.

The right way to fix it I think, i... | design | handle blank config for our menu to work you have to configure paths in the retroarch config if the right paths are not set it is not able to do anything and even segfaults this is a bug because most users have an empty config file when launching retroarch for the first time the right way to fix it i think i... | 1 |

609,840 | 18,888,810,406 | IssuesEvent | 2021-11-15 10:53:15 | CatalogueOfLife/backend | https://api.github.com/repos/CatalogueOfLife/backend | closed | Search exceptions for large offsets | bug high priority search | http://api.catalogueoflife.org/dataset/2349/nameusage/search?facet=rank&facet=issue&facet=status&facet=nomStatus&facet=nameType&facet=field&facet=authorship&facet=extinct&facet=environment&limit=50&offset=4418350&reverse=false&sortBy=taxonomic&status=_NOT_NULL

```

{"type": "server", "timestamp": "2021-11-15T10:32:1... | 1.0 | Search exceptions for large offsets - http://api.catalogueoflife.org/dataset/2349/nameusage/search?facet=rank&facet=issue&facet=status&facet=nomStatus&facet=nameType&facet=field&facet=authorship&facet=extinct&facet=environment&limit=50&offset=4418350&reverse=false&sortBy=taxonomic&status=_NOT_NULL

```

{"type": "ser... | non_design | search exceptions for large offsets type server timestamp level debug component o e a s transportsearchaction cluster name col node name node col message failed to execute indicesoptions indicesoptions types routing null preference null ... | 0 |

677,612 | 23,167,734,880 | IssuesEvent | 2022-07-30 07:50:12 | ObsidianMC/Obsidian | https://api.github.com/repos/ObsidianMC/Obsidian | closed | Implement new chat message/command changes (1.19 branch) | enhancement help wanted good first issue priority: high networking | As the title suggests I'm opening up this issue for someone who wants to do this before I get to it myself.

Helpful Links:

https://wiki.vg/images/f/f4/MinecraftChat.drawio4.png

https://wiki.vg/Chat#Processing_chat

Packets that should be looked at (Classes for these packets are created already)

https://wiki.vg/... | 1.0 | Implement new chat message/command changes (1.19 branch) - As the title suggests I'm opening up this issue for someone who wants to do this before I get to it myself.

Helpful Links:

https://wiki.vg/images/f/f4/MinecraftChat.drawio4.png

https://wiki.vg/Chat#Processing_chat

Packets that should be looked at (Class... | non_design | implement new chat message command changes branch as the title suggests i m opening up this issue for someone who wants to do this before i get to it myself helpful links packets that should be looked at classes for these packets are created already this should work reliably with onl... | 0 |

257,957 | 22,265,919,078 | IssuesEvent | 2022-06-10 07:25:35 | gravitee-io/issues | https://api.github.com/repos/gravitee-io/issues | closed | [gateway] conditional logging on date/Duration prevents to display API logs | type: bug project: APIM Support 2 p2 loop quantum status: in test | ## :collision: Describe the bug

When enabling logging on an API with a Condition on an date/Duration, the detailed logs are not displayed and the following warning is raise in the gateway logs :

```

gio_apim_gateway-3.16.1 | 10:17:52.956 [vert.x-eventloop-thread-4] [] WARN i.g.g.c.l.p.LoggableRequestProcessor - Une... | 1.0 | [gateway] conditional logging on date/Duration prevents to display API logs - ## :collision: Describe the bug

When enabling logging on an API with a Condition on an date/Duration, the detailed logs are not displayed and the following warning is raise in the gateway logs :

```

gio_apim_gateway-3.16.1 | 10:17:52.956 [... | non_design | conditional logging on date duration prevents to display api logs collision describe the bug when enabling logging on an api with a condition on an date duration the detailed logs are not displayed and the following warning is raise in the gateway logs gio apim gateway warn i g g... | 0 |

100,558 | 4,098,272,332 | IssuesEvent | 2016-06-03 07:34:43 | agda/agda | https://api.github.com/repos/agda/agda | closed | Copattern matching with catch-all is inconsistent | copatterns pattern-matching priority-high | Consider the following code:

```agda

data Bool : Set where

true false : Bool

f : Bool → Set₁

f true = Set

f = λ _ → Set

```

Agda fails to see that the pattern matching in `f` is complete:

```

Incomplete pattern matching for f. Missing cases:

f false

when checking the definition of f

```

Is this... | 1.0 | Copattern matching with catch-all is inconsistent - Consider the following code:

```agda

data Bool : Set where

true false : Bool

f : Bool → Set₁

f true = Set

f = λ _ → Set

```

Agda fails to see that the pattern matching in `f` is complete:

```

Incomplete pattern matching for f. Missing cases:

f fa... | non_design | copattern matching with catch all is inconsistent consider the following code agda data bool set where true false bool f bool → set₁ f true set f λ → set agda fails to see that the pattern matching in f is complete incomplete pattern matching for f missing cases f fa... | 0 |

63,070 | 17,366,059,390 | IssuesEvent | 2021-07-30 07:27:30 | vector-im/element-web | https://api.github.com/repos/vector-im/element-web | closed | Riot is not respecting audible and call settings | A-Notifications A-VoIP P1 S-Major T-Defect | Hi

The Linux version of Riot desktop is not respecting the audible notifications and the p2p call settings.

I disabled audible notifications and p2p calls and I still get loud notifications with messages and calls, and I still get p2p calls. It seems to me that the settings are not respecting for such things.

... | 1.0 | Riot is not respecting audible and call settings - Hi

The Linux version of Riot desktop is not respecting the audible notifications and the p2p call settings.

I disabled audible notifications and p2p calls and I still get loud notifications with messages and calls, and I still get p2p calls. It seems to me that ... | non_design | riot is not respecting audible and call settings hi the linux version of riot desktop is not respecting the audible notifications and the call settings i disabled audible notifications and calls and i still get loud notifications with messages and calls and i still get calls it seems to me that the se... | 0 |

109,971 | 16,946,278,494 | IssuesEvent | 2021-06-28 07:16:53 | k8-proxy/go-k8s-infra | https://api.github.com/repos/k8-proxy/go-k8s-infra | reopened | Securely handle Secrets across multiple clusters using industry standard practices | Epic P1 Security | As an InfoSec manageer or IT Administrator I expect Glasswall to implment recognised secrets management patterns for the handling and propogation of secrets in a open-architecture.

<br/>

Clusters should be able to validate secrets from a central location so that administrators are not expected to manually change valu... | True | Securely handle Secrets across multiple clusters using industry standard practices - As an InfoSec manageer or IT Administrator I expect Glasswall to implment recognised secrets management patterns for the handling and propogation of secrets in a open-architecture.

<br/>

Clusters should be able to validate secrets fr... | non_design | securely handle secrets across multiple clusters using industry standard practices as an infosec manageer or it administrator i expect glasswall to implment recognised secrets management patterns for the handling and propogation of secrets in a open architecture clusters should be able to validate secrets from a... | 0 |

660,761 | 21,997,105,264 | IssuesEvent | 2022-05-26 07:42:22 | googleapis/python-appengine-admin | https://api.github.com/repos/googleapis/python-appengine-admin | closed | tests.unit.gapic.appengine_admin_v1.test_authorized_domains: test_list_authorized_domains_async_pager failed | type: bug priority: p1 flakybot: issue api: appengine | This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add the `flakybot: quiet` label and

I will stop commenting.

---

commit: 02284e0a99978cbfd3608d0204e210ee9944475f

b... | 1.0 | tests.unit.gapic.appengine_admin_v1.test_authorized_domains: test_list_authorized_domains_async_pager failed - This test failed!

To configure my behavior, see [the Flaky Bot documentation](https://github.com/googleapis/repo-automation-bots/tree/main/packages/flakybot).

If I'm commenting on this issue too often, add t... | non_design | tests unit gapic appengine admin test authorized domains test list authorized domains async pager failed this test failed to configure my behavior see if i m commenting on this issue too often add the flakybot quiet label and i will stop commenting commit buildurl status failed te... | 0 |

108,134 | 23,538,177,291 | IssuesEvent | 2022-08-20 01:26:46 | microsoft/vscode-cpptools | https://api.github.com/repos/microsoft/vscode-cpptools | opened | With 1.12.1, infinite memory gets used after doing a readability-else-after-return clang-tidy fix with vcFormat on Windows with a file with LF (\n) line endings | bug Language Service regression Feature: Code Formatting Feature: Code Analysis | Use a file with LF line endings on Windows with...

```cpp

void CheckModified(int ii) {

if (ii == 0) {

return;

}

else {

//aaa

}

}

```

```json

"C_Cpp.codeAnalysis.clangTidy.checks.enabled": [

"readability-else-after-return"

],

"C_Cpp.formatting": "vcF... | 2.0 | With 1.12.1, infinite memory gets used after doing a readability-else-after-return clang-tidy fix with vcFormat on Windows with a file with LF (\n) line endings - Use a file with LF line endings on Windows with...

```cpp

void CheckModified(int ii) {

if (ii == 0) {

return;

}

else {

/... | non_design | with infinite memory gets used after doing a readability else after return clang tidy fix with vcformat on windows with a file with lf n line endings use a file with lf line endings on windows with cpp void checkmodified int ii if ii return else ... | 0 |

408,921 | 11,954,462,485 | IssuesEvent | 2020-04-03 23:44:35 | getting-things-gnome/gtg | https://api.github.com/repos/getting-things-gnome/gtg | closed | Task editor windows do not have the proper children window relationship to the main window | bug low-hanging-fruit priority:medium reproducible-in-git | The task editor instances need to:

* have the "attached-to" and/or "parent" and/or "transient-for" property set correctly to be attached to the main window

* possibly have a type-hint set to be a utility (or dialog) window?

* in both cases it would also avoid having minimize/maximize buttons added by Ubuntu onto ... | 1.0 | Task editor windows do not have the proper children window relationship to the main window - The task editor instances need to:

* have the "attached-to" and/or "parent" and/or "transient-for" property set correctly to be attached to the main window

* possibly have a type-hint set to be a utility (or dialog) window?

... | non_design | task editor windows do not have the proper children window relationship to the main window the task editor instances need to have the attached to and or parent and or transient for property set correctly to be attached to the main window possibly have a type hint set to be a utility or dialog window ... | 0 |

239,996 | 26,254,310,517 | IssuesEvent | 2023-01-05 22:31:59 | mpulsemobile/doccano | https://api.github.com/repos/mpulsemobile/doccano | opened | CVE-2021-23386 (Medium) detected in dns-packet-1.3.1.tgz | security vulnerability | ## CVE-2021-23386 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dns-packet-1.3.1.tgz</b></p></summary>

<p>An abstract-encoding compliant module for encoding / decoding DNS packets<... | True | CVE-2021-23386 (Medium) detected in dns-packet-1.3.1.tgz - ## CVE-2021-23386 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Library - <b>dns-packet-1.3.1.tgz</b></p></summary>

<p>An abstract-enc... | non_design | cve medium detected in dns packet tgz cve medium severity vulnerability vulnerable library dns packet tgz an abstract encoding compliant module for encoding decoding dns packets library home page a href path to dependency file app server static package json path to... | 0 |

590,240 | 17,774,509,479 | IssuesEvent | 2021-08-30 17:24:21 | anegostudios/VintageStory-Issues | https://api.github.com/repos/anegostudios/VintageStory-Issues | opened | Request World Download from Server | status: new priority: high | **Game Version:** 1.15.5

**Platform:** Windows

**Modded:** Unknown

### Description

Im having trouble with downloading the world from my server.

My game says "World Download Requested, copying in progress.

To continue, the game keepings crashing when trying to load "Your Game Server" here is the log

### How... | 1.0 | Request World Download from Server - **Game Version:** 1.15.5

**Platform:** Windows

**Modded:** Unknown

### Description

Im having trouble with downloading the world from my server.

My game says "World Download Requested, copying in progress.

To continue, the game keepings crashing when trying to load "Your Gam... | non_design | request world download from server game version platform windows modded unknown description im having trouble with downloading the world from my server my game says world download requested copying in progress to continue the game keepings crashing when trying to load your game... | 0 |

1,811 | 2,572,415,302 | IssuesEvent | 2015-02-10 22:23:08 | kmcurry/3Scape | https://api.github.com/repos/kmcurry/3Scape | closed | Design Profile Page | Design In Progress UX | - [x] Sketch several concepts for profile pages

- [x] Review concepts and down-select top one or two

- [x] Render top choice

- [x] Review and iterate as needed

- [ ] Get internal feedback

- [ ] Get external feedback and UX review if practical

- [ ] Write CSS | 1.0 | Design Profile Page - - [x] Sketch several concepts for profile pages

- [x] Review concepts and down-select top one or two

- [x] Render top choice

- [x] Review and iterate as needed

- [ ] Get internal feedback

- [ ] Get external feedback and UX review if practical

- [ ] Write CSS | design | design profile page sketch several concepts for profile pages review concepts and down select top one or two render top choice review and iterate as needed get internal feedback get external feedback and ux review if practical write css | 1 |

163,320 | 25,789,588,863 | IssuesEvent | 2022-12-10 01:25:07 | MetaMask/metamask-extension | https://api.github.com/repos/MetaMask/metamask-extension | opened | Consolidate all component import paths | area-UI design-system IA/NAV | ### Description

We currently have inconsistent import paths in our component documentation. We should be consistent with our import paths for components.

### Technical De... | 1.0 | Consolidate all component import paths - ### Description

We currently have inconsistent import paths in our component documentation. We should be consistent with our import paths for components.

to the system; each logger would help to log down messages in a particular scenario (e.g. rolling-file logging, rdbms logging, console l... | 1.0 | add logging utility features - **logging** is essential to all kinds of systems, a simple yet flexible logging architecture could solve things out in an efficient way.

Features involved:

* able to add logger(s) to the system; each logger would help to log down messages in a particular scenario (e.g. rolling-file lo... | design | add logging utility features logging is essential to all kinds of systems a simple yet flexible logging architecture could solve things out in an efficient way features involved able to add logger s to the system each logger would help to log down messages in a particular scenario e g rolling file lo... | 1 |

391,389 | 26,890,849,922 | IssuesEvent | 2023-02-06 08:48:46 | TypeCobolTeam/TypeCobol | https://api.github.com/repos/TypeCobolTeam/TypeCobol | closed | Create better functional documentation for TypeCobol (FR + EN) | Documentation | We already have some docs on the Wiki + one private Tutorial.

We need to improve the documentation.

For our enterprise we need a doc in English and French. | 1.0 | Create better functional documentation for TypeCobol (FR + EN) - We already have some docs on the Wiki + one private Tutorial.

We need to improve the documentation.

For our enterprise we need a doc in English and French. | non_design | create better functional documentation for typecobol fr en we already have some docs on the wiki one private tutorial we need to improve the documentation for our enterprise we need a doc in english and french | 0 |

6,578 | 7,693,392,766 | IssuesEvent | 2018-05-18 03:20:08 | terraform-providers/terraform-provider-azurerm | https://api.github.com/repos/terraform-providers/terraform-provider-azurerm | closed | VM Extensions Example gives error on Network Interface | bug service/virtual-machine-extensions | ### Terraform Version

Terraform v0.10.8

### Affected Resource(s)

N/A

### Terraform Configuration Files

```hcl

resource "random_id" "server" {

keepers = {

azi_id = 1

}

byte_length = 8

}

resource "azurerm_resource_group" "test" {

name = "acctestrg"

location = "West US 2"

}

reso... | 1.0 | VM Extensions Example gives error on Network Interface - ### Terraform Version

Terraform v0.10.8

### Affected Resource(s)

N/A

### Terraform Configuration Files

```hcl

resource "random_id" "server" {

keepers = {

azi_id = 1

}

byte_length = 8

}

resource "azurerm_resource_group" "test" {

na... | non_design | vm extensions example gives error on network interface terraform version terraform affected resource s n a terraform configuration files hcl resource random id server keepers azi id byte length resource azurerm resource group test name... | 0 |

94,002 | 11,841,120,550 | IssuesEvent | 2020-03-23 20:10:30 | patternfly/patternfly-design | https://api.github.com/repos/patternfly/patternfly-design | closed | Accordion box-shadow | Enhancement Visual Design | From https://github.com/patternfly/patternfly-next/issues/2375, we added a variation to the accordion component that removes the CSS `box-shadow`. After chatting with @mceledonia about it, we came to the conclusion that the shadow is not necessary and could be removed.

In this issue, I would like to determine if we ... | 1.0 | Accordion box-shadow - From https://github.com/patternfly/patternfly-next/issues/2375, we added a variation to the accordion component that removes the CSS `box-shadow`. After chatting with @mceledonia about it, we came to the conclusion that the shadow is not necessary and could be removed.

In this issue, I would l... | design | accordion box shadow from we added a variation to the accordion component that removes the css box shadow after chatting with mceledonia about it we came to the conclusion that the shadow is not necessary and could be removed in this issue i would like to determine if we should leave the component ... | 1 |

1,172 | 2,532,695,066 | IssuesEvent | 2015-01-23 17:49:01 | ThibaultLatrille/ControverSciences | https://api.github.com/repos/ThibaultLatrille/ControverSciences | closed | Icone | **** urgent design | Mettre icone pour les pages

Sur la page http://www.controversciences.org/

Par : T. Latrille

Navigateur : chrome modern linux webkit | 1.0 | Icone - Mettre icone pour les pages

Sur la page http://www.controversciences.org/

Par : T. Latrille

Navigateur : chrome modern linux webkit | design | icone mettre icone pour les pages sur la page par t latrille navigateur chrome modern linux webkit | 1 |

218,066 | 16,748,612,618 | IssuesEvent | 2021-06-11 19:05:02 | ESV-20/CAHSI-Bayamon | https://api.github.com/repos/ESV-20/CAHSI-Bayamon | opened | Deliver Database E-R Model Diagram | documentation | _Design Entity Relation Model Diagram to fully understand the purpose for a database to the project._ | 1.0 | Deliver Database E-R Model Diagram - _Design Entity Relation Model Diagram to fully understand the purpose for a database to the project._ | non_design | deliver database e r model diagram design entity relation model diagram to fully understand the purpose for a database to the project | 0 |

20,869 | 2,631,873,583 | IssuesEvent | 2015-03-07 15:04:35 | GrannyCookies/scratchext2 | https://api.github.com/repos/GrannyCookies/scratchext2 | opened | 2.0 Online Editor Glitch | bug low priority | Just found a glitch in the 2.0 Editor. I am running both the Chrome Extension and the Tampermonkey Userscript.

Oh goodness it annoys me!

Can someone fix? | 1.0 | 2.0 Online Editor Glitch - Just found a glitch in the 2.0 Editor. I am running both the Chrome Extension and the Tampermonkey Userscript.

Oh goodness it annoys me!

Can someone fix? | non_design | online editor glitch just found a glitch in the editor i am running both the chrome extension and the tampermonkey userscript oh goodness it annoys me can someone fix | 0 |

125,854 | 16,845,458,251 | IssuesEvent | 2021-06-19 11:35:34 | lukihd/scanReader | https://api.github.com/repos/lukihd/scanReader | opened | Sketch of the application | design | # Sketch design of all componnents

- [ ] Home

- [ ] Manga

- [ ] Reader

- [ ] Submit

- [ ] Settings | 1.0 | Sketch of the application - # Sketch design of all componnents

- [ ] Home

- [ ] Manga

- [ ] Reader

- [ ] Submit

- [ ] Settings | design | sketch of the application sketch design of all componnents home manga reader submit settings | 1 |

157,206 | 24,632,869,982 | IssuesEvent | 2022-10-17 04:48:14 | zachyuen/fa22-cse110-lab3 | https://api.github.com/repos/zachyuen/fa22-cse110-lab3 | closed | Create meeting minutes template | design/template | **Lab #:**3

**Describe problem**

I need to create a meeting minutes template for the lab. | 1.0 | Create meeting minutes template - **Lab #:**3

**Describe problem**

I need to create a meeting minutes template for the lab. | design | create meeting minutes template lab describe problem i need to create a meeting minutes template for the lab | 1 |

9,202 | 4,442,141,227 | IssuesEvent | 2016-08-19 12:20:20 | aria2/aria2 | https://api.github.com/repos/aria2/aria2 | closed | Link failure with ld.gold, -Wl,--as-needed and --enable-libaria2 | bug build | When aria2 is built with `--enable-libaria2` most of the relevant code is built into the shared library. However, `src/Makefile.am` puts almost all linked dependency libraries into `LDADD` which applies to executables only. Therefore, most of the NEEDED entries land in the executable rather than the library.

This ha... | 1.0 | Link failure with ld.gold, -Wl,--as-needed and --enable-libaria2 - When aria2 is built with `--enable-libaria2` most of the relevant code is built into the shared library. However, `src/Makefile.am` puts almost all linked dependency libraries into `LDADD` which applies to executables only. Therefore, most of the NEEDED... | non_design | link failure with ld gold wl as needed and enable when is built with enable most of the relevant code is built into the shared library however src makefile am puts almost all linked dependency libraries into ldadd which applies to executables only therefore most of the needed entries land in t... | 0 |

171,730 | 27,169,549,150 | IssuesEvent | 2023-02-17 18:05:22 | briangormanly/agora | https://api.github.com/repos/briangormanly/agora | closed | Investigate / Integrate Google Identity services for web | help wanted good first issue Software Archecture Planning / Design Infrastructure SSO | Use googles new API (current one is scheduled for deprecation in 23)

https://developers.google.com/identity/gsi/web/guides/overview | 1.0 | Investigate / Integrate Google Identity services for web - Use googles new API (current one is scheduled for deprecation in 23)

https://developers.google.com/identity/gsi/web/guides/overview | design | investigate integrate google identity services for web use googles new api current one is scheduled for deprecation in | 1 |

153,635 | 24,166,328,977 | IssuesEvent | 2022-09-22 15:18:23 | hypha-dao/dho-web-client | https://api.github.com/repos/hypha-dao/dho-web-client | closed | Remove decimals from numbers | Design | Summary:

As a DAO user I don't want to see decimals in the DAO because the numbers are too long and confusing:

AC:

Remove decimals behind the dot on following numbers:

- [x] Proposal detail page (token for Utility, Cash & Voice)

- [x] Proposal creation wizard (token for Utility, Cash & Voice)

- [x] Wallet wid... | 1.0 | Remove decimals from numbers - Summary:

As a DAO user I don't want to see decimals in the DAO because the numbers are too long and confusing:

AC:

Remove decimals behind the dot on following numbers:

- [x] Proposal detail page (token for Utility, Cash & Voice)

- [x] Proposal creation wizard (token for Utility, ... | design | remove decimals from numbers summary as a dao user i don t want to see decimals in the dao because the numbers are too long and confusing ac remove decimals behind the dot on following numbers proposal detail page token for utility cash voice proposal creation wizard token for utility cash... | 1 |

24,260 | 5,041,344,980 | IssuesEvent | 2016-12-19 10:00:54 | salesforce-ux/design-system | https://api.github.com/repos/salesforce-ux/design-system | closed | SLDS.com / Grid / Order | bug documentation | https://www.lightningdesignsystem.com/components/utilities/grid/#flavor-order

There's a bug with the example:

- large: orders correctly (reversed: 3, 2, 1)

- medium: orders correctly (1, 2, 3)

- small: orders the same as medium instead of (3, 1, 2)...

(Using Chrome 53.0.2785.143, Firefox 45.0.1, Opera 40.0.2308.81)

... | 1.0 | SLDS.com / Grid / Order - https://www.lightningdesignsystem.com/components/utilities/grid/#flavor-order

There's a bug with the example:

- large: orders correctly (reversed: 3, 2, 1)

- medium: orders correctly (1, 2, 3)

- small: orders the same as medium instead of (3, 1, 2)...

(Using Chrome 53.0.2785.143, Firefox 45.... | non_design | slds com grid order there s a bug with the example large orders correctly reversed medium orders correctly small orders the same as medium instead of using chrome firefox opera i have no idea how to add labels or anything but it should b... | 0 |

115,196 | 14,702,535,080 | IssuesEvent | 2021-01-04 13:45:21 | EmbarkStudios/opensource-website | https://api.github.com/repos/EmbarkStudios/opensource-website | closed | Create a nice view for newsletter archives and signup | design enhancement good first issue help wanted | **Describe the solution you'd like**

Currently, the newsletter is displayed as a basic HTML list on the homepage. We should have a separate page (embark.dev/newsletter) that shows recent newsletters in a nice way, and allows users to sign up. (this is currently done through the basic mailchimp page)

| 1.0 | Create a nice view for newsletter archives and signup - **Describe the solution you'd like**

Currently, the newsletter is displayed as a basic HTML list on the homepage. We should have a separate page (embark.dev/newsletter) that shows recent newsletters in a nice way, and allows users to sign up. (this is currently d... | design | create a nice view for newsletter archives and signup describe the solution you d like currently the newsletter is displayed as a basic html list on the homepage we should have a separate page embark dev newsletter that shows recent newsletters in a nice way and allows users to sign up this is currently d... | 1 |

56,729 | 15,343,044,901 | IssuesEvent | 2021-02-27 18:38:27 | scipy/scipy | https://api.github.com/repos/scipy/scipy | closed | The crystallball distribution entropy is sometimes minus infinity | defect scipy.stats | <!--

Thank you for taking the time to file a bug report.

Please fill in the fields below, deleting the sections that

don't apply to your issue. You can view the final output

by clicking the preview button above.

Note: This is a comment, and won't appear in the output.

-->

The crystalball entropy function re... | 1.0 | The crystallball distribution entropy is sometimes minus infinity - <!--

Thank you for taking the time to file a bug report.

Please fill in the fields below, deleting the sections that

don't apply to your issue. You can view the final output

by clicking the preview button above.

Note: This is a comment, and won... | non_design | the crystallball distribution entropy is sometimes minus infinity thank you for taking the time to file a bug report please fill in the fields below deleting the sections that don t apply to your issue you can view the final output by clicking the preview button above note this is a comment and won... | 0 |

11,769 | 7,447,011,814 | IssuesEvent | 2018-03-28 11:01:21 | nerdalize/nerd | https://api.github.com/repos/nerdalize/nerd | opened | Unhelpful error when specifying invalid kubeconfig path | usability | ## Expected Behavior

When specifying an invalid kubeconfig path, it should return a proper error and not a "not logged in" one

## Actual Behavior

| True | Unhelpful error when specifying invalid kubeconfig path - ## Expected Behavior

When specifying an invalid kubeconfig path, it should return a proper error and not a "not logged in" one

## Actual Behavior

**For å reprodusere**

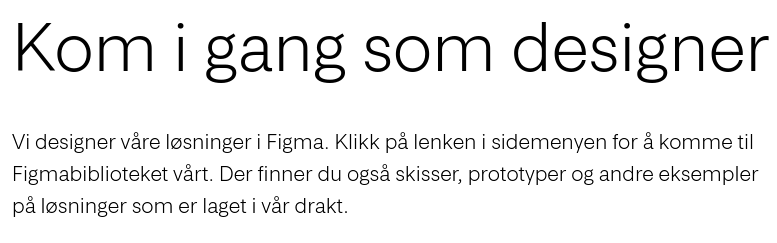

Fortell oss hvordan vi kan gjenskape feilen (det hjelper os... | 1.0 | Feil: For Designere peker til en lenke som ikke finnes lengre. - **Feilbeskrivelse**

"Klikk på lenken i sidemenyen", det er ikke lengre noen lenke i sidemenyen som kan ta deg til figma

**For å reproduse... | design | feil for designere peker til en lenke som ikke finnes lengre feilbeskrivelse klikk på lenken i sidemenyen det er ikke lengre noen lenke i sidemenyen som kan ta deg til figma for å reprodusere fortell oss hvordan vi kan gjenskape feilen det hjelper oss med å fikse den gå til f... | 1 |

137,323 | 20,115,759,472 | IssuesEvent | 2022-02-07 19:20:21 | influxdata/ui | https://api.github.com/repos/influxdata/ui | opened | Take Inventory of Honeybadger Calls: Determine Appropriate Amplitude Events | team/design | Take Inventory of Honeybadger Calls: Determine Appropriate Amplitude Events

| 1.0 | Take Inventory of Honeybadger Calls: Determine Appropriate Amplitude Events - Take Inventory of Honeybadger Calls: Determine Appropriate Amplitude Events

| design | take inventory of honeybadger calls determine appropriate amplitude events take inventory of honeybadger calls determine appropriate amplitude events | 1 |

40,045 | 5,169,836,730 | IssuesEvent | 2017-01-18 02:44:04 | c4gnv/c4gnv.github.io | https://api.github.com/repos/c4gnv/c4gnv.github.io | closed | Review admin site designs | design | Hey there, team! Please take a look at the visual designs I've put together for our c4gnv.com site and leave your feedback here.

Home: https://www.dropbox.com/s/lccvsow6gdfew1m/home.png?dl=0

Get Started: https://www.dropbox.com/s/twa0av2v33fcqjc/get-started.png?dl=0 | 1.0 | Review admin site designs - Hey there, team! Please take a look at the visual designs I've put together for our c4gnv.com site and leave your feedback here.

Home: https://www.dropbox.com/s/lccvsow6gdfew1m/home.png?dl=0

Get Started: https://www.dropbox.com/s/twa0av2v33fcqjc/get-started.png?dl=0 | design | review admin site designs hey there team please take a look at the visual designs i ve put together for our com site and leave your feedback here home get started | 1 |

438,475 | 12,639,481,283 | IssuesEvent | 2020-06-16 00:02:04 | seccomp/libseccomp-golang | https://api.github.com/repos/seccomp/libseccomp-golang | opened | BUG: rename the "master" branch to "main" #255 | bug priority/medium | Similar to [PR #246](https://github.com/seccomp/libseccomp/pull/246) we need to change the name of the "master" branch to "main", or similar. This is quite easy, and via a branch rename, it can be done without losing any of the git history; there is no good reason to *not* do this.

The steps are simple:

1. Rename ... | 1.0 | BUG: rename the "master" branch to "main" #255 - Similar to [PR #246](https://github.com/seccomp/libseccomp/pull/246) we need to change the name of the "master" branch to "main", or similar. This is quite easy, and via a branch rename, it can be done without losing any of the git history; there is no good reason to *n... | non_design | bug rename the master branch to main similar to we need to change the name of the master branch to main or similar this is quite easy and via a branch rename it can be done without losing any of the git history there is no good reason to not do this the steps are simple rename the loc... | 0 |

517,288 | 15,001,435,536 | IssuesEvent | 2021-01-30 00:08:47 | IDAES/idaes-pse | https://api.github.com/repos/IDAES/idaes-pse | closed | Units problem with drum.py model when updating to Pyomo master | Priority:High bug | @jsiirola and I are testing idaes tests with the Pyomo master before we bump the IDAES Pyomo version. We encountered the following error with the test_drum.py in the power generation unit model library.

> Units problem with expression fs.unit.control_volume.volume[0.0] - (((asin((fs.unit.drum_level[0.0] - (0.5*fs.u... | 1.0 | Units problem with drum.py model when updating to Pyomo master - @jsiirola and I are testing idaes tests with the Pyomo master before we bump the IDAES Pyomo version. We encountered the following error with the test_drum.py in the power generation unit model library.

> Units problem with expression fs.unit.control_... | non_design | units problem with drum py model when updating to pyomo master jsiirola and i are testing idaes tests with the pyomo master before we bump the idaes pyomo version we encountered the following error with the test drum py in the power generation unit model library units problem with expression fs unit control ... | 0 |

42,613 | 5,502,783,498 | IssuesEvent | 2017-03-16 01:01:59 | kubernetes-incubator/bootkube | https://api.github.com/repos/kubernetes-incubator/bootkube | closed | self hosted etcd: checkpoint iptables on master nodes | kind/design kind/enhancement priority/P1 | self hosted etcd relies on service IP to work correctly. Kuberetes API server contact etcd pod by service IP (load balancing + hide the actual etcd pod IP which is subject to change).

Service IP relies on API server to be restored after a machine reboot. If we restart all API servers at the same time, service IP is ... | 1.0 | self hosted etcd: checkpoint iptables on master nodes - self hosted etcd relies on service IP to work correctly. Kuberetes API server contact etcd pod by service IP (load balancing + hide the actual etcd pod IP which is subject to change).

Service IP relies on API server to be restored after a machine reboot. If we ... | design | self hosted etcd checkpoint iptables on master nodes self hosted etcd relies on service ip to work correctly kuberetes api server contact etcd pod by service ip load balancing hide the actual etcd pod ip which is subject to change service ip relies on api server to be restored after a machine reboot if we ... | 1 |

114,766 | 14,633,060,870 | IssuesEvent | 2020-12-24 00:31:17 | keepid/keepid_client | https://api.github.com/repos/keepid/keepid_client | opened | Create breakpoint sizing on XL/Lg/Md/Sm/Xs screens | Design Component | Make breakpoints for these modals. Remember for small screens (sm and xs) the modal will resize to the whole screen | 1.0 | Create breakpoint sizing on XL/Lg/Md/Sm/Xs screens - Make breakpoints for these modals. Remember for small screens (sm and xs) the modal will resize to the whole screen | design | create breakpoint sizing on xl lg md sm xs screens make breakpoints for these modals remember for small screens sm and xs the modal will resize to the whole screen | 1 |

104,848 | 4,226,100,885 | IssuesEvent | 2016-07-02 07:42:27 | The-Compiler/qutebrowser | https://api.github.com/repos/The-Compiler/qutebrowser | closed | Don't reload page when url didn't change with `:edit-url` | easy priority: 2 - low | Everything is in the title, since I bound `e` to `:edit-url` and sometimes press it by mistake, I would like to be able to quickly undo this (so I just hit `ZZ` in vim), but this will then reload the page, which I don't want since the URL hasn't changed.

Do you agree ? | 1.0 | Don't reload page when url didn't change with `:edit-url` - Everything is in the title, since I bound `e` to `:edit-url` and sometimes press it by mistake, I would like to be able to quickly undo this (so I just hit `ZZ` in vim), but this will then reload the page, which I don't want since the URL hasn't changed.

Do... | non_design | don t reload page when url didn t change with edit url everything is in the title since i bound e to edit url and sometimes press it by mistake i would like to be able to quickly undo this so i just hit zz in vim but this will then reload the page which i don t want since the url hasn t changed do... | 0 |

789 | 2,905,112,497 | IssuesEvent | 2015-06-18 21:41:55 | rust-lang/rust | https://api.github.com/repos/rust-lang/rust | closed | Publish signing key and security team key to keybase.io | A-infrastructure | keybase.io is a seemingly-popular new service for validating GPG keys. I'd like to publish our keys there but haven't gotten around to it. | 1.0 | Publish signing key and security team key to keybase.io - keybase.io is a seemingly-popular new service for validating GPG keys. I'd like to publish our keys there but haven't gotten around to it. | non_design | publish signing key and security team key to keybase io keybase io is a seemingly popular new service for validating gpg keys i d like to publish our keys there but haven t gotten around to it | 0 |

182,025 | 30,779,321,220 | IssuesEvent | 2023-07-31 08:55:47 | opencollective/opencollective | https://api.github.com/repos/opencollective/opencollective | opened | OCR: Handle currency mismatches | frontend enhancement needs design | Part of https://github.com/opencollective/opencollective/issues/6865

Require https://github.com/opencollective/opencollective/issues/6903

In https://github.com/opencollective/opencollective/issues/6903, we'll start with not prefilling amounts in case they're expressed with a currency that is different from the expe... | 1.0 | OCR: Handle currency mismatches - Part of https://github.com/opencollective/opencollective/issues/6865

Require https://github.com/opencollective/opencollective/issues/6903

In https://github.com/opencollective/opencollective/issues/6903, we'll start with not prefilling amounts in case they're expressed with a curren... | design | ocr handle currency mismatches part of require in we ll start with not prefilling amounts in case they re expressed with a currency that is different from the expense following up on that we would like to be smart and try to convert the amount to the desired currency but we need the ux to be really c... | 1 |

177,931 | 29,193,313,400 | IssuesEvent | 2023-05-19 22:55:21 | prettierlichess/prettierlichess | https://api.github.com/repos/prettierlichess/prettierlichess | closed | Board is oversized | design request |

I downloaded the latest ver of the extension, it fixed the player window discrepancy but now the board is oversized. If you can fix this would be appreciated, thanks in advance! | 1.0 | Board is oversized -

I downloaded the latest ver of the extension, it fixed the player window discrepancy but now the board is oversized. If you can fix this would be appreciated... | design | board is oversized i downloaded the latest ver of the extension it fixed the player window discrepancy but now the board is oversized if you can fix this would be appreciated thanks in advance | 1 |

174,706 | 27,712,188,144 | IssuesEvent | 2023-03-14 14:52:44 | hpi-swa-lab/BP2021RH1 | https://api.github.com/repos/hpi-swa-lab/BP2021RH1 | opened | Guide | U-low C-design-prototype | Es sollte einen Guide durch die Seite geben. Das kann auch in Form von Tooltips während der Nutzung passieren. | 1.0 | Guide - Es sollte einen Guide durch die Seite geben. Das kann auch in Form von Tooltips während der Nutzung passieren. | design | guide es sollte einen guide durch die seite geben das kann auch in form von tooltips während der nutzung passieren | 1 |

155,079 | 24,397,940,565 | IssuesEvent | 2022-10-04 21:10:42 | dotnet/efcore | https://api.github.com/repos/dotnet/efcore | closed | Migration is not smart enough to combine mutually exclusive settings | closed-by-design customer-reported | I am not sure whether it is a bug or an already-known issue that is left as it is (because it is not harmful).

The following

```csharp

class Person

{

public int Id { get; set; }

public string? FullName { get; set; }

public string? Biography { get; set; }

}

```

```csharp

class MyContext : DbCon... | 1.0 | Migration is not smart enough to combine mutually exclusive settings - I am not sure whether it is a bug or an already-known issue that is left as it is (because it is not harmful).

The following

```csharp

class Person

{

public int Id { get; set; }

public string? FullName { get; set; }

public stri... | design | migration is not smart enough to combine mutually exclusive settings i am not sure whether it is a bug or an already known issue that is left as it is because it is not harmful the following csharp class person public int id get set public string fullname get set public stri... | 1 |

143,276 | 21,993,500,196 | IssuesEvent | 2022-05-26 02:11:42 | harryodubhghaill/CI-Portforlio-4-blogsocial | https://api.github.com/repos/harryodubhghaill/CI-Portforlio-4-blogsocial | closed | Design database structure | SysAdmin Design | Design a relational database structure to allow for the full functionality of the site.

- [ ] User

- [ ] Post

- [ ] Comment

- [ ] Group | 1.0 | Design database structure - Design a relational database structure to allow for the full functionality of the site.

- [ ] User

- [ ] Post

- [ ] Comment

- [ ] Group | design | design database structure design a relational database structure to allow for the full functionality of the site user post comment group | 1 |

90,650 | 11,424,360,830 | IssuesEvent | 2020-02-03 17:35:14 | are-you-still-watching/web-app | https://api.github.com/repos/are-you-still-watching/web-app | opened | Create profile page buttons | design | Create profile page buttons, including:

- [ ] netflix

- [ ] crave

- [ ] disney +

- [ ] prime video

| 1.0 | Create profile page buttons - Create profile page buttons, including:

- [ ] netflix

- [ ] crave

- [ ] disney +

- [ ] prime video

| design | create profile page buttons create profile page buttons including netflix crave disney prime video | 1 |

173,643 | 27,503,716,541 | IssuesEvent | 2023-03-05 23:53:35 | penumbra-zone/penumbra | https://api.github.com/repos/penumbra-zone/penumbra | closed | Specify note contents | A-shielded-crypto E-medium C-design | Fill in this section of the protocol spec; settle on (and write up) a choice of `leadByte` method. | 1.0 | Specify note contents - Fill in this section of the protocol spec; settle on (and write up) a choice of `leadByte` method. | design | specify note contents fill in this section of the protocol spec settle on and write up a choice of leadbyte method | 1 |

63,731 | 7,740,452,286 | IssuesEvent | 2018-05-28 21:49:53 | vtex/styleguide | https://api.github.com/repos/vtex/styleguide | closed | Empty state | Design Done | Here's the Empty State pattern we use in the Credit Control module.

(It's already implemented, so it's just a matter of extracting it :)

What problem it solves

===

Shows _something_ instead of _nothing_... | 1.0 | Empty state - Here's the Empty State pattern we use in the Credit Control module.

(It's already implemented, so it's just a matter of extracting it :)

What problem it solves

===

Shows _something_ instea... | design | empty state here s the empty state pattern we use in the credit control module it s already implemented so it s just a matter of extracting it what problem it solves shows something instead of nothing explains what should be in that particular space and encourages the designer developer... | 1 |

103,966 | 22,534,119,974 | IssuesEvent | 2022-06-25 01:19:36 | macder/medusa-fulfillment-shippo | https://api.github.com/repos/macder/medusa-fulfillment-shippo | closed | Dev - automate config source references | code improvement chore | eliminate the manual step of changing the config reference (standalone localhost vs medusa package) | 1.0 | Dev - automate config source references - eliminate the manual step of changing the config reference (standalone localhost vs medusa package) | non_design | dev automate config source references eliminate the manual step of changing the config reference standalone localhost vs medusa package | 0 |

91,381 | 11,498,792,194 | IssuesEvent | 2020-02-12 12:44:34 | liqd/adhocracy-plus | https://api.github.com/repos/liqd/adhocracy-plus | closed | changes in organisation page | Type: UX/UI or design | URL: https://aplus-dev.liqd.net/teststadt/

Comment/Question:

If possible, I would increase the image height cause most of the pictures aren't that stretched. The layout had 345px height.

I would also leave the project description text using the same paragraph style as the about text on top.

I know I alre... | 1.0 | changes in organisation page - URL: https://aplus-dev.liqd.net/teststadt/

Comment/Question:

If possible, I would increase the image height cause most of the pictures aren't that stretched. The layout had 345px height.

I would also leave the project description text using the same paragraph style as the about... | design | changes in organisation page url comment question if possible i would increase the image height cause most of the pictures aren t that stretched the layout had height i would also leave the project description text using the same paragraph style as the about text on top i know i already menti... | 1 |

84,928 | 10,573,271,105 | IssuesEvent | 2019-10-07 11:34:36 | fac-17/Generation-Change | https://api.github.com/repos/fac-17/Generation-Change | closed | prepare presentation on design week | design important 1 | - [ ] prepare demo for design week figmas

- [ ] talk about what went well and what could have gone better

- [ ] show things that we've learnt

- [ ] where we go from here | 1.0 | prepare presentation on design week - - [ ] prepare demo for design week figmas

- [ ] talk about what went well and what could have gone better

- [ ] show things that we've learnt

- [ ] where we go from here | design | prepare presentation on design week prepare demo for design week figmas talk about what went well and what could have gone better show things that we ve learnt where we go from here | 1 |

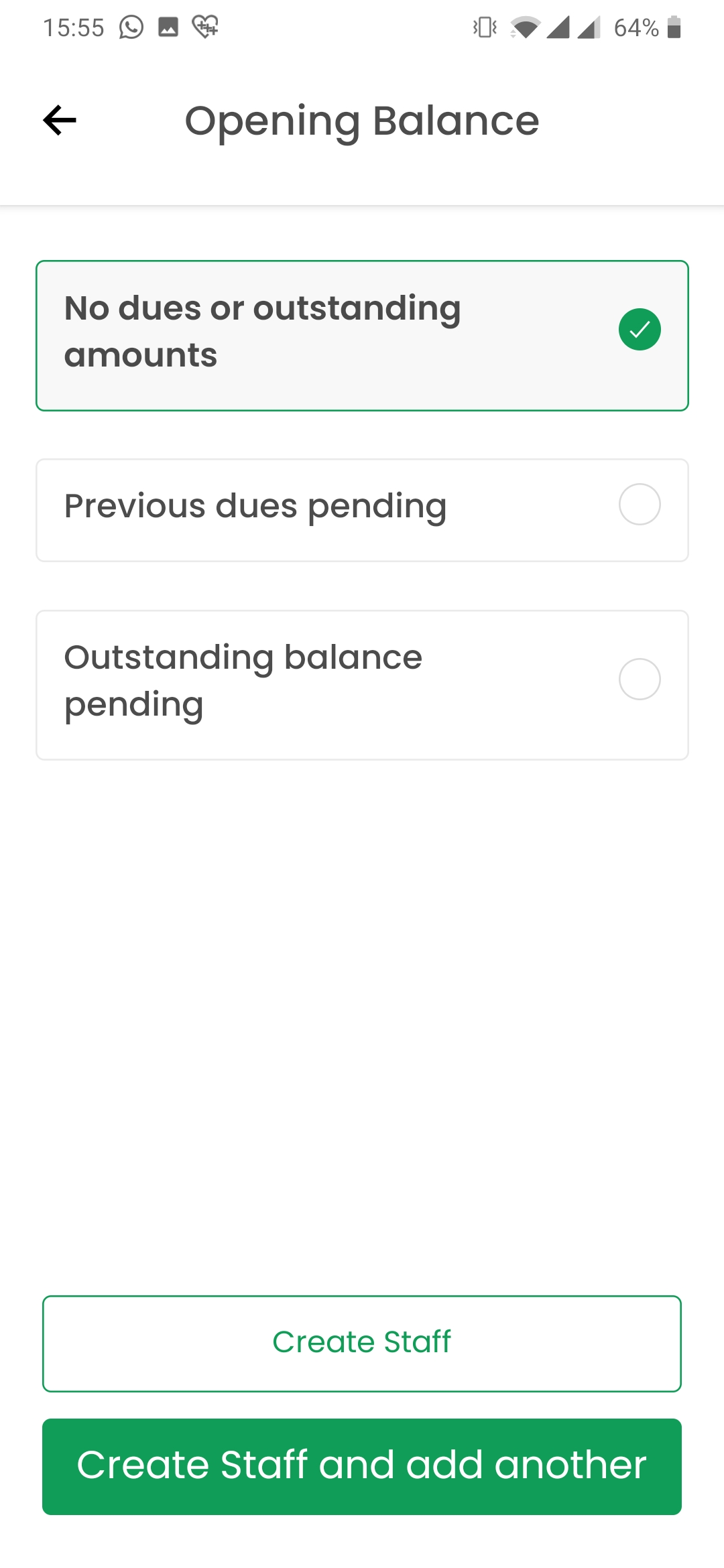

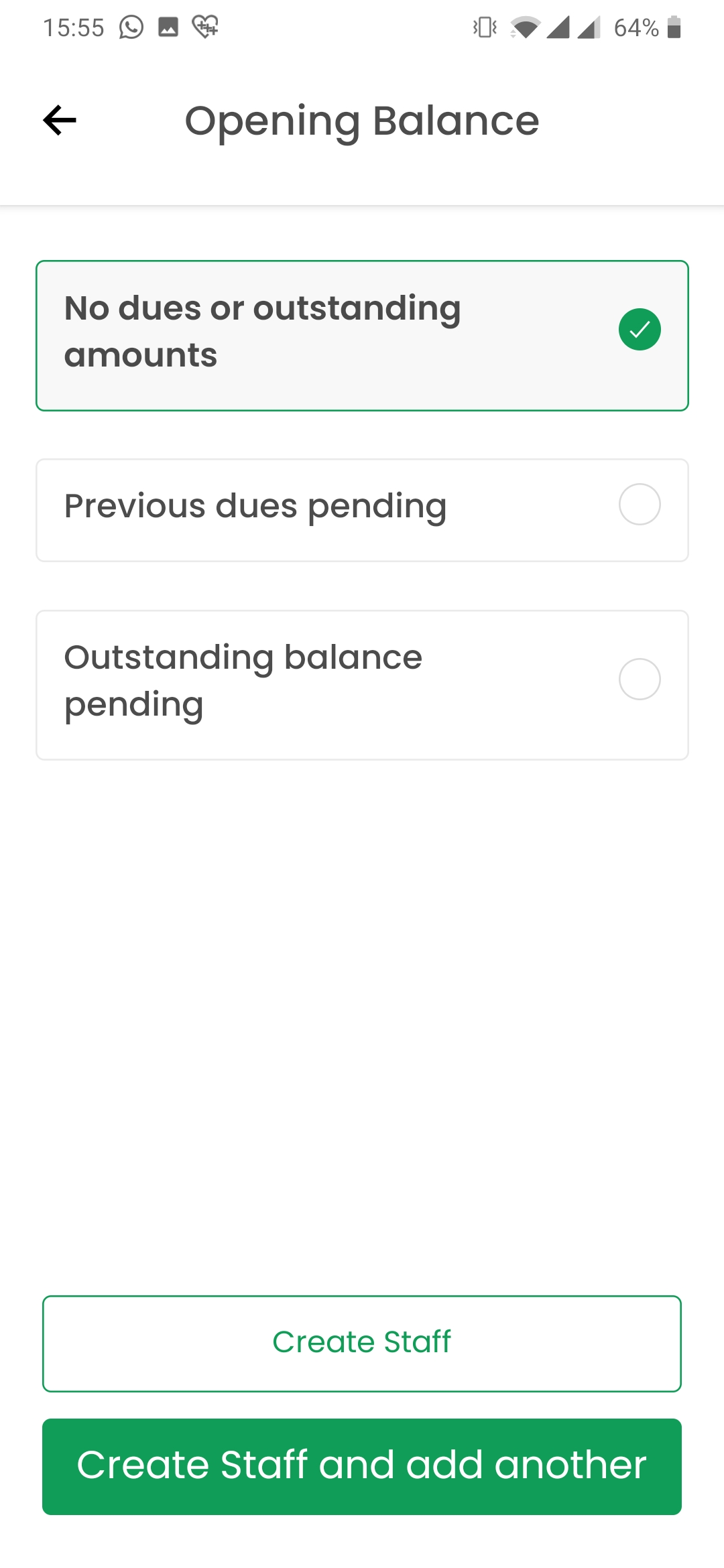

111,893 | 14,169,463,610 | IssuesEvent | 2020-11-12 13:16:16 | ajency/Dhanda-App | https://api.github.com/repos/ajency/Dhanda-App | closed | Opening Balance Page: The font size on the second button is too big. Make it the same size as " Create staff " | Assigned to QA Priority: High UI/ design bug |

| 1.0 | Opening Balance Page: The font size on the second button is too big. Make it the same size as " Create staff " -

| design | opening balance page the font size on the second button is too big make it the same size as create staff | 1 |

149,159 | 11,884,393,376 | IssuesEvent | 2020-03-27 17:34:02 | NuGet/Home | https://api.github.com/repos/NuGet/Home | closed | PortResever doesn't work correctly for netcoreapp5.0 | Area:Test | PortReserver has the following code, setting TimeSpan.Zero for a CancellationTokenSource:

[` var tryOnceCts = new CancellationTokenSource(TimeSpan.Zero);`](https://github.com/NuGet/NuGet.Client/blob/dev/test/TestUtilities/Test.Utility/TestServer/PortReserver.cs#L60)

In netcore5.0, the task will get cancelled, so it... | 1.0 | PortResever doesn't work correctly for netcoreapp5.0 - PortReserver has the following code, setting TimeSpan.Zero for a CancellationTokenSource:

[` var tryOnceCts = new CancellationTokenSource(TimeSpan.Zero);`](https://github.com/NuGet/NuGet.Client/blob/dev/test/TestUtilities/Test.Utility/TestServer/PortReserver.cs#L6... | non_design | portresever doesn t work correctly for portreserver has the following code setting timespan zero for a cancellationtokensource in the task will get cancelled so it will never get a chance to run task on this port then it will keep trying to increase the port until it exceeds the max value t... | 0 |

218,527 | 24,376,033,267 | IssuesEvent | 2022-10-04 01:02:03 | TIBCOSoftware/jasperreports-server-ce | https://api.github.com/repos/TIBCOSoftware/jasperreports-server-ce | opened | CVE-2022-42004 (Medium) detected in jackson-databind-2.13.2.2.jar, jackson-databind-2.13.2.jar | security vulnerability | ## CVE-2022-42004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2.13.2.2.jar</b>, <b>jackson-databind-2.13.2.jar</b></p></summary>

<p>

<details><summary><b>jacks... | True | CVE-2022-42004 (Medium) detected in jackson-databind-2.13.2.2.jar, jackson-databind-2.13.2.jar - ## CVE-2022-42004 - Medium Severity Vulnerability

<details><summary><img src='https://whitesource-resources.whitesourcesoftware.com/vulnerability_details.png' width=19 height=20> Vulnerable Libraries - <b>jackson-databind-2... | non_design | cve medium detected in jackson databind jar jackson databind jar cve medium severity vulnerability vulnerable libraries jackson databind jar jackson databind jar jackson databind jar general data binding functionality for jackson works on co... | 0 |