Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

622,324 | 19,621,403,909 | IssuesEvent | 2022-01-07 07:14:26 | tgpethan/EUS | https://api.github.com/repos/tgpethan/EUS | closed | Migrate to using database. | enhancement High priority | Currently as it stands EUS stores every single image in a json file in it's module folder. This is pretty bad ***json is not a database*** and it shouldn't be used as such therefore support for mysql and sqlite should be implemented and a migration path for old installs should be provided. | 1.0 | Migrate to using database. - Currently as it stands EUS stores every single image in a json file in it's module folder. This is pretty bad ***json is not a database*** and it shouldn't be used as such therefore support for mysql and sqlite should be implemented and a migration path for old installs should be provided. | priority | migrate to using database currently as it stands eus stores every single image in a json file in it s module folder this is pretty bad json is not a database and it shouldn t be used as such therefore support for mysql and sqlite should be implemented and a migration path for old installs should be provided | 1 |

652,196 | 21,525,055,478 | IssuesEvent | 2022-04-28 17:32:56 | ooni/explorer | https://api.github.com/repos/ooni/explorer | closed | Help text for MAT | priority/high MAT | To enable use of the MAT, we can include the following copy under the "Help" section of the MAT:

```

# What is the MAT?

OONI's Measurement Aggregation Toolkit (MAT) is a tool that enables you to generate your own custom charts based on **aggregate views of real-time OONI data** collected from around the world.

OONI data consists of network measurements collected by [OONI Probe](https://ooni.org/install/) users around the world.

These measurements contain information about various types of **internet censorship**, such as the [blocking of websites and apps](https://ooni.org/nettest/) around the world.

# Who is the MAT for?

The MAT was built for researchers, journalists, and human rights defenders interested in examining internet censorship around the world.

# Why use the MAT?

When examining cases of internet censorship, it's important to **look at many measurements at once** ("in aggregate") in order to answer key questions like the following:

* Does the testing of a service (e.g. Facebook) present **signs of blocking every time that it is tested** in a country? This can be helpful for ruling out [false positives](https://ooni.org/support/faq/#what-are-false-positives).

* What types of websites (e.g. human rights websites) are blocked in each country?

* In which countries is a specific website (e.g. `bbc.com`) blocked?

* How does the blocking of different apps (e.g. WhatsApp or Telegram) vary across countries?

* How does the blocking of a service vary across countries and [ASNs](https://ooni.org/support/glossary/#asn)?

* How does the blocking of a service change over time?

When trying to answer questions like the above, we normally perform relevant data analysis (instead of inspecting measurements one by one).

The MAT incorporates our data analysis techniques, enabling you to answer such questions without any data analysis skills, and with the click of a button!

# How to use the MAT?

Through the filters at the start of the page, select the parameters you care about in order to plot charts based on aggregate views of OONI data.

The MAT includes the following filters:

* **Countries:** Select a country through the drop-down menu (the "All Countries" option will show global coverage)

* **Test Name:** Select an [OONI Probe test](https://ooni.org/nettest/) based on which you would like to get measurements (for example, select `Web Connectivity` to view the testing of websites)

* **Domain:** Type the domain for the website you would like to get measurements (e.g. `twitter.com`)

* **Website categories:** Select the [website category](https://github.com/citizenlab/test-lists/blob/master/lists/00-LEGEND-new_category_codes.csv) for which you would like to get measurements (e.g. `News Media` for news media websites)

* **ASN:** Type the [ASN](https://ooni.org/support/glossary/#asn) of the network for which you would like to get measurements (e.g. `AS30722` for Vodafone Italia)

* **Date range:** Select the date range of the measurements by adjusting the `Since` and `Until` filters

* **X axis:** Select the values that you would like to appear on the horizontal axis of your chart

* **Y axis:** Select the values that you would like to appear on the vertical axis of your chart

Depending on what you would like to explore, adjust the MAT filters accordingly and click `Submit`.

For example, if you would like to check the testing of BBC in all countries around the world:

* Type `www.bbc.com` under `Domain`

* Select `Countries` under the `Y axis`

* Click `Submit`

This will plot numerous charts based on the OONI Probe testing of `www.bbc.com` worldwide.

# Interpreting MAT charts

The MAT charts (and associated tables) include the following values:

* **OK count:** Successful measurements (i.e. NO sign of internet censorship)

* **Confirmed count:** Measurements from automatically **confirmed blocked websites** (e.g. a [block page](https://ooni.org/support/glossary/#block-page) was served)

* **Anomaly count:** Measurements that provided **signs of potential blocking** (however, [false positives](https://ooni.org/support/faq/#what-are-false-positives) can occur)

* **Failure count:** Failed experiments that should be discarded

* **Measurement count:** Total volume of OONI measurements (pertaining to the selected country, resource, etc.)

When trying to identify the blocking of a service (e.g. `twitter.com`), it's useful to check whether:

* Measurements are annotated as `confirmed`, automatically confirming the blocking of websites

* A large volume of measurements (in comparison to the overall measurement count) present `anomalies` (i.e. signs of potential censorship)

You can access the raw data by clicking on the bars of charts, and subsequently clicking on the relevant measurement links.

# Website categories

[OONI Probe](https://ooni.org/install/) users test a wide range of [websites](https://ooni.org/support/faq/#which-websites-will-i-test-for-censorship-with-ooni-probe) that fall under the following [30 standardized categories](https://github.com/citizenlab/test-lists/blob/master/lists/00-LEGEND-new_category_codes.csv).

``` | 1.0 | Help text for MAT - To enable use of the MAT, we can include the following copy under the "Help" section of the MAT:

```

# What is the MAT?

OONI's Measurement Aggregation Toolkit (MAT) is a tool that enables you to generate your own custom charts based on **aggregate views of real-time OONI data** collected from around the world.

OONI data consists of network measurements collected by [OONI Probe](https://ooni.org/install/) users around the world.

These measurements contain information about various types of **internet censorship**, such as the [blocking of websites and apps](https://ooni.org/nettest/) around the world.

# Who is the MAT for?

The MAT was built for researchers, journalists, and human rights defenders interested in examining internet censorship around the world.

# Why use the MAT?

When examining cases of internet censorship, it's important to **look at many measurements at once** ("in aggregate") in order to answer key questions like the following:

* Does the testing of a service (e.g. Facebook) present **signs of blocking every time that it is tested** in a country? This can be helpful for ruling out [false positives](https://ooni.org/support/faq/#what-are-false-positives).

* What types of websites (e.g. human rights websites) are blocked in each country?

* In which countries is a specific website (e.g. `bbc.com`) blocked?

* How does the blocking of different apps (e.g. WhatsApp or Telegram) vary across countries?

* How does the blocking of a service vary across countries and [ASNs](https://ooni.org/support/glossary/#asn)?

* How does the blocking of a service change over time?

When trying to answer questions like the above, we normally perform relevant data analysis (instead of inspecting measurements one by one).

The MAT incorporates our data analysis techniques, enabling you to answer such questions without any data analysis skills, and with the click of a button!

# How to use the MAT?

Through the filters at the start of the page, select the parameters you care about in order to plot charts based on aggregate views of OONI data.

The MAT includes the following filters:

* **Countries:** Select a country through the drop-down menu (the "All Countries" option will show global coverage)

* **Test Name:** Select an [OONI Probe test](https://ooni.org/nettest/) based on which you would like to get measurements (for example, select `Web Connectivity` to view the testing of websites)

* **Domain:** Type the domain for the website you would like to get measurements (e.g. `twitter.com`)

* **Website categories:** Select the [website category](https://github.com/citizenlab/test-lists/blob/master/lists/00-LEGEND-new_category_codes.csv) for which you would like to get measurements (e.g. `News Media` for news media websites)

* **ASN:** Type the [ASN](https://ooni.org/support/glossary/#asn) of the network for which you would like to get measurements (e.g. `AS30722` for Vodafone Italia)

* **Date range:** Select the date range of the measurements by adjusting the `Since` and `Until` filters

* **X axis:** Select the values that you would like to appear on the horizontal axis of your chart

* **Y axis:** Select the values that you would like to appear on the vertical axis of your chart

Depending on what you would like to explore, adjust the MAT filters accordingly and click `Submit`.

For example, if you would like to check the testing of BBC in all countries around the world:

* Type `www.bbc.com` under `Domain`

* Select `Countries` under the `Y axis`

* Click `Submit`

This will plot numerous charts based on the OONI Probe testing of `www.bbc.com` worldwide.

# Interpreting MAT charts

The MAT charts (and associated tables) include the following values:

* **OK count:** Successful measurements (i.e. NO sign of internet censorship)

* **Confirmed count:** Measurements from automatically **confirmed blocked websites** (e.g. a [block page](https://ooni.org/support/glossary/#block-page) was served)

* **Anomaly count:** Measurements that provided **signs of potential blocking** (however, [false positives](https://ooni.org/support/faq/#what-are-false-positives) can occur)

* **Failure count:** Failed experiments that should be discarded

* **Measurement count:** Total volume of OONI measurements (pertaining to the selected country, resource, etc.)

When trying to identify the blocking of a service (e.g. `twitter.com`), it's useful to check whether:

* Measurements are annotated as `confirmed`, automatically confirming the blocking of websites

* A large volume of measurements (in comparison to the overall measurement count) present `anomalies` (i.e. signs of potential censorship)

You can access the raw data by clicking on the bars of charts, and subsequently clicking on the relevant measurement links.

# Website categories

[OONI Probe](https://ooni.org/install/) users test a wide range of [websites](https://ooni.org/support/faq/#which-websites-will-i-test-for-censorship-with-ooni-probe) that fall under the following [30 standardized categories](https://github.com/citizenlab/test-lists/blob/master/lists/00-LEGEND-new_category_codes.csv).

``` | priority | help text for mat to enable use of the mat we can include the following copy under the help section of the mat what is the mat ooni s measurement aggregation toolkit mat is a tool that enables you to generate your own custom charts based on aggregate views of real time ooni data collected from around the world ooni data consists of network measurements collected by users around the world these measurements contain information about various types of internet censorship such as the around the world who is the mat for the mat was built for researchers journalists and human rights defenders interested in examining internet censorship around the world why use the mat when examining cases of internet censorship it s important to look at many measurements at once in aggregate in order to answer key questions like the following does the testing of a service e g facebook present signs of blocking every time that it is tested in a country this can be helpful for ruling out what types of websites e g human rights websites are blocked in each country in which countries is a specific website e g bbc com blocked how does the blocking of different apps e g whatsapp or telegram vary across countries how does the blocking of a service vary across countries and how does the blocking of a service change over time when trying to answer questions like the above we normally perform relevant data analysis instead of inspecting measurements one by one the mat incorporates our data analysis techniques enabling you to answer such questions without any data analysis skills and with the click of a button how to use the mat through the filters at the start of the page select the parameters you care about in order to plot charts based on aggregate views of ooni data the mat includes the following filters countries select a country through the drop down menu the all countries option will show global coverage test name select an based on which you would like to get measurements for example select web connectivity to view the testing of websites domain type the domain for the website you would like to get measurements e g twitter com website categories select the for which you would like to get measurements e g news media for news media websites asn type the of the network for which you would like to get measurements e g for vodafone italia date range select the date range of the measurements by adjusting the since and until filters x axis select the values that you would like to appear on the horizontal axis of your chart y axis select the values that you would like to appear on the vertical axis of your chart depending on what you would like to explore adjust the mat filters accordingly and click submit for example if you would like to check the testing of bbc in all countries around the world type under domain select countries under the y axis click submit this will plot numerous charts based on the ooni probe testing of worldwide interpreting mat charts the mat charts and associated tables include the following values ok count successful measurements i e no sign of internet censorship confirmed count measurements from automatically confirmed blocked websites e g a was served anomaly count measurements that provided signs of potential blocking however can occur failure count failed experiments that should be discarded measurement count total volume of ooni measurements pertaining to the selected country resource etc when trying to identify the blocking of a service e g twitter com it s useful to check whether measurements are annotated as confirmed automatically confirming the blocking of websites a large volume of measurements in comparison to the overall measurement count present anomalies i e signs of potential censorship you can access the raw data by clicking on the bars of charts and subsequently clicking on the relevant measurement links website categories users test a wide range of that fall under the following | 1 |

518,736 | 15,033,774,767 | IssuesEvent | 2021-02-02 11:58:10 | opentargets/platform | https://api.github.com/repos/opentargets/platform | closed | I would like to have an example for Reactome for the new JSON schema | Kind: Data Priority: High | Provide an example evidence from Reactome of how it looks with the current JSON schema and how it should look like when they use the new schema | 1.0 | I would like to have an example for Reactome for the new JSON schema - Provide an example evidence from Reactome of how it looks with the current JSON schema and how it should look like when they use the new schema | priority | i would like to have an example for reactome for the new json schema provide an example evidence from reactome of how it looks with the current json schema and how it should look like when they use the new schema | 1 |

146,910 | 5,630,412,514 | IssuesEvent | 2017-04-05 12:12:48 | CS2103JAN2017-T11-B2/main | https://api.github.com/repos/CS2103JAN2017-T11-B2/main | closed | V0.5rc Documentation | priority.high type.task | All .md files need to be updated, including UserGuide, DeveloperGuide, AboutUs, and README | 1.0 | V0.5rc Documentation - All .md files need to be updated, including UserGuide, DeveloperGuide, AboutUs, and README | priority | documentation all md files need to be updated including userguide developerguide aboutus and readme | 1 |

470,009 | 13,529,607,776 | IssuesEvent | 2020-09-15 18:34:12 | Kedyn/fusliez-notes | https://api.github.com/repos/Kedyn/fusliez-notes | closed | Add title attribute to h1 input so the user knows its editable | Priority: High Status: Pending Type: Maintenance | In my fork I had a title attribute on the h1 input that read "Click to edit". it's not obvious that it can be edited. | 1.0 | Add title attribute to h1 input so the user knows its editable - In my fork I had a title attribute on the h1 input that read "Click to edit". it's not obvious that it can be edited. | priority | add title attribute to input so the user knows its editable in my fork i had a title attribute on the input that read click to edit it s not obvious that it can be edited | 1 |

803,779 | 29,189,021,261 | IssuesEvent | 2023-05-19 18:03:43 | minio/docs | https://api.github.com/repos/minio/docs | opened | [RELEASE] MinIO RELEASE.2023-05-18T00-05-36Z doc changes | priority: high | **Summary**

See https://github.com/minio/minio/releases/tag/RELEASE.2023-05-18T00-05-36Z for full changelog

** ToDo

- [ ] Persistent Queue Store for system/audit logs - [PR 17121](https://github.com/minio/minio/pull/17121)

- [ ] Max policy size of 2KiB for Service Account / STS policies (clarify w/ engineer) - [PR 17161](https://github.com/minio/minio/pull/17167)

- [ ] Webhook usage metrics [PR 17179)(https://github.com/minio/minio/pull/17179)

- [ ] healing updates parity based on current Storage Class - clarify w/ engineer [PR 17187](https://github.com/minio/minio/pull/17187)

- [ ] Improved support for topology changes during decomm - clarify w/ engineer [PR 17221](https://github.com/minio/minio/pull/17221)

**Additional context**

Add any other context or screenshots about the feature request here.

| 1.0 | [RELEASE] MinIO RELEASE.2023-05-18T00-05-36Z doc changes - **Summary**

See https://github.com/minio/minio/releases/tag/RELEASE.2023-05-18T00-05-36Z for full changelog

** ToDo

- [ ] Persistent Queue Store for system/audit logs - [PR 17121](https://github.com/minio/minio/pull/17121)

- [ ] Max policy size of 2KiB for Service Account / STS policies (clarify w/ engineer) - [PR 17161](https://github.com/minio/minio/pull/17167)

- [ ] Webhook usage metrics [PR 17179)(https://github.com/minio/minio/pull/17179)

- [ ] healing updates parity based on current Storage Class - clarify w/ engineer [PR 17187](https://github.com/minio/minio/pull/17187)

- [ ] Improved support for topology changes during decomm - clarify w/ engineer [PR 17221](https://github.com/minio/minio/pull/17221)

**Additional context**

Add any other context or screenshots about the feature request here.

| priority | minio release doc changes summary see for full changelog todo persistent queue store for system audit logs max policy size of for service account sts policies clarify w engineer webhook usage metrics pr healing updates parity based on current storage class clarify w engineer improved support for topology changes during decomm clarify w engineer additional context add any other context or screenshots about the feature request here | 1 |

751,708 | 26,254,527,449 | IssuesEvent | 2023-01-05 22:47:30 | lambdaclass/cairo-rs | https://api.github.com/repos/lambdaclass/cairo-rs | closed | Abstract the representation of field elements | high-priority | We're currently using `BigInt` explicitly, which forces us to compute the `.mod_floor` of results after every operation, use costly divisions, etc.

The first step to fix arithmetic performance issues is to isolate the field properties into a new type and let the rest of the code operate on fields in a more abstract way. | 1.0 | Abstract the representation of field elements - We're currently using `BigInt` explicitly, which forces us to compute the `.mod_floor` of results after every operation, use costly divisions, etc.

The first step to fix arithmetic performance issues is to isolate the field properties into a new type and let the rest of the code operate on fields in a more abstract way. | priority | abstract the representation of field elements we re currently using bigint explicitly which forces us to compute the mod floor of results after every operation use costly divisions etc the first step to fix arithmetic performance issues is to isolate the field properties into a new type and let the rest of the code operate on fields in a more abstract way | 1 |

479,386 | 13,795,650,187 | IssuesEvent | 2020-10-09 18:23:48 | vanjarosoftware/Vanjaro.Platform | https://api.github.com/repos/vanjarosoftware/Vanjaro.Platform | closed | Add resource file in Authentication package | Area: Backend Priority: High Release: Minor | extract in file in below path

DesktopModules\AuthenticationServices\Vanjaro\App_LocalResources | 1.0 | Add resource file in Authentication package - extract in file in below path

DesktopModules\AuthenticationServices\Vanjaro\App_LocalResources | priority | add resource file in authentication package extract in file in below path desktopmodules authenticationservices vanjaro app localresources | 1 |

565,991 | 16,777,734,309 | IssuesEvent | 2021-06-15 00:52:57 | myConsciousness/twitter-bot-j | https://api.github.com/repos/myConsciousness/twitter-bot-j | opened | 不要な差分シンボルの追加処理がある | Priority: high Problem: bug | # High Priotity Bug Report

## 1. Bug Details

数値が負数であった場合にその値を文字列に変換すると既に負数を表す「-」が付いている状態だが、

現在の処理の流れではこの負数にも負数のシンボルを付与してしまい「-」が2つ付いている状態で値が出力される。

```java

private String toReportCount(@NonNull final Difference difference) {

return switch (difference.getDifferenceType()) {

case NONE -> DifferenceSymbolUtils.toNoneString(difference.getValue());

case INCREASE -> DifferenceSymbolUtils.toIncreaseString(difference.getValue());

case DECREASE -> DifferenceSymbolUtils.toDecreaseString(difference.getValue());

};

}

```

## 2. What you did caused that bug

運用時の出力結果で確認。

## 3. How it should be

上記の処理で負数の場合にはシンボルを付与せずただ文字列として変換すべき。

## 4. References

| 1.0 | 不要な差分シンボルの追加処理がある - # High Priotity Bug Report

## 1. Bug Details

数値が負数であった場合にその値を文字列に変換すると既に負数を表す「-」が付いている状態だが、

現在の処理の流れではこの負数にも負数のシンボルを付与してしまい「-」が2つ付いている状態で値が出力される。

```java

private String toReportCount(@NonNull final Difference difference) {

return switch (difference.getDifferenceType()) {

case NONE -> DifferenceSymbolUtils.toNoneString(difference.getValue());

case INCREASE -> DifferenceSymbolUtils.toIncreaseString(difference.getValue());

case DECREASE -> DifferenceSymbolUtils.toDecreaseString(difference.getValue());

};

}

```

## 2. What you did caused that bug

運用時の出力結果で確認。

## 3. How it should be

上記の処理で負数の場合にはシンボルを付与せずただ文字列として変換すべき。

## 4. References

| priority | 不要な差分シンボルの追加処理がある high priotity bug report bug details 数値が負数であった場合にその値を文字列に変換すると既に負数を表す「 」が付いている状態だが、 現在の処理の流れではこの負数にも負数のシンボルを付与してしまい「 」 。 java private string toreportcount nonnull final difference difference return switch difference getdifferencetype case none differencesymbolutils tononestring difference getvalue case increase differencesymbolutils toincreasestring difference getvalue case decrease differencesymbolutils todecreasestring difference getvalue what you did caused that bug 運用時の出力結果で確認。 how it should be 上記の処理で負数の場合にはシンボルを付与せずただ文字列として変換すべき。 references | 1 |

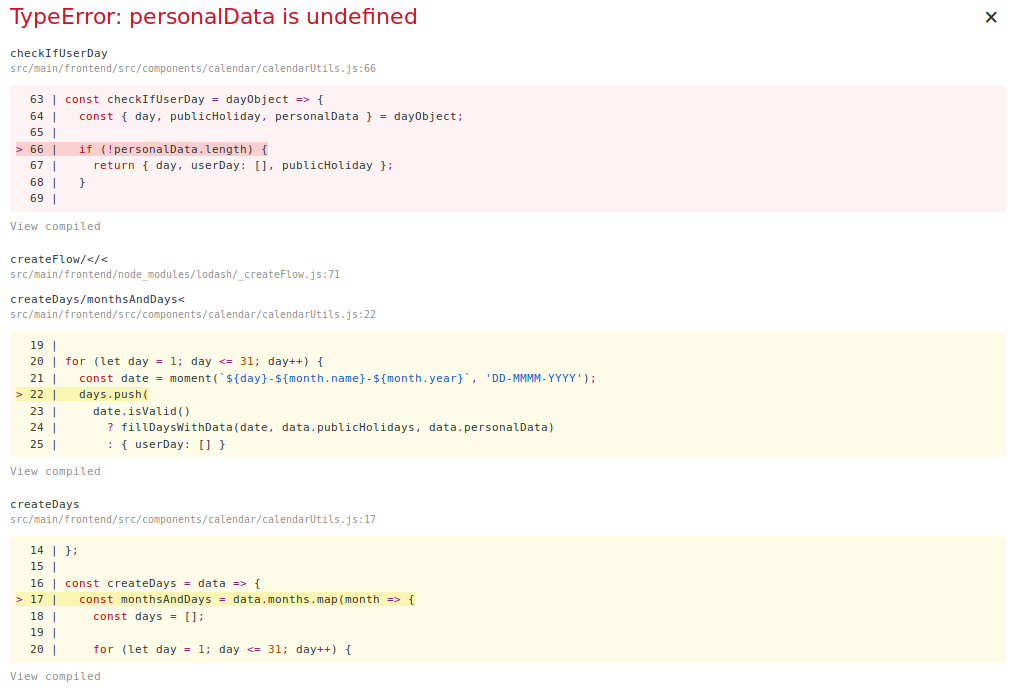

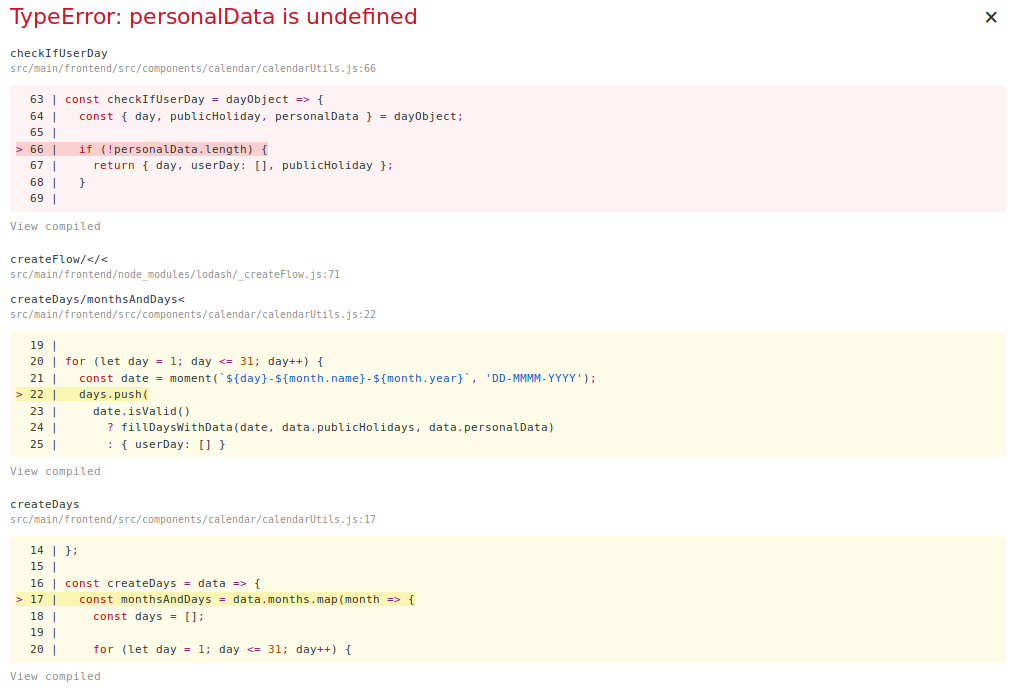

363,605 | 10,745,079,350 | IssuesEvent | 2019-10-30 08:11:59 | execom-eu/hawaii | https://api.github.com/repos/execom-eu/hawaii | closed | Manually changing leave profile for employee crashes the application | fix frontend high priority | Steps to reproduce (must have HR_MANAGER role):

- Go to administration and select Employees tab

- Find your profile information and change leave profile

- Save the change, and go to the dashboard

Expected result:

- New leave profile is set and the corresponding allowance values are set

Actual result:

- Application crashes with an error message (image below)

| 1.0 | Manually changing leave profile for employee crashes the application - Steps to reproduce (must have HR_MANAGER role):

- Go to administration and select Employees tab

- Find your profile information and change leave profile

- Save the change, and go to the dashboard

Expected result:

- New leave profile is set and the corresponding allowance values are set

Actual result:

- Application crashes with an error message (image below)

| priority | manually changing leave profile for employee crashes the application steps to reproduce must have hr manager role go to administration and select employees tab find your profile information and change leave profile save the change and go to the dashboard expected result new leave profile is set and the corresponding allowance values are set actual result application crashes with an error message image below | 1 |

811,732 | 30,297,940,309 | IssuesEvent | 2023-07-10 01:48:30 | steedos/steedos-platform | https://api.github.com/repos/steedos/steedos-platform | closed | [Bug]: 导入数据,lookup字段的“关联失败时保存key”功能无效 | bug done priority: High | ### Description

底层代码问题,当关联失败时,判断数据条数的地方已经通过 .length 转换成数值了,后面的if里面又来了一次 .length 造成判断无效,全部进入最后的else

### Steps To Reproduce 重现步骤

1. 配置数据导入

2. 配置关联表字段的导入

3. 配置对应的 “关联失败时保存key” 为打勾状态

4. 保存

5. 到对应的对象上导入数据

### Version 版本

所有版本 | 1.0 | [Bug]: 导入数据,lookup字段的“关联失败时保存key”功能无效 - ### Description

底层代码问题,当关联失败时,判断数据条数的地方已经通过 .length 转换成数值了,后面的if里面又来了一次 .length 造成判断无效,全部进入最后的else

### Steps To Reproduce 重现步骤

1. 配置数据导入

2. 配置关联表字段的导入

3. 配置对应的 “关联失败时保存key” 为打勾状态

4. 保存

5. 到对应的对象上导入数据

### Version 版本

所有版本 | priority | 导入数据,lookup字段的“关联失败时保存key”功能无效 description 底层代码问题,当关联失败时,判断数据条数的地方已经通过 length 转换成数值了,后面的if里面又来了一次 length 造成判断无效,全部进入最后的else steps to reproduce 重现步骤 配置数据导入 配置关联表字段的导入 配置对应的 “关联失败时保存key” 为打勾状态 保存 到对应的对象上导入数据 version 版本 所有版本 | 1 |

340,390 | 10,271,858,700 | IssuesEvent | 2019-08-23 15:02:23 | storybookjs/storybook | https://api.github.com/repos/storybookjs/storybook | closed | Addon-docs: User #root styles breaks Docs tab | addon: docs bug high priority ui | ### Problem

User applied the following global style to the preview iframe:

```css

#root {

height: 100vh;

display: flex;

flex-direction: column;

}

```

This breaks docs in the following way. When the user click on the `Docs` tab, Storybook applies the `hidden` attribute to `#root`, which triggers the following browser CSS:

```css

[hidden] {

display: none;

}

```

However, the `#root` CSS is more specific, so the Story renders on top of the docs.

### Solution

When we added the following CSS to the user's code, it fixed it.

```css

#root[hidden] {

display: none;

}

```

We can add this to Storybook itself to avoid this issue for users that style `#root` (which is a completely reasonable thing to do).

However, I'm not sure this is enough...

| 1.0 | Addon-docs: User #root styles breaks Docs tab - ### Problem

User applied the following global style to the preview iframe:

```css

#root {

height: 100vh;

display: flex;

flex-direction: column;

}

```

This breaks docs in the following way. When the user click on the `Docs` tab, Storybook applies the `hidden` attribute to `#root`, which triggers the following browser CSS:

```css

[hidden] {

display: none;

}

```

However, the `#root` CSS is more specific, so the Story renders on top of the docs.

### Solution

When we added the following CSS to the user's code, it fixed it.

```css

#root[hidden] {

display: none;

}

```

We can add this to Storybook itself to avoid this issue for users that style `#root` (which is a completely reasonable thing to do).

However, I'm not sure this is enough...

| priority | addon docs user root styles breaks docs tab problem user applied the following global style to the preview iframe css root height display flex flex direction column this breaks docs in the following way when the user click on the docs tab storybook applies the hidden attribute to root which triggers the following browser css css display none however the root css is more specific so the story renders on top of the docs solution when we added the following css to the user s code it fixed it css root display none we can add this to storybook itself to avoid this issue for users that style root which is a completely reasonable thing to do however i m not sure this is enough | 1 |

430,587 | 12,463,497,772 | IssuesEvent | 2020-05-28 10:43:25 | UTRS2/utrs | https://api.github.com/repos/UTRS2/utrs | closed | Unable to view appeals | Priority: High bug | Users w/ the global flag in users.wikis or maybe with multiple wikis are not seeing appeals at all.

Current workaround: Manual override of DB field to "enwiki" until fixed as there are no global appeals yet.

Done for these users so far, replace them when done.

Xoasflux

JJMC89

ST47

TonyBallioni

TheSandDoctor | 1.0 | Unable to view appeals - Users w/ the global flag in users.wikis or maybe with multiple wikis are not seeing appeals at all.

Current workaround: Manual override of DB field to "enwiki" until fixed as there are no global appeals yet.

Done for these users so far, replace them when done.

Xoasflux

JJMC89

ST47

TonyBallioni

TheSandDoctor | priority | unable to view appeals users w the global flag in users wikis or maybe with multiple wikis are not seeing appeals at all current workaround manual override of db field to enwiki until fixed as there are no global appeals yet done for these users so far replace them when done xoasflux tonyballioni thesanddoctor | 1 |

745,862 | 26,004,398,832 | IssuesEvent | 2022-12-20 17:55:57 | vyper-protocol/vyper-otc-ui | https://api.github.com/repos/vyper-protocol/vyper-otc-ui | closed | add support for featured | high priority | add a menu in the topbar with a dropdown with featured products. These can be different depending on the cluster. #364 also relevant for this | 1.0 | add support for featured - add a menu in the topbar with a dropdown with featured products. These can be different depending on the cluster. #364 also relevant for this | priority | add support for featured add a menu in the topbar with a dropdown with featured products these can be different depending on the cluster also relevant for this | 1 |

517,500 | 15,014,950,055 | IssuesEvent | 2021-02-01 07:32:55 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | connect.garmin.com - site is not usable | browser-firefox engine-gecko ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Firefox 86.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:86.0) Gecko/20100101 Firefox/86.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66526 -->

**URL**: https://connect.garmin.com/modern/

**Browser / Version**: Firefox 86.0

**Operating System**: Windows 10

**Tested Another Browser**: No

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

Tab loads with the page but page remains blank

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/1/9f3fc4ee-7863-46dd-98e4-136ef8be4ac3.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20210128185743</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2021/1/4457943e-bc80-48c5-a650-bcb7d7736133)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | 1.0 | connect.garmin.com - site is not usable - <!-- @browser: Firefox 86.0 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:86.0) Gecko/20100101 Firefox/86.0 -->

<!-- @reported_with: desktop-reporter -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/66526 -->

**URL**: https://connect.garmin.com/modern/

**Browser / Version**: Firefox 86.0

**Operating System**: Windows 10

**Tested Another Browser**: No

**Problem type**: Site is not usable

**Description**: Page not loading correctly

**Steps to Reproduce**:

Tab loads with the page but page remains blank

<details>

<summary>View the screenshot</summary>

<img alt="Screenshot" src="https://webcompat.com/uploads/2021/1/9f3fc4ee-7863-46dd-98e4-136ef8be4ac3.jpeg">

</details>

<details>

<summary>Browser Configuration</summary>

<ul>

<li>gfx.webrender.all: false</li><li>gfx.webrender.blob-images: true</li><li>gfx.webrender.enabled: false</li><li>image.mem.shared: true</li><li>buildID: 20210128185743</li><li>channel: beta</li><li>hasTouchScreen: false</li><li>mixed active content blocked: false</li><li>mixed passive content blocked: false</li><li>tracking content blocked: false</li>

</ul>

</details>

[View console log messages](https://webcompat.com/console_logs/2021/1/4457943e-bc80-48c5-a650-bcb7d7736133)

_From [webcompat.com](https://webcompat.com/) with ❤️_ | priority | connect garmin com site is not usable url browser version firefox operating system windows tested another browser no problem type site is not usable description page not loading correctly steps to reproduce tab loads with the page but page remains blank view the screenshot img alt screenshot src browser configuration gfx webrender all false gfx webrender blob images true gfx webrender enabled false image mem shared true buildid channel beta hastouchscreen false mixed active content blocked false mixed passive content blocked false tracking content blocked false from with ❤️ | 1 |

805,896 | 29,736,130,298 | IssuesEvent | 2023-06-14 01:10:08 | GSM-MSG/SMS-BackEnd | https://api.github.com/repos/GSM-MSG/SMS-BackEnd | closed | Spring Security에서 예외 핸들링 반대로 되어져 있음 | 1️⃣ Priority: High | ### Describe

인증에 403이 뜨고, 인가에 401이 뜨게 잘못 핸들링 해서 수정하겠습니다.

### Additional

_No response_ | 1.0 | Spring Security에서 예외 핸들링 반대로 되어져 있음 - ### Describe

인증에 403이 뜨고, 인가에 401이 뜨게 잘못 핸들링 해서 수정하겠습니다.

### Additional

_No response_ | priority | spring security에서 예외 핸들링 반대로 되어져 있음 describe 인증에 뜨고 인가에 뜨게 잘못 핸들링 해서 수정하겠습니다 additional no response | 1 |

387,720 | 11,467,130,334 | IssuesEvent | 2020-02-08 02:20:38 | allenai/scholar-reader | https://api.github.com/repos/allenai/scholar-reader | closed | Fault-tolerant processing of one entity at a time | high-priority pipeline | One challenge with colorizing multiple entities at a time is cascading failures---an error in colorizing one entity may change the location of all after it, or cause an entire batch of entities not to be colorized.

This task includes:

- [x] Add option to colorizing commands to process entities one at a time

- [x] Add option to full pipeline for processing entities one at a time

- [x] Add visual validation to check for any black pixels in image diffs. If there are black pixels, then do not attempt to detect bounding boxes

- [x] Documentation that conveys that running one entity at a time will result in much greater usage of storage

Follow-up analysis includes (put this in a separate issue later):

- [ ] Characterize the time it takes to process the 'typical' paper one entity at a time

- [ ] Compare to the relative costs and benefits of reworking the TeX engine to handle colorizing without affecting paper layout

- [ ] Characterize the number of entities that will be left out during one-at-a-time entity colorizing | 1.0 | Fault-tolerant processing of one entity at a time - One challenge with colorizing multiple entities at a time is cascading failures---an error in colorizing one entity may change the location of all after it, or cause an entire batch of entities not to be colorized.

This task includes:

- [x] Add option to colorizing commands to process entities one at a time

- [x] Add option to full pipeline for processing entities one at a time

- [x] Add visual validation to check for any black pixels in image diffs. If there are black pixels, then do not attempt to detect bounding boxes

- [x] Documentation that conveys that running one entity at a time will result in much greater usage of storage

Follow-up analysis includes (put this in a separate issue later):

- [ ] Characterize the time it takes to process the 'typical' paper one entity at a time

- [ ] Compare to the relative costs and benefits of reworking the TeX engine to handle colorizing without affecting paper layout

- [ ] Characterize the number of entities that will be left out during one-at-a-time entity colorizing | priority | fault tolerant processing of one entity at a time one challenge with colorizing multiple entities at a time is cascading failures an error in colorizing one entity may change the location of all after it or cause an entire batch of entities not to be colorized this task includes add option to colorizing commands to process entities one at a time add option to full pipeline for processing entities one at a time add visual validation to check for any black pixels in image diffs if there are black pixels then do not attempt to detect bounding boxes documentation that conveys that running one entity at a time will result in much greater usage of storage follow up analysis includes put this in a separate issue later characterize the time it takes to process the typical paper one entity at a time compare to the relative costs and benefits of reworking the tex engine to handle colorizing without affecting paper layout characterize the number of entities that will be left out during one at a time entity colorizing | 1 |

355,374 | 10,579,943,329 | IssuesEvent | 2019-10-08 04:50:44 | CalNourish/ucbfpa-webapp | https://api.github.com/repos/CalNourish/ucbfpa-webapp | opened | Update Add/Edit UI | high priority 🔥 | - [ ] Remove points

- [ ] Remove images

- [ ] Introduce some UI for easily adding and subtracting counts without having to math (and be able to edit the total amount directly somehow) | 1.0 | Update Add/Edit UI - - [ ] Remove points

- [ ] Remove images

- [ ] Introduce some UI for easily adding and subtracting counts without having to math (and be able to edit the total amount directly somehow) | priority | update add edit ui remove points remove images introduce some ui for easily adding and subtracting counts without having to math and be able to edit the total amount directly somehow | 1 |

166,221 | 6,300,163,869 | IssuesEvent | 2017-07-21 02:21:05 | minio/minio | https://api.github.com/repos/minio/minio | closed | [mint] Tests fail in Azure/GCS gateway mode | priority: high working as intended | ## Expected Behavior

Mint test cases should pass.

## Current Behavior

When Mint is run against the `minio gateway azure` or `minio gateway gcs`, requests fail with server log as below

```

$ ./minio gateway azure

Endpoint: http://192.168.86.129:9000 http://172.17.0.1:9000 http://172.18.0.1:9000 http://127.0.0.1:9000

ERRO[0007] {"method":"PUT","reqURI":"/aws-sdk-php-bucket-10234","header":{"Authorization":["AWS4-HMAC-SHA256 Credential=minio/20170720/us-east-1/s3/aws4_request, SignedHeaders=aws-sdk-invocation-id;aws-sdk-retry;host;x-amz-content-sha256;x-amz-date, Signature=ac91ba1d601e7b2d5136e531a3111770db0603705d9f388aee05c36f53fc6f65"],"Aws-Sdk-Invocation-Id":["8ccba5715ed1842e5862c4ec36a94c8c"],"Aws-Sdk-Retry":["0/0"],"Content-Length":["0"],"Host":["127.0.0.1:9000"],"User-Agent":["aws-sdk-php/3.31.7 GuzzleHttp/6.2.1 curl/7.47.0 PHP/7.0.18-0ubuntu0.16.04.1"],"X-Amz-Content-Sha256":["e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"],"X-Amz-Date":["20170720T094407Z"]}} cause=Signature does not match source=[auth-handler.go:122:checkRequestAuthType()]

ERRO[0007] {"method":"DELETE","reqURI":"/aws-sdk-php-bucket-10234/obj1","header":{"Authorization":["AWS4-HMAC-SHA256 Credential=minio/20170720/us-east-1/s3/aws4_request, SignedHeaders=aws-sdk-invocation-id;aws-sdk-retry;host;x-amz-content-sha256;x-amz-date, Signature=7f448c75c4f825a397624b1fc1836841dffd8ecbf402a474c1a28486c026b9ae"],"Aws-Sdk-Invocation-Id":["908b9e424ad67ce48c2d5cde6c2659ec"],"Aws-Sdk-Retry":["0/0"],"Host":["127.0.0.1:9000"],"User-Agent":["aws-sdk-php/3.31.7 GuzzleHttp/6.2.1 curl/7.47.0 PHP/7.0.18-0ubuntu0.16.04.1"],"X-Amz-Content-Sha256":["e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"],"X-Amz-Date":["20170720T094407Z"]}} cause=Signature does not match source=[auth-handler.go:122:checkRequestAuthType()]

```

```

$ ./minio gateway gcs peak-essence-171622

*** Warning: Not Ready for Production ***

ERRO[0025] {"method":"PUT","reqURI":"/aws-sdk-php-bucket-43021","header":{"Authorization":["AWS4-HMAC-SHA256 Credential=minio/20170720/us-east-1/s3/aws4_request, SignedHeaders=aws-sdk-invocation-id;aws-sdk-retry;host;x-amz-content-sha256;x-amz-date, Signature=2297da513a5dabc3230d17adc6713c54a5af97f4f0573ee86c3486add17d36c9"],"Aws-Sdk-Invocation-Id":["3ac3bd8d67bb14aedf64ee263ae42d6d"],"Aws-Sdk-Retry":["0/0"],"Content-Length":["0"],"Host":["127.0.0.1:9000"],"User-Agent":["aws-sdk-php/3.31.7 GuzzleHttp/6.2.1 curl/7.47.0 PHP/7.0.18-0ubuntu0.16.04.1"],"X-Amz-Content-Sha256":["e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"],"X-Amz-Date":["20170720T102637Z"]}} cause=Signature does not match source=[auth-handler.go:122:checkRequestAuthType()]

```

Note that region is set using `export MINIO_REGION="us-east-1"`

## Steps to Reproduce (for bugs)

1. Start Minio server in azure or gcs gateway mode.

2. Run Mint against the Minio server instance. | 1.0 | [mint] Tests fail in Azure/GCS gateway mode - ## Expected Behavior

Mint test cases should pass.

## Current Behavior

When Mint is run against the `minio gateway azure` or `minio gateway gcs`, requests fail with server log as below

```

$ ./minio gateway azure

Endpoint: http://192.168.86.129:9000 http://172.17.0.1:9000 http://172.18.0.1:9000 http://127.0.0.1:9000

ERRO[0007] {"method":"PUT","reqURI":"/aws-sdk-php-bucket-10234","header":{"Authorization":["AWS4-HMAC-SHA256 Credential=minio/20170720/us-east-1/s3/aws4_request, SignedHeaders=aws-sdk-invocation-id;aws-sdk-retry;host;x-amz-content-sha256;x-amz-date, Signature=ac91ba1d601e7b2d5136e531a3111770db0603705d9f388aee05c36f53fc6f65"],"Aws-Sdk-Invocation-Id":["8ccba5715ed1842e5862c4ec36a94c8c"],"Aws-Sdk-Retry":["0/0"],"Content-Length":["0"],"Host":["127.0.0.1:9000"],"User-Agent":["aws-sdk-php/3.31.7 GuzzleHttp/6.2.1 curl/7.47.0 PHP/7.0.18-0ubuntu0.16.04.1"],"X-Amz-Content-Sha256":["e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"],"X-Amz-Date":["20170720T094407Z"]}} cause=Signature does not match source=[auth-handler.go:122:checkRequestAuthType()]

ERRO[0007] {"method":"DELETE","reqURI":"/aws-sdk-php-bucket-10234/obj1","header":{"Authorization":["AWS4-HMAC-SHA256 Credential=minio/20170720/us-east-1/s3/aws4_request, SignedHeaders=aws-sdk-invocation-id;aws-sdk-retry;host;x-amz-content-sha256;x-amz-date, Signature=7f448c75c4f825a397624b1fc1836841dffd8ecbf402a474c1a28486c026b9ae"],"Aws-Sdk-Invocation-Id":["908b9e424ad67ce48c2d5cde6c2659ec"],"Aws-Sdk-Retry":["0/0"],"Host":["127.0.0.1:9000"],"User-Agent":["aws-sdk-php/3.31.7 GuzzleHttp/6.2.1 curl/7.47.0 PHP/7.0.18-0ubuntu0.16.04.1"],"X-Amz-Content-Sha256":["e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"],"X-Amz-Date":["20170720T094407Z"]}} cause=Signature does not match source=[auth-handler.go:122:checkRequestAuthType()]

```

```

$ ./minio gateway gcs peak-essence-171622

*** Warning: Not Ready for Production ***

ERRO[0025] {"method":"PUT","reqURI":"/aws-sdk-php-bucket-43021","header":{"Authorization":["AWS4-HMAC-SHA256 Credential=minio/20170720/us-east-1/s3/aws4_request, SignedHeaders=aws-sdk-invocation-id;aws-sdk-retry;host;x-amz-content-sha256;x-amz-date, Signature=2297da513a5dabc3230d17adc6713c54a5af97f4f0573ee86c3486add17d36c9"],"Aws-Sdk-Invocation-Id":["3ac3bd8d67bb14aedf64ee263ae42d6d"],"Aws-Sdk-Retry":["0/0"],"Content-Length":["0"],"Host":["127.0.0.1:9000"],"User-Agent":["aws-sdk-php/3.31.7 GuzzleHttp/6.2.1 curl/7.47.0 PHP/7.0.18-0ubuntu0.16.04.1"],"X-Amz-Content-Sha256":["e3b0c44298fc1c149afbf4c8996fb92427ae41e4649b934ca495991b7852b855"],"X-Amz-Date":["20170720T102637Z"]}} cause=Signature does not match source=[auth-handler.go:122:checkRequestAuthType()]

```

Note that region is set using `export MINIO_REGION="us-east-1"`

## Steps to Reproduce (for bugs)

1. Start Minio server in azure or gcs gateway mode.

2. Run Mint against the Minio server instance. | priority | tests fail in azure gcs gateway mode expected behavior mint test cases should pass current behavior when mint is run against the minio gateway azure or minio gateway gcs requests fail with server log as below minio gateway azure endpoint erro method put requri aws sdk php bucket header authorization aws sdk invocation id aws sdk retry content length host user agent x amz content x amz date cause signature does not match source erro method delete requri aws sdk php bucket header authorization aws sdk invocation id aws sdk retry host user agent x amz content x amz date cause signature does not match source minio gateway gcs peak essence warning not ready for production erro method put requri aws sdk php bucket header authorization aws sdk invocation id aws sdk retry content length host user agent x amz content x amz date cause signature does not match source note that region is set using export minio region us east steps to reproduce for bugs start minio server in azure or gcs gateway mode run mint against the minio server instance | 1 |

520,042 | 15,077,759,202 | IssuesEvent | 2021-02-05 07:34:09 | wso2/cellery | https://api.github.com/repos/wso2/cellery | closed | Dry run mode should be implemented for running cellery component tests | Priority/High Resolution/Won’t Fix Type/Improvement | **Description:**

After the CLI refactoring, the kubectl commands are run in a ballerina native function. Therefore the component tests are required to be run on dry run mode to `cellery run` command perform a kubectl dry run to stop communication to the api server. As a workaround for the moment, the exceptions thrown by kubectl apply command are skipped after checking. | 1.0 | Dry run mode should be implemented for running cellery component tests - **Description:**

After the CLI refactoring, the kubectl commands are run in a ballerina native function. Therefore the component tests are required to be run on dry run mode to `cellery run` command perform a kubectl dry run to stop communication to the api server. As a workaround for the moment, the exceptions thrown by kubectl apply command are skipped after checking. | priority | dry run mode should be implemented for running cellery component tests description after the cli refactoring the kubectl commands are run in a ballerina native function therefore the component tests are required to be run on dry run mode to cellery run command perform a kubectl dry run to stop communication to the api server as a workaround for the moment the exceptions thrown by kubectl apply command are skipped after checking | 1 |

362,260 | 10,724,513,707 | IssuesEvent | 2019-10-28 02:02:55 | LuanKovacs/LittleMatchGirlGame | https://api.github.com/repos/LuanKovacs/LittleMatchGirlGame | closed | [UPDATE, NOW WITH CAMERA PROBLEMS] I've fallen over and can get back up | Priority: High bug | **Describe the bug**

you can fall over if you die near the end of the game

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the final area

2. die

3. if the spawn in falling stutters a little then the player can now fall over when walking

**Expected behavior**

you shouldn't be able to fall over like that, the player while running should stay upright

**Additional context**

Add any other context about the problem here.

| 1.0 | [UPDATE, NOW WITH CAMERA PROBLEMS] I've fallen over and can get back up - **Describe the bug**

you can fall over if you die near the end of the game

**To Reproduce**

Steps to reproduce the behavior:

1. Go to the final area

2. die

3. if the spawn in falling stutters a little then the player can now fall over when walking

**Expected behavior**

you shouldn't be able to fall over like that, the player while running should stay upright

**Additional context**

Add any other context about the problem here.

| priority | i ve fallen over and can get back up describe the bug you can fall over if you die near the end of the game to reproduce steps to reproduce the behavior go to the final area die if the spawn in falling stutters a little then the player can now fall over when walking expected behavior you shouldn t be able to fall over like that the player while running should stay upright additional context add any other context about the problem here | 1 |

192,217 | 6,847,705,995 | IssuesEvent | 2017-11-13 16:11:06 | cceh/capitularia | https://api.github.com/repos/cceh/capitularia | closed | Implementierung des Ortsregisters in den Handschriftenfilter | High Priority | Das Ortsregister sollte zeitnah in den Handschriftenfilter implementiert werden. | 1.0 | Implementierung des Ortsregisters in den Handschriftenfilter - Das Ortsregister sollte zeitnah in den Handschriftenfilter implementiert werden. | priority | implementierung des ortsregisters in den handschriftenfilter das ortsregister sollte zeitnah in den handschriftenfilter implementiert werden | 1 |

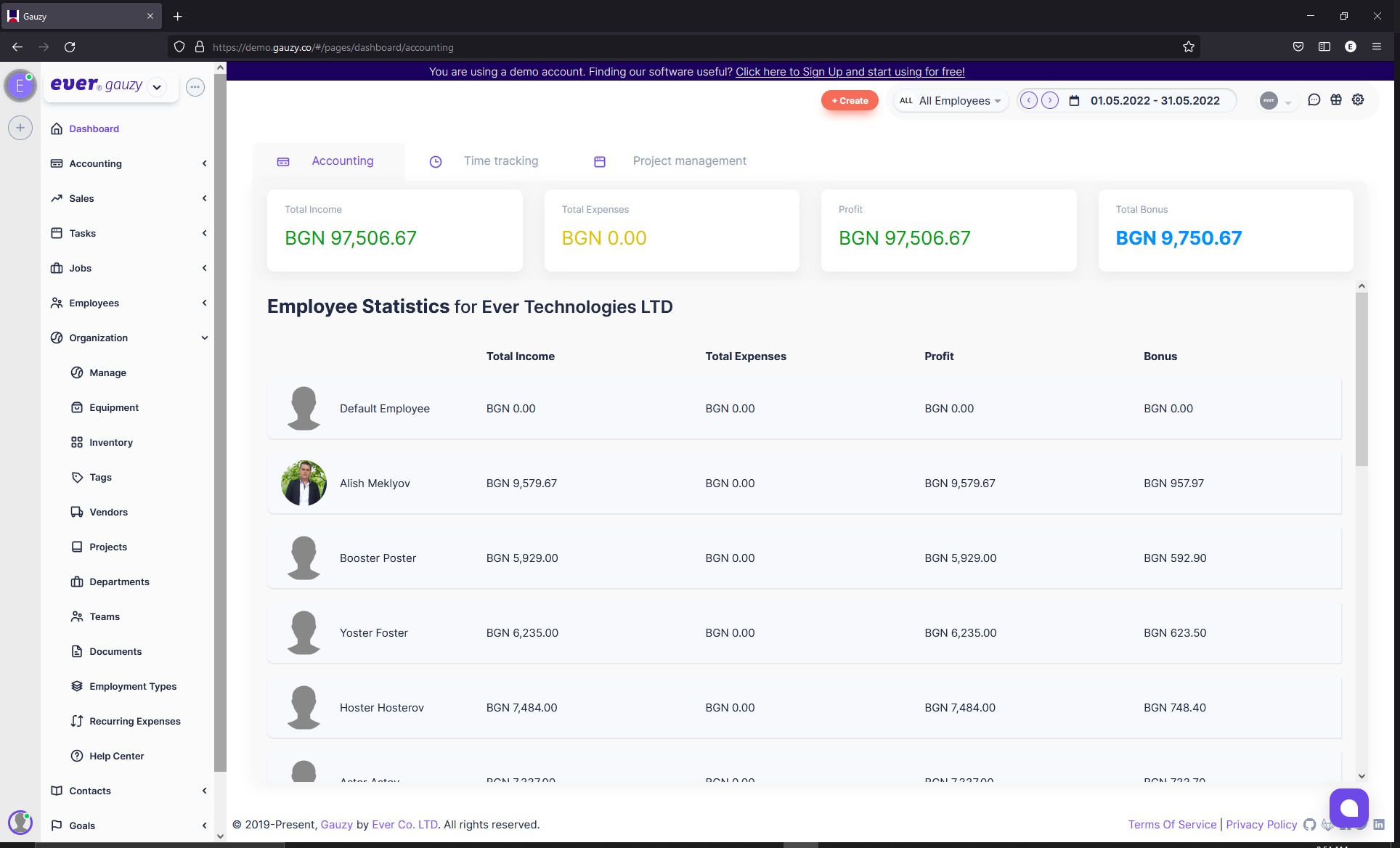

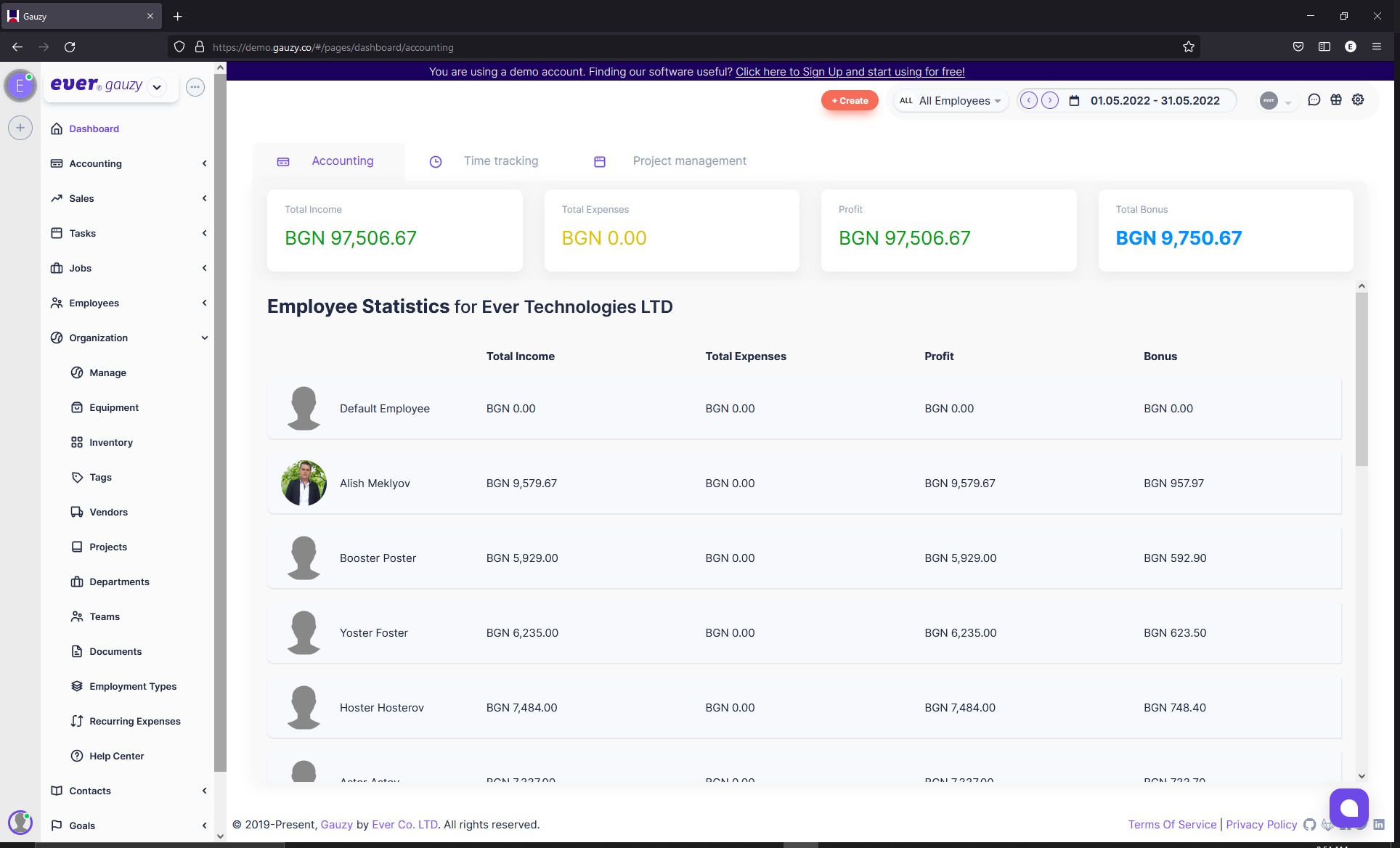

656,227 | 21,724,004,263 | IssuesEvent | 2022-05-11 05:21:29 | ever-co/ever-gauzy | https://api.github.com/repos/ever-co/ever-gauzy | closed | Fix: Theme Card Scrollbars (Firefox) | priority: highest Firefox Chorme Safari | The theme "scrollbars" in Firefox so they will be "thin" like in Chrome.

| 1.0 | Fix: Theme Card Scrollbars (Firefox) - The theme "scrollbars" in Firefox so they will be "thin" like in Chrome.

| priority | fix theme card scrollbars firefox the theme scrollbars in firefox so they will be thin like in chrome | 1 |

151,026 | 5,795,538,621 | IssuesEvent | 2017-05-02 17:20:06 | JiscRDSS/rdss-canonical-data-model | https://api.github.com/repos/JiscRDSS/rdss-canonical-data-model | closed | UC87 Metadata fields | alpha priority:High use case | UC no.: 87

Theme: Metadata fields

As a Data creator

I want Widespread use of unique IDs for people and organisations

So that I don't have to keep up with correct/canonical names changing all the time, particularly for organisations and individuals' email addresses

Comments

Identifiers are a key component of the MVP (and data model) | 1.0 | UC87 Metadata fields - UC no.: 87

Theme: Metadata fields

As a Data creator

I want Widespread use of unique IDs for people and organisations

So that I don't have to keep up with correct/canonical names changing all the time, particularly for organisations and individuals' email addresses

Comments

Identifiers are a key component of the MVP (and data model) | priority | metadata fields uc no theme metadata fields as a data creator i want widespread use of unique ids for people and organisations so that i don t have to keep up with correct canonical names changing all the time particularly for organisations and individuals email addresses comments identifiers are a key component of the mvp and data model | 1 |

432,023 | 12,488,173,186 | IssuesEvent | 2020-05-31 13:01:57 | STAMACODING/RSA-App | https://api.github.com/repos/STAMACODING/RSA-App | opened | Switch to OpenJDK | high priority meeting relevant organization | Auf meiner Raspberry Pi 4B gibt es leider nur schweren Support für das kommerzielle Oracle JDK. Für die neuste Version (14) sogar gar keine. Der Standard ist [OpenJDK](https://openjdk.java.net/), eine Open-Source-Variante des JDKs. Fürs Programmieren würde das quasi keine Unterschiede machen. Es müsste nur jeder bei sich einrichten. Dafür könnte ich auch ein Tutorial schreiben. Am besten besprechen wir das ganze in einem **Meeting**. | 1.0 | Switch to OpenJDK - Auf meiner Raspberry Pi 4B gibt es leider nur schweren Support für das kommerzielle Oracle JDK. Für die neuste Version (14) sogar gar keine. Der Standard ist [OpenJDK](https://openjdk.java.net/), eine Open-Source-Variante des JDKs. Fürs Programmieren würde das quasi keine Unterschiede machen. Es müsste nur jeder bei sich einrichten. Dafür könnte ich auch ein Tutorial schreiben. Am besten besprechen wir das ganze in einem **Meeting**. | priority | switch to openjdk auf meiner raspberry pi gibt es leider nur schweren support für das kommerzielle oracle jdk für die neuste version sogar gar keine der standard ist eine open source variante des jdks fürs programmieren würde das quasi keine unterschiede machen es müsste nur jeder bei sich einrichten dafür könnte ich auch ein tutorial schreiben am besten besprechen wir das ganze in einem meeting | 1 |

413,005 | 12,059,178,805 | IssuesEvent | 2020-04-15 18:48:16 | tern-tools/tern | https://api.github.com/repos/tern-tools/tern | closed | Update SPDX format to include file level analysis | high-priority | **Description**

Update the SPDX report format to include situations where there is file level data. Use the http://13.57.134.254/app/validate/ online tool to validate the generated SPDX document for various container images.

**Background**

This depends on https://github.com/vmware/tern/pull/582 to be merged.

**Super Issues**

#583

| 1.0 | Update SPDX format to include file level analysis - **Description**

Update the SPDX report format to include situations where there is file level data. Use the http://13.57.134.254/app/validate/ online tool to validate the generated SPDX document for various container images.

**Background**

This depends on https://github.com/vmware/tern/pull/582 to be merged.

**Super Issues**

#583

| priority | update spdx format to include file level analysis description update the spdx report format to include situations where there is file level data use the online tool to validate the generated spdx document for various container images background this depends on to be merged super issues | 1 |

296,020 | 9,103,469,990 | IssuesEvent | 2019-02-20 15:57:57 | infor-design/website | https://api.github.com/repos/infor-design/website | closed | Source Sans fonts aren't included in ng7 build | for: dev priority: high | **Describe the bug**

Oops.

**To Reproduce**

Navigate to site on a device that doesn't have Source Sans installed.

**Expected behavior**

Include using Google fonts as previously or you could include and serve the fonts from `ids-identity`. | 1.0 | Source Sans fonts aren't included in ng7 build - **Describe the bug**

Oops.

**To Reproduce**

Navigate to site on a device that doesn't have Source Sans installed.

**Expected behavior**

Include using Google fonts as previously or you could include and serve the fonts from `ids-identity`. | priority | source sans fonts aren t included in build describe the bug oops to reproduce navigate to site on a device that doesn t have source sans installed expected behavior include using google fonts as previously or you could include and serve the fonts from ids identity | 1 |

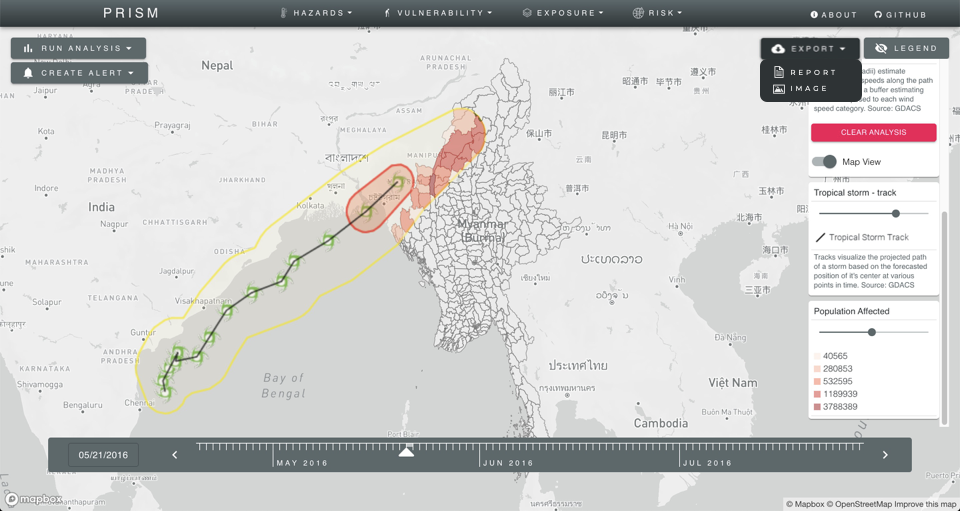

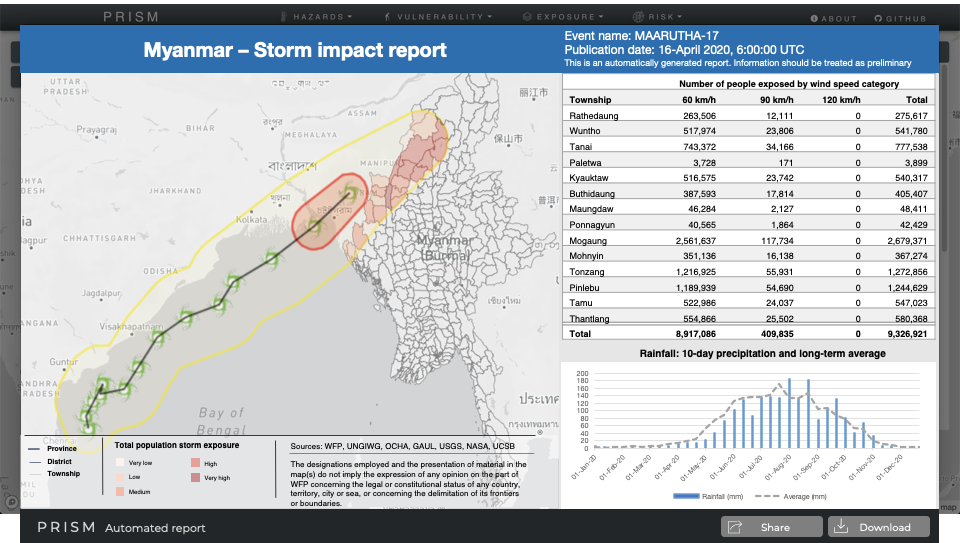

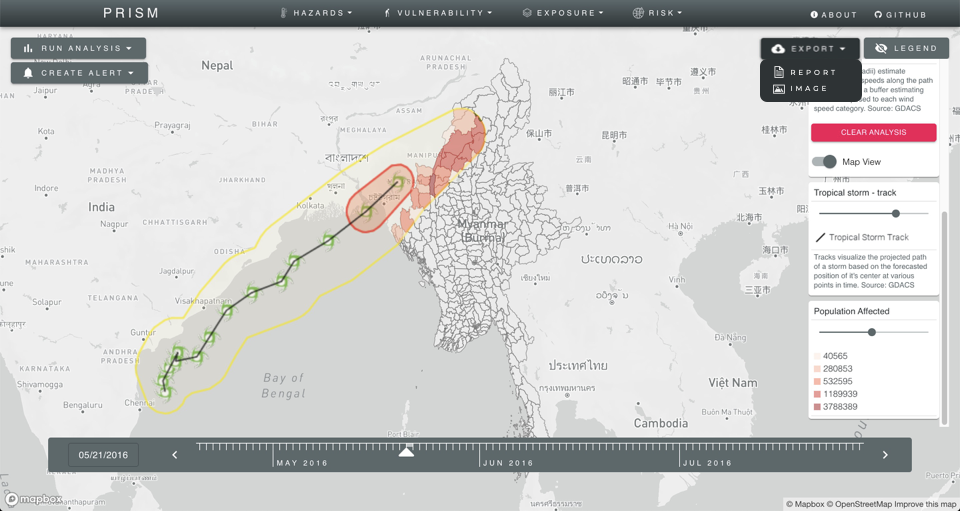

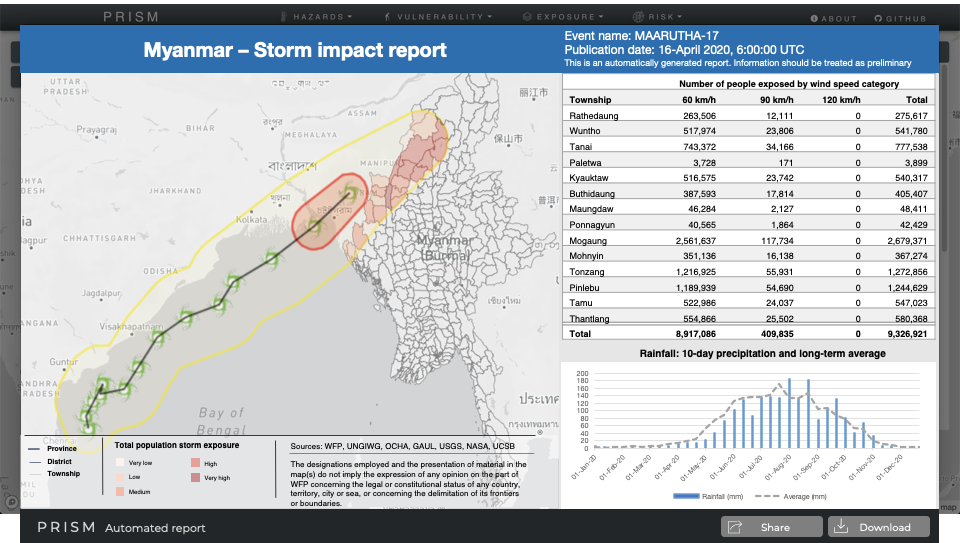

727,707 | 25,044,734,986 | IssuesEvent | 2022-11-05 04:50:12 | WFP-VAM/prism-app | https://api.github.com/repos/WFP-VAM/prism-app | closed | PRISM reports module | enhancement help wanted idea priority:high | Most users of information accessible through PRISM will not frequently visit the application. In addition, more advanced users such as analysts and GIS officers produce outputs that are then shared to a wider audience. Recognizing this, PRISM requires the ability to 1) condense various data inputs into a single output for a single snapshot view of key risk and impact factors; 2) reduce the steps involved for a user to generate a shareable output to a wide audience. A reports feature in PRISM will produce a static view of the dashboard exposing multiple datasets through a map view complemented with tabular data and a chart. The report could be triggered through the ‘Export’ button, and a direct link will be accessible via a URL such as https://prism.org?view=report&date=2021-05-17&hazard=tropical-storm

Google doc to comment on here: https://docs.google.com/document/d/1FaV2jp526Xa134j32iXPZ_U4lhxFanlr/edit?usp=sharing&ouid=105953411765103382631&rtpof=true&sd=true

Mockups:

| 1.0 | PRISM reports module - Most users of information accessible through PRISM will not frequently visit the application. In addition, more advanced users such as analysts and GIS officers produce outputs that are then shared to a wider audience. Recognizing this, PRISM requires the ability to 1) condense various data inputs into a single output for a single snapshot view of key risk and impact factors; 2) reduce the steps involved for a user to generate a shareable output to a wide audience. A reports feature in PRISM will produce a static view of the dashboard exposing multiple datasets through a map view complemented with tabular data and a chart. The report could be triggered through the ‘Export’ button, and a direct link will be accessible via a URL such as https://prism.org?view=report&date=2021-05-17&hazard=tropical-storm

Google doc to comment on here: https://docs.google.com/document/d/1FaV2jp526Xa134j32iXPZ_U4lhxFanlr/edit?usp=sharing&ouid=105953411765103382631&rtpof=true&sd=true

Mockups:

| priority | prism reports module most users of information accessible through prism will not frequently visit the application in addition more advanced users such as analysts and gis officers produce outputs that are then shared to a wider audience recognizing this prism requires the ability to condense various data inputs into a single output for a single snapshot view of key risk and impact factors reduce the steps involved for a user to generate a shareable output to a wide audience a reports feature in prism will produce a static view of the dashboard exposing multiple datasets through a map view complemented with tabular data and a chart the report could be triggered through the ‘export’ button and a direct link will be accessible via a url such as google doc to comment on here mockups | 1 |

527,434 | 15,342,640,821 | IssuesEvent | 2021-02-27 17:02:21 | getting-things-gnome/gtg | https://api.github.com/repos/getting-things-gnome/gtg | opened | Tags picker popover is empty after the v2 file format branch merge | bug priority:high reproducible-in-git | With current master, with the "screenshots" dataset, if you create a new task, in the task editor, clicking the tags button will give you an empty popover rather than showing existing tags. | 1.0 | Tags picker popover is empty after the v2 file format branch merge - With current master, with the "screenshots" dataset, if you create a new task, in the task editor, clicking the tags button will give you an empty popover rather than showing existing tags. | priority | tags picker popover is empty after the file format branch merge with current master with the screenshots dataset if you create a new task in the task editor clicking the tags button will give you an empty popover rather than showing existing tags | 1 |

135,793 | 5,258,857,791 | IssuesEvent | 2017-02-03 00:57:57 | ucdavis/ipa-client-angular | https://api.github.com/repos/ucdavis/ipa-client-angular | closed | TeachingCallResponse report: suggested courses not displayed correctly | bug high priority | From email:

If you look at faculty member "Hanti Kao Lin" in Philosophy - his interested courses are all listed as sabbaticals in the report.

| 1.0 | TeachingCallResponse report: suggested courses not displayed correctly - From email:

If you look at faculty member "Hanti Kao Lin" in Philosophy - his interested courses are all listed as sabbaticals in the report.

| priority | teachingcallresponse report suggested courses not displayed correctly from email if you look at faculty member hanti kao lin in philosophy his interested courses are all listed as sabbaticals in the report | 1 |

274,845 | 8,568,542,085 | IssuesEvent | 2018-11-10 22:37:32 | giftdibs/giftdibs-browser | https://api.github.com/repos/giftdibs/giftdibs-browser | closed | Gift Detail > Gift delivered message | priority: high | Show message on gift detail (and `gift_delivered` notification) when someone delivers a gift, to let the owner of the gift mark it as received.

Clean up the "Delivered by" section on the gift detail. | 1.0 | Gift Detail > Gift delivered message - Show message on gift detail (and `gift_delivered` notification) when someone delivers a gift, to let the owner of the gift mark it as received.

Clean up the "Delivered by" section on the gift detail. | priority | gift detail gift delivered message show message on gift detail and gift delivered notification when someone delivers a gift to let the owner of the gift mark it as received clean up the delivered by section on the gift detail | 1 |

597,806 | 18,172,502,063 | IssuesEvent | 2021-09-27 21:45:22 | StatisticsNZ/simplevis | https://api.github.com/repos/StatisticsNZ/simplevis | closed | bar: x_var date labels are not working correctly | high priority | ```

library(tidyverse)

library(er.helpers)

library(simplevis)

setup_datalake_access()

no2_nzta <- er.helpers::read_from_datalake( "air/2021/tidy/no2_nzta.RDS")

sitecheck_data <- no2_nzta %>%

select(site, "value" = concentration, month, year) %>%

mutate(len = str_length(site)) %>%

mutate(temp_id = as.character(substring(site, 1,6))) %>%

group_by(temp_id) %>%

filter(any(str_length(site) > 6)) %>%

mutate(measurement_date = lubridate::my(paste0(month, year)) %>% lubridate::as_date()) %>%

mutate(site = as.character(site))

p <- sitecheck_data %>%

filter(temp_id == "AUC004") %>%

simplevis::gg_bar_col(x_var = measurement_date,

y_var = value,

col_var = site,

x_pretty_n = 10,

x_labels = scales::date_format("%y"))

p

sitecheck_data %>%

filter(temp_id == "AUC004") %>%

ggplot(aes(x = measurement_date, y = value, fill = site)) +

geom_col()

plotly::ggplotly(p)

```

| 1.0 | bar: x_var date labels are not working correctly - ```

library(tidyverse)

library(er.helpers)

library(simplevis)

setup_datalake_access()

no2_nzta <- er.helpers::read_from_datalake( "air/2021/tidy/no2_nzta.RDS")

sitecheck_data <- no2_nzta %>%

select(site, "value" = concentration, month, year) %>%

mutate(len = str_length(site)) %>%

mutate(temp_id = as.character(substring(site, 1,6))) %>%

group_by(temp_id) %>%

filter(any(str_length(site) > 6)) %>%

mutate(measurement_date = lubridate::my(paste0(month, year)) %>% lubridate::as_date()) %>%

mutate(site = as.character(site))

p <- sitecheck_data %>%

filter(temp_id == "AUC004") %>%

simplevis::gg_bar_col(x_var = measurement_date,

y_var = value,

col_var = site,

x_pretty_n = 10,

x_labels = scales::date_format("%y"))

p

sitecheck_data %>%

filter(temp_id == "AUC004") %>%

ggplot(aes(x = measurement_date, y = value, fill = site)) +

geom_col()

plotly::ggplotly(p)

```

| priority | bar x var date labels are not working correctly library tidyverse library er helpers library simplevis setup datalake access nzta er helpers read from datalake air tidy nzta rds sitecheck data select site value concentration month year mutate len str length site mutate temp id as character substring site group by temp id filter any str length site mutate measurement date lubridate my month year lubridate as date mutate site as character site p filter temp id simplevis gg bar col x var measurement date y var value col var site x pretty n x labels scales date format y p sitecheck data filter temp id ggplot aes x measurement date y value fill site geom col plotly ggplotly p | 1 |

478,897 | 13,787,839,858 | IssuesEvent | 2020-10-09 05:58:08 | wso2/streaming-integrator | https://api.github.com/repos/wso2/streaming-integrator | opened | Improvement for Siddhi Aggregation process | Priority/High Severity/Major Type/Improvement | **Description:**

We need to have a check before purging tables.

Ex. when purging the "days" table, we need to check whether the "months" table aggregations have happened with the relevant data which is going to be purged. And if not, we need to log a warning or an error.

So that if any error happens, there will be data in the tables without purging so after providing a fix, it will resume aggregate data from where it left off. This will result in no data loss.

**Affected Product Version:**

SI-1.1.0 | 1.0 | Improvement for Siddhi Aggregation process - **Description:**

We need to have a check before purging tables.

Ex. when purging the "days" table, we need to check whether the "months" table aggregations have happened with the relevant data which is going to be purged. And if not, we need to log a warning or an error.

So that if any error happens, there will be data in the tables without purging so after providing a fix, it will resume aggregate data from where it left off. This will result in no data loss.

**Affected Product Version:**

SI-1.1.0 | priority | improvement for siddhi aggregation process description we need to have a check before purging tables ex when purging the days table we need to check whether the months table aggregations have happened with the relevant data which is going to be purged and if not we need to log a warning or an error so that if any error happens there will be data in the tables without purging so after providing a fix it will resume aggregate data from where it left off this will result in no data loss affected product version si | 1 |

45,343 | 2,928,232,999 | IssuesEvent | 2015-06-27 00:55:54 | EFForg/privacybadgerchrome | https://api.github.com/repos/EFForg/privacybadgerchrome | closed | One click whitelist is broken for youtube.com | bug High priority | It should pop up when a user tries to comment on youtube but it doesn't. We should check disqus as well. | 1.0 | One click whitelist is broken for youtube.com - It should pop up when a user tries to comment on youtube but it doesn't. We should check disqus as well. | priority | one click whitelist is broken for youtube com it should pop up when a user tries to comment on youtube but it doesn t we should check disqus as well | 1 |

106,641 | 4,281,570,843 | IssuesEvent | 2016-07-15 03:57:55 | fflewddur/archivo | https://api.github.com/repos/fflewddur/archivo | closed | Pixelization/macroblocking in archived videos | bug high priority | Using Windows 10 and PrivateInternetAccess VPN, PC is unable to find TiVo device. They are on the same network - disabling firewall for private connections didn't help. If I disable the VPN it works fine. Any ideas on how to use without disabling the VPN? | 1.0 | Pixelization/macroblocking in archived videos - Using Windows 10 and PrivateInternetAccess VPN, PC is unable to find TiVo device. They are on the same network - disabling firewall for private connections didn't help. If I disable the VPN it works fine. Any ideas on how to use without disabling the VPN? | priority | pixelization macroblocking in archived videos using windows and privateinternetaccess vpn pc is unable to find tivo device they are on the same network disabling firewall for private connections didn t help if i disable the vpn it works fine any ideas on how to use without disabling the vpn | 1 |

624,964 | 19,714,774,121 | IssuesEvent | 2022-01-13 09:55:10 | hermeznetwork/wallet-ui | https://api.github.com/repos/hermeznetwork/wallet-ui | closed | Token Swap/Implement Design Token selector | type: enhancement priority: high | - Needs API to query tokens

- Needs to check valid swaps for second token

- Needs way to get images for token

| 1.0 | Token Swap/Implement Design Token selector - - Needs API to query tokens

- Needs to check valid swaps for second token

- Needs way to get images for token

| priority | token swap implement design token selector needs api to query tokens needs to check valid swaps for second token needs way to get images for token | 1 |

454,140 | 13,095,491,648 | IssuesEvent | 2020-08-03 14:12:24 | firecracker-microvm/firecracker | https://api.github.com/repos/firecracker-microvm/firecracker | closed | InvalidOffset (virtio-block) error when resuming after loading a snapshot (with rootfs from firecracker-containerd) | Feature: Snapshotting Priority: High Quality: Bug | Hi, we started developing support for snapshot `pause/resume/create/load` inside our fork of firecracker-containerd (we can make the code public). While we had no problem with supporting `pause/resume/create-snapshot` methods we ran into an error inside Firecracker's virtio-block module with `load-snapshot->resume`.

The workflow is startVM(boot) -> Pause -> Create-Snap -> Offload (kill the VM with SIGTERM) -> SnapshotLoad -> Resume

The problem we face is at the Resume point where we get the following error from firecracker's log:

```

2020-06-25T07:21:10.652265445 [anonymous-instance:INFO:src/api_server/src/parsed_request.rs:124] The API server received a Put request on "/snapshot/load" with body "{\"mem_file_path\":\"/tmp/mem_file\",\"snapshot_path\":\"/tmp/snapshot_file\"}".

2020-06-25T07:21:10.664695532 [anonymous-instance:INFO:src/api_server/src/parsed_request.rs:89] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:21:10.665787599 [anonymous-instance:INFO:src/api_server/src/parsed_request.rs:124] The API server received a Patch request on "/vm" with body "{\"state\":\"Resumed\"}".

2020-06-25T07:21:10.665908820 [anonymous-instance:INFO:src/api_server/src/parsed_request.rs:89] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:21:14.799994834 [anonymous-instance:ERROR:src/devices/src/virtio/block/device.rs:226] Failed to execute request: BadRequest(InvalidOffset)

2020-06-25T07:21:14.802628426 [anonymous-instance:ERROR:src/devices/src/virtio/block/device.rs:226] Failed to execute request: BadRequest(InvalidOffset)

```

We tend to think that this can be related to the way firecracker-containerd manages block devices. The VM mounts block devices in 2 phases. First, the VM boots from a generic rootfs (with the containerd agent):

```

The API server received a Put request on "/drives/root_drive" with body "The API server received a Put request on "/drives/root_drive" with body "{\"drive_id\":\"root_drive\",\"is_read_only\":true,\"is_root_device\":true,\"path_on_host\":\"/var/lib/firecracker-containerd/runtime/default-rootfs.img\"}\n".

```

Then the agent needs to mount a second block device that contains the container-specific data. To do so, containerd attaches another drive and patches the path to the drive twice:

```

The API server received a Put request on "/drives/MN2HE43UOVRDA" with body "{\"drive_id\":\"MN2HE43UOVRDA\",\"is_read_only\":false,\"is_root_device\":false,\"path_on_host\":\"/var/lib/firecracker-containerd/shim-base/firecracker-containerd/505/ctrstub0\"}\n".

<<..>>

The API server received a Put request on "/actions" with body "{\"action_type\":\"InstanceStart\"}\n".

The request was executed successfully. Status code: 204 No Content.

The API server received a Patch request on "/drives/MN2HE43UOVRDA" with body "{\"drive_id\":\"MN2HE43UOVRDA\",\"path_on_host\":\"/dev/mapper/fc-dev-thinpool-snap-9\"}\n".

```

Can the issue be connected to the way [PATCH drive works](https://github.com/firecracker-microvm/firecracker/blob/master/docs/api_requests/patch-block.md)? This drive is supposed to remain mounted into the restored guest.

We would greatly appreciate comments and ideas on what could be the root cause from Firecracker and firecracker-containerd maintainers: for example, @acatangiu @kzys . Once we fix the issue, we would be happy to contribute our changes to firecracker-containerd upstream.

Full workflow log (Firecracker's log):

```

Running Firecracker v0.21.0

2020-06-25T07:20:17.287742839 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.288144594 [anonymous-instance:INFO] The API server received a Put request on "/machine-config" with body "{\"cpu_template\":\"T2\",\"ht_enabled\":false,\"mem_size_mib\":512,\"vcpu_count\":1}\n".

2020-06-25T07:20:17.288291418 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.288521865 [anonymous-instance:INFO] The API server received a Get request on "/machine-config".

2020-06-25T07:20:17.288625819 [anonymous-instance:INFO] The request was executed successfully. Status code: 200 OK.

2020-06-25T07:20:17.288971127 [anonymous-instance:INFO] The API server received a Put request on "/boot-source" with body "{\"boot_args\":\"8250.nr_uarts=0 ip=190.128.0.2::190.128.0.1:255.192.0.0:::off::: systemd.log_color=false init=/sbin/overlay-init systemd.unit=firecracker.target quiet noapic nomodules ipv6.disable=1 ro panic=1 tsc=reliable reboot=k pci=off\",\"kernel_image_path\":\"/var/lib/firecracker-containerd/runtime/hello-vmlinux.bin\"}\n".

2020-06-25T07:20:17.289115444 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.289571124 [anonymous-instance:INFO] The API server received a Put request on "/drives/root_drive" with body "{\"drive_id\":\"root_drive\",\"is_read_only\":true,\"is_root_device\":true,\"path_on_host\":\"/var/lib/firecracker-containerd/runtime/default-rootfs.img\"}\n".

2020-06-25T07:20:17.289732138 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.290046499 [anonymous-instance:INFO] The API server received a Put request on "/drives/MN2HE43UOVRDA" with body "{\"drive_id\":\"MN2HE43UOVRDA\",\"is_read_only\":false,\"is_root_device\":false,\"path_on_host\":\"/var/lib/firecracker-containerd/shim-base/firecracker-containerd/505/ctrstub0\"}\n".

2020-06-25T07:20:17.290151860 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.290522553 [anonymous-instance:INFO] The API server received a Put request on "/network-interfaces/1" with body "{\"guest_mac\":\"02:FC:00:00:00:00\",\"host_dev_name\":\"fc-0-tap0\",\"iface_id\":\"1\"}\n".

2020-06-25T07:20:17.292898333 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.293236487 [anonymous-instance:INFO] The API server received a Put request on "/vsock" with body "{\"guest_cid\":0,\"uds_path\":\"firecracker.vsock\",\"vsock_id\":\"agent_api\"}\n".

2020-06-25T07:20:17.293472939 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:17.293754191 [anonymous-instance:INFO] The API server received a Put request on "/actions" with body "{\"action_type\":\"InstanceStart\"}\n".

2020-06-25T07:20:17.305625745 [anonymous-instance:WARN] Could not add serial input event to epoll: Error during epoll call: Operation not permitted (os error 1)

2020-06-25T07:20:17.306309907 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:19.177877690 [anonymous-instance:INFO] The API server received a Patch request on "/drives/MN2HE43UOVRDA" with body "{\"drive_id\":\"MN2HE43UOVRDA\",\"path_on_host\":\"/dev/mapper/fc-dev-thinpool-snap-9\"}\n".

2020-06-25T07:20:19.178152684 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.

2020-06-25T07:20:20.104482947 [anonymous-instance:INFO] The API server received a Patch request on "/vm" with body "{\"state\":\"Paused\"}".

2020-06-25T07:20:20.104702379 [anonymous-instance:INFO] The request was executed successfully. Status code: 204 No Content.