Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1 value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3 values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13 values | text_combine stringlengths 96 261k | label stringclasses 2 values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

359,258 | 10,667,397,475 | IssuesEvent | 2019-10-19 12:07:52 | AY1920S1-CS2113-T14-1/main | https://api.github.com/repos/AY1920S1-CS2113-T14-1/main | opened | Create various UI contexts | component.UI priority.High status.Ongoing type.Task | Contexts as documented in the [https://github.com/AY1920S1-CS2113-T14-1/main/blob/master/docs/UserGuide.adoc](User Guide)

1. Home

2. Patients

3. Treatment

4. Evidence

5. Investigation | 1.0 | Create various UI contexts - Contexts as documented in the [https://github.com/AY1920S1-CS2113-T14-1/main/blob/master/docs/UserGuide.adoc](User Guide)

1. Home

2. Patients

3. Treatment

4. Evidence

5. Investigation | priority | create various ui contexts contexts as documented in the user guide home patients treatment evidence investigation | 1 |

678,506 | 23,200,276,734 | IssuesEvent | 2022-08-01 20:40:40 | stormk539/CavemanCooking | https://api.github.com/repos/stormk539/CavemanCooking | opened | Start of Building System | Priority: Highest 8 | As the systems designer, I need to begin the building system so that the level designer can use my blueprints to lay out where the player is able to buy furniture for the store.

CoS:

- [ ] Chairs/Tables/Decor player's can buy are shown as transparent before they buy them

- [ ] Player can press e to buy them within range

- [ ] Once bought, the material switches to non-transparent | 1.0 | Start of Building System - As the systems designer, I need to begin the building system so that the level designer can use my blueprints to lay out where the player is able to buy furniture for the store.

CoS:

- [ ] Chairs/Tables/Decor player's can buy are shown as transparent before they buy them

- [ ] Player can press e to buy them within range

- [ ] Once bought, the material switches to non-transparent | priority | start of building system as the systems designer i need to begin the building system so that the level designer can use my blueprints to lay out where the player is able to buy furniture for the store cos chairs tables decor player s can buy are shown as transparent before they buy them player can press e to buy them within range once bought the material switches to non transparent | 1 |

600,867 | 18,360,525,822 | IssuesEvent | 2021-10-09 05:54:17 | AY2122S1-CS2103T-F11-1/tp | https://api.github.com/repos/AY2122S1-CS2103T-F11-1/tp | closed | Add rates field to student class | type.Task priority.High | As per title. Furthermore, need to integrate rates with

* add command

* edit command

* storage | 1.0 | Add rates field to student class - As per title. Furthermore, need to integrate rates with

* add command

* edit command

* storage | priority | add rates field to student class as per title furthermore need to integrate rates with add command edit command storage | 1 |

709,022 | 24,365,195,305 | IssuesEvent | 2022-10-03 14:40:53 | status-im/status-desktop | https://api.github.com/repos/status-im/status-desktop | reopened | overlapped messages | bug ui priority 1: high pixel-perfect-issues | # Bug Report

## Description

<img width="1010" alt="Screen Shot 2022-10-02 at 7 30 26 PM" src="https://user-images.githubusercontent.com/176720/193481135-cbffd304-fd76-4c95-a6d6-52c859b2802b.png">

| 1.0 | overlapped messages - # Bug Report

## Description

<img width="1010" alt="Screen Shot 2022-10-02 at 7 30 26 PM" src="https://user-images.githubusercontent.com/176720/193481135-cbffd304-fd76-4c95-a6d6-52c859b2802b.png">

| priority | overlapped messages bug report description img width alt screen shot at pm src | 1 |

376,515 | 11,148,043,871 | IssuesEvent | 2019-12-23 14:25:59 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Need to add a Filter in amp tree shaking for adding custom CSS. | NEXT UPDATE [Priority: HIGH] enhancement | Desc: Need to add a Filter in amp tree shaking for adding custom CSS so that we can add CSS for amp-bind classes. | 1.0 | Need to add a Filter in amp tree shaking for adding custom CSS. - Desc: Need to add a Filter in amp tree shaking for adding custom CSS so that we can add CSS for amp-bind classes. | priority | need to add a filter in amp tree shaking for adding custom css desc need to add a filter in amp tree shaking for adding custom css so that we can add css for amp bind classes | 1 |

414,385 | 12,102,756,506 | IssuesEvent | 2020-04-20 17:13:18 | iglance/iGlance | https://api.github.com/repos/iglance/iGlance | closed | Menubar items stop refreshing after sleeping | bug fixed with next update high priority | **Describe the bug🐛**

Netowork module displays 0KB/s up and down despite being online and browsing/watching videos/Zooming

**To Reproduce**

Not entirely sure - seems to have happened when i woke my computer from sleep or switched to VPN

**Expected behavior**

Shows traffic numbers

**Screenshots (Optional)**

<img width="96" alt="Screen Shot 2020-04-05 at 23 13 25" src="https://user-images.githubusercontent.com/23653752/78520292-0cb0cd00-7794-11ea-8725-360bfdd2f3c5.png">

**Desktop (please complete the following information):**

- MacOS Version: 10.15.4

- Version of the app: 2.0.4

**Log**

Couldn't log it, had logging off when it happened sadly, will try to replicate

| 1.0 | Menubar items stop refreshing after sleeping - **Describe the bug🐛**

Netowork module displays 0KB/s up and down despite being online and browsing/watching videos/Zooming

**To Reproduce**

Not entirely sure - seems to have happened when i woke my computer from sleep or switched to VPN

**Expected behavior**

Shows traffic numbers

**Screenshots (Optional)**

<img width="96" alt="Screen Shot 2020-04-05 at 23 13 25" src="https://user-images.githubusercontent.com/23653752/78520292-0cb0cd00-7794-11ea-8725-360bfdd2f3c5.png">

**Desktop (please complete the following information):**

- MacOS Version: 10.15.4

- Version of the app: 2.0.4

**Log**

Couldn't log it, had logging off when it happened sadly, will try to replicate

| priority | menubar items stop refreshing after sleeping describe the bug🐛 netowork module displays s up and down despite being online and browsing watching videos zooming to reproduce not entirely sure seems to have happened when i woke my computer from sleep or switched to vpn expected behavior shows traffic numbers screenshots optional img width alt screen shot at src desktop please complete the following information macos version version of the app log couldn t log it had logging off when it happened sadly will try to replicate | 1 |

749,740 | 26,177,492,052 | IssuesEvent | 2023-01-02 11:36:31 | wp-media/wp-rocket | https://api.github.com/repos/wp-media/wp-rocket | closed | Update banners in the plugin | type: enhancement priority: high module: user interface | **Is your feature request related to a problem? Please describe.**

Based on the upcoming change we'll need to update our banners.

1. Expired banners: https://docs.google.com/spreadsheets/d/1Zu1MbsVNkOWCsW0CmVRuY69I0t3vtTLG4ykhuOI3YVI/edit#gid=110497832

2. Expiring banners:

https://docs.google.com/spreadsheets/d/1Zu1MbsVNkOWCsW0CmVRuY69I0t3vtTLG4ykhuOI3YVI/edit#gid=675951386

3. Upgrade banners:

https://docs.google.com/spreadsheets/d/1Zu1MbsVNkOWCsW0CmVRuY69I0t3vtTLG4ykhuOI3YVI/edit#gid=379622301

| 1.0 | Update banners in the plugin - **Is your feature request related to a problem? Please describe.**

Based on the upcoming change we'll need to update our banners.

1. Expired banners: https://docs.google.com/spreadsheets/d/1Zu1MbsVNkOWCsW0CmVRuY69I0t3vtTLG4ykhuOI3YVI/edit#gid=110497832

2. Expiring banners:

https://docs.google.com/spreadsheets/d/1Zu1MbsVNkOWCsW0CmVRuY69I0t3vtTLG4ykhuOI3YVI/edit#gid=675951386

3. Upgrade banners:

https://docs.google.com/spreadsheets/d/1Zu1MbsVNkOWCsW0CmVRuY69I0t3vtTLG4ykhuOI3YVI/edit#gid=379622301

| priority | update banners in the plugin is your feature request related to a problem please describe based on the upcoming change we ll need to update our banners expired banners expiring banners upgrade banners | 1 |

500,204 | 14,492,861,955 | IssuesEvent | 2020-12-11 07:41:57 | ahmedkaludi/accelerated-mobile-pages | https://api.github.com/repos/ahmedkaludi/accelerated-mobile-pages | closed | Validation error in author bio alt attribute | NEXT UPDATE [Priority: HIGH] bug | Users are getting Validation error in author bio alt attribute after plugin update version 1.0.70

WordPress ref: https://wordpress.org/support/topic/amp-error-24/ | 1.0 | Validation error in author bio alt attribute - Users are getting Validation error in author bio alt attribute after plugin update version 1.0.70

WordPress ref: https://wordpress.org/support/topic/amp-error-24/ | priority | validation error in author bio alt attribute users are getting validation error in author bio alt attribute after plugin update version wordpress ref | 1 |

796,641 | 28,121,422,753 | IssuesEvent | 2023-03-31 14:28:57 | NCC-CNC/wheretowork | https://api.github.com/repos/NCC-CNC/wheretowork | opened | renv::restore() on newer versions of R fails to install certain packages | bug high priority | WTW was built on R version `4.1.2 (2021-11-01) -- "Bird Hippie"`

New users have updated versions of R and when they go to clone WTW and `renv::restore()` a bunch of packages fail to install.

So far the list of failed package installs are: `gert`, `httpuv` , `igraph`, `rgdal`, `sf`, `xml2`, `curl`

| 1.0 | renv::restore() on newer versions of R fails to install certain packages - WTW was built on R version `4.1.2 (2021-11-01) -- "Bird Hippie"`

New users have updated versions of R and when they go to clone WTW and `renv::restore()` a bunch of packages fail to install.

So far the list of failed package installs are: `gert`, `httpuv` , `igraph`, `rgdal`, `sf`, `xml2`, `curl`

| priority | renv restore on newer versions of r fails to install certain packages wtw was built on r version bird hippie new users have updated versions of r and when they go to clone wtw and renv restore a bunch of packages fail to install so far the list of failed package installs are gert httpuv igraph rgdal sf curl | 1 |

655,519 | 21,693,642,098 | IssuesEvent | 2022-05-09 17:46:15 | rich-iannone/pointblank | https://api.github.com/repos/rich-iannone/pointblank | closed | BigQuery support | Difficulty: [3] Advanced Effort: [3] High Type: ★ Enhancement Priority: [3] High | ### Discussed in https://github.com/rich-iannone/pointblank/discussions/404

Thanks for your comment. I'd be happy to help test this, and thanks for the link. I'll if I can get it running with one of the public big query datasets, and report back to you how I fare.

---

<div type='discussions-op-text'>

<sup>Originally posted by **good-marketing** April 25, 2022</sup>

Hi,

Thanks for creating pointblank, it is a next level R package, in terms of documentation, approach and execution. [ Hat tip].

I work in GCP environment, and was wondering if support for BigQuery (https://bigrquery.r-dbi.org/) is something you might consider.

Cheers,

Bob</div> | 1.0 | BigQuery support - ### Discussed in https://github.com/rich-iannone/pointblank/discussions/404

Thanks for your comment. I'd be happy to help test this, and thanks for the link. I'll if I can get it running with one of the public big query datasets, and report back to you how I fare.

---

<div type='discussions-op-text'>

<sup>Originally posted by **good-marketing** April 25, 2022</sup>

Hi,

Thanks for creating pointblank, it is a next level R package, in terms of documentation, approach and execution. [ Hat tip].

I work in GCP environment, and was wondering if support for BigQuery (https://bigrquery.r-dbi.org/) is something you might consider.

Cheers,

Bob</div> | priority | bigquery support discussed in thanks for your comment i d be happy to help test this and thanks for the link i ll if i can get it running with one of the public big query datasets and report back to you how i fare originally posted by good marketing april hi thanks for creating pointblank it is a next level r package in terms of documentation approach and execution i work in gcp environment and was wondering if support for bigquery is something you might consider cheers bob | 1 |

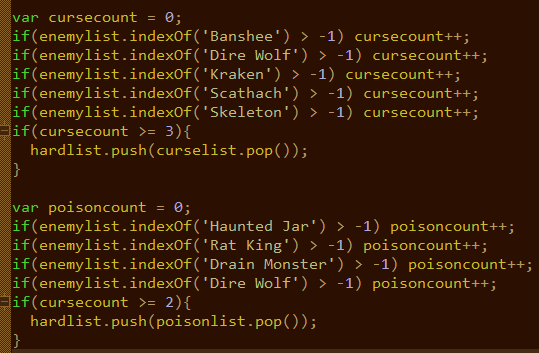

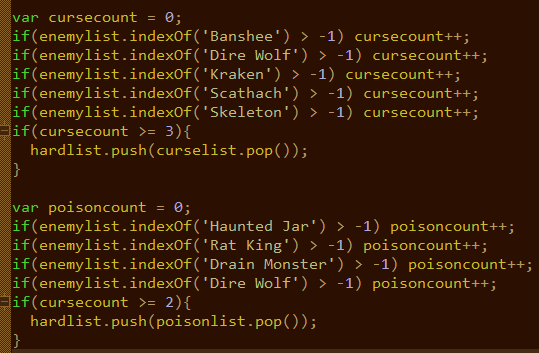

338,502 | 10,230,166,315 | IssuesEvent | 2019-08-17 18:59:57 | TerryCavanagh/diceydungeons.com | https://api.github.com/repos/TerryCavanagh/diceydungeons.com | closed | In Bonus Round, the Poison rules are only considered if there's enough Curse-based enemies | High Priority reported in launch v1.0 |

From `remixrules.txt`. Should be a simple fix - change `if(cursecount >=2)` to `if(poisoncount >=2)`. | 1.0 | In Bonus Round, the Poison rules are only considered if there's enough Curse-based enemies -

From `remixrules.txt`. Should be a simple fix - change `if(cursecount >=2)` to `if(poisoncount >=2)`. | priority | in bonus round the poison rules are only considered if there s enough curse based enemies from remixrules txt should be a simple fix change if cursecount to if poisoncount | 1 |

104,648 | 4,216,646,605 | IssuesEvent | 2016-06-30 10:00:07 | CloudOpting/cloudopting-manager | https://api.github.com/repos/CloudOpting/cloudopting-manager | closed | Platform independence | bug high priority | The software should be able to run on all platforms and have no dependency on OS or J2EE environment. | 1.0 | Platform independence - The software should be able to run on all platforms and have no dependency on OS or J2EE environment. | priority | platform independence the software should be able to run on all platforms and have no dependency on os or environment | 1 |

368,055 | 10,865,428,173 | IssuesEvent | 2019-11-14 18:58:47 | pytorch/xla | https://api.github.com/repos/pytorch/xla | closed | Remove extra overrides for non-leaf nodes. | enhancement high priority | Note that when removing extra overrides, we need to make sure their leaf part is added if not present. For example, `arange` should be removed but we should add `arange_out`.

For reference here's source of truth of all leaf nodes as of 10/21/2019:

https://gist.github.com/ailzhang/905aa41a5be8c5315ba0e3d8f0d0e326

- [x] (Ailing)Tensor bitwise_not(const Tensor& self); // bitwise_not(Tensor)->Tensor

- [x] (Ailing)Tensor& bitwise_not_(Tensor& self); // bitwise_not_(Tensor)->Tensor

- [x] (Ailing) Tensor _cast_Byte(const Tensor& self, bool non_blocking); // _cast_Byte(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Char(const Tensor& self, bool non_blocking); // _cast_Char(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Float(const Tensor& self, bool non_blocking); // _cast_Float(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Int(const Tensor& self, bool non_blocking); // _cast_Int(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Long(const Tensor& self, bool non_blocking); // _cast_Long(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Short(const Tensor& self, bool non_blocking); // _cast_Short(Tensor,bool)->Tensor

- [x] (Ailing)Tensor _dim_arange(const Tensor& like, int64_t dim); // _dim_arange(Tensor,int64_t)->Tensor

- [x] (Ailing) Tensor argmax(const Tensor& self, c10::optional<int64_t> dim, bool keepdim); // argmax(Tensor,c10::optional<int64_t>,bool)->Tensor

- [x] (Ailing) Tensor argmin(const Tensor& self, c10::optional<int64_t> dim, bool keepdim); // argmin(Tensor,c10::optional<int64_t>,bool)->Tensor

- [x] (Ailing) Tensor argsort(const Tensor& self, int64_t dim, bool descending); // argsort(Tensor,int64_t,bool)->Tensor

- [x] (Ailing) Tensor avg_pool1d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, bool ceil_mode, bool count_include_pad); // avg_pool1d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,bool,bool)->Tensor

- [x] (Ailing) Tensor batch_norm(const Tensor& input, const Tensor& weight, const Tensor& bias, const Tensor& running_mean, const Tensor& running_var, bool training, double momentum, double eps, bool cudnn_enabled); // batch_norm(Tensor,Tensor,Tensor,Tensor,Tensor,bool,double,double,bool)->Tensor

- [x] (Ailing) Tensor bernoulli(const Tensor& self, double p, Generator* generator); // bernoulli(Tensor,double,Generator)->Tensor

- [x] (Ailing) Tensor bilinear(const Tensor& input1, const Tensor& input2, const Tensor& weight, const Tensor& bias); // bilinear(Tensor,Tensor,Tensor,Tensor)->Tensor

- [x] (Ailing) Tensor binary_cross_entropy_with_logits_backward( const Tensor& grad_output, const Tensor& self, const Tensor& target, const Tensor& weight, const Tensor& pos_weight, int64_t reduction); // binary_cross_entropy_with_logits_backward(Tensor,Tensor,Tensor,Tensor,Tensor,int64_t)->Tensor

- [x] (Ailing) std::vector<Tensor> broadcast_tensors(TensorList tensors); // broadcast_tensors(TensorList)->std::vector<Tensor>

- [x] (Ailing) Tensor celu(const Tensor& self, Scalar alpha); // celu(Tensor,Scalar)->Tensor

- [x] (Ailing) Tensor& celu_(Tensor& self, Scalar alpha); // celu_(Tensor,Scalar)->Tensor

- [x] (Ailing) Tensor chain_matmul(TensorList matrices); // chain_matmul(TensorList)->Tensor

- [x] (Ailing) Tensor contiguous(const Tensor& self, MemoryFormat memory_format); // contiguous(Tensor,MemoryFormat)->Tensor

- [ ] (Ailing) Tensor& copy_(Tensor& self, const Tensor& src, bool non_blocking); // copy_(Tensor,Tensor,bool)->Tensor

- [x] (Ailing) Tensor cosine_embedding_loss(const Tensor& input1, const Tensor& input2, const Tensor& target, double margin, int64_t reduction); // cosine_embedding_loss(Tensor,Tensor,Tensor,double,int64_t)->Tensor

- [x] (Ailing) Tensor cosine_similarity(const Tensor& x1, const Tensor& x2, int64_t dim, double eps); // cosine_similarity(Tensor,Tensor,int64_t,double)->Tensor

- [x] (Ailing) Tensor diagflat(const Tensor& self, int64_t offset); // diagflat(Tensor,int64_t)->Tensor

- [x] (Ailing) Tensor dropout(const Tensor& input, double p, bool train); // dropout(Tensor,double,bool)->Tensor

- [x] (Ailing) Tensor& dropout_(Tensor& self, double p, bool train); // dropout_(Tensor,double,bool)->Tensor

- [ ] Tensor einsum(std::string equation, TensorList tensors); // einsum(std::string,TensorList)->Tensor

- [x] Tensor empty_like(const Tensor& self); // empty_like(Tensor)->Tensor

- [x] Tensor expand_as(const Tensor& self, const Tensor& other); // expand_as(Tensor,Tensor)->Tensor

- [x] Tensor flatten(const Tensor& self, int64_t start_dim, int64_t end_dim); // flatten(Tensor,int64_t,int64_t)->Tensor

- [x] Tensor frobenius_norm(const Tensor& self); // frobenius_norm(Tensor)->Tensor

- [x] Tensor frobenius_norm(const Tensor& self, IntArrayRef dim, bool keepdim); // frobenius_norm(Tensor,IntArrayRef,bool)->Tensor

- [x] Tensor full_like(const Tensor& self, Scalar fill_value); // full_like(Tensor,Scalar)->Tensor

- [x] Tensor group_norm(const Tensor& input, int64_t num_groups, const Tensor& weight, const Tensor& bias, double eps, bool cudnn_enabled); // group_norm(Tensor,int64_t,Tensor,Tensor,double,bool)->Tensor

- [x] Tensor hinge_embedding_loss(const Tensor& self, const Tensor& target, double margin, int64_t reduction); // hinge_embedding_loss(Tensor,Tensor,double,int64_t)->Tensor

- [x] Tensor index_add(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& source); // index_add(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor index_copy(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& source); // index_copy(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor index_fill(const Tensor& self, int64_t dim, const Tensor& index, Scalar value); // index_fill(Tensor,int64_t,Tensor,Scalar)->Tensor

- [x] Tensor index_fill(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& value); // index_fill(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor index_put(const Tensor& self, TensorList indices, const Tensor& values, bool accumulate); // index_put(Tensor,TensorList,Tensor,bool)->Tensor

- [x] Tensor instance_norm( const Tensor& input, const Tensor& weight, const Tensor& bias, const Tensor& running_mean, const Tensor& running_var, bool use_input_stats, double momentum, double eps, bool cudnn_enabled); // instance_norm(Tensor,Tensor,Tensor,Tensor,Tensor,bool,double,double,bool)->Tensor

- [x] bool is_floating_point(const Tensor& self); // is_floating_point(Tensor)->bool

- [x] bool is_signed(const Tensor& self); // is_signed(Tensor)->bool

- [x] Tensor layer_norm(const Tensor& input, IntArrayRef normalized_shape, const Tensor& weight, const Tensor& bias, double eps, bool cudnn_enable); // layer_norm(Tensor,IntArrayRef,Tensor,Tensor,double,bool)->Tensor

- [x] Tensor linear(const Tensor& input, const Tensor& weight, const Tensor& bias); // linear(Tensor,Tensor,Tensor)->Tensor

- [x] Tensor log_sigmoid(const Tensor& self); // log_sigmoid(Tensor)->Tensor

- [x] Tensor log_softmax(const Tensor& self, int64_t dim, c10::optional<ScalarType> dtype); // log_softmax(Tensor,int64_t,c10::optional<ScalarType>)->Tensor

- [x] Tensor margin_ranking_loss(const Tensor& input1, const Tensor& input2, const Tensor& target, double margin, int64_t reduction); // margin_ranking_loss(Tensor,Tensor,Tensor,double,int64_t)->Tensor

- [x] Tensor masked_fill(const Tensor& self, const Tensor& mask, Scalar value); // masked_fill(Tensor,Tensor,Scalar)->Tensor

- [x] Tensor masked_fill(const Tensor& self, const Tensor& mask, const Tensor& value); // masked_fill(Tensor,Tensor,Tensor)->Tensor

- [x] Tensor matmul(const Tensor& self, const Tensor& other); // matmul(Tensor,Tensor)->Tensor

- [x] Tensor max_pool1d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, IntArrayRef dilation, bool ceil_mode); // max_pool1d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,IntArrayRef,bool)->Tensor

- [x] Tensor max_pool2d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, IntArrayRef dilation, bool ceil_mode); // max_pool2d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,IntArrayRef,bool)->Tensor

- [x] Tensor max_pool3d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, IntArrayRef dilation, bool ceil_mode); // max_pool3d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,IntArrayRef,bool)->Tensor

- [x] std::vector<Tensor> meshgrid(TensorList tensors); // meshgrid(TensorList)->std::vector<Tensor>

- [x] Tensor narrow(const Tensor& self, int64_t dim, int64_t start, int64_t length); // narrow(Tensor,int64_t,int64_t,int64_t)->Tensor

- [x] Tensor nll_loss(const Tensor& self, const Tensor& target, const Tensor& weight, int64_t reduction, int64_t ignore_index); // nll_loss(Tensor,Tensor,Tensor,int64_t,int64_t)->Tensor

- [x] Tensor nuclear_norm(const Tensor& self, bool keepdim); // nuclear_norm(Tensor,bool)->Tensor

- [x] Tensor one_hot(const Tensor& self, int64_t num_classes); // one_hot(Tensor,int64_t)->Tensor

- [x] Tensor ones_like(const Tensor& self); // ones_like(Tensor)->Tensor

- [x] Tensor pairwise_distance(const Tensor& x1, const Tensor& x2, double p, double eps, bool keepdim); // pairwise_distance(Tensor,Tensor,double,double,bool)->Tensor

- [x] Tensor pixel_shuffle(const Tensor& self, int64_t upscale_factor); // pixel_shuffle(Tensor,int64_t)->Tensor

- [x] Tensor pinverse(const Tensor& self, double rcond); // pinverse(Tensor,double)->Tensor

- [x] Tensor reshape(const Tensor& self, IntArrayRef shape); // reshape(Tensor,IntArrayRef)->Tensor

- [x] Tensor scatter(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& src); // scatter(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor scatter(const Tensor& self, int64_t dim, const Tensor& index, Scalar value); // scatter(Tensor,int64_t,Tensor,Scalar)->Tensor

- [x] Tensor scatter_add(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& src); // scatter_add(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor selu(const Tensor& self); // selu(Tensor)->Tensor

- [x] Tensor& selu_(Tensor& self); // selu_(Tensor)->Tensor

- [x] int64_t size(const Tensor& self, int64_t dim); // size(Tensor,int64_t)->int64_t

- [x] Tensor softmax(const Tensor& self, int64_t dim, c10::optional<ScalarType> dtype); // softmax(Tensor,int64_t,c10::optional<ScalarType>)->Tensor

- [x] Tensor sum_to_size(const Tensor& self, IntArrayRef size); // sum_to_size(Tensor,IntArrayRef)->Tensor

- [x] Tensor tensordot(const Tensor& self, const Tensor& other, IntArrayRef dims_self, IntArrayRef dims_other); // tensordot(Tensor,Tensor,IntArrayRef,IntArrayRef)->Tensor

- [x] Tensor to(const Tensor& self, const TensorOptions& options, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,TensorOptions,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor to(const Tensor& self, Device device, ScalarType dtype, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,Device,ScalarType,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor to(const Tensor& self, ScalarType dtype, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,ScalarType,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor to(const Tensor& self, const Tensor& other, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,Tensor,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor triplet_margin_loss(const Tensor& anchor, const Tensor& positive, const Tensor& negative, double margin, double p, double eps, bool swap, int64_t reduction); // triplet_margin_loss(Tensor,Tensor,Tensor,double,double,double,bool,int64_t)->Tensor

- [x] Tensor view_as(const Tensor& self, const Tensor& other); // view_as(Tensor,Tensor)->Tensor

- [x] Tensor where(const Tensor& condition, const Tensor& self, const Tensor& other); // where(Tensor,Tensor,Tensor)->Tensor

- [x] Tensor zeros_like(const Tensor& self); // zeros_like(Tensor)->Tensor

And a few more (non-leaf factory functions)

- [x] Tensor arange(Scalar end, const TensorOptions& options); // arange(Scalar,TensorOptions)->Tensor

- [x] Tensor arange(Scalar start, Scalar end, const TensorOptions& options); // arange(Scalar,Scalar,TensorOptions)->Tensor

- [x] Tensor arange(Scalar start, Scalar end, Scalar step, const TensorOptions& options); // arange(Scalar,Scalar,Scalar,TensorOptions)->Tensor

- [x] Tensor bartlett_window(int64_t window_length, const TensorOptions& options); // bartlett_window(int64_t,TensorOptions)->Tensor

- [x] Tensor bartlett_window(int64_t window_length, bool periodic, const TensorOptions& options); // bartlett_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor blackman_window(int64_t window_length, const TensorOptions& options); // blackman_window(int64_t,TensorOptions)->Tensor

- [x] Tensor blackman_window(int64_t window_length, bool periodic, const TensorOptions& options); // blackman_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor empty_like(const Tensor& self, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // empty_like(Tensor,TensorOptions,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor eye(int64_t n, const TensorOptions& options); // eye(int64_t,TensorOptions)->Tensor

- [x] Tensor eye(int64_t n, int64_t m, const TensorOptions& options); // eye(int64_t,int64_t,TensorOptions)->Tensor

- [x] Tensor full(IntArrayRef size, Scalar fill_value, const TensorOptions& options); // full(IntArrayRef,Scalar,TensorOptions)->Tensor

- [x] Tensor full_like(const Tensor& self, Scalar fill_value, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // full_like(Tensor,Scalar,TensorOptions,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor hamming_window(int64_t window_length, const TensorOptions& options); // hamming_window(int64_t,TensorOptions)->Tensor

- [x] Tensor hamming_window(int64_t window_length, bool periodic, const TensorOptions& options); // hamming_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor hamming_window(int64_t window_length, bool periodic, double alpha, const TensorOptions& options); // hamming_window(int64_t,bool,double,TensorOptions)->Tensor

- [x] Tensor hamming_window(int64_t window_length, bool periodic, double alpha, double beta, const TensorOptions& options); // hamming_window(int64_t,bool,double,double,TensorOptions)->Tensor

- [x] Tensor hann_window(int64_t window_length, const TensorOptions& options); // hann_window(int64_t,TensorOptions)->Tensor

- [x] Tensor hann_window(int64_t window_length, bool periodic, const TensorOptions& options); // hann_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor ones(IntArrayRef size, const TensorOptions& options); // ones(IntArrayRef,TensorOptions)->Tensor

- [x] Tensor ones_like(const Tensor& self, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // ones_like(Tensor,TensorOptions,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor randperm(int64_t n, const TensorOptions& options); // randperm(int64_t,TensorOptions)->Tensor

- [x] Tensor randperm(int64_t n, Generator* generator, const TensorOptions& options); // randperm(int64_t,Generator,TensorOptions)->Tensor

- [x] Tensor zeros_like(const Tensor& self, c10::optional<MemoryFormat> memory_format); // zeros_like(Tensor,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor zeros_like(const Tensor& self, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // zeros_like(Tensor,TensorOptions,c10::optional<MemoryFormat>)->Tensor

| 1.0 | Remove extra overrides for non-leaf nodes. - Note that when removing extra overrides, we need to make sure their leaf part is added if not present. For example, `arange` should be removed but we should add `arange_out`.

For reference here's source of truth of all leaf nodes as of 10/21/2019:

https://gist.github.com/ailzhang/905aa41a5be8c5315ba0e3d8f0d0e326

- [x] (Ailing)Tensor bitwise_not(const Tensor& self); // bitwise_not(Tensor)->Tensor

- [x] (Ailing)Tensor& bitwise_not_(Tensor& self); // bitwise_not_(Tensor)->Tensor

- [x] (Ailing) Tensor _cast_Byte(const Tensor& self, bool non_blocking); // _cast_Byte(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Char(const Tensor& self, bool non_blocking); // _cast_Char(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Float(const Tensor& self, bool non_blocking); // _cast_Float(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Int(const Tensor& self, bool non_blocking); // _cast_Int(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Long(const Tensor& self, bool non_blocking); // _cast_Long(Tensor,bool)->Tensor

- [x] (Ailing) Tensor _cast_Short(const Tensor& self, bool non_blocking); // _cast_Short(Tensor,bool)->Tensor

- [x] (Ailing)Tensor _dim_arange(const Tensor& like, int64_t dim); // _dim_arange(Tensor,int64_t)->Tensor

- [x] (Ailing) Tensor argmax(const Tensor& self, c10::optional<int64_t> dim, bool keepdim); // argmax(Tensor,c10::optional<int64_t>,bool)->Tensor

- [x] (Ailing) Tensor argmin(const Tensor& self, c10::optional<int64_t> dim, bool keepdim); // argmin(Tensor,c10::optional<int64_t>,bool)->Tensor

- [x] (Ailing) Tensor argsort(const Tensor& self, int64_t dim, bool descending); // argsort(Tensor,int64_t,bool)->Tensor

- [x] (Ailing) Tensor avg_pool1d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, bool ceil_mode, bool count_include_pad); // avg_pool1d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,bool,bool)->Tensor

- [x] (Ailing) Tensor batch_norm(const Tensor& input, const Tensor& weight, const Tensor& bias, const Tensor& running_mean, const Tensor& running_var, bool training, double momentum, double eps, bool cudnn_enabled); // batch_norm(Tensor,Tensor,Tensor,Tensor,Tensor,bool,double,double,bool)->Tensor

- [x] (Ailing) Tensor bernoulli(const Tensor& self, double p, Generator* generator); // bernoulli(Tensor,double,Generator)->Tensor

- [x] (Ailing) Tensor bilinear(const Tensor& input1, const Tensor& input2, const Tensor& weight, const Tensor& bias); // bilinear(Tensor,Tensor,Tensor,Tensor)->Tensor

- [x] (Ailing) Tensor binary_cross_entropy_with_logits_backward( const Tensor& grad_output, const Tensor& self, const Tensor& target, const Tensor& weight, const Tensor& pos_weight, int64_t reduction); // binary_cross_entropy_with_logits_backward(Tensor,Tensor,Tensor,Tensor,Tensor,int64_t)->Tensor

- [x] (Ailing) std::vector<Tensor> broadcast_tensors(TensorList tensors); // broadcast_tensors(TensorList)->std::vector<Tensor>

- [x] (Ailing) Tensor celu(const Tensor& self, Scalar alpha); // celu(Tensor,Scalar)->Tensor

- [x] (Ailing) Tensor& celu_(Tensor& self, Scalar alpha); // celu_(Tensor,Scalar)->Tensor

- [x] (Ailing) Tensor chain_matmul(TensorList matrices); // chain_matmul(TensorList)->Tensor

- [x] (Ailing) Tensor contiguous(const Tensor& self, MemoryFormat memory_format); // contiguous(Tensor,MemoryFormat)->Tensor

- [ ] (Ailing) Tensor& copy_(Tensor& self, const Tensor& src, bool non_blocking); // copy_(Tensor,Tensor,bool)->Tensor

- [x] (Ailing) Tensor cosine_embedding_loss(const Tensor& input1, const Tensor& input2, const Tensor& target, double margin, int64_t reduction); // cosine_embedding_loss(Tensor,Tensor,Tensor,double,int64_t)->Tensor

- [x] (Ailing) Tensor cosine_similarity(const Tensor& x1, const Tensor& x2, int64_t dim, double eps); // cosine_similarity(Tensor,Tensor,int64_t,double)->Tensor

- [x] (Ailing) Tensor diagflat(const Tensor& self, int64_t offset); // diagflat(Tensor,int64_t)->Tensor

- [x] (Ailing) Tensor dropout(const Tensor& input, double p, bool train); // dropout(Tensor,double,bool)->Tensor

- [x] (Ailing) Tensor& dropout_(Tensor& self, double p, bool train); // dropout_(Tensor,double,bool)->Tensor

- [ ] Tensor einsum(std::string equation, TensorList tensors); // einsum(std::string,TensorList)->Tensor

- [x] Tensor empty_like(const Tensor& self); // empty_like(Tensor)->Tensor

- [x] Tensor expand_as(const Tensor& self, const Tensor& other); // expand_as(Tensor,Tensor)->Tensor

- [x] Tensor flatten(const Tensor& self, int64_t start_dim, int64_t end_dim); // flatten(Tensor,int64_t,int64_t)->Tensor

- [x] Tensor frobenius_norm(const Tensor& self); // frobenius_norm(Tensor)->Tensor

- [x] Tensor frobenius_norm(const Tensor& self, IntArrayRef dim, bool keepdim); // frobenius_norm(Tensor,IntArrayRef,bool)->Tensor

- [x] Tensor full_like(const Tensor& self, Scalar fill_value); // full_like(Tensor,Scalar)->Tensor

- [x] Tensor group_norm(const Tensor& input, int64_t num_groups, const Tensor& weight, const Tensor& bias, double eps, bool cudnn_enabled); // group_norm(Tensor,int64_t,Tensor,Tensor,double,bool)->Tensor

- [x] Tensor hinge_embedding_loss(const Tensor& self, const Tensor& target, double margin, int64_t reduction); // hinge_embedding_loss(Tensor,Tensor,double,int64_t)->Tensor

- [x] Tensor index_add(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& source); // index_add(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor index_copy(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& source); // index_copy(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor index_fill(const Tensor& self, int64_t dim, const Tensor& index, Scalar value); // index_fill(Tensor,int64_t,Tensor,Scalar)->Tensor

- [x] Tensor index_fill(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& value); // index_fill(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor index_put(const Tensor& self, TensorList indices, const Tensor& values, bool accumulate); // index_put(Tensor,TensorList,Tensor,bool)->Tensor

- [x] Tensor instance_norm( const Tensor& input, const Tensor& weight, const Tensor& bias, const Tensor& running_mean, const Tensor& running_var, bool use_input_stats, double momentum, double eps, bool cudnn_enabled); // instance_norm(Tensor,Tensor,Tensor,Tensor,Tensor,bool,double,double,bool)->Tensor

- [x] bool is_floating_point(const Tensor& self); // is_floating_point(Tensor)->bool

- [x] bool is_signed(const Tensor& self); // is_signed(Tensor)->bool

- [x] Tensor layer_norm(const Tensor& input, IntArrayRef normalized_shape, const Tensor& weight, const Tensor& bias, double eps, bool cudnn_enable); // layer_norm(Tensor,IntArrayRef,Tensor,Tensor,double,bool)->Tensor

- [x] Tensor linear(const Tensor& input, const Tensor& weight, const Tensor& bias); // linear(Tensor,Tensor,Tensor)->Tensor

- [x] Tensor log_sigmoid(const Tensor& self); // log_sigmoid(Tensor)->Tensor

- [x] Tensor log_softmax(const Tensor& self, int64_t dim, c10::optional<ScalarType> dtype); // log_softmax(Tensor,int64_t,c10::optional<ScalarType>)->Tensor

- [x] Tensor margin_ranking_loss(const Tensor& input1, const Tensor& input2, const Tensor& target, double margin, int64_t reduction); // margin_ranking_loss(Tensor,Tensor,Tensor,double,int64_t)->Tensor

- [x] Tensor masked_fill(const Tensor& self, const Tensor& mask, Scalar value); // masked_fill(Tensor,Tensor,Scalar)->Tensor

- [x] Tensor masked_fill(const Tensor& self, const Tensor& mask, const Tensor& value); // masked_fill(Tensor,Tensor,Tensor)->Tensor

- [x] Tensor matmul(const Tensor& self, const Tensor& other); // matmul(Tensor,Tensor)->Tensor

- [x] Tensor max_pool1d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, IntArrayRef dilation, bool ceil_mode); // max_pool1d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,IntArrayRef,bool)->Tensor

- [x] Tensor max_pool2d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, IntArrayRef dilation, bool ceil_mode); // max_pool2d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,IntArrayRef,bool)->Tensor

- [x] Tensor max_pool3d(const Tensor& self, IntArrayRef kernel_size, IntArrayRef stride, IntArrayRef padding, IntArrayRef dilation, bool ceil_mode); // max_pool3d(Tensor,IntArrayRef,IntArrayRef,IntArrayRef,IntArrayRef,bool)->Tensor

- [x] std::vector<Tensor> meshgrid(TensorList tensors); // meshgrid(TensorList)->std::vector<Tensor>

- [x] Tensor narrow(const Tensor& self, int64_t dim, int64_t start, int64_t length); // narrow(Tensor,int64_t,int64_t,int64_t)->Tensor

- [x] Tensor nll_loss(const Tensor& self, const Tensor& target, const Tensor& weight, int64_t reduction, int64_t ignore_index); // nll_loss(Tensor,Tensor,Tensor,int64_t,int64_t)->Tensor

- [x] Tensor nuclear_norm(const Tensor& self, bool keepdim); // nuclear_norm(Tensor,bool)->Tensor

- [x] Tensor one_hot(const Tensor& self, int64_t num_classes); // one_hot(Tensor,int64_t)->Tensor

- [x] Tensor ones_like(const Tensor& self); // ones_like(Tensor)->Tensor

- [x] Tensor pairwise_distance(const Tensor& x1, const Tensor& x2, double p, double eps, bool keepdim); // pairwise_distance(Tensor,Tensor,double,double,bool)->Tensor

- [x] Tensor pixel_shuffle(const Tensor& self, int64_t upscale_factor); // pixel_shuffle(Tensor,int64_t)->Tensor

- [x] Tensor pinverse(const Tensor& self, double rcond); // pinverse(Tensor,double)->Tensor

- [x] Tensor reshape(const Tensor& self, IntArrayRef shape); // reshape(Tensor,IntArrayRef)->Tensor

- [x] Tensor scatter(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& src); // scatter(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor scatter(const Tensor& self, int64_t dim, const Tensor& index, Scalar value); // scatter(Tensor,int64_t,Tensor,Scalar)->Tensor

- [x] Tensor scatter_add(const Tensor& self, int64_t dim, const Tensor& index, const Tensor& src); // scatter_add(Tensor,int64_t,Tensor,Tensor)->Tensor

- [x] Tensor selu(const Tensor& self); // selu(Tensor)->Tensor

- [x] Tensor& selu_(Tensor& self); // selu_(Tensor)->Tensor

- [x] int64_t size(const Tensor& self, int64_t dim); // size(Tensor,int64_t)->int64_t

- [x] Tensor softmax(const Tensor& self, int64_t dim, c10::optional<ScalarType> dtype); // softmax(Tensor,int64_t,c10::optional<ScalarType>)->Tensor

- [x] Tensor sum_to_size(const Tensor& self, IntArrayRef size); // sum_to_size(Tensor,IntArrayRef)->Tensor

- [x] Tensor tensordot(const Tensor& self, const Tensor& other, IntArrayRef dims_self, IntArrayRef dims_other); // tensordot(Tensor,Tensor,IntArrayRef,IntArrayRef)->Tensor

- [x] Tensor to(const Tensor& self, const TensorOptions& options, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,TensorOptions,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor to(const Tensor& self, Device device, ScalarType dtype, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,Device,ScalarType,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor to(const Tensor& self, ScalarType dtype, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,ScalarType,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor to(const Tensor& self, const Tensor& other, bool non_blocking, bool copy, c10::optional<MemoryFormat> memory_format); // to(Tensor,Tensor,bool,bool,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor triplet_margin_loss(const Tensor& anchor, const Tensor& positive, const Tensor& negative, double margin, double p, double eps, bool swap, int64_t reduction); // triplet_margin_loss(Tensor,Tensor,Tensor,double,double,double,bool,int64_t)->Tensor

- [x] Tensor view_as(const Tensor& self, const Tensor& other); // view_as(Tensor,Tensor)->Tensor

- [x] Tensor where(const Tensor& condition, const Tensor& self, const Tensor& other); // where(Tensor,Tensor,Tensor)->Tensor

- [x] Tensor zeros_like(const Tensor& self); // zeros_like(Tensor)->Tensor

And a few more (non-leaf factory functions)

- [x] Tensor arange(Scalar end, const TensorOptions& options); // arange(Scalar,TensorOptions)->Tensor

- [x] Tensor arange(Scalar start, Scalar end, const TensorOptions& options); // arange(Scalar,Scalar,TensorOptions)->Tensor

- [x] Tensor arange(Scalar start, Scalar end, Scalar step, const TensorOptions& options); // arange(Scalar,Scalar,Scalar,TensorOptions)->Tensor

- [x] Tensor bartlett_window(int64_t window_length, const TensorOptions& options); // bartlett_window(int64_t,TensorOptions)->Tensor

- [x] Tensor bartlett_window(int64_t window_length, bool periodic, const TensorOptions& options); // bartlett_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor blackman_window(int64_t window_length, const TensorOptions& options); // blackman_window(int64_t,TensorOptions)->Tensor

- [x] Tensor blackman_window(int64_t window_length, bool periodic, const TensorOptions& options); // blackman_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor empty_like(const Tensor& self, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // empty_like(Tensor,TensorOptions,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor eye(int64_t n, const TensorOptions& options); // eye(int64_t,TensorOptions)->Tensor

- [x] Tensor eye(int64_t n, int64_t m, const TensorOptions& options); // eye(int64_t,int64_t,TensorOptions)->Tensor

- [x] Tensor full(IntArrayRef size, Scalar fill_value, const TensorOptions& options); // full(IntArrayRef,Scalar,TensorOptions)->Tensor

- [x] Tensor full_like(const Tensor& self, Scalar fill_value, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // full_like(Tensor,Scalar,TensorOptions,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor hamming_window(int64_t window_length, const TensorOptions& options); // hamming_window(int64_t,TensorOptions)->Tensor

- [x] Tensor hamming_window(int64_t window_length, bool periodic, const TensorOptions& options); // hamming_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor hamming_window(int64_t window_length, bool periodic, double alpha, const TensorOptions& options); // hamming_window(int64_t,bool,double,TensorOptions)->Tensor

- [x] Tensor hamming_window(int64_t window_length, bool periodic, double alpha, double beta, const TensorOptions& options); // hamming_window(int64_t,bool,double,double,TensorOptions)->Tensor

- [x] Tensor hann_window(int64_t window_length, const TensorOptions& options); // hann_window(int64_t,TensorOptions)->Tensor

- [x] Tensor hann_window(int64_t window_length, bool periodic, const TensorOptions& options); // hann_window(int64_t,bool,TensorOptions)->Tensor

- [x] Tensor ones(IntArrayRef size, const TensorOptions& options); // ones(IntArrayRef,TensorOptions)->Tensor

- [x] Tensor ones_like(const Tensor& self, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // ones_like(Tensor,TensorOptions,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor randperm(int64_t n, const TensorOptions& options); // randperm(int64_t,TensorOptions)->Tensor

- [x] Tensor randperm(int64_t n, Generator* generator, const TensorOptions& options); // randperm(int64_t,Generator,TensorOptions)->Tensor

- [x] Tensor zeros_like(const Tensor& self, c10::optional<MemoryFormat> memory_format); // zeros_like(Tensor,c10::optional<MemoryFormat>)->Tensor

- [x] Tensor zeros_like(const Tensor& self, const TensorOptions& options, c10::optional<MemoryFormat> memory_format); // zeros_like(Tensor,TensorOptions,c10::optional<MemoryFormat>)->Tensor

| priority | remove extra overrides for non leaf nodes note that when removing extra overrides we need to make sure their leaf part is added if not present for example arange should be removed but we should add arange out for reference here s source of truth of all leaf nodes as of ailing tensor bitwise not const tensor self bitwise not tensor tensor ailing tensor bitwise not tensor self bitwise not tensor tensor ailing tensor cast byte const tensor self bool non blocking cast byte tensor bool tensor ailing tensor cast char const tensor self bool non blocking cast char tensor bool tensor ailing tensor cast float const tensor self bool non blocking cast float tensor bool tensor ailing tensor cast int const tensor self bool non blocking cast int tensor bool tensor ailing tensor cast long const tensor self bool non blocking cast long tensor bool tensor ailing tensor cast short const tensor self bool non blocking cast short tensor bool tensor ailing tensor dim arange const tensor like t dim dim arange tensor t tensor ailing tensor argmax const tensor self optional dim bool keepdim argmax tensor optional bool tensor ailing tensor argmin const tensor self optional dim bool keepdim argmin tensor optional bool tensor ailing tensor argsort const tensor self t dim bool descending argsort tensor t bool tensor ailing tensor avg const tensor self intarrayref kernel size intarrayref stride intarrayref padding bool ceil mode bool count include pad avg tensor intarrayref intarrayref intarrayref bool bool tensor ailing tensor batch norm const tensor input const tensor weight const tensor bias const tensor running mean const tensor running var bool training double momentum double eps bool cudnn enabled batch norm tensor tensor tensor tensor tensor bool double double bool tensor ailing tensor bernoulli const tensor self double p generator generator bernoulli tensor double generator tensor ailing tensor bilinear const tensor const tensor const tensor weight const tensor bias bilinear tensor tensor tensor tensor tensor ailing tensor binary cross entropy with logits backward const tensor grad output const tensor self const tensor target const tensor weight const tensor pos weight t reduction binary cross entropy with logits backward tensor tensor tensor tensor tensor t tensor ailing std vector broadcast tensors tensorlist tensors broadcast tensors tensorlist std vector ailing tensor celu const tensor self scalar alpha celu tensor scalar tensor ailing tensor celu tensor self scalar alpha celu tensor scalar tensor ailing tensor chain matmul tensorlist matrices chain matmul tensorlist tensor ailing tensor contiguous const tensor self memoryformat memory format contiguous tensor memoryformat tensor ailing tensor copy tensor self const tensor src bool non blocking copy tensor tensor bool tensor ailing tensor cosine embedding loss const tensor const tensor const tensor target double margin t reduction cosine embedding loss tensor tensor tensor double t tensor ailing tensor cosine similarity const tensor const tensor t dim double eps cosine similarity tensor tensor t double tensor ailing tensor diagflat const tensor self t offset diagflat tensor t tensor ailing tensor dropout const tensor input double p bool train dropout tensor double bool tensor ailing tensor dropout tensor self double p bool train dropout tensor double bool tensor tensor einsum std string equation tensorlist tensors einsum std string tensorlist tensor tensor empty like const tensor self empty like tensor tensor tensor expand as const tensor self const tensor other expand as tensor tensor tensor tensor flatten const tensor self t start dim t end dim flatten tensor t t tensor tensor frobenius norm const tensor self frobenius norm tensor tensor tensor frobenius norm const tensor self intarrayref dim bool keepdim frobenius norm tensor intarrayref bool tensor tensor full like const tensor self scalar fill value full like tensor scalar tensor tensor group norm const tensor input t num groups const tensor weight const tensor bias double eps bool cudnn enabled group norm tensor t tensor tensor double bool tensor tensor hinge embedding loss const tensor self const tensor target double margin t reduction hinge embedding loss tensor tensor double t tensor tensor index add const tensor self t dim const tensor index const tensor source index add tensor t tensor tensor tensor tensor index copy const tensor self t dim const tensor index const tensor source index copy tensor t tensor tensor tensor tensor index fill const tensor self t dim const tensor index scalar value index fill tensor t tensor scalar tensor tensor index fill const tensor self t dim const tensor index const tensor value index fill tensor t tensor tensor tensor tensor index put const tensor self tensorlist indices const tensor values bool accumulate index put tensor tensorlist tensor bool tensor tensor instance norm const tensor input const tensor weight const tensor bias const tensor running mean const tensor running var bool use input stats double momentum double eps bool cudnn enabled instance norm tensor tensor tensor tensor tensor bool double double bool tensor bool is floating point const tensor self is floating point tensor bool bool is signed const tensor self is signed tensor bool tensor layer norm const tensor input intarrayref normalized shape const tensor weight const tensor bias double eps bool cudnn enable layer norm tensor intarrayref tensor tensor double bool tensor tensor linear const tensor input const tensor weight const tensor bias linear tensor tensor tensor tensor tensor log sigmoid const tensor self log sigmoid tensor tensor tensor log softmax const tensor self t dim optional dtype log softmax tensor t optional tensor tensor margin ranking loss const tensor const tensor const tensor target double margin t reduction margin ranking loss tensor tensor tensor double t tensor tensor masked fill const tensor self const tensor mask scalar value masked fill tensor tensor scalar tensor tensor masked fill const tensor self const tensor mask const tensor value masked fill tensor tensor tensor tensor tensor matmul const tensor self const tensor other matmul tensor tensor tensor tensor max const tensor self intarrayref kernel size intarrayref stride intarrayref padding intarrayref dilation bool ceil mode max tensor intarrayref intarrayref intarrayref intarrayref bool tensor tensor max const tensor self intarrayref kernel size intarrayref stride intarrayref padding intarrayref dilation bool ceil mode max tensor intarrayref intarrayref intarrayref intarrayref bool tensor tensor max const tensor self intarrayref kernel size intarrayref stride intarrayref padding intarrayref dilation bool ceil mode max tensor intarrayref intarrayref intarrayref intarrayref bool tensor std vector meshgrid tensorlist tensors meshgrid tensorlist std vector tensor narrow const tensor self t dim t start t length narrow tensor t t t tensor tensor nll loss const tensor self const tensor target const tensor weight t reduction t ignore index nll loss tensor tensor tensor t t tensor tensor nuclear norm const tensor self bool keepdim nuclear norm tensor bool tensor tensor one hot const tensor self t num classes one hot tensor t tensor tensor ones like const tensor self ones like tensor tensor tensor pairwise distance const tensor const tensor double p double eps bool keepdim pairwise distance tensor tensor double double bool tensor tensor pixel shuffle const tensor self t upscale factor pixel shuffle tensor t tensor tensor pinverse const tensor self double rcond pinverse tensor double tensor tensor reshape const tensor self intarrayref shape reshape tensor intarrayref tensor tensor scatter const tensor self t dim const tensor index const tensor src scatter tensor t tensor tensor tensor tensor scatter const tensor self t dim const tensor index scalar value scatter tensor t tensor scalar tensor tensor scatter add const tensor self t dim const tensor index const tensor src scatter add tensor t tensor tensor tensor tensor selu const tensor self selu tensor tensor tensor selu tensor self selu tensor tensor t size const tensor self t dim size tensor t t tensor softmax const tensor self t dim optional dtype softmax tensor t optional tensor tensor sum to size const tensor self intarrayref size sum to size tensor intarrayref tensor tensor tensordot const tensor self const tensor other intarrayref dims self intarrayref dims other tensordot tensor tensor intarrayref intarrayref tensor tensor to const tensor self const tensoroptions options bool non blocking bool copy optional memory format to tensor tensoroptions bool bool optional tensor tensor to const tensor self device device scalartype dtype bool non blocking bool copy optional memory format to tensor device scalartype bool bool optional tensor tensor to const tensor self scalartype dtype bool non blocking bool copy optional memory format to tensor scalartype bool bool optional tensor tensor to const tensor self const tensor other bool non blocking bool copy optional memory format to tensor tensor bool bool optional tensor tensor triplet margin loss const tensor anchor const tensor positive const tensor negative double margin double p double eps bool swap t reduction triplet margin loss tensor tensor tensor double double double bool t tensor tensor view as const tensor self const tensor other view as tensor tensor tensor tensor where const tensor condition const tensor self const tensor other where tensor tensor tensor tensor tensor zeros like const tensor self zeros like tensor tensor and a few more non leaf factory functions tensor arange scalar end const tensoroptions options arange scalar tensoroptions tensor tensor arange scalar start scalar end const tensoroptions options arange scalar scalar tensoroptions tensor tensor arange scalar start scalar end scalar step const tensoroptions options arange scalar scalar scalar tensoroptions tensor tensor bartlett window t window length const tensoroptions options bartlett window t tensoroptions tensor tensor bartlett window t window length bool periodic const tensoroptions options bartlett window t bool tensoroptions tensor tensor blackman window t window length const tensoroptions options blackman window t tensoroptions tensor tensor blackman window t window length bool periodic const tensoroptions options blackman window t bool tensoroptions tensor tensor empty like const tensor self const tensoroptions options optional memory format empty like tensor tensoroptions optional tensor tensor eye t n const tensoroptions options eye t tensoroptions tensor tensor eye t n t m const tensoroptions options eye t t tensoroptions tensor tensor full intarrayref size scalar fill value const tensoroptions options full intarrayref scalar tensoroptions tensor tensor full like const tensor self scalar fill value const tensoroptions options optional memory format full like tensor scalar tensoroptions optional tensor tensor hamming window t window length const tensoroptions options hamming window t tensoroptions tensor tensor hamming window t window length bool periodic const tensoroptions options hamming window t bool tensoroptions tensor tensor hamming window t window length bool periodic double alpha const tensoroptions options hamming window t bool double tensoroptions tensor tensor hamming window t window length bool periodic double alpha double beta const tensoroptions options hamming window t bool double double tensoroptions tensor tensor hann window t window length const tensoroptions options hann window t tensoroptions tensor tensor hann window t window length bool periodic const tensoroptions options hann window t bool tensoroptions tensor tensor ones intarrayref size const tensoroptions options ones intarrayref tensoroptions tensor tensor ones like const tensor self const tensoroptions options optional memory format ones like tensor tensoroptions optional tensor tensor randperm t n const tensoroptions options randperm t tensoroptions tensor tensor randperm t n generator generator const tensoroptions options randperm t generator tensoroptions tensor tensor zeros like const tensor self optional memory format zeros like tensor optional tensor tensor zeros like const tensor self const tensoroptions options optional memory format zeros like tensor tensoroptions optional tensor | 1 |

306,022 | 9,379,678,519 | IssuesEvent | 2019-04-04 15:24:09 | VeraPrinsen/isomorphisms | https://api.github.com/repos/VeraPrinsen/isomorphisms | closed | Improve algorithm | High Priority | Dit is een voorbeeld van torus144 graph 0 en 6.

<img width="1268" alt="Screenshot 2019-03-29 at 13 46 24" src="https://user-images.githubusercontent.com/33416829/55233516-2c302400-5229-11e9-8bbd-d47d54ea5d04.png">

De tottime is de tijd die het algoritme in dat stuk van de code doorbrengt, de cumtime is de totale tijd van begin tot eind van aanroepen van de methode, maar ook de tijd van andere methoden die hierin worden aangeroepen.

Dus vandaar dat ik denk dat get_colors() nu een bottleneck is, want ongeveer een derde van de tijd is hieraan kwijt. Verder zie ik 4 seconden voor add_edge, wat via de copy() methode komt.

| 1.0 | Improve algorithm - Dit is een voorbeeld van torus144 graph 0 en 6.

<img width="1268" alt="Screenshot 2019-03-29 at 13 46 24" src="https://user-images.githubusercontent.com/33416829/55233516-2c302400-5229-11e9-8bbd-d47d54ea5d04.png">

De tottime is de tijd die het algoritme in dat stuk van de code doorbrengt, de cumtime is de totale tijd van begin tot eind van aanroepen van de methode, maar ook de tijd van andere methoden die hierin worden aangeroepen.

Dus vandaar dat ik denk dat get_colors() nu een bottleneck is, want ongeveer een derde van de tijd is hieraan kwijt. Verder zie ik 4 seconden voor add_edge, wat via de copy() methode komt.

| priority | improve algorithm dit is een voorbeeld van graph en img width alt screenshot at src de tottime is de tijd die het algoritme in dat stuk van de code doorbrengt de cumtime is de totale tijd van begin tot eind van aanroepen van de methode maar ook de tijd van andere methoden die hierin worden aangeroepen dus vandaar dat ik denk dat get colors nu een bottleneck is want ongeveer een derde van de tijd is hieraan kwijt verder zie ik seconden voor add edge wat via de copy methode komt | 1 |

782,783 | 27,506,923,186 | IssuesEvent | 2023-03-06 04:53:47 | xKDR/Survey.jl | https://api.github.com/repos/xKDR/Survey.jl | closed | Constructor to directly read replicate weights data | enhancement help wanted high priority logic heavy | Currently, you need a `SurveyDesign` to make a `ReplicateDesign`; however, you should be able to make it directly. For privacy reason, in many datasets, only the replicate weights are given and not the actual weights. | 1.0 | Constructor to directly read replicate weights data - Currently, you need a `SurveyDesign` to make a `ReplicateDesign`; however, you should be able to make it directly. For privacy reason, in many datasets, only the replicate weights are given and not the actual weights. | priority | constructor to directly read replicate weights data currently you need a surveydesign to make a replicatedesign however you should be able to make it directly for privacy reason in many datasets only the replicate weights are given and not the actual weights | 1 |

233,040 | 7,689,343,362 | IssuesEvent | 2018-05-17 12:27:56 | HGustavs/LenaSYS | https://api.github.com/repos/HGustavs/LenaSYS | opened | Barriers for login/reset password/security question | Group 1 (2018) highPriority | Currently the "maximum amount of tries" barrier for the 3 features (login, reset password and security question) is written in JavaScript. This is not secure and should probably be done in php instead.

* Login #5363

* Reset password #5364

* Security question #5365 | 1.0 | Barriers for login/reset password/security question - Currently the "maximum amount of tries" barrier for the 3 features (login, reset password and security question) is written in JavaScript. This is not secure and should probably be done in php instead.

* Login #5363

* Reset password #5364

* Security question #5365 | priority | barriers for login reset password security question currently the maximum amount of tries barrier for the features login reset password and security question is written in javascript this is not secure and should probably be done in php instead login reset password security question | 1 |

244,576 | 7,876,797,213 | IssuesEvent | 2018-06-26 03:16:42 | heptio/sonobuoy | https://api.github.com/repos/heptio/sonobuoy | closed | conformance test fail | p0 - Higher Priority | **Is this a BUG REPORT or FEATURE REQUEST?**:

bug.

**What happened**:

My env: kubernetes v1.10.2,flannel 0.10.

use kubeadm install kubernetes cluster,no more addon or apps.

command:

`curl -L https://raw.githubusercontent.com/cncf/k8s-conformance/master/sonobuoy-conformance.yaml | kubectl apply -f - `

when the test finish, the log of sonobuoy shows "error running plugins: timed out waiting for plugins, shutting down HTTP server".And I found there was a pod named e2e-XXXX still running, the tarball did not contain plugins.

After it, I followed https://scanner.heptio.com/ and still got fail.

#kubectl -n heptio-sonobuoy logs -f sonobuoy -c forwarder

```kubectl -n heptio-sonobuoy logs -f sonobuoy -c forwarder

time="2018-05-18T06:00:56Z" level=info msg="forwarder information" Scanner ID=04b9547158ca910b5f91453e80df1ae1 Scanner URL="https://scanner.heptio.com"

2018/05/18 06:01:26 http: proxy error: dial tcp: i/o timeout

time="2018-05-18T06:01:26Z" level=info msg="failed to send init message" error="non success response code: 502"

time="2018-05-18T06:01:26Z" level=info msg="waiting for a done file to appear..." looking for=/tmp/sonobuoy/done

time="2018-05-18T07:30:57Z" level=info msg="done file detected"

2018/05/18 07:31:27 http: proxy error: dial tcp: i/o timeout

time="2018-05-18T07:31:27Z" level=info msg="failed to delete init file" error="non success response code: 502"

time="2018-05-18T07:31:27Z" level=info msg="processing the done file contents"

time="2018-05-18T07:31:27Z" level=info msg="processing result file" result file=/tmp/sonobuoy/201805180600_sonobuoy_a99b6b82-3ad5-4033-a01c-011cf20896ab.tar.gz

2018/05/18 07:31:57 http: proxy error: dial tcp: i/o timeout

time="2018-05-18T07:31:57Z" level=info msg="failed to POST data" endpoint="http://127.0.0.1:9898/ingest/" error="non success response code: 502" result file=/tmp/sonobuoy/201805180600_sonobuoy_a99b6b82-3ad5-4033-a01c-011cf20896ab.tar.gz

```

#kubectl -n heptio-sonobuoy logs -f sonobuoy -c kube-sonobuoy

```kubectl -n heptio-sonobuoy logs -f sonobuoy -c kube-sonobuoy

time="2018-05-18T06:00:54Z" level=info msg="Scanning plugins in ./plugins.d (pwd: /)"

time="2018-05-18T06:00:54Z" level=info msg="unknown template type" filename=..2018_05_18_06_00_43.406360815

time="2018-05-18T06:00:54Z" level=info msg="unknown template type" filename=..data

time="2018-05-18T06:00:54Z" level=info msg="Scanning plugins in /etc/sonobuoy/plugins.d (pwd: /)"

time="2018-05-18T06:00:54Z" level=info msg="Directory (/etc/sonobuoy/plugins.d) does not exist"

time="2018-05-18T06:00:54Z" level=info msg="Scanning plugins in ~/sonobuoy/plugins.d (pwd: /)"

time="2018-05-18T06:00:54Z" level=info msg="Directory (~/sonobuoy/plugins.d) does not exist"

time="2018-05-18T06:00:54Z" level=info msg="Loading plugin driver Job"

time="2018-05-18T06:00:54Z" level=info msg="Filtering namespaces based on the following regex:.*|heptio-sonobuoy"

time="2018-05-18T06:00:54Z" level=info msg="Namespace default Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-daemonsets-m52jl Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-prestop-mkpjt Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-proxy-wzqg6 Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-replication-controller-jwbck Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace heptio-sonobuoy Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace kube-public Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace kube-system Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Starting server Expected Results: [{ e2e}]"

time="2018-05-18T06:00:54Z" level=info msg="Running (e2e) plugin"

time="2018-05-18T06:00:54Z" level=info msg="Listening for incoming results on 0.0.0.0:8080\n"

time="2018-05-18T07:30:54Z" level=error msg="error running plugins: timed out waiting for plugins, shutting down HTTP server"

time="2018-05-18T07:30:54Z" level=info msg="Running non-ns query"

time="2018-05-18T07:30:54Z" level=info msg="Collecting Node Configuration and Health..."

time="2018-05-18T07:30:54Z" level=info msg="Creating host results for ip-10-27-185-24.eu-central-1.compute.internal under /tmp/sonobuoy/a99b6b82-3ad5-4033-a01c-011cf20896ab/hosts/ip-10-27-185-24.eu-central-1.compute.internal\n"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get configz endpoint for node ip-10-27-185-24.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get healthz endpoint for node ip-10-27-185-24.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=info msg="Creating host results for ip-10-27-185-48.eu-central-1.compute.internal under /tmp/sonobuoy/a99b6b82-3ad5-4033-a01c-011cf20896ab/hosts/ip-10-27-185-48.eu-central-1.compute.internal\n"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get configz endpoint for node ip-10-27-185-48.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get healthz endpoint for node ip-10-27-185-48.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (default)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-daemonsets-m52jl)"

o msg="Results available at /tmp/sonobuoy/201805180600_sonobuoy_a99b6b82-3ad5-4033-a01c-011cf20896ab.tar.gz"time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-prestop-mkpjt)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-proxy-wzqg6)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-replication-controller-jwbck)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (heptio-sonobuoy)"

time="2018-05-18T07:30:54Z" level=info msg="Collecting Pod Logs..."

time="2018-05-18T07:30:55Z" level=error msg="error querying PodPresets: the server could not find the requested resource (get podpresets.settings.k8s.io)"

time="2018-05-18T07:30:57Z" level=info msg="Running ns query (kube-public)"

time="2018-05-18T07:30:57Z" level=info msg="Running ns query (kube-system)"

time="2018-05-18T07:30:57Z" level=inf

```

**What you expected to happen**:

test pass.

**How to reproduce it (as minimally and precisely as possible)**:

kubernetes v1.10.2,flannel 0.10.

`curl -L https://raw.githubusercontent.com/cncf/k8s-conformance/master/sonobuoy-conformance.yaml | kubectl apply -f - `

**Anything else we need to know?**:

**Environment**:

- Sonobuoy tarball (which contains * below)

- Kubernetes version (use `kubectl version`): v1.10.2

- Cloud provider or hardware configuration: AWS

- OS (e.g. from /etc/os-release): centos 7.4

- Kernel (e.g. `uname -a`):Linux ip-10-27-185-48.eu-central-1.compute.internal 3.10.0-693.11.6.el7.x86_64 #1 SMP Thu Jan 4 01:06:37 UTC 2018 x86_64 x86_64 x86_64 GNU/Linux

| 1.0 | conformance test fail - **Is this a BUG REPORT or FEATURE REQUEST?**:

bug.

**What happened**:

My env: kubernetes v1.10.2,flannel 0.10.

use kubeadm install kubernetes cluster,no more addon or apps.

command:

`curl -L https://raw.githubusercontent.com/cncf/k8s-conformance/master/sonobuoy-conformance.yaml | kubectl apply -f - `

when the test finish, the log of sonobuoy shows "error running plugins: timed out waiting for plugins, shutting down HTTP server".And I found there was a pod named e2e-XXXX still running, the tarball did not contain plugins.

After it, I followed https://scanner.heptio.com/ and still got fail.

#kubectl -n heptio-sonobuoy logs -f sonobuoy -c forwarder

```kubectl -n heptio-sonobuoy logs -f sonobuoy -c forwarder

time="2018-05-18T06:00:56Z" level=info msg="forwarder information" Scanner ID=04b9547158ca910b5f91453e80df1ae1 Scanner URL="https://scanner.heptio.com"

2018/05/18 06:01:26 http: proxy error: dial tcp: i/o timeout

time="2018-05-18T06:01:26Z" level=info msg="failed to send init message" error="non success response code: 502"

time="2018-05-18T06:01:26Z" level=info msg="waiting for a done file to appear..." looking for=/tmp/sonobuoy/done

time="2018-05-18T07:30:57Z" level=info msg="done file detected"

2018/05/18 07:31:27 http: proxy error: dial tcp: i/o timeout

time="2018-05-18T07:31:27Z" level=info msg="failed to delete init file" error="non success response code: 502"

time="2018-05-18T07:31:27Z" level=info msg="processing the done file contents"

time="2018-05-18T07:31:27Z" level=info msg="processing result file" result file=/tmp/sonobuoy/201805180600_sonobuoy_a99b6b82-3ad5-4033-a01c-011cf20896ab.tar.gz

2018/05/18 07:31:57 http: proxy error: dial tcp: i/o timeout

time="2018-05-18T07:31:57Z" level=info msg="failed to POST data" endpoint="http://127.0.0.1:9898/ingest/" error="non success response code: 502" result file=/tmp/sonobuoy/201805180600_sonobuoy_a99b6b82-3ad5-4033-a01c-011cf20896ab.tar.gz

```

#kubectl -n heptio-sonobuoy logs -f sonobuoy -c kube-sonobuoy

```kubectl -n heptio-sonobuoy logs -f sonobuoy -c kube-sonobuoy

time="2018-05-18T06:00:54Z" level=info msg="Scanning plugins in ./plugins.d (pwd: /)"

time="2018-05-18T06:00:54Z" level=info msg="unknown template type" filename=..2018_05_18_06_00_43.406360815

time="2018-05-18T06:00:54Z" level=info msg="unknown template type" filename=..data

time="2018-05-18T06:00:54Z" level=info msg="Scanning plugins in /etc/sonobuoy/plugins.d (pwd: /)"

time="2018-05-18T06:00:54Z" level=info msg="Directory (/etc/sonobuoy/plugins.d) does not exist"

time="2018-05-18T06:00:54Z" level=info msg="Scanning plugins in ~/sonobuoy/plugins.d (pwd: /)"

time="2018-05-18T06:00:54Z" level=info msg="Directory (~/sonobuoy/plugins.d) does not exist"

time="2018-05-18T06:00:54Z" level=info msg="Loading plugin driver Job"

time="2018-05-18T06:00:54Z" level=info msg="Filtering namespaces based on the following regex:.*|heptio-sonobuoy"

time="2018-05-18T06:00:54Z" level=info msg="Namespace default Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-daemonsets-m52jl Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-prestop-mkpjt Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-proxy-wzqg6 Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace e2e-tests-replication-controller-jwbck Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace heptio-sonobuoy Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace kube-public Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Namespace kube-system Matched=true"

time="2018-05-18T06:00:54Z" level=info msg="Starting server Expected Results: [{ e2e}]"

time="2018-05-18T06:00:54Z" level=info msg="Running (e2e) plugin"

time="2018-05-18T06:00:54Z" level=info msg="Listening for incoming results on 0.0.0.0:8080\n"

time="2018-05-18T07:30:54Z" level=error msg="error running plugins: timed out waiting for plugins, shutting down HTTP server"

time="2018-05-18T07:30:54Z" level=info msg="Running non-ns query"

time="2018-05-18T07:30:54Z" level=info msg="Collecting Node Configuration and Health..."

time="2018-05-18T07:30:54Z" level=info msg="Creating host results for ip-10-27-185-24.eu-central-1.compute.internal under /tmp/sonobuoy/a99b6b82-3ad5-4033-a01c-011cf20896ab/hosts/ip-10-27-185-24.eu-central-1.compute.internal\n"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get configz endpoint for node ip-10-27-185-24.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get healthz endpoint for node ip-10-27-185-24.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=info msg="Creating host results for ip-10-27-185-48.eu-central-1.compute.internal under /tmp/sonobuoy/a99b6b82-3ad5-4033-a01c-011cf20896ab/hosts/ip-10-27-185-48.eu-central-1.compute.internal\n"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get configz endpoint for node ip-10-27-185-48.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=warning msg="Could not get healthz endpoint for node ip-10-27-185-48.eu-central-1.compute.internal: the server could not find the requested resource"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (default)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-daemonsets-m52jl)"

o msg="Results available at /tmp/sonobuoy/201805180600_sonobuoy_a99b6b82-3ad5-4033-a01c-011cf20896ab.tar.gz"time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-prestop-mkpjt)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-proxy-wzqg6)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (e2e-tests-replication-controller-jwbck)"

time="2018-05-18T07:30:54Z" level=info msg="Running ns query (heptio-sonobuoy)"

time="2018-05-18T07:30:54Z" level=info msg="Collecting Pod Logs..."

time="2018-05-18T07:30:55Z" level=error msg="error querying PodPresets: the server could not find the requested resource (get podpresets.settings.k8s.io)"

time="2018-05-18T07:30:57Z" level=info msg="Running ns query (kube-public)"

time="2018-05-18T07:30:57Z" level=info msg="Running ns query (kube-system)"

time="2018-05-18T07:30:57Z" level=inf

```

**What you expected to happen**:

test pass.

**How to reproduce it (as minimally and precisely as possible)**:

kubernetes v1.10.2,flannel 0.10.

`curl -L https://raw.githubusercontent.com/cncf/k8s-conformance/master/sonobuoy-conformance.yaml | kubectl apply -f - `

**Anything else we need to know?**:

**Environment**:

- Sonobuoy tarball (which contains * below)

- Kubernetes version (use `kubectl version`): v1.10.2

- Cloud provider or hardware configuration: AWS

- OS (e.g. from /etc/os-release): centos 7.4

- Kernel (e.g. `uname -a`):Linux ip-10-27-185-48.eu-central-1.compute.internal 3.10.0-693.11.6.el7.x86_64 #1 SMP Thu Jan 4 01:06:37 UTC 2018 x86_64 x86_64 x86_64 GNU/Linux