Unnamed: 0 int64 0 832k | id float64 2.49B 32.1B | type stringclasses 1

value | created_at stringlengths 19 19 | repo stringlengths 5 112 | repo_url stringlengths 34 141 | action stringclasses 3

values | title stringlengths 1 855 | labels stringlengths 4 721 | body stringlengths 1 261k | index stringclasses 13

values | text_combine stringlengths 96 261k | label stringclasses 2

values | text stringlengths 96 240k | binary_label int64 0 1 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

119,964 | 4,778,277,125 | IssuesEvent | 2016-10-27 18:48:35 | IQSS/dataverse | https://api.github.com/repos/IQSS/dataverse | closed | Search: search box returns 0 results when searching for existing/duplicate MD5 | Component: Search/Browse Priority 3: Serious Priority: High Status: QA Type: Bug Type: Feature | I was trying to locate a duplicate md5 in dataset with a large number of files but the search feature returns 0 results. MD5 i tested was tagged as a duplicate during file upload. I put the md5 into the search for the entire dataset, with 0 results. | 2.0 | Search: search box returns 0 results when searching for existing/duplicate MD5 - I was trying to locate a duplicate md5 in dataset with a large number of files but the search feature returns 0 results. MD5 i tested was tagged as a duplicate during file upload. I put the md5 into the search for the entire dataset, with ... | priority | search search box returns results when searching for existing duplicate i was trying to locate a duplicate in dataset with a large number of files but the search feature returns results i tested was tagged as a duplicate during file upload i put the into the search for the entire dataset with result... | 1 |

310,334 | 9,488,803,616 | IssuesEvent | 2019-04-22 20:34:56 | AugurProject/augur | https://api.github.com/repos/AugurProject/augur | closed | Mobile trading pg positions fields are scrunched together | Priority: High | Fields under the positions section of the trading pg on Mobil are all jumbled together....

see screenshot

https://cdn.discordapp.com/attachments/464067340015763466/568586637345423360/Screenshot_20190418-195855.jpg | 1.0 | Mobile trading pg positions fields are scrunched together - Fields under the positions section of the trading pg on Mobil are all jumbled together....

see screenshot

https://cdn.discordapp.com/attachments/464067340015763466/568586637345423360/Screenshot_20190418-195855.jpg | priority | mobile trading pg positions fields are scrunched together fields under the positions section of the trading pg on mobil are all jumbled together see screenshot | 1 |

290,566 | 8,896,698,760 | IssuesEvent | 2019-01-16 12:15:39 | FundacionParaguaya/MentorApp | https://api.github.com/repos/FundacionParaguaya/MentorApp | closed | When logging out and logging back in survey images disappear | bug high priority | **Describe the bug**

When I log in as a user, then I log out, then I login as a different user, create a draft and go to the questions screen, I no longer see images.

When I logout and then I login back in as the same user - I no longer see the images - The images ONLY appear on emptying the data and the cache

**Expe... | 1.0 | When logging out and logging back in survey images disappear - **Describe the bug**

When I log in as a user, then I log out, then I login as a different user, create a draft and go to the questions screen, I no longer see images.

When I logout and then I login back in as the same user - I no longer see the images - Th... | priority | when logging out and logging back in survey images disappear describe the bug when i log in as a user then i log out then i login as a different user create a draft and go to the questions screen i no longer see images when i logout and then i login back in as the same user i no longer see the images th... | 1 |

99,215 | 4,049,397,627 | IssuesEvent | 2016-05-23 14:03:31 | GPUOpen-Effects/TressFX | https://api.github.com/repos/GPUOpen-Effects/TressFX | closed | Weird glitchy render with the preview | priority: high type: bug | TressFX 2.2 render : http://puu.sh/mKo82/21b2cdd9f6.jpg

TressFX 3.0 render : http://puu.sh/mKo9U/11de724e15.jpg

The hair on the 3.0 is glitchy, i had to zoom so it's easier to see.

Built at the same hour, on the same computer. | 1.0 | Weird glitchy render with the preview - TressFX 2.2 render : http://puu.sh/mKo82/21b2cdd9f6.jpg

TressFX 3.0 render : http://puu.sh/mKo9U/11de724e15.jpg

The hair on the 3.0 is glitchy, i had to zoom so it's easier to see.

Built at the same hour, on the same computer. | priority | weird glitchy render with the preview tressfx render tressfx render the hair on the is glitchy i had to zoom so it s easier to see built at the same hour on the same computer | 1 |

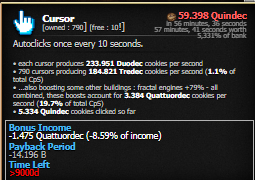

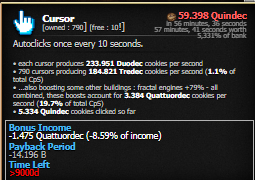

498,923 | 14,435,693,834 | IssuesEvent | 2020-12-07 09:06:31 | Aktanusa/CookieMonster | https://api.github.com/repos/Aktanusa/CookieMonster | closed | Negative Bonus Income when golden cookie is on the screen | Bug HIGH priority |

My best guess is that Dragon's Fortune is not considered in the calculations | 1.0 | Negative Bonus Income when golden cookie is on the screen -

My best guess is that Dragon's Fortune is not considered in the calculations | priority | negative bonus income when golden cookie is on the screen my best guess is that dragon s fortune is not considered in the calculations | 1 |

186,242 | 6,734,621,643 | IssuesEvent | 2017-10-18 18:41:58 | geosolutions-it/eumetsat-EOWS | https://api.github.com/repos/geosolutions-it/eumetsat-EOWS | opened | Improve Resource Limits | geoserver Priority: High user story | - [ ] #45 Ability to kill raster rendering/processing requests in JAI

- [ ] #46 Ability to kill long running DBMS queries | 1.0 | Improve Resource Limits - - [ ] #45 Ability to kill raster rendering/processing requests in JAI

- [ ] #46 Ability to kill long running DBMS queries | priority | improve resource limits ability to kill raster rendering processing requests in jai ability to kill long running dbms queries | 1 |

86,335 | 3,710,741,713 | IssuesEvent | 2016-03-02 06:40:59 | damlaren/ogle | https://api.github.com/repos/damlaren/ogle | closed | rejigger Mesh class | priority:very high | Existing class is only appropriate for rendering, closely tied to MeshRenderer, and makes assumptions about what hardware & graphics API is being used. | 1.0 | rejigger Mesh class - Existing class is only appropriate for rendering, closely tied to MeshRenderer, and makes assumptions about what hardware & graphics API is being used. | priority | rejigger mesh class existing class is only appropriate for rendering closely tied to meshrenderer and makes assumptions about what hardware graphics api is being used | 1 |

418,840 | 12,214,136,357 | IssuesEvent | 2020-05-01 09:05:38 | JuezUN/INGInious | https://api.github.com/repos/JuezUN/INGInious | opened | Modify the way input files are downloaded | Change request Frontend High Priority Plugins Task | Currently, input files in multilang are downloaded sending the text from container.

Though, this uses more memory in the container that may cause memory leaks and in case the input is long, the frontend will be heavier.

A better way set the files that can be downloaded in the public/ folder in the task file system. | 1.0 | Modify the way input files are downloaded - Currently, input files in multilang are downloaded sending the text from container.

Though, this uses more memory in the container that may cause memory leaks and in case the input is long, the frontend will be heavier.

A better way set the files that can be downloaded in... | priority | modify the way input files are downloaded currently input files in multilang are downloaded sending the text from container though this uses more memory in the container that may cause memory leaks and in case the input is long the frontend will be heavier a better way set the files that can be downloaded in... | 1 |

401,322 | 11,788,697,465 | IssuesEvent | 2020-03-17 15:57:49 | AY1920S2-CS2103T-W17-2/main | https://api.github.com/repos/AY1920S2-CS2103T-W17-2/main | closed | Create SuggestionModelImpl | priority.High status.Ongoing type.Enhancement | To create `SuggestionModelImpl` class that implements the `SuggestionModel` interface.

`model --> suggestion --> SuggestionModelImpl` | 1.0 | Create SuggestionModelImpl - To create `SuggestionModelImpl` class that implements the `SuggestionModel` interface.

`model --> suggestion --> SuggestionModelImpl` | priority | create suggestionmodelimpl to create suggestionmodelimpl class that implements the suggestionmodel interface model suggestion suggestionmodelimpl | 1 |

145,185 | 5,560,059,710 | IssuesEvent | 2017-03-24 18:27:29 | Esteemed-Innovation/Esteemed-Innovation | https://api.github.com/repos/Esteemed-Innovation/Esteemed-Innovation | closed | several blocks don't function correctly after having their chunks reloaded | Content: SteamNet and Technology Priority: High Status: Cannot Reproduce Type: Bug | seems to be sporadic but the flash boiler will sometimes appear to be completely empty of steam and water and you will not be able to interact with it (feed fuel/water) until you break and replace a block to reinitialize it.

Vacuums will not suck up items until they are broken and replaced in a similar way to the abov... | 1.0 | several blocks don't function correctly after having their chunks reloaded - seems to be sporadic but the flash boiler will sometimes appear to be completely empty of steam and water and you will not be able to interact with it (feed fuel/water) until you break and replace a block to reinitialize it.

Vacuums will not ... | priority | several blocks don t function correctly after having their chunks reloaded seems to be sporadic but the flash boiler will sometimes appear to be completely empty of steam and water and you will not be able to interact with it feed fuel water until you break and replace a block to reinitialize it vacuums will not ... | 1 |

535,824 | 15,699,411,492 | IssuesEvent | 2021-03-26 08:26:57 | wso2/product-apim | https://api.github.com/repos/wso2/product-apim | closed | API resources required to create API Product are not displayed | API-M 4.0.0 Feature/APIProducts Priority/Highest Resolution/Duplicate Type/Bug Type/React-UI Type/UX | ### Description:

When attempting to create an API Product via the Publisher UI, when navigating to the API Selection window in order to select the resources that need to be added to the API Product, no resources are displayed when selecting a respective API as shown below

- [x] Put the package on RRAN

- [x] Create a Github release for the package version installed... | 1.0 | Prepare package for RRAN release - - [x] Make sure there are no errors in R CMD check on local OS

- [x] Make sure R CMD check passes with R version 4.1 on Windows 10 and R version 4.2 on macOS

- [x] Assign a version number (update DESCRIPTION and NEWS.md)

- [x] Put the package on RRAN

- [x] Create a Github releas... | priority | prepare package for rran release make sure there are no errors in r cmd check on local os make sure r cmd check passes with r version on windows and r version on macos assign a version number update description and news md put the package on rran create a github release for the p... | 1 |

178,527 | 6,609,789,479 | IssuesEvent | 2017-09-19 15:33:45 | dschoenbauer/orm | https://api.github.com/repos/dschoenbauer/orm | opened | Add event to allow for Update but not allow it to display | enhancement Priority: High v1.0 | #### Overview description

A field should be allowed to be updated but not allowed to be viewed. This is specific to a password field. It should never be displayed.

#### Steps to reproduce

1.Pull any field from the database

#### Actual Results

Field is returned

#### Expected Results

Fields identified as h... | 1.0 | Add event to allow for Update but not allow it to display - #### Overview description

A field should be allowed to be updated but not allowed to be viewed. This is specific to a password field. It should never be displayed.

#### Steps to reproduce

1.Pull any field from the database

#### Actual Results

Field ... | priority | add event to allow for update but not allow it to display overview description a field should be allowed to be updated but not allowed to be viewed this is specific to a password field it should never be displayed steps to reproduce pull any field from the database actual results field ... | 1 |

281,341 | 8,694,064,886 | IssuesEvent | 2018-12-04 11:31:08 | NJACKWinterOfCode/Alphynite | https://api.github.com/repos/NJACKWinterOfCode/Alphynite | closed | Make project Live - Priority Extremely High | Beginner Priority:HIGH good first issue | **I'm submitting a ...**

- [x] feature request

**Current behavior:**

<!-- How the bug manifests. -->

No Deployments.

**Expected behavior:**

<!-- Behavior would be without the bug. -->

Deploy the project to Heroku or any other free hosting provider.

Here is the resource for Heroku .

https://medium.com/@hello... | 1.0 | Make project Live - Priority Extremely High - **I'm submitting a ...**

- [x] feature request

**Current behavior:**

<!-- How the bug manifests. -->

No Deployments.

**Expected behavior:**

<!-- Behavior would be without the bug. -->

Deploy the project to Heroku or any other free hosting provider.

Here is the ... | priority | make project live priority extremely high i m submitting a feature request current behavior no deployments expected behavior deploy the project to heroku or any other free hosting provider here is the resource for heroku would you like to work on the issue n... | 1 |

517,851 | 15,020,452,925 | IssuesEvent | 2021-02-01 14:43:59 | ansible/galaxy_ng | https://api.github.com/repos/ansible/galaxy_ng | closed | Remove instances where we still proxy api requests to pulp | area/api priority/high status/new type/enhancement | There are still a couple of views that use the old pulp_ansible client to proxy requests to pulp_ansible. These should be replaced with subclassed viewsets sometime before GA. | 1.0 | Remove instances where we still proxy api requests to pulp - There are still a couple of views that use the old pulp_ansible client to proxy requests to pulp_ansible. These should be replaced with subclassed viewsets sometime before GA. | priority | remove instances where we still proxy api requests to pulp there are still a couple of views that use the old pulp ansible client to proxy requests to pulp ansible these should be replaced with subclassed viewsets sometime before ga | 1 |

137,494 | 5,310,366,097 | IssuesEvent | 2017-02-12 19:28:18 | alonshmilo/MedicalData_jce | https://api.github.com/repos/alonshmilo/MedicalData_jce | closed | DeepMedic | 2 - Working <= 5 Points: 8 Priority: Very High | # Issue: DeepMedic

### Explanation:

Efficient Multi-Scale 3D Convolutional Neural Network for Brain Lesion Segmentation. Understanding the network, making it work on our macs, and start to think about the rib cage bone orientation.

### Checklist:

- [x] Code

- [x] Reading

- [x] Documenting reading

- [x] Proje... | 1.0 | DeepMedic - # Issue: DeepMedic

### Explanation:

Efficient Multi-Scale 3D Convolutional Neural Network for Brain Lesion Segmentation. Understanding the network, making it work on our macs, and start to think about the rib cage bone orientation.

### Checklist:

- [x] Code

- [x] Reading

- [x] Documenting reading

... | priority | deepmedic issue deepmedic explanation efficient multi scale convolutional neural network for brain lesion segmentation understanding the network making it work on our macs and start to think about the rib cage bone orientation checklist code reading documenting reading pr... | 1 |

171,700 | 6,493,500,940 | IssuesEvent | 2017-08-21 17:22:48 | qlicker/qlicker | https://api.github.com/repos/qlicker/qlicker | closed | Prof session run panel should show the correct answer! | bug enhancement High priority | The preview panel in the prof session run view should show which is the correct answer! The prof might not remember off the top of their head the correct answer! | 1.0 | Prof session run panel should show the correct answer! - The preview panel in the prof session run view should show which is the correct answer! The prof might not remember off the top of their head the correct answer! | priority | prof session run panel should show the correct answer the preview panel in the prof session run view should show which is the correct answer the prof might not remember off the top of their head the correct answer | 1 |

123,786 | 4,876,005,005 | IssuesEvent | 2016-11-16 11:22:21 | BinPar/PPD | https://api.github.com/repos/BinPar/PPD | opened | INFORMACION PRECIOS PRODUCTOS FORMACION | Priority: High | Se solicita modificar el cálculo de los precios cuando se trate de los siguientes productos:

EXPERTO

MASTER

PROGRAMA DE FORMACION CONTINUADA

PROGRAMA DE ACTUALIZACION

CURSO ONLINE

Los campos:

PRECIO LOCAL ESTIMADO: Se introduce el dato de forma manual

PRECIO ESTIMADO INTERCOMPAÑIA: SIN DATOS

PRECIO ESTIMADO ... | 1.0 | INFORMACION PRECIOS PRODUCTOS FORMACION - Se solicita modificar el cálculo de los precios cuando se trate de los siguientes productos:

EXPERTO

MASTER

PROGRAMA DE FORMACION CONTINUADA

PROGRAMA DE ACTUALIZACION

CURSO ONLINE

Los campos:

PRECIO LOCAL ESTIMADO: Se introduce el dato de forma manual

PRECIO ESTIMADO ... | priority | informacion precios productos formacion se solicita modificar el cálculo de los precios cuando se trate de los siguientes productos experto master programa de formacion continuada programa de actualizacion curso online los campos precio local estimado se introduce el dato de forma manual precio estimado ... | 1 |

407,646 | 11,935,122,742 | IssuesEvent | 2020-04-02 08:00:17 | wso2/docker-open-banking | https://api.github.com/repos/wso2/docker-open-banking | closed | Create Docker resources for WSO2 Open Banking version 1.5.0 | Priority/High Type/Task | **Description:**

Dockerfile for Open Banking API Manager(OBAM) should be created to build the OBAM based on adoptopenjdk:11.0.6_10-jdk-hotspot-bionic image and run the server

**Affected Product Version:**

OB 1.5.0

**OS, DB, other environment details and versions:**

ubuntu, alpine

| 1.0 | Create Docker resources for WSO2 Open Banking version 1.5.0 - **Description:**

Dockerfile for Open Banking API Manager(OBAM) should be created to build the OBAM based on adoptopenjdk:11.0.6_10-jdk-hotspot-bionic image and run the server

**Affected Product Version:**

OB 1.5.0

**OS, DB, other environment details ... | priority | create docker resources for open banking version description dockerfile for open banking api manager obam should be created to build the obam based on adoptopenjdk jdk hotspot bionic image and run the server affected product version ob os db other environment details and v... | 1 |

324,305 | 9,887,396,726 | IssuesEvent | 2019-06-25 09:07:00 | lwjohnst86/prodigenr | https://api.github.com/repos/lwjohnst86/prodigenr | closed | Add drake build instead of using devtools load_all | enhancement hard high priority | And have the project setup not be a package. | 1.0 | Add drake build instead of using devtools load_all - And have the project setup not be a package. | priority | add drake build instead of using devtools load all and have the project setup not be a package | 1 |

826,387 | 31,593,488,883 | IssuesEvent | 2023-09-05 02:11:18 | lfortran/lfortran | https://api.github.com/repos/lfortran/lfortran | closed | Print ASR after every pass | high priority | It must print it after each pass and the very first ASR, *before* ASR verify is called, since it can fail.

Both in LPython and LFortran. This will greatly simplify debugging. This is quite urgent now, since I now hit this almost every day and so is everyone else. | 1.0 | Print ASR after every pass - It must print it after each pass and the very first ASR, *before* ASR verify is called, since it can fail.

Both in LPython and LFortran. This will greatly simplify debugging. This is quite urgent now, since I now hit this almost every day and so is everyone else. | priority | print asr after every pass it must print it after each pass and the very first asr before asr verify is called since it can fail both in lpython and lfortran this will greatly simplify debugging this is quite urgent now since i now hit this almost every day and so is everyone else | 1 |

54,890 | 3,071,567,939 | IssuesEvent | 2015-08-19 12:56:17 | INN/Largo | https://api.github.com/repos/INN/Largo | closed | when 1-col footer option is selected, footer 2 & 3 widget areas are still registered | priority: high type: bug | Observed on MWEN. They should be deactivated to avoid confusion. | 1.0 | when 1-col footer option is selected, footer 2 & 3 widget areas are still registered - Observed on MWEN. They should be deactivated to avoid confusion. | priority | when col footer option is selected footer widget areas are still registered observed on mwen they should be deactivated to avoid confusion | 1 |

686,063 | 23,475,443,014 | IssuesEvent | 2022-08-17 05:16:23 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Document issues in Configuring OIDC Federated IdP Initiated Logout | Priority/Highest docs Severity/Critical Affected-6.0.0 QA-Reported federated-oidc-back-channel-logout | **Is your suggestion related to a missing or misleading document? Please describe.**

Document [1] required following updates,

[1]https://is.docs.wso2.com/en/5.12.0/learn/configuring-oidc-federated-idp-initiated-logout/

- [ ] Add following missing section on document, [Configure Pickup Dispatch application in the ... | 1.0 | Document issues in Configuring OIDC Federated IdP Initiated Logout - **Is your suggestion related to a missing or misleading document? Please describe.**

Document [1] required following updates,

[1]https://is.docs.wso2.com/en/5.12.0/learn/configuring-oidc-federated-idp-initiated-logout/

- [ ] Add following missin... | priority | document issues in configuring oidc federated idp initiated logout is your suggestion related to a missing or misleading document please describe document required following updates add following missing section on document enable oidc back channel logout and add back channel logout url ... | 1 |

604,623 | 18,715,696,892 | IssuesEvent | 2021-11-03 04:07:53 | AyeCode/geodirectory | https://api.github.com/repos/AyeCode/geodirectory | closed | Add preview option to attachments icon | Priority: High Type: Enhancement | Currently, there is no way to view an attachment such as a PDF if a user uploads it without previewing or publishing the listing.

We should add a font awesome eye icon so attachments can be previewed.

I think the best solution might be to view this in a modal iframe? If the browser supports viewing PDFs in the browse... | 1.0 | Add preview option to attachments icon - Currently, there is no way to view an attachment such as a PDF if a user uploads it without previewing or publishing the listing.

We should add a font awesome eye icon so attachments can be previewed.

I think the best solution might be to view this in a modal iframe? If the br... | priority | add preview option to attachments icon currently there is no way to view an attachment such as a pdf if a user uploads it without previewing or publishing the listing we should add a font awesome eye icon so attachments can be previewed i think the best solution might be to view this in a modal iframe if the br... | 1 |

563,084 | 16,675,804,462 | IssuesEvent | 2021-06-07 16:01:52 | phetsims/tandem | https://api.github.com/repos/phetsims/tandem | closed | ReferenceIO instances should be phetioState: false | dev:phet-io priority:2-high status:blocks-publication status:ready-for-review | I found an unimportant bug where ReferenceIO instances are often stateful, in addition to being used for Data-Type serialization. Basically, there are many ReferenceIO instances that look like this in the state. For example in Natural Selection:

```

"naturalSelection.global.model.alleles.whiteFurAllele": {

... | 1.0 | ReferenceIO instances should be phetioState: false - I found an unimportant bug where ReferenceIO instances are often stateful, in addition to being used for Data-Type serialization. Basically, there are many ReferenceIO instances that look like this in the state. For example in Natural Selection:

```

"naturalS... | priority | referenceio instances should be phetiostate false i found an unimportant bug where referenceio instances are often stateful in addition to being used for data type serialization basically there are many referenceio instances that look like this in the state for example in natural selection naturals... | 1 |

103,510 | 4,174,410,264 | IssuesEvent | 2016-06-21 13:59:50 | ngageoint/hootenanny | https://api.github.com/repos/ngageoint/hootenanny | closed | Right angle turn not conflating | Category: Algorithms Priority: High Type: Bug | Example case is in the associated branch. (`test-files/cases/unifying/highway-662/`)

Small changes to the geometries will make the lines conflate, but there is clearly a bug in there. Please investigate and fix. | 1.0 | Right angle turn not conflating - Example case is in the associated branch. (`test-files/cases/unifying/highway-662/`)

Small changes to the geometries will make the lines conflate, but there is clearly a bug in there. Please investigate and fix. | priority | right angle turn not conflating example case is in the associated branch test files cases unifying highway small changes to the geometries will make the lines conflate but there is clearly a bug in there please investigate and fix | 1 |

136,295 | 5,279,548,929 | IssuesEvent | 2017-02-07 11:37:24 | bbcarchdev/spindle | https://api.github.com/repos/bbcarchdev/spindle | reopened | Loops in triggers | bug high priority triaged Twine | When ingesting a data using a resource as the named graph a loop is created in the triggers.

Example:

the following file

[sample.txt](https://github.com/bbcarchdev/spindle/files/529654/sample.txt)

generates:

<img width="938" alt="screen shot 2016-10-14 at 10 07 51" src="https://cloud.githubusercontent.com/assets/196436... | 1.0 | Loops in triggers - When ingesting a data using a resource as the named graph a loop is created in the triggers.

Example:

the following file

[sample.txt](https://github.com/bbcarchdev/spindle/files/529654/sample.txt)

generates:

<img width="938" alt="screen shot 2016-10-14 at 10 07 51" src="https://cloud.githubuserconte... | priority | loops in triggers when ingesting a data using a resource as the named graph a loop is created in the triggers example the following file generates img width alt screen shot at src | 1 |

712,948 | 24,512,089,484 | IssuesEvent | 2022-10-10 22:58:53 | tbaranoski/Trading_Quant | https://api.github.com/repos/tbaranoski/Trading_Quant | closed | [Possible Bug]: get_bars pulling aftermarket and pre market data intraday | HIGH PRIORITY | We want to make sure we are only pulling market hour data and not pre/post market data for trend computations | 1.0 | [Possible Bug]: get_bars pulling aftermarket and pre market data intraday - We want to make sure we are only pulling market hour data and not pre/post market data for trend computations | priority | get bars pulling aftermarket and pre market data intraday we want to make sure we are only pulling market hour data and not pre post market data for trend computations | 1 |

563,834 | 16,706,097,111 | IssuesEvent | 2021-06-09 10:07:48 | yt-dlp/yt-dlp | https://api.github.com/repos/yt-dlp/yt-dlp | closed | [Broken] split-chapters is broken in 2021.06.08 | bug high priority | Just updated yt-dlp to 2021.06.08 and found that split-chapters is broken. I confirm that it is working with 2021.06.01

With 2021.06.08 I get "ERROR: %d format: a number is required, not str"

Debug output:

$ yt-dlp -v --split-chapters RGOj5yH7evk

[debug] Command-line config: ['-v', '--split-chapters... | 1.0 | [Broken] split-chapters is broken in 2021.06.08 - Just updated yt-dlp to 2021.06.08 and found that split-chapters is broken. I confirm that it is working with 2021.06.01

With 2021.06.08 I get "ERROR: %d format: a number is required, not str"

Debug output:

$ yt-dlp -v --split-chapters RGOj5yH7evk

[de... | priority | split chapters is broken in just updated yt dlp to and found that split chapters is broken i confirm that it is working with with i get error d format a number is required not str debug output yt dlp v split chapters command line config encodings l... | 1 |

395,527 | 11,687,873,727 | IssuesEvent | 2020-03-05 13:37:14 | opensourceai/vue-front-end | https://api.github.com/repos/opensourceai/vue-front-end | opened | 设计登录注册页面UI | priority:high ui | 要求:

1. 设计力求简约,可参考`知乎`

2. 登记表单

- 用户名

- 密码

- 验证码

3. 注册表单

- 用户名

- 邮箱

- 密码

- 验证码

4. 其他自由发挥 | 1.0 | 设计登录注册页面UI - 要求:

1. 设计力求简约,可参考`知乎`

2. 登记表单

- 用户名

- 密码

- 验证码

3. 注册表单

- 用户名

- 邮箱

- 密码

- 验证码

4. 其他自由发挥 | priority | 设计登录注册页面ui 要求 设计力求简约 可参考 知乎 登记表单 用户名 密码 验证码 注册表单 用户名 邮箱 密码 验证码 其他自由发挥 | 1 |

134,818 | 5,237,774,158 | IssuesEvent | 2017-01-31 01:07:33 | ankidroid/Anki-Android | https://api.github.com/repos/ankidroid/Anki-Android | closed | Blackberry devices are unable to sync | accepted Priority-High waitingforfeedback | AnkiWeb's SSL certificate was updated on the 7th, and it appears to have broken syncing on Blackberry devices. Users are able to access ankiweb.net in their device's browser, but connections within AnkiDroid time out. There don't appear to be any errors in the server logs, so I'm not sure whether any connections are be... | 1.0 | Blackberry devices are unable to sync - AnkiWeb's SSL certificate was updated on the 7th, and it appears to have broken syncing on Blackberry devices. Users are able to access ankiweb.net in their device's browser, but connections within AnkiDroid time out. There don't appear to be any errors in the server logs, so I'm... | priority | blackberry devices are unable to sync ankiweb s ssl certificate was updated on the and it appears to have broken syncing on blackberry devices users are able to access ankiweb net in their device s browser but connections within ankidroid time out there don t appear to be any errors in the server logs so i m n... | 1 |

212,894 | 7,243,791,520 | IssuesEvent | 2018-02-14 13:05:39 | CodeGra-de/CodeGra.de | https://api.github.com/repos/CodeGra-de/CodeGra.de | opened | LTI launch sometimes gets stuck in infinite loop | LTI bug frontend priority-0-high | It seems that this is caused by localforage not finding any storage adapter. | 1.0 | LTI launch sometimes gets stuck in infinite loop - It seems that this is caused by localforage not finding any storage adapter. | priority | lti launch sometimes gets stuck in infinite loop it seems that this is caused by localforage not finding any storage adapter | 1 |

554,613 | 16,434,758,048 | IssuesEvent | 2021-05-20 07:59:47 | southppp22/Flower-Shop-Seorimhwa | https://api.github.com/repos/southppp22/Flower-Shop-Seorimhwa | closed | [Client]Image Atom Component 개발 | E:3.0 Priority: High Status: To Do Type: Feature/Function | ### ISSUE

- Type: `feature`

- Detail: Image Atom Component 개발

-

### TODO

1. [ ] style-component, storybook 적용

2. [ ] props interface 정의

### Estimated time

### `3h`

| 1.0 | [Client]Image Atom Component 개발 - ### ISSUE

- Type: `feature`

- Detail: Image Atom Component 개발

-

### TODO

1. [ ] style-component, storybook 적용

2. [ ] props interface 정의

### Estimated time

### `3h`

| priority | image atom component 개발 issue type feature detail image atom component 개발 todo style component storybook 적용 props interface 정의 estimated time | 1 |

219,801 | 7,346,038,469 | IssuesEvent | 2018-03-07 19:22:16 | AyuntamientoMadrid/consul | https://api.github.com/repos/AyuntamientoMadrid/consul | closed | Proposal document URL returns 404 | Bug High priority | [This proposal's documents](https://decide.madrid.es/proposals/19914-incineradora-de-valdemingomez-no) URLs returns a 404 code. Looks like somehow the internal link is broken or missing, but the `Document` object is correctly stored in ddbb with the right ids and references.

```

ActionController::RoutingError: No r... | 1.0 | Proposal document URL returns 404 - [This proposal's documents](https://decide.madrid.es/proposals/19914-incineradora-de-valdemingomez-no) URLs returns a 404 code. Looks like somehow the internal link is broken or missing, but the `Document` object is correctly stored in ddbb with the right ids and references.

```

... | priority | proposal document url returns urls returns a code looks like somehow the internal link is broken or missing but the document object is correctly stored in ddbb with the right ids and references actioncontroller routingerror no route matches system documents attachments original pdf ... | 1 |

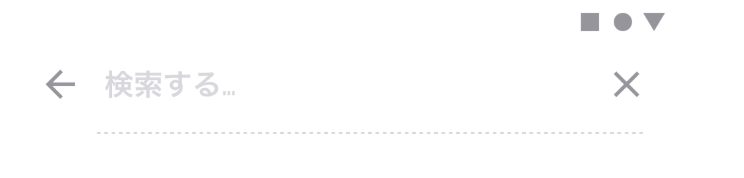

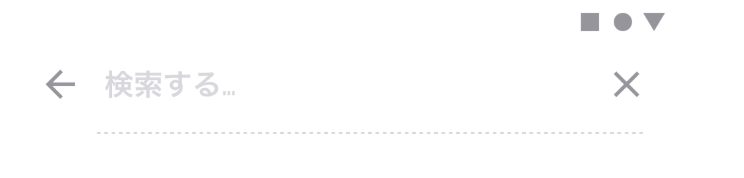

288,633 | 8,849,677,329 | IssuesEvent | 2019-01-08 10:55:34 | DroidKaigi/conference-app-2019 | https://api.github.com/repos/DroidKaigi/conference-app-2019 | closed | Apply SearchView design | assigned help wanted high priority welcome contribute | ## Overview (Required)

- Even if it is not as designed, I want to delete the image here.

design

current

- Even if it is not as designed, I want to delete the image here.

design

current

- claim property and transfer owner to this title:

- claim property and transfer owner to this title:

.click() in idp_mgt_edit.js file. But in the fix for 5.12.387, it has been mistakenly added under jQuery('#roleAd... | 1.0 | idp role mapping is not working - When tried to add the IDP role mapping in wso2 identity server, it's not work as expected. As check on this further in the code base, following line has to added under jQuery('#advancedClaimMappingAddLink').click() in idp_mgt_edit.js file. But in the fix for 5.12.387, it has been mist... | priority | idp role mapping is not working when tried to add the idp role mapping in identity server it s not work as expected as check on this further in the code base following line has to added under jquery advancedclaimmappingaddlink click in idp mgt edit js file but in the fix for it has been mistakenly... | 1 |

488,863 | 14,087,437,214 | IssuesEvent | 2020-11-05 06:25:05 | webcompat/web-bugs | https://api.github.com/repos/webcompat/web-bugs | closed | gmail.com - desktop site instead of mobile site | browser-chrome ml-needsdiagnosis-false ml-probability-high priority-normal | <!-- @browser: Chrome 80.0.3987 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/61157 -->

**URL**: https://gmail.com

**Browser / ... | 1.0 | gmail.com - desktop site instead of mobile site - <!-- @browser: Chrome 80.0.3987 -->

<!-- @ua_header: Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36 -->

<!-- @reported_with: unknown -->

<!-- @public_url: https://github.com/webcompat/web-bugs/issues/6... | priority | gmail com desktop site instead of mobile site url browser version chrome operating system windows tested another browser yes chrome problem type desktop site instead of mobile site description desktop site instead of mobile site steps to reproduce in valid u... | 1 |

32,544 | 2,755,767,207 | IssuesEvent | 2015-04-26 22:50:42 | paceuniversity/cs3892015team1 | https://api.github.com/repos/paceuniversity/cs3892015team1 | closed | User Story 5 | Difficulty 7 Functional High Priority Product Backlog User Story | As a parent I want to edit flashcards in order to Change the word associated with a picture. (This is to better clarify User Story 4 and rid User Story 1 of amalgamation issues) | 1.0 | User Story 5 - As a parent I want to edit flashcards in order to Change the word associated with a picture. (This is to better clarify User Story 4 and rid User Story 1 of amalgamation issues) | priority | user story as a parent i want to edit flashcards in order to change the word associated with a picture this is to better clarify user story and rid user story of amalgamation issues | 1 |

27,847 | 2,696,615,308 | IssuesEvent | 2015-04-02 15:06:06 | IQSS/dataverse | https://api.github.com/repos/IQSS/dataverse | opened | Once you are in data "explore" no options to exit and get back into the dataset file page | Component: UX & Upgrade Priority: High Status: Dev Type: Feature | Once you hit "explore" you are taken to "Two ravens" but there are no options to get back to the study page, and two ravens doesn't open in a new window | 1.0 | Once you are in data "explore" no options to exit and get back into the dataset file page - Once you hit "explore" you are taken to "Two ravens" but there are no options to get back to the study page, and two ravens doesn't open in a new window | priority | once you are in data explore no options to exit and get back into the dataset file page once you hit explore you are taken to two ravens but there are no options to get back to the study page and two ravens doesn t open in a new window | 1 |

754,534 | 26,392,491,483 | IssuesEvent | 2023-01-12 16:40:19 | nexB/scancode.io | https://api.github.com/repos/nexB/scancode.io | closed | Improve the value of the "documentDescribes" field in a generated SPDX 2.3 SBOM | bug high priority reporting | At the recent SPDX Docfest, it was pointed out that the value we provide in the SPDX 2.3 SBOM for the "documentDescribes" field is a self-reference to the SPDX Document, rather than the subject of the SBOM: `SPDXRef-DOCUMENT` .

It would be better to provide a filename (possibly even a PURL when available) to identi... | 1.0 | Improve the value of the "documentDescribes" field in a generated SPDX 2.3 SBOM - At the recent SPDX Docfest, it was pointed out that the value we provide in the SPDX 2.3 SBOM for the "documentDescribes" field is a self-reference to the SPDX Document, rather than the subject of the SBOM: `SPDXRef-DOCUMENT` .

It wou... | priority | improve the value of the documentdescribes field in a generated spdx sbom at the recent spdx docfest it was pointed out that the value we provide in the spdx sbom for the documentdescribes field is a self reference to the spdx document rather than the subject of the sbom spdxref document it wou... | 1 |

158,901 | 6,036,902,616 | IssuesEvent | 2017-06-09 17:17:42 | forcedotcom/scmt-server | https://api.github.com/repos/forcedotcom/scmt-server | opened | Implement variable exponential back off and jitter in the backoff | enhancement priority - high | (so if there's multiple migrations running and they all hiccup from the same perf issue they don't all slam us at the same time?) | 1.0 | Implement variable exponential back off and jitter in the backoff - (so if there's multiple migrations running and they all hiccup from the same perf issue they don't all slam us at the same time?) | priority | implement variable exponential back off and jitter in the backoff so if there s multiple migrations running and they all hiccup from the same perf issue they don t all slam us at the same time | 1 |

292,427 | 8,957,799,320 | IssuesEvent | 2019-01-27 08:12:21 | cstate/cstate | https://api.github.com/repos/cstate/cstate | opened | HEX codes starting with 00 are unusable | bug help wanted priority: high | Golang/Hugo interprets a HEX code starting with 0 as octal

[Reference of quirk](https://scripter.co/golang-quirk-number-strings-starting-with-0-are-octals/)

Contributors appreciated

| 1.0 | HEX codes starting with 00 are unusable - Golang/Hugo interprets a HEX code starting with 0 as octal

[Reference of quirk](https://scripter.co/golang-quirk-number-strings-starting-with-0-are-octals/)

Contributors appreciated

| priority | hex codes starting with are unusable golang hugo interprets a hex code starting with as octal contributors appreciated | 1 |

307,636 | 9,419,642,480 | IssuesEvent | 2019-04-10 22:40:59 | cb-geo/mpm | https://api.github.com/repos/cb-geo/mpm | closed | Create node / particle sets | Priority: High Status: Pending Type: Core feature | In the mesh class, we should be able to create a set of nodes and particles on which loading or restraints can be applied. A code snipped on how to create a node set:

```

//! Assign node sets

//! \brief Assign loads and restrain node sets

//! \return create_status Return success or failure of node set creation

t... | 1.0 | Create node / particle sets - In the mesh class, we should be able to create a set of nodes and particles on which loading or restraints can be applied. A code snipped on how to create a node set:

```

//! Assign node sets

//! \brief Assign loads and restrain node sets

//! \return create_status Return success or f... | priority | create node particle sets in the mesh class we should be able to create a set of nodes and particles on which loading or restraints can be applied a code snipped on how to create a node set assign node sets brief assign loads and restrain node sets return create status return success or f... | 1 |

273,313 | 8,529,111,694 | IssuesEvent | 2018-11-03 08:02:19 | CS2103-AY1819S1-F10-3/main | https://api.github.com/repos/CS2103-AY1819S1-F10-3/main | closed | [P.E. Dry Run] services' cost does not accept 2 d.p. values | priority.High | client#1 addservice s/ring c/2.99

- Executing above command results in command being ignored with no error messages told to user.

- Should accept numbers with 2 d.p. since the User Guide says it accepts SGD, which is a money format that accepts 2 d.p. Can either change the User Guide to specify only integers, or chan... | 1.0 | [P.E. Dry Run] services' cost does not accept 2 d.p. values - client#1 addservice s/ring c/2.99

- Executing above command results in command being ignored with no error messages told to user.

- Should accept numbers with 2 d.p. since the User Guide says it accepts SGD, which is a money format that accepts 2 d.p. Can ... | priority | services cost does not accept d p values client addservice s ring c executing above command results in command being ignored with no error messages told to user should accept numbers with d p since the user guide says it accepts sgd which is a money format that accepts d p can either change ... | 1 |

335,160 | 10,149,655,104 | IssuesEvent | 2019-08-05 15:40:58 | HUPO-PSI/mzTab | https://api.github.com/repos/HUPO-PSI/mzTab | closed | CV Terms for mzTab-m 2.0.0 | Priority-High enhancement metabolomics-part mzTab-M 2.0.0 | SEP, MS

[sample_processing](https://github.com/HUPO-PSI/mzTab/blob/master/specification_document-developments/1_1-Metabolomics-Draft/mzTab_format_specification_1_1-M_draft.adoc#625-sample_processing1-n)

MS

[instrument_name](https://github.com/HUPO-PSI/mzTab/blob/master/specification_document-developments/1_1-Metab... | 1.0 | CV Terms for mzTab-m 2.0.0 - SEP, MS

[sample_processing](https://github.com/HUPO-PSI/mzTab/blob/master/specification_document-developments/1_1-Metabolomics-Draft/mzTab_format_specification_1_1-M_draft.adoc#625-sample_processing1-n)

MS

[instrument_name](https://github.com/HUPO-PSI/mzTab/blob/master/specification_do... | priority | cv terms for mztab m sep ms ms ms ms ms ms ms any cv ms or other cv ms ms ms or other ms any cv arbitrary these should not be validated newt bto cl doid any cv ... | 1 |

603,913 | 18,673,942,197 | IssuesEvent | 2021-10-31 08:08:34 | xournalpp/xournalpp | https://api.github.com/repos/xournalpp/xournalpp | closed | Objects disappear when deselected or while working | bug priority::high rendering | Hi!

I experienced this issue while using LaTeX, I was a lot of images deep when suddendly one or two started disappearing.

I don't know what caused it, but I think it has something to do with the selection mode, because the first object disappeared when I switched do "Select Object" mode

### 💻 Operating system

Something else

### 👀 Have you spent some time to check if this i... | 1.0 | 🐛 Bug Report: Cancel booking not showing in backoffice. - ### 👟 Reproduction steps

Cancel booking not showing in backoffice.

### 👍 Expected behavior

Cancel booking should be in back office.

### 👎 Actual Behavior

.

### 🎲 App version

Different version (specify in environment)

### 💻 Operating system

Somethi... | priority | 🐛 bug report cancel booking not showing in backoffice 👟 reproduction steps cancel booking not showing in backoffice 👍 expected behavior cancel booking should be in back office 👎 actual behavior 🎲 app version different version specify in environment 💻 operating system somethi... | 1 |

554,101 | 16,389,156,628 | IssuesEvent | 2021-05-17 14:10:39 | micronaut-projects/micronaut-core | https://api.github.com/repos/micronaut-projects/micronaut-core | closed | Regression 2.4.x 2.5.x Unable to deploy to Elastic Beanstalk | priority: high type: bug | # Steps to reproduce

`mn create-app example.micronaut.micronautguide --inplace`

## Health Endpoint

Add `micronaut-management` dependency:

```groovy

dependencies {

...

..

.

implementation("io.micronaut:micronaut-management")

}

```

## Port 5000 EC2 Env

Create a file to run the application in ... | 1.0 | Regression 2.4.x 2.5.x Unable to deploy to Elastic Beanstalk - # Steps to reproduce

`mn create-app example.micronaut.micronautguide --inplace`

## Health Endpoint

Add `micronaut-management` dependency:

```groovy

dependencies {

...

..

.

implementation("io.micronaut:micronaut-management")

}

```

#... | priority | regression x x unable to deploy to elastic beanstalk steps to reproduce mn create app example micronaut micronautguide inplace health endpoint add micronaut management dependency groovy dependencies implementation io micronaut micronaut management ... | 1 |

357,122 | 10,602,235,443 | IssuesEvent | 2019-10-10 13:52:51 | geosolutions-it/MapStore2 | https://api.github.com/repos/geosolutions-it/MapStore2 | closed | Upgrade React to 16.x and also update related libraries | Accepted Priority: High user story | ### Description

Following up investigation done for #3528 we want to upgrade React to the latest 16.x version and the following related libraries:

- Babel (7.2.2)

- Webpack (4.29.3)

- react-redux (6.0.0)

We will also need to updgrade (to be compatible with React 16):

- qrcode.react

- react-color

- rea... | 1.0 | Upgrade React to 16.x and also update related libraries - ### Description

Following up investigation done for #3528 we want to upgrade React to the latest 16.x version and the following related libraries:

- Babel (7.2.2)

- Webpack (4.29.3)

- react-redux (6.0.0)

We will also need to updgrade (to be compatibl... | priority | upgrade react to x and also update related libraries description following up investigation done for we want to upgrade react to the latest x version and the following related libraries babel webpack react redux we will also need to updgrade to be compatible with... | 1 |

537,943 | 15,757,794,395 | IssuesEvent | 2021-03-31 05:51:54 | ballerina-platform/ballerina-lang | https://api.github.com/repos/ballerina-platform/ballerina-lang | closed | Transaction:getInfo() does not return the past transaction information | Lang/Transaction Priority/High Team/CompilerFE Type/Bug | Consider the following code.

```ballerina

transaction {

readonly d = 123;

transactions:setData(d);

transactions:Info transInfo = transactions:info();

transactions:Info? newTransInfo = transactions:getInfo(transInfo.xid);

if(newTransInfo is transactions:Info) {

... | 1.0 | Transaction:getInfo() does not return the past transaction information - Consider the following code.

```ballerina

transaction {

readonly d = 123;

transactions:setData(d);

transactions:Info transInfo = transactions:info();

transactions:Info? newTransInfo = transactions:getInfo(... | priority | transaction getinfo does not return the past transaction information consider the following code ballerina transaction readonly d transactions setdata d transactions info transinfo transactions info transactions info newtransinfo transactions getinfo tr... | 1 |

240,598 | 7,803,353,158 | IssuesEvent | 2018-06-10 22:49:05 | leo-project/leofs | https://api.github.com/repos/leo-project/leofs | closed | [rack aware] doesn't work as expected | Bug Priority-HIGH _leo_manager _leo_redundant_manager _leo_storage survey v1.4 | Now there are some cases that rack awareness doesn't work as expected.

For example, let's say that we try to create a cluster with

- 5 storage nodes (node[1-5])

- 2 physical racks (rack[1-2])

- 2 replicas (N=2) and each replica should belong to a different rack

then set the configurations as following

- rep... | 1.0 | [rack aware] doesn't work as expected - Now there are some cases that rack awareness doesn't work as expected.

For example, let's say that we try to create a cluster with

- 5 storage nodes (node[1-5])

- 2 physical racks (rack[1-2])

- 2 replicas (N=2) and each replica should belong to a different rack

then set ... | priority | doesn t work as expected now there are some cases that rack awareness doesn t work as expected for example let s say that we try to create a cluster with storage nodes node physical racks rack replicas n and each replica should belong to a different rack then set the configurations ... | 1 |

689,390 | 23,618,636,949 | IssuesEvent | 2022-08-24 18:15:05 | nexB/vulnerablecode | https://api.github.com/repos/nexB/vulnerablecode | closed | Odd behavior for certain vulnerability searches | bug Priority: high | I just noticed odd results from a vulnerability search that appear to be related to the number of `aliases` for that vulnerability.

For example, if I search for `cve`, my local DB returns 4,629 records. 1 record is `VULCOID-1`, and the vulnerability search results table shows 351 Affected packages and 2 Fixed packa... | 1.0 | Odd behavior for certain vulnerability searches - I just noticed odd results from a vulnerability search that appear to be related to the number of `aliases` for that vulnerability.

For example, if I search for `cve`, my local DB returns 4,629 records. 1 record is `VULCOID-1`, and the vulnerability search results t... | priority | odd behavior for certain vulnerability searches i just noticed odd results from a vulnerability search that appear to be related to the number of aliases for that vulnerability for example if i search for cve my local db returns records record is vulcoid and the vulnerability search results tab... | 1 |

300,880 | 9,213,202,537 | IssuesEvent | 2019-03-10 09:45:30 | leinardi/FloatingActionButtonSpeedDial | https://api.github.com/repos/leinardi/FloatingActionButtonSpeedDial | closed | Use unique view IDs | Priority: High Status: Review Needed Status: Stale Type: Enhancement | It would be a good idea to prefix view ids used in the library to make them unique.

I'm getting the following error:

```

Wrong state class, expecting View State but received class com.google.android.material.stateful.ExtendableSavedState instead. This usually happens when two views of different type have the sam... | 1.0 | Use unique view IDs - It would be a good idea to prefix view ids used in the library to make them unique.

I'm getting the following error:

```

Wrong state class, expecting View State but received class com.google.android.material.stateful.ExtendableSavedState instead. This usually happens when two views of diffe... | priority | use unique view ids it would be a good idea to prefix view ids used in the library to make them unique i m getting the following error wrong state class expecting view state but received class com google android material stateful extendablesavedstate instead this usually happens when two views of diffe... | 1 |

594,182 | 18,026,014,572 | IssuesEvent | 2021-09-17 04:43:02 | input-output-hk/cardano-graphql | https://api.github.com/repos/input-output-hk/cardano-graphql | closed | Problem with reading rewards after upgrade to Alonzo | BUG PRIORITY:HIGH | After upgrade the Alonzo update of the Cardano Node we are not able to read our rewards.

We are sending the following query:

```

rewards(

where:{

stakePool:{

id:{_eq:"pool13xxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxxx"... | 1.0 | Problem with reading rewards after upgrade to Alonzo - After upgrade the Alonzo update of the Cardano Node we are not able to read our rewards.

We are sending the following query:

```

rewards(

where:{

stakePool:{

id:{_e... | priority | problem with reading rewards after upgrade to alonzo after upgrade the alonzo update of the cardano node we are not able to read our rewards we are sending the following query rewards where stakepool id e... | 1 |

467,407 | 13,447,631,935 | IssuesEvent | 2020-09-08 14:28:51 | onaio/reveal-frontend | https://api.github.com/repos/onaio/reveal-frontend | opened | Dynamic FI Plans Missing Details in the FI Plans for Thailand | Priority: High Reveal-DSME | - [ ] The Dynamic FI plans on the Thailand preview does not show details that were there on the initial FI plans This include: | 1.0 | Dynamic FI Plans Missing Details in the FI Plans for Thailand - - [ ] The Dynamic FI plans on the Thailand preview does not show details that were there on the initial FI plans This include: | priority | dynamic fi plans missing details in the fi plans for thailand the dynamic fi plans on the thailand preview does not show details that were there on the initial fi plans this include | 1 |

803,048 | 29,116,045,607 | IssuesEvent | 2023-05-17 01:07:34 | encorelab/ck-board | https://api.github.com/repos/encorelab/ck-board | closed | Modify Todo List goal types | enhancement high priority | ### Description

Add a new goal type

**Tasks**

- [ ] Add "ATL skills" as a new goal type (between "Classroom engagement" and "Assigned class work"

- [ ] For the info icon beside the goal type selector add "ATL skills (i.e., thining skills, self-management skills, and research skills)" between the class engagemen... | 1.0 | Modify Todo List goal types - ### Description

Add a new goal type

**Tasks**

- [ ] Add "ATL skills" as a new goal type (between "Classroom engagement" and "Assigned class work"

- [ ] For the info icon beside the goal type selector add "ATL skills (i.e., thining skills, self-management skills, and research skill... | priority | modify todo list goal types description add a new goal type tasks add atl skills as a new goal type between classroom engagement and assigned class work for the info icon beside the goal type selector add atl skills i e thining skills self management skills and research skills ... | 1 |

634,506 | 20,363,690,967 | IssuesEvent | 2022-02-21 01:16:35 | NCC-CNC/whattemplatemaker | https://api.github.com/repos/NCC-CNC/whattemplatemaker | closed | Action IDs must be unique - should we provide guidance? | bug high priority | e.g., if they have two separate types of invasive species management? I think people can get around this by manually entering invasive spp management for second instance. Should we make that clearer or is that something for the manual? | 1.0 | Action IDs must be unique - should we provide guidance? - e.g., if they have two separate types of invasive species management? I think people can get around this by manually entering invasive spp management for second instance. Should we make that clearer or is that something for the manual? | priority | action ids must be unique should we provide guidance e g if they have two separate types of invasive species management i think people can get around this by manually entering invasive spp management for second instance should we make that clearer or is that something for the manual | 1 |

191,052 | 6,825,232,145 | IssuesEvent | 2017-11-08 09:47:38 | atlarge-research/opendc-frontend | https://api.github.com/repos/atlarge-research/opendc-frontend | closed | Support editing the tile-topology of a room | in progress Priority: High | In room mode, a button labeled 'Edit room' should be added which allows the user to add and remove tiles to/of that room. Currently, the only way of doing this is deleting the room (and any racks that may belong to it) and re-creating it. | 1.0 | Support editing the tile-topology of a room - In room mode, a button labeled 'Edit room' should be added which allows the user to add and remove tiles to/of that room. Currently, the only way of doing this is deleting the room (and any racks that may belong to it) and re-creating it. | priority | support editing the tile topology of a room in room mode a button labeled edit room should be added which allows the user to add and remove tiles to of that room currently the only way of doing this is deleting the room and any racks that may belong to it and re creating it | 1 |

25,303 | 2,679,046,614 | IssuesEvent | 2015-03-26 14:48:47 | Connexions/webview | https://api.github.com/repos/Connexions/webview | closed | Editor - user needs to be able to edit type attribute | enhancement High Priority | The Biology OSC book uses the type attribute on Notes that are Art Connection features. We need to at least add editing of type to Notes. It should work in the same way that the class attribute editor works.

Tagging Legand for Biology: https://rice.box.com/s/2m8ysm0mg3a5bvzwu55djfqw0myxc4sf

Example of Note usin... | 1.0 | Editor - user needs to be able to edit type attribute - The Biology OSC book uses the type attribute on Notes that are Art Connection features. We need to at least add editing of type to Notes. It should work in the same way that the class attribute editor works.

Tagging Legand for Biology: https://rice.box.com/s/... | priority | editor user needs to be able to edit type attribute the biology osc book uses the type attribute on notes that are art connection features we need to at least add editing of type to notes it should work in the same way that the class attribute editor works tagging legand for biology example of note us... | 1 |

242,763 | 7,846,603,758 | IssuesEvent | 2018-06-19 15:54:58 | department-of-veterans-affairs/caseflow | https://api.github.com/repos/department-of-veterans-affairs/caseflow | closed | RAMP election | Bug: we allow users to create EPs even if there are no issues to close | Triage bug-high-priority caseflow-intake sierra | There was a situation where an NOD was mistakenly dated one day later than the RAMP opt-in. The CA was able to create the EP, but no VACOLS appeal issues were closed. We should tell users when there are no valid issues on the "finish" step. | 1.0 | RAMP election | Bug: we allow users to create EPs even if there are no issues to close - There was a situation where an NOD was mistakenly dated one day later than the RAMP opt-in. The CA was able to create the EP, but no VACOLS appeal issues were closed. We should tell users when there are no valid issues on the "fini... | priority | ramp election bug we allow users to create eps even if there are no issues to close there was a situation where an nod was mistakenly dated one day later than the ramp opt in the ca was able to create the ep but no vacols appeal issues were closed we should tell users when there are no valid issues on the fini... | 1 |

184,094 | 6,705,401,790 | IssuesEvent | 2017-10-12 00:01:17 | Monitorr/Monitorr | https://api.github.com/repos/Monitorr/Monitorr | closed | Change config.php delivery | enhancement Epic Priority: HIGH | Need to change the way we supply the config file so that updates do not overwrite a users config.

- [x] Change the config.php to config.php.sample

- [x] Update README to reflect the new config setup (copy config.in.sample to config.ini) | 1.0 | Change config.php delivery - Need to change the way we supply the config file so that updates do not overwrite a users config.

- [x] Change the config.php to config.php.sample

- [x] Update README to reflect the new config setup (copy config.in.sample to config.ini) | priority | change config php delivery need to change the way we supply the config file so that updates do not overwrite a users config change the config php to config php sample update readme to reflect the new config setup copy config in sample to config ini | 1 |

328,195 | 9,990,732,194 | IssuesEvent | 2019-07-11 09:27:54 | nhn/tui.grid | https://api.github.com/repos/nhn/tui.grid | closed | jquery is required in onClick event code | 3.x Bug Priority: High | 안녕하세요 tui-grid 팀 여러분, 훌륭한 라이브러리 제공에 감사드립니다. toast-ui.vue-grid를 통해 코드 작성 중 에러로 보이는 부분이 있어 전달드립니다.

상황부터 설명드리자면, Tree Data 형식을 이용하고자, 다음과 같이 설정한 후,

```js

//////////// template part /////////////

...

<Grid class="table"

ref="table"

theme="striped"

:options="gridOptions"

... | 1.0 | jquery is required in onClick event code - 안녕하세요 tui-grid 팀 여러분, 훌륭한 라이브러리 제공에 감사드립니다. toast-ui.vue-grid를 통해 코드 작성 중 에러로 보이는 부분이 있어 전달드립니다.

상황부터 설명드리자면, Tree Data 형식을 이용하고자, 다음과 같이 설정한 후,

```js

//////////// template part /////////////

...

<Grid class="table"

ref="table"

theme="striped"

... | priority | jquery is required in onclick event code 안녕하세요 tui grid 팀 여러분 훌륭한 라이브러리 제공에 감사드립니다 toast ui vue grid를 통해 코드 작성 중 에러로 보이는 부분이 있어 전달드립니다 상황부터 설명드리자면 tree data 형식을 이용하고자 다음과 같이 설정한 후 js template part grid class table ref table theme striped ... | 1 |

339,575 | 10,256,289,029 | IssuesEvent | 2019-08-21 17:18:38 | openstax/tutor | https://api.github.com/repos/openstax/tutor | closed | Don’t allow "Test Prep for AP® Courses" or "Science Practice Challenge Questions" to be selectable when creating reading/HW assignments | change priority1-high | ### Description

Don’t allow "Test Prep for AP® Courses" or "Science Practice Challenge Questions" to be selectable when creating reading/HW assignments

### Acceptance Criteria

These end-of-chapter questions appear in reference view, but are not available to assign when creating a reading assignment or when selecting... | 1.0 | Don’t allow "Test Prep for AP® Courses" or "Science Practice Challenge Questions" to be selectable when creating reading/HW assignments - ### Description

Don’t allow "Test Prep for AP® Courses" or "Science Practice Challenge Questions" to be selectable when creating reading/HW assignments

### Acceptance Criteria

Th... | priority | don’t allow test prep for ap® courses or science practice challenge questions to be selectable when creating reading hw assignments description don’t allow test prep for ap® courses or science practice challenge questions to be selectable when creating reading hw assignments acceptance criteria th... | 1 |

178,487 | 6,609,307,265 | IssuesEvent | 2017-09-19 14:09:23 | metasfresh/metasfresh-webui-api | https://api.github.com/repos/metasfresh/metasfresh-webui-api | closed | Unable to see list of document's attachments | branch:master branch:release priority:high type:bug | ### Type of issue

Bug

### Steps to reproduce:

1. Open window/123/2156482. This document has many attachments uploaded

2. Click on dropdown menu button (in top-right corner of the page)

3. Then click on tab with paperclip icon

4. Loading indicator will appear, need to wait some 20-30 seconds and then it throw... | 1.0 | Unable to see list of document's attachments - ### Type of issue

Bug

### Steps to reproduce:

1. Open window/123/2156482. This document has many attachments uploaded

2. Click on dropdown menu button (in top-right corner of the page)

3. Then click on tab with paperclip icon

4. Loading indicator will appear, ne... | priority | unable to see list of document s attachments type of issue bug steps to reproduce open window this document has many attachments uploaded click on dropdown menu button in top right corner of the page then click on tab with paperclip icon loading indicator will appear need to wa... | 1 |

553,126 | 16,357,488,553 | IssuesEvent | 2021-05-14 02:08:30 | k8ssandra/k8ssandra | https://api.github.com/repos/k8ssandra/k8ssandra | closed | Token allocations are random when using 4.0 and lead to collisions | bug complexity: high component: cass-operator component: cassandra priority: p1 sprint: 5 | ## Bug Report

<!--

Thanks for filing an issue! Before hitting the button, please answer these questions.

Fill in as much of the template below as you can.

-->

**Describe the bug**

When collocating Cassandra pods on the same worker node using Cassandra 4.0, some nodes will fail to start due to tokens collisio... | 1.0 | Token allocations are random when using 4.0 and lead to collisions - ## Bug Report

<!--

Thanks for filing an issue! Before hitting the button, please answer these questions.

Fill in as much of the template below as you can.

-->

**Describe the bug**

When collocating Cassandra pods on the same worker node usin... | priority | token allocations are random when using and lead to collisions bug report thanks for filing an issue before hitting the button please answer these questions fill in as much of the template below as you can describe the bug when collocating cassandra pods on the same worker node usin... | 1 |

783,759 | 27,544,657,765 | IssuesEvent | 2023-03-07 10:54:02 | frequenz-floss/frequenz-sdk-python | https://api.github.com/repos/frequenz-floss/frequenz-sdk-python | opened | Create a BackgroundService class | priority:high type:enhancement part:core | ### What's needed?

Right now we have no common approach on how to manage classes/objects that spawn tasks. The most core example are actors, but we also have a lot of other classes that spawn tasks (like the resampler, moving window, etc.).

It should be possible to clearly control the lifespan of these objects, and... | 1.0 | Create a BackgroundService class - ### What's needed?

Right now we have no common approach on how to manage classes/objects that spawn tasks. The most core example are actors, but we also have a lot of other classes that spawn tasks (like the resampler, moving window, etc.).

It should be possible to clearly control... | priority | create a backgroundservice class what s needed right now we have no common approach on how to manage classes objects that spawn tasks the most core example are actors but we also have a lot of other classes that spawn tasks like the resampler moving window etc it should be possible to clearly control... | 1 |

254,379 | 8,073,429,849 | IssuesEvent | 2018-08-06 19:13:52 | HealthCatalyst/healthcareai-r | https://api.github.com/repos/HealthCatalyst/healthcareai-r | closed | Limone integration | High Priority model interpretation new features | should be called after/separately from `predict` rather than being a switch to turn on during predict | 1.0 | Limone integration - should be called after/separately from `predict` rather than being a switch to turn on during predict | priority | limone integration should be called after separately from predict rather than being a switch to turn on during predict | 1 |

258,646 | 8,178,606,926 | IssuesEvent | 2018-08-28 14:15:29 | Theophilix/event-table-edit | https://api.github.com/repos/Theophilix/event-table-edit | closed | Frontend: Layout: Normal mode: If user clicks on date, only actual date is shown. | bug high priority | Click was on first date, 24.03.2016! Also, the filter is not working.

| 1.0 | Frontend: Layout: Normal mode: If user clicks on date, only actual date is shown. - Click was on first date, 24.03.2016! Also, the filter is not working.

| priority | frontend layout normal mode if user clicks on date only actual date is shown click was on first date also the filter is not working | 1 |

580,428 | 17,243,820,820 | IssuesEvent | 2021-07-21 05:09:50 | prysmaticlabs/prysm | https://api.github.com/repos/prysmaticlabs/prysm | opened | Nested Subcommands Are Broken on v1.4.1 | Bug Priority: High | # 🐞 Bug Report

### Description

Nested Subcommands are Broken on `v1.4.1` . Any subcommand whose parent was a subcommand too

is affected by this error. #9129 introduced stricter validation of commands and arguments which did end up

breaking this command flow.

### Has this worked before in a previous versi... | 1.0 | Nested Subcommands Are Broken on v1.4.1 - # 🐞 Bug Report

### Description

Nested Subcommands are Broken on `v1.4.1` . Any subcommand whose parent was a subcommand too

is affected by this error. #9129 introduced stricter validation of commands and arguments which did end up

breaking this command flow.

###... | priority | nested subcommands are broken on 🐞 bug report description nested subcommands are broken on any subcommand whose parent was a subcommand too is affected by this error introduced stricter validation of commands and arguments which did end up breaking this command flow has ... | 1 |

605,418 | 18,735,000,024 | IssuesEvent | 2021-11-04 05:44:21 | AY2122S1-CS2103T-F11-2/tp | https://api.github.com/repos/AY2122S1-CS2103T-F11-2/tp | closed | [PE-D] delete 6 6 command has unexpected behaviour | bug priority.High mustfix | `delete 6 6` returns error "The person index provided is invalid", but the applicant at index 6 is actually deleted in the GUI list. In addition, upon immediately exiting the application and opening the application, applicant at index 6 remains and is not deleted.

Expected success message, and applicant at index 6 is ... | 1.0 | [PE-D] delete 6 6 command has unexpected behaviour - `delete 6 6` returns error "The person index provided is invalid", but the applicant at index 6 is actually deleted in the GUI list. In addition, upon immediately exiting the application and opening the application, applicant at index 6 remains and is not deleted.

E... | priority | delete command has unexpected behaviour delete returns error the person index provided is invalid but the applicant at index is actually deleted in the gui list in addition upon immediately exiting the application and opening the application applicant at index remains and is not deleted expect... | 1 |

424,035 | 12,305,215,039 | IssuesEvent | 2020-05-11 22:00:34 | salimkanoun/Orthanc-Tools-JS | https://api.github.com/repos/salimkanoun/Orthanc-Tools-JS | closed | Sortie des Overlay | Priority : High enhancement question | Serait il possible de fermer les overlay automatiquement quand ils ont plus le focus ou qcch comme ca.

Ou si la souris est sortie de l'overlay ?

L'idée serait de pas avoir à recliquer sur le boutton pour fermer l'overlay.

| 1.0 | Sortie des Overlay - Serait il possible de fermer les overlay automatiquement quand ils ont plus le focus ou qcch comme ca.

Ou si la souris est sortie de l'overlay ?

L'idée serait de pas avoir à recliquer sur le boutton pour fermer l'overlay.

| priority | sortie des overlay serait il possible de fermer les overlay automatiquement quand ils ont plus le focus ou qcch comme ca ou si la souris est sortie de l overlay l idée serait de pas avoir à recliquer sur le boutton pour fermer l overlay | 1 |

379,433 | 11,221,660,486 | IssuesEvent | 2020-01-07 18:21:40 | ansible/awx | https://api.github.com/repos/ansible/awx | closed | Incorrect Delete Modal on InventoryGroupDetail | component:ui_next priority:high state:in_progress | ##### ISSUE TYPE

- Bug Report

##### SUMMARY

The InventoryGroupDetails view does not use the correct delete modal.

##### ENVIRONMENT

* AWX version: 9.1

* AWX install method: docker for mac

* Ansible version: 2.10=

* Operating System: Mojave

* Web Browser: Chrome

##### STEPS TO REPRODUCE

Go to Invent... | 1.0 | Incorrect Delete Modal on InventoryGroupDetail - ##### ISSUE TYPE

- Bug Report

##### SUMMARY

The InventoryGroupDetails view does not use the correct delete modal.

##### ENVIRONMENT

* AWX version: 9.1

* AWX install method: docker for mac

* Ansible version: 2.10=

* Operating System: Mojave

* Web Browser: C... | priority | incorrect delete modal on inventorygroupdetail issue type bug report summary the inventorygroupdetails view does not use the correct delete modal environment awx version awx install method docker for mac ansible version operating system mojave web browser ch... | 1 |

399,067 | 11,742,665,065 | IssuesEvent | 2020-03-12 01:38:16 | thaliawww/concrexit | https://api.github.com/repos/thaliawww/concrexit | closed | CSV export does not contain payment values | bug easy and fun events priority: high | In GitLab by @JobDoesburg on May 7, 2019, 09:07

### One-sentence description

The .csv export of event registrations does not contain payment values

### Current behaviour / Reproducing the bug

Every row is exported as unpaid

### Expected behaviour

Contain the payment value visible in the admin | 1.0 | CSV export does not contain payment values - In GitLab by @JobDoesburg on May 7, 2019, 09:07

### One-sentence description

The .csv export of event registrations does not contain payment values

### Current behaviour / Reproducing the bug

Every row is exported as unpaid

### Expected behaviour

Contain the payment va... | priority | csv export does not contain payment values in gitlab by jobdoesburg on may one sentence description the csv export of event registrations does not contain payment values current behaviour reproducing the bug every row is exported as unpaid expected behaviour contain the payment value v... | 1 |

563,666 | 16,703,358,758 | IssuesEvent | 2021-06-09 07:00:42 | knowease-inc/knowease-inc.github.io | https://api.github.com/repos/knowease-inc/knowease-inc.github.io | opened | Google Analytics 적용 | Domain:Infra ETR:1W- Priority:High Task:Enhancement | ## 이런 목표를 달성해야 합니다

> 이 이슈로 무슨 목표를 달성하고자 하며 어떤 상태가 되어야 하는지 간결히 적어주세요.

* Google Analytics 데이터 수집 가능상태

## 현재 이런 상태입니다

> 이 이슈를 생성한 현시점의 문제 혹은 향후 문제 발생 가능성에 대하여 간결히 적어주세요.

* 페이지 방문자 관련 데이터를 알 수 없습니다.

## 이 이슈는 이 분이 풀 수 있을 것 같습니다

> 담당할 Assignee를 @로 **1명만** 멘션해주세요.

* @T-Mook

## 아래의 세부적인 문제를 풀어야 할 것 같습니다

... | 1.0 | Google Analytics 적용 - ## 이런 목표를 달성해야 합니다

> 이 이슈로 무슨 목표를 달성하고자 하며 어떤 상태가 되어야 하는지 간결히 적어주세요.

* Google Analytics 데이터 수집 가능상태

## 현재 이런 상태입니다

> 이 이슈를 생성한 현시점의 문제 혹은 향후 문제 발생 가능성에 대하여 간결히 적어주세요.

* 페이지 방문자 관련 데이터를 알 수 없습니다.

## 이 이슈는 이 분이 풀 수 있을 것 같습니다

> 담당할 Assignee를 @로 **1명만** 멘션해주세요.

* @T-Mook

## 아래의 세... | priority | google analytics 적용 이런 목표를 달성해야 합니다 이 이슈로 무슨 목표를 달성하고자 하며 어떤 상태가 되어야 하는지 간결히 적어주세요 google analytics 데이터 수집 가능상태 현재 이런 상태입니다 이 이슈를 생성한 현시점의 문제 혹은 향후 문제 발생 가능성에 대하여 간결히 적어주세요 페이지 방문자 관련 데이터를 알 수 없습니다 이 이슈는 이 분이 풀 수 있을 것 같습니다 담당할 assignee를 로 멘션해주세요 t mook 아래의 세부적... | 1 |

527,061 | 15,307,955,372 | IssuesEvent | 2021-02-24 21:41:11 | infiniteautomation/ma-core-public | https://api.github.com/repos/infiniteautomation/ma-core-public | closed | Audit Event Refactor | High Priority Item | Audit events are currently raised via a static method in the AbstractVoDao, they should leverage DaoEvents. The problem that brought this to my attention was that EventInstanceDao is attempting to raise audit events for EventInstanceVOs when they are inserted (which won't generally happen in Mango but does in the test... | 1.0 | Audit Event Refactor - Audit events are currently raised via a static method in the AbstractVoDao, they should leverage DaoEvents. The problem that brought this to my attention was that EventInstanceDao is attempting to raise audit events for EventInstanceVOs when they are inserted (which won't generally happen in Man... | priority | audit event refactor audit events are currently raised via a static method in the abstractvodao they should leverage daoevents the problem that brought this to my attention was that eventinstancedao is attempting to raise audit events for eventinstancevos when they are inserted which won t generally happen in man... | 1 |

688,594 | 23,589,154,368 | IssuesEvent | 2022-08-23 13:58:03 | huridocs/uwazi | https://api.github.com/repos/huridocs/uwazi | closed | Multi date range and multi date ranges are not supported by CSV import | Sprint Priority: High Feature Backend 💾 | The property type date range and multi date range are not supported through csv import. At present, this type of information has to be manually entered into the database as the import leaves out these property types when trying to do an import.

User Story:

As an admin user responsible for the migration of a large... | 1.0 | Multi date range and multi date ranges are not supported by CSV import - The property type date range and multi date range are not supported through csv import. At present, this type of information has to be manually entered into the database as the import leaves out these property types when trying to do an import.

... | priority | multi date range and multi date ranges are not supported by csv import the property type date range and multi date range are not supported through csv import at present this type of information has to be manually entered into the database as the import leaves out these property types when trying to do an import ... | 1 |

645,947 | 21,033,517,539 | IssuesEvent | 2022-03-31 04:47:24 | wso2/product-is | https://api.github.com/repos/wso2/product-is | closed | Getting "Something went wrong" Error when trying to add a attribute for a registered OIDC application | ui Priority/Highest Severity/Blocker bug console UI Component/Application Management UI Component/Attribute Management Affected-5.12.0 QA-Reported | **How to reproduce:**

1. Set up postgres 11.5 as primary db

2. Access Console

3. Register a OIDC application