Unnamed: 0

int64 0

832k

| id

float64 2.49B

32.1B

| type

stringclasses 1

value | created_at

stringlengths 19

19

| repo

stringlengths 5

112

| repo_url

stringlengths 34

141

| action

stringclasses 3

values | title

stringlengths 1

855

| labels

stringlengths 4

721

| body

stringlengths 1

261k

| index

stringclasses 13

values | text_combine

stringlengths 96

261k

| label

stringclasses 2

values | text

stringlengths 96

240k

| binary_label

int64 0

1

|

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

443,322

| 12,792,548,904

|

IssuesEvent

|

2020-07-02 01:40:39

|

lardemua/atom

|

https://api.github.com/repos/lardemua/atom

|

closed

|

Merge general hand eye with calibrate

|

High Priority enhancement help wanted

|

Should do this to advance in the general approach.

Defined with @eupedrosa that the best way is to try to put in calibrate the stuff from hand eye, i.e. to use the calibrate as base.

|

1.0

|

Merge general hand eye with calibrate - Should do this to advance in the general approach.

Defined with @eupedrosa that the best way is to try to put in calibrate the stuff from hand eye, i.e. to use the calibrate as base.

|

priority

|

merge general hand eye with calibrate should do this to advance in the general approach defined with eupedrosa that the best way is to try to put in calibrate the stuff from hand eye i e to use the calibrate as base

| 1

|

141,381

| 5,435,457,609

|

IssuesEvent

|

2017-03-05 17:09:33

|

Templarian/MaterialDesign

|

https://api.github.com/repos/Templarian/MaterialDesign

|

closed

|

Exponent and Root

|

Alias Icon High Priority Icon Request

|

I'm sure this was part of a request at some point but I can't seem to find these icons. An `x²` and `√x` would be really useful. Could also use a decimal point (the dot talked about in #1709 would work), and a degree symbol like `x°`.

|

1.0

|

Exponent and Root - I'm sure this was part of a request at some point but I can't seem to find these icons. An `x²` and `√x` would be really useful. Could also use a decimal point (the dot talked about in #1709 would work), and a degree symbol like `x°`.

|

priority

|

exponent and root i m sure this was part of a request at some point but i can t seem to find these icons an x² and √x would be really useful could also use a decimal point the dot talked about in would work and a degree symbol like x°

| 1

|

131,858

| 5,166,426,990

|

IssuesEvent

|

2017-01-17 16:12:26

|

snaiperskaya96/test-import-repo

|

https://api.github.com/repos/snaiperskaya96/test-import-repo

|

opened

|

Delete old 'auto invoice' module

|

Accepted Enhancement High Priority

|

https://trello.com/c/XWeij4td/487-delete-old-auto-invoice-module

This must be done after all of our Brightpearl accounts are fully upgraded to version 4.90.

|

1.0

|

Delete old 'auto invoice' module - https://trello.com/c/XWeij4td/487-delete-old-auto-invoice-module

This must be done after all of our Brightpearl accounts are fully upgraded to version 4.90.

|

priority

|

delete old auto invoice module this must be done after all of our brightpearl accounts are fully upgraded to version

| 1

|

737,245

| 25,507,848,224

|

IssuesEvent

|

2022-11-28 10:48:37

|

opensquare-network/bounties

|

https://api.github.com/repos/opensquare-network/bounties

|

closed

|

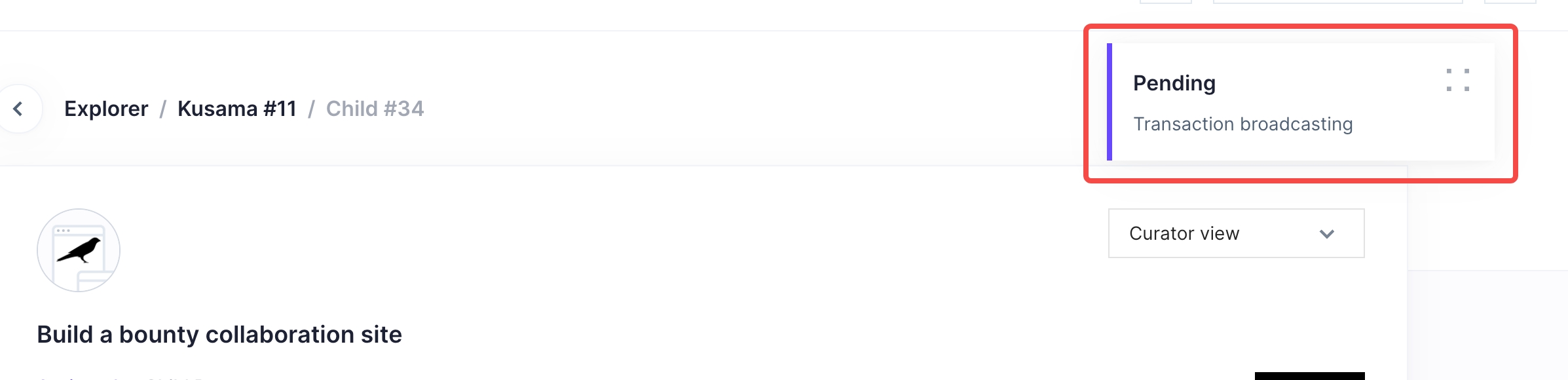

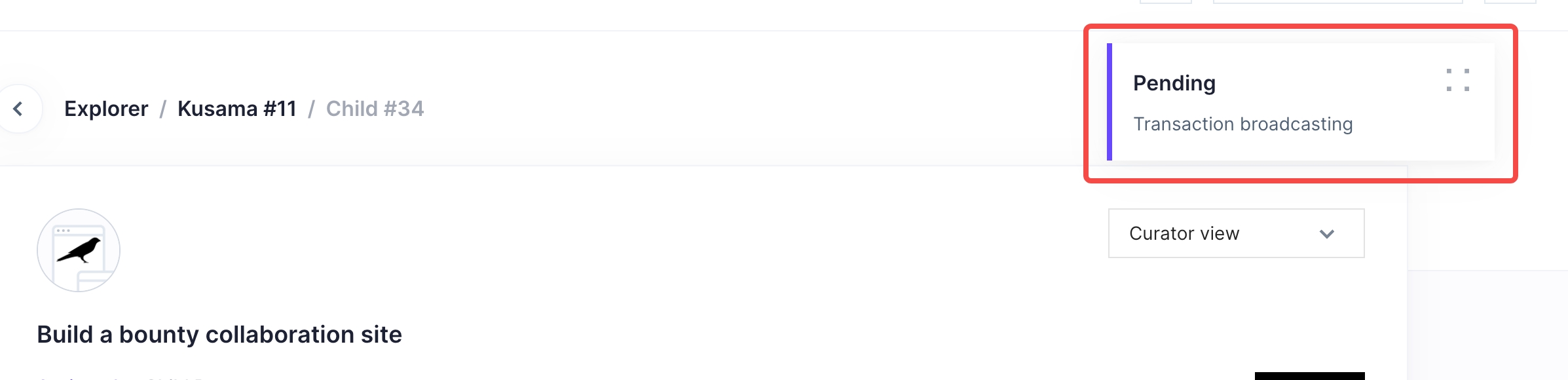

Award extrinsic keep broadcasting

|

bug priority:high

|

1. The award button should be disabled if work not submitted

2. The broadcasting toast keep showing after clicking award button when no work not submitted

|

1.0

|

Award extrinsic keep broadcasting - 1. The award button should be disabled if work not submitted

2. The broadcasting toast keep showing after clicking award button when no work not submitted

|

priority

|

award extrinsic keep broadcasting the award button should be disabled if work not submitted the broadcasting toast keep showing after clicking award button when no work not submitted

| 1

|

32,883

| 2,760,832,254

|

IssuesEvent

|

2015-04-28 14:24:24

|

DLR-SC/tixi

|

https://api.github.com/repos/DLR-SC/tixi

|

closed

|

Function for specifying output stream of error and warning messages

|

auto-migrated Milestone-Release2.1.2 Priority-High Type-Enhancement

|

```

Currently, all error and warnings are printed to stdout. This is sometimes

impractical. E.g. we would like to get TiXI Message inside TiGLViewer.

Therefore we have to pipe the messages into the Logging framework of TiGL.

Adding a new logging framework to Tixi is overkill, but we could provide a

function that allows setting a different output stream.

The function could be

tixiSetStandardOut(FILE * outstream)

```

Original issue reported on code.google.com by `martinsi...@gmail.com` on 14 Nov 2013 at 8:40

|

1.0

|

Function for specifying output stream of error and warning messages - ```

Currently, all error and warnings are printed to stdout. This is sometimes

impractical. E.g. we would like to get TiXI Message inside TiGLViewer.

Therefore we have to pipe the messages into the Logging framework of TiGL.

Adding a new logging framework to Tixi is overkill, but we could provide a

function that allows setting a different output stream.

The function could be

tixiSetStandardOut(FILE * outstream)

```

Original issue reported on code.google.com by `martinsi...@gmail.com` on 14 Nov 2013 at 8:40

|

priority

|

function for specifying output stream of error and warning messages currently all error and warnings are printed to stdout this is sometimes impractical e g we would like to get tixi message inside tiglviewer therefore we have to pipe the messages into the logging framework of tigl adding a new logging framework to tixi is overkill but we could provide a function that allows setting a different output stream the function could be tixisetstandardout file outstream original issue reported on code google com by martinsi gmail com on nov at

| 1

|

780,204

| 27,384,570,300

|

IssuesEvent

|

2023-02-28 12:26:12

|

Azure/mec-app-solution-accelerator

|

https://api.github.com/repos/Azure/mec-app-solution-accelerator

|

closed

|

[doc] Create an .MD explaining how to provision a new camera

|

issue P1 (High priority)

|

Add that as an .MD with a link from README.md explaining how to provision a new camera.

|

1.0

|

[doc] Create an .MD explaining how to provision a new camera - Add that as an .MD with a link from README.md explaining how to provision a new camera.

|

priority

|

create an md explaining how to provision a new camera add that as an md with a link from readme md explaining how to provision a new camera

| 1

|

576,783

| 17,094,640,473

|

IssuesEvent

|

2021-07-08 23:14:36

|

CHOMPStation2/CHOMPStation2

|

https://api.github.com/repos/CHOMPStation2/CHOMPStation2

|

closed

|

Mapping support needed for the change to conveyor belts

|

High Priority Map Edit

|

#### Brief description of the issue

Due to https://github.com/CHOMPStation2/CHOMPStation2/pull/2441, conveyor belts set to diagonal directions are pointing in the wrong directions, commonly problematic at the mining base that relies on it to process materials.

#### What you expected to happen

For conveyor belts to work before the PR merge.

#### What actually happened

Conveyor belts are pointing in the wrong direction if they were set to diagonals.

#### Steps to reproduce

- Step 1 - Go to Mining on Sif

- Step 2 - Check conveyor belts

- Step 3 - Laugh/Cry

#### Code Revision

Server revision: B:-Using TGS- D:-Using TGS-

Commit: 7438d0f486dcc60bafc39dd0e8266db5b55194fd

TGS version: 4.11.1

DMAPI version: 5.3.0

#### Anything else you may wish to add:

- This generally affects all conveyor belts mapped in, just blocks mining from their job.

|

1.0

|

Mapping support needed for the change to conveyor belts - #### Brief description of the issue

Due to https://github.com/CHOMPStation2/CHOMPStation2/pull/2441, conveyor belts set to diagonal directions are pointing in the wrong directions, commonly problematic at the mining base that relies on it to process materials.

#### What you expected to happen

For conveyor belts to work before the PR merge.

#### What actually happened

Conveyor belts are pointing in the wrong direction if they were set to diagonals.

#### Steps to reproduce

- Step 1 - Go to Mining on Sif

- Step 2 - Check conveyor belts

- Step 3 - Laugh/Cry

#### Code Revision

Server revision: B:-Using TGS- D:-Using TGS-

Commit: 7438d0f486dcc60bafc39dd0e8266db5b55194fd

TGS version: 4.11.1

DMAPI version: 5.3.0

#### Anything else you may wish to add:

- This generally affects all conveyor belts mapped in, just blocks mining from their job.

|

priority

|

mapping support needed for the change to conveyor belts brief description of the issue due to conveyor belts set to diagonal directions are pointing in the wrong directions commonly problematic at the mining base that relies on it to process materials what you expected to happen for conveyor belts to work before the pr merge what actually happened conveyor belts are pointing in the wrong direction if they were set to diagonals steps to reproduce step go to mining on sif step check conveyor belts step laugh cry code revision server revision b using tgs d using tgs commit tgs version dmapi version anything else you may wish to add this generally affects all conveyor belts mapped in just blocks mining from their job

| 1

|

614,495

| 19,184,245,408

|

IssuesEvent

|

2021-12-04 23:26:27

|

aaronparker/evergreen

|

https://api.github.com/repos/aaronparker/evergreen

|

closed

|

[Bug]: Microsoft.NET Download URL's changed?

|

bug priority:high

|

### What happened?

Microsoft.NET 'LTS' Channel downloads appear to have moved from https://dotnetcli.azureedge.net/dotnet/Runtime/6.0.0/....

'Current' Channel still seems to be valid for downloads.

If I go to the Microsoft .NET Download site - the new url appears to be:

https://dotnet.microsoft.com/download/dotnet/thank-you/runtime-desktop-6.0.0-windows-x64-installer

### Version

2111.448

### What PowerShell edition/s are you running Evergreen on?

Windows PowerShell

### Which operating system/s are you running Evergreen on?

Windows Server 2016+

### Have you reviewed the documentation?

- [X] Troubleshooting at: https://stealthpuppy.com/evergreen/troubleshoot/

- [X] Known issues at: https://stealthpuppy.com/evergreen/issues/

### Verbose output

```shell

Get-EvergreenApp -Name Microsoft.NET -Verbose

VERBOSE: Get-EvergreenApp: Function exists: C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Apps\Get-Microsoft.NET.ps1.

VERBOSE: Get-EvergreenApp: Dot sourcing: C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Apps\Get-Microsoft.NET.ps1.

VERBOSE: Get-FunctionResource: read application resource strings from [C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Man

ifests\Microsoft.NET.json]

VERBOSE: Get-EvergreenApp: Calling: Get-Microsoft.NET.

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UserAgent: Mozilla/5.0 (Windows NT; Windows NT 10.0; en-US) AppleWebKit

/534.6 (KHTML, like Gecko) Chrome/7.0.500.0 Safari/534.6].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Method: Default].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [ErrorAction: Continue].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UseBasicParsing: True].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Uri: https://dotnetcli.blob.core.windows.net/dotnet/Runtime/Current/lat

est.version].

VERBOSE: GET https://dotnetcli.blob.core.windows.net/dotnet/Runtime/Current/latest.version with 0-byte payload

VERBOSE: received 6-byte response of content type text/plain

VERBOSE: Invoke-WebRequestWrapper: Response: [200].

VERBOSE: Invoke-WebRequestWrapper: Content type: [text/plain].

VERBOSE: Invoke-WebRequestWrapper: Returning content of length: [6].

VERBOSE: Get-Microsoft.NET: found version: 5.0.12.

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UserAgent: Mozilla/5.0 (Windows NT; Windows NT 10.0; en-US) AppleWebKit

/534.6 (KHTML, like Gecko) Chrome/7.0.500.0 Safari/534.6].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Method: Default].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [ErrorAction: Continue].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UseBasicParsing: True].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Uri: https://dotnetcli.blob.core.windows.net/dotnet/Runtime/LTS/latest.

version].

VERBOSE: GET https://dotnetcli.blob.core.windows.net/dotnet/Runtime/LTS/latest.version with 0-byte payload

VERBOSE: received 5-byte response of content type text/plain

VERBOSE: Invoke-WebRequestWrapper: Response: [200].

VERBOSE: Invoke-WebRequestWrapper: Content type: [text/plain].

VERBOSE: Invoke-WebRequestWrapper: Returning content of length: [5].

VERBOSE: Get-Microsoft.NET: found version: 6.0.0.

VERBOSE: Get-EvergreenApp: Output result from: C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Apps\Get-Microsoft.NET.ps1.

Version Architecture Channel URI

------- ------------ ------- ---

6.0.0 x64 LTS https://dotnetcli.azureedge.net/dotnet/Runtime/6.0.0/windowsdesktop-runtime-6.0.0-win-x64.exe

6.0.0 x86 LTS https://dotnetcli.azureedge.net/dotnet/Runtime/6.0.0/windowsdesktop-runtime-6.0.0-win-x86.exe

5.0.12 x64 Current https://dotnetcli.blob.core.windows.net/dotnet/WindowsDesktop/5.0.12/windowsdesktop-runtime-5.0.12-win-...

5.0.12 x86 Current https://dotnetcli.blob.core.windows.net/dotnet/WindowsDesktop/5.0.12/windowsdesktop-runtime-5.0.12-win-...

```

|

1.0

|

[Bug]: Microsoft.NET Download URL's changed? - ### What happened?

Microsoft.NET 'LTS' Channel downloads appear to have moved from https://dotnetcli.azureedge.net/dotnet/Runtime/6.0.0/....

'Current' Channel still seems to be valid for downloads.

If I go to the Microsoft .NET Download site - the new url appears to be:

https://dotnet.microsoft.com/download/dotnet/thank-you/runtime-desktop-6.0.0-windows-x64-installer

### Version

2111.448

### What PowerShell edition/s are you running Evergreen on?

Windows PowerShell

### Which operating system/s are you running Evergreen on?

Windows Server 2016+

### Have you reviewed the documentation?

- [X] Troubleshooting at: https://stealthpuppy.com/evergreen/troubleshoot/

- [X] Known issues at: https://stealthpuppy.com/evergreen/issues/

### Verbose output

```shell

Get-EvergreenApp -Name Microsoft.NET -Verbose

VERBOSE: Get-EvergreenApp: Function exists: C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Apps\Get-Microsoft.NET.ps1.

VERBOSE: Get-EvergreenApp: Dot sourcing: C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Apps\Get-Microsoft.NET.ps1.

VERBOSE: Get-FunctionResource: read application resource strings from [C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Man

ifests\Microsoft.NET.json]

VERBOSE: Get-EvergreenApp: Calling: Get-Microsoft.NET.

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UserAgent: Mozilla/5.0 (Windows NT; Windows NT 10.0; en-US) AppleWebKit

/534.6 (KHTML, like Gecko) Chrome/7.0.500.0 Safari/534.6].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Method: Default].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [ErrorAction: Continue].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UseBasicParsing: True].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Uri: https://dotnetcli.blob.core.windows.net/dotnet/Runtime/Current/lat

est.version].

VERBOSE: GET https://dotnetcli.blob.core.windows.net/dotnet/Runtime/Current/latest.version with 0-byte payload

VERBOSE: received 6-byte response of content type text/plain

VERBOSE: Invoke-WebRequestWrapper: Response: [200].

VERBOSE: Invoke-WebRequestWrapper: Content type: [text/plain].

VERBOSE: Invoke-WebRequestWrapper: Returning content of length: [6].

VERBOSE: Get-Microsoft.NET: found version: 5.0.12.

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UserAgent: Mozilla/5.0 (Windows NT; Windows NT 10.0; en-US) AppleWebKit

/534.6 (KHTML, like Gecko) Chrome/7.0.500.0 Safari/534.6].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Method: Default].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [ErrorAction: Continue].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [UseBasicParsing: True].

VERBOSE: Invoke-WebRequestWrapper: Invoke-WebRequest parameter: [Uri: https://dotnetcli.blob.core.windows.net/dotnet/Runtime/LTS/latest.

version].

VERBOSE: GET https://dotnetcli.blob.core.windows.net/dotnet/Runtime/LTS/latest.version with 0-byte payload

VERBOSE: received 5-byte response of content type text/plain

VERBOSE: Invoke-WebRequestWrapper: Response: [200].

VERBOSE: Invoke-WebRequestWrapper: Content type: [text/plain].

VERBOSE: Invoke-WebRequestWrapper: Returning content of length: [5].

VERBOSE: Get-Microsoft.NET: found version: 6.0.0.

VERBOSE: Get-EvergreenApp: Output result from: C:\Program Files\WindowsPowerShell\Modules\Evergreen\2111.488\Apps\Get-Microsoft.NET.ps1.

Version Architecture Channel URI

------- ------------ ------- ---

6.0.0 x64 LTS https://dotnetcli.azureedge.net/dotnet/Runtime/6.0.0/windowsdesktop-runtime-6.0.0-win-x64.exe

6.0.0 x86 LTS https://dotnetcli.azureedge.net/dotnet/Runtime/6.0.0/windowsdesktop-runtime-6.0.0-win-x86.exe

5.0.12 x64 Current https://dotnetcli.blob.core.windows.net/dotnet/WindowsDesktop/5.0.12/windowsdesktop-runtime-5.0.12-win-...

5.0.12 x86 Current https://dotnetcli.blob.core.windows.net/dotnet/WindowsDesktop/5.0.12/windowsdesktop-runtime-5.0.12-win-...

```

|

priority

|

microsoft net download url s changed what happened microsoft net lts channel downloads appear to have moved from current channel still seems to be valid for downloads if i go to the microsoft net download site the new url appears to be version what powershell edition s are you running evergreen on windows powershell which operating system s are you running evergreen on windows server have you reviewed the documentation troubleshooting at known issues at verbose output shell get evergreenapp name microsoft net verbose verbose get evergreenapp function exists c program files windowspowershell modules evergreen apps get microsoft net verbose get evergreenapp dot sourcing c program files windowspowershell modules evergreen apps get microsoft net verbose get functionresource read application resource strings from c program files windowspowershell modules evergreen man ifests microsoft net json verbose get evergreenapp calling get microsoft net verbose invoke webrequestwrapper invoke webrequest parameter useragent mozilla windows nt windows nt en us applewebkit khtml like gecko chrome safari verbose invoke webrequestwrapper invoke webrequest parameter verbose invoke webrequestwrapper invoke webrequest parameter verbose invoke webrequestwrapper invoke webrequest parameter verbose invoke webrequestwrapper invoke webrequest parameter uri est version verbose get with byte payload verbose received byte response of content type text plain verbose invoke webrequestwrapper response verbose invoke webrequestwrapper content type verbose invoke webrequestwrapper returning content of length verbose get microsoft net found version verbose invoke webrequestwrapper invoke webrequest parameter useragent mozilla windows nt windows nt en us applewebkit khtml like gecko chrome safari verbose invoke webrequestwrapper invoke webrequest parameter verbose invoke webrequestwrapper invoke webrequest parameter verbose invoke webrequestwrapper invoke webrequest parameter verbose invoke webrequestwrapper invoke webrequest parameter uri version verbose get with byte payload verbose received byte response of content type text plain verbose invoke webrequestwrapper response verbose invoke webrequestwrapper content type verbose invoke webrequestwrapper returning content of length verbose get microsoft net found version verbose get evergreenapp output result from c program files windowspowershell modules evergreen apps get microsoft net version architecture channel uri lts lts current current

| 1

|

282,606

| 8,708,460,740

|

IssuesEvent

|

2018-12-06 10:58:09

|

pablotabares/decide

|

https://api.github.com/repos/pablotabares/decide

|

closed

|

Add poll creation functionality

|

bot enhancement priority: high

|

Telegram bot must provide commands to create a poll, including questions and answers.

|

1.0

|

Add poll creation functionality - Telegram bot must provide commands to create a poll, including questions and answers.

|

priority

|

add poll creation functionality telegram bot must provide commands to create a poll including questions and answers

| 1

|

635,471

| 20,403,353,866

|

IssuesEvent

|

2022-02-23 00:26:01

|

CoEDL/nyingarn-workspace

|

https://api.github.com/repos/CoEDL/nyingarn-workspace

|

closed

|

Users need to be able to download specific files and delete specific files

|

enhancement priority-high

|

Implement ability to see resource files, download them (e.g. digivol csv) and delete specific files.

|

1.0

|

Users need to be able to download specific files and delete specific files - Implement ability to see resource files, download them (e.g. digivol csv) and delete specific files.

|

priority

|

users need to be able to download specific files and delete specific files implement ability to see resource files download them e g digivol csv and delete specific files

| 1

|

373,325

| 11,042,216,416

|

IssuesEvent

|

2019-12-09 08:40:39

|

ballerina-platform/ballerina-lang

|

https://api.github.com/repos/ballerina-platform/ballerina-lang

|

closed

|

Issue in inferRecordFieldType method

|

Area/Language Component/Compiler Points/1 Priority/High Type/Improvement

|

**Description:**

In the Types class, we can infer the type of a record field using `inferRecordFieldType` method. `anydata` is not considered in this method, `any` will be returned in such cases.

|

1.0

|

Issue in inferRecordFieldType method - **Description:**

In the Types class, we can infer the type of a record field using `inferRecordFieldType` method. `anydata` is not considered in this method, `any` will be returned in such cases.

|

priority

|

issue in inferrecordfieldtype method description in the types class we can infer the type of a record field using inferrecordfieldtype method anydata is not considered in this method any will be returned in such cases

| 1

|

441,258

| 12,710,053,444

|

IssuesEvent

|

2020-06-23 13:20:29

|

RonAsis/Wsep202

|

https://api.github.com/repos/RonAsis/Wsep202

|

opened

|

bug- not responsive enough - can't appoint all users as owner

|

High priority bug

|

and doesn't tell why- there is no error message

|

1.0

|

bug- not responsive enough - can't appoint all users as owner - and doesn't tell why- there is no error message

|

priority

|

bug not responsive enough can t appoint all users as owner and doesn t tell why there is no error message

| 1

|

243,593

| 7,859,496,551

|

IssuesEvent

|

2018-06-21 16:46:50

|

minio/minio-go

|

https://api.github.com/repos/minio/minio-go

|

closed

|

Error while running Azure tests on Mint

|

priority: high

|

When I was running Azure gateway tests on Mint, I got the following error:

```

{

"args": {

"bucketName": "minio-go-test-mj6c6n45dw0bpa4e",

"objectName": "test-object",

"opts": "",

"size": -1

},

"duration": 289,

"function": "PutObject(bucketName, objectName, reader, size, opts)",

"message": "Expected content-language 'en-US' doesn't match with StatObject return value",

"name": "minio-go: testPutObjectWithContentLanguage",

"status": "FAIL"

}

```

|

1.0

|

Error while running Azure tests on Mint - When I was running Azure gateway tests on Mint, I got the following error:

```

{

"args": {

"bucketName": "minio-go-test-mj6c6n45dw0bpa4e",

"objectName": "test-object",

"opts": "",

"size": -1

},

"duration": 289,

"function": "PutObject(bucketName, objectName, reader, size, opts)",

"message": "Expected content-language 'en-US' doesn't match with StatObject return value",

"name": "minio-go: testPutObjectWithContentLanguage",

"status": "FAIL"

}

```

|

priority

|

error while running azure tests on mint when i was running azure gateway tests on mint i got the following error args bucketname minio go test objectname test object opts size duration function putobject bucketname objectname reader size opts message expected content language en us doesn t match with statobject return value name minio go testputobjectwithcontentlanguage status fail

| 1

|

797,763

| 28,154,687,278

|

IssuesEvent

|

2023-04-03 06:18:02

|

AY2223S2-CS2113-T15-4/tp

|

https://api.github.com/repos/AY2223S2-CS2113-T15-4/tp

|

closed

|

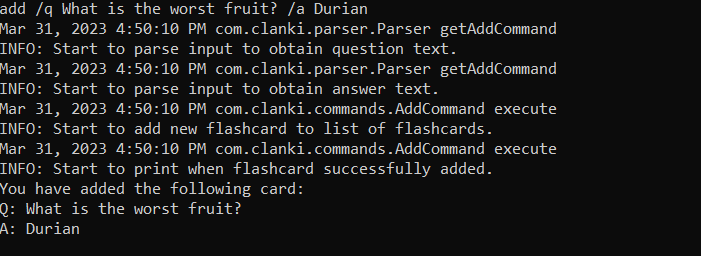

[PE-D][Tester A] There is some logging that is disrupting the user experience when using commands

|

type.Bug priority.High severity.High

|

<!--session: 1680252479707-92304da9-1923-4959-b973-1d1a8a61eabd-->

<!--Version: Web v3.4.7-->

-------------

Labels: `type.FunctionalityBug` `severity.Medium`

original: SSzeWen/ped#1

|

1.0

|

[PE-D][Tester A] There is some logging that is disrupting the user experience when using commands -

<!--session: 1680252479707-92304da9-1923-4959-b973-1d1a8a61eabd-->

<!--Version: Web v3.4.7-->

-------------

Labels: `type.FunctionalityBug` `severity.Medium`

original: SSzeWen/ped#1

|

priority

|

there is some logging that is disrupting the user experience when using commands labels type functionalitybug severity medium original sszewen ped

| 1

|

581,808

| 17,332,278,413

|

IssuesEvent

|

2021-07-28 05:12:54

|

sacloud/terraform-provider-sakuracloud

|

https://api.github.com/repos/sacloud/terraform-provider-sakuracloud

|

closed

|

外部ツール経由でプラン変更された場合のID変更の追跡

|

area/resources priority/high v2

|

### 概要

さくらのクラウドではプラン変更時にリソースのIDが変更される。

対象リソース:

- サーバ

- ルータ

- ELB

Terraformからプラン変更を行なった場合、IDが変更されることへの対応が実装されているが、

外部ツール(AutoScalerなど)からプラン変更が行われた場合にリソースが追跡できなくなる。

これは、各リソースのReadでIDを元にさくらのクラウドAPIを用いてリソースの情報を参照しているため。

(IDで検索し404が返ってきた場合はリソースが削除されたとみなす)

追跡できなくなってもimportすることで復帰させることが出来るが運用的に煩雑。

このため、何らかのルールにしたがって変更前のIDをメタデータとしてリソースに保持しておき、IDでの検索が404になった場合にはメタデータを利用して検索するようにフォールバックする。

### 実装案

`@previous-id=123456789012`のようなタグで変更前のIDを表す。

Terraform側はまずIDでの検索を試し、404になった場合は同一ゾーンのリソースから`@previous-id=<現在保持しているID>`というタグを条件に検索する。

API呼び出し例: `GET /server?{"Filter":{"Tags.Name":"@previous-id=123456789012"}}`

|

1.0

|

外部ツール経由でプラン変更された場合のID変更の追跡 - ### 概要

さくらのクラウドではプラン変更時にリソースのIDが変更される。

対象リソース:

- サーバ

- ルータ

- ELB

Terraformからプラン変更を行なった場合、IDが変更されることへの対応が実装されているが、

外部ツール(AutoScalerなど)からプラン変更が行われた場合にリソースが追跡できなくなる。

これは、各リソースのReadでIDを元にさくらのクラウドAPIを用いてリソースの情報を参照しているため。

(IDで検索し404が返ってきた場合はリソースが削除されたとみなす)

追跡できなくなってもimportすることで復帰させることが出来るが運用的に煩雑。

このため、何らかのルールにしたがって変更前のIDをメタデータとしてリソースに保持しておき、IDでの検索が404になった場合にはメタデータを利用して検索するようにフォールバックする。

### 実装案

`@previous-id=123456789012`のようなタグで変更前のIDを表す。

Terraform側はまずIDでの検索を試し、404になった場合は同一ゾーンのリソースから`@previous-id=<現在保持しているID>`というタグを条件に検索する。

API呼び出し例: `GET /server?{"Filter":{"Tags.Name":"@previous-id=123456789012"}}`

|

priority

|

外部ツール経由でプラン変更された場合のid変更の追跡 概要 さくらのクラウドではプラン変更時にリソースのidが変更される。 対象リソース サーバ ルータ elb terraformからプラン変更を行なった場合、idが変更されることへの対応が実装されているが、 外部ツール autoscalerなど からプラン変更が行われた場合にリソースが追跡できなくなる。 これは、各リソースのreadでidを元にさくらのクラウドapiを用いてリソースの情報を参照しているため。 追跡できなくなってもimportすることで復帰させることが出来るが運用的に煩雑。 このため、何らかのルールにしたがって変更前のidをメタデータとしてリソースに保持しておき、 。 実装案 previous id のようなタグで変更前のidを表す。 terraform側はまずidでの検索を試し、 previous id というタグを条件に検索する。 api呼び出し例 get server filter tags name previous id

| 1

|

448,881

| 12,959,526,980

|

IssuesEvent

|

2020-07-20 13:08:14

|

wso2/micro-integrator

|

https://api.github.com/repos/wso2/micro-integrator

|

closed

|

Inconsistency in printing logs while using the payloadFactory mediator

|

Priority/High Severity/Major

|

**Description:**

1. Please find the following proxy service.

```

<?xml version="1.0" encoding="UTF-8"?>

<proxy xmlns="http://ws.apache.org/ns/synapse" name="test" startOnLoad="true" statistics="disable" trace="disable" transports="http,https">

<target>

<inSequence>

<payloadFactory media-type="xml">

<format>

<values>Test123</values>

</format>

<args/>

</payloadFactory>

<log level="full"/>

<log>

<property expression="//*[local-name()='values']" name="objects4"/>

</log>

<respond/>

</inSequence>

</target>

<description/>

</proxy>

```

2. Deploy it inside wso2mi-1.1.0 and invoke it.

Expected result

` <values>Test123</values>`

Actual result

`Test123`

**Suggested Labels:**

wso2mi-1.1.0, payloadFactory

**Affected Product Version:**

wso2mi-1.1.0

**OS, DB, other environment details and versions:**

Linnux

**Related Issues:**

https://github.com/wso2/product-ei/issues/2092

|

1.0

|

Inconsistency in printing logs while using the payloadFactory mediator - **Description:**

1. Please find the following proxy service.

```

<?xml version="1.0" encoding="UTF-8"?>

<proxy xmlns="http://ws.apache.org/ns/synapse" name="test" startOnLoad="true" statistics="disable" trace="disable" transports="http,https">

<target>

<inSequence>

<payloadFactory media-type="xml">

<format>

<values>Test123</values>

</format>

<args/>

</payloadFactory>

<log level="full"/>

<log>

<property expression="//*[local-name()='values']" name="objects4"/>

</log>

<respond/>

</inSequence>

</target>

<description/>

</proxy>

```

2. Deploy it inside wso2mi-1.1.0 and invoke it.

Expected result

` <values>Test123</values>`

Actual result

`Test123`

**Suggested Labels:**

wso2mi-1.1.0, payloadFactory

**Affected Product Version:**

wso2mi-1.1.0

**OS, DB, other environment details and versions:**

Linnux

**Related Issues:**

https://github.com/wso2/product-ei/issues/2092

|

priority

|

inconsistency in printing logs while using the payloadfactory mediator description please find the following proxy service deploy it inside and invoke it expected result actual result suggested labels payloadfactory affected product version os db other environment details and versions linnux related issues

| 1

|

185,292

| 6,720,769,436

|

IssuesEvent

|

2017-10-16 09:07:03

|

kedgeproject/kedge

|

https://api.github.com/repos/kedgeproject/kedge

|

closed

|

replicas set to 0 if not specified

|

kind/bug kind/task priority/high

|

If replicas is not set in Kedge file, the generated output shouldn't have it either.

Now it defaults to 0, which leads to a confusing situation where your containers are not started when DC id deployed.

```

name: foo

controller: deploymentconfig

containers:

- image: quay.io/tomkral/sleeper

```

```

▶ ./kedge generate -f test.yaml

---

apiVersion: v1

kind: DeploymentConfig

metadata:

creationTimestamp: null

name: foo

spec:

replicas: 0

strategy:

resources: {}

template:

metadata:

creationTimestamp: null

spec:

containers:

- image: quay.io/tomkral/sleeper

name: foo

resources: {}

test: false

triggers: null

status:

availableReplicas: 0

latestVersion: 0

observedGeneration: 0

replicas: 0

unavailableReplicas: 0

updatedReplicas: 0

```

replicas shouldn't be set if it's not set in Kedge file, OpenShift default will be used on the cluster side.

|

1.0

|

replicas set to 0 if not specified - If replicas is not set in Kedge file, the generated output shouldn't have it either.

Now it defaults to 0, which leads to a confusing situation where your containers are not started when DC id deployed.

```

name: foo

controller: deploymentconfig

containers:

- image: quay.io/tomkral/sleeper

```

```

▶ ./kedge generate -f test.yaml

---

apiVersion: v1

kind: DeploymentConfig

metadata:

creationTimestamp: null

name: foo

spec:

replicas: 0

strategy:

resources: {}

template:

metadata:

creationTimestamp: null

spec:

containers:

- image: quay.io/tomkral/sleeper

name: foo

resources: {}

test: false

triggers: null

status:

availableReplicas: 0

latestVersion: 0

observedGeneration: 0

replicas: 0

unavailableReplicas: 0

updatedReplicas: 0

```

replicas shouldn't be set if it's not set in Kedge file, OpenShift default will be used on the cluster side.

|

priority

|

replicas set to if not specified if replicas is not set in kedge file the generated output shouldn t have it either now it defaults to which leads to a confusing situation where your containers are not started when dc id deployed name foo controller deploymentconfig containers image quay io tomkral sleeper ▶ kedge generate f test yaml apiversion kind deploymentconfig metadata creationtimestamp null name foo spec replicas strategy resources template metadata creationtimestamp null spec containers image quay io tomkral sleeper name foo resources test false triggers null status availablereplicas latestversion observedgeneration replicas unavailablereplicas updatedreplicas replicas shouldn t be set if it s not set in kedge file openshift default will be used on the cluster side

| 1

|

517,364

| 15,007,623,422

|

IssuesEvent

|

2021-01-31 05:52:59

|

Left-on-Read/app

|

https://api.github.com/repos/Left-on-Read/app

|

closed

|

Implement one filter

|

high priority (p1) setup

|

Implement filtering - we can start with just a single filter, such as by date.

~This should be redux-driven.~

|

1.0

|

Implement one filter - Implement filtering - we can start with just a single filter, such as by date.

~This should be redux-driven.~

|

priority

|

implement one filter implement filtering we can start with just a single filter such as by date this should be redux driven

| 1

|

473,405

| 13,641,942,594

|

IssuesEvent

|

2020-09-25 14:50:47

|

pytorch/pytorch

|

https://api.github.com/repos/pytorch/pytorch

|

closed

|

CPU memory leak when using torch.no_grad()

|

high priority module: autograd topic: memory usage triage review triaged

|

## 🐛 Bug

If use `torch.no_grad()` block, the cpu memory will continually increase untill OOM kill happens.

But once remove the `no_grad`, everything would be all right.

I tried del loss or put the validation step into a function, but the memory leak still happens.

Is my code wrong? or a BUG?

## To Reproduce

Steps to reproduce the behavior:

1. Validation step using `torch.no_grad()`.

2. Using `CrossEntropyLoss()` as criterion.

3. CPU RAM continually increasing occurs a OOM kill by system(Ubuntu 18.04).

PyTorch 1.6

```

for epoch in range(10):

net.train()

# Good training.

for data in trainloader:

inputs, labels = data['images'], data['masks']

for idx in range(0, len(inputs), 7):

optimizer.zero_grad()

outputs = net(inputs[idx:idx + 7])

loss = criterion(outputs, labels[idx:idx + 7])

loss.backward()

optimizer.step()

# Bad validation.

net.eval()

test_loss = 0.0

test_times = 0

for data in testloader:

# !!!!!!!!👇

with torch.no_grad():

inputs, labels = data['images'], data['masks']

for idx in range(0, len(inputs), 7):

# or put no_grad here, leaking still happens.

outputs = net(inputs[idx:idx + 7])

loss = criterion(outputs, labels[idx:idx + 7])

test_loss += loss.item()

test_times += 1

test_loss /= test_times

```

## Expected behavior

Normally valid without increasing CPU RAM.

## Environment

- PyTorch Version (e.g., 1.0): 1.6

- OS (e.g., Linux): Ubuntu 18.04

- How you installed PyTorch (`conda`, `pip`, source): conda

- Build command you used (if compiling from source): NaN

- Python version: 3.8.5

- CUDA/cuDNN version: 10.2

- GPU models and configuration: Tesla V100 16G

- Any other relevant information: with system RAM 128G

## Additional context

Thanks for all your excellent work!

cc @ezyang @gchanan @zou3519 @albanD @gqchen @pearu @nikitaved

|

1.0

|

CPU memory leak when using torch.no_grad() - ## 🐛 Bug

If use `torch.no_grad()` block, the cpu memory will continually increase untill OOM kill happens.

But once remove the `no_grad`, everything would be all right.

I tried del loss or put the validation step into a function, but the memory leak still happens.

Is my code wrong? or a BUG?

## To Reproduce

Steps to reproduce the behavior:

1. Validation step using `torch.no_grad()`.

2. Using `CrossEntropyLoss()` as criterion.

3. CPU RAM continually increasing occurs a OOM kill by system(Ubuntu 18.04).

PyTorch 1.6

```

for epoch in range(10):

net.train()

# Good training.

for data in trainloader:

inputs, labels = data['images'], data['masks']

for idx in range(0, len(inputs), 7):

optimizer.zero_grad()

outputs = net(inputs[idx:idx + 7])

loss = criterion(outputs, labels[idx:idx + 7])

loss.backward()

optimizer.step()

# Bad validation.

net.eval()

test_loss = 0.0

test_times = 0

for data in testloader:

# !!!!!!!!👇

with torch.no_grad():

inputs, labels = data['images'], data['masks']

for idx in range(0, len(inputs), 7):

# or put no_grad here, leaking still happens.

outputs = net(inputs[idx:idx + 7])

loss = criterion(outputs, labels[idx:idx + 7])

test_loss += loss.item()

test_times += 1

test_loss /= test_times

```

## Expected behavior

Normally valid without increasing CPU RAM.

## Environment

- PyTorch Version (e.g., 1.0): 1.6

- OS (e.g., Linux): Ubuntu 18.04

- How you installed PyTorch (`conda`, `pip`, source): conda

- Build command you used (if compiling from source): NaN

- Python version: 3.8.5

- CUDA/cuDNN version: 10.2

- GPU models and configuration: Tesla V100 16G

- Any other relevant information: with system RAM 128G

## Additional context

Thanks for all your excellent work!

cc @ezyang @gchanan @zou3519 @albanD @gqchen @pearu @nikitaved

|

priority

|

cpu memory leak when using torch no grad 🐛 bug if use torch no grad block the cpu memory will continually increase untill oom kill happens but once remove the no grad everything would be all right i tried del loss or put the validation step into a function but the memory leak still happens is my code wrong or a bug to reproduce steps to reproduce the behavior validation step using torch no grad using crossentropyloss as criterion cpu ram continually increasing occurs a oom kill by system ubuntu pytorch for epoch in range net train good training for data in trainloader inputs labels data data for idx in range len inputs optimizer zero grad outputs net inputs loss criterion outputs labels loss backward optimizer step bad validation net eval test loss test times for data in testloader 👇 with torch no grad inputs labels data data for idx in range len inputs or put no grad here leaking still happens outputs net inputs loss criterion outputs labels test loss loss item test times test loss test times expected behavior normally valid without increasing cpu ram environment pytorch version e g os e g linux ubuntu how you installed pytorch conda pip source conda build command you used if compiling from source nan python version cuda cudnn version gpu models and configuration tesla any other relevant information with system ram additional context thanks for all your excellent work cc ezyang gchanan alband gqchen pearu nikitaved

| 1

|

617,097

| 19,342,641,181

|

IssuesEvent

|

2021-12-15 07:18:14

|

ballerina-platform/ballerina-dev-website

|

https://api.github.com/repos/ballerina-platform/ballerina-dev-website

|

closed

|

Add Content on How to Write a Connector in Bio

|

Priority/Highest Area/Docs Type/Task Points/1

|

**Description:**

Need to update [1] according to the latest Swan Lake changes and add the content to Bio.

[1] https://medium.com/ballerina-techblog/how-to-write-a-client-endpoint-in-ballerina-3c24c185ffaf

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, Browser, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

1.0

|

Add Content on How to Write a Connector in Bio - **Description:**

Need to update [1] according to the latest Swan Lake changes and add the content to Bio.

[1] https://medium.com/ballerina-techblog/how-to-write-a-client-endpoint-in-ballerina-3c24c185ffaf

**Suggested Labels:**

<!-- Optional comma separated list of suggested labels. Non committers can’t assign labels to issues, so this will help issue creators who are not a committer to suggest possible labels-->

**Suggested Assignees:**

<!--Optional comma separated list of suggested team members who should attend the issue. Non committers can’t assign issues to assignees, so this will help issue creators who are not a committer to suggest possible assignees-->

**Affected Product Version:**

**OS, Browser, other environment details and versions:**

**Steps to reproduce:**

**Related Issues:**

<!-- Any related issues such as sub tasks, issues reported in other repositories (e.g component repositories), similar problems, etc. -->

|

priority

|

add content on how to write a connector in bio description need to update according to the latest swan lake changes and add the content to bio suggested labels suggested assignees affected product version os browser other environment details and versions steps to reproduce related issues

| 1

|

711,306

| 24,457,641,549

|

IssuesEvent

|

2022-10-07 08:17:32

|

AY2223S1-CS2113-F11-4/tp

|

https://api.github.com/repos/AY2223S1-CS2113-F11-4/tp

|

closed

|

Adding New Prescription Record: add

|

type.Story priority.High

|

As a doctor / user, I want to be able to add a a new prescription for a patient so that I can retrieve it for their future visits and records

<img width="499" alt="image" src="https://user-images.githubusercontent.com/31297758/193443112-74662d47-2998-405d-9315-1da60dec36d5.png">

|

1.0

|

Adding New Prescription Record: add - As a doctor / user, I want to be able to add a a new prescription for a patient so that I can retrieve it for their future visits and records

<img width="499" alt="image" src="https://user-images.githubusercontent.com/31297758/193443112-74662d47-2998-405d-9315-1da60dec36d5.png">

|

priority

|

adding new prescription record add as a doctor user i want to be able to add a a new prescription for a patient so that i can retrieve it for their future visits and records img width alt image src

| 1

|

312,047

| 9,542,320,880

|

IssuesEvent

|

2019-05-01 03:08:45

|

openmsupply/mobile

|

https://api.github.com/repos/openmsupply/mobile

|

opened

|

Customer requisition finalisation crash

|

Bug Effort small Ivory Coast (phase 1) Priority: High

|

Build Number: 2.3.0-rc0 dev+apk

Description:

Reproducible: yes

Reproduction Steps:

1. receive a customer requisition

2. enter a value

3. finalise it

4. some loading spinner and a RSoD (in dev, crash in apk)

Comments: There were items in my customer requisition that my store didn't actually have visible in their store.

|

1.0

|

Customer requisition finalisation crash - Build Number: 2.3.0-rc0 dev+apk

Description:

Reproducible: yes

Reproduction Steps:

1. receive a customer requisition

2. enter a value

3. finalise it

4. some loading spinner and a RSoD (in dev, crash in apk)

Comments: There were items in my customer requisition that my store didn't actually have visible in their store.

|

priority

|

customer requisition finalisation crash build number dev apk description reproducible yes reproduction steps receive a customer requisition enter a value finalise it some loading spinner and a rsod in dev crash in apk comments there were items in my customer requisition that my store didn t actually have visible in their store

| 1

|

460,117

| 13,205,116,210

|

IssuesEvent

|

2020-08-14 17:15:56

|

googleinterns/bazel-rules-fuzzing

|

https://api.github.com/repos/googleinterns/bazel-rules-fuzzing

|

closed

|

Enable regression support in the launcher script

|

high priority

|

## Expected Behavior

Launcher needs to support running regression test without continuous fuzzing test.

To achieve this, a string_flag `engine` will be added to decide the launcher's behavior. After the launcher is modified, a new rule `regression_launcher` will be needed to start the launcher in the regression mode.

## Actual Behavior

Only continuous fuzzing test mode is supported

|

1.0

|

Enable regression support in the launcher script - ## Expected Behavior

Launcher needs to support running regression test without continuous fuzzing test.

To achieve this, a string_flag `engine` will be added to decide the launcher's behavior. After the launcher is modified, a new rule `regression_launcher` will be needed to start the launcher in the regression mode.

## Actual Behavior

Only continuous fuzzing test mode is supported

|

priority

|

enable regression support in the launcher script expected behavior launcher needs to support running regression test without continuous fuzzing test to achieve this a string flag engine will be added to decide the launcher s behavior after the launcher is modified a new rule regression launcher will be needed to start the launcher in the regression mode actual behavior only continuous fuzzing test mode is supported

| 1

|

36,066

| 2,795,249,985

|

IssuesEvent

|

2015-05-11 20:59:18

|

Arabidopsis-Information-Portal/adama

|

https://api.github.com/repos/Arabidopsis-Information-Portal/adama

|

closed

|

Restrict names of namespaces to current allowed characters

|

bug high priority

|

In Adama 0.3 the namespace together with the service name correspond to a container image. The only characters allowed for a container image are [a-z0-9_.-]. This is an implementation detail and it **should not** leak to the user (see #45 and #3). While we fix those issues, Adama should refuse to create a namespace with forbidden characters.

Right now, Adama accepts namespaces with forbidden characters, and it refuses service names with those characters. However, even if the service name is compliant, being under a non-compliant namespace produces an invalid image name.

|

1.0

|

Restrict names of namespaces to current allowed characters - In Adama 0.3 the namespace together with the service name correspond to a container image. The only characters allowed for a container image are [a-z0-9_.-]. This is an implementation detail and it **should not** leak to the user (see #45 and #3). While we fix those issues, Adama should refuse to create a namespace with forbidden characters.

Right now, Adama accepts namespaces with forbidden characters, and it refuses service names with those characters. However, even if the service name is compliant, being under a non-compliant namespace produces an invalid image name.

|

priority

|

restrict names of namespaces to current allowed characters in adama the namespace together with the service name correspond to a container image the only characters allowed for a container image are this is an implementation detail and it should not leak to the user see and while we fix those issues adama should refuse to create a namespace with forbidden characters right now adama accepts namespaces with forbidden characters and it refuses service names with those characters however even if the service name is compliant being under a non compliant namespace produces an invalid image name

| 1

|

744,886

| 25,959,460,957

|

IssuesEvent

|

2022-12-18 17:54:16

|

zigtools/zls

|

https://api.github.com/repos/zigtools/zls

|

closed

|

Integer overflow when parsing incomplete function

|

bug priority:high fuzzing result

|

### Zig Version

0.11.0-dev.782+0b4461d97

### Zig Language Server Version

3526f5fb84b89b6327fc21c6b836b842bf4db90d

### Steps to Reproduce

Open the following file:

```zig

AtEnd() void {

_ = @import("std");

const expect =

try expect(result[1]);

var buf = [_:0]u8{ 'a', 'b'}, 42.0f};

};

});

if (builtin.zig_backend == .stage2_wasm) return error.SkipZigTest; // TODO

try expect(@as(u32, 0xFE00BE00), structPtr.*);

}

pub fn set_checksum_size = 1000;

v.func_field(0) == 2);

try expect(ctz(@as(u121, 3)),

const tmp = ref.*;

\\#define foo "a string";

.is_var_args);

try testOneCtzVector(u128, 64, @splat(4, @as(u24, 0x6a2c48)));

const X = struct {

\\const std = @import("std");

pub fn main() void {

var bad: f128 = 0.0_;

_ = @Type(@typeInfo(Foo).Union.tag_type == f32);

try expect(length == std.mem.eql(u8, messages here is a VarDecl in scope

}

\\0: first arg

\\ return error.SkipZigTest; // TODO

comptime try expect(G3.Fn.return_trace;

return Foo {.x = 13};

}

var some_struct_param_type() void {

_ = v;

};

try testTruncWithVectors() !void {

var target: [*c]u8 = @ptrCast([*]u8, &l.array);

try expect(a == -1);

try S.entry(true, false };

_ = c.printf("0.000000000FFFFFFFF000000000000p+3

.a = .{

S.declaration of label 'blk'

// :8:12: error: union declared here

// :24:20: error: loop in named 'c' in enum 'tmp.Letter'

// :1:7: error: C import block

// :5:10: error: parameters cannot cast into pointer" {

const X = struct field" {

var a: c_int = @ptrCast(*u32, &bytes);

try expect(result == 1) catch unreachable;

}

// error

// backend=stage2

// target=native

//

// :1:8: error: this is a longer message, "integer overflow = @addWithOverflow(u64, a, b, &res));

\\}

try expectEqual = std.testing.expectEqualStrings = std.fs;

pub const B = enum(u8) {

const x: f32 = 0.0;

var default value stored to trigger the bug

//a second vector initialization syntax

// :2:1: error: expectedBitSize = 80;

comptime {

return error union by return" {

const S = extern struct {

const std = @import("behavior = .Pipe;

\\}

});

const i = g + h; // 100

return 1234;

var array = array[i];

fn foo(a: bool, b: bool = true,

}

}

// error

// backend=stage2

// target=native

//

// :2:5: note: control flow inside runtime isNan(nan_times_zero));

.build_modes = true,

};

_ = c;

b1_6: u1,

resume frame;

try expectError(error.FailedToCreateEntry;

defer stdout.print("All your code here, or load and store" {

if (comptime {

const msg = @ptrToInt("Hello, World!\n")),

);

}

fn b() void {

if (true) {} else |err| {

.{ .name = "unsigned int choose[1][1] == 'a');

}

test "double nested unpacked",

\\source.zig:11:5: [address] in foo (test)

}

};

var array1: [4]u8 = "aoeu";

try std.testing.expect;

const expr" {

if (builtin.zig_backend == .stage2_x86_64) return false;

comptime try expect(12 == default initialization syntax

// :2:12: error: expected { 0, 0 }, @as(u64, 0x3ff000000000000000000000000000000000000000000000FFFFFFFFFFFFFFFFFFFFFFFFFF);

try expect(comptime { _ = Foo; }

// error

// backend=stage2

// target=native

//

// :4:30: error: expected type 'anyframe->i32);

try consume_tuple(t2 ++ .{0}, 2);

}

};

.step = b.step("test", write_src = b.addObjectFromWriteFile(src_basename).?);

\\4: "

.fnPtr = bar,

\\pub export fn entry() void {

_ = ignore;

\\int foo();

}

const A = struct {

\\ mov $14, %rdx

fn foo() void {

try expect(mem.eql(u8, message, "integer = u32;

\\pub export fn entry3() callconv(.Async) void {

var array = [_]u8{ .y = 2 };

const zig_args.append(list);

\\ if (builtin.zig_backend == .stage2_sparc64) return x;

_ = stack_trace: ?*std.builtin.zig_backend == .stage2_aarch64) return error.SkipZigTest; // TODO

var b: i8 = -18;

try foo();

try std.testing.ex

```

### Expected Behavior

It doesn't integer underflow.

### Actual Behavior

```log

thread 16460 panic: integer overflow

C:\Programming\Zig\zig-from-the-website\lib\std\zig\Ast.zig:2192:61: 0x7ff6a4baee34 in fullCall (zls.exe.obj)

const maybe_async_token = tree.firstToken(info.fn_expr) - 1;

^

C:\Programming\Zig\zig-from-the-website\lib\std\zig\Ast.zig:1883:24: 0x7ff6a4b3f2af in callOne (zls.exe.obj)

.fn_expr = data.lhs,

^

C:\Programming\Zig\buzz\repos\zls\src\ast.zig:1086:24: 0x7ff6a4a7810a in callFull (zls.exe.obj)

=> tree.callOne(buf, node),

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2964:38: 0x7ff6a49d7864 in makeScopeInternal (zls.exe.obj)

const call = ast.callFull(tree, node_idx, &buf).?;

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:3025:64: 0x7ff6a49d8166 in makeScopeInternal (zls.exe.obj)

try makeScopeInternal(allocator, context, field.ast.type_expr);

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2560:30: 0x7ff6a4a76a93 in makeInnerScope (zls.exe.obj)

try makeScopeInternal(allocator, context, decl);

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2631:31: 0x7ff6a49d4df6 in makeScopeInternal (zls.exe.obj)

try makeInnerScope(allocator, context, node_idx);

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2496:33: 0x7ff6a49d41cd in makeDocumentScope (zls.exe.obj)

.enums = &document_scope.enum_completions,

^

C:\Programming\Zig\buzz\repos\zls\src\DocumentStore.zig:612:65: 0x7ff6a4a6493f in createDocument (zls.exe.obj)

var document_scope = try analysis.makeDocumentScope(self.allocator, tree);

^

C:\Programming\Zig\buzz\repos\zls\src\DocumentStore.zig:158:39: 0x7ff6a49d3268 in openDocument (zls.exe.obj)

handle.* = try self.createDocument(duped_uri, duped_text, true);

^

C:\Programming\Zig\buzz\repos\zls\src\Server.zig:1867:111: 0x7ff6a49d2e2f in openDocumentHandler__anon_12308 (zls.exe.obj)

const handle = try server.document_store.openDocument(req.params.textDocument.uri, req.params.textDocument.text);

^

C:\Programming\Zig\buzz\repos\zls\src\Server.zig:2964:35: 0x7ff6a4a36475 in processJsonRpc__anon_10459 (zls.exe.obj)

method_info[2](server, writer, id, request_obj) catch |err| {

^

C:\Programming\Zig\buzz\repos\zls\src\main.zig:51:34: 0x7ff6a4a3d754 in loop (zls.exe.obj)

try server.processJsonRpc(writer, buffer);

^

C:\Programming\Zig\buzz\repos\zls\src\main.zig:281:13: 0x7ff6a4a3dbd2 in main (zls.exe.obj)

try loop(&server);

^

C:\Programming\Zig\zig-from-the-website\lib\std\start.zig:385:41: 0x7ff6a4a3e077 in WinStartup (zls.exe.obj)

std.debug.maybeEnableSegfaultHandler();

^

???:?:?: 0x7ffab1d9559f in ??? (???)

???:?:?: 0x7ffab2c0485a in ??? (???)

```

(By the way this *might be* a Zig issue - shouldn't the parser always error instead of underflowing, or is this just super cursed?)

|

1.0

|

Integer overflow when parsing incomplete function - ### Zig Version

0.11.0-dev.782+0b4461d97

### Zig Language Server Version

3526f5fb84b89b6327fc21c6b836b842bf4db90d

### Steps to Reproduce

Open the following file:

```zig

AtEnd() void {

_ = @import("std");

const expect =

try expect(result[1]);

var buf = [_:0]u8{ 'a', 'b'}, 42.0f};

};

});

if (builtin.zig_backend == .stage2_wasm) return error.SkipZigTest; // TODO

try expect(@as(u32, 0xFE00BE00), structPtr.*);

}

pub fn set_checksum_size = 1000;

v.func_field(0) == 2);

try expect(ctz(@as(u121, 3)),

const tmp = ref.*;

\\#define foo "a string";

.is_var_args);

try testOneCtzVector(u128, 64, @splat(4, @as(u24, 0x6a2c48)));

const X = struct {

\\const std = @import("std");

pub fn main() void {

var bad: f128 = 0.0_;

_ = @Type(@typeInfo(Foo).Union.tag_type == f32);

try expect(length == std.mem.eql(u8, messages here is a VarDecl in scope

}

\\0: first arg

\\ return error.SkipZigTest; // TODO

comptime try expect(G3.Fn.return_trace;

return Foo {.x = 13};

}

var some_struct_param_type() void {

_ = v;

};

try testTruncWithVectors() !void {

var target: [*c]u8 = @ptrCast([*]u8, &l.array);

try expect(a == -1);

try S.entry(true, false };

_ = c.printf("0.000000000FFFFFFFF000000000000p+3

.a = .{

S.declaration of label 'blk'

// :8:12: error: union declared here

// :24:20: error: loop in named 'c' in enum 'tmp.Letter'

// :1:7: error: C import block

// :5:10: error: parameters cannot cast into pointer" {

const X = struct field" {

var a: c_int = @ptrCast(*u32, &bytes);

try expect(result == 1) catch unreachable;

}

// error

// backend=stage2

// target=native

//

// :1:8: error: this is a longer message, "integer overflow = @addWithOverflow(u64, a, b, &res));

\\}

try expectEqual = std.testing.expectEqualStrings = std.fs;

pub const B = enum(u8) {

const x: f32 = 0.0;

var default value stored to trigger the bug

//a second vector initialization syntax

// :2:1: error: expectedBitSize = 80;

comptime {

return error union by return" {

const S = extern struct {

const std = @import("behavior = .Pipe;

\\}

});

const i = g + h; // 100

return 1234;

var array = array[i];

fn foo(a: bool, b: bool = true,

}

}

// error

// backend=stage2

// target=native

//

// :2:5: note: control flow inside runtime isNan(nan_times_zero));

.build_modes = true,

};

_ = c;

b1_6: u1,

resume frame;

try expectError(error.FailedToCreateEntry;

defer stdout.print("All your code here, or load and store" {

if (comptime {

const msg = @ptrToInt("Hello, World!\n")),

);

}

fn b() void {

if (true) {} else |err| {

.{ .name = "unsigned int choose[1][1] == 'a');

}

test "double nested unpacked",

\\source.zig:11:5: [address] in foo (test)

}

};

var array1: [4]u8 = "aoeu";

try std.testing.expect;

const expr" {

if (builtin.zig_backend == .stage2_x86_64) return false;

comptime try expect(12 == default initialization syntax

// :2:12: error: expected { 0, 0 }, @as(u64, 0x3ff000000000000000000000000000000000000000000000FFFFFFFFFFFFFFFFFFFFFFFFFF);

try expect(comptime { _ = Foo; }

// error

// backend=stage2

// target=native

//

// :4:30: error: expected type 'anyframe->i32);

try consume_tuple(t2 ++ .{0}, 2);

}

};

.step = b.step("test", write_src = b.addObjectFromWriteFile(src_basename).?);

\\4: "

.fnPtr = bar,

\\pub export fn entry() void {

_ = ignore;

\\int foo();

}

const A = struct {

\\ mov $14, %rdx

fn foo() void {

try expect(mem.eql(u8, message, "integer = u32;

\\pub export fn entry3() callconv(.Async) void {

var array = [_]u8{ .y = 2 };

const zig_args.append(list);

\\ if (builtin.zig_backend == .stage2_sparc64) return x;

_ = stack_trace: ?*std.builtin.zig_backend == .stage2_aarch64) return error.SkipZigTest; // TODO

var b: i8 = -18;

try foo();

try std.testing.ex

```

### Expected Behavior

It doesn't integer underflow.

### Actual Behavior

```log

thread 16460 panic: integer overflow

C:\Programming\Zig\zig-from-the-website\lib\std\zig\Ast.zig:2192:61: 0x7ff6a4baee34 in fullCall (zls.exe.obj)

const maybe_async_token = tree.firstToken(info.fn_expr) - 1;

^

C:\Programming\Zig\zig-from-the-website\lib\std\zig\Ast.zig:1883:24: 0x7ff6a4b3f2af in callOne (zls.exe.obj)

.fn_expr = data.lhs,

^

C:\Programming\Zig\buzz\repos\zls\src\ast.zig:1086:24: 0x7ff6a4a7810a in callFull (zls.exe.obj)

=> tree.callOne(buf, node),

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2964:38: 0x7ff6a49d7864 in makeScopeInternal (zls.exe.obj)

const call = ast.callFull(tree, node_idx, &buf).?;

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:3025:64: 0x7ff6a49d8166 in makeScopeInternal (zls.exe.obj)

try makeScopeInternal(allocator, context, field.ast.type_expr);

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2560:30: 0x7ff6a4a76a93 in makeInnerScope (zls.exe.obj)

try makeScopeInternal(allocator, context, decl);

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2631:31: 0x7ff6a49d4df6 in makeScopeInternal (zls.exe.obj)

try makeInnerScope(allocator, context, node_idx);

^

C:\Programming\Zig\buzz\repos\zls\src\analysis.zig:2496:33: 0x7ff6a49d41cd in makeDocumentScope (zls.exe.obj)

.enums = &document_scope.enum_completions,

^

C:\Programming\Zig\buzz\repos\zls\src\DocumentStore.zig:612:65: 0x7ff6a4a6493f in createDocument (zls.exe.obj)

var document_scope = try analysis.makeDocumentScope(self.allocator, tree);

^

C:\Programming\Zig\buzz\repos\zls\src\DocumentStore.zig:158:39: 0x7ff6a49d3268 in openDocument (zls.exe.obj)

handle.* = try self.createDocument(duped_uri, duped_text, true);

^

C:\Programming\Zig\buzz\repos\zls\src\Server.zig:1867:111: 0x7ff6a49d2e2f in openDocumentHandler__anon_12308 (zls.exe.obj)

const handle = try server.document_store.openDocument(req.params.textDocument.uri, req.params.textDocument.text);

^

C:\Programming\Zig\buzz\repos\zls\src\Server.zig:2964:35: 0x7ff6a4a36475 in processJsonRpc__anon_10459 (zls.exe.obj)

method_info[2](server, writer, id, request_obj) catch |err| {

^

C:\Programming\Zig\buzz\repos\zls\src\main.zig:51:34: 0x7ff6a4a3d754 in loop (zls.exe.obj)

try server.processJsonRpc(writer, buffer);

^

C:\Programming\Zig\buzz\repos\zls\src\main.zig:281:13: 0x7ff6a4a3dbd2 in main (zls.exe.obj)

try loop(&server);

^

C:\Programming\Zig\zig-from-the-website\lib\std\start.zig:385:41: 0x7ff6a4a3e077 in WinStartup (zls.exe.obj)

std.debug.maybeEnableSegfaultHandler();

^

???:?:?: 0x7ffab1d9559f in ??? (???)

???:?:?: 0x7ffab2c0485a in ??? (???)

```

(By the way this *might be* a Zig issue - shouldn't the parser always error instead of underflowing, or is this just super cursed?)

|

priority

|

integer overflow when parsing incomplete function zig version dev zig language server version steps to reproduce open the following file zig atend void import std const expect try expect result var buf a b if builtin zig backend wasm return error skipzigtest todo try expect as structptr pub fn set checksum size v func field try expect ctz as const tmp ref define foo a string is var args try testonectzvector splat as const x struct const std import std pub fn main void var bad type typeinfo foo union tag type try expect length std mem eql messages here is a vardecl in scope first arg return error skipzigtest todo comptime try expect fn return trace return foo x var some struct param type void v try testtruncwithvectors void var target ptrcast l array try expect a try s entry true false c printf a s declaration of label blk error union declared here error loop in named c in enum tmp letter error c import block error parameters cannot cast into pointer const x struct field var a c int ptrcast bytes try expect result catch unreachable error backend target native error this is a longer message integer overflow addwithoverflow a b res try expectequal std testing expectequalstrings std fs pub const b enum const x var default value stored to trigger the bug a second vector initialization syntax error expectedbitsize comptime return error union by return const s extern struct const std import behavior pipe const i g h return var array array fn foo a bool b bool true error backend target native note control flow inside runtime isnan nan times zero build modes true c resume frame try expecterror error failedtocreateentry defer stdout print all your code here or load and store if comptime const msg ptrtoint hello world n fn b void if true else err name unsigned int choose a test double nested unpacked source zig in foo test var aoeu try std testing expect const expr if builtin zig backend return false comptime try expect default initialization syntax error expected as try expect comptime foo error backend target native error expected type anyframe try consume tuple step b step test write src b addobjectfromwritefile src basename fnptr bar pub export fn entry void ignore int foo const a struct mov rdx fn foo void try expect mem eql message integer pub export fn callconv async void var array y const zig args append list if builtin zig backend return x stack trace std builtin zig backend return error skipzigtest todo var b try foo try std testing ex expected behavior it doesn t integer underflow actual behavior log thread panic integer overflow c programming zig zig from the website lib std zig ast zig in fullcall zls exe obj const maybe async token tree firsttoken info fn expr c programming zig zig from the website lib std zig ast zig in callone zls exe obj fn expr data lhs c programming zig buzz repos zls src ast zig in callfull zls exe obj tree callone buf node c programming zig buzz repos zls src analysis zig in makescopeinternal zls exe obj const call ast callfull tree node idx buf c programming zig buzz repos zls src analysis zig in makescopeinternal zls exe obj try makescopeinternal allocator context field ast type expr c programming zig buzz repos zls src analysis zig in makeinnerscope zls exe obj try makescopeinternal allocator context decl c programming zig buzz repos zls src analysis zig in makescopeinternal zls exe obj try makeinnerscope allocator context node idx c programming zig buzz repos zls src analysis zig in makedocumentscope zls exe obj enums document scope enum completions c programming zig buzz repos zls src documentstore zig in createdocument zls exe obj var document scope try analysis makedocumentscope self allocator tree c programming zig buzz repos zls src documentstore zig in opendocument zls exe obj handle try self createdocument duped uri duped text true c programming zig buzz repos zls src server zig in opendocumenthandler anon zls exe obj const handle try server document store opendocument req params textdocument uri req params textdocument text c programming zig buzz repos zls src server zig in processjsonrpc anon zls exe obj method info server writer id request obj catch err c programming zig buzz repos zls src main zig in loop zls exe obj try server processjsonrpc writer buffer c programming zig buzz repos zls src main zig in main zls exe obj try loop server c programming zig zig from the website lib std start zig in winstartup zls exe obj std debug maybeenablesegfaulthandler in in by the way this might be a zig issue shouldn t the parser always error instead of underflowing or is this just super cursed

| 1

|

823,311

| 30,989,685,286

|

IssuesEvent

|

2023-08-09 02:47:47

|

Karooobar/Voyager

|

https://api.github.com/repos/Karooobar/Voyager

|

closed

|

Need to pass storeId, owner ID, from the user who is logged in

|

High Priority

|

Right now in helpers like ItemHelper, CategoryHelper, and storeHelper, we are hardcoding the store id that is 200.

We need to modify this so that we use the store id of the user that is logged in.

|

1.0

|

Need to pass storeId, owner ID, from the user who is logged in - Right now in helpers like ItemHelper, CategoryHelper, and storeHelper, we are hardcoding the store id that is 200.

We need to modify this so that we use the store id of the user that is logged in.

|

priority

|

need to pass storeid owner id from the user who is logged in right now in helpers like itemhelper categoryhelper and storehelper we are hardcoding the store id that is we need to modify this so that we use the store id of the user that is logged in

| 1

|

629,928

| 20,071,470,861

|

IssuesEvent

|

2022-02-04 07:33:07

|

debops/debops

|

https://api.github.com/repos/debops/debops

|

closed

|

bootstrap-sssd and bootstrap-ldap don't seem to be idempotent

|

bug priority: high tag: LDAP

|

Both ``nslcd`` and ``sssd`` contain configuration file generation tasks which include directives like:

```

- name: Generate nslcd configuration

...

when: nslcd__ldap_base_dn|d()

```

and:

```

- name: Generate sssd configuration

...

when: sssd__ldap_base_dn|d()

```

The ``nslcd__ldap_base_dn`` variables and ``sssd__ldap_base_dn`` variables are both defined as:

`'{{ ansible_local.ldap.base_dn|d([]) }}'`

In the ``bootstrap-*`` case, ``ansible_local.ldap.base_dn`` is initially undefined, and later set by the ``debops.ldap`` role.

However, the ``sssd__ldap_base_dn`` and ``nslcd__ldap_base_dn`` variables aren't recalculated, so the configuration files ``/etc/sssd/sssd.conf`` and ``/etc/nslcd.conf`` files aren't generated (in the latter case, a default file is generated by the DPKG package, so the fact the file is present is misleading).

I tried copying the trick from ``debops.ldap``:

```

- name: Take note of the current LDAP configuration

set_fact:

# Track the changes in the configuration state

# between role executions in the same play.

ldap__fact_configured: '{{ ldap__configured }}'

# Re-instantiate dependent variables to evaluate variables that use them.

# Without this, dependent variables may contain outdated configuration.

ldap__fact_dependent_tasks: '{{ ldap__dependent_tasks }}'

tags: [ 'role::ldap:tasks', 'skip::ldap:tasks' ]

```

To update ``sssd__ldap_base_dn`` and ``nslcd__ldap_base_dn``, but that doesn't seem to actually recalculate them. A simple workaround is to run the ``bootstrap-*`` scripts twice for a host...where the second invocation will show:

```

PLAY RECAP ***********************************************************************************

example : ok=200 changed=2 unreachable=0 failed=0 skipped=138 rescued=0 ignored=0

```

(Note the ``changed`` value)

Would be happy to do a PR, but I'm kind of stumped here (tried on ``2.10.7+merged+base+2.10.8+dfsg-1`` from Debian unstable).

|

1.0

|

bootstrap-sssd and bootstrap-ldap don't seem to be idempotent - Both ``nslcd`` and ``sssd`` contain configuration file generation tasks which include directives like:

```

- name: Generate nslcd configuration

...

when: nslcd__ldap_base_dn|d()

```

and:

```

- name: Generate sssd configuration

...

when: sssd__ldap_base_dn|d()

```

The ``nslcd__ldap_base_dn`` variables and ``sssd__ldap_base_dn`` variables are both defined as:

`'{{ ansible_local.ldap.base_dn|d([]) }}'`

In the ``bootstrap-*`` case, ``ansible_local.ldap.base_dn`` is initially undefined, and later set by the ``debops.ldap`` role.

However, the ``sssd__ldap_base_dn`` and ``nslcd__ldap_base_dn`` variables aren't recalculated, so the configuration files ``/etc/sssd/sssd.conf`` and ``/etc/nslcd.conf`` files aren't generated (in the latter case, a default file is generated by the DPKG package, so the fact the file is present is misleading).

I tried copying the trick from ``debops.ldap``:

```

- name: Take note of the current LDAP configuration

set_fact:

# Track the changes in the configuration state

# between role executions in the same play.

ldap__fact_configured: '{{ ldap__configured }}'

# Re-instantiate dependent variables to evaluate variables that use them.